license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 3.5280 | 3.4936 | 0 | | 3.4633 | 3.2513 | 1 | | 3.4649 | 3.3503 | 2 | | 3.4537 | 3.2847 | 3 | | 3.3745 | 3.3207 | 4 | | 3.3546 | 3.1687 | 5 | | 3.3208 | 3.0532 | 6 | | 3.1858 | 3.2573 | 7 | | 3.2212 | 3.0786 | 8 | | 3.1136 | 2.9661 | 9 | | 3.1065 | 3.1472 | 10 | | 2.9766 | 3.0139 | 11 | | 2.9592 | 3.0047 | 12 | | 2.9163 | 3.0109 | 13 | | 2.8840 | 2.9384 | 14 | | 2.8533 | 3.0551 | 15 | | 2.8657 | 3.0014 | 16 | | 2.8383 | 3.0040 | 17 | | 2.8457 | 3.0526 | 18 | | 2.8306 | 3.0281 | 19 | | 040034eb305ad020320c9b8aa7b7bab1 |

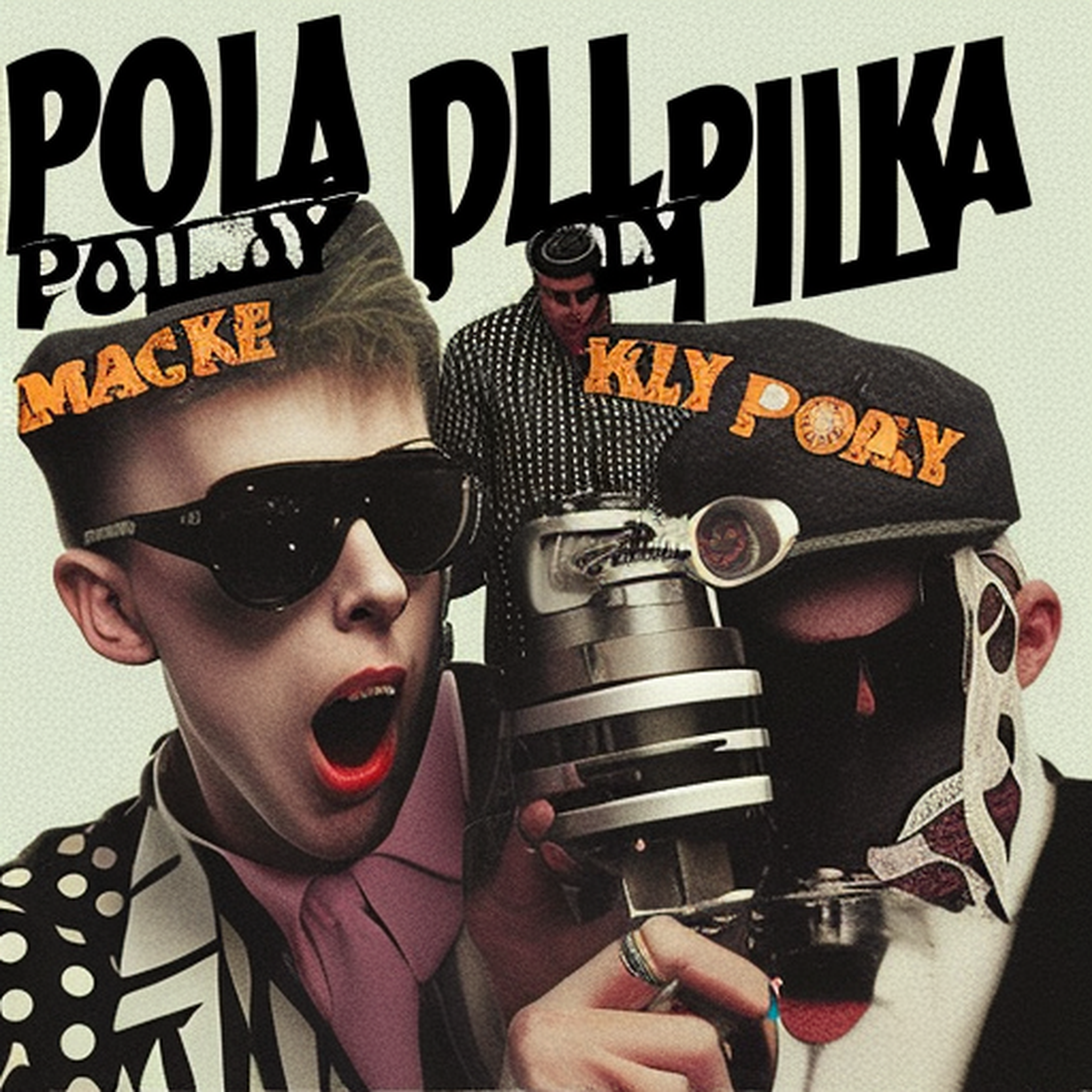

mit | [] | false | Plen-Ki-Mun on Stable Diffusion This is the `<plen-ki-mun>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:       | f80708e8737043f066fe4aaf489b8fc6 |

afl-3.0 | [] | false | Validation Metrics - Loss: 0.230 - Accuracy: 0.936 - Macro F1: 0.927 - Micro F1: 0.936 - Weighted F1: 0.936 - Macro Precision: 0.929 - Micro Precision: 0.936 - Weighted Precision: 0.936 - Macro Recall: 0.925 - Micro Recall: 0.936 - Weighted Recall: 0.936 from transformers import AutoModelForSequenceClassification, AutoTokenizer model = AutoModelForSequenceClassification.from_pretrained("thothai/turkce-kufur-tespiti", use_auth_token=True) tokenizer = AutoTokenizer.from_pretrained("thothai/turkce-kufur-tespiti", use_auth_token=True) inputs = tokenizer("Merhaba", return_tensors="pt") outputs = model(**inputs) ``` | f77e94c143618efc17f5f911f4a45683 |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2r_fr_vp-100k_age_teens-2_sixties-8_s869 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 13ecf16b457e55f700a629beacae427f |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2r_de_xls-r_age_teens-8_sixties-2_s338 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 1ce01aba45d31ebb6c646073818fb340 |

apache-2.0 | ['generated_from_trainer'] | false | final-squad-bn-qgen-mt5-small-all-metric-v2 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6559 - Rouge1 Precision: 31.143 - Rouge1 Recall: 24.8687 - Rouge1 Fmeasure: 26.7861 - Rouge2 Precision: 12.1721 - Rouge2 Recall: 9.3907 - Rouge2 Fmeasure: 10.1945 - Rougel Precision: 29.2741 - Rougel Recall: 23.4105 - Rougel Fmeasure: 25.196 - Rougelsum Precision: 29.2488 - Rougelsum Recall: 23.3873 - Rougelsum Fmeasure: 25.1783 - Bleu-1: 20.2844 - Bleu-2: 11.7083 - Bleu-3: 7.2251 - Bleu-4: 4.6646 - Meteor: 0.1144 | e52cf5bef3afbadec20fa6258cdb2901 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | bc52befe34269a945d48276629e65058 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 Precision | Rouge1 Recall | Rouge1 Fmeasure | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | Rougel Precision | Rougel Recall | Rougel Fmeasure | Rougelsum Precision | Rougelsum Recall | Rougelsum Fmeasure | Bleu-1 | Bleu-2 | Bleu-3 | Bleu-4 | Meteor | |:-------------:|:-----:|:-----:|:---------------:|:----------------:|:-------------:|:---------------:|:----------------:|:-------------:|:---------------:|:----------------:|:-------------:|:---------------:|:-------------------:|:----------------:|:------------------:|:-------:|:-------:|:------:|:------:|:------:| | 0.9251 | 1.0 | 6769 | 0.7237 | 26.4973 | 20.6282 | 22.3983 | 9.3138 | 6.9928 | 7.6534 | 24.9538 | 19.4635 | 21.1113 | 24.9713 | 19.4608 | 21.119 | 17.5414 | 9.5172 | 5.6104 | 3.4646 | 0.097 | | 0.8214 | 2.0 | 13538 | 0.6804 | 29.524 | 23.4125 | 25.2574 | 11.2954 | 8.6345 | 9.3841 | 27.8173 | 22.1005 | 23.8164 | 27.7939 | 22.0878 | 23.801 | 19.2368 | 10.9056 | 6.6821 | 4.2702 | 0.1074 | | 0.7914 | 3.0 | 20307 | 0.6600 | 30.7136 | 24.5527 | 26.4259 | 11.8743 | 9.1634 | 9.9452 | 28.8725 | 23.1161 | 24.859 | 28.8566 | 23.1018 | 24.8457 | 19.9315 | 11.4473 | 7.0613 | 4.5701 | 0.1119 | | 0.7895 | 4.0 | 27076 | 0.6559 | 31.1568 | 24.8787 | 26.8004 | 12.1685 | 9.3879 | 10.1929 | 29.2804 | 23.3999 | 25.1925 | 29.2554 | 23.3891 | 25.1818 | 20.2844 | 11.7083 | 7.2251 | 4.6646 | 0.1144 | | a191ea811faebac84b38a8734c73d973 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-multilingual-cased-finetuned-misogyny-multilingual This model is a fine-tuned version of [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9917 - Accuracy: 0.8808 - F1: 0.7543 - Precision: 0.7669 - Recall: 0.7421 - Mae: 0.1192 | abb606d955fffd6233771ee3138c2fd9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | Mae | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:---------:|:------:|:------:| | 0.3366 | 1.0 | 1407 | 0.3297 | 0.8630 | 0.6862 | 0.7886 | 0.6073 | 0.1370 | | 0.2371 | 2.0 | 2814 | 0.3423 | 0.8802 | 0.7468 | 0.7802 | 0.7161 | 0.1198 | | 0.1714 | 3.0 | 4221 | 0.4373 | 0.8749 | 0.7351 | 0.7693 | 0.7039 | 0.1251 | | 0.1161 | 4.0 | 5628 | 0.5584 | 0.8699 | 0.7525 | 0.7089 | 0.8019 | 0.1301 | | 0.0646 | 5.0 | 7035 | 0.7005 | 0.8788 | 0.7357 | 0.7961 | 0.6837 | 0.1212 | | 0.0539 | 6.0 | 8442 | 0.7866 | 0.8710 | 0.7465 | 0.7243 | 0.7702 | 0.1290 | | 0.0336 | 7.0 | 9849 | 0.8967 | 0.8783 | 0.7396 | 0.7828 | 0.7010 | 0.1217 | | 0.0202 | 8.0 | 11256 | 0.9053 | 0.8810 | 0.7472 | 0.7845 | 0.7133 | 0.1190 | | 0.018 | 9.0 | 12663 | 0.9785 | 0.8792 | 0.7478 | 0.7706 | 0.7262 | 0.1208 | | 0.0069 | 10.0 | 14070 | 0.9917 | 0.8808 | 0.7543 | 0.7669 | 0.7421 | 0.1192 | | 293f2550c8dffe228d3f8c29a825802d |

cc-by-4.0 | ['question generation'] | false | Model Card of `lmqg/bart-large-squadshifts-nyt-qg` This model is fine-tuned version of [lmqg/bart-large-squad](https://huggingface.co/lmqg/bart-large-squad) for question generation task on the [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (dataset_name: nyt) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | c10d2dc870a1c784a2b2ed91818958b6 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [lmqg/bart-large-squad](https://huggingface.co/lmqg/bart-large-squad) - **Language:** en - **Training data:** [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (nyt) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 3b58b4128745107dc1b2d733289f2e67 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/bart-large-squadshifts-nyt-qg") output = pipe("<hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | f92b255216a067f7916010742a4f644e |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/bart-large-squadshifts-nyt-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.nyt.json) | | Score | Type | Dataset | |:-----------|--------:|:-------|:---------------------------------------------------------------------------| | BERTScore | 93.04 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_1 | 25.82 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_2 | 17.11 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_3 | 12.03 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_4 | 8.74 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | METEOR | 25.08 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | MoverScore | 65.02 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | ROUGE_L | 25.28 | nyt | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | aab014e69e713ec3ad8adbb32b40ea52 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squadshifts - dataset_name: nyt - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: None - model: lmqg/bart-large-squad - max_length: 512 - max_length_output: 32 - epoch: 1 - batch: 32 - lr: 5e-05 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 4 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/bart-large-squadshifts-nyt-qg/raw/main/trainer_config.json). | 1908469c9b5d8612d1118b8b507205e1 |

apache-2.0 | ['generated_from_trainer'] | false | sd-smallmol-roles-v2 This model is a fine-tuned version of [michiyasunaga/BioLinkBERT-large](https://huggingface.co/michiyasunaga/BioLinkBERT-large) on the source_data_nlp dataset. It achieves the following results on the evaluation set: - Loss: 0.0015 - Accuracy Score: 0.9995 - Precision: 0.9628 - Recall: 0.9716 - F1: 0.9672 | 950a0b3549d29d6e3795f0b1fab98ef7 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 256 - seed: 42 - optimizer: Adafactor - lr_scheduler_type: linear - num_epochs: 1.0 | 561ebd706a9200ee74315491b89ebc8e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy Score | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------------:|:---------:|:------:|:------:| | 0.0013 | 1.0 | 1569 | 0.0015 | 0.9995 | 0.9628 | 0.9716 | 0.9672 | | 087cb26882a2ef3bcf4b6d0a30fcffbb |

apache-2.0 | ['image-classification'] | false | resnet152 Implementation of ResNet proposed in [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385) ``` python ResNet.resnet18() ResNet.resnet26() ResNet.resnet34() ResNet.resnet50() ResNet.resnet101() ResNet.resnet152() ResNet.resnet200() Variants (d) proposed in `Bag of Tricks for Image Classification with Convolutional Neural Networks <https://arxiv.org/pdf/1812.01187.pdf`_ ResNet.resnet26d() ResNet.resnet34d() ResNet.resnet50d() | 290ed5bee93003b0c4bf84dfff2c4569 |

apache-2.0 | ['image-classification'] | false | You can construct your own one by chaning `stem` and `block` resnet101d = ResNet.resnet101(stem=ResNetStemC, block=partial(ResNetBottleneckBlock, shortcut=ResNetShorcutD)) ``` Examples: ``` python | 4a936186601f20f648622c5ec62331e1 |

apache-2.0 | ['generated_from_keras_callback'] | false | market_positivity_model This model is a fine-tuned version of [hfl/chinese-roberta-wwm-ext](https://huggingface.co/hfl/chinese-roberta-wwm-ext) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.5776 - Train Sparse Categorical Accuracy: 0.7278 - Validation Loss: 0.6460 - Validation Sparse Categorical Accuracy: 0.6859 - Epoch: 2 | 74c9d6f4d10006f1bab1229f2687ea82 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Sparse Categorical Accuracy | Validation Loss | Validation Sparse Categorical Accuracy | Epoch | |:----------:|:---------------------------------:|:---------------:|:--------------------------------------:|:-----:| | 0.7207 | 0.6394 | 0.6930 | 0.6811 | 0 | | 0.6253 | 0.7033 | 0.6549 | 0.6872 | 1 | | 0.5776 | 0.7278 | 0.6460 | 0.6859 | 2 | | a59235e7967e396f7857c13c837aea13 |

creativeml-openrail-m | [] | false | What is this This is a hypernetwork trained to make pictures of Re-l Mayer from Ergo Proxy. Trained on Atlers mix (758f6d9b). Usable on other models aware of anime. Limited usefullness on real-life models. | b35b742c756b6519ec260295cecba0d6 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/bert-base-nli-stsb-mean-tokens This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. | e954def5fdda7c56ef92db934cf1ecb0 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('sentence-transformers/bert-base-nli-stsb-mean-tokens') embeddings = model.encode(sentences) print(embeddings) ``` | d7b521e1d24da0592d8b5f14085e393e |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Load model from HuggingFace Hub tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/bert-base-nli-stsb-mean-tokens') model = AutoModel.from_pretrained('sentence-transformers/bert-base-nli-stsb-mean-tokens') | 831c6ed303fbb43b81ce1bfdb10ead4e |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/bert-base-nli-stsb-mean-tokens) | 6ef31463687c43868ed7a5be05c6ccd2 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Full Model Architecture ``` SentenceTransformer( (0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: BertModel (1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False}) ) ``` | 057fe064b79ff1dc2ccecfd7962770fd |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Medium ID - FLEURS-CV-LBV - Augmented This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the following datasets: - [mozilla-foundation/common_voice_11_0](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0) - [google/fleurs](https://huggingface.co/datasets/google/fleurs) - [indonesian-nlp/librivox-indonesia](https://huggingface.co/datasets/indonesian-nlp/librivox-indonesia) It achieves the following results on the evaluation set (Common Voice 11.0): - Loss: 0.2788 - Wer: 7.6132 - Cer: 2.3332 | 295349cdc6f7374ca5b0a50b53be962a |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training and evaluation data Training: - [mozilla-foundation/common_voice_11_0](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0) (train+validation) - [google/fleurs](https://huggingface.co/datasets/google/fleurs) (train+validation) - [indonesian-nlp/librivox-indonesia](https://huggingface.co/datasets/indonesian-nlp/librivox-indonesia) (train) Evaluation: - [mozilla-foundation/common_voice_11_0](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0) (test) - [google/fleurs](https://huggingface.co/datasets/google/fleurs) (test) - [indonesian-nlp/librivox-indonesia](https://huggingface.co/datasets/indonesian-nlp/librivox-indonesia) (test) | e4ddc79df779a69b89c59c08454fefaf |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training procedure Datasets were augmented on-the-fly using [audiomentations](https://github.com/iver56/audiomentations) via PitchShift, AddGaussianNoise and TimeStretch transformations at `p=0.3`. | f8c371f80c3ee6172a143d5d3d2ab7e0 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 10000 - mixed_precision_training: Native AMP | e7cb3865f8feaa8c4aae7f5db7b9f42e |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:| | 0.3002 | 1.9 | 1000 | 0.1659 | 8.1850 | 2.5333 | | 0.0514 | 3.8 | 2000 | 0.1818 | 8.0559 | 2.5244 | | 0.0145 | 5.7 | 3000 | 0.2150 | 7.8945 | 2.5281 | | 0.0037 | 7.6 | 4000 | 0.2248 | 7.7100 | 2.3738 | | 0.0016 | 9.51 | 5000 | 0.2402 | 7.6224 | 2.3591 | | 0.0009 | 11.41 | 6000 | 0.2525 | 7.7654 | 2.3952 | | 0.0005 | 13.31 | 7000 | 0.2609 | 7.5994 | 2.3487 | | 0.0008 | 15.21 | 8000 | 0.2682 | 7.5855 | 2.3347 | | 0.0002 | 17.11 | 9000 | 0.2756 | 7.6178 | 2.3288 | | 0.0002 | 19.01 | 10000 | 0.2788 | 7.6132 | 2.3332 | | 13a3c266dee428f34af372ebe8180017 |

apache-2.0 | [] | false | This model is a fine-tune checkpoint of [T5-small](https://huggingface.co/t5-small), fine-tuned on the [Wiki Neutrality Corpus (WNC)](https://github.com/rpryzant/neutralizing-bias), a labeled dataset composed of 180,000 biased and neutralized sentence pairs that are generated from Wikipedia edits tagged for “neutral point of view”. This model reaches an accuracy of 0.32 on a dev split of the WNC. For more details about T5, check out this [model card](https://huggingface.co/t5-small). | 0e1081d7dd2f2a17b10dcf6cc2d6239c |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Introduction ptt5-base-msmarco-pt-100k-v2 is a T5-based model pretrained in the BrWac corpus, finetuned on Portuguese translated version of MS MARCO passage dataset. In the v2 version, the Portuguese dataset was translated using Google Translate. This model was finetuned for 100k steps. Further information about the dataset or the translation method can be found on our [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository. | 9a692baa3b4d4b6c3ebaa838d5bb718c |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Usage ```python from transformers import T5Tokenizer, T5ForConditionalGeneration model_name = 'unicamp-dl/ptt5-base-msmarco-pt-100k-v2' tokenizer = T5Tokenizer.from_pretrained(model_name) model = T5ForConditionalGeneration.from_pretrained(model_name) ``` | b5eb0e59d2381722be63c3d9acf53006 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Citation If you use ptt5-base-msmarco-pt-100k-v2, please cite: @misc{bonifacio2021mmarco, title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset}, author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and and Roberto Lotufo and Rodrigo Nogueira}, year={2021}, eprint={2108.13897}, archivePrefix={arXiv}, primaryClass={cs.CL} } | 31e06faa4da13740601c5e4bcf18f74b |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-misogyny-sexism-fr-indomain-bal This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9526 - Accuracy: 0.8690 - F1: 0.0079 - Precision: 0.1053 - Recall: 0.0041 - Mae: 0.1310 | 51bc5cbe4372d5a6dc2b2590cfd25335 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | Mae | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:---------:|:------:|:------:| | 0.3961 | 1.0 | 1613 | 0.7069 | 0.8648 | 0.0171 | 0.1125 | 0.0093 | 0.1352 | | 0.338 | 2.0 | 3226 | 0.7963 | 0.8659 | 0.0172 | 0.125 | 0.0093 | 0.1341 | | 0.2794 | 3.0 | 4839 | 0.8851 | 0.8656 | 0.0134 | 0.1 | 0.0072 | 0.1344 | | 0.2345 | 4.0 | 6452 | 0.9526 | 0.8690 | 0.0079 | 0.1053 | 0.0041 | 0.1310 | | 286c195bd9a39ca0af8759dfeed34081 |

mit | ['generated_from_trainer'] | false | roberta-large-finetuned-TRAC-DS This model is a fine-tuned version of [roberta-large](https://huggingface.co/roberta-large) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.8198 - Accuracy: 0.7190 - Precision: 0.6955 - Recall: 0.6979 - F1: 0.6963 | 9172481e861a95231cb10c4eb9d83b9c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.9538 | 1.0 | 612 | 0.8083 | 0.6111 | 0.6192 | 0.6164 | 0.5994 | | 0.7924 | 2.0 | 1224 | 0.7594 | 0.6601 | 0.6688 | 0.6751 | 0.6424 | | 0.6844 | 3.0 | 1836 | 0.6986 | 0.7042 | 0.6860 | 0.6969 | 0.6858 | | 0.5715 | 3.99 | 2448 | 0.7216 | 0.7075 | 0.6957 | 0.6978 | 0.6925 | | 0.45 | 4.99 | 3060 | 0.7963 | 0.7288 | 0.7126 | 0.7074 | 0.7073 | | 0.352 | 5.99 | 3672 | 1.0824 | 0.7141 | 0.6999 | 0.6774 | 0.6818 | | 0.2546 | 6.99 | 4284 | 1.0884 | 0.7230 | 0.7006 | 0.7083 | 0.7028 | | 0.1975 | 7.99 | 4896 | 1.5338 | 0.7337 | 0.7090 | 0.7063 | 0.7074 | | 0.1656 | 8.99 | 5508 | 1.8182 | 0.7100 | 0.6882 | 0.6989 | 0.6896 | | 0.1358 | 9.98 | 6120 | 2.1623 | 0.7173 | 0.6917 | 0.6959 | 0.6934 | | 0.1235 | 10.98 | 6732 | 2.3249 | 0.7141 | 0.6881 | 0.6914 | 0.6888 | | 0.1003 | 11.98 | 7344 | 2.3474 | 0.7124 | 0.6866 | 0.6920 | 0.6887 | | 0.0826 | 12.98 | 7956 | 2.3574 | 0.7083 | 0.6853 | 0.6959 | 0.6874 | | 0.0727 | 13.98 | 8568 | 2.4989 | 0.7116 | 0.6858 | 0.6934 | 0.6883 | | 0.0553 | 14.98 | 9180 | 2.8090 | 0.7026 | 0.6747 | 0.6710 | 0.6725 | | 0.0433 | 15.97 | 9792 | 2.6647 | 0.7255 | 0.7010 | 0.7028 | 0.7018 | | 0.0449 | 16.97 | 10404 | 2.6568 | 0.7247 | 0.7053 | 0.6997 | 0.7010 | | 0.0373 | 17.97 | 11016 | 2.7632 | 0.7149 | 0.6888 | 0.6938 | 0.6909 | | 0.0278 | 18.97 | 11628 | 2.8245 | 0.7124 | 0.6866 | 0.6930 | 0.6889 | | 0.0288 | 19.97 | 12240 | 2.8198 | 0.7190 | 0.6955 | 0.6979 | 0.6963 | | ff20d08e2b5cb0e36664f5bffb4b1d29 |

mit | ['code'] | false | Model Description Content-based filtering is used for recommending movies based on the content of their previously voted movies. e.g. genre, actors, ... By using collaborative filtering, similar interests are found and movies that have been voted for by some users are recommended to users who have not voted for them. It doesn't depend on the content and doesn't need domain knowledge. The ensemble model is created by combining the last two methods to give better recommendations. The algorithm finds similar people and then recommends films based on their votes, filtering them based on content preferences. - Developed by: Aida Aminian, Mohammadreza Mohammadzadeh Asl <!--- Shared by [optional]: [More Information Needed]--> - Model type: content-based filtering and collaborative and an ensemble model of these two model - Language(s) (NLP): not used, only TFIDF for keywords is used - License: MIT License | af4dfc6e7e7a13c13f759ae5b176c51b |

mit | ['code'] | false | How to Get Started with the Model Install the sklearn, pandas and numpy libraries for python. Download the MovieLens dataset and put that in the 'content/IMDB' path in the project directory. Use python interpreter to run the code. | bbdae393565f3b4570d72844a620124a |

mit | ['code'] | false | Environmental Impact <!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly --> <!-- Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact | af4c2b266bceee2872f9af88028c57c2 |

mit | ['code'] | false | compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). --> <!-- - Hardware Type: [More Information Needed] --> <!-- - Hours used: [More Information Needed] --> <!-- - Cloud Provider: [More Information Needed] --> <!-- - Compute Region: [More Information Needed] --> <!-- - Carbon Emitted: [More Information Needed] --> | 673c19ececbbff2c621fc0ed26082f5f |

apache-2.0 | ['t5-small', 'text2text-generation', 'natural language understanding', 'conversational system', 'task-oriented dialog'] | false | t5-small-nlu-multiwoz21-context3 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on [MultiWOZ 2.1](https://huggingface.co/datasets/ConvLab/multiwoz21) with context window size == 3. Refer to [ConvLab-3](https://github.com/ConvLab/ConvLab-3) for model description and usage. | 545a7da70718003f917e5f5e40844128 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 2 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | c58875c32aafb6f3e4fb3aa4d1ce471d |

apache-2.0 | ['generated_from_keras_callback'] | false | nlp-esg-scoring/bert-base-finetuned-esg-gri-clean This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.9511 - Validation Loss: 1.5293 - Epoch: 9 | 35ca291688f3b710a6a2173e280b6435 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -797, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 2b2ba7511fe28a5d16a6ade709286ff7 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 1.9468 | 1.5190 | 0 | | 1.9433 | 1.5186 | 1 | | 1.9569 | 1.4843 | 2 | | 1.9510 | 1.5563 | 3 | | 1.9451 | 1.5308 | 4 | | 1.9576 | 1.5209 | 5 | | 1.9464 | 1.5324 | 6 | | 1.9525 | 1.5168 | 7 | | 1.9488 | 1.5340 | 8 | | 1.9511 | 1.5293 | 9 | | 0acdaf0a62de7d6d41cf2347172a3728 |

apache-2.0 | ['generated_from_trainer'] | false | resnet-50-finetuned-resnet50_0831 This model is a fine-tuned version of [microsoft/resnet-50](https://huggingface.co/microsoft/resnet-50) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0862 - Accuracy: 0.9764 | b4df57e66b6ffb122ff95ed45c8dfb5c |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 20 | 4d2211465a6b8afdadcacc0ed6b1f1a8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.9066 | 1.0 | 223 | 0.8770 | 0.6659 | | 0.5407 | 2.0 | 446 | 0.4251 | 0.7867 | | 0.3614 | 3.0 | 669 | 0.2009 | 0.9390 | | 0.3016 | 4.0 | 892 | 0.1362 | 0.9582 | | 0.2358 | 5.0 | 1115 | 0.1139 | 0.9676 | | 0.247 | 6.0 | 1338 | 0.1081 | 0.9698 | | 0.2135 | 7.0 | 1561 | 0.1027 | 0.9720 | | 0.2043 | 8.0 | 1784 | 0.1026 | 0.9695 | | 0.2165 | 9.0 | 2007 | 0.0957 | 0.9733 | | 0.1983 | 10.0 | 2230 | 0.0936 | 0.9736 | | 0.2116 | 11.0 | 2453 | 0.0949 | 0.9736 | | 0.2341 | 12.0 | 2676 | 0.0905 | 0.9755 | | 0.2004 | 13.0 | 2899 | 0.0901 | 0.9739 | | 0.1956 | 14.0 | 3122 | 0.0877 | 0.9755 | | 0.1668 | 15.0 | 3345 | 0.0847 | 0.9764 | | 0.1855 | 16.0 | 3568 | 0.0850 | 0.9755 | | 0.18 | 17.0 | 3791 | 0.0897 | 0.9745 | | 0.1772 | 18.0 | 4014 | 0.0852 | 0.9755 | | 0.1881 | 19.0 | 4237 | 0.0845 | 0.9764 | | 0.2145 | 20.0 | 4460 | 0.0862 | 0.9764 | | 98d11ecd883c9a09a5c87290ea9d9630 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Hindi Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Hindi using the following datasets: - [Common Voice](https://huggingface.co/datasets/common_voice), - [Indic TTS- IITM](https://www.iitm.ac.in/donlab/tts/index.php) and - [IIITH - Indic Speech Datasets](http://speech.iiit.ac.in/index.php/research-svl/69.html) The Indic datasets are well balanced across gender and accents. However the CommonVoice dataset is skewed towards male voices Fine-tuned on facebook/wav2vec2-large-xlsr-53 using Hindi dataset :: 60 epochs >> 17.05% WER When using this model, make sure that your speech input is sampled at 16kHz. | a6e472de2113c7349289988dbd2754fd |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "hi", split="test") processor = Wav2Vec2Processor.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") model = Wav2Vec2ForCTC.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 909cdb90317e50b5068ed8a778e40676 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) print("Prediction:", processor.batch_decode(predicted_ids)) print("Reference:", test_dataset["sentence"][:2]) ``` | 2521542e331a1dc6d0384d88d8adfb33 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Predictions *Some good ones ..... * | Predictions | Reference | |-------|-------| |फिर वो सूरज तारे पहाड बारिश पदछड़ दिन रात शाम नदी बर्फ़ समुद्र धुंध हवा कुछ भी हो सकती है | फिर वो सूरज तारे पहाड़ बारिश पतझड़ दिन रात शाम नदी बर्फ़ समुद्र धुंध हवा कुछ भी हो सकती है | | इस कारण जंगल में बडी दूर स्थित राघव के आश्रम में लोघ कम आने लगे और अधिकांश भक्त सुंदर के आश्रम में जाने लगे | इस कारण जंगल में बड़ी दूर स्थित राघव के आश्रम में लोग कम आने लगे और अधिकांश भक्त सुन्दर के आश्रम में जाने लगे | | अपने बचन के अनुसार शुभमूर्त पर अनंत दक्षिणी पर्वत गया और मंत्रों का जप करके सरोवर में उतरा | अपने बचन के अनुसार शुभमुहूर्त पर अनंत दक्षिणी पर्वत गया और मंत्रों का जप करके सरोवर में उतरा | *Some crappy stuff .... * | Predictions | Reference | |-------|-------| | वस गनिल साफ़ है। | उसका दिल साफ़ है। | | चाय वा एक कुछ लैंगे हब | चायवाय कुछ लेंगे आप | | टॉम आधे है स्कूल हें है | टॉम अभी भी स्कूल में है | | 598d43f17e870a7d61d9efdda5c969c5 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the following two datasets: 1. Custom dataset created from 20% of Indic, IIITH and CV (test): WER 17.xx% 2. CommonVoice Hindi test dataset: WER 56.xx% Links to the datasets are provided above (check the links at the start of the README) train-test csv files are shared on the following gdrive links: a. IIITH [train](https://storage.googleapis.com/indic-dataset/train_test_splits/iiit_hi_train.csv) [test](https://storage.googleapis.com/indic-dataset/train_test_splits/iiit_hi_test.csv) b. Indic TTS [train](https://storage.googleapis.com/indic-dataset/train_test_splits/indic_train_full.csv) [test](https://storage.googleapis.com/indic-dataset/train_test_splits/indic_test_full.csv) Update the audio_path as per your local file structure. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re | 47cf107db57853744ef123fd4c24c8a8 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Load the datasets test_dataset = load_dataset("common_voice", "hi", split="test") indic = load_dataset("csv", data_files= {'train':"/workspace/data/hi2/indic_train_full.csv", "test": "/workspace/data/hi2/indic_test_full.csv"}, download_mode="force_redownload") iiith = load_dataset("csv", data_files= {"train": "/workspace/data/hi2/iiit_hi_train.csv", "test": "/workspace/data/hi2/iiit_hi_test.csv"}, download_mode="force_redownload") | bb64201ecb7a0b87d12a7337bc2243e4 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Drop columns of common_voice split = ['train', 'test', 'validation', 'other', 'invalidated'] for sp in split: common_voice[sp] = common_voice[sp].remove_columns(['client_id', 'up_votes', 'down_votes', 'age', 'gender', 'accent', 'locale', 'segment']) common_voice = common_voice.rename_column('path', 'audio_path') common_voice = common_voice.rename_column('sentence', 'target_text') train_dataset = datasets.concatenate_datasets([indic['train'], iiith['train'], common_voice['train']]) test_dataset = datasets.concatenate_datasets([indic['test'], iiith['test'], common_voice['test'], common_voice['validation']]) | ac9c9b03e0a9316945bc4557ad49eb66 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Load model from HF hub wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") model = Wav2Vec2ForCTC.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\'\;\:\"\“\%\‘\”\�Utrnle\_]' unicode_ignore_regex = r'[dceMaWpmFui\xa0\u200d]' | 6db0944466548ca2cca6bf1930c839c0 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["target_text"] = re.sub(chars_to_ignore_regex, '', batch["target_text"]) batch["target_text"] = re.sub(unicode_ignore_regex, '', batch["target_text"]) speech_array, sampling_rate = torchaudio.load(batch["audio_path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 2322c4278e92cd35a891222a7d786dc6 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result on custom dataset**: 17.23 % ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "hi", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") model = Wav2Vec2ForCTC.from_pretrained("skylord/wav2vec2-large-xlsr-hindi") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\'\;\:\"\“\%\‘\”\�Utrnle\_]' unicode_ignore_regex = r'[dceMaWpmFui\xa0\u200d]' | e94b359e9fb1688cb10d4317efdaff91 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).sub(unicode_ignore_regex, '', batch["sentence"]) speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 71baa630154a9135dbcbb5a92e2f3f8c |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result on CommonVoice**: 56.46 % | 0b737d41fd864aa422dcd59a9d00cb20 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Training The Common Voice `train`, `validation`, datasets were used for training as well as The script used for training & wandb dashboard can be found [here](https://wandb.ai/thinkevolve/huggingface/reports/Project-Hindi-XLSR-Large--Vmlldzo2MTI2MTQ) | ca5bec8b69a9da0ead25d1b4c82a9b31 |

apache-2.0 | [] | false | bert-base-zh-cased We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages. Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | d9380340c177f379a2e152c5c4e48f1c |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-zh-cased") model = AutoModel.from_pretrained("Geotrend/bert-base-zh-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 88154858e489a3842f902432ca5068a2 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0008 - train_batch_size: 64 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.01 - training_steps: 50354 - mixed_precision_training: Native AMP | e4f4cd7fb11acc893e87e8c0d13e0792 |

apache-2.0 | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['kejian/codeparrot-train-more-filter-3.3b-cleaned'], 'is_split_by_sentences': True}, 'generation': {'batch_size': 128, 'metrics_configs': [{}, {'n': 1}, {}], 'scenario_configs': [{'display_as_html': True, 'generate_kwargs': {'do_sample': True, 'eos_token_id': 0, 'max_length': 640, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 512}, {'display_as_html': True, 'generate_kwargs': {'do_sample': True, 'eos_token_id': 0, 'max_length': 272, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'functions', 'num_samples': 512, 'prompts_path': 'resources/functions_csnet.jsonl', 'use_prompt_for_scoring': True}], 'scorer_config': {}}, 'kl_gpt3_callback': {'gpt3_kwargs': {'model_name': 'code-cushman-001'}, 'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'path_or_name': 'codeparrot/codeparrot-small'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'codeparrot/codeparrot-small'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'kejian/final-mle-again', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0008, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000.0, 'output_dir': 'training_output', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 5000, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | 121d3c4abd7ded631da8bf44ccf41aea |

mit | [] | false | Mizkif on Stable Diffusion This is the `<mizkif>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). <br> <h3>here are some images i rendered with this model</h3> <span>graffiti wall</span> <img src="https://i.imgur.com/PIq7Y0w.png" alt="graffiti wall" width="200"/> <span>stained glass</span> <img src="https://i.imgur.com/QcwB5GF.png" alt="stained glass" width="200"/> <br> <h3>here are the images i used to train the model</h3>    | 84bc95417286676508c269ce64f156d1 |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Model Overview This model performs joint intent classification and slot filling, directly from audio input. The model treats the problem as an audio-to-text problem, where the output text is the flattened string representation of the semantics annotation. The model is trained on the SLURP dataset [1]. | ba8e91f05502562d786f35f6d7ccdf15 |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Model Architecture The model is has an encoder-decoder architecture, where the encoder is a Conformer-Large model [2], and the decoder is a three-layer Transformer Decoder [3]. We use the Conformer encoder pretrained on NeMo ASR-Set (details [here](https://ngc.nvidia.com/models/nvidia:nemo:stt_en_conformer_ctc_large)), while the decoder is trained from scratch. A start-of-sentence (BOS) and an end-of-sentence (EOS) tokens are added to each sentence. The model is trained end-to-end by minimizing the negative log-likelihood loss with teacher forcing. During inference, the prediction is generated by beam search, where a BOS token is used to trigger the generation process. | b53ba8c2db6153d818275682d7650fe6 |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Training The NeMo toolkit [4] was used for training the models for around 100 epochs. These model are trained with this [example script](https://github.com/NVIDIA/NeMo/blob/main/examples/slu/slurp/run_slurp_train.py) and this [base config](https://github.com/NVIDIA/NeMo/blob/main/examples/slu/slurp/configs/conformer_transformer_large_bpe.yaml). The tokenizers for these models were built using the semantics annotations of the train set with this [script](https://github.com/NVIDIA/NeMo/blob/main/scripts/tokenizers/process_asr_text_tokenizer.py). We use a vocabulary size of 58, including the BOS, EOS and padding tokens. Details on how to train the model can be found [here](https://github.com/NVIDIA/NeMo/blob/main/examples/slu/speech_intent_slot/README.md). | da816205eb57c9d3033283c00bdd7e1e |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Performance | | | | | **Intent (Scenario_Action)** | | **Entity** | | | **SLURP Metrics** | | |-------|--------------------------------------------------|----------------|--------------------------|------------------------------|---------------|------------|--------|--------------|-------------------|---------------------| |**Version**| **Model** | **Params (M)** | **Pretrained** | **Accuracy** | **Precision** | **Recall** | **F1** | **Precsion** | **Recall** | **F1** | |1.13.0| Conformer-Transformer-Large | 127 | NeMo ASR-Set 3.0 | 90.14 | 78.95 | 74.93 | 76.89 | 84.31 | 80.33 | 82.27 | |Baseline| Conformer-Transformer-Large | 127 | None | 72.56 | 43.19 | 43.5 | 43.34 | 53.59 | 53.92 | 53.76 | Note: during inference, we use beam size of 32, and a temperature of 1.25. | 6efc4c37b186941fa885cc765422b1c1 |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Automatically load the model from NGC ```python import nemo.collections.asr as nemo_asr asr_model = nemo_asr.models.SLUIntentSlotBPEModel.from_pretrained(model_name="slu_conformer_transformer_large_slurp") ``` | 744bd3fa2f47aa3dadefa1c1a8bc6bb3 |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | Predict intents and slots with this model ```shell python [NEMO_GIT_FOLDER]/examples/slu/speech_intent_slot/eval_utils/inference.py \ pretrained_name="slu_conformer_transformer_slurp" \ audio_dir="<DIRECTORY CONTAINING AUDIO FILES>" \ sequence_generator.type="<'beam' OR 'greedy' FOR BEAM/GREEDY SEARCH>" \ sequence_generator.beam_size="<SIZE OF BEAM>" \ sequence_generator.temperature="<TEMPERATURE FOR BEAM SEARCH>" ``` | b0efe9aafdc4247a3af36797a1ccee2c |

cc-by-4.0 | ['spoken-language-understanding', 'speech-intent-classification', 'speech-slot-filling', 'SLURP', 'Conformer', 'Transformer', 'pytorch', 'NeMo'] | false | References [1] [SLURP: A Spoken Language Understanding Resource Package](https://arxiv.org/abs/2011.13205) [2] [Conformer: Convolution-augmented Transformer for Speech Recognition](https://arxiv.org/abs/2005.08100) [3] [Attention Is All You Need](https://arxiv.org/abs/1706.03762?context=cs) [4] [NVIDIA NeMo Toolkit](https://github.com/NVIDIA/NeMo) | 237060722282d1f0a6d2ea6800f4cab9 |

apache-2.0 | ['generated_from_trainer'] | false | bart-mlm-paraphrasing This model is a fine-tuned version of [gayanin/bart-mlm-pubmed](https://huggingface.co/gayanin/bart-mlm-pubmed) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.4617 - Rouge2 Precision: 0.8361 - Rouge2 Recall: 0.6703 - Rouge2 Fmeasure: 0.7304 | 8e87bd8a4545e2af572db02636143e11 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | |:-------------:|:-----:|:-----:|:---------------:|:----------------:|:-------------:|:---------------:| | 0.4845 | 1.0 | 1325 | 0.4270 | 0.8332 | 0.6701 | 0.7294 | | 0.3911 | 2.0 | 2650 | 0.4195 | 0.8358 | 0.6713 | 0.7313 | | 0.328 | 3.0 | 3975 | 0.4119 | 0.8355 | 0.6706 | 0.7304 | | 0.2783 | 4.0 | 5300 | 0.4160 | 0.8347 | 0.6678 | 0.7284 | | 0.2397 | 5.0 | 6625 | 0.4329 | 0.8411 | 0.6747 | 0.7351 | | 0.2155 | 6.0 | 7950 | 0.4389 | 0.8382 | 0.6716 | 0.7321 | | 0.1888 | 7.0 | 9275 | 0.4432 | 0.838 | 0.6718 | 0.7323 | | 0.1724 | 8.0 | 10600 | 0.4496 | 0.8381 | 0.6714 | 0.7319 | | 0.1586 | 9.0 | 11925 | 0.4575 | 0.8359 | 0.6704 | 0.7303 | | 0.1496 | 10.0 | 13250 | 0.4617 | 0.8361 | 0.6703 | 0.7304 | | 7f1685c8adccae5f27c6fdf4c813726e |

mit | ['object-detection', 'object-tracking', 'video', 'video-object-segmentation'] | false | Model Details Unicorn accomplishes the great unification of the network architecture and the learning paradigm for four tracking tasks. Unicorn puts forwards new state-of-the-art performance on many challenging tracking benchmarks using the same model parameters. This model has an input size of 800x1280. - License: This model is licensed under the MIT license - Resources for more information: - [Research Paper](https://arxiv.org/abs/2111.12085) - [GitHub Repo](https://github.com/MasterBin-IIAU/Unicorn) </model_details> <uses> | 321c3a25c38b6bed965f3dd1b2f759f0 |

mit | ['object-detection', 'object-tracking', 'video', 'video-object-segmentation'] | false | Direct Use This model can be used for: * Single Object Tracking (SOT) * Multiple Object Tracking (MOT) * Video Object Segmentation (VOS) * Multi-Object Tracking and Segmentation (MOTS) <Eval_Results> | e645958d1e35fc68d2a77ece1356f991 |

mit | ['object-detection', 'object-tracking', 'video', 'video-object-segmentation'] | false | Citation Information ```bibtex @inproceedings{unicorn, title={Towards Grand Unification of Object Tracking}, author={Yan, Bin and Jiang, Yi and Sun, Peize and Wang, Dong and Yuan, Zehuan and Luo, Ping and Lu, Huchuan}, booktitle={ECCV}, year={2022} } ``` </Cite> | 25651beb0ce129ab6ece8162670cb8e2 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3861 - Accuracy: 0.8675 - F1: 0.8704 | e15cfa1251f661e59ebc8b6a2a7773dd |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | 6bc3a286de27d01bc0541d4d489c24a5 |

apache-2.0 | ['generated_from_trainer'] | false | openai/whisper-small This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5636 - Wer: 13.4646 | 4d077549c632dfaece09de0270e367bb |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 64 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 4000 - mixed_precision_training: Native AMP | 977c0c360c3f2761a0cf2dbc8ece296d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.1789 | 4.02 | 1000 | 0.3421 | 13.1199 | | 0.0264 | 8.04 | 2000 | 0.4579 | 13.5155 | | 0.0023 | 13.01 | 3000 | 0.5479 | 13.6539 | | 0.0011 | 17.03 | 4000 | 0.5636 | 13.4646 | | a436991ec9445c3b23ab88ba2abcdc73 |

apache-2.0 | ['automatic-speech-recognition', 'timit_asr', 'generated_from_trainer'] | false | sew-small-100k-timit This model is a fine-tuned version of [asapp/sew-small-100k](https://huggingface.co/asapp/sew-small-100k) on the TIMIT_ASR - NA dataset. It achieves the following results on the evaluation set: - Loss: 0.4926 - Wer: 0.2988 | 82800aff4b8e7e5d86c292e5625c5397 |

apache-2.0 | ['automatic-speech-recognition', 'timit_asr', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 32 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 20.0 - mixed_precision_training: Native AMP | 6abb44d76a6479b8c931b14ecd9f6eed |

apache-2.0 | ['automatic-speech-recognition', 'timit_asr', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.071 | 0.69 | 100 | 3.0262 | 1.0 | | 2.9304 | 1.38 | 200 | 2.9297 | 1.0 | | 2.8823 | 2.07 | 300 | 2.8367 | 1.0 | | 1.5668 | 2.76 | 400 | 1.2310 | 0.8807 | | 0.7422 | 3.45 | 500 | 0.7080 | 0.5957 | | 0.4121 | 4.14 | 600 | 0.5829 | 0.5073 | | 0.3981 | 4.83 | 700 | 0.5153 | 0.4461 | | 0.5038 | 5.52 | 800 | 0.4908 | 0.4151 | | 0.2899 | 6.21 | 900 | 0.5122 | 0.4111 | | 0.2198 | 6.9 | 1000 | 0.4908 | 0.3803 | | 0.2129 | 7.59 | 1100 | 0.4668 | 0.3789 | | 0.3007 | 8.28 | 1200 | 0.4788 | 0.3562 | | 0.2264 | 8.97 | 1300 | 0.5113 | 0.3635 | | 0.1536 | 9.66 | 1400 | 0.4950 | 0.3441 | | 0.1206 | 10.34 | 1500 | 0.5062 | 0.3421 | | 0.2021 | 11.03 | 1600 | 0.4900 | 0.3283 | | 0.1458 | 11.72 | 1700 | 0.5019 | 0.3307 | | 0.1151 | 12.41 | 1800 | 0.4989 | 0.3270 | | 0.0985 | 13.1 | 1900 | 0.4925 | 0.3173 | | 0.1412 | 13.79 | 2000 | 0.4868 | 0.3125 | | 0.1579 | 14.48 | 2100 | 0.4983 | 0.3147 | | 0.1043 | 15.17 | 2200 | 0.4914 | 0.3091 | | 0.0773 | 15.86 | 2300 | 0.4858 | 0.3102 | | 0.1327 | 16.55 | 2400 | 0.5084 | 0.3064 | | 0.1281 | 17.24 | 2500 | 0.5017 | 0.3025 | | 0.0845 | 17.93 | 2600 | 0.5001 | 0.3012 | | 0.0717 | 18.62 | 2700 | 0.4894 | 0.3004 | | 0.0835 | 19.31 | 2800 | 0.4963 | 0.2998 | | 0.1181 | 20.0 | 2900 | 0.4926 | 0.2988 | | af41e32f423bbb065dd4fda4a9ca4b8f |

mit | ['generated_from_trainer'] | false | elegant_galileo This model was trained from scratch on the tomekkorbak/pii-pile-chunk3-0-50000, the tomekkorbak/pii-pile-chunk3-50000-100000, the tomekkorbak/pii-pile-chunk3-100000-150000, the tomekkorbak/pii-pile-chunk3-150000-200000, the tomekkorbak/pii-pile-chunk3-200000-250000, the tomekkorbak/pii-pile-chunk3-250000-300000, the tomekkorbak/pii-pile-chunk3-300000-350000, the tomekkorbak/pii-pile-chunk3-350000-400000, the tomekkorbak/pii-pile-chunk3-400000-450000, the tomekkorbak/pii-pile-chunk3-450000-500000, the tomekkorbak/pii-pile-chunk3-500000-550000, the tomekkorbak/pii-pile-chunk3-550000-600000, the tomekkorbak/pii-pile-chunk3-600000-650000, the tomekkorbak/pii-pile-chunk3-650000-700000, the tomekkorbak/pii-pile-chunk3-700000-750000, the tomekkorbak/pii-pile-chunk3-750000-800000, the tomekkorbak/pii-pile-chunk3-800000-850000, the tomekkorbak/pii-pile-chunk3-850000-900000, the tomekkorbak/pii-pile-chunk3-900000-950000, the tomekkorbak/pii-pile-chunk3-950000-1000000, the tomekkorbak/pii-pile-chunk3-1000000-1050000, the tomekkorbak/pii-pile-chunk3-1050000-1100000, the tomekkorbak/pii-pile-chunk3-1100000-1150000, the tomekkorbak/pii-pile-chunk3-1150000-1200000, the tomekkorbak/pii-pile-chunk3-1200000-1250000, the tomekkorbak/pii-pile-chunk3-1250000-1300000, the tomekkorbak/pii-pile-chunk3-1300000-1350000, the tomekkorbak/pii-pile-chunk3-1350000-1400000, the tomekkorbak/pii-pile-chunk3-1400000-1450000, the tomekkorbak/pii-pile-chunk3-1450000-1500000, the tomekkorbak/pii-pile-chunk3-1500000-1550000, the tomekkorbak/pii-pile-chunk3-1550000-1600000, the tomekkorbak/pii-pile-chunk3-1600000-1650000, the tomekkorbak/pii-pile-chunk3-1650000-1700000, the tomekkorbak/pii-pile-chunk3-1700000-1750000, the tomekkorbak/pii-pile-chunk3-1750000-1800000, the tomekkorbak/pii-pile-chunk3-1800000-1850000, the tomekkorbak/pii-pile-chunk3-1850000-1900000 and the tomekkorbak/pii-pile-chunk3-1900000-1950000 datasets. | 8f5c818dbab7a9093dfb0eb05dabeed9 |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/pii-pile-chunk3-0-50000', 'tomekkorbak/pii-pile-chunk3-50000-100000', 'tomekkorbak/pii-pile-chunk3-100000-150000', 'tomekkorbak/pii-pile-chunk3-150000-200000', 'tomekkorbak/pii-pile-chunk3-200000-250000', 'tomekkorbak/pii-pile-chunk3-250000-300000', 'tomekkorbak/pii-pile-chunk3-300000-350000', 'tomekkorbak/pii-pile-chunk3-350000-400000', 'tomekkorbak/pii-pile-chunk3-400000-450000', 'tomekkorbak/pii-pile-chunk3-450000-500000', 'tomekkorbak/pii-pile-chunk3-500000-550000', 'tomekkorbak/pii-pile-chunk3-550000-600000', 'tomekkorbak/pii-pile-chunk3-600000-650000', 'tomekkorbak/pii-pile-chunk3-650000-700000', 'tomekkorbak/pii-pile-chunk3-700000-750000', 'tomekkorbak/pii-pile-chunk3-750000-800000', 'tomekkorbak/pii-pile-chunk3-800000-850000', 'tomekkorbak/pii-pile-chunk3-850000-900000', 'tomekkorbak/pii-pile-chunk3-900000-950000', 'tomekkorbak/pii-pile-chunk3-950000-1000000', 'tomekkorbak/pii-pile-chunk3-1000000-1050000', 'tomekkorbak/pii-pile-chunk3-1050000-1100000', 'tomekkorbak/pii-pile-chunk3-1100000-1150000', 'tomekkorbak/pii-pile-chunk3-1150000-1200000', 'tomekkorbak/pii-pile-chunk3-1200000-1250000', 'tomekkorbak/pii-pile-chunk3-1250000-1300000', 'tomekkorbak/pii-pile-chunk3-1300000-1350000', 'tomekkorbak/pii-pile-chunk3-1350000-1400000', 'tomekkorbak/pii-pile-chunk3-1400000-1450000', 'tomekkorbak/pii-pile-chunk3-1450000-1500000', 'tomekkorbak/pii-pile-chunk3-1500000-1550000', 'tomekkorbak/pii-pile-chunk3-1550000-1600000', 'tomekkorbak/pii-pile-chunk3-1600000-1650000', 'tomekkorbak/pii-pile-chunk3-1650000-1700000', 'tomekkorbak/pii-pile-chunk3-1700000-1750000', 'tomekkorbak/pii-pile-chunk3-1750000-1800000', 'tomekkorbak/pii-pile-chunk3-1800000-1850000', 'tomekkorbak/pii-pile-chunk3-1850000-1900000', 'tomekkorbak/pii-pile-chunk3-1900000-1950000'], 'filter_threshold': 0.000286, 'is_split_by_sentences': True, 'skip_tokens': 1649999872}, 'generation': {'force_call_on': [25177], 'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}], 'scorer_config': {}}, 'kl_gpt3_callback': {'force_call_on': [25177], 'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': False, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'model_kwargs': {'revision': '9e6c78543a6ff1e4089002c38864d5a9cf71ec90'}, 'path_or_name': 'tomekkorbak/nervous_wozniak'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 128, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'elegant_galileo', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0001, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output2', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25177, 'save_strategy': 'steps', 'seed': 42, 'tokens_already_seen': 1649999872, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | d012646fd4a84babdfc2a8515c999e0a |

mit | ['pytorch', 'feature-extraction'] | false | Model Card: VinVL VisualBackbone Disclaimer: The model is taken from the official repository, it can be found here: [microsoft/scene_graph_benchmark](https://github.com/microsoft/scene_graph_benchmark) | 5a9b6fe4fb09fdcfe6e3f88dfdf11199 |

mit | ['pytorch', 'feature-extraction'] | false | Quick start: Feature extraction ```python from scene_graph_benchmark.wrappers import VinVLVisualBackbone img_file = "scene_graph_bechmark/demo/woman_fish.jpg" detector = VinVLVisualBackbone() dets = detector(img_file) ``` `dets` contains the following keys: ["boxes", "classes", "scores", "features", "spatial_features"] You can obtain the full VinVL's visual features by concatenating the "features" and the "spatial_features" ```python import numpy as np v_feats = np.concatenate((dets['features'], dets['spatial_features']), axis=1) | c153ab1b85d14077618a2366ba4670fa |

mit | ['pytorch', 'feature-extraction'] | false | Citations Please consider citing the original project and the VinVL paper ```BibTeX @misc{han2021image, title={Image Scene Graph Generation (SGG) Benchmark}, author={Xiaotian Han and Jianwei Yang and Houdong Hu and Lei Zhang and Jianfeng Gao and Pengchuan Zhang}, year={2021}, eprint={2107.12604}, archivePrefix={arXiv}, primaryClass={cs.CV} } @inproceedings{zhang2021vinvl, title={Vinvl: Revisiting visual representations in vision-language models}, author={Zhang, Pengchuan and Li, Xiujun and Hu, Xiaowei and Yang, Jianwei and Zhang, Lei and Wang, Lijuan and Choi, Yejin and Gao, Jianfeng}, booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition}, pages={5579--5588}, year={2021} } ``` | 29cb001cb0fe6d91f8f9f6b3d86cc325 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Album-Cover-Style Dreambooth model > trained by lckidwell with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Trained on ~80 album covers, mostly from the 50s and 60s, a mix of Jazz, pop, polka, religious, children's and other genres. | 9f800f51c28bcf339fdf31de5da508c2 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Sample Prompts: * Kanye plays jazz, albumcover style * Swingin' with Henry Kissinger, albumcover style * Jay Z Children's album, albumcover style * Polka Party with Machine Gun Kelly, albumcover style | cc58d6b346d97154960596f1d8e03609 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Sample pictures of this concept:               | 7469b3400cc7430fa7e1d5a1da449f48 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Moar Samples      | 9a6fdb7151de27ec254d3ebed1734300 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.