license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['flair', 'Text Classification', 'token-classification', 'sequence-tagger-model'] | false | Arabic NER Model using Flair Embeddings Training was conducted over 94 epochs, using a linear decaying learning rate of 2e-05, starting from 0.225 and a batch size of 32 with GloVe and Flair forward and backward embeddings. | b16a7237d7a54488d5963276e0735abd |

apache-2.0 | ['flair', 'Text Classification', 'token-classification', 'sequence-tagger-model'] | false | Results: - F1-score (micro) 0.8666 - F1-score (macro) 0.8488 | | Named Entity Type | True Posititves | False Positives | False Negatives | Precision | Recall | class-F1 | |------|-|----|----|----|-----------|--------|----------| | LOC | Location| 539 | 51 | 68 | 0.9136 | 0.8880 | 0.9006 | | MISC | Miscellaneous|408 | 57 | 89 | 0.8774 | 0.8209 | 0.8482 | | ORG | Organisation|167 | 43 | 64 | 0.7952 | 0.7229 | 0.7574 | | PER | Person (no title)|501 | 65 | 60 | 0.8852 | 0.8930 | 0.8891 | --- | 557b3269c06c5eacd57c4ae198a904d8 |

apache-2.0 | ['flair', 'Text Classification', 'token-classification', 'sequence-tagger-model'] | false | Usage ```python from flair.data import Sentence from flair.models import SequenceTagger import pyarabic.araby as araby from icecream import ic tagger = SequenceTagger.load("julien-c/flair-ner") arTagger = SequenceTagger.load('megantosh/flair-arabic-multi-ner') sentence = Sentence('George Washington went to Washington .') arSentence = Sentence('عمرو عادلي أستاذ للاقتصاد السياسي المساعد في الجامعة الأمريكية بالقاهرة .') | 6b1ae613893b0c2971069926479a80c6 |

apache-2.0 | ['flair', 'Text Classification', 'token-classification', 'sequence-tagger-model'] | false | Example ```bash 2021-07-07 14:30:59,649 loading file /Users/mega/.flair/models/flair-ner/f22eb997f66ae2eacad974121069abaefca5fe85fce71b49e527420ff45b9283.941c7c30b38aef8d8a4eb5c1b6dd7fe8583ff723fef457382589ad6a4e859cfc 2021-07-07 14:31:04,654 loading file /Users/mega/.flair/models/flair-arabic-multi-ner/c7af7ddef4fdcc681fcbe1f37719348afd2862b12aa1cfd4f3b93bd2d77282c7.242d030cb106124f7f9f6a88fb9af8e390f581d42eeca013367a86d585ee6dd6 ic| sentence.to_tagged_string: <bound method Sentence.to_tagged_string of Sentence: "George Washington went to Washington ." [− Tokens: 6 − Token-Labels: "George <B-PER> Washington <E-PER> went to Washington <S-LOC> ."]> ic| arSentence.to_tagged_string: <bound method Sentence.to_tagged_string of Sentence: "عمرو عادلي أستاذ للاقتصاد السياسي المساعد في الجامعة الأمريكية بالقاهرة ." [− Tokens: 11 − Token-Labels: "عمرو <B-PER> عادلي <I-PER> أستاذ للاقتصاد السياسي المساعد في الجامعة <B-ORG> الأمريكية <I-ORG> بالقاهرة <B-LOC> ."]> ic| entity: <PER-span (1,2): "George Washington"> ic| entity: <LOC-span (5): "Washington"> ic| entity: <PER-span (1,2): "عمرو عادلي"> ic| entity: <ORG-span (8,9): "الجامعة الأمريكية"> ic| entity: <LOC-span (10): "بالقاهرة"> ic| sentence.to_dict(tag_type='ner'): {"text":"عمرو عادلي أستاذ للاقتصاد السياسي المساعد في الجامعة الأمريكية بالقاهرة .", "labels":[], {"entities":[{{{ "text":"عمرو عادلي", "start_pos":0, "end_pos":10, "labels":[PER (0.9826)]}, {"text":"الجامعة الأمريكية", "start_pos":45, "end_pos":62, "labels":[ORG (0.7679)]}, {"text":"بالقاهرة", "start_pos":64, "end_pos":72, "labels":[LOC (0.8079)]}]} "text":"George Washington went to Washington .", "labels":[], "entities":[{ {"text":"George Washington", "start_pos":0, "end_pos":17, "labels":[PER (0.9968)]}, {"text":"Washington""start_pos":26, "end_pos":36, "labels":[LOC (0.9994)]}}]} ``` | 295d10c663bbff482b195a0c3f8b6e5d |

apache-2.0 | ['flair', 'Text Classification', 'token-classification', 'sequence-tagger-model'] | false | Model Configuration ```python SequenceTagger( (embeddings): StackedEmbeddings( (list_embedding_0): WordEmbeddings('glove') (list_embedding_1): FlairEmbeddings( (lm): LanguageModel( (drop): Dropout(p=0.1, inplace=False) (encoder): Embedding(7125, 100) (rnn): LSTM(100, 2048) (decoder): Linear(in_features=2048, out_features=7125, bias=True) ) ) (list_embedding_2): FlairEmbeddings( (lm): LanguageModel( (drop): Dropout(p=0.1, inplace=False) (encoder): Embedding(7125, 100) (rnn): LSTM(100, 2048) (decoder): Linear(in_features=2048, out_features=7125, bias=True) ) ) ) (word_dropout): WordDropout(p=0.05) (locked_dropout): LockedDropout(p=0.5) (embedding2nn): Linear(in_features=4196, out_features=4196, bias=True) (rnn): LSTM(4196, 256, batch_first=True, bidirectional=True) (linear): Linear(in_features=512, out_features=15, bias=True) (beta): 1.0 (weights): None (weight_tensor) None ``` Due to the right-to-left in left-to-right context, some formatting errors might occur. and your code might appear like [this](https://ibb.co/ky20Lnq), (link accessed on 2020-10-27) | 1f2dd069f053c7a4cb6fcf36272d2203 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-work-1-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3586 - Accuracy: 0.3689 | fe7776a761b2a1140d3fcd9f1895384b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.8058 | 1.0 | 1 | 2.6169 | 0.2356 | | 2.3524 | 2.0 | 2 | 2.5215 | 0.2978 | | 1.9543 | 3.0 | 3 | 2.4427 | 0.3422 | | 1.5539 | 4.0 | 4 | 2.3874 | 0.36 | | 1.4133 | 5.0 | 5 | 2.3586 | 0.3689 | | 774cfb0140f7f85f61f8da255620eb29 |

apache-2.0 | ['generated_from_trainer'] | false | Learning-sentiment-analysis-through-imdb-ds This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3419 - Accuracy: 0.8767 - F1: 0.8818 | ae526531f27d5e531f39e5b417769b87 |

other | ['vision', 'image-segmentation'] | false | Mask2Former Mask2Former model trained on Cityscapes semantic segmentation (base-IN21k, Swin backbone). It was introduced in the paper [Masked-attention Mask Transformer for Universal Image Segmentation ](https://arxiv.org/abs/2112.01527) and first released in [this repository](https://github.com/facebookresearch/Mask2Former/). Disclaimer: The team releasing Mask2Former did not write a model card for this model so this model card has been written by the Hugging Face team. | 65b3aa1c89bb08fbe2ee4dfbe5ceac30 |

other | ['vision', 'image-segmentation'] | false | load Mask2Former fine-tuned on Cityscapes semantic segmentation processor = AutoImageProcessor.from_pretrained("facebook/mask2former-swin-base-IN21k-cityscapes-semantic") model = Mask2FormerForUniversalSegmentation.from_pretrained("facebook/mask2former-swin-base-IN21k-cityscapes-semantic") url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) inputs = processor(images=image, return_tensors="pt") with torch.no_grad(): outputs = model(**inputs) | 07e61fe09606b74d6bda24f4ad04a829 |

apache-2.0 | ['generated_from_keras_callback'] | false | edgertej/poebert-eras-balanced This model is a fine-tuned version of [edgertej/poebert-eras-balanced](https://huggingface.co/edgertej/poebert-eras-balanced) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.5715 - Validation Loss: 3.3710 - Epoch: 6 | 3f3dcc4d4b59ad561d1a71354bec9548 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': 3e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False} - training_precision: float32 | 6c09c39e4f8c05ebc00a0a30f84bff0f |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 3.8002 | 3.5259 | 0 | | 3.7486 | 3.4938 | 1 | | 3.7053 | 3.4520 | 2 | | 3.7315 | 3.4211 | 3 | | 3.6226 | 3.4031 | 4 | | 3.6021 | 3.3968 | 5 | | 3.5715 | 3.3710 | 6 | | 49d2e3825087b59889f99549dcf4306a |

apache-2.0 | ['automatic-speech-recognition', 'zh-CN'] | false | exp_w2v2t_zh-cn_vp-es_s408 Fine-tuned [facebook/wav2vec2-large-es-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-es-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (zh-CN)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 0d0cbb6e2df9a1589374ca40ba964d5a |

apache-2.0 | [] | false | Model description **CAMeLBERT-DA NER Model** is a Named Entity Recognition (NER) model that was built by fine-tuning the [CAMeLBERT Dialectal Arabic (DA)](https://huggingface.co/CAMeL-Lab/bert-base-arabic-camelbert-da/) model. For the fine-tuning, we used the [ANERcorp](https://camel.abudhabi.nyu.edu/anercorp/) dataset. Our fine-tuning procedure and the hyperparameters we used can be found in our paper *"[The Interplay of Variant, Size, and Task Type in Arabic Pre-trained Language Models](https://arxiv.org/abs/2103.06678)." * Our fine-tuning code can be found [here](https://github.com/CAMeL-Lab/CAMeLBERT). | 71c00bb322da3ba7de521800a14ed50c |

apache-2.0 | [] | false | Intended uses You can use the CAMeLBERT-DA NER model directly as part of our [CAMeL Tools](https://github.com/CAMeL-Lab/camel_tools) NER component (*recommended*) or as part of the transformers pipeline. | b1d1a652b87b8f2601929e0b512aef9b |

apache-2.0 | [] | false | How to use To use the model with the [CAMeL Tools](https://github.com/CAMeL-Lab/camel_tools) NER component: ```python >>> from camel_tools.ner import NERecognizer >>> from camel_tools.tokenizers.word import simple_word_tokenize >>> ner = NERecognizer('CAMeL-Lab/bert-base-arabic-camelbert-da-ner') >>> sentence = simple_word_tokenize('إمارة أبوظبي هي إحدى إمارات دولة الإمارات العربية المتحدة السبع') >>> ner.predict_sentence(sentence) >>> ['O', 'B-LOC', 'O', 'O', 'O', 'O', 'B-LOC', 'I-LOC', 'I-LOC', 'O'] ``` You can also use the NER model directly with a transformers pipeline: ```python >>> from transformers import pipeline >>> ner = pipeline('ner', model='CAMeL-Lab/bert-base-arabic-camelbert-da-ner') >>> ner("إمارة أبوظبي هي إحدى إمارات دولة الإمارات العربية المتحدة السبع") [{'word': 'أبوظبي', 'score': 0.9895730018615723, 'entity': 'B-LOC', 'index': 2, 'start': 6, 'end': 12}, {'word': 'الإمارات', 'score': 0.8156259655952454, 'entity': 'B-LOC', 'index': 8, 'start': 33, 'end': 41}, {'word': 'العربية', 'score': 0.890906810760498, 'entity': 'I-LOC', 'index': 9, 'start': 42, 'end': 49}, {'word': 'المتحدة', 'score': 0.8169114589691162, 'entity': 'I-LOC', 'index': 10, 'start': 50, 'end': 57}] ``` *Note*: to download our models, you would need `transformers>=3.5.0`. Otherwise, you could download the models manually. | 2530b7275a6486d08934ce3b491bf4bf |

apache-2.0 | [] | false | Citation ```bibtex @inproceedings{inoue-etal-2021-interplay, title = "The Interplay of Variant, Size, and Task Type in {A}rabic Pre-trained Language Models", author = "Inoue, Go and Alhafni, Bashar and Baimukan, Nurpeiis and Bouamor, Houda and Habash, Nizar", booktitle = "Proceedings of the Sixth Arabic Natural Language Processing Workshop", month = apr, year = "2021", address = "Kyiv, Ukraine (Online)", publisher = "Association for Computational Linguistics", abstract = "In this paper, we explore the effects of language variants, data sizes, and fine-tuning task types in Arabic pre-trained language models. To do so, we build three pre-trained language models across three variants of Arabic: Modern Standard Arabic (MSA), dialectal Arabic, and classical Arabic, in addition to a fourth language model which is pre-trained on a da of the three. We also examine the importance of pre-training data size by building additional models that are pre-trained on a scaled-down set of the MSA variant. We compare our different models to each other, as well as to eight publicly available models by fine-tuning them on five NLP tasks spanning 12 datasets. Our results suggest that the variant proximity of pre-training data to fine-tuning data is more important than the pre-training data size. We exploit this insight in defining an optimized system selection model for the studied tasks.", } ``` | e73bf6464746aadfb42b99cc9442cfb6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | No log | 1.0 | 125 | 2.8679 | 23.1742 | 9.8716 | 18.5896 | 20.7943 | 19.0 | | 21552394fa84873a28aa8b569e47c272 |

mit | ['generated_from_trainer'] | false | tluo_xml_roberta_base_amazon_review_sentiment_v2 This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.9630 - Accuracy: 0.6057 | 577f6d46215daa96e95cb1dad6b56d85 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 123 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | 35ca8b9ac397eeba07c79092ff3bc6d1 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 1.0561 | 0.33 | 5000 | 0.9954 | 0.567 | | 0.948 | 0.67 | 10000 | 0.9641 | 0.5862 | | 0.9557 | 1.0 | 15000 | 0.9605 | 0.589 | | 0.8891 | 1.33 | 20000 | 0.9420 | 0.5875 | | 0.8889 | 1.67 | 25000 | 0.9397 | 0.592 | | 0.8777 | 2.0 | 30000 | 0.9236 | 0.6042 | | 0.778 | 2.33 | 35000 | 0.9612 | 0.5972 | | 0.7589 | 2.67 | 40000 | 0.9728 | 0.5995 | | 0.7593 | 3.0 | 45000 | 0.9630 | 0.6057 | | 13d22810d6bde6625d15134933ccdd94 |

mit | ['generated_from_keras_callback'] | false | huynhdoo/camembert-base-finetuned-jva-missions-report This model is a fine-tuned version of [camembert-base](https://huggingface.co/camembert-base) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0542 - Train Accuracy: 0.9844 - Validation Loss: 0.5073 - Validation Accuracy: 0.8436 - Epoch: 4 | fe4b1c2da64b2c553748acc10c989c67 |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5e-05, 'decay_steps': 1005, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False} - training_precision: float32 | 4a0660c724643cb6dff43005366b40f5 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch | |:----------:|:--------------:|:---------------:|:-------------------:|:-----:| | 0.4753 | 0.7890 | 0.3616 | 0.8547 | 0 | | 0.3120 | 0.8799 | 0.3702 | 0.8492 | 1 | | 0.1824 | 0.9340 | 0.3928 | 0.8547 | 2 | | 0.0972 | 0.9714 | 0.4849 | 0.8436 | 3 | | 0.0542 | 0.9844 | 0.5073 | 0.8436 | 4 | | 57cf7274388b4f4c2e582a3ebab873bb |

creativeml-openrail-m | ['text-to-image'] | false | Sonic06-Diffusion on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 8e72c85e3fce191f8e9d1d8d639bb950 |

creativeml-openrail-m | ['text-to-image'] | false | Model by Laughify This is a fine-tuned Stable Diffusion model trained on screenshots from Sonic The Hedgehog 2006 game. Use saisikwrd in your prompts for the effect. **A4FD8847-3EFF-4770-BDDA-A9404B5D436E.png, EDCBECAC-0116-4170-8A43-16F2F8FCC119.png, 815527F3-1658-4655-B0A9-6F0C73CED7D7.png** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). You can run your new concept via A1111 Colab :[Fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Sample pictures of this concept: 815527F3-1658-4655-B0A9-6F0C73CED7D7.png EDCBECAC-0116-4170-8A43-16F2F8FCC119.png A4FD8847-3EFF-4770-BDDA-A9404B5D436E.png    | 6504eef67f78b8539ff32d5a188937fa |

apache-2.0 | [] | false | Example Usage ```python from transformers import AutoTokenizer, T5ForConditionalGeneration tokenizer = AutoTokenizer.from_pretrained("laituan245/molt5-small", model_max_length=512) model = T5ForConditionalGeneration.from_pretrained('laituan245/molt5-small') ``` | f658dd43953802cd9183434d4a8996dd |

apache-2.0 | ['summarization'] | false | Longformer Encoder-Decoder (LED) fine-tuned on Billsum This model is a fine-tuned version of [led-base-16384](https://huggingface.co/allenai/led-base-16384) on the [billsum](https://huggingface.co/datasets/billsum) dataset. As described in [Longformer: The Long-Document Transformer](https://arxiv.org/pdf/2004.05150.pdf) by Iz Beltagy, Matthew E. Peters, Arman Cohan, *led-base-16384* was initialized from [*bart-base*](https://huggingface.co/facebook/bart-base) since both models share the exact same architecture. To be able to process 16K tokens, *bart-base*'s position embedding matrix was simply copied 16 times. | 1a00b335bb985a0f53d6e699b5dc714c |

apache-2.0 | ['summarization'] | false | How to use ```Python from transformers import AutoModelForSeq2SeqLM, AutoTokenizer device = "cuda" if torch.cuda.is_available() else "cpu" tokenizer = AutoTokenizer.from_pretrained("d0r1h/LEDBill") model = AutoModelForSeq2SeqLM.from_pretrained("d0r1h/LEDBill", return_dict_in_generate=True).to(device) case = "......." input_ids = tokenizer(case, return_tensors="pt").input_ids.to(device) global_attention_mask = torch.zeros_like(input_ids) global_attention_mask[:, 0] = 1 sequences = model.generate(input_ids, global_attention_mask=global_attention_mask).sequences summary = tokenizer.batch_decode(sequences, skip_special_tokens=True) ``` | d65e3c637256df6fc4a9180e53bc7e81 |

apache-2.0 | ['summarization'] | false | Evaluation results When the model is used for summarizing Billsum documents(10 sample), it achieves the following results: | Model | rouge1-f | rouge1-p | rouge2-f | rouge2-p | rougeL-f | rougeL-p | |:-----------:|:-----:|:-----:|:------:|:-----:|:------:|:-----:| | LEDBill | **34** | **37** | **15** | **16** | **30** | **32** | | led-base | 2 | 15 | 0 | 0 | 2 | 15 | [This notebook](https://colab.research.google.com/drive/1iEEFbWeTGUSDesmxHIU2QDsPQM85Ka1K?usp=sharing) shows how *led* can effectively be used for downstream task such summarization. | b876d5f90b4609648d18968e2faeb882 |

apache-2.0 | ['diffusion model', 'stable diffusion', 'v1.4'] | false | This repository hosts the TFLite version of `diffusion model` part of [KerasCV Stable Diffusion](https://github.com/keras-team/keras-cv/tree/master/keras_cv/models/stable_diffusion). Stable Diffusion consists of `text encoder`, `diffusion model`, `decoder`, and some glue codes to handl inputs and outputs of each part. The TFLite version of `diffusion model` in this repository is built not only with the `diffusion model` itself but also TensorFlow operations that takes `context`, `unconditional context` from `text encoder` and generates `latent`. The `latent` output should be passed down to the `decoder` which is hosted in [this repository](https://huggingface.co/keras-sd/decoder-tflite/tree/main). TFLite conversion was based on the `SavedModel` from [this repository](https://huggingface.co/keras-sd/tfs-text-encoder/tree/main), and TensorFlow version `>= 2.12-nightly` was used. - NOTE: [Dynamic range quantization](https://www.tensorflow.org/lite/performance/post_training_quant | 6e5b2df271c455f488125bcf0df93688 |

apache-2.0 | ['diffusion model', 'stable diffusion', 'v1.4'] | false | optimizing_an_existing_model) was used. - NOTE: TensorFlow version `< 2.12-nightly` will fail for the conversion process. - NOTE: For those who wonder how `SavedModel` is constructed, find it in [keras-sd-serving repository](https://github.com/deep-diver/keras-sd-serving). | 5a0e9d7f907a92ae3d52ff3b7b1cd62a |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 3 | 11a9b29b23e3e96b2e5123fa03d9b4dd |

apache-2.0 | ['generated_from_trainer'] | false | sentiment-model-sample-ekman-emotion This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.4963 - Accuracy: 0.6713 | c028b5db08bf3310a51c01bcd72cec55 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 2 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | 0fd578ec9506afdc01dec93a3495019f |

mit | [] | false | klance on Stable Diffusion This is the `<klance>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:       | 473fcda1004021e85d1bee1c80fa8388 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__hate_speech_offensive__train-8-1 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.1013 - Accuracy: 0.0915 | 7bf32452e8f5b23c976bb5981cd9397b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.0866 | 1.0 | 5 | 1.1363 | 0.0 | | 1.0439 | 2.0 | 10 | 1.1803 | 0.0 | | 1.0227 | 3.0 | 15 | 1.2162 | 0.2 | | 0.9111 | 4.0 | 20 | 1.2619 | 0.0 | | 0.8243 | 5.0 | 25 | 1.2929 | 0.2 | | 0.7488 | 6.0 | 30 | 1.3010 | 0.2 | | 0.62 | 7.0 | 35 | 1.3011 | 0.2 | | 0.5054 | 8.0 | 40 | 1.2931 | 0.4 | | 0.4191 | 9.0 | 45 | 1.3274 | 0.4 | | 0.4107 | 10.0 | 50 | 1.3259 | 0.4 | | 0.3376 | 11.0 | 55 | 1.2800 | 0.4 | | 37f431c16211bbf0a0c9fa5f6e659049 |

cc-by-4.0 | ['TTS', 'audio', 'synthesis', 'VITS', 'speech', 'coqui.ai', 'pytorch'] | false | Model description This model was trained from scratch using the [Coqui TTS](https://github.com/coqui-ai/TTS) toolkit on a combination of 3 datasets: [Festcat](http://festcat.talp.cat/devel.php), high quality open speech dataset of [Google](http://openslr.org/69/) (can be found in [OpenSLR 69](https://huggingface.co/datasets/openslr/viewer/SLR69/train)) and [Common Voice v8](https://commonvoice.mozilla.org/ca). For the training, 101460 utterances consisting of 257 speakers were used, which corresponds to nearly 138 hours of speech. A live inference demo can be found in our spaces, [here](https://huggingface.co/spaces/projecte-aina/tts-ca-coqui-vits-multispeaker). | b14125b885b71726889d458329886133 |

cc-by-4.0 | ['TTS', 'audio', 'synthesis', 'VITS', 'speech', 'coqui.ai', 'pytorch'] | false | egg=TTS ``` Synthesize a speech using python: ```bash import tempfile import gradio as gr import numpy as np import os import json from typing import Optional from TTS.config import load_config from TTS.utils.manage import ModelManager from TTS.utils.synthesizer import Synthesizer model_path = | 0f1ca2c80cc7dc60f62a383f7da11c1d |

cc-by-4.0 | ['TTS', 'audio', 'synthesis', 'VITS', 'speech', 'coqui.ai', 'pytorch'] | false | Absolute path to speakers.pth file text = "Text to synthetize" speaker_idx = "Speaker ID" synthesizer = Synthesizer( model_path, config_path, speakers_file_path, None, None, None, ) wavs = synthesizer.tts(text, speaker_idx) ``` | 45fb86f256832509a4b8a633b3f87a3d |

cc-by-4.0 | ['TTS', 'audio', 'synthesis', 'VITS', 'speech', 'coqui.ai', 'pytorch'] | false | Data preparation The data has been processed using the script [process_data.sh](https://huggingface.co/projecte-aina/tts-ca-coqui-vits-multispeaker/blob/main/data_processing/process_data.sh), which reduces the sampling frequency of the audios, eliminates silences, adds padding and structures the data in the format accepted by the framework. You can find more information [here](https://huggingface.co/projecte-aina/tts-ca-coqui-vits-multispeaker/blob/main/data_processing/README.md). | 9d67de99f1b4c5a90349ec0409d66158 |

cc-by-4.0 | ['TTS', 'audio', 'synthesis', 'VITS', 'speech', 'coqui.ai', 'pytorch'] | false | Hyperparameter The model is based on VITS proposed by [Kim et al](https://arxiv.org/abs/2106.06103). The following hyperparameters were set in the coqui framework. | Hyperparameter | Value | |------------------------------------|----------------------------------| | Model | vits | | Batch Size | 16 | | Eval Batch Size | 8 | | Mixed Precision | false | | Window Length | 1024 | | Hop Length | 256 | | FTT size | 1024 | | Num Mels | 80 | | Phonemizer | espeak | | Phoneme Lenguage | ca | | Text Cleaners | multilingual_cleaners | | Formatter | vctk_old | | Optimizer | adam | | Adam betas | (0.8, 0.99) | | Adam eps | 1e-09 | | Adam weight decay | 0.01 | | Learning Rate Gen | 0.0001 | | Lr. schedurer Gen | ExponentialLR | | Lr. schedurer Gamma Gen | 0.999875 | | Learning Rate Disc | 0.0001 | | Lr. schedurer Disc | ExponentialLR | | Lr. schedurer Gamma Disc | 0.999875 | The model was trained for 730962 steps. | a83d1e6ec01159e4b6a2201c5eff5526 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad-seed-420 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.9590 | ca14f687877240eb7c806b203e0e3e91 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 2.4491 | 1.0 | 8248 | 2.1014 | | 2.1388 | 2.0 | 16496 | 1.9590 | | 7df26cc2877335a20af2c9633233183e |

apache-2.0 | ['translation'] | false | eng-zlw * source group: English * target group: West Slavic languages * OPUS readme: [eng-zlw](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-zlw/README.md) * model: transformer * source language(s): eng * target language(s): ces csb_Latn dsb hsb pol * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus2m-2020-08-02.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-zlw/opus2m-2020-08-02.zip) * test set translations: [opus2m-2020-08-02.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-zlw/opus2m-2020-08-02.test.txt) * test set scores: [opus2m-2020-08-02.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-zlw/opus2m-2020-08-02.eval.txt) | 380116f6d28bf19ef8e1696ef8dccd9d |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | newssyscomb2009-engces.eng.ces | 20.6 | 0.488 | | news-test2008-engces.eng.ces | 18.3 | 0.466 | | newstest2009-engces.eng.ces | 19.8 | 0.483 | | newstest2010-engces.eng.ces | 19.8 | 0.486 | | newstest2011-engces.eng.ces | 20.6 | 0.489 | | newstest2012-engces.eng.ces | 18.6 | 0.464 | | newstest2013-engces.eng.ces | 22.3 | 0.495 | | newstest2015-encs-engces.eng.ces | 21.7 | 0.502 | | newstest2016-encs-engces.eng.ces | 24.5 | 0.521 | | newstest2017-encs-engces.eng.ces | 20.1 | 0.480 | | newstest2018-encs-engces.eng.ces | 19.9 | 0.483 | | newstest2019-encs-engces.eng.ces | 21.2 | 0.490 | | Tatoeba-test.eng-ces.eng.ces | 43.7 | 0.632 | | Tatoeba-test.eng-csb.eng.csb | 1.2 | 0.188 | | Tatoeba-test.eng-dsb.eng.dsb | 1.5 | 0.167 | | Tatoeba-test.eng-hsb.eng.hsb | 5.7 | 0.199 | | Tatoeba-test.eng.multi | 42.8 | 0.632 | | Tatoeba-test.eng-pol.eng.pol | 43.2 | 0.641 | | bab4c843da62a10463fe24d094d1ebe5 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: eng-zlw - source_languages: eng - target_languages: zlw - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-zlw/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['en', 'pl', 'cs', 'zlw'] - src_constituents: {'eng'} - tgt_constituents: {'csb_Latn', 'dsb', 'hsb', 'pol', 'ces'} - src_multilingual: False - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-zlw/opus2m-2020-08-02.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-zlw/opus2m-2020-08-02.test.txt - src_alpha3: eng - tgt_alpha3: zlw - short_pair: en-zlw - chrF2_score: 0.632 - bleu: 42.8 - brevity_penalty: 0.973 - ref_len: 65397.0 - src_name: English - tgt_name: West Slavic languages - train_date: 2020-08-02 - src_alpha2: en - tgt_alpha2: zlw - prefer_old: False - long_pair: eng-zlw - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 8eabcb5fa74a2ee1f23ecac08d28d32c |

creativeml-openrail-m | [] | false | Science Fiction space station textual embedding for Stable Diffusion 2.0. This embedding is trained on 42 images from Marcel Deneuve's Artstation (https://www.artstation.com/marceldeneuve), then further tuned with an expanded dataset that includes 96 additional images generated with the initial embedding alongside specific prompting tailored to improving the quality. Example generations:  _Prompt: "Deneuve Station" Steps: 10, Sampler: DPM++ SDE Karras, CFG scale: 5, Seed: 461940410, Size: 768x768, Model hash: 2c02b20a_  _Prompt: "Deneuve Station" Steps: 10, Sampler: DPM++ SDE Karras, CFG scale: 5, Seed: 2907310488, Size: 768x768, Model hash: 2c02b20a_  _Prompt: "Deneuve Station" Steps: 10, Sampler: DPM++ SDE Karras, CFG scale: 5, Seed: 2937662716, Size: 768x768, Model hash: 2c02b20a_ | 842331694da10aeadea077c58eb31925 |

mit | ['generated_from_trainer'] | false | xlm-roberta-profane-final This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3272 - Accuracy: 0.9087 - Precision: 0.8411 - Recall: 0.8441 - F1: 0.8426 | 52c193c48ca0d911e1bac14ad41a724e |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | No log | 1.0 | 296 | 0.2705 | 0.9030 | 0.8368 | 0.8192 | 0.8276 | | 0.3171 | 2.0 | 592 | 0.2174 | 0.9192 | 0.8847 | 0.8204 | 0.8476 | | 0.3171 | 3.0 | 888 | 0.2250 | 0.9202 | 0.8658 | 0.8531 | 0.8593 | | 0.2162 | 4.0 | 1184 | 0.2329 | 0.9106 | 0.8422 | 0.8538 | 0.8478 | | 0.2162 | 5.0 | 1480 | 0.2260 | 0.9183 | 0.8584 | 0.8584 | 0.8584 | | 0.1766 | 6.0 | 1776 | 0.2638 | 0.9116 | 0.8409 | 0.8651 | 0.8522 | | 0.146 | 7.0 | 2072 | 0.3088 | 0.9125 | 0.8494 | 0.8464 | 0.8478 | | 0.146 | 8.0 | 2368 | 0.2873 | 0.9154 | 0.8568 | 0.8459 | 0.8512 | | 0.1166 | 9.0 | 2664 | 0.3227 | 0.9144 | 0.8518 | 0.8518 | 0.8518 | | 0.1166 | 10.0 | 2960 | 0.3272 | 0.9087 | 0.8411 | 0.8441 | 0.8426 | | 9038f7be195d3b1f6120834da388333f |

apache-2.0 | ['generated_from_keras_callback'] | false | jiseong/mt5-small-finetuned-news This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1208 - Validation Loss: 0.1012 - Epoch: 2 | 22aff77ecaba62376ba4e449bcc7f10a |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.1829 | 0.1107 | 0 | | 0.1421 | 0.1135 | 1 | | 0.1208 | 0.1012 | 2 | | cd48fed4bb5fa140106f6716e876d3c1 |

apache-2.0 | ['translation'] | false | opus-mt-es-csn * source languages: es * target languages: csn * OPUS readme: [es-csn](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-csn/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-csn/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-csn/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-csn/opus-2020-01-16.eval.txt) | 1f1625370fd77950eefbe56bbab709a0 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2270 - Accuracy: 0.9245 - F1: 0.9249 | ebd3434a6fa7ed7d6e377b4039fe84b0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8398 | 1.0 | 250 | 0.3276 | 0.9005 | 0.8966 | | 0.2541 | 2.0 | 500 | 0.2270 | 0.9245 | 0.9249 | | fd14e62a1248ae07efc4adc47edcee17 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-it This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.2380 - F1 Score: 0.8289 | 1e110fab938d095809f5fe439581479a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 Score | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7058 | 1.0 | 70 | 0.3183 | 0.7480 | | 0.2808 | 2.0 | 140 | 0.2647 | 0.8070 | | 0.1865 | 3.0 | 210 | 0.2380 | 0.8289 | | 27d91a59a1c4eb80fd117ae3eb925562 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2057 - Accuracy: 0.9255 - F1: 0.9257 | d4462fcf4d4c8fc41adc3d7b39fc664a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8084 | 1.0 | 250 | 0.2883 | 0.9125 | 0.9110 | | 0.2371 | 2.0 | 500 | 0.2057 | 0.9255 | 0.9257 | | 3423e92e4d97c235066b7aba9ba5ac06 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_qqp This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QQP dataset. It achieves the following results on the evaluation set: - Loss: 0.4050 - Accuracy: 0.8320 - F1: 0.7639 - Combined Score: 0.7979 | 8346f9f3e38356af7ba0df2a6071234e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:--------------:| | 0.5406 | 1.0 | 1422 | 0.4844 | 0.7648 | 0.6276 | 0.6962 | | 0.4161 | 2.0 | 2844 | 0.4451 | 0.8044 | 0.6939 | 0.7491 | | 0.3079 | 3.0 | 4266 | 0.4050 | 0.8320 | 0.7639 | 0.7979 | | 0.2338 | 4.0 | 5688 | 0.4633 | 0.8388 | 0.7715 | 0.8052 | | 0.1801 | 5.0 | 7110 | 0.5597 | 0.8346 | 0.7489 | 0.7918 | | 0.1433 | 6.0 | 8532 | 0.5641 | 0.8460 | 0.7774 | 0.8117 | | 0.1155 | 7.0 | 9954 | 0.5940 | 0.8481 | 0.7889 | 0.8185 | | 0.0963 | 8.0 | 11376 | 0.6896 | 0.8438 | 0.7670 | 0.8054 | | 60ac771d0f245b233e9efa68f1942b9d |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | XLM-RoBERTa base Universal Dependencies v2.8 POS tagging: Basque This model is part of our paper called: - Make the Best of Cross-lingual Transfer: Evidence from POS Tagging with over 100 Languages Check the [Space](https://huggingface.co/spaces/wietsedv/xpos) for more details. | aa3da65c3f198e73cc2f796567ade582 |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-eu") model = AutoModelForTokenClassification.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-eu") ``` | 72411e5f67a480e3dd2dddd7728c544f |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0602 - Precision: 0.9274 - Recall: 0.9370 - F1: 0.9322 - Accuracy: 0.9839 | d5e7babcb8efa580d694d41a23102e01 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2431 | 1.0 | 878 | 0.0690 | 0.9174 | 0.9214 | 0.9194 | 0.9811 | | 0.0525 | 2.0 | 1756 | 0.0606 | 0.9251 | 0.9348 | 0.9299 | 0.9830 | | 0.0299 | 3.0 | 2634 | 0.0602 | 0.9274 | 0.9370 | 0.9322 | 0.9839 | | 3d7d51b644cd07356281db7e226c7fac |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-turkish-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.3873 - Wer: 0.3224 | b2af6f97da4368aabd82bfd937ec73d3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.0846 | 3.67 | 400 | 0.7488 | 0.7702 | | 0.4487 | 7.34 | 800 | 0.4428 | 0.5255 | | 0.1926 | 11.01 | 1200 | 0.4218 | 0.4667 | | 0.1302 | 14.68 | 1600 | 0.3957 | 0.4269 | | 0.0989 | 18.35 | 2000 | 0.4321 | 0.4085 | | 0.0748 | 22.02 | 2400 | 0.4067 | 0.3904 | | 0.0615 | 25.69 | 2800 | 0.3914 | 0.3557 | | 0.0485 | 29.36 | 3200 | 0.3873 | 0.3224 | | b7bd9fad834d6ecf9a7d07c585505305 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-fr This model is a fine-tuned version of [tkubotake/xlm-roberta-base-finetuned-panx-de](https://huggingface.co/tkubotake/xlm-roberta-base-finetuned-panx-de) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1829 - F1: 0.8671 | 00408ee240434c78ef710be54f432d2c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.158 | 1.0 | 715 | 0.1689 | 0.8471 | | 0.099 | 2.0 | 1430 | 0.1781 | 0.8576 | | 0.0599 | 3.0 | 2145 | 0.1829 | 0.8671 | | 116fa555059d30d901bee948c4f1097a |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.2661 - F1: 0.8422 | 423cb710611a42a89a5b200323dea425 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.5955 | 1.0 | 191 | 0.3344 | 0.7932 | | 0.2556 | 2.0 | 382 | 0.2923 | 0.8252 | | 0.1741 | 3.0 | 573 | 0.2661 | 0.8422 | | 4edbd29dc9748b92cf78c4c7220e5c89 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | opus-mt-tc-big-it-en Neural machine translation model for translating from Italian (it) to English (en). This model is part of the [OPUS-MT project](https://github.com/Helsinki-NLP/Opus-MT), an effort to make neural machine translation models widely available and accessible for many languages in the world. All models are originally trained using the amazing framework of [Marian NMT](https://marian-nmt.github.io/), an efficient NMT implementation written in pure C++. The models have been converted to pyTorch using the transformers library by huggingface. Training data is taken from [OPUS](https://opus.nlpl.eu/) and training pipelines use the procedures of [OPUS-MT-train](https://github.com/Helsinki-NLP/Opus-MT-train). * Publications: [OPUS-MT – Building open translation services for the World](https://aclanthology.org/2020.eamt-1.61/) and [The Tatoeba Translation Challenge – Realistic Data Sets for Low Resource and Multilingual MT](https://aclanthology.org/2020.wmt-1.139/) (Please, cite if you use this model.) ``` @inproceedings{tiedemann-thottingal-2020-opus, title = "{OPUS}-{MT} {--} Building open translation services for the World", author = {Tiedemann, J{\"o}rg and Thottingal, Santhosh}, booktitle = "Proceedings of the 22nd Annual Conference of the European Association for Machine Translation", month = nov, year = "2020", address = "Lisboa, Portugal", publisher = "European Association for Machine Translation", url = "https://aclanthology.org/2020.eamt-1.61", pages = "479--480", } @inproceedings{tiedemann-2020-tatoeba, title = "The Tatoeba Translation Challenge {--} Realistic Data Sets for Low Resource and Multilingual {MT}", author = {Tiedemann, J{\"o}rg}, booktitle = "Proceedings of the Fifth Conference on Machine Translation", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.wmt-1.139", pages = "1174--1182", } ``` | adbd19df10d344107656dc98e0e8d95b |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Model info * Release: 2022-02-25 * source language(s): ita * target language(s): eng * model: transformer-big * data: opusTCv20210807+bt ([source](https://github.com/Helsinki-NLP/Tatoeba-Challenge)) * tokenization: SentencePiece (spm32k,spm32k) * original model: [opusTCv20210807+bt_transformer-big_2022-02-25.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-eng/opusTCv20210807+bt_transformer-big_2022-02-25.zip) * more information released models: [OPUS-MT ita-eng README](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/ita-eng/README.md) | accb81141d479a2a94c86548478abfe2 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Usage A short example code: ```python from transformers import MarianMTModel, MarianTokenizer src_text = [ "So chi è il mio nemico.", "Tom è illetterato; non capisce assolutamente nulla." ] model_name = "pytorch-models/opus-mt-tc-big-it-en" tokenizer = MarianTokenizer.from_pretrained(model_name) model = MarianMTModel.from_pretrained(model_name) translated = model.generate(**tokenizer(src_text, return_tensors="pt", padding=True)) for t in translated: print( tokenizer.decode(t, skip_special_tokens=True) ) | 4b6dd705194064213264c267021f9cc6 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Tom is illiterate; he understands absolutely nothing. ``` You can also use OPUS-MT models with the transformers pipelines, for example: ```python from transformers import pipeline pipe = pipeline("translation", model="Helsinki-NLP/opus-mt-tc-big-it-en") print(pipe("So chi è il mio nemico.")) | 143fbf5f56614b20d13a8c608e72be98 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Benchmarks * test set translations: [opusTCv20210807+bt_transformer-big_2022-02-25.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-eng/opusTCv20210807+bt_transformer-big_2022-02-25.test.txt) * test set scores: [opusTCv20210807+bt_transformer-big_2022-02-25.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-eng/opusTCv20210807+bt_transformer-big_2022-02-25.eval.txt) * benchmark results: [benchmark_results.txt](benchmark_results.txt) * benchmark output: [benchmark_translations.zip](benchmark_translations.zip) | langpair | testset | chr-F | BLEU | | efa1b2fe10bee963eb8364dc85d95495 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | words | |----------|---------|-------|-------|-------|--------| | ita-eng | tatoeba-test-v2021-08-07 | 0.82288 | 72.1 | 17320 | 119214 | | ita-eng | flores101-devtest | 0.62115 | 32.8 | 1012 | 24721 | | ita-eng | newssyscomb2009 | 0.59822 | 34.4 | 502 | 11818 | | ita-eng | newstest2009 | 0.59646 | 34.3 | 2525 | 65399 | | 9d5d468c89746a53ae481406507c91ae |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-teacher-base-student-en-asr-timit This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 73.5882 - Wer: 0.3422 | 8b814ec60cb260b12b24f383068048c0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 30 - mixed_precision_training: Native AMP | 2c5a42b0e967e3cda08761effd18e118 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 920.6083 | 3.17 | 200 | 1256.0675 | 1.0 | | 660.5993 | 6.35 | 400 | 717.6098 | 0.9238 | | 336.5288 | 9.52 | 600 | 202.0025 | 0.5306 | | 131.3178 | 12.7 | 800 | 108.0701 | 0.4335 | | 73.4232 | 15.87 | 1000 | 90.2797 | 0.3728 | | 54.9439 | 19.05 | 1200 | 76.9043 | 0.3636 | | 44.6595 | 22.22 | 1400 | 79.2443 | 0.3550 | | 38.6381 | 25.4 | 1600 | 73.6277 | 0.3493 | | 35.074 | 28.57 | 1800 | 73.5882 | 0.3422 | | 0ddbb2d9792a1a4e905a03fc08386927 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab66 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.2675 - Wer: 1.0 | a09241ceb3f9c835798fcc08e0ee26dc |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 5.3521 | 7.04 | 500 | 3.3666 | 1.0 | | 3.1768 | 14.08 | 1000 | 3.3977 | 1.0 | | 3.1576 | 21.13 | 1500 | 3.2332 | 1.0 | | 3.1509 | 28.17 | 2000 | 3.2686 | 1.0 | | 3.149 | 35.21 | 2500 | 3.2550 | 1.0 | | 3.1478 | 42.25 | 3000 | 3.2689 | 1.0 | | 3.1444 | 49.3 | 3500 | 3.2848 | 1.0 | | 3.1442 | 56.34 | 4000 | 3.2675 | 1.0 | | 207dba3cc1683d9a35e7eca83b4c01ac |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-10epochs-3e3 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7024 - Wer: 0.6481 | cca3f81e0717f6c7c50c070ebe121777 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.003 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 8 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 10 - mixed_precision_training: Native AMP | b1f3a55100500831f2abcdeea74a48f9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.0938 | 0.36 | 100 | 0.4842 | 0.3227 | | 0.67 | 0.72 | 200 | 0.7219 | 0.5669 | | 0.7133 | 1.08 | 300 | 1.0698 | 0.7080 | | 1.0312 | 1.44 | 400 | 1.2692 | 0.8953 | | 1.2162 | 1.8 | 500 | 1.4763 | 1.0443 | | 1.2401 | 2.16 | 600 | 1.4906 | 0.8694 | | 1.2022 | 2.52 | 700 | 1.3686 | 0.9518 | | 1.154 | 2.88 | 800 | 1.1618 | 0.9109 | | 1.0467 | 3.24 | 900 | 1.2007 | 0.8602 | | 1.1785 | 3.6 | 1000 | 1.2000 | 0.9160 | | 0.979 | 3.96 | 1100 | 1.1464 | 0.8852 | | 1.1421 | 4.32 | 1200 | 1.1117 | 0.9018 | | 0.9622 | 4.68 | 1300 | 1.0976 | 0.8602 | | 1.0939 | 5.04 | 1400 | 1.1126 | 0.8831 | | 0.9414 | 5.4 | 1500 | 1.0134 | 0.8448 | | 0.9433 | 5.76 | 1600 | 0.9320 | 0.7977 | | 0.8389 | 6.12 | 1700 | 0.9013 | 0.7742 | | 0.8838 | 6.47 | 1800 | 0.9088 | 0.7509 | | 0.7907 | 6.83 | 1900 | 0.8581 | 0.7382 | | 0.7704 | 7.19 | 2000 | 0.8300 | 0.7481 | | 0.667 | 7.55 | 2100 | 0.8221 | 0.7349 | | 0.6111 | 7.91 | 2200 | 0.7803 | 0.7102 | | 0.5555 | 8.27 | 2300 | 0.8198 | 0.7314 | | 0.4947 | 8.63 | 2400 | 0.8127 | 0.7036 | | 0.4697 | 8.99 | 2500 | 0.7514 | 0.6805 | | 0.402 | 9.35 | 2600 | 0.7348 | 0.6606 | | 0.3682 | 9.71 | 2700 | 0.7024 | 0.6481 | | 6808b1ab93178c6a711073ac958bfbbc |

apache-2.0 | ['generated_from_keras_callback'] | false | long-t5-tglobal-large This model is a fine-tuned version of [google/long-t5-tglobal-large](https://huggingface.co/google/long-t5-tglobal-large) on an unknown dataset. It achieves the following results on the evaluation set: | 590863f58ce888268d11d689f2988684 |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/massive_social-roberta-large-v1-3-7 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | c1d5be0b2297256cd7c94bfe8d5a09e7 |

mit | ['text-classification'] | false | MiniLM: Small and Fast Pre-trained Models for Language Understanding and Generation MiniLM is a distilled model from the paper "[MiniLM: Deep Self-Attention Distillation for Task-Agnostic Compression of Pre-Trained Transformers](https://arxiv.org/abs/2002.10957)". Please find the information about preprocessing, training and full details of the MiniLM in the [original MiniLM repository](https://github.com/microsoft/unilm/blob/master/minilm/). Please note: This checkpoint can be an inplace substitution for BERT and it needs to be fine-tuned before use! | eb55f115c92dd334ba623afee7d5adcf |

mit | ['text-classification'] | false | English Pre-trained Models We release the **uncased** **12**-layer model with **384** hidden size distilled from an in-house pre-trained [UniLM v2](/unilm) model in BERT-Base size. - MiniLMv1-L12-H384-uncased: 12-layer, 384-hidden, 12-heads, 33M parameters, 2.7x faster than BERT-Base | 700cc3082e500d8de5751cb2e4c0d8f9 |

mit | ['text-classification'] | false | Param | SQuAD 2.0 | MNLI-m | SST-2 | QNLI | CoLA | RTE | MRPC | QQP | |---------------------------------------------------|--------|-----------|--------|-------|------|------|------|------|------| | [BERT-Base](https://arxiv.org/pdf/1810.04805.pdf) | 109M | 76.8 | 84.5 | 93.2 | 91.7 | 58.9 | 68.6 | 87.3 | 91.3 | | **MiniLM-L12xH384** | 33M | 81.7 | 85.7 | 93.0 | 91.5 | 58.5 | 73.3 | 89.5 | 91.3 | | 591a2c37066fcb3bee292b0d5c97a0a1 |

mit | ['text-classification'] | false | Citation If you find MiniLM useful in your research, please cite the following paper: ``` latex @misc{wang2020minilm, title={MiniLM: Deep Self-Attention Distillation for Task-Agnostic Compression of Pre-Trained Transformers}, author={Wenhui Wang and Furu Wei and Li Dong and Hangbo Bao and Nan Yang and Ming Zhou}, year={2020}, eprint={2002.10957}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | 0546a76d1786ba08f059e595a1f6d04e |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-marc-en This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 0.8945 - Mae: 0.5 | f83c187ca1a60e3de8786a5158d25681 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mae | |:-------------:|:-----:|:----:|:---------------:|:---:| | 1.1411 | 1.0 | 235 | 0.9358 | 0.5 | | 0.9653 | 2.0 | 470 | 0.8945 | 0.5 | | 7ea89baa330a35f0da8cc37d37082145 |

mit | ['pytorch', 'diffusers', 'unconditional-image-generation', 'diffusion-models-class'] | false | Model Card for a model trained based on the Unit 1 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class), not using accelarate yet. This model is a diffusion model for unconditional image generation of cute but small 🦋. The model was trained with 1000 images using the [DDPM](https://arxiv.org/abs/2006.11239) architecture. Images generated are of 64x64 pixel size. The model was trained for 50 epochs with a batch size of 64, using around 11 GB of GPU memory. | fb2aba10dc8a85712f7116d4b5d7b7a4 |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-SMALL-DM1000 (Deep-Narrow version) T5-Efficient-SMALL-DM1000 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | 27e8cab70a71dbdabfb1d120caef3016 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-small-dm1000** - is of model type **Small** with the following variations: - **dm** is **1000** It has **121.03** million parameters and thus requires *ca.* **484.11 MB** of memory in full precision (*fp32*) or **242.05 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | af5cbea91831057a787f532d4a06a182 |

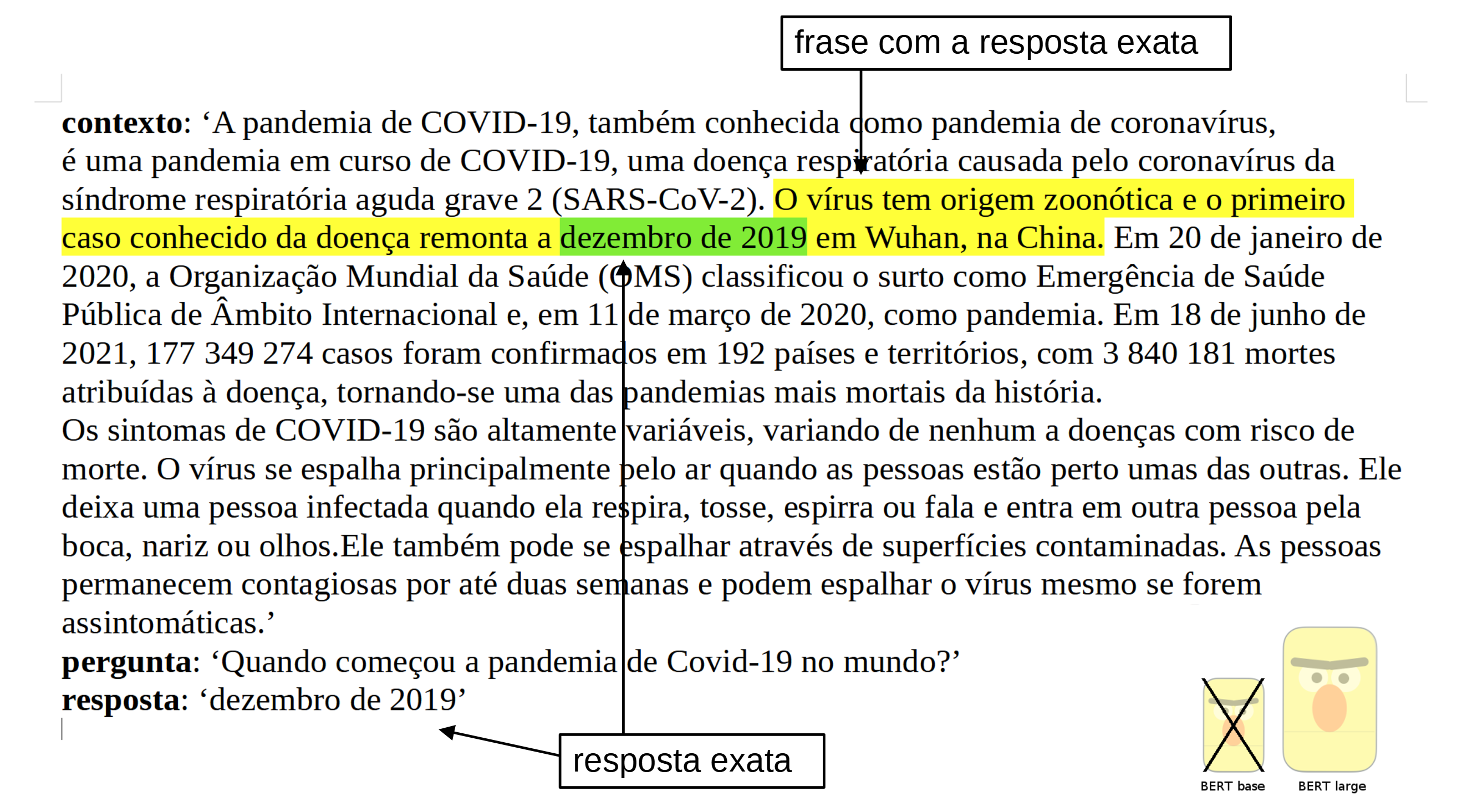

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1  | 3320c99e94fcf6e631e8dcd5a256fa82 |

mit | ['question-answering', 'bert', 'bert-large', 'pytorch'] | false | Introduction The model was trained on the dataset SQUAD v1.1 in portuguese from the [Deep Learning Brasil group](http://www.deeplearningbrasil.com.br/). The language model used is the [BERTimbau Large](https://huggingface.co/neuralmind/bert-large-portuguese-cased) (aka "bert-large-portuguese-cased") from [Neuralmind.ai](https://neuralmind.ai/): BERTimbau is a pretrained BERT model for Brazilian Portuguese that achieves state-of-the-art performances on three downstream NLP tasks: Named Entity Recognition, Sentence Textual Similarity and Recognizing Textual Entailment. It is available in two sizes: Base and Large. | 95c42223e0811036729c883cb1a4d39a |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.