Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

110,201 | 11,694,119,216 | IssuesEvent | 2020-03-06 02:53:37 | AGHSEagleRobotics/frc1388-2020 | https://api.github.com/repos/AGHSEagleRobotics/frc1388-2020 | closed | Wiring List (spreadsheet) | documentation | The wiring and placement of electronic components and sensors needs to be tracked. This will be done with a spreadsheet linked here: https://docs.google.com/spreadsheets/d/1-_TZ13xsfVZVFzq8rJBhIH7Ht_RBKVlgj4VI8T67ISo/edit#gid=0

This needs to be updated regularly to ensure good communication and planning. | 1.0 | Wiring List (spreadsheet) - The wiring and placement of electronic components and sensors needs to be tracked. This will be done with a spreadsheet linked here: https://docs.google.com/spreadsheets/d/1-_TZ13xsfVZVFzq8rJBhIH7Ht_RBKVlgj4VI8T67ISo/edit#gid=0

This needs to be updated regularly to ensure good communicati... | non_defect | wiring list spreadsheet the wiring and placement of electronic components and sensors needs to be tracked this will be done with a spreadsheet linked here this needs to be updated regularly to ensure good communication and planning | 0 |

22,842 | 3,711,518,664 | IssuesEvent | 2016-03-02 10:40:19 | OpenMS/OpenMS | https://api.github.com/repos/OpenMS/OpenMS | closed | MzTabExporter crash on idXML export | defect major | The `MzTabExporter` tool crashes on this `idXML` [1] with

```

Error: Unexpected internal error (Could not convert non-StringList DataValue to StringList)

```

[1] https://gist.github.com/lars20070/adfebc5fc6c44c641601 | 1.0 | MzTabExporter crash on idXML export - The `MzTabExporter` tool crashes on this `idXML` [1] with

```

Error: Unexpected internal error (Could not convert non-StringList DataValue to StringList)

```

[1] https://gist.github.com/lars20070/adfebc5fc6c44c641601 | defect | mztabexporter crash on idxml export the mztabexporter tool crashes on this idxml with error unexpected internal error could not convert non stringlist datavalue to stringlist | 1 |

279,400 | 24,222,497,929 | IssuesEvent | 2022-09-26 12:04:10 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Add tests for Console's context menu and its actions | Feature:Console Feature:Dev Tools Team:Deployment Management test-coverage | ## Summary

Console offers a context menu per request, which enables the user to perform a number of actions with the relevant request. Let's add functional tests to document and verify this behavior:

- [ ] Request-specific context menu

- [ ] Copy the request as cURL

- [ ] Open a documentation link for the relev... | 1.0 | Add tests for Console's context menu and its actions - ## Summary

Console offers a context menu per request, which enables the user to perform a number of actions with the relevant request. Let's add functional tests to document and verify this behavior:

- [ ] Request-specific context menu

- [ ] Copy the request... | non_defect | add tests for console s context menu and its actions summary console offers a context menu per request which enables the user to perform a number of actions with the relevant request let s add functional tests to document and verify this behavior request specific context menu copy the request as ... | 0 |

15,352 | 19,522,523,109 | IssuesEvent | 2021-12-29 21:41:53 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | Error shown for certain column types when getting results from cache | Type:Bug Priority:P2 Database/Redshift Querying/Processor .Backend | **Describe the bug**

When returning an `interval` column type via cached results, then an error is shown in the frontend instead of the results.

I'm unsure if this is specific to Redshift or generally for unknown column types. I've not been able to reproduce on Postgres.

**To Reproduce**

1. Admin > Settings > C... | 1.0 | Error shown for certain column types when getting results from cache - **Describe the bug**

When returning an `interval` column type via cached results, then an error is shown in the frontend instead of the results.

I'm unsure if this is specific to Redshift or generally for unknown column types. I've not been able... | non_defect | error shown for certain column types when getting results from cache describe the bug when returning an interval column type via cached results then an error is shown in the frontend instead of the results i m unsure if this is specific to redshift or generally for unknown column types i ve not been able... | 0 |

23,731 | 3,851,867,304 | IssuesEvent | 2016-04-06 05:28:43 | GPF/imame4all | https://api.github.com/repos/GPF/imame4all | closed | Not issue, just Thanks for your new 0.134 | auto-migrated Priority-Medium Type-Defect | ```

Thanks! Thanks! Thanks!

Great Man!

hope can see you keep update!

```

Original issue reported on code.google.com by `fatcatma...@gmail.com` on 5 Mar 2012 at 6:14 | 1.0 | Not issue, just Thanks for your new 0.134 - ```

Thanks! Thanks! Thanks!

Great Man!

hope can see you keep update!

```

Original issue reported on code.google.com by `fatcatma...@gmail.com` on 5 Mar 2012 at 6:14 | defect | not issue just thanks for your new thanks thanks thanks great man hope can see you keep update original issue reported on code google com by fatcatma gmail com on mar at | 1 |

79,776 | 10,141,769,542 | IssuesEvent | 2019-08-03 17:12:26 | microsoft/Requirements | https://api.github.com/repos/microsoft/Requirements | opened | Better Docs | documentation | Topics that need to be covered

* What is a Requirement?

* What does a single Requirement look like?

* What are all the properties on a Requirement?

* Which properties are mandatory/optional?

* What are the major patterns for building Requirements?

* Dynamically generating Requirements using contr... | 1.0 | Better Docs - Topics that need to be covered

* What is a Requirement?

* What does a single Requirement look like?

* What are all the properties on a Requirement?

* Which properties are mandatory/optional?

* What are the major patterns for building Requirements?

* Dynamically generating Requiremen... | non_defect | better docs topics that need to be covered what is a requirement what does a single requirement look like what are all the properties on a requirement which properties are mandatory optional what are the major patterns for building requirements dynamically generating requiremen... | 0 |

532,867 | 15,572,387,535 | IssuesEvent | 2021-03-17 06:57:47 | datastax/cassandra-quarkus | https://api.github.com/repos/datastax/cassandra-quarkus | closed | Cannot disable metrics in native mode | priority:critical type:bug | Steps to reproduce:

* Create a quickstart project on quarkus.io with the C* extension;

* Disable metrics in application.properties;

* Run the native tests with `mvn clean verify -Dnative`.

Expected outcome: the tests pass.

Observed outcome:

```

ERROR [io.qua.run.Application] (main) Failed to start appl... | 1.0 | Cannot disable metrics in native mode - Steps to reproduce:

* Create a quickstart project on quarkus.io with the C* extension;

* Disable metrics in application.properties;

* Run the native tests with `mvn clean verify -Dnative`.

Expected outcome: the tests pass.

Observed outcome:

```

ERROR [io.qua.run.... | non_defect | cannot disable metrics in native mode steps to reproduce create a quickstart project on quarkus io with the c extension disable metrics in application properties run the native tests with mvn clean verify dnative expected outcome the tests pass observed outcome error main fai... | 0 |

65,146 | 12,533,998,156 | IssuesEvent | 2020-06-04 18:36:51 | pi-hole/AdminLTE | https://api.github.com/repos/pi-hole/AdminLTE | opened | Remove `initCheckboxRadioStyle()` | Code maintenance | footer.js is loaded synchronously, which means that `initCheckboxRadioStyle()` fires up after the DOM has loaded.

This should be removed and the logic moved to PHP. | 1.0 | Remove `initCheckboxRadioStyle()` - footer.js is loaded synchronously, which means that `initCheckboxRadioStyle()` fires up after the DOM has loaded.

This should be removed and the logic moved to PHP. | non_defect | remove initcheckboxradiostyle footer js is loaded synchronously which means that initcheckboxradiostyle fires up after the dom has loaded this should be removed and the logic moved to php | 0 |

46,693 | 13,055,960,432 | IssuesEvent | 2020-07-30 03:14:32 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | building multiple copies of HTML docs (Trac #1723) | Incomplete Migration Migrated from Trac defect other | Migrated from https://code.icecube.wisc.edu/ticket/1723

```json

{

"status": "closed",

"changetime": "2016-06-09T14:50:36",

"description": "with the addition of sphinx-apidoc in r2580/IceTray we're now building two copies of the docs that step on each other and barf warnings everywhere",

"reporter": "... | 1.0 | building multiple copies of HTML docs (Trac #1723) - Migrated from https://code.icecube.wisc.edu/ticket/1723

```json

{

"status": "closed",

"changetime": "2016-06-09T14:50:36",

"description": "with the addition of sphinx-apidoc in r2580/IceTray we're now building two copies of the docs that step on each ot... | defect | building multiple copies of html docs trac migrated from json status closed changetime description with the addition of sphinx apidoc in icetray we re now building two copies of the docs that step on each other and barf warnings everywhere reporter nega... | 1 |

29,277 | 5,632,301,722 | IssuesEvent | 2017-04-05 16:16:31 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | Call to a member function schema() on null ... AclComponent.php on line 298 | Defect On hold | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 2.0.5

* Platform and Target: Windows 10 xampp

### What you did

this is de 298 line in AclComponent:

`$permKeys = $this->_getAcoKeys($this->Aro->Permission->schema());

`

### What happened

after l... | 1.0 | Call to a member function schema() on null ... AclComponent.php on line 298 - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 2.0.5

* Platform and Target: Windows 10 xampp

### What you did

this is de 298 line in AclComponent:

`$permKeys = $this... | defect | call to a member function schema on null aclcomponent php on line this is a multiple allowed bug enhancement feature discussion rfc cakephp version platform and target windows xampp what you did this is de line in aclcomponent permkeys this getacoke... | 1 |

34,244 | 12,258,886,846 | IssuesEvent | 2020-05-06 15:45:36 | DbugTech/dbugtech.github.io | https://api.github.com/repos/DbugTech/dbugtech.github.io | opened | CVE-2020-11022 (Medium) detected in jquery-2.1.4.min.js, jquery-1.11.3.min.js | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-1.11.3.min.js</b></p></summary>

<p>

<details><summary><b>jquery-2.1.4.min.js</b... | True | CVE-2020-11022 (Medium) detected in jquery-2.1.4.min.js, jquery-1.11.3.min.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-... | non_defect | cve medium detected in jquery min js jquery min js cve medium severity vulnerability vulnerable libraries jquery min js jquery min js jquery min js javascript library for dom operations library home page a href path to dependency file tmp... | 0 |

777,638 | 27,289,102,780 | IssuesEvent | 2023-02-23 15:25:31 | PIP-Technical-Team/pipapi | https://api.github.com/repos/PIP-Technical-Team/pipapi | closed | review potential issue with ui_cp_download | Priority: 2-High Type: 1-Bug | `ui_cp_download_single` sometimes returns data.frame with duplciated rows l594 of `ui_functions.R`

Need to figure out why this is happening. | 1.0 | review potential issue with ui_cp_download - `ui_cp_download_single` sometimes returns data.frame with duplciated rows l594 of `ui_functions.R`

Need to figure out why this is happening. | non_defect | review potential issue with ui cp download ui cp download single sometimes returns data frame with duplciated rows of ui functions r need to figure out why this is happening | 0 |

688,786 | 23,596,307,596 | IssuesEvent | 2022-08-23 19:36:01 | GoogleChrome/lighthouse | https://api.github.com/repos/GoogleChrome/lighthouse | closed | PageSpeed Insights showing as same resource(file) loaded multiple times | needs-priority PSI/LR | Site URL: https://getgist.com/

I have tried analysing the performance of our site in the [PageSpeed Insights developers site](https://developers.google.com/speed/pagespeed/insights/) and found that the results showing as same resource loaded multiple times and the **total requests count as 422** with around **10MB**... | 1.0 | PageSpeed Insights showing as same resource(file) loaded multiple times - Site URL: https://getgist.com/

I have tried analysing the performance of our site in the [PageSpeed Insights developers site](https://developers.google.com/speed/pagespeed/insights/) and found that the results showing as same resource loaded m... | non_defect | pagespeed insights showing as same resource file loaded multiple times site url i have tried analysing the performance of our site in the and found that the results showing as same resource loaded multiple times and the total requests count as with around of data transferred which doesn t looks... | 0 |

3,053 | 2,607,970,564 | IssuesEvent | 2015-02-26 00:44:23 | chrsmithdemos/leveldb | https://api.github.com/repos/chrsmithdemos/leveldb | opened | corruption_test segfaults on ARM | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Compile leveldb's corruption test for armv7a

2. Execute on some such lower-powered device (e.g. beaglebone)

3. Segmentation fault!

What is the expected output? What do you see instead?

>>EXPECTED>>

==== Test CorruptionTest.Recovery

expected=100..100; got=100; ba... | 1.0 | corruption_test segfaults on ARM - ```

What steps will reproduce the problem?

1. Compile leveldb's corruption test for armv7a

2. Execute on some such lower-powered device (e.g. beaglebone)

3. Segmentation fault!

What is the expected output? What do you see instead?

>>EXPECTED>>

==== Test CorruptionTest.Recovery... | defect | corruption test segfaults on arm what steps will reproduce the problem compile leveldb s corruption test for execute on some such lower powered device e g beaglebone segmentation fault what is the expected output what do you see instead expected test corruptiontest recovery ... | 1 |

70,276 | 23,091,115,953 | IssuesEvent | 2022-07-26 15:17:24 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | closed | Defect Hunting: Wizard | bug Defect Hunting wizard | #### Is this a bug, enhancement, or feature request?

bug/doc

#### Briefly describe your proposal.

- [ ] Wizard mobile examples - I am not familiar with any cell phone screens this tall, let's make these examples shorter

### Reproducer

```

<h:form id="form">

<div style="width: 500px">

<p:tree id="testTree" value="#{indexControlle... | 1.0 | Tree: Nodes with long text are displaced - ### Describe the bug

Nodes with long text are displaced

### Reproducer

```

<h:form id="form">

<div style="width: 500px">

<p... | defect | tree nodes with long text are displaced describe the bug nodes with long text are displaced reproducer named ... | 1 |

42,999 | 11,423,166,462 | IssuesEvent | 2020-02-03 15:27:05 | extnet/Ext.NET | https://api.github.com/repos/extnet/Ext.NET | closed | Desktop.addModule()'s added shortcut position is reset on window resize | 5.x defect | Found: 5.1.0

Ext.NET Forum Thread: [Desktop module icon overlap after window resize](https://forums.ext.net/showthread.php?62833)

No Sencha thread - feature is exclusive to Ext.NET.

Desktop window resize calls desktop's `arrangeShortcuts(ignorePosition: false, ignoreTemp: true)`, thus icons just added via `addModu... | 1.0 | Desktop.addModule()'s added shortcut position is reset on window resize - Found: 5.1.0

Ext.NET Forum Thread: [Desktop module icon overlap after window resize](https://forums.ext.net/showthread.php?62833)

No Sencha thread - feature is exclusive to Ext.NET.

Desktop window resize calls desktop's `arrangeShortcuts(ign... | defect | desktop addmodule s added shortcut position is reset on window resize found ext net forum thread no sencha thread feature is exclusive to ext net desktop window resize calls desktop s arrangeshortcuts ignoreposition false ignoretemp true thus icons just added via addmodule position wo... | 1 |

64,825 | 18,939,725,761 | IssuesEvent | 2021-11-18 00:28:53 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | opened | Custom Jitsi server support | T-Defect | ### Steps to reproduce

I have setup a custom Jitsi server according to [this](https://github.com/vector-im/element-web/blob/develop/docs/jitsi.md#configuring-element-to-use-your-self-hosted-jitsi-server) documentation. I setup a videocall using the 'Camera' call button but the client used jitsi.riot.im rather than my... | 1.0 | Custom Jitsi server support - ### Steps to reproduce

I have setup a custom Jitsi server according to [this](https://github.com/vector-im/element-web/blob/develop/docs/jitsi.md#configuring-element-to-use-your-self-hosted-jitsi-server) documentation. I setup a videocall using the 'Camera' call button but the client used... | defect | custom jitsi server support steps to reproduce i have setup a custom jitsi server according to documentation i setup a videocall using the camera call button but the client used jitsi riot im rather than my custom server here is my nginx config which sets the jitsi domain name server ... | 1 |

51,559 | 13,207,526,832 | IssuesEvent | 2020-08-14 23:27:20 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | can't find python libs > python 2.6.x (Trac #627) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/627">https://code.icecube.wisc.edu/projects/icecube/ticket/627</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2011-05-11T19:50:31",

"_ts": "1305143431... | 1.0 | can't find python libs > python 2.6.x (Trac #627) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/627">https://code.icecube.wisc.edu/projects/icecube/ticket/627</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"change... | defect | can t find python libs python x trac migrated from json status closed changetime ts description cmake x only has support for python x in its pythonfindx cmake modules hard coded values this seems to have been fixed in cmake ... | 1 |

31,623 | 7,430,523,144 | IssuesEvent | 2018-03-25 02:56:41 | dsherret/ts-simple-ast | https://api.github.com/repos/dsherret/ts-simple-ast | closed | Remove "Base" variables from declaration file | code improvement | The declaration file for this project shouldn't have the "Base" variable declarations in it. | 1.0 | Remove "Base" variables from declaration file - The declaration file for this project shouldn't have the "Base" variable declarations in it. | non_defect | remove base variables from declaration file the declaration file for this project shouldn t have the base variable declarations in it | 0 |

150,269 | 11,954,067,218 | IssuesEvent | 2020-04-03 22:26:03 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | System.Net.Sockets.Tests.DualModeAcceptAsync.AcceptAsyncV4BoundToSpecificV6_CantConnect test failed in CI | area-System.Net.Sockets test bug test-run-core | https://dnceng.visualstudio.com/public/_build/results?buildId=393221&view=ms.vss-test-web.build-test-results-tab&runId=12222448&resultId=177679&paneView=debug

Configuration: `netcoreapp-Windows_NT-Release-x86-Windows.10.Amd64.Client19H1.Open`

```

Assert.Throws() Failure\r\nExpected: typeof(System.Net.Sockets.Soc... | 2.0 | System.Net.Sockets.Tests.DualModeAcceptAsync.AcceptAsyncV4BoundToSpecificV6_CantConnect test failed in CI - https://dnceng.visualstudio.com/public/_build/results?buildId=393221&view=ms.vss-test-web.build-test-results-tab&runId=12222448&resultId=177679&paneView=debug

Configuration: `netcoreapp-Windows_NT-Release-x86-... | non_defect | system net sockets tests dualmodeacceptasync cantconnect test failed in ci configuration netcoreapp windows nt release windows open assert throws failure r nexpected typeof system net sockets socketexception r nactual typeof system timeoutexception timed out while waiting for either o... | 0 |

23,251 | 3,783,890,464 | IssuesEvent | 2016-03-19 12:46:04 | VladSerdobintsev/zfcore | https://api.github.com/repos/VladSerdobintsev/zfcore | closed | Login issue with "Remember me" flag. | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.Login with "Remember me" flag.

2.Logout

3.Login without "Remember me" flag.

4.Close and open browser.

Issue : User still logged in.

Caused by:

When user logging out session id and expires date doesn't change after logout.

Resolve:

Add to class Users_Model_User_Manager fun... | 1.0 | Login issue with "Remember me" flag. - ```

What steps will reproduce the problem?

1.Login with "Remember me" flag.

2.Logout

3.Login without "Remember me" flag.

4.Close and open browser.

Issue : User still logged in.

Caused by:

When user logging out session id and expires date doesn't change after logout.

Resolve:

Ad... | defect | login issue with remember me flag what steps will reproduce the problem login with remember me flag logout login without remember me flag close and open browser issue user still logged in caused by when user logging out session id and expires date doesn t change after logout resolve ad... | 1 |

342,643 | 30,633,022,011 | IssuesEvent | 2023-07-24 15:42:17 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | schemachanger,sctest: backup tests are slightly broken | C-bug C-test-failure A-testing T-sql-foundations A-schema-changer-impl | In an effort to understand how the `TestBackup*` cumulative tests for the declarative schema changer work, I noticed a subtle bug: we back up _after_ executing each post-commit stage, which is fine but means we never test backups after the stmt txn commits but before the job runs, which is arguably the most important c... | 2.0 | schemachanger,sctest: backup tests are slightly broken - In an effort to understand how the `TestBackup*` cumulative tests for the declarative schema changer work, I noticed a subtle bug: we back up _after_ executing each post-commit stage, which is fine but means we never test backups after the stmt txn commits but be... | non_defect | schemachanger sctest backup tests are slightly broken in an effort to understand how the testbackup cumulative tests for the declarative schema changer work i noticed a subtle bug we back up after executing each post commit stage which is fine but means we never test backups after the stmt txn commits but be... | 0 |

46,822 | 13,055,982,575 | IssuesEvent | 2020-07-30 03:18:07 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [codereview] grbllh (Trac #1935) | Incomplete Migration Migrated from Trac cmake defect | Migrated from https://code.icecube.wisc.edu/ticket/1935

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:55",

"description": "",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"_ts": "1550067175380821",

"component": "cmake",

"summary": "[codereview] gr... | 1.0 | [codereview] grbllh (Trac #1935) - Migrated from https://code.icecube.wisc.edu/ticket/1935

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:55",

"description": "",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"_ts": "1550067175380821",

"component": "cmake... | defect | grbllh trac migrated from json status closed changetime description reporter kjmeagher cc resolution fixed ts component cmake summary grbllh priority normal keywords ... | 1 |

59,511 | 17,023,148,139 | IssuesEvent | 2021-07-03 00:35:26 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Website using ISO-8859-1 encoding conflicts with UTF-8 data. | Component: website Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 5.27am, Monday, 19th March 2007]**

The website uses iso-8859-1 (default www) encoding, which results in all kinds of issues when using the browser to read data from or input data into the OSM project.

This is no problem when handling ASCII-data but using the applet... | 1.0 | Website using ISO-8859-1 encoding conflicts with UTF-8 data. - **[Submitted to the original trac issue database at 5.27am, Monday, 19th March 2007]**

The website uses iso-8859-1 (default www) encoding, which results in all kinds of issues when using the browser to read data from or input data into the OSM project.

T... | defect | website using iso encoding conflicts with utf data the website uses iso default www encoding which results in all kinds of issues when using the browser to read data from or input data into the osm project this is no problem when handling ascii data but using the applet to edit things the inpu... | 1 |

61,400 | 17,023,684,731 | IssuesEvent | 2021-07-03 03:17:31 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Keyboard commands not responsive | Component: potlatch2 Priority: minor Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 2.28pm, Thursday, 3rd March 2011]**

(Andy, potlatch-dev, 4 mar 2011)

"I still find the shift key stopping working every now and then, but like Richard I don't have steps to reproduce this one."

Fwiw, I haven't seen this. Does it also happen with other modifiers? ... | 1.0 | Keyboard commands not responsive - **[Submitted to the original trac issue database at 2.28pm, Thursday, 3rd March 2011]**

(Andy, potlatch-dev, 4 mar 2011)

"I still find the shift key stopping working every now and then, but like Richard I don't have steps to reproduce this one."

Fwiw, I haven't seen this. Does it... | defect | keyboard commands not responsive andy potlatch dev mar i still find the shift key stopping working every now and then but like richard i don t have steps to reproduce this one fwiw i haven t seen this does it also happen with other modifiers does it stay out of action for the whole session ... | 1 |

473,467 | 13,642,868,207 | IssuesEvent | 2020-09-25 16:10:29 | dwyl/smart-home-auth-server | https://api.github.com/repos/dwyl/smart-home-auth-server | opened | Locks aren't implemented with a mode through GUI | bug priority-3 | While testing the new lock GUI for RBAC, I noticed that new locks aren't set a default mode, resulting in the error:

`16:08:04.856 [info] Unimplemented mode: nil/Lock not configured`

This should be an easy fix.

Through the API this works fine. | 1.0 | Locks aren't implemented with a mode through GUI - While testing the new lock GUI for RBAC, I noticed that new locks aren't set a default mode, resulting in the error:

`16:08:04.856 [info] Unimplemented mode: nil/Lock not configured`

This should be an easy fix.

Through the API this works fine. | non_defect | locks aren t implemented with a mode through gui while testing the new lock gui for rbac i noticed that new locks aren t set a default mode resulting in the error unimplemented mode nil lock not configured this should be an easy fix through the api this works fine | 0 |

98,869 | 30,206,655,843 | IssuesEvent | 2023-07-05 09:53:02 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [mono][ios] Unknown format in import in System.Security.Cryptography.Tests | area-System.Security disabled-test os-ios in-pr Known Build Error | ## Build Information

Build: https://dev.azure.com/dnceng-public/public/_build/results?buildId=320749&view=results

Build error leg or test failing: iossimulator-x64 Release AllSubsets_Mono

Pull request: https://github.com/dotnet/runtime/pull/88042

<!-- Error message template -->

## Error Message

Fill the error ... | 1.0 | [mono][ios] Unknown format in import in System.Security.Cryptography.Tests - ## Build Information

Build: https://dev.azure.com/dnceng-public/public/_build/results?buildId=320749&view=results

Build error leg or test failing: iossimulator-x64 Release AllSubsets_Mono

Pull request: https://github.com/dotnet/runtime/pull... | non_defect | unknown format in import in system security cryptography tests build information build build error leg or test failing iossimulator release allsubsets mono pull request error message fill the error message using json errormessage interop applecrypto applecommoncryp... | 0 |

465,448 | 13,385,994,963 | IssuesEvent | 2020-09-02 14:10:45 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | Strapi admin roles&permissions no longer able to click anywhere on row to get into edit screen | priority: high source: admin status: confirmed type: bug | Version 3.1.0, worked in 3.0.5.

Now being forced to click on the small edit icon which is next to delete icon - each time feels intimidating. In Collection Types clicking anywhere on row still works to edit.

Maybe this bug should be filled for plugin? Don't know which part is responsible for UI behavior.

| 1.0 | Strapi admin roles&permissions no longer able to click anywhere on row to get into edit screen - Version 3.1.0, worked in 3.0.5.

Now being forced to click on the small edit icon which is next to delete icon - each time feels intimidating. In Collection Types clicking anywhere on row still works to edit.

Maybe this bu... | non_defect | strapi admin roles permissions no longer able to click anywhere on row to get into edit screen version worked in now being forced to click on the small edit icon which is next to delete icon each time feels intimidating in collection types clicking anywhere on row still works to edit maybe this bu... | 0 |

71,954 | 23,867,521,731 | IssuesEvent | 2022-09-07 12:22:09 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Some Hazelcast log statements missing when used via Spring Boot [HZ-822] | Type: Defect Team: Core Source: Internal Module: Spring to-jira | **Describe the bug**

When Hazelcast is used via Spring Boot not all log statements are printed. All debug/trace logs are missing, some info statements are missing.

**Expected behavior**

All log statements are visible according to Spring Boot Logging configuration

**To Reproduce**

Use any starter, e.g. ht... | 1.0 | Some Hazelcast log statements missing when used via Spring Boot [HZ-822] - **Describe the bug**

When Hazelcast is used via Spring Boot not all log statements are printed. All debug/trace logs are missing, some info statements are missing.

**Expected behavior**

All log statements are visible according to Spring... | defect | some hazelcast log statements missing when used via spring boot describe the bug when hazelcast is used via spring boot not all log statements are printed all debug trace logs are missing some info statements are missing expected behavior all log statements are visible according to spring boot l... | 1 |

19,037 | 3,129,397,136 | IssuesEvent | 2015-09-09 00:53:33 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | opened | VM Service: null instances don't have a class field | Area-Observatory Type-Defect | According to `Instance`'s documentation, "`Instance` references always include their class". However, for a null instance I get the following JSON:

```json

{

"type": "@Instance",

"_vmType": "null",

"kind": "Null",

"fixedId": true,

"id": "objects/null",

"valueAsString": "null"

}

``` | 1.0 | VM Service: null instances don't have a class field - According to `Instance`'s documentation, "`Instance` references always include their class". However, for a null instance I get the following JSON:

```json

{

"type": "@Instance",

"_vmType": "null",

"kind": "Null",

"fixedId": true,

"id": "objects/n... | defect | vm service null instances don t have a class field according to instance s documentation instance references always include their class however for a null instance i get the following json json type instance vmtype null kind null fixedid true id objects n... | 1 |

8,252 | 2,611,473,470 | IssuesEvent | 2015-02-27 05:17:35 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | team name disappears when switching to fullscreen in OS X Lion | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. start a game (windowed by default)

2. switch to fullscreen (a team name disappears)

3. go back to windowed mode (the problem persists)

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

0.9.16 OS X L... | 1.0 | team name disappears when switching to fullscreen in OS X Lion - ```

What steps will reproduce the problem?

1. start a game (windowed by default)

2. switch to fullscreen (a team name disappears)

3. go back to windowed mode (the problem persists)

What is the expected output? What do you see instead?

What version of t... | defect | team name disappears when switching to fullscreen in os x lion what steps will reproduce the problem start a game windowed by default switch to fullscreen a team name disappears go back to windowed mode the problem persists what is the expected output what do you see instead what version of t... | 1 |

176,162 | 14,565,334,478 | IssuesEvent | 2020-12-17 07:04:09 | MarkEdmondson1234/googleAnalyticsR | https://api.github.com/repos/MarkEdmondson1234/googleAnalyticsR | closed | [DOCS] | documentation | Dear Mark,

Thanks for your package.

When reading the [tutorials section](https://code.markedmondson.me/googleAnalyticsR/#tutorials) of the package's documentation, I found some broken links:

* Bert uses googleAnalyticsR to detect bot traffic

* Fredrik forecasts sales from Google Analytics and sends it to you... | 1.0 | [DOCS] - Dear Mark,

Thanks for your package.

When reading the [tutorials section](https://code.markedmondson.me/googleAnalyticsR/#tutorials) of the package's documentation, I found some broken links:

* Bert uses googleAnalyticsR to detect bot traffic

* Fredrik forecasts sales from Google Analytics and sends... | non_defect | dear mark thanks for your package when reading the of the package s documentation i found some broken links bert uses googleanalyticsr to detect bot traffic fredrik forecasts sales from google analytics and sends it to your own slack bot omar shows some heatmaps and using googleanalyt... | 0 |

42,310 | 10,960,782,758 | IssuesEvent | 2019-11-27 14:14:09 | idaholab/moose | https://api.github.com/repos/idaholab/moose | opened | MacOS Catalina requires all PKGs to be notarized | C: Documentation T: defect | ## Bug Description

MacOS Catalina requires all .pkg bundles to be notarized. Ref: https://developer.apple.com/documentation/xcode/notarizing_macos_software_before_distribution

## Steps to Reproduce

Download the app using a web browser. This will apply a quarantine attribute to the downloaded file. Which, triggers ... | 1.0 | MacOS Catalina requires all PKGs to be notarized - ## Bug Description

MacOS Catalina requires all .pkg bundles to be notarized. Ref: https://developer.apple.com/documentation/xcode/notarizing_macos_software_before_distribution

## Steps to Reproduce

Download the app using a web browser. This will apply a quarantine... | defect | macos catalina requires all pkgs to be notarized bug description macos catalina requires all pkg bundles to be notarized ref steps to reproduce download the app using a web browser this will apply a quarantine attribute to the downloaded file which triggers the notarizing halt impact withou... | 1 |

57,883 | 16,115,783,561 | IssuesEvent | 2021-04-28 07:12:45 | line/armeria | https://api.github.com/repos/line/armeria | closed | `ClosedStreamException` triggered by `ArmeriaServerCall.doSendMessage()` not handled by Armeria | defect | We got the following exception report from a user at our Slack workspace:

```

2021-04-12 14:14:02.858 WARN 8324 --- [-worker-nio-2-8] i.n.u.concurrent.AbstractEventExecutor : A task raised an exception. Task: com.linecorp.armeria.common.RequestContext$$Lambda$1068/66855464@6d21f471

com.linecorp.armeria.common.s... | 1.0 | `ClosedStreamException` triggered by `ArmeriaServerCall.doSendMessage()` not handled by Armeria - We got the following exception report from a user at our Slack workspace:

```

2021-04-12 14:14:02.858 WARN 8324 --- [-worker-nio-2-8] i.n.u.concurrent.AbstractEventExecutor : A task raised an exception. Task: com.li... | defect | closedstreamexception triggered by armeriaservercall dosendmessage not handled by armeria we got the following exception report from a user at our slack workspace warn i n u concurrent abstracteventexecutor a task raised an exception task com linecorp armeria common request... | 1 |

153,658 | 12,156,448,111 | IssuesEvent | 2020-04-25 17:22:38 | SAP/spartacus | https://api.github.com/repos/SAP/spartacus | closed | E2E: additional test case | applied-promotions e2e-tests promotions | Test scenario:

-> add two products from the price range 100-150$ to the cart

-> test if you see the order promotion for order with total sum of > 200$

-> remove one of the product

-> test that you should not see this order promotion anymore | 1.0 | E2E: additional test case - Test scenario:

-> add two products from the price range 100-150$ to the cart

-> test if you see the order promotion for order with total sum of > 200$

-> remove one of the product

-> test that you should not see this order promotion anymore | non_defect | additional test case test scenario add two products from the price range to the cart test if you see the order promotion for order with total sum of remove one of the product test that you should not see this order promotion anymore | 0 |

15,296 | 2,850,599,479 | IssuesEvent | 2015-05-31 18:21:31 | damonkohler/sl4a | https://api.github.com/repos/damonkohler/sl4a | opened | SL4A Force Close on droid.startActivityIntent(chooserIntent) | auto-migrated Priority-Medium Type-Defect | _From @GoogleCodeExporter on May 31, 2015 11:30_

```

What device(s) are you experiencing the problem on?

Samsung Vibrant (SGH-T959)

What firmware version are you running on the device?

2.2

What steps will reproduce the problem?

1. Run the attached Python script on an Android device with SL4Ar4

-or-

1. Make an intent... | 1.0 | SL4A Force Close on droid.startActivityIntent(chooserIntent) - _From @GoogleCodeExporter on May 31, 2015 11:30_

```

What device(s) are you experiencing the problem on?

Samsung Vibrant (SGH-T959)

What firmware version are you running on the device?

2.2

What steps will reproduce the problem?

1. Run the attached Python... | defect | force close on droid startactivityintent chooserintent from googlecodeexporter on may what device s are you experiencing the problem on samsung vibrant sgh what firmware version are you running on the device what steps will reproduce the problem run the attached python script on a... | 1 |

69,661 | 22,597,048,163 | IssuesEvent | 2022-06-29 04:58:34 | unascribed/Fabrication | https://api.github.com/repos/unascribed/Fabrication | opened | [REQUEST] Would You like to add KEYBIND to instantly open Fabrication Settings menu? | k: Defect n: Fabric s: New | Instead of opening ModMenu first. Nice idea cool isn't?

THANK YOU! :) | 1.0 | [REQUEST] Would You like to add KEYBIND to instantly open Fabrication Settings menu? - Instead of opening ModMenu first. Nice idea cool isn't?

THANK YOU! :) | defect | would you like to add keybind to instantly open fabrication settings menu instead of opening modmenu first nice idea cool isn t thank you | 1 |

294,752 | 22,162,120,910 | IssuesEvent | 2022-06-04 16:56:03 | Irishbecky91/TheWaggingTailor | https://api.github.com/repos/Irishbecky91/TheWaggingTailor | opened | TASK: README Requirements | documentation | - [ ] - Evidence of either a real Facebook site or a mockup of one for digital marketing purposes.

- [ ] - A description of the e-commerce business model including marketing strategies in the README file.

- [ ] - Detailed testing write-ups, beyond results of validation tools.

- [ ] - Detailed ERD image displayed on ... | 1.0 | TASK: README Requirements - - [ ] - Evidence of either a real Facebook site or a mockup of one for digital marketing purposes.

- [ ] - A description of the e-commerce business model including marketing strategies in the README file.

- [ ] - Detailed testing write-ups, beyond results of validation tools.

- [ ] - Deta... | non_defect | task readme requirements evidence of either a real facebook site or a mockup of one for digital marketing purposes a description of the e commerce business model including marketing strategies in the readme file detailed testing write ups beyond results of validation tools detailed erd... | 0 |

78,754 | 27,746,229,984 | IssuesEvent | 2023-03-15 17:13:24 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Warning about inviting unknown users is not shown when creating a room via "Start chat" | T-Defect | ### Steps to reproduce

1. Click the + "Start chat" button next to the header of the People section of the room list.

2. Attempt to start a chat with a user that you know does not exist (and alternatively, on a homeserver you know does exist).

3. Click "Go"

4. You are let through to the next stage without any warnin... | 1.0 | Warning about inviting unknown users is not shown when creating a room via "Start chat" - ### Steps to reproduce

1. Click the + "Start chat" button next to the header of the People section of the room list.

2. Attempt to start a chat with a user that you know does not exist (and alternatively, on a homeserver you kno... | defect | warning about inviting unknown users is not shown when creating a room via start chat steps to reproduce click the start chat button next to the header of the people section of the room list attempt to start a chat with a user that you know does not exist and alternatively on a homeserver you kno... | 1 |

26,687 | 4,777,576,680 | IssuesEvent | 2016-10-27 16:40:31 | wheeler-microfluidics/microdrop | https://api.github.com/repos/wheeler-microfluidics/microdrop | opened | gstreamer help message overriding argparse message (Trac #166) | defect Incomplete Migration microdrop Migrated from Trac | Migrated from http://microfluidics.utoronto.ca/microdrop/ticket/166

```json

{

"status": "new",

"changetime": "2015-01-06T14:23:40",

"description": "{{{\n#!md\nSee [here][1].\n\n[1]: http://stackoverflow.com/questions/12059806/gstreamer-help-message-overriding-my-argparse-message#answer-12417626\n}}}",

... | 1.0 | gstreamer help message overriding argparse message (Trac #166) - Migrated from http://microfluidics.utoronto.ca/microdrop/ticket/166

```json

{

"status": "new",

"changetime": "2015-01-06T14:23:40",

"description": "{{{\n#!md\nSee [here][1].\n\n[1]: http://stackoverflow.com/questions/12059806/gstreamer-help-... | defect | gstreamer help message overriding argparse message trac migrated from json status new changetime description n md nsee n n reporter cfobel cc resolution ts component microdrop summary ... | 1 |

2,885 | 2,607,964,509 | IssuesEvent | 2015-02-26 00:41:43 | chrsmithdemos/leveldb | https://api.github.com/repos/chrsmithdemos/leveldb | closed | Support for DragonFlyBSD/NetBSD/OpenBSD | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Try to compile on one of the above operating systems

2. The compile will fail because of missing includes and missing fdatasync, etc

What is the expected output? What do you see instead?

leveldb should compile correctly, instead it fails.

Please provide any additional i... | 1.0 | Support for DragonFlyBSD/NetBSD/OpenBSD - ```

What steps will reproduce the problem?

1. Try to compile on one of the above operating systems

2. The compile will fail because of missing includes and missing fdatasync, etc

What is the expected output? What do you see instead?

leveldb should compile correctly, instead i... | defect | support for dragonflybsd netbsd openbsd what steps will reproduce the problem try to compile on one of the above operating systems the compile will fail because of missing includes and missing fdatasync etc what is the expected output what do you see instead leveldb should compile correctly instead i... | 1 |

84,716 | 3,670,937,163 | IssuesEvent | 2016-02-22 02:46:33 | coollog/sublite | https://api.github.com/repos/coollog/sublite | opened | Show most popular job listings | 2 Difficulty 3 Length 5 Priority Category: Jobs Type: Feature | Not much detail on this yet, so need a design for where it should go first. | 1.0 | Show most popular job listings - Not much detail on this yet, so need a design for where it should go first. | non_defect | show most popular job listings not much detail on this yet so need a design for where it should go first | 0 |

414,309 | 27,983,938,134 | IssuesEvent | 2023-03-26 13:32:49 | OpenTabletDriver/OpenTabletDriver.Web | https://api.github.com/repos/OpenTabletDriver/OpenTabletDriver.Web | opened | Document DE's that handle monitor mapping (KDE, more?) | documentation | Problem: DE sees tablet and maps tablet for you. However, since OTD also maps the tablet for you, the common problem is along the lines of "tablet only reachable in the upper left" or similar.

The fix is to either map KDE to the full monitor layout (OTD devs: can we work around this?), or mapping to the entire monit... | 1.0 | Document DE's that handle monitor mapping (KDE, more?) - Problem: DE sees tablet and maps tablet for you. However, since OTD also maps the tablet for you, the common problem is along the lines of "tablet only reachable in the upper left" or similar.

The fix is to either map KDE to the full monitor layout (OTD devs: ... | non_defect | document de s that handle monitor mapping kde more problem de sees tablet and maps tablet for you however since otd also maps the tablet for you the common problem is along the lines of tablet only reachable in the upper left or similar the fix is to either map kde to the full monitor layout otd devs ... | 0 |

70,904 | 23,366,122,153 | IssuesEvent | 2022-08-10 15:29:55 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Subspaces aren't indented | T-Defect X-Regression S-Minor X-Release-Blocker A-Spaces O-Frequent | ### Steps to reproduce

1. Expand the space panel

2. Click the 'expand' arrow next to a top-level space to reveal its subspaces

### Outcome

#### What did you expect?

The subspaces should be indented further than the top-level space

#### What happened instead?

They have matching indentation

![Screenshot 2... | 1.0 | Subspaces aren't indented - ### Steps to reproduce

1. Expand the space panel

2. Click the 'expand' arrow next to a top-level space to reveal its subspaces

### Outcome

#### What did you expect?

The subspaces should be indented further than the top-level space

#### What happened instead?

They have matching i... | defect | subspaces aren t indented steps to reproduce expand the space panel click the expand arrow next to a top level space to reveal its subspaces outcome what did you expect the subspaces should be indented further than the top level space what happened instead they have matching i... | 1 |

23,253 | 3,783,890,467 | IssuesEvent | 2016-03-19 12:46:04 | VladSerdobintsev/zfcore | https://api.github.com/repos/VladSerdobintsev/zfcore | closed | Windows Install Process | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

Try to install ZFCore on WAMP

What is the expected output? What do you see instead?

Инсталяция на windows прошла с ошибками:

1. install.bat в отличии от install.sh не

преименовывает .htaccess.sample - .htaccess, пришлось

вручную после чего запустилась веб

инсталятор

2. ap... | 1.0 | Windows Install Process - ```

What steps will reproduce the problem?

Try to install ZFCore on WAMP

What is the expected output? What do you see instead?

Инсталяция на windows прошла с ошибками:

1. install.bat в отличии от install.sh не

преименовывает .htaccess.sample - .htaccess, пришлось

вручную после чего запусти... | defect | windows install process what steps will reproduce the problem try to install zfcore on wamp what is the expected output what do you see instead инсталяция на windows прошла с ошибками install bat в отличии от install sh не преименовывает htaccess sample htaccess пришлось вручную после чего запусти... | 1 |

50,773 | 13,187,734,787 | IssuesEvent | 2020-08-13 04:24:14 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [topsimulator] I3TimeShiffter (Trac #1345) | Migrated from Trac combo simulation defect | The segment still uses the C++ version of I3TimeShifter, which was removed. Why is the segment using this at all? Why not just use the I3TriggerSim segment?

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1345">https://code.icecube.wisc.edu/ticket/1345</a>, reported by olivas and ... | 1.0 | [topsimulator] I3TimeShiffter (Trac #1345) - The segment still uses the C++ version of I3TimeShifter, which was removed. Why is the segment using this at all? Why not just use the I3TriggerSim segment?

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1345">https://code.icecube.wisc... | defect | trac the segment still uses the c version of which was removed why is the segment using this at all why not just use the segment migrated from json status closed changetime description the segment still uses the c version of which was ... | 1 |

595,500 | 18,067,678,174 | IssuesEvent | 2021-09-20 21:13:18 | OpenMandrivaAssociation/test2 | https://api.github.com/repos/OpenMandrivaAssociation/test2 | closed | ruby 2.0 and rpm macro (Bugzilla Bug 241) | bug high priority major | This issue was created automatically with bugzilla2github

# Bugzilla Bug 241

Date: 2013-10-28 13:35:59 +0000

From: Alexander <<nobodydead@gmail.com>>

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @berolinux, @cris-b, @itchka, nix.or.die@gmail.com, raul.liota@gmail.com, @robxu9, @tpgxyz

Last updated... | 1.0 | ruby 2.0 and rpm macro (Bugzilla Bug 241) - This issue was created automatically with bugzilla2github

# Bugzilla Bug 241

Date: 2013-10-28 13:35:59 +0000

From: Alexander <<nobodydead@gmail.com>>

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @berolinux, @cris-b, @itchka, nix.or.die@gmail.com, raul.lio... | non_defect | ruby and rpm macro bugzilla bug this issue was created automatically with bugzilla bug date from alexander lt gt to openmandriva qa lt gt cc berolinux cris b itchka nix or die gmail com raul liota gmail com tpgxyz last updated comment d... | 0 |

699,927 | 24,037,515,966 | IssuesEvent | 2022-09-15 20:40:58 | plexiondev/plexiondev.github.io | https://api.github.com/repos/plexiondev/plexiondev.github.io | closed | make project types clearer | area:project-library priority:1 type:styling type:accessibility | it is currently not the most obvious what project type these are:

(or even that the icons represent anything)

related to #78 | 1.0 | make project types clearer - it is currently not the most obvious what project type these are:

(or even that the icons represent anything)

related to #78 | non_defect | make project types clearer it is currently not the most obvious what project type these are or even that the icons represent anything related to | 0 |

68,645 | 21,775,565,465 | IssuesEvent | 2022-05-13 13:30:16 | matrix-org/synapse | https://api.github.com/repos/matrix-org/synapse | closed | Server notice rooms are created rapidly if triggered by maybe_send_server_notice_to_user | S-Minor T-Defect | In the case where a server has reached it's MAU limit, the ResourceLimitsServerNotices class is meant to send a notice to users informing them of this. However, it ends up creating a ton of duplicate rooms *and* never actually invites the target for the notice to the room.

- `ServerNoticesManager.get_or_create_notic... | 1.0 | Server notice rooms are created rapidly if triggered by maybe_send_server_notice_to_user - In the case where a server has reached it's MAU limit, the ResourceLimitsServerNotices class is meant to send a notice to users informing them of this. However, it ends up creating a ton of duplicate rooms *and* never actually in... | defect | server notice rooms are created rapidly if triggered by maybe send server notice to user in the case where a server has reached it s mau limit the resourcelimitsservernotices class is meant to send a notice to users informing them of this however it ends up creating a ton of duplicate rooms and never actually in... | 1 |

108,912 | 4,363,688,708 | IssuesEvent | 2016-08-03 01:55:52 | idevelopment/Hcrm | https://api.github.com/repos/idevelopment/Hcrm | closed | Add Password routes | bug enhancement High Priority | For now the routes for the password reset system. Are not in the routes file. We need to add them. | 1.0 | Add Password routes - For now the routes for the password reset system. Are not in the routes file. We need to add them. | non_defect | add password routes for now the routes for the password reset system are not in the routes file we need to add them | 0 |

55,223 | 14,285,480,370 | IssuesEvent | 2020-11-23 13:58:42 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | JSON_TABLE emulation in PostgreSQL broken | T: Defect | jOOQ 3.14.3

openjdk version "11.0.8" 2020-07-14

Postgres 13

Using **_exactly_** the example from https://www.jooq.org/doc/3.14/manual/sql-building/table-expressions/json-table-function/:

```java

// For more information on imports and data types, click on the "Help" icon

// These imports, and possibly others, ... | 1.0 | JSON_TABLE emulation in PostgreSQL broken - jOOQ 3.14.3

openjdk version "11.0.8" 2020-07-14

Postgres 13

Using **_exactly_** the example from https://www.jooq.org/doc/3.14/manual/sql-building/table-expressions/json-table-function/:

```java

// For more information on imports and data types, click on the "Help" i... | defect | json table emulation in postgresql broken jooq openjdk version postgres using exactly the example from java for more information on imports and data types click on the help icon these imports and possibly others are implied import static org jooq impl dsl im... | 1 |

615,973 | 19,287,131,068 | IssuesEvent | 2021-12-11 05:48:53 | Vyxal/Vyxal | https://api.github.com/repos/Vyxal/Vyxal | closed | Integers can't be sorted | bug difficulty: easy priority:medium | When trying to sort numbers with the `s` command, it errors.

Example: [Try it Online!](https://vyxal.pythonanywhere.com/#WyIiLCIiLCIxMjMgcyAiLCIiLCIiXQ==) | 1.0 | Integers can't be sorted - When trying to sort numbers with the `s` command, it errors.

Example: [Try it Online!](https://vyxal.pythonanywhere.com/#WyIiLCIiLCIxMjMgcyAiLCIiLCIiXQ==) | non_defect | integers can t be sorted when trying to sort numbers with the s command it errors example | 0 |

81,404 | 30,829,673,525 | IssuesEvent | 2023-08-01 23:56:01 | dotCMS/core | https://api.github.com/repos/dotCMS/core | closed | LogViewer connection times out. | Type : Defect QA : Approved Merged QA : Passed Internal LTS: Next Team : Scout Triage Release : 23.06 | ### Parent Issue

_No response_

### Problem Statement

The log viewer disconnects when the load balancer times out.

The old implementation used a servlet to feed the front end and used a keep-alive strategy sending a blank space every 20 seconds. That implementation got replaced by a much more modern Server-Sent Eve... | 1.0 | LogViewer connection times out. - ### Parent Issue

_No response_

### Problem Statement

The log viewer disconnects when the load balancer times out.

The old implementation used a servlet to feed the front end and used a keep-alive strategy sending a blank space every 20 seconds. That implementation got replaced by ... | defect | logviewer connection times out parent issue no response problem statement the log viewer disconnects when the load balancer times out the old implementation used a servlet to feed the front end and used a keep alive strategy sending a blank space every seconds that implementation got replaced by a... | 1 |

70,898 | 23,363,709,762 | IssuesEvent | 2022-08-10 13:47:58 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: WebdriverJS FluentWait | C-nodejs I-defect | ### What happened?

WebdriverJS FluentWait works in Javascript as per the example in the [documentation](https://www.selenium.dev/documentation/webdriver/waits/#fluentwait). However in TypeScript we are missing the types for the 4th parameter (the frequency at which it polls).

Working JavaScript example (which is ... | 1.0 | [🐛 Bug]: WebdriverJS FluentWait - ### What happened?

WebdriverJS FluentWait works in Javascript as per the example in the [documentation](https://www.selenium.dev/documentation/webdriver/waits/#fluentwait). However in TypeScript we are missing the types for the 4th parameter (the frequency at which it polls).

Wo... | defect | webdriverjs fluentwait what happened webdriverjs fluentwait works in javascript as per the example in the however in typescript we are missing the types for the parameter the frequency at which it polls working javascript example which is polling and including the frequency at which it polls ... | 1 |

356,093 | 25,176,107,653 | IssuesEvent | 2022-11-11 09:24:12 | jeromepui/pe | https://api.github.com/repos/jeromepui/pe | opened | [User Guide] Did not say all kinds of roles in description | severity.Low type.DocumentationBug | There are multiple roles for the professor such as Coordinator, Lecturer, etc. but these were not mentioned in the description for the command.

<!--session: 1668153057787-9e... | 1.0 | [User Guide] Did not say all kinds of roles in description - There are multiple roles for the professor such as Coordinator, Lecturer, etc. but these were not mentioned in the description for the command.

**

_Thursday Aug 02, 2018 at 09:05 GMT_

_Originally opened as https://github.com/gizafoundation/api-layer/pull/22_... | 2.0 | [CLOSED] Setup idea local params - <a href="https://github.com/vojoup"><img src="https://avatars2.githubusercontent.com/u/24936972?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [vojoup](https://github.com/vojoup)**

_Thursday Aug 02, 2018 at 09:05 GMT_

_Originally opened as https://github.co... | non_defect | setup idea local params issue by thursday aug at gmt originally opened as this pr should make it easy to use local config for services while using run dashboard in intellij included the following code | 0 |

34,659 | 7,458,528,222 | IssuesEvent | 2018-03-30 10:49:10 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | dev-mini jaoks disaini- ja funktsionaalsuse muudatused | C: AVAR P: highest R: fixed T: defect | **Reported by mehis muldma on 12 Jan 2015 10:15 UTC**

'''Objekt'''

Rakendusse teha dev-mini keskkonna jaoks disaini- ja funktsionaalsuse muudatusi.

'''TODO'''

1) Väljendada suurelt ja punaselt päises, et tegu on väikse baasiga

2) Impordi ajaks panna sait lukku ja näidata kirjakest, et "Import käib"

3) indekse... | 1.0 | dev-mini jaoks disaini- ja funktsionaalsuse muudatused - **Reported by mehis muldma on 12 Jan 2015 10:15 UTC**

'''Objekt'''

Rakendusse teha dev-mini keskkonna jaoks disaini- ja funktsionaalsuse muudatusi.

'''TODO'''

1) Väljendada suurelt ja punaselt päises, et tegu on väikse baasiga

2) Impordi ajaks panna sait ... | defect | dev mini jaoks disaini ja funktsionaalsuse muudatused reported by mehis muldma on jan utc objekt rakendusse teha dev mini keskkonna jaoks disaini ja funktsionaalsuse muudatusi todo väljendada suurelt ja punaselt päises et tegu on väikse baasiga impordi ajaks panna sait lukku ... | 1 |

320,797 | 23,825,929,720 | IssuesEvent | 2022-09-05 14:51:47 | osism/issues | https://api.github.com/repos/osism/issues | closed | Neutron Upgrade failing due to missing Neutron SSH key | bug documentation | Neutron Upgrade Failed, because the Neutron SSH key was undefined. We had to create the key and the ansible var/dict ourselves.

Traceback (most recent call last):

File "/usr/local/lib/python3.8/dist-packages/ansible/template/__init__.py", line 1160, in do_template

res = j2_concat(rf)

File "<template>", li... | 1.0 | Neutron Upgrade failing due to missing Neutron SSH key - Neutron Upgrade Failed, because the Neutron SSH key was undefined. We had to create the key and the ansible var/dict ourselves.

Traceback (most recent call last):

File "/usr/local/lib/python3.8/dist-packages/ansible/template/__init__.py", line 1160, in do_t... | non_defect | neutron upgrade failing due to missing neutron ssh key neutron upgrade failed because the neutron ssh key was undefined we had to create the key and the ansible var dict ourselves traceback most recent call last file usr local lib dist packages ansible template init py line in do template ... | 0 |

505,002 | 14,625,780,446 | IssuesEvent | 2020-12-23 09:10:56 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.netflix.com - video or audio doesn't play | browser-firefox engine-gecko ml-needsdiagnosis-false priority-critical | <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile VR; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64176 -->

**URL**: https://www.netflix.com/browse

**Browser / Version**: Firefox 81.0

**Ope... | 1.0 | www.netflix.com - video or audio doesn't play - <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile VR; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64176 -->

**URL**: https://www.netflix.com/b... | non_defect | video or audio doesn t play url browser version firefox operating system android tested another browser no problem type video or audio doesn t play description there is no video steps to reproduce inop browser configuration gfx webrender all false ... | 0 |

213,209 | 16,515,775,573 | IssuesEvent | 2021-05-26 09:35:31 | AlexanderNorup/SemesterProjekt-2 | https://api.github.com/repos/AlexanderNorup/SemesterProjekt-2 | closed | PLPGSQL functionTypeByName & categoryByName | documentation | Jeg smider lige funktionerne til databasen her, så vi ikke glemmer hvor de er.

## PLPGSQL Functions

functionTypeByName:

```

CREATE OR REPLACE FUNCTION functionTypeByName(nameToFind varchar)

RETURNS integer

AS $$

DECLARE

functionTypeId integer := 42; -- Uknown

BEGIN

SELECT FT.id INTO fun... | 1.0 | PLPGSQL functionTypeByName & categoryByName - Jeg smider lige funktionerne til databasen her, så vi ikke glemmer hvor de er.

## PLPGSQL Functions

functionTypeByName:

```

CREATE OR REPLACE FUNCTION functionTypeByName(nameToFind varchar)

RETURNS integer

AS $$

DECLARE

functionTypeId integer := 42; -- Uk... | non_defect | plpgsql functiontypebyname categorybyname jeg smider lige funktionerne til databasen her så vi ikke glemmer hvor de er plpgsql functions functiontypebyname create or replace function functiontypebyname nametofind varchar returns integer as declare functiontypeid integer ukn... | 0 |

50,361 | 12,503,084,741 | IssuesEvent | 2020-06-02 06:27:20 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | closed | unable to build | Area: Build System Status: In PR Type: Bug :bug: | Repro steps:

$ git clone <repo>

$ yarn

$ yarn build

Expected: built repo

Actual:

```

@fluentui/ability-attributes: [11:32:40] Requiring external module @uifabric/build/babel/register

@fluentui/ability-attributes: internal/modules/cjs/loader.js:492

@fluentui/ability-attributes: throw new E... | 1.0 | unable to build - Repro steps:

$ git clone <repo>

$ yarn

$ yarn build

Expected: built repo

Actual:

```

@fluentui/ability-attributes: [11:32:40] Requiring external module @uifabric/build/babel/register

@fluentui/ability-attributes: internal/modules/cjs/loader.js:492

@fluentui/ability-attribu... | non_defect | unable to build repro steps git clone yarn yarn build expected built repo actual fluentui ability attributes requiring external module uifabric build babel register fluentui ability attributes internal modules cjs loader js fluentui ability attributes throw new... | 0 |

153,522 | 13,508,363,042 | IssuesEvent | 2020-09-14 07:36:12 | kyma-project/control-plane | https://api.github.com/repos/kyma-project/control-plane | closed | Create and align existing documentation about upgrade | area/control-plane documentation | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Create and align existing documentation about the upgrade:

- how to make an upgrade via KEB tutorial

- any specific info about upgrade... | 1.0 | Create and align existing documentation about upgrade - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Create and align existing documentation about the upgrade:

- how to make an upg... | non_defect | create and align existing documentation about upgrade thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description create and align existing documentation about the upgrade how to make an upg... | 0 |

175,908 | 14,544,857,340 | IssuesEvent | 2020-12-15 18:47:25 | bounswe/bounswe2020group4 | https://api.github.com/repos/bounswe/bounswe2020group4 | opened | (BKND) Api documentation | Backend Effort: Medium Priority: High Status: In-Progress Type: Documentation | An api documentation should be created for the endpoints.

Deadline: 22/12/20 | 1.0 | (BKND) Api documentation - An api documentation should be created for the endpoints.

Deadline: 22/12/20 | non_defect | bknd api documentation an api documentation should be created for the endpoints deadline | 0 |

86,068 | 10,473,149,832 | IssuesEvent | 2019-09-23 11:58:35 | Denmads/3-semesterprojekt-fitness | https://api.github.com/repos/Denmads/3-semesterprojekt-fitness | closed | Create Project Foundation Structure dokument | documentation | start op the Project Foundation Structure dokument | 1.0 | Create Project Foundation Structure dokument - start op the Project Foundation Structure dokument | non_defect | create project foundation structure dokument start op the project foundation structure dokument | 0 |

76,648 | 26,535,450,434 | IssuesEvent | 2023-01-19 15:20:53 | SasView/sasview | https://api.github.com/repos/SasView/sasview | closed | 5.x: Parameter limits in the Fit Page are not respected. | Defect | This defect was originally reported by User AdrianR using 5.0.3 on 28 Dec 2020 but I cannot find a corresponding issue (@krzywon might...). However, User GregS has now reported the same issue using 5.0.4 which suggests no fix was implemented. So am logging the issue here.

Adrian said:

>I have been using SasView 5.0... | 1.0 | 5.x: Parameter limits in the Fit Page are not respected. - This defect was originally reported by User AdrianR using 5.0.3 on 28 Dec 2020 but I cannot find a corresponding issue (@krzywon might...). However, User GregS has now reported the same issue using 5.0.4 which suggests no fix was implemented. So am logging the ... | defect | x parameter limits in the fit page are not respected this defect was originally reported by user adrianr using on dec but i cannot find a corresponding issue krzywon might however user gregs has now reported the same issue using which suggests no fix was implemented so am logging the issu... | 1 |

132,453 | 18,722,817,426 | IssuesEvent | 2021-11-03 13:37:57 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | opened | Research & Design - Organizations Can Request Support Online | design research experience | As the Experience Team, we need some research and design for the feature to allow Organizations to request support online as outlined in this Epic [https://app.zenhub.com/workspaces/experience-607d9d5e68b95200150fec37/issues/cdcgov/prime-reportstream/2906](url). | 1.0 | Research & Design - Organizations Can Request Support Online - As the Experience Team, we need some research and design for the feature to allow Organizations to request support online as outlined in this Epic [https://app.zenhub.com/workspaces/experience-607d9d5e68b95200150fec37/issues/cdcgov/prime-reportstream/2906](... | non_defect | research design organizations can request support online as the experience team we need some research and design for the feature to allow organizations to request support online as outlined in this epic url | 0 |

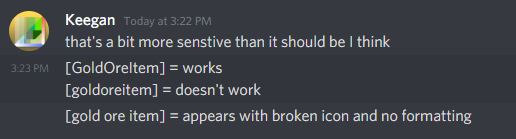

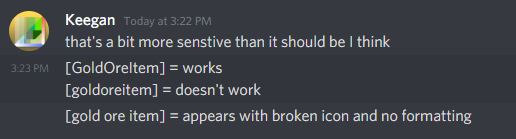

411,731 | 12,031,152,786 | IssuesEvent | 2020-04-13 09:01:27 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Ecopedia Item linking is overly sensitive and has a weird broken version | Priority: Low Status: Fixed Week Task |

Ideally we can make it match the sensitivity level in admin commands, so you can just do [coppergolditem] and have it correctly link the item with the friendly name. | 1.0 | Ecopedia Item linking is overly sensitive and has a weird broken version -

Ideally we can make it match the sensitivity level in admin commands, so you can just do [coppergolditem] and have it correctly link ... | non_defect | ecopedia item linking is overly sensitive and has a weird broken version ideally we can make it match the sensitivity level in admin commands so you can just do and have it correctly link the item with the friendly name | 0 |

24,295 | 4,074,882,028 | IssuesEvent | 2016-05-28 19:36:57 | vertigo17/Cerberus | https://api.github.com/repos/vertigo17/Cerberus | closed | [TestCase] Countries checked by default when property is created | enhancement Perim : GUITest | In the TestCase,jsp page. when a property is created, all countries should be checked by default, unless there is a property with the same name already defined, in which case no countries will be checked. | 1.0 | [TestCase] Countries checked by default when property is created - In the TestCase,jsp page. when a property is created, all countries should be checked by default, unless there is a property with the same name already defined, in which case no countries will be checked. | non_defect | countries checked by default when property is created in the testcase jsp page when a property is created all countries should be checked by default unless there is a property with the same name already defined in which case no countries will be checked | 0 |

529,611 | 15,392,367,515 | IssuesEvent | 2021-03-03 15:33:18 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | CustomStats with numeric name do not work | Category: Laws Category: Refactoring Priority: Low Staging Type: Bug | While debugging https://github.com/StrangeLoopGames/Eco/pull/7948, I found out that attempting to register a custom stat with all numeric name will fail to setup database. It can happen with any name starting with a number and can even be possible if the name contains number anywhere.

It's related with StatInfo.Shor... | 1.0 | CustomStats with numeric name do not work - While debugging https://github.com/StrangeLoopGames/Eco/pull/7948, I found out that attempting to register a custom stat with all numeric name will fail to setup database. It can happen with any name starting with a number and can even be possible if the name contains number ... | non_defect | customstats with numeric name do not work while debugging i found out that attempting to register a custom stat with all numeric name will fail to setup database it can happen with any name starting with a number and can even be possible if the name contains number anywhere it s related with statinfo shortname... | 0 |

160,371 | 12,509,192,610 | IssuesEvent | 2020-06-02 16:44:01 | rancher/k3s | https://api.github.com/repos/rancher/k3s | closed | Addressing K8S CVE - CVE 2020-10749 and CVE-2020-8555 | [zube]: To Test | We are working to patch k3s to address the following CVEs:

- [CVE-2020-10749](https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-10749) - IPv4 only clusters susceptible to MitM attacks via IPv6 rogue router advertisements

- [CVE-2020-8555](https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8555): kube-contr... | 1.0 | Addressing K8S CVE - CVE 2020-10749 and CVE-2020-8555 - We are working to patch k3s to address the following CVEs:

- [CVE-2020-10749](https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-10749) - IPv4 only clusters susceptible to MitM attacks via IPv6 rogue router advertisements

- [CVE-2020-8555](https://cve.mitre... | non_defect | addressing cve cve and cve we are working to patch to address the following cves only clusters susceptible to mitm attacks via rogue router advertisements kube controller manager ssrf cve is being addressed by upgrading the version of the library used by to see the... | 0 |

237,067 | 26,078,775,048 | IssuesEvent | 2022-12-25 01:10:25 | arabaske/Ceres | https://api.github.com/repos/arabaske/Ceres | opened | CVE-2022-23540 (Medium) detected in jsonwebtoken-8.1.0.tgz | security vulnerability | ## CVE-2022-23540 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-8.1.0.tgz</b></p></summary>

<p>JSON Web Token implementation (symmetric and asymmetric)</p>

<p>Library ... | True | CVE-2022-23540 (Medium) detected in jsonwebtoken-8.1.0.tgz - ## CVE-2022-23540 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-8.1.0.tgz</b></p></summary>

<p>JSON Web To... | non_defect | cve medium detected in jsonwebtoken tgz cve medium severity vulnerability vulnerable library jsonwebtoken tgz json web token implementation symmetric and asymmetric library home page a href path to dependency file ceres package json path to vulnerable library nod... | 0 |

76,305 | 26,354,683,100 | IssuesEvent | 2023-01-11 08:48:11 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | Incorrect leadership rebalancing after remove CP member, add a new member and add new CP groups | Type: Defect Team: Core Source: Internal Module: CP Subsystem | **Description:**

I saw incorrect leadership rebalancing for following scenario:

- `cp-size=5` and `group-size=3`, 3 nodes with priority 1, 2 nodes with priority 2.

- 3 CP groups for every possible combination of groups are added (i.e. there are 30 groups; only 2 new CP groups for combination which is used for METADA... | 1.0 | Incorrect leadership rebalancing after remove CP member, add a new member and add new CP groups - **Description:**

I saw incorrect leadership rebalancing for following scenario:

- `cp-size=5` and `group-size=3`, 3 nodes with priority 1, 2 nodes with priority 2.