Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

201,086 | 15,173,147,647 | IssuesEvent | 2021-02-13 12:47:17 | apisofshit/apisofshit.github.io | https://api.github.com/repos/apisofshit/apisofshit.github.io | opened | test | 飞翔小站 | /post/test/ Gitalk | https://freene.tk/post/test/

test

test

test

test

test

www.github.com

test

test test

#test#

##test#3

test

test

test

detected in netty-codec-http-4.1.39.Final.jar - autoclosed | security vulnerability | ## CVE-2021-21295 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application fra... | True | CVE-2021-21295 (Medium) detected in netty-codec-http-4.1.39.Final.jar - autoclosed - ## CVE-2021-21295 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.39.Final.ja... | non_defect | cve medium detected in netty codec http final jar autoclosed cve medium severity vulnerability vulnerable library netty codec http final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol ... | 0 |

6,939 | 24,042,198,796 | IssuesEvent | 2022-09-16 03:42:51 | AdamXweb/awesome-aussie | https://api.github.com/repos/AdamXweb/awesome-aussie | closed | [ADDITION] Deputy | Awaiting Review Added to Airtable Automation from Airtable | ### Category

HR

### Software to be added

Deputy

### Supporting Material

URL: https://www.deputy.com/

Description: Deputy is an employee management tool, simplifying scheduling, timesheets, tasks and workplace communication.

Size:

HQ: Sydney

LinkedIn: https://www.linkedin.com/company/deputyapp/

#### See Record on Airt... | 1.0 | [ADDITION] Deputy - ### Category

HR

### Software to be added

Deputy

### Supporting Material

URL: https://www.deputy.com/

Description: Deputy is an employee management tool, simplifying scheduling, timesheets, tasks and workplace communication.

Size:

HQ: Sydney

LinkedIn: https://www.linkedin.com/company/deputyapp/

###... | non_defect | deputy category hr software to be added deputy supporting material url description deputy is an employee management tool simplifying scheduling timesheets tasks and workplace communication size hq sydney linkedin see record on airtable | 0 |

87,172 | 10,881,108,997 | IssuesEvent | 2019-11-17 15:40:01 | bounswe/bounswe2019group5 | https://api.github.com/repos/bounswe/bounswe2019group5 | opened | Removing listening question body limit | Backend Status: Available Type: Design | While I am adding listening questions from admin panel, I have encountered string character limit as 1001 chars. Since we are adding base64 converted version of any audio files, their string lengths are much longer than 1001 chars. In our database, we should be able to keep longer strings in order to fetch the audio fi... | 1.0 | Removing listening question body limit - While I am adding listening questions from admin panel, I have encountered string character limit as 1001 chars. Since we are adding base64 converted version of any audio files, their string lengths are much longer than 1001 chars. In our database, we should be able to keep long... | non_defect | removing listening question body limit while i am adding listening questions from admin panel i have encountered string character limit as chars since we are adding converted version of any audio files their string lengths are much longer than chars in our database we should be able to keep longer strings ... | 0 |

8,607 | 6,587,234,960 | IssuesEvent | 2017-09-13 20:17:25 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Proposed: C# compiler could use flow analysis to track types in variables permitting the use of a `constrained` instruction on virtual calls. | Area-Compilers Feature Request Tenet-Performance | Boxing for `isinst` aside https://github.com/dotnet/coreclr/issues/12877 it would be nice if Pattern Matching issued a `constrained` instruction prior to `callvirt` where a generic type was matched to an interface

**Version Used**:

> Microsoft Visual Studio Enterprise 2017

Version 15.3.4

VisualStudio.15.Release... | True | Proposed: C# compiler could use flow analysis to track types in variables permitting the use of a `constrained` instruction on virtual calls. - Boxing for `isinst` aside https://github.com/dotnet/coreclr/issues/12877 it would be nice if Pattern Matching issued a `constrained` instruction prior to `callvirt` where a gen... | non_defect | proposed c compiler could use flow analysis to track types in variables permitting the use of a constrained instruction on virtual calls boxing for isinst aside it would be nice if pattern matching issued a constrained instruction prior to callvirt where a generic type was matched to an interface ve... | 0 |

42,661 | 11,205,188,491 | IssuesEvent | 2020-01-05 12:32:35 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | CI failing: Travis CI Py36 refguide and Linux_Python_36_32bit_full scipy.{optimize,special} | defect scipy.sparse | CI testing unexpectedly fails for some recent PRs gh-9719 and gh-11267.

#### Error message:

<s>

* Travis-CI fails building the refguide using Py3.6 with 4 `scipy.sparse` errors involving int64 and longlong such as

```

Expected:

<2x3 sparse matrix of type '<class 'numpy.int64'>'

with 2 stored... | 1.0 | CI failing: Travis CI Py36 refguide and Linux_Python_36_32bit_full scipy.{optimize,special} - CI testing unexpectedly fails for some recent PRs gh-9719 and gh-11267.

#### Error message:

<s>

* Travis-CI fails building the refguide using Py3.6 with 4 `scipy.sparse` errors involving int64 and longlong such as

``... | defect | ci failing travis ci refguide and linux python full scipy optimize special ci testing unexpectedly fails for some recent prs gh and gh error message travis ci fails building the refguide using with scipy sparse errors involving and longlong such as expected ... | 1 |

54,442 | 13,688,742,364 | IssuesEvent | 2020-09-30 12:11:55 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | GitHub Actions don't perform documentation tests and show submodule error | Defect DoNotPublish NotIDDChange | Issue overview

--------------

Two issues need to be resolved when using GitHub actions:

1. They were not set up to test the documentation (or create annotations from the LaTeX warnings/issues)

2. There was a submodule error issued post-checkout that was triggered by submodules in Penumbra | 1.0 | GitHub Actions don't perform documentation tests and show submodule error - Issue overview

--------------

Two issues need to be resolved when using GitHub actions:

1. They were not set up to test the documentation (or create annotations from the LaTeX warnings/issues)

2. There was a submodule error issued post-ch... | defect | github actions don t perform documentation tests and show submodule error issue overview two issues need to be resolved when using github actions they were not set up to test the documentation or create annotations from the latex warnings issues there was a submodule error issued post ch... | 1 |

63,576 | 17,773,894,551 | IssuesEvent | 2021-08-30 16:38:57 | idaholab/raven | https://api.github.com/repos/idaholab/raven | closed | [DEFECT] Dymola interface does not show error | priority_normal defect | --------

Defect Description

--------

I tried to run dymola from RAVEN using the Code Models

##### What did you expect to see happen?

An explanation about why the Dymola executable could not be launched

##### What did you see instead?

The job failed without any explanation. The dslog is printed in one of th... | 1.0 | [DEFECT] Dymola interface does not show error - --------

Defect Description

--------

I tried to run dymola from RAVEN using the Code Models

##### What did you expect to see happen?

An explanation about why the Dymola executable could not be launched

##### What did you see instead?

The job failed without an... | defect | dymola interface does not show error defect description i tried to run dymola from raven using the code models what did you expect to see happen an explanation about why the dymola executable could not be launched what did you see instead the job failed without any expla... | 1 |

33,436 | 7,122,987,147 | IssuesEvent | 2018-01-19 13:58:35 | p5n/archlinux-stuff | https://api.github.com/repos/p5n/archlinux-stuff | closed | xdg_menu license | Priority-Medium Type-Defect auto-migrated | ```

Arch Linux xdg_menu package states "GPL", but source code contains only obscure

"All rights reserved". Is it GPL-licensed after all? Was author permission to

freely use the code ever received or do I have yet contact him?

```

Original issue reported on code.google.com by `Rvach...@nxt.ru` on 24 Oct 2012 at 7:51... | 1.0 | xdg_menu license - ```

Arch Linux xdg_menu package states "GPL", but source code contains only obscure

"All rights reserved". Is it GPL-licensed after all? Was author permission to

freely use the code ever received or do I have yet contact him?

```

Original issue reported on code.google.com by `Rvach...@nxt.ru` on ... | defect | xdg menu license arch linux xdg menu package states gpl but source code contains only obscure all rights reserved is it gpl licensed after all was author permission to freely use the code ever received or do i have yet contact him original issue reported on code google com by rvach nxt ru on ... | 1 |

40,445 | 9,998,864,478 | IssuesEvent | 2019-07-12 09:14:06 | contao/contao | https://api.github.com/repos/contao/contao | closed | Visible fieldset and empty legend with template member_grouped on captcha field | defect | **Affected version(s)**

4.4.40

**Description**

Das Template member_grouped erzeugt folgende Ausgabe bei dem Captcha

```html

<fieldset>

<legend></legend>

<div class="widget widget-captcha mandatory" style="display: none;">

<label for="ctrl_registration">

<span class="invisible">Mandatory f... | 1.0 | Visible fieldset and empty legend with template member_grouped on captcha field - **Affected version(s)**

4.4.40

**Description**

Das Template member_grouped erzeugt folgende Ausgabe bei dem Captcha

```html

<fieldset>

<legend></legend>

<div class="widget widget-captcha mandatory" style="display: none;... | defect | visible fieldset and empty legend with template member grouped on captcha field affected version s description das template member grouped erzeugt folgende ausgabe bei dem captcha html mandatory field security question please calculate... | 1 |

508,086 | 14,689,536,786 | IssuesEvent | 2021-01-02 10:22:22 | ChrisNZL/Tallowmere2 | https://api.github.com/repos/ChrisNZL/Tallowmere2 | opened | Create Nintendo Switch™ version | ⚠ priority++ | My Switch devkit is sitting pretty. Need to get the Switch version going. | 1.0 | Create Nintendo Switch™ version - My Switch devkit is sitting pretty. Need to get the Switch version going. | non_defect | create nintendo switch™ version my switch devkit is sitting pretty need to get the switch version going | 0 |

138,976 | 20,751,572,212 | IssuesEvent | 2022-03-15 08:10:41 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Remove unnecessary scroll bar on shields v2 for details view | bug feature/shields design QA/Yes release-notes/exclude feature/shields/panel OS/Desktop | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Remove unnecessary scroll bar on shields v2 for details view - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE... | non_defect | remove unnecessary scroll bar on shields for details view have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue ... | 0 |

17,057 | 2,974,405,920 | IssuesEvent | 2015-07-15 00:18:02 | davidhabib/Volunteers-for-Salesforce | https://api.github.com/repos/davidhabib/Volunteers-for-Salesforce | closed | Avoid job signups while one in progress, to avoid duplicate contacts being created | Defect High Priority | hub thread: https://powerofus.force.com/0D580000028Q8KH

I was finally able to reproduce this by simply clicking Signup on the popup dialog, and then quickly clicking another signup link and ok'ing the dialog. Solution is to probably disable the page until it refreshes from the server, to avoid this race condition. | 1.0 | Avoid job signups while one in progress, to avoid duplicate contacts being created - hub thread: https://powerofus.force.com/0D580000028Q8KH

I was finally able to reproduce this by simply clicking Signup on the popup dialog, and then quickly clicking another signup link and ok'ing the dialog. Solution is to probabl... | defect | avoid job signups while one in progress to avoid duplicate contacts being created hub thread i was finally able to reproduce this by simply clicking signup on the popup dialog and then quickly clicking another signup link and ok ing the dialog solution is to probably disable the page until it refreshes from... | 1 |

28,019 | 4,077,241,694 | IssuesEvent | 2016-05-30 07:03:29 | V-Squared/v2-Production | https://api.github.com/repos/V-Squared/v2-Production | closed | E: Design ViPanel & ViSurge 05.05. | m.size.epic m.stage.2.design m.type.E.pcb m.type.E.schematic | - [x] Fix → [Safety Issue of Fuse Holder](#issuecomment-219592890)

- [x] Create snap shot of new design, send to HC to publish in Issue for review by BC

- [x] @bcaswelch Review new design

- [x] Complete Design

- [x] Trigger review to HC

| 1.0 | E: Design ViPanel & ViSurge 05.05. - - [x] Fix → [Safety Issue of Fuse Holder](#issuecomment-219592890)

- [x] Create snap shot of new design, send to HC to publish in Issue for review by BC

- [x] @bcaswelch Review new design

- [x] Complete Design

- [x] Trigger review to HC

| non_defect | e design vipanel visurge fix → issuecomment create snap shot of new design send to hc to publish in issue for review by bc bcaswelch review new design complete design trigger review to hc | 0 |

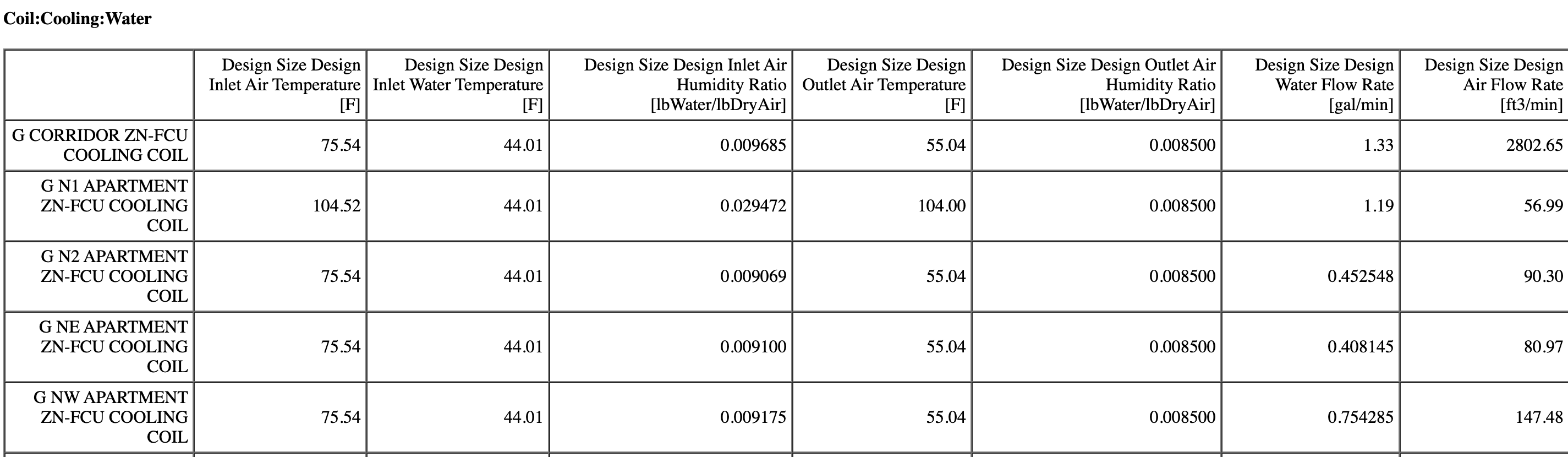

58,401 | 16,525,826,585 | IssuesEvent | 2021-05-26 19:58:14 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | opened | Component Sizing Summary Report table headers are redundant in using the word "Design" | Defect NotIDDChange | Issue overview

--------------

The column headers for a number of tables in the Component Sizing Summary Report is redundant in using the word "Design". Examples of this are shown below:

| Migrated from Trac cmake defect | In testing PnF for pole deployment (using make tarball to create a deployable object):

We found 'make tarball' was failing on spts-access:

LZMA_LIBRARIES-NOTFOUND => jeb.trunk.r154481.Linux-x86_64.gcc-4.4.6/lib/tools

realpath: /scratch/tschmidt/new/icecube/icetray/work/jeb/build/LZMA_LIBRARIES-NOTFOUND

Traceback (most... | 1.0 | make tarball fails when libarchive is missing xv-devel package (Trac #1977) - In testing PnF for pole deployment (using make tarball to create a deployable object):

We found 'make tarball' was failing on spts-access:

LZMA_LIBRARIES-NOTFOUND => jeb.trunk.r154481.Linux-x86_64.gcc-4.4.6/lib/tools

realpath: /scratch/tschm... | defect | make tarball fails when libarchive is missing xv devel package trac in testing pnf for pole deployment using make tarball to create a deployable object we found make tarball was failing on spts access lzma libraries notfound jeb trunk linux gcc lib tools realpath scratch tschmidt new icec... | 1 |

11,156 | 16,532,723,157 | IssuesEvent | 2021-05-27 08:12:22 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | `separateMajorMinor` flag in package rule doesn't override the global one for grouped dependencies | priority-5-triage status:requirements type:bug | **How are you running Renovate?**

- [x] WhiteSource Renovate hosted app on github.com

- [ ] Self hosted

Renovate version: 25.31.2

**Describe the bug**

`separateMajorMinor` flag in package rules doesn't seem to be overridden the global value for grouped dependencies.

For instance,

```

"separateMajorM... | 1.0 | `separateMajorMinor` flag in package rule doesn't override the global one for grouped dependencies - **How are you running Renovate?**

- [x] WhiteSource Renovate hosted app on github.com

- [ ] Self hosted

Renovate version: 25.31.2

**Describe the bug**

`separateMajorMinor` flag in package rules doesn't seem... | non_defect | separatemajorminor flag in package rule doesn t override the global one for grouped dependencies how are you running renovate whitesource renovate hosted app on github com self hosted renovate version describe the bug separatemajorminor flag in package rules doesn t seem to be... | 0 |

46,623 | 2,963,550,492 | IssuesEvent | 2015-07-10 11:13:35 | thexerteproject/xerteonlinetoolkits | https://api.github.com/repos/thexerteproject/xerteonlinetoolkits | closed | Workspace doesn't refresh if popups are blocked when creating new LO | bug high priority v3 Release | It often results in multiple clicks and then when Workspace is refreshed there are loads of LOs..

We can fix this by clearing name after click and refreshing Workspace differently... | 1.0 | Workspace doesn't refresh if popups are blocked when creating new LO - It often results in multiple clicks and then when Workspace is refreshed there are loads of LOs..

We can fix this by clearing name after click and refreshing Workspace differently... | non_defect | workspace doesn t refresh if popups are blocked when creating new lo it often results in multiple clicks and then when workspace is refreshed there are loads of los we can fix this by clearing name after click and refreshing workspace differently | 0 |

59,659 | 17,023,194,169 | IssuesEvent | 2021-07-03 00:48:08 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Order of layers added causes old permalinks to show maplint layer incorrectly | Component: mapnik Priority: minor Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 5.07pm, Wednesday, 2nd January 2008]**

When the maplint layer was added, maplint replaced the position of marker in the permalink layers parameter.

Old permalinks that had the markers layer set to show, will cause the maplint layer to show.

One solution is to swa... | 1.0 | Order of layers added causes old permalinks to show maplint layer incorrectly - **[Submitted to the original trac issue database at 5.07pm, Wednesday, 2nd January 2008]**

When the maplint layer was added, maplint replaced the position of marker in the permalink layers parameter.

Old permalinks that had the markers la... | defect | order of layers added causes old permalinks to show maplint layer incorrectly when the maplint layer was added maplint replaced the position of marker in the permalink layers parameter old permalinks that had the markers layer set to show will cause the maplint layer to show one solution is to swap the... | 1 |

144,757 | 19,298,878,833 | IssuesEvent | 2021-12-13 01:02:19 | snowdensb/job-dsl-plugin | https://api.github.com/repos/snowdensb/job-dsl-plugin | closed | CVE-2021-44228 (High) detected in log4j-1.2.9.jar, log4j-1.2.12.jar - autoclosed | security vulnerability | ## CVE-2021-44228 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>log4j-1.2.9.jar</b>, <b>log4j-1.2.12.jar</b></p></summary>

<p>

<details><summary><b>log4j-1.2.9.jar</b></p></summary... | True | CVE-2021-44228 (High) detected in log4j-1.2.9.jar, log4j-1.2.12.jar - autoclosed - ## CVE-2021-44228 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>log4j-1.2.9.jar</b>, <b>log4j-1.2.... | non_defect | cve high detected in jar jar autoclosed cve high severity vulnerability vulnerable libraries jar jar jar path to dependency file job dsl plugin job dsl plugin build gradle path to vulnerable library home wss scanner gradle cach... | 0 |

33,835 | 7,267,154,752 | IssuesEvent | 2018-02-20 02:52:40 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | closed | Navision Nota de crédito - Marca error en número de pedimento, y pareciera que está OK | bug defect | PARA LA NOTA DE CRÉDITO: 18AVMX005

SI VEO EN EL LOG, MANDA QUE EL PEDIMENTO ES, ES DECIR CON 1 ESPACIO:

17 43 4325 7003170

PERO SI CONSULTO EN LA TABLA CORRESPONDIENTE, SI TIENE LOS 2 ESPACIOS REQUERIDOS POR EL SAT:

select

[Document No_] as "no. factura"

,[No_] as "referencia"

,[Nº pedimento]

from [IPNav... | 1.0 | Navision Nota de crédito - Marca error en número de pedimento, y pareciera que está OK - PARA LA NOTA DE CRÉDITO: 18AVMX005

SI VEO EN EL LOG, MANDA QUE EL PEDIMENTO ES, ES DECIR CON 1 ESPACIO:

17 43 4325 7003170

PERO SI CONSULTO EN LA TABLA CORRESPONDIENTE, SI TIENE LOS 2 ESPACIOS REQUERIDOS POR EL SAT:

selec... | defect | navision nota de crédito marca error en número de pedimento y pareciera que está ok para la nota de crédito si veo en el log manda que el pedimento es es decir con espacio pero si consulto en la tabla correspondiente si tiene los espacios requeridos por el sat select as no factu... | 1 |

328,971 | 10,010,582,965 | IssuesEvent | 2019-07-15 08:25:37 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | mail.google.com - site is not usable | browser-fenix engine-gecko priority-critical | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1.1; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://mail.google.com

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android 5.1.1

**Tested Anot... | 1.0 | mail.google.com - site is not usable - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1.1; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://mail.google.com

**Browser / Version**: Firefox Mobile 68.0

**Operatin... | non_defect | mail google com site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description could not attach file steps to reproduce was composing mail clicked on attach file sele... | 0 |

14,663 | 17,786,556,680 | IssuesEvent | 2021-08-31 11:48:39 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Parcel V2 sandbox issue | type: support / not a bug (process) untriaged team-Local-Exec |

### Description of the problem / feature request:

Bazel sandbox on Linux is causing problems with parcel V2 RC

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

I trying to run parcel V2 similiar to

the example for parcel V1 at

https://github.c... | 1.0 | Parcel V2 sandbox issue -

### Description of the problem / feature request:

Bazel sandbox on Linux is causing problems with parcel V2 RC

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

I trying to run parcel V2 similiar to

the example for parc... | non_defect | parcel sandbox issue description of the problem feature request bazel sandbox on linux is causing problems with parcel rc bugs what s the simplest easiest way to reproduce this bug please provide a minimal example if possible i trying to run parcel similiar to the example for parcel ... | 0 |

61,195 | 14,943,463,768 | IssuesEvent | 2021-01-25 23:06:55 | NVIDIA/TensorRT | https://api.github.com/repos/NVIDIA/TensorRT | closed | NvInfer.h not found | Component: OSS Build Component: Plugins triaged | Dear all,

I manage to install TensorRT using a tar file by referring to the [official NVIDIA site](https://docs.nvidia.com/deeplearning/tensorrt/install-guide/index.html#installing-tar) and try to convert PyTorch model to TensorRT model. The example codes for the TensorRT are [TensorRT-RetinaFace](https://github.com... | 1.0 | NvInfer.h not found - Dear all,

I manage to install TensorRT using a tar file by referring to the [official NVIDIA site](https://docs.nvidia.com/deeplearning/tensorrt/install-guide/index.html#installing-tar) and try to convert PyTorch model to TensorRT model. The example codes for the TensorRT are [TensorRT-RetinaFa... | non_defect | nvinfer h not found dear all i manage to install tensorrt using a tar file by referring to the and try to convert pytorch model to tensorrt model the example codes for the tensorrt are however i m having a problem with a nvinfer h i think the nvinfer h is not existed in my machine i installed bel... | 0 |

170,370 | 14,257,538,521 | IssuesEvent | 2020-11-20 03:56:03 | post-grad-beta-test/remote-startups | https://api.github.com/repos/post-grad-beta-test/remote-startups | opened | Project needs a README | documentation | As a developer who is new to the project, I want to see an informative README, so that I can get started with the code right away.

# Acceptance Criteria

* README includes the following information:

* Overview / description of the project.

* How to install and configure.

* Includes instructions to... | 1.0 | Project needs a README - As a developer who is new to the project, I want to see an informative README, so that I can get started with the code right away.

# Acceptance Criteria

* README includes the following information:

* Overview / description of the project.

* How to install and configure.

*... | non_defect | project needs a readme as a developer who is new to the project i want to see an informative readme so that i can get started with the code right away acceptance criteria readme includes the following information overview description of the project how to install and configure ... | 0 |

333,258 | 10,119,590,919 | IssuesEvent | 2019-07-31 11:53:13 | JuliaMV/culture-portal | https://api.github.com/repos/JuliaMV/culture-portal | closed | Create Author page template | priority: mediocre | Create author page template with fields for the following information (no content or draft content if desired):

- [x] name and photo

- [x] years of life

- [x] timeline

- [x] list of artist's works with the date of creation

- [x] video

- [x] geotag

- [x] photo gallery with author's picture and pictures of ... | 1.0 | Create Author page template - Create author page template with fields for the following information (no content or draft content if desired):

- [x] name and photo

- [x] years of life

- [x] timeline

- [x] list of artist's works with the date of creation

- [x] video

- [x] geotag

- [x] photo gallery with aut... | non_defect | create author page template create author page template with fields for the following information no content or draft content if desired name and photo years of life timeline list of artist s works with the date of creation video geotag photo gallery with author s picture ... | 0 |

119,316 | 15,498,334,127 | IssuesEvent | 2021-03-11 06:17:46 | purnima143/Kurakoo | https://api.github.com/repos/purnima143/Kurakoo | closed | Design: Signup & login page on figma | design good first issue gssoc21 help wanted level2 medium ui/ux | - **UI Prototyping** with figma tool [figma design](https://www.figma.com/file/1gYZlafa8bUZu61ji10unF/Kurakoo?node-id=0%3A1).

- **Please follow the 3 primary colours - #FFBB00 ,#FF6F00 ,#411818 for designing the pages**

## Content for signup

- Name

- Email id

- Password

- confirm password

-... | 1.0 | Design: Signup & login page on figma - - **UI Prototyping** with figma tool [figma design](https://www.figma.com/file/1gYZlafa8bUZu61ji10unF/Kurakoo?node-id=0%3A1).

- **Please follow the 3 primary colours - #FFBB00 ,#FF6F00 ,#411818 for designing the pages**

## Content for signup

- Name

- Email id

- ... | non_defect | design signup login page on figma ui prototyping with figma tool please follow the primary colours for designing the pages content for signup name email id password confirm password year sem college signup button con... | 0 |

3,390 | 2,610,061,818 | IssuesEvent | 2015-02-26 18:18:10 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 路桥检查不育哪里正规 | auto-migrated Priority-Medium Type-Defect | ```

路桥检查不育哪里正规【台州五洲生殖医院】24小时健康咨询

热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市

椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、1

18、198及椒江一金清公交车直达枫南小区,乘坐107、105、109、

112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专... | 1.0 | 路桥检查不育哪里正规 - ```

路桥检查不育哪里正规【台州五洲生殖医院】24小时健康咨询

热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市

椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、1

18、198及椒江一金清公交车直达枫南小区,乘坐107、105、109、

112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医... | defect | 路桥检查不育哪里正规 路桥检查不育哪里正规【台州五洲生殖医院】 热线 微信号tzwzszyy 医院地址 台州市 (枫南大转盘旁)乘车线路 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 original i... | 1 |

27,794 | 5,104,700,225 | IssuesEvent | 2017-01-05 02:39:05 | STEllAR-GROUP/hpx | https://api.github.com/repos/STEllAR-GROUP/hpx | closed | Mismatch between #if/#endif and namespace scope brackets in this_thread_executers.hpp | type: defect | https://github.com/STEllAR-GROUP/hpx/blob/master/hpx/runtime/threads/executors/this_thread_executors.hpp

Note that the namespaces are declared inside a #if/#endif construct but the closing brackets are outside any such preprocessor scope. | 1.0 | Mismatch between #if/#endif and namespace scope brackets in this_thread_executers.hpp - https://github.com/STEllAR-GROUP/hpx/blob/master/hpx/runtime/threads/executors/this_thread_executors.hpp

Note that the namespaces are declared inside a #if/#endif construct but the closing brackets are outside any such preprocess... | defect | mismatch between if endif and namespace scope brackets in this thread executers hpp note that the namespaces are declared inside a if endif construct but the closing brackets are outside any such preprocessor scope | 1 |

1,860 | 2,603,972,592 | IssuesEvent | 2015-02-24 19:00:38 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳病毒性疣能治好吗 | auto-migrated Priority-Medium Type-Defect | ```

沈阳病毒性疣能治好吗〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105... | 1.0 | 沈阳病毒性疣能治好吗 - ```

沈阳病毒性疣能治好吗〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.co... | defect | 沈阳病毒性疣能治好吗 沈阳病毒性疣能治好吗〓沈陽軍區政治部醫院性病〓tel: 〓 , 。� �� 。是一所與新中國同建立共輝煌� ��歷史悠久、設備精良、技術權威、專家云集,是預防、保健 、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲�� �部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、� ��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空 軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體�� �等功。 original issue reported on code google com by gmail com on jun at | 1 |

34,057 | 7,781,665,688 | IssuesEvent | 2018-06-06 01:43:02 | BBAD-Furniture/BBAD-furniture | https://api.github.com/repos/BBAD-Furniture/BBAD-furniture | closed | View the full list of all products | Code Review | The product catalog, so that I can see everything that's available

API Routes needed:

Reviews, Products, Users, Cart

and Axios, in Redux Store. | 1.0 | View the full list of all products - The product catalog, so that I can see everything that's available

API Routes needed:

Reviews, Products, Users, Cart

and Axios, in Redux Store. | non_defect | view the full list of all products the product catalog so that i can see everything that s available api routes needed reviews products users cart and axios in redux store | 0 |

60,606 | 17,023,469,878 | IssuesEvent | 2021-07-03 02:11:40 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Last commit to es.yml broke all utf-8 non-ascii characters | Component: website Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 9.36pm, Thursday, 27th August 2009]**

The latest commit http://trac.openstreetmap.org/changeset/17284 to file http://trac.openstreetmap.org/browser/sites/rails_port/config/locales/es.yml?rev=17284 replaced all UTF-8 characters with some other encoding (probably broke... | 1.0 | Last commit to es.yml broke all utf-8 non-ascii characters - **[Submitted to the original trac issue database at 9.36pm, Thursday, 27th August 2009]**

The latest commit http://trac.openstreetmap.org/changeset/17284 to file http://trac.openstreetmap.org/browser/sites/rails_port/config/locales/es.yml?rev=17284 replaced ... | defect | last commit to es yml broke all utf non ascii characters the latest commit to file replaced all utf characters with some other encoding probably broken or misconfigured text editor used | 1 |

25,759 | 4,440,865,261 | IssuesEvent | 2016-08-19 06:40:18 | pcolby/bipolar | https://api.github.com/repos/pcolby/bipolar | closed | windows Bipolar-0.5.2.297.exe fails to install / start - MSVCP140.dll missing | defect | Hello,

just tried to install version Bipolar-0.5.2.297.exe on Win7 64Bit, Polar Flow Sync 2.6.2

During the install provess a message appeared that the hook could not be installed.

By trying to do that step manually "bipolar.exe -install-hook" am message box stated that "MSVCP140.dll" is missing

MSVCP120.dll i... | 1.0 | windows Bipolar-0.5.2.297.exe fails to install / start - MSVCP140.dll missing - Hello,

just tried to install version Bipolar-0.5.2.297.exe on Win7 64Bit, Polar Flow Sync 2.6.2

During the install provess a message appeared that the hook could not be installed.

By trying to do that step manually "bipolar.exe -inst... | defect | windows bipolar exe fails to install start dll missing hello just tried to install version bipolar exe on polar flow sync during the install provess a message appeared that the hook could not be installed by trying to do that step manually bipolar exe install hook am messa... | 1 |

223,566 | 24,711,923,095 | IssuesEvent | 2022-10-20 02:00:00 | alpersonalwebsite/react-mobx-redux | https://api.github.com/repos/alpersonalwebsite/react-mobx-redux | closed | WS-2020-0042 (High) detected in acorn-5.7.4.tgz - autoclosed | security vulnerability | ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>acorn-5.7.4.tgz</b></p></summary>

<p>ECMAScript parser</p>

<p>Library home page: <a href="https://registry.npmjs.org/aco... | True | WS-2020-0042 (High) detected in acorn-5.7.4.tgz - autoclosed - ## WS-2020-0042 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>acorn-5.7.4.tgz</b></p></summary>

<p>ECMAScript parser</p... | non_defect | ws high detected in acorn tgz autoclosed ws high severity vulnerability vulnerable library acorn tgz ecmascript parser library home page a href path to dependency file package json path to vulnerable library node modules jsdom node modules acorn package json ... | 0 |

66,275 | 20,110,484,411 | IssuesEvent | 2022-02-07 14:40:20 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | Compact Serialization / invalid field names ? | Type: Defect | **Context**

Running server 5.1-SNAPSHOT as downloaded from Maven on Feb. 7th.

**Describe the bug**

Create a compact schema with one field named `value` and push the schema to the cluster with `ClientSendSchemaCodec`. Works fine, no error is reported. Try to fetch the schema back with `ClientFetchSchemaCodec`... | 1.0 | Compact Serialization / invalid field names ? - **Context**

Running server 5.1-SNAPSHOT as downloaded from Maven on Feb. 7th.

**Describe the bug**

Create a compact schema with one field named `value` and push the schema to the cluster with `ClientSendSchemaCodec`. Works fine, no error is reported. Try to fet... | defect | compact serialization invalid field names context running server snapshot as downloaded from maven on feb describe the bug create a compact schema with one field named value and push the schema to the cluster with clientsendschemacodec works fine no error is reported try to fetch... | 1 |

319,956 | 27,410,717,570 | IssuesEvent | 2023-03-01 10:20:16 | openPMD/openPMD-api | https://api.github.com/repos/openPMD/openPMD-api | opened | Examples: Fix Windows PDB | bug tests machine/system | With the `dev` branch pre 0.15.0:

```

C:\Users\runneradmin\AppData\Local\Temp\ci-pU5qdA784w\src\examples\10_streaming_write.cpp : fatal error C1041: cannot open program database 'C:\Users\runneradmin\AppData\Local\Temp\ci-pU5qdA784w\build\bin\RelWithDebInfo\vc143.pdb'; if multiple CL.EXE write to the same .PDB file,... | 1.0 | Examples: Fix Windows PDB - With the `dev` branch pre 0.15.0:

```

C:\Users\runneradmin\AppData\Local\Temp\ci-pU5qdA784w\src\examples\10_streaming_write.cpp : fatal error C1041: cannot open program database 'C:\Users\runneradmin\AppData\Local\Temp\ci-pU5qdA784w\build\bin\RelWithDebInfo\vc143.pdb'; if multiple CL.EXE ... | non_defect | examples fix windows pdb with the dev branch pre c users runneradmin appdata local temp ci src examples streaming write cpp fatal error cannot open program database c users runneradmin appdata local temp ci build bin relwithdebinfo pdb if multiple cl exe write to the same pdb file ... | 0 |

177,769 | 21,509,178,221 | IssuesEvent | 2022-04-28 01:12:59 | amccool/AngularASPNETCore2WebApiAuth | https://api.github.com/repos/amccool/AngularASPNETCore2WebApiAuth | closed | WS-2019-0291 (High) detected in handlebars-4.0.11.tgz - autoclosed | security vulnerability | ## WS-2019-0291 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.11.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates e... | True | WS-2019-0291 (High) detected in handlebars-4.0.11.tgz - autoclosed - ## WS-2019-0291 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.11.tgz</b></p></summary>

<p>Handleba... | non_defect | ws high detected in handlebars tgz autoclosed ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file ... | 0 |

20,206 | 3,315,219,189 | IssuesEvent | 2015-11-06 10:46:05 | akvo/akvo-flow-mobile | https://api.github.com/repos/akvo/akvo-flow-mobile | opened | Question Group translations | Defect | For forms that have translated question groups, the question group translations do not show up when languages are switched. | 1.0 | Question Group translations - For forms that have translated question groups, the question group translations do not show up when languages are switched. | defect | question group translations for forms that have translated question groups the question group translations do not show up when languages are switched | 1 |

12,469 | 2,700,656,343 | IssuesEvent | 2015-04-04 12:14:07 | MKergall/osmbonuspack | https://api.github.com/repos/MKergall/osmbonuspack | closed | git migration | auto-migrated Priority-Medium Type-Defect | ```

Hello, thanks for this library.

Do you want to migrate on git? Seems, it's much better to develop in it.

Also, I already fork osmbonuspack on github and add my implementation for

clickable/closeable bubbles. You can see it here, if interesting.

https://github.com/Sash0k/osmbonuspack/tree/clickable-bubbles

```

O... | 1.0 | git migration - ```

Hello, thanks for this library.

Do you want to migrate on git? Seems, it's much better to develop in it.

Also, I already fork osmbonuspack on github and add my implementation for

clickable/closeable bubbles. You can see it here, if interesting.

https://github.com/Sash0k/osmbonuspack/tree/clickabl... | defect | git migration hello thanks for this library do you want to migrate on git seems it s much better to develop in it also i already fork osmbonuspack on github and add my implementation for clickable closeable bubbles you can see it here if interesting original issue reported on code google com by... | 1 |

232,270 | 18,855,030,680 | IssuesEvent | 2021-11-12 04:23:30 | Tencent/bk-ci | https://api.github.com/repos/Tencent/bk-ci | closed | feat: 对BatchScript脚本执行过程加锁,防止被系统清理掉 | stage/uat stage/test kind/enhancement area/ci/agent test/passed uat/passed priority/critical-urgent | <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

windows 执行batch脚本的特点

假设batch插件内容为

```batch

set extScriptName=a.bat

call :LOG_INFO Start call %extScriptName%

call %extScriptName%

if errorlevel 1 (

call :LOG_INFO Run "%extScriptName%" Err... | 2.0 | feat: 对BatchScript脚本执行过程加锁,防止被系统清理掉 - <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

windows 执行batch脚本的特点

假设batch插件内容为

```batch

set extScriptName=a.bat

call :LOG_INFO Start call %extScriptName%

call %extScriptName%

if errorlevel 1 (

ca... | non_defect | feat 对batchscript脚本执行过程加锁,防止被系统清理掉 what would you like to be added windows 执行batch脚本的特点 假设batch插件内容为 batch set extscriptname a bat call log info start call extscriptname call extscriptname if errorlevel call log info run extscriptname error goto failure log info... | 0 |

20,218 | 3,317,272,531 | IssuesEvent | 2015-11-06 20:50:06 | spockframework/spock | https://api.github.com/repos/spockframework/spock | closed | IllegalArgumentException when mocking java.io.PrintStream | Module-Core Status-New Type-Defect | Originally reported on Google Code with ID 384

```

When I attempt to run a spec that mocks java.io.PrintStream I get an IllegalArgumentException

thrown:

class PrintSpec extends Specification {

private PrintStream printStream = Mock()

}

spock-core version - 0.7-groovy-2.0

cglib-nodep version - 3.1

objenesi... | 1.0 | IllegalArgumentException when mocking java.io.PrintStream - Originally reported on Google Code with ID 384

```

When I attempt to run a spec that mocks java.io.PrintStream I get an IllegalArgumentException

thrown:

class PrintSpec extends Specification {

private PrintStream printStream = Mock()

}

spock-core v... | defect | illegalargumentexception when mocking java io printstream originally reported on google code with id when i attempt to run a spec that mocks java io printstream i get an illegalargumentexception thrown class printspec extends specification private printstream printstream mock spock core ver... | 1 |

251,305 | 21,468,764,163 | IssuesEvent | 2022-04-26 07:35:00 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | ibmcloud command failing on windows | area/testing | /area testing

I've noticed that `ibmcloud` command that is being executed as part of our test suite on windows tests are failing

https://cloud.ibm.com/devops/pipelines/929da3ed-6cad-4026-a847-7a8485f20fa4/582614a3-b910-4659-ac27-6288668c5fe6/a1d74ffa-3d39-4dbb-8583-b0a34f87f3bd?env_id=ibm:yp:eu-de

This is pro... | 1.0 | ibmcloud command failing on windows - /area testing

I've noticed that `ibmcloud` command that is being executed as part of our test suite on windows tests are failing

https://cloud.ibm.com/devops/pipelines/929da3ed-6cad-4026-a847-7a8485f20fa4/582614a3-b910-4659-ac27-6288668c5fe6/a1d74ffa-3d39-4dbb-8583-b0a34f87f... | non_defect | ibmcloud command failing on windows area testing i ve noticed that ibmcloud command that is being executed as part of our test suite on windows tests are failing this is probably due to the use of posix style path and not using windows path ibmcloud cos upload bucket odo tests openshift l... | 0 |

375,407 | 26,161,276,590 | IssuesEvent | 2022-12-31 15:22:40 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Roadmap 2022 (discussion) | feature comp-documentation | This is ClickHouse open-source roadmap 2022.

Descriptions and links to be filled.

This roadmap does not cover the tasks related to infrastructure, orchestration, documentation, marketing, integrations, SaaS, drivers, etc.

See also:

Roadmap 2021: [#17623](https://github.com/ClickHouse/ClickHouse/issues/17623)

... | 1.0 | Roadmap 2022 (discussion) - This is ClickHouse open-source roadmap 2022.

Descriptions and links to be filled.

This roadmap does not cover the tasks related to infrastructure, orchestration, documentation, marketing, integrations, SaaS, drivers, etc.

See also:

Roadmap 2021: [#17623](https://github.com/ClickHou... | non_defect | roadmap discussion this is clickhouse open source roadmap descriptions and links to be filled this roadmap does not cover the tasks related to infrastructure orchestration documentation marketing integrations saas drivers etc see also roadmap roadmap main tasks ... | 0 |

3,497 | 2,610,063,777 | IssuesEvent | 2015-02-26 18:18:45 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 黄岩看不育哪家效果好 | auto-migrated Priority-Medium Type-Defect | ```

黄岩看不育哪家效果好【台州五洲生殖医院】24小时健康咨询

热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市

椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、1

18、198及椒江一金清公交车直达枫南小区,乘坐107、105、109、

112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专... | 1.0 | 黄岩看不育哪家效果好 - ```

黄岩看不育哪家效果好【台州五洲生殖医院】24小时健康咨询

热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市

椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、1

18、198及椒江一金清公交车直达枫南小区,乘坐107、105、109、

112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医... | defect | 黄岩看不育哪家效果好 黄岩看不育哪家效果好【台州五洲生殖医院】 热线 微信号tzwzszyy 医院地址 台州市 (枫南大转盘旁)乘车线路 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 original i... | 1 |

52,674 | 13,224,896,720 | IssuesEvent | 2020-08-17 20:04:07 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | lazy frame needs docs (Trac #136) | Migrated from Trac defect documentation |

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/136">https://code.icecube.wisc.edu/projects/icecube/ticket/136</a>, reported by troyand owned by blaufuss</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:56",

"_ts": "1416... | 1.0 | lazy frame needs docs (Trac #136) -

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/136">https://code.icecube.wisc.edu/projects/icecube/ticket/136</a>, reported by troyand owned by blaufuss</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "20... | defect | lazy frame needs docs trac migrated from json status closed changetime ts description reporter troy cc resolution wont or cant fix time component documentation summary lazy... | 1 |

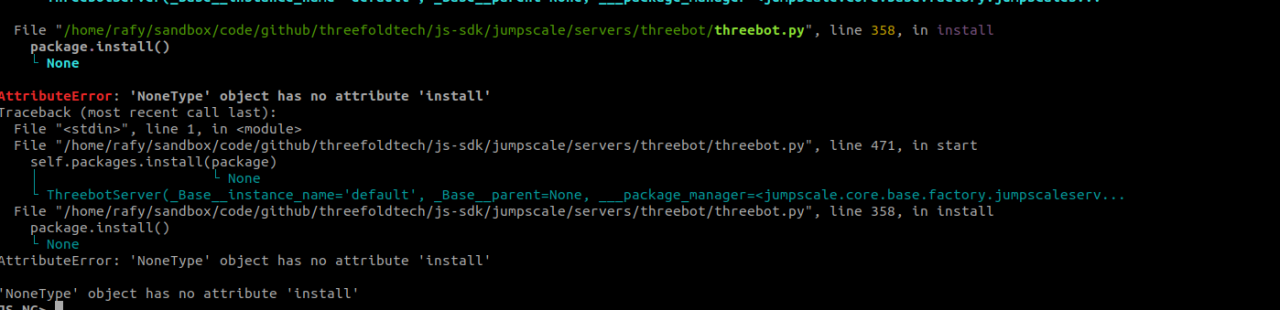

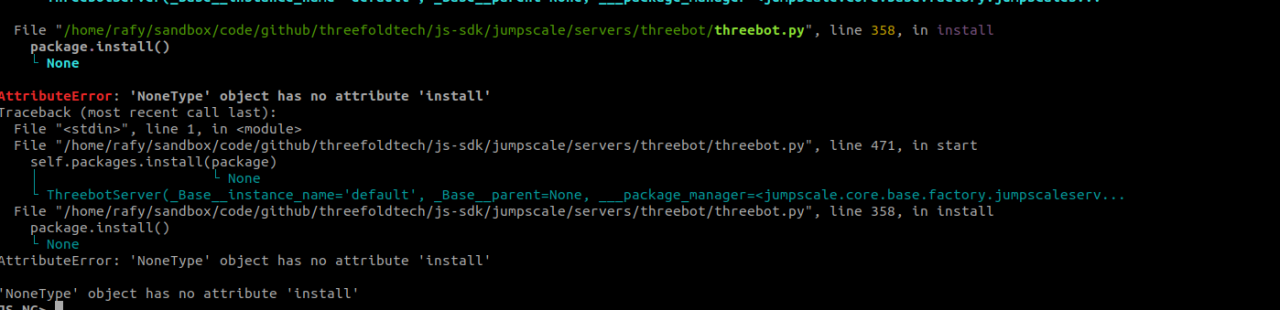

440,229 | 12,696,140,680 | IssuesEvent | 2020-06-22 09:33:51 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | can't start threebot server | priority_critical | ```python3

server = j.servers.threebot.get("default")

server.start()

```

| 1.0 | can't start threebot server - ```python3

server = j.servers.threebot.get("default")

server.start()

```

| non_defect | can t start threebot server server j servers threebot get default server start | 0 |

523,525 | 15,184,321,586 | IssuesEvent | 2021-02-15 09:25:05 | online-judge-tools/oj | https://api.github.com/repos/online-judge-tools/oj | closed | Show a hint message when the cookie.jar is broken | difficulty:low enhancement good first issue priority:low | ## Description / 説明

The cookie.jar may be broken, and the workaround for such cases is just removing the cookie.jar. Showing a hint message for this help users.

## Motivation / 動機

There is a user who is confused by the broken cookie.jar.

<blockquote class="twitter-tweet"><p lang="ja" dir="ltr">online judge ... | 1.0 | Show a hint message when the cookie.jar is broken - ## Description / 説明

The cookie.jar may be broken, and the workaround for such cases is just removing the cookie.jar. Showing a hint message for this help users.

## Motivation / 動機

There is a user who is confused by the broken cookie.jar.

<blockquote class=... | non_defect | show a hint message when the cookie jar is broken description 説明 the cookie jar may be broken and the workaround for such cases is just removing the cookie jar showing a hint message for this help users motivation 動機 there is a user who is confused by the broken cookie jar online judge too... | 0 |

42,753 | 11,254,366,457 | IssuesEvent | 2020-01-11 23:05:10 | hasse69/rar2fs | https://api.github.com/repos/hasse69/rar2fs | closed | Regression: Reduce memory footprint during archive scan | Defect Priority-High | rar2fs currently does not list archive files anymore. Prior to this commit is good - this is the commit that breaks my archives.

```

rar2fs#/mnt/Web /mnt/WebU fuse ro,allow_other,uid=kyle,gid=storage,umask=0222,kernel_cache,--seek-length=2,--date-rar 0 0

```

```

FileServer /tmp/rar2fs # git bisect bad

4bc904... | 1.0 | Regression: Reduce memory footprint during archive scan - rar2fs currently does not list archive files anymore. Prior to this commit is good - this is the commit that breaks my archives.

```

rar2fs#/mnt/Web /mnt/WebU fuse ro,allow_other,uid=kyle,gid=storage,umask=0222,kernel_cache,--seek-length=2,--date-rar 0 0

`... | defect | regression reduce memory footprint during archive scan currently does not list archive files anymore prior to this commit is good this is the commit that breaks my archives mnt web mnt webu fuse ro allow other uid kyle gid storage umask kernel cache seek length date rar fi... | 1 |

37,835 | 8,530,278,363 | IssuesEvent | 2018-11-03 20:48:59 | ralsina/devicenzo | https://api.github.com/repos/ralsina/devicenzo | closed | seg fault | Priority-Medium Type-Defect auto-migrated | ```

i downloaded

http://code.google.com/p/devicenzo/source/browse/trunk/devicenzo.py and when i

run it i get a seg fault... might wanna look into to it.

patx@patx-desktop:~/Desktop$ python devicenzo.py.py

Traceback (most recent call last):

File "devicenzo.py.py", line 168, in <module>

wb.addTab(QtCore.QUrl('ht... | 1.0 | seg fault - ```

i downloaded

http://code.google.com/p/devicenzo/source/browse/trunk/devicenzo.py and when i

run it i get a seg fault... might wanna look into to it.

patx@patx-desktop:~/Desktop$ python devicenzo.py.py

Traceback (most recent call last):

File "devicenzo.py.py", line 168, in <module>

wb.addTab(QtC... | defect | seg fault i downloaded and when i run it i get a seg fault might wanna look into to it patx patx desktop desktop python devicenzo py py traceback most recent call last file devicenzo py py line in wb addtab qtcore qurl file devicenzo py py line in addtab self tabs setcu... | 1 |

21,225 | 28,311,099,999 | IssuesEvent | 2023-04-10 15:27:50 | cse442-at-ub/project_s23-cinco | https://api.github.com/repos/cse442-at-ub/project_s23-cinco | closed | Retrieve and load events from database to feed | Processing Task Sprint 3 | Task Tests

Test 1:

1. Go to https://www-student.cse.buffalo.edu/CSE442-542/2023-Spring/cse-442b/build

2. Verify you can see events that are different and unique.

3. Click on an event and verify you can see... | 1.0 | Retrieve and load events from database to feed - Task Tests

Test 1:

1. Go to https://www-student.cse.buffalo.edu/CSE442-542/2023-Spring/cse-442b/build

2. Verify you can see events that are different and unique.

4. Select OK

5. Save file

What is the expected output?

http://

What do you see instead?

http://localhost:8080/forms/call.html... | 1.0 | Set custom forms - once a form is set it is not possible to go back to automatic form generation - ```

What steps will reproduce the problem?

1. Open the attached file in the editor

2. Select "Set custom form" for the task "Call for papers"

3. Remove the http reference (leave "http://")

4. Select OK

5. Save file

Wha... | defect | set custom forms once a form is set it is not possible to go back to automatic form generation what steps will reproduce the problem open the attached file in the editor select set custom form for the task call for papers remove the http reference leave select ok save file what is the... | 1 |

244,582 | 7,876,998,893 | IssuesEvent | 2018-06-26 04:37:46 | Dallas-Makerspace/tracker | https://api.github.com/repos/Dallas-Makerspace/tracker | closed | [Feature] Members directory / Jobboard | CR/enhancement Committee/Public Relations Priority/LOW Volunteer/help wanted wontfix | Profiles:

- name

- user portraits

- bios

- telephone ( optional )

- email (optional )

- talk link / discord talk

- Areas of interest

- SME tags

Integration with talk and discord apis

Skill sets

- contact mailing list per skill tag ( notifices on new posting )

- AD groups?

... | 1.0 | [Feature] Members directory / Jobboard - Profiles:

- name

- user portraits

- bios

- telephone ( optional )

- email (optional )

- talk link / discord talk

- Areas of interest

- SME tags

Integration with talk and discord apis

Skill sets

- contact mailing list per skill tag ( notifi... | non_defect | members directory jobboard profiles name user portraits bios telephone optional email optional talk link discord talk areas of interest sme tags integration with talk and discord apis skill sets contact mailing list per skill tag notifices on n... | 0 |

19,224 | 5,825,945,598 | IssuesEvent | 2017-05-08 01:43:03 | houtan1/PortOpt-WebApp | https://api.github.com/repos/houtan1/PortOpt-WebApp | opened | Expand and update hard-coded capabilities | V1(hard-coded) | Add 2 more stocks and update all number for stocks, allowing the user to have more than 1 choice for pairing.

Error checking and alerting if choosing non-negatively correlating stocks. | 1.0 | Expand and update hard-coded capabilities - Add 2 more stocks and update all number for stocks, allowing the user to have more than 1 choice for pairing.

Error checking and alerting if choosing non-negatively correlating stocks. | non_defect | expand and update hard coded capabilities add more stocks and update all number for stocks allowing the user to have more than choice for pairing error checking and alerting if choosing non negatively correlating stocks | 0 |

16,258 | 2,886,043,599 | IssuesEvent | 2015-06-12 04:10:24 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | 3.0.6 - Subquery w/hasMany creating bad SQL | Defect ORM | Hi guys. When defining a `hasMany` relationship with a `strategy` of `subquery` it tries to use an undefined column as the `foreignKey` (ignoring the `foreignKey` I'm trying to use). As I understand it, either way should work without having to change anything. I'd prefer to use `subquery` since I'm dealing with a lot ... | 1.0 | 3.0.6 - Subquery w/hasMany creating bad SQL - Hi guys. When defining a `hasMany` relationship with a `strategy` of `subquery` it tries to use an undefined column as the `foreignKey` (ignoring the `foreignKey` I'm trying to use). As I understand it, either way should work without having to change anything. I'd prefer t... | defect | subquery w hasmany creating bad sql hi guys when defining a hasmany relationship with a strategy of subquery it tries to use an undefined column as the foreignkey ignoring the foreignkey i m trying to use as i understand it either way should work without having to change anything i d prefer t... | 1 |

37,356 | 4,804,338,843 | IssuesEvent | 2016-11-02 13:16:53 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Create UI for GeoSearch Component | addition: feature experience: design priority: medium | Create the UI component to implement GeoSearch based on mockup created by https://github.com/fgpv-vpgf/fgpv-vpgf/issues/1163.

| 1.0 | Create UI for GeoSearch Component - Create the UI component to implement GeoSearch based on mockup created by https://github.com/fgpv-vpgf/fgpv-vpgf/issues/1163.

| non_defect | create ui for geosearch component create the ui component to implement geosearch based on mockup created by | 0 |

17,973 | 3,013,836,656 | IssuesEvent | 2015-07-29 11:35:31 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | resourceService does not communicate properly with data source (postgresql service) | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.Download and install PostgreSQL 9.3.5 using the

postgresql-9.3.5-3-windows-x64 installer and setting user postgres with passwd

yawl and creating DB yawl with pgAdmin III

2.Use tomcat 7.0.56

3.Try to login to the resourceService

(http://localhost:8080/resourceService/fa... | 1.0 | resourceService does not communicate properly with data source (postgresql service) - ```

What steps will reproduce the problem?

1.Download and install PostgreSQL 9.3.5 using the

postgresql-9.3.5-3-windows-x64 installer and setting user postgres with passwd

yawl and creating DB yawl with pgAdmin III

2.Use tomcat 7.... | defect | resourceservice does not communicate properly with data source postgresql service what steps will reproduce the problem download and install postgresql using the postgresql windows installer and setting user postgres with passwd yawl and creating db yawl with pgadmin iii use tomcat ... | 1 |

233,977 | 25,793,357,534 | IssuesEvent | 2022-12-10 09:31:41 | turkdevops/karma-jasmine | https://api.github.com/repos/turkdevops/karma-jasmine | closed | CVE-2021-27292 (High) detected in ua-parser-js-0.7.21.tgz - autoclosed | security vulnerability | ## CVE-2021-27292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.21.tgz</b></p></summary>

<p>Lightweight JavaScript-based user-agent string parser</p>

<p>Library home... | True | CVE-2021-27292 (High) detected in ua-parser-js-0.7.21.tgz - autoclosed - ## CVE-2021-27292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.21.tgz</b></p></summary>

<p>... | non_defect | cve high detected in ua parser js tgz autoclosed cve high severity vulnerability vulnerable library ua parser js tgz lightweight javascript based user agent string parser library home page a href path to dependency file package json path to vulnerable library nod... | 0 |

13,095 | 2,732,897,005 | IssuesEvent | 2015-04-17 10:04:07 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | repo2: fields in Modelinfo should resize automatically (and must be resizable manually) | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. select model in repo

2. move modelinfo frame on the right

3.

What is the expected output?

- the fields should resize when moving the frame

What do you see instead?

- fields have fixed size

Please provide any additional information below.

- ideally the editing field could... | 1.0 | repo2: fields in Modelinfo should resize automatically (and must be resizable manually) - ```

What steps will reproduce the problem?

1. select model in repo

2. move modelinfo frame on the right

3.

What is the expected output?

- the fields should resize when moving the frame

What do you see instead?

- fields have fixe... | defect | fields in modelinfo should resize automatically and must be resizable manually what steps will reproduce the problem select model in repo move modelinfo frame on the right what is the expected output the fields should resize when moving the frame what do you see instead fields have fixed si... | 1 |

161,653 | 25,378,411,925 | IssuesEvent | 2022-11-21 15:42:16 | metatablecat/Tabby | https://api.github.com/repos/metatablecat/Tabby | opened | Projects | enhancement status: needs design | Projects would be a new way of Tabby loading in plugin runtimes. Right now, Tabby does all of this in the backend with no developer input into the loading order (minus `Priority`) and configuration of the loader.

The solution?

## Projects

Projects would provide developers with the ability to load in fragments of... | 1.0 | Projects - Projects would be a new way of Tabby loading in plugin runtimes. Right now, Tabby does all of this in the backend with no developer input into the loading order (minus `Priority`) and configuration of the loader.

The solution?

## Projects

Projects would provide developers with the ability to load in f... | non_defect | projects projects would be a new way of tabby loading in plugin runtimes right now tabby does all of this in the backend with no developer input into the loading order minus priority and configuration of the loader the solution projects projects would provide developers with the ability to load in f... | 0 |

699,145 | 24,006,499,044 | IssuesEvent | 2022-09-14 15:10:15 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Log spew on rocksdb init | kind/enhancement good first issue area/docdb priority/medium | Jira Link: [DB-581](https://yugabyte.atlassian.net/browse/DB-581)

### Description

As part of https://phabricator.dev.yugabyte.com/D10932 / c9aee058dde24a08d669a98e996b0f8368c6cd86, a probably unintended side effect is that we are no longer actually updating the default values of some auto-tune flags, such as compacti... | 1.0 | [DocDB] Log spew on rocksdb init - Jira Link: [DB-581](https://yugabyte.atlassian.net/browse/DB-581)

### Description

As part of https://phabricator.dev.yugabyte.com/D10932 / c9aee058dde24a08d669a98e996b0f8368c6cd86, a probably unintended side effect is that we are no longer actually updating the default values of som... | non_defect | log spew on rocksdb init jira link description as part of a probably unintended side effect is that we are no longer actually updating the default values of some auto tune flags such as compaction threads this leads to some log spew on rocksdb init eg tablet creation truncate etc | 0 |

411,248 | 27,816,678,547 | IssuesEvent | 2023-03-18 19:13:05 | Gobidev/pfetch-rs | https://api.github.com/repos/Gobidev/pfetch-rs | closed | Metrics wrong in readme | documentation | In the first line of the benchmark table, the mean is larger than the min and the max, and the max is smaller than the min.

https://github.com/Gobidev/pfetch-rs/blame/main/README.md#L62 | 1.0 | Metrics wrong in readme - In the first line of the benchmark table, the mean is larger than the min and the max, and the max is smaller than the min.

https://github.com/Gobidev/pfetch-rs/blame/main/README.md#L62 | non_defect | metrics wrong in readme in the first line of the benchmark table the mean is larger than the min and the max and the max is smaller than the min | 0 |

26,948 | 4,839,659,068 | IssuesEvent | 2016-11-09 10:15:39 | google/google-authenticator | https://api.github.com/repos/google/google-authenticator | closed | Specific google_authenticator key generation options do not allow auth | bug libpam Priority-Medium Type-Defect | Original [issue 394](https://code.google.com/p/google-authenticator/issues/detail?id=394) created by acesmythe on 2014-06-27T00:31:33.000Z:

<b>What steps will reproduce the problem?</b>

1. (CentOS 6.5) Install via the steps listed at http://www.techrepublic.com/blog/linux-and-open-source/two-factor-ssh-authentication-... | 1.0 | Specific google_authenticator key generation options do not allow auth - Original [issue 394](https://code.google.com/p/google-authenticator/issues/detail?id=394) created by acesmythe on 2014-06-27T00:31:33.000Z:

<b>What steps will reproduce the problem?</b>

1. (CentOS 6.5) Install via the steps listed at http://www.t... | defect | specific google authenticator key generation options do not allow auth original created by acesmythe on what steps will reproduce the problem centos install via the steps listed at short version get the git compile edit the necessary pam and sshd configs restart sshd generate ... | 1 |

6,299 | 2,610,239,994 | IssuesEvent | 2015-02-26 19:16:32 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 台州割包茎手术到哪家医院比较好 | auto-migrated Priority-Medium Type-Defect | ```

台州割包茎手术到哪家医院比较好【台州五洲生殖医院】24小

时健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院�

��址:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘�

��104、108、118、198及椒江一金清公交车直达枫南小区,乘坐107

、105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备... | 1.0 | 台州割包茎手术到哪家医院比较好 - ```

台州割包茎手术到哪家医院比较好【台州五洲生殖医院】24小

时健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院�

��址:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘�

��104、108、118、198及椒江一金清公交车直达枫南小区,乘坐107

、105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格... | defect | 台州割包茎手术到哪家医院比较好 台州割包茎手术到哪家医院比较好【台州五洲生殖医院】 时健康咨询热线 微信号tzwzszyy 医院� ��址 (枫南大转盘旁)乘车线路 乘� �� 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 ... | 1 |

208,336 | 15,886,398,581 | IssuesEvent | 2021-04-09 22:32:07 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | The translations of the string 'Blob Container' are inconsistent between strings 'Blob Container' and 'Blob Container (ADLS Gen2)' on the 'Attach with Azure AD' dialog | 🌐 localization 🧪 testing | **Storage Explorer Version:** 1.15.1

**Build**: 20200904.2

**Branch**: main

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04 / MacOS Catalina

**Architecture**: ia32/x64

**Language:** German

**Regression From:** Not a regression

**Steps to reproduce:**

1. Launch Storage Explorer.

2. Open 'Settings' -> Applicat... | 1.0 | The translations of the string 'Blob Container' are inconsistent between strings 'Blob Container' and 'Blob Container (ADLS Gen2)' on the 'Attach with Azure AD' dialog - **Storage Explorer Version:** 1.15.1

**Build**: 20200904.2

**Branch**: main

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04 / MacOS Catalina

**Arc... | non_defect | the translations of the string blob container are inconsistent between strings blob container and blob container adls on the attach with azure ad dialog storage explorer version build branch main platform os windows linux ubuntu macos catalina architecture ... | 0 |

22,546 | 3,665,357,520 | IssuesEvent | 2016-02-19 15:47:13 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Exception if dart:async not specified in _embedder.yaml | analyzer-stability area-analyzer priority-high Type-Defect | Given the following ```_embedder.yaml``` file

```

embedder_libs:

'dart:core': '/absolute/path/to/fletch-sdk/internal/dart_lib/lib/core/core.dart'

# 'dart:async': '/absolute/path/to/any/dart-sdk/async/async.dart'

'dart:fletch': '/absolute/path/to/fletch-sdk/internal/fletch_lib/lib/fletch/fletch.dart'

ana... | 1.0 | Exception if dart:async not specified in _embedder.yaml - Given the following ```_embedder.yaml``` file

```

embedder_libs:

'dart:core': '/absolute/path/to/fletch-sdk/internal/dart_lib/lib/core/core.dart'

# 'dart:async': '/absolute/path/to/any/dart-sdk/async/async.dart'

'dart:fletch': '/absolute/path/to/f... | defect | exception if dart async not specified in embedder yaml given the following embedder yaml file embedder libs dart core absolute path to fletch sdk internal dart lib lib core core dart dart async absolute path to any dart sdk async async dart dart fletch absolute path to f... | 1 |

799,555 | 28,309,281,615 | IssuesEvent | 2023-04-10 14:03:04 | status-im/status-mobile | https://api.github.com/repos/status-im/status-mobile | opened | The message can't be edited/replied/removed on the 'Pinned messages' section on the 'group detailed' page | bug low-priority group-chat | #### Steps to reproduce:

1. Create a group chat

2. Send the message inside the current chat

3. Pin the message

4. Go to the message list

5. Long tap on the group chat -> tap the 'Group details' option

6. Open the 'Pinned message' section

7. Long tap on the message

8. Select the following options:

- Edit mess... | 1.0 | The message can't be edited/replied/removed on the 'Pinned messages' section on the 'group detailed' page - #### Steps to reproduce:

1. Create a group chat

2. Send the message inside the current chat

3. Pin the message

4. Go to the message list

5. Long tap on the group chat -> tap the 'Group details' option

6. Op... | non_defect | the message can t be edited replied removed on the pinned messages section on the group detailed page steps to reproduce create a group chat send the message inside the current chat pin the message go to the message list long tap on the group chat tap the group details option op... | 0 |

48,693 | 13,184,719,448 | IssuesEvent | 2020-08-12 19:58:12 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | cmake goodies from trunk for V01-11-02 (Trac #66) | Incomplete Migration Migrated from Trac defect offline-software | <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/66

, reported by blaufuss and owned by _</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "esp. stuff from rev #33235 (svn info in ENGLISH, por favor)",

"reporter": "blaufuss",

... | 1.0 | cmake goodies from trunk for V01-11-02 (Trac #66) - <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/66

, reported by blaufuss and owned by _</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"description": "esp. stuff from rev #33235 (svn info in ENG... | defect | cmake goodies from trunk for trac migrated from reported by blaufuss and owned by json status closed changetime description esp stuff from rev svn info in english por favor reporter blaufuss cc resolution f... | 1 |

38,032 | 8,638,487,236 | IssuesEvent | 2018-11-23 14:55:46 | contao/contao | https://api.github.com/repos/contao/contao | closed | Wartungsmodus führt zu "Es ist ein Fehler aufgetreten" | defect | Ich habe das Problem von dem geschlossenen Issue https://github.com/contao/core-bundle/issues/1307 auch in Contao 4.6:

Bei mir unter Contao 4.6.7, PHP7.2 tritt das Problem auch auf.

Der letzte Eintrag aus der log-Datei ist folgender:

`[2018-11-08 21:13:32] request.CRITICAL: Uncaught PHP Exception RuntimeExcepti... | 1.0 | Wartungsmodus führt zu "Es ist ein Fehler aufgetreten" - Ich habe das Problem von dem geschlossenen Issue https://github.com/contao/core-bundle/issues/1307 auch in Contao 4.6:

Bei mir unter Contao 4.6.7, PHP7.2 tritt das Problem auch auf.

Der letzte Eintrag aus der log-Datei ist folgender:

`[2018-11-08 21:13:32... | defect | wartungsmodus führt zu es ist ein fehler aufgetreten ich habe das problem von dem geschlossenen issue auch in contao bei mir unter contao tritt das problem auch auf der letzte eintrag aus der log datei ist folgender request critical uncaught php exception runtimeexception error whe... | 1 |

186,806 | 6,742,777,974 | IssuesEvent | 2017-10-20 09:12:48 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | Post Redesign Cleanup | priority: minor status: issue: on hold type: website improvement | The redesign will happen in the `develop` branch so the old client will stay as long as it's not complete, after it's we'll have to do a bit of cleanup.

This list is not complete, we'll probably add new items:

- [ ] Remove Bower (and relative code, post install scripts, ...)

- [ ] Remove unused assets

- [ ] Remove com... | 1.0 | Post Redesign Cleanup - The redesign will happen in the `develop` branch so the old client will stay as long as it's not complete, after it's we'll have to do a bit of cleanup.

This list is not complete, we'll probably add new items:

- [ ] Remove Bower (and relative code, post install scripts, ...)

- [ ] Remove unused... | non_defect | post redesign cleanup the redesign will happen in the develop branch so the old client will stay as long as it s not complete after it s we ll have to do a bit of cleanup this list is not complete we ll probably add new items remove bower and relative code post install scripts remove unused ass... | 0 |

357,537 | 10,608,258,940 | IssuesEvent | 2019-10-11 07:02:51 | canonical-web-and-design/maas-ui | https://api.github.com/repos/canonical-web-and-design/maas-ui | closed | Client stuck on loading if issue parsing csrf token on server | Bug 🐛 Priority: High | If maas server has csrf authentication enabled, the client will sit in a "loading" state indefinitely without an error.

The client should be raising a PROTOCOL_ERROR with the error "Invalid CSRF token", but this is not surfaced to the client. | 1.0 | Client stuck on loading if issue parsing csrf token on server - If maas server has csrf authentication enabled, the client will sit in a "loading" state indefinitely without an error.

The client should be raising a PROTOCOL_ERROR with the error "Invalid CSRF token", but this is not surfaced to the client. | non_defect | client stuck on loading if issue parsing csrf token on server if maas server has csrf authentication enabled the client will sit in a loading state indefinitely without an error the client should be raising a protocol error with the error invalid csrf token but this is not surfaced to the client | 0 |