Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

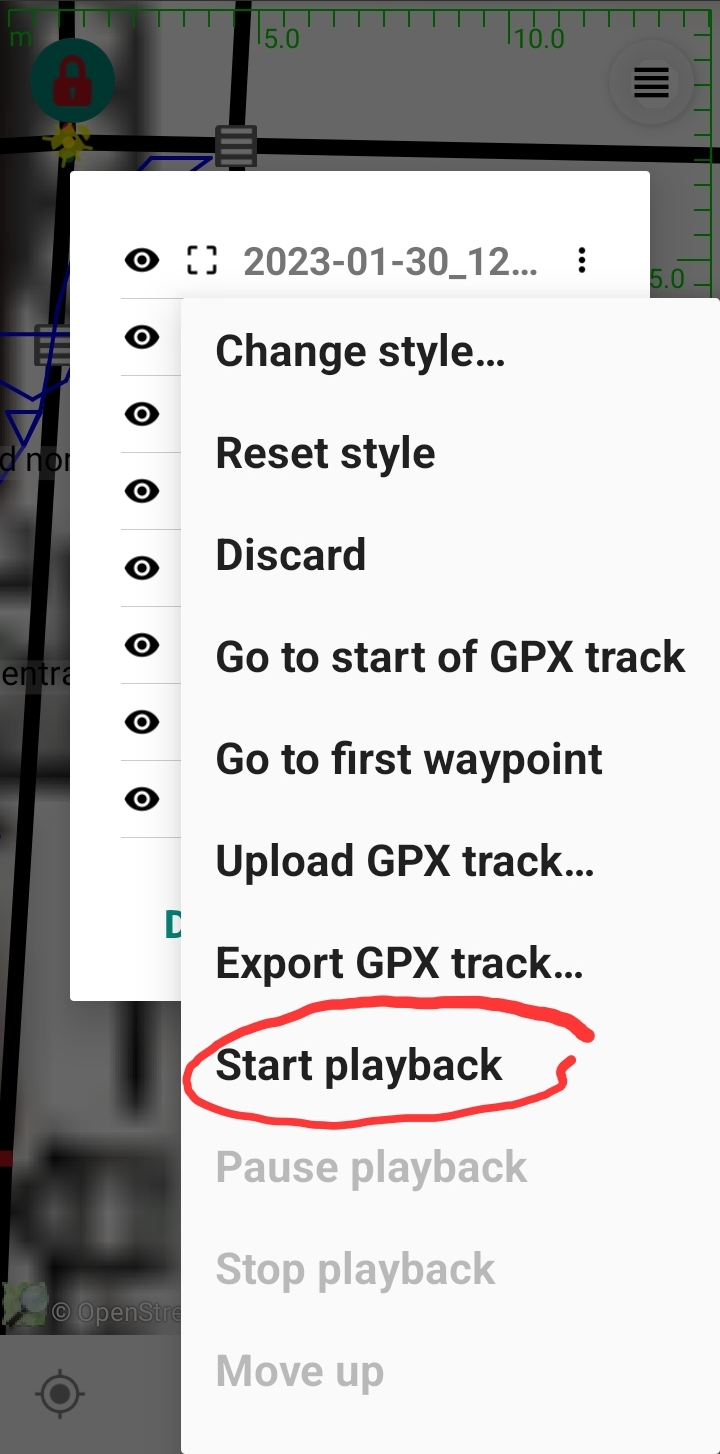

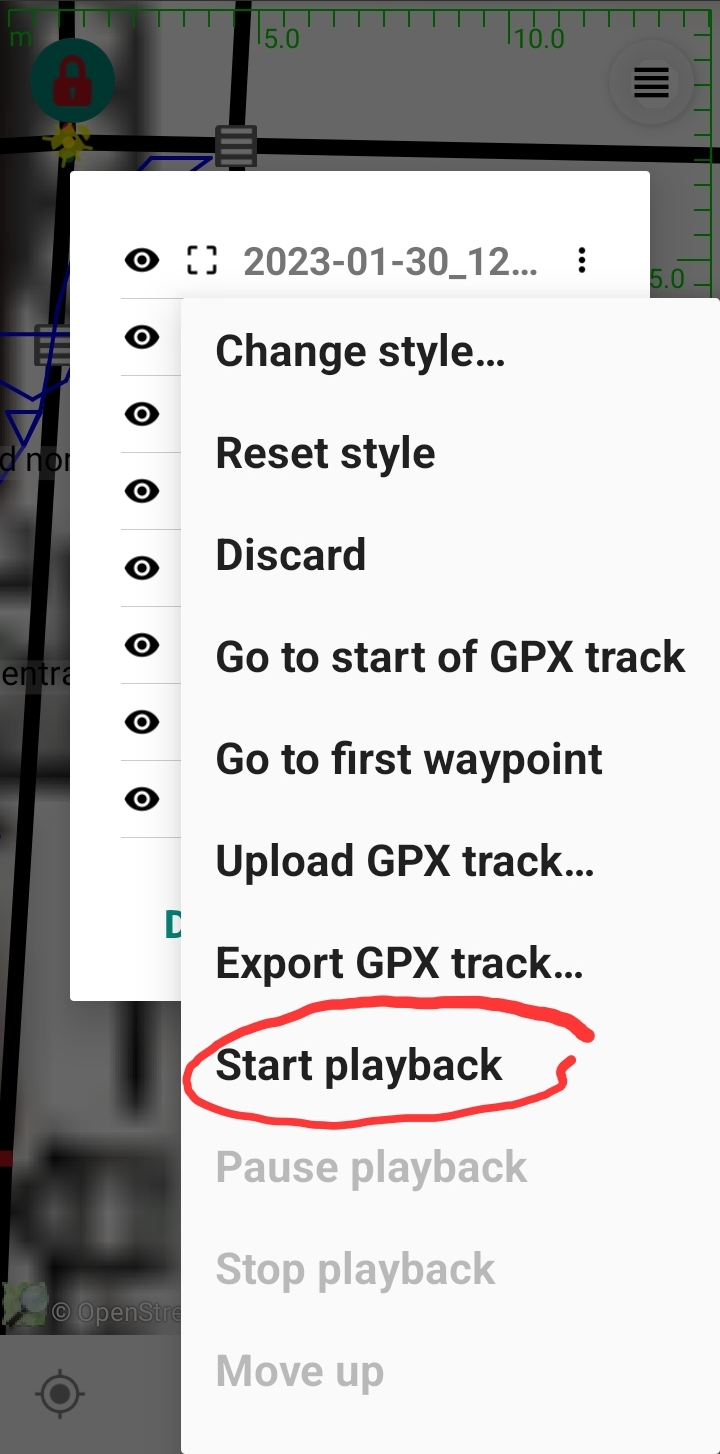

77,133 | 26,792,798,936 | IssuesEvent | 2023-02-01 09:44:56 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | closed | No playback when adding layer from GPX file | Defect Minor | Today I tested using

- Add layer from GPX file, then

- Start playback.

I found no playback ensued.

All I know is I had to remember to push Stop Playback later otherwise... | 1.0 | No playback when adding layer from GPX file - Today I tested using

- Add layer from GPX file, then

- Start playback.

I found no playback ensued.

All I know is I had to ... | defect | no playback when adding layer from gpx file today i tested using add layer from gpx file then start playback i found no playback ensued all i know is i had to remember to push stop playback later otherwise i feared that something was going on that i could not see using up cpu cycles i was using ... | 1 |

187,950 | 14,434,932,377 | IssuesEvent | 2020-12-07 07:58:10 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: disk-stalled/log=false,data=false failed | C-test-failure O-roachtest O-robot branch-release-20.2 release-blocker | [(roachtest).disk-stalled/log=false,data=false failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2500126&tab=buildLog) on [release-20.2@c15afb605a18772689caf46c3dd74c4aca33badd](https://github.com/cockroachdb/cockroach/commits/c15afb605a18772689caf46c3dd74c4aca33badd):

```

| | ```

| | set -exuo... | 2.0 | roachtest: disk-stalled/log=false,data=false failed - [(roachtest).disk-stalled/log=false,data=false failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2500126&tab=buildLog) on [release-20.2@c15afb605a18772689caf46c3dd74c4aca33badd](https://github.com/cockroachdb/cockroach/commits/c15afb605a18772689caf46c3dd... | non_defect | roachtest disk stalled log false data false failed on set exuo pipefail thrift dir opt thrift if then sudo apt get update sudo apt get install qy automake bison flex g git libboost all dev libevent dev libssl dev... | 0 |

14,241 | 2,795,455,128 | IssuesEvent | 2015-05-11 22:07:27 | revelc/formatter-maven-plugin | https://api.github.com/repos/revelc/formatter-maven-plugin | closed | plugin ignores line wrapping settings | auto-migrated Priority-Medium Type-Defect | ```

Expected output:

private static EntityManagerFactory emf = Persistence.createEntityManagerFactory("myHappyService", Collections.emptyMap());

Actual output:

private static EntityManagerFactory emf = Persistence

.createEntityManagerFactory("myHappyService",

... | 1.0 | plugin ignores line wrapping settings - ```

Expected output:

private static EntityManagerFactory emf = Persistence.createEntityManagerFactory("myHappyService", Collections.emptyMap());

Actual output:

private static EntityManagerFactory emf = Persistence

.createEntityManagerFactory("my... | defect | plugin ignores line wrapping settings expected output private static entitymanagerfactory emf persistence createentitymanagerfactory myhappyservice collections emptymap actual output private static entitymanagerfactory emf persistence createentitymanagerfactory my... | 1 |

56,617 | 15,222,546,771 | IssuesEvent | 2021-02-18 00:32:01 | naev/naev | https://api.github.com/repos/naev/naev | closed | Gprof lists planet_exists as the most expensive function in the game | Priority-Medium Type-Defect | ```

Flat profile:

Each sample counts as 0.01 seconds.

% cumulative self self total

time seconds seconds calls ms/call ms/call name

14.63 1.34 1.34 449585 0.00 0.00 planet_exists

13.65 2.59 1.25 1911404 0.00 0.00 pilot_update

...

```

... | 1.0 | Gprof lists planet_exists as the most expensive function in the game - ```

Flat profile:

Each sample counts as 0.01 seconds.

% cumulative self self total

time seconds seconds calls ms/call ms/call name

14.63 1.34 1.34 449585 0.00 0.00 planet_exists

13.65 ... | defect | gprof lists planet exists as the most expensive function in the game flat profile each sample counts as seconds cumulative self self total time seconds seconds calls ms call ms call name planet exists ... | 1 |

742,727 | 25,867,292,055 | IssuesEvent | 2022-12-13 22:07:59 | solgenomics/sgn | https://api.github.com/repos/solgenomics/sgn | closed | Genotyping "shift" issue when using "." in vcf upload/download | Priority: Critical Type: Bug | Expected Behavior <!-- Describe the desired or expected behavour here. -->

--------------------------------------------------------------------------

As reported by Jeffrey Endelman:

To clarify, the problem is not that we are missing markers. The markers are there, but Breedbase removed the two fields with “.” w... | 1.0 | Genotyping "shift" issue when using "." in vcf upload/download - Expected Behavior <!-- Describe the desired or expected behavour here. -->

--------------------------------------------------------------------------

As reported by Jeffrey Endelman:

To clarify, the problem is not that we are missing markers. The m... | non_defect | genotyping shift issue when using in vcf upload download expected behavior as reported by jeffrey endelman to clarify the problem is not that we are missing markers the markers are there but breedbase removed the two fields ... | 0 |

65,026 | 19,028,716,349 | IssuesEvent | 2021-11-24 08:19:58 | scipy/scipy | https://api.github.com/repos/scipy/scipy | opened | BUG: Implementation of Bessel Functions in Scipy | defect | ### Describe your issue.

According to the documentation in scipy, the spherical bessel function (https://docs.scipy.org/doc/scipy/reference/generated/scipy.special.spherical_jn.html#r1a410864550e-3)

is implemented using a recursion relation provided in the documentation:

(https://dlmf.nist.gov/10.51#E1)

However... | 1.0 | BUG: Implementation of Bessel Functions in Scipy - ### Describe your issue.

According to the documentation in scipy, the spherical bessel function (https://docs.scipy.org/doc/scipy/reference/generated/scipy.special.spherical_jn.html#r1a410864550e-3)

is implemented using a recursion relation provided in the documenta... | defect | bug implementation of bessel functions in scipy describe your issue according to the documentation in scipy the spherical bessel function is implemented using a recursion relation provided in the documentation however there are two issues i seem to have with this the documentation says t... | 1 |

515,217 | 14,952,509,243 | IssuesEvent | 2021-01-26 15:37:11 | airshipit/treasuremap | https://api.github.com/repos/airshipit/treasuremap | closed | Create Elasticsearch-Data composite in airshipctl/treasuremap | priority/critical | Description :

As a developer I need the ability to to create the Elasticsearch-Data composite in airshipctl/treasuremap

This will include instance of the Elasticsearch function, configured to deploy a set of nodes configured with the Data role. These nodes connect to the Elasticsearch-ingest nodes to form the Elast... | 1.0 | Create Elasticsearch-Data composite in airshipctl/treasuremap - Description :

As a developer I need the ability to to create the Elasticsearch-Data composite in airshipctl/treasuremap

This will include instance of the Elasticsearch function, configured to deploy a set of nodes configured with the Data role. These n... | non_defect | create elasticsearch data composite in airshipctl treasuremap description as a developer i need the ability to to create the elasticsearch data composite in airshipctl treasuremap this will include instance of the elasticsearch function configured to deploy a set of nodes configured with the data role these n... | 0 |

89,561 | 10,604,060,366 | IssuesEvent | 2019-10-10 17:20:19 | ggerganov/diff-challenge | https://api.github.com/repos/ggerganov/diff-challenge | opened | Automatic PR merging on successful submission | documentation | Making this repo to automatically test the submitted PRs and potentially merge them if they satisfy the challenge requirements was kind of interesting to me so here is a quick summary:

1. Got a cheap [Linode](https://www.linode.com) server

2. Installed [Jenkins](https://jenkins.io) on it

3. In Jenkins - installed ... | 1.0 | Automatic PR merging on successful submission - Making this repo to automatically test the submitted PRs and potentially merge them if they satisfy the challenge requirements was kind of interesting to me so here is a quick summary:

1. Got a cheap [Linode](https://www.linode.com) server

2. Installed [Jenkins](https... | non_defect | automatic pr merging on successful submission making this repo to automatically test the submitted prs and potentially merge them if they satisfy the challenge requirements was kind of interesting to me so here is a quick summary got a cheap server installed on it in jenkins installed the ... | 0 |

391,865 | 11,579,602,269 | IssuesEvent | 2020-02-21 18:17:23 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.morele.net - Unable to apply filters | browser-focus-geckoview engine-gecko priority-important severity-important | <!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48723 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.morele.net/komputery/moni... | 1.0 | www.morele.net - Unable to apply filters - <!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48723 -->

<!-- @extra_labels: browser-focus-geckoview -->

**U... | non_defect | unable to apply filters url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description filtering doesnt load steps to reproduce unblocking trackers fixes browser configuration non... | 0 |

146,372 | 13,180,046,259 | IssuesEvent | 2020-08-12 12:06:40 | simphony/osp-core | https://api.github.com/repos/simphony/osp-core | closed | Update the documentation of YAML ontologies, especially the documentation | documentation simple fix | In GitLab by @urbanmatthias on Jan 16, 2020, 10:07

| 1.0 | Update the documentation of YAML ontologies, especially the documentation - In GitLab by @urbanmatthias on Jan 16, 2020, 10:07

| non_defect | update the documentation of yaml ontologies especially the documentation in gitlab by urbanmatthias on jan | 0 |

76,144 | 26,264,017,091 | IssuesEvent | 2023-01-06 10:42:03 | scipy/scipy | https://api.github.com/repos/scipy/scipy | opened | BUG: SciPy requires OpenBLAS even when building against a different library | defect | ### Describe your issue.

I tried to build SciPy against Arm Performance Libraries (ArmPL); however, the build fails because of missing OpenBLAS.

For context, I was able to build NumPy against ArmPL using the same `site.cfg`.

### Reproducing Code Example

```python

I used the following `site.cfg` file:

# ArmPL

... | 1.0 | BUG: SciPy requires OpenBLAS even when building against a different library - ### Describe your issue.

I tried to build SciPy against Arm Performance Libraries (ArmPL); however, the build fails because of missing OpenBLAS.

For context, I was able to build NumPy against ArmPL using the same `site.cfg`.

### Reprodu... | defect | bug scipy requires openblas even when building against a different library describe your issue i tried to build scipy against arm performance libraries armpl however the build fails because of missing openblas for context i was able to build numpy against armpl using the same site cfg reprodu... | 1 |

71,893 | 23,843,870,427 | IssuesEvent | 2022-09-06 12:37:34 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | opened | dnsdist: 'Fake' entries inserted into the ring buffers on timeouts have wrong flags | defect dnsdist backport to dnsdist-1.7.x |

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report

### Short description

When we detect a timeout while waiting for a UDP response from a backend, we insert a 'fake' entry into the ring buffers as a placeholder. This is useful to be able to look for which queries are causing... | 1.0 | dnsdist: 'Fake' entries inserted into the ring buffers on timeouts have wrong flags -

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report

### Short description

When we detect a timeout while waiting for a UDP response from a backend, we insert a 'fake' entry into the ring buf... | defect | dnsdist fake entries inserted into the ring buffers on timeouts have wrong flags program dnsdist issue type bug report short description when we detect a timeout while waiting for a udp response from a backend we insert a fake entry into the ring buffers as a placeholder this is useful to ... | 1 |

436,113 | 30,538,023,268 | IssuesEvent | 2023-07-19 18:55:48 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | GitHub Access Changes | platform-content-team documentation-support pw-footer-feedback | ### Description

It has been shared with me that some teams have trouble using our GitHub access information when teams are moving into the VA.gov space for the first time. These teams do not have a program manager who has access to GitHub so they cannot create a request for access because those managers don't have a... | 1.0 | GitHub Access Changes - ### Description

It has been shared with me that some teams have trouble using our GitHub access information when teams are moving into the VA.gov space for the first time. These teams do not have a program manager who has access to GitHub so they cannot create a request for access because tho... | non_defect | github access changes description it has been shared with me that some teams have trouble using our github access information when teams are moving into the va gov space for the first time these teams do not have a program manager who has access to github so they cannot create a request for access because tho... | 0 |

24,026 | 3,900,273,384 | IssuesEvent | 2016-04-18 04:32:15 | catmaid/CATMAID | https://api.github.com/repos/catmaid/CATMAID | closed | Volume manager creates box selection layer, but doesn't remove it | status: done type: defect | This happens when an existing volume is edited and then the type is changed (which shouldn't be possible in the first place). | 1.0 | Volume manager creates box selection layer, but doesn't remove it - This happens when an existing volume is edited and then the type is changed (which shouldn't be possible in the first place). | defect | volume manager creates box selection layer but doesn t remove it this happens when an existing volume is edited and then the type is changed which shouldn t be possible in the first place | 1 |

4,690 | 2,610,141,050 | IssuesEvent | 2015-02-26 18:44:28 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Players need manual/documentation/ so MOSTLY an EDITABLE wiki! | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Log-in into Hedgewars.org

2. Click wiki

3. Found you can do nothing about it.

What is the expected output? What do you see instead?

Talk page and edit button.

What version of the product are you using? On what operating system?

Current ALL

Please provide any additional... | 1.0 | Players need manual/documentation/ so MOSTLY an EDITABLE wiki! - ```

What steps will reproduce the problem?

1. Log-in into Hedgewars.org

2. Click wiki

3. Found you can do nothing about it.

What is the expected output? What do you see instead?

Talk page and edit button.

What version of the product are you using? On w... | defect | players need manual documentation so mostly an editable wiki what steps will reproduce the problem log in into hedgewars org click wiki found you can do nothing about it what is the expected output what do you see instead talk page and edit button what version of the product are you using on w... | 1 |

406,153 | 11,887,455,723 | IssuesEvent | 2020-03-28 01:55:30 | Thorium-Sim/thorium | https://api.github.com/repos/Thorium-Sim/thorium | opened | Crew Positions | priority/medium type/feature | ### Requested By: Lissa Hadfield

### Priority: Medium

### Version: 2.8.0

As we are creating things for the new CMSC ships, we want a wider range of Damage Control/Security Officer positions (we want specialists instead of "Security" lol). But when we add a Security Guard/Damage Control officer and change their job it ... | 1.0 | Crew Positions - ### Requested By: Lissa Hadfield

### Priority: Medium

### Version: 2.8.0

As we are creating things for the new CMSC ships, we want a wider range of Damage Control/Security Officer positions (we want specialists instead of "Security" lol). But when we add a Security Guard/Damage Control officer and cha... | non_defect | crew positions requested by lissa hadfield priority medium version as we are creating things for the new cmsc ships we want a wider range of damage control security officer positions we want specialists instead of security lol but when we add a security guard damage control officer and cha... | 0 |

275,056 | 20,904,931,919 | IssuesEvent | 2022-03-24 00:22:24 | RainwayApp/node-clangffi | https://api.github.com/repos/RainwayApp/node-clangffi | closed | Move to absolute urls in README | bug documentation good first issue | Now that this repo is public, we should move to absolute URLs for links in the `README.md` files, so that they work from npm as well. | 1.0 | Move to absolute urls in README - Now that this repo is public, we should move to absolute URLs for links in the `README.md` files, so that they work from npm as well. | non_defect | move to absolute urls in readme now that this repo is public we should move to absolute urls for links in the readme md files so that they work from npm as well | 0 |

43,781 | 11,845,138,589 | IssuesEvent | 2020-03-24 07:42:44 | line/armeria | https://api.github.com/repos/line/armeria | opened | DNS resolution timeout may take longer | defect | Let's say that `/etc/resolve.conf` contains:

```

a.svc.cluster.local svc.cluster.local cluster.local

```

If `queryTimeoutMillis` is 5 seconds and the client tries to find the address of `a.com`, it sends DNS queries sequencially as follow:

```

a.com.a.svc.cluster.local. // 5 seconds timeout.

a.com.svc.cluster.l... | 1.0 | DNS resolution timeout may take longer - Let's say that `/etc/resolve.conf` contains:

```

a.svc.cluster.local svc.cluster.local cluster.local

```

If `queryTimeoutMillis` is 5 seconds and the client tries to find the address of `a.com`, it sends DNS queries sequencially as follow:

```

a.com.a.svc.cluster.local. /... | defect | dns resolution timeout may take longer let s say that etc resolve conf contains a svc cluster local svc cluster local cluster local if querytimeoutmillis is seconds and the client tries to find the address of a com it sends dns queries sequencially as follow a com a svc cluster local ... | 1 |

7,472 | 2,610,387,959 | IssuesEvent | 2015-02-26 20:05:31 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Patch for /share/hedgewars/Data/Locale/missions_it.txt | auto-migrated Type-Defect | ```

Fixed error in line 11 of file missions_it.txt

```

-----

Original issue reported on code.google.com by `chipho...@yahoo.it` on 21 Dec 2011 at 5:38

Attachments:

* [missions_it.txt.patch](https://storage.googleapis.com/google-code-attachments/hedgewars/issue-340/comment-0/missions_it.txt.patch)

| 1.0 | Patch for /share/hedgewars/Data/Locale/missions_it.txt - ```

Fixed error in line 11 of file missions_it.txt

```

-----

Original issue reported on code.google.com by `chipho...@yahoo.it` on 21 Dec 2011 at 5:38

Attachments:

* [missions_it.txt.patch](https://storage.googleapis.com/google-code-attachments/hedgewars/issue-... | defect | patch for share hedgewars data locale missions it txt fixed error in line of file missions it txt original issue reported on code google com by chipho yahoo it on dec at attachments | 1 |

161,146 | 13,806,941,496 | IssuesEvent | 2020-10-11 19:48:36 | Bloceducare/LeaderBoard | https://api.github.com/repos/Bloceducare/LeaderBoard | opened | Setup repo and write up documentation | documentation | Setup repository with all required integrations for testing, deployment and issue tracking. Also write up some documentation | 1.0 | Setup repo and write up documentation - Setup repository with all required integrations for testing, deployment and issue tracking. Also write up some documentation | non_defect | setup repo and write up documentation setup repository with all required integrations for testing deployment and issue tracking also write up some documentation | 0 |

269,029 | 28,959,972,356 | IssuesEvent | 2023-05-10 01:04:45 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | reopened | CVE-2013-7271 (Medium) detected in multiple libraries | Mend: dependency security vulnerability | ## CVE-2013-7271 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>randomv3.0.66</b>, <b>linuxv3.0.70</b>, <b>linuxv3.0</b>, <b>linuxv3.0</b></p></summary>

<p>

</p>

</details>

<p></... | True | CVE-2013-7271 (Medium) detected in multiple libraries - ## CVE-2013-7271 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>randomv3.0.66</b>, <b>linuxv3.0.70</b>, <b>linuxv3.0</b>, <b... | non_defect | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries vulnerability details the recvmsg function in net af c in the linux kernel before updates a certain length value without ensuring th... | 0 |

79,838 | 29,351,335,758 | IssuesEvent | 2023-05-27 00:54:50 | scipy/scipy | https://api.github.com/repos/scipy/scipy | opened | BUG: `rv_discrete` fails when support is unbounded below | defect | ### Describe your issue.

I am trying to create a discrete distribution on the integers (both positive and negative). The documentation states that `rv_discrete` takes an "optional" lower bound parameter `a`. It does not, however, seem to allow for an unbounded distribution.

The `rv_discrete` class should probably s... | 1.0 | BUG: `rv_discrete` fails when support is unbounded below - ### Describe your issue.

I am trying to create a discrete distribution on the integers (both positive and negative). The documentation states that `rv_discrete` takes an "optional" lower bound parameter `a`. It does not, however, seem to allow for an unbounded... | defect | bug rv discrete fails when support is unbounded below describe your issue i am trying to create a discrete distribution on the integers both positive and negative the documentation states that rv discrete takes an optional lower bound parameter a it does not however seem to allow for an unbounded... | 1 |

400,866 | 27,303,967,137 | IssuesEvent | 2023-02-24 06:08:47 | purpleclay/gitz | https://api.github.com/repos/purpleclay/gitz | closed | [Docs]: include posthog support for capturing documentation analytics | documentation | ### Describe your edit

Include posthog support within the existing documentation to capture analytics.

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | 1.0 | [Docs]: include posthog support for capturing documentation analytics - ### Describe your edit

Include posthog support within the existing documentation to capture analytics.

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct | non_defect | include posthog support for capturing documentation analytics describe your edit include posthog support within the existing documentation to capture analytics code of conduct i agree to follow this project s code of conduct | 0 |

289,045 | 31,931,118,903 | IssuesEvent | 2023-09-19 07:29:57 | Trinadh465/linux-4.1.15_CVE-2023-4128 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2023-4128 | opened | CVE-2019-19052 (High) detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2019-19052 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home pag... | True | CVE-2019-19052 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2019-19052 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartw... | non_defect | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch main vulnerable source files ... | 0 |

212,375 | 23,882,164,781 | IssuesEvent | 2022-09-08 02:58:06 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Israeli Defence Minister's Cleaner Sentenced for Spying Attempt | SecurityWeek Stale |

**A man employed as a cleaner in Israeli Defence Minister Benny Gantz's home was sentenced to three years' prison for attempting to spy for Iran-linked hackers, the justice ministry said Tuesday.**

[read more](https://www.securityweek.com/israeli-defence-ministers-cleaner-sentenced-spying-attempt)

<https://www.se... | True | [SecurityWeek] Israeli Defence Minister's Cleaner Sentenced for Spying Attempt -

**A man employed as a cleaner in Israeli Defence Minister Benny Gantz's home was sentenced to three years' prison for attempting to spy for Iran-linked hackers, the justice ministry said Tuesday.**

[read more](https://www.securityweek.c... | non_defect | israeli defence minister s cleaner sentenced for spying attempt a man employed as a cleaner in israeli defence minister benny gantz s home was sentenced to three years prison for attempting to spy for iran linked hackers the justice ministry said tuesday | 0 |

294,433 | 22,151,058,672 | IssuesEvent | 2022-06-03 16:50:17 | jenkinsci/packaging | https://api.github.com/repos/jenkinsci/packaging | closed | Current documentation does not work for me despite using Docker | documentation | ### Describe your use-case which is not covered by existing documentation.

Hi there 👋

I have started to modify the [documentation](https://github.com/gounthar/packaging/tree/documentation-update) in the hope of submitting a PR shortly.

I am using `docker` as it is the [only supported platform](https://github.c... | 1.0 | Current documentation does not work for me despite using Docker - ### Describe your use-case which is not covered by existing documentation.

Hi there 👋

I have started to modify the [documentation](https://github.com/gounthar/packaging/tree/documentation-update) in the hope of submitting a PR shortly.

I am usin... | non_defect | current documentation does not work for me despite using docker describe your use case which is not covered by existing documentation hi there 👋 i have started to modify the in the hope of submitting a pr shortly i am using docker as it is the following the existing documentation after se... | 0 |

224,597 | 7,471,938,912 | IssuesEvent | 2018-04-03 10:55:39 | ballerina-lang/composer | https://api.github.com/repos/ballerina-lang/composer | closed | Errors when opening routing service sample | 0.94-pre-release Imported Priority/Highest Severity/Major component/Composer | Following error was observed.

```

Oct 12, 2017 3:34:45 PM org.ballerinalang.composer.service.workspace.rest.datamodel.BLangFileRestService generateJSON

SEVERE: [json]

Oct 12, 2017 3:34:45 PM org.ballerinalang.composer.service.workspace.rest.datamodel.BLangFileRestService generateJSON

SEVERE: [string, TypeCastE... | 1.0 | Errors when opening routing service sample - Following error was observed.

```

Oct 12, 2017 3:34:45 PM org.ballerinalang.composer.service.workspace.rest.datamodel.BLangFileRestService generateJSON

SEVERE: [json]

Oct 12, 2017 3:34:45 PM org.ballerinalang.composer.service.workspace.rest.datamodel.BLangFileRestServ... | non_defect | errors when opening routing service sample following error was observed oct pm org ballerinalang composer service workspace rest datamodel blangfilerestservice generatejson severe oct pm org ballerinalang composer service workspace rest datamodel blangfilerestservice generatejson ... | 0 |

77,508 | 27,027,956,226 | IssuesEvent | 2023-02-11 21:05:43 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | MAINT: optimize.shgo: returns incorrect solution to Rosenbrock problem | defect scipy.optimize | <!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, and won't appear in the output.

-->

My issue is about optimize.shgo. It... | 1.0 | MAINT: optimize.shgo: returns incorrect solution to Rosenbrock problem - <!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, an... | defect | maint optimize shgo returns incorrect solution to rosenbrock problem thank you for taking the time to file a bug report please fill in the fields below deleting the sections that don t apply to your issue you can view the final output by clicking the preview button above note this is a comment an... | 1 |

34,115 | 7,346,691,360 | IssuesEvent | 2018-03-07 21:36:20 | prettydiff/prettydiff | https://api.github.com/repos/prettydiff/prettydiff | closed | Beautification erase code when it meets several Twig `if` statements in the same line | Defect Not started Parsing | # Description

Like title says, beautification erase code in lines that contains several Twig `if` statements.

Seems like, from second `endif` tag beautification deletes everything after it, including the second `endif` tag.

# Input Before Beautification

This is what the code looked like before:

```

<a ... | 1.0 | Beautification erase code when it meets several Twig `if` statements in the same line - # Description

Like title says, beautification erase code in lines that contains several Twig `if` statements.

Seems like, from second `endif` tag beautification deletes everything after it, including the second `endif` tag.

... | defect | beautification erase code when it meets several twig if statements in the same line description like title says beautification erase code in lines that contains several twig if statements seems like from second endif tag beautification deletes everything after it including the second endif tag ... | 1 |

53,140 | 13,261,006,577 | IssuesEvent | 2020-08-20 19:12:50 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | tableio - doxygen errors, missing class members (Trac #799) | Migrated from Trac combo core defect |

```text

/data/i3home/nega/i3/offline-software/src/tableio/private/tableio/converter/dataclasses_container_convert.cxx:19: warning: no matching class member found for

void convert::I3Trigger::AddFields(I3TableRowDescriptionPtr desc, const booked_type &)

/data/i3home/nega/i3/offline-software/src/tableio/private/tabl... | 1.0 | tableio - doxygen errors, missing class members (Trac #799) -

```text

/data/i3home/nega/i3/offline-software/src/tableio/private/tableio/converter/dataclasses_container_convert.cxx:19: warning: no matching class member found for

void convert::I3Trigger::AddFields(I3TableRowDescriptionPtr desc, const booked_type &)

... | defect | tableio doxygen errors missing class members trac text data nega offline software src tableio private tableio converter dataclasses container convert cxx warning no matching class member found for void convert addfields desc const booked type data nega offline software src tabl... | 1 |

86,225 | 15,755,442,409 | IssuesEvent | 2021-03-31 01:47:19 | ervin210/LIVE-ROOM-1485590319891 | https://api.github.com/repos/ervin210/LIVE-ROOM-1485590319891 | opened | CVE-2021-21298 (Medium) detected in runtime-0.20.8.tgz | security vulnerability | ## CVE-2021-21298 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>runtime-0.20.8.tgz</b></p></summary>

<p>@node-red/runtime ====================</p>

<p>Library home page: <a href="ht... | True | CVE-2021-21298 (Medium) detected in runtime-0.20.8.tgz - ## CVE-2021-21298 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>runtime-0.20.8.tgz</b></p></summary>

<p>@node-red/runtime =... | non_defect | cve medium detected in runtime tgz cve medium severity vulnerability vulnerable library runtime tgz node red runtime library home page a href path to dependency file live room package json path to vulnerable library live room node modules n... | 0 |

220,030 | 24,548,548,878 | IssuesEvent | 2022-10-12 10:42:11 | Vonage/vonage-python-code-snippets | https://api.github.com/repos/Vonage/vonage-python-code-snippets | closed | PyYAML-5.1.tar.gz: 3 vulnerabilities (highest severity is: 9.8) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>PyYAML-5.1.tar.gz</b></p></summary>

<p>YAML parser and emitter for Python</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/9f/2c/9417b5c7747... | True | PyYAML-5.1.tar.gz: 3 vulnerabilities (highest severity is: 9.8) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>PyYAML-5.1.tar.gz</b></p></summary>

<p>YAML parser and emitter for Python</p>

<p>Librar... | non_defect | pyyaml tar gz vulnerabilities highest severity is autoclosed vulnerable library pyyaml tar gz yaml parser and emitter for python library home page a href path to dependency file requirements txt path to vulnerable library requirements txt requirements txt found in ... | 0 |

21,985 | 3,587,538,262 | IssuesEvent | 2016-01-30 11:14:48 | ariya/phantomjs | https://api.github.com/repos/ariya/phantomjs | closed | phantomjs crashed o Lion 10.7.5, .dmp attached | old.Priority-Medium old.Status-New old.Type-Defect | _**[hora...@gmail.com](http://code.google.com/u/106972801875058279253/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

> <b>What steps will reproduce the problem?</b>

1. /usr/local/bin/phantomjs iphonereserve.coffee "firstname" "lastname" "na... | 1.0 | phantomjs crashed o Lion 10.7.5, .dmp attached - _**[hora...@gmail.com](http://code.google.com/u/106972801875058279253/) commented:**_

> <b>Which version of PhantomJS are you using? Tip: run 'phantomjs --version'.</b>

> <b>What steps will reproduce the problem?</b>

1. /usr/local/bin/phantomjs iphonereserve.coffee &q... | defect | phantomjs crashed o lion dmp attached commented which version of phantomjs are you using tip run phantomjs version what steps will reproduce the problem usr local bin phantomjs iphonereserve coffee quot firstname quot quot lastname quot quot name mail com quot quot ... | 1 |

295,392 | 25,472,605,286 | IssuesEvent | 2022-11-25 11:31:36 | peviitor-ro/ui-js | https://api.github.com/repos/peviitor-ro/ui-js | closed | The briefcase icon width is 20px | bug TestQuality | ## Precondition

URL: https://beta.peviitor.ro/

Device: Samsung Galaxy S21 Ultra

Browser: Chrome

Platform: Android 12

## Steps to Reproduce:

### Step 1 <span style="color:#58b880"> **[Pass]** </span>

Open URL in browser

#### Expected Result

Website is loaded without any issues

### Step 2 <span style="color:#ff5538"... | 1.0 | The briefcase icon width is 20px - ## Precondition

URL: https://beta.peviitor.ro/

Device: Samsung Galaxy S21 Ultra

Browser: Chrome

Platform: Android 12

## Steps to Reproduce:

### Step 1 <span style="color:#58b880"> **[Pass]** </span>

Open URL in browser

#### Expected Result

Website is loaded without any issues

###... | non_defect | the briefcase icon width is precondition url device samsung galaxy ultra browser chrome platform android steps to reproduce step open url in browser expected result website is loaded without any issues step inspect witdh for briefcase icon on quot alătură ... | 0 |

291,929 | 25,185,936,028 | IssuesEvent | 2022-11-11 17:58:11 | microsoft/playwright | https://api.github.com/repos/microsoft/playwright | closed | [Question] Specific test retries | feature-test-runner v1.28 | Hi!

Is there any way I could set the maximum number of retries to a specific test function?

My case is the following: in my test, I create an object via a POST request and then I want to verify that the response body of a GET request contains some info about it. The matter is that our backend will return no info ... | 1.0 | [Question] Specific test retries - Hi!

Is there any way I could set the maximum number of retries to a specific test function?

My case is the following: in my test, I create an object via a POST request and then I want to verify that the response body of a GET request contains some info about it. The matter is th... | non_defect | specific test retries hi is there any way i could set the maximum number of retries to a specific test function my case is the following in my test i create an object via a post request and then i want to verify that the response body of a get request contains some info about it the matter is that our ba... | 0 |

94,905 | 11,940,352,116 | IssuesEvent | 2020-04-02 16:34:30 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | closed | Profile developers are requesting adding back the "IBMid" to new IBM.com L0 mastheads (post-login) | Airtable Done Feature request design design: research | <!-- replace _{{...}}_ with your own words -->

### The problem

While redesigning the MyIBM Profile, the developers from the Profile team have noticed the new IBM.com L0/L1 no longer retain the IBMid navigation link on far right. This IBMid only appears once a user has logged in and inside MyIBM environment.

### ... | 2.0 | Profile developers are requesting adding back the "IBMid" to new IBM.com L0 mastheads (post-login) - <!-- replace _{{...}}_ with your own words -->

### The problem

While redesigning the MyIBM Profile, the developers from the Profile team have noticed the new IBM.com L0/L1 no longer retain the IBMid navigation link ... | non_defect | profile developers are requesting adding back the ibmid to new ibm com mastheads post login the problem while redesigning the myibm profile the developers from the profile team have noticed the new ibm com no longer retain the ibmid navigation link on far right this ibmid only appears once a use... | 0 |

421,662 | 28,351,973,005 | IssuesEvent | 2023-04-12 03:33:55 | oforiwaasam/converterpro | https://api.github.com/repos/oforiwaasam/converterpro | opened | Set up a static documentation website | documentation backlog | As demonstrated in class, setup a static website for your project. You are allowed to use any framework you like, but I recommend sticking to something tried-and-true like Sphinx. Your project’s website should have the following elements:

- How to install your library

- How to use your library

- Autodocumentation ... | 1.0 | Set up a static documentation website - As demonstrated in class, setup a static website for your project. You are allowed to use any framework you like, but I recommend sticking to something tried-and-true like Sphinx. Your project’s website should have the following elements:

- How to install your library

- How t... | non_defect | set up a static documentation website as demonstrated in class setup a static website for your project you are allowed to use any framework you like but i recommend sticking to something tried and true like sphinx your project’s website should have the following elements how to install your library how t... | 0 |

7,943 | 2,611,067,995 | IssuesEvent | 2015-02-27 00:31:48 | alistairreilly/andors-trail | https://api.github.com/repos/alistairreilly/andors-trail | closed | Physical keyboards. | auto-migrated Type-Defect | ```

An option to use physical keyboard as controls. (Can be bound to any key).

Some devices don't all match up eg: HTC Vision WASD aren't as comfortable as

WASZ.

Would be used primarily as movement and quick use.

```

Original issue reported on code.google.com by `erik.the.lion@gmail.com` on 12 Oct 2011 at 11:34 | 1.0 | Physical keyboards. - ```

An option to use physical keyboard as controls. (Can be bound to any key).

Some devices don't all match up eg: HTC Vision WASD aren't as comfortable as

WASZ.

Would be used primarily as movement and quick use.

```

Original issue reported on code.google.com by `erik.the.lion@gmail.com` on... | defect | physical keyboards an option to use physical keyboard as controls can be bound to any key some devices don t all match up eg htc vision wasd aren t as comfortable as wasz would be used primarily as movement and quick use original issue reported on code google com by erik the lion gmail com on... | 1 |

11,155 | 2,641,231,470 | IssuesEvent | 2015-03-11 16:41:04 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Interactive presentation should provide link back to the main page | Milestone-3 Priority-Medium Slides Type-Defect | Original [issue 16](https://code.google.com/p/html5rocks/issues/detail?id=16) created by chrsmith on 2010-06-22T23:51:00.000Z:

...either at the end, or below the slides all the time. | 1.0 | Interactive presentation should provide link back to the main page - Original [issue 16](https://code.google.com/p/html5rocks/issues/detail?id=16) created by chrsmith on 2010-06-22T23:51:00.000Z:

...either at the end, or below the slides all the time. | defect | interactive presentation should provide link back to the main page original created by chrsmith on either at the end or below the slides all the time | 1 |

17,385 | 9,744,183,696 | IssuesEvent | 2019-06-03 05:59:05 | KazDragon/terminalpp | https://api.github.com/repos/KazDragon/terminalpp | closed | Remove usage of boost::format when constructing terminal output | Improvement Performance | For whatever reason, boost::format is taking up some 50% of the time it takes to generate element differences (generating the element differences in total accounts for about 25% of the time of munin-acceptance) | True | Remove usage of boost::format when constructing terminal output - For whatever reason, boost::format is taking up some 50% of the time it takes to generate element differences (generating the element differences in total accounts for about 25% of the time of munin-acceptance) | non_defect | remove usage of boost format when constructing terminal output for whatever reason boost format is taking up some of the time it takes to generate element differences generating the element differences in total accounts for about of the time of munin acceptance | 0 |

76,470 | 26,445,146,911 | IssuesEvent | 2023-01-16 06:21:24 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: can not launch chrome when proxy environment variables are set | R-awaiting answer I-defect | ### What happened?

if the proxy is set in bash:

```bash

export http_proxy=http://127.0.0.1:1080

export https_proxy=http://127.0.0.1:1080

```

I can not launch chrome:

```python

from selenium import webdriver

browser = webdriver.Chrome()

```

raise error:

```python

Traceback (most recent call last):... | 1.0 | [🐛 Bug]: can not launch chrome when proxy environment variables are set - ### What happened?

if the proxy is set in bash:

```bash

export http_proxy=http://127.0.0.1:1080

export https_proxy=http://127.0.0.1:1080

```

I can not launch chrome:

```python

from selenium import webdriver

browser = webdriver.Chr... | defect | can not launch chrome when proxy environment variables are set what happened if the proxy is set in bash bash export http proxy export https proxy i can not launch chrome python from selenium import webdriver browser webdriver chrome raise error python traceb... | 1 |

66,668 | 8,037,829,696 | IssuesEvent | 2018-07-30 13:49:33 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | closed | Tip Management: New Tip Required Error | WIP feature protocol designer stale | As a user, I would like to be told if I accidentally create a first step without a tip.

Note this could happen if your second step has 'change tip: use tip from previous step', and you delete the first step.

## Acceptance Criteria

- [ ] If first step has 'change tip: use tip from previous step', display a form-... | 1.0 | Tip Management: New Tip Required Error - As a user, I would like to be told if I accidentally create a first step without a tip.

Note this could happen if your second step has 'change tip: use tip from previous step', and you delete the first step.

## Acceptance Criteria

- [ ] If first step has 'change tip: use... | non_defect | tip management new tip required error as a user i would like to be told if i accidentally create a first step without a tip note this could happen if your second step has change tip use tip from previous step and you delete the first step acceptance criteria if first step has change tip use t... | 0 |

653,173 | 21,574,553,970 | IssuesEvent | 2022-05-02 12:25:22 | trimble-oss/website-modus-react-bootstrap.trimble.com | https://api.github.com/repos/trimble-oss/website-modus-react-bootstrap.trimble.com | closed | Content Tree React - Keyboard integrations | 5 story priority:medium content-tree | * Refer to the screenshot for how to navigate through Tree items

* Tree Item selection:

- [ ] ** Shift + Click should select a range (see first example). Ctrl + click selects multiple individual items (see 2nd example).

- [ ] #160

| 1.0 | Content Tree React - Keyboard integrations - * Refer to the screenshot for how to navigate through Tree items

* Tree Item selection:

- [ ] ** Shift + Click should select a range (see first example). Ctrl + click selects multiple individual items (see 2nd example).

- [ ] #160

| non_defect | content tree react keyboard integrations refer to the screenshot for how to navigate through tree items tree item selection shift click should select a range see first example ctrl click selects multiple individual items see example | 0 |

47,168 | 13,056,045,701 | IssuesEvent | 2020-07-30 03:29:25 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | glshovel doesn't compile with gcc v3.2.2 (Trac #88) | Migrated from Trac defect glshovel | I have tried to compile the icesim V02-00-04cand, but it stopped at the compilation of glshovel.

This is probably due to our old gcc version at Chiba (v3.2.2).

So, I need to change log(1+nhits) to something like log(static_cast<double>(1+nhits)) or so

at line 129 of render/StartTime.cxx.

Could you please change this, a... | 1.0 | glshovel doesn't compile with gcc v3.2.2 (Trac #88) - I have tried to compile the icesim V02-00-04cand, but it stopped at the compilation of glshovel.

This is probably due to our old gcc version at Chiba (v3.2.2).

So, I need to change log(1+nhits) to something like log(static_cast<double>(1+nhits)) or so

at line 129 of... | defect | glshovel doesn t compile with gcc trac i have tried to compile the icesim but it stopped at the compilation of glshovel this is probably due to our old gcc version at chiba so i need to change log nhits to something like log static cast nhits or so at line of render starttime cx... | 1 |

45,471 | 12,814,902,370 | IssuesEvent | 2020-07-04 21:53:10 | mestrade/k8shell | https://api.github.com/repos/mestrade/k8shell | closed | Dede | defectdojo security / info | *Dede*

*Severity:* Info

*Cve:*

*Product/Engagement:* k8shell / Ad Hoc Engagement

*Systems*:

*Description*:

dede

*Mitigation*:

de

*Impact*:

de

*References*:No references given | 1.0 | Dede - *Dede*

*Severity:* Info

*Cve:*

*Product/Engagement:* k8shell / Ad Hoc Engagement

*Systems*:

*Description*:

dede

*Mitigation*:

de

*Impact*:

de

*References*:No references given | defect | dede dede severity info cve product engagement ad hoc engagement systems description dede mitigation de impact de references no references given | 1 |

57,846 | 16,101,985,983 | IssuesEvent | 2021-04-27 10:27:59 | snowplow/snowplow-android-tracker | https://api.github.com/repos/snowplow/snowplow-android-tracker | closed | Fix crash on demo app on API 30 | priority:high status:completed type:defect | The DemoApp crashes at the moment when using API 30. It looks like this is a OkHttp3.

Bumping to 4.9 fixes the issue.

Also, StrictMode shows a couple of minor alerts related to demo app code. | 1.0 | Fix crash on demo app on API 30 - The DemoApp crashes at the moment when using API 30. It looks like this is a OkHttp3.

Bumping to 4.9 fixes the issue.

Also, StrictMode shows a couple of minor alerts related to demo app code. | defect | fix crash on demo app on api the demoapp crashes at the moment when using api it looks like this is a bumping to fixes the issue also strictmode shows a couple of minor alerts related to demo app code | 1 |

593,085 | 17,937,468,019 | IssuesEvent | 2021-09-10 17:14:02 | kubernetes/ingress-nginx | https://api.github.com/repos/kubernetes/ingress-nginx | closed | The new v1.0.0 IngressClass handling logic makes a zero-downtime Ingress controller upgrade hard for the users | kind/bug needs-triage needs-priority | **NGINX Ingress controller version**: v1.0.0 vs 0.4x

**Kubernetes version** (use `kubectl version`):

1.19, 1.20, 1.21, 1.22

**Environment**:

- **Cloud provider or hardware configuration**: Not relevant, the problem is generic for all users

- **OS** (e.g. from /etc/os-release): Not relevant

- **Kernel** (e.g... | 1.0 | The new v1.0.0 IngressClass handling logic makes a zero-downtime Ingress controller upgrade hard for the users - **NGINX Ingress controller version**: v1.0.0 vs 0.4x

**Kubernetes version** (use `kubectl version`):

1.19, 1.20, 1.21, 1.22

**Environment**:

- **Cloud provider or hardware configuration**: Not rele... | non_defect | the new ingressclass handling logic makes a zero downtime ingress controller upgrade hard for the users nginx ingress controller version vs kubernetes version use kubectl version environment cloud provider or hardware configuration not relevant t... | 0 |

80,493 | 30,307,409,243 | IssuesEvent | 2023-07-10 10:25:23 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: selenium-manager webdriver doesn't support Edge browser version is 114.0.1823.67 | R-awaiting answer I-defect | ### What happened?

I am using selenium version 4.10.0.0 and recently Edge version updated from 112 to 114 and now getting an error -

selenium.common.exceptions.SessionNotCreatedException: Message: session not created: This version of Microsoft Edge WebDriver only supports Microsoft Edge version 112 Current browser v... | 1.0 | [🐛 Bug]: selenium-manager webdriver doesn't support Edge browser version is 114.0.1823.67 - ### What happened?

I am using selenium version 4.10.0.0 and recently Edge version updated from 112 to 114 and now getting an error -

selenium.common.exceptions.SessionNotCreatedException: Message: session not created: This v... | defect | selenium manager webdriver doesn t support edge browser version is what happened i am using selenium version and recently edge version updated from to and now getting an error selenium common exceptions sessionnotcreatedexception message session not created this version of microsof... | 1 |

7,065 | 2,610,324,942 | IssuesEvent | 2015-02-26 19:44:45 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Typo | auto-migrated Priority-Low Type-Defect | ```

There is a problem, like someone forgot something in the Acclamator description

after "squadrons and."

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 6 Jun 2011 at 10:27 | 1.0 | Typo - ```

There is a problem, like someone forgot something in the Acclamator description

after "squadrons and."

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 6 Jun 2011 at 10:27 | defect | typo there is a problem like someone forgot something in the acclamator description after squadrons and original issue reported on code google com by gmail com on jun at | 1 |

259,812 | 22,553,027,515 | IssuesEvent | 2022-06-27 07:47:59 | meveo-org/meveo | https://api.github.com/repos/meveo-org/meveo | closed | crosstorage - postgres - filter based on Pagination - lowercase | bug test | When building queries with Pagination object, the result may not be correct in some very particular case:

If we use the filter PersistenceService.SEARCH_WILDCARD_OR_IGNORE_CAS, the results are not OK, when we search specific Cyrilic characters. It's because the result of the lower case function is not the same in ja... | 1.0 | crosstorage - postgres - filter based on Pagination - lowercase - When building queries with Pagination object, the result may not be correct in some very particular case:

If we use the filter PersistenceService.SEARCH_WILDCARD_OR_IGNORE_CAS, the results are not OK, when we search specific Cyrilic characters. It's b... | non_defect | crosstorage postgres filter based on pagination lowercase when building queries with pagination object the result may not be correct in some very particular case if we use the filter persistenceservice search wildcard or ignore cas the results are not ok when we search specific cyrilic characters it s b... | 0 |

788,002 | 27,739,348,581 | IssuesEvent | 2023-03-15 13:24:09 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | opened | The toggle function of overflow buttons in Toolbar is not triggering | Bug C: ToolBar SEV: Medium jQuery Priority 5 | ### Bug report

The toggle functions of buttons that are in overflow in the Toolbar is not triggering

**Regression introduced with R1 2023**

### Reproduction of the problem

1. Open this example - https://dojo.telerik.com/OPebOsEC/12

2. Open the browser console

3. Click on one of the overflow buttons

###... | 1.0 | The toggle function of overflow buttons in Toolbar is not triggering - ### Bug report

The toggle functions of buttons that are in overflow in the Toolbar is not triggering

**Regression introduced with R1 2023**

### Reproduction of the problem

1. Open this example - https://dojo.telerik.com/OPebOsEC/12

2. O... | non_defect | the toggle function of overflow buttons in toolbar is not triggering bug report the toggle functions of buttons that are in overflow in the toolbar is not triggering regression introduced with reproduction of the problem open this example open the browser console click on on... | 0 |

878 | 2,594,260,033 | IssuesEvent | 2015-02-20 01:13:21 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | closed | BALLView VRML exporter produces wrong extension | C: VIEW P: minor R: fixed T: defect | **Reported by odin on 13 Dec 39385592 02:13 UTC**

VRML files should have .wrl not .vrml extension | 1.0 | BALLView VRML exporter produces wrong extension - **Reported by odin on 13 Dec 39385592 02:13 UTC**

VRML files should have .wrl not .vrml extension | defect | ballview vrml exporter produces wrong extension reported by odin on dec utc vrml files should have wrl not vrml extension | 1 |

47,198 | 13,056,052,013 | IssuesEvent | 2020-07-30 03:30:33 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | hdf5merge can't handle FilterMasks (Trac #121) | Migrated from Trac booking defect | There is something funky there about merging hdf5 generated

from experimental data.

Migrated from https://code.icecube.wisc.edu/ticket/121

```json

{

"status": "closed",

"changetime": "2011-04-14T19:17:19",

"description": "There is something funky there about merging hdf5 generated \nfrom experimental ... | 1.0 | hdf5merge can't handle FilterMasks (Trac #121) - There is something funky there about merging hdf5 generated

from experimental data.

Migrated from https://code.icecube.wisc.edu/ticket/121

```json

{

"status": "closed",

"changetime": "2011-04-14T19:17:19",

"description": "There is something funky there ... | defect | can t handle filtermasks trac there is something funky there about merging generated from experimental data migrated from json status closed changetime description there is something funky there about merging generated nfrom experimental data ... | 1 |

12,245 | 2,685,530,678 | IssuesEvent | 2015-03-30 02:16:15 | IssueMigrationTest/Test5 | https://api.github.com/repos/IssueMigrationTest/Test5 | closed | cannot locate module: twisted | auto-migrated Priority-Medium Type-Defect | **Issue by Conrad.C...@gmail.com**

_7 Dec 2012 at 11:28 GMT_

_Originally opened on Google Code_

----

```

What steps will reproduce the problem?

1. Add the following to a python file.

from twisted.internet import pollreactor

pollreactor.install()

reactor = pollreactor.PollReactor()

from twisted.internet import protoc... | 1.0 | cannot locate module: twisted - **Issue by Conrad.C...@gmail.com**

_7 Dec 2012 at 11:28 GMT_

_Originally opened on Google Code_

----

```

What steps will reproduce the problem?

1. Add the following to a python file.

from twisted.internet import pollreactor

pollreactor.install()

reactor = pollreactor.PollReactor()

fro... | defect | cannot locate module twisted issue by conrad c gmail com dec at gmt originally opened on google code what steps will reproduce the problem add the following to a python file from twisted internet import pollreactor pollreactor install reactor pollreactor pollreactor from twi... | 1 |

87,635 | 10,934,365,137 | IssuesEvent | 2019-11-24 11:04:01 | MarlinFirmware/Marlin | https://api.github.com/repos/MarlinFirmware/Marlin | closed | [Discussion] Handling of junction speeds and jerk | T: Design Concept T: Development | This is a follow-up to a topic which already was here a long time ago, but I can't find it anymore. If someone remebers it and can find the issue, you might link it here.

During the research for strange jerk behaviour today I stumbled around the suboptimal handling of junction speeds again. In one sentence: Marlins ... | 1.0 | [Discussion] Handling of junction speeds and jerk - This is a follow-up to a topic which already was here a long time ago, but I can't find it anymore. If someone remebers it and can find the issue, you might link it here.

During the research for strange jerk behaviour today I stumbled around the suboptimal handling... | non_defect | handling of junction speeds and jerk this is a follow up to a topic which already was here a long time ago but i can t find it anymore if someone remebers it and can find the issue you might link it here during the research for strange jerk behaviour today i stumbled around the suboptimal handling of junctio... | 0 |

31,578 | 5,960,953,309 | IssuesEvent | 2017-05-29 15:33:27 | webpack/webpack.js.org | https://api.github.com/repos/webpack/webpack.js.org | closed | Document the concept and value value range of LimitChunkCountPlugin. | Documentation: Plugins | Looks like there is a nice PR opportunity with low hanging fruit for someone in webpack/webpack#4178. I'm going to leave this issue here as a stub so that once we stamp down the desired behavior then we can log the defaults and a PR here can accompany a PR in the original issue | 1.0 | Document the concept and value value range of LimitChunkCountPlugin. - Looks like there is a nice PR opportunity with low hanging fruit for someone in webpack/webpack#4178. I'm going to leave this issue here as a stub so that once we stamp down the desired behavior then we can log the defaults and a PR here can accompa... | non_defect | document the concept and value value range of limitchunkcountplugin looks like there is a nice pr opportunity with low hanging fruit for someone in webpack webpack i m going to leave this issue here as a stub so that once we stamp down the desired behavior then we can log the defaults and a pr here can accompany ... | 0 |

622,339 | 19,622,014,223 | IssuesEvent | 2022-01-07 08:13:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | apnews.com - see bug description | browser-firefox-mobile priority-normal engine-gecko | <!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/97859 -->

**URL**: https://apnews.com/article/immigration-coronavirus-pandemic... | 1.0 | apnews.com - see bug description - <!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/97859 -->

**URL**: https://apnews.com/artic... | non_defect | apnews com see bug description url browser version firefox mobile operating system android tested another browser yes chrome problem type something else description popup x blocked steps to reproduce because of your layout not minimizing the address bar when... | 0 |

50,144 | 13,187,346,414 | IssuesEvent | 2020-08-13 03:07:15 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | pnf to Icetray v3: check v3 thread safety (Trac #196) | Migrated from Trac defect jeb + pnf | Check for any non-thread-safe stuff in IceTray v3 and clean them

up for pnf support.

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/196

, reported by blaufuss and owned by tschmidt_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"description": "... | 1.0 | pnf to Icetray v3: check v3 thread safety (Trac #196) - Check for any non-thread-safe stuff in IceTray v3 and clean them

up for pnf support.

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/196

, reported by blaufuss and owned by tschmidt_</summary>

<p>

```json

{

"status": "closed",

"... | defect | pnf to icetray check thread safety trac check for any non thread safe stuff in icetray and clean them up for pnf support migrated from reported by blaufuss and owned by tschmidt json status closed changetime description check for any non thread... | 1 |

367,634 | 10,860,140,549 | IssuesEvent | 2019-11-14 08:23:01 | Porkins97/DinoNuggetsGame | https://api.github.com/repos/Porkins97/DinoNuggetsGame | opened | Player Quits Scene When Button Held Down | Priority Medium bug | When playing, if the player holds down the A/X button (Xbox/Playstation) on scene load, it opens the menu and causes them to quit to the main scene | 1.0 | Player Quits Scene When Button Held Down - When playing, if the player holds down the A/X button (Xbox/Playstation) on scene load, it opens the menu and causes them to quit to the main scene | non_defect | player quits scene when button held down when playing if the player holds down the a x button xbox playstation on scene load it opens the menu and causes them to quit to the main scene | 0 |

64,055 | 18,158,850,811 | IssuesEvent | 2021-09-27 07:12:13 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | mvn install -DskipTests failed in hazelcast@5.0 on centos8_aarch64 | Type: Defect | Hello,I meet a problem:mvn install -DskipTests failed in hazelcast@5.0 on centos8_aarch64

```console

bug:

[INFO] Attaching shaded artifact.

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary for Hazelcast Root 5.1-SNAPSHOT:

[INFO]

[INFO] Hazelcast Root ........... | 1.0 | mvn install -DskipTests failed in hazelcast@5.0 on centos8_aarch64 - Hello,I meet a problem:mvn install -DskipTests failed in hazelcast@5.0 on centos8_aarch64

```console

bug:

[INFO] Attaching shaded artifact.

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary for... | defect | mvn install dskiptests failed in hazelcast on hello i meet a problem mvn install dskiptests failed in hazelcast on console bug: attaching shaded artifact reactor summary for hazelcast root snapshot haz... | 1 |

24,801 | 4,104,662,172 | IssuesEvent | 2016-06-05 14:40:29 | bwu-dart/bwu_datagrid | https://api.github.com/repos/bwu-dart/bwu_datagrid | closed | Column Headers are ~8px wider in FF/Safari | type:defect | I'm not sure if this is a duplicate ticket/issue. But, in FF/Safari the column headers seem to be wider than Chrome/Chromium. Removing the checkbox column has no effect.

Column headers in Chrome/Chromium:

? | design discussion front-end mac | Hey, nice player! I've come to know about it just minutes ago! :)

Would you be interested in having a better UI integration in macOS? I might be able to help design and theme your app in macOS but I need help.

| 1.0 | macOS theme(s)? - Hey, nice player! I've come to know about it just minutes ago! :)

Would you be interested in having a better UI integration in macOS? I might be able to help design and theme your app in macOS but I need help.

| non_defect | macos theme s hey nice player i ve come to know about it just minutes ago would you be interested in having a better ui integration in macos i might be able to help design and theme your app in macos but i need help | 0 |

831,992 | 32,068,301,283 | IssuesEvent | 2023-09-25 05:58:36 | GoogleCloudPlatform/golang-samples | https://api.github.com/repos/GoogleCloudPlatform/golang-samples | closed | spanner/spanner_snippets/spanner: TestUpdateDatabaseSample failed | type: bug priority: p1 api: spanner samples flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 993a6162d95844e06564b429034b39f6da7dff72

b... | 1.0 | spanner/spanner_snippets/spanner: TestUpdateDatabaseSample failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop ... | non_defect | spanner spanner snippets spanner testupdatedatabasesample failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output integration test go del... | 0 |

72,139 | 23,956,710,870 | IssuesEvent | 2022-09-12 15:29:02 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | QR code view broken when no display name is set | T-Defect help wanted A-Invite S-Minor O-Uncommon Z-WTF | ### Steps to reproduce

1. Unset your display name (in Settings -> General)

2. Left panel -> miniature QR code next to your name

### Outcome

#### What did you expect?

Avatar does not cover MXID

2. Left panel -> miniature QR code next to your name

### Outcome

#### What did you expect?

Avatar does not cover MXID

MPI: aborti... | 1.0 | mpi problem with pyronoise - ```

I'm trying to run PyroNoise on a 64bit linux system:-

mpirun -np 8 $an_home/PyroNoiseM -din $out_dir/flows.dat -lin

$out_dir/denoised.list -rin $amplicon_dat_file -v >$out_dir/denoised3.fout

This is collected in my error file:-

MPI: MPI_COMM_WORLD rank 0 has terminated without calli... | defect | mpi problem with pyronoise i m trying to run pyronoise on a linux system mpirun np an home pyronoisem din out dir flows dat lin out dir denoised list rin amplicon dat file v out dir fout this is collected in my error file mpi mpi comm world rank has terminated without calling mpi final... | 1 |

31,826 | 6,642,969,490 | IssuesEvent | 2017-09-27 09:29:28 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: scipy.sparse.linalg.linsolve() + scikits.umfpack 0.3.0 on win-amd64 | defect scipy.sparse.linalg | scikits.umfpack 0.3.0 has many failures on win-amd64, caused by other bit-width chars for the same types than on linux - see https://github.com/scikit-umfpack/scikit-umfpack/issues/36. Some of the tests just call `scipy.sparse.linalg.linsolve()`, which has to be fixed in the same spirit as in the PR https://github.com/... | 1.0 | BUG: scipy.sparse.linalg.linsolve() + scikits.umfpack 0.3.0 on win-amd64 - scikits.umfpack 0.3.0 has many failures on win-amd64, caused by other bit-width chars for the same types than on linux - see https://github.com/scikit-umfpack/scikit-umfpack/issues/36. Some of the tests just call `scipy.sparse.linalg.linsolve()`... | defect | bug scipy sparse linalg linsolve scikits umfpack on win scikits umfpack has many failures on win caused by other bit width chars for the same types than on linux see some of the tests just call scipy sparse linalg linsolve which has to be fixed in the same spirit as in the pr when it... | 1 |

8,124 | 2,611,453,296 | IssuesEvent | 2015-02-27 05:00:44 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Playing with 48 hedgehogs and Per Hedgehog Ammo is not possible | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Select game mode with Per Hedgehog Ammo.

2. Add 6 team with 8 player each.

3. Run fight.

What is the expected output? What do you see instead?

The fight don't run, I get a error window:

"Last two engine messages:

Establishing IPC connection... ok

Ammo stores overflow"

Wha... | 1.0 | Playing with 48 hedgehogs and Per Hedgehog Ammo is not possible - ```

What steps will reproduce the problem?

1. Select game mode with Per Hedgehog Ammo.

2. Add 6 team with 8 player each.

3. Run fight.

What is the expected output? What do you see instead?

The fight don't run, I get a error window:

"Last two engine mess... | defect | playing with hedgehogs and per hedgehog ammo is not possible what steps will reproduce the problem select game mode with per hedgehog ammo add team with player each run fight what is the expected output what do you see instead the fight don t run i get a error window last two engine messa... | 1 |

39,419 | 9,449,233,880 | IssuesEvent | 2019-04-16 00:56:30 | STEllAR-GROUP/phylanx | https://api.github.com/repos/STEllAR-GROUP/phylanx | closed | `fmap` does not accept NumPy arrays | category: primitives submodule: backend type: compatibility issue type: defect | Having:

```py

import numpy as np

from phylanx import Phylanx

@Phylanx

def map_fn(fn, elems):

return fmap(fn, elems)

x = np.array([1,2,3,4])

map_fn(lambda a:a+1,x)

```

results in:

```pytb

Traceback (most recent call last):

File "test51.py", line 64, in <module>

print(map_fn(lambda a:a+1,x))

... | 1.0 | `fmap` does not accept NumPy arrays - Having:

```py

import numpy as np

from phylanx import Phylanx

@Phylanx

def map_fn(fn, elems):

return fmap(fn, elems)

x = np.array([1,2,3,4])

map_fn(lambda a:a+1,x)

```

results in:

```pytb

Traceback (most recent call last):

File "test51.py", line 64, in <module... | defect | fmap does not accept numpy arrays having py import numpy as np from phylanx import phylanx phylanx def map fn fn elems return fmap fn elems x np array map fn lambda a a x results in pytb traceback most recent call last file py line in print map fn l... | 1 |

23,627 | 3,851,864,888 | IssuesEvent | 2016-04-06 05:27:36 | GPF/imame4all | https://api.github.com/repos/GPF/imame4all | closed | Autosave feature request | auto-migrated Priority-Medium Type-Defect | ```

Any chance it would be a simple addition to add autosave to the configuration

options? I use it on my PC version by simply adding the -autosave switch to the

shortcut. This would be a great addition to this great work!

```

Original issue reported on code.google.com by `2systema...@gmail.com` on 12 Mar 2013 at 8:... | 1.0 | Autosave feature request - ```

Any chance it would be a simple addition to add autosave to the configuration

options? I use it on my PC version by simply adding the -autosave switch to the

shortcut. This would be a great addition to this great work!

```

Original issue reported on code.google.com by `2systema...@gmai... | defect | autosave feature request any chance it would be a simple addition to add autosave to the configuration options i use it on my pc version by simply adding the autosave switch to the shortcut this would be a great addition to this great work original issue reported on code google com by gmail com ... | 1 |

7,092 | 10,239,466,172 | IssuesEvent | 2019-08-19 18:19:11 | RIOT-OS/RIOT | https://api.github.com/repos/RIOT-OS/RIOT | closed | core: API: RTC interface should not use struct tm | Discussion: RFC Process: API change State: stale | While collecting implementation ideas for a new timer subsystem, I stumbled about the fact that our real time clock interface can only be used using `struct tm` time representation.

While that might sound natural for a RTC, it seems inefficient:

- `struct tm` is defined using at least 9 integers in newlib (-> 36bytes)... | 1.0 | core: API: RTC interface should not use struct tm - While collecting implementation ideas for a new timer subsystem, I stumbled about the fact that our real time clock interface can only be used using `struct tm` time representation.

While that might sound natural for a RTC, it seems inefficient:

- `struct tm` is defi... | non_defect | core api rtc interface should not use struct tm while collecting implementation ideas for a new timer subsystem i stumbled about the fact that our real time clock interface can only be used using struct tm time representation while that might sound natural for a rtc it seems inefficient struct tm is defi... | 0 |

10,537 | 2,622,171,354 | IssuesEvent | 2015-03-04 00:14:38 | byzhang/rapidjson | https://api.github.com/repos/byzhang/rapidjson | closed | Linking Error in VS 2010 | auto-migrated Priority-Medium Type-Defect | ```

Getting a linker error when I try to compile my code on VS 2010 with rapidjson

included.

Apparently msvc doesn't like the fact that an unused private class member is

only declared.

In document.h, line 54:

GenericValue(const GenericValue& rhs);

change to:

GenericValue(const GenericValue& rhs) {}

and it links co... | 1.0 | Linking Error in VS 2010 - ```

Getting a linker error when I try to compile my code on VS 2010 with rapidjson

included.

Apparently msvc doesn't like the fact that an unused private class member is

only declared.

In document.h, line 54:

GenericValue(const GenericValue& rhs);

change to: