Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

411,256 | 12,016,095,535 | IssuesEvent | 2020-04-10 15:22:35 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | opened | Bell message missing value for claim winnings | Bug Needed for V2 launch Priority: High | Just claimed roughly $250 from claim proceeds....$115 was profit

The bell message only shows a dash and not the value...

| 1.0 | Bell message missing value for claim winnings - Just claimed roughly $250 from claim proceeds....$115 was profit

The bell message only shows a dash and not the value...

| priority | bell message missing value for claim winnings just claimed roughly from claim proceeds was profit the bell message only shows a dash and not the value | 1 |

199,048 | 6,980,266,951 | IssuesEvent | 2017-12-13 00:43:45 | steemit/hivemind | https://api.github.com/repos/steemit/hivemind | closed | finalize db schema | priority/high WIP | todo:

- bool fields

consider:

- use INT ids instead of varchar(16) account names

- use block_num instead of timestamp

hive_posts_cache:

- few missing fields: `depth`, `get_post_stats` vals

- bonus: distinguish simple vote updates (payout/ranking fields) from body/thread updates | 1.0 | finalize db schema - todo:

- bool fields

consider:

- use INT ids instead of varchar(16) account names

- use block_num instead of timestamp

hive_posts_cache:

- few missing fields: `depth`, `get_post_stats` vals

- bonus: distinguish simple vote updates (payout/ranking fields) from body/thread updates | priority | finalize db schema todo bool fields consider use int ids instead of varchar account names use block num instead of timestamp hive posts cache few missing fields depth get post stats vals bonus distinguish simple vote updates payout ranking fields from body thread updates | 1 |

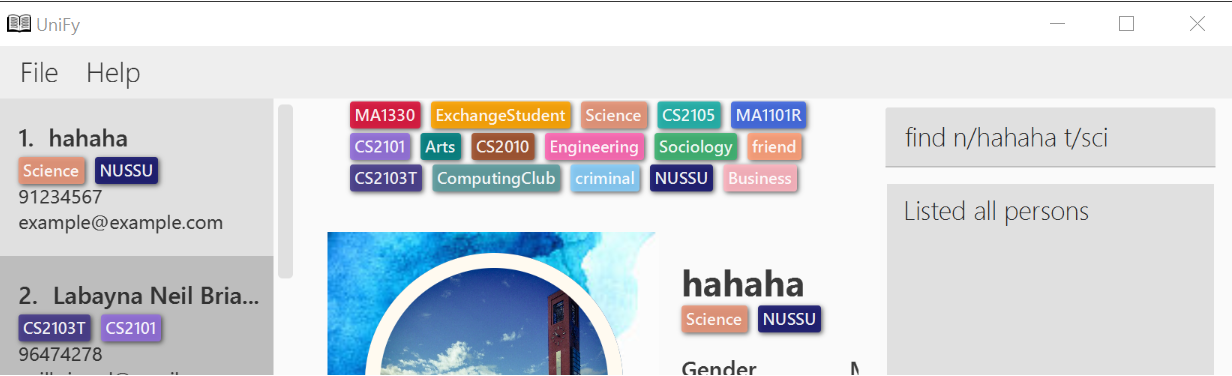

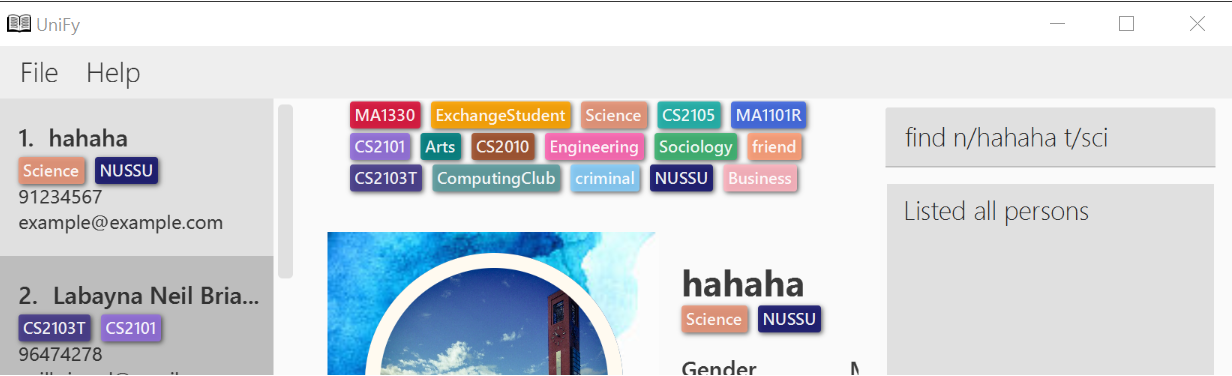

646,265 | 21,042,647,840 | IssuesEvent | 2022-03-31 13:36:38 | AY2122S2-CS2103T-W12-1/tp | https://api.github.com/repos/AY2122S2-CS2103T-W12-1/tp | closed | As a Teaching Assistant, I can “tag” students with various tags | type.Story priority.High | ... so that I can keep track of who to follow up on, who to check up on more often etc | 1.0 | As a Teaching Assistant, I can “tag” students with various tags - ... so that I can keep track of who to follow up on, who to check up on more often etc | priority | as a teaching assistant i can “tag” students with various tags so that i can keep track of who to follow up on who to check up on more often etc | 1 |

654,967 | 21,674,876,453 | IssuesEvent | 2022-05-08 14:53:39 | emredermann/453_Test | https://api.github.com/repos/emredermann/453_Test | opened | Dummy function complexity enhancement | bug enhancement good first issue High priority | Dummy function in dummy.py file must be reimplemented in order to get better complexity. | 1.0 | Dummy function complexity enhancement - Dummy function in dummy.py file must be reimplemented in order to get better complexity. | priority | dummy function complexity enhancement dummy function in dummy py file must be reimplemented in order to get better complexity | 1 |

96,114 | 3,964,556,367 | IssuesEvent | 2016-05-03 01:42:13 | donejs/donejs | https://api.github.com/repos/donejs/donejs | closed | Run server throws error for undefined version | bug Priority - High | I have created app with `donejs add app anniv`.

and changed port to avoid conflicts with another app I run.

and run application with `donejs develop`

```sh

> anniv@0.0.0 develop /Users/mshin/Workspace/github/anniv

> done-serve --develop --port 7000

done-serve starting on http://localhost:7000

Potentially unhandled rejection [8] TypeError: Error loading "anniv@0.0.0#index.stache!done-autorender@0.8.0#autorender" at <unknown>

Error loading "can@2.3.23#util/vdom/document/document" at file:/Users/mshin/Workspace/github/anniv/node_modules/can/util/vdom/document/document.js

Error loading "can@2.3.23#util/vdom/document/document" from "done-autorender@0.8.0#autorender" at file:/Users/mshin/Workspace/github/anniv/node_modules/done-autorender/src/autorender.js

Cannot read property 'version' of undefined

at createModuleNameAndNormalize (file:/Users/mshin/Workspace/github/anniv/node_modules/steal/ext/npm-extension.js:179:37)

at tryCatchReject (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1183:30)

at runContinuation1 (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1142:4)

at Fulfilled.when (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:930:4)

at Pending.run (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:821:13)

at Scheduler._drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:97:19)

at Scheduler.drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:62:9)

at nextTickCallbackWith0Args (node.js:415:9)

at process._tickCallback (node.js:344:13)

Potentially unhandled rejection [7] TypeError: Error loading "can@2.3.23#util/vdom/document/document" at file:/Users/mshin/Workspace/github/anniv/node_modules/can/util/vdom/document/document.js

Error loading "can@2.3.23#util/vdom/document/document" from "anniv@0.0.0#index.stache!done-autorender@0.8.0#autorender" at file:/Users/mshin/Workspace/github/anniv/src/index.stache

Cannot read property 'version' of undefined

at createModuleNameAndNormalize (file:/Users/mshin/Workspace/github/anniv/node_modules/steal/ext/npm-extension.js:179:37)

at tryCatchReject (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1183:30)

at runContinuation1 (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1142:4)

at Fulfilled.when (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:930:4)

at Pending.run (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:821:13)

at Scheduler._drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:97:19)

at Scheduler.drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:62:9)

at nextTickCallbackWith0Args (node.js:415:9)

at process._tickCallback (node.js:344:13)

``` | 1.0 | Run server throws error for undefined version - I have created app with `donejs add app anniv`.

and changed port to avoid conflicts with another app I run.

and run application with `donejs develop`

```sh

> anniv@0.0.0 develop /Users/mshin/Workspace/github/anniv

> done-serve --develop --port 7000

done-serve starting on http://localhost:7000

Potentially unhandled rejection [8] TypeError: Error loading "anniv@0.0.0#index.stache!done-autorender@0.8.0#autorender" at <unknown>

Error loading "can@2.3.23#util/vdom/document/document" at file:/Users/mshin/Workspace/github/anniv/node_modules/can/util/vdom/document/document.js

Error loading "can@2.3.23#util/vdom/document/document" from "done-autorender@0.8.0#autorender" at file:/Users/mshin/Workspace/github/anniv/node_modules/done-autorender/src/autorender.js

Cannot read property 'version' of undefined

at createModuleNameAndNormalize (file:/Users/mshin/Workspace/github/anniv/node_modules/steal/ext/npm-extension.js:179:37)

at tryCatchReject (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1183:30)

at runContinuation1 (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1142:4)

at Fulfilled.when (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:930:4)

at Pending.run (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:821:13)

at Scheduler._drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:97:19)

at Scheduler.drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:62:9)

at nextTickCallbackWith0Args (node.js:415:9)

at process._tickCallback (node.js:344:13)

Potentially unhandled rejection [7] TypeError: Error loading "can@2.3.23#util/vdom/document/document" at file:/Users/mshin/Workspace/github/anniv/node_modules/can/util/vdom/document/document.js

Error loading "can@2.3.23#util/vdom/document/document" from "anniv@0.0.0#index.stache!done-autorender@0.8.0#autorender" at file:/Users/mshin/Workspace/github/anniv/src/index.stache

Cannot read property 'version' of undefined

at createModuleNameAndNormalize (file:/Users/mshin/Workspace/github/anniv/node_modules/steal/ext/npm-extension.js:179:37)

at tryCatchReject (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1183:30)

at runContinuation1 (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:1142:4)

at Fulfilled.when (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:930:4)

at Pending.run (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:821:13)

at Scheduler._drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:97:19)

at Scheduler.drain (/Users/mshin/Workspace/github/anniv/node_modules/steal/node_modules/steal-systemjs/node_modules/steal-es6-module-loader/dist/es6-module-loader.src.js:62:9)

at nextTickCallbackWith0Args (node.js:415:9)

at process._tickCallback (node.js:344:13)

``` | priority | run server throws error for undefined version i have created app with donejs add app anniv and changed port to avoid conflicts with another app i run and run application with donejs develop sh anniv develop users mshin workspace github anniv done serve develop port done serve starting on potentially unhandled rejection typeerror error loading anniv index stache done autorender autorender at error loading can util vdom document document at file users mshin workspace github anniv node modules can util vdom document document js error loading can util vdom document document from done autorender autorender at file users mshin workspace github anniv node modules done autorender src autorender js cannot read property version of undefined at createmodulenameandnormalize file users mshin workspace github anniv node modules steal ext npm extension js at trycatchreject users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at fulfilled when users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at pending run users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at scheduler drain users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at scheduler drain users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at node js at process tickcallback node js potentially unhandled rejection typeerror error loading can util vdom document document at file users mshin workspace github anniv node modules can util vdom document document js error loading can util vdom document document from anniv index stache done autorender autorender at file users mshin workspace github anniv src index stache cannot read property version of undefined at createmodulenameandnormalize file users mshin workspace github anniv node modules steal ext npm extension js at trycatchreject users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at fulfilled when users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at pending run users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at scheduler drain users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at scheduler drain users mshin workspace github anniv node modules steal node modules steal systemjs node modules steal module loader dist module loader src js at node js at process tickcallback node js | 1 |

302,437 | 9,259,594,156 | IssuesEvent | 2019-03-18 00:44:59 | CosmiQ/cw-geodata | https://api.github.com/repos/CosmiQ/cw-geodata | opened | Implement unit tests for vector.graph | Difficulty: Medium Priority: High Type: Maintenance | @avanetten, do you think you could do this? If not, can you drop me a quick example file to build a graph from alongside a pickled graph object to compare it to? Ideally from a low-density tile or something like that so that I can include the files in the testing submodule.

Thanks! | 1.0 | Implement unit tests for vector.graph - @avanetten, do you think you could do this? If not, can you drop me a quick example file to build a graph from alongside a pickled graph object to compare it to? Ideally from a low-density tile or something like that so that I can include the files in the testing submodule.

Thanks! | priority | implement unit tests for vector graph avanetten do you think you could do this if not can you drop me a quick example file to build a graph from alongside a pickled graph object to compare it to ideally from a low density tile or something like that so that i can include the files in the testing submodule thanks | 1 |

719,131 | 24,747,860,525 | IssuesEvent | 2022-10-21 11:16:50 | ChildMindInstitute/mindlogger-applet-builder | https://api.github.com/repos/ChildMindInstitute/mindlogger-applet-builder | closed | Reordering the report components is not considered a change in the applet | bug Config Report High EK-High Priority | **Steps to reproduce**

1. Open a site and log in: https://admin.mindlogger.org/

2. Open the activity with a configured report and at least two components

3. Reorder components

4. Click "Save"

5. Pay attention to the warning pop-up

**Actual result**

Reordering the report components is not considered a change in the applet

**Expected result**

Reordering the report components is considered a change in the applet

**Notes**: If there will be any other changes the reordering will be saved and applied.

**Video**: https://www.screencast.com/t/coGnguabIv

**Environment:**

https://admin.mindlogger.org/

https://admin-staging.mindlogger.org/

Win 10 / Chrome 103

prod account:

test-user1@com.us / qwerty

my applet 6 / Edit test

Applet password: Qwe123!!! | 1.0 | Reordering the report components is not considered a change in the applet - **Steps to reproduce**

1. Open a site and log in: https://admin.mindlogger.org/

2. Open the activity with a configured report and at least two components

3. Reorder components

4. Click "Save"

5. Pay attention to the warning pop-up

**Actual result**

Reordering the report components is not considered a change in the applet

**Expected result**

Reordering the report components is considered a change in the applet

**Notes**: If there will be any other changes the reordering will be saved and applied.

**Video**: https://www.screencast.com/t/coGnguabIv

**Environment:**

https://admin.mindlogger.org/

https://admin-staging.mindlogger.org/

Win 10 / Chrome 103

prod account:

test-user1@com.us / qwerty

my applet 6 / Edit test

Applet password: Qwe123!!! | priority | reordering the report components is not considered a change in the applet steps to reproduce open a site and log in open the activity with a configured report and at least two components reorder components click save pay attention to the warning pop up actual result reordering the report components is not considered a change in the applet expected result reordering the report components is considered a change in the applet notes if there will be any other changes the reordering will be saved and applied video environment win chrome prod account test com us qwerty my applet edit test applet password | 1 |

177,870 | 6,588,041,544 | IssuesEvent | 2017-09-14 00:20:18 | gravityview/GravityView | https://api.github.com/repos/gravityview/GravityView | opened | Editing an entry stips the labels from product calculation fields | Bug Core: Edit Entry Core: Fields Difficulty: Medium Priority: High | The labels get stripped from the receipt table after editing in Edit Entry; probably due to bad serialization?

Also look into whether it's the deleting of the entry meta on `GravityView_Field_Product::clear_product_info_cache()` method

See [HS#10931](https://secure.helpscout.net/conversation/430351819/10931/). | 1.0 | Editing an entry stips the labels from product calculation fields - The labels get stripped from the receipt table after editing in Edit Entry; probably due to bad serialization?

Also look into whether it's the deleting of the entry meta on `GravityView_Field_Product::clear_product_info_cache()` method

See [HS#10931](https://secure.helpscout.net/conversation/430351819/10931/). | priority | editing an entry stips the labels from product calculation fields the labels get stripped from the receipt table after editing in edit entry probably due to bad serialization also look into whether it s the deleting of the entry meta on gravityview field product clear product info cache method see | 1 |

666,892 | 22,390,978,152 | IssuesEvent | 2022-06-17 07:39:41 | nexB/scancode.io | https://api.github.com/repos/nexB/scancode.io | closed | DiscoveredPackage matching query does not exist. | bug high priority | Input: https://github.com/ballerina-platform/ballerina-lang/archive/refs/tags/v1.2.29.tar.gz

Pipeline: `scan_package`

```

DiscoveredPackage matching query does not exist.

Traceback:

File "/app/scanpipe/pipelines/__init__.py", line 115, in execute

step(self)

File "/app/scanpipe/pipelines/scan_package.py", line 119, in build_inventory_from_scan

scancode.create_inventory_from_scan(self.project, self.scan_output_location)

File "/app/scanpipe/pipes/scancode.py", line 504, in create_inventory_from_scan

create_codebase_resources(project, scanned_codebase)

File "/app/scanpipe/pipes/scancode.py", line 398, in create_codebase_resources

package = DiscoveredPackage.objects.get(package_uid=package_uid)

File "/usr/local/lib/python3.9/site-packages/django/db/models/manager.py", line 85, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/django/db/models/query.py", line 496, in get

raise self.model.DoesNotExist(

```

The culprit is the `for_packages` attribute on the `codebase/ballerina-lang-1.2.29/tool-plugins/theia/yarn.lock` resource that returns a list containing a `None` value as the `package_uid`, `for_packages == [None]`.

`None` cannot be matched in the DB thus the model.DoesNotExist exception.

```

from commoncode.resource import VirtualCodebase

scanned_codebase = VirtualCodebase("scancode-2022-06-15-04-55-57.json")

resource = scanned_codebase.get_resource('codebase/ballerina-lang-1.2.29/tool-plugins/theia/yarn.lock')

resource.for_packages # -> [None]

```

1. This is likely an issue in the way the `for_packages` value is generated and needs to be fixed.

2. The package QuerySet is not scoped with the current project in create_codebase_resources in https://github.com/nexB/scancode.io/blob/main/scanpipe/pipes/scancode.py#L398

3. The `package_uid` field is not enforced to be unique in a project, also it is not indexed at the moment and it should as the code now heavily relies on it to fetch DiscoveredPackage instances https://github.com/nexB/scancode.io/blob/main/scanpipe/models.py#L1729 | 1.0 | DiscoveredPackage matching query does not exist. - Input: https://github.com/ballerina-platform/ballerina-lang/archive/refs/tags/v1.2.29.tar.gz

Pipeline: `scan_package`

```

DiscoveredPackage matching query does not exist.

Traceback:

File "/app/scanpipe/pipelines/__init__.py", line 115, in execute

step(self)

File "/app/scanpipe/pipelines/scan_package.py", line 119, in build_inventory_from_scan

scancode.create_inventory_from_scan(self.project, self.scan_output_location)

File "/app/scanpipe/pipes/scancode.py", line 504, in create_inventory_from_scan

create_codebase_resources(project, scanned_codebase)

File "/app/scanpipe/pipes/scancode.py", line 398, in create_codebase_resources

package = DiscoveredPackage.objects.get(package_uid=package_uid)

File "/usr/local/lib/python3.9/site-packages/django/db/models/manager.py", line 85, in manager_method

return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/django/db/models/query.py", line 496, in get

raise self.model.DoesNotExist(

```

The culprit is the `for_packages` attribute on the `codebase/ballerina-lang-1.2.29/tool-plugins/theia/yarn.lock` resource that returns a list containing a `None` value as the `package_uid`, `for_packages == [None]`.

`None` cannot be matched in the DB thus the model.DoesNotExist exception.

```

from commoncode.resource import VirtualCodebase

scanned_codebase = VirtualCodebase("scancode-2022-06-15-04-55-57.json")

resource = scanned_codebase.get_resource('codebase/ballerina-lang-1.2.29/tool-plugins/theia/yarn.lock')

resource.for_packages # -> [None]

```

1. This is likely an issue in the way the `for_packages` value is generated and needs to be fixed.

2. The package QuerySet is not scoped with the current project in create_codebase_resources in https://github.com/nexB/scancode.io/blob/main/scanpipe/pipes/scancode.py#L398

3. The `package_uid` field is not enforced to be unique in a project, also it is not indexed at the moment and it should as the code now heavily relies on it to fetch DiscoveredPackage instances https://github.com/nexB/scancode.io/blob/main/scanpipe/models.py#L1729 | priority | discoveredpackage matching query does not exist input pipeline scan package discoveredpackage matching query does not exist traceback file app scanpipe pipelines init py line in execute step self file app scanpipe pipelines scan package py line in build inventory from scan scancode create inventory from scan self project self scan output location file app scanpipe pipes scancode py line in create inventory from scan create codebase resources project scanned codebase file app scanpipe pipes scancode py line in create codebase resources package discoveredpackage objects get package uid package uid file usr local lib site packages django db models manager py line in manager method return getattr self get queryset name args kwargs file usr local lib site packages django db models query py line in get raise self model doesnotexist the culprit is the for packages attribute on the codebase ballerina lang tool plugins theia yarn lock resource that returns a list containing a none value as the package uid for packages none cannot be matched in the db thus the model doesnotexist exception from commoncode resource import virtualcodebase scanned codebase virtualcodebase scancode json resource scanned codebase get resource codebase ballerina lang tool plugins theia yarn lock resource for packages this is likely an issue in the way the for packages value is generated and needs to be fixed the package queryset is not scoped with the current project in create codebase resources in the package uid field is not enforced to be unique in a project also it is not indexed at the moment and it should as the code now heavily relies on it to fetch discoveredpackage instances | 1 |

347,457 | 10,430,184,635 | IssuesEvent | 2019-09-17 05:56:42 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Need to allow the user to navigate if user is using iframe | NEED FAST ACTION NEXT UPDATE [Priority: HIGH] bug | Console Error: Unsafe JavaScript attempt to initiate navigation for frame with origin 'https://www.thecorporatethiefbeats.com' from frame with URL 'https://corporatethief.infinity.airbit.com/?config_id=2325&embed=1#amp=1'. The frame attempting navigation of the top-level window is sandboxed, but the flag of 'allow-top-navigation' or 'allow-top-navigation-by-user-activation' is not set.

HelpScout Link: https://secure.helpscout.net/conversation/940768169/79433?folderId=1060554

Need to add this attribute in the sandbox to allow the user to navigate properly:

"allow-top-navigation"

| 1.0 | Need to allow the user to navigate if user is using iframe - Console Error: Unsafe JavaScript attempt to initiate navigation for frame with origin 'https://www.thecorporatethiefbeats.com' from frame with URL 'https://corporatethief.infinity.airbit.com/?config_id=2325&embed=1#amp=1'. The frame attempting navigation of the top-level window is sandboxed, but the flag of 'allow-top-navigation' or 'allow-top-navigation-by-user-activation' is not set.

HelpScout Link: https://secure.helpscout.net/conversation/940768169/79433?folderId=1060554

Need to add this attribute in the sandbox to allow the user to navigate properly:

"allow-top-navigation"

| priority | need to allow the user to navigate if user is using iframe console error unsafe javascript attempt to initiate navigation for frame with origin from frame with url the frame attempting navigation of the top level window is sandboxed but the flag of allow top navigation or allow top navigation by user activation is not set helpscout link need to add this attribute in the sandbox to allow the user to navigate properly allow top navigation | 1 |

392,541 | 11,592,719,963 | IssuesEvent | 2020-02-24 12:07:15 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Context menu differs in schedule vs grid | bug high-priority resolved | Open advanced demo

Right click a task

Right click a row in the locked section. Note the difference

In Basic demo, context menus are identical | 1.0 | Context menu differs in schedule vs grid - Open advanced demo

Right click a task

Right click a row in the locked section. Note the difference

In Basic demo, context menus are identical | priority | context menu differs in schedule vs grid open advanced demo right click a task right click a row in the locked section note the difference in basic demo context menus are identical | 1 |

794,924 | 28,054,759,649 | IssuesEvent | 2023-03-29 08:39:30 | inlang/inlang | https://api.github.com/repos/inlang/inlang | closed | validate config | type: feature scope: core priority: high | ## Problem

1. Programatically testing the config is not possible e.g. "Does the `readResources` function work?", "Are my lints correct?".

2. Plugin authors are starting to "hack" the inlang config. That's great. But will lead to unintended consequences and breaking changes in the future.

## Proposal

Provide a module (`inlang/core/test` ?) that can validate the config schema and functionality. That module can be used by, for example, the CLI that provides an `inlang config validate` command and the editor that checks the config on the fly.

- [ ] Validate the config schema with something like `zod`.

- [ ] "Test run" functions that are defined in the config

- [ ] Provide a CLI command like `inlang config validate` to test the config file programmatically

- [ ] Use regex to ban code in the config file that won't run in the browser like `import`, node globals, etc.

## Additional information

Using zod for the validation seems to make sense.

| 1.0 | validate config - ## Problem

1. Programatically testing the config is not possible e.g. "Does the `readResources` function work?", "Are my lints correct?".

2. Plugin authors are starting to "hack" the inlang config. That's great. But will lead to unintended consequences and breaking changes in the future.

## Proposal

Provide a module (`inlang/core/test` ?) that can validate the config schema and functionality. That module can be used by, for example, the CLI that provides an `inlang config validate` command and the editor that checks the config on the fly.

- [ ] Validate the config schema with something like `zod`.

- [ ] "Test run" functions that are defined in the config

- [ ] Provide a CLI command like `inlang config validate` to test the config file programmatically

- [ ] Use regex to ban code in the config file that won't run in the browser like `import`, node globals, etc.

## Additional information

Using zod for the validation seems to make sense.

| priority | validate config problem programatically testing the config is not possible e g does the readresources function work are my lints correct plugin authors are starting to hack the inlang config that s great but will lead to unintended consequences and breaking changes in the future proposal provide a module inlang core test that can validate the config schema and functionality that module can be used by for example the cli that provides an inlang config validate command and the editor that checks the config on the fly validate the config schema with something like zod test run functions that are defined in the config provide a cli command like inlang config validate to test the config file programmatically use regex to ban code in the config file that won t run in the browser like import node globals etc additional information using zod for the validation seems to make sense | 1 |

584,466 | 17,455,549,834 | IssuesEvent | 2021-08-06 00:14:53 | zulip/zulip | https://api.github.com/repos/zulip/zulip | opened | Add infrastructure to prevent double-sending of custom emails | help wanted priority: high area: emails | The `manage.py send_custom_email` system is very useful for sending custom emails to portions of the userbase for a Zulip server. However, one flaw in its design is that if it encounters an error that crashes the job, there isn't a convenient way to continue (without emailing users twice). While it is possible to do so with string-parsing `/var/log/zulip/send_email.log`, it'd be much better to track this information in the Zulip database.

I think the right way to do this is to create a `RealmAuditLog` entry when custom emails are sent to a given user; we can number it as `CUSTOM_EMAIL_SENT = 800` in `AbtractRealmAuditLog` (picked to not conflict with the 700 in https://github.com/zulip/zulip/issues/19528) with the full ID for the custom email (the long thing in the template path) included in the `extra_data` key.

And then we can have the `send_custom_email` function exclude users who have a RealmAuditLog entry for the current email's ID. We will want to do this exclusion carefully to ensure that logic like the `--marketing` option, which are designed to only email an address once even if they have multiple accounts for their email address, will avoid sending the email if any UserProfile with that `delivery_email` has such a RealmAuditLog entry.

This won't be a perfect system, in that custom email ID changes if the Markdown template passed into it changes, but that can be addressed by hand by excluding additional email IDs.

| 1.0 | Add infrastructure to prevent double-sending of custom emails - The `manage.py send_custom_email` system is very useful for sending custom emails to portions of the userbase for a Zulip server. However, one flaw in its design is that if it encounters an error that crashes the job, there isn't a convenient way to continue (without emailing users twice). While it is possible to do so with string-parsing `/var/log/zulip/send_email.log`, it'd be much better to track this information in the Zulip database.

I think the right way to do this is to create a `RealmAuditLog` entry when custom emails are sent to a given user; we can number it as `CUSTOM_EMAIL_SENT = 800` in `AbtractRealmAuditLog` (picked to not conflict with the 700 in https://github.com/zulip/zulip/issues/19528) with the full ID for the custom email (the long thing in the template path) included in the `extra_data` key.

And then we can have the `send_custom_email` function exclude users who have a RealmAuditLog entry for the current email's ID. We will want to do this exclusion carefully to ensure that logic like the `--marketing` option, which are designed to only email an address once even if they have multiple accounts for their email address, will avoid sending the email if any UserProfile with that `delivery_email` has such a RealmAuditLog entry.

This won't be a perfect system, in that custom email ID changes if the Markdown template passed into it changes, but that can be addressed by hand by excluding additional email IDs.

| priority | add infrastructure to prevent double sending of custom emails the manage py send custom email system is very useful for sending custom emails to portions of the userbase for a zulip server however one flaw in its design is that if it encounters an error that crashes the job there isn t a convenient way to continue without emailing users twice while it is possible to do so with string parsing var log zulip send email log it d be much better to track this information in the zulip database i think the right way to do this is to create a realmauditlog entry when custom emails are sent to a given user we can number it as custom email sent in abtractrealmauditlog picked to not conflict with the in with the full id for the custom email the long thing in the template path included in the extra data key and then we can have the send custom email function exclude users who have a realmauditlog entry for the current email s id we will want to do this exclusion carefully to ensure that logic like the marketing option which are designed to only email an address once even if they have multiple accounts for their email address will avoid sending the email if any userprofile with that delivery email has such a realmauditlog entry this won t be a perfect system in that custom email id changes if the markdown template passed into it changes but that can be addressed by hand by excluding additional email ids | 1 |

776,271 | 27,254,137,120 | IssuesEvent | 2023-02-22 10:15:50 | AY2223S2-CS2103T-T09-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-T09-4/tp | closed | Add client management features to the User Guide for v1.1 | priority.High User Guide type.Task | As a user, I can see the client management features in the User Guide. | 1.0 | Add client management features to the User Guide for v1.1 - As a user, I can see the client management features in the User Guide. | priority | add client management features to the user guide for as a user i can see the client management features in the user guide | 1 |

628,141 | 19,976,732,596 | IssuesEvent | 2022-01-29 07:35:39 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Need to make proper compatibility with amp analytics function when infinite scroll is used. | bug Urgent [Priority: HIGH] | Ref:- https://secure.helpscout.net/conversation/1752450699/222045?folderId=4874234

Need to make proper compatibility with amp analytics function when infinite scroll is used and analytics only register the first pageview. | 1.0 | Need to make proper compatibility with amp analytics function when infinite scroll is used. - Ref:- https://secure.helpscout.net/conversation/1752450699/222045?folderId=4874234

Need to make proper compatibility with amp analytics function when infinite scroll is used and analytics only register the first pageview. | priority | need to make proper compatibility with amp analytics function when infinite scroll is used ref need to make proper compatibility with amp analytics function when infinite scroll is used and analytics only register the first pageview | 1 |

822,315 | 30,864,807,042 | IssuesEvent | 2023-08-03 07:15:51 | kubebb/components | https://api.github.com/repos/kubebb/components | closed | deploy kubebb stack in private cluster | enhancement priority-high difficulty-medium | When kubernetes cluster can not access public network ,we have to use kubebb differently.

## Steps to deploy kubbb stack in a private cluster

1. deploy k8s cluster

2. deploy a private image registry(optional)

3. push all images to private image registry

- kubebb/core

- buildingbase images

- chartmuseum image

- ...

4. deploy kubebb core

5. deploy a private component repository

- use chartmuseum

- only internal usage

6. push all official components into this private repository

6. create `Repository` into `kubebb/core`

- with image registry override

## Steps to deploy a component in private cluster

1. push component into private repository

2. push required images into private image registry

3. update `componentplan.yaml` to use private image registry and private component repository

4. apply `componentplan.yaml` and check status

| 1.0 | deploy kubebb stack in private cluster - When kubernetes cluster can not access public network ,we have to use kubebb differently.

## Steps to deploy kubbb stack in a private cluster

1. deploy k8s cluster

2. deploy a private image registry(optional)

3. push all images to private image registry

- kubebb/core

- buildingbase images

- chartmuseum image

- ...

4. deploy kubebb core

5. deploy a private component repository

- use chartmuseum

- only internal usage

6. push all official components into this private repository

6. create `Repository` into `kubebb/core`

- with image registry override

## Steps to deploy a component in private cluster

1. push component into private repository

2. push required images into private image registry

3. update `componentplan.yaml` to use private image registry and private component repository

4. apply `componentplan.yaml` and check status

| priority | deploy kubebb stack in private cluster when kubernetes cluster can not access public network we have to use kubebb differently steps to deploy kubbb stack in a private cluster deploy cluster deploy a private image registry optional push all images to private image registry kubebb core buildingbase images chartmuseum image deploy kubebb core deploy a private component repository use chartmuseum only internal usage push all official components into this private repository create repository into kubebb core with image registry override steps to deploy a component in private cluster push component into private repository push required images into private image registry update componentplan yaml to use private image registry and private component repository apply componentplan yaml and check status | 1 |

694,631 | 23,822,023,238 | IssuesEvent | 2022-09-05 12:07:54 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | [bug][conan 2] Reference install/download fails - conan.tools.scm not available | priority: high bug | When downloading or installing the `zlib/1.2.11` (latest revision of the recipe should be prepared to work with conan 2) recipe from conan center it fails the command when loading the recipe contents saying `ModuleNotFoundError: No module named 'conan.tools.scm'`

### Environment Details (include every applicable attribute)

* Operating System+version: Windows 10

* Compiler+version: VS 2020

* Conan version: 2.0.0.beta2

* Python version: 3.10.2

### Steps to reproduce (Include if Applicable)

```

conan install --reference zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -r conancenter

```

or

```

conan download zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -p os=Windows -r conancenter

```

### Logs (Executed commands with output) (Include/Attach if Applicable)

```

(conan2) λ conan install --reference zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -r conancenter

-------- Input profiles ----------

Profile host:

[settings]

arch=x86_64

build_type=Release

compiler=msvc

compiler.cppstd=14

compiler.runtime=dynamic

compiler.runtime_type=Release

compiler.version=192

os=Windows

[options]

[tool_requires]

[env]

Profile build:

[settings]

arch=x86_64

build_type=Release

compiler=msvc

compiler.cppstd=14

compiler.runtime=dynamic

compiler.runtime_type=Release

compiler.version=192

os=Windows

[options]

[tool_requires]

[env]

-------- Computing dependency graph ----------

Graph root

virtual

-------- Computing necessary packages ----------

ERROR: Package 'zlib/1.2.11' not resolved: zlib/1.2.11: Cannot load recipe.

Error loading conanfile at 'C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py': Unable to load conanfile in C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py", line 5, in <module>

from conan.tools.scm import Version

ModuleNotFoundError: No module named 'conan.tools.scm'

```

or

```

(conan2) λ conan download zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -p os=Windows -r conancenter

Downloading zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea

Downloading conanmanifest.txt

Downloading conanfile.py

Downloading conan_export.tgz

Decompressing conan_export.tgz

ERROR: Error loading conanfile at 'C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py': Unable to load conanfile in C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py", line 5, in <module>

from conan.tools.scm import Version

ModuleNotFoundError: No module named 'conan.tools.scm'

```

| 1.0 | [bug][conan 2] Reference install/download fails - conan.tools.scm not available - When downloading or installing the `zlib/1.2.11` (latest revision of the recipe should be prepared to work with conan 2) recipe from conan center it fails the command when loading the recipe contents saying `ModuleNotFoundError: No module named 'conan.tools.scm'`

### Environment Details (include every applicable attribute)

* Operating System+version: Windows 10

* Compiler+version: VS 2020

* Conan version: 2.0.0.beta2

* Python version: 3.10.2

### Steps to reproduce (Include if Applicable)

```

conan install --reference zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -r conancenter

```

or

```

conan download zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -p os=Windows -r conancenter

```

### Logs (Executed commands with output) (Include/Attach if Applicable)

```

(conan2) λ conan install --reference zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -r conancenter

-------- Input profiles ----------

Profile host:

[settings]

arch=x86_64

build_type=Release

compiler=msvc

compiler.cppstd=14

compiler.runtime=dynamic

compiler.runtime_type=Release

compiler.version=192

os=Windows

[options]

[tool_requires]

[env]

Profile build:

[settings]

arch=x86_64

build_type=Release

compiler=msvc

compiler.cppstd=14

compiler.runtime=dynamic

compiler.runtime_type=Release

compiler.version=192

os=Windows

[options]

[tool_requires]

[env]

-------- Computing dependency graph ----------

Graph root

virtual

-------- Computing necessary packages ----------

ERROR: Package 'zlib/1.2.11' not resolved: zlib/1.2.11: Cannot load recipe.

Error loading conanfile at 'C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py': Unable to load conanfile in C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py", line 5, in <module>

from conan.tools.scm import Version

ModuleNotFoundError: No module named 'conan.tools.scm'

```

or

```

(conan2) λ conan download zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea -p os=Windows -r conancenter

Downloading zlib/1.2.11#d77ee68739fcbe5bf37b8a4690eea6ea

Downloading conanmanifest.txt

Downloading conanfile.py

Downloading conan_export.tgz

Decompressing conan_export.tgz

ERROR: Error loading conanfile at 'C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py': Unable to load conanfile in C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "C:\Users\danimtb\.conan2\p\92eecd812928ae0c\e\conanfile.py", line 5, in <module>

from conan.tools.scm import Version

ModuleNotFoundError: No module named 'conan.tools.scm'

```

| priority | reference install download fails conan tools scm not available when downloading or installing the zlib latest revision of the recipe should be prepared to work with conan recipe from conan center it fails the command when loading the recipe contents saying modulenotfounderror no module named conan tools scm environment details include every applicable attribute operating system version windows compiler version vs conan version python version steps to reproduce include if applicable conan install reference zlib r conancenter or conan download zlib p os windows r conancenter logs executed commands with output include attach if applicable λ conan install reference zlib r conancenter input profiles profile host arch build type release compiler msvc compiler cppstd compiler runtime dynamic compiler runtime type release compiler version os windows profile build arch build type release compiler msvc compiler cppstd compiler runtime dynamic compiler runtime type release compiler version os windows computing dependency graph graph root virtual computing necessary packages error package zlib not resolved zlib cannot load recipe error loading conanfile at c users danimtb p e conanfile py unable to load conanfile in c users danimtb p e conanfile py file line in exec module file line in call with frames removed file c users danimtb p e conanfile py line in from conan tools scm import version modulenotfounderror no module named conan tools scm or λ conan download zlib p os windows r conancenter downloading zlib downloading conanmanifest txt downloading conanfile py downloading conan export tgz decompressing conan export tgz error error loading conanfile at c users danimtb p e conanfile py unable to load conanfile in c users danimtb p e conanfile py file line in exec module file line in call with frames removed file c users danimtb p e conanfile py line in from conan tools scm import version modulenotfounderror no module named conan tools scm | 1 |

458,301 | 13,172,808,868 | IssuesEvent | 2020-08-11 19:06:53 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | opened | Role Dao - Get Inherited should be done in a transaction | High Priority Item | This would cater for changes in the structure during this recursive call. | 1.0 | Role Dao - Get Inherited should be done in a transaction - This would cater for changes in the structure during this recursive call. | priority | role dao get inherited should be done in a transaction this would cater for changes in the structure during this recursive call | 1 |

265,389 | 8,353,752,617 | IssuesEvent | 2018-10-02 11:07:49 | handsontable/handsontable | https://api.github.com/repos/handsontable/handsontable | closed | [Column sorting] New, inserted columns breaks the table | Plugin: column sorting Priority: high Regression Status: Released Type: Bug | ### Description

After creating few columns behind the viewport, Handsontable throws an exception.

### Steps to reproduce

<!--- Provide steps to reproduce this issue -->

1. Insert two columns behind the viewport when the `columnSorting` plugin is enabled.

2. You can see an exception.

### Your environment

* Handsontable version: 6.0.0

| 1.0 | [Column sorting] New, inserted columns breaks the table - ### Description

After creating few columns behind the viewport, Handsontable throws an exception.

### Steps to reproduce

<!--- Provide steps to reproduce this issue -->

1. Insert two columns behind the viewport when the `columnSorting` plugin is enabled.

2. You can see an exception.

### Your environment

* Handsontable version: 6.0.0

| priority | new inserted columns breaks the table description after creating few columns behind the viewport handsontable throws an exception steps to reproduce insert two columns behind the viewport when the columnsorting plugin is enabled you can see an exception your environment handsontable version | 1 |

195,088 | 6,902,572,912 | IssuesEvent | 2017-11-25 22:24:16 | wlandau-lilly/drake | https://api.github.com/repos/wlandau-lilly/drake | closed | Require 'config' to be supplied to all user-side functions except make() and drake_config() | high priority | This will simplify and fortify the code base. Functions like `vis_drake_graph()` take a bunch of arguments and then construct a `drake_config()` list if one is not already supplied. This generates a lot of confusion, repeat code, and room for error. Only `make()` and `drake_config()` should take arguments like `parallelism` and `jobs` and `verbose` in the usual way. The others should be required to get them from a `drake_config()` list. | 1.0 | Require 'config' to be supplied to all user-side functions except make() and drake_config() - This will simplify and fortify the code base. Functions like `vis_drake_graph()` take a bunch of arguments and then construct a `drake_config()` list if one is not already supplied. This generates a lot of confusion, repeat code, and room for error. Only `make()` and `drake_config()` should take arguments like `parallelism` and `jobs` and `verbose` in the usual way. The others should be required to get them from a `drake_config()` list. | priority | require config to be supplied to all user side functions except make and drake config this will simplify and fortify the code base functions like vis drake graph take a bunch of arguments and then construct a drake config list if one is not already supplied this generates a lot of confusion repeat code and room for error only make and drake config should take arguments like parallelism and jobs and verbose in the usual way the others should be required to get them from a drake config list | 1 |

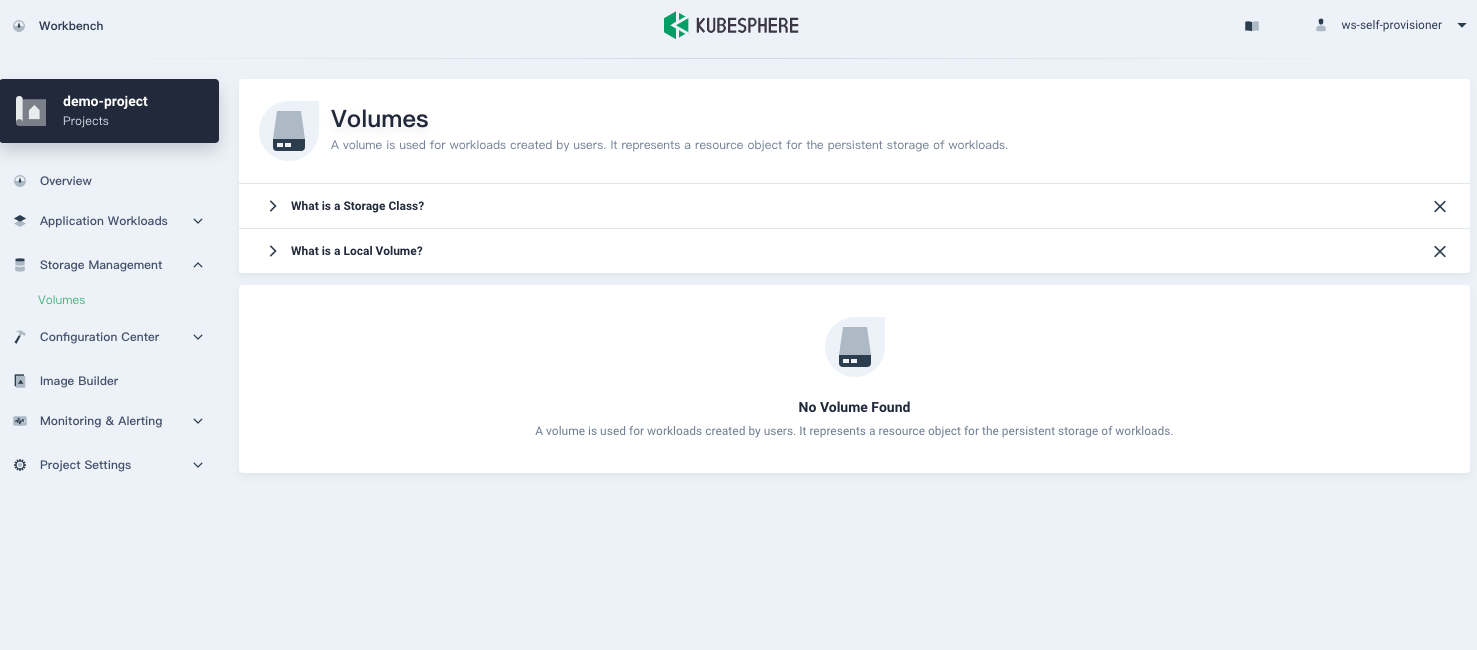

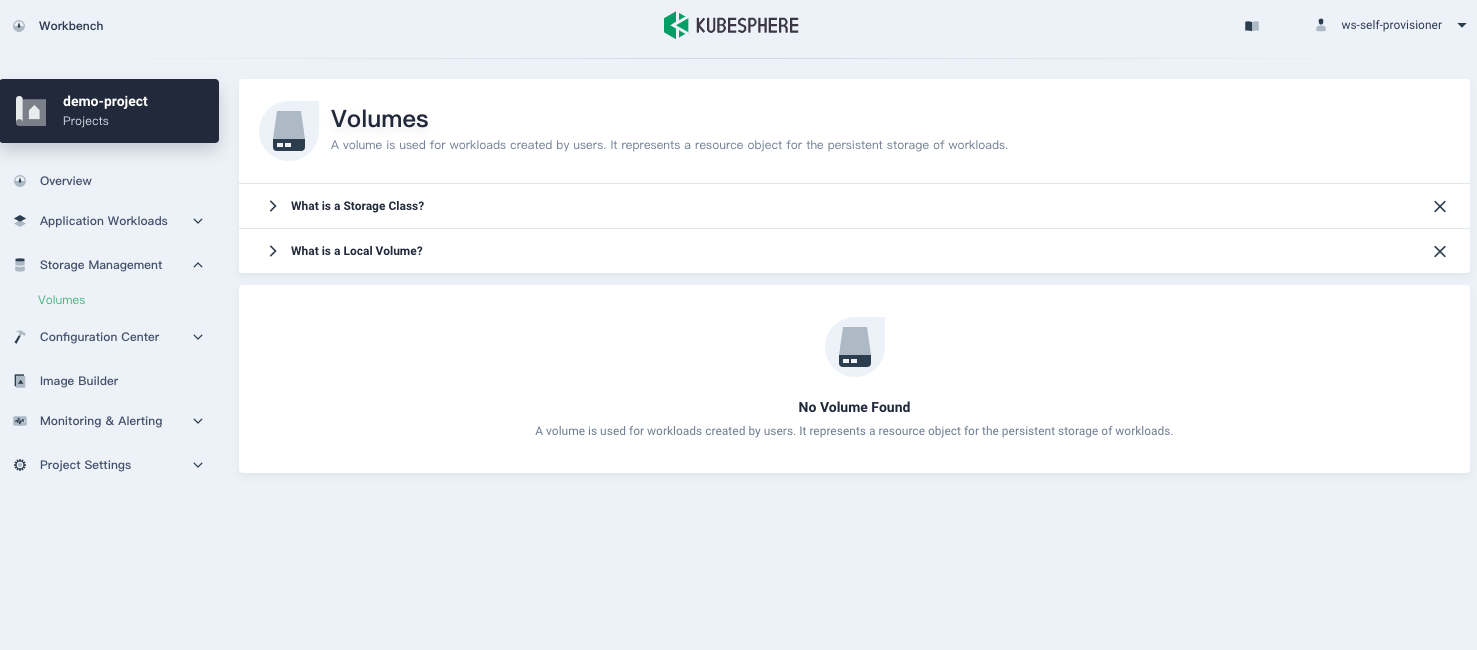

451,975 | 13,044,277,013 | IssuesEvent | 2020-07-29 04:10:10 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | self provisioner storage permission problems | area/console area/iam kind/bug kind/need-to-verify priority/high | **Describe the Bug**

Self provisioner can't create volume, and snapshot not shown up

**Versions Used**

KubeSphere: 3.0.0-dev

| 1.0 | self provisioner storage permission problems - **Describe the Bug**

Self provisioner can't create volume, and snapshot not shown up

**Versions Used**

KubeSphere: 3.0.0-dev

| priority | self provisioner storage permission problems describe the bug self provisioner can t create volume and snapshot not shown up versions used kubesphere dev | 1 |

117,907 | 4,728,897,636 | IssuesEvent | 2016-10-18 17:11:15 | MRN-Code/penny-collector | https://api.github.com/repos/MRN-Code/penny-collector | closed | Drop “globals” language | enhancement high priority | There’s a lot of “globals” required (_src/utils/globals.js_). However, these are *shared* components, not items on the global object. Change the language to make this clearer. | 1.0 | Drop “globals” language - There’s a lot of “globals” required (_src/utils/globals.js_). However, these are *shared* components, not items on the global object. Change the language to make this clearer. | priority | drop “globals” language there’s a lot of “globals” required src utils globals js however these are shared components not items on the global object change the language to make this clearer | 1 |

712,339 | 24,492,019,984 | IssuesEvent | 2022-10-10 03:48:31 | AlphaWallet/alpha-wallet-android | https://api.github.com/repos/AlphaWallet/alpha-wallet-android | closed | Make a build so that the artifacts (jars for Android, frameworks for iOS) and their dependencies can be included in another project | High Priority | Details might change, so refer to https://github.com/AlphaWallet/alpha-wallet-ios/issues/5234, at least for now | 1.0 | Make a build so that the artifacts (jars for Android, frameworks for iOS) and their dependencies can be included in another project - Details might change, so refer to https://github.com/AlphaWallet/alpha-wallet-ios/issues/5234, at least for now | priority | make a build so that the artifacts jars for android frameworks for ios and their dependencies can be included in another project details might change so refer to at least for now | 1 |

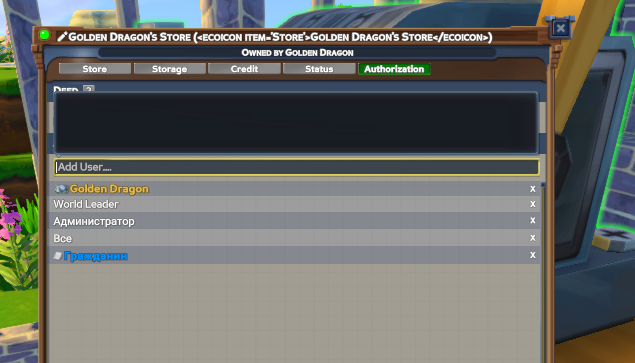

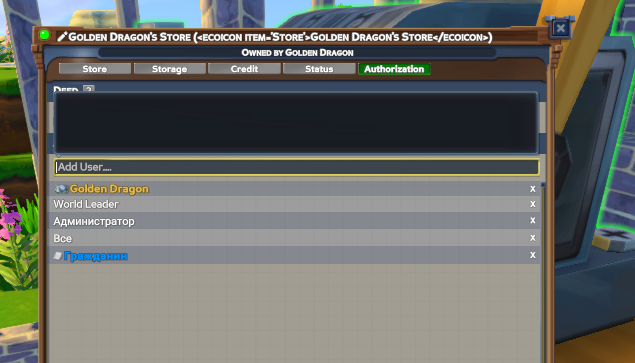

265,712 | 8,357,838,220 | IssuesEvent | 2018-10-02 23:15:31 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Master: adding world leader in store Authorization prevent server from startup | Fixed High Priority |

save

shut down server

try to launch it again

then in debugger

> Obj.data.Government = 'Obj.data.Government' threw an exception of type 'System.NullReferenceException'

@johnkslg ? | 1.0 | Master: adding world leader in store Authorization prevent server from startup -

save

shut down server

try to launch it again

then in debugger

> Obj.data.Government = 'Obj.data.Government' threw an exception of type 'System.NullReferenceException'

@johnkslg ? | priority | master adding world leader in store authorization prevent server from startup save shut down server try to launch it again then in debugger obj data government obj data government threw an exception of type system nullreferenceexception johnkslg | 1 |

124,300 | 4,894,640,766 | IssuesEvent | 2016-11-19 11:47:22 | dalaranwow/dalaran-wow | https://api.github.com/repos/dalaranwow/dalaran-wow | closed | Rogue: Blade Flurry | Class - Rogue Mechanics On PTR Priority - High | Ok so basically the idea behind this ability is to transfer 100% of the damage you deal to target x onto target y. The damage you deal to target x is being reduced by armor, but the damage you deal to target y with blade flurry is being reduced again by armor, and therefore gets double armor penalty. This shouldnt be the case, the transferred damage should do exactly as much and bypass armor.

| 1.0 | Rogue: Blade Flurry - Ok so basically the idea behind this ability is to transfer 100% of the damage you deal to target x onto target y. The damage you deal to target x is being reduced by armor, but the damage you deal to target y with blade flurry is being reduced again by armor, and therefore gets double armor penalty. This shouldnt be the case, the transferred damage should do exactly as much and bypass armor.

| priority | rogue blade flurry ok so basically the idea behind this ability is to transfer of the damage you deal to target x onto target y the damage you deal to target x is being reduced by armor but the damage you deal to target y with blade flurry is being reduced again by armor and therefore gets double armor penalty this shouldnt be the case the transferred damage should do exactly as much and bypass armor | 1 |

543,502 | 15,883,107,256 | IssuesEvent | 2021-04-09 16:55:38 | sopra-fs21-group-26/client | https://api.github.com/repos/sopra-fs21-group-26/client | opened | Screen for lobby transition | high priority task | <h1>Story #7 Lobby </h1>

<h2>Sub-Tasks:</h2>

- [ ] CSS

- [ ] Join Lobby Button

- [ ] Create Lobby Button

- [ ] Back to Menu Button

- [ ] Click Functionality & Routing

<h2>Estimate: 0.5h</h2> | 1.0 | Screen for lobby transition - <h1>Story #7 Lobby </h1>

<h2>Sub-Tasks:</h2>

- [ ] CSS

- [ ] Join Lobby Button

- [ ] Create Lobby Button

- [ ] Back to Menu Button

- [ ] Click Functionality & Routing

<h2>Estimate: 0.5h</h2> | priority | screen for lobby transition story lobby sub tasks css join lobby button create lobby button back to menu button click functionality routing estimate | 1 |

727,598 | 25,041,140,498 | IssuesEvent | 2022-11-04 20:55:12 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | [AQ40] Ouro can have no targets even if attacked | Confirmed Priority-High 60 Instance - Raid - Vanilla | ### Current Behaviour

If pulled at max range or Ouro can't find another target, it will not cast spells or Submerge

### Expected Blizzlike Behaviour

Ouro will always target another player

### Source

_No response_

### Steps to reproduce the problem

1. `.gm on`

2. `.go xyz -9144.474609 2126.168701 -64.607368 531 3.682328`

3. `.gm off`

4. see if Ouro has a target. Does not submerge, does not cast spells

### Extra Notes

https://github.com/chromiecraft/chromiecraft/issues/4391

### AC rev. hash/commit

https://github.com/azerothcore/azerothcore-wotlk/commit/93622ccb481e3484b90971f3450b4e3c9c902c9d

### Operating system

Windows 10

### Custom changes or Modules

_No response_ | 1.0 | [AQ40] Ouro can have no targets even if attacked - ### Current Behaviour

If pulled at max range or Ouro can't find another target, it will not cast spells or Submerge

### Expected Blizzlike Behaviour

Ouro will always target another player

### Source

_No response_

### Steps to reproduce the problem

1. `.gm on`

2. `.go xyz -9144.474609 2126.168701 -64.607368 531 3.682328`

3. `.gm off`

4. see if Ouro has a target. Does not submerge, does not cast spells

### Extra Notes

https://github.com/chromiecraft/chromiecraft/issues/4391

### AC rev. hash/commit

https://github.com/azerothcore/azerothcore-wotlk/commit/93622ccb481e3484b90971f3450b4e3c9c902c9d

### Operating system

Windows 10

### Custom changes or Modules

_No response_ | priority | ouro can have no targets even if attacked current behaviour if pulled at max range or ouro can t find another target it will not cast spells or submerge expected blizzlike behaviour ouro will always target another player source no response steps to reproduce the problem gm on go xyz gm off see if ouro has a target does not submerge does not cast spells extra notes ac rev hash commit operating system windows custom changes or modules no response | 1 |

793,588 | 28,003,216,745 | IssuesEvent | 2023-03-27 13:54:17 | CivMC/SimpleAdminHacks | https://api.github.com/repos/CivMC/SimpleAdminHacks | closed | Add config option to prevent breaking diamond ore without a silk touch pickaxe | Category: Feature Priority: High | Players often mine with a normal pickaxe and save a silk touch for mining diamonds. But if there's block lag, or hiddenore decides the block you're breaking *right now* should be a diamond, you frustratingly break with the wrong pickaxe.

One option is having a player /config option that will prevent you from breaking an ore without a silk touch. That way a player can prevent themselves from accidentally breaking it with the wrong pickaxe.

This will help keep players away from using bots/scripts for these scenarios, or rule breaking environmental reading. | 1.0 | Add config option to prevent breaking diamond ore without a silk touch pickaxe - Players often mine with a normal pickaxe and save a silk touch for mining diamonds. But if there's block lag, or hiddenore decides the block you're breaking *right now* should be a diamond, you frustratingly break with the wrong pickaxe.

One option is having a player /config option that will prevent you from breaking an ore without a silk touch. That way a player can prevent themselves from accidentally breaking it with the wrong pickaxe.

This will help keep players away from using bots/scripts for these scenarios, or rule breaking environmental reading. | priority | add config option to prevent breaking diamond ore without a silk touch pickaxe players often mine with a normal pickaxe and save a silk touch for mining diamonds but if there s block lag or hiddenore decides the block you re breaking right now should be a diamond you frustratingly break with the wrong pickaxe one option is having a player config option that will prevent you from breaking an ore without a silk touch that way a player can prevent themselves from accidentally breaking it with the wrong pickaxe this will help keep players away from using bots scripts for these scenarios or rule breaking environmental reading | 1 |

328,100 | 9,986,036,507 | IssuesEvent | 2019-07-10 18:04:23 | MarchWorks/AniTV | https://api.github.com/repos/MarchWorks/AniTV | closed | Show subtitles while playing a video | bug priority: hight | The subtitles doesn't work. they need to be extracted then get added with the `track` tag but they wouldn't work yet because of the following warning `Resource interpreted as TextTrack but transferred with MIME type text/plain`

**Solutions**

- Solve `Resource interpreted as TextTrack but transferred with MIME type text/plain` warning somehow.

- Use the native web media player to play videos | 1.0 | Show subtitles while playing a video - The subtitles doesn't work. they need to be extracted then get added with the `track` tag but they wouldn't work yet because of the following warning `Resource interpreted as TextTrack but transferred with MIME type text/plain`

**Solutions**

- Solve `Resource interpreted as TextTrack but transferred with MIME type text/plain` warning somehow.

- Use the native web media player to play videos | priority | show subtitles while playing a video the subtitles doesn t work they need to be extracted then get added with the track tag but they wouldn t work yet because of the following warning resource interpreted as texttrack but transferred with mime type text plain solutions solve resource interpreted as texttrack but transferred with mime type text plain warning somehow use the native web media player to play videos | 1 |

643,497 | 20,958,364,960 | IssuesEvent | 2022-03-27 12:32:11 | isawnyu/pleiades-gazetteer | https://api.github.com/repos/isawnyu/pleiades-gazetteer | closed | ensure URIs in TTL export files are valid and well-formed: 3pts | bug priority: high | @ryanfb reports:

working with pleiades RDF dumps I get some issues with URL's in the ttl files not being URL-encoded that winds up needing hand-fixing before parsing in some things…e.g. angle brackets, square brackets, percent signs, etc. not being %-escaped

https://gist.github.com/ryanfb/6796a5e2d77a4c1423b4 for specific instances

Steps to reproduce:

current flow encountering them is using jena rdfcat to concatenate the places ttl and convert to rdfxm

Migrated from http://pleiades.stoa.org/docs/site-issue-tracker/60

| 1.0 | ensure URIs in TTL export files are valid and well-formed: 3pts - @ryanfb reports:

working with pleiades RDF dumps I get some issues with URL's in the ttl files not being URL-encoded that winds up needing hand-fixing before parsing in some things…e.g. angle brackets, square brackets, percent signs, etc. not being %-escaped

https://gist.github.com/ryanfb/6796a5e2d77a4c1423b4 for specific instances

Steps to reproduce:

current flow encountering them is using jena rdfcat to concatenate the places ttl and convert to rdfxm

Migrated from http://pleiades.stoa.org/docs/site-issue-tracker/60

| priority | ensure uris in ttl export files are valid and well formed ryanfb reports working with pleiades rdf dumps i get some issues with url s in the ttl files not being url encoded that winds up needing hand fixing before parsing in some things…e g angle brackets square brackets percent signs etc not being escaped for specific instances steps to reproduce current flow encountering them is using jena rdfcat to concatenate the places ttl and convert to rdfxm migrated from | 1 |

440,301 | 12,697,519,187 | IssuesEvent | 2020-06-22 11:58:33 | verdaccio/verdaccio | https://api.github.com/repos/verdaccio/verdaccio | reopened | /-/all endpoint doesn't use access groups | dev: high priority issue: bug | **Describe the bug**

We're using the simple htpasswd auth plugin currently. Tried using https://github.com/btshj-snail/snail-verdaccio-group/ but unfortunately couldn't get it to work yet, so we ended up with just regular lists of users instead of groups.

However, it seems that the /-/all endpoint stopped working - expectation here would be that authenticated users get all packages they are authenticated for when using that endpoint, but instead no packages are returned for anyone now (even if that person has explicit access to all packages).

**To Reproduce**

Set up explicit access instead of using $all or $authenticated:

```

'**':

# scoped packages

access: user-01 user-02

publish: user-01

unpublish: user-01

```

- Log in in the web backend or through npm

- Use the /-/all endpoint and see that it's empty

The same happens with explicit package access. The web interface lists the correct results, just that (important) all endpoint isn't working (which is important since we're using this with Unity Package Manager which accesses that endpoint to determine which packages it allows to download).

**Expected behavior**

user-01 and user-02 see all packages in /-/all endpoint

**Actual behavior**

/-/all endpoint is empty

**EDIT:** it seems the search endpoint, on the other hand, always returns all packages, also ignoring any auth! This is so weird.

**EDIT 2:** to summarize:

- current auth does not influence the outcome of search and /all

- search always returns all packages

- /all always returns 0 packages (unless for packages where $all is used)

- web interface lists the correct packages the user has auth for | 1.0 | /-/all endpoint doesn't use access groups - **Describe the bug**

We're using the simple htpasswd auth plugin currently. Tried using https://github.com/btshj-snail/snail-verdaccio-group/ but unfortunately couldn't get it to work yet, so we ended up with just regular lists of users instead of groups.

However, it seems that the /-/all endpoint stopped working - expectation here would be that authenticated users get all packages they are authenticated for when using that endpoint, but instead no packages are returned for anyone now (even if that person has explicit access to all packages).

**To Reproduce**

Set up explicit access instead of using $all or $authenticated:

```

'**':

# scoped packages

access: user-01 user-02

publish: user-01

unpublish: user-01

```

- Log in in the web backend or through npm

- Use the /-/all endpoint and see that it's empty

The same happens with explicit package access. The web interface lists the correct results, just that (important) all endpoint isn't working (which is important since we're using this with Unity Package Manager which accesses that endpoint to determine which packages it allows to download).

**Expected behavior**

user-01 and user-02 see all packages in /-/all endpoint

**Actual behavior**

/-/all endpoint is empty

**EDIT:** it seems the search endpoint, on the other hand, always returns all packages, also ignoring any auth! This is so weird.

**EDIT 2:** to summarize:

- current auth does not influence the outcome of search and /all

- search always returns all packages

- /all always returns 0 packages (unless for packages where $all is used)

- web interface lists the correct packages the user has auth for | priority | all endpoint doesn t use access groups describe the bug we re using the simple htpasswd auth plugin currently tried using but unfortunately couldn t get it to work yet so we ended up with just regular lists of users instead of groups however it seems that the all endpoint stopped working expectation here would be that authenticated users get all packages they are authenticated for when using that endpoint but instead no packages are returned for anyone now even if that person has explicit access to all packages to reproduce set up explicit access instead of using all or authenticated scoped packages access user user publish user unpublish user log in in the web backend or through npm use the all endpoint and see that it s empty the same happens with explicit package access the web interface lists the correct results just that important all endpoint isn t working which is important since we re using this with unity package manager which accesses that endpoint to determine which packages it allows to download expected behavior user and user see all packages in all endpoint actual behavior all endpoint is empty edit it seems the search endpoint on the other hand always returns all packages also ignoring any auth this is so weird edit to summarize current auth does not influence the outcome of search and all search always returns all packages all always returns packages unless for packages where all is used web interface lists the correct packages the user has auth for | 1 |

147,055 | 5,633,015,181 | IssuesEvent | 2017-04-05 17:55:08 | mPowering/django-orb | https://api.github.com/repos/mPowering/django-orb | closed | Integrity error when adding a new resource in Spanish | bug high priority | Adding a new resource when the ORB language is set to 'en' is working fine, but if I try to add a resource when the language is set to 'es' I get an integrity error (see below).

I was using 'English' as the text entered in the language field and presume that this is then trying to add a new tag (in name_es) for the language, rather than using the existing one that's causing the error?

The error also occurs on the staging server...

--------------------

IntegrityError at /resource/create/1/

(1062, "Duplicate entry 'English-9' for key 'orb_tag_name_797cef049b536205_uniq'")

Environment:

Request Method: POST

Request URL: http://localhost:8000/resource/create/1/

Django Version: 1.8.17

Python Version: 2.7.12

Installed Applications:

('django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'django.contrib.sites',

'orb',

'crispy_forms',

'tastypie',

'tinymce',

'django_wysiwyg',

'haystack',

'sorl.thumbnail',

'orb.analytics',

'orb.review',

'django.contrib.humanize',

'modeltranslation',

'modeltranslation_exim',

'orb.peers')

Installed Middleware:

('django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.locale.LocaleMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware',

'orb.middleware.SearchFormMiddleware')

Traceback:

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/core/handlers/base.py" in get_response

132. response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/django-mpowering/orb/views.py" in resource_create_step1_view

229. resource, form.cleaned_data.get('languages'), request.user, 'language')

File "/home/alex/data/Digital-Campus/development/mpowering/django-mpowering/orb/views.py" in resource_add_free_text_tags

894. 'update_user': user,

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/manager.py" in manager_method

127. return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/modeltranslation/manager.py" in get_or_create

363. return super(MultilingualQuerySet, self).get_or_create(**kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/query.py" in get_or_create

407. return self._create_object_from_params(lookup, params)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/query.py" in _create_object_from_params

447. six.reraise(*exc_info)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/query.py" in _create_object_from_params

439. obj = self.create(**params)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/modeltranslation/manager.py" in create

355. return super(MultilingualQuerySet, self).create(**kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/query.py" in create

348. obj.save(force_insert=True, using=self.db)

File "/home/alex/data/Digital-Campus/development/mpowering/django-mpowering/orb/models.py" in save

540. super(Tag, self).save(*args, **kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/base.py" in save

734. force_update=force_update, update_fields=update_fields)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/base.py" in save_base

762. updated = self._save_table(raw, cls, force_insert, force_update, using, update_fields)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/base.py" in _save_table

846. result = self._do_insert(cls._base_manager, using, fields, update_pk, raw)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/base.py" in _do_insert

885. using=using, raw=raw)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/manager.py" in manager_method

127. return getattr(self.get_queryset(), name)(*args, **kwargs)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/query.py" in _insert

920. return query.get_compiler(using=using).execute_sql(return_id)

File "/home/alex/data/Digital-Campus/development/mpowering/mpowering_core/env/local/lib/python2.7/site-packages/django/db/models/sql/compiler.py" in execute_sql