Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

337,916 | 10,221,001,085 | IssuesEvent | 2019-08-15 23:27:42 | onaio/reveal-frontend | https://api.github.com/repos/onaio/reveal-frontend | closed | IRS Tables only showing 20 rows | Priority: High bug has pr | For some reason the tables in IRS plan list page, IRS Plan Jurisdiction selection page, and IRS Finalized Plan page only render 20 rows of data. | 1.0 | IRS Tables only showing 20 rows - For some reason the tables in IRS plan list page, IRS Plan Jurisdiction selection page, and IRS Finalized Plan page only render 20 rows of data. | priority | irs tables only showing rows for some reason the tables in irs plan list page irs plan jurisdiction selection page and irs finalized plan page only render rows of data | 1 |

118,267 | 4,733,613,020 | IssuesEvent | 2016-10-19 11:48:39 | armadito/armadito-glpi | https://api.github.com/repos/armadito/armadito-glpi | closed | Add massive action for adding a new Job for a selected computer | feature high priority | For example, create a new scan for selected computers.

Check if selected computer is well associated with an armadito-agent id | 1.0 | Add massive action for adding a new Job for a selected computer - For example, create a new scan for selected computers.

Check if selected computer is well associated with an armadito-agent id | priority | add massive action for adding a new job for a selected computer for example create a new scan for selected computers check if selected computer is well associated with an armadito agent id | 1 |

207,984 | 7,134,969,491 | IssuesEvent | 2018-01-22 22:47:18 | noavish/24event | https://api.github.com/repos/noavish/24event | opened | Create routes | high-priority server-side | - [ ] main page

- [ ] add event

- [ ] delete event

- [ ] join event

- [ ] search event | 1.0 | Create routes - - [ ] main page

- [ ] add event

- [ ] delete event

- [ ] join event

- [ ] search event | priority | create routes main page add event delete event join event search event | 1 |

504,828 | 14,622,568,602 | IssuesEvent | 2020-12-23 00:40:05 | BenJeau/react-native-draw | https://api.github.com/repos/BenJeau/react-native-draw | closed | Be able to draw dots | bug priority: high | I think this is related to my implementation of creating the SVG path strings, in the following function

https://github.com/BenJeau/react-native-draw/blob/f87103fc126f2773557c3f6d577ef8463b0316c8/src/utils/svg.ts#L3-L10

I could either

- modify this function to cover edge cases

- implement #2 with a way that takes this in mind | 1.0 | Be able to draw dots - I think this is related to my implementation of creating the SVG path strings, in the following function

https://github.com/BenJeau/react-native-draw/blob/f87103fc126f2773557c3f6d577ef8463b0316c8/src/utils/svg.ts#L3-L10

I could either

- modify this function to cover edge cases

- implement #2 with a way that takes this in mind | priority | be able to draw dots i think this is related to my implementation of creating the svg path strings in the following function i could either modify this function to cover edge cases implement with a way that takes this in mind | 1 |

66,655 | 3,256,934,564 | IssuesEvent | 2015-10-20 15:44:30 | ceylon/ceylon.language | https://api.github.com/repos/ceylon/ceylon.language | closed | confusing error message for parseFloat() failures | BUG high priority | `parseFloat(2.7182818284590452354)` on the JVM results in:

```

ceylon compile-dart: 8736074210880900738 cannot be coerced into a 64 bit floating point value

ceylon.language.OverflowException "8736074210880900738 cannot be coerced into a 64 bit floating point value"

at ceylon.language.Integer.getFloat(Integer.java:442)

at ceylon.language.parseFloat_.parseFloat(parseFloat.ceylon:86)

```

which complicates troubleshooting (trying to find source data that aligns with `8736074210880900738`). I'm guessing this is an unintended consequence of https://github.com/ceylon/ceylon.language/pull/756.

cc @ePaul

| 1.0 | confusing error message for parseFloat() failures - `parseFloat(2.7182818284590452354)` on the JVM results in:

```

ceylon compile-dart: 8736074210880900738 cannot be coerced into a 64 bit floating point value

ceylon.language.OverflowException "8736074210880900738 cannot be coerced into a 64 bit floating point value"

at ceylon.language.Integer.getFloat(Integer.java:442)

at ceylon.language.parseFloat_.parseFloat(parseFloat.ceylon:86)

```

which complicates troubleshooting (trying to find source data that aligns with `8736074210880900738`). I'm guessing this is an unintended consequence of https://github.com/ceylon/ceylon.language/pull/756.

cc @ePaul

| priority | confusing error message for parsefloat failures parsefloat on the jvm results in ceylon compile dart cannot be coerced into a bit floating point value ceylon language overflowexception cannot be coerced into a bit floating point value at ceylon language integer getfloat integer java at ceylon language parsefloat parsefloat parsefloat ceylon which complicates troubleshooting trying to find source data that aligns with i m guessing this is an unintended consequence of cc epaul | 1 |

181,369 | 6,659,063,332 | IssuesEvent | 2017-10-01 05:23:05 | WazeDev/WME-Place-Harmonizer | https://api.github.com/repos/WazeDev/WME-Place-Harmonizer | closed | Ignore duplicate matching names inside parentheses | Bug: Mild Priority: High | For instance... **Starbucks (inside Target)** should not flag a duplicate if a Target is nearby. | 1.0 | Ignore duplicate matching names inside parentheses - For instance... **Starbucks (inside Target)** should not flag a duplicate if a Target is nearby. | priority | ignore duplicate matching names inside parentheses for instance starbucks inside target should not flag a duplicate if a target is nearby | 1 |

689,373 | 23,618,180,980 | IssuesEvent | 2022-08-24 17:48:57 | ATTPC/ATTPCROOTv2 | https://api.github.com/repos/ATTPC/ATTPCROOTv2 | opened | Covariance matrix of initial cluster | high priority | The initial track cluster used for fitting has an undefined covariance matrix. This might be affecting the fitting perfomance. | 1.0 | Covariance matrix of initial cluster - The initial track cluster used for fitting has an undefined covariance matrix. This might be affecting the fitting perfomance. | priority | covariance matrix of initial cluster the initial track cluster used for fitting has an undefined covariance matrix this might be affecting the fitting perfomance | 1 |

107,405 | 4,307,979,440 | IssuesEvent | 2016-07-21 11:07:41 | laurencedawson/reddit-sync-development | https://api.github.com/repos/laurencedawson/reddit-sync-development | closed | Load more than 9 child comments | bug High priority | [https://www.reddit.com/r/circlejerk/comments/2nuwma/an_ice_cube_after_15_minutes_on_my_keychain/cmh55tf](https://www.reddit.com/r/circlejerk/comments/2nuwma/an_ice_cube_after_15_minutes_on_my_keychain/cmh55tf)

Clicking on load more in the above thread do not bring up any further child comments | 1.0 | Load more than 9 child comments - [https://www.reddit.com/r/circlejerk/comments/2nuwma/an_ice_cube_after_15_minutes_on_my_keychain/cmh55tf](https://www.reddit.com/r/circlejerk/comments/2nuwma/an_ice_cube_after_15_minutes_on_my_keychain/cmh55tf)

Clicking on load more in the above thread do not bring up any further child comments | priority | load more than child comments clicking on load more in the above thread do not bring up any further child comments | 1 |

565,897 | 16,772,289,198 | IssuesEvent | 2021-06-14 16:08:22 | tomvothecoder/xcdat | https://api.github.com/repos/tomvothecoder/xcdat | opened | Analyze regridding requirements and third-party libraries | Priority: High | This will help us determine the complexity and time estimate for [horizontal](#44) and [vertical](#45) regridding.

1) Analyze our regridding requirements

2) Determine if existing third-party libraries are sufficient in meeting requirements

a. If sufficient, should we include or not include library in dependencies? Should users just install them separately in their environment?

b. If not sufficient, should we extend library or write regridder from scratch? | 1.0 | Analyze regridding requirements and third-party libraries - This will help us determine the complexity and time estimate for [horizontal](#44) and [vertical](#45) regridding.

1) Analyze our regridding requirements

2) Determine if existing third-party libraries are sufficient in meeting requirements

a. If sufficient, should we include or not include library in dependencies? Should users just install them separately in their environment?

b. If not sufficient, should we extend library or write regridder from scratch? | priority | analyze regridding requirements and third party libraries this will help us determine the complexity and time estimate for and regridding analyze our regridding requirements determine if existing third party libraries are sufficient in meeting requirements a if sufficient should we include or not include library in dependencies should users just install them separately in their environment b if not sufficient should we extend library or write regridder from scratch | 1 |

533,628 | 15,595,383,873 | IssuesEvent | 2021-03-18 14:50:24 | onaio/reveal-frontend | https://api.github.com/repos/onaio/reveal-frontend | closed | BCC Data not Showing on Thailand Production Monitor Page | Priority: High | The plan `A1 เขาแก้ว (2207010601) 2021-02-25` ID: `7dbf2406-1191-5c5b-80d5-da2328fe3f33` has data submitted on the android device and marked as synced to the server. Checks on the OpenSRP server confirms that that data has synced. However, the plan does not show the BCC data on the WebUI under the Monitor page [here](https://mhealth.ddc.moph.go.th/focus-investigation/map/301d72b4-0946-50ea-8897-3cf4ff0acea6).

The same issue applied to the plan `A1 ผักกาด (2204100301) 2021-02-27` ID: `5ba50d6b-4687-5a5a-84a8-3cd846340eed` accessible [here](https://mhealth.ddc.moph.go.th/focus-investigation/map/1a9ccc6b-38e3-5b03-82ec-2172fc1e3ced).

| 1.0 | BCC Data not Showing on Thailand Production Monitor Page - The plan `A1 เขาแก้ว (2207010601) 2021-02-25` ID: `7dbf2406-1191-5c5b-80d5-da2328fe3f33` has data submitted on the android device and marked as synced to the server. Checks on the OpenSRP server confirms that that data has synced. However, the plan does not show the BCC data on the WebUI under the Monitor page [here](https://mhealth.ddc.moph.go.th/focus-investigation/map/301d72b4-0946-50ea-8897-3cf4ff0acea6).

The same issue applied to the plan `A1 ผักกาด (2204100301) 2021-02-27` ID: `5ba50d6b-4687-5a5a-84a8-3cd846340eed` accessible [here](https://mhealth.ddc.moph.go.th/focus-investigation/map/1a9ccc6b-38e3-5b03-82ec-2172fc1e3ced).

| priority | bcc data not showing on thailand production monitor page the plan เขาแก้ว id has data submitted on the android device and marked as synced to the server checks on the opensrp server confirms that that data has synced however the plan does not show the bcc data on the webui under the monitor page the same issue applied to the plan ผักกาด id accessible | 1 |

283,601 | 8,721,128,809 | IssuesEvent | 2018-12-08 19:44:49 | SunwellWoW/Sunwell-TBC-Bugtracker | https://api.github.com/repos/SunwellWoW/Sunwell-TBC-Bugtracker | closed | Quest - City of Light | High Priority duplicate |

Quest still wont complete, tried twice now... both times the npc despawns randomly right after the Scryers section.

| 1.0 | Quest - City of Light -

Quest still wont complete, tried twice now... both times the npc despawns randomly right after the Scryers section.

| priority | quest city of light quest still wont complete tried twice now both times the npc despawns randomly right after the scryers section | 1 |

163,557 | 6,200,778,594 | IssuesEvent | 2017-07-06 02:43:11 | chrisblakley/Nebula | https://api.github.com/repos/chrisblakley/Nebula | closed | Theme update email version numbers are backwards | Backend (Server) Bug High Priority Parent / Child Theme | The theme update email version number is backwards for some reason...

```

The parent Nebula theme has been updated from version 5.0.27 (Committed: May 27, 2017) to 5.0.24 for...

``` | 1.0 | Theme update email version numbers are backwards - The theme update email version number is backwards for some reason...

```

The parent Nebula theme has been updated from version 5.0.27 (Committed: May 27, 2017) to 5.0.24 for...

``` | priority | theme update email version numbers are backwards the theme update email version number is backwards for some reason the parent nebula theme has been updated from version committed may to for | 1 |

550,225 | 16,107,256,395 | IssuesEvent | 2021-04-27 16:19:05 | TEAM-SUITS/Suits | https://api.github.com/repos/TEAM-SUITS/Suits | closed | feat: 답변에 대한 좋아요 기능 구현 | :orange_circle: Priority: High :sparkling_heart: feature | # ✨Feature Request

## 답변에 대한 좋아요 기능 구현

- 답변 좋아요 클릭 시 좋아요 상태를 업데이트하는 기능 구현 필요

| 1.0 | feat: 답변에 대한 좋아요 기능 구현 - # ✨Feature Request

## 답변에 대한 좋아요 기능 구현

- 답변 좋아요 클릭 시 좋아요 상태를 업데이트하는 기능 구현 필요

| priority | feat 답변에 대한 좋아요 기능 구현 ✨feature request 답변에 대한 좋아요 기능 구현 답변 좋아요 클릭 시 좋아요 상태를 업데이트하는 기능 구현 필요 | 1 |

97,551 | 3,995,592,930 | IssuesEvent | 2016-05-10 15:58:09 | WikiEducationFoundation/WikiEduDashboard | https://api.github.com/repos/WikiEducationFoundation/WikiEduDashboard | closed | dashboard.wikiedu.org interactions with wikimedia API is extremely slow | high-importance bug top priority WINTR | As of this morning, it takes longer than 30 seconds to do queries against the MediaWiki. This means that login and editing actions on the dashboard time out.

I'm able to get data back *eventually* when making queries to Commons or Wikipedia on the rails console, but it takes a very long time. Staging and other instances are not affected, just dashboard.wikiedu.org. | 1.0 | dashboard.wikiedu.org interactions with wikimedia API is extremely slow - As of this morning, it takes longer than 30 seconds to do queries against the MediaWiki. This means that login and editing actions on the dashboard time out.

I'm able to get data back *eventually* when making queries to Commons or Wikipedia on the rails console, but it takes a very long time. Staging and other instances are not affected, just dashboard.wikiedu.org. | priority | dashboard wikiedu org interactions with wikimedia api is extremely slow as of this morning it takes longer than seconds to do queries against the mediawiki this means that login and editing actions on the dashboard time out i m able to get data back eventually when making queries to commons or wikipedia on the rails console but it takes a very long time staging and other instances are not affected just dashboard wikiedu org | 1 |

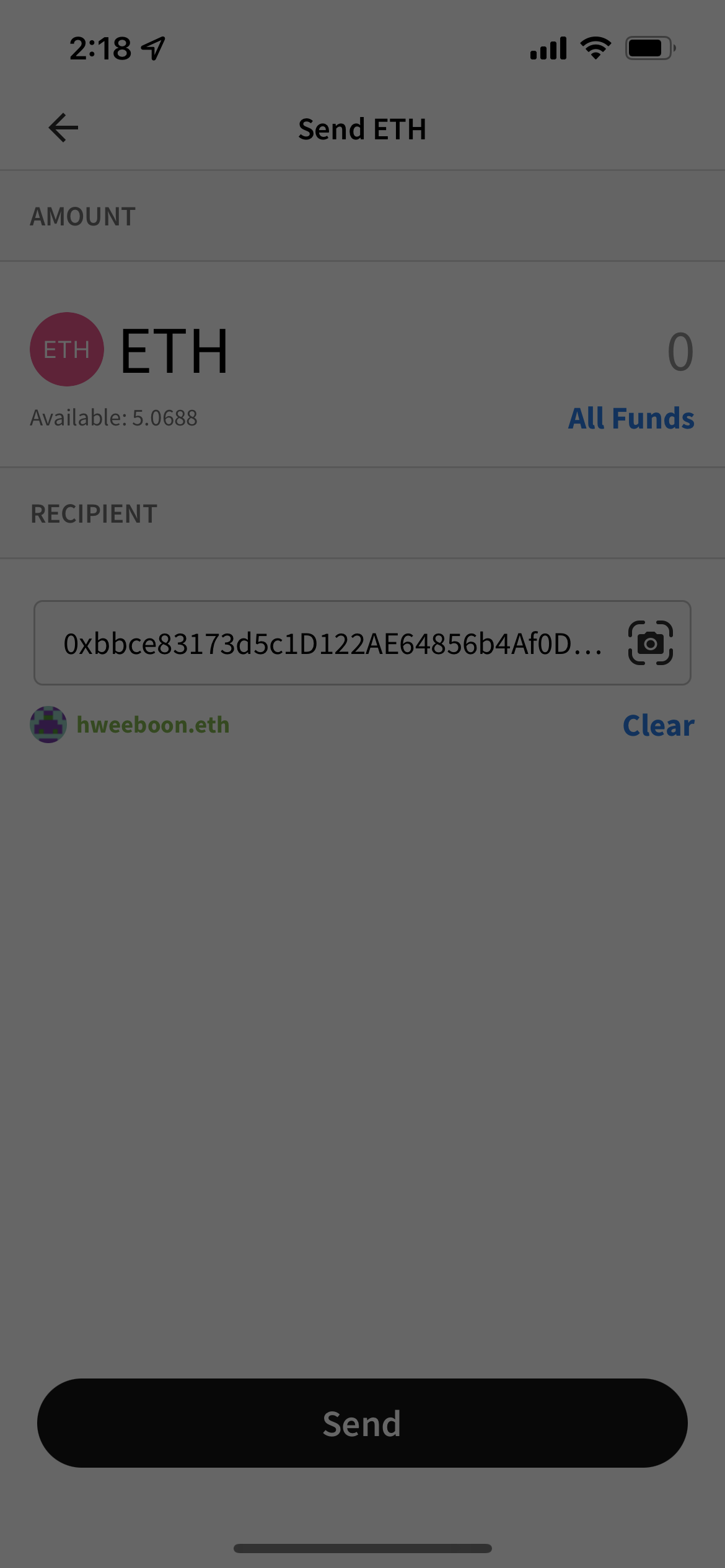

641,915 | 20,844,052,813 | IssuesEvent | 2022-03-21 06:20:27 | AlphaWallet/alpha-wallet-ios | https://api.github.com/repos/AlphaWallet/alpha-wallet-ios | opened | Freeze with grayed out screen after sending ETH on Ropsten successfully | High Priority | This is right after the actionsheet is closed. The transaction was sent successfully (confirmed in Etherscan).

| 1.0 | Freeze with grayed out screen after sending ETH on Ropsten successfully - This is right after the actionsheet is closed. The transaction was sent successfully (confirmed in Etherscan).

| priority | freeze with grayed out screen after sending eth on ropsten successfully this is right after the actionsheet is closed the transaction was sent successfully confirmed in etherscan | 1 |

316,737 | 9,654,309,222 | IssuesEvent | 2019-05-19 13:06:29 | WoWManiaUK/Blackwing-Lair | https://api.github.com/repos/WoWManiaUK/Blackwing-Lair | closed | [Dungeon] Deadmines -loot table- (issue 2) | Dungeon/Raid Exploit Priority-High | **Links:**

http://i66.tinypic.com/20nor5.jpg

http://i63.tinypic.com/2hi9qig.jpg

http://i65.tinypic.com/2dih9n7.jpg

http://i64.tinypic.com/28a3qti.jpg

**What is happening:**

The problem is all the bosses from Deadmines (lvl 15 one) drop Justice Points

**What should happen:**

Its a low level dungeon and it should not drop Justice Points | 1.0 | [Dungeon] Deadmines -loot table- (issue 2) - **Links:**

http://i66.tinypic.com/20nor5.jpg

http://i63.tinypic.com/2hi9qig.jpg

http://i65.tinypic.com/2dih9n7.jpg

http://i64.tinypic.com/28a3qti.jpg

**What is happening:**

The problem is all the bosses from Deadmines (lvl 15 one) drop Justice Points

**What should happen:**

Its a low level dungeon and it should not drop Justice Points | priority | deadmines loot table issue links what is happening the problem is all the bosses from deadmines lvl one drop justice points what should happen its a low level dungeon and it should not drop justice points | 1 |

276,506 | 8,599,481,732 | IssuesEvent | 2018-11-16 02:20:14 | QuantEcon/lecture-source-jl | https://api.github.com/repos/QuantEcon/lecture-source-jl | opened | Remaining Slate of Changes | high-priority | A lot of the checklists and such were out of date so I figured I would reconsolidate. This binds for the non-Jupinx/build changes.

### Interpolations

The only thing missing is the use of `Dierckx` in `amss`, which @Nosferican is rewriting in #159.

### Fixed Point and NLsolve

We use `compute_fixed_point` in `career, ifp, odu`. Xiaojun was having trouble converting these over. @Nosferican and I said we'd take a look --- I'll assign myself to this while he's working on the `amss`, etc.

### Expectations

Done all the ones we can without a major rewrite. The last thing we might do is replace the two instances of `do_quad` in `odu`.

### Structs and Mutable Structs

Mutable Structs:

- [ ] `amss` (#159)

- [ ] `odu`

- [ ] `opt_tax_recur`

- [ ] `uncertainty_traps`

- [ ] `lake_model` (almost done)

Structs:

- [ ] `amss` (#159)

- [ ] `hist_dep_policies`

- [ ] `jv`

- [ ] `lqramsey`

- [ ] `odu`

- [ ] `opt_tax_recur`

- [ ] `lake_model` (above)

I took out the type parameters etc. from a lot of these, just need to rip them out.

### Plots

We still call `pyplot()` in

- [ ] `linear_models`

- [ ] `hist_dep_policies`

- [ ] `amss` (#159)

| 1.0 | Remaining Slate of Changes - A lot of the checklists and such were out of date so I figured I would reconsolidate. This binds for the non-Jupinx/build changes.

### Interpolations

The only thing missing is the use of `Dierckx` in `amss`, which @Nosferican is rewriting in #159.

### Fixed Point and NLsolve

We use `compute_fixed_point` in `career, ifp, odu`. Xiaojun was having trouble converting these over. @Nosferican and I said we'd take a look --- I'll assign myself to this while he's working on the `amss`, etc.

### Expectations

Done all the ones we can without a major rewrite. The last thing we might do is replace the two instances of `do_quad` in `odu`.

### Structs and Mutable Structs

Mutable Structs:

- [ ] `amss` (#159)

- [ ] `odu`

- [ ] `opt_tax_recur`

- [ ] `uncertainty_traps`

- [ ] `lake_model` (almost done)

Structs:

- [ ] `amss` (#159)

- [ ] `hist_dep_policies`

- [ ] `jv`

- [ ] `lqramsey`

- [ ] `odu`

- [ ] `opt_tax_recur`

- [ ] `lake_model` (above)

I took out the type parameters etc. from a lot of these, just need to rip them out.

### Plots

We still call `pyplot()` in

- [ ] `linear_models`

- [ ] `hist_dep_policies`

- [ ] `amss` (#159)

| priority | remaining slate of changes a lot of the checklists and such were out of date so i figured i would reconsolidate this binds for the non jupinx build changes interpolations the only thing missing is the use of dierckx in amss which nosferican is rewriting in fixed point and nlsolve we use compute fixed point in career ifp odu xiaojun was having trouble converting these over nosferican and i said we d take a look i ll assign myself to this while he s working on the amss etc expectations done all the ones we can without a major rewrite the last thing we might do is replace the two instances of do quad in odu structs and mutable structs mutable structs amss odu opt tax recur uncertainty traps lake model almost done structs amss hist dep policies jv lqramsey odu opt tax recur lake model above i took out the type parameters etc from a lot of these just need to rip them out plots we still call pyplot in linear models hist dep policies amss | 1 |

304,882 | 9,337,168,364 | IssuesEvent | 2019-03-28 23:52:41 | bcgov/ols-router | https://api.github.com/repos/bcgov/ols-router | closed | in route planner, add a resource called ping that checks node health | api enhancement functional route planner high priority | @mraross commented on [Fri Jun 01 2018](https://github.com/bcgov/api-specs/issues/336)

The resource should perform a simple distance along road network calculation between two known points and return an HTTP 200 response code with no body.

Example:

router.api.gov.bc.ca/ping

ping will be used by a watchdog timer in the API Gateway to determine node health.

| 1.0 | in route planner, add a resource called ping that checks node health - @mraross commented on [Fri Jun 01 2018](https://github.com/bcgov/api-specs/issues/336)

The resource should perform a simple distance along road network calculation between two known points and return an HTTP 200 response code with no body.

Example:

router.api.gov.bc.ca/ping

ping will be used by a watchdog timer in the API Gateway to determine node health.

| priority | in route planner add a resource called ping that checks node health mraross commented on the resource should perform a simple distance along road network calculation between two known points and return an http response code with no body example router api gov bc ca ping ping will be used by a watchdog timer in the api gateway to determine node health | 1 |

646,182 | 21,040,133,388 | IssuesEvent | 2022-03-31 11:32:48 | AY2122S2-CS2103T-T12-1/tp | https://api.github.com/repos/AY2122S2-CS2103T-T12-1/tp | closed | Batch update for student activities | type.AdvancedFeatures priority.High type.Enhancement v1.3 | Current implementation is only for `ClassCode`, v1.3 needs for activities as well | 1.0 | Batch update for student activities - Current implementation is only for `ClassCode`, v1.3 needs for activities as well | priority | batch update for student activities current implementation is only for classcode needs for activities as well | 1 |

563,292 | 16,679,412,057 | IssuesEvent | 2021-06-07 20:49:12 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | opened | [studio] Create site and site listing fail due to missing publish_status permission | bug priority: high | ## Describe the bug

Site creation and site listing fail due to missing `publish_status` permission.

## To Reproduce

Steps to reproduce the behavior:

1. Create a site from Editorial BP

2. Watch the logs and the site listing screen

## Expected behavior

Site creation and site listing should work. Additionally, we should consider that `publish_status` is a must permission for those viewing the site listing.

## Screenshots

## Logs

```logs

[ERROR] 2021-06-07T16:46:49,863 [http-nio-8080-exec-3] [v2.ExceptionHandlers] | API endpoint http://localhost:8080/studio/api/2/publish/status?siteId=ed failed with response: ApiResponse{code=2001, message='Unauthorized', remedialAction='You don't have permission to perform this task, please contact your administrator', documentationUrl=''}

org.craftercms.commons.security.exception.ActionDeniedException: Current subject does not have permission to execute action "publish_status" on {siteId=ed}

```

## Specs

### Version

4.0.0-SNAPSHOT on 6/7/2021

### OS

Linux

### Browser

Chrome

## Additional context

N/A | 1.0 | [studio] Create site and site listing fail due to missing publish_status permission - ## Describe the bug

Site creation and site listing fail due to missing `publish_status` permission.

## To Reproduce

Steps to reproduce the behavior:

1. Create a site from Editorial BP

2. Watch the logs and the site listing screen

## Expected behavior

Site creation and site listing should work. Additionally, we should consider that `publish_status` is a must permission for those viewing the site listing.

## Screenshots

## Logs

```logs

[ERROR] 2021-06-07T16:46:49,863 [http-nio-8080-exec-3] [v2.ExceptionHandlers] | API endpoint http://localhost:8080/studio/api/2/publish/status?siteId=ed failed with response: ApiResponse{code=2001, message='Unauthorized', remedialAction='You don't have permission to perform this task, please contact your administrator', documentationUrl=''}

org.craftercms.commons.security.exception.ActionDeniedException: Current subject does not have permission to execute action "publish_status" on {siteId=ed}

```

## Specs

### Version

4.0.0-SNAPSHOT on 6/7/2021

### OS

Linux

### Browser

Chrome

## Additional context

N/A | priority | create site and site listing fail due to missing publish status permission describe the bug site creation and site listing fail due to missing publish status permission to reproduce steps to reproduce the behavior create a site from editorial bp watch the logs and the site listing screen expected behavior site creation and site listing should work additionally we should consider that publish status is a must permission for those viewing the site listing screenshots logs logs api endpoint failed with response apiresponse code message unauthorized remedialaction you don t have permission to perform this task please contact your administrator documentationurl org craftercms commons security exception actiondeniedexception current subject does not have permission to execute action publish status on siteid ed specs version snapshot on os linux browser chrome additional context n a | 1 |

227,073 | 7,526,636,058 | IssuesEvent | 2018-04-13 14:35:25 | USGCRP/gcis | https://api.github.com/repos/USGCRP/gcis | closed | CSSR Postmortem | priority high type question | Review how CSSR went. Talk about what went well, and what we wish had gone better. Figure out what we can do to improve those things before we do this again for NCA4! | 1.0 | CSSR Postmortem - Review how CSSR went. Talk about what went well, and what we wish had gone better. Figure out what we can do to improve those things before we do this again for NCA4! | priority | cssr postmortem review how cssr went talk about what went well and what we wish had gone better figure out what we can do to improve those things before we do this again for | 1 |

760,049 | 26,625,727,329 | IssuesEvent | 2023-01-24 14:26:10 | ITISFoundation/osparc-simcore | https://api.github.com/repos/ITISFoundation/osparc-simcore | opened | Pulling images not always works as expected | bug High Priority | I have a new machine and just published s4l. Pulling the service failed 2/3 times with the following error.

```

WARNING: [2023-01-24 13:59:30,813/MainProcess] [servicelib.long_running_tasks._task:get_task_result_old(257)] - Task simcore_service_dynamic_sidecar.modules.long_running_tasks.task_create_service_containers.68c5562c-a737-417a-8cb5-449637b8e784 finished with error: Task simcore_service_dynamic_sidecar.modules.long_running_tasks.task_create_service_containers.68c5562c-a737-417a-8cb5-449637b8e784 finished with exception: ''93dac263047b''

File "/home/scu/.venv/lib/python3.9/site-packages/servicelib/long_running_tasks/_task.py", line 414, in _progress_task

return await handler(progress, **task_kwargs)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/modules/long_running_tasks.py", line 119, in task_create_service_containers

await docker_compose_pull(app, shared_store.compose_spec)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_compose_utils.py", line 110, in docker_compose_pull

await pull_images(list_of_images, registry_settings, _progress_cb, _log_cb)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 82, in pull_images

await asyncio.gather(

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 191, in _pull_image_with_progress

if _parse_docker_pull_progress(

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 129, in _parse_docker_pull_progress

_, layer_total_size = all_image_pulling_data[image_name][layer_id]

```

Feels like a concurrency issue to me, but I might be wrong. I see you are sharing an object. | 1.0 | Pulling images not always works as expected - I have a new machine and just published s4l. Pulling the service failed 2/3 times with the following error.

```

WARNING: [2023-01-24 13:59:30,813/MainProcess] [servicelib.long_running_tasks._task:get_task_result_old(257)] - Task simcore_service_dynamic_sidecar.modules.long_running_tasks.task_create_service_containers.68c5562c-a737-417a-8cb5-449637b8e784 finished with error: Task simcore_service_dynamic_sidecar.modules.long_running_tasks.task_create_service_containers.68c5562c-a737-417a-8cb5-449637b8e784 finished with exception: ''93dac263047b''

File "/home/scu/.venv/lib/python3.9/site-packages/servicelib/long_running_tasks/_task.py", line 414, in _progress_task

return await handler(progress, **task_kwargs)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/modules/long_running_tasks.py", line 119, in task_create_service_containers

await docker_compose_pull(app, shared_store.compose_spec)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_compose_utils.py", line 110, in docker_compose_pull

await pull_images(list_of_images, registry_settings, _progress_cb, _log_cb)

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 82, in pull_images

await asyncio.gather(

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 191, in _pull_image_with_progress

if _parse_docker_pull_progress(

dy-sidecar_51fb7bc0-6141-46e9-a873-f0be3f6aacea.1.w53j60bwxpkk@testmachine2 |

File "/home/scu/.venv/lib/python3.9/site-packages/simcore_service_dynamic_sidecar/core/docker_utils.py", line 129, in _parse_docker_pull_progress

_, layer_total_size = all_image_pulling_data[image_name][layer_id]

```

Feels like a concurrency issue to me, but I might be wrong. I see you are sharing an object. | priority | pulling images not always works as expected i have a new machine and just published pulling the service failed times with the following error warning task simcore service dynamic sidecar modules long running tasks task create service containers finished with error task simcore service dynamic sidecar modules long running tasks task create service containers finished with exception file home scu venv lib site packages servicelib long running tasks task py line in progress task return await handler progress task kwargs dy sidecar file home scu venv lib site packages simcore service dynamic sidecar modules long running tasks py line in task create service containers await docker compose pull app shared store compose spec dy sidecar file home scu venv lib site packages simcore service dynamic sidecar core docker compose utils py line in docker compose pull await pull images list of images registry settings progress cb log cb dy sidecar file home scu venv lib site packages simcore service dynamic sidecar core docker utils py line in pull images await asyncio gather dy sidecar file home scu venv lib site packages simcore service dynamic sidecar core docker utils py line in pull image with progress if parse docker pull progress dy sidecar file home scu venv lib site packages simcore service dynamic sidecar core docker utils py line in parse docker pull progress layer total size all image pulling data feels like a concurrency issue to me but i might be wrong i see you are sharing an object | 1 |

454,894 | 13,108,984,748 | IssuesEvent | 2020-08-04 17:50:17 | digital-dream-labs/vector-web-setup | https://api.github.com/repos/digital-dream-labs/vector-web-setup | closed | Fix usage string on unconfigured projects | QA Ready bug priority - high | When I run `vector-web-setup serve` without doing anything, I get this:

```

Seems like you have missed this step 'configure'!

E.g. 'npm run vector-setup configure'

```

The command listed is wrong now. It should be `vector-web-setup configure` | 1.0 | Fix usage string on unconfigured projects - When I run `vector-web-setup serve` without doing anything, I get this:

```

Seems like you have missed this step 'configure'!

E.g. 'npm run vector-setup configure'

```

The command listed is wrong now. It should be `vector-web-setup configure` | priority | fix usage string on unconfigured projects when i run vector web setup serve without doing anything i get this seems like you have missed this step configure e g npm run vector setup configure the command listed is wrong now it should be vector web setup configure | 1 |

191,015 | 6,824,830,209 | IssuesEvent | 2017-11-08 08:16:58 | xcodeswift/xcproj | https://api.github.com/repos/xcodeswift/xcproj | opened | Wrong build phase name | difficulty:easy good first issue priority:high status:ready-development type:bug | ## Context 🕵️♀️

I opened a project with `xcproj` and after saving it, some build files that belonged to a copy files build phase had the wrong comment.

## What 🌱

```

// Before

04EDB7B51F3B446100411A92 /* Features.framework in Embed Local Frameworks */ = {isa = PBXBuildFile; fileRef = 23616E711DD1CE9700250513 /* Features.framework */; settings = {ATTRIBUTES = (CodeSignOnCopy, RemoveHeadersOnCopy, ); }; };

// After

04EDB7B51F3B446100411A92 /* Features.framework in CopyFiles */ = {isa = PBXBuildFile; fileRef = 23616E711DD1CE9700250513 /* Features.framework */; settings = {ATTRIBUTES = (CodeSignOnCopy, RemoveHeadersOnCopy, ); }; };

```

**Notice the `Features.framework` in CopyFiles` comment. `CopyFiles` should be the build phase name.**

## Proposal 🎉

Update `PBXBuildFile` to generate the right comment.

<!-- Love xcproj? Please consider supporting our collective:

👉 https://opencollective.com/xcproj/donate --> | 1.0 | Wrong build phase name - ## Context 🕵️♀️

I opened a project with `xcproj` and after saving it, some build files that belonged to a copy files build phase had the wrong comment.

## What 🌱

```

// Before

04EDB7B51F3B446100411A92 /* Features.framework in Embed Local Frameworks */ = {isa = PBXBuildFile; fileRef = 23616E711DD1CE9700250513 /* Features.framework */; settings = {ATTRIBUTES = (CodeSignOnCopy, RemoveHeadersOnCopy, ); }; };

// After

04EDB7B51F3B446100411A92 /* Features.framework in CopyFiles */ = {isa = PBXBuildFile; fileRef = 23616E711DD1CE9700250513 /* Features.framework */; settings = {ATTRIBUTES = (CodeSignOnCopy, RemoveHeadersOnCopy, ); }; };

```

**Notice the `Features.framework` in CopyFiles` comment. `CopyFiles` should be the build phase name.**

## Proposal 🎉

Update `PBXBuildFile` to generate the right comment.

<!-- Love xcproj? Please consider supporting our collective:

👉 https://opencollective.com/xcproj/donate --> | priority | wrong build phase name context 🕵️♀️ i opened a project with xcproj and after saving it some build files that belonged to a copy files build phase had the wrong comment what 🌱 before features framework in embed local frameworks isa pbxbuildfile fileref features framework settings attributes codesignoncopy removeheadersoncopy after features framework in copyfiles isa pbxbuildfile fileref features framework settings attributes codesignoncopy removeheadersoncopy notice the features framework in copyfiles comment copyfiles should be the build phase name proposal 🎉 update pbxbuildfile to generate the right comment love xcproj please consider supporting our collective 👉 | 1 |

210,636 | 7,191,774,417 | IssuesEvent | 2018-02-02 22:27:19 | OpenEnergyDashboard/OED | https://api.github.com/repos/OpenEnergyDashboard/OED | closed | colors on compare day chart | bug high priority | The colors for the Day comparison is different from the other two choices. I think this one is wrong. First, the projected usage is not lighter for today. Second, yesterday and today actual usage to that time are not the same color. Note, the color for yesterday are reversed from the other compares and for today the projected is the color for actual in the other charts. | 1.0 | colors on compare day chart - The colors for the Day comparison is different from the other two choices. I think this one is wrong. First, the projected usage is not lighter for today. Second, yesterday and today actual usage to that time are not the same color. Note, the color for yesterday are reversed from the other compares and for today the projected is the color for actual in the other charts. | priority | colors on compare day chart the colors for the day comparison is different from the other two choices i think this one is wrong first the projected usage is not lighter for today second yesterday and today actual usage to that time are not the same color note the color for yesterday are reversed from the other compares and for today the projected is the color for actual in the other charts | 1 |

646,076 | 21,036,636,478 | IssuesEvent | 2022-03-31 08:28:29 | AY2122S2-CS2103-F11-2/tp | https://api.github.com/repos/AY2122S2-CS2103-F11-2/tp | closed | Fix profile picture not showing for lowercase letters | type.Bug priority.High | Currently the profile picture in the focus card crashes when user enters a name with lower case.

Let's add a check to enable profile picture to be shown for names with lower case. | 1.0 | Fix profile picture not showing for lowercase letters - Currently the profile picture in the focus card crashes when user enters a name with lower case.

Let's add a check to enable profile picture to be shown for names with lower case. | priority | fix profile picture not showing for lowercase letters currently the profile picture in the focus card crashes when user enters a name with lower case let s add a check to enable profile picture to be shown for names with lower case | 1 |

771,911 | 27,098,795,354 | IssuesEvent | 2023-02-15 06:39:55 | xKDR/Survey.jl | https://api.github.com/repos/xKDR/Survey.jl | closed | Registration of package in Julia Repository | high priority | This thread to start the process of registeration of `Survey.jl`.

@ayushpatnaikgit you said that `Survey.jl` is very close to existing package [`Curves.jl`](https://juliapackages.com/p/curves).

Lets proceed to registration? | 1.0 | Registration of package in Julia Repository - This thread to start the process of registeration of `Survey.jl`.

@ayushpatnaikgit you said that `Survey.jl` is very close to existing package [`Curves.jl`](https://juliapackages.com/p/curves).

Lets proceed to registration? | priority | registration of package in julia repository this thread to start the process of registeration of survey jl ayushpatnaikgit you said that survey jl is very close to existing package lets proceed to registration | 1 |

53,705 | 3,044,228,274 | IssuesEvent | 2015-08-10 07:51:25 | UnifiedViews/Core | https://api.github.com/repos/UnifiedViews/Core | closed | Description field is always empty for root DPU templates | priority: High resolution: fixed severity: bug | After https://github.com/UnifiedViews/Core/pull/491 is accepted, name and description of root DPU templates (second level items in the DPU template tree, just under "Extractors", "Transformers", and "Loader") cannot be changed. Thus, for every root DPU template, the general tab looks as follows:

<img width="1410" alt="screen shot 2015-08-06 at 11 12 22" src="https://cloud.githubusercontent.com/assets/3014917/9108490/d42812e0-3c2e-11e5-8822-3f37daa99cee.png">

Description is taken from pom.xml and put to "Description of JAR". So "Description" field is always empty for root DPU templates (2nd level items in the DPU tree) which is a bit confusing.

Proposed solution:

1) the description field should contain fixed text "See DPU Template Configuration, About tab". Such text should be localized for SK version (with the corresponding names of the tabs for SK version).

| 1.0 | Description field is always empty for root DPU templates - After https://github.com/UnifiedViews/Core/pull/491 is accepted, name and description of root DPU templates (second level items in the DPU template tree, just under "Extractors", "Transformers", and "Loader") cannot be changed. Thus, for every root DPU template, the general tab looks as follows:

<img width="1410" alt="screen shot 2015-08-06 at 11 12 22" src="https://cloud.githubusercontent.com/assets/3014917/9108490/d42812e0-3c2e-11e5-8822-3f37daa99cee.png">

Description is taken from pom.xml and put to "Description of JAR". So "Description" field is always empty for root DPU templates (2nd level items in the DPU tree) which is a bit confusing.

Proposed solution:

1) the description field should contain fixed text "See DPU Template Configuration, About tab". Such text should be localized for SK version (with the corresponding names of the tabs for SK version).

| priority | description field is always empty for root dpu templates after is accepted name and description of root dpu templates second level items in the dpu template tree just under extractors transformers and loader cannot be changed thus for every root dpu template the general tab looks as follows img width alt screen shot at src description is taken from pom xml and put to description of jar so description field is always empty for root dpu templates level items in the dpu tree which is a bit confusing proposed solution the description field should contain fixed text see dpu template configuration about tab such text should be localized for sk version with the corresponding names of the tabs for sk version | 1 |

555,899 | 16,472,387,860 | IssuesEvent | 2021-05-23 17:19:38 | lorenzwalthert/precommit | https://api.github.com/repos/lorenzwalthert/precommit | closed | {renv} hook dependencies should be auto-updated | Complexity: Medium Priority: High Status: WIP | We can build a GitHub action to send a PR once a month. | 1.0 | {renv} hook dependencies should be auto-updated - We can build a GitHub action to send a PR once a month. | priority | renv hook dependencies should be auto updated we can build a github action to send a pr once a month | 1 |

563,061 | 16,675,514,187 | IssuesEvent | 2021-06-07 15:43:02 | 10up/ElasticPress | https://api.github.com/repos/10up/ElasticPress | closed | Image block and post_mime_type | confirmed bug high priority wip | **Describe the bug**

The Gutenberg image block adds `'post_mime_type' => 'image'` to the media modal's search queries (the `query-attachments` AJAX action). The classic editor didn't add this parameter.

ElasticPress translates post_mime_type to an exact match, but in `wp_post_mime_type_where()`, which WP_Query uses to parse that argument, if there's no `/` in the post_mime_type, the resulting SQL wildcards the second half of the mime type, so `LIKE 'image/%'` (for this specific case).

While admin-side functionality isn't the default for this plugin, updating this logic would make it more seamless.

**Steps to Reproduce**

1. Install/configure ElasticPress, Classic Editor plugin

2. Enable document indexing

3. Enable admin and AJAX via `ep_admin_wp_query_integration` and `ep_ajax_wp_query_integration`

4. Add an image to the media library

5. Create a new post

6. Edit with classic editor

7. Use the "Add Media" button to open the media modal

8. See your image

9. Use the search bar to search for your image

10. Still see your image

11. Close out. Edit a post with the block editor.

12. Add a new image block to bring up the media modal

13. See your image

14. Use the search bar to search for your image

15. See no results returned

**Expected behavior**

Image search results in the admin should be consistent.

**Environment information**

- Device: ThinkPad

- OS: Windows

- Browser and version: Firefox

- Plugins and version: ElasticPress, latest

- Theme and version: Custom

- Other installed plugin(s) and version(s): I can provide this if the above steps fail to reproduce

**Additional context**

Current workaround is a `pre_get_posts` filter:

```

public function query_attachments( &$query ) {

if( !wp_doing_ajax() || $_POST['action'] != 'query-attachments' ) {

return;

}

$query->set('post_mime_type', '');

}

``` | 1.0 | Image block and post_mime_type - **Describe the bug**

The Gutenberg image block adds `'post_mime_type' => 'image'` to the media modal's search queries (the `query-attachments` AJAX action). The classic editor didn't add this parameter.

ElasticPress translates post_mime_type to an exact match, but in `wp_post_mime_type_where()`, which WP_Query uses to parse that argument, if there's no `/` in the post_mime_type, the resulting SQL wildcards the second half of the mime type, so `LIKE 'image/%'` (for this specific case).

While admin-side functionality isn't the default for this plugin, updating this logic would make it more seamless.

**Steps to Reproduce**

1. Install/configure ElasticPress, Classic Editor plugin

2. Enable document indexing

3. Enable admin and AJAX via `ep_admin_wp_query_integration` and `ep_ajax_wp_query_integration`

4. Add an image to the media library

5. Create a new post

6. Edit with classic editor

7. Use the "Add Media" button to open the media modal

8. See your image

9. Use the search bar to search for your image

10. Still see your image

11. Close out. Edit a post with the block editor.

12. Add a new image block to bring up the media modal

13. See your image

14. Use the search bar to search for your image

15. See no results returned

**Expected behavior**

Image search results in the admin should be consistent.

**Environment information**

- Device: ThinkPad

- OS: Windows

- Browser and version: Firefox

- Plugins and version: ElasticPress, latest

- Theme and version: Custom

- Other installed plugin(s) and version(s): I can provide this if the above steps fail to reproduce

**Additional context**

Current workaround is a `pre_get_posts` filter:

```

public function query_attachments( &$query ) {

if( !wp_doing_ajax() || $_POST['action'] != 'query-attachments' ) {

return;

}

$query->set('post_mime_type', '');

}

``` | priority | image block and post mime type describe the bug the gutenberg image block adds post mime type image to the media modal s search queries the query attachments ajax action the classic editor didn t add this parameter elasticpress translates post mime type to an exact match but in wp post mime type where which wp query uses to parse that argument if there s no in the post mime type the resulting sql wildcards the second half of the mime type so like image for this specific case while admin side functionality isn t the default for this plugin updating this logic would make it more seamless steps to reproduce install configure elasticpress classic editor plugin enable document indexing enable admin and ajax via ep admin wp query integration and ep ajax wp query integration add an image to the media library create a new post edit with classic editor use the add media button to open the media modal see your image use the search bar to search for your image still see your image close out edit a post with the block editor add a new image block to bring up the media modal see your image use the search bar to search for your image see no results returned expected behavior image search results in the admin should be consistent environment information device thinkpad os windows browser and version firefox plugins and version elasticpress latest theme and version custom other installed plugin s and version s i can provide this if the above steps fail to reproduce additional context current workaround is a pre get posts filter public function query attachments query if wp doing ajax post query attachments return query set post mime type | 1 |

827,409 | 31,771,413,802 | IssuesEvent | 2023-09-12 12:03:19 | svthalia/concrexit | https://api.github.com/repos/svthalia/concrexit | closed | Fix moneybird pagination problem in get_or_create_project | priority: high bug moneybirdsynchronization | ### Describe the bug

https://thalia.sentry.io/issues/4111947935/?project=1463433&query=is%3Aunresolved+issue.category%3Aerror&referrer=issue-stream&statsPeriod=14d&stream_index=5

Moneybird stuff nearly always fails when getting an existing project, because only 25 projects are returned by default (iirc 100 is the max that can be set).

So we need to either handle pagination, or find out if we can filter by name in https://github.com/svthalia/concrexit/blob/d04b57585e58d692916d4a5225eeea4bfa4cde4c/website/moneybirdsynchronization/moneybird.py#L23-L25.

### How to reproduce

Steps to reproduce the behaviour:

1. Try moneybird if over 25 projects exist.

| 1.0 | Fix moneybird pagination problem in get_or_create_project - ### Describe the bug

https://thalia.sentry.io/issues/4111947935/?project=1463433&query=is%3Aunresolved+issue.category%3Aerror&referrer=issue-stream&statsPeriod=14d&stream_index=5

Moneybird stuff nearly always fails when getting an existing project, because only 25 projects are returned by default (iirc 100 is the max that can be set).

So we need to either handle pagination, or find out if we can filter by name in https://github.com/svthalia/concrexit/blob/d04b57585e58d692916d4a5225eeea4bfa4cde4c/website/moneybirdsynchronization/moneybird.py#L23-L25.

### How to reproduce

Steps to reproduce the behaviour:

1. Try moneybird if over 25 projects exist.

| priority | fix moneybird pagination problem in get or create project describe the bug moneybird stuff nearly always fails when getting an existing project because only projects are returned by default iirc is the max that can be set so we need to either handle pagination or find out if we can filter by name in how to reproduce steps to reproduce the behaviour try moneybird if over projects exist | 1 |

386,840 | 11,451,432,819 | IssuesEvent | 2020-02-06 11:35:41 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | While editing a post from WPbakery builder,there is an error in Console (Uncaught TypeError: Cannot read property 'on' of null) | NEXT UPDATE [Priority: HIGH] bug | Ref:- https://secure.helpscout.net/conversation/1065294226/108105?folderId=1060556

https://monosnap.com/file/QoVWO8Gknb5UHWVp4SOcauwuQi80fx

| 1.0 | While editing a post from WPbakery builder,there is an error in Console (Uncaught TypeError: Cannot read property 'on' of null) - Ref:- https://secure.helpscout.net/conversation/1065294226/108105?folderId=1060556

https://monosnap.com/file/QoVWO8Gknb5UHWVp4SOcauwuQi80fx

| priority | while editing a post from wpbakery builder there is an error in console uncaught typeerror cannot read property on of null ref | 1 |

830,239 | 31,997,118,382 | IssuesEvent | 2023-09-21 09:52:58 | EBISPOT/goci | https://api.github.com/repos/EBISPOT/goci | closed | Implement process to detect and update obsoleted EFO terms prior to data release | Priority: High | EFO sometimes obsoletes terms and replaces them with new ones. When obsolete terms have been used in the GWAS Catalog and not updated, this causes the data release to fail.

We need a process to to detect and update obsolete terms to be run prior to data release.

EFO release notes are here:

https://github.com/EBISPOT/efo/blob/master/ExFactor%20Ontology%20release%20notes.txt

See also these tickets:

gwas-utils#15

goci#366 (wider issue of propogating changes in EFO eg term name, definition, to the GWAS catalog, not required now but we should plan for this in the future)

| 1.0 | Implement process to detect and update obsoleted EFO terms prior to data release - EFO sometimes obsoletes terms and replaces them with new ones. When obsolete terms have been used in the GWAS Catalog and not updated, this causes the data release to fail.

We need a process to to detect and update obsolete terms to be run prior to data release.

EFO release notes are here:

https://github.com/EBISPOT/efo/blob/master/ExFactor%20Ontology%20release%20notes.txt

See also these tickets:

gwas-utils#15

goci#366 (wider issue of propogating changes in EFO eg term name, definition, to the GWAS catalog, not required now but we should plan for this in the future)

| priority | implement process to detect and update obsoleted efo terms prior to data release efo sometimes obsoletes terms and replaces them with new ones when obsolete terms have been used in the gwas catalog and not updated this causes the data release to fail we need a process to to detect and update obsolete terms to be run prior to data release efo release notes are here see also these tickets gwas utils goci wider issue of propogating changes in efo eg term name definition to the gwas catalog not required now but we should plan for this in the future | 1 |

541,223 | 15,823,560,705 | IssuesEvent | 2021-04-06 01:04:08 | AY2021S2-CS2103T-T12-4/tp | https://api.github.com/repos/AY2021S2-CS2103T-T12-4/tp | closed | [PE-D] Valid Tag Parameters | priority.High severity.VeryLow type.Bug | valid tag examples:

nature

nature123

invalid tag examples

nature 123

nature nature

error msg for these invalid tag -> tag names should be alphanumeric.

Perhaps the error msg could be more specific? or mention that tag names should not contain space within

<!--session: 1617429946124-258a37f6-cce5-4442-9226-410ccb423b7f-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: Nanxi-Huang/ped#3 | 1.0 | [PE-D] Valid Tag Parameters - valid tag examples:

nature

nature123

invalid tag examples

nature 123

nature nature

error msg for these invalid tag -> tag names should be alphanumeric.

Perhaps the error msg could be more specific? or mention that tag names should not contain space within

<!--session: 1617429946124-258a37f6-cce5-4442-9226-410ccb423b7f-->

-------------

Labels: `severity.Low` `type.FunctionalityBug`

original: Nanxi-Huang/ped#3 | priority | valid tag parameters valid tag examples nature invalid tag examples nature nature nature error msg for these invalid tag tag names should be alphanumeric perhaps the error msg could be more specific or mention that tag names should not contain space within labels severity low type functionalitybug original nanxi huang ped | 1 |

607,270 | 18,778,557,860 | IssuesEvent | 2021-11-08 01:29:06 | Pr47/Pr47 | https://api.github.com/repos/Pr47/Pr47 | closed | Unify `Combustor` and `AsyncCombustor` | C: feature P: high-priority E: medium A: engine K: al31f A: async-await | `Serializer` can also be of good use when doing something tricky. | 1.0 | Unify `Combustor` and `AsyncCombustor` - `Serializer` can also be of good use when doing something tricky. | priority | unify combustor and asynccombustor serializer can also be of good use when doing something tricky | 1 |

809,057 | 30,122,848,806 | IssuesEvent | 2023-06-30 16:37:38 | netlify/next-runtime | https://api.github.com/repos/netlify/next-runtime | closed | Support Custom Route Handlers | priority: high Ecosystem: Frameworks | Next.js 13.2 introduces custom route handlers. See https://nextjs.org/blog/next-13-2#custom-route-handlers. We currently handle routes in pages/api, but we'll need to support this as well for Next 13 users.

For the most part, this should work out of the box because it will be reflected in the routes manifest, but we may need to tweak our logic a bit to support this. | 1.0 | Support Custom Route Handlers - Next.js 13.2 introduces custom route handlers. See https://nextjs.org/blog/next-13-2#custom-route-handlers. We currently handle routes in pages/api, but we'll need to support this as well for Next 13 users.

For the most part, this should work out of the box because it will be reflected in the routes manifest, but we may need to tweak our logic a bit to support this. | priority | support custom route handlers next js introduces custom route handlers see we currently handle routes in pages api but we ll need to support this as well for next users for the most part this should work out of the box because it will be reflected in the routes manifest but we may need to tweak our logic a bit to support this | 1 |

204,463 | 7,087,965,628 | IssuesEvent | 2018-01-11 19:43:34 | SilentChaos512/ScalingHealth | https://api.github.com/repos/SilentChaos512/ScalingHealth | closed | Blight equipment seems pretty good | bug priority: high |

Caption: "U fukin wot, m8?"

The enchantments go off the page for this blight's gear. I believe it came from a golden blight skelly that dropped into my grinder. My difficulty wasn't even that high. was only like 12 and then it went down to zero with his death.

Mod version is ScalingHealth-1.12-1.3.7-87. | 1.0 | Blight equipment seems pretty good -

Caption: "U fukin wot, m8?"

The enchantments go off the page for this blight's gear. I believe it came from a golden blight skelly that dropped into my grinder. My difficulty wasn't even that high. was only like 12 and then it went down to zero with his death.

Mod version is ScalingHealth-1.12-1.3.7-87. | priority | blight equipment seems pretty good caption u fukin wot the enchantments go off the page for this blight s gear i believe it came from a golden blight skelly that dropped into my grinder my difficulty wasn t even that high was only like and then it went down to zero with his death mod version is scalinghealth | 1 |

283,420 | 8,719,452,712 | IssuesEvent | 2018-12-08 00:58:01 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | VisIt crashes on startup on Windows Vista | bug crash likelihood high priority reviewed severity high wrong results | When starting VisIt on Windows Vista, the mdserver crashes on startup.

A few users have reported this workaround: to run VisIt with compatibility mode set to NT 4 (service pack 5).

I verified this work-around on my version of Vista, which is Vista Business 64 bit, SP2.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 192

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: VisIt crashes on startup on Windows Vista

Assigned to: Kathleen Biagas

Category:

Target version: 2.1

Author: Kathleen Biagas

Start: 06/30/2010

Due date:

% Done: 0

Estimated time:

Created: 06/30/2010 08:19 pm

Updated: 08/27/2010 05:34 pm

Likelihood: 4 - Common

Severity: 4 - Crash / Wrong Results

Found in version: 2.0.0

Impact:

Expected Use:

OS: Windows

Support Group: Any

Description:

When starting VisIt on Windows Vista, the mdserver crashes on startup.

A few users have reported this workaround: to run VisIt with compatibility mode set to NT 4 (service pack 5).

I verified this work-around on my version of Vista, which is Vista Business 64 bit, SP2.

Comments:

Assignment from LLNL VisIt 2.1 Release Meeting

I downloaded Microsoft's 'Application Compatibility Toolkit' in order to track down why Vista is flagging VisIt as needing to be run in compatibility mode (especially an NT-4 compat mode!)First few passes with the tool appear to indicate that VisIt is attempting to WRITE to HKLM registry files, which should notbe happening. That's the only compatibility issues that cropped up. The registry write does not appear in VisIt's source code.I ran VisIt through a a tool that generates call-stack information to discover the source of the Registry write operations, andit appears to be happening down in GL calls. (wglSwapMultipleBuffers). If this is truly the case, I'm not sure what we can doto mitigate this issue on Vista.

Binaries built with Visual Studio 9.0 (2008) do not have the same issue.Also, binaries built on Vista using Visual Studio 8 do not have this issue.Starting with VisIt 2.1, we will distribute binaries built with Visual Studio 9.

| 1.0 | VisIt crashes on startup on Windows Vista - When starting VisIt on Windows Vista, the mdserver crashes on startup.

A few users have reported this workaround: to run VisIt with compatibility mode set to NT 4 (service pack 5).

I verified this work-around on my version of Vista, which is Vista Business 64 bit, SP2.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 192

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: VisIt crashes on startup on Windows Vista

Assigned to: Kathleen Biagas

Category:

Target version: 2.1

Author: Kathleen Biagas

Start: 06/30/2010

Due date:

% Done: 0

Estimated time:

Created: 06/30/2010 08:19 pm

Updated: 08/27/2010 05:34 pm

Likelihood: 4 - Common

Severity: 4 - Crash / Wrong Results

Found in version: 2.0.0

Impact:

Expected Use:

OS: Windows

Support Group: Any

Description:

When starting VisIt on Windows Vista, the mdserver crashes on startup.

A few users have reported this workaround: to run VisIt with compatibility mode set to NT 4 (service pack 5).

I verified this work-around on my version of Vista, which is Vista Business 64 bit, SP2.

Comments:

Assignment from LLNL VisIt 2.1 Release Meeting

I downloaded Microsoft's 'Application Compatibility Toolkit' in order to track down why Vista is flagging VisIt as needing to be run in compatibility mode (especially an NT-4 compat mode!)First few passes with the tool appear to indicate that VisIt is attempting to WRITE to HKLM registry files, which should notbe happening. That's the only compatibility issues that cropped up. The registry write does not appear in VisIt's source code.I ran VisIt through a a tool that generates call-stack information to discover the source of the Registry write operations, andit appears to be happening down in GL calls. (wglSwapMultipleBuffers). If this is truly the case, I'm not sure what we can doto mitigate this issue on Vista.

Binaries built with Visual Studio 9.0 (2008) do not have the same issue.Also, binaries built on Vista using Visual Studio 8 do not have this issue.Starting with VisIt 2.1, we will distribute binaries built with Visual Studio 9.

| priority | visit crashes on startup on windows vista when starting visit on windows vista the mdserver crashes on startup a few users have reported this workaround to run visit with compatibility mode set to nt service pack i verified this work around on my version of vista which is vista business bit redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status resolved project visit tracker bug priority high subject visit crashes on startup on windows vista assigned to kathleen biagas category target version author kathleen biagas start due date done estimated time created pm updated pm likelihood common severity crash wrong results found in version impact expected use os windows support group any description when starting visit on windows vista the mdserver crashes on startup a few users have reported this workaround to run visit with compatibility mode set to nt service pack i verified this work around on my version of vista which is vista business bit comments assignment from llnl visit release meeting i downloaded microsoft s application compatibility toolkit in order to track down why vista is flagging visit as needing to be run in compatibility mode especially an nt compat mode first few passes with the tool appear to indicate that visit is attempting to write to hklm registry files which should notbe happening that s the only compatibility issues that cropped up the registry write does not appear in visit s source code i ran visit through a a tool that generates call stack information to discover the source of the registry write operations andit appears to be happening down in gl calls wglswapmultiplebuffers if this is truly the case i m not sure what we can doto mitigate this issue on vista binaries built with visual studio do not have the same issue also binaries built on vista using visual studio do not have this issue starting with visit we will distribute binaries built with visual studio | 1 |

374,871 | 11,096,472,535 | IssuesEvent | 2019-12-16 11:13:37 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.gutenberg.org - see bug description | browser-focus-geckoview engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.gutenberg.org/ebooks/17731

**Browser / Version**: Firefox Mobile 71.0

**Operating System**: Android 7.0

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: I can't back out of this page

**Steps to Reproduce**:

I was led to this page, nothing on there apply to my situation, so I tried to back out. Can't be none.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.gutenberg.org - see bug description - <!-- @browser: Firefox Mobile 71.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:71.0) Gecko/71.0 Firefox/71.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.gutenberg.org/ebooks/17731

**Browser / Version**: Firefox Mobile 71.0

**Operating System**: Android 7.0

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: I can't back out of this page

**Steps to Reproduce**:

I was led to this page, nothing on there apply to my situation, so I tried to back out. Can't be none.

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description i can t back out of this page steps to reproduce i was led to this page nothing on there apply to my situation so i tried to back out can t be none browser configuration none from with ❤️ | 1 |

227,782 | 7,542,697,901 | IssuesEvent | 2018-04-17 13:42:56 | EdwardHinkle/indigenous-ios | https://api.github.com/repos/EdwardHinkle/indigenous-ios | closed | Send access_token in the POST parameters | Micropub Support enhancement high priority | Wordpress and some other sites require access_token to be sent in post parameters. | 1.0 | Send access_token in the POST parameters - Wordpress and some other sites require access_token to be sent in post parameters. | priority | send access token in the post parameters wordpress and some other sites require access token to be sent in post parameters | 1 |

285,706 | 8,773,553,694 | IssuesEvent | 2018-12-18 17:09:57 | Automattic/simplenote-electron | https://api.github.com/repos/Automattic/simplenote-electron | closed | Note Sync Nudge Could Cause Constant Websocket Reconnect | bug priority-high | I'm still seeing a higher amount of websocket `init` traffic from this app. I think we may have an issue with the `ActivityHooks` code repeatedly reconnecting because it thinks it has an unsynced note.

I can cause many repeated reconnects by adding `v = 0;` [here](https://github.com/Automattic/simplenote-electron/blob/master/lib/utils/sync/nudge-unsynced.js#L21-L26), but I'm not certain this is exactly what is causing this. Still, the way that we connect the client [here](https://github.com/Automattic/simplenote-electron/blob/master/lib/utils/sync/nudge-unsynced.js#L42), and it was added in 1.3.0 when this started occurring, makes me believe that something like this is happening for some users.

Perhaps we should get rid of `ActivityHooks` for now, and just reconnect the websocket during the import process only so we still solve the issue of unsynced notes for large imports?

| 1.0 | Note Sync Nudge Could Cause Constant Websocket Reconnect - I'm still seeing a higher amount of websocket `init` traffic from this app. I think we may have an issue with the `ActivityHooks` code repeatedly reconnecting because it thinks it has an unsynced note.

I can cause many repeated reconnects by adding `v = 0;` [here](https://github.com/Automattic/simplenote-electron/blob/master/lib/utils/sync/nudge-unsynced.js#L21-L26), but I'm not certain this is exactly what is causing this. Still, the way that we connect the client [here](https://github.com/Automattic/simplenote-electron/blob/master/lib/utils/sync/nudge-unsynced.js#L42), and it was added in 1.3.0 when this started occurring, makes me believe that something like this is happening for some users.

Perhaps we should get rid of `ActivityHooks` for now, and just reconnect the websocket during the import process only so we still solve the issue of unsynced notes for large imports?

| priority | note sync nudge could cause constant websocket reconnect i m still seeing a higher amount of websocket init traffic from this app i think we may have an issue with the activityhooks code repeatedly reconnecting because it thinks it has an unsynced note i can cause many repeated reconnects by adding v but i m not certain this is exactly what is causing this still the way that we connect the client and it was added in when this started occurring makes me believe that something like this is happening for some users perhaps we should get rid of activityhooks for now and just reconnect the websocket during the import process only so we still solve the issue of unsynced notes for large imports | 1 |

423,239 | 12,293,256,063 | IssuesEvent | 2020-05-10 18:09:54 | cdnjs/cdnjs | https://api.github.com/repos/cdnjs/cdnjs | closed | [Request] Add jasmine-marbles | :rotating_light: High Priority 🏷 Library Request | **Library name:** jasmine-marbles

**Library description:** Marble testing helpers for RxJS and Jasmine

**Git repository url:** https://github.com/synapse-wireless-labs/jasmine-marbles

**npm package name or url** (if there is one): https://www.npmjs.com/package/jasmine-marbles

**License (List them all if it's multiple):** MIT License

**Official homepage:** https://github.com/synapse-wireless-labs/jasmine-marbles

**Wanna say something? Leave message here:** 55,626 downloads in the last month

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/56349519-request-add-jasmine-marbles?utm_campaign=plugin&utm_content=tracker%2F32893&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F32893&utm_medium=issues&utm_source=github).

</bountysource-plugin> | 1.0 | [Request] Add jasmine-marbles - **Library name:** jasmine-marbles

**Library description:** Marble testing helpers for RxJS and Jasmine

**Git repository url:** https://github.com/synapse-wireless-labs/jasmine-marbles

**npm package name or url** (if there is one): https://www.npmjs.com/package/jasmine-marbles

**License (List them all if it's multiple):** MIT License

**Official homepage:** https://github.com/synapse-wireless-labs/jasmine-marbles

**Wanna say something? Leave message here:** 55,626 downloads in the last month

<bountysource-plugin>

---

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/56349519-request-add-jasmine-marbles?utm_campaign=plugin&utm_content=tracker%2F32893&utm_medium=issues&utm_source=github)** We accept bounties via [Bountysource](https://www.bountysource.com/?utm_campaign=plugin&utm_content=tracker%2F32893&utm_medium=issues&utm_source=github).

</bountysource-plugin> | priority | add jasmine marbles library name jasmine marbles library description marble testing helpers for rxjs and jasmine git repository url npm package name or url if there is one license list them all if it s multiple mit license official homepage wanna say something leave message here downloads in the last month want to back this issue we accept bounties via | 1 |

825,051 | 31,240,826,297 | IssuesEvent | 2023-08-20 21:04:33 | bigcapitalhq/bigcapital | https://api.github.com/repos/bigcapitalhq/bigcapital | closed | [BIG-56] Should not write GL entries when save transaction as draft. | bug High priority | If you saved a new sale invoice as draft the system shouldn't write associated GL entries of the invoice unless you publish the invoice out.

* Sale invoice

* Sale Receipt

* Credit Note

* Bill

* Vendor Credit

<sub>From [SyncLinear.com](https://synclinear.com) | [BIG-56](https://linear.app/bigcaptial/issue/BIG-56/should-not-write-gl-entries-when-save-transaction-as-draft)</sub>

[BIG-56]: https://bigcapital.atlassian.net/browse/BIG-56?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [BIG-56] Should not write GL entries when save transaction as draft. - If you saved a new sale invoice as draft the system shouldn't write associated GL entries of the invoice unless you publish the invoice out.

* Sale invoice

* Sale Receipt

* Credit Note

* Bill

* Vendor Credit

<sub>From [SyncLinear.com](https://synclinear.com) | [BIG-56](https://linear.app/bigcaptial/issue/BIG-56/should-not-write-gl-entries-when-save-transaction-as-draft)</sub>

[BIG-56]: https://bigcapital.atlassian.net/browse/BIG-56?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | should not write gl entries when save transaction as draft if you saved a new sale invoice as draft the system shouldn t write associated gl entries of the invoice unless you publish the invoice out sale invoice sale receipt credit note bill vendor credit from | 1 |

594,711 | 18,051,826,322 | IssuesEvent | 2021-09-19 21:48:40 | EdwinParra35/MinTIC_g02_TicDigitalG8 | https://api.github.com/repos/EdwinParra35/MinTIC_g02_TicDigitalG8 | closed | Realizar el wireframe utilizando la herramienta Figma | High priority | El wireframe lo debes de realizar utilizando la herramienta Figma, puedes ingresar al sitio: https://www.figma.com/. Recuerda: Incluye la capacitación en el manejo de la herramienta, como tiempo que utilizas en desarrollo de tu actividad en el proyecto. | 1.0 | Realizar el wireframe utilizando la herramienta Figma - El wireframe lo debes de realizar utilizando la herramienta Figma, puedes ingresar al sitio: https://www.figma.com/. Recuerda: Incluye la capacitación en el manejo de la herramienta, como tiempo que utilizas en desarrollo de tu actividad en el proyecto. | priority | realizar el wireframe utilizando la herramienta figma el wireframe lo debes de realizar utilizando la herramienta figma puedes ingresar al sitio recuerda incluye la capacitación en el manejo de la herramienta como tiempo que utilizas en desarrollo de tu actividad en el proyecto | 1 |

173,016 | 6,519,051,889 | IssuesEvent | 2017-08-28 10:56:10 | VirtoCommerce/vc-platform | https://api.github.com/repos/VirtoCommerce/vc-platform | closed | Not work order products by created date | bug Priority: High | Please provide detailed information about your issue, thank you!

Version info:

- Browser version: All