Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

113,115 | 4,543,414,948 | IssuesEvent | 2016-09-10 04:05:59 | codeforamerica/intake | https://api.github.com/repos/codeforamerica/intake | closed | Large PDF requests can break Heroku's 30 second limit | partner-SFC priority-high | This morning a user couldn't access an 18-page generated PDF. When they tried, the request timed out after 30 seconds, a hard stop imposed by Heroku. There is no way to increase this time limit for request timeouts on Heroku. Accessing the same pdf later did not result in an error.

I was able to recreate the error by refreshing the page while it was loading.

Here are options that could prevent this in the future:

* creating a separate background process that allows the web request to complete in a short amount of time.

* create PDFs ahead of time, and serve them as static files

* moving off of Heroku and allowing PDF requests to take longer. | 1.0 | Large PDF requests can break Heroku's 30 second limit - This morning a user couldn't access an 18-page generated PDF. When they tried, the request timed out after 30 seconds, a hard stop imposed by Heroku. There is no way to increase this time limit for request timeouts on Heroku. Accessing the same pdf later did not result in an error.

I was able to recreate the error by refreshing the page while it was loading.

Here are options that could prevent this in the future:

* creating a separate background process that allows the web request to complete in a short amount of time.

* create PDFs ahead of time, and serve them as static files

* moving off of Heroku and allowing PDF requests to take longer. | priority | large pdf requests can break heroku s second limit this morning a user couldn t access an page generated pdf when they tried the request timed out after seconds a hard stop imposed by heroku there is no way to increase this time limit for request timeouts on heroku accessing the same pdf later did not result in an error i was able to recreate the error by refreshing the page while it was loading here are options that could prevent this in the future creating a separate background process that allows the web request to complete in a short amount of time create pdfs ahead of time and serve them as static files moving off of heroku and allowing pdf requests to take longer | 1 |

469,740 | 13,524,751,035 | IssuesEvent | 2020-09-15 12:02:48 | gnosis/conditional-tokens-explorer | https://api.github.com/repos/gnosis/conditional-tokens-explorer | opened | Partition section - Edit partition bugs | High priority bug | Related to #237 , #66 , #67

1. Empty rows are displayed in the section when drag and drop all the outcomes and then click on the reset button (see the video)

https://drive.google.com/file/d/1DJeNOQ5emlvRIEjTfz2HK1-7UDe_p-ta/view

2. Empty rows are displayed in the section when replace an outcome into Outcomes section by **clicking on the outcome circle** and then click on the reset button

3. The system allows to save a partition with only one collection in it: repeat steps 1 or 2 of the current issue, an then, then there is only one outcome and 1 empty line, click on the Save button (see the video). The transaction for positions creating will be finished with failure

https://drive.google.com/file/d/1-hYTJCH9QatnUD1HNiUX7jaDeXPk9PkO/view

4. Numbers do not fit the circle area when a condition contains outcomes more than 100 outcomes (3-digits outcomes)

5. The edited partitioning before login is not saved after a user logs in (see the video) Note: the issue with amount field on the video is described here: #252

https://drive.google.com/file/d/1HscxRWRKrSb8_e1AOgjIt_QYhaMJo04-/view

6. Bun icon is still displayed when drag and drop a position (should not be displayed according to the mock-up (https://zpl.io/aR5Z76K)

7. a Circle in the New collection prevoew field should be in red when hover a mouse on it according to the mock-up (https://zpl.io/aR5Z76K)

8. No horizontal lines should be displayed in the partition when it contains a lot of outcomes, placed one after another according to the mock-up (https://zpl.io/aR5Z76K)

9. 'Move' and 'Bin' icons should be top-aligned when outcomes exceed 1 line (mock-up https://zpl.io/aR5Z76K)

10. New collection preview should contain the lines between the outcomes according to the mock-up https://zpl.io/V039zl9

11. There is no confirmation before removing New Collection Preview. It should be according to the mock-up https://zpl.io/a3WXQZx

| 1.0 | Partition section - Edit partition bugs - Related to #237 , #66 , #67

1. Empty rows are displayed in the section when drag and drop all the outcomes and then click on the reset button (see the video)

https://drive.google.com/file/d/1DJeNOQ5emlvRIEjTfz2HK1-7UDe_p-ta/view

2. Empty rows are displayed in the section when replace an outcome into Outcomes section by **clicking on the outcome circle** and then click on the reset button

3. The system allows to save a partition with only one collection in it: repeat steps 1 or 2 of the current issue, an then, then there is only one outcome and 1 empty line, click on the Save button (see the video). The transaction for positions creating will be finished with failure

https://drive.google.com/file/d/1-hYTJCH9QatnUD1HNiUX7jaDeXPk9PkO/view

4. Numbers do not fit the circle area when a condition contains outcomes more than 100 outcomes (3-digits outcomes)

5. The edited partitioning before login is not saved after a user logs in (see the video) Note: the issue with amount field on the video is described here: #252

https://drive.google.com/file/d/1HscxRWRKrSb8_e1AOgjIt_QYhaMJo04-/view

6. Bun icon is still displayed when drag and drop a position (should not be displayed according to the mock-up (https://zpl.io/aR5Z76K)

7. a Circle in the New collection prevoew field should be in red when hover a mouse on it according to the mock-up (https://zpl.io/aR5Z76K)

8. No horizontal lines should be displayed in the partition when it contains a lot of outcomes, placed one after another according to the mock-up (https://zpl.io/aR5Z76K)

9. 'Move' and 'Bin' icons should be top-aligned when outcomes exceed 1 line (mock-up https://zpl.io/aR5Z76K)

10. New collection preview should contain the lines between the outcomes according to the mock-up https://zpl.io/V039zl9

11. There is no confirmation before removing New Collection Preview. It should be according to the mock-up https://zpl.io/a3WXQZx

| priority | partition section edit partition bugs related to empty rows are displayed in the section when drag and drop all the outcomes and then click on the reset button see the video empty rows are displayed in the section when replace an outcome into outcomes section by clicking on the outcome circle and then click on the reset button the system allows to save a partition with only one collection in it repeat steps or of the current issue an then then there is only one outcome and empty line click on the save button see the video the transaction for positions creating will be finished with failure numbers do not fit the circle area when a condition contains outcomes more than outcomes digits outcomes the edited partitioning before login is not saved after a user logs in see the video note the issue with amount field on the video is described here bun icon is still displayed when drag and drop a position should not be displayed according to the mock up a circle in the new collection prevoew field should be in red when hover a mouse on it according to the mock up no horizontal lines should be displayed in the partition when it contains a lot of outcomes placed one after another according to the mock up move and bin icons should be top aligned when outcomes exceed line mock up new collection preview should contain the lines between the outcomes according to the mock up there is no confirmation before removing new collection preview it should be according to the mock up | 1 |

179,389 | 6,624,619,396 | IssuesEvent | 2017-09-22 12:30:41 | YetiForceCompany/YetiForceCRM | https://api.github.com/repos/YetiForceCompany/YetiForceCRM | closed | Błąd skrzynki pocztowej po włączeniu podglądu wiadomości | Category::Bug Subcategory::HighPriority | Po zaznaczeniu w ustawieniach poczty Pokaż podgląd wiadomości w folderze Odebrane w skrzynce pocztowej nie wyświetlają się żadne wiadomości. Wyłączenie podglądu przywraca widok wiadomości.

Błąd wywołany w YetiForce 4.0.0. w wersji testowej zamieszczonej na stronie yetiforce.com

<!--- Before you create a new issue, please check out our [manual] (https://yetiforce.com/en/github/issues/126-issues.html) --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug. Descriptions can be provided in English or Polish (remember to add [PL] for Polish in the title). -->

#### Actual Behavior

<!--- Describe the result -->

#### Expected Behavior

<!--- Describe what you would want the result to be -->

#### How to trigger the error

<!--- If possible, please make a video using [ScreenToGif] (https://screentogif.codeplex.com/) or any other program used for recording actions from your desktop. -->

1.

2.

3.

#### Your Environment

<!---Describe the environment -->

* YetiForce Version used:

* Browser name and version:

* Environment name and version:

* Operating System and version:

<!--- Please check on your issue from time to time, in case we have questions or need some extra information. --->

| 1.0 | Błąd skrzynki pocztowej po włączeniu podglądu wiadomości - Po zaznaczeniu w ustawieniach poczty Pokaż podgląd wiadomości w folderze Odebrane w skrzynce pocztowej nie wyświetlają się żadne wiadomości. Wyłączenie podglądu przywraca widok wiadomości.

Błąd wywołany w YetiForce 4.0.0. w wersji testowej zamieszczonej na stronie yetiforce.com

<!--- Before you create a new issue, please check out our [manual] (https://yetiforce.com/en/github/issues/126-issues.html) --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug. Descriptions can be provided in English or Polish (remember to add [PL] for Polish in the title). -->

#### Actual Behavior

<!--- Describe the result -->

#### Expected Behavior

<!--- Describe what you would want the result to be -->

#### How to trigger the error

<!--- If possible, please make a video using [ScreenToGif] (https://screentogif.codeplex.com/) or any other program used for recording actions from your desktop. -->

1.

2.

3.

#### Your Environment

<!---Describe the environment -->

* YetiForce Version used:

* Browser name and version:

* Environment name and version:

* Operating System and version:

<!--- Please check on your issue from time to time, in case we have questions or need some extra information. --->

| priority | błąd skrzynki pocztowej po włączeniu podglądu wiadomości po zaznaczeniu w ustawieniach poczty pokaż podgląd wiadomości w folderze odebrane w skrzynce pocztowej nie wyświetlają się żadne wiadomości wyłączenie podglądu przywraca widok wiadomości błąd wywołany w yetiforce w wersji testowej zamieszczonej na stronie yetiforce com issue actual behavior expected behavior how to trigger the error your environment yetiforce version used browser name and version environment name and version operating system and version | 1 |

707,570 | 24,309,969,361 | IssuesEvent | 2022-09-29 21:10:00 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | Counties of Kenya | Priority-High (Needed for work) Function-Locality/Event/Georeferencing | ### First Step: Explain what geography needs created.

It looks like Kenya has undergone some revision. Some may be in Arctos, some not.

[Counties of Kenya](https://en.wikipedia.org/wiki/Counties_of_Kenya) needed for CSULB data migration include [Machakos](https://en.wikipedia.org/wiki/Machakos_County), [Makueni](https://en.wikipedia.org/wiki/Makueni_County) and [Nyeri](https://en.wikipedia.org/wiki/Nyeri_County)

We will respond with a CSV template, or request more information.

| 1.0 | Counties of Kenya - ### First Step: Explain what geography needs created.

It looks like Kenya has undergone some revision. Some may be in Arctos, some not.

[Counties of Kenya](https://en.wikipedia.org/wiki/Counties_of_Kenya) needed for CSULB data migration include [Machakos](https://en.wikipedia.org/wiki/Machakos_County), [Makueni](https://en.wikipedia.org/wiki/Makueni_County) and [Nyeri](https://en.wikipedia.org/wiki/Nyeri_County)

We will respond with a CSV template, or request more information.

| priority | counties of kenya first step explain what geography needs created it looks like kenya has undergone some revision some may be in arctos some not needed for csulb data migration include and we will respond with a csv template or request more information | 1 |

800,502 | 28,368,744,845 | IssuesEvent | 2023-04-12 15:28:06 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | search results: add "fields" to FLAT | Priority-High (Needed for work) Enhancement Bug | **Is your feature request related to a problem? Please describe.**

https://github.com/ArctosDB/arctos/issues/6017 is causing pain for users and glitches in the system and I think cannot be ignored any longer even though the identifier discussions have stalled. I have to remove the many and mostly unused identifiers options from results ASAP in the name of stability.

I'd like to compensate by adding the things that are most used, and some loose ends that have been floating around. Here's what I think needs to happen, please let me know ASAP if anything else is regularly necessary in results.

* superorder (for @DerekSikes via https://github.com/ArctosDB/arctos/issues/6035)

* Preparator Number (see https://github.com/ArctosDB/arctos/issues/6031, I think this is stable)

* AF

* NK

above from https://github.com/ArctosDB/arctos/issues/5460 @jebrad did I miss anything?

**Describe what you're trying to accomplish**

Cache whatever's necessary to make Arctos performant, without making any new messes.

**Describe the solution you'd like**

Input from anyone who wants to include stuff in search results. (Perhaps excepting Attributes, which is a bit of a monster and needs a dedicated discussion.)

**Describe alternatives you've considered**

Things that a few users occasionally need can be quickly added dynamically, and there are download options for most of this; not adding something here does not mean it's not available.

**Additional context**

**Priority**

This is already smouldering, I'll make the changes and run the updates this weekend unless someone has a compelling reason not to.

Other things can be added at any time, but (depending on the specifics) it can take several days of CPU to complete; anything that can be done now should be done now for the sake of efficiency.

| 1.0 | search results: add "fields" to FLAT - **Is your feature request related to a problem? Please describe.**

https://github.com/ArctosDB/arctos/issues/6017 is causing pain for users and glitches in the system and I think cannot be ignored any longer even though the identifier discussions have stalled. I have to remove the many and mostly unused identifiers options from results ASAP in the name of stability.

I'd like to compensate by adding the things that are most used, and some loose ends that have been floating around. Here's what I think needs to happen, please let me know ASAP if anything else is regularly necessary in results.

* superorder (for @DerekSikes via https://github.com/ArctosDB/arctos/issues/6035)

* Preparator Number (see https://github.com/ArctosDB/arctos/issues/6031, I think this is stable)

* AF

* NK

above from https://github.com/ArctosDB/arctos/issues/5460 @jebrad did I miss anything?

**Describe what you're trying to accomplish**

Cache whatever's necessary to make Arctos performant, without making any new messes.

**Describe the solution you'd like**

Input from anyone who wants to include stuff in search results. (Perhaps excepting Attributes, which is a bit of a monster and needs a dedicated discussion.)

**Describe alternatives you've considered**

Things that a few users occasionally need can be quickly added dynamically, and there are download options for most of this; not adding something here does not mean it's not available.

**Additional context**

**Priority**

This is already smouldering, I'll make the changes and run the updates this weekend unless someone has a compelling reason not to.

Other things can be added at any time, but (depending on the specifics) it can take several days of CPU to complete; anything that can be done now should be done now for the sake of efficiency.

| priority | search results add fields to flat is your feature request related to a problem please describe is causing pain for users and glitches in the system and i think cannot be ignored any longer even though the identifier discussions have stalled i have to remove the many and mostly unused identifiers options from results asap in the name of stability i d like to compensate by adding the things that are most used and some loose ends that have been floating around here s what i think needs to happen please let me know asap if anything else is regularly necessary in results superorder for dereksikes via preparator number see i think this is stable af nk above from jebrad did i miss anything describe what you re trying to accomplish cache whatever s necessary to make arctos performant without making any new messes describe the solution you d like input from anyone who wants to include stuff in search results perhaps excepting attributes which is a bit of a monster and needs a dedicated discussion describe alternatives you ve considered things that a few users occasionally need can be quickly added dynamically and there are download options for most of this not adding something here does not mean it s not available additional context priority this is already smouldering i ll make the changes and run the updates this weekend unless someone has a compelling reason not to other things can be added at any time but depending on the specifics it can take several days of cpu to complete anything that can be done now should be done now for the sake of efficiency | 1 |

487,021 | 14,017,938,687 | IssuesEvent | 2020-10-29 16:13:35 | wazuh/wazuh-documentation | https://api.github.com/repos/wazuh/wazuh-documentation | opened | Imrpove the OD upgrade section | priority: highest type: refactor | Hello team! This issue aims to improve the upgrade guide section for Open Distro.

We may face the situation where a user has the Elastic or Open Distro repository and this may lead to unwanted upgrades and broken installations.

We should disable those repositories in order to prevent accidental upgrades.

Regards,

David | 1.0 | Imrpove the OD upgrade section - Hello team! This issue aims to improve the upgrade guide section for Open Distro.

We may face the situation where a user has the Elastic or Open Distro repository and this may lead to unwanted upgrades and broken installations.

We should disable those repositories in order to prevent accidental upgrades.

Regards,

David | priority | imrpove the od upgrade section hello team this issue aims to improve the upgrade guide section for open distro we may face the situation where a user has the elastic or open distro repository and this may lead to unwanted upgrades and broken installations we should disable those repositories in order to prevent accidental upgrades regards david | 1 |

392,715 | 11,594,906,818 | IssuesEvent | 2020-02-24 16:05:38 | malaquiasdev/mamae-eu-quero-service | https://api.github.com/repos/malaquiasdev/mamae-eu-quero-service | closed | Some properties of Baby model should use required true | 🙇 good first issue 🚧 Status: WIP 🛠 Type: Minor 🤘 Priority: High | **Is your feature request related to a problem? Please describe.**

The properties below should not be optional.

- [x] motherMail

- [x] sexy

- [x] name

**Describe the solution you'd like**

We need put the schema property like below:

```

name: {

type: String,

required: true

}

```

After this we will need catch the error in the src/functions/client/create.js module.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | Some properties of Baby model should use required true - **Is your feature request related to a problem? Please describe.**

The properties below should not be optional.

- [x] motherMail

- [x] sexy

- [x] name

**Describe the solution you'd like**

We need put the schema property like below:

```

name: {

type: String,

required: true

}

```

After this we will need catch the error in the src/functions/client/create.js module.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | some properties of baby model should use required true is your feature request related to a problem please describe the properties below should not be optional mothermail sexy name describe the solution you d like we need put the schema property like below name type string required true after this we will need catch the error in the src functions client create js module describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here | 1 |

830,412 | 32,007,185,989 | IssuesEvent | 2023-09-21 15:30:24 | The-Aether-Team/The-Aether | https://api.github.com/repos/The-Aether-Team/The-Aether | opened | Feature: Active Moa Skin textures | priority/high status/in-progress feat/art | Need to produce Moa skin textures for active patreon pledges

- [ ] Ascentan Tier Moa skins

- [ ] Valkyrie Tier Moa skins

| 1.0 | Feature: Active Moa Skin textures - Need to produce Moa skin textures for active patreon pledges

- [ ] Ascentan Tier Moa skins

- [ ] Valkyrie Tier Moa skins

| priority | feature active moa skin textures need to produce moa skin textures for active patreon pledges ascentan tier moa skins valkyrie tier moa skins | 1 |

130,444 | 5,116,064,414 | IssuesEvent | 2017-01-07 00:22:39 | TheTyee/design-article.thetyee.ca | https://api.github.com/repos/TheTyee/design-article.thetyee.ca | closed | Homepage nav broken on ipad | Priority: High Status: Bug Type: CSS & presentation | Here's a screenshot from ipad mini Safair.

I can't reproduce this issue on desktop Safari.

| 1.0 | Homepage nav broken on ipad - Here's a screenshot from ipad mini Safair.

I can't reproduce this issue on desktop Safari.

| priority | homepage nav broken on ipad here s a screenshot from ipad mini safair i can t reproduce this issue on desktop safari | 1 |

783,070 | 27,517,486,341 | IssuesEvent | 2023-03-06 12:59:44 | AY2223S2-CS2113-T12-2/tp | https://api.github.com/repos/AY2223S2-CS2113-T12-2/tp | opened | Load and store component data from JSON files | priority.High | Add DataStorage class which loads and stores all data to be accessed | 1.0 | Load and store component data from JSON files - Add DataStorage class which loads and stores all data to be accessed | priority | load and store component data from json files add datastorage class which loads and stores all data to be accessed | 1 |

289,902 | 8,880,035,223 | IssuesEvent | 2019-01-14 03:05:22 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | ldap: Add support for providing the "full name" field split across two attributes | area: authentication in progress priority: high | In some LDAP databases, they only have separate "first name" and "last name" fields; we should offer a way to do that (inspired by comments in https://github.com/zulip/zulip/issues/9710).

I think a reasonable approach for this is to support users setting the `first_name` and `last_name` keys in `AUTH_LDAP_ATTR_MAP`, and then just have some custom code similar to what we have in 5dd646f33fa154ea98df1366b53e8904e5adf762 for combining together the two values in the two `get_or_build_user` functions. | 1.0 | ldap: Add support for providing the "full name" field split across two attributes - In some LDAP databases, they only have separate "first name" and "last name" fields; we should offer a way to do that (inspired by comments in https://github.com/zulip/zulip/issues/9710).

I think a reasonable approach for this is to support users setting the `first_name` and `last_name` keys in `AUTH_LDAP_ATTR_MAP`, and then just have some custom code similar to what we have in 5dd646f33fa154ea98df1366b53e8904e5adf762 for combining together the two values in the two `get_or_build_user` functions. | priority | ldap add support for providing the full name field split across two attributes in some ldap databases they only have separate first name and last name fields we should offer a way to do that inspired by comments in i think a reasonable approach for this is to support users setting the first name and last name keys in auth ldap attr map and then just have some custom code similar to what we have in for combining together the two values in the two get or build user functions | 1 |

425,045 | 12,334,793,745 | IssuesEvent | 2020-05-14 10:49:37 | EBISPOT/goci | https://api.github.com/repos/EBISPOT/goci | opened | Submission template validator should validate that study tags provided by submitter are unique within the submission | Blocker Priority: High Type: Enhancement | Submission template validator should validate that study tags provided by submitter are unique within the submission. As study tags are used to identify the study they must be unique within a particular submission. | 1.0 | Submission template validator should validate that study tags provided by submitter are unique within the submission - Submission template validator should validate that study tags provided by submitter are unique within the submission. As study tags are used to identify the study they must be unique within a particular submission. | priority | submission template validator should validate that study tags provided by submitter are unique within the submission submission template validator should validate that study tags provided by submitter are unique within the submission as study tags are used to identify the study they must be unique within a particular submission | 1 |

107,505 | 4,309,869,194 | IssuesEvent | 2016-07-21 17:22:33 | isawnyu/isaw.web | https://api.github.com/repos/isawnyu/isaw.web | closed | calendar buttons and grid need tweaks (from isaw.theme) | bug deploy high priority style | Copying this issue over from isaw.theme issue #46:

In calendar view, the Month and Week buttons look unfinished (missing a side border), and the calendar grid is misaligned in Week view.

I think this may be fixed, but I can't tell at the moment (can't see events). I've copied this issue here to remind myself to check. | 1.0 | calendar buttons and grid need tweaks (from isaw.theme) - Copying this issue over from isaw.theme issue #46:

In calendar view, the Month and Week buttons look unfinished (missing a side border), and the calendar grid is misaligned in Week view.

I think this may be fixed, but I can't tell at the moment (can't see events). I've copied this issue here to remind myself to check. | priority | calendar buttons and grid need tweaks from isaw theme copying this issue over from isaw theme issue in calendar view the month and week buttons look unfinished missing a side border and the calendar grid is misaligned in week view i think this may be fixed but i can t tell at the moment can t see events i ve copied this issue here to remind myself to check | 1 |

492,259 | 14,199,317,794 | IssuesEvent | 2020-11-16 01:59:36 | nhn/tui.grid | https://api.github.com/repos/nhn/tui.grid | closed | "Cannot read property 'rowSpanMap' of undefined" occurs when deleting rows | Bug Priority: High | **Describe the bug**

Error occurs when the raw data is recalled and the row is deleted by recalling the data back to ajax.

**To Reproduce**

Steps to reproduce the behavior:

1. Create Grid (Initialize Data)

2. Recall data using ajax (approximately 700 results)

3. Select 400th from first row (click + shift used, Scroll down during this process)

4. tui-grid.js:2388 Uncaused TypeError: Unable to read property 'rowSpanMap' for undefined (Occurs when the scroll position is more than half of the total position.)

5. Recall data using ajax (approximately 700 results)

6. tui-grid.js:2777 Uncaught TypeError: Cannot read property 'forEach' of undefined

**Desktop (please complete the following information):**

- OS: Window10

- Browser: Chrome

- Version 85.0.4183.121

| 1.0 | "Cannot read property 'rowSpanMap' of undefined" occurs when deleting rows - **Describe the bug**

Error occurs when the raw data is recalled and the row is deleted by recalling the data back to ajax.

**To Reproduce**

Steps to reproduce the behavior:

1. Create Grid (Initialize Data)

2. Recall data using ajax (approximately 700 results)

3. Select 400th from first row (click + shift used, Scroll down during this process)

4. tui-grid.js:2388 Uncaused TypeError: Unable to read property 'rowSpanMap' for undefined (Occurs when the scroll position is more than half of the total position.)

5. Recall data using ajax (approximately 700 results)

6. tui-grid.js:2777 Uncaught TypeError: Cannot read property 'forEach' of undefined

**Desktop (please complete the following information):**

- OS: Window10

- Browser: Chrome

- Version 85.0.4183.121

| priority | cannot read property rowspanmap of undefined occurs when deleting rows describe the bug error occurs when the raw data is recalled and the row is deleted by recalling the data back to ajax to reproduce steps to reproduce the behavior create grid initialize data recall data using ajax approximately results select from first row click shift used scroll down during this process tui grid js uncaused typeerror unable to read property rowspanmap for undefined occurs when the scroll position is more than half of the total position recall data using ajax approximately results tui grid js uncaught typeerror cannot read property foreach of undefined desktop please complete the following information os browser chrome version | 1 |

221,767 | 7,395,968,817 | IssuesEvent | 2018-03-18 05:53:35 | chimano/SOEN341_SA2 | https://api.github.com/repos/chimano/SOEN341_SA2 | closed | User's should not be able to vote on their answer | bug priority: high | The system should not allow users to vote on their own question and answer. (~30 minutes) | 1.0 | User's should not be able to vote on their answer - The system should not allow users to vote on their own question and answer. (~30 minutes) | priority | user s should not be able to vote on their answer the system should not allow users to vote on their own question and answer minutes | 1 |

492,998 | 14,224,225,541 | IssuesEvent | 2020-11-17 19:21:15 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | opened | [Story Player] Improve player build/layout strategy | P1: High Priority Type: Bug WG: stories | Opening most pages using a player ([example](https://www.greenschemetv.net/gluten-free-cream-cheese-brownies/)) with a very fast computer/network speed, I'd expect the story to be loaded before it gets in the viewport. We should consider making our build/layout strategy more aggressive.

cc @ampproject/wg-stories | 1.0 | [Story Player] Improve player build/layout strategy - Opening most pages using a player ([example](https://www.greenschemetv.net/gluten-free-cream-cheese-brownies/)) with a very fast computer/network speed, I'd expect the story to be loaded before it gets in the viewport. We should consider making our build/layout strategy more aggressive.

cc @ampproject/wg-stories | priority | improve player build layout strategy opening most pages using a player with a very fast computer network speed i d expect the story to be loaded before it gets in the viewport we should consider making our build layout strategy more aggressive cc ampproject wg stories | 1 |

140,601 | 5,412,974,039 | IssuesEvent | 2017-03-01 15:45:00 | gwt-plugins/gwt-eclipse-plugin | https://api.github.com/repos/gwt-plugins/gwt-eclipse-plugin | closed | GWT plug-ins check for updates and do analytics ping whenever a Java build occurs (on a GWT Project) | enhancement High Priority | I do not think it is sensible for the GWT plug-ins to check for GWT plug-in updates on every java compile of any project with a GWT nature. This can be detrimental to performance.

I know that update checking can be turned off in the preferences, but perhaps just checking on Eclipse start-up or something (or once a day or whatever) would be more sensible (just a thought)?

Much more annoying than the updates is the compilation analytics ping request as there doesn't seem to be a way of turning this off, and (again) it happens on every Java build of a GWT natured project!

It should at least respect the general error reporting preferences (e.g.org.eclipse.epp.logging.aeri.ide/sendMode=NEVER) and enable me to opt out of sending information back etc. Also, it does of course fill up my logs with (what to me at least is) not useful information (although it is not alone in that of course).

`/**

* A compilation participant that is used to trigger an update check of the GWT Plugin's feature

* whenever a Java build is triggered on a GWT project.

*/

public class UpdateTriggerCompilationParticipant extends CompilationParticipant {

@Override

public boolean isActive(IJavaProject project) {

if (!project.exists()) {

return false;

}

if (GWTNature.isGWTProject(project.getProject())) {

GdtExtPlugin.getFeatureUpdateManager().checkForUpdates();

GdtExtPlugin.getAnalyticsPingManager().sendCompilationPing();

return true;

} else {

return false;

}

}

}

` | 1.0 | GWT plug-ins check for updates and do analytics ping whenever a Java build occurs (on a GWT Project) - I do not think it is sensible for the GWT plug-ins to check for GWT plug-in updates on every java compile of any project with a GWT nature. This can be detrimental to performance.

I know that update checking can be turned off in the preferences, but perhaps just checking on Eclipse start-up or something (or once a day or whatever) would be more sensible (just a thought)?

Much more annoying than the updates is the compilation analytics ping request as there doesn't seem to be a way of turning this off, and (again) it happens on every Java build of a GWT natured project!

It should at least respect the general error reporting preferences (e.g.org.eclipse.epp.logging.aeri.ide/sendMode=NEVER) and enable me to opt out of sending information back etc. Also, it does of course fill up my logs with (what to me at least is) not useful information (although it is not alone in that of course).

`/**

* A compilation participant that is used to trigger an update check of the GWT Plugin's feature

* whenever a Java build is triggered on a GWT project.

*/

public class UpdateTriggerCompilationParticipant extends CompilationParticipant {

@Override

public boolean isActive(IJavaProject project) {

if (!project.exists()) {

return false;

}

if (GWTNature.isGWTProject(project.getProject())) {

GdtExtPlugin.getFeatureUpdateManager().checkForUpdates();

GdtExtPlugin.getAnalyticsPingManager().sendCompilationPing();

return true;

} else {

return false;

}

}

}

` | priority | gwt plug ins check for updates and do analytics ping whenever a java build occurs on a gwt project i do not think it is sensible for the gwt plug ins to check for gwt plug in updates on every java compile of any project with a gwt nature this can be detrimental to performance i know that update checking can be turned off in the preferences but perhaps just checking on eclipse start up or something or once a day or whatever would be more sensible just a thought much more annoying than the updates is the compilation analytics ping request as there doesn t seem to be a way of turning this off and again it happens on every java build of a gwt natured project it should at least respect the general error reporting preferences e g org eclipse epp logging aeri ide sendmode never and enable me to opt out of sending information back etc also it does of course fill up my logs with what to me at least is not useful information although it is not alone in that of course a compilation participant that is used to trigger an update check of the gwt plugin s feature whenever a java build is triggered on a gwt project public class updatetriggercompilationparticipant extends compilationparticipant override public boolean isactive ijavaproject project if project exists return false if gwtnature isgwtproject project getproject gdtextplugin getfeatureupdatemanager checkforupdates gdtextplugin getanalyticspingmanager sendcompilationping return true else return false | 1 |

118,186 | 4,732,888,962 | IssuesEvent | 2016-10-19 09:21:56 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Simpler way to remove existing layers from the layer tree | enhancement pending review Priority: High task | The standard way for removing layers from the layer tree is too complex, see image below:

I would like to have something similar to what we have done for SIRA, se below:

| 1.0 | Simpler way to remove existing layers from the layer tree - The standard way for removing layers from the layer tree is too complex, see image below:

I would like to have something similar to what we have done for SIRA, se below:

| priority | simpler way to remove existing layers from the layer tree the standard way for removing layers from the layer tree is too complex see image below i would like to have something similar to what we have done for sira se below | 1 |

519,095 | 15,044,078,814 | IssuesEvent | 2021-02-03 02:08:37 | Psychoanalytic-Electronic-Publishing/OpenPubArchive-Content-Server | https://api.github.com/repos/Psychoanalytic-Electronic-Publishing/OpenPubArchive-Content-Server | opened | DevOps Build Process Optimizations | optimization priority-high | Today may have been a bit unusual, with an error from solr seeming to repeatedly happen, while meanwhile the Development solr database appears to be updated, as does the Development RDS database. Maybe I'm missing something.

It's not acceptable that a full build is still running, 20 hours later. I ran the entire process here in about 4 hours on my PC. But the worst part of this is that if I were really building a production set with a deadline, this would really extend the time...especially if I had to run it more than once. Even incremental builds don't solve the problem...I don't have timing data, but as I recall the last time I manually ran one, the incremental process takes many hours as well...when in fact, it may only be a handful of files being loaded.

We need to do better.

Some ideas for improving build performance:

1) In a rebuild scenario, once we see the crossing point of the forward and reverse runs, we should stop or at least let then ext step (build images) proceed...that takes far too long on AWS to finish even though the work is actually done. And the fact that the process can't "complete" until each direction has looked at all the files, makes me wait hours more.

So I've added a flag: --halfway which can be used for both the forward and reverse processes--and they will quit after each processing half of the files. Hopefully, that will help.

2) We could add more processes to the full build...my 4 hour timing is running forward, reverse, and targeting some of the bigger journals separately (only works when resetting the cores, so it's not set to "all" but rather checks if each file is in the database, so overlap with two main processes isn't a problem, and they just zip past when they are already processed (of course it doesn't seem to zip on AWS). I sometimes run as many as 6 or so processes here on my Intel I9 9900K 8-core PC to optimize the timing.

3) For smaller changes it may be a good idea to make another processing option...where I can make more targeted changes to development solr and development RDS quickly...and then generate what's needed for stage. I can run my builds remotely on those, and for smaller updates, I think it may run more quickly than the current update process. Then we'd have a script to create what's needed to create the AMI's to push to stage and eventually production (after testing). This could even run off of stage, as an option...so after checking stage, if there are some issues, it can be dealt with there, then the AMIs generated and pushed to production.

Though we can discuss why incremental also seems to takes so long before going down that road. One thing that's odd--I noticed when running incremental builds, it's falsely detecting changes and processing some items, where it shouldn't have to. Not sure why that happens--it doesn't happen when run locally. Perhaps something about comparing times between S3 and Solr?

I do have another ace up my sleeve to make it faster...but I'm hesitant because it involves a loss of an interesting search feature (even though the client isn't using it) and requires changes to the server as well. Still I'm considering it if we can't speed things up. I'm open to ideas. I am ok with a 12 hour total for a full build--one I can start in the evening and have it ready in the morning. I'd prefer faster but I can live with that. Incremental builds should take very little time, anywhere from 15 minutes at most for a few new files to an hour or two, for example, to rerun the whole PEPCurrent folder (<7000 files).

But I don't think a full build that takes over 20 hours, like the current one is taking, works for starters. Maybe that's an anomaly, but it's certainly holding me up today...and I don't want to stop it to try again, because it seems to take 14 or more hours to rebuild in any case.

| 1.0 | DevOps Build Process Optimizations - Today may have been a bit unusual, with an error from solr seeming to repeatedly happen, while meanwhile the Development solr database appears to be updated, as does the Development RDS database. Maybe I'm missing something.

It's not acceptable that a full build is still running, 20 hours later. I ran the entire process here in about 4 hours on my PC. But the worst part of this is that if I were really building a production set with a deadline, this would really extend the time...especially if I had to run it more than once. Even incremental builds don't solve the problem...I don't have timing data, but as I recall the last time I manually ran one, the incremental process takes many hours as well...when in fact, it may only be a handful of files being loaded.

We need to do better.

Some ideas for improving build performance:

1) In a rebuild scenario, once we see the crossing point of the forward and reverse runs, we should stop or at least let then ext step (build images) proceed...that takes far too long on AWS to finish even though the work is actually done. And the fact that the process can't "complete" until each direction has looked at all the files, makes me wait hours more.

So I've added a flag: --halfway which can be used for both the forward and reverse processes--and they will quit after each processing half of the files. Hopefully, that will help.

2) We could add more processes to the full build...my 4 hour timing is running forward, reverse, and targeting some of the bigger journals separately (only works when resetting the cores, so it's not set to "all" but rather checks if each file is in the database, so overlap with two main processes isn't a problem, and they just zip past when they are already processed (of course it doesn't seem to zip on AWS). I sometimes run as many as 6 or so processes here on my Intel I9 9900K 8-core PC to optimize the timing.

3) For smaller changes it may be a good idea to make another processing option...where I can make more targeted changes to development solr and development RDS quickly...and then generate what's needed for stage. I can run my builds remotely on those, and for smaller updates, I think it may run more quickly than the current update process. Then we'd have a script to create what's needed to create the AMI's to push to stage and eventually production (after testing). This could even run off of stage, as an option...so after checking stage, if there are some issues, it can be dealt with there, then the AMIs generated and pushed to production.

Though we can discuss why incremental also seems to takes so long before going down that road. One thing that's odd--I noticed when running incremental builds, it's falsely detecting changes and processing some items, where it shouldn't have to. Not sure why that happens--it doesn't happen when run locally. Perhaps something about comparing times between S3 and Solr?

I do have another ace up my sleeve to make it faster...but I'm hesitant because it involves a loss of an interesting search feature (even though the client isn't using it) and requires changes to the server as well. Still I'm considering it if we can't speed things up. I'm open to ideas. I am ok with a 12 hour total for a full build--one I can start in the evening and have it ready in the morning. I'd prefer faster but I can live with that. Incremental builds should take very little time, anywhere from 15 minutes at most for a few new files to an hour or two, for example, to rerun the whole PEPCurrent folder (<7000 files).

But I don't think a full build that takes over 20 hours, like the current one is taking, works for starters. Maybe that's an anomaly, but it's certainly holding me up today...and I don't want to stop it to try again, because it seems to take 14 or more hours to rebuild in any case.

| priority | devops build process optimizations today may have been a bit unusual with an error from solr seeming to repeatedly happen while meanwhile the development solr database appears to be updated as does the development rds database maybe i m missing something it s not acceptable that a full build is still running hours later i ran the entire process here in about hours on my pc but the worst part of this is that if i were really building a production set with a deadline this would really extend the time especially if i had to run it more than once even incremental builds don t solve the problem i don t have timing data but as i recall the last time i manually ran one the incremental process takes many hours as well when in fact it may only be a handful of files being loaded we need to do better some ideas for improving build performance in a rebuild scenario once we see the crossing point of the forward and reverse runs we should stop or at least let then ext step build images proceed that takes far too long on aws to finish even though the work is actually done and the fact that the process can t complete until each direction has looked at all the files makes me wait hours more so i ve added a flag halfway which can be used for both the forward and reverse processes and they will quit after each processing half of the files hopefully that will help we could add more processes to the full build my hour timing is running forward reverse and targeting some of the bigger journals separately only works when resetting the cores so it s not set to all but rather checks if each file is in the database so overlap with two main processes isn t a problem and they just zip past when they are already processed of course it doesn t seem to zip on aws i sometimes run as many as or so processes here on my intel core pc to optimize the timing for smaller changes it may be a good idea to make another processing option where i can make more targeted changes to development solr and development rds quickly and then generate what s needed for stage i can run my builds remotely on those and for smaller updates i think it may run more quickly than the current update process then we d have a script to create what s needed to create the ami s to push to stage and eventually production after testing this could even run off of stage as an option so after checking stage if there are some issues it can be dealt with there then the amis generated and pushed to production though we can discuss why incremental also seems to takes so long before going down that road one thing that s odd i noticed when running incremental builds it s falsely detecting changes and processing some items where it shouldn t have to not sure why that happens it doesn t happen when run locally perhaps something about comparing times between and solr i do have another ace up my sleeve to make it faster but i m hesitant because it involves a loss of an interesting search feature even though the client isn t using it and requires changes to the server as well still i m considering it if we can t speed things up i m open to ideas i am ok with a hour total for a full build one i can start in the evening and have it ready in the morning i d prefer faster but i can live with that incremental builds should take very little time anywhere from minutes at most for a few new files to an hour or two for example to rerun the whole pepcurrent folder files but i don t think a full build that takes over hours like the current one is taking works for starters maybe that s an anomaly but it s certainly holding me up today and i don t want to stop it to try again because it seems to take or more hours to rebuild in any case | 1 |

280,948 | 8,688,557,861 | IssuesEvent | 2018-12-03 16:23:46 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | opened | Indirect - ConvFit crash when selecting Fit from different buttons | Component: Indirect Inelastic Misc: Bug Priority: High | **Found by:** Unscripted testing

### Expected behavior

No crash - the relevant buttons should be disabled

### Steps to reproduce the behavior

1. `Interfaces`->`Indirect`->`Data Analysis`->`ConvFit` Tab

3. Load the data below

3. Fit Type is `Teixeira Water`

4. Click the 'Fit' combobox above the FitPropertyBrowser and then click 'Sequential Fit'

5. Quickly click 'Run' while it is still fitting

crash!

**Data Files**

[irs26176_graphite002_red.zip](https://github.com/mantidproject/mantid/files/2640253/irs26176_graphite002_red.zip)

[irs26173_graphite002_res.zip](https://github.com/mantidproject/mantid/files/2640254/irs26173_graphite002_res.zip)

### Platforms affected

All | 1.0 | Indirect - ConvFit crash when selecting Fit from different buttons - **Found by:** Unscripted testing

### Expected behavior

No crash - the relevant buttons should be disabled

### Steps to reproduce the behavior

1. `Interfaces`->`Indirect`->`Data Analysis`->`ConvFit` Tab

3. Load the data below

3. Fit Type is `Teixeira Water`

4. Click the 'Fit' combobox above the FitPropertyBrowser and then click 'Sequential Fit'

5. Quickly click 'Run' while it is still fitting

crash!

**Data Files**

[irs26176_graphite002_red.zip](https://github.com/mantidproject/mantid/files/2640253/irs26176_graphite002_red.zip)

[irs26173_graphite002_res.zip](https://github.com/mantidproject/mantid/files/2640254/irs26173_graphite002_res.zip)

### Platforms affected

All | priority | indirect convfit crash when selecting fit from different buttons found by unscripted testing expected behavior no crash the relevant buttons should be disabled steps to reproduce the behavior interfaces indirect data analysis convfit tab load the data below fit type is teixeira water click the fit combobox above the fitpropertybrowser and then click sequential fit quickly click run while it is still fitting crash data files platforms affected all | 1 |

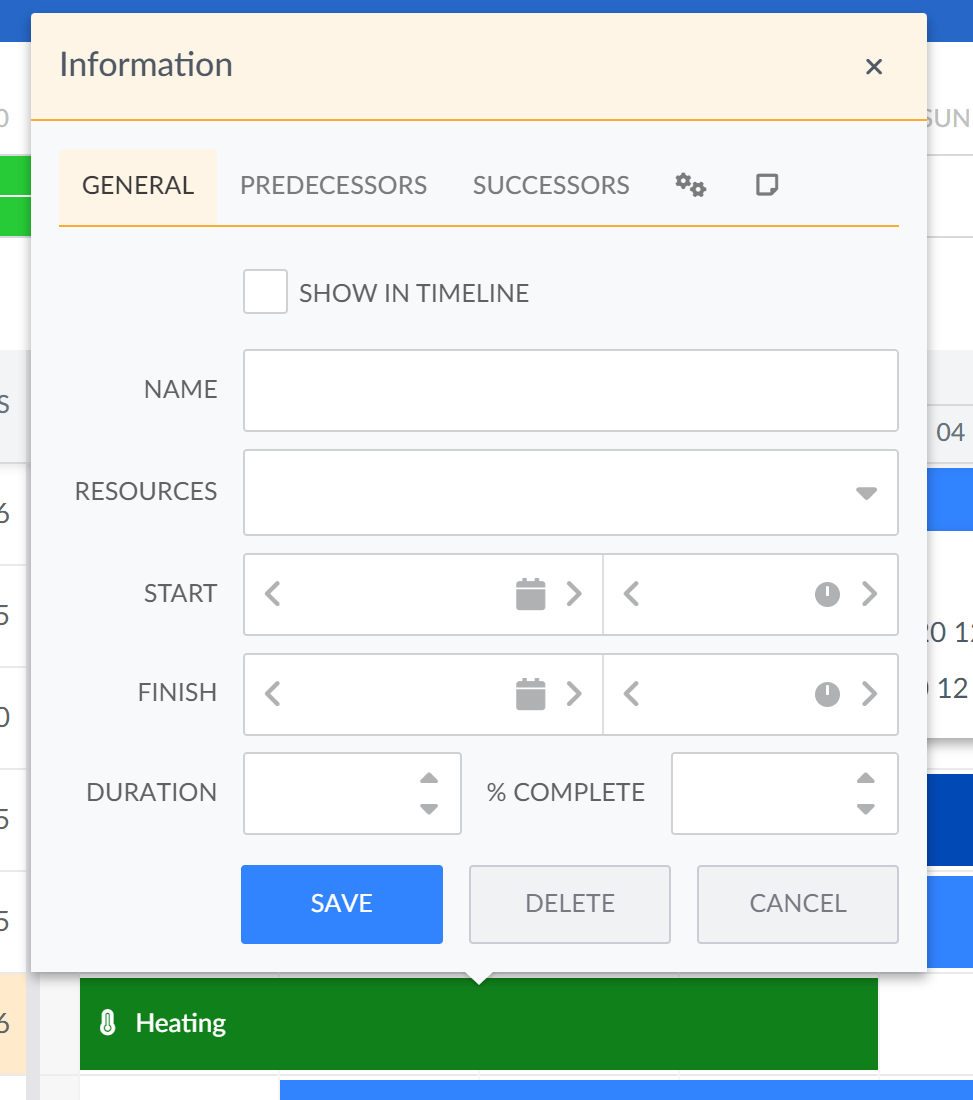

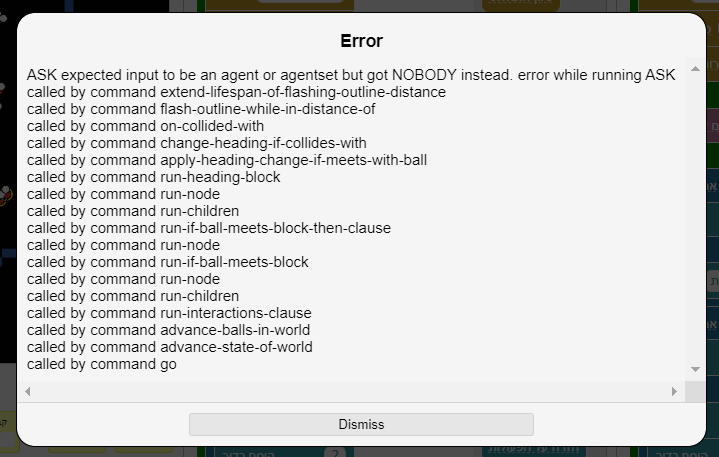

707,147 | 24,297,091,096 | IssuesEvent | 2022-09-29 10:59:00 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Task editor in Scheduler Pro gets empty when opened too fast after closing | bug resolved high-priority OEM | Reproducible on timeline demo:

1. select event

2. press Enter to open the editor

3. press Escape to close and immediately Enter once again

Editor fields get empty. This is much easier to reproduce on slower environnments like Salesforce

| 1.0 | Task editor in Scheduler Pro gets empty when opened too fast after closing - Reproducible on timeline demo:

1. select event

2. press Enter to open the editor

3. press Escape to close and immediately Enter once again

Editor fields get empty. This is much easier to reproduce on slower environnments like Salesforce

| priority | task editor in scheduler pro gets empty when opened too fast after closing reproducible on timeline demo select event press enter to open the editor press escape to close and immediately enter once again editor fields get empty this is much easier to reproduce on slower environnments like salesforce | 1 |

201,479 | 7,032,063,673 | IssuesEvent | 2017-12-26 23:31:33 | woborschilde/fluentlogin | https://api.github.com/repos/woborschilde/fluentlogin | closed | User App Login | kind: feature request priority: high status: done time span: long-term | Parts:

- Login

- Logout

- Register

- Password Lost

_This issue is archived and was originally created on 03 Aug 2017._ | 1.0 | User App Login - Parts:

- Login

- Logout

- Register

- Password Lost

_This issue is archived and was originally created on 03 Aug 2017._ | priority | user app login parts login logout register password lost this issue is archived and was originally created on aug | 1 |

748,677 | 26,132,664,411 | IssuesEvent | 2022-12-29 07:55:55 | NomicFoundation/hardhat | https://api.github.com/repos/NomicFoundation/hardhat | closed | `estimateGas` issue with hardhat network when autoMine is off | type:bug priority:high | We are having some issue with `estimateGas` when autoMine is off.

Hardhat version: version "2.6.1"

hardhat.config.js

```

localhost: {

hardfork: "istanbul",

},

hardhat: {

hardfork: "istanbul",

},

```

The `gasLimit` of the `hardhat` network is greatly larger than the `localhost` one, causing the transaction being dropped by the next block due to the block gasLimit constraint. The next block being mined can only hold 2 transactions.

The transaction data when sending to a `localhost` network:

```

{

hash: '0x72d6362e91291c311012d9cef61b9c9f3ddcaa52b8706e2f0286550cc3a51aa6',

type: null,

accessList: null,

blockHash: null,

blockNumber: null,

transactionIndex: null,

confirmations: 0,

from: '0x3C44CdDdB6a900fa2b585dd299e03d12FA4293BC',

gasPrice: BigNumber { _hex: '0x01dcd65000', _isBigNumber: true },

gasLimit: BigNumber { _hex: '0x0ea7fd', _isBigNumber: true },

to: '0x9A9f2CCfdE556A7E9Ff0848998Aa4a0CFD8863AE',

value: BigNumber { _hex: '0x00', _isBigNumber: true },

nonce: 12,

data: '0x42e77284000000000000000000000000000000000000000000000000000000000000002000000000000000000000000000000000000000000000000000000000000000010000000000000000000000003c44cdddb6a900fa2b585dd299e03d12fa4293bc',

r: '0xf24fb81b34cffd9463e22786632605125630dae1d9042d6b6def669353c9ea15',

s: '0x0c77da00a7191abe556898036b6fb1c849107e39be70e2244943e5239f19730f',

v: 62710,

creates: null,

chainId: 31337,

wait: [Function (anonymous)]

}

```

The transaction data when sending to the default `hardhat` network:

```

{

hash: '0x5dce93e43d33274b720399bb9e659b042990b4556980db79c0686affd5607bb8',

type: null,

accessList: null,

blockHash: null,

blockNumber: null,

transactionIndex: null,

confirmations: 0,

from: '0x3C44CdDdB6a900fa2b585dd299e03d12FA4293BC',

gasPrice: BigNumber { _hex: '0x01dcd65000', _isBigNumber: true },

gasLimit: BigNumber { _hex: '0x01badb18', _isBigNumber: true },

to: '0x9A9f2CCfdE556A7E9Ff0848998Aa4a0CFD8863AE',

value: BigNumber { _hex: '0x00', _isBigNumber: true },

nonce: 12,

data: '0x42e77284000000000000000000000000000000000000000000000000000000000000002000000000000000000000000000000000000000000000000000000000000000010000000000000000000000003c44cdddb6a900fa2b585dd299e03d12fa4293bc',

r: '0xeb2a0c24521571805b031ef169984919de500112daee1a5008f05192580c7286',

s: '0x7d3f542d5ca41c628f4e807fa1bc3b148db62a32b8e9933ead16a9a00f340034',

v: 62710,

creates: null,

chainId: 31337,

wait: [Function (anonymous)]

}

```

| 1.0 | `estimateGas` issue with hardhat network when autoMine is off - We are having some issue with `estimateGas` when autoMine is off.

Hardhat version: version "2.6.1"

hardhat.config.js

```

localhost: {

hardfork: "istanbul",

},

hardhat: {

hardfork: "istanbul",

},

```

The `gasLimit` of the `hardhat` network is greatly larger than the `localhost` one, causing the transaction being dropped by the next block due to the block gasLimit constraint. The next block being mined can only hold 2 transactions.

The transaction data when sending to a `localhost` network:

```

{

hash: '0x72d6362e91291c311012d9cef61b9c9f3ddcaa52b8706e2f0286550cc3a51aa6',

type: null,

accessList: null,

blockHash: null,

blockNumber: null,

transactionIndex: null,

confirmations: 0,

from: '0x3C44CdDdB6a900fa2b585dd299e03d12FA4293BC',

gasPrice: BigNumber { _hex: '0x01dcd65000', _isBigNumber: true },

gasLimit: BigNumber { _hex: '0x0ea7fd', _isBigNumber: true },

to: '0x9A9f2CCfdE556A7E9Ff0848998Aa4a0CFD8863AE',

value: BigNumber { _hex: '0x00', _isBigNumber: true },

nonce: 12,

data: '0x42e77284000000000000000000000000000000000000000000000000000000000000002000000000000000000000000000000000000000000000000000000000000000010000000000000000000000003c44cdddb6a900fa2b585dd299e03d12fa4293bc',

r: '0xf24fb81b34cffd9463e22786632605125630dae1d9042d6b6def669353c9ea15',

s: '0x0c77da00a7191abe556898036b6fb1c849107e39be70e2244943e5239f19730f',

v: 62710,

creates: null,

chainId: 31337,

wait: [Function (anonymous)]

}

```

The transaction data when sending to the default `hardhat` network:

```

{

hash: '0x5dce93e43d33274b720399bb9e659b042990b4556980db79c0686affd5607bb8',

type: null,

accessList: null,

blockHash: null,

blockNumber: null,

transactionIndex: null,

confirmations: 0,

from: '0x3C44CdDdB6a900fa2b585dd299e03d12FA4293BC',

gasPrice: BigNumber { _hex: '0x01dcd65000', _isBigNumber: true },

gasLimit: BigNumber { _hex: '0x01badb18', _isBigNumber: true },

to: '0x9A9f2CCfdE556A7E9Ff0848998Aa4a0CFD8863AE',

value: BigNumber { _hex: '0x00', _isBigNumber: true },

nonce: 12,

data: '0x42e77284000000000000000000000000000000000000000000000000000000000000002000000000000000000000000000000000000000000000000000000000000000010000000000000000000000003c44cdddb6a900fa2b585dd299e03d12fa4293bc',

r: '0xeb2a0c24521571805b031ef169984919de500112daee1a5008f05192580c7286',

s: '0x7d3f542d5ca41c628f4e807fa1bc3b148db62a32b8e9933ead16a9a00f340034',

v: 62710,

creates: null,

chainId: 31337,

wait: [Function (anonymous)]

}

```

| priority | estimategas issue with hardhat network when automine is off we are having some issue with estimategas when automine is off hardhat version version hardhat config js localhost hardfork istanbul hardhat hardfork istanbul the gaslimit of the hardhat network is greatly larger than the localhost one causing the transaction being dropped by the next block due to the block gaslimit constraint the next block being mined can only hold transactions the transaction data when sending to a localhost network hash type null accesslist null blockhash null blocknumber null transactionindex null confirmations from gasprice bignumber hex isbignumber true gaslimit bignumber hex isbignumber true to value bignumber hex isbignumber true nonce data r s v creates null chainid wait the transaction data when sending to the default hardhat network hash type null accesslist null blockhash null blocknumber null transactionindex null confirmations from gasprice bignumber hex isbignumber true gaslimit bignumber hex isbignumber true to value bignumber hex isbignumber true nonce data r s v creates null chainid wait | 1 |

266,619 | 8,372,759,590 | IssuesEvent | 2018-10-05 08:12:32 | IBM/watson-assistant-workbench | https://api.github.com/repos/IBM/watson-assistant-workbench | closed | Importing nodes reverse their order | Priority: high XML bug | Due to changes in #130 the order of imported nodes is reverted. | 1.0 | Importing nodes reverse their order - Due to changes in #130 the order of imported nodes is reverted. | priority | importing nodes reverse their order due to changes in the order of imported nodes is reverted | 1 |

561,861 | 16,626,085,632 | IssuesEvent | 2021-06-03 09:43:41 | nhost/hasura-backend-plus | https://api.github.com/repos/nhost/hasura-backend-plus | closed | Is there a way to dynamically pass a redirect_url to an auth provider? | Priority: High Scope: Authentication Type: Feature Request | As we have several frontends with different domains and only one hasura-backend-plus instance, we have different redirect URL depending on the calling frontend app.

Would there be a way to dynamically pass a redirect_url to an auth provider if given, and fallback to the standard environment variable `PROVIDER_SUCCESS_REDIRECT` otherwise?

Example: //hasura-backend-plus/auth/providers/google?redirect_url_success=http://my-front-end-1/home | 1.0 | Is there a way to dynamically pass a redirect_url to an auth provider? - As we have several frontends with different domains and only one hasura-backend-plus instance, we have different redirect URL depending on the calling frontend app.

Would there be a way to dynamically pass a redirect_url to an auth provider if given, and fallback to the standard environment variable `PROVIDER_SUCCESS_REDIRECT` otherwise?

Example: //hasura-backend-plus/auth/providers/google?redirect_url_success=http://my-front-end-1/home | priority | is there a way to dynamically pass a redirect url to an auth provider as we have several frontends with different domains and only one hasura backend plus instance we have different redirect url depending on the calling frontend app would there be a way to dynamically pass a redirect url to an auth provider if given and fallback to the standard environment variable provider success redirect otherwise example hasura backend plus auth providers google redirect url success | 1 |

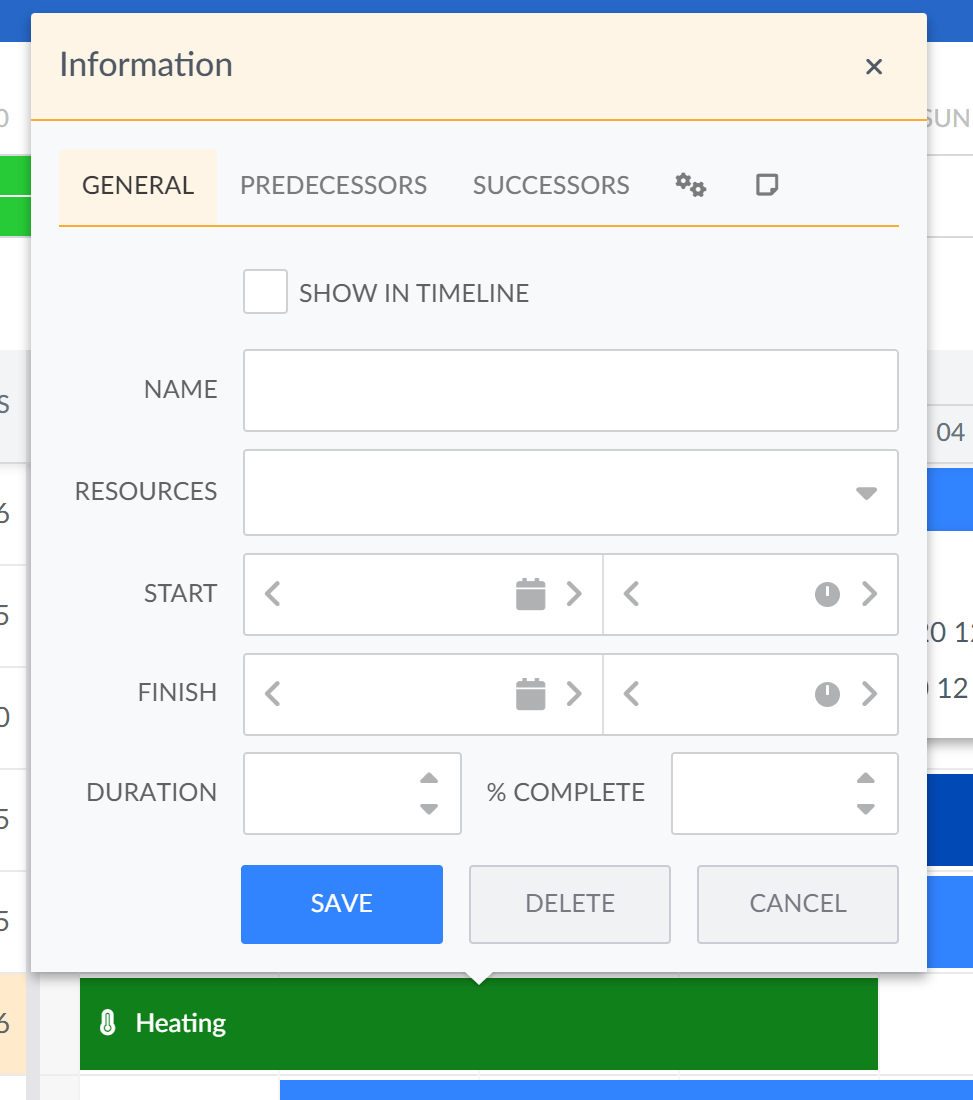

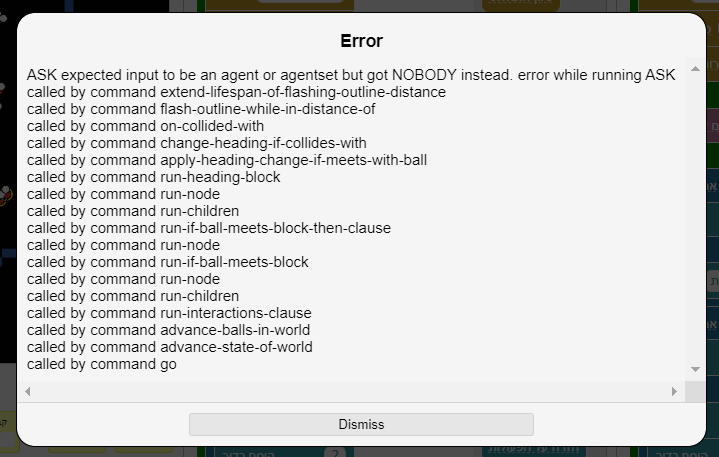

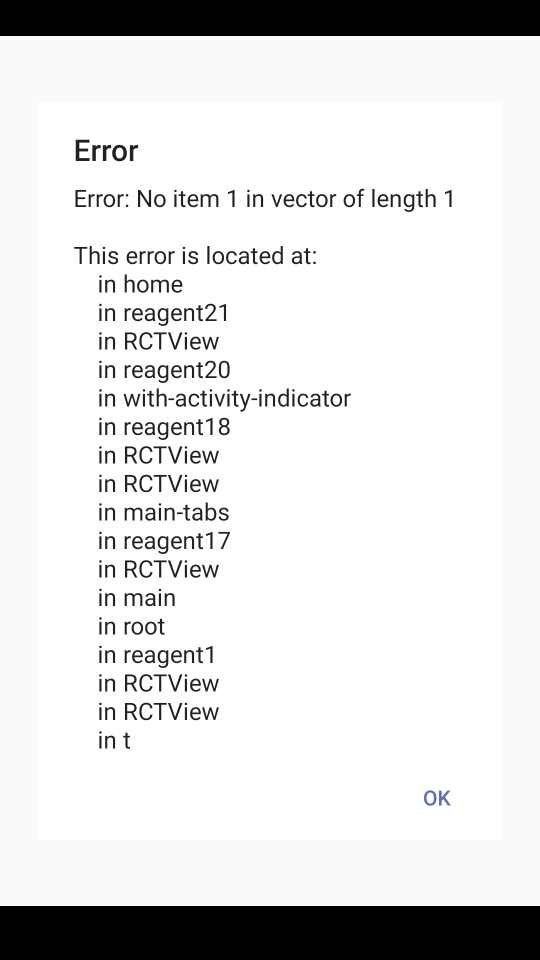

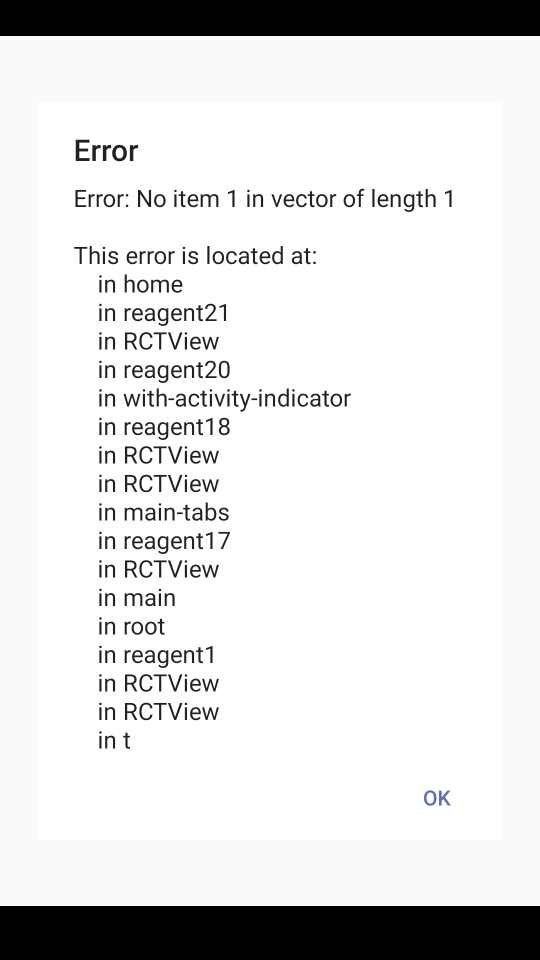

583,768 | 17,398,055,062 | IssuesEvent | 2021-08-02 15:42:14 | Systems-Learning-and-Development-Lab/MMM | https://api.github.com/repos/Systems-Learning-and-Development-Lab/MMM | closed | Error | priority-high |

, אני יודעת שאמרת שזה לא קשור אבל זה קרה אחרי שהוספתי אוכלוסייה שלישית

בעצם זה קורה די הרבה, גם כשמריצה מולקולות מתנגשות זו בזו | 1.0 | Error -

, אני יודעת שאמרת שזה לא קשור אבל זה קרה אחרי שהוספתי אוכלוסייה שלישית

בעצם זה קורה די הרבה, גם כשמריצה מולקולות מתנגשות זו בזו | priority | error אני יודעת שאמרת שזה לא קשור אבל זה קרה אחרי שהוספתי אוכלוסייה שלישית בעצם זה קורה די הרבה גם כשמריצה מולקולות מתנגשות זו בזו | 1 |

405,830 | 11,883,187,968 | IssuesEvent | 2020-03-27 15:33:32 | redhat-developer/vscode-tekton | https://api.github.com/repos/redhat-developer/vscode-tekton | closed | Add support for conditions | enhancement in progress priority/high | [Tekton conditions](https://github.com/tektoncd/pipeline/blob/master/docs/conditions.md) enable conditional execution of tasks in a pipeline. `Condition`s should be added in the Tekton window in the tree to allow user to view and edit them. | 1.0 | Add support for conditions - [Tekton conditions](https://github.com/tektoncd/pipeline/blob/master/docs/conditions.md) enable conditional execution of tasks in a pipeline. `Condition`s should be added in the Tekton window in the tree to allow user to view and edit them. | priority | add support for conditions enable conditional execution of tasks in a pipeline condition s should be added in the tekton window in the tree to allow user to view and edit them | 1 |

737,985 | 25,540,320,531 | IssuesEvent | 2022-11-29 14:53:57 | opendatahub-io/odh-dashboard | https://api.github.com/repos/opendatahub-io/odh-dashboard | closed | [Bug]: Dashboard cannot launch 300 user notebooks with 1s or 2s delay | kind/bug feature/notebook-controller priority/high | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

Follow up of #627

## Details

Perform a scale test of notebook creation at user-level

* 300 users

* 1s or 2s delay between each user

* 2 consecutive runs

[300 users, 1s delay](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/512/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1574135534509363200/artifacts/nb-ux-on-ocp/test/artifacts/test_run_1/plotting/report_00_report:_error_report.html) 0/300 successes

[300 users, 2s delay](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/512/pull-ci-openshift-psap-ci-artifacts-master-ods-plot-nb-ux-on-ocp/1574305203211997184/artifacts/plot-nb-ux-on-ocp/test/artifacts/report_00_report:_error_report.html) 31/300 successes

And similar results with m5a.2xlarge masters instead of m6a.xlarge masters:

[300 users, 1s delay, m5a.2xlarge masters](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/515/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1575154323908726784/artifacts/nb-ux-on-ocp/test/artifacts/000_prepare/001_test_run_1/plotting/report_00_report:_error_report.html) 3/300 successes (first run)

[second run](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/515/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1575154323908726784/artifacts/nb-ux-on-ocp/test/artifacts/000_prepare/002_test_run_2/plotting/report_00_report:_error_report.html) 215/300 successes

We got that some of these performance issues are related to `LIST` k8s calls. We have already addressed the `nb-events` endpoint in #627 and now we need to find all the bottlenecks left and fix them.

### Expected Behavior

No issues with multiple users spawning notebooks at the same time.

### Steps To Reproduce

Perform a scale test of notebook creation at user-level

* 300 users

* 1s or 2s delay between each user

* 2 consecutive runs

### Workaround (if any)

_No response_

### OpenShift Infrastructure Version

_No response_

### Openshift Version

_No response_

### What browsers are you seeing the problem on?

_No response_

### Open Data Hub Version

_No response_

### Relevant log output

_No response_ | 1.0 | [Bug]: Dashboard cannot launch 300 user notebooks with 1s or 2s delay - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

Follow up of #627

## Details

Perform a scale test of notebook creation at user-level

* 300 users

* 1s or 2s delay between each user

* 2 consecutive runs

[300 users, 1s delay](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/512/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1574135534509363200/artifacts/nb-ux-on-ocp/test/artifacts/test_run_1/plotting/report_00_report:_error_report.html) 0/300 successes

[300 users, 2s delay](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/512/pull-ci-openshift-psap-ci-artifacts-master-ods-plot-nb-ux-on-ocp/1574305203211997184/artifacts/plot-nb-ux-on-ocp/test/artifacts/report_00_report:_error_report.html) 31/300 successes

And similar results with m5a.2xlarge masters instead of m6a.xlarge masters:

[300 users, 1s delay, m5a.2xlarge masters](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/515/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1575154323908726784/artifacts/nb-ux-on-ocp/test/artifacts/000_prepare/001_test_run_1/plotting/report_00_report:_error_report.html) 3/300 successes (first run)

[second run](https://gcsweb-ci.apps.ci.l2s4.p1.openshiftapps.com/gcs/origin-ci-test/pr-logs/pull/openshift-psap_ci-artifacts/515/pull-ci-openshift-psap-ci-artifacts-master-ods-nb-ux-on-ocp/1575154323908726784/artifacts/nb-ux-on-ocp/test/artifacts/000_prepare/002_test_run_2/plotting/report_00_report:_error_report.html) 215/300 successes

We got that some of these performance issues are related to `LIST` k8s calls. We have already addressed the `nb-events` endpoint in #627 and now we need to find all the bottlenecks left and fix them.

### Expected Behavior

No issues with multiple users spawning notebooks at the same time.

### Steps To Reproduce

Perform a scale test of notebook creation at user-level

* 300 users

* 1s or 2s delay between each user

* 2 consecutive runs

### Workaround (if any)

_No response_

### OpenShift Infrastructure Version

_No response_

### Openshift Version

_No response_

### What browsers are you seeing the problem on?

_No response_

### Open Data Hub Version

_No response_

### Relevant log output

_No response_ | priority | dashboard cannot launch user notebooks with or delay is there an existing issue for this i have searched the existing issues current behavior follow up of details perform a scale test of notebook creation at user level users or delay between each user consecutive runs successes successes and similar results with masters instead of xlarge masters successes first run successes we got that some of these performance issues are related to list calls we have already addressed the nb events endpoint in and now we need to find all the bottlenecks left and fix them expected behavior no issues with multiple users spawning notebooks at the same time steps to reproduce perform a scale test of notebook creation at user level users or delay between each user consecutive runs workaround if any no response openshift infrastructure version no response openshift version no response what browsers are you seeing the problem on no response open data hub version no response relevant log output no response | 1 |

193,237 | 6,882,849,599 | IssuesEvent | 2017-11-21 06:45:38 | ppy/osu-framework | https://api.github.com/repos/ppy/osu-framework | opened | Fix cursor not hiding | high priority | Code is currently removed (https://github.com/smoogipoo/osu-framework/blob/netstandard/osu.Framework/Platform/GameWindow.cs#L81-L98), because it caused low-level exception on Windows. This needs to be investigated at an OpenTK level. | 1.0 | Fix cursor not hiding - Code is currently removed (https://github.com/smoogipoo/osu-framework/blob/netstandard/osu.Framework/Platform/GameWindow.cs#L81-L98), because it caused low-level exception on Windows. This needs to be investigated at an OpenTK level. | priority | fix cursor not hiding code is currently removed because it caused low level exception on windows this needs to be investigated at an opentk level | 1 |

514,177 | 14,934,904,565 | IssuesEvent | 2021-01-25 11:10:05 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Allow overriding default serializers with a property | Module: Serialization Priority: High Source: Internal Team: Client Team: Core Type: Enhancement good first issue | We have added default serializers to the 4.2 series here:

https://github.com/hazelcast/hazelcast/pull/17934

There is a problem with backward compatibility. If a user had CustomSerializer for optional in 4.1, in 4.2 there is no way to use their serializers, and Hazelcast will throw `java.lang.IllegalArgumentException: [class java.util.Optional] serializer cannot be overridden`

Users can basically remove the Optional serializer and continue but this does not play well with Rolling Upgrade. For Rolling Upgrade to work, the user should be able to continue to use the same serializer that is used in the old version.

The proposal is to add a way to override default serializers to cover this scenario.

1. It should be enabled with a property explicitly. Something like `hazelcast.serialization.allowOverrideDefaultSerializers` in ClusterProperty with default value `false`.

2. When the instance does not start and throws the `IllegalArgumentException`, we should mention the new property and the implications on a log.

3. This new property should be documented in release notes and reference manual.

| 1.0 | Allow overriding default serializers with a property - We have added default serializers to the 4.2 series here:

https://github.com/hazelcast/hazelcast/pull/17934

There is a problem with backward compatibility. If a user had CustomSerializer for optional in 4.1, in 4.2 there is no way to use their serializers, and Hazelcast will throw `java.lang.IllegalArgumentException: [class java.util.Optional] serializer cannot be overridden`

Users can basically remove the Optional serializer and continue but this does not play well with Rolling Upgrade. For Rolling Upgrade to work, the user should be able to continue to use the same serializer that is used in the old version.

The proposal is to add a way to override default serializers to cover this scenario.

1. It should be enabled with a property explicitly. Something like `hazelcast.serialization.allowOverrideDefaultSerializers` in ClusterProperty with default value `false`.

2. When the instance does not start and throws the `IllegalArgumentException`, we should mention the new property and the implications on a log.

3. This new property should be documented in release notes and reference manual.

| priority | allow overriding default serializers with a property we have added default serializers to the series here there is a problem with backward compatibility if a user had customserializer for optional in in there is no way to use their serializers and hazelcast will throw java lang illegalargumentexception serializer cannot be overridden users can basically remove the optional serializer and continue but this does not play well with rolling upgrade for rolling upgrade to work the user should be able to continue to use the same serializer that is used in the old version the proposal is to add a way to override default serializers to cover this scenario it should be enabled with a property explicitly something like hazelcast serialization allowoverridedefaultserializers in clusterproperty with default value false when the instance does not start and throws the illegalargumentexception we should mention the new property and the implications on a log this new property should be documented in release notes and reference manual | 1 |

28,366 | 2,701,180,998 | IssuesEvent | 2015-04-05 00:41:32 | TypeStrong/atom-typescript | https://api.github.com/repos/TypeStrong/atom-typescript | opened | External modules Dependency Diagram | priority:high | This is one of the things I promised when I suggest that you *must* use external modules.

This is a problem for which people have asked for a solution before. Specifically doing cyclic checks is also useful. | 1.0 | External modules Dependency Diagram - This is one of the things I promised when I suggest that you *must* use external modules.

This is a problem for which people have asked for a solution before. Specifically doing cyclic checks is also useful. | priority | external modules dependency diagram this is one of the things i promised when i suggest that you must use external modules this is a problem for which people have asked for a solution before specifically doing cyclic checks is also useful | 1 |

680,605 | 23,279,558,593 | IssuesEvent | 2022-08-05 10:37:23 | chakra-ui/chakra-ui | https://api.github.com/repos/chakra-ui/chakra-ui | closed | vite:dep-pre-bundle cannot resolve entry for some Chakra UI packages | Priority: High 🚨 | ### Description

I have a Vite SPA using React and Typescript template from Vite. After installing dependencies and running "yarn dev" which starts the development server, errors like this pop up: "[plugin vite:dep-pre-bundle] Failed to resolve entry for package "@chakra-ui/react-utils". The package may have incorrect main/module/exports specified in its package.json."

### Link to Reproduction

https://github.com/frle10/chakra-ui-package-versions-bug

### Steps to reproduce

1. Clone repo from link to reproduction