Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

747,402 | 26,083,275,573 | IssuesEvent | 2022-12-25 18:15:53 | bounswe/bounswe2022group1 | https://api.github.com/repos/bounswe/bounswe2022group1 | opened | Adding 'upvote' Feature for a Learning Space | Priority: High Type: Task Status: In Progress Frontend | **Issue Description:**

I will add `upvote` feature for a learning space and create a pull request for this new feature if it will be completed.

**Tasks to Do:**

- [ ] add issue labels

- [ ] add reviewer

- [ ] add related links

- [ ] search for `upvote` feature

- [ ] implement `upvote` feature

- [ ] create a PR

*Task Deadline: 25/12/2022 11:59 pm*

*Final Situation:*

*Reviewer: @kamilkorkut*

| 1.0 | Adding 'upvote' Feature for a Learning Space - **Issue Description:**

I will add `upvote` feature for a learning space and create a pull request for this new feature if it will be completed.

**Tasks to Do:**

- [ ] add issue labels

- [ ] add reviewer

- [ ] add related links

- [ ] search for `upvote` feature

- [ ] implement `upvote` feature

- [ ] create a PR

*Task Deadline: 25/12/2022 11:59 pm*

*Final Situation:*

*Reviewer: @kamilkorkut*

| priority | adding upvote feature for a learning space issue description i will add upvote feature for a learning space and create a pull request for this new feature if it will be completed tasks to do add issue labels add reviewer add related links search for upvote feature implement upvote feature create a pr task deadline pm final situation reviewer kamilkorkut | 1 |

764,348 | 26,796,608,963 | IssuesEvent | 2023-02-01 12:21:37 | SuddenDevelopment/StopMotion | https://api.github.com/repos/SuddenDevelopment/StopMotion | opened | Keyframing | enhancement Priority High | Let's have a chat about this.

**Some things to think about?**

1. Process of keyframing.

2. Hotkeys.

3. Setting the first key.

4. Storing a default pose for keys. | 1.0 | Keyframing - Let's have a chat about this.

**Some things to think about?**

1. Process of keyframing.

2. Hotkeys.

3. Setting the first key.

4. Storing a default pose for keys. | priority | keyframing let s have a chat about this some things to think about process of keyframing hotkeys setting the first key storing a default pose for keys | 1 |

805,875 | 29,670,855,630 | IssuesEvent | 2023-06-11 11:44:43 | OJ-lab/judger | https://api.github.com/repos/OJ-lab/judger | closed | Relation fix with judge-test-collection | good first issue high priority | https://github.com/OJ-lab/judger-test-collection/issues/2

After this issue fixed, we should also apply related changes for judger. | 1.0 | Relation fix with judge-test-collection - https://github.com/OJ-lab/judger-test-collection/issues/2

After this issue fixed, we should also apply related changes for judger. | priority | relation fix with judge test collection after this issue fixed we should also apply related changes for judger | 1 |

112,626 | 4,535,183,856 | IssuesEvent | 2016-09-08 16:33:06 | openml/openml-r | https://api.github.com/repos/openml/openml-r | closed | Diagnosing data download issues | bug high priority | I encountered some problems while downloading a few datasets, e.g.:

```

Downloading from 'http://www.openml.org/api/v1/data/1223' to '/Users/joa/.openml/cache/datasets/1223/description.xml'.

Downloading from 'http://www.openml.org/data/download/249042/letter-challenge-labeled.arff' to '/Users/joa/.openml/cache/datasets/1223/dataset.arff'

Error in parseHeader(path) :

Invalid column specification line found in ARFF header:

<!doctype html>

```

It looks like the server is returning an error/warning instead of the actual dataset. However, since only the first line is shown (I'm using verbosity=2) I cannot read the error message. I also cannot reproduce the error because it works fine when I use the REST API directly. There must be something different with the request made by the R interface.

How do I get the full message returned by the server to the R interface?

Thanks!

Joaquin

| 1.0 | Diagnosing data download issues - I encountered some problems while downloading a few datasets, e.g.:

```

Downloading from 'http://www.openml.org/api/v1/data/1223' to '/Users/joa/.openml/cache/datasets/1223/description.xml'.

Downloading from 'http://www.openml.org/data/download/249042/letter-challenge-labeled.arff' to '/Users/joa/.openml/cache/datasets/1223/dataset.arff'

Error in parseHeader(path) :

Invalid column specification line found in ARFF header:

<!doctype html>

```

It looks like the server is returning an error/warning instead of the actual dataset. However, since only the first line is shown (I'm using verbosity=2) I cannot read the error message. I also cannot reproduce the error because it works fine when I use the REST API directly. There must be something different with the request made by the R interface.

How do I get the full message returned by the server to the R interface?

Thanks!

Joaquin

| priority | diagnosing data download issues i encountered some problems while downloading a few datasets e g downloading from to users joa openml cache datasets description xml downloading from to users joa openml cache datasets dataset arff error in parseheader path invalid column specification line found in arff header it looks like the server is returning an error warning instead of the actual dataset however since only the first line is shown i m using verbosity i cannot read the error message i also cannot reproduce the error because it works fine when i use the rest api directly there must be something different with the request made by the r interface how do i get the full message returned by the server to the r interface thanks joaquin | 1 |

28,076 | 2,699,293,984 | IssuesEvent | 2015-04-03 15:54:52 | CenterForOpenScience/osf.io | https://api.github.com/repos/CenterForOpenScience/osf.io | closed | Email links not logged to console when running `invoke server` | 5 - Pending Review Bug: Staging Community Priority - High | h/t @cosenal for helping us find this issue on IRC.

When `USE_EMAIL` is set to `False` in `website.settings` and a new user is registered, the email that would have been sent to the user is printed to the console as part of the log output. This is important for development, as copying and pasting this link is how a developer can confirm the registration of an account on their local copy.

This log no longer prints. Upon investigation, @HarryRybacki and I discovered that while it doesn't print when the server is run through `invoke server`, it does print when it is invoke through `python main.py`. The log lever for the email is `DEBUG`, and it appears that the logger's threshold is being changed somewhere when `inv server` is run, as no logs of that level are being printed.

Since our documentation tells devs to run the OSF locally through Invoke, by running `inv server`, this issue is preventing community developers from creating and registering accounts locally. | 1.0 | Email links not logged to console when running `invoke server` - h/t @cosenal for helping us find this issue on IRC.

When `USE_EMAIL` is set to `False` in `website.settings` and a new user is registered, the email that would have been sent to the user is printed to the console as part of the log output. This is important for development, as copying and pasting this link is how a developer can confirm the registration of an account on their local copy.

This log no longer prints. Upon investigation, @HarryRybacki and I discovered that while it doesn't print when the server is run through `invoke server`, it does print when it is invoke through `python main.py`. The log lever for the email is `DEBUG`, and it appears that the logger's threshold is being changed somewhere when `inv server` is run, as no logs of that level are being printed.

Since our documentation tells devs to run the OSF locally through Invoke, by running `inv server`, this issue is preventing community developers from creating and registering accounts locally. | priority | email links not logged to console when running invoke server h t cosenal for helping us find this issue on irc when use email is set to false in website settings and a new user is registered the email that would have been sent to the user is printed to the console as part of the log output this is important for development as copying and pasting this link is how a developer can confirm the registration of an account on their local copy this log no longer prints upon investigation harryrybacki and i discovered that while it doesn t print when the server is run through invoke server it does print when it is invoke through python main py the log lever for the email is debug and it appears that the logger s threshold is being changed somewhere when inv server is run as no logs of that level are being printed since our documentation tells devs to run the osf locally through invoke by running inv server this issue is preventing community developers from creating and registering accounts locally | 1 |

378,815 | 11,209,146,276 | IssuesEvent | 2020-01-06 09:47:57 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Using torch.multiprocessing along with torch.distributed (mpi-backend) | high priority module: distributed module: mpi triage review triaged | ## 🐛 Bug

Trying to communicate between spawned sub-processes using Pytorch's multiprocessing class within an Openmpi distributed Backend process group fails.

## To Reproduce

Steps to reproduce the behavior:

1. Install Openmpi

2. Build / install Pytorch from source

3. Run the following code using "mpirun -np 2 python test_code.py"

```

def run(i, *args):

print("I WAS SPAWNED BY:", args[0])

tsr = torch.zeros(1)

if args[0] == 0:

tsr += 100

dist.send(tsr, dst=1)

else:

dist.recv(tsr)

print ("RECEIVED VALUE =", tsr)

if __name__ == '__main__':

# Initialize Process Group

dist.init_process_group(backend="mpi")

mp.set_start_method('spawn')

# get current process information

world_size = dist.get_world_size()

rank = dist.get_rank()

# spawn sub-processes

mp.spawn(run, args=(rank, world_size,), nprocs=1)

```

Following exception is raised "Default process group is not initialized"

```

Traceback (most recent call last):

File "test_code_2.py", line 29, in <module>

mp.spawn(run, args=(rank, world_size,), nprocs=1)

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 167, in spawn

while not spawn_context.join():

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 114, in join

raise Exception(msg)

Exception:

-- Process 0 terminated with the following error:

Traceback (most recent call last):

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 19, in _wrap

fn(i, *args)

File "/media/usama/Personal/Study/LAB/experiments/codes/test_code_2.py", line 12, in run

dist.send(tsr, dst=1)

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/distributed/distributed_c10d.py", line 660, in send

_check_default_pg()

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/distributed/distributed_c10d.py", line 185, in _check_default_pg

"Default process group is not initialized"

AssertionError: Default process group is not initialized

```

## Expected behavior

Each sub-process should be able to communicate not only with other sub-processes but also with parent processes.

## Environment

PyTorch version: 1.1.0a0+8f0603b

Is debug build: No

CUDA used to build PyTorch: 10.1.105

OS: Ubuntu 18.04.3 LTS

GCC version: (Ubuntu 7.4.0-1ubuntu1~18.04.1) 7.4.0

CMake version: version 3.14.3

Python version: 3.7

Is CUDA available: Yes

CUDA runtime version: Could not collect

GPU models and configuration: GPU 0: GeForce GTX 960M

Nvidia driver version: 430.64

cuDNN version: /usr/local/cuda-10.1/targets/x86_64-linux/lib/libcudnn.so.7

Versions of relevant libraries:

[pip] numpy==1.17.4

[pip] numpydoc==0.9.1

[pip] torch==1.1.0a0+c182824

[pip] torchvision==0.2.2

[conda] _tflow_select 2.3.0 mkl

[conda] blas 1.0 mkl

[conda] mkl 2019.4 243

[conda] mkl-service 2.3.0 py37he904b0f_0

[conda] mkl_fft 1.0.15 py37ha843d7b_0

[conda] mkl_random 1.1.0 py37hd6b4f25_0

[conda] pytorch 1.0.1 cuda100py37he554f03_0

[conda] tensorflow 1.15.0 mkl_py37h28c19af_0

[conda] tensorflow-base 1.15.0 mkl_py37he1670d9_0

[conda] torch 1.1.0a0+c182824 pypi_0 pypi

[conda] torchvision 0.2.2 py_3 pytorch

## Additional context

Is it even possible to communicate between sub-process to two different parent processes?

cc @ezyang @gchanan @zou3519 @pietern @mrshenli @pritamdamania87 @zhaojuanmao @satgera @rohan-varma @gqchen @aazzolini @xush6528 | 1.0 | Using torch.multiprocessing along with torch.distributed (mpi-backend) - ## 🐛 Bug

Trying to communicate between spawned sub-processes using Pytorch's multiprocessing class within an Openmpi distributed Backend process group fails.

## To Reproduce

Steps to reproduce the behavior:

1. Install Openmpi

2. Build / install Pytorch from source

3. Run the following code using "mpirun -np 2 python test_code.py"

```

def run(i, *args):

print("I WAS SPAWNED BY:", args[0])

tsr = torch.zeros(1)

if args[0] == 0:

tsr += 100

dist.send(tsr, dst=1)

else:

dist.recv(tsr)

print ("RECEIVED VALUE =", tsr)

if __name__ == '__main__':

# Initialize Process Group

dist.init_process_group(backend="mpi")

mp.set_start_method('spawn')

# get current process information

world_size = dist.get_world_size()

rank = dist.get_rank()

# spawn sub-processes

mp.spawn(run, args=(rank, world_size,), nprocs=1)

```

Following exception is raised "Default process group is not initialized"

```

Traceback (most recent call last):

File "test_code_2.py", line 29, in <module>

mp.spawn(run, args=(rank, world_size,), nprocs=1)

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 167, in spawn

while not spawn_context.join():

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 114, in join

raise Exception(msg)

Exception:

-- Process 0 terminated with the following error:

Traceback (most recent call last):

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/multiprocessing/spawn.py", line 19, in _wrap

fn(i, *args)

File "/media/usama/Personal/Study/LAB/experiments/codes/test_code_2.py", line 12, in run

dist.send(tsr, dst=1)

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/distributed/distributed_c10d.py", line 660, in send

_check_default_pg()

File "/home/usama/anaconda3/lib/python3.7/site-packages/torch/distributed/distributed_c10d.py", line 185, in _check_default_pg

"Default process group is not initialized"

AssertionError: Default process group is not initialized

```

## Expected behavior

Each sub-process should be able to communicate not only with other sub-processes but also with parent processes.

## Environment

PyTorch version: 1.1.0a0+8f0603b

Is debug build: No

CUDA used to build PyTorch: 10.1.105

OS: Ubuntu 18.04.3 LTS

GCC version: (Ubuntu 7.4.0-1ubuntu1~18.04.1) 7.4.0

CMake version: version 3.14.3

Python version: 3.7

Is CUDA available: Yes

CUDA runtime version: Could not collect

GPU models and configuration: GPU 0: GeForce GTX 960M

Nvidia driver version: 430.64

cuDNN version: /usr/local/cuda-10.1/targets/x86_64-linux/lib/libcudnn.so.7

Versions of relevant libraries:

[pip] numpy==1.17.4

[pip] numpydoc==0.9.1

[pip] torch==1.1.0a0+c182824

[pip] torchvision==0.2.2

[conda] _tflow_select 2.3.0 mkl

[conda] blas 1.0 mkl

[conda] mkl 2019.4 243

[conda] mkl-service 2.3.0 py37he904b0f_0

[conda] mkl_fft 1.0.15 py37ha843d7b_0

[conda] mkl_random 1.1.0 py37hd6b4f25_0

[conda] pytorch 1.0.1 cuda100py37he554f03_0

[conda] tensorflow 1.15.0 mkl_py37h28c19af_0

[conda] tensorflow-base 1.15.0 mkl_py37he1670d9_0

[conda] torch 1.1.0a0+c182824 pypi_0 pypi

[conda] torchvision 0.2.2 py_3 pytorch

## Additional context

Is it even possible to communicate between sub-process to two different parent processes?

cc @ezyang @gchanan @zou3519 @pietern @mrshenli @pritamdamania87 @zhaojuanmao @satgera @rohan-varma @gqchen @aazzolini @xush6528 | priority | using torch multiprocessing along with torch distributed mpi backend 🐛 bug trying to communicate between spawned sub processes using pytorch s multiprocessing class within an openmpi distributed backend process group fails to reproduce steps to reproduce the behavior install openmpi build install pytorch from source run the following code using mpirun np python test code py def run i args print i was spawned by args tsr torch zeros if args tsr dist send tsr dst else dist recv tsr print received value tsr if name main initialize process group dist init process group backend mpi mp set start method spawn get current process information world size dist get world size rank dist get rank spawn sub processes mp spawn run args rank world size nprocs following exception is raised default process group is not initialized traceback most recent call last file test code py line in mp spawn run args rank world size nprocs file home usama lib site packages torch multiprocessing spawn py line in spawn while not spawn context join file home usama lib site packages torch multiprocessing spawn py line in join raise exception msg exception process terminated with the following error traceback most recent call last file home usama lib site packages torch multiprocessing spawn py line in wrap fn i args file media usama personal study lab experiments codes test code py line in run dist send tsr dst file home usama lib site packages torch distributed distributed py line in send check default pg file home usama lib site packages torch distributed distributed py line in check default pg default process group is not initialized assertionerror default process group is not initialized expected behavior each sub process should be able to communicate not only with other sub processes but also with parent processes environment pytorch version is debug build no cuda used to build pytorch os ubuntu lts gcc version ubuntu cmake version version python version is cuda available yes cuda runtime version could not collect gpu models and configuration gpu geforce gtx nvidia driver version cudnn version usr local cuda targets linux lib libcudnn so versions of relevant libraries numpy numpydoc torch torchvision tflow select mkl blas mkl mkl mkl service mkl fft mkl random pytorch tensorflow mkl tensorflow base mkl torch pypi pypi torchvision py pytorch additional context is it even possible to communicate between sub process to two different parent processes cc ezyang gchanan pietern mrshenli zhaojuanmao satgera rohan varma gqchen aazzolini | 1 |

594,960 | 18,058,092,471 | IssuesEvent | 2021-09-20 10:50:25 | cusodede/dpl | https://api.github.com/repos/cusodede/dpl | opened | Переезд в гитлаб | priority:high | Планируем на конец этой/начало следующей недели.

Гитхаб остаётся в качестве резервной площадки. Доделываем здесь всю текучку, с момента переезда всю новую активность ведём в гитлабе.

Что нужно сделать:

- [ ] Все PR - влить/закрыть.

- [ ] Все тикеты - актуализировать. Выполненное - закрыть, нужное, но не реализованное - перенести в jira, косвенные задачи - по обстоятельствам (лучше всего - выполнить и влить).

- [ ] Все ветки - проверить, влитые удалить, не влитые - актуализировать, надо/не надо. Не надо - удалить, надо - оставить тут для доделки. | 1.0 | Переезд в гитлаб - Планируем на конец этой/начало следующей недели.

Гитхаб остаётся в качестве резервной площадки. Доделываем здесь всю текучку, с момента переезда всю новую активность ведём в гитлабе.

Что нужно сделать:

- [ ] Все PR - влить/закрыть.

- [ ] Все тикеты - актуализировать. Выполненное - закрыть, нужное, но не реализованное - перенести в jira, косвенные задачи - по обстоятельствам (лучше всего - выполнить и влить).

- [ ] Все ветки - проверить, влитые удалить, не влитые - актуализировать, надо/не надо. Не надо - удалить, надо - оставить тут для доделки. | priority | переезд в гитлаб планируем на конец этой начало следующей недели гитхаб остаётся в качестве резервной площадки доделываем здесь всю текучку с момента переезда всю новую активность ведём в гитлабе что нужно сделать все pr влить закрыть все тикеты актуализировать выполненное закрыть нужное но не реализованное перенести в jira косвенные задачи по обстоятельствам лучше всего выполнить и влить все ветки проверить влитые удалить не влитые актуализировать надо не надо не надо удалить надо оставить тут для доделки | 1 |

271,137 | 8,476,585,831 | IssuesEvent | 2018-10-24 22:32:24 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [beta 7.8.0 b7709677] Sorting for match does not work | Fixed High Priority | When you sort for the "match" of an server this will not work, the other sorting features do work. | 1.0 | [beta 7.8.0 b7709677] Sorting for match does not work - When you sort for the "match" of an server this will not work, the other sorting features do work. | priority | sorting for match does not work when you sort for the match of an server this will not work the other sorting features do work | 1 |

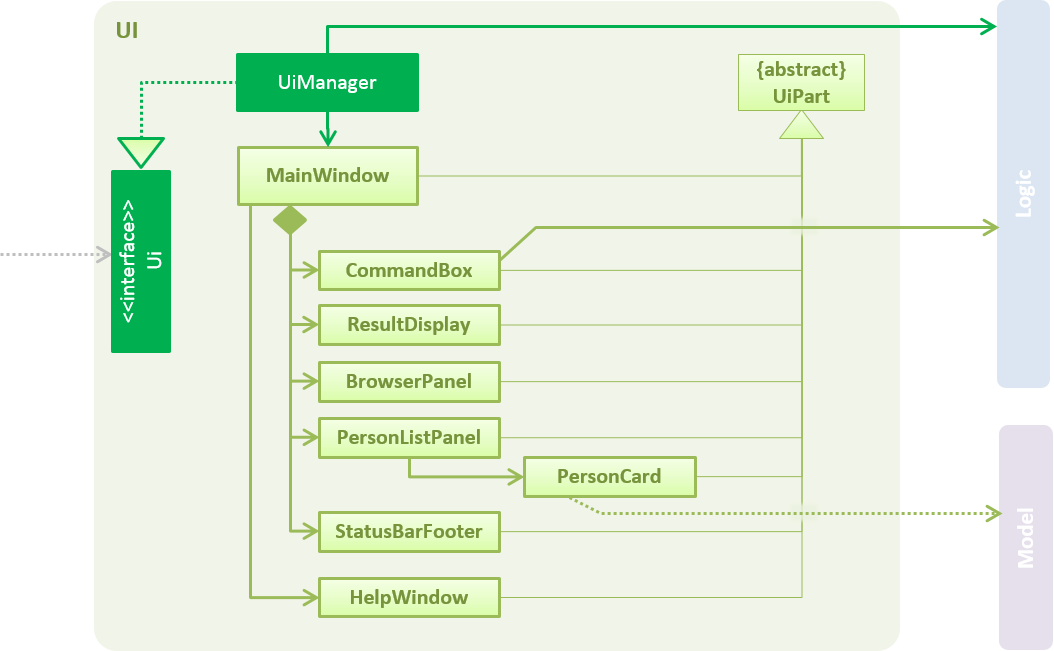

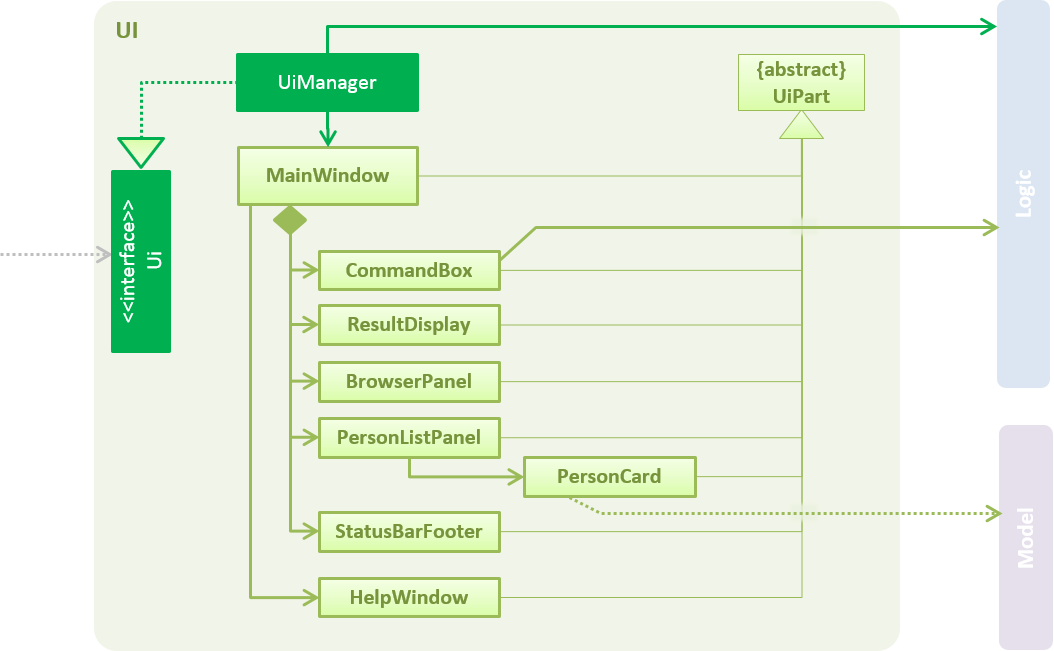

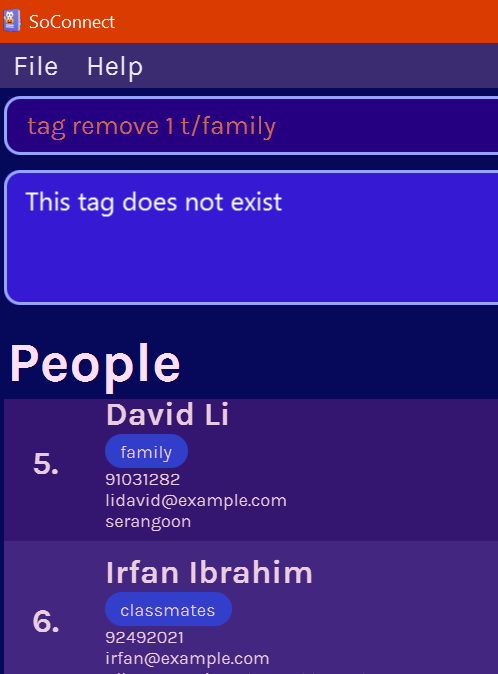

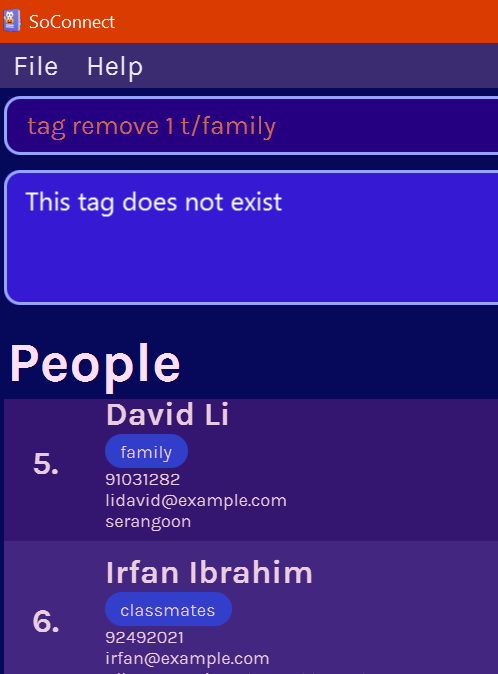

274,868 | 8,568,910,621 | IssuesEvent | 2018-11-11 03:33:47 | CS2103-AY1819S1-F10-4/main | https://api.github.com/repos/CS2103-AY1819S1-F10-4/main | closed | UI component mentions Person | component.docs priority.high type.bug | **Describe the bug**

As illustrated, it still mentions Person.

**Expected behavior**

Should be updated to fit the state of our application. | 1.0 | UI component mentions Person - **Describe the bug**

As illustrated, it still mentions Person.

**Expected behavior**

Should be updated to fit the state of our application. | priority | ui component mentions person describe the bug as illustrated it still mentions person expected behavior should be updated to fit the state of our application | 1 |

745,752 | 25,999,163,552 | IssuesEvent | 2022-12-20 14:03:21 | nf-core/taxprofiler | https://api.github.com/repos/nf-core/taxprofiler | closed | Revert back to python samplesheet checking | bug high-priority | ### Description of the bug

We need a release very soon, and unfortunately the current version of `eido` is not producing good enough errors.

We should retain the current `eido` implementation in a separte branch however to maybe re-integrate it at a future date.

### Command used and terminal output

_No response_

### Relevant files

_No response_

### System information

_No response_ | 1.0 | Revert back to python samplesheet checking - ### Description of the bug

We need a release very soon, and unfortunately the current version of `eido` is not producing good enough errors.

We should retain the current `eido` implementation in a separte branch however to maybe re-integrate it at a future date.

### Command used and terminal output

_No response_

### Relevant files

_No response_

### System information

_No response_ | priority | revert back to python samplesheet checking description of the bug we need a release very soon and unfortunately the current version of eido is not producing good enough errors we should retain the current eido implementation in a separte branch however to maybe re integrate it at a future date command used and terminal output no response relevant files no response system information no response | 1 |

368,410 | 10,878,381,829 | IssuesEvent | 2019-11-16 17:15:41 | bounswe/bounswe2019group10 | https://api.github.com/repos/bounswe/bounswe2019group10 | closed | Fix evaluation of own writing | Priority: High Relation: Backend Type: Bug | Currently a user can evaluate his/her own quiz. This needs to be fixed. | 1.0 | Fix evaluation of own writing - Currently a user can evaluate his/her own quiz. This needs to be fixed. | priority | fix evaluation of own writing currently a user can evaluate his her own quiz this needs to be fixed | 1 |

584,563 | 17,458,354,899 | IssuesEvent | 2021-08-06 06:47:35 | kubesphere/ks-devops | https://api.github.com/repos/kubesphere/ks-devops | closed | Correct repository owner comparison condition in GitHub action configuration | kind/bug priority/high | Recently, we have transferred organization of ks-devops from `kubesphere-sigs` to `kubesphere`.

But, [in GitHub action configuration](https://github.com/kubesphere/ks-devops/blob/7714e9c334ad2f2c68bad2260415047eea71b7e2/.github/workflows/build.yaml), `kubesphere-sigs` is still be used to compare with `github.repository_owner`.

I'm not sure if we are supposed to label this issue as `good-first-issue`, because this problem will affect docker image build triggered by subsequent commit. | 1.0 | Correct repository owner comparison condition in GitHub action configuration - Recently, we have transferred organization of ks-devops from `kubesphere-sigs` to `kubesphere`.

But, [in GitHub action configuration](https://github.com/kubesphere/ks-devops/blob/7714e9c334ad2f2c68bad2260415047eea71b7e2/.github/workflows/build.yaml), `kubesphere-sigs` is still be used to compare with `github.repository_owner`.

I'm not sure if we are supposed to label this issue as `good-first-issue`, because this problem will affect docker image build triggered by subsequent commit. | priority | correct repository owner comparison condition in github action configuration recently we have transferred organization of ks devops from kubesphere sigs to kubesphere but kubesphere sigs is still be used to compare with github repository owner i m not sure if we are supposed to label this issue as good first issue because this problem will affect docker image build triggered by subsequent commit | 1 |

301,342 | 9,219,243,362 | IssuesEvent | 2019-03-11 15:02:51 | TrinityCore/TrinityCore | https://api.github.com/repos/TrinityCore/TrinityCore | closed | [3.3.5] Core/Spell: Negative auras displayed as buff instead of debuff | Branch-3.3.5a Comp-Core Priority-High Sub-Spells | **Description:**

Spell: Leeching Swarm (https://www.wowhead.com/spell=53468)

Leeching Swarm is a buff that Deals 2 Nature damage per second

**Current behaviour:**

It appears like a buff, instead of a debuff

**Expected behaviour:**

It should be a debuff.

**Steps to reproduce the problem:**

1. .go c id 29120

2. Start the encounter and Anub'arak will cast this on the character.

**Branch(es):** 3.3.5

**TC rev. hash/commit:** 7b40303a488ef3edcee0930aee5171bb3df97688

**Operating system:** Win 8.1 | 1.0 | [3.3.5] Core/Spell: Negative auras displayed as buff instead of debuff - **Description:**

Spell: Leeching Swarm (https://www.wowhead.com/spell=53468)

Leeching Swarm is a buff that Deals 2 Nature damage per second

**Current behaviour:**

It appears like a buff, instead of a debuff

**Expected behaviour:**

It should be a debuff.

**Steps to reproduce the problem:**

1. .go c id 29120

2. Start the encounter and Anub'arak will cast this on the character.

**Branch(es):** 3.3.5

**TC rev. hash/commit:** 7b40303a488ef3edcee0930aee5171bb3df97688

**Operating system:** Win 8.1 | priority | core spell negative auras displayed as buff instead of debuff description spell leeching swarm leeching swarm is a buff that deals nature damage per second current behaviour it appears like a buff instead of a debuff expected behaviour it should be a debuff steps to reproduce the problem go c id start the encounter and anub arak will cast this on the character branch es tc rev hash commit operating system win | 1 |

832,060 | 32,071,131,852 | IssuesEvent | 2023-09-25 08:05:42 | EnMAP-Box/enmap-box | https://api.github.com/repos/EnMAP-Box/enmap-box | closed | [Spectral resampling algos] add option for linear interpolation | feature request priority: high | _Requested by Akpona and discussed with @jakimowb and Sebastian van der Linden._

Currently all spectral resampling algos are using SRF for resampling.

It is proposed to add an option that uses simple linear interpolation instead.

| 1.0 | [Spectral resampling algos] add option for linear interpolation - _Requested by Akpona and discussed with @jakimowb and Sebastian van der Linden._

Currently all spectral resampling algos are using SRF for resampling.

It is proposed to add an option that uses simple linear interpolation instead.

| priority | add option for linear interpolation requested by akpona and discussed with jakimowb and sebastian van der linden currently all spectral resampling algos are using srf for resampling it is proposed to add an option that uses simple linear interpolation instead | 1 |

248,353 | 7,929,578,230 | IssuesEvent | 2018-07-06 15:31:32 | canonical-websites/www.ubuntu.com | https://api.github.com/repos/canonical-websites/www.ubuntu.com | opened | misspelled Support on MOBILE - DEV | Priority: High |

<img width="702" alt="screen shot 2018-07-06 at 16 23 17" src="https://user-images.githubusercontent.com/36884067/42387348-f4e3f1c2-8139-11e8-8a08-aab35e41a0e5.png">

---

*Reported from: http://mongoose.staging.ubuntu.com/* | 1.0 | misspelled Support on MOBILE - DEV -

<img width="702" alt="screen shot 2018-07-06 at 16 23 17" src="https://user-images.githubusercontent.com/36884067/42387348-f4e3f1c2-8139-11e8-8a08-aab35e41a0e5.png">

---

*Reported from: http://mongoose.staging.ubuntu.com/* | priority | misspelled support on mobile dev img width alt screen shot at src reported from | 1 |

410,614 | 11,994,483,788 | IssuesEvent | 2020-04-08 13:46:59 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Update All In app links to new help center URL | Bug Needed for V2 launch Priority: High | All Help Cneter links need to be updated to new urls

https://docs.google.com/spreadsheets/d/1UHaFfDF9LBNgDcQx4U9ZyQXW8F6OD0MQfnpAvt_O0JI/edit#gid=603506167 | 1.0 | Update All In app links to new help center URL - All Help Cneter links need to be updated to new urls

https://docs.google.com/spreadsheets/d/1UHaFfDF9LBNgDcQx4U9ZyQXW8F6OD0MQfnpAvt_O0JI/edit#gid=603506167 | priority | update all in app links to new help center url all help cneter links need to be updated to new urls | 1 |

283,800 | 8,723,523,243 | IssuesEvent | 2018-12-09 22:33:35 | noamross/redoc | https://api.github.com/repos/noamross/redoc | closed | Improve word -> md critic markup lua filter comment handling | high-priority | The lua filter that creates Critic Markup comments from docx is not complete, as it inserts comments at the start location of where they are in the document, but does not properly wrap the selected area with `{==` `==}`. | 1.0 | Improve word -> md critic markup lua filter comment handling - The lua filter that creates Critic Markup comments from docx is not complete, as it inserts comments at the start location of where they are in the document, but does not properly wrap the selected area with `{==` `==}`. | priority | improve word md critic markup lua filter comment handling the lua filter that creates critic markup comments from docx is not complete as it inserts comments at the start location of where they are in the document but does not properly wrap the selected area with | 1 |

810,931 | 30,268,609,493 | IssuesEvent | 2023-07-07 13:45:07 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | opened | Multiple time the same attribute raise an error on the portal | Type: Bug Priority: High | **Describe the bug**

When per example you try to hit the portal with this URL:

http://10.0.0.1/Cisco::WLC/sidbef01b?&redirect=http://fireoscaptiveportal.com/generate_204&redirect=http://fireoscaptiveportal.com/generate_204

PacketFence trigger an error:

httpd.portal-docker-wrapper[289117]: [Fri Jul 07 09:37:10.467422 2023] [perl:error] [pid 16] [client 100.64.0.1:49106] param(): usage error. Invalid syntax at /usr/local/pf/lib/pf/web/externalportal.pm line 208.\n

| 1.0 | Multiple time the same attribute raise an error on the portal - **Describe the bug**

When per example you try to hit the portal with this URL:

http://10.0.0.1/Cisco::WLC/sidbef01b?&redirect=http://fireoscaptiveportal.com/generate_204&redirect=http://fireoscaptiveportal.com/generate_204

PacketFence trigger an error:

httpd.portal-docker-wrapper[289117]: [Fri Jul 07 09:37:10.467422 2023] [perl:error] [pid 16] [client 100.64.0.1:49106] param(): usage error. Invalid syntax at /usr/local/pf/lib/pf/web/externalportal.pm line 208.\n

| priority | multiple time the same attribute raise an error on the portal describe the bug when per example you try to hit the portal with this url packetfence trigger an error httpd portal docker wrapper param usage error invalid syntax at usr local pf lib pf web externalportal pm line n | 1 |

67,037 | 3,265,501,950 | IssuesEvent | 2015-10-22 16:29:13 | CoderDojo/community-platform | https://api.github.com/repos/CoderDojo/community-platform | opened | Capacity missing from Dojo | bug high priority | The capacity is empty for all events on the NSC Mahon Dojo. The Docklands one is working fine so I don't know why this is.

This Dojo cannot use the events system because of the error.

| 1.0 | Capacity missing from Dojo - The capacity is empty for all events on the NSC Mahon Dojo. The Docklands one is working fine so I don't know why this is.

This Dojo cannot use the events system because of the error.

| priority | capacity missing from dojo the capacity is empty for all events on the nsc mahon dojo the docklands one is working fine so i don t know why this is this dojo cannot use the events system because of the error | 1 |

660,802 | 22,031,395,007 | IssuesEvent | 2022-05-28 00:14:46 | depub-team/depub.space | https://api.github.com/repos/depub-team/depub.space | reopened | Edit and Delete feature of a single tweet | enhancement high priority | - Add [Edit] (pen icon) below a tweet for the author

- Add [Share] (standard iOS share icon) below all tweets

- Clicking Edit allows editing the tweet. When edited and reposted, the ISCN will stay same with the version number plus 1.

- A [Delete Tweet] button is available in Edit mode. When used, a set of pre-defined words will be used as the new version of the original tweet. Also a parameter will be added to the ISCN will be added, e.g. Deleted: yes (to be discussed). depub.SPACE will not display tweets with such a parameter enabled.

- The predefined tweet can be:

> This tweet is removed from depub.SPACE frontend by the author.

- [ ] Edit a tweet

- [ ] Delete a tweet | 1.0 | Edit and Delete feature of a single tweet - - Add [Edit] (pen icon) below a tweet for the author

- Add [Share] (standard iOS share icon) below all tweets

- Clicking Edit allows editing the tweet. When edited and reposted, the ISCN will stay same with the version number plus 1.

- A [Delete Tweet] button is available in Edit mode. When used, a set of pre-defined words will be used as the new version of the original tweet. Also a parameter will be added to the ISCN will be added, e.g. Deleted: yes (to be discussed). depub.SPACE will not display tweets with such a parameter enabled.

- The predefined tweet can be:

> This tweet is removed from depub.SPACE frontend by the author.

- [ ] Edit a tweet

- [ ] Delete a tweet | priority | edit and delete feature of a single tweet add pen icon below a tweet for the author add standard ios share icon below all tweets clicking edit allows editing the tweet when edited and reposted the iscn will stay same with the version number plus a button is available in edit mode when used a set of pre defined words will be used as the new version of the original tweet also a parameter will be added to the iscn will be added e g deleted yes to be discussed depub space will not display tweets with such a parameter enabled the predefined tweet can be this tweet is removed from depub space frontend by the author edit a tweet delete a tweet | 1 |

268,747 | 8,411,186,580 | IssuesEvent | 2018-10-12 13:11:03 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | opened | kcom2: possiblity to passthrough without shipping provider | High priority bug | When you do it quickly it will allow you to select shipping provider, but checkout form will not be updated

https://drive.google.com/open?id=19vES5rHmKgMZsj0LxGAHXJ7rEHJExJWS

http://vladtest54bweb-vladtest54b.kademi-ci.co/vladtest54bweb-ecomstore/cart | 1.0 | kcom2: possiblity to passthrough without shipping provider - When you do it quickly it will allow you to select shipping provider, but checkout form will not be updated

https://drive.google.com/open?id=19vES5rHmKgMZsj0LxGAHXJ7rEHJExJWS

http://vladtest54bweb-vladtest54b.kademi-ci.co/vladtest54bweb-ecomstore/cart | priority | possiblity to passthrough without shipping provider when you do it quickly it will allow you to select shipping provider but checkout form will not be updated | 1 |

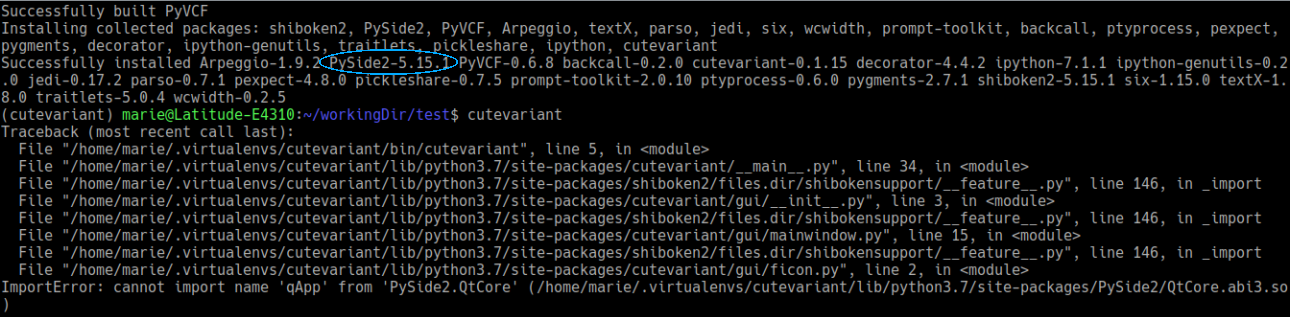

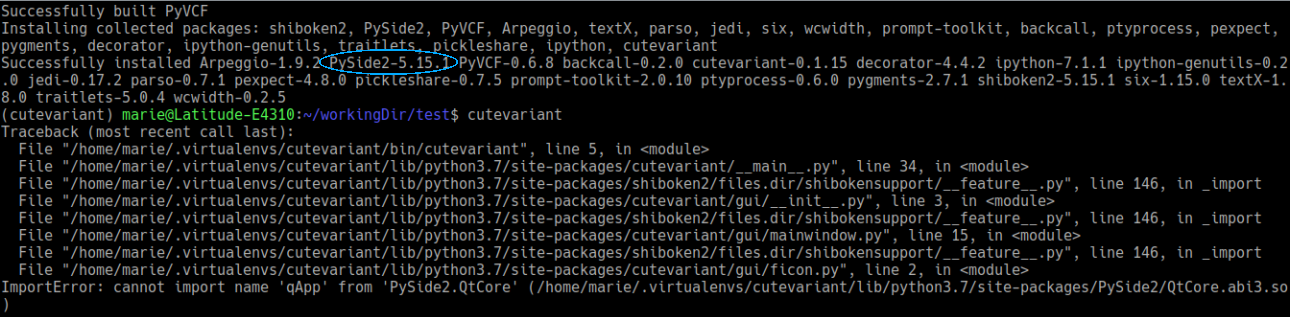

474,044 | 13,651,368,843 | IssuesEvent | 2020-09-27 00:43:23 | labsquare/cutevariant | https://api.github.com/repos/labsquare/cutevariant | closed | Cutevariant not working when installed via pip | bug devel high-priority master | I installed cutevariant via pip (without any error) but when I tried to launch cutevariant I got the following error :

Requirements allow PySide2>=5.11.2 but cutevariant is not working with the last version of PySide2 (5.15.1). The error disappears if you force the installation of PySide2 == 5.11.2. | 1.0 | Cutevariant not working when installed via pip - I installed cutevariant via pip (without any error) but when I tried to launch cutevariant I got the following error :

Requirements allow PySide2>=5.11.2 but cutevariant is not working with the last version of PySide2 (5.15.1). The error disappears if you force the installation of PySide2 == 5.11.2. | priority | cutevariant not working when installed via pip i installed cutevariant via pip without any error but when i tried to launch cutevariant i got the following error requirements allow but cutevariant is not working with the last version of the error disappears if you force the installation of | 1 |

613,260 | 19,085,107,339 | IssuesEvent | 2021-11-29 04:15:22 | pycaret/pycaret | https://api.github.com/repos/pycaret/pycaret | closed | Add Prediction Interval coverage of the test set | enhancement time_series metrics priority_high | **Is your feature request related to a problem? Please describe.**

Per this [comment on LinkedIn](https://www.linkedin.com/feed/update/urn:li:ugcPost:6814933350713741312?commentUrn=urn%3Ali%3Acomment%3A%28ugcPost%3A6814933350713741312%2C6814934502796779520%29&replyUrn=urn%3Ali%3Acomment%3A%28ugcPost%3A6814933350713741312%2C6815038277939273728%29) from @RamiKrispin

**Describe the solution you'd like**

If you are running a horse race between multiple models with backtesting, it is nice to add the PI coverage rate on the testing partitions. | 1.0 | Add Prediction Interval coverage of the test set - **Is your feature request related to a problem? Please describe.**

Per this [comment on LinkedIn](https://www.linkedin.com/feed/update/urn:li:ugcPost:6814933350713741312?commentUrn=urn%3Ali%3Acomment%3A%28ugcPost%3A6814933350713741312%2C6814934502796779520%29&replyUrn=urn%3Ali%3Acomment%3A%28ugcPost%3A6814933350713741312%2C6815038277939273728%29) from @RamiKrispin

**Describe the solution you'd like**

If you are running a horse race between multiple models with backtesting, it is nice to add the PI coverage rate on the testing partitions. | priority | add prediction interval coverage of the test set is your feature request related to a problem please describe per this from ramikrispin describe the solution you d like if you are running a horse race between multiple models with backtesting it is nice to add the pi coverage rate on the testing partitions | 1 |

765,007 | 26,828,365,520 | IssuesEvent | 2023-02-02 14:25:52 | NIAEFEUP/tts-revamp-fe | https://api.github.com/repos/NIAEFEUP/tts-revamp-fe | closed | Hotfix: schedule editing after importing not applying | bug high priority medium effort | After importing a schedule, if the schedule is then edited, the changes are permanent: after switching to another schedule and then back to the imported schedule it's possible to notice that the changes were not applied.

Note: this only happens once, after switching back and forth once the schedule can be edited without any problems. | 1.0 | Hotfix: schedule editing after importing not applying - After importing a schedule, if the schedule is then edited, the changes are permanent: after switching to another schedule and then back to the imported schedule it's possible to notice that the changes were not applied.

Note: this only happens once, after switching back and forth once the schedule can be edited without any problems. | priority | hotfix schedule editing after importing not applying after importing a schedule if the schedule is then edited the changes are permanent after switching to another schedule and then back to the imported schedule it s possible to notice that the changes were not applied note this only happens once after switching back and forth once the schedule can be edited without any problems | 1 |

664,752 | 22,287,075,428 | IssuesEvent | 2022-06-11 20:06:26 | red-hat-storage/ocs-ci | https://api.github.com/repos/red-hat-storage/ocs-ci | closed | Fail to pull FIO image, due to pull rate limit | bug High Priority lifecycle/stale | tests with io on background may fail if pull rate limit reached from docker.io

test report [here](http://magna002.ceph.redhat.com/ocsci-jenkins/openshift-clusters/j002aud1c33-ua/j002aud1c33-ua_20210720T192204/logs/test_report_1626808655.html), see `test_add_capacity` | 1.0 | Fail to pull FIO image, due to pull rate limit - tests with io on background may fail if pull rate limit reached from docker.io

test report [here](http://magna002.ceph.redhat.com/ocsci-jenkins/openshift-clusters/j002aud1c33-ua/j002aud1c33-ua_20210720T192204/logs/test_report_1626808655.html), see `test_add_capacity` | priority | fail to pull fio image due to pull rate limit tests with io on background may fail if pull rate limit reached from docker io test report see test add capacity | 1 |

584,293 | 17,411,024,143 | IssuesEvent | 2021-08-03 12:21:37 | OceanDataTools/openrvdas | https://api.github.com/repos/OceanDataTools/openrvdas | opened | Fix logger_manager stderr listening | bug high priority | **Describe the bug**

Right now, the logger_manager sets up threads to listen to each logger's stderr file to relay those lines to the console and cached data server. When one or more loggers is generating a lot of stderr output (say, because of a bad parse format), the logger manager gets overloaded and starts timing out on other tasks. This causes cascading failures.

The problem seems to be exacerbated when the logger_manager starts up if logger stderr files are already large.

| 1.0 | Fix logger_manager stderr listening - **Describe the bug**

Right now, the logger_manager sets up threads to listen to each logger's stderr file to relay those lines to the console and cached data server. When one or more loggers is generating a lot of stderr output (say, because of a bad parse format), the logger manager gets overloaded and starts timing out on other tasks. This causes cascading failures.

The problem seems to be exacerbated when the logger_manager starts up if logger stderr files are already large.

| priority | fix logger manager stderr listening describe the bug right now the logger manager sets up threads to listen to each logger s stderr file to relay those lines to the console and cached data server when one or more loggers is generating a lot of stderr output say because of a bad parse format the logger manager gets overloaded and starts timing out on other tasks this causes cascading failures the problem seems to be exacerbated when the logger manager starts up if logger stderr files are already large | 1 |

743,231 | 25,892,074,481 | IssuesEvent | 2022-12-14 18:49:53 | dmwm/CRABServer | https://api.github.com/repos/dmwm/CRABServer | opened | add support for user containers | Type: Enhancement Type: Question Priority: High Status: Available | all we know about this so far:

Katy had a meeting with convenors of new Common Analysis Tools (CAT) group. They already have questions about CRAB, one is: Running in an ad-hoc container instead of CMSSW standard singularity image.

This may be possible already, Dario has info which he will share although the container needs to be on CVMFS (quite a limitation in a way). Dario passed this config https://gitlab.cern.ch/-/snippets/2139 to Shahzad some time ago

We need to understand the use case, and decide if to support.

We need to verify and understand details and decide how to document/offer this to users.

Since request came from CAT convenors, put prio as high, at least meaning that we need to undrestand more and clarify before we define exactly what to do | 1.0 | add support for user containers - all we know about this so far:

Katy had a meeting with convenors of new Common Analysis Tools (CAT) group. They already have questions about CRAB, one is: Running in an ad-hoc container instead of CMSSW standard singularity image.

This may be possible already, Dario has info which he will share although the container needs to be on CVMFS (quite a limitation in a way). Dario passed this config https://gitlab.cern.ch/-/snippets/2139 to Shahzad some time ago

We need to understand the use case, and decide if to support.

We need to verify and understand details and decide how to document/offer this to users.

Since request came from CAT convenors, put prio as high, at least meaning that we need to undrestand more and clarify before we define exactly what to do | priority | add support for user containers all we know about this so far katy had a meeting with convenors of new common analysis tools cat group they already have questions about crab one is running in an ad hoc container instead of cmssw standard singularity image this may be possible already dario has info which he will share although the container needs to be on cvmfs quite a limitation in a way dario passed this config to shahzad some time ago we need to understand the use case and decide if to support we need to verify and understand details and decide how to document offer this to users since request came from cat convenors put prio as high at least meaning that we need to undrestand more and clarify before we define exactly what to do | 1 |

64,393 | 3,211,199,108 | IssuesEvent | 2015-10-06 09:23:16 | music-encoding/music-encoding | https://api.github.com/repos/music-encoding/music-encoding | closed | Permanent links to the schema | Component: Core Schema Priority: High Status: Needs Discussion | Since the Google Code repository will be dropped, the links to the schema previously hosted on Google Code will need to be changed to the new url. This is actually a pretty bad practice and I think we should avoid it in the future. So we should have a permanent url for the schema that does not rely on a repository system. We can use music-encoding.org and either copy each release there or redirect to GitHub. It would also be good to have the schema without version number referring to the latest version but also each version numbered explicitly for users who want to refer to a specific release. That is, something like:

http://www.music-encoding.org/schema/mei-all.rng

http://www.music-encoding.org/schema/mei-all-3.0.0.rng

Any thoughts? | 1.0 | Permanent links to the schema - Since the Google Code repository will be dropped, the links to the schema previously hosted on Google Code will need to be changed to the new url. This is actually a pretty bad practice and I think we should avoid it in the future. So we should have a permanent url for the schema that does not rely on a repository system. We can use music-encoding.org and either copy each release there or redirect to GitHub. It would also be good to have the schema without version number referring to the latest version but also each version numbered explicitly for users who want to refer to a specific release. That is, something like:

http://www.music-encoding.org/schema/mei-all.rng

http://www.music-encoding.org/schema/mei-all-3.0.0.rng

Any thoughts? | priority | permanent links to the schema since the google code repository will be dropped the links to the schema previously hosted on google code will need to be changed to the new url this is actually a pretty bad practice and i think we should avoid it in the future so we should have a permanent url for the schema that does not rely on a repository system we can use music encoding org and either copy each release there or redirect to github it would also be good to have the schema without version number referring to the latest version but also each version numbered explicitly for users who want to refer to a specific release that is something like any thoughts | 1 |

638,308 | 20,721,097,226 | IssuesEvent | 2022-03-13 12:03:59 | AY2122S2-CS2103T-W15-2/tp | https://api.github.com/repos/AY2122S2-CS2103T-W15-2/tp | opened | Update GUI Icon and Name | priority.High type.Task | Update Icon and name displayed in the GUI to match with the project name and icon. | 1.0 | Update GUI Icon and Name - Update Icon and name displayed in the GUI to match with the project name and icon. | priority | update gui icon and name update icon and name displayed in the gui to match with the project name and icon | 1 |

504,320 | 14,616,756,193 | IssuesEvent | 2020-12-22 13:45:54 | SAP/ownid-webapp | https://api.github.com/repos/SAP/ownid-webapp | closed | Reset password notify that phone already associated with an account | Priority: High Type: Bug | Reset password should overwrite the existing data.

| 1.0 | Reset password notify that phone already associated with an account - Reset password should overwrite the existing data.

| priority | reset password notify that phone already associated with an account reset password should overwrite the existing data | 1 |

4,861 | 2,564,696,700 | IssuesEvent | 2015-02-06 21:42:29 | prey/prey-android-client | https://api.github.com/repos/prey/prey-android-client | closed | Passwords stored in plaintext in com.prey_references.xml | bug priority: high | The preferences `PREFS_ADMIN_DEVICE_REVOKED_PASSWORD`, `PASSWORD`, `UNLOCK_PASS` store passwords in plaintext. Please encrypt these, using one-way hashes like sha1 if possible. Particularly, the file seems to contain enough information to log in to my prey account. And if someone uses the same password for prey.com as the email they use to log in, that email address is right there too.

This file can probably be opened by root only, but root access on an android device is usually a matter of selecting "allow" - or not doing anything at all if the app was used before. So basically, anyone smart enough who steals my phone could avoid changing the SIM card (so prey doesn't activate itself), read that file, get the password, and detach prey / uninstall it / take over my prey account. Fun.

(Of course this previous argument isn't very valid since root access with no auth also lets you remove prey with a `rm` and maybe a `kill`. Or factory reset right after stealing it, no root or passwords required. The fact that it's trivial to identify that a phone runs prey annoys me too. But I digress, this ticket isn't meant to cover all of my paranoia sources.) | 1.0 | Passwords stored in plaintext in com.prey_references.xml - The preferences `PREFS_ADMIN_DEVICE_REVOKED_PASSWORD`, `PASSWORD`, `UNLOCK_PASS` store passwords in plaintext. Please encrypt these, using one-way hashes like sha1 if possible. Particularly, the file seems to contain enough information to log in to my prey account. And if someone uses the same password for prey.com as the email they use to log in, that email address is right there too.

This file can probably be opened by root only, but root access on an android device is usually a matter of selecting "allow" - or not doing anything at all if the app was used before. So basically, anyone smart enough who steals my phone could avoid changing the SIM card (so prey doesn't activate itself), read that file, get the password, and detach prey / uninstall it / take over my prey account. Fun.

(Of course this previous argument isn't very valid since root access with no auth also lets you remove prey with a `rm` and maybe a `kill`. Or factory reset right after stealing it, no root or passwords required. The fact that it's trivial to identify that a phone runs prey annoys me too. But I digress, this ticket isn't meant to cover all of my paranoia sources.) | priority | passwords stored in plaintext in com prey references xml the preferences prefs admin device revoked password password unlock pass store passwords in plaintext please encrypt these using one way hashes like if possible particularly the file seems to contain enough information to log in to my prey account and if someone uses the same password for prey com as the email they use to log in that email address is right there too this file can probably be opened by root only but root access on an android device is usually a matter of selecting allow or not doing anything at all if the app was used before so basically anyone smart enough who steals my phone could avoid changing the sim card so prey doesn t activate itself read that file get the password and detach prey uninstall it take over my prey account fun of course this previous argument isn t very valid since root access with no auth also lets you remove prey with a rm and maybe a kill or factory reset right after stealing it no root or passwords required the fact that it s trivial to identify that a phone runs prey annoys me too but i digress this ticket isn t meant to cover all of my paranoia sources | 1 |

517,869 | 15,020,503,843 | IssuesEvent | 2021-02-01 14:47:20 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | closed | Remove legacy v3/_ui/ endpoint | area/api priority/high status/new type/bug | Remove old `v3/_ui/` endpoint once the UI on cloud.redhat.com has been updated to not require it anymore.

This should happen after we push our current api to cloud.redhat.com | 1.0 | Remove legacy v3/_ui/ endpoint - Remove old `v3/_ui/` endpoint once the UI on cloud.redhat.com has been updated to not require it anymore.

This should happen after we push our current api to cloud.redhat.com | priority | remove legacy ui endpoint remove old ui endpoint once the ui on cloud redhat com has been updated to not require it anymore this should happen after we push our current api to cloud redhat com | 1 |

586,543 | 17,580,421,179 | IssuesEvent | 2021-08-16 06:31:13 | ivpn/android-app | https://api.github.com/repos/ivpn/android-app | closed | Default trust status is always reverted to None | type: bug Network Protection priority: high | ### Description:

On version 2.4.1, when setting the default trust status to Untrusted/Trusted, the trust status is always reverted back to None. The issue does not happen the first time the trust status is changed, but when logging out and logging back in, from this moment on, it is not possible to change the default to Untrusted or Trusted.

**Note:**

The issue happens in all devices, Android OS.

This issue might be related to the changes implemented on https://github.com/ivpn/android-app/issues/48

### Actual result:

Default trust status is always reverted to None.

### Expected result:

User should always be able to change the trust status of default, mobile and current network.

### Steps to reproduce:

1. Install 2.4.1.

2. Login.

3. Enable Network Protection.

4. Set default to untrusted - app connects automatically.

5. Disconnect from VPN.

6. Enable KillSwitch.

7. Logout.

8. Log back in.

9. Set default to Untrusted - nothing happens.

10. Access network protection again, default is reverted to none.

### Environment:

IVPN: 2.4.1

Devices: Samsung Galaxy S10/Android 11, Xiaomi Redmi 5/Android 8.1 | 1.0 | Default trust status is always reverted to None - ### Description:

On version 2.4.1, when setting the default trust status to Untrusted/Trusted, the trust status is always reverted back to None. The issue does not happen the first time the trust status is changed, but when logging out and logging back in, from this moment on, it is not possible to change the default to Untrusted or Trusted.

**Note:**

The issue happens in all devices, Android OS.

This issue might be related to the changes implemented on https://github.com/ivpn/android-app/issues/48

### Actual result:

Default trust status is always reverted to None.

### Expected result:

User should always be able to change the trust status of default, mobile and current network.

### Steps to reproduce:

1. Install 2.4.1.

2. Login.

3. Enable Network Protection.

4. Set default to untrusted - app connects automatically.

5. Disconnect from VPN.

6. Enable KillSwitch.

7. Logout.

8. Log back in.

9. Set default to Untrusted - nothing happens.

10. Access network protection again, default is reverted to none.

### Environment:

IVPN: 2.4.1

Devices: Samsung Galaxy S10/Android 11, Xiaomi Redmi 5/Android 8.1 | priority | default trust status is always reverted to none description on version when setting the default trust status to untrusted trusted the trust status is always reverted back to none the issue does not happen the first time the trust status is changed but when logging out and logging back in from this moment on it is not possible to change the default to untrusted or trusted note the issue happens in all devices android os this issue might be related to the changes implemented on actual result default trust status is always reverted to none expected result user should always be able to change the trust status of default mobile and current network steps to reproduce install login enable network protection set default to untrusted app connects automatically disconnect from vpn enable killswitch logout log back in set default to untrusted nothing happens access network protection again default is reverted to none environment ivpn devices samsung galaxy android xiaomi redmi android | 1 |

506,045 | 14,657,130,731 | IssuesEvent | 2020-12-28 14:56:41 | dmwm/CRABServer | https://api.github.com/repos/dmwm/CRABServer | closed | PublisherMaster print final message too soon | Area: StandalonePublish/ASOless Priority: High Type: Bug | e.g. here PublihserMaster print "iteration completed" before Process 58 stopped.

I was looking at things and noticed that after log had this content still `ps fux` was showing one slave running

```

2020-12-26 18:25:19,570:INFO:master:Starting process <Process(Process-56, started)> pid=1071

2020-12-26 18:25:29,581:INFO:master:Terminated: <Process(Process-55, stopped)> pid=1047

2020-12-26 18:25:29,585:INFO:master:Starting process <Process(Process-57, started)> pid=1078

2020-12-26 18:25:39,597:INFO:master:Terminated: <Process(Process-56, stopped)> pid=1071

2020-12-26 18:25:39,601:INFO:master:Starting process <Process(Process-58, started)> pid=1090

2020-12-26 18:25:49,613:INFO:master:Terminated: <Process(Process-58, stopped)> pid=1090

2020-12-26 18:25:49,617:INFO:master:Starting process <Process(Process-59, started)> pid=1204

2020-12-26 18:25:59,629:INFO:master:Terminated: <Process(Process-57, stopped)> pid=1078

2020-12-26 18:25:59,629:INFO:master:Algorithm iteration completed

2020-12-26 18:25:59,630:INFO:master:Wait 1498 sec for next cycle

2020-12-26 18:25:59,630:INFO:master:Next cycle will start at 18:50:57

[crab3@crab-prod-tw02 processes]$

``` | 1.0 | PublisherMaster print final message too soon - e.g. here PublihserMaster print "iteration completed" before Process 58 stopped.

I was looking at things and noticed that after log had this content still `ps fux` was showing one slave running

```

2020-12-26 18:25:19,570:INFO:master:Starting process <Process(Process-56, started)> pid=1071

2020-12-26 18:25:29,581:INFO:master:Terminated: <Process(Process-55, stopped)> pid=1047

2020-12-26 18:25:29,585:INFO:master:Starting process <Process(Process-57, started)> pid=1078

2020-12-26 18:25:39,597:INFO:master:Terminated: <Process(Process-56, stopped)> pid=1071

2020-12-26 18:25:39,601:INFO:master:Starting process <Process(Process-58, started)> pid=1090

2020-12-26 18:25:49,613:INFO:master:Terminated: <Process(Process-58, stopped)> pid=1090

2020-12-26 18:25:49,617:INFO:master:Starting process <Process(Process-59, started)> pid=1204

2020-12-26 18:25:59,629:INFO:master:Terminated: <Process(Process-57, stopped)> pid=1078

2020-12-26 18:25:59,629:INFO:master:Algorithm iteration completed

2020-12-26 18:25:59,630:INFO:master:Wait 1498 sec for next cycle

2020-12-26 18:25:59,630:INFO:master:Next cycle will start at 18:50:57

[crab3@crab-prod-tw02 processes]$

``` | priority | publishermaster print final message too soon e g here publihsermaster print iteration completed before process stopped i was looking at things and noticed that after log had this content still ps fux was showing one slave running info master starting process pid info master terminated pid info master starting process pid info master terminated pid info master starting process pid info master terminated pid info master starting process pid info master terminated pid info master algorithm iteration completed info master wait sec for next cycle info master next cycle will start at | 1 |

788,049 | 27,741,736,054 | IssuesEvent | 2023-03-15 14:41:36 | nlbdev/nordic-epub3-dtbook-migrator | https://api.github.com/repos/nlbdev/nordic-epub3-dtbook-migrator | closed | Add check for backlink presence in footnotes/endnotes | High priority validator-revision | Currently, we don't have a rule that checks backlink presence for footnotes/endnotes, so this should be added.

The [current text of the Nordic Guidelines](https://format.mtm.se/nordic_epub/2020-1#notes-and-note-references) is not exact on which of the two methods for backlinks from the DAISY KB should be preferred in 2020-1, so to begin with, we should check both or any method (always including `@role="doc-backlink"`). Is link integrity check enough, or should we implement a cross-reference check that ensures that the backlink target is equal to the referencing noteref of the present footnote?

| 1.0 | Add check for backlink presence in footnotes/endnotes - Currently, we don't have a rule that checks backlink presence for footnotes/endnotes, so this should be added.

The [current text of the Nordic Guidelines](https://format.mtm.se/nordic_epub/2020-1#notes-and-note-references) is not exact on which of the two methods for backlinks from the DAISY KB should be preferred in 2020-1, so to begin with, we should check both or any method (always including `@role="doc-backlink"`). Is link integrity check enough, or should we implement a cross-reference check that ensures that the backlink target is equal to the referencing noteref of the present footnote?

| priority | add check for backlink presence in footnotes endnotes currently we don t have a rule that checks backlink presence for footnotes endnotes so this should be added the is not exact on which of the two methods for backlinks from the daisy kb should be preferred in so to begin with we should check both or any method always including role doc backlink is link integrity check enough or should we implement a cross reference check that ensures that the backlink target is equal to the referencing noteref of the present footnote | 1 |

739,739 | 25,715,736,790 | IssuesEvent | 2022-12-07 10:11:54 | DataDog/guarddog | https://api.github.com/repos/DataDog/guarddog | closed | GuardDog may fail on `verify` if trying to unzip a file | bug high-priority | Can be reproduced with

```

guarddog verify requirements.txt

```

With the requirements file from the project (see [PR](https://github.com/DataDog/guarddog/pull/103))

| 1.0 | GuardDog may fail on `verify` if trying to unzip a file - Can be reproduced with

```

guarddog verify requirements.txt

```

With the requirements file from the project (see [PR](https://github.com/DataDog/guarddog/pull/103))

| priority | guarddog may fail on verify if trying to unzip a file can be reproduced with guarddog verify requirements txt with the requirements file from the project see | 1 |

117,774 | 4,727,637,441 | IssuesEvent | 2016-10-18 14:02:02 | zoonproject/zoon | https://api.github.com/repos/zoonproject/zoon | closed | User defined attributes in df not propagated | bug Priority - high | User defined attributes (as opposed to hard-coded attributes like `covCols`) don't propagate through the workflow.

In line 387 (zoonHelpers.R), the `cbind` strips the attributes, and user defined attributes in occurrence of process modules never get recovered (only the `covCols` attribute get recovered).

I'll write up a pull request for this today or tomorrow, as fixing this is necessary for allowing support of detection models. | 1.0 | User defined attributes in df not propagated - User defined attributes (as opposed to hard-coded attributes like `covCols`) don't propagate through the workflow.

In line 387 (zoonHelpers.R), the `cbind` strips the attributes, and user defined attributes in occurrence of process modules never get recovered (only the `covCols` attribute get recovered).

I'll write up a pull request for this today or tomorrow, as fixing this is necessary for allowing support of detection models. | priority | user defined attributes in df not propagated user defined attributes as opposed to hard coded attributes like covcols don t propagate through the workflow in line zoonhelpers r the cbind strips the attributes and user defined attributes in occurrence of process modules never get recovered only the covcols attribute get recovered i ll write up a pull request for this today or tomorrow as fixing this is necessary for allowing support of detection models | 1 |

559,060 | 16,549,103,221 | IssuesEvent | 2021-05-28 06:11:33 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Cannot assign multiple resources when creating new event | bug high-priority resolved | https://bryntum.com/examples/calendar/multiassign/

double click to create a new event

notice the resource picker is single select (should be multi) | 1.0 | Cannot assign multiple resources when creating new event - https://bryntum.com/examples/calendar/multiassign/

double click to create a new event

notice the resource picker is single select (should be multi) | priority | cannot assign multiple resources when creating new event double click to create a new event notice the resource picker is single select should be multi | 1 |

311,647 | 9,537,031,686 | IssuesEvent | 2019-04-30 11:23:04 | aartiukh/sph | https://api.github.com/repos/aartiukh/sph | opened | Setup coverage measure using Traciv CI and codecov | area: cmake priority: high type: enhancement | **Is your feature request related to a problem? Please describe.**

Add coverage measure and badge to README | 1.0 | Setup coverage measure using Traciv CI and codecov - **Is your feature request related to a problem? Please describe.**

Add coverage measure and badge to README | priority | setup coverage measure using traciv ci and codecov is your feature request related to a problem please describe add coverage measure and badge to readme | 1 |

533,729 | 15,597,683,962 | IssuesEvent | 2021-03-18 17:11:50 | AY2021S2-CS2113-F10-3/tp | https://api.github.com/repos/AY2021S2-CS2113-F10-3/tp | closed | As a user, I can store all my data locally | priority.High type.Epic | so that my saved schedules can be loaded whenever I load the application | 1.0 | As a user, I can store all my data locally - so that my saved schedules can be loaded whenever I load the application | priority | as a user i can store all my data locally so that my saved schedules can be loaded whenever i load the application | 1 |

253,203 | 8,052,773,985 | IssuesEvent | 2018-08-01 20:25:57 | children-of-gazimba/companion-qt-tool | https://api.github.com/repos/children-of-gazimba/companion-qt-tool | closed | visualize volume change when hover scrolling sound tiles | enhancement high priority on it! | The hover scrolling volume change has seen some good use, yet it is still difficult to make precise changes. We need visual feedback to aid this feature. It might make most sense to have a slider pop up and visualize the change whenever a tile is hover scrolled. | 1.0 | visualize volume change when hover scrolling sound tiles - The hover scrolling volume change has seen some good use, yet it is still difficult to make precise changes. We need visual feedback to aid this feature. It might make most sense to have a slider pop up and visualize the change whenever a tile is hover scrolled. | priority | visualize volume change when hover scrolling sound tiles the hover scrolling volume change has seen some good use yet it is still difficult to make precise changes we need visual feedback to aid this feature it might make most sense to have a slider pop up and visualize the change whenever a tile is hover scrolled | 1 |

689,684 | 23,630,463,839 | IssuesEvent | 2022-08-25 08:53:23 | apluslms/a-plus | https://api.github.com/repos/apluslms/a-plus | closed | Assignments using custom JavaScript stopped working in v1.16 | type: bug priority: high effort: days experience: moderate requester: CS area: javascript | A+ v1.16 has a bug that breaks assignments that use custom JavaScript code in the assignment description/instructions. Clicking the submit button does not seem to do anything: it does not upload the submission, no data is saved and nothing is graded since there is no submission.

> The "submit" button does not submit any data anymore, instead it just makes the button disabled.

Private ticket:

https://rt.cs.aalto.fi/Ticket/Display.html?id=21819

One affected course and assignment are listed in the RT ticket. | 1.0 | Assignments using custom JavaScript stopped working in v1.16 - A+ v1.16 has a bug that breaks assignments that use custom JavaScript code in the assignment description/instructions. Clicking the submit button does not seem to do anything: it does not upload the submission, no data is saved and nothing is graded since there is no submission.

> The "submit" button does not submit any data anymore, instead it just makes the button disabled.

Private ticket:

https://rt.cs.aalto.fi/Ticket/Display.html?id=21819

One affected course and assignment are listed in the RT ticket. | priority | assignments using custom javascript stopped working in a has a bug that breaks assignments that use custom javascript code in the assignment description instructions clicking the submit button does not seem to do anything it does not upload the submission no data is saved and nothing is graded since there is no submission the submit button does not submit any data anymore instead it just makes the button disabled private ticket one affected course and assignment are listed in the rt ticket | 1 |

594,710 | 18,051,826,273 | IssuesEvent | 2021-09-19 21:48:39 | EdwinParra35/MinTIC_g02_TicDigitalG8 | https://api.github.com/repos/EdwinParra35/MinTIC_g02_TicDigitalG8 | closed | Genera un formulario que permita la administración de películas | High priority | Genera un formulario que permita la administración de películas que hay disponibles en cartelera para ver en cine. ¿Qué información es relevante? Antes de ponerlo en consideración con el grupo de trabajo, genera tu propia propuesta de interfaz. Luego, puedes generar una nueva versión del diseño. | 1.0 | Genera un formulario que permita la administración de películas - Genera un formulario que permita la administración de películas que hay disponibles en cartelera para ver en cine. ¿Qué información es relevante? Antes de ponerlo en consideración con el grupo de trabajo, genera tu propia propuesta de interfaz. Luego, puedes generar una nueva versión del diseño. | priority | genera un formulario que permita la administración de películas genera un formulario que permita la administración de películas que hay disponibles en cartelera para ver en cine ¿qué información es relevante antes de ponerlo en consideración con el grupo de trabajo genera tu propia propuesta de interfaz luego puedes generar una nueva versión del diseño | 1 |

596,026 | 18,094,793,600 | IssuesEvent | 2021-09-22 07:51:13 | turbot/steampipe-plugin-aws | https://api.github.com/repos/turbot/steampipe-plugin-aws | closed | Handling exceptions aws_macie2_classification_job table | bug priority:high | **Describe the bug**

`aws_macie2_classification_job` table returns AccessDeniedException in case any one of the regions is configured in `aws.spc `file is not enabled with Macie.

Also, provide the Macie service enabled/disabled status part of this table.

**Steampipe version (`steampipe -v`)**

Example: v0.3.0

**Plugin version (`steampipe plugin list`)**

Example: v0.5.0

**To reproduce**

**Error when IAM user with all required permissions and only 1 region is enabled with Macie**

```

> select * from aws_aab.aws_macie2_classification_job

Error: AccessDeniedException: Macie is not enabled.

> select * from aws_aab.aws_macie2_classification_job where region = 'us-east-1'

+--------------+----------------------------------+-------------------------------------------------------------------------------------------+------------+----------+--------------------------------------+------

| name | job_id | arn | job_status | job_type | client_token | creat

+--------------+----------------------------------+-------------------------------------------------------------------------------------------+------------+----------+--------------------------------------+------

| bucket-audit | f9d91733ace0a9sdfdsfsdfsdfsd98916e | arn:aws:macie2:us-east-1:123493682495:classification-job/f9d91733ace0a93c09e8da13f598916e | COMPLETE | ONE_TIME | cf6b6918-9c3f-4a33-887b-f4f834a1046d | 2021-

+--------------+----------------------------------+-------------------------------------------------------------------------------------------+------------+----------+--------------------------------------+------

```

**The exception remains the same with a different message when a user with no IAM privileges, tries to query**

```

> select * from aws_macie2_classification_job

Error: AccessDeniedException: User: arn:aws:iam::533793682495:user/macie-test is not authorized to perform: macie2:ListClassificationJobs on resource: arn:aws:macie2:ap-south-1:533793682495:*

> .exit

```

**CLI Output**

```

turbot-macpro-raj:steampipe-mod-aws-top10 raj$ aws macie2 list-classification-jobs --profile devaab --region us-east-1

{

"items": [

{

"bucketDefinitions": [

{

"accountId": "123453682495",

"buckets": [

"andrew-turbot-test-bucket",

"aws-logs-123453682495-us-east-1"

]

}

],

"createdAt": "2021-09-06T18:13:46.335482+00:00",

"jobId": "f9d91733ace0a93c09e8da13f598916e",

"jobStatus": "COMPLETE",

"jobType": "ONE_TIME",

"lastRunErrorStatus": {

"code": "NONE"

},

"name": "bucket-audit"

}

]

}

turbot-macpro-raj:steampipe-mod-aws-top10 raj$ aws macie2 list-classification-jobs --profile devaab --region ap-south-1

An error occurred (AccessDeniedException) when calling the ListClassificationJobs operation: Macie is not enabled.

turbot-macpro-raj:steampipe-mod-aws-top10 raj$ aws macie2 list-classification-jobs --profile devaab-onlyrds --region ap-south-1

An error occurred (AccessDeniedException) when calling the ListClassificationJobs operation: User: arn:aws:iam::123453682495:user/macie-test is not authorized to perform: macie2:ListClassificationJobs on resource: arn:aws:macie2:ap-south-1:123453682495:*

turbot-macpro-raj:steampipe-mod-aws-top10 raj$

```

**Expected behavior**

Do we need to handle the message, not the exception?

**Additional context**

Add any other context about the problem here.

| 1.0 | Handling exceptions aws_macie2_classification_job table - **Describe the bug**

`aws_macie2_classification_job` table returns AccessDeniedException in case any one of the regions is configured in `aws.spc `file is not enabled with Macie.

Also, provide the Macie service enabled/disabled status part of this table.

**Steampipe version (`steampipe -v`)**

Example: v0.3.0

**Plugin version (`steampipe plugin list`)**

Example: v0.5.0

**To reproduce**

**Error when IAM user with all required permissions and only 1 region is enabled with Macie**

```