Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

625,974 | 19,783,535,242 | IssuesEvent | 2022-01-18 01:58:24 | tracer-protocol/pools-client | https://api.github.com/repos/tracer-protocol/pools-client | closed | Release 1.2 - Bugs from Testing | bug Priority: High | Based on current testing:

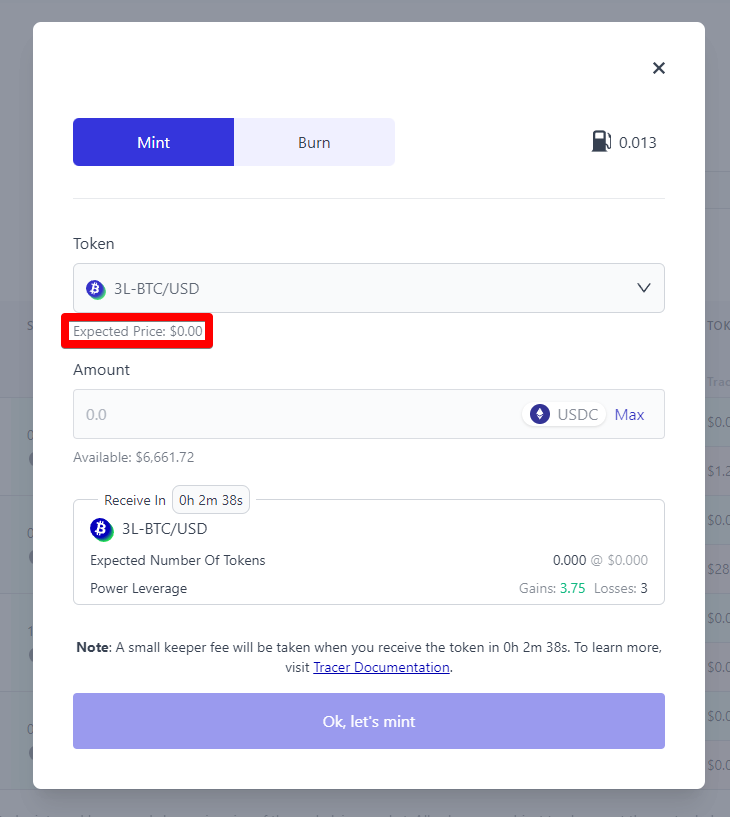

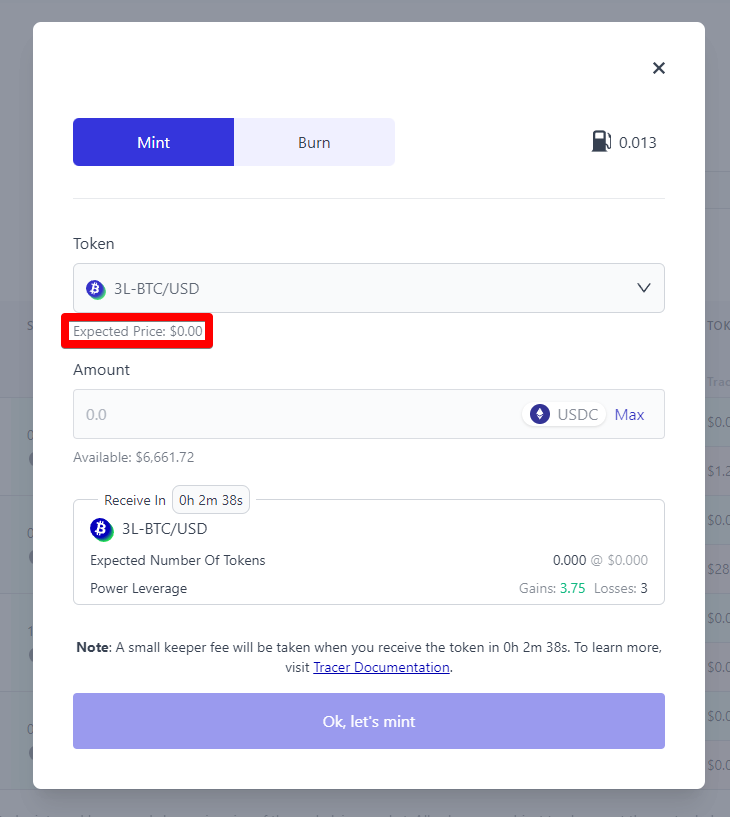

- [ ] Countdown timers don't match between toasts and table view

- [ ] Expected Price is usually $0, which affects the price through the whole process.

- [ ] 'View on Arbiscan' only appears on toast prior to confirming in web3 wallet, then changes to view order. It shouldn't disappear.

No toast notifications after queued toaster reaches 0 and disappears.

- [ ] No toast for minting in progress (user story is missing, I need to confirm with @KittyLomas)

- [ ] No toast for success

**To Reproduce**

1. Go to https://deploy-preview-380--tracer-pools.netlify.app

2. Follow gifs

**Desktop (please complete the following information):**

- OS: Windows 11

- Browser: Brave

- Version: 1.34.80

| 1.0 | Release 1.2 - Bugs from Testing - Based on current testing:

- [ ] Countdown timers don't match between toasts and table view

- [ ] Expected Price is usually $0, which affects the price through the whole process.

- [ ] 'View on Arbiscan' only appears on toast prior to confirming in web3 wallet, then changes to view order. It shouldn't disappear.

No toast notifications after queued toaster reaches 0 and disappears.

- [ ] No toast for minting in progress (user story is missing, I need to confirm with @KittyLomas)

- [ ] No toast for success

**To Reproduce**

1. Go to https://deploy-preview-380--tracer-pools.netlify.app

2. Follow gifs

**Desktop (please complete the following information):**

- OS: Windows 11

- Browser: Brave

- Version: 1.34.80

| priority | release bugs from testing based on current testing countdown timers don t match between toasts and table view expected price is usually which affects the price through the whole process view on arbiscan only appears on toast prior to confirming in wallet then changes to view order it shouldn t disappear no toast notifications after queued toaster reaches and disappears no toast for minting in progress user story is missing i need to confirm with kittylomas no toast for success to reproduce go to follow gifs desktop please complete the following information os windows browser brave version | 1 |

554,964 | 16,443,928,844 | IssuesEvent | 2021-05-20 17:13:23 | LBNL-ETA/BEDES-Manager | https://api.github.com/repos/LBNL-ETA/BEDES-Manager | closed | Warn user of duplicates when creating new composite term | bug high priority | Check if existing BEDES approved composite term exists and give an alert to the user. | 1.0 | Warn user of duplicates when creating new composite term - Check if existing BEDES approved composite term exists and give an alert to the user. | priority | warn user of duplicates when creating new composite term check if existing bedes approved composite term exists and give an alert to the user | 1 |

620,130 | 19,553,436,145 | IssuesEvent | 2022-01-03 04:05:05 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Resource path that precedes with / results in Missing identifier | Type/Improvement Priority/High Team/CompilerFE Area/Diagnostics Area/Parser | **Description:**

<!-- Give a brief description of the improvement -->

Then the resource path precedes with a `/`, it results in `Missing identifier` diagnostic error. This causes confusion in the Choreo resource form as we can't identify the issue. The error diagnostic should ne more meaningful to identify the exact error.

<img width="552" alt="Screenshot 2021-12-13 at 12 48 12" src="https://user-images.githubusercontent.com/5234623/145768863-a3beeede-6a46-4759-99ee-dad21d8dc56f.png">

**Describe your problem(s)**

```

import ballerina/http;

service / on new http:Listener(8080) {

resource function get /hello(string name) returns json|error? {

return error("");

}

}

```

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| 1.0 | Resource path that precedes with / results in Missing identifier - **Description:**

<!-- Give a brief description of the improvement -->

Then the resource path precedes with a `/`, it results in `Missing identifier` diagnostic error. This causes confusion in the Choreo resource form as we can't identify the issue. The error diagnostic should ne more meaningful to identify the exact error.

<img width="552" alt="Screenshot 2021-12-13 at 12 48 12" src="https://user-images.githubusercontent.com/5234623/145768863-a3beeede-6a46-4759-99ee-dad21d8dc56f.png">

**Describe your problem(s)**

```

import ballerina/http;

service / on new http:Listener(8080) {

resource function get /hello(string name) returns json|error? {

return error("");

}

}

```

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| priority | resource path that precedes with results in missing identifier description then the resource path precedes with a it results in missing identifier diagnostic error this causes confusion in the choreo resource form as we can t identify the issue the error diagnostic should ne more meaningful to identify the exact error img width alt screenshot at src describe your problem s import ballerina http service on new http listener resource function get hello string name returns json error return error describe your solution s related issues optional suggested labels optional suggested assignees optional | 1 |

450,298 | 13,001,671,303 | IssuesEvent | 2020-07-24 00:28:49 | UC-Davis-molecular-computing/scadnano-python-package | https://api.github.com/repos/UC-Davis-molecular-computing/scadnano-python-package | opened | add CI checks for API docs building and PyPI tar.gz file building | enhancement high priority | On each commit to dev, there should be a GitHub action that tries to build the API docs and the scadnano-x.x.x.tar.gz file that is uploaded to PyPI. This way, if either of these fails, we know that the docs or PyPI package action will fail when committed to master.

The checks could pass and the action on commit to master could still fail, but at least this way it would not be because the files simply could not be generated. | 1.0 | add CI checks for API docs building and PyPI tar.gz file building - On each commit to dev, there should be a GitHub action that tries to build the API docs and the scadnano-x.x.x.tar.gz file that is uploaded to PyPI. This way, if either of these fails, we know that the docs or PyPI package action will fail when committed to master.

The checks could pass and the action on commit to master could still fail, but at least this way it would not be because the files simply could not be generated. | priority | add ci checks for api docs building and pypi tar gz file building on each commit to dev there should be a github action that tries to build the api docs and the scadnano x x x tar gz file that is uploaded to pypi this way if either of these fails we know that the docs or pypi package action will fail when committed to master the checks could pass and the action on commit to master could still fail but at least this way it would not be because the files simply could not be generated | 1 |

34,766 | 2,787,472,776 | IssuesEvent | 2015-05-08 06:13:37 | CheckiO/checkio-empire-battle | https://api.github.com/repos/CheckiO/checkio-empire-battle | closed | Initial parameter "Size" should be a size of square, not a radius (2 time bigger) | complex:simple priority:high refactoring | Building sizes are not clear. | 1.0 | Initial parameter "Size" should be a size of square, not a radius (2 time bigger) - Building sizes are not clear. | priority | initial parameter size should be a size of square not a radius time bigger building sizes are not clear | 1 |

526,276 | 15,285,176,454 | IssuesEvent | 2021-02-23 13:13:52 | carbon-design-system/carbon-for-ibm-dotcom | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom | closed | CTA section: Same height by CSS | Airtable Done dev package: react priority: high | ### The problem

Currently CTA section attempts to ensure all copy contents have the same height, by JavaScript code. It caused FOUC. FOUC causes false positives in Percy depending on when the screenshot is taken.

#### Additional Information

- scope includes content item horizontal, link list and button group

### The solution

Change it to CSS-based, e.g. `grid-auto-rows: 1fr` in CSS grid.

#### Acceptance Criteria

- [ ] No java script limitation for same height

- [ ] No user observable delay to apply the same height | 1.0 | CTA section: Same height by CSS - ### The problem

Currently CTA section attempts to ensure all copy contents have the same height, by JavaScript code. It caused FOUC. FOUC causes false positives in Percy depending on when the screenshot is taken.

#### Additional Information

- scope includes content item horizontal, link list and button group

### The solution

Change it to CSS-based, e.g. `grid-auto-rows: 1fr` in CSS grid.

#### Acceptance Criteria

- [ ] No java script limitation for same height

- [ ] No user observable delay to apply the same height | priority | cta section same height by css the problem currently cta section attempts to ensure all copy contents have the same height by javascript code it caused fouc fouc causes false positives in percy depending on when the screenshot is taken additional information scope includes content item horizontal link list and button group the solution change it to css based e g grid auto rows in css grid acceptance criteria no java script limitation for same height no user observable delay to apply the same height | 1 |

120,634 | 4,792,640,655 | IssuesEvent | 2016-10-31 16:02:13 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | classification creation | Enhancement Priority-High | When creating taxon names, species, scientific name, etc., are often not populated, and are somewhat painful to generate manually. Find interface magic.

| 1.0 | classification creation - When creating taxon names, species, scientific name, etc., are often not populated, and are somewhat painful to generate manually. Find interface magic.

| priority | classification creation when creating taxon names species scientific name etc are often not populated and are somewhat painful to generate manually find interface magic | 1 |

787,232 | 27,711,349,911 | IssuesEvent | 2023-03-14 14:26:06 | AY2223S2-CS2113-T13-1/tp | https://api.github.com/repos/AY2223S2-CS2113-T13-1/tp | closed | "create-account" command | type.Story priority.High | As a user, I can create multiple financial accounts so that I can better categorise my expenses and budgets.

### Acceptance Criteria

- Creates an account for the specified currency.

- For now, throw error if an account with the specified currency already exists

- Format: `create-account $/CURRENCY`

- Examples:

```java

>> create-account $/EUR

// Creates a $EUR account

``` | 1.0 | "create-account" command - As a user, I can create multiple financial accounts so that I can better categorise my expenses and budgets.

### Acceptance Criteria

- Creates an account for the specified currency.

- For now, throw error if an account with the specified currency already exists

- Format: `create-account $/CURRENCY`

- Examples:

```java

>> create-account $/EUR

// Creates a $EUR account

``` | priority | create account command as a user i can create multiple financial accounts so that i can better categorise my expenses and budgets acceptance criteria creates an account for the specified currency for now throw error if an account with the specified currency already exists format create account currency examples java create account eur creates a eur account | 1 |

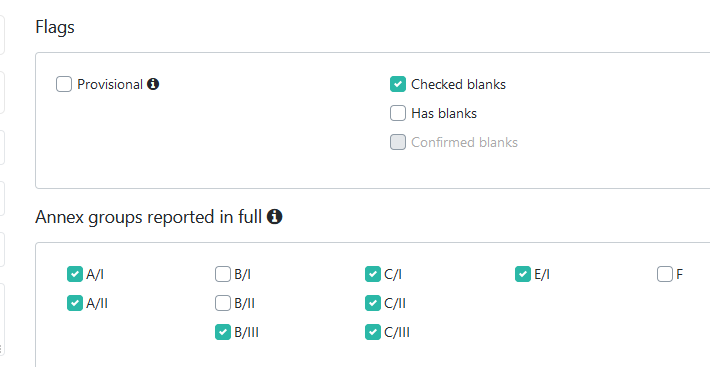

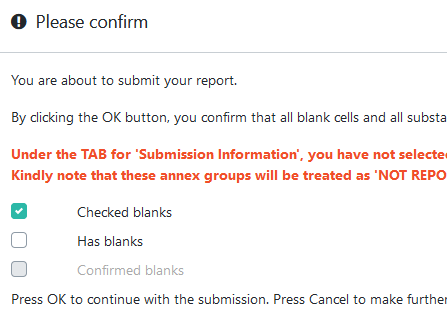

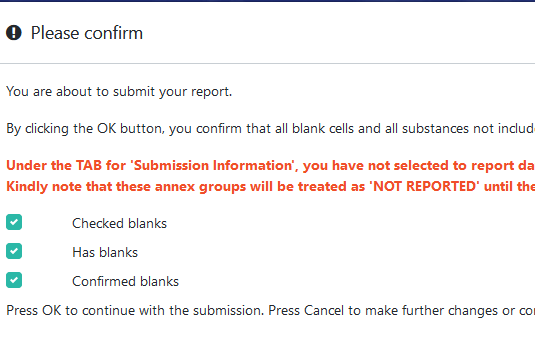

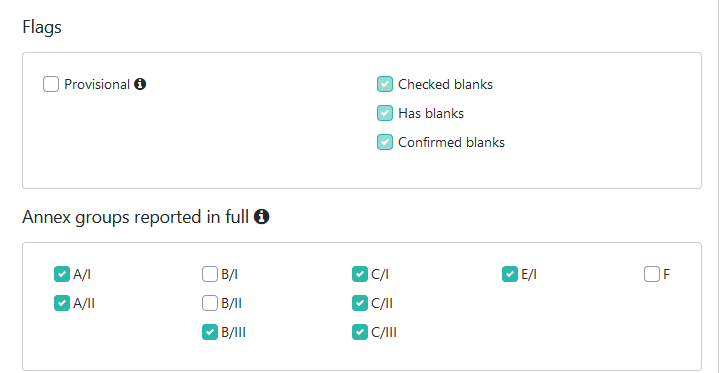

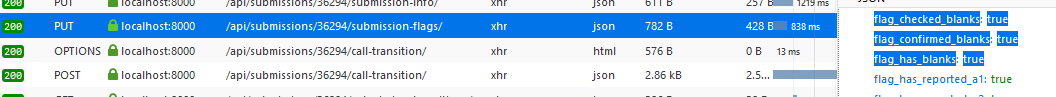

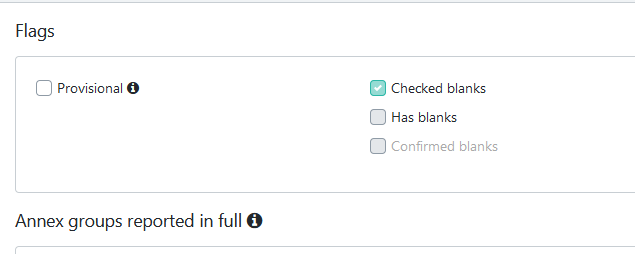

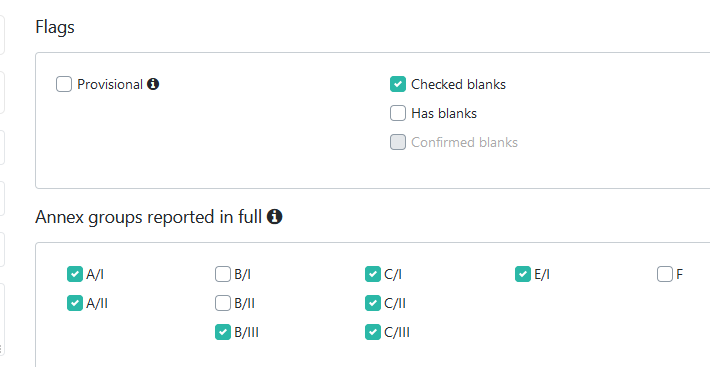

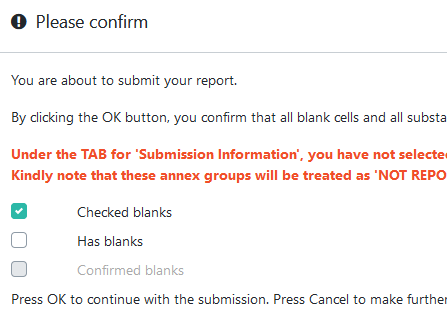

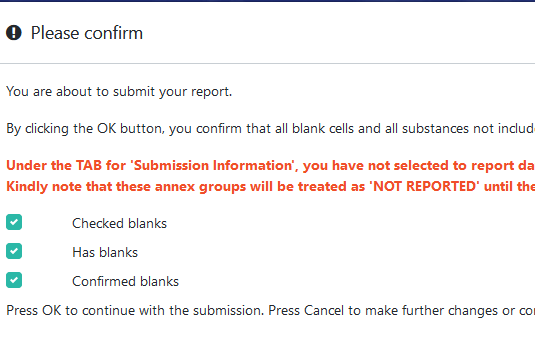

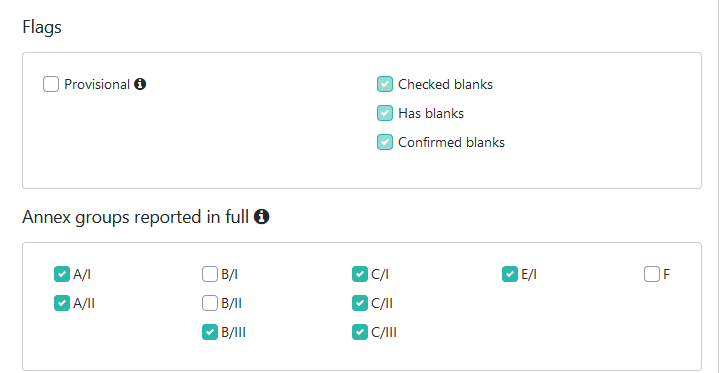

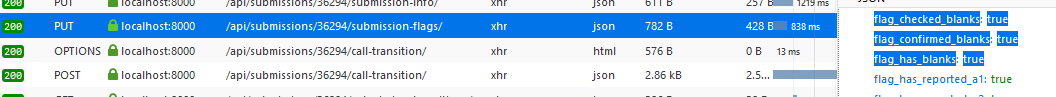

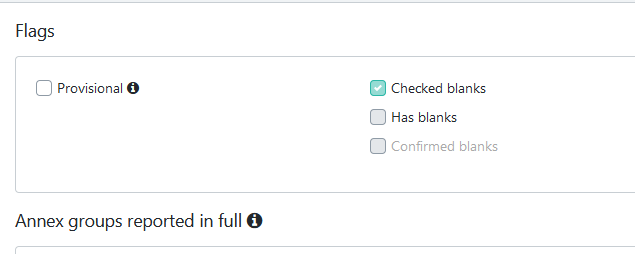

352,632 | 10,544,332,336 | IssuesEvent | 2019-10-02 16:41:58 | eaudeweb/ozone | https://api.github.com/repos/eaudeweb/ozone | closed | Art7 - saving flags | Component: Backend Feature: Art7 Priority: High Status: In progress | related to #1354, copying last comment here, as this seems to be a backend issue:

When creating a submission as secretariat, before Submit there is a popup which allows changing the has_blanks flag:

Check some previously unchecked flags and submit:

After submit, the flags seem to be correctly updated:

The PUT request seems to have the right values:

but after refresh, the flags are reverted to the initial values:

| 1.0 | Art7 - saving flags - related to #1354, copying last comment here, as this seems to be a backend issue:

When creating a submission as secretariat, before Submit there is a popup which allows changing the has_blanks flag:

Check some previously unchecked flags and submit:

After submit, the flags seem to be correctly updated:

The PUT request seems to have the right values:

but after refresh, the flags are reverted to the initial values:

| priority | saving flags related to copying last comment here as this seems to be a backend issue when creating a submission as secretariat before submit there is a popup which allows changing the has blanks flag check some previously unchecked flags and submit after submit the flags seem to be correctly updated the put request seems to have the right values but after refresh the flags are reverted to the initial values | 1 |

606,278 | 18,759,032,629 | IssuesEvent | 2021-11-05 14:26:46 | wasmerio/wasmer | https://api.github.com/repos/wasmerio/wasmer | closed | Upgrade Inkwell to `0.1.0-beta.4` | 🎉 enhancement 📦 lib-compiler-llvm priority-high | Latest inkwell supports the metadata PR wasmer depends on. So we no longer need to depend on `wasmer-inkwell`

This will close the #2433 and allow us to release a new version of Wasmer supporting up to LLVM 13.

It should be a relatively easy change (just a version bump) | 1.0 | Upgrade Inkwell to `0.1.0-beta.4` - Latest inkwell supports the metadata PR wasmer depends on. So we no longer need to depend on `wasmer-inkwell`

This will close the #2433 and allow us to release a new version of Wasmer supporting up to LLVM 13.

It should be a relatively easy change (just a version bump) | priority | upgrade inkwell to beta latest inkwell supports the metadata pr wasmer depends on so we no longer need to depend on wasmer inkwell this will close the and allow us to release a new version of wasmer supporting up to llvm it should be a relatively easy change just a version bump | 1 |

755,722 | 26,437,827,103 | IssuesEvent | 2023-01-15 16:03:46 | Thorfusion/Mekanism-1.7.10-Community-Edition | https://api.github.com/repos/Thorfusion/Mekanism-1.7.10-Community-Edition | closed | [BUG]: crash report | TYPE: BUG PRIORITY: HIGH STATUS: FINISHED MC: 1.7.10 | ### Describe the bug

I don't know why the game won't start with the IC2.

### Expected behavior

Start the game normally.

### Mekanism Version

9.10.23-ALL

### Minecraft Version is this regarding?

1.7.10

### What OS are you seeing the problem on?

Windows

### Name of modpack if applicable

_No response_

### Version of said modpack if applicable

_No response_

### Screenshots

### The crash report in folder ./crash-reports (both server and client logs)

crash-2023-01-15_20.46.39-client.txt : [https://pastebin.com/qCr6SWw8](https://pastebin.com/qCr6SWw8)

### Please provide the following other files

Use default configuration file.

latest.txt : [https://pastebin.com/qFmSRLTV](https://pastebin.com/qFmSRLTV)

| 1.0 | [BUG]: crash report - ### Describe the bug

I don't know why the game won't start with the IC2.

### Expected behavior

Start the game normally.

### Mekanism Version

9.10.23-ALL

### Minecraft Version is this regarding?

1.7.10

### What OS are you seeing the problem on?

Windows

### Name of modpack if applicable

_No response_

### Version of said modpack if applicable

_No response_

### Screenshots

### The crash report in folder ./crash-reports (both server and client logs)

crash-2023-01-15_20.46.39-client.txt : [https://pastebin.com/qCr6SWw8](https://pastebin.com/qCr6SWw8)

### Please provide the following other files

Use default configuration file.

latest.txt : [https://pastebin.com/qFmSRLTV](https://pastebin.com/qFmSRLTV)

| priority | crash report describe the bug i don t know why the game won t start with the expected behavior start the game normally mekanism version all minecraft version is this regarding what os are you seeing the problem on windows name of modpack if applicable no response version of said modpack if applicable no response screenshots the crash report in folder crash reports both server and client logs crash client txt please provide the following other files use default configuration file latest txt | 1 |

326,828 | 9,961,591,617 | IssuesEvent | 2019-07-07 06:30:32 | orbs-network/orbs-network-go | https://api.github.com/repos/orbs-network/orbs-network-go | opened | TestSendSameTransactionFastToTwoNodes is flaky | flakiness high priority | ```

t.go:28: �[31;1minfo 2019-07-06T23:06:39.866969Z service sync node=a32884 service=block-storage entry-point=state-storage-sync request-id=state-storage-sync-1562454398690812451 block-height=488 function=servicesync.syncOneBlock source=services/blockstorage/servicesync/service_sync.go:62 _test=acceptance _test-id=acc-TestSendSameTransactionFastTwiceToSameNode-1562454398-65-BENCHMARK_CONSENSUS

t.go:28: �[31;1minfo 2019-07-06T23:06:39.867052Z trying to commit state diff node=a32884 service=state-storage entry-point=state-storage-sync request-id=state-storage-sync-1562454398690812451 block-height=488 number-of-state-diffs=0 function=statestorage.(*service).CommitStateDiff source=services/statestorage/service.go:85 _test=acceptance _test-id=acc-TestSendSameTransactionFastTwiceToSameNode-1562454398-65-BENCHMARK_CONSENSUS

require.go:157:

Error Trace: duplicate_tx_test.go:124

duplicate_tx_test.go:88

network_harness_builder.go:159

context.go:23

network_harness_builder.go:143

supervisor.go:60

supervisor.go:54

network_harness_builder.go:139

network_harness_builder.go:123

Error: Not equal:

expected: 1

actual : 0

Test: TestSendSameTransactionFastTwiceToSameNode/CONSENSUS_ALGO_TYPE_BENCHMARK_CONSENSUS

Messages: blocks should include tx exactly once

```

https://circleci.com/gh/orbs-network/orbs-network-go/16170#tests/containers/3

| 1.0 | TestSendSameTransactionFastToTwoNodes is flaky - ```

t.go:28: �[31;1minfo 2019-07-06T23:06:39.866969Z service sync node=a32884 service=block-storage entry-point=state-storage-sync request-id=state-storage-sync-1562454398690812451 block-height=488 function=servicesync.syncOneBlock source=services/blockstorage/servicesync/service_sync.go:62 _test=acceptance _test-id=acc-TestSendSameTransactionFastTwiceToSameNode-1562454398-65-BENCHMARK_CONSENSUS

t.go:28: �[31;1minfo 2019-07-06T23:06:39.867052Z trying to commit state diff node=a32884 service=state-storage entry-point=state-storage-sync request-id=state-storage-sync-1562454398690812451 block-height=488 number-of-state-diffs=0 function=statestorage.(*service).CommitStateDiff source=services/statestorage/service.go:85 _test=acceptance _test-id=acc-TestSendSameTransactionFastTwiceToSameNode-1562454398-65-BENCHMARK_CONSENSUS

require.go:157:

Error Trace: duplicate_tx_test.go:124

duplicate_tx_test.go:88

network_harness_builder.go:159

context.go:23

network_harness_builder.go:143

supervisor.go:60

supervisor.go:54

network_harness_builder.go:139

network_harness_builder.go:123

Error: Not equal:

expected: 1

actual : 0

Test: TestSendSameTransactionFastTwiceToSameNode/CONSENSUS_ALGO_TYPE_BENCHMARK_CONSENSUS

Messages: blocks should include tx exactly once

```

https://circleci.com/gh/orbs-network/orbs-network-go/16170#tests/containers/3

| priority | testsendsametransactionfasttotwonodes is flaky t go � service sync node service block storage entry point state storage sync request id state storage sync block height function servicesync synconeblock source services blockstorage servicesync service sync go test acceptance test id acc testsendsametransactionfasttwicetosamenode benchmark consensus t go � trying to commit state diff node service state storage entry point state storage sync request id state storage sync block height number of state diffs function statestorage service commitstatediff source services statestorage service go test acceptance test id acc testsendsametransactionfasttwicetosamenode benchmark consensus require go error trace duplicate tx test go duplicate tx test go network harness builder go context go network harness builder go supervisor go supervisor go network harness builder go network harness builder go error not equal expected actual test testsendsametransactionfasttwicetosamenode consensus algo type benchmark consensus messages blocks should include tx exactly once | 1 |

282,979 | 8,712,431,870 | IssuesEvent | 2018-12-06 22:12:34 | DaedalusGame/BetterWithAddons | https://api.github.com/repos/DaedalusGame/BetterWithAddons | closed | Book of Single example pictures broken after installation of BWA | high priority | Even the regular BWM example pics of the kiln structures or the crash course manual pics of the windmills end up as dead red links. Have tried rebooting, but to no avail. Were showing up just fine before inclusion of BWA into pack.

Using the following versions of the mods: BetterWithMods-1.12-2.3.16 & BetterWithLib-1.12-1.5 & Better+With+Addons-0.46

| 1.0 | Book of Single example pictures broken after installation of BWA - Even the regular BWM example pics of the kiln structures or the crash course manual pics of the windmills end up as dead red links. Have tried rebooting, but to no avail. Were showing up just fine before inclusion of BWA into pack.

Using the following versions of the mods: BetterWithMods-1.12-2.3.16 & BetterWithLib-1.12-1.5 & Better+With+Addons-0.46

| priority | book of single example pictures broken after installation of bwa even the regular bwm example pics of the kiln structures or the crash course manual pics of the windmills end up as dead red links have tried rebooting but to no avail were showing up just fine before inclusion of bwa into pack using the following versions of the mods betterwithmods betterwithlib better with addons | 1 |

474,485 | 13,670,906,422 | IssuesEvent | 2020-09-29 05:58:55 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.ebay-kleinanzeigen.de - site is not usable | browser-firefox-mobile engine-gecko ml-needsdiagnosis-false ml-probability-high priority-important | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58983 -->

**URL**: https://www.ebay-kleinanzeigen.de/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android

**Tested Another Browser**: Yes Other

**Problem type**: Site is not usable

**Description**: Missing items

**Steps to Reproduce**:

Verweist immer darauf eine App herunter zu laden. Daher muss der Desktopmodus aktiviert werden um die Seite anzeigen zu können

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/e9f97b56-8fdc-40d2-b0ec-7f56cd78a731.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200827194101</li><li>channel: default</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/ccf949b2-368f-4520-9099-f2ade45c3e6a)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.ebay-kleinanzeigen.de - site is not usable - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58983 -->

**URL**: https://www.ebay-kleinanzeigen.de/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android

**Tested Another Browser**: Yes Other

**Problem type**: Site is not usable

**Description**: Missing items

**Steps to Reproduce**:

Verweist immer darauf eine App herunter zu laden. Daher muss der Desktopmodus aktiviert werden um die Seite anzeigen zu können

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/9/e9f97b56-8fdc-40d2-b0ec-7f56cd78a731.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200827194101</li><li>channel: default</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/9/ccf949b2-368f-4520-9099-f2ade45c3e6a)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | site is not usable url browser version firefox mobile operating system android tested another browser yes other problem type site is not usable description missing items steps to reproduce verweist immer darauf eine app herunter zu laden daher muss der desktopmodus aktiviert werden um die seite anzeigen zu können view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel default hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

327,420 | 9,975,406,361 | IssuesEvent | 2019-07-09 13:01:39 | python/mypy | https://api.github.com/repos/python/mypy | closed | New semantic analyzer: crash on assignment to sqlalchemy @hybrid_property | crash new-semantic-analyzer priority-0-high | I'm testing out the new semantic analyzer, but it crashes on my codebase. Trying to assign to a [SQLAlchemy `@hybrid_property`](https://docs.sqlalchemy.org/en/13/orm/extensions/hybrid.html)) results in a `Cannot assign to a method` error followed by a crash.

Here's a fairly minimal reproduction for it:

```python3

from sqlalchemy import Base, Column, String

from sqlalchemy.ext.hybrid import hybrid_property

class FirstNameOnly(Base):

first_name = Column(String)

@hybrid_property

def name(self) -> str:

return self.first_name

@name.setter # type: ignore

def name(self, value: str) -> None:

self.first_name = value

def __init__(self, name: str):

self.name = name

```

The `# type: ignore` comment on the setter is a workaround for this issue I reported last year: https://github.com/python/mypy/issues/4430

Here's the full output:

```

(tildes) vagrant@ubuntu-xenial:/opt/tildes$ mypy --new-semantic-analyzer --show-traceback test_mypy.py

test_mypy.py:16: error: Cannot assign to a method

test_mypy.py:16: error: INTERNAL ERROR -- Please try using mypy master on Github:

https://mypy.rtfd.io/en/latest/common_issues.html#using-development-mypy-build

Please report a bug at https://github.com/python/mypy/issues

version: 0.720+dev.48916e63403645730a584d6898fbe925d513a841

Traceback (most recent call last):

File "/opt/venvs/tildes/bin/mypy", line 10, in <module>

sys.exit(console_entry())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/__main__.py", line 8, in console_entry

main(None, sys.stdout, sys.stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/main.py", line 83, in main

res = build.build(sources, options, None, flush_errors, fscache, stdout, stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 164, in build

result = _build(sources, options, alt_lib_path, flush_errors, fscache, stdout, stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 224, in _build

graph = dispatch(sources, manager, stdout)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2567, in dispatch

process_graph(graph, manager)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2880, in process_graph

process_stale_scc(graph, scc, manager)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2987, in process_stale_scc

graph[id].type_check_first_pass()

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2096, in type_check_first_pass

self.type_checker().check_first_pass()

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 281, in check_first_pass

self.accept(d)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 913, in accept

return visitor.visit_class_def(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1596, in visit_class_def

self.accept(defn.defs)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 978, in accept

return visitor.visit_block(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1785, in visit_block

self.accept(s)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 655, in accept

return visitor.visit_func_def(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 703, in visit_func_def

self._visit_func_def(defn)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 707, in _visit_func_def

self.check_func_item(defn, name=defn.name())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 769, in check_func_item

self.check_func_def(defn, typ, name)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 935, in check_func_def

self.accept(item.body)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 978, in accept

return visitor.visit_block(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1785, in visit_block

self.accept(s)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 1036, in accept

return visitor.visit_assignment_stmt(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1793, in visit_assignment_stmt

self.check_assignment(s.lvalues[-1], s.rvalue, s.type is None, s.new_syntax)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1834, in check_assignment

lvalue_type, index_lvalue, inferred = self.check_lvalue(lvalue)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 2479, in check_lvalue

True)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkexpr.py", line 1766, in analyze_ordinary_member_access

in_literal_context=self.is_literal_context())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 103, in analyze_member_access

result = _analyze_member_access(name, typ, mx, override_info)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 117, in _analyze_member_access

return analyze_instance_member_access(name, typ, mx, override_info)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 179, in analyze_instance_member_access

signature = function_type(method, mx.builtin_type('builtins.function'))

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/types.py", line 2188, in function_type

assert isinstance(func, mypy.nodes.FuncItem), str(func)

AssertionError: OverloadedFuncDef:7(

Decorator:11(

Var(name)

MemberExpr:11(

NameExpr(name [test_mypy.FirstNameOnly.name])

setter)

FuncDef:12(

name

Args(

Var(self)

Var(value))

def (self: test_mypy.FirstNameOnly, value: builtins.str)

Block:12(

AssignmentStmt:13(

MemberExpr:13(

NameExpr(self [l])

first_name)

NameExpr(value [l]))))))

``` | 1.0 | New semantic analyzer: crash on assignment to sqlalchemy @hybrid_property - I'm testing out the new semantic analyzer, but it crashes on my codebase. Trying to assign to a [SQLAlchemy `@hybrid_property`](https://docs.sqlalchemy.org/en/13/orm/extensions/hybrid.html)) results in a `Cannot assign to a method` error followed by a crash.

Here's a fairly minimal reproduction for it:

```python3

from sqlalchemy import Base, Column, String

from sqlalchemy.ext.hybrid import hybrid_property

class FirstNameOnly(Base):

first_name = Column(String)

@hybrid_property

def name(self) -> str:

return self.first_name

@name.setter # type: ignore

def name(self, value: str) -> None:

self.first_name = value

def __init__(self, name: str):

self.name = name

```

The `# type: ignore` comment on the setter is a workaround for this issue I reported last year: https://github.com/python/mypy/issues/4430

Here's the full output:

```

(tildes) vagrant@ubuntu-xenial:/opt/tildes$ mypy --new-semantic-analyzer --show-traceback test_mypy.py

test_mypy.py:16: error: Cannot assign to a method

test_mypy.py:16: error: INTERNAL ERROR -- Please try using mypy master on Github:

https://mypy.rtfd.io/en/latest/common_issues.html#using-development-mypy-build

Please report a bug at https://github.com/python/mypy/issues

version: 0.720+dev.48916e63403645730a584d6898fbe925d513a841

Traceback (most recent call last):

File "/opt/venvs/tildes/bin/mypy", line 10, in <module>

sys.exit(console_entry())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/__main__.py", line 8, in console_entry

main(None, sys.stdout, sys.stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/main.py", line 83, in main

res = build.build(sources, options, None, flush_errors, fscache, stdout, stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 164, in build

result = _build(sources, options, alt_lib_path, flush_errors, fscache, stdout, stderr)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 224, in _build

graph = dispatch(sources, manager, stdout)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2567, in dispatch

process_graph(graph, manager)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2880, in process_graph

process_stale_scc(graph, scc, manager)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2987, in process_stale_scc

graph[id].type_check_first_pass()

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/build.py", line 2096, in type_check_first_pass

self.type_checker().check_first_pass()

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 281, in check_first_pass

self.accept(d)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 913, in accept

return visitor.visit_class_def(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1596, in visit_class_def

self.accept(defn.defs)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 978, in accept

return visitor.visit_block(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1785, in visit_block

self.accept(s)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 655, in accept

return visitor.visit_func_def(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 703, in visit_func_def

self._visit_func_def(defn)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 707, in _visit_func_def

self.check_func_item(defn, name=defn.name())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 769, in check_func_item

self.check_func_def(defn, typ, name)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 935, in check_func_def

self.accept(item.body)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 978, in accept

return visitor.visit_block(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1785, in visit_block

self.accept(s)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 392, in accept

stmt.accept(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/nodes.py", line 1036, in accept

return visitor.visit_assignment_stmt(self)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1793, in visit_assignment_stmt

self.check_assignment(s.lvalues[-1], s.rvalue, s.type is None, s.new_syntax)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 1834, in check_assignment

lvalue_type, index_lvalue, inferred = self.check_lvalue(lvalue)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checker.py", line 2479, in check_lvalue

True)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkexpr.py", line 1766, in analyze_ordinary_member_access

in_literal_context=self.is_literal_context())

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 103, in analyze_member_access

result = _analyze_member_access(name, typ, mx, override_info)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 117, in _analyze_member_access

return analyze_instance_member_access(name, typ, mx, override_info)

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/checkmember.py", line 179, in analyze_instance_member_access

signature = function_type(method, mx.builtin_type('builtins.function'))

File "/opt/venvs/tildes/lib/python3.7/site-packages/mypy/types.py", line 2188, in function_type

assert isinstance(func, mypy.nodes.FuncItem), str(func)

AssertionError: OverloadedFuncDef:7(

Decorator:11(

Var(name)

MemberExpr:11(

NameExpr(name [test_mypy.FirstNameOnly.name])

setter)

FuncDef:12(

name

Args(

Var(self)

Var(value))

def (self: test_mypy.FirstNameOnly, value: builtins.str)

Block:12(

AssignmentStmt:13(

MemberExpr:13(

NameExpr(self [l])

first_name)

NameExpr(value [l]))))))

``` | priority | new semantic analyzer crash on assignment to sqlalchemy hybrid property i m testing out the new semantic analyzer but it crashes on my codebase trying to assign to a results in a cannot assign to a method error followed by a crash here s a fairly minimal reproduction for it from sqlalchemy import base column string from sqlalchemy ext hybrid import hybrid property class firstnameonly base first name column string hybrid property def name self str return self first name name setter type ignore def name self value str none self first name value def init self name str self name name the type ignore comment on the setter is a workaround for this issue i reported last year here s the full output tildes vagrant ubuntu xenial opt tildes mypy new semantic analyzer show traceback test mypy py test mypy py error cannot assign to a method test mypy py error internal error please try using mypy master on github please report a bug at version dev traceback most recent call last file opt venvs tildes bin mypy line in sys exit console entry file opt venvs tildes lib site packages mypy main py line in console entry main none sys stdout sys stderr file opt venvs tildes lib site packages mypy main py line in main res build build sources options none flush errors fscache stdout stderr file opt venvs tildes lib site packages mypy build py line in build result build sources options alt lib path flush errors fscache stdout stderr file opt venvs tildes lib site packages mypy build py line in build graph dispatch sources manager stdout file opt venvs tildes lib site packages mypy build py line in dispatch process graph graph manager file opt venvs tildes lib site packages mypy build py line in process graph process stale scc graph scc manager file opt venvs tildes lib site packages mypy build py line in process stale scc graph type check first pass file opt venvs tildes lib site packages mypy build py line in type check first pass self type checker check first pass file opt venvs tildes lib site packages mypy checker py line in check first pass self accept d file opt venvs tildes lib site packages mypy checker py line in accept stmt accept self file opt venvs tildes lib site packages mypy nodes py line in accept return visitor visit class def self file opt venvs tildes lib site packages mypy checker py line in visit class def self accept defn defs file opt venvs tildes lib site packages mypy checker py line in accept stmt accept self file opt venvs tildes lib site packages mypy nodes py line in accept return visitor visit block self file opt venvs tildes lib site packages mypy checker py line in visit block self accept s file opt venvs tildes lib site packages mypy checker py line in accept stmt accept self file opt venvs tildes lib site packages mypy nodes py line in accept return visitor visit func def self file opt venvs tildes lib site packages mypy checker py line in visit func def self visit func def defn file opt venvs tildes lib site packages mypy checker py line in visit func def self check func item defn name defn name file opt venvs tildes lib site packages mypy checker py line in check func item self check func def defn typ name file opt venvs tildes lib site packages mypy checker py line in check func def self accept item body file opt venvs tildes lib site packages mypy checker py line in accept stmt accept self file opt venvs tildes lib site packages mypy nodes py line in accept return visitor visit block self file opt venvs tildes lib site packages mypy checker py line in visit block self accept s file opt venvs tildes lib site packages mypy checker py line in accept stmt accept self file opt venvs tildes lib site packages mypy nodes py line in accept return visitor visit assignment stmt self file opt venvs tildes lib site packages mypy checker py line in visit assignment stmt self check assignment s lvalues s rvalue s type is none s new syntax file opt venvs tildes lib site packages mypy checker py line in check assignment lvalue type index lvalue inferred self check lvalue lvalue file opt venvs tildes lib site packages mypy checker py line in check lvalue true file opt venvs tildes lib site packages mypy checkexpr py line in analyze ordinary member access in literal context self is literal context file opt venvs tildes lib site packages mypy checkmember py line in analyze member access result analyze member access name typ mx override info file opt venvs tildes lib site packages mypy checkmember py line in analyze member access return analyze instance member access name typ mx override info file opt venvs tildes lib site packages mypy checkmember py line in analyze instance member access signature function type method mx builtin type builtins function file opt venvs tildes lib site packages mypy types py line in function type assert isinstance func mypy nodes funcitem str func assertionerror overloadedfuncdef decorator var name memberexpr nameexpr name setter funcdef name args var self var value def self test mypy firstnameonly value builtins str block assignmentstmt memberexpr nameexpr self first name nameexpr value | 1 |

697,789 | 23,952,898,564 | IssuesEvent | 2022-09-12 12:59:05 | benicamera/SupplyManager | https://api.github.com/repos/benicamera/SupplyManager | opened | Implement Item delete and Item create | good first issue Priority: High models business logic | # Tasks

- [ ] Create Item and add to list

- [ ] Remove Item from list

- [ ] Update view

## Create Item and add to list

- [ ] Creation form (maybe with image select)

- [ ] Add to list

## Remove Item from list

- [ ] Swipe or long press

- [ ] Confirm question | 1.0 | Implement Item delete and Item create - # Tasks

- [ ] Create Item and add to list

- [ ] Remove Item from list

- [ ] Update view

## Create Item and add to list

- [ ] Creation form (maybe with image select)

- [ ] Add to list

## Remove Item from list

- [ ] Swipe or long press

- [ ] Confirm question | priority | implement item delete and item create tasks create item and add to list remove item from list update view create item and add to list creation form maybe with image select add to list remove item from list swipe or long press confirm question | 1 |

347,216 | 10,426,653,488 | IssuesEvent | 2019-09-16 18:05:30 | jetrails/magento-cloudflare | https://api.github.com/repos/jetrails/magento-cloudflare | closed | Verify Zone ID Is Valid For Domain | priority: high request | Currently, only token is validated, but we should also check to see if zone id corresponds to the domain name in the current scope. | 1.0 | Verify Zone ID Is Valid For Domain - Currently, only token is validated, but we should also check to see if zone id corresponds to the domain name in the current scope. | priority | verify zone id is valid for domain currently only token is validated but we should also check to see if zone id corresponds to the domain name in the current scope | 1 |

239,841 | 7,800,088,230 | IssuesEvent | 2018-06-09 04:36:41 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0007142:

sometimes we filter to much html content | Bug Felamimail Mantis high priority | **Reported by pschuele on 25 Sep 2012 14:02**

**Version:** Joey (2012.10.1~beta2)

sometimes we filter to much html content -> empty mail

| 1.0 | 0007142:

sometimes we filter to much html content - **Reported by pschuele on 25 Sep 2012 14:02**

**Version:** Joey (2012.10.1~beta2)

sometimes we filter to much html content -> empty mail

| priority | sometimes we filter to much html content reported by pschuele on sep version joey sometimes we filter to much html content gt empty mail | 1 |

85,957 | 3,700,957,823 | IssuesEvent | 2016-02-29 10:57:08 | uds-datalab/PDBF | https://api.github.com/repos/uds-datalab/PDBF | closed | On Ubuntu with old version of TexLive mvn verify results in error | 1-high-priority bug wontfix | If you encounter an error message similar to this one:

> The file is not valid, error(s) :

> 1.2.1 : Body Syntax error, Single space expected [offset=2786901; key=2786901; line=5 0 obj <<; object=COSObject{5, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2786635; key=2786635; line=11 0 obj <<; object=COSObject{11, 0}]

> 1.2.1 : Body Syntax error, EOL expected before the 'endobj' keyword at offset 2786894

> 1.2.1 : Body Syntax error, Single space expected [offset=2761071; key=2761071; line=3 0 obj <<; object=COSObject{3, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2761433; key=2761433; line=8 0 obj <<; object=COSObject{8, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2787764; key=2787764; line=12 0 obj <<; object=COSObject{12, 0}]

then dont worry. This is a known issue with old versions of TexLive. You can safely ignore it or upgrade your TexLive to an up to date version (Should be fixed in TexLive 2015). | 1.0 | On Ubuntu with old version of TexLive mvn verify results in error - If you encounter an error message similar to this one:

> The file is not valid, error(s) :

> 1.2.1 : Body Syntax error, Single space expected [offset=2786901; key=2786901; line=5 0 obj <<; object=COSObject{5, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2786635; key=2786635; line=11 0 obj <<; object=COSObject{11, 0}]

> 1.2.1 : Body Syntax error, EOL expected before the 'endobj' keyword at offset 2786894

> 1.2.1 : Body Syntax error, Single space expected [offset=2761071; key=2761071; line=3 0 obj <<; object=COSObject{3, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2761433; key=2761433; line=8 0 obj <<; object=COSObject{8, 0}]

> 1.2.1 : Body Syntax error, Single space expected [offset=2787764; key=2787764; line=12 0 obj <<; object=COSObject{12, 0}]

then dont worry. This is a known issue with old versions of TexLive. You can safely ignore it or upgrade your TexLive to an up to date version (Should be fixed in TexLive 2015). | priority | on ubuntu with old version of texlive mvn verify results in error if you encounter an error message similar to this one the file is not valid error s body syntax error single space expected body syntax error single space expected body syntax error eol expected before the endobj keyword at offset body syntax error single space expected body syntax error single space expected body syntax error single space expected then dont worry this is a known issue with old versions of texlive you can safely ignore it or upgrade your texlive to an up to date version should be fixed in texlive | 1 |

84,180 | 3,654,789,606 | IssuesEvent | 2016-02-17 14:06:23 | emoncms/MyHomeEnergyPlanner | https://api.github.com/repos/emoncms/MyHomeEnergyPlanner | closed | Fabric Measures | feature High priority | Hi Carlos,

I've created a spreadsheet with some text measures in it as requested.

I've also had a think about the labels for the form fields - see notes in the spreadsheet.

Hopefully self-explanatory, and enough for you to be getting on with.

Thanks,

[20160208_Test Measures List.xlsx](https://github.com/emoncms/MyHomeEnergyPlanner/files/121593/20160208_Test.Measures.List.xlsx)

| 1.0 | Fabric Measures - Hi Carlos,

I've created a spreadsheet with some text measures in it as requested.

I've also had a think about the labels for the form fields - see notes in the spreadsheet.

Hopefully self-explanatory, and enough for you to be getting on with.

Thanks,

[20160208_Test Measures List.xlsx](https://github.com/emoncms/MyHomeEnergyPlanner/files/121593/20160208_Test.Measures.List.xlsx)

| priority | fabric measures hi carlos i ve created a spreadsheet with some text measures in it as requested i ve also had a think about the labels for the form fields see notes in the spreadsheet hopefully self explanatory and enough for you to be getting on with thanks | 1 |

710,806 | 24,435,502,110 | IssuesEvent | 2022-10-06 11:10:55 | hackforla/expunge-assist | https://api.github.com/repos/hackforla/expunge-assist | reopened | Review auto-generated text for repetition [from usability testing] | priority: high role: UX content writing feature: figma content writing size: 5pt | ### Overview

Auto-generated text needs to be reviewed and updated. For example, one user pointed out that several responses began with "Since my conviction…" Another user noticed repetitive sentences under the "Involvement: Job" section.

### Action Items

- [x] Review auto-generated text

- [x] Identify areas of repetition

- [x] Create new copy for those areas

- [x] Collaborate with Dev regarding the creation of randomly selected text (i.e. one sentence could be written in 3 ways and each user gets a randomly selected text inserted - this helps with creating variation in the letters that only 1-2 judges will see). (Answer from Dev: Cannot do right now)

- [x] Consider creating multiple sentence starters/fragments/etc. that users could choose from (personalize/make more authentic to each user). (Not for this iteration - revisit next)

- [x] Discuss in Content/iterate

- [x] Finalize

- [x] Link all appropriate documents/figma pages/etc. in the resource section below

- [ ] Hand over to Dev https://github.com/hackforla/expunge-assist/issues/705

### Resources/Instructions

This is for Form Fields Inconsistencies and Repetitiveness [Google Doc](https://docs.google.com/document/d/1UAjwLopUswtOleJrwB-oyuqyUR4mhOF08x8jR9AfJnk/edit?usp=sharing)

Continuing this work directly in Figma under the WIP LG page | 1.0 | Review auto-generated text for repetition [from usability testing] - ### Overview

Auto-generated text needs to be reviewed and updated. For example, one user pointed out that several responses began with "Since my conviction…" Another user noticed repetitive sentences under the "Involvement: Job" section.

### Action Items

- [x] Review auto-generated text

- [x] Identify areas of repetition

- [x] Create new copy for those areas

- [x] Collaborate with Dev regarding the creation of randomly selected text (i.e. one sentence could be written in 3 ways and each user gets a randomly selected text inserted - this helps with creating variation in the letters that only 1-2 judges will see). (Answer from Dev: Cannot do right now)

- [x] Consider creating multiple sentence starters/fragments/etc. that users could choose from (personalize/make more authentic to each user). (Not for this iteration - revisit next)

- [x] Discuss in Content/iterate

- [x] Finalize

- [x] Link all appropriate documents/figma pages/etc. in the resource section below

- [ ] Hand over to Dev https://github.com/hackforla/expunge-assist/issues/705

### Resources/Instructions

This is for Form Fields Inconsistencies and Repetitiveness [Google Doc](https://docs.google.com/document/d/1UAjwLopUswtOleJrwB-oyuqyUR4mhOF08x8jR9AfJnk/edit?usp=sharing)

Continuing this work directly in Figma under the WIP LG page | priority | review auto generated text for repetition overview auto generated text needs to be reviewed and updated for example one user pointed out that several responses began with since my conviction… another user noticed repetitive sentences under the involvement job section action items review auto generated text identify areas of repetition create new copy for those areas collaborate with dev regarding the creation of randomly selected text i e one sentence could be written in ways and each user gets a randomly selected text inserted this helps with creating variation in the letters that only judges will see answer from dev cannot do right now consider creating multiple sentence starters fragments etc that users could choose from personalize make more authentic to each user not for this iteration revisit next discuss in content iterate finalize link all appropriate documents figma pages etc in the resource section below hand over to dev resources instructions this is for form fields inconsistencies and repetitiveness continuing this work directly in figma under the wip lg page | 1 |

175,675 | 6,552,937,745 | IssuesEvent | 2017-09-05 20:22:08 | envistaInteractive/itagroup-ecommerce-template | https://api.github.com/repos/envistaInteractive/itagroup-ecommerce-template | opened | Events: Category / Search Page | High Priority Page Layout | ### Summary

Layout contents of Events: Category / Search page as specified on Events: Category / Search in Zeplin.

We do not have color mockups. The top bar is the blue that is also used on the checkout pages. Use those same classes and html. We will move that out of the checkout to be more generic later.

Use a mobile first approach to adjust the layout using responsive design as the screen gets larger.

**Use branch**: feature/events

**Layout file**: templates/events/list.liquid (file does not exist)

**Url for testing**: http://localhost:1337/events

**Delivery Date**: Sept 7th | 1.0 | Events: Category / Search Page - ### Summary

Layout contents of Events: Category / Search page as specified on Events: Category / Search in Zeplin.

We do not have color mockups. The top bar is the blue that is also used on the checkout pages. Use those same classes and html. We will move that out of the checkout to be more generic later.

Use a mobile first approach to adjust the layout using responsive design as the screen gets larger.

**Use branch**: feature/events

**Layout file**: templates/events/list.liquid (file does not exist)

**Url for testing**: http://localhost:1337/events

**Delivery Date**: Sept 7th | priority | events category search page summary layout contents of events category search page as specified on events category search in zeplin we do not have color mockups the top bar is the blue that is also used on the checkout pages use those same classes and html we will move that out of the checkout to be more generic later use a mobile first approach to adjust the layout using responsive design as the screen gets larger use branch feature events layout file templates events list liquid file does not exist url for testing delivery date sept | 1 |

347,031 | 10,423,479,679 | IssuesEvent | 2019-09-16 11:32:36 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Server always crashes/doesn't load | High Priority | When trying to start a server it always crashes and I get the message that errors ocurred loading users. Already tried to deinstall both Eco and Eco Server as well as restart multiple times. Nothing seems to help, can't play on the server with my friends at the moment.

The log with error message is attached. Might need some help!

[log_190915072601.log](https://github.com/StrangeLoopGames/EcoIssues/files/3615405/log_190915072601.log)

| 1.0 | Server always crashes/doesn't load - When trying to start a server it always crashes and I get the message that errors ocurred loading users. Already tried to deinstall both Eco and Eco Server as well as restart multiple times. Nothing seems to help, can't play on the server with my friends at the moment.

The log with error message is attached. Might need some help!

[log_190915072601.log](https://github.com/StrangeLoopGames/EcoIssues/files/3615405/log_190915072601.log)

| priority | server always crashes doesn t load when trying to start a server it always crashes and i get the message that errors ocurred loading users already tried to deinstall both eco and eco server as well as restart multiple times nothing seems to help can t play on the server with my friends at the moment the log with error message is attached might need some help | 1 |

831,773 | 32,060,525,832 | IssuesEvent | 2023-09-24 15:57:01 | oncokb/oncokb | https://api.github.com/repos/oncokb/oncokb | opened | Some OncoKB genes do not have ensembl gene curated | bug high priority | All OncoKB genes should have ensembl gene transcript, otherwise the genomic change will be filtered out for annotation(missing chromosome/start/end). Therefore the genomic change and hgvsg annotation will not work. | 1.0 | Some OncoKB genes do not have ensembl gene curated - All OncoKB genes should have ensembl gene transcript, otherwise the genomic change will be filtered out for annotation(missing chromosome/start/end). Therefore the genomic change and hgvsg annotation will not work. | priority | some oncokb genes do not have ensembl gene curated all oncokb genes should have ensembl gene transcript otherwise the genomic change will be filtered out for annotation missing chromosome start end therefore the genomic change and hgvsg annotation will not work | 1 |

345,629 | 10,370,688,866 | IssuesEvent | 2019-09-08 14:41:29 | byaka/VombatiDB | https://api.github.com/repos/byaka/VombatiDB | opened | Добавить режим хранения индекса `wide` при котором выделяется ячейка на хранение ссылки на данные | high-priority improvement optimization | В таком режиме все методы доступа к индексу будут разпаковывать ноду не на 2 обьекта (`props, childs`), а на 3 (`..,data`). При этом распакованный третий обьект станет передаваться в `_getData()` и аналоги.

**Похоже, реализация этого как отдельного режима работы ядра будет тяжелой изза того, что придется усложнять код передачи аргументов и в целом раздувать кодовую базу кучей однотипных проверок. В таком случае лучше выкинуть старый режим.** | 1.0 | Добавить режим хранения индекса `wide` при котором выделяется ячейка на хранение ссылки на данные - В таком режиме все методы доступа к индексу будут разпаковывать ноду не на 2 обьекта (`props, childs`), а на 3 (`..,data`). При этом распакованный третий обьект станет передаваться в `_getData()` и аналоги.

**Похоже, реализация этого как отдельного режима работы ядра будет тяжелой изза того, что придется усложнять код передачи аргументов и в целом раздувать кодовую базу кучей однотипных проверок. В таком случае лучше выкинуть старый режим.** | priority | добавить режим хранения индекса wide при котором выделяется ячейка на хранение ссылки на данные в таком режиме все методы доступа к индексу будут разпаковывать ноду не на обьекта props childs а на data при этом распакованный третий обьект станет передаваться в getdata и аналоги похоже реализация этого как отдельного режима работы ядра будет тяжелой изза того что придется усложнять код передачи аргументов и в целом раздувать кодовую базу кучей однотипных проверок в таком случае лучше выкинуть старый режим | 1 |

67,302 | 3,268,440,533 | IssuesEvent | 2015-10-23 11:30:51 | pakalbekim/armaldia | https://api.github.com/repos/pakalbekim/armaldia | opened | Rebalance gym once again | High Priority | When increasing energy limit - use a lot of stamina;

when increasing stamina limit - use a lot of energy;

when increasing health limit - use moderate amounts of both; | 1.0 | Rebalance gym once again - When increasing energy limit - use a lot of stamina;

when increasing stamina limit - use a lot of energy;

when increasing health limit - use moderate amounts of both; | priority | rebalance gym once again when increasing energy limit use a lot of stamina when increasing stamina limit use a lot of energy when increasing health limit use moderate amounts of both | 1 |

487,329 | 14,040,547,886 | IssuesEvent | 2020-11-01 03:18:34 | xournalpp/xournalpp | https://api.github.com/repos/xournalpp/xournalpp | closed | Recently used file with "no such device" causes Xournal++ to crash at start. | Crash bug priority: high | **Affects versions :**

- OS: Arch Linux

- Desktop environment: Gnome-Wayland

- Version of Xournal++: 45a619d83f97205c92ac146f13d5ae00af83af7e

- Installation method: AUR ([xournalpp-git](https://aur.archlinux.org/packages/xournalpp-git/))

**Describe the bug**

When a file exists in the "recently used files" where the device is not available (f.e. a Samba mount) Xournal++ crashes at start.

**To Reproduce**

Steps to reproduce the behavior:

1. Mount a samba drive using a VPN interface

2. Open the file in Xournal++, close it

3. Make sure the VPN interface is not available without unmounting the Samba drive (`no such device`)

4. Re-open Xournal++

**Expected behavior**

Xournal++ ignores the unavailable file.

**Additional context**

Crash:

```

terminate called after throwing an instance of 'std::filesystem::__cxx11::filesystem_error'

what(): filesystem error: status: No such device [/mnt/samba/mount/that/is/not/available/file.pdf]

** (com.github.xournalpp.xournalpp:26191): WARNING **: 12:39:29.368: [Crash Handler] Crashed with signal 6

** (com.github.xournalpp.xournalpp:26191): WARNING **: 12:39:29.369: [Crash Handler] Wrote crash log to: $HOME/.cache/com.github.xournalpp.xournalpp/errorlogs/errorlog.20200908-123929.log

```

Error log: https://fb.hash.works/HMqP0W

File path is from `./.local/share/recently-used.xbel`:

```

<bookmark href="file:///mnt/samba/mount/that/is/not/available/file.pdf" added="2020-09-02T23:54:55Z" modified="2020-09-03T00:00:03Z" visited="1969-12-31T23:59:59Z">

```

This sounds like something that should've been fixed with #1730, but as I stated above I'm using the latest version in master. | 1.0 | Recently used file with "no such device" causes Xournal++ to crash at start. - **Affects versions :**

- OS: Arch Linux

- Desktop environment: Gnome-Wayland

- Version of Xournal++: 45a619d83f97205c92ac146f13d5ae00af83af7e

- Installation method: AUR ([xournalpp-git](https://aur.archlinux.org/packages/xournalpp-git/))

**Describe the bug**

When a file exists in the "recently used files" where the device is not available (f.e. a Samba mount) Xournal++ crashes at start.

**To Reproduce**

Steps to reproduce the behavior:

1. Mount a samba drive using a VPN interface

2. Open the file in Xournal++, close it

3. Make sure the VPN interface is not available without unmounting the Samba drive (`no such device`)

4. Re-open Xournal++

**Expected behavior**

Xournal++ ignores the unavailable file.

**Additional context**

Crash:

```

terminate called after throwing an instance of 'std::filesystem::__cxx11::filesystem_error'

what(): filesystem error: status: No such device [/mnt/samba/mount/that/is/not/available/file.pdf]

** (com.github.xournalpp.xournalpp:26191): WARNING **: 12:39:29.368: [Crash Handler] Crashed with signal 6

** (com.github.xournalpp.xournalpp:26191): WARNING **: 12:39:29.369: [Crash Handler] Wrote crash log to: $HOME/.cache/com.github.xournalpp.xournalpp/errorlogs/errorlog.20200908-123929.log

```

Error log: https://fb.hash.works/HMqP0W

File path is from `./.local/share/recently-used.xbel`:

```

<bookmark href="file:///mnt/samba/mount/that/is/not/available/file.pdf" added="2020-09-02T23:54:55Z" modified="2020-09-03T00:00:03Z" visited="1969-12-31T23:59:59Z">

```

This sounds like something that should've been fixed with #1730, but as I stated above I'm using the latest version in master. | priority | recently used file with no such device causes xournal to crash at start affects versions os arch linux desktop environment gnome wayland version of xournal installation method aur describe the bug when a file exists in the recently used files where the device is not available f e a samba mount xournal crashes at start to reproduce steps to reproduce the behavior mount a samba drive using a vpn interface open the file in xournal close it make sure the vpn interface is not available without unmounting the samba drive no such device re open xournal expected behavior xournal ignores the unavailable file additional context crash terminate called after throwing an instance of std filesystem filesystem error what filesystem error status no such device com github xournalpp xournalpp warning crashed with signal com github xournalpp xournalpp warning wrote crash log to home cache com github xournalpp xournalpp errorlogs errorlog log error log file path is from local share recently used xbel this sounds like something that should ve been fixed with but as i stated above i m using the latest version in master | 1 |

2,438 | 2,525,857,317 | IssuesEvent | 2015-01-21 06:51:08 | graybeal/ont | https://api.github.com/repos/graybeal/ont | closed | Allow tab delimiter when importing data in voc2rdf | 1 star enhancement imported Milestone-Release1.2 Priority-High voc2rdf | _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on April 06, 2009 11:51:45_

(thanks John for this feedback) What capability do you want added or improved? Allow tab delimiter when importing data in voc2rdf Where do you want this capability to be accessible? voc2rdf What sort of input/command mechanism do you want? In the CSV dialog, have a checkbox or something to indicate that the

contents are tab-delimited columns

_Original issue: http://code.google.com/p/mmisw/issues/detail?id=115_ | 1.0 | Allow tab delimiter when importing data in voc2rdf - _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on April 06, 2009 11:51:45_

(thanks John for this feedback) What capability do you want added or improved? Allow tab delimiter when importing data in voc2rdf Where do you want this capability to be accessible? voc2rdf What sort of input/command mechanism do you want? In the CSV dialog, have a checkbox or something to indicate that the

contents are tab-delimited columns

_Original issue: http://code.google.com/p/mmisw/issues/detail?id=115_ | priority | allow tab delimiter when importing data in from on april thanks john for this feedback what capability do you want added or improved allow tab delimiter when importing data in where do you want this capability to be accessible what sort of input command mechanism do you want in the csv dialog have a checkbox or something to indicate that the contents are tab delimited columns original issue | 1 |

421,761 | 12,261,138,679 | IssuesEvent | 2020-05-06 19:32:34 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | configurator: MariaDB doesn't use timezone setting until reboot | Priority: High Type: Bug | **Describe the bug**

When you set a timezone in configurator, MariaDB is not configured with that timezone until you reboot.

Consequently, admin user created in DB is created with a `valid_from` value based on **default** timezone. Depending on your timezone, it means that your account could be not valid after you reboot.

**To Reproduce**

Steps to reproduce the behavior:

1. Install a PacketFence ZEN

2. Set timezone to EST at step 2 of configurator

3. Set admin password

4. Check value in DB:

```sql

SELECT valid_from FROM password where pid='admin'\G;

```

5. Finish configurator

6. Log in on web admin with `admin` user

=> It works.

7. Reboot

8. Log in on web admin with `admin` user

=> It fails because account is not yet valid.

**Expected behavior**

MariaDB should be configured using timezone defined at step 2. | 1.0 | configurator: MariaDB doesn't use timezone setting until reboot - **Describe the bug**

When you set a timezone in configurator, MariaDB is not configured with that timezone until you reboot.

Consequently, admin user created in DB is created with a `valid_from` value based on **default** timezone. Depending on your timezone, it means that your account could be not valid after you reboot.

**To Reproduce**

Steps to reproduce the behavior:

1. Install a PacketFence ZEN

2. Set timezone to EST at step 2 of configurator

3. Set admin password

4. Check value in DB:

```sql

SELECT valid_from FROM password where pid='admin'\G;

```

5. Finish configurator

6. Log in on web admin with `admin` user

=> It works.

7. Reboot

8. Log in on web admin with `admin` user

=> It fails because account is not yet valid.

**Expected behavior**

MariaDB should be configured using timezone defined at step 2. | priority | configurator mariadb doesn t use timezone setting until reboot describe the bug when you set a timezone in configurator mariadb is not configured with that timezone until you reboot consequently admin user created in db is created with a valid from value based on default timezone depending on your timezone it means that your account could be not valid after you reboot to reproduce steps to reproduce the behavior install a packetfence zen set timezone to est at step of configurator set admin password check value in db sql select valid from from password where pid admin g finish configurator log in on web admin with admin user it works reboot log in on web admin with admin user it fails because account is not yet valid expected behavior mariadb should be configured using timezone defined at step | 1 |

636,792 | 20,609,351,789 | IssuesEvent | 2022-03-07 06:34:22 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [FEATURE] Soft reboot/shutdown | enhancement area/ui priority/1 highlight area/kubevirt | We should support a graceful soft reboot/shutdown from the UI, to allow the VM and filesystem on it to have a chance to shutdown properly.

Guest agent might be required. | 1.0 | [FEATURE] Soft reboot/shutdown - We should support a graceful soft reboot/shutdown from the UI, to allow the VM and filesystem on it to have a chance to shutdown properly.

Guest agent might be required. | priority | soft reboot shutdown we should support a graceful soft reboot shutdown from the ui to allow the vm and filesystem on it to have a chance to shutdown properly guest agent might be required | 1 |

129,076 | 5,088,229,403 | IssuesEvent | 2016-12-31 16:50:06 | zulip/zulip-electron | https://api.github.com/repos/zulip/zulip-electron | closed | Can not find module 'debug/browser' | bug help wanted Priority: High | Something is broken and I'm not able to figure it out 😭

Because of above error preload script can't be injected and hence spellchecker won't work 😢

| 1.0 | Can not find module 'debug/browser' - Something is broken and I'm not able to figure it out 😭

Because of above error preload script can't be injected and hence spellchecker won't work 😢

| priority | can not find module debug browser something is broken and i m not able to figure it out 😭 because of above error preload script can t be injected and hence spellchecker won t work 😢 | 1 |

283,027 | 8,713,246,379 | IssuesEvent | 2018-12-07 01:40:51 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | visit2.9.2 release tarball[s?] are doubly-compressed | bug likelihood high priority reviewed severity medium wontfix | The release tarball:

http://portal.nersc.gov/project/visit/releases/2.9.2/visit2_9_2.linux-x86_64-ubuntu14.tar.gz

is gzipped *twice*. That is, to extract one must:

$ gunzip visit2_9_2.linux-x86_64-ubuntu14.tar.gz

$ mv visit2_9_2.linux-x86_64-ubuntu14.tar visit2_9_2.linux-x86_64-ubuntu14.tar.gz

$ tar zxvf visit2_9_2.linux-x86_64-ubuntu14.tar.gz

I have a vague recollection that I hit this with 2.9.1 as well. Perhaps there is a packaging script bug?

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 2307

Status: Rejected

Project: VisIt

Tracker: Bug

Priority: High

Subject: visit2.9.2 release tarball[s?] are doubly-compressed

Assigned to:

Category:

Target version: 2.10

Author: Tom Fogal

Start: 06/23/2015

Due date:

% Done: 0

Estimated time: