Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

548,667

| 16,068,726,891

|

IssuesEvent

|

2021-04-24 01:50:04

|

KATO-Hiro/AtCoderClans

|

https://api.github.com/repos/KATO-Hiro/AtCoderClans

|

closed

|

Googleサイト内検索を導入したが、レイアウトがイマイチよくない気がする

|

help wanted priority high

|

## WHY

- 作者がHTML・CSSをあまり理解していないせいで、見づらい感じ

- 余白が不自然に多い気がする

- タブレットやスマホだとあまり気にならないが、PCだと野暮ったい印象を受ける

- Clansのユーザの2/3はPCユーザであるため、これはマズい

|

1.0

|

Googleサイト内検索を導入したが、レイアウトがイマイチよくない気がする - ## WHY

- 作者がHTML・CSSをあまり理解していないせいで、見づらい感じ

- 余白が不自然に多い気がする

- タブレットやスマホだとあまり気にならないが、PCだと野暮ったい印象を受ける

- Clansのユーザの2/3はPCユーザであるため、これはマズい

|

priority

|

googleサイト内検索を導入したが、レイアウトがイマイチよくない気がする why 作者がhtml・cssをあまり理解していないせいで、見づらい感じ 余白が不自然に多い気がする タブレットやスマホだとあまり気にならないが、pcだと野暮ったい印象を受ける 、これはマズい

| 1

|

77,393

| 3,506,368,530

|

IssuesEvent

|

2016-01-08 06:10:55

|

OregonCore/OregonCore

|

https://api.github.com/repos/OregonCore/OregonCore

|

closed

|

Error C2065 / C2070 (BB #439)

|

duplicate migrated Priority: High Type: Bug

|

This issue was migrated from bitbucket.

**Original Reporter:** sh1fty88

**Original Date:** 11.03.2013 17:05:55 GMT+0000

**Original Priority:** blocker

**Original Type:** bug

**Original State:** duplicate

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/439

<hr>

While building/compiling the last 3 core's i get the following error:

using: Windows server 2008R2 / Visual Studio 2010 Express / Cmake 2.8.10.2

13> Creating library C:/build/src/oregonrealm/Debug/oregon-realm.lib and object C:/build/src/oregonrealm/Debug/oregon-realm.exp

14> WheatyExceptionReport.cpp

13> oregon-realm.vcxproj -> C:\build\bin\Debug\oregon-realm.exe

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2065: 'commandbuf' : undeclared identifier

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2065: 'commandbuf' : undeclared identifier

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2070: ''unknown-type'': illegal sizeof operand

15>------ Build started: Project: ALL_BUILD, Configuration: Debug Win32 ------

15> Building Custom Rule C:/OregonCore/CMakeLists.txt

15> CMake does not need to re-run because C:\build\CMakeFiles\generate.stamp is up-to-date.

15> Build all projects

16>------ Skipped Build: Project: INSTALL, Configuration: Debug Win32 ------

16>Project not selected to build for this solution configuration

========== Build: 14 succeeded, 1 failed, 0 up-to-date, 1 skipped ==========

|

1.0

|

Error C2065 / C2070 (BB #439) - This issue was migrated from bitbucket.

**Original Reporter:** sh1fty88

**Original Date:** 11.03.2013 17:05:55 GMT+0000

**Original Priority:** blocker

**Original Type:** bug

**Original State:** duplicate

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/439

<hr>

While building/compiling the last 3 core's i get the following error:

using: Windows server 2008R2 / Visual Studio 2010 Express / Cmake 2.8.10.2

13> Creating library C:/build/src/oregonrealm/Debug/oregon-realm.lib and object C:/build/src/oregonrealm/Debug/oregon-realm.exp

14> WheatyExceptionReport.cpp

13> oregon-realm.vcxproj -> C:\build\bin\Debug\oregon-realm.exe

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2065: 'commandbuf' : undeclared identifier

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2065: 'commandbuf' : undeclared identifier

14>..\..\..\OregonCore\src\oregoncore\CliRunnable.cpp(701): error C2070: ''unknown-type'': illegal sizeof operand

15>------ Build started: Project: ALL_BUILD, Configuration: Debug Win32 ------

15> Building Custom Rule C:/OregonCore/CMakeLists.txt

15> CMake does not need to re-run because C:\build\CMakeFiles\generate.stamp is up-to-date.

15> Build all projects

16>------ Skipped Build: Project: INSTALL, Configuration: Debug Win32 ------

16>Project not selected to build for this solution configuration

========== Build: 14 succeeded, 1 failed, 0 up-to-date, 1 skipped ==========

|

priority

|

error bb this issue was migrated from bitbucket original reporter original date gmt original priority blocker original type bug original state duplicate direct link while building compiling the last core s i get the following error using windows server visual studio express cmake creating library c build src oregonrealm debug oregon realm lib and object c build src oregonrealm debug oregon realm exp wheatyexceptionreport cpp oregon realm vcxproj c build bin debug oregon realm exe oregoncore src oregoncore clirunnable cpp error commandbuf undeclared identifier oregoncore src oregoncore clirunnable cpp error commandbuf undeclared identifier oregoncore src oregoncore clirunnable cpp error unknown type illegal sizeof operand build started project all build configuration debug building custom rule c oregoncore cmakelists txt cmake does not need to re run because c build cmakefiles generate stamp is up to date build all projects skipped build project install configuration debug project not selected to build for this solution configuration build succeeded failed up to date skipped

| 1

|

305,924

| 9,378,350,433

|

IssuesEvent

|

2019-04-04 12:42:14

|

AugurProject/augur

|

https://api.github.com/repos/AugurProject/augur

|

closed

|

Total Cost column needs to display unrealized cost

|

Bug Priority: High

|

steps to reproduce....buy 3 shares of an outcome at one price...sell 1 share higher to get a realized and unrealized pnl. Then sell another 1 share at a different price. Total cost is still displaying on the original purchase amount and not on the remaining open balance.

|

1.0

|

Total Cost column needs to display unrealized cost - steps to reproduce....buy 3 shares of an outcome at one price...sell 1 share higher to get a realized and unrealized pnl. Then sell another 1 share at a different price. Total cost is still displaying on the original purchase amount and not on the remaining open balance.

|

priority

|

total cost column needs to display unrealized cost steps to reproduce buy shares of an outcome at one price sell share higher to get a realized and unrealized pnl then sell another share at a different price total cost is still displaying on the original purchase amount and not on the remaining open balance

| 1

|

235,539

| 7,739,852,894

|

IssuesEvent

|

2018-05-28 17:58:29

|

gnebehay/schoselwette

|

https://api.github.com/repos/gnebehay/schoselwette

|

closed

|

make baseUrl stuff configurable

|

high priority

|

Currently you have to adapt

package.json

App.vue

router.js

in order to be able to set all paths correctly. Can this configuration somehow be centralized so that only one file has to be changed? (preferably a separate config file). I need that for automatic deployment.

|

1.0

|

make baseUrl stuff configurable - Currently you have to adapt

package.json

App.vue

router.js

in order to be able to set all paths correctly. Can this configuration somehow be centralized so that only one file has to be changed? (preferably a separate config file). I need that for automatic deployment.

|

priority

|

make baseurl stuff configurable currently you have to adapt package json app vue router js in order to be able to set all paths correctly can this configuration somehow be centralized so that only one file has to be changed preferably a separate config file i need that for automatic deployment

| 1

|

95,297

| 3,941,705,782

|

IssuesEvent

|

2016-04-27 08:55:52

|

raml-org/raml-js-parser-2

|

https://api.github.com/repos/raml-org/raml-js-parser-2

|

closed

|

map type expression not supported

|

bug priority:high

|

When trying to use **map** type expression, as stated [here](http://docs.raml.org/specs/1.0/#raml-10-spec-type-expressions)

I get *"Syntax error:Expected \"|\" or end of input but \"{\" found.*

Example:

```

#%RAML 1.0

title: My Api

/maps:

get:

responses:

200:

body:

type: number{}

```

|

1.0

|

map type expression not supported - When trying to use **map** type expression, as stated [here](http://docs.raml.org/specs/1.0/#raml-10-spec-type-expressions)

I get *"Syntax error:Expected \"|\" or end of input but \"{\" found.*

Example:

```

#%RAML 1.0

title: My Api

/maps:

get:

responses:

200:

body:

type: number{}

```

|

priority

|

map type expression not supported when trying to use map type expression as stated i get syntax error expected or end of input but found example raml title my api maps get responses body type number

| 1

|

681,425

| 23,310,733,928

|

IssuesEvent

|

2022-08-08 08:01:49

|

okTurtles/group-income

|

https://api.github.com/repos/okTurtles/group-income

|

closed

|

Proposals to change voting threshold don't show reason [$25 bounty]

|

Kind:Bug Note:Up-for-grabs App:Frontend Level:Starter Priority:High Note:UI/UX Note:Bounty Note:Contracts

|

### Problem

The reason I gave for this proposal isn't appearing, even though reasons do appear on some other proposals (for example, to remove a member):

<img width="782" alt="Screen Shot 2022-04-28 at 1 51 38 PM" src="https://user-images.githubusercontent.com/138706/165843599-6d6bc8d6-9d64-4d3f-a0f5-dd7c6e70af1c.png">

### Solution

Find out why it's not appearing for changing the voting threshold and fix.

### Bounty

$25 bounty for a clean solution to this (paid in cryptocurrency).

|

1.0

|

Proposals to change voting threshold don't show reason [$25 bounty] - ### Problem

The reason I gave for this proposal isn't appearing, even though reasons do appear on some other proposals (for example, to remove a member):

<img width="782" alt="Screen Shot 2022-04-28 at 1 51 38 PM" src="https://user-images.githubusercontent.com/138706/165843599-6d6bc8d6-9d64-4d3f-a0f5-dd7c6e70af1c.png">

### Solution

Find out why it's not appearing for changing the voting threshold and fix.

### Bounty

$25 bounty for a clean solution to this (paid in cryptocurrency).

|

priority

|

proposals to change voting threshold don t show reason problem the reason i gave for this proposal isn t appearing even though reasons do appear on some other proposals for example to remove a member img width alt screen shot at pm src solution find out why it s not appearing for changing the voting threshold and fix bounty bounty for a clean solution to this paid in cryptocurrency

| 1

|

65,192

| 3,226,986,489

|

IssuesEvent

|

2015-10-10 19:42:28

|

chocolatey/chocolatey.org

|

https://api.github.com/repos/chocolatey/chocolatey.org

|

reopened

|

New packages are not able to be accessed on the package cache

|

0 - Backlog Bug Priority_HIGH

|

https://gitter.im/chocolatey/choco?at=56194f121b0e279854bdbdf2

> i think something is broken for the newly pushed packages. download the newest nupkgs manually:

https://chocolatey.org/packages/chromium

https://chocolatey.org/packages/qbittorrent

https://chocolatey.org/packages/kvrt

i get this:

https://packages.chocolatey.org/chromium.48.0.2533.0.nupkg

I think there is some issue in the S3 bucket resolution for things that are pushed after the policy is created. They are explicitly setting permissions on the package, but even removing those did not appear to work.

I've found the closest person that has this problem as the last message at https://forums.aws.amazon.com/thread.jspa?messageID=555932

|

1.0

|

New packages are not able to be accessed on the package cache - https://gitter.im/chocolatey/choco?at=56194f121b0e279854bdbdf2

> i think something is broken for the newly pushed packages. download the newest nupkgs manually:

https://chocolatey.org/packages/chromium

https://chocolatey.org/packages/qbittorrent

https://chocolatey.org/packages/kvrt

i get this:

https://packages.chocolatey.org/chromium.48.0.2533.0.nupkg

I think there is some issue in the S3 bucket resolution for things that are pushed after the policy is created. They are explicitly setting permissions on the package, but even removing those did not appear to work.

I've found the closest person that has this problem as the last message at https://forums.aws.amazon.com/thread.jspa?messageID=555932

|

priority

|

new packages are not able to be accessed on the package cache i think something is broken for the newly pushed packages download the newest nupkgs manually i get this i think there is some issue in the bucket resolution for things that are pushed after the policy is created they are explicitly setting permissions on the package but even removing those did not appear to work i ve found the closest person that has this problem as the last message at

| 1

|

756,093

| 26,456,634,927

|

IssuesEvent

|

2023-01-16 14:49:09

|

woocommerce/woocommerce

|

https://api.github.com/repos/woocommerce/woocommerce

|

closed

|

[COT/HPOS] WooCommerce API calls not behaving as expected for orders with search param `page`

|

type: bug needs: author feedback priority: high focus: wc rest api focus: custom order tables plugin: woocommerce

|

### Prerequisites

- [X] I have carried out troubleshooting steps and I believe I have found a bug.

- [X] I have searched for similar bugs in both open and closed issues and cannot find a duplicate.

### Describe the bug

The `page` search param is no longer taking effect so, say we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

it is returning the same results as below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. both only returning the first page values

### Expected behavior

The `page` search param should have an effect on the results returned in the GET orders call

i.e. If we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

It should returning different results to below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. the first call should return the first 4 orders and the second call should return the next 4 (different) orders

### Actual behavior

The `page` search param is no longer taking effect so, say we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

it is returning the same results as below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. both only returning the first page values

### Steps to reproduce

1. Enable COT/HPOS

2. Use the WooCommerce API to create 8 orders

3. Attempt to search on orders and get the first page of results (given 4 results per page) with search param as follows:

```

{

per_page: 4,

page: 1

}

```

4. Attempt to search on orders and get the second page of results (given 4 results per page) with search param as follows:

```

{

per_page: 4,

page: 2

}

```

5. The same results are returned for each

### WordPress Environment

`

### WordPress Environment ###

WordPress address (URL): http://localhost:8086

Site address (URL): http://localhost:8086

WC Version: 7.0.0

REST API Version: ✔ 7.0.0

WC Blocks Version: ✔ 8.5.1

Action Scheduler Version: ✔ 3.4.0

Log Directory Writable: ✔

WP Version: 6.0.2

WP Multisite: –

WP Memory Limit: 256 MB

WP Debug Mode: –

WP Cron: ✔

Language: en_US

External object cache: –

### Server Environment ###

Server Info: Apache/2.4.54 (Debian)

PHP Version: 7.4.32

PHP Post Max Size: 8 MB

PHP Time Limit: 30

PHP Max Input Vars: 1000

cURL Version: 7.74.0

OpenSSL/1.1.1n

SUHOSIN Installed: –

MySQL Version: 5.5.5-10.9.3-MariaDB-1:10.9.3+maria~ubu2204

Max Upload Size: 2 MB

Default Timezone is UTC: ✔

fsockopen/cURL: ✔

SoapClient: ❌ Your server does not have the SoapClient class enabled - some gateway plugins which use SOAP may not work as expected.

DOMDocument: ✔

GZip: ✔

Multibyte String: ✔

Remote Post: ✔

Remote Get: ✔

### Database ###

WC Database Version: 7.0.0

WC Database Prefix: wp_

Total Database Size: 5.19MB

Database Data Size: 3.52MB

Database Index Size: 1.67MB

wp_woocommerce_sessions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_api_keys: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_attribute_taxonomies: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_downloadable_product_permissions: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_order_items: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_order_itemmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_tax_rates: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_tax_rate_locations: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_shipping_zones: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_shipping_zone_locations: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_shipping_zone_methods: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_payment_tokens: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_payment_tokenmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_log: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_actions: Data: 0.02MB + Index: 0.11MB + Engine InnoDB

wp_actionscheduler_claims: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_groups: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_logs: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_commentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_comments: Data: 0.02MB + Index: 0.09MB + Engine InnoDB

wp_links: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_options: Data: 2.52MB + Index: 0.03MB + Engine InnoDB

wp_postmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_posts: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_termmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_terms: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_term_relationships: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_term_taxonomy: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_usermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_users: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_admin_notes: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_admin_note_actions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_category_lookup: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_customer_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_download_log: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_orders: Data: 0.02MB + Index: 0.11MB + Engine InnoDB

wp_wc_orders_meta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_addresses: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_coupon_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_operational_data: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_product_lookup: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_stats: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_order_tax_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_product_attributes_lookup: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_product_download_directories: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_product_meta_lookup: Data: 0.02MB + Index: 0.09MB + Engine InnoDB

wp_wc_rate_limits: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_reserved_stock: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_tax_rate_classes: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_webhooks: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wpml_mails: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

### Post Type Counts ###

attachment: 1

page: 7

post: 2

### Security ###

Secure connection (HTTPS): ❌

Your store is not using HTTPS. Learn more about HTTPS and SSL Certificates.

Hide errors from visitors: ✔

### Active Plugins (5) ###

WooCommerce Enable COT: by – 0.0.2

JSON Basic Authentication: by WordPress API Team – 0.1

WooCommerce Reset: by WooCommerce – 0.1.0

WooCommerce: by Automattic – 7.0.0-dev

WP Mail Logging: by Wysija – 1.10.4

### Inactive Plugins (2) ###

Akismet Anti-Spam: by Automattic – 5.0

Hello Dolly: by Matt Mullenweg – 1.7.2

### Settings ###

API Enabled: –

Force SSL: –

Currency: USD ($)

Currency Position: left

Thousand Separator: ,

Decimal Separator: .

Number of Decimals: 2

Taxonomies: Product Types: external (external)

grouped (grouped)

simple (simple)

variable (variable)

Taxonomies: Product Visibility: exclude-from-catalog (exclude-from-catalog)

exclude-from-search (exclude-from-search)

featured (featured)

outofstock (outofstock)

rated-1 (rated-1)

rated-2 (rated-2)

rated-3 (rated-3)

rated-4 (rated-4)

rated-5 (rated-5)

Connected to WooCommerce.com: –

Enforce Approved Product Download Directories: ✔

### WC Pages ###

Shop base: #5 - /shop/

Cart: #6 - /cart/

Checkout: #7 - /checkout/

My account: #8 - /my-account/

Terms and conditions: ❌ Page not set

### Theme ###

Name: Twenty Nineteen

Version: 2.3

Author URL: https://wordpress.org/

Child Theme: ❌ – If you are modifying WooCommerce on a parent theme that you did not build personally we recommend using a child theme. See: How to create a child theme

WooCommerce Support: ✔

### Templates ###

Overrides: –

### Admin ###

Enabled Features: activity-panels

analytics

coupons

customer-effort-score-tracks

experimental-products-task

experimental-import-products-task

experimental-fashion-sample-products

experimental-product-tour

shipping-smart-defaults

shipping-setting-tour

homescreen

marketing

multichannel-marketing

mobile-app-banner

navigation

onboarding

onboarding-tasks

remote-inbox-notifications

remote-free-extensions

payment-gateway-suggestions

shipping-label-banner

subscriptions

store-alerts

transient-notices

woo-mobile-welcome

wc-pay-promotion

wc-pay-welcome-page

Disabled Features: minified-js

new-product-management-experience

settings

Daily Cron: ✔ Next scheduled: 2022-10-04 15:10:05 +00:00

Options: ✔

Notes: 2

Onboarding: -

### Action Scheduler ###

Pending: 2

Oldest: 2022-10-04 15:11:10 +0000

Newest: 2022-10-04 15:11:11 +0000

### Status report information ###

Generated at: 2022-10-04 15:11:26 +00:00

`

### Isolating the problem

- [X] I have deactivated other plugins and confirmed this bug occurs when only WooCommerce plugin is active.

- [X] This bug happens with a default WordPress theme active, or [Storefront](https://woocommerce.com/storefront/).

- [X] I can reproduce this bug consistently using the steps above.

|

1.0

|

[COT/HPOS] WooCommerce API calls not behaving as expected for orders with search param `page` - ### Prerequisites

- [X] I have carried out troubleshooting steps and I believe I have found a bug.

- [X] I have searched for similar bugs in both open and closed issues and cannot find a duplicate.

### Describe the bug

The `page` search param is no longer taking effect so, say we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

it is returning the same results as below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. both only returning the first page values

### Expected behavior

The `page` search param should have an effect on the results returned in the GET orders call

i.e. If we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

It should returning different results to below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. the first call should return the first 4 orders and the second call should return the next 4 (different) orders

### Actual behavior

The `page` search param is no longer taking effect so, say we had 10 orders and we called the api to GET orders and return the first page using search params as follows:

```

params: {

per_page: 4,

page: 1

},

```

it is returning the same results as below:

```

params: {

per_page: 4,

page: 2

},

```

i.e. both only returning the first page values

### Steps to reproduce

1. Enable COT/HPOS

2. Use the WooCommerce API to create 8 orders

3. Attempt to search on orders and get the first page of results (given 4 results per page) with search param as follows:

```

{

per_page: 4,

page: 1

}

```

4. Attempt to search on orders and get the second page of results (given 4 results per page) with search param as follows:

```

{

per_page: 4,

page: 2

}

```

5. The same results are returned for each

### WordPress Environment

`

### WordPress Environment ###

WordPress address (URL): http://localhost:8086

Site address (URL): http://localhost:8086

WC Version: 7.0.0

REST API Version: ✔ 7.0.0

WC Blocks Version: ✔ 8.5.1

Action Scheduler Version: ✔ 3.4.0

Log Directory Writable: ✔

WP Version: 6.0.2

WP Multisite: –

WP Memory Limit: 256 MB

WP Debug Mode: –

WP Cron: ✔

Language: en_US

External object cache: –

### Server Environment ###

Server Info: Apache/2.4.54 (Debian)

PHP Version: 7.4.32

PHP Post Max Size: 8 MB

PHP Time Limit: 30

PHP Max Input Vars: 1000

cURL Version: 7.74.0

OpenSSL/1.1.1n

SUHOSIN Installed: –

MySQL Version: 5.5.5-10.9.3-MariaDB-1:10.9.3+maria~ubu2204

Max Upload Size: 2 MB

Default Timezone is UTC: ✔

fsockopen/cURL: ✔

SoapClient: ❌ Your server does not have the SoapClient class enabled - some gateway plugins which use SOAP may not work as expected.

DOMDocument: ✔

GZip: ✔

Multibyte String: ✔

Remote Post: ✔

Remote Get: ✔

### Database ###

WC Database Version: 7.0.0

WC Database Prefix: wp_

Total Database Size: 5.19MB

Database Data Size: 3.52MB

Database Index Size: 1.67MB

wp_woocommerce_sessions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_api_keys: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_attribute_taxonomies: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_downloadable_product_permissions: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_order_items: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_order_itemmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_tax_rates: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_tax_rate_locations: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_shipping_zones: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_shipping_zone_locations: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_shipping_zone_methods: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_payment_tokens: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_payment_tokenmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_log: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_actions: Data: 0.02MB + Index: 0.11MB + Engine InnoDB

wp_actionscheduler_claims: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_groups: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_logs: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_commentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_comments: Data: 0.02MB + Index: 0.09MB + Engine InnoDB

wp_links: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_options: Data: 2.52MB + Index: 0.03MB + Engine InnoDB

wp_postmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_posts: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_termmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_terms: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_term_relationships: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_term_taxonomy: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_usermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_users: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_admin_notes: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_admin_note_actions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_category_lookup: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_customer_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_download_log: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_orders: Data: 0.02MB + Index: 0.11MB + Engine InnoDB

wp_wc_orders_meta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_addresses: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_coupon_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_operational_data: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_product_lookup: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_stats: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_order_tax_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_product_attributes_lookup: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_product_download_directories: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_product_meta_lookup: Data: 0.02MB + Index: 0.09MB + Engine InnoDB

wp_wc_rate_limits: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_reserved_stock: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_tax_rate_classes: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_webhooks: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wpml_mails: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

### Post Type Counts ###

attachment: 1

page: 7

post: 2

### Security ###

Secure connection (HTTPS): ❌

Your store is not using HTTPS. Learn more about HTTPS and SSL Certificates.

Hide errors from visitors: ✔

### Active Plugins (5) ###

WooCommerce Enable COT: by – 0.0.2

JSON Basic Authentication: by WordPress API Team – 0.1

WooCommerce Reset: by WooCommerce – 0.1.0

WooCommerce: by Automattic – 7.0.0-dev

WP Mail Logging: by Wysija – 1.10.4

### Inactive Plugins (2) ###

Akismet Anti-Spam: by Automattic – 5.0

Hello Dolly: by Matt Mullenweg – 1.7.2

### Settings ###

API Enabled: –

Force SSL: –

Currency: USD ($)

Currency Position: left

Thousand Separator: ,

Decimal Separator: .

Number of Decimals: 2

Taxonomies: Product Types: external (external)

grouped (grouped)

simple (simple)

variable (variable)

Taxonomies: Product Visibility: exclude-from-catalog (exclude-from-catalog)

exclude-from-search (exclude-from-search)

featured (featured)

outofstock (outofstock)

rated-1 (rated-1)

rated-2 (rated-2)

rated-3 (rated-3)

rated-4 (rated-4)

rated-5 (rated-5)

Connected to WooCommerce.com: –

Enforce Approved Product Download Directories: ✔

### WC Pages ###

Shop base: #5 - /shop/

Cart: #6 - /cart/

Checkout: #7 - /checkout/

My account: #8 - /my-account/

Terms and conditions: ❌ Page not set

### Theme ###

Name: Twenty Nineteen

Version: 2.3

Author URL: https://wordpress.org/

Child Theme: ❌ – If you are modifying WooCommerce on a parent theme that you did not build personally we recommend using a child theme. See: How to create a child theme

WooCommerce Support: ✔

### Templates ###

Overrides: –

### Admin ###

Enabled Features: activity-panels

analytics

coupons

customer-effort-score-tracks

experimental-products-task

experimental-import-products-task

experimental-fashion-sample-products

experimental-product-tour

shipping-smart-defaults

shipping-setting-tour

homescreen

marketing

multichannel-marketing

mobile-app-banner

navigation

onboarding

onboarding-tasks

remote-inbox-notifications

remote-free-extensions

payment-gateway-suggestions

shipping-label-banner

subscriptions

store-alerts

transient-notices

woo-mobile-welcome

wc-pay-promotion

wc-pay-welcome-page

Disabled Features: minified-js

new-product-management-experience

settings

Daily Cron: ✔ Next scheduled: 2022-10-04 15:10:05 +00:00

Options: ✔

Notes: 2

Onboarding: -

### Action Scheduler ###

Pending: 2

Oldest: 2022-10-04 15:11:10 +0000

Newest: 2022-10-04 15:11:11 +0000

### Status report information ###

Generated at: 2022-10-04 15:11:26 +00:00

`

### Isolating the problem

- [X] I have deactivated other plugins and confirmed this bug occurs when only WooCommerce plugin is active.

- [X] This bug happens with a default WordPress theme active, or [Storefront](https://woocommerce.com/storefront/).

- [X] I can reproduce this bug consistently using the steps above.

|

priority

|

woocommerce api calls not behaving as expected for orders with search param page prerequisites i have carried out troubleshooting steps and i believe i have found a bug i have searched for similar bugs in both open and closed issues and cannot find a duplicate describe the bug the page search param is no longer taking effect so say we had orders and we called the api to get orders and return the first page using search params as follows params per page page it is returning the same results as below params per page page i e both only returning the first page values expected behavior the page search param should have an effect on the results returned in the get orders call i e if we had orders and we called the api to get orders and return the first page using search params as follows params per page page it should returning different results to below params per page page i e the first call should return the first orders and the second call should return the next different orders actual behavior the page search param is no longer taking effect so say we had orders and we called the api to get orders and return the first page using search params as follows params per page page it is returning the same results as below params per page page i e both only returning the first page values steps to reproduce enable cot hpos use the woocommerce api to create orders attempt to search on orders and get the first page of results given results per page with search param as follows per page page attempt to search on orders and get the second page of results given results per page with search param as follows per page page the same results are returned for each wordpress environment wordpress environment wordpress address url site address url wc version rest api version ✔ wc blocks version ✔ action scheduler version ✔ log directory writable ✔ wp version wp multisite – wp memory limit mb wp debug mode – wp cron ✔ language en us external object cache – server environment server info apache debian php version php post max size mb php time limit php max input vars curl version openssl suhosin installed – mysql version mariadb maria max upload size mb default timezone is utc ✔ fsockopen curl ✔ soapclient ❌ your server does not have the soapclient class enabled some gateway plugins which use soap may not work as expected domdocument ✔ gzip ✔ multibyte string ✔ remote post ✔ remote get ✔ database wc database version wc database prefix wp total database size database data size database index size wp woocommerce sessions data index engine innodb wp woocommerce api keys data index engine innodb wp woocommerce attribute taxonomies data index engine innodb wp woocommerce downloadable product permissions data index engine innodb wp woocommerce order items data index engine innodb wp woocommerce order itemmeta data index engine innodb wp woocommerce tax rates data index engine innodb wp woocommerce tax rate locations data index engine innodb wp woocommerce shipping zones data index engine innodb wp woocommerce shipping zone locations data index engine innodb wp woocommerce shipping zone methods data index engine innodb wp woocommerce payment tokens data index engine innodb wp woocommerce payment tokenmeta data index engine innodb wp woocommerce log data index engine innodb wp actionscheduler actions data index engine innodb wp actionscheduler claims data index engine innodb wp actionscheduler groups data index engine innodb wp actionscheduler logs data index engine innodb wp commentmeta data index engine innodb wp comments data index engine innodb wp links data index engine innodb wp options data index engine innodb wp postmeta data index engine innodb wp posts data index engine innodb wp termmeta data index engine innodb wp terms data index engine innodb wp term relationships data index engine innodb wp term taxonomy data index engine innodb wp usermeta data index engine innodb wp users data index engine innodb wp wc admin notes data index engine innodb wp wc admin note actions data index engine innodb wp wc category lookup data index engine innodb wp wc customer lookup data index engine innodb wp wc download log data index engine innodb wp wc orders data index engine innodb wp wc orders meta data index engine innodb wp wc order addresses data index engine innodb wp wc order coupon lookup data index engine innodb wp wc order operational data data index engine innodb wp wc order product lookup data index engine innodb wp wc order stats data index engine innodb wp wc order tax lookup data index engine innodb wp wc product attributes lookup data index engine innodb wp wc product download directories data index engine innodb wp wc product meta lookup data index engine innodb wp wc rate limits data index engine innodb wp wc reserved stock data index engine innodb wp wc tax rate classes data index engine innodb wp wc webhooks data index engine innodb wp wpml mails data index engine innodb post type counts attachment page post security secure connection https ❌ your store is not using https learn more about https and ssl certificates hide errors from visitors ✔ active plugins woocommerce enable cot by – json basic authentication by wordpress api team – woocommerce reset by woocommerce – woocommerce by automattic – dev wp mail logging by wysija – inactive plugins akismet anti spam by automattic – hello dolly by matt mullenweg – settings api enabled – force ssl – currency usd currency position left thousand separator decimal separator number of decimals taxonomies product types external external grouped grouped simple simple variable variable taxonomies product visibility exclude from catalog exclude from catalog exclude from search exclude from search featured featured outofstock outofstock rated rated rated rated rated rated rated rated rated rated connected to woocommerce com – enforce approved product download directories ✔ wc pages shop base shop cart cart checkout checkout my account my account terms and conditions ❌ page not set theme name twenty nineteen version author url child theme ❌ – if you are modifying woocommerce on a parent theme that you did not build personally we recommend using a child theme see how to create a child theme woocommerce support ✔ templates overrides – admin enabled features activity panels analytics coupons customer effort score tracks experimental products task experimental import products task experimental fashion sample products experimental product tour shipping smart defaults shipping setting tour homescreen marketing multichannel marketing mobile app banner navigation onboarding onboarding tasks remote inbox notifications remote free extensions payment gateway suggestions shipping label banner subscriptions store alerts transient notices woo mobile welcome wc pay promotion wc pay welcome page disabled features minified js new product management experience settings daily cron ✔ next scheduled options ✔ notes onboarding action scheduler pending oldest newest status report information generated at isolating the problem i have deactivated other plugins and confirmed this bug occurs when only woocommerce plugin is active this bug happens with a default wordpress theme active or i can reproduce this bug consistently using the steps above

| 1

|

772,299

| 27,115,371,433

|

IssuesEvent

|

2023-02-15 18:08:38

|

NIAEFEUP/tts-revamp-fe

|

https://api.github.com/repos/NIAEFEUP/tts-revamp-fe

|

closed

|

Add courses outside of major

|

low priority high effort

|

- [x] Add extra courses button in modal

- [x] Extra courses Combobox with all available courses

- [ ] Selecting a course from the Combobox adds it to the selected courses

|

1.0

|

Add courses outside of major - - [x] Add extra courses button in modal

- [x] Extra courses Combobox with all available courses

- [ ] Selecting a course from the Combobox adds it to the selected courses

|

priority

|

add courses outside of major add extra courses button in modal extra courses combobox with all available courses selecting a course from the combobox adds it to the selected courses

| 1

|

267,858

| 8,393,845,751

|

IssuesEvent

|

2018-10-09 21:50:50

|

prettier/prettier

|

https://api.github.com/repos/prettier/prettier

|

opened

|

YAML: Incorrect quotes are used when string contains mixed quotes

|

lang:yaml priority:high type:bug

|

**Prettier 1.14.2**

[Playground link](https://prettier.io/playground/#N4Igxg9gdgLgprEAuEAdEByAhq3IBG6qUIANCBAA4wCW0AzsqFgE4sQDuACqwoylgBuEGgBMyBFljABrODADKlaTSgBzZDBYBXOOVX04LGFylqAtlmQAzLABtD5AFb0AHgCEps+Qqzm4ADKqcDb2jiDKLIYsyCAAnn52EpQsqjAA6mIwABbIABwADOQpEIbpUpSxKXDRgiHkLHAAjto0jaZYFlZItg56IIbmNJo6-fSqanZwAIraEPChfeQwWPiZojnIAEzLUjR2EwDCEOaWsVDQ9SDahgAqq-y9hgC+z0A)

```sh

--parser yaml

```

**Input:**

```yaml

"'a\"b"

```

**Output:**

```yaml

''a"b'

```

**Second Output:**

```yaml

SyntaxError: Document is not valid YAML (bad indentation?) (1:3)

> 1 | ''a"b'

| ^^^

> 2 |

| ^

```

**Expected behavior:**

|

1.0

|

YAML: Incorrect quotes are used when string contains mixed quotes - **Prettier 1.14.2**

[Playground link](https://prettier.io/playground/#N4Igxg9gdgLgprEAuEAdEByAhq3IBG6qUIANCBAA4wCW0AzsqFgE4sQDuACqwoylgBuEGgBMyBFljABrODADKlaTSgBzZDBYBXOOVX04LGFylqAtlmQAzLABtD5AFb0AHgCEps+Qqzm4ADKqcDb2jiDKLIYsyCAAnn52EpQsqjAA6mIwABbIABwADOQpEIbpUpSxKXDRgiHkLHAAjto0jaZYFlZItg56IIbmNJo6-fSqanZwAIraEPChfeQwWPiZojnIAEzLUjR2EwDCEOaWsVDQ9SDahgAqq-y9hgC+z0A)

```sh

--parser yaml

```

**Input:**

```yaml

"'a\"b"

```

**Output:**

```yaml

''a"b'

```

**Second Output:**

```yaml

SyntaxError: Document is not valid YAML (bad indentation?) (1:3)

> 1 | ''a"b'

| ^^^

> 2 |

| ^

```

**Expected behavior:**

|

priority

|

yaml incorrect quotes are used when string contains mixed quotes prettier sh parser yaml input yaml a b output yaml a b second output yaml syntaxerror document is not valid yaml bad indentation a b expected behavior

| 1

|

795,361

| 28,070,704,005

|

IssuesEvent

|

2023-03-29 18:50:28

|

ucb-rit/coldfront

|

https://api.github.com/repos/ucb-rit/coldfront

|

closed

|

Gracefully handle case when CILogon does not provide user first/last name fields

|

bug high priority lrc-only

|

In general, when a user logs in with CILogon, it provides information about the user, including email, first name, last name, etc.

However, when CILogon fails to provide some of these fields (e.g., first name), the [custom application logic](https://github.com/ucb-rit/coldfront/blob/master/coldfront/core/socialaccount/adapter.py#L54) for populating a user from the provided info fails:

```

ERROR middleware.process_exception: AnonymousUser encountered an uncaught exception at /accounts/cilogon/login/callback/. Details:

ERROR middleware.process_exception: null value in column "first_name" violates not-null constraint

```

Update the code to default to an empty string instead of `None`, like the [base class](https://github.com/pennersr/django-allauth/blob/master/allauth/socialaccount/adapter.py#L110) in `django-allauth` does.

|

1.0

|

Gracefully handle case when CILogon does not provide user first/last name fields - In general, when a user logs in with CILogon, it provides information about the user, including email, first name, last name, etc.

However, when CILogon fails to provide some of these fields (e.g., first name), the [custom application logic](https://github.com/ucb-rit/coldfront/blob/master/coldfront/core/socialaccount/adapter.py#L54) for populating a user from the provided info fails:

```

ERROR middleware.process_exception: AnonymousUser encountered an uncaught exception at /accounts/cilogon/login/callback/. Details:

ERROR middleware.process_exception: null value in column "first_name" violates not-null constraint

```

Update the code to default to an empty string instead of `None`, like the [base class](https://github.com/pennersr/django-allauth/blob/master/allauth/socialaccount/adapter.py#L110) in `django-allauth` does.

|

priority

|

gracefully handle case when cilogon does not provide user first last name fields in general when a user logs in with cilogon it provides information about the user including email first name last name etc however when cilogon fails to provide some of these fields e g first name the for populating a user from the provided info fails error middleware process exception anonymoususer encountered an uncaught exception at accounts cilogon login callback details error middleware process exception null value in column first name violates not null constraint update the code to default to an empty string instead of none like the in django allauth does

| 1

|

667,226

| 22,422,739,984

|

IssuesEvent

|

2022-06-20 06:06:12

|

thewca/worldcubeassociation.org

|

https://api.github.com/repos/thewca/worldcubeassociation.org

|

closed

|

Competitors now able to delete their registration when accepted

|

bug registration good second issue high-priority

|

When you enable competitors being able to edit their events it seems they are also able to delete their registrations after they have been accepted. This is undesirable for now, since there are further actions that need to be taken for an accepted registration (e.g. processing a refund if it is due, and accepting the next competitor on the waiting list). While competitors being able to edit their events is great, it would be good if the ability to change their status was not an option for them. Obviously this can be re-enabled when there is automated waiting list handling!

|

1.0

|

Competitors now able to delete their registration when accepted - When you enable competitors being able to edit their events it seems they are also able to delete their registrations after they have been accepted. This is undesirable for now, since there are further actions that need to be taken for an accepted registration (e.g. processing a refund if it is due, and accepting the next competitor on the waiting list). While competitors being able to edit their events is great, it would be good if the ability to change their status was not an option for them. Obviously this can be re-enabled when there is automated waiting list handling!

|

priority

|

competitors now able to delete their registration when accepted when you enable competitors being able to edit their events it seems they are also able to delete their registrations after they have been accepted this is undesirable for now since there are further actions that need to be taken for an accepted registration e g processing a refund if it is due and accepting the next competitor on the waiting list while competitors being able to edit their events is great it would be good if the ability to change their status was not an option for them obviously this can be re enabled when there is automated waiting list handling

| 1

|

670,961

| 22,715,244,250

|

IssuesEvent

|

2022-07-06 00:58:31

|

apache/incubator-devlake

|

https://api.github.com/repos/apache/incubator-devlake

|

closed

|

Apache compliance

|

type/docs priority/high epic

|

## Documentation Scope

ALL

## Describe the Change

1. #1910

2. #1911

3. #1912

4. #1897

|

1.0

|

Apache compliance - ## Documentation Scope

ALL

## Describe the Change

1. #1910

2. #1911

3. #1912

4. #1897

|

priority

|

apache compliance documentation scope all describe the change

| 1

|

243,970

| 7,868,826,650

|

IssuesEvent

|

2018-06-24 05:04:54

|

PaddlePaddle/Paddle

|

https://api.github.com/repos/PaddlePaddle/Paddle

|

closed

|

Error when transpile program with piecewise_decay to distributed program

|

Bug high priority

|

When `piecewise_decay` is defined the pserver side program will have a wrong `conditional_block` refer to a block id that doesn't exist on the pserver program.

This error was first met by @kolinwei

```python

optimizer = fluid.optimizer.Momentum(

learning_rate=fluid.layers.piecewise_decay(

boundaries=bd, values=lr),

momentum=0.9,

regularization=fluid.regularizer.L2Decay(1e-4))

```

|

1.0

|

Error when transpile program with piecewise_decay to distributed program - When `piecewise_decay` is defined the pserver side program will have a wrong `conditional_block` refer to a block id that doesn't exist on the pserver program.

This error was first met by @kolinwei

```python

optimizer = fluid.optimizer.Momentum(

learning_rate=fluid.layers.piecewise_decay(

boundaries=bd, values=lr),

momentum=0.9,

regularization=fluid.regularizer.L2Decay(1e-4))

```

|

priority

|

error when transpile program with piecewise decay to distributed program when piecewise decay is defined the pserver side program will have a wrong conditional block refer to a block id that doesn t exist on the pserver program this error was first met by kolinwei python optimizer fluid optimizer momentum learning rate fluid layers piecewise decay boundaries bd values lr momentum regularization fluid regularizer

| 1

|

692,202

| 23,726,031,578

|

IssuesEvent

|

2022-08-30 19:42:12

|

archesproject/arches

|

https://api.github.com/repos/archesproject/arches

|

closed

|

ElasticSearch throws an error on any page where it's used

|

Priority: High bug

|

**Describe the bug**

<!--- By fully explaining what you are encountering, you can help us understand and reproduce the issue. -->

<!--- Often times, a screenshot or animated GIF can help show what you are encountering. -->

```

elastic_transport.TlsError: TLS error caused by: TlsError(TLS error caused by: SSLError([SSL: WRONG_VERSION_NUMBER] wrong version number...)

```

**To Reproduce**

Steps to reproduce the behavior:

1. Load dev/7.0.x in core and arches-her

2. visit the search page

|

1.0

|

ElasticSearch throws an error on any page where it's used - **Describe the bug**

<!--- By fully explaining what you are encountering, you can help us understand and reproduce the issue. -->

<!--- Often times, a screenshot or animated GIF can help show what you are encountering. -->

```

elastic_transport.TlsError: TLS error caused by: TlsError(TLS error caused by: SSLError([SSL: WRONG_VERSION_NUMBER] wrong version number...)

```

**To Reproduce**

Steps to reproduce the behavior:

1. Load dev/7.0.x in core and arches-her

2. visit the search page

|

priority

|

elasticsearch throws an error on any page where it s used describe the bug elastic transport tlserror tls error caused by tlserror tls error caused by sslerror wrong version number to reproduce steps to reproduce the behavior load dev x in core and arches her visit the search page

| 1

|

200,874

| 7,017,824,519

|

IssuesEvent

|

2017-12-21 11:07:18

|

bleenco/abstruse

|

https://api.github.com/repos/bleenco/abstruse

|

closed

|

[bug]: echo $ENV_VARIABLE

|

Priority: High Status: In Progress Type: Question

|

Check what happens if someone put `echo $ENV_VARIABLE` command in `.abstruse.yml`.

Encrypted data should not show at any cost

|

1.0

|

[bug]: echo $ENV_VARIABLE - Check what happens if someone put `echo $ENV_VARIABLE` command in `.abstruse.yml`.

Encrypted data should not show at any cost

|

priority

|

echo env variable check what happens if someone put echo env variable command in abstruse yml encrypted data should not show at any cost

| 1

|

551,008

| 16,136,149,228

|

IssuesEvent

|

2021-04-29 12:07:11

|

Neural-Systems-at-UIO/VisuAlign

|

https://api.github.com/repos/Neural-Systems-at-UIO/VisuAlign

|

closed

|

MeshView v0.8 - user defined sections and hidden outline

|

High Priority enhancement

|

We like to request the following changes to MeshView v0.8 (https://www.nesys.uio.no/MeshView/meshview.html?atlas=ABA_mouse_v3_2017_full ):

1. An option to cut imported point clouds in user-defined sections with a user-defined thickness (in the newest version of MeshView). This option is available in a previous version of MeshView (https://www.nesys.uio.no/MeshView/meshviewx.html?atlas=ABA_mouse_v3_2017_full ). Here it is done by cutting in "Cloud only" and adjusting the thickness of the slice by a slider. See attached image for explanation of what function we want to implement from previous version of MeshView.

2. View imported point clouds without structural outlines, or the option to make it "invisible" for the eye (turn off structural outline) while still keeping the bounding box.

|

1.0

|

MeshView v0.8 - user defined sections and hidden outline - We like to request the following changes to MeshView v0.8 (https://www.nesys.uio.no/MeshView/meshview.html?atlas=ABA_mouse_v3_2017_full ):

1. An option to cut imported point clouds in user-defined sections with a user-defined thickness (in the newest version of MeshView). This option is available in a previous version of MeshView (https://www.nesys.uio.no/MeshView/meshviewx.html?atlas=ABA_mouse_v3_2017_full ). Here it is done by cutting in "Cloud only" and adjusting the thickness of the slice by a slider. See attached image for explanation of what function we want to implement from previous version of MeshView.

2. View imported point clouds without structural outlines, or the option to make it "invisible" for the eye (turn off structural outline) while still keeping the bounding box.

|

priority

|

meshview user defined sections and hidden outline we like to request the following changes to meshview an option to cut imported point clouds in user defined sections with a user defined thickness in the newest version of meshview this option is available in a previous version of meshview here it is done by cutting in cloud only and adjusting the thickness of the slice by a slider see attached image for explanation of what function we want to implement from previous version of meshview view imported point clouds without structural outlines or the option to make it invisible for the eye turn off structural outline while still keeping the bounding box

| 1

|

357,460

| 10,606,644,544

|

IssuesEvent

|

2019-10-11 00:14:47

|

carbon-design-system/carbon

|

https://api.github.com/repos/carbon-design-system/carbon

|

closed

|

Tag (tag) component has insufficient contrast on Gray 100 theme

|

Severity 1 🚨 priority: high type: a11y ♿

|

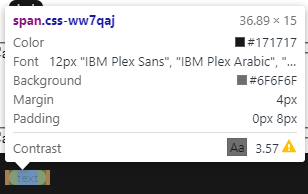

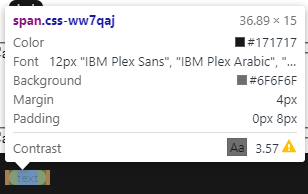

<!-- Feel free to remove sections that aren't relevant.

## Title line template: [Title]: Brief description

-->

## Environment

Windows 10

Chrome Version 76.0.3809.132 (Official Build) (64-bit)

## Detailed description

Carbon v10 - React

Component: Tag (filter), http://themes.carbondesignsystem.com/?nav=tag

The component is using,

`background-color: interactive-02, color: inverse-01`

When switching to the Gray 100 theme, the contrast ratio has dropped to 3.57 (WCAG AA: Fail).

I used WebAIM (https://webaim.org/resources/contrastchecker/) to check the colour contrast.

## Additional information

|

1.0

|

Tag (tag) component has insufficient contrast on Gray 100 theme - <!-- Feel free to remove sections that aren't relevant.

## Title line template: [Title]: Brief description

-->

## Environment

Windows 10

Chrome Version 76.0.3809.132 (Official Build) (64-bit)

## Detailed description

Carbon v10 - React

Component: Tag (filter), http://themes.carbondesignsystem.com/?nav=tag

The component is using,

`background-color: interactive-02, color: inverse-01`

When switching to the Gray 100 theme, the contrast ratio has dropped to 3.57 (WCAG AA: Fail).

I used WebAIM (https://webaim.org/resources/contrastchecker/) to check the colour contrast.

## Additional information

|

priority

|

tag tag component has insufficient contrast on gray theme feel free to remove sections that aren t relevant title line template brief description environment windows chrome version official build bit detailed description carbon react component tag filter the component is using background color interactive color inverse when switching to the gray theme the contrast ratio has dropped to wcag aa fail i used webaim to check the colour contrast additional information

| 1

|

168,501

| 6,376,697,955

|

IssuesEvent

|

2017-08-02 08:12:39

|

morpho-os/framework

|

https://api.github.com/repos/morpho-os/framework

|

closed

|

Use integers for priority of events in ModuleManager, accept the `-` sign.

|

high priority (4)

|

Fix DB column type if necessary.

Fix range - `0..100` and the default priority.

|

1.0

|

Use integers for priority of events in ModuleManager, accept the `-` sign. - Fix DB column type if necessary.

Fix range - `0..100` and the default priority.

|

priority

|

use integers for priority of events in modulemanager accept the sign fix db column type if necessary fix range and the default priority

| 1

|

386,941

| 11,453,492,579

|

IssuesEvent

|

2020-02-06 15:29:36

|

woocommerce/woocommerce-gateway-paypal-express-checkout

|

https://api.github.com/repos/woocommerce/woocommerce-gateway-paypal-express-checkout

|

closed

|

Double stock reduce when IPN and PDT notifications are enable

|

Priority: High [Type] Cannot reproduce

|

When IPN and PDT notifications are enabled , the stock is reduced twice.

Stock levels reduced: High-waist wide leg trousers – S (1904LAG60240154B) 0→-1, Fringes short sleeve t-shirt – S (1904SIX58760129B) 1→0

July 28, 2019 at 9:28 am Delete note

Stock levels reduced: High-waist wide leg trousers – S (1904LAG60240154B) 1→0, Fringes short sleeve t-shirt – S (1904SIX58760129B) 2→1

July 28, 2019 at 9:28 am Delete note

PDT payment completed

July 28, 2019 at 9:27 am Delete note

IPN payment completed

July 28, 2019 at 9:27 am Delete note

|

1.0

|

Double stock reduce when IPN and PDT notifications are enable - When IPN and PDT notifications are enabled , the stock is reduced twice.

Stock levels reduced: High-waist wide leg trousers – S (1904LAG60240154B) 0→-1, Fringes short sleeve t-shirt – S (1904SIX58760129B) 1→0

July 28, 2019 at 9:28 am Delete note

Stock levels reduced: High-waist wide leg trousers – S (1904LAG60240154B) 1→0, Fringes short sleeve t-shirt – S (1904SIX58760129B) 2→1

July 28, 2019 at 9:28 am Delete note

PDT payment completed

July 28, 2019 at 9:27 am Delete note

IPN payment completed

July 28, 2019 at 9:27 am Delete note

|

priority

|

double stock reduce when ipn and pdt notifications are enable when ipn and pdt notifications are enabled the stock is reduced twice stock levels reduced high waist wide leg trousers – s → fringes short sleeve t shirt – s → july at am delete note stock levels reduced high waist wide leg trousers – s → fringes short sleeve t shirt – s → july at am delete note pdt payment completed july at am delete note ipn payment completed july at am delete note

| 1

|

277,488

| 8,629,027,702

|

IssuesEvent

|

2018-11-21 19:17:11

|

Polymer/lit-html

|

https://api.github.com/repos/Polymer/lit-html

|

closed

|

Update when directive to handle any number of cases.

|

Area: API Priority: High Status: Accepted Type: Enhancement

|

We have two feature requests related to conditional rendering:

- #511 - the false value should be optional

- A `switch` directive.

We can resolve both of these requests by generalizing `when` to introspect it's arguments to operate in two modes:

### `if` mode

When the second argument is a function or TemplateResult, we treat the condition as a truthy value and render either the second or third argument.

```ts

html`${when(condition,

() => html`condition is true`,

() => html`condition is false`

)}`

```

### `switch` mode

When the second argument is an object, we treat the condition as simple value, and the second argument as a map of cases:

```ts

html`${when(value, {

'one': () => html`value is one`,

'two': () => html`value is two`,

default: () => html`value is neither`,

})}`

```

In switch mode, cases can only be keyed by strings, number and symbols. If in the future we want to lift this restriction, we can accept arrays:

```ts

html`${when(value,

['one', () => html`value is one`],

['two': () => html`value is two`]

)}`

```

But this seems better to leave off for now.

One of the benefits of handling both the `if` and `switch` use cases in one directive is that we don't have to choose two new names, since we can't name a variable `if`, `switch` or `case`. It should also simplify choices.

### Caching

Currently `when` caches the DOM created by the true and false cases. This should increase performance on fast/frequently changing values, but this might not always be the case, and it increases complexity, code size and overhead. To make complexity pay-for-play we should remove caching from the `when` directive and add a separate `cachingWhen` directive. `cachingWhen` can locally be renamed to `when` when imported:

```ts

import {cachingWhen as when} from 'lit-html/directives/caching-when.js';

```

|

1.0

|

Update when directive to handle any number of cases. - We have two feature requests related to conditional rendering:

- #511 - the false value should be optional

- A `switch` directive.

We can resolve both of these requests by generalizing `when` to introspect it's arguments to operate in two modes:

### `if` mode

When the second argument is a function or TemplateResult, we treat the condition as a truthy value and render either the second or third argument.

```ts

html`${when(condition,

() => html`condition is true`,

() => html`condition is false`

)}`

```

### `switch` mode

When the second argument is an object, we treat the condition as simple value, and the second argument as a map of cases:

```ts

html`${when(value, {

'one': () => html`value is one`,

'two': () => html`value is two`,

default: () => html`value is neither`,

})}`

```

In switch mode, cases can only be keyed by strings, number and symbols. If in the future we want to lift this restriction, we can accept arrays:

```ts

html`${when(value,

['one', () => html`value is one`],

['two': () => html`value is two`]

)}`

```

But this seems better to leave off for now.

One of the benefits of handling both the `if` and `switch` use cases in one directive is that we don't have to choose two new names, since we can't name a variable `if`, `switch` or `case`. It should also simplify choices.

### Caching

Currently `when` caches the DOM created by the true and false cases. This should increase performance on fast/frequently changing values, but this might not always be the case, and it increases complexity, code size and overhead. To make complexity pay-for-play we should remove caching from the `when` directive and add a separate `cachingWhen` directive. `cachingWhen` can locally be renamed to `when` when imported:

```ts

import {cachingWhen as when} from 'lit-html/directives/caching-when.js';

```

|

priority

|

update when directive to handle any number of cases we have two feature requests related to conditional rendering the false value should be optional a switch directive we can resolve both of these requests by generalizing when to introspect it s arguments to operate in two modes if mode when the second argument is a function or templateresult we treat the condition as a truthy value and render either the second or third argument ts html when condition html condition is true html condition is false switch mode when the second argument is an object we treat the condition as simple value and the second argument as a map of cases ts html when value one html value is one two html value is two default html value is neither in switch mode cases can only be keyed by strings number and symbols if in the future we want to lift this restriction we can accept arrays ts html when value but this seems better to leave off for now one of the benefits of handling both the if and switch use cases in one directive is that we don t have to choose two new names since we can t name a variable if switch or case it should also simplify choices caching currently when caches the dom created by the true and false cases this should increase performance on fast frequently changing values but this might not always be the case and it increases complexity code size and overhead to make complexity pay for play we should remove caching from the when directive and add a separate cachingwhen directive cachingwhen can locally be renamed to when when imported ts import cachingwhen as when from lit html directives caching when js

| 1

|

157,991

| 6,019,910,749

|

IssuesEvent

|

2017-06-07 15:23:53

|

cytoscape/cytoscape.js

|

https://api.github.com/repos/cytoscape/cytoscape.js

|

closed

|

Bottom/top roundrect shapes (`shape: top-roundrectangle | bottom-roundrectangle`)

|

priority-1-high

|

`shape: top-roundrectangle | bottom-roundrectangle`

|

1.0

|

Bottom/top roundrect shapes (`shape: top-roundrectangle | bottom-roundrectangle`) -

`shape: top-roundrectangle | bottom-roundrectangle`

|

priority

|

bottom top roundrect shapes shape top roundrectangle bottom roundrectangle shape top roundrectangle bottom roundrectangle

| 1

|

327,725

| 9,979,616,508

|

IssuesEvent

|

2019-07-09 23:39:08

|

MolSnoo/Alter-Ego

|

https://api.github.com/repos/MolSnoo/Alter-Ego

|

opened

|

Bot crashes when trying to load a player with no status effects

|

bug high priority

|

If a player with no status effects is loaded, the bot will crash when trying to generate the player's statusString.

|

1.0

|

Bot crashes when trying to load a player with no status effects - If a player with no status effects is loaded, the bot will crash when trying to generate the player's statusString.

|

priority

|

bot crashes when trying to load a player with no status effects if a player with no status effects is loaded the bot will crash when trying to generate the player s statusstring

| 1

|

295,217

| 9,083,230,576

|

IssuesEvent

|

2019-02-17 18:49:50

|

sul-dlss/preservation_catalog

|

https://api.github.com/repos/sul-dlss/preservation_catalog

|

reopened

|

zip segment names are being incorrectly generated(?)

|

bug high priority

|

Consider druid vb008fc5700

AWS S3 bucket contains the following files for this druid:

```

vb/008/fc/5700/vb008fc5700.v0001.z01

vb/008/fc/5700/vb008fc5700.v0001.z05

vb/008/fc/5700/vb008fc5700.v0001.z06

vb/008/fc/5700/vb008fc5700.v0001.z07

vb/008/fc/5700/vb008fc5700.v0001.z08

vb/008/fc/5700/vb008fc5700.v0001.z09

vb/008/fc/5700/vb008fc5700.v0001.z10

vb/008/fc/5700/vb008fc5700.v0001.z11

vb/008/fc/5700/vb008fc5700.v0001.z12

vb/008/fc/5700/vb008fc5700.v0001.z13

vb/008/fc/5700/vb008fc5700.v0001.z14

vb/008/fc/5700/vb008fc5700.v0001.z15

vb/008/fc/5700/vb008fc5700.v0001.z16

vb/008/fc/5700/vb008fc5700.v0001.z17

vb/008/fc/5700/vb008fc5700.v0001.z18

vb/008/fc/5700/vb008fc5700.v0001.z19

vb/008/fc/5700/vb008fc5700.v0001.z20

vb/008/fc/5700/vb008fc5700.v0001.z21

vb/008/fc/5700/vb008fc5700.v0001.z22

vb/008/fc/5700/vb008fc5700.v0001.z23

vb/008/fc/5700/vb008fc5700.v0001.z26

vb/008/fc/5700/vb008fc5700.v0001.z27

vb/008/fc/5700/vb008fc5700.v0001.z28

vb/008/fc/5700/vb008fc5700.v0001.z29

vb/008/fc/5700/vb008fc5700.v0001.zip

vb/008/fc/5700/vb008fc5700.v0002.zip

```

Note: missing order (z02, z03 and z04) and a total of 27 uploaded segments for version 1.

Metadata for the upload says:

```

{

"AcceptRanges": "bytes",

"ContentType": "",

"LastModified": "Mon, 17 Sep 2018 10:08:05 GMT",

"ContentLength": 7405818770,

"ETag": "\"b024db8b693879360086f845c0dbcb8d-1413\"",

"StorageClass": "GLACIER",

"Metadata": {

"size": "7405818770",

"parts_count": "27",

"zip_version": "Zip 3.0 (July 5th 2008)",

"checksum_md5": "e2d64ef108bfd463c8cf71478063c7f7",

"zip_cmd": "zip -r0X -s 10g /sdr-transfers/vb/008/fc/5700/vb008fc5700.v0001.zip vb008fc5700/v0001"

}

}

```

Note parts_count: 27, which implies the final segment should be z26, *not* z29.

There are 3 unexpected segments present: z27, z28 and z29.

There are 3 expected segments missing: z02, z03, z04.

This *suggests* that the unexpected segments are, in fact, the missing z02, z03 and z04. But even if that is the case, this is broken.

|

1.0

|

zip segment names are being incorrectly generated(?) - Consider druid vb008fc5700

AWS S3 bucket contains the following files for this druid:

```

vb/008/fc/5700/vb008fc5700.v0001.z01

vb/008/fc/5700/vb008fc5700.v0001.z05

vb/008/fc/5700/vb008fc5700.v0001.z06

vb/008/fc/5700/vb008fc5700.v0001.z07

vb/008/fc/5700/vb008fc5700.v0001.z08

vb/008/fc/5700/vb008fc5700.v0001.z09

vb/008/fc/5700/vb008fc5700.v0001.z10

vb/008/fc/5700/vb008fc5700.v0001.z11

vb/008/fc/5700/vb008fc5700.v0001.z12

vb/008/fc/5700/vb008fc5700.v0001.z13

vb/008/fc/5700/vb008fc5700.v0001.z14

vb/008/fc/5700/vb008fc5700.v0001.z15

vb/008/fc/5700/vb008fc5700.v0001.z16

vb/008/fc/5700/vb008fc5700.v0001.z17

vb/008/fc/5700/vb008fc5700.v0001.z18

vb/008/fc/5700/vb008fc5700.v0001.z19

vb/008/fc/5700/vb008fc5700.v0001.z20

vb/008/fc/5700/vb008fc5700.v0001.z21

vb/008/fc/5700/vb008fc5700.v0001.z22

vb/008/fc/5700/vb008fc5700.v0001.z23

vb/008/fc/5700/vb008fc5700.v0001.z26

vb/008/fc/5700/vb008fc5700.v0001.z27

vb/008/fc/5700/vb008fc5700.v0001.z28

vb/008/fc/5700/vb008fc5700.v0001.z29

vb/008/fc/5700/vb008fc5700.v0001.zip

vb/008/fc/5700/vb008fc5700.v0002.zip

```

Note: missing order (z02, z03 and z04) and a total of 27 uploaded segments for version 1.

Metadata for the upload says:

```

{

"AcceptRanges": "bytes",

"ContentType": "",

"LastModified": "Mon, 17 Sep 2018 10:08:05 GMT",

"ContentLength": 7405818770,

"ETag": "\"b024db8b693879360086f845c0dbcb8d-1413\"",

"StorageClass": "GLACIER",

"Metadata": {

"size": "7405818770",

"parts_count": "27",

"zip_version": "Zip 3.0 (July 5th 2008)",

"checksum_md5": "e2d64ef108bfd463c8cf71478063c7f7",

"zip_cmd": "zip -r0X -s 10g /sdr-transfers/vb/008/fc/5700/vb008fc5700.v0001.zip vb008fc5700/v0001"

}

}

```

Note parts_count: 27, which implies the final segment should be z26, *not* z29.

There are 3 unexpected segments present: z27, z28 and z29.

There are 3 expected segments missing: z02, z03, z04.

This *suggests* that the unexpected segments are, in fact, the missing z02, z03 and z04. But even if that is the case, this is broken.

|

priority

|