Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

87,075

| 3,736,717,427

|

IssuesEvent

|

2016-03-08 16:48:35

|

Fermat-ORG/fermat-org

|

https://api.github.com/repos/Fermat-ORG/fermat-org

|

closed

|

Update developer list

|

Priority: HIGH question server

|

Caso de estudio:

Miguelcldn es un nuevo developer que quiere entrar en Fermat, nunca tuvo una ficha asignada.

- Entra en la página

- Inicia sesión

- Crea un componente

- Pone "miguelcldn" en el nombre del developer

- Se crea la ficha y se pone en su posición, pero... ¿aparecerá Miguelcldn en la lista de developers al presionar F5?

|

1.0

|

Update developer list - Caso de estudio:

Miguelcldn es un nuevo developer que quiere entrar en Fermat, nunca tuvo una ficha asignada.

- Entra en la página

- Inicia sesión

- Crea un componente

- Pone "miguelcldn" en el nombre del developer

- Se crea la ficha y se pone en su posición, pero... ¿aparecerá Miguelcldn en la lista de developers al presionar F5?

|

priority

|

update developer list caso de estudio miguelcldn es un nuevo developer que quiere entrar en fermat nunca tuvo una ficha asignada entra en la página inicia sesión crea un componente pone miguelcldn en el nombre del developer se crea la ficha y se pone en su posición pero ¿aparecerá miguelcldn en la lista de developers al presionar

| 1

|

403,934

| 11,849,278,852

|

IssuesEvent

|

2020-03-24 15:01:02

|

Monika-After-Story/MonikaModDev

|

https://api.github.com/repos/Monika-After-Story/MonikaModDev

|

closed

|

In-game explanation of gifting mechanic

|

enhancement high priority

|

A quick search of code shows no instance of "characters folder" or "characters/" in any dialogue relating to gifting, which implies there is no in-game explanation of the gifting mechanic. This is probably why new players don't understand or miss out on things as it was initially introduced in a 9-22 event as an external file.

We should have some in-game, repeatable explanation of this mechanic (story-events, with a say prompt like "How do I gift you things?"). This is especially important given consumables.

This should be also noted in intro, like how we explain hotkeys and games.

Additionally, I'd say an update script to queue the topic is unnecessary, a new prompt will draw attention in unseen anyway.

@multimokia I am assigning this to you but you can triage as you see fit. Just get this in before next release.

|

1.0

|

In-game explanation of gifting mechanic - A quick search of code shows no instance of "characters folder" or "characters/" in any dialogue relating to gifting, which implies there is no in-game explanation of the gifting mechanic. This is probably why new players don't understand or miss out on things as it was initially introduced in a 9-22 event as an external file.

We should have some in-game, repeatable explanation of this mechanic (story-events, with a say prompt like "How do I gift you things?"). This is especially important given consumables.

This should be also noted in intro, like how we explain hotkeys and games.

Additionally, I'd say an update script to queue the topic is unnecessary, a new prompt will draw attention in unseen anyway.

@multimokia I am assigning this to you but you can triage as you see fit. Just get this in before next release.

|

priority

|

in game explanation of gifting mechanic a quick search of code shows no instance of characters folder or characters in any dialogue relating to gifting which implies there is no in game explanation of the gifting mechanic this is probably why new players don t understand or miss out on things as it was initially introduced in a event as an external file we should have some in game repeatable explanation of this mechanic story events with a say prompt like how do i gift you things this is especially important given consumables this should be also noted in intro like how we explain hotkeys and games additionally i d say an update script to queue the topic is unnecessary a new prompt will draw attention in unseen anyway multimokia i am assigning this to you but you can triage as you see fit just get this in before next release

| 1

|

321,773

| 9,808,860,529

|

IssuesEvent

|

2019-06-12 16:31:52

|

wso2/product-is

|

https://api.github.com/repos/wso2/product-is

|

opened

|

IS : Custom claims lost when updating user profile

|

Complexity/High Component/OAuth Priority/High

|

Moved from: https://wso2.org/jira/browse/IDENTITY-7335

Hi WSO2 team,

WSO2 Identity Server offers some extension points for instance ability to add custom claims.

This is normally donc by writing a custom claim handler as described in one wso2 post :

http://pushpalankajaya.blogspot.com/2014/07/adding-custom-claims-to-saml-response.html

When using WSO2 OAuth2.0 Playground sample and OAuth2.0 Authorization code grant type, custom claim handler is invoked normally when resource owner is authenticated.

Then at the end of process you get an access token enabling to retrieve, for instance, user info with customs claim ("computed before when custom claim handler is invoked).

If you update some information of user profile and request again user info from user info endpoint with access_token, all the +custom + claims are lost in provided response.

Actually, when you update user profile, information that were previously cached are emptied so when user info endpoint is called with access_token, user claims (and their corresponding values) are computed from userstore perspective only so that why custom claims are lost.

Would it be possible in that scenario of retrieving claims from userstore that custom claim handler would be also called to ensure that customs claims are available in answer?

Regards,

Franck

|

1.0

|

IS : Custom claims lost when updating user profile - Moved from: https://wso2.org/jira/browse/IDENTITY-7335

Hi WSO2 team,

WSO2 Identity Server offers some extension points for instance ability to add custom claims.

This is normally donc by writing a custom claim handler as described in one wso2 post :

http://pushpalankajaya.blogspot.com/2014/07/adding-custom-claims-to-saml-response.html

When using WSO2 OAuth2.0 Playground sample and OAuth2.0 Authorization code grant type, custom claim handler is invoked normally when resource owner is authenticated.

Then at the end of process you get an access token enabling to retrieve, for instance, user info with customs claim ("computed before when custom claim handler is invoked).

If you update some information of user profile and request again user info from user info endpoint with access_token, all the +custom + claims are lost in provided response.

Actually, when you update user profile, information that were previously cached are emptied so when user info endpoint is called with access_token, user claims (and their corresponding values) are computed from userstore perspective only so that why custom claims are lost.

Would it be possible in that scenario of retrieving claims from userstore that custom claim handler would be also called to ensure that customs claims are available in answer?

Regards,

Franck

|

priority

|

is custom claims lost when updating user profile moved from hi team identity server offers some extension points for instance ability to add custom claims this is normally donc by writing a custom claim handler as described in one post when using playground sample and authorization code grant type custom claim handler is invoked normally when resource owner is authenticated then at the end of process you get an access token enabling to retrieve for instance user info with customs claim computed before when custom claim handler is invoked if you update some information of user profile and request again user info from user info endpoint with access token all the custom claims are lost in provided response actually when you update user profile information that were previously cached are emptied so when user info endpoint is called with access token user claims and their corresponding values are computed from userstore perspective only so that why custom claims are lost would it be possible in that scenario of retrieving claims from userstore that custom claim handler would be also called to ensure that customs claims are available in answer regards franck

| 1

|

567,375

| 16,857,149,375

|

IssuesEvent

|

2021-06-21 08:19:34

|

FEDMix/eshmun

|

https://api.github.com/repos/FEDMix/eshmun

|

closed

|

Orthogonal view to view DICOM Images

|

Brachytherapy FEDmix High Priority Modir User Story

|

## Story

As a Clinician

I want to view DICOM images of a selected patient

So that I can see a preview of the choice I am trying to make

## Proposed work

Within the application it should be possible to view DICOM images.

There should be 3 views that are orthogonal to each other (x, y and z directions).

The user should be able to pan and zoom the views.

## Acceptance Criteria

- [x] 3 Orthogonal Views

- [x] Panning & Zooming

## Designs

|

1.0

|

Orthogonal view to view DICOM Images - ## Story

As a Clinician

I want to view DICOM images of a selected patient

So that I can see a preview of the choice I am trying to make

## Proposed work

Within the application it should be possible to view DICOM images.

There should be 3 views that are orthogonal to each other (x, y and z directions).

The user should be able to pan and zoom the views.

## Acceptance Criteria

- [x] 3 Orthogonal Views

- [x] Panning & Zooming

## Designs

|

priority

|

orthogonal view to view dicom images story as a clinician i want to view dicom images of a selected patient so that i can see a preview of the choice i am trying to make proposed work within the application it should be possible to view dicom images there should be views that are orthogonal to each other x y and z directions the user should be able to pan and zoom the views acceptance criteria orthogonal views panning zooming designs

| 1

|

692,450

| 23,735,308,479

|

IssuesEvent

|

2022-08-31 07:33:57

|

vignetteapp/sekai

|

https://api.github.com/repos/vignetteapp/sekai

|

closed

|

Implement a basic rendering system

|

enhancement priority:high

|

As our rendering engine is primarily for 3D, we'd need a basic rendering system that shows 3D models with lighting and shadows.

|

1.0

|

Implement a basic rendering system - As our rendering engine is primarily for 3D, we'd need a basic rendering system that shows 3D models with lighting and shadows.

|

priority

|

implement a basic rendering system as our rendering engine is primarily for we d need a basic rendering system that shows models with lighting and shadows

| 1

|

404,490

| 11,858,245,224

|

IssuesEvent

|

2020-03-25 11:04:21

|

AY1920S2-CS2103T-F10-2/main

|

https://api.github.com/repos/AY1920S2-CS2103T-F10-2/main

|

closed

|

Implement Reminder feature

|

priority.High type.Epic

|

Update the default list view of the Internship Diary to be shown according to urgency of the application, in terms of the application deadline followed by interview(s) date(s).

|

1.0

|

Implement Reminder feature - Update the default list view of the Internship Diary to be shown according to urgency of the application, in terms of the application deadline followed by interview(s) date(s).

|

priority

|

implement reminder feature update the default list view of the internship diary to be shown according to urgency of the application in terms of the application deadline followed by interview s date s

| 1

|

229,631

| 7,582,167,904

|

IssuesEvent

|

2018-04-25 02:29:17

|

Myoats/preprod

|

https://api.github.com/repos/Myoats/preprod

|

opened

|

The Nav on small on medium devices on the should just be the hamburger

|

Highest Priority

|

It should look like this

|

1.0

|

The Nav on small on medium devices on the should just be the hamburger - It should look like this

|

priority

|

the nav on small on medium devices on the should just be the hamburger it should look like this

| 1

|

359,071

| 10,659,685,809

|

IssuesEvent

|

2019-10-18 08:17:45

|

AY1920S1-CS2113T-W13-2/main

|

https://api.github.com/repos/AY1920S1-CS2113T-W13-2/main

|

opened

|

Create Review function for Quiz

|

priority.High type.Task

|

Allows user to review their answers and the correct answer at the end of a quiz session (CLI).

|

1.0

|

Create Review function for Quiz - Allows user to review their answers and the correct answer at the end of a quiz session (CLI).

|

priority

|

create review function for quiz allows user to review their answers and the correct answer at the end of a quiz session cli

| 1

|

86,978

| 3,735,649,127

|

IssuesEvent

|

2016-03-08 13:08:54

|

asterics/AsTeRICS

|

https://api.github.com/repos/asterics/AsTeRICS

|

closed

|

JNativeHook error message when starting two instances of AsTeRICS

|

high priority

|

When starting two instances of AsTeRICS the jnativehook service bundle cannot be activated and an error message is thrown.

The debug console shows the following root exception:

Caused by: java.lang.RuntimeException: C:\Users\mad\AppData\Local\Temp\JNativeHook-1.2.RC2.dll (Der Prozess kann nicht auf die Datei zugreifen, da sie von einem anderen Prozess verwendet wird)

at org.jnativehook.GlobalScreen.loadNativeLibrary(GlobalScreen.java:609)

at org.jnativehook.GlobalScreen.<init>(GlobalScreen.java:86)

at org.jnativehook.GlobalScreen.<clinit>(GlobalScreen.java:67)

... 28 more

Caused by: java.io.FileNotFoundException: C:\Users\mad\AppData\Local\Temp\JNativeHook-1.2.RC2.dll (Der Prozess kann nicht auf die Datei zugreifen, da sie von einem anderen Prozess verwendet wird)

at java.io.FileOutputStream.open(Native Method)

at java.io.FileOutputStream.<init>(FileOutputStream.java:221)

at java.io.FileOutputStream.<init>(FileOutputStream.java:171)

at org.jnativehook.GlobalScreen.loadNativeLibrary(GlobalScreen.java:570)

... 30 more

Obviously the native dll file is busy and cannot be accessed two times.

Possible solutions:

upgrade to newer version of jnativehook, maybe it's already fixed?

workaround:

Manually delete .dll file after startup of bundle.

|

1.0

|

JNativeHook error message when starting two instances of AsTeRICS - When starting two instances of AsTeRICS the jnativehook service bundle cannot be activated and an error message is thrown.

The debug console shows the following root exception:

Caused by: java.lang.RuntimeException: C:\Users\mad\AppData\Local\Temp\JNativeHook-1.2.RC2.dll (Der Prozess kann nicht auf die Datei zugreifen, da sie von einem anderen Prozess verwendet wird)

at org.jnativehook.GlobalScreen.loadNativeLibrary(GlobalScreen.java:609)

at org.jnativehook.GlobalScreen.<init>(GlobalScreen.java:86)

at org.jnativehook.GlobalScreen.<clinit>(GlobalScreen.java:67)

... 28 more

Caused by: java.io.FileNotFoundException: C:\Users\mad\AppData\Local\Temp\JNativeHook-1.2.RC2.dll (Der Prozess kann nicht auf die Datei zugreifen, da sie von einem anderen Prozess verwendet wird)

at java.io.FileOutputStream.open(Native Method)

at java.io.FileOutputStream.<init>(FileOutputStream.java:221)

at java.io.FileOutputStream.<init>(FileOutputStream.java:171)

at org.jnativehook.GlobalScreen.loadNativeLibrary(GlobalScreen.java:570)

... 30 more

Obviously the native dll file is busy and cannot be accessed two times.

Possible solutions:

upgrade to newer version of jnativehook, maybe it's already fixed?

workaround:

Manually delete .dll file after startup of bundle.

|

priority

|

jnativehook error message when starting two instances of asterics when starting two instances of asterics the jnativehook service bundle cannot be activated and an error message is thrown the debug console shows the following root exception caused by java lang runtimeexception c users mad appdata local temp jnativehook dll der prozess kann nicht auf die datei zugreifen da sie von einem anderen prozess verwendet wird at org jnativehook globalscreen loadnativelibrary globalscreen java at org jnativehook globalscreen globalscreen java at org jnativehook globalscreen globalscreen java more caused by java io filenotfoundexception c users mad appdata local temp jnativehook dll der prozess kann nicht auf die datei zugreifen da sie von einem anderen prozess verwendet wird at java io fileoutputstream open native method at java io fileoutputstream fileoutputstream java at java io fileoutputstream fileoutputstream java at org jnativehook globalscreen loadnativelibrary globalscreen java more obviously the native dll file is busy and cannot be accessed two times possible solutions upgrade to newer version of jnativehook maybe it s already fixed workaround manually delete dll file after startup of bundle

| 1

|

725,264

| 24,956,556,151

|

IssuesEvent

|

2022-11-01 12:18:11

|

AY2223S1-CS2113-T18-1b/tp

|

https://api.github.com/repos/AY2223S1-CS2113-T18-1b/tp

|

closed

|

[PE-D][Tester C] sort should come configurable ordering to make sense - i.e. asc or desc. Otherwise, fix current defaults as some orderings do not make sense

|

priority.High

|

For example, the current sort for review is ascending, which is unusual as most people sort shows by descending rating

<!--session: 1666946737319-de45f0f8-5014-4481-873f-b0f1008853e3--><!--Version: Web v3.4.4-->

-------------

Labels: `type.FeatureFlaw` `severity.Low`

original: winston-lim/ped#7

|

1.0

|

[PE-D][Tester C] sort should come configurable ordering to make sense - i.e. asc or desc. Otherwise, fix current defaults as some orderings do not make sense - For example, the current sort for review is ascending, which is unusual as most people sort shows by descending rating

<!--session: 1666946737319-de45f0f8-5014-4481-873f-b0f1008853e3--><!--Version: Web v3.4.4-->

-------------

Labels: `type.FeatureFlaw` `severity.Low`

original: winston-lim/ped#7

|

priority

|

sort should come configurable ordering to make sense i e asc or desc otherwise fix current defaults as some orderings do not make sense for example the current sort for review is ascending which is unusual as most people sort shows by descending rating labels type featureflaw severity low original winston lim ped

| 1

|

351,271

| 10,514,749,872

|

IssuesEvent

|

2019-09-28 03:17:59

|

xournalpp/xournalpp

|

https://api.github.com/repos/xournalpp/xournalpp

|

closed

|

Crash on opening files.

|

Crash PR available bug confirmed difficulty:easy priority: high

|

**Affects versions :**

- OS: [Linux Mint 19.2 Cinnamon]

- Version of Xournal++ [current master]

**Describe the bug**

Pressing the open button crashes the software

**To Reproduce**

compile with clang (in DEBUG Mode), open xournal++ try to open a file

**Expected behavior**

Do not crash.

fixed it already in a local branch, all `std::strings` in `Settings` are returned by value thus they only live after the line if not assigned. There was a .c_str() call on a temporary which is destructed immediately.

Replaced all returns of a `std::string` by value in `Settings` with a `string const&`.

PR following.

|

1.0

|

Crash on opening files. - **Affects versions :**

- OS: [Linux Mint 19.2 Cinnamon]

- Version of Xournal++ [current master]

**Describe the bug**

Pressing the open button crashes the software

**To Reproduce**

compile with clang (in DEBUG Mode), open xournal++ try to open a file

**Expected behavior**

Do not crash.

fixed it already in a local branch, all `std::strings` in `Settings` are returned by value thus they only live after the line if not assigned. There was a .c_str() call on a temporary which is destructed immediately.

Replaced all returns of a `std::string` by value in `Settings` with a `string const&`.

PR following.

|

priority

|

crash on opening files affects versions os version of xournal describe the bug pressing the open button crashes the software to reproduce compile with clang in debug mode open xournal try to open a file expected behavior do not crash fixed it already in a local branch all std strings in settings are returned by value thus they only live after the line if not assigned there was a c str call on a temporary which is destructed immediately replaced all returns of a std string by value in settings with a string const pr following

| 1

|

204,129

| 7,084,095,754

|

IssuesEvent

|

2018-01-11 04:32:06

|

EFForg/privacybadger

|

https://api.github.com/repos/EFForg/privacybadger

|

reopened

|

Error reports for www.youtube.com

|

broken site high priority

|

We are getting lots of error reports for `www.youtube.com`; it's our most-reported-on domain for the latest Privacy Badger (version 2017.7.24).

Here is some of what our users say:

>video won't load

>Youtube video shows: 'Request blocked by extension'. Fixed by switching on s0.2mdn.net

>video said an extension blocked requests to the server

>Videos laden anfangs nicht - Meldung "Anfragen an den Server wurden durch Erweiterung blockiert"

>Ads don't appear instead it says an extension is blocking the ad content. Ad blocks still appear just without the actual ad.

>preroll Advertisement on Youtube "blocked by an extension".

|

1.0

|

Error reports for www.youtube.com - We are getting lots of error reports for `www.youtube.com`; it's our most-reported-on domain for the latest Privacy Badger (version 2017.7.24).

Here is some of what our users say:

>video won't load

>Youtube video shows: 'Request blocked by extension'. Fixed by switching on s0.2mdn.net

>video said an extension blocked requests to the server

>Videos laden anfangs nicht - Meldung "Anfragen an den Server wurden durch Erweiterung blockiert"

>Ads don't appear instead it says an extension is blocking the ad content. Ad blocks still appear just without the actual ad.

>preroll Advertisement on Youtube "blocked by an extension".

|

priority

|

error reports for we are getting lots of error reports for it s our most reported on domain for the latest privacy badger version here is some of what our users say video won t load youtube video shows request blocked by extension fixed by switching on net video said an extension blocked requests to the server videos laden anfangs nicht meldung anfragen an den server wurden durch erweiterung blockiert ads don t appear instead it says an extension is blocking the ad content ad blocks still appear just without the actual ad preroll advertisement on youtube blocked by an extension

| 1

|

810,681

| 30,254,112,094

|

IssuesEvent

|

2023-07-07 00:00:59

|

hwgilbert16/scholarsome

|

https://api.github.com/repos/hwgilbert16/scholarsome

|

closed

|

Exclude HTML tags from written response answers

|

high priority

|

Written response quiz questions check for exact strings. Cards created using the new rich text editor include `<p>` tags, which are checked for.

These need to be removed before creating a quiz, or we need to filter the set beforehand to exclude these from being quizzed on.

|

1.0

|

Exclude HTML tags from written response answers - Written response quiz questions check for exact strings. Cards created using the new rich text editor include `<p>` tags, which are checked for.

These need to be removed before creating a quiz, or we need to filter the set beforehand to exclude these from being quizzed on.

|

priority

|

exclude html tags from written response answers written response quiz questions check for exact strings cards created using the new rich text editor include tags which are checked for these need to be removed before creating a quiz or we need to filter the set beforehand to exclude these from being quizzed on

| 1

|

727,942

| 25,060,630,893

|

IssuesEvent

|

2022-11-07 00:56:45

|

AY2223S1-CS2103T-W15-4/tp

|

https://api.github.com/repos/AY2223S1-CS2103T-W15-4/tp

|

closed

|

[PE-D][Tester A] Mark INDEX marked 2 students

|

type.Bug priority.High severity.High

|

1. add n/DavId Li

2. add n/Davlid Lin

3. sort t/d

4. mark 1 will pass mastery check for both

5. unmark similarly removes pass for both

<i><video controls><source src="https://raw.githubusercontent.com/KSHan29/ped/main/files/024d6dca-4e30-48ff-a3c0-15dbe2a8bf03.mov" type="video/mp4">Your browser does not support the video tag.</video><br>video:https://raw.githubusercontent.com/KSHan29/ped/main/files/024d6dca-4e30-48ff-a3c0-15dbe2a8bf03.mov</i>

<!--session: 1666944123338-1a1c6713-a846-47ba-a447-d3dd287bc156-->

<!--Version: Web v3.4.4-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: KSHan29/ped#1

|

1.0

|

[PE-D][Tester A] Mark INDEX marked 2 students - 1. add n/DavId Li

2. add n/Davlid Lin

3. sort t/d

4. mark 1 will pass mastery check for both

5. unmark similarly removes pass for both

<i><video controls><source src="https://raw.githubusercontent.com/KSHan29/ped/main/files/024d6dca-4e30-48ff-a3c0-15dbe2a8bf03.mov" type="video/mp4">Your browser does not support the video tag.</video><br>video:https://raw.githubusercontent.com/KSHan29/ped/main/files/024d6dca-4e30-48ff-a3c0-15dbe2a8bf03.mov</i>

<!--session: 1666944123338-1a1c6713-a846-47ba-a447-d3dd287bc156-->

<!--Version: Web v3.4.4-->

-------------

Labels: `severity.Medium` `type.FunctionalityBug`

original: KSHan29/ped#1

|

priority

|

mark index marked students add n david li add n davlid lin sort t d mark will pass mastery check for both unmark similarly removes pass for both your browser does not support the video tag video labels severity medium type functionalitybug original ped

| 1

|

402,408

| 11,809,307,977

|

IssuesEvent

|

2020-03-19 14:46:01

|

wso2/docker-is

|

https://api.github.com/repos/wso2/docker-is

|

closed

|

Use WSO2 product pack downloadable links to binaries available at GitHub release pages

|

Priority/Highest Type/Task

|

**Description:**

Currently, WSO2 product binaries available at JFrog Bintray are used for the Docker image builds.

It has been suggested to use WSO2 product pack downloadable links to binaries available at GitHub product release pages, as the default.

**Affected Product Version:**

Docker resources for WSO2 IAM v5.10.0 and beyond

**Sub Tasks:**

- [x] Integrate to Alpine based Docker resources

- [x] Integrate to CentOS based Docker resources

- [x] Integrate to Ubuntu based Docker resources

- [x] Code/Peer review

|

1.0

|

Use WSO2 product pack downloadable links to binaries available at GitHub release pages - **Description:**

Currently, WSO2 product binaries available at JFrog Bintray are used for the Docker image builds.

It has been suggested to use WSO2 product pack downloadable links to binaries available at GitHub product release pages, as the default.

**Affected Product Version:**

Docker resources for WSO2 IAM v5.10.0 and beyond

**Sub Tasks:**

- [x] Integrate to Alpine based Docker resources

- [x] Integrate to CentOS based Docker resources

- [x] Integrate to Ubuntu based Docker resources

- [x] Code/Peer review

|

priority

|

use product pack downloadable links to binaries available at github release pages description currently product binaries available at jfrog bintray are used for the docker image builds it has been suggested to use product pack downloadable links to binaries available at github product release pages as the default affected product version docker resources for iam and beyond sub tasks integrate to alpine based docker resources integrate to centos based docker resources integrate to ubuntu based docker resources code peer review

| 1

|

205,670

| 7,104,589,060

|

IssuesEvent

|

2018-01-16 10:29:33

|

AnSyn/ansyn

|

https://api.github.com/repos/AnSyn/ansyn

|

closed

|

Bug- shadow mouse- cursor on inactive screen

|

Bug Priority: High Severity: Medium

|

**Current behavior**

When hovering an inactive screen with the cursor- the user can see both the shadow mouse cross and the cursor in the same screen.

**Expected behavior**

the shadow mouse cross should not appear, so the user wont be confused.

**Minimal reproduction of the problem with instructions**

open more then one screen

activate shadow mouse

hover the inactive screen

|

1.0

|

Bug- shadow mouse- cursor on inactive screen -

**Current behavior**

When hovering an inactive screen with the cursor- the user can see both the shadow mouse cross and the cursor in the same screen.

**Expected behavior**

the shadow mouse cross should not appear, so the user wont be confused.

**Minimal reproduction of the problem with instructions**

open more then one screen

activate shadow mouse

hover the inactive screen

|

priority

|

bug shadow mouse cursor on inactive screen current behavior when hovering an inactive screen with the cursor the user can see both the shadow mouse cross and the cursor in the same screen expected behavior the shadow mouse cross should not appear so the user wont be confused minimal reproduction of the problem with instructions open more then one screen activate shadow mouse hover the inactive screen

| 1

|

30,980

| 2,730,528,396

|

IssuesEvent

|

2015-04-16 15:20:01

|

Esri/briefing-book

|

https://api.github.com/repos/Esri/briefing-book

|

closed

|

Comment the codebase

|

bug develop21drop High Priority

|

Aside from the config.js, the majority of the code base isn't commented.

Please provide quality commenting within the code base.

|

1.0

|

Comment the codebase - Aside from the config.js, the majority of the code base isn't commented.

Please provide quality commenting within the code base.

|

priority

|

comment the codebase aside from the config js the majority of the code base isn t commented please provide quality commenting within the code base

| 1

|

481,243

| 13,882,518,034

|

IssuesEvent

|

2020-10-18 07:23:11

|

gileli121/WindowTop

|

https://api.github.com/repos/gileli121/WindowTop

|

closed

|

Modern toolbar UI is not working after saving window configuration on window with Shrink mode

|

bug fixed high-priority

|

A user reported that the new toolbar UI is not showing even when enabling the modern toolbar feature.

I asked the user for the WindowTop.settings file, and he sent it.

I found that the configuration that saved in this file was reproduced the issue.

While debugging the issue, I found that the configuration key that reproduces it is:

```

[WindowsSettings]

=ArrowXPos=0.45

```

It should not be like that. it should be:

```

[WindowsSettings]

some_process.exe=ArrowXPos=0.45

```

I reviewed the code, and according to the code review, this configuration issue is caused in the following scenario:

1. Disable the modern toolbar

2. Shrink some window

3. Resize the shrink box so the arrow button will show when you move to the top area...

4. Drag the arrow button to some another position

5. Unshrink the window or exit WindowTop

At this point, this configuration issue will reproduce.

Because this configuration issue affects only the modern toolbar UI, you will need to enable the modern toolbar UI feature to reproduce it.

Seems that the user did this scenario in older version or he did it before enabaling the modern toolbar feature.

|

1.0

|

Modern toolbar UI is not working after saving window configuration on window with Shrink mode - A user reported that the new toolbar UI is not showing even when enabling the modern toolbar feature.

I asked the user for the WindowTop.settings file, and he sent it.

I found that the configuration that saved in this file was reproduced the issue.

While debugging the issue, I found that the configuration key that reproduces it is:

```

[WindowsSettings]

=ArrowXPos=0.45

```

It should not be like that. it should be:

```

[WindowsSettings]

some_process.exe=ArrowXPos=0.45

```

I reviewed the code, and according to the code review, this configuration issue is caused in the following scenario:

1. Disable the modern toolbar

2. Shrink some window

3. Resize the shrink box so the arrow button will show when you move to the top area...

4. Drag the arrow button to some another position

5. Unshrink the window or exit WindowTop

At this point, this configuration issue will reproduce.

Because this configuration issue affects only the modern toolbar UI, you will need to enable the modern toolbar UI feature to reproduce it.

Seems that the user did this scenario in older version or he did it before enabaling the modern toolbar feature.

|

priority

|

modern toolbar ui is not working after saving window configuration on window with shrink mode a user reported that the new toolbar ui is not showing even when enabling the modern toolbar feature i asked the user for the windowtop settings file and he sent it i found that the configuration that saved in this file was reproduced the issue while debugging the issue i found that the configuration key that reproduces it is arrowxpos it should not be like that it should be some process exe arrowxpos i reviewed the code and according to the code review this configuration issue is caused in the following scenario disable the modern toolbar shrink some window resize the shrink box so the arrow button will show when you move to the top area drag the arrow button to some another position unshrink the window or exit windowtop at this point this configuration issue will reproduce because this configuration issue affects only the modern toolbar ui you will need to enable the modern toolbar ui feature to reproduce it seems that the user did this scenario in older version or he did it before enabaling the modern toolbar feature

| 1

|

804,372

| 29,485,251,542

|

IssuesEvent

|

2023-06-02 09:14:22

|

Avaiga/taipy-core

|

https://api.github.com/repos/Avaiga/taipy-core

|

closed

|

Event: fire an event when scenario name is modified

|

🟧 Priority: High 📈 Improvement

|

**Description**

An event should be fired when scenario name is modified.

|

1.0

|

Event: fire an event when scenario name is modified - **Description**

An event should be fired when scenario name is modified.

|

priority

|

event fire an event when scenario name is modified description an event should be fired when scenario name is modified

| 1

|

708,856

| 24,357,537,377

|

IssuesEvent

|

2022-10-03 08:49:19

|

NethermindEth/nethermind

|

https://api.github.com/repos/NethermindEth/nethermind

|

closed

|

[Peers] When Peers number drop to 0, nethermind have problems to recover

|

high priority stability

|

**Describe the bug**

After some network issues peers number drops to 0. For about 20 minutes it was logging "Waiting for Peers" and nothing interesting happened. After restart of nethermind app, peers came back properly.

**To Reproduce**

Steps to reproduce the behavior:

Disconnect the network for a moment and check if peers dropped to 0 and then recover network connection.

**Expected behavior**

After reconnecting we should be able to recover and find peers back.

**Additional context**

Add any other context about the problem here.

|

1.0

|

[Peers] When Peers number drop to 0, nethermind have problems to recover - **Describe the bug**

After some network issues peers number drops to 0. For about 20 minutes it was logging "Waiting for Peers" and nothing interesting happened. After restart of nethermind app, peers came back properly.

**To Reproduce**

Steps to reproduce the behavior:

Disconnect the network for a moment and check if peers dropped to 0 and then recover network connection.

**Expected behavior**

After reconnecting we should be able to recover and find peers back.

**Additional context**

Add any other context about the problem here.

|

priority

|

when peers number drop to nethermind have problems to recover describe the bug after some network issues peers number drops to for about minutes it was logging waiting for peers and nothing interesting happened after restart of nethermind app peers came back properly to reproduce steps to reproduce the behavior disconnect the network for a moment and check if peers dropped to and then recover network connection expected behavior after reconnecting we should be able to recover and find peers back additional context add any other context about the problem here

| 1

|

321,249

| 9,795,819,627

|

IssuesEvent

|

2019-06-11 05:36:34

|

apache/skywalking

|

https://api.github.com/repos/apache/skywalking

|

opened

|

Time series ElasticSearch implementation bug of record type

|

OAP-backend bug high priority

|

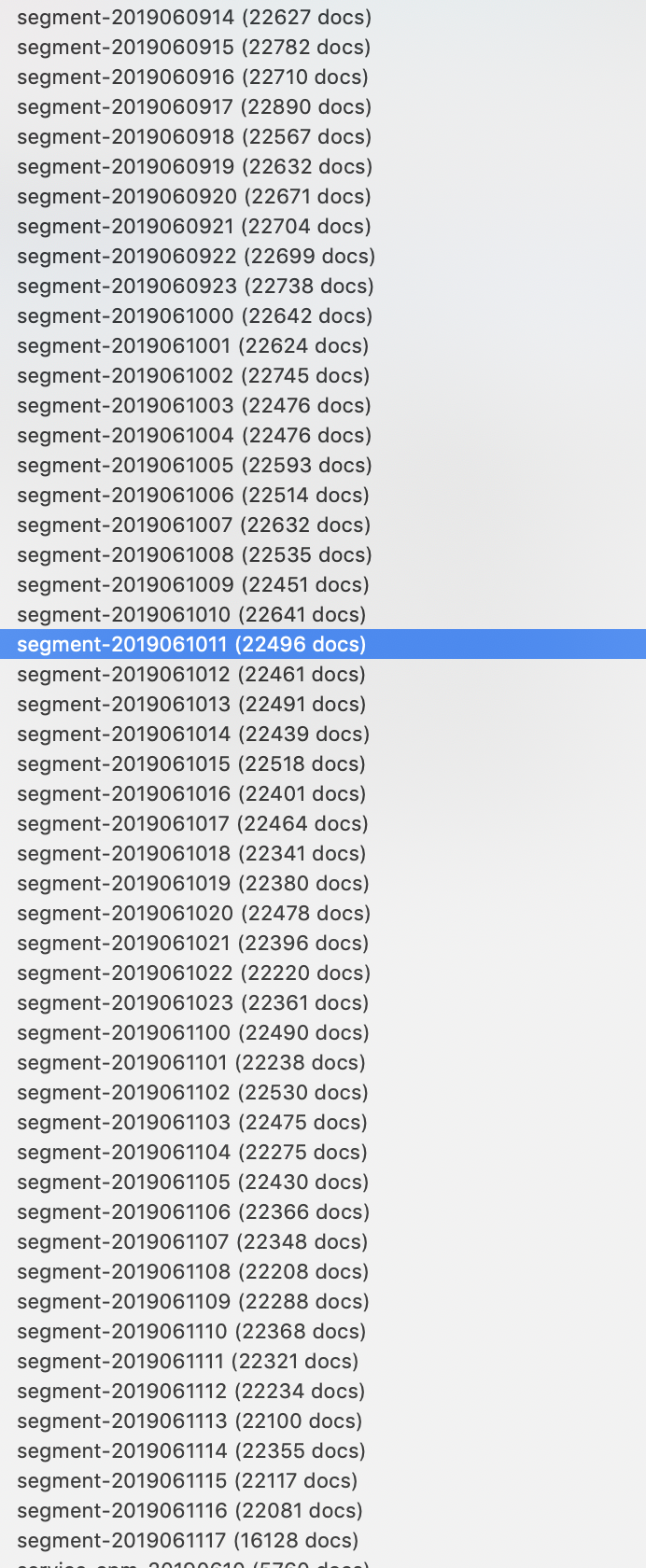

As the following screenshot shows, the segment(also alarm and other record types) don't create index by day, but in hour wrongly.

This needs to be fixed and make sure the TTL works for it(not working today, possible because of wrong table name).

|

1.0

|

Time series ElasticSearch implementation bug of record type - As the following screenshot shows, the segment(also alarm and other record types) don't create index by day, but in hour wrongly.

This needs to be fixed and make sure the TTL works for it(not working today, possible because of wrong table name).

|

priority

|

time series elasticsearch implementation bug of record type as the following screenshot shows the segment also alarm and other record types don t create index by day but in hour wrongly this needs to be fixed and make sure the ttl works for it not working today possible because of wrong table name

| 1

|

409,446

| 11,962,589,959

|

IssuesEvent

|

2020-04-05 13:01:11

|

traffic-control-fyp-aub/ns3-gym

|

https://api.github.com/repos/traffic-control-fyp-aub/ns3-gym

|

closed

|

System Avg Speed [ Performance ]

|

High Priority

|

Study the effect of different vehicle control percentages (incorporate human driver control) on the system wide average speed and whether it increases or decreases.

|

1.0

|

System Avg Speed [ Performance ] - Study the effect of different vehicle control percentages (incorporate human driver control) on the system wide average speed and whether it increases or decreases.

|

priority

|

system avg speed study the effect of different vehicle control percentages incorporate human driver control on the system wide average speed and whether it increases or decreases

| 1

|

440,283

| 12,697,315,600

|

IssuesEvent

|

2020-06-22 11:36:19

|

chiyadev/MudaeFarm

|

https://api.github.com/repos/chiyadev/MudaeFarm

|

closed

|

Bot breaks and spams chat

|

bug high priority

|

At some point the farm falls apart and fails to parse anything Mudaebot sends, even normal rolls. It seems insane to me that MudaeFarm would try every ~30 seconds indefinitely and create hundreds of messages. Unsanitized logs below, timestamp is 2020-06-19T21:59:41.2842398-05:00

[log_2020-06-19 06.22.24Z-20200619.txt](https://github.com/chiyadev/MudaeFarm/files/4807768/log_2020-06-19.06.22.24Z-20200619.txt)

EDIT: I just realized these logs aren't verbose. Given it's been happening every night, I'll have some soon

|

1.0

|

Bot breaks and spams chat - At some point the farm falls apart and fails to parse anything Mudaebot sends, even normal rolls. It seems insane to me that MudaeFarm would try every ~30 seconds indefinitely and create hundreds of messages. Unsanitized logs below, timestamp is 2020-06-19T21:59:41.2842398-05:00

[log_2020-06-19 06.22.24Z-20200619.txt](https://github.com/chiyadev/MudaeFarm/files/4807768/log_2020-06-19.06.22.24Z-20200619.txt)

EDIT: I just realized these logs aren't verbose. Given it's been happening every night, I'll have some soon

|

priority

|

bot breaks and spams chat at some point the farm falls apart and fails to parse anything mudaebot sends even normal rolls it seems insane to me that mudaefarm would try every seconds indefinitely and create hundreds of messages unsanitized logs below timestamp is edit i just realized these logs aren t verbose given it s been happening every night i ll have some soon

| 1

|

678,068

| 23,186,038,094

|

IssuesEvent

|

2022-08-01 08:28:08

|

netdata/netdata-cloud

|

https://api.github.com/repos/netdata/netdata-cloud

|

closed

|

Enhance the Alerts drawer / modal in the Active alerts and Alerts Configuration Page

|

priority/high cloud-frontend cloud-backend alerts-team Q2 GOAL feature request

|

### Problem

The current Alerts drawer / modal does not display the details of node instances where this alert has been raised and how it has been aggregated at the node level.

### Description

The alert drawer is currently being used in the Active Alerts tab and will need to be used also in the "Alerts Configuration" / "Manage Alerts" tab. The drawer / model needs to be enhanced to provide more clear information on the alert itself:

- The Chart relevant to the alert (already available)

- Details of the alert event as a vertical bar with value (already available for Warning and Critical) also for the Clear state.

- Table with Node instances where the alert was raised (with criticality)

- Alert configurations in a more easily readable and understandable way for each node instance

- CTA to view the alert configuration at the node level (on a dedicated page?)

This can possibly be extended to also show historical logs relevant to the specific alert within the drawer.

cc: @ktsaou @amalkov @vinnygats @car12o @YaroslavDev @novykh @jacekkolasa

### Importance

must have

### Value proposition

1. The current alert drawer does not convey a clear description of the alert

2. The user will need to see details at the node instance level

3. Possible future extension to also show the historical logs relevant to the alert

...

### Proposed implementation

_No response_

|

1.0

|

Enhance the Alerts drawer / modal in the Active alerts and Alerts Configuration Page - ### Problem

The current Alerts drawer / modal does not display the details of node instances where this alert has been raised and how it has been aggregated at the node level.

### Description

The alert drawer is currently being used in the Active Alerts tab and will need to be used also in the "Alerts Configuration" / "Manage Alerts" tab. The drawer / model needs to be enhanced to provide more clear information on the alert itself:

- The Chart relevant to the alert (already available)

- Details of the alert event as a vertical bar with value (already available for Warning and Critical) also for the Clear state.

- Table with Node instances where the alert was raised (with criticality)

- Alert configurations in a more easily readable and understandable way for each node instance

- CTA to view the alert configuration at the node level (on a dedicated page?)

This can possibly be extended to also show historical logs relevant to the specific alert within the drawer.

cc: @ktsaou @amalkov @vinnygats @car12o @YaroslavDev @novykh @jacekkolasa

### Importance

must have

### Value proposition

1. The current alert drawer does not convey a clear description of the alert

2. The user will need to see details at the node instance level

3. Possible future extension to also show the historical logs relevant to the alert

...

### Proposed implementation

_No response_

|

priority

|

enhance the alerts drawer modal in the active alerts and alerts configuration page problem the current alerts drawer modal does not display the details of node instances where this alert has been raised and how it has been aggregated at the node level description the alert drawer is currently being used in the active alerts tab and will need to be used also in the alerts configuration manage alerts tab the drawer model needs to be enhanced to provide more clear information on the alert itself the chart relevant to the alert already available details of the alert event as a vertical bar with value already available for warning and critical also for the clear state table with node instances where the alert was raised with criticality alert configurations in a more easily readable and understandable way for each node instance cta to view the alert configuration at the node level on a dedicated page this can possibly be extended to also show historical logs relevant to the specific alert within the drawer cc ktsaou amalkov vinnygats yaroslavdev novykh jacekkolasa importance must have value proposition the current alert drawer does not convey a clear description of the alert the user will need to see details at the node instance level possible future extension to also show the historical logs relevant to the alert proposed implementation no response

| 1

|

528,619

| 15,370,896,137

|

IssuesEvent

|

2021-03-02 09:22:16

|

enthought/enable

|

https://api.github.com/repos/enthought/enable

|

closed

|

draw_rect behaves weirdly with QPainter backend

|

priority: high type: bug

|

|agg|QPainter|

|---|---|

|<img width="256" alt="kiva agg draw_rect 2x" src="https://user-images.githubusercontent.com/1926457/109619792-1623f000-7b31-11eb-90e4-b5590aba5d9c.png">|<img width="256" alt="qpainter draw_rect 2x" src="https://user-images.githubusercontent.com/1926457/109619815-18864a00-7b31-11eb-900d-cae1da311d98.png">|

The above images have been generated using the new `enable.gcbench` on windows with PyQt5.

|

1.0

|

draw_rect behaves weirdly with QPainter backend - |agg|QPainter|

|---|---|

|<img width="256" alt="kiva agg draw_rect 2x" src="https://user-images.githubusercontent.com/1926457/109619792-1623f000-7b31-11eb-90e4-b5590aba5d9c.png">|<img width="256" alt="qpainter draw_rect 2x" src="https://user-images.githubusercontent.com/1926457/109619815-18864a00-7b31-11eb-900d-cae1da311d98.png">|

The above images have been generated using the new `enable.gcbench` on windows with PyQt5.

|

priority

|

draw rect behaves weirdly with qpainter backend agg qpainter img width alt kiva agg draw rect src width alt qpainter draw rect src the above images have been generated using the new enable gcbench on windows with

| 1

|

413,788

| 12,092,155,253

|

IssuesEvent

|

2020-04-19 14:35:40

|

lorenzwalthert/precommit

|

https://api.github.com/repos/lorenzwalthert/precommit

|

closed

|

Allow to choose installation environment

|

Complexity: Medium Priority: High Status: WIP Type: Enhancement

|

As mentioned in [#113 ](https://github.com/lorenzwalthert/precommit/issues/113#issuecomment-603808455). Recently I've tried to run `keras::install_keras()` inside the Docker image with already installed precommit. Looks like both keras and tensorflow are now [installed by default to `r-reticulate`](https://github.com/rstudio/keras/issues/1014).

This results in the following error:

>Collecting package metadata (current_repodata.json): ...working... done

Solving environment: ...working... failed with initial frozen solve. Retrying with flexible solve.

Solving environment: ...working... failed with repodata from current_repodata.json, will retry with next repodata source.

Collecting package metadata (repodata.json): ...working... done

Solving environment: ...working... failed with initial frozen solve. Retrying with flexible solve.

Examining setuptools: 7%|▋ | 2/30 [00:00<00:00, 2603.54it/s]

Comparing specs that have this dependency: 0%| | 0/15 [00:00<?, ?it/s]

Finding conflict paths: 0%| | 0/2 [00:00<?, ?it/s]

Finding shortest conflict path for setuptools: 0%| | 0/2 [00:00<?, ?it/s]

Finding shortest conflict path for setuptools: 50%|█████ | 1/2 [00:01<00:01, 1.99s/it]

Finding shortest conflict path for setuptools: 100%|██████████| 2/2 [00:01<00:00, 1.01it/s]

...truncated...

|

1.0

|

Allow to choose installation environment - As mentioned in [#113 ](https://github.com/lorenzwalthert/precommit/issues/113#issuecomment-603808455). Recently I've tried to run `keras::install_keras()` inside the Docker image with already installed precommit. Looks like both keras and tensorflow are now [installed by default to `r-reticulate`](https://github.com/rstudio/keras/issues/1014).

This results in the following error:

>Collecting package metadata (current_repodata.json): ...working... done

Solving environment: ...working... failed with initial frozen solve. Retrying with flexible solve.

Solving environment: ...working... failed with repodata from current_repodata.json, will retry with next repodata source.

Collecting package metadata (repodata.json): ...working... done

Solving environment: ...working... failed with initial frozen solve. Retrying with flexible solve.

Examining setuptools: 7%|▋ | 2/30 [00:00<00:00, 2603.54it/s]

Comparing specs that have this dependency: 0%| | 0/15 [00:00<?, ?it/s]

Finding conflict paths: 0%| | 0/2 [00:00<?, ?it/s]

Finding shortest conflict path for setuptools: 0%| | 0/2 [00:00<?, ?it/s]

Finding shortest conflict path for setuptools: 50%|█████ | 1/2 [00:01<00:01, 1.99s/it]

Finding shortest conflict path for setuptools: 100%|██████████| 2/2 [00:01<00:00, 1.01it/s]

...truncated...

|

priority

|

allow to choose installation environment as mentioned in recently i ve tried to run keras install keras inside the docker image with already installed precommit looks like both keras and tensorflow are now this results in the following error collecting package metadata current repodata json working done solving environment working failed with initial frozen solve retrying with flexible solve solving environment working failed with repodata from current repodata json will retry with next repodata source collecting package metadata repodata json working done solving environment working failed with initial frozen solve retrying with flexible solve examining setuptools ▋ comparing specs that have this dependency finding conflict paths finding shortest conflict path for setuptools finding shortest conflict path for setuptools █████ finding shortest conflict path for setuptools ██████████ truncated

| 1

|

813,717

| 30,469,039,169

|

IssuesEvent

|

2023-07-17 12:29:53

|

tinkoff-ai/etna

|

https://api.github.com/repos/tinkoff-ai/etna

|

opened

|

LimitTransform

|

enhancement priority/high

|

### 🚀 Feature Request

Create a transform that limits values of some feature between the borders.

### Proposal

Create `LimitTransform`.

Parameters:

- `in_column`: column to make transformation on;

- `lower_bound`: lower bound for the value of the column; -infty by default;

- `upper_bound`: upper bound for the value of the column; +infty by default;

- If there is value out of limit the exception should be raised.

- NaNs should be ignored.

Reference: [Ensure time series forecasts stay within limits](https://datasciencestunt.com/time-series-forecasting-within-limits/).

To discuss:

- Should this transform work for non-target column?

- Should this transform have `inplace` parameter for working in non-inplace mode?

### Test cases

What should be checked:

- Working on target / non-target column

- Working with set/unset lower/upper values

- Exception on out-of-limit value

- Full pipeline that predicts some arbitrary values can be used

Don't forget to add inference tests into `tests/test_models/test_inference/`.

### Additional context

_No response_

|

1.0

|

LimitTransform - ### 🚀 Feature Request

Create a transform that limits values of some feature between the borders.

### Proposal

Create `LimitTransform`.

Parameters:

- `in_column`: column to make transformation on;

- `lower_bound`: lower bound for the value of the column; -infty by default;

- `upper_bound`: upper bound for the value of the column; +infty by default;

- If there is value out of limit the exception should be raised.

- NaNs should be ignored.

Reference: [Ensure time series forecasts stay within limits](https://datasciencestunt.com/time-series-forecasting-within-limits/).

To discuss:

- Should this transform work for non-target column?

- Should this transform have `inplace` parameter for working in non-inplace mode?

### Test cases

What should be checked:

- Working on target / non-target column

- Working with set/unset lower/upper values

- Exception on out-of-limit value

- Full pipeline that predicts some arbitrary values can be used

Don't forget to add inference tests into `tests/test_models/test_inference/`.

### Additional context

_No response_

|

priority

|

limittransform 🚀 feature request create a transform that limits values of some feature between the borders proposal create limittransform parameters in column column to make transformation on lower bound lower bound for the value of the column infty by default upper bound upper bound for the value of the column infty by default if there is value out of limit the exception should be raised nans should be ignored reference to discuss should this transform work for non target column should this transform have inplace parameter for working in non inplace mode test cases what should be checked working on target non target column working with set unset lower upper values exception on out of limit value full pipeline that predicts some arbitrary values can be used don t forget to add inference tests into tests test models test inference additional context no response

| 1

|

480,829

| 13,876,853,552

|

IssuesEvent

|

2020-10-17 01:02:37

|

webiny/webiny-js

|

https://api.github.com/repos/webiny/webiny-js

|

closed

|

Headless CMS - Access Token unable to access listContentModels

|

bug cms no-issue-activity priority: high

|

This is:

- Bug

## Expected Behavior

Using Access Token with /read API should allow me to use the `listConentModels` field.

## Actual Behavior

Throws `Not Authorized` error.

|

1.0

|

Headless CMS - Access Token unable to access listContentModels - This is:

- Bug

## Expected Behavior

Using Access Token with /read API should allow me to use the `listConentModels` field.

## Actual Behavior

Throws `Not Authorized` error.

|

priority

|

headless cms access token unable to access listcontentmodels this is bug expected behavior using access token with read api should allow me to use the listconentmodels field actual behavior throws not authorized error

| 1

|

420,768

| 12,243,764,121

|

IssuesEvent

|

2020-05-05 09:50:43

|

pokt-network/pocket-core

|

https://api.github.com/repos/pokt-network/pocket-core

|

opened

|

Remove HTTP Round Trip for Queries

|

enhancement high priority optimization performance

|

**Is your feature request related to a problem? Please describe.**

Remove HTTP calls for queries, change to a programmatic interface. Remove all QueryWithData calls and replace them with a direct programmatic interface.

**This is high priority for relays/dispatches only**

|

1.0

|

Remove HTTP Round Trip for Queries - **Is your feature request related to a problem? Please describe.**

Remove HTTP calls for queries, change to a programmatic interface. Remove all QueryWithData calls and replace them with a direct programmatic interface.

**This is high priority for relays/dispatches only**

|

priority

|

remove http round trip for queries is your feature request related to a problem please describe remove http calls for queries change to a programmatic interface remove all querywithdata calls and replace them with a direct programmatic interface this is high priority for relays dispatches only

| 1

|

443,215

| 12,761,475,610

|

IssuesEvent

|

2020-06-29 11:32:29

|

getkirby/kirby

|

https://api.github.com/repos/getkirby/kirby

|

closed

|

CURLOPT_SSL_VERIFYPEER disabled in Remote::fetch()

|

priority: high 🔥 type: bug 🐛

|

Hello,

I just took a look at `Remote::fetch()` due to some forum question and I noticed that `CURLOPT_SSL_VERIFYPEER` is set to `FALSE` by default. Is there a reason why this security feature is disabled by default? I would have expected SSL cert validation to be enabled by default or to find some information why this is disabled but I found nothing about this.

See this line: https://github.com/getkirby/kirby/blob/6bd14bc8099d6c9dbe67ef0582b8c0a2d4c0888c/src/Http/Remote.php#L152

I'm sorry if this should have been posted in the forum or if it's an obvious misunderstanding on my side.

Best Regards,

|

1.0

|

CURLOPT_SSL_VERIFYPEER disabled in Remote::fetch() - Hello,

I just took a look at `Remote::fetch()` due to some forum question and I noticed that `CURLOPT_SSL_VERIFYPEER` is set to `FALSE` by default. Is there a reason why this security feature is disabled by default? I would have expected SSL cert validation to be enabled by default or to find some information why this is disabled but I found nothing about this.

See this line: https://github.com/getkirby/kirby/blob/6bd14bc8099d6c9dbe67ef0582b8c0a2d4c0888c/src/Http/Remote.php#L152

I'm sorry if this should have been posted in the forum or if it's an obvious misunderstanding on my side.

Best Regards,

|

priority

|

curlopt ssl verifypeer disabled in remote fetch hello i just took a look at remote fetch due to some forum question and i noticed that curlopt ssl verifypeer is set to false by default is there a reason why this security feature is disabled by default i would have expected ssl cert validation to be enabled by default or to find some information why this is disabled but i found nothing about this see this line i m sorry if this should have been posted in the forum or if it s an obvious misunderstanding on my side best regards

| 1

|

684,578

| 23,422,835,725

|

IssuesEvent

|

2022-08-14 00:28:54

|

ChaosInitiative/Portal-2-Community-Edition

|

https://api.github.com/repos/ChaosInitiative/Portal-2-Community-Edition

|

closed

|

Bug: Multiplayer players spawn at info_player_start instead of info_coop_spawn

|

Type: bug Component: gameplay Priority 2: High Status: resolved Focus: Co-Op Size 5: Tiny

|

### Describe the bug

Self-explanatory...

This is in the most recent nightly build. Despite the issue being super obvious, I didn't hear anyone talk about it yet so I just wanted to make an issue for it.

Happens regardless of player team.

### Issue Map

All maps.

### To Reproduce

1. Set ConVar **mp_dev_wait_for_other_player** to 0

2. Start a multiplayer game through **map mp_coop_2paints_1bridge**

3. Player spawns at info_player_start

### Operating System

Tested on Windows 10

|

1.0

|

Bug: Multiplayer players spawn at info_player_start instead of info_coop_spawn - ### Describe the bug

Self-explanatory...

This is in the most recent nightly build. Despite the issue being super obvious, I didn't hear anyone talk about it yet so I just wanted to make an issue for it.

Happens regardless of player team.

### Issue Map

All maps.

### To Reproduce

1. Set ConVar **mp_dev_wait_for_other_player** to 0

2. Start a multiplayer game through **map mp_coop_2paints_1bridge**

3. Player spawns at info_player_start

### Operating System

Tested on Windows 10

|

priority

|

bug multiplayer players spawn at info player start instead of info coop spawn describe the bug self explanatory this is in the most recent nightly build despite the issue being super obvious i didn t hear anyone talk about it yet so i just wanted to make an issue for it happens regardless of player team issue map all maps to reproduce set convar mp dev wait for other player to start a multiplayer game through map mp coop player spawns at info player start operating system tested on windows

| 1

|

312,831

| 9,553,708,880

|

IssuesEvent

|

2019-05-02 20:00:17

|

eJourn-al/eJournal

|

https://api.github.com/repos/eJourn-al/eJournal

|

closed

|

Non-required file upload throws an error when left empty

|

Priority: High Status: Review Needed Type: Bug Workload: Low

|

**Describe the bug**

The back end always denies requests that contain `None` for a file upload field.

**To Reproduce**

Steps to reproduce the behavior:

1. Make a template with a non-required file upload field

2. Try to post it as a student

3. Observe that the server returns a bad request stating `One of your files was not correctly uploaded, please try gain.`

**Expected behavior**

Non-required fields to be non-required.

|

1.0

|

Non-required file upload throws an error when left empty - **Describe the bug**

The back end always denies requests that contain `None` for a file upload field.

**To Reproduce**

Steps to reproduce the behavior:

1. Make a template with a non-required file upload field

2. Try to post it as a student

3. Observe that the server returns a bad request stating `One of your files was not correctly uploaded, please try gain.`

**Expected behavior**

Non-required fields to be non-required.

|

priority

|

non required file upload throws an error when left empty describe the bug the back end always denies requests that contain none for a file upload field to reproduce steps to reproduce the behavior make a template with a non required file upload field try to post it as a student observe that the server returns a bad request stating one of your files was not correctly uploaded please try gain expected behavior non required fields to be non required

| 1

|

668,301

| 22,577,064,654

|

IssuesEvent

|

2022-06-28 08:19:23

|

freesewing/freesewing

|

https://api.github.com/repos/freesewing/freesewing

|

closed

|

Ursula is not drafting in 2.21.0

|

:bug: bug :rotating_light: high priority :package: ursula

|

With either inputed measures or the "standard measurses* Ursula is not drafting in 2.21.0. I'm not 100 but I suspect this is the splitting packages folders.

|

1.0

|

Ursula is not drafting in 2.21.0 - With either inputed measures or the "standard measurses* Ursula is not drafting in 2.21.0. I'm not 100 but I suspect this is the splitting packages folders.

|

priority

|

ursula is not drafting in with either inputed measures or the standard measurses ursula is not drafting in i m not but i suspect this is the splitting packages folders

| 1

|

259,855

| 8,200,702,870

|

IssuesEvent

|

2018-09-01 08:05:08

|

marvinlabs/customer-area

|

https://api.github.com/repos/marvinlabs/customer-area

|

opened

|

Bug in reset password

|

Priority - high bug

|

Some keys are generated with special characters (e.g. `1535687074:$P$Bdz168aJBFAFUOaQpZZHK6oArvdxDk0` ) but the add-on code seems to be removing those characters **before** comparison with in-db user key.

|

1.0

|

Bug in reset password - Some keys are generated with special characters (e.g. `1535687074:$P$Bdz168aJBFAFUOaQpZZHK6oArvdxDk0` ) but the add-on code seems to be removing those characters **before** comparison with in-db user key.

|

priority

|

bug in reset password some keys are generated with special characters e g p but the add on code seems to be removing those characters before comparison with in db user key

| 1

|

619,297

| 19,521,364,126

|

IssuesEvent

|

2021-12-29 19:09:51

|

Cotalker/documentation

|

https://api.github.com/repos/Cotalker/documentation

|

closed

|

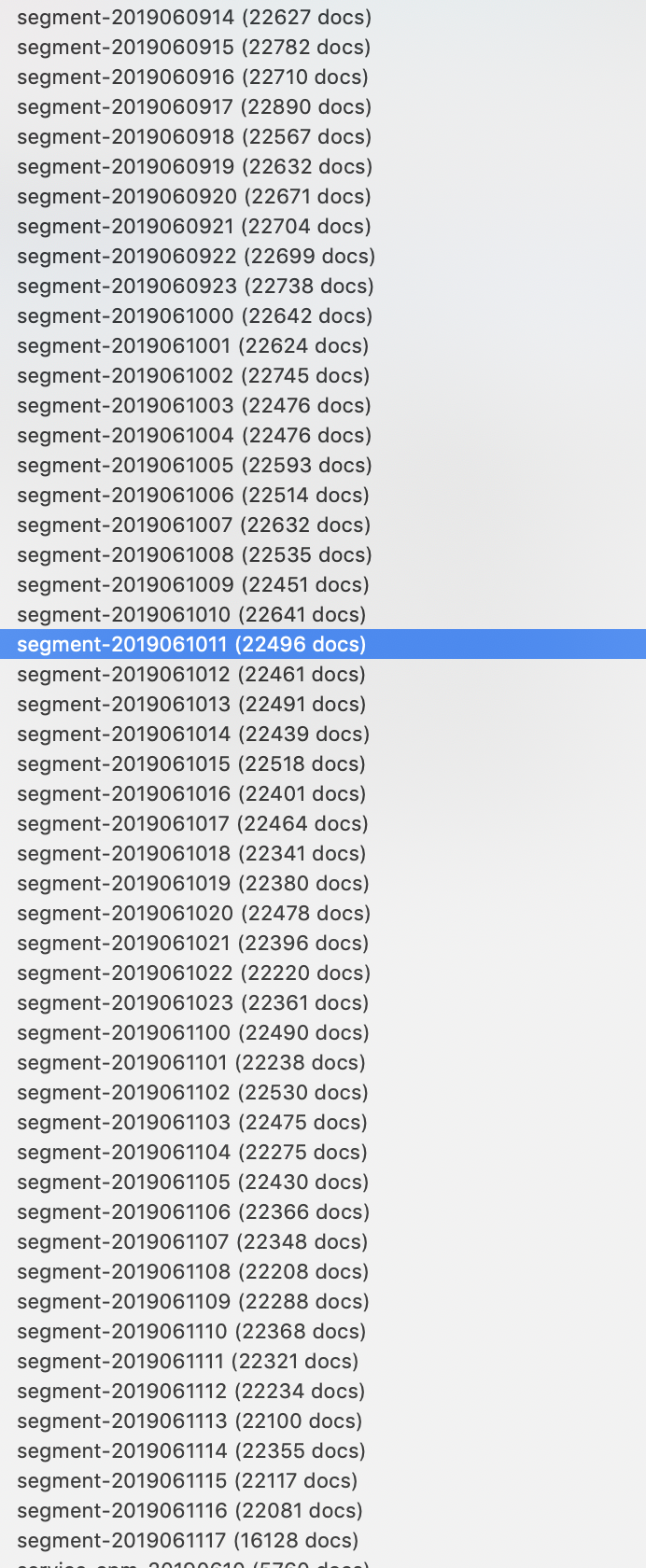

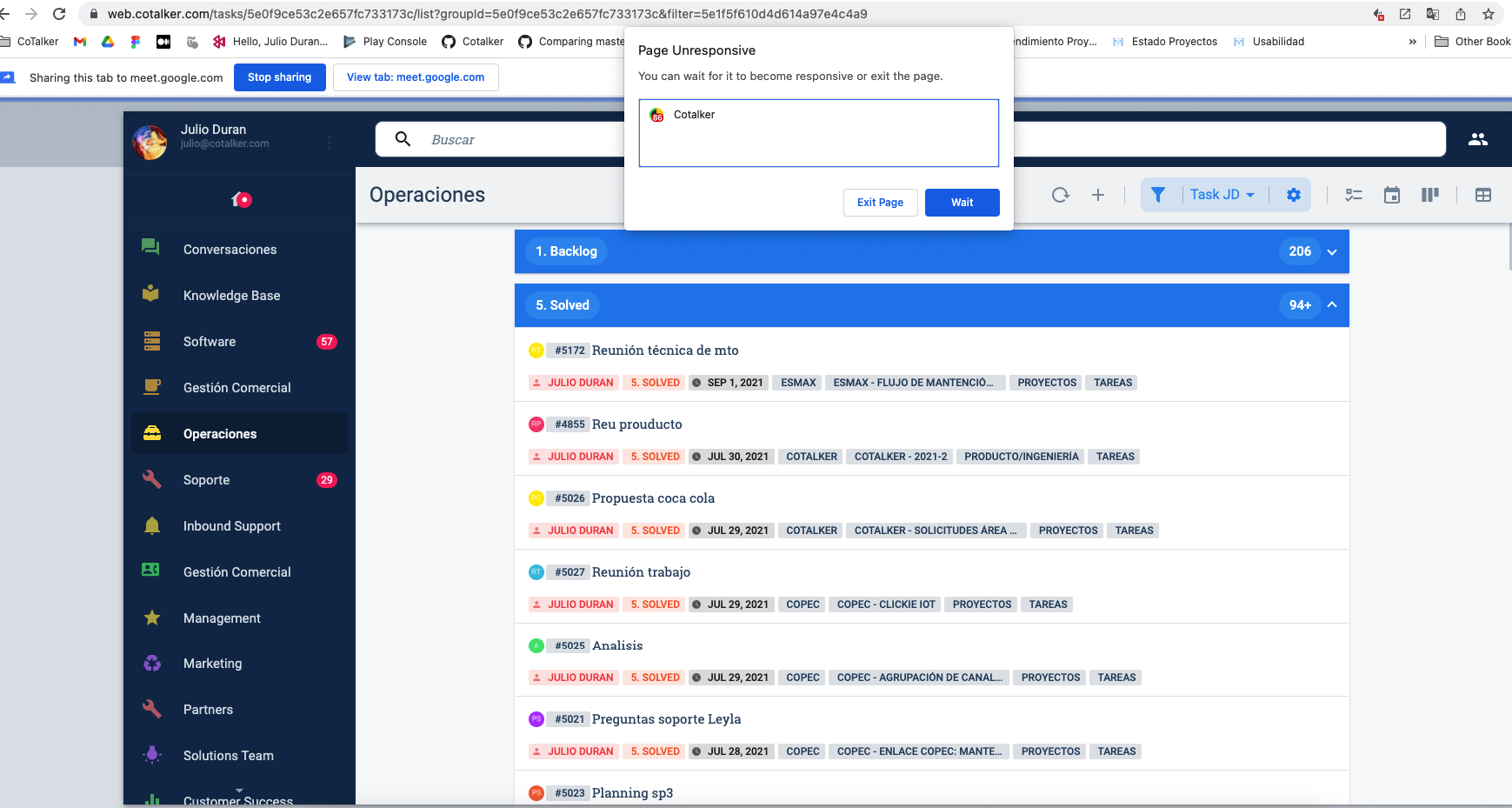

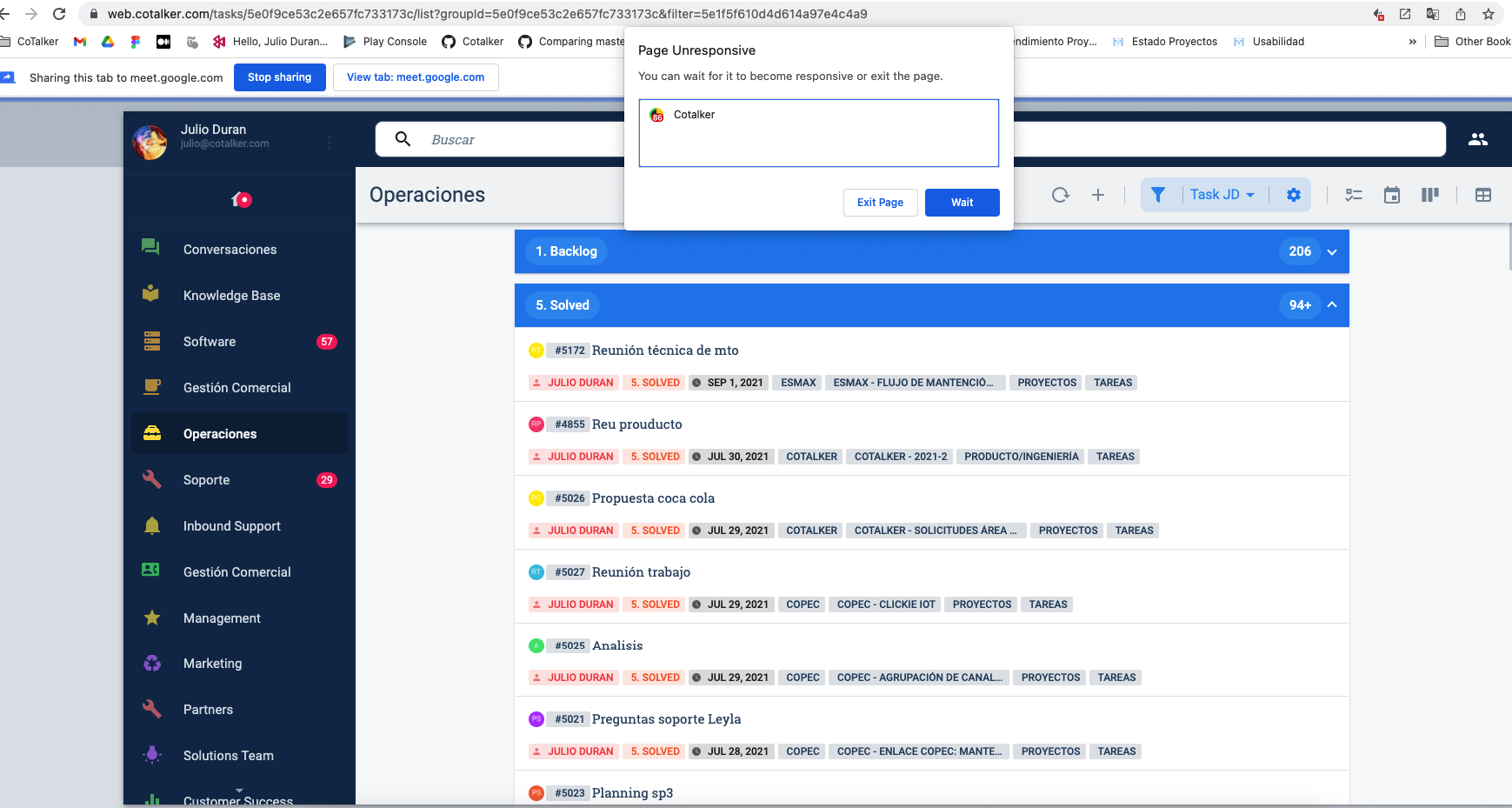

Bug report: Vista de task se pega al cargar

|

Bug report Bug high priority Bug rejected

|

### Affected system

Cotalker Web Application

### Affected system (other)

_No response_

### Affected environment

Production

### Affected environment (other)

_No response_

### App version

17.5.8

### Details

Al cargar la vista de task, se queda la pestaña de chrome pegada

** No permite desplegar sección **

### Steps to reproduce

Abrir vista de task en un flujo de trabajo que tenga múltiple task

### Expected result

Que no se pegue la página y se carguen de forma correcta las tasks.

### Additional data

- Company: Cotalker

- Group : Operaciones (sub flujo optask)

|

1.0

|

Bug report: Vista de task se pega al cargar - ### Affected system

Cotalker Web Application

### Affected system (other)

_No response_

### Affected environment

Production

### Affected environment (other)

_No response_

### App version

17.5.8

### Details

Al cargar la vista de task, se queda la pestaña de chrome pegada

** No permite desplegar sección **

### Steps to reproduce

Abrir vista de task en un flujo de trabajo que tenga múltiple task

### Expected result

Que no se pegue la página y se carguen de forma correcta las tasks.

### Additional data

- Company: Cotalker

- Group : Operaciones (sub flujo optask)

|

priority

|

bug report vista de task se pega al cargar affected system cotalker web application affected system other no response affected environment production affected environment other no response app version details al cargar la vista de task se queda la pestaña de chrome pegada no permite desplegar sección steps to reproduce abrir vista de task en un flujo de trabajo que tenga múltiple task expected result que no se pegue la página y se carguen de forma correcta las tasks additional data company cotalker group operaciones sub flujo optask

| 1

|

105,749

| 4,241,211,507

|

IssuesEvent

|

2016-07-06 15:40:25

|

ccswbs/hjckrrh

|

https://api.github.com/repos/ccswbs/hjckrrh

|

closed

|

G- Establish default front page layouts for pages

|

feature: general (G) priority: high type: enhancement request

|

This should be specified (documented requirements)- see Trello card- G14-#240

https://trello.com/c/ZkCKfdnw

|

1.0

|

G- Establish default front page layouts for pages - This should be specified (documented requirements)- see Trello card- G14-#240

https://trello.com/c/ZkCKfdnw

|

priority

|

g establish default front page layouts for pages this should be specified documented requirements see trello card

| 1

|

667,161

| 22,420,269,662

|

IssuesEvent

|

2022-06-20 01:45:26

|

portefaix/portefaix

|

https://api.github.com/repos/portefaix/portefaix

|

closed

|

AKS: Ingress Application Gateway

|

priority/high kind/feature area/terraform lifecycle/stale lifecycle/frozen cloud/azure todo

|

AKS: Ingress Application Gateway

- https://github.com/Azure/terraform-azurerm-aks/pull/99

enable_ingress_application_gateway = true

ingress_application_gateway_gateway_name =

ingress_application_gateway_subnet_cidr =

ingress_application_gateway_subnet_id =

https://github.com/portefaix/portefaix/blob/739c1d3f62ac9ddb95d4c2cfd684529098216e8c/terraform/azure/aks/modules/aks/aks.tf#L53

```ruby

# rbac_aad_managed = false

# rbac_aad_admin_group_object_ids = var.admin_group_object_ids

enable_log_analytics_workspace = false

enable_auto_scaling = var.enable_auto_scaling

enable_kube_dashboard = var.enable_kube_dashboard

enable_azure_policy = var.enable_azure_policy

enable_http_application_routing = var.enable_http_application_routing

# TODO: AKS: Ingress Application Gateway

# labels: kind/feature, priority/high, lifecycle/frozen, area/terraform, cloud/azure

# https://github.com/Azure/terraform-azurerm-aks/pull/99

# enable_ingress_application_gateway = true

# ingress_application_gateway_gateway_name =

# ingress_application_gateway_subnet_cidr =

# ingress_application_gateway_subnet_id =

os_disk_size_gb = var.os_disk_size_gb

agents_min_count = var.agents_min_count

```

afce5cfdca8a7775d0e82c8e7e9387f68d5e7736

|

1.0

|

AKS: Ingress Application Gateway - AKS: Ingress Application Gateway

- https://github.com/Azure/terraform-azurerm-aks/pull/99

enable_ingress_application_gateway = true

ingress_application_gateway_gateway_name =

ingress_application_gateway_subnet_cidr =

ingress_application_gateway_subnet_id =

https://github.com/portefaix/portefaix/blob/739c1d3f62ac9ddb95d4c2cfd684529098216e8c/terraform/azure/aks/modules/aks/aks.tf#L53

```ruby

# rbac_aad_managed = false

# rbac_aad_admin_group_object_ids = var.admin_group_object_ids

enable_log_analytics_workspace = false

enable_auto_scaling = var.enable_auto_scaling

enable_kube_dashboard = var.enable_kube_dashboard

enable_azure_policy = var.enable_azure_policy

enable_http_application_routing = var.enable_http_application_routing

# TODO: AKS: Ingress Application Gateway

# labels: kind/feature, priority/high, lifecycle/frozen, area/terraform, cloud/azure

# https://github.com/Azure/terraform-azurerm-aks/pull/99

# enable_ingress_application_gateway = true

# ingress_application_gateway_gateway_name =

# ingress_application_gateway_subnet_cidr =

# ingress_application_gateway_subnet_id =

os_disk_size_gb = var.os_disk_size_gb

agents_min_count = var.agents_min_count

```

afce5cfdca8a7775d0e82c8e7e9387f68d5e7736

|

priority

|

aks ingress application gateway aks ingress application gateway enable ingress application gateway true ingress application gateway gateway name ingress application gateway subnet cidr ingress application gateway subnet id ruby rbac aad managed false rbac aad admin group object ids var admin group object ids enable log analytics workspace false enable auto scaling var enable auto scaling enable kube dashboard var enable kube dashboard enable azure policy var enable azure policy enable http application routing var enable http application routing todo aks ingress application gateway labels kind feature priority high lifecycle frozen area terraform cloud azure enable ingress application gateway true ingress application gateway gateway name ingress application gateway subnet cidr ingress application gateway subnet id os disk size gb var os disk size gb agents min count var agents min count

| 1

|

6,304

| 2,587,112,727

|

IssuesEvent

|

2015-02-17 16:30:01

|

civio/quienmanda.es

|

https://api.github.com/repos/civio/quienmanda.es

|

closed

|

Implementar 'páginas temáticas'

|

high_priority in progress

|

Queremos agrupar una serie de fotos y artículos en una "página temática" que compile y muestre de una forma agradable toda la información sobre un tema.

Primer uso: compilar los artículos sobre el Colegio de El Pilar. Comenzaremos mostrando simplemente una serie de fotos y/o artículos etiquetados con una palabra X, pero podría añadirse una foto y/o texto de entrada, dando contexto. Posteriormente esto aplica a temas como 'energía', 'banca'...

|

1.0

|

Implementar 'páginas temáticas' - Queremos agrupar una serie de fotos y artículos en una "página temática" que compile y muestre de una forma agradable toda la información sobre un tema.

Primer uso: compilar los artículos sobre el Colegio de El Pilar. Comenzaremos mostrando simplemente una serie de fotos y/o artículos etiquetados con una palabra X, pero podría añadirse una foto y/o texto de entrada, dando contexto. Posteriormente esto aplica a temas como 'energía', 'banca'...

|

priority

|

implementar páginas temáticas queremos agrupar una serie de fotos y artículos en una página temática que compile y muestre de una forma agradable toda la información sobre un tema primer uso compilar los artículos sobre el colegio de el pilar comenzaremos mostrando simplemente una serie de fotos y o artículos etiquetados con una palabra x pero podría añadirse una foto y o texto de entrada dando contexto posteriormente esto aplica a temas como energía banca

| 1

|

283,012

| 8,712,895,467

|

IssuesEvent

|

2018-12-06 23:59:53

|