Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

432,686

| 12,496,729,755

|

IssuesEvent

|

2020-06-01 15:18:35

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

range.second - range.first == t.size() INTERNAL ASSERT FAILED

|

high priority module: autograd triage review triaged

|

This is my first entry about a problem. So, please feel free to ask anything to clarify the problem.

## 🐛 Bug

I run the same code in three different machines separately. In two of them, I encounter an error when running "loss.backward()" function. The networks never learn.

## To Reproduce

Steps to reproduce the behavior:

1. Run the code for dueling double deep q-learning (Dueling DDQN) and/or DDQNs.

2. Simulation runs until the number of samples in training sample buffer is enough to train the networks.

3. Backpropagation function is called and code crashed.

The error message is as below:

dueling_ddqn_agent.py in learn(self)

149

150 loss = self.q_eval.loss(q_target, q_pred).to(self.q_eval.device)

--> 151 loss.backward()

152 self.q_eval.optimizer.step()

153 self.learn_step_counter += 1

~\Anaconda3\lib\site-packages\torch\tensor.py in backward(self, gradient, retain_graph, create_graph)

196 products. Defaults to ``False``.

197 """

--> 198 torch.autograd.backward(self, gradient, retain_graph, create_graph)

199

200 def register_hook(self, hook):

~\Anaconda3\lib\site-packages\torch\autograd\__init__.py in backward(tensors, grad_tensors, retain_graph, create_graph, grad_variables)

98 Variable._execution_engine.run_backward(

99 tensors, grad_tensors, retain_graph, create_graph,

--> 100 allow_unreachable=True) # allow_unreachable flag

101

102

RuntimeError: range.second - range.first == t.size() INTERNAL ASSERT FAILED at ..\torch\csrc\autograd\generated\Functions.cpp:57, please report a bug to PyTorch. inconsistent range for TensorList output (copy_range at ..\torch\csrc\autograd\generated\Functions.cpp:57)

(no backtrace available)

## Expected behavior

In one of the computers agents learn as expected. The same code runs without any errors.

## Environment

PyTorch version: 1.5.0

Is debug build: No

CUDA used to build PyTorch: 10.2

OS: Microsoft Windows 10 Home Single Language

GCC version: Could not collect

CMake version: Could not collect

Python version: 3.7

Is CUDA available: Yes

CUDA runtime version: Could not collect

GPU models and configuration: GPU 0: GeForce GTX 750 Ti

Nvidia driver version: 441.12

cuDNN version: Could not collect

Versions of relevant libraries:

[pip] numpy==1.17.4

[pip] numpydoc==0.9.1

[pip] torch==1.5.0

[pip] torchvision==0.6.0

[conda] blas 1.0 mkl

[conda] mkl 2019.4 245

[conda] mkl-service 2.3.0 py37hb782905_0

[conda] mkl_fft 1.0.15 py37h14836fe_0

[conda] mkl_random 1.1.0 py37h675688f_0

[conda] numpy 1.17.4 py37h4320e6b_0

[conda] numpy-base 1.17.4 py37hc3f5095_0

[conda] numpydoc 0.9.1 py_0

[conda] torch 1.5.0 pypi_0 pypi

[conda] torchvision 0.6.0 pypi_0 pypi

## Additional context

The same problem occurs with Linear and Conv2d layers.

cc @ezyang @gchanan @zou3519 @SsnL @albanD @gqchen

|

1.0

|

range.second - range.first == t.size() INTERNAL ASSERT FAILED - This is my first entry about a problem. So, please feel free to ask anything to clarify the problem.

## 🐛 Bug

I run the same code in three different machines separately. In two of them, I encounter an error when running "loss.backward()" function. The networks never learn.

## To Reproduce

Steps to reproduce the behavior:

1. Run the code for dueling double deep q-learning (Dueling DDQN) and/or DDQNs.

2. Simulation runs until the number of samples in training sample buffer is enough to train the networks.

3. Backpropagation function is called and code crashed.

The error message is as below:

dueling_ddqn_agent.py in learn(self)

149

150 loss = self.q_eval.loss(q_target, q_pred).to(self.q_eval.device)

--> 151 loss.backward()

152 self.q_eval.optimizer.step()

153 self.learn_step_counter += 1

~\Anaconda3\lib\site-packages\torch\tensor.py in backward(self, gradient, retain_graph, create_graph)

196 products. Defaults to ``False``.

197 """

--> 198 torch.autograd.backward(self, gradient, retain_graph, create_graph)

199

200 def register_hook(self, hook):

~\Anaconda3\lib\site-packages\torch\autograd\__init__.py in backward(tensors, grad_tensors, retain_graph, create_graph, grad_variables)

98 Variable._execution_engine.run_backward(

99 tensors, grad_tensors, retain_graph, create_graph,

--> 100 allow_unreachable=True) # allow_unreachable flag

101

102

RuntimeError: range.second - range.first == t.size() INTERNAL ASSERT FAILED at ..\torch\csrc\autograd\generated\Functions.cpp:57, please report a bug to PyTorch. inconsistent range for TensorList output (copy_range at ..\torch\csrc\autograd\generated\Functions.cpp:57)

(no backtrace available)

## Expected behavior

In one of the computers agents learn as expected. The same code runs without any errors.

## Environment

PyTorch version: 1.5.0

Is debug build: No

CUDA used to build PyTorch: 10.2

OS: Microsoft Windows 10 Home Single Language

GCC version: Could not collect

CMake version: Could not collect

Python version: 3.7

Is CUDA available: Yes

CUDA runtime version: Could not collect

GPU models and configuration: GPU 0: GeForce GTX 750 Ti

Nvidia driver version: 441.12

cuDNN version: Could not collect

Versions of relevant libraries:

[pip] numpy==1.17.4

[pip] numpydoc==0.9.1

[pip] torch==1.5.0

[pip] torchvision==0.6.0

[conda] blas 1.0 mkl

[conda] mkl 2019.4 245

[conda] mkl-service 2.3.0 py37hb782905_0

[conda] mkl_fft 1.0.15 py37h14836fe_0

[conda] mkl_random 1.1.0 py37h675688f_0

[conda] numpy 1.17.4 py37h4320e6b_0

[conda] numpy-base 1.17.4 py37hc3f5095_0

[conda] numpydoc 0.9.1 py_0

[conda] torch 1.5.0 pypi_0 pypi

[conda] torchvision 0.6.0 pypi_0 pypi

## Additional context

The same problem occurs with Linear and Conv2d layers.

cc @ezyang @gchanan @zou3519 @SsnL @albanD @gqchen

|

priority

|

range second range first t size internal assert failed this is my first entry about a problem so please feel free to ask anything to clarify the problem 🐛 bug i run the same code in three different machines separately in two of them i encounter an error when running loss backward function the networks never learn to reproduce steps to reproduce the behavior run the code for dueling double deep q learning dueling ddqn and or ddqns simulation runs until the number of samples in training sample buffer is enough to train the networks backpropagation function is called and code crashed the error message is as below dueling ddqn agent py in learn self loss self q eval loss q target q pred to self q eval device loss backward self q eval optimizer step self learn step counter lib site packages torch tensor py in backward self gradient retain graph create graph products defaults to false torch autograd backward self gradient retain graph create graph def register hook self hook lib site packages torch autograd init py in backward tensors grad tensors retain graph create graph grad variables variable execution engine run backward tensors grad tensors retain graph create graph allow unreachable true allow unreachable flag runtimeerror range second range first t size internal assert failed at torch csrc autograd generated functions cpp please report a bug to pytorch inconsistent range for tensorlist output copy range at torch csrc autograd generated functions cpp no backtrace available expected behavior in one of the computers agents learn as expected the same code runs without any errors environment pytorch version is debug build no cuda used to build pytorch os microsoft windows home single language gcc version could not collect cmake version could not collect python version is cuda available yes cuda runtime version could not collect gpu models and configuration gpu geforce gtx ti nvidia driver version cudnn version could not collect versions of relevant libraries numpy numpydoc torch torchvision blas mkl mkl mkl service mkl fft mkl random numpy numpy base numpydoc py torch pypi pypi torchvision pypi pypi additional context the same problem occurs with linear and layers cc ezyang gchanan ssnl alband gqchen

| 1

|

412,163

| 12,036,020,384

|

IssuesEvent

|

2020-04-13 18:59:30

|

ASbeletsky/TimeOffTracker

|

https://api.github.com/repos/ASbeletsky/TimeOffTracker

|

closed

|

Organize vacation approving process: client-form part

|

done enhancement high priority mutable

|

## Overview

Nowadays the app doesn't support a possibility to organise a chain of vacation approvement request, where accountants and other managers could approve or decline the request and see, who already had approved one, and usual employees could track a current state of the request approvement. Also the employee should have a possibility to choose people, who will apply the request, and have an access to review the history of his requests. On the other hand, all managers should have an access to all requests.

## Requirements

Implement the following pages from client side:

- to apply the request, where the employee chooses type, date and duration of vacation with required managers, who should approve the request;

- a timeline vacation page, where the employee watches a current and previous states of the request;

- a table history request page, where the employees chooses a needed applying request to review, or the manager chooses a needed approved/declined request to review;

- vacation detalis page, where the manager or the employee watches details of the request (and if the request not watched yet,add a possibility to accept or decline it);

Estimate: 60 hours

Deadline: 10.04.2020

|

1.0

|

Organize vacation approving process: client-form part - ## Overview

Nowadays the app doesn't support a possibility to organise a chain of vacation approvement request, where accountants and other managers could approve or decline the request and see, who already had approved one, and usual employees could track a current state of the request approvement. Also the employee should have a possibility to choose people, who will apply the request, and have an access to review the history of his requests. On the other hand, all managers should have an access to all requests.

## Requirements

Implement the following pages from client side:

- to apply the request, where the employee chooses type, date and duration of vacation with required managers, who should approve the request;

- a timeline vacation page, where the employee watches a current and previous states of the request;

- a table history request page, where the employees chooses a needed applying request to review, or the manager chooses a needed approved/declined request to review;

- vacation detalis page, where the manager or the employee watches details of the request (and if the request not watched yet,add a possibility to accept or decline it);

Estimate: 60 hours

Deadline: 10.04.2020

|

priority

|

organize vacation approving process client form part overview nowadays the app doesn t support a possibility to organise a chain of vacation approvement request where accountants and other managers could approve or decline the request and see who already had approved one and usual employees could track a current state of the request approvement also the employee should have a possibility to choose people who will apply the request and have an access to review the history of his requests on the other hand all managers should have an access to all requests requirements implement the following pages from client side to apply the request where the employee chooses type date and duration of vacation with required managers who should approve the request a timeline vacation page where the employee watches a current and previous states of the request a table history request page where the employees chooses a needed applying request to review or the manager chooses a needed approved declined request to review vacation detalis page where the manager or the employee watches details of the request and if the request not watched yet add a possibility to accept or decline it estimate hours deadline

| 1

|

294,104

| 9,013,381,676

|

IssuesEvent

|

2019-02-05 19:21:10

|

zephyrproject-rtos/west

|

https://api.github.com/repos/zephyrproject-rtos/west

|

closed

|

out-of-installation use of west

|

bug priority: high

|

User feedback noted that it's not possible by default to use west commands outside of the installation (i.e. not in a subdirectory of the directory containing .west).

Options:

1. One way to do this is to allow the user to specify the default west installation in a system- or user-level west configuration file. Their locations are described in this comment: https://github.com/zephyrproject-rtos/west/blob/master/src/west/config.py#L27

2. We may also be able to use the WEST_DIR environment variable to cover this case, so build, flash, etc. work normally even if things like the build directory are outside of the installation.

3. Another alternative if WEST_DIR is missing and west is invoked from outside an installation is to fall back on searching inside ZEPHYR_BASE, should that be defined.

Some notes for context:

- you can already build with something like `west build -s /tmp/app-source -d /tmp/build` and flash with `west flash -d /tmp/build` even if `/tmp/app-source` and `/tmp/build` are outside of the west installation. Just west itself has to be run from inside the installation to find the `build` and `flash` commands themselves.

- the use of a west installation, which is found by looking for a `.west` directory, is a deliberate design decision which allows users to keep parallel installations, cd around between them, and have them 'just work', without requiring environment variables which are cumbersome for some, and error-prone in general (since they can point to the wrong place).

|

1.0

|

out-of-installation use of west - User feedback noted that it's not possible by default to use west commands outside of the installation (i.e. not in a subdirectory of the directory containing .west).

Options:

1. One way to do this is to allow the user to specify the default west installation in a system- or user-level west configuration file. Their locations are described in this comment: https://github.com/zephyrproject-rtos/west/blob/master/src/west/config.py#L27

2. We may also be able to use the WEST_DIR environment variable to cover this case, so build, flash, etc. work normally even if things like the build directory are outside of the installation.

3. Another alternative if WEST_DIR is missing and west is invoked from outside an installation is to fall back on searching inside ZEPHYR_BASE, should that be defined.

Some notes for context:

- you can already build with something like `west build -s /tmp/app-source -d /tmp/build` and flash with `west flash -d /tmp/build` even if `/tmp/app-source` and `/tmp/build` are outside of the west installation. Just west itself has to be run from inside the installation to find the `build` and `flash` commands themselves.

- the use of a west installation, which is found by looking for a `.west` directory, is a deliberate design decision which allows users to keep parallel installations, cd around between them, and have them 'just work', without requiring environment variables which are cumbersome for some, and error-prone in general (since they can point to the wrong place).

|

priority

|

out of installation use of west user feedback noted that it s not possible by default to use west commands outside of the installation i e not in a subdirectory of the directory containing west options one way to do this is to allow the user to specify the default west installation in a system or user level west configuration file their locations are described in this comment we may also be able to use the west dir environment variable to cover this case so build flash etc work normally even if things like the build directory are outside of the installation another alternative if west dir is missing and west is invoked from outside an installation is to fall back on searching inside zephyr base should that be defined some notes for context you can already build with something like west build s tmp app source d tmp build and flash with west flash d tmp build even if tmp app source and tmp build are outside of the west installation just west itself has to be run from inside the installation to find the build and flash commands themselves the use of a west installation which is found by looking for a west directory is a deliberate design decision which allows users to keep parallel installations cd around between them and have them just work without requiring environment variables which are cumbersome for some and error prone in general since they can point to the wrong place

| 1

|

305,711

| 9,375,821,845

|

IssuesEvent

|

2019-04-04 05:57:14

|

nateraw/Lda2vec-Tensorflow

|

https://api.github.com/repos/nateraw/Lda2vec-Tensorflow

|

closed

|

Reproducible working example in new version of Lda2Vec

|

high priority in progress

|

I've made TONS of changes the last few weeks. This has caused things to break and has made it so my working example no longer works :cry: . So, a new reproducible example needs to be made. This is highly related to #8 , where you can see that we ended up with a working example. However, with the new changes, we should be able to remake this reliably, straight from running the run_20newsgroups.py file.

|

1.0

|

Reproducible working example in new version of Lda2Vec - I've made TONS of changes the last few weeks. This has caused things to break and has made it so my working example no longer works :cry: . So, a new reproducible example needs to be made. This is highly related to #8 , where you can see that we ended up with a working example. However, with the new changes, we should be able to remake this reliably, straight from running the run_20newsgroups.py file.

|

priority

|

reproducible working example in new version of i ve made tons of changes the last few weeks this has caused things to break and has made it so my working example no longer works cry so a new reproducible example needs to be made this is highly related to where you can see that we ended up with a working example however with the new changes we should be able to remake this reliably straight from running the run py file

| 1

|

316,882

| 9,658,173,021

|

IssuesEvent

|

2019-05-20 10:18:55

|

nim-lang/Nim

|

https://api.github.com/repos/nim-lang/Nim

|

closed

|

return NimNode from macro causes type mismatch

|

High Priority Macros

|

```Nim

import macros

macro testA: string =

result = newLit("testA")

macro testB: untyped =

newLit("testB")

macro testC: untyped =

return newLit("testC")

macro testD: string =

newLit("testD")

macro testE: string =

return newLit("testE")

```

compilation output:

```

scratch.nim(14, 3) Error: type mismatch: got (NimNode) but expected 'string'

Compilation exited abnormally with code 1 at Fri May 19 14:16:02

```

initially I posted the problem in the forum:

https://forum.nim-lang.org/t/2963

|

1.0

|

return NimNode from macro causes type mismatch -

```Nim

import macros

macro testA: string =

result = newLit("testA")

macro testB: untyped =

newLit("testB")

macro testC: untyped =

return newLit("testC")

macro testD: string =

newLit("testD")

macro testE: string =

return newLit("testE")

```

compilation output:

```

scratch.nim(14, 3) Error: type mismatch: got (NimNode) but expected 'string'

Compilation exited abnormally with code 1 at Fri May 19 14:16:02

```

initially I posted the problem in the forum:

https://forum.nim-lang.org/t/2963

|

priority

|

return nimnode from macro causes type mismatch nim import macros macro testa string result newlit testa macro testb untyped newlit testb macro testc untyped return newlit testc macro testd string newlit testd macro teste string return newlit teste compilation output scratch nim error type mismatch got nimnode but expected string compilation exited abnormally with code at fri may initially i posted the problem in the forum

| 1

|

238,478

| 7,779,857,967

|

IssuesEvent

|

2018-06-05 18:09:37

|

daily-bruin/meow

|

https://api.github.com/repos/daily-bruin/meow

|

closed

|

"Post Now button" with Confirmation Dialog

|

enhancement high priority low hanging fruit

|

Posts which are Readied should have a button that allows them to post immediately, with a confirmation dialog showing the message and everything to be sent out. The permission also needs to be set to Copy only.

### Tasks

- [ ] Post now button

- [ ] Confirmation Dialog

- [ ] Give permissions to Copy

|

1.0

|

"Post Now button" with Confirmation Dialog - Posts which are Readied should have a button that allows them to post immediately, with a confirmation dialog showing the message and everything to be sent out. The permission also needs to be set to Copy only.

### Tasks

- [ ] Post now button

- [ ] Confirmation Dialog

- [ ] Give permissions to Copy

|

priority

|

post now button with confirmation dialog posts which are readied should have a button that allows them to post immediately with a confirmation dialog showing the message and everything to be sent out the permission also needs to be set to copy only tasks post now button confirmation dialog give permissions to copy

| 1

|

4,330

| 2,550,285,799

|

IssuesEvent

|

2015-02-01 10:59:23

|

Araq/Nim

|

https://api.github.com/repos/Araq/Nim

|

closed

|

calling a large number of macros doing some computation fails

|

Easy High Priority VM

|

Consider the following code

```nim

import pegs, macros

proc parse*(fmt: string): int {.nosideeffect.} =

let p =

sequence(capture(?sequence(anyRune(), &charSet({'<', '>', '=', '^'}))),

capture(?charSet({'<', '>', '=', '^'})),

capture(?charSet({'-', '+', ' '})),

capture(?charSet({'#'})),

capture(?(+digits())),

capture(?charSet({','})),

capture(?sequence(charSet({'.'}), +digits())),

capture(?charSet({'b', 'c', 'd', 'e', 'E', 'f', 'F', 'g', 'G', 'n', 'o', 's', 'x', 'X', '%'})),

capture(?sequence(charSet({'a'}), *pegs.any())))

var caps: Captures

return fmt.rawmatch(p, 0, caps)

macro test(s: string{lit}): expr =

result = newIntLitNode(parse($s))

# the following line is repeated 7463 times

echo test("abc")

echo test("abc")

echo test("abc")

...

```

Compiling this code with nim 10.2 failes with the following error message:

```

nim c test

Hint: used config file '/path/Nim/config/nim.cfg' [Conf]

Hint: system [Processing]

Hint: test [Processing]

Hint: pegs [Processing]

Hint: strutils [Processing]

Hint: parseutils [Processing]

Hint: unicode [Processing]

Hint: macros [Processing]

stack trace: (most recent call last)

test.nim(18) test

test.nim(15) parse

lib/pure/pegs.nim(647) rawMatch

test.nim(7482, 9) Info: instantiation from here

lib/pure/pegs.nim(647, 22) Error: interpretation requires too many iterations

```

The compilation does not fail if the line `echo test("abc")` is repeated only 7462 times.

I assume this problem is caused by some safeguard so that calling macros cannot lead to infinite loops. However, each single call is fast and I would expect that the count starts again from 0 each time a new toplevel macro is called.

The code above is only an example. I ran into the issue in some real code using the (inofficial) `strfmt` package, which used `pegs` in very much the same way. In this case far less than 7463 calls to a strfmt-macro are required in order to get this error. Is there a way to enlarge the iteration bound?

|

1.0

|

calling a large number of macros doing some computation fails - Consider the following code

```nim

import pegs, macros

proc parse*(fmt: string): int {.nosideeffect.} =

let p =

sequence(capture(?sequence(anyRune(), &charSet({'<', '>', '=', '^'}))),

capture(?charSet({'<', '>', '=', '^'})),

capture(?charSet({'-', '+', ' '})),

capture(?charSet({'#'})),

capture(?(+digits())),

capture(?charSet({','})),

capture(?sequence(charSet({'.'}), +digits())),

capture(?charSet({'b', 'c', 'd', 'e', 'E', 'f', 'F', 'g', 'G', 'n', 'o', 's', 'x', 'X', '%'})),

capture(?sequence(charSet({'a'}), *pegs.any())))

var caps: Captures

return fmt.rawmatch(p, 0, caps)

macro test(s: string{lit}): expr =

result = newIntLitNode(parse($s))

# the following line is repeated 7463 times

echo test("abc")

echo test("abc")

echo test("abc")

...

```

Compiling this code with nim 10.2 failes with the following error message:

```

nim c test

Hint: used config file '/path/Nim/config/nim.cfg' [Conf]

Hint: system [Processing]

Hint: test [Processing]

Hint: pegs [Processing]

Hint: strutils [Processing]

Hint: parseutils [Processing]

Hint: unicode [Processing]

Hint: macros [Processing]

stack trace: (most recent call last)

test.nim(18) test

test.nim(15) parse

lib/pure/pegs.nim(647) rawMatch

test.nim(7482, 9) Info: instantiation from here

lib/pure/pegs.nim(647, 22) Error: interpretation requires too many iterations

```

The compilation does not fail if the line `echo test("abc")` is repeated only 7462 times.

I assume this problem is caused by some safeguard so that calling macros cannot lead to infinite loops. However, each single call is fast and I would expect that the count starts again from 0 each time a new toplevel macro is called.

The code above is only an example. I ran into the issue in some real code using the (inofficial) `strfmt` package, which used `pegs` in very much the same way. In this case far less than 7463 calls to a strfmt-macro are required in order to get this error. Is there a way to enlarge the iteration bound?

|

priority

|

calling a large number of macros doing some computation fails consider the following code nim import pegs macros proc parse fmt string int nosideeffect let p sequence capture sequence anyrune charset capture charset capture charset capture charset capture digits capture charset capture sequence charset digits capture charset b c d e e f f g g n o s x x capture sequence charset a pegs any var caps captures return fmt rawmatch p caps macro test s string lit expr result newintlitnode parse s the following line is repeated times echo test abc echo test abc echo test abc compiling this code with nim failes with the following error message nim c test hint used config file path nim config nim cfg hint system hint test hint pegs hint strutils hint parseutils hint unicode hint macros stack trace most recent call last test nim test test nim parse lib pure pegs nim rawmatch test nim info instantiation from here lib pure pegs nim error interpretation requires too many iterations the compilation does not fail if the line echo test abc is repeated only times i assume this problem is caused by some safeguard so that calling macros cannot lead to infinite loops however each single call is fast and i would expect that the count starts again from each time a new toplevel macro is called the code above is only an example i ran into the issue in some real code using the inofficial strfmt package which used pegs in very much the same way in this case far less than calls to a strfmt macro are required in order to get this error is there a way to enlarge the iteration bound

| 1

|

828,567

| 31,834,791,521

|

IssuesEvent

|

2023-09-14 12:55:18

|

filamentphp/filament

|

https://api.github.com/repos/filamentphp/filament

|

closed

|

canViewForRecord() in RelationManager Not change properly

|

bug unconfirmed high priority

|

### Package

filament/filament

### Package Version

v3.0.19

### Laravel Version

v10.19.0

### Livewire Version

_No response_

### PHP Version

PHP 8.1.21

### Problem description

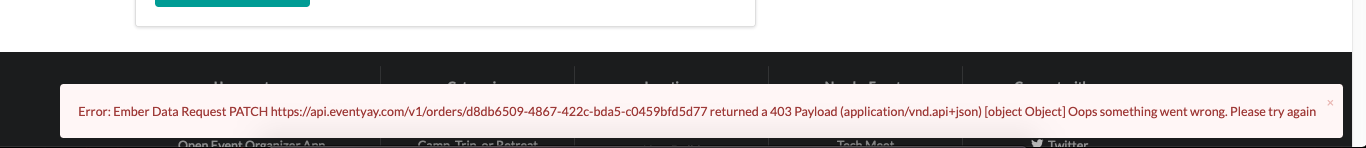

After i implement [this](https://filamentphp.com/docs/3.x/panels/resources/relation-managers#conditionally-showing-relation-managers)

```php

public static function canViewForRecord(Model $ownerRecord, string $pageClass): bool

{

return $ownerRecord->type === FoodPackageType::PACKAGE;

}

```

the relation in edit page not changed properly

### Expected behavior

The relation is changed according to record set in canViewForRecord() method

### Steps to reproduce

1. Choose a food package

2. open edit page of a food package

3. change type to 'snack' and the relation is gone

4. the console give me this error

5. but if i refresh the page. the relation is back to normal and show according to canViewForRecord()

### Reproduction repository

https://github.com/chickgit/filament-canViewForRecord-bug

### Relevant log output

_No response_

|

1.0

|

canViewForRecord() in RelationManager Not change properly - ### Package

filament/filament

### Package Version

v3.0.19

### Laravel Version

v10.19.0

### Livewire Version

_No response_

### PHP Version

PHP 8.1.21

### Problem description

After i implement [this](https://filamentphp.com/docs/3.x/panels/resources/relation-managers#conditionally-showing-relation-managers)

```php

public static function canViewForRecord(Model $ownerRecord, string $pageClass): bool

{

return $ownerRecord->type === FoodPackageType::PACKAGE;

}

```

the relation in edit page not changed properly

### Expected behavior

The relation is changed according to record set in canViewForRecord() method

### Steps to reproduce

1. Choose a food package

2. open edit page of a food package

3. change type to 'snack' and the relation is gone

4. the console give me this error

5. but if i refresh the page. the relation is back to normal and show according to canViewForRecord()

### Reproduction repository

https://github.com/chickgit/filament-canViewForRecord-bug

### Relevant log output

_No response_

|

priority

|

canviewforrecord in relationmanager not change properly package filament filament package version laravel version livewire version no response php version php problem description after i implement php public static function canviewforrecord model ownerrecord string pageclass bool return ownerrecord type foodpackagetype package the relation in edit page not changed properly expected behavior the relation is changed according to record set in canviewforrecord method steps to reproduce choose a food package open edit page of a food package change type to snack and the relation is gone the console give me this error but if i refresh the page the relation is back to normal and show according to canviewforrecord reproduction repository relevant log output no response

| 1

|

4,335

| 2,550,401,613

|

IssuesEvent

|

2015-02-01 14:25:49

|

JasperHorn/GoodSuite

|

https://api.github.com/repos/JasperHorn/GoodSuite

|

closed

|

Proper API for getting object by id

|

bug high priority

|

I removed createDummy with a comment that there would soon be a different way to achieve its main purpose. And then that other way didn't arrive.

Currently, my tests use the `setId()` function, which works, but is definitely not part of the *public* API. There should be a better way, perhaps something like a `getById` function on a storage.

|

1.0

|

Proper API for getting object by id - I removed createDummy with a comment that there would soon be a different way to achieve its main purpose. And then that other way didn't arrive.

Currently, my tests use the `setId()` function, which works, but is definitely not part of the *public* API. There should be a better way, perhaps something like a `getById` function on a storage.

|

priority

|

proper api for getting object by id i removed createdummy with a comment that there would soon be a different way to achieve its main purpose and then that other way didn t arrive currently my tests use the setid function which works but is definitely not part of the public api there should be a better way perhaps something like a getbyid function on a storage

| 1

|

499,025

| 14,437,760,815

|

IssuesEvent

|

2020-12-07 12:02:35

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

closed

|

[0.9.2 staging-1862] Blackout background that blocks all UIs

|

Category: Laws Priority: High

|

Step to reproduce:

- open court (I guess you can use any such civic objects):

- create any law:

- and press 'Add New Law to an Election', add to new election, submit:

- press ok:

- I have Blackout background and can't use any UI. Need to restart game to fix it.

[Player.log](https://github.com/StrangeLoopGames/EcoIssues/files/5635158/Player.log)

|

1.0

|

[0.9.2 staging-1862] Blackout background that blocks all UIs - Step to reproduce:

- open court (I guess you can use any such civic objects):

- create any law:

- and press 'Add New Law to an Election', add to new election, submit:

- press ok:

- I have Blackout background and can't use any UI. Need to restart game to fix it.

[Player.log](https://github.com/StrangeLoopGames/EcoIssues/files/5635158/Player.log)

|

priority

|

blackout background that blocks all uis step to reproduce open court i guess you can use any such civic objects create any law and press add new law to an election add to new election submit press ok i have blackout background and can t use any ui need to restart game to fix it

| 1

|

259,912

| 8,201,590,394

|

IssuesEvent

|

2018-09-01 19:36:49

|

keepassxreboot/keepassxc

|

https://api.github.com/repos/keepassxreboot/keepassxc

|

closed

|

Database corruption on merging to a locked database

|

bug high priority security

|

<!--- Provide a general summary of the issue in the title above -->

I just lost my database. Fingers were faster than they should've been, attempted a merge while my db was locked. That just opened the db I'm merging. Manually opening the db I merged into shows it's corrupt.

I guess it was because the db was locked, looking at similar corruption issues in the past. This is the second corruption I had resulting in loss of some data.

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

Merging into locked database should not corrupt it.

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1. Open database, set to auto lock after delay. Wait for db to be locked.

2. Select Database -> Merge from KeepassXC database

3. Poof. DB gets corrupt

## Debug Info

<!--- Paste debug info from Help → About here -->

KeePassXC - Version 2.3.1

Revision: 2fcaeea

Libraries:

- Qt 5.10.1

- libgcrypt 1.8.2

Operating system: Arch Linux

CPU architecture: x86_64

Kernel: linux 4.15.15-1-ARCH

Enabled extensions:

- Auto-Type

- Browser Integration

- Legacy Browser Integration (KeePassHTTP)

- SSH Agent

- YubiKey

|

1.0

|

Database corruption on merging to a locked database - <!--- Provide a general summary of the issue in the title above -->

I just lost my database. Fingers were faster than they should've been, attempted a merge while my db was locked. That just opened the db I'm merging. Manually opening the db I merged into shows it's corrupt.

I guess it was because the db was locked, looking at similar corruption issues in the past. This is the second corruption I had resulting in loss of some data.

## Expected Behavior

<!--- If you're describing a bug, tell us what should happen -->

Merging into locked database should not corrupt it.

<!--- If you're suggesting a change/improvement, tell us how it should work -->

## Steps to Reproduce (for bugs)

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug. Include code to reproduce, if relevant -->

1. Open database, set to auto lock after delay. Wait for db to be locked.

2. Select Database -> Merge from KeepassXC database

3. Poof. DB gets corrupt

## Debug Info

<!--- Paste debug info from Help → About here -->

KeePassXC - Version 2.3.1

Revision: 2fcaeea

Libraries:

- Qt 5.10.1

- libgcrypt 1.8.2

Operating system: Arch Linux

CPU architecture: x86_64

Kernel: linux 4.15.15-1-ARCH

Enabled extensions:

- Auto-Type

- Browser Integration

- Legacy Browser Integration (KeePassHTTP)

- SSH Agent

- YubiKey

|

priority

|

database corruption on merging to a locked database i just lost my database fingers were faster than they should ve been attempted a merge while my db was locked that just opened the db i m merging manually opening the db i merged into shows it s corrupt i guess it was because the db was locked looking at similar corruption issues in the past this is the second corruption i had resulting in loss of some data expected behavior merging into locked database should not corrupt it steps to reproduce for bugs open database set to auto lock after delay wait for db to be locked select database merge from keepassxc database poof db gets corrupt debug info keepassxc version revision libraries qt libgcrypt operating system arch linux cpu architecture kernel linux arch enabled extensions auto type browser integration legacy browser integration keepasshttp ssh agent yubikey

| 1

|

223,713

| 7,460,058,875

|

IssuesEvent

|

2018-03-30 17:58:38

|

EvictionLab/eviction-maps

|

https://api.github.com/repos/EvictionLab/eviction-maps

|

closed

|

Add county level filing data to S3 interface

|

enhancement high priority

|

Not really map related but putting here to track it. Data should come in tomorrow (March 28)

|

1.0

|

Add county level filing data to S3 interface - Not really map related but putting here to track it. Data should come in tomorrow (March 28)

|

priority

|

add county level filing data to interface not really map related but putting here to track it data should come in tomorrow march

| 1

|

639,315

| 20,751,295,917

|

IssuesEvent

|

2022-03-15 07:52:05

|

AY2122s2-CS2113-F12-3/tp

|

https://api.github.com/repos/AY2122s2-CS2113-F12-3/tp

|

opened

|

Edit staff details

|

type.Story priority.High

|

As a user, I can add new staff, modify staff details and delete staff that has left the company.

|

1.0

|

Edit staff details - As a user, I can add new staff, modify staff details and delete staff that has left the company.

|

priority

|

edit staff details as a user i can add new staff modify staff details and delete staff that has left the company

| 1

|

186,927

| 6,743,660,916

|

IssuesEvent

|

2017-10-20 13:02:17

|

ActivityWatch/activitywatch

|

https://api.github.com/repos/ActivityWatch/activitywatch

|

closed

|

Fixing packaging on macOS

|

area: ci platform: macos priority: high size: small type: bug

|

There has been this annoying bug with the macOS builds:

```

Error loading Python lib '/Applications/activitywatch/.Python': dlopen(/Applications/activitywatch/.Python, 10): image not found

```

@jwiese had [the same issue](https://github.com/ActivityWatch/activitywatch/issues/78#issuecomment-325065686)

Then [someone on reddit had the same issue](https://www.reddit.com/r/Entrepreneur/comments/76qnbq/tell_us_about_your_startupbuisness/dojs27u/).

I thought a bit about it, and the fix might be stupidly easy: `cp src/* dest/` doesn't copy files beginning with a dot.

|

1.0

|

Fixing packaging on macOS - There has been this annoying bug with the macOS builds:

```

Error loading Python lib '/Applications/activitywatch/.Python': dlopen(/Applications/activitywatch/.Python, 10): image not found

```

@jwiese had [the same issue](https://github.com/ActivityWatch/activitywatch/issues/78#issuecomment-325065686)

Then [someone on reddit had the same issue](https://www.reddit.com/r/Entrepreneur/comments/76qnbq/tell_us_about_your_startupbuisness/dojs27u/).

I thought a bit about it, and the fix might be stupidly easy: `cp src/* dest/` doesn't copy files beginning with a dot.

|

priority

|

fixing packaging on macos there has been this annoying bug with the macos builds error loading python lib applications activitywatch python dlopen applications activitywatch python image not found jwiese had then i thought a bit about it and the fix might be stupidly easy cp src dest doesn t copy files beginning with a dot

| 1

|

22,756

| 2,650,829,285

|

IssuesEvent

|

2015-03-16 05:22:05

|

Glavin001/atom-beautify

|

https://api.github.com/repos/Glavin001/atom-beautify

|

closed

|

Atom.Object.defineProperty.get is deprecated.

|

high priority

|

Bug report from Atom:

> atom.workspaceView is no longer available.

> In most cases you will not need the view. See the Workspace docs for

> alternatives: https://atom.io/docs/api/latest/Workspace.

> If you do need the view, please use `atom.views.getView(atom.workspace)`,

> which returns an HTMLElement.

> ```

> Atom.Object.defineProperty.get (c:\Users\xxx\AppData\Local\atom\app-0.174.0\resources\app\src\atom.js:55:11)

> LoadingView.module.exports.LoadingView.show (c:\Users\xxx\.atom\packages\atom-beautify\lib\loading-view.coffee:38:11)

> ```

|

1.0

|

Atom.Object.defineProperty.get is deprecated. - Bug report from Atom:

> atom.workspaceView is no longer available.

> In most cases you will not need the view. See the Workspace docs for

> alternatives: https://atom.io/docs/api/latest/Workspace.

> If you do need the view, please use `atom.views.getView(atom.workspace)`,

> which returns an HTMLElement.

> ```

> Atom.Object.defineProperty.get (c:\Users\xxx\AppData\Local\atom\app-0.174.0\resources\app\src\atom.js:55:11)

> LoadingView.module.exports.LoadingView.show (c:\Users\xxx\.atom\packages\atom-beautify\lib\loading-view.coffee:38:11)

> ```

|

priority

|

atom object defineproperty get is deprecated bug report from atom atom workspaceview is no longer available in most cases you will not need the view see the workspace docs for alternatives if you do need the view please use atom views getview atom workspace which returns an htmlelement atom object defineproperty get c users xxx appdata local atom app resources app src atom js loadingview module exports loadingview show c users xxx atom packages atom beautify lib loading view coffee

| 1

|

745,598

| 25,991,126,576

|

IssuesEvent

|

2022-12-20 07:38:05

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Fix runtime type APIs to support type reference types

|

Type/Task Priority/High Team/jBallerina Points/7

|

**Description:**

$subject

**Describe your task(s)**

Currently, the following runtime APIs are modified to provide `TypeReferenceType` as a return type.

```

typedesc.getDescribingType()

recordField.getFieldType()

arrayValue.getElementType()

arrayType.getElementType()

```

But, we still have several APIs that need to be fixed for this support.

```

getMemberTypes()

getRestType()

getRestFieldType()

getReturnType()

getConstrainedType()

getEffectiveType()

getParamValueType()

getCompletionType()

getKeyType()

getImmutableType()

```

etc.

**Related Issues (optional):**

https://github.com/ballerina-platform/ballerina-lang/issues/35270

|

1.0

|

Fix runtime type APIs to support type reference types - **Description:**

$subject

**Describe your task(s)**

Currently, the following runtime APIs are modified to provide `TypeReferenceType` as a return type.

```

typedesc.getDescribingType()

recordField.getFieldType()

arrayValue.getElementType()

arrayType.getElementType()

```

But, we still have several APIs that need to be fixed for this support.

```

getMemberTypes()

getRestType()

getRestFieldType()

getReturnType()

getConstrainedType()

getEffectiveType()

getParamValueType()

getCompletionType()

getKeyType()

getImmutableType()

```

etc.

**Related Issues (optional):**

https://github.com/ballerina-platform/ballerina-lang/issues/35270

|

priority

|

fix runtime type apis to support type reference types description subject describe your task s currently the following runtime apis are modified to provide typereferencetype as a return type typedesc getdescribingtype recordfield getfieldtype arrayvalue getelementtype arraytype getelementtype but we still have several apis that need to be fixed for this support getmembertypes getresttype getrestfieldtype getreturntype getconstrainedtype geteffectivetype getparamvaluetype getcompletiontype getkeytype getimmutabletype etc related issues optional

| 1

|

291,324

| 8,923,416,639

|

IssuesEvent

|

2019-01-21 15:36:26

|

OpenNebula/one

|

https://api.github.com/repos/OpenNebula/one

|

opened

|

Distributed port groups not working in unmanaged nic

|

Category: vCenter Priority: High Status: Accepted Type: Bug

|

**Description**

It is not possible to import vCenter templates that use virtual distributed port groups

**To Reproduce**

in VSphere, convert to template a virtual_machine attached to vdportgroup.

Import into OpenNebula.

**Expected behavior**

Importation ends without any problem.

**Details**

- Affected Component: vmm

- Hypervisor: vCenter

- Version: development

**Additional context**

Add any other context about the problem here.

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [ ] Branch created

- [ ] Code committed to development branch

- [ ] Testing - QA

- [ ] Documentation

- [ ] Release notes - resolved issues, compatibility, known issues

- [ ] Code committed to upstream release/hotfix branches

- [ ] Documentation committed to upstream release/hotfix branches

|

1.0

|

Distributed port groups not working in unmanaged nic - **Description**

It is not possible to import vCenter templates that use virtual distributed port groups

**To Reproduce**

in VSphere, convert to template a virtual_machine attached to vdportgroup.

Import into OpenNebula.

**Expected behavior**

Importation ends without any problem.

**Details**

- Affected Component: vmm

- Hypervisor: vCenter

- Version: development

**Additional context**

Add any other context about the problem here.

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM -->

<!-- BOTH FOR BUGS AND ENHANCEMENT REQUESTS -->

<!-- PROGRESS WILL BE REFLECTED HERE -->

<!--////////////////////////////////////////////-->

## Progress Status

- [ ] Branch created

- [ ] Code committed to development branch

- [ ] Testing - QA

- [ ] Documentation

- [ ] Release notes - resolved issues, compatibility, known issues

- [ ] Code committed to upstream release/hotfix branches

- [ ] Documentation committed to upstream release/hotfix branches

|

priority

|

distributed port groups not working in unmanaged nic description it is not possible to import vcenter templates that use virtual distributed port groups to reproduce in vsphere convert to template a virtual machine attached to vdportgroup import into opennebula expected behavior importation ends without any problem details affected component vmm hypervisor vcenter version development additional context add any other context about the problem here progress status branch created code committed to development branch testing qa documentation release notes resolved issues compatibility known issues code committed to upstream release hotfix branches documentation committed to upstream release hotfix branches

| 1

|

807,313

| 29,994,706,978

|

IssuesEvent

|

2023-06-26 03:57:35

|

HackerN64/HackerSM64

|

https://api.github.com/repos/HackerN64/HackerSM64

|

closed

|

Get rid of all inline asm in the repo

|

bug HOW high priority monkaS

|

Inline asm seems to potentially cause instruction scheduling issues in GCC, so get rid of all of it. Pretty much every instance can be replaced with a GCC builtin to generate the same or similar codegen, so this isn't an issue. The `construct_float` in the mtxf_to_mtx function can remain since we know that function has correct codegen.

|

1.0

|

Get rid of all inline asm in the repo - Inline asm seems to potentially cause instruction scheduling issues in GCC, so get rid of all of it. Pretty much every instance can be replaced with a GCC builtin to generate the same or similar codegen, so this isn't an issue. The `construct_float` in the mtxf_to_mtx function can remain since we know that function has correct codegen.

|

priority

|

get rid of all inline asm in the repo inline asm seems to potentially cause instruction scheduling issues in gcc so get rid of all of it pretty much every instance can be replaced with a gcc builtin to generate the same or similar codegen so this isn t an issue the construct float in the mtxf to mtx function can remain since we know that function has correct codegen

| 1

|

509,646

| 14,741,023,629

|

IssuesEvent

|

2021-01-07 09:59:06

|

quickcase/node-toolkit

|

https://api.github.com/repos/quickcase/node-toolkit

|

closed

|

Case: createCase(httpClient)(caseType)(event)(payload)

|

priority:high type:feature

|

A function to create new cases for a given case type using the provided event and case data.

### Example

```javascript

import {createCase, httpClient} from '@quickcase/node-toolkit';

// A configured `httpClient` is required to create case

const client = httpClient('http://data-store:4452')(() => Promise.resolve('access-token'));

const aCase = await createCase(client)('CaseType1')('CreateEvent')({

data: {

field1: 'value1',

field2: 'value2',

},

summary: 'Short text',

description: 'Longer description',

});

/*

{

id: '1234123412341238',

state: 'Created',

data: {

field1: 'value1',

field2: 'value2',

},

...

}

*/

```

|

1.0

|

Case: createCase(httpClient)(caseType)(event)(payload) - A function to create new cases for a given case type using the provided event and case data.

### Example

```javascript

import {createCase, httpClient} from '@quickcase/node-toolkit';

// A configured `httpClient` is required to create case

const client = httpClient('http://data-store:4452')(() => Promise.resolve('access-token'));

const aCase = await createCase(client)('CaseType1')('CreateEvent')({

data: {

field1: 'value1',

field2: 'value2',

},

summary: 'Short text',

description: 'Longer description',

});

/*

{

id: '1234123412341238',

state: 'Created',

data: {

field1: 'value1',

field2: 'value2',

},

...

}

*/

```

|

priority

|

case createcase httpclient casetype event payload a function to create new cases for a given case type using the provided event and case data example javascript import createcase httpclient from quickcase node toolkit a configured httpclient is required to create case const client httpclient promise resolve access token const acase await createcase client createevent data summary short text description longer description id state created data

| 1

|

493,890

| 14,240,381,126

|

IssuesEvent

|

2020-11-18 21:36:56

|

bounswe/bounswe2020group9

|

https://api.github.com/repos/bounswe/bounswe2020group9

|

closed

|

Add bootstrap to frontend project

|

Priority - High Type: Enhancement

|

Bootstrap and react-bootstrap design libraries should be added to the repo. I will try to add by tomorrow evening.

|

1.0

|

Add bootstrap to frontend project - Bootstrap and react-bootstrap design libraries should be added to the repo. I will try to add by tomorrow evening.

|

priority

|

add bootstrap to frontend project bootstrap and react bootstrap design libraries should be added to the repo i will try to add by tomorrow evening

| 1

|

67,489

| 3,274,502,794

|

IssuesEvent

|

2015-10-26 11:15:48

|

OCHA-DAP/hdx-ckan

|

https://api.github.com/repos/OCHA-DAP/hdx-ckan

|

opened

|

Geopreview: Everything looks ok with this one in QGIS, but geopreview has a crazy extent

|

GeoPreview Priority-High

|

Extents of the file look fine in QGIS: xMin,yMin 61.0015,23.9622 : xMax,yMax 79.2384,37.0316

But from our api: BOX(62.8094901807575 **-345.137507041407**,77.24811554 37.0315789417147)

When enabling geopreview, the exent is global and the data doesn't display. I've updated the geopreview twice with the same result.

It would be good to know if this is a problem at our end or in the file (more likely). And to consider how we handle this failures.

|

1.0

|

Geopreview: Everything looks ok with this one in QGIS, but geopreview has a crazy extent - Extents of the file look fine in QGIS: xMin,yMin 61.0015,23.9622 : xMax,yMax 79.2384,37.0316

But from our api: BOX(62.8094901807575 **-345.137507041407**,77.24811554 37.0315789417147)

When enabling geopreview, the exent is global and the data doesn't display. I've updated the geopreview twice with the same result.

It would be good to know if this is a problem at our end or in the file (more likely). And to consider how we handle this failures.

|

priority

|

geopreview everything looks ok with this one in qgis but geopreview has a crazy extent extents of the file look fine in qgis xmin ymin xmax ymax but from our api box when enabling geopreview the exent is global and the data doesn t display i ve updated the geopreview twice with the same result it would be good to know if this is a problem at our end or in the file more likely and to consider how we handle this failures

| 1

|

441,496

| 12,718,883,245

|

IssuesEvent

|

2020-06-24 08:20:48

|

Wirlie/EnhancedBungeeList

|

https://api.github.com/repos/Wirlie/EnhancedBungeeList

|

closed

|

Configuration file corruption

|

bug high-priority

|

Someone have reported that the configuration file (Config.yml) have been corrupted, so I need to investigate the cause of the issue.

Reference:

https://www.spigotmc.org/threads/enhancedbungeelist.303250/page-3#post-3773965

|

1.0

|

Configuration file corruption - Someone have reported that the configuration file (Config.yml) have been corrupted, so I need to investigate the cause of the issue.

Reference:

https://www.spigotmc.org/threads/enhancedbungeelist.303250/page-3#post-3773965

|

priority

|

configuration file corruption someone have reported that the configuration file config yml have been corrupted so i need to investigate the cause of the issue reference

| 1

|

80,754

| 3,574,107,314

|

IssuesEvent

|

2016-01-27 10:17:21

|

restlet/restlet-framework-java

|

https://api.github.com/repos/restlet/restlet-framework-java

|

closed

|

Method value caching broken depending on class initialization order

|

Module: Restlet API Priority: high State: waiting for input Type: bug Version: 2.3

|

The method `org.restlet.data.Method.valueOf(String)` usually returns cached values for common HTTP methods (GET etc.). In a particular application test case, I noticed the caching was not working, i.e., `valueOf` was *always* returning new instances.

The reason is the way the caching depends on class initialization order (and a tiny bug in Method.java).

- When Engine.getEngine() is called before class `Method` is being initialized, the caching works (I guess this is the case often)

- When class `Method` is loaded/initialized before Engine.getEngine() is called, the caching breaks.

The root cause is the initialization block in [Method.java#L.240](https://github.com/restlet/restlet-framework-java/blob/master/modules/org.restlet/src/org/restlet/data/Method.java#L240). This block is *supposed* to be called after the class constants (GET, etc.) have been initialized and assigned. But it is missing the `static {}` keyword, so it is not actually a class initialization block, but an object initialization block. It is thus called when the first constant instance is initialized, before the constants are assigned. This means that the constants read by `HttpProtocolHelper.registerMethods` are still `null`, and no Methods get registered.

I will provide a unit test and a patch in a pull request.

|

1.0

|

Method value caching broken depending on class initialization order - The method `org.restlet.data.Method.valueOf(String)` usually returns cached values for common HTTP methods (GET etc.). In a particular application test case, I noticed the caching was not working, i.e., `valueOf` was *always* returning new instances.

The reason is the way the caching depends on class initialization order (and a tiny bug in Method.java).

- When Engine.getEngine() is called before class `Method` is being initialized, the caching works (I guess this is the case often)

- When class `Method` is loaded/initialized before Engine.getEngine() is called, the caching breaks.

The root cause is the initialization block in [Method.java#L.240](https://github.com/restlet/restlet-framework-java/blob/master/modules/org.restlet/src/org/restlet/data/Method.java#L240). This block is *supposed* to be called after the class constants (GET, etc.) have been initialized and assigned. But it is missing the `static {}` keyword, so it is not actually a class initialization block, but an object initialization block. It is thus called when the first constant instance is initialized, before the constants are assigned. This means that the constants read by `HttpProtocolHelper.registerMethods` are still `null`, and no Methods get registered.

I will provide a unit test and a patch in a pull request.

|

priority

|

method value caching broken depending on class initialization order the method org restlet data method valueof string usually returns cached values for common http methods get etc in a particular application test case i noticed the caching was not working i e valueof was always returning new instances the reason is the way the caching depends on class initialization order and a tiny bug in method java when engine getengine is called before class method is being initialized the caching works i guess this is the case often when class method is loaded initialized before engine getengine is called the caching breaks the root cause is the initialization block in this block is supposed to be called after the class constants get etc have been initialized and assigned but it is missing the static keyword so it is not actually a class initialization block but an object initialization block it is thus called when the first constant instance is initialized before the constants are assigned this means that the constants read by httpprotocolhelper registermethods are still null and no methods get registered i will provide a unit test and a patch in a pull request

| 1

|

805,535

| 29,524,188,590

|

IssuesEvent

|

2023-06-05 06:14:30

|

ballerina-platform/ballerina-standard-library

|

https://api.github.com/repos/ballerina-platform/ballerina-standard-library

|

opened

|

Receive runtime error when query, path, and header parameters have subtype of integer

|

Priority/High Type/Bug module/http

|

**Description:**

We receive the below runtime error[2] while executing `bal run` for below Ballerina sample, But this didn't give any compilation error while executing `bal build`

**Steps to reproduce:**

[1] Ballerina code

```ballerina

import ballerina/http;

listener http:Listener ep0 = new (9090, config = {host: "localhost"});

service / on ep0 {

resource function get correspondence/listByPatientId/[int:Signed32 patientId]/out(int:Signed32 locationId, @http:Header int:Signed32 header) returns string {

return "Hello World!";

}

}

```

[2] error

```

Running executable

error: invalid query parameter type 'lang.int:Signed32'

error: invalid path parameter type 'lang.int:Signed32'

error: invalid header parameter type 'lang.int:Signed32'

```

**Affected Versions:**

Ballerina Swanlake 2201.6.0

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

1.0

|

Receive runtime error when query, path, and header parameters have subtype of integer - **Description:**

We receive the below runtime error[2] while executing `bal run` for below Ballerina sample, But this didn't give any compilation error while executing `bal build`

**Steps to reproduce:**

[1] Ballerina code

```ballerina

import ballerina/http;

listener http:Listener ep0 = new (9090, config = {host: "localhost"});

service / on ep0 {

resource function get correspondence/listByPatientId/[int:Signed32 patientId]/out(int:Signed32 locationId, @http:Header int:Signed32 header) returns string {

return "Hello World!";

}

}

```

[2] error

```

Running executable

error: invalid query parameter type 'lang.int:Signed32'

error: invalid path parameter type 'lang.int:Signed32'

error: invalid header parameter type 'lang.int:Signed32'

```

**Affected Versions:**

Ballerina Swanlake 2201.6.0

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

priority

|

receive runtime error when query path and header parameters have subtype of integer description we receive the below runtime error while executing bal run for below ballerina sample but this didn t give any compilation error while executing bal build steps to reproduce ballerina code ballerina import ballerina http listener http listener new config host localhost service on resource function get correspondence listbypatientid out int locationid http header int header returns string return hello world error running executable error invalid query parameter type lang int error invalid path parameter type lang int error invalid header parameter type lang int affected versions ballerina swanlake os db other environment details and versions related issues optional suggested labels optional suggested assignees optional

| 1

|

176,506

| 6,560,366,101

|

IssuesEvent

|

2017-09-07 09:01:39

|

salesagility/SuiteCRM

|

https://api.github.com/repos/salesagility/SuiteCRM

|

closed

|

Possible opportunity for SQL injection attack in file modules/Emails/EmailUIAjax.php

|

bug Fix Proposed High Priority Resolved: Next Release

|

inside:

`case "getTemplateAttachments":`

line:

`$where = "parent_id='{$_REQUEST['parent_id']}'";`

All user inputs must be used after validation/sanitisation/escaping in SQL commands.

Refer to usage of DBManager::quote() function

|

1.0

|

Possible opportunity for SQL injection attack in file modules/Emails/EmailUIAjax.php - inside:

`case "getTemplateAttachments":`

line:

`$where = "parent_id='{$_REQUEST['parent_id']}'";`

All user inputs must be used after validation/sanitisation/escaping in SQL commands.

Refer to usage of DBManager::quote() function

|

priority

|

possible opportunity for sql injection attack in file modules emails emailuiajax php inside case gettemplateattachments line where parent id request all user inputs must be used after validation sanitisation escaping in sql commands refer to usage of dbmanager quote function

| 1

|

170,139

| 6,424,876,188

|

IssuesEvent

|

2017-08-09 14:23:43

|

oneOCT3T/SARPbugs

|

https://api.github.com/repos/oneOCT3T/SARPbugs

|

closed

|

Calling CreateObjects to lift object in LS beach apartment

|

bug high priority

|

I don't have to explain it, a GMX can fix but we need a proper solution. Mainly Octet did that script if I am not wrong.

Request to Octet for checking the issue and fix the bug since it's not for the mapping objects but lift moveable bug(?)

|

1.0

|

Calling CreateObjects to lift object in LS beach apartment - I don't have to explain it, a GMX can fix but we need a proper solution. Mainly Octet did that script if I am not wrong.

Request to Octet for checking the issue and fix the bug since it's not for the mapping objects but lift moveable bug(?)

|

priority

|

calling createobjects to lift object in ls beach apartment i don t have to explain it a gmx can fix but we need a proper solution mainly octet did that script if i am not wrong request to octet for checking the issue and fix the bug since it s not for the mapping objects but lift moveable bug

| 1

|

214,565

| 7,274,444,352

|

IssuesEvent

|

2018-02-21 10:01:58

|

ballerina-lang/language-server

|

https://api.github.com/repos/ballerina-lang/language-server

|

closed

|

Go to variable definition support

|

Priority/High Type/Task

|

**Description:**

Currently, we only support go to definition for functions, structs and enums. We need to support variables, connectors and actions in future.

|

1.0

|

Go to variable definition support - **Description:**

Currently, we only support go to definition for functions, structs and enums. We need to support variables, connectors and actions in future.

|

priority

|

go to variable definition support description currently we only support go to definition for functions structs and enums we need to support variables connectors and actions in future

| 1

|

748,217

| 26,112,398,550

|

IssuesEvent

|

2022-12-27 22:22:40

|

yugabyte/yugabyte-db

|

https://api.github.com/repos/yugabyte/yugabyte-db

|

closed

|

Tserver Registration hazard - UUID can be blank

|

kind/bug area/docdb priority/high 2.12 Backport Required jira-originated 2.14 Backport Required 2.16 Backport Required

|

Jira Link: [DB-3832](https://yugabyte.atlassian.net/browse/DB-3832)

At tserver startup, it may encounter difficulty reading the instance file, and try to register with an empty UUID, as in the case below:

```1008 02:07:23.531638 26967 ts_manager.cc:140] Registered new tablet server { permanent_uuid: "" instance_seqno: 1665194843459852 start_time_us: 1665194843459852 } with Master, full list: [{22f43dd4c90a4f2bbb6ebd1516c25616, 0x000000001d472010 -> { permanent_uuid: 22f43dd4c90a4f2bbb6ebd1516c25616 registration: common { private_rpc_addresses { host: "10.88.16.80" port: 9100 } http_addresses { host: "10.88.16.80" ...```

The server should protect itself by verifying that a reasonable UUID is available, before attempting to register.

|

1.0

|

Tserver Registration hazard - UUID can be blank - Jira Link: [DB-3832](https://yugabyte.atlassian.net/browse/DB-3832)

At tserver startup, it may encounter difficulty reading the instance file, and try to register with an empty UUID, as in the case below:

```1008 02:07:23.531638 26967 ts_manager.cc:140] Registered new tablet server { permanent_uuid: "" instance_seqno: 1665194843459852 start_time_us: 1665194843459852 } with Master, full list: [{22f43dd4c90a4f2bbb6ebd1516c25616, 0x000000001d472010 -> { permanent_uuid: 22f43dd4c90a4f2bbb6ebd1516c25616 registration: common { private_rpc_addresses { host: "10.88.16.80" port: 9100 } http_addresses { host: "10.88.16.80" ...```

The server should protect itself by verifying that a reasonable UUID is available, before attempting to register.

|

priority

|

tserver registration hazard uuid can be blank jira link at tserver startup it may encounter difficulty reading the instance file and try to register with an empty uuid as in the case below ts manager cc registered new tablet server permanent uuid instance seqno start time us with master full list permanent uuid registration common private rpc addresses host port http addresses host the server should protect itself by verifying that a reasonable uuid is available before attempting to register

| 1

|

514,441

| 14,939,294,169

|

IssuesEvent

|

2021-01-25 16:46:32

|

diyabc/diyabcGUI

|

https://api.github.com/repos/diyabc/diyabcGUI

|

closed

|

Add specific abcranger output prefix to allow different runs in a single project

|

enhancement high priority

|

Specify name parameter or candidate models in prefix output for abcranger run.

abcranger option to do so:

```

-o, --output arg Prefix output (modelchoice_out or estimparam_out by

default)

```

Interest: run multiple parameter estimation or multiple model choice procedure in a single project

|

1.0

|

Add specific abcranger output prefix to allow different runs in a single project - Specify name parameter or candidate models in prefix output for abcranger run.

abcranger option to do so:

```

-o, --output arg Prefix output (modelchoice_out or estimparam_out by

default)

```

Interest: run multiple parameter estimation or multiple model choice procedure in a single project

|

priority

|

add specific abcranger output prefix to allow different runs in a single project specify name parameter or candidate models in prefix output for abcranger run abcranger option to do so o output arg prefix output modelchoice out or estimparam out by default interest run multiple parameter estimation or multiple model choice procedure in a single project

| 1

|

746,079

| 26,014,734,203

|

IssuesEvent

|

2022-12-21 07:15:13

|

akvo/akvo-rsr

|

https://api.github.com/repos/akvo/akvo-rsr

|

closed

|

Endpoint performance

|

Bug Type: Performance Priority: High python Epic stale

|

Endpoint performance has been a long-standing issue with some endpoints taking multiple seconds to complete.

This epic will track the most problematic endpoints and try to find either targeted or general improvements.

|

1.0

|

Endpoint performance - Endpoint performance has been a long-standing issue with some endpoints taking multiple seconds to complete.

This epic will track the most problematic endpoints and try to find either targeted or general improvements.

|

priority

|

endpoint performance endpoint performance has been a long standing issue with some endpoints taking multiple seconds to complete this epic will track the most problematic endpoints and try to find either targeted or general improvements

| 1

|

678,475

| 23,198,966,250

|

IssuesEvent

|

2022-08-01 19:20:08

|

azerothcore/azerothcore-wotlk

|

https://api.github.com/repos/azerothcore/azerothcore-wotlk

|

closed

|