Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

646,684

| 21,056,310,707

|

IssuesEvent

|

2022-04-01 03:56:32

|

oasis-engine/engine

|

https://api.github.com/repos/oasis-engine/engine

|

closed

|

undelete _currentEvents in physics packages

|

bug Physical high priority

|

when two collider collide together, the script delete the component, info still exit in _currentEvents which will cause the undefined. To fix this problem, we should consider two things:

1. clear relevant index in _currentEvents after destroy entity.

2. delete all object after one frame(not in the middle of frame).

|

1.0

|

undelete _currentEvents in physics packages - when two collider collide together, the script delete the component, info still exit in _currentEvents which will cause the undefined. To fix this problem, we should consider two things:

1. clear relevant index in _currentEvents after destroy entity.

2. delete all object after one frame(not in the middle of frame).

|

priority

|

undelete currentevents in physics packages when two collider collide together the script delete the component info still exit in currentevents which will cause the undefined to fix this problem we should consider two things clear relevant index in currentevents after destroy entity delete all object after one frame not in the middle of frame

| 1

|

443,247

| 12,768,979,354

|

IssuesEvent

|

2020-06-30 02:10:18

|

qlcchain/go-qlc

|

https://api.github.com/repos/qlcchain/go-qlc

|

closed

|

enable the RPC module according to the configuration file

|

Priority: High Type: Enhancement

|

- enable the RPC module according to the configuration file

- by default, enable all RPC modules in test network mode

- by default, disable all RPC modules which for enterprise application in main net mode

|

1.0

|

enable the RPC module according to the configuration file - - enable the RPC module according to the configuration file

- by default, enable all RPC modules in test network mode

- by default, disable all RPC modules which for enterprise application in main net mode

|

priority

|

enable the rpc module according to the configuration file enable the rpc module according to the configuration file by default enable all rpc modules in test network mode by default disable all rpc modules which for enterprise application in main net mode

| 1

|

661,920

| 22,095,864,580

|

IssuesEvent

|

2022-06-01 10:03:45

|

mantidproject/mantid

|

https://api.github.com/repos/mantidproject/mantid

|

closed

|

Project recovery on linux uses wrong checkpoint

|

High Priority Bug ISIS Team: Core

|

**Describe the bug**

Project recovery on IDAaaS always seems to use the previous recovery file

**To Reproduce**

(1) Set project recovery to save every ~5s (File > Settings > General)

(2) Make a workspace

`

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace')

`

(3) Wait for it to save project (see this in log at debug level)

(4) Crash mantid with `Segfault `algorithm with `DryRun=False`

(5) Open workbench again (project recovery should give a pop-up askign to restore workspace but not on IDAaaS)

(6) Make 2 workspaces

```

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace')

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace2')

```

(7) Repeat steps (3-5) - this time I see a project recovery pop-up but it only restores one workspace

**Screenshots**

<!--If applicable/possible, add screenshots to help explain your problem. -->

**Platform/Version (please complete the following information):**

- Mantid nightly 9th May on IDAaaS

**Additional context**

<!--Add any other context about the problem here.-->

|

1.0

|

Project recovery on linux uses wrong checkpoint - **Describe the bug**

Project recovery on IDAaaS always seems to use the previous recovery file

**To Reproduce**

(1) Set project recovery to save every ~5s (File > Settings > General)

(2) Make a workspace

`

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace')

`

(3) Wait for it to save project (see this in log at debug level)

(4) Crash mantid with `Segfault `algorithm with `DryRun=False`

(5) Open workbench again (project recovery should give a pop-up askign to restore workspace but not on IDAaaS)

(6) Make 2 workspaces

```

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace')

CreateWorkspace(DataX=range(12), DataY=range(12), DataE=range(12), NSpec=4, OutputWorkspace='NewWorkspace2')

```

(7) Repeat steps (3-5) - this time I see a project recovery pop-up but it only restores one workspace

**Screenshots**

<!--If applicable/possible, add screenshots to help explain your problem. -->

**Platform/Version (please complete the following information):**

- Mantid nightly 9th May on IDAaaS

**Additional context**

<!--Add any other context about the problem here.-->

|

priority

|

project recovery on linux uses wrong checkpoint describe the bug project recovery on idaaas always seems to use the previous recovery file to reproduce set project recovery to save every file settings general make a workspace createworkspace datax range datay range datae range nspec outputworkspace newworkspace wait for it to save project see this in log at debug level crash mantid with segfault algorithm with dryrun false open workbench again project recovery should give a pop up askign to restore workspace but not on idaaas make workspaces createworkspace datax range datay range datae range nspec outputworkspace newworkspace createworkspace datax range datay range datae range nspec outputworkspace repeat steps this time i see a project recovery pop up but it only restores one workspace screenshots platform version please complete the following information mantid nightly may on idaaas additional context

| 1

|

791,814

| 27,878,473,396

|

IssuesEvent

|

2023-03-21 17:33:21

|

vscentrum/vsc-software-stack

|

https://api.github.com/repos/vscentrum/vsc-software-stack

|

opened

|

funannotate

|

difficulty: easy new priority: high Python site:ugent

|

* link to support ticket: [#2023031360001037](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=113595)

* website: https://funannotate.readthedocs.io

* installation docs: https://funannotate.readthedocs.io/en/latest/install.html

* toolchain: `foss/2021b`

* easyblock to use: `...`

* required dependencies:

* see https://github.com/nextgenusfs/funannotate/blob/master/setup.py

* notes:

* requires Python 3.9?

* effort: *(TBD)*

|

1.0

|

funannotate - * link to support ticket: [#2023031360001037](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=113595)

* website: https://funannotate.readthedocs.io

* installation docs: https://funannotate.readthedocs.io/en/latest/install.html

* toolchain: `foss/2021b`

* easyblock to use: `...`

* required dependencies:

* see https://github.com/nextgenusfs/funannotate/blob/master/setup.py

* notes:

* requires Python 3.9?

* effort: *(TBD)*

|

priority

|

funannotate link to support ticket website installation docs toolchain foss easyblock to use required dependencies see notes requires python effort tbd

| 1

|

119,994

| 4,778,910,555

|

IssuesEvent

|

2016-10-27 20:46:22

|

OneNoteDev/WebClipper

|

https://api.github.com/repos/OneNoteDev/WebClipper

|

closed

|

We will create a blank page if an existing user tries clipping a PDF but does not complete the permissions process

|

bug high-priority

|

New PDF mode scenario:

1. A pre-3.2.9 Clipper user attempts to clip a PDF.

2. We have permission to create a new page for the clip, and so we do.

3. We don't have permission to read and update that page, however.

4. We tell them, "We've added features to the Web Clipper that require new permissions. To accept them, please sign out and sign back in."

5. They decide, "Eh, no, thanks."

Broken experience: a page with just a citation in the user's notebook, created by the Clipper.

Better experience: that page never gets created.

We need a permissions check before creating the new page.

|

1.0

|

We will create a blank page if an existing user tries clipping a PDF but does not complete the permissions process - New PDF mode scenario:

1. A pre-3.2.9 Clipper user attempts to clip a PDF.

2. We have permission to create a new page for the clip, and so we do.

3. We don't have permission to read and update that page, however.

4. We tell them, "We've added features to the Web Clipper that require new permissions. To accept them, please sign out and sign back in."

5. They decide, "Eh, no, thanks."

Broken experience: a page with just a citation in the user's notebook, created by the Clipper.

Better experience: that page never gets created.

We need a permissions check before creating the new page.

|

priority

|

we will create a blank page if an existing user tries clipping a pdf but does not complete the permissions process new pdf mode scenario a pre clipper user attempts to clip a pdf we have permission to create a new page for the clip and so we do we don t have permission to read and update that page however we tell them we ve added features to the web clipper that require new permissions to accept them please sign out and sign back in they decide eh no thanks broken experience a page with just a citation in the user s notebook created by the clipper better experience that page never gets created we need a permissions check before creating the new page

| 1

|

689,035

| 23,604,782,616

|

IssuesEvent

|

2022-08-24 07:17:18

|

1ForeverHD/TopbarPlus

|

https://api.github.com/repos/1ForeverHD/TopbarPlus

|

opened

|

Improve VR compatibility

|

Type: Enhancement Type: Bug Scope: Core Priority: High

|

Ignore VR devices within ``guiService.MenuOpened`` and ``guiService.MenuClosed`` (line 1225 and below of IconController) because their menu button doesn't upon the escape menu, it makes the GUI interactable (therefore we don't want to hide their topbar icons)

i.e.

Credit to @cl1ents (ievnnnnnnnnnnnnnnnnn) for this

|

1.0

|

Improve VR compatibility - Ignore VR devices within ``guiService.MenuOpened`` and ``guiService.MenuClosed`` (line 1225 and below of IconController) because their menu button doesn't upon the escape menu, it makes the GUI interactable (therefore we don't want to hide their topbar icons)

i.e.

Credit to @cl1ents (ievnnnnnnnnnnnnnnnnn) for this

|

priority

|

improve vr compatibility ignore vr devices within guiservice menuopened and guiservice menuclosed line and below of iconcontroller because their menu button doesn t upon the escape menu it makes the gui interactable therefore we don t want to hide their topbar icons i e credit to ievnnnnnnnnnnnnnnnnn for this

| 1

|

251,483

| 8,015,981,908

|

IssuesEvent

|

2018-07-25 11:55:49

|

BEXIS2/Core

|

https://api.github.com/repos/BEXIS2/Core

|

closed

|

A User with read rights can download files.

|

Priority: High Status: Completed Type: Bug

|

**Describe the bug**

a user with read rights can download files. but in the system there is a download right.

|

1.0

|

A User with read rights can download files. - **Describe the bug**

a user with read rights can download files. but in the system there is a download right.

|

priority

|

a user with read rights can download files describe the bug a user with read rights can download files but in the system there is a download right

| 1

|

718,322

| 24,712,101,439

|

IssuesEvent

|

2022-10-20 02:14:44

|

AY2223S1-CS2103T-W08-3/tp

|

https://api.github.com/repos/AY2223S1-CS2103T-W08-3/tp

|

closed

|

As a user, I can find contacts by any field I want

|

priority.High type.Enhancement

|

...so that I can narrow down my search as much as I want and save even more time.

|

1.0

|

As a user, I can find contacts by any field I want - ...so that I can narrow down my search as much as I want and save even more time.

|

priority

|

as a user i can find contacts by any field i want so that i can narrow down my search as much as i want and save even more time

| 1

|

479,475

| 13,797,409,131

|

IssuesEvent

|

2020-10-09 22:09:44

|

aws/aws-app-mesh-roadmap

|

https://api.github.com/repos/aws/aws-app-mesh-roadmap

|

closed

|

Bug: X-Ray trace regression in Envoy image v1.15.0.0-prod

|

Bug Envoy Docker Image Phase: Working on it Priority: High

|

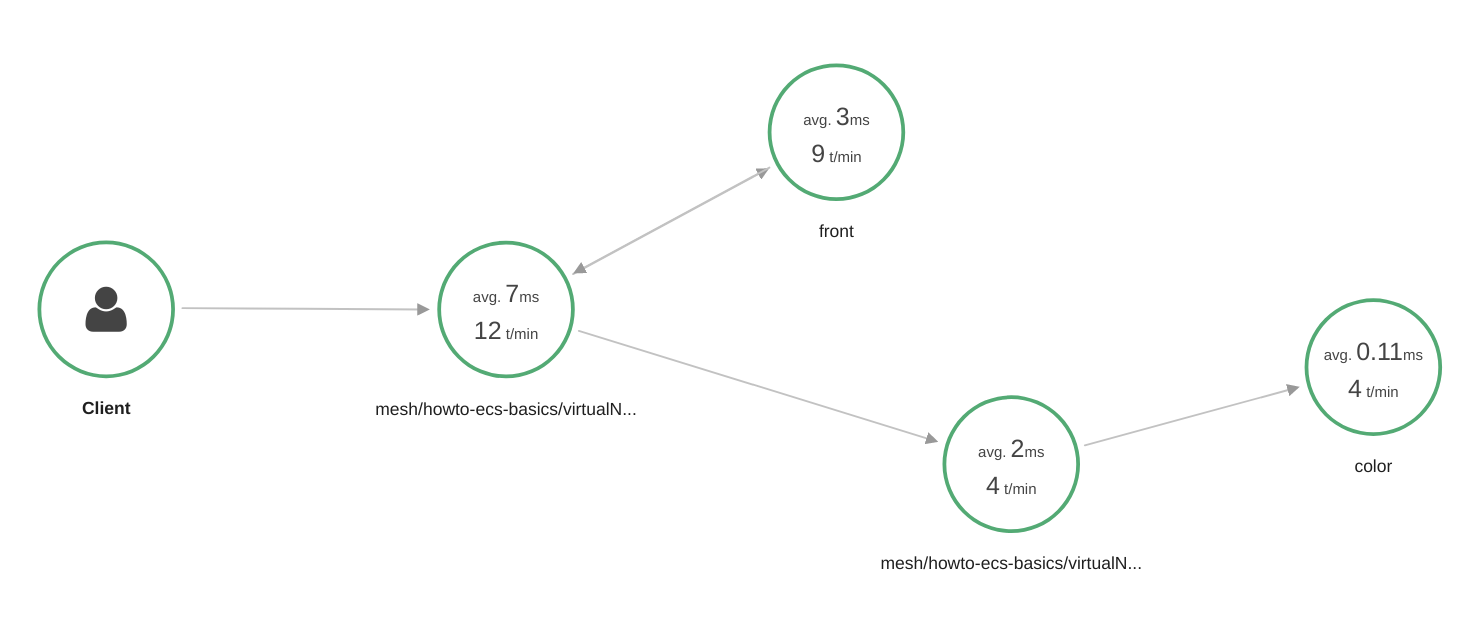

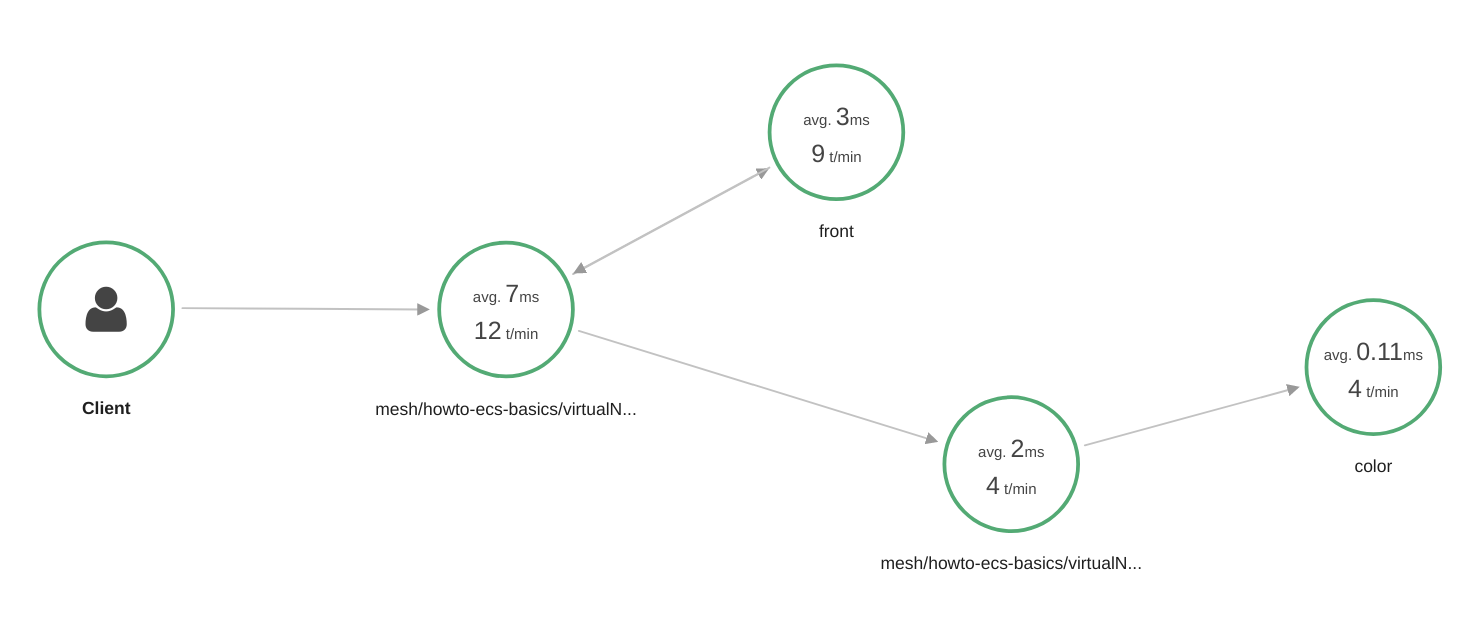

**Summary**

The X-Ray traces emitted by Envoy 1.15 are different than previous releases.

**Steps to Reproduce**

*You can use https://github.com/aws/aws-app-mesh-examples/tree/master/walkthroughs/howto-ecs-basics as a test application*

* Set `ENVOY_IMAGE` to `840364872350.dkr.ecr.us-west-2.amazonaws.com/aws-appmesh-envoy:v1.15.0.0-prod`.

* Follow instructions at least through chapter 3 to set up the mesh.

* Observe that:

* The nodes in the service map are missing the type (segment origin): `AWS::AppMesh::Proxy`.

* The segment names are the full VirtualNode name: `mesh/howto-ecs-basics/virtualNode/howto-ecs-basics-front-node` instead of `howto-ecs-basics/howto-ecs-basics-front-node`.

* The [segment documents](https://docs.aws.amazon.com/xray/latest/devguide/xray-api-segmentdocuments.html) are missing the `aws` metadata:

```

"aws": {

"app_mesh": {

"mesh_name": "howto-ecs-basics",

"virtual_node_name": "howto-ecs-basics-front-node"

}

}

```

**Are you currently working around this issue?**

Using the older Envoy image: `840364872350.dkr.ecr.<region>.amazonaws.com/aws-appmesh-envoy:v1.12.5.0-prod` does not reproduce this behavior.

|

1.0

|

Bug: X-Ray trace regression in Envoy image v1.15.0.0-prod - **Summary**

The X-Ray traces emitted by Envoy 1.15 are different than previous releases.

**Steps to Reproduce**

*You can use https://github.com/aws/aws-app-mesh-examples/tree/master/walkthroughs/howto-ecs-basics as a test application*

* Set `ENVOY_IMAGE` to `840364872350.dkr.ecr.us-west-2.amazonaws.com/aws-appmesh-envoy:v1.15.0.0-prod`.

* Follow instructions at least through chapter 3 to set up the mesh.

* Observe that:

* The nodes in the service map are missing the type (segment origin): `AWS::AppMesh::Proxy`.

* The segment names are the full VirtualNode name: `mesh/howto-ecs-basics/virtualNode/howto-ecs-basics-front-node` instead of `howto-ecs-basics/howto-ecs-basics-front-node`.

* The [segment documents](https://docs.aws.amazon.com/xray/latest/devguide/xray-api-segmentdocuments.html) are missing the `aws` metadata:

```

"aws": {

"app_mesh": {

"mesh_name": "howto-ecs-basics",

"virtual_node_name": "howto-ecs-basics-front-node"

}

}

```

**Are you currently working around this issue?**

Using the older Envoy image: `840364872350.dkr.ecr.<region>.amazonaws.com/aws-appmesh-envoy:v1.12.5.0-prod` does not reproduce this behavior.

|

priority

|

bug x ray trace regression in envoy image prod summary the x ray traces emitted by envoy are different than previous releases steps to reproduce you can use as a test application set envoy image to dkr ecr us west amazonaws com aws appmesh envoy prod follow instructions at least through chapter to set up the mesh observe that the nodes in the service map are missing the type segment origin aws appmesh proxy the segment names are the full virtualnode name mesh howto ecs basics virtualnode howto ecs basics front node instead of howto ecs basics howto ecs basics front node the are missing the aws metadata aws app mesh mesh name howto ecs basics virtual node name howto ecs basics front node are you currently working around this issue using the older envoy image dkr ecr amazonaws com aws appmesh envoy prod does not reproduce this behavior

| 1

|

525,224

| 15,241,126,929

|

IssuesEvent

|

2021-02-19 07:56:08

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

opened

|

[0.9.3] Craft station UI can be broken

|

Category: UI Priority: High Type: Bug

|

Step to reproduce:

- place workbench (or any Craft station)

- fly away to a distance when Workbench dissapears but chunk which contains this workbeck will not unload.

- fly back to workbench and open it. I don't have cursor (tab mode) when I open workbench UI and have a lot of exceptions in log file:

```

NullReferenceException: Object reference not set to an instance of an object.

at UnityEngine.Component.GetComponent[T] () [0x00000] in <00000000000000000000000000000000>:0

at UI.CraftingUI.OnShow () [0x00000] in <00000000000000000000000000000000>:0

at UI.WorldObjectUI+PanelData.OnShow () [0x00000] in <00000000000000000000000000000000>:0

at UI.WorldObjectUI.Open (Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at UI.UIManager.Open (System.String guiName, Eco.Shared.Serialization.BSONObject bson, UI.UILayer layer, System.Boolean singleInstance) [0x00000] in <00000000000000000000000000000000>:0

at GenericClient`1[T].OpenUI (System.String uiName, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <00000000000000000000000000000000>:0

at System.Comparison`1[T].Invoke (T x, T y) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONArray bsonArgs, System.Object& result) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.HandleReceiveRPC (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at NetworkManager.Eco.Shared.Networking.INetworkEventHandler.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.NetObject.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketQueueHandler.TryFetchNextClientUpdate (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketHandler.HandleNetworkEvents (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at System.Action`1[T].Invoke (T obj) [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.PlannerGroup.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.FramePlannerSystem.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystemGroup.UpdateAllSystems () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at System.Action.Invoke () [0x00000] in <00000000000000000000000000000000>:0

Rethrow as TargetInvocationException: Exception has been thrown by the target of an invocation.

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <00000000000000000000000000000000>:0

at System.Comparison`1[T].Invoke (T x, T y) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONArray bsonArgs, System.Object& result) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.HandleReceiveRPC (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at NetworkManager.Eco.Shared.Networking.INetworkEventHandler.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.NetObject.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketQueueHandler.TryFetchNextClientUpdate (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketHandler.HandleNetworkEvents (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at System.Action`1[T].Invoke (T obj) [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.PlannerGroup.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.FramePlannerSystem.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystemGroup.UpdateAllSystems () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at System.Action.Invoke () [0x00000] in <00000000000000000000000000000000>:0

UnityEngine.Logger:LogException(Exception, Object)

UnityEngine.Debug:LogException(Exception)

FramePlanner.PlannerGroup:OnUpdate()

FramePlanner.FramePlannerSystem:OnUpdate()

Unity.Entities.ComponentSystem:Update()

Unity.Entities.ComponentSystemGroup:UpdateAllSystems()

Unity.Entities.ComponentSystem:Update()

System.Action:Invoke()

```

[Player.log](https://github.com/StrangeLoopGames/EcoIssues/files/6008441/Player.log)

If you don't have it first time, just try to repeat it.

Video: https://drive.google.com/file/d/1ZglupNjl5F9cYCu745Y_P0G9Jpjqxzrk/view?usp=sharing

|

1.0

|

[0.9.3] Craft station UI can be broken - Step to reproduce:

- place workbench (or any Craft station)

- fly away to a distance when Workbench dissapears but chunk which contains this workbeck will not unload.

- fly back to workbench and open it. I don't have cursor (tab mode) when I open workbench UI and have a lot of exceptions in log file:

```

NullReferenceException: Object reference not set to an instance of an object.

at UnityEngine.Component.GetComponent[T] () [0x00000] in <00000000000000000000000000000000>:0

at UI.CraftingUI.OnShow () [0x00000] in <00000000000000000000000000000000>:0

at UI.WorldObjectUI+PanelData.OnShow () [0x00000] in <00000000000000000000000000000000>:0

at UI.WorldObjectUI.Open (Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at UI.UIManager.Open (System.String guiName, Eco.Shared.Serialization.BSONObject bson, UI.UILayer layer, System.Boolean singleInstance) [0x00000] in <00000000000000000000000000000000>:0

at GenericClient`1[T].OpenUI (System.String uiName, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <00000000000000000000000000000000>:0

at System.Comparison`1[T].Invoke (T x, T y) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONArray bsonArgs, System.Object& result) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.HandleReceiveRPC (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at NetworkManager.Eco.Shared.Networking.INetworkEventHandler.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.NetObject.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketQueueHandler.TryFetchNextClientUpdate (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketHandler.HandleNetworkEvents (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at System.Action`1[T].Invoke (T obj) [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.PlannerGroup.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.FramePlannerSystem.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystemGroup.UpdateAllSystems () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at System.Action.Invoke () [0x00000] in <00000000000000000000000000000000>:0

Rethrow as TargetInvocationException: Exception has been thrown by the target of an invocation.

at System.Reflection.MonoMethod.Invoke (System.Object obj, System.Reflection.BindingFlags invokeAttr, System.Reflection.Binder binder, System.Object[] parameters, System.Globalization.CultureInfo culture) [0x00000] in <00000000000000000000000000000000>:0

at System.Reflection.MethodBase.Invoke (System.Object obj, System.Object[] parameters) [0x00000] in <00000000000000000000000000000000>:0

at System.Comparison`1[T].Invoke (T x, T y) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.TryInvoke (Eco.Shared.Networking.INetClient client, System.Object target, System.String methodname, Eco.Shared.Serialization.BSONArray bsonArgs, System.Object& result) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.InvokeOn (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson, System.Object target, System.String methodname) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.RPCManager.HandleReceiveRPC (Eco.Shared.Networking.INetClient client, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at NetworkManager.Eco.Shared.Networking.INetworkEventHandler.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bson) [0x00000] in <00000000000000000000000000000000>:0

at Eco.Shared.Networking.NetObject.ReceiveEvent (Eco.Shared.Networking.INetClient client, Eco.Shared.Networking.NetworkEvent netEvent, Eco.Shared.Serialization.BSONObject bsonObj) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketQueueHandler.TryFetchNextClientUpdate (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at ClientPacketHandler.HandleNetworkEvents (Eco.Shared.Time.TimeLimit timeLimit) [0x00000] in <00000000000000000000000000000000>:0

at System.Action`1[T].Invoke (T obj) [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.PlannerGroup.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at FramePlanner.FramePlannerSystem.OnUpdate () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystemGroup.UpdateAllSystems () [0x00000] in <00000000000000000000000000000000>:0

at Unity.Entities.ComponentSystem.Update () [0x00000] in <00000000000000000000000000000000>:0

at System.Action.Invoke () [0x00000] in <00000000000000000000000000000000>:0

UnityEngine.Logger:LogException(Exception, Object)

UnityEngine.Debug:LogException(Exception)

FramePlanner.PlannerGroup:OnUpdate()

FramePlanner.FramePlannerSystem:OnUpdate()

Unity.Entities.ComponentSystem:Update()

Unity.Entities.ComponentSystemGroup:UpdateAllSystems()

Unity.Entities.ComponentSystem:Update()

System.Action:Invoke()

```

[Player.log](https://github.com/StrangeLoopGames/EcoIssues/files/6008441/Player.log)

If you don't have it first time, just try to repeat it.

Video: https://drive.google.com/file/d/1ZglupNjl5F9cYCu745Y_P0G9Jpjqxzrk/view?usp=sharing

|

priority

|

craft station ui can be broken step to reproduce place workbench or any craft station fly away to a distance when workbench dissapears but chunk which contains this workbeck will not unload fly back to workbench and open it i don t have cursor tab mode when i open workbench ui and have a lot of exceptions in log file nullreferenceexception object reference not set to an instance of an object at unityengine component getcomponent in at ui craftingui onshow in at ui worldobjectui paneldata onshow in at ui worldobjectui open eco shared serialization bsonobject bsonobj in at ui uimanager open system string guiname eco shared serialization bsonobject bson ui uilayer layer system boolean singleinstance in at genericclient openui system string uiname eco shared serialization bsonobject bsonobj in at system reflection monomethod invoke system object obj system reflection bindingflags invokeattr system reflection binder binder system object parameters system globalization cultureinfo culture in at system reflection methodbase invoke system object obj system object parameters in at system comparison invoke t x t y in at eco shared networking rpcmanager tryinvoke eco shared networking inetclient client system object target system string methodname eco shared serialization bsonarray bsonargs system object result in at eco shared networking rpcmanager invokeon eco shared networking inetclient client eco shared serialization bsonobject bson system object target system string methodname in at eco shared networking rpcmanager handlereceiverpc eco shared networking inetclient client eco shared serialization bsonobject bson in at networkmanager eco shared networking inetworkeventhandler receiveevent eco shared networking inetclient client eco shared networking networkevent netevent eco shared serialization bsonobject bson in at eco shared networking netobject receiveevent eco shared networking inetclient client eco shared networking networkevent netevent eco shared serialization bsonobject bsonobj in at clientpacketqueuehandler tryfetchnextclientupdate eco shared time timelimit timelimit in at clientpackethandler handlenetworkevents eco shared time timelimit timelimit in at system action invoke t obj in at frameplanner plannergroup onupdate in at frameplanner frameplannersystem onupdate in at unity entities componentsystem update in at unity entities componentsystemgroup updateallsystems in at unity entities componentsystem update in at system action invoke in rethrow as targetinvocationexception exception has been thrown by the target of an invocation at system reflection monomethod invoke system object obj system reflection bindingflags invokeattr system reflection binder binder system object parameters system globalization cultureinfo culture in at system reflection methodbase invoke system object obj system object parameters in at system comparison invoke t x t y in at eco shared networking rpcmanager tryinvoke eco shared networking inetclient client system object target system string methodname eco shared serialization bsonarray bsonargs system object result in at eco shared networking rpcmanager invokeon eco shared networking inetclient client eco shared serialization bsonobject bson system object target system string methodname in at eco shared networking rpcmanager handlereceiverpc eco shared networking inetclient client eco shared serialization bsonobject bson in at networkmanager eco shared networking inetworkeventhandler receiveevent eco shared networking inetclient client eco shared networking networkevent netevent eco shared serialization bsonobject bson in at eco shared networking netobject receiveevent eco shared networking inetclient client eco shared networking networkevent netevent eco shared serialization bsonobject bsonobj in at clientpacketqueuehandler tryfetchnextclientupdate eco shared time timelimit timelimit in at clientpackethandler handlenetworkevents eco shared time timelimit timelimit in at system action invoke t obj in at frameplanner plannergroup onupdate in at frameplanner frameplannersystem onupdate in at unity entities componentsystem update in at unity entities componentsystemgroup updateallsystems in at unity entities componentsystem update in at system action invoke in unityengine logger logexception exception object unityengine debug logexception exception frameplanner plannergroup onupdate frameplanner frameplannersystem onupdate unity entities componentsystem update unity entities componentsystemgroup updateallsystems unity entities componentsystem update system action invoke if you don t have it first time just try to repeat it video

| 1

|

387,447

| 11,461,542,778

|

IssuesEvent

|

2020-02-07 12:11:48

|

robotframework/robotframework

|

https://api.github.com/repos/robotframework/robotframework

|

opened

|

Remove Python 2 and Python 3.5 support

|

backwards incompatible enhancement priority: high

|

Python 2 [will be officially retired in April, 2020](https://www.python.org/psf/press-release/pr20191220/). Robot Framework continuing its support much long does not make sense, and the [Robot Framework Foundation](https://robotframework.org/foundation/) has decided that it will not sponsor Robot Framework development targeting Python 2 anymore in 2021. That means the following:

- Robot Framework 4.0 will not support Python 2 anymore. Its development will most likely start sometime in H2/2020 and the final release is expected for H1/2021.

- Robot Framework 3.2 (currently in beta) and all its minor releases will support Python 2.7. If there would be Robot Framework 3.3, also it would support Python 2.7.

- When Python 2 support is removed, also support for Python 3.5 and older will be removed. This eases development by making it possible to take into use newer Python features, most notably f-strings. Python 3.5 will also [reach its end-of-life in H2/2020](https://devguide.python.org/#status-of-python-branches), a lot before the expected Robot Framework 4.0 final release.

|

1.0

|

Remove Python 2 and Python 3.5 support - Python 2 [will be officially retired in April, 2020](https://www.python.org/psf/press-release/pr20191220/). Robot Framework continuing its support much long does not make sense, and the [Robot Framework Foundation](https://robotframework.org/foundation/) has decided that it will not sponsor Robot Framework development targeting Python 2 anymore in 2021. That means the following:

- Robot Framework 4.0 will not support Python 2 anymore. Its development will most likely start sometime in H2/2020 and the final release is expected for H1/2021.

- Robot Framework 3.2 (currently in beta) and all its minor releases will support Python 2.7. If there would be Robot Framework 3.3, also it would support Python 2.7.

- When Python 2 support is removed, also support for Python 3.5 and older will be removed. This eases development by making it possible to take into use newer Python features, most notably f-strings. Python 3.5 will also [reach its end-of-life in H2/2020](https://devguide.python.org/#status-of-python-branches), a lot before the expected Robot Framework 4.0 final release.

|

priority

|

remove python and python support python robot framework continuing its support much long does not make sense and the has decided that it will not sponsor robot framework development targeting python anymore in that means the following robot framework will not support python anymore its development will most likely start sometime in and the final release is expected for robot framework currently in beta and all its minor releases will support python if there would be robot framework also it would support python when python support is removed also support for python and older will be removed this eases development by making it possible to take into use newer python features most notably f strings python will also a lot before the expected robot framework final release

| 1

|

755,512

| 26,431,045,311

|

IssuesEvent

|

2023-01-14 20:28:44

|

bats-core/bats-core

|

https://api.github.com/repos/bats-core/bats-core

|

closed

|

Include all support libraries in the Docker image

|

Type: Enhancement Priority: High Component: Docker Component: Packaging Size: Large

|

**Is your feature request related to a problem? Please describe.**

I am trying to setup a Docker-based validation process using `bats`. Our tests make use of `bats-assert` and `bast-support`, and those are not included in the `bats/bats` Docker image.

**Describe the solution you'd like**

Include all optional libraries in the Docker image. Alternatively, provide an additional image with those included, if adding more libraries to the base one is not desirable.

**Describe alternatives you've considered**

We considered building our own image, but having all libraries in the official Docker image is cleaner and has less potential of becoming stale.

**Additional context**

Thanks for an awesome tool!

|

1.0

|

Include all support libraries in the Docker image - **Is your feature request related to a problem? Please describe.**

I am trying to setup a Docker-based validation process using `bats`. Our tests make use of `bats-assert` and `bast-support`, and those are not included in the `bats/bats` Docker image.

**Describe the solution you'd like**

Include all optional libraries in the Docker image. Alternatively, provide an additional image with those included, if adding more libraries to the base one is not desirable.

**Describe alternatives you've considered**

We considered building our own image, but having all libraries in the official Docker image is cleaner and has less potential of becoming stale.

**Additional context**

Thanks for an awesome tool!

|

priority

|

include all support libraries in the docker image is your feature request related to a problem please describe i am trying to setup a docker based validation process using bats our tests make use of bats assert and bast support and those are not included in the bats bats docker image describe the solution you d like include all optional libraries in the docker image alternatively provide an additional image with those included if adding more libraries to the base one is not desirable describe alternatives you ve considered we considered building our own image but having all libraries in the official docker image is cleaner and has less potential of becoming stale additional context thanks for an awesome tool

| 1

|

610,025

| 18,892,534,736

|

IssuesEvent

|

2021-11-15 14:41:18

|

craftercms/craftercms

|

https://api.github.com/repos/craftercms/craftercms

|

closed

|

[studio] [studio-ui] Studio search breaks when updating the search term while in a > 1 page

|

bug priority: high CI

|

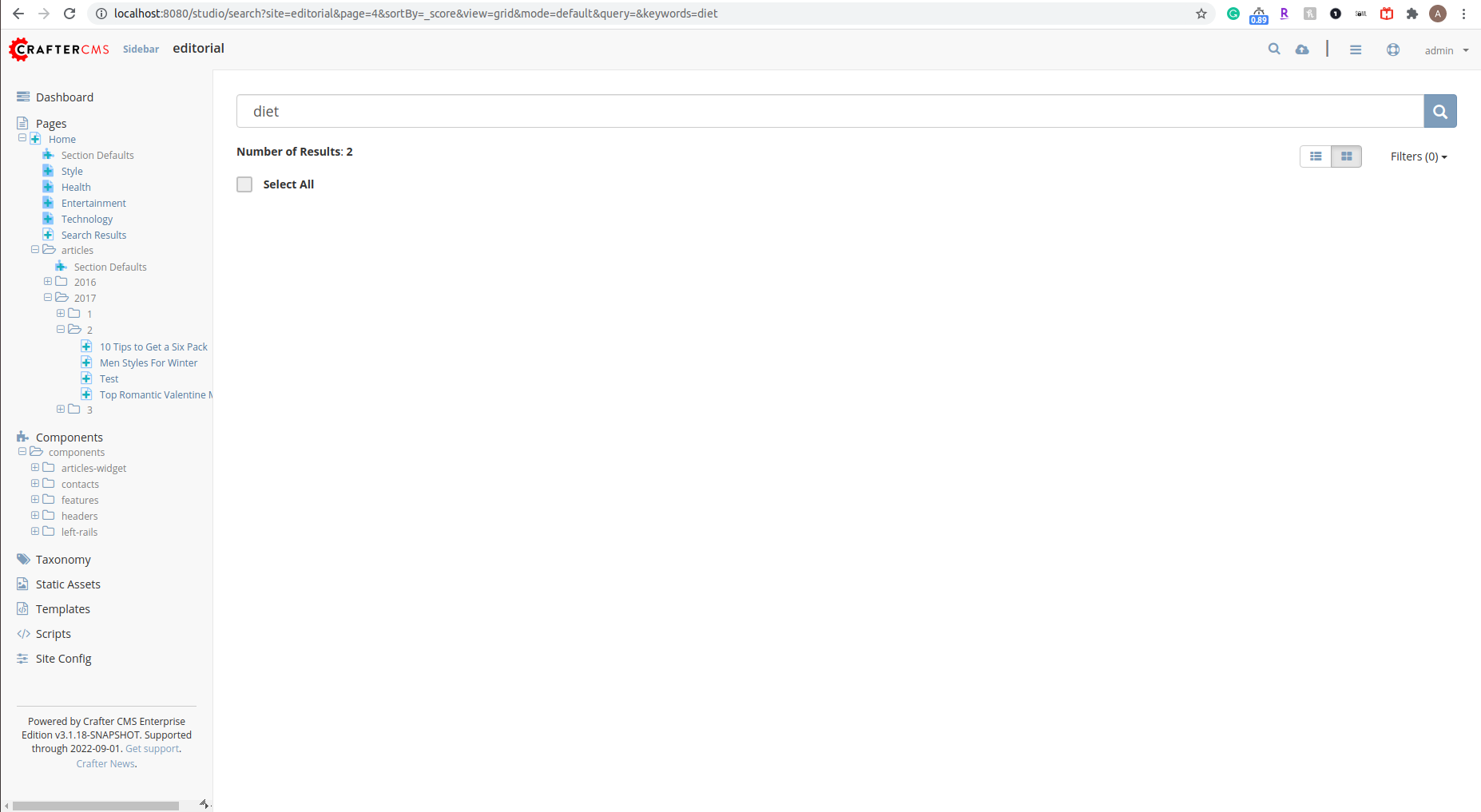

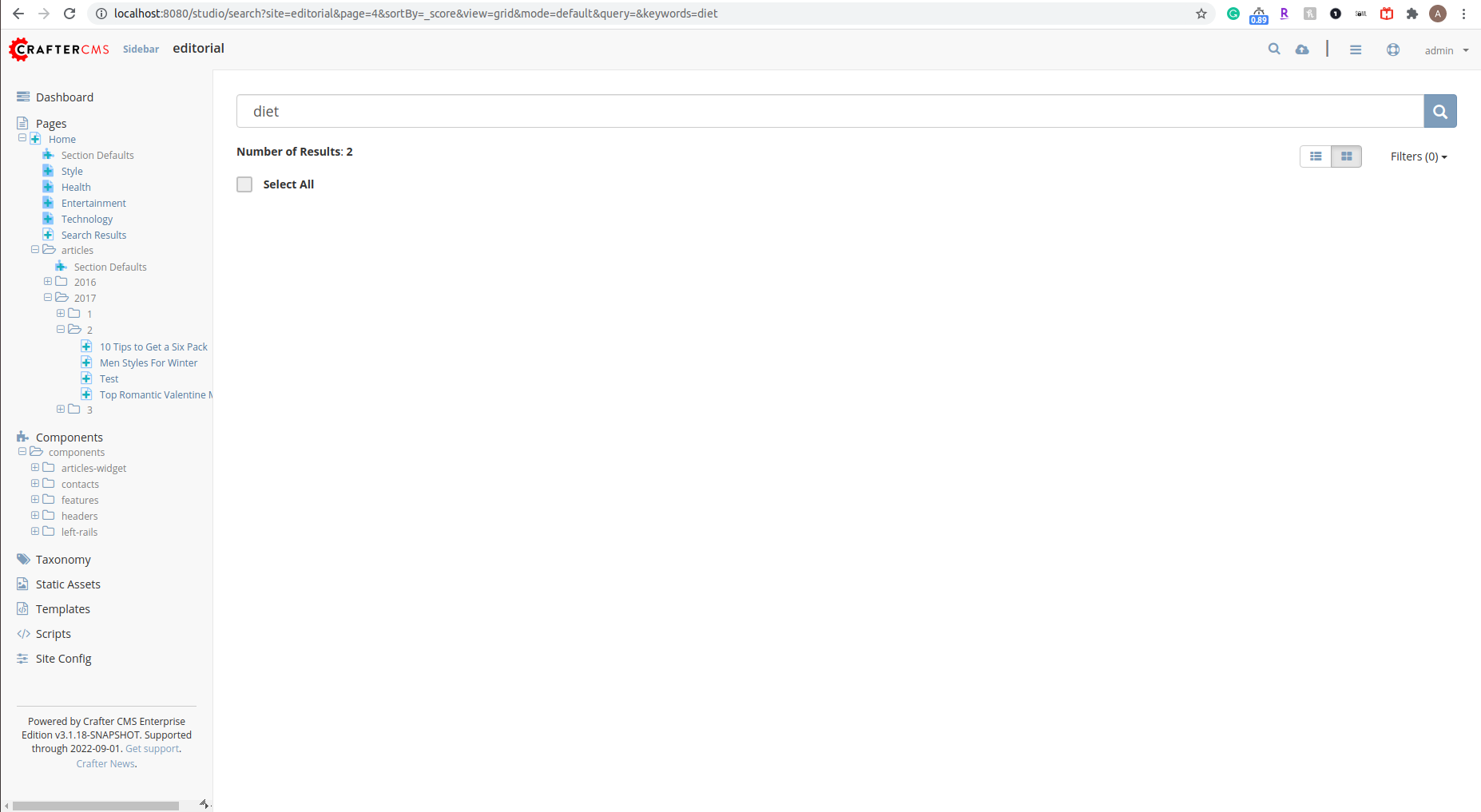

### Bug Report

#### Crafter CMS Version

3.1.15 and latest 3.1.18 build

#### Date of Build

11/08/2021

#### Describe the bug

Studio search breaks when updating the search term while in a > 1 page: no results are shown and in some instances the page becomes unresponsive.

#### To Reproduce

Steps to reproduce the behavior:

1. Create a site based on Editorial

2. Click on the magnifying glass to go to Studio search

3. Pick the last page in the results

4. Enter diet in the search terms

You'll notice that the UI says that there are 3 search results but none is displayed. In some client repos the page becomes unresponsive too.

#### Logs

N/A

#### Screenshots

|

1.0

|

[studio] [studio-ui] Studio search breaks when updating the search term while in a > 1 page - ### Bug Report

#### Crafter CMS Version

3.1.15 and latest 3.1.18 build

#### Date of Build

11/08/2021

#### Describe the bug

Studio search breaks when updating the search term while in a > 1 page: no results are shown and in some instances the page becomes unresponsive.

#### To Reproduce

Steps to reproduce the behavior:

1. Create a site based on Editorial

2. Click on the magnifying glass to go to Studio search

3. Pick the last page in the results

4. Enter diet in the search terms

You'll notice that the UI says that there are 3 search results but none is displayed. In some client repos the page becomes unresponsive too.

#### Logs

N/A

#### Screenshots

|

priority

|

studio search breaks when updating the search term while in a page bug report crafter cms version and latest build date of build describe the bug studio search breaks when updating the search term while in a page no results are shown and in some instances the page becomes unresponsive to reproduce steps to reproduce the behavior create a site based on editorial click on the magnifying glass to go to studio search pick the last page in the results enter diet in the search terms you ll notice that the ui says that there are search results but none is displayed in some client repos the page becomes unresponsive too logs n a screenshots

| 1

|

321,464

| 9,798,887,873

|

IssuesEvent

|

2019-06-11 13:22:26

|

EricssonResearch/scott-eu

|

https://api.github.com/repos/EricssonResearch/scott-eu

|

closed

|

Figure out the extensibility mechanism for the Gateway Backend

|

Comp: Gateway Priority: High Status: Review Needed Type: Feature Xtra: Fix Verified

|

I initially thought of OSGi.

Leo has suggested loading a JAR at runtime: https://stackoverflow.com/questions/60764/how-should-i-load-jars-dynamically-at-runtime But this approach would require to scan the whole JAR, load its each of its classes etc: https://stackoverflow.com/questions/45166757/loading-classes-and-resources-in-java-9 and https://stackoverflow.com/questions/41932635/scanning-classpath-modulepath-in-runtime-in-java-9

Then I have recalled of https://docs.oracle.com/javase/9/docs/api/java/util/ServiceLoader.html class and found the guide https://docs.oracle.com/javase/tutorial/ext/basics/spi.html#introduction. The guide only requires to put a JAR on the classpath at the application startup. Why not? We can put the JAR into a Docker volume that gets copied to the `/lib/ext` and Jetty will put it on the classpath at the startup: https://www.eclipse.org/jetty/documentation/9.4.x/startup-classpath.html

|

1.0

|

Figure out the extensibility mechanism for the Gateway Backend - I initially thought of OSGi.

Leo has suggested loading a JAR at runtime: https://stackoverflow.com/questions/60764/how-should-i-load-jars-dynamically-at-runtime But this approach would require to scan the whole JAR, load its each of its classes etc: https://stackoverflow.com/questions/45166757/loading-classes-and-resources-in-java-9 and https://stackoverflow.com/questions/41932635/scanning-classpath-modulepath-in-runtime-in-java-9

Then I have recalled of https://docs.oracle.com/javase/9/docs/api/java/util/ServiceLoader.html class and found the guide https://docs.oracle.com/javase/tutorial/ext/basics/spi.html#introduction. The guide only requires to put a JAR on the classpath at the application startup. Why not? We can put the JAR into a Docker volume that gets copied to the `/lib/ext` and Jetty will put it on the classpath at the startup: https://www.eclipse.org/jetty/documentation/9.4.x/startup-classpath.html

|

priority

|

figure out the extensibility mechanism for the gateway backend i initially thought of osgi leo has suggested loading a jar at runtime but this approach would require to scan the whole jar load its each of its classes etc and then i have recalled of class and found the guide the guide only requires to put a jar on the classpath at the application startup why not we can put the jar into a docker volume that gets copied to the lib ext and jetty will put it on the classpath at the startup

| 1

|

104,088

| 4,195,052,517

|

IssuesEvent

|

2016-06-25 13:38:53

|

neuropoly/spinalcordtoolbox

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox

|

reopened

|

sct_testing fails on neurodebian

|

bug priority: high

|

~~~

Spinal Cord Toolbox (version dev-484e5905c7fa148da396e8ccc58414ca1802df0e)

Running /home/brain/sct/scripts/sct_testing.py -d 1

Downloading testing data...

sct_download_data -d sct_testing_data

Path to testing data: /home/brain/sct_testing_data/data/

Checking test_sct_apply_transfo.....................[OK]

Checking test_sct_check_atlas_integrity.............[OK]

Checking test_sct_compute_mtr.......................[OK]

Checking test_sct_concat_transfo....................[OK]

Checking test_sct_convert...........................[OK]

Checking test_sct_create_mask.......................[OK]

Checking test_sct_crop_image........................[OK]

Checking test_sct_dmri_compute_dti..................[OK]

Checking test_sct_dmri_get_bvalue...................[OK]

Checking test_sct_dmri_transpose_bvecs..............[OK]

Checking test_sct_dmri_moco.........................[OK]

Checking test_sct_dmri_separate_b0_and_dwi..........[OK]

Checking test_sct_extract_metric....................[OK]

Checking test_sct_fmri_compute_tsnr.................[OK]

Checking test_sct_fmri_moco.........................[OK]

Checking test_sct_image.............................[OK]

Checking test_sct_label_utils.......................[OK]

Checking test_sct_label_vertebrae...................Running /home/brain/sct/scripts/sct_label_vertebrae.py -laplacian 0 -o t2_seg_labeled.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 1 -denoise 0 -ofolder sct_label_vertebrae_data_160625093012_560151/ -initz 34,3

Check folder existence...

Create temporary folder...

Create temporary folder...

mkdir tmp.160625093012_893250/

Copying input data to tmp folder...

sct_convert -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -o tmp.160625093012_893250/data.nii

sct_convert -i /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -o tmp.160625093012_893250/segmentation.nii.gz

Create label to identify disc...

Intel MKL FATAL ERROR: Cannot load libmkl_avx.so or libmkl_def.so.

/home/brain/sct/scripts/sct_utils.py, line 104

[FAIL]

====================================================================================================

sct_label_vertebrae -laplacian 0 -o t2_seg_labeled.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 1 -denoise 0 -ofolder sct_label_vertebrae_data_160625093012_560151/ -initz 34,3

====================================================================================================

ERROR: Function crashed!

Checking test_sct_maths.............................[OK]

Checking test_sct_process_segmentation..............[OK]

Checking test_sct_propseg...........................[OK]

Checking test_sct_register_graymatter...............[OK]

Checking test_sct_register_multimodal...............[OK]

Checking test_sct_register_to_template..............Running /home/brain/sct/scripts/sct_register_to_template.py -c t2 -l /home/brain/sct_testing_data/data/t2/labels.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -t /home/brain/sct_testing_data/data/template/ -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 0 -param step=1,type=seg,algo=slicereg,metric=MeanSquares,iter=5:step=2,type=seg,algo=bsplinesyn,iter=3,metric=MI:step=3,iter=0 -ofolder sct_register_to_template_data_160625093041_76113/

Check folder existence...

Check folder existence...

Check template files...

OK: /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_T2.nii.gz

OK: /home/brain/sct_testing_data/data/template/template/landmarks_center.nii.gz

OK: /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_cord.nii.gz

Check parameters:

.. Data: /home/brain/sct_testing_data/data/t2/t2.nii.gz

.. Landmarks: /home/brain/sct_testing_data/data/t2/labels.nii.gz

.. Segmentation: /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz

.. Path template: /home/brain/sct_testing_data/data/template/template

.. Path output: sct_register_to_template_data_160625093041_76113/

.. Output type: 1

.. Remove temp files: 0

Parameters for registration:

Step #1

.. Type #seg

.. Algorithm................ slicereg

.. Metric................... MeanSquares

.. Number of iterations..... 5

.. Shrink factor............ 1

.. Smoothing factor......... 5

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Step #2

.. Type #seg

.. Algorithm................ bsplinesyn

.. Metric................... MI

.. Number of iterations..... 3

.. Shrink factor............ 1

.. Smoothing factor......... 1

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Step #3

.. Type #im

.. Algorithm................ syn

.. Metric................... CC

.. Number of iterations..... 0

.. Shrink factor............ 1

.. Smoothing factor......... 0

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Check if data, segmentation and landmarks are in the same space...

Check input labels...

Create temporary folder...

mkdir tmp.160625093041_218218/

Copying input data to tmp folder and convert to nii...

sct_convert -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -o tmp.160625093041_218218/data.nii

sct_convert -i /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -o tmp.160625093041_218218/seg.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/t2/labels.nii.gz -o tmp.160625093041_218218/label.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_T2.nii.gz -o tmp.160625093041_218218/template.nii

sct_convert -i /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_cord.nii.gz -o tmp.160625093041_218218/template_seg.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/template/template/landmarks_center.nii.gz -o tmp.160625093041_218218/template_label.nii.gz

Smooth segmentation...

sct_maths -i seg.nii.gz -smooth 0 -o seg_smooth.nii.gz

Resample data to 1mm isotropic...

sct_resample -i data.nii -mm 1.0x1.0x1.0 -x linear -o data_1mm.nii

sct_resample -i seg_smooth.nii.gz -mm 1.0x1.0x1.0 -x linear -o seg_smooth_1mm.nii.gz

Position=(31,44,26) -- Value= 3

Position=(32,9,26) -- Value= 5

Useful notation:

31,44,26,3:32,9,26,5

sct_label_utils -i data_1mm.nii -create 31,44,26,3:32,9,26,5 -v 1 -o label_1mm.nii.gz

Change orientation of input images to RPI...

sct_image -i data_1mm.nii -setorient RPI -o data_1mm_rpi.nii

sct_image -i seg_smooth_1mm.nii.gz -setorient RPI -o seg_smooth_1mm_rpi.nii.gz

sct_image -i label_1mm.nii.gz -setorient RPI -o label_1mm_rpi.nii.gz

sct_crop_image -i seg_smooth_1mm_rpi.nii.gz -o seg_smooth_1mm_rpi_crop.nii.gz -dim 2 -bzmax

Straighten the spinal cord using centerline/segmentation...

sct_straighten_spinalcord -i seg_smooth_1mm_rpi_crop.nii.gz -s seg_smooth_1mm_rpi_crop.nii.gz -o seg_smooth_1mm_rpi_crop_straight.nii.gz -qc 0 -r 0 -v 1

sct_concat_transfo -w warp_straight2curve.nii.gz -d data_1mm_rpi.nii -o warp_straight2curve.nii.gz

Remove unused label on template. Keep only label present in the input label image...

sct_label_utils -i template_label.nii.gz -o template_label.nii.gz -remove label_1mm_rpi.nii.gz

Dilating input labels using 3vox ball radius

sct_maths -i label_1mm_rpi.nii.gz -o label_1mm_rpi_dilate.nii.gz -dilate 3

Running /home/brain/sct/scripts/sct_maths.py -i label_1mm_rpi.nii.gz -o label_1mm_rpi_dilate.nii.gz -dilate 3

Intel MKL FATAL ERROR: Cannot load libmkl_avx.so or libmkl_def.so.

/home/brain/sct/scripts/sct_utils.py, line 104

/home/brain/sct/scripts/sct_utils.py, line 104

[FAIL]

====================================================================================================

sct_register_to_template -c t2 -l /home/brain/sct_testing_data/data/t2/labels.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -t /home/brain/sct_testing_data/data/template/ -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 0 -param step=1,type=seg,algo=slicereg,metric=MeanSquares,iter=5:step=2,type=seg,algo=bsplinesyn,iter=3,metric=MI:step=3,iter=0 -ofolder sct_register_to_template_data_160625093041_76113/

====================================================================================================

ERROR: Function crashed!

Checking test_sct_resample..........................[OK]

Checking test_sct_segment_graymatter................[OK]

Checking test_sct_smooth_spinalcord.................[OK]

Checking test_sct_straighten_spinalcord.............[OK]

Checking test_sct_warp_template.....................[OK]

Checking test_sct_documentation.....................[OK]

Checking test_sct_dmri_create_noisemask.............[OK]

status: [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0]

Finished! Elapsed time: 141s

~~~

|

1.0

|

sct_testing fails on neurodebian - ~~~

Spinal Cord Toolbox (version dev-484e5905c7fa148da396e8ccc58414ca1802df0e)

Running /home/brain/sct/scripts/sct_testing.py -d 1

Downloading testing data...

sct_download_data -d sct_testing_data

Path to testing data: /home/brain/sct_testing_data/data/

Checking test_sct_apply_transfo.....................[OK]

Checking test_sct_check_atlas_integrity.............[OK]

Checking test_sct_compute_mtr.......................[OK]

Checking test_sct_concat_transfo....................[OK]

Checking test_sct_convert...........................[OK]

Checking test_sct_create_mask.......................[OK]

Checking test_sct_crop_image........................[OK]

Checking test_sct_dmri_compute_dti..................[OK]

Checking test_sct_dmri_get_bvalue...................[OK]

Checking test_sct_dmri_transpose_bvecs..............[OK]

Checking test_sct_dmri_moco.........................[OK]

Checking test_sct_dmri_separate_b0_and_dwi..........[OK]

Checking test_sct_extract_metric....................[OK]

Checking test_sct_fmri_compute_tsnr.................[OK]

Checking test_sct_fmri_moco.........................[OK]

Checking test_sct_image.............................[OK]

Checking test_sct_label_utils.......................[OK]

Checking test_sct_label_vertebrae...................Running /home/brain/sct/scripts/sct_label_vertebrae.py -laplacian 0 -o t2_seg_labeled.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 1 -denoise 0 -ofolder sct_label_vertebrae_data_160625093012_560151/ -initz 34,3

Check folder existence...

Create temporary folder...

Create temporary folder...

mkdir tmp.160625093012_893250/

Copying input data to tmp folder...

sct_convert -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -o tmp.160625093012_893250/data.nii

sct_convert -i /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -o tmp.160625093012_893250/segmentation.nii.gz

Create label to identify disc...

Intel MKL FATAL ERROR: Cannot load libmkl_avx.so or libmkl_def.so.

/home/brain/sct/scripts/sct_utils.py, line 104

[FAIL]

====================================================================================================

sct_label_vertebrae -laplacian 0 -o t2_seg_labeled.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 1 -denoise 0 -ofolder sct_label_vertebrae_data_160625093012_560151/ -initz 34,3

====================================================================================================

ERROR: Function crashed!

Checking test_sct_maths.............................[OK]

Checking test_sct_process_segmentation..............[OK]

Checking test_sct_propseg...........................[OK]

Checking test_sct_register_graymatter...............[OK]

Checking test_sct_register_multimodal...............[OK]

Checking test_sct_register_to_template..............Running /home/brain/sct/scripts/sct_register_to_template.py -c t2 -l /home/brain/sct_testing_data/data/t2/labels.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -t /home/brain/sct_testing_data/data/template/ -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 0 -param step=1,type=seg,algo=slicereg,metric=MeanSquares,iter=5:step=2,type=seg,algo=bsplinesyn,iter=3,metric=MI:step=3,iter=0 -ofolder sct_register_to_template_data_160625093041_76113/

Check folder existence...

Check folder existence...

Check template files...

OK: /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_T2.nii.gz

OK: /home/brain/sct_testing_data/data/template/template/landmarks_center.nii.gz

OK: /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_cord.nii.gz

Check parameters:

.. Data: /home/brain/sct_testing_data/data/t2/t2.nii.gz

.. Landmarks: /home/brain/sct_testing_data/data/t2/labels.nii.gz

.. Segmentation: /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz

.. Path template: /home/brain/sct_testing_data/data/template/template

.. Path output: sct_register_to_template_data_160625093041_76113/

.. Output type: 1

.. Remove temp files: 0

Parameters for registration:

Step #1

.. Type #seg

.. Algorithm................ slicereg

.. Metric................... MeanSquares

.. Number of iterations..... 5

.. Shrink factor............ 1

.. Smoothing factor......... 5

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Step #2

.. Type #seg

.. Algorithm................ bsplinesyn

.. Metric................... MI

.. Number of iterations..... 3

.. Shrink factor............ 1

.. Smoothing factor......... 1

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Step #3

.. Type #im

.. Algorithm................ syn

.. Metric................... CC

.. Number of iterations..... 0

.. Shrink factor............ 1

.. Smoothing factor......... 0

.. Gradient step............ 0.5

.. Degree of polynomial..... 3

Check if data, segmentation and landmarks are in the same space...

Check input labels...

Create temporary folder...

mkdir tmp.160625093041_218218/

Copying input data to tmp folder and convert to nii...

sct_convert -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -o tmp.160625093041_218218/data.nii

sct_convert -i /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -o tmp.160625093041_218218/seg.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/t2/labels.nii.gz -o tmp.160625093041_218218/label.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_T2.nii.gz -o tmp.160625093041_218218/template.nii

sct_convert -i /home/brain/sct_testing_data/data/template/template/MNI-Poly-AMU_cord.nii.gz -o tmp.160625093041_218218/template_seg.nii.gz

sct_convert -i /home/brain/sct_testing_data/data/template/template/landmarks_center.nii.gz -o tmp.160625093041_218218/template_label.nii.gz

Smooth segmentation...

sct_maths -i seg.nii.gz -smooth 0 -o seg_smooth.nii.gz

Resample data to 1mm isotropic...

sct_resample -i data.nii -mm 1.0x1.0x1.0 -x linear -o data_1mm.nii

sct_resample -i seg_smooth.nii.gz -mm 1.0x1.0x1.0 -x linear -o seg_smooth_1mm.nii.gz

Position=(31,44,26) -- Value= 3

Position=(32,9,26) -- Value= 5

Useful notation:

31,44,26,3:32,9,26,5

sct_label_utils -i data_1mm.nii -create 31,44,26,3:32,9,26,5 -v 1 -o label_1mm.nii.gz

Change orientation of input images to RPI...

sct_image -i data_1mm.nii -setorient RPI -o data_1mm_rpi.nii

sct_image -i seg_smooth_1mm.nii.gz -setorient RPI -o seg_smooth_1mm_rpi.nii.gz

sct_image -i label_1mm.nii.gz -setorient RPI -o label_1mm_rpi.nii.gz

sct_crop_image -i seg_smooth_1mm_rpi.nii.gz -o seg_smooth_1mm_rpi_crop.nii.gz -dim 2 -bzmax

Straighten the spinal cord using centerline/segmentation...

sct_straighten_spinalcord -i seg_smooth_1mm_rpi_crop.nii.gz -s seg_smooth_1mm_rpi_crop.nii.gz -o seg_smooth_1mm_rpi_crop_straight.nii.gz -qc 0 -r 0 -v 1

sct_concat_transfo -w warp_straight2curve.nii.gz -d data_1mm_rpi.nii -o warp_straight2curve.nii.gz

Remove unused label on template. Keep only label present in the input label image...

sct_label_utils -i template_label.nii.gz -o template_label.nii.gz -remove label_1mm_rpi.nii.gz

Dilating input labels using 3vox ball radius

sct_maths -i label_1mm_rpi.nii.gz -o label_1mm_rpi_dilate.nii.gz -dilate 3

Running /home/brain/sct/scripts/sct_maths.py -i label_1mm_rpi.nii.gz -o label_1mm_rpi_dilate.nii.gz -dilate 3

Intel MKL FATAL ERROR: Cannot load libmkl_avx.so or libmkl_def.so.

/home/brain/sct/scripts/sct_utils.py, line 104

/home/brain/sct/scripts/sct_utils.py, line 104

[FAIL]

====================================================================================================

sct_register_to_template -c t2 -l /home/brain/sct_testing_data/data/t2/labels.nii.gz -i /home/brain/sct_testing_data/data/t2/t2.nii.gz -t /home/brain/sct_testing_data/data/template/ -v 1 -s /home/brain/sct_testing_data/data/t2/t2_seg.nii.gz -r 0 -param step=1,type=seg,algo=slicereg,metric=MeanSquares,iter=5:step=2,type=seg,algo=bsplinesyn,iter=3,metric=MI:step=3,iter=0 -ofolder sct_register_to_template_data_160625093041_76113/

====================================================================================================

ERROR: Function crashed!

Checking test_sct_resample..........................[OK]

Checking test_sct_segment_graymatter................[OK]

Checking test_sct_smooth_spinalcord.................[OK]

Checking test_sct_straighten_spinalcord.............[OK]

Checking test_sct_warp_template.....................[OK]

Checking test_sct_documentation.....................[OK]

Checking test_sct_dmri_create_noisemask.............[OK]

status: [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0]

Finished! Elapsed time: 141s

~~~

|

priority

|

sct testing fails on neurodebian spinal cord toolbox version dev running home brain sct scripts sct testing py d downloading testing data sct download data d sct testing data path to testing data home brain sct testing data data checking test sct apply transfo checking test sct check atlas integrity checking test sct compute mtr checking test sct concat transfo checking test sct convert checking test sct create mask checking test sct crop image checking test sct dmri compute dti checking test sct dmri get bvalue checking test sct dmri transpose bvecs checking test sct dmri moco checking test sct dmri separate and dwi checking test sct extract metric checking test sct fmri compute tsnr checking test sct fmri moco checking test sct image checking test sct label utils checking test sct label vertebrae running home brain sct scripts sct label vertebrae py laplacian o seg labeled nii gz i home brain sct testing data data nii gz v s home brain sct testing data data seg nii gz r denoise ofolder sct label vertebrae data initz check folder existence create temporary folder create temporary folder mkdir tmp copying input data to tmp folder sct convert i home brain sct testing data data nii gz o tmp data nii sct convert i home brain sct testing data data seg nii gz o tmp segmentation nii gz create label to identify disc intel mkl fatal error cannot load libmkl avx so or libmkl def so home brain sct scripts sct utils py line sct label vertebrae laplacian o seg labeled nii gz i home brain sct testing data data nii gz v s home brain sct testing data data seg nii gz r denoise ofolder sct label vertebrae data initz error function crashed checking test sct maths checking test sct process segmentation checking test sct propseg checking test sct register graymatter checking test sct register multimodal checking test sct register to template running home brain sct scripts sct register to template py c l home brain sct testing data data labels nii gz i home brain sct testing data data nii gz t home brain sct testing data data template v s home brain sct testing data data seg nii gz r param step type seg algo slicereg metric meansquares iter step type seg algo bsplinesyn iter metric mi step iter ofolder sct register to template data check folder existence check folder existence check template files ok home brain sct testing data data template template mni poly amu nii gz ok home brain sct testing data data template template landmarks center nii gz ok home brain sct testing data data template template mni poly amu cord nii gz check parameters data home brain sct testing data data nii gz landmarks home brain sct testing data data labels nii gz segmentation home brain sct testing data data seg nii gz path template home brain sct testing data data template template path output sct register to template data output type remove temp files parameters for registration step type seg algorithm slicereg metric meansquares number of iterations shrink factor smoothing factor gradient step degree of polynomial step type seg algorithm bsplinesyn metric mi number of iterations shrink factor smoothing factor gradient step degree of polynomial step type im algorithm syn metric cc number of iterations shrink factor smoothing factor gradient step degree of polynomial check if data segmentation and landmarks are in the same space check input labels create temporary folder mkdir tmp copying input data to tmp folder and convert to nii sct convert i home brain sct testing data data nii gz o tmp data nii sct convert i home brain sct testing data data seg nii gz o tmp seg nii gz sct convert i home brain sct testing data data labels nii gz o tmp label nii gz sct convert i home brain sct testing data data template template mni poly amu nii gz o tmp template nii sct convert i home brain sct testing data data template template mni poly amu cord nii gz o tmp template seg nii gz sct convert i home brain sct testing data data template template landmarks center nii gz o tmp template label nii gz smooth segmentation sct maths i seg nii gz smooth o seg smooth nii gz resample data to isotropic sct resample i data nii mm x linear o data nii sct resample i seg smooth nii gz mm x linear o seg smooth nii gz position value position value useful notation sct label utils i data nii create v o label nii gz change orientation of input images to rpi sct image i data nii setorient rpi o data rpi nii sct image i seg smooth nii gz setorient rpi o seg smooth rpi nii gz sct image i label nii gz setorient rpi o label rpi nii gz sct crop image i seg smooth rpi nii gz o seg smooth rpi crop nii gz dim bzmax straighten the spinal cord using centerline segmentation sct straighten spinalcord i seg smooth rpi crop nii gz s seg smooth rpi crop nii gz o seg smooth rpi crop straight nii gz qc r v sct concat transfo w warp nii gz d data rpi nii o warp nii gz remove unused label on template keep only label present in the input label image sct label utils i template label nii gz o template label nii gz remove label rpi nii gz dilating input labels using ball radius sct maths i label rpi nii gz o label rpi dilate nii gz dilate running home brain sct scripts sct maths py i label rpi nii gz o label rpi dilate nii gz dilate intel mkl fatal error cannot load libmkl avx so or libmkl def so home brain sct scripts sct utils py line home brain sct scripts sct utils py line sct register to template c l home brain sct testing data data labels nii gz i home brain sct testing data data nii gz t home brain sct testing data data template v s home brain sct testing data data seg nii gz r param step type seg algo slicereg metric meansquares iter step type seg algo bsplinesyn iter metric mi step iter ofolder sct register to template data error function crashed checking test sct resample checking test sct segment graymatter checking test sct smooth spinalcord checking test sct straighten spinalcord checking test sct warp template checking test sct documentation checking test sct dmri create noisemask status finished elapsed time

| 1

|

517,655

| 15,017,718,910

|

IssuesEvent

|

2021-02-01 11:13:16

|

Conjurinc-workato-dev/ldap-sync

|

https://api.github.com/repos/Conjurinc-workato-dev/ldap-sync

|

reopened

|

jira bug 11

|

Bugtype/Functionality ONYX-6559 Severity/High kind/bug priority/Default team/Jason

|

##description

Steps to reproduce:

Current Results: 222

Expected Results:

Error Messages:

Logs: fff

Other Symptoms:

Tenant ID / Pod Number:

##Found in version

11.5

##Workaround Complexity

There's a complex workaround

##Workaround Description

ssss

##Link to JIRA bug

https://ca-il-jira-test.il.cyber-ark.com/browse/ONYX-6559

|

1.0

|

jira bug 11 - ##description

Steps to reproduce:

Current Results: 222

Expected Results:

Error Messages:

Logs: fff

Other Symptoms:

Tenant ID / Pod Number:

##Found in version

11.5

##Workaround Complexity

There's a complex workaround

##Workaround Description

ssss

##Link to JIRA bug

https://ca-il-jira-test.il.cyber-ark.com/browse/ONYX-6559

|

priority

|

jira bug description steps to reproduce current results expected results error messages logs fff other symptoms tenant id pod number found in version workaround complexity there s a complex workaround workaround description ssss link to jira bug

| 1

|

649,243

| 21,260,373,650

|

IssuesEvent

|

2022-04-13 03:04:58

|

RiceShelley/EtherNIC

|

https://api.github.com/repos/RiceShelley/EtherNIC

|

closed

|

Need cocotb RMII Test

|

High Priority sim

|

cocotb doesn't have a testbench for RMII already made.

We'll likely need to create one ourselves

|

1.0

|

Need cocotb RMII Test - cocotb doesn't have a testbench for RMII already made.

We'll likely need to create one ourselves

|

priority

|

need cocotb rmii test cocotb doesn t have a testbench for rmii already made we ll likely need to create one ourselves

| 1

|

604,042

| 18,676,000,013

|

IssuesEvent

|

2021-10-31 15:17:28

|

CMPUT301F21T26/Habit-Tracker

|

https://api.github.com/repos/CMPUT301F21T26/Habit-Tracker

|

closed

|

02.01.01 - Habit Event Core

|

Priority: High Base

|

**Focus**

Habit Events

**Partial US**

As a doer, I want to denote a habit event when I have done a habit as planned.

**Reason**

These instances help hold users accountable, and also act as a means of potentially making memories

**Story Points**

3

**Risk Level**

Low

|

1.0

|

02.01.01 - Habit Event Core - **Focus**

Habit Events

**Partial US**

As a doer, I want to denote a habit event when I have done a habit as planned.

**Reason**

These instances help hold users accountable, and also act as a means of potentially making memories

**Story Points**

3

**Risk Level**

Low

|

priority

|

habit event core focus habit events partial us as a doer i want to denote a habit event when i have done a habit as planned reason these instances help hold users accountable and also act as a means of potentially making memories story points risk level low

| 1

|

586,904

| 17,599,593,185

|

IssuesEvent

|

2021-08-17 10:06:37

|

margaritahumanitarian/helpafamily

|

https://api.github.com/repos/margaritahumanitarian/helpafamily

|

closed

|

DeepSource checks are failing incorrectly

|

bug good first issue help wanted high priority

|

DeepSource is a really amazing tool. Working on this issue is an opportunity to learn how to work with it. I'm happy to grant any additional access permissions needed to contributor(s) interested in working on this.

---

When contributors submit a PR, there is a DeepSource check that appears to fail incorrectly.

The PRs where this occurred are #25 #26 #28 #30 #31 #42.

Some ideas of how to get started:

- [ ] Study PR #25 which is where the check first failed

- [ ] See if fixing any of the other issues identified by DeepSource causes the DeepSource checks to pass