Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

47,743

| 2,984,925,863

|

IssuesEvent

|

2015-07-18 13:52:09

|

AmatCoder/mednaffe

|

https://api.github.com/repos/AmatCoder/mednaffe

|

reopened

|

[BUG] XInput maps always Axis - deadzones required

|

bug Priority-High

|

Microsoft gamepads are crappy so they need deadzone for axis and triggers, Xinput.h defines them:

#define XINPUT_GAMEPAD_LEFT_THUMB_DEADZONE 7849

#define XINPUT_GAMEPAD_RIGHT_THUMB_DEADZONE 8689

#define XINPUT_GAMEPAD_TRIGGER_THRESHOLD 30

I have mapping problem because of this ie. axis movement is reported as 'key pressed' in PSX Input mapping.

|

1.0

|

[BUG] XInput maps always Axis - deadzones required - Microsoft gamepads are crappy so they need deadzone for axis and triggers, Xinput.h defines them:

#define XINPUT_GAMEPAD_LEFT_THUMB_DEADZONE 7849

#define XINPUT_GAMEPAD_RIGHT_THUMB_DEADZONE 8689

#define XINPUT_GAMEPAD_TRIGGER_THRESHOLD 30

I have mapping problem because of this ie. axis movement is reported as 'key pressed' in PSX Input mapping.

|

priority

|

xinput maps always axis deadzones required microsoft gamepads are crappy so they need deadzone for axis and triggers xinput h defines them define xinput gamepad left thumb deadzone define xinput gamepad right thumb deadzone define xinput gamepad trigger threshold i have mapping problem because of this ie axis movement is reported as key pressed in psx input mapping

| 1

|

221,707

| 7,394,393,840

|

IssuesEvent

|

2018-03-17 10:32:38

|

glutanimate/image-occlusion-enhanced

|

https://api.github.com/repos/glutanimate/image-occlusion-enhanced

|

closed

|

Switching to a different note type from I/O causing the first field to be hidden

|

anki2.1 high priority regression

|

Steps to reproduce:

1. Open Anki's "Add" screen

2. Change the note type to "Image Occlusion Enhanced"

3. Switch to a different note type (e.g. basic)

Expected behavior (present under Anki 2.0):

- Image Occlusion Enhanced note type: first field ("ID (hidden)" is hidden from user)

- Basic note type: All fields visible

Observed behavior (present under Anki 2.1):

- Image Occlusion Enhanced note type: first field ("ID (hidden)" is hidden from user)

- Basic note type: **First field hidden**

Might be a good opportunity to discuss whether Anki should offer a way for add-on authors to mark specific note type fields as hidden (could be useful for any note type that is generated programmatically and contains fields that should not be user-editable).

|

1.0

|

Switching to a different note type from I/O causing the first field to be hidden - Steps to reproduce:

1. Open Anki's "Add" screen

2. Change the note type to "Image Occlusion Enhanced"

3. Switch to a different note type (e.g. basic)

Expected behavior (present under Anki 2.0):

- Image Occlusion Enhanced note type: first field ("ID (hidden)" is hidden from user)

- Basic note type: All fields visible

Observed behavior (present under Anki 2.1):

- Image Occlusion Enhanced note type: first field ("ID (hidden)" is hidden from user)

- Basic note type: **First field hidden**

Might be a good opportunity to discuss whether Anki should offer a way for add-on authors to mark specific note type fields as hidden (could be useful for any note type that is generated programmatically and contains fields that should not be user-editable).

|

priority

|

switching to a different note type from i o causing the first field to be hidden steps to reproduce open anki s add screen change the note type to image occlusion enhanced switch to a different note type e g basic expected behavior present under anki image occlusion enhanced note type first field id hidden is hidden from user basic note type all fields visible observed behavior present under anki image occlusion enhanced note type first field id hidden is hidden from user basic note type first field hidden might be a good opportunity to discuss whether anki should offer a way for add on authors to mark specific note type fields as hidden could be useful for any note type that is generated programmatically and contains fields that should not be user editable

| 1

|

24,836

| 2,673,783,993

|

IssuesEvent

|

2015-03-24 21:10:55

|

pufexi/multiorder

|

https://api.github.com/repos/pufexi/multiorder

|

closed

|

!!! zadruhe !!!! Číslo zásilky (tracking)

|

high priority

|

Tohle udelame rovnou s tim importem tech cisel zasilek... at se s tim nemazem na vickrat.

V priloze zasilam zasilkyPodaniExport.csv.png , smaz to png a mas CSV , v tom je na prvnim miste (Excel sloupec A) cislo zasilky a na sloupci W 1413996795 , to je variabilni symbo, pro nas cislo objednavky, cele v UTF-8. Podle tohodle to budes parovat.

Samotne cislo zasilky bych dal za stat, tedy "Frýdek-Místek, CZ , BA6950905034M" a bylo by prokliknutelne na https://www.postaonline.cz/trackandtrace/-/zasilka/cislo?parcelNumbers=BA6950905034M v novem okne. Netreba zadne editace nikde, stava se zridka a editoval bych v DB pres phpmyadmina, ostatne takhle jsem zvyklej nejaky extemy doladit.

Pak nekde musi byt ten formular, kam budu vkladat ten soubor pro import... udelej to treba doleva jak jsou ty Objednavky otevrene, tak treba dvojta cara ci neco a dat tam "Import tracking Čpost"

Pri importu se zaroven odesle email zakaznikovi, to si tam nejak priprav, ale ocenime to v jinem Issue, kde budem resit notifikaci zakaznika.

VIZ SOUBOR: zasilkypodaniexport.csv

|

1.0

|

!!! zadruhe !!!! Číslo zásilky (tracking) - Tohle udelame rovnou s tim importem tech cisel zasilek... at se s tim nemazem na vickrat.

V priloze zasilam zasilkyPodaniExport.csv.png , smaz to png a mas CSV , v tom je na prvnim miste (Excel sloupec A) cislo zasilky a na sloupci W 1413996795 , to je variabilni symbo, pro nas cislo objednavky, cele v UTF-8. Podle tohodle to budes parovat.

Samotne cislo zasilky bych dal za stat, tedy "Frýdek-Místek, CZ , BA6950905034M" a bylo by prokliknutelne na https://www.postaonline.cz/trackandtrace/-/zasilka/cislo?parcelNumbers=BA6950905034M v novem okne. Netreba zadne editace nikde, stava se zridka a editoval bych v DB pres phpmyadmina, ostatne takhle jsem zvyklej nejaky extemy doladit.

Pak nekde musi byt ten formular, kam budu vkladat ten soubor pro import... udelej to treba doleva jak jsou ty Objednavky otevrene, tak treba dvojta cara ci neco a dat tam "Import tracking Čpost"

Pri importu se zaroven odesle email zakaznikovi, to si tam nejak priprav, ale ocenime to v jinem Issue, kde budem resit notifikaci zakaznika.

VIZ SOUBOR: zasilkypodaniexport.csv

|

priority

|

zadruhe číslo zásilky tracking tohle udelame rovnou s tim importem tech cisel zasilek at se s tim nemazem na vickrat v priloze zasilam zasilkypodaniexport csv png smaz to png a mas csv v tom je na prvnim miste excel sloupec a cislo zasilky a na sloupci w to je variabilni symbo pro nas cislo objednavky cele v utf podle tohodle to budes parovat samotne cislo zasilky bych dal za stat tedy frýdek místek cz a bylo by prokliknutelne na v novem okne netreba zadne editace nikde stava se zridka a editoval bych v db pres phpmyadmina ostatne takhle jsem zvyklej nejaky extemy doladit pak nekde musi byt ten formular kam budu vkladat ten soubor pro import udelej to treba doleva jak jsou ty objednavky otevrene tak treba dvojta cara ci neco a dat tam import tracking čpost pri importu se zaroven odesle email zakaznikovi to si tam nejak priprav ale ocenime to v jinem issue kde budem resit notifikaci zakaznika viz soubor zasilkypodaniexport csv

| 1

|

539,050

| 15,782,695,630

|

IssuesEvent

|

2021-04-01 13:07:17

|

eksperimental/ex_doc

|

https://api.github.com/repos/eksperimental/ex_doc

|

closed

|

add "(type)" to link title in menu

|

Priority:High enhancement

|

search for "has_key?/2"

and two `Dict.has_key?/2` will be displayed, without being able to know what is the difference.

MAybe we should put in parenthesis the type of anything that is not a macro or a function. (ie, callbacks)

|

1.0

|

add "(type)" to link title in menu - search for "has_key?/2"

and two `Dict.has_key?/2` will be displayed, without being able to know what is the difference.

MAybe we should put in parenthesis the type of anything that is not a macro or a function. (ie, callbacks)

|

priority

|

add type to link title in menu search for has key and two dict has key will be displayed without being able to know what is the difference maybe we should put in parenthesis the type of anything that is not a macro or a function ie callbacks

| 1

|

308,732

| 9,449,220,143

|

IssuesEvent

|

2019-04-16 00:52:33

|

smacademic/project-cgkm

|

https://api.github.com/repos/smacademic/project-cgkm

|

closed

|

Arc is missing due dates

|

priority - high severity - major type - planned feature

|

Arcs do not currently have due dates. This would be a simple addition in the arc.dart file and the databasehelper.dart file.

|

1.0

|

Arc is missing due dates - Arcs do not currently have due dates. This would be a simple addition in the arc.dart file and the databasehelper.dart file.

|

priority

|

arc is missing due dates arcs do not currently have due dates this would be a simple addition in the arc dart file and the databasehelper dart file

| 1

|

465,200

| 13,358,551,349

|

IssuesEvent

|

2020-08-31 11:54:55

|

DIAGNijmegen/website-content

|

https://api.github.com/repos/DIAGNijmegen/website-content

|

closed

|

Build redirects for transition from old to new website

|

Priority: High enhancement

|

- [x] People pages

- [x] Publication pages for people

- [x] Publications

- [x] Main pages

|

1.0

|

Build redirects for transition from old to new website - - [x] People pages

- [x] Publication pages for people

- [x] Publications

- [x] Main pages

|

priority

|

build redirects for transition from old to new website people pages publication pages for people publications main pages

| 1

|

135,336

| 5,246,900,348

|

IssuesEvent

|

2017-02-01 11:07:40

|

DigitalCampus/oppia-mobile-android

|

https://api.github.com/repos/DigitalCampus/oppia-mobile-android

|

opened

|

App crashing issues on password reset and video download

|

bug High priority

|

On both these tasks, the app crashes. Very similar to the login carshing we had the other day - so suspect that it may be the same cause/issue

|

1.0

|

App crashing issues on password reset and video download - On both these tasks, the app crashes. Very similar to the login carshing we had the other day - so suspect that it may be the same cause/issue

|

priority

|

app crashing issues on password reset and video download on both these tasks the app crashes very similar to the login carshing we had the other day so suspect that it may be the same cause issue

| 1

|

613,919

| 19,101,539,576

|

IssuesEvent

|

2021-11-29 23:22:06

|

CMPUT301F21T21/detes

|

https://api.github.com/repos/CMPUT301F21T21/detes

|

closed

|

US 05.01.01 - Habit Following and Sharing

|

Final checkpoint High Risk Medium Priority Updated

|

As a doer, I want to ask another doer to follow all their **public** habits.

**Clarification:** The user would like to ask another user to follow their progress on their publicly viewable habits

Story Points: 4

|

1.0

|

US 05.01.01 - Habit Following and Sharing - As a doer, I want to ask another doer to follow all their **public** habits.

**Clarification:** The user would like to ask another user to follow their progress on their publicly viewable habits

Story Points: 4

|

priority

|

us habit following and sharing as a doer i want to ask another doer to follow all their public habits clarification the user would like to ask another user to follow their progress on their publicly viewable habits story points

| 1

|

399,200

| 11,744,496,462

|

IssuesEvent

|

2020-03-12 07:51:54

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

support.mozilla.org - see bug description

|

browser-fenix engine-gecko ml-needsdiagnosis-false ml-probability-high priority-important

|

<!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50052 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://support.mozilla.org/zh-CN/kb/firefox-preview-upgrade-faqs

**Browser / Version**: Firefox Mobile 75.0

**Operating System**: Android

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: js和ua

**Steps to Reproduce**:

能否增加自定义网站ua与JavaScript禁止开启控制

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

support.mozilla.org - see bug description - <!-- @browser: Firefox Mobile 75.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:75.0) Gecko/75.0 Firefox/75.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50052 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://support.mozilla.org/zh-CN/kb/firefox-preview-upgrade-faqs

**Browser / Version**: Firefox Mobile 75.0

**Operating System**: Android

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: js和ua

**Steps to Reproduce**:

能否增加自定义网站ua与JavaScript禁止开启控制

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

priority

|

support mozilla org see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description js和ua steps to reproduce 能否增加自定义网站ua与javascript禁止开启控制 browser configuration none from with ❤️

| 1

|

511,805

| 14,882,023,910

|

IssuesEvent

|

2021-01-20 11:17:45

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

opened

|

[0.9.2 staging-1907] Creating civics articles in Amendments breaks all Capitol articles , not only delete one.

|

Category: Laws Priority: High

|

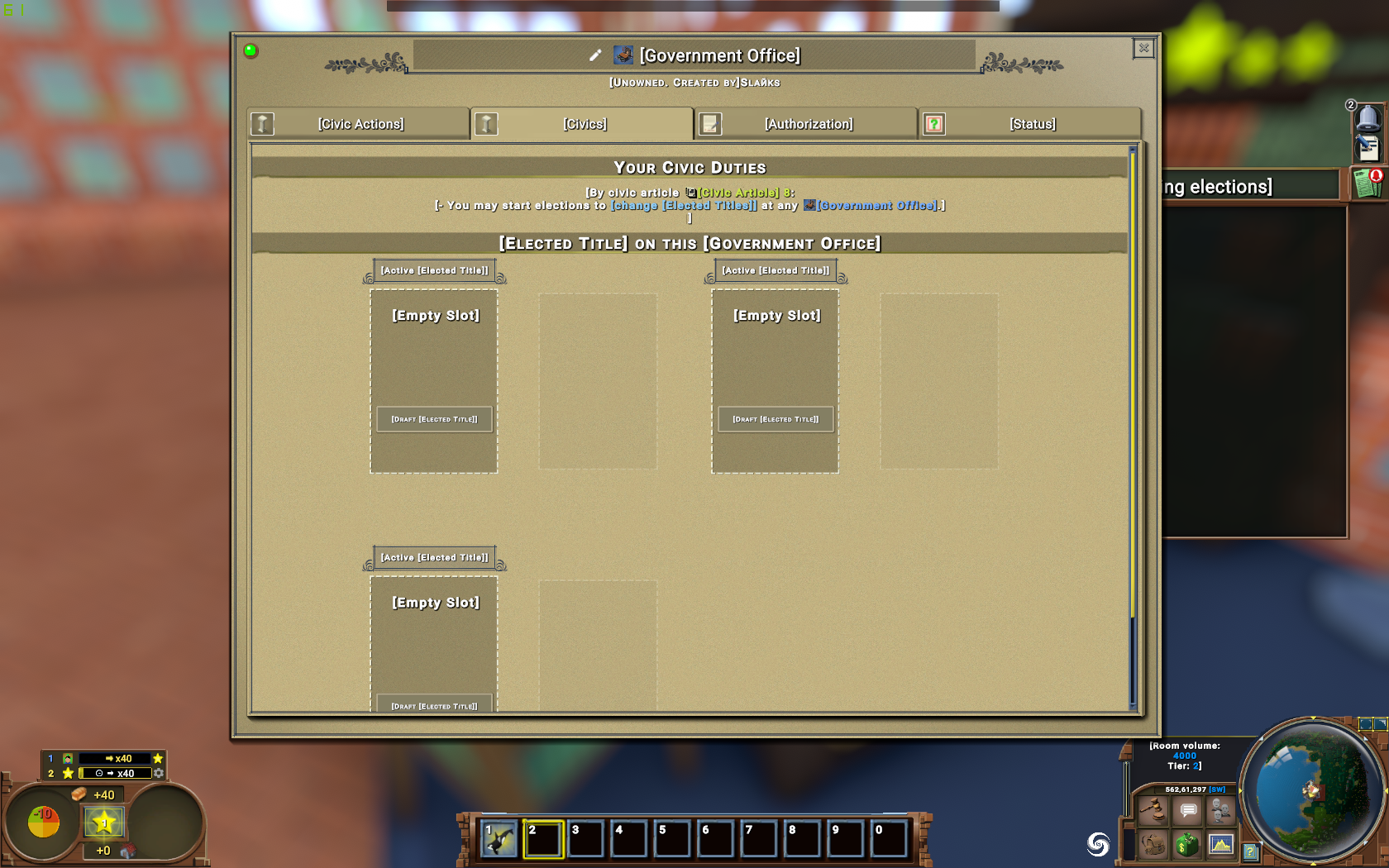

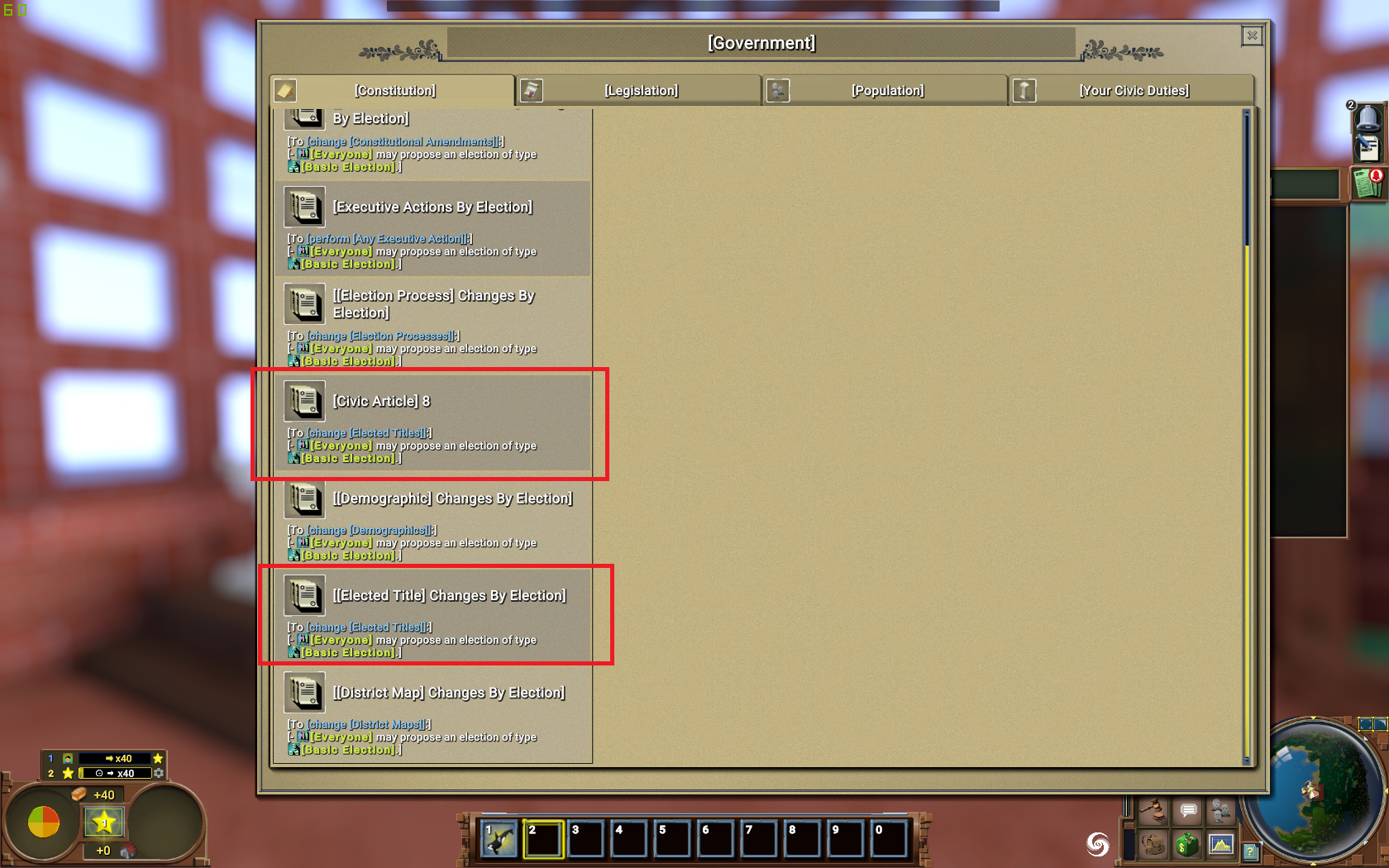

Step to reproduce:

- create a new world, ratify constitution:

- check court to see tha I can create laws:

- place amedndments and start to create another article:

- /civics winelection. So now all articles instead of the new one were broken:

- I only can create Elected titles:

In government window I have all articles are active, and removed article is active one too:

|

1.0

|

[0.9.2 staging-1907] Creating civics articles in Amendments breaks all Capitol articles , not only delete one. - Step to reproduce:

- create a new world, ratify constitution:

- check court to see tha I can create laws:

- place amedndments and start to create another article:

- /civics winelection. So now all articles instead of the new one were broken:

- I only can create Elected titles:

In government window I have all articles are active, and removed article is active one too:

|

priority

|

creating civics articles in amendments breaks all capitol articles not only delete one step to reproduce create a new world ratify constitution check court to see tha i can create laws place amedndments and start to create another article civics winelection so now all articles instead of the new one were broken i only can create elected titles in government window i have all articles are active and removed article is active one too

| 1

|

798,169

| 28,238,656,753

|

IssuesEvent

|

2023-04-06 04:30:01

|

AY2223S2-CS2113-T15-4/tp

|

https://api.github.com/repos/AY2223S2-CS2113-T15-4/tp

|

closed

|

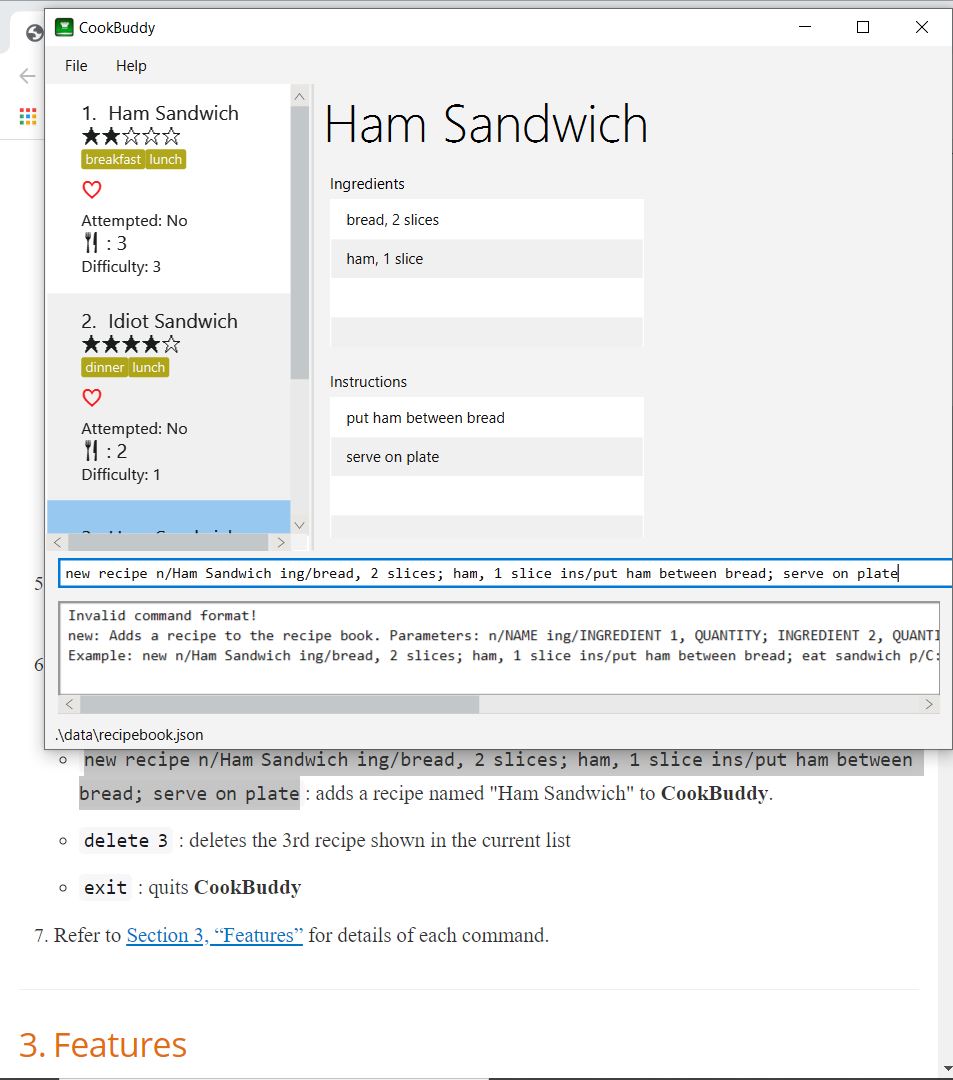

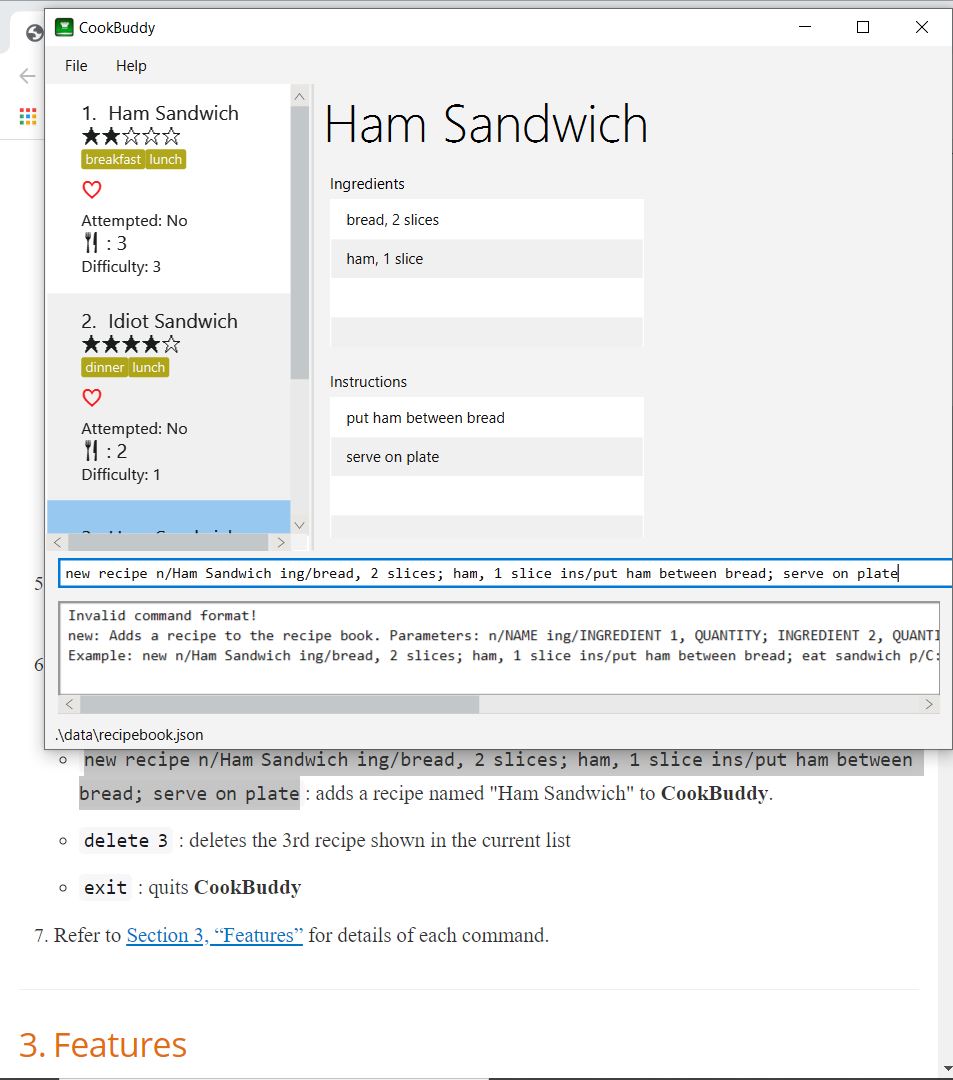

[PE-D][Tester C] Trying to update the question to one with a whitespace results in wrong error message

|

type.Bug priority.High severity.Medium

|

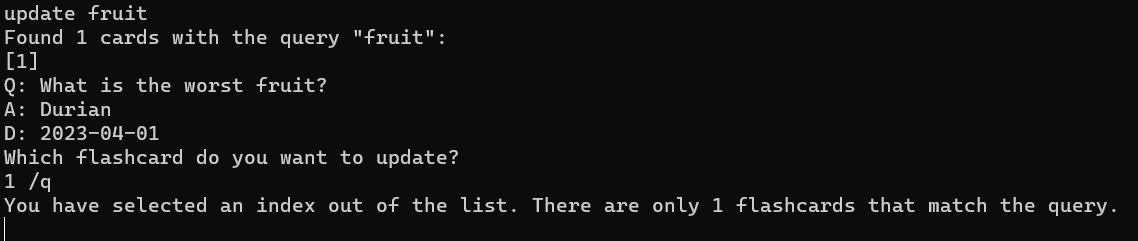

Throwing an error is correct in this case, however, the name of the error is misleading.

Steps to reproduce: key in `add /q What is the worst fruit? /a Durian` and then key in `update fruit`, press enter and then key in `1 /q ` with a whitespace.

Expected: Unable to update the question to one that has an empty input!

Actual:

This can mislead the user thinking that the selected index is wrong, but '1' is actually in fact correct.

<!--session: 1680252405098-9e85e652-f0de-43e6-b248-5a883a5bc5ba-->

<!--Version: Web v3.4.7-->

-------------

Labels: `severity.VeryLow` `type.FunctionalityBug`

original: denzelcjy/ped#4

|

1.0

|

[PE-D][Tester C] Trying to update the question to one with a whitespace results in wrong error message - Throwing an error is correct in this case, however, the name of the error is misleading.

Steps to reproduce: key in `add /q What is the worst fruit? /a Durian` and then key in `update fruit`, press enter and then key in `1 /q ` with a whitespace.

Expected: Unable to update the question to one that has an empty input!

Actual:

This can mislead the user thinking that the selected index is wrong, but '1' is actually in fact correct.

<!--session: 1680252405098-9e85e652-f0de-43e6-b248-5a883a5bc5ba-->

<!--Version: Web v3.4.7-->

-------------

Labels: `severity.VeryLow` `type.FunctionalityBug`

original: denzelcjy/ped#4

|

priority

|

trying to update the question to one with a whitespace results in wrong error message throwing an error is correct in this case however the name of the error is misleading steps to reproduce key in add q what is the worst fruit a durian and then key in update fruit press enter and then key in q with a whitespace expected unable to update the question to one that has an empty input actual this can mislead the user thinking that the selected index is wrong but is actually in fact correct labels severity verylow type functionalitybug original denzelcjy ped

| 1

|

191,197

| 6,826,811,446

|

IssuesEvent

|

2017-11-08 15:15:33

|

GRIS-UdeM/ServerGRIS

|

https://api.github.com/repos/GRIS-UdeM/ServerGRIS

|

closed

|

Ajouter HRTF

|

enhancement High priority

|

Pour mémoire, suite à la réunion du 11 septembre, ajouter le HRTF avant la date possible de sortie, le 1er novembre.

|

1.0

|

Ajouter HRTF - Pour mémoire, suite à la réunion du 11 septembre, ajouter le HRTF avant la date possible de sortie, le 1er novembre.

|

priority

|

ajouter hrtf pour mémoire suite à la réunion du septembre ajouter le hrtf avant la date possible de sortie le novembre

| 1

|

439,031

| 12,676,194,051

|

IssuesEvent

|

2020-06-19 04:19:45

|

ocaml/ocaml

|

https://api.github.com/repos/ocaml/ocaml

|

closed

|

ocamlmklib always adds -L (absolute) directories also the run-time linker path.

|

Stale bug high-priority tools

|

**Original bug ID:** 5943

**Reporter:** is

**Status:** acknowledged (set by @damiendoligez on 2013-06-19T18:34:33Z)

**Resolution:** open

**Priority:** high

**Severity:** minor

**Platform:** any

**OS:** any

**OS Version:** any

**Version:** 4.00.1

**Category:** tools (ocaml{lex,yacc,dep,debug,...})

**Tags:** patch

**Monitored by:** is @ygrek @hcarty

## Bug description

ocamlmklib contains this snippet:

else if starts_with s "-L" then

(c_Lopts := s :: !c_Lopts;

let l = chop_prefix s "-L" in

if not (Filename.is_relative l) then rpath := l :: !rpath)

This results in absolute paths always added to the run-time-path. This is wrong in any build environment where the object directory is accessed through an absolute path; when using -R, the wrong path is added along the right one.

Contrary, ELF linker tools always require explicit specification of the run-time path, even when the same.

I suggest removing

let l = chop_prefix s "-L" in

if not (Filename.is_relative l) then rpath := l :: !rpath)

If this behaviour is deemed necessary for backwards compatibility, the new one should at least be selectable by a global option to ocamlmklib.

## File attachments

- [patch-tools_ocamlmklib](https://gist.githubusercontent.com/vicuna/1562f94183302316bea164bbb7db9014/raw/c9a1b7bcfb09e724b4100fe8a4143def7b6ab471/patch-tools_ocamlmklib)

|

1.0

|

ocamlmklib always adds -L (absolute) directories also the run-time linker path. - **Original bug ID:** 5943

**Reporter:** is

**Status:** acknowledged (set by @damiendoligez on 2013-06-19T18:34:33Z)

**Resolution:** open

**Priority:** high

**Severity:** minor

**Platform:** any

**OS:** any

**OS Version:** any

**Version:** 4.00.1

**Category:** tools (ocaml{lex,yacc,dep,debug,...})

**Tags:** patch

**Monitored by:** is @ygrek @hcarty

## Bug description

ocamlmklib contains this snippet:

else if starts_with s "-L" then

(c_Lopts := s :: !c_Lopts;

let l = chop_prefix s "-L" in

if not (Filename.is_relative l) then rpath := l :: !rpath)

This results in absolute paths always added to the run-time-path. This is wrong in any build environment where the object directory is accessed through an absolute path; when using -R, the wrong path is added along the right one.

Contrary, ELF linker tools always require explicit specification of the run-time path, even when the same.

I suggest removing

let l = chop_prefix s "-L" in

if not (Filename.is_relative l) then rpath := l :: !rpath)

If this behaviour is deemed necessary for backwards compatibility, the new one should at least be selectable by a global option to ocamlmklib.

## File attachments

- [patch-tools_ocamlmklib](https://gist.githubusercontent.com/vicuna/1562f94183302316bea164bbb7db9014/raw/c9a1b7bcfb09e724b4100fe8a4143def7b6ab471/patch-tools_ocamlmklib)

|

priority

|

ocamlmklib always adds l absolute directories also the run time linker path original bug id reporter is status acknowledged set by damiendoligez on resolution open priority high severity minor platform any os any os version any version category tools ocaml lex yacc dep debug tags patch monitored by is ygrek hcarty bug description ocamlmklib contains this snippet else if starts with s l then c lopts s c lopts let l chop prefix s l in if not filename is relative l then rpath l rpath this results in absolute paths always added to the run time path this is wrong in any build environment where the object directory is accessed through an absolute path when using r the wrong path is added along the right one contrary elf linker tools always require explicit specification of the run time path even when the same i suggest removing let l chop prefix s l in if not filename is relative l then rpath l rpath if this behaviour is deemed necessary for backwards compatibility the new one should at least be selectable by a global option to ocamlmklib file attachments

| 1

|

333,315

| 10,120,459,467

|

IssuesEvent

|

2019-07-31 13:47:02

|

jncc/topcat

|

https://api.github.com/repos/jncc/topcat

|

closed

|

Providing users with links to Data Provider Agreements and License

|

high priority

|

For third party data, the Marine team is working towards the use of a _'Data Provider Agreement'_ which will capture specific restrictions information on the dataset as requested by the original owner/supplier. The aim is for this procedure to be rolled out across JNCC's marine team (and potentially JNCC-wide) in the coming months.

Whilst restrictions can be captured generally in the _usage_ section of the metadata, it would be worthwhile to have a standard place for metadata creators to link to the DPA for the dataset in question for auditing and information etc.

This doesn't necessarily have to be a new field, but could instead be written into the topcat/metadata protocol to instruct the user to include the information in an existing field (e.g. the Notes section).

|

1.0

|

Providing users with links to Data Provider Agreements and License - For third party data, the Marine team is working towards the use of a _'Data Provider Agreement'_ which will capture specific restrictions information on the dataset as requested by the original owner/supplier. The aim is for this procedure to be rolled out across JNCC's marine team (and potentially JNCC-wide) in the coming months.

Whilst restrictions can be captured generally in the _usage_ section of the metadata, it would be worthwhile to have a standard place for metadata creators to link to the DPA for the dataset in question for auditing and information etc.

This doesn't necessarily have to be a new field, but could instead be written into the topcat/metadata protocol to instruct the user to include the information in an existing field (e.g. the Notes section).

|

priority

|

providing users with links to data provider agreements and license for third party data the marine team is working towards the use of a data provider agreement which will capture specific restrictions information on the dataset as requested by the original owner supplier the aim is for this procedure to be rolled out across jncc s marine team and potentially jncc wide in the coming months whilst restrictions can be captured generally in the usage section of the metadata it would be worthwhile to have a standard place for metadata creators to link to the dpa for the dataset in question for auditing and information etc this doesn t necessarily have to be a new field but could instead be written into the topcat metadata protocol to instruct the user to include the information in an existing field e g the notes section

| 1

|

90,301

| 3,814,201,535

|

IssuesEvent

|

2016-03-28 11:38:01

|

Esri/coordinate-conversion-addin-dotnet

|

https://api.github.com/repos/Esri/coordinate-conversion-addin-dotnet

|

closed

|

Use the "Flash" Call Inherent to ArcMap for Flash button

|

4 - Verify priority - high

|

Customer Feedback from on-site visit on 18FEB2016. Currently the Flash button pans-to and creates a graphic at the coordinate location. What if the graphic is the same symbology as a feature at the same location? When clicking the "Flash" button, the user thought it would act just like the inherent "Flash" call in ArcMap.

This isn't identical to Issue #67, but does overlap when speaking about the graphic being placed in the Data Frame.

|

1.0

|

Use the "Flash" Call Inherent to ArcMap for Flash button - Customer Feedback from on-site visit on 18FEB2016. Currently the Flash button pans-to and creates a graphic at the coordinate location. What if the graphic is the same symbology as a feature at the same location? When clicking the "Flash" button, the user thought it would act just like the inherent "Flash" call in ArcMap.

This isn't identical to Issue #67, but does overlap when speaking about the graphic being placed in the Data Frame.

|

priority

|

use the flash call inherent to arcmap for flash button customer feedback from on site visit on currently the flash button pans to and creates a graphic at the coordinate location what if the graphic is the same symbology as a feature at the same location when clicking the flash button the user thought it would act just like the inherent flash call in arcmap this isn t identical to issue but does overlap when speaking about the graphic being placed in the data frame

| 1

|

27,815

| 2,696,335,559

|

IssuesEvent

|

2015-04-02 13:30:37

|

alexeyxo/protobuf-swift

|

https://api.github.com/repos/alexeyxo/protobuf-swift

|

closed

|

Errors while compiling: does not conform to protocol 'GeneratedMessageProtocol' and is not a member type of

|

bug high priority

|

I have several protobuf files which are actively used in a project with some other languages, and now need swift versions. I can build .pb.swift files successfully but when I add them to Xcode, I am receiving tons of bugs mainly composed of:

* Type 1 'Foo' does not conform to protocol 'GeneratedMessageProtocol'"

* 'Foo' is not a member type of 'Bar'

I prepared a minimal example showing this issue:

```

message PStatus {

optional int64 battery_usage = 1;

optional int64 max_battery_usage = 2;

message PCardVoltageStatus

{

message PCard

{

optional string card_name = 1;

optional string no = 2;

};

repeated PCard card = 1;

};

};

```

If you build .pb.swift file from this example and add to xcode, you should also get those errors.

|

1.0

|

Errors while compiling: does not conform to protocol 'GeneratedMessageProtocol' and is not a member type of - I have several protobuf files which are actively used in a project with some other languages, and now need swift versions. I can build .pb.swift files successfully but when I add them to Xcode, I am receiving tons of bugs mainly composed of:

* Type 1 'Foo' does not conform to protocol 'GeneratedMessageProtocol'"

* 'Foo' is not a member type of 'Bar'

I prepared a minimal example showing this issue:

```

message PStatus {

optional int64 battery_usage = 1;

optional int64 max_battery_usage = 2;

message PCardVoltageStatus

{

message PCard

{

optional string card_name = 1;

optional string no = 2;

};

repeated PCard card = 1;

};

};

```

If you build .pb.swift file from this example and add to xcode, you should also get those errors.

|

priority

|

errors while compiling does not conform to protocol generatedmessageprotocol and is not a member type of i have several protobuf files which are actively used in a project with some other languages and now need swift versions i can build pb swift files successfully but when i add them to xcode i am receiving tons of bugs mainly composed of type foo does not conform to protocol generatedmessageprotocol foo is not a member type of bar i prepared a minimal example showing this issue message pstatus optional battery usage optional max battery usage message pcardvoltagestatus message pcard optional string card name optional string no repeated pcard card if you build pb swift file from this example and add to xcode you should also get those errors

| 1

|

279,362

| 8,664,452,354

|

IssuesEvent

|

2018-11-28 20:12:49

|

supergiant/control

|

https://api.github.com/repos/supergiant/control

|

closed

|

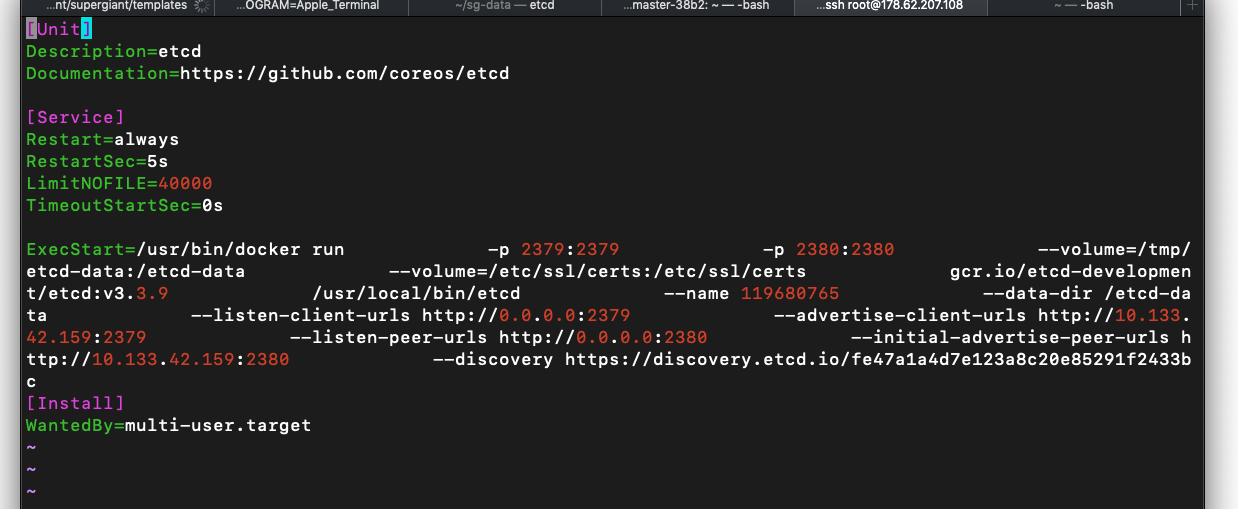

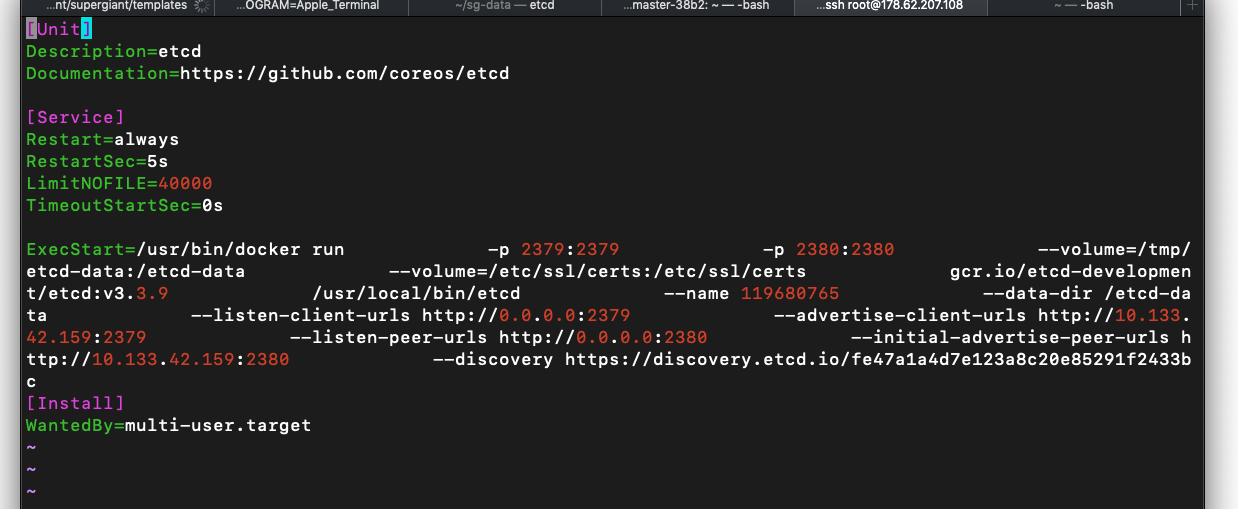

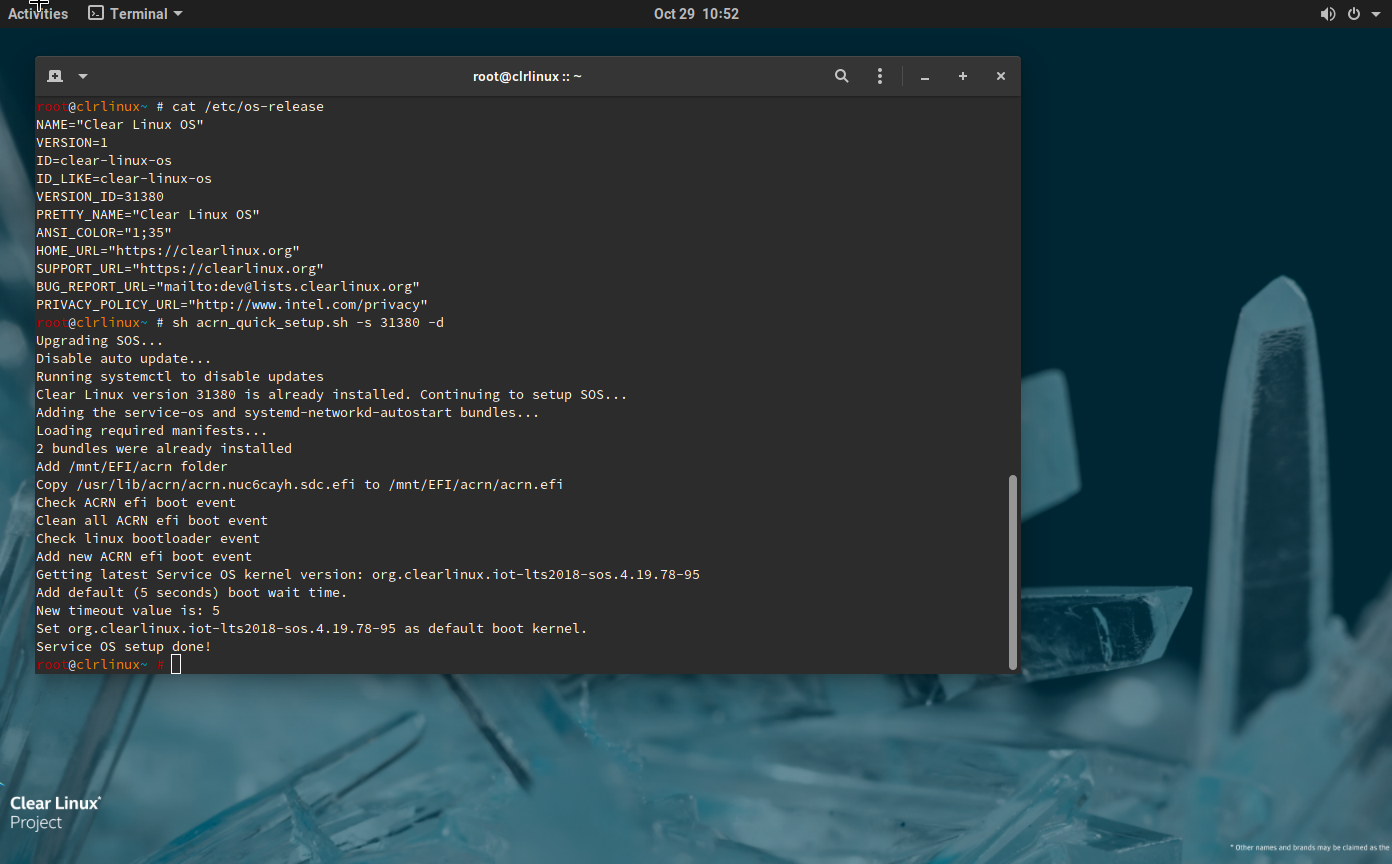

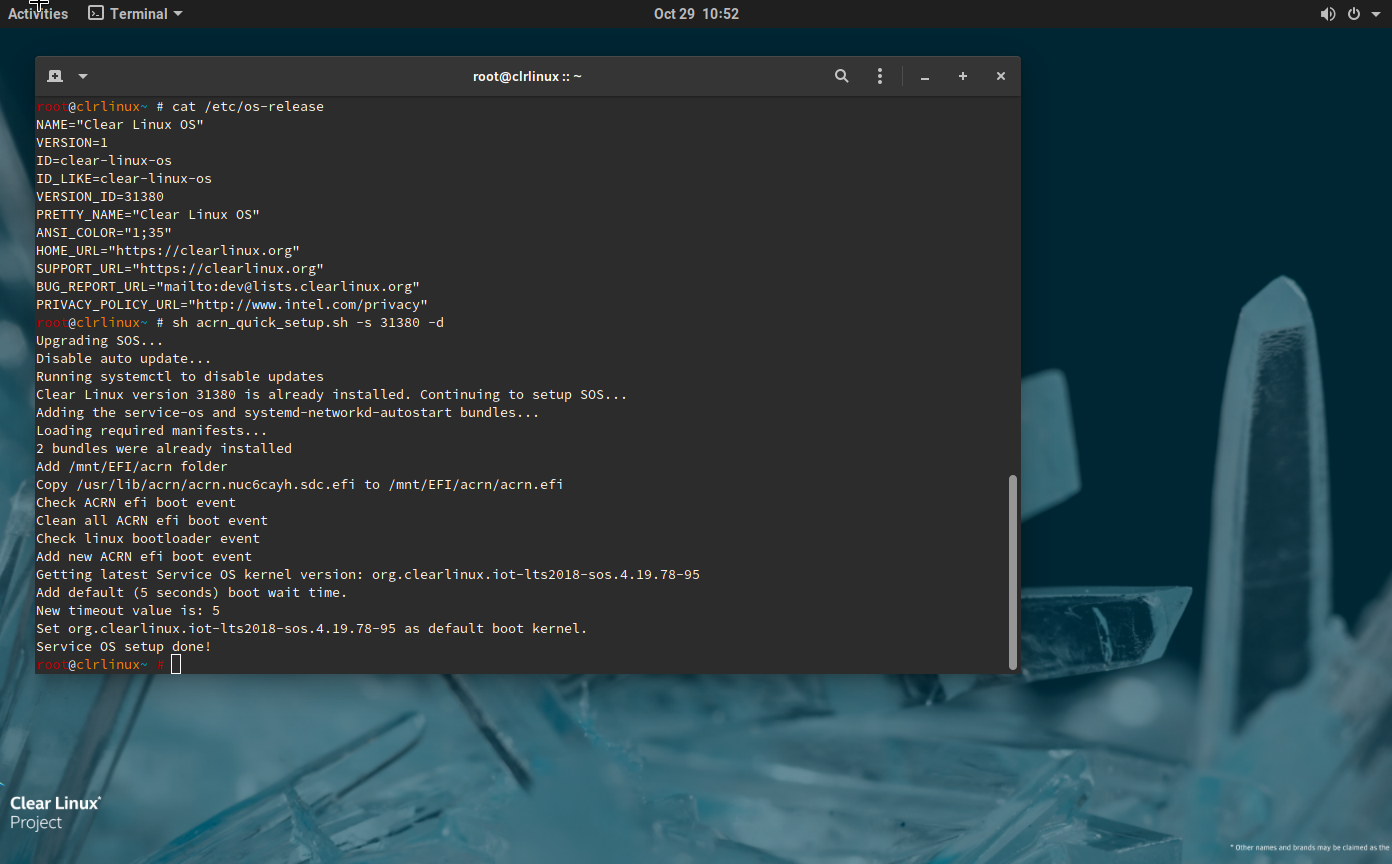

2.0: API - After rebooting the master, the etcd database can not be found.

|

High Priority

|

**Short Summary:**

After rebooting the master, the master can not join the cluster due to the etcd db volume being stored in the /tmp directory.

**Steps to Reproduce:**

1. Spin up a cluster

2. ssh into the master and issue a reboot

**Expected Results:**

The box reboots and the cluster is working correctly

**Actual Results:**

The box reboots and the etcd database can not be found. It shows as started when checking systemctl status. However, nothing is happening. After restarting etcd with systemctl stop and restart, the etcd will not run.

Please see the attached screen shot to show that the volume is located at /tmp.

**Dev Info:** (add links to log files)

1. Output of `go version`: 1.10

2. Commit hash or release tag used (`git log`): bfa60ad35ccef78c4e51b19bd01eb2a1e06d2d3f

3. Number of Masters and Nodes: Any

4. Cloud Provider: DO and AWS

|

1.0

|

2.0: API - After rebooting the master, the etcd database can not be found. - **Short Summary:**

After rebooting the master, the master can not join the cluster due to the etcd db volume being stored in the /tmp directory.

**Steps to Reproduce:**

1. Spin up a cluster

2. ssh into the master and issue a reboot

**Expected Results:**

The box reboots and the cluster is working correctly

**Actual Results:**

The box reboots and the etcd database can not be found. It shows as started when checking systemctl status. However, nothing is happening. After restarting etcd with systemctl stop and restart, the etcd will not run.

Please see the attached screen shot to show that the volume is located at /tmp.

**Dev Info:** (add links to log files)

1. Output of `go version`: 1.10

2. Commit hash or release tag used (`git log`): bfa60ad35ccef78c4e51b19bd01eb2a1e06d2d3f

3. Number of Masters and Nodes: Any

4. Cloud Provider: DO and AWS

|

priority

|

api after rebooting the master the etcd database can not be found short summary after rebooting the master the master can not join the cluster due to the etcd db volume being stored in the tmp directory steps to reproduce spin up a cluster ssh into the master and issue a reboot expected results the box reboots and the cluster is working correctly actual results the box reboots and the etcd database can not be found it shows as started when checking systemctl status however nothing is happening after restarting etcd with systemctl stop and restart the etcd will not run please see the attached screen shot to show that the volume is located at tmp dev info add links to log files output of go version commit hash or release tag used git log number of masters and nodes any cloud provider do and aws

| 1

|

639,705

| 20,762,585,956

|

IssuesEvent

|

2022-03-15 17:28:23

|

project-pareto/project-pareto

|

https://api.github.com/repos/project-pareto/project-pareto

|

opened

|

Fix image location warnings in Sphinx/ReadTheDocs

|

Priority:High

|

- [ ] Fix warnings

- [ ] Set `fail_on_warning: true` in `.readthedocs.yaml`

|

1.0

|

Fix image location warnings in Sphinx/ReadTheDocs - - [ ] Fix warnings

- [ ] Set `fail_on_warning: true` in `.readthedocs.yaml`

|

priority

|

fix image location warnings in sphinx readthedocs fix warnings set fail on warning true in readthedocs yaml

| 1

|

717,068

| 24,659,795,236

|

IssuesEvent

|

2022-10-18 05:15:44

|

AY2223S1-CS2103T-T10-1/tp

|

https://api.github.com/repos/AY2223S1-CS2103T-T10-1/tp

|

closed

|

As a student, I can edit module

|

type.Story priority.High

|

So that I do not have to delete module data and re-create in case I mess up.

|

1.0

|

As a student, I can edit module - So that I do not have to delete module data and re-create in case I mess up.

|

priority

|

as a student i can edit module so that i do not have to delete module data and re create in case i mess up

| 1

|

695,951

| 23,877,712,097

|

IssuesEvent

|

2022-09-07 20:49:20

|

ClassicLootManager/ClassicLootManager

|

https://api.github.com/repos/ClassicLootManager/ClassicLootManager

|

closed

|

Player rename not working due to GUID check

|

bug Priority::High

|

Existence check in AddProfile is wrong and checks the conditions improperly thus blocking rename support

|

1.0

|

Player rename not working due to GUID check - Existence check in AddProfile is wrong and checks the conditions improperly thus blocking rename support

|

priority

|

player rename not working due to guid check existence check in addprofile is wrong and checks the conditions improperly thus blocking rename support

| 1

|

74,282

| 3,437,296,982

|

IssuesEvent

|

2015-12-13 03:11:09

|

jakev/dtf

|

https://api.github.com/repos/jakev/dtf

|

closed

|

Export fails when modules lack version

|

bug priority-high

|

```

06:38:58 /DevTesting$ dtf pm export test.zip

Traceback (most recent call last):

File "/usr/local/bin/dtf", line 185, in <module>

sys.exit(main())

File "/usr/local/bin/dtf", line 149, in main

return pkg.launch_builtin_module('pm', sys.argv)

File "/usr/local/lib/python2.7/dist-packages/dtf/packages.py", line 145, in launch_builtin_module

return __launch_python_module(launch_path, cmd, args)

File "/usr/local/lib/python2.7/dist-packages/dtf/packages.py", line 97, in __launch_python_module

return mod_inst.run(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/module.py", line 62, in run

result = getattr(self, 'execute')(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 723, in execute

rtn = self.do_export(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 235, in do_export

rtn = self.generate_export_xml(export_items, export_manifest)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 390, in generate_export_xml

item_xml.attrib['majorVersion'] = item.major_version

File "lxml.etree.pyx", line 2245, in lxml.etree._Attrib.__setitem__ (src/lxml/lxml.etree.c:58775)

File "apihelpers.pxi", line 547, in lxml.etree._setAttributeValue (src/lxml/lxml.etree.c:19025)

File "apihelpers.pxi", line 1393, in lxml.etree._utf8 (src/lxml/lxml.etree.c:26460)

TypeError: Argument must be bytes or unicode, got 'NoneType'

```

|

1.0

|

Export fails when modules lack version - ```

06:38:58 /DevTesting$ dtf pm export test.zip

Traceback (most recent call last):

File "/usr/local/bin/dtf", line 185, in <module>

sys.exit(main())

File "/usr/local/bin/dtf", line 149, in main

return pkg.launch_builtin_module('pm', sys.argv)

File "/usr/local/lib/python2.7/dist-packages/dtf/packages.py", line 145, in launch_builtin_module

return __launch_python_module(launch_path, cmd, args)

File "/usr/local/lib/python2.7/dist-packages/dtf/packages.py", line 97, in __launch_python_module

return mod_inst.run(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/module.py", line 62, in run

result = getattr(self, 'execute')(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 723, in execute

rtn = self.do_export(args)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 235, in do_export

rtn = self.generate_export_xml(export_items, export_manifest)

File "/usr/local/lib/python2.7/dist-packages/dtf/core/cmds/pm.py", line 390, in generate_export_xml

item_xml.attrib['majorVersion'] = item.major_version

File "lxml.etree.pyx", line 2245, in lxml.etree._Attrib.__setitem__ (src/lxml/lxml.etree.c:58775)

File "apihelpers.pxi", line 547, in lxml.etree._setAttributeValue (src/lxml/lxml.etree.c:19025)

File "apihelpers.pxi", line 1393, in lxml.etree._utf8 (src/lxml/lxml.etree.c:26460)

TypeError: Argument must be bytes or unicode, got 'NoneType'

```

|

priority

|

export fails when modules lack version devtesting dtf pm export test zip traceback most recent call last file usr local bin dtf line in sys exit main file usr local bin dtf line in main return pkg launch builtin module pm sys argv file usr local lib dist packages dtf packages py line in launch builtin module return launch python module launch path cmd args file usr local lib dist packages dtf packages py line in launch python module return mod inst run args file usr local lib dist packages dtf module py line in run result getattr self execute args file usr local lib dist packages dtf core cmds pm py line in execute rtn self do export args file usr local lib dist packages dtf core cmds pm py line in do export rtn self generate export xml export items export manifest file usr local lib dist packages dtf core cmds pm py line in generate export xml item xml attrib item major version file lxml etree pyx line in lxml etree attrib setitem src lxml lxml etree c file apihelpers pxi line in lxml etree setattributevalue src lxml lxml etree c file apihelpers pxi line in lxml etree src lxml lxml etree c typeerror argument must be bytes or unicode got nonetype

| 1

|

420,091

| 12,233,183,891

|

IssuesEvent

|

2020-05-04 11:08:13

|

bedita/bedita

|

https://api.github.com/repos/bedita/bedita

|

closed

|

Links (model, table, entity)

|

Priority - High Topic - Core Topic - ORM

|

Provide data modelling in BEdita/Core for "links".

Links extend object. They should have also the following properties:

- http status

- last update date

|

1.0

|

Links (model, table, entity) - Provide data modelling in BEdita/Core for "links".

Links extend object. They should have also the following properties:

- http status

- last update date

|

priority

|

links model table entity provide data modelling in bedita core for links links extend object they should have also the following properties http status last update date

| 1

|

590,716

| 17,785,768,822

|

IssuesEvent

|

2021-08-31 10:50:59

|

GEWIS/gewisweb

|

https://api.github.com/repos/GEWIS/gewisweb

|

closed

|

`./web` is broken, which causes the cronjobs to fail

|

Type: Bug Priority: High For: Backend Status: Confirmed

|

`./web` fails because the script is unaware of any environment variables (primarily `APP_ENV`).

|

1.0

|

`./web` is broken, which causes the cronjobs to fail - `./web` fails because the script is unaware of any environment variables (primarily `APP_ENV`).

|

priority

|

web is broken which causes the cronjobs to fail web fails because the script is unaware of any environment variables primarily app env

| 1

|

121,116

| 4,805,127,192

|

IssuesEvent

|

2016-11-02 15:19:17

|

windupmicheal/Tackle-Trading

|

https://api.github.com/repos/windupmicheal/Tackle-Trading

|

closed

|

New header design + modification to stylesheet

|

1. High Priority!

|

Notice:

- The new all-white logo

- New menu font

- The location of LOGIN | SIGNUP so that the P in SIGNUP is virtically aligned with the magnifying glass of the search bar

- New Login/signup/logout font

- New "Active Menu" locator - the grey highlight oval surrounding the currently expanded menu

- The font and light-grey color of the SEARCH text

- The location of the magnifying glass in the SEARCH bar

You'll notice that the menu headers are using the "MUSEO SLAB" font, which is available from typekit.com. I have Noah's credentials for this. Ask me for them in private when you are ready.

According to a wpengine.com scan, we're using FIVE fonts on the site. That is two too many. I would like to adjust the stylesheet to use MUSEO SLAB font for all headers, and HELVETICA NEUE for paragraph text. The only other font that should be used is AERO, which is the logo font and the font for headers on the LEARN MORE page. No other fonts should be used on the site. Where OPEN SANS is used in the footer, let's use HELVETICA NEUE.

You should be able to pull graphic assets from this PDF file. If not, let me know what you need and I'll get it to you.

[new front page w video bg.pdf](https://github.com/windupmicheal/Tackle-Trading/files/546130/new.front.page.w.video.bg.pdf)

|

1.0

|

New header design + modification to stylesheet - Notice:

- The new all-white logo

- New menu font

- The location of LOGIN | SIGNUP so that the P in SIGNUP is virtically aligned with the magnifying glass of the search bar

- New Login/signup/logout font

- New "Active Menu" locator - the grey highlight oval surrounding the currently expanded menu

- The font and light-grey color of the SEARCH text

- The location of the magnifying glass in the SEARCH bar

You'll notice that the menu headers are using the "MUSEO SLAB" font, which is available from typekit.com. I have Noah's credentials for this. Ask me for them in private when you are ready.

According to a wpengine.com scan, we're using FIVE fonts on the site. That is two too many. I would like to adjust the stylesheet to use MUSEO SLAB font for all headers, and HELVETICA NEUE for paragraph text. The only other font that should be used is AERO, which is the logo font and the font for headers on the LEARN MORE page. No other fonts should be used on the site. Where OPEN SANS is used in the footer, let's use HELVETICA NEUE.

You should be able to pull graphic assets from this PDF file. If not, let me know what you need and I'll get it to you.

[new front page w video bg.pdf](https://github.com/windupmicheal/Tackle-Trading/files/546130/new.front.page.w.video.bg.pdf)

|

priority

|

new header design modification to stylesheet notice the new all white logo new menu font the location of login signup so that the p in signup is virtically aligned with the magnifying glass of the search bar new login signup logout font new active menu locator the grey highlight oval surrounding the currently expanded menu the font and light grey color of the search text the location of the magnifying glass in the search bar you ll notice that the menu headers are using the museo slab font which is available from typekit com i have noah s credentials for this ask me for them in private when you are ready according to a wpengine com scan we re using five fonts on the site that is two too many i would like to adjust the stylesheet to use museo slab font for all headers and helvetica neue for paragraph text the only other font that should be used is aero which is the logo font and the font for headers on the learn more page no other fonts should be used on the site where open sans is used in the footer let s use helvetica neue you should be able to pull graphic assets from this pdf file if not let me know what you need and i ll get it to you

| 1

|

115,838

| 4,682,939,155

|

IssuesEvent

|

2016-10-09 14:42:45

|

CS2103AUG2016-T15-C3/main

|

https://api.github.com/repos/CS2103AUG2016-T15-C3/main

|

opened

|

As a user I want to modify the information of a task

|

priority.high type.story

|

So that I can update the details, requirements and deadline of a task if they are changed

|

1.0

|

As a user I want to modify the information of a task - So that I can update the details, requirements and deadline of a task if they are changed

|

priority

|

as a user i want to modify the information of a task so that i can update the details requirements and deadline of a task if they are changed

| 1

|

409,100

| 11,956,787,907

|

IssuesEvent

|

2020-04-04 12:04:45

|

weso/shex-lite

|

https://api.github.com/repos/weso/shex-lite

|

closed

|

Travis CI is completly unstable for scala builds

|

affects: repository dificulty: low priority: high status: accepted type: bug

|

Travis CI has been unstable during all day for scala builds, this is related to that sometimes it misses some libraries, sometimes it misses other libraries and then the same test executed twice fails first time and passes second time....

In my opinion we should be looking for another CI tool.

|

1.0

|

Travis CI is completly unstable for scala builds - Travis CI has been unstable during all day for scala builds, this is related to that sometimes it misses some libraries, sometimes it misses other libraries and then the same test executed twice fails first time and passes second time....

In my opinion we should be looking for another CI tool.

|

priority

|

travis ci is completly unstable for scala builds travis ci has been unstable during all day for scala builds this is related to that sometimes it misses some libraries sometimes it misses other libraries and then the same test executed twice fails first time and passes second time in my opinion we should be looking for another ci tool

| 1

|

97,244

| 3,987,523,488

|

IssuesEvent

|

2016-05-09 04:29:18

|

rfbonett/CSCi435-ODBR

|

https://api.github.com/repos/rfbonett/CSCi435-ODBR

|

closed

|

Hierarchy Dump : Can no longer use Accessibility Service to get root Node

|

bug High Priority

|

We need a new way to get a handle to the root node's AccessibilityNodeInfo if we are to use the current implementation. Otherwise, we can use uiautomator dump via bash.

|

1.0

|

Hierarchy Dump : Can no longer use Accessibility Service to get root Node - We need a new way to get a handle to the root node's AccessibilityNodeInfo if we are to use the current implementation. Otherwise, we can use uiautomator dump via bash.

|

priority

|

hierarchy dump can no longer use accessibility service to get root node we need a new way to get a handle to the root node s accessibilitynodeinfo if we are to use the current implementation otherwise we can use uiautomator dump via bash

| 1

|

248,647

| 7,934,659,443

|

IssuesEvent

|

2018-07-08 21:51:45

|

BananiumLabs/AtomBlast.io

|

https://api.github.com/repos/BananiumLabs/AtomBlast.io

|

closed

|

Split Powerup into Atom and Compound

|

enhancement high priority

|

Currently the Powerup structure is created in a way that the item you pick up is the same item that you will use. However, this is not how our game will be structured.

Instead, we will split the functionality into two different classes, Atom and Compound.

Atom is what you pick up, so it will inherit most of the current Powerup functionality (spawning, pickup, etc). However, you cannot use Atoms by themselves.

Atoms must be turned into Compounds before they are useful. Compounds use Atoms as basic building blocks, just like in real life. Players will be able to select (or unlock??) Blueprints which will allow for different configurations of Compounds to be created and deployed.

We will likely create several slots in the HUD where players can select what several blueprints they want ingame out of a large collection of different blueprints. During the game, players cannot change what blueprints they can use- this will be done in the Main Menu and between games.

|

1.0

|

Split Powerup into Atom and Compound - Currently the Powerup structure is created in a way that the item you pick up is the same item that you will use. However, this is not how our game will be structured.

Instead, we will split the functionality into two different classes, Atom and Compound.

Atom is what you pick up, so it will inherit most of the current Powerup functionality (spawning, pickup, etc). However, you cannot use Atoms by themselves.

Atoms must be turned into Compounds before they are useful. Compounds use Atoms as basic building blocks, just like in real life. Players will be able to select (or unlock??) Blueprints which will allow for different configurations of Compounds to be created and deployed.

We will likely create several slots in the HUD where players can select what several blueprints they want ingame out of a large collection of different blueprints. During the game, players cannot change what blueprints they can use- this will be done in the Main Menu and between games.

|

priority

|

split powerup into atom and compound currently the powerup structure is created in a way that the item you pick up is the same item that you will use however this is not how our game will be structured instead we will split the functionality into two different classes atom and compound atom is what you pick up so it will inherit most of the current powerup functionality spawning pickup etc however you cannot use atoms by themselves atoms must be turned into compounds before they are useful compounds use atoms as basic building blocks just like in real life players will be able to select or unlock blueprints which will allow for different configurations of compounds to be created and deployed we will likely create several slots in the hud where players can select what several blueprints they want ingame out of a large collection of different blueprints during the game players cannot change what blueprints they can use this will be done in the main menu and between games

| 1

|

168,623

| 6,379,292,135

|

IssuesEvent

|

2017-08-02 14:29:00

|

canonical-websites/vanillaframework.io

|

https://api.github.com/repos/canonical-websites/vanillaframework.io

|

opened

|

Update link of CTA button 'Get started'

|

Priority: High

|

## Summary

Update 'Get started' CTA button to point to Vanilla documentation rather than anchor scrolling down one row on homepage.

## Current and expected result

Current result - 'Get started' anchor scrolls to 'Quick start' on same page.

Expected result - 'Get started' to send users to Vanilla documentation.

|

1.0

|

Update link of CTA button 'Get started' - ## Summary

Update 'Get started' CTA button to point to Vanilla documentation rather than anchor scrolling down one row on homepage.

## Current and expected result

Current result - 'Get started' anchor scrolls to 'Quick start' on same page.

Expected result - 'Get started' to send users to Vanilla documentation.

|

priority

|

update link of cta button get started summary update get started cta button to point to vanilla documentation rather than anchor scrolling down one row on homepage current and expected result current result get started anchor scrolls to quick start on same page expected result get started to send users to vanilla documentation

| 1

|

586,440

| 17,577,733,599

|

IssuesEvent

|

2021-08-15 23:16:33

|

PlanktonTeam/planktonr

|

https://api.github.com/repos/PlanktonTeam/planktonr

|

closed

|

Importing commonly used packages

|

enhancement high priority

|

I think I should switch to attaching the whole `dplyr` package to minimise the `dplyr::` calls.

This can be done just once (suggest a function in utils.R). I should also adopt this approach for magrittr.

|

1.0

|

Importing commonly used packages - I think I should switch to attaching the whole `dplyr` package to minimise the `dplyr::` calls.

This can be done just once (suggest a function in utils.R). I should also adopt this approach for magrittr.

|

priority

|

importing commonly used packages i think i should switch to attaching the whole dplyr package to minimise the dplyr calls this can be done just once suggest a function in utils r i should also adopt this approach for magrittr

| 1

|

352,894

| 10,546,929,425

|

IssuesEvent

|

2019-10-02 23:00:51

|

emory-libraries/ezpaarse-platforms

|

https://api.github.com/repos/emory-libraries/ezpaarse-platforms

|

closed

|

Nurimedia (DBpia & KRpia)

|

Add Parser High Priority Stakeholder Priority

|

### Example:star::star: :

http://www.dbpia.co.kr.proxy.library.emory.edu/

http://www.krpia.co.kr.proxy.library.emory.edu/

### Priority:

High

### Subscriber (Library):

Woodruff

### ezPAARSE

Analysis: N/A

Trello: N/A

|

2.0

|

Nurimedia (DBpia & KRpia) - ### Example:star::star: :

http://www.dbpia.co.kr.proxy.library.emory.edu/

http://www.krpia.co.kr.proxy.library.emory.edu/

### Priority:

High

### Subscriber (Library):

Woodruff

### ezPAARSE

Analysis: N/A

Trello: N/A

|

priority

|

nurimedia dbpia krpia example star star priority high subscriber library woodruff ezpaarse analysis n a trello n a

| 1

|

96,140

| 3,964,945,508

|

IssuesEvent

|

2016-05-03 04:59:37

|

meumobi/infomobi

|

https://api.github.com/repos/meumobi/infomobi

|

opened

|

Prevent vote if none option is selected

|

bug high-priority polls

|

see logs on api

```bash

[2016-05-03 07:04:44] sitebuilder.INFO: http request {"method":"POST","url":"/api/alcon.meumobi.com/items/57282f7a9a645da03e22a204/poll","data":[],"component":"api"}

```

|

1.0

|

Prevent vote if none option is selected - see logs on api

```bash

[2016-05-03 07:04:44] sitebuilder.INFO: http request {"method":"POST","url":"/api/alcon.meumobi.com/items/57282f7a9a645da03e22a204/poll","data":[],"component":"api"}

```

|

priority

|

prevent vote if none option is selected see logs on api bash sitebuilder info http request method post url api alcon meumobi com items poll data component api

| 1

|

747,855

| 26,101,183,410

|

IssuesEvent

|

2022-12-27 07:15:54

|

bounswe/bounswe2022group7

|

https://api.github.com/repos/bounswe/bounswe2022group7

|

closed

|

[FE] Display following users on profile page

|

Status: Completed Priority: High Type: Implementation Target: Frontend

|

When the following button on the profile page is clicked, the list of followed users should be displayed.

|

1.0

|

[FE] Display following users on profile page - When the following button on the profile page is clicked, the list of followed users should be displayed.

|

priority

|

display following users on profile page when the following button on the profile page is clicked the list of followed users should be displayed

| 1

|

774,403

| 27,195,452,142

|

IssuesEvent

|

2023-02-20 04:32:02

|

openmsupply/open-msupply

|

https://api.github.com/repos/openmsupply/open-msupply

|

closed

|

Postgres running out of memory

|

bug back-end sync v5 Priority: High

|

When running in development with postgres DB I cannot initialise modest sized sites. In my tests I am initialising and integrating about 180K records, at about 10 mins into integration I get this error and integration stops:

```

2022-12-01 17:57:26.443497 WARN service::sync::translation_and_integration - DBError { msg: "UNKNOWN", extra: "\"\\\"out of shared memory\\\"\"" } "6C2C13CE7735AB4CA5E002AF1B533E61" "requisition_line" service/src/sync/translation_and_integration.rs:125

```

Likely unrelated, but during initialisation I get many, many errors for unsupported records.

**Interestingly this is totally fine when running sqlite.**

Preliminary googling suggests this is likely due to having too many transactions within a transaction.

|

1.0

|

Postgres running out of memory - When running in development with postgres DB I cannot initialise modest sized sites. In my tests I am initialising and integrating about 180K records, at about 10 mins into integration I get this error and integration stops:

```

2022-12-01 17:57:26.443497 WARN service::sync::translation_and_integration - DBError { msg: "UNKNOWN", extra: "\"\\\"out of shared memory\\\"\"" } "6C2C13CE7735AB4CA5E002AF1B533E61" "requisition_line" service/src/sync/translation_and_integration.rs:125

```

Likely unrelated, but during initialisation I get many, many errors for unsupported records.

**Interestingly this is totally fine when running sqlite.**

Preliminary googling suggests this is likely due to having too many transactions within a transaction.

|

priority

|

postgres running out of memory when running in development with postgres db i cannot initialise modest sized sites in my tests i am initialising and integrating about records at about mins into integration i get this error and integration stops warn service sync translation and integration dberror msg unknown extra out of shared memory requisition line service src sync translation and integration rs likely unrelated but during initialisation i get many many errors for unsupported records interestingly this is totally fine when running sqlite preliminary googling suggests this is likely due to having too many transactions within a transaction

| 1

|

117,337

| 4,713,972,026

|

IssuesEvent

|

2016-10-14 22:00:54

|

mulesoft/api-workbench

|

https://api.github.com/repos/mulesoft/api-workbench

|

closed

|

DataType Examples are not parsed

|

bug priority:high

|

In attempting to use dataTypes in place of schemas I have defined a type with an example. The example in the type is ignored by the console or mocking service unless the example is explicitly defined in the response's specific media type.

I raised this issue on the api-designer repo as well, and it was confirmed a bug this morning.

Example:

Type Definition:

```RAML

types:

Member:

properties:

firstName: string

lastName: string

age: integer

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

````

If a response is resource type the example is not included

````RAML

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

````

Resource:

````RAML

/members:

type:

collection:

typeName: <<resourcePathName|!uppercamelcase>>

get:

````

This will show the Object type and its properties but not the example. If the resource type explicitly defines the example it will be included in the console and returned by the Mock service.

````RAML

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

application/json:

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

````

The description of Examples in the 1.0 spec seems to indicate this would be a supported usage. Full RAML below:

````RAML

#%RAML 1.0

title: TestRAML

baseUri: http://someapi.com/api

mediaType: application/json

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

types:

Member:

properties:

firstName: string

lastName: string

age: integer

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

/members:

type:

collection:

typeName: <<resourcePathName|!uppercamelcase>>

get:

````

|

1.0

|

DataType Examples are not parsed - In attempting to use dataTypes in place of schemas I have defined a type with an example. The example in the type is ignored by the console or mocking service unless the example is explicitly defined in the response's specific media type.

I raised this issue on the api-designer repo as well, and it was confirmed a bug this morning.

Example:

Type Definition:

```RAML

types:

Member:

properties:

firstName: string

lastName: string

age: integer

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

````

If a response is resource type the example is not included

````RAML

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

````

Resource:

````RAML

/members:

type:

collection:

typeName: <<resourcePathName|!uppercamelcase>>

get:

````

This will show the Object type and its properties but not the example. If the resource type explicitly defines the example it will be included in the console and returned by the Mock service.

````RAML

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

application/json:

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

````

The description of Examples in the 1.0 spec seems to indicate this would be a supported usage. Full RAML below:

````RAML

#%RAML 1.0

title: TestRAML

baseUri: http://someapi.com/api

mediaType: application/json

resourceTypes:

collection:

usage: Use this resourceType to represent a collection of <<resourcePathName|!singularize>> items

description: A collection of <<resourcePathName>>

get:

description: |

Get all <<resourcePathName>>,

optionally filtered

responses:

200:

body:

type: <<typeName|!singularize>>[]

types:

Member:

properties:

firstName: string

lastName: string

age: integer

examples:

Bob:

value:

firstName: "Bob"

lastName: "Slidell"

age: 42

Bill:

value:

firstName: "Bill"

lastName: "Lumberg"

age: 41

/members:

type:

collection:

typeName: <<resourcePathName|!uppercamelcase>>

get:

````

|

priority

|

datatype examples are not parsed in attempting to use datatypes in place of schemas i have defined a type with an example the example in the type is ignored by the console or mocking service unless the example is explicitly defined in the response s specific media type i raised this issue on the api designer repo as well and it was confirmed a bug this morning example type definition raml types member properties firstname string lastname string age integer examples bob value firstname bob lastname slidell age bill value firstname bill lastname lumberg age if a response is resource type the example is not included raml resourcetypes collection usage use this resourcetype to represent a collection of items description a collection of get description get all optionally filtered responses body type resource raml members type collection typename get this will show the object type and its properties but not the example if the resource type explicitly defines the example it will be included in the console and returned by the mock service raml resourcetypes collection usage use this resourcetype to represent a collection of items description a collection of get description get all optionally filtered responses body type application json examples bob value firstname bob lastname slidell age bill value firstname bill lastname lumberg age the description of examples in the spec seems to indicate this would be a supported usage full raml below raml raml title testraml baseuri mediatype application json resourcetypes collection usage use this resourcetype to represent a collection of items description a collection of get description get all optionally filtered responses body type types member properties firstname string lastname string age integer examples bob value firstname bob lastname slidell age bill value firstname bill lastname lumberg age members type collection typename get

| 1

|

80,453

| 3,561,747,325

|

IssuesEvent

|

2016-01-24 00:43:35

|

godotengine/godot

|

https://api.github.com/repos/godotengine/godot

|

closed

|

Subscenes no longer updates variables values in parent scenes

|

bug high priority topic:editor

|

Subscenes no longer updates their values in parent scenes. The easiest way to reproduce it is to use **exported** variable since for those it behaves like this every time.

That means if you change properties in subscene file the instanced ones will never be updated, even if you never touch these variables in parent scene.

Short video proof: https://youtu.be/A8Dbk8LaT2A

Tested in: 1bc91848e3cee91eccaf2150a74deaf1cd84be13

Bug is quite fresh I'm almost 100% sure it was not with us on the build from the beginning of December.

It's quite serious and takes away one of the reasons to use subscene, think it should be fixed for 2.0

Edit: **Sample in this comment: https://github.com/godotengine/godot/issues/3127#issuecomment-171634970**

|

1.0

|

Subscenes no longer updates variables values in parent scenes - Subscenes no longer updates their values in parent scenes. The easiest way to reproduce it is to use **exported** variable since for those it behaves like this every time.

That means if you change properties in subscene file the instanced ones will never be updated, even if you never touch these variables in parent scene.

Short video proof: https://youtu.be/A8Dbk8LaT2A

Tested in: 1bc91848e3cee91eccaf2150a74deaf1cd84be13

Bug is quite fresh I'm almost 100% sure it was not with us on the build from the beginning of December.

It's quite serious and takes away one of the reasons to use subscene, think it should be fixed for 2.0

Edit: **Sample in this comment: https://github.com/godotengine/godot/issues/3127#issuecomment-171634970**

|

priority

|

subscenes no longer updates variables values in parent scenes subscenes no longer updates their values in parent scenes the easiest way to reproduce it is to use exported variable since for those it behaves like this every time that means if you change properties in subscene file the instanced ones will never be updated even if you never touch these variables in parent scene short video proof tested in bug is quite fresh i m almost sure it was not with us on the build from the beginning of december it s quite serious and takes away one of the reasons to use subscene think it should be fixed for edit sample in this comment

| 1

|

348,319

| 10,440,880,427

|

IssuesEvent

|

2019-09-18 09:36:59

|

geosolutions-it/MapStore2

|

https://api.github.com/repos/geosolutions-it/MapStore2

|

closed

|

Switching CRS, circle annotations change size

|

Accepted CRS Selector Priority: High annotations bug

|

### Description

When switching CRS using the CRS selector, **circle annotations** (if present) change their size.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [x] Internet Explorer

- [x] Chrome

- [x] Firefox

- [x] Safari

*Browser Version Affected*

Last

*Steps to reproduce*

- Open a map

- Add some layer

- Add a circle annotation

- switch CRS through the CRS selector

*Expected Result*

The circle annotation doesn't change size.

*Current Result*

The circle annotation changes size.

### Other useful information (optional):

|

1.0

|

Switching CRS, circle annotations change size - ### Description

When switching CRS using the CRS selector, **circle annotations** (if present) change their size.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

(use this site: https://www.whatsmybrowser.org/ for non expert users)

- [x] Internet Explorer

- [x] Chrome

- [x] Firefox

- [x] Safari

*Browser Version Affected*

Last

*Steps to reproduce*

- Open a map

- Add some layer

- Add a circle annotation

- switch CRS through the CRS selector

*Expected Result*

The circle annotation doesn't change size.

*Current Result*

The circle annotation changes size.

### Other useful information (optional):

|

priority

|

switching crs circle annotations change size description when switching crs using the crs selector circle annotations if present change their size in case of bug otherwise remove this paragraph browser affected use this site for non expert users internet explorer chrome firefox safari browser version affected last steps to reproduce open a map add some layer add a circle annotation switch crs through the crs selector expected result the circle annotation doesn t change size current result the circle annotation changes size other useful information optional

| 1

|

554,923

| 16,442,517,241

|

IssuesEvent

|

2021-05-20 15:46:05

|

DSpace/dspace-angular

|

https://api.github.com/repos/DSpace/dspace-angular

|

closed

|

Edit of an eperson doesn't work

|

bug e/2 high priority

|