Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

154,121

| 5,909,773,769

|

IssuesEvent

|

2017-05-20 03:12:36

|

neuropoly/spinalcordtoolbox

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox

|

closed

|

AttributeError: 'str' object has no attribute 'change_orientation'

|

bug priority:HIGH sct_propseg wontfix

|

data: 20170519_issue1335

~~~

sct_propseg -i mt0.nii.gz -c t2s -qc ~/qc_test1

--

Spinal Cord Toolbox (master/5f1beeea11d952cc36ad0c40b1cebd600e4ee21e)

Running /Users/julien/code/sct/scripts/sct_propseg.py -i mt0.nii.gz -c t2s -qc /Users/julien/qc_test1

Check folder existence...

Detecting the spinal cord using OptiC

isct_propseg -i "/Users/julien/data/temp/mt/mt0.nii.gz" -t t2 -o "./" -verbose -init-centerline ./mt0_centerline_optic.nii.gz -centerline-binary

Initialization - using given centerline

Total propagation length = 110.967 mm

Segmentation finished. To view results, type:

fslview /Users/julien/data/temp/mt/mt0.nii.gz ./mt0_seg.nii.gz &

Check consistency of segmentation...

Create temporary folder...

sct_image -i tmp.segmentation.nii.gz -setorient RPI -o tmp.segmentation_RPI.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.segmentation.nii.gz -setorient RPI -o tmp.segmentation_RPI.nii.gz

tmp.segmentation.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

Generate output files...

WARNING: File tmp.segmentation_RPI.nii.gz already exists. Deleting it.

Created file(s):

--> tmp.segmentation_RPI.nii.gz

sct_image -i tmp.centerline.nii.gz -setorient RPI -o tmp.centerline_RPI.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.centerline.nii.gz -setorient RPI -o tmp.centerline_RPI.nii.gz

tmp.centerline.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

Generate output files...

WARNING: File tmp.centerline_RPI.nii.gz already exists. Deleting it.

Created file(s):

--> tmp.centerline_RPI.nii.gz

Get data dimensions...

sct_image -i tmp.segmentation_RPI_c.nii.gz -setorient RPI -o ../mt0_seg.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.segmentation_RPI_c.nii.gz -setorient RPI -o ../mt0_seg.nii.gz

tmp.segmentation_RPI_c.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

rm -f tmp.segmentation_RPI_c_RPI.nii.gz

Generate output files...

WARNING: File ../mt0_seg.nii.gz already exists. Deleting it.

Created file(s):

--> ../mt0_seg.nii.gz

Remove temporary files...

WARNING: File mt0_seg.nii.gz already exists. Deleting it.

Remove temporary files...

Traceback (most recent call last):

File "/Users/julien/code/sct/scripts/sct_propseg.py", line 599, in <module>

test(qcslice.Axial(fname_input_data, fname_seg))

File "/Users/julien/code/sct/python/lib/python2.7/site-packages/spinalcordtoolbox/reports/slice.py", line 43, in __init__

self.image.change_orientation('SAL')

AttributeError: 'str' object has no attribute 'change_orientation'

~~~

|

1.0

|

AttributeError: 'str' object has no attribute 'change_orientation' - data: 20170519_issue1335

~~~

sct_propseg -i mt0.nii.gz -c t2s -qc ~/qc_test1

--

Spinal Cord Toolbox (master/5f1beeea11d952cc36ad0c40b1cebd600e4ee21e)

Running /Users/julien/code/sct/scripts/sct_propseg.py -i mt0.nii.gz -c t2s -qc /Users/julien/qc_test1

Check folder existence...

Detecting the spinal cord using OptiC

isct_propseg -i "/Users/julien/data/temp/mt/mt0.nii.gz" -t t2 -o "./" -verbose -init-centerline ./mt0_centerline_optic.nii.gz -centerline-binary

Initialization - using given centerline

Total propagation length = 110.967 mm

Segmentation finished. To view results, type:

fslview /Users/julien/data/temp/mt/mt0.nii.gz ./mt0_seg.nii.gz &

Check consistency of segmentation...

Create temporary folder...

sct_image -i tmp.segmentation.nii.gz -setorient RPI -o tmp.segmentation_RPI.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.segmentation.nii.gz -setorient RPI -o tmp.segmentation_RPI.nii.gz

tmp.segmentation.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

Generate output files...

WARNING: File tmp.segmentation_RPI.nii.gz already exists. Deleting it.

Created file(s):

--> tmp.segmentation_RPI.nii.gz

sct_image -i tmp.centerline.nii.gz -setorient RPI -o tmp.centerline_RPI.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.centerline.nii.gz -setorient RPI -o tmp.centerline_RPI.nii.gz

tmp.centerline.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

Generate output files...

WARNING: File tmp.centerline_RPI.nii.gz already exists. Deleting it.

Created file(s):

--> tmp.centerline_RPI.nii.gz

Get data dimensions...

sct_image -i tmp.segmentation_RPI_c.nii.gz -setorient RPI -o ../mt0_seg.nii.gz

Running /Users/julien/code/sct/scripts/sct_image.py -i tmp.segmentation_RPI_c.nii.gz -setorient RPI -o ../mt0_seg.nii.gz

tmp.segmentation_RPI_c.nii.gz

Get dimensions of data...

256 x 256 x 15 x 1

Change orientation...

rm -f tmp.segmentation_RPI_c_RPI.nii.gz

Generate output files...

WARNING: File ../mt0_seg.nii.gz already exists. Deleting it.

Created file(s):

--> ../mt0_seg.nii.gz

Remove temporary files...

WARNING: File mt0_seg.nii.gz already exists. Deleting it.

Remove temporary files...

Traceback (most recent call last):

File "/Users/julien/code/sct/scripts/sct_propseg.py", line 599, in <module>

test(qcslice.Axial(fname_input_data, fname_seg))

File "/Users/julien/code/sct/python/lib/python2.7/site-packages/spinalcordtoolbox/reports/slice.py", line 43, in __init__

self.image.change_orientation('SAL')

AttributeError: 'str' object has no attribute 'change_orientation'

~~~

|

priority

|

attributeerror str object has no attribute change orientation data sct propseg i nii gz c qc qc spinal cord toolbox master running users julien code sct scripts sct propseg py i nii gz c qc users julien qc check folder existence detecting the spinal cord using optic isct propseg i users julien data temp mt nii gz t o verbose init centerline centerline optic nii gz centerline binary initialization using given centerline total propagation length mm segmentation finished to view results type fslview users julien data temp mt nii gz seg nii gz check consistency of segmentation create temporary folder sct image i tmp segmentation nii gz setorient rpi o tmp segmentation rpi nii gz running users julien code sct scripts sct image py i tmp segmentation nii gz setorient rpi o tmp segmentation rpi nii gz tmp segmentation nii gz get dimensions of data x x x change orientation generate output files warning file tmp segmentation rpi nii gz already exists deleting it created file s tmp segmentation rpi nii gz sct image i tmp centerline nii gz setorient rpi o tmp centerline rpi nii gz running users julien code sct scripts sct image py i tmp centerline nii gz setorient rpi o tmp centerline rpi nii gz tmp centerline nii gz get dimensions of data x x x change orientation generate output files warning file tmp centerline rpi nii gz already exists deleting it created file s tmp centerline rpi nii gz get data dimensions sct image i tmp segmentation rpi c nii gz setorient rpi o seg nii gz running users julien code sct scripts sct image py i tmp segmentation rpi c nii gz setorient rpi o seg nii gz tmp segmentation rpi c nii gz get dimensions of data x x x change orientation rm f tmp segmentation rpi c rpi nii gz generate output files warning file seg nii gz already exists deleting it created file s seg nii gz remove temporary files warning file seg nii gz already exists deleting it remove temporary files traceback most recent call last file users julien code sct scripts sct propseg py line in test qcslice axial fname input data fname seg file users julien code sct python lib site packages spinalcordtoolbox reports slice py line in init self image change orientation sal attributeerror str object has no attribute change orientation

| 1

|

542,991

| 15,875,833,937

|

IssuesEvent

|

2021-04-09 07:34:14

|

Project-Books/book-project

|

https://api.github.com/repos/Project-Books/book-project

|

closed

|

Fix transient object exception

|

bug hibernate high-priority

|

**Describe the bug**

The test `createJsonRepresentationForBooks()` in `BookServiceTest.java` is failing because a 'tag' is not saved before flushing.

**To Reproduce**

Steps to reproduce the behaviour:

1. Run the `createJsonRepresentationForBooks() test

**Expected behaviour**

Test should pass.

**Additional context**

Stack trace: https://gist.github.com/knjk04/f0fb2886214dfeb8c3139943bda80189

Branch: `0.2.0`. In your pull request, set the destination branch as `0.2.0`.

|

1.0

|

Fix transient object exception - **Describe the bug**

The test `createJsonRepresentationForBooks()` in `BookServiceTest.java` is failing because a 'tag' is not saved before flushing.

**To Reproduce**

Steps to reproduce the behaviour:

1. Run the `createJsonRepresentationForBooks() test

**Expected behaviour**

Test should pass.

**Additional context**

Stack trace: https://gist.github.com/knjk04/f0fb2886214dfeb8c3139943bda80189

Branch: `0.2.0`. In your pull request, set the destination branch as `0.2.0`.

|

priority

|

fix transient object exception describe the bug the test createjsonrepresentationforbooks in bookservicetest java is failing because a tag is not saved before flushing to reproduce steps to reproduce the behaviour run the createjsonrepresentationforbooks test expected behaviour test should pass additional context stack trace branch in your pull request set the destination branch as

| 1

|

617,480

| 19,358,763,784

|

IssuesEvent

|

2021-12-16 00:55:43

|

UC-Davis-molecular-computing/scadnano

|

https://api.github.com/repos/UC-Davis-molecular-computing/scadnano

|

closed

|

make scadnano pitch angle agree with oxDNA

|

invalid high priority closed in dev

|

**Note:** This is a breaking change since it will change how oxDNA output works.

Currently, scadnano interprets the pitch angle as a clockwise rotation in the Y-Z plane, following SVG convention. The following design has a helix group (containing helix 1) with pitch=45 (clockwise, away from the single strand on helix 0):

However, exporting to oxDNA rotates the helix in the opposite direction (counter-clockwise, towards the single strand on helix 0):

The two conventions should match, either by rotating counter-clockwise in the scadnano main view, or by changing the oxDNA export code to rotate clockwise in the Y-Z plane. **UPDATE:** We changed the oxDNA export to rotate clockwise in the Y-Z plane.

|

1.0

|

make scadnano pitch angle agree with oxDNA - **Note:** This is a breaking change since it will change how oxDNA output works.

Currently, scadnano interprets the pitch angle as a clockwise rotation in the Y-Z plane, following SVG convention. The following design has a helix group (containing helix 1) with pitch=45 (clockwise, away from the single strand on helix 0):

However, exporting to oxDNA rotates the helix in the opposite direction (counter-clockwise, towards the single strand on helix 0):

The two conventions should match, either by rotating counter-clockwise in the scadnano main view, or by changing the oxDNA export code to rotate clockwise in the Y-Z plane. **UPDATE:** We changed the oxDNA export to rotate clockwise in the Y-Z plane.

|

priority

|

make scadnano pitch angle agree with oxdna note this is a breaking change since it will change how oxdna output works currently scadnano interprets the pitch angle as a clockwise rotation in the y z plane following svg convention the following design has a helix group containing helix with pitch clockwise away from the single strand on helix however exporting to oxdna rotates the helix in the opposite direction counter clockwise towards the single strand on helix the two conventions should match either by rotating counter clockwise in the scadnano main view or by changing the oxdna export code to rotate clockwise in the y z plane update we changed the oxdna export to rotate clockwise in the y z plane

| 1

|

800,861

| 28,436,057,293

|

IssuesEvent

|

2023-04-15 10:21:26

|

svthalia/concrexit

|

https://api.github.com/repos/svthalia/concrexit

|

closed

|

Thabloid preview is broken

|

priority: high thabloid bug request-for-comments

|

### Describe the bug

The preview of thabloids does not work since S3:

- https://thalia.nu/members/thabloid/pages/2022/3/ returns `[]` (see https://github.com/svthalia/concrexit/blob/ce784be158c2e26afa9d389d67065db1cb1a716c/website/thabloid/models.py#L82)

- The 'pages' idea of thabloid is in general pretty broken. It seems like pages are getting created when requesting https://thalia.nu/members/thabloid/ because it's incredibly slow.

### How to reproduce

Open https://thalia.nu/members/thabloid/.

It's probably a good idea to rework the thabloid saving logic quite thoroughly. It might be good to add a `ThabloidPage` model just to keep track of the files that are being created more clearly.

The hacky ghostscript used to thumbnail the pages is quite ugly anyway. So personally I wouldn't mind dropping the page viewer alltogether. Then we still need to get the frontpages somehow for the cover, but it would be great if we can get rid of ghostscript entirely.

|

1.0

|

Thabloid preview is broken - ### Describe the bug

The preview of thabloids does not work since S3:

- https://thalia.nu/members/thabloid/pages/2022/3/ returns `[]` (see https://github.com/svthalia/concrexit/blob/ce784be158c2e26afa9d389d67065db1cb1a716c/website/thabloid/models.py#L82)

- The 'pages' idea of thabloid is in general pretty broken. It seems like pages are getting created when requesting https://thalia.nu/members/thabloid/ because it's incredibly slow.

### How to reproduce

Open https://thalia.nu/members/thabloid/.

It's probably a good idea to rework the thabloid saving logic quite thoroughly. It might be good to add a `ThabloidPage` model just to keep track of the files that are being created more clearly.

The hacky ghostscript used to thumbnail the pages is quite ugly anyway. So personally I wouldn't mind dropping the page viewer alltogether. Then we still need to get the frontpages somehow for the cover, but it would be great if we can get rid of ghostscript entirely.

|

priority

|

thabloid preview is broken describe the bug the preview of thabloids does not work since returns see the pages idea of thabloid is in general pretty broken it seems like pages are getting created when requesting because it s incredibly slow how to reproduce open it s probably a good idea to rework the thabloid saving logic quite thoroughly it might be good to add a thabloidpage model just to keep track of the files that are being created more clearly the hacky ghostscript used to thumbnail the pages is quite ugly anyway so personally i wouldn t mind dropping the page viewer alltogether then we still need to get the frontpages somehow for the cover but it would be great if we can get rid of ghostscript entirely

| 1

|

53,833

| 3,051,658,058

|

IssuesEvent

|

2015-08-12 10:00:50

|

Metaswitch/sprout

|

https://api.github.com/repos/Metaswitch/sprout

|

closed

|

Assert in pjsip timer when connection fails to SIP peer.

|

bug high-priority

|

See lots of log spam like the following when running stress.

```

25-06-2015 18:03:07.317 UTC Error pjsip: Assert failed: ../src/pj/timer.c:492 entry->_timer_id < 1

25-06-2015 18:03:07.317 UTC Error pjsip: tcpc0x7f85748f TCP connect() error: Connection refused [code=120111]

```

I suspect this is related to https://github.com/Metaswitch/pjsip-upstream/pull/35, but I can't really see how. I suspect the next step will be to get the stack when we hit this.

|

1.0

|

Assert in pjsip timer when connection fails to SIP peer. - See lots of log spam like the following when running stress.

```

25-06-2015 18:03:07.317 UTC Error pjsip: Assert failed: ../src/pj/timer.c:492 entry->_timer_id < 1

25-06-2015 18:03:07.317 UTC Error pjsip: tcpc0x7f85748f TCP connect() error: Connection refused [code=120111]

```

I suspect this is related to https://github.com/Metaswitch/pjsip-upstream/pull/35, but I can't really see how. I suspect the next step will be to get the stack when we hit this.

|

priority

|

assert in pjsip timer when connection fails to sip peer see lots of log spam like the following when running stress utc error pjsip assert failed src pj timer c entry timer id utc error pjsip tcp connect error connection refused i suspect this is related to but i can t really see how i suspect the next step will be to get the stack when we hit this

| 1

|

304,220

| 9,328,953,474

|

IssuesEvent

|

2019-03-28 00:08:18

|

Wraithaven/WraithEngine3

|

https://api.github.com/repos/Wraithaven/WraithEngine3

|

closed

|

Packet Protocol Encryption

|

enhancement high priority security

|

**Is your feature request related to a problem? Please describe.**

With the current developed implementation of the packet protocol for server networking, information is sent over the network unencrypted. This is a huge issue for certain bits of information being sent. This is highly vulnerable to cracks, information theft, and information modification. This is a huge issue and needs to be addressed. It can also make it extremely easy for users to fake the identity of other users.

**Describe the solution you'd like**

Packets should be encrypted before being sent over the network. This also ties into user authentication, which is highly related. Packets should be read by the server and the client, no one else.

**Steps to Solve**

An official authentication server should be set up to allow users to log in and have their identity verified. Afterward, a secure connection must be established between the user server and the client to allow for packets to be sent through.

|

1.0

|

Packet Protocol Encryption - **Is your feature request related to a problem? Please describe.**

With the current developed implementation of the packet protocol for server networking, information is sent over the network unencrypted. This is a huge issue for certain bits of information being sent. This is highly vulnerable to cracks, information theft, and information modification. This is a huge issue and needs to be addressed. It can also make it extremely easy for users to fake the identity of other users.

**Describe the solution you'd like**

Packets should be encrypted before being sent over the network. This also ties into user authentication, which is highly related. Packets should be read by the server and the client, no one else.

**Steps to Solve**

An official authentication server should be set up to allow users to log in and have their identity verified. Afterward, a secure connection must be established between the user server and the client to allow for packets to be sent through.

|

priority

|

packet protocol encryption is your feature request related to a problem please describe with the current developed implementation of the packet protocol for server networking information is sent over the network unencrypted this is a huge issue for certain bits of information being sent this is highly vulnerable to cracks information theft and information modification this is a huge issue and needs to be addressed it can also make it extremely easy for users to fake the identity of other users describe the solution you d like packets should be encrypted before being sent over the network this also ties into user authentication which is highly related packets should be read by the server and the client no one else steps to solve an official authentication server should be set up to allow users to log in and have their identity verified afterward a secure connection must be established between the user server and the client to allow for packets to be sent through

| 1

|

605,752

| 18,740,168,992

|

IssuesEvent

|

2021-11-04 12:44:34

|

vignetteapp/vignette

|

https://api.github.com/repos/vignetteapp/vignette

|

closed

|

Live2D binaries are not included by default in Portable Distributions.

|

bug priority:high

|

For some reason we are no longer supplying Live2DCubism with our ZIP files. This should be included regardless since we expect the user downloading this ZIPs to have them immediately. Did something change during the restructuring?

|

1.0

|

Live2D binaries are not included by default in Portable Distributions. - For some reason we are no longer supplying Live2DCubism with our ZIP files. This should be included regardless since we expect the user downloading this ZIPs to have them immediately. Did something change during the restructuring?

|

priority

|

binaries are not included by default in portable distributions for some reason we are no longer supplying with our zip files this should be included regardless since we expect the user downloading this zips to have them immediately did something change during the restructuring

| 1

|

734,977

| 25,372,967,931

|

IssuesEvent

|

2022-11-21 12:00:30

|

CLOSER-Cohorts/archivist

|

https://api.github.com/repos/CLOSER-Cohorts/archivist

|

closed

|

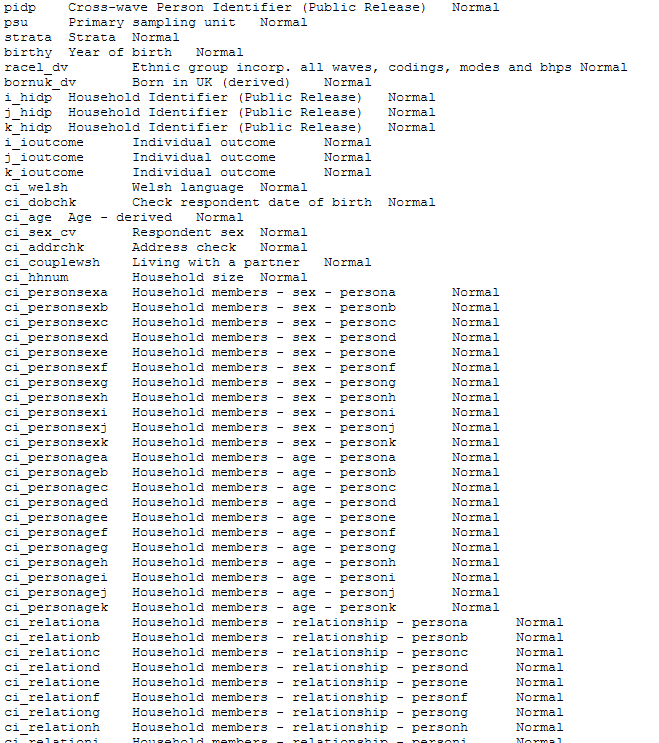

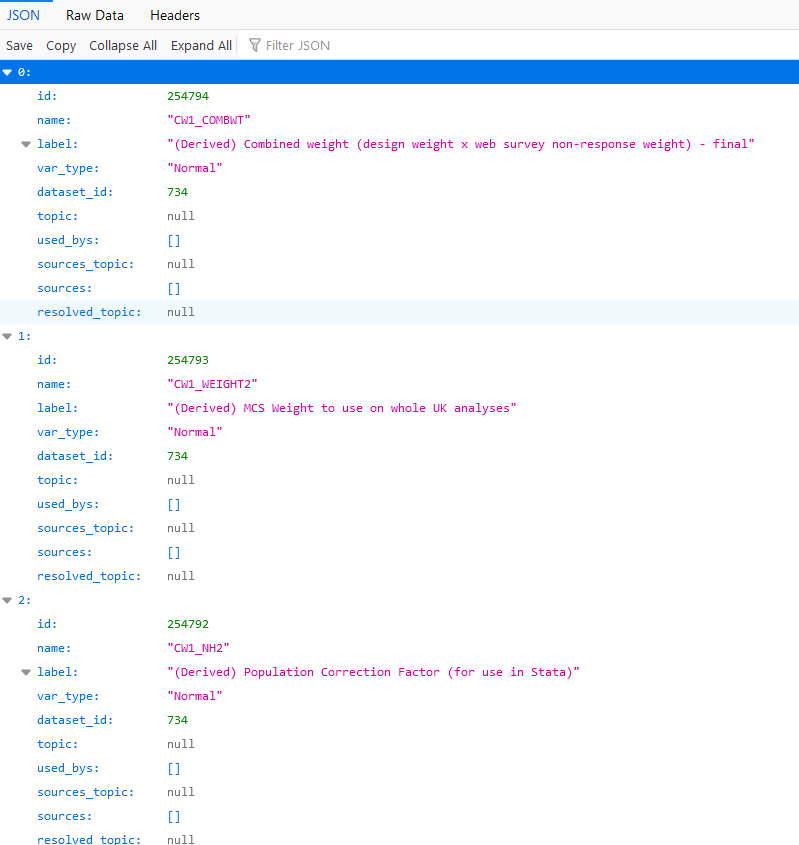

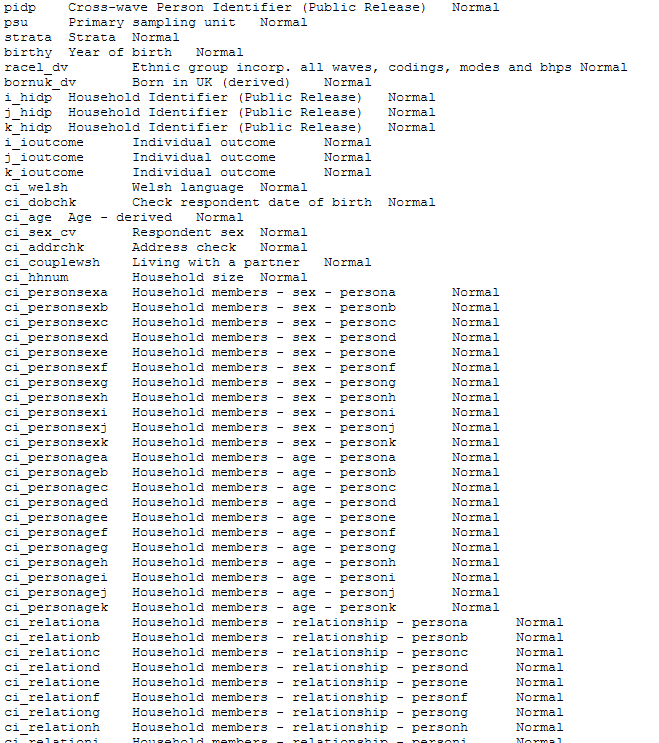

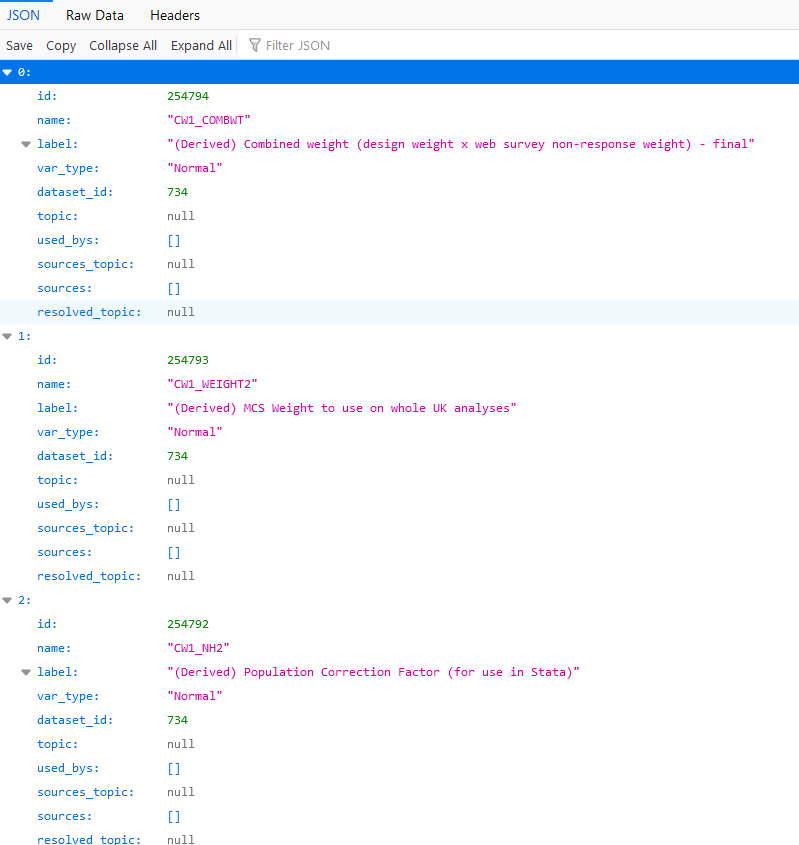

REACT: variables.txt is not loading correctly

|

bug High priority

|

e.g. https://closer-archivist-staging.herokuapp.com/datasets/734/variables.txt?token=eyJhbGciOiJIUzI1NiJ9.eyJpZCI6NjcsImFwaV9rZXkiOiJjNWFhZTg4MjUzOTM0NjllODU5NSJ9.donSPbb8rCNs5S0tZDujkix-awvn_qaDj9ZW2aFiqu4

It should look something like this

But it looks like this

I can get the variables from the tv.txt and the backend so not high priority.

|

1.0

|

REACT: variables.txt is not loading correctly - e.g. https://closer-archivist-staging.herokuapp.com/datasets/734/variables.txt?token=eyJhbGciOiJIUzI1NiJ9.eyJpZCI6NjcsImFwaV9rZXkiOiJjNWFhZTg4MjUzOTM0NjllODU5NSJ9.donSPbb8rCNs5S0tZDujkix-awvn_qaDj9ZW2aFiqu4

It should look something like this

But it looks like this

I can get the variables from the tv.txt and the backend so not high priority.

|

priority

|

react variables txt is not loading correctly e g it should look something like this but it looks like this i can get the variables from the tv txt and the backend so not high priority

| 1

|

616,596

| 19,306,961,391

|

IssuesEvent

|

2021-12-13 12:35:55

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Parser does not support nested ternary expressions

|

Type/Bug Priority/High Team/CompilerFE Area/Parser Error/TypeK Lang/Expressions/ConditionalExpr

|

**Description:**

$title.

**Steps to reproduce:**

```ballerina

boolean cond = true;

int x5 = 1;

int y5 = 10;

int b11 = cond? cond? cond? y5 : x5 : x5 : x5; // not support

int b12 = cond? cond? (cond? y5 : x5) : x5 : x5; // support

```

**Affected Versions:**

SL Beta3

|

1.0

|

Parser does not support nested ternary expressions - **Description:**

$title.

**Steps to reproduce:**

```ballerina

boolean cond = true;

int x5 = 1;

int y5 = 10;

int b11 = cond? cond? cond? y5 : x5 : x5 : x5; // not support

int b12 = cond? cond? (cond? y5 : x5) : x5 : x5; // support

```

**Affected Versions:**

SL Beta3

|

priority

|

parser does not support nested ternary expressions description title steps to reproduce ballerina boolean cond true int int int cond cond cond not support int cond cond cond support affected versions sl

| 1

|

208,488

| 7,155,060,350

|

IssuesEvent

|

2018-01-26 11:04:54

|

metasfresh/metasfresh-webui-frontend

|

https://api.github.com/repos/metasfresh/metasfresh-webui-frontend

|

closed

|

editable views: sometimes the view's editable fields are PATCHed after the view was DELETEd

|

priority:high type:bug

|

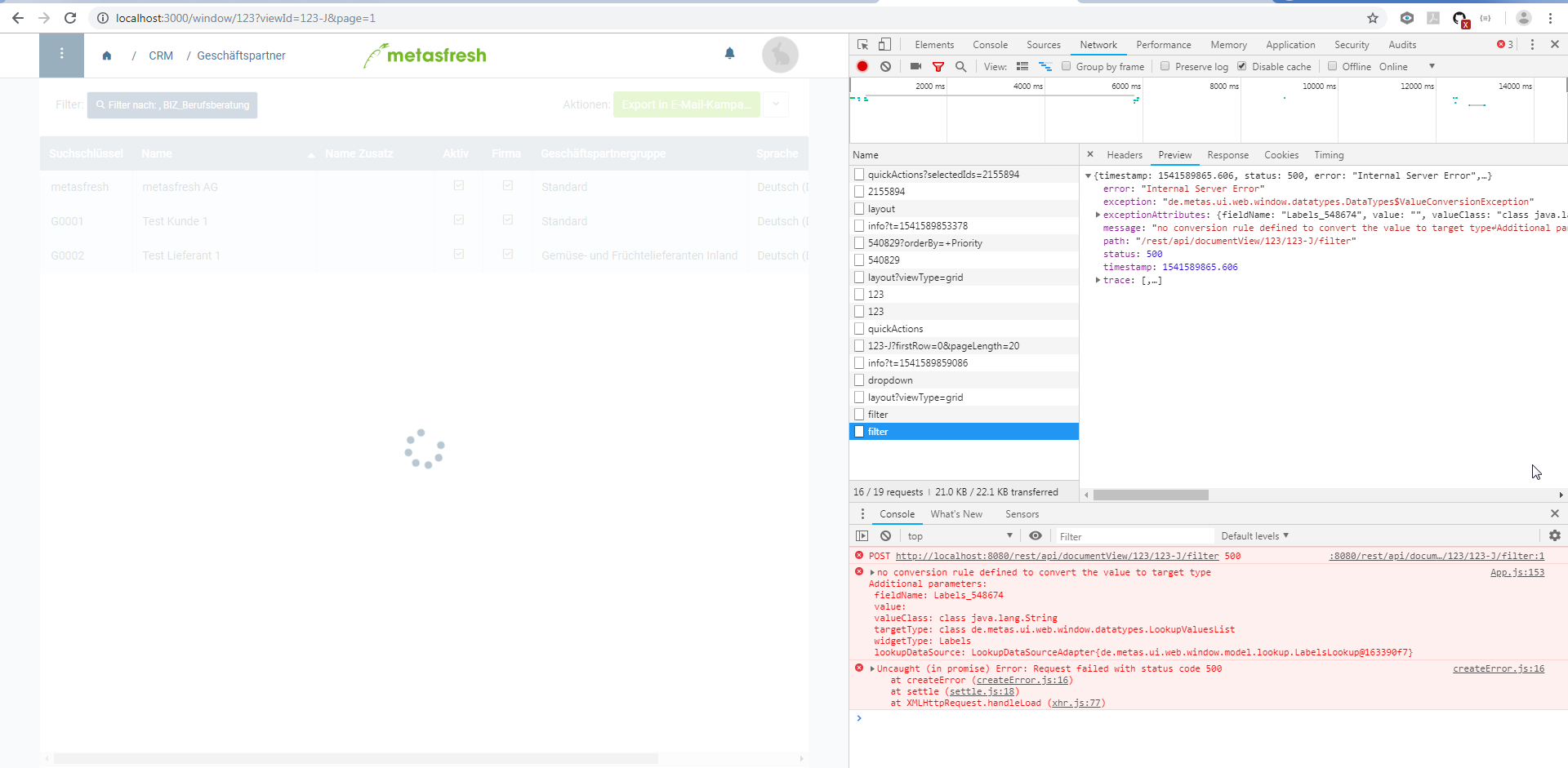

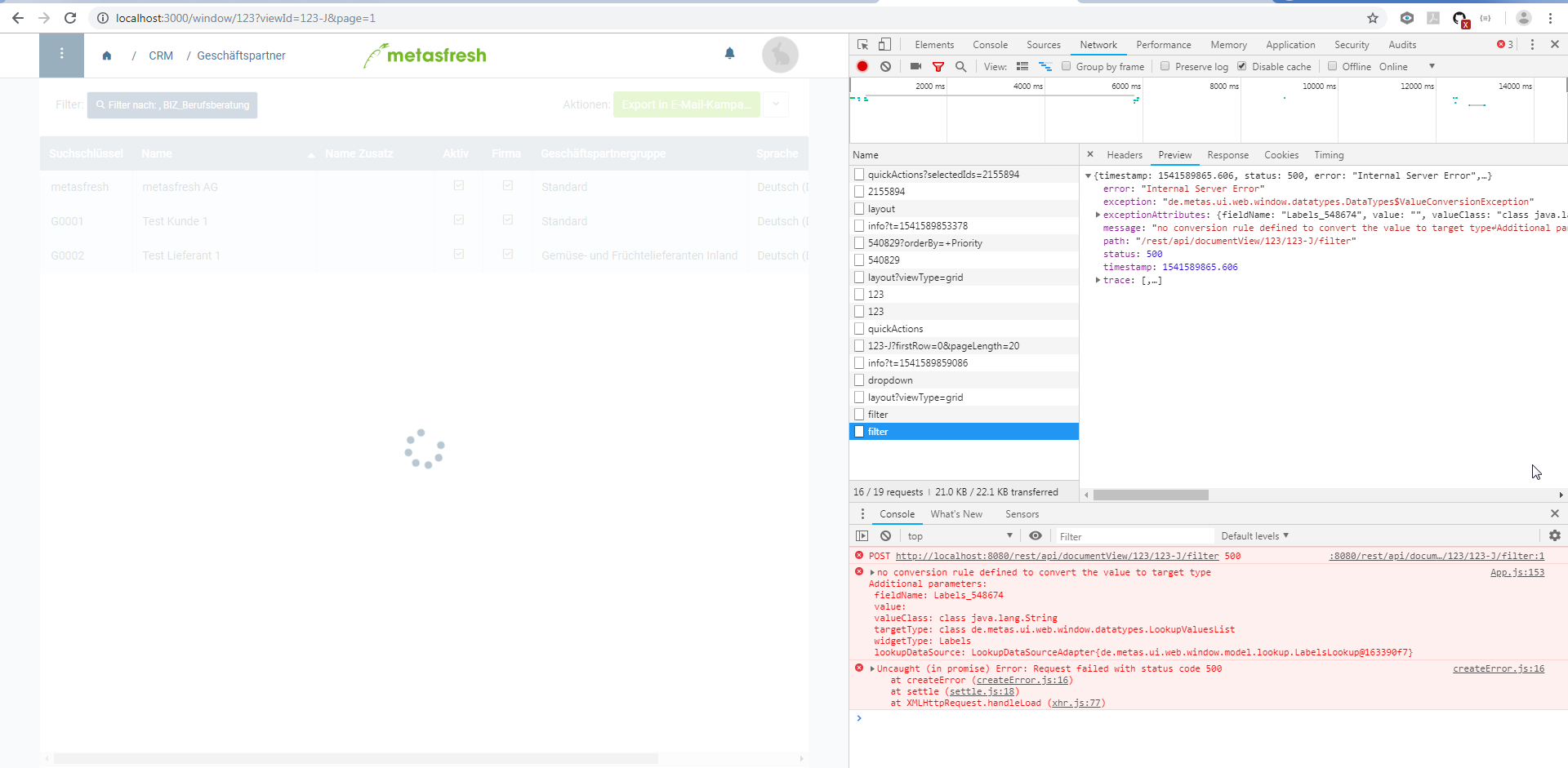

### Is this a bug or feature request?

### What is the current behavior?

#### Which are the steps to reproduce?

* open sales order:

* select some lines and call "Create purchase order"

* press OK

When pressing OK, the frontend shall send back all the changed fields and then it shall delete the view.

Sometimes (NOT always!) this happens in reversed order (i.e. first the view is deleted) which ofc will trigger an issue on backend side.

### What is the expected or desired behavior?

ALWAYS, do the view patching BEFORE deleting the view.

|

1.0

|

editable views: sometimes the view's editable fields are PATCHed after the view was DELETEd - ### Is this a bug or feature request?

### What is the current behavior?

#### Which are the steps to reproduce?

* open sales order:

* select some lines and call "Create purchase order"

* press OK

When pressing OK, the frontend shall send back all the changed fields and then it shall delete the view.

Sometimes (NOT always!) this happens in reversed order (i.e. first the view is deleted) which ofc will trigger an issue on backend side.

### What is the expected or desired behavior?

ALWAYS, do the view patching BEFORE deleting the view.

|

priority

|

editable views sometimes the view s editable fields are patched after the view was deleted is this a bug or feature request what is the current behavior which are the steps to reproduce open sales order select some lines and call create purchase order press ok when pressing ok the frontend shall send back all the changed fields and then it shall delete the view sometimes not always this happens in reversed order i e first the view is deleted which ofc will trigger an issue on backend side what is the expected or desired behavior always do the view patching before deleting the view

| 1

|

788,224

| 27,747,752,386

|

IssuesEvent

|

2023-03-15 18:14:12

|

AY2223S2-CS2103T-T13-2/tp

|

https://api.github.com/repos/AY2223S2-CS2103T-T13-2/tp

|

closed

|

V1.2: Refactor Parser for "Edit", "Delete", "Find", "Help" and their commands

|

type.Enhancement priority.High

|

Change functionality to target our new Recipe model

|

1.0

|

V1.2: Refactor Parser for "Edit", "Delete", "Find", "Help" and their commands - Change functionality to target our new Recipe model

|

priority

|

refactor parser for edit delete find help and their commands change functionality to target our new recipe model

| 1

|

23,670

| 2,660,217,751

|

IssuesEvent

|

2015-03-19 04:02:42

|

cs2103jan2015-t11-2c/main

|

https://api.github.com/repos/cs2103jan2015-t11-2c/main

|

closed

|

UI: Create Main Menu

|

priority.high type.task

|

_From @limtheckyee on March 11, 2015 7:48_

_Copied from original issue: jasqxl/cs2103jan2015-t11-2c#17_

|

1.0

|

UI: Create Main Menu - _From @limtheckyee on March 11, 2015 7:48_

_Copied from original issue: jasqxl/cs2103jan2015-t11-2c#17_

|

priority

|

ui create main menu from limtheckyee on march copied from original issue jasqxl

| 1

|

473,642

| 13,645,366,187

|

IssuesEvent

|

2020-09-25 20:38:52

|

spacetelescope/mirage

|

https://api.github.com/repos/spacetelescope/mirage

|

closed

|

Linearized darks for the 2 FGS detectors are mixed in dark_prep

|

Bug High Priority dark_prep

|

For FGS exposures that contain more than one integration, it is possible for Mirage to use a guider1 dark for a guider2 exposures and vice versa. This is because the list of possible darks is a simple glob of the fits files in the dark directory of MIRAGE_DATA. For exposures with a single integration, a dark from the correct detector will be used because there is logic in the yaml_generator to separate the darks from the two detectors. This logic needs to be added to dark_prep when it creates its lindark_list.

|

1.0

|

Linearized darks for the 2 FGS detectors are mixed in dark_prep - For FGS exposures that contain more than one integration, it is possible for Mirage to use a guider1 dark for a guider2 exposures and vice versa. This is because the list of possible darks is a simple glob of the fits files in the dark directory of MIRAGE_DATA. For exposures with a single integration, a dark from the correct detector will be used because there is logic in the yaml_generator to separate the darks from the two detectors. This logic needs to be added to dark_prep when it creates its lindark_list.

|

priority

|

linearized darks for the fgs detectors are mixed in dark prep for fgs exposures that contain more than one integration it is possible for mirage to use a dark for a exposures and vice versa this is because the list of possible darks is a simple glob of the fits files in the dark directory of mirage data for exposures with a single integration a dark from the correct detector will be used because there is logic in the yaml generator to separate the darks from the two detectors this logic needs to be added to dark prep when it creates its lindark list

| 1

|

65,724

| 3,238,070,655

|

IssuesEvent

|

2015-10-14 14:46:52

|

neuropoly/spinalcordtoolbox_web

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox_web

|

opened

|

create an iSCT version that works with minimal functionalities

|

bug priority: high

|

Current problems are:

- not possible to upload data

|

1.0

|

create an iSCT version that works with minimal functionalities - Current problems are:

- not possible to upload data

|

priority

|

create an isct version that works with minimal functionalities current problems are not possible to upload data

| 1

|

498,276

| 14,404,978,507

|

IssuesEvent

|

2020-12-03 18:01:49

|

DistrictDataLabs/yellowbrick

|

https://api.github.com/repos/DistrictDataLabs/yellowbrick

|

closed

|

Yellowbrick v1.2 Conda Package

|

priority: high type: task

|

Version 1.2 has been released, once it's been verified we need to [upload it to conda](http://www.scikit-yb.org/en/develop/contributing/advanced_development_topics.html#deploying-to-anaconda-cloud)!

|

1.0

|

Yellowbrick v1.2 Conda Package - Version 1.2 has been released, once it's been verified we need to [upload it to conda](http://www.scikit-yb.org/en/develop/contributing/advanced_development_topics.html#deploying-to-anaconda-cloud)!

|

priority

|

yellowbrick conda package version has been released once it s been verified we need to

| 1

|

428,788

| 12,416,805,692

|

IssuesEvent

|

2020-05-22 19:03:23

|

juntofoundation/junto-mobile

|

https://api.github.com/repos/juntofoundation/junto-mobile

|

closed

|

Various notification caching optimizations

|

High Priority

|

So, when you receive a pack request you will get both a connection and a pack request notification in your inbox. What we have set up right now is that responding to one of those requests deletes only that particular notification from cache. However, in the case of the pack request, if I accept it *before* I respond to the connection request, it removes both of them from the API (since joining someone's pack automatically connects you with them). The issue we have then is that the connection request remains in cache as only the pack request in removed.

Would it be better for us to simply have one 'update notification cache' function to use? @orestesgaolin I know you're working on this for removing comment notifications, and I wonder if we should just implement this for all areas that need to update cache to reduce the amount of edge cases we need to account for and ensure we're always up to date with what the API returns. We won't ever have more than 100 notifs so I don't think it would be a big performance issue.

@Nash0x7E2 if this is the route we take then we could just implement this function when responding to requests in the relations drawer + packs requests in the Packs section of the app.

|

1.0

|

Various notification caching optimizations - So, when you receive a pack request you will get both a connection and a pack request notification in your inbox. What we have set up right now is that responding to one of those requests deletes only that particular notification from cache. However, in the case of the pack request, if I accept it *before* I respond to the connection request, it removes both of them from the API (since joining someone's pack automatically connects you with them). The issue we have then is that the connection request remains in cache as only the pack request in removed.

Would it be better for us to simply have one 'update notification cache' function to use? @orestesgaolin I know you're working on this for removing comment notifications, and I wonder if we should just implement this for all areas that need to update cache to reduce the amount of edge cases we need to account for and ensure we're always up to date with what the API returns. We won't ever have more than 100 notifs so I don't think it would be a big performance issue.

@Nash0x7E2 if this is the route we take then we could just implement this function when responding to requests in the relations drawer + packs requests in the Packs section of the app.

|

priority

|

various notification caching optimizations so when you receive a pack request you will get both a connection and a pack request notification in your inbox what we have set up right now is that responding to one of those requests deletes only that particular notification from cache however in the case of the pack request if i accept it before i respond to the connection request it removes both of them from the api since joining someone s pack automatically connects you with them the issue we have then is that the connection request remains in cache as only the pack request in removed would it be better for us to simply have one update notification cache function to use orestesgaolin i know you re working on this for removing comment notifications and i wonder if we should just implement this for all areas that need to update cache to reduce the amount of edge cases we need to account for and ensure we re always up to date with what the api returns we won t ever have more than notifs so i don t think it would be a big performance issue if this is the route we take then we could just implement this function when responding to requests in the relations drawer packs requests in the packs section of the app

| 1

|

167,058

| 6,331,572,780

|

IssuesEvent

|

2017-07-26 10:16:34

|

CraftAcademy/ca_course

|

https://api.github.com/repos/CraftAcademy/ca_course

|

opened

|

Create screencasts for week 5

|

ca-course course material high priority ready

|

We need screencasts on the following topics. Add more if I missed something.

- [ ] Rails routing

- [ ] Active Record basics

- [ ] Rails Helpers

- [ ] Nested Routes (I have a recording of this, will check it out) - Might need to make a short one though.. 🤔

|

1.0

|

Create screencasts for week 5 - We need screencasts on the following topics. Add more if I missed something.

- [ ] Rails routing

- [ ] Active Record basics

- [ ] Rails Helpers

- [ ] Nested Routes (I have a recording of this, will check it out) - Might need to make a short one though.. 🤔

|

priority

|

create screencasts for week we need screencasts on the following topics add more if i missed something rails routing active record basics rails helpers nested routes i have a recording of this will check it out might need to make a short one though 🤔

| 1

|

239,949

| 7,800,186,756

|

IssuesEvent

|

2018-06-09 06:06:52

|

MrBlizzard/RCAdmins-Tracker

|

https://api.github.com/repos/MrBlizzard/RCAdmins-Tracker

|

closed

|

[RCV] Constant/Common RCV Rollbacks

|

awaiting developer bug priority:high

|

RC Vaults don't appear to save on open/close and are subject to rollback without warning.

|

1.0

|

[RCV] Constant/Common RCV Rollbacks - RC Vaults don't appear to save on open/close and are subject to rollback without warning.

|

priority

|

constant common rcv rollbacks rc vaults don t appear to save on open close and are subject to rollback without warning

| 1

|

202,548

| 7,048,884,364

|

IssuesEvent

|

2018-01-02 19:40:06

|

unfoldingWord-dev/translationCore

|

https://api.github.com/repos/unfoldingWord-dev/translationCore

|

closed

|

Need a build with full NT for alignment

|

Epic Priority/High QA/Pass

|

- [ ] The aligners need a stable build that has the whole NT so that they can keep working on aligning the NT.

- [ ] Go back to before the project refactor, create a release branch (0.8.1)

- [ ] Remove the Titus restriction

|

1.0

|

Need a build with full NT for alignment - - [ ] The aligners need a stable build that has the whole NT so that they can keep working on aligning the NT.

- [ ] Go back to before the project refactor, create a release branch (0.8.1)

- [ ] Remove the Titus restriction

|

priority

|

need a build with full nt for alignment the aligners need a stable build that has the whole nt so that they can keep working on aligning the nt go back to before the project refactor create a release branch remove the titus restriction

| 1

|

230,790

| 7,613,982,873

|

IssuesEvent

|

2018-05-01 23:53:23

|

StrangeLoopGames/EcoIssues

|

https://api.github.com/repos/StrangeLoopGames/EcoIssues

|

closed

|

Version 7.4 stagged

|

High Priority

|

Machinist table is not designed so we can't craft things like gearbox. that mean we can't destroy meteor....

|

1.0

|

Version 7.4 stagged - Machinist table is not designed so we can't craft things like gearbox. that mean we can't destroy meteor....

|

priority

|

version stagged machinist table is not designed so we can t craft things like gearbox that mean we can t destroy meteor

| 1

|

49,159

| 3,001,742,965

|

IssuesEvent

|

2015-07-24 13:29:52

|

centreon/centreon

|

https://api.github.com/repos/centreon/centreon

|

closed

|

[Hosts] Can't remove last Template

|

Category: Centreon - Configuration Component: Affect Version Component: Resolution Priority: High Status: Rejected Tracker: Bug

|

---

Author Name: **Florian Asche** (Florian Asche)

Original Redmine Issue: 5368, https://forge.centreon.com/issues/5368

Original Date: 2014-03-15

Original Assignee: remi werquin

---

Hello,

if i want to delete the last template that is associated with a host, centreon didnt delete it when saving.

|

1.0

|

[Hosts] Can't remove last Template - ---

Author Name: **Florian Asche** (Florian Asche)

Original Redmine Issue: 5368, https://forge.centreon.com/issues/5368

Original Date: 2014-03-15

Original Assignee: remi werquin

---

Hello,

if i want to delete the last template that is associated with a host, centreon didnt delete it when saving.

|

priority

|

can t remove last template author name florian asche florian asche original redmine issue original date original assignee remi werquin hello if i want to delete the last template that is associated with a host centreon didnt delete it when saving

| 1

|

112,475

| 4,533,240,301

|

IssuesEvent

|

2016-09-08 10:50:00

|

japanesemediamanager/MyAnime3

|

https://api.github.com/repos/japanesemediamanager/MyAnime3

|

closed

|

Resume video file doesn't work, plays from beginning always

|

Bug - High Priority

|

**Reported by ignaciogarciaaguirre, Oct 22, 2013**

*What steps will reproduce the problem?*

1. Play video.

2. Stop video.

3. Replay video.

*What is the expected output? What do you see instead?*

Option to resume or start from the beginning.

Starts from the beginning always.

*What version of the product are you using? On what operating system?*

MA3 3.1.32.0

MediaPortal 1.5.0 Release

Titan Skin

```

00000005 - 22-10-2013 12:26:43 - ImageLoad: Finished

00000001 - 22-10-2013 12:26:43 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:26:43 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000024 - 22-10-2013 12:26:43 - GOT FANART details in: 5.0003 ms (.hack//G.U. Returner)

00000024 - 22-10-2013 12:26:43 - LOADING FANART: .hack//G.U. Returner - C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs\Anime3\TvDB\fanart\original\79099-10.jpg

00000024 - 22-10-2013 12:26:43 - Report ItemToAutoSelect: 0

00000024 - 22-10-2013 12:26:43 - SetFacade List Mode: Episode

00000024 - 22-10-2013 12:26:43 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:26:43 - [Anime2:\anime3_DVDDIVX.png]

00000001 - 22-10-2013 12:26:51 - Selected to play: 1 - OVA

00000001 - 22-10-2013 12:26:51 - Filetoplay: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:26:51 - Getting time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:26:51 - Time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 0

00000001 - 22-10-2013 12:26:52 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:26:52 - Playback started for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:26 - OnPlayBackStopped: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 33 - Video

00000001 - 22-10-2013 12:27:26 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:26 - Playback stopped for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:26 - Checking for set watched

00000001 - 22-10-2013 12:27:26 - Starting page load...

00000001 - 22-10-2013 12:27:26 - Adding hook to hook event handler

00000001 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:27:26 - C:\ProgramData\Team MediaPortal\MediaPortal\Skin\Titan\Anime3_SkinSettings.xml

00000001 - 22-10-2013 12:27:26 - Loading Logos

00000001 - 22-10-2013 12:27:26 - Thumbs Setting Folder: C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs

00000001 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000024 - 22-10-2013 12:27:26 - GOT FANART details in: 4.0002 ms (.hack//G.U. Returner)

00000024 - 22-10-2013 12:27:26 - LOADING FANART: .hack//G.U. Returner - C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs\Anime3\TvDB\fanart\original\79099-5.jpg

00000024 - 22-10-2013 12:27:26 - Report ItemToAutoSelect: 0

00000024 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000024 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:27:32 - Selected to play: 1 - OVA

00000001 - 22-10-2013 12:27:32 - Filetoplay: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:32 - Getting time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:32 - Time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 0

00000001 - 22-10-2013 12:27:32 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:32 - Playback started for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:36 - OnPlayBackStopped: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 3 - Video

00000001 - 22-10-2013 12:27:36 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:36 - Playback stopped for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

```

|

1.0

|

Resume video file doesn't work, plays from beginning always - **Reported by ignaciogarciaaguirre, Oct 22, 2013**

*What steps will reproduce the problem?*

1. Play video.

2. Stop video.

3. Replay video.

*What is the expected output? What do you see instead?*

Option to resume or start from the beginning.

Starts from the beginning always.

*What version of the product are you using? On what operating system?*

MA3 3.1.32.0

MediaPortal 1.5.0 Release

Titan Skin

```

00000005 - 22-10-2013 12:26:43 - ImageLoad: Finished

00000001 - 22-10-2013 12:26:43 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:26:43 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000024 - 22-10-2013 12:26:43 - GOT FANART details in: 5.0003 ms (.hack//G.U. Returner)

00000024 - 22-10-2013 12:26:43 - LOADING FANART: .hack//G.U. Returner - C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs\Anime3\TvDB\fanart\original\79099-10.jpg

00000024 - 22-10-2013 12:26:43 - Report ItemToAutoSelect: 0

00000024 - 22-10-2013 12:26:43 - SetFacade List Mode: Episode

00000024 - 22-10-2013 12:26:43 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:26:43 - [Anime2:\anime3_DVDDIVX.png]

00000001 - 22-10-2013 12:26:51 - Selected to play: 1 - OVA

00000001 - 22-10-2013 12:26:51 - Filetoplay: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:26:51 - Getting time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:26:51 - Time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 0

00000001 - 22-10-2013 12:26:52 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:26:52 - Playback started for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:26 - OnPlayBackStopped: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 33 - Video

00000001 - 22-10-2013 12:27:26 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:26 - Playback stopped for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:26 - Checking for set watched

00000001 - 22-10-2013 12:27:26 - Starting page load...

00000001 - 22-10-2013 12:27:26 - Adding hook to hook event handler

00000001 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:27:26 - C:\ProgramData\Team MediaPortal\MediaPortal\Skin\Titan\Anime3_SkinSettings.xml

00000001 - 22-10-2013 12:27:26 - Loading Logos

00000001 - 22-10-2013 12:27:26 - Thumbs Setting Folder: C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs

00000001 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000001 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000024 - 22-10-2013 12:27:26 - GOT FANART details in: 4.0002 ms (.hack//G.U. Returner)

00000024 - 22-10-2013 12:27:26 - LOADING FANART: .hack//G.U. Returner - C:\ProgramData\Team MediaPortal\MediaPortal\Thumbs\Anime3\TvDB\fanart\original\79099-5.jpg

00000024 - 22-10-2013 12:27:26 - Report ItemToAutoSelect: 0

00000024 - 22-10-2013 12:27:26 - SetFacade List Mode: Episode

00000024 - 22-10-2013 12:27:26 - SetFacade: Filters: False - Groups: False - Series: False - Episodes: True

00000001 - 22-10-2013 12:27:32 - Selected to play: 1 - OVA

00000001 - 22-10-2013 12:27:32 - Filetoplay: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:32 - Getting time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:32 - Time stopped for : Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 0

00000001 - 22-10-2013 12:27:32 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:32 - Playback started for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

00000001 - 22-10-2013 12:27:36 - OnPlayBackStopped: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - 3 - Video

00000001 - 22-10-2013 12:27:36 - PlayBackOpIsOfConcern: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv - Video - MyAnimePlugin3.ViewModel.AnimeEpisodeVM

00000001 - 22-10-2013 12:27:36 - Playback stopped for: Y:\anime\.hackG.U. Returner\[LuPerry]_dot_hack_GU_-_returner[496A700E].mkv

```

|

priority

|

resume video file doesn t work plays from beginning always reported by ignaciogarciaaguirre oct what steps will reproduce the problem play video stop video replay video what is the expected output what do you see instead option to resume or start from the beginning starts from the beginning always what version of the product are you using on what operating system mediaportal release titan skin imageload finished setfacade list mode episode setfacade filters false groups false series false episodes true got fanart details in ms hack g u returner loading fanart hack g u returner c programdata team mediaportal mediaportal thumbs tvdb fanart original jpg report itemtoautoselect setfacade list mode episode setfacade filters false groups false series false episodes true selected to play ova filetoplay y anime hackg u returner dot hack gu returner mkv getting time stopped for y anime hackg u returner dot hack gu returner mkv time stopped for y anime hackg u returner dot hack gu returner mkv playbackopisofconcern y anime hackg u returner dot hack gu returner mkv video viewmodel animeepisodevm playback started for y anime hackg u returner dot hack gu returner mkv onplaybackstopped y anime hackg u returner dot hack gu returner mkv video playbackopisofconcern y anime hackg u returner dot hack gu returner mkv video viewmodel animeepisodevm playback stopped for y anime hackg u returner dot hack gu returner mkv checking for set watched starting page load adding hook to hook event handler setfacade list mode episode setfacade filters false groups false series false episodes true c programdata team mediaportal mediaportal skin titan skinsettings xml loading logos thumbs setting folder c programdata team mediaportal mediaportal thumbs setfacade list mode episode setfacade filters false groups false series false episodes true got fanart details in ms hack g u returner loading fanart hack g u returner c programdata team mediaportal mediaportal thumbs tvdb fanart original jpg report itemtoautoselect setfacade list mode episode setfacade filters false groups false series false episodes true selected to play ova filetoplay y anime hackg u returner dot hack gu returner mkv getting time stopped for y anime hackg u returner dot hack gu returner mkv time stopped for y anime hackg u returner dot hack gu returner mkv playbackopisofconcern y anime hackg u returner dot hack gu returner mkv video viewmodel animeepisodevm playback started for y anime hackg u returner dot hack gu returner mkv onplaybackstopped y anime hackg u returner dot hack gu returner mkv video playbackopisofconcern y anime hackg u returner dot hack gu returner mkv video viewmodel animeepisodevm playback stopped for y anime hackg u returner dot hack gu returner mkv

| 1

|

650,972

| 21,445,652,377

|

IssuesEvent

|

2022-04-25 05:54:50

|

rosekamallove/youtemy

|

https://api.github.com/repos/rosekamallove/youtemy

|

opened

|

Create: Landing Page

|

enhancement high-priority

|

Currently, when a user is not logged in, it goes to the landing page which doesn't have anything on it other than the SignIn and Contribute Button.

We need to create a beautiful Landing Page containing a Call to Action and possibly animations explaining the various features in our web app.

|

1.0

|

Create: Landing Page - Currently, when a user is not logged in, it goes to the landing page which doesn't have anything on it other than the SignIn and Contribute Button.

We need to create a beautiful Landing Page containing a Call to Action and possibly animations explaining the various features in our web app.

|

priority

|

create landing page currently when a user is not logged in it goes to the landing page which doesn t have anything on it other than the signin and contribute button we need to create a beautiful landing page containing a call to action and possibly animations explaining the various features in our web app

| 1

|

479,327

| 13,794,788,946

|

IssuesEvent

|

2020-10-09 16:53:14

|

ooni/explorer

|

https://api.github.com/repos/ooni/explorer

|

closed

|

Some measurements display the ASN in the report ID / OONI Explorer measurement URLs, but not in the raw data

|

bug effort/XL interrupt priority/high

|

In some measurements (for example in WhatsApp and Telegram test results) the ASN is annotated as AS0, but it is possible to retrieve the actual ASN from OONI Explorer measurement URLs.

For example, in this measurement I can see from the measurement URL that the ASN is AS57293 (even though it is annotated as AS0 in the raw data): https://explorer.ooni.org/measurement/20200927T053618Z_AS57293_SZU6APrRIoL4pcWrIwmACx7ewQRL5pCqy5tEztueu4Tc5THkeX

Why does the measurement say AS0, while an ASN is displayed in the measurement URL?

|

1.0

|

Some measurements display the ASN in the report ID / OONI Explorer measurement URLs, but not in the raw data - In some measurements (for example in WhatsApp and Telegram test results) the ASN is annotated as AS0, but it is possible to retrieve the actual ASN from OONI Explorer measurement URLs.

For example, in this measurement I can see from the measurement URL that the ASN is AS57293 (even though it is annotated as AS0 in the raw data): https://explorer.ooni.org/measurement/20200927T053618Z_AS57293_SZU6APrRIoL4pcWrIwmACx7ewQRL5pCqy5tEztueu4Tc5THkeX

Why does the measurement say AS0, while an ASN is displayed in the measurement URL?

|

priority

|

some measurements display the asn in the report id ooni explorer measurement urls but not in the raw data in some measurements for example in whatsapp and telegram test results the asn is annotated as but it is possible to retrieve the actual asn from ooni explorer measurement urls for example in this measurement i can see from the measurement url that the asn is even though it is annotated as in the raw data why does the measurement say while an asn is displayed in the measurement url

| 1

|

510,275

| 14,787,764,872

|

IssuesEvent

|

2021-01-12 08:11:37

|

Disfactory/Disfactory

|

https://api.github.com/repos/Disfactory/Disfactory

|

closed

|

後台輸出各縣市各display status的數量

|

Backend high priority

|

**Is your feature request related to a problem? Please describe.**

每個月地公會發月報告知大家各縣市進度,所以需要輸出這個數量

**Describe the solution you'd like**

可以輸出一個csv,以縣市為經,以display status為緯,

<img width="546" alt="截圖 2020-12-09 下午8 43 02" src="https://user-images.githubusercontent.com/60970217/101631353-2a3ee080-3a5f-11eb-955a-94a13ae8af17.png">

**Describe alternatives you've considered**

自己一個一個算QQ

|

1.0

|

後台輸出各縣市各display status的數量 - **Is your feature request related to a problem? Please describe.**

每個月地公會發月報告知大家各縣市進度,所以需要輸出這個數量

**Describe the solution you'd like**

可以輸出一個csv,以縣市為經,以display status為緯,

<img width="546" alt="截圖 2020-12-09 下午8 43 02" src="https://user-images.githubusercontent.com/60970217/101631353-2a3ee080-3a5f-11eb-955a-94a13ae8af17.png">

**Describe alternatives you've considered**

自己一個一個算QQ

|

priority

|

後台輸出各縣市各display status的數量 is your feature request related to a problem please describe 每個月地公會發月報告知大家各縣市進度,所以需要輸出這個數量 describe the solution you d like 可以輸出一個csv,以縣市為經,以display status為緯, img width alt 截圖 src describe alternatives you ve considered 自己一個一個算qq

| 1

|

29,401

| 2,715,484,832

|

IssuesEvent

|

2015-04-10 13:28:55

|

OpenConceptLab/oclapi

|

https://api.github.com/repos/OpenConceptLab/oclapi

|

opened

|

Setup celery environment on dev server / fabric scripts

|

enhancement high-priority

|

Celery scripts will be used to run exports as a background process.

Confirm design with Aaron.

|

1.0

|

Setup celery environment on dev server / fabric scripts - Celery scripts will be used to run exports as a background process.

Confirm design with Aaron.

|

priority

|

setup celery environment on dev server fabric scripts celery scripts will be used to run exports as a background process confirm design with aaron

| 1

|

720,359

| 24,789,410,327

|

IssuesEvent

|

2022-10-24 12:39:18

|

KinsonDigital/Velaptor

|

https://api.github.com/repos/KinsonDigital/Velaptor

|

closed

|

🚧Build app settings system

|

✨new feature high priority preview

|

### I have done the items below . . .

- [X] I have updated the title by replacing the '**_<title_**>' section.

### Description

Build an application settings system. This will be used to turn on and off various application behaviors. The system should automatically check if app settings exist. If the application settings do not exist, the file will be auto-created and all application settings with default settings will be added to the file.

This should be set up as a service with the name `AppSettingsService` with an interface and full unit testing.

The settings will be simple key-value pairs in JSON format.

Create 2 app settings for the width and height of the application. Refactor/add code to make use of these 2 settings.

Add a method overload to the `App` class of the method `CreateWindow()`. This class will create an instance of the singleton app settings object and check if the settings exist. If the settings do not exist, use a default width and height of **1500 x 800**.

The default width and height should be constants in the `App` class.

Change the method used in the `Program.cs` file for the **VelaptorTesting** project to use the new `CreateWindow()` method overload.

### Acceptance Criteria

**This issue is finished when:**

- [x] The settings should be saved in JSON format

- [x] The check for file existence and creation with default settings should only be done one time upon creation of the service singleton. This means once upon application startup.

- If this was done every time, then that could be stress on the system by checking and loading file data.

- [x] ~The settings service should hold all of the app setting names and values as a `record` type.~

- [x] ~The settings service should have a method that returns the settings for evaluation and use by other parts of the application~

- [x] The settings service will be a singleton in the IoC container

- [x] Overload method named `CreateWindow()` added to the `App` class.

- This method will pull the window width and height app settings for users to set the size of the application.

- [x] The app settings are set up to be used as default instead of using hard-coded values using the `CreateWindow(unit, unit)` method

- [x] Unit tests added

- [x] All unit tests pass

### ToDo Items

- [x] Draft pull request created and linked to this issue

- [X] Priority label added to issue (**_low priority_**, **_medium priority_**, or **_high priority_**)

- [x] Issue linked to the proper project

- [X] Issue linked to proper milestone

### Issue Dependencies

_No response_

### Related Work

- #247

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct

|

1.0

|

🚧Build app settings system - ### I have done the items below . . .

- [X] I have updated the title by replacing the '**_<title_**>' section.

### Description

Build an application settings system. This will be used to turn on and off various application behaviors. The system should automatically check if app settings exist. If the application settings do not exist, the file will be auto-created and all application settings with default settings will be added to the file.

This should be set up as a service with the name `AppSettingsService` with an interface and full unit testing.

The settings will be simple key-value pairs in JSON format.

Create 2 app settings for the width and height of the application. Refactor/add code to make use of these 2 settings.

Add a method overload to the `App` class of the method `CreateWindow()`. This class will create an instance of the singleton app settings object and check if the settings exist. If the settings do not exist, use a default width and height of **1500 x 800**.

The default width and height should be constants in the `App` class.

Change the method used in the `Program.cs` file for the **VelaptorTesting** project to use the new `CreateWindow()` method overload.

### Acceptance Criteria

**This issue is finished when:**

- [x] The settings should be saved in JSON format

- [x] The check for file existence and creation with default settings should only be done one time upon creation of the service singleton. This means once upon application startup.

- If this was done every time, then that could be stress on the system by checking and loading file data.

- [x] ~The settings service should hold all of the app setting names and values as a `record` type.~

- [x] ~The settings service should have a method that returns the settings for evaluation and use by other parts of the application~

- [x] The settings service will be a singleton in the IoC container

- [x] Overload method named `CreateWindow()` added to the `App` class.

- This method will pull the window width and height app settings for users to set the size of the application.

- [x] The app settings are set up to be used as default instead of using hard-coded values using the `CreateWindow(unit, unit)` method

- [x] Unit tests added

- [x] All unit tests pass

### ToDo Items

- [x] Draft pull request created and linked to this issue

- [X] Priority label added to issue (**_low priority_**, **_medium priority_**, or **_high priority_**)

- [x] Issue linked to the proper project

- [X] Issue linked to proper milestone

### Issue Dependencies

_No response_

### Related Work

- #247

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct

|

priority

|

🚧build app settings system i have done the items below i have updated the title by replacing the section description build an application settings system this will be used to turn on and off various application behaviors the system should automatically check if app settings exist if the application settings do not exist the file will be auto created and all application settings with default settings will be added to the file this should be set up as a service with the name appsettingsservice with an interface and full unit testing the settings will be simple key value pairs in json format create app settings for the width and height of the application refactor add code to make use of these settings add a method overload to the app class of the method createwindow this class will create an instance of the singleton app settings object and check if the settings exist if the settings do not exist use a default width and height of x the default width and height should be constants in the app class change the method used in the program cs file for the velaptortesting project to use the new createwindow method overload acceptance criteria this issue is finished when the settings should be saved in json format the check for file existence and creation with default settings should only be done one time upon creation of the service singleton this means once upon application startup if this was done every time then that could be stress on the system by checking and loading file data the settings service should hold all of the app setting names and values as a record type the settings service should have a method that returns the settings for evaluation and use by other parts of the application the settings service will be a singleton in the ioc container overload method named createwindow added to the app class this method will pull the window width and height app settings for users to set the size of the application the app settings are set up to be used as default instead of using hard coded values using the createwindow unit unit method unit tests added all unit tests pass todo items draft pull request created and linked to this issue priority label added to issue low priority medium priority or high priority issue linked to the proper project issue linked to proper milestone issue dependencies no response related work code of conduct i agree to follow this project s code of conduct

| 1

|

317,339

| 9,663,585,451

|

IssuesEvent

|

2019-05-21 01:23:56

|

NCIOCPL/cgov-digital-platform

|

https://api.github.com/repos/NCIOCPL/cgov-digital-platform

|

closed

|

Metadata tags are missing for video and infographic

|

High priority

|

The metadata is missing from video and infographic pages. Please fix.

|

1.0

|

Metadata tags are missing for video and infographic - The metadata is missing from video and infographic pages. Please fix.

|

priority

|

metadata tags are missing for video and infographic the metadata is missing from video and infographic pages please fix

| 1

|

472,803

| 13,631,565,346

|

IssuesEvent

|

2020-09-24 18:12:56

|

cloudfour/lighthouse-parade

|

https://api.github.com/repos/cloudfour/lighthouse-parade

|

closed

|

Convert to TypeScript

|

High priority enhancement

|

- [x] Set up TS https://github.com/cloudfour/lighthouse-parade/pull/11

- [x] Convert to TS: `utilities` https://github.com/cloudfour/lighthouse-parade/pull/15

- [x] Convert to TS: `reportToRow` https://github.com/cloudfour/lighthouse-parade/pull/17

- [x] Convert to TS: `combine` https://github.com/cloudfour/lighthouse-parade/pull/19

- [ ] Convert to TS: `combine_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [x] Convert to TS: `lighthouse` https://github.com/cloudfour/lighthouse-parade/pull/20

- [ ] Convert to TS: `lighthouse_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [x] Convert to TS: `url_csv_maker` https://github.com/cloudfour/lighthouse-parade/pull/22

- [ ] Convert to TS: `scan_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [ ] Convert to TS: `urls_task` https://github.com/cloudfour/lighthouse-parade/pull/23

|

1.0

|

Convert to TypeScript - - [x] Set up TS https://github.com/cloudfour/lighthouse-parade/pull/11

- [x] Convert to TS: `utilities` https://github.com/cloudfour/lighthouse-parade/pull/15

- [x] Convert to TS: `reportToRow` https://github.com/cloudfour/lighthouse-parade/pull/17

- [x] Convert to TS: `combine` https://github.com/cloudfour/lighthouse-parade/pull/19

- [ ] Convert to TS: `combine_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [x] Convert to TS: `lighthouse` https://github.com/cloudfour/lighthouse-parade/pull/20

- [ ] Convert to TS: `lighthouse_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [x] Convert to TS: `url_csv_maker` https://github.com/cloudfour/lighthouse-parade/pull/22

- [ ] Convert to TS: `scan_task` https://github.com/cloudfour/lighthouse-parade/pull/23

- [ ] Convert to TS: `urls_task` https://github.com/cloudfour/lighthouse-parade/pull/23

|

priority

|

convert to typescript set up ts convert to ts utilities convert to ts reporttorow convert to ts combine convert to ts combine task convert to ts lighthouse convert to ts lighthouse task convert to ts url csv maker convert to ts scan task convert to ts urls task

| 1

|

228,350

| 7,550,007,123

|

IssuesEvent

|

2018-04-18 15:39:20

|

EyeSeeTea/QAApp

|

https://api.github.com/repos/EyeSeeTea/QAApp

|

reopened

|

Improve, feedback: - Indent feedback content

|

complexity - low (1hr) priority - high type - maintenance

|

Please, use different colors for the background of Questions and Feedback scripts. Maybe a different shade of grey? This will help users to distinguish between Questions and Feedback scripts more easily.

|

1.0

|

Improve, feedback: - Indent feedback content - Please, use different colors for the background of Questions and Feedback scripts. Maybe a different shade of grey? This will help users to distinguish between Questions and Feedback scripts more easily.

|

priority

|

improve feedback indent feedback content please use different colors for the background of questions and feedback scripts maybe a different shade of grey this will help users to distinguish between questions and feedback scripts more easily

| 1

|

783,219

| 27,523,136,638

|

IssuesEvent

|

2023-03-06 16:14:47

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

tests/bluetooth/bsim/audio unicast_audio broken in main blocking CI

|

bug priority: high area: Bluetooth area: Bluetooth Audio

|

**Describe the bug**

tests/bluetooth/bsim/audio unicast_audio is broken in main and is currently blocking CI

**To Reproduce**

Look at bluetooth's CI workflow

Or:

1. fetch latest main locally

2. tests/bluetooth/bsim/audio/compile.sh

3. tests/bluetooth/bsim/audio/test_scripts/unicast_audio.sh

**Expected behavior**

The test passes

**Impact**

CI blocked for BT tests

**Logs and console output**

https://github.com/zephyrproject-rtos/zephyr/actions/runs/4344560229/jobs/7588045210

**Environment (please complete the following information):**

- Zephyr's CI

- Local Linux host

|

1.0

|

tests/bluetooth/bsim/audio unicast_audio broken in main blocking CI - **Describe the bug**

tests/bluetooth/bsim/audio unicast_audio is broken in main and is currently blocking CI

**To Reproduce**

Look at bluetooth's CI workflow

Or:

1. fetch latest main locally

2. tests/bluetooth/bsim/audio/compile.sh

3. tests/bluetooth/bsim/audio/test_scripts/unicast_audio.sh

**Expected behavior**

The test passes

**Impact**

CI blocked for BT tests

**Logs and console output**

https://github.com/zephyrproject-rtos/zephyr/actions/runs/4344560229/jobs/7588045210

**Environment (please complete the following information):**

- Zephyr's CI

- Local Linux host

|

priority

|

tests bluetooth bsim audio unicast audio broken in main blocking ci describe the bug tests bluetooth bsim audio unicast audio is broken in main and is currently blocking ci to reproduce look at bluetooth s ci workflow or fetch latest main locally tests bluetooth bsim audio compile sh tests bluetooth bsim audio test scripts unicast audio sh expected behavior the test passes impact ci blocked for bt tests logs and console output environment please complete the following information zephyr s ci local linux host

| 1

|

162,324

| 6,150,882,429

|

IssuesEvent

|

2017-06-28 00:08:55

|

Codewars/codewars.com

|

https://api.github.com/repos/Codewars/codewars.com

|

closed

|

Codewars Red streak stats misbehaving again

|

bug high priority

|

For some days now my profile has said this --

-- even though I did some katas on the mornings (California time) of April 30, May 1, etc.

|

1.0

|

Codewars Red streak stats misbehaving again - For some days now my profile has said this --

-- even though I did some katas on the mornings (California time) of April 30, May 1, etc.

|

priority

|

codewars red streak stats misbehaving again for some days now my profile has said this even though i did some katas on the mornings california time of april may etc

| 1

|

480,244

| 13,838,412,012

|

IssuesEvent

|

2020-10-14 06:11:50

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

cse.google.com - design is broken

|