Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

174,248 | 27,606,003,106 | IssuesEvent | 2023-03-09 13:02:56 | Kotlin/kotlinx.coroutines | https://api.github.com/repos/Kotlin/kotlinx.coroutines | closed | Consider deprecating or changing the behaviour of CoroutineContext.isActive | enhancement design breaking change for 1.7 | According to the [doc](https://kotlin.github.io/kotlinx.coroutines/kotlinx-coroutines-core/kotlinx.coroutines/is-active.html), `isActive` has the following property:

>The coroutineContext.isActive expression is a shortcut for coroutineContext[Job]?.isActive == true. See [Job.isActive](https://kotlin.github.io/kotlinx.coroutines/kotlinx-coroutines-core/kotlinx.coroutines/-job/is-active.html).

It means that, if the `Job` is not present, `isActive` always returns `false`.

We have multiple reports that such behaviour can be error-prone when used with non-`kotlinx.coroutines` entry points, such as Ktor and `suspend fun main`, because it is inconsistent with the overall contract:

>(Job) has not been completed and was not cancelled yet

`CoroutineContext.isActive` predates both `CoroutineScope` (which should always have a `Job` in it, if it's not `GlobalScope`) and `job` extension, so it may be the case that it can be safely deprecated.

Basically, we have three options:

* Do nothing, left things as is. It doesn't solve the original issue, but also doesn't introduce any potentially breaking changes

* Deprecate `CoroutineContext.isActive`. Such change has multiple potential downsides

* Its only possible replacement is `this.job.isActive`, but this replacement is not equivalent to the original method -- `.job` throws an exception for contexts without a `Job`. An absence of replacement can be too disturbing as [a lot of code](https://grep.app/search?q=context.isActive&filter[lang][0]=Kotlin) rely on a perfectly fine `ctxWithJob.isActive`

* Code that relies on `.job.isActive` no longer can be called from such entry points safely

* Change the default behaviour -- return `true`. It also "fixes" such patterns as `GlobalScope.isActive` but basically is a breaking change | 1.0 | Consider deprecating or changing the behaviour of CoroutineContext.isActive - According to the [doc](https://kotlin.github.io/kotlinx.coroutines/kotlinx-coroutines-core/kotlinx.coroutines/is-active.html), `isActive` has the following property:

>The coroutineContext.isActive expression is a shortcut for coroutineContext[Job]?.isActive == true. See [Job.isActive](https://kotlin.github.io/kotlinx.coroutines/kotlinx-coroutines-core/kotlinx.coroutines/-job/is-active.html).

It means that, if the `Job` is not present, `isActive` always returns `false`.

We have multiple reports that such behaviour can be error-prone when used with non-`kotlinx.coroutines` entry points, such as Ktor and `suspend fun main`, because it is inconsistent with the overall contract:

>(Job) has not been completed and was not cancelled yet

`CoroutineContext.isActive` predates both `CoroutineScope` (which should always have a `Job` in it, if it's not `GlobalScope`) and `job` extension, so it may be the case that it can be safely deprecated.

Basically, we have three options:

* Do nothing, left things as is. It doesn't solve the original issue, but also doesn't introduce any potentially breaking changes

* Deprecate `CoroutineContext.isActive`. Such change has multiple potential downsides

* Its only possible replacement is `this.job.isActive`, but this replacement is not equivalent to the original method -- `.job` throws an exception for contexts without a `Job`. An absence of replacement can be too disturbing as [a lot of code](https://grep.app/search?q=context.isActive&filter[lang][0]=Kotlin) rely on a perfectly fine `ctxWithJob.isActive`

* Code that relies on `.job.isActive` no longer can be called from such entry points safely

* Change the default behaviour -- return `true`. It also "fixes" such patterns as `GlobalScope.isActive` but basically is a breaking change | non_infrastructure | consider deprecating or changing the behaviour of coroutinecontext isactive according to the isactive has the following property the coroutinecontext isactive expression is a shortcut for coroutinecontext isactive true see it means that if the job is not present isactive always returns false we have multiple reports that such behaviour can be error prone when used with non kotlinx coroutines entry points such as ktor and suspend fun main because it is inconsistent with the overall contract job has not been completed and was not cancelled yet coroutinecontext isactive predates both coroutinescope which should always have a job in it if it s not globalscope and job extension so it may be the case that it can be safely deprecated basically we have three options do nothing left things as is it doesn t solve the original issue but also doesn t introduce any potentially breaking changes deprecate coroutinecontext isactive such change has multiple potential downsides its only possible replacement is this job isactive but this replacement is not equivalent to the original method job throws an exception for contexts without a job an absence of replacement can be too disturbing as kotlin rely on a perfectly fine ctxwithjob isactive code that relies on job isactive no longer can be called from such entry points safely change the default behaviour return true it also fixes such patterns as globalscope isactive but basically is a breaking change | 0 |

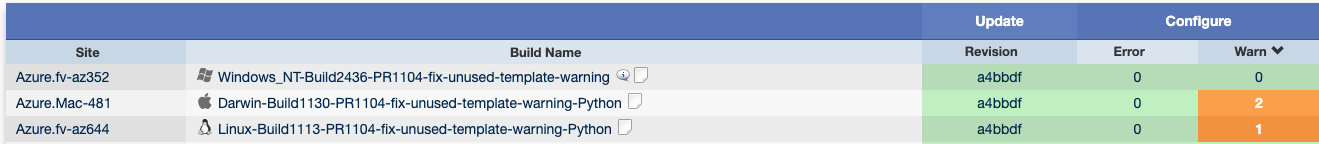

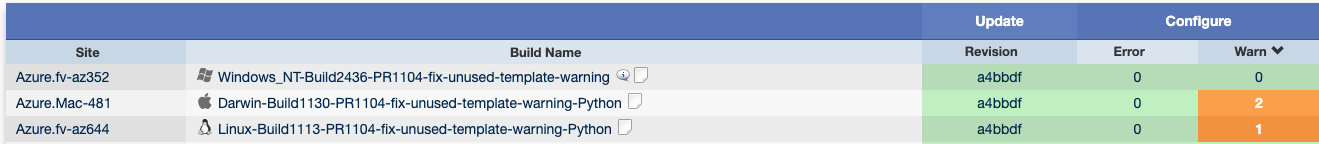

31,905 | 26,230,972,289 | IssuesEvent | 2023-01-05 00:08:34 | iree-org/iree | https://api.github.com/repos/iree-org/iree | closed | Schedule release candidate CI workflow picks up commits with failed checks | bug 🐞 infrastructure | ### What happened?

https://github.com/iree-org/iree/actions/runs/3469376607/jobs/5796297215

picked a failing commit (https://github.com/iree-org/iree/commit/3ff9d517054313a63d20012dcf9510e307915df1) as the "last green commit"

The code to pick up the commit is at https://github.com/talentpair/last-green-commit-action/blob/d95cfa836b22ef047dd0a8ddb1e6d9567982d702/src/main.ts#L34

GitHub REST api returns the `check_suites` via https://docs.github.com/en/rest/checks/suites#list-check-suites-for-a-git-reference

In the example above, it returns a list of check_suites with 13 checks, and some of them are with status `queued` and some of them are with the status `completed`

https://api.github.com/repos/iree-org/iree/check-suites/9307573321

https://api.github.com/repos/iree-org/iree/check-suites/9316620900

### Steps to reproduce your issue

_No response_

### What component(s) does this issue relate to?

Other

### Version information

_No response_

### Additional context

_No response_ | 1.0 | Schedule release candidate CI workflow picks up commits with failed checks - ### What happened?

https://github.com/iree-org/iree/actions/runs/3469376607/jobs/5796297215

picked a failing commit (https://github.com/iree-org/iree/commit/3ff9d517054313a63d20012dcf9510e307915df1) as the "last green commit"

The code to pick up the commit is at https://github.com/talentpair/last-green-commit-action/blob/d95cfa836b22ef047dd0a8ddb1e6d9567982d702/src/main.ts#L34

GitHub REST api returns the `check_suites` via https://docs.github.com/en/rest/checks/suites#list-check-suites-for-a-git-reference

In the example above, it returns a list of check_suites with 13 checks, and some of them are with status `queued` and some of them are with the status `completed`

https://api.github.com/repos/iree-org/iree/check-suites/9307573321

https://api.github.com/repos/iree-org/iree/check-suites/9316620900

### Steps to reproduce your issue

_No response_

### What component(s) does this issue relate to?

Other

### Version information

_No response_

### Additional context

_No response_ | infrastructure | schedule release candidate ci workflow picks up commits with failed checks what happened picked a failing commit as the last green commit the code to pick up the commit is at github rest api returns the check suites via in the example above it returns a list of check suites with checks and some of them are with status queued and some of them are with the status completed steps to reproduce your issue no response what component s does this issue relate to other version information no response additional context no response | 1 |

9,232 | 7,879,406,414 | IssuesEvent | 2018-06-26 13:20:20 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Grid copy and paste seems to be broken | bug interface/infrastructure | Attempts to paste into a grid cause ApsimX to crash. The problem appears to be a null reference exception. This should be fixed, and we should also have a more robust exception handling mechanism, to try to handle just failures more gracefully (and ideally without data loss). | 1.0 | Grid copy and paste seems to be broken - Attempts to paste into a grid cause ApsimX to crash. The problem appears to be a null reference exception. This should be fixed, and we should also have a more robust exception handling mechanism, to try to handle just failures more gracefully (and ideally without data loss). | infrastructure | grid copy and paste seems to be broken attempts to paste into a grid cause apsimx to crash the problem appears to be a null reference exception this should be fixed and we should also have a more robust exception handling mechanism to try to handle just failures more gracefully and ideally without data loss | 1 |

17,369 | 12,321,682,131 | IssuesEvent | 2020-05-13 09:06:35 | microsoft/WindowsTemplateStudio | https://api.github.com/repos/microsoft/WindowsTemplateStudio | closed | VS Emulator: Recreate app | Can Close Out Soon Infrastructure enhancement | Add a button in the generation details that recreates the same app (user selection and folder destination).

This will be very usefull because when we are creating templates there are times when you fix something in the template and you want to generate the same app to check if the issue is fixed or not and you always have to open the wizard and set the same user selection. | 1.0 | VS Emulator: Recreate app - Add a button in the generation details that recreates the same app (user selection and folder destination).

This will be very usefull because when we are creating templates there are times when you fix something in the template and you want to generate the same app to check if the issue is fixed or not and you always have to open the wizard and set the same user selection. | infrastructure | vs emulator recreate app add a button in the generation details that recreates the same app user selection and folder destination this will be very usefull because when we are creating templates there are times when you fix something in the template and you want to generate the same app to check if the issue is fixed or not and you always have to open the wizard and set the same user selection | 1 |

11,330 | 9,104,771,880 | IssuesEvent | 2019-02-20 19:02:27 | square/misk-web | https://api.github.com/repos/square/misk-web | closed | Rework misk-web repo to have a single Rush managed directory | enhancement infrastructure | Currently we have 2 Rush managed directories

- `examples`

- `misk-web/web/packages`

A single one will reduce the legwork to bumping and publishing packages since version updates will be updated across the `@misk/*` packages and the example code.

| 1.0 | Rework misk-web repo to have a single Rush managed directory - Currently we have 2 Rush managed directories

- `examples`

- `misk-web/web/packages`

A single one will reduce the legwork to bumping and publishing packages since version updates will be updated across the `@misk/*` packages and the example code.

| infrastructure | rework misk web repo to have a single rush managed directory currently we have rush managed directories examples misk web web packages a single one will reduce the legwork to bumping and publishing packages since version updates will be updated across the misk packages and the example code | 1 |

14,962 | 11,272,264,892 | IssuesEvent | 2020-01-14 14:35:26 | approvals/ApprovalTests.cpp | https://api.github.com/repos/approvals/ApprovalTests.cpp | closed | Naming of targets in third_party is non-standard | bug infrastructure | If the Catch2 project is included via CMake's `add_subdirectory()` or `FetchContent`, then the following targets are created, as far as I can tell:

* `Catch2`

* `Catch2::Catch2`

Unfortunately I didn't appreciate the significance of this when creating third_party/catch2/CMakeLists.txt - which creates the target `catch`

The name `Catch2::Catch2` is preferred, as if that is missing, a warning is issued when CMake runs, making it easier to track down missing dependencies.

I think it should be possible to create aliases in the CMake files in third_party to retain the old naming, in case any users are already depending on the third_party target-names I created earlier. | 1.0 | Naming of targets in third_party is non-standard - If the Catch2 project is included via CMake's `add_subdirectory()` or `FetchContent`, then the following targets are created, as far as I can tell:

* `Catch2`

* `Catch2::Catch2`

Unfortunately I didn't appreciate the significance of this when creating third_party/catch2/CMakeLists.txt - which creates the target `catch`

The name `Catch2::Catch2` is preferred, as if that is missing, a warning is issued when CMake runs, making it easier to track down missing dependencies.

I think it should be possible to create aliases in the CMake files in third_party to retain the old naming, in case any users are already depending on the third_party target-names I created earlier. | infrastructure | naming of targets in third party is non standard if the project is included via cmake s add subdirectory or fetchcontent then the following targets are created as far as i can tell unfortunately i didn t appreciate the significance of this when creating third party cmakelists txt which creates the target catch the name is preferred as if that is missing a warning is issued when cmake runs making it easier to track down missing dependencies i think it should be possible to create aliases in the cmake files in third party to retain the old naming in case any users are already depending on the third party target names i created earlier | 1 |

18,068 | 12,748,414,342 | IssuesEvent | 2020-06-26 20:04:17 | hyphacoop/organizing | https://api.github.com/repos/hyphacoop/organizing | opened | Re-evaluate external services | [priority-★☆☆] wg:infrastructure | <sup>_This initial comment is collaborative and open to modification by all._</sup>

## Task Summary

🎟️ **Re-ticketed from:** #

🗣 **Loomio:** N/A

📅 **Due date:** end-Oct

🎯 **Success criteria:** Have a plan for continued hosting (or deprecation) of each service listed below.

In our discussions, we planned to not migrate these services:

>- VM8: email 💧💾💾💾

>- VM9: matrix + whatsapp bridge + chatbot 💧💧💾

>- VM4: nextcloud + onlyoffice 💧💧💾💾

>- VM10: android vm 💧

We should re-evaluate the long-term plan for these services, and deprecate ones that are no longer important.

## To Do

- [ ] Discuss and have a plan for each service

| 1.0 | Re-evaluate external services - <sup>_This initial comment is collaborative and open to modification by all._</sup>

## Task Summary

🎟️ **Re-ticketed from:** #

🗣 **Loomio:** N/A

📅 **Due date:** end-Oct

🎯 **Success criteria:** Have a plan for continued hosting (or deprecation) of each service listed below.

In our discussions, we planned to not migrate these services:

>- VM8: email 💧💾💾💾

>- VM9: matrix + whatsapp bridge + chatbot 💧💧💾

>- VM4: nextcloud + onlyoffice 💧💧💾💾

>- VM10: android vm 💧

We should re-evaluate the long-term plan for these services, and deprecate ones that are no longer important.

## To Do

- [ ] Discuss and have a plan for each service

| infrastructure | re evaluate external services this initial comment is collaborative and open to modification by all task summary 🎟️ re ticketed from 🗣 loomio n a 📅 due date end oct 🎯 success criteria have a plan for continued hosting or deprecation of each service listed below in our discussions we planned to not migrate these services email 💧💾💾💾 matrix whatsapp bridge chatbot 💧💧💾 nextcloud onlyoffice 💧💧💾💾 android vm 💧 we should re evaluate the long term plan for these services and deprecate ones that are no longer important to do discuss and have a plan for each service | 1 |

831,539 | 32,051,978,481 | IssuesEvent | 2023-09-23 17:10:02 | Hamlib/Hamlib | https://api.github.com/repos/Hamlib/Hamlib | opened | FT-DX101MP ST command fix | bug priority | To maintain compatibility with older firmware need to test if ST command is availble.

Send ST; and if ?; is received command is not available.

| 1.0 | FT-DX101MP ST command fix - To maintain compatibility with older firmware need to test if ST command is availble.

Send ST; and if ?; is received command is not available.

| non_infrastructure | ft st command fix to maintain compatibility with older firmware need to test if st command is availble send st and if is received command is not available | 0 |

28,656 | 23,422,211,677 | IssuesEvent | 2022-08-13 21:53:45 | oppia/oppia-android | https://api.github.com/repos/oppia/oppia-android | closed | [A11Y Advanced] Lessons tab flow needs to be improved | Type: Improvement Priority: Essential issue_type_infrastructure issue_user_impact_low user_team | Lessons tab flow needs to be improved. Current experience is shown below:

https://user-images.githubusercontent.com/9396084/136244615-eb6b4f3f-e676-485d-9477-579822845039.mp4

| 1.0 | [A11Y Advanced] Lessons tab flow needs to be improved - Lessons tab flow needs to be improved. Current experience is shown below:

https://user-images.githubusercontent.com/9396084/136244615-eb6b4f3f-e676-485d-9477-579822845039.mp4

| infrastructure | lessons tab flow needs to be improved lessons tab flow needs to be improved current experience is shown below | 1 |

417,795 | 12,179,342,906 | IssuesEvent | 2020-04-28 10:30:33 | web-platform-tests/wpt | https://api.github.com/repos/web-platform-tests/wpt | closed | Commits touching many files fail to run in Taskcluster | Taskcluster infra priority:roadmap | https://wpt.fyi/runs?max-count=100&label=beta shows these runs so far this year:

Beta runs are triggered weekly, so many dates are missing here, like all of July, Aug 5 and Aug 19.

To figure out what went wrong, one has to know what the weekly SHA was, and https://wpt.fyi/api/revisions/list?epochs=weekly&num_revisions=100 lists them going back in time.

Then a URL like https://api.github.com/repos/web-platform-tests/wpt/statuses/8561d630fb3c4ede85b33df61f91847a21c1989e will lead to the task group:

https://tools.taskcluster.net/groups/WfP5NQ-ISzahOnNbiJugEQ

This last time, it looks like all tasks failed like this:

```

[taskcluster 2019-08-19 00:00:58.151Z] === Task Starting ===

standard_init_linux.go:190: exec user process caused "argument list too long"

[taskcluster 2019-08-19 00:00:59.121Z] === Task Finished ===

```

For [Aug 5](https://tools.taskcluster.net/groups/Dq8CaooRRreUDE1TBSPcBg) it was the same.

@jgraham any idea why this happens, and if it's disproportionately affecting Beta runs?

Related: https://github.com/web-platform-tests/wpt/issues/14210 | 1.0 | Commits touching many files fail to run in Taskcluster - https://wpt.fyi/runs?max-count=100&label=beta shows these runs so far this year:

Beta runs are triggered weekly, so many dates are missing here, like all of July, Aug 5 and Aug 19.

To figure out what went wrong, one has to know what the weekly SHA was, and https://wpt.fyi/api/revisions/list?epochs=weekly&num_revisions=100 lists them going back in time.

Then a URL like https://api.github.com/repos/web-platform-tests/wpt/statuses/8561d630fb3c4ede85b33df61f91847a21c1989e will lead to the task group:

https://tools.taskcluster.net/groups/WfP5NQ-ISzahOnNbiJugEQ

This last time, it looks like all tasks failed like this:

```

[taskcluster 2019-08-19 00:00:58.151Z] === Task Starting ===

standard_init_linux.go:190: exec user process caused "argument list too long"

[taskcluster 2019-08-19 00:00:59.121Z] === Task Finished ===

```

For [Aug 5](https://tools.taskcluster.net/groups/Dq8CaooRRreUDE1TBSPcBg) it was the same.

@jgraham any idea why this happens, and if it's disproportionately affecting Beta runs?

Related: https://github.com/web-platform-tests/wpt/issues/14210 | non_infrastructure | commits touching many files fail to run in taskcluster shows these runs so far this year beta runs are triggered weekly so many dates are missing here like all of july aug and aug to figure out what went wrong one has to know what the weekly sha was and lists them going back in time then a url like will lead to the task group this last time it looks like all tasks failed like this task starting standard init linux go exec user process caused argument list too long task finished for it was the same jgraham any idea why this happens and if it s disproportionately affecting beta runs related | 0 |

183,174 | 21,714,470,828 | IssuesEvent | 2022-05-10 16:30:13 | svg-GHC-2/test_django.nv | https://api.github.com/repos/svg-GHC-2/test_django.nv | closed | CVE-2016-2513 (Low) detected in Django-1.8.3-py2.py3-none-any.whl - autoclosed | security vulnerability | ## CVE-2016-2513 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.8.3-py2.py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/a3/e1/0f3c17b1caa559ba69513ff72e250377c268d5bd3e8ad2b22809c7e2e907/Django-1.8.3-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/a3/e1/0f3c17b1caa559ba69513ff72e250377c268d5bd3e8ad2b22809c7e2e907/Django-1.8.3-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /requirements.txt</p>

<p>Path to vulnerable library: /requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.8.3-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/svg-GHC-2/test_django.nv/commit/9c82557a12ed8d1bf704180a7d351aa1518ef16c">9c82557a12ed8d1bf704180a7d351aa1518ef16c</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The password hasher in contrib/auth/hashers.py in Django before 1.8.10 and 1.9.x before 1.9.3 allows remote attackers to enumerate users via a timing attack involving login requests.

<p>Publish Date: 2016-04-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-2513>CVE-2016-2513</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-2513">https://nvd.nist.gov/vuln/detail/CVE-2016-2513</a></p>

<p>Release Date: 2016-04-08</p>

<p>Fix Resolution: 1.8.10,1.9.3</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"Django","packageVersion":"1.8.3","packageFilePaths":["/requirements.txt"],"isTransitiveDependency":false,"dependencyTree":"Django:1.8.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"1.8.10,1.9.3","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2016-2513","vulnerabilityDetails":"The password hasher in contrib/auth/hashers.py in Django before 1.8.10 and 1.9.x before 1.9.3 allows remote attackers to enumerate users via a timing attack involving login requests.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-2513","cvss3Severity":"low","cvss3Score":"3.1","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"Required","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2016-2513 (Low) detected in Django-1.8.3-py2.py3-none-any.whl - autoclosed - ## CVE-2016-2513 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.8.3-py2.py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/a3/e1/0f3c17b1caa559ba69513ff72e250377c268d5bd3e8ad2b22809c7e2e907/Django-1.8.3-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/a3/e1/0f3c17b1caa559ba69513ff72e250377c268d5bd3e8ad2b22809c7e2e907/Django-1.8.3-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /requirements.txt</p>

<p>Path to vulnerable library: /requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.8.3-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/svg-GHC-2/test_django.nv/commit/9c82557a12ed8d1bf704180a7d351aa1518ef16c">9c82557a12ed8d1bf704180a7d351aa1518ef16c</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The password hasher in contrib/auth/hashers.py in Django before 1.8.10 and 1.9.x before 1.9.3 allows remote attackers to enumerate users via a timing attack involving login requests.

<p>Publish Date: 2016-04-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-2513>CVE-2016-2513</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-2513">https://nvd.nist.gov/vuln/detail/CVE-2016-2513</a></p>

<p>Release Date: 2016-04-08</p>

<p>Fix Resolution: 1.8.10,1.9.3</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"Django","packageVersion":"1.8.3","packageFilePaths":["/requirements.txt"],"isTransitiveDependency":false,"dependencyTree":"Django:1.8.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"1.8.10,1.9.3","isBinary":false}],"baseBranches":["main"],"vulnerabilityIdentifier":"CVE-2016-2513","vulnerabilityDetails":"The password hasher in contrib/auth/hashers.py in Django before 1.8.10 and 1.9.x before 1.9.3 allows remote attackers to enumerate users via a timing attack involving login requests.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-2513","cvss3Severity":"low","cvss3Score":"3.1","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"Required","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_infrastructure | cve low detected in django none any whl autoclosed cve low severity vulnerability vulnerable library django none any whl a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency file requirements txt path to vulnerable library requirements txt dependency hierarchy x django none any whl vulnerable library found in head commit a href found in base branch main vulnerability details the password hasher in contrib auth hashers py in django before and x before allows remote attackers to enumerate users via a timing attack involving login requests publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction required scope unchanged impact metrics confidentiality impact low integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution check this box to open an automated fix pr isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency false dependencytree django isminimumfixversionavailable true minimumfixversion isbinary false basebranches vulnerabilityidentifier cve vulnerabilitydetails the password hasher in contrib auth hashers py in django before and x before allows remote attackers to enumerate users via a timing attack involving login requests vulnerabilityurl | 0 |

22,043 | 14,972,682,530 | IssuesEvent | 2021-01-27 23:20:53 | neopragma/cobol-check | https://api.github.com/repos/neopragma/cobol-check | closed | Build approval test into the gradle build | enhancement infrastructure | Due to the fact this is a batch process, we can't catch enough errors with unit checks only. Need to set up an approval test using sample Cobol source and test suites and include it in the Gradle build with a dependency on the integration test step. | 1.0 | Build approval test into the gradle build - Due to the fact this is a batch process, we can't catch enough errors with unit checks only. Need to set up an approval test using sample Cobol source and test suites and include it in the Gradle build with a dependency on the integration test step. | infrastructure | build approval test into the gradle build due to the fact this is a batch process we can t catch enough errors with unit checks only need to set up an approval test using sample cobol source and test suites and include it in the gradle build with a dependency on the integration test step | 1 |

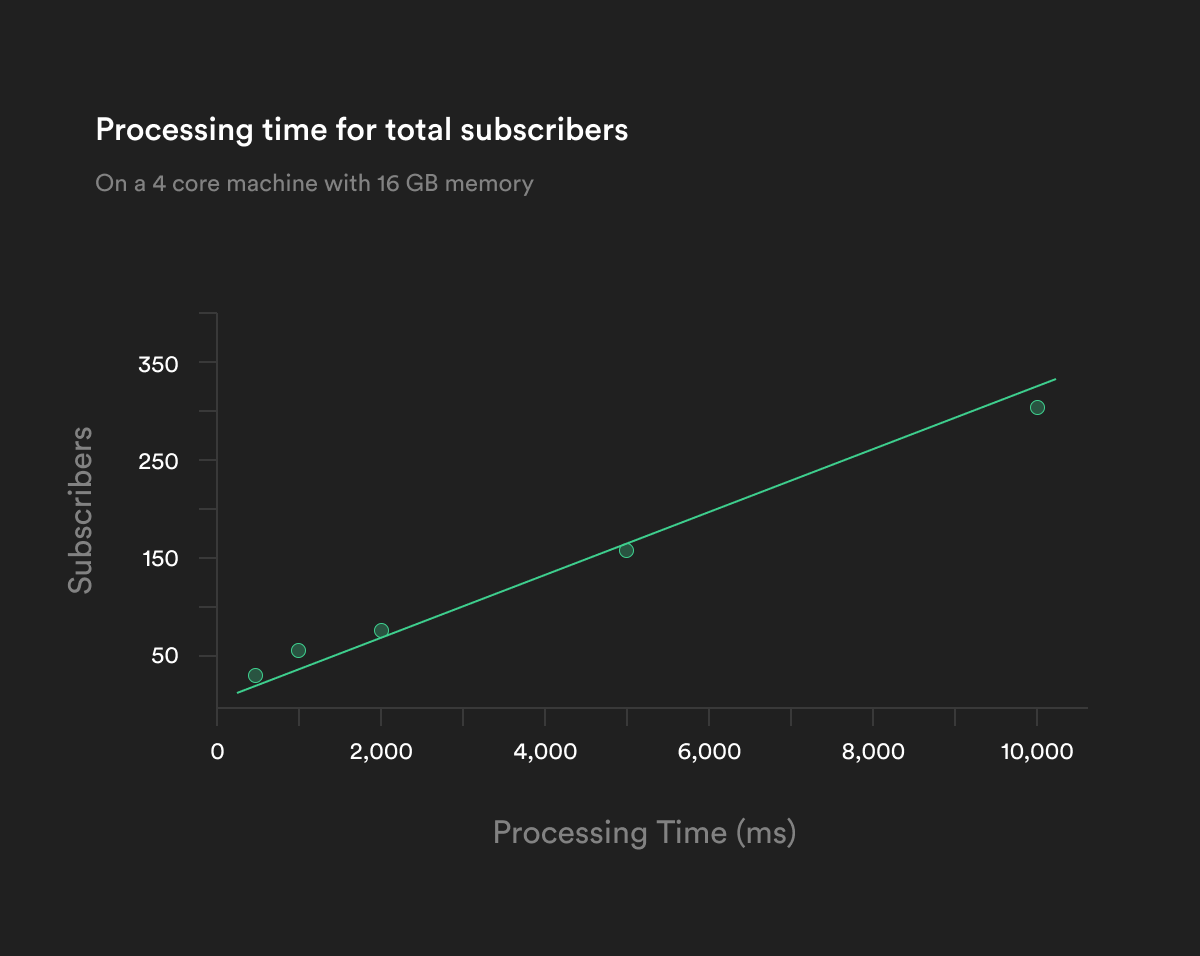

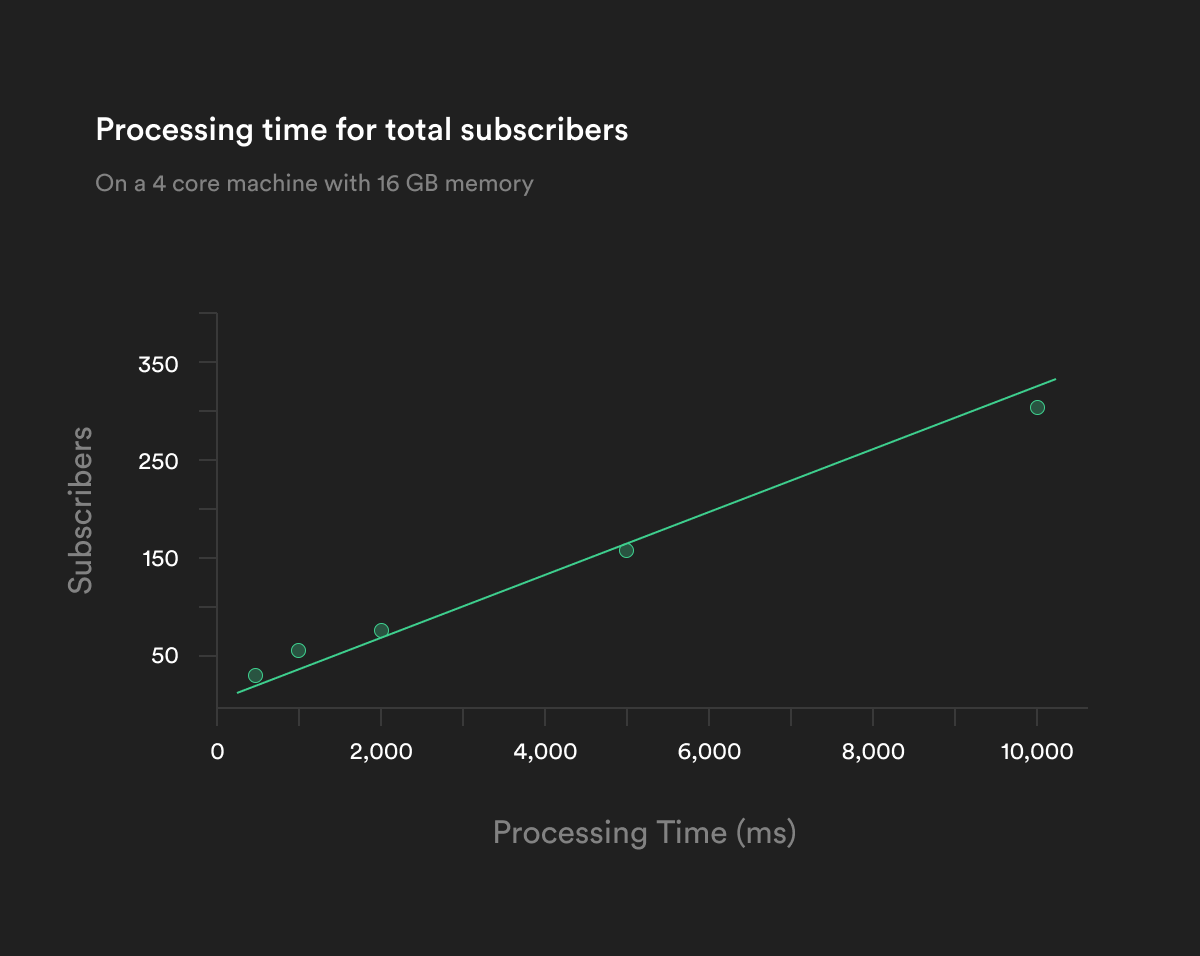

253,596 | 19,142,761,689 | IssuesEvent | 2021-12-02 02:02:17 | supabase/supabase | https://api.github.com/repos/supabase/supabase | closed | Realtime Blog Post 12/1/2021 has very bad chart | documentation | # Improve documentation

## Link

https://supabase.com/blog/2021/12/01/realtime-row-level-security-in-postgresql

## Describe the problem

I was quite scared when I saw this graph....

https://supabase.com/images/blog/launch-week-three/realtime-row-level-security-in-postgresql/supabase-realtime-processing-per-subscription.png

## Describe the improvement

Swap the X and Y axis labels (hopefully...)

| 1.0 | Realtime Blog Post 12/1/2021 has very bad chart - # Improve documentation

## Link

https://supabase.com/blog/2021/12/01/realtime-row-level-security-in-postgresql

## Describe the problem

I was quite scared when I saw this graph....

https://supabase.com/images/blog/launch-week-three/realtime-row-level-security-in-postgresql/supabase-realtime-processing-per-subscription.png

## Describe the improvement

Swap the X and Y axis labels (hopefully...)

| non_infrastructure | realtime blog post has very bad chart improve documentation link describe the problem i was quite scared when i saw this graph describe the improvement swap the x and y axis labels hopefully | 0 |

22,642 | 6,278,591,108 | IssuesEvent | 2017-07-18 14:39:39 | eclipse/che | https://api.github.com/repos/eclipse/che | closed | No error notification when trying to create a workspace with wrong name | kind/bug severity/P2 status/code-review team/plugin | While creating a new workspace add to the workspace name "-" symbol. After clicking the Save button nothing changes.

| 1.0 | No error notification when trying to create a workspace with wrong name - While creating a new workspace add to the workspace name "-" symbol. After clicking the Save button nothing changes.

| non_infrastructure | no error notification when trying to create a workspace with wrong name while creating a new workspace add to the workspace name symbol after clicking the save button nothing changes | 0 |

18,309 | 12,889,250,172 | IssuesEvent | 2020-07-13 14:15:53 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | closed | RPMs: Decide how to package the UI | area/infrastructure priority/high sprint/2 status/ready-for-QE type/enhancement | - Determine the best strategy for packaging the UI

- Produce PyPi and/or NPM packages that can be consumed by the RPM build process

- Coordinate with Evgeni

Subtask of #145 | 1.0 | RPMs: Decide how to package the UI - - Determine the best strategy for packaging the UI

- Produce PyPi and/or NPM packages that can be consumed by the RPM build process

- Coordinate with Evgeni

Subtask of #145 | infrastructure | rpms decide how to package the ui determine the best strategy for packaging the ui produce pypi and or npm packages that can be consumed by the rpm build process coordinate with evgeni subtask of | 1 |

788 | 2,904,916,276 | IssuesEvent | 2015-06-18 20:40:28 | openEXO/cloud-kepler | https://api.github.com/repos/openEXO/cloud-kepler | opened | First draft of Output FITS Structure | in progress infrastructure | There is no documentation for how the output file is structured. | 1.0 | First draft of Output FITS Structure - There is no documentation for how the output file is structured. | infrastructure | first draft of output fits structure there is no documentation for how the output file is structured | 1 |

13,910 | 10,543,505,078 | IssuesEvent | 2019-10-02 15:05:54 | fablabbcn/fablabs.io | https://api.github.com/repos/fablabbcn/fablabs.io | opened | Backstage search differs from frontend view | Infrastructure bug | **Describe the bug**

Some approved labs under the backstage are not appearing as they are on the fronted.

**To Reproduce**

Steps to reproduce the behavior:

Shown in screenshots!

**Expected behavior**

Example for Denmark on the front end you see 9 fablabs as approved, on the backstage, you only see 6 and there is no way to find them, try all possible search methods and it basically tells me that the labs don't exist, even though their link is working on the platform.

**Screenshots**

frontend:

<img width="594" alt="Screen Shot 2019-10-02 at 9 55 31 AM" src="https://user-images.githubusercontent.com/24419466/66055958-fdf31000-e4fb-11e9-8071-6f2f0aa8e124.png">

backstage:

<img width="560" alt="Screen Shot 2019-10-02 at 9 57 31 AM" src="https://user-images.githubusercontent.com/24419466/66055970-03505a80-e4fc-11e9-9607-ac99d68ffd77.png">

**Desktop (please complete the following information):**

- OS: macOS 10.14.6

- Browser: Chrome

- Version: Version 77.0.3865.90 (Official Build) (64-bit)

**Additional context**

not helping with actual metrics.

| 1.0 | Backstage search differs from frontend view - **Describe the bug**

Some approved labs under the backstage are not appearing as they are on the fronted.

**To Reproduce**

Steps to reproduce the behavior:

Shown in screenshots!

**Expected behavior**

Example for Denmark on the front end you see 9 fablabs as approved, on the backstage, you only see 6 and there is no way to find them, try all possible search methods and it basically tells me that the labs don't exist, even though their link is working on the platform.

**Screenshots**

frontend:

<img width="594" alt="Screen Shot 2019-10-02 at 9 55 31 AM" src="https://user-images.githubusercontent.com/24419466/66055958-fdf31000-e4fb-11e9-8071-6f2f0aa8e124.png">

backstage:

<img width="560" alt="Screen Shot 2019-10-02 at 9 57 31 AM" src="https://user-images.githubusercontent.com/24419466/66055970-03505a80-e4fc-11e9-9607-ac99d68ffd77.png">

**Desktop (please complete the following information):**

- OS: macOS 10.14.6

- Browser: Chrome

- Version: Version 77.0.3865.90 (Official Build) (64-bit)

**Additional context**

not helping with actual metrics.

| infrastructure | backstage search differs from frontend view describe the bug some approved labs under the backstage are not appearing as they are on the fronted to reproduce steps to reproduce the behavior shown in screenshots expected behavior example for denmark on the front end you see fablabs as approved on the backstage you only see and there is no way to find them try all possible search methods and it basically tells me that the labs don t exist even though their link is working on the platform screenshots frontend img width alt screen shot at am src backstage img width alt screen shot at am src desktop please complete the following information os macos browser chrome version version official build bit additional context not helping with actual metrics | 1 |

238,134 | 18,234,578,319 | IssuesEvent | 2021-10-01 04:22:57 | CoinAlpha/hummingbot | https://api.github.com/repos/CoinAlpha/hummingbot | closed | Documentation for `HangingOrdersTracker` class | documentation | ## Why

The `HangingOrdersTracker` class is one of the two clear components (the other is `APIThrottler`) that we have individualized even in the code that are going to be used as they are and something that we want to make it usable by the community.

## What

Add to developer documentation and include as much information as possible about **how to use** this class. | 1.0 | Documentation for `HangingOrdersTracker` class - ## Why

The `HangingOrdersTracker` class is one of the two clear components (the other is `APIThrottler`) that we have individualized even in the code that are going to be used as they are and something that we want to make it usable by the community.

## What

Add to developer documentation and include as much information as possible about **how to use** this class. | non_infrastructure | documentation for hangingorderstracker class why the hangingorderstracker class is one of the two clear components the other is apithrottler that we have individualized even in the code that are going to be used as they are and something that we want to make it usable by the community what add to developer documentation and include as much information as possible about how to use this class | 0 |

35,645 | 31,930,956,722 | IssuesEvent | 2023-09-19 07:23:20 | SonarSource/sonarlint-visualstudio | https://api.github.com/repos/SonarSource/sonarlint-visualstudio | closed | Fix MEF importing constructors - calls GetService | Type: Task Infrastructure Threading | Sub Group of Ticket https://github.com/SonarSource/sonarlint-visualstudio/issues/4512

Depends on #4859

TODO

- [x] ActiveDocumentLocator

- [x] VsInfoService

- [x] AbsoluteFilePathLocator

- [x] ToolWindowService

- [x] TeamExplorerController

- [x] VcxRequestFactory

- [x] StatusBarNotifier

- [x] InfoBarManager -> Only needs a test to confirm it is free threaded

- [x] IssueLocationActionsSourceProvider

- [x] StatusRequestHandler

- [x] ErrorListHelper

- [x] TaintIssuesSynchronizer | 1.0 | Fix MEF importing constructors - calls GetService - Sub Group of Ticket https://github.com/SonarSource/sonarlint-visualstudio/issues/4512

Depends on #4859

TODO

- [x] ActiveDocumentLocator

- [x] VsInfoService

- [x] AbsoluteFilePathLocator

- [x] ToolWindowService

- [x] TeamExplorerController

- [x] VcxRequestFactory

- [x] StatusBarNotifier

- [x] InfoBarManager -> Only needs a test to confirm it is free threaded

- [x] IssueLocationActionsSourceProvider

- [x] StatusRequestHandler

- [x] ErrorListHelper

- [x] TaintIssuesSynchronizer | infrastructure | fix mef importing constructors calls getservice sub group of ticket depends on todo activedocumentlocator vsinfoservice absolutefilepathlocator toolwindowservice teamexplorercontroller vcxrequestfactory statusbarnotifier infobarmanager only needs a test to confirm it is free threaded issuelocationactionssourceprovider statusrequesthandler errorlisthelper taintissuessynchronizer | 1 |

231,302 | 25,499,103,928 | IssuesEvent | 2022-11-28 01:07:00 | joshbnewton31080/NodeGoat | https://api.github.com/repos/joshbnewton31080/NodeGoat | closed | CVE-2017-16137 (Medium) detected in debug-2.2.0.tgz - autoclosed | security vulnerability | ## CVE-2017-16137 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.2.0.tgz</b></p></summary>

<p>small debugging utility</p>

<p>Library home page: <a href="https://registry.npmjs.org/debug/-/debug-2.2.0.tgz">https://registry.npmjs.org/debug/-/debug-2.2.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/connect/node_modules/debug/package.json</p>

<p>

Dependency Hierarchy:

- helmet-2.3.0.tgz (Root Library)

- connect-3.4.1.tgz

- :x: **debug-2.2.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/joshbnewton31080/NodeGoat/commit/a3c66c1e0636f4caeff5096ac64c1f21ebad3387">a3c66c1e0636f4caeff5096ac64c1f21ebad3387</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The debug module is vulnerable to regular expression denial of service when untrusted user input is passed into the o formatter. It takes around 50k characters to block for 2 seconds making this a low severity issue.

<p>Publish Date: 2018-06-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-16137>CVE-2017-16137</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-16137">https://nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-16137</a></p>

<p>Release Date: 2018-06-07</p>

<p>Fix Resolution (debug): 2.6.9</p>

<p>Direct dependency fix Resolution (helmet): 3.8.2</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue | True | CVE-2017-16137 (Medium) detected in debug-2.2.0.tgz - autoclosed - ## CVE-2017-16137 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.2.0.tgz</b></p></summary>

<p>small debugging utility</p>

<p>Library home page: <a href="https://registry.npmjs.org/debug/-/debug-2.2.0.tgz">https://registry.npmjs.org/debug/-/debug-2.2.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/connect/node_modules/debug/package.json</p>

<p>

Dependency Hierarchy:

- helmet-2.3.0.tgz (Root Library)

- connect-3.4.1.tgz

- :x: **debug-2.2.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/joshbnewton31080/NodeGoat/commit/a3c66c1e0636f4caeff5096ac64c1f21ebad3387">a3c66c1e0636f4caeff5096ac64c1f21ebad3387</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The debug module is vulnerable to regular expression denial of service when untrusted user input is passed into the o formatter. It takes around 50k characters to block for 2 seconds making this a low severity issue.

<p>Publish Date: 2018-06-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-16137>CVE-2017-16137</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-16137">https://nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-16137</a></p>

<p>Release Date: 2018-06-07</p>

<p>Fix Resolution (debug): 2.6.9</p>

<p>Direct dependency fix Resolution (helmet): 3.8.2</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue | non_infrastructure | cve medium detected in debug tgz autoclosed cve medium severity vulnerability vulnerable library debug tgz small debugging utility library home page a href path to dependency file package json path to vulnerable library node modules connect node modules debug package json dependency hierarchy helmet tgz root library connect tgz x debug tgz vulnerable library found in head commit a href found in base branch master vulnerability details the debug module is vulnerable to regular expression denial of service when untrusted user input is passed into the o formatter it takes around characters to block for seconds making this a low severity issue publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution debug direct dependency fix resolution helmet rescue worker helmet automatic remediation is available for this issue | 0 |

33,730 | 27,759,902,765 | IssuesEvent | 2023-03-16 07:20:10 | nilearn/nilearn | https://api.github.com/repos/nilearn/nilearn | closed | Document all GitHub Actions workflows | Infrastructure Developer Experience | The [README.md](https://github.com/nilearn/nilearn/blob/main/.github/workflows/README.md) in `.github/workflows` only documents the documentation build workflow. It can be useful to comprehensively document all the GiHub Actions workflows here.

Linking comments: https://github.com/nilearn/nilearn/pull/3536#pullrequestreview-1315426922 and https://github.com/nilearn/nilearn/pull/3536#issuecomment-1446297374 | 1.0 | Document all GitHub Actions workflows - The [README.md](https://github.com/nilearn/nilearn/blob/main/.github/workflows/README.md) in `.github/workflows` only documents the documentation build workflow. It can be useful to comprehensively document all the GiHub Actions workflows here.

Linking comments: https://github.com/nilearn/nilearn/pull/3536#pullrequestreview-1315426922 and https://github.com/nilearn/nilearn/pull/3536#issuecomment-1446297374 | infrastructure | document all github actions workflows the in github workflows only documents the documentation build workflow it can be useful to comprehensively document all the gihub actions workflows here linking comments and | 1 |

75,175 | 25,569,390,607 | IssuesEvent | 2022-11-30 16:30:22 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | CMS: Explore behavior where banners can be published but not assigned to a system. | Defect Needs refining | ## Describe the defect

There are currently ~100 banner alerts for "Website coming soon" that are published, but that are not assigned to a system (NOTE, I've only checked a few, the assumption is that this is the case for all.) This shouldn't be possible, the assigned system is a required field.

A couple example:

http://prod.cms.va.gov/va-hines-health-care/vamc-banner-alert/2021-09-22/website-coming-soon-not-the-official-va-hines-health-care-website

http://prod.cms.va.gov/va-pittsburgh-health-care/vamc-banner-alert/2021-04-07/website-coming-soon-not-the-official-va-houston-health-care-website

> Hunch from Swirt:

> Then my next hunch is that it is actually set, but something about the winnower or the view is not making it appear as set..... though if it were truly set, it would show on the FE. This definitely needs a ticket for more investigation. There is some logic on that select list that disables items that are not within your section. It may run afoul for admins who have no section.

## To Reproduce

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

## AC / Expected behavior

A clear and concise description of what you expected to happen.

## Screenshots

If applicable, add screenshots to help explain your problem.

## Additional context

Add any other context about the problem here. Reach out to the Product Managers to determine if it should be escalated as critical (prevents users from accomplishing their work with no known workaround and needs to be addressed within 2 business days).

## Desktop (please complete the following information if relevant, or delete)

- OS: [e.g. iOS]

- Browser [e.g. chrome, safari]

- Version [e.g. 22]

## Labels

(You can delete this section once it's complete)

- [x] Issue type (red) (defaults to "Defect")

- [ ] CMS subsystem (green)

- [ ] CMS practice area (blue)

- [x] CMS workstream (orange) (not needed for bug tickets)

- [ ] CMS-supported product (black)

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- [ ] `Platform CMS Team`

- [ ] `Sitewide Crew`

- [ ] `⭐️ Sitewide CMS`

- [ ] `⭐️ Public Websites`

- [x] `⭐️ Facilities`

- [ ] `⭐️ User support`

| 1.0 | CMS: Explore behavior where banners can be published but not assigned to a system. - ## Describe the defect

There are currently ~100 banner alerts for "Website coming soon" that are published, but that are not assigned to a system (NOTE, I've only checked a few, the assumption is that this is the case for all.) This shouldn't be possible, the assigned system is a required field.

A couple example:

http://prod.cms.va.gov/va-hines-health-care/vamc-banner-alert/2021-09-22/website-coming-soon-not-the-official-va-hines-health-care-website

http://prod.cms.va.gov/va-pittsburgh-health-care/vamc-banner-alert/2021-04-07/website-coming-soon-not-the-official-va-houston-health-care-website

> Hunch from Swirt:

> Then my next hunch is that it is actually set, but something about the winnower or the view is not making it appear as set..... though if it were truly set, it would show on the FE. This definitely needs a ticket for more investigation. There is some logic on that select list that disables items that are not within your section. It may run afoul for admins who have no section.

## To Reproduce

Steps to reproduce the behavior:

1. Go to '...'

2. Click on '....'

3. Scroll down to '....'

4. See error

## AC / Expected behavior

A clear and concise description of what you expected to happen.

## Screenshots

If applicable, add screenshots to help explain your problem.

## Additional context

Add any other context about the problem here. Reach out to the Product Managers to determine if it should be escalated as critical (prevents users from accomplishing their work with no known workaround and needs to be addressed within 2 business days).

## Desktop (please complete the following information if relevant, or delete)

- OS: [e.g. iOS]

- Browser [e.g. chrome, safari]

- Version [e.g. 22]

## Labels

(You can delete this section once it's complete)

- [x] Issue type (red) (defaults to "Defect")

- [ ] CMS subsystem (green)

- [ ] CMS practice area (blue)

- [x] CMS workstream (orange) (not needed for bug tickets)

- [ ] CMS-supported product (black)

### CMS Team

Please check the team(s) that will do this work.

- [ ] `Program`

- [ ] `Platform CMS Team`

- [ ] `Sitewide Crew`

- [ ] `⭐️ Sitewide CMS`

- [ ] `⭐️ Public Websites`

- [x] `⭐️ Facilities`

- [ ] `⭐️ User support`

| non_infrastructure | cms explore behavior where banners can be published but not assigned to a system describe the defect there are currently banner alerts for website coming soon that are published but that are not assigned to a system note i ve only checked a few the assumption is that this is the case for all this shouldn t be possible the assigned system is a required field a couple example hunch from swirt then my next hunch is that it is actually set but something about the winnower or the view is not making it appear as set though if it were truly set it would show on the fe this definitely needs a ticket for more investigation there is some logic on that select list that disables items that are not within your section it may run afoul for admins who have no section to reproduce steps to reproduce the behavior go to click on scroll down to see error ac expected behavior a clear and concise description of what you expected to happen screenshots if applicable add screenshots to help explain your problem additional context add any other context about the problem here reach out to the product managers to determine if it should be escalated as critical prevents users from accomplishing their work with no known workaround and needs to be addressed within business days desktop please complete the following information if relevant or delete os browser version labels you can delete this section once it s complete issue type red defaults to defect cms subsystem green cms practice area blue cms workstream orange not needed for bug tickets cms supported product black cms team please check the team s that will do this work program platform cms team sitewide crew ⭐️ sitewide cms ⭐️ public websites ⭐️ facilities ⭐️ user support | 0 |

20,873 | 14,222,468,590 | IssuesEvent | 2020-11-17 16:55:26 | pulibrary/dspace-cli | https://api.github.com/repos/pulibrary/dspace-cli | closed | Update the git repository URLs on updatespace to no longer use bitbucket repositories | infrastructure | Currently there are repositories using bitbucket remote URLs on the server environment. | 1.0 | Update the git repository URLs on updatespace to no longer use bitbucket repositories - Currently there are repositories using bitbucket remote URLs on the server environment. | infrastructure | update the git repository urls on updatespace to no longer use bitbucket repositories currently there are repositories using bitbucket remote urls on the server environment | 1 |

13,091 | 10,119,601,226 | IssuesEvent | 2019-07-31 11:54:48 | raiden-network/raiden-services | https://api.github.com/repos/raiden-network/raiden-services | opened | Add withdraw support to register-service script | Enhancement :star2: Infrastructure :office: | We should add support for withdrawing the deposit from the ServiceRegistry. | 1.0 | Add withdraw support to register-service script - We should add support for withdrawing the deposit from the ServiceRegistry. | infrastructure | add withdraw support to register service script we should add support for withdrawing the deposit from the serviceregistry | 1 |

14,381 | 10,776,195,323 | IssuesEvent | 2019-11-03 19:11:58 | xxks-kkk/shuati | https://api.github.com/repos/xxks-kkk/shuati | opened | Add test infrastructure for python code | infrastructure | Add test infrastructure code for python to allow push-button test on all python programs | 1.0 | Add test infrastructure for python code - Add test infrastructure code for python to allow push-button test on all python programs | infrastructure | add test infrastructure for python code add test infrastructure code for python to allow push button test on all python programs | 1 |

17,971 | 23,983,657,651 | IssuesEvent | 2022-09-13 17:03:54 | mdsreq-fga-unb/2022.1-Capita-C | https://api.github.com/repos/mdsreq-fga-unb/2022.1-Capita-C | closed | Processo de Requisitos | requisito ProcessoRequisitos REQ Comentários Professor | Da mesma maneira como foi realizado na unidade 1, está sendo listado um conjunto de atividades: quando serão feitas, por quem, etc. Onde essas atividades estão ou serão posicionadas no processo de trabalho?

**Fonte**

https://mdsreq-fga-unb.github.io/2022.1-Capita-C/processoER/

| 1.0 | Processo de Requisitos - Da mesma maneira como foi realizado na unidade 1, está sendo listado um conjunto de atividades: quando serão feitas, por quem, etc. Onde essas atividades estão ou serão posicionadas no processo de trabalho?

**Fonte**

https://mdsreq-fga-unb.github.io/2022.1-Capita-C/processoER/

| non_infrastructure | processo de requisitos da mesma maneira como foi realizado na unidade está sendo listado um conjunto de atividades quando serão feitas por quem etc onde essas atividades estão ou serão posicionadas no processo de trabalho fonte | 0 |

752,863 | 26,329,740,628 | IssuesEvent | 2023-01-10 09:53:40 | swhustla/comparison_of_time_series_methods | https://api.github.com/repos/swhustla/comparison_of_time_series_methods | closed | Prophet error for Straight line | bug High priority | Looks like seasonality for the straight line data returns an empty list that causes the further error. | 1.0 | Prophet error for Straight line - Looks like seasonality for the straight line data returns an empty list that causes the further error. | non_infrastructure | prophet error for straight line looks like seasonality for the straight line data returns an empty list that causes the further error | 0 |

18,827 | 13,129,291,116 | IssuesEvent | 2020-08-06 13:41:18 | bootstrapworld/curriculum | https://api.github.com/repos/bootstrapworld/curriculum | opened | In printed pyret workbooks where students write code for functions the word "end" hangs far below the last line | Infrastructure | Could it be moved up?

For example on p. 26 of the algebra workbook... | 1.0 | In printed pyret workbooks where students write code for functions the word "end" hangs far below the last line - Could it be moved up?

For example on p. 26 of the algebra workbook... | infrastructure | in printed pyret workbooks where students write code for functions the word end hangs far below the last line could it be moved up for example on p of the algebra workbook | 1 |

24,445 | 17,268,961,541 | IssuesEvent | 2021-07-22 17:05:06 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | [wasm] wasm-tools workload installation fails randomly | area-Infrastructure-mono | The installation for the performace CI builds is failing randomly like here https://helixri8s23ayyeko0k025g8.blob.core.windows.net/dotnet-runtime-refs-heads-alicial-wasmaotmicr448c314f6f6941daa4/x64.micro.net6.0.Partition11/console.dbe24a6e.log?sv=2019-07-07&se=2021-10-19T17%3A57%3A11Z&sr=c&sp=rl&sig=uFl%2FQ3RhxB9G%2BIQx120Qtws8L1maPMrQIg4vLmt8auM%3D

Usually even after retry.

```

[2021/07/21 11:53:11][INFO] $ dotnet --info

[2021/07/21 11:53:11][INFO] .NET SDK (reflecting any global.json):

[2021/07/21 11:53:11][INFO] Version: 6.0.100-rc.1.21371.4

[2021/07/21 11:53:11][INFO] Commit: ebd2d1d607

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] Runtime Environment:

[2021/07/21 11:53:11][INFO] OS Name: ubuntu

[2021/07/21 11:53:11][INFO] OS Version: 18.04

[2021/07/21 11:53:11][INFO] OS Platform: Linux

[2021/07/21 11:53:11][INFO] RID: ubuntu.18.04-x64

[2021/07/21 11:53:11][INFO] Base Path: /home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/sdk/6.0.100-rc.1.21371.4/

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] Host (useful for support):

[2021/07/21 11:53:11][INFO] Version: 6.0.0-rc.1.21369.14

[2021/07/21 11:53:11][INFO] Commit: bd35632892

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] .NET SDKs installed:

[2021/07/21 11:53:11][INFO] 6.0.100-rc.1.21371.4 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/sdk]

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] .NET runtimes installed:

[2021/07/21 11:53:11][INFO] Microsoft.AspNetCore.App 6.0.0-rc.1.21370.12 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/shared/Microsoft.AspNetCore.App]

[2021/07/21 11:53:11][INFO] Microsoft.NETCore.App 6.0.0-rc.1.21369.14 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/shared/Microsoft.NETCore.App]

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] To install additional .NET runtimes or SDKs:

[2021/07/21 11:53:11][INFO] https://aka.ms/dotnet-download

[2021/07/21 11:53:11][INFO] $ pushd "/home/helixbot/work/A7280930/p/performance/src/benchmarks/micro/wasmaot"

[2021/07/21 11:53:11][INFO] $ dotnet workload install wasm-tools

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:12][INFO] Skip NuGet package signing validation. NuGet signing validation is not available on Linux or macOS https://aka.ms/workloadskippackagevalidation .

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.android.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.ios.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.maui.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.macos.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.workload.emscripten.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.workload.mono.toolchain.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.tvos.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.maccatalyst.

[2021/07/21 11:53:12][INFO] Installing workload manifest microsoft.net.sdk.macos version 12.0.100-preview.7183.

[2021/07/21 11:53:13][INFO] Installing workload manifest microsoft.net.sdk.ios version 15.0.100-preview.7183.

[2021/07/21 11:53:13][INFO] Installing workload manifest microsoft.net.sdk.maui version 6.0.100-preview.6.1003+sha.5c159aabf-azdo.4977641.

[2021/07/21 11:53:14][INFO] Installing workload manifest microsoft.net.sdk.android version 30.0.100-preview.7.91.

[2021/07/21 11:53:14][INFO] Installing workload manifest microsoft.net.sdk.maccatalyst version 15.0.100-preview.7183.

[2021/07/21 11:53:14][INFO] Installing workload manifest microsoft.net.workload.mono.toolchain version 6.0.0-rc.1.21371.7.

[2021/07/21 11:53:15][INFO] Installing workload manifest microsoft.net.sdk.tvos version 15.0.100-preview.7183.

[2021/07/21 11:53:16][INFO] Installing pack Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:17][INFO] Writing workload pack installation record for Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:17][INFO] Installing pack Microsoft.NETCore.App.Runtime.Mono.browser-wasm version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:18][INFO] Workload installation failed, rolling back installed packs...

[2021/07/21 11:53:18][INFO] Installing workload manifest microsoft.net.sdk.macos version 11.3.100-ci.main.723.

[2021/07/21 11:53:18][INFO] Installation roll back failed: Failed to install manifest microsoft.net.sdk.macos version 11.3.100-ci.main.723: The transaction has aborted..

[2021/07/21 11:53:18][INFO] Rolling back pack Microsoft.NET.Runtime.WebAssembly.Sdk installation...

[2021/07/21 11:53:18][INFO] Uninstalling workload pack Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7.

[2021/07/21 11:53:18][INFO] Rolling back pack Microsoft.NETCore.App.Runtime.Mono.browser-wasm installation...

[2021/07/21 11:53:18][INFO] Workload installation failed: Downloading microsoft.netcore.app.runtime.mono.browser-wasm version 6.0.0-rc.1.21371.7 failed

[2021/07/21 11:53:18][INFO] install

[2021/07/21 11:53:18][INFO] Install a workload.

[2021/07/21 11:53:18][INFO]

[2021/07/21 11:53:18][INFO] Usage:

[2021/07/21 11:53:18][INFO] dotnet [options] workload install [<WORKLOAD_ID>...]

[2021/07/21 11:53:18][INFO]

[2021/07/21 11:53:18][INFO] Arguments:

[2021/07/21 11:53:18][INFO] <WORKLOAD_ID> The NuGet Package Id of the workload to install.

[2021/07/21 11:53:18][INFO]

[2021/07/21 11:53:18][INFO] Options:

[2021/07/21 11:53:18][INFO] --sdk-version <VERSION> The version of the SDK.

[2021/07/21 11:53:18][INFO] --configfile <FILE> The NuGet configuration file to use.

[2021/07/21 11:53:18][INFO] -s, --source <SOURCE> The NuGet package source to use

[2021/07/21 11:53:18][INFO] during the restore.

[2021/07/21 11:53:18][INFO] --skip-manifest-update Skip updating the workload manifests.

[2021/07/21 11:53:18][INFO] --from-cache <from-cache> Complete the operation from cache

[2021/07/21 11:53:18][INFO] (offline).

[2021/07/21 11:53:18][INFO] --download-to-cache Download packages needed to install a

[2021/07/21 11:53:18][INFO] <download-to-cache> workload to a folder which can be

[2021/07/21 11:53:18][INFO] used for offline installation.

[2021/07/21 11:53:18][INFO] --include-previews Allow prerelease workload manifests.

[2021/07/21 11:53:18][INFO] --temp-dir <temp-dir> Configure the temporary directory

[2021/07/21 11:53:18][INFO] used for this command (must be

[2021/07/21 11:53:18][INFO] secure).

[2021/07/21 11:53:18][INFO] --disable-parallel Prevent restoring multiple projects

[2021/07/21 11:53:18][INFO] in parallel.

[2021/07/21 11:53:18][INFO] --ignore-failed-sources Treat package source failures as

[2021/07/21 11:53:18][INFO] warnings.

[2021/07/21 11:53:18][INFO] --no-cache Do not cache packages and http

[2021/07/21 11:53:18][INFO] requests.

[2021/07/21 11:53:18][INFO] --interactive Allows the command to stop and wait

[2021/07/21 11:53:18][INFO] for user input or action (for example

[2021/07/21 11:53:18][INFO] to complete authentication).

[2021/07/21 11:53:18][INFO] -v, --verbosity Set the MSBuild verbosity level.

[2021/07/21 11:53:18][INFO] <d|detailed|diag|diagnostic|m|minimal| Allowed values are q[uiet],

[2021/07/21 11:53:18][INFO] n|normal|q|quiet> m[inimal], n[ormal], d[etailed], and

[2021/07/21 11:53:18][INFO] diag[nostic].

[2021/07/21 11:53:18][INFO] -?, -h, --help Show command line help.

[2021/07/21 11:53:18][INFO]

[2021/07/21 11:53:18][INFO] $ popd

[2021/07/21 11:53:18][INFO] $ pushd "/home/helixbot/work/A7280930/p/performance/src/benchmarks/micro/wasmaot"

[2021/07/21 11:53:18][INFO] $ dotnet workload install wasm-tools

[2021/07/21 11:53:18][INFO]

[2021/07/21 11:53:18][INFO] Skip NuGet package signing validation. NuGet signing validation is not available on Linux or macOS https://aka.ms/workloadskippackagevalidation .

[2021/07/21 11:53:18][INFO] Updated advertising manifest microsoft.net.sdk.maui.

[2021/07/21 11:53:18][INFO] Updated advertising manifest microsoft.net.workload.emscripten.

[2021/07/21 11:53:18][INFO] Updated advertising manifest microsoft.net.sdk.android.

[2021/07/21 11:53:19][INFO] Updated advertising manifest microsoft.net.sdk.maccatalyst.

[2021/07/21 11:53:19][INFO] Updated advertising manifest microsoft.net.sdk.macos.

[2021/07/21 11:53:19][INFO] Updated advertising manifest microsoft.net.sdk.tvos.

[2021/07/21 11:53:19][INFO] Updated advertising manifest microsoft.net.workload.mono.toolchain.

[2021/07/21 11:53:19][INFO] Updated advertising manifest microsoft.net.sdk.ios.

[2021/07/21 11:53:19][INFO] Installing pack Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:20][INFO] Writing workload pack installation record for Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:20][INFO] Installing pack Microsoft.NETCore.App.Runtime.Mono.browser-wasm version 6.0.0-rc.1.21371.7...

[2021/07/21 11:53:20][INFO] Workload installation failed, rolling back installed packs...

[2021/07/21 11:53:20][INFO] Uninstalling workload pack Microsoft.NET.Runtime.WebAssembly.Sdk version 6.0.0-rc.1.21371.7.

[2021/07/21 11:53:20][INFO] Rolling back pack Microsoft.NET.Runtime.WebAssembly.Sdk installation...

[2021/07/21 11:53:20][INFO] Rolling back pack Microsoft.NETCore.App.Runtime.Mono.browser-wasm installation...

[2021/07/21 11:53:20][INFO] Workload installation failed: Downloading microsoft.netcore.app.runtime.mono.browser-wasm version 6.0.0-rc.1.21371.7 failed

[2021/07/21 11:53:20][INFO] install

[2021/07/21 11:53:20][INFO] Install a workload.

[2021/07/21 11:53:20][INFO]

[2021/07/21 11:53:20][INFO] Usage:

[2021/07/21 11:53:20][INFO] dotnet [options] workload install [<WORKLOAD_ID>...]

[2021/07/21 11:53:20][INFO]

[2021/07/21 11:53:20][INFO] Arguments:

[2021/07/21 11:53:20][INFO] <WORKLOAD_ID> The NuGet Package Id of the workload to install.

[2021/07/21 11:53:20][INFO]

[2021/07/21 11:53:20][INFO] Options:

[2021/07/21 11:53:20][INFO] --sdk-version <VERSION> The version of the SDK.

[2021/07/21 11:53:20][INFO] --configfile <FILE> The NuGet configuration file to use.

[2021/07/21 11:53:20][INFO] -s, --source <SOURCE> The NuGet package source to use

[2021/07/21 11:53:20][INFO] during the restore.

[2021/07/21 11:53:20][INFO] --skip-manifest-update Skip updating the workload manifests.

[2021/07/21 11:53:20][INFO] --from-cache <from-cache> Complete the operation from cache

[2021/07/21 11:53:20][INFO] (offline).

[2021/07/21 11:53:20][INFO] --download-to-cache Download packages needed to install a

[2021/07/21 11:53:20][INFO] <download-to-cache> workload to a folder which can be

[2021/07/21 11:53:20][INFO] used for offline installation.

[2021/07/21 11:53:20][INFO] --include-previews Allow prerelease workload manifests.

[2021/07/21 11:53:20][INFO] --temp-dir <temp-dir> Configure the temporary directory

[2021/07/21 11:53:20][INFO] used for this command (must be

[2021/07/21 11:53:20][INFO] secure).

[2021/07/21 11:53:20][INFO] --disable-parallel Prevent restoring multiple projects

[2021/07/21 11:53:20][INFO] in parallel.

[2021/07/21 11:53:20][INFO] --ignore-failed-sources Treat package source failures as

[2021/07/21 11:53:20][INFO] warnings.

[2021/07/21 11:53:20][INFO] --no-cache Do not cache packages and http

[2021/07/21 11:53:20][INFO] requests.

[2021/07/21 11:53:20][INFO] --interactive Allows the command to stop and wait

[2021/07/21 11:53:20][INFO] for user input or action (for example

[2021/07/21 11:53:20][INFO] to complete authentication).

[2021/07/21 11:53:20][INFO] -v, --verbosity Set the MSBuild verbosity level.

[2021/07/21 11:53:20][INFO] <d|detailed|diag|diagnostic|m|minimal| Allowed values are q[uiet],

[2021/07/21 11:53:20][INFO] n|normal|q|quiet> m[inimal], n[ormal], d[etailed], and

[2021/07/21 11:53:20][INFO] diag[nostic].

[2021/07/21 11:53:20][INFO] -?, -h, --help Show command line help.

``` | 1.0 | [wasm] wasm-tools workload installation fails randomly - The installation for the performace CI builds is failing randomly like here https://helixri8s23ayyeko0k025g8.blob.core.windows.net/dotnet-runtime-refs-heads-alicial-wasmaotmicr448c314f6f6941daa4/x64.micro.net6.0.Partition11/console.dbe24a6e.log?sv=2019-07-07&se=2021-10-19T17%3A57%3A11Z&sr=c&sp=rl&sig=uFl%2FQ3RhxB9G%2BIQx120Qtws8L1maPMrQIg4vLmt8auM%3D

Usually even after retry.

```

[2021/07/21 11:53:11][INFO] $ dotnet --info

[2021/07/21 11:53:11][INFO] .NET SDK (reflecting any global.json):

[2021/07/21 11:53:11][INFO] Version: 6.0.100-rc.1.21371.4

[2021/07/21 11:53:11][INFO] Commit: ebd2d1d607

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] Runtime Environment:

[2021/07/21 11:53:11][INFO] OS Name: ubuntu

[2021/07/21 11:53:11][INFO] OS Version: 18.04

[2021/07/21 11:53:11][INFO] OS Platform: Linux

[2021/07/21 11:53:11][INFO] RID: ubuntu.18.04-x64

[2021/07/21 11:53:11][INFO] Base Path: /home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/sdk/6.0.100-rc.1.21371.4/

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] Host (useful for support):

[2021/07/21 11:53:11][INFO] Version: 6.0.0-rc.1.21369.14

[2021/07/21 11:53:11][INFO] Commit: bd35632892

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] .NET SDKs installed:

[2021/07/21 11:53:11][INFO] 6.0.100-rc.1.21371.4 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/sdk]

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] .NET runtimes installed:

[2021/07/21 11:53:11][INFO] Microsoft.AspNetCore.App 6.0.0-rc.1.21370.12 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/shared/Microsoft.AspNetCore.App]

[2021/07/21 11:53:11][INFO] Microsoft.NETCore.App 6.0.0-rc.1.21369.14 [/home/helixbot/work/A7280930/p/performance/tools/dotnet/x64/shared/Microsoft.NETCore.App]

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:11][INFO] To install additional .NET runtimes or SDKs:

[2021/07/21 11:53:11][INFO] https://aka.ms/dotnet-download

[2021/07/21 11:53:11][INFO] $ pushd "/home/helixbot/work/A7280930/p/performance/src/benchmarks/micro/wasmaot"

[2021/07/21 11:53:11][INFO] $ dotnet workload install wasm-tools

[2021/07/21 11:53:11][INFO]

[2021/07/21 11:53:12][INFO] Skip NuGet package signing validation. NuGet signing validation is not available on Linux or macOS https://aka.ms/workloadskippackagevalidation .

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.android.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.ios.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.maui.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.macos.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.workload.emscripten.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.workload.mono.toolchain.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.tvos.

[2021/07/21 11:53:12][INFO] Updated advertising manifest microsoft.net.sdk.maccatalyst.

[2021/07/21 11:53:12][INFO] Installing workload manifest microsoft.net.sdk.macos version 12.0.100-preview.7183.