Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

106,168 | 9,115,897,779 | IssuesEvent | 2019-02-22 07:09:44 | scylladb/scylla | https://api.github.com/repos/scylladb/scylla | closed | cql SELECT limit broken | CQL bug dtest | Scylla version: 84465c23c4d74ad5f2e12d6092427d4c157c964f

Broken dtests:

[consistency_test.TestConsistency.short_read_reversed_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/consistency_test/TestConsistency/short_read_reversed_test/)

[consistency_test.TestConsistency.short_read_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/consistency_test/TestConsistency/short_read_test/)

[cql_additional_tests.CQLAdditionalTests.limit_date_value_out_of_range_upper_limit_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/CQLAdditionalTests/limit_date_value_out_of_range_upper_limit_test/)

[cql_additional_tests.TestCQL.exclusive_slice_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/exclusive_slice_test/)

[cql_additional_tests.TestCQL.limit_bugs_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/limit_bugs_test)

[cql_additional_tests.TestCQL.limit_sparse_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/limit_sparse_test)

[cql_additional_tests.TestCQL.range_with_deletes_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/range_with_deletes_test)

[cql_additional_tests.TestCQL.static_with_limit_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/static_with_limit_test)

[cqlsh_tests.cqlsh_tests.CqlshSmokeTest.select_all_cl_quorum_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cqlsh_tests/cqlsh_tests.CqlshSmokeTest/select_all_cl_quorum_test)

[paging_test.TestPagingWithModifiers.test_with_limit](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/paging_test/TestCQL/TestPagingWithModifiers/test_with_limit)

@psarna wrote:

> in one or two places the per_partition_limit is compared against numeric_limits<>::max() to decide whether we need post-processing or not, and I see that it's sometimes set to <int32_t>::max() instead of <uint32_t>::max() | 1.0 | cql SELECT limit broken - Scylla version: 84465c23c4d74ad5f2e12d6092427d4c157c964f

Broken dtests:

[consistency_test.TestConsistency.short_read_reversed_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/consistency_test/TestConsistency/short_read_reversed_test/)

[consistency_test.TestConsistency.short_read_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/consistency_test/TestConsistency/short_read_test/)

[cql_additional_tests.CQLAdditionalTests.limit_date_value_out_of_range_upper_limit_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/CQLAdditionalTests/limit_date_value_out_of_range_upper_limit_test/)

[cql_additional_tests.TestCQL.exclusive_slice_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/exclusive_slice_test/)

[cql_additional_tests.TestCQL.limit_bugs_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/limit_bugs_test)

[cql_additional_tests.TestCQL.limit_sparse_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/limit_sparse_test)

[cql_additional_tests.TestCQL.range_with_deletes_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/range_with_deletes_test)

[cql_additional_tests.TestCQL.static_with_limit_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cql_additional_tests/TestCQL/static_with_limit_test)

[cqlsh_tests.cqlsh_tests.CqlshSmokeTest.select_all_cl_quorum_test](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/cqlsh_tests/cqlsh_tests.CqlshSmokeTest/select_all_cl_quorum_test)

[paging_test.TestPagingWithModifiers.test_with_limit](http://jenkins.cloudius-systems.com:8080/view/master/job/scylla-master/job/dtest-release/36/testReport/paging_test/TestCQL/TestPagingWithModifiers/test_with_limit)

@psarna wrote:

> in one or two places the per_partition_limit is compared against numeric_limits<>::max() to decide whether we need post-processing or not, and I see that it's sometimes set to <int32_t>::max() instead of <uint32_t>::max() | non_infrastructure | cql select limit broken scylla version broken dtests psarna wrote in one or two places the per partition limit is compared against numeric limits max to decide whether we need post processing or not and i see that it s sometimes set to max instead of max | 0 |

20,452 | 13,927,835,011 | IssuesEvent | 2020-10-21 20:28:09 | cmu-db/noisepage | https://api.github.com/repos/cmu-db/noisepage | closed | jemalloc and ASAN / Valgrind don't get along | deferred infrastructure | ASAN and Valgrind currently do not catch any memory issues in tests.

As the title suggests linking in jemalloc results in some wonky issues where ASAN and Valgrind stops being able to detect leaks. The Internet suggests that either jemalloc doesn't expose the necessary interface or the dynamic linking interferes with instrumentation of the two tools. A quick search did not turn up any widely accepted explanation or fix.

See #56 for more information. | 1.0 | jemalloc and ASAN / Valgrind don't get along - ASAN and Valgrind currently do not catch any memory issues in tests.

As the title suggests linking in jemalloc results in some wonky issues where ASAN and Valgrind stops being able to detect leaks. The Internet suggests that either jemalloc doesn't expose the necessary interface or the dynamic linking interferes with instrumentation of the two tools. A quick search did not turn up any widely accepted explanation or fix.

See #56 for more information. | infrastructure | jemalloc and asan valgrind don t get along asan and valgrind currently do not catch any memory issues in tests as the title suggests linking in jemalloc results in some wonky issues where asan and valgrind stops being able to detect leaks the internet suggests that either jemalloc doesn t expose the necessary interface or the dynamic linking interferes with instrumentation of the two tools a quick search did not turn up any widely accepted explanation or fix see for more information | 1 |

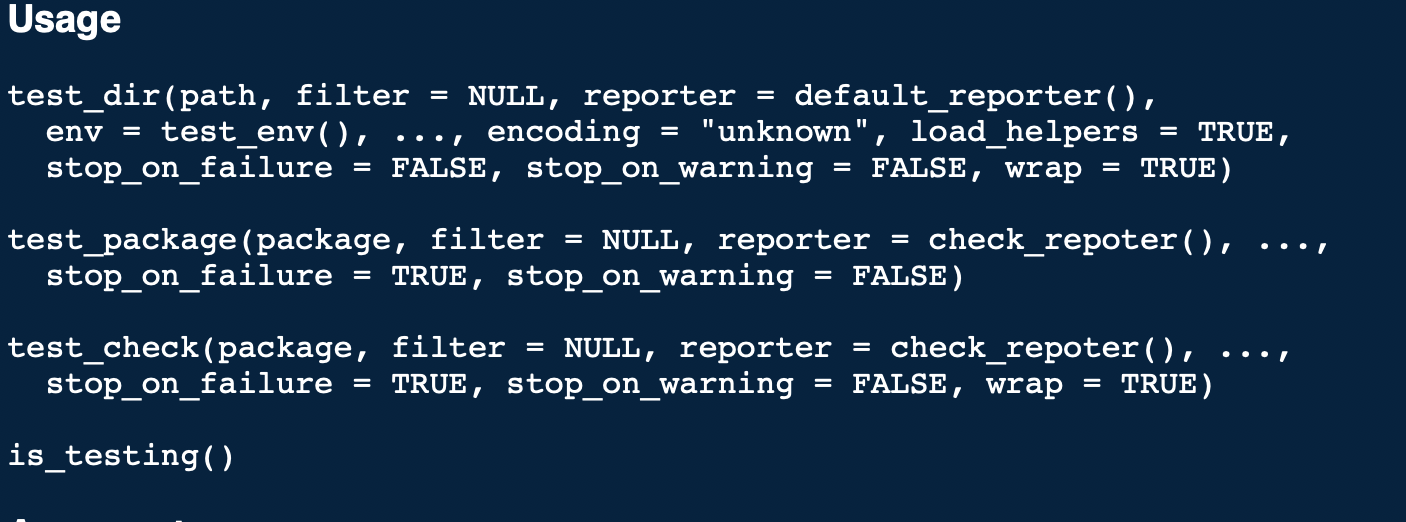

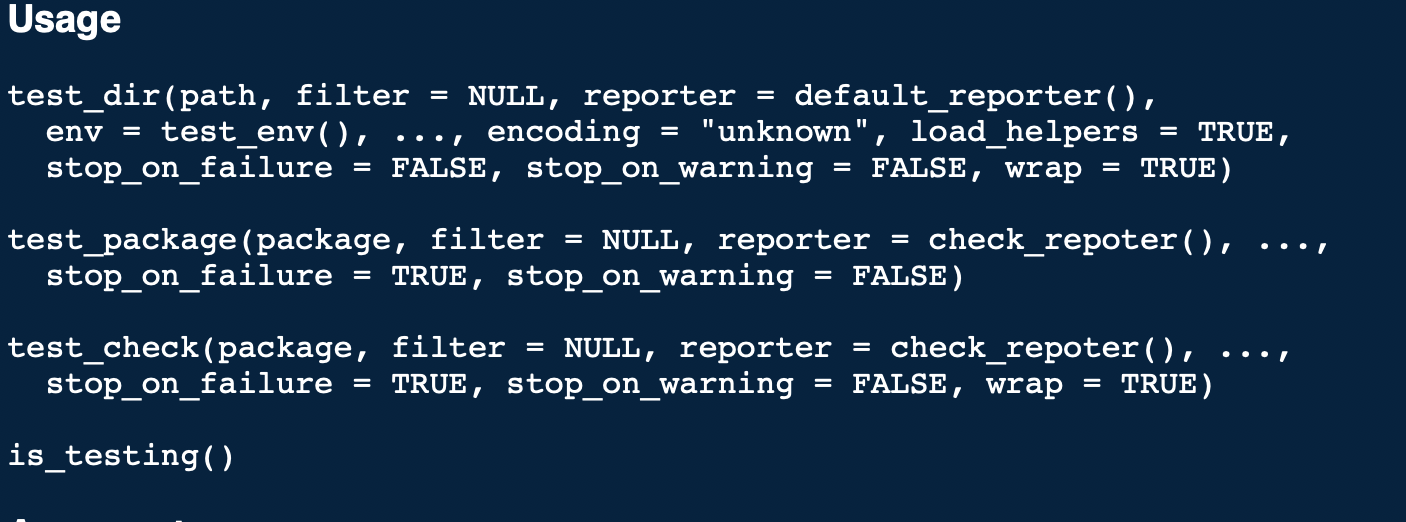

83,505 | 10,330,386,776 | IssuesEvent | 2019-09-02 14:33:13 | r-lib/testthat | https://api.github.com/repos/r-lib/testthat | closed | question on stop_on_failure default | documentation | I see the following in `?testthat::test_dir`

Why is the default for `stop_on_failure` in `test_dir()` `FALSE` when it is `TRUE` for `test_package()` and `test_check()`? Is that intentional or an oversight?

In my opinion, the default behavior of a testing framework should be to fail (cause a non-zero exit code) if any tests fail. If you agree with me and this is an oversight, please let me know and I'd be happy to make a PR to change it.

Thanks! | 1.0 | question on stop_on_failure default - I see the following in `?testthat::test_dir`

Why is the default for `stop_on_failure` in `test_dir()` `FALSE` when it is `TRUE` for `test_package()` and `test_check()`? Is that intentional or an oversight?

In my opinion, the default behavior of a testing framework should be to fail (cause a non-zero exit code) if any tests fail. If you agree with me and this is an oversight, please let me know and I'd be happy to make a PR to change it.

Thanks! | non_infrastructure | question on stop on failure default i see the following in testthat test dir why is the default for stop on failure in test dir false when it is true for test package and test check is that intentional or an oversight in my opinion the default behavior of a testing framework should be to fail cause a non zero exit code if any tests fail if you agree with me and this is an oversight please let me know and i d be happy to make a pr to change it thanks | 0 |

260,798 | 19,685,839,718 | IssuesEvent | 2022-01-11 22:02:53 | suaraujo/DeepSpyce | https://api.github.com/repos/suaraujo/DeepSpyce | opened | Documentacion | documentation | 1. Armar la documentación del repo.

2. Subir y configurar la documentación armada a [Read the Docs](https://readthedocs.org/). | 1.0 | Documentacion - 1. Armar la documentación del repo.

2. Subir y configurar la documentación armada a [Read the Docs](https://readthedocs.org/). | non_infrastructure | documentacion armar la documentación del repo subir y configurar la documentación armada a | 0 |

23,353 | 16,088,036,367 | IssuesEvent | 2021-04-26 13:39:20 | gnosis/safe-ios | https://api.github.com/repos/gnosis/safe-ios | closed | Regenerate new distribution certificate before 21 April 2021 | No QA infrastructure | Distribution Certificate will no longer be valid. To generate a new certificate, sign in and visit [Certificates, Identifiers & Profiles](https://developer.apple.com/account/).

| 1.0 | Regenerate new distribution certificate before 21 April 2021 - Distribution Certificate will no longer be valid. To generate a new certificate, sign in and visit [Certificates, Identifiers & Profiles](https://developer.apple.com/account/).

| infrastructure | regenerate new distribution certificate before april distribution certificate will no longer be valid to generate a new certificate sign in and visit | 1 |

235,055 | 19,294,851,640 | IssuesEvent | 2021-12-12 12:18:24 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | reopened | Nightly Integration Testing Report | nightly-testing | ### ✅ [build against repo] Integration test succeeded!

Requested by @DellaBitta on commit 483c74a4047a46a676204dc9f03893302bbcc81d

Last updated: Sun Dec 12 03:33 PST 2021

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/1569113726)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against SDK] Integration test succeeded!

Requested by @firebase-workflow-trigger[bot] on commit 483c74a4047a46a676204dc9f03893302bbcc81d

Last updated: Sat Dec 11 04:11 PST 2021

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/1566837664)**

| 1.0 | Nightly Integration Testing Report - ### ✅ [build against repo] Integration test succeeded!

Requested by @DellaBitta on commit 483c74a4047a46a676204dc9f03893302bbcc81d

Last updated: Sun Dec 12 03:33 PST 2021

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/1569113726)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against SDK] Integration test succeeded!

Requested by @firebase-workflow-trigger[bot] on commit 483c74a4047a46a676204dc9f03893302bbcc81d

Last updated: Sat Dec 11 04:11 PST 2021

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/1566837664)**

| non_infrastructure | nightly integration testing report ✅ nbsp integration test succeeded requested by dellabitta on commit last updated sun dec pst ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated sat dec pst | 0 |

28,830 | 23,513,499,391 | IssuesEvent | 2022-08-18 18:57:50 | google/docsy | https://api.github.com/repos/google/docsy | closed | Google search console should be registered as an Open Source project | admin e0-minutes e1-hours infrastructure search p2-medium | Google search console account should be registered as ~nonprofit~ open source project so that we can turn off ads. | 1.0 | Google search console should be registered as an Open Source project - Google search console account should be registered as ~nonprofit~ open source project so that we can turn off ads. | infrastructure | google search console should be registered as an open source project google search console account should be registered as nonprofit open source project so that we can turn off ads | 1 |

19,000 | 13,184,852,858 | IssuesEvent | 2020-08-12 20:13:31 | Kemmey/Kemmey-TeslaWatch-Public | https://api.github.com/repos/Kemmey/Kemmey-TeslaWatch-Public | closed | Download issue | AppStore infrastructure issue | Have purchased app 3 times and will not download. Shows purchased in App Store but doesn’t show up on watch or iPhone. Purchased notation shows up subdued on App Store. Won’t show up purchased on account in App Store on watch. Have latest watch is installed. | 1.0 | Download issue - Have purchased app 3 times and will not download. Shows purchased in App Store but doesn’t show up on watch or iPhone. Purchased notation shows up subdued on App Store. Won’t show up purchased on account in App Store on watch. Have latest watch is installed. | infrastructure | download issue have purchased app times and will not download shows purchased in app store but doesn’t show up on watch or iphone purchased notation shows up subdued on app store won’t show up purchased on account in app store on watch have latest watch is installed | 1 |

170,629 | 20,883,788,729 | IssuesEvent | 2022-03-23 01:12:59 | mattdanielbrown/primed | https://api.github.com/repos/mattdanielbrown/primed | opened | CVE-2021-33502 (High) detected in normalize-url-2.0.1.tgz, normalize-url-3.3.0.tgz | security vulnerability | ## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>normalize-url-2.0.1.tgz</b>, <b>normalize-url-3.3.0.tgz</b></p></summary>

<p>

<details><summary><b>normalize-url-2.0.1.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-2.0.1.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-2.0.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/cacheable-request/node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- gulp-imagemin-6.2.0.tgz (Root Library)

- imagemin-gifsicle-6.0.1.tgz

- gifsicle-4.0.1.tgz

- bin-wrapper-4.1.0.tgz

- download-7.1.0.tgz

- got-8.3.2.tgz

- cacheable-request-2.1.4.tgz

- :x: **normalize-url-2.0.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>normalize-url-3.3.0.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-3.3.0.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-3.3.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- cssnano-4.1.10.tgz (Root Library)

- cssnano-preset-default-4.0.7.tgz

- postcss-normalize-url-4.0.1.tgz

- :x: **normalize-url-3.3.0.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The normalize-url package before 4.5.1, 5.x before 5.3.1, and 6.x before 6.0.1 for Node.js has a ReDoS (regular expression denial of service) issue because it has exponential performance for data: URLs.

<p>Publish Date: 2021-05-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33502>CVE-2021-33502</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502</a></p>

<p>Release Date: 2021-05-24</p>

<p>Fix Resolution (normalize-url): 4.5.1</p>

<p>Direct dependency fix Resolution (cssnano): 5.0.0-rc.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-33502 (High) detected in normalize-url-2.0.1.tgz, normalize-url-3.3.0.tgz - ## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>normalize-url-2.0.1.tgz</b>, <b>normalize-url-3.3.0.tgz</b></p></summary>

<p>

<details><summary><b>normalize-url-2.0.1.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-2.0.1.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-2.0.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/cacheable-request/node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- gulp-imagemin-6.2.0.tgz (Root Library)

- imagemin-gifsicle-6.0.1.tgz

- gifsicle-4.0.1.tgz

- bin-wrapper-4.1.0.tgz

- download-7.1.0.tgz

- got-8.3.2.tgz

- cacheable-request-2.1.4.tgz

- :x: **normalize-url-2.0.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>normalize-url-3.3.0.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-3.3.0.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-3.3.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- cssnano-4.1.10.tgz (Root Library)

- cssnano-preset-default-4.0.7.tgz

- postcss-normalize-url-4.0.1.tgz

- :x: **normalize-url-3.3.0.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The normalize-url package before 4.5.1, 5.x before 5.3.1, and 6.x before 6.0.1 for Node.js has a ReDoS (regular expression denial of service) issue because it has exponential performance for data: URLs.

<p>Publish Date: 2021-05-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33502>CVE-2021-33502</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502</a></p>

<p>Release Date: 2021-05-24</p>

<p>Fix Resolution (normalize-url): 4.5.1</p>

<p>Direct dependency fix Resolution (cssnano): 5.0.0-rc.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_infrastructure | cve high detected in normalize url tgz normalize url tgz cve high severity vulnerability vulnerable libraries normalize url tgz normalize url tgz normalize url tgz normalize a url library home page a href path to dependency file package json path to vulnerable library node modules cacheable request node modules normalize url package json dependency hierarchy gulp imagemin tgz root library imagemin gifsicle tgz gifsicle tgz bin wrapper tgz download tgz got tgz cacheable request tgz x normalize url tgz vulnerable library normalize url tgz normalize a url library home page a href path to dependency file package json path to vulnerable library node modules normalize url package json dependency hierarchy cssnano tgz root library cssnano preset default tgz postcss normalize url tgz x normalize url tgz vulnerable library found in base branch master vulnerability details the normalize url package before x before and x before for node js has a redos regular expression denial of service issue because it has exponential performance for data urls publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution normalize url direct dependency fix resolution cssnano rc step up your open source security game with whitesource | 0 |

226,452 | 17,352,296,056 | IssuesEvent | 2021-07-29 10:13:48 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | [Request] Add return type info to doc for FocusOnKeyCallback | d: api docs documentation easy fix framework passed first triage proposal | This is the DartDoc & declaration of `FocusOnKeyCallback`:

```

/// Signature of a callback used by [Focus.onKey] and [FocusScope.onKey]

/// to receive key events.

///

/// The [node] is the node that received the event.

typedef FocusOnKeyCallback = bool Function(FocusNode node, RawKeyEvent event);

```

The callback has a return type of `bool`, but how that value will be used is not clear. (Sorry if this is an obvious one :p) | 1.0 | [Request] Add return type info to doc for FocusOnKeyCallback - This is the DartDoc & declaration of `FocusOnKeyCallback`:

```

/// Signature of a callback used by [Focus.onKey] and [FocusScope.onKey]

/// to receive key events.

///

/// The [node] is the node that received the event.

typedef FocusOnKeyCallback = bool Function(FocusNode node, RawKeyEvent event);

```

The callback has a return type of `bool`, but how that value will be used is not clear. (Sorry if this is an obvious one :p) | non_infrastructure | add return type info to doc for focusonkeycallback this is the dartdoc declaration of focusonkeycallback signature of a callback used by and to receive key events the is the node that received the event typedef focusonkeycallback bool function focusnode node rawkeyevent event the callback has a return type of bool but how that value will be used is not clear sorry if this is an obvious one p | 0 |

16,310 | 11,907,758,472 | IssuesEvent | 2020-03-30 23:04:37 | breadware/registrant | https://api.github.com/repos/breadware/registrant | opened | Estruturar o projeto conforme Gitflow | infrastructure | Estruturar as branches do projeto de acordo com o [modelo Gitflow](https://www.atlassian.com/git/tutorials/comparing-workflows/gitflow-workflow). Isto facilitará o controle de branches e issues. | 1.0 | Estruturar o projeto conforme Gitflow - Estruturar as branches do projeto de acordo com o [modelo Gitflow](https://www.atlassian.com/git/tutorials/comparing-workflows/gitflow-workflow). Isto facilitará o controle de branches e issues. | infrastructure | estruturar o projeto conforme gitflow estruturar as branches do projeto de acordo com o isto facilitará o controle de branches e issues | 1 |

148,693 | 5,694,538,949 | IssuesEvent | 2017-04-15 14:14:19 | Radarr/Radarr | https://api.github.com/repos/Radarr/Radarr | closed | [API] SizeOnDisk is 0 on Request by movieId | bug priority:low | As the Title says. The Field SizeOnDisk is correct if the whole MovieList is requested.

If the Movie is requested by id, the Field SizeOnDisk is 0.

List Request:

Single Request:

| 1.0 | [API] SizeOnDisk is 0 on Request by movieId - As the Title says. The Field SizeOnDisk is correct if the whole MovieList is requested.

If the Movie is requested by id, the Field SizeOnDisk is 0.

List Request:

Single Request:

| non_infrastructure | sizeondisk is on request by movieid as the title says the field sizeondisk is correct if the whole movielist is requested if the movie is requested by id the field sizeondisk is list request single request | 0 |

11,703 | 9,380,699,298 | IssuesEvent | 2019-04-04 17:44:50 | ressec/kakoo-gaming | https://api.github.com/repos/ressec/kakoo-gaming | opened | FEAT - Create the Gaming Server project | Act.: Infrastructure Env.: Development Task Typ.: Feature | ## Description

This project is containing the server part of the game. | 1.0 | FEAT - Create the Gaming Server project - ## Description

This project is containing the server part of the game. | infrastructure | feat create the gaming server project description this project is containing the server part of the game | 1 |

313,130 | 9,557,521,268 | IssuesEvent | 2019-05-03 11:50:10 | fritzing/fritzing-app | https://api.github.com/repos/fritzing/fritzing-app | closed | real cables? | Priority-Low enhancement imported | _From [irasc...@gmail.com](https://code.google.com/u/104729248032245122687/) on April 17, 2013 04:00:34_

feature idea: off-board components like displays and speakers, connectable with "real" cables to the various jacks

_Original issue: http://code.google.com/p/fritzing/issues/detail?id=2516_

| 1.0 | real cables? - _From [irasc...@gmail.com](https://code.google.com/u/104729248032245122687/) on April 17, 2013 04:00:34_

feature idea: off-board components like displays and speakers, connectable with "real" cables to the various jacks

_Original issue: http://code.google.com/p/fritzing/issues/detail?id=2516_

| non_infrastructure | real cables from on april feature idea off board components like displays and speakers connectable with real cables to the various jacks original issue | 0 |

287,223 | 31,827,556,516 | IssuesEvent | 2023-09-14 08:31:23 | gardener/gardener | https://api.github.com/repos/gardener/gardener | closed | ☂️ Improve Gardener Operator | kind/enhancement area/dev-productivity area/security area/delivery area/open-source area/high-availability area/ipcei | **How to categorize this issue?**

<!--

Please select area, kind, and priority for this issue. This helps the community categorizing it.

Replace below TODOs or exchange the existing identifiers with those that fit best in your opinion.

If multiple identifiers make sense you can also state the commands multiple times, e.g.

/area control-plane

/area auto-scaling

...

"/area" identifiers: audit-logging|auto-scaling|backup|certification|control-plane-migration|control-plane|cost|delivery|dev-productivity|disaster-recovery|documentation|high-availability|logging|metering|monitoring|networking|open-source|ops-productivity|os|performance|quality|robustness|scalability|security|storage|testing|usability|user-management

"/kind" identifiers: api-change|bug|cleanup|discussion|enhancement|epic|impediment|poc|post-mortem|question|regression|task|technical-debt|test

-->

/area dev-productivity delivery high-availability security open-source

/kind enhancement

As of today, Gardener has no notion of managing any components running in the garden cluster. As a consequence, human operators have to manually deploy the "garden system components" (`vertical-pod-autoscaler`, `hvpa-controller`, `etcd-druid`, `nginx-ingress`) and the "virtual garden control plane components" (`etcd`/`backup-restore`, `kube-apiserver`, `kube-controller-manager`) as well as the "Gardener control plane components".

However, logically these processes are quite similar to what we have already implemented in the `gardenlet` for seed or shoot clusters.

The idea of the `gardener-operator` component is re-using existing code and sharing it with `gardenlet` so that the needed components can be made available more easily in all environments.

As part of this, `gardener-resource-manager` is becoming a central "garden system component" as well since it has a lot of features like token invalidation, seccomp defaulting, token requesting, HA config injection, etc.

## Tasks

- Initial skaffolding and introduction of new component

- [x] https://github.com/gardener/gardener/pull/7009

- Manage Garden System Components

- [x] Fine-grained `PriorityClass`es: https://github.com/gardener/gardener/pull/7009

- [x] `gardener-resource-manager`: https://github.com/gardener/gardener/pull/7009

- [x] `vertical-pod-autoscaler`: https://github.com/gardener/gardener/pull/7009

- [x] `hvpa-controller`: https://github.com/gardener/gardener/pull/7048

- [x] `etcd-druid`: https://github.com/gardener/gardener/pull/7048

- [x] `istio`: https://github.com/gardener/gardener/pull/7817

- Manage Virtual Garden Control Plane Components

- [x] `etcd`/`backup-restore` (via `Etcd` custom resource): https://github.com/gardener/gardener/pull/7067

- [x] `kube-apiserver` exposure

- [x] via `LoadBalancer` service: https://github.com/gardener/gardener/pull/7238

- [x] via `Istio`: https://github.com/gardener/gardener/pull/7953

- [x] https://github.com/gardener/gardener/pull/8156

- [x] https://github.com/gardener/gardener/pull/8302

- [x] `kube-apiserver`: https://github.com/gardener/gardener/pull/7730

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7242

- [x] https://github.com/gardener/gardener/pull/7243

- [x] https://github.com/gardener/gardener/pull/7710

- [x] https://github.com/gardener/gardener/pull/7258

- [x] https://github.com/gardener/gardener/pull/7498

- [x] https://github.com/gardener/gardener/pull/7518

- [x] https://github.com/gardener/gardener/pull/7558

- [x] https://github.com/gardener/gardener/pull/7567

- [x] https://github.com/gardener/gardener/pull/7573

- [x] https://github.com/gardener/gardener/pull/7687

- [x] https://github.com/gardener/gardener/pull/7682

- [x] https://github.com/gardener/gardener/pull/7693

- Follow-up work:

- [x] https://github.com/gardener/gardener/pull/7734

- [x] https://github.com/gardener/gardener/pull/7735

- [x] https://github.com/gardener/gardener/pull/7877

- [x] `virtual-garden-gardener-resource-manager`: https://github.com/gardener/gardener/pull/7881

- [x] `kube-controller-manager`: https://github.com/gardener/gardener/pull/7931

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7858

- [x] https://github.com/gardener/gardener/pull/7887

- Manage Gardener Control Plane Components

- [x] `gardener-{apiserver,controller-manager,...}`: https://github.com/gardener/gardener/pull/8309

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7998

- [x] https://github.com/gardener/gardener/pull/8234

- [x] https://github.com/gardener/gardener/pull/8215

- [x] https://github.com/gardener/gardener/pull/8235

- [x] https://github.com/gardener/gardener/pull/8244

- [x] https://github.com/gardener/gardener/pull/8251

- [x] https://github.com/gardener/gardener/pull/8282

- [x] https://github.com/gardener/gardener/pull/8283

- [x] https://github.com/gardener/gardener/pull/8262

- [x] https://github.com/gardener/gardener/pull/8265

- [x] https://github.com/gardener/gardener/pull/8276

- [x] https://github.com/gardener/gardener/pull/8396

- Manage Garden Observability Components

- [x] `nginx-ingress-controller` (~[or ideally `istio` only](https://github.com/gardener/gardener/issues/7232)~): https://github.com/gardener/gardener/pull/7945

- [x] `kube-state-metrics`: https://github.com/gardener/gardener/pull/7836

- [x] `fluent-operator`: https://github.com/gardener/gardener/pull/8240

- [x] `vali`: https://github.com/gardener/gardener/pull/8240

- [x] `plutono`: https://github.com/gardener/gardener/pull/8301

- [x] `gardener-metrics-exporter`: https://github.com/gardener/gardener/pull/8419

- Miscellaneous

- [x] https://github.com/gardener/gardener/pull/7859

- [x] https://github.com/gardener/gardener/pull/8158

- [x] https://github.com/gardener/gardener/pull/8346

- [x] https://github.com/gardener/gardener/pull/8439

- [x] Add support for credentials rotation (similar to how it works for [`Shoot`s](https://github.com/gardener/gardener/blob/master/docs/usage/shoot_credentials_rotation.md)): https://github.com/gardener/gardener/pull/7144

- [x] https://github.com/gardener/gardener/pull/8393

- [x] Extended validation (deletion protection, etc.): https://github.com/gardener/gardener/pull/7144

- [x] https://github.com/gardener/gardener/pull/7225

- [x] https://github.com/gardener/gardener/issues/6896

- [x] https://github.com/gardener/gardener/pull/8238

- [x] https://github.com/gardener/gardener/pull/8279

- [x] https://github.com/gardener/gardener/pull/8413

- [x] https://github.com/gardener/gardener/pull/8433

❗️ Please note ❗️

- It is NOT planned (at least for the foreseeable future until this component graduates) to manage any additional addons deployed to the garden cluster (Gardener dashboard, audit log components, ...) or any extensions.

- Managing the `gardenlet` via `gardener-operator` is NOT planned as well, but could be done. However, in production scenarios, the garden cluster is typically not a seed cluster at a same time, and generally we cannot assume that `gardener-operator` has network connectivity to the clusters where a `gardenlet` should be deployed to. Hence, managing the `gardenlet` has a low priority (if any at all). | True | ☂️ Improve Gardener Operator - **How to categorize this issue?**

<!--

Please select area, kind, and priority for this issue. This helps the community categorizing it.

Replace below TODOs or exchange the existing identifiers with those that fit best in your opinion.

If multiple identifiers make sense you can also state the commands multiple times, e.g.

/area control-plane

/area auto-scaling

...

"/area" identifiers: audit-logging|auto-scaling|backup|certification|control-plane-migration|control-plane|cost|delivery|dev-productivity|disaster-recovery|documentation|high-availability|logging|metering|monitoring|networking|open-source|ops-productivity|os|performance|quality|robustness|scalability|security|storage|testing|usability|user-management

"/kind" identifiers: api-change|bug|cleanup|discussion|enhancement|epic|impediment|poc|post-mortem|question|regression|task|technical-debt|test

-->

/area dev-productivity delivery high-availability security open-source

/kind enhancement

As of today, Gardener has no notion of managing any components running in the garden cluster. As a consequence, human operators have to manually deploy the "garden system components" (`vertical-pod-autoscaler`, `hvpa-controller`, `etcd-druid`, `nginx-ingress`) and the "virtual garden control plane components" (`etcd`/`backup-restore`, `kube-apiserver`, `kube-controller-manager`) as well as the "Gardener control plane components".

However, logically these processes are quite similar to what we have already implemented in the `gardenlet` for seed or shoot clusters.

The idea of the `gardener-operator` component is re-using existing code and sharing it with `gardenlet` so that the needed components can be made available more easily in all environments.

As part of this, `gardener-resource-manager` is becoming a central "garden system component" as well since it has a lot of features like token invalidation, seccomp defaulting, token requesting, HA config injection, etc.

## Tasks

- Initial skaffolding and introduction of new component

- [x] https://github.com/gardener/gardener/pull/7009

- Manage Garden System Components

- [x] Fine-grained `PriorityClass`es: https://github.com/gardener/gardener/pull/7009

- [x] `gardener-resource-manager`: https://github.com/gardener/gardener/pull/7009

- [x] `vertical-pod-autoscaler`: https://github.com/gardener/gardener/pull/7009

- [x] `hvpa-controller`: https://github.com/gardener/gardener/pull/7048

- [x] `etcd-druid`: https://github.com/gardener/gardener/pull/7048

- [x] `istio`: https://github.com/gardener/gardener/pull/7817

- Manage Virtual Garden Control Plane Components

- [x] `etcd`/`backup-restore` (via `Etcd` custom resource): https://github.com/gardener/gardener/pull/7067

- [x] `kube-apiserver` exposure

- [x] via `LoadBalancer` service: https://github.com/gardener/gardener/pull/7238

- [x] via `Istio`: https://github.com/gardener/gardener/pull/7953

- [x] https://github.com/gardener/gardener/pull/8156

- [x] https://github.com/gardener/gardener/pull/8302

- [x] `kube-apiserver`: https://github.com/gardener/gardener/pull/7730

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7242

- [x] https://github.com/gardener/gardener/pull/7243

- [x] https://github.com/gardener/gardener/pull/7710

- [x] https://github.com/gardener/gardener/pull/7258

- [x] https://github.com/gardener/gardener/pull/7498

- [x] https://github.com/gardener/gardener/pull/7518

- [x] https://github.com/gardener/gardener/pull/7558

- [x] https://github.com/gardener/gardener/pull/7567

- [x] https://github.com/gardener/gardener/pull/7573

- [x] https://github.com/gardener/gardener/pull/7687

- [x] https://github.com/gardener/gardener/pull/7682

- [x] https://github.com/gardener/gardener/pull/7693

- Follow-up work:

- [x] https://github.com/gardener/gardener/pull/7734

- [x] https://github.com/gardener/gardener/pull/7735

- [x] https://github.com/gardener/gardener/pull/7877

- [x] `virtual-garden-gardener-resource-manager`: https://github.com/gardener/gardener/pull/7881

- [x] `kube-controller-manager`: https://github.com/gardener/gardener/pull/7931

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7858

- [x] https://github.com/gardener/gardener/pull/7887

- Manage Gardener Control Plane Components

- [x] `gardener-{apiserver,controller-manager,...}`: https://github.com/gardener/gardener/pull/8309

- Prerequisites / related upfront work:

- [x] https://github.com/gardener/gardener/pull/7998

- [x] https://github.com/gardener/gardener/pull/8234

- [x] https://github.com/gardener/gardener/pull/8215

- [x] https://github.com/gardener/gardener/pull/8235

- [x] https://github.com/gardener/gardener/pull/8244

- [x] https://github.com/gardener/gardener/pull/8251

- [x] https://github.com/gardener/gardener/pull/8282

- [x] https://github.com/gardener/gardener/pull/8283

- [x] https://github.com/gardener/gardener/pull/8262

- [x] https://github.com/gardener/gardener/pull/8265

- [x] https://github.com/gardener/gardener/pull/8276

- [x] https://github.com/gardener/gardener/pull/8396

- Manage Garden Observability Components

- [x] `nginx-ingress-controller` (~[or ideally `istio` only](https://github.com/gardener/gardener/issues/7232)~): https://github.com/gardener/gardener/pull/7945

- [x] `kube-state-metrics`: https://github.com/gardener/gardener/pull/7836

- [x] `fluent-operator`: https://github.com/gardener/gardener/pull/8240

- [x] `vali`: https://github.com/gardener/gardener/pull/8240

- [x] `plutono`: https://github.com/gardener/gardener/pull/8301

- [x] `gardener-metrics-exporter`: https://github.com/gardener/gardener/pull/8419

- Miscellaneous

- [x] https://github.com/gardener/gardener/pull/7859

- [x] https://github.com/gardener/gardener/pull/8158

- [x] https://github.com/gardener/gardener/pull/8346

- [x] https://github.com/gardener/gardener/pull/8439

- [x] Add support for credentials rotation (similar to how it works for [`Shoot`s](https://github.com/gardener/gardener/blob/master/docs/usage/shoot_credentials_rotation.md)): https://github.com/gardener/gardener/pull/7144

- [x] https://github.com/gardener/gardener/pull/8393

- [x] Extended validation (deletion protection, etc.): https://github.com/gardener/gardener/pull/7144

- [x] https://github.com/gardener/gardener/pull/7225

- [x] https://github.com/gardener/gardener/issues/6896

- [x] https://github.com/gardener/gardener/pull/8238

- [x] https://github.com/gardener/gardener/pull/8279

- [x] https://github.com/gardener/gardener/pull/8413

- [x] https://github.com/gardener/gardener/pull/8433

❗️ Please note ❗️

- It is NOT planned (at least for the foreseeable future until this component graduates) to manage any additional addons deployed to the garden cluster (Gardener dashboard, audit log components, ...) or any extensions.

- Managing the `gardenlet` via `gardener-operator` is NOT planned as well, but could be done. However, in production scenarios, the garden cluster is typically not a seed cluster at a same time, and generally we cannot assume that `gardener-operator` has network connectivity to the clusters where a `gardenlet` should be deployed to. Hence, managing the `gardenlet` has a low priority (if any at all). | non_infrastructure | ☂️ improve gardener operator how to categorize this issue please select area kind and priority for this issue this helps the community categorizing it replace below todos or exchange the existing identifiers with those that fit best in your opinion if multiple identifiers make sense you can also state the commands multiple times e g area control plane area auto scaling area identifiers audit logging auto scaling backup certification control plane migration control plane cost delivery dev productivity disaster recovery documentation high availability logging metering monitoring networking open source ops productivity os performance quality robustness scalability security storage testing usability user management kind identifiers api change bug cleanup discussion enhancement epic impediment poc post mortem question regression task technical debt test area dev productivity delivery high availability security open source kind enhancement as of today gardener has no notion of managing any components running in the garden cluster as a consequence human operators have to manually deploy the garden system components vertical pod autoscaler hvpa controller etcd druid nginx ingress and the virtual garden control plane components etcd backup restore kube apiserver kube controller manager as well as the gardener control plane components however logically these processes are quite similar to what we have already implemented in the gardenlet for seed or shoot clusters the idea of the gardener operator component is re using existing code and sharing it with gardenlet so that the needed components can be made available more easily in all environments as part of this gardener resource manager is becoming a central garden system component as well since it has a lot of features like token invalidation seccomp defaulting token requesting ha config injection etc tasks initial skaffolding and introduction of new component manage garden system components fine grained priorityclass es gardener resource manager vertical pod autoscaler hvpa controller etcd druid istio manage virtual garden control plane components etcd backup restore via etcd custom resource kube apiserver exposure via loadbalancer service via istio kube apiserver prerequisites related upfront work follow up work virtual garden gardener resource manager kube controller manager prerequisites related upfront work manage gardener control plane components gardener apiserver controller manager prerequisites related upfront work manage garden observability components nginx ingress controller kube state metrics fluent operator vali plutono gardener metrics exporter miscellaneous add support for credentials rotation similar to how it works for extended validation deletion protection etc ❗️ please note ❗️ it is not planned at least for the foreseeable future until this component graduates to manage any additional addons deployed to the garden cluster gardener dashboard audit log components or any extensions managing the gardenlet via gardener operator is not planned as well but could be done however in production scenarios the garden cluster is typically not a seed cluster at a same time and generally we cannot assume that gardener operator has network connectivity to the clusters where a gardenlet should be deployed to hence managing the gardenlet has a low priority if any at all | 0 |

16,323 | 3,518,045,552 | IssuesEvent | 2016-01-12 10:53:29 | I2PC/scipion | https://api.github.com/repos/I2PC/scipion | opened | Check failing tests of Significant and Compare-Reprojections | bug test | scipion test tests.em.protocols.test_protocols_xmipp_2d.TestXmippCompareReprojections

scipion test tests.em.workflows.test_workflow_initialvolume.TestSignificant | 1.0 | Check failing tests of Significant and Compare-Reprojections - scipion test tests.em.protocols.test_protocols_xmipp_2d.TestXmippCompareReprojections

scipion test tests.em.workflows.test_workflow_initialvolume.TestSignificant | non_infrastructure | check failing tests of significant and compare reprojections scipion test tests em protocols test protocols xmipp testxmippcomparereprojections scipion test tests em workflows test workflow initialvolume testsignificant | 0 |

82,114 | 15,646,505,639 | IssuesEvent | 2021-03-23 01:04:51 | jgeraigery/linux | https://api.github.com/repos/jgeraigery/linux | opened | CVE-2019-8980 (High) detected in linuxv5.2 | security vulnerability | ## CVE-2019-8980 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux/fs/exec.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux/fs/exec.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A memory leak in the kernel_read_file function in fs/exec.c in the Linux kernel through 4.20.11 allows attackers to cause a denial of service (memory consumption) by triggering vfs_read failures.

<p>Publish Date: 2019-02-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8980>CVE-2019-8980</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-8980">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-8980</a></p>

<p>Release Date: 2019-02-21</p>

<p>Fix Resolution: v5.1-rc1</p>

</p>

</details>

<p></p>

| True | CVE-2019-8980 (High) detected in linuxv5.2 - ## CVE-2019-8980 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torvalds/linux.git>https://github.com/torvalds/linux.git</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux/fs/exec.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux/fs/exec.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A memory leak in the kernel_read_file function in fs/exec.c in the Linux kernel through 4.20.11 allows attackers to cause a denial of service (memory consumption) by triggering vfs_read failures.

<p>Publish Date: 2019-02-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8980>CVE-2019-8980</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-8980">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-8980</a></p>

<p>Release Date: 2019-02-21</p>

<p>Fix Resolution: v5.1-rc1</p>

</p>

</details>

<p></p>

| non_infrastructure | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href vulnerable source files linux fs exec c linux fs exec c vulnerability details a memory leak in the kernel read file function in fs exec c in the linux kernel through allows attackers to cause a denial of service memory consumption by triggering vfs read failures publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution | 0 |

108,220 | 23,579,862,613 | IssuesEvent | 2022-08-23 06:37:31 | UnitTestBot/UTBotJava | https://api.github.com/repos/UnitTestBot/UTBotJava | closed | Unnecessary reflections in code generated for arrays | codegen | **Description**

In some cases codegen works with arrays using reflection even if there is no need for it.

**To Reproduce**

Run plugin on the following code:

```Java

package rndpkg;

class C {

int x;

public C(int x) { this.x = x; }

}

public class SomeClass {

public int f(C[] c) {

c[0].x -= 1;

return c[0].x;

}

}

```

**Expected behavior**

Produced tests don't use reflection.

**Actual behavior**

Tests look like this:

```Java

@Test

@DisplayName("f: c = C[0] -> throw ArrayIndexOutOfBoundsException")

public void testFThrowsAIOOBEWithEmptyObjectArray() throws Throwable {

SomeClass someClass = new SomeClass();

Object[] c = createArray("rndpkg.C", 0);

/* This test fails because method [rndpkg.SomeClass.f] produces [java.lang.ArrayIndexOutOfBoundsException: 0]

rndpkg.SomeClass.f(SomeClass.java:10) */

Class someClassClazz = Class.forName("rndpkg.SomeClass");

Class cType = Class.forName("[Lrndpkg.C;");

Method fMethod = someClassClazz.getDeclaredMethod("f", cType);

fMethod.setAccessible(true);

Object[] fMethodArguments = new Object[1];

fMethodArguments[0] = c;

try {

fMethod.invoke(someClass, fMethodArguments);

} catch (InvocationTargetException invocationTargetException) {

throw invocationTargetException.getTargetException();

}

}

```

| 1.0 | Unnecessary reflections in code generated for arrays - **Description**

In some cases codegen works with arrays using reflection even if there is no need for it.

**To Reproduce**

Run plugin on the following code:

```Java

package rndpkg;

class C {

int x;

public C(int x) { this.x = x; }

}

public class SomeClass {

public int f(C[] c) {

c[0].x -= 1;

return c[0].x;

}

}

```

**Expected behavior**

Produced tests don't use reflection.

**Actual behavior**

Tests look like this:

```Java

@Test

@DisplayName("f: c = C[0] -> throw ArrayIndexOutOfBoundsException")

public void testFThrowsAIOOBEWithEmptyObjectArray() throws Throwable {

SomeClass someClass = new SomeClass();

Object[] c = createArray("rndpkg.C", 0);

/* This test fails because method [rndpkg.SomeClass.f] produces [java.lang.ArrayIndexOutOfBoundsException: 0]

rndpkg.SomeClass.f(SomeClass.java:10) */

Class someClassClazz = Class.forName("rndpkg.SomeClass");

Class cType = Class.forName("[Lrndpkg.C;");

Method fMethod = someClassClazz.getDeclaredMethod("f", cType);

fMethod.setAccessible(true);

Object[] fMethodArguments = new Object[1];

fMethodArguments[0] = c;

try {

fMethod.invoke(someClass, fMethodArguments);

} catch (InvocationTargetException invocationTargetException) {

throw invocationTargetException.getTargetException();

}

}

```

| non_infrastructure | unnecessary reflections in code generated for arrays description in some cases codegen works with arrays using reflection even if there is no need for it to reproduce run plugin on the following code java package rndpkg class c int x public c int x this x x public class someclass public int f c c c x return c x expected behavior produced tests don t use reflection actual behavior tests look like this java test displayname f c c throw arrayindexoutofboundsexception public void testfthrowsaioobewithemptyobjectarray throws throwable someclass someclass new someclass object c createarray rndpkg c this test fails because method produces rndpkg someclass f someclass java class someclassclazz class forname rndpkg someclass class ctype class forname lrndpkg c method fmethod someclassclazz getdeclaredmethod f ctype fmethod setaccessible true object fmethodarguments new object fmethodarguments c try fmethod invoke someclass fmethodarguments catch invocationtargetexception invocationtargetexception throw invocationtargetexception gettargetexception | 0 |

24,171 | 16,986,655,319 | IssuesEvent | 2021-06-30 15:04:52 | celo-org/celo-blockchain | https://api.github.com/repos/celo-org/celo-blockchain | closed | Kubernetes Service session-affinity not working for multiple ports in the same service | blockchain current-sprint theme: infrastructure | Kubernetes service may not forward rpc calls (port 8545) and ws traffic (port 8546) from the same client when `sessionAffinity: ClientIP`. This causes multiple blockscout errors in mainnet now that the load that the indexer generates is higher and there are multiple service endpoints.

Issue in Kubernetes repository: https://github.com/kubernetes/kubernetes/issues/103000

Tentative solution will be setting up an internal nginx proxy inside each pod that handles the redirection to rpc or websocket port. | 1.0 | Kubernetes Service session-affinity not working for multiple ports in the same service - Kubernetes service may not forward rpc calls (port 8545) and ws traffic (port 8546) from the same client when `sessionAffinity: ClientIP`. This causes multiple blockscout errors in mainnet now that the load that the indexer generates is higher and there are multiple service endpoints.

Issue in Kubernetes repository: https://github.com/kubernetes/kubernetes/issues/103000

Tentative solution will be setting up an internal nginx proxy inside each pod that handles the redirection to rpc or websocket port. | infrastructure | kubernetes service session affinity not working for multiple ports in the same service kubernetes service may not forward rpc calls port and ws traffic port from the same client when sessionaffinity clientip this causes multiple blockscout errors in mainnet now that the load that the indexer generates is higher and there are multiple service endpoints issue in kubernetes repository tentative solution will be setting up an internal nginx proxy inside each pod that handles the redirection to rpc or websocket port | 1 |

76,497 | 26,459,780,175 | IssuesEvent | 2023-01-16 16:33:48 | zed-industries/feedback | https://api.github.com/repos/zed-industries/feedback | closed | Typescript support doesn't work | defect typescript language | ### Check for existing issues

- [X] Completed

### Describe the bug

Hey zed team!

This looks super promising and I'm excited to see where it goes from here.

Now, for the issue at hand - it looks like the typescript language server simply doesn't work.

Here's my config, just in case I misconfigured something on my end:

```json

// Zed settings

//

// For information on how to configure Zed, see the Zed

// documentation: https://zed.dev/docs/configuring-zed

//

// To see all of Zed's default settings without changing your

// custom settings, run the `open default settings` command

// from the command palette or from `Zed` application menu.

{

"buffer_font_size": 15,

"buffer_font_family": "CaskaydiaCove Nerd Font",

"autosave": "on_focus_change",

"lsp": {},

"terminal": {

"font_family": "CaskaydiaCove Nerd Font"

},

"enable_language_server": true,

// "vim_mode": true,

"tab_size": 2,

"language_overrides": {

"JavaScript": {

"format_on_save": {

"external": {

"command": "prettier",

"arguments": [

"--stdin-filepath",

"{buffer_path}"

]

}

}

},

"TypeScript": {

"format_on_save": {

"external": {

"command": "prettier",

"arguments": [

"--stdin-filepath",

"{buffer_path}"

]

}

}

}

},

"languages": {

"TypeScript": {

"format_on_save": "language_server",

"enable_language_server": true

},

"JavaScript": {

"format_on_save": "language_server",

"enable_language_server": true

}

}

}

```

### To reproduce

Open any typescript project

### Expected behavior

autocompletion, go to definition, etc` should work

### Environment

Zed 0.50.0 – /Applications/Zed.app

macOS 12.5

architecture x86_64

### If applicable, add mockups / screenshots to help explain present your vision of the feature

_No response_

### If applicable, attach your `~/Library/Logs/Zed/Zed.log` file to this issue

18:26:14 [INFO] ========== starting zed ==========

18:26:17 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.cargo/bin:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

18:27:09 [ERROR] Unhandled method completionItem/resolve

18:27:42 [ERROR] no worktree found for diagnostics

18:27:57 [ERROR] Os { code: 2, kind: NotFound, message: "No such file or directory" }

18:28:03 [INFO] set status on client 0: Authenticating

18:28:07 [INFO] set status on client 0: Connecting

18:28:07 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

18:28:08 [INFO] add connection to peer

18:28:08 [INFO] add_connection;

18:28:08 [INFO] set status to connected 0

18:28:08 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

18:28:34 [INFO] open paths ["/Users/lev/Projects/zencity/export-service"]

18:29:04 [ERROR] no worktree found for diagnostics

18:29:30 [INFO] Editor::page_down

18:29:30 [INFO] Editor::page_down

18:29:30 [INFO] Editor::page_down

18:29:32 [INFO] Editor::page_down

18:29:32 [INFO] Editor::page_down

18:29:32 [INFO] Editor::page_down

18:29:32 [INFO] Editor::page_down

18:29:33 [INFO] Editor::page_down

18:29:33 [INFO] Editor::page_down

18:29:33 [INFO] Editor::page_down

18:29:41 [ERROR] Unhandled method completionItem/resolve

18:29:51 [ERROR] Unhandled method completionItem/resolve

18:29:56 [ERROR] Unhandled method completionItem/resolve

18:30:06 [ERROR] Unhandled method completionItem/resolve

18:30:10 [ERROR] Unhandled method completionItem/resolve

18:30:16 [ERROR] Unhandled method completionItem/resolve

18:30:21 [ERROR] Unhandled method completionItem/resolve

18:30:24 [ERROR] Unhandled method completionItem/resolve

18:30:34 [ERROR] Unhandled method completionItem/resolve

18:30:37 [ERROR] Unhandled method completionItem/resolve

18:30:58 [ERROR] trailing comma at line 15 column 1

18:31:01 [ERROR] trailing comma at line 15 column 1

18:31:20 [ERROR] Unhandled method completionItem/resolve

18:31:25 [ERROR] Unhandled method completionItem/resolve

18:32:13 [ERROR] invalid header

18:32:13 [ERROR] oneshot canceled

18:32:13 [ERROR] Broken pipe (os error 32)

18:32:13 [ERROR] oneshot canceled

18:32:43 [ERROR] Unhandled method workspace/symbol

18:32:45 [ERROR] Unhandled method workspace/symbol

18:33:40 [ERROR] Unhandled method workspace/symbol

18:33:43 [INFO] Editor::page_up

18:33:43 [INFO] Editor::page_up

18:33:43 [INFO] Editor::page_up

18:33:43 [INFO] Editor::page_up

18:33:51 [ERROR] Unhandled method completionItem/resolve

18:33:53 [ERROR] Unhandled method completionItem/resolve

18:33:55 [ERROR] trailing comma at line 19 column 1

18:34:23 [ERROR] no worktree found for diagnostics

18:34:34 [ERROR] invalid header

18:34:34 [ERROR] oneshot canceled

18:34:34 [ERROR] Broken pipe (os error 32)

18:34:34 [ERROR] oneshot canceled

18:34:40 [INFO] Editor::page_down

18:34:40 [INFO] Editor::page_down

18:34:40 [INFO] Editor::page_down

18:34:40 [INFO] Editor::page_down

18:34:40 [INFO] Editor::page_down

18:34:40 [INFO] Editor::page_down

18:34:41 [INFO] Editor::page_down

18:34:41 [INFO] Editor::page_down

18:34:41 [INFO] Editor::page_down

18:34:41 [INFO] Editor::page_up

18:34:42 [INFO] Editor::page_up

18:34:47 [INFO] Editor::page_down

18:34:47 [INFO] Editor::page_up

18:34:47 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:48 [INFO] Editor::page_up

18:34:49 [INFO] Editor::page_up

18:34:49 [INFO] Editor::page_up

18:34:49 [INFO] Editor::page_down

18:38:37 [WARN] incoming response: unknown request connection_id=0 message_id=15 responding_to=835

18:38:41 [ERROR] no such worktree

18:38:52 [ERROR] oneshot canceled

18:39:09 [INFO] ========== starting zed ==========

18:39:09 [INFO] open paths ["/Users/lev/Projects/zencity/export-service"]

18:39:09 [INFO] set status on client 0: Authenticating

18:39:09 [INFO] set status on client 0: Connecting

18:39:09 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

18:39:10 [INFO] add connection to peer

18:39:10 [INFO] add_connection;

18:39:10 [INFO] set status to connected 0

18:39:10 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

18:39:12 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.nvm/versions/node/v16.15.0/bin:/Users/lev/.cargo/bin:/Applications/kitty.app/Contents/MacOS:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

18:41:07 [ERROR] invalid header

18:41:07 [ERROR] oneshot canceled

18:41:07 [ERROR] oneshot canceled

18:41:07 [ERROR] Broken pipe (os error 32)

18:41:24 [ERROR] Unhandled method completionItem/resolve

18:41:44 [ERROR] Unhandled method completionItem/resolve

18:41:55 [ERROR] no worktree found for diagnostics

18:41:59 [INFO] Editor::page_down

18:41:59 [INFO] Editor::page_down

18:41:59 [INFO] Editor::page_down

18:42:07 [INFO] Editor::page_down

18:42:07 [INFO] Editor::page_down

18:42:07 [INFO] Editor::page_down

18:42:09 [INFO] Editor::page_down

18:42:09 [INFO] Editor::page_down

18:42:09 [INFO] Editor::page_down

18:43:37 [INFO] Editor::page_down

18:43:37 [INFO] Editor::page_down

18:43:39 [ERROR] oneshot canceled

18:43:39 [ERROR] oneshot canceled

05:11:48 [INFO] ========== starting zed ==========

05:11:49 [INFO] set status on client 0: Authenticating

05:11:49 [INFO] set status on client 0: Connecting

05:11:50 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:11:50 [INFO] add connection to peer

05:11:50 [INFO] add_connection;

05:11:50 [INFO] set status to connected 0

05:11:50 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

05:11:51 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.cargo/bin:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

05:22:35 [INFO] ========== starting zed ==========

05:22:36 [INFO] open paths ["/Users/lev/Projects/zencity/export-service"]

05:22:36 [INFO] set status on client 0: Authenticating

05:22:36 [INFO] set status on client 0: Connecting

05:22:37 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:22:37 [INFO] add connection to peer

05:22:37 [INFO] add_connection;

05:22:37 [INFO] set status to connected 0

05:22:37 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

05:22:39 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.nvm/versions/node/v16.15.0/bin:/Users/lev/.cargo/bin:/Applications/kitty.app/Contents/MacOS:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

05:22:51 [ERROR] invalid header

05:22:51 [ERROR] Broken pipe (os error 32)

05:22:51 [ERROR] oneshot canceled

05:22:51 [ERROR] oneshot canceled

05:23:03 [INFO] Editor::page_down

05:23:03 [INFO] Editor::page_down

05:23:03 [INFO] Editor::page_down

05:23:03 [INFO] Editor::page_down

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:04 [INFO] Editor::page_up

05:23:19 [INFO] Editor::page_down

05:23:20 [INFO] Editor::page_down

05:23:20 [INFO] Editor::page_down

05:23:20 [INFO] Editor::page_down

05:24:56 [ERROR] Unhandled method completionItem/resolve

05:25:01 [ERROR] Unhandled method completionItem/resolve

05:25:03 [ERROR] Unhandled method completionItem/resolve

05:25:12 [ERROR] duplicate field `languages` at line 33 column 14

05:25:23 [ERROR] oneshot canceled

05:25:27 [INFO] ========== starting zed ==========

05:25:27 [ERROR] duplicate field `languages` at line 33 column 14

05:25:28 [INFO] open paths ["/Users/lev/Projects/zencity/export-service"]

05:25:28 [INFO] set status on client 0: Authenticating

05:25:28 [INFO] set status on client 0: Connecting

05:25:28 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:25:29 [INFO] add connection to peer

05:25:29 [INFO] add_connection;

05:25:29 [INFO] set status to connected 0

05:25:29 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

05:25:30 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.nvm/versions/node/v16.15.0/bin:/Users/lev/.cargo/bin:/Applications/kitty.app/Contents/MacOS:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

05:25:33 [ERROR] invalid header

05:25:33 [ERROR] oneshot canceled

05:25:33 [ERROR] Broken pipe (os error 32)

05:25:33 [ERROR] oneshot canceled

05:25:37 [INFO] Editor::page_down

05:25:37 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:38 [INFO] Editor::page_down

05:25:41 [ERROR] duplicate field `languages` at line 33 column 16

05:25:44 [ERROR] duplicate field `languages` at line 32 column 16

05:26:13 [ERROR] Unhandled method completionItem/resolve

05:26:20 [ERROR] duplicate field `languages` at line 32 column 16

05:26:33 [ERROR] oneshot canceled

05:26:35 [INFO] ========== starting zed ==========

05:26:35 [ERROR] duplicate field `languages` at line 32 column 16

05:26:35 [INFO] open paths ["/Users/lev/Projects/zencity/export-service"]

05:26:35 [INFO] set status on client 0: Authenticating

05:26:35 [INFO] set status on client 0: Connecting

05:26:36 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:26:36 [INFO] add connection to peer

05:26:36 [INFO] add_connection;

05:26:36 [INFO] set status to connected 0

05:26:36 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

05:26:37 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.nvm/versions/node/v16.15.0/bin:/Users/lev/.cargo/bin:/Applications/kitty.app/Contents/MacOS:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

05:27:11 [ERROR] no worktree found for diagnostics

05:27:14 [ERROR] invalid header

05:27:14 [ERROR] oneshot canceled

05:27:14 [ERROR] oneshot canceled

05:27:14 [ERROR] Broken pipe (os error 32)

05:27:25 [INFO] Editor::page_down

05:27:25 [INFO] Editor::page_down

05:27:25 [INFO] Editor::page_down

05:27:36 [ERROR] Unhandled method completionItem/resolve

05:27:40 [ERROR] Unhandled method completionItem/resolve

05:27:41 [ERROR] duplicate field `languages` at line 32 column 16

05:27:44 [ERROR] duplicate field `languages` at line 32 column 16

05:27:59 [ERROR] Unhandled method completionItem/resolve

05:28:01 [ERROR] duplicate field `languages` at line 43 column 16

05:28:55 [ERROR] Unhandled method completionItem/resolve

05:32:58 [ERROR] no worktree found for diagnostics

05:32:58 [ERROR] duplicate field `languages` at line 44 column 16

05:33:41 [INFO] Editor::page_down

05:33:41 [INFO] Editor::page_down

05:33:41 [INFO] Editor::page_down

05:33:42 [INFO] Editor::page_down

05:33:43 [INFO] Editor::page_down

05:33:43 [INFO] Editor::page_down

05:33:44 [INFO] Editor::page_down

05:33:44 [INFO] Editor::page_down

05:35:09 [ERROR] oneshot canceled

05:35:09 [ERROR] oneshot canceled

05:35:09 [ERROR] oneshot canceled

05:35:10 [INFO] ========== starting zed ==========

05:35:10 [ERROR] duplicate field `languages` at line 44 column 16

05:35:11 [INFO] set status on client 0: Authenticating

05:35:11 [INFO] set status on client 0: Connecting

05:35:11 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:35:13 [INFO] add connection to peer

05:35:13 [INFO] add_connection;

05:35:13 [INFO] set status to connected 0

05:35:13 [INFO] set status on client 0: Connected { connection_id: ConnectionId(0) }

05:35:13 [INFO] set environment variables from shell:/bin/zsh, path:/Users/lev/.pyenv/shims:/Users/lev/.nvm/versions/node/v12.16.1/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/Library/Apple/usr/bin:/Users/lev/.cargo/bin:/Users/lev/.fig/bin:/Users/lev/.local/bin:/Users/lev/go/bin:/Users/lev/.deno/bin

05:35:16 [INFO] open paths ["/Users/lev/Projects/zencity"]

05:35:21 [INFO] ========== starting zed ==========

05:35:21 [ERROR] duplicate field `languages` at line 44 column 16

05:35:22 [INFO] set status on client 0: Authenticating

05:35:22 [INFO] set status on client 0: Connecting

05:35:22 [INFO] connected to rpc endpoint https://collab.zed.dev/rpc

05:35:24 [INFO] add connection to peer

05:35:24 [INFO] add_connection;

05:35:24 [INFO] set status to connected 0