Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,562 | 8,824,462,452 | IssuesEvent | 2019-01-02 17:05:10 | ionic-team/capacitor | https://api.github.com/repos/ionic-team/capacitor | closed | Cordova Plugin: Casts to CordovaActivity will fail | known incompatible cordova plugin | `((CordovaActivity)this.cordova.getActivity())` will fail with an exception:

```

D/Cordova Intents Shim: Action: registerBroadcastReceiver

E/PluginManager: Uncaught exception from plugin

java.lang.ClassCastException: de.test.example.MainActivity cannot be cast to ```

org.apache.cordova.CordovaActivity

at com.darryncampbell.cordova.plugin.intent.IntentShim.execute(IntentShim.java:118)

at org.apache.cordova.CordovaPlugin.execute(CordovaPlugin.java:98)

at org.apache.cordova.PluginManager.exec(PluginManager.java:132)

at com.getcapacitor.MessageHandler.callCordovaPluginMethod(MessageHandler.java:73)

at com.getcapacitor.MessageHandler.postMessage(MessageHandler.java:46)

at android.os.MessageQueue.nativePollOnce(Native Method)

at android.os.MessageQueue.next(MessageQueue.java:323)

at android.os.Looper.loop(Looper.java:136)

at android.os.HandlerThread.run(HandlerThread.java:61)

```

`this.cordova.getActivity()` works. The problem is due to MainActivity not extending [CordovaActivity](https://github.com/apache/cordova-android/blob/master/framework/src/org/apache/cordova/CordovaActivity.java). This may be intended and a nofix issue.

Might be implemented by extending CordovaActivity and wrapping an [AppCompatDelegate ](https://developer.android.com/reference/android/support/v7/app/AppCompatDelegate) instead of [AppCompatActivity ](https://developer.android.com/reference/android/support/v7/app/AppCompatActivity) as a base class.

See this issue:

https://github.com/darryncampbell/darryncampbell-cordova-plugin-intent/issues/64

See changes neccessary for the plugin:

https://github.com/darryncampbell/darryncampbell-cordova-plugin-intent/pull/65/files

That line is not uncommon:

https://github.com/search?q=%28%28CordovaActivity%29this.cordova.getActivity%28%29%29&type=Code | True | Cordova Plugin: Casts to CordovaActivity will fail - `((CordovaActivity)this.cordova.getActivity())` will fail with an exception:

```

D/Cordova Intents Shim: Action: registerBroadcastReceiver

E/PluginManager: Uncaught exception from plugin

java.lang.ClassCastException: de.test.example.MainActivity cannot be cast to ```

org.apache.cordova.CordovaActivity

at com.darryncampbell.cordova.plugin.intent.IntentShim.execute(IntentShim.java:118)

at org.apache.cordova.CordovaPlugin.execute(CordovaPlugin.java:98)

at org.apache.cordova.PluginManager.exec(PluginManager.java:132)

at com.getcapacitor.MessageHandler.callCordovaPluginMethod(MessageHandler.java:73)

at com.getcapacitor.MessageHandler.postMessage(MessageHandler.java:46)

at android.os.MessageQueue.nativePollOnce(Native Method)

at android.os.MessageQueue.next(MessageQueue.java:323)

at android.os.Looper.loop(Looper.java:136)

at android.os.HandlerThread.run(HandlerThread.java:61)

```

`this.cordova.getActivity()` works. The problem is due to MainActivity not extending [CordovaActivity](https://github.com/apache/cordova-android/blob/master/framework/src/org/apache/cordova/CordovaActivity.java). This may be intended and a nofix issue.

Might be implemented by extending CordovaActivity and wrapping an [AppCompatDelegate ](https://developer.android.com/reference/android/support/v7/app/AppCompatDelegate) instead of [AppCompatActivity ](https://developer.android.com/reference/android/support/v7/app/AppCompatActivity) as a base class.

See this issue:

https://github.com/darryncampbell/darryncampbell-cordova-plugin-intent/issues/64

See changes neccessary for the plugin:

https://github.com/darryncampbell/darryncampbell-cordova-plugin-intent/pull/65/files

That line is not uncommon:

https://github.com/search?q=%28%28CordovaActivity%29this.cordova.getActivity%28%29%29&type=Code | non_infrastructure | cordova plugin casts to cordovaactivity will fail cordovaactivity this cordova getactivity will fail with an exception d cordova intents shim action registerbroadcastreceiver e pluginmanager uncaught exception from plugin java lang classcastexception de test example mainactivity cannot be cast to org apache cordova cordovaactivity at com darryncampbell cordova plugin intent intentshim execute intentshim java at org apache cordova cordovaplugin execute cordovaplugin java at org apache cordova pluginmanager exec pluginmanager java at com getcapacitor messagehandler callcordovapluginmethod messagehandler java at com getcapacitor messagehandler postmessage messagehandler java at android os messagequeue nativepollonce native method at android os messagequeue next messagequeue java at android os looper loop looper java at android os handlerthread run handlerthread java this cordova getactivity works the problem is due to mainactivity not extending this may be intended and a nofix issue might be implemented by extending cordovaactivity and wrapping an instead of as a base class see this issue see changes neccessary for the plugin that line is not uncommon | 0 |

797,729 | 28,153,471,161 | IssuesEvent | 2023-04-03 04:56:50 | Team-Ampersand/Dotori-client-v2 | https://api.github.com/repos/Team-Ampersand/Dotori-client-v2 | closed | 안마의자 toast message 수정 | 3️⃣ Priority: Low ⚡Type: Simple | <img width="327" alt="스크린샷 2023-04-03 오후 12 15 14" src="https://user-images.githubusercontent.com/80191860/229403449-ec5fb261-43ce-4495-b5f9-1568dfd4aa3c.png">

안마의자 신청 시간 안내가 잘못나와있습니다

8시 ~ 10시 -> 8시 20분 ~ 9시 | 1.0 | 안마의자 toast message 수정 - <img width="327" alt="스크린샷 2023-04-03 오후 12 15 14" src="https://user-images.githubusercontent.com/80191860/229403449-ec5fb261-43ce-4495-b5f9-1568dfd4aa3c.png">

안마의자 신청 시간 안내가 잘못나와있습니다

8시 ~ 10시 -> 8시 20분 ~ 9시 | non_infrastructure | 안마의자 toast message 수정 img width alt 스크린샷 오후 src 안마의자 신청 시간 안내가 잘못나와있습니다 | 0 |

14,237 | 10,720,853,310 | IssuesEvent | 2019-10-26 20:47:14 | dart-lang/site-angulardart | https://api.github.com/repos/dart-lang/site-angulardart | closed | CI: simplify build process | infrastructure | E.g., having stages complicates the build process but in our case buys us very little (less than originally expected).

https://github.com/dart-lang/site-webdev/pull/1476 introduced stages into [.travis.yml](https://github.com/dart-lang/site-webdev/pull/1476/files#diff-354f30a63fb0907d4ad57269548329e3) | 1.0 | CI: simplify build process - E.g., having stages complicates the build process but in our case buys us very little (less than originally expected).

https://github.com/dart-lang/site-webdev/pull/1476 introduced stages into [.travis.yml](https://github.com/dart-lang/site-webdev/pull/1476/files#diff-354f30a63fb0907d4ad57269548329e3) | infrastructure | ci simplify build process e g having stages complicates the build process but in our case buys us very little less than originally expected introduced stages into | 1 |

774,848 | 27,214,182,979 | IssuesEvent | 2023-02-20 19:37:59 | az-digital/az_quickstart | https://api.github.com/repos/az-digital/az_quickstart | closed | Broken time zone conversion for imported Trellis events | bug high priority 2.6.x only | ## Problem/Motivation

While testing the new experimental AZ Events - Trellis Event Importer module with the Trellis team last week, we discovered that there is a problem with the timezone conversion happening on events when they are imported from Trellis.

### Describe the bug

The datetime values included in Trellis events API responses are UTC values but we're treating them as if they are whatever the timezone for the event is.

## Proposed resolution

Process Trellis event datetime values as UTC. Decide whether or not we need to save the timezone value at all.

| 1.0 | Broken time zone conversion for imported Trellis events - ## Problem/Motivation

While testing the new experimental AZ Events - Trellis Event Importer module with the Trellis team last week, we discovered that there is a problem with the timezone conversion happening on events when they are imported from Trellis.

### Describe the bug

The datetime values included in Trellis events API responses are UTC values but we're treating them as if they are whatever the timezone for the event is.

## Proposed resolution

Process Trellis event datetime values as UTC. Decide whether or not we need to save the timezone value at all.

| non_infrastructure | broken time zone conversion for imported trellis events problem motivation while testing the new experimental az events trellis event importer module with the trellis team last week we discovered that there is a problem with the timezone conversion happening on events when they are imported from trellis describe the bug the datetime values included in trellis events api responses are utc values but we re treating them as if they are whatever the timezone for the event is proposed resolution process trellis event datetime values as utc decide whether or not we need to save the timezone value at all | 0 |

373,705 | 11,047,716,333 | IssuesEvent | 2019-12-09 19:34:43 | LongTailBio/pangea-server | https://api.github.com/repos/LongTailBio/pangea-server | closed | Handle invalidation of Analysis Results | low priority middleware | Middleware results for queries will be accurate at the time of the query. If the owner of an included Sample later updates a Tool Result for that Sample then the middleware results for the query could potentially change. We should either note that the Query results are no longer accurate or should recalculate them.

I'm leaning towards tagging it as invalid which would manifest as a warning and a 'Rerun Middleware' button for the user. This allows them to hold onto the query-time results if they so choose. | 1.0 | Handle invalidation of Analysis Results - Middleware results for queries will be accurate at the time of the query. If the owner of an included Sample later updates a Tool Result for that Sample then the middleware results for the query could potentially change. We should either note that the Query results are no longer accurate or should recalculate them.

I'm leaning towards tagging it as invalid which would manifest as a warning and a 'Rerun Middleware' button for the user. This allows them to hold onto the query-time results if they so choose. | non_infrastructure | handle invalidation of analysis results middleware results for queries will be accurate at the time of the query if the owner of an included sample later updates a tool result for that sample then the middleware results for the query could potentially change we should either note that the query results are no longer accurate or should recalculate them i m leaning towards tagging it as invalid which would manifest as a warning and a rerun middleware button for the user this allows them to hold onto the query time results if they so choose | 0 |

5,517 | 5,717,603,822 | IssuesEvent | 2017-04-19 17:37:33 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Generic update doesn't support exception_handler kwarg | bug Infrastructure | While trying to add a custom exception handler to his PR (#2821) @alfantp found that `cli_generic_update_command` does not support the `exception_handler` kwarg.

- [x] add support for exception_handler to generic update and generic wait commands.

- [x] hook up custom exception handler for `redis update` | 1.0 | Generic update doesn't support exception_handler kwarg - While trying to add a custom exception handler to his PR (#2821) @alfantp found that `cli_generic_update_command` does not support the `exception_handler` kwarg.

- [x] add support for exception_handler to generic update and generic wait commands.

- [x] hook up custom exception handler for `redis update` | infrastructure | generic update doesn t support exception handler kwarg while trying to add a custom exception handler to his pr alfantp found that cli generic update command does not support the exception handler kwarg add support for exception handler to generic update and generic wait commands hook up custom exception handler for redis update | 1 |

802,503 | 28,964,840,018 | IssuesEvent | 2023-05-10 07:07:39 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | [YSQL][Wait-on-conflict]: Serialization error not being observed for lock-modification conflict | kind/bug area/ysql QA status/awaiting-triage priority/highest | ### Description

Steps to reproduce:

1. Create universe on `2.19.0.0-b148` with enable_wait_queus and enable_deadlock_detection gflags set to true.

2. `CREATE TABLE tb(k int primary key, v int);`

3. `INSERT INTO tb VALUES (1,1), (2,2), (3,3);`

4. Transaction-1: `BEGIN TRANSACTION ISOLATION LEVEL REPEATABLE READ;`

5. Transaction-1: `UPDATE tb SET v=22 WHERE k=1;`

6. Tansaction-2: `BEGIN TRANSACTION ISOLATION LEVEL REPEATABLE READ;`

7. Transaction-2: `SELECT * FROM tb WHERE k=1 FOR SHARE;`

8. Transaction-1: `COMMIT;`

Notice that Transaction-2 doesn't through any SE and outputs the updated value from the table.

Recording: https://drive.google.com/file/d/1aha080nzcq6b5siU6zCAswkt7yLFnbyW/view?usp=sharing

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information. | 1.0 | [YSQL][Wait-on-conflict]: Serialization error not being observed for lock-modification conflict - ### Description

Steps to reproduce:

1. Create universe on `2.19.0.0-b148` with enable_wait_queus and enable_deadlock_detection gflags set to true.

2. `CREATE TABLE tb(k int primary key, v int);`

3. `INSERT INTO tb VALUES (1,1), (2,2), (3,3);`

4. Transaction-1: `BEGIN TRANSACTION ISOLATION LEVEL REPEATABLE READ;`

5. Transaction-1: `UPDATE tb SET v=22 WHERE k=1;`

6. Tansaction-2: `BEGIN TRANSACTION ISOLATION LEVEL REPEATABLE READ;`

7. Transaction-2: `SELECT * FROM tb WHERE k=1 FOR SHARE;`

8. Transaction-1: `COMMIT;`

Notice that Transaction-2 doesn't through any SE and outputs the updated value from the table.

Recording: https://drive.google.com/file/d/1aha080nzcq6b5siU6zCAswkt7yLFnbyW/view?usp=sharing

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information. | non_infrastructure | serialization error not being observed for lock modification conflict description steps to reproduce create universe on with enable wait queus and enable deadlock detection gflags set to true create table tb k int primary key v int insert into tb values transaction begin transaction isolation level repeatable read transaction update tb set v where k tansaction begin transaction isolation level repeatable read transaction select from tb where k for share transaction commit notice that transaction doesn t through any se and outputs the updated value from the table recording warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 0 |

22,715 | 15,395,062,099 | IssuesEvent | 2021-03-03 18:43:15 | dotnet/dotnet-docker | https://api.github.com/repos/dotnet/dotnet-docker | closed | Create tests for the monitor images | area-infrastructure enhancement | A set of unit tests should be created for the `monitor` images. To conform with the existing tests, two basic tests come to mind.

1. Validate the static state such as the `ENVs` defined in the image.

1. A basic scenario test that ensures dotnet-monitor is installed correctly and is usable.

I consider these tests to be a requirement before merging the images to the master branch. | 1.0 | Create tests for the monitor images - A set of unit tests should be created for the `monitor` images. To conform with the existing tests, two basic tests come to mind.

1. Validate the static state such as the `ENVs` defined in the image.

1. A basic scenario test that ensures dotnet-monitor is installed correctly and is usable.

I consider these tests to be a requirement before merging the images to the master branch. | infrastructure | create tests for the monitor images a set of unit tests should be created for the monitor images to conform with the existing tests two basic tests come to mind validate the static state such as the envs defined in the image a basic scenario test that ensures dotnet monitor is installed correctly and is usable i consider these tests to be a requirement before merging the images to the master branch | 1 |

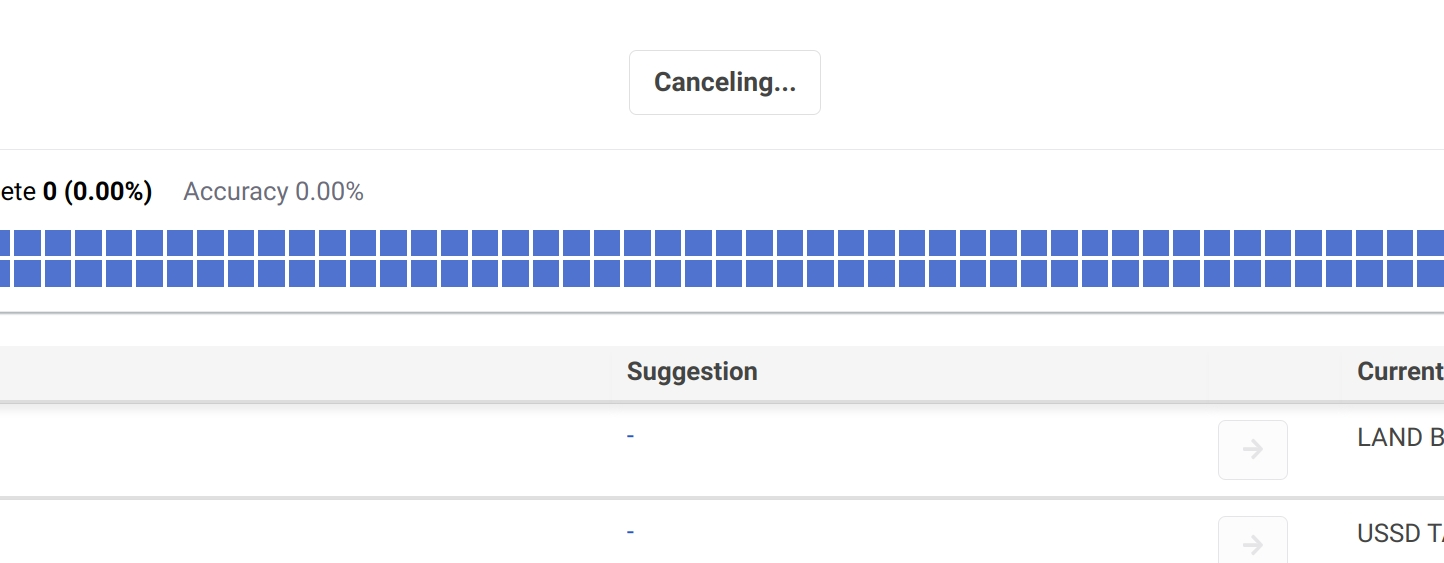

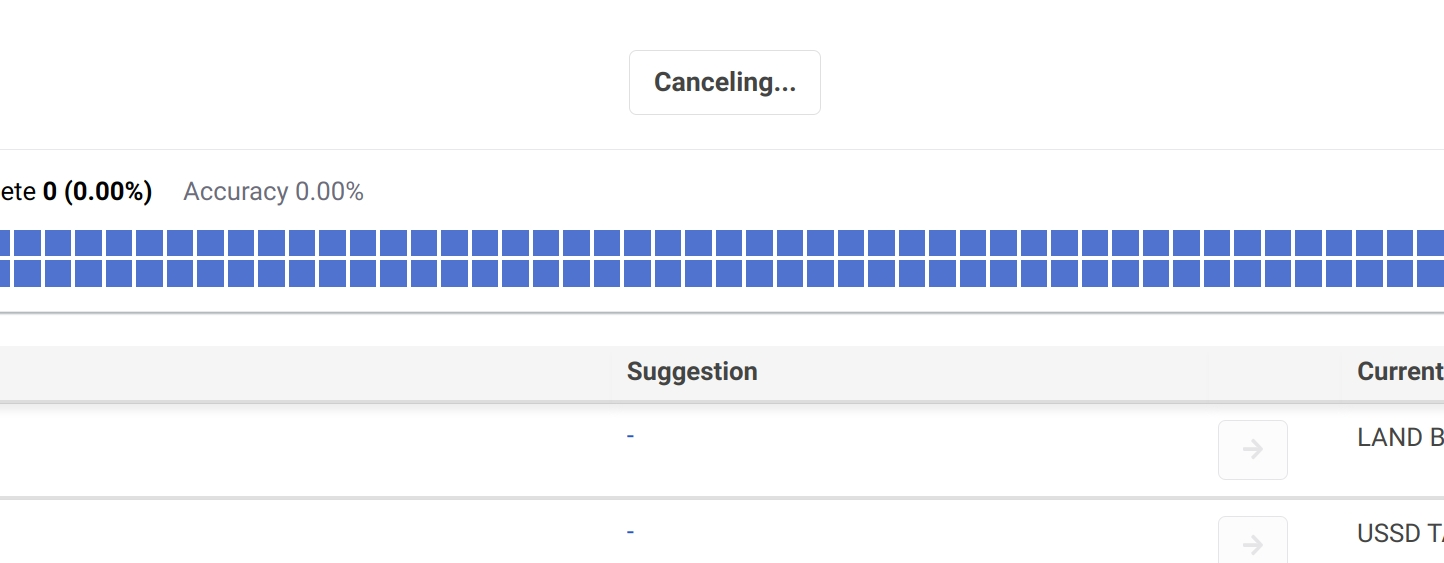

10,553 | 8,630,887,740 | IssuesEvent | 2018-11-22 04:45:04 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | closed | Multi-process Job Runner | bug interface/infrastructure | There are currently several issues with the multi-process job runner. Now that this runner seems more stable, it would be good to fix some of these issues:

- Progress bar doesn't work

- Exceptions thrown after the job finishes (e.g. when running post-simulation tools) are unhandled, and will kill the application

- Exceptions thrown inside runner processes are passed back as a string to the main process, which means that the exception is not nicely formatted in the status bar | 1.0 | Multi-process Job Runner - There are currently several issues with the multi-process job runner. Now that this runner seems more stable, it would be good to fix some of these issues:

- Progress bar doesn't work

- Exceptions thrown after the job finishes (e.g. when running post-simulation tools) are unhandled, and will kill the application

- Exceptions thrown inside runner processes are passed back as a string to the main process, which means that the exception is not nicely formatted in the status bar | infrastructure | multi process job runner there are currently several issues with the multi process job runner now that this runner seems more stable it would be good to fix some of these issues progress bar doesn t work exceptions thrown after the job finishes e g when running post simulation tools are unhandled and will kill the application exceptions thrown inside runner processes are passed back as a string to the main process which means that the exception is not nicely formatted in the status bar | 1 |

98,599 | 4,028,786,471 | IssuesEvent | 2016-05-18 08:07:10 | Taeir/Test-Codecov | https://api.github.com/repos/Taeir/Test-Codecov | opened | Game design document - Overview | Priority A | Create the GDD Overview.

This is a subtask of #7 - Game design document | 1.0 | Game design document - Overview - Create the GDD Overview.

This is a subtask of #7 - Game design document | non_infrastructure | game design document overview create the gdd overview this is a subtask of game design document | 0 |

38,364 | 12,536,736,487 | IssuesEvent | 2020-06-05 01:05:04 | jgeraigery/DDWatch | https://api.github.com/repos/jgeraigery/DDWatch | opened | CVE-2019-17359 (High) detected in bcprov-jdk15on-1.52.jar | security vulnerability | ## CVE-2019-17359 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bcprov-jdk15on-1.52.jar</b></p></summary>

<p>The Bouncy Castle Crypto package is a Java implementation of cryptographic algorithms. This jar contains JCE provider and lightweight API for the Bouncy Castle Cryptography APIs for JDK 1.5 to JDK 1.8.</p>

<p>Library home page: <a href="http://www.bouncycastle.org/java.html">http://www.bouncycastle.org/java.html</a></p>

<p>Path to dependency file: /tmp/ws-scm/DDWatch/pom.xml</p>

<p>Path to vulnerable library: /tmp/ws-ua_20200428152211_JHBTJH/downloadResource_QIVTYB/20200428152549/bcprov-jdk15on-1.52.jar</p>

<p>

Dependency Hierarchy:

- jasperreports-6.6.0.jar (Root Library)

- itext-2.1.7.js6.jar

- :x: **bcprov-jdk15on-1.52.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The ASN.1 parser in Bouncy Castle Crypto (aka BC Java) 1.63 can trigger a large attempted memory allocation, and resultant OutOfMemoryError error, via crafted ASN.1 data. This is fixed in 1.64.

<p>Publish Date: 2019-10-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-17359>CVE-2019-17359</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-17359">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-17359</a></p>

<p>Release Date: 2019-10-08</p>

<p>Fix Resolution: org.bouncycastle:bcprov-jdk15on:1.64</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.bouncycastle","packageName":"bcprov-jdk15on","packageVersion":"1.52","isTransitiveDependency":true,"dependencyTree":"net.sf.jasperreports:jasperreports:6.6.0;com.lowagie:itext:2.1.7.js6;org.bouncycastle:bcprov-jdk15on:1.52","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.bouncycastle:bcprov-jdk15on:1.64"}],"vulnerabilityIdentifier":"CVE-2019-17359","vulnerabilityDetails":"The ASN.1 parser in Bouncy Castle Crypto (aka BC Java) 1.63 can trigger a large attempted memory allocation, and resultant OutOfMemoryError error, via crafted ASN.1 data. This is fixed in 1.64.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-17359","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2019-17359 (High) detected in bcprov-jdk15on-1.52.jar - ## CVE-2019-17359 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bcprov-jdk15on-1.52.jar</b></p></summary>

<p>The Bouncy Castle Crypto package is a Java implementation of cryptographic algorithms. This jar contains JCE provider and lightweight API for the Bouncy Castle Cryptography APIs for JDK 1.5 to JDK 1.8.</p>

<p>Library home page: <a href="http://www.bouncycastle.org/java.html">http://www.bouncycastle.org/java.html</a></p>

<p>Path to dependency file: /tmp/ws-scm/DDWatch/pom.xml</p>

<p>Path to vulnerable library: /tmp/ws-ua_20200428152211_JHBTJH/downloadResource_QIVTYB/20200428152549/bcprov-jdk15on-1.52.jar</p>

<p>

Dependency Hierarchy:

- jasperreports-6.6.0.jar (Root Library)

- itext-2.1.7.js6.jar

- :x: **bcprov-jdk15on-1.52.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The ASN.1 parser in Bouncy Castle Crypto (aka BC Java) 1.63 can trigger a large attempted memory allocation, and resultant OutOfMemoryError error, via crafted ASN.1 data. This is fixed in 1.64.

<p>Publish Date: 2019-10-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-17359>CVE-2019-17359</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-17359">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-17359</a></p>

<p>Release Date: 2019-10-08</p>

<p>Fix Resolution: org.bouncycastle:bcprov-jdk15on:1.64</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.bouncycastle","packageName":"bcprov-jdk15on","packageVersion":"1.52","isTransitiveDependency":true,"dependencyTree":"net.sf.jasperreports:jasperreports:6.6.0;com.lowagie:itext:2.1.7.js6;org.bouncycastle:bcprov-jdk15on:1.52","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.bouncycastle:bcprov-jdk15on:1.64"}],"vulnerabilityIdentifier":"CVE-2019-17359","vulnerabilityDetails":"The ASN.1 parser in Bouncy Castle Crypto (aka BC Java) 1.63 can trigger a large attempted memory allocation, and resultant OutOfMemoryError error, via crafted ASN.1 data. This is fixed in 1.64.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-17359","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_infrastructure | cve high detected in bcprov jar cve high severity vulnerability vulnerable library bcprov jar the bouncy castle crypto package is a java implementation of cryptographic algorithms this jar contains jce provider and lightweight api for the bouncy castle cryptography apis for jdk to jdk library home page a href path to dependency file tmp ws scm ddwatch pom xml path to vulnerable library tmp ws ua jhbtjh downloadresource qivtyb bcprov jar dependency hierarchy jasperreports jar root library itext jar x bcprov jar vulnerable library vulnerability details the asn parser in bouncy castle crypto aka bc java can trigger a large attempted memory allocation and resultant outofmemoryerror error via crafted asn data this is fixed in publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org bouncycastle bcprov isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails the asn parser in bouncy castle crypto aka bc java can trigger a large attempted memory allocation and resultant outofmemoryerror error via crafted asn data this is fixed in vulnerabilityurl | 0 |

21,025 | 14,282,183,283 | IssuesEvent | 2020-11-23 09:14:26 | pymor/pymor | https://api.github.com/repos/pymor/pymor | closed | Broken MatplotlibPatchWidget yields no CI errors | bug infrastructure | The tests for #1017 currently succeed with a `MatplotlibPatchWidget` that should fail in its init due to changes in `MatplotlibPatchAxes` | 1.0 | Broken MatplotlibPatchWidget yields no CI errors - The tests for #1017 currently succeed with a `MatplotlibPatchWidget` that should fail in its init due to changes in `MatplotlibPatchAxes` | infrastructure | broken matplotlibpatchwidget yields no ci errors the tests for currently succeed with a matplotlibpatchwidget that should fail in its init due to changes in matplotlibpatchaxes | 1 |

67,033 | 7,033,165,775 | IssuesEvent | 2017-12-27 09:15:40 | MajkiIT/polish-ads-filter | https://api.github.com/repos/MajkiIT/polish-ads-filter | closed | medianarodowe.com | dodać reguły gotowe/testowanie reklama |

`https://medianarodowe.com/papiez-franciszek-prawdziwy-duch-bozego-narodzenia-radosc-tego-ze-bog-nas-kocha/`

elementy textowe

moje filtry

easylist + polskie filtry

Nano Adblocker 1.0.0.21

Nano Defender 13.16

Chrome 63.0.3239.108 | 1.0 | medianarodowe.com -

`https://medianarodowe.com/papiez-franciszek-prawdziwy-duch-bozego-narodzenia-radosc-tego-ze-bog-nas-kocha/`

elementy textowe

moje filtry

easylist + polskie filtry

Nano Adblocker 1.0.0.21

Nano Defender 13.16

Chrome 63.0.3239.108 | non_infrastructure | medianarodowe com elementy textowe moje filtry easylist polskie filtry nano adblocker nano defender chrome | 0 |

9,781 | 8,154,708,596 | IssuesEvent | 2018-08-23 04:56:13 | hashmapinc/Tempus | https://api.github.com/repos/hashmapinc/Tempus | closed | Configure authorization from Jenkins to Github | infrastructure/issue next | Configure OAuth 2 so that Jenkins will use GitHub for authentication and authorization. | 1.0 | Configure authorization from Jenkins to Github - Configure OAuth 2 so that Jenkins will use GitHub for authentication and authorization. | infrastructure | configure authorization from jenkins to github configure oauth so that jenkins will use github for authentication and authorization | 1 |

7,782 | 7,092,419,342 | IssuesEvent | 2018-01-12 16:29:49 | sociomantic-tsunami/turtle | https://api.github.com/repos/sociomantic-tsunami/turtle | closed | Try switching turtle to CircleCI as a pilot project | type-infrastructure | As turtle has low development activity, it should be fine with 1500 minute limit of unpaid CircleCI subscription. We can see how it goes after.

Looking for green light from @leandro-lucarella-sociomantic (== enabling CircleCI for the repo administratively)

FYI @sociomantic-tsunami/core-team | 1.0 | Try switching turtle to CircleCI as a pilot project - As turtle has low development activity, it should be fine with 1500 minute limit of unpaid CircleCI subscription. We can see how it goes after.

Looking for green light from @leandro-lucarella-sociomantic (== enabling CircleCI for the repo administratively)

FYI @sociomantic-tsunami/core-team | infrastructure | try switching turtle to circleci as a pilot project as turtle has low development activity it should be fine with minute limit of unpaid circleci subscription we can see how it goes after looking for green light from leandro lucarella sociomantic enabling circleci for the repo administratively fyi sociomantic tsunami core team | 1 |

353,547 | 10,554,152,743 | IssuesEvent | 2019-10-03 18:48:57 | gitthermal/thermal | https://api.github.com/repos/gitthermal/thermal | opened | Selected repo path not showing in input field | difficulty: easy good first issue hacktoberfest 🐞 Bug 🚶🏻♀️ Priority low | ## Description

Typing some text in input field and then selecting a folder the path _(text)_ doesn't replace the value inside the input field.

## To Reproduce

Steps to reproduce the behavior:

1. Click on `Add new repo` button

2. Type something in input field _(optional: remove the text from the input field)_

3. Click on select button to select a folder

4. The folder path will not be added to input field

## Expected behavior

Whatever text is typed in the input field it should be replaced by the newly selected folder path.

## Screenshots

**Desktop (please complete the following information):**

- OS: Windows 10

- Version: 0.0.4

| 1.0 | Selected repo path not showing in input field - ## Description

Typing some text in input field and then selecting a folder the path _(text)_ doesn't replace the value inside the input field.

## To Reproduce

Steps to reproduce the behavior:

1. Click on `Add new repo` button

2. Type something in input field _(optional: remove the text from the input field)_

3. Click on select button to select a folder

4. The folder path will not be added to input field

## Expected behavior

Whatever text is typed in the input field it should be replaced by the newly selected folder path.

## Screenshots

**Desktop (please complete the following information):**

- OS: Windows 10

- Version: 0.0.4

| non_infrastructure | selected repo path not showing in input field description typing some text in input field and then selecting a folder the path text doesn t replace the value inside the input field to reproduce steps to reproduce the behavior click on add new repo button type something in input field optional remove the text from the input field click on select button to select a folder the folder path will not be added to input field expected behavior whatever text is typed in the input field it should be replaced by the newly selected folder path screenshots desktop please complete the following information os windows version | 0 |

71,221 | 9,485,119,443 | IssuesEvent | 2019-04-22 09:10:43 | mindsdb/mindsdb | https://api.github.com/repos/mindsdb/mindsdb | closed | Add descriptions to each of the scores inside the light metadata | documentation enhancement | The descriptions should be a more detailed version of what we explain to the user in the logged messages. Maybe make it something like:

```python

'description': {

'short': 'blah' # <--- presented in the logs

'long': 'blah blah' # <---- sent to the mindsdb-server to be presented in the UI and maybe displayed in some situations or if the debug flag is enabled

}

```

Granted, this may be a bit of an overkill, I'll chew on it | 1.0 | Add descriptions to each of the scores inside the light metadata - The descriptions should be a more detailed version of what we explain to the user in the logged messages. Maybe make it something like:

```python

'description': {

'short': 'blah' # <--- presented in the logs

'long': 'blah blah' # <---- sent to the mindsdb-server to be presented in the UI and maybe displayed in some situations or if the debug flag is enabled

}

```

Granted, this may be a bit of an overkill, I'll chew on it | non_infrastructure | add descriptions to each of the scores inside the light metadata the descriptions should be a more detailed version of what we explain to the user in the logged messages maybe make it something like python description short blah presented in the logs long blah blah sent to the mindsdb server to be presented in the ui and maybe displayed in some situations or if the debug flag is enabled granted this may be a bit of an overkill i ll chew on it | 0 |

29,208 | 23,803,262,696 | IssuesEvent | 2022-09-03 16:31:36 | celeritas-project/celeritas | https://api.github.com/repos/celeritas-project/celeritas | opened | Add support for NVHPC `-stdpar` | enhancement infrastructure | Explore auto-parallelization using Nvidia's PGI-derived NVHPC tool suite. We can track development issues on here.

Our initial path is just to modify the host code pathways so that they always run on device, and later we'll cleanly support both hose and device dispatch.

- [x] Install geant4

- [x] unsupported procedure

- [ ] ...

# Issues (newest first)

## unsupported procedure

- @paulromano got errors while trying to build InitTracks.cc: `NVC++-F-0155-Compiler failed to translate accelerator region (see -Minfo messages): Unsupported procedure`

- @mcolg tracked this down to a `CELER_VALIDATE`

- I've updated `CELER_DEVICE_COMPILE` to act as though we're in "device compile" mode when using `-stdpar` 98122dc9952f3790a3ebb079b4732585a05a3ed5

## Geant4 build

- Geant4 threads are incompatible (nvhpc doesn't like `static thread_local` in template classes)

- Recursive template instantiation depth is too small

- Patched spack with https://github.com/spack/spack/pull/32185

- Fixed upstream geant4 as `emdna-V11-00-25`

# Warnings

Fixed numerous warnings in https://github.com/celeritas-project/celeritas/pull/486

# Test failures

@pcanal dug down on some slight floating point differences between vanilla GCC and stdpar: we're making incorrectly strict assumptions about floating point behavior in a couple of our unit tests: 2e04478ea9831b5222d6ac53374f333d1cfa7677 | 1.0 | Add support for NVHPC `-stdpar` - Explore auto-parallelization using Nvidia's PGI-derived NVHPC tool suite. We can track development issues on here.

Our initial path is just to modify the host code pathways so that they always run on device, and later we'll cleanly support both hose and device dispatch.

- [x] Install geant4

- [x] unsupported procedure

- [ ] ...

# Issues (newest first)

## unsupported procedure

- @paulromano got errors while trying to build InitTracks.cc: `NVC++-F-0155-Compiler failed to translate accelerator region (see -Minfo messages): Unsupported procedure`

- @mcolg tracked this down to a `CELER_VALIDATE`

- I've updated `CELER_DEVICE_COMPILE` to act as though we're in "device compile" mode when using `-stdpar` 98122dc9952f3790a3ebb079b4732585a05a3ed5

## Geant4 build

- Geant4 threads are incompatible (nvhpc doesn't like `static thread_local` in template classes)

- Recursive template instantiation depth is too small

- Patched spack with https://github.com/spack/spack/pull/32185

- Fixed upstream geant4 as `emdna-V11-00-25`

# Warnings

Fixed numerous warnings in https://github.com/celeritas-project/celeritas/pull/486

# Test failures

@pcanal dug down on some slight floating point differences between vanilla GCC and stdpar: we're making incorrectly strict assumptions about floating point behavior in a couple of our unit tests: 2e04478ea9831b5222d6ac53374f333d1cfa7677 | infrastructure | add support for nvhpc stdpar explore auto parallelization using nvidia s pgi derived nvhpc tool suite we can track development issues on here our initial path is just to modify the host code pathways so that they always run on device and later we ll cleanly support both hose and device dispatch install unsupported procedure issues newest first unsupported procedure paulromano got errors while trying to build inittracks cc nvc f compiler failed to translate accelerator region see minfo messages unsupported procedure mcolg tracked this down to a celer validate i ve updated celer device compile to act as though we re in device compile mode when using stdpar build threads are incompatible nvhpc doesn t like static thread local in template classes recursive template instantiation depth is too small patched spack with fixed upstream as emdna warnings fixed numerous warnings in test failures pcanal dug down on some slight floating point differences between vanilla gcc and stdpar we re making incorrectly strict assumptions about floating point behavior in a couple of our unit tests | 1 |

18,400 | 12,968,426,959 | IssuesEvent | 2020-07-21 05:45:04 | radareorg/radare2 | https://api.github.com/repos/radareorg/radare2 | closed | Kill Jenkins, setup Concourse CI | infrastructure | It is easier, configuration stored in the files, like Travis & Co, written in Go, faster: https://concourse-ci.org/

See https://www.digitalocean.com/community/tutorials/how-to-install-concourse-ci-on-ubuntu-16-04

https://concourse-ci.org/install.html | 1.0 | Kill Jenkins, setup Concourse CI - It is easier, configuration stored in the files, like Travis & Co, written in Go, faster: https://concourse-ci.org/

See https://www.digitalocean.com/community/tutorials/how-to-install-concourse-ci-on-ubuntu-16-04

https://concourse-ci.org/install.html | infrastructure | kill jenkins setup concourse ci it is easier configuration stored in the files like travis co written in go faster see | 1 |

66,767 | 20,624,227,346 | IssuesEvent | 2022-03-07 20:37:59 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: | I-defect needs-triaging | ### What happened?

I am following an example on microsofts website for selenium 4. I am using the msEdgeDriver.exe for 99.0. I am using edge 99.0. it is a simple basic script. I am running in VsTools. The browser will launch, and it goes to the site. In the debugger though I am getting an error. "Exception has occurred: NoSuchElementException

Message: no such element: Unable to locate element: {"method":"css selector","selector":"[id="sb_form_q"]"}

(Session info: MicrosoftEdge=99.0.1150.30)"

Any help would be appreciated Here is the example I followed https://docs.microsoft.com/en-us/microsoft-edge/webdriver-chromium/?tabs=c-sharp.

### How can we reproduce the issue?

```shell

Should not be hard. VSCODE, MS Edge, are both default installs.

#Testing new webdriver

from selenium import webdriver

from selenium.webdriver.common.by import By

import time

from selenium.webdriver.edge.service import Service

dPath = '.\msedgedriver99.exe'

driver = webdriver.Edge(dPath)

service = Service(executable_path=dPath)

driver.get('https://www.google.com')

element = driver.find_element(By.ID, 'sb_form_q')

element.send_keys('WebDriver')

element.submit()

time.sleep(5)

driver.quit()

```

### Relevant log output

```shell

Message: no such element: Unable to locate element: {"method":"css selector","selector":"[id="sb_form_q"]"}

(Session info: MicrosoftEdge=99.0.1150.30)

Stacktrace:

Backtrace:

Microsoft::Applications::Events::EventProperties::unpack [0x005A4E63+58211]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004837C1+1400481]

Microsoft::Applications::Events::ILogConfiguration::operator* [0x0027406E+3470]

Microsoft::Applications::Events::GUID_t::GUID_t [0x0029DC30+100304]

Microsoft::Applications::Events::GUID_t::GUID_t [0x0029DDB0+100688]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002C1252+245234]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002B1D34+182484]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002BFAD3+239219]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002B1A66+181766]

Microsoft::Applications::Events::GUID_t::GUID_t [0x00294C66+63494]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002959F6+66966]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x0049D895+1507189]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x007212E2+115298]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x00721046+114630]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x00724D60+130272]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x0072197C+116988]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x00495237+1472791]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004A0078+1517400]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004A0202+1517794]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004B1EB2+1590674]

BaseThreadInitThunk [0x75A5FA29+25]

RtlGetAppContainerNamedObjectPath [0x77177A9E+286]

RtlGetAppContainerNamedObjectPath [0x77177A6E+238]

File "C:\Users\TJ423JZ\OneDrive - EY\Documents\VSCode\Workspaces\Selenium 4 Test\seleniumTest.py", line 11, in <module>

element = driver.find_element(By.ID, 'sb_form_q')

```

### Operating System

Windows 10

### Selenium version

Python 3.10.2 VScode v1.64.2

### What are the browser(s) and version(s) where you see this issue?

Edge 99.0

### What are the browser driver(s) and version(s) where you see this issue?

EdgeDriver99

### Are you using Selenium Grid?

4.0 | 1.0 | [🐛 Bug]: - ### What happened?

I am following an example on microsofts website for selenium 4. I am using the msEdgeDriver.exe for 99.0. I am using edge 99.0. it is a simple basic script. I am running in VsTools. The browser will launch, and it goes to the site. In the debugger though I am getting an error. "Exception has occurred: NoSuchElementException

Message: no such element: Unable to locate element: {"method":"css selector","selector":"[id="sb_form_q"]"}

(Session info: MicrosoftEdge=99.0.1150.30)"

Any help would be appreciated Here is the example I followed https://docs.microsoft.com/en-us/microsoft-edge/webdriver-chromium/?tabs=c-sharp.

### How can we reproduce the issue?

```shell

Should not be hard. VSCODE, MS Edge, are both default installs.

#Testing new webdriver

from selenium import webdriver

from selenium.webdriver.common.by import By

import time

from selenium.webdriver.edge.service import Service

dPath = '.\msedgedriver99.exe'

driver = webdriver.Edge(dPath)

service = Service(executable_path=dPath)

driver.get('https://www.google.com')

element = driver.find_element(By.ID, 'sb_form_q')

element.send_keys('WebDriver')

element.submit()

time.sleep(5)

driver.quit()

```

### Relevant log output

```shell

Message: no such element: Unable to locate element: {"method":"css selector","selector":"[id="sb_form_q"]"}

(Session info: MicrosoftEdge=99.0.1150.30)

Stacktrace:

Backtrace:

Microsoft::Applications::Events::EventProperties::unpack [0x005A4E63+58211]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004837C1+1400481]

Microsoft::Applications::Events::ILogConfiguration::operator* [0x0027406E+3470]

Microsoft::Applications::Events::GUID_t::GUID_t [0x0029DC30+100304]

Microsoft::Applications::Events::GUID_t::GUID_t [0x0029DDB0+100688]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002C1252+245234]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002B1D34+182484]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002BFAD3+239219]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002B1A66+181766]

Microsoft::Applications::Events::GUID_t::GUID_t [0x00294C66+63494]

Microsoft::Applications::Events::GUID_t::GUID_t [0x002959F6+66966]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x0049D895+1507189]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x007212E2+115298]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x00721046+114630]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x00724D60+130272]

Microsoft::Applications::Events::ILogManager::DispatchEventBroadcast [0x0072197C+116988]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x00495237+1472791]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004A0078+1517400]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004A0202+1517794]

Microsoft::Applications::Events::ISemanticContext::SetCommonField [0x004B1EB2+1590674]

BaseThreadInitThunk [0x75A5FA29+25]

RtlGetAppContainerNamedObjectPath [0x77177A9E+286]

RtlGetAppContainerNamedObjectPath [0x77177A6E+238]

File "C:\Users\TJ423JZ\OneDrive - EY\Documents\VSCode\Workspaces\Selenium 4 Test\seleniumTest.py", line 11, in <module>

element = driver.find_element(By.ID, 'sb_form_q')

```

### Operating System

Windows 10

### Selenium version

Python 3.10.2 VScode v1.64.2

### What are the browser(s) and version(s) where you see this issue?

Edge 99.0

### What are the browser driver(s) and version(s) where you see this issue?

EdgeDriver99

### Are you using Selenium Grid?

4.0 | non_infrastructure | what happened i am following an example on microsofts website for selenium i am using the msedgedriver exe for i am using edge it is a simple basic script i am running in vstools the browser will launch and it goes to the site in the debugger though i am getting an error exception has occurred nosuchelementexception message no such element unable to locate element method css selector selector session info microsoftedge any help would be appreciated here is the example i followed how can we reproduce the issue shell should not be hard vscode ms edge are both default installs testing new webdriver from selenium import webdriver from selenium webdriver common by import by import time from selenium webdriver edge service import service dpath exe driver webdriver edge dpath service service executable path dpath driver get element driver find element by id sb form q element send keys webdriver element submit time sleep driver quit relevant log output shell message no such element unable to locate element method css selector selector session info microsoftedge stacktrace backtrace microsoft applications events eventproperties unpack microsoft applications events isemanticcontext setcommonfield microsoft applications events ilogconfiguration operator microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events guid t guid t microsoft applications events isemanticcontext setcommonfield microsoft applications events ilogmanager dispatcheventbroadcast microsoft applications events ilogmanager dispatcheventbroadcast microsoft applications events ilogmanager dispatcheventbroadcast microsoft applications events ilogmanager dispatcheventbroadcast microsoft applications events isemanticcontext setcommonfield microsoft applications events isemanticcontext setcommonfield microsoft applications events isemanticcontext setcommonfield microsoft applications events isemanticcontext setcommonfield basethreadinitthunk rtlgetappcontainernamedobjectpath rtlgetappcontainernamedobjectpath file c users onedrive ey documents vscode workspaces selenium test seleniumtest py line in element driver find element by id sb form q operating system windows selenium version python vscode what are the browser s and version s where you see this issue edge what are the browser driver s and version s where you see this issue are you using selenium grid | 0 |

18,148 | 12,809,392,273 | IssuesEvent | 2020-07-03 15:32:53 | pysal/pysal | https://api.github.com/repos/pysal/pysal | closed | Coverage tests lines in `__main__`. | Good First PR Infrastructure | We should probably not consider the code contained below `if __name__ == '__main__'` blocks in our coverage statistics.

If you [look at coveralls](https://coveralls.io/jobs/16444253/source_files/954095238#L452), this might actually reflect a large part of the current uncovered code.

| 1.0 | Coverage tests lines in `__main__`. - We should probably not consider the code contained below `if __name__ == '__main__'` blocks in our coverage statistics.

If you [look at coveralls](https://coveralls.io/jobs/16444253/source_files/954095238#L452), this might actually reflect a large part of the current uncovered code.

| infrastructure | coverage tests lines in main we should probably not consider the code contained below if name main blocks in our coverage statistics if you this might actually reflect a large part of the current uncovered code | 1 |

15,922 | 11,770,079,608 | IssuesEvent | 2020-03-15 17:39:56 | hackforla/website | https://api.github.com/repos/hackforla/website | closed | Prototype a Project Home Page | Hack Night Projects UI enhancement front end good first issue infrastructure | ### Overview

We want to start prototyping a dedicated page for each Project. These are already being rendered by Jekyll, but we need to add more details. This task will eventually roll up into #14.

### Action Items

1. Edit the existing Project layout under `_layouts/project.html`

2. Fill out details, assets, etc.

3. Render links as cards.

| 1.0 | Prototype a Project Home Page - ### Overview

We want to start prototyping a dedicated page for each Project. These are already being rendered by Jekyll, but we need to add more details. This task will eventually roll up into #14.

### Action Items

1. Edit the existing Project layout under `_layouts/project.html`

2. Fill out details, assets, etc.

3. Render links as cards.

| infrastructure | prototype a project home page overview we want to start prototyping a dedicated page for each project these are already being rendered by jekyll but we need to add more details this task will eventually roll up into action items edit the existing project layout under layouts project html fill out details assets etc render links as cards | 1 |

629,592 | 20,047,757,990 | IssuesEvent | 2022-02-03 00:08:21 | monarch-initiative/mondo | https://api.github.com/repos/monarch-initiative/mondo | closed | Map Orphanet's non-rare diseases to Mondo (list included) | mapping high priority | Orphanet seems to have several non-rare diseases in it's ontology, simply labeled as e.g. "_NON-RARE IN EUROPE: Melanoma_" (Which is just regular Melanoma then).

Seems like none of these diseases are mapped to any other ontology and no other equivalency mapping out there seems to cover these.

Would it be possible for MONDO to cover/map these? Or is there a reason to leave these diseases as-is?

Our team's already gone over the list of these Orphanet resources and added the appropriate MONDO IDs where possible.

Mind you that there were some Orphanet resources we were not able to map to any MONDO equivalent.

I've left those out of the list below (I'll create another ticket if necessary to add those diseases to MONDO).

It's quite the list, I know :sweat_smile:

Orphanet ID | Mondo ID | Label (from Orphanet) | Synonym (from Orphanet)

-- | -- | -- | --

Orphanet:924 | MONDO:0007035 | NON RARE IN EUROPE: Acanthosis nigricans |

Orphanet:464463 | MONDO:0005036 | NON RARE IN EUROPE: Adenocarcinoma of stomach |

Orphanet:415268 | MONDO:0005061 | NON RARE IN EUROPE: Adenocarcinoma of the lung |

Orphanet:3153 | MONDO:0005488 | NON RARE IN EUROPE: Adolescent idiopathic scoliosis |

Orphanet:99888 | MONDO:0003924 | NON RARE IN EUROPE: Adrenocortical adenoma |

Orphanet:85142 | MONDO:0014200 | NON RARE IN EUROPE: Aldosterone-producing adenoma | Primary aldosteronism due to Conn adenoma \| Aldosterone-secreting adenoma \| Aldosteronoma \| Conn adenoma

Orphanet:238616 | MONDO:0004975 | NON RARE IN EUROPE: Alzheimer disease |

Orphanet:825 | MONDO:0005306 | NON RARE IN EUROPE: Ankylosing spondylitis | Ankylosing spondylarthritis \| Bechterew syndrome

Orphanet:36297 | MONDO:0005351 | NON RARE IN EUROPE: Anorexia nervosa |

Orphanet:80 | MONDO:0007140 | NON RARE IN EUROPE: Antiphospholipid syndrome | Hughes syndrome \| Antiphospholipid antibody syndrome \| Familial lupus anticoagulant

Orphanet:1162 | MONDO:0005259 | NON RARE IN EUROPE: Asperger syndrome |

Orphanet:625 | MONDO:0009755 | NON RARE IN EUROPE: Atypical mole | Dysplastic nevus \| Clark nevus

Orphanet:106 | MONDO:0005258 | NON RARE IN EUROPE: Autism |

Orphanet:462 | MONDO:0007810 | NON RARE IN EUROPE: Autosomal dominant ichthyosis vulgaris |

Orphanet:1232 | MONDO:0013662 | NON RARE IN EUROPE: Barrett esophagus |

Orphanet:97562 | MONDO:0007709 | NON RARE IN EUROPE: Benign familial hematuria |

Orphanet:34145 | MONDO:0005342 | NON RARE IN EUROPE: Berger disease | IgA nephropathy

Orphanet:1244 | MONDO:0007194 | NON RARE IN EUROPE: Bicuspid aortic valve |

Orphanet:157980 | MONDO:0001187 | NON RARE IN EUROPE: Bladder cancer |

Orphanet:93393 | MONDO:0007217 | NON RARE IN EUROPE: Brachydactyly type A3 | Brachymesophalangy V \| Brachydactyly-clinodactyly

Orphanet:93385 | MONDO:0007222 | NON RARE IN EUROPE: Brachydactyly type D |

Orphanet:50838 | MONDO:0007275 | NON RARE IN EUROPE: Carpal tunnel syndrome |

Orphanet:555 | MONDO:0005130 | NON RARE IN EUROPE: Celiac disease | Celiac sprue \| Gluten intolerance \| Gluten-induced enteropathy \| Coeliac disease \| Gluten-sensitive enteropathy \| Idiopathic steatorrhea \| Nontropical sprue \| Coeliac sprue

Orphanet:164 | MONDO:0000820 | NON RARE IN EUROPE: Cerebral cavernous malformations | Brain cavernous hemangioma

Orphanet:1983 | MONDO:0005404 | NON RARE IN EUROPE: Chronic fatigue syndrome | Myalgic encephalomyelitis \| Chronic fatigue immune dysfunction syndrome

Orphanet:1002 | MONDO:0043537 | NON RARE IN EUROPE: Cluster headache | Erythroprosopalgia of Bing \| Ciliary neuralgia \| Red migraine \| Horton headache \| Erythromelalgia of the head \| Histaminic cephalalgia \| Histamine headache \| Histamine cephalalgia \| Migrainous neuralgia \| Cluster migraine

Orphanet:466667 | MONDO:0005575 | NON RARE IN EUROPE: Colorectal cancer |

Orphanet:206 | MONDO:0005011 | NON RARE IN EUROPE: Crohn disease |

Orphanet:1648 | MONDO:0007488 | NON RARE IN EUROPE: Dementia with Lewy body | Lewy body dementia \| DLB \| Diffuse Lewy body disease \| Cortical Lewy body disease

Orphanet:243377 | MONDO:0005147 | NON RARE IN EUROPE: Diabetes mellitus type 1 | Insulin-dependent diabetes mellitus

Orphanet:243761 | MONDO:0001134 | NON RARE IN EUROPE: Essential hypertension |

Orphanet:529819 | MONDO:0008327 | NON RARE IN EUROPE: Exfoliation syndrome | Pseudoexfoliation syndrome \| XFS

Orphanet:276271 | MONDO:0014448 | NON RARE IN EUROPE: Familial dysalbuminemic hyperthyroxinemia | Bisalbuminemia

Orphanet:426 | MONDO:0017774 | NON RARE IN EUROPE: Familial hypobetalipoproteinemia |

Orphanet:155 | MONDO:0024573 | NON RARE IN EUROPE: Familial isolated hypertrophic cardiomyopathy | Familila or idiopathic hypertrophic obstructive cardiomyopathy

Orphanet:2794 | MONDO:0005349 | NON RARE IN EUROPE: Familial otosclerosis |

Orphanet:336 | MONDO:0006761 | NON RARE IN EUROPE: Fibromuscular dysplasia of arteries |

Orphanet:41842 | MONDO:0005546 | NON RARE IN EUROPE: Fibromyalgia |

Orphanet:459690 | MONDO:0001153 | NON RARE IN EUROPE: Gender dysphoria |

Orphanet:357 | MONDO:0007745 | NON RARE IN EUROPE: Gilbert syndrome | Hyperbilirubinemia type 1 \| Familial cholemia

Orphanet:362 | MONDO:0040671 | NON RARE IN EUROPE: Glucose-6-phosphate-dehydrogenase deficiency | Favism \| G6PD deficiency

Orphanet:100642 | MONDO:0004277 | NON RARE IN EUROPE: Gonorrhea |

Orphanet:855 | MONDO:0007699 | NON RARE IN EUROPE: Hashimoto thyroiditis | Hashimoto hypothyroidism

Orphanet:139498 | MONDO:0021001 | NON RARE IN EUROPE: Hemochromatosis type 1 | C282Y/C282Y hemochromatosis \| Classic hemochromatosis \| HFE-related hemochromatosis

Orphanet:862 | MONDO:0003233 | NON RARE IN EUROPE: Hereditary essential tremor |

Orphanet:387 | MONDO:0006559 | NON RARE IN EUROPE: Hidradenitis suppurativa | Fox den disease \| Ectopic acne \| Pyoderma fistulans significa \| Verneuil disease \| Acne inversa

Orphanet:89939 | MONDO:0100161 | NON RARE IN EUROPE: Hyperkalemic renal tubular acidosis | Renal tubular acidosis type 4

Orphanet:413 | MONDO:0007761 | NON RARE IN EUROPE: Hyperlipoproteinemia type 4 | HLP type 4 \| Familial hypertriglyceridemia

Orphanet:2227 | MONDO:0005486 | NON RARE IN EUROPE: Hypodontia | Tooth agenesis

Orphanet:2810 | MONDO:0005665 | NON RARE IN EUROPE: Idiopathic facial palsy | Bell palsy

Orphanet:651 | MONDO:0005712 | NON RARE IN EUROPE: Idiopathic infantile nystagmus | Congenital idiopathic nystagmus \| Motor congenital nystagmus

Orphanet:69127 | MONDO:0001341 | NON RARE IN EUROPE: Immunoglobulin A deficiency | SIgAD \| Selective immunoglobulin A deficiency

Orphanet:83449 | MONDO:0006802 | NON RARE IN EUROPE: Inappropriate antidiuretic hormone secretion syndrome | SIADH

Orphanet:464293 | MONDO:0011191 | NON RARE IN EUROPE: Infantile capillary hemangioma |

Orphanet:319684 | MONDO:0013461 | NON RARE IN EUROPE: Inosine triphosphate pyrophosphatase deficiency |

Orphanet:2335 | MONDO:0015486 | NON RARE IN EUROPE: Isolated keratoconus |

Orphanet:459696 | MONDO:0100076 | NON RARE IN EUROPE: Juvenile idiopathic scoliosis |

Orphanet:484 | MONDO:0006823 | NON RARE IN EUROPE: Klinefelter syndrome | 47,XXY syndrome

Orphanet:319681 | MONDO:0006065 | NON RARE IN EUROPE: Lactase non-persistence in adulthood |

Orphanet:33409 | MONDO:0007899 | NON RARE IN EUROPE: Lichen sclerosus | Lichen sclerosus et atrophicus

Orphanet:411533 | MONDO:0005105 | NON RARE IN EUROPE: Melanoma |

Orphanet:45360 | MONDO:0007972 | NON RARE IN EUROPE: MeniÞre disease |

Orphanet:411969 | MONDO:0004955 | NON RARE IN EUROPE: Metabolic syndrome |

Orphanet:802 | MONDO:0005301 | NON RARE IN EUROPE: Multiple sclerosis |

Orphanet:521399 | MONDO:0011122 | NON RARE IN EUROPE: Non rare obesity |

Orphanet:64738 | MONDO:0002305 | NON RARE IN EUROPE: Non rare thrombophilia |

Orphanet:33271 | MONDO:0013209 | NON RARE IN EUROPE: Non-alcoholic fatty liver disease | NAFLD

Orphanet:415300 | MONDO:0000499 | NON RARE IN EUROPE: Non-arteritic anterior ischemic optic neuropathy | NAION

Orphanet:488201 | MONDO:0005233 | NON RARE IN EUROPE: Non-small cell lung cancer | NSCLC

Orphanet:280110 | MONDO:0005382 | NON RARE IN EUROPE: Paget disease of bone | Osteitis deformans

Orphanet:319705 | MONDO:0005180 | NON RARE IN EUROPE: Parkinson disease |

Orphanet:319698 | MONDO:0010564 | NON RARE IN EUROPE: Partial color blindness, deutan type | Partial achromatopsia, deutan type \| Deuteranopia

Orphanet:319691 | MONDO:0010565 | NON RARE IN EUROPE: Partial color blindness, protan type | Partial achromatopsia, protan type

Orphanet:706 | MONDO:0011827 | NON RARE IN EUROPE: Patent arterial duct | Patent ductus arteriosus \| Persistent patency of the arterial duct

Orphanet:58208 | MONDO:0005904 | NON RARE IN EUROPE: Pericarditis |

Orphanet:120 | MONDO:0008228 | NON RARE IN EUROPE: Pernicious anemia | Acquired pernicious anemia \| Biermer anemia \| Biermer disease \| Addison-Biermer anemia \| Juvenile onset pernicious anemia

Orphanet:2870 | MONDO:0008231 | NON RARE IN EUROPE: Peyronie syndrome | Induratio penis plastica

Orphanet:26823 | MONDO:0010896 | NON RARE IN EUROPE: Pigment-dispersion syndrome |

Orphanet:3185 | MONDO:0008487 | NON RARE IN EUROPE: Polycystic ovary syndrome | PCOS \| Stein-Leventhal syndrome

Orphanet:466673 | MONDO:0041052 | NON RARE IN EUROPE: Post-herpetic neuralgia |

Orphanet:449262 | MONDO:0013214 | NON RARE IN EUROPE: Primary bile acid malabsorption |

Orphanet:619 | MONDO:0005387 | NON RARE IN EUROPE: Primary ovarian failure | Premature ovarian failure

Orphanet:40050 | MONDO:0011849 | NON RARE IN EUROPE: Psoriatic arthritis |

Orphanet:284130 | MONDO:0008383 | NON RARE IN EUROPE: Rheumatoid arthritis |

Orphanet:3140 | MONDO:0005090 | NON RARE IN EUROPE: Schizophrenia |

Orphanet:378 | MONDO:0010030 | NON RARE IN EUROPE: Sj÷gren syndrome | Sjögren-Gougerot syndrome \| Sicca syndrome

Orphanet:458713 | MONDO:0000724 | NON RARE IN EUROPE: Specific language impairment |

Orphanet:489 | MONDO:0006460 | NON RARE IN EUROPE: Thyroglossal duct cyst | Thyroglossal tract cyst

Orphanet:856 | MONDO:0007661 | NON RARE IN EUROPE: Tourette syndrome | Gilles de la Tourette syndrome \| Tourette disease \| GTS

Orphanet:35056 | MONDO:0011182 | NON RARE IN EUROPE: Trimethylaminuria | Fish-odor syndrome

Orphanet:771 | MONDO:0005101 | NON RARE IN EUROPE: Ulcerative colitis | Ulcerative proctosigmoiditis

Orphanet:319658 | MONDO:0001071 | NON RARE IN EUROPE: Unexplained intellectual disability |

Orphanet:1480 | MONDO:0002070 | NON RARE IN EUROPE: Ventricular septal defect | Interventricular communication \| VSD

Orphanet:3435 | MONDO:0008661 | NON RARE IN EUROPE: Vitiligo |

Orphanet:97354 | MONDO:0007020 | NON RARE IN EUROPE: Wernicke encephalopathy | Dementia due to thiamine deficiency

Orphanet:907 | MONDO:0008685 | NON RARE IN EUROPE: Wolff-Parkinson-White syndrome | Ventricular familial preexcitation syndrome

ORCID-ID in case it's necessary

0000-0002-9584-9618 | 1.0 | Map Orphanet's non-rare diseases to Mondo (list included) - Orphanet seems to have several non-rare diseases in it's ontology, simply labeled as e.g. "_NON-RARE IN EUROPE: Melanoma_" (Which is just regular Melanoma then).

Seems like none of these diseases are mapped to any other ontology and no other equivalency mapping out there seems to cover these.

Would it be possible for MONDO to cover/map these? Or is there a reason to leave these diseases as-is?

Our team's already gone over the list of these Orphanet resources and added the appropriate MONDO IDs where possible.

Mind you that there were some Orphanet resources we were not able to map to any MONDO equivalent.

I've left those out of the list below (I'll create another ticket if necessary to add those diseases to MONDO).

It's quite the list, I know :sweat_smile:

Orphanet ID | Mondo ID | Label (from Orphanet) | Synonym (from Orphanet)

-- | -- | -- | --

Orphanet:924 | MONDO:0007035 | NON RARE IN EUROPE: Acanthosis nigricans |

Orphanet:464463 | MONDO:0005036 | NON RARE IN EUROPE: Adenocarcinoma of stomach |

Orphanet:415268 | MONDO:0005061 | NON RARE IN EUROPE: Adenocarcinoma of the lung |

Orphanet:3153 | MONDO:0005488 | NON RARE IN EUROPE: Adolescent idiopathic scoliosis |

Orphanet:99888 | MONDO:0003924 | NON RARE IN EUROPE: Adrenocortical adenoma |

Orphanet:85142 | MONDO:0014200 | NON RARE IN EUROPE: Aldosterone-producing adenoma | Primary aldosteronism due to Conn adenoma \| Aldosterone-secreting adenoma \| Aldosteronoma \| Conn adenoma

Orphanet:238616 | MONDO:0004975 | NON RARE IN EUROPE: Alzheimer disease |

Orphanet:825 | MONDO:0005306 | NON RARE IN EUROPE: Ankylosing spondylitis | Ankylosing spondylarthritis \| Bechterew syndrome

Orphanet:36297 | MONDO:0005351 | NON RARE IN EUROPE: Anorexia nervosa |

Orphanet:80 | MONDO:0007140 | NON RARE IN EUROPE: Antiphospholipid syndrome | Hughes syndrome \| Antiphospholipid antibody syndrome \| Familial lupus anticoagulant

Orphanet:1162 | MONDO:0005259 | NON RARE IN EUROPE: Asperger syndrome |

Orphanet:625 | MONDO:0009755 | NON RARE IN EUROPE: Atypical mole | Dysplastic nevus \| Clark nevus

Orphanet:106 | MONDO:0005258 | NON RARE IN EUROPE: Autism |

Orphanet:462 | MONDO:0007810 | NON RARE IN EUROPE: Autosomal dominant ichthyosis vulgaris |

Orphanet:1232 | MONDO:0013662 | NON RARE IN EUROPE: Barrett esophagus |

Orphanet:97562 | MONDO:0007709 | NON RARE IN EUROPE: Benign familial hematuria |

Orphanet:34145 | MONDO:0005342 | NON RARE IN EUROPE: Berger disease | IgA nephropathy

Orphanet:1244 | MONDO:0007194 | NON RARE IN EUROPE: Bicuspid aortic valve |

Orphanet:157980 | MONDO:0001187 | NON RARE IN EUROPE: Bladder cancer |

Orphanet:93393 | MONDO:0007217 | NON RARE IN EUROPE: Brachydactyly type A3 | Brachymesophalangy V \| Brachydactyly-clinodactyly

Orphanet:93385 | MONDO:0007222 | NON RARE IN EUROPE: Brachydactyly type D |

Orphanet:50838 | MONDO:0007275 | NON RARE IN EUROPE: Carpal tunnel syndrome |

Orphanet:555 | MONDO:0005130 | NON RARE IN EUROPE: Celiac disease | Celiac sprue \| Gluten intolerance \| Gluten-induced enteropathy \| Coeliac disease \| Gluten-sensitive enteropathy \| Idiopathic steatorrhea \| Nontropical sprue \| Coeliac sprue

Orphanet:164 | MONDO:0000820 | NON RARE IN EUROPE: Cerebral cavernous malformations | Brain cavernous hemangioma

Orphanet:1983 | MONDO:0005404 | NON RARE IN EUROPE: Chronic fatigue syndrome | Myalgic encephalomyelitis \| Chronic fatigue immune dysfunction syndrome

Orphanet:1002 | MONDO:0043537 | NON RARE IN EUROPE: Cluster headache | Erythroprosopalgia of Bing \| Ciliary neuralgia \| Red migraine \| Horton headache \| Erythromelalgia of the head \| Histaminic cephalalgia \| Histamine headache \| Histamine cephalalgia \| Migrainous neuralgia \| Cluster migraine

Orphanet:466667 | MONDO:0005575 | NON RARE IN EUROPE: Colorectal cancer |

Orphanet:206 | MONDO:0005011 | NON RARE IN EUROPE: Crohn disease |

Orphanet:1648 | MONDO:0007488 | NON RARE IN EUROPE: Dementia with Lewy body | Lewy body dementia \| DLB \| Diffuse Lewy body disease \| Cortical Lewy body disease

Orphanet:243377 | MONDO:0005147 | NON RARE IN EUROPE: Diabetes mellitus type 1 | Insulin-dependent diabetes mellitus

Orphanet:243761 | MONDO:0001134 | NON RARE IN EUROPE: Essential hypertension |

Orphanet:529819 | MONDO:0008327 | NON RARE IN EUROPE: Exfoliation syndrome | Pseudoexfoliation syndrome \| XFS

Orphanet:276271 | MONDO:0014448 | NON RARE IN EUROPE: Familial dysalbuminemic hyperthyroxinemia | Bisalbuminemia

Orphanet:426 | MONDO:0017774 | NON RARE IN EUROPE: Familial hypobetalipoproteinemia |

Orphanet:155 | MONDO:0024573 | NON RARE IN EUROPE: Familial isolated hypertrophic cardiomyopathy | Familila or idiopathic hypertrophic obstructive cardiomyopathy

Orphanet:2794 | MONDO:0005349 | NON RARE IN EUROPE: Familial otosclerosis |

Orphanet:336 | MONDO:0006761 | NON RARE IN EUROPE: Fibromuscular dysplasia of arteries |

Orphanet:41842 | MONDO:0005546 | NON RARE IN EUROPE: Fibromyalgia |

Orphanet:459690 | MONDO:0001153 | NON RARE IN EUROPE: Gender dysphoria |

Orphanet:357 | MONDO:0007745 | NON RARE IN EUROPE: Gilbert syndrome | Hyperbilirubinemia type 1 \| Familial cholemia

Orphanet:362 | MONDO:0040671 | NON RARE IN EUROPE: Glucose-6-phosphate-dehydrogenase deficiency | Favism \| G6PD deficiency

Orphanet:100642 | MONDO:0004277 | NON RARE IN EUROPE: Gonorrhea |

Orphanet:855 | MONDO:0007699 | NON RARE IN EUROPE: Hashimoto thyroiditis | Hashimoto hypothyroidism

Orphanet:139498 | MONDO:0021001 | NON RARE IN EUROPE: Hemochromatosis type 1 | C282Y/C282Y hemochromatosis \| Classic hemochromatosis \| HFE-related hemochromatosis

Orphanet:862 | MONDO:0003233 | NON RARE IN EUROPE: Hereditary essential tremor |

Orphanet:387 | MONDO:0006559 | NON RARE IN EUROPE: Hidradenitis suppurativa | Fox den disease \| Ectopic acne \| Pyoderma fistulans significa \| Verneuil disease \| Acne inversa

Orphanet:89939 | MONDO:0100161 | NON RARE IN EUROPE: Hyperkalemic renal tubular acidosis | Renal tubular acidosis type 4

Orphanet:413 | MONDO:0007761 | NON RARE IN EUROPE: Hyperlipoproteinemia type 4 | HLP type 4 \| Familial hypertriglyceridemia

Orphanet:2227 | MONDO:0005486 | NON RARE IN EUROPE: Hypodontia | Tooth agenesis

Orphanet:2810 | MONDO:0005665 | NON RARE IN EUROPE: Idiopathic facial palsy | Bell palsy

Orphanet:651 | MONDO:0005712 | NON RARE IN EUROPE: Idiopathic infantile nystagmus | Congenital idiopathic nystagmus \| Motor congenital nystagmus

Orphanet:69127 | MONDO:0001341 | NON RARE IN EUROPE: Immunoglobulin A deficiency | SIgAD \| Selective immunoglobulin A deficiency

Orphanet:83449 | MONDO:0006802 | NON RARE IN EUROPE: Inappropriate antidiuretic hormone secretion syndrome | SIADH

Orphanet:464293 | MONDO:0011191 | NON RARE IN EUROPE: Infantile capillary hemangioma |

Orphanet:319684 | MONDO:0013461 | NON RARE IN EUROPE: Inosine triphosphate pyrophosphatase deficiency |

Orphanet:2335 | MONDO:0015486 | NON RARE IN EUROPE: Isolated keratoconus |

Orphanet:459696 | MONDO:0100076 | NON RARE IN EUROPE: Juvenile idiopathic scoliosis |

Orphanet:484 | MONDO:0006823 | NON RARE IN EUROPE: Klinefelter syndrome | 47,XXY syndrome

Orphanet:319681 | MONDO:0006065 | NON RARE IN EUROPE: Lactase non-persistence in adulthood |

Orphanet:33409 | MONDO:0007899 | NON RARE IN EUROPE: Lichen sclerosus | Lichen sclerosus et atrophicus

Orphanet:411533 | MONDO:0005105 | NON RARE IN EUROPE: Melanoma |

Orphanet:45360 | MONDO:0007972 | NON RARE IN EUROPE: MeniÞre disease |

Orphanet:411969 | MONDO:0004955 | NON RARE IN EUROPE: Metabolic syndrome |

Orphanet:802 | MONDO:0005301 | NON RARE IN EUROPE: Multiple sclerosis |

Orphanet:521399 | MONDO:0011122 | NON RARE IN EUROPE: Non rare obesity |

Orphanet:64738 | MONDO:0002305 | NON RARE IN EUROPE: Non rare thrombophilia |

Orphanet:33271 | MONDO:0013209 | NON RARE IN EUROPE: Non-alcoholic fatty liver disease | NAFLD

Orphanet:415300 | MONDO:0000499 | NON RARE IN EUROPE: Non-arteritic anterior ischemic optic neuropathy | NAION

Orphanet:488201 | MONDO:0005233 | NON RARE IN EUROPE: Non-small cell lung cancer | NSCLC

Orphanet:280110 | MONDO:0005382 | NON RARE IN EUROPE: Paget disease of bone | Osteitis deformans

Orphanet:319705 | MONDO:0005180 | NON RARE IN EUROPE: Parkinson disease |

Orphanet:319698 | MONDO:0010564 | NON RARE IN EUROPE: Partial color blindness, deutan type | Partial achromatopsia, deutan type \| Deuteranopia

Orphanet:319691 | MONDO:0010565 | NON RARE IN EUROPE: Partial color blindness, protan type | Partial achromatopsia, protan type

Orphanet:706 | MONDO:0011827 | NON RARE IN EUROPE: Patent arterial duct | Patent ductus arteriosus \| Persistent patency of the arterial duct

Orphanet:58208 | MONDO:0005904 | NON RARE IN EUROPE: Pericarditis |

Orphanet:120 | MONDO:0008228 | NON RARE IN EUROPE: Pernicious anemia | Acquired pernicious anemia \| Biermer anemia \| Biermer disease \| Addison-Biermer anemia \| Juvenile onset pernicious anemia

Orphanet:2870 | MONDO:0008231 | NON RARE IN EUROPE: Peyronie syndrome | Induratio penis plastica

Orphanet:26823 | MONDO:0010896 | NON RARE IN EUROPE: Pigment-dispersion syndrome |

Orphanet:3185 | MONDO:0008487 | NON RARE IN EUROPE: Polycystic ovary syndrome | PCOS \| Stein-Leventhal syndrome

Orphanet:466673 | MONDO:0041052 | NON RARE IN EUROPE: Post-herpetic neuralgia |

Orphanet:449262 | MONDO:0013214 | NON RARE IN EUROPE: Primary bile acid malabsorption |

Orphanet:619 | MONDO:0005387 | NON RARE IN EUROPE: Primary ovarian failure | Premature ovarian failure

Orphanet:40050 | MONDO:0011849 | NON RARE IN EUROPE: Psoriatic arthritis |

Orphanet:284130 | MONDO:0008383 | NON RARE IN EUROPE: Rheumatoid arthritis |

Orphanet:3140 | MONDO:0005090 | NON RARE IN EUROPE: Schizophrenia |

Orphanet:378 | MONDO:0010030 | NON RARE IN EUROPE: Sj÷gren syndrome | Sjögren-Gougerot syndrome \| Sicca syndrome

Orphanet:458713 | MONDO:0000724 | NON RARE IN EUROPE: Specific language impairment |

Orphanet:489 | MONDO:0006460 | NON RARE IN EUROPE: Thyroglossal duct cyst | Thyroglossal tract cyst

Orphanet:856 | MONDO:0007661 | NON RARE IN EUROPE: Tourette syndrome | Gilles de la Tourette syndrome \| Tourette disease \| GTS

Orphanet:35056 | MONDO:0011182 | NON RARE IN EUROPE: Trimethylaminuria | Fish-odor syndrome

Orphanet:771 | MONDO:0005101 | NON RARE IN EUROPE: Ulcerative colitis | Ulcerative proctosigmoiditis

Orphanet:319658 | MONDO:0001071 | NON RARE IN EUROPE: Unexplained intellectual disability |

Orphanet:1480 | MONDO:0002070 | NON RARE IN EUROPE: Ventricular septal defect | Interventricular communication \| VSD

Orphanet:3435 | MONDO:0008661 | NON RARE IN EUROPE: Vitiligo |

Orphanet:97354 | MONDO:0007020 | NON RARE IN EUROPE: Wernicke encephalopathy | Dementia due to thiamine deficiency

Orphanet:907 | MONDO:0008685 | NON RARE IN EUROPE: Wolff-Parkinson-White syndrome | Ventricular familial preexcitation syndrome

ORCID-ID in case it's necessary