Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,485 | 5,110,358,838 | IssuesEvent | 2017-01-05 23:56:37 | sketch-city/project-ideas | https://api.github.com/repos/sketch-city/project-ideas | closed | Regional Server Network for Regional Apps | infrastructure | Please describe your project, the problem you're solving, and why it's important. Keep it brief! Link to further reading if necessary.

Local code irrespective of device downloadable from local servers / as close to backbone as possible.

| 1.0 | Regional Server Network for Regional Apps - Please describe your project, the problem you're solving, and why it's important. Keep it brief! Link to further reading if necessary.

Local code irrespective of device downloadable from local servers / as close to backbone as possible.

| infrastructure | regional server network for regional apps please describe your project the problem you re solving and why it s important keep it brief link to further reading if necessary local code irrespective of device downloadable from local servers as close to backbone as possible | 1 |

21,754 | 14,786,431,571 | IssuesEvent | 2021-01-12 05:29:43 | pol-is/polis | https://api.github.com/repos/pol-is/polis | opened | Use GitHub Container Registry to store pre-built containers for test workflows | ⚒️ infrastructure | Right now, we're pushing nightly builds to docker hub. In theory, this makes deploying quicker for new people, since can pull instead of building. In practice, I don't think it's used much.

Having said that, we're also building containers in order to run cypress tests. If we start building them for cross-browser testing of old browsers on BrowserStack, then we'll be building the containers at least twice per commit. This expends twice as many build minutes as we need, and each docker build takes about 8 minutes.

We could instead build the containers in one workflow, and push them to GitHub Container Registry. These would be set to private, so just for internal tests. We could then pull them in the workflows that needs to spin up an instance, without rebuilding them each time (e.g. cypress tests, browserstack tests, etc).

GitHub Container Registry vs GitHub Docker Registry: https://docs.github.com/en/free-pro-team@latest/packages/guides/migrating-to-github-container-registry-for-docker-images (main thing is that GHCR has more fine-grained permissions)

Enabling: https://docs.github.com/en/free-pro-team@latest/packages/guides/enabling-improved-container-support | 1.0 | Use GitHub Container Registry to store pre-built containers for test workflows - Right now, we're pushing nightly builds to docker hub. In theory, this makes deploying quicker for new people, since can pull instead of building. In practice, I don't think it's used much.

Having said that, we're also building containers in order to run cypress tests. If we start building them for cross-browser testing of old browsers on BrowserStack, then we'll be building the containers at least twice per commit. This expends twice as many build minutes as we need, and each docker build takes about 8 minutes.

We could instead build the containers in one workflow, and push them to GitHub Container Registry. These would be set to private, so just for internal tests. We could then pull them in the workflows that needs to spin up an instance, without rebuilding them each time (e.g. cypress tests, browserstack tests, etc).

GitHub Container Registry vs GitHub Docker Registry: https://docs.github.com/en/free-pro-team@latest/packages/guides/migrating-to-github-container-registry-for-docker-images (main thing is that GHCR has more fine-grained permissions)

Enabling: https://docs.github.com/en/free-pro-team@latest/packages/guides/enabling-improved-container-support | infrastructure | use github container registry to store pre built containers for test workflows right now we re pushing nightly builds to docker hub in theory this makes deploying quicker for new people since can pull instead of building in practice i don t think it s used much having said that we re also building containers in order to run cypress tests if we start building them for cross browser testing of old browsers on browserstack then we ll be building the containers at least twice per commit this expends twice as many build minutes as we need and each docker build takes about minutes we could instead build the containers in one workflow and push them to github container registry these would be set to private so just for internal tests we could then pull them in the workflows that needs to spin up an instance without rebuilding them each time e g cypress tests browserstack tests etc github container registry vs github docker registry main thing is that ghcr has more fine grained permissions enabling | 1 |

14,160 | 10,678,268,586 | IssuesEvent | 2019-10-21 16:56:24 | aspnet/AspNetCore | https://api.github.com/repos/aspnet/AspNetCore | closed | Unable to .\restore.cmd - TagBuilderWebSite.csproj | area-infrastructure | ### Describe the bug

Cannot restore from from clean master

### To Reproduce

Steps to reproduce the behavior:

1. Using this version of ASP.NET Core '418e35c4396682aea6ee6e9833ff3bd3bfe624eb'

2. Run this code '\.restore.cmd'

3. See error

### Expected behavior

Successful restore

### Screenshots

If applicable, add screenshots to help explain your problem.

### Additional context

dotnet --info:

```

C:\dev\AspNetCore>dotnet --info

A compatible installed .NET Core SDK for global.json version [5.0.100-alpha1-014696] from [C:\dev\AspNetCore\global.json] was not found

Install the [5.0.100-alpha1-014696] .NET Core SDK or update [C:\dev\AspNetCore\global.json] with an installed .NET Core SDK:

1.0.0-preview4-004233 [C:\Program Files\dotnet\sdk]

1.0.0 [C:\Program Files\dotnet\sdk]

1.0.4 [C:\Program Files\dotnet\sdk]

2.1.4 [C:\Program Files\dotnet\sdk]

2.1.103 [C:\Program Files\dotnet\sdk]

2.1.104 [C:\Program Files\dotnet\sdk]

2.1.201 [C:\Program Files\dotnet\sdk]

2.1.202 [C:\Program Files\dotnet\sdk]

2.1.300-preview1-008174 [C:\Program Files\dotnet\sdk]

2.1.300-rc1-008673 [C:\Program Files\dotnet\sdk]

2.1.300 [C:\Program Files\dotnet\sdk]

2.1.401 [C:\Program Files\dotnet\sdk]

2.1.402 [C:\Program Files\dotnet\sdk]

2.1.403 [C:\Program Files\dotnet\sdk]

2.1.502 [C:\Program Files\dotnet\sdk]

2.1.503 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009426 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009472 [C:\Program Files\dotnet\sdk]

2.1.602 [C:\Program Files\dotnet\sdk]

2.1.800 [C:\Program Files\dotnet\sdk]

2.2.101 [C:\Program Files\dotnet\sdk]

3.0.100-preview6-012264 [C:\Program Files\dotnet\sdk]

3.0.100 [C:\Program Files\dotnet\sdk]

3.1.100-preview1-014459 [C:\Program Files\dotnet\sdk]

Host (useful for support):

Version: 5.0.0-alpha1.19514.1

Commit: 4ace84dbf9

.NET Core SDKs installed:

1.0.0-preview4-004233 [C:\Program Files\dotnet\sdk]

1.0.0 [C:\Program Files\dotnet\sdk]

1.0.4 [C:\Program Files\dotnet\sdk]

2.1.4 [C:\Program Files\dotnet\sdk]

2.1.103 [C:\Program Files\dotnet\sdk]

2.1.104 [C:\Program Files\dotnet\sdk]

2.1.201 [C:\Program Files\dotnet\sdk]

2.1.202 [C:\Program Files\dotnet\sdk]

2.1.300-preview1-008174 [C:\Program Files\dotnet\sdk]

2.1.300-rc1-008673 [C:\Program Files\dotnet\sdk]

2.1.300 [C:\Program Files\dotnet\sdk]

2.1.401 [C:\Program Files\dotnet\sdk]

2.1.402 [C:\Program Files\dotnet\sdk]

2.1.403 [C:\Program Files\dotnet\sdk]

2.1.502 [C:\Program Files\dotnet\sdk]

2.1.503 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009426 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009472 [C:\Program Files\dotnet\sdk]

2.1.602 [C:\Program Files\dotnet\sdk]

2.1.800 [C:\Program Files\dotnet\sdk]

2.2.101 [C:\Program Files\dotnet\sdk]

3.0.100-preview6-012264 [C:\Program Files\dotnet\sdk]

3.0.100 [C:\Program Files\dotnet\sdk]

3.1.100-preview1-014459 [C:\Program Files\dotnet\sdk]

.NET Core runtimes installed:

Microsoft.AspNetCore.All 2.1.0-preview1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.0-rc1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.App 2.1.0-preview1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.0-rc1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.0.0-preview6.19307.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.1.0-preview1.19508.20 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 5.0.0-dev [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.NETCore.App 1.0.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.0.4 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.0.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.1.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.1.2 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.6 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.7 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.9 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0-preview1-26216-03 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0-rc1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.3-servicing-26724-03 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.0.0-preview6-27804-01 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.1.0-preview1.19506.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 5.0.0-alpha1.19514.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.WindowsDesktop.App 3.0.0-preview6-27804-01 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 3.1.0-preview1.19506.1 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

To install additional .NET Core runtimes or SDKs:

https://aka.ms/dotnet-download

```

Console output:

```

C:\dev\AspNetCore>git status

On branch master

Your branch is up to date with 'origin/master'.

nothing to commit, working tree clean

C:\dev\AspNetCore>.\restore.cmd

Building of C# project is enabled and has dependencies on NodeJS projects. Building of NodeJS projects is enabled since node is detected in C:\Program Files.

Wiederherstellung in "41,94 ms" für "C:\Users\admin\.nuget\packages\microsoft.dotnet.arcade

.sdk\1.0.0-beta.19462.4\tools\Tools.proj" abgeschlossen.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\". Note: This change will not be visible if PowerShell was run as a child process.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\x86\". Note: This change will not be visible if PowerShell was run as a child process.

Wiederherstellung in "14,54 ms" für "C:\dev\AspNetCore\eng\tools\RepoTasks\RepoTasks.csproj

" abgeschlossen.

RepoTasks -> C:\dev\AspNetCore\artifacts\bin\RepoTasks\Release\netcoreapp5.0\RepoTasks.dll

RepoTasks -> C:\dev\AspNetCore\artifacts\bin\RepoTasks\Release\net472\RepoTasks.dll

Wiederherstellung in "41,11 ms" für "C:\Users\admin\.nuget\packages\microsoft.dotnet.arcade

.sdk\1.0.0-beta.19462.4\tools\Tools.proj" abgeschlossen.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\". Note: This change will not be visible if PowerShell was run as a child process.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\x86\". Note: This change will not be visible if PowerShell was run as a child process.

C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\MSBuild\Current\Bin\Microsoft.

Common.CurrentVersion.targets(31,3): error MSB4024: Die importierte Projektdatei "C:\dev\AspN

etCore\src\Mvc\test\WebSites\TagHelpersWebSite\TagHelpersWebSite.csproj.user" konnte nicht ge

laden werden Das Stammelement ist nicht vorhanden. [C:\dev\AspNetCore\src\Mvc\test\WebSites\T

agHelpersWebSite\TagHelpersWebSite.csproj]

Fehler beim Buildvorgang.

C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\MSBuild\Current\Bin\Microsoft.

Common.CurrentVersion.targets(31,3): error MSB4024: Die importierte Projektdatei "C:\dev\AspN

etCore\src\Mvc\test\WebSites\TagHelpersWebSite\TagHelpersWebSite.csproj.user" konnte nicht ge

laden werden Das Stammelement ist nicht vorhanden. [C:\dev\AspNetCore\src\Mvc\test\WebSites\T

agHelpersWebSite\TagHelpersWebSite.csproj]

0 Warnung(en)

1 Fehler

Verstrichene Zeit 00:00:15.58

Build failed.

```

I am new here, and have tried A LOT of things. I've got the restore and build running once, but installed VS 2019 Preview in an attempt to get targeting the locally built artifacts. I've uninstalled already, but am still getting these errors now.

How does the build pipeline decide which version of VS to use, and why is it that as soon as I have any other version of VS, it will always break? | 1.0 | Unable to .\restore.cmd - TagBuilderWebSite.csproj - ### Describe the bug

Cannot restore from from clean master

### To Reproduce

Steps to reproduce the behavior:

1. Using this version of ASP.NET Core '418e35c4396682aea6ee6e9833ff3bd3bfe624eb'

2. Run this code '\.restore.cmd'

3. See error

### Expected behavior

Successful restore

### Screenshots

If applicable, add screenshots to help explain your problem.

### Additional context

dotnet --info:

```

C:\dev\AspNetCore>dotnet --info

A compatible installed .NET Core SDK for global.json version [5.0.100-alpha1-014696] from [C:\dev\AspNetCore\global.json] was not found

Install the [5.0.100-alpha1-014696] .NET Core SDK or update [C:\dev\AspNetCore\global.json] with an installed .NET Core SDK:

1.0.0-preview4-004233 [C:\Program Files\dotnet\sdk]

1.0.0 [C:\Program Files\dotnet\sdk]

1.0.4 [C:\Program Files\dotnet\sdk]

2.1.4 [C:\Program Files\dotnet\sdk]

2.1.103 [C:\Program Files\dotnet\sdk]

2.1.104 [C:\Program Files\dotnet\sdk]

2.1.201 [C:\Program Files\dotnet\sdk]

2.1.202 [C:\Program Files\dotnet\sdk]

2.1.300-preview1-008174 [C:\Program Files\dotnet\sdk]

2.1.300-rc1-008673 [C:\Program Files\dotnet\sdk]

2.1.300 [C:\Program Files\dotnet\sdk]

2.1.401 [C:\Program Files\dotnet\sdk]

2.1.402 [C:\Program Files\dotnet\sdk]

2.1.403 [C:\Program Files\dotnet\sdk]

2.1.502 [C:\Program Files\dotnet\sdk]

2.1.503 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009426 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009472 [C:\Program Files\dotnet\sdk]

2.1.602 [C:\Program Files\dotnet\sdk]

2.1.800 [C:\Program Files\dotnet\sdk]

2.2.101 [C:\Program Files\dotnet\sdk]

3.0.100-preview6-012264 [C:\Program Files\dotnet\sdk]

3.0.100 [C:\Program Files\dotnet\sdk]

3.1.100-preview1-014459 [C:\Program Files\dotnet\sdk]

Host (useful for support):

Version: 5.0.0-alpha1.19514.1

Commit: 4ace84dbf9

.NET Core SDKs installed:

1.0.0-preview4-004233 [C:\Program Files\dotnet\sdk]

1.0.0 [C:\Program Files\dotnet\sdk]

1.0.4 [C:\Program Files\dotnet\sdk]

2.1.4 [C:\Program Files\dotnet\sdk]

2.1.103 [C:\Program Files\dotnet\sdk]

2.1.104 [C:\Program Files\dotnet\sdk]

2.1.201 [C:\Program Files\dotnet\sdk]

2.1.202 [C:\Program Files\dotnet\sdk]

2.1.300-preview1-008174 [C:\Program Files\dotnet\sdk]

2.1.300-rc1-008673 [C:\Program Files\dotnet\sdk]

2.1.300 [C:\Program Files\dotnet\sdk]

2.1.401 [C:\Program Files\dotnet\sdk]

2.1.402 [C:\Program Files\dotnet\sdk]

2.1.403 [C:\Program Files\dotnet\sdk]

2.1.502 [C:\Program Files\dotnet\sdk]

2.1.503 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009426 [C:\Program Files\dotnet\sdk]

2.1.600-preview-009472 [C:\Program Files\dotnet\sdk]

2.1.602 [C:\Program Files\dotnet\sdk]

2.1.800 [C:\Program Files\dotnet\sdk]

2.2.101 [C:\Program Files\dotnet\sdk]

3.0.100-preview6-012264 [C:\Program Files\dotnet\sdk]

3.0.100 [C:\Program Files\dotnet\sdk]

3.1.100-preview1-014459 [C:\Program Files\dotnet\sdk]

.NET Core runtimes installed:

Microsoft.AspNetCore.All 2.1.0-preview1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.0-rc1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.All 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.All]

Microsoft.AspNetCore.App 2.1.0-preview1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.0-rc1-final [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.0.0-preview6.19307.2 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 3.1.0-preview1.19508.20 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 5.0.0-dev [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.NETCore.App 1.0.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.0.4 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.0.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.1.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 1.1.2 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.6 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.7 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.0.9 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0-preview1-26216-03 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0-rc1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.3-servicing-26724-03 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.3 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.4 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.5 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.6 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.7 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.9 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.1.12 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 2.2.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.0.0-preview6-27804-01 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 3.1.0-preview1.19506.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 5.0.0-alpha1.19514.1 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.WindowsDesktop.App 3.0.0-preview6-27804-01 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 3.0.0 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 3.1.0-preview1.19506.1 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

To install additional .NET Core runtimes or SDKs:

https://aka.ms/dotnet-download

```

Console output:

```

C:\dev\AspNetCore>git status

On branch master

Your branch is up to date with 'origin/master'.

nothing to commit, working tree clean

C:\dev\AspNetCore>.\restore.cmd

Building of C# project is enabled and has dependencies on NodeJS projects. Building of NodeJS projects is enabled since node is detected in C:\Program Files.

Wiederherstellung in "41,94 ms" für "C:\Users\admin\.nuget\packages\microsoft.dotnet.arcade

.sdk\1.0.0-beta.19462.4\tools\Tools.proj" abgeschlossen.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\". Note: This change will not be visible if PowerShell was run as a child process.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\x86\". Note: This change will not be visible if PowerShell was run as a child process.

Wiederherstellung in "14,54 ms" für "C:\dev\AspNetCore\eng\tools\RepoTasks\RepoTasks.csproj

" abgeschlossen.

RepoTasks -> C:\dev\AspNetCore\artifacts\bin\RepoTasks\Release\netcoreapp5.0\RepoTasks.dll

RepoTasks -> C:\dev\AspNetCore\artifacts\bin\RepoTasks\Release\net472\RepoTasks.dll

Wiederherstellung in "41,11 ms" für "C:\Users\admin\.nuget\packages\microsoft.dotnet.arcade

.sdk\1.0.0-beta.19462.4\tools\Tools.proj" abgeschlossen.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\". Note: This change will not be visible if PowerShell was run as a child process.

dotnet-install: .NET Core Runtime version 5.0.0-alpha1.19514.1 is already installed.

dotnet-install: Adding to current process PATH: "C:\dev\AspNetCore\.dotnet\x86\". Note: This change will not be visible if PowerShell was run as a child process.

C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\MSBuild\Current\Bin\Microsoft.

Common.CurrentVersion.targets(31,3): error MSB4024: Die importierte Projektdatei "C:\dev\AspN

etCore\src\Mvc\test\WebSites\TagHelpersWebSite\TagHelpersWebSite.csproj.user" konnte nicht ge

laden werden Das Stammelement ist nicht vorhanden. [C:\dev\AspNetCore\src\Mvc\test\WebSites\T

agHelpersWebSite\TagHelpersWebSite.csproj]

Fehler beim Buildvorgang.

C:\Program Files (x86)\Microsoft Visual Studio\2019\Enterprise\MSBuild\Current\Bin\Microsoft.

Common.CurrentVersion.targets(31,3): error MSB4024: Die importierte Projektdatei "C:\dev\AspN

etCore\src\Mvc\test\WebSites\TagHelpersWebSite\TagHelpersWebSite.csproj.user" konnte nicht ge

laden werden Das Stammelement ist nicht vorhanden. [C:\dev\AspNetCore\src\Mvc\test\WebSites\T

agHelpersWebSite\TagHelpersWebSite.csproj]

0 Warnung(en)

1 Fehler

Verstrichene Zeit 00:00:15.58

Build failed.

```

I am new here, and have tried A LOT of things. I've got the restore and build running once, but installed VS 2019 Preview in an attempt to get targeting the locally built artifacts. I've uninstalled already, but am still getting these errors now.

How does the build pipeline decide which version of VS to use, and why is it that as soon as I have any other version of VS, it will always break? | infrastructure | unable to restore cmd tagbuilderwebsite csproj describe the bug cannot restore from from clean master to reproduce steps to reproduce the behavior using this version of asp net core run this code restore cmd see error expected behavior successful restore screenshots if applicable add screenshots to help explain your problem additional context dotnet info c dev aspnetcore dotnet info a compatible installed net core sdk for global json version from was not found install the net core sdk or update with an installed net core sdk preview preview host useful for support version commit net core sdks installed preview preview net core runtimes installed microsoft aspnetcore all final microsoft aspnetcore all final microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore all microsoft aspnetcore app final microsoft aspnetcore app final microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app dev microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app servicing microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft windowsdesktop app microsoft windowsdesktop app microsoft windowsdesktop app to install additional net core runtimes or sdks console output c dev aspnetcore git status on branch master your branch is up to date with origin master nothing to commit working tree clean c dev aspnetcore restore cmd building of c project is enabled and has dependencies on nodejs projects building of nodejs projects is enabled since node is detected in c program files wiederherstellung in ms für c users admin nuget packages microsoft dotnet arcade sdk beta tools tools proj abgeschlossen dotnet install net core runtime version is already installed dotnet install adding to current process path c dev aspnetcore dotnet note this change will not be visible if powershell was run as a child process dotnet install net core runtime version is already installed dotnet install adding to current process path c dev aspnetcore dotnet note this change will not be visible if powershell was run as a child process wiederherstellung in ms für c dev aspnetcore eng tools repotasks repotasks csproj abgeschlossen repotasks c dev aspnetcore artifacts bin repotasks release repotasks dll repotasks c dev aspnetcore artifacts bin repotasks release repotasks dll wiederherstellung in ms für c users admin nuget packages microsoft dotnet arcade sdk beta tools tools proj abgeschlossen dotnet install net core runtime version is already installed dotnet install adding to current process path c dev aspnetcore dotnet note this change will not be visible if powershell was run as a child process dotnet install net core runtime version is already installed dotnet install adding to current process path c dev aspnetcore dotnet note this change will not be visible if powershell was run as a child process c program files microsoft visual studio enterprise msbuild current bin microsoft common currentversion targets error die importierte projektdatei c dev aspn etcore src mvc test websites taghelperswebsite taghelperswebsite csproj user konnte nicht ge laden werden das stammelement ist nicht vorhanden c dev aspnetcore src mvc test websites t aghelperswebsite taghelperswebsite csproj fehler beim buildvorgang c program files microsoft visual studio enterprise msbuild current bin microsoft common currentversion targets error die importierte projektdatei c dev aspn etcore src mvc test websites taghelperswebsite taghelperswebsite csproj user konnte nicht ge laden werden das stammelement ist nicht vorhanden c dev aspnetcore src mvc test websites t aghelperswebsite taghelperswebsite csproj warnung en fehler verstrichene zeit build failed i am new here and have tried a lot of things i ve got the restore and build running once but installed vs preview in an attempt to get targeting the locally built artifacts i ve uninstalled already but am still getting these errors now how does the build pipeline decide which version of vs to use and why is it that as soon as i have any other version of vs it will always break | 1 |

10,253 | 8,453,125,979 | IssuesEvent | 2018-10-20 12:33:09 | TeamBravo2018/cloned-rfid-card-detection | https://api.github.com/repos/TeamBravo2018/cloned-rfid-card-detection | opened | Setup MQTT Simulator | backlog item infrastructure messaging test production | ### Description ###

Simulate messaging between the applications by using MQTT simulator.

[https://dzone.com/articles/top-3-online-tools-to-simulate-an-mqtt-client]()

| 1.0 | Setup MQTT Simulator - ### Description ###

Simulate messaging between the applications by using MQTT simulator.

[https://dzone.com/articles/top-3-online-tools-to-simulate-an-mqtt-client]()

| infrastructure | setup mqtt simulator description simulate messaging between the applications by using mqtt simulator | 1 |

813,125 | 30,446,199,738 | IssuesEvent | 2023-07-15 17:48:03 | ncssar/radiolog | https://api.github.com/repos/ncssar/radiolog | opened | generated log PDFs should be searchable | enhancement Priority:Medium | not so important for generated clue report PDFs, but all others - radio log, team logs, clue log - should be searchable (using e.g. acrobat reader); surprised to see that they are currently not searchable | 1.0 | generated log PDFs should be searchable - not so important for generated clue report PDFs, but all others - radio log, team logs, clue log - should be searchable (using e.g. acrobat reader); surprised to see that they are currently not searchable | non_infrastructure | generated log pdfs should be searchable not so important for generated clue report pdfs but all others radio log team logs clue log should be searchable using e g acrobat reader surprised to see that they are currently not searchable | 0 |

716,244 | 24,626,321,471 | IssuesEvent | 2022-10-16 15:11:39 | AY2223S1-CS2103T-T10-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-T10-1/tp | opened | As a forgetful student, I want to know which of my friends take a common module | type.Story priority.High | so that I know who to approach when I need help with that module's work. | 1.0 | As a forgetful student, I want to know which of my friends take a common module - so that I know who to approach when I need help with that module's work. | non_infrastructure | as a forgetful student i want to know which of my friends take a common module so that i know who to approach when i need help with that module s work | 0 |

293,730 | 25,318,877,925 | IssuesEvent | 2022-11-18 00:54:22 | dotnet/sdk | https://api.github.com/repos/dotnet/sdk | closed | dotnet test fail to pass MSBuild properties | Area-DotNet Test untriaged | ### Describe the bug

Until .Net 6.x, we were able to run

`

dotnet test App.sln -p Property=Value

`

and the property was adequately passed down to MSBuild, interpreted by project files and the like.

On upgrading to .Net 7.x, we found the property was no longer passed down. However, we have a workaround

`

dotnet test -p Property=Value App.sln

`

### To Reproduce

<!--

We ❤ code! Point us to a minimalistic repro project hosted in a GitHub repo, Gist snippet, or other means to see the isolated behavior.

We may close this issue if:

- the repro project you share with us is complex. We can't investigate custom projects, so don't point us to such, please.

- if we will not be able to repro the behavior you're reporting

-->

Fail - runs only one test

`

dotnet test TestProject1.csproj -p:CIBuild=Integration

`

Correct - runs only one test

`

dotnet test -p:CIBuild=Unit TestProject1.csproj

`

Correct - runs the two tests

`

dotnet test -p:CIBuild=Integration TestProject1.csproj

`

**TestProject1.csproj**

```xml

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<TargetFramework>net7.0</TargetFramework>

<ImplicitUsings>enable</ImplicitUsings>

<Nullable>enable</Nullable>

<IsPackable>false</IsPackable>

<ApplicationManifest>app.manifest</ApplicationManifest>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="Microsoft.NET.Test.Sdk" Version="17.3.2" />

<PackageReference Include="MSTest.TestAdapter" Version="2.2.10" />

<PackageReference Include="MSTest.TestFramework" Version="2.2.10" />

<PackageReference Include="coverlet.collector" Version="3.1.2" />

</ItemGroup>

</Project>

```

**Directory.Build.props**

```xml

<Project>

<PropertyGroup>

<LangVersion>11.0</LangVersion>

<TargetFramework>net7.0</TargetFramework>

<RunSettingsFilePath>$(MSBuildThisFileDirectory)Unit.runsettings</RunSettingsFilePath>

<RunSettingsFilePath Condition="$(CIBuild) != ''">$(MSBuildThisFileDirectory)$(CIBuild).runsettings</RunSettingsFilePath>

</PropertyGroup>

</Project>

```

**Unit.runsettings**

```xml

<RunSettings>

<RunConfiguration>

<TestCaseFilter>TestCategory=Unit</TestCaseFilter>

</RunConfiguration>

</RunSettings>

```

**Integration.runsettings**

```xml

<RunSettings>

<RunConfiguration>

<TestCaseFilter>TestCategory=Unit|TestCategory=Integration</TestCaseFilter>

</RunConfiguration>

</RunSettings>

```

**UnitTest1.cs**

```xml

namespace TestProject1

{

[TestClass]

public class UnitTest1

{

[TestMethod, TestCategory("Unit")]

public void TestMethod1()

{

}

[TestMethod, TestCategory("Integration")]

public void TestMethod2()

{

}

}

}

```

### Exceptions (if any)

No exceptions

### Further technical details

- Include the output of `dotnet --info`

`

.NET SDK:

Version: 7.0.100

Commit: e12b7af219

Runtime Environment:

OS Name: Windows

OS Version: 10.0.22621

OS Platform: Windows

RID: win10-x64

Base Path: C:\Program Files\dotnet\sdk\7.0.100\

Host:

Version: 7.0.0

Architecture: x64

Commit: d099f075e4

.NET SDKs installed:

3.1.425 [C:\Program Files\dotnet\sdk]

6.0.202 [C:\Program Files\dotnet\sdk]

6.0.306 [C:\Program Files\dotnet\sdk]

7.0.100 [C:\Program Files\dotnet\sdk]

.NET runtimes installed:

Microsoft.AspNetCore.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.NETCore.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.WindowsDesktop.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Other architectures found:

x86 [C:\Program Files (x86)\dotnet]

registered at [HKLM\SOFTWARE\dotnet\Setup\InstalledVersions\x86\InstallLocation]

Environment variables:

Not set

global.json file:

Not found

Learn more:

https://aka.ms/dotnet/info

Download .NET:

https://aka.ms/dotnet/download

`

- VS 17.4

### Related

Passing properties seem to have worked since earlier versions, but it is not well documented.

#23198

| 1.0 | dotnet test fail to pass MSBuild properties - ### Describe the bug

Until .Net 6.x, we were able to run

`

dotnet test App.sln -p Property=Value

`

and the property was adequately passed down to MSBuild, interpreted by project files and the like.

On upgrading to .Net 7.x, we found the property was no longer passed down. However, we have a workaround

`

dotnet test -p Property=Value App.sln

`

### To Reproduce

<!--

We ❤ code! Point us to a minimalistic repro project hosted in a GitHub repo, Gist snippet, or other means to see the isolated behavior.

We may close this issue if:

- the repro project you share with us is complex. We can't investigate custom projects, so don't point us to such, please.

- if we will not be able to repro the behavior you're reporting

-->

Fail - runs only one test

`

dotnet test TestProject1.csproj -p:CIBuild=Integration

`

Correct - runs only one test

`

dotnet test -p:CIBuild=Unit TestProject1.csproj

`

Correct - runs the two tests

`

dotnet test -p:CIBuild=Integration TestProject1.csproj

`

**TestProject1.csproj**

```xml

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<TargetFramework>net7.0</TargetFramework>

<ImplicitUsings>enable</ImplicitUsings>

<Nullable>enable</Nullable>

<IsPackable>false</IsPackable>

<ApplicationManifest>app.manifest</ApplicationManifest>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="Microsoft.NET.Test.Sdk" Version="17.3.2" />

<PackageReference Include="MSTest.TestAdapter" Version="2.2.10" />

<PackageReference Include="MSTest.TestFramework" Version="2.2.10" />

<PackageReference Include="coverlet.collector" Version="3.1.2" />

</ItemGroup>

</Project>

```

**Directory.Build.props**

```xml

<Project>

<PropertyGroup>

<LangVersion>11.0</LangVersion>

<TargetFramework>net7.0</TargetFramework>

<RunSettingsFilePath>$(MSBuildThisFileDirectory)Unit.runsettings</RunSettingsFilePath>

<RunSettingsFilePath Condition="$(CIBuild) != ''">$(MSBuildThisFileDirectory)$(CIBuild).runsettings</RunSettingsFilePath>

</PropertyGroup>

</Project>

```

**Unit.runsettings**

```xml

<RunSettings>

<RunConfiguration>

<TestCaseFilter>TestCategory=Unit</TestCaseFilter>

</RunConfiguration>

</RunSettings>

```

**Integration.runsettings**

```xml

<RunSettings>

<RunConfiguration>

<TestCaseFilter>TestCategory=Unit|TestCategory=Integration</TestCaseFilter>

</RunConfiguration>

</RunSettings>

```

**UnitTest1.cs**

```xml

namespace TestProject1

{

[TestClass]

public class UnitTest1

{

[TestMethod, TestCategory("Unit")]

public void TestMethod1()

{

}

[TestMethod, TestCategory("Integration")]

public void TestMethod2()

{

}

}

}

```

### Exceptions (if any)

No exceptions

### Further technical details

- Include the output of `dotnet --info`

`

.NET SDK:

Version: 7.0.100

Commit: e12b7af219

Runtime Environment:

OS Name: Windows

OS Version: 10.0.22621

OS Platform: Windows

RID: win10-x64

Base Path: C:\Program Files\dotnet\sdk\7.0.100\

Host:

Version: 7.0.0

Architecture: x64

Commit: d099f075e4

.NET SDKs installed:

3.1.425 [C:\Program Files\dotnet\sdk]

6.0.202 [C:\Program Files\dotnet\sdk]

6.0.306 [C:\Program Files\dotnet\sdk]

7.0.100 [C:\Program Files\dotnet\sdk]

.NET runtimes installed:

Microsoft.AspNetCore.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.AspNetCore.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.AspNetCore.App]

Microsoft.NETCore.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.NETCore.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.NETCore.App]

Microsoft.WindowsDesktop.App 3.1.31 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 6.0.11 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Microsoft.WindowsDesktop.App 7.0.0 [C:\Program Files\dotnet\shared\Microsoft.WindowsDesktop.App]

Other architectures found:

x86 [C:\Program Files (x86)\dotnet]

registered at [HKLM\SOFTWARE\dotnet\Setup\InstalledVersions\x86\InstallLocation]

Environment variables:

Not set

global.json file:

Not found

Learn more:

https://aka.ms/dotnet/info

Download .NET:

https://aka.ms/dotnet/download

`

- VS 17.4

### Related

Passing properties seem to have worked since earlier versions, but it is not well documented.

#23198

| non_infrastructure | dotnet test fail to pass msbuild properties describe the bug until net x we were able to run dotnet test app sln p property value and the property was adequately passed down to msbuild interpreted by project files and the like on upgrading to net x we found the property was no longer passed down however we have a workaround dotnet test p property value app sln to reproduce we ❤ code point us to a minimalistic repro project hosted in a github repo gist snippet or other means to see the isolated behavior we may close this issue if the repro project you share with us is complex we can t investigate custom projects so don t point us to such please if we will not be able to repro the behavior you re reporting fail runs only one test dotnet test csproj p cibuild integration correct runs only one test dotnet test p cibuild unit csproj correct runs the two tests dotnet test p cibuild integration csproj csproj xml enable enable false app manifest directory build props xml msbuildthisfiledirectory unit runsettings msbuildthisfiledirectory cibuild runsettings unit runsettings xml testcategory unit integration runsettings xml testcategory unit testcategory integration cs xml namespace public class public void public void exceptions if any no exceptions further technical details include the output of dotnet info net sdk version commit runtime environment os name windows os version os platform windows rid base path c program files dotnet sdk host version architecture commit net sdks installed net runtimes installed microsoft aspnetcore app microsoft aspnetcore app microsoft aspnetcore app microsoft netcore app microsoft netcore app microsoft netcore app microsoft windowsdesktop app microsoft windowsdesktop app microsoft windowsdesktop app other architectures found registered at environment variables not set global json file not found learn more download net vs related passing properties seem to have worked since earlier versions but it is not well documented | 0 |

6,370 | 6,361,319,796 | IssuesEvent | 2017-07-31 12:37:23 | warg-lang/warg | https://api.github.com/repos/warg-lang/warg | opened | Generate accessible static analysis diagnostics | ci enhancement infrastructure | [neovim](https://github.com/neovim/neovim) provides a nice [diagnostics overview](https://neovim.io/doc/reports/clang/) using Clang Static Analysis. While it is not completely transparent how to do that, making similar page would be great. | 1.0 | Generate accessible static analysis diagnostics - [neovim](https://github.com/neovim/neovim) provides a nice [diagnostics overview](https://neovim.io/doc/reports/clang/) using Clang Static Analysis. While it is not completely transparent how to do that, making similar page would be great. | infrastructure | generate accessible static analysis diagnostics provides a nice using clang static analysis while it is not completely transparent how to do that making similar page would be great | 1 |

6,826 | 6,657,418,989 | IssuesEvent | 2017-09-30 05:13:33 | nathantspencer/AtLAS-mobile | https://api.github.com/repos/nathantspencer/AtLAS-mobile | closed | UI + Request for Sign In/Out | Client-Server Security UI | Sign in request will require username and password. Sign out request will require username and authentication key. | True | UI + Request for Sign In/Out - Sign in request will require username and password. Sign out request will require username and authentication key. | non_infrastructure | ui request for sign in out sign in request will require username and password sign out request will require username and authentication key | 0 |

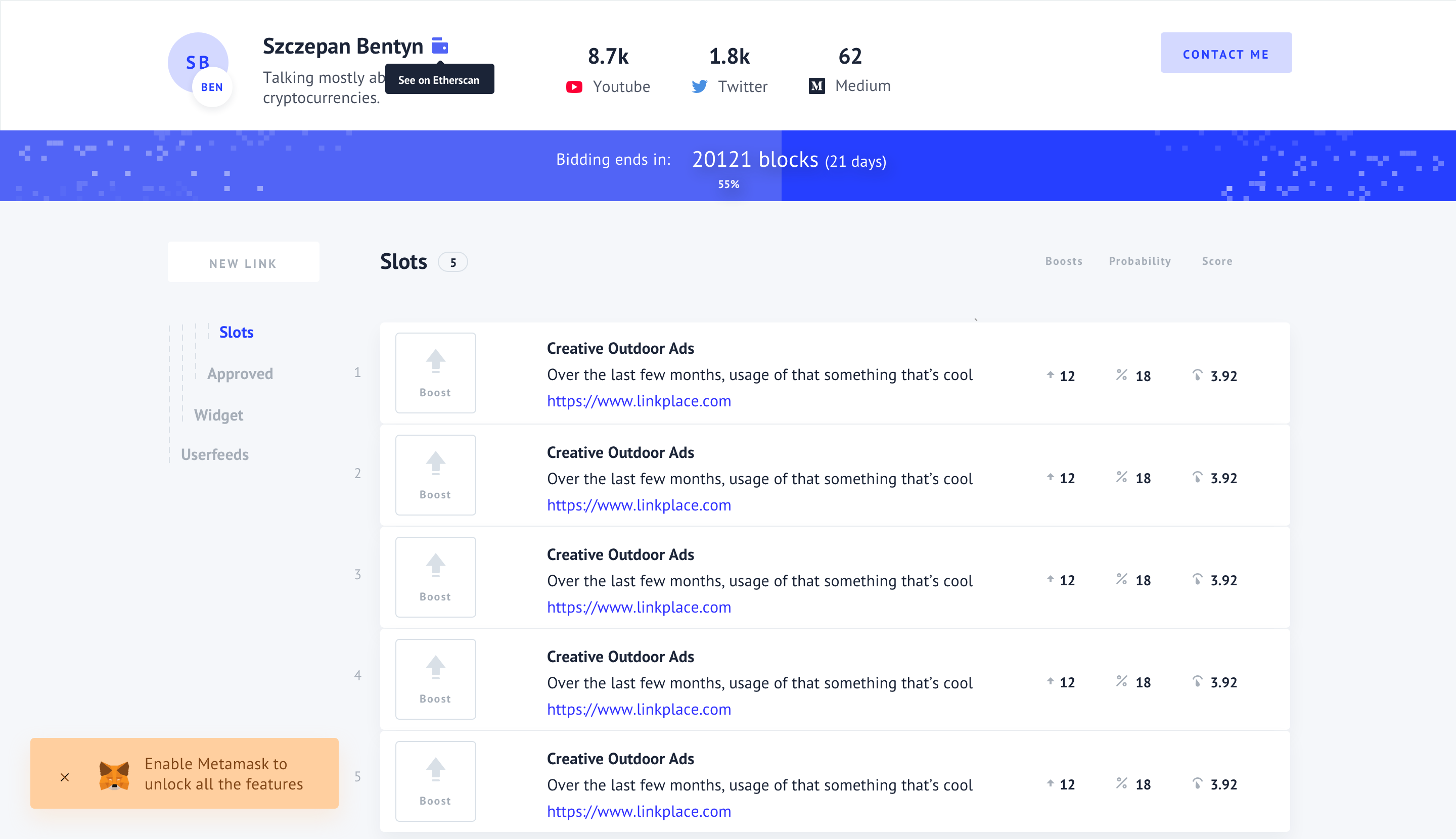

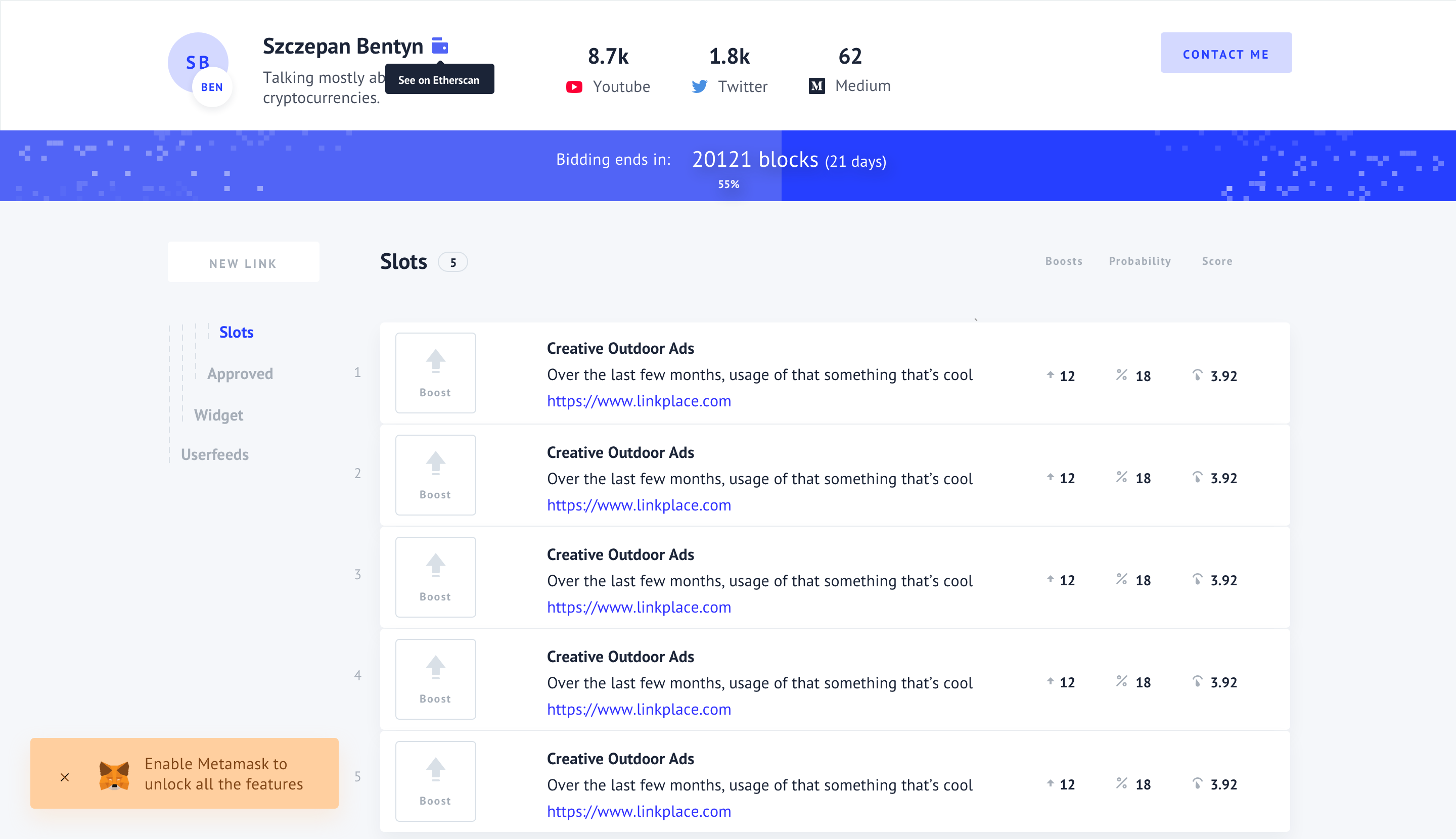

57,982 | 7,110,431,567 | IssuesEvent | 2018-01-17 10:36:10 | Userfeeds/Apps | https://api.github.com/repos/Userfeeds/Apps | closed | Inform that Metamask is disabled | design | Currently whenever my Metamask is not logged I can't see any functional buttons. I'd rather have them disabled and inform the user about this issue.

| 1.0 | Inform that Metamask is disabled - Currently whenever my Metamask is not logged I can't see any functional buttons. I'd rather have them disabled and inform the user about this issue.

| non_infrastructure | inform that metamask is disabled currently whenever my metamask is not logged i can t see any functional buttons i d rather have them disabled and inform the user about this issue | 0 |

2,631 | 2,699,148,226 | IssuesEvent | 2015-04-03 14:47:24 | itgsod-lukas-michanek/Neocache | https://api.github.com/repos/itgsod-lukas-michanek/Neocache | opened | Project cleanup | documentation | We are soon about to leave the stage of database modelling, but before we do, make sure that everything is properly commented, formatted and everything else. | 1.0 | Project cleanup - We are soon about to leave the stage of database modelling, but before we do, make sure that everything is properly commented, formatted and everything else. | non_infrastructure | project cleanup we are soon about to leave the stage of database modelling but before we do make sure that everything is properly commented formatted and everything else | 0 |

179,745 | 21,580,319,605 | IssuesEvent | 2022-05-02 17:59:45 | vincenzodistasio97/excel-to-json | https://api.github.com/repos/vincenzodistasio97/excel-to-json | opened | CVE-2020-28498 (Medium) detected in elliptic-6.4.0.tgz | security vulnerability | ## CVE-2020-28498 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.0.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/elliptic/-/elliptic-6.4.0.tgz">https://registry.npmjs.org/elliptic/-/elliptic-6.4.0.tgz</a></p>

<p>Path to dependency file: /client/package.json</p>

<p>Path to vulnerable library: /client/node_modules/elliptic/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-1.1.1.tgz (Root Library)

- webpack-3.8.1.tgz

- node-libs-browser-2.1.0.tgz

- crypto-browserify-3.12.0.tgz

- create-ecdh-4.0.0.tgz

- :x: **elliptic-6.4.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vincenzodistasio97/excel-to-json/commit/e367d4db4134dc676344b2b9fb2443300bd3c9c7">e367d4db4134dc676344b2b9fb2443300bd3c9c7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package elliptic before 6.5.4 are vulnerable to Cryptographic Issues via the secp256k1 implementation in elliptic/ec/key.js. There is no check to confirm that the public key point passed into the derive function actually exists on the secp256k1 curve. This results in the potential for the private key used in this implementation to be revealed after a number of ECDH operations are performed.

<p>Publish Date: 2021-02-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-28498>CVE-2020-28498</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-28498">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-28498</a></p>

<p>Release Date: 2021-02-02</p>

<p>Fix Resolution (elliptic): 6.5.4</p>

<p>Direct dependency fix Resolution (react-scripts): 1.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-28498 (Medium) detected in elliptic-6.4.0.tgz - ## CVE-2020-28498 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.0.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/elliptic/-/elliptic-6.4.0.tgz">https://registry.npmjs.org/elliptic/-/elliptic-6.4.0.tgz</a></p>

<p>Path to dependency file: /client/package.json</p>

<p>Path to vulnerable library: /client/node_modules/elliptic/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-1.1.1.tgz (Root Library)

- webpack-3.8.1.tgz

- node-libs-browser-2.1.0.tgz

- crypto-browserify-3.12.0.tgz

- create-ecdh-4.0.0.tgz

- :x: **elliptic-6.4.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/vincenzodistasio97/excel-to-json/commit/e367d4db4134dc676344b2b9fb2443300bd3c9c7">e367d4db4134dc676344b2b9fb2443300bd3c9c7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package elliptic before 6.5.4 are vulnerable to Cryptographic Issues via the secp256k1 implementation in elliptic/ec/key.js. There is no check to confirm that the public key point passed into the derive function actually exists on the secp256k1 curve. This results in the potential for the private key used in this implementation to be revealed after a number of ECDH operations are performed.

<p>Publish Date: 2021-02-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-28498>CVE-2020-28498</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-28498">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-28498</a></p>

<p>Release Date: 2021-02-02</p>

<p>Fix Resolution (elliptic): 6.5.4</p>

<p>Direct dependency fix Resolution (react-scripts): 1.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_infrastructure | cve medium detected in elliptic tgz cve medium severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file client package json path to vulnerable library client node modules elliptic package json dependency hierarchy react scripts tgz root library webpack tgz node libs browser tgz crypto browserify tgz create ecdh tgz x elliptic tgz vulnerable library found in head commit a href found in base branch master vulnerability details the package elliptic before are vulnerable to cryptographic issues via the implementation in elliptic ec key js there is no check to confirm that the public key point passed into the derive function actually exists on the curve this results in the potential for the private key used in this implementation to be revealed after a number of ecdh operations are performed publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope changed impact metrics confidentiality impact high integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution elliptic direct dependency fix resolution react scripts step up your open source security game with whitesource | 0 |

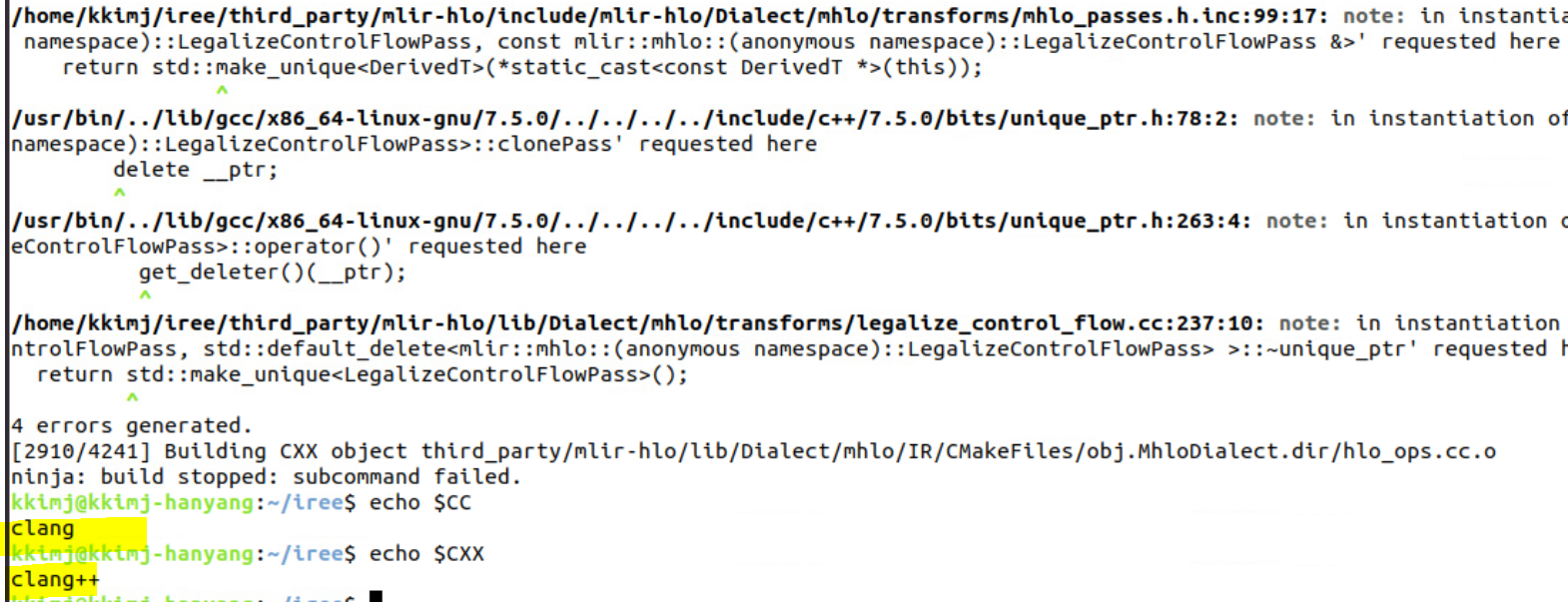

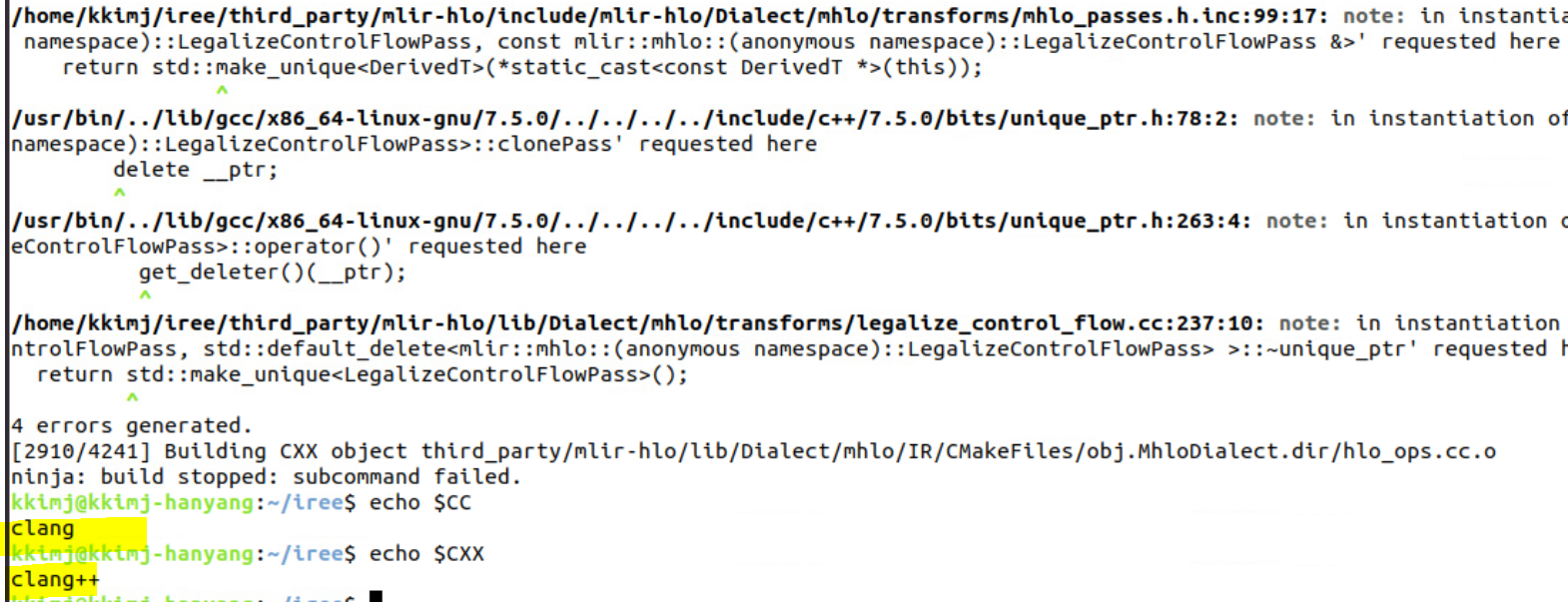

24,255 | 17,046,813,829 | IssuesEvent | 2021-07-06 00:59:33 | google/iree | https://api.github.com/repos/google/iree | closed | [build] cant build iree | bug 🐞 help wanted infrastructure 🛠️ support 🤗 | Hello,

I have some problems and errors while building IREE

I just followed ```getting started``` to build

https://google.github.io/iree/building-from-source/getting-started/

Could you give some hints or instructions for this problems?

Thanks!

## Trials

```

$ sudo apt-get install clang lld

$ sudo apt-get install clang++

$ sudo apt install python-clang-4.0

$ sudo apt install python-clang-5.0

$ sudo apt install python-clang-6.0

$ sudo apt install python-clang-7

$ sudo apt install python-clang-8

$ sudo apt install python-clang-9

$ sudo apt install python3-clang-10

$ sudo apt update

$ sudo apt upgrade

$ sudo apt autoremove

# g++ to clang++

$ sudo update-alternatives --config c++

# gcc to clang

$ sudo update-alternatives --config cc

```

```

$ export CC=clang

$ export CXX=clang++

$ sudo rm -r ../iree-build

$ cmake -B ../iree-build/ -DCMAKE_BUILD_TYPE=RelWithDebInfo . -GNinja

$ cmake --build ../iree-build/ -j6

```

## Machine Spec

- OS : Ubuntu 18.04.5 LTS

- CPU : Intel(R) Core(TM) i7-10700K CPU @ 3.80GHz

- RAM : 16GB

- SSD : nvme, samsung

## Verbose log

### Results of checking ```$CC```, ```$CXX``` after failed with errors

```

$ cmake --build ../iree-build/ -j6

[0/2] Re-checking globbed directories...

[2905/4241] Building CXX object third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/mhlo_control_flow_to_scf.cc.o

FAILED: third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/mhlo_control_flow_to_scf.cc.o

/usr/bin/clang++ -DGTEST_HAS_RTTI=0 -D__STDC_CONSTANT_MACROS -D__STDC_FORMAT_MACROS -D__STDC_LIMIT_MACROS -I/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree-build/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree/third_party/llvm-project/llvm/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/include -I/home/kkimj/iree/third_party/llvm-project/mlir/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/tools/mlir/include -I/home/kkimj/iree/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo -fPIC -fvisibility-inlines-hidden -Werror=date-time -Werror=unguarded-availability-new -w -fdiagnostics-color -ffunction-sections -fdata-sections -O2 -g -DNDEBUG -fPIC -fno-exceptions -fno-rtti -std=gnu++14 -MD -MT third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/mhlo_control_flow_to_scf.cc.o -MF third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/mhlo_control_flow_to_scf.cc.o.d -o third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/mhlo_control_flow_to_scf.cc.o -c /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:47:24: error: only virtual member functions can be marked 'override'

void runOnFunction() override {

^~~~~~~~~

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:48:5: error: use of undeclared identifier 'getFunction'

getFunction().walk([&](WhileOp whileOp) { MatchAndRewrite(whileOp); });

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:16:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/Support/Casting.h:20:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:202:15: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass>' requested here

return std::make_unique<ControlFlowToScfPass>();

^

/home/kkimj/iree/third_party/llvm-project/mlir/include/mlir/Pass/Pass.h:177:16: note: unimplemented pure virtual method 'runOnOperation' in 'ControlFlowToScfPass'

virtual void runOnOperation() = 0;

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:16:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/Support/Casting.h:20:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/include/mlir-hlo/Dialect/mhlo/transforms/mhlo_passes.h.inc:125:17: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass, const mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass &>' requested here

return std::make_unique<DerivedT>(*static_cast<const DerivedT *>(this));

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:78:2: note: in instantiation of member function 'mlir::mhlo::LegalizeControlFlowToScfPassBase<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass>::clonePass' requested here

delete __ptr;

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:263:4: note: in instantiation of member function 'std::default_delete<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass>::operator()' requested here

get_deleter()(__ptr);

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/mhlo_control_flow_to_scf.cc:202:10: note: in instantiation of member function 'std::unique_ptr<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass, std::default_delete<mlir::mhlo::(anonymous namespace)::ControlFlowToScfPass> >::~unique_ptr' requested here

return std::make_unique<ControlFlowToScfPass>();

^

4 errors generated.

[2906/4241] Building CXX object third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_to_standard.cc.o

FAILED: third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_to_standard.cc.o

/usr/bin/clang++ -DGTEST_HAS_RTTI=0 -D__STDC_CONSTANT_MACROS -D__STDC_FORMAT_MACROS -D__STDC_LIMIT_MACROS -I/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree-build/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree/third_party/llvm-project/llvm/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/include -I/home/kkimj/iree/third_party/llvm-project/mlir/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/tools/mlir/include -I/home/kkimj/iree/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo -fPIC -fvisibility-inlines-hidden -Werror=date-time -Werror=unguarded-availability-new -w -fdiagnostics-color -ffunction-sections -fdata-sections -O2 -g -DNDEBUG -fPIC -fno-exceptions -fno-rtti -std=gnu++14 -MD -MT third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_to_standard.cc.o -MF third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_to_standard.cc.o.d -o third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_to_standard.cc.o -c /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:187:24: error: only virtual member functions can be marked 'override'

void runOnFunction() override;

^~~~~~~~

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:205:38: error: use of undeclared identifier 'getFunction'

(void)applyPatternsAndFoldGreedily(getFunction(), std::move(patterns));

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:18:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/StringSwitch.h:15:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/StringRef.h:12:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/STLExtras.h:19:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/Optional.h:24:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:192:15: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass>' requested here

return std::make_unique<LegalizeToStandardPass>();

^

/home/kkimj/iree/third_party/llvm-project/mlir/include/mlir/Pass/Pass.h:177:16: note: unimplemented pure virtual method 'runOnOperation' in 'LegalizeToStandardPass'

virtual void runOnOperation() = 0;

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:18:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/StringSwitch.h:15:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/StringRef.h:12:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/STLExtras.h:19:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/Optional.h:24:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/include/mlir-hlo/Dialect/mhlo/transforms/mhlo_passes.h.inc:229:17: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass, const mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass &>' requested here

return std::make_unique<DerivedT>(*static_cast<const DerivedT *>(this));

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:78:2: note: in instantiation of member function 'mlir::mhlo::LegalizeToStandardPassBase<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass>::clonePass' requested here

delete __ptr;

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:263:4: note: in instantiation of member function 'std::default_delete<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass>::operator()' requested here

get_deleter()(__ptr);

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_to_standard.cc:192:10: note: in instantiation of member function 'std::unique_ptr<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass, std::default_delete<mlir::mhlo::(anonymous namespace)::LegalizeToStandardPass> >::~unique_ptr' requested here

return std::make_unique<LegalizeToStandardPass>();

^

4 errors generated.

[2907/4241] Building CXX object third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_control_flow.cc.o

FAILED: third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_control_flow.cc.o

/usr/bin/clang++ -DGTEST_HAS_RTTI=0 -D__STDC_CONSTANT_MACROS -D__STDC_FORMAT_MACROS -D__STDC_LIMIT_MACROS -I/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree-build/third_party/mlir-hlo/lib/Dialect/mhlo/transforms -I/home/kkimj/iree/third_party/llvm-project/llvm/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/include -I/home/kkimj/iree/third_party/llvm-project/mlir/include -I/home/kkimj/iree-build/third_party/llvm-project/llvm/tools/mlir/include -I/home/kkimj/iree/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo/include -I/home/kkimj/iree-build/third_party/mlir-hlo -fPIC -fvisibility-inlines-hidden -Werror=date-time -Werror=unguarded-availability-new -w -fdiagnostics-color -ffunction-sections -fdata-sections -O2 -g -DNDEBUG -fPIC -fno-exceptions -fno-rtti -std=gnu++14 -MD -MT third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_control_flow.cc.o -MF third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_control_flow.cc.o.d -o third_party/mlir-hlo/lib/Dialect/mhlo/transforms/CMakeFiles/obj.MhloToStandard.dir/legalize_control_flow.cc.o -c /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:43:24: error: only virtual member functions can be marked 'override'

void runOnFunction() override;

^~~~~~~~

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:216:15: error: use of undeclared identifier 'getFunction'

auto func = getFunction();

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:18:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/STLExtras.h:19:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/Optional.h:24:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:237:15: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass>' requested here

return std::make_unique<LegalizeControlFlowPass>();

^

/home/kkimj/iree/third_party/llvm-project/mlir/include/mlir/Pass/Pass.h:177:16: note: unimplemented pure virtual method 'runOnOperation' in 'LegalizeControlFlowPass'

virtual void runOnOperation() = 0;

^

In file included from /home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:18:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/STLExtras.h:19:

In file included from /home/kkimj/iree/third_party/llvm-project/llvm/include/llvm/ADT/Optional.h:24:

In file included from /usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/memory:80:

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:821:34: error: allocating an object of abstract class type 'mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass'

{ return unique_ptr<_Tp>(new _Tp(std::forward<_Args>(__args)...)); }

^

/home/kkimj/iree/third_party/mlir-hlo/include/mlir-hlo/Dialect/mhlo/transforms/mhlo_passes.h.inc:99:17: note: in instantiation of function template specialization 'std::make_unique<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass, const mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass &>' requested here

return std::make_unique<DerivedT>(*static_cast<const DerivedT *>(this));

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:78:2: note: in instantiation of member function 'mlir::mhlo::LegalizeControlFlowPassBase<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass>::clonePass' requested here

delete __ptr;

^

/usr/bin/../lib/gcc/x86_64-linux-gnu/7.5.0/../../../../include/c++/7.5.0/bits/unique_ptr.h:263:4: note: in instantiation of member function 'std::default_delete<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass>::operator()' requested here

get_deleter()(__ptr);

^

/home/kkimj/iree/third_party/mlir-hlo/lib/Dialect/mhlo/transforms/legalize_control_flow.cc:237:10: note: in instantiation of member function 'std::unique_ptr<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass, std::default_delete<mlir::mhlo::(anonymous namespace)::LegalizeControlFlowPass> >::~unique_ptr' requested here

return std::make_unique<LegalizeControlFlowPass>();

^

4 errors generated.

[2910/4241] Building CXX object third_party/mlir-hlo/lib/Dialect/mhlo/IR/CMakeFiles/obj.MhloDialect.dir/hlo_ops.cc.o

ninja: build stopped: subcommand failed.

``` | 1.0 | [build] cant build iree - Hello,

I have some problems and errors while building IREE