Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 665 | labels stringlengths 4 554 | body stringlengths 3 235k | index stringclasses 6 values | text_combine stringlengths 96 235k | label stringclasses 2 values | text stringlengths 96 196k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

23,169 | 15,876,515,460 | IssuesEvent | 2021-04-09 08:28:14 | ManimCommunity/manim | https://api.github.com/repos/ManimCommunity/manim | closed | Nondeterministic build failures in `Tex('The horse does not eat cucumber salad.')` | infrastructure | ## Description of bug / unexpected behavior

<!-- Add a clear and concise description of the problem you encountered. -->

As I've been figuring out the test coverage toolset for Manim, I've done quite a lot of automated builds on a copied (not forked) repository (https://github.com/MrMallIronmaker/manim-cov/). For some reason, occasionally one of the Ubuntu builds fails while I've been playing around with coverage settings. Whether it's 3.7, 3.8, or 3.9 is apparently random.

All of them fail in the Tex doctest.

## Expected behavior

It should pass the test.

## How to reproduce the issue

<!-- Provide a piece of code illustrating the undesired behavior. -->

<details><summary>Code for reproducing the problem</summary>

In theory the problematic code is here:

```py

Tex('The horse does not eat cucumber salad.')

```

However, I've not been able to replicate this issue

</details>

## Additional media files

<!-- Paste in the files manim produced on rendering the code above. Note that GitHub doesn't allow posting videos, so you may need to convert it to a GIF or use the `-i` rendering option. -->

<details><summary>Build output</summary>

```

Run poetry run pytest --cov-append --doctest-modules manim

poetry run pytest --cov-append --doctest-modules manim

shell: /usr/bin/bash -e {0}

env:

POETRY_VIRTUALENVS_CREATE: false

pythonLocation: /opt/hostedtoolcache/Python/3.8.8/x64

LD_LIBRARY_PATH: /opt/hostedtoolcache/Python/3.8.8/x64/lib

Skipping virtualenv creation, as specified in config file.

============================= test session starts ==============================

platform linux -- Python 3.8.8, pytest-6.2.2, py-1.10.0, pluggy-0.13.1

rootdir: /home/runner/work/manim-cov/manim-cov, configfile: pyproject.toml

plugins: cov-2.11.1

collected 30 items

manim/_config/__init__.py . [ 3%]

manim/_config/utils.py ..

## Logs

<details><summary>Terminal output</summary>

<!-- Add "-v DEBUG" when calling manim to generate more detailed logs -->

```

PASTE HERE OR PROVIDE LINK TO https://pastebin.com/ OR SIMILAR

```

<!-- Insert screenshots here (only when absolutely necessary, we prefer copy/pasted output!) -->

</details>

## System specifications

<details><summary>System Details</summary>

- OS (with version, e.g Windows 10 v2004 or macOS 10.15 (Catalina)):

- RAM:

- Python version (`python/py/python3 --version`):

- Installed modules (provide output from `pip list`):

```

PASTE HERE

```

</details>

<details><summary>LaTeX details</summary>

+ LaTeX distribution (e.g. TeX Live 2020):

+ Installed LaTeX packages:

<!-- output of `tlmgr list --only-installed` for TeX Live or a screenshot of the Packages page for MikTeX -->

</details>

<details><summary>FFMPEG</summary>

Output of `ffmpeg -version`:

```

PASTE HERE

```

</details>

## Additional comments

<!-- Add further context that you think might be relevant for this issue here. -->

[ 10%]

manim/animation/animation.py . [ 13%]

manim/mobject/geometry.py ......... [ 43%]

manim/mobject/mobject.py ...... [ 63%]

manim/mobject/svg/tex_mobject.py ..F [ 73%]

manim/mobject/svg/text_mobject.py ... [ 83%]

manim/mobject/types/vectorized_mobject.py .... [ 96%]

manim/utils/color.py . [100%]

=================================== FAILURES ===================================

_________________ [doctest] manim.mobject.svg.tex_mobject.Tex __________________

491 A string compiled with LaTeX in normal mode.

492

493 Tests

494 -----

495

496 Check whether writing a LaTeX string works::

497

498 >>> Tex('The horse does not eat cucumber salad.')

UNEXPECTED EXCEPTION: IndexError('list index out of range')

Traceback (most recent call last):

File "/opt/hostedtoolcache/Python/3.8.8/x64/lib/python3.8/doctest.py", line 1336, in __run

exec(compile(example.source, filename, "single",

File "<doctest manim.mobject.svg.tex_mobject.Tex[0]>", line 1, in <module>

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 506, in __init__

MathTex.__init__(

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 392, in __init__

self.break_up_by_substrings()

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 434, in break_up_by_substrings

sub_tex_mob.move_to(self.submobjects[last_submob_index], RIGHT)

IndexError: list index out of range

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py:498: UnexpectedException

----------------------------- Captured stdout call -----------------------------

INFO Writing "The horse does not tex_file_writing.py:81

eat cucumber salad." to medi

a/Tex/3ecc83aec1683253.tex

------------------------------ Captured log call -------------------------------

INFO manim:tex_file_writing.py:81 Writing "The horse does not eat cucumber salad." to media/Tex/3ecc83aec1683253.tex

=============================== warnings summary ===============================

manim/mobject/mobject.py::manim.mobject.mobject.Mobject.set

<doctest manim.mobject.mobject.Mobject.set[1]>:1: DeprecationWarning: This method is not guaranteed to stay around. Please prefer setting the attribute normally or with Mobject.set().

manim/mobject/mobject.py::manim.mobject.mobject.Mobject.set

<doctest manim.mobject.mobject.Mobject.set[2]>:1: DeprecationWarning: This method is not guaranteed to stay around. Please prefer getting the attribute normally.

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-84, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-104, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-101, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-111, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-114, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-115, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-100, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-110, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-116, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-97, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-99, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-117, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-109, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-98, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-108, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-46, which is not recognized

warnings.warn(warning_text)

-- Docs: https://docs.pytest.org/en/stable/warnings.html

----------- coverage: platform linux, python 3.8.8-final-0 -----------

Coverage XML written to file coverage.xml

=========================== short test summary info ============================

FAILED manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

================== 1 failed, 29 passed, 18 warnings in 6.56s ===================

Error: Process completed with exit code 1.

```

</details> | 1.0 | Nondeterministic build failures in `Tex('The horse does not eat cucumber salad.')` - ## Description of bug / unexpected behavior

<!-- Add a clear and concise description of the problem you encountered. -->

As I've been figuring out the test coverage toolset for Manim, I've done quite a lot of automated builds on a copied (not forked) repository (https://github.com/MrMallIronmaker/manim-cov/). For some reason, occasionally one of the Ubuntu builds fails while I've been playing around with coverage settings. Whether it's 3.7, 3.8, or 3.9 is apparently random.

All of them fail in the Tex doctest.

## Expected behavior

It should pass the test.

## How to reproduce the issue

<!-- Provide a piece of code illustrating the undesired behavior. -->

<details><summary>Code for reproducing the problem</summary>

In theory the problematic code is here:

```py

Tex('The horse does not eat cucumber salad.')

```

However, I've not been able to replicate this issue

</details>

## Additional media files

<!-- Paste in the files manim produced on rendering the code above. Note that GitHub doesn't allow posting videos, so you may need to convert it to a GIF or use the `-i` rendering option. -->

<details><summary>Build output</summary>

```

Run poetry run pytest --cov-append --doctest-modules manim

poetry run pytest --cov-append --doctest-modules manim

shell: /usr/bin/bash -e {0}

env:

POETRY_VIRTUALENVS_CREATE: false

pythonLocation: /opt/hostedtoolcache/Python/3.8.8/x64

LD_LIBRARY_PATH: /opt/hostedtoolcache/Python/3.8.8/x64/lib

Skipping virtualenv creation, as specified in config file.

============================= test session starts ==============================

platform linux -- Python 3.8.8, pytest-6.2.2, py-1.10.0, pluggy-0.13.1

rootdir: /home/runner/work/manim-cov/manim-cov, configfile: pyproject.toml

plugins: cov-2.11.1

collected 30 items

manim/_config/__init__.py . [ 3%]

manim/_config/utils.py ..

## Logs

<details><summary>Terminal output</summary>

<!-- Add "-v DEBUG" when calling manim to generate more detailed logs -->

```

PASTE HERE OR PROVIDE LINK TO https://pastebin.com/ OR SIMILAR

```

<!-- Insert screenshots here (only when absolutely necessary, we prefer copy/pasted output!) -->

</details>

## System specifications

<details><summary>System Details</summary>

- OS (with version, e.g Windows 10 v2004 or macOS 10.15 (Catalina)):

- RAM:

- Python version (`python/py/python3 --version`):

- Installed modules (provide output from `pip list`):

```

PASTE HERE

```

</details>

<details><summary>LaTeX details</summary>

+ LaTeX distribution (e.g. TeX Live 2020):

+ Installed LaTeX packages:

<!-- output of `tlmgr list --only-installed` for TeX Live or a screenshot of the Packages page for MikTeX -->

</details>

<details><summary>FFMPEG</summary>

Output of `ffmpeg -version`:

```

PASTE HERE

```

</details>

## Additional comments

<!-- Add further context that you think might be relevant for this issue here. -->

[ 10%]

manim/animation/animation.py . [ 13%]

manim/mobject/geometry.py ......... [ 43%]

manim/mobject/mobject.py ...... [ 63%]

manim/mobject/svg/tex_mobject.py ..F [ 73%]

manim/mobject/svg/text_mobject.py ... [ 83%]

manim/mobject/types/vectorized_mobject.py .... [ 96%]

manim/utils/color.py . [100%]

=================================== FAILURES ===================================

_________________ [doctest] manim.mobject.svg.tex_mobject.Tex __________________

491 A string compiled with LaTeX in normal mode.

492

493 Tests

494 -----

495

496 Check whether writing a LaTeX string works::

497

498 >>> Tex('The horse does not eat cucumber salad.')

UNEXPECTED EXCEPTION: IndexError('list index out of range')

Traceback (most recent call last):

File "/opt/hostedtoolcache/Python/3.8.8/x64/lib/python3.8/doctest.py", line 1336, in __run

exec(compile(example.source, filename, "single",

File "<doctest manim.mobject.svg.tex_mobject.Tex[0]>", line 1, in <module>

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 506, in __init__

MathTex.__init__(

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 392, in __init__

self.break_up_by_substrings()

File "/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py", line 434, in break_up_by_substrings

sub_tex_mob.move_to(self.submobjects[last_submob_index], RIGHT)

IndexError: list index out of range

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/tex_mobject.py:498: UnexpectedException

----------------------------- Captured stdout call -----------------------------

INFO Writing "The horse does not tex_file_writing.py:81

eat cucumber salad." to medi

a/Tex/3ecc83aec1683253.tex

------------------------------ Captured log call -------------------------------

INFO manim:tex_file_writing.py:81 Writing "The horse does not eat cucumber salad." to media/Tex/3ecc83aec1683253.tex

=============================== warnings summary ===============================

manim/mobject/mobject.py::manim.mobject.mobject.Mobject.set

<doctest manim.mobject.mobject.Mobject.set[1]>:1: DeprecationWarning: This method is not guaranteed to stay around. Please prefer setting the attribute normally or with Mobject.set().

manim/mobject/mobject.py::manim.mobject.mobject.Mobject.set

<doctest manim.mobject.mobject.Mobject.set[2]>:1: DeprecationWarning: This method is not guaranteed to stay around. Please prefer getting the attribute normally.

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-84, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-104, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-101, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-111, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-114, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-115, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-100, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-110, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-116, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-97, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-99, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-117, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-109, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-98, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-108, which is not recognized

warnings.warn(warning_text)

manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

/home/runner/work/manim-cov/manim-cov/manim/mobject/svg/svg_mobject.py:261: UserWarning: media/Tex/3ecc83aec1683253.svg contains a reference to id #g0-46, which is not recognized

warnings.warn(warning_text)

-- Docs: https://docs.pytest.org/en/stable/warnings.html

----------- coverage: platform linux, python 3.8.8-final-0 -----------

Coverage XML written to file coverage.xml

=========================== short test summary info ============================

FAILED manim/mobject/svg/tex_mobject.py::manim.mobject.svg.tex_mobject.Tex

================== 1 failed, 29 passed, 18 warnings in 6.56s ===================

Error: Process completed with exit code 1.

```

</details> | infrastructure | nondeterministic build failures in tex the horse does not eat cucumber salad description of bug unexpected behavior as i ve been figuring out the test coverage toolset for manim i ve done quite a lot of automated builds on a copied not forked repository for some reason occasionally one of the ubuntu builds fails while i ve been playing around with coverage settings whether it s or is apparently random all of them fail in the tex doctest expected behavior it should pass the test how to reproduce the issue code for reproducing the problem in theory the problematic code is here py tex the horse does not eat cucumber salad however i ve not been able to replicate this issue additional media files build output run poetry run pytest cov append doctest modules manim poetry run pytest cov append doctest modules manim shell usr bin bash e env poetry virtualenvs create false pythonlocation opt hostedtoolcache python ld library path opt hostedtoolcache python lib skipping virtualenv creation as specified in config file test session starts platform linux python pytest py pluggy rootdir home runner work manim cov manim cov configfile pyproject toml plugins cov collected items manim config init py manim config utils py logs terminal output paste here or provide link to or similar system specifications system details os with version e g windows or macos catalina ram python version python py version installed modules provide output from pip list paste here latex details latex distribution e g tex live installed latex packages ffmpeg output of ffmpeg version paste here additional comments manim animation animation py manim mobject geometry py manim mobject mobject py manim mobject svg tex mobject py f manim mobject svg text mobject py manim mobject types vectorized mobject py manim utils color py failures manim mobject svg tex mobject tex a string compiled with latex in normal mode tests check whether writing a latex string works tex the horse does not eat cucumber salad unexpected exception indexerror list index out of range traceback most recent call last file opt hostedtoolcache python lib doctest py line in run exec compile example source filename single file line in file home runner work manim cov manim cov manim mobject svg tex mobject py line in init mathtex init file home runner work manim cov manim cov manim mobject svg tex mobject py line in init self break up by substrings file home runner work manim cov manim cov manim mobject svg tex mobject py line in break up by substrings sub tex mob move to self submobjects right indexerror list index out of range home runner work manim cov manim cov manim mobject svg tex mobject py unexpectedexception captured stdout call info writing the horse does not tex file writing py eat cucumber salad to medi a tex tex captured log call info manim tex file writing py writing the horse does not eat cucumber salad to media tex tex warnings summary manim mobject mobject py manim mobject mobject mobject set deprecationwarning this method is not guaranteed to stay around please prefer setting the attribute normally or with mobject set manim mobject mobject py manim mobject mobject mobject set deprecationwarning this method is not guaranteed to stay around please prefer getting the attribute normally manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text manim mobject svg tex mobject py manim mobject svg tex mobject tex home runner work manim cov manim cov manim mobject svg svg mobject py userwarning media tex svg contains a reference to id which is not recognized warnings warn warning text docs coverage platform linux python final coverage xml written to file coverage xml short test summary info failed manim mobject svg tex mobject py manim mobject svg tex mobject tex failed passed warnings in error process completed with exit code | 1 |

25,136 | 18,157,222,918 | IssuesEvent | 2021-09-27 04:21:59 | happy-travel/agent-app-project | https://api.github.com/repos/happy-travel/agent-app-project | closed | Check all connector updaters to have stdout logger and sentry connected | backend infrastructure static-data updater npt-testable | Sentry projects management is on @evm-andrey side.

In this task it is needed to check the code | 1.0 | Check all connector updaters to have stdout logger and sentry connected - Sentry projects management is on @evm-andrey side.

In this task it is needed to check the code | infrastructure | check all connector updaters to have stdout logger and sentry connected sentry projects management is on evm andrey side in this task it is needed to check the code | 1 |

26,305 | 19,978,409,779 | IssuesEvent | 2022-01-29 13:40:16 | angular/material.angular.io | https://api.github.com/repos/angular/material.angular.io | closed | Update Stackblitz package.json to use Angular ^13.0.0 | P1: urgent infrastructure | <!--------

🛑

Use the Angular Components repository (https://github.com/angular/components/issues/new/choose)

to report issues.

The Angular team can't provide general troubleshooting help. This is especially true when the

problem is specific to your app and cannot be reproduced in a StackBlitz demo.

However, the extended community of users may be able to provide help via the following channels:

- StackOverflow: https://stackoverflow.com/questions/tagged/angular-material2

- Gitter: https://gitter.im/angular/material2

- Google Groups: https://groups.google.com/forum/#!forum/angular-material2

-------->

Currently the [package.json](https://github.com/angular/material.angular.io/blob/master/src/assets/stack-blitz/package.json) for Stackblitz demos uses Angular version `~13.0.0-next.0` and it results in buggy projects like this: https://github.com/angular/components/issues/24173. | 1.0 | Update Stackblitz package.json to use Angular ^13.0.0 - <!--------

🛑

Use the Angular Components repository (https://github.com/angular/components/issues/new/choose)

to report issues.

The Angular team can't provide general troubleshooting help. This is especially true when the

problem is specific to your app and cannot be reproduced in a StackBlitz demo.

However, the extended community of users may be able to provide help via the following channels:

- StackOverflow: https://stackoverflow.com/questions/tagged/angular-material2

- Gitter: https://gitter.im/angular/material2

- Google Groups: https://groups.google.com/forum/#!forum/angular-material2

-------->

Currently the [package.json](https://github.com/angular/material.angular.io/blob/master/src/assets/stack-blitz/package.json) for Stackblitz demos uses Angular version `~13.0.0-next.0` and it results in buggy projects like this: https://github.com/angular/components/issues/24173. | infrastructure | update stackblitz package json to use angular 🛑 use the angular components repository to report issues the angular team can t provide general troubleshooting help this is especially true when the problem is specific to your app and cannot be reproduced in a stackblitz demo however the extended community of users may be able to provide help via the following channels stackoverflow gitter google groups currently the for stackblitz demos uses angular version next and it results in buggy projects like this | 1 |

25,175 | 18,239,297,706 | IssuesEvent | 2021-10-01 10:53:42 | tskit-dev/tskit | https://api.github.com/repos/tskit-dev/tskit | closed | warnings building tskit with Ming64 on Windows | C API Infrastructure and tools | @rdinnager reports the following warnings when building tskit (actually tskit's code inside SLiM, but it's the same code) on Windows using the Ming64 compiler:

```

F:/SLiM/treerec/tskit/core.c: In function 'get_random_bytes':

F:/SLiM/treerec/tskit/core.c:47:29: warning: initialization of 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

47 | HCRYPTPROV hCryptProv = NULL;

| ^~~~

F:/SLiM/treerec/tskit/core.c:57:20: warning: assignment to 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

57 | hCryptProv = NULL;

| ^

F:/SLiM/treerec/tskit/core.c:60:16: warning: assignment to 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

60 | hCryptProv = NULL;

| ^

F:/SLiM/treerec/tskit/core.c:63:20: warning: comparison between pointer and integer

63 | if (hCryptProv != NULL) {

| ^~

```

According to the Microsoft docs (https://docs.microsoft.com/en-us/windows/win32/seccrypto/hcryptprov) `HCRYPTPROV` is supposed to be a pointer to `unsigned long`:

`typedef ULONG_PTR HCRYPTPROV;`

It looks like whatever headers are coming from Russell's toolchain define it as `long long unsigned int` instead; I have no idea why. I would suggest that maybe tskit ought to change those four assignments above, of `NULL`, to be assignments of `(HCRYPTPROV)NULL` so that it builds without warnings regardless of what type `HCRYPTPROV` is defined to be. Should be a trivial fix. Thanks!

This was originally discussed in https://github.com/MesserLab/SLiM/issues/66 but there's no need to read through that, it just says the same things I say above. :-> | 1.0 | warnings building tskit with Ming64 on Windows - @rdinnager reports the following warnings when building tskit (actually tskit's code inside SLiM, but it's the same code) on Windows using the Ming64 compiler:

```

F:/SLiM/treerec/tskit/core.c: In function 'get_random_bytes':

F:/SLiM/treerec/tskit/core.c:47:29: warning: initialization of 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

47 | HCRYPTPROV hCryptProv = NULL;

| ^~~~

F:/SLiM/treerec/tskit/core.c:57:20: warning: assignment to 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

57 | hCryptProv = NULL;

| ^

F:/SLiM/treerec/tskit/core.c:60:16: warning: assignment to 'HCRYPTPROV' {aka 'long long unsigned int'} from 'void *' makes integer from pointer without a cast [-Wint-conversion]

60 | hCryptProv = NULL;

| ^

F:/SLiM/treerec/tskit/core.c:63:20: warning: comparison between pointer and integer

63 | if (hCryptProv != NULL) {

| ^~

```

According to the Microsoft docs (https://docs.microsoft.com/en-us/windows/win32/seccrypto/hcryptprov) `HCRYPTPROV` is supposed to be a pointer to `unsigned long`:

`typedef ULONG_PTR HCRYPTPROV;`

It looks like whatever headers are coming from Russell's toolchain define it as `long long unsigned int` instead; I have no idea why. I would suggest that maybe tskit ought to change those four assignments above, of `NULL`, to be assignments of `(HCRYPTPROV)NULL` so that it builds without warnings regardless of what type `HCRYPTPROV` is defined to be. Should be a trivial fix. Thanks!

This was originally discussed in https://github.com/MesserLab/SLiM/issues/66 but there's no need to read through that, it just says the same things I say above. :-> | infrastructure | warnings building tskit with on windows rdinnager reports the following warnings when building tskit actually tskit s code inside slim but it s the same code on windows using the compiler f slim treerec tskit core c in function get random bytes f slim treerec tskit core c warning initialization of hcryptprov aka long long unsigned int from void makes integer from pointer without a cast hcryptprov hcryptprov null f slim treerec tskit core c warning assignment to hcryptprov aka long long unsigned int from void makes integer from pointer without a cast hcryptprov null f slim treerec tskit core c warning assignment to hcryptprov aka long long unsigned int from void makes integer from pointer without a cast hcryptprov null f slim treerec tskit core c warning comparison between pointer and integer if hcryptprov null according to the microsoft docs hcryptprov is supposed to be a pointer to unsigned long typedef ulong ptr hcryptprov it looks like whatever headers are coming from russell s toolchain define it as long long unsigned int instead i have no idea why i would suggest that maybe tskit ought to change those four assignments above of null to be assignments of hcryptprov null so that it builds without warnings regardless of what type hcryptprov is defined to be should be a trivial fix thanks this was originally discussed in but there s no need to read through that it just says the same things i say above | 1 |

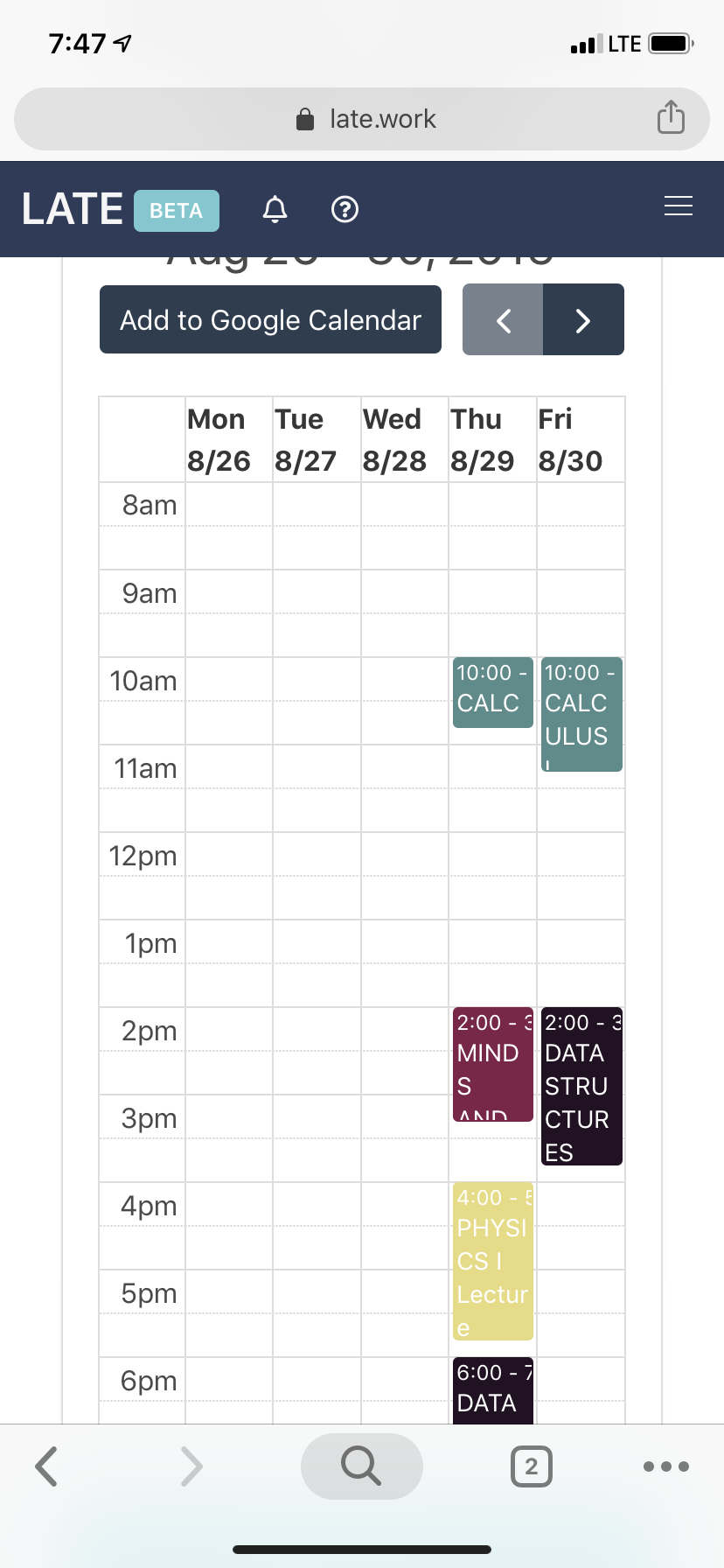

339,665 | 10,257,558,875 | IssuesEvent | 2019-08-21 20:25:39 | Apexal/late | https://api.github.com/repos/Apexal/late | closed | Calendar Blocks | Enhancement Front End Priority: Low | **Describe the bug**

Calendar blocks are too small to be viewed.

**To Reproduce** *optional*

Steps to reproduce the behavior:

Login and view the calendar on your phone

**Expected behavior**

**Screenshots** *optional*

**Device**

iPhone XR | 1.0 | Calendar Blocks - **Describe the bug**

Calendar blocks are too small to be viewed.

**To Reproduce** *optional*

Steps to reproduce the behavior:

Login and view the calendar on your phone

**Expected behavior**

**Screenshots** *optional*

**Device**

iPhone XR | non_infrastructure | calendar blocks describe the bug calendar blocks are too small to be viewed to reproduce optional steps to reproduce the behavior login and view the calendar on your phone expected behavior screenshots optional device iphone xr | 0 |

7,804 | 7,104,241,126 | IssuesEvent | 2018-01-16 09:18:21 | eclipse/vorto | https://api.github.com/repos/eclipse/vorto | closed | Automated Web Site Deployment | Infrastructure Website | When a PR for web site change is accepted and merged into the codebase, the Vorto CI (Hudson) builds the website with Jekyll and pushes it to Eclipse web site git repository, so that these changes are immediately visible on www.eclipse.org/vorto

| 1.0 | Automated Web Site Deployment - When a PR for web site change is accepted and merged into the codebase, the Vorto CI (Hudson) builds the website with Jekyll and pushes it to Eclipse web site git repository, so that these changes are immediately visible on www.eclipse.org/vorto

| infrastructure | automated web site deployment when a pr for web site change is accepted and merged into the codebase the vorto ci hudson builds the website with jekyll and pushes it to eclipse web site git repository so that these changes are immediately visible on | 1 |

4,427 | 5,068,325,493 | IssuesEvent | 2016-12-24 15:11:48 | Hackerfleet/meta | https://api.github.com/repos/Hackerfleet/meta | opened | Set up c3 RPi | events infrastructure | * [ ] Attach where it cannot be de-attached

* [ ] Make sure it works

* [ ] Tighten security as required | 1.0 | Set up c3 RPi - * [ ] Attach where it cannot be de-attached

* [ ] Make sure it works

* [ ] Tighten security as required | infrastructure | set up rpi attach where it cannot be de attached make sure it works tighten security as required | 1 |

31,021 | 25,259,630,120 | IssuesEvent | 2022-11-15 21:25:54 | dotnet/razor | https://api.github.com/repos/dotnet/razor | closed | Build source code produce: "The variable '$vsMajorVersion' cannot be retrieved because it has not been set." | area-infrastructure | **Describe the bug:**

Cannot build the source code using the command line on **Windows** Docker image, but the same error occurs also on my machine

**Version used:**

PowerShell Core 7.2

**To reproduce:**

1. Fresh Windows servercore machine

2. Install Git 2.38.1

3. Install NodeJs 19

4. Install Python39

5. Globally install yarn -> `npm I -g yarn`

6. clone repo

7. launch build.cmd

**Expected behavior:**

Build Successfully

**Actual behavior:**

```

Using xcopy-msbuild version of 17.2.1 since VS version 16.3 provided in global.json is not compatible

The variable '$vsMajorVersion' cannot be retrieved because it has not been set.

```

**Additional context:**

I have tried both Linux(ubuntu) and Windows(servercore:ltsc2022)

Linux: build OK

Windows: Error above

I added the Dockerfiles used

## Linux Dockerfile

```

FROM ubuntu

# Add required packages

RUN apt update && \

install curl \

-y

# Add nodejs source

RUN curl -s https://deb.nodesource.com/setup_16.x | bash

# Install nodejs 16 dotnet6, python3 is already installed

RUN apt install nodejs dotnet6 wget git -y

# Run the machine and clone/build repo

```

## Win Dockerfile

```

FROM mcr.microsoft.com/windows/servercore:ltsc2022

# Download software

WORKDIR c:/temp

# -L means allow redirect, -O same name specified on URL

RUN curl -L -O https://github.com/PowerShell/PowerShell/releases/download/v7.2.6/PowerShell-7.2.6-win-x64.zip

RUN curl -L -O https://www.7-zip.org/a/7z2201-x64.exe

RUN curl -L -O https://github.com/git-for-windows/git/releases/download/v2.38.1.windows.1/PortableGit-2.38.1-64-bit.7z.exe

# Extract all

RUN 7z2201-x64.exe /S /D=c:/apps/7zip

RUN c:/apps/7zip/7z.exe x c:/temp/PowerShell-7.2.6-win-x64.zip -oc:/apps/PowerShell

RUN c:/apps/7zip/7z.exe x c:/temp/PortableGit-2.38.1-64-bit.7z.exe -oc:/apps/git

RUN setx path "%path%;C:\apps\PowerShell;c:\apps\git\bin;c:\apps\7zip"

RUN del c:\temp /q

RUN net user user01 /ADD

USER user01

RUN setx path "%path%;C:\apps\PowerShell;c:\apps\git\bin;c:\apps\7zip"

RUN pwsh -command Set-ExecutionPolicy RemoteSigned -Scope CurrentUser

RUN ["pwsh", "-command", "irm get.scoop.sh | iex"]

RUN scoop bucket add versions

RUN scoop bucket add extras

RUN scoop install nodejs

RUN scoop install python39

RUN npm i -g yarn

# Run the machine and clone/build repo

```

| 1.0 | Build source code produce: "The variable '$vsMajorVersion' cannot be retrieved because it has not been set." - **Describe the bug:**

Cannot build the source code using the command line on **Windows** Docker image, but the same error occurs also on my machine

**Version used:**

PowerShell Core 7.2

**To reproduce:**

1. Fresh Windows servercore machine

2. Install Git 2.38.1

3. Install NodeJs 19

4. Install Python39

5. Globally install yarn -> `npm I -g yarn`

6. clone repo

7. launch build.cmd

**Expected behavior:**

Build Successfully

**Actual behavior:**

```

Using xcopy-msbuild version of 17.2.1 since VS version 16.3 provided in global.json is not compatible

The variable '$vsMajorVersion' cannot be retrieved because it has not been set.

```

**Additional context:**

I have tried both Linux(ubuntu) and Windows(servercore:ltsc2022)

Linux: build OK

Windows: Error above

I added the Dockerfiles used

## Linux Dockerfile

```

FROM ubuntu

# Add required packages

RUN apt update && \

install curl \

-y

# Add nodejs source

RUN curl -s https://deb.nodesource.com/setup_16.x | bash

# Install nodejs 16 dotnet6, python3 is already installed

RUN apt install nodejs dotnet6 wget git -y

# Run the machine and clone/build repo

```

## Win Dockerfile

```

FROM mcr.microsoft.com/windows/servercore:ltsc2022

# Download software

WORKDIR c:/temp

# -L means allow redirect, -O same name specified on URL

RUN curl -L -O https://github.com/PowerShell/PowerShell/releases/download/v7.2.6/PowerShell-7.2.6-win-x64.zip

RUN curl -L -O https://www.7-zip.org/a/7z2201-x64.exe

RUN curl -L -O https://github.com/git-for-windows/git/releases/download/v2.38.1.windows.1/PortableGit-2.38.1-64-bit.7z.exe

# Extract all

RUN 7z2201-x64.exe /S /D=c:/apps/7zip

RUN c:/apps/7zip/7z.exe x c:/temp/PowerShell-7.2.6-win-x64.zip -oc:/apps/PowerShell

RUN c:/apps/7zip/7z.exe x c:/temp/PortableGit-2.38.1-64-bit.7z.exe -oc:/apps/git

RUN setx path "%path%;C:\apps\PowerShell;c:\apps\git\bin;c:\apps\7zip"

RUN del c:\temp /q

RUN net user user01 /ADD

USER user01

RUN setx path "%path%;C:\apps\PowerShell;c:\apps\git\bin;c:\apps\7zip"

RUN pwsh -command Set-ExecutionPolicy RemoteSigned -Scope CurrentUser

RUN ["pwsh", "-command", "irm get.scoop.sh | iex"]

RUN scoop bucket add versions

RUN scoop bucket add extras

RUN scoop install nodejs

RUN scoop install python39

RUN npm i -g yarn

# Run the machine and clone/build repo

```

| infrastructure | build source code produce the variable vsmajorversion cannot be retrieved because it has not been set describe the bug cannot build the source code using the command line on windows docker image but the same error occurs also on my machine version used powershell core to reproduce fresh windows servercore machine install git install nodejs install globally install yarn npm i g yarn clone repo launch build cmd expected behavior build successfully actual behavior using xcopy msbuild version of since vs version provided in global json is not compatible the variable vsmajorversion cannot be retrieved because it has not been set additional context i have tried both linux ubuntu and windows servercore linux build ok windows error above i added the dockerfiles used linux dockerfile from ubuntu add required packages run apt update install curl y add nodejs source run curl s bash install nodejs is already installed run apt install nodejs wget git y run the machine and clone build repo win dockerfile from mcr microsoft com windows servercore download software workdir c temp l means allow redirect o same name specified on url run curl l o run curl l o run curl l o extract all run exe s d c apps run c apps exe x c temp powershell win zip oc apps powershell run c apps exe x c temp portablegit bit exe oc apps git run setx path path c apps powershell c apps git bin c apps run del c temp q run net user add user run setx path path c apps powershell c apps git bin c apps run pwsh command set executionpolicy remotesigned scope currentuser run run scoop bucket add versions run scoop bucket add extras run scoop install nodejs run scoop install run npm i g yarn run the machine and clone build repo | 1 |

26,300 | 19,974,804,101 | IssuesEvent | 2022-01-29 00:34:07 | acm-toce/documentation | https://api.github.com/repos/acm-toce/documentation | closed | Revise author guidelines | infrastructure | Some suggested revisions:

* Add a set of contribution types (like the broad set often discussed in HCI) to help authors understand how their submissions will be evaluated.

* Add a section on reporting standards, link to the CSEdResearch page on how to report studies.

* Clarify anonymization practices (e.g., anonymize self-citations w/ third person)

* Clarify whether dissertations are prior publications (e.g., an author wrote: "I have a paper recently published under the ACM Transactions for Computing Education for which you oversee. The paper is part of my PhD thesis. So, I wonder with the copy right contract that I had signed, would I be able to use the content word-by-word in my thesis along with an acknowledge and citation? I want to make sure I have the approval before including the article as one of my chapters" -> https://www.acm.org/publications/policies/copyright-policy#permanent%20rights

* Explain how to handle overlapping prior publications (30% rule, link to ACM)

* Explain to authors how to interpret the reviews they receive (e.g., AE's have discretion, point to AE and reviewer guidelines) | 1.0 | Revise author guidelines - Some suggested revisions:

* Add a set of contribution types (like the broad set often discussed in HCI) to help authors understand how their submissions will be evaluated.

* Add a section on reporting standards, link to the CSEdResearch page on how to report studies.

* Clarify anonymization practices (e.g., anonymize self-citations w/ third person)

* Clarify whether dissertations are prior publications (e.g., an author wrote: "I have a paper recently published under the ACM Transactions for Computing Education for which you oversee. The paper is part of my PhD thesis. So, I wonder with the copy right contract that I had signed, would I be able to use the content word-by-word in my thesis along with an acknowledge and citation? I want to make sure I have the approval before including the article as one of my chapters" -> https://www.acm.org/publications/policies/copyright-policy#permanent%20rights

* Explain how to handle overlapping prior publications (30% rule, link to ACM)

* Explain to authors how to interpret the reviews they receive (e.g., AE's have discretion, point to AE and reviewer guidelines) | infrastructure | revise author guidelines some suggested revisions add a set of contribution types like the broad set often discussed in hci to help authors understand how their submissions will be evaluated add a section on reporting standards link to the csedresearch page on how to report studies clarify anonymization practices e g anonymize self citations w third person clarify whether dissertations are prior publications e g an author wrote i have a paper recently published under the acm transactions for computing education for which you oversee the paper is part of my phd thesis so i wonder with the copy right contract that i had signed would i be able to use the content word by word in my thesis along with an acknowledge and citation i want to make sure i have the approval before including the article as one of my chapters explain how to handle overlapping prior publications rule link to acm explain to authors how to interpret the reviews they receive e g ae s have discretion point to ae and reviewer guidelines | 1 |

11,303 | 9,087,175,093 | IssuesEvent | 2019-02-18 13:03:33 | eventespresso/event-espresso-core | https://api.github.com/repos/eventespresso/event-espresso-core | closed | REST API cant filter datetimes by deleted field | category:models-and-data-infrastructure status:stale type:bug 🐞 | <!--

BEFORE POSTING YOUR ISSUE:

- These comments won't show up when you submit the issue.

- Please ensure that what you are reporting is specific to this project.

- Try to add as much detail as possible. Be specific!

- Make sure you read the README.md for the project regarding posting issues.

- Search this repository for issues and pull requests and whether it has been fixed or reported already.

- Ensure you are using the latest code before reporting bugs (unless you are reporting an issue disovered in a branch).

- Disable all plugins and switch to a default theme to ensure its not a plugin/theme conflict issue.

- To report a security issue, please visit this page: https://eventespresso.com/report-a-security-vulnerability/

-->

## Issue Overview

<!-- Describe what this issue is about. -->

Queries like this always return an empty set: `/datetimes?where[DTT_deleted]=false` returns an empty set (even when there are definetely non-trashed datetimes) and so does `/datetimes?where[DTT_deleted]=true`.

## Bug report or feature request?

* [x] Bug

* [ ] Feature

* [ ] Neither

## Environment Data:

Version of EE: <!-- Can be a branch name or the version of EE the issue happened in. -->

4.9.71

Version of WordPress:

4.9.7

PHP Version: <!-- if known, add your php version here -->

7.1

Browser used: <!-- also include your browser version if possible -->

Firefox/postman

## Steps to Reproduce (for bugs)

<!-- If possible provide any links to a live example, or an unambiguous set of steps to reproduce this bug -->

<!-- Feel free to include code to reproduce if relevant. -->

1. Create an event with two datetimes, and trash one.

2. Send a request to `wp-json/ee/v4.8.36/datetimes`, you should see the one untrashed one. Then add `?where[DTT_deleted]=false` and somehow you'll get an empty set. Then change it to `?where[DTT_deleted]=true` and it's still empty. Weirdness.

## Expected Behaviour

<!-- If you're describing a bug, tell us what should happen -->

<!-- If you're describing a feature/enhancement, explain the difference from current behaviour -->

`/wp-json/ee/v4.8.36/datetimes?where[DTT_deleted]=false` should return undeleted datetimes. And instead using `where[DTT_deleted]=true` should... probably tell you you're not allowed to see deleted datetimes, as they're not visible on the frontend; or maybe show them. It's up for debate, but probably an error would be better.

## Current Behaviour

<!-- If describing a bug, what is the current behaviour and how does it differ from expected behaviour? -->

<!-- If describing a feature, describe what the current behaviour is in the part of the application that you want your feature suggestion to improve on. -->

See steps to reproduce.

## Related Information:

<!--

- If you were directed to create an issue here by the EE support team, you can include the link to your original EE support forum thread.

- You can also include any other links you think may be useful (related issues and/or Pull Requests)

- Any screenshots or screencasts that help illustrate what you are describing is always useful.

-->

| 1.0 | REST API cant filter datetimes by deleted field - <!--

BEFORE POSTING YOUR ISSUE:

- These comments won't show up when you submit the issue.

- Please ensure that what you are reporting is specific to this project.

- Try to add as much detail as possible. Be specific!

- Make sure you read the README.md for the project regarding posting issues.

- Search this repository for issues and pull requests and whether it has been fixed or reported already.

- Ensure you are using the latest code before reporting bugs (unless you are reporting an issue disovered in a branch).

- Disable all plugins and switch to a default theme to ensure its not a plugin/theme conflict issue.

- To report a security issue, please visit this page: https://eventespresso.com/report-a-security-vulnerability/

-->

## Issue Overview

<!-- Describe what this issue is about. -->

Queries like this always return an empty set: `/datetimes?where[DTT_deleted]=false` returns an empty set (even when there are definetely non-trashed datetimes) and so does `/datetimes?where[DTT_deleted]=true`.

## Bug report or feature request?

* [x] Bug

* [ ] Feature

* [ ] Neither

## Environment Data:

Version of EE: <!-- Can be a branch name or the version of EE the issue happened in. -->

4.9.71

Version of WordPress:

4.9.7

PHP Version: <!-- if known, add your php version here -->

7.1

Browser used: <!-- also include your browser version if possible -->

Firefox/postman

## Steps to Reproduce (for bugs)

<!-- If possible provide any links to a live example, or an unambiguous set of steps to reproduce this bug -->

<!-- Feel free to include code to reproduce if relevant. -->

1. Create an event with two datetimes, and trash one.

2. Send a request to `wp-json/ee/v4.8.36/datetimes`, you should see the one untrashed one. Then add `?where[DTT_deleted]=false` and somehow you'll get an empty set. Then change it to `?where[DTT_deleted]=true` and it's still empty. Weirdness.

## Expected Behaviour

<!-- If you're describing a bug, tell us what should happen -->

<!-- If you're describing a feature/enhancement, explain the difference from current behaviour -->

`/wp-json/ee/v4.8.36/datetimes?where[DTT_deleted]=false` should return undeleted datetimes. And instead using `where[DTT_deleted]=true` should... probably tell you you're not allowed to see deleted datetimes, as they're not visible on the frontend; or maybe show them. It's up for debate, but probably an error would be better.

## Current Behaviour

<!-- If describing a bug, what is the current behaviour and how does it differ from expected behaviour? -->

<!-- If describing a feature, describe what the current behaviour is in the part of the application that you want your feature suggestion to improve on. -->

See steps to reproduce.

## Related Information:

<!--

- If you were directed to create an issue here by the EE support team, you can include the link to your original EE support forum thread.

- You can also include any other links you think may be useful (related issues and/or Pull Requests)

- Any screenshots or screencasts that help illustrate what you are describing is always useful.

-->

| infrastructure | rest api cant filter datetimes by deleted field before posting your issue these comments won t show up when you submit the issue please ensure that what you are reporting is specific to this project try to add as much detail as possible be specific make sure you read the readme md for the project regarding posting issues search this repository for issues and pull requests and whether it has been fixed or reported already ensure you are using the latest code before reporting bugs unless you are reporting an issue disovered in a branch disable all plugins and switch to a default theme to ensure its not a plugin theme conflict issue to report a security issue please visit this page issue overview queries like this always return an empty set datetimes where false returns an empty set even when there are definetely non trashed datetimes and so does datetimes where true bug report or feature request bug feature neither environment data version of ee version of wordpress php version browser used firefox postman steps to reproduce for bugs create an event with two datetimes and trash one send a request to wp json ee datetimes you should see the one untrashed one then add where false and somehow you ll get an empty set then change it to where true and it s still empty weirdness expected behaviour wp json ee datetimes where false should return undeleted datetimes and instead using where true should probably tell you you re not allowed to see deleted datetimes as they re not visible on the frontend or maybe show them it s up for debate but probably an error would be better current behaviour see steps to reproduce related information if you were directed to create an issue here by the ee support team you can include the link to your original ee support forum thread you can also include any other links you think may be useful related issues and or pull requests any screenshots or screencasts that help illustrate what you are describing is always useful | 1 |

96,683 | 12,150,381,169 | IssuesEvent | 2020-04-24 17:52:27 | tesshucom/jpsonic | https://api.github.com/repos/tesshucom/jpsonic | opened | Add an option to specify the genre handled as audiobook | in : search status: pending-design-work type: enhancement | There is often song data that is not music, such as the first intro of 10 songs in the album.

These are treated as AUDIOBOOK by specifying the genre and excluded from the shuffle.

There are some notes.

- Should it be included in the genre master

- Whether to increase the MediaType (although it is difficult to verify).

| 1.0 | Add an option to specify the genre handled as audiobook - There is often song data that is not music, such as the first intro of 10 songs in the album.

These are treated as AUDIOBOOK by specifying the genre and excluded from the shuffle.

There are some notes.

- Should it be included in the genre master

- Whether to increase the MediaType (although it is difficult to verify).

| non_infrastructure | add an option to specify the genre handled as audiobook there is often song data that is not music such as the first intro of songs in the album these are treated as audiobook by specifying the genre and excluded from the shuffle there are some notes should it be included in the genre master whether to increase the mediatype although it is difficult to verify | 0 |

433,257 | 30,320,507,290 | IssuesEvent | 2023-07-10 18:51:17 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | opened | Install instructions for rhel-like distros fail | documentation | ## Applies To

https://goteleport.com/docs/installation/#linux

## Details

The instructions in the `Amazon Linux 2023/RHEL 8+ (dnf)` tab in the Enterprise section don't work on Rhel.

```

[root@rheltest /]# source os-release && dnf config-manager --add-repo "$(rpm --eval https://yum.releases.teleport.dev/${ID}/${VERSION_ID}/Teleport/%{_arch}/stable/v13/teleport.repo)"

Adding repo from: https://yum.releases.teleport.dev/rhel/8.8/Teleport/x86_64/stable/v13/teleport.repo

Status code: 404 for https://yum.releases.teleport.dev/rhel/8.8/Teleport/x86_64/stable/v13/teleport.repo (IP: 18.238.4.22)

Error: Configuration of repo failed

```

Additionally, I would expect that our instructions would work for centos stream, rockylinux 7/8, and almalinux 7/8.

As far as I can tell, the valid values for `${ID}` and `${VERSION_ID}` are as follows:

* centos stream 8 -> centos 8

* rocky linux 8 -> rhel 8

* alma linux 8 -> rhel 8

* rhel 8.x -> rhel 8

rocky, alma, and rhel seem to set `VERSION_ID` to 8.6 or 8.8 or similar, which doesn't work.

The docs advise that it may be necessary to use `ID_LIKE` instead of `ID`, but rocky and alma set that to `rhel centos fedora`, which would not be valid.

It might be feasible to write a command that tries to map these values to the necessary values based on what's in the repository. Another approach would be to list the valid values in our repo and let the end-user pick which values are appropriate.

Here is a table of various rhel-like distros and what their ID, ID_LIKE, and VERSION_ID values are:

| distro | id | id_like | version_id |

|--------------------------|------------|-----------------------|------------|

| rockylinux 8 | rocky | rhel centos fedora | 8.8 |

| rockylinux 9 | rocky | rhel centos fedora | 9.2 |

| almalinux 8 | almalinux | rhel centos fedora | 8.8 |

| almalinux 9 | almalinux | rhel centos fedora | 9.2 |

| oraclelinux 7 | ol | fedora | 7.9 |

| oraclelinux 8 | ol | fedora | 8.8 |

| oraclelinux 9 | ol | fedora | 9.2 |

| rhel7 | rhel | fedora | 7.9 |

| rhel8 | rhel | fedora | 8.8 |

| rhel9 | rhel | fedora | 9.2 |

| centos stream 8 | centos | rhel fedora | 8 |

| centos stream 9 | centos | rhel fedora | 9 |

| centos 7 | centos | rhel fedora | 7 |

| centos 8 (EOL/Canceled) | centos | rhel fedora | 8 |

## How will we know this is resolved?

Instructions on rhel and clones will work without modification.

## Related Issues

| 1.0 | Install instructions for rhel-like distros fail - ## Applies To

https://goteleport.com/docs/installation/#linux

## Details

The instructions in the `Amazon Linux 2023/RHEL 8+ (dnf)` tab in the Enterprise section don't work on Rhel.

```

[root@rheltest /]# source os-release && dnf config-manager --add-repo "$(rpm --eval https://yum.releases.teleport.dev/${ID}/${VERSION_ID}/Teleport/%{_arch}/stable/v13/teleport.repo)"

Adding repo from: https://yum.releases.teleport.dev/rhel/8.8/Teleport/x86_64/stable/v13/teleport.repo

Status code: 404 for https://yum.releases.teleport.dev/rhel/8.8/Teleport/x86_64/stable/v13/teleport.repo (IP: 18.238.4.22)

Error: Configuration of repo failed

```

Additionally, I would expect that our instructions would work for centos stream, rockylinux 7/8, and almalinux 7/8.

As far as I can tell, the valid values for `${ID}` and `${VERSION_ID}` are as follows:

* centos stream 8 -> centos 8

* rocky linux 8 -> rhel 8

* alma linux 8 -> rhel 8

* rhel 8.x -> rhel 8

rocky, alma, and rhel seem to set `VERSION_ID` to 8.6 or 8.8 or similar, which doesn't work.

The docs advise that it may be necessary to use `ID_LIKE` instead of `ID`, but rocky and alma set that to `rhel centos fedora`, which would not be valid.

It might be feasible to write a command that tries to map these values to the necessary values based on what's in the repository. Another approach would be to list the valid values in our repo and let the end-user pick which values are appropriate.

Here is a table of various rhel-like distros and what their ID, ID_LIKE, and VERSION_ID values are:

| distro | id | id_like | version_id |

|--------------------------|------------|-----------------------|------------|

| rockylinux 8 | rocky | rhel centos fedora | 8.8 |

| rockylinux 9 | rocky | rhel centos fedora | 9.2 |

| almalinux 8 | almalinux | rhel centos fedora | 8.8 |

| almalinux 9 | almalinux | rhel centos fedora | 9.2 |

| oraclelinux 7 | ol | fedora | 7.9 |

| oraclelinux 8 | ol | fedora | 8.8 |

| oraclelinux 9 | ol | fedora | 9.2 |

| rhel7 | rhel | fedora | 7.9 |

| rhel8 | rhel | fedora | 8.8 |

| rhel9 | rhel | fedora | 9.2 |

| centos stream 8 | centos | rhel fedora | 8 |

| centos stream 9 | centos | rhel fedora | 9 |

| centos 7 | centos | rhel fedora | 7 |

| centos 8 (EOL/Canceled) | centos | rhel fedora | 8 |

## How will we know this is resolved?

Instructions on rhel and clones will work without modification.

## Related Issues

| non_infrastructure | install instructions for rhel like distros fail applies to details the instructions in the amazon linux rhel dnf tab in the enterprise section don t work on rhel source os release dnf config manager add repo rpm eval adding repo from status code for ip error configuration of repo failed additionally i would expect that our instructions would work for centos stream rockylinux and almalinux as far as i can tell the valid values for id and version id are as follows centos stream centos rocky linux rhel alma linux rhel rhel x rhel rocky alma and rhel seem to set version id to or or similar which doesn t work the docs advise that it may be necessary to use id like instead of id but rocky and alma set that to rhel centos fedora which would not be valid it might be feasible to write a command that tries to map these values to the necessary values based on what s in the repository another approach would be to list the valid values in our repo and let the end user pick which values are appropriate here is a table of various rhel like distros and what their id id like and version id values are distro id id like version id rockylinux rocky rhel centos fedora rockylinux rocky rhel centos fedora almalinux almalinux rhel centos fedora almalinux almalinux rhel centos fedora oraclelinux ol fedora oraclelinux ol fedora oraclelinux ol fedora rhel fedora rhel fedora rhel fedora centos stream centos rhel fedora centos stream centos rhel fedora centos centos rhel fedora centos eol canceled centos rhel fedora how will we know this is resolved instructions on rhel and clones will work without modification related issues | 0 |

16,640 | 12,086,248,457 | IssuesEvent | 2020-04-18 08:58:23 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | CI unable to install dotnet-ef | area-infrastructure | Win7 and Win8 agents are failing in ProjectTemplates.Tests trying to install dotnet-ef.

```

Running 'C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1'

'C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1' completed with exit code '1'

Exception in InstallAspNetAppIfNeeded: System.InvalidOperationException: Command C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1 returned exit code 1

at RunTests.ProcessUtil.RunAsync(String filename, String arguments, String workingDirectory, Boolean throwOnError, IDictionary`2 environmentVariables, Action`1 outputDataReceived, Action`1 errorDataReceived, Action`1 onStart, CancellationToken cancellationToken) in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\ProcessUtil.cs:line 144

at RunTests.ProcessUtil.RunAsync(String filename, String arguments, String workingDirectory, Boolean throwOnError, IDictionary`2 environmentVariables, Action`1 outputDataReceived, Action`1 errorDataReceived, Action`1 onStart, CancellationToken cancellationToken) in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\ProcessUtil.cs:line 144

at RunTests.TestRunner.InstallAspNetAppIfNeededAsync() in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\TestRunner.cs:line 136

```

@HaoK is working on adding more logs here.

https://dev.azure.com/dnceng/internal/_build/results?buildId=603871&view=ms.vss-test-web.build-test-results-tab

https://dev.azure.com/dnceng/internal/_build/results?buildId=604194&view=ms.vss-test-web.build-test-results-tab&runId=18998130&resultId=120299&paneView=debug

https://dev.azure.com/dnceng/internal/_build/results?buildId=604848&view=ms.vss-test-web.build-test-results-tab

https://dev.azure.com/dnceng/internal/_build/results?buildId=605130&view=ms.vss-test-web.build-test-results-tab&runId=19026724&resultId=120297&paneView=attachments

https://dev.azure.com/dnceng/public/_build/results?buildId=604460&view=ms.vss-test-web.build-test-results-tab

This one is a bit different:

`Tool 'dotnet-ef' is already installed.`

https://dev.azure.com/dnceng/public/_build/results?buildId=605452&view=ms.vss-test-web.build-test-results-tab&runId=19034852&resultId=120299&paneView=attachments | 1.0 | CI unable to install dotnet-ef - Win7 and Win8 agents are failing in ProjectTemplates.Tests trying to install dotnet-ef.

```

Running 'C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1'

'C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1' completed with exit code '1'

Exception in InstallAspNetAppIfNeeded: System.InvalidOperationException: Command C:\h\w\A3FF092E\p\sdk\x64/dotnet tool install dotnet-ef --global --version 5.0.0-preview.4.20215.1 returned exit code 1

at RunTests.ProcessUtil.RunAsync(String filename, String arguments, String workingDirectory, Boolean throwOnError, IDictionary`2 environmentVariables, Action`1 outputDataReceived, Action`1 errorDataReceived, Action`1 onStart, CancellationToken cancellationToken) in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\ProcessUtil.cs:line 144

at RunTests.ProcessUtil.RunAsync(String filename, String arguments, String workingDirectory, Boolean throwOnError, IDictionary`2 environmentVariables, Action`1 outputDataReceived, Action`1 errorDataReceived, Action`1 onStart, CancellationToken cancellationToken) in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\ProcessUtil.cs:line 144

at RunTests.TestRunner.InstallAspNetAppIfNeededAsync() in C:\h\w\A3FF092E\w\B32B0A1A\e\RunTests\TestRunner.cs:line 136

```

@HaoK is working on adding more logs here.

https://dev.azure.com/dnceng/internal/_build/results?buildId=603871&view=ms.vss-test-web.build-test-results-tab

https://dev.azure.com/dnceng/internal/_build/results?buildId=604194&view=ms.vss-test-web.build-test-results-tab&runId=18998130&resultId=120299&paneView=debug

https://dev.azure.com/dnceng/internal/_build/results?buildId=604848&view=ms.vss-test-web.build-test-results-tab

https://dev.azure.com/dnceng/internal/_build/results?buildId=605130&view=ms.vss-test-web.build-test-results-tab&runId=19026724&resultId=120297&paneView=attachments

https://dev.azure.com/dnceng/public/_build/results?buildId=604460&view=ms.vss-test-web.build-test-results-tab

This one is a bit different:

`Tool 'dotnet-ef' is already installed.`

https://dev.azure.com/dnceng/public/_build/results?buildId=605452&view=ms.vss-test-web.build-test-results-tab&runId=19034852&resultId=120299&paneView=attachments | infrastructure | ci unable to install dotnet ef and agents are failing in projecttemplates tests trying to install dotnet ef running c h w p sdk dotnet tool install dotnet ef global version preview c h w p sdk dotnet tool install dotnet ef global version preview completed with exit code exception in installaspnetappifneeded system invalidoperationexception command c h w p sdk dotnet tool install dotnet ef global version preview returned exit code at runtests processutil runasync string filename string arguments string workingdirectory boolean throwonerror idictionary environmentvariables action outputdatareceived action errordatareceived action onstart cancellationtoken cancellationtoken in c h w w e runtests processutil cs line at runtests processutil runasync string filename string arguments string workingdirectory boolean throwonerror idictionary environmentvariables action outputdatareceived action errordatareceived action onstart cancellationtoken cancellationtoken in c h w w e runtests processutil cs line at runtests testrunner installaspnetappifneededasync in c h w w e runtests testrunner cs line haok is working on adding more logs here this one is a bit different tool dotnet ef is already installed | 1 |

597,994 | 18,233,684,465 | IssuesEvent | 2021-10-01 02:27:07 | AY2122S1-CS2103T-W12-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-W12-3/tp | closed | Update UG for v1.1 | type.Task priority.High | Ensure that UG details are correct and move it into the repo

- [x] Table of contents

- [x] Quick start

- [x] Features

- [x] Add command

- [x] Edit command

- [x] Delete command

- [x] List command

- [x] View command

- [x] Save data

- [x] Edit data directly

- [x] Exit command

- [x] Archive data

- [x] Command summary

- [x] Param constraints

- [x] Glossary

- [x] FAQ | 1.0 | Update UG for v1.1 - Ensure that UG details are correct and move it into the repo

- [x] Table of contents

- [x] Quick start

- [x] Features

- [x] Add command

- [x] Edit command

- [x] Delete command

- [x] List command

- [x] View command

- [x] Save data

- [x] Edit data directly

- [x] Exit command

- [x] Archive data

- [x] Command summary

- [x] Param constraints

- [x] Glossary

- [x] FAQ | non_infrastructure | update ug for ensure that ug details are correct and move it into the repo table of contents quick start features add command edit command delete command list command view command save data edit data directly exit command archive data command summary param constraints glossary faq | 0 |

51,115 | 10,587,531,314 | IssuesEvent | 2019-10-08 22:26:18 | TauCetiStation/TauCetiClassic | https://api.github.com/repos/TauCetiStation/TauCetiClassic | closed | [Proposal]На сервере дебилы на форуме | ÿ Admin Awaiting Author Bad Issue Description Balance Bug Code Improvements Contentious Could Not Reproduce DO NOT MERGE Duplicate Issue Experimental Exploit FEATURE FREEZE Feature Fix Global Problems Good First Issue HONK Help Wanted I ded MAP FREEZE Maintainability Improvements Map Edit Map Issue Map PR With No Screenshot Mapmerge/Mapedit Fail Merge Conflict Merge Ready Not a Bug Performance Port Request Proposal Refactor Resprite Revert / Removal Sprite Needs Sprites Stalled PR Test Feedback Test Merge Candidate Tools Tweak Work In Progress | #### Подробное описание проблемы

НА сервере педалят говноеды

#### Что должно было произойти

На сервере не должны педалить говноеды

#### Что произошло на самом деле

В администрации одни говноеды

#### Как повторить

Поставить на трон трупократию

#### Дополнительная информация:

Мне похуй, я полковник

https://pastebin.com/fqkgx3mG

https://i.imgur.com/CKH6SEn.png | 1.0 | [Proposal]На сервере дебилы на форуме - #### Подробное описание проблемы

НА сервере педалят говноеды

#### Что должно было произойти

На сервере не должны педалить говноеды

#### Что произошло на самом деле

В администрации одни говноеды

#### Как повторить

Поставить на трон трупократию

#### Дополнительная информация:

Мне похуй, я полковник

https://pastebin.com/fqkgx3mG