Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

728,372 | 25,076,402,734 | IssuesEvent | 2022-11-07 15:48:29 | OpenTabletDriver/OpenTabletDriver | https://api.github.com/repos/OpenTabletDriver/OpenTabletDriver | closed | Aux Buttons should not have Pen Passthrough as an option | enhancement linux/gtk priority:low desktop | ## Description

Aux buttons with pen passthrough does not make sense. I tried implementing it with stuff like BTN_0 etc, but applications do not seem to know what to do with this. Until we know it makes sense, it's probably smarter to hide it entirely.

## System Information:

<!-- Please fill out this information --... | 1.0 | Aux Buttons should not have Pen Passthrough as an option - ## Description

Aux buttons with pen passthrough does not make sense. I tried implementing it with stuff like BTN_0 etc, but applications do not seem to know what to do with this. Until we know it makes sense, it's probably smarter to hide it entirely.

## Sy... | priority | aux buttons should not have pen passthrough as an option description aux buttons with pen passthrough does not make sense i tried implementing it with stuff like btn etc but applications do not seem to know what to do with this until we know it makes sense it s probably smarter to hide it entirely sy... | 1 |

533,682 | 15,596,673,272 | IssuesEvent | 2021-03-18 16:06:11 | open-telemetry/opentelemetry-specification | https://api.github.com/repos/open-telemetry/opentelemetry-specification | closed | Update YAML files for semantic conventions once supported by markdown generator | area:semantic-conventions priority:p3 release:allowed-for-ga spec:miscellaneous | YAML model for attributes seems to only have number type. But we have int or double as possible attribute types.

https://github.com/open-telemetry/opentelemetry-specification/blob/master/specification/common/common.md#attributes

https://github.com/open-telemetry/opentelemetry-specification/blob/master/semantic_conv... | 1.0 | Update YAML files for semantic conventions once supported by markdown generator - YAML model for attributes seems to only have number type. But we have int or double as possible attribute types.

https://github.com/open-telemetry/opentelemetry-specification/blob/master/specification/common/common.md#attributes

https... | priority | update yaml files for semantic conventions once supported by markdown generator yaml model for attributes seems to only have number type but we have int or double as possible attribute types once the yaml syntax and md generator are updated we need to update our yaml files here accordingly | 1 |

755,870 | 26,444,504,099 | IssuesEvent | 2023-01-16 05:30:46 | codersforcauses/wadl | https://api.github.com/repos/codersforcauses/wadl | closed | Cleanup component filenames | difficulty::easy priority::low | ## Basic Information

all files are using index.vue, this can be confusing. Give proper filenames | 1.0 | Cleanup component filenames - ## Basic Information

all files are using index.vue, this can be confusing. Give proper filenames | priority | cleanup component filenames basic information all files are using index vue this can be confusing give proper filenames | 1 |

303,206 | 9,303,794,239 | IssuesEvent | 2019-03-24 20:08:57 | bounswe/bounswe2019group3 | https://api.github.com/repos/bounswe/bounswe2019group3 | opened | (for Egemen, Bartu, Orkan, Ekrem) Document your research about GitHub under GitHub Research wiki page | Priority: Low Status: Pending Type: Assignment | - [ ] Egemen Kaplan

- [ ] Bartu Ören

- [ ] Orkan Akısü

- [ ] Muhammet Ekrem Gezgen

Document the following research you will perform:

• Explore GitHub repositories to discover repos that you like. Document them by

giving their references and describing what you liked.

• Study git as a version management s... | 1.0 | (for Egemen, Bartu, Orkan, Ekrem) Document your research about GitHub under GitHub Research wiki page - - [ ] Egemen Kaplan

- [ ] Bartu Ören

- [ ] Orkan Akısü

- [ ] Muhammet Ekrem Gezgen

Document the following research you will perform:

• Explore GitHub repositories to discover repos that you like. Documen... | priority | for egemen bartu orkan ekrem document your research about github under github research wiki page egemen kaplan bartu ören orkan akısü muhammet ekrem gezgen document the following research you will perform • explore github repositories to discover repos that you like document them b... | 1 |

80,992 | 3,587,083,923 | IssuesEvent | 2016-01-30 02:52:29 | onyxfish/csvkit | https://api.github.com/repos/onyxfish/csvkit | closed | Add ability to modify or friendly format numerical values | feature Low Priority | It would be useful to have an option that friendly formatted or applied modifiers to numbers.

For example, if you had the float:

```

5081998.9369165478

```

It would print it out as:

```

5,081,998.9369165478

```

I'm currently printing out a large table of numbers using `csvlook`, and it would be much ... | 1.0 | Add ability to modify or friendly format numerical values - It would be useful to have an option that friendly formatted or applied modifiers to numbers.

For example, if you had the float:

```

5081998.9369165478

```

It would print it out as:

```

5,081,998.9369165478

```

I'm currently printing out a l... | priority | add ability to modify or friendly format numerical values it would be useful to have an option that friendly formatted or applied modifiers to numbers for example if you had the float it would print it out as i m currently printing out a large table of numbers using ... | 1 |

324,685 | 9,907,574,965 | IssuesEvent | 2019-06-27 16:06:19 | MontrealCorpusTools/PolyglotDB | https://api.github.com/repos/MontrealCorpusTools/PolyglotDB | opened | Query module refactoring | lower priority query | The query modules are a bit messy and could use cleaning up/refactoring, with some overlapping methods, inconsistent naming schemes from various additions of code and general lack of clarity in how pieces are structured and fit together. | 1.0 | Query module refactoring - The query modules are a bit messy and could use cleaning up/refactoring, with some overlapping methods, inconsistent naming schemes from various additions of code and general lack of clarity in how pieces are structured and fit together. | priority | query module refactoring the query modules are a bit messy and could use cleaning up refactoring with some overlapping methods inconsistent naming schemes from various additions of code and general lack of clarity in how pieces are structured and fit together | 1 |

556,082 | 16,474,063,001 | IssuesEvent | 2021-05-24 00:29:58 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Multiple vlan interfaces on same interface not working | Stale area: Networking bug priority: low | **Describe the bug**

This issue was already hinted at in issue #26235, but the author was asked to create a separate issue. I could not find such a followup issue, if I missed it please link it here and close this one.

I want to run two vlan interfaces on the same ethernet interface in addition to the normal interf... | 1.0 | Multiple vlan interfaces on same interface not working - **Describe the bug**

This issue was already hinted at in issue #26235, but the author was asked to create a separate issue. I could not find such a followup issue, if I missed it please link it here and close this one.

I want to run two vlan interfaces on the... | priority | multiple vlan interfaces on same interface not working describe the bug this issue was already hinted at in issue but the author was asked to create a separate issue i could not find such a followup issue if i missed it please link it here and close this one i want to run two vlan interfaces on the sam... | 1 |

67,504 | 3,274,630,515 | IssuesEvent | 2015-10-26 12:01:04 | ManoSeimas/manoseimas.lt | https://api.github.com/repos/ManoSeimas/manoseimas.lt | closed | Lobbyist list pagination overlaps HR | bug priority: 3 - low | **Steps to reproduce**:

- Go to manoseimas.lt

- Click "Lobistai"

- Click on the name of a Lobbyist, e.g. http://www.manoseimas.lt/lobbyists/lobbyist/uab-vento-nuovo

**Expected results**:

Pagination buttons show up above the horizontal rule.

**Actual results**:

Pagination buttons overlap the horizontal r... | 1.0 | Lobbyist list pagination overlaps HR - **Steps to reproduce**:

- Go to manoseimas.lt

- Click "Lobistai"

- Click on the name of a Lobbyist, e.g. http://www.manoseimas.lt/lobbyists/lobbyist/uab-vento-nuovo

**Expected results**:

Pagination buttons show up above the horizontal rule.

**Actual results**:

Pagin... | priority | lobbyist list pagination overlaps hr steps to reproduce go to manoseimas lt click lobistai click on the name of a lobbyist e g expected results pagination buttons show up above the horizontal rule actual results pagination buttons overlap the horizontal rule see image b... | 1 |

375,810 | 11,134,875,177 | IssuesEvent | 2019-12-20 13:01:00 | nanopb/nanopb | https://api.github.com/repos/nanopb/nanopb | closed | Checking nanopb for Windows into Git results in missing files since .gitignore in the zip ignores .pyc etc. | FixedInGit Priority-Low Type-Review | nanopb-windows-x86\generator-bin>protoc.exe --nanopb_out=source --proto_path=myfile.proto

*************************************************************

*** Could not import the Google protobuf Python libraries ***

*** Try installing package 'python-protobuf' or similar. ***

**... | 1.0 | Checking nanopb for Windows into Git results in missing files since .gitignore in the zip ignores .pyc etc. - nanopb-windows-x86\generator-bin>protoc.exe --nanopb_out=source --proto_path=myfile.proto

*************************************************************

*** Could not import the Google prot... | priority | checking nanopb for windows into git results in missing files since gitignore in the zip ignores pyc etc nanopb windows generator bin protoc exe nanopb out source proto path myfile proto could not import the google protob... | 1 |

520,500 | 15,087,081,443 | IssuesEvent | 2021-02-05 21:27:43 | vmware/clarity | https://api.github.com/repos/vmware/clarity | closed | The action pop-up in the single row action data-grid is not positioned correctly | @clr/angular priority: 1 low status: needs info type: enhancement | ## Describe the bug

The position of the action pop-up in single action data-grid is not calculated correctly when the height/width of the window changes.

As a result the pop-up is not aligned to the selected grid element.

## How to reproduce

https://stackblitz.com/edit/clarity-light-theme-v2-owaezn

In a m... | 1.0 | The action pop-up in the single row action data-grid is not positioned correctly - ## Describe the bug

The position of the action pop-up in single action data-grid is not calculated correctly when the height/width of the window changes.

As a result the pop-up is not aligned to the selected grid element.

## How ... | priority | the action pop up in the single row action data grid is not positioned correctly describe the bug the position of the action pop up in single action data grid is not calculated correctly when the height width of the window changes as a result the pop up is not aligned to the selected grid element how ... | 1 |

261,709 | 8,245,239,678 | IssuesEvent | 2018-09-11 09:07:12 | nlbdev/nordic-epub3-dtbook-migrator | https://api.github.com/repos/nlbdev/nordic-epub3-dtbook-migrator | closed | Reconsider whether rearnotes should have linear="no" | 0 - Low priority guidelines revision question | You may want to read the rearnotes when you're done reading its chapter. Using linear="no" would cause reading systems to skip that document unless you explicitly click a noteref that links to it or if you select it in the toc.

<!---

@huboard:{"order":0.0009765625}

-->

| 1.0 | Reconsider whether rearnotes should have linear="no" - You may want to read the rearnotes when you're done reading its chapter. Using linear="no" would cause reading systems to skip that document unless you explicitly click a noteref that links to it or if you select it in the toc.

<!---

@huboard:{"order":0.0009765625... | priority | reconsider whether rearnotes should have linear no you may want to read the rearnotes when you re done reading its chapter using linear no would cause reading systems to skip that document unless you explicitly click a noteref that links to it or if you select it in the toc huboard order | 1 |

35,055 | 2,789,775,138 | IssuesEvent | 2015-05-08 21:24:58 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | Vertical label wrap for Line Charts | Priority-Low Type-Enhancement | Original [issue 91](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=91) created by orwant on 2009-10-16T14:32:44.000Z:

IT would be nice to have the option to wrap vertical labels on Line Charts

(and anywhere else wrapping might make sense). Often have just a few X-axis

points but the cli... | 1.0 | Vertical label wrap for Line Charts - Original [issue 91](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=91) created by orwant on 2009-10-16T14:32:44.000Z:

IT would be nice to have the option to wrap vertical labels on Line Charts

(and anywhere else wrapping might make sense). Often have ... | priority | vertical label wrap for line charts original created by orwant on it would be nice to have the option to wrap vertical labels on line charts and anywhere else wrapping might make sense often have just a few x axis points but the client wants non abbreviated descriptions even with them on ... | 1 |

85,900 | 3,700,080,409 | IssuesEvent | 2016-02-29 05:53:42 | olpeh/wht | https://api.github.com/repos/olpeh/wht | opened | More features when using the commandline arguments | Feature request low-priority | ## Todo

- [x] Starting

- [x] Stopping

- [ ] Possibility to select project

- [ ] Possibility to select task

- [ ] Possibility to set description

- [ ] Possibility to save using last entered specs

- [ ] Fix an issue that causes breakduration to become -1

- [ ] Allow only one instance of the app to run | 1.0 | More features when using the commandline arguments - ## Todo

- [x] Starting

- [x] Stopping

- [ ] Possibility to select project

- [ ] Possibility to select task

- [ ] Possibility to set description

- [ ] Possibility to save using last entered specs

- [ ] Fix an issue that causes breakduration to become -1

- [ ] ... | priority | more features when using the commandline arguments todo starting stopping possibility to select project possibility to select task possibility to set description possibility to save using last entered specs fix an issue that causes breakduration to become allow only one i... | 1 |

258,822 | 8,179,968,504 | IssuesEvent | 2018-08-28 17:59:40 | kjohnsen/MMAPPR2 | https://api.github.com/repos/kjohnsen/MMAPPR2 | closed | Auto-index BAM files | complexity-low enhancement priority-medium | This already works somewhat, but only at the time the param is created. If an already-created param references a BAM file without an index, for example, MMAPPR will crash. | 1.0 | Auto-index BAM files - This already works somewhat, but only at the time the param is created. If an already-created param references a BAM file without an index, for example, MMAPPR will crash. | priority | auto index bam files this already works somewhat but only at the time the param is created if an already created param references a bam file without an index for example mmappr will crash | 1 |

301,508 | 9,221,094,452 | IssuesEvent | 2019-03-11 19:05:36 | ME-ICA/tedana | https://api.github.com/repos/ME-ICA/tedana | closed | PCA on wavelet-transformed data is failing | bug low-priority | Performing PCA on the wavelet-transformed data (i.e., using the `wvpca` flag) is currently raising an error. It's possible that the shape checks I added for the inputs to various functions are too strict, in which case we will need to adjust those and improve the parameter documentation for affect functions.

Given t... | 1.0 | PCA on wavelet-transformed data is failing - Performing PCA on the wavelet-transformed data (i.e., using the `wvpca` flag) is currently raising an error. It's possible that the shape checks I added for the inputs to various functions are too strict, in which case we will need to adjust those and improve the parameter d... | priority | pca on wavelet transformed data is failing performing pca on the wavelet transformed data i e using the wvpca flag is currently raising an error it s possible that the shape checks i added for the inputs to various functions are too strict in which case we will need to adjust those and improve the parameter d... | 1 |

264,220 | 8,306,616,100 | IssuesEvent | 2018-09-22 20:46:54 | electerious/Lychee | https://api.github.com/repos/electerious/Lychee | closed | Only previeus button available when 'Photo Info' is opened. | enhancement help wanted low priority | Only the button to navigate to the previous image is available when the 'Photo Info' sidebar is opened.

| 1.0 | Only previeus button available when 'Photo Info' is opened. - Only the button to navigate to the previous image is available when the 'Photo Info' sidebar is opened.

| priority | only previeus button available when photo info is opened only the button to navigate to the previous image is available when the photo info sidebar is opened | 1 |

104,165 | 4,197,367,788 | IssuesEvent | 2016-06-27 01:19:06 | johnthagen/stardust-rpg | https://api.github.com/repos/johnthagen/stardust-rpg | opened | Fix cyclomatic complexity issues | priority/low refactoring | Fix cyclomatic complexity issues

Several functions have over 10 cyclomatic complexity. Should be cleaned up if possible. | 1.0 | Fix cyclomatic complexity issues - Fix cyclomatic complexity issues

Several functions have over 10 cyclomatic complexity. Should be cleaned up if possible. | priority | fix cyclomatic complexity issues fix cyclomatic complexity issues several functions have over cyclomatic complexity should be cleaned up if possible | 1 |

488,842 | 14,087,343,338 | IssuesEvent | 2020-11-05 06:12:22 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | When sync-log=false, fsync raftdb before kvdb persists data | component/rocksdb difficulty/medium priority/low severity/Minor sig/engine status/discussion type/bug | There are value in using sync-log=false when small data loss on edge case can be tolerate, and bare metal performance is desired. However, current a TiKV node may not be able to recovery from power off, if kvdb have newer data than raftdb. This can be fixed by fsync raftdb before every time kvdb persists it's data (e.g... | 1.0 | When sync-log=false, fsync raftdb before kvdb persists data - There are value in using sync-log=false when small data loss on edge case can be tolerate, and bare metal performance is desired. However, current a TiKV node may not be able to recovery from power off, if kvdb have newer data than raftdb. This can be fixed ... | priority | when sync log false fsync raftdb before kvdb persists data there are value in using sync log false when small data loss on edge case can be tolerate and bare metal performance is desired however current a tikv node may not be able to recovery from power off if kvdb have newer data than raftdb this can be fixed ... | 1 |

457,811 | 13,162,737,589 | IssuesEvent | 2020-08-10 22:18:45 | osrf/romi-dashboard | https://api.github.com/repos/osrf/romi-dashboard | opened | Migrate from ExpansionPanel to Accordions | low priority | ## pre-requisite

Upgrade material-UI #76

Accordions: https://material-ui.com/components/accordion/

| 1.0 | Migrate from ExpansionPanel to Accordions - ## pre-requisite

Upgrade material-UI #76

Accordions: https://material-ui.com/components/accordion/

| priority | migrate from expansionpanel to accordions pre requisite upgrade material ui accordions | 1 |

170,813 | 6,472,150,012 | IssuesEvent | 2017-08-17 13:24:22 | arquillian/smart-testing | https://api.github.com/repos/arquillian/smart-testing | closed | Git properties | Component: Core Priority: Low Type: Chore Type: Feature | We should rename git properties to make them easier to understand. Having `git.commit` and `git.previous.commit` does not clearly explain their purpose nor it's easy to guess.

I would suggest following changes:

* `git.commit` -> `scm.range.head`

* `git.previous.commit` -> `scm.range.tail`

* `git.last.com... | 1.0 | Git properties - We should rename git properties to make them easier to understand. Having `git.commit` and `git.previous.commit` does not clearly explain their purpose nor it's easy to guess.

I would suggest following changes:

* `git.commit` -> `scm.range.head`

* `git.previous.commit` -> `scm.range.tail`

... | priority | git properties we should rename git properties to make them easier to understand having git commit and git previous commit does not clearly explain their purpose nor it s easy to guess i would suggest following changes git commit scm range head git previous commit scm range tail ... | 1 |

188,716 | 6,781,478,796 | IssuesEvent | 2017-10-30 01:11:03 | StuPro-TOSCAna/TOSCAna | https://api.github.com/repos/StuPro-TOSCAna/TOSCAna | opened | Exclude resources folders from Codacy | enhancement low priority | Codacy should not look at artifacts residing in our resources dirs. | 1.0 | Exclude resources folders from Codacy - Codacy should not look at artifacts residing in our resources dirs. | priority | exclude resources folders from codacy codacy should not look at artifacts residing in our resources dirs | 1 |

490,038 | 14,114,923,709 | IssuesEvent | 2020-11-07 18:16:41 | Sphereserver/Source-X | https://api.github.com/repos/Sphereserver/Source-X | closed | Item stacking ini setting glitch the dupelist | Priority: Low Status-Bug: Confirmed | When activating this option:

`// EF_ItemStacking 00000004 // Enable item stacking feature when drop items on ground`

Item stack when we drop them on the ground. But when this option is activate. the... | 1.0 | Item stacking ini setting glitch the dupelist - When activating this option:

`// EF_ItemStacking 00000004 // Enable item stacking feature when drop items on ground`

Item stack when we drop them on t... | priority | item stacking ini setting glitch the dupelist when activating this option ef itemstacking enable item stacking feature when drop items on ground item stack when we drop them on the ground but when this option is activate there no way to change the dupelist on an item for exemple i dagger... | 1 |

722,432 | 24,861,853,299 | IssuesEvent | 2022-10-27 08:53:40 | input-output-hk/cardano-node | https://api.github.com/repos/input-output-hk/cardano-node | closed | [FR] - build cmd to check whether read-only reference input is in ledger state | enhancement priority low Vasil type: enhancement user type: internal comp: cardano-cli era: babbage | **Internal/External**

*Internal* if an IOHK staff member.

**Area**

*Other* Any other topic (Delegation, Ranking, ...).

**Describe the feature you'd like**

When using `build` cmd's `--read-only-tx-in-reference` with a utxo that is not in the ledger state there is no error until `submit` when you see somethi... | 1.0 | [FR] - build cmd to check whether read-only reference input is in ledger state - **Internal/External**

*Internal* if an IOHK staff member.

**Area**

*Other* Any other topic (Delegation, Ranking, ...).

**Describe the feature you'd like**

When using `build` cmd's `--read-only-tx-in-reference` with a utxo that... | priority | build cmd to check whether read only reference input is in ledger state internal external internal if an iohk staff member area other any other topic delegation ranking describe the feature you d like when using build cmd s read only tx in reference with a utxo that is... | 1 |

458,404 | 13,174,672,593 | IssuesEvent | 2020-08-11 23:09:00 | shimming-toolbox/shimming-toolbox-py | https://api.github.com/repos/shimming-toolbox/shimming-toolbox-py | opened | Improve masking capabilities | Priority: LOW enhancement | ## Context

The initial issue #47 which was partially fixed by PR #60 added some basic capabilities to mask some data (threshold, shape: square, cube). More masking capability could be implemented to segment the brain and spinal cord as well as other shapes.

Algorithms that could help

- SCT: for spinal cord + simpl... | 1.0 | Improve masking capabilities - ## Context

The initial issue #47 which was partially fixed by PR #60 added some basic capabilities to mask some data (threshold, shape: square, cube). More masking capability could be implemented to segment the brain and spinal cord as well as other shapes.

Algorithms that could help

... | priority | improve masking capabilities context the initial issue which was partially fixed by pr added some basic capabilities to mask some data threshold shape square cube more masking capability could be implemented to segment the brain and spinal cord as well as other shapes algorithms that could help ... | 1 |

31,719 | 2,736,540,011 | IssuesEvent | 2015-04-19 14:55:47 | cs2103jan2015-t16-3c/Main | https://api.github.com/repos/cs2103jan2015-t16-3c/Main | closed | A user can group the to-do list according to day, week and month | priority.low | so that the user can see his schedule easier | 1.0 | A user can group the to-do list according to day, week and month - so that the user can see his schedule easier | priority | a user can group the to do list according to day week and month so that the user can see his schedule easier | 1 |

593,885 | 18,019,373,056 | IssuesEvent | 2021-09-16 17:22:08 | Azure/autorest.java | https://api.github.com/repos/Azure/autorest.java | closed | LRO implementation for protocol methods | priority-0 v4 low-level-client | This task tracks the changes needed in autorest to generate LRO protocol methods. This task depends on #1044 | 1.0 | LRO implementation for protocol methods - This task tracks the changes needed in autorest to generate LRO protocol methods. This task depends on #1044 | priority | lro implementation for protocol methods this task tracks the changes needed in autorest to generate lro protocol methods this task depends on | 1 |

727,474 | 25,036,566,715 | IssuesEvent | 2022-11-04 16:30:39 | tempesta-tech/tempesta | https://api.github.com/repos/tempesta-tech/tempesta | opened | Limit the size of loaded certificates | low priority TLS good to start | # Motivation

With large certificate chains we might exceed the 16KB TLS record limit and need to fragment Certificate message, what we're unable to do for now. See https://techcommunity.microsoft.com/t5/ask-the-directory-services-team/ssl-tls-record-fragmentation-support/ba-p/395743

# Scope

`tfw_tls_set_cert()... | 1.0 | Limit the size of loaded certificates - # Motivation

With large certificate chains we might exceed the 16KB TLS record limit and need to fragment Certificate message, what we're unable to do for now. See https://techcommunity.microsoft.com/t5/ask-the-directory-services-team/ssl-tls-record-fragmentation-support/ba-p/... | priority | limit the size of loaded certificates motivation with large certificate chains we might exceed the tls record limit and need to fragment certificate message what we re unable to do for now see scope tfw tls set cert should verify the file size and limit it by overhead to make sure that th... | 1 |

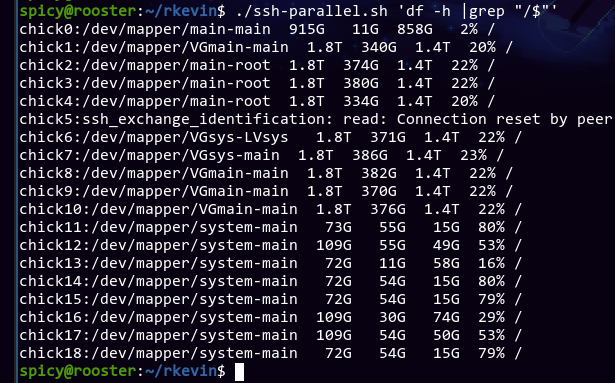

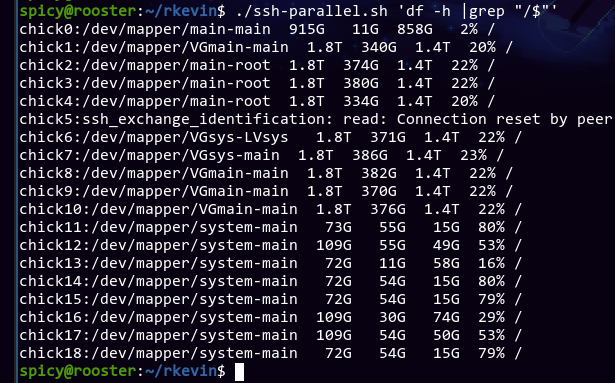

456,186 | 13,146,546,867 | IssuesEvent | 2020-08-08 10:32:25 | LibreTexts/metalc | https://api.github.com/repos/LibreTexts/metalc | closed | Chicks 11~18 have low disk storage | flock cluster low priority |

Not an issue for now, but pods scheduled on those nodes might get some out of disk space issues when pulling images. | 1.0 | Chicks 11~18 have low disk storage -

Not an issue for now, but pods scheduled on those nodes might get some out of disk space issues when pulling images. | priority | chicks have low disk storage not an issue for now but pods scheduled on those nodes might get some out of disk space issues when pulling images | 1 |

176,403 | 6,559,107,027 | IssuesEvent | 2017-09-07 01:33:32 | copperhead/bugtracker | https://api.github.com/repos/copperhead/bugtracker | closed | give system_server different ASLR bases than the zygote | enhancement priority-low | It breaks if preloading isn't used, which is what is blocking this from being done. This is unimportant on CopperheadOS since _everything else_ is spawned from the Zygote via `exec`, but it would be nice to cover this too just in case something was missed. This subset is also something that could be upstreamed, as spaw... | 1.0 | give system_server different ASLR bases than the zygote - It breaks if preloading isn't used, which is what is blocking this from being done. This is unimportant on CopperheadOS since _everything else_ is spawned from the Zygote via `exec`, but it would be nice to cover this too just in case something was missed. This ... | priority | give system server different aslr bases than the zygote it breaks if preloading isn t used which is what is blocking this from being done this is unimportant on copperheados since everything else is spawned from the zygote via exec but it would be nice to cover this too just in case something was missed this ... | 1 |

783,283 | 27,525,385,143 | IssuesEvent | 2023-03-06 17:39:41 | strategitica/strategitica | https://api.github.com/repos/strategitica/strategitica | opened | Daily time averages for balancing workload | low priority | I want to be able to see the average duration of all tasks for each day of the week so I can reschedule tasks to make things more balanced. I'd add a link in the menu called, idk, "Stats" or "Averages" or something, and it'd open a modal with the averages and maybe a table showing the total tasks duration for each day ... | 1.0 | Daily time averages for balancing workload - I want to be able to see the average duration of all tasks for each day of the week so I can reschedule tasks to make things more balanced. I'd add a link in the menu called, idk, "Stats" or "Averages" or something, and it'd open a modal with the averages and maybe a table s... | priority | daily time averages for balancing workload i want to be able to see the average duration of all tasks for each day of the week so i can reschedule tasks to make things more balanced i d add a link in the menu called idk stats or averages or something and it d open a modal with the averages and maybe a table s... | 1 |

577,748 | 17,117,969,530 | IssuesEvent | 2021-07-11 18:58:06 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | Check Triggers Touch Logic | Outcome: Resolved Priority: Low Size: Medium Type: Enhancement Where: Game | Check if triggers are properly checking if the activator is passing trigger filters. `OnStartTouch` overrides automatically guarantee this, but things like `Touch`, or even `StartTouch` need to manually check filters in the function. Consider converting `StartTouch` to be `OnStartTouch` to simplify the filter checks as... | 1.0 | Check Triggers Touch Logic - Check if triggers are properly checking if the activator is passing trigger filters. `OnStartTouch` overrides automatically guarantee this, but things like `Touch`, or even `StartTouch` need to manually check filters in the function. Consider converting `StartTouch` to be `OnStartTouch` to ... | priority | check triggers touch logic check if triggers are properly checking if the activator is passing trigger filters onstarttouch overrides automatically guarantee this but things like touch or even starttouch need to manually check filters in the function consider converting starttouch to be onstarttouch to ... | 1 |

460,947 | 13,221,193,557 | IssuesEvent | 2020-08-17 13:41:02 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | samples/subsys/canbus/isotp/sample.subsys.canbus.isotp fails on FRDM-K64F | area: CAN bug platform: NXP priority: low | When trying to run this sample on a FRDM-K64F I get:

```

*** Booting Zephyr OS build zephyr-v2.3.0-667-g766bb84a95e2 ***

Start sending data

TX complete cb [-2]

Error while sending data to ID 384 [-2]

Receiving error [-14]

Got 0 bytes in total

Receiving erreor [-14]

[00:00:02.007,000] <err> isotp: Reception ... | 1.0 | samples/subsys/canbus/isotp/sample.subsys.canbus.isotp fails on FRDM-K64F - When trying to run this sample on a FRDM-K64F I get:

```

*** Booting Zephyr OS build zephyr-v2.3.0-667-g766bb84a95e2 ***

Start sending data

TX complete cb [-2]

Error while sending data to ID 384 [-2]

Receiving error [-14]

Got 0 bytes ... | priority | samples subsys canbus isotp sample subsys canbus isotp fails on frdm when trying to run this sample on a frdm i get booting zephyr os build zephyr start sending data tx complete cb error while sending data to id receiving error got bytes in total receiving erreor ... | 1 |

782,568 | 27,500,113,343 | IssuesEvent | 2023-03-05 15:51:18 | concretecms/concretecms | https://api.github.com/repos/concretecms/concretecms | closed | [v9] bug: Datetime picker widget in Dashboard has no Next/Prev icons and not loading in frontend | Type:Bug Status:Proposal Status:Blocked Bug Priority:Low | 1. Datetime picker widget in Dashboard has no Next/Prev icons. The mouse and tooltip are not captured by the screen capture program, but you can see there are now next/prev icons on hover:

created by orwant on 2010-05-27T10:21:46.000Z:

<b>What would you like to see us add to this API?</b>

To be able to set the width of the columns in a column chart, and to set

the width of the legend (which is cut off... | 1.0 | Set the column width and legend width - Original [issue 295](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=295) created by orwant on 2010-05-27T10:21:46.000Z:

<b>What would you like to see us add to this API?</b>

To be able to set the width of the columns in a column chart, and to set

t... | priority | set the column width and legend width original created by orwant on what would you like to see us add to this api to be able to set the width of the columns in a column chart and to set the width of the legend which is cut off if the chart widht is not long what component is this issue... | 1 |

431,994 | 12,487,434,939 | IssuesEvent | 2020-05-31 09:03:58 | parzh/xrange | https://api.github.com/repos/parzh/xrange | opened | Remove all deprecated entities and functionality | Change: major Domain: main Priority: low Type: improvement | Remove all deprecated entities and functionality | 1.0 | Remove all deprecated entities and functionality - Remove all deprecated entities and functionality | priority | remove all deprecated entities and functionality remove all deprecated entities and functionality | 1 |

334,728 | 10,144,492,535 | IssuesEvent | 2019-08-04 21:26:13 | labsquare/cutevariant | https://api.github.com/repos/labsquare/cutevariant | closed | ColumnWidget as a list | low-priority question | Actually, columnWidget display columns as a Tree within 3 categories ( variants, annotations, samples).

I propose to have a simple list with 2 columns ( field name, field description) , category as icon and a search bar to perform search on boith 2 columns.

Something like this :

.

I propose to have a simple list with 2 columns ( field name, field description) , category as icon and a search bar to perform search on boith 2 columns.

Something like this :

1. Collapse one or more of the subCategory groups

1. Collapse the category Group

1. Expand Category

### Current behavior

The aggregate rows are missing and the locked and unlocked content is misaligned

### Expected/desire... | 1.0 | Rows are misaligned with multi-level grouping and locked columns - ### Reproduction of the problem

1. Run [this dojo](https://dojo.telerik.com/IDEDUCIq)

1. Collapse one or more of the subCategory groups

1. Collapse the category Group

1. Expand Category

### Current behavior

The aggregate rows are missing and t... | priority | rows are misaligned with multi level grouping and locked columns reproduction of the problem run collapse one or more of the subcategory groups collapse the category group expand category current behavior the aggregate rows are missing and the locked and unlocked content is misaligne... | 1 |

460,973 | 13,221,558,234 | IssuesEvent | 2020-08-17 14:14:08 | tempesta-tech/tempesta | https://api.github.com/repos/tempesta-tech/tempesta | closed | KASAN: Errors on starting Tempesta | bug invalid low priority | It's hard to say, how much this issue is really apply to Tempesta, I've tried to run kernel with slightly different configs, where KASAN was enabled, but I couldn't start Tempesta, when the KASAN is enabled. In the same time other teammates has no issues with running KASAN-enabled kernels and starting Tempesta on them.... | 1.0 | KASAN: Errors on starting Tempesta - It's hard to say, how much this issue is really apply to Tempesta, I've tried to run kernel with slightly different configs, where KASAN was enabled, but I couldn't start Tempesta, when the KASAN is enabled. In the same time other teammates has no issues with running KASAN-enabled k... | priority | kasan errors on starting tempesta it s hard to say how much this issue is really apply to tempesta i ve tried to run kernel with slightly different configs where kasan was enabled but i couldn t start tempesta when the kasan is enabled in the same time other teammates has no issues with running kasan enabled k... | 1 |

798,699 | 28,292,689,071 | IssuesEvent | 2023-04-09 12:15:00 | bounswe/bounswe2023group8 | https://api.github.com/repos/bounswe/bounswe2023group8 | closed | Add Project Requirements to Milestone 1 Report Page | status: to-do priority: high effort: low | Milestone 1 report page has already been created. There is a "Software Requirement Specification" section. Take the project requirements from "Requirements" page and add them to corresponding section. | 1.0 | Add Project Requirements to Milestone 1 Report Page - Milestone 1 report page has already been created. There is a "Software Requirement Specification" section. Take the project requirements from "Requirements" page and add them to corresponding section. | priority | add project requirements to milestone report page milestone report page has already been created there is a software requirement specification section take the project requirements from requirements page and add them to corresponding section | 1 |

401,597 | 11,795,200,749 | IssuesEvent | 2020-03-18 08:28:26 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Improve messages for exam/summary upload | education priority: low technical change | In GitLab by @se-bastiaan on Jan 14, 2020, 11:39

### One-sentence description

Improve messages for exam/summary upload

### Why?

They're unclear?. It does not say that the exam will be added to the approval queue. And that causes confusion because people often upload the document again.

Edit: I did not get any mes... | 1.0 | Improve messages for exam/summary upload - In GitLab by @se-bastiaan on Jan 14, 2020, 11:39

### One-sentence description

Improve messages for exam/summary upload

### Why?

They're unclear?. It does not say that the exam will be added to the approval queue. And that causes confusion because people often upload the d... | priority | improve messages for exam summary upload in gitlab by se bastiaan on jan one sentence description improve messages for exam summary upload why they re unclear it does not say that the exam will be added to the approval queue and that causes confusion because people often upload the documen... | 1 |

299,427 | 9,205,486,705 | IssuesEvent | 2019-03-08 10:42:47 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | PostGIS Add Layer does not support non-TCP/IP connections | Category: Data Provider Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **Frank Warmerdam -** (Frank Warmerdam -)

Original Redmine Issue: 817, https://issues.qgis.org/issues/817

Original Assignee: nobody -

---

There is no mechanism to access a local postgres server using named pipes instead of tcp/ip sockets.

In PQconnectdb() all that is needed to support this case i... | 1.0 | PostGIS Add Layer does not support non-TCP/IP connections - ---

Author Name: **Frank Warmerdam -** (Frank Warmerdam -)

Original Redmine Issue: 817, https://issues.qgis.org/issues/817

Original Assignee: nobody -

---

There is no mechanism to access a local postgres server using named pipes instead of tcp/ip sockets.... | priority | postgis add layer does not support non tcp ip connections author name frank warmerdam frank warmerdam original redmine issue original assignee nobody there is no mechanism to access a local postgres server using named pipes instead of tcp ip sockets in pqconnectdb all that is need... | 1 |

581,676 | 17,314,826,360 | IssuesEvent | 2021-07-27 03:39:54 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | desktop anki can't import ankidroid apkg backup files due to missing media file | Bug Help Wanted Priority-Low Reproduced Stale | ```

Import failed.

Traceback (most recent call last):

File "C:\cygwin\home\dae\win\build\pyi.win32\anki\outPYZ1.pyz/aqt.importing", line 327, in importFile

File "C:\cygwin\home\dae\win\build\pyi.win32\anki\outPYZ1.pyz/anki.importing.apkg", line 22, in run

File "C:\cygwin\home\dae\win\build\pyi.win32\anki\outPYZ1.... | 1.0 | desktop anki can't import ankidroid apkg backup files due to missing media file - ```

Import failed.

Traceback (most recent call last):

File "C:\cygwin\home\dae\win\build\pyi.win32\anki\outPYZ1.pyz/aqt.importing", line 327, in importFile

File "C:\cygwin\home\dae\win\build\pyi.win32\anki\outPYZ1.pyz/anki.importing.a... | priority | desktop anki can t import ankidroid apkg backup files due to missing media file import failed traceback most recent call last file c cygwin home dae win build pyi anki pyz aqt importing line in importfile file c cygwin home dae win build pyi anki pyz anki importing apkg line in run ... | 1 |

646,278 | 21,043,037,059 | IssuesEvent | 2022-03-31 13:53:18 | thesaurus-linguae-aegyptiae/tla-web | https://api.github.com/repos/thesaurus-linguae-aegyptiae/tla-web | closed | Belegstellenseite: case-Permuationen als solche markieren | feature request low priority | **Hintergrund**

Einzelne Sätze eines Texts existieren ggf. mehrfach, in einem Set von möglichen Permuationen von Lesevarianten (dazu https://github.com/thesaurus-linguae-aegyptiae/tla-datentransformation/issues/43).

Diese sind daran erkennbar, dass hinter der eigentlichen Satz-ID ein Index "-00", "-01", ... steht,... | 1.0 | Belegstellenseite: case-Permuationen als solche markieren - **Hintergrund**

Einzelne Sätze eines Texts existieren ggf. mehrfach, in einem Set von möglichen Permuationen von Lesevarianten (dazu https://github.com/thesaurus-linguae-aegyptiae/tla-datentransformation/issues/43).

Diese sind daran erkennbar, dass hinter... | priority | belegstellenseite case permuationen als solche markieren hintergrund einzelne sätze eines texts existieren ggf mehrfach in einem set von möglichen permuationen von lesevarianten dazu diese sind daran erkennbar dass hinter der eigentlichen satz id ein index steht z b ... | 1 |

343,446 | 10,330,512,015 | IssuesEvent | 2019-09-02 14:51:26 | MajorCooke/Doom4Doom | https://api.github.com/repos/MajorCooke/Doom4Doom | opened | 5:4 Hud Clipping | Low Priority bug | 5:4 resolutions have some hud clipping issues that will eventually need sorting out.

Low priority at the moment. | 1.0 | 5:4 Hud Clipping - 5:4 resolutions have some hud clipping issues that will eventually need sorting out.

Low priority at the moment. | priority | hud clipping resolutions have some hud clipping issues that will eventually need sorting out low priority at the moment | 1 |

149,109 | 5,711,677,293 | IssuesEvent | 2017-04-19 00:05:01 | playasoft/laravel-voldb | https://api.github.com/repos/playasoft/laravel-voldb | opened | Allow username or email when logging in | enhancement priority: low | Related issue: https://github.com/playasoft/weightlifter/issues/78

Many users in the Art Grant Database project seem to forget their username after registering, so we should allow users to sign in by using either their username or their email address to prevent confusion. | 1.0 | Allow username or email when logging in - Related issue: https://github.com/playasoft/weightlifter/issues/78

Many users in the Art Grant Database project seem to forget their username after registering, so we should allow users to sign in by using either their username or their email address to prevent confusion. | priority | allow username or email when logging in related issue many users in the art grant database project seem to forget their username after registering so we should allow users to sign in by using either their username or their email address to prevent confusion | 1 |

655,328 | 21,685,844,550 | IssuesEvent | 2022-05-09 11:11:41 | canonical-web-and-design/charmed-osm.com | https://api.github.com/repos/canonical-web-and-design/charmed-osm.com | closed | Contact modal should be NPM module | Priority: Low | The `dynamic-contact-form.js` is a file for the contact pop up modal used in multiple repos - it should be made into an NPM module which can be installed. | 1.0 | Contact modal should be NPM module - The `dynamic-contact-form.js` is a file for the contact pop up modal used in multiple repos - it should be made into an NPM module which can be installed. | priority | contact modal should be npm module the dynamic contact form js is a file for the contact pop up modal used in multiple repos it should be made into an npm module which can be installed | 1 |

745,722 | 25,997,681,560 | IssuesEvent | 2022-12-20 12:58:52 | pendulum-chain/spacewalk | https://api.github.com/repos/pendulum-chain/spacewalk | opened | Add `clippy --fix` to pre-commit script | priority:low | It might be a good idea to add a `clippy --fix` command to the existing pre-commit script. It can automatically apply some best practices to the code, which the CI is likely to complain about anyways. | 1.0 | Add `clippy --fix` to pre-commit script - It might be a good idea to add a `clippy --fix` command to the existing pre-commit script. It can automatically apply some best practices to the code, which the CI is likely to complain about anyways. | priority | add clippy fix to pre commit script it might be a good idea to add a clippy fix command to the existing pre commit script it can automatically apply some best practices to the code which the ci is likely to complain about anyways | 1 |

4,947 | 2,566,459,924 | IssuesEvent | 2015-02-08 15:34:17 | cs2103jan2015-t13-2c/main | https://api.github.com/repos/cs2103jan2015-t13-2c/main | opened | As a user I can categorise my tasks (work, family, etc.) | priority.low | so that I can view tasks of the same type together. | 1.0 | As a user I can categorise my tasks (work, family, etc.) - so that I can view tasks of the same type together. | priority | as a user i can categorise my tasks work family etc so that i can view tasks of the same type together | 1 |

123,250 | 4,859,278,536 | IssuesEvent | 2016-11-13 15:34:40 | choderalab/yank | https://api.github.com/repos/choderalab/yank | opened | SMILES with uncertain stereochemistry | enhancement Priority low | Our SMILES-based setup pipeline will fail if the stereochemistry is not specified for molecules with chiral atoms or bonds. We should probably either:

* Require users specify molecules with certain stereochemistry and issue a failure quickly with a clear error message about how to correct this. We can use the [OpenEye... | 1.0 | SMILES with uncertain stereochemistry - Our SMILES-based setup pipeline will fail if the stereochemistry is not specified for molecules with chiral atoms or bonds. We should probably either:

* Require users specify molecules with certain stereochemistry and issue a failure quickly with a clear error message about how ... | priority | smiles with uncertain stereochemistry our smiles based setup pipeline will fail if the stereochemistry is not specified for molecules with chiral atoms or bonds we should probably either require users specify molecules with certain stereochemistry and issue a failure quickly with a clear error message about how ... | 1 |

290,248 | 8,883,652,465 | IssuesEvent | 2019-01-14 16:10:25 | vuejs/rollup-plugin-vue | https://api.github.com/repos/vuejs/rollup-plugin-vue | closed | Add documentation for vue-template-compiler options | Priority: Low Status: Available Type: Maintenance | I used the compileOptions -> modules,

I basically copied it from vue-loader.

Do you maybe want to add it to the documentation?

| 1.0 | Add documentation for vue-template-compiler options - I used the compileOptions -> modules,

I basically copied it from vue-loader.

Do you maybe want to add it to the documentation?

| priority | add documentation for vue template compiler options i used the compileoptions modules i basically copied it from vue loader do you maybe want to add it to the documentation | 1 |

413,289 | 12,064,499,749 | IssuesEvent | 2020-04-16 08:23:50 | minetest/minetest | https://api.github.com/repos/minetest/minetest | closed | testStreamRead and testBufReader failures | Bug Low priority | Is this a real bug or just because the values were set to 53.53467f and tested as 53.534f?

```

Test assertion failed: readF1000(is) == 53.534f

at test_serialization.cpp:305

[FAIL] testStreamRead - 0ms

Test assertion failed: buf.getF1000() == 53.534f

at test_serialization.cpp:472

[FAIL] testBufReader - 0ms

```... | 1.0 | testStreamRead and testBufReader failures - Is this a real bug or just because the values were set to 53.53467f and tested as 53.534f?

```

Test assertion failed: readF1000(is) == 53.534f

at test_serialization.cpp:305

[FAIL] testStreamRead - 0ms

Test assertion failed: buf.getF1000() == 53.534f

at test_serializa... | priority | teststreamread and testbufreader failures is this a real bug or just because the values were set to and tested as test assertion failed is at test serialization cpp teststreamread test assertion failed buf at test serialization cpp testbufreader minet... | 1 |

243,491 | 7,858,503,868 | IssuesEvent | 2018-06-21 14:06:19 | alinaciuysal/OEDA | https://api.github.com/repos/alinaciuysal/OEDA | closed | Incoming data type selection using dropdown list | low priority | A small bug exists in successful & running experiment pages. Dropdown lists in these pages do not reflect the change in the incoming data type at initialization. However, plots are generated successfully.

it's related with [(ngValue)] and [selected] attributes of Angular:

[see](https://stackoverflow.com/questions/4... | 1.0 | Incoming data type selection using dropdown list - A small bug exists in successful & running experiment pages. Dropdown lists in these pages do not reflect the change in the incoming data type at initialization. However, plots are generated successfully.

it's related with [(ngValue)] and [selected] attributes of An... | priority | incoming data type selection using dropdown list a small bug exists in successful running experiment pages dropdown lists in these pages do not reflect the change in the incoming data type at initialization however plots are generated successfully it s related with and attributes of angular | 1 |

655,149 | 21,678,611,860 | IssuesEvent | 2022-05-09 02:20:07 | darwinia-network/apps | https://api.github.com/repos/darwinia-network/apps | closed | 切换网络后,Staking里当前的Stash账户地址和Controller地址,未自动更新成对应地址格式 | low-priority | <img width="1463" alt="image" src="https://user-images.githubusercontent.com/102211220/165881193-d3e50794-ba0c-4747-ab1f-6a19a3acd90c.png">

<img width="1468" alt="image" src="https://user-images.githubusercontent.com/102211220/165881266-d5cc3321-efb7-4bbe-bd01-575d7cade615.png">

| 1.0 | 切换网络后,Staking里当前的Stash账户地址和Controller地址,未自动更新成对应地址格式 - <img width="1463" alt="image" src="https://user-images.githubusercontent.com/102211220/165881193-d3e50794-ba0c-4747-ab1f-6a19a3acd90c.png">

<img width="1468" alt="image" src="https://user-images.githubusercontent.com/102211220/165881266-d5cc3321-efb7-4bbe-bd01-575... | priority | 切换网络后,staking里当前的stash账户地址和controller地址,未自动更新成对应地址格式 img width alt image src img width alt image src | 1 |

737,273 | 25,509,285,609 | IssuesEvent | 2022-11-28 11:54:10 | nanoframework/Home | https://api.github.com/repos/nanoframework/Home | closed | Failure to flash nanoframework onto ESP32 Lilygo | Type: Feature request Area: Tools Priority: Low | ### Tool

nanoff

### Description

Trying to update ESP32 Lilygo module with nanoframework version 1.8.0.581 using nanoff version nanoff 2.4.2+c4df2f6716.

Flashing device is successful but looking at ESP32 on TeraTerm shows a continual reboot cycle with the following debug output.

```

rst:0x7 (TG0WDT_SYS_RESET),b... | 1.0 | Failure to flash nanoframework onto ESP32 Lilygo - ### Tool

nanoff

### Description

Trying to update ESP32 Lilygo module with nanoframework version 1.8.0.581 using nanoff version nanoff 2.4.2+c4df2f6716.

Flashing device is successful but looking at ESP32 on TeraTerm shows a continual reboot cycle with the following... | priority | failure to flash nanoframework onto lilygo tool nanoff description trying to update lilygo module with nanoframework version using nanoff version nanoff flashing device is successful but looking at on teraterm shows a continual reboot cycle with the following debug output ... | 1 |

349,653 | 10,471,565,185 | IssuesEvent | 2019-09-23 08:11:03 | garden-io/garden | https://api.github.com/repos/garden-io/garden | reopened | Hot reload tasks wait for last batch of tasks to complete before running. | bug enhancement priority:low | ## Bug

Because the `TaskGraph` currently sequences `processTasks` calls (and thus implicitly batches the tasks that were in the graph at the time of that `processTasks` call), `HotReloadTask`s that are added while a `processTasks` batch is in progress aren't run until that batch is completed.

This isn't really a ... | 1.0 | Hot reload tasks wait for last batch of tasks to complete before running. - ## Bug

Because the `TaskGraph` currently sequences `processTasks` calls (and thus implicitly batches the tasks that were in the graph at the time of that `processTasks` call), `HotReloadTask`s that are added while a `processTasks` batch is i... | priority | hot reload tasks wait for last batch of tasks to complete before running bug because the taskgraph currently sequences processtasks calls and thus implicitly batches the tasks that were in the graph at the time of that processtasks call hotreloadtask s that are added while a processtasks batch is i... | 1 |

405,776 | 11,882,538,968 | IssuesEvent | 2020-03-27 14:33:42 | ntop/ntopng | https://api.github.com/repos/ntop/ntopng | closed | Unexpected Shutdown & List 'name' has 0 rules | in progress low-priority bug | Feb 28 00:00:05 ntop ntopng[1722]: 28/Feb/2020 00:00:05 [MySQLDB.cpp:824] Attempting to connect to MySQL for interface tcp://127.0.0.1:5556...

Feb 28 00:00:05 ntop ntopng[1722]: 28/Feb/2020 00:00:05 [MySQLDB.cpp:850] Successfully connected to MySQL [root@localhost:3306] for interface tcp://127.0.0.1:5556

Feb 28 00:00... | 1.0 | Unexpected Shutdown & List 'name' has 0 rules - Feb 28 00:00:05 ntop ntopng[1722]: 28/Feb/2020 00:00:05 [MySQLDB.cpp:824] Attempting to connect to MySQL for interface tcp://127.0.0.1:5556...

Feb 28 00:00:05 ntop ntopng[1722]: 28/Feb/2020 00:00:05 [MySQLDB.cpp:850] Successfully connected to MySQL [root@localhost:3306] ... | priority | unexpected shutdown list name has rules feb ntop ntopng feb attempting to connect to mysql for interface tcp feb ntop ntopng feb successfully connected to mysql for interface tcp feb ntop ntopng feb shutting down ... | 1 |

270,802 | 8,470,414,346 | IssuesEvent | 2018-10-24 04:09:16 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | opened | Warn if uploading configuration will overwrite someone elses changes | Configuration Priority: 3 - Low Status: 1 - Triaged Type: Improvement medic-conf | Technical users use medic-conf to make configuration changes to instances and hopefully remember to commit the changes to git to track, back up, and share their work.

This can overwrite other configuration changes if...

1. a user has made changes through the admin app,

2. a user has made changes directly to the ... | 1.0 | Warn if uploading configuration will overwrite someone elses changes - Technical users use medic-conf to make configuration changes to instances and hopefully remember to commit the changes to git to track, back up, and share their work.

This can overwrite other configuration changes if...

1. a user has made chan... | priority | warn if uploading configuration will overwrite someone elses changes technical users use medic conf to make configuration changes to instances and hopefully remember to commit the changes to git to track back up and share their work this can overwrite other configuration changes if a user has made chan... | 1 |

720,753 | 24,805,365,106 | IssuesEvent | 2022-10-25 03:39:55 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | it8xxx2_evb: The testcase tests/kernel/sleep/failed to run. | bug priority: low platform: ITE | **Describe the bug**

The testcase tests/kernel/sleep/ failed to run on the it8xxx2_evb

**To Reproduce**

twister -p it8xxx2_evb --device-testing --west-flash="../../itetool/loader.sh" --device-serial=/dev/ttyUSB0 -T tests/kernel/sleep/ -v

**Logs and console output**

```

***** delaying boot 1ms (per build co... | 1.0 | it8xxx2_evb: The testcase tests/kernel/sleep/failed to run. - **Describe the bug**

The testcase tests/kernel/sleep/ failed to run on the it8xxx2_evb

**To Reproduce**

twister -p it8xxx2_evb --device-testing --west-flash="../../itetool/loader.sh" --device-serial=/dev/ttyUSB0 -T tests/kernel/sleep/ -v

**Logs and ... | priority | evb the testcase tests kernel sleep failed to run describe the bug the testcase tests kernel sleep failed to run on the evb to reproduce twister p evb device testing west flash itetool loader sh device serial dev t tests kernel sleep v logs and console output ... | 1 |

727,300 | 25,030,379,962 | IssuesEvent | 2022-11-04 11:50:15 | YangCatalog/sdo_analysis | https://api.github.com/repos/YangCatalog/sdo_analysis | closed | `check_archived_drafts.py` attemting to use nonexistent directory | bug Priority: Low | The `check_archived_drafts.py` script constructs it's output path like [this](https://github.com/YangCatalog/sdo_analysis/blob/ebfcc6c24251de4ef5ad9bd2f218a6ab2fa64a09/bin/check_archived_drafts.py#L64):

```python3

all_yang_path = os.path.join(temp_dir, 'YANG-ALL')

```

Usually this will resolve to `/var/yang/tmp... | 1.0 | `check_archived_drafts.py` attemting to use nonexistent directory - The `check_archived_drafts.py` script constructs it's output path like [this](https://github.com/YangCatalog/sdo_analysis/blob/ebfcc6c24251de4ef5ad9bd2f218a6ab2fa64a09/bin/check_archived_drafts.py#L64):

```python3

all_yang_path = os.path.join(tem... | priority | check archived drafts py attemting to use nonexistent directory the check archived drafts py script constructs it s output path like all yang path os path join temp dir yang all usually this will resolve to var yang tmp yang all such a directory doesn t ever seem to be created this... | 1 |

122,281 | 4,833,059,243 | IssuesEvent | 2016-11-08 09:46:19 | MiT-HEP/ChargedHiggs | https://api.github.com/repos/MiT-HEP/ChargedHiggs | opened | CombineTools | low priority | make a standardize set of tools to write datacards.

and use them in the two scripts. | 1.0 | CombineTools - make a standardize set of tools to write datacards.

and use them in the two scripts. | priority | combinetools make a standardize set of tools to write datacards and use them in the two scripts | 1 |

772,109 | 27,106,637,451 | IssuesEvent | 2023-02-15 12:36:32 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | [bug] Symbolic links are not properly copied when importing on Linux | type: bug good first issue stage: queue priority: medium complex: low component: ux | ### Environment Details (include every applicable attribute)

* Operating System+version: Ubuntu 19.10

* Compiler+version: GCC 9.2.1

* Conan version: Conan 1.21.0

* Python version: Python 3.7.5

### Steps to reproduce (Include if Applicable)

`conanfile.txt`:

```

[requires]

expat/2.2.8

[imports]

b... | 1.0 | [bug] Symbolic links are not properly copied when importing on Linux - ### Environment Details (include every applicable attribute)

* Operating System+version: Ubuntu 19.10

* Compiler+version: GCC 9.2.1

* Conan version: Conan 1.21.0

* Python version: Python 3.7.5

### Steps to reproduce (Include if Applic... | priority | symbolic links are not properly copied when importing on linux environment details include every applicable attribute operating system version ubuntu compiler version gcc conan version conan python version python steps to reproduce include if applicable ... | 1 |

689,934 | 23,640,840,622 | IssuesEvent | 2022-08-25 16:54:18 | WordPress/openverse-frontend | https://api.github.com/repos/WordPress/openverse-frontend | opened | Playwright logs are too verbose | 🟩 priority: low 🛠 goal: fix 🤖 aspect: dx | ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Currently, it is very difficult to read through the CI Playwright logs because they are to... | 1.0 | Playwright logs are too verbose - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Currently, it is very difficult to read through the CI P... | priority | playwright logs are too verbose description currently it is very difficult to read through the ci playwright logs because they are too verbose there are talkback proxy logs for each tape that is found unfortunatly it is not possible to only log the not found tapes so all of the tapes are being lo... | 1 |

81,392 | 3,590,450,271 | IssuesEvent | 2016-02-01 05:55:58 | ESAPI/esapi-java-legacy | https://api.github.com/repos/ESAPI/esapi-java-legacy | closed | Patch for /trunk/src/main/java/org/owasp/esapi/reference/crypto/JavaEncryptor.java | Component-Encryptor imported Maintainability Milestone-Release2.1 OpSys-All Priority-Low Type-Patch | _From [noloa...@gmail.com](https://code.google.com/u/114558122492435650190/) on October 08, 2011 17:20:41_

Removed all those damn errant UTF-8 BOMs (EF BB BF) which made it impossible to format the source

**Attachment:** [JavaEncryptor.java.patch](http://code.google.com/p/owasp-esapi-java/issues/detail?id=247)

_Orig... | 1.0 | Patch for /trunk/src/main/java/org/owasp/esapi/reference/crypto/JavaEncryptor.java - _From [noloa...@gmail.com](https://code.google.com/u/114558122492435650190/) on October 08, 2011 17:20:41_

Removed all those damn errant UTF-8 BOMs (EF BB BF) which made it impossible to format the source

**Attachment:** [JavaEncrypt... | priority | patch for trunk src main java org owasp esapi reference crypto javaencryptor java from on october removed all those damn errant utf boms ef bb bf which made it impossible to format the source attachment original issue | 1 |

501,008 | 14,518,419,746 | IssuesEvent | 2020-12-13 23:34:31 | godaddy-wordpress/coblocks | https://api.github.com/repos/godaddy-wordpress/coblocks | opened | Cover block - style should not apply when there's no image selected | [Priority] Low [Type] Bug | ### Describe the bug:

ISBAT see what I'm doing.

When you apply top or bottom wave to the cover block and you still didn't select an image, the block is covered by the wave.

It also happens in WP.com, and it's specially problematic when you use full width, as the block is almost covered.

### To reproduce:

<!-- St... | 1.0 | Cover block - style should not apply when there's no image selected - ### Describe the bug:

ISBAT see what I'm doing.

When you apply top or bottom wave to the cover block and you still didn't select an image, the block is covered by the wave.

It also happens in WP.com, and it's specially problematic when you use ful... | priority | cover block style should not apply when there s no image selected describe the bug isbat see what i m doing when you apply top or bottom wave to the cover block and you still didn t select an image the block is covered by the wave it also happens in wp com and it s specially problematic when you use ful... | 1 |

741,992 | 25,831,493,601 | IssuesEvent | 2022-12-12 16:23:04 | HEPData/hepdata | https://api.github.com/repos/HEPData/hepdata | opened | search: check for `error` in `query_result` before returning JSON | type: bug priority: medium complexity: low | If search results are requested in JSON format, the current code tries to calculate `query_result['hits']` and return the JSON results without checking if there is an `error` in the `query_result`:

https://github.com/HEPData/hepdata/blob/3dcdfe62dede1fb28752f31cf863da42ff2aacde/hepdata/modules/search/views.py#L249-L... | 1.0 | search: check for `error` in `query_result` before returning JSON - If search results are requested in JSON format, the current code tries to calculate `query_result['hits']` and return the JSON results without checking if there is an `error` in the `query_result`:

https://github.com/HEPData/hepdata/blob/3dcdfe62ded... | priority | search check for error in query result before returning json if search results are requested in json format the current code tries to calculate query result and return the json results without checking if there is an error in the query result an invalid query like q gives an exception ... | 1 |

510,631 | 14,813,379,869 | IssuesEvent | 2021-01-14 01:55:03 | onicagroup/runway | https://api.github.com/repos/onicagroup/runway | opened | Binary builds require glibc >= 2.29, breaking compatibility with older linux distros (e.g. Amazon Linux 2) | bug priority:low status:review_needed | It appears that GitHub Actions update bumped the glibc version used in binary builds, causing errors like:

```

155] Error loading Python lib '/codebuild/output/src662356390/src/github.com/devmohammedothman/runway-cloudformation/node_modules/@onica/runway/src/runway/libpython3.7m.so.1.0': dlopen: /lib64/libm.so.6: v... | 1.0 | Binary builds require glibc >= 2.29, breaking compatibility with older linux distros (e.g. Amazon Linux 2) - It appears that GitHub Actions update bumped the glibc version used in binary builds, causing errors like:

```

155] Error loading Python lib '/codebuild/output/src662356390/src/github.com/devmohammedothman/r... | priority | binary builds require glibc breaking compatibility with older linux distros e g amazon linux it appears that github actions update bumped the glibc version used in binary builds causing errors like error loading python lib codebuild output src github com devmohammedothman runway cloudfor... | 1 |

716,535 | 24,638,283,126 | IssuesEvent | 2022-10-17 09:37:12 | ibissource/frank-flow | https://api.github.com/repos/ibissource/frank-flow | closed | You should see a clear error message when you have a backend jar without frontend | feature priority:low | **Is your feature request related to a problem? Please describe.**

The Maven build of the frank-flow project produces a .jar that should be deployed on the server. There is a profile `frontend`. Only if this profile is enabled, then the frontend is added to this .jar file. We expect that developers will sometimes prod... | 1.0 | You should see a clear error message when you have a backend jar without frontend - **Is your feature request related to a problem? Please describe.**

The Maven build of the frank-flow project produces a .jar that should be deployed on the server. There is a profile `frontend`. Only if this profile is enabled, then th... | priority | you should see a clear error message when you have a backend jar without frontend is your feature request related to a problem please describe the maven build of the frank flow project produces a jar that should be deployed on the server there is a profile frontend only if this profile is enabled then th... | 1 |

631,302 | 20,150,102,768 | IssuesEvent | 2022-02-09 11:30:12 | ita-social-projects/horondi_client_fe | https://api.github.com/repos/ita-social-projects/horondi_client_fe | closed | (SP: 3) "Sell" registration on buy as a guest page | FrontEnd part priority: low | Create mockup and "Selling" text and realise it on the checkout page

Show benefits on checkout, like you will see and manage your orders.

| 1.0 | (SP: 3) "Sell" registration on buy as a guest page - Create mockup and "Selling" text and realise it on the checkout page

Show benefits on checkout, like you will see and manage your orders.

| priority | sp sell registration on buy as a guest page create mockup and selling text and realise it on the checkout page show benefits on checkout like you will see and manage your orders | 1 |

484,229 | 13,936,566,362 | IssuesEvent | 2020-10-22 13:06:24 | cds-snc/report-a-cybercrime | https://api.github.com/repos/cds-snc/report-a-cybercrime | closed | Self Harm Words Incorrectly indicated in Server Console. | bug low priority | ## Summary

When a report is submitted the we scan the contents for words that indicate the user is at risk for self harm. Any matches are flagged in the analyst report and printed in the server console. When no self harm words are found we still print an empty list in the console.

meaning all over the code base there is explicit conversion of date in months to the actual month something like `actual_months = 1 + ((months - 1) mod 12)` i.e.

- months = 12 = actual_m... | 1.0 | Counting dates in CorsixTH - Currently world.lua and possibly other files track the date in months (this stems from the original level files operating in months) meaning all over the code base there is explicit conversion of date in months to the actual month something like `actual_months = 1 + ((months - 1) mod 12)` i... | priority | counting dates in corsixth currently world lua and possibly other files track the date in months this stems from the original level files operating in months meaning all over the code base there is explicit conversion of date in months to the actual month something like actual months months mod i ... | 1 |

407,248 | 11,911,133,258 | IssuesEvent | 2020-03-31 08:06:12 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 Staging 1489] Large/Small Ashlar Stone fountain values wrong way around | Priority: Low Status: Fixed | 1. 0.9.0 Staging 1489

2. Create Large or Small Ashlar Stone fountin

3. Small fountain should cost less than a large fountain

4. Large fountain is 40 Ashlar, Small is 60

Resource use is also very high for the item

Original Redmine Issue: 56, https://issues.qgis.org/issues/56

Original Assignee: Gary Sherman

---

In the map composer the width&height in General and Item tabs have different meaning - but it's hard to tell which one is right. Anyway, width field in Ge... | 1.0 | Map composer: width&height swapped - ---

Author Name: **werchowyna-epf-pl -** (werchowyna-epf-pl -)

Original Redmine Issue: 56, https://issues.qgis.org/issues/56

Original Assignee: Gary Sherman

---

In the map composer the width&height in General and Item tabs have different meaning - but it's hard to tell which on... | priority | map composer width height swapped author name werchowyna epf pl werchowyna epf pl original redmine issue original assignee gary sherman in the map composer the width height in general and item tabs have different meaning but it s hard to tell which one is right anyway width field i... | 1 |

793,351 | 27,991,956,382 | IssuesEvent | 2023-03-27 05:03:39 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Request] fairy-stockfish (and -git) | request:new-pkg priority:low | ### Link to the package base(s) in the AUR

[fairy-stockfish](https://aur.archlinux.org/packages/fairy-stockfish)

[fairy-stockfish-git](https://aur.archlinux.org/packages/fairy-stockfish-git)

### Utility this package has for you

Chess variants are fun. Why not be able to play against a computer?

### Do you consider... | 1.0 | [Request] fairy-stockfish (and -git) - ### Link to the package base(s) in the AUR

[fairy-stockfish](https://aur.archlinux.org/packages/fairy-stockfish)

[fairy-stockfish-git](https://aur.archlinux.org/packages/fairy-stockfish-git)

### Utility this package has for you

Chess variants are fun. Why not be able to play a... | priority | fairy stockfish and git link to the package base s in the aur utility this package has for you chess variants are fun why not be able to play against a computer do you consider the package s to be useful for every chaotic aur user no but for a great amount do you consider th... | 1 |

199,804 | 6,994,374,368 | IssuesEvent | 2017-12-15 15:09:30 | rathena/rathena | https://api.github.com/repos/rathena/rathena | closed | Chain Lightning vs icewall | component:skill mode:prerenewal mode:renewal priority:low status:confirmed type:bug | <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**: [b2d904b](https://github.com/rathena/rathena/commit/b2d904b764f43137547e5ed689af2b5983b8b234)

<!-- Please specify the rAthena [GitHub hash](https://help.github.com/articles/autolinked-references-and-urls/... | 1.0 | Chain Lightning vs icewall - <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**: [b2d904b](https://github.com/rathena/rathena/commit/b2d904b764f43137547e5ed689af2b5983b8b234)

<!-- Please specify the rAthena [GitHub hash](https://help.github.com/articles/au... | priority | chain lightning vs icewall rathena hash please specify the rathena on which you encountered this issue how to get your github hash cd your rathena directory git rev parse short head copy the resulting hash client date server mode renewal ... | 1 |

804,251 | 29,481,494,901 | IssuesEvent | 2023-06-02 06:12:36 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | rocket_clean_post() - The whole cache is cleared under certain conditions | type: bug module: cache priority: low needs: grooming severity: moderate | **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✅

- Used the search feature to ensure that the bug hasn’t been reported before ✅

**Describe the bug**

When the cache of a post is cleared, we are using `rocket_clean_post()` which call... | 1.0 | rocket_clean_post() - The whole cache is cleared under certain conditions - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version ✅

- Used the search feature to ensure that the bug hasn’t been reported before ✅

**Describe the bug**

When t... | priority | rocket clean post the whole cache is cleared under certain conditions before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version ✅ used the search feature to ensure that the bug hasn’t been reported before ✅ describe the bug when t... | 1 |

532,853 | 15,572,047,720 | IssuesEvent | 2021-03-17 06:18:33 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | docker image linux/arm/v7 platform request | Priority: Low Status: Available Type: Enhancement | **Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

I choose the `armhf-latest` tag to run on my raspberry and got the warning

```shell

docker run -d \

--name watchtower \

-v /var/run/docke... | 1.0 | docker image linux/arm/v7 platform request - **Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

I choose the `armhf-latest` tag to run on my raspberry and got the warning

```shell

docker run -d \