Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

410,255 | 11,985,489,682 | IssuesEvent | 2020-04-07 17:35:54 | melonproject/protocol | https://api.github.com/repos/melonproject/protocol | closed | More sophisticated MLN premium pricing function in Engine | idea low priority | Right now the `premiumPercent` function in Engine is just stepwise and applies to the entire supply of eth in the contract (@SeanJCasey brought attention to this in chat a while ago).

This was a rough preliminary approach since the goal was just to be a sink, but we could come up with something that applies to the s... | 1.0 | More sophisticated MLN premium pricing function in Engine - Right now the `premiumPercent` function in Engine is just stepwise and applies to the entire supply of eth in the contract (@SeanJCasey brought attention to this in chat a while ago).

This was a rough preliminary approach since the goal was just to be a sin... | priority | more sophisticated mln premium pricing function in engine right now the premiumpercent function in engine is just stepwise and applies to the entire supply of eth in the contract seanjcasey brought attention to this in chat a while ago this was a rough preliminary approach since the goal was just to be a sin... | 1 |

496,635 | 14,350,725,579 | IssuesEvent | 2020-11-29 22:09:07 | GrandDynamo/OneDrive-Cloud-Player | https://api.github.com/repos/GrandDynamo/OneDrive-Cloud-Player | closed | Sanitize file names | low priority | Who thinks of these names... VideoPlayerPageViewModel.cs already resides in the ViewModels folder, so IMO it's unnecessary to have these long names. | 1.0 | Sanitize file names - Who thinks of these names... VideoPlayerPageViewModel.cs already resides in the ViewModels folder, so IMO it's unnecessary to have these long names. | priority | sanitize file names who thinks of these names videoplayerpageviewmodel cs already resides in the viewmodels folder so imo it s unnecessary to have these long names | 1 |

586,967 | 17,600,710,019 | IssuesEvent | 2021-08-17 11:29:37 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | opened | Change tooltip in magic lifestyles about traits | suggestion :question: priority low :grey_exclamation: | <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Describe your suggestion in full detail below:**

@ValianBlue suggestion

> I would personally change it to "Remaining [Magic] Perks to get [Level]: [X]." ... | 1.0 | Change tooltip in magic lifestyles about traits - <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

**Describe your suggestion in full detail below:**

@ValianBlue suggestion

> I would personally change it ... | priority | change tooltip in magic lifestyles about traits do not remove pre existing lines describe your suggestion in full detail below valianblue suggestion i would personally change it ... | 1 |

504,416 | 14,618,153,963 | IssuesEvent | 2020-12-22 15:49:10 | super-cooper/memebot | https://api.github.com/repos/super-cooper/memebot | closed | Include user's name in !hello output | feature low-priority | **What do you dislike about the feature in its current state?**

!hello is too generic. I want the bot to say hello *to me*.

**Describe the solution you'd like**

Output is instead "Hello, [user]!" where [user] is the nickname of the user who called the command. If the user has no nickname, use their username.

**... | 1.0 | Include user's name in !hello output - **What do you dislike about the feature in its current state?**

!hello is too generic. I want the bot to say hello *to me*.

**Describe the solution you'd like**

Output is instead "Hello, [user]!" where [user] is the nickname of the user who called the command. If the user has... | priority | include user s name in hello output what do you dislike about the feature in its current state hello is too generic i want the bot to say hello to me describe the solution you d like output is instead hello where is the nickname of the user who called the command if the user has no nickna... | 1 |

539,194 | 15,784,861,137 | IssuesEvent | 2021-04-01 15:37:41 | faktaoklimatu/web-core | https://api.github.com/repos/faktaoklimatu/web-core | opened | Non-existent authors on explainers also link to "about us" | 1: low priority free-to-take | The corals explainer is written by Tereza Jarníková, the name links to `/o-nas#clenove` but she is not listed. What should be done?

1. Nothing.

2. Only create a link if the person exists (logistically somewhat involved).

3. Ask all explainer creators to add a profile.

Any opinions, @jankrcal, @mgrabovsky? | 1.0 | Non-existent authors on explainers also link to "about us" - The corals explainer is written by Tereza Jarníková, the name links to `/o-nas#clenove` but she is not listed. What should be done?

1. Nothing.

2. Only create a link if the person exists (logistically somewhat involved).

3. Ask all explainer creators to ad... | priority | non existent authors on explainers also link to about us the corals explainer is written by tereza jarníková the name links to o nas clenove but she is not listed what should be done nothing only create a link if the person exists logistically somewhat involved ask all explainer creators to ad... | 1 |

765,485 | 26,848,454,133 | IssuesEvent | 2023-02-03 09:11:47 | canonical/ubuntu.com | https://api.github.com/repos/canonical/ubuntu.com | closed | /advantage table row anchors hide the purpose of the table | Priority: Low | 1\. Go to any of these URLs:

- https://ubuntu.com/advantage/#esm

- https://ubuntu.com/advantage/#livepatch

- https://ubuntu.com/advantage/#fips

What happens:

👍 The relevant row in the “Plans for enterprise use” table is highlighted.

👎 The page is scrolled so that nothing is visible above that table row.

Wh... | 1.0 | /advantage table row anchors hide the purpose of the table - 1\. Go to any of these URLs:

- https://ubuntu.com/advantage/#esm

- https://ubuntu.com/advantage/#livepatch

- https://ubuntu.com/advantage/#fips

What happens:

👍 The relevant row in the “Plans for enterprise use” table is highlighted.

👎 The page is sc... | priority | advantage table row anchors hide the purpose of the table go to any of these urls what happens 👍 the relevant row in the “plans for enterprise use” table is highlighted 👎 the page is scrolled so that nothing is visible above that table row what’s wrong with this linking to an anc... | 1 |

133,289 | 5,200,303,837 | IssuesEvent | 2017-01-23 23:24:41 | IBMDataScience/datascix | https://api.github.com/repos/IBMDataScience/datascix | closed | Add the ability to validate URL syntax for "image_url", "blog_url" properties | priority-low type-enhancement |

e.g. Bad:

```

"image_url" : "https://github.com/IBMDataScience/datascix/blob/master/public/prod/changelog/img/github.png?raw=true?raw=true",

``` | 1.0 | Add the ability to validate URL syntax for "image_url", "blog_url" properties -

e.g. Bad:

```

"image_url" : "https://github.com/IBMDataScience/datascix/blob/master/public/prod/changelog/img/github.png?raw=true?raw=true",

``` | priority | add the ability to validate url syntax for image url blog url properties e g bad image url | 1 |

175,830 | 6,554,336,001 | IssuesEvent | 2017-09-06 05:07:41 | hacksu/2017-kenthackenough-ui-main | https://api.github.com/repos/hacksu/2017-kenthackenough-ui-main | closed | Powered by Hacksu Icon @ the bottom of the site | Low Priority | Add Powered by Hacksu logo to the bottom of the site (Or somewhere) | 1.0 | Powered by Hacksu Icon @ the bottom of the site - Add Powered by Hacksu logo to the bottom of the site (Or somewhere) | priority | powered by hacksu icon the bottom of the site add powered by hacksu logo to the bottom of the site or somewhere | 1 |

276,941 | 8,614,771,282 | IssuesEvent | 2018-11-19 18:29:29 | MontrealCorpusTools/iscan-server | https://api.github.com/repos/MontrealCorpusTools/iscan-server | opened | Add default stop subsets to phone subset enrichment | UI enrichment low priority | Currently the phone subset enrichment has defaults for syllabics, etc. There should also be buttons for voiced and voiceless stops just to save time. Maybe this could be generalised to just add a few more phonetic categories in general to the subset enrichment. | 1.0 | Add default stop subsets to phone subset enrichment - Currently the phone subset enrichment has defaults for syllabics, etc. There should also be buttons for voiced and voiceless stops just to save time. Maybe this could be generalised to just add a few more phonetic categories in general to the subset enrichment. | priority | add default stop subsets to phone subset enrichment currently the phone subset enrichment has defaults for syllabics etc there should also be buttons for voiced and voiceless stops just to save time maybe this could be generalised to just add a few more phonetic categories in general to the subset enrichment | 1 |

700,958 | 24,080,496,823 | IssuesEvent | 2022-09-19 05:55:57 | tensorchord/envd | https://api.github.com/repos/tensorchord/envd | closed | enhancement(network error): Make error message more informative | priority/3-low 💙 type/enhancement 💭 | ## Description

When the image pulling process is affected by the network issue, the error message is not friendly.

| 1.0 | enhancement(network error): Make error message more informative - ## Description

When the image pulling process is affected by the network issue, the error message is not friendly.

| priority | enhancement network error make error message more informative description when the image pulling process is affected by the network issue the error message is not friendly | 1 |

172,797 | 6,516,315,421 | IssuesEvent | 2017-08-27 06:55:12 | python/mypy | https://api.github.com/repos/python/mypy | closed | Type alias problems with typeshed's `bytes` | crash priority-2-low | Running mypy on typeshed's stdlib/2/builtins.pyi violates the assertion in semanal.py:931 - `sym.node` is a Var instead of a TypeInfo for `builtins.bytes`. This is related to the statement `bytes = str` and type promotion of `str` to `bytes`.

```

...

File ".../mypy/mypy/build.py", line 1425, in semantic_analy... | 1.0 | Type alias problems with typeshed's `bytes` - Running mypy on typeshed's stdlib/2/builtins.pyi violates the assertion in semanal.py:931 - `sym.node` is a Var instead of a TypeInfo for `builtins.bytes`. This is related to the statement `bytes = str` and type promotion of `str` to `bytes`.

```

...

File ".../myp... | priority | type alias problems with typeshed s bytes running mypy on typeshed s stdlib builtins pyi violates the assertion in semanal py sym node is a var instead of a typeinfo for builtins bytes this is related to the statement bytes str and type promotion of str to bytes file mypy ... | 1 |

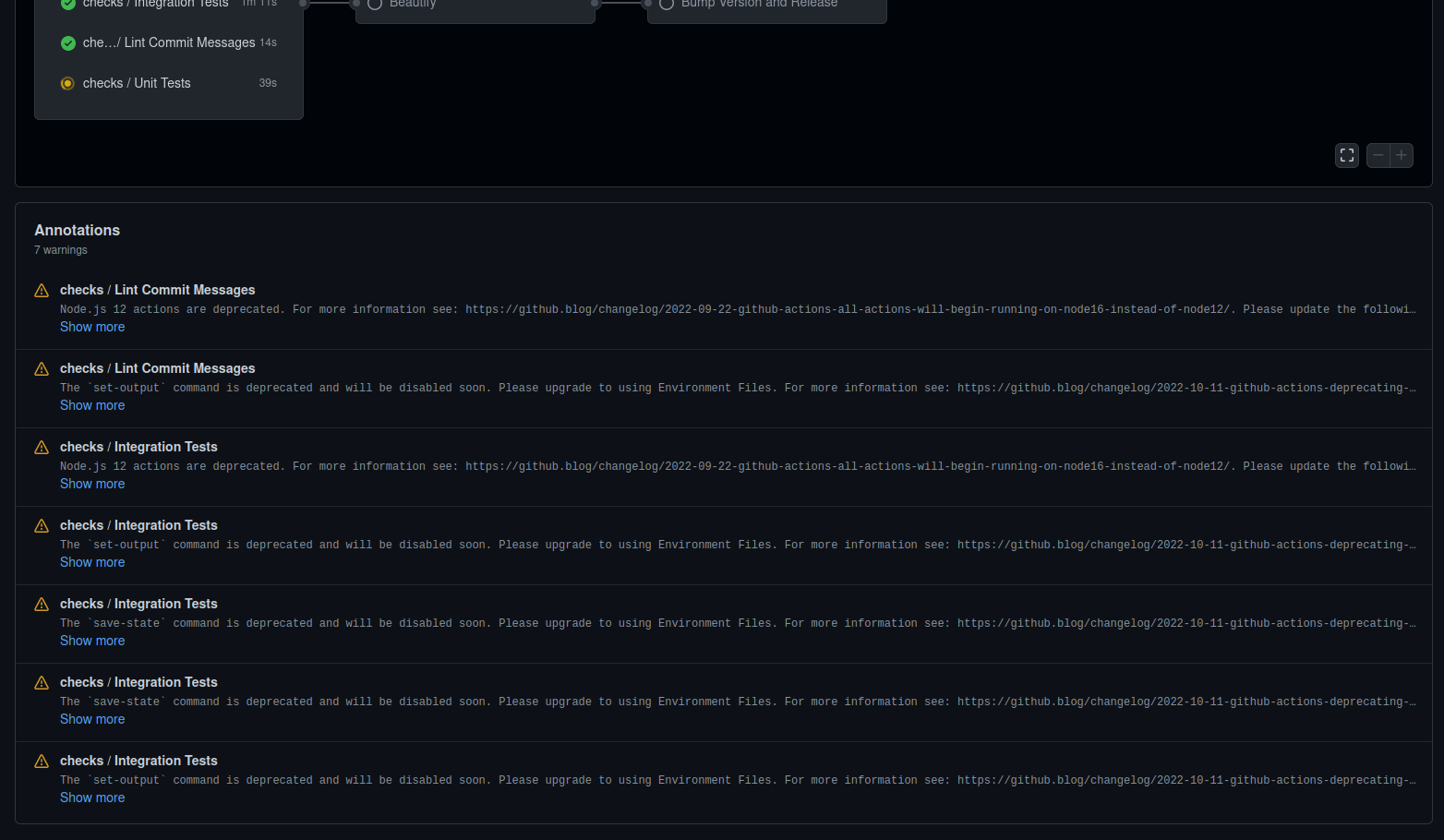

734,451 | 25,349,726,614 | IssuesEvent | 2022-11-19 16:19:05 | es-ude/elastic-ai.creator | https://api.github.com/repos/es-ude/elastic-ai.creator | closed | Check GitHub Workflow deprecation warnings | major priority gh-workflow | During the runtime of our GitHub workflow, we receive the following [deprecation warnings](https://github.com/es-ude/elastic-ai.creator/actions/runs/3454812472):

I guess we need to fix th... | 1.0 | Check GitHub Workflow deprecation warnings - During the runtime of our GitHub workflow, we receive the following [deprecation warnings](https://github.com/es-ude/elastic-ai.creator/actions/runs/3454812472):

| 1.0 | Error in Installing Open Babel - I wanted to install Open Babel but everytime I tried installing, a window came up stating as the attachment. I hope someone could help me with this issue. Please..

Reported by: wannursyakilla

Original Ticket: [openbabel/bugs/978](https://sourceforge.net/p/openbabel/bugs/978) | priority | error in installing open babel i wanted to install open babel but everytime i tried installing a window came up stating as the attachment i hope someone could help me with this issue please reported by wannursyakilla original ticket | 1 |

504,921 | 14,623,683,276 | IssuesEvent | 2020-12-23 04:08:00 | AtlasOfLivingAustralia/volunteer-portal | https://api.github.com/repos/AtlasOfLivingAustralia/volunteer-portal | closed | Tutorials link to ///data/volunteer//tutorials | Priority - low | The tutorial page links to ``///data/volunteer//tutorials``. These links manage to render, but it makes it more difficult to setup a useful ``robots.txt`` file to tell bots which paths under ``/data`` (and ``/``) they should and shouldn't index. The links on the tutorial page seem to work if changed to remove the redun... | 1.0 | Tutorials link to ///data/volunteer//tutorials - The tutorial page links to ``///data/volunteer//tutorials``. These links manage to render, but it makes it more difficult to setup a useful ``robots.txt`` file to tell bots which paths under ``/data`` (and ``/``) they should and shouldn't index. The links on the tutorial... | priority | tutorials link to data volunteer tutorials the tutorial page links to data volunteer tutorials these links manage to render but it makes it more difficult to setup a useful robots txt file to tell bots which paths under data and they should and shouldn t index the links on the tutorial... | 1 |

506,841 | 14,674,204,400 | IssuesEvent | 2020-12-30 14:50:19 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | opened | Adapt Horse Archers and Camels | :books: lore :books: :grey_exclamation: priority low :ice_cream: vanilla modification :icecream: :question: suggestion :question: | <!--

DO NOT REMOVE PRE-EXISTING LINES

IF YOU WANT TO SUGGEST A FEW THINGS, OPEN A NEW ISSUE PER EVERY SUGGESTION

----------------------------------------------------------------------------------------------------------

-->

**Describe your suggestion in full detail below:**

We should adapt vanilla horse archers a... | 1.0 | Adapt Horse Archers and Camels - <!--

DO NOT REMOVE PRE-EXISTING LINES

IF YOU WANT TO SUGGEST A FEW THINGS, OPEN A NEW ISSUE PER EVERY SUGGESTION

----------------------------------------------------------------------------------------------------------

-->

**Describe your suggestion in full detail below:**

We sho... | priority | adapt horse archers and camels do not remove pre existing lines if you want to suggest a few things open a new issue per every suggestion describe your suggestion in full detail below we sho... | 1 |

724,469 | 24,931,607,455 | IssuesEvent | 2022-10-31 12:07:25 | ignite/cli | https://api.github.com/repos/ignite/cli | opened | Update Module Dependencies | request priority/low | This issue tracks recently updated PRs for updating `ignite`'s module dependencies:

- [ ] #3004

- [ ] #3005

- [ ] #3006

- [ ] #3007

- [ ] #3008 | 1.0 | Update Module Dependencies - This issue tracks recently updated PRs for updating `ignite`'s module dependencies:

- [ ] #3004

- [ ] #3005

- [ ] #3006

- [ ] #3007

- [ ] #3008 | priority | update module dependencies this issue tracks recently updated prs for updating ignite s module dependencies | 1 |

82,530 | 3,614,580,509 | IssuesEvent | 2016-02-06 03:51:44 | MenoData/Time4J | https://api.github.com/repos/MenoData/Time4J | closed | Add equals/hashCode-support for platform formatter | bug fixed priority: low | Following test fails if the i18n-module is not available:

```java

assertThat(

PlainDate.localFormatter(DisplayMode.FULL),

is(PlainDate.formatter(DisplayMode.FULL, Locale.getDefault()))

);

``` | 1.0 | Add equals/hashCode-support for platform formatter - Following test fails if the i18n-module is not available:

```java

assertThat(

PlainDate.localFormatter(DisplayMode.FULL),

is(PlainDate.formatter(DisplayMode.FULL, Locale.getDefault()))

);

``` | priority | add equals hashcode support for platform formatter following test fails if the module is not available java assertthat plaindate localformatter displaymode full is plaindate formatter displaymode full locale getdefault | 1 |

413,711 | 12,090,831,874 | IssuesEvent | 2020-04-19 08:41:27 | Matteas-Eden/roll-for-reaction | https://api.github.com/repos/Matteas-Eden/roll-for-reaction | closed | Hotkey to equip items | Low Priority usability | **User Story**

As a gamer, I'd like to be able to press 'E' to equip an item, so that I can equip items without usig the mouse

**Acceptance Criteria**

- When the notification for receiving a new item comes up, you can press 'E' to eqip it

- Pressing 'E' also removes the notification

---

**Why is this feat... | 1.0 | Hotkey to equip items - **User Story**

As a gamer, I'd like to be able to press 'E' to equip an item, so that I can equip items without usig the mouse

**Acceptance Criteria**

- When the notification for receiving a new item comes up, you can press 'E' to eqip it

- Pressing 'E' also removes the notification

-... | priority | hotkey to equip items user story as a gamer i d like to be able to press e to equip an item so that i can equip items without usig the mouse acceptance criteria when the notification for receiving a new item comes up you can press e to eqip it pressing e also removes the notification ... | 1 |

129,143 | 5,089,262,109 | IssuesEvent | 2017-01-01 13:38:47 | Geeklog-Core/geeklog | https://api.github.com/repos/Geeklog-Core/geeklog | closed | [Feature Requests] Eliminate the need for COM_stripslashes | low priority minor | **Reported by jmucchiello on 4 Jul 2008 18:08**

**Version:** Future

**Description:**

This was discussed on the mailing list last summer/fall:

http://eight.pairlist.net/pipermail/geeklog-devel/2007-September/002318.html

Not sure how this interacts with Web Services which for some reason call COM_applyBasicFilter whic... | 1.0 | [Feature Requests] Eliminate the need for COM_stripslashes - **Reported by jmucchiello on 4 Jul 2008 18:08**

**Version:** Future

**Description:**

This was discussed on the mailing list last summer/fall:

http://eight.pairlist.net/pipermail/geeklog-devel/2007-September/002318.html

Not sure how this interacts with Web ... | priority | eliminate the need for com stripslashes reported by jmucchiello on jul version future description this was discussed on the mailing list last summer fall not sure how this interacts with web services which for some reason call com applybasicfilter which just doesn t have com stripslashes... | 1 |

755,131 | 26,418,351,345 | IssuesEvent | 2023-01-13 17:52:41 | geopm/geopm | https://api.github.com/repos/geopm/geopm | closed | geopmd hanging on shutdown | bug bug-priority-high bug-exposure-high bug-quality-low | **Describe the bug**

I tried to shut the service down with systemctl and I expected it to shutdown gracefully instead it hangs, and will eventually be killed with SIGKILL.

**GEOPM version**

5e829c4c2

**Expected behavior**

```

$ sudo systemctl stop geopm

$ journalctl -u geopm

...

Jan 12 16:52:42 mcfly1 syst... | 1.0 | geopmd hanging on shutdown - **Describe the bug**

I tried to shut the service down with systemctl and I expected it to shutdown gracefully instead it hangs, and will eventually be killed with SIGKILL.

**GEOPM version**

5e829c4c2

**Expected behavior**

```

$ sudo systemctl stop geopm

$ journalctl -u geopm

...... | priority | geopmd hanging on shutdown describe the bug i tried to shut the service down with systemctl and i expected it to shutdown gracefully instead it hangs and will eventually be killed with sigkill geopm version expected behavior sudo systemctl stop geopm journalctl u geopm jan ... | 1 |

538,495 | 15,770,035,522 | IssuesEvent | 2021-03-31 18:59:50 | vacuumlabs/adalite | https://api.github.com/repos/vacuumlabs/adalite | closed | Separate circleCI into separate jobs | low priority | Currently, the workflow doesn't allow other checks to run if one fails, which might be annoying for development | 1.0 | Separate circleCI into separate jobs - Currently, the workflow doesn't allow other checks to run if one fails, which might be annoying for development | priority | separate circleci into separate jobs currently the workflow doesn t allow other checks to run if one fails which might be annoying for development | 1 |

167,411 | 6,337,618,176 | IssuesEvent | 2017-07-27 00:39:25 | redox-os/ion | https://api.github.com/repos/redox-os/ion | closed | Parsing Issue w/ && Operator | bug high-priority low-hanging fruit | I'm not sure why this is at the moment, but:

```sh

matches Foo '([A-Z])\w+' && echo true

```

Gives the following output:

```

ion: syntax error: '(' at position 13 is out of place

```

But this:

```sh

matches Foo '([A-Z])\w+'; echo true

```

Gives:

```

true

```

I've also noticed that this:

... | 1.0 | Parsing Issue w/ && Operator - I'm not sure why this is at the moment, but:

```sh

matches Foo '([A-Z])\w+' && echo true

```

Gives the following output:

```

ion: syntax error: '(' at position 13 is out of place

```

But this:

```sh

matches Foo '([A-Z])\w+'; echo true

```

Gives:

```

true

```

... | priority | parsing issue w operator i m not sure why this is at the moment but sh matches foo w echo true gives the following output ion syntax error at position is out of place but this sh matches foo w echo true gives true i ve al... | 1 |

718,420 | 24,716,532,825 | IssuesEvent | 2022-10-20 07:26:39 | eth-cscs/DLA-Future | https://api.github.com/repos/eth-cscs/DLA-Future | opened | Should `reference_wrapper` and other things be automatically be unwrapped in more contexts than `transform` and `transformMPI` | enhancement Task Priority:Low | We currently automatically unwrap futures and reference wrappers in `transform` and `transformMPI`. @albestro had a use case where he expected e.g. `withTemporaryTile` to do the same. One might expect this to happen in `let_value`, and probably many other places. Should we expand the number of places where we do it aut... | 1.0 | Should `reference_wrapper` and other things be automatically be unwrapped in more contexts than `transform` and `transformMPI` - We currently automatically unwrap futures and reference wrappers in `transform` and `transformMPI`. @albestro had a use case where he expected e.g. `withTemporaryTile` to do the same. One mig... | priority | should reference wrapper and other things be automatically be unwrapped in more contexts than transform and transformmpi we currently automatically unwrap futures and reference wrappers in transform and transformmpi albestro had a use case where he expected e g withtemporarytile to do the same one mig... | 1 |

612,420 | 19,012,268,902 | IssuesEvent | 2021-11-23 10:35:58 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Install One CLI Tools on Mac OS | Category: CLI Community Type: Bug Status: Accepted Priority: Low | **Description**

It would be great to be able to install the CLI Tools on Mac OS.

**Use case**

I want to use some of the CLI binaries to execute API calls against the OpenNebula API.

I'm using a Mac laptop and it's annoying to have to connect to a Linux machine in order to be able to use the CLI Tools.

**Change... | 1.0 | Install One CLI Tools on Mac OS - **Description**

It would be great to be able to install the CLI Tools on Mac OS.

**Use case**

I want to use some of the CLI binaries to execute API calls against the OpenNebula API.

I'm using a Mac laptop and it's annoying to have to connect to a Linux machine in order to be able... | priority | install one cli tools on mac os description it would be great to be able to install the cli tools on mac os use case i want to use some of the cli binaries to execute api calls against the opennebula api i m using a mac laptop and it s annoying to have to connect to a linux machine in order to be able... | 1 |

97,078 | 3,984,919,665 | IssuesEvent | 2016-05-07 14:36:57 | Brickimedia/brickimedia | https://api.github.com/repos/Brickimedia/brickimedia | closed | Browsers, operating systems, and device | [feedback] Question [feedback] RFC [priority] Mid-low | @Brickimedia/developers

Can we get a real quick list of what everyone uses mainly? Its nice to know so when there's a problem that pertains to one of the specific things we can ping that certain dev, and also to know what our full testing environment as a whole team looks like as a whole, and see if we need to expa... | 1.0 | Browsers, operating systems, and device - @Brickimedia/developers

Can we get a real quick list of what everyone uses mainly? Its nice to know so when there's a problem that pertains to one of the specific things we can ping that certain dev, and also to know what our full testing environment as a whole team looks l... | priority | browsers operating systems and device brickimedia developers can we get a real quick list of what everyone uses mainly its nice to know so when there s a problem that pertains to one of the specific things we can ping that certain dev and also to know what our full testing environment as a whole team looks l... | 1 |

149,659 | 5,723,108,219 | IssuesEvent | 2017-04-20 11:17:26 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | unit tests: pmempool_info/TEST18: SETUP (all/pmem/debug) fails | Exposure: Low OS: Linux Priority: 4 low Type: Bug | Found on a492d408739b33ba79681e74c8942ce1c4f62167:

> pmempool_info/TEST18: SETUP (all/pmem/debug)

> [MATCHING FAILED, COMPLETE FILE (out18.log) BELOW]

> Poolset structure:

> Number of replicas : 1

> Replica 0 (master) - local, 1 part(s):

> part 0:

> path : /dev/dax0.0

> type ... | 1.0 | unit tests: pmempool_info/TEST18: SETUP (all/pmem/debug) fails - Found on a492d408739b33ba79681e74c8942ce1c4f62167:

> pmempool_info/TEST18: SETUP (all/pmem/debug)

> [MATCHING FAILED, COMPLETE FILE (out18.log) BELOW]

> Poolset structure:

> Number of replicas : 1

> Replica 0 (master) - local, 1 part(s):

> ... | priority | unit tests pmempool info setup all pmem debug fails found on pmempool info setup all pmem debug poolset structure number of replicas replica master local part s part path dev type device dax size ... | 1 |

715,087 | 24,586,134,673 | IssuesEvent | 2022-10-13 19:54:11 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Malformed Page-URL's can pass through Studio's validation check by using the browsers auto-correct | bug priority: low CI validate | ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [x] The issue is in the latest released 4.0.x

- [X] The issue is in the latest released 3.1.x

### Describe the issue

Modifying the Page-URL in the content form to a name that suggests the name be capitalized passes through studio's check... | 1.0 | [studio-ui] Malformed Page-URL's can pass through Studio's validation check by using the browsers auto-correct - ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [x] The issue is in the latest released 4.0.x

- [X] The issue is in the latest released 3.1.x

### Describe the issue

Modify... | priority | malformed page url s can pass through studio s validation check by using the browsers auto correct duplicates i have searched the existing issues latest version the issue is in the latest released x the issue is in the latest released x describe the issue modifying the page url... | 1 |

587,363 | 17,613,959,242 | IssuesEvent | 2021-08-18 07:21:14 | BAMWelDX/weldx | https://api.github.com/repos/BAMWelDX/weldx | closed | add weldx extension manifest | ASDF low priority | while I don't think we have to switch over to the new manifest style of loading our extension, maybe it would still be good to create such a manifest for the weldx extension as a place to collect the relevant extension metadata (and prepare for switching later)

reference: https://asdf.readthedocs.io/en/al-503-docume... | 1.0 | add weldx extension manifest - while I don't think we have to switch over to the new manifest style of loading our extension, maybe it would still be good to create such a manifest for the weldx extension as a place to collect the relevant extension metadata (and prepare for switching later)

reference: https://asdf.... | priority | add weldx extension manifest while i don t think we have to switch over to the new manifest style of loading our extension maybe it would still be good to create such a manifest for the weldx extension as a place to collect the relevant extension metadata and prepare for switching later reference | 1 |

192,353 | 6,848,989,056 | IssuesEvent | 2017-11-13 20:27:05 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Spinner/state doesn't work for dependencies | bug priority: low | When publishing items the spinner appears on the items indicating they're being published. However, this doesn't appear on items that are dependencies of other items.

Steps to Reproduce

===============

* Create a site using Editorial BP

* Create an article called `one` under `articles`

* Copy `articles/2017` and... | 1.0 | [studio-ui] Spinner/state doesn't work for dependencies - When publishing items the spinner appears on the items indicating they're being published. However, this doesn't appear on items that are dependencies of other items.

Steps to Reproduce

===============

* Create a site using Editorial BP

* Create an article... | priority | spinner state doesn t work for dependencies when publishing items the spinner appears on the items indicating they re being published however this doesn t appear on items that are dependencies of other items steps to reproduce create a site using editorial bp create an article called o... | 1 |

403,098 | 11,835,737,233 | IssuesEvent | 2020-03-23 11:10:34 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | missing redraw for local copy | bug: pending priority: low scope: UI understood: clear | <!-- IMPORTANT

Bug reports that do not make an effort to help the developers will be closed without notice.

Make sure that this bug has not already been opened and/or closed by searching the issues on GitHub, as duplicate bug reports will be closed.

A bug report simply stating that Darktable crashes is unhelpful, so... | 1.0 | missing redraw for local copy - <!-- IMPORTANT

Bug reports that do not make an effort to help the developers will be closed without notice.

Make sure that this bug has not already been opened and/or closed by searching the issues on GitHub, as duplicate bug reports will be closed.

A bug report simply stating that Da... | priority | missing redraw for local copy important bug reports that do not make an effort to help the developers will be closed without notice make sure that this bug has not already been opened and or closed by searching the issues on github as duplicate bug reports will be closed a bug report simply stating that da... | 1 |

624,462 | 19,698,572,439 | IssuesEvent | 2022-01-12 14:35:16 | BeccaLyria/discord-documentation | https://api.github.com/repos/BeccaLyria/discord-documentation | closed | [DOC] - Add documentation for the "Ban Appeal config" feature | 🏁 status: ready for dev 🟩 priority: low ⭐ goal: addition 📄 aspect: text good first issue | ### What work needs to be performed?

This would involve adding details similar to this on the ["Configure your Server" page](https://docs.beccalyria.com/#/configure-server):

| Setting | Value | Description |

| :-: | :-: | :-: |

| Ban Appeal Config | appeal_link: string | You can provide a link to a Google Form (or ... | 1.0 | [DOC] - Add documentation for the "Ban Appeal config" feature - ### What work needs to be performed?

This would involve adding details similar to this on the ["Configure your Server" page](https://docs.beccalyria.com/#/configure-server):

| Setting | Value | Description |

| :-: | :-: | :-: |

| Ban Appeal Config | ap... | priority | add documentation for the ban appeal config feature what work needs to be performed this would involve adding details similar to this on the setting value description ban appeal config appeal link string you can provide a link to a google form or another service ... | 1 |

540,190 | 15,802,435,994 | IssuesEvent | 2021-04-03 09:43:44 | DeadlyBossMods/DBM-TBC | https://api.github.com/repos/DeadlyBossMods/DBM-TBC | closed | [TASK] Convert classic mods back to spellId | Low Priority On Hold | Switch classic raids back to spellIds. Not a lazy retail mod merge in either, there are stll some differences in a few places, especially since retail mods fell behind sync of classic ones quite a bit later on.

This is a super slow priority task.

- [x] AQ20

- [x] AQ40

- [x] Azeroth

- [x] BWL

- [x] MC

- [x] N... | 1.0 | [TASK] Convert classic mods back to spellId - Switch classic raids back to spellIds. Not a lazy retail mod merge in either, there are stll some differences in a few places, especially since retail mods fell behind sync of classic ones quite a bit later on.

This is a super slow priority task.

- [x] AQ20

- [x] AQ4... | priority | convert classic mods back to spellid switch classic raids back to spellids not a lazy retail mod merge in either there are stll some differences in a few places especially since retail mods fell behind sync of classic ones quite a bit later on this is a super slow priority task azeroth ... | 1 |

812,014 | 30,311,911,563 | IssuesEvent | 2023-07-10 13:20:31 | IGS/gEAR | https://api.github.com/repos/IGS/gEAR | opened | Remove toggle for enabling/disabling projection learning | Low Priority | Sometime last year, @hertzron had requested we have a toggle to enable or disable displaying of the "Transfer Learning" link until stability issues were worked out. I feel we are at a point where the toggle can be removed and the "Transfer Learning" link is permanent. One reason is also that @carlocolantuoni noted the... | 1.0 | Remove toggle for enabling/disabling projection learning - Sometime last year, @hertzron had requested we have a toggle to enable or disable displaying of the "Transfer Learning" link until stability issues were worked out. I feel we are at a point where the toggle can be removed and the "Transfer Learning" link is per... | priority | remove toggle for enabling disabling projection learning sometime last year hertzron had requested we have a toggle to enable or disable displaying of the transfer learning link until stability issues were worked out i feel we are at a point where the toggle can be removed and the transfer learning link is per... | 1 |

144,779 | 5,545,816,783 | IssuesEvent | 2017-03-22 22:37:45 | ponylang/ponyc | https://api.github.com/repos/ponylang/ponyc | closed | Identity comparison for boxed types is weird | bug: 4 - in progress difficulty: 2 - medium priority: 1 - low | ```pony

actor Main

new create(env: Env) =>

let a = U8(2)

let b = U8(2)

env.out.print((a is b).string())

foo(env, a, b)

fun foo(env: Env, a: Any, b: Any) =>

env.out.print((a is b).string())

```

This code prints `true` then `false`, but I'd expect it to print `true` then `true`. ... | 1.0 | Identity comparison for boxed types is weird - ```pony

actor Main

new create(env: Env) =>

let a = U8(2)

let b = U8(2)

env.out.print((a is b).string())

foo(env, a, b)

fun foo(env: Env, a: Any, b: Any) =>

env.out.print((a is b).string())

```

This code prints `true` then `false`, ... | priority | identity comparison for boxed types is weird pony actor main new create env env let a let b env out print a is b string foo env a b fun foo env env a any b any env out print a is b string this code prints true then false bu... | 1 |

539,549 | 15,790,635,956 | IssuesEvent | 2021-04-02 02:04:36 | AH-64D-Apache-Official-Project/AH-64D | https://api.github.com/repos/AH-64D-Apache-Official-Project/AH-64D | closed | AFM rotor brake | enhancement low priority | https://github.com/SachaOropeza/AH64D-Project/issues/53

The rotor brake switch doesn't actually enable the brake on the aircraft.

Shouldn't be too many lines of code:

[Here](https://community.bistudio.com/wiki/setRotorBrakeRTD) is the function for it | 1.0 | AFM rotor brake - https://github.com/SachaOropeza/AH64D-Project/issues/53

The rotor brake switch doesn't actually enable the brake on the aircraft.

Shouldn't be too many lines of code:

[Here](https://community.bistudio.com/wiki/setRotorBrakeRTD) is the function for it | priority | afm rotor brake the rotor brake switch doesn t actually enable the brake on the aircraft shouldn t be too many lines of code is the function for it | 1 |

778,949 | 27,334,380,791 | IssuesEvent | 2023-02-26 02:09:52 | noisy/portfolio | https://api.github.com/repos/noisy/portfolio | opened | "How I bankrupt" images have fixed sizes 640px | bug Priority: Low | Images in blog's post "How I bankrupt" have fixed sizes 640px and don't react to responsive changing.

Steps:

1. Open page https://krzysztofszumny.com/post/how-i-bankrupt-my-first-startup-by-not-understanding-the-definition-of-mvp-minimum-viable-product

2. Change pages's width below 640px

Expected result: Images... | 1.0 | "How I bankrupt" images have fixed sizes 640px - Images in blog's post "How I bankrupt" have fixed sizes 640px and don't react to responsive changing.

Steps:

1. Open page https://krzysztofszumny.com/post/how-i-bankrupt-my-first-startup-by-not-understanding-the-definition-of-mvp-minimum-viable-product

2. Change pag... | priority | how i bankrupt images have fixed sizes images in blog s post how i bankrupt have fixed sizes and don t react to responsive changing steps open page change pages s width below expected result images are changing their sizes to fit the page actual result images don t change their sizes ... | 1 |

247,967 | 7,926,166,757 | IssuesEvent | 2018-07-06 00:13:39 | UTAS-HealthSciences/mylo-mate | https://api.github.com/repos/UTAS-HealthSciences/mylo-mate | closed | Manage Files nav bar icon disappearance | bug low priority |

Disappears when in Unit Admin screen.

| 1.0 | Manage Files nav bar icon disappearance -

Disappears when in Unit Admin screen.

| priority | manage files nav bar icon disappearance disappears when in unit admin screen | 1 |

331,220 | 10,062,071,525 | IssuesEvent | 2019-07-22 23:31:29 | lightingft/appinventor-sources | https://api.github.com/repos/lightingft/appinventor-sources | opened | Scatter Chart Point Style | Part: Designer Priority: Low Status: To Do Type: Feature | The Scatter Chart should have a style option to select the circle shape of the Scatter Chart points. | 1.0 | Scatter Chart Point Style - The Scatter Chart should have a style option to select the circle shape of the Scatter Chart points. | priority | scatter chart point style the scatter chart should have a style option to select the circle shape of the scatter chart points | 1 |

410,649 | 11,995,017,980 | IssuesEvent | 2020-04-08 14:35:15 | GoSecure/pyrdp | https://api.github.com/repos/GoSecure/pyrdp | closed | 24bpp makes the PyRDP player crash | bug low-priority | Tested with Liveplayer with FreeRDP --> win10 and win8 --> win10.

Its a free() error, so most likely due to a bug in rle.c when decompressing 24bpp bitmaps

Crashes after an undefined amount of time (seconds to minutes) | 1.0 | 24bpp makes the PyRDP player crash - Tested with Liveplayer with FreeRDP --> win10 and win8 --> win10.

Its a free() error, so most likely due to a bug in rle.c when decompressing 24bpp bitmaps

Crashes after an undefined amount of time (seconds to minutes) | priority | makes the pyrdp player crash tested with liveplayer with freerdp and its a free error so most likely due to a bug in rle c when decompressing bitmaps crashes after an undefined amount of time seconds to minutes | 1 |

776,135 | 27,248,343,901 | IssuesEvent | 2023-02-22 05:19:06 | curiouslearning/FeedTheMonsterJS | https://api.github.com/repos/curiouslearning/FeedTheMonsterJS | closed | PWA app update | Low Priority | How should we handle if there is a newer version of the app available to install? | 1.0 | PWA app update - How should we handle if there is a newer version of the app available to install? | priority | pwa app update how should we handle if there is a newer version of the app available to install | 1 |

627,994 | 19,958,692,800 | IssuesEvent | 2022-01-28 04:37:06 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | [UX] APIM carbon console - Add user flow - UI issues | Priority/Low Affected/2.1.0 | **Description:**

***Not fulfilling [checklist items](https://docs.google.com/spreadsheets/d/1l6YKXSbmtykvvn_NvX6uJbXSsZvpT8jn72Qoi_FoJq8/edit#gid=1221574205):***

Error recognition - Is it precisely indicate the problem

Flexibility and efficiency of use - Does the task cater to both experienced and inexperienced ... | 1.0 | [UX] APIM carbon console - Add user flow - UI issues - **Description:**

***Not fulfilling [checklist items](https://docs.google.com/spreadsheets/d/1l6YKXSbmtykvvn_NvX6uJbXSsZvpT8jn72Qoi_FoJq8/edit#gid=1221574205):***

Error recognition - Is it precisely indicate the problem

Flexibility and efficiency of use - Do... | priority | apim carbon console add user flow ui issues description not fulfilling error recognition is it precisely indicate the problem flexibility and efficiency of use does the task cater to both experienced and inexperienced users match between system and the real world is design match with ... | 1 |

24,542 | 2,668,835,714 | IssuesEvent | 2015-03-23 11:52:38 | Araq/Nim | https://api.github.com/repos/Araq/Nim | closed | Compiler SIGSEGV on illegal 'type.name' usage. | Low Priority Semcheck | The following incorrect code causes the compiler to crash:

```Nimrod

echo type.name

```

The complete traceback:

```

Traceback (most recent call last)

nimrod.nim(91) nimrod

nimrod.nim(55) handleCmdLine

main.nim(308) mainCommand

main.nim(73) commandCompileToC

module... | 1.0 | Compiler SIGSEGV on illegal 'type.name' usage. - The following incorrect code causes the compiler to crash:

```Nimrod

echo type.name

```

The complete traceback:

```

Traceback (most recent call last)

nimrod.nim(91) nimrod

nimrod.nim(55) handleCmdLine

main.nim(308) mainCommand

m... | priority | compiler sigsegv on illegal type name usage the following incorrect code causes the compiler to crash nimrod echo type name the complete traceback traceback most recent call last nimrod nim nimrod nimrod nim handlecmdline main nim maincommand main ... | 1 |

719,207 | 24,751,262,388 | IssuesEvent | 2022-10-21 13:55:17 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | opened | More advanced session commands | priority: 2 - low | Some inspiration from [tab-manager/tab-manager.py at master - tab-manager - Codeberg.org](https://codeberg.org/mister_monster/tab-manager/src/branch/master/tab-manager.py):

- Adding a tab to a session (#3853, #4346)

- Removing a tab from a session

- Renaming a session

- Exporting a session to HTML

- Merging mult... | 1.0 | More advanced session commands - Some inspiration from [tab-manager/tab-manager.py at master - tab-manager - Codeberg.org](https://codeberg.org/mister_monster/tab-manager/src/branch/master/tab-manager.py):

- Adding a tab to a session (#3853, #4346)

- Removing a tab from a session

- Renaming a session

- Exporting ... | priority | more advanced session commands some inspiration from adding a tab to a session removing a tab from a session renaming a session exporting a session to html merging multiple sessions | 1 |

265,924 | 8,360,555,516 | IssuesEvent | 2018-10-03 11:57:15 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | typeChecking: false has no effect | effort1: easy (hours) freq1: low priority: 2 (required) severity3: broken | <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [x] bug report -> please search issues before submitting

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing,... | 1.0 | typeChecking: false has no effect - <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [x] bug report -> please search issues before submitting

- [ ] feature request

```

### Versions.

<!--

Outpu... | priority | typechecking false has no effect if you don t fill out the following information your issue might be closed without investigating bug report or feature request mark with an x bug report please search issues before submitting feature request versions output fr... | 1 |

213,892 | 7,261,311,721 | IssuesEvent | 2018-02-18 19:31:53 | SmartlyDressedGames/Unturned-4.x-Community | https://api.github.com/repos/SmartlyDressedGames/Unturned-4.x-Community | closed | Splash screen | Priority: Low Status: Complete Type: Cleanup | One requirement for UE4 is the splash screen, so we need the SDG + UE4 intro during loading | 1.0 | Splash screen - One requirement for UE4 is the splash screen, so we need the SDG + UE4 intro during loading | priority | splash screen one requirement for is the splash screen so we need the sdg intro during loading | 1 |

738,059 | 25,543,670,647 | IssuesEvent | 2022-11-29 17:01:59 | SlimeVR/SlimeVR-Server | https://api.github.com/repos/SlimeVR/SlimeVR-Server | opened | OpenXR Support | Type: Feature Request Priority: Low | OpenXR support for SlimeVR would be nice for future-proofing and extended compatability. | 1.0 | OpenXR Support - OpenXR support for SlimeVR would be nice for future-proofing and extended compatability. | priority | openxr support openxr support for slimevr would be nice for future proofing and extended compatability | 1 |

221,969 | 7,404,099,131 | IssuesEvent | 2018-03-20 02:37:25 | QueensRideshare/Qshare | https://api.github.com/repos/QueensRideshare/Qshare | closed | Filter: Text Search Bar 💡 | enhancement priority: nice-to-have (low) | • Add text filter at top of ride table

• Filter against ride parameters ```name, origin, destination, description```

• Keep list of **all** rides in state, but only show rides matching filter in table

• Live update table with each new character typed | 1.0 | Filter: Text Search Bar 💡 - • Add text filter at top of ride table

• Filter against ride parameters ```name, origin, destination, description```

• Keep list of **all** rides in state, but only show rides matching filter in table

• Live update table with each new character typed | priority | filter text search bar 💡 • add text filter at top of ride table • filter against ride parameters name origin destination description • keep list of all rides in state but only show rides matching filter in table • live update table with each new character typed | 1 |

415,279 | 12,127,291,507 | IssuesEvent | 2020-04-22 18:28:22 | Reese2596/CPW215-Spring2020-DataTracker | https://api.github.com/repos/Reese2596/CPW215-Spring2020-DataTracker | opened | Fill out Features paragraph | interface low priority | The "Features" paragraph on the home page was not filled out because we don't have/know all the features that will be implemented. Near the end of our project this quarter, I believe that we will be able to properly fill this out with what features we have implemented. | 1.0 | Fill out Features paragraph - The "Features" paragraph on the home page was not filled out because we don't have/know all the features that will be implemented. Near the end of our project this quarter, I believe that we will be able to properly fill this out with what features we have implemented. | priority | fill out features paragraph the features paragraph on the home page was not filled out because we don t have know all the features that will be implemented near the end of our project this quarter i believe that we will be able to properly fill this out with what features we have implemented | 1 |

596,364 | 18,104,126,786 | IssuesEvent | 2021-09-22 17:12:45 | NOAA-GSL/VxLegacyIngest | https://api.github.com/repos/NOAA-GSL/VxLegacyIngest | closed | Verify sub-hourly RTMA | Type: Task Priority: Low | ---

Author Name: **jeffrey.a.hamilton** (jeffrey.a.hamilton)

Original Redmine Issue: 72725, https://vlab.ncep.noaa.gov/redmine/issues/72725

Original Date: 2019-12-19

Original Assignee: jeffrey.a.hamilton

---

Guoqing has requested verification of the sub-hourly RTMA fields in the ceiling and visibility apps. Work on... | 1.0 | Verify sub-hourly RTMA - ---

Author Name: **jeffrey.a.hamilton** (jeffrey.a.hamilton)

Original Redmine Issue: 72725, https://vlab.ncep.noaa.gov/redmine/issues/72725

Original Date: 2019-12-19

Original Assignee: jeffrey.a.hamilton

---

Guoqing has requested verification of the sub-hourly RTMA fields in the ceiling and... | priority | verify sub hourly rtma author name jeffrey a hamilton jeffrey a hamilton original redmine issue original date original assignee jeffrey a hamilton guoqing has requested verification of the sub hourly rtma fields in the ceiling and visibility apps work on making this happen the grids... | 1 |

460,732 | 13,217,463,594 | IssuesEvent | 2020-08-17 06:48:31 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | The source address is not fully displayed | area/console kind/bug kind/need-to-verify priority/low |

**Describe the Bug**

The source address is not fully displayed, and the full information is not displayed when the mouse moves to this point

<img width="823" alt="display" src="https://user-images.githubusercontent.com/36271543/87771392-de429380-c852-11ea-9416-11f2deac662e.png">

**Versions Used**

KubeSphere:3... | 1.0 | The source address is not fully displayed -

**Describe the Bug**

The source address is not fully displayed, and the full information is not displayed when the mouse moves to this point

<img width="823" alt="display" src="https://user-images.githubusercontent.com/36271543/87771392-de429380-c852-11ea-9416-11f2deac662... | priority | the source address is not fully displayed describe the bug the source address is not fully displayed and the full information is not displayed when the mouse moves to this point img width alt display src versions used kubesphere kubernetes host envi... | 1 |

551,242 | 16,165,284,469 | IssuesEvent | 2021-05-01 11:07:57 | SunstriderEmu/BugTracker | https://api.github.com/repos/SunstriderEmu/BugTracker | closed | Auction House Mail Bug | confirmed low priority | **Describe the bug**

<!--- A clear and concise description of what the bug is. -->

As an Alliance player, I am receiving mail that says "Horde Auction House".

**To Reproduce**

<!--- Steps to reproduce the behavior. Note that providing as much details as possible will help us fix it faster! -->

1. Receive mail from... | 1.0 | Auction House Mail Bug - **Describe the bug**

<!--- A clear and concise description of what the bug is. -->

As an Alliance player, I am receiving mail that says "Horde Auction House".

**To Reproduce**

<!--- Steps to reproduce the behavior. Note that providing as much details as possible will help us fix it faster! ... | priority | auction house mail bug describe the bug as an alliance player i am receiving mail that says horde auction house to reproduce receive mail from the alliance auction house noice that it says horde auction house mail sent from the alliance auction house expected behavior m... | 1 |

621,221 | 19,580,474,262 | IssuesEvent | 2022-01-04 20:34:45 | oxen-io/lokinet | https://api.github.com/repos/oxen-io/lokinet | closed | Network Level PoW? | enhancement question low priority | Popular Hidden services on Tor often experience DoS and DDoS attacks, unlike traditional web services, hidden services cannot deploy intermediate services like Cloudflare which can check browser fingerprints (Tor browser prevents this) and IP address reputation. Tor Hidden services also lack the ability to block spamme... | 1.0 | Network Level PoW? - Popular Hidden services on Tor often experience DoS and DDoS attacks, unlike traditional web services, hidden services cannot deploy intermediate services like Cloudflare which can check browser fingerprints (Tor browser prevents this) and IP address reputation. Tor Hidden services also lack the ab... | priority | network level pow popular hidden services on tor often experience dos and ddos attacks unlike traditional web services hidden services cannot deploy intermediate services like cloudflare which can check browser fingerprints tor browser prevents this and ip address reputation tor hidden services also lack the ab... | 1 |

132,047 | 5,168,651,838 | IssuesEvent | 2017-01-17 22:10:14 | TechReborn/TechReborn | https://api.github.com/repos/TechReborn/TechReborn | closed | Config Missing Some Formatting | LOW PRIORITY | **Mod version:** [2.0.6.71](https://minecraft.curseforge.com/projects/techreborn/files/2356177)

**Forge version:** [1.11-13.19.1.2188](http://files.minecraftforge.net/maven/net/minecraftforge/forge/1.11-13.19.1.2188/forge-1.11-13.19.1.2188-changelog.txt)

There is an entry with missing localization in `main.cfg`.

... | 1.0 | Config Missing Some Formatting - **Mod version:** [2.0.6.71](https://minecraft.curseforge.com/projects/techreborn/files/2356177)

**Forge version:** [1.11-13.19.1.2188](http://files.minecraftforge.net/maven/net/minecraftforge/forge/1.11-13.19.1.2188/forge-1.11-13.19.1.2188-changelog.txt)

There is an entry with missi... | priority | config missing some formatting mod version forge version there is an entry with missing localization in main cfg there is also an entry with inconsistent formatting that s all there is to this issue really | 1 |

189,346 | 6,796,843,221 | IssuesEvent | 2017-11-01 20:27:54 | techx/quill | https://api.github.com/repos/techx/quill | closed | Autocomplete / dropdown school name | contributor friendly Priority: Low Status: Available Type: Enhancement | Forcing standard school names would make it easier for organizers to search for schools. Semantic-UI has the perfect [autocomplete dropdown](https://semantic-ui.com/modules/dropdown.html#search-selection) module for this, so this should be a relatively simple change. | 1.0 | Autocomplete / dropdown school name - Forcing standard school names would make it easier for organizers to search for schools. Semantic-UI has the perfect [autocomplete dropdown](https://semantic-ui.com/modules/dropdown.html#search-selection) module for this, so this should be a relatively simple change. | priority | autocomplete dropdown school name forcing standard school names would make it easier for organizers to search for schools semantic ui has the perfect module for this so this should be a relatively simple change | 1 |

34,408 | 2,780,302,957 | IssuesEvent | 2015-05-06 02:53:15 | broadinstitute/hellbender | https://api.github.com/repos/broadinstitute/hellbender | closed | See which Picard tools can be easily converted to ReadWalkers | enhancement Picard PRIORITY_LOW question ReadWalker tools | To help unify things, we should start moving Picard tools to ReadWalkers, where possible. Most of the tools that came from picard.sam should fall under this category.

For tools that can't easily be converted, we should make a note of the traversal pattern so we can consider how to implement it later. | 1.0 | See which Picard tools can be easily converted to ReadWalkers - To help unify things, we should start moving Picard tools to ReadWalkers, where possible. Most of the tools that came from picard.sam should fall under this category.

For tools that can't easily be converted, we should make a note of the traversal patte... | priority | see which picard tools can be easily converted to readwalkers to help unify things we should start moving picard tools to readwalkers where possible most of the tools that came from picard sam should fall under this category for tools that can t easily be converted we should make a note of the traversal patte... | 1 |

398,894 | 11,742,475,149 | IssuesEvent | 2020-03-12 00:55:45 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Change mentions of 'supporter' to 'benefactor' | priority: low technical change | In GitLab by @se-bastiaan on Sep 8, 2018, 16:40

### One-sentence description

Change mentions of 'supporter' to 'benefactor' for begunstigers

### Why?

It is the translation we use in all official documents that was decided upon by the Translacie.

### Current implementation

We use several different names for the 'b... | 1.0 | Change mentions of 'supporter' to 'benefactor' - In GitLab by @se-bastiaan on Sep 8, 2018, 16:40

### One-sentence description

Change mentions of 'supporter' to 'benefactor' for begunstigers

### Why?

It is the translation we use in all official documents that was decided upon by the Translacie.

### Current implemen... | priority | change mentions of supporter to benefactor in gitlab by se bastiaan on sep one sentence description change mentions of supporter to benefactor for begunstigers why it is the translation we use in all official documents that was decided upon by the translacie current implementatio... | 1 |

490,516 | 14,135,332,136 | IssuesEvent | 2020-11-10 01:26:20 | drashland/sinco | https://api.github.com/repos/drashland/sinco | opened | Add Support For Web Scraping | Priority: Low Type: Enhancement | ## Summary

What:

Unsure what this entails, but one use case for using these tools for scraping is running a script on a browser

Why:

There definitely seems a use case for this feature

## Acceptance Criteria

Below is a list of tasks that must be completed before this issue can be closed.

- [ ] Write... | 1.0 | Add Support For Web Scraping - ## Summary

What:

Unsure what this entails, but one use case for using these tools for scraping is running a script on a browser

Why:

There definitely seems a use case for this feature

## Acceptance Criteria

Below is a list of tasks that must be completed before this issu... | priority | add support for web scraping summary what unsure what this entails but one use case for using these tools for scraping is running a script on a browser why there definitely seems a use case for this feature acceptance criteria below is a list of tasks that must be completed before this issu... | 1 |

527,094 | 15,308,460,010 | IssuesEvent | 2021-02-24 22:30:04 | konveyor/forklift-ui | https://api.github.com/repos/konveyor/forklift-ui | closed | Determine and show the default migration network for hosts before they are configured | blocked enhancement low-priority | Related RHBZ: https://bugzilla.redhat.com/show_bug.cgi?id=1908034

From the discussion here: https://github.com/konveyor/virt-ui/pull/244#issuecomment-731192433

Right now there is no way for the UI to know what network will be used for a host unless it was specified by the user (the default is determined in the ba... | 1.0 | Determine and show the default migration network for hosts before they are configured - Related RHBZ: https://bugzilla.redhat.com/show_bug.cgi?id=1908034

From the discussion here: https://github.com/konveyor/virt-ui/pull/244#issuecomment-731192433

Right now there is no way for the UI to know what network will be ... | priority | determine and show the default migration network for hosts before they are configured related rhbz from the discussion here right now there is no way for the ui to know what network will be used for a host unless it was specified by the user the default is determined in the backend at migration time so... | 1 |

728,464 | 25,080,297,848 | IssuesEvent | 2022-11-07 18:42:03 | kubermatic/kubeone | https://api.github.com/repos/kubermatic/kubeone | opened | Spike: integrate Terraform CLI in the KubeOne binary | kind/feature priority/low sig/cluster-management | ### Description of the feature you would like to add / User story

As part of our single binary experience improvements, we might want to consider integrating Terraform CLI in the KubeOne binary. This should improve the getting started experience in a way that users don't have to download and install Terraform themse... | 1.0 | Spike: integrate Terraform CLI in the KubeOne binary - ### Description of the feature you would like to add / User story

As part of our single binary experience improvements, we might want to consider integrating Terraform CLI in the KubeOne binary. This should improve the getting started experience in a way that us... | priority | spike integrate terraform cli in the kubeone binary description of the feature you would like to add user story as part of our single binary experience improvements we might want to consider integrating terraform cli in the kubeone binary this should improve the getting started experience in a way that us... | 1 |

347,297 | 10,428,195,311 | IssuesEvent | 2019-09-16 21:50:14 | clearlinux/clr-installer | https://api.github.com/repos/clearlinux/clr-installer | closed | Creating ISO can fail silently | bug duplicate low priority | **Describe the bug**

If you attempt to create an ISO image and run out of disk space, the image generation will claim success though no ISO is found.

**To Reproduce**

Steps to reproduce the behavior:

1. Ensure you /tmp is nearly all in use

2. create a live desktop image

3. clr-installer -c scripts/live-desktop.... | 1.0 | Creating ISO can fail silently - **Describe the bug**

If you attempt to create an ISO image and run out of disk space, the image generation will claim success though no ISO is found.

**To Reproduce**

Steps to reproduce the behavior:

1. Ensure you /tmp is nearly all in use

2. create a live desktop image

3. clr-i... | priority | creating iso can fail silently describe the bug if you attempt to create an iso image and run out of disk space the image generation will claim success though no iso is found to reproduce steps to reproduce the behavior ensure you tmp is nearly all in use create a live desktop image clr i... | 1 |

505,713 | 14,644,110,213 | IssuesEvent | 2020-12-25 21:00:40 | SupremeObsidian/ProjectManager | https://api.github.com/repos/SupremeObsidian/ProjectManager | opened | Daily token take config | Low Priority enhancement | Make a config option for configuring the number of tokens taken per day | 1.0 | Daily token take config - Make a config option for configuring the number of tokens taken per day | priority | daily token take config make a config option for configuring the number of tokens taken per day | 1 |

211,346 | 7,200,491,432 | IssuesEvent | 2018-02-05 19:14:29 | haskell/cabal | https://api.github.com/repos/haskell/cabal | opened | cabal init: "getDirectoryContents:openDirStream: resource exhausted (Too many open files)" | cabal-install: cmd/init priority: low | Ran into this when i ran init accidentally in the wrong directory (with a lot of subdirectories with a lot of contents). The crawl for module contents combined with lazy IO clearly ran into the usual sorts of resource limits.

This is a corner case, but in general it is better to defensively code around these things. | 1.0 | cabal init: "getDirectoryContents:openDirStream: resource exhausted (Too many open files)" - Ran into this when i ran init accidentally in the wrong directory (with a lot of subdirectories with a lot of contents). The crawl for module contents combined with lazy IO clearly ran into the usual sorts of resource limits.

... | priority | cabal init getdirectorycontents opendirstream resource exhausted too many open files ran into this when i ran init accidentally in the wrong directory with a lot of subdirectories with a lot of contents the crawl for module contents combined with lazy io clearly ran into the usual sorts of resource limits ... | 1 |

258,681 | 8,178,903,103 | IssuesEvent | 2018-08-28 15:00:42 | spacetelescope/webbpsf | https://api.github.com/repos/spacetelescope/webbpsf | closed | Update filter profiles, for NIRISS and others | priority:low | <a href="https://github.com/mperrin"><img src="https://avatars0.githubusercontent.com/u/1151745?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [mperrin](https://github.com/mperrin)**

_Thursday Jan 24, 2013 at 20:42 GMT_

_Originally opened as https://github.com/mperrin/webbpsf/issues/7_

----... | 1.0 | Update filter profiles, for NIRISS and others - <a href="https://github.com/mperrin"><img src="https://avatars0.githubusercontent.com/u/1151745?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [mperrin](https://github.com/mperrin)**

_Thursday Jan 24, 2013 at 20:42 GMT_

_Originally opened as ht... | priority | update filter profiles for niriss and others issue by thursday jan at gmt originally opened as need to work with vicki loic et al to ensure we have updated filter profiles | 1 |

317,967 | 9,672,201,925 | IssuesEvent | 2019-05-22 02:23:41 | ReliefApplications/bms_front | https://api.github.com/repos/ReliefApplications/bms_front | closed | Uniformity of Function - Magnifying Glass - Low | In progress Low Priority | On Distribution Validated page, the magnifying modal should display same data as ‘Household Information Summary’ from Add New Beneficiary step 4. | 1.0 | Uniformity of Function - Magnifying Glass - Low - On Distribution Validated page, the magnifying modal should display same data as ‘Household Information Summary’ from Add New Beneficiary step 4. | priority | uniformity of function magnifying glass low on distribution validated page the magnifying modal should display same data as ‘household information summary’ from add new beneficiary step | 1 |

681,182 | 23,299,829,262 | IssuesEvent | 2022-08-07 06:22:16 | zot4plan/Zot4Plan | https://api.github.com/repos/zot4plan/Zot4Plan | opened | INS-19 About us Page | Priority: low Type: feature request | **Story**

A new page for team members

**Requirement**

Friendly & creative design | 1.0 | INS-19 About us Page - **Story**

A new page for team members

**Requirement**

Friendly & creative design | priority | ins about us page story a new page for team members requirement friendly creative design | 1 |

253,536 | 8,057,437,366 | IssuesEvent | 2018-08-02 15:23:25 | openfaas/faas-cli | https://api.github.com/repos/openfaas/faas-cli | closed | RPi: Kubernetes error function stuck in ImageInspectError state | priority/low support | Hi, I trying to deploy a home made function, but every time I, the pod that run that function goes in ImageInspectError state...

When I deploy a test function like `figlet`, everything work, but when it come to something I write myself, it fails.

I think I'm maybe missing something in the functioning because I'm pret... | 1.0 | RPi: Kubernetes error function stuck in ImageInspectError state - Hi, I trying to deploy a home made function, but every time I, the pod that run that function goes in ImageInspectError state...

When I deploy a test function like `figlet`, everything work, but when it come to something I write myself, it fails.

I thi... | priority | rpi kubernetes error function stuck in imageinspecterror state hi i trying to deploy a home made function but every time i the pod that run that function goes in imageinspecterror state when i deploy a test function like figlet everything work but when it come to something i write myself it fails i thi... | 1 |

709,318 | 24,373,508,544 | IssuesEvent | 2022-10-03 21:37:53 | raceintospace/raceintospace | https://api.github.com/repos/raceintospace/raceintospace | closed | Job review gets worse while prestige rises? | bug Low Priority | A user wrote me last night to remark that in the game, his prestige was doing very well, but his job rating was progressively bad. Anyone know why this might be? He can be emailed directly at shch0003@yandex.ru if you need details.

In fheroes2 we have 1Black, 2 Red and 3 Green Dragons.

In fheroes2 we have 1Black, 2 Red and 3 Green Dragons.

in the password field after previous password was not accepted I am taping erase on the keyboard and all previously letters are removed

#### Expected behavior

I should be able to remove symbols one by o... | 1.0 | All letters are removed when tapping erase button one time in password field after incorrect attempt [develop] - ### Description

*Type*: Bug

*Summary*: When I am trying to edit the password (like correct 1 last symbol) in the password field after previous password was not accepted I am taping erase on the keybo... | priority | all letters are removed when tapping erase button one time in password field after incorrect attempt description type bug summary when i am trying to edit the password like correct last symbol in the password field after previous password was not accepted i am taping erase on the keyboard and ... | 1 |

322,999 | 9,834,904,167 | IssuesEvent | 2019-06-17 10:52:35 | tud-zih-energy/lo2s | https://api.github.com/repos/tud-zih-energy/lo2s | closed | Look into PEBS | low priority | Can we use PEBS through perf_event with recent kernels?

Or maybe manually use PEBS? (https://github.com/andikleen/pmu-tools/tree/master/simple-pebs) | 1.0 | Look into PEBS - Can we use PEBS through perf_event with recent kernels?

Or maybe manually use PEBS? (https://github.com/andikleen/pmu-tools/tree/master/simple-pebs) | priority | look into pebs can we use pebs through perf event with recent kernels or maybe manually use pebs | 1 |

242,763 | 7,846,603,758 | IssuesEvent | 2018-06-19 15:54:58 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | RAMP election | Bug: we allow users to create EPs even if there are no issues to close | Triage bug-high-priority caseflow-intake sierra | There was a situation where an NOD was mistakenly dated one day later than the RAMP opt-in. The CA was able to create the EP, but no VACOLS appeal issues were closed. We should tell users when there are no valid issues on the "finish" step. | 1.0 | RAMP election | Bug: we allow users to create EPs even if there are no issues to close - There was a situation where an NOD was mistakenly dated one day later than the RAMP opt-in. The CA was able to create the EP, but no VACOLS appeal issues were closed. We should tell users when there are no valid issues on the "fini... | priority | ramp election bug we allow users to create eps even if there are no issues to close there was a situation where an nod was mistakenly dated one day later than the ramp opt in the ca was able to create the ep but no vacols appeal issues were closed we should tell users when there are no valid issues on the fini... | 1 |

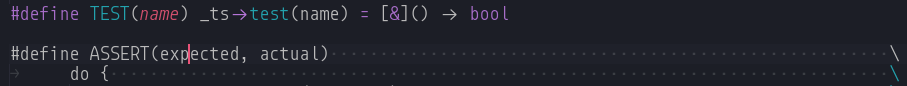

310,832 | 9,524,706,678 | IssuesEvent | 2019-04-28 06:08:13 | jeff-hykin/cpp-textmate-grammar | https://api.github.com/repos/jeff-hykin/cpp-textmate-grammar | opened | partial lambda breaks subsequent macro | Hard low priority 🐛 Bug | example code

```c++

#define TEST(name) _ts->test(name) = [&]() -> bool

#define ASSERT(expected, actual) \

```

image:

... | 1.0 | partial lambda breaks subsequent macro - example code

```c++

#define TEST(name) _ts->test(name) = [&]() -> bool

#define ASSERT(expected, actual) \

```

image:

they are allowed to enter the staff if necessary, and (2) position themselves between the two notes of the turn (when the turn is delayed).

Here is an example from Bee... | 1.0 | Delayed turn placement enhancements - Turns try to position themselves above the top line of a staff, and move upwards if they detected a collision.

Instead, it would be better if: (1) they are allowed to enter the staff if necessary, and (2) position themselves between the two notes of the turn (when the turn is de... | priority | delayed turn placement enhancements turns try to position themselves above the top line of a staff and move upwards if they detected a collision instead it would be better if they are allowed to enter the staff if necessary and position themselves between the two notes of the turn when the turn is de... | 1 |

108,724 | 4,349,597,559 | IssuesEvent | 2016-07-30 17:31:13 | JustArchi/ArchiSteamFarm | https://api.github.com/repos/JustArchi/ArchiSteamFarm | closed | Investigate if DistributeKeys is still needed | Discussion Feedback welcome Low priority | To me it seems redundant now, as ```ForwardKeysToOtherBots``` got significant improvements - https://github.com/JustArchi/ArchiSteamFarm/releases/tag/2.1.3.2

CC @Ryzhehvost @Pandiora - do you think that option still makes sense?

More info - https://github.com/JustArchi/ArchiSteamFarm/commit/a90573e0ea95ab89a632c8... | 1.0 | Investigate if DistributeKeys is still needed - To me it seems redundant now, as ```ForwardKeysToOtherBots``` got significant improvements - https://github.com/JustArchi/ArchiSteamFarm/releases/tag/2.1.3.2

CC @Ryzhehvost @Pandiora - do you think that option still makes sense?

More info - https://github.com/JustAr... | priority | investigate if distributekeys is still needed to me it seems redundant now as forwardkeystootherbots got significant improvements cc ryzhehvost pandiora do you think that option still makes sense more info | 1 |

298,585 | 9,200,554,655 | IssuesEvent | 2019-03-07 17:19:55 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | Last column of Postgis table is missing | Category: Data Provider Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **Redmine Admin** (Redmine Admin)

Original Redmine Issue: 153, https://issues.qgis.org/issues/153

Original Assignee: Gavin Macaulay -

---

When opening the attribut table of a postgis layer the last column of the postgis table is empty.

After identifying the same object with the identify tool, the... | 1.0 | Last column of Postgis table is missing - ---

Author Name: **Redmine Admin** (Redmine Admin)

Original Redmine Issue: 153, https://issues.qgis.org/issues/153

Original Assignee: Gavin Macaulay -

---

When opening the attribut table of a postgis layer the last column of the postgis table is empty.

After identifying t... | priority | last column of postgis table is missing author name redmine admin redmine admin original redmine issue original assignee gavin macaulay when opening the attribut table of a postgis layer the last column of the postgis table is empty after identifying the same object with the identify to... | 1 |

508,976 | 14,709,896,704 | IssuesEvent | 2021-01-05 03:37:57 | input-output-hk/cardano-ledger-specs | https://api.github.com/repos/input-output-hk/cardano-ledger-specs | closed | Invariants section at begining | formal-spec :scroll: priority low shelley era | Bring out the invariants up front in a separate section. Make it clear what should be proved.

We could even have side-conditions in various rules where there are key properties, which can be injected into the executable spec | 1.0 | Invariants section at begining - Bring out the invariants up front in a separate section. Make it clear what should be proved.

We could even have side-conditions in various rules where there are key properties, which can be injected into the executable spec | priority | invariants section at begining bring out the invariants up front in a separate section make it clear what should be proved we could even have side conditions in various rules where there are key properties which can be injected into the executable spec | 1 |

144,236 | 5,537,474,856 | IssuesEvent | 2017-03-21 22:11:35 | technomancers/2017SteamWorks | https://api.github.com/repos/technomancers/2017SteamWorks | closed | Add rumble while gear subsystem is deployed | Priority: Low Type: Enhancement | While the gear subsystem is open we should rumble the controller to let Ryan know that it is open and he should close it! | 1.0 | Add rumble while gear subsystem is deployed - While the gear subsystem is open we should rumble the controller to let Ryan know that it is open and he should close it! | priority | add rumble while gear subsystem is deployed while the gear subsystem is open we should rumble the controller to let ryan know that it is open and he should close it | 1 |