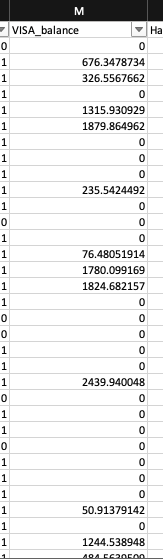

Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

38,244 | 19,042,369,260 | IssuesEvent | 2021-11-25 00:27:49 | earthly/earthly | https://api.github.com/repos/earthly/earthly | closed | SAVE IMAGE is slow, even when there's no work to be done | type:performance | I was observing that for highly optimized builds the slowest part can be saving images. For example, [here](https://github.com/jazzdan/earthly-save-image)’s a repo where `earth +all` takes 12s if everything is cached (like I run `earth +all` twice). Yet if I comment the `SAVE IMAGE` lines out the total time drops to 2s. This implies that `SAVE IMAGE` is doing a lot of work, even when nothing has changed.

Is there anything I can do speed up SAVE IMAGE in instances like this? I’m surprised that SAVE IMAGE does anything if the image hasn’t changed, is it possible for it do some more sophisticated content negotiation with the layers?

After [talking with](https://earthlycommunity.slack.com/archives/C01DL2928RM/p1605112044036800) @agbell in Slack I hypothesized that it might not be possible for Earthly to what images/layers the host has. This is all conjecture on my part, but:

If Earth is running in a container then it doesn’t know the state of the registry on the host machine, and what layers it has. It’s only option is to export the entire image to the host, which on a Mac could be slow because containers on a mac are actually running in a VM.

Maybe if Earth could mount/be aware of the host docker registry it could just do docker push?

This reminds me of similar problems that are being solved in the Kubernetes local cluster space https://github.com/kubernetes/enhancements/tree/master/keps/sig-cluster-lifecycle/generic/1755-communicating-a-local-registry | True | SAVE IMAGE is slow, even when there's no work to be done - I was observing that for highly optimized builds the slowest part can be saving images. For example, [here](https://github.com/jazzdan/earthly-save-image)’s a repo where `earth +all` takes 12s if everything is cached (like I run `earth +all` twice). Yet if I comment the `SAVE IMAGE` lines out the total time drops to 2s. This implies that `SAVE IMAGE` is doing a lot of work, even when nothing has changed.

Is there anything I can do speed up SAVE IMAGE in instances like this? I’m surprised that SAVE IMAGE does anything if the image hasn’t changed, is it possible for it do some more sophisticated content negotiation with the layers?

After [talking with](https://earthlycommunity.slack.com/archives/C01DL2928RM/p1605112044036800) @agbell in Slack I hypothesized that it might not be possible for Earthly to what images/layers the host has. This is all conjecture on my part, but:

If Earth is running in a container then it doesn’t know the state of the registry on the host machine, and what layers it has. It’s only option is to export the entire image to the host, which on a Mac could be slow because containers on a mac are actually running in a VM.

Maybe if Earth could mount/be aware of the host docker registry it could just do docker push?

This reminds me of similar problems that are being solved in the Kubernetes local cluster space https://github.com/kubernetes/enhancements/tree/master/keps/sig-cluster-lifecycle/generic/1755-communicating-a-local-registry | non_priority | save image is slow even when there s no work to be done i was observing that for highly optimized builds the slowest part can be saving images for example a repo where earth all takes if everything is cached like i run earth all twice yet if i comment the save image lines out the total time drops to this implies that save image is doing a lot of work even when nothing has changed is there anything i can do speed up save image in instances like this i’m surprised that save image does anything if the image hasn’t changed is it possible for it do some more sophisticated content negotiation with the layers after agbell in slack i hypothesized that it might not be possible for earthly to what images layers the host has this is all conjecture on my part but if earth is running in a container then it doesn’t know the state of the registry on the host machine and what layers it has it’s only option is to export the entire image to the host which on a mac could be slow because containers on a mac are actually running in a vm maybe if earth could mount be aware of the host docker registry it could just do docker push this reminds me of similar problems that are being solved in the kubernetes local cluster space | 0 |

163,510 | 12,733,284,737 | IssuesEvent | 2020-06-25 12:01:32 | DiSSCo/ELViS | https://api.github.com/repos/DiSSCo/ELViS | closed | Possibility for requesters and VA Coordinators to filter own/other requests | enhancement resolved to test | #### Description

Since requesters and VA Coordinators now have the possibility to see all requests from others (either other requesters or requests related to other institutions) in the main menu option "Requests", it is necessary to offer them a filter via which they can choose to either see their own requests and requests related to their own institution, or other requests, from other requesters (except the ones still in the draft status ofc) or only related to other institution than their own.

Sprint target for sprint 13 | 1.0 | Possibility for requesters and VA Coordinators to filter own/other requests - #### Description

Since requesters and VA Coordinators now have the possibility to see all requests from others (either other requesters or requests related to other institutions) in the main menu option "Requests", it is necessary to offer them a filter via which they can choose to either see their own requests and requests related to their own institution, or other requests, from other requesters (except the ones still in the draft status ofc) or only related to other institution than their own.

Sprint target for sprint 13 | non_priority | possibility for requesters and va coordinators to filter own other requests description since requesters and va coordinators now have the possibility to see all requests from others either other requesters or requests related to other institutions in the main menu option requests it is necessary to offer them a filter via which they can choose to either see their own requests and requests related to their own institution or other requests from other requesters except the ones still in the draft status ofc or only related to other institution than their own sprint target for sprint | 0 |

69,521 | 22,409,673,274 | IssuesEvent | 2022-06-18 14:27:19 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | The left margin of display name on reply tiles on TimelineCard should be removed | T-Defect | ### Steps to reproduce

1. Open a room

1. Enable a widget

2. Maximize the widget

3. Open a chat panel

4. Send a message

5. Reply to the message

### Outcome

#### What did you expect?

The left margin should not be added to the display name inside the reply tile.

#### What happened instead?

There is the left margin next to the display name.

### Operating system

Debian

### Browser information

Firefox ESR 91

### URL for webapp

localhost

### Application version

develop branch

### Homeserver

_No response_

### Will you send logs?

No | 1.0 | The left margin of display name on reply tiles on TimelineCard should be removed - ### Steps to reproduce

1. Open a room

1. Enable a widget

2. Maximize the widget

3. Open a chat panel

4. Send a message

5. Reply to the message

### Outcome

#### What did you expect?

The left margin should not be added to the display name inside the reply tile.

#### What happened instead?

There is the left margin next to the display name.

### Operating system

Debian

### Browser information

Firefox ESR 91

### URL for webapp

localhost

### Application version

develop branch

### Homeserver

_No response_

### Will you send logs?

No | non_priority | the left margin of display name on reply tiles on timelinecard should be removed steps to reproduce open a room enable a widget maximize the widget open a chat panel send a message reply to the message outcome what did you expect the left margin should not be added to the display name inside the reply tile what happened instead there is the left margin next to the display name operating system debian browser information firefox esr url for webapp localhost application version develop branch homeserver no response will you send logs no | 0 |

11,500 | 30,768,013,328 | IssuesEvent | 2023-07-30 14:45:30 | SuperCowPowers/sageworks | https://api.github.com/repos/SuperCowPowers/sageworks | opened | Anomaly Detection: Sliding Window | algorithm data_source architecture athena research | Can we do a 'sliding window' based anomaly detection, meaning that we can't (or don't want to) process all the data so we use a sliding window (1 day, 3 days, 1 week, etc) and we run anomaly detection on just that window of data. | 1.0 | Anomaly Detection: Sliding Window - Can we do a 'sliding window' based anomaly detection, meaning that we can't (or don't want to) process all the data so we use a sliding window (1 day, 3 days, 1 week, etc) and we run anomaly detection on just that window of data. | non_priority | anomaly detection sliding window can we do a sliding window based anomaly detection meaning that we can t or don t want to process all the data so we use a sliding window day days week etc and we run anomaly detection on just that window of data | 0 |

285,756 | 24,694,709,444 | IssuesEvent | 2022-10-19 11:11:41 | wpfoodmanager/wp-food-manager | https://api.github.com/repos/wpfoodmanager/wp-food-manager | closed | Icon size is decrease when food title name is long | In Testing | Icon size is decrease when food title name is long.

| 1.0 | Icon size is decrease when food title name is long - Icon size is decrease when food title name is long.

| non_priority | icon size is decrease when food title name is long icon size is decrease when food title name is long | 0 |

192,345 | 15,343,510,333 | IssuesEvent | 2021-02-27 20:37:04 | wprig/wprig | https://api.github.com/repos/wprig/wprig | closed | Update documentation re: child themes | documentation | State in `README.md` that WP Rig should not be used to build a child theme. See #260. | 1.0 | Update documentation re: child themes - State in `README.md` that WP Rig should not be used to build a child theme. See #260. | non_priority | update documentation re child themes state in readme md that wp rig should not be used to build a child theme see | 0 |

189,315 | 14,497,725,417 | IssuesEvent | 2020-12-11 14:36:38 | rancher/harvester | https://api.github.com/repos/rancher/harvester | reopened | create default admin cause cannot add indexers to running index | area/authentication bug to-test | cannot add indexers to running index of the default admin

```

time="2020-11-02T02:52:31Z" level=info msg="Listening on :8443"

time="2020-11-02T02:52:32Z" level=info msg="Starting harvester.cattle.io/v1alpha1, Kind=VirtualMachineImage controller"

time="2020-11-02T02:52:32Z" level=info msg="Starting /v1, Kind=Secret controller"

time="2020-11-02T02:52:32Z" level=info msg="Active TLS secret serving-cert (ver=1209280) (count 6): map[listener.cattle.io/cn-10.42.0.155:10.42.0.155 listener.cattle.io/cn-10.42.0.95:10.42.0.95 listener.cattle.io/cn-10.42.3.13:10.42.3.13 listener.cattle.io/cn-10.42.3.9:10.42.3.9 listener.cattle.io/cn-10.42.4.11:10.42.4.11 listener.cattle.io/cn-172.16.0.63:172.16.0.63 listener.cattle.io/hash:b9226b4a0f99901e63c93d7eb6ea5b7067e5460e32552bdccd53945329c169fa]"

I1102 02:53:14.056000 7 leaderelection.go:252] successfully acquired lease kube-system/harvester-controllers

time="2020-11-02T02:53:14Z" level=error msg="error syncing 'user-gdj99': handler user-rbac-controller: Index with name auth.harvester.cattle.io/crb-by-role-and-subject does not exist, requeuing"

time="2020-11-02T02:53:14Z" level=error msg="error syncing 'user-gdj99': handler user-rbac-controller: Index with name auth.harvester.cattle.io/crb-by-role-and-subject does not exist, requeuing"

time="2020-11-02T02:53:14Z" level=info msg="Default admin already created, skip create admin step"

panic: cannot add indexers to running index

goroutine 2457 [running]:

github.com/rancher/harvester/vendor/k8s.io/apimachinery/pkg/util/runtime.Must(...)

/go/src/github.com/rancher/harvester/vendor/k8s.io/apimachinery/pkg/util/runtime/runtime.go:171

github.com/rancher/harvester/vendor/github.com/rancher/wrangler-api/pkg/generated/controllers/rbac/v1.(*clusterRoleBindingCache).AddIndexer(0xc002bae1b0, 0x1af2d7a, 0x30, 0x1b7cc18)

/go/src/github.com/rancher/harvester/vendor/github.com/rancher/wrangler-api/pkg/generated/controllers/rbac/v1/clusterrolebinding.go:239 +0x11d

github.com/rancher/harvester/pkg/indexeres.RegisterManagementIndexers(0xc000314380)

/go/src/github.com/rancher/harvester/pkg/indexeres/indexer.go:22 +0xa4

github.com/rancher/harvester/pkg/controller/master.register(0x1d688a0, 0xc0010bca80, 0xc000134000, 0xc000314380, 0x0, 0x0)

/go/src/github.com/rancher/harvester/pkg/controller/master/controller.go:39 +0xe6

github.com/rancher/harvester/pkg/controller/master.Setup.func1(0x1d688a0, 0xc0010bca80)

/go/src/github.com/rancher/harvester/pkg/controller/master/setup.go:14 +0x54

created by github.com/rancher/harvester/vendor/github.com/rancher/wrangler/pkg/leader.run.func1

/go/src/github.com/rancher/harvester/vendor/github.com/rancher/wrangler/pkg/leader/leader.go:58 +0x46

``` | 1.0 | create default admin cause cannot add indexers to running index - cannot add indexers to running index of the default admin

```

time="2020-11-02T02:52:31Z" level=info msg="Listening on :8443"

time="2020-11-02T02:52:32Z" level=info msg="Starting harvester.cattle.io/v1alpha1, Kind=VirtualMachineImage controller"

time="2020-11-02T02:52:32Z" level=info msg="Starting /v1, Kind=Secret controller"

time="2020-11-02T02:52:32Z" level=info msg="Active TLS secret serving-cert (ver=1209280) (count 6): map[listener.cattle.io/cn-10.42.0.155:10.42.0.155 listener.cattle.io/cn-10.42.0.95:10.42.0.95 listener.cattle.io/cn-10.42.3.13:10.42.3.13 listener.cattle.io/cn-10.42.3.9:10.42.3.9 listener.cattle.io/cn-10.42.4.11:10.42.4.11 listener.cattle.io/cn-172.16.0.63:172.16.0.63 listener.cattle.io/hash:b9226b4a0f99901e63c93d7eb6ea5b7067e5460e32552bdccd53945329c169fa]"

I1102 02:53:14.056000 7 leaderelection.go:252] successfully acquired lease kube-system/harvester-controllers

time="2020-11-02T02:53:14Z" level=error msg="error syncing 'user-gdj99': handler user-rbac-controller: Index with name auth.harvester.cattle.io/crb-by-role-and-subject does not exist, requeuing"

time="2020-11-02T02:53:14Z" level=error msg="error syncing 'user-gdj99': handler user-rbac-controller: Index with name auth.harvester.cattle.io/crb-by-role-and-subject does not exist, requeuing"

time="2020-11-02T02:53:14Z" level=info msg="Default admin already created, skip create admin step"

panic: cannot add indexers to running index

goroutine 2457 [running]:

github.com/rancher/harvester/vendor/k8s.io/apimachinery/pkg/util/runtime.Must(...)

/go/src/github.com/rancher/harvester/vendor/k8s.io/apimachinery/pkg/util/runtime/runtime.go:171

github.com/rancher/harvester/vendor/github.com/rancher/wrangler-api/pkg/generated/controllers/rbac/v1.(*clusterRoleBindingCache).AddIndexer(0xc002bae1b0, 0x1af2d7a, 0x30, 0x1b7cc18)

/go/src/github.com/rancher/harvester/vendor/github.com/rancher/wrangler-api/pkg/generated/controllers/rbac/v1/clusterrolebinding.go:239 +0x11d

github.com/rancher/harvester/pkg/indexeres.RegisterManagementIndexers(0xc000314380)

/go/src/github.com/rancher/harvester/pkg/indexeres/indexer.go:22 +0xa4

github.com/rancher/harvester/pkg/controller/master.register(0x1d688a0, 0xc0010bca80, 0xc000134000, 0xc000314380, 0x0, 0x0)

/go/src/github.com/rancher/harvester/pkg/controller/master/controller.go:39 +0xe6

github.com/rancher/harvester/pkg/controller/master.Setup.func1(0x1d688a0, 0xc0010bca80)

/go/src/github.com/rancher/harvester/pkg/controller/master/setup.go:14 +0x54

created by github.com/rancher/harvester/vendor/github.com/rancher/wrangler/pkg/leader.run.func1

/go/src/github.com/rancher/harvester/vendor/github.com/rancher/wrangler/pkg/leader/leader.go:58 +0x46

``` | non_priority | create default admin cause cannot add indexers to running index cannot add indexers to running index of the default admin time level info msg listening on time level info msg starting harvester cattle io kind virtualmachineimage controller time level info msg starting kind secret controller time level info msg active tls secret serving cert ver count map leaderelection go successfully acquired lease kube system harvester controllers time level error msg error syncing user handler user rbac controller index with name auth harvester cattle io crb by role and subject does not exist requeuing time level error msg error syncing user handler user rbac controller index with name auth harvester cattle io crb by role and subject does not exist requeuing time level info msg default admin already created skip create admin step panic cannot add indexers to running index goroutine github com rancher harvester vendor io apimachinery pkg util runtime must go src github com rancher harvester vendor io apimachinery pkg util runtime runtime go github com rancher harvester vendor github com rancher wrangler api pkg generated controllers rbac clusterrolebindingcache addindexer go src github com rancher harvester vendor github com rancher wrangler api pkg generated controllers rbac clusterrolebinding go github com rancher harvester pkg indexeres registermanagementindexers go src github com rancher harvester pkg indexeres indexer go github com rancher harvester pkg controller master register go src github com rancher harvester pkg controller master controller go github com rancher harvester pkg controller master setup go src github com rancher harvester pkg controller master setup go created by github com rancher harvester vendor github com rancher wrangler pkg leader run go src github com rancher harvester vendor github com rancher wrangler pkg leader leader go | 0 |

23,155 | 3,771,338,787 | IssuesEvent | 2016-03-16 17:17:37 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Emit issue when using += or -= operator on dictionary values | defect | Related to forum post:

http://forums.bridge.net/forum/bridge-net-pro/bugs/1783

Live Bridge sample:

http://live.bridge.net/#82f9b2ca69a1505af3f9

### Expected

not sure

### Actual

```javascript

dict.set(0, +1);

```

### Steps To Reproduce

```csharp

public class App

{

[Ready]

public static void Main()

{

var dict = new Dictionary<int, int>();

dict.Add(0, 5);

dict[0] += 1;

Global.alert(dict[0]);

}

}

``` | 1.0 | Emit issue when using += or -= operator on dictionary values - Related to forum post:

http://forums.bridge.net/forum/bridge-net-pro/bugs/1783

Live Bridge sample:

http://live.bridge.net/#82f9b2ca69a1505af3f9

### Expected

not sure

### Actual

```javascript

dict.set(0, +1);

```

### Steps To Reproduce

```csharp

public class App

{

[Ready]

public static void Main()

{

var dict = new Dictionary<int, int>();

dict.Add(0, 5);

dict[0] += 1;

Global.alert(dict[0]);

}

}

``` | non_priority | emit issue when using or operator on dictionary values related to forum post live bridge sample expected not sure actual javascript dict set steps to reproduce csharp public class app public static void main var dict new dictionary dict add dict global alert dict | 0 |

122,028 | 10,210,493,726 | IssuesEvent | 2019-08-14 14:54:15 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | opened | Run flake8 checks in Travis on modified code | Theme: Development Theme: Testing Type: Feature | ### Is your feature request related to a problem? Please describe.

We can currently introduce flake8 errors into our code and have no linting/error checking.

### Describe the solution you'd like

This site shows how to run flake8 tests on the modified code. It's in the context of pre-commit hooks, but could we use the code in our Travis build?

- https://consideratecode.com/2016/10/15/check-code-changes-with-flake8-before-committing/

Code from site:

```sh

git diff --cached -U0 | flake8 --diff

```

It notes that this won't catch _all_ issues, so another check that could be performed would be to perform a full check on master and on the PR, and ensure the outputs are the same (i.e. no new errors).

### Stakeholders

@cclauss @hornc | 1.0 | Run flake8 checks in Travis on modified code - ### Is your feature request related to a problem? Please describe.

We can currently introduce flake8 errors into our code and have no linting/error checking.

### Describe the solution you'd like

This site shows how to run flake8 tests on the modified code. It's in the context of pre-commit hooks, but could we use the code in our Travis build?

- https://consideratecode.com/2016/10/15/check-code-changes-with-flake8-before-committing/

Code from site:

```sh

git diff --cached -U0 | flake8 --diff

```

It notes that this won't catch _all_ issues, so another check that could be performed would be to perform a full check on master and on the PR, and ensure the outputs are the same (i.e. no new errors).

### Stakeholders

@cclauss @hornc | non_priority | run checks in travis on modified code is your feature request related to a problem please describe we can currently introduce errors into our code and have no linting error checking describe the solution you d like this site shows how to run tests on the modified code it s in the context of pre commit hooks but could we use the code in our travis build code from site sh git diff cached diff it notes that this won t catch all issues so another check that could be performed would be to perform a full check on master and on the pr and ensure the outputs are the same i e no new errors stakeholders cclauss hornc | 0 |

212,213 | 16,476,730,576 | IssuesEvent | 2021-05-24 06:38:38 | metoppv/improver | https://api.github.com/repos/metoppv/improver | opened | One page summary diagram per diagnostic | Type:Documentation blue_team | As a X I want Y so that Z

Related issues: #I, #J

Optional extra information text goes here

Acceptance criteria:

* A

* B

* C

| 1.0 | One page summary diagram per diagnostic - As a X I want Y so that Z

Related issues: #I, #J

Optional extra information text goes here

Acceptance criteria:

* A

* B

* C

| non_priority | one page summary diagram per diagnostic as a x i want y so that z related issues i j optional extra information text goes here acceptance criteria a b c | 0 |

86,608 | 15,755,696,280 | IssuesEvent | 2021-03-31 02:14:07 | SmartBear/ready-msazure-plugin | https://api.github.com/repos/SmartBear/ready-msazure-plugin | opened | CVE-2021-21350 (High) detected in xstream-1.3.1.jar | security vulnerability | ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path to dependency file: ready-msazure-plugin/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/thoughtworks/xstream/1.3.1/xstream-1.3.1.jar</p>

<p>

Dependency Hierarchy:

- ready-api-soapui-pro-1.3.0.jar (Root Library)

- ready-api-soapui-1.3.0.jar

- :x: **xstream-1.3.1.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream. No user is affected, who followed the recommendation to setup XStream's security framework with a whitelist limited to the minimal required types. If you rely on XStream's default blacklist of the Security Framework, you will have to use at least version 1.4.16.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21350>CVE-2021-21350</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/x-stream/xstream/security/advisories/GHSA-43gc-mjxg-gvrq">https://github.com/x-stream/xstream/security/advisories/GHSA-43gc-mjxg-gvrq</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: com.thoughtworks.xstream:xstream:1.4.16</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.thoughtworks.xstream","packageName":"xstream","packageVersion":"1.3.1","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.smartbear:ready-api-soapui-pro:1.3.0;com.smartbear:ready-api-soapui:1.3.0;com.thoughtworks.xstream:xstream:1.3.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.thoughtworks.xstream:xstream:1.4.16"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-21350","vulnerabilityDetails":"XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream. No user is affected, who followed the recommendation to setup XStream\u0027s security framework with a whitelist limited to the minimal required types. If you rely on XStream\u0027s default blacklist of the Security Framework, you will have to use at least version 1.4.16.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21350","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2021-21350 (High) detected in xstream-1.3.1.jar - ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path to dependency file: ready-msazure-plugin/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/thoughtworks/xstream/1.3.1/xstream-1.3.1.jar</p>

<p>

Dependency Hierarchy:

- ready-api-soapui-pro-1.3.0.jar (Root Library)

- ready-api-soapui-1.3.0.jar

- :x: **xstream-1.3.1.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream. No user is affected, who followed the recommendation to setup XStream's security framework with a whitelist limited to the minimal required types. If you rely on XStream's default blacklist of the Security Framework, you will have to use at least version 1.4.16.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21350>CVE-2021-21350</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/x-stream/xstream/security/advisories/GHSA-43gc-mjxg-gvrq">https://github.com/x-stream/xstream/security/advisories/GHSA-43gc-mjxg-gvrq</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: com.thoughtworks.xstream:xstream:1.4.16</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.thoughtworks.xstream","packageName":"xstream","packageVersion":"1.3.1","packageFilePaths":["/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.smartbear:ready-api-soapui-pro:1.3.0;com.smartbear:ready-api-soapui:1.3.0;com.thoughtworks.xstream:xstream:1.3.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.thoughtworks.xstream:xstream:1.4.16"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-21350","vulnerabilityDetails":"XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream. No user is affected, who followed the recommendation to setup XStream\u0027s security framework with a whitelist limited to the minimal required types. If you rely on XStream\u0027s default blacklist of the Security Framework, you will have to use at least version 1.4.16.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21350","cvss3Severity":"high","cvss3Score":"9.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | non_priority | cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar path to dependency file ready msazure plugin pom xml path to vulnerable library home wss scanner repository thoughtworks xstream xstream jar dependency hierarchy ready api soapui pro jar root library ready api soapui jar x xstream jar vulnerable library found in base branch master vulnerability details xstream is a java library to serialize objects to xml and back again in xstream before version there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream no user is affected who followed the recommendation to setup xstream s security framework with a whitelist limited to the minimal required types if you rely on xstream s default blacklist of the security framework you will have to use at least version publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com thoughtworks xstream xstream isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree com smartbear ready api soapui pro com smartbear ready api soapui com thoughtworks xstream xstream isminimumfixversionavailable true minimumfixversion com thoughtworks xstream xstream basebranches vulnerabilityidentifier cve vulnerabilitydetails xstream is a java library to serialize objects to xml and back again in xstream before version there is a vulnerability which may allow a remote attacker to execute arbitrary code only by manipulating the processed input stream no user is affected who followed the recommendation to setup xstream security framework with a whitelist limited to the minimal required types if you rely on xstream default blacklist of the security framework you will have to use at least version vulnerabilityurl | 0 |

47,451 | 7,327,808,040 | IssuesEvent | 2018-03-04 14:33:06 | nbuchwitz/icingaweb2-module-map | https://api.github.com/repos/nbuchwitz/icingaweb2-module-map | opened | Documentation for marker icons | documentation | Provide documentation for marker icons (`e.g. `vars.map_icon = "print"``) | 1.0 | Documentation for marker icons - Provide documentation for marker icons (`e.g. `vars.map_icon = "print"``) | non_priority | documentation for marker icons provide documentation for marker icons e g vars map icon print | 0 |

55,030 | 6,423,317,571 | IssuesEvent | 2017-08-09 10:41:36 | mautic/mautic | https://api.github.com/repos/mautic/mautic | closed | Custom fields value in detail of contact | Bug Ready To Test | What type of report is this:

| Q | A

| ---| ---

| Bug report? | Y

| Feature request? | N

| Enhancement? | N

## Description:

I create new custon fields type boolean. When I edit this coustom fieltds in detail of contacts, it don't save and I must set and save it more times.

Video of this problem is here: https://www.youtube.com/watch?v=O3EJkf8Mdwo&feature=youtu.be

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.2.1

| PHP version | PHP Version 7.0.10-1~dotdeb+8.1

### Steps to reproduce:

In Video

### Log errors:

No error in logs. | 1.0 | Custom fields value in detail of contact - What type of report is this:

| Q | A

| ---| ---

| Bug report? | Y

| Feature request? | N

| Enhancement? | N

## Description:

I create new custon fields type boolean. When I edit this coustom fieltds in detail of contacts, it don't save and I must set and save it more times.

Video of this problem is here: https://www.youtube.com/watch?v=O3EJkf8Mdwo&feature=youtu.be

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.2.1

| PHP version | PHP Version 7.0.10-1~dotdeb+8.1

### Steps to reproduce:

In Video

### Log errors:

No error in logs. | non_priority | custom fields value in detail of contact what type of report is this q a bug report y feature request n enhancement n description i create new custon fields type boolean when i edit this coustom fieltds in detail of contacts it don t save and i must set and save it more times video of this problem is here if a bug q a mautic version php version php version dotdeb steps to reproduce in video log errors no error in logs | 0 |

55,986 | 23,657,693,405 | IssuesEvent | 2022-08-26 12:52:05 | microsoft/SynapseML | https://api.github.com/repos/microsoft/SynapseML | closed | HealthCareSDK Returns NullPointerException on Synapse Spark | bug awaiting response area/cognitive-service | **Describe the bug**

When using the HealthCareSDK class in SynapseML, I get a NullPointerException when running on a dataset of 1,000+ rows.

**To Reproduce**

On a medium amount of data (1,000+ rows) with StringType field between 250 and 4,000 characters long, execute the following code:

```

%%configure -f

{

"name": "nerHealthExtract",

"conf": {

"spark.jars.packages": "com.microsoft.azure:synapseml_2.12:0.9.5-13-d1b51517-SNAPSHOT",

"spark.jars.repositories": "https://mmlspark.azureedge.net/maven",

"spark.jars.excludes": "org.scala-lang:scala-reflect,org.apache.spark:spark-tags_2.12,org.scalactic:scalactic_2.12,org.scalatest:scalatest_2.12",

"spark.yarn.user.classpath.first": "true"

}

}

df_text_aggregated = spark.read.parquet("path/to/something")

healthcareService = (HealthcareSDK()

.setSubscriptionKey("API_KEY")

.setLocation("centralus")

.setErrorCol("nerHealthError")

.setLanguage("en")

.setOutputCol("nerHealthOutput"))

df_ner = healthcareService.transform(df_text_aggregated)

df_ner.cache()

df_ner.write.mode("overwrite").parquet("path/to/somewhere/else")

```

During the write, I receive the StackTrace below.

**Expected behavior**

I would expect to receive the healthcare output across all rows and NOT a NullPointerException.

**Info (please complete the following information):**

- SynapseML Version: com.microsoft.azure:synapseml_2.12:0.9.5-13-d1b51517-SNAPSHOT

- Spark Version 3.1

- Spark Platform Synapse Spark

**Stacktrace**

```

Error: An error occurred while calling o1115.parquet.

: org.apache.spark.SparkException: Job aborted.

at org.apache.spark.sql.execution.datasources.FileFormatWriter$.write(FileFormatWriter.scala:231)

at org.apache.spark.sql.execution.datasources.InsertIntoHadoopFsRelationCommand.run(InsertIntoHadoopFsRelationCommand.scala:188)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.sideEffectResult$lzycompute(commands.scala:108)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.sideEffectResult(commands.scala:106)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.doExecute(commands.scala:131)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$execute$1(SparkPlan.scala:218)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$executeQuery$1(SparkPlan.scala:256)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.sql.execution.SparkPlan.executeQuery(SparkPlan.scala:253)

at org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:214)

at org.apache.spark.sql.execution.QueryExecution.toRdd$lzycompute(QueryExecution.scala:148)

at org.apache.spark.sql.execution.QueryExecution.toRdd(QueryExecution.scala:147)

at org.apache.spark.sql.DataFrameWriter.$anonfun$runCommand$1(DataFrameWriter.scala:995)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:107)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:181)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:94)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:68)

at org.apache.spark.sql.DataFrameWriter.runCommand(DataFrameWriter.scala:995)

at org.apache.spark.sql.DataFrameWriter.saveToV1Source(DataFrameWriter.scala:444)

at org.apache.spark.sql.DataFrameWriter.saveInternal(DataFrameWriter.scala:416)

at org.apache.spark.sql.DataFrameWriter.save(DataFrameWriter.scala:294)

at org.apache.spark.sql.DataFrameWriter.parquet(DataFrameWriter.scala:880)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)

at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357)

at py4j.Gateway.invoke(Gateway.java:282)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.GatewayConnection.run(GatewayConnection.java:238)

at java.lang.Thread.run(Thread.java:748)

Caused by : org.apache.spark.SparkException: Job aborted due to stage failure: Task 33 in stage 76.0 failed 4 times, most recent failure: Lost task 33.3 in stage 76.0 (TID 3877) (vm-89521530 executor 1): java.lang.NullPointerException

at com.azure.ai.textanalytics.implementation.Utility.toRecognizeHealthcareEntitiesResults(Utility.java:510)

at com.azure.ai.textanalytics.AnalyzeHealthcareEntityAsyncClient.toTextAnalyticsPagedResponse(AnalyzeHealthcareEntityAsyncClient.java:179)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onNext(FluxMapFuseable.java:113)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onSubscribe(MonoFlatMap.java:238)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.set(Operators.java:2194)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.onSubscribe(Operators.java:2068)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.signalCached(MonoCacheTime.java:337)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.onNext(MonoCacheTime.java:354)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onSubscribe(MonoFlatMap.java:110)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoCacheTime.subscribeOrReturn(MonoCacheTime.java:143)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:57)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.onNext(FluxContextWrite.java:107)

at reactor.core.publisher.FluxDoOnEach$DoOnEachSubscriber.onNext(FluxDoOnEach.java:173)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.FluxMap$MapSubscriber.onNext(FluxMap.java:120)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.SerializedSubscriber.onNext(SerializedSubscriber.java:99)

at reactor.core.publisher.FluxRetryWhen$RetryWhenMainSubscriber.onNext(FluxRetryWhen.java:174)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoInnerProducerBase.complete(Operators.java:2664)

at reactor.core.publisher.MonoSingle$SingleSubscriber.onComplete(MonoSingle.java:180)

at reactor.core.publisher.MonoFlatMapMany$FlatMapManyInner.onComplete(MonoFlatMapMany.java:260)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.FluxDoFinally$DoFinallySubscriber.onComplete(FluxDoFinally.java:145)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1817)

at reactor.core.publisher.MonoCollect$CollectSubscriber.onComplete(MonoCollect.java:159)

at reactor.core.publisher.FluxHandle$HandleSubscriber.onComplete(FluxHandle.java:213)

at reactor.core.publisher.FluxMap$MapConditionalSubscriber.onComplete(FluxMap.java:269)

at reactor.netty.channel.FluxReceive.onInboundComplete(FluxReceive.java:400)

at reactor.netty.channel.ChannelOperations.onInboundComplete(ChannelOperations.java:419)

at reactor.netty.channel.ChannelOperations.terminate(ChannelOperations.java:473)

at reactor.netty.http.client.HttpClientOperations.onInboundNext(HttpClientOperations.java:684)

at reactor.netty.channel.ChannelOperationsHandler.channelRead(ChannelOperationsHandler.java:93)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.CombinedChannelDuplexHandler$DelegatingChannelHandlerContext.fireChannelRead(CombinedChannelDuplexHandler.java:436)

at io.netty.handler.codec.ByteToMessageDecoder.fireChannelRead(ByteToMessageDecoder.java:324)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:296)

at io.netty.channel.CombinedChannelDuplexHandler.channelRead(CombinedChannelDuplexHandler.java:251)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.handler.ssl.SslHandler.unwrap(SslHandler.java:1372)

at io.netty.handler.ssl.SslHandler.decodeJdkCompatible(SslHandler.java:1235)

at io.netty.handler.ssl.SslHandler.decode(SslHandler.java:1284)

at io.netty.handler.codec.ByteToMessageDecoder.decodeRemovalReentryProtection(ByteToMessageDecoder.java:507)

at io.netty.handler.codec.ByteToMessageDecoder.callDecode(ByteToMessageDecoder.java:446)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:276)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.DefaultChannelPipeline$HeadContext.channelRead(DefaultChannelPipeline.java:1410)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.DefaultChannelPipeline.fireChannelRead(DefaultChannelPipeline.java:919)

at io.netty.channel.epoll.AbstractEpollStreamChannel$EpollStreamUnsafe.epollInReady(AbstractEpollStreamChannel.java:795)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:480)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:378)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748) Suppressed: java.lang.Exception: #block terminated with an error

at reactor.core.publisher.BlockingSingleSubscriber.blockingGet(BlockingSingleSubscriber.java:99)

at reactor.core.publisher.Flux.blockLast(Flux.java:2644)

at com.azure.core.util.paging.ContinuablePagedByIteratorBase.requestPage(ContinuablePagedByIteratorBase.java:94)

at com.azure.core.util.paging.ContinuablePagedByItemIterable$ContinuablePagedByItemIterator.<init>(ContinuablePagedByItemIterable.java:50)

at com.azure.core.util.paging.ContinuablePagedByItemIterable.iterator(ContinuablePagedByItemIterable.java:37)

at com.azure.core.util.paging.ContinuablePagedIterable.iterator(ContinuablePagedIterable.java:106)

at scala.collection.convert.Wrappers$JIterableWrapper.iterator(Wrappers.scala:55)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at scala.collection.TraversableLike.flatMap(TraversableLike.scala:245)

at scala.collection.TraversableLike.flatMap$(TraversableLike.scala:242)

at scala.collection.AbstractTraversable.flatMap(Traversable.scala:108)

at com.microsoft.azure.synapse.ml.cognitive.HealthcareSDK.invokeTextAnalytics(TextAnalyticsSDK.scala:339)

at com.microsoft.azure.synapse.ml.cognitive.TextAnalyticsSDKBase.$anonfun$transformTextRows$4(TextAnalyticsSDK.scala:128)

at scala.concurrent.Future$.$anonfun$apply$1(Future.scala:659)

at scala.util.Success.$anonfun$map$1(Try.scala:255)

at scala.util.Success.map(Try.scala:213)

at scala.concurrent.Future.$anonfun$map$1(Future.scala:292)

at scala.concurrent.impl.Promise.liftedTree1$1(Promise.scala:33)

at scala.concurrent.impl.Promise.$anonfun$transform$1(Promise.scala:33)

at scala.concurrent.impl.CallbackRunnable.run(Promise.scala:64)

at java.util.concurrent.ForkJoinTask$RunnableExecuteAction.exec(ForkJoinTask.java:1402)

at java.util.concurrent.ForkJoinTask.doExec(ForkJoinTask.java:289)

at java.util.concurrent.ForkJoinPool$WorkQueue.runTask(ForkJoinPool.java:1056)

at java.util.concurrent.ForkJoinPool.runWorker(ForkJoinPool.java:1692)

at java.util.concurrent.ForkJoinWorkerThread.run(ForkJoinWorkerThread.java:175) Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.failJobAndIndependentStages(DAGScheduler.scala:2263)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2(DAGScheduler.scala:2212)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2$adapted(DAGScheduler.scala:2211)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:2211)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1(DAGScheduler.scala:1082)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1$adapted(DAGScheduler.scala:1082)

at scala.Option.foreach(Option.scala:407)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:1082)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2450)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2392)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2381)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:869)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2282)

at org.apache.spark.sql.execution.datasources.FileFormatWriter$.write(FileFormatWriter.scala:200) ... 33 more

Caused by : java.lang.NullPointerException

at com.azure.ai.textanalytics.implementation.Utility.toRecognizeHealthcareEntitiesResults(Utility.java:510)

at com.azure.ai.textanalytics.AnalyzeHealthcareEntityAsyncClient.toTextAnalyticsPagedResponse(AnalyzeHealthcareEntityAsyncClient.java:179)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onNext(FluxMapFuseable.java:113)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onSubscribe(MonoFlatMap.java:238)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.set(Operators.java:2194)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.onSubscribe(Operators.java:2068)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.signalCached(MonoCacheTime.java:337)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.onNext(MonoCacheTime.java:354)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onSubscribe(MonoFlatMap.java:110)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoCacheTime.subscribeOrReturn(MonoCacheTime.java:143)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:57)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.onNext(FluxContextWrite.java:107)

at reactor.core.publisher.FluxDoOnEach$DoOnEachSubscriber.onNext(FluxDoOnEach.java:173)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.FluxMap$MapSubscriber.onNext(FluxMap.java:120)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.SerializedSubscriber.onNext(SerializedSubscriber.java:99)

at reactor.core.publisher.FluxRetryWhen$RetryWhenMainSubscriber.onNext(FluxRetryWhen.java:174)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoInnerProducerBase.complete(Operators.java:2664)

at reactor.core.publisher.MonoSingle$SingleSubscriber.onComplete(MonoSingle.java:180)

at reactor.core.publisher.MonoFlatMapMany$FlatMapManyInner.onComplete(MonoFlatMapMany.java:260)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.FluxDoFinally$DoFinallySubscriber.onComplete(FluxDoFinally.java:145)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1817)

at reactor.core.publisher.MonoCollect$CollectSubscriber.onComplete(MonoCollect.java:159)

at reactor.core.publisher.FluxHandle$HandleSubscriber.onComplete(FluxHandle.java:213)

at reactor.core.publisher.FluxMap$MapConditionalSubscriber.onComplete(FluxMap.java:269)

at reactor.netty.channel.FluxReceive.onInboundComplete(FluxReceive.java:400)

at reactor.netty.channel.ChannelOperations.onInboundComplete(ChannelOperations.java:419)

at reactor.netty.channel.ChannelOperations.terminate(ChannelOperations.java:473)

at reactor.netty.http.client.HttpClientOperations.onInboundNext(HttpClientOperations.java:684)

at reactor.netty.channel.ChannelOperationsHandler.channelRead(ChannelOperationsHandler.java:93)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.CombinedChannelDuplexHandler$DelegatingChannelHandlerContext.fireChannelRead(CombinedChannelDuplexHandler.java:436)

at io.netty.handler.codec.ByteToMessageDecoder.fireChannelRead(ByteToMessageDecoder.java:324)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:296)

at io.netty.channel.CombinedChannelDuplexHandler.channelRead(CombinedChannelDuplexHandler.java:251)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.handler.ssl.SslHandler.unwrap(SslHandler.java:1372)

at io.netty.handler.ssl.SslHandler.decodeJdkCompatible(SslHandler.java:1235)

at io.netty.handler.ssl.SslHandler.decode(SslHandler.java:1284)

at io.netty.handler.codec.ByteToMessageDecoder.decodeRemovalReentryProtection(ByteToMessageDecoder.java:507)

at io.netty.handler.codec.ByteToMessageDecoder.callDecode(ByteToMessageDecoder.java:446)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:276)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.DefaultChannelPipeline$HeadContext.channelRead(DefaultChannelPipeline.java:1410)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.DefaultChannelPipeline.fireChannelRead(DefaultChannelPipeline.java:919)

at io.netty.channel.epoll.AbstractEpollStreamChannel$EpollStreamUnsafe.epollInReady(AbstractEpollStreamChannel.java:795)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:480)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:378)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30) ... 1 more Suppressed: java.lang.Exception: #block terminated with an error

at reactor.core.publisher.BlockingSingleSubscriber.blockingGet(BlockingSingleSubscriber.java:99)

at reactor.core.publisher.Flux.blockLast(Flux.java:2644)

at com.azure.core.util.paging.ContinuablePagedByIteratorBase.requestPage(ContinuablePagedByIteratorBase.java:94)

at com.azure.core.util.paging.ContinuablePagedByItemIterable$ContinuablePagedByItemIterator.<init>(ContinuablePagedByItemIterable.java:50)

at com.azure.core.util.paging.ContinuablePagedByItemIterable.iterator(ContinuablePagedByItemIterable.java:37)

at com.azure.core.util.paging.ContinuablePagedIterable.iterator(ContinuablePagedIterable.java:106)

at scala.collection.convert.Wrappers$JIterableWrapper.iterator(Wrappers.scala:55)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at scala.collection.TraversableLike.flatMap(TraversableLike.scala:245)

at scala.collection.TraversableLike.flatMap$(TraversableLike.scala:242)

at scala.collection.AbstractTraversable.flatMap(Traversable.scala:108)

at com.microsoft.azure.synapse.ml.cognitive.HealthcareSDK.invokeTextAnalytics(TextAnalyticsSDK.scala:339)

at com.microsoft.azure.synapse.ml.cognitive.TextAnalyticsSDKBase.$anonfun$transformTextRows$4(TextAnalyticsSDK.scala:128)

at scala.concurrent.Future$.$anonfun$apply$1(Future.scala:659)

at scala.util.Success.$anonfun$map$1(Try.scala:255)

at scala.util.Success.map(Try.scala:213)

at scala.concurrent.Future.$anonfun$map$1(Future.scala:292)

at scala.concurrent.impl.Promise.liftedTree1$1(Promise.scala:33)

at scala.concurrent.impl.Promise.$anonfun$transform$1(Promise.scala:33)

at scala.concurrent.impl.CallbackRunnable.run(Promise.scala:64)

at java.util.concurrent.ForkJoinTask$RunnableExecuteAction.exec(ForkJoinTask.java:1402)

at java.util.concurrent.ForkJoinTask.doExec(ForkJoinTask.java:289)

at java.util.concurrent.ForkJoinPool$WorkQueue.runTask(ForkJoinPool.java:1056)

at java.util.concurrent.ForkJoinPool.runWorker(ForkJoinPool.java:1692)

at java.util.concurrent.ForkJoinWorkerThread.run(ForkJoinWorkerThread.java:175) Traceback (most recent call last): File "/opt/spark/python/lib/pyspark.zip/pyspark/sql/readwriter.py", line 1250, in parquet self._jwrite.parquet(path) File "/home/trusted-service-user/cluster-env/env/lib/python3.8/site-packages/py4j/java_gateway.py", line 1304, in __call__ return_value = get_return_value( File "/opt/spark/python/lib/pyspark.zip/pyspark/sql/utils.py", line 111, in deco return f(*a, **kw) File "/home/trusted-service-user/cluster-env/env/lib/python3.8/site-packages/py4j/protocol.py", line 326, in get_return_value raise Py4JJavaError( py4j.protocol.Py4JJavaError: An error occurred while calling o1115.parquet. : org.apache.spark.SparkException: Job aborted.

at org.apache.spark.sql.execution.datasources.FileFormatWriter$.write(FileFormatWriter.scala:231)

at org.apache.spark.sql.execution.datasources.InsertIntoHadoopFsRelationCommand.run(InsertIntoHadoopFsRelationCommand.scala:188)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.sideEffectResult$lzycompute(commands.scala:108)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.sideEffectResult(commands.scala:106)

at org.apache.spark.sql.execution.command.DataWritingCommandExec.doExecute(commands.scala:131)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$execute$1(SparkPlan.scala:218)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$executeQuery$1(SparkPlan.scala:256)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.sql.execution.SparkPlan.executeQuery(SparkPlan.scala:253)

at org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:214)

at org.apache.spark.sql.execution.QueryExecution.toRdd$lzycompute(QueryExecution.scala:148)

at org.apache.spark.sql.execution.QueryExecution.toRdd(QueryExecution.scala:147)

at org.apache.spark.sql.DataFrameWriter.$anonfun$runCommand$1(DataFrameWriter.scala:995)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:107)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:181)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:94)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:68)

at org.apache.spark.sql.DataFrameWriter.runCommand(DataFrameWriter.scala:995)

at org.apache.spark.sql.DataFrameWriter.saveToV1Source(DataFrameWriter.scala:444)

at org.apache.spark.sql.DataFrameWriter.saveInternal(DataFrameWriter.scala:416)

at org.apache.spark.sql.DataFrameWriter.save(DataFrameWriter.scala:294)

at org.apache.spark.sql.DataFrameWriter.parquet(DataFrameWriter.scala:880)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)

at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357)

at py4j.Gateway.invoke(Gateway.java:282)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.GatewayConnection.run(GatewayConnection.java:238)

at java.lang.Thread.run(Thread.java:748)

Caused by : org.apache.spark.SparkException: Job aborted due to stage failure: Task 33 in stage 76.0 failed 4 times, most recent failure: Lost task 33.3 in stage 76.0 (TID 3877) (vm-89521530 executor 1): java.lang.NullPointerException

at com.azure.ai.textanalytics.implementation.Utility.toRecognizeHealthcareEntitiesResults(Utility.java:510)

at com.azure.ai.textanalytics.AnalyzeHealthcareEntityAsyncClient.toTextAnalyticsPagedResponse(AnalyzeHealthcareEntityAsyncClient.java:179)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onNext(FluxMapFuseable.java:113)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onNext(MonoFlatMap.java:249)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapInner.onSubscribe(MonoFlatMap.java:238)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxSwitchIfEmpty$SwitchIfEmptySubscriber.onNext(FluxSwitchIfEmpty.java:74)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.set(Operators.java:2194)

at reactor.core.publisher.Operators$MultiSubscriptionSubscriber.onSubscribe(Operators.java:2068)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.signalCached(MonoCacheTime.java:337)

at reactor.core.publisher.MonoCacheTime$CoordinatorSubscriber.onNext(MonoCacheTime.java:354)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.Operators$ScalarSubscription.request(Operators.java:2398)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onSubscribe(MonoFlatMap.java:110)

at reactor.core.publisher.MonoJust.subscribe(MonoJust.java:55)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:64)

at reactor.core.publisher.MonoDefer.subscribe(MonoDefer.java:52)

at reactor.core.publisher.MonoCacheTime.subscribeOrReturn(MonoCacheTime.java:143)

at reactor.core.publisher.InternalMonoOperator.subscribe(InternalMonoOperator.java:57)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:157)

at reactor.core.publisher.FluxContextWrite$ContextWriteSubscriber.onNext(FluxContextWrite.java:107)

at reactor.core.publisher.FluxDoOnEach$DoOnEachSubscriber.onNext(FluxDoOnEach.java:173)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.FluxMap$MapSubscriber.onNext(FluxMap.java:120)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1816)

at reactor.core.publisher.MonoFlatMap$FlatMapMain.onNext(MonoFlatMap.java:151)

at reactor.core.publisher.SerializedSubscriber.onNext(SerializedSubscriber.java:99)

at reactor.core.publisher.FluxRetryWhen$RetryWhenMainSubscriber.onNext(FluxRetryWhen.java:174)

at reactor.core.publisher.FluxOnErrorResume$ResumeSubscriber.onNext(FluxOnErrorResume.java:79)

at reactor.core.publisher.Operators$MonoInnerProducerBase.complete(Operators.java:2664)

at reactor.core.publisher.MonoSingle$SingleSubscriber.onComplete(MonoSingle.java:180)

at reactor.core.publisher.MonoFlatMapMany$FlatMapManyInner.onComplete(MonoFlatMapMany.java:260)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.FluxDoFinally$DoFinallySubscriber.onComplete(FluxDoFinally.java:145)

at reactor.core.publisher.FluxMapFuseable$MapFuseableSubscriber.onComplete(FluxMapFuseable.java:150)

at reactor.core.publisher.Operators$MonoSubscriber.complete(Operators.java:1817)

at reactor.core.publisher.MonoCollect$CollectSubscriber.onComplete(MonoCollect.java:159)

at reactor.core.publisher.FluxHandle$HandleSubscriber.onComplete(FluxHandle.java:213)

at reactor.core.publisher.FluxMap$MapConditionalSubscriber.onComplete(FluxMap.java:269)

at reactor.netty.channel.FluxReceive.onInboundComplete(FluxReceive.java:400)

at reactor.netty.channel.ChannelOperations.onInboundComplete(ChannelOperations.java:419)

at reactor.netty.channel.ChannelOperations.terminate(ChannelOperations.java:473)

at reactor.netty.http.client.HttpClientOperations.onInboundNext(HttpClientOperations.java:684)

at reactor.netty.channel.ChannelOperationsHandler.channelRead(ChannelOperationsHandler.java:93)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.CombinedChannelDuplexHandler$DelegatingChannelHandlerContext.fireChannelRead(CombinedChannelDuplexHandler.java:436)

at io.netty.handler.codec.ByteToMessageDecoder.fireChannelRead(ByteToMessageDecoder.java:324)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:296)

at io.netty.channel.CombinedChannelDuplexHandler.channelRead(CombinedChannelDuplexHandler.java:251)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.handler.ssl.SslHandler.unwrap(SslHandler.java:1372)

at io.netty.handler.ssl.SslHandler.decodeJdkCompatible(SslHandler.java:1235)

at io.netty.handler.ssl.SslHandler.decode(SslHandler.java:1284)

at io.netty.handler.codec.ByteToMessageDecoder.decodeRemovalReentryProtection(ByteToMessageDecoder.java:507)

at io.netty.handler.codec.ByteToMessageDecoder.callDecode(ByteToMessageDecoder.java:446)

at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:276)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:357)

at io.netty.channel.DefaultChannelPipeline$HeadContext.channelRead(DefaultChannelPipeline.java:1410)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:379)

at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:365)

at io.netty.channel.DefaultChannelPipeline.fireChannelRead(DefaultChannelPipeline.java:919)

at io.netty.channel.epoll.AbstractEpollStreamChannel$EpollStreamUnsafe.epollInReady(AbstractEpollStreamChannel.java:795)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:480)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:378)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748) Suppressed: java.lang.Exception: #block terminated with an error

at reactor.core.publisher.BlockingSingleSubscriber.blockingGet(BlockingSingleSubscriber.java:99)

at reactor.core.publisher.Flux.blockLast(Flux.java:2644)

at com.azure.core.util.paging.ContinuablePagedByIteratorBase.requestPage(ContinuablePagedByIteratorBase.java:94)

at com.azure.core.util.paging.ContinuablePagedByItemIterable$ContinuablePagedByItemIterator.<init>(ContinuablePagedByItemIterable.java:50)

at com.azure.core.util.paging.ContinuablePagedByItemIterable.iterator(ContinuablePagedByItemIterable.java:37)

at com.azure.core.util.paging.ContinuablePagedIterable.iterator(ContinuablePagedIterable.java:106)