Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

310,771 | 26,741,904,856 | IssuesEvent | 2023-01-30 13:33:35 | hotosm/fAIr | https://api.github.com/repos/hotosm/fAIr | closed | Create API endpoint to gather the folder structure of training along with its no of files | component ; backend status : testing Feature Request | - Info included on #47 | 1.0 | Create API endpoint to gather the folder structure of training along with its no of files - - Info included on #47 | non_priority | create api endpoint to gather the folder structure of training along with its no of files info included on | 0 |

62,850 | 26,186,576,280 | IssuesEvent | 2023-01-03 01:46:16 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Cannot create App plan in sub A for ASEv3 in Sub B | Service Attention App Services app-service-ase | ### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az appservice plan create`

**Errors:**

```

App service environment 'subscriptions/<subB>/resourceGroups/ase-rg/providers/Microsoft.Web/hostingEnvironments/begimase' not found in subscription.

```

## To Reproduce:

Steps to reproduce the behavior. Note that argument values have been redacted, as they may contain sensitive information.

- _Put any pre-requisite steps here..._

- `az appservice plan create --name {} --resource-group {} --app-service-environment {} --location {} --number-of-workers {} --sku {}`

## Expected Behavior

## Environment Summary

```

Windows-10-10.0.22000-SP0

Python 3.8.9

Installer: MSI

azure-cli 2.29.0

Extensions:

aks-preview 0.5.34

```

## Additional Context

<!--Please don't remove this:-->

<!--auto-generated-->

| 3.0 | Cannot create App plan in sub A for ASEv3 in Sub B - ### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az appservice plan create`

**Errors:**

```

App service environment 'subscriptions/<subB>/resourceGroups/ase-rg/providers/Microsoft.Web/hostingEnvironments/begimase' not found in subscription.

```

## To Reproduce:

Steps to reproduce the behavior. Note that argument values have been redacted, as they may contain sensitive information.

- _Put any pre-requisite steps here..._

- `az appservice plan create --name {} --resource-group {} --app-service-environment {} --location {} --number-of-workers {} --sku {}`

## Expected Behavior

## Environment Summary

```

Windows-10-10.0.22000-SP0

Python 3.8.9

Installer: MSI

azure-cli 2.29.0

Extensions:

aks-preview 0.5.34

```

## Additional Context

<!--Please don't remove this:-->

<!--auto-generated-->

| non_priority | cannot create app plan in sub a for in sub b this is autogenerated please review and update as needed describe the bug command name az appservice plan create errors app service environment subscriptions resourcegroups ase rg providers microsoft web hostingenvironments begimase not found in subscription to reproduce steps to reproduce the behavior note that argument values have been redacted as they may contain sensitive information put any pre requisite steps here az appservice plan create name resource group app service environment location number of workers sku expected behavior environment summary windows python installer msi azure cli extensions aks preview additional context | 0 |

316,205 | 23,619,439,177 | IssuesEvent | 2022-08-24 19:00:27 | tidymodels/probably | https://api.github.com/repos/tidymodels/probably | closed | Update vignette for recent releases of probably and yardstick | documentation | The [`where-to-use` vignette](https://github.com/tidymodels/probably/blob/master/vignettes/where-to-use.Rmd) is now a bit out-of-date in how it discusses probably and yardstick working together and needs to be updated, both in code and narrative.

For example, comments like this:

https://github.com/tidymodels/probably/blob/98fbe4acd5a75c16a2ef5b1aa1303e904484fb1f/vignettes/where-to-use.Rmd#L137-L138 | 1.0 | Update vignette for recent releases of probably and yardstick - The [`where-to-use` vignette](https://github.com/tidymodels/probably/blob/master/vignettes/where-to-use.Rmd) is now a bit out-of-date in how it discusses probably and yardstick working together and needs to be updated, both in code and narrative.

For example, comments like this:

https://github.com/tidymodels/probably/blob/98fbe4acd5a75c16a2ef5b1aa1303e904484fb1f/vignettes/where-to-use.Rmd#L137-L138 | non_priority | update vignette for recent releases of probably and yardstick the is now a bit out of date in how it discusses probably and yardstick working together and needs to be updated both in code and narrative for example comments like this | 0 |

253,209 | 19,095,478,014 | IssuesEvent | 2021-11-29 16:15:12 | nss-day-cohort-50/bangazon-api-cameronpwhite | https://api.github.com/repos/nss-day-cohort-50/bangazon-api-cameronpwhite | opened | TEST: Delete payment type | documentation enhancement | Add a test to the `tests/payment.py` module that verifies that a payment type can be deleted.

| 1.0 | TEST: Delete payment type - Add a test to the `tests/payment.py` module that verifies that a payment type can be deleted.

| non_priority | test delete payment type add a test to the tests payment py module that verifies that a payment type can be deleted | 0 |

139,861 | 31,799,311,067 | IssuesEvent | 2023-09-13 10:02:01 | arduino/arduino-cli | https://api.github.com/repos/arduino/arduino-cli | opened | Log `Required tool` with `debug` severity instead of `info` | type: enhancement topic: code | ### Describe the request

Please relax the daemon logger and print the `Required tool` with the `debug` level.

Currently, I have the `info` level set in the CLI config, and when the LS is running, touching the editor once triggers so many tools log messages—then formatting the code once more. Can you relax and print these messages with a `debug` severity?

### Describe the current behavior

Currently, it's with `info` level.

https://github.com/arduino/arduino-cli/assets/1405703/74b90b75-a4f6-4bb0-8ff4-e805d9566630

### Arduino CLI version

3f5c0eb2

### Operating system

macOS

### Operating system version

13.5.2

### Additional context

_No response_

### Issue checklist

- [X] I searched for previous requests in [the issue tracker](https://github.com/arduino/arduino-cli/issues?q=)

- [X] I verified the feature was still missing when using the [nightly build](https://arduino.github.io/arduino-cli/dev/installation/#nightly-builds)

- [X] My request contains all necessary details | 1.0 | Log `Required tool` with `debug` severity instead of `info` - ### Describe the request

Please relax the daemon logger and print the `Required tool` with the `debug` level.

Currently, I have the `info` level set in the CLI config, and when the LS is running, touching the editor once triggers so many tools log messages—then formatting the code once more. Can you relax and print these messages with a `debug` severity?

### Describe the current behavior

Currently, it's with `info` level.

https://github.com/arduino/arduino-cli/assets/1405703/74b90b75-a4f6-4bb0-8ff4-e805d9566630

### Arduino CLI version

3f5c0eb2

### Operating system

macOS

### Operating system version

13.5.2

### Additional context

_No response_

### Issue checklist

- [X] I searched for previous requests in [the issue tracker](https://github.com/arduino/arduino-cli/issues?q=)

- [X] I verified the feature was still missing when using the [nightly build](https://arduino.github.io/arduino-cli/dev/installation/#nightly-builds)

- [X] My request contains all necessary details | non_priority | log required tool with debug severity instead of info describe the request please relax the daemon logger and print the required tool with the debug level currently i have the info level set in the cli config and when the ls is running touching the editor once triggers so many tools log messages—then formatting the code once more can you relax and print these messages with a debug severity describe the current behavior currently it s with info level arduino cli version operating system macos operating system version additional context no response issue checklist i searched for previous requests in i verified the feature was still missing when using the my request contains all necessary details | 0 |

313,000 | 26,893,974,935 | IssuesEvent | 2023-02-06 10:59:47 | TestIntegrations/TestForwarding | https://api.github.com/repos/TestIntegrations/TestForwarding | opened | Hav | forwardeddddddTest ddw | # :clipboard: Bug Details

>Hav

key | value

--|--

Reported At | 2023-02-06 10:59:31 UTC

Email | shussein@instabug.com

Categories | Report a bug, sssss, jj

Tags | forwardeddddddTest, ddw

App Version | 1.0 (4)

Session Duration | 77479

Device | Simulator, iOS 15.5

Display | 414x736 (@3x)

## :point_right: [View Full Bug Report on Instabug](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?utm_source=github&utm_medium=integrations) :point_left:

___

# :chart_with_downwards_trend: Session Profiler

Here is what the app was doing right before the bug was reported:

Key | Value

--|--

CPU Load | 13.1%

Used Memory | 100.0% - 0.36/0.36 GB

Used Storage | 96.8% - 225.93/233.47 GB

Connectivity | WiFi

Battery | 100% - unplugged

Orientation | portrait

Find all the changes that happened in the parameters mentioned above during the last 60 seconds before the bug was reported here: :point_right: **[View Full Session Profiler](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?show-session-profiler=true&utm_source=github&utm_medium=integrations)** :point_left:

___

# :bust_in_silhouette: User Info

### User Attributes

```

Age: 18

Logged in: True

```

___

# :mag_right: Logs

### User Steps

Here are the last 10 steps done by the user right before the bug was reported:

```

10:59:23 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:35:39 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:30:30 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:28:56 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:28:04 Top View: ViewController

13:28:04 Application: DidBecomeActive

13:28:04 Application: SceneDidActivate

13:28:04 Application: WillEnterForeground

13:28:04 Application: SceneWillConnect

```

Find all the user steps done by the user throughout the session here: :point_right: **[View All User Steps](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?show-logs=user_steps&utm_source=github&utm_medium=integrations)** :point_left:

___

# :camera: Images

[](https://d38gnqwzxziyyy.cloudfront.net/attachments/bugs/19578560/e6ee4debf29c65fe31bb93c49a043fb2_original/28794530/2023020612592774539400.jpg?Expires=4831354786&Signature=XPp416wl7gu3SXpzqwCo0G~zXB06BWt0O2aF3A3lr6LVte92EFPNKkjO8S9UBe6Xgy86ILVtJIQXy3nGJKDDXqF-6DMq7fUUuPhxQjoOFr5ogvCb-P17kADgla9y7LUumwxhc2dZL1QE2FtTIPqQtfzHObNreA4FBEPx5aLZkZWhuYQeQ0A2xhWJoc787vlu9-1kZF39EKt0MYd8olr4Ih~4pMPaXV~KjxemnA9Z6AZdWqOAH76LKS6XAejtow5gaVknJEv2n4xwytp9j~qzwSQx-sK~6U~hSfxH1c9bdxHI7W9qAcmZNaWizW3AFk4~6EPdon3W~OFU36jcNBSb6w__&Key-Pair-Id=APKAIXAG65U6UUX7JAQQ)

___

# :warning: Looking for More Details?

1. **Network Log**: we are unable to capture your network requests automatically. If you are using AFNetworking or Alamofire, [**check the details mentioned here**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations#section-requests-not-appearing-in-logs).

2. **User Events**: start capturing custom User Events to send them along with each report. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations).

3. **Instabug Log**: start adding Instabug logs to see them right inside each report you receive. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations).

4. **Console Log**: when enabled you will see them right inside each report you receive. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations). | 1.0 | Hav - # :clipboard: Bug Details

>Hav

key | value

--|--

Reported At | 2023-02-06 10:59:31 UTC

Email | shussein@instabug.com

Categories | Report a bug, sssss, jj

Tags | forwardeddddddTest, ddw

App Version | 1.0 (4)

Session Duration | 77479

Device | Simulator, iOS 15.5

Display | 414x736 (@3x)

## :point_right: [View Full Bug Report on Instabug](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?utm_source=github&utm_medium=integrations) :point_left:

___

# :chart_with_downwards_trend: Session Profiler

Here is what the app was doing right before the bug was reported:

Key | Value

--|--

CPU Load | 13.1%

Used Memory | 100.0% - 0.36/0.36 GB

Used Storage | 96.8% - 225.93/233.47 GB

Connectivity | WiFi

Battery | 100% - unplugged

Orientation | portrait

Find all the changes that happened in the parameters mentioned above during the last 60 seconds before the bug was reported here: :point_right: **[View Full Session Profiler](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?show-session-profiler=true&utm_source=github&utm_medium=integrations)** :point_left:

___

# :bust_in_silhouette: User Info

### User Attributes

```

Age: 18

Logged in: True

```

___

# :mag_right: Logs

### User Steps

Here are the last 10 steps done by the user right before the bug was reported:

```

10:59:23 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:35:39 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:30:30 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:28:56 Tap in Floating Button of type IBGInvocationFloatingView in ViewController

13:28:04 Top View: ViewController

13:28:04 Application: DidBecomeActive

13:28:04 Application: SceneDidActivate

13:28:04 Application: WillEnterForeground

13:28:04 Application: SceneWillConnect

```

Find all the user steps done by the user throughout the session here: :point_right: **[View All User Steps](https://dashboard.instabug.com/applications/birdy-demo-app/beta/bugs/9301?show-logs=user_steps&utm_source=github&utm_medium=integrations)** :point_left:

___

# :camera: Images

[](https://d38gnqwzxziyyy.cloudfront.net/attachments/bugs/19578560/e6ee4debf29c65fe31bb93c49a043fb2_original/28794530/2023020612592774539400.jpg?Expires=4831354786&Signature=XPp416wl7gu3SXpzqwCo0G~zXB06BWt0O2aF3A3lr6LVte92EFPNKkjO8S9UBe6Xgy86ILVtJIQXy3nGJKDDXqF-6DMq7fUUuPhxQjoOFr5ogvCb-P17kADgla9y7LUumwxhc2dZL1QE2FtTIPqQtfzHObNreA4FBEPx5aLZkZWhuYQeQ0A2xhWJoc787vlu9-1kZF39EKt0MYd8olr4Ih~4pMPaXV~KjxemnA9Z6AZdWqOAH76LKS6XAejtow5gaVknJEv2n4xwytp9j~qzwSQx-sK~6U~hSfxH1c9bdxHI7W9qAcmZNaWizW3AFk4~6EPdon3W~OFU36jcNBSb6w__&Key-Pair-Id=APKAIXAG65U6UUX7JAQQ)

___

# :warning: Looking for More Details?

1. **Network Log**: we are unable to capture your network requests automatically. If you are using AFNetworking or Alamofire, [**check the details mentioned here**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations#section-requests-not-appearing-in-logs).

2. **User Events**: start capturing custom User Events to send them along with each report. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations).

3. **Instabug Log**: start adding Instabug logs to see them right inside each report you receive. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations).

4. **Console Log**: when enabled you will see them right inside each report you receive. [**Find all the details in the docs**](https://docs.instabug.com/docs/ios-logging?utm_source=github&utm_medium=integrations). | non_priority | hav clipboard bug details hav key value reported at utc email shussein instabug com categories report a bug sssss jj tags forwardeddddddtest ddw app version session duration device simulator ios display point right point left chart with downwards trend session profiler here is what the app was doing right before the bug was reported key value cpu load used memory gb used storage gb connectivity wifi battery unplugged orientation portrait find all the changes that happened in the parameters mentioned above during the last seconds before the bug was reported here point right point left bust in silhouette user info user attributes age logged in true mag right logs user steps here are the last steps done by the user right before the bug was reported tap in floating button of type ibginvocationfloatingview in viewcontroller tap in floating button of type ibginvocationfloatingview in viewcontroller tap in floating button of type ibginvocationfloatingview in viewcontroller tap in floating button of type ibginvocationfloatingview in viewcontroller top view viewcontroller application didbecomeactive application scenedidactivate application willenterforeground application scenewillconnect find all the user steps done by the user throughout the session here point right point left camera images warning looking for more details network log we are unable to capture your network requests automatically if you are using afnetworking or alamofire user events start capturing custom user events to send them along with each report instabug log start adding instabug logs to see them right inside each report you receive console log when enabled you will see them right inside each report you receive | 0 |

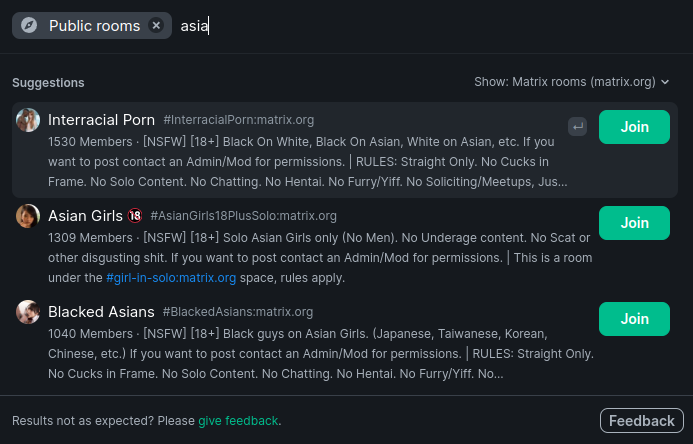

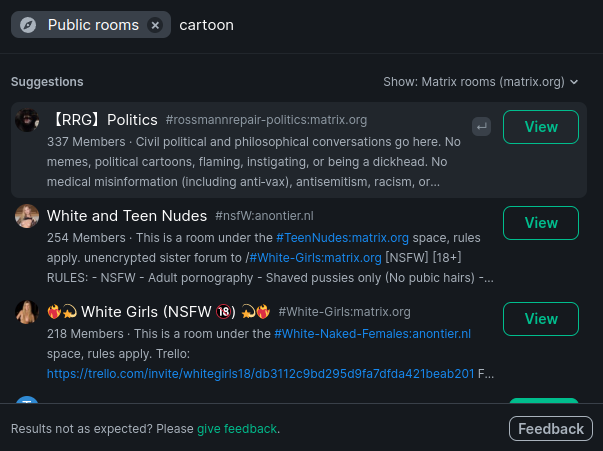

78,411 | 27,511,429,855 | IssuesEvent | 2023-03-06 09:06:25 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Remove Sexual Content from the Room Directory (NSFW) | T-Defect S-Critical O-Frequent A-Safety | ### Your use case

Matrix.org homeserver seems to not have its own issue tracker and my message in Matrix HQ has been ignored so I will report here as that's what the Element app suggests. I ask you to remove sexual content from the public room search as there is a high probability of users discovering such content unintentionally and the content that does get discovered is just a pure PR disaster waiting to happen.

For example I wouldn't want an Asian person to feel objectified the moment they join matrix:

Or a minor to discover sex related rooms the moment they search "cartoon":

Those innocent search queries returning a slew of fetishistic content is utterly inappropriate for a network that strives to be inclusive for all kinds of people. Therefore I propose to ban NSFW content from the public room search on Matrix.org homeserver.

### Have you considered any alternatives?

Offer a toggle that is off by default if it is technically possible

### Additional context

_No response_ | 1.0 | Remove Sexual Content from the Room Directory (NSFW) - ### Your use case

Matrix.org homeserver seems to not have its own issue tracker and my message in Matrix HQ has been ignored so I will report here as that's what the Element app suggests. I ask you to remove sexual content from the public room search as there is a high probability of users discovering such content unintentionally and the content that does get discovered is just a pure PR disaster waiting to happen.

For example I wouldn't want an Asian person to feel objectified the moment they join matrix:

Or a minor to discover sex related rooms the moment they search "cartoon":

Those innocent search queries returning a slew of fetishistic content is utterly inappropriate for a network that strives to be inclusive for all kinds of people. Therefore I propose to ban NSFW content from the public room search on Matrix.org homeserver.

### Have you considered any alternatives?

Offer a toggle that is off by default if it is technically possible

### Additional context

_No response_ | non_priority | remove sexual content from the room directory nsfw your use case matrix org homeserver seems to not have its own issue tracker and my message in matrix hq has been ignored so i will report here as that s what the element app suggests i ask you to remove sexual content from the public room search as there is a high probability of users discovering such content unintentionally and the content that does get discovered is just a pure pr disaster waiting to happen for example i wouldn t want an asian person to feel objectified the moment they join matrix or a minor to discover sex related rooms the moment they search cartoon those innocent search queries returning a slew of fetishistic content is utterly inappropriate for a network that strives to be inclusive for all kinds of people therefore i propose to ban nsfw content from the public room search on matrix org homeserver have you considered any alternatives offer a toggle that is off by default if it is technically possible additional context no response | 0 |

146,354 | 23,051,587,252 | IssuesEvent | 2022-07-24 18:04:44 | penumbra-zone/penumbra | https://api.github.com/repos/penumbra-zone/penumbra | closed | NCT frontier snapshot endpoint for `pd` | A-node A-client C-enhancement E-med C-design A-TCT | **Is your feature request related to a problem? Please describe.**

Motivation is the same as #1128, solution desired is simpler.

If you absolutely know that you don't have any transactions before a certain date (say, you generated your spendkey on that date), it would be nice to not have to sync the entirety of the chain, but rather to start processing at that date.

**Describe the solution you'd like**

`pd` could track every epoch-boundary NCT frontier in sidecar non-consensus storage, and provide a gRPC endpoint to let you request the one for a given epoch, alongside a proof of inclusion of that frontier's root in the JMT.

(some details copied from #1128)

Implementing this would require writing a non-incremental (de)serialization function for solely the frontier of the NCT, into a protobuf representation. Some details about this are discussed in https://github.com/penumbra-zone/penumbra/issues/1082, which I previously closed as low-priority and negligible impact. Because this would not be consensus critical information, we could choose to include or not include cached internal node hashes in the frontier; either choice is fine, and can be altered at will.

On the view-client side, when the view service is started in this checkpointed mode, it should request the checkpoint for the most recent epoch prior to the block desired to start at, and proceed synchronizing from there. In order to prevent leaking information about which height is desired, the client could issue an additional number of random chaff requests to the endpoint, distributed so that the desired request is randomly ordered in the chaff, and cannot be reasonably distinguished in the distribution.

To make this process automatic, we can have key generation store in the custody file the block height at which the keys were generated (check the block height, then generate the keys, to ensure that they only existed before that moment). This way, if you reset your view state, this extra data is present in the custody file and can still be used to resume from that moment in time rather than zero.

**Describe alternatives you've considered**

#1128 is an alternative, but it's not necessary to store this in consensus, as pointed out by @hdevalence.

On the point about automating this via custody, we could instead choose to disable this feature by default and require the user to explicitly opt-in when initializing `pviewd` or `pcli` for the first time.

| 1.0 | NCT frontier snapshot endpoint for `pd` - **Is your feature request related to a problem? Please describe.**

Motivation is the same as #1128, solution desired is simpler.

If you absolutely know that you don't have any transactions before a certain date (say, you generated your spendkey on that date), it would be nice to not have to sync the entirety of the chain, but rather to start processing at that date.

**Describe the solution you'd like**

`pd` could track every epoch-boundary NCT frontier in sidecar non-consensus storage, and provide a gRPC endpoint to let you request the one for a given epoch, alongside a proof of inclusion of that frontier's root in the JMT.

(some details copied from #1128)

Implementing this would require writing a non-incremental (de)serialization function for solely the frontier of the NCT, into a protobuf representation. Some details about this are discussed in https://github.com/penumbra-zone/penumbra/issues/1082, which I previously closed as low-priority and negligible impact. Because this would not be consensus critical information, we could choose to include or not include cached internal node hashes in the frontier; either choice is fine, and can be altered at will.

On the view-client side, when the view service is started in this checkpointed mode, it should request the checkpoint for the most recent epoch prior to the block desired to start at, and proceed synchronizing from there. In order to prevent leaking information about which height is desired, the client could issue an additional number of random chaff requests to the endpoint, distributed so that the desired request is randomly ordered in the chaff, and cannot be reasonably distinguished in the distribution.

To make this process automatic, we can have key generation store in the custody file the block height at which the keys were generated (check the block height, then generate the keys, to ensure that they only existed before that moment). This way, if you reset your view state, this extra data is present in the custody file and can still be used to resume from that moment in time rather than zero.

**Describe alternatives you've considered**

#1128 is an alternative, but it's not necessary to store this in consensus, as pointed out by @hdevalence.

On the point about automating this via custody, we could instead choose to disable this feature by default and require the user to explicitly opt-in when initializing `pviewd` or `pcli` for the first time.

| non_priority | nct frontier snapshot endpoint for pd is your feature request related to a problem please describe motivation is the same as solution desired is simpler if you absolutely know that you don t have any transactions before a certain date say you generated your spendkey on that date it would be nice to not have to sync the entirety of the chain but rather to start processing at that date describe the solution you d like pd could track every epoch boundary nct frontier in sidecar non consensus storage and provide a grpc endpoint to let you request the one for a given epoch alongside a proof of inclusion of that frontier s root in the jmt some details copied from implementing this would require writing a non incremental de serialization function for solely the frontier of the nct into a protobuf representation some details about this are discussed in which i previously closed as low priority and negligible impact because this would not be consensus critical information we could choose to include or not include cached internal node hashes in the frontier either choice is fine and can be altered at will on the view client side when the view service is started in this checkpointed mode it should request the checkpoint for the most recent epoch prior to the block desired to start at and proceed synchronizing from there in order to prevent leaking information about which height is desired the client could issue an additional number of random chaff requests to the endpoint distributed so that the desired request is randomly ordered in the chaff and cannot be reasonably distinguished in the distribution to make this process automatic we can have key generation store in the custody file the block height at which the keys were generated check the block height then generate the keys to ensure that they only existed before that moment this way if you reset your view state this extra data is present in the custody file and can still be used to resume from that moment in time rather than zero describe alternatives you ve considered is an alternative but it s not necessary to store this in consensus as pointed out by hdevalence on the point about automating this via custody we could instead choose to disable this feature by default and require the user to explicitly opt in when initializing pviewd or pcli for the first time | 0 |

297,218 | 25,710,231,151 | IssuesEvent | 2022-12-07 05:47:47 | dotnet/msbuild | https://api.github.com/repos/dotnet/msbuild | opened | DeleteFiles function doesn't delete first file directory when second file is in the subfolder of first file | bug needs-triage test | ### Issue Description

There's a bug in the cleanup logic here. Specifically, it creates the source and dest files, and at the end of the test, it calls Helpers.DeleteFiles(sourceFile, destFile); That method loops through each file and deletes it if it exists, then deletes the directory containing it if it's empty...but when we delete the source file, the directory isn't empty; it has the destination folder/file. When we delete the destination file, its folder just contains the destination file, so we delete that. Afterwards, the source folder never gets deleted. That means we can't write to it.

https://github.com/dotnet/msbuild/blob/c5532da3a3c99817e70d95fe9e07302ba72ee523/src/Shared/UnitTests/ObjectModelHelpers.cs#L1818-L1833 | 1.0 | DeleteFiles function doesn't delete first file directory when second file is in the subfolder of first file - ### Issue Description

There's a bug in the cleanup logic here. Specifically, it creates the source and dest files, and at the end of the test, it calls Helpers.DeleteFiles(sourceFile, destFile); That method loops through each file and deletes it if it exists, then deletes the directory containing it if it's empty...but when we delete the source file, the directory isn't empty; it has the destination folder/file. When we delete the destination file, its folder just contains the destination file, so we delete that. Afterwards, the source folder never gets deleted. That means we can't write to it.

https://github.com/dotnet/msbuild/blob/c5532da3a3c99817e70d95fe9e07302ba72ee523/src/Shared/UnitTests/ObjectModelHelpers.cs#L1818-L1833 | non_priority | deletefiles function doesn t delete first file directory when second file is in the subfolder of first file issue description there s a bug in the cleanup logic here specifically it creates the source and dest files and at the end of the test it calls helpers deletefiles sourcefile destfile that method loops through each file and deletes it if it exists then deletes the directory containing it if it s empty but when we delete the source file the directory isn t empty it has the destination folder file when we delete the destination file its folder just contains the destination file so we delete that afterwards the source folder never gets deleted that means we can t write to it | 0 |

12,594 | 4,506,156,255 | IssuesEvent | 2016-09-02 01:55:58 | Jeremy-Barnes/Critters | https://api.github.com/repos/Jeremy-Barnes/Critters | opened | Server: Personal Messages | Code feature | Create server endpoint to allow users to send a message to other users.

Add new messages to the long polling notification system. | 1.0 | Server: Personal Messages - Create server endpoint to allow users to send a message to other users.

Add new messages to the long polling notification system. | non_priority | server personal messages create server endpoint to allow users to send a message to other users add new messages to the long polling notification system | 0 |

141,458 | 21,524,084,867 | IssuesEvent | 2022-04-28 16:37:32 | dotnet/iot | https://api.github.com/repos/dotnet/iot | closed | [Proposal] Support OpenDrain and OpenCollector options for GPIO PinMode | Design Discussion api-suggestion area-System.Device.Gpio | GPIO `PinMode` currently only supports Input, Output, InputPullDown and InputPullUp. There are other scenarios where outputs are open-drain and open-collector types and the API should accommodate.

## Rationale and Usage

I/O expanders are a good example where they have different types of I/O within a particular family and are differentiated by part numbers for the family. In many cases, a user's hardware will be configured with the basic Input or Output `PinMode` values along with attaching external resistors for pull-up/down functionality. However, you can utilize internal circuitry in binding devices to perform this. For example, the MCP23X09/MCP23X18 devices have open-drain outputs. Therefore, you could update the GPIO Pull-up Resistor Configuration Register (GPPU) when opening pins when using as a controller.

## Example

You could have something similar below based on the [IGpioControllerProvider proposal](https://github.com/dotnet/iot/issues/125).

```csharp

var connectionSettings = new SpiConnectionSettings(0, 0);

var spiDevice = new UnixSpiDevice(connectionSettings);

var mcp23Sxx = new Mcp23Sxx(0, spiDevice);

GpioController mcp23SxxController = mcp23Sxx.GetDefaultGpioController();

// Now when you call the GpioController methods, the master controller will send

// the respective SPI commands to the binding behind the scenes to update its I/O.

//

// The code below would interact with the MCP23XXX related registers (IODIR, GPIO, GPPU, etc.)

// to configure the pin as an output and write the value.

mcp23SxxController.SetPinMode(1, PinMode.OpenDrainPullUp);

mcp23SxxController.Write(1, PinValue.High);

```

## Proposed Change

Update the GPIO `PinMode` to include the new values. Summaries were left out for simplicity.

```csharp

public enum PinMode

{

Input,

Output,

InputPullDown,

InputPullUp,

// The following are the proposed additions.

OutputOpenDrain,

OutputOpenDrainPullUp,

OutputOpenCollector,

OutputOpenCollectorPullDown

}

```

I have not checked throughout API, but will also need to update logic that checks if PinMode is of specific type. For example, the GpioController.Write methods verify the mode is equal to Output before writing.

```csharp

// From GpioController.cs file.

if (_driver.GetPinMode(pinNumber) != PinMode.Output)

{

throw new InvalidOperationException("Can not write to a pin that is not set to Output mode.");

}

```

## Open Questions

1. [Windows.Devices.Gpio.GpioPinDriveMode](https://docs.microsoft.com/en-us/uwp/api/windows.devices.gpio.gpiopindrivemode) is similar to `System.Device.Gpio.PinMode`. It already includes current `PinMode` values and the values being proposed. However, the names are slightly different for the open-collector types. The question is should we stay consistent to Windows.Devices.Gpio (OutputOpenSource and OutputOpenSourcePullDown) or use OutputOpenCollector and OutputOpenCollectorPullDown? I've mainly seen references to open-collector and not open-source while researching related content. I'm not sure what the best approach would be in this case and would be content either way. Open-source mentioned here during [this Libgpiod session](https://youtu.be/cdTLewJCL1Y?list=PLbzoR-pLrL6pISWAq-1cXP4_UZAyRtesk&t=14m).

## References

https://blog.digilentinc.com/open-collector-vs-open-drain/

**Section 1.5**: http://ww1.microchip.com/downloads/en/DeviceDoc/20002121C.pdf

https://docs.microsoft.com/en-us/uwp/api/windows.devices.gpio.gpiopindrivemode

| 1.0 | [Proposal] Support OpenDrain and OpenCollector options for GPIO PinMode - GPIO `PinMode` currently only supports Input, Output, InputPullDown and InputPullUp. There are other scenarios where outputs are open-drain and open-collector types and the API should accommodate.

## Rationale and Usage

I/O expanders are a good example where they have different types of I/O within a particular family and are differentiated by part numbers for the family. In many cases, a user's hardware will be configured with the basic Input or Output `PinMode` values along with attaching external resistors for pull-up/down functionality. However, you can utilize internal circuitry in binding devices to perform this. For example, the MCP23X09/MCP23X18 devices have open-drain outputs. Therefore, you could update the GPIO Pull-up Resistor Configuration Register (GPPU) when opening pins when using as a controller.

## Example

You could have something similar below based on the [IGpioControllerProvider proposal](https://github.com/dotnet/iot/issues/125).

```csharp

var connectionSettings = new SpiConnectionSettings(0, 0);

var spiDevice = new UnixSpiDevice(connectionSettings);

var mcp23Sxx = new Mcp23Sxx(0, spiDevice);

GpioController mcp23SxxController = mcp23Sxx.GetDefaultGpioController();

// Now when you call the GpioController methods, the master controller will send

// the respective SPI commands to the binding behind the scenes to update its I/O.

//

// The code below would interact with the MCP23XXX related registers (IODIR, GPIO, GPPU, etc.)

// to configure the pin as an output and write the value.

mcp23SxxController.SetPinMode(1, PinMode.OpenDrainPullUp);

mcp23SxxController.Write(1, PinValue.High);

```

## Proposed Change

Update the GPIO `PinMode` to include the new values. Summaries were left out for simplicity.

```csharp

public enum PinMode

{

Input,

Output,

InputPullDown,

InputPullUp,

// The following are the proposed additions.

OutputOpenDrain,

OutputOpenDrainPullUp,

OutputOpenCollector,

OutputOpenCollectorPullDown

}

```

I have not checked throughout API, but will also need to update logic that checks if PinMode is of specific type. For example, the GpioController.Write methods verify the mode is equal to Output before writing.

```csharp

// From GpioController.cs file.

if (_driver.GetPinMode(pinNumber) != PinMode.Output)

{

throw new InvalidOperationException("Can not write to a pin that is not set to Output mode.");

}

```

## Open Questions

1. [Windows.Devices.Gpio.GpioPinDriveMode](https://docs.microsoft.com/en-us/uwp/api/windows.devices.gpio.gpiopindrivemode) is similar to `System.Device.Gpio.PinMode`. It already includes current `PinMode` values and the values being proposed. However, the names are slightly different for the open-collector types. The question is should we stay consistent to Windows.Devices.Gpio (OutputOpenSource and OutputOpenSourcePullDown) or use OutputOpenCollector and OutputOpenCollectorPullDown? I've mainly seen references to open-collector and not open-source while researching related content. I'm not sure what the best approach would be in this case and would be content either way. Open-source mentioned here during [this Libgpiod session](https://youtu.be/cdTLewJCL1Y?list=PLbzoR-pLrL6pISWAq-1cXP4_UZAyRtesk&t=14m).

## References

https://blog.digilentinc.com/open-collector-vs-open-drain/

**Section 1.5**: http://ww1.microchip.com/downloads/en/DeviceDoc/20002121C.pdf

https://docs.microsoft.com/en-us/uwp/api/windows.devices.gpio.gpiopindrivemode

| non_priority | support opendrain and opencollector options for gpio pinmode gpio pinmode currently only supports input output inputpulldown and inputpullup there are other scenarios where outputs are open drain and open collector types and the api should accommodate rationale and usage i o expanders are a good example where they have different types of i o within a particular family and are differentiated by part numbers for the family in many cases a user s hardware will be configured with the basic input or output pinmode values along with attaching external resistors for pull up down functionality however you can utilize internal circuitry in binding devices to perform this for example the devices have open drain outputs therefore you could update the gpio pull up resistor configuration register gppu when opening pins when using as a controller example you could have something similar below based on the csharp var connectionsettings new spiconnectionsettings var spidevice new unixspidevice connectionsettings var new spidevice gpiocontroller getdefaultgpiocontroller now when you call the gpiocontroller methods the master controller will send the respective spi commands to the binding behind the scenes to update its i o the code below would interact with the related registers iodir gpio gppu etc to configure the pin as an output and write the value setpinmode pinmode opendrainpullup write pinvalue high proposed change update the gpio pinmode to include the new values summaries were left out for simplicity csharp public enum pinmode input output inputpulldown inputpullup the following are the proposed additions outputopendrain outputopendrainpullup outputopencollector outputopencollectorpulldown i have not checked throughout api but will also need to update logic that checks if pinmode is of specific type for example the gpiocontroller write methods verify the mode is equal to output before writing csharp from gpiocontroller cs file if driver getpinmode pinnumber pinmode output throw new invalidoperationexception can not write to a pin that is not set to output mode open questions is similar to system device gpio pinmode it already includes current pinmode values and the values being proposed however the names are slightly different for the open collector types the question is should we stay consistent to windows devices gpio outputopensource and outputopensourcepulldown or use outputopencollector and outputopencollectorpulldown i ve mainly seen references to open collector and not open source while researching related content i m not sure what the best approach would be in this case and would be content either way open source mentioned here during references section | 0 |

57,642 | 7,086,941,784 | IssuesEvent | 2018-01-11 16:16:30 | 18F/dol-whd-14c | https://api.github.com/repos/18F/dol-whd-14c | closed | As a WHD Security Officer, I would like each User's session to timeout from inactivity set to WHD policies | Milestone 1 Security Visual Design WHD Provide Requirements required to go live | Refer to prototype here for behavior: https://preview.uxpin.com/6dc6e8912fdaeb32cdfefa6e237f0d300d960588#/pages/77031627/simulate/no-panels?mode=i

**Note:** On the first page, click anywhere on the dark modal background to simulate the session expiring.

Descripition:

In Ref to NIST Control AC-12, The WHD Organiation Defined Perameter (ODP) will be provided so that it can be enforced by the information system - 15 mins of inactivity

TODO WHD Provide clarification on 15 min vs 60 min (DIT); WHD business needs to provide clarification on what should happen with "Application Status". Also what UX behavior do we expect in terms of a popup or something to remind user that session is about to timeout?

Tasks:

- [ ] User session timeout value is configured in web.config and provided to UI through some existing means (API?)

- [ ] Implement web server session timeout

- [ ] Create running task to track timeout

- [ ] Create alert/notification to user at a set interval before the timeout occurs, following 508 standards

- [x] Alert should go by USWDS

Acceptance Criteria: After 60 minutes of inactivity any logged in user should be automatically logged out and their session should be terminated. At a set interval before that timeout, alert should be thrown to current logged in user that in x interval (minutes) they will be logged out and their session terminated.

Considerations:

1. Application Status

WHD Business position: Filled out Application Status values must be saved.

IT position: Currently Application Status values are saved when moving to the next page. Hence fair to expect values must not be saved. In addition saving values in weird states left by the user is a complex problem and needs workflow/context implementation internally and not recommended.

2. Role: Any logged in User

| 1.0 | As a WHD Security Officer, I would like each User's session to timeout from inactivity set to WHD policies - Refer to prototype here for behavior: https://preview.uxpin.com/6dc6e8912fdaeb32cdfefa6e237f0d300d960588#/pages/77031627/simulate/no-panels?mode=i

**Note:** On the first page, click anywhere on the dark modal background to simulate the session expiring.

Descripition:

In Ref to NIST Control AC-12, The WHD Organiation Defined Perameter (ODP) will be provided so that it can be enforced by the information system - 15 mins of inactivity

TODO WHD Provide clarification on 15 min vs 60 min (DIT); WHD business needs to provide clarification on what should happen with "Application Status". Also what UX behavior do we expect in terms of a popup or something to remind user that session is about to timeout?

Tasks:

- [ ] User session timeout value is configured in web.config and provided to UI through some existing means (API?)

- [ ] Implement web server session timeout

- [ ] Create running task to track timeout

- [ ] Create alert/notification to user at a set interval before the timeout occurs, following 508 standards

- [x] Alert should go by USWDS

Acceptance Criteria: After 60 minutes of inactivity any logged in user should be automatically logged out and their session should be terminated. At a set interval before that timeout, alert should be thrown to current logged in user that in x interval (minutes) they will be logged out and their session terminated.

Considerations:

1. Application Status

WHD Business position: Filled out Application Status values must be saved.

IT position: Currently Application Status values are saved when moving to the next page. Hence fair to expect values must not be saved. In addition saving values in weird states left by the user is a complex problem and needs workflow/context implementation internally and not recommended.

2. Role: Any logged in User

| non_priority | as a whd security officer i would like each user s session to timeout from inactivity set to whd policies refer to prototype here for behavior note on the first page click anywhere on the dark modal background to simulate the session expiring descripition in ref to nist control ac the whd organiation defined perameter odp will be provided so that it can be enforced by the information system mins of inactivity todo whd provide clarification on min vs min dit whd business needs to provide clarification on what should happen with application status also what ux behavior do we expect in terms of a popup or something to remind user that session is about to timeout tasks user session timeout value is configured in web config and provided to ui through some existing means api implement web server session timeout create running task to track timeout create alert notification to user at a set interval before the timeout occurs following standards alert should go by uswds acceptance criteria after minutes of inactivity any logged in user should be automatically logged out and their session should be terminated at a set interval before that timeout alert should be thrown to current logged in user that in x interval minutes they will be logged out and their session terminated considerations application status whd business position filled out application status values must be saved it position currently application status values are saved when moving to the next page hence fair to expect values must not be saved in addition saving values in weird states left by the user is a complex problem and needs workflow context implementation internally and not recommended role any logged in user | 0 |

70,695 | 9,437,948,388 | IssuesEvent | 2019-04-13 19:09:25 | django-mptt/django-mptt | https://api.github.com/repos/django-mptt/django-mptt | closed | Order of insertion causes new roots to get the same tree_id when order_insertion_by is defined | Broken Tree Documentation | Using `django-mptt 0.8.4`

With this model:

```

from django.db import models

from mptt.models import MPTTModel, TreeForeignKey

class Node(MPTTModel):

name = models.CharField(max_length=50)

parent = TreeForeignKey('self', null=True, blank=True, related_name='children', db_index=True)

class MPTTMeta:

order_insertion_by = ['name']

```

And these tests:

```

from django.test import TestCase

from .models import Node

class NodeTest(TestCase):

def test_multiple_roots_in_order(self):

r1 = Node.objects.create(name='A', parent=None)

r2 = Node.objects.create(name='B', parent=None)

self.assertNotEqual(r1.tree_id, r2.tree_id)

def test_multiple_roots_in_reverse_order(self):

r1 = Node.objects.create(name='B', parent=None)

r2 = Node.objects.create(name='A', parent=None)

self.assertNotEqual(r1.tree_id, r2.tree_id)

```

When the nodes are inserted "in order" (A before B), they go into separate trees. When the nodes are inserted in reverse order, they go into the same tree:

```

Creating test database for alias 'default'...

.F

======================================================================

FAIL: test_multiple_roots_in_reverse_order (my_app.tests.NodeTest)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/cmermingas/django_projects/mptt_test/my_app/tests.py", line 16, in test_multiple_roots_in_reverse_order

self.assertNotEqual(r1.tree_id, r2.tree_id)

AssertionError: 1 == 1

----------------------------------------------------------------------

Ran 2 tests in 0.007s

FAILED (failures=1)

Destroying test database for alias 'default'...

```

If someone is willing to coach me a bit, I am willing to create a pull request and work on this.

Please let me know if I am doing something wrong. I'm pretty new here.

- Edited: I had a "suspected problem" and a potential fix here. I removed it after discovering that I was totally wrong.

| 1.0 | Order of insertion causes new roots to get the same tree_id when order_insertion_by is defined - Using `django-mptt 0.8.4`

With this model:

```

from django.db import models

from mptt.models import MPTTModel, TreeForeignKey

class Node(MPTTModel):

name = models.CharField(max_length=50)

parent = TreeForeignKey('self', null=True, blank=True, related_name='children', db_index=True)

class MPTTMeta:

order_insertion_by = ['name']

```

And these tests:

```

from django.test import TestCase

from .models import Node

class NodeTest(TestCase):

def test_multiple_roots_in_order(self):

r1 = Node.objects.create(name='A', parent=None)

r2 = Node.objects.create(name='B', parent=None)

self.assertNotEqual(r1.tree_id, r2.tree_id)

def test_multiple_roots_in_reverse_order(self):

r1 = Node.objects.create(name='B', parent=None)

r2 = Node.objects.create(name='A', parent=None)

self.assertNotEqual(r1.tree_id, r2.tree_id)

```

When the nodes are inserted "in order" (A before B), they go into separate trees. When the nodes are inserted in reverse order, they go into the same tree:

```

Creating test database for alias 'default'...

.F

======================================================================

FAIL: test_multiple_roots_in_reverse_order (my_app.tests.NodeTest)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/cmermingas/django_projects/mptt_test/my_app/tests.py", line 16, in test_multiple_roots_in_reverse_order

self.assertNotEqual(r1.tree_id, r2.tree_id)

AssertionError: 1 == 1

----------------------------------------------------------------------

Ran 2 tests in 0.007s

FAILED (failures=1)

Destroying test database for alias 'default'...

```

If someone is willing to coach me a bit, I am willing to create a pull request and work on this.

Please let me know if I am doing something wrong. I'm pretty new here.

- Edited: I had a "suspected problem" and a potential fix here. I removed it after discovering that I was totally wrong.

| non_priority | order of insertion causes new roots to get the same tree id when order insertion by is defined using django mptt with this model from django db import models from mptt models import mpttmodel treeforeignkey class node mpttmodel name models charfield max length parent treeforeignkey self null true blank true related name children db index true class mpttmeta order insertion by and these tests from django test import testcase from models import node class nodetest testcase def test multiple roots in order self node objects create name a parent none node objects create name b parent none self assertnotequal tree id tree id def test multiple roots in reverse order self node objects create name b parent none node objects create name a parent none self assertnotequal tree id tree id when the nodes are inserted in order a before b they go into separate trees when the nodes are inserted in reverse order they go into the same tree creating test database for alias default f fail test multiple roots in reverse order my app tests nodetest traceback most recent call last file users cmermingas django projects mptt test my app tests py line in test multiple roots in reverse order self assertnotequal tree id tree id assertionerror ran tests in failed failures destroying test database for alias default if someone is willing to coach me a bit i am willing to create a pull request and work on this please let me know if i am doing something wrong i m pretty new here edited i had a suspected problem and a potential fix here i removed it after discovering that i was totally wrong | 0 |

159,978 | 20,086,694,626 | IssuesEvent | 2022-02-05 04:03:08 | jtimberlake/rei-cedar | https://api.github.com/repos/jtimberlake/rei-cedar | opened | CVE-2021-32803 (High) detected in tar-6.1.0.tgz | security vulnerability | ## CVE-2021-32803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-6.1.0.tgz">https://registry.npmjs.org/tar/-/tar-6.1.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/tar/package.json</p>

<p>

Dependency Hierarchy:

- node-sass-6.0.0.tgz (Root Library)

- node-gyp-7.1.2.tgz

- :x: **tar-6.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/jtimberlake/rei-cedar/commit/9c0c2cadda2965ff0d2cb956635474ae9161ddfe">9c0c2cadda2965ff0d2cb956635474ae9161ddfe</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 6.1.2, 5.0.7, 4.4.15, and 3.2.3 has an arbitrary File Creation/Overwrite vulnerability via insufficient symlink protection. `node-tar` aims to guarantee that any file whose location would be modified by a symbolic link is not extracted. This is, in part, achieved by ensuring that extracted directories are not symlinks. Additionally, in order to prevent unnecessary `stat` calls to determine whether a given path is a directory, paths are cached when directories are created. This logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory. This order of operations resulted in the directory being created and added to the `node-tar` directory cache. When a directory is present in the directory cache, subsequent calls to mkdir for that directory are skipped. However, this is also where `node-tar` checks for symlinks occur. By first creating a directory, and then replacing that directory with a symlink, it was thus possible to bypass `node-tar` symlink checks on directories, essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location, thus allowing arbitrary file creation and overwrite. This issue was addressed in releases 3.2.3, 4.4.15, 5.0.7 and 6.1.2.

<p>Publish Date: 2021-08-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32803>CVE-2021-32803</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-r628-mhmh-qjhw">https://github.com/npm/node-tar/security/advisories/GHSA-r628-mhmh-qjhw</a></p>

<p>Release Date: 2021-08-03</p>

<p>Fix Resolution: tar - 3.2.3, 4.4.15, 5.0.7, 6.1.2</p>

</p>

</details>

<p></p>

| True | CVE-2021-32803 (High) detected in tar-6.1.0.tgz - ## CVE-2021-32803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-6.1.0.tgz">https://registry.npmjs.org/tar/-/tar-6.1.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/tar/package.json</p>

<p>

Dependency Hierarchy:

- node-sass-6.0.0.tgz (Root Library)

- node-gyp-7.1.2.tgz

- :x: **tar-6.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/jtimberlake/rei-cedar/commit/9c0c2cadda2965ff0d2cb956635474ae9161ddfe">9c0c2cadda2965ff0d2cb956635474ae9161ddfe</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 6.1.2, 5.0.7, 4.4.15, and 3.2.3 has an arbitrary File Creation/Overwrite vulnerability via insufficient symlink protection. `node-tar` aims to guarantee that any file whose location would be modified by a symbolic link is not extracted. This is, in part, achieved by ensuring that extracted directories are not symlinks. Additionally, in order to prevent unnecessary `stat` calls to determine whether a given path is a directory, paths are cached when directories are created. This logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory. This order of operations resulted in the directory being created and added to the `node-tar` directory cache. When a directory is present in the directory cache, subsequent calls to mkdir for that directory are skipped. However, this is also where `node-tar` checks for symlinks occur. By first creating a directory, and then replacing that directory with a symlink, it was thus possible to bypass `node-tar` symlink checks on directories, essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location, thus allowing arbitrary file creation and overwrite. This issue was addressed in releases 3.2.3, 4.4.15, 5.0.7 and 6.1.2.

<p>Publish Date: 2021-08-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-32803>CVE-2021-32803</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-r628-mhmh-qjhw">https://github.com/npm/node-tar/security/advisories/GHSA-r628-mhmh-qjhw</a></p>

<p>Release Date: 2021-08-03</p>

<p>Fix Resolution: tar - 3.2.3, 4.4.15, 5.0.7, 6.1.2</p>

</p>

</details>

<p></p>

| non_priority | cve high detected in tar tgz cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file package json path to vulnerable library node modules tar package json dependency hierarchy node sass tgz root library node gyp tgz x tar tgz vulnerable library found in head commit a href vulnerability details the npm package tar aka node tar before versions and has an arbitrary file creation overwrite vulnerability via insufficient symlink protection node tar aims to guarantee that any file whose location would be modified by a symbolic link is not extracted this is in part achieved by ensuring that extracted directories are not symlinks additionally in order to prevent unnecessary stat calls to determine whether a given path is a directory paths are cached when directories are created this logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory this order of operations resulted in the directory being created and added to the node tar directory cache when a directory is present in the directory cache subsequent calls to mkdir for that directory are skipped however this is also where node tar checks for symlinks occur by first creating a directory and then replacing that directory with a symlink it was thus possible to bypass node tar symlink checks on directories essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location thus allowing arbitrary file creation and overwrite this issue was addressed in releases and publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tar | 0 |

216,578 | 24,281,576,575 | IssuesEvent | 2022-09-28 17:54:09 | liorzilberg/struts | https://api.github.com/repos/liorzilberg/struts | opened | CVE-2016-1000027 (High) detected in spring-web-4.3.13.RELEASE.jar | security vulnerability | ## CVE-2016-1000027 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-4.3.13.RELEASE.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/spring-projects/spring-framework">https://github.com/spring-projects/spring-framework</a></p>

<p>Path to dependency file: /plugins/async/pom.xml</p>

<p>Path to vulnerable library: /.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar</p>

<p>

Dependency Hierarchy:

- :x: **spring-web-4.3.13.RELEASE.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/liorzilberg/struts/commit/6950763af860884188f4080d19a18c5ede16cd74">6950763af860884188f4080d19a18c5ede16cd74</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Pivotal Spring Framework through 5.3.16 suffers from a potential remote code execution (RCE) issue if used for Java deserialization of untrusted data. Depending on how the library is implemented within a product, this issue may or not occur, and authentication may be required. NOTE: the vendor's position is that untrusted data is not an intended use case. The product's behavior will not be changed because some users rely on deserialization of trusted data.

<p>Publish Date: 2020-01-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-1000027>CVE-2016-1000027</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-1000027">https://nvd.nist.gov/vuln/detail/CVE-2016-1000027</a></p>

<p>Release Date: 2020-01-02</p>

<p>Fix Resolution: 4.3.26.RELEASE</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| True | CVE-2016-1000027 (High) detected in spring-web-4.3.13.RELEASE.jar - ## CVE-2016-1000027 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-4.3.13.RELEASE.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/spring-projects/spring-framework">https://github.com/spring-projects/spring-framework</a></p>

<p>Path to dependency file: /plugins/async/pom.xml</p>

<p>Path to vulnerable library: /.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/home/wss-scanner/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar,/.m2/repository/org/springframework/spring-web/4.3.13.RELEASE/spring-web-4.3.13.RELEASE.jar</p>

<p>

Dependency Hierarchy:

- :x: **spring-web-4.3.13.RELEASE.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/liorzilberg/struts/commit/6950763af860884188f4080d19a18c5ede16cd74">6950763af860884188f4080d19a18c5ede16cd74</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Pivotal Spring Framework through 5.3.16 suffers from a potential remote code execution (RCE) issue if used for Java deserialization of untrusted data. Depending on how the library is implemented within a product, this issue may or not occur, and authentication may be required. NOTE: the vendor's position is that untrusted data is not an intended use case. The product's behavior will not be changed because some users rely on deserialization of trusted data.

<p>Publish Date: 2020-01-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-1000027>CVE-2016-1000027</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-1000027">https://nvd.nist.gov/vuln/detail/CVE-2016-1000027</a></p>

<p>Release Date: 2020-01-02</p>

<p>Fix Resolution: 4.3.26.RELEASE</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| non_priority | cve high detected in spring web release jar cve high severity vulnerability vulnerable library spring web release jar spring web library home page a href path to dependency file plugins async pom xml path to vulnerable library repository org springframework spring web release spring web release jar home wss scanner repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar home wss scanner repository org springframework spring web release spring web release jar home wss scanner repository org springframework spring web release spring web release jar repository org springframework spring web release spring web release jar dependency hierarchy x spring web release jar vulnerable library found in head commit a href found in base branch master vulnerability details pivotal spring framework through suffers from a potential remote code execution rce issue if used for java deserialization of untrusted data depending on how the library is implemented within a product this issue may or not occur and authentication may be required note the vendor s position is that untrusted data is not an intended use case the product s behavior will not be changed because some users rely on deserialization of trusted data publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution release check this box to open an automated fix pr | 0 |

8,134 | 2,868,985,749 | IssuesEvent | 2015-06-05 22:23:10 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Building project results in dart packages not being in appropriate location | AsDesigned bug | _Originally opened as dart-lang/sdk#21127_

*This issue was originally filed by daniel.robinson.open...@gmail.com*

_____

1. Get latest from https://github.com/0xor1/purity_oauth2/tree/integration-test

2. run pub build

3. run build/test/integration/bin/host.dart

Expect the program to start running, but it fails as it cannot find the packages directory, if I manually copy the packages folder from build/test to build/test/integration/bin then it does work:

Unhandled exception:

Uncaught Error: FileSystemException: Cannot open file, path = 'D:\Projects\purity_oauth2\build\test\integration\bin\packages\purity\host.dart' (OS Error: The system cannot find the path specified.

Dart Editor version 1.6.0.release (STABLE)

Dart SDK version 1.6.0

Windows 8.1 x64 | 1.0 | Building project results in dart packages not being in appropriate location - _Originally opened as dart-lang/sdk#21127_

*This issue was originally filed by daniel.robinson.open...@gmail.com*

_____

1. Get latest from https://github.com/0xor1/purity_oauth2/tree/integration-test

2. run pub build

3. run build/test/integration/bin/host.dart

Expect the program to start running, but it fails as it cannot find the packages directory, if I manually copy the packages folder from build/test to build/test/integration/bin then it does work:

Unhandled exception:

Uncaught Error: FileSystemException: Cannot open file, path = 'D:\Projects\purity_oauth2\build\test\integration\bin\packages\purity\host.dart' (OS Error: The system cannot find the path specified.

Dart Editor version 1.6.0.release (STABLE)

Dart SDK version 1.6.0

Windows 8.1 x64 | non_priority | building project results in dart packages not being in appropriate location originally opened as dart lang sdk this issue was originally filed by daniel robinson open gmail com get latest from run pub build run build test integration bin host dart expect the program to start running but it fails as it cannot find the packages directory if i manually copy the packages folder from build test to build test integration bin then it does work unhandled exception uncaught error filesystemexception cannot open file path d projects purity build test integration bin packages purity host dart os error the system cannot find the path specified dart editor version release stable dart sdk version windows | 0 |

169,719 | 20,841,884,031 | IssuesEvent | 2022-03-21 01:45:43 | violasarah2000/satx2 | https://api.github.com/repos/violasarah2000/satx2 | opened | CVE-2022-24773 (Medium) detected in node-forge-0.9.0.tgz | security vulnerability | ## CVE-2022-24773 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-forge-0.9.0.tgz</b></p></summary>