Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

163,907 | 13,929,632,140 | IssuesEvent | 2020-10-22 00:10:47 | aquiles23/mangahost_navbar_fix | https://api.github.com/repos/aquiles23/mangahost_navbar_fix | closed | criar Readme | documentation good first issue hacktoberfest português | estou realmente com preguiça de criar um readme, qualquer ajuda é bem vinda, e como se trata de um site pt-br é necessario que as instruções de uso do plugin sejam em português e opcionalmente seja traduzido para inglês. | 1.0 | criar Readme - estou realmente com preguiça de criar um readme, qualquer ajuda é bem vinda, e como se trata de um site pt-br é necessario que as instruções de uso do plugin sejam em português e opcionalmente seja traduzido para inglês. | non_priority | criar readme estou realmente com preguiça de criar um readme qualquer ajuda é bem vinda e como se trata de um site pt br é necessario que as instruções de uso do plugin sejam em português e opcionalmente seja traduzido para inglês | 0 |

164,853 | 13,961,396,384 | IssuesEvent | 2020-10-25 03:02:12 | patrick204nqh/linux | https://api.github.com/repos/patrick204nqh/linux | opened | linux command | documentation good first issue | > https://www.hostinger.com/tutorials/linux-commands

1. **pwd** (find out the path of the current working directory (folder) you’re in)

2. **cd**

3. **ls**

4. **cat**

5. **cp**

6. **mv**

7. **mkdir**

8. **rmdir** (rmdir only allows you to delete empty directories)

9. **rm**

10. **touch**

11. **locate** ( l... | 1.0 | linux command - > https://www.hostinger.com/tutorials/linux-commands

1. **pwd** (find out the path of the current working directory (folder) you’re in)

2. **cd**

3. **ls**

4. **cat**

5. **cp**

6. **mv**

7. **mkdir**

8. **rmdir** (rmdir only allows you to delete empty directories)

9. **rm**

10. **touch**

11... | non_priority | linux command pwd find out the path of the current working directory folder you’re in cd ls cat cp mv mkdir rmdir rmdir only allows you to delete empty directories rm touch locate like the search command in windows ... | 0 |

86,552 | 10,761,930,139 | IssuesEvent | 2019-10-31 22:00:31 | SharePoint/sp-dev-docs | https://api.github.com/repos/SharePoint/sp-dev-docs | closed | removeNavLink doesn't work | area:docs-comment area:other area:site-design status:answered | I create script with following two actions:

{

"verb": "removeNavLink",

"displayName": "Site contents",

"isWebRelative": true

},

{

"verb": "removeNavLink",

"displayName": "Pages",

"isWebRelative": true

}

And, right now there are no specific permissions ... | 1.0 | removeNavLink doesn't work - I create script with following two actions:

{

"verb": "removeNavLink",

"displayName": "Site contents",

"isWebRelative": true

},

{

"verb": "removeNavLink",

"displayName": "Pages",

"isWebRelative": true

}

And, right now there... | non_priority | removenavlink doesn t work i create script with following two actions verb removenavlink displayname site contents iswebrelative true verb removenavlink displayname pages iswebrelative true and right now there... | 0 |

154,705 | 24,329,920,711 | IssuesEvent | 2022-09-30 18:22:06 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | closed | Update transfer volume tooltip copy to be inclusive of 1 to many transfers | protocol designer design | ## Background

Our current tooltip copy only works if a user is building a 1-to-many or an n:n transfer.

## Acceptance criteria

Update copy to read "Volume to dispense in each well in a 1-to-many or n-... | 2.0 | Update transfer volume tooltip copy to be inclusive of 1 to many transfers - ## Background

Our current tooltip copy only works if a user is building a 1-to-many or an n:n transfer.

## Acceptance criter... | non_priority | update transfer volume tooltip copy to be inclusive of to many transfers background our current tooltip copy only works if a user is building a to many or an n n transfer acceptance criteria update copy to read volume to dispense in each well in a to many or n to n transfer volume to aspi... | 0 |

230,354 | 17,613,741,939 | IssuesEvent | 2021-08-18 07:03:10 | ContextLab/davos | https://api.github.com/repos/ContextLab/davos | closed | add brief "How it works" section to the docs | documentation | - overview/basic/non-context-specific info

- context-dependent info

- google colab

- jupyter notebooks

- Pure (non-interactive) Python | 1.0 | add brief "How it works" section to the docs - - overview/basic/non-context-specific info

- context-dependent info

- google colab

- jupyter notebooks

- Pure (non-interactive) Python | non_priority | add brief how it works section to the docs overview basic non context specific info context dependent info google colab jupyter notebooks pure non interactive python | 0 |

20,480 | 4,552,925,143 | IssuesEvent | 2016-09-13 01:32:18 | dcjones/Gadfly.jl | https://api.github.com/repos/dcjones/Gadfly.jl | opened | Docs are down | documentation | Both https://dcjones.github.io/Gadfly.jl/latest and http://gadflyjl.org are stuck in redirect loops. Not much that I can do until @dcjones fixes this. In the mean time a somewhat functionally complete copy is available at http://tamasnagy.com/Gadfly.jl/

| 1.0 | Docs are down - Both https://dcjones.github.io/Gadfly.jl/latest and http://gadflyjl.org are stuck in redirect loops. Not much that I can do until @dcjones fixes this. In the mean time a somewhat functionally complete copy is available at http://tamasnagy.com/Gadfly.jl/

| non_priority | docs are down both and are stuck in redirect loops not much that i can do until dcjones fixes this in the mean time a somewhat functionally complete copy is available at | 0 |

280,736 | 30,849,849,509 | IssuesEvent | 2023-08-02 15:56:56 | autismdrive/autismdrive | https://api.github.com/repos/autismdrive/autismdrive | opened | Update backend (pypi) packages | Chore Security | Update backend (pypi) packages and fix any resulting breaking changes | True | Update backend (pypi) packages - Update backend (pypi) packages and fix any resulting breaking changes | non_priority | update backend pypi packages update backend pypi packages and fix any resulting breaking changes | 0 |

63,056 | 12,278,906,238 | IssuesEvent | 2020-05-08 10:59:31 | Pokecube-Development/Pokecube-Issues-and-Wiki | https://api.github.com/repos/Pokecube-Development/Pokecube-Issues-and-Wiki | closed | villages, trainers, scattered/ruin structures and mirage spots spawning in dimensions | 1.14.x 1.15.2 Bug - Code Bug - Resources Fixed | #### Issue Description:

I can find villages, trainers, scattered/ruin structures and mirage spots all spawn in the end and nether

Same for the Ultra wormhole, except they also have meteors spawning in there, might be spawning in the end and nether too, but I haven't seen any yet.

#### What happens:

I go into ... | 1.0 | villages, trainers, scattered/ruin structures and mirage spots spawning in dimensions - #### Issue Description:

I can find villages, trainers, scattered/ruin structures and mirage spots all spawn in the end and nether

Same for the Ultra wormhole, except they also have meteors spawning in there, might be spawning in... | non_priority | villages trainers scattered ruin structures and mirage spots spawning in dimensions issue description i can find villages trainers scattered ruin structures and mirage spots all spawn in the end and nether same for the ultra wormhole except they also have meteors spawning in there might be spawning in... | 0 |

290,157 | 25,040,486,791 | IssuesEvent | 2022-11-04 20:10:38 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: restoreTPCCIncLatest/nodes=10 failed | C-test-failure O-robot O-roachtest T-disaster-recovery branch-release-22.2 | roachtest.restoreTPCCIncLatest/nodes=10 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6495720?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6495720?buildTab=artifacts#/restoreTPC... | 2.0 | roachtest: restoreTPCCIncLatest/nodes=10 failed - roachtest.restoreTPCCIncLatest/nodes=10 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6495720?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNigh... | non_priority | roachtest restoretpccinclatest nodes failed roachtest restoretpccinclatest nodes with on release wraps output in run cockroach sql wraps cockroach sql insecure e restore from in gs cockroach fixtures tpcc incrementals auth... | 0 |

101,323 | 21,649,777,303 | IssuesEvent | 2022-05-06 08:08:31 | ProteinsWebTeam/interpro7-client | https://api.github.com/repos/ProteinsWebTeam/interpro7-client | closed | Menu highlight issue in InterProScan result page | bug On code review | The menu for InterProScan search results (Overview / Entries /Sequence) makes the current selected tab name unreadable.

| 1.0 | Menu highlight issue in InterProScan result page - The menu for InterProScan search results (Overview / Entries /Sequence) makes the current selected tab name unreadable.

| non_priority | menu highlight issue in interproscan result page the menu for interproscan search results overview entries sequence makes the current selected tab name unreadable | 0 |

7,304 | 9,552,274,979 | IssuesEvent | 2019-05-02 16:14:34 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | reopened | incompatible_use_python_toolchains: The Python runtime is obtained from a toolchain rather than a flag | breaking-change-0.26 incompatible-change migration-0.25 team-Rules-Python | **Flag:** `--incompatible_use_python_toolchains`

**Available since:** 0.25

**Will be flipped in:** ???

**Feature tracking issue:** #7375

## Motivation

For background on toolchains, see [here](https://docs.bazel.build/versions/master/toolchains.html).

Previously, the Python runtime (i.e., the interpreter use... | True | incompatible_use_python_toolchains: The Python runtime is obtained from a toolchain rather than a flag - **Flag:** `--incompatible_use_python_toolchains`

**Available since:** 0.25

**Will be flipped in:** ???

**Feature tracking issue:** #7375

## Motivation

For background on toolchains, see [here](https://docs.b... | non_priority | incompatible use python toolchains the python runtime is obtained from a toolchain rather than a flag flag incompatible use python toolchains available since will be flipped in feature tracking issue motivation for background on toolchains see previously the py... | 0 |

237,085 | 18,152,327,099 | IssuesEvent | 2021-09-26 13:32:34 | AlirizaSari/4S_Handleidingen | https://api.github.com/repos/AlirizaSari/4S_Handleidingen | closed | Pagina van een Merk aanpassen. (Ticket_2a) | documentation | alle type nummers en product nummers in 1 lange lijst zie en dat maakt niet handig uit met scherm grote hebben wil graag zien dat het in een grid zie. | 1.0 | Pagina van een Merk aanpassen. (Ticket_2a) - alle type nummers en product nummers in 1 lange lijst zie en dat maakt niet handig uit met scherm grote hebben wil graag zien dat het in een grid zie. | non_priority | pagina van een merk aanpassen ticket alle type nummers en product nummers in lange lijst zie en dat maakt niet handig uit met scherm grote hebben wil graag zien dat het in een grid zie | 0 |

101,371 | 16,507,858,938 | IssuesEvent | 2021-05-25 21:52:11 | idonthaveafifaaddiction/ember-css-modules | https://api.github.com/repos/idonthaveafifaaddiction/ember-css-modules | opened | CVE-2021-23337 (High) detected in lodash-4.17.20.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.20.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.... | True | CVE-2021-23337 (High) detected in lodash-4.17.20.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.20.tgz</b></p></summary>

<p>Lodash modular utilitie... | non_priority | cve high detected in lodash tgz cve high severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file ember css modules package json path to vulnerable library ember css modules node modules lodash pa... | 0 |

68,840 | 29,738,078,871 | IssuesEvent | 2023-06-14 03:50:43 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | reopened | Kubectl apply command is throwing an error | triaged cxp product-issue Pri2 awaiting-customer-response azure-kubernetes-service/svc |

[Enter feedback here]

The command:

kubectl apply -f nvidia-device-plugin-ds.yaml

returns an error when using AKS with version 1.25.6:

error: unable to decode "nvidia-device-plugin-ds.yaml": no kind "DaemonSet" is registered for version "apps/v1"

---

#### Document Details

⚠ *Do not edit this section. It is ... | 1.0 | Kubectl apply command is throwing an error -

[Enter feedback here]

The command:

kubectl apply -f nvidia-device-plugin-ds.yaml

returns an error when using AKS with version 1.25.6:

error: unable to decode "nvidia-device-plugin-ds.yaml": no kind "DaemonSet" is registered for version "apps/v1"

---

#### Document D... | non_priority | kubectl apply command is throwing an error the command kubectl apply f nvidia device plugin ds yaml returns an error when using aks with version error unable to decode nvidia device plugin ds yaml no kind daemonset is registered for version apps document details ⚠ do not ed... | 0 |

110,524 | 11,704,992,818 | IssuesEvent | 2020-03-07 13:12:41 | lkno0705/MatrixMultiplication | https://api.github.com/repos/lkno0705/MatrixMultiplication | opened | New test runs | documentation | Just to track. Whenever you have time @lkno0705

Needed are:

- [ ] x64 NASM re-run

- [ ] SIMD C++ | 1.0 | New test runs - Just to track. Whenever you have time @lkno0705

Needed are:

- [ ] x64 NASM re-run

- [ ] SIMD C++ | non_priority | new test runs just to track whenever you have time needed are nasm re run simd c | 0 |

30,896 | 4,226,657,419 | IssuesEvent | 2016-07-02 16:16:45 | berkmancenter/bookanook | https://api.github.com/repos/berkmancenter/bookanook | opened | Add filter for Nook/location related reservation statistics | design feature | Admin will be able to choose the nooks/locations for showing the statistics. | 1.0 | Add filter for Nook/location related reservation statistics - Admin will be able to choose the nooks/locations for showing the statistics. | non_priority | add filter for nook location related reservation statistics admin will be able to choose the nooks locations for showing the statistics | 0 |

9,818 | 13,962,451,298 | IssuesEvent | 2020-10-25 09:35:13 | ISISScientificComputing/autoreduce | https://api.github.com/repos/ISISScientificComputing/autoreduce | closed | Tidy up run Summary page for skipped runs | :exclamation: Release requirement :key: WebApp | Issue raised by: [developer]

### What?

From #844 originally

When a run is skipped the run summary page should:

- Start time: Not run

- End time: Not run

- Duration: Not run

- Rerun section: Not visible

- Logs: Not shown

### Where?

`WebApp/autoreduce_webapp/templates/run_summary.html`

### How?

Smoke tes... | 1.0 | Tidy up run Summary page for skipped runs - Issue raised by: [developer]

### What?

From #844 originally

When a run is skipped the run summary page should:

- Start time: Not run

- End time: Not run

- Duration: Not run

- Rerun section: Not visible

- Logs: Not shown

### Where?

`WebApp/autoreduce_webapp/templa... | non_priority | tidy up run summary page for skipped runs issue raised by what from originally when a run is skipped the run summary page should start time not run end time not run duration not run rerun section not visible logs not shown where webapp autoreduce webapp templates run summ... | 0 |

55,155 | 7,963,857,473 | IssuesEvent | 2018-07-13 19:07:18 | wedeploy/wedeploy.com | https://api.github.com/repos/wedeploy/wedeploy.com | closed | Add documentation on the new approach of assigning custom domains to services | documentation | @ipeychev commented on [Sat Nov 04 2017](https://github.com/wedeploy/ideas/issues/181)

Once we apply the changes to all repositories, we have to update the documentation too.

---

@jonnilundy commented on [Tue Jan 30 2018](https://github.com/wedeploy/ideas/issues/181#issuecomment-361785704)

Hey @ipeychev, is this is... | 1.0 | Add documentation on the new approach of assigning custom domains to services - @ipeychev commented on [Sat Nov 04 2017](https://github.com/wedeploy/ideas/issues/181)

Once we apply the changes to all repositories, we have to update the documentation too.

---

@jonnilundy commented on [Tue Jan 30 2018](https://github.... | non_priority | add documentation on the new approach of assigning custom domains to services ipeychev commented on once we apply the changes to all repositories we have to update the documentation too jonnilundy commented on hey ipeychev is this issue completed if not can you move it to the appropriate rep... | 0 |

42,913 | 5,546,738,706 | IssuesEvent | 2017-03-23 02:15:36 | UniGDC/unigdc.github.io | https://api.github.com/repos/UniGDC/unigdc.github.io | closed | Columned designing | Design | Using simple column grid defined in _sass/_layout.scss, columned website should be implemented.

Columned website might allow us to make the website not only more organized but also more beautiful.

2:1 for index page.

| 1.0 | Columned designing - Using simple column grid defined in _sass/_layout.scss, columned website should be implemented.

Columned website might allow us to make the website not only more organized but also more beautiful.

2:1 for index page.

| non_priority | columned designing using simple column grid defined in sass layout scss columned website should be implemented columned website might allow us to make the website not only more organized but also more beautiful for index page | 0 |

9,476 | 7,992,605,333 | IssuesEvent | 2018-07-20 02:33:59 | APSIMInitiative/ApsimX | https://api.github.com/repos/APSIMInitiative/ApsimX | opened | Minor documentation errors | interface/infrastructure | A few files have syntax errors in the XML documentation. This is another dummy issue after I accidentally resolved the previous one. | 1.0 | Minor documentation errors - A few files have syntax errors in the XML documentation. This is another dummy issue after I accidentally resolved the previous one. | non_priority | minor documentation errors a few files have syntax errors in the xml documentation this is another dummy issue after i accidentally resolved the previous one | 0 |

64,155 | 8,713,020,767 | IssuesEvent | 2018-12-07 00:34:56 | Microsoft/WindowsTemplateStudio | https://api.github.com/repos/Microsoft/WindowsTemplateStudio | closed | docs/mvvmbasic.md Not sure what this means | Documentation | "You can see examples of this being used in many of the pages that can be included as part of project generation"

I am not sure what this project generation refers to, this needs to be more clear.

| 1.0 | docs/mvvmbasic.md Not sure what this means - "You can see examples of this being used in many of the pages that can be included as part of project generation"

I am not sure what this project generation refers to, this needs to be more clear.

| non_priority | docs mvvmbasic md not sure what this means you can see examples of this being used in many of the pages that can be included as part of project generation i am not sure what this project generation refers to this needs to be more clear | 0 |

47,335 | 12,015,614,785 | IssuesEvent | 2020-04-10 14:22:18 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Image Classification not working in Local System | type:build/install | **System information**

**### - OS Platform and Distribution (MacOs Catalina):**

### **- TensorFlow version:2.1.0**

### **- Python version: 3.6.9**

### **- Platform - docker with jupyter notebook**

### **During The Image Classification, I got An error like this,

It working properly in google colab notebook**

... | 1.0 | Image Classification not working in Local System - **System information**

**### - OS Platform and Distribution (MacOs Catalina):**

### **- TensorFlow version:2.1.0**

### **- Python version: 3.6.9**

### **- Platform - docker with jupyter notebook**

### **During The Image Classification, I got An error like this, ... | non_priority | image classification not working in local system system information os platform and distribution macos catalina tensorflow version python version platform docker with jupyter notebook during the image classification i got an error like this ... | 0 |

20,396 | 3,591,252,553 | IssuesEvent | 2016-02-01 10:50:29 | vigour-io/vjs | https://api.github.com/repos/vigour-io/vjs | closed | [Subscribe] Spec "downward" and "deep" subscribe API, and add tests | new:design | This would search downward to fulfil subscription: | 1.0 | [Subscribe] Spec "downward" and "deep" subscribe API, and add tests - This would search downward to fulfil subscription: | non_priority | spec downward and deep subscribe api and add tests this would search downward to fulfil subscription | 0 |

33,459 | 6,205,581,489 | IssuesEvent | 2017-07-06 16:26:47 | edamontology/edamontology | https://api.github.com/repos/edamontology/edamontology | closed | Improve license info on GitHub | documentation partially fixed | From BOSC 2017 review by @peterjc: "From the GitHub repository alone, it is not obvious how EDAM is licensed. Please add this to the README and/or as a top level LICENSE file (using .md etc as you prefer)." | 1.0 | Improve license info on GitHub - From BOSC 2017 review by @peterjc: "From the GitHub repository alone, it is not obvious how EDAM is licensed. Please add this to the README and/or as a top level LICENSE file (using .md etc as you prefer)." | non_priority | improve license info on github from bosc review by peterjc from the github repository alone it is not obvious how edam is licensed please add this to the readme and or as a top level license file using md etc as you prefer | 0 |

21,919 | 14,934,738,052 | IssuesEvent | 2021-01-25 10:56:23 | sanger/print_my_barcode | https://api.github.com/repos/sanger/print_my_barcode | closed | GPL-622 Move pmb into psd-deployment on TRAINING FCE | Infrastructure | Description

PMB is currently running in the old machines and the config changes need to be added manually. We would like to move the application into TRAINING FCE and configure it to use the printers.

Who the primary contacts are for this work

Harriet

Eduardo | 1.0 | GPL-622 Move pmb into psd-deployment on TRAINING FCE - Description

PMB is currently running in the old machines and the config changes need to be added manually. We would like to move the application into TRAINING FCE and configure it to use the printers.

Who the primary contacts are for this work

Harriet

Eduardo | non_priority | gpl move pmb into psd deployment on training fce description pmb is currently running in the old machines and the config changes need to be added manually we would like to move the application into training fce and configure it to use the printers who the primary contacts are for this work harriet eduardo | 0 |

99,829 | 12,479,667,933 | IssuesEvent | 2020-05-29 18:40:26 | hbersey/BenBonk-Game-Jam-1-Entry | https://api.github.com/repos/hbersey/BenBonk-Game-Jam-1-Entry | closed | Platformer Boiler Plate | Backend Game Design | - [x] NPC

- [x] Player Controls

- [x] Scoring and High Score

- [x] Health and Damage

- [ ] Player

- [ ] Enemies

- [ ] Example | 1.0 | Platformer Boiler Plate - - [x] NPC

- [x] Player Controls

- [x] Scoring and High Score

- [x] Health and Damage

- [ ] Player

- [ ] Enemies

- [ ] Example | non_priority | platformer boiler plate npc player controls scoring and high score health and damage player enemies example | 0 |

67,042 | 14,839,496,024 | IssuesEvent | 2021-01-16 01:00:09 | GooseWSS/gogs | https://api.github.com/repos/GooseWSS/gogs | opened | WS-2020-0163 (Medium) detected in marked-0.8.1.min.js | security vulnerability | ## WS-2020-0163 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-0.8.1.min.js</b></p></summary>

<p>A markdown parser built for speed</p>

<p>Library home page: <a href="https://... | True | WS-2020-0163 (Medium) detected in marked-0.8.1.min.js - ## WS-2020-0163 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-0.8.1.min.js</b></p></summary>

<p>A markdown parser bui... | non_priority | ws medium detected in marked min js ws medium severity vulnerability vulnerable library marked min js a markdown parser built for speed library home page a href path to vulnerable library gogs public plugins marked marked min js dependency hierarchy x ... | 0 |

175,863 | 14,543,407,383 | IssuesEvent | 2020-12-15 16:50:32 | thenewboston-developers/Website | https://api.github.com/repos/thenewboston-developers/Website | closed | Update Docs for Bank - Invalid Blocks | PR Reward - 1000 documentation engineering | Update documentation and sample code for Bank - Invalid Blocks

- ensure all available endpoints (`GET`, `POST`, `PATCH`, and `DELETE`) are documented

- update request and response examples from real API data (2 items for `GET` list responses)

- JSON should be formatted with 2 space indents

- ensure any/all params a... | 1.0 | Update Docs for Bank - Invalid Blocks - Update documentation and sample code for Bank - Invalid Blocks

- ensure all available endpoints (`GET`, `POST`, `PATCH`, and `DELETE`) are documented

- update request and response examples from real API data (2 items for `GET` list responses)

- JSON should be formatted with 2 ... | non_priority | update docs for bank invalid blocks update documentation and sample code for bank invalid blocks ensure all available endpoints get post patch and delete are documented update request and response examples from real api data items for get list responses json should be formatted with ... | 0 |

93,321 | 8,408,981,572 | IssuesEvent | 2018-10-12 05:00:33 | nodejs/node | https://api.github.com/repos/nodejs/node | opened | Investigate flaky parallel/test-repl-tab-complete (debug build) | CI / flaky test repl | * **Version**: master

* **Platform**: x64 Linux Debug build

* **Subsystem**: repl

https://ci.nodejs.org/job/node-test-commit-linux-containered/7719/nodes=ubuntu1604_sharedlibs_debug_x64/console

```

14:10:10 not ok 1615 parallel/test-repl-tab-complete

14:10:10 ---

14:10:10 duration_ms: 62.152

14:10:10 ... | 1.0 | Investigate flaky parallel/test-repl-tab-complete (debug build) - * **Version**: master

* **Platform**: x64 Linux Debug build

* **Subsystem**: repl

https://ci.nodejs.org/job/node-test-commit-linux-containered/7719/nodes=ubuntu1604_sharedlibs_debug_x64/console

```

14:10:10 not ok 1615 parallel/test-repl-tab-com... | non_priority | investigate flaky parallel test repl tab complete debug build version master platform linux debug build subsystem repl not ok parallel test repl tab complete duration ms severity fail stack ... | 0 |

62,385 | 25,979,754,137 | IssuesEvent | 2022-12-19 17:42:29 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | aws_autoscaling_group - setting suspended processes to empty list attaches all processes instead | bug service/autoscaling stale | ### Terraform Version

v0.10.4

### Affected Resource(s)

Please list the resources as a list, for example:

- aws_autoscaling_group

If this issue appears to affect multiple resources, it may be an issue with Terraform's core, so please mention this.

### Terraform Configuration Files

```hcl

variable "load_bal... | 1.0 | aws_autoscaling_group - setting suspended processes to empty list attaches all processes instead - ### Terraform Version

v0.10.4

### Affected Resource(s)

Please list the resources as a list, for example:

- aws_autoscaling_group

If this issue appears to affect multiple resources, it may be an issue with Terrafo... | non_priority | aws autoscaling group setting suspended processes to empty list attaches all processes instead terraform version affected resource s please list the resources as a list for example aws autoscaling group if this issue appears to affect multiple resources it may be an issue with terraform... | 0 |

1,006 | 2,569,635,093 | IssuesEvent | 2015-02-10 00:09:29 | webgme/webgme | https://api.github.com/repos/webgme/webgme | closed | Add documentation and script for setting up DSML repository. | Documentation Enhancement Major | The main ReadMe should have a new section and modify the src/bin/generate_config.js to put a configuration file and app.js file in the root (cwd) of the repository using webgme. | 1.0 | Add documentation and script for setting up DSML repository. - The main ReadMe should have a new section and modify the src/bin/generate_config.js to put a configuration file and app.js file in the root (cwd) of the repository using webgme. | non_priority | add documentation and script for setting up dsml repository the main readme should have a new section and modify the src bin generate config js to put a configuration file and app js file in the root cwd of the repository using webgme | 0 |

80,356 | 15,586,279,825 | IssuesEvent | 2021-03-18 01:34:50 | attesch/myretail | https://api.github.com/repos/attesch/myretail | opened | CVE-2020-5398 (High) detected in spring-web-5.0.4.RELEASE.jar | security vulnerability | ## CVE-2020-5398 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.0.4.RELEASE.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/spri... | True | CVE-2020-5398 (High) detected in spring-web-5.0.4.RELEASE.jar - ## CVE-2020-5398 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-5.0.4.RELEASE.jar</b></p></summary>

<p>Sprin... | non_priority | cve high detected in spring web release jar cve high severity vulnerability vulnerable library spring web release jar spring web library home page a href path to dependency file myretail build gradle path to vulnerable library myretail build gradle dependency hi... | 0 |

321,601 | 23,863,016,009 | IssuesEvent | 2022-09-07 08:42:42 | vercel/next.js | https://api.github.com/repos/vercel/next.js | opened | Docs: `output: "standalone"` conflicts with discouraged usage of Custom Servers | template: documentation | ### What is the improvement or update you wish to see?

The [Custom Server documentation](https://nextjs.org/docs/advanced-features/custom-server) highlights

> A custom server will remove important performance optimizations, like serverless functions and [Automatic Static Optimization](https://nextjs.org/docs/advanc... | 1.0 | Docs: `output: "standalone"` conflicts with discouraged usage of Custom Servers - ### What is the improvement or update you wish to see?

The [Custom Server documentation](https://nextjs.org/docs/advanced-features/custom-server) highlights

> A custom server will remove important performance optimizations, like serve... | non_priority | docs output standalone conflicts with discouraged usage of custom servers what is the improvement or update you wish to see the highlights a custom server will remove important performance optimizations like serverless functions and however builds a custom server inside next stand... | 0 |

82,584 | 15,648,359,139 | IssuesEvent | 2021-03-23 05:33:40 | YJSoft/namuhub | https://api.github.com/repos/YJSoft/namuhub | closed | CVE-2019-16792 (High) detected in waitress-0.8.9.tar.gz - autoclosed | security vulnerability | ## CVE-2019-16792 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>waitress-0.8.9.tar.gz</b></p></summary>

<p>Waitress WSGI server</p>

<p>Library home page: <a href="https://files.pytho... | True | CVE-2019-16792 (High) detected in waitress-0.8.9.tar.gz - autoclosed - ## CVE-2019-16792 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>waitress-0.8.9.tar.gz</b></p></summary>

<p>Wait... | non_priority | cve high detected in waitress tar gz autoclosed cve high severity vulnerability vulnerable library waitress tar gz waitress wsgi server library home page a href path to dependency file namuhub requirements txt path to vulnerable library namuhub requirements txt ... | 0 |

43,667 | 23,326,840,960 | IssuesEvent | 2022-08-08 22:18:00 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Regressions in System.Tests.Perf_UInt64 | area-System.Runtime tenet-performance tenet-performance-benchmarks refs/heads/main RunKind=micro Windows 10.0.19041 Regression CoreClr arm64 | ### Run Information

Architecture | arm64

-- | --

OS | Windows 10.0.19041

Baseline | [b92de6bf0351280cd36221f3232b2964a4e61e88](https://github.com/dotnet/runtime/commit/b92de6bf0351280cd36221f3232b2964a4e61e88)

Compare | [d4a9ade2dfbee1ef532e7793ea9c330c51b5c028](https://github.com/dotnet/runtime/commit/d4a9ade2d... | True | Regressions in System.Tests.Perf_UInt64 - ### Run Information

Architecture | arm64

-- | --

OS | Windows 10.0.19041

Baseline | [b92de6bf0351280cd36221f3232b2964a4e61e88](https://github.com/dotnet/runtime/commit/b92de6bf0351280cd36221f3232b2964a4e61e88)

Compare | [d4a9ade2dfbee1ef532e7793ea9c330c51b5c028](https://... | non_priority | regressions in system tests perf run information architecture os windows baseline compare diff regressions in system collections containskeyfalse lt string string gt benchmark baseline test test base test quality edge detector baseline ir ... | 0 |

48,121 | 13,301,491,813 | IssuesEvent | 2020-08-25 13:02:07 | rammatzkvosky/jdb | https://api.github.com/repos/rammatzkvosky/jdb | opened | CVE-2019-16335 (High) detected in jackson-databind-2.8.8.jar | security vulnerability | ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-16335 (High) detected in jackson-databind-2.8.8.jar - ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm jdb pom xml path ... | 0 |

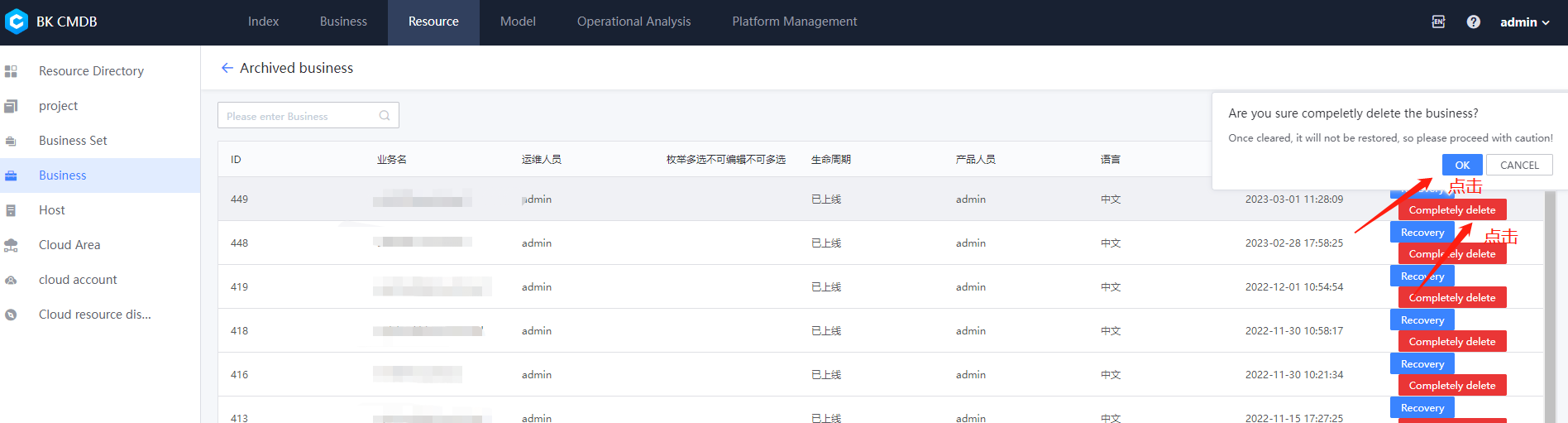

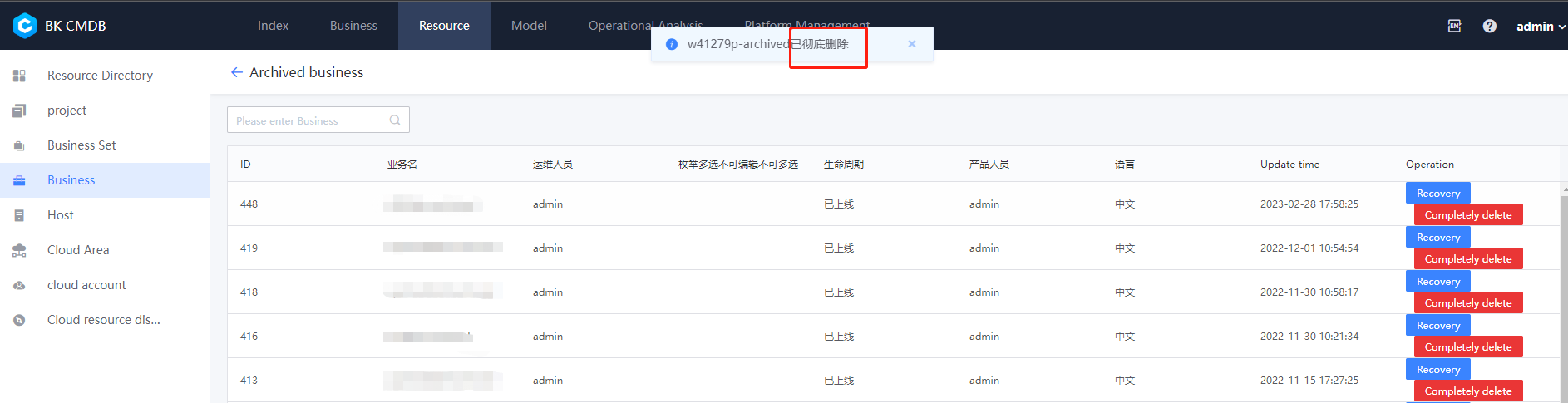

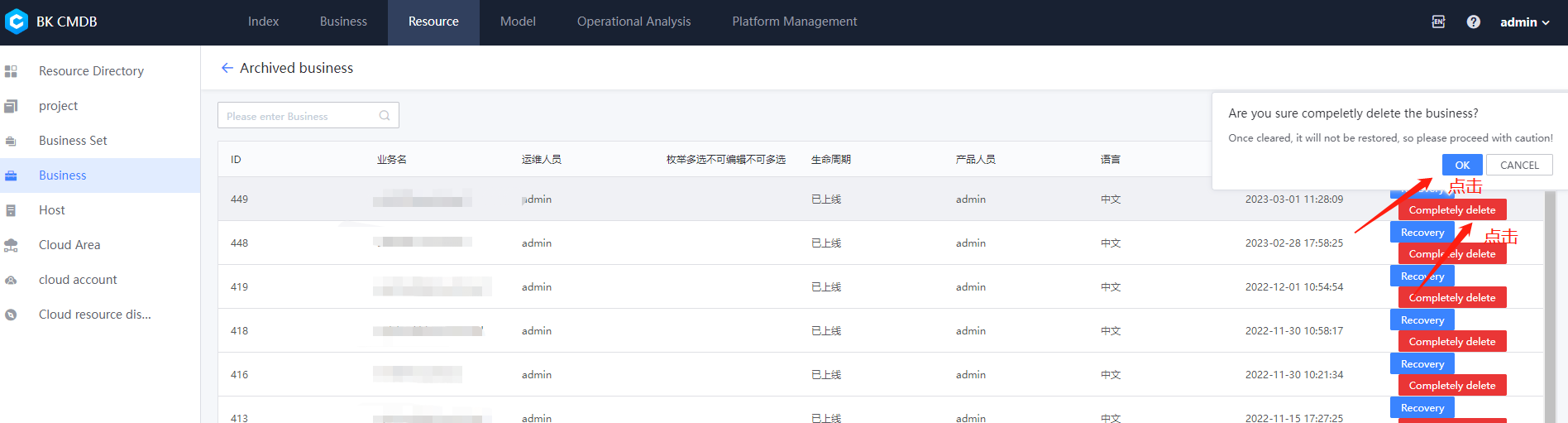

334,854 | 29,993,598,322 | IssuesEvent | 2023-06-26 02:15:58 | TencentBlueKing/bk-cmdb | https://api.github.com/repos/TencentBlueKing/bk-cmdb | closed | 【3.10.24-alpha1 】删除业务时,删除成功提示未国际化及搜索框可搜索字段未国际化 | grayed tested | 一、前提条件

中英文切换中切换语言为英文或者中文

二 、重现步骤

如下图删除业务

确认删除成功提示中包含中文已彻底删除

搜索框可搜索字段未国际化

确认删除成功提示中包含中文已彻底删除

that documents your project including:

Wireframes

Technologies used

Features

Future work

| 1.0 | Write README that documents the project - Write a README (using Markdown) that documents your project including:

Wireframes

Technologies used

Features

Future work

| non_priority | write readme that documents the project write a readme using markdown that documents your project including wireframes technologies used features future work | 0 |

4,729 | 2,871,346,919 | IssuesEvent | 2015-06-08 01:31:34 | tjchambers32/Embedded-Reflex-Test | https://api.github.com/repos/tjchambers32/Embedded-Reflex-Test | closed | Document Original Dev Board | documentation | It would be good to document the hardware platform this was originally designed on (the ECEn 330 Dev Board). We should get a picture at least. | 1.0 | Document Original Dev Board - It would be good to document the hardware platform this was originally designed on (the ECEn 330 Dev Board). We should get a picture at least. | non_priority | document original dev board it would be good to document the hardware platform this was originally designed on the ecen dev board we should get a picture at least | 0 |

300,542 | 22,685,832,915 | IssuesEvent | 2022-07-04 14:01:13 | nf-core/eager | https://api.github.com/repos/nf-core/eager | closed | Clarify "very short reads" in helptext of `clip_readlength` | documentation next-patch | The current helptext reads:

>Defines the minimum read length that is required for reads after merging to be considered for downstream analysis after read merging. Default is 30.

>Note that performing read length filtering at this step is not reliable for correct endogenous DNA calculation, when you have a large perce... | 1.0 | Clarify "very short reads" in helptext of `clip_readlength` - The current helptext reads:

>Defines the minimum read length that is required for reads after merging to be considered for downstream analysis after read merging. Default is 30.

>Note that performing read length filtering at this step is not reliable for c... | non_priority | clarify very short reads in helptext of clip readlength the current helptext reads defines the minimum read length that is required for reads after merging to be considered for downstream analysis after read merging default is note that performing read length filtering at this step is not reliable for co... | 0 |

221,800 | 24,659,153,978 | IssuesEvent | 2022-10-18 04:21:29 | valtech-ch/microservice-kubernetes-cluster | https://api.github.com/repos/valtech-ch/microservice-kubernetes-cluster | reopened | CVE-2019-16335 (High) detected in jackson-databind-2.9.8.jar | security vulnerability | ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-16335 (High) detected in jackson-databind-2.9.8.jar - ## CVE-2019-16335 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file functions build gradle path ... | 0 |

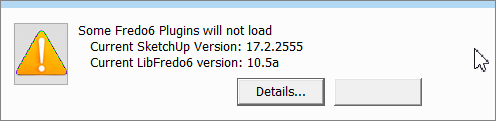

179,484 | 14,704,652,331 | IssuesEvent | 2021-01-04 16:49:18 | SketchUp/api-issue-tracker | https://api.github.com/repos/SketchUp/api-issue-tracker | closed | UI::Notification - The two buttons always appears | Ruby API SketchUp documentation | If you use **only one** button via _#on_accept_ or _#on_dismiss_, BOTH buttons always appear in the notification box, the second one being empty.

| 1.0 | UI::Notification - The two buttons always appears - If you use **only one** button via _#on_accept_ or _#on_dismiss_, BOTH buttons always appear in the notification box, the second one being empty.

| non_priority | ui notification the two buttons always appears if you use only one button via on accept or on dismiss both buttons always appear in the notification box the second one being empty | 0 |

17,936 | 5,535,189,948 | IssuesEvent | 2017-03-21 16:51:55 | phetsims/masses-and-springs | https://api.github.com/repos/phetsims/masses-and-springs | closed | Adjust approach to ToolboxPanel.js start event. | dev:code-review | As per the suggestion of @samreid, this code should be revised for clarity. It seems to be finding the parent ScreenView before starting the "start" event. It isn't clear whether this code is necessary and should be revised.

```js

if ( !timerParentScreenView2 ) {

var testNode = self;

... | 1.0 | Adjust approach to ToolboxPanel.js start event. - As per the suggestion of @samreid, this code should be revised for clarity. It seems to be finding the parent ScreenView before starting the "start" event. It isn't clear whether this code is necessary and should be revised.

```js

if ( !timerParentScreenVi... | non_priority | adjust approach to toolboxpanel js start event as per the suggestion of samreid this code should be revised for clarity it seems to be finding the parent screenview before starting the start event it isn t clear whether this code is necessary and should be revised js if ... | 0 |

130,422 | 18,072,996,785 | IssuesEvent | 2021-09-21 06:27:14 | BitcoinDesign/Guide | https://api.github.com/repos/BitcoinDesign/Guide | opened | Add ⚡️ content to `Designing Bitcoin Products` > `Design resources` | copy Design Bitcoin Products | Add Lightning-related content to the [Design resources](https://bitcoin.design/guide/designing-products/design-resources/) page, as appropriate. This page likely needs only minor tweaks.

Secondary, do a general review of this page (check overlap with other pages, add relevant cross-links...) and check open issues fo... | 1.0 | Add ⚡️ content to `Designing Bitcoin Products` > `Design resources` - Add Lightning-related content to the [Design resources](https://bitcoin.design/guide/designing-products/design-resources/) page, as appropriate. This page likely needs only minor tweaks.

Secondary, do a general review of this page (check overlap w... | non_priority | add ⚡️ content to designing bitcoin products design resources add lightning related content to the page as appropriate this page likely needs only minor tweaks secondary do a general review of this page check overlap with other pages add relevant cross links and check open issues for changes on... | 0 |

6,680 | 3,040,446,607 | IssuesEvent | 2015-08-07 15:27:03 | emberjs/ember.js | https://api.github.com/repos/emberjs/ember.js | closed | Document `unbound` | Documentation Good for New Contributors | Changes to the behavior of `unbound` were made in: https://github.com/emberjs/ember.js/pull/11965

This helper has no docs, but we should add some now that it is roughly sane. | 1.0 | Document `unbound` - Changes to the behavior of `unbound` were made in: https://github.com/emberjs/ember.js/pull/11965

This helper has no docs, but we should add some now that it is roughly sane. | non_priority | document unbound changes to the behavior of unbound were made in this helper has no docs but we should add some now that it is roughly sane | 0 |

5,505 | 7,189,844,847 | IssuesEvent | 2018-02-02 15:22:02 | ga4gh/dockstore | https://api.github.com/repos/ga4gh/dockstore | opened | Refresh of source files should record last modification date | enhancement web service | ## Feature Request

### Desired behaviour

Source files should pull down modification dates, these are files for tools, workflows, dockerfiles, etc. | 1.0 | Refresh of source files should record last modification date - ## Feature Request

### Desired behaviour

Source files should pull down modification dates, these are files for tools, workflows, dockerfiles, etc. | non_priority | refresh of source files should record last modification date feature request desired behaviour source files should pull down modification dates these are files for tools workflows dockerfiles etc | 0 |

89,651 | 11,271,355,744 | IssuesEvent | 2020-01-14 12:53:35 | functional-streams-for-scala/fs2 | https://api.github.com/repos/functional-streams-for-scala/fs2 | closed | Improve Stream#observe | design/refactoring | Find the way how to improve current observe semantics and potentially its performance | 1.0 | Improve Stream#observe - Find the way how to improve current observe semantics and potentially its performance | non_priority | improve stream observe find the way how to improve current observe semantics and potentially its performance | 0 |

149,692 | 13,299,441,272 | IssuesEvent | 2020-08-25 09:44:34 | smsdigital/styleguide-variables | https://api.github.com/repos/smsdigital/styleguide-variables | closed | Proper readme | documentation help wanted | Create a proper readme file.

This should include examples of the output of the converted files but also usage-examples. | 1.0 | Proper readme - Create a proper readme file.

This should include examples of the output of the converted files but also usage-examples. | non_priority | proper readme create a proper readme file this should include examples of the output of the converted files but also usage examples | 0 |

12,250 | 14,767,694,787 | IssuesEvent | 2021-01-10 08:16:30 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Fork start method is susceptible to deadlocks | feature module: multiprocessing module: multithreading todo triaged | ## Versions

```

Ubuntu 16.04

Python 3.6.2

pytorch 0.1.12_2

```

## Issue description

```python

import torch

import torch.multiprocessing as mp

import torch.functional as f

import threading

import numpy as np

from timeit import timeit

def build(cuda=False):

nn = torch.nn.Sequential(

torch... | 1.0 | Fork start method is susceptible to deadlocks - ## Versions

```

Ubuntu 16.04

Python 3.6.2

pytorch 0.1.12_2

```

## Issue description

```python

import torch

import torch.multiprocessing as mp

import torch.functional as f

import threading

import numpy as np

from timeit import timeit

def build(cuda=False... | non_priority | fork start method is susceptible to deadlocks versions ubuntu python pytorch issue description python import torch import torch multiprocessing as mp import torch functional as f import threading import numpy as np from timeit import timeit def build cuda false ... | 0 |

72,601 | 19,345,901,426 | IssuesEvent | 2021-12-15 10:45:58 | icsharpcode/AvalonEdit | https://api.github.com/repos/icsharpcode/AvalonEdit | closed | Fix Unit Test for .NET Core 3.1 & .NET 6.0 | Issue-Bug Area-Build | ```

Log level is set to Informational (Default).

Connected to test environment '< Local Windows Environment >'

Test data store opened in 0.018 sec.

========== Starting test discovery ==========

NUnit Adapter 4.1.0.0: Test discovery starting

NUnit Adapter 4.1.0.0: Test discovery complete

No test is available in D... | 1.0 | Fix Unit Test for .NET Core 3.1 & .NET 6.0 - ```

Log level is set to Informational (Default).

Connected to test environment '< Local Windows Environment >'

Test data store opened in 0.018 sec.

========== Starting test discovery ==========

NUnit Adapter 4.1.0.0: Test discovery starting

NUnit Adapter 4.1.0.0: Test ... | non_priority | fix unit test for net core net log level is set to informational default connected to test environment test data store opened in sec starting test discovery nunit adapter test discovery starting nunit adapter test discovery complete no test is... | 0 |

260,985 | 19,692,932,654 | IssuesEvent | 2022-01-12 09:11:13 | dreemurrs-embedded/Pine64-Arch | https://api.github.com/repos/dreemurrs-embedded/Pine64-Arch | closed | The Barebones Image Quick Start wiki page should be updated for the Pinephone keyboard | documentation | The Pinephone Keyboard is a third option besides a USB keyboard or SSH.

[The page in question](https://github.com/dreemurrs-embedded/Pine64-Arch/wiki/Barebone-Image-Quick-Start) | 1.0 | The Barebones Image Quick Start wiki page should be updated for the Pinephone keyboard - The Pinephone Keyboard is a third option besides a USB keyboard or SSH.

[The page in question](https://github.com/dreemurrs-embedded/Pine64-Arch/wiki/Barebone-Image-Quick-Start) | non_priority | the barebones image quick start wiki page should be updated for the pinephone keyboard the pinephone keyboard is a third option besides a usb keyboard or ssh | 0 |

66,429 | 20,194,757,055 | IssuesEvent | 2022-02-11 09:38:17 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Settings.parseRetainCommentsBetweenQueries doesn't work for the last comment | T: Defect P: Medium E: All Editions C: Parser | The new feature https://github.com/jOOQ/jOOQ/issues/12538 implemented in jOOQ 3.16 doesn't work for trailing comments:

```sql

-- ==========================================

-- header

-- created 2000-01-01

-- ==========================================

-- Change Request #14813

-- -------------------------------... | 1.0 | Settings.parseRetainCommentsBetweenQueries doesn't work for the last comment - The new feature https://github.com/jOOQ/jOOQ/issues/12538 implemented in jOOQ 3.16 doesn't work for trailing comments:

```sql

-- ==========================================

-- header

-- created 2000-01-01

-- ===========================... | non_priority | settings parseretaincommentsbetweenqueries doesn t work for the last comment the new feature implemented in jooq doesn t work for trailing comments sql header created change request ... | 0 |

55,708 | 6,489,357,128 | IssuesEvent | 2017-08-21 01:09:03 | FireFly-WoW/FireFly-IssueTracker | https://api.github.com/repos/FireFly-WoW/FireFly-IssueTracker | closed | Icemist village bug | Status: Needs Testing | **Description:**

Theres an Star's Rest Sentinel following me around in Icemist Village.

**Current behaviour:**

This one keeps following me around here. It also keeps evading my attacks and its lvl 75 elite.

**Expected behaviour:**

It shouldnt happen.

**Steps to reproduce the problem:**

Go to Icemis... | 1.0 | Icemist village bug - **Description:**

Theres an Star's Rest Sentinel following me around in Icemist Village.

**Current behaviour:**

This one keeps following me around here. It also keeps evading my attacks and its lvl 75 elite.

**Expected behaviour:**

It shouldnt happen.

**Steps to reproduce the prob... | non_priority | icemist village bug description theres an star s rest sentinel following me around in icemist village current behaviour this one keeps following me around here it also keeps evading my attacks and its lvl elite expected behaviour it shouldnt happen steps to reproduce the probl... | 0 |

89,887 | 10,618,334,719 | IssuesEvent | 2019-10-13 03:27:48 | sharyuwu/optimum-tilt-of-solar-panels | https://api.github.com/repos/sharyuwu/optimum-tilt-of-solar-panels | closed | SRS Review: Internal Document Links Not Working | bug documentation | Some of the references in the document aren't being called correctly in the LaTeX file, which causes them to show up as ?? in the pdf.

| 1.0 | SRS Review: Internal Document Links Not Working - Some of the references in the document aren't being called correctly in the LaTeX file, which causes them to show up as ?? in the pdf.

| non_priority | srs review internal document links not working some of the references in the document aren t being called correctly in the latex file which causes them to show up as in the pdf | 0 |

96,388 | 16,129,628,621 | IssuesEvent | 2021-04-29 01:05:55 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | opened | CVE-2019-19079 (High) detected in linuxv5.2 | security vulnerability | ## CVE-2019-19079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torva... | True | CVE-2019-19079 (High) detected in linuxv5.2 - ## CVE-2019-19079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Libra... | non_priority | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files ampere centos kernel net qrtr tun c ampere cento... | 0 |

83,755 | 16,361,719,124 | IssuesEvent | 2021-05-14 10:30:14 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] Unused modal ? | No Code Attached Yet | Can anyone tell me where `administrator\components\com_fields\tmpl\field\modal.php` is used | 1.0 | [4.0] Unused modal ? - Can anyone tell me where `administrator\components\com_fields\tmpl\field\modal.php` is used | non_priority | unused modal can anyone tell me where administrator components com fields tmpl field modal php is used | 0 |

84,485 | 10,542,113,533 | IssuesEvent | 2019-10-02 12:29:36 | Automattic/woocommerce-services | https://api.github.com/repos/Automattic/woocommerce-services | closed | [Labels UI] UI for selecting rates from multiple carriers | Shipping Labels Shipping Rates [Status] Needs Design | In the "Rates" step of the "Purchase Label" modal, for each package we'll show *all* the available services that the merchant can use. Obviously there won't be rates from all the carriers (if there's a US domestic shipment I doubt we'll get rates from New Zealand Post), but there will be more than now.

We need to fi... | 1.0 | [Labels UI] UI for selecting rates from multiple carriers - In the "Rates" step of the "Purchase Label" modal, for each package we'll show *all* the available services that the merchant can use. Obviously there won't be rates from all the carriers (if there's a US domestic shipment I doubt we'll get rates from New Zeal... | non_priority | ui for selecting rates from multiple carriers in the rates step of the purchase label modal for each package we ll show all the available services that the merchant can use obviously there won t be rates from all the carriers if there s a us domestic shipment i doubt we ll get rates from new zealand post ... | 0 |

40,473 | 8,793,715,758 | IssuesEvent | 2018-12-21 21:10:13 | PegaSysEng/artemis | https://api.github.com/repos/PegaSysEng/artemis | closed | member variables should not be public | code style 💅 | ### Description

use accessors/mutators when appropriate

| 1.0 | member variables should not be public - ### Description

use accessors/mutators when appropriate

| non_priority | member variables should not be public description use accessors mutators when appropriate | 0 |

34,581 | 7,457,533,031 | IssuesEvent | 2018-03-30 05:14:29 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Viga RDFide genereerimisel | C: AIS P: highest R: fixed T: defect | **Reported by sven syld on 23 Apr 2014 08:43 UTC**

Start aggregate RDF generation for DESCRIPTION_UNIT, FNS EAA.2502

[ Catchable Fatal Error: Argument 1 passed to Dira\OpendataBundle\Component\P

uriResolver\PuriResolver::getPuriUrls() must implement interface Dira\Opend

ataBundle\Component\Behavior\Puriable,... | 1.0 | Viga RDFide genereerimisel - **Reported by sven syld on 23 Apr 2014 08:43 UTC**

Start aggregate RDF generation for DESCRIPTION_UNIT, FNS EAA.2502

[ Catchable Fatal Error: Argument 1 passed to Dira\OpendataBundle\Component\P

uriResolver\PuriResolver::getPuriUrls() must implement interface Dira\Opend

ataBundle... | non_priority | viga rdfide genereerimisel reported by sven syld on apr utc start aggregate rdf generation for description unit fns eaa catchable fatal error argument passed to dira opendatabundle component p uriresolver puriresolver getpuriurls must implement interface dira opend atabundle componen... | 0 |

19,337 | 11,200,350,352 | IssuesEvent | 2020-01-03 21:29:22 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | aws_cloudwatch_event_rule is_enabled flag is not working | bug service/cloudwatch service/cloudwatchevents | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backen... | 2.0 | aws_cloudwatch_event_rule is_enabled flag is not working - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.htm... | non_priority | aws cloudwatch event rule is enabled flag is not working please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of thes... | 0 |

9,334 | 6,848,317,137 | IssuesEvent | 2017-11-13 18:05:25 | explosion/spaCy | https://api.github.com/repos/explosion/spaCy | closed | Tokenizer: -ing contraction parsed incorrectly | help wanted help wanted (easy) language / english performance | Spacy doesn't properly tokenize words with contracted '-ing' ending:

```python

import en_core_web_sm

nlp = en_core_web_sm.load()

doc = nlp("I'm lovin' it")

print(doc[1])

# 'm – CORRECT!

print(doc[1].lemma_)

# be – CORRECT!

print(doc[2])

# lovin – INCORRECT!

print(doc[2].lemma_)

# lovin – INCORRECT!

print(d... | True | Tokenizer: -ing contraction parsed incorrectly - Spacy doesn't properly tokenize words with contracted '-ing' ending:

```python

import en_core_web_sm

nlp = en_core_web_sm.load()

doc = nlp("I'm lovin' it")

print(doc[1])

# 'm – CORRECT!

print(doc[1].lemma_)

# be – CORRECT!

print(doc[2])

# lovin – INCORRECT!

pr... | non_priority | tokenizer ing contraction parsed incorrectly spacy doesn t properly tokenize words with contracted ing ending python import en core web sm nlp en core web sm load doc nlp i m lovin it print doc m – correct print doc lemma be – correct print doc lovin – incorrect print do... | 0 |

79,990 | 7,735,746,275 | IssuesEvent | 2018-05-27 18:30:56 | kubernetes/test-infra | https://api.github.com/repos/kubernetes/test-infra | closed | Prow job status icons are not showing up correctly in Firefox for Android on Marshmallow | area/prow kind/bug sig/testing | Firefox version: 59.0.2

Android version: 6.0.1

/area prow

/kind bug

@kubernetes/sig-testing-bugs | 1.0 | Prow job status icons are not showing up correctly in Firefox for Android on Marshmallow - Firefox version: 59.0.2

Android version: 6.0.1

/area prow

/kind bug

@kubernetes/sig-testi... | non_priority | prow job status icons are not showing up correctly in firefox for android on marshmallow firefox version android version area prow kind bug kubernetes sig testing bugs | 0 |

312,796 | 26,877,146,689 | IssuesEvent | 2023-02-05 06:39:03 | sebastianbergmann/phpunit | https://api.github.com/repos/sebastianbergmann/phpunit | opened | Allow test runner extensions to disable default progress and result printing | type/enhancement feature/test-runner feature/events | Starting with [PHPUnit 10](https://phpunit.de/announcements/phpunit-10.html) and its [event system](https://github.com/sebastianbergmann/phpunit/issues/4676), the [test runner can be extended](https://phpunit.readthedocs.io/en/10.0/extending-phpunit.html#extending-the-test-runner).

To extend PHPUnit's test runner, y... | 1.0 | Allow test runner extensions to disable default progress and result printing - Starting with [PHPUnit 10](https://phpunit.de/announcements/phpunit-10.html) and its [event system](https://github.com/sebastianbergmann/phpunit/issues/4676), the [test runner can be extended](https://phpunit.readthedocs.io/en/10.0/extending... | non_priority | allow test runner extensions to disable default progress and result printing starting with and its the to extend phpunit s test runner you implement the interface this interface requires a bootstrap method to implemented this method is called by phpunit s test runner to bootstrap a configure... | 0 |

148,566 | 19,534,403,093 | IssuesEvent | 2021-12-31 01:34:29 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | opened | CVE-2015-8787 (High) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2015-8787 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page... | True | CVE-2015-8787 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2015-8787 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwri... | non_priority | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in base branch master vulnerable source files net netfilter nf... | 0 |

404,629 | 27,491,957,622 | IssuesEvent | 2023-03-04 18:28:34 | ItKlubBozoLagan/kontestis | https://api.github.com/repos/ItKlubBozoLagan/kontestis | opened | Improve documentation | documentation | - [ ] Make a nicer front page

- [ ] Document all new features

- [ ] Add more technical details | 1.0 | Improve documentation - - [ ] Make a nicer front page

- [ ] Document all new features

- [ ] Add more technical details | non_priority | improve documentation make a nicer front page document all new features add more technical details | 0 |

59,031 | 11,939,567,557 | IssuesEvent | 2020-04-02 15:23:35 | magento/magento2-phpstorm-plugin | https://api.github.com/repos/magento/magento2-phpstorm-plugin | opened | Code generation. Module XML template description | code generation distributed-contribution-day-2020 good first issue | ### Description (*)

Describe the purpose of the module.xml file template and containing variables.

See the example here #68

| 1.0 | Code generation. Module XML template description - ### Description (*)

Describe the purpose of the module.xml file template and containing variables.

See the example here #68

| non_priority | code generation module xml template description description describe the purpose of the module xml file template and containing variables see the example here | 0 |

208,814 | 23,655,800,150 | IssuesEvent | 2022-08-26 11:05:31 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Security Solution] Error "Invalid IP" occurs if each decimal number of the IP address starts with zero and the end is eight or nine | bug impact:low Team: SecuritySolution Team:Onboarding and Lifecycle Mgt v8.5.0 | **Description:**

Error "Invalid IP" occurs if each decimal number of the IP address starts with zero and the end is eight or nine

**Build Details:**

```

VERSION: 8.5.0

BUILD: 55792

COMMIT: 2f65e138d75bf940d12318008b21f440da90fecf

ARTIFACT PAGE: https://snapshots.elastic.co/8.5.0-17b8a62d/summary-8.5.0-SNAPSH... | True | [Security Solution] Error "Invalid IP" occurs if each decimal number of the IP address starts with zero and the end is eight or nine - **Description:**

Error "Invalid IP" occurs if each decimal number of the IP address starts with zero and the end is eight or nine

**Build Details:**

```

VERSION: 8.5.0

BUILD: 5... | non_priority | error invalid ip occurs if each decimal number of the ip address starts with zero and the end is eight or nine description error invalid ip occurs if each decimal number of the ip address starts with zero and the end is eight or nine build details version build commit artif... | 0 |

26,525 | 20,192,701,893 | IssuesEvent | 2022-02-11 07:38:34 | DestinyItemManager/DIM | https://api.github.com/repos/DestinyItemManager/DIM | opened | Automatically run yarn i18n | Infrastructure: i18n | For PRs, it'd be handy if we had a workflow that detected changes to `config/i18n.json`, ran `yarn i18n`, and then committed to the branch if there were any changes. This is especially useful when committing suggestion changes. | 1.0 | Automatically run yarn i18n - For PRs, it'd be handy if we had a workflow that detected changes to `config/i18n.json`, ran `yarn i18n`, and then committed to the branch if there were any changes. This is especially useful when committing suggestion changes. | non_priority | automatically run yarn for prs it d be handy if we had a workflow that detected changes to config json ran yarn and then committed to the branch if there were any changes this is especially useful when committing suggestion changes | 0 |

241,256 | 20,113,131,358 | IssuesEvent | 2022-02-07 16:48:00 | ChainSafe/gossamer | https://api.github.com/repos/ChainSafe/gossamer | closed | lib/trie: refactor and add tests for trie and database related code | tests Type: Chore w3f approved | ## Task summary

- [x] Database code fully tested; then

- [x] Database code refactored

- [x] Trie code (lib/trie/trie.go) fully tested

- [ ] Trie code refactored | 1.0 | lib/trie: refactor and add tests for trie and database related code - ## Task summary

- [x] Database code fully tested; then

- [x] Database code refactored

- [x] Trie code (lib/trie/trie.go) fully tested

- [ ] Trie code refactored | non_priority | lib trie refactor and add tests for trie and database related code task summary database code fully tested then database code refactored trie code lib trie trie go fully tested trie code refactored | 0 |

67,108 | 14,853,869,177 | IssuesEvent | 2021-01-18 10:31:52 | kadirselcuk/sanity-nuxt-events | https://api.github.com/repos/kadirselcuk/sanity-nuxt-events | closed | CVE-2020-8175 (Medium) detected in jpeg-js-0.3.4.tgz - autoclosed | security vulnerability | ## CVE-2020-8175 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jpeg-js-0.3.4.tgz</b></p></summary>

<p>A pure javascript JPEG encoder and decoder</p>

<p>Library home page: <a href="... | True | CVE-2020-8175 (Medium) detected in jpeg-js-0.3.4.tgz - autoclosed - ## CVE-2020-8175 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jpeg-js-0.3.4.tgz</b></p></summary>

<p>A pure jav... | non_priority | cve medium detected in jpeg js tgz autoclosed cve medium severity vulnerability vulnerable library jpeg js tgz a pure javascript jpeg encoder and decoder library home page a href path to dependency file sanity nuxt events web package json path to vulnerable library ... | 0 |

53,065 | 10,981,407,735 | IssuesEvent | 2019-11-30 21:35:15 | AgileVentures/sfn-client | https://api.github.com/repos/AgileVentures/sfn-client | opened | Adding a how it works section to home page | code design enhancement feature help wanted | <!--- Provide a general summary of the issue in the Title above -->

We need to add a how it works section on the home page how Sing for Needs works. Optimistically with little illustrations showing the process what the charity does, what the fans are getting and how the artist is helping within.

## Expected Behavio... | 1.0 | Adding a how it works section to home page - <!--- Provide a general summary of the issue in the Title above -->

We need to add a how it works section on the home page how Sing for Needs works. Optimistically with little illustrations showing the process what the charity does, what the fans are getting and how the art... | non_priority | adding a how it works section to home page we need to add a how it works section on the home page how sing for needs works optimistically with little illustrations showing the process what the charity does what the fans are getting and how the artist is helping within expected behavior illustration... | 0 |

267,859 | 20,248,206,443 | IssuesEvent | 2022-02-14 15:32:06 | Xcov19/mycovidconnect | https://api.github.com/repos/Xcov19/mycovidconnect | closed | @codecakes Outline and refine Docker setup and add documentation and Gotchas steps; | bug documentation good first issue up-for-grabs | _Originally posted by @jayeclark in https://github.com/Xcov19/mycovidconnect/issues/233#issuecomment-987194703_

First timers to the project get confused when running the project. It is better to document and outline which **scopes** of the project they can run locally (for now)

Documentation is required to limit ... | 1.0 | @codecakes Outline and refine Docker setup and add documentation and Gotchas steps; - _Originally posted by @jayeclark in https://github.com/Xcov19/mycovidconnect/issues/233#issuecomment-987194703_

First timers to the project get confused when running the project. It is better to document and outline which **scopes*... | non_priority | codecakes outline and refine docker setup and add documentation and gotchas steps originally posted by jayeclark in first timers to the project get confused when running the project it is better to document and outline which scopes of the project they can run locally for now documentation is requir... | 0 |

148,810 | 13,248,081,257 | IssuesEvent | 2020-08-19 18:20:40 | zillow/luminaire | https://api.github.com/repos/zillow/luminaire | closed | Add documentation link to the "About" section of this repo | documentation | This is the one consistent location people usually find links to docs; we should link to it there as well. | 1.0 | Add documentation link to the "About" section of this repo - This is the one consistent location people usually find links to docs; we should link to it there as well. | non_priority | add documentation link to the about section of this repo this is the one consistent location people usually find links to docs we should link to it there as well | 0 |

125,246 | 16,749,466,356 | IssuesEvent | 2021-06-11 20:24:47 | woocommerce/woocommerce-android | https://api.github.com/repos/woocommerce/woocommerce-android | opened | Login: can the background color of the the system indicator be the same as the rest of the screen? | category: design feature: login good first issue type: enhancement | > Is it possible to make the background color of the system indicator the same as the rest of the screen? (Gray 0)

<sup>— from @Garance91540 as part of 6.8 beta testing 👍</sup>

<img src="https://user-images.githubusercontent.com/1119271/121737117-b2a54900-cab5-11eb-97fa-03367113031a.png" width="270" alt="image">

... | 1.0 | Login: can the background color of the the system indicator be the same as the rest of the screen? - > Is it possible to make the background color of the system indicator the same as the rest of the screen? (Gray 0)

<sup>— from @Garance91540 as part of 6.8 beta testing 👍</sup>

<img src="https://user-images.github... | non_priority | login can the background color of the the system indicator be the same as the rest of the screen is it possible to make the background color of the system indicator the same as the rest of the screen gray — from as part of beta testing 👍 internal reference comment testing ... | 0 |

15,501 | 5,969,786,588 | IssuesEvent | 2017-05-30 21:03:55 | dotnet/buildtools | https://api.github.com/repos/dotnet/buildtools | closed | Add property for specifying XUnit method/class to test | 0 - Backlog area-buildtools-code | To specify a method or class to run when running tests for a project using the BuildTools `Test` target, one needs to specify `"/p:XunitOptions=-method <method_name>"` or `"/p:XunitOptions=-class <class_name>"`. As mentioned in https://github.com/dotnet/buildtools/pull/1272#discussion_r93084987, this is unintuitive.

... | 1.0 | Add property for specifying XUnit method/class to test - To specify a method or class to run when running tests for a project using the BuildTools `Test` target, one needs to specify `"/p:XunitOptions=-method <method_name>"` or `"/p:XunitOptions=-class <class_name>"`. As mentioned in https://github.com/dotnet/buildtoo... | non_priority | add property for specifying xunit method class to test to specify a method or class to run when running tests for a project using the buildtools test target one needs to specify p xunitoptions method or p xunitoptions class as mentioned in this is unintuitive in addition specifying it usin... | 0 |

12,515 | 7,895,627,820 | IssuesEvent | 2018-06-29 04:38:48 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Using Dataset api with Estimator in MirroredStrategy, Non-DMA-safe string tensor error | type:bug/performance |

### System information

- **Have I written custom code (as opposed to using a stock example script provided in TensorFlow)**:

- **OS Platform and Distribution (e.g., Linux Ubuntu 16.04)**: centos

- **TensorFlow installed from (source or binary)**: pip install tensorflow-gpu

- **TensorFlow version (use command belo... | True | Using Dataset api with Estimator in MirroredStrategy, Non-DMA-safe string tensor error -

### System information

- **Have I written custom code (as opposed to using a stock example script provided in TensorFlow)**:

- **OS Platform and Distribution (e.g., Linux Ubuntu 16.04)**: centos

- **TensorFlow installed from ... | non_priority | using dataset api with estimator in mirroredstrategy non dma safe string tensor error system information have i written custom code as opposed to using a stock example script provided in tensorflow os platform and distribution e g linux ubuntu centos tensorflow installed from s... | 0 |

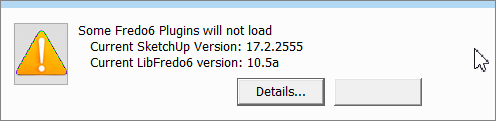

145,447 | 22,689,569,137 | IssuesEvent | 2022-07-04 18:01:19 | Joystream/atlas | https://api.github.com/repos/Joystream/atlas | reopened | Improve "No node connection banner" | design ux discussion | When I sign out and have no connection - I can see only a notification saying that there is no node connection but I don't have a feeling that I'm waiting for something - currently it looks like the screen is frozen

Moreover, the latter formatting does not provide additional value (i.e. what does `2022-11-07` mean in this case? is it a date? or something else?)

<!--session: 1668154065008-52adc948-2a6b-43f0-ae21-9d8... | 1.0 | Inconsistent formatting of dates in UG -

Moreover, the latter formatting does not provide additional value (i.e. what does `2022-11-07` mean in this case? is it a date? or something else?)

<!--session: ... | non_priority | inconsistent formatting of dates in ug moreover the latter formatting does not provide additional value i e what does mean in this case is it a date or something else | 0 |

101,506 | 12,691,573,827 | IssuesEvent | 2020-06-21 17:44:38 | zinc-collective/support | https://api.github.com/repos/zinc-collective/support | closed | Using Support. | design documentation question | Some questions!

It looks like a license is required to use Support. Do you offer a free trial? How does an interested party know that this product is ideal for their use case? (On the website, sending an email to Zinc is mentioned. I'm guessing this is so you can chat further to discuss whether the product is a good... | 1.0 | Using Support. - Some questions!