Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

122,277 | 12,148,110,954 | IssuesEvent | 2020-04-24 14:05:45 | RakipInitiative/ModelRepository | https://api.github.com/repos/RakipInitiative/ModelRepository | opened | Improve Feedback for errors (esp. OpenBUGS) | documentation question | - if a model execution fails due to changes in the parameter settings, there should be an error message specifying the cause, e.g:

- parameters out of bounds

- parameters are the wrong type

- for OpenBUGS:

- if the simulation in the software fails, is there a way to get the error message from R? | 1.0 | Improve Feedback for errors (esp. OpenBUGS) - - if a model execution fails due to changes in the parameter settings, there should be an error message specifying the cause, e.g:

- parameters out of bounds

- parameters are the wrong type

- for OpenBUGS:

- if the simulation in the software fails, is there a way... | non_priority | improve feedback for errors esp openbugs if a model execution fails due to changes in the parameter settings there should be an error message specifying the cause e g parameters out of bounds parameters are the wrong type for openbugs if the simulation in the software fails is there a way... | 0 |

184,564 | 21,784,912,473 | IssuesEvent | 2022-05-14 01:46:53 | jinuem/Shopping-Cart-POC | https://api.github.com/repos/jinuem/Shopping-Cart-POC | closed | WS-2019-0333 (High) detected in handlebars-4.1.0.tgz - autoclosed | security vulnerability | ## WS-2019-0333 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ef... | True | WS-2019-0333 (High) detected in handlebars-4.1.0.tgz - autoclosed - ## WS-2019-0333 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.0.tgz</b></p></summary>

<p>Handlebars... | non_priority | ws high detected in handlebars tgz autoclosed ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file ... | 0 |

65,922 | 27,278,951,799 | IssuesEvent | 2023-02-23 08:34:31 | Epitech-Nantes-Tek3/A-equals-l-squared | https://api.github.com/repos/Epitech-Nantes-Tek3/A-equals-l-squared | closed | Add Calendar Service | enhancement Service Front feature | **Short description:**

Add the google calendar service.

**Describe the solution you'd like**

First i want to be able to create a meeting in my google calendar, then in the google calendar of the logged user,

and then i want to be able to have an action when it's the time of the new meeting.

| 1.0 | Add Calendar Service - **Short description:**

Add the google calendar service.

**Describe the solution you'd like**

First i want to be able to create a meeting in my google calendar, then in the google calendar of the logged user,

and then i want to be able to have an action when it's the time of the new meeting.... | non_priority | add calendar service short description add the google calendar service describe the solution you d like first i want to be able to create a meeting in my google calendar then in the google calendar of the logged user and then i want to be able to have an action when it s the time of the new meeting ... | 0 |

102,213 | 21,933,004,250 | IssuesEvent | 2022-05-23 11:27:24 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | closed | Short solution needed: "chrome headless user agent" (chrome-headless) | help wanted good first issue code chrome-headless | Please help us write most modern and shortest code solution for this issue:

**chrome headless user agent** (technology: [chrome-headless](https://onelinerhub.com/chrome-headless))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create [pull request](https://github.com/Onelinerhub/onelin... | 1.0 | Short solution needed: "chrome headless user agent" (chrome-headless) - Please help us write most modern and shortest code solution for this issue:

**chrome headless user agent** (technology: [chrome-headless](https://onelinerhub.com/chrome-headless))

### Fast way

Just write the code solution in the comments.

### Pre... | non_priority | short solution needed chrome headless user agent chrome headless please help us write most modern and shortest code solution for this issue chrome headless user agent technology fast way just write the code solution in the comments prefered way create with a new code file inside ... | 0 |

227,212 | 18,053,998,117 | IssuesEvent | 2021-09-20 04:42:11 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.TML_01B JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test TML_01B Passing | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif tml_01b.pfc)

% ISSUE: https://github.com/logicmoo/logicmoo_workspace/issues/

% EDIT: https://github.com/log... | 3.0 | logicmoo.pfc.test.sanity_base.TML_01B JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif tml_01b.pfc)

% ISSUE: https://github.com/logicmoo/logicmoo_wor... | non_priority | logicmoo pfc test sanity base tml junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base timeout foreground preserve status s sigkill k swipl x var lib jenkins workspace logicmoo workspace bin lmoo clif tml pfc issue edit jenkins issue search ... | 0 |

48,421 | 25,519,673,116 | IssuesEvent | 2022-11-28 19:18:28 | rubymonsters/speakerinnen_liste | https://api.github.com/repos/rubymonsters/speakerinnen_liste | closed | Cache docker images in Travis | performance | Once we merge #971, the build will be slower than now. This can be improved with some caching. | True | Cache docker images in Travis - Once we merge #971, the build will be slower than now. This can be improved with some caching. | non_priority | cache docker images in travis once we merge the build will be slower than now this can be improved with some caching | 0 |

246,789 | 18,853,962,138 | IssuesEvent | 2021-11-12 02:06:10 | plutoniumpw/landing | https://api.github.com/repos/plutoniumpw/landing | closed | Update T6 Server Guide | documentation | New server.cfg zip has a `localappdata` folder, yet guide hasn't been updated.

https://plutonium.pw/docs/server/t6/setting-up-a-server/#1-preparation

Screenshots n such | 1.0 | Update T6 Server Guide - New server.cfg zip has a `localappdata` folder, yet guide hasn't been updated.

https://plutonium.pw/docs/server/t6/setting-up-a-server/#1-preparation

Screenshots n such | non_priority | update server guide new server cfg zip has a localappdata folder yet guide hasn t been updated screenshots n such | 0 |

52,748 | 13,225,001,742 | IssuesEvent | 2020-08-17 20:17:24 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | test scripts should be moved to resources/test (Trac #278) | Migrated from Trac combo reconstruction defect | Currently a lot of test scripts in icerec projects reside in resources/scripts. For consistency with offline-software they should be moved to resources/test (or is it tests?).

Here's a list of currently affected projects created by

```text

grep resources/scripts */CMakeLists.txt

```

in icerec trunk source:

BadDom... | 1.0 | test scripts should be moved to resources/test (Trac #278) - Currently a lot of test scripts in icerec projects reside in resources/scripts. For consistency with offline-software they should be moved to resources/test (or is it tests?).

Here's a list of currently affected projects created by

```text

grep resources... | non_priority | test scripts should be moved to resources test trac currently a lot of test scripts in icerec projects reside in resources scripts for consistency with offline software they should be moved to resources test or is it tests here s a list of currently affected projects created by text grep resources s... | 0 |

21,056 | 10,568,858,623 | IssuesEvent | 2019-10-06 15:47:45 | gkueny/gkueny.github.io | https://api.github.com/repos/gkueny/gkueny.github.io | closed | WS-2018-0021 Medium Severity Vulnerability detected by WhiteSource | security vulnerability | ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.6-3.3.6.js</b></p></summary>

<p>Google-styled theme for Bootstrap.</p>

<p>path: /gkueny.github.... | True | WS-2018-0021 Medium Severity Vulnerability detected by WhiteSource - ## WS-2018-0021 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.6-3.3.6.js</b></p></summary... | non_priority | ws medium severity vulnerability detected by whitesource ws medium severity vulnerability vulnerable library bootstrap js google styled theme for bootstrap path gkueny github io css js bootstrap js library home page a href dependency hierarchy x bootstrap ... | 0 |

57,321 | 24,098,982,932 | IssuesEvent | 2022-09-19 21:43:25 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Unsetting a field format is not possible | bug Team:AppServicesSv duplicate impact:medium | **Kibana version:** 8.3.3

**Elasticsearch version:** 8.3.3

**Server OS version:** Linux

**Browser version:** Chrome 104

**Browser OS version:** macOS

**Original install method (e.g. download page, yum, from source, etc.):** Docker image

**Describe the bug:**

When I set a specific format for a field... | 1.0 | Unsetting a field format is not possible - **Kibana version:** 8.3.3

**Elasticsearch version:** 8.3.3

**Server OS version:** Linux

**Browser version:** Chrome 104

**Browser OS version:** macOS

**Original install method (e.g. download page, yum, from source, etc.):** Docker image

**Describe the bug:**

... | non_priority | unsetting a field format is not possible kibana version elasticsearch version server os version linux browser version chrome browser os version macos original install method e g download page yum from source etc docker image describe the bug ... | 0 |

55,448 | 11,431,441,852 | IssuesEvent | 2020-02-04 12:09:32 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | [0.9.702] Sub Editor - Toggle Visibility icon, doesn't really look like an icon. | Bug Code | - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Unless you stare really hard next to the generate waypoint button, the toggle visibility button doesn't really look like it existed. Make it an eye icon?

**Version**

0.9.702 | 1.0 | [0.9.702] Sub Editor - Toggle Visibility icon, doesn't really look like an icon. - - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Unless you stare really hard next to the generate waypoint button, the toggle visibility button doesn't really look like it exis... | non_priority | sub editor toggle visibility icon doesn t really look like an icon i have searched the issue tracker to check if the issue has already been reported description unless you stare really hard next to the generate waypoint button the toggle visibility button doesn t really look like it existed make ... | 0 |

170,407 | 14,259,467,781 | IssuesEvent | 2020-11-20 08:19:47 | ExploreASL/ExploreASL | https://api.github.com/repos/ExploreASL/ExploreASL | closed | Improve internal documentation | documentation | Goal: Create a documentation similar to [NIFTYTORCH](https://niftytorch.github.io/doc/) or [MONAI](https://docs.monai.io/en/latest/) ?

* Add **README** files to subfolders to improve the orientation within the ExploreASL project.

* Improve code readability and overall access for new developers.

* Integrate all the... | 1.0 | Improve internal documentation - Goal: Create a documentation similar to [NIFTYTORCH](https://niftytorch.github.io/doc/) or [MONAI](https://docs.monai.io/en/latest/) ?

* Add **README** files to subfolders to improve the orientation within the ExploreASL project.

* Improve code readability and overall access for new... | non_priority | improve internal documentation goal create a documentation similar to or add readme files to subfolders to improve the orientation within the exploreasl project improve code readability and overall access for new developers integrate all the readme files into an interactive documentati... | 0 |

74,005 | 15,298,926,531 | IssuesEvent | 2021-02-24 10:18:50 | rsoreq/kendo-ui-core | https://api.github.com/repos/rsoreq/kendo-ui-core | opened | CVE-2020-15256 (High) detected in object-path-0.9.2.tgz | security vulnerability | ## CVE-2020-15256 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.9.2.tgz</b></p></summary>

<p>Access deep properties using a path</p>

<p>Library home page: <a href="http... | True | CVE-2020-15256 (High) detected in object-path-0.9.2.tgz - ## CVE-2020-15256 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.9.2.tgz</b></p></summary>

<p>Access deep prope... | non_priority | cve high detected in object path tgz cve high severity vulnerability vulnerable library object path tgz access deep properties using a path library home page a href path to dependency file kendo ui core package json path to vulnerable library kendo ui core node modul... | 0 |

85,652 | 24,649,147,063 | IssuesEvent | 2022-10-17 17:06:40 | dotnet/arcade | https://api.github.com/repos/dotnet/arcade | closed | Build failed: dotnet-arcade-validation-official/main #20221016.1 | Build Failed | Build [#20221016.1](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_build/results?buildId=2022543) partiallySucceeded

## :warning: : internal / dotnet-arcade-validation-official partiallySucceeded

### Summary

**Finished** - Mon, 17 Oct 2022 01:48:35 GMT

**Duration** - 98 minutes

**Requested ... | 1.0 | Build failed: dotnet-arcade-validation-official/main #20221016.1 - Build [#20221016.1](https://dev.azure.com/dnceng/7ea9116e-9fac-403d-b258-b31fcf1bb293/_build/results?buildId=2022543) partiallySucceeded

## :warning: : internal / dotnet-arcade-validation-official partiallySucceeded

### Summary

**Finished** - Mo... | non_priority | build failed dotnet arcade validation official main build partiallysucceeded warning internal dotnet arcade validation official partiallysucceeded summary finished mon oct gmt duration minutes requested for microsoft visualstudio services tfs reason ... | 0 |

24,434 | 12,103,869,128 | IssuesEvent | 2020-04-20 19:10:51 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | aws_lambda_alias is recreated when function_name changes from function name to ARN and viceversa | enhancement service/lambda | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backen... | 1.0 | aws_lambda_alias is recreated when function_name changes from function name to ARN and viceversa - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https... | non_priority | aws lambda alias is recreated when function name changes from function name to arn and viceversa please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple provider... | 0 |

60,642 | 6,712,162,659 | IssuesEvent | 2017-10-13 08:18:32 | daisy/ace | https://api.github.com/repos/daisy/ace | opened | Add integration tests for concurrent runs of Ace | tests | Ace should now be able to process several EPUBs concurrently. We should add a couple integration tests for this. | 1.0 | Add integration tests for concurrent runs of Ace - Ace should now be able to process several EPUBs concurrently. We should add a couple integration tests for this. | non_priority | add integration tests for concurrent runs of ace ace should now be able to process several epubs concurrently we should add a couple integration tests for this | 0 |

11,781 | 4,290,203,340 | IssuesEvent | 2016-07-18 08:43:55 | Outernet-Project/librarian | https://api.github.com/repos/Outernet-Project/librarian | closed | Javascript errors in console when opening hamburger menu (which also doesn't open) | bug UI code (JS/CSS) | Possibly during the merge of bundles, either the order or some of the files were left out, these errors appear:

Name elements.Element already defined with value undefined

Name elements.ExpandableBox already defined with value undefined

Name widgets.PulldownMenubar already defined with value undefined

... | 1.0 | Javascript errors in console when opening hamburger menu (which also doesn't open) - Possibly during the merge of bundles, either the order or some of the files were left out, these errors appear:

Name elements.Element already defined with value undefined

Name elements.ExpandableBox already defined with val... | non_priority | javascript errors in console when opening hamburger menu which also doesn t open possibly during the merge of bundles either the order or some of the files were left out these errors appear name elements element already defined with value undefined name elements expandablebox already defined with val... | 0 |

61,625 | 25,578,152,076 | IssuesEvent | 2022-12-01 00:41:44 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [REMOTO] Engenheiro de Software na [SOCIAL MINER] | BIG DATA MYSQL PYTHON MONGODB JAVASCRIPT C# TESTE AUTOMATIZADO NODE.JS DOCKER KUBERNETES NOSQL AWS ETL REMOTO ELASTIC APACHE KAFKA .NET CORE AZURE MICROSERVICES HADOOP ECOSYSTEM REDSHIFT SERVERLESS SCIKIT-LEARN TENSORFLOW KINESIS Stale | ## Engenheiro de Software

Nos preocupamos com o que você entrega e não como você se veste. Sabe como é uma Startup, né? Pessoas criativas, ambiente dinâmico, despojado, horário flexível, pouca burocracia e formalidades... Dá uma olhada nas fotos para sentir a vibe dos nerds :P

## Atividades

Estamos procuran... | 1.0 | [REMOTO] Engenheiro de Software na [SOCIAL MINER] - ## Engenheiro de Software

Nos preocupamos com o que você entrega e não como você se veste. Sabe como é uma Startup, né? Pessoas criativas, ambiente dinâmico, despojado, horário flexível, pouca burocracia e formalidades... Dá uma olhada nas fotos para sentir a vibe ... | non_priority | engenheiro de software na engenheiro de software nos preocupamos com o que você entrega e não como você se veste sabe como é uma startup né pessoas criativas ambiente dinâmico despojado horário flexível pouca burocracia e formalidades dá uma olhada nas fotos para sentir a vibe dos nerds p ... | 0 |

131,274 | 10,687,078,810 | IssuesEvent | 2019-10-22 15:29:07 | imixs/imixs-melman | https://api.github.com/repos/imixs/imixs-melman | closed | JWTAuthenticator - set jwt as header property instead of a query string | feature testing | set the jwt as a header property

See also https://github.com/imixs/imixs-jwt/issues/9 | 1.0 | JWTAuthenticator - set jwt as header property instead of a query string - set the jwt as a header property

See also https://github.com/imixs/imixs-jwt/issues/9 | non_priority | jwtauthenticator set jwt as header property instead of a query string set the jwt as a header property see also | 0 |

9,735 | 13,854,794,530 | IssuesEvent | 2020-10-15 10:00:24 | alessandrasonsini/PeakLand | https://api.github.com/repos/alessandrasonsini/PeakLand | opened | FR - Visited list | Functional Requirement | The system shall provide a list of all visited itineraries for each logged user. | 1.0 | FR - Visited list - The system shall provide a list of all visited itineraries for each logged user. | non_priority | fr visited list the system shall provide a list of all visited itineraries for each logged user | 0 |

96,968 | 8,638,745,576 | IssuesEvent | 2018-11-23 15:47:34 | EyeSeeTea/dhis2-core | https://api.github.com/repos/EyeSeeTea/dhis2-core | closed | App for notifications settings | testing | For DHIS 2.30

- [x] Create skeleton app: dhis2-app-skeleton. Check existing apps for best reference.:

- [x] d2 (30.x.x)

- [x] d2-ui

- [x] d2-i18n

- [x] material-ui v3

- [x] style checks

- [x] build infrastructure (manifest + webapp).

- [x] testing: jest + enzyme

- [x] App: notifications-app, Notificatio... | 1.0 | App for notifications settings - For DHIS 2.30

- [x] Create skeleton app: dhis2-app-skeleton. Check existing apps for best reference.:

- [x] d2 (30.x.x)

- [x] d2-ui

- [x] d2-i18n

- [x] material-ui v3

- [x] style checks

- [x] build infrastructure (manifest + webapp).

- [x] testing: jest + enzyme

- [x] Ap... | non_priority | app for notifications settings for dhis create skeleton app app skeleton check existing apps for best reference x x ui material ui style checks build infrastructure manifest webapp testing jest enzyme app notifications app notificat... | 0 |

299,674 | 25,917,275,129 | IssuesEvent | 2022-12-15 18:27:20 | apache/beam | https://api.github.com/repos/apache/beam | closed | testTwoTimersSettingEachOtherWithCreateAsInputBounded flaky | java runners dataflow P1 bug failing test flake beam-fixit | beam_PostCommit_Java_VR_Dataflow_V2_Streaming flakes on org.apache.beam.sdk.transforms.ParDoTest$TimerTests.testTwoTimersSettingEachOtherWithCreateAsInputBounded

java.lang.RuntimeException: generic::unknown: org.apache.beam.sdk.util.UserCodeException: java.lang.AssertionError: ParDoTest.TimerTests.TwoTimerTest/ParDo... | 1.0 | testTwoTimersSettingEachOtherWithCreateAsInputBounded flaky - beam_PostCommit_Java_VR_Dataflow_V2_Streaming flakes on org.apache.beam.sdk.transforms.ParDoTest$TimerTests.testTwoTimersSettingEachOtherWithCreateAsInputBounded

java.lang.RuntimeException: generic::unknown: org.apache.beam.sdk.util.UserCodeException: jav... | non_priority | testtwotimerssettingeachotherwithcreateasinputbounded flaky beam postcommit java vr dataflow streaming flakes on org apache beam sdk transforms pardotest timertests testtwotimerssettingeachotherwithcreateasinputbounded java lang runtimeexception generic unknown org apache beam sdk util usercodeexception java... | 0 |

18,189 | 10,024,275,356 | IssuesEvent | 2019-07-16 21:24:23 | ampproject/amphtml | https://api.github.com/repos/ampproject/amphtml | closed | Introduce new tick event cls (Cumulative Layout Shift) | Type: Feature Request WG: performance | ## Describe the new feature or change to an existing feature you'd like to see

We introduced support for viewers to read a new tick event `lj` (layout jank) in #21060. Layout jank was a new, experimental metric behind a Chrome Origin Trial. Since then, the metric has matured a bit. It is now a [draft](https://wicg.g... | True | Introduce new tick event cls (Cumulative Layout Shift) - ## Describe the new feature or change to an existing feature you'd like to see

We introduced support for viewers to read a new tick event `lj` (layout jank) in #21060. Layout jank was a new, experimental metric behind a Chrome Origin Trial. Since then, the met... | non_priority | introduce new tick event cls cumulative layout shift describe the new feature or change to an existing feature you d like to see we introduced support for viewers to read a new tick event lj layout jank in layout jank was a new experimental metric behind a chrome origin trial since then the metric ... | 0 |

311,213 | 26,777,122,209 | IssuesEvent | 2023-01-31 18:00:06 | Kong/kubernetes-ingress-controller | https://api.github.com/repos/Kong/kubernetes-ingress-controller | closed | E2E tests shouldn't use cleaner.Cleanup | area/tests | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

As E2E tests create their own clusters, it doesn't make sense to use `cleaner.Cleanup` (cleaning up in-cluster resources created by the test) on their tear down. Instead, we should simply `cluster.Cleanup` ... | 1.0 | E2E tests shouldn't use cleaner.Cleanup - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

As E2E tests create their own clusters, it doesn't make sense to use `cleaner.Cleanup` (cleaning up in-cluster resources created by the test) on their tear down. In... | non_priority | tests shouldn t use cleaner cleanup is there an existing issue for this i have searched the existing issues problem statement as tests create their own clusters it doesn t make sense to use cleaner cleanup cleaning up in cluster resources created by the test on their tear down instead ... | 0 |

64,411 | 14,665,072,382 | IssuesEvent | 2020-12-29 13:28:28 | turkdevops/karma-jasmine | https://api.github.com/repos/turkdevops/karma-jasmine | opened | CVE-2020-28282 (High) detected in getobject-0.1.0.tgz | security vulnerability | ## CVE-2020-28282 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>getobject-0.1.0.tgz</b></p></summary>

<p>get.and.set.deep.objects.easily = true</p>

<p>Library home page: <a href="htt... | True | CVE-2020-28282 (High) detected in getobject-0.1.0.tgz - ## CVE-2020-28282 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>getobject-0.1.0.tgz</b></p></summary>

<p>get.and.set.deep.obje... | non_priority | cve high detected in getobject tgz cve high severity vulnerability vulnerable library getobject tgz get and set deep objects easily true library home page a href path to dependency file karma jasmine package json path to vulnerable library karma jasmine node module... | 0 |

2,469 | 2,733,194,748 | IssuesEvent | 2015-04-17 12:27:06 | Doola/elmenusFeed | https://api.github.com/repos/Doola/elmenusFeed | closed | SS Import reviews Of Restaurants from mysql to neo4j | Code reviewed Documentation reviewed | SS Import reviews Of Restaurants from mysql to neo4j #72 | 1.0 | SS Import reviews Of Restaurants from mysql to neo4j - SS Import reviews Of Restaurants from mysql to neo4j #72 | non_priority | ss import reviews of restaurants from mysql to ss import reviews of restaurants from mysql to | 0 |

228,909 | 17,484,459,745 | IssuesEvent | 2021-08-09 09:09:05 | oscar-system/Oscar.jl | https://api.github.com/repos/oscar-system/Oscar.jl | opened | docs: disable doctests by default, allow enabling | documentation | I am not at a computer right now, else I'd do this right away, but I don't want to forget about this (again): we wanted to disable doctests when building the docs by default, and add a keyword argument to `Oscar.build_doc` to allow enabling them again. Perhaps also add other KW args to it, e.g. for controlling the `str... | 1.0 | docs: disable doctests by default, allow enabling - I am not at a computer right now, else I'd do this right away, but I don't want to forget about this (again): we wanted to disable doctests when building the docs by default, and add a keyword argument to `Oscar.build_doc` to allow enabling them again. Perhaps also ad... | non_priority | docs disable doctests by default allow enabling i am not at a computer right now else i d do this right away but i don t want to forget about this again we wanted to disable doctests when building the docs by default and add a keyword argument to oscar build doc to allow enabling them again perhaps also ad... | 0 |

73,105 | 9,645,734,079 | IssuesEvent | 2019-05-17 09:24:16 | cselab/YMeRo | https://api.github.com/repos/cselab/YMeRo | closed | add Tutorials | documentation | Add a section with tutorials in the docs.

Should include several sections, with commented scripts.

The scripts must be part of the tests for maintainability reasons.

- [x] a simple "hello world" setup

- [x] a basic setup with plugins example

- [x] walls creation

- [x] object belonging: membranes with inner/outer

... | 1.0 | add Tutorials - Add a section with tutorials in the docs.

Should include several sections, with commented scripts.

The scripts must be part of the tests for maintainability reasons.

- [x] a simple "hello world" setup

- [x] a basic setup with plugins example

- [x] walls creation

- [x] object belonging: membranes w... | non_priority | add tutorials add a section with tutorials in the docs should include several sections with commented scripts the scripts must be part of the tests for maintainability reasons a simple hello world setup a basic setup with plugins example walls creation object belonging membranes with inne... | 0 |

154,012 | 19,710,213,549 | IssuesEvent | 2022-01-13 03:56:33 | CanarysPlayground/Sample45 | https://api.github.com/repos/CanarysPlayground/Sample45 | closed | CVE-2020-25649 (High) detected in jackson-databind-2.2.3.jar | security vulnerability | ## CVE-2020-25649 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.2.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-25649 (High) detected in jackson-databind-2.2.3.jar - ## CVE-2020-25649 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.2.3.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to vulnerable library lib jackson databind jar dependency hierarchy ... | 0 |

257,138 | 19,488,672,209 | IssuesEvent | 2021-12-26 22:39:53 | PandaHugMonster/php-simputils | https://api.github.com/repos/PandaHugMonster/php-simputils | opened | Prepare lots of documentation | documentation | After finalizing initial architecture. Prepare extensive amount of detailed documentation to cover lot's of cases. | 1.0 | Prepare lots of documentation - After finalizing initial architecture. Prepare extensive amount of detailed documentation to cover lot's of cases. | non_priority | prepare lots of documentation after finalizing initial architecture prepare extensive amount of detailed documentation to cover lot s of cases | 0 |

215,389 | 16,601,485,461 | IssuesEvent | 2021-06-01 20:07:53 | krautzource/aria-tree-walker | https://api.github.com/repos/krautzource/aria-tree-walker | closed | add music score example | documentation | Another low hanging fruit.

Some useful links:

* examples: https://w3c.github.io/mnx/docs/comparisons/musicxml/

* svg: https://opensheetmusicdisplay.github.io/demo/

* speech: http://iceb.org/DictatingMusic.pdf

* braille output:

* https://code.google.com/archive/p/freedots/ // http://musicxml2braille.appspo... | 1.0 | add music score example - Another low hanging fruit.

Some useful links:

* examples: https://w3c.github.io/mnx/docs/comparisons/musicxml/

* svg: https://opensheetmusicdisplay.github.io/demo/

* speech: http://iceb.org/DictatingMusic.pdf

* braille output:

* https://code.google.com/archive/p/freedots/ // http... | non_priority | add music score example another low hanging fruit some useful links examples svg speech braille output | 0 |

193,269 | 15,372,318,390 | IssuesEvent | 2021-03-02 11:05:40 | GAA-UAM/scikit-fda | https://api.github.com/repos/GAA-UAM/scikit-fda | opened | Add sphinx-bibtex extension | documentation enhancement | **Is your feature request related to a problem? Please describe.**

The references used in this packages are not uniform, but they use different citation styles.

**Describe the solution you'd like**

We should use the [sphinx-bibtex extension](https://sphinxcontrib-bibtex.readthedocs.io/en/latest/). This would allow... | 1.0 | Add sphinx-bibtex extension - **Is your feature request related to a problem? Please describe.**

The references used in this packages are not uniform, but they use different citation styles.

**Describe the solution you'd like**

We should use the [sphinx-bibtex extension](https://sphinxcontrib-bibtex.readthedocs.io... | non_priority | add sphinx bibtex extension is your feature request related to a problem please describe the references used in this packages are not uniform but they use different citation styles describe the solution you d like we should use the this would allow us to put our references in a bibtex file and se... | 0 |

76,854 | 7,547,198,284 | IssuesEvent | 2018-04-18 07:08:52 | vaadin/beverage-starter-flow | https://api.github.com/repos/vaadin/beverage-starter-flow | closed | Test on Apache Tomcat 8.0.x, 8.5, 9 | testing | Test manually with the following Apache Tomcat versions and use cases:

- [x] 8.0.x (newest)

- [x] devevelopent

- [x] production

- [x] push in production

- [x] 8.5

- [x] devevelopent

- [x] production

- [x] push in production

- [x] 9

- [x] devevelopent

- [x] production

- [x] push in product... | 1.0 | Test on Apache Tomcat 8.0.x, 8.5, 9 - Test manually with the following Apache Tomcat versions and use cases:

- [x] 8.0.x (newest)

- [x] devevelopent

- [x] production

- [x] push in production

- [x] 8.5

- [x] devevelopent

- [x] production

- [x] push in production

- [x] 9

- [x] devevelopent

- [... | non_priority | test on apache tomcat x test manually with the following apache tomcat versions and use cases x newest devevelopent production push in production devevelopent production push in production devevelopent production p... | 0 |

108,168 | 11,582,032,583 | IssuesEvent | 2020-02-22 00:53:16 | keep-network/tbtc | https://api.github.com/repos/keep-network/tbtc | closed | Revisit interlinking between sections | :book: documentation tbtc | We're doing some parallel work right now on multiple sections; we'll have to do a pass where we revisit how the sections are interlinked and see if there are more natural ways to handle them. | 1.0 | Revisit interlinking between sections - We're doing some parallel work right now on multiple sections; we'll have to do a pass where we revisit how the sections are interlinked and see if there are more natural ways to handle them. | non_priority | revisit interlinking between sections we re doing some parallel work right now on multiple sections we ll have to do a pass where we revisit how the sections are interlinked and see if there are more natural ways to handle them | 0 |

6,838 | 6,625,700,743 | IssuesEvent | 2017-09-22 16:24:38 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | closed | [Windows Arm64] CI Trigger words should match job name to eliminate confusion | area-Infrastructure bug | The current trigger `@dotnet-bot test Windows_NT arm64 Checked` is confusing

@jashook | 1.0 | [Windows Arm64] CI Trigger words should match job name to eliminate confusion - The current trigger `@dotnet-bot test Windows_NT arm64 Checked` is confusing

@jashook | non_priority | ci trigger words should match job name to eliminate confusion the current trigger dotnet bot test windows nt checked is confusing jashook | 0 |

10,435 | 12,396,198,870 | IssuesEvent | 2020-05-20 20:04:52 | facebook/hhvm | https://api.github.com/repos/facebook/hhvm | closed | DateTime: issue with timestamps and timezones | php5 incompatibility | I think I may have encountered a bug i DateTime behavior in HHVM - here's the code:

``` PHP

echo "\nDocumenting a bug in HHVM:\n\n";

$date = date_create(null, new DateTimeZone('Europe/Copenhagen'));

$date->setTimestamp(173919600);

echo "expected: 1975-07-07 00:00:00\n result: " . $date->format('Y-m-d H:i:s') . "\n\n... | True | DateTime: issue with timestamps and timezones - I think I may have encountered a bug i DateTime behavior in HHVM - here's the code:

``` PHP

echo "\nDocumenting a bug in HHVM:\n\n";

$date = date_create(null, new DateTimeZone('Europe/Copenhagen'));

$date->setTimestamp(173919600);

echo "expected: 1975-07-07 00:00:00\n ... | non_priority | datetime issue with timestamps and timezones i think i may have encountered a bug i datetime behavior in hhvm here s the code php echo ndocumenting a bug in hhvm n n date date create null new datetimezone europe copenhagen date settimestamp echo expected n result dat... | 0 |

182,461 | 30,851,934,661 | IssuesEvent | 2023-08-02 17:24:28 | bcgov/cloud-pathfinder | https://api.github.com/repos/bcgov/cloud-pathfinder | closed | Baseline our KPIs for future reporting to governance bodies | Service Design | **Describe the issue**

Our business case for building out the Public Cloud Accelerator Service goes to Digital Investment Board on October 21. It includes several KPIs that will measure our progress towards realizing the desired benefits, and we'll report on these to several governance bodies (ED cloud group (tri-weekl... | 1.0 | Baseline our KPIs for future reporting to governance bodies - **Describe the issue**

Our business case for building out the Public Cloud Accelerator Service goes to Digital Investment Board on October 21. It includes several KPIs that will measure our progress towards realizing the desired benefits, and we'll report on... | non_priority | baseline our kpis for future reporting to governance bodies describe the issue our business case for building out the public cloud accelerator service goes to digital investment board on october it includes several kpis that will measure our progress towards realizing the desired benefits and we ll report on ... | 0 |

53,778 | 13,206,548,760 | IssuesEvent | 2020-08-14 20:29:40 | spack/spack | https://api.github.com/repos/spack/spack | opened | Installation issue: rocm-opencl | build-error | <!-- Thanks for taking the time to report this build failure. To proceed with the report please:

1. Title the issue "Installation issue: <name-of-the-package>".

2. Provide the information required below.

We encourage you to try, as much as possible, to reduce your problem to the minimal example that still reprod... | 1.0 | Installation issue: rocm-opencl - <!-- Thanks for taking the time to report this build failure. To proceed with the report please:

1. Title the issue "Installation issue: <name-of-the-package>".

2. Provide the information required below.

We encourage you to try, as much as possible, to reduce your problem to the... | non_priority | installation issue rocm opencl thanks for taking the time to report this build failure to proceed with the report please title the issue installation issue provide the information required below we encourage you to try as much as possible to reduce your problem to the minimal example tha... | 0 |

121,957 | 10,208,267,511 | IssuesEvent | 2019-08-14 09:42:28 | maidsafe/safe_client_libs | https://api.github.com/repos/maidsafe/safe_client_libs | closed | Add tests for Unpublished Unsequenced AppendOnly data | testing | Similar to [this test module](https://github.com/maidsafe/safe_client_libs/blob/experimental/safe_app/src/tests/unpublished_mutable_data.rs) there should be extensive tests to test the various scenarios of Unpublished Unsequenced AppendOnly data. | 1.0 | Add tests for Unpublished Unsequenced AppendOnly data - Similar to [this test module](https://github.com/maidsafe/safe_client_libs/blob/experimental/safe_app/src/tests/unpublished_mutable_data.rs) there should be extensive tests to test the various scenarios of Unpublished Unsequenced AppendOnly data. | non_priority | add tests for unpublished unsequenced appendonly data similar to there should be extensive tests to test the various scenarios of unpublished unsequenced appendonly data | 0 |

337,656 | 30,253,303,752 | IssuesEvent | 2023-07-06 22:51:57 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix manipulation.test_squeeze | Sub Task Failing Test | | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5412847572/jobs/9837524581"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5412847572/jobs/9837524581"><img src=https://img.shields.io/badge/-success-success></a>

|t... | 1.0 | Fix manipulation.test_squeeze - | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5412847572/jobs/9837524581"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5412847572/jobs/9837524581"><img src=https://img.shields.io... | non_priority | fix manipulation test squeeze jax a href src numpy a href src tensorflow a href src torch a href src paddle a href src | 0 |

138,542 | 11,204,914,251 | IssuesEvent | 2020-01-05 10:19:18 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | HTTPRequest fails on sites with IPv6 | bug needs testing topic:network | <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:** 3.0.2 Stable

<!-- Specify commit hash if non-official. -->

**OS/device including version:** Windows 10. I have IPv6 enabled.

<!-- Specify GPU model... | 1.0 | HTTPRequest fails on sites with IPv6 - <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:** 3.0.2 Stable

<!-- Specify commit hash if non-official. -->

**OS/device including version:** Windows 10. I hav... | non_priority | httprequest fails on sites with please search existing issues for potential duplicates before filing yours godot version stable os device including version windows i have enabled issue description accessing some sites with httprequest fails with error ... | 0 |

392,905 | 26,964,531,807 | IssuesEvent | 2023-02-08 21:04:38 | py-why/dodiscover | https://api.github.com/repos/py-why/dodiscover | opened | [DOC] Relevant in-depth tutorial on FCI | documentation help wanted | Brought up in https://github.com/py-why/dodiscover/pull/106#discussion_r1100661794, we want to develop a good example (or set of examples) of causal graphs that we then generate data from that we then feed into FCI.

This can be then used to illustrate how FCI applies its rules and that selection bias (i.e. undirecte... | 1.0 | [DOC] Relevant in-depth tutorial on FCI - Brought up in https://github.com/py-why/dodiscover/pull/106#discussion_r1100661794, we want to develop a good example (or set of examples) of causal graphs that we then generate data from that we then feed into FCI.

This can be then used to illustrate how FCI applies its rul... | non_priority | relevant in depth tutorial on fci brought up in we want to develop a good example or set of examples of causal graphs that we then generate data from that we then feed into fci this can be then used to illustrate how fci applies its rules and that selection bias i e undirected edges bidirected edges and... | 0 |

98,130 | 29,489,013,681 | IssuesEvent | 2023-06-02 12:06:03 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Task]: Refactor sticky canvas Arena to avoid unnecessary forced reflow | Performance Pod UI Builders Pod Task Drag & Drop Canvas / Grid Performance | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### SubTasks

Refactor sticky canvas Arena to avoid unnecessary forced reflow. This is done by enabling the canvas to observe only when required i.e, only when user is using,

- [ ] Drag to select

- [ ] Dragging widgets | 1.0 | [Task]: Refactor sticky canvas Arena to avoid unnecessary forced reflow - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### SubTasks

Refactor sticky canvas Arena to avoid unnecessary forced reflow. This is done by enabling the canvas to observe only when required i.e, only when u... | non_priority | refactor sticky canvas arena to avoid unnecessary forced reflow is there an existing issue for this i have searched the existing issues subtasks refactor sticky canvas arena to avoid unnecessary forced reflow this is done by enabling the canvas to observe only when required i e only when user is ... | 0 |

186,117 | 15,047,653,346 | IssuesEvent | 2021-02-03 09:12:26 | apache/buildstream | https://api.github.com/repos/apache/buildstream | closed | Follow-up from "Refer readers to our tutorial before referring them to existing bst projects" | bug documentation | [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/608)

In GitLab by [[Gitlab user @tristanvb]](https://gitlab.com/tristanvb) on Aug 25, 2018, 10:53

The following discussion from !578 should be addressed:

- [x] [[Gitlab user @tristanvb]](https://gitlab.com/tristanvb) started a [discuss... | 1.0 | Follow-up from "Refer readers to our tutorial before referring them to existing bst projects" - [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/608)

In GitLab by [[Gitlab user @tristanvb]](https://gitlab.com/tristanvb) on Aug 25, 2018, 10:53

The following discussion from !578 should ... | non_priority | follow up from refer readers to our tutorial before referring them to existing bst projects in gitlab by on aug the following discussion from should be addressed started a just noticed this in the install guide today why are we recommending that windows us... | 0 |

133,458 | 29,181,422,691 | IssuesEvent | 2023-05-19 12:14:24 | AllYarnsAreBeautiful/ayab-desktop | https://api.github.com/repos/AllYarnsAreBeautiful/ayab-desktop | closed | API version sent by device is not used by host | code quality | `control.api_version` is set in state `VERSION_CHECK` but `control.operate()` does not use the value: instead, it uses a default value of 6. | 1.0 | API version sent by device is not used by host - `control.api_version` is set in state `VERSION_CHECK` but `control.operate()` does not use the value: instead, it uses a default value of 6. | non_priority | api version sent by device is not used by host control api version is set in state version check but control operate does not use the value instead it uses a default value of | 0 |

43,055 | 11,456,005,222 | IssuesEvent | 2020-02-06 20:17:09 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | Correlation between map pin and cards in search results | 508-defect-0 functional vsa | ## User Story:

As a Veteran, I need to be able to see correlation between location on map and list

## Tasks

[ ] Style map pins to correlate between map and list

## Acceptance Criteria:

[ ] Map pi... | 1.0 | Correlation between map pin and cards in search results - ## User Story:

As a Veteran, I need to be able to see correlation between location on map and list

## Tasks

[ ] Style map pins to correlate between map and list

detected in linuxv3.10 | security vulnerability | ## CVE-2018-1000028 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/to... | True | CVE-2018-1000028 (High) detected in linuxv3.10 - ## CVE-2018-1000028 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p... | non_priority | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch xsentinel experimental vulnerable source files android kernel ... | 0 |

254,898 | 19,276,345,678 | IssuesEvent | 2021-12-10 12:19:22 | Gaius-Augustus/clamsa | https://api.github.com/repos/Gaius-Augustus/clamsa | opened | Warning for out-of-phase inputs | documentation | Users should be warned if input appears to be a genome alignment without an assumed ORF in the same phase for all alignment rows. | 1.0 | Warning for out-of-phase inputs - Users should be warned if input appears to be a genome alignment without an assumed ORF in the same phase for all alignment rows. | non_priority | warning for out of phase inputs users should be warned if input appears to be a genome alignment without an assumed orf in the same phase for all alignment rows | 0 |

27,811 | 8,037,782,094 | IssuesEvent | 2018-07-30 13:41:45 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | Rebuilding or serving library project | comp: devkit/build-angular type: feature | <!--

We will close this issue if you don't provide the needed information.

For feature requests, delete the form below and describe the requirements and use case.

-->

### Feature Request

Some interface that would rebuild library project that is generated with `ng g library niceLib`.

Of Course the best option wo... | 1.0 | Rebuilding or serving library project - <!--

We will close this issue if you don't provide the needed information.

For feature requests, delete the form below and describe the requirements and use case.

-->

### Feature Request

Some interface that would rebuild library project that is generated with `ng g library... | non_priority | rebuilding or serving library project we will close this issue if you don t provide the needed information for feature requests delete the form below and describe the requirements and use case feature request some interface that would rebuild library project that is generated with ng g library... | 0 |

262,152 | 19,762,291,821 | IssuesEvent | 2022-01-16 15:59:28 | degawa/dictos | https://api.github.com/repos/degawa/dictos | opened | remove 0 from stencil and table for the staggered grid | documentation | value at `0` is not used.

`0` breaks the consistency of the finite difference equation on the staggered grid. | 1.0 | remove 0 from stencil and table for the staggered grid - value at `0` is not used.

`0` breaks the consistency of the finite difference equation on the staggered grid. | non_priority | remove from stencil and table for the staggered grid value at is not used breaks the consistency of the finite difference equation on the staggered grid | 0 |

7,277 | 24,564,481,610 | IssuesEvent | 2022-10-13 00:42:08 | Roche/rtables | https://api.github.com/repos/Roche/rtables | closed | Add pkgdown publishing to repo automation | automation | @cicdguy would you mind doing this?

If you can add a multi-version documentation that would be awesome, otherwise you can just use the root (CRAN release) and `dev/` (`main`) method that `pkgdown` supports. | 1.0 | Add pkgdown publishing to repo automation - @cicdguy would you mind doing this?

If you can add a multi-version documentation that would be awesome, otherwise you can just use the root (CRAN release) and `dev/` (`main`) method that `pkgdown` supports. | non_priority | add pkgdown publishing to repo automation cicdguy would you mind doing this if you can add a multi version documentation that would be awesome otherwise you can just use the root cran release and dev main method that pkgdown supports | 0 |

66,711 | 14,798,942,185 | IssuesEvent | 2021-01-13 01:02:27 | jtimberlake/cloud-inquisitor | https://api.github.com/repos/jtimberlake/cloud-inquisitor | opened | CVE-2020-24025 (Medium) detected in node-sass-4.14.1.tgz | security vulnerability | ## CVE-2020-24025 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry... | True | CVE-2020-24025 (Medium) detected in node-sass-4.14.1.tgz - ## CVE-2020-24025 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around ... | non_priority | cve medium detected in node sass tgz cve medium severity vulnerability vulnerable library node sass tgz wrapper around libsass library home page a href path to dependency file cloud inquisitor frontend package json path to vulnerable library cloud inquisitor frontend... | 0 |

104,444 | 22,669,309,610 | IssuesEvent | 2022-07-03 11:20:25 | codechef-org/status | https://api.github.com/repos/codechef-org/status | closed | 🛑 CodeChef Goodies is down | status code-chef-goodies | In [`c4f21fa`](https://github.com/codechef-org/status/commit/c4f21fa2ca32e740310e26aae8eb35178b000a08

), CodeChef Goodies (https://goodies.codechef.com) was **down**:

- HTTP code: 0

- Response time: 0 ms

| 1.0 | 🛑 CodeChef Goodies is down - In [`c4f21fa`](https://github.com/codechef-org/status/commit/c4f21fa2ca32e740310e26aae8eb35178b000a08

), CodeChef Goodies (https://goodies.codechef.com) was **down**:

- HTTP code: 0

- Response time: 0 ms

| non_priority | 🛑 codechef goodies is down in codechef goodies was down http code response time ms | 0 |

109,797 | 23,824,318,564 | IssuesEvent | 2022-09-05 13:45:08 | aimhubio/aim | https://api.github.com/repos/aimhubio/aim | closed | Handle deprecations in PyTorch Lightning 1.7 API | area / integrations type / code-health phase / shipped | ## Proposed refactoring or deprecation

Change imports in `aim.sdk.adaptors.pytorch_lightning` to handle deprecations in PyTorch Lightning API.

* `pytorch_lightning.loggers.base.rank_zero_experiment` -> `pytorch_lightning.loggers.logger.rank_zero_experiment`

* `pytorch_lightning.loggers.base.LightningLoggerBase` ... | 1.0 | Handle deprecations in PyTorch Lightning 1.7 API - ## Proposed refactoring or deprecation

Change imports in `aim.sdk.adaptors.pytorch_lightning` to handle deprecations in PyTorch Lightning API.

* `pytorch_lightning.loggers.base.rank_zero_experiment` -> `pytorch_lightning.loggers.logger.rank_zero_experiment`

* `p... | non_priority | handle deprecations in pytorch lightning api proposed refactoring or deprecation change imports in aim sdk adaptors pytorch lightning to handle deprecations in pytorch lightning api pytorch lightning loggers base rank zero experiment pytorch lightning loggers logger rank zero experiment p... | 0 |

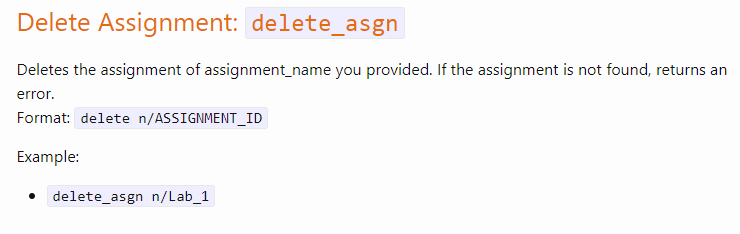

417,552 | 28,110,620,689 | IssuesEvent | 2023-03-31 06:50:59 | slackernoob/ped | https://api.github.com/repos/slackernoob/ped | opened | Delete Assignment given in User Guide not working | type.DocumentationBug severity.High | Delete Assignment command example given in the User Guide does not work.

`delete_asgn n/Lab_1`

`delete_asgn n/Lab_1`

| security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>vonage/client-2.4.0</b></p></summary>

<p></p>

<p>

</details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/... | True | vonage/client-2.4.0: 6 vulnerabilities (highest severity is: 8.1) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>vonage/client-2.4.0</b></p></summary>

<p></p>

<p>

</details>

## Vulnerabilities

| CVE | Severit... | non_priority | vonage client vulnerabilities highest severity is vulnerable library vonage client vulnerabilities cve severity cvss dependency type fixed in remediation available high ... | 0 |

20,207 | 6,827,658,068 | IssuesEvent | 2017-11-08 17:44:17 | zooniverse/Panoptes-Front-End | https://api.github.com/repos/zooniverse/Panoptes-Front-End | closed | Subject exists and show in the classification interface but can't be previewed under the subject set project builder page | bug project builder | I'm making a project for my summer student to mark boulders in Planet Four images (thanks for making a create toolset!) so it's a private project at the moment. I had trouble uploading images. Finally got it work, but they're not showing up in the preview on the project builder but the images are showing up in the proj... | 1.0 | Subject exists and show in the classification interface but can't be previewed under the subject set project builder page - I'm making a project for my summer student to mark boulders in Planet Four images (thanks for making a create toolset!) so it's a private project at the moment. I had trouble uploading images. Fin... | non_priority | subject exists and show in the classification interface but can t be previewed under the subject set project builder page i m making a project for my summer student to mark boulders in planet four images thanks for making a create toolset so it s a private project at the moment i had trouble uploading images fin... | 0 |

55,186 | 14,262,327,163 | IssuesEvent | 2020-11-20 12:47:52 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Parser cannot look up column from tables by same name from different schemas | C: Parser E: All Editions P: Medium T: Defect | Given a database like this:

```sql

create table a.t (i int);

create table b.t (j int);

```

The following statement:

```sql

select i, j from a.t, b.t

```

Fails to parse with this error:

```

org.jooq.impl.ParserException: Unknown field identifier: [1:9] select i[*], j from a.t, b.t

at org.jooq.impl... | 1.0 | Parser cannot look up column from tables by same name from different schemas - Given a database like this:

```sql

create table a.t (i int);

create table b.t (j int);

```

The following statement:

```sql

select i, j from a.t, b.t

```

Fails to parse with this error:

```

org.jooq.impl.ParserException: ... | non_priority | parser cannot look up column from tables by same name from different schemas given a database like this sql create table a t i int create table b t j int the following statement sql select i j from a t b t fails to parse with this error org jooq impl parserexception ... | 0 |

126,368 | 17,023,385,216 | IssuesEvent | 2021-07-03 01:45:18 | USACE/cumulus | https://api.github.com/repos/USACE/cumulus | closed | [New UI] Overview | design enhancement | ### New Features

- [x] Left Navigation menu (mobile friendly)

- [x] **Home**

- [x] Design is more mobile friendly, but subject to change

- [x] Latest Updates Section to keep users informed of new products/changes

- [x] **Products**

- [x] Product names are more descriptive and human friendly

- [x] Produ... | 1.0 | [New UI] Overview - ### New Features

- [x] Left Navigation menu (mobile friendly)

- [x] **Home**

- [x] Design is more mobile friendly, but subject to change

- [x] Latest Updates Section to keep users informed of new products/changes

- [x] **Products**

- [x] Product names are more descriptive and human fri... | non_priority | overview new features left navigation menu mobile friendly home design is more mobile friendly but subject to change latest updates section to keep users informed of new products changes products product names are more descriptive and human friendly produc... | 0 |

95,186 | 10,868,310,410 | IssuesEvent | 2019-11-15 03:13:24 | IntelPython/sdc | https://api.github.com/repos/IntelPython/sdc | opened | [SDC] Update license.md | Documentation | Present [license](https://github.com/IntelPython/sdc/blob/master/LICENSE.md) file does not look right. Need to change it to the correct one | 1.0 | [SDC] Update license.md - Present [license](https://github.com/IntelPython/sdc/blob/master/LICENSE.md) file does not look right. Need to change it to the correct one | non_priority | update license md present file does not look right need to change it to the correct one | 0 |

26,592 | 13,061,725,168 | IssuesEvent | 2020-07-30 14:15:51 | ppy/osu | https://api.github.com/repos/ppy/osu | closed | Perfomance issues (High consume of CPU and RAM) | type:performance | Stable Osu! (the other one) runs quite well on my notebook (it is old, but still works):

- Intel Celeron Bay Trail N2806 @ 1.6 Ghz;

- 2 GB RAM;

- Intel HD graphics 4400;

- Windows 8.1 Single Language;

The next screenshot show the hardware usage when osu! is in stand-by (not playing nor downloading beatmaps...):

... | True | Perfomance issues (High consume of CPU and RAM) - Stable Osu! (the other one) runs quite well on my notebook (it is old, but still works):

- Intel Celeron Bay Trail N2806 @ 1.6 Ghz;

- 2 GB RAM;

- Intel HD graphics 4400;

- Windows 8.1 Single Language;

The next screenshot show the hardware usage when osu! is in st... | non_priority | perfomance issues high consume of cpu and ram stable osu the other one runs quite well on my notebook it is old but still works intel celeron bay trail ghz gb ram intel hd graphics windows single language the next screenshot show the hardware usage when osu is in stand by ... | 0 |

226,875 | 18,045,932,799 | IssuesEvent | 2021-09-18 22:29:56 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.NEG_01E JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test NEG_01E | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif neg_01e.pfc)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ANEG_01E

GITLAB: https://logicmoo.org:208... | 3.0 | logicmoo.pfc.test.sanity_base.NEG_01E JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif neg_01e.pfc)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+lab... | non_priority | logicmoo pfc test sanity base neg junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base timeout foreground preserve status s sigkill k lmoo clif neg pfc gh master issue finfo issue search gitlab latest this build github running v... | 0 |

29,020 | 13,923,694,393 | IssuesEvent | 2020-10-21 14:42:47 | ManageIQ/manageiq-api | https://api.github.com/repos/ManageIQ/manageiq-api | closed | API Performance | performance | Issues related to improving the performance of the ManageIQ Rest API

- [x] [Slow but small response for virtual columns seemingly due to N+1 queries](https://github.com/ManageIQ/manageiq-api/issues/869)

- [x] _[renderer.rb] Don't re-Rbac: part deux:_ #874

- [x] _AR VirtualAttribute queries + introspection:_ ~... | True | API Performance - Issues related to improving the performance of the ManageIQ Rest API

- [x] [Slow but small response for virtual columns seemingly due to N+1 queries](https://github.com/ManageIQ/manageiq-api/issues/869)

- [x] _[renderer.rb] Don't re-Rbac: part deux:_ #874

- [x] _AR VirtualAttribute queries +... | non_priority | api performance issues related to improving the performance of the manageiq rest api don t re rbac part deux ar virtualattribute queries introspection improve user login timings use update attribute over save for user login man... | 0 |

157,727 | 13,721,774,179 | IssuesEvent | 2020-10-03 00:15:05 | SJSU-Robotic/ros-curriculum | https://api.github.com/repos/SJSU-Robotic/ros-curriculum | opened | RE: lecture1.pdf, `roscore` behavior requires further elaboration | documentation | ## Problem Description

Regarding [lecture1.pdf](https://github.com/SJSU-Robotic/ros-curriculum/blob/master/readings/lecture1.pdf)

On slide 10, after running `roscore` in `terminal`, end users are presented with various links:

On slide 10, after running `roscore` in `terminal`, end users are presented with various links:

![image_from_ios]... | non_priority | re pdf roscore behavior requires further elaboration problem description regarding on slide after running roscore in terminal end users are presented with various links users unfamiliar with roscore s architecture are likely to assume that these links need to be opened and int... | 0 |

227,118 | 17,374,574,596 | IssuesEvent | 2021-07-30 18:49:53 | arnaudmillergoupil/veganrecipe | https://api.github.com/repos/arnaudmillergoupil/veganrecipe | opened | Web Design | documentation enhancement | - [ ] Arborescence générale du site

- [ ] UI, de quoi aura l'air du site, ses principales charactéristiques, et son

- [ ] Template d'une recette | 1.0 | Web Design - - [ ] Arborescence générale du site

- [ ] UI, de quoi aura l'air du site, ses principales charactéristiques, et son

- [ ] Template d'une recette | non_priority | web design arborescence générale du site ui de quoi aura l air du site ses principales charactéristiques et son template d une recette | 0 |

189,751 | 14,521,181,217 | IssuesEvent | 2020-12-14 06:55:52 | HumanBrainProject/interactive-viewer | https://api.github.com/repos/HumanBrainProject/interactive-viewer | closed | [Bug] After change connectivity source, if new source does not have data it breaks | bug needs test v2.3.0 | Steps to reproduce:

1. Load Cytoarchitectonic maps - v1.18

2. Select region "Area STS2 (STS) - right hemisphere"

3. Expand the connectivity

4. change connectivity from "1000BRAINS study" to "Averaged_FC_JuBrain_184Regions"

Expected Behavior

Instead of a connectivity diagram, it should return a message that co... | 1.0 | [Bug] After change connectivity source, if new source does not have data it breaks - Steps to reproduce:

1. Load Cytoarchitectonic maps - v1.18

2. Select region "Area STS2 (STS) - right hemisphere"

3. Expand the connectivity

4. change connectivity from "1000BRAINS study" to "Averaged_FC_JuBrain_184Regions"

Exp... | non_priority | after change connectivity source if new source does not have data it breaks steps to reproduce load cytoarchitectonic maps select region area sts right hemisphere expand the connectivity change connectivity from study to averaged fc jubrain expected behavior instead of ... | 0 |

21,332 | 4,704,099,070 | IssuesEvent | 2016-10-13 10:13:42 | Sylius/Sylius | https://api.github.com/repos/Sylius/Sylius | closed | [Docs] Models are not in bundle, but in component | Bug Documentation | For example, in this file `docs/bundles/SyliusPromotionBundle/models.rst` we have: All the models of this bundle are defined in Sylius\Bundle\PromotionBundle\Model.

But in PromotionBundle there is no Model folder...

are failing**:

https://prow.k8s.io/view/gcs/kubernetes-jenkins/pr-logs/directory/pull-kubernetes-integration/1172176898202013696

... | 1.0 | flake: TestWebhookAdmission - <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

pull-kubernetes-integration

**Which test(s) are failing**:

https://prow.k8s.io/view/gcs/kubernetes-jenkins/pr-logs/directory/pull-kubernetes-integ... | non_priority | flake testwebhookadmission which jobs are failing pull kubernetes integration which test s are failing run testwebhookadmissionwithwatchcache apiextensions io customresourcedefinitions delete client go parsed scheme endpoint endpoint go ... | 0 |

180,969 | 21,629,319,936 | IssuesEvent | 2022-05-05 08:02:45 | druidfi/security-checker-action | https://api.github.com/repos/druidfi/security-checker-action | opened | Pending security updates in production! | security | ## Security updates available

- `drupal/core` from 9.3.9 to [9.3.12](https://www.drupal.org/project/drupal/releases/9.3.12)

- `drupal/ctools` from 3.4.0 to [3.7.0](https://www.drupal.org/project/ctools/releases/8.x-3.7)

These updates are pending and were found with scanning `composer.lock` and checking for available ... | True | Pending security updates in production! - ## Security updates available

- `drupal/core` from 9.3.9 to [9.3.12](https://www.drupal.org/project/drupal/releases/9.3.12)

- `drupal/ctools` from 3.4.0 to [3.7.0](https://www.drupal.org/project/ctools/releases/8.x-3.7)

These updates are pending and were found with scanning `... | non_priority | pending security updates in production security updates available drupal core from to drupal ctools from to these updates are pending and were found with scanning composer lock and checking for available security updates branch refs heads main | 0 |

135,130 | 18,667,101,553 | IssuesEvent | 2021-10-30 02:14:59 | eclipse/rdf4j | https://api.github.com/repos/eclipse/rdf4j | closed | XML-based parsers should not load external DTDs by default | 🐞 bug 📦 rio security | ### Problem description

We recently received a improvement request for Any23 to [optionally disable remote HTTP connections when resolving XML entities](https://issues.apache.org/jira/browse/ANY23-504). Any23 utilizes rdf4j 3.1.2. The stack trace provided by the reporter indicates that `org.eclipse.rdf4j.rio.trix.TriX... | True | XML-based parsers should not load external DTDs by default - ### Problem description

We recently received a improvement request for Any23 to [optionally disable remote HTTP connections when resolving XML entities](https://issues.apache.org/jira/browse/ANY23-504). Any23 utilizes rdf4j 3.1.2. The stack trace provided b... | non_priority | xml based parsers should not load external dtds by default problem description we recently received a improvement request for to utilizes the stack trace provided by the reporter indicates that org eclipse rio trix trixparser parsing can lead to a hung thread for about two minutes with an... | 0 |

55,609 | 13,647,449,462 | IssuesEvent | 2020-09-26 03:22:45 | TerryCavanagh/diceydungeonsbeta | https://api.github.com/repos/TerryCavanagh/diceydungeonsbeta | closed | Suggestion/Ideas: Silence for Robot and Jester | v0.5: 21st June Build | for robot:

* only the calculate button is visible

or

* no jackpot

for jester:

* only the discard/snap button is visible (like multicard with less multi)

or

* can't discard/snap | 1.0 | Suggestion/Ideas: Silence for Robot and Jester - for robot:

* only the calculate button is visible

or

* no jackpot

for jester:

* only the discard/snap button is visible (like multicard with less multi)

or

* can't discard/snap | non_priority | suggestion ideas silence for robot and jester for robot only the calculate button is visible or no jackpot for jester only the discard snap button is visible like multicard with less multi or can t discard snap | 0 |

306,229 | 23,150,457,214 | IssuesEvent | 2022-07-29 07:47:40 | damdalf/Personal-Projects | https://api.github.com/repos/damdalf/Personal-Projects | closed | Remove all 'TODO' comments from stock.py and make them Git issues | documentation | **Requirements**

All existing 'TODO' comments shall be converted into Git issues and removed from stock.py. | 1.0 | Remove all 'TODO' comments from stock.py and make them Git issues - **Requirements**

All existing 'TODO' comments shall be converted into Git issues and removed from stock.py. | non_priority | remove all todo comments from stock py and make them git issues requirements all existing todo comments shall be converted into git issues and removed from stock py | 0 |

313,753 | 23,490,420,022 | IssuesEvent | 2022-08-17 18:08:51 | astropy/astroquery | https://api.github.com/repos/astropy/astroquery | opened | DOC: identify smaller datasets to plug into documentation examples | Documentation esa.esa_hubble esa | Some of the code examples in the ESA modules are being skipped for testing as they are pulling largish datasets. It would be wonderful to identify much smaller datasets to include the examples in the testing.

cc @jespinosaar | 1.0 | DOC: identify smaller datasets to plug into documentation examples - Some of the code examples in the ESA modules are being skipped for testing as they are pulling largish datasets. It would be wonderful to identify much smaller datasets to include the examples in the testing.

cc @jespinosaar | non_priority | doc identify smaller datasets to plug into documentation examples some of the code examples in the esa modules are being skipped for testing as they are pulling largish datasets it would be wonderful to identify much smaller datasets to include the examples in the testing cc jespinosaar | 0 |

245,290 | 18,778,801,636 | IssuesEvent | 2021-11-08 02:03:37 | AY2122S1-CS2103T-T15-1/tp | https://api.github.com/repos/AY2122S1-CS2103T-T15-1/tp | closed | [DOC] Rama Update DG | type.Documentation | Each member should describe the implementation of at least one enhancement she/he has added (or planning to add).

Expected length: 1+ page per person | 1.0 | [DOC] Rama Update DG - Each member should describe the implementation of at least one enhancement she/he has added (or planning to add).

Expected length: 1+ page per person | non_priority | rama update dg each member should describe the implementation of at least one enhancement she he has added or planning to add expected length page per person | 0 |

62,928 | 7,657,573,833 | IssuesEvent | 2018-05-10 20:07:48 | endangereddataweek/resources | https://api.github.com/repos/endangereddataweek/resources | opened | Revise existing workshop material to be more generalizable | brainstorming design enhancement mozsprint review | On a suggestion by @chadsansing, I'll work on assessing and redesigning my existing workshop slides to make them more flexible for adaptability. Any review of the slides for what's working vs. what's not would be helpful! | 1.0 | Revise existing workshop material to be more generalizable - On a suggestion by @chadsansing, I'll work on assessing and redesigning my existing workshop slides to make them more flexible for adaptability. Any review of the slides for what's working vs. what's not would be helpful! | non_priority | revise existing workshop material to be more generalizable on a suggestion by chadsansing i ll work on assessing and redesigning my existing workshop slides to make them more flexible for adaptability any review of the slides for what s working vs what s not would be helpful | 0 |

128,193 | 18,040,476,659 | IssuesEvent | 2021-09-18 01:19:55 | Reid-Turner/uppy | https://api.github.com/repos/Reid-Turner/uppy | opened | CVE-2021-3801 (Medium) detected in prismjs-1.22.0.tgz | security vulnerability | ## CVE-2021-3801 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>prismjs-1.22.0.tgz</b></p></summary>

<p>Lightweight, robust, elegant syntax highlighting. A spin-off project from Dab... | True | CVE-2021-3801 (Medium) detected in prismjs-1.22.0.tgz - ## CVE-2021-3801 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>prismjs-1.22.0.tgz</b></p></summary>

<p>Lightweight, robust, ... | non_priority | cve medium detected in prismjs tgz cve medium severity vulnerability vulnerable library prismjs tgz lightweight robust elegant syntax highlighting a spin off project from dabblet library home page a href dependency hierarchy uppy io file website tgz root libr... | 0 |