Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 844 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 12

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 248k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

270,314 | 20,597,409,039 | IssuesEvent | 2022-03-05 18:25:54 | ReactiveX/RxPY | https://api.github.com/repos/ReactiveX/RxPY | closed | Getting Started out of date | documentation | The Getting Started in the notebooks uses Observer and from_iterable, which are not used anymore by the library.

The getting started should be simple to do and the current one asks for digging into the documentation. | 1.0 | Getting Started out of date - The Getting Started in the notebooks uses Observer and from_iterable, which are not used anymore by the library.

The getting started should be simple to do and the current one asks for digging into the documentation. | non_priority | getting started out of date the getting started in the notebooks uses observer and from iterable which are not used anymore by the library the getting started should be simple to do and the current one asks for digging into the documentation | 0 |

314,617 | 27,013,901,649 | IssuesEvent | 2023-02-10 17:32:57 | AFM-SPM/TopoStats | https://api.github.com/repos/AFM-SPM/TopoStats | closed | Remove unnecessary images from tests | testing | Tests take ages to run, I introduced comparing Matplotlib generated images to many of the unittests but I think there are too many in there now.

We should leave those in for explicit testing of `plottingfuncs` and `plotting` (work in progress!) but all the other arrays are checked by their sums and so the graphs the... | 1.0 | Remove unnecessary images from tests - Tests take ages to run, I introduced comparing Matplotlib generated images to many of the unittests but I think there are too many in there now.

We should leave those in for explicit testing of `plottingfuncs` and `plotting` (work in progress!) but all the other arrays are chec... | non_priority | remove unnecessary images from tests tests take ages to run i introduced comparing matplotlib generated images to many of the unittests but i think there are too many in there now we should leave those in for explicit testing of plottingfuncs and plotting work in progress but all the other arrays are chec... | 0 |

378,978 | 26,346,843,589 | IssuesEvent | 2023-01-10 23:04:10 | gr0vity-dev/nano-node-tracker | https://api.github.com/repos/gr0vity-dev/nano-node-tracker | opened | Break up and document node source | documentation quality improvements | 2018-08-28T16:21:23Z cryptocodeA common feedback from new devs looking at the code is the large and mostly undocumented source files.Breaking it up makes navigating easier and will reduce compile times, esp. when editing headers which many files depend on due to their scope. is already done.(This issue is work in prog... | 1.0 | Break up and document node source - 2018-08-28T16:21:23Z cryptocodeA common feedback from new devs looking at the code is the large and mostly undocumented source files.Breaking it up makes navigating easier and will reduce compile times, esp. when editing headers which many files depend on due to their scope. is alre... | non_priority | break up and document node source cryptocodea common feedback from new devs looking at the code is the large and mostly undocumented source files breaking it up makes navigating easier and will reduce compile times esp when editing headers which many files depend on due to their scope is already done t... | 0 |

86,892 | 10,850,140,545 | IssuesEvent | 2019-11-13 08:08:16 | vaadin/flow | https://api.github.com/repos/vaadin/flow | closed | Change generated TS types for nullable Java types | ccdm needs design | The TS type wrappers generated by Connect should fix the DX issue with nullability: https://github.com/vaadin/vaadin-connect/issues/404.

Current implementation:

Java:

```java

/* Category.java */

public class Category {

private Long id;

private String name

}

/* CategoryService.java */

@VaadinServic... | 1.0 | Change generated TS types for nullable Java types - The TS type wrappers generated by Connect should fix the DX issue with nullability: https://github.com/vaadin/vaadin-connect/issues/404.

Current implementation:

Java:

```java

/* Category.java */

public class Category {

private Long id;

private String ... | non_priority | change generated ts types for nullable java types the ts type wrappers generated by connect should fix the dx issue with nullability current implementation java java category java public class category private long id private string name categoryservice java vaadinservi... | 0 |

356,094 | 25,176,107,693 | IssuesEvent | 2022-11-11 09:24:12 | totsukatomofumi/pe | https://api.github.com/repos/totsukatomofumi/pe | opened | Missing punctuation | type.DocumentationBug severity.Low |

The missing comma made this hard to read at first. Page 16 of User Guide.

<!--session: 1668154060078-7154a181-3c17-4a5d-8442-00e667ee7736-->

<!--Version: Web v3.4.4--> | 1.0 | Missing punctuation -

The missing comma made this hard to read at first. Page 16 of User Guide.

<!--session: 1668154060078-7154a181-3c17-4a5d-8442-00e667ee7736-->

<!--V... | non_priority | missing punctuation the missing comma made this hard to read at first page of user guide | 0 |

4,781 | 5,289,808,618 | IssuesEvent | 2017-02-08 18:17:30 | hydroshare/hydroshare | https://api.github.com/repos/hydroshare/hydroshare | closed | Add requires.io badge to Github | in progress SECURITY | Allow easy checking for out of date Python dependencies

- [x] rework [hs_docker_base](https://github.com/hydroshare/hs_docker_base) to comply to requires.io formatting.

- Existing requirements are not brought in by a file, but rather by direct definition in the Dockerfile

- [ ] ~update Celery to 4.0.0 to allow for... | True | Add requires.io badge to Github - Allow easy checking for out of date Python dependencies

- [x] rework [hs_docker_base](https://github.com/hydroshare/hs_docker_base) to comply to requires.io formatting.

- Existing requirements are not brought in by a file, but rather by direct definition in the Dockerfile

- [ ] ~u... | non_priority | add requires io badge to github allow easy checking for out of date python dependencies rework to comply to requires io formatting existing requirements are not brought in by a file but rather by direct definition in the dockerfile update celery to to allow for the removal of pickle refe... | 0 |

182,004 | 21,664,473,871 | IssuesEvent | 2022-05-07 01:28:11 | rzr/rzr-presentation-gstreamer | https://api.github.com/repos/rzr/rzr-presentation-gstreamer | closed | WS-2016-0031 (High) detected in ws-0.8.0.tgz - autoclosed | security vulnerability | ## WS-2016-0031 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ws-0.8.0.tgz</b></p></summary>

<p>simple to use, blazing fast and thoroughly tested websocket client, server and console... | True | WS-2016-0031 (High) detected in ws-0.8.0.tgz - autoclosed - ## WS-2016-0031 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ws-0.8.0.tgz</b></p></summary>

<p>simple to use, blazing fas... | non_priority | ws high detected in ws tgz autoclosed ws high severity vulnerability vulnerable library ws tgz simple to use blazing fast and thoroughly tested websocket client server and console for node js up to date against rfc library home page a href path to dependency file ... | 0 |

10,630 | 2,962,553,209 | IssuesEvent | 2015-07-10 01:34:15 | TypeStrong/atom-typescript | https://api.github.com/repos/TypeStrong/atom-typescript | closed | Typescript Plugin Reload | by-design | Switching git branches doesn't prompt a reload of atom-typescript

After switching between branches, compilation errors will appear because of inconsistent state. I need to close and re-open atom for these errors to go away. | 1.0 | Typescript Plugin Reload - Switching git branches doesn't prompt a reload of atom-typescript

After switching between branches, compilation errors will appear because of inconsistent state. I need to close and re-open atom for these errors to go away. | non_priority | typescript plugin reload switching git branches doesn t prompt a reload of atom typescript after switching between branches compilation errors will appear because of inconsistent state i need to close and re open atom for these errors to go away | 0 |

233,263 | 25,758,329,224 | IssuesEvent | 2022-12-08 18:12:00 | whitesource-ps/ws-cleanup-tool | https://api.github.com/repos/whitesource-ps/ws-cleanup-tool | opened | certifi-2022.6.15-py3-none-any.whl: 1 vulnerabilities (highest severity is: 6.8) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>certifi-2022.6.15-py3-none-any.whl</b></p></summary>

<p>Python package for providing Mozilla's CA Bundle.</p>

<p>Library home page: <a href="https://files.pythonhoste... | True | certifi-2022.6.15-py3-none-any.whl: 1 vulnerabilities (highest severity is: 6.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>certifi-2022.6.15-py3-none-any.whl</b></p></summary>

<p>Python package for providin... | non_priority | certifi none any whl vulnerabilities highest severity is vulnerable library certifi none any whl python package for providing mozilla s ca bundle library home page a href path to dependency file requirements txt path to vulnerable library requirements txt tmp ws s... | 0 |

223,090 | 24,711,644,089 | IssuesEvent | 2022-10-20 01:35:57 | raindigi/site-preview | https://api.github.com/repos/raindigi/site-preview | closed | CVE-2021-3805 (High) detected in object-path-0.11.4.tgz - autoclosed | security vulnerability | ## CVE-2021-3805 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.11.4.tgz</b></p></summary>

<p>Access deep object properties using a path</p>

<p>Library home page: <a hre... | True | CVE-2021-3805 (High) detected in object-path-0.11.4.tgz - autoclosed - ## CVE-2021-3805 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>object-path-0.11.4.tgz</b></p></summary>

<p>Acce... | non_priority | cve high detected in object path tgz autoclosed cve high severity vulnerability vulnerable library object path tgz access deep object properties using a path library home page a href path to dependency file package json path to vulnerable library node modules obj... | 0 |

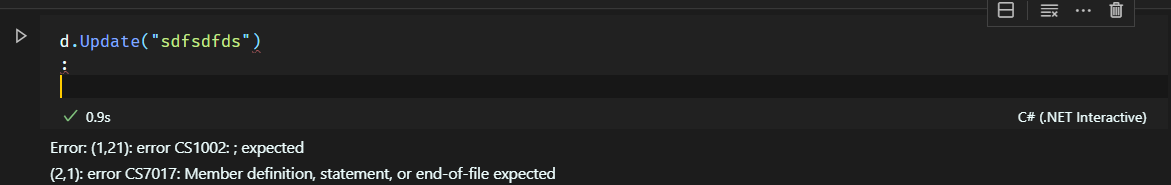

70,565 | 13,492,754,775 | IssuesEvent | 2020-09-11 18:32:23 | dotnet/interactive | https://api.github.com/repos/dotnet/interactive | closed | Failed execution in vscode notebook display green tick | Area-VS Code Extension Impact-Medium bug | The cell state is success even if the submission failed

| 1.0 | Failed execution in vscode notebook display green tick - The cell state is success even if the submission failed

| non_priority | failed execution in vscode notebook display green tick the cell state is success even if the submission failed | 0 |

8,950 | 7,516,991,102 | IssuesEvent | 2018-04-12 00:57:48 | LighthouseBlog/Blog | https://api.github.com/repos/LighthouseBlog/Blog | opened | Authentication not redirecting after a certain amount of time | bug security | <!--

PLEASE HELP US PROCESS GITHUB ISSUES FASTER BY PROVIDING THE FOLLOWING INFORMATION.

ISSUES MISSING IMPORTANT INFORMATION MAY BE CLOSED WITHOUT INVESTIGATION.

-->

## I'm submitting a...

<!-- Check one of the following options with "x" -->

<pre><code>

[ ] Regression (a behavior that used to work and stopp... | True | Authentication not redirecting after a certain amount of time - <!--

PLEASE HELP US PROCESS GITHUB ISSUES FASTER BY PROVIDING THE FOLLOWING INFORMATION.

ISSUES MISSING IMPORTANT INFORMATION MAY BE CLOSED WITHOUT INVESTIGATION.

-->

## I'm submitting a...

<!-- Check one of the following options with "x" -->

<pr... | non_priority | authentication not redirecting after a certain amount of time please help us process github issues faster by providing the following information issues missing important information may be closed without investigation i m submitting a regression a behavior that used to work and sto... | 0 |

175,231 | 27,811,241,651 | IssuesEvent | 2023-03-18 06:00:16 | HHS/OPRE-OPS | https://api.github.com/repos/HHS/OPRE-OPS | closed | Experiment on Figma Components | design | ### Goals

* Determine if Figma components ease the iterative and creative design process

* Determine how much current reusability there is with our design

* Assess what tasks will be on future stories, or totally net-new stories should result

### Tasks

- [x] Meet with and learn from other Flexioneers about design sys... | 1.0 | Experiment on Figma Components - ### Goals

* Determine if Figma components ease the iterative and creative design process

* Determine how much current reusability there is with our design

* Assess what tasks will be on future stories, or totally net-new stories should result

### Tasks

- [x] Meet with and learn from o... | non_priority | experiment on figma components goals determine if figma components ease the iterative and creative design process determine how much current reusability there is with our design assess what tasks will be on future stories or totally net new stories should result tasks meet with and learn from oth... | 0 |

244,959 | 20,734,563,084 | IssuesEvent | 2022-03-14 12:36:34 | hyperledger/cactus | https://api.github.com/repos/hyperledger/cactus | closed | test: fix yarn test:all on GCP instances | bug Flaky-Test-Automation Tests | **Describe the bug**

I spawn an Ubuntu 20 VM, installed java 8, latest docker and node 16.13, run configure (sucess) and then npm run test:all. There are tests that fail

**To Reproduce**

Spawn a VM on Google Cloud ( c2-standard-8, 70gb SSD 8 cores, 32gb ram), run npm configure. After that run npm run ™test:all

... | 2.0 | test: fix yarn test:all on GCP instances - **Describe the bug**

I spawn an Ubuntu 20 VM, installed java 8, latest docker and node 16.13, run configure (sucess) and then npm run test:all. There are tests that fail

**To Reproduce**

Spawn a VM on Google Cloud ( c2-standard-8, 70gb SSD 8 cores, 32gb ram), run npm co... | non_priority | test fix yarn test all on gcp instances describe the bug i spawn an ubuntu vm installed java latest docker and node run configure sucess and then npm run test all there are tests that fail to reproduce spawn a vm on google cloud standard ssd cores ram run npm configure a... | 0 |

15,702 | 19,848,397,889 | IssuesEvent | 2022-01-21 09:31:07 | ooi-data/RS03ASHS-MJ03B-09-BOTPTA304-streamed-botpt_nano_sample | https://api.github.com/repos/ooi-data/RS03ASHS-MJ03B-09-BOTPTA304-streamed-botpt_nano_sample | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:31:06.652844.

## Details

Flow name: `RS03ASHS-MJ03B-09-BOTPTA304-streamed-botpt_nano_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: cannot reshape array of size 1209600 into shape (25000000,)

<d... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T09:31:06.652844.

## Details

Flow name: `RS03ASHS-MJ03B-09-BOTPTA304-streamed-botpt_nano_sample`

Task name: `processing_task`

Error type: `ValueError`

Error message: cannot reshape array of size ... | non_priority | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name streamed botpt nano sample task name processing task error type valueerror error message cannot reshape array of size into shape traceback ... | 0 |

40,209 | 9,908,257,073 | IssuesEvent | 2019-06-27 17:49:22 | idaholab/moose | https://api.github.com/repos/idaholab/moose | opened | MOOSEDocs: links are same color as note box heading background | T: defect | ## Bug Description

There is at least one instance where a link is used in a note box header: the "Core Extension" page of the MOOSEDocs documentation. The link "Markdown" is invisible in the first note. The fix may just be to not use links in note headers, rather than change colors.

## Steps to Reproduce

See above... | 1.0 | MOOSEDocs: links are same color as note box heading background - ## Bug Description

There is at least one instance where a link is used in a note box header: the "Core Extension" page of the MOOSEDocs documentation. The link "Markdown" is invisible in the first note. The fix may just be to not use links in note header... | non_priority | moosedocs links are same color as note box heading background bug description there is at least one instance where a link is used in a note box header the core extension page of the moosedocs documentation the link markdown is invisible in the first note the fix may just be to not use links in note header... | 0 |

326,532 | 24,089,774,175 | IssuesEvent | 2022-09-19 13:55:36 | DaveyJH/ci-portfolio-four | https://api.github.com/repos/DaveyJH/ci-portfolio-four | closed | Docs: Add live site links and repo link | documentation 1 sprint-12 | **Details:**

Add live site links and repo link

- Render

- Heroku | 1.0 | Docs: Add live site links and repo link - **Details:**

Add live site links and repo link

- Render

- Heroku | non_priority | docs add live site links and repo link details add live site links and repo link render heroku | 0 |

15,990 | 20,188,203,128 | IssuesEvent | 2022-02-11 01:17:42 | savitamittalmsft/WAS-SEC-TEST | https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST | opened | Automatically remove/obfuscate personally identifiable information (PII) for this workload | WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Health Modeling & Monitoring Application Level Monitoring | <a href="https://docs.microsoft.com/azure/search/cognitive-search-skill-pii-detection">Automatically remove/obfuscate personally identifiable information (PII) for this workload</a>

<p><b>Why Consider This?</b></p>

Extra care should be taken around logging of sensitive application areas. PII (contact informatio... | 1.0 | Automatically remove/obfuscate personally identifiable information (PII) for this workload - <a href="https://docs.microsoft.com/azure/search/cognitive-search-skill-pii-detection">Automatically remove/obfuscate personally identifiable information (PII) for this workload</a>

<p><b>Why Consider This?</b></p>

Extr... | non_priority | automatically remove obfuscate personally identifiable information pii for this workload why consider this extra care should be taken around logging of sensitive application areas pii contact information payment information etc should not be stored in any application logs and protective measures... | 0 |

175,753 | 21,327,565,329 | IssuesEvent | 2022-04-18 02:16:38 | fluent/fluent-bit | https://api.github.com/repos/fluent/fluent-bit | closed | Security Vulnerabilities for fluent-bit:1.8.10 | Stale security | ## Bug Report

**Describe the bug**

Hi team,

Security-scanning the latest release version (`v1.8.10`) for our fluent-bit image, we have found two vulnerabilities (high and medium severity) that got in via the fluent-bit binary installation.

Would be highly appreciated if you could either rescore them based on your... | True | Security Vulnerabilities for fluent-bit:1.8.10 - ## Bug Report

**Describe the bug**

Hi team,

Security-scanning the latest release version (`v1.8.10`) for our fluent-bit image, we have found two vulnerabilities (high and medium severity) that got in via the fluent-bit binary installation.

Would be highly appreciat... | non_priority | security vulnerabilities for fluent bit bug report describe the bug hi team security scanning the latest release version for our fluent bit image we have found two vulnerabilities high and medium severity that got in via the fluent bit binary installation would be highly appreciated ... | 0 |

30,736 | 8,583,555,625 | IssuesEvent | 2018-11-13 20:04:10 | travis-ci/travis-ci | https://api.github.com/repos/travis-ci/travis-ci | closed | Passing build artifacts across stages | build stages feature-request important locked | We want to achieve a 2 stage CI:

* A `build` stage that compiles a program. 2 builds: mac and linux.

* A `test` stage that use the compiled program. Multiple builds: 2 OSes * number of different language versions (e.g. `python: 2.7` and `python: 3.5`).

It is hard to pass the compiled program to the `test` stage.... | 1.0 | Passing build artifacts across stages - We want to achieve a 2 stage CI:

* A `build` stage that compiles a program. 2 builds: mac and linux.

* A `test` stage that use the compiled program. Multiple builds: 2 OSes * number of different language versions (e.g. `python: 2.7` and `python: 3.5`).

It is hard to pass t... | non_priority | passing build artifacts across stages we want to achieve a stage ci a build stage that compiles a program builds mac and linux a test stage that use the compiled program multiple builds oses number of different language versions e g python and python it is hard to pass t... | 0 |

7,999 | 4,120,203,029 | IssuesEvent | 2016-06-08 17:08:44 | Azure/azure-iot-gateway-sdk | https://api.github.com/repos/Azure/azure-iot-gateway-sdk | closed | Building on Raspbian - Raspberry PI 3 - Errors and workaround | build | Trying to build the gateway sources on a PI 3, using the **build.sh** script, fails with the following error:

`[ 43%] Building C object deps/azure-iot-sdks/c/azure-uamqp-c/tests/session_unittests/CMakeFiles/session_unittests_exe.dir/main.c.o

Linking CXX executable session_unittests_exe

Linking CXX executable mqtt_... | 1.0 | Building on Raspbian - Raspberry PI 3 - Errors and workaround - Trying to build the gateway sources on a PI 3, using the **build.sh** script, fails with the following error:

`[ 43%] Building C object deps/azure-iot-sdks/c/azure-uamqp-c/tests/session_unittests/CMakeFiles/session_unittests_exe.dir/main.c.o

Linking CX... | non_priority | building on raspbian raspberry pi errors and workaround trying to build the gateway sources on a pi using the build sh script fails with the following error building c object deps azure iot sdks c azure uamqp c tests session unittests cmakefiles session unittests exe dir main c o linking cxx exe... | 0 |

58,421 | 8,257,946,063 | IssuesEvent | 2018-09-13 07:35:42 | sebcrozet/ncollide | https://api.github.com/repos/sebcrozet/ncollide | closed | Update the section about event handling on the user guide. | documentation | In particular, the callbacks for event handling are no longer present on ncollide 0.14. | 1.0 | Update the section about event handling on the user guide. - In particular, the callbacks for event handling are no longer present on ncollide 0.14. | non_priority | update the section about event handling on the user guide in particular the callbacks for event handling are no longer present on ncollide | 0 |

52,381 | 22,171,451,792 | IssuesEvent | 2022-06-06 01:28:25 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | reopened | Support for adding service account permissions while setting up azurerm_storage_sync_cloud_endpoint | question service/storage | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to th... | 1.0 | Support for adding service account permissions while setting up azurerm_storage_sync_cloud_endpoint - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [r... | non_priority | support for adding service account permissions while setting up azurerm storage sync cloud endpoint is there an existing issue for this i have searched the existing issues community note please vote on this issue by adding a thumbsup to the original issue to help the community and m... | 0 |

155,982 | 12,289,317,639 | IssuesEvent | 2020-05-09 20:53:52 | openshift/openshift-azure | https://api.github.com/repos/openshift/openshift-azure | closed | readiness failed on DeploymentConfig | kind/test-flake lifecycle/rotten | /kind test-flake

During scale up/down readiness failed during sanity check

`time="2019-12-10T13:48:45Z" level=error msg="templateinstance e2e-test-h3dty/e2e-test-h3dty failed: Failed (readiness failed on DeploymentConfig e2e-test-h3dty/django-psql-persistent)" func="github.com/openshift/openshift-azure/test/e2e/sta... | 1.0 | readiness failed on DeploymentConfig - /kind test-flake

During scale up/down readiness failed during sanity check

`time="2019-12-10T13:48:45Z" level=error msg="templateinstance e2e-test-h3dty/e2e-test-h3dty failed: Failed (readiness failed on DeploymentConfig e2e-test-h3dty/django-psql-persistent)" func="github.co... | non_priority | readiness failed on deploymentconfig kind test flake during scale up down readiness failed during sanity check time level error msg templateinstance test test failed failed readiness failed on deploymentconfig test django psql persistent func github com openshift openshift azure t... | 0 |

191 | 4,513,527,470 | IssuesEvent | 2016-09-04 10:20:46 | backdrop-ops/backdropcms.org | https://api.github.com/repos/backdrop-ops/backdropcms.org | opened | Project release node title not updated when GitHub release is changed. | type - github-automation | @docwilmot has made an initial release of https://github.com/backdrop-contrib/block_disabler and made the common mistake to tag the release as 1.x-1.x (https://github.com/backdrop-contrib/block_disabler/issues/1). Even though the release was edited and changed to 1.x-1.0.0, the respective project node on b.org still sh... | 1.0 | Project release node title not updated when GitHub release is changed. - @docwilmot has made an initial release of https://github.com/backdrop-contrib/block_disabler and made the common mistake to tag the release as 1.x-1.x (https://github.com/backdrop-contrib/block_disabler/issues/1). Even though the release was edite... | non_priority | project release node title not updated when github release is changed docwilmot has made an initial release of and made the common mistake to tag the release as x x even though the release was edited and changed to x the respective project node on b org still shows x x and points to the origin... | 0 |

28,449 | 12,840,675,958 | IssuesEvent | 2020-07-07 21:30:28 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | azurerm_api_management_identity_provider_aad keeps updating client_secret | service/api-management | ### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or "me too" comments, they generate extra no... | 1.0 | azurerm_api_management_identity_provider_aad keeps updating client_secret - ### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this requ... | non_priority | azurerm api management identity provider aad keeps updating client secret community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue follo... | 0 |

41,577 | 6,919,579,185 | IssuesEvent | 2017-11-29 15:51:26 | samvera-labs/valkyrie | https://api.github.com/repos/samvera-labs/valkyrie | opened | Document how to create an array with its order preserved | documentation | I want to do something like:

``` ruby

class ThingWithAnOrderedAttribute < Valkyrie::Resource

attribute :ordered_array, Valkyrie::Types::Array

end

```

And know that the elements in `ordered_array` have their ordered preserved. It would also be doubly-nice if we could use something like:

``` ruby

class ThingWit... | 1.0 | Document how to create an array with its order preserved - I want to do something like:

``` ruby

class ThingWithAnOrderedAttribute < Valkyrie::Resource

attribute :ordered_array, Valkyrie::Types::Array

end

```

And know that the elements in `ordered_array` have their ordered preserved. It would also be doubly-nic... | non_priority | document how to create an array with its order preserved i want to do something like ruby class thingwithanorderedattribute valkyrie resource attribute ordered array valkyrie types array end and know that the elements in ordered array have their ordered preserved it would also be doubly nic... | 0 |

310,240 | 26,707,711,241 | IssuesEvent | 2023-01-27 19:50:25 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Informações Institucionais - Leis Municipais - Brumadinho | generalization test development template - ABO (21) subtag - Registro das Competências subtag - Leis Municipais | DoD: Realizar o teste de Generalização do validador da tag Informações Institucionais - Leis Municipais para o Município de Brumadinho. | 1.0 | Teste de generalizacao para a tag Informações Institucionais - Leis Municipais - Brumadinho - DoD: Realizar o teste de Generalização do validador da tag Informações Institucionais - Leis Municipais para o Município de Brumadinho. | non_priority | teste de generalizacao para a tag informações institucionais leis municipais brumadinho dod realizar o teste de generalização do validador da tag informações institucionais leis municipais para o município de brumadinho | 0 |

190,955 | 14,589,514,239 | IssuesEvent | 2020-12-19 02:20:52 | red/red | https://api.github.com/repos/red/red | closed | unix-style LF + read/lines = trash being read | status.built status.tested test.written type.bug | **Describe the bug**

```

Red []

recycle/off

make-dir %tmp$

files: []

repeat i 100 [

append files f: rejoin [%tmp$/drw i ".red"]

write/binary f append/dup copy {^/} "11 " i

]

foreach f files [read/lines probe f]

```

Running this script I'm getting:

```

%tmp$/drw1.red

%tmp$/drw2.red

%tmp$/drw3.red

... | 2.0 | unix-style LF + read/lines = trash being read - **Describe the bug**

```

Red []

recycle/off

make-dir %tmp$

files: []

repeat i 100 [

append files f: rejoin [%tmp$/drw i ".red"]

write/binary f append/dup copy {^/} "11 " i

]

foreach f files [read/lines probe f]

```

Running this script I'm getting:

```

... | non_priority | unix style lf read lines trash being read describe the bug red recycle off make dir tmp files repeat i append files f rejoin write binary f append dup copy i foreach f files running this script i m getting tmp red tmp red tmp red tmp... | 0 |

404,282 | 27,456,952,938 | IssuesEvent | 2023-03-02 22:16:38 | pfmc-assessments/pfmc_assessment_handbook | https://api.github.com/repos/pfmc-assessments/pfmc_assessment_handbook | opened | add more info on reasons for choosing discard approach? | documentation question | In two independent settings today I was asked about approaches to modeling discards. The section in our handbook on discards, which @chantelwetzel-noaa recently expanded, has lots of really valuable detail about the different ways to model them: https://pfmc-assessments.github.io/pfmc_assessment_handbook/01-data-source... | 1.0 | add more info on reasons for choosing discard approach? - In two independent settings today I was asked about approaches to modeling discards. The section in our handbook on discards, which @chantelwetzel-noaa recently expanded, has lots of really valuable detail about the different ways to model them: https://pfmc-ass... | non_priority | add more info on reasons for choosing discard approach in two independent settings today i was asked about approaches to modeling discards the section in our handbook on discards which chantelwetzel noaa recently expanded has lots of really valuable detail about the different ways to model them however i ... | 0 |

32,057 | 8,790,658,315 | IssuesEvent | 2018-12-21 09:50:12 | openshiftio/openshift.io | https://api.github.com/repos/openshiftio/openshift.io | closed | Build Service: Connect with fabric8-environement | area/pipelines sprint/current team/build-cd type/task |

Get json schema from fabric8-environement service

Fabric8-Build issue: https://github.com/fabric8-services/fabric8-build-service/issues/41 | 1.0 | Build Service: Connect with fabric8-environement -

Get json schema from fabric8-environement service

Fabric8-Build issue: https://github.com/fabric8-services/fabric8-build-service/issues/41 | non_priority | build service connect with environement get json schema from environement service build issue | 0 |

431,692 | 30,246,766,241 | IssuesEvent | 2023-07-06 17:06:09 | NetAppDocs/storagegrid-116 | https://api.github.com/repos/NetAppDocs/storagegrid-116 | closed | SSE Feedback on Remove Fibre Channel HBA | documentation | Page: [Remove Fibre Channel HBA](https://docs.netapp.com/us-en/storagegrid-116/sg6000/removing-fibre-channel-hba.html)

the link under "about this task" for monitoring node connections is broken

"See the information about [monitoring node connection states]" (https://docs.netapp.com/us-en/storagegrid-116/monitor/m... | 1.0 | SSE Feedback on Remove Fibre Channel HBA - Page: [Remove Fibre Channel HBA](https://docs.netapp.com/us-en/storagegrid-116/sg6000/removing-fibre-channel-hba.html)

the link under "about this task" for monitoring node connections is broken

"See the information about [monitoring node connection states]" (https://docs... | non_priority | sse feedback on remove fibre channel hba page the link under about this task for monitoring node connections is broken see the information about that link routes to a | 0 |

151,113 | 19,648,495,301 | IssuesEvent | 2022-01-10 01:52:18 | Thezone1975/tabliss | https://api.github.com/repos/Thezone1975/tabliss | opened | WS-2019-0605 (Medium) detected in opennmsopennms-source-23.0.0-1 | security vulnerability | ## WS-2019-0605 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Li... | True | WS-2019-0605 (Medium) detected in opennmsopennms-source-23.0.0-1 - ## WS-2019-0605 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-23.0.0-1</b></p></summary>

<p>... | non_priority | ws medium detected in opennmsopennms source ws medium severity vulnerability vulnerable library opennmsopennms source a java based fault and performance management system library home page a href vulnerable source files tabliss node module... | 0 |

150,039 | 13,308,180,018 | IssuesEvent | 2020-08-26 00:11:21 | nwg-piotr/autotiling | https://api.github.com/repos/nwg-piotr/autotiling | closed | Wrong manual install instructions and AUR package | documentation packaging | Manual install is not possible according to instructions because of #14

```

==> Checking for dependencies

==> Making package: autotiling-git r64.126c07d-1 (Ne 23. srpna 2020, 00:15:53)

==> Checking runtime dependencies...

==> Checking buildtime dependencies...

==> Retrieving sources...

-> Updating autotilin... | 1.0 | Wrong manual install instructions and AUR package - Manual install is not possible according to instructions because of #14

```

==> Checking for dependencies

==> Making package: autotiling-git r64.126c07d-1 (Ne 23. srpna 2020, 00:15:53)

==> Checking runtime dependencies...

==> Checking buildtime dependencies...... | non_priority | wrong manual install instructions and aur package manual install is not possible according to instructions because of checking for dependencies making package autotiling git ne srpna checking runtime dependencies checking buildtime dependencies retrieving... | 0 |

26,231 | 19,759,597,487 | IssuesEvent | 2022-01-16 07:08:14 | WordPress/performance | https://api.github.com/repos/WordPress/performance | closed | Implement GitHub action to ensure necessary labels and milestone are present on pull requests | [Type] Enhancement Infrastructure no milestone | Based on https://github.com/WordPress/performance/issues/41#issuecomment-994746944, we should have a GitHub workflow that ensures that every pull request has the necessary labels and milestones. This can later be set up as a requirement that has to be met before a pull request can be merged.

Here's the specification... | 1.0 | Implement GitHub action to ensure necessary labels and milestone are present on pull requests - Based on https://github.com/WordPress/performance/issues/41#issuecomment-994746944, we should have a GitHub workflow that ensures that every pull request has the necessary labels and milestones. This can later be set up as a... | non_priority | implement github action to ensure necessary labels and milestone are present on pull requests based on we should have a github workflow that ensures that every pull request has the necessary labels and milestones this can later be set up as a requirement that has to be met before a pull request can be merged h... | 0 |

118,963 | 15,383,336,899 | IssuesEvent | 2021-03-03 02:31:53 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Getting started: NVDA does not allow up / down navigation | *as-designed accessibility | Refs: #117313

1. Turn on NVDA screen reader on windows

2. VS Code - > getting started

3. Try to use up / down to navigate between getting started buttons -> does not work 🐛

NVDA seems to be eating up the up and down keys.

I am not sure why that is the case. Probably because NVDA thinks you are in Browse mode... | 1.0 | Getting started: NVDA does not allow up / down navigation - Refs: #117313

1. Turn on NVDA screen reader on windows

2. VS Code - > getting started

3. Try to use up / down to navigate between getting started buttons -> does not work 🐛

NVDA seems to be eating up the up and down keys.

I am not sure why that is t... | non_priority | getting started nvda does not allow up down navigation refs turn on nvda screen reader on windows vs code getting started try to use up down to navigate between getting started buttons does not work 🐛 nvda seems to be eating up the up and down keys i am not sure why that is the ca... | 0 |

40,108 | 5,183,251,492 | IssuesEvent | 2017-01-20 00:03:33 | codeforamerica/syracuse_biz_portal | https://api.github.com/repos/codeforamerica/syracuse_biz_portal | closed | Account page wireframes | design specs feature ready | These are designs for the account page. We've decided that the other stuff (notebooks/checklists/etc) will live on its own page.

# On load

## Mobile

## Desktop

will live on its own page.

# On load

## Mobile

## Desktop... | non_priority | account page wireframes these are designs for the account page we ve decided that the other stuff notebooks checklists etc will live on its own page on load mobile desktop display name display email button to change password desktop is using the column grid for... | 0 |

102,860 | 12,827,681,416 | IssuesEvent | 2020-07-06 18:59:03 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Assigning departments to topics and topic collections? | Content type: Topic Page Content type: Topic collection page Feature: Staff Permissions & Roles Joplin Alpha Site Content Team: Content Team: Design | We're implemented department permissions that make it so that a user selects a department when creating a page, so that it has departmental permissions. But we're thinking of limiting the creation of topic and topic collections to admins, who will decide the direction of the IA. Does this mean we don't need this sectio... | 1.0 | Assigning departments to topics and topic collections? - We're implemented department permissions that make it so that a user selects a department when creating a page, so that it has departmental permissions. But we're thinking of limiting the creation of topic and topic collections to admins, who will decide the dire... | non_priority | assigning departments to topics and topic collections we re implemented department permissions that make it so that a user selects a department when creating a page so that it has departmental permissions but we re thinking of limiting the creation of topic and topic collections to admins who will decide the dire... | 0 |

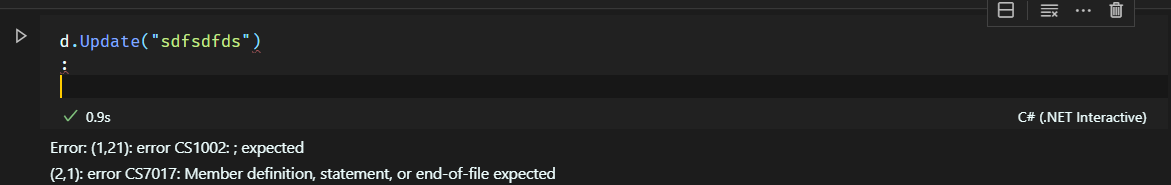

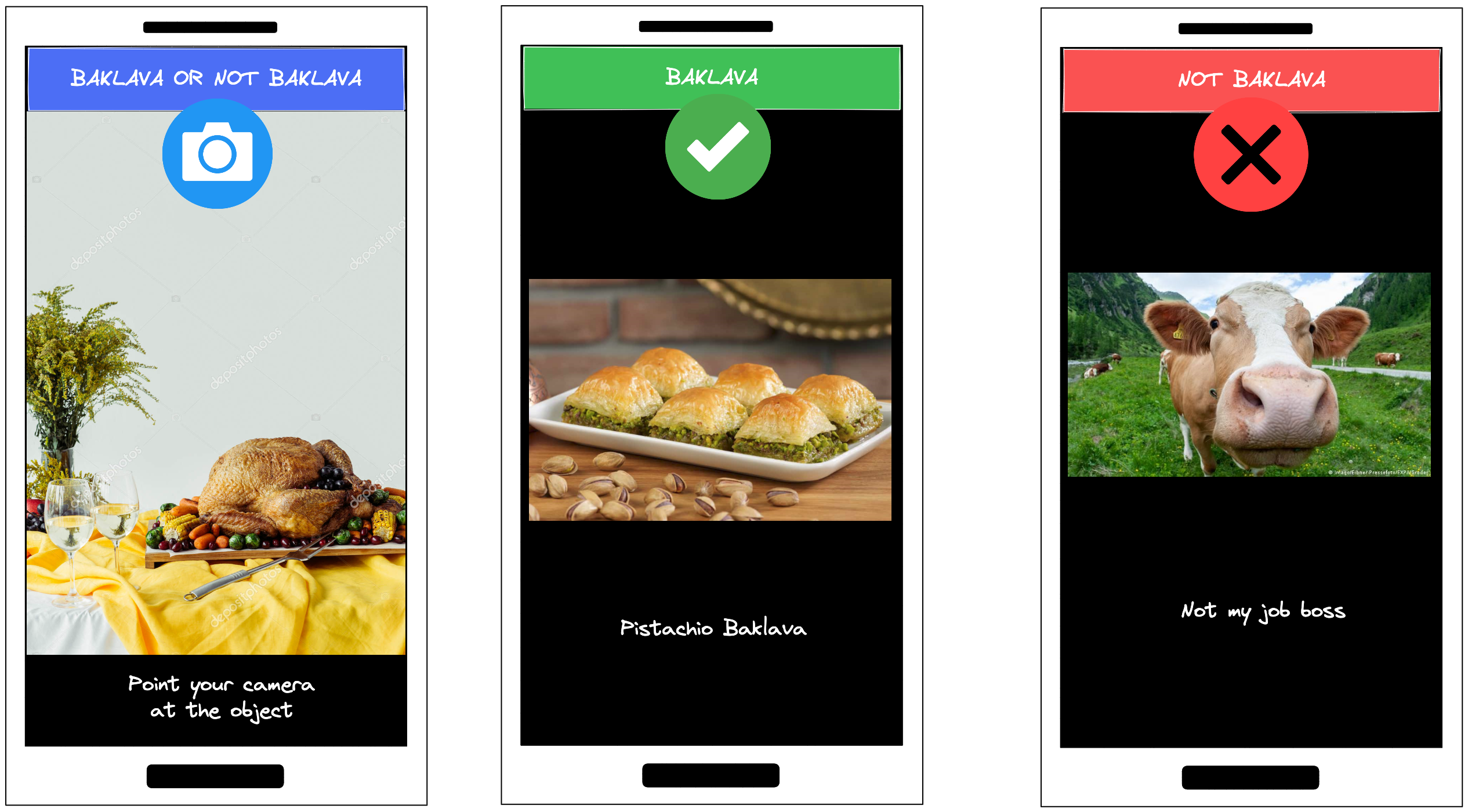

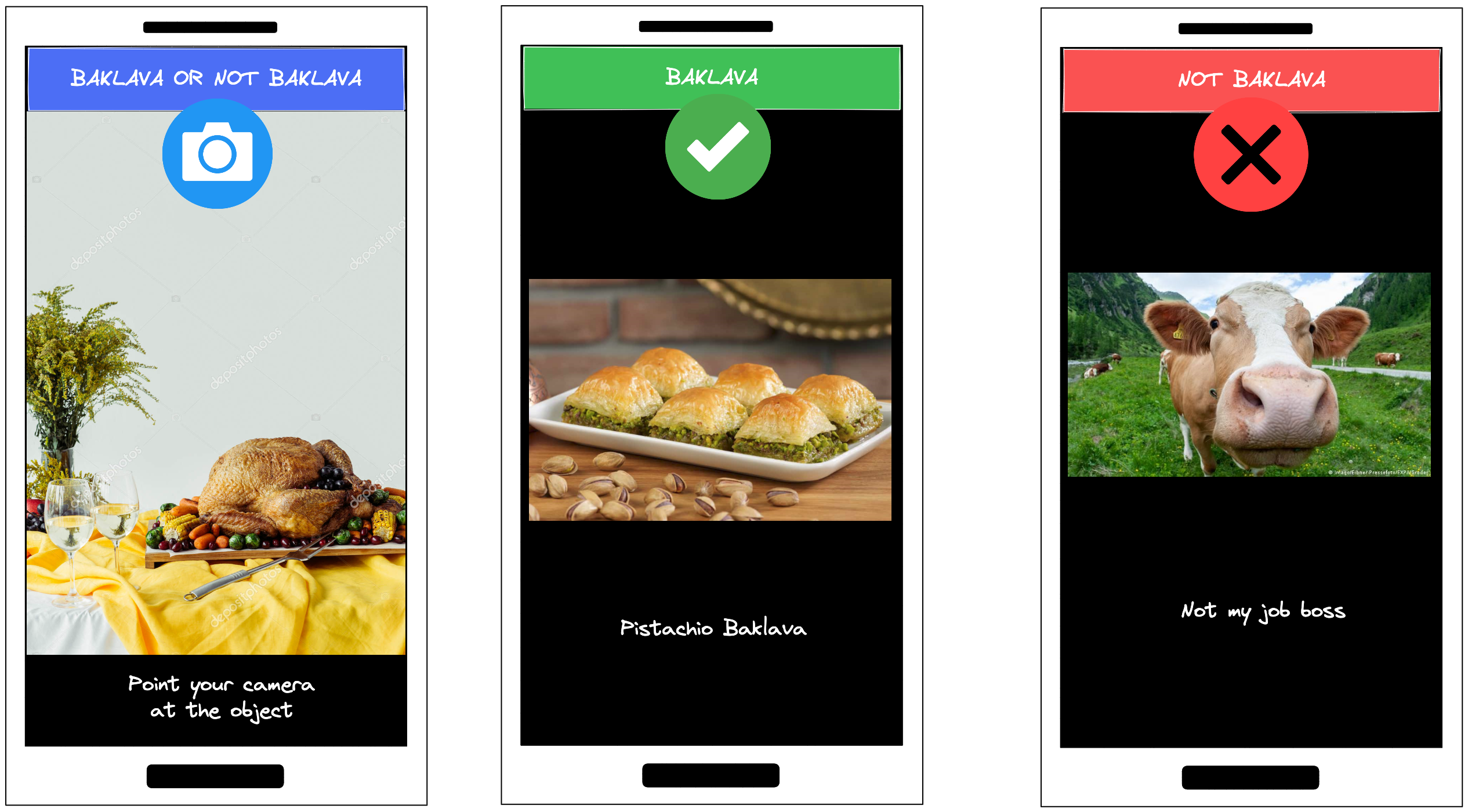

317,545 | 23,678,542,016 | IssuesEvent | 2022-08-28 13:02:32 | rashmi-carol-dsouza/baklava-or-not-baklava | https://api.github.com/repos/rashmi-carol-dsouza/baklava-or-not-baklava | closed | Design Web App Architecture | documentation | # Web App Architecture

## UI

## Local System Design

##... | 1.0 | Design Web App Architecture - # Web App Architecture

## UI

## Local System Design

call

* #7657 Type guards in Array.prototype.filter

* #7738 Execute prop... | 1.0 | Suggestion Backlog Slog, 4/11/2016 -

* #2671 Allow omitted elements in tuple type annotations

* #1579 `nameof` operator

* #3802 Allow merging classes and modules across files

* #2957 Reopen static and instance side of classes

* #7285 Allow subclass constructors without super() call

* #7657 Type guards in Array.p... | non_priority | suggestion backlog slog allow omitted elements in tuple type annotations nameof operator allow merging classes and modules across files reopen static and instance side of classes allow subclass constructors without super call type guards in array prototype filter ... | 0 |

179,529 | 13,885,448,199 | IssuesEvent | 2020-10-18 20:03:23 | daniel-norris/neu_ui | https://api.github.com/repos/daniel-norris/neu_ui | opened | Create a CardText test | good first issue hacktoberfest tests | **Is your feature request related to a problem? Please describe.**

Test coverage across the application is low. We need to build confidence that the components have the expected behaviour that we want and to help mitigate any regression in the future.

**Describe the solution you'd like**

We need to implement bette... | 1.0 | Create a CardText test - **Is your feature request related to a problem? Please describe.**

Test coverage across the application is low. We need to build confidence that the components have the expected behaviour that we want and to help mitigate any regression in the future.

**Describe the solution you'd like**

W... | non_priority | create a cardtext test is your feature request related to a problem please describe test coverage across the application is low we need to build confidence that the components have the expected behaviour that we want and to help mitigate any regression in the future describe the solution you d like w... | 0 |

22,046 | 3,932,373,810 | IssuesEvent | 2016-04-25 15:32:44 | NetsBlox/NetsBlox | https://api.github.com/repos/NetsBlox/NetsBlox | closed | periodically failing tests on travis | bug minor testing under review | Sometimes the tests will fail on travis because it can't get phantomjs. Caching the node_modules directory should help with this | 1.0 | periodically failing tests on travis - Sometimes the tests will fail on travis because it can't get phantomjs. Caching the node_modules directory should help with this | non_priority | periodically failing tests on travis sometimes the tests will fail on travis because it can t get phantomjs caching the node modules directory should help with this | 0 |

286,207 | 24,729,818,020 | IssuesEvent | 2022-10-20 16:36:15 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | reopened | DISABLED test_noncontiguous_samples_nn_functional_conv_transpose2d_cuda_float32 (__main__.TestCommonCUDA) | module: flaky-tests skipped module: unknown | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_noncontiguous_samples_nn_functional_conv_transpose2d_cuda_float32&suite=TestCommonCUDA) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/8751614432).

... | 1.0 | DISABLED test_noncontiguous_samples_nn_functional_conv_transpose2d_cuda_float32 (__main__.TestCommonCUDA) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_noncontiguous_samples_nn_functional_conv_transpose2d_cuda_float32&suite=Test... | non_priority | disabled test noncontiguous samples nn functional conv cuda main testcommoncuda platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging inst... | 0 |

295,920 | 22,283,484,896 | IssuesEvent | 2022-06-11 08:28:36 | a2n-s/scripts | https://api.github.com/repos/a2n-s/scripts | closed | Correct the headers and the doc | documentation enhancement good first issue | As scripts have been renamed recently, the headers of the scripts and the mentions to the old file names are incorrect.

This issue proposes to **correct the headers and to change the mentions to the old names to the new ones**.

**IMPLEMENTATION DETAILS**:

let's take the example of the `battery` script.

1. it ha... | 1.0 | Correct the headers and the doc - As scripts have been renamed recently, the headers of the scripts and the mentions to the old file names are incorrect.

This issue proposes to **correct the headers and to change the mentions to the old names to the new ones**.

**IMPLEMENTATION DETAILS**:

let's take the example ... | non_priority | correct the headers and the doc as scripts have been renamed recently the headers of the scripts and the mentions to the old file names are incorrect this issue proposes to correct the headers and to change the mentions to the old names to the new ones implementation details let s take the example ... | 0 |

79,619 | 10,134,213,761 | IssuesEvent | 2019-08-02 06:49:49 | kyma-project/console | https://api.github.com/repos/kyma-project/console | closed | First header in documentation is ignored | area/console area/documentation bug | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

<!-- Provide a clear and concise description of the problem.

Describe where it appears, when it occurred, and what it affects. -->

<!... | 1.0 | First header in documentation is ignored - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

<!-- Provide a clear and concise description of the problem.

Describe where it appears, when i... | non_priority | first header in documentation is ignored thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description provide a clear and concise description of the problem describe where it appears when i... | 0 |

24,307 | 12,259,119,485 | IssuesEvent | 2020-05-06 16:06:03 | beakerbrowser/beaker | https://api.github.com/repos/beakerbrowser/beaker | closed | High CPU usage on loading a large file | performance | Operation System: Ubuntu 16.04

Beaker Version: 0.7.9

I have a [large TiddlyWiki file](https://www.dropbox.com/s/fzf67dpktmfau0d/notes.html?dl=1) (55MB due to a lot of embedded images) that I want to open using Beaker. Opening the HTML file works as expected, but if I add it as a site's index.html and open the site,... | True | High CPU usage on loading a large file - Operation System: Ubuntu 16.04

Beaker Version: 0.7.9

I have a [large TiddlyWiki file](https://www.dropbox.com/s/fzf67dpktmfau0d/notes.html?dl=1) (55MB due to a lot of embedded images) that I want to open using Beaker. Opening the HTML file works as expected, but if I add it ... | non_priority | high cpu usage on loading a large file operation system ubuntu beaker version i have a due to a lot of embedded images that i want to open using beaker opening the html file works as expected but if i add it as a site s index html and open the site i get some problems the page shows up and... | 0 |

265,491 | 20,100,341,645 | IssuesEvent | 2022-02-07 02:45:39 | tanyaleepr/portfolio-generator | https://api.github.com/repos/tanyaleepr/portfolio-generator | opened | Prompt user for more input | documentation | **Description**

_Profile questions_

- Name

- GitHub account name

- About me

_Project questions_

- Project name

- Project description

- Programming Languages

- Project link | 1.0 | Prompt user for more input - **Description**

_Profile questions_

- Name

- GitHub account name

- About me

_Project questions_

- Project name

- Project description

- Programming Languages

- Project link | non_priority | prompt user for more input description profile questions name github account name about me project questions project name project description programming languages project link | 0 |

209,668 | 23,730,737,894 | IssuesEvent | 2022-08-31 01:18:24 | melsorg/github-scanner-test | https://api.github.com/repos/melsorg/github-scanner-test | closed | WS-2015-0018 (Medium) detected in semver-1.1.4.tgz - autoclosed | security vulnerability | ## WS-2015-0018 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>semver-1.1.4.tgz</b></p></summary>

<p>The semantic version parser used by npm.</p>

<p>Library home page: <a href="http... | True | WS-2015-0018 (Medium) detected in semver-1.1.4.tgz - autoclosed - ## WS-2015-0018 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>semver-1.1.4.tgz</b></p></summary>

<p>The semantic v... | non_priority | ws medium detected in semver tgz autoclosed ws medium severity vulnerability vulnerable library semver tgz the semantic version parser used by npm library home page a href path to dependency file tmp ws scm github scanner test package json path to vulnerable libra... | 0 |

27,897 | 22,587,842,992 | IssuesEvent | 2022-06-28 16:46:23 | LanDinh/khaleesi-ninja | https://api.github.com/repos/LanDinh/khaleesi-ninja | opened | Use named ports for gRPC probes | layer:infrastructure waiting | As per kubernetes 1.24, it's not possible to use named ports for gRPC probes | 1.0 | Use named ports for gRPC probes - As per kubernetes 1.24, it's not possible to use named ports for gRPC probes | non_priority | use named ports for grpc probes as per kubernetes it s not possible to use named ports for grpc probes | 0 |

47,527 | 12,044,043,508 | IssuesEvent | 2020-04-14 13:26:37 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Fix NullPointerExceptions for Linux watchers | @build-cache a:bug from:member | Currently, we receive `NullPointerException`s when trying to update the watchers on Linux. The exception happens here: https://github.com/gradle/gradle/blob/f5d92e72e2c13c82071b199ad44231642febb5b0/subprojects/core/src/main/java/org/gradle/internal/vfs/LinuxFileWatcherRegistry.java#L65

It seems like we don't have th... | 1.0 | Fix NullPointerExceptions for Linux watchers - Currently, we receive `NullPointerException`s when trying to update the watchers on Linux. The exception happens here: https://github.com/gradle/gradle/blob/f5d92e72e2c13c82071b199ad44231642febb5b0/subprojects/core/src/main/java/org/gradle/internal/vfs/LinuxFileWatcherRegi... | non_priority | fix nullpointerexceptions for linux watchers currently we receive nullpointerexception s when trying to update the watchers on linux the exception happens here it seems like we don t have the directories which we used when we added the snapshot so something may be wrong with our bookkeeping this happeni... | 0 |

23,720 | 7,370,586,981 | IssuesEvent | 2018-03-13 09:00:41 | scalacenter/bloop | https://api.github.com/repos/scalacenter/bloop | closed | install.py in release is broken | bug build install | The [install.py](https://github.com/scalacenter/bloop/releases/download/v1.0.0-M6/install.py) that makes it into the release assets on GitHub is broken as it contains python expressions before the almighty import `from __future__`.

The following should just be moved below the imports.

```

NAILGUN_COMMIT = "ebe7ab2... | 1.0 | install.py in release is broken - The [install.py](https://github.com/scalacenter/bloop/releases/download/v1.0.0-M6/install.py) that makes it into the release assets on GitHub is broken as it contains python expressions before the almighty import `from __future__`.

The following should just be moved below the import... | non_priority | install py in release is broken the that makes it into the release assets on github is broken as it contains python expressions before the almighty import from future the following should just be moved below the imports nailgun commit bloop version | 0 |

175,232 | 14,519,514,640 | IssuesEvent | 2020-12-14 03:03:44 | SpencerTSterling/ColorGame | https://api.github.com/repos/SpencerTSterling/ColorGame | closed | Core Game Mechanics | documentation enhancement | These mechanics are necessary for both game modes.

Basic Game Mechanics:

- Buttons need to be randomly generated colors

- A button needs to be randomly chosen to be the "odd one out"

- Points are rewarded when the correct button is pressed (+10 points)

- OPTIONALLY: more points are rewarded if the correct butt... | 1.0 | Core Game Mechanics - These mechanics are necessary for both game modes.

Basic Game Mechanics:

- Buttons need to be randomly generated colors

- A button needs to be randomly chosen to be the "odd one out"

- Points are rewarded when the correct button is pressed (+10 points)

- OPTIONALLY: more points are reward... | non_priority | core game mechanics these mechanics are necessary for both game modes basic game mechanics buttons need to be randomly generated colors a button needs to be randomly chosen to be the odd one out points are rewarded when the correct button is pressed points optionally more points are rewarde... | 0 |

194,085 | 15,396,798,890 | IssuesEvent | 2021-03-03 21:10:19 | antoinezanardi/werewolves-assistant-web | https://api.github.com/repos/antoinezanardi/werewolves-assistant-web | closed | Update README.md | documentation | Update the `README.md` file with:

- Travis explanations for CI.

- New `npm` version on live server. | 1.0 | Update README.md - Update the `README.md` file with:

- Travis explanations for CI.

- New `npm` version on live server. | non_priority | update readme md update the readme md file with travis explanations for ci new npm version on live server | 0 |

220,606 | 17,210,387,021 | IssuesEvent | 2021-07-19 02:50:32 | dapr/dapr | https://api.github.com/repos/dapr/dapr | reopened | E2E test to verify trace export | P2 area/test/e2e kind/observability size/XS triaged/resolved | <!-- If you need to report a security issue with Dapr, send an email to daprct@microsoft.com. -->

## In what area(s)?

<!-- Remove the '> ' to select -->

/area test-and-release

## Describe the feature

As part of #2337 we want to have E2E tests to make sure traces are correctly exported.

## Release Note

<!... | 1.0 | E2E test to verify trace export - <!-- If you need to report a security issue with Dapr, send an email to daprct@microsoft.com. -->

## In what area(s)?

<!-- Remove the '> ' to select -->

/area test-and-release

## Describe the feature

As part of #2337 we want to have E2E tests to make sure traces are correctl... | non_priority | test to verify trace export in what area s to select area test and release describe the feature as part of we want to have tests to make sure traces are correctly exported release note release note add test to verify trace export | 0 |

13,837 | 3,362,943,364 | IssuesEvent | 2015-11-20 09:38:51 | redmatrix/hubzilla | https://api.github.com/repos/redmatrix/hubzilla | closed | Editing a post will remove community tags and saved folders from post | bug retest please UX | Have not investigated further yet. | 1.0 | Editing a post will remove community tags and saved folders from post - Have not investigated further yet. | non_priority | editing a post will remove community tags and saved folders from post have not investigated further yet | 0 |

349,833 | 24,957,583,062 | IssuesEvent | 2022-11-01 13:09:04 | atorus-research/Tplyr | https://api.github.com/repos/atorus-research/Tplyr | closed | Typo in `f_str()` documentation on valid variables | documentation | `f_str()` docs say 'variance' instead of 'var' for the variance summary in desc layers.

https://github.com/atorus-research/Tplyr/blob/03f7650d932fd2fb5b9609c77ac6b220c991a0c7/R/format.R#L83 | 1.0 | Typo in `f_str()` documentation on valid variables - `f_str()` docs say 'variance' instead of 'var' for the variance summary in desc layers.

https://github.com/atorus-research/Tplyr/blob/03f7650d932fd2fb5b9609c77ac6b220c991a0c7/R/format.R#L83 | non_priority | typo in f str documentation on valid variables f str docs say variance instead of var for the variance summary in desc layers | 0 |

124,227 | 10,300,478,575 | IssuesEvent | 2019-08-28 12:58:59 | wsi-cogs/frontend | https://api.github.com/repos/wsi-cogs/frontend | closed | Meaning of the deadlines is unclear to users | UX/UI user-testing | The five deadlines that make up a rotation are only described in a single short phrase each in the application, and there's no documentation of them elsewhere either. Members of the Graduate Office are sometimes unsure of the current state of the rotation, which this won't be helping.

There should be some kind of in... | 1.0 | Meaning of the deadlines is unclear to users - The five deadlines that make up a rotation are only described in a single short phrase each in the application, and there's no documentation of them elsewhere either. Members of the Graduate Office are sometimes unsure of the current state of the rotation, which this won't... | non_priority | meaning of the deadlines is unclear to users the five deadlines that make up a rotation are only described in a single short phrase each in the application and there s no documentation of them elsewhere either members of the graduate office are sometimes unsure of the current state of the rotation which this won t... | 0 |

115,685 | 9,809,131,958 | IssuesEvent | 2019-06-12 17:11:57 | firebase/firebase-ios-sdk | https://api.github.com/repos/firebase/firebase-ios-sdk | closed | ABTExperimentsToSetFromPayloads crash | api: abtesting api: analytics api: remoteconfig | Our most common crash now is a a crash in what seems to be something related to remote config. In crashlytics its title is `ABTExperimentsToSetFromPayloads` and this is the message:

```

Fatal Exception: NSGenericException

*** Collection <__NSArrayM: 0x281ca66d0> was mutated while being enumerated.

ABTExperimentsT... | 1.0 | ABTExperimentsToSetFromPayloads crash - Our most common crash now is a a crash in what seems to be something related to remote config. In crashlytics its title is `ABTExperimentsToSetFromPayloads` and this is the message:

```

Fatal Exception: NSGenericException

*** Collection <__NSArrayM: 0x281ca66d0> was mutated ... | non_priority | abtexperimentstosetfrompayloads crash our most common crash now is a a crash in what seems to be something related to remote config in crashlytics its title is abtexperimentstosetfrompayloads and this is the message fatal exception nsgenericexception collection was mutated while being enumerated ... | 0 |

29,920 | 7,134,600,075 | IssuesEvent | 2018-01-22 21:26:32 | opencode18/Girls-who-code | https://api.github.com/repos/opencode18/Girls-who-code | opened | Change the events only limited to india | Advanced: 30 Points Opencode18 | Collect infromation about events like confernces and events that happen in india and add update the list.

This PR requires decent reasearch, if you are not doing it, we wont merge the PR.

P.S add hack in the north ❤️ | 1.0 | Change the events only limited to india - Collect infromation about events like confernces and events that happen in india and add update the list.

This PR requires decent reasearch, if you are not doing it, we wont merge the PR.

P.S add hack in the north ❤️ | non_priority | change the events only limited to india collect infromation about events like confernces and events that happen in india and add update the list this pr requires decent reasearch if you are not doing it we wont merge the pr p s add hack in the north ❤️ | 0 |

67,876 | 17,094,047,093 | IssuesEvent | 2021-07-08 21:57:51 | typeorm/typeorm | https://api.github.com/repos/typeorm/typeorm | closed | SQL Server error when requesting additional returning columns | bug comp: query builder driver: mssql | ## Issue Description

#5361 changed the way SQL Server uses `OUTPUT` in `INSERT`/`UPDATE` queries, causing an error if additional columns are requested using `InsertQueryBuilder.returning()`.

### Expected Behavior

```typescript

connection.createQueryBuilder(Post, 'post')

.insert()

.values({ title: "TIT... | 1.0 | SQL Server error when requesting additional returning columns - ## Issue Description

#5361 changed the way SQL Server uses `OUTPUT` in `INSERT`/`UPDATE` queries, causing an error if additional columns are requested using `InsertQueryBuilder.returning()`.

### Expected Behavior

```typescript

connection.createQuer... | non_priority | sql server error when requesting additional returning columns issue description changed the way sql server uses output in insert update queries causing an error if additional columns are requested using insertquerybuilder returning expected behavior typescript connection createquerybu... | 0 |

13,545 | 16,088,313,038 | IssuesEvent | 2021-04-26 13:55:52 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | host cell apoplast question | multi-species process quick fix |

host cell apoplast -> host apoplast

(it isn't a cellular thing?) | 1.0 | host cell apoplast question -

host cell apoplast -> host apoplast

(it isn't a cellular thing?) | non_priority | host cell apoplast question host cell apoplast host apoplast it isn t a cellular thing | 0 |

133,727 | 18,948,509,333 | IssuesEvent | 2021-11-18 12:53:42 | medicotary/Medicotary | https://api.github.com/repos/medicotary/Medicotary | closed | Make the vendors page | enhancement Design | it would be awesome if I could see the list of all vendors which supply me some medicines, and use the contact info to contact them

- [x] top search bar

- [x] Vendor table | 1.0 | Make the vendors page - it would be awesome if I could see the list of all vendors which supply me some medicines, and use the contact info to contact them

- [x] top search bar

- [x] Vendor table | non_priority | make the vendors page it would be awesome if i could see the list of all vendors which supply me some medicines and use the contact info to contact them top search bar vendor table | 0 |

4,588 | 3,407,146,884 | IssuesEvent | 2015-12-04 00:46:29 | bokeh/bokeh | https://api.github.com/repos/bokeh/bokeh | opened | CI Build breakdown | tag: build type: task | Some suggestions:

* JS, docs, and flake tests only need to run once, suggest one py3 test for all three

* Consider python + integration tests could be merged into one build, to share "install" cost.

* longest tests (both "examples" builds) should be run first together, so that their start times are not unnecessaril... | 1.0 | CI Build breakdown - Some suggestions:

* JS, docs, and flake tests only need to run once, suggest one py3 test for all three

* Consider python + integration tests could be merged into one build, to share "install" cost.

* longest tests (both "examples" builds) should be run first together, so that their start times... | non_priority | ci build breakdown some suggestions js docs and flake tests only need to run once suggest one test for all three consider python integration tests could be merged into one build to share install cost longest tests both examples builds should be run first together so that their start times a... | 0 |

140,773 | 32,058,364,064 | IssuesEvent | 2023-09-24 11:11:06 | coder/modules | https://api.github.com/repos/coder/modules | closed | VS Code Web | module-idea coder_script | This is different than code-server: `code serve-web`:

- [x] Variable to accept license (user must put true as a variable, default is false)

- [x] coder_app

- [x] coder_script | 1.0 | VS Code Web - This is different than code-server: `code serve-web`:

- [x] Variable to accept license (user must put true as a variable, default is false)

- [x] coder_app

- [x] coder_script | non_priority | vs code web this is different than code server code serve web variable to accept license user must put true as a variable default is false coder app coder script | 0 |

7,312 | 3,535,371,803 | IssuesEvent | 2016-01-16 13:11:52 | itchio/itch | https://api.github.com/repos/itchio/itch | closed | Add opts-checking code and start using it throughout tasks/utils | code quality | It's just preconditions — for example, `util/deploy` shouldn't start if `stage_path` isn't specified, etc. | 1.0 | Add opts-checking code and start using it throughout tasks/utils - It's just preconditions — for example, `util/deploy` shouldn't start if `stage_path` isn't specified, etc. | non_priority | add opts checking code and start using it throughout tasks utils it s just preconditions — for example util deploy shouldn t start if stage path isn t specified etc | 0 |

37,572 | 18,536,389,379 | IssuesEvent | 2021-10-21 12:00:32 | timescale/timescaledb | https://api.github.com/repos/timescale/timescaledb | reopened | Select with a bound on a sequence field in a compressed hypertable with an index is very slow/fails the database | bug performance investigate compression severity-p3 hypertables | ### Tables

```

CREATE TABLE IF NOT EXISTS series_values (

time TIMESTAMPTZ NOT NULL,

value DOUBLE PRECISION NOT NULL,

series_id INTEGER NOT NULL,

seq BIGSERIAL

);

SELECT create_hypertable('series_values', 'time');

ALTER TABLE series_values SET (

timescaledb.compress,

timescaledb.com... | True | Select with a bound on a sequence field in a compressed hypertable with an index is very slow/fails the database - ### Tables

```

CREATE TABLE IF NOT EXISTS series_values (

time TIMESTAMPTZ NOT NULL,

value DOUBLE PRECISION NOT NULL,

series_id INTEGER NOT NULL,

seq BIGSERIAL

);

SELECT create_... | non_priority | select with a bound on a sequence field in a compressed hypertable with an index is very slow fails the database tables create table if not exists series values time timestamptz not null value double precision not null series id integer not null seq bigserial select create ... | 0 |

183,395 | 31,465,208,098 | IssuesEvent | 2023-08-30 01:02:57 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Group block and Row block borders overlapping on content | [Block] Group [Feature] Design Tools | ### Description

Adding borders on group block and row blocks overlap on content

### Step-by-step reproduction instructions

1 Go to FSE,

2 Add a group block, and add a /para block and list block inside the group block

3 Add border to the group block with rounded corners

4 Change the border colour and size (optiona... | 1.0 | Group block and Row block borders overlapping on content - ### Description

Adding borders on group block and row blocks overlap on content

### Step-by-step reproduction instructions

1 Go to FSE,

2 Add a group block, and add a /para block and list block inside the group block

3 Add border to the group block with ro... | non_priority | group block and row block borders overlapping on content description adding borders on group block and row blocks overlap on content step by step reproduction instructions go to fse add a group block and add a para block and list block inside the group block add border to the group block with ro... | 0 |

56,950 | 7,018,437,325 | IssuesEvent | 2017-12-21 13:44:36 | TechnionYearlyProject/Roommates | https://api.github.com/repos/TechnionYearlyProject/Roommates | closed | Login Form properties | Design | Login

These are the proprties we are going to fetch from **POST /users/login** request:

| Field | requirement | comment |

|------------------------|----------------------------------|--------------------------------|

|email|MANDATO... | 1.0 | Login Form properties - Login

These are the proprties we are going to fetch from **POST /users/login** request:

| Field | requirement | comment |

|------------------------|----------------------------------|-------------------------... | non_priority | login form properties login these are the proprties we are going to fetch from post users login request field requirement comment ... | 0 |

139,329 | 12,853,880,096 | IssuesEvent | 2020-07-09 00:06:07 | seanpm2001/horsin-around-in-the-barn | https://api.github.com/repos/seanpm2001/horsin-around-in-the-barn | opened | 3 files couldn't be uploaded | documentation enhancement good first issue wontfix |

***

### 3 files couldn't be uploaded

I couldn't upload the following 3 projects to this repository, due to the GitHub 25 Megabyte file limit:

`//horsingaroundinthebarn.com/Root/Media/WelcomeVideoProjectDraft1.mp4` - Size: 31,717,824 bytes (31.71 Megabytes)

`//horsingaroundinthebarn.com/Horsingaroundintheb... | 1.0 | 3 files couldn't be uploaded -

***

### 3 files couldn't be uploaded

I couldn't upload the following 3 projects to this repository, due to the GitHub 25 Megabyte file limit:

`//horsingaroundinthebarn.com/Root/Media/WelcomeVideoProjectDraft1.mp4` - Size: 31,717,824 bytes (31.71 Megabytes)

`//horsingaroundin... | non_priority | files couldn t be uploaded files couldn t be uploaded i couldn t upload the following projects to this repository due to the github megabyte file limit horsingaroundinthebarn com root media size bytes megabytes horsingaroundinthebarn com horsingaroundinthebarn... | 0 |

210,161 | 23,739,023,575 | IssuesEvent | 2022-08-31 10:41:27 | SacleuxBenoit/test-nodejs | https://api.github.com/repos/SacleuxBenoit/test-nodejs | closed | CVE-2021-3664 (Medium) detected in url-parse-1.4.7.tgz - autoclosed | security vulnerability | ## CVE-2021-3664 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small footprint URL parser that works seamlessly across Node.js and browser ... | True | CVE-2021-3664 (Medium) detected in url-parse-1.4.7.tgz - autoclosed - ## CVE-2021-3664 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small ... | non_priority | cve medium detected in url parse tgz autoclosed cve medium severity vulnerability vulnerable library url parse tgz small footprint url parser that works seamlessly across node js and browser environments library home page a href path to dependency file front package ... | 0 |

113,329 | 9,636,215,401 | IssuesEvent | 2019-05-16 04:56:53 | owncloud/encryption | https://api.github.com/repos/owncloud/encryption | opened | Acceptance test: Check users are required to re-login after recreating master key. | QA-team dev:acceptance-tests | Part of #35207

Acceptance test: Check users are required to re-login after recreating master key.

introduced by https://github.com/owncloud/core/pull/34596 | 1.0 | Acceptance test: Check users are required to re-login after recreating master key. - Part of #35207

Acceptance test: Check users are required to re-login after recreating master key.

introduced by https://github.com/owncloud/core/pull/34596 | non_priority | acceptance test check users are required to re login after recreating master key part of acceptance test check users are required to re login after recreating master key introduced by | 0 |

46,690 | 13,180,992,699 | IssuesEvent | 2020-08-12 13:40:39 | mibo32/fitbit-api-example-java | https://api.github.com/repos/mibo32/fitbit-api-example-java | opened | CVE-2018-8014 (High) detected in tomcat-embed-core-8.5.4.jar | security vulnerability | ## CVE-2018-8014 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-8.5.4.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="http://t... | True | CVE-2018-8014 (High) detected in tomcat-embed-core-8.5.4.jar - ## CVE-2018-8014 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-8.5.4.jar</b></p></summary>

<p>Core To... | non_priority | cve high detected in tomcat embed core jar cve high severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file tmp ws scm fitbit api example java pom xml path to vulnerable library hom... | 0 |

217,146 | 24,313,218,070 | IssuesEvent | 2022-09-30 02:02:58 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | reopened | CVE-2022-0492 (High) detected in linuxv5.2 | security vulnerability | ## CVE-2022-0492 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torval... | True | CVE-2022-0492 (High) detected in linuxv5.2 - ## CVE-2022-0492 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library... | non_priority | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files kernel cgroup cgroup c kernel cgroup cgroup ... | 0 |

192,310 | 15,343,074,831 | IssuesEvent | 2021-02-27 18:46:05 | ethereum-optimism/community-hub | https://api.github.com/repos/ethereum-optimism/community-hub | opened | L2 FAQ/Noob Questions | documentation good first issue help wanted | Would like to pick out the best questions from this thread and give them answers:

https://twitter.com/AdamScochran/status/1365365202749915136 | 1.0 | L2 FAQ/Noob Questions - Would like to pick out the best questions from this thread and give them answers:

https://twitter.com/AdamScochran/status/1365365202749915136 | non_priority | faq noob questions would like to pick out the best questions from this thread and give them answers | 0 |

196,339 | 14,856,190,876 | IssuesEvent | 2021-01-18 13:49:48 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: "after all" hook for "Creates and activates a new EQL rule" - Detection rules, EQL "after all" hook for "Creates and activates a new EQL rule" | Team: SecuritySolution Team:Detections and Resp failed-test | A test failed on a tracked branch

```