Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

208,903 | 23,665,431,073 | IssuesEvent | 2022-08-26 20:18:17 | JohnDeere/work-tracker-examples | https://api.github.com/repos/JohnDeere/work-tracker-examples | closed | WS-2020-0293 (Medium) detected in spring-security-web-4.2.11.RELEASE.jar - autoclosed | security vulnerability | ## WS-2020-0293 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-security-web-4.2.11.RELEASE.jar</b></p></summary>

<p>spring-security-web</p>

<p>Library home page: <a href="http://spring.io/spring-security">http://spring.io/spring-security</a></p>

<p>Path to dependency file: /spring-boot-example/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/springframework/security/spring-security-web/4.2.11.RELEASE/spring-security-web-4.2.11.RELEASE.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-security-1.5.19.RELEASE.jar (Root Library)

- :x: **spring-security-web-4.2.11.RELEASE.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/JohnDeere/work-tracker-examples/commit/7aa2fa9c80c3d14d7e62f0494ba7edaff8842068">7aa2fa9c80c3d14d7e62f0494ba7edaff8842068</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Spring Security before 5.2.9, 5.3.7, and 5.4.3 vulnerable to side-channel attacks. Vulnerable versions of Spring Security don't use constant time comparisons for CSRF tokens.

<p>Publish Date: 2020-12-17

<p>URL: <a href=https://github.com/spring-projects/spring-security/commit/40e027c56d11b9b4c5071360bfc718165c937784>WS-2020-0293</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2020-12-17</p>

<p>Fix Resolution (org.springframework.security:spring-security-web): 5.2.9.RELEASE</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-security): 2.3.0.RELEASE</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | WS-2020-0293 (Medium) detected in spring-security-web-4.2.11.RELEASE.jar - autoclosed - ## WS-2020-0293 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-security-web-4.2.11.RELEASE.jar</b></p></summary>

<p>spring-security-web</p>

<p>Library home page: <a href="http://spring.io/spring-security">http://spring.io/spring-security</a></p>

<p>Path to dependency file: /spring-boot-example/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/springframework/security/spring-security-web/4.2.11.RELEASE/spring-security-web-4.2.11.RELEASE.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-security-1.5.19.RELEASE.jar (Root Library)

- :x: **spring-security-web-4.2.11.RELEASE.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/JohnDeere/work-tracker-examples/commit/7aa2fa9c80c3d14d7e62f0494ba7edaff8842068">7aa2fa9c80c3d14d7e62f0494ba7edaff8842068</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Spring Security before 5.2.9, 5.3.7, and 5.4.3 vulnerable to side-channel attacks. Vulnerable versions of Spring Security don't use constant time comparisons for CSRF tokens.

<p>Publish Date: 2020-12-17

<p>URL: <a href=https://github.com/spring-projects/spring-security/commit/40e027c56d11b9b4c5071360bfc718165c937784>WS-2020-0293</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2020-12-17</p>

<p>Fix Resolution (org.springframework.security:spring-security-web): 5.2.9.RELEASE</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-security): 2.3.0.RELEASE</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | ws medium detected in spring security web release jar autoclosed ws medium severity vulnerability vulnerable library spring security web release jar spring security web library home page a href path to dependency file spring boot example pom xml path to vulnerable library home wss scanner repository org springframework security spring security web release spring security web release jar dependency hierarchy spring boot starter security release jar root library x spring security web release jar vulnerable library found in head commit a href found in base branch master vulnerability details spring security before and vulnerable to side channel attacks vulnerable versions of spring security don t use constant time comparisons for csrf tokens publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version release date fix resolution org springframework security spring security web release direct dependency fix resolution org springframework boot spring boot starter security release step up your open source security game with mend | 0 |

149,759 | 13,301,491,642 | IssuesEvent | 2020-08-25 13:02:06 | JJguri/bestiapop | https://api.github.com/repos/JJguri/bestiapop | closed | acknowledge the data providers | documentation | We need to update the documentation and provide an acknowledge for the data use (I am not entirely sure if this needs to be in the header of the file).

**SILO**

Under the CC Attribution licence, users are required to acknowledge the data provider, so we ask our clients to:

• cite the Jeffrey et al. 2001 paper in **technical documents**

• acknowledge SILO as the data source in **non-technical documents**, for example:

These data were obtained from the Queensland Government’s [SILO](https://www.longpaddock.qld.gov.au/silo/) climate database and are licensed under [CC BY 4.0.](https://creativecommons.org/licenses/by/4.0/)”

**NASAPOWER**

When POWER data products are used in a **publication**, we request the following acknowledgment be included: “These data were obtained from the NASA Langley Research Center POWER Project funded through the NASA Earth Science Directorate Applied Science Program.” | 1.0 | acknowledge the data providers - We need to update the documentation and provide an acknowledge for the data use (I am not entirely sure if this needs to be in the header of the file).

**SILO**

Under the CC Attribution licence, users are required to acknowledge the data provider, so we ask our clients to:

• cite the Jeffrey et al. 2001 paper in **technical documents**

• acknowledge SILO as the data source in **non-technical documents**, for example:

These data were obtained from the Queensland Government’s [SILO](https://www.longpaddock.qld.gov.au/silo/) climate database and are licensed under [CC BY 4.0.](https://creativecommons.org/licenses/by/4.0/)”

**NASAPOWER**

When POWER data products are used in a **publication**, we request the following acknowledgment be included: “These data were obtained from the NASA Langley Research Center POWER Project funded through the NASA Earth Science Directorate Applied Science Program.” | non_main | acknowledge the data providers we need to update the documentation and provide an acknowledge for the data use i am not entirely sure if this needs to be in the header of the file silo under the cc attribution licence users are required to acknowledge the data provider so we ask our clients to • cite the jeffrey et al paper in technical documents • acknowledge silo as the data source in non technical documents for example these data were obtained from the queensland government’s climate database and are licensed under nasapower when power data products are used in a publication we request the following acknowledgment be included “these data were obtained from the nasa langley research center power project funded through the nasa earth science directorate applied science program ” | 0 |

5,104 | 26,018,850,884 | IssuesEvent | 2022-12-21 10:48:31 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | closed | Hide transformation results in a 500 in /run request | type: bug work: backend status: ready restricted: maintainers | ## Description

Adding a hide transformation results in a 500 with the following error:

```

Environment:

Request Method: POST

Request URL: http://localhost:8000/api/db/v0/queries/run/

Django Version: 3.1.14

Python Version: 3.9.15

Installed Applications:

['django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'rest_framework',

'django_filters',

'django_property_filter',

'mathesar']

Installed Middleware:

['django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware',

'mathesar.middleware.CursorClosedHandlerMiddleware',

'mathesar.middleware.PasswordChangeNeededMiddleware',

'django_userforeignkey.middleware.UserForeignKeyMiddleware',

'django_request_cache.middleware.RequestCacheMiddleware']

Traceback (most recent call last):

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/exception.py", line 47, in inner

response = get_response(request)

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/base.py", line 181, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/usr/local/lib/python3.9/site-packages/django/views/decorators/csrf.py", line 54, in wrapped_view

return view_func(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/viewsets.py", line 125, in view

return self.dispatch(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 509, in dispatch

response = self.handle_exception(exc)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 466, in handle_exception

response = exception_handler(exc, context)

File "/code/mathesar/exception_handlers.py", line 59, in mathesar_exception_handler

raise exc

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 506, in dispatch

response = handler(request, *args, **kwargs)

File "/code/mathesar/api/db/viewsets/queries.py", line 123, in run

column_metadata = query.all_columns_description_map

File "/code/mathesar/models/query.py", line 248, in all_columns_description_map

return {

File "/code/mathesar/models/query.py", line 249, in <dictcomp>

alias: self._describe_query_column(sa_col)

File "/code/mathesar/models/query.py", line 217, in _describe_query_column

type=sa_col.db_type.id,

File "/code/db/columns/base.py", line 225, in db_type

return get_db_type_enum_from_class(self.type.__class__)

File "/code/db/types/operations/convert.py", line 37, in get_db_type_enum_from_class

raise UnknownDbTypeId

Exception Type: UnknownDbTypeId at /api/db/v0/queries/run/

Exception Value:

```

The request:

```json

{

"base_table":72,

"initial_columns":[

{

"id":225,

"alias":"Patrons_First Name"

},

{

"id":226,

"alias":"Patrons_Last Name"

},

{

"id":227,

"alias":"Patrons_Email"

}

],

"transformations":[

{

"type":"hide",

"spec":[]

}

],

``` | True | Hide transformation results in a 500 in /run request - ## Description

Adding a hide transformation results in a 500 with the following error:

```

Environment:

Request Method: POST

Request URL: http://localhost:8000/api/db/v0/queries/run/

Django Version: 3.1.14

Python Version: 3.9.15

Installed Applications:

['django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'rest_framework',

'django_filters',

'django_property_filter',

'mathesar']

Installed Middleware:

['django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware',

'mathesar.middleware.CursorClosedHandlerMiddleware',

'mathesar.middleware.PasswordChangeNeededMiddleware',

'django_userforeignkey.middleware.UserForeignKeyMiddleware',

'django_request_cache.middleware.RequestCacheMiddleware']

Traceback (most recent call last):

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/exception.py", line 47, in inner

response = get_response(request)

File "/usr/local/lib/python3.9/site-packages/django/core/handlers/base.py", line 181, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/usr/local/lib/python3.9/site-packages/django/views/decorators/csrf.py", line 54, in wrapped_view

return view_func(*args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/viewsets.py", line 125, in view

return self.dispatch(request, *args, **kwargs)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 509, in dispatch

response = self.handle_exception(exc)

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 466, in handle_exception

response = exception_handler(exc, context)

File "/code/mathesar/exception_handlers.py", line 59, in mathesar_exception_handler

raise exc

File "/usr/local/lib/python3.9/site-packages/rest_framework/views.py", line 506, in dispatch

response = handler(request, *args, **kwargs)

File "/code/mathesar/api/db/viewsets/queries.py", line 123, in run

column_metadata = query.all_columns_description_map

File "/code/mathesar/models/query.py", line 248, in all_columns_description_map

return {

File "/code/mathesar/models/query.py", line 249, in <dictcomp>

alias: self._describe_query_column(sa_col)

File "/code/mathesar/models/query.py", line 217, in _describe_query_column

type=sa_col.db_type.id,

File "/code/db/columns/base.py", line 225, in db_type

return get_db_type_enum_from_class(self.type.__class__)

File "/code/db/types/operations/convert.py", line 37, in get_db_type_enum_from_class

raise UnknownDbTypeId

Exception Type: UnknownDbTypeId at /api/db/v0/queries/run/

Exception Value:

```

The request:

```json

{

"base_table":72,

"initial_columns":[

{

"id":225,

"alias":"Patrons_First Name"

},

{

"id":226,

"alias":"Patrons_Last Name"

},

{

"id":227,

"alias":"Patrons_Email"

}

],

"transformations":[

{

"type":"hide",

"spec":[]

}

],

``` | main | hide transformation results in a in run request description adding a hide transformation results in a with the following error environment request method post request url django version python version installed applications django contrib admin django contrib auth django contrib contenttypes django contrib sessions django contrib messages django contrib staticfiles rest framework django filters django property filter mathesar installed middleware django middleware security securitymiddleware django contrib sessions middleware sessionmiddleware django middleware common commonmiddleware django middleware csrf csrfviewmiddleware django contrib auth middleware authenticationmiddleware django contrib messages middleware messagemiddleware django middleware clickjacking xframeoptionsmiddleware mathesar middleware cursorclosedhandlermiddleware mathesar middleware passwordchangeneededmiddleware django userforeignkey middleware userforeignkeymiddleware django request cache middleware requestcachemiddleware traceback most recent call last file usr local lib site packages django core handlers exception py line in inner response get response request file usr local lib site packages django core handlers base py line in get response response wrapped callback request callback args callback kwargs file usr local lib site packages django views decorators csrf py line in wrapped view return view func args kwargs file usr local lib site packages rest framework viewsets py line in view return self dispatch request args kwargs file usr local lib site packages rest framework views py line in dispatch response self handle exception exc file usr local lib site packages rest framework views py line in handle exception response exception handler exc context file code mathesar exception handlers py line in mathesar exception handler raise exc file usr local lib site packages rest framework views py line in dispatch response handler request args kwargs file code mathesar api db viewsets queries py line in run column metadata query all columns description map file code mathesar models query py line in all columns description map return file code mathesar models query py line in alias self describe query column sa col file code mathesar models query py line in describe query column type sa col db type id file code db columns base py line in db type return get db type enum from class self type class file code db types operations convert py line in get db type enum from class raise unknowndbtypeid exception type unknowndbtypeid at api db queries run exception value the request json base table initial columns id alias patrons first name id alias patrons last name id alias patrons email transformations type hide spec | 1 |

1,398 | 6,025,396,153 | IssuesEvent | 2017-06-08 08:35:42 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | win_acl error with having LDAP signing enabled | affects_2.2 bug_report waiting_on_maintainer windows | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

win_acl

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0

```

##### CONFIGURATION

none / default

##### OS / ENVIRONMENT

managing Windows7 from MacOS 10.11

##### SUMMARY

win_acl is failing if having LDAP signing enabled

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Give Domain Users the required access rights for folder

win_acl:

path: 'C:\Users\Public\Desktop\somefolder'

user: 'DOMAIN\Domain Users'

rights: 'ReadAndExecute,Write,ListDirectory,CreateDirectories,CreateFiles,DeleteSubdirectoriesAndFiles,Synchronize,Traverse'

type: 'allow'

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

ACL for that folder updated

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

FAILED! => {"changed": false, "failed": true, "msg": "exception calling \"FindOne\" with 0 argument(s): \"A more secure authentication method is required for this server.\r\n\""}

```

| True | win_acl error with having LDAP signing enabled - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

win_acl

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0

```

##### CONFIGURATION

none / default

##### OS / ENVIRONMENT

managing Windows7 from MacOS 10.11

##### SUMMARY

win_acl is failing if having LDAP signing enabled

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Give Domain Users the required access rights for folder

win_acl:

path: 'C:\Users\Public\Desktop\somefolder'

user: 'DOMAIN\Domain Users'

rights: 'ReadAndExecute,Write,ListDirectory,CreateDirectories,CreateFiles,DeleteSubdirectoriesAndFiles,Synchronize,Traverse'

type: 'allow'

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

ACL for that folder updated

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

<!--- Paste verbatim command output between quotes below -->

```

FAILED! => {"changed": false, "failed": true, "msg": "exception calling \"FindOne\" with 0 argument(s): \"A more secure authentication method is required for this server.\r\n\""}

```

| main | win acl error with having ldap signing enabled issue type bug report component name win acl ansible version ansible configuration none default os environment managing from macos summary win acl is failing if having ldap signing enabled steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used name give domain users the required access rights for folder win acl path c users public desktop somefolder user domain domain users rights readandexecute write listdirectory createdirectories createfiles deletesubdirectoriesandfiles synchronize traverse type allow expected results acl for that folder updated actual results failed changed false failed true msg exception calling findone with argument s a more secure authentication method is required for this server r n | 1 |

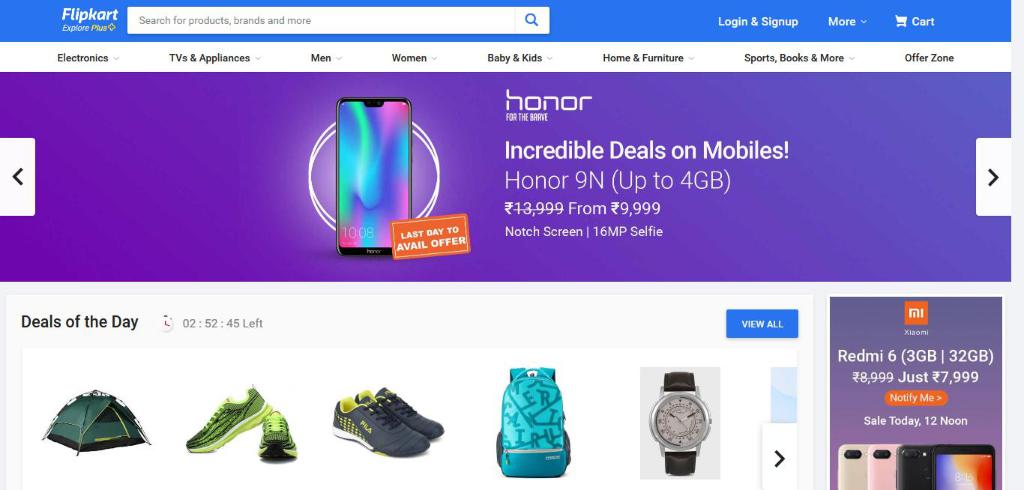

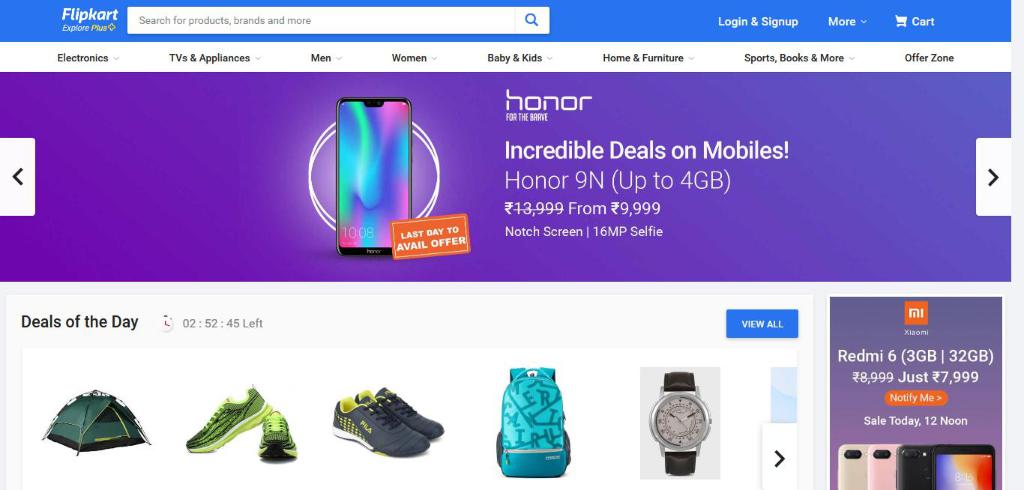

273,951 | 8,555,268,733 | IssuesEvent | 2018-11-08 09:33:33 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.flipkart.com - site is not usable | browser-firefox priority-important | <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.flipkart.com/?affid=galaksion&affExtParam1=54B902A0-E307-11E8-B292-9140F31CBED0&affExtParam2=21997

**Browser / Version**: Firefox 64.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Site is not usable

**Description**: this site leads to crash of the connection

**Steps to Reproduce**:

this site is spam and not allowing me to use the net

[](https://webcompat.com/uploads/2018/11/430c72f5-a32a-43f4-91b7-452a762c59d2.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20181022150107</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: beta</li>

</ul>

<p>Console Messages:</p>

<pre>

[u'[JavaScript Warning: "Content Security Policy: Directive child-src has been deprecated. Please use directive worker-src to control workers, or directive frame-src to control frames respectively."]', u'[console.log(ServiceWorker registration successful with scope: , https://www.flipkart.com/) https://www.flipkart.com/?affid=galaksion&affExtParam1=54B902A0-E307-11E8-B292-9140F31CBED0&affExtParam2=21997:159:4]', u"[console.log(Flipkart 's web) https://img1a.flixcart.com/www/linchpin/fk-cp-zion/js/raven.3.22.3.js:2:1243]"]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.flipkart.com - site is not usable - <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.flipkart.com/?affid=galaksion&affExtParam1=54B902A0-E307-11E8-B292-9140F31CBED0&affExtParam2=21997

**Browser / Version**: Firefox 64.0

**Operating System**: Windows 10

**Tested Another Browser**: Unknown

**Problem type**: Site is not usable

**Description**: this site leads to crash of the connection

**Steps to Reproduce**:

this site is spam and not allowing me to use the net

[](https://webcompat.com/uploads/2018/11/430c72f5-a32a-43f4-91b7-452a762c59d2.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20181022150107</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: beta</li>

</ul>

<p>Console Messages:</p>

<pre>

[u'[JavaScript Warning: "Content Security Policy: Directive child-src has been deprecated. Please use directive worker-src to control workers, or directive frame-src to control frames respectively."]', u'[console.log(ServiceWorker registration successful with scope: , https://www.flipkart.com/) https://www.flipkart.com/?affid=galaksion&affExtParam1=54B902A0-E307-11E8-B292-9140F31CBED0&affExtParam2=21997:159:4]', u"[console.log(Flipkart 's web) https://img1a.flixcart.com/www/linchpin/fk-cp-zion/js/raven.3.22.3.js:2:1243]"]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_main | site is not usable url browser version firefox operating system windows tested another browser unknown problem type site is not usable description this site leads to crash of the connection steps to reproduce this site is spam and not allowing me to use the net browser configuration mixed active content blocked false image mem shared true buildid tracking content blocked false gfx webrender blob images true hastouchscreen false mixed passive content blocked false gfx webrender enabled false gfx webrender all false channel beta console messages u u from with ❤️ | 0 |

1,032 | 4,827,588,341 | IssuesEvent | 2016-11-07 14:05:54 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | cloudformation module fails when state:absent and stack does not exist | affects_2.0 aws bug_report cloud waiting_on_maintainer | ##### Issue Type:

- Bug Report

##### Plugin Name:

cloudformation

##### Ansible Version:

```

$ ansible --version

ansible 2.0.1.0

config file = /Users/dcarr/.ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

None

##### Environment:

N/A; Mac OS X 10.10.5

##### Summary:

I have a playbook that deletes a CloudFormation stack. If I run it when the stack is already absent, I expect it to succeed without error, noting that no changes were needed. What I actually see is that it fails with an error message:

```

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "msg": "Stack with id STACKNAME does not exist"}

```

##### Steps To Reproduce:

```

---

- name: delete stack play

hosts: localhost

connection: local

gather_facts: false

tasks:

- name: delete stack task

cloudformation:

stack_name: "STACKNAME"

state: "absent"

region: "us-east-1"

```

##### Expected Results:

Success with no changes

##### Actual Results:

<!-- What actually happened? If possible run with high verbosity (-vvvv) -->

```

$ ansible-playbook bug.yaml -vvvv

Using /Users/dcarr/.ansible.cfg as config file

Loaded callback default of type stdout, v2.0

1 plays in bug.yaml

PLAY [delete stack play] *******************************************************

TASK [delete stack task] *******************************************************

task path: /private/var/folders/2c/qd7lcfcs5tsctw7tmvyd61v00000gn/T/bug.s12v4I2i/bug.yaml:7

ESTABLISH LOCAL CONNECTION FOR USER: dcarr

127.0.0.1 EXEC /bin/sh -c '( umask 22 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760 `" && echo "` echo $HOME/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760 `" )'

127.0.0.1 PUT /var/folders/2c/qd7lcfcs5tsctw7tmvyd61v00000gn/T/tmp8h9eEU TO /Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/cloudformation

127.0.0.1 EXEC /bin/sh -c 'LANG=en_US.UTF-8 LC_ALL=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 /usr/bin/python /Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/cloudformation; rm -rf "/Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/" > /dev/null 2>&1'

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "invocation": {"module_args": {"aws_access_key": null, "aws_secret_key": null, "disable_rollback": false, "ec2_url": null, "notification_arns": null, "profile": null, "region": "us-east-1", "security_token": null, "stack_name": "STACKNAME", "stack_policy": null, "state": "absent", "tags": null, "template": null, "template_format": "json", "template_parameters": {}, "template_url": null, "validate_certs": true}, "module_name": "cloudformation"}, "msg": "Stack with id STACKNAME does not exist"}

NO MORE HOSTS LEFT *************************************************************

to retry, use: --limit @bug.retry

PLAY RECAP *********************************************************************

localhost : ok=0 changed=0 unreachable=0 failed=1

```

| True | cloudformation module fails when state:absent and stack does not exist - ##### Issue Type:

- Bug Report

##### Plugin Name:

cloudformation

##### Ansible Version:

```

$ ansible --version

ansible 2.0.1.0

config file = /Users/dcarr/.ansible.cfg

configured module search path = Default w/o overrides

```

##### Ansible Configuration:

None

##### Environment:

N/A; Mac OS X 10.10.5

##### Summary:

I have a playbook that deletes a CloudFormation stack. If I run it when the stack is already absent, I expect it to succeed without error, noting that no changes were needed. What I actually see is that it fails with an error message:

```

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "msg": "Stack with id STACKNAME does not exist"}

```

##### Steps To Reproduce:

```

---

- name: delete stack play

hosts: localhost

connection: local

gather_facts: false

tasks:

- name: delete stack task

cloudformation:

stack_name: "STACKNAME"

state: "absent"

region: "us-east-1"

```

##### Expected Results:

Success with no changes

##### Actual Results:

<!-- What actually happened? If possible run with high verbosity (-vvvv) -->

```

$ ansible-playbook bug.yaml -vvvv

Using /Users/dcarr/.ansible.cfg as config file

Loaded callback default of type stdout, v2.0

1 plays in bug.yaml

PLAY [delete stack play] *******************************************************

TASK [delete stack task] *******************************************************

task path: /private/var/folders/2c/qd7lcfcs5tsctw7tmvyd61v00000gn/T/bug.s12v4I2i/bug.yaml:7

ESTABLISH LOCAL CONNECTION FOR USER: dcarr

127.0.0.1 EXEC /bin/sh -c '( umask 22 && mkdir -p "` echo $HOME/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760 `" && echo "` echo $HOME/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760 `" )'

127.0.0.1 PUT /var/folders/2c/qd7lcfcs5tsctw7tmvyd61v00000gn/T/tmp8h9eEU TO /Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/cloudformation

127.0.0.1 EXEC /bin/sh -c 'LANG=en_US.UTF-8 LC_ALL=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 /usr/bin/python /Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/cloudformation; rm -rf "/Users/dcarr/.ansible/tmp/ansible-tmp-1458161277.5-250244469102760/" > /dev/null 2>&1'

fatal: [localhost]: FAILED! => {"changed": false, "failed": true, "invocation": {"module_args": {"aws_access_key": null, "aws_secret_key": null, "disable_rollback": false, "ec2_url": null, "notification_arns": null, "profile": null, "region": "us-east-1", "security_token": null, "stack_name": "STACKNAME", "stack_policy": null, "state": "absent", "tags": null, "template": null, "template_format": "json", "template_parameters": {}, "template_url": null, "validate_certs": true}, "module_name": "cloudformation"}, "msg": "Stack with id STACKNAME does not exist"}

NO MORE HOSTS LEFT *************************************************************

to retry, use: --limit @bug.retry

PLAY RECAP *********************************************************************

localhost : ok=0 changed=0 unreachable=0 failed=1

```

| main | cloudformation module fails when state absent and stack does not exist issue type bug report plugin name cloudformation ansible version ansible version ansible config file users dcarr ansible cfg configured module search path default w o overrides ansible configuration none environment n a mac os x summary i have a playbook that deletes a cloudformation stack if i run it when the stack is already absent i expect it to succeed without error noting that no changes were needed what i actually see is that it fails with an error message fatal failed changed false failed true msg stack with id stackname does not exist steps to reproduce name delete stack play hosts localhost connection local gather facts false tasks name delete stack task cloudformation stack name stackname state absent region us east expected results success with no changes actual results ansible playbook bug yaml vvvv using users dcarr ansible cfg as config file loaded callback default of type stdout plays in bug yaml play task task path private var folders t bug bug yaml establish local connection for user dcarr exec bin sh c umask mkdir p echo home ansible tmp ansible tmp echo echo home ansible tmp ansible tmp put var folders t to users dcarr ansible tmp ansible tmp cloudformation exec bin sh c lang en us utf lc all en us utf lc messages en us utf usr bin python users dcarr ansible tmp ansible tmp cloudformation rm rf users dcarr ansible tmp ansible tmp dev null fatal failed changed false failed true invocation module args aws access key null aws secret key null disable rollback false url null notification arns null profile null region us east security token null stack name stackname stack policy null state absent tags null template null template format json template parameters template url null validate certs true module name cloudformation msg stack with id stackname does not exist no more hosts left to retry use limit bug retry play recap localhost ok changed unreachable failed | 1 |

434,694 | 30,462,619,888 | IssuesEvent | 2023-07-17 08:08:05 | kubecub/go-project-layout | https://api.github.com/repos/kubecub/go-project-layout | closed | Bug reports for links in kubecub docs | kind/documentation triage/unresolved report lifecycle/stale | ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 171 |

| ✅ Successful | 164 |

| ⏳ Timeouts | 1 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 0 |

| ❓ Unknown | 0 |

| 🚫 Errors | 6 |

## Errors per input

### Errors in CONTRIBUTING.md

* [TIMEOUT] [https://twitter.com/xxw3293172751](https://twitter.com/xxw3293172751) | Timeout

### Errors in .github/CODE_OF_CONDUCT.md

* [404] [https://github.com/kubecub/kubecub/tree/main/.github/ISSUE_TEMPLATE](https://github.com/kubecub/kubecub/tree/main/.github/ISSUE_TEMPLATE) | Failed: Network error: Not Found

* [ERR] [file:///home/runner/work/go-project-layout/go-project-layout/.github/nsddd.top](file:///home/runner/work/go-project-layout/go-project-layout/.github/nsddd.top) | Failed: Cannot find file

* [404] [https://github.com/kubecub/community/blob/main/DEVELOPGUIDE.md](https://github.com/kubecub/community/blob/main/DEVELOPGUIDE.md) | Failed: Network error: Not Found

* [ERR] [file:///home/runner/work/go-project-layout/go-project-layout/.github/google.com/search](file:///home/runner/work/go-project-layout/go-project-layout/.github/google.com/search) | Failed: Cannot find file

### Errors in README.md

* [404] [https://github.com/kubecub/go-project-layout/generate](https://github.com/kubecub/go-project-layout/generate) | Failed: Network error: Not Found

* [400] [https://github.com/issues?q=org%kubecub+is%3Aissue+label%3A%22good+first+issue%22+no%3Aassignee](https://github.com/issues?q=org%kubecub+is%3Aissue+label%3A%22good+first+issue%22+no%3Aassignee) | Failed: Network error: Bad Request

[Full Github Actions output](https://github.com/kubecub/go-project-layout/actions/runs/5235294087?check_suite_focus=true)

| 1.0 | Bug reports for links in kubecub docs - ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 171 |

| ✅ Successful | 164 |

| ⏳ Timeouts | 1 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 0 |

| ❓ Unknown | 0 |

| 🚫 Errors | 6 |

## Errors per input

### Errors in CONTRIBUTING.md

* [TIMEOUT] [https://twitter.com/xxw3293172751](https://twitter.com/xxw3293172751) | Timeout

### Errors in .github/CODE_OF_CONDUCT.md

* [404] [https://github.com/kubecub/kubecub/tree/main/.github/ISSUE_TEMPLATE](https://github.com/kubecub/kubecub/tree/main/.github/ISSUE_TEMPLATE) | Failed: Network error: Not Found

* [ERR] [file:///home/runner/work/go-project-layout/go-project-layout/.github/nsddd.top](file:///home/runner/work/go-project-layout/go-project-layout/.github/nsddd.top) | Failed: Cannot find file

* [404] [https://github.com/kubecub/community/blob/main/DEVELOPGUIDE.md](https://github.com/kubecub/community/blob/main/DEVELOPGUIDE.md) | Failed: Network error: Not Found

* [ERR] [file:///home/runner/work/go-project-layout/go-project-layout/.github/google.com/search](file:///home/runner/work/go-project-layout/go-project-layout/.github/google.com/search) | Failed: Cannot find file

### Errors in README.md

* [404] [https://github.com/kubecub/go-project-layout/generate](https://github.com/kubecub/go-project-layout/generate) | Failed: Network error: Not Found

* [400] [https://github.com/issues?q=org%kubecub+is%3Aissue+label%3A%22good+first+issue%22+no%3Aassignee](https://github.com/issues?q=org%kubecub+is%3Aissue+label%3A%22good+first+issue%22+no%3Aassignee) | Failed: Network error: Bad Request

[Full Github Actions output](https://github.com/kubecub/go-project-layout/actions/runs/5235294087?check_suite_focus=true)

| non_main | bug reports for links in kubecub docs summary status count 🔍 total ✅ successful ⏳ timeouts 🔀 redirected 👻 excluded ❓ unknown 🚫 errors errors per input errors in contributing md timeout errors in github code of conduct md failed network error not found file home runner work go project layout go project layout github nsddd top failed cannot find file failed network error not found file home runner work go project layout go project layout github google com search failed cannot find file errors in readme md failed network error not found failed network error bad request | 0 |

393,485 | 11,616,550,872 | IssuesEvent | 2020-02-26 15:53:10 | hms-dbmi/cistrome-higlass-wrapper | https://api.github.com/repos/hms-dbmi/cistrome-higlass-wrapper | closed | Use updated higlass viewport-projection-horizontal track to enable multiple genome interval selections | enhancement high priority | When https://github.com/higlass/higlass/pull/864 is merged this can be completed | 1.0 | Use updated higlass viewport-projection-horizontal track to enable multiple genome interval selections - When https://github.com/higlass/higlass/pull/864 is merged this can be completed | non_main | use updated higlass viewport projection horizontal track to enable multiple genome interval selections when is merged this can be completed | 0 |

63,769 | 12,374,413,646 | IssuesEvent | 2020-05-19 01:30:19 | toebes/ciphers | https://api.github.com/repos/toebes/ciphers | opened | Baconian word generator needs a UI to show letters chosen | CodeBusters enhancement | When generating a word baconian, it needs to have a field for the HINT characters.

With the given Hint characters, it should show in the letter map which letters are covered by the hint.

For example with the sample plain text

SOMETHING

and a HINT of

SOME

With the text chosen as:

BY OUR ERNST ALERT AUDIO --- BE ITS EARTH A BOOK ABBEY

On the mapping, the letters **AB DE I L NO RSTU Y** should be bold or highlighted in a color as well as the A/B letter that they map to

**AB**C**DE**FGH**I**JK**L**M**NO**PQ**RSTU**VWX**Y**Z

Ideally the code should also check the question text to make sure that the hint occurs in the question (like the other generators do). Note that the hint field should only be present and checked for the word baconian. | 1.0 | Baconian word generator needs a UI to show letters chosen - When generating a word baconian, it needs to have a field for the HINT characters.

With the given Hint characters, it should show in the letter map which letters are covered by the hint.

For example with the sample plain text

SOMETHING

and a HINT of

SOME

With the text chosen as:

BY OUR ERNST ALERT AUDIO --- BE ITS EARTH A BOOK ABBEY

On the mapping, the letters **AB DE I L NO RSTU Y** should be bold or highlighted in a color as well as the A/B letter that they map to

**AB**C**DE**FGH**I**JK**L**M**NO**PQ**RSTU**VWX**Y**Z

Ideally the code should also check the question text to make sure that the hint occurs in the question (like the other generators do). Note that the hint field should only be present and checked for the word baconian. | non_main | baconian word generator needs a ui to show letters chosen when generating a word baconian it needs to have a field for the hint characters with the given hint characters it should show in the letter map which letters are covered by the hint for example with the sample plain text something and a hint of some with the text chosen as by our ernst alert audio be its earth a book abbey on the mapping the letters ab de i l no rstu y should be bold or highlighted in a color as well as the a b letter that they map to ab c de fgh i jk l m no pq rstu vwx y z ideally the code should also check the question text to make sure that the hint occurs in the question like the other generators do note that the hint field should only be present and checked for the word baconian | 0 |

1,728 | 6,574,824,683 | IssuesEvent | 2017-09-11 14:12:25 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Passphrase protected private-key require to enter passphrase several times on one task to one host | affects_2.1 docs_report waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report / Documentation Report

##### COMPONENT NAME

git

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

all default

##### OS / ENVIRONMENT

Use _Putty_ to _Centos 7.x_ via _Vagrant_ on _VM VirtualBox_ at _Windows10_

##### SUMMARY

I have passphrase-protected-ssh-private-key for access the private git repo. I copy this key to target host but every time i run ansible-git-task it's asked me passphrase six (!) times for every single host.

Yes i know that one ansible git command translate into several git commands. It was not so obviously but afer some investigation time i found it. So my next step was to use some of forwarding practices. And cannot do this at all. 8(

Not helped:

ssh-agent + ssh-add

ansible.cfg with ssh_args = -o ForwardAgent=true

run playbook w/ or w/o sudo

##### STEPS TO REPRODUCE

1. Phassphrase private ssh key and private git repo (for example on bitbucket)

2. Create user (not root!) on remote host with this protected private key

3. Run ansible playbook command from control machine

Ansible git task example:

```

- name: checkout repo

git: repo=ssh://git@altssh.bitbucket.org:443/user/repo.git version="{{ git_branch }}" dest="{{ dir_app }}" accept_hostkey="yes"

become: yes

become_user: "{{ user.login }}"

tags: ['app-update', 'sandbox']

```

Playbook command example

```

[vagrant@localhost ~]$ ansible-playbook /vagrant/provisioning/sandbox/dd-apps-sandboxes.yml -i /vagrant/provisioning/dd-hosts.txt --limit="brutto.dev" --tags="app-update"

PLAY [brutto.dev] **************************************************************

TASK [setup] *******************************************************************

ok: [brutto.dev]

TASK [../roles/app : checkout repo] ********************************************

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

ok: [brutto.dev]

PLAY RECAP *********************************************************************

brutto.dev : ok=2 changed=0 unreachable=0 failed=0

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

I want enter passphrase one time or never if i use some forwarding

##### ACTUAL RESULTS

Every time passphrase prompted six times!

| True | Passphrase protected private-key require to enter passphrase several times on one task to one host - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

- Bug Report / Documentation Report

##### COMPONENT NAME

git

##### ANSIBLE VERSION

```

ansible 2.1.1.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

all default

##### OS / ENVIRONMENT

Use _Putty_ to _Centos 7.x_ via _Vagrant_ on _VM VirtualBox_ at _Windows10_

##### SUMMARY

I have passphrase-protected-ssh-private-key for access the private git repo. I copy this key to target host but every time i run ansible-git-task it's asked me passphrase six (!) times for every single host.

Yes i know that one ansible git command translate into several git commands. It was not so obviously but afer some investigation time i found it. So my next step was to use some of forwarding practices. And cannot do this at all. 8(

Not helped:

ssh-agent + ssh-add

ansible.cfg with ssh_args = -o ForwardAgent=true

run playbook w/ or w/o sudo

##### STEPS TO REPRODUCE

1. Phassphrase private ssh key and private git repo (for example on bitbucket)

2. Create user (not root!) on remote host with this protected private key

3. Run ansible playbook command from control machine

Ansible git task example:

```

- name: checkout repo

git: repo=ssh://git@altssh.bitbucket.org:443/user/repo.git version="{{ git_branch }}" dest="{{ dir_app }}" accept_hostkey="yes"

become: yes

become_user: "{{ user.login }}"

tags: ['app-update', 'sandbox']

```

Playbook command example

```

[vagrant@localhost ~]$ ansible-playbook /vagrant/provisioning/sandbox/dd-apps-sandboxes.yml -i /vagrant/provisioning/dd-hosts.txt --limit="brutto.dev" --tags="app-update"

PLAY [brutto.dev] **************************************************************

TASK [setup] *******************************************************************

ok: [brutto.dev]

TASK [../roles/app : checkout repo] ********************************************

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

Enter passphrase for key '/home/brutto/.ssh/id_rsa':

ok: [brutto.dev]

PLAY RECAP *********************************************************************

brutto.dev : ok=2 changed=0 unreachable=0 failed=0

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

I want enter passphrase one time or never if i use some forwarding

##### ACTUAL RESULTS

Every time passphrase prompted six times!

| main | passphrase protected private key require to enter passphrase several times on one task to one host issue type bug report documentation report component name git ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration all default os environment use putty to centos x via vagrant on vm virtualbox at summary i have passphrase protected ssh private key for access the private git repo i copy this key to target host but every time i run ansible git task it s asked me passphrase six times for every single host yes i know that one ansible git command translate into several git commands it was not so obviously but afer some investigation time i found it so my next step was to use some of forwarding practices and cannot do this at all not helped ssh agent ssh add ansible cfg with ssh args o forwardagent true run playbook w or w o sudo steps to reproduce phassphrase private ssh key and private git repo for example on bitbucket create user not root on remote host with this protected private key run ansible playbook command from control machine ansible git task example name checkout repo git repo ssh git altssh bitbucket org user repo git version git branch dest dir app accept hostkey yes become yes become user user login tags playbook command example ansible playbook vagrant provisioning sandbox dd apps sandboxes yml i vagrant provisioning dd hosts txt limit brutto dev tags app update play task ok task enter passphrase for key home brutto ssh id rsa enter passphrase for key home brutto ssh id rsa enter passphrase for key home brutto ssh id rsa enter passphrase for key home brutto ssh id rsa enter passphrase for key home brutto ssh id rsa enter passphrase for key home brutto ssh id rsa ok play recap brutto dev ok changed unreachable failed expected results i want enter passphrase one time or never if i use some forwarding actual results every time passphrase prompted six times | 1 |

359 | 3,298,189,639 | IssuesEvent | 2015-11-02 13:21:53 | Homebrew/homebrew | https://api.github.com/repos/Homebrew/homebrew | opened | Possible way to handle sandbox issues for Postgres's plugins | help wanted maintainer feedback sandbox upstream issue | As we can seen in https://github.com/Homebrew/homebrew/pull/41962 and many others PR, all of Postgre's plugins are broken under sandbox. Moreover, this means all of them are broken during `upgrade/unlink/link/switch` etc.

Considering the amount of plugins for Postgres, vending all of them will soon become unscalable. However, until it's fixed/supported by upstream (See https://github.com/Homebrew/homebrew/issues/10247), Postgres is inherently hostile to Homebrew-style sandboxing where several components are symlinked into a common prefix.

Since there isn't any perfect solution, we may will just accept some hacking middle ground. AFAIK, NixOS handles this by copying all of binaries directly to common prefix, hence breaking its symlink sandbox as well. We may take some similar approach:

* Compile Postgres as usual.

* Copy all of binaries in `prefix/bin` to `prefix/libexec/bin-backup`.

* Hard link binaries `prefix/libexec/bin-backup` to `HOMEBREW_PREFIX/bin` during `post_install`.

Clearly, it's still breaking our symlink system. But at least, it can work under sandbox.

Any objection/suggestion/commments? OR should we just vendor all of them inside one mega formula?

cc @mikemcquaid @DomT4 | True | Possible way to handle sandbox issues for Postgres's plugins - As we can seen in https://github.com/Homebrew/homebrew/pull/41962 and many others PR, all of Postgre's plugins are broken under sandbox. Moreover, this means all of them are broken during `upgrade/unlink/link/switch` etc.

Considering the amount of plugins for Postgres, vending all of them will soon become unscalable. However, until it's fixed/supported by upstream (See https://github.com/Homebrew/homebrew/issues/10247), Postgres is inherently hostile to Homebrew-style sandboxing where several components are symlinked into a common prefix.

Since there isn't any perfect solution, we may will just accept some hacking middle ground. AFAIK, NixOS handles this by copying all of binaries directly to common prefix, hence breaking its symlink sandbox as well. We may take some similar approach:

* Compile Postgres as usual.

* Copy all of binaries in `prefix/bin` to `prefix/libexec/bin-backup`.

* Hard link binaries `prefix/libexec/bin-backup` to `HOMEBREW_PREFIX/bin` during `post_install`.

Clearly, it's still breaking our symlink system. But at least, it can work under sandbox.

Any objection/suggestion/commments? OR should we just vendor all of them inside one mega formula?

cc @mikemcquaid @DomT4 | main | possible way to handle sandbox issues for postgres s plugins as we can seen in and many others pr all of postgre s plugins are broken under sandbox moreover this means all of them are broken during upgrade unlink link switch etc considering the amount of plugins for postgres vending all of them will soon become unscalable however until it s fixed supported by upstream see postgres is inherently hostile to homebrew style sandboxing where several components are symlinked into a common prefix since there isn t any perfect solution we may will just accept some hacking middle ground afaik nixos handles this by copying all of binaries directly to common prefix hence breaking its symlink sandbox as well we may take some similar approach compile postgres as usual copy all of binaries in prefix bin to prefix libexec bin backup hard link binaries prefix libexec bin backup to homebrew prefix bin during post install clearly it s still breaking our symlink system but at least it can work under sandbox any objection suggestion commments or should we just vendor all of them inside one mega formula cc mikemcquaid | 1 |

220,163 | 17,153,381,185 | IssuesEvent | 2021-07-14 01:21:44 | kworkflow/kworkflow | https://api.github.com/repos/kworkflow/kworkflow | opened | vm_test fail if we have kworkflow.config in the kw main directory | bug tests | **Describe the bug**

When I was running `./run_test test vm_test`, I got the following error:

```

=========================================================

test_vm_mount

ASSERT:(1) - Expected 125 expected:<125> but was:<0>

test_vm_umount

Ran 2 tests.

FAILED (failures=1)

```

It looks like that `vm_test` is sensitive to an external file.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the main folder from kw (`cd kworkflow`);

2. Use `kw init`. You will see a file named `kworkflow.config`.

3. Run: `./run_tests.sh test vm_test`.

**Expected behavior**

Full pass.

**Desktop (please complete the following information):**

- OS: Ubuntu

- Version: Sid

- Bash Version: 5.0.17

| 1.0 | vm_test fail if we have kworkflow.config in the kw main directory - **Describe the bug**

When I was running `./run_test test vm_test`, I got the following error:

```

=========================================================

test_vm_mount

ASSERT:(1) - Expected 125 expected:<125> but was:<0>

test_vm_umount

Ran 2 tests.

FAILED (failures=1)

```

It looks like that `vm_test` is sensitive to an external file.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the main folder from kw (`cd kworkflow`);

2. Use `kw init`. You will see a file named `kworkflow.config`.

3. Run: `./run_tests.sh test vm_test`.

**Expected behavior**

Full pass.

**Desktop (please complete the following information):**

- OS: Ubuntu

- Version: Sid

- Bash Version: 5.0.17

| non_main | vm test fail if we have kworkflow config in the kw main directory describe the bug when i was running run test test vm test i got the following error test vm mount assert expected expected but was test vm umount ran tests failed failures it looks like that vm test is sensitive to an external file to reproduce steps to reproduce the behavior go to the main folder from kw cd kworkflow use kw init you will see a file named kworkflow config run run tests sh test vm test expected behavior full pass desktop please complete the following information os ubuntu version sid bash version | 0 |

4,703 | 24,270,821,562 | IssuesEvent | 2022-09-28 10:07:06 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | closed | SEO | Duplicate title tags | engineering Maintain | Off the back of the Grassriots site audit, it has been identified that there are a number of issues with pages that have duplicate title tags.

Detail from Grassriots:

Pages in this set have duplicate / generalized title tags - in some case english language / non-translated. Duplicate <title> tags make it difficult for search engines to determine which of a website's pages is relevant for a specific search query, and which one should be prioritized in search results. Pages with duplicate titles have a lower chance of ranking well and are at risk of being banned. Moreover, identical <title> tags confuse users as to which webpage they should follow.

Link to the [audit](https://docs.google.com/spreadsheets/d/15HwgpxSYc4Zl809kcebAhLfLYXFuIk8ZP-Qvk3yVV8Q/edit#gid=627737737) | True | SEO | Duplicate title tags - Off the back of the Grassriots site audit, it has been identified that there are a number of issues with pages that have duplicate title tags.

Detail from Grassriots:

Pages in this set have duplicate / generalized title tags - in some case english language / non-translated. Duplicate <title> tags make it difficult for search engines to determine which of a website's pages is relevant for a specific search query, and which one should be prioritized in search results. Pages with duplicate titles have a lower chance of ranking well and are at risk of being banned. Moreover, identical <title> tags confuse users as to which webpage they should follow.

Link to the [audit](https://docs.google.com/spreadsheets/d/15HwgpxSYc4Zl809kcebAhLfLYXFuIk8ZP-Qvk3yVV8Q/edit#gid=627737737) | main | seo duplicate title tags off the back of the grassriots site audit it has been identified that there are a number of issues with pages that have duplicate title tags detail from grassriots pages in this set have duplicate generalized title tags in some case english language non translated duplicate tags make it difficult for search engines to determine which of a website s pages is relevant for a specific search query and which one should be prioritized in search results pages with duplicate titles have a lower chance of ranking well and are at risk of being banned moreover identical tags confuse users as to which webpage they should follow link to the | 1 |

549,887 | 16,101,522,798 | IssuesEvent | 2021-04-27 09:53:45 | googleapis/python-spanner | https://api.github.com/repos/googleapis/python-spanner | opened | Synthesis failed for python-spanner | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate python-spanner. :broken_heart:

Please investigate and fix this issue within 5 business days. While it remains broken,

this library cannot be updated with changes to the python-spanner API, and the library grows

stale.

See https://github.com/googleapis/synthtool/blob/master/autosynth/TroubleShooting.md

for trouble shooting tips.

Here's the output from running `synth.py`:

```

l_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:77:1

DEBUG: Rule 'com_google_protoc_java_resource_names_plugin' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "4b714b35ee04ba90f560ee60e64c7357428efcb6b0f3a298f343f8ec2c6d4a5d"

DEBUG: Call stack for the definition of repository 'com_google_protoc_java_resource_names_plugin' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:234:1

DEBUG: Rule 'protoc_docs_plugin' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "33b387245455775e0de45869c7355cc5a9e98b396a6fc43b02812a63b75fee20"

DEBUG: Call stack for the definition of repository 'protoc_docs_plugin' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:258:1

DEBUG: Rule 'rules_python' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "48f7e716f4098b85296ad93f5a133baf712968c13fbc2fdf3a6136158fe86eac"

DEBUG: Call stack for the definition of repository 'rules_python' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:42:1

DEBUG: Rule 'gapic_generator_python' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "fe995def6873fcbdc2a8764ef4bce96eb971a9d1950fe9db9be442f3c64fb3b6"

DEBUG: Call stack for the definition of repository 'gapic_generator_python' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:278:1

DEBUG: Rule 'com_googleapis_gapic_generator_go' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "c0d0efba86429cee5e52baf838165b0ed7cafae1748d025abec109d25e006628"

DEBUG: Call stack for the definition of repository 'com_googleapis_gapic_generator_go' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:300:1

DEBUG: Rule 'gapic_generator_php' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "3dffc5c34a5f35666843df04b42d6ce1c545b992f9c093a777ec40833b548d86"

DEBUG: Call stack for the definition of repository 'gapic_generator_php' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:364:1

DEBUG: Rule 'gapic_generator_csharp' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "4db430cfb9293e4521ec8e8138f8095faf035d8e752cf332d227710d749939eb"

DEBUG: Call stack for the definition of repository 'gapic_generator_csharp' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:386:1

DEBUG: Rule 'gapic_generator_ruby' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "a14ec475388542f2ea70d16d75579065758acc4b99fdd6d59463d54e1a9e4499"

DEBUG: Call stack for the definition of repository 'gapic_generator_ruby' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:400:1

DEBUG: /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/rules_python/python/pip.bzl:61:5: DEPRECATED: the pip_repositories rule has been replaced with pip_install, please see rules_python 0.1 release notes

DEBUG: Rule 'bazel_skylib' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "1dde365491125a3db70731e25658dfdd3bc5dbdfd11b840b3e987ecf043c7ca0"

DEBUG: Call stack for the definition of repository 'bazel_skylib' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:35:1

Analyzing: target //google/spanner/v1:spanner-v1-py (1 packages loaded, 0 targets configured)

ERROR: /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/upb/bazel/upb_proto_library.bzl:257:29: aspect() got unexpected keyword argument 'incompatible_use_toolchain_transition'

ERROR: Analysis of target '//google/spanner/v1:spanner-v1-py' failed; build aborted: error loading package '@com_github_grpc_grpc//': in /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/com_github_grpc_grpc/bazel/grpc_build_system.bzl: Extension file 'bazel/upb_proto_library.bzl' has errors

INFO: Elapsed time: 0.252s

INFO: 0 processes.

FAILED: Build did NOT complete successfully (2 packages loaded, 13 targets configured)

FAILED: Build did NOT complete successfully (2 packages loaded, 13 targets configured)

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 102, in <module>

main()

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 829, in __call__

return self.main(*args, **kwargs)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 94, in main

spec.loader.exec_module(synth_module) # type: ignore

File "<frozen importlib._bootstrap_external>", line 678, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/home/kbuilder/.cache/synthtool/python-spanner/synth.py", line 30, in <module>

include_protos=True,

File "/tmpfs/src/github/synthtool/synthtool/gcp/gapic_bazel.py", line 52, in py_library

return self._generate_code(service, version, "python", False, **kwargs)

File "/tmpfs/src/github/synthtool/synthtool/gcp/gapic_bazel.py", line 204, in _generate_code

shell.run(bazel_run_args)

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 39, in run

raise exc

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 33, in run

encoding="utf-8",

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 438, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['bazel', '--max_idle_secs=240', 'build', '//google/spanner/v1:spanner-v1-py']' returned non-zero exit status 1.

2021-04-27 02:53:43,740 autosynth [ERROR] > Synthesis failed

2021-04-27 02:53:43,740 autosynth [DEBUG] > Running: git reset --hard HEAD

HEAD is now at 7bddb81 chore(revert): revert preventing normalization (#318)

2021-04-27 02:53:43,746 autosynth [DEBUG] > Running: git checkout autosynth

Switched to branch 'autosynth'

2021-04-27 02:53:43,751 autosynth [DEBUG] > Running: git clean -fdx

Removing __pycache__/

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 356, in <module>

main()

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 191, in main

return _inner_main(temp_dir)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 336, in _inner_main

commit_count = synthesize_loop(x, multiple_prs, change_pusher, synthesizer)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 68, in synthesize_loop

has_changes = toolbox.synthesize_version_in_new_branch(synthesizer, youngest)

File "/tmpfs/src/github/synthtool/autosynth/synth_toolbox.py", line 259, in synthesize_version_in_new_branch

synthesizer.synthesize(synth_log_path, self.environ)

File "/tmpfs/src/github/synthtool/autosynth/synthesizer.py", line 120, in synthesize

synth_proc.check_returncode() # Raise an exception.

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 389, in check_returncode

self.stderr)

subprocess.CalledProcessError: Command '['/tmpfs/src/github/synthtool/env/bin/python3', '-m', 'synthtool', '--metadata', 'synth.metadata', 'synth.py', '--']' returned non-zero exit status 1.

```

Google internal developers can see the full log [here](http://sponge2/results/invocations/1d11e2cc-303c-4be4-a4bf-414e479e240b/targets/github%2Fsynthtool;config=default/tests;query=python-spanner;failed=false).

| 1.0 | Synthesis failed for python-spanner - Hello! Autosynth couldn't regenerate python-spanner. :broken_heart:

Please investigate and fix this issue within 5 business days. While it remains broken,

this library cannot be updated with changes to the python-spanner API, and the library grows

stale.

See https://github.com/googleapis/synthtool/blob/master/autosynth/TroubleShooting.md

for trouble shooting tips.

Here's the output from running `synth.py`:

```

l_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:77:1

DEBUG: Rule 'com_google_protoc_java_resource_names_plugin' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "4b714b35ee04ba90f560ee60e64c7357428efcb6b0f3a298f343f8ec2c6d4a5d"

DEBUG: Call stack for the definition of repository 'com_google_protoc_java_resource_names_plugin' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:234:1

DEBUG: Rule 'protoc_docs_plugin' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "33b387245455775e0de45869c7355cc5a9e98b396a6fc43b02812a63b75fee20"

DEBUG: Call stack for the definition of repository 'protoc_docs_plugin' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:258:1

DEBUG: Rule 'rules_python' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "48f7e716f4098b85296ad93f5a133baf712968c13fbc2fdf3a6136158fe86eac"

DEBUG: Call stack for the definition of repository 'rules_python' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:42:1

DEBUG: Rule 'gapic_generator_python' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "fe995def6873fcbdc2a8764ef4bce96eb971a9d1950fe9db9be442f3c64fb3b6"

DEBUG: Call stack for the definition of repository 'gapic_generator_python' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:278:1

DEBUG: Rule 'com_googleapis_gapic_generator_go' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "c0d0efba86429cee5e52baf838165b0ed7cafae1748d025abec109d25e006628"

DEBUG: Call stack for the definition of repository 'com_googleapis_gapic_generator_go' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>

- /home/kbuilder/.cache/synthtool/googleapis/WORKSPACE:300:1

DEBUG: Rule 'gapic_generator_php' indicated that a canonical reproducible form can be obtained by modifying arguments sha256 = "3dffc5c34a5f35666843df04b42d6ce1c545b992f9c093a777ec40833b548d86"

DEBUG: Call stack for the definition of repository 'gapic_generator_php' which is a http_archive (rule definition at /home/kbuilder/.cache/bazel/_bazel_kbuilder/a732f932c2cbeb7e37e1543f189a2a73/external/bazel_tools/tools/build_defs/repo/http.bzl:296:16):

- <builtin>