Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,886 | 25,072,177,024 | IssuesEvent | 2022-11-07 13:02:13 | chocolatey-community/chocolatey-package-requests | https://api.github.com/repos/chocolatey-community/chocolatey-package-requests | closed | RFM - aaclr | Status: Available For Maintainer(s) | ## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request for this package;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://chocolatey.org/packages/aaclr

Package source URL: https://github.com/dtgm/chocolatey-packages

Date the maintainer was contacted (in YYYY-MM-DD): 2021-12-13

How the maintainer was contacted: Email

| True | RFM - aaclr - ## I DON'T Want To Become The Maintainer

- [x] I have followed the Package Triage Process and I do NOT want to become maintainer of the package;

- [x] There is no existing open maintainer request for this package;

## Checklist

- [x] Issue title starts with 'RFM - '

## Existing Package Details

Package URL: https://chocolatey.org/packages/aaclr

Package source URL: https://github.com/dtgm/chocolatey-packages

Date the maintainer was contacted (in YYYY-MM-DD): 2021-12-13

How the maintainer was contacted: Email

| main | rfm aaclr i don t want to become the maintainer i have followed the package triage process and i do not want to become maintainer of the package there is no existing open maintainer request for this package checklist issue title starts with rfm existing package details package url package source url date the maintainer was contacted in yyyy mm dd how the maintainer was contacted email | 1 |

194,427 | 22,261,983,202 | IssuesEvent | 2022-06-10 01:56:30 | Trinadh465/device_renesas_kernel_AOSP10_r33 | https://api.github.com/repos/Trinadh465/device_renesas_kernel_AOSP10_r33 | reopened | CVE-2021-20292 (Medium) detected in linuxlinux-4.19.239, linuxlinux-4.19.239 | security vulnerability | ## CVE-2021-20292 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.239</b>, <b>linuxlinux-4.19.239</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is a flaw reported in the Linux kernel in versions before 5.9 in drivers/gpu/drm/nouveau/nouveau_sgdma.c in nouveau_sgdma_create_ttm in Nouveau DRM subsystem. The issue results from the lack of validating the existence of an object prior to performing operations on the object. An attacker with a local account with a root privilege, can leverage this vulnerability to escalate privileges and execute code in the context of the kernel.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-20292>CVE-2021-20292</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2021-20292">https://www.linuxkernelcves.com/cves/CVE-2021-20292</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: v4.19.140, v5.4.59, v5.7.16, v5.8.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-20292 (Medium) detected in linuxlinux-4.19.239, linuxlinux-4.19.239 - ## CVE-2021-20292 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.19.239</b>, <b>linuxlinux-4.19.239</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is a flaw reported in the Linux kernel in versions before 5.9 in drivers/gpu/drm/nouveau/nouveau_sgdma.c in nouveau_sgdma_create_ttm in Nouveau DRM subsystem. The issue results from the lack of validating the existence of an object prior to performing operations on the object. An attacker with a local account with a root privilege, can leverage this vulnerability to escalate privileges and execute code in the context of the kernel.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-20292>CVE-2021-20292</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.7</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2021-20292">https://www.linuxkernelcves.com/cves/CVE-2021-20292</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: v4.19.140, v5.4.59, v5.7.16, v5.8.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in linuxlinux linuxlinux cve medium severity vulnerability vulnerable libraries linuxlinux linuxlinux vulnerability details there is a flaw reported in the linux kernel in versions before in drivers gpu drm nouveau nouveau sgdma c in nouveau sgdma create ttm in nouveau drm subsystem the issue results from the lack of validating the existence of an object prior to performing operations on the object an attacker with a local account with a root privilege can leverage this vulnerability to escalate privileges and execute code in the context of the kernel publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required high user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

827,297 | 31,765,057,160 | IssuesEvent | 2023-09-12 08:16:30 | filamentphp/filament | https://api.github.com/repos/filamentphp/filament | opened | Test | bug unconfirmed low priority | ### Package

filament/filament

### Package Version

vNothing

### Laravel Version

vNothing

### Livewire Version

vNothing

### PHP Version

vNothing

### Problem description

N/A

### Expected behavior

N/A

### Steps to reproduce

N/A

### Reproduction repository

N/A

### Relevant log output

```shell

N/A

```

| 1.0 | Test - ### Package

filament/filament

### Package Version

vNothing

### Laravel Version

vNothing

### Livewire Version

vNothing

### PHP Version

vNothing

### Problem description

N/A

### Expected behavior

N/A

### Steps to reproduce

N/A

### Reproduction repository

N/A

### Relevant log output

```shell

N/A

```

| non_main | test package filament filament package version vnothing laravel version vnothing livewire version vnothing php version vnothing problem description n a expected behavior n a steps to reproduce n a reproduction repository n a relevant log output shell n a | 0 |

1,630 | 6,572,656,330 | IssuesEvent | 2017-09-11 04:08:02 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | .npm cache gets populated at /root with ownership of sudo user | affects_2.1 bug_report waiting_on_maintainer | ##### ISSUE TYPE

Bug Report

##### COMPONENT NAME

npm module

##### ANSIBLE VERSION

```

ansible 2.1.1.0

```

##### CONFIGURATION

```

vars:

node_branch: '4.x'

node_version: '4.6.2-1nodesource1~trusty1'

tasks:

- name: node | Register NodeSource signing key

become: true

apt_key: url=https://deb.nodesource.com/gpgkey/nodesource.gpg.key state=present

- name: node | Add NodeSource repository

become: true

apt_repository: repo='{{item}}' state=present

with_items:

- deb https://deb.nodesource.com/node_{{node_branch}} trusty main

- deb-src https://deb.nodesource.com/node_{{node_branch}} trusty main

- name: node | Install Node.js

become: true

apt: name='nodejs={{node_version}}' update_cache=yes state=present

- name: node | Install pm2

become: true

npm: name=pm2 global=yes version=2.1.4

```

##### OS / ENVIRONMENT

```

nodejs v4.6.2 LTS shipping with npm v2.5.11

Mac OS X 10.11.6 (local)

Ubuntu 14.04 LTS (provisioning target)

```

##### SUMMARY

If a module is installed globally the ownership of npm's cache (the `.npm` folder) after provisioning is not correct which causes subsequent errors and strange behaviors due to wrong permissions on that folder.

After provisioning with Ansible's npm module the npm cache is populated at `/root/.npm` but with ownership `deploy:deploy` where `deploy` is the user that is used for SSH-login on the remote machine.

As global installation of npm modules requires root privileges `become: true` is set and causes invocation via `sudo`. I found out that in these cases Ansible invokes `npm` via `sudo -H ...` which causes `$HOME` to be changed to `/root`. This might cause population of `.npm` folder at `$HOME/.npm` == `/root/.npm` but with ownership of the user defined by `$SUDO_USER` which is still `deploy`.

Unfortunately there is no proper documentation on how npm handles installs via sudo, so this is only a guess from my side.

Nevertheless this can also be reproduced manually via `deploy:~$ sudo -H npm install -g pm2` which causes the same behavior. If the flag `-H` is omitted everything is fine as the `.npm` folder gets populated at `/home/deploy/.npm` with ownership `deploy:deploy` but I do not see any configuration option for Ansible to influence the parameters for the sudo-invocation.

##### STEPS TO REPRODUCE

see above

##### EXPECTED RESULTS

npm's cache should be populated at `/home/deploy/.npm` with ownership `deploy:deploy`.

##### ACTUAL RESULTS

npm's cache is populated at `/root/.npm` with ownership `deploy:deploy`.

```

root:~# ls -la

-rw------- 1 root root 6893 Nov 11 07:26 .bash_history

-rw-r--r-- 1 root root 3106 Feb 20 2014 .bashrc

drwxr-xr-x 3 deploy deploy 4096 Nov 10 21:01 .npm/

-rw-r--r-- 1 root root 141 Nov 7 19:55 .profile

drwx------ 2 root root 4096 Jul 29 10:00 .ssh/

-rw------- 1 root root 3787 Nov 9 20:42 .viminfo

```

| True | .npm cache gets populated at /root with ownership of sudo user - ##### ISSUE TYPE

Bug Report

##### COMPONENT NAME

npm module

##### ANSIBLE VERSION

```

ansible 2.1.1.0

```

##### CONFIGURATION

```

vars:

node_branch: '4.x'

node_version: '4.6.2-1nodesource1~trusty1'

tasks:

- name: node | Register NodeSource signing key

become: true

apt_key: url=https://deb.nodesource.com/gpgkey/nodesource.gpg.key state=present

- name: node | Add NodeSource repository

become: true

apt_repository: repo='{{item}}' state=present

with_items:

- deb https://deb.nodesource.com/node_{{node_branch}} trusty main

- deb-src https://deb.nodesource.com/node_{{node_branch}} trusty main

- name: node | Install Node.js

become: true

apt: name='nodejs={{node_version}}' update_cache=yes state=present

- name: node | Install pm2

become: true

npm: name=pm2 global=yes version=2.1.4

```

##### OS / ENVIRONMENT

```

nodejs v4.6.2 LTS shipping with npm v2.5.11

Mac OS X 10.11.6 (local)

Ubuntu 14.04 LTS (provisioning target)

```

##### SUMMARY

If a module is installed globally the ownership of npm's cache (the `.npm` folder) after provisioning is not correct which causes subsequent errors and strange behaviors due to wrong permissions on that folder.

After provisioning with Ansible's npm module the npm cache is populated at `/root/.npm` but with ownership `deploy:deploy` where `deploy` is the user that is used for SSH-login on the remote machine.

As global installation of npm modules requires root privileges `become: true` is set and causes invocation via `sudo`. I found out that in these cases Ansible invokes `npm` via `sudo -H ...` which causes `$HOME` to be changed to `/root`. This might cause population of `.npm` folder at `$HOME/.npm` == `/root/.npm` but with ownership of the user defined by `$SUDO_USER` which is still `deploy`.

Unfortunately there is no proper documentation on how npm handles installs via sudo, so this is only a guess from my side.

Nevertheless this can also be reproduced manually via `deploy:~$ sudo -H npm install -g pm2` which causes the same behavior. If the flag `-H` is omitted everything is fine as the `.npm` folder gets populated at `/home/deploy/.npm` with ownership `deploy:deploy` but I do not see any configuration option for Ansible to influence the parameters for the sudo-invocation.

##### STEPS TO REPRODUCE

see above

##### EXPECTED RESULTS

npm's cache should be populated at `/home/deploy/.npm` with ownership `deploy:deploy`.

##### ACTUAL RESULTS

npm's cache is populated at `/root/.npm` with ownership `deploy:deploy`.

```

root:~# ls -la

-rw------- 1 root root 6893 Nov 11 07:26 .bash_history

-rw-r--r-- 1 root root 3106 Feb 20 2014 .bashrc

drwxr-xr-x 3 deploy deploy 4096 Nov 10 21:01 .npm/

-rw-r--r-- 1 root root 141 Nov 7 19:55 .profile

drwx------ 2 root root 4096 Jul 29 10:00 .ssh/

-rw------- 1 root root 3787 Nov 9 20:42 .viminfo

```

| main | npm cache gets populated at root with ownership of sudo user issue type bug report component name npm module ansible version ansible configuration vars node branch x node version tasks name node register nodesource signing key become true apt key url state present name node add nodesource repository become true apt repository repo item state present with items deb trusty main deb src trusty main name node install node js become true apt name nodejs node version update cache yes state present name node install become true npm name global yes version os environment nodejs lts shipping with npm mac os x local ubuntu lts provisioning target summary if a module is installed globally the ownership of npm s cache the npm folder after provisioning is not correct which causes subsequent errors and strange behaviors due to wrong permissions on that folder after provisioning with ansible s npm module the npm cache is populated at root npm but with ownership deploy deploy where deploy is the user that is used for ssh login on the remote machine as global installation of npm modules requires root privileges become true is set and causes invocation via sudo i found out that in these cases ansible invokes npm via sudo h which causes home to be changed to root this might cause population of npm folder at home npm root npm but with ownership of the user defined by sudo user which is still deploy unfortunately there is no proper documentation on how npm handles installs via sudo so this is only a guess from my side nevertheless this can also be reproduced manually via deploy sudo h npm install g which causes the same behavior if the flag h is omitted everything is fine as the npm folder gets populated at home deploy npm with ownership deploy deploy but i do not see any configuration option for ansible to influence the parameters for the sudo invocation steps to reproduce see above expected results npm s cache should be populated at home deploy npm with ownership deploy deploy actual results npm s cache is populated at root npm with ownership deploy deploy root ls la rw root root nov bash history rw r r root root feb bashrc drwxr xr x deploy deploy nov npm rw r r root root nov profile drwx root root jul ssh rw root root nov viminfo | 1 |

286,689 | 31,720,941,630 | IssuesEvent | 2023-09-10 12:07:28 | TomasiDeveloping/ExpensesTracker | https://api.github.com/repos/TomasiDeveloping/ExpensesTracker | closed | sweetalert2-11.7.3.tgz: 1 vulnerabilities (highest severity is: 5.3) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sweetalert2-11.7.3.tgz</b></p></summary>

<p></p>

<p>Library home page: <a href="https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz">https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz</a></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/TomasiDeveloping/ExpensesTracker/commit/7671290d086466bd3fe985b9968e287ff7d69ca0">7671290d086466bd3fe985b9968e287ff7d69ca0</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (sweetalert2 version) | Remediation Possible** |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [WS-2023-0250](https://github.com/advisories/GHSA-mrr8-v49w-3333) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 5.3 | sweetalert2-11.7.3.tgz | Direct | N/A | ❌ |

<p>**In some cases, Remediation PR cannot be created automatically for a vulnerability despite the availability of remediation</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> WS-2023-0250</summary>

### Vulnerable Library - <b>sweetalert2-11.7.3.tgz</b></p>

<p></p>

<p>Library home page: <a href="https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz">https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz</a></p>

<p>

Dependency Hierarchy:

- :x: **sweetalert2-11.7.3.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/TomasiDeveloping/ExpensesTracker/commit/7671290d086466bd3fe985b9968e287ff7d69ca0">7671290d086466bd3fe985b9968e287ff7d69ca0</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

sweetalert2 versions 11.6.14 and above have potentially undesirable behavior. The package outputs audio and/or video messages that do not pertain to the functionality of the package when run on specific tlds. This functionality is documented on the project's readme

<p>Publish Date: 2023-07-10

<p>URL: <a href=https://github.com/advisories/GHSA-mrr8-v49w-3333>WS-2023-0250</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>5.3</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | True | sweetalert2-11.7.3.tgz: 1 vulnerabilities (highest severity is: 5.3) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sweetalert2-11.7.3.tgz</b></p></summary>

<p></p>

<p>Library home page: <a href="https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz">https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz</a></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/TomasiDeveloping/ExpensesTracker/commit/7671290d086466bd3fe985b9968e287ff7d69ca0">7671290d086466bd3fe985b9968e287ff7d69ca0</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (sweetalert2 version) | Remediation Possible** |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [WS-2023-0250](https://github.com/advisories/GHSA-mrr8-v49w-3333) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 5.3 | sweetalert2-11.7.3.tgz | Direct | N/A | ❌ |

<p>**In some cases, Remediation PR cannot be created automatically for a vulnerability despite the availability of remediation</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> WS-2023-0250</summary>

### Vulnerable Library - <b>sweetalert2-11.7.3.tgz</b></p>

<p></p>

<p>Library home page: <a href="https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz">https://registry.npmjs.org/sweetalert2/-/sweetalert2-11.7.3.tgz</a></p>

<p>

Dependency Hierarchy:

- :x: **sweetalert2-11.7.3.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/TomasiDeveloping/ExpensesTracker/commit/7671290d086466bd3fe985b9968e287ff7d69ca0">7671290d086466bd3fe985b9968e287ff7d69ca0</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

sweetalert2 versions 11.6.14 and above have potentially undesirable behavior. The package outputs audio and/or video messages that do not pertain to the functionality of the package when run on specific tlds. This functionality is documented on the project's readme

<p>Publish Date: 2023-07-10

<p>URL: <a href=https://github.com/advisories/GHSA-mrr8-v49w-3333>WS-2023-0250</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>5.3</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | non_main | tgz vulnerabilities highest severity is vulnerable library tgz library home page a href found in head commit a href vulnerabilities cve severity cvss dependency type fixed in version remediation possible medium tgz direct n a in some cases remediation pr cannot be created automatically for a vulnerability despite the availability of remediation details ws vulnerable library tgz library home page a href dependency hierarchy x tgz vulnerable library found in head commit a href found in base branch master vulnerability details versions and above have potentially undesirable behavior the package outputs audio and or video messages that do not pertain to the functionality of the package when run on specific tlds this functionality is documented on the project s readme publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact low availability impact none for more information on scores click a href step up your open source security game with mend | 0 |

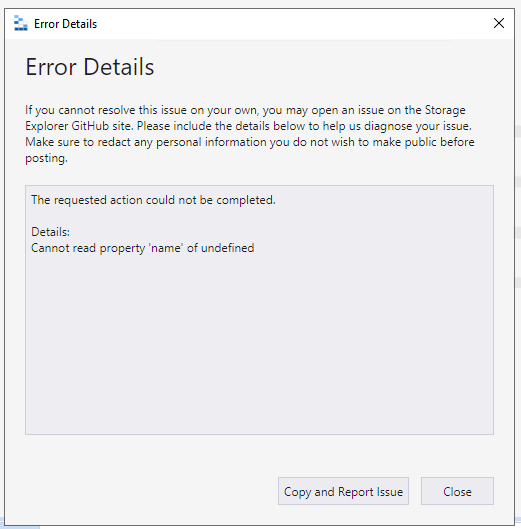

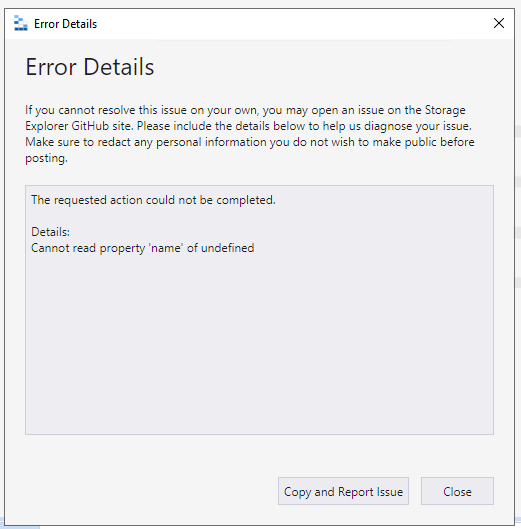

198,341 | 14,974,024,920 | IssuesEvent | 2021-01-28 02:31:16 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | An error dialog pops up when executing 'Propagate Access Control Lists…' for a SAS attached ADLS Gen2 blob container | :beetle: regression :gear: adls gen2 :heavy_check_mark: merged 🧪 testing | **Storage Explorer Version:** 1.17.0

**Build Number:** 20210127.3

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ MacOS Catalina

**Architecture:** ia32/x64

**Regression From:** Previous build (20210123.6)

**Steps to reproduce:**

1. Expand one ADLS Gen2 storage account -> Blob Containers.

2. Create a blob container -> Attach it via SAS with full permissions.

3. Right click the SAS attached blob container -> Click 'Propagate Access Control Lists...' -> Click 'OK'.

4. Check there no error dialog pops up.

**Expect Experience:**

No error dialog pops up.

**Actual Experience:**

An error dialog pops up.

| 1.0 | An error dialog pops up when executing 'Propagate Access Control Lists…' for a SAS attached ADLS Gen2 blob container - **Storage Explorer Version:** 1.17.0

**Build Number:** 20210127.3

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ MacOS Catalina

**Architecture:** ia32/x64

**Regression From:** Previous build (20210123.6)

**Steps to reproduce:**

1. Expand one ADLS Gen2 storage account -> Blob Containers.

2. Create a blob container -> Attach it via SAS with full permissions.

3. Right click the SAS attached blob container -> Click 'Propagate Access Control Lists...' -> Click 'OK'.

4. Check there no error dialog pops up.

**Expect Experience:**

No error dialog pops up.

**Actual Experience:**

An error dialog pops up.

| non_main | an error dialog pops up when executing propagate access control lists… for a sas attached adls blob container storage explorer version build number branch main platform os windows linux ubuntu macos catalina architecture regression from previous build steps to reproduce expand one adls storage account blob containers create a blob container attach it via sas with full permissions right click the sas attached blob container click propagate access control lists click ok check there no error dialog pops up expect experience no error dialog pops up actual experience an error dialog pops up | 0 |

314,577 | 23,528,973,224 | IssuesEvent | 2022-08-19 13:37:44 | vegaprotocol/specs | https://api.github.com/repos/vegaprotocol/specs | opened | New spec to detail behaviour of the candles subscription | documentation specs | To help develop and test the candles data, we need to write a spec we can agree on and use as the reference for future work and testing. | 1.0 | New spec to detail behaviour of the candles subscription - To help develop and test the candles data, we need to write a spec we can agree on and use as the reference for future work and testing. | non_main | new spec to detail behaviour of the candles subscription to help develop and test the candles data we need to write a spec we can agree on and use as the reference for future work and testing | 0 |

229,546 | 25,362,277,151 | IssuesEvent | 2022-11-21 01:02:34 | DavidSpek/pipelines | https://api.github.com/repos/DavidSpek/pipelines | opened | CVE-2022-41885 (Medium) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2022-41885 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: /contrib/components/openvino/ovms-deployer/containers/requirements.txt</p>

<p>Path to vulnerable library: /contrib/components/openvino/ovms-deployer/containers/requirements.txt,/samples/core/ai_platform/training</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DavidSpek/pipelines/commit/6f7433f006e282c4f25441e7502b80d73751e38f">6f7433f006e282c4f25441e7502b80d73751e38f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an open source platform for machine learning. When `tf.raw_ops.FusedResizeAndPadConv2D` is given a large tensor shape, it overflows. We have patched the issue in GitHub commit d66e1d568275e6a2947de97dca7a102a211e01ce. The fix will be included in TensorFlow 2.11. We will also cherrypick this commit on TensorFlow 2.10.1, 2.9.3, and TensorFlow 2.8.4, as these are also affected and still in supported range.

<p>Publish Date: 2022-11-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-41885>CVE-2022-41885</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-41885">https://www.cve.org/CVERecord?id=CVE-2022-41885</a></p>

<p>Release Date: 2022-11-18</p>

<p>Fix Resolution: tensorflow - 2.7.4, 2.8.1, 2.9.1, 2.10.0, tensorflow-cpu - 2.7.4, 2.8.1, 2.9.1, 2.10.0, tensorflow-gpu - 2.7.4, 2.8.1, 2.9.1, 2.10.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-41885 (Medium) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2022-41885 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl">https://files.pythonhosted.org/packages/ec/98/f968caf5f65759e78873b900cbf0ae20b1699fb11268ecc0f892186419a7/tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</a></p>

<p>Path to dependency file: /contrib/components/openvino/ovms-deployer/containers/requirements.txt</p>

<p>Path to vulnerable library: /contrib/components/openvino/ovms-deployer/containers/requirements.txt,/samples/core/ai_platform/training</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/DavidSpek/pipelines/commit/6f7433f006e282c4f25441e7502b80d73751e38f">6f7433f006e282c4f25441e7502b80d73751e38f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

TensorFlow is an open source platform for machine learning. When `tf.raw_ops.FusedResizeAndPadConv2D` is given a large tensor shape, it overflows. We have patched the issue in GitHub commit d66e1d568275e6a2947de97dca7a102a211e01ce. The fix will be included in TensorFlow 2.11. We will also cherrypick this commit on TensorFlow 2.10.1, 2.9.3, and TensorFlow 2.8.4, as these are also affected and still in supported range.

<p>Publish Date: 2022-11-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-41885>CVE-2022-41885</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-41885">https://www.cve.org/CVERecord?id=CVE-2022-41885</a></p>

<p>Release Date: 2022-11-18</p>

<p>Fix Resolution: tensorflow - 2.7.4, 2.8.1, 2.9.1, 2.10.0, tensorflow-cpu - 2.7.4, 2.8.1, 2.9.1, 2.10.0, tensorflow-gpu - 2.7.4, 2.8.1, 2.9.1, 2.10.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file contrib components openvino ovms deployer containers requirements txt path to vulnerable library contrib components openvino ovms deployer containers requirements txt samples core ai platform training dependency hierarchy x tensorflow whl vulnerable library found in head commit a href found in base branch master vulnerability details tensorflow is an open source platform for machine learning when tf raw ops is given a large tensor shape it overflows we have patched the issue in github commit the fix will be included in tensorflow we will also cherrypick this commit on tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required low user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with mend | 0 |

4,559 | 23,727,632,973 | IssuesEvent | 2022-08-30 21:15:46 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | sam local commands fail for Hello World lambda | area/local/invoke maintainer/need-followup | sam local functions all fail for default HelloWorld function, reporting "No response from invoke container for HelloWorldFunction" / "Invalid lambda response received: Lambda response must be valid json"

Environment:

* aws-cli : SAM CLI, version 1.18.0

* docker: Docker version 19.03.1, build 74b1e89e8a

* python 3.7.9

* Virtualbox 6.1.18

* Windows 7

Set Up:

Create the default HelloWorld application, using python3.7 :

`> sam init`

Template Source Choice 1: AWS Quick Start Templates

Package Choice 1: zip

Runtime Choice 9: Python 3.7

Template choice 1: Hello World example

`> sam build`

`> sam local invoke --debug`

```2021-02-12 14:57:04,629 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2021-02-12 14:57:04,630 | local invoke command is called

2021-02-12 14:57:04,643 | No Parameters detected in the template

2021-02-12 14:57:04,736 | 2 resources found in the template

2021-02-12 14:57:04,736 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2021-02-12 14:57:04,795 | Found one Lambda function with name 'HelloWorldFunction'

2021-02-12 14:57:04,795 | Invoking app.lambda_handler (python3.7)

2021-02-12 14:57:04,796 | No environment variables found for function 'HelloWorldFunction'

2021-02-12 14:57:04,796 | Environment variables overrides data is standard format

2021-02-12 14:57:04,797 | Loading AWS credentials from session with profile 'None'

2021-02-12 14:57:04,829 | Resolving code path. Cwd=F:\projects\sam-app\.aws-sam\build, CodeUri=HelloWorldFunction

2021-02-12 14:57:04,829 | Resolved absolute path to code is F:\projects\sam-app\.aws-sam\build\HelloWorldFunction

2021-02-12 14:57:04,830 | Code F:\projects\sam-app\.aws-sam\build\HelloWorldFunction is not a zip/jar file

2021-02-12 14:57:04,889 | Skip pulling image and use local one: amazon/aws-sam-cli-emulation-image-python3.7:rapid-1.18.0.

2021-02-12 14:57:04,890 | Mounting F:\projects\sam-app\.aws-sam\build\HelloWorldFunction as /var/task:ro,delegated inside runtime container

2021-02-12 14:57:05,375 | Starting a timer for 30 seconds for function 'HelloWorldFunction'

2021-02-12 14:57:09,258 | Cleaning all decompressed code dirs

2021-02-12 14:57:09,267 | No response from invoke container for HelloWorldFunction

2021-02-12 14:57:09,296 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '8ebfedc3-168e-4b85-a900-55cfe5379a91', 'installationId': '124efb15-d1bd-47bb-8b98-e654f948fb6d', 'sessionId': '353b9e74-a108-489e-9083-519c0dce5779', 'executionEnvironment': 'CL

I', 'pyversion': '3.8.7', 'samcliVersion': '1.18.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam local invoke', 'duration': 4674, 'exitReason': 'success', 'exitCode': 0}}]}

2021-02-12 14:57:09,876 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

```

Running start api and using browser to access \hello reports similar error

` sam local start-api --debug`

```2021-02-12 15:02:01,721 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2021-02-12 15:02:02,327 | local start-api command is called

2021-02-12 15:02:02,339 | No Parameters detected in the template

2021-02-12 15:02:02,418 | 2 resources found in the template

2021-02-12 15:02:02,419 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2021-02-12 15:02:02,452 | No Parameters detected in the template

2021-02-12 15:02:02,515 | 2 resources found in the template

2021-02-12 15:02:02,516 | Found '1' API Events in Serverless function with name 'HelloWorldFunction'

2021-02-12 15:02:02,516 | Detected Inline Swagger definition

2021-02-12 15:02:02,517 | Lambda function integration not found in Swagger document at path='/hello' method='get'

2021-02-12 15:02:02,517 | Found '0' APIs in resource 'ServerlessRestApi'

2021-02-12 15:02:02,518 | Removed duplicates from '0' Explicit APIs and '1' Implicit APIs to produce '1' APIs

2021-02-12 15:02:02,518 | 1 APIs found in the template

2021-02-12 15:02:02,547 | Mounting HelloWorldFunction at http://127.0.0.1:3000/hello [GET]

2021-02-12 15:02:02,547 | You can now browse to the above endpoints to invoke your functions. You do not need to restart/reload SAM CLI while working on your functions, changes will be reflected instantly/automatically. You only need to restart SAM CLI if you update your AWS SAM template

2021-02-12 15:02:02,548 | Localhost server is starting up. Multi-threading = True

2021-02-12 15:02:02 * Running on http://127.0.0.1:3000/ (Press CTRL+C to quit)

2021-02-12 15:03:20,948 | Constructed String representation of Event to invoke Lambda. Event: {"body": null, "headers": {"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9", "Accept-Encoding": "gzip, deflate, br", "Accept-Language": "en-GB,en;q=0.9,en-US;q=0.8", "Connection": "keep-alive", "Host": "127.0.0.1:3000", "Sec-Ch-Ua": "\"Chromium\";v=\"88\", \"Google Chrome\";v=\"88\", \";Not A Brand\";v=\"99\"", "Sec-Ch-Ua-Mobile": "?0", "Sec-Fetch-Dest": "document", "Sec-Fetch-Mode": "navigate", "Sec-Fetch-Site": "none", "Sec-Fetch-User": "?1", "Upgrade-Insecure-Requests": "1", "User-Agent": "Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/88.0.4324.150 Safari/537.36", "X-Forwarded-Port": "3000", "X-Forwarded-Proto": "http"}, "httpMethod": "GET", "isBase64Encoded": false, "multiValueHeaders": {"Accept": ["text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9"], "Accept-Encoding": ["gzip, deflate, br"], "Accept-Language": ["en-GB,en;q=0.9,en-US;q=0.8"], "Connection": ["keep-alive"], "Host": ["127.0.0.1:3000"], "Sec-Ch-Ua": ["\"Chromium\";v=\"88\", \"Google Chrome\";v=\"88\", \";Not A Brand\";v=\"99\""], "Sec-Ch-Ua-Mobile": ["?0"], "Sec-Fetch-Dest": ["document"], "Sec-Fetch-Mode": ["navigate"], "Sec-Fetch-Site": ["none"], "Sec-Fetch-User": ["?1"], "Upgrade-Insecure-Requests": ["1"], "User-Agent": ["Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/88.0.4324.150 Safari/537.36"], "X-Forwarded-Port": ["3000"], "X-Forwarded-Proto": ["http"]}, "multiValueQueryStringParameters": null, "path": "/hello", "pathParameters": null, "queryStringParameters": null, "requestContext": {"accountId": "123456789012", "apiId": "1234567890", "domainName": "127.0.0.1:3000", "extendedRequestId": null, "httpMethod": "GET", "identity": {"accountId": null, "apiKey": null, "caller": null, "cognitoAuthenticationProvider": null, "cognitoAuthenticationType": null, "cognitoIdentityPoolId": null, "sourceIp": "127.0.0.1", "user": null, "userAgent": "Custom User Agent String", "userArn": null}, "path": "/hello", "protocol": "HTTP/1.1", "requestId": "d282a32c-7b65-4a39-86ed-472b8dc50915", "requestTime": "12/Feb/2021:15:02:02 +0000", "requestTimeEpoch": 1613142122, "resourceId": "123456", "resourcePath": "/hello", "stage": "Prod"}, "resource": "/hello", "stageVariables": null, "version": "1.0"}

2021-02-12 15:03:20,953 | Found one Lambda function with name 'HelloWorldFunction'

2021-02-12 15:03:20,953 | Invoking app.lambda_handler (python3.7)

2021-02-12 15:03:20,954 | No environment variables found for function 'HelloWorldFunction'

2021-02-12 15:03:20,954 | Environment variables overrides data is standard format

2021-02-12 15:03:20,955 | Loading AWS credentials from session with profile 'None'

2021-02-12 15:03:20,985 | Resolving code path. Cwd=F:\projects\sam-app\.aws-sam\build, CodeUri=HelloWorldFunction

2021-02-12 15:03:20,985 | Resolved absolute path to code is F:\projects\sam-app\.aws-sam\build\HelloWorldFunction

2021-02-12 15:03:20,986 | Code F:\projects\sam-app\.aws-sam\build\HelloWorldFunction is not a zip/jar file

2021-02-12 15:03:21,032 | Skip pulling image and use local one: amazon/aws-sam-cli-emulation-image-python3.7:rapid-1.18.0.

2021-02-12 15:03:21,033 | Mounting F:\projects\sam-app\.aws-sam\build\HelloWorldFunction as /var/task:ro,delegated inside runtime container

2021-02-12 15:03:21,480 | Starting a timer for 30 seconds for function 'HelloWorldFunction'

2021-02-12 15:03:25,359 | Cleaning all decompressed code dirs

2021-02-12 15:03:25,360 | No response from invoke container for HelloWorldFunction

2021-02-12 15:03:25,361 | Invalid lambda response received: Lambda response must be valid json

2021-02-12 15:03:25 127.0.0.1 - - [12/Feb/2021 15:03:25] "GET /hello HTTP/1.1" 502 -

2021-02-12 15:03:25 127.0.0.1 - - [12/Feb/2021 15:03:25] "GET /favicon.ico HTTP/1.1" 403 -

```

Lambda can be deployed and run on AWS, but no local debugging or execution appears possible. This makes it very hard to develop functions.

(Note that timeout was increased to ensure any startup delay was not causing problems with execution). | True | sam local commands fail for Hello World lambda - sam local functions all fail for default HelloWorld function, reporting "No response from invoke container for HelloWorldFunction" / "Invalid lambda response received: Lambda response must be valid json"

Environment:

* aws-cli : SAM CLI, version 1.18.0

* docker: Docker version 19.03.1, build 74b1e89e8a

* python 3.7.9

* Virtualbox 6.1.18

* Windows 7

Set Up:

Create the default HelloWorld application, using python3.7 :

`> sam init`

Template Source Choice 1: AWS Quick Start Templates

Package Choice 1: zip

Runtime Choice 9: Python 3.7

Template choice 1: Hello World example

`> sam build`

`> sam local invoke --debug`

```2021-02-12 14:57:04,629 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2021-02-12 14:57:04,630 | local invoke command is called

2021-02-12 14:57:04,643 | No Parameters detected in the template

2021-02-12 14:57:04,736 | 2 resources found in the template

2021-02-12 14:57:04,736 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2021-02-12 14:57:04,795 | Found one Lambda function with name 'HelloWorldFunction'

2021-02-12 14:57:04,795 | Invoking app.lambda_handler (python3.7)

2021-02-12 14:57:04,796 | No environment variables found for function 'HelloWorldFunction'

2021-02-12 14:57:04,796 | Environment variables overrides data is standard format

2021-02-12 14:57:04,797 | Loading AWS credentials from session with profile 'None'

2021-02-12 14:57:04,829 | Resolving code path. Cwd=F:\projects\sam-app\.aws-sam\build, CodeUri=HelloWorldFunction

2021-02-12 14:57:04,829 | Resolved absolute path to code is F:\projects\sam-app\.aws-sam\build\HelloWorldFunction

2021-02-12 14:57:04,830 | Code F:\projects\sam-app\.aws-sam\build\HelloWorldFunction is not a zip/jar file

2021-02-12 14:57:04,889 | Skip pulling image and use local one: amazon/aws-sam-cli-emulation-image-python3.7:rapid-1.18.0.

2021-02-12 14:57:04,890 | Mounting F:\projects\sam-app\.aws-sam\build\HelloWorldFunction as /var/task:ro,delegated inside runtime container

2021-02-12 14:57:05,375 | Starting a timer for 30 seconds for function 'HelloWorldFunction'

2021-02-12 14:57:09,258 | Cleaning all decompressed code dirs

2021-02-12 14:57:09,267 | No response from invoke container for HelloWorldFunction

2021-02-12 14:57:09,296 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '8ebfedc3-168e-4b85-a900-55cfe5379a91', 'installationId': '124efb15-d1bd-47bb-8b98-e654f948fb6d', 'sessionId': '353b9e74-a108-489e-9083-519c0dce5779', 'executionEnvironment': 'CL

I', 'pyversion': '3.8.7', 'samcliVersion': '1.18.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam local invoke', 'duration': 4674, 'exitReason': 'success', 'exitCode': 0}}]}

2021-02-12 14:57:09,876 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

```

Running start api and using browser to access \hello reports similar error

` sam local start-api --debug`

```2021-02-12 15:02:01,721 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2021-02-12 15:02:02,327 | local start-api command is called

2021-02-12 15:02:02,339 | No Parameters detected in the template

2021-02-12 15:02:02,418 | 2 resources found in the template

2021-02-12 15:02:02,419 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2021-02-12 15:02:02,452 | No Parameters detected in the template

2021-02-12 15:02:02,515 | 2 resources found in the template

2021-02-12 15:02:02,516 | Found '1' API Events in Serverless function with name 'HelloWorldFunction'

2021-02-12 15:02:02,516 | Detected Inline Swagger definition

2021-02-12 15:02:02,517 | Lambda function integration not found in Swagger document at path='/hello' method='get'

2021-02-12 15:02:02,517 | Found '0' APIs in resource 'ServerlessRestApi'

2021-02-12 15:02:02,518 | Removed duplicates from '0' Explicit APIs and '1' Implicit APIs to produce '1' APIs

2021-02-12 15:02:02,518 | 1 APIs found in the template

2021-02-12 15:02:02,547 | Mounting HelloWorldFunction at http://127.0.0.1:3000/hello [GET]

2021-02-12 15:02:02,547 | You can now browse to the above endpoints to invoke your functions. You do not need to restart/reload SAM CLI while working on your functions, changes will be reflected instantly/automatically. You only need to restart SAM CLI if you update your AWS SAM template

2021-02-12 15:02:02,548 | Localhost server is starting up. Multi-threading = True

2021-02-12 15:02:02 * Running on http://127.0.0.1:3000/ (Press CTRL+C to quit)

2021-02-12 15:03:20,948 | Constructed String representation of Event to invoke Lambda. Event: {"body": null, "headers": {"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9", "Accept-Encoding": "gzip, deflate, br", "Accept-Language": "en-GB,en;q=0.9,en-US;q=0.8", "Connection": "keep-alive", "Host": "127.0.0.1:3000", "Sec-Ch-Ua": "\"Chromium\";v=\"88\", \"Google Chrome\";v=\"88\", \";Not A Brand\";v=\"99\"", "Sec-Ch-Ua-Mobile": "?0", "Sec-Fetch-Dest": "document", "Sec-Fetch-Mode": "navigate", "Sec-Fetch-Site": "none", "Sec-Fetch-User": "?1", "Upgrade-Insecure-Requests": "1", "User-Agent": "Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/88.0.4324.150 Safari/537.36", "X-Forwarded-Port": "3000", "X-Forwarded-Proto": "http"}, "httpMethod": "GET", "isBase64Encoded": false, "multiValueHeaders": {"Accept": ["text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9"], "Accept-Encoding": ["gzip, deflate, br"], "Accept-Language": ["en-GB,en;q=0.9,en-US;q=0.8"], "Connection": ["keep-alive"], "Host": ["127.0.0.1:3000"], "Sec-Ch-Ua": ["\"Chromium\";v=\"88\", \"Google Chrome\";v=\"88\", \";Not A Brand\";v=\"99\""], "Sec-Ch-Ua-Mobile": ["?0"], "Sec-Fetch-Dest": ["document"], "Sec-Fetch-Mode": ["navigate"], "Sec-Fetch-Site": ["none"], "Sec-Fetch-User": ["?1"], "Upgrade-Insecure-Requests": ["1"], "User-Agent": ["Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/88.0.4324.150 Safari/537.36"], "X-Forwarded-Port": ["3000"], "X-Forwarded-Proto": ["http"]}, "multiValueQueryStringParameters": null, "path": "/hello", "pathParameters": null, "queryStringParameters": null, "requestContext": {"accountId": "123456789012", "apiId": "1234567890", "domainName": "127.0.0.1:3000", "extendedRequestId": null, "httpMethod": "GET", "identity": {"accountId": null, "apiKey": null, "caller": null, "cognitoAuthenticationProvider": null, "cognitoAuthenticationType": null, "cognitoIdentityPoolId": null, "sourceIp": "127.0.0.1", "user": null, "userAgent": "Custom User Agent String", "userArn": null}, "path": "/hello", "protocol": "HTTP/1.1", "requestId": "d282a32c-7b65-4a39-86ed-472b8dc50915", "requestTime": "12/Feb/2021:15:02:02 +0000", "requestTimeEpoch": 1613142122, "resourceId": "123456", "resourcePath": "/hello", "stage": "Prod"}, "resource": "/hello", "stageVariables": null, "version": "1.0"}

2021-02-12 15:03:20,953 | Found one Lambda function with name 'HelloWorldFunction'

2021-02-12 15:03:20,953 | Invoking app.lambda_handler (python3.7)

2021-02-12 15:03:20,954 | No environment variables found for function 'HelloWorldFunction'

2021-02-12 15:03:20,954 | Environment variables overrides data is standard format

2021-02-12 15:03:20,955 | Loading AWS credentials from session with profile 'None'

2021-02-12 15:03:20,985 | Resolving code path. Cwd=F:\projects\sam-app\.aws-sam\build, CodeUri=HelloWorldFunction

2021-02-12 15:03:20,985 | Resolved absolute path to code is F:\projects\sam-app\.aws-sam\build\HelloWorldFunction

2021-02-12 15:03:20,986 | Code F:\projects\sam-app\.aws-sam\build\HelloWorldFunction is not a zip/jar file

2021-02-12 15:03:21,032 | Skip pulling image and use local one: amazon/aws-sam-cli-emulation-image-python3.7:rapid-1.18.0.

2021-02-12 15:03:21,033 | Mounting F:\projects\sam-app\.aws-sam\build\HelloWorldFunction as /var/task:ro,delegated inside runtime container

2021-02-12 15:03:21,480 | Starting a timer for 30 seconds for function 'HelloWorldFunction'

2021-02-12 15:03:25,359 | Cleaning all decompressed code dirs

2021-02-12 15:03:25,360 | No response from invoke container for HelloWorldFunction

2021-02-12 15:03:25,361 | Invalid lambda response received: Lambda response must be valid json

2021-02-12 15:03:25 127.0.0.1 - - [12/Feb/2021 15:03:25] "GET /hello HTTP/1.1" 502 -

2021-02-12 15:03:25 127.0.0.1 - - [12/Feb/2021 15:03:25] "GET /favicon.ico HTTP/1.1" 403 -

```

Lambda can be deployed and run on AWS, but no local debugging or execution appears possible. This makes it very hard to develop functions.

(Note that timeout was increased to ensure any startup delay was not causing problems with execution). | main | sam local commands fail for hello world lambda sam local functions all fail for default helloworld function reporting no response from invoke container for helloworldfunction invalid lambda response received lambda response must be valid json environment aws cli sam cli version docker docker version build python virtualbox windows set up create the default helloworld application using sam init template source choice aws quick start templates package choice zip runtime choice python template choice hello world example sam build sam local invoke debug telemetry endpoint configured to be local invoke command is called no parameters detected in the template resources found in the template found serverless function with name helloworldfunction and codeuri helloworldfunction found one lambda function with name helloworldfunction invoking app lambda handler no environment variables found for function helloworldfunction environment variables overrides data is standard format loading aws credentials from session with profile none resolving code path cwd f projects sam app aws sam build codeuri helloworldfunction resolved absolute path to code is f projects sam app aws sam build helloworldfunction code f projects sam app aws sam build helloworldfunction is not a zip jar file skip pulling image and use local one amazon aws sam cli emulation image rapid mounting f projects sam app aws sam build helloworldfunction as var task ro delegated inside runtime container starting a timer for seconds for function helloworldfunction cleaning all decompressed code dirs no response from invoke container for helloworldfunction sending telemetry metrics commandrun requestid installationid sessionid executionenvironment cl i pyversion samcliversion awsprofileprovided false debugflagprovided true region commandname sam local invoke duration exitreason success exitcode httpsconnectionpool host aws serverless tools telemetry us west amazonaws com port read timed out read timeout running start api and using browser to access hello reports similar error sam local start api debug telemetry endpoint configured to be local start api command is called no parameters detected in the template resources found in the template found serverless function with name helloworldfunction and codeuri helloworldfunction no parameters detected in the template resources found in the template found api events in serverless function with name helloworldfunction detected inline swagger definition lambda function integration not found in swagger document at path hello method get found apis in resource serverlessrestapi removed duplicates from explicit apis and implicit apis to produce apis apis found in the template mounting helloworldfunction at you can now browse to the above endpoints to invoke your functions you do not need to restart reload sam cli while working on your functions changes will be reflected instantly automatically you only need to restart sam cli if you update your aws sam template localhost server is starting up multi threading true running on press ctrl c to quit constructed string representation of event to invoke lambda event body null headers accept text html application xhtml xml application xml q image avif image webp image apng q application signed exchange v q accept encoding gzip deflate br accept language en gb en q en us q connection keep alive host sec ch ua chromium v google chrome v not a brand v sec ch ua mobile sec fetch dest document sec fetch mode navigate sec fetch site none sec fetch user upgrade insecure requests user agent mozilla windows nt applewebkit khtml like gecko chrome safari x forwarded port x forwarded proto http httpmethod get false multivalueheaders accept accept encoding accept language connection host sec ch ua sec ch ua mobile sec fetch dest sec fetch mode sec fetch site sec fetch user upgrade insecure requests user agent x forwarded port x forwarded proto multivaluequerystringparameters null path hello pathparameters null querystringparameters null requestcontext accountid apiid domainname extendedrequestid null httpmethod get identity accountid null apikey null caller null cognitoauthenticationprovider null cognitoauthenticationtype null cognitoidentitypoolid null sourceip user null useragent custom user agent string userarn null path hello protocol http requestid requesttime feb requesttimeepoch resourceid resourcepath hello stage prod resource hello stagevariables null version found one lambda function with name helloworldfunction invoking app lambda handler no environment variables found for function helloworldfunction environment variables overrides data is standard format loading aws credentials from session with profile none resolving code path cwd f projects sam app aws sam build codeuri helloworldfunction resolved absolute path to code is f projects sam app aws sam build helloworldfunction code f projects sam app aws sam build helloworldfunction is not a zip jar file skip pulling image and use local one amazon aws sam cli emulation image rapid mounting f projects sam app aws sam build helloworldfunction as var task ro delegated inside runtime container starting a timer for seconds for function helloworldfunction cleaning all decompressed code dirs no response from invoke container for helloworldfunction invalid lambda response received lambda response must be valid json get hello http get favicon ico http lambda can be deployed and run on aws but no local debugging or execution appears possible this makes it very hard to develop functions note that timeout was increased to ensure any startup delay was not causing problems with execution | 1 |

745,993 | 26,009,224,250 | IssuesEvent | 2022-12-20 22:57:37 | DanielWestberg/economicalc | https://api.github.com/repos/DanielWestberg/economicalc | closed | Process OCR data to filter out incorrect mappings | bug frontend HIGH PRIORITY | Example of incorrect item in a receipt:

{

"amount": 5.89,

"category": null,

"description": "Moms 12%",

"flags": "",

"qty": null,

"remarks": null,

"tags": null,

"unitPrice": null

} | 1.0 | Process OCR data to filter out incorrect mappings - Example of incorrect item in a receipt:

{

"amount": 5.89,

"category": null,

"description": "Moms 12%",

"flags": "",

"qty": null,

"remarks": null,

"tags": null,

"unitPrice": null

} | non_main | process ocr data to filter out incorrect mappings example of incorrect item in a receipt amount category null description moms flags qty null remarks null tags null unitprice null | 0 |

5,373 | 27,004,502,162 | IssuesEvent | 2023-02-10 10:31:44 | microcai/gentoo-zh | https://api.github.com/repos/microcai/gentoo-zh | closed | drop package: net-proxy/clash-for-windows-bin | maintainer-needed | 自己不用了,而且再加上最近连连出漏洞,我觉得如果没有别的人打算maintain,是否应该drop | True | drop package: net-proxy/clash-for-windows-bin - 自己不用了,而且再加上最近连连出漏洞,我觉得如果没有别的人打算maintain,是否应该drop | main | drop package net proxy clash for windows bin 自己不用了,而且再加上最近连连出漏洞,我觉得如果没有别的人打算maintain,是否应该drop | 1 |

4,120 | 19,539,427,432 | IssuesEvent | 2021-12-31 16:26:47 | asclepias/asclepias-broker | https://api.github.com/repos/asclepias/asclepias-broker | closed | Dev: Create weekly report/slackbot of ingestion/harvesting success/failure | Monitoring and Maintainence | Create monitoring view for weekly ingestion and harvesting. | True | Dev: Create weekly report/slackbot of ingestion/harvesting success/failure - Create monitoring view for weekly ingestion and harvesting. | main | dev create weekly report slackbot of ingestion harvesting success failure create monitoring view for weekly ingestion and harvesting | 1 |

43,123 | 17,410,084,074 | IssuesEvent | 2021-08-03 11:10:00 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | Terrafrom Crashed when creating AzureRM web app | bug crash service/app-service | Code and trace below. This code has previously run, so I do not think it a persistent problem. At a guess a problem with azure state.

It has happened 3 times now on CI (azure devops) but not running locally.

Terraform v0.13.2

+ provider registry.terraform.io/hashicorp/azurerm v2.63.0

Configure the Microsoft Azure Provider

provider "azurerm" {

features {}

}

#Create Azure Resource Group

resource "azurerm_resource_group" "AutoConference-RG" {

for_each = local.conferences

name = each.key

location = "West Europe"

tags = {

tier = each.value.tier

size = each.value.size

}

}

#Create Azure App Service Plan

resource "azurerm_app_service_plan" "AutoConference-ASP" {

for_each = azurerm_resource_group.AutoConference-RG

name = each.value.name

location = "West Europe"

resource_group_name = each.value.name

kind = "Windows"

sku {

tier = each.value.tags.tier

size = each.value.tags.size

}

}

#Create Azure App Service

resource "azurerm_app_service" "AutoConference-AS" {

for_each = azurerm_app_service_plan.AutoConference-ASP

depends_on = [azurerm_app_service_plan.AutoConference-ASP]

name = each.value.name

location = each.value.location

resource_group_name = each.value.name

app_service_plan_id = each.value.id

tags = {

"Acceptance" = "Test"

}

site_config {

dotnet_framework_version = "v5.0"

always_on = false

use_32_bit_worker_process = false

default_documents = []

}

app_settings = {

manual_integration = true

}

connection_string {

name = "AsecConn"

type = "SQLServer"

value = "Server=${each.value.name}.database.windows.net,1433; Database=Asec;User ID=xxxxxxxx;Password=xxxxxxxxxx;Trusted_Connection=False;Encrypt=True;"

}

connection_string {

name = "GrvRmsConn"

type = "SQLServer"

value = "Server=${each.value.name}.database.windows.net,1433; Database=GrvRms;User ID=xxxxxxx;Password=xxxxxxxxxxx;Trusted_Connection=False;Encrypt=True;"

}

}

#Create Azure SQL Server

resource "azurerm_sql_server" "test" {

for_each = azurerm_resource_group.AutoConference-RG

name = each.value.name

resource_group_name = each.value.name

location = each.value.location

version = "12.0"

administrator_login = "xxxxxxxx"

administrator_login_password = "xxxxxxx"

}

#Create Azure SQL Database

resource "azurerm_sql_database" "Asec" {

for_each = azurerm_sql_server.test

name = "Asec"

resource_group_name = each.value.name

location = each.value.location

server_name = each.value.name

requested_service_objective_name = "S0"

tags = {

environment = "production"

}

}

#Create Azure SQL Database

resource "azurerm_sql_database" "GrvRms" {

for_each = azurerm_sql_server.test

name = "GrvRms"

resource_group_name = each.value.name

location = each.value.location

server_name = each.value.name

requested_service_objective_name = "S0"

tags = {

environment = "production"

}

}

resource "azurerm_app_service_custom_hostname_binding" "appsvc" {

for_each = azurerm_app_service.AutoConference-AS

hostname = "${each.value.name}.jort.co.uk"

app_service_name = each.value.name

resource_group_name = each.value.name

#ssl_state = "SniEnabled"

#thumbprint = azurerm_app_service_certificate.foo.thumbprint

}

2021-07-15T19:44:57.1769044Z �[0m�[1mazurerm_resource_group.AutoConference-RG["jortytestyb"]: Creating...�[0m�[0m

2021-07-15T19:44:57.1771076Z �[0m�[1mazurerm_resource_group.AutoConference-RG["jortytestya"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5392440Z �[0m�[1mazurerm_resource_group.AutoConference-RG["jortytestyb"]: Creation complete after 1s [id=/subscriptions/be4455db-bf41-4de0-9b0c-5703f7bbe8f0/resourceGroups/jortytestyb]�[0m�[0m

2021-07-15T19:44:57.5438170Z �[0m�[1mazurerm_resource_group.AutoConference-RG["jortytestya"]: Creation complete after 1s [id=/subscriptions/be4455db-bf41-4de0-9b0c-5703f7bbe8f0/resourceGroups/jortytestya]�[0m�[0m

2021-07-15T19:44:57.5862888Z �[0m�[1mazurerm_sql_database.Asec["jortytestyb"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5864061Z �[0m�[1mazurerm_sql_database.GrvRms["jortytestya"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5910498Z �[0m�[1mazurerm_sql_database.GrvRms["jortytestyb"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5911112Z �[0m�[1mazurerm_app_service.AutoConference-AS["jortytestyb"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5955839Z �[0m�[1mazurerm_sql_database.Asec["jortytestya"]: Creating...�[0m�[0m

2021-07-15T19:44:57.5956739Z �[0m�[1mazurerm_app_service.AutoConference-AS["jortytestya"]: Creating...�[0m�[0m

2021-07-15T19:44:57.8201183Z �[31m

2021-07-15T19:44:57.8201861Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8203234Z

2021-07-15T19:44:57.8203624Z �[0m�[0m�[0m

2021-07-15T19:44:57.8206556Z �[31m

2021-07-15T19:44:57.8207100Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8207488Z

2021-07-15T19:44:57.8207871Z �[0m�[0m�[0m

2021-07-15T19:44:57.8208161Z �[31m

2021-07-15T19:44:57.8208579Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8208875Z

2021-07-15T19:44:57.8210530Z �[0m�[0m�[0m

2021-07-15T19:44:57.8210908Z �[31m

2021-07-15T19:44:57.8211556Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8211912Z

2021-07-15T19:44:57.8212272Z �[0m�[0m�[0m

2021-07-15T19:44:57.8212642Z �[31m

2021-07-15T19:44:57.8213633Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8214546Z

2021-07-15T19:44:57.8230656Z �[0m�[0m�[0m

2021-07-15T19:44:57.8231161Z �[31m

2021-07-15T19:44:57.8231521Z �[1m�[31mError: �[0m�[0m�[1mrpc error: code = Unavailable desc = transport is closing�[0m

2021-07-15T19:44:57.8231745Z

2021-07-15T19:44:57.8232000Z �[0m�[0m�[0m

2021-07-15T19:44:57.8382267Z panic: runtime error: invalid memory address or nil pointer dereference

2021-07-15T19:44:57.8382870Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: [signal 0xc0000005 code=0x0 addr=0x20 pc=0x5c6e12f]

2021-07-15T19:44:57.8383486Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe:

2021-07-15T19:44:57.8384037Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: goroutine 189 [running]:

2021-07-15T19:44:57.8384832Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: github.com/terraform-providers/terraform-provider-azurerm/azurerm/internal/services/web.resourceAppServiceCreate(0xc0021f1180, 0x603da40, 0xc000ac6e00, 0x0, 0x0)

2021-07-15T19:44:57.8386248Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/azurerm/internal/services/web/app_service_resource.go:242 +0x60f

2021-07-15T19:44:57.8387732Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: github.com/hashicorp/terraform-plugin-sdk/helper/schema.(*Resource).Apply(0xc000a7c5a0, 0xc00230f130, 0xc002330d00, 0x603da40, 0xc000ac6e00, 0x606ae01, 0xc002594e18, 0xc0025a8690)

2021-07-15T19:44:57.8389386Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/github.com/hashicorp/terraform-plugin-sdk/helper/schema/resource.go:320 +0x395

2021-07-15T19:44:57.8390575Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: github.com/hashicorp/terraform-plugin-sdk/helper/schema.(*Provider).Apply(0xc0001c2c80, 0xc001a27a38, 0xc00230f130, 0xc002330d00, 0xc002595380, 0xc002586350, 0x606d3e0)

2021-07-15T19:44:57.8391593Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/github.com/hashicorp/terraform-plugin-sdk/helper/schema/provider.go:294 +0xa5

2021-07-15T19:44:57.8393181Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: github.com/hashicorp/terraform-plugin-sdk/internal/helper/plugin.(*GRPCProviderServer).ApplyResourceChange(0xc000006430, 0x6f38750, 0xc002332990, 0xc0021f0cb0, 0xc000006430, 0xc002332990, 0xc001a1bba0)

2021-07-15T19:44:57.8394389Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/github.com/hashicorp/terraform-plugin-sdk/internal/helper/plugin/grpc_provider.go:895 +0x8c5

2021-07-15T19:44:57.8395661Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: github.com/hashicorp/terraform-plugin-sdk/internal/tfplugin5._Provider_ApplyResourceChange_Handler(0x6524300, 0xc000006430, 0x6f38750, 0xc002332990, 0xc000ac0c00, 0x0, 0x6f38750, 0xc002332990, 0xc002348000, 0xd30)

2021-07-15T19:44:57.8397071Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/github.com/hashicorp/terraform-plugin-sdk/internal/tfplugin5/tfplugin5.pb.go:3305 +0x222

2021-07-15T19:44:57.8398401Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: google.golang.org/grpc.(*Server).processUnaryRPC(0xc0005368c0, 0x6f80338, 0xc000159c80, 0xc000097a00, 0xc000acc960, 0xa392960, 0x0, 0x0, 0x0)

2021-07-15T19:44:57.8399330Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/google.golang.org/grpc/server.go:1194 +0x52b

2021-07-15T19:44:57.8400210Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: google.golang.org/grpc.(*Server).handleStream(0xc0005368c0, 0x6f80338, 0xc000159c80, 0xc000097a00, 0x0)

2021-07-15T19:44:57.8401088Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/google.golang.org/grpc/server.go:1517 +0xd0c

2021-07-15T19:44:57.8401969Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: google.golang.org/grpc.(*Server).serveStreams.func1.2(0xc0010b12c0, 0xc0005368c0, 0x6f80338, 0xc000159c80, 0xc000097a00)

2021-07-15T19:44:57.8402864Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/google.golang.org/grpc/server.go:859 +0xb2

2021-07-15T19:44:57.8403676Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: created by google.golang.org/grpc.(*Server).serveStreams.func1

2021-07-15T19:44:57.8404663Z 2021-07-15T19:44:57.806Z [DEBUG] plugin.terraform-provider-azurerm_v2.63.0_x5.exe: /opt/teamcity-agent/work/5d79fe75d4460a2f/src/github.com/terraform-providers/terraform-provider-azurerm/vendor/google.golang.org/grpc/server.go:857 +0x1fd

2021-07-15T19:44:57.8405661Z 2021/07/15 19:44:57 [DEBUG] azurerm_sql_database.GrvRms["jortytestya"]: apply errored, but we're indicating that via the Error pointer rather than returning it: rpc error: code = Unavailable desc = transport is closing

2021-07-15T19:44:57.8406362Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalMaybeTainted

2021-07-15T19:44:57.8406934Z 2021/07/15 19:44:57 [TRACE] EvalMaybeTainted: azurerm_sql_database.GrvRms["jortytestya"] encountered an error during creation, so it is now marked as tainted

2021-07-15T19:44:57.8407521Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalWriteState

2021-07-15T19:44:57.8408115Z 2021/07/15 19:44:57 [TRACE] EvalWriteState: removing state object for azurerm_sql_database.GrvRms["jortytestya"]

2021-07-15T19:44:57.8408601Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalApplyProvisioners

2021-07-15T19:44:57.8409147Z 2021/07/15 19:44:57 [TRACE] EvalApplyProvisioners: azurerm_sql_database.GrvRms["jortytestya"] has no state, so skipping provisioners

2021-07-15T19:44:57.8409752Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalMaybeTainted

2021-07-15T19:44:57.8410300Z 2021/07/15 19:44:57 [TRACE] EvalMaybeTainted: azurerm_sql_database.GrvRms["jortytestya"] encountered an error during creation, so it is now marked as tainted

2021-07-15T19:44:57.8411977Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalWriteState

2021-07-15T19:44:57.8412575Z 2021/07/15 19:44:57 [TRACE] EvalWriteState: removing state object for azurerm_sql_database.GrvRms["jortytestya"]

2021-07-15T19:44:57.8413094Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalIf

2021-07-15T19:44:57.8413532Z 2021/07/15 19:44:57 [TRACE] eval: *terraform.EvalIf