Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,359 | 22,056,591,250 | IssuesEvent | 2022-05-30 13:25:09 | Homebrew/homebrew-core | https://api.github.com/repos/Homebrew/homebrew-core | closed | luajit probably needs to be deprecated | help wanted maintainer feedback | - The latest release is from 2015, and the latest beta is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#issuecomment-752404043

The reason I'm not doing a pull request directly is that a lot of things depend on luajit, so I want to open a discussion and figure out the best way to handle this. Can some of these be migrated to one of the lua formulas? | True | luajit probably needs to be deprecated - - The latest release is from 2015, and the latest beta is from 2017

- It's heavily patched

- Every new macOS version requires an additional patch

- Upstream's recommendation is to “build from git HEAD”, and they won't apparently ship new releases: https://github.com/LuaJIT/LuaJIT/issues/648#issuecomment-752404043

The reason I'm not doing a pull request directly is that a lot of things depend on luajit, so I want to open a discussion and figure out the best way to handle this. Can some of these be migrated to one of the lua formulas? | main | luajit probably needs to be deprecated the latest release is from and the latest beta is from it s heavily patched every new macos version requires an additional patch upstream s recommendation is to “build from git head” and they won t apparently ship new releases the reason i m not doing a pull request directly is that a lot of things depend on luajit so i want to open a discussion and figure out the best way to handle this can some of these be migrated to one of the lua formulas | 1 |

351 | 3,252,424,356 | IssuesEvent | 2015-10-19 14:49:33 | tethysplatform/tethys | https://api.github.com/repos/tethysplatform/tethys | closed | Upgrade gsconfig dependency of tethys_dataset_services to 1.0.0 | enhancement maintain dependencies | Upgrade the gsconfig library to 1.0.0 and test for bugs. | True | Upgrade gsconfig dependency of tethys_dataset_services to 1.0.0 - Upgrade the gsconfig library to 1.0.0 and test for bugs. | main | upgrade gsconfig dependency of tethys dataset services to upgrade the gsconfig library to and test for bugs | 1 |

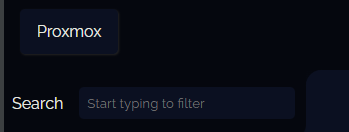

4,435 | 23,049,059,974 | IssuesEvent | 2022-07-24 10:57:29 | Lissy93/dashy | https://api.github.com/repos/Lissy93/dashy | closed | [BUG] Multiple-Pages not working | 👤 Awaiting Maintainer Response | Hi there!

Actuall i'm building my Dashy environment.

I wanted to split dashy with multiple pages.

As i read the docs, section multiple pages,

the following code:

`pages:

- name: Proxmox

path: './conf-proxmox.yml'`

Is not working for me, i must add the yml file is in the public folder, as mentionned in the docs

When i build and restart Dashy, i see the proxmox button

It just end up to https://mydashy.fr/home/Proxmox

Problem is i cant see the monitoring...

Thanks for all help and explaining! | True | [BUG] Multiple-Pages not working - Hi there!

Actuall i'm building my Dashy environment.

I wanted to split dashy with multiple pages.

As i read the docs, section multiple pages,

the following code:

`pages:

- name: Proxmox

path: './conf-proxmox.yml'`

Is not working for me, i must add the yml file is in the public folder, as mentionned in the docs

When i build and restart Dashy, i see the proxmox button

It just end up to https://mydashy.fr/home/Proxmox

Problem is i cant see the monitoring...

Thanks for all help and explaining! | main | multiple pages not working hi there actuall i m building my dashy environment i wanted to split dashy with multiple pages as i read the docs section multiple pages the following code pages name proxmox path conf proxmox yml is not working for me i must add the yml file is in the public folder as mentionned in the docs when i build and restart dashy i see the proxmox button it just end up to problem is i cant see the monitoring thanks for all help and explaining | 1 |

350,024 | 10,477,331,945 | IssuesEvent | 2019-09-23 20:39:19 | avalonmediasystem/avalon | https://api.github.com/repos/avalonmediasystem/avalon | closed | Thumbnail grabbing modal too big for small screens | 6.x abandoned low priority wontfix | Thumbnail grabbing modal buttons appear below the fold for small screen sizes. | 1.0 | Thumbnail grabbing modal too big for small screens - Thumbnail grabbing modal buttons appear below the fold for small screen sizes. | non_main | thumbnail grabbing modal too big for small screens thumbnail grabbing modal buttons appear below the fold for small screen sizes | 0 |

204,249 | 15,896,285,959 | IssuesEvent | 2021-04-11 16:54:11 | mlr-org/mlr3spatiotempcv | https://api.github.com/repos/mlr-org/mlr3spatiotempcv | opened | Re-categorize methods in pkgdown reference | Priority: Medium Status: Pending Type: Documentation | Current:

- Spatial

- Spatiotemporal

Maybe better:

- Spatial

- Spatiotemporal

- Feature space

Also it might be helpful to add an additional grouping identifier into the title of certain methods that rely on the same idea.

Example:

"[Buffering] <method title" | 1.0 | Re-categorize methods in pkgdown reference - Current:

- Spatial

- Spatiotemporal

Maybe better:

- Spatial

- Spatiotemporal

- Feature space

Also it might be helpful to add an additional grouping identifier into the title of certain methods that rely on the same idea.

Example:

"[Buffering] <method title" | non_main | re categorize methods in pkgdown reference current spatial spatiotemporal maybe better spatial spatiotemporal feature space also it might be helpful to add an additional grouping identifier into the title of certain methods that rely on the same idea example method title | 0 |

432,473 | 30,284,846,000 | IssuesEvent | 2023-07-08 14:39:57 | OHDSI/GIS | https://api.github.com/repos/OHDSI/GIS | closed | Restructure the ERD | documentation | Restructure (following suit to the GIS proposal) and rename on the website | 1.0 | Restructure the ERD - Restructure (following suit to the GIS proposal) and rename on the website | non_main | restructure the erd restructure following suit to the gis proposal and rename on the website | 0 |

4,472 | 23,319,942,758 | IssuesEvent | 2022-08-08 15:29:27 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | [Bug]: The overflow menu item is truncated automatically when the text length is more | type: bug 🐛 status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 | ### Package

carbon-components, carbon-components-react

### Browser

Chrome

### Package version

10.50

### React version

17.0.2

### Description

The overflow menu item text is truncated automatically when the text is some what larger text. See image below

Please let me know is there a fix/workaround for this as we wont be able to upgrade the carbon component version now. Thanks

### Reproduction/example

https://codesandbox.io/s/flamboyant-mccarthy-wi9f66?file=/src/index.js

### Steps to reproduce

1. Add overmenu component

2. Add an item with a bigger text

### Code of Conduct

- [X] I agree to follow this project's [Code of Conduct](https://github.com/carbon-design-system/carbon/blob/f555616971a03fd454c0f4daea184adf41fff05b/.github/CODE_OF_CONDUCT.md)

- [X] I checked the [current issues](https://github.com/carbon-design-system/carbon/issues) for duplicate problems | True | [Bug]: The overflow menu item is truncated automatically when the text length is more - ### Package

carbon-components, carbon-components-react

### Browser

Chrome

### Package version

10.50

### React version

17.0.2

### Description

The overflow menu item text is truncated automatically when the text is some what larger text. See image below

Please let me know is there a fix/workaround for this as we wont be able to upgrade the carbon component version now. Thanks

### Reproduction/example

https://codesandbox.io/s/flamboyant-mccarthy-wi9f66?file=/src/index.js

### Steps to reproduce

1. Add overmenu component

2. Add an item with a bigger text

### Code of Conduct

- [X] I agree to follow this project's [Code of Conduct](https://github.com/carbon-design-system/carbon/blob/f555616971a03fd454c0f4daea184adf41fff05b/.github/CODE_OF_CONDUCT.md)

- [X] I checked the [current issues](https://github.com/carbon-design-system/carbon/issues) for duplicate problems | main | the overflow menu item is truncated automatically when the text length is more package carbon components carbon components react browser chrome package version react version description the overflow menu item text is truncated automatically when the text is some what larger text see image below please let me know is there a fix workaround for this as we wont be able to upgrade the carbon component version now thanks reproduction example steps to reproduce add overmenu component add an item with a bigger text code of conduct i agree to follow this project s i checked the for duplicate problems | 1 |

127,993 | 27,171,266,709 | IssuesEvent | 2023-02-17 19:40:47 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | opened | [PNI Refactor] Add documentation to pni-sort-dropdown.js | engineering buyer's guide 🛍 code cleanup needs grooming | Add documentation (in [JSDoc style](https://jsdoc.app/)) to `source/js/buyers-guide/search/pni-sort-dropdown.js`.

e.g.,

```js

/**

* Represents a book.

* @constructor

* @param {string} title - The title of the book.

* @param {string} author - The author of the book.

*/

function Book(title, author) {

}

```

| 1.0 | [PNI Refactor] Add documentation to pni-sort-dropdown.js - Add documentation (in [JSDoc style](https://jsdoc.app/)) to `source/js/buyers-guide/search/pni-sort-dropdown.js`.

e.g.,

```js

/**

* Represents a book.

* @constructor

* @param {string} title - The title of the book.

* @param {string} author - The author of the book.

*/

function Book(title, author) {

}

```

| non_main | add documentation to pni sort dropdown js add documentation in to source js buyers guide search pni sort dropdown js e g js represents a book constructor param string title the title of the book param string author the author of the book function book title author | 0 |

271,466 | 29,506,336,787 | IssuesEvent | 2023-06-03 11:05:24 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Enzo Biochem Ransomware Attack Exposes Information of 2.5M Individuals | SecurityWeek Stale |

Enzo Biochem says the clinical test information of roughly 2.47 million individuals was exposed in a recent ransomware attack.

The post [Enzo Biochem Ransomware Attack Exposes Information of 2.5M Individuals](https://www.securityweek.com/enzo-biochem-ransomware-attack-exposes-information-of-2-5m-individuals/) appeared first on [SecurityWeek](https://www.securityweek.com).

<https://www.securityweek.com/enzo-biochem-ransomware-attack-exposes-information-of-2-5m-individuals/>

| True | [SecurityWeek] Enzo Biochem Ransomware Attack Exposes Information of 2.5M Individuals -

Enzo Biochem says the clinical test information of roughly 2.47 million individuals was exposed in a recent ransomware attack.

The post [Enzo Biochem Ransomware Attack Exposes Information of 2.5M Individuals](https://www.securityweek.com/enzo-biochem-ransomware-attack-exposes-information-of-2-5m-individuals/) appeared first on [SecurityWeek](https://www.securityweek.com).

<https://www.securityweek.com/enzo-biochem-ransomware-attack-exposes-information-of-2-5m-individuals/>

| non_main | enzo biochem ransomware attack exposes information of individuals enzo biochem says the clinical test information of roughly million individuals was exposed in a recent ransomware attack the post appeared first on | 0 |

7,328 | 3,082,726,826 | IssuesEvent | 2015-08-24 00:46:35 | california-civic-data-coalition/django-calaccess-raw-data | https://api.github.com/repos/california-civic-data-coalition/django-calaccess-raw-data | opened | Add documentation for the ``payee_st`` field on the ``LexpCd`` database model | documentation enhancement small |

## Your mission

Add documentation for the ``payee_st`` field on the ``LexpCd`` database model.

## Here's how

**Step 1**: Claim this ticket by leaving a comment below. Tell everyone you're ON IT!

**Step 2**: Open up the file that contains this model. It should be in <a href="https://github.com/california-civic-data-coalition/django-calaccess-raw-data/blob/master/calaccess_raw/models/lobbying.py">calaccess_raw.models.lobbying.py</a>.

**Step 3**: Hit the little pencil button in the upper-right corner of the code box to begin editing the file.

**Step 4**: Find this model and field in the file. (Clicking into the box and searching with CTRL-F can help you here.) Once you find it, we expect the field to lack the ``help_text`` field typically used in Django to explain what a field contains.

```python

effect_dt = fields.DateField(

null=True,

db_column="EFFECT_DT"

)

```

**Step 5**: In a separate tab, open up the <a href="Quilmes">official state documentation</a> and find the page that defines all the fields in this model.

**Step 6**: Find the row in that table's definition table that spells out what this field contains. If it lacks documentation. Note that in the ticket and close it now.

**Step 7**: Return to the GitHub tab.

**Step 8**: Add the state's label explaining what's in the field, to our field definition by inserting it a ``help_text`` argument. That should look something like this:

```python

effect_dt = fields.DateField(

null=True,

db_column="EFFECT_DT",

# Add a help_text argument like the one here, but put your string in instead.

help_text="The other values in record were effective as of this date"

)

```

**Step 9**: Scroll down below the code box and describe the change you've made in the commit message. Press the button below.

**Step 10**: Review your changes and create a pull request submitting them to the core team for inclusion.

That's it! Mission accomplished!

| 1.0 | Add documentation for the ``payee_st`` field on the ``LexpCd`` database model -

## Your mission

Add documentation for the ``payee_st`` field on the ``LexpCd`` database model.

## Here's how

**Step 1**: Claim this ticket by leaving a comment below. Tell everyone you're ON IT!

**Step 2**: Open up the file that contains this model. It should be in <a href="https://github.com/california-civic-data-coalition/django-calaccess-raw-data/blob/master/calaccess_raw/models/lobbying.py">calaccess_raw.models.lobbying.py</a>.

**Step 3**: Hit the little pencil button in the upper-right corner of the code box to begin editing the file.

**Step 4**: Find this model and field in the file. (Clicking into the box and searching with CTRL-F can help you here.) Once you find it, we expect the field to lack the ``help_text`` field typically used in Django to explain what a field contains.

```python

effect_dt = fields.DateField(

null=True,

db_column="EFFECT_DT"

)

```

**Step 5**: In a separate tab, open up the <a href="Quilmes">official state documentation</a> and find the page that defines all the fields in this model.

**Step 6**: Find the row in that table's definition table that spells out what this field contains. If it lacks documentation. Note that in the ticket and close it now.

**Step 7**: Return to the GitHub tab.

**Step 8**: Add the state's label explaining what's in the field, to our field definition by inserting it a ``help_text`` argument. That should look something like this:

```python

effect_dt = fields.DateField(

null=True,

db_column="EFFECT_DT",

# Add a help_text argument like the one here, but put your string in instead.

help_text="The other values in record were effective as of this date"

)

```

**Step 9**: Scroll down below the code box and describe the change you've made in the commit message. Press the button below.

**Step 10**: Review your changes and create a pull request submitting them to the core team for inclusion.

That's it! Mission accomplished!

| non_main | add documentation for the payee st field on the lexpcd database model your mission add documentation for the payee st field on the lexpcd database model here s how step claim this ticket by leaving a comment below tell everyone you re on it step open up the file that contains this model it should be in a href step hit the little pencil button in the upper right corner of the code box to begin editing the file step find this model and field in the file clicking into the box and searching with ctrl f can help you here once you find it we expect the field to lack the help text field typically used in django to explain what a field contains python effect dt fields datefield null true db column effect dt step in a separate tab open up the official state documentation and find the page that defines all the fields in this model step find the row in that table s definition table that spells out what this field contains if it lacks documentation note that in the ticket and close it now step return to the github tab step add the state s label explaining what s in the field to our field definition by inserting it a help text argument that should look something like this python effect dt fields datefield null true db column effect dt add a help text argument like the one here but put your string in instead help text the other values in record were effective as of this date step scroll down below the code box and describe the change you ve made in the commit message press the button below step review your changes and create a pull request submitting them to the core team for inclusion that s it mission accomplished | 0 |

125,021 | 26,577,567,931 | IssuesEvent | 2023-01-22 01:40:49 | hugh-mend/Java-Demo-Log4J | https://api.github.com/repos/hugh-mend/Java-Demo-Log4J | opened | Code Security Report: 37 high severity findings, 88 total findings | code security findings | # Code Security Report

**Latest Scan:** 2023-01-22 01:40am

**Total Findings:** 88

**Tested Project Files:** 102

**Detected Programming Languages:** 1

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END -->

## Language: Java

| Severity | CWE | Vulnerability Type | Count |

|-|-|-|-|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-94](https://cwe.mitre.org/data/definitions/94.html)|Code Injection|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-22](https://cwe.mitre.org/data/definitions/22.html)|Path/Directory Traversal|9|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-73](https://cwe.mitre.org/data/definitions/73.html)|File Manipulation|8|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-79](https://cwe.mitre.org/data/definitions/79.html)|Cross-Site Scripting|18|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-918](https://cwe.mitre.org/data/definitions/918.html)|Server Side Request Forgery|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-338](https://cwe.mitre.org/data/definitions/338.html)|Weak Pseudo-Random|2|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-244](https://cwe.mitre.org/data/definitions/244.html)|Heap Inspection|5|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-501](https://cwe.mitre.org/data/definitions/501.html)|Trust Boundary Violation|5|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-209](https://cwe.mitre.org/data/definitions/209.html)|Error Messages Information Exposure|15|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-601](https://cwe.mitre.org/data/definitions/601.html)|Unvalidated/Open Redirect|14|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-117](https://cwe.mitre.org/data/definitions/117.html)|Log Forging|4|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-113](https://cwe.mitre.org/data/definitions/113.html)|HTTP Header Injection|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-20](https://cwe.mitre.org/data/definitions/20.html)|Session Poisoning|5|

### Details

> The below list presents the 20 most relevant findings that need your attention. To view information on the remaining findings, navigate to the [Mend SAST Application](https://saas.mend.io/sast/#/scans/edceb2f3-0cdd-480d-a378-8ae3450e6707/details).

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Code Injection (CWE-94) : 1</summary>

#### Findings

<details>

<summary>vulnerabilities/CodeInjectionServlet.java:65</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L60-L65

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L25

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L44

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L45

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L46

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L47

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L61

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L65

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Path/Directory Traversal (CWE-22) : 9</summary>

#### Findings

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:84</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L79-L84

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:84</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L79-L84

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

</details>

</details>

<details>

<summary>vulnerabilities/NullByteInjectionServlet.java:46</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L41-L46

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L35

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L40

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L46

</details>

</details>

<details>

<summary>vulnerabilities/MailHeaderInjectionServlet.java:133</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L128-L133

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L125

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L127

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L133

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:135</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L130-L135

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L106

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L135

</details>

</details>

<details>

<summary>vulnerabilities/XEEandXXEServlet.java:196</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L191-L196

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L148

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L161

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L192

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L196

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:114</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L109-L114

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L111

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L114

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:127</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L122-L127

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L111

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L127

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:110</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L105-L110

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L106

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L110

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>File Manipulation (CWE-73) : 8</summary>

#### Findings

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>vulnerabilities/MailHeaderInjectionServlet.java:142</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L137-L142

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L142

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L81

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L80

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L148

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L157

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Cross-Site Scripting (CWE-79) : 2</summary>

#### Findings

<details>

<summary>servlets/AbstractServlet.java:94</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L89-L94

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L21

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L68

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L31

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L94

</details>

</details>

<details>

<summary>servlets/AbstractServlet.java:94</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L89-L94

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L21

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L30

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L44

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L31

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L94

</details>

</details>

</details>

| 1.0 | Code Security Report: 37 high severity findings, 88 total findings - # Code Security Report

**Latest Scan:** 2023-01-22 01:40am

**Total Findings:** 88

**Tested Project Files:** 102

**Detected Programming Languages:** 1

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END -->

## Language: Java

| Severity | CWE | Vulnerability Type | Count |

|-|-|-|-|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-94](https://cwe.mitre.org/data/definitions/94.html)|Code Injection|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-22](https://cwe.mitre.org/data/definitions/22.html)|Path/Directory Traversal|9|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-73](https://cwe.mitre.org/data/definitions/73.html)|File Manipulation|8|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-79](https://cwe.mitre.org/data/definitions/79.html)|Cross-Site Scripting|18|

|<img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High|[CWE-918](https://cwe.mitre.org/data/definitions/918.html)|Server Side Request Forgery|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-338](https://cwe.mitre.org/data/definitions/338.html)|Weak Pseudo-Random|2|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-244](https://cwe.mitre.org/data/definitions/244.html)|Heap Inspection|5|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-501](https://cwe.mitre.org/data/definitions/501.html)|Trust Boundary Violation|5|

|<img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium|[CWE-209](https://cwe.mitre.org/data/definitions/209.html)|Error Messages Information Exposure|15|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-601](https://cwe.mitre.org/data/definitions/601.html)|Unvalidated/Open Redirect|14|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-117](https://cwe.mitre.org/data/definitions/117.html)|Log Forging|4|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-113](https://cwe.mitre.org/data/definitions/113.html)|HTTP Header Injection|1|

|<img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Low|[CWE-20](https://cwe.mitre.org/data/definitions/20.html)|Session Poisoning|5|

### Details

> The below list presents the 20 most relevant findings that need your attention. To view information on the remaining findings, navigate to the [Mend SAST Application](https://saas.mend.io/sast/#/scans/edceb2f3-0cdd-480d-a378-8ae3450e6707/details).

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Code Injection (CWE-94) : 1</summary>

#### Findings

<details>

<summary>vulnerabilities/CodeInjectionServlet.java:65</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L60-L65

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L25

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L44

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L45

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L46

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L47

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L61

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/CodeInjectionServlet.java#L65

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Path/Directory Traversal (CWE-22) : 9</summary>

#### Findings

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:84</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L79-L84

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:84</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L79-L84

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

</details>

</details>

<details>

<summary>vulnerabilities/NullByteInjectionServlet.java:46</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L41-L46

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L35

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L40

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/NullByteInjectionServlet.java#L46

</details>

</details>

<details>

<summary>vulnerabilities/MailHeaderInjectionServlet.java:133</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L128-L133

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L125

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L127

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L133

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:135</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L130-L135

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L106

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L135

</details>

</details>

<details>

<summary>vulnerabilities/XEEandXXEServlet.java:196</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L191-L196

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L148

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L161

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L192

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L196

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:114</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L109-L114

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L111

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L114

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedSizeUploadServlet.java:127</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L122-L127

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L111

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L127

</details>

</details>

<details>

<summary>vulnerabilities/UnrestrictedExtensionUploadServlet.java:110</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L105-L110

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L84

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L106

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L110

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>File Manipulation (CWE-73) : 8</summary>

#### Findings

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>vulnerabilities/MailHeaderInjectionServlet.java:142</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L137-L142

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/MailHeaderInjectionServlet.java#L142

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:38</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33-L38

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L37

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L38

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L69

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L76

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedExtensionUploadServlet.java#L81

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L70

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L71

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/UnrestrictedSizeUploadServlet.java#L80

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

<details>

<summary>utils/MultiPartFileUtils.java:33</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28-L33

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L141

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L57

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L59

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L148

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/vulnerabilities/XEEandXXEServlet.java#L157

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/utils/MultiPartFileUtils.java#L33

</details>

</details>

</details>

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20>Cross-Site Scripting (CWE-79) : 2</summary>

#### Findings

<details>

<summary>servlets/AbstractServlet.java:94</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L89-L94

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L21

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L28

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/performance/CreatingUnnecessaryObjectsServlet.java#L68

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L31

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L94

</details>

</details>

<details>

<summary>servlets/AbstractServlet.java:94</summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L89-L94

<details>

<summary> Trace </summary>

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L21

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L30

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/troubles/TruncationErrorServlet.java#L44

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L31

https://github.com/hugh-mend/Java-Demo-Log4J/blob/05dcf189b81da05c2da90ee1d184aa3cf974a4a0/src/main/java/org/t246osslab/easybuggy/core/servlets/AbstractServlet.java#L94

</details>

</details>

</details>