Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

494,564 | 14,260,506,351 | IssuesEvent | 2020-11-20 09:55:51 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | closed | AutoCompleteSelector regex error | Bug: production Effort: small Priority: normal bugsnag production | # Describe the bug

From bugsnag. It seems some characters being type in the autocomplete selector are throwing errors. Specifically `\`, but some others should be checked.

## Error in mSupply Mobile

**SyntaxError** in **MainActivity**

SyntaxError: Invalid regular expression: \ at end of pattern

This error is located at:

in c

in RCTView

in RCTView

in RCTView

in f

in RCTView

in RCTView

in RCTView

in RCTModalHostView

in n

in ModalBox

in y

in l

in RCTView

in RCTView

in RCTView

in f

in RCTView

in RCTView

in ModalBox

in y

in l

in o

in RCTView

in RCTView

in p

in f

in h

in s

in Connect(s)

in Unknown

in v

in RCTView

in f

in RCTView

in f

in C

in n

in P

in RCTView

in n

in RCTView

in f

in b

in y

in L

in RCTView

in h

in C

in k

in v

in P

in Unknown

in Connect(Component)

in RCTView

in n

in Connect(n)

in s

in c

in v

in RCTView

in RCTView

in c

[View on Bugsnag](https://app.bugsnag.com/sustainable-solutions-nz-ltd/msupply-mobile/errors/5cf930a22b0061001a37cce6?event_id=5cf930a20041fddad3890000&i=gh&m=ci)

## Stacktrace

src/widgets/AutocompleteSelector.js:65 - filterArrayData

src/widgets/AutocompleteSelector.js:82 - getData

src/widgets/AutocompleteSelector.js:101 - value

[View full stacktrace](https://app.bugsnag.com/sustainable-solutions-nz-ltd/msupply-mobile/errors/5cf930a22b0061001a37cce6?event_id=5cf930a20041fddad3890000&i=gh&m=ci)

*Created automatically via Bugsnag* | 1.0 | AutoCompleteSelector regex error - # Describe the bug

From bugsnag. It seems some characters being type in the autocomplete selector are throwing errors. Specifically `\`, but some others should be checked.

## Error in mSupply Mobile

**SyntaxError** in **MainActivity**

SyntaxError: Invalid regular expression: \ at end of pattern

This error is located at:

in c

in RCTView

in RCTView

in RCTView

in f

in RCTView

in RCTView

in RCTView

in RCTModalHostView

in n

in ModalBox

in y

in l

in RCTView

in RCTView

in RCTView

in f

in RCTView

in RCTView

in ModalBox

in y

in l

in o

in RCTView

in RCTView

in p

in f

in h

in s

in Connect(s)

in Unknown

in v

in RCTView

in f

in RCTView

in f

in C

in n

in P

in RCTView

in n

in RCTView

in f

in b

in y

in L

in RCTView

in h

in C

in k

in v

in P

in Unknown

in Connect(Component)

in RCTView

in n

in Connect(n)

in s

in c

in v

in RCTView

in RCTView

in c

[View on Bugsnag](https://app.bugsnag.com/sustainable-solutions-nz-ltd/msupply-mobile/errors/5cf930a22b0061001a37cce6?event_id=5cf930a20041fddad3890000&i=gh&m=ci)

## Stacktrace

src/widgets/AutocompleteSelector.js:65 - filterArrayData

src/widgets/AutocompleteSelector.js:82 - getData

src/widgets/AutocompleteSelector.js:101 - value

[View full stacktrace](https://app.bugsnag.com/sustainable-solutions-nz-ltd/msupply-mobile/errors/5cf930a22b0061001a37cce6?event_id=5cf930a20041fddad3890000&i=gh&m=ci)

*Created automatically via Bugsnag* | non_main | autocompleteselector regex error describe the bug from bugsnag it seems some characters being type in the autocomplete selector are throwing errors specifically but some others should be checked error in msupply mobile syntaxerror in mainactivity syntaxerror invalid regular expression at end of pattern this error is located at in c in rctview in rctview in rctview in f in rctview in rctview in rctview in rctmodalhostview in n in modalbox in y in l in rctview in rctview in rctview in f in rctview in rctview in modalbox in y in l in o in rctview in rctview in p in f in h in s in connect s in unknown in v in rctview in f in rctview in f in c in n in p in rctview in n in rctview in f in b in y in l in rctview in h in c in k in v in p in unknown in connect component in rctview in n in connect n in s in c in v in rctview in rctview in c stacktrace src widgets autocompleteselector js filterarraydata src widgets autocompleteselector js getdata src widgets autocompleteselector js value created automatically via bugsnag | 0 |

4,022 | 4,835,697,968 | IssuesEvent | 2016-11-08 17:28:10 | vmware/vic | https://api.github.com/repos/vmware/vic | closed | Username/Password authentication for vic-admin | area/security | This issue is the second half of #2342 representing a sign-in page for vic admin whereby a user can sign in with their vSphere credentials. These credentials should be used for actual authentication against vSphere such that commands issued via vicadmin are limited by the permissions applied to the user in question.

| True | Username/Password authentication for vic-admin - This issue is the second half of #2342 representing a sign-in page for vic admin whereby a user can sign in with their vSphere credentials. These credentials should be used for actual authentication against vSphere such that commands issued via vicadmin are limited by the permissions applied to the user in question.

| non_main | username password authentication for vic admin this issue is the second half of representing a sign in page for vic admin whereby a user can sign in with their vsphere credentials these credentials should be used for actual authentication against vsphere such that commands issued via vicadmin are limited by the permissions applied to the user in question | 0 |

450,053 | 31,881,743,780 | IssuesEvent | 2023-09-16 13:04:49 | junit-team/junit5 | https://api.github.com/repos/junit-team/junit5 | closed | Fix implementation of `RandomNumberExtension` in the User Guide | theme: documentation component: Jupiter | ## Steps to reproduce

Copying the relevant parts of the `RandomNumberExtension` example in the documentation [here](https://junit.org/junit5/docs/snapshot/user-guide/index.html#extensions-RandomNumberExtension):

```java

class RandomNumberDemo {

// Use static randomNumber0 field anywhere in the test class,

// including @BeforeAll or @AfterEach lifecycle methods.

@Random

private static Integer randomNumber0;

// Use randomNumber1 field in test methods and @BeforeEach

// or @AfterEach lifecycle methods.

@Random

private int randomNumber1;

...

}

class RandomNumberExtension

implements BeforeAllCallback, TestInstancePostProcessor, ParameterResolver {

...

private void injectFields(Class<?> testClass, Object testInstance,

Predicate<Field> predicate) {

predicate = predicate.and(field -> isInteger(field.getType()));

findAnnotatedFields(testClass, Random.class, predicate)

.forEach(field -> {

try {

field.setAccessible(true);

field.set(testInstance, this.random.nextInt());

}

catch (Exception ex) {

throw new RuntimeException(ex);

}

});

}

...

}

```

There are a couple of issues with this code:

1. `randomNumber0` is an Integer, so it's not injected.

2. `findAnnotatedFields` is missing the fourth parameter, so it doesn't compile.

3. If `randomNumber0` is removed completely from `RandomNumberDemo`, then `randomNumber1` is not injected. I believe the root cause is that `TestMethodTestDescriptor` does not invoke `TestInstancePostProcessor` (side note: even though it does invoke `TestInstancePreDestroyCallback`). Meanwhile, `ClassBasedTestDescriptor` does, which is why `randomNumber1` is populated when `randomNumber0` is present. I can't tell which part is wrong here: the documentation or the code.

Note: The code in https://github.com/junit-team/junit5/issues/3004#issuecomment-1215045050 fixes all these issues. The last issue is resolved by using `BeforeEachCallback` instead of `TestInstancePostProcessor`.

## Context

- Used versions (Jupiter/Vintage/Platform): 5.9.3

- Build Tool/IDE: Eclipse 2023-03 (4.27.0)

## Deliverables

- <strike>Remove bugs from documentation</strike>

- <strike>Possibly change `TestMethodTestDescriptor` to start invoking `TestInstancePostProcessor` (if that's desirable)... or switch the example to use `BeforeEachCallback` (and mention the limitations with `TestMethodTestDescriptor` and `TestInstancePostProcessor`)</strike>

- [x] Fix implementation of `isInteger()` in `RandomNumberExtension` in the User Guide.

| 1.0 | Fix implementation of `RandomNumberExtension` in the User Guide - ## Steps to reproduce

Copying the relevant parts of the `RandomNumberExtension` example in the documentation [here](https://junit.org/junit5/docs/snapshot/user-guide/index.html#extensions-RandomNumberExtension):

```java

class RandomNumberDemo {

// Use static randomNumber0 field anywhere in the test class,

// including @BeforeAll or @AfterEach lifecycle methods.

@Random

private static Integer randomNumber0;

// Use randomNumber1 field in test methods and @BeforeEach

// or @AfterEach lifecycle methods.

@Random

private int randomNumber1;

...

}

class RandomNumberExtension

implements BeforeAllCallback, TestInstancePostProcessor, ParameterResolver {

...

private void injectFields(Class<?> testClass, Object testInstance,

Predicate<Field> predicate) {

predicate = predicate.and(field -> isInteger(field.getType()));

findAnnotatedFields(testClass, Random.class, predicate)

.forEach(field -> {

try {

field.setAccessible(true);

field.set(testInstance, this.random.nextInt());

}

catch (Exception ex) {

throw new RuntimeException(ex);

}

});

}

...

}

```

There are a couple of issues with this code:

1. `randomNumber0` is an Integer, so it's not injected.

2. `findAnnotatedFields` is missing the fourth parameter, so it doesn't compile.

3. If `randomNumber0` is removed completely from `RandomNumberDemo`, then `randomNumber1` is not injected. I believe the root cause is that `TestMethodTestDescriptor` does not invoke `TestInstancePostProcessor` (side note: even though it does invoke `TestInstancePreDestroyCallback`). Meanwhile, `ClassBasedTestDescriptor` does, which is why `randomNumber1` is populated when `randomNumber0` is present. I can't tell which part is wrong here: the documentation or the code.

Note: The code in https://github.com/junit-team/junit5/issues/3004#issuecomment-1215045050 fixes all these issues. The last issue is resolved by using `BeforeEachCallback` instead of `TestInstancePostProcessor`.

## Context

- Used versions (Jupiter/Vintage/Platform): 5.9.3

- Build Tool/IDE: Eclipse 2023-03 (4.27.0)

## Deliverables

- <strike>Remove bugs from documentation</strike>

- <strike>Possibly change `TestMethodTestDescriptor` to start invoking `TestInstancePostProcessor` (if that's desirable)... or switch the example to use `BeforeEachCallback` (and mention the limitations with `TestMethodTestDescriptor` and `TestInstancePostProcessor`)</strike>

- [x] Fix implementation of `isInteger()` in `RandomNumberExtension` in the User Guide.

| non_main | fix implementation of randomnumberextension in the user guide steps to reproduce copying the relevant parts of the randomnumberextension example in the documentation java class randomnumberdemo use static field anywhere in the test class including beforeall or aftereach lifecycle methods random private static integer use field in test methods and beforeeach or aftereach lifecycle methods random private int class randomnumberextension implements beforeallcallback testinstancepostprocessor parameterresolver private void injectfields class testclass object testinstance predicate predicate predicate predicate and field isinteger field gettype findannotatedfields testclass random class predicate foreach field try field setaccessible true field set testinstance this random nextint catch exception ex throw new runtimeexception ex there are a couple of issues with this code is an integer so it s not injected findannotatedfields is missing the fourth parameter so it doesn t compile if is removed completely from randomnumberdemo then is not injected i believe the root cause is that testmethodtestdescriptor does not invoke testinstancepostprocessor side note even though it does invoke testinstancepredestroycallback meanwhile classbasedtestdescriptor does which is why is populated when is present i can t tell which part is wrong here the documentation or the code note the code in fixes all these issues the last issue is resolved by using beforeeachcallback instead of testinstancepostprocessor context used versions jupiter vintage platform build tool ide eclipse deliverables remove bugs from documentation possibly change testmethodtestdescriptor to start invoking testinstancepostprocessor if that s desirable or switch the example to use beforeeachcallback and mention the limitations with testmethodtestdescriptor and testinstancepostprocessor fix implementation of isinteger in randomnumberextension in the user guide | 0 |

667 | 4,195,335,465 | IssuesEvent | 2016-06-25 17:22:09 | duckduckgo/zeroclickinfo-goodies | https://api.github.com/repos/duckduckgo/zeroclickinfo-goodies | closed | Swift Cheat Sheet: Shows deprecated functionality | CheatSheet Maintainer Approved | Some functions shown are deprecated in the latest release of Xcode, and will be removed completely in Swift 3. For example ++ and -- should be replaced with += and -=.

------

IA Page: http://duck.co/ia/view/swift_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @gautamkrishnar | True | Swift Cheat Sheet: Shows deprecated functionality - Some functions shown are deprecated in the latest release of Xcode, and will be removed completely in Swift 3. For example ++ and -- should be replaced with += and -=.

------

IA Page: http://duck.co/ia/view/swift_cheat_sheet

[Maintainer](http://docs.duckduckhack.com/maintaining/guidelines.html): @gautamkrishnar | main | swift cheat sheet shows deprecated functionality some functions shown are deprecated in the latest release of xcode and will be removed completely in swift for example and should be replaced with and ia page gautamkrishnar | 1 |

318,340 | 23,715,684,634 | IssuesEvent | 2022-08-30 11:35:02 | veraison/docs | https://api.github.com/repos/veraison/docs | closed | Introduce a repository introduction document for Project Veraison | documentation | Introduce a repository introduction document for Project Veraison:

Veraison Org has myriad of repositories. We need a high level document/README.md that explains what all repositories exists in Project Veraison and how they should be logically navigated to set the correct context! | 1.0 | Introduce a repository introduction document for Project Veraison - Introduce a repository introduction document for Project Veraison:

Veraison Org has myriad of repositories. We need a high level document/README.md that explains what all repositories exists in Project Veraison and how they should be logically navigated to set the correct context! | non_main | introduce a repository introduction document for project veraison introduce a repository introduction document for project veraison veraison org has myriad of repositories we need a high level document readme md that explains what all repositories exists in project veraison and how they should be logically navigated to set the correct context | 0 |

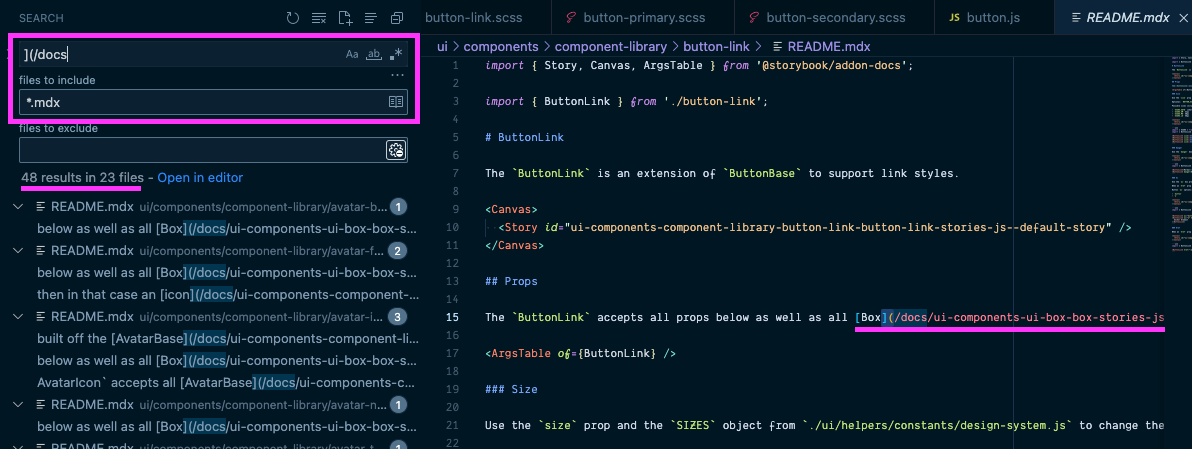

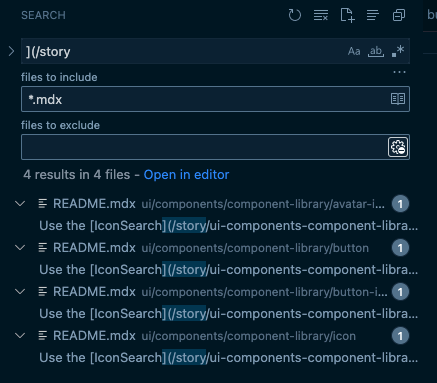

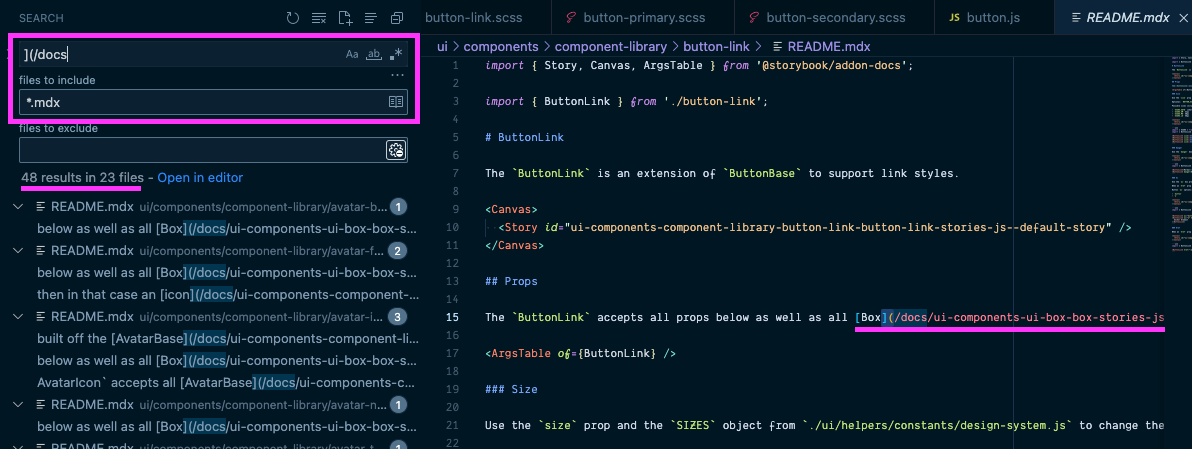

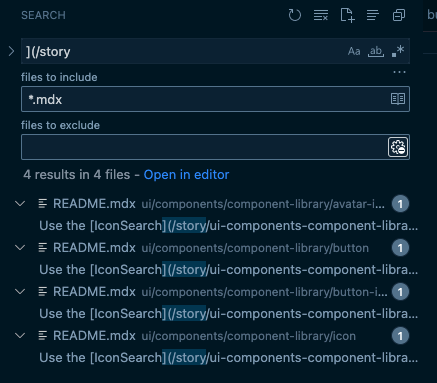

168,180 | 26,611,883,259 | IssuesEvent | 2023-01-24 01:18:00 | MetaMask/metamask-extension | https://api.github.com/repos/MetaMask/metamask-extension | closed | Update story links in storybook documentation | design-system | ### Description

When https://github.com/MetaMask/metamask-extension/pull/17092 is merged it will change all storybook URLs and will essentially break any link references to those stories. We will need to update internal and external links. This ticket is to update all internal links inside of the repo.

A good start would be to search for `[(/docs` in `.mdx` files that point to component doc pages

Then searching for `](/story` in `.mdx` files that point to component stort pages

I would also do a vert broad search `](/` and scan for any links that point to storybook pages

### Technical Details

- Update all URLs to stories in `.mdx` files to use the new URLS

### Acceptance Criteria

- All links to stories in MDX docs work

| 1.0 | Update story links in storybook documentation - ### Description

When https://github.com/MetaMask/metamask-extension/pull/17092 is merged it will change all storybook URLs and will essentially break any link references to those stories. We will need to update internal and external links. This ticket is to update all internal links inside of the repo.

A good start would be to search for `[(/docs` in `.mdx` files that point to component doc pages

Then searching for `](/story` in `.mdx` files that point to component stort pages

I would also do a vert broad search `](/` and scan for any links that point to storybook pages

### Technical Details

- Update all URLs to stories in `.mdx` files to use the new URLS

### Acceptance Criteria

- All links to stories in MDX docs work

| non_main | update story links in storybook documentation description when is merged it will change all storybook urls and will essentially break any link references to those stories we will need to update internal and external links this ticket is to update all internal links inside of the repo a good start would be to search for docs in mdx files that point to component doc pages then searching for story in mdx files that point to component stort pages i would also do a vert broad search and scan for any links that point to storybook pages technical details update all urls to stories in mdx files to use the new urls acceptance criteria all links to stories in mdx docs work | 0 |

4,868 | 25,020,291,287 | IssuesEvent | 2022-11-03 23:26:37 | aws/serverless-application-model | https://api.github.com/repos/aws/serverless-application-model | closed | Api Path Can't Bet Set from Reference | area/resource/api type/feature contributors/good-first-issue area/intrinsics maintainer/need-response | I want to do a cloudformation deploy with an events section that looks like this and the parameter "ThePath is set to "/good":

```

Events:

Api2:

Type: Api

Properties:

Method: ANY

Path: !Ref ThePath

```

This results in:

```

Failed to create the changeset: Waiter ChangeSetCreateComplete failed: Waiter encountered a terminal failure state Status: FAILED. Reason: Transform AWS::Serverless-2016-10-31 failed with: Internal transform failure.

```

When I modify the exact same file to use an actual path instead of a parameter it works fine:

```

Events:

Api2:

Type: Api

Properties:

Method: ANY

Path: /good

```

Perhaps the part of the code that is doing the validation won't let this happen because it can't verify that the parameter begins with a "/"?

| True | Api Path Can't Bet Set from Reference - I want to do a cloudformation deploy with an events section that looks like this and the parameter "ThePath is set to "/good":

```

Events:

Api2:

Type: Api

Properties:

Method: ANY

Path: !Ref ThePath

```

This results in:

```

Failed to create the changeset: Waiter ChangeSetCreateComplete failed: Waiter encountered a terminal failure state Status: FAILED. Reason: Transform AWS::Serverless-2016-10-31 failed with: Internal transform failure.

```

When I modify the exact same file to use an actual path instead of a parameter it works fine:

```

Events:

Api2:

Type: Api

Properties:

Method: ANY

Path: /good

```

Perhaps the part of the code that is doing the validation won't let this happen because it can't verify that the parameter begins with a "/"?

| main | api path can t bet set from reference i want to do a cloudformation deploy with an events section that looks like this and the parameter thepath is set to good events type api properties method any path ref thepath this results in failed to create the changeset waiter changesetcreatecomplete failed waiter encountered a terminal failure state status failed reason transform aws serverless failed with internal transform failure when i modify the exact same file to use an actual path instead of a parameter it works fine events type api properties method any path good perhaps the part of the code that is doing the validation won t let this happen because it can t verify that the parameter begins with a | 1 |

4,898 | 25,155,359,957 | IssuesEvent | 2022-11-10 13:10:40 | grafana/k6-docs | https://api.github.com/repos/grafana/k6-docs | opened | Add better information about time series and how to avoid generating too many | Area: OSS Content Type: needsMaintainerHelp | With k6 v0.41.0, some scripts that previously worked fine with millions of different time series will no longer be OK (https://github.com/grafana/k6/issues/2765).

We should add more warnings about that in the [Metrics](https://k6.io/docs/using-k6/metrics/) and [Tags and Groups](https://k6.io/docs/using-k6/tags-and-groups/) sections. Maybe polish a little bit the [URL grouping section](https://k6.io/docs/using-k6/http-requests/#url-grouping) and link it from more places, or move it to its own page? :thinking: | True | Add better information about time series and how to avoid generating too many - With k6 v0.41.0, some scripts that previously worked fine with millions of different time series will no longer be OK (https://github.com/grafana/k6/issues/2765).

We should add more warnings about that in the [Metrics](https://k6.io/docs/using-k6/metrics/) and [Tags and Groups](https://k6.io/docs/using-k6/tags-and-groups/) sections. Maybe polish a little bit the [URL grouping section](https://k6.io/docs/using-k6/http-requests/#url-grouping) and link it from more places, or move it to its own page? :thinking: | main | add better information about time series and how to avoid generating too many with some scripts that previously worked fine with millions of different time series will no longer be ok we should add more warnings about that in the and sections maybe polish a little bit the and link it from more places or move it to its own page thinking | 1 |

147,201 | 19,501,457,509 | IssuesEvent | 2021-12-28 04:29:49 | loftwah/casualcoder.io | https://api.github.com/repos/loftwah/casualcoder.io | closed | CVE-2021-37701 (High) detected in tar-6.1.0.tgz - autoclosed | security vulnerability | ## CVE-2021-37701 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-6.1.0.tgz">https://registry.npmjs.org/tar/-/tar-6.1.0.tgz</a></p>

<p>Path to dependency file: /wp-content/themes/twentytwenty/package.json</p>

<p>Path to vulnerable library: /wp-content/themes/twentytwenty/node_modules/tar/package.json,/wp-content/themes/twentynineteen/node_modules/tar/package.json</p>

<p>

Dependency Hierarchy:

- node-sass-6.0.0.tgz (Root Library)

- node-gyp-7.1.2.tgz

- :x: **tar-6.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/loftwah/casualcoder.io/commit/14ae92bd92b84f61737b3ee22fa2cc32e9fbdf03">14ae92bd92b84f61737b3ee22fa2cc32e9fbdf03</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 4.4.16, 5.0.8, and 6.1.7 has an arbitrary file creation/overwrite and arbitrary code execution vulnerability. node-tar aims to guarantee that any file whose location would be modified by a symbolic link is not extracted. This is, in part, achieved by ensuring that extracted directories are not symlinks. Additionally, in order to prevent unnecessary stat calls to determine whether a given path is a directory, paths are cached when directories are created. This logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory, where the symlink and directory names in the archive entry used backslashes as a path separator on posix systems. The cache checking logic used both `\` and `/` characters as path separators, however `\` is a valid filename character on posix systems. By first creating a directory, and then replacing that directory with a symlink, it was thus possible to bypass node-tar symlink checks on directories, essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location, thus allowing arbitrary file creation and overwrite. Additionally, a similar confusion could arise on case-insensitive filesystems. If a tar archive contained a directory at `FOO`, followed by a symbolic link named `foo`, then on case-insensitive file systems, the creation of the symbolic link would remove the directory from the filesystem, but _not_ from the internal directory cache, as it would not be treated as a cache hit. A subsequent file entry within the `FOO` directory would then be placed in the target of the symbolic link, thinking that the directory had already been created. These issues were addressed in releases 4.4.16, 5.0.8 and 6.1.7. The v3 branch of node-tar has been deprecated and did not receive patches for these issues. If you are still using a v3 release we recommend you update to a more recent version of node-tar. If this is not possible, a workaround is available in the referenced GHSA-9r2w-394v-53qc.

<p>Publish Date: 2021-08-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-37701>CVE-2021-37701</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-9r2w-394v-53qc">https://github.com/npm/node-tar/security/advisories/GHSA-9r2w-394v-53qc</a></p>

<p>Release Date: 2021-08-31</p>

<p>Fix Resolution (tar): 6.1.7</p>

<p>Direct dependency fix Resolution (node-sass): 6.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-37701 (High) detected in tar-6.1.0.tgz - autoclosed - ## CVE-2021-37701 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/tar-6.1.0.tgz">https://registry.npmjs.org/tar/-/tar-6.1.0.tgz</a></p>

<p>Path to dependency file: /wp-content/themes/twentytwenty/package.json</p>

<p>Path to vulnerable library: /wp-content/themes/twentytwenty/node_modules/tar/package.json,/wp-content/themes/twentynineteen/node_modules/tar/package.json</p>

<p>

Dependency Hierarchy:

- node-sass-6.0.0.tgz (Root Library)

- node-gyp-7.1.2.tgz

- :x: **tar-6.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/loftwah/casualcoder.io/commit/14ae92bd92b84f61737b3ee22fa2cc32e9fbdf03">14ae92bd92b84f61737b3ee22fa2cc32e9fbdf03</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The npm package "tar" (aka node-tar) before versions 4.4.16, 5.0.8, and 6.1.7 has an arbitrary file creation/overwrite and arbitrary code execution vulnerability. node-tar aims to guarantee that any file whose location would be modified by a symbolic link is not extracted. This is, in part, achieved by ensuring that extracted directories are not symlinks. Additionally, in order to prevent unnecessary stat calls to determine whether a given path is a directory, paths are cached when directories are created. This logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory, where the symlink and directory names in the archive entry used backslashes as a path separator on posix systems. The cache checking logic used both `\` and `/` characters as path separators, however `\` is a valid filename character on posix systems. By first creating a directory, and then replacing that directory with a symlink, it was thus possible to bypass node-tar symlink checks on directories, essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location, thus allowing arbitrary file creation and overwrite. Additionally, a similar confusion could arise on case-insensitive filesystems. If a tar archive contained a directory at `FOO`, followed by a symbolic link named `foo`, then on case-insensitive file systems, the creation of the symbolic link would remove the directory from the filesystem, but _not_ from the internal directory cache, as it would not be treated as a cache hit. A subsequent file entry within the `FOO` directory would then be placed in the target of the symbolic link, thinking that the directory had already been created. These issues were addressed in releases 4.4.16, 5.0.8 and 6.1.7. The v3 branch of node-tar has been deprecated and did not receive patches for these issues. If you are still using a v3 release we recommend you update to a more recent version of node-tar. If this is not possible, a workaround is available in the referenced GHSA-9r2w-394v-53qc.

<p>Publish Date: 2021-08-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-37701>CVE-2021-37701</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/npm/node-tar/security/advisories/GHSA-9r2w-394v-53qc">https://github.com/npm/node-tar/security/advisories/GHSA-9r2w-394v-53qc</a></p>

<p>Release Date: 2021-08-31</p>

<p>Fix Resolution (tar): 6.1.7</p>

<p>Direct dependency fix Resolution (node-sass): 6.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve high detected in tar tgz autoclosed cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file wp content themes twentytwenty package json path to vulnerable library wp content themes twentytwenty node modules tar package json wp content themes twentynineteen node modules tar package json dependency hierarchy node sass tgz root library node gyp tgz x tar tgz vulnerable library found in head commit a href found in base branch main vulnerability details the npm package tar aka node tar before versions and has an arbitrary file creation overwrite and arbitrary code execution vulnerability node tar aims to guarantee that any file whose location would be modified by a symbolic link is not extracted this is in part achieved by ensuring that extracted directories are not symlinks additionally in order to prevent unnecessary stat calls to determine whether a given path is a directory paths are cached when directories are created this logic was insufficient when extracting tar files that contained both a directory and a symlink with the same name as the directory where the symlink and directory names in the archive entry used backslashes as a path separator on posix systems the cache checking logic used both and characters as path separators however is a valid filename character on posix systems by first creating a directory and then replacing that directory with a symlink it was thus possible to bypass node tar symlink checks on directories essentially allowing an untrusted tar file to symlink into an arbitrary location and subsequently extracting arbitrary files into that location thus allowing arbitrary file creation and overwrite additionally a similar confusion could arise on case insensitive filesystems if a tar archive contained a directory at foo followed by a symbolic link named foo then on case insensitive file systems the creation of the symbolic link would remove the directory from the filesystem but not from the internal directory cache as it would not be treated as a cache hit a subsequent file entry within the foo directory would then be placed in the target of the symbolic link thinking that the directory had already been created these issues were addressed in releases and the branch of node tar has been deprecated and did not receive patches for these issues if you are still using a release we recommend you update to a more recent version of node tar if this is not possible a workaround is available in the referenced ghsa publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tar direct dependency fix resolution node sass step up your open source security game with whitesource | 0 |

4,680 | 24,184,086,168 | IssuesEvent | 2022-09-23 11:44:05 | beyarkay/eskom-calendar | https://api.github.com/repos/beyarkay/eskom-calendar | opened | Schedule missing for Nelson Mandela Bay | bug waiting-on-maintainer missing-area-schedule | Schedules available here: https://www.nelsonmandelabay.gov.za/page/loadshedding

Schedule found to be missing by https://github.com/beyarkay/eskom-calendar/issues/78#issuecomment-1256098341

#### Technical details

Nelson mandela bay looks to be less well formatted than other areas, so adding schedules might take a while to get accurate. | True | Schedule missing for Nelson Mandela Bay - Schedules available here: https://www.nelsonmandelabay.gov.za/page/loadshedding

Schedule found to be missing by https://github.com/beyarkay/eskom-calendar/issues/78#issuecomment-1256098341

#### Technical details

Nelson mandela bay looks to be less well formatted than other areas, so adding schedules might take a while to get accurate. | main | schedule missing for nelson mandela bay schedules available here schedule found to be missing by technical details nelson mandela bay looks to be less well formatted than other areas so adding schedules might take a while to get accurate | 1 |

119,077 | 12,014,037,169 | IssuesEvent | 2020-04-10 10:20:05 | GTFB/Altrp | https://api.github.com/repos/GTFB/Altrp | closed | Разработка окна редактора | documentation | - [x] Создание новой страница для предосмотра шаблона для вставки в iframe

- [x] компоновка данных шаблона при перетаскивании виджета

| 1.0 | Разработка окна редактора - - [x] Создание новой страница для предосмотра шаблона для вставки в iframe

- [x] компоновка данных шаблона при перетаскивании виджета

| non_main | разработка окна редактора создание новой страница для предосмотра шаблона для вставки в iframe компоновка данных шаблона при перетаскивании виджета | 0 |

518 | 3,911,472,251 | IssuesEvent | 2016-04-20 06:05:21 | zendframework/zend-ldap | https://api.github.com/repos/zendframework/zend-ldap | closed | incorrect default value for 'port' option | awaiting maintainer response bug | Hi,

according to the documentation the [Server Options](http://framework.zend.com/manual/current/en/modules/zend.authentication.adapter.ldap.html#server-options) 'port' parameter say:

>The port on which the LDAP server is listening. If useSsl is TRUE, the default port value is 636. If useSsl is FALSE, the default port value is 389.

with the following code:

```

$options = [

'host' => 's0.foo.net',

// 'port' => '389',

'useStartTls' => 'false',

'accountDomainName' => 'foo.net',

'accountDomainNameShort' => 'FOO',

'accountCanonicalForm' => '4',

'baseDn' => 'CN=user1,DC=foo,DC=net',

'allowEmptyPassword' => false

]

$ldap = new Ldap($options);

$ldap->bind('myuser','mypwd')

```

i get the exception: `Failed to connect to LDAP server: s0.foo.net:0`

```

exception 'Zend\Ldap\Exception\LdapException' with message 'Failed to connect to LDAP server: s0.foo.net:0' in /home/dockerdev/app/vendor/zendframework/zend-ldap/src/Ldap.php:748

Stack trace:

#0 /home/dockerdev/app/vendor/zendframework/zend-ldap/src/Ldap.php(812): Zend\Ldap\Ldap->connect()

#1 /home/dockerdev/app/module/DipvvfModule/src/DipvvfModule/Check/LdapServiceCheck.php(57): Zend\Ldap\Ldap->bind('intranet@no.dip...', 'Intr4n3t101177!')

#2 /home/dockerdev/app/vendor/zendframework/zenddiagnostics/src/ZendDiagnostics/Runner/Runner.php(123): DipvvfModule\Check\LdapServiceCheck->check()

#3 /home/dockerdev/app/vendor/zendframework/zftool/src/ZFTool/Diagnostics/Runner.php(43): ZendDiagnostics\Runner\Runner->run(NULL)

#4 /home/dockerdev/app/vendor/zendframework/zftool/src/ZFTool/Controller/DiagnosticsController.php(234): ZFTool\Diagnostics\Runner->run()

#5 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Controller/AbstractActionController.php(82): ZFTool\Controller\DiagnosticsController->runAction()

#6 [internal function]: Zend\Mvc\Controller\AbstractActionController->onDispatch(Object(Zend\Mvc\MvcEvent))

#7 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(444): call_user_func(Array, Object(Zend\Mvc\MvcEvent))

#8 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(205): Zend\EventManager\EventManager->triggerListeners('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#9 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Controller/AbstractController.php(118): Zend\EventManager\EventManager->trigger('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#10 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/DispatchListener.php(93): Zend\Mvc\Controller\AbstractController->dispatch(Object(Zend\Console\Request), Object(Zend\Console\Response))

#11 [internal function]: Zend\Mvc\DispatchListener->onDispatch(Object(Zend\Mvc\MvcEvent))

#12 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(444): call_user_func(Array, Object(Zend\Mvc\MvcEvent))

#13 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(205): Zend\EventManager\EventManager->triggerListeners('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#14 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Application.php(314): Zend\EventManager\EventManager->trigger('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#15 /home/dockerdev/app/tools/zf.php(53): Zend\Mvc\Application->run()

#16 {main}

```

if we uncomment the 'port' option everything work fine.

| True | incorrect default value for 'port' option - Hi,

according to the documentation the [Server Options](http://framework.zend.com/manual/current/en/modules/zend.authentication.adapter.ldap.html#server-options) 'port' parameter say:

>The port on which the LDAP server is listening. If useSsl is TRUE, the default port value is 636. If useSsl is FALSE, the default port value is 389.

with the following code:

```

$options = [

'host' => 's0.foo.net',

// 'port' => '389',

'useStartTls' => 'false',

'accountDomainName' => 'foo.net',

'accountDomainNameShort' => 'FOO',

'accountCanonicalForm' => '4',

'baseDn' => 'CN=user1,DC=foo,DC=net',

'allowEmptyPassword' => false

]

$ldap = new Ldap($options);

$ldap->bind('myuser','mypwd')

```

i get the exception: `Failed to connect to LDAP server: s0.foo.net:0`

```

exception 'Zend\Ldap\Exception\LdapException' with message 'Failed to connect to LDAP server: s0.foo.net:0' in /home/dockerdev/app/vendor/zendframework/zend-ldap/src/Ldap.php:748

Stack trace:

#0 /home/dockerdev/app/vendor/zendframework/zend-ldap/src/Ldap.php(812): Zend\Ldap\Ldap->connect()

#1 /home/dockerdev/app/module/DipvvfModule/src/DipvvfModule/Check/LdapServiceCheck.php(57): Zend\Ldap\Ldap->bind('intranet@no.dip...', 'Intr4n3t101177!')

#2 /home/dockerdev/app/vendor/zendframework/zenddiagnostics/src/ZendDiagnostics/Runner/Runner.php(123): DipvvfModule\Check\LdapServiceCheck->check()

#3 /home/dockerdev/app/vendor/zendframework/zftool/src/ZFTool/Diagnostics/Runner.php(43): ZendDiagnostics\Runner\Runner->run(NULL)

#4 /home/dockerdev/app/vendor/zendframework/zftool/src/ZFTool/Controller/DiagnosticsController.php(234): ZFTool\Diagnostics\Runner->run()

#5 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Controller/AbstractActionController.php(82): ZFTool\Controller\DiagnosticsController->runAction()

#6 [internal function]: Zend\Mvc\Controller\AbstractActionController->onDispatch(Object(Zend\Mvc\MvcEvent))

#7 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(444): call_user_func(Array, Object(Zend\Mvc\MvcEvent))

#8 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(205): Zend\EventManager\EventManager->triggerListeners('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#9 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Controller/AbstractController.php(118): Zend\EventManager\EventManager->trigger('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#10 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/DispatchListener.php(93): Zend\Mvc\Controller\AbstractController->dispatch(Object(Zend\Console\Request), Object(Zend\Console\Response))

#11 [internal function]: Zend\Mvc\DispatchListener->onDispatch(Object(Zend\Mvc\MvcEvent))

#12 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(444): call_user_func(Array, Object(Zend\Mvc\MvcEvent))

#13 /home/dockerdev/app/vendor/zendframework/zend-eventmanager/src/EventManager.php(205): Zend\EventManager\EventManager->triggerListeners('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#14 /home/dockerdev/app/vendor/zendframework/zend-mvc/src/Application.php(314): Zend\EventManager\EventManager->trigger('dispatch', Object(Zend\Mvc\MvcEvent), Object(Closure))

#15 /home/dockerdev/app/tools/zf.php(53): Zend\Mvc\Application->run()

#16 {main}

```

if we uncomment the 'port' option everything work fine.

| main | incorrect default value for port option hi according to the documentation the port parameter say the port on which the ldap server is listening if usessl is true the default port value is if usessl is false the default port value is with the following code options host foo net port usestarttls false accountdomainname foo net accountdomainnameshort foo accountcanonicalform basedn cn dc foo dc net allowemptypassword false ldap new ldap options ldap bind myuser mypwd i get the exception failed to connect to ldap server foo net exception zend ldap exception ldapexception with message failed to connect to ldap server foo net in home dockerdev app vendor zendframework zend ldap src ldap php stack trace home dockerdev app vendor zendframework zend ldap src ldap php zend ldap ldap connect home dockerdev app module dipvvfmodule src dipvvfmodule check ldapservicecheck php zend ldap ldap bind intranet no dip home dockerdev app vendor zendframework zenddiagnostics src zenddiagnostics runner runner php dipvvfmodule check ldapservicecheck check home dockerdev app vendor zendframework zftool src zftool diagnostics runner php zenddiagnostics runner runner run null home dockerdev app vendor zendframework zftool src zftool controller diagnosticscontroller php zftool diagnostics runner run home dockerdev app vendor zendframework zend mvc src controller abstractactioncontroller php zftool controller diagnosticscontroller runaction zend mvc controller abstractactioncontroller ondispatch object zend mvc mvcevent home dockerdev app vendor zendframework zend eventmanager src eventmanager php call user func array object zend mvc mvcevent home dockerdev app vendor zendframework zend eventmanager src eventmanager php zend eventmanager eventmanager triggerlisteners dispatch object zend mvc mvcevent object closure home dockerdev app vendor zendframework zend mvc src controller abstractcontroller php zend eventmanager eventmanager trigger dispatch object zend mvc mvcevent object closure home dockerdev app vendor zendframework zend mvc src dispatchlistener php zend mvc controller abstractcontroller dispatch object zend console request object zend console response zend mvc dispatchlistener ondispatch object zend mvc mvcevent home dockerdev app vendor zendframework zend eventmanager src eventmanager php call user func array object zend mvc mvcevent home dockerdev app vendor zendframework zend eventmanager src eventmanager php zend eventmanager eventmanager triggerlisteners dispatch object zend mvc mvcevent object closure home dockerdev app vendor zendframework zend mvc src application php zend eventmanager eventmanager trigger dispatch object zend mvc mvcevent object closure home dockerdev app tools zf php zend mvc application run main if we uncomment the port option everything work fine | 1 |

1,602 | 6,572,385,913 | IssuesEvent | 2017-09-11 01:54:57 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | There are now three ovirt modules. Is there a path forward? | affects_2.3 bug_report cloud waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

cloud/misc/ovirt.py

cloud/misc/rhevm.py (new in 2.2)

cloud/ovirt/ovirt_vms.py (new in 2.2)

##### SUMMARY

There are now three modules for the same thing in extras. Ideally, there's some sort of path forward to a single module.

CC @TimothyVandenbrande @machacekondra @vincentvdk

| True | There are now three ovirt modules. Is there a path forward? - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

cloud/misc/ovirt.py

cloud/misc/rhevm.py (new in 2.2)

cloud/ovirt/ovirt_vms.py (new in 2.2)

##### SUMMARY

There are now three modules for the same thing in extras. Ideally, there's some sort of path forward to a single module.

CC @TimothyVandenbrande @machacekondra @vincentvdk

| main | there are now three ovirt modules is there a path forward issue type bug report component name cloud misc ovirt py cloud misc rhevm py new in cloud ovirt ovirt vms py new in summary there are now three modules for the same thing in extras ideally there s some sort of path forward to a single module cc timothyvandenbrande machacekondra vincentvdk | 1 |

3,710 | 15,210,475,553 | IssuesEvent | 2021-02-17 07:31:45 | skybasedb/skybase | https://api.github.com/repos/skybasedb/skybase | closed | Security: Merge v0.5-hotfix.1 into next | A-independent C-security D-server P-high S-waiting-on-maintainers | **Introduction**

As we notified via [this tweet](https://twitter.com/onskybase/status/1361193440558596097), a security vulnerability has been identified in v0.5.0 and a corresponding patch has been released.

**Patch link**: This patch can be downloaded by users for their platform from this link: https://dl.skybasedb.com/v0.5-hotfix.1/.

**Affected versions**

This vulnerability only affects v0.5.0 of the database server and all such deployments are immediately requested to deploy this hotfix.

**Pending actions**

We will be releasing a full length security and versioning policy soon. At the same time, we'll be releasing the security advisory and disclose the bug on the embargo date of 17 Feb 2020, 0630 UTC.

This issue exists to track the status of the successful merging of this hotfix into the primary branch. | True | Security: Merge v0.5-hotfix.1 into next - **Introduction**

As we notified via [this tweet](https://twitter.com/onskybase/status/1361193440558596097), a security vulnerability has been identified in v0.5.0 and a corresponding patch has been released.

**Patch link**: This patch can be downloaded by users for their platform from this link: https://dl.skybasedb.com/v0.5-hotfix.1/.

**Affected versions**

This vulnerability only affects v0.5.0 of the database server and all such deployments are immediately requested to deploy this hotfix.

**Pending actions**

We will be releasing a full length security and versioning policy soon. At the same time, we'll be releasing the security advisory and disclose the bug on the embargo date of 17 Feb 2020, 0630 UTC.

This issue exists to track the status of the successful merging of this hotfix into the primary branch. | main | security merge hotfix into next introduction as we notified via a security vulnerability has been identified in and a corresponding patch has been released patch link this patch can be downloaded by users for their platform from this link affected versions this vulnerability only affects of the database server and all such deployments are immediately requested to deploy this hotfix pending actions we will be releasing a full length security and versioning policy soon at the same time we ll be releasing the security advisory and disclose the bug on the embargo date of feb utc this issue exists to track the status of the successful merging of this hotfix into the primary branch | 1 |

4,883 | 25,046,635,289 | IssuesEvent | 2022-11-05 10:35:08 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | gas_transfer_coefficient does nothing | Maintainability/Hinders improvements Oversight | [Round ID]: # (If you discovered this issue from playing tgstation hosted servers:)

[Round ID]: # (**INCLUDE THE ROUND ID**)

[Round ID]: # (It can be found in the Status panel or retrieved from https://atlantaned.space/statbus/round.php ! The round id let's us look up valuable information and logs for the round the bug happened.)

[Testmerges]: # (If you believe the issue to be caused by a test merge [OOC tab -> Show Server Revision], report it in the pull request's comment section instead.)

[Reproduction]: # (Explain your issue in detail, including the steps to reproduce it. Issues without proper reproduction steps or explanation are open to being ignored/closed by maintainers.)

[For Admins]: # (Oddities induced by var-edits and other admin tools are not necessarily bugs. Verify that your issues occur under regular circumstances before reporting them.)

This variable does absolutely nothing, either we remove it or give a function, I'm more interested in the latter. | True | gas_transfer_coefficient does nothing - [Round ID]: # (If you discovered this issue from playing tgstation hosted servers:)

[Round ID]: # (**INCLUDE THE ROUND ID**)

[Round ID]: # (It can be found in the Status panel or retrieved from https://atlantaned.space/statbus/round.php ! The round id let's us look up valuable information and logs for the round the bug happened.)

[Testmerges]: # (If you believe the issue to be caused by a test merge [OOC tab -> Show Server Revision], report it in the pull request's comment section instead.)

[Reproduction]: # (Explain your issue in detail, including the steps to reproduce it. Issues without proper reproduction steps or explanation are open to being ignored/closed by maintainers.)

[For Admins]: # (Oddities induced by var-edits and other admin tools are not necessarily bugs. Verify that your issues occur under regular circumstances before reporting them.)

This variable does absolutely nothing, either we remove it or give a function, I'm more interested in the latter. | main | gas transfer coefficient does nothing if you discovered this issue from playing tgstation hosted servers include the round id it can be found in the status panel or retrieved from the round id let s us look up valuable information and logs for the round the bug happened if you believe the issue to be caused by a test merge report it in the pull request s comment section instead explain your issue in detail including the steps to reproduce it issues without proper reproduction steps or explanation are open to being ignored closed by maintainers oddities induced by var edits and other admin tools are not necessarily bugs verify that your issues occur under regular circumstances before reporting them this variable does absolutely nothing either we remove it or give a function i m more interested in the latter | 1 |

661 | 4,179,152,437 | IssuesEvent | 2016-06-22 09:42:28 | Particular/NServiceBus.SqlServer | https://api.github.com/repos/Particular/NServiceBus.SqlServer | opened | Create a AWS lab environment for SQL Server testing | State: In Progress - Maintainer Prio | * 3 VMs, each with SQL Server instance

* DTC configured between all 3 VMS | True | Create a AWS lab environment for SQL Server testing - * 3 VMs, each with SQL Server instance

* DTC configured between all 3 VMS | main | create a aws lab environment for sql server testing vms each with sql server instance dtc configured between all vms | 1 |

2,468 | 8,639,903,358 | IssuesEvent | 2018-11-23 22:33:07 | F5OEO/rpitx | https://api.github.com/repos/F5OEO/rpitx | closed | cover dcf77 | V1 related (not maintained) | Hi,

it is possible extend frequency to 77 Khz ?

If yes,

I like to try something like https://github.com/CodingGhost/DCF77-Transmitter/blob/master/DCF77_Protocoll_.ino

With some hint I can do the job.

| True | cover dcf77 - Hi,

it is possible extend frequency to 77 Khz ?

If yes,

I like to try something like https://github.com/CodingGhost/DCF77-Transmitter/blob/master/DCF77_Protocoll_.ino

With some hint I can do the job.

| main | cover hi it is possible extend frequency to khz if yes i like to try something like with some hint i can do the job | 1 |

92,681 | 3,872,900,225 | IssuesEvent | 2016-04-11 15:15:54 | jcgregorio/httplib2 | https://api.github.com/repos/jcgregorio/httplib2 | closed | HEAD requests with redirects become GETs with cache | bug imported Priority-Medium | _From [JNR...@gmail.com](https://code.google.com/u/114612663764561724112/) on August 14, 2011 09:34:20_

What steps will reproduce the problem? 1. http = httplib2.Http(cache='SOME_LOCATION')

2. h, r = http.request(' http://bit.ly/qpNbiv' , method='HEAD')

3. len(r)

186754 What is the expected output? What do you see instead? I expect a zero-length result What version of the product are you using? On what operating system? 0.7.1 Please provide any additional information below. This looks to me like it may be bug #123 resurfacing. I actually struggled for a few minutes trying to decide whether to comment there, or open a new bug.

I've attached an interpreter session log with debugging enabled.

Thanks,

James

**Attachment:** [test.txt](http://code.google.com/p/httplib2/issues/detail?id=163)

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=163_ | 1.0 | HEAD requests with redirects become GETs with cache - _From [JNR...@gmail.com](https://code.google.com/u/114612663764561724112/) on August 14, 2011 09:34:20_

What steps will reproduce the problem? 1. http = httplib2.Http(cache='SOME_LOCATION')

2. h, r = http.request(' http://bit.ly/qpNbiv' , method='HEAD')

3. len(r)

186754 What is the expected output? What do you see instead? I expect a zero-length result What version of the product are you using? On what operating system? 0.7.1 Please provide any additional information below. This looks to me like it may be bug #123 resurfacing. I actually struggled for a few minutes trying to decide whether to comment there, or open a new bug.

I've attached an interpreter session log with debugging enabled.

Thanks,

James

**Attachment:** [test.txt](http://code.google.com/p/httplib2/issues/detail?id=163)

_Original issue: http://code.google.com/p/httplib2/issues/detail?id=163_ | non_main | head requests with redirects become gets with cache from on august what steps will reproduce the problem http http cache some location h r http request method head len r what is the expected output what do you see instead i expect a zero length result what version of the product are you using on what operating system please provide any additional information below this looks to me like it may be bug resurfacing i actually struggled for a few minutes trying to decide whether to comment there or open a new bug i ve attached an interpreter session log with debugging enabled thanks james attachment original issue | 0 |

2,677 | 9,215,216,810 | IssuesEvent | 2019-03-11 01:57:56 | coq-community/manifesto | https://api.github.com/repos/coq-community/manifesto | opened | Move CertiCrypt to Coq-community | maintainer-wanted move-project | ## Move a project to coq-community ##

**Project name:** CertiCrypt

**Initial author(s):* Gilles Barthe, Benjamin Grégoire, Federico Olmedo, Santiago Zanella-Béguelin, Daniel Hedin, and Sylvain Heraud.

**Current URL:** https://github.com/EasyCrypt/certicrypt

**Kind:** pure Coq library

**License:** CeCILL-B

**Description:** A framework that enables construction and verification of code-based proofs about cryptographic systems.

**Status:** unmaintained

**New maintainer:** looking for a volunteer

More about the project: http://certicrypt.gforge.inria.fr | True | Move CertiCrypt to Coq-community - ## Move a project to coq-community ##

**Project name:** CertiCrypt

**Initial author(s):* Gilles Barthe, Benjamin Grégoire, Federico Olmedo, Santiago Zanella-Béguelin, Daniel Hedin, and Sylvain Heraud.

**Current URL:** https://github.com/EasyCrypt/certicrypt

**Kind:** pure Coq library

**License:** CeCILL-B

**Description:** A framework that enables construction and verification of code-based proofs about cryptographic systems.

**Status:** unmaintained

**New maintainer:** looking for a volunteer

More about the project: http://certicrypt.gforge.inria.fr | main | move certicrypt to coq community move a project to coq community project name certicrypt initial author s gilles barthe benjamin grégoire federico olmedo santiago zanella béguelin daniel hedin and sylvain heraud current url kind pure coq library license cecill b description a framework that enables construction and verification of code based proofs about cryptographic systems status unmaintained new maintainer looking for a volunteer more about the project | 1 |

4,423 | 22,783,138,119 | IssuesEvent | 2022-07-08 23:03:04 | Clever-ISA/Clever-ISA | https://api.github.com/repos/Clever-ISA/Clever-ISA | closed | Include Vector Shuffle instruction | X-vector S-blocked-on-maintainer I-enhancement V-1.0 | X-vector should include a shuffle instruction to allow both static and dynamic reordering of vector elements. | True | Include Vector Shuffle instruction - X-vector should include a shuffle instruction to allow both static and dynamic reordering of vector elements. | main | include vector shuffle instruction x vector should include a shuffle instruction to allow both static and dynamic reordering of vector elements | 1 |

3,771 | 15,835,980,448 | IssuesEvent | 2021-04-06 18:42:04 | MDAnalysis/mdanalysis | https://api.github.com/repos/MDAnalysis/mdanalysis | closed | discontinue Python 2 support | maintainability upstream | Python 2 reaches end of life on **1 January, 2020**, according to [PEP 373](https://www.python.org/dev/peps/pep-0373/) and https://github.com/python/devguide/pull/344 based on https://mail.python.org/pipermail/python-dev/2018-March/152348.html.

Many of our dependencies (notably numpy, see [Plan for dropping Python 2.7 support](https://docs.scipy.org/doc/numpy-1.14.0/neps/dropping-python2.7-proposal.html)) have ceased Python 2.7 support in new releases or will also drop Python 2.7 in 2020.

I know that science is rolling slowly and surely some scientific projects will continue with Python 2.7 beyond 2020. MDAnalysis has been supporting Python 2 and Python 3 now for a while. However, given how precious developer time is, I think **we also need to decide that we will stop caring for 2.7 after the official Python 2.7 drop date.**

We need to decide how to do this. I am opening this issue with the intent that it gets edited into an actionable list of items.

| True | discontinue Python 2 support - Python 2 reaches end of life on **1 January, 2020**, according to [PEP 373](https://www.python.org/dev/peps/pep-0373/) and https://github.com/python/devguide/pull/344 based on https://mail.python.org/pipermail/python-dev/2018-March/152348.html.

Many of our dependencies (notably numpy, see [Plan for dropping Python 2.7 support](https://docs.scipy.org/doc/numpy-1.14.0/neps/dropping-python2.7-proposal.html)) have ceased Python 2.7 support in new releases or will also drop Python 2.7 in 2020.

I know that science is rolling slowly and surely some scientific projects will continue with Python 2.7 beyond 2020. MDAnalysis has been supporting Python 2 and Python 3 now for a while. However, given how precious developer time is, I think **we also need to decide that we will stop caring for 2.7 after the official Python 2.7 drop date.**

We need to decide how to do this. I am opening this issue with the intent that it gets edited into an actionable list of items.

| main | discontinue python support python reaches end of life on january according to and based on many of our dependencies notably numpy see have ceased python support in new releases or will also drop python in i know that science is rolling slowly and surely some scientific projects will continue with python beyond mdanalysis has been supporting python and python now for a while however given how precious developer time is i think we also need to decide that we will stop caring for after the official python drop date we need to decide how to do this i am opening this issue with the intent that it gets edited into an actionable list of items | 1 |

28,623 | 4,424,492,026 | IssuesEvent | 2016-08-16 12:45:54 | centreon/centreon | https://api.github.com/repos/centreon/centreon | closed | [Config Broker] Command file path is not display | BetaTest Kind/Bug Status/Implemented | ---------------------------------------------------

BUG REPORT INFORMATION

---------------------------------------------------

* Centreon web 2.7.x/2.8.x

**Steps to reproduce the issue:**

1. Define command file in "Administration > Parameters > Monitoring" and set value for "Centreon Broker socket path"

2. Edit a Centreon Broker configuration, in "General" tab, command_file field is empty. | 1.0 | [Config Broker] Command file path is not display - ---------------------------------------------------

BUG REPORT INFORMATION

---------------------------------------------------

* Centreon web 2.7.x/2.8.x

**Steps to reproduce the issue:**

1. Define command file in "Administration > Parameters > Monitoring" and set value for "Centreon Broker socket path"

2. Edit a Centreon Broker configuration, in "General" tab, command_file field is empty. | non_main | command file path is not display bug report information centreon web x x steps to reproduce the issue define command file in administration parameters monitoring and set value for centreon broker socket path edit a centreon broker configuration in general tab command file field is empty | 0 |

30,358 | 7,191,215,661 | IssuesEvent | 2018-02-02 20:07:51 | Serrin/Celestra | https://api.github.com/repos/Serrin/Celestra | closed | Add function isElement in v1.18.0 | closed - done or fixed code documentation type - enhancement | ```

function isElement (v) {

return typeof v === "object" && v.nodeType === 1;

}

``` | 1.0 | Add function isElement in v1.18.0 - ```

function isElement (v) {

return typeof v === "object" && v.nodeType === 1;

}

``` | non_main | add function iselement in function iselement v return typeof v object v nodetype | 0 |

4,244 | 21,040,677,852 | IssuesEvent | 2022-03-31 12:03:12 | aws/serverless-application-model | https://api.github.com/repos/aws/serverless-application-model | closed | Custom Event Bus with Schedule Event Type | type/feature stage/pm-review maintainer/need-followup | <!--

Before reporting a new issue, make sure we don't have any duplicates already open or closed by

searching the issues list. If there is a duplicate, re-open or add a comment to the

existing issue instead of creating a new one. If you are reporting a bug,

make sure to include relevant information asked below to help with debugging.

## GENERAL HELP QUESTIONS ##

Github Issues is for bug reports and feature requests. If you have general support

questions, the following locations are a good place:

- Post a question in StackOverflow with "aws-sam" tag

-->

**Description:**

Schedule event type does not support custom event bus

https://docs.aws.amazon.com/serverless-application-model/latest/developerguide/sam-property-function-schedule.html

**Steps to reproduce the issue:**

1. Tried to set EventBusName property with Schedule event and the transform failed to deploy

**Observed result:**

Deploy fails with 'property EventBusName not defined for resource of type Schedule'

**Expected result:**

Expected to be able to specify custom bus name with Schedule event (similar to the EventBridge rule type)

| True | Custom Event Bus with Schedule Event Type - <!--

Before reporting a new issue, make sure we don't have any duplicates already open or closed by

searching the issues list. If there is a duplicate, re-open or add a comment to the

existing issue instead of creating a new one. If you are reporting a bug,

make sure to include relevant information asked below to help with debugging.

## GENERAL HELP QUESTIONS ##

Github Issues is for bug reports and feature requests. If you have general support

questions, the following locations are a good place:

- Post a question in StackOverflow with "aws-sam" tag

-->

**Description:**

Schedule event type does not support custom event bus

https://docs.aws.amazon.com/serverless-application-model/latest/developerguide/sam-property-function-schedule.html

**Steps to reproduce the issue:**

1. Tried to set EventBusName property with Schedule event and the transform failed to deploy

**Observed result:**

Deploy fails with 'property EventBusName not defined for resource of type Schedule'

**Expected result:**

Expected to be able to specify custom bus name with Schedule event (similar to the EventBridge rule type)

| main | custom event bus with schedule event type before reporting a new issue make sure we don t have any duplicates already open or closed by searching the issues list if there is a duplicate re open or add a comment to the existing issue instead of creating a new one if you are reporting a bug make sure to include relevant information asked below to help with debugging general help questions github issues is for bug reports and feature requests if you have general support questions the following locations are a good place post a question in stackoverflow with aws sam tag description schedule event type does not support custom event bus steps to reproduce the issue tried to set eventbusname property with schedule event and the transform failed to deploy observed result deploy fails with property eventbusname not defined for resource of type schedule expected result expected to be able to specify custom bus name with schedule event similar to the eventbridge rule type | 1 |

5,429 | 27,239,589,497 | IssuesEvent | 2023-02-21 19:09:29 | mozilla/foundation.mozilla.org | https://api.github.com/repos/mozilla/foundation.mozilla.org | closed | Upgrade Wagtail to 3.0 | engineering maintain | ## Description

Goal of this ticket is to upgrade Wagtail to version 3.0. This ticket is a follow up to the Spike #8534.

Before we can upgrade Wagtail, we need to address the following tickets:

- [x] Upgrade `wagtail-localize-git` to 0.13 (#9675)

- [x] Upgrade `wagtail-inventory` to 1.6 (#9676)

- [x] Remove Airtable integration (#9147)

During upgrade we need to address the following:

- Changes to module paths.

- Basically all imports to core wagtail modules need to be updated.

- There is a management command provided to update all import paths.

- Removal of special-purpose field panel types

- All special field panels (like `RichTextFieldPanel` , `StreamFieldPanel`, etc.) can be replaced with the basic `FieldPanel`. The detection now happens automatically.

- Use `use_json_field` argument for `StreamField`

- All uses of `StreamField`should be updated to include the argument `use_json_field=True`.

- Running the migrations might take a while, because of some restructuring internally. We will see this on staging, since staging has quite similar data as production.

See also: https://docs.wagtail.org/en/stable/releases/3.0.html

## Dev notes

- [x] Create upgrade branch from main

- [x] Check your project’s console output for any deprecation warnings, and fix them where necessary `python -Wa manage.py check`

- [x] Check the new version’s release notes

- [x] Check the compatible Django / Python versions [table](https://docs.wagtail.io/en/stable/releases/upgrading.html#compatible-django-python-versions), for any dependencies that need upgrading first;

- [x] Upgrade supporting requirements (Python, Django) if necessary

- [x] Upgrade Wagtail

- [x] Make new migration (might result in none).

- [x] Migrate database changes (locally)

- [x] Implement needed changes from upgrade considerations (see above)

- [x] Perform testing

- [x] Run test suites

- [ ] Smoke test site / testing journeys (manually on the site)

- [ ] Smoke test admin (Click around in the admin to see if anything is broken)

- [ ] Check for new deprecations `python -Wa manage.py check` and fix if necessary

## Acceptance criteria

- [ ] Wagtail is upgraded to version 3.0

- [ ] ticket for Wagtail 4.1 upgrade is created | True | Upgrade Wagtail to 3.0 - ## Description

Goal of this ticket is to upgrade Wagtail to version 3.0. This ticket is a follow up to the Spike #8534.

Before we can upgrade Wagtail, we need to address the following tickets:

- [x] Upgrade `wagtail-localize-git` to 0.13 (#9675)

- [x] Upgrade `wagtail-inventory` to 1.6 (#9676)

- [x] Remove Airtable integration (#9147)

During upgrade we need to address the following:

- Changes to module paths.

- Basically all imports to core wagtail modules need to be updated.

- There is a management command provided to update all import paths.

- Removal of special-purpose field panel types

- All special field panels (like `RichTextFieldPanel` , `StreamFieldPanel`, etc.) can be replaced with the basic `FieldPanel`. The detection now happens automatically.

- Use `use_json_field` argument for `StreamField`

- All uses of `StreamField`should be updated to include the argument `use_json_field=True`.

- Running the migrations might take a while, because of some restructuring internally. We will see this on staging, since staging has quite similar data as production.

See also: https://docs.wagtail.org/en/stable/releases/3.0.html

## Dev notes

- [x] Create upgrade branch from main

- [x] Check your project’s console output for any deprecation warnings, and fix them where necessary `python -Wa manage.py check`

- [x] Check the new version’s release notes

- [x] Check the compatible Django / Python versions [table](https://docs.wagtail.io/en/stable/releases/upgrading.html#compatible-django-python-versions), for any dependencies that need upgrading first;

- [x] Upgrade supporting requirements (Python, Django) if necessary

- [x] Upgrade Wagtail

- [x] Make new migration (might result in none).

- [x] Migrate database changes (locally)

- [x] Implement needed changes from upgrade considerations (see above)

- [x] Perform testing

- [x] Run test suites

- [ ] Smoke test site / testing journeys (manually on the site)

- [ ] Smoke test admin (Click around in the admin to see if anything is broken)

- [ ] Check for new deprecations `python -Wa manage.py check` and fix if necessary

## Acceptance criteria

- [ ] Wagtail is upgraded to version 3.0

- [ ] ticket for Wagtail 4.1 upgrade is created | main | upgrade wagtail to description goal of this ticket is to upgrade wagtail to version this ticket is a follow up to the spike before we can upgrade wagtail we need to address the following tickets upgrade wagtail localize git to upgrade wagtail inventory to remove airtable integration during upgrade we need to address the following changes to module paths basically all imports to core wagtail modules need to be updated there is a management command provided to update all import paths removal of special purpose field panel types all special field panels like richtextfieldpanel streamfieldpanel etc can be replaced with the basic fieldpanel the detection now happens automatically use use json field argument for streamfield all uses of streamfield should be updated to include the argument use json field true running the migrations might take a while because of some restructuring internally we will see this on staging since staging has quite similar data as production see also dev notes create upgrade branch from main check your project’s console output for any deprecation warnings and fix them where necessary python wa manage py check check the new version’s release notes check the compatible django python versions for any dependencies that need upgrading first upgrade supporting requirements python django if necessary upgrade wagtail make new migration might result in none migrate database changes locally implement needed changes from upgrade considerations see above perform testing run test suites smoke test site testing journeys manually on the site smoke test admin click around in the admin to see if anything is broken check for new deprecations python wa manage py check and fix if necessary acceptance criteria wagtail is upgraded to version ticket for wagtail upgrade is created | 1 |

2,676 | 9,215,137,036 | IssuesEvent | 2019-03-11 01:28:17 | coq-community/manifesto | https://api.github.com/repos/coq-community/manifesto | opened | Move hybrid to Coq-community | maintainer-wanted move-project | ## Move a project to coq-community ##

**Project name:** hybrid

**Initial author(s):** Herman Geuvers, Dan Synek, Adam Koprowski, and Eelis van der Weegen

**Current URL:** https://github.com/Eelis/hybrid

**Kind:** Coq library and extractable program

**License:** unknown

**Description:** A prover for hybrid systems, formalized using CoRN, MathClasses, and CoLoR.

**Status:** unmaintained since 2012

**New maintainer:** looking for a volunteer