Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

69,584 | 22,550,278,395 | IssuesEvent | 2022-06-27 04:19:42 | beefproject/beef | https://api.github.com/repos/beefproject/beef | reopened | Can't hook from within Firefox chrome zone | Defect Core Priority Low medium effort | A BeEF hook injected into a Firefox extension will not hook. Fix this.

Blocked on #875

| 1.0 | Can't hook from within Firefox chrome zone - A BeEF hook injected into a Firefox extension will not hook. Fix this.

Blocked on #875

| non_main | can t hook from within firefox chrome zone a beef hook injected into a firefox extension will not hook fix this blocked on | 0 |

946 | 4,677,105,305 | IssuesEvent | 2016-10-07 14:10:10 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | firewalld fails, if firewall is not started | affects_2.1 feature_idea waiting_on_maintainer | It would be desired that the ansible firewalld module can add a rule even if the firewall is down/not started at the moment.

Here is my use case:

```

-bash-4.2# systemctl stop firewalld

-bash-4.2# ansible-playbook ~/provision/test.yml -i ~/provision/hosts --connection=local

PLAY [local] ******************************************************************

GATHERING FACTS ***************************************************************

ok: [localhost]

TASK: [test | Firewall settings] **********************************************

failed: [localhost] => {"failed": true, "parsed": false}

failed=True msg='failed to connect to the firewalld daemon'

FATAL: all hosts have already failed -- aborting

PLAY RECAP ********************************************************************

to retry, use: --limit @/root/test.retry

localhost : ok=1 changed=0 unreachable=0 failed=1

```

The task looks like this:

```

- name: Firewall settings

firewalld: zone=public port=5000/tcp permanent=true state=enabled

``` | True | firewalld fails, if firewall is not started - It would be desired that the ansible firewalld module can add a rule even if the firewall is down/not started at the moment.

Here is my use case:

```

-bash-4.2# systemctl stop firewalld

-bash-4.2# ansible-playbook ~/provision/test.yml -i ~/provision/hosts --connection=local

PLAY [local] ******************************************************************

GATHERING FACTS ***************************************************************

ok: [localhost]

TASK: [test | Firewall settings] **********************************************

failed: [localhost] => {"failed": true, "parsed": false}

failed=True msg='failed to connect to the firewalld daemon'

FATAL: all hosts have already failed -- aborting

PLAY RECAP ********************************************************************

to retry, use: --limit @/root/test.retry

localhost : ok=1 changed=0 unreachable=0 failed=1

```

The task looks like this:

```

- name: Firewall settings

firewalld: zone=public port=5000/tcp permanent=true state=enabled

``` | main | firewalld fails if firewall is not started it would be desired that the ansible firewalld module can add a rule even if the firewall is down not started at the moment here is my use case bash systemctl stop firewalld bash ansible playbook provision test yml i provision hosts connection local play gathering facts ok task failed failed true parsed false failed true msg failed to connect to the firewalld daemon fatal all hosts have already failed aborting play recap to retry use limit root test retry localhost ok changed unreachable failed the task looks like this name firewall settings firewalld zone public port tcp permanent true state enabled | 1 |

112,538 | 24,290,842,896 | IssuesEvent | 2022-09-29 05:45:47 | heclak/community-a4e-c | https://api.github.com/repos/heclak/community-a4e-c | closed | S_EVENT_SHOT , event id == 1 does not trigger/work in multiplayer server | Feature Request Code/LUA Need Research Multiplayer good first issue | S_EVENT_SHOT , event id == 1 does not trigger/work in multiplayer server for A-4 only, but works in single player.

**To Reproduce**

Steps to reproduce the behavior:

Hookup S_EVENT_SHOT or event id == 1 script (MIST required) for event setup.

- Fire any weapon in multiplayer standalone server and event id == 1 will no trigger/work.

**Expected behavior**

S_EVENT_SHOT or event id == 1 is supposed to trigger and upon event whatever action you set will execute

**Software Information (please complete the following information):**

- DCS Version: [e.g. 2.7.17.29140 Open Beta Dedicated Server]

- A-4E (all versions ... tried multiple versions)

**Additional context**

I tried other events event id == 2, 14 and they all work in multiplayer.

| 1.0 | S_EVENT_SHOT , event id == 1 does not trigger/work in multiplayer server - S_EVENT_SHOT , event id == 1 does not trigger/work in multiplayer server for A-4 only, but works in single player.

**To Reproduce**

Steps to reproduce the behavior:

Hookup S_EVENT_SHOT or event id == 1 script (MIST required) for event setup.

- Fire any weapon in multiplayer standalone server and event id == 1 will no trigger/work.

**Expected behavior**

S_EVENT_SHOT or event id == 1 is supposed to trigger and upon event whatever action you set will execute

**Software Information (please complete the following information):**

- DCS Version: [e.g. 2.7.17.29140 Open Beta Dedicated Server]

- A-4E (all versions ... tried multiple versions)

**Additional context**

I tried other events event id == 2, 14 and they all work in multiplayer.

| non_main | s event shot event id does not trigger work in multiplayer server s event shot event id does not trigger work in multiplayer server for a only but works in single player to reproduce steps to reproduce the behavior hookup s event shot or event id script mist required for event setup fire any weapon in multiplayer standalone server and event id will no trigger work expected behavior s event shot or event id is supposed to trigger and upon event whatever action you set will execute software information please complete the following information dcs version a all versions tried multiple versions additional context i tried other events event id and they all work in multiplayer | 0 |

604,614 | 18,715,526,438 | IssuesEvent | 2021-11-03 03:43:47 | dotnet/machinelearning-modelbuilder | https://api.github.com/repos/dotnet/machinelearning-modelbuilder | closed | The JSON-RPC connection with the remote party was lost before the request could complete. | Priority:0 Ship Blocker | **Model Builder Version**: Latest main

**Visual Studion Version**: _In which Visual Studio version was the bug encountered?_

**Bug description**

_A clear and concise description of what the bug is._

**Steps to Reproduce**

1. _List the minimal steps required to reproduce the bug._

2. _Thanks for reporting!_

**Expected Experience**

_A description of what you expected to happen. If applicable, add screenshots or "Machine Learning" Output Logs to help explain what you expected._

**Actual Experience**

_A description of what actually happens. If applicable, add screenshots or "Machine Learning" Output Logs to help explain what actually happened._

**Additional Context*

This issue happens for Image classification, OD scenarios, and Recommendation scenarios

| 1.0 | The JSON-RPC connection with the remote party was lost before the request could complete. - **Model Builder Version**: Latest main

**Visual Studion Version**: _In which Visual Studio version was the bug encountered?_

**Bug description**

_A clear and concise description of what the bug is._

**Steps to Reproduce**

1. _List the minimal steps required to reproduce the bug._

2. _Thanks for reporting!_

**Expected Experience**

_A description of what you expected to happen. If applicable, add screenshots or "Machine Learning" Output Logs to help explain what you expected._

**Actual Experience**

_A description of what actually happens. If applicable, add screenshots or "Machine Learning" Output Logs to help explain what actually happened._

**Additional Context*

This issue happens for Image classification, OD scenarios, and Recommendation scenarios

| non_main | the json rpc connection with the remote party was lost before the request could complete model builder version latest main visual studion version in which visual studio version was the bug encountered bug description a clear and concise description of what the bug is steps to reproduce list the minimal steps required to reproduce the bug thanks for reporting expected experience a description of what you expected to happen if applicable add screenshots or machine learning output logs to help explain what you expected actual experience description of what actually happens if applicable add screenshots or machine learning output logs to help explain what actually happened additional context this issue happens for image classification od scenarios and recommendation scenarios | 0 |

74,613 | 20,253,316,728 | IssuesEvent | 2022-02-14 20:13:09 | bitcoin/bitcoin | https://api.github.com/repos/bitcoin/bitcoin | closed | ARMv8 sha2 support | Build system Android | I assume #13191 make this less hard, although benefits may be small. Brief [chat on IRC](https://botbot.me/freenode/bitcoin-core-dev/2018-06-05/?msg=100812298&page=2):

Me

> While trying to get bitcoind to run on one the many *-pi's out there, I wondered: has anyone ever tried to design a system on chip that's optimal for this?

@laanwj:

> provoostenator: you mean secp256k1 specific instructions? people have been thingking about it, could be done on a FPGA, but I don't think it's ever been done

Me:

> echeveria seems to believe sha256 is the bottleneck (see #bitcoin), but also that anything outside the CPU would be too slow I/O to be worh it.

echeveria:

> I looked at the Zynq combination FPGA / ARM devices a long time ago and came to the conclusion that the copy time even on the shared memory bus between the two chips would make it non viable. I'd enjoy being proved wrong though.

laanwj:

> provoostenator: well sha256 extension instructions exist for ARM (supported on newer SoCs), I intend to add support for them at some point. But I would be surprised if that is the biggest bottleneck in validation.

echeveria:

> yes, if there is high-bandwidth communication between two chpis that tends to dominate. I was > thinking of, say, RiscV extensions for secp256k1 validation so it's in-core.

> for ARM it's somewhat unlikely at this time

I have (at least) three devices to test this on, which all have 4 to 8 ARM Cortex-A53 cores, and 1- 4 GB RAM: an Android Xiaomi A1 ([ABCore](https://github.com/greenaddress/abcore) syncs the whole chain in less than a month), a NanoPi Neo Plus and a Khadas VIM2 Max.

Maybe this c++ code is useful: https://github.com/randombit/botan/issues/841 | 1.0 | ARMv8 sha2 support - I assume #13191 make this less hard, although benefits may be small. Brief [chat on IRC](https://botbot.me/freenode/bitcoin-core-dev/2018-06-05/?msg=100812298&page=2):

Me

> While trying to get bitcoind to run on one the many *-pi's out there, I wondered: has anyone ever tried to design a system on chip that's optimal for this?

@laanwj:

> provoostenator: you mean secp256k1 specific instructions? people have been thingking about it, could be done on a FPGA, but I don't think it's ever been done

Me:

> echeveria seems to believe sha256 is the bottleneck (see #bitcoin), but also that anything outside the CPU would be too slow I/O to be worh it.

echeveria:

> I looked at the Zynq combination FPGA / ARM devices a long time ago and came to the conclusion that the copy time even on the shared memory bus between the two chips would make it non viable. I'd enjoy being proved wrong though.

laanwj:

> provoostenator: well sha256 extension instructions exist for ARM (supported on newer SoCs), I intend to add support for them at some point. But I would be surprised if that is the biggest bottleneck in validation.

echeveria:

> yes, if there is high-bandwidth communication between two chpis that tends to dominate. I was > thinking of, say, RiscV extensions for secp256k1 validation so it's in-core.

> for ARM it's somewhat unlikely at this time

I have (at least) three devices to test this on, which all have 4 to 8 ARM Cortex-A53 cores, and 1- 4 GB RAM: an Android Xiaomi A1 ([ABCore](https://github.com/greenaddress/abcore) syncs the whole chain in less than a month), a NanoPi Neo Plus and a Khadas VIM2 Max.

Maybe this c++ code is useful: https://github.com/randombit/botan/issues/841 | non_main | support i assume make this less hard although benefits may be small brief me while trying to get bitcoind to run on one the many pi s out there i wondered has anyone ever tried to design a system on chip that s optimal for this laanwj provoostenator you mean specific instructions people have been thingking about it could be done on a fpga but i don t think it s ever been done me echeveria seems to believe is the bottleneck see bitcoin but also that anything outside the cpu would be too slow i o to be worh it echeveria i looked at the zynq combination fpga arm devices a long time ago and came to the conclusion that the copy time even on the shared memory bus between the two chips would make it non viable i d enjoy being proved wrong though laanwj provoostenator well extension instructions exist for arm supported on newer socs i intend to add support for them at some point but i would be surprised if that is the biggest bottleneck in validation echeveria yes if there is high bandwidth communication between two chpis that tends to dominate i was thinking of say riscv extensions for validation so it s in core for arm it s somewhat unlikely at this time i have at least three devices to test this on which all have to arm cortex cores and gb ram an android xiaomi syncs the whole chain in less than a month a nanopi neo plus and a khadas max maybe this c code is useful | 0 |

3,348 | 12,977,722,539 | IssuesEvent | 2020-07-21 21:12:49 | PowerShell/PowerShell | https://api.github.com/repos/PowerShell/PowerShell | closed | RunspaceInvoke is missing from latest release | Area-SDK Issue-Question Review - Maintainer | ILSpy on the latest release:

https://www.nuget.org/packages/System.Management.Automation/

shows RunspaceInvoke missing

References:

https://docs.microsoft.com/en-us/dotnet/api/system.management.automation.runspaceinvoke?view=powershellsdk-1.1.0

https://github.com/PowerShell/PowerShell/blob/master/src/System.Management.Automation/engine/hostifaces/RunspaceInvoke.cs | True | RunspaceInvoke is missing from latest release - ILSpy on the latest release:

https://www.nuget.org/packages/System.Management.Automation/

shows RunspaceInvoke missing

References:

https://docs.microsoft.com/en-us/dotnet/api/system.management.automation.runspaceinvoke?view=powershellsdk-1.1.0

https://github.com/PowerShell/PowerShell/blob/master/src/System.Management.Automation/engine/hostifaces/RunspaceInvoke.cs | main | runspaceinvoke is missing from latest release ilspy on the latest release shows runspaceinvoke missing references | 1 |

5,041 | 25,841,357,763 | IssuesEvent | 2022-12-13 00:47:43 | ElasticPerch/websocket | https://api.github.com/repos/ElasticPerch/websocket | opened | [bug] Manually passed `Cookie` header overrides `http.CookieJar` cookies | waiting on new maintainer feature request | From websocket created by [yauheni-chaburanau](https://github.com/yauheni-chaburanau): gorilla/websocket#597

**Description**

If you manually pass `Cookie` header in `DialContext(..., http.Header)`, cookies from `Dialer.Jar` will be overwritten.

**Steps to Reproduce**

```go

dialer := websocket.Dialer{

Jar: jar,

}

header := http.Header{}

header.Set("Cookie", "some_cookie_name=some_cookie_value")

... = dialer.DialContext(ctx, url, header)

```

**Possible reason**

From the first look I would say that this is happening because [the part of code which is responsible for setting up all the passed headers](https://github.com/gorilla/websocket/blob/master/client.go#L207) ignores [already applied `Cookie` header from `http.CookieJar`](https://github.com/gorilla/websocket/blob/master/client.go#L190). | True | [bug] Manually passed `Cookie` header overrides `http.CookieJar` cookies - From websocket created by [yauheni-chaburanau](https://github.com/yauheni-chaburanau): gorilla/websocket#597

**Description**

If you manually pass `Cookie` header in `DialContext(..., http.Header)`, cookies from `Dialer.Jar` will be overwritten.

**Steps to Reproduce**

```go

dialer := websocket.Dialer{

Jar: jar,

}

header := http.Header{}

header.Set("Cookie", "some_cookie_name=some_cookie_value")

... = dialer.DialContext(ctx, url, header)

```

**Possible reason**

From the first look I would say that this is happening because [the part of code which is responsible for setting up all the passed headers](https://github.com/gorilla/websocket/blob/master/client.go#L207) ignores [already applied `Cookie` header from `http.CookieJar`](https://github.com/gorilla/websocket/blob/master/client.go#L190). | main | manually passed cookie header overrides http cookiejar cookies from websocket created by gorilla websocket description if you manually pass cookie header in dialcontext http header cookies from dialer jar will be overwritten steps to reproduce go dialer websocket dialer jar jar header http header header set cookie some cookie name some cookie value dialer dialcontext ctx url header possible reason from the first look i would say that this is happening because ignores | 1 |

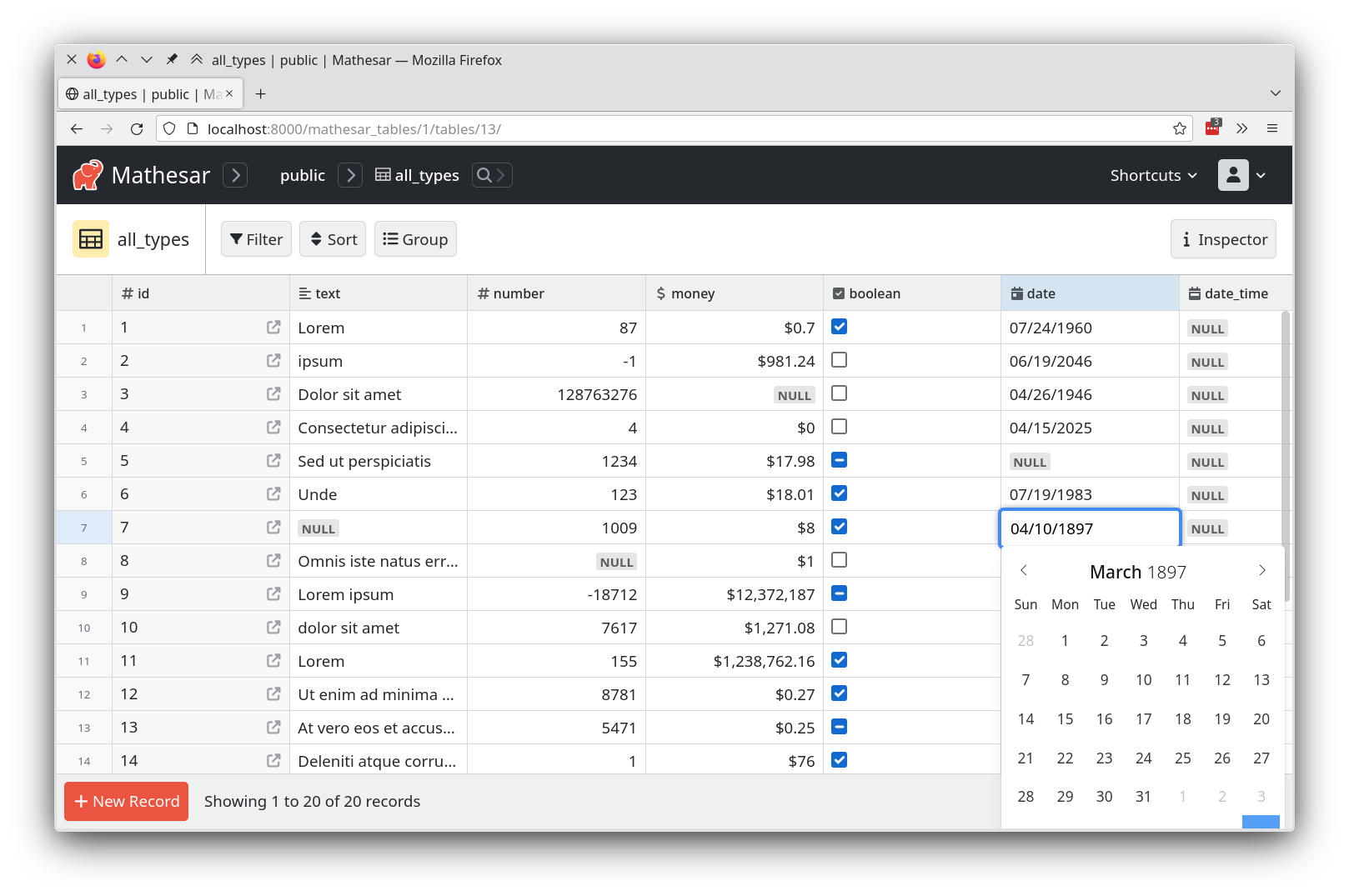

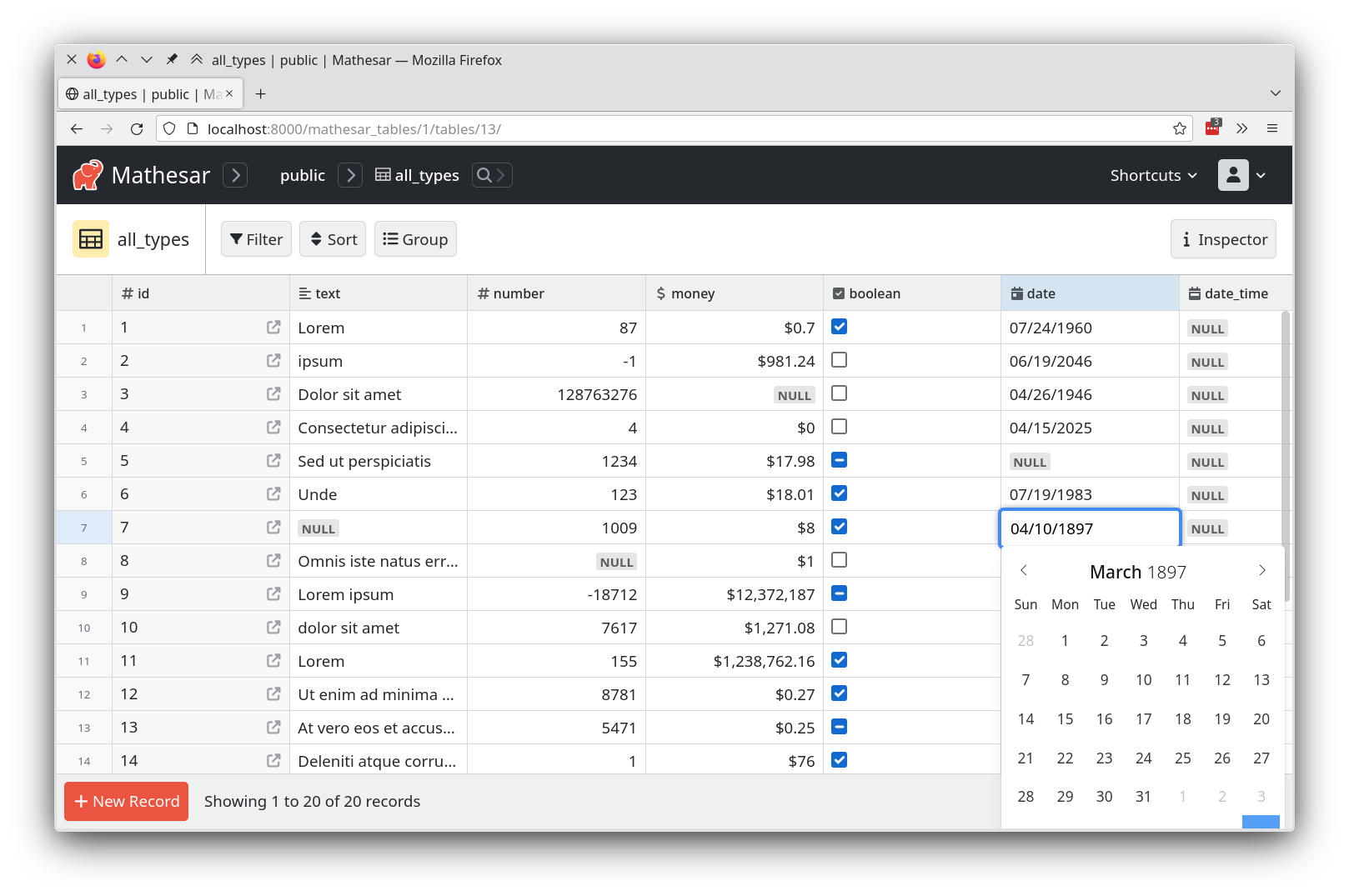

5,733 | 30,314,520,776 | IssuesEvent | 2023-07-10 14:45:50 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | opened | Confusing timezone issue when editing Time cells | type: enhancement work: frontend status: ready restricted: maintainers | Mathesar appears so have some sort of timezone-related logic at play in the screencap below, but it't not immediately clear to me what is happening.

https://github.com/centerofci/mathesar/assets/42411/53b55916-0e8b-4049-92e0-4d6f1ded2b7e

1. I'm currently in timezone UTC-4:00.

1. I've made a brand new Time column and entered a value into a new row, specifying my time as `8:50`.

1. After saving, Mathesar displayed that value back to me as `4:50`.

1. Given that I never told Mathesar about anything related to timezones, and that Mathesar never told me about anything related to timezones, this discrepancy in value is surprising.

I'm not sure what work is required to fix this problem because I don't understand our product design around Time cells deeply enough. Perhaps the behavior I experienced is our expected behavior? If so, then we need to do a better job communicating this behavior to users.

| True | Confusing timezone issue when editing Time cells - Mathesar appears so have some sort of timezone-related logic at play in the screencap below, but it't not immediately clear to me what is happening.

https://github.com/centerofci/mathesar/assets/42411/53b55916-0e8b-4049-92e0-4d6f1ded2b7e

1. I'm currently in timezone UTC-4:00.

1. I've made a brand new Time column and entered a value into a new row, specifying my time as `8:50`.

1. After saving, Mathesar displayed that value back to me as `4:50`.

1. Given that I never told Mathesar about anything related to timezones, and that Mathesar never told me about anything related to timezones, this discrepancy in value is surprising.

I'm not sure what work is required to fix this problem because I don't understand our product design around Time cells deeply enough. Perhaps the behavior I experienced is our expected behavior? If so, then we need to do a better job communicating this behavior to users.

| main | confusing timezone issue when editing time cells mathesar appears so have some sort of timezone related logic at play in the screencap below but it t not immediately clear to me what is happening i m currently in timezone utc i ve made a brand new time column and entered a value into a new row specifying my time as after saving mathesar displayed that value back to me as given that i never told mathesar about anything related to timezones and that mathesar never told me about anything related to timezones this discrepancy in value is surprising i m not sure what work is required to fix this problem because i don t understand our product design around time cells deeply enough perhaps the behavior i experienced is our expected behavior if so then we need to do a better job communicating this behavior to users | 1 |

33,454 | 7,127,211,889 | IssuesEvent | 2018-01-20 19:07:49 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | CORREGIR LA MANERA DE REPORTAR EL IVA EXENTO EN WEB Y EN ESCRITORIO | bug defect | SOLO PARA EL CASO, DONDE LA FACTURA INCLUYA ÚNICAMENTE TRASLADO-IVA-EXENTO.

SE REPORTA EL NODO DE IMPUESTOS A NIVEL CONCEPTO, PERO A NIVEL GENERAL NO SE REPORTA EL NODO.

<cfdi:Emisor Rfc="FOPR681125BQ0" Nombre="RIGOBERTO MISAEL FLORES DE LA PAZ" RegimenFiscal="612"></cfdi:Emisor>

<cfdi:Receptor Rfc="TIA101020BP4" Nombre="Tecnologias de Informacion Aplicada SA de CV" UsoCFDI="G03"></cfdi:Receptor>

<cfdi:Conceptos>

<cfdi:Concepto ClaveProdServ="01010101" NoIdentificacion="001" Cantidad="1" ClaveUnidad="E48" Unidad="servicio" Descripcion="renta de equipo de audio" ValorUnitario="10.00" Importe="10.00">

<cfdi:Impuestos>

<cfdi:Traslados>

<cfdi:Traslado Base="10.00" Impuesto="002" TipoFactor="Exento"></cfdi:Traslado>

</cfdi:Traslados>

</cfdi:Impuestos>

</cfdi:Concepto>

</cfdi:Conceptos>

<cfdi:Complemento>

<tfd:TimbreFiscalDigital xmlns:tfd="http://www.sat.gob.mx/TimbreFiscalDigital" xsi:schemaLocation="http://www.sat.gob.mx/TimbreFiscalDigital http://www.sat.gob.mx/sitio_internet/cfd/TimbreFiscalDigital/TimbreFiscalDigitalv11.xsd" Version="1.1" UUID="CA9BFFA6-5F2C-43B9-B3C5-B8E0374A11E8" FechaTimbrado="2018-01-20T12:36:04" RfcProvCertif="SAT970701NN3" SelloCFD="dWXJJT2R6Q7hHPPCy/tJhzrGnDtpHplcUDiIGQ0dkExSsXNpcIEQ5bbSf4zPZ887xkthKMoDAmyXI0oPCQzdhzYrRMeXIEAvPKoTBJPBEmfcNBDuEEbPel0opJTg4NZavn+LwivvjLVPVIeKNtboUUvJWj/Byzld/skXgV3hdTwBguzRlWz/nvL4f7wVWYwqZP+NokmbYgOYP8/hEDg+Ef4BNTAkV/SlGI/y4+yJDSs+GJf9535yraWkRPBNfI+noxcIN2rvQxXHlsV6SmElXHLX7Erm77camCRqG34DMn7SZTdGLiF7j7PXOIkN9VT9dRfZk58m3/4gjbwFD1Xufg==" NoCertificadoSAT="00001000000403258748" SelloSAT="ABTsiTLkS6gm9nTSFYm9vpDmeSJ7bc6gdbfcjYKd2CA6PNPXmrfNm+V5T6SbAQw3WdjUKZX3qk2BpjCgcILAskXzOIIPJqjlZVE0MGaQ8WZTo4HzwlPnie0UuWbZ9Vu+H2ythzmUvo8FEAQ25ov3PY1EbolepyQpVcGLPE8N+Vl4Z7gFVNsKNruDj3jeF5BZpqbCXlHYuykKmP0gjGU0J86iVUwKLZ+dUYME+GU76tVlmj+C+CsNNpXOB4eJfvGfQdtbJ4vvdjJc3ZULKIdk+VLY0/VfklOIIdaZ5J1lNJYq+AAdXwJzgl+3ffMOYIRnq9iiAFOcqBJHj3Y4QMAivw==" />

</cfdi:Complemento>

</cfdi:Comprobante> | 1.0 | CORREGIR LA MANERA DE REPORTAR EL IVA EXENTO EN WEB Y EN ESCRITORIO - SOLO PARA EL CASO, DONDE LA FACTURA INCLUYA ÚNICAMENTE TRASLADO-IVA-EXENTO.

SE REPORTA EL NODO DE IMPUESTOS A NIVEL CONCEPTO, PERO A NIVEL GENERAL NO SE REPORTA EL NODO.

<cfdi:Emisor Rfc="FOPR681125BQ0" Nombre="RIGOBERTO MISAEL FLORES DE LA PAZ" RegimenFiscal="612"></cfdi:Emisor>

<cfdi:Receptor Rfc="TIA101020BP4" Nombre="Tecnologias de Informacion Aplicada SA de CV" UsoCFDI="G03"></cfdi:Receptor>

<cfdi:Conceptos>

<cfdi:Concepto ClaveProdServ="01010101" NoIdentificacion="001" Cantidad="1" ClaveUnidad="E48" Unidad="servicio" Descripcion="renta de equipo de audio" ValorUnitario="10.00" Importe="10.00">

<cfdi:Impuestos>

<cfdi:Traslados>

<cfdi:Traslado Base="10.00" Impuesto="002" TipoFactor="Exento"></cfdi:Traslado>

</cfdi:Traslados>

</cfdi:Impuestos>

</cfdi:Concepto>

</cfdi:Conceptos>

<cfdi:Complemento>

<tfd:TimbreFiscalDigital xmlns:tfd="http://www.sat.gob.mx/TimbreFiscalDigital" xsi:schemaLocation="http://www.sat.gob.mx/TimbreFiscalDigital http://www.sat.gob.mx/sitio_internet/cfd/TimbreFiscalDigital/TimbreFiscalDigitalv11.xsd" Version="1.1" UUID="CA9BFFA6-5F2C-43B9-B3C5-B8E0374A11E8" FechaTimbrado="2018-01-20T12:36:04" RfcProvCertif="SAT970701NN3" SelloCFD="dWXJJT2R6Q7hHPPCy/tJhzrGnDtpHplcUDiIGQ0dkExSsXNpcIEQ5bbSf4zPZ887xkthKMoDAmyXI0oPCQzdhzYrRMeXIEAvPKoTBJPBEmfcNBDuEEbPel0opJTg4NZavn+LwivvjLVPVIeKNtboUUvJWj/Byzld/skXgV3hdTwBguzRlWz/nvL4f7wVWYwqZP+NokmbYgOYP8/hEDg+Ef4BNTAkV/SlGI/y4+yJDSs+GJf9535yraWkRPBNfI+noxcIN2rvQxXHlsV6SmElXHLX7Erm77camCRqG34DMn7SZTdGLiF7j7PXOIkN9VT9dRfZk58m3/4gjbwFD1Xufg==" NoCertificadoSAT="00001000000403258748" SelloSAT="ABTsiTLkS6gm9nTSFYm9vpDmeSJ7bc6gdbfcjYKd2CA6PNPXmrfNm+V5T6SbAQw3WdjUKZX3qk2BpjCgcILAskXzOIIPJqjlZVE0MGaQ8WZTo4HzwlPnie0UuWbZ9Vu+H2ythzmUvo8FEAQ25ov3PY1EbolepyQpVcGLPE8N+Vl4Z7gFVNsKNruDj3jeF5BZpqbCXlHYuykKmP0gjGU0J86iVUwKLZ+dUYME+GU76tVlmj+C+CsNNpXOB4eJfvGfQdtbJ4vvdjJc3ZULKIdk+VLY0/VfklOIIdaZ5J1lNJYq+AAdXwJzgl+3ffMOYIRnq9iiAFOcqBJHj3Y4QMAivw==" />

</cfdi:Complemento>

</cfdi:Comprobante> | non_main | corregir la manera de reportar el iva exento en web y en escritorio solo para el caso donde la factura incluya únicamente traslado iva exento se reporta el nodo de impuestos a nivel concepto pero a nivel general no se reporta el nodo | 0 |

242,955 | 18,674,584,948 | IssuesEvent | 2021-10-31 10:37:34 | codezonediitj/pydatastructs | https://api.github.com/repos/codezonediitj/pydatastructs | closed | Add note in Graph doc string for clarifying adding nodes and edges | documentation enhancement graphs | #### Description of the problem

<!--Please provide a clear and details information of the bug/data structure to be added.-->

The correct way to create a graph is to first create nodes of the right type (`AdjacencyListGraphNode` or `AdjacencyListMatrixNode`) and then add these nodes to the graph. Once done, add edges between these nodes.

1. A note should be added in the class doc string for the above process.

2. In the doc string of `add_edge` it should be clarified that this function will assume that the nodes are already present in the graph. If they are not present, then this function will not add the new nodes on it's own. In case someone attempts to do that then a nice error message should be raised describing the same.

#### Example of the problem

<!--Provide a reproducible example code which is causing the bug to appear. Leave this section if the problem is not a bug.-->

#### References/Other comments

cc: @pratikgl | 1.0 | Add note in Graph doc string for clarifying adding nodes and edges - #### Description of the problem

<!--Please provide a clear and details information of the bug/data structure to be added.-->

The correct way to create a graph is to first create nodes of the right type (`AdjacencyListGraphNode` or `AdjacencyListMatrixNode`) and then add these nodes to the graph. Once done, add edges between these nodes.

1. A note should be added in the class doc string for the above process.

2. In the doc string of `add_edge` it should be clarified that this function will assume that the nodes are already present in the graph. If they are not present, then this function will not add the new nodes on it's own. In case someone attempts to do that then a nice error message should be raised describing the same.

#### Example of the problem

<!--Provide a reproducible example code which is causing the bug to appear. Leave this section if the problem is not a bug.-->

#### References/Other comments

cc: @pratikgl | non_main | add note in graph doc string for clarifying adding nodes and edges description of the problem the correct way to create a graph is to first create nodes of the right type adjacencylistgraphnode or adjacencylistmatrixnode and then add these nodes to the graph once done add edges between these nodes a note should be added in the class doc string for the above process in the doc string of add edge it should be clarified that this function will assume that the nodes are already present in the graph if they are not present then this function will not add the new nodes on it s own in case someone attempts to do that then a nice error message should be raised describing the same example of the problem references other comments cc pratikgl | 0 |

2,284 | 8,132,816,890 | IssuesEvent | 2018-08-18 16:41:06 | openwrt/packages | https://api.github.com/repos/openwrt/packages | closed | wifidog init script executing wifidog too early | waiting for maintainer | I've been testing wifidog with openwrt on several of the ubiquiti air-routers placed at different locations, cafe's hotels etc... I've found that the default init script with procd starts wifidog too early and this causes problems. Although wifidog still functions and behaves normally, memory is gradually leaked until wifidog crashes because it cant malloc anymore, either that or the router needs hard rebooting.

Removing procd from the init script and adding "wifidog -c /etc/myconfig.conf" on its own without procd doesn't work at all and wifidog stops shortly after executing because the vlan interface its set to listen on is not up yet. With procd, wifidog is somehow able to start even though the vlan listen interface is not up.

Starting wifidog manually via ssh after the router boots doesn't exhibit any memory problems when left running for an extended period of time with many clients connecting and logging in.

Changing START=65 to 95 and adding "sleep 20" into the wifidog init script with or without procd fixes the problem with starting too early and also the memory leak.

I don't know if this problem occurs on other hardware running openwrt as I haven't tested any in heavy use environments like Hotels, just the air-router.

| True | wifidog init script executing wifidog too early - I've been testing wifidog with openwrt on several of the ubiquiti air-routers placed at different locations, cafe's hotels etc... I've found that the default init script with procd starts wifidog too early and this causes problems. Although wifidog still functions and behaves normally, memory is gradually leaked until wifidog crashes because it cant malloc anymore, either that or the router needs hard rebooting.

Removing procd from the init script and adding "wifidog -c /etc/myconfig.conf" on its own without procd doesn't work at all and wifidog stops shortly after executing because the vlan interface its set to listen on is not up yet. With procd, wifidog is somehow able to start even though the vlan listen interface is not up.

Starting wifidog manually via ssh after the router boots doesn't exhibit any memory problems when left running for an extended period of time with many clients connecting and logging in.

Changing START=65 to 95 and adding "sleep 20" into the wifidog init script with or without procd fixes the problem with starting too early and also the memory leak.

I don't know if this problem occurs on other hardware running openwrt as I haven't tested any in heavy use environments like Hotels, just the air-router.

| main | wifidog init script executing wifidog too early i ve been testing wifidog with openwrt on several of the ubiquiti air routers placed at different locations cafe s hotels etc i ve found that the default init script with procd starts wifidog too early and this causes problems although wifidog still functions and behaves normally memory is gradually leaked until wifidog crashes because it cant malloc anymore either that or the router needs hard rebooting removing procd from the init script and adding wifidog c etc myconfig conf on its own without procd doesn t work at all and wifidog stops shortly after executing because the vlan interface its set to listen on is not up yet with procd wifidog is somehow able to start even though the vlan listen interface is not up starting wifidog manually via ssh after the router boots doesn t exhibit any memory problems when left running for an extended period of time with many clients connecting and logging in changing start to and adding sleep into the wifidog init script with or without procd fixes the problem with starting too early and also the memory leak i don t know if this problem occurs on other hardware running openwrt as i haven t tested any in heavy use environments like hotels just the air router | 1 |

3,520 | 13,804,304,154 | IssuesEvent | 2020-10-11 08:17:36 | sukritishah15/DS-Algo-Point | https://api.github.com/repos/sukritishah15/DS-Algo-Point | closed | WARNING - MAINTAINERS | maintainers | ## 🚀 We do not appreciate and encourage bossy or rude behaviour with any of our contributors by any maintainer and vice-versa as well.

If there is an issue with any PR, mention it politely. Do not try and act BOSSY.

We will come across a lot of beginners and they will make a lot of mistakes, it is absolutely OKAY. Guide them. Do not YELL or type rude comments.

- MAKING MISTAKES IS NOT SPAMMY.

- Sending repetitive code/content via a PR just to increase the PR count is SPAMMY.

- Making a PR which does not contribute much is SPAMMY.

Also, for the contributors, please be polite with the maintainers under all circumstances.

Let's not forget we are all learners at the end of the day. We all have something to learn.

Keep a learning and growth mindset and exchange healthy, useful and productive conversations.

Enjoy open source at it's core and recognize it for what it is.

P.S. - This post comes across, after I saw rude comments by **one** of the maintainer. **Rest all are doing extremely well.** | True | WARNING - MAINTAINERS - ## 🚀 We do not appreciate and encourage bossy or rude behaviour with any of our contributors by any maintainer and vice-versa as well.

If there is an issue with any PR, mention it politely. Do not try and act BOSSY.

We will come across a lot of beginners and they will make a lot of mistakes, it is absolutely OKAY. Guide them. Do not YELL or type rude comments.

- MAKING MISTAKES IS NOT SPAMMY.

- Sending repetitive code/content via a PR just to increase the PR count is SPAMMY.

- Making a PR which does not contribute much is SPAMMY.

Also, for the contributors, please be polite with the maintainers under all circumstances.

Let's not forget we are all learners at the end of the day. We all have something to learn.

Keep a learning and growth mindset and exchange healthy, useful and productive conversations.

Enjoy open source at it's core and recognize it for what it is.

P.S. - This post comes across, after I saw rude comments by **one** of the maintainer. **Rest all are doing extremely well.** | main | warning maintainers 🚀 we do not appreciate and encourage bossy or rude behaviour with any of our contributors by any maintainer and vice versa as well if there is an issue with any pr mention it politely do not try and act bossy we will come across a lot of beginners and they will make a lot of mistakes it is absolutely okay guide them do not yell or type rude comments making mistakes is not spammy sending repetitive code content via a pr just to increase the pr count is spammy making a pr which does not contribute much is spammy also for the contributors please be polite with the maintainers under all circumstances let s not forget we are all learners at the end of the day we all have something to learn keep a learning and growth mindset and exchange healthy useful and productive conversations enjoy open source at it s core and recognize it for what it is p s this post comes across after i saw rude comments by one of the maintainer rest all are doing extremely well | 1 |

132,393 | 10,745,195,183 | IssuesEvent | 2019-10-30 08:29:20 | DivanteLtd/shopware-pwa | https://api.github.com/repos/DivanteLtd/shopware-pwa | closed | Populate Shopware test instance with pretty data | Test eCommerce SIte | **Context**

Frontend layer does not look well with default gray images. We want to populate Shopware 6 Test Instance with data and images that will cause the frontend layer to look good. That will make the testing process easier. | 1.0 | Populate Shopware test instance with pretty data - **Context**

Frontend layer does not look well with default gray images. We want to populate Shopware 6 Test Instance with data and images that will cause the frontend layer to look good. That will make the testing process easier. | non_main | populate shopware test instance with pretty data context frontend layer does not look well with default gray images we want to populate shopware test instance with data and images that will cause the frontend layer to look good that will make the testing process easier | 0 |

541,005 | 15,820,097,496 | IssuesEvent | 2021-04-05 18:27:57 | litecoin-foundation/loafwallet-ios | https://api.github.com/repos/litecoin-foundation/loafwallet-ios | closed | 🥳[Feature] Reset Litecoin Card password | Priority-Medium enhancement size: 3 | ## Goal

Allow the user the reset their Litecoin Card password from the Login / Card view

## Approach

Using the forgot password endpoint to allow the user to reset their Litecoin Card password using the registered email address

- Endpoint: `PATCH /v1/user/:user_id/password`

- Docs: https://docs.getblockcard.com/docs/api-user-change-password/

## Definition of Done

- [ ] Unit Test written to verify the endpoint

- [ ] Reset accepts the registration email with a modal with a textfield and includes an `ok` and `cancel` button

- [ ] User is able to login with the new password in Litewallet : Card

| 1.0 | 🥳[Feature] Reset Litecoin Card password - ## Goal

Allow the user the reset their Litecoin Card password from the Login / Card view

## Approach

Using the forgot password endpoint to allow the user to reset their Litecoin Card password using the registered email address

- Endpoint: `PATCH /v1/user/:user_id/password`

- Docs: https://docs.getblockcard.com/docs/api-user-change-password/

## Definition of Done

- [ ] Unit Test written to verify the endpoint

- [ ] Reset accepts the registration email with a modal with a textfield and includes an `ok` and `cancel` button

- [ ] User is able to login with the new password in Litewallet : Card

| non_main | 🥳 reset litecoin card password goal allow the user the reset their litecoin card password from the login card view approach using the forgot password endpoint to allow the user to reset their litecoin card password using the registered email address endpoint patch user user id password docs definition of done unit test written to verify the endpoint reset accepts the registration email with a modal with a textfield and includes an ok and cancel button user is able to login with the new password in litewallet card | 0 |

2,264 | 7,961,991,169 | IssuesEvent | 2018-07-13 12:54:47 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | closed | 'EventArgs' should not have public setters | Area: analyzer Area: maintainability feature in progress | Classes inheriting from `EventArgs` should not provide properties that have publicly visible setters.

Instead these setters shall be `private` to avoid setting from outside. They shall also neither be `protected` nor `internal`.

If these properties shall be set, then there should only be (circuit breaker) methods that allow to set the property once.

Explanation:

The reason is that events are raised and handled by event handlers. If now the event data changes between the handlers, then there is a race condition ongoing as not all handlers get the same result.

If the event shall be canceled, then the setting method shall act as circuit-breaker so that the event can be canceled but then no longer be un-canceled. | True | 'EventArgs' should not have public setters - Classes inheriting from `EventArgs` should not provide properties that have publicly visible setters.

Instead these setters shall be `private` to avoid setting from outside. They shall also neither be `protected` nor `internal`.

If these properties shall be set, then there should only be (circuit breaker) methods that allow to set the property once.

Explanation:

The reason is that events are raised and handled by event handlers. If now the event data changes between the handlers, then there is a race condition ongoing as not all handlers get the same result.

If the event shall be canceled, then the setting method shall act as circuit-breaker so that the event can be canceled but then no longer be un-canceled. | main | eventargs should not have public setters classes inheriting from eventargs should not provide properties that have publicly visible setters instead these setters shall be private to avoid setting from outside they shall also neither be protected nor internal if these properties shall be set then there should only be circuit breaker methods that allow to set the property once explanation the reason is that events are raised and handled by event handlers if now the event data changes between the handlers then there is a race condition ongoing as not all handlers get the same result if the event shall be canceled then the setting method shall act as circuit breaker so that the event can be canceled but then no longer be un canceled | 1 |

271,290 | 29,418,930,371 | IssuesEvent | 2023-05-31 01:03:25 | MidnightBSD/src | https://api.github.com/repos/MidnightBSD/src | reopened | CVE-2022-30699 (Medium) detected in multiple libraries | Mend: dependency security vulnerability | ## CVE-2022-30699 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

NLnet Labs Unbound, up to and including version 1.16.1, is vulnerable to a novel type of the "ghost domain names" attack. The vulnerability works by targeting an Unbound instance. Unbound is queried for a rogue domain name when the cached delegation information is about to expire. The rogue nameserver delays the response so that the cached delegation information is expired. Upon receiving the delayed answer containing the delegation information, Unbound overwrites the now expired entries. This action can be repeated when the delegation information is about to expire making the rogue delegation information ever-updating. From version 1.16.2 on, Unbound stores the start time for a query and uses that to decide if the cached delegation information can be overwritten.

<p>Publish Date: 2022-08-01

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-30699>CVE-2022-30699</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-30699">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-30699</a></p>

<p>Release Date: 2022-08-01</p>

<p>Fix Resolution: release-1.16.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-30699 (Medium) detected in multiple libraries - ## CVE-2022-30699 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b>, <b>hardenedBSDbbfb1edd70e15241d852d82eb7e1c1049a01b886</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

NLnet Labs Unbound, up to and including version 1.16.1, is vulnerable to a novel type of the "ghost domain names" attack. The vulnerability works by targeting an Unbound instance. Unbound is queried for a rogue domain name when the cached delegation information is about to expire. The rogue nameserver delays the response so that the cached delegation information is expired. Upon receiving the delayed answer containing the delegation information, Unbound overwrites the now expired entries. This action can be repeated when the delegation information is about to expire making the rogue delegation information ever-updating. From version 1.16.2 on, Unbound stores the start time for a query and uses that to decide if the cached delegation information can be overwritten.

<p>Publish Date: 2022-08-01

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-30699>CVE-2022-30699</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-30699">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-30699</a></p>

<p>Release Date: 2022-08-01</p>

<p>Fix Resolution: release-1.16.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries vulnerability details nlnet labs unbound up to and including version is vulnerable to a novel type of the ghost domain names attack the vulnerability works by targeting an unbound instance unbound is queried for a rogue domain name when the cached delegation information is about to expire the rogue nameserver delays the response so that the cached delegation information is expired upon receiving the delayed answer containing the delegation information unbound overwrites the now expired entries this action can be repeated when the delegation information is about to expire making the rogue delegation information ever updating from version on unbound stores the start time for a query and uses that to decide if the cached delegation information can be overwritten publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution release step up your open source security game with mend | 0 |

307,975 | 9,424,670,280 | IssuesEvent | 2019-04-11 14:33:26 | python/mypy | https://api.github.com/repos/python/mypy | closed | segmentation fault on indexing object with __getattr__ | crash priority-0-high | Please provide more information to help us understand the issue:

* Are you reporting a bug, or opening a feature request?

Bug.

* Please insert below the code you are checking with mypy,

or a mock-up repro if the source is private. We would appreciate

if you try to simplify your case to a minimal repro.

```python

class C:

def __getattr__(self, name: str) -> 'C':

...

def f(v: C) -> None:

v[0]

```

* What is the actual behavior/output?

segfault

* What is the behavior/output you expect?

not crashing

* What are the versions of mypy and Python you are using?

python 3.6.7 on Ubuntu 18.04.2 x86_64

mypy 0.670 installed march 20 2019 via pip

Do you see the same issue after installing mypy from Git master?

yes

0.680+dev.4e0a1583aeb00b248e187054980771f1897a1d31

* What are the mypy flags you are using? (For example --strict-optional)

none

| 1.0 | segmentation fault on indexing object with __getattr__ - Please provide more information to help us understand the issue:

* Are you reporting a bug, or opening a feature request?

Bug.

* Please insert below the code you are checking with mypy,

or a mock-up repro if the source is private. We would appreciate

if you try to simplify your case to a minimal repro.

```python

class C:

def __getattr__(self, name: str) -> 'C':

...

def f(v: C) -> None:

v[0]

```

* What is the actual behavior/output?

segfault

* What is the behavior/output you expect?

not crashing

* What are the versions of mypy and Python you are using?

python 3.6.7 on Ubuntu 18.04.2 x86_64

mypy 0.670 installed march 20 2019 via pip

Do you see the same issue after installing mypy from Git master?

yes

0.680+dev.4e0a1583aeb00b248e187054980771f1897a1d31

* What are the mypy flags you are using? (For example --strict-optional)

none

| non_main | segmentation fault on indexing object with getattr please provide more information to help us understand the issue are you reporting a bug or opening a feature request bug please insert below the code you are checking with mypy or a mock up repro if the source is private we would appreciate if you try to simplify your case to a minimal repro python class c def getattr self name str c def f v c none v what is the actual behavior output segfault what is the behavior output you expect not crashing what are the versions of mypy and python you are using python on ubuntu mypy installed march via pip do you see the same issue after installing mypy from git master yes dev what are the mypy flags you are using for example strict optional none | 0 |

35,246 | 14,655,666,915 | IssuesEvent | 2020-12-28 11:33:33 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | IntelliSense within remote ssh broken | Language Service more info needed remote | **Type:** IntelliSense

**Describe the bug**

- OS and Version: Mac Mojave 10.14.6, remote with Linux 4.12.14-lp151.28.36-default x86_64

- VS Code Version: 1.42.1

- C/C++ Extension Version: 0.26.3

- Other extensions you installed (and if the issue persists after disabling them): C/C++ GNU Global (0.3.2), Visual Studio IntelliCode (1.2.5), Git History (0.6.0)

When using the [remote ssh extension](https://code.visualstudio.com/blogs/2019/07/25/remote-ssh), the IntelliSense works for the first couple of seconds but then has no suggestions for the rest of the session.

**To Reproduce**

<!-- Steps to reproduce the behavior: -->

<!-- *The most actionable issue reports include a code sample including configuration files such as c_cpp_properties.json* -->

1. Get the remote ssh extension

2. Remote into a Linus server

3. Start writing some C code.

4. See the lack of suggestions and autocomplete after a couple of minutes of usage. (error)

**Expected behavior**

I expect there to be IntelliSense working in full functionality in the remote ssh window.

| 1.0 | IntelliSense within remote ssh broken - **Type:** IntelliSense

**Describe the bug**

- OS and Version: Mac Mojave 10.14.6, remote with Linux 4.12.14-lp151.28.36-default x86_64

- VS Code Version: 1.42.1

- C/C++ Extension Version: 0.26.3

- Other extensions you installed (and if the issue persists after disabling them): C/C++ GNU Global (0.3.2), Visual Studio IntelliCode (1.2.5), Git History (0.6.0)

When using the [remote ssh extension](https://code.visualstudio.com/blogs/2019/07/25/remote-ssh), the IntelliSense works for the first couple of seconds but then has no suggestions for the rest of the session.

**To Reproduce**

<!-- Steps to reproduce the behavior: -->

<!-- *The most actionable issue reports include a code sample including configuration files such as c_cpp_properties.json* -->

1. Get the remote ssh extension

2. Remote into a Linus server

3. Start writing some C code.

4. See the lack of suggestions and autocomplete after a couple of minutes of usage. (error)

**Expected behavior**

I expect there to be IntelliSense working in full functionality in the remote ssh window.

| non_main | intellisense within remote ssh broken type intellisense describe the bug os and version mac mojave remote with linux default vs code version c c extension version other extensions you installed and if the issue persists after disabling them c c gnu global visual studio intellicode git history when using the the intellisense works for the first couple of seconds but then has no suggestions for the rest of the session to reproduce get the remote ssh extension remote into a linus server start writing some c code see the lack of suggestions and autocomplete after a couple of minutes of usage error expected behavior i expect there to be intellisense working in full functionality in the remote ssh window | 0 |

2,906 | 10,327,617,632 | IssuesEvent | 2019-09-02 07:28:51 | varenc/homebrew-ffmpeg | https://api.github.com/repos/varenc/homebrew-ffmpeg | closed | Possible improvements, housekeeping | maintainer-feedback | Based on Lou Logan's comments:

- `--enable-avresample` really needed?

- `--enable-librtmp` can be removed

- `--enable-libxvid` can be removed

- `--enable-libspeex` is obsolete, replaced by Opus

- `--enable-hardcoded-tables` – might be time to drop that if it makes no significant difference if someone wants to test

- Figure out how to deal with `libjack` – should it be optional under Linux?

My suggestion would be to:

- [x] Keep librtmp, but make it optional => #15

- [x] Keep libxvid, but make it optional => #13

- [x] Keep libspeex, but make it optional => #14

- [ ] Check usage of `avresample`

- [ ] Add support for `libjack` => #16

- [x] Keep hardcoded tables, but have to further investigate --> done by Reto. | True | Possible improvements, housekeeping - Based on Lou Logan's comments:

- `--enable-avresample` really needed?

- `--enable-librtmp` can be removed

- `--enable-libxvid` can be removed

- `--enable-libspeex` is obsolete, replaced by Opus

- `--enable-hardcoded-tables` – might be time to drop that if it makes no significant difference if someone wants to test

- Figure out how to deal with `libjack` – should it be optional under Linux?

My suggestion would be to:

- [x] Keep librtmp, but make it optional => #15

- [x] Keep libxvid, but make it optional => #13

- [x] Keep libspeex, but make it optional => #14

- [ ] Check usage of `avresample`

- [ ] Add support for `libjack` => #16

- [x] Keep hardcoded tables, but have to further investigate --> done by Reto. | main | possible improvements housekeeping based on lou logan s comments enable avresample really needed enable librtmp can be removed enable libxvid can be removed enable libspeex is obsolete replaced by opus enable hardcoded tables – might be time to drop that if it makes no significant difference if someone wants to test figure out how to deal with libjack – should it be optional under linux my suggestion would be to keep librtmp but make it optional keep libxvid but make it optional keep libspeex but make it optional check usage of avresample add support for libjack keep hardcoded tables but have to further investigate done by reto | 1 |

4,421 | 22,782,699,690 | IssuesEvent | 2022-07-08 22:09:33 | radical-semiconductor/katsu-board-demo | https://api.github.com/repos/radical-semiconductor/katsu-board-demo | opened | DRY out github workflows (ci + releases) | maintainability | just use explicit include to have multiple variables without adding dimensions

include:

- site: "production"

datacenter: "site-a"

- site: "staging"

datacenter: "site-b" | True | DRY out github workflows (ci + releases) - just use explicit include to have multiple variables without adding dimensions

include:

- site: "production"

datacenter: "site-a"

- site: "staging"

datacenter: "site-b" | main | dry out github workflows ci releases just use explicit include to have multiple variables without adding dimensions include site production datacenter site a site staging datacenter site b | 1 |

3,245 | 12,368,707,071 | IssuesEvent | 2020-05-18 14:13:33 | Kashdeya/Tiny-Progressions | https://api.github.com/repos/Kashdeya/Tiny-Progressions | closed | Berry bush generation blacklist | Version not Maintainted | A configurable feature where we can blacklist dimensions for the berry bush generation etc could be useful. eg: berry bushes spawn in the betweenlands dimension which doesn't fit in the dimension. | True | Berry bush generation blacklist - A configurable feature where we can blacklist dimensions for the berry bush generation etc could be useful. eg: berry bushes spawn in the betweenlands dimension which doesn't fit in the dimension. | main | berry bush generation blacklist a configurable feature where we can blacklist dimensions for the berry bush generation etc could be useful eg berry bushes spawn in the betweenlands dimension which doesn t fit in the dimension | 1 |

5,767 | 30,567,421,172 | IssuesEvent | 2023-07-20 18:55:40 | ocbe-uio/trajpy | https://api.github.com/repos/ocbe-uio/trajpy | closed | Remove plot feature from the GUI to reduce dependencies | good first issue maintainability | Currently we have a plotting feature in the GUI. This feature is unnecessary and increase the number of dependencies.

Code that should be **removed**:

- in _trajpy/gui.py_

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L6-L10

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L34

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L110

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L266-L271

- in _requirements.txt_

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/requirements.txt#L3

After removal we should organise the GUI buttons in a better way. We can reduce the windows size and increase text size for improved accessibility.

| True | Remove plot feature from the GUI to reduce dependencies - Currently we have a plotting feature in the GUI. This feature is unnecessary and increase the number of dependencies.

Code that should be **removed**:

- in _trajpy/gui.py_

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L6-L10

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L34

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L110

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/trajpy/gui.py#L266-L271

- in _requirements.txt_

https://github.com/ocbe-uio/trajpy/blob/2b742349b8f24aab32b39369b47f59302c150913/requirements.txt#L3

After removal we should organise the GUI buttons in a better way. We can reduce the windows size and increase text size for improved accessibility.

| main | remove plot feature from the gui to reduce dependencies currently we have a plotting feature in the gui this feature is unnecessary and increase the number of dependencies code that should be removed in trajpy gui py in requirements txt after removal we should organise the gui buttons in a better way we can reduce the windows size and increase text size for improved accessibility | 1 |

1,752 | 6,574,969,094 | IssuesEvent | 2017-09-11 14:38:47 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | declare a "port" parameter for os_security_group_rule | affects_2.3 cloud feature_idea openstack waiting_on_maintainer | ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

os_security_group_rule

I end up with a lot of "os_security_group_rule:" statements that have:

port_range_max: "{{ item }}"

port_range_min: "{{ item }}"

For rules where only a single port is needed. Is there some reason not to define a "port:" parameter that if that has been passed set port_range_min and port_range_max to that value?

That should pass the right things down the chain through shade to openstack.

| True | declare a "port" parameter for os_security_group_rule - ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

os_security_group_rule

I end up with a lot of "os_security_group_rule:" statements that have:

port_range_max: "{{ item }}"

port_range_min: "{{ item }}"

For rules where only a single port is needed. Is there some reason not to define a "port:" parameter that if that has been passed set port_range_min and port_range_max to that value?

That should pass the right things down the chain through shade to openstack.

| main | declare a port parameter for os security group rule issue type feature idea component name os security group rule i end up with a lot of os security group rule statements that have port range max item port range min item for rules where only a single port is needed is there some reason not to define a port parameter that if that has been passed set port range min and port range max to that value that should pass the right things down the chain through shade to openstack | 1 |

383,722 | 26,562,627,931 | IssuesEvent | 2023-01-20 17:06:58 | unibz-core/Scior-Tester | https://api.github.com/repos/unibz-core/Scior-Tester | opened | Review and update execution instruction documentations | documentation | @mozzherina, please review and update the execution instruction documentations:

1) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Build.md#execution-instructions

2) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Test1.md#execution-instructions

3) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Test2.md#execution-instructions | 1.0 | Review and update execution instruction documentations - @mozzherina, please review and update the execution instruction documentations:

1) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Build.md#execution-instructions

2) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Test1.md#execution-instructions

3) https://github.com/unibz-core/Scior-Tester/blob/main/documentation/Scior-Tester-Test2.md#execution-instructions | non_main | review and update execution instruction documentations mozzherina please review and update the execution instruction documentations | 0 |

694,799 | 23,830,352,243 | IssuesEvent | 2022-09-05 19:50:25 | themotte/rDrama | https://api.github.com/repos/themotte/rDrama | opened | 2FA may be malfunctioning via Google Authenticator? | bug P2 priority | So far there's two people I know of who have tried 2FA. One reported it worked, one reported it didn't. I dunno what's going on there. See https://www.themotte.org/post/20/a-writeup-on-the-reason-the/1039?context=8#context | 1.0 | 2FA may be malfunctioning via Google Authenticator? - So far there's two people I know of who have tried 2FA. One reported it worked, one reported it didn't. I dunno what's going on there. See https://www.themotte.org/post/20/a-writeup-on-the-reason-the/1039?context=8#context | non_main | may be malfunctioning via google authenticator so far there s two people i know of who have tried one reported it worked one reported it didn t i dunno what s going on there see | 0 |

2,691 | 9,396,179,707 | IssuesEvent | 2019-04-08 06:20:06 | RalfKoban/MiKo-Analyzers | https://api.github.com/repos/RalfKoban/MiKo-Analyzers | closed | Methods that return IEnumerable should never return null | Area: analyzer Area: maintainability feature review | Methods that return `IEnumerable` are expected to be used in `foreach` loops or `Linq` queries.

As it's completely unexpected for developers to get a `NullReferenceException` or `ArgumentNullException` being thrown at such place, such situation should be avoided.

To avoid those situations, such methods are _**NOT**_ allowed to return `null`. | True | Methods that return IEnumerable should never return null - Methods that return `IEnumerable` are expected to be used in `foreach` loops or `Linq` queries.

As it's completely unexpected for developers to get a `NullReferenceException` or `ArgumentNullException` being thrown at such place, such situation should be avoided.

To avoid those situations, such methods are _**NOT**_ allowed to return `null`. | main | methods that return ienumerable should never return null methods that return ienumerable are expected to be used in foreach loops or linq queries as it s completely unexpected for developers to get a nullreferenceexception or argumentnullexception being thrown at such place such situation should be avoided to avoid those situations such methods are not allowed to return null | 1 |

5,278 | 26,671,726,137 | IssuesEvent | 2023-01-26 10:52:28 | beyarkay/eskom-calendar | https://api.github.com/repos/beyarkay/eskom-calendar | closed | Schedule missing for Manguang | bug waiting-on-maintainer missing-area-schedule | Power appears to be provided by Centlec: https://www.centlec.co.za/LoadShedding/LoadSheddingDocuments with the schedule available [here](https://www.centlec.co.za/LoadShedding/ViewFile?filePath=wwwroot%2FUploadedFiles%2FLoadShedding%2FCENTLEC%20Load%20Shedding%20Schedule%202022%20Updated_245d.pdf). | True | Schedule missing for Manguang - Power appears to be provided by Centlec: https://www.centlec.co.za/LoadShedding/LoadSheddingDocuments with the schedule available [here](https://www.centlec.co.za/LoadShedding/ViewFile?filePath=wwwroot%2FUploadedFiles%2FLoadShedding%2FCENTLEC%20Load%20Shedding%20Schedule%202022%20Updated_245d.pdf). | main | schedule missing for manguang power appears to be provided by centlec with the schedule available | 1 |

419,155 | 12,218,290,662 | IssuesEvent | 2020-05-01 19:00:55 | vz-risk/VCDB | https://api.github.com/repos/vz-risk/VCDB | opened | Nine million logs of Brits' road journeys spill onto the internet from password-less number-plate camera dashboard | Breach Error Priority 2020 | https://www.theregister.co.uk/2020/04/28/anpr_sheffield_council/ | 1.0 | Nine million logs of Brits' road journeys spill onto the internet from password-less number-plate camera dashboard - https://www.theregister.co.uk/2020/04/28/anpr_sheffield_council/ | non_main | nine million logs of brits road journeys spill onto the internet from password less number plate camera dashboard | 0 |

68,857 | 8,357,293,065 | IssuesEvent | 2018-10-02 21:05:53 | AndrewOkonar/5cube | https://api.github.com/repos/AndrewOkonar/5cube | closed | Kasino | Design | <b>Manager Name:</b> Zkladov

<b>Client Name:</b> Carlos

<b>Contact with manager:</b> Slack

<b>Contact with client: Slack</b> (Zkladov) | 1.0 | Kasino - <b>Manager Name:</b> Zkladov

<b>Client Name:</b> Carlos

<b>Contact with manager:</b> Slack

<b>Contact with client: Slack</b> (Zkladov) | non_main | kasino manager name zkladov client name carlos contact with manager slack contact with client slack zkladov | 0 |

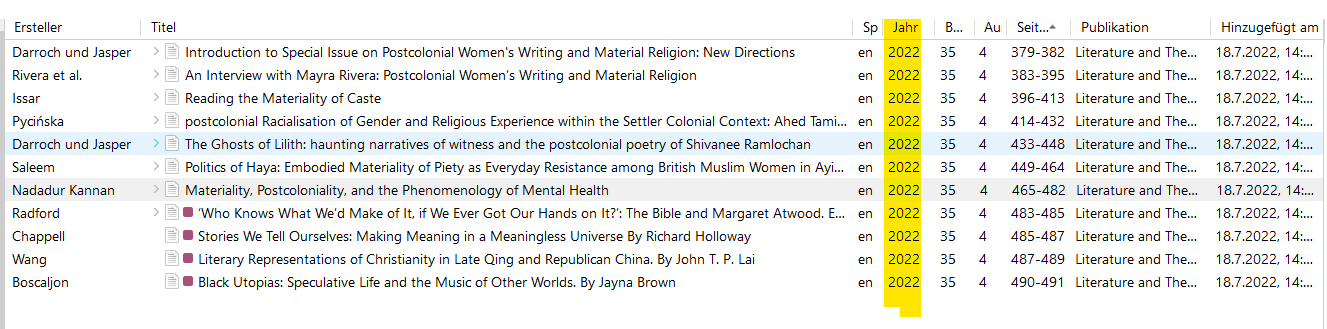

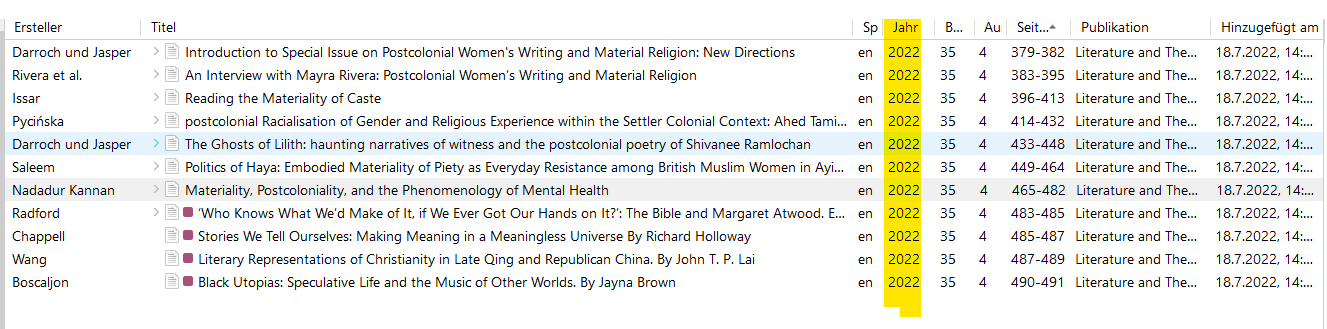

270,448 | 23,509,802,950 | IssuesEvent | 2022-08-18 15:30:14 | ubtue/DatenProbleme | https://api.github.com/repos/ubtue/DatenProbleme | closed | ISSN 0269-1205 | Literature and Theology (Oxford) | Berichtsjahr | ready for testing Zotero_SEMI-AUTO | #### URL

https://academic.oup.com/litthe/issue/35/4

#### Import-Translator

Einzel- und Mehrfachimport:

ubtue_Oxford Academic.js

### Problembeschreibung

Das Heft hat als Berichtsjahr **2021**. Allerdings wird überall als Jahr das Erscheinungsjahr online importiert:

| 1.0 | ISSN 0269-1205 | Literature and Theology (Oxford) | Berichtsjahr - #### URL

https://academic.oup.com/litthe/issue/35/4

#### Import-Translator

Einzel- und Mehrfachimport:

ubtue_Oxford Academic.js

### Problembeschreibung

Das Heft hat als Berichtsjahr **2021**. Allerdings wird überall als Jahr das Erscheinungsjahr online importiert:

| non_main | issn literature and theology oxford berichtsjahr url import translator einzel und mehrfachimport ubtue oxford academic js problembeschreibung das heft hat als berichtsjahr allerdings wird überall als jahr das erscheinungsjahr online importiert | 0 |

2,112 | 7,187,125,235 | IssuesEvent | 2018-02-02 03:05:29 | Microsoft/DirectXMath | https://api.github.com/repos/Microsoft/DirectXMath | closed | Remove 17.1 compiler support | maintainence | When the Xbox One XDK platform drops support for the 17.1 compiler, I can remove one remaining adapter:

- Remove `XM_CTOR_DEFAULT` adapter and replace it with `=default`

- The ``XMMatrixMultiply`` and ``XMMatrixMultiplyTranspose`` implementation also have a workaround for compilers prior to VS 2013 | True | Remove 17.1 compiler support - When the Xbox One XDK platform drops support for the 17.1 compiler, I can remove one remaining adapter:

- Remove `XM_CTOR_DEFAULT` adapter and replace it with `=default`

- The ``XMMatrixMultiply`` and ``XMMatrixMultiplyTranspose`` implementation also have a workaround for compilers prior to VS 2013 | main | remove compiler support when the xbox one xdk platform drops support for the compiler i can remove one remaining adapter remove xm ctor default adapter and replace it with default the xmmatrixmultiply and xmmatrixmultiplytranspose implementation also have a workaround for compilers prior to vs | 1 |

475,982 | 13,731,539,624 | IssuesEvent | 2020-10-05 01:25:18 | iragm/fishauctions | https://api.github.com/repos/iragm/fishauctions | closed | Add breederboard showing top breeders | priority | Two I would like to see are most lots posted and greatest diversity of fish posted. | 1.0 | Add breederboard showing top breeders - Two I would like to see are most lots posted and greatest diversity of fish posted. | non_main | add breederboard showing top breeders two i would like to see are most lots posted and greatest diversity of fish posted | 0 |

6,456 | 2,588,156,728 | IssuesEvent | 2015-02-17 23:00:04 | PresConsUIUC/PSAP | https://api.github.com/repos/PresConsUIUC/PSAP | closed | Delete unused FIDG images | priority-low task | Not urgent at all, but FYI:

The code I wrote that imports FIDG content into the application is able to compile a list of unused images. These are images that exist somewhere inside the FormatIDGuide-HTML folder, but are never referenced in img tags, and thus aren't showing up anywhere. Here is the current list.

Feel free to delete them if you know they are no longer needed.

I'm not assigning this to anyone or any milestone in particular, and it's OK if it never gets done. This is a rainy-day, "I'm so bored I could almost do this" task.

(Ignore everything before FormatIDGuide-HTML in the paths)

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/2inORAudioLarge.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/audiotape-or-quarter3.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/cylinder-moldy.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/cylinder-wax-brown-damaged1.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/cylinder-wax-brown-damaged1@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/dvd_thumb_150x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/film-16mm-magstock.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/film-16mm-magstock@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/Film-Gauges.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/film-polyester-lightpiping-ucla.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/film-polyester-lightpiping-ucla@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/filmcore-ucla.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/filmcore-ucla@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/filmreel-wcan-ucla.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/filmreel-wcan-ucla@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/large_plasticCyl.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/large_waxCyl.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/magnetictape-shedonguide.JPG

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/magnetictape-shedonhead.JPG

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/magnetictape-shedonhead@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/polyesterfilm-lightpipe-ucla.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/polyesterfilm-lightpipe-ucla@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/quarterInchAudio_Thumb_150.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-12in-lacquerdisc.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-12in-vinyldisc.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-45rpm-slystone.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-45rpm-slystone@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-edisondiamonddisc.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-lacquer-scale1.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/record-lacquer-scale1@2x.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/avmedia/images/UNC_Safetyfilm.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/papersbooks/images/binding-periodical-sidesewn-flatback1a_cropped.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/papersbooks/images/binding-sidesewn-marbling1b_cropped.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/papersbooks/images/binding-sidesewn-marbling1c_cropped.jpg

/Volumes/Data/alexd/Projects/psap/db/seed_data/FormatIDGuide-HTML/profiles/papersbooks/images/book-24310_BT_02_SpineTear_cropped.jpg