Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

190 | 2,810,555,581 | IssuesEvent | 2015-05-17 00:21:36 | jenkinsci/slack-plugin | https://api.github.com/repos/jenkinsci/slack-plugin | opened | Releasing Milestone 1.8 | maintainer communication | I think a release is overdue. I'm currently working on a release as well as documenting how I do a release. Pull request to follow for release preparation. This pull request will be closed when the release is completed.

This covers all pull requests merged since PR #46. | True | Releasing Milestone 1.8 - I think a release is overdue. I'm currently working on a release as well as documenting how I do a release. Pull request to follow for release preparation. This pull request will be closed when the release is completed.

This covers all pull requests merged since PR #46. | main | releasing milestone i think a release is overdue i m currently working on a release as well as documenting how i do a release pull request to follow for release preparation this pull request will be closed when the release is completed this covers all pull requests merged since pr | 1 |

2,404 | 8,528,807,827 | IssuesEvent | 2018-11-03 03:47:15 | TabbycatDebate/tabbycat | https://api.github.com/repos/TabbycatDebate/tabbycat | opened | Separate feedback questions for different adj types | awaiting maintainer enhancement | As discussed, this separation might be quite light and not involve fundamental changes to the model. Instead, differentiation could be largely handled on the front-end. | True | Separate feedback questions for different adj types - As discussed, this separation might be quite light and not involve fundamental changes to the model. Instead, differentiation could be largely handled on the front-end. | main | separate feedback questions for different adj types as discussed this separation might be quite light and not involve fundamental changes to the model instead differentiation could be largely handled on the front end | 1 |

5,167 | 26,287,624,089 | IssuesEvent | 2023-01-08 01:49:26 | NIAEFEUP/website-niaefeup-backend | https://api.github.com/repos/NIAEFEUP/website-niaefeup-backend | closed | dto: transition to JMapper API | maintainability | As discussed in #20, JMapper should be investigated as to if it is a good option to replace our current Dto code. The objectives for the new implementation would be: fewer reflective operations and a cleaner implementation.

Depending on how JMapper generates mappers, a caching strategy should be considered. | True | dto: transition to JMapper API - As discussed in #20, JMapper should be investigated as to if it is a good option to replace our current Dto code. The objectives for the new implementation would be: fewer reflective operations and a cleaner implementation.

Depending on how JMapper generates mappers, a caching strategy should be considered. | main | dto transition to jmapper api as discussed in jmapper should be investigated as to if it is a good option to replace our current dto code the objectives for the new implementation would be fewer reflective operations and a cleaner implementation depending on how jmapper generates mappers a caching strategy should be considered | 1 |

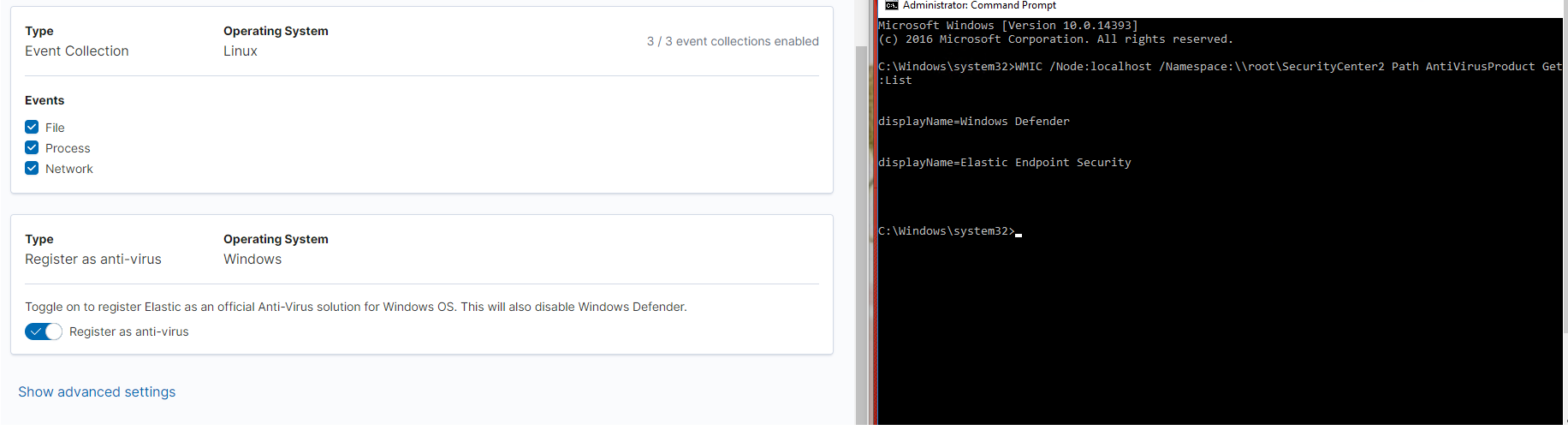

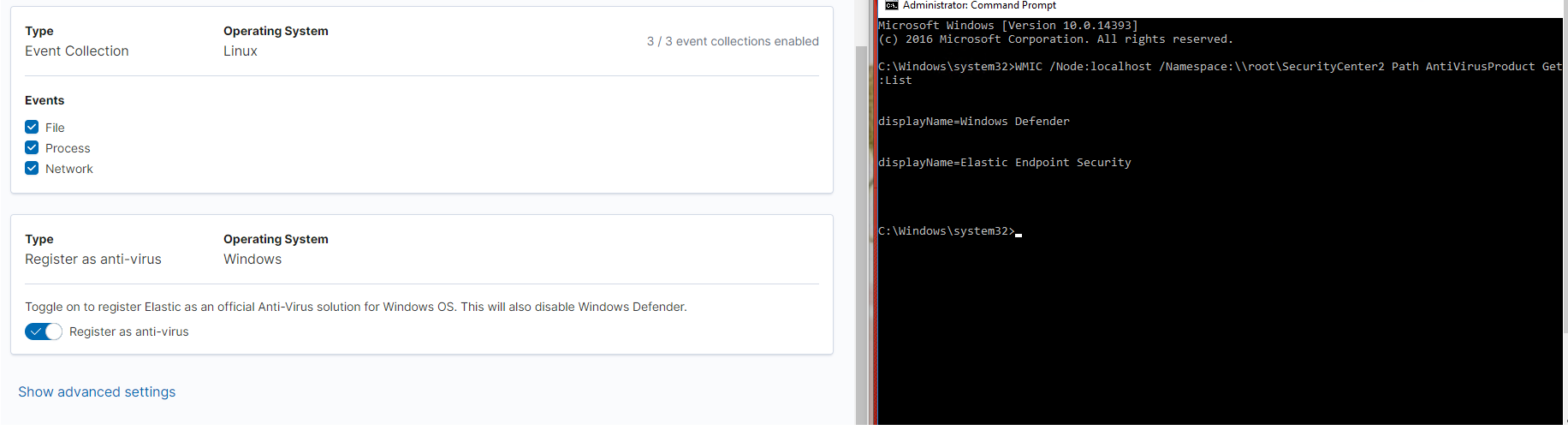

61,089 | 14,614,361,109 | IssuesEvent | 2020-12-22 09:46:40 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Security Solution] Rebranded of "Elastic Endpoint Security" under security services. | Team: SecuritySolution bug | **Describe the bug**

Rebranded of "Elastic Endpoint Security" under security services

**Build Details:**

```

Platform: Staging

Version: 8.0-SNAPSHOT

Commit: a76c666a6153b062d2f9d97b5c7e87620339a181

Build number: 39157

Artifact: https://artifacts-api.elastic.co/v1/search/8.0-SNAPSHOT

```

**Browser Details**

All

**Preconditions**

1. Cloud environment on staging should exist.

2. Endpoint should be deployed with Security Integration installed.

**Steps to Reproduce**

1. Navigate to Kibana URL on Browser.

2. Click on the "Administration" tab under Security from the left navigation bar.

3. Go to the policy and enable the Register as Antivirus toggle.

4. Observe that instead of Endpoint Security, 'Elastic Endpoint Security' is displaying for the security service.

```

Command used:

WMIC /Node:localhost /Namespace:\\root\SecurityCenter2 Path AntiVirusProduct Get displayName /Format:List

```

**Test data**

N/A

**Impacted Test case(s)**

N/A

**Actual Result**

Rebranded of "Elastic Endpoint Security" under security services is not done.

**Expected Result**

Rebranded of "Elastic Endpoint Security" under security services should be done.

**What's Working**

N/A

**What's not Working**

N/A

**Screenshots**

**Logs**

N/A | True | [Security Solution] Rebranded of "Elastic Endpoint Security" under security services. - **Describe the bug**

Rebranded of "Elastic Endpoint Security" under security services

**Build Details:**

```

Platform: Staging

Version: 8.0-SNAPSHOT

Commit: a76c666a6153b062d2f9d97b5c7e87620339a181

Build number: 39157

Artifact: https://artifacts-api.elastic.co/v1/search/8.0-SNAPSHOT

```

**Browser Details**

All

**Preconditions**

1. Cloud environment on staging should exist.

2. Endpoint should be deployed with Security Integration installed.

**Steps to Reproduce**

1. Navigate to Kibana URL on Browser.

2. Click on the "Administration" tab under Security from the left navigation bar.

3. Go to the policy and enable the Register as Antivirus toggle.

4. Observe that instead of Endpoint Security, 'Elastic Endpoint Security' is displaying for the security service.

```

Command used:

WMIC /Node:localhost /Namespace:\\root\SecurityCenter2 Path AntiVirusProduct Get displayName /Format:List

```

**Test data**

N/A

**Impacted Test case(s)**

N/A

**Actual Result**

Rebranded of "Elastic Endpoint Security" under security services is not done.

**Expected Result**

Rebranded of "Elastic Endpoint Security" under security services should be done.

**What's Working**

N/A

**What's not Working**

N/A

**Screenshots**

**Logs**

N/A | non_main | rebranded of elastic endpoint security under security services describe the bug rebranded of elastic endpoint security under security services build details platform staging version snapshot commit build number artifact browser details all preconditions cloud environment on staging should exist endpoint should be deployed with security integration installed steps to reproduce navigate to kibana url on browser click on the administration tab under security from the left navigation bar go to the policy and enable the register as antivirus toggle observe that instead of endpoint security elastic endpoint security is displaying for the security service command used wmic node localhost namespace root path antivirusproduct get displayname format list test data n a impacted test case s n a actual result rebranded of elastic endpoint security under security services is not done expected result rebranded of elastic endpoint security under security services should be done what s working n a what s not working n a screenshots logs n a | 0 |

242,514 | 18,667,429,511 | IssuesEvent | 2021-10-30 03:43:13 | eraware/dnn-elements | https://api.github.com/repos/eraware/dnn-elements | closed | Add a demo/sample project | documentation | [enhancement request]

For folks (like me) trying to understand a new project and see the effect, it would be nice to at least have screen shots of these components to get a feel for what the base UI/appearance is.

Ideally there would be a sample/test application to see/exercise all these components with a minimum of effort for lazy folks (like me) browsing around.

I understand having a running demo might require hosting fees, but maybe you could have a demo (or one for each component?) run on https://www.typescriptlang.org/play or one of the similar online environments.

Love this idea for sure. I may even try to add some of my own?! | 1.0 | Add a demo/sample project - [enhancement request]

For folks (like me) trying to understand a new project and see the effect, it would be nice to at least have screen shots of these components to get a feel for what the base UI/appearance is.

Ideally there would be a sample/test application to see/exercise all these components with a minimum of effort for lazy folks (like me) browsing around.

I understand having a running demo might require hosting fees, but maybe you could have a demo (or one for each component?) run on https://www.typescriptlang.org/play or one of the similar online environments.

Love this idea for sure. I may even try to add some of my own?! | non_main | add a demo sample project for folks like me trying to understand a new project and see the effect it would be nice to at least have screen shots of these components to get a feel for what the base ui appearance is ideally there would be a sample test application to see exercise all these components with a minimum of effort for lazy folks like me browsing around i understand having a running demo might require hosting fees but maybe you could have a demo or one for each component run on or one of the similar online environments love this idea for sure i may even try to add some of my own | 0 |

3,457 | 13,224,627,048 | IssuesEvent | 2020-08-17 19:30:54 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | Tooltip is not showing text when in modal | status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 type: bug 🐛 | ## Tooltip is not showing text when in modal

## What package(s) are you using?

<!--

Add an x in one of the options below, for example:

- [x] package name

-->

- [x] `carbon-components`

- [x] `carbon-components-react`

## Detailed description

> Describe in detail the issue you're having.

When I'm using a tooltip in a modal, I noticed that the text does not show when I click on the tooltip icon as shown below:

Tooltip shows up fine though when it's not in a modal. I'm thinking that it's possibly a z-index issue.

> Is this issue related to a specific component?

`Tooltip`

> What did you expect to happen? What happened instead? What would you like to

> see changed?

Tooltip text should show when being clicked in modal.

> What browser are you working in?

Google Chrome

> What version of the Carbon Design System are you using?

"carbon-components": "10.16.0",

"carbon-components-react": "7.16.0",

> What offering/product do you work on? Any pressing ship or release dates we

> should be aware of?

`IBM Cloud Catalog`

| True | Tooltip is not showing text when in modal - ## Tooltip is not showing text when in modal

## What package(s) are you using?

<!--

Add an x in one of the options below, for example:

- [x] package name

-->

- [x] `carbon-components`

- [x] `carbon-components-react`

## Detailed description

> Describe in detail the issue you're having.

When I'm using a tooltip in a modal, I noticed that the text does not show when I click on the tooltip icon as shown below:

Tooltip shows up fine though when it's not in a modal. I'm thinking that it's possibly a z-index issue.

> Is this issue related to a specific component?

`Tooltip`

> What did you expect to happen? What happened instead? What would you like to

> see changed?

Tooltip text should show when being clicked in modal.

> What browser are you working in?

Google Chrome

> What version of the Carbon Design System are you using?

"carbon-components": "10.16.0",

"carbon-components-react": "7.16.0",

> What offering/product do you work on? Any pressing ship or release dates we

> should be aware of?

`IBM Cloud Catalog`

| main | tooltip is not showing text when in modal tooltip is not showing text when in modal what package s are you using add an x in one of the options below for example package name carbon components carbon components react detailed description describe in detail the issue you re having when i m using a tooltip in a modal i noticed that the text does not show when i click on the tooltip icon as shown below tooltip shows up fine though when it s not in a modal i m thinking that it s possibly a z index issue is this issue related to a specific component tooltip what did you expect to happen what happened instead what would you like to see changed tooltip text should show when being clicked in modal what browser are you working in google chrome what version of the carbon design system are you using carbon components carbon components react what offering product do you work on any pressing ship or release dates we should be aware of ibm cloud catalog | 1 |

3,774 | 15,864,476,235 | IssuesEvent | 2021-04-08 13:50:14 | heroku/heroku-buildpack-python | https://api.github.com/repos/heroku/heroku-buildpack-python | closed | Add caching to identify new deployments vs existing applications | maintainability-issue | Currently there's no defined way to tell new apps apart from existing ones. Caching this info would enable a lot of refactoring | True | Add caching to identify new deployments vs existing applications - Currently there's no defined way to tell new apps apart from existing ones. Caching this info would enable a lot of refactoring | main | add caching to identify new deployments vs existing applications currently there s no defined way to tell new apps apart from existing ones caching this info would enable a lot of refactoring | 1 |

1,375 | 5,954,778,306 | IssuesEvent | 2017-05-27 21:15:58 | caskroom/homebrew-cask | https://api.github.com/repos/caskroom/homebrew-cask | closed | Outdated cask: Paste | awaiting maintainer feedback | Running `brew cask install paste` fails due to `curl` receiving a 404 Not Found when attempting to fetch the download URL in [the cask](https://github.com/caskroom/homebrew-cask/blob/master/Casks/paste.rb). I even tried to use earlier incarnations of the cask that previously worked for me and they now do not.

I guess for some reason it just disappeared from the [HockeyApp](https://www.hockeyapp.net/) API. I have no idea why that would happen. Not really brew's fault, I know. But am I correct in my understanding that the HockeyApp account being used is something you all control as infrastructure? Never used it before, but that's what I gather from their docs and I see it used in multiple casks.

The newest version of [Paste](http://pasteapp.me/) is "Version 2.2.1 (34)".

#### Debugging info

<details><summary>Output of the command with `--verbose --debug`</summary>

```sh

sholladay$ brew cask install paste --verbose --debug

==> Hbc::Installer#install

==> Printing caveats

==> Hbc::Installer#fetch

==> Downloading

==> Downloading https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip

==> Calling curl with args ["https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip", "-C", "0", "-o", "#<Pathname:/Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete>"]

/usr/bin/curl --remote-time --location --user-agent Homebrew/1.2.1-92-ge931fee7 (Macintosh; Intel Mac OS X 10.12.4) curl/7.51.0 --fail https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip -C 0 -o /Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0

curl: (22) The requested URL returned error: 404 Not Found

Error: Download failed on Cask 'paste' with message: Download failed: https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip

The incomplete download is cached at /Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete

Error: nothing to install/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli/install.rb:17:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli/abstract_command.rb:34:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:98:in `run_command'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:149:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:132:in `run'

/usr/local/Homebrew/Library/Homebrew/cmd/cask.rb:8:in `cask'

/usr/local/Homebrew/Library/Homebrew/brew.rb:93:in `<main>'

Error: Kernel.exit

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:154:in `exit'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:154:in `rescue in run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:140:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:132:in `run'

/usr/local/Homebrew/Library/Homebrew/cmd/cask.rb:8:in `cask'

/usr/local/Homebrew/Library/Homebrew/brew.rb:93:in `<main>'

```

</details>

<details><summary>Output of `brew doctor`</summary>

```

Your system is ready to brew.

```

</details>

<details><summary>Output of `brew cask doctor`</summary>

```sh

==> Homebrew-Cask Version

Homebrew-Cask 1.2.1

caskroom/homebrew-cask (git revision 33118; last commit 2017-05-24)

==> Homebrew-Cask Install Location

<NONE>

==> Homebrew-Cask Staging Location

/usr/local/Caskroom

==> Homebrew-Cask Cached Downloads

~/Library/Caches/Homebrew/Cask (13 files, 663.4MB)

==> Homebrew-Cask Taps:

/usr/local/Homebrew/Library/Taps/caskroom/homebrew-cask (3617 casks)

/usr/local/Homebrew/Library/Taps/homebrew/homebrew-core (0 casks)

/usr/local/Homebrew/Library/Taps/homebrew/homebrew-services (0 casks)

==> Contents of $LOAD_PATH

/usr/local/Homebrew/Library/Homebrew/cask/lib

/usr/local/Homebrew/Library/Homebrew

/Library/Ruby/Site/2.0.0

/Library/Ruby/Site/2.0.0/x86_64-darwin16

/Library/Ruby/Site/2.0.0/universal-darwin16

/Library/Ruby/Site

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0/x86_64-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0/universal-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0/x86_64-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0/universal-darwin16

==> Environment Variables

LANG="en_US.UTF-8"

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/local/Homebrew/Library/Taps/homebrew/homebrew-services/cmd:/usr/local/Homebrew/Library/Homebrew/shims/scm"

SHELL="/usr/local/bin/bash"

```

</details> | True | Outdated cask: Paste - Running `brew cask install paste` fails due to `curl` receiving a 404 Not Found when attempting to fetch the download URL in [the cask](https://github.com/caskroom/homebrew-cask/blob/master/Casks/paste.rb). I even tried to use earlier incarnations of the cask that previously worked for me and they now do not.

I guess for some reason it just disappeared from the [HockeyApp](https://www.hockeyapp.net/) API. I have no idea why that would happen. Not really brew's fault, I know. But am I correct in my understanding that the HockeyApp account being used is something you all control as infrastructure? Never used it before, but that's what I gather from their docs and I see it used in multiple casks.

The newest version of [Paste](http://pasteapp.me/) is "Version 2.2.1 (34)".

#### Debugging info

<details><summary>Output of the command with `--verbose --debug`</summary>

```sh

sholladay$ brew cask install paste --verbose --debug

==> Hbc::Installer#install

==> Printing caveats

==> Hbc::Installer#fetch

==> Downloading

==> Downloading https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip

==> Calling curl with args ["https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip", "-C", "0", "-o", "#<Pathname:/Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete>"]

/usr/bin/curl --remote-time --location --user-agent Homebrew/1.2.1-92-ge931fee7 (Macintosh; Intel Mac OS X 10.12.4) curl/7.51.0 --fail https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip -C 0 -o /Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0

curl: (22) The requested URL returned error: 404 Not Found

Error: Download failed on Cask 'paste' with message: Download failed: https://rink.hockeyapp.net/api/2/apps/ee24d1a939cd4ff8b2861eb8c788a995/app_versions/6?format=zip

The incomplete download is cached at /Users/sholladay/Library/Caches/Homebrew/Cask/paste--2.2.1,6.incomplete

Error: nothing to install/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli/install.rb:17:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli/abstract_command.rb:34:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:98:in `run_command'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:149:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:132:in `run'

/usr/local/Homebrew/Library/Homebrew/cmd/cask.rb:8:in `cask'

/usr/local/Homebrew/Library/Homebrew/brew.rb:93:in `<main>'

Error: Kernel.exit

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:154:in `exit'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:154:in `rescue in run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:140:in `run'

/usr/local/Homebrew/Library/Homebrew/cask/lib/hbc/cli.rb:132:in `run'

/usr/local/Homebrew/Library/Homebrew/cmd/cask.rb:8:in `cask'

/usr/local/Homebrew/Library/Homebrew/brew.rb:93:in `<main>'

```

</details>

<details><summary>Output of `brew doctor`</summary>

```

Your system is ready to brew.

```

</details>

<details><summary>Output of `brew cask doctor`</summary>

```sh

==> Homebrew-Cask Version

Homebrew-Cask 1.2.1

caskroom/homebrew-cask (git revision 33118; last commit 2017-05-24)

==> Homebrew-Cask Install Location

<NONE>

==> Homebrew-Cask Staging Location

/usr/local/Caskroom

==> Homebrew-Cask Cached Downloads

~/Library/Caches/Homebrew/Cask (13 files, 663.4MB)

==> Homebrew-Cask Taps:

/usr/local/Homebrew/Library/Taps/caskroom/homebrew-cask (3617 casks)

/usr/local/Homebrew/Library/Taps/homebrew/homebrew-core (0 casks)

/usr/local/Homebrew/Library/Taps/homebrew/homebrew-services (0 casks)

==> Contents of $LOAD_PATH

/usr/local/Homebrew/Library/Homebrew/cask/lib

/usr/local/Homebrew/Library/Homebrew

/Library/Ruby/Site/2.0.0

/Library/Ruby/Site/2.0.0/x86_64-darwin16

/Library/Ruby/Site/2.0.0/universal-darwin16

/Library/Ruby/Site

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0/x86_64-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby/2.0.0/universal-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/vendor_ruby

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0/x86_64-darwin16

/System/Library/Frameworks/Ruby.framework/Versions/2.0/usr/lib/ruby/2.0.0/universal-darwin16

==> Environment Variables

LANG="en_US.UTF-8"

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/local/Homebrew/Library/Taps/homebrew/homebrew-services/cmd:/usr/local/Homebrew/Library/Homebrew/shims/scm"

SHELL="/usr/local/bin/bash"

```

</details> | main | outdated cask paste running brew cask install paste fails due to curl receiving a not found when attempting to fetch the download url in i even tried to use earlier incarnations of the cask that previously worked for me and they now do not i guess for some reason it just disappeared from the api i have no idea why that would happen not really brew s fault i know but am i correct in my understanding that the hockeyapp account being used is something you all control as infrastructure never used it before but that s what i gather from their docs and i see it used in multiple casks the newest version of is version debugging info output of the command with verbose debug sh sholladay brew cask install paste verbose debug hbc installer install printing caveats hbc installer fetch downloading downloading calling curl with args usr bin curl remote time location user agent homebrew macintosh intel mac os x curl fail c o users sholladay library caches homebrew cask paste incomplete total received xferd average speed time time time current dload upload total spent left speed curl the requested url returned error not found error download failed on cask paste with message download failed the incomplete download is cached at users sholladay library caches homebrew cask paste incomplete error nothing to install usr local homebrew library homebrew cask lib hbc cli install rb in run usr local homebrew library homebrew cask lib hbc cli abstract command rb in run usr local homebrew library homebrew cask lib hbc cli rb in run command usr local homebrew library homebrew cask lib hbc cli rb in run usr local homebrew library homebrew cask lib hbc cli rb in run usr local homebrew library homebrew cmd cask rb in cask usr local homebrew library homebrew brew rb in error kernel exit usr local homebrew library homebrew cask lib hbc cli rb in exit usr local homebrew library homebrew cask lib hbc cli rb in rescue in run usr local homebrew library homebrew cask lib hbc cli rb in run usr local homebrew library homebrew cask lib hbc cli rb in run usr local homebrew library homebrew cmd cask rb in cask usr local homebrew library homebrew brew rb in output of brew doctor your system is ready to brew output of brew cask doctor sh homebrew cask version homebrew cask caskroom homebrew cask git revision last commit homebrew cask install location homebrew cask staging location usr local caskroom homebrew cask cached downloads library caches homebrew cask files homebrew cask taps usr local homebrew library taps caskroom homebrew cask casks usr local homebrew library taps homebrew homebrew core casks usr local homebrew library taps homebrew homebrew services casks contents of load path usr local homebrew library homebrew cask lib usr local homebrew library homebrew library ruby site library ruby site library ruby site universal library ruby site system library frameworks ruby framework versions usr lib ruby vendor ruby system library frameworks ruby framework versions usr lib ruby vendor ruby system library frameworks ruby framework versions usr lib ruby vendor ruby universal system library frameworks ruby framework versions usr lib ruby vendor ruby system library frameworks ruby framework versions usr lib ruby system library frameworks ruby framework versions usr lib ruby system library frameworks ruby framework versions usr lib ruby universal environment variables lang en us utf path usr local sbin usr local bin usr sbin usr bin sbin bin usr local homebrew library taps homebrew homebrew services cmd usr local homebrew library homebrew shims scm shell usr local bin bash | 1 |

470,542 | 13,540,153,650 | IssuesEvent | 2020-09-16 14:19:53 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Add completion support for Object constructor expression | Area/LanguageServer Points/1 Priority/High SwanLakeDump Team/Tooling Type/Task | **Description:**

$title, as per related to #25643 | 1.0 | Add completion support for Object constructor expression - **Description:**

$title, as per related to #25643 | non_main | add completion support for object constructor expression description title as per related to | 0 |

1,246 | 5,308,978,080 | IssuesEvent | 2017-02-12 04:03:07 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | vmware_guest.py OS customization | affects_2.2 cloud feature_idea vmware waiting_on_maintainer | ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

vmware_guest.py

##### ANSIBLE VERSION

```

ansible 2.2.0

```

##### CONFIGURATION

Default configuration

##### OS / ENVIRONMENT

N/A

##### SUMMARY

This module should support ip address, subnet, gateway and dns settings.

Network settings should be allowed to set per interface.

```

def deploy_template(self, poweron=False, wait_for_ip=False):

# FIXME:

# - clusters

# - multiple datacenters

# - resource pools

# - multiple templates by the same name

# - use disk config from template by default

# - static IPs

```

##### STEPS TO REPRODUCE

N/A

##### EXPECTED RESULTS

N/A

##### ACTUAL RESULTS

```

N/A

```

| True | vmware_guest.py OS customization - ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

vmware_guest.py

##### ANSIBLE VERSION

```

ansible 2.2.0

```

##### CONFIGURATION

Default configuration

##### OS / ENVIRONMENT

N/A

##### SUMMARY

This module should support ip address, subnet, gateway and dns settings.

Network settings should be allowed to set per interface.

```

def deploy_template(self, poweron=False, wait_for_ip=False):

# FIXME:

# - clusters

# - multiple datacenters

# - resource pools

# - multiple templates by the same name

# - use disk config from template by default

# - static IPs

```

##### STEPS TO REPRODUCE

N/A

##### EXPECTED RESULTS

N/A

##### ACTUAL RESULTS

```

N/A

```

| main | vmware guest py os customization issue type feature idea component name vmware guest py ansible version ansible configuration default configuration os environment n a summary this module should support ip address subnet gateway and dns settings network settings should be allowed to set per interface def deploy template self poweron false wait for ip false fixme clusters multiple datacenters resource pools multiple templates by the same name use disk config from template by default static ips steps to reproduce n a expected results n a actual results n a | 1 |

5,781 | 30,635,346,749 | IssuesEvent | 2023-07-24 17:19:25 | microsoft/mu_basecore | https://api.github.com/repos/microsoft/mu_basecore | closed | [Bug]: SMMUv3 IORT HTTU Override flag needs to be expanded | state:needs-triage type:bug state:needs-maintainer-feedback urgency:low | ### Is there an existing issue for this?

- [X] I have searched existing issues

### Current Behavior

The flag for HTTU override in an SMMUv3 node in the IORT table is currently define in MU_BASECORE/MdePkg/Include/IndustryStandard/IoRemappingTable.h:63 as:

```

#define EFI_ACPI_IORT_SMMUv3_FLAG_HTTU_OVERRIDE BIT1

```

### Expected Behavior

The length of this field is actually 2 bits. Possible values are:

0b0000: Hardware update of the Access flag and dirty state are not supported.

0b0001: Support for hardware update of the Access flag for Block and Page descriptors.

0b0010: As 0b0001, and adds support for hardware update of the Access flag for Block and Page descriptors. Hardware update of dirty state is supported.

For more info see:

- Arm System Memory Management Unit Architecture Specification

- Hardware Updates to Access Flag and Dirty State in the Armv8.1-A architecture

### Steps To Reproduce

N/A

### Build Environment

```markdown

N/A

```

### Version Information

```text

v2023020002.1.4

```

### Urgency

Low

### Are you going to fix this?

I will fix it

### Do you need maintainer feedback?

Maintainer feedback requested

### Anything else?

_No response_ | True | [Bug]: SMMUv3 IORT HTTU Override flag needs to be expanded - ### Is there an existing issue for this?

- [X] I have searched existing issues

### Current Behavior

The flag for HTTU override in an SMMUv3 node in the IORT table is currently define in MU_BASECORE/MdePkg/Include/IndustryStandard/IoRemappingTable.h:63 as:

```

#define EFI_ACPI_IORT_SMMUv3_FLAG_HTTU_OVERRIDE BIT1

```

### Expected Behavior

The length of this field is actually 2 bits. Possible values are:

0b0000: Hardware update of the Access flag and dirty state are not supported.

0b0001: Support for hardware update of the Access flag for Block and Page descriptors.

0b0010: As 0b0001, and adds support for hardware update of the Access flag for Block and Page descriptors. Hardware update of dirty state is supported.

For more info see:

- Arm System Memory Management Unit Architecture Specification

- Hardware Updates to Access Flag and Dirty State in the Armv8.1-A architecture

### Steps To Reproduce

N/A

### Build Environment

```markdown

N/A

```

### Version Information

```text

v2023020002.1.4

```

### Urgency

Low

### Are you going to fix this?

I will fix it

### Do you need maintainer feedback?

Maintainer feedback requested

### Anything else?

_No response_ | main | iort httu override flag needs to be expanded is there an existing issue for this i have searched existing issues current behavior the flag for httu override in an node in the iort table is currently define in mu basecore mdepkg include industrystandard ioremappingtable h as define efi acpi iort flag httu override expected behavior the length of this field is actually bits possible values are hardware update of the access flag and dirty state are not supported support for hardware update of the access flag for block and page descriptors as and adds support for hardware update of the access flag for block and page descriptors hardware update of dirty state is supported for more info see arm system memory management unit architecture specification hardware updates to access flag and dirty state in the a architecture steps to reproduce n a build environment markdown n a version information text urgency low are you going to fix this i will fix it do you need maintainer feedback maintainer feedback requested anything else no response | 1 |

4,623 | 23,930,781,364 | IssuesEvent | 2022-09-10 14:07:21 | cncf/glossary | https://api.github.com/repos/cncf/glossary | closed | The custom search uses old indexes | maintainers | Hi, I found that several search index data were old and didn't reflect the actual path format. ref) https://github.com/cncf/glossary/pull/922

For example, currently `Shift Left` page is on `/shift-left`, but the search result links to `/shift_left`. | True | The custom search uses old indexes - Hi, I found that several search index data were old and didn't reflect the actual path format. ref) https://github.com/cncf/glossary/pull/922

For example, currently `Shift Left` page is on `/shift-left`, but the search result links to `/shift_left`. | main | the custom search uses old indexes hi i found that several search index data were old and didn t reflect the actual path format ref for example currently shift left page is on shift left but the search result links to shift left | 1 |

1,856 | 6,577,402,365 | IssuesEvent | 2017-09-12 00:39:53 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | os_router: HA interfaces break os_router module. | affects_2.0 bug_report cloud openstack waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

os_router.py

##### ANSIBLE VERSION

```

ansible 2.0.1.0

```

##### OS / ENVIRONMENT

NA

##### SUMMARY

The HA ports cause issues when deleting a router through this module.

This means that any router updates or deletions through this module will fail.

Currently, for updates, the code retrieves all internal interfaces of a router(including the HA ports), then tries to delete them.

See:

https://github.com/ansible/ansible-modules-core/blob/devel/cloud/openstack/os_router.py#L330

The principle is the same for deletion.

However, neutron does not allow these interfaces to be deleted and will throw an error on any such attempt.

##### STEPS TO REPRODUCE

1. Create a router using the os_router module on an environment running Neutron L3HA using the VRRP protocol(i'm unsure about DVR).

2. Update it's configurations

3. Re-run the playbooks. They will fail when trying to delete the HA ports.

##### EXPECTED RESULTS

The playbooks will fail to run.

| True | os_router: HA interfaces break os_router module. - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

os_router.py

##### ANSIBLE VERSION

```

ansible 2.0.1.0

```

##### OS / ENVIRONMENT

NA

##### SUMMARY

The HA ports cause issues when deleting a router through this module.

This means that any router updates or deletions through this module will fail.

Currently, for updates, the code retrieves all internal interfaces of a router(including the HA ports), then tries to delete them.

See:

https://github.com/ansible/ansible-modules-core/blob/devel/cloud/openstack/os_router.py#L330

The principle is the same for deletion.

However, neutron does not allow these interfaces to be deleted and will throw an error on any such attempt.

##### STEPS TO REPRODUCE

1. Create a router using the os_router module on an environment running Neutron L3HA using the VRRP protocol(i'm unsure about DVR).

2. Update it's configurations

3. Re-run the playbooks. They will fail when trying to delete the HA ports.

##### EXPECTED RESULTS

The playbooks will fail to run.

| main | os router ha interfaces break os router module issue type bug report component name os router py ansible version ansible os environment na summary the ha ports cause issues when deleting a router through this module this means that any router updates or deletions through this module will fail currently for updates the code retrieves all internal interfaces of a router including the ha ports then tries to delete them see the principle is the same for deletion however neutron does not allow these interfaces to be deleted and will throw an error on any such attempt steps to reproduce create a router using the os router module on an environment running neutron using the vrrp protocol i m unsure about dvr update it s configurations re run the playbooks they will fail when trying to delete the ha ports expected results the playbooks will fail to run | 1 |

4,823 | 24,857,939,086 | IssuesEvent | 2022-10-27 05:15:40 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Bug: sam invoke local - Docker 404 Client Error: Not Found | type/question blocked/more-info-needed maintainer/need-followup | ### Description:

I'm having a hard time trying to locally invoking lambda code with hello-world templates

### Steps to reproduce:

`sam init -r python3.8`

`1`

`1`

`N`

`sam-app-py38`

`cd sam-app-py38`

`sam build --debug`

2022-10-19 19:05:12,888 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:05:12,888 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:05:12,888 | Expand command line arguments to:

2022-10-19 19:05:12,888 | --template_file=/PATH_TO_SAM_APP/template.yml --build_dir=.aws-sam/build --cache_dir=.aws-sam/cache

2022-10-19 19:05:13,127 | 'build' command is called

2022-10-19 19:05:13,127 | Template is not provided in context, skip adding project type metric

2022-10-19 19:05:13,154 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '5dab619d-ddc3-435c-a390-8deddd4ff231', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': '109eed1c-a0cf-4170-a22a-0b43307498a9', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam build', 'metricSpecificAttributes': {'gitOrigin': None, 'projectName': '894b4894943a1373a55cb1b7c0a8aa33530234fd6f4de1e562bc37c62c949767', 'initialCommit': None}, 'duration': 265, 'exitReason': 'TemplateNotFoundException', 'exitCode': 1}}]}

2022-10-19 19:05:13,853 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

Error: Template file not found at /PATH_TO_SAM_APP/template.yml

afrigerio@Alessandros-MacBook-Pro lambdas % cd sam-app-py38

afrigerio@Alessandros-MacBook-Pro sam-app-py38 % sam build --debug

2022-10-19 19:06:07,009 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:06:07,009 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:06:07,009 | Expand command line arguments to:

2022-10-19 19:06:07,009 | --template_file=/PATH_TO_SAM_APP/sam-app-py38/template.yaml --build_dir=.aws-sam/build --cache_dir=.aws-sam/cache

2022-10-19 19:06:07,144 | 'build' command is called

2022-10-19 19:06:07,147 | No Parameters detected in the template

2022-10-19 19:06:07,158 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,158 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,158 | 0 stacks found in the template

2022-10-19 19:06:07,158 | No Parameters detected in the template

2022-10-19 19:06:07,163 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,164 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,164 | 2 resources found in the stack

2022-10-19 19:06:07,164 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

2022-10-19 19:06:07,164 | --base-dir is not presented, adjusting uri hello_world/ relative to /PATH_TO_SAM_APP/sam-app-py38/template.yaml

2022-10-19 19:06:07,166 | Your template contains a resource with logical ID "ServerlessRestApi", which is a reserved logical ID in AWS SAM. It could result in unexpected behaviors and is not recommended.

2022-10-19 19:06:07,166 | 2 resources found in the stack

2022-10-19 19:06:07,166 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

2022-10-19 19:06:07,167 | Instantiating build definitions

2022-10-19 19:06:07,167 | No previous build graph found, generating new one

2022-10-19 19:06:07,167 | Unique function build definition found, adding as new (Function Build Definition: BuildDefinition(python3.8, /PATH_TO_SAM_APP/sam-app-py38/hello_world, Zip, , 4a7df146-0be6-426a-b355-2e43bc6fce03, {}, {}, x86_64, []), Function: Function(function_id='HelloWorldFunction', name='HelloWorldFunction', functionname='HelloWorldFunction', runtime='python3.8', memory=None, timeout=3, handler='app.lambda_handler', imageuri=None, packagetype='Zip', imageconfig=None, codeuri='/PATH_TO_SAM_APP/sam-app-py38/hello_world', environment=None, rolearn=None, layers=[], events={'HelloWorld': {'Type': 'Api', 'Properties': {'Path': '/hello', 'Method': 'get', 'RestApiId': 'ServerlessRestApi'}}}, metadata={'SamResourceId': 'HelloWorldFunction'}, inlinecode=None, codesign_config_arn=None, architectures=['x86_64'], function_url_config=None, stack_path=''))

2022-10-19 19:06:07,168 | Building codeuri: /PATH_TO_SAM_APP/sam-app-py38/hello_world runtime: python3.8 metadata: {} architecture: x86_64 functions: HelloWorldFunction

2022-10-19 19:06:07,168 | Building to following folder /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:06:07,168 | Loading workflow module 'aws_lambda_builders.workflows'

2022-10-19 19:06:07,172 | Registering workflow 'PythonPipBuilder' with capability 'Capability(language='python', dependency_manager='pip', application_framework=None)'

2022-10-19 19:06:07,173 | Registering workflow 'NodejsNpmBuilder' with capability 'Capability(language='nodejs', dependency_manager='npm', application_framework=None)'

2022-10-19 19:06:07,174 | Registering workflow 'RubyBundlerBuilder' with capability 'Capability(language='ruby', dependency_manager='bundler', application_framework=None)'

2022-10-19 19:06:07,176 | Registering workflow 'GoModulesBuilder' with capability 'Capability(language='go', dependency_manager='modules', application_framework=None)'

2022-10-19 19:06:07,178 | Registering workflow 'JavaGradleWorkflow' with capability 'Capability(language='java', dependency_manager='gradle', application_framework=None)'

2022-10-19 19:06:07,179 | Registering workflow 'JavaMavenWorkflow' with capability 'Capability(language='java', dependency_manager='maven', application_framework=None)'

2022-10-19 19:06:07,181 | Registering workflow 'DotnetCliPackageBuilder' with capability 'Capability(language='dotnet', dependency_manager='cli-package', application_framework=None)'

2022-10-19 19:06:07,182 | Registering workflow 'CustomMakeBuilder' with capability 'Capability(language='provided', dependency_manager=None, application_framework=None)'

2022-10-19 19:06:07,183 | Registering workflow 'NodejsNpmEsbuildBuilder' with capability 'Capability(language='nodejs', dependency_manager='npm-esbuild', application_framework=None)'

2022-10-19 19:06:07,183 | Found workflow 'PythonPipBuilder' to support capabilities 'Capability(language='python', dependency_manager='pip', application_framework=None)'

2022-10-19 19:06:07,198 | Running workflow 'PythonPipBuilder'

2022-10-19 19:06:07,199 | Running PythonPipBuilder:ResolveDependencies

2022-10-19 19:06:07,216 | calling pip download -r /PATH_TO_SAM_APP/sam-app-py38/hello_world/requirements.txt --dest /var/folders/7s/02wlz_dx27q5f_hjqbrsjdsr0000gn/T/tmpm3ffmr1d --exists-action i

2022-10-19 19:06:07,782 | Full dependency closure: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | initial compatible: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | initial incompatible: set()

2022-10-19 19:06:07,783 | Downloading missing wheels: set()

2022-10-19 19:06:07,783 | compatible wheels after second download pass: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Build missing wheels from sdists (C compiling True): set()

2022-10-19 19:06:07,783 | compatible after building wheels (no C compiling): {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Build missing wheels from sdists (C compiling False): set()

2022-10-19 19:06:07,783 | compatible after building wheels (C compiling): {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Final compatible: {idna==3.4(wheel), urllib3==1.26.12(wheel), requests==2.28.1(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Final incompatible: set()

2022-10-19 19:06:07,783 | Final missing wheels: set()

2022-10-19 19:06:07,797 | PythonPipBuilder:ResolveDependencies succeeded

2022-10-19 19:06:07,797 | Running PythonPipBuilder:CopySource

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/requirements.txt) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/requirements.txt)

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/__init__.py) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/__init__.py)

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/app.py) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/app.py)

2022-10-19 19:06:07,798 | PythonPipBuilder:CopySource succeeded

2022-10-19 19:06:07,799 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,799 | 2 resources found in the stack

2022-10-19 19:06:07,799 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

Build Succeeded

Built Artifacts : .aws-sam/build

Built Template : .aws-sam/build/template.yaml

Commands you can use next

[*] Validate SAM template: sam validate

[*] Invoke Function: sam local invoke

[*] Test Function in the Cloud: sam sync --stack-name {stack-name} --watch

[*] Deploy: sam deploy --guided

2022-10-19 19:06:07,826 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:06:07,826 | Unable to find Click Context for getting session_id.

2022-10-19 19:06:07,827 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '7ac7183d-5134-44d2-a310-1ee8d30ece10', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b549e766-17a5-4641-b0de-d53095b082cb', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam build', 'metricSpecificAttributes': {'projectType': 'CFN', 'gitOrigin': None, 'projectName': '0fdcacdf0a5a5c982672247f3c17ee797bd2e5c80105099d05b5329699391655', 'initialCommit': None}, 'duration': 816, 'exitReason': 'success', 'exitCode': 0}}]}

2022-10-19 19:06:07,827 | Sending Telemetry: {'metrics': [{'events': {'requestId': 'dd4677f9-d7d4-4ebb-9214-aa1aebc9712c', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b549e766-17a5-4641-b0de-d53095b082cb', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'metricSpecificAttributes': {'events': [{'event_name': 'BuildWorkflowUsed', 'event_value': 'python-pip', 'thread_id': 4376790400, 'time_stamp': '2022-10-19 17:06:07.168'}, {'event_name': 'BuildFunctionRuntime', 'event_value': 'python3.8', 'thread_id': 4376790400, 'time_stamp': '2022-10-19 17:06:07.801'}]}}}]}

2022-10-19 19:06:08,521 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

2022-10-19 19:06:08,522 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

`sam local invoke --debug`

2022-10-19 19:15:55,258 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:15:55,258 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:15:55,258 | Expand command line arguments to:

2022-10-19 19:15:55,258 | --template_file=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/template.yaml --event=events/event.json --no_event --layer_cache_basedir=/Users/afrigerio/.aws-sam/layers-pkg --container_host=localhost --container_host_interface=127.0.0.1

2022-10-19 19:15:55,258 | local invoke command is called

2022-10-19 19:15:55,261 | No Parameters detected in the template

2022-10-19 19:15:55,270 | Sam customer defined id is more priority than other IDs. Customer defined id for resource HelloWorldFunction is HelloWorldFunction

2022-10-19 19:15:55,270 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:15:55,270 | 0 stacks found in the template

2022-10-19 19:15:55,270 | No Parameters detected in the template

2022-10-19 19:15:55,275 | Sam customer defined id is more priority than other IDs. Customer defined id for resource HelloWorldFunction is HelloWorldFunction

2022-10-19 19:15:55,275 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:15:55,276 | 2 resources found in the stack

2022-10-19 19:15:55,276 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2022-10-19 19:15:55,276 | --base-dir is not presented, adjusting uri HelloWorldFunction relative to /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/template.yaml

2022-10-19 19:15:55,299 | Found one Lambda function with name 'HelloWorldFunction'

2022-10-19 19:15:55,299 | Invoking app.lambda_handler (python3.8)

2022-10-19 19:15:55,299 | No environment variables found for function 'HelloWorldFunction'

2022-10-19 19:15:55,299 | Loading AWS credentials from session with profile 'None'

2022-10-19 19:15:55,304 | Resolving code path. Cwd=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build, CodeUri=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:15:55,304 | Resolved absolute path to code is /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:15:55,305 | Code /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction is not a zip/jar file

2022-10-19 19:15:55,307 | Cleaning all decompressed code dirs

2022-10-19 19:15:55,333 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '94dcccda-e42d-4b06-b446-ad4bcc6fe6c3', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b2d0e00e-d549-46e3-bab5-0cd6a21e9883', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam local invoke', 'metricSpecificAttributes': {'projectType': 'CFN', 'gitOrigin': None, 'projectName': '0fdcacdf0a5a5c982672247f3c17ee797bd2e5c80105099d05b5329699391655', 'initialCommit': None}, 'duration': 75, 'exitReason': 'NotFound', 'exitCode': 255}}]}

2022-10-19 19:15:56,064 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

Traceback (most recent call last):

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/api/client.py", line 261, in _raise_for_status

response.raise_for_status()

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/requests/models.py", line 943, in raise_for_status

raise HTTPError(http_error_msg, response=self)

requests.exceptions.HTTPError: 404 Client Error: Not Found for url: http+docker://localhost/v1.35/images/public.ecr.aws/sam/emulation-python3.8:rapid-1.60.0-x86_64/json

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/opt/homebrew/bin/sam", line 8, in <module>

sys.exit(cli())

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 829, in __call__

return self.main(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 1259, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 1259, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/decorators.py", line 73, in new_func

return ctx.invoke(f, obj, *args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/lib/telemetry/metric.py", line 176, in wrapped

raise exception # pylint: disable=raising-bad-type

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/lib/telemetry/metric.py", line 126, in wrapped

return_value = func(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/lib/utils/version_checker.py", line 41, in wrapped

actual_result = func(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/cli/main.py", line 86, in wrapper

return func(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/commands/local/invoke/cli.py", line 85, in cli

do_cli(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/commands/local/invoke/cli.py", line 182, in do_cli

context.local_lambda_runner.invoke(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/commands/local/lib/local_lambda.py", line 137, in invoke

self.local_runtime.invoke(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/lib/telemetry/metric.py", line 240, in wrapped_func

return_value = func(*args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/local/lambdafn/runtime.py", line 177, in invoke

container = self.create(function_config, debug_context, container_host, container_host_interface)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/local/lambdafn/runtime.py", line 73, in create

container = LambdaContainer(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/local/docker/lambda_container.py", line 93, in __init__

image = LambdaContainer._get_image(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/local/docker/lambda_container.py", line 236, in _get_image

return lambda_image.build(runtime, packagetype, image, layers, architecture, function_name=function_name)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/samcli/local/docker/lambda_image.py", line 145, in build

self.docker_client.images.get(image_tag)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/models/images.py", line 316, in get

return self.prepare_model(self.client.api.inspect_image(name))

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/utils/decorators.py", line 19, in wrapped

return f(self, resource_id, *args, **kwargs)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/api/image.py", line 245, in inspect_image

return self._result(

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/api/client.py", line 267, in _result

self._raise_for_status(response)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/api/client.py", line 263, in _raise_for_status

raise create_api_error_from_http_exception(e)

File "/opt/homebrew/Cellar/aws-sam-cli/1.60.0/libexec/lib/python3.8/site-packages/docker/errors.py", line 31, in create_api_error_from_http_exception

raise cls(e, response=response, explanation=explanation)

docker.errors.NotFound: 404 Client Error: Not Found ("id not found")

### Expected result:

I was expecting the result coming from the lambda execution. I got a similar output if i try

`sam local start-api`

and then

`curl http://127.0.0.1:3000/hello`

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: macOS Monterey (M1)

2. `sam --version`: SAM CLI, version 1.60.0

3. AWS region: eu-central-1

| True | Bug: sam invoke local - Docker 404 Client Error: Not Found - ### Description:

I'm having a hard time trying to locally invoking lambda code with hello-world templates

### Steps to reproduce:

`sam init -r python3.8`

`1`

`1`

`N`

`sam-app-py38`

`cd sam-app-py38`

`sam build --debug`

2022-10-19 19:05:12,888 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:05:12,888 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:05:12,888 | Expand command line arguments to:

2022-10-19 19:05:12,888 | --template_file=/PATH_TO_SAM_APP/template.yml --build_dir=.aws-sam/build --cache_dir=.aws-sam/cache

2022-10-19 19:05:13,127 | 'build' command is called

2022-10-19 19:05:13,127 | Template is not provided in context, skip adding project type metric

2022-10-19 19:05:13,154 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '5dab619d-ddc3-435c-a390-8deddd4ff231', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': '109eed1c-a0cf-4170-a22a-0b43307498a9', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam build', 'metricSpecificAttributes': {'gitOrigin': None, 'projectName': '894b4894943a1373a55cb1b7c0a8aa33530234fd6f4de1e562bc37c62c949767', 'initialCommit': None}, 'duration': 265, 'exitReason': 'TemplateNotFoundException', 'exitCode': 1}}]}

2022-10-19 19:05:13,853 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

Error: Template file not found at /PATH_TO_SAM_APP/template.yml

afrigerio@Alessandros-MacBook-Pro lambdas % cd sam-app-py38

afrigerio@Alessandros-MacBook-Pro sam-app-py38 % sam build --debug

2022-10-19 19:06:07,009 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:06:07,009 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:06:07,009 | Expand command line arguments to:

2022-10-19 19:06:07,009 | --template_file=/PATH_TO_SAM_APP/sam-app-py38/template.yaml --build_dir=.aws-sam/build --cache_dir=.aws-sam/cache

2022-10-19 19:06:07,144 | 'build' command is called

2022-10-19 19:06:07,147 | No Parameters detected in the template

2022-10-19 19:06:07,158 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,158 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,158 | 0 stacks found in the template

2022-10-19 19:06:07,158 | No Parameters detected in the template

2022-10-19 19:06:07,163 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,164 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,164 | 2 resources found in the stack

2022-10-19 19:06:07,164 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

2022-10-19 19:06:07,164 | --base-dir is not presented, adjusting uri hello_world/ relative to /PATH_TO_SAM_APP/sam-app-py38/template.yaml

2022-10-19 19:06:07,166 | Your template contains a resource with logical ID "ServerlessRestApi", which is a reserved logical ID in AWS SAM. It could result in unexpected behaviors and is not recommended.

2022-10-19 19:06:07,166 | 2 resources found in the stack

2022-10-19 19:06:07,166 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

2022-10-19 19:06:07,167 | Instantiating build definitions

2022-10-19 19:06:07,167 | No previous build graph found, generating new one

2022-10-19 19:06:07,167 | Unique function build definition found, adding as new (Function Build Definition: BuildDefinition(python3.8, /PATH_TO_SAM_APP/sam-app-py38/hello_world, Zip, , 4a7df146-0be6-426a-b355-2e43bc6fce03, {}, {}, x86_64, []), Function: Function(function_id='HelloWorldFunction', name='HelloWorldFunction', functionname='HelloWorldFunction', runtime='python3.8', memory=None, timeout=3, handler='app.lambda_handler', imageuri=None, packagetype='Zip', imageconfig=None, codeuri='/PATH_TO_SAM_APP/sam-app-py38/hello_world', environment=None, rolearn=None, layers=[], events={'HelloWorld': {'Type': 'Api', 'Properties': {'Path': '/hello', 'Method': 'get', 'RestApiId': 'ServerlessRestApi'}}}, metadata={'SamResourceId': 'HelloWorldFunction'}, inlinecode=None, codesign_config_arn=None, architectures=['x86_64'], function_url_config=None, stack_path=''))

2022-10-19 19:06:07,168 | Building codeuri: /PATH_TO_SAM_APP/sam-app-py38/hello_world runtime: python3.8 metadata: {} architecture: x86_64 functions: HelloWorldFunction

2022-10-19 19:06:07,168 | Building to following folder /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:06:07,168 | Loading workflow module 'aws_lambda_builders.workflows'

2022-10-19 19:06:07,172 | Registering workflow 'PythonPipBuilder' with capability 'Capability(language='python', dependency_manager='pip', application_framework=None)'

2022-10-19 19:06:07,173 | Registering workflow 'NodejsNpmBuilder' with capability 'Capability(language='nodejs', dependency_manager='npm', application_framework=None)'

2022-10-19 19:06:07,174 | Registering workflow 'RubyBundlerBuilder' with capability 'Capability(language='ruby', dependency_manager='bundler', application_framework=None)'

2022-10-19 19:06:07,176 | Registering workflow 'GoModulesBuilder' with capability 'Capability(language='go', dependency_manager='modules', application_framework=None)'

2022-10-19 19:06:07,178 | Registering workflow 'JavaGradleWorkflow' with capability 'Capability(language='java', dependency_manager='gradle', application_framework=None)'

2022-10-19 19:06:07,179 | Registering workflow 'JavaMavenWorkflow' with capability 'Capability(language='java', dependency_manager='maven', application_framework=None)'

2022-10-19 19:06:07,181 | Registering workflow 'DotnetCliPackageBuilder' with capability 'Capability(language='dotnet', dependency_manager='cli-package', application_framework=None)'

2022-10-19 19:06:07,182 | Registering workflow 'CustomMakeBuilder' with capability 'Capability(language='provided', dependency_manager=None, application_framework=None)'

2022-10-19 19:06:07,183 | Registering workflow 'NodejsNpmEsbuildBuilder' with capability 'Capability(language='nodejs', dependency_manager='npm-esbuild', application_framework=None)'

2022-10-19 19:06:07,183 | Found workflow 'PythonPipBuilder' to support capabilities 'Capability(language='python', dependency_manager='pip', application_framework=None)'

2022-10-19 19:06:07,198 | Running workflow 'PythonPipBuilder'

2022-10-19 19:06:07,199 | Running PythonPipBuilder:ResolveDependencies

2022-10-19 19:06:07,216 | calling pip download -r /PATH_TO_SAM_APP/sam-app-py38/hello_world/requirements.txt --dest /var/folders/7s/02wlz_dx27q5f_hjqbrsjdsr0000gn/T/tmpm3ffmr1d --exists-action i

2022-10-19 19:06:07,782 | Full dependency closure: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | initial compatible: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | initial incompatible: set()

2022-10-19 19:06:07,783 | Downloading missing wheels: set()

2022-10-19 19:06:07,783 | compatible wheels after second download pass: {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Build missing wheels from sdists (C compiling True): set()

2022-10-19 19:06:07,783 | compatible after building wheels (no C compiling): {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Build missing wheels from sdists (C compiling False): set()

2022-10-19 19:06:07,783 | compatible after building wheels (C compiling): {urllib3==1.26.12(wheel), requests==2.28.1(wheel), idna==3.4(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Final compatible: {idna==3.4(wheel), urllib3==1.26.12(wheel), requests==2.28.1(wheel), certifi==2022.9.24(wheel), charset-normalizer==2.1.1(wheel)}

2022-10-19 19:06:07,783 | Final incompatible: set()

2022-10-19 19:06:07,783 | Final missing wheels: set()

2022-10-19 19:06:07,797 | PythonPipBuilder:ResolveDependencies succeeded

2022-10-19 19:06:07,797 | Running PythonPipBuilder:CopySource

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/requirements.txt) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/requirements.txt)

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/__init__.py) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/__init__.py)

2022-10-19 19:06:07,798 | Copying source file (/PATH_TO_SAM_APP/sam-app-py38/hello_world/app.py) to destination (/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction/app.py)

2022-10-19 19:06:07,798 | PythonPipBuilder:CopySource succeeded

2022-10-19 19:06:07,799 | There is no customer defined id or cdk path defined for resource HelloWorldFunction, so we will use the resource logical id as the resource id

2022-10-19 19:06:07,799 | 2 resources found in the stack

2022-10-19 19:06:07,799 | Found Serverless function with name='HelloWorldFunction' and CodeUri='hello_world/'

Build Succeeded

Built Artifacts : .aws-sam/build

Built Template : .aws-sam/build/template.yaml

Commands you can use next

[*] Validate SAM template: sam validate

[*] Invoke Function: sam local invoke

[*] Test Function in the Cloud: sam sync --stack-name {stack-name} --watch

[*] Deploy: sam deploy --guided

2022-10-19 19:06:07,826 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:06:07,826 | Unable to find Click Context for getting session_id.

2022-10-19 19:06:07,827 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '7ac7183d-5134-44d2-a310-1ee8d30ece10', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b549e766-17a5-4641-b0de-d53095b082cb', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam build', 'metricSpecificAttributes': {'projectType': 'CFN', 'gitOrigin': None, 'projectName': '0fdcacdf0a5a5c982672247f3c17ee797bd2e5c80105099d05b5329699391655', 'initialCommit': None}, 'duration': 816, 'exitReason': 'success', 'exitCode': 0}}]}

2022-10-19 19:06:07,827 | Sending Telemetry: {'metrics': [{'events': {'requestId': 'dd4677f9-d7d4-4ebb-9214-aa1aebc9712c', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b549e766-17a5-4641-b0de-d53095b082cb', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'metricSpecificAttributes': {'events': [{'event_name': 'BuildWorkflowUsed', 'event_value': 'python-pip', 'thread_id': 4376790400, 'time_stamp': '2022-10-19 17:06:07.168'}, {'event_name': 'BuildFunctionRuntime', 'event_value': 'python3.8', 'thread_id': 4376790400, 'time_stamp': '2022-10-19 17:06:07.801'}]}}}]}

2022-10-19 19:06:08,521 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

2022-10-19 19:06:08,522 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

`sam local invoke --debug`

2022-10-19 19:15:55,258 | Telemetry endpoint configured to be https://aws-serverless-tools-telemetry.us-west-2.amazonaws.com/metrics

2022-10-19 19:15:55,258 | Using config file: samconfig.toml, config environment: default

2022-10-19 19:15:55,258 | Expand command line arguments to:

2022-10-19 19:15:55,258 | --template_file=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/template.yaml --event=events/event.json --no_event --layer_cache_basedir=/Users/afrigerio/.aws-sam/layers-pkg --container_host=localhost --container_host_interface=127.0.0.1

2022-10-19 19:15:55,258 | local invoke command is called

2022-10-19 19:15:55,261 | No Parameters detected in the template

2022-10-19 19:15:55,270 | Sam customer defined id is more priority than other IDs. Customer defined id for resource HelloWorldFunction is HelloWorldFunction

2022-10-19 19:15:55,270 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:15:55,270 | 0 stacks found in the template

2022-10-19 19:15:55,270 | No Parameters detected in the template

2022-10-19 19:15:55,275 | Sam customer defined id is more priority than other IDs. Customer defined id for resource HelloWorldFunction is HelloWorldFunction

2022-10-19 19:15:55,275 | There is no customer defined id or cdk path defined for resource ServerlessRestApi, so we will use the resource logical id as the resource id

2022-10-19 19:15:55,276 | 2 resources found in the stack

2022-10-19 19:15:55,276 | Found Serverless function with name='HelloWorldFunction' and CodeUri='HelloWorldFunction'

2022-10-19 19:15:55,276 | --base-dir is not presented, adjusting uri HelloWorldFunction relative to /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/template.yaml

2022-10-19 19:15:55,299 | Found one Lambda function with name 'HelloWorldFunction'

2022-10-19 19:15:55,299 | Invoking app.lambda_handler (python3.8)

2022-10-19 19:15:55,299 | No environment variables found for function 'HelloWorldFunction'

2022-10-19 19:15:55,299 | Loading AWS credentials from session with profile 'None'

2022-10-19 19:15:55,304 | Resolving code path. Cwd=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build, CodeUri=/PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:15:55,304 | Resolved absolute path to code is /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction

2022-10-19 19:15:55,305 | Code /PATH_TO_SAM_APP/sam-app-py38/.aws-sam/build/HelloWorldFunction is not a zip/jar file

2022-10-19 19:15:55,307 | Cleaning all decompressed code dirs

2022-10-19 19:15:55,333 | Sending Telemetry: {'metrics': [{'commandRun': {'requestId': '94dcccda-e42d-4b06-b446-ad4bcc6fe6c3', 'installationId': '8ada282a-332d-4b64-98e1-4f7fb559111a', 'sessionId': 'b2d0e00e-d549-46e3-bab5-0cd6a21e9883', 'executionEnvironment': 'CLI', 'ci': False, 'pyversion': '3.8.15', 'samcliVersion': '1.60.0', 'awsProfileProvided': False, 'debugFlagProvided': True, 'region': '', 'commandName': 'sam local invoke', 'metricSpecificAttributes': {'projectType': 'CFN', 'gitOrigin': None, 'projectName': '0fdcacdf0a5a5c982672247f3c17ee797bd2e5c80105099d05b5329699391655', 'initialCommit': None}, 'duration': 75, 'exitReason': 'NotFound', 'exitCode': 255}}]}

2022-10-19 19:15:56,064 | HTTPSConnectionPool(host='aws-serverless-tools-telemetry.us-west-2.amazonaws.com', port=443): Read timed out. (read timeout=0.1)

Traceback (most recent call last):