Unnamed: 0 int64 1 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 3 438 | labels stringlengths 4 308 | body stringlengths 7 254k | index stringclasses 7 values | text_combine stringlengths 96 254k | label stringclasses 2 values | text stringlengths 96 246k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,311 | 26,810,680,730 | IssuesEvent | 2023-02-01 22:06:16 | MozillaFoundation/donate-wagtail | https://api.github.com/repos/MozillaFoundation/donate-wagtail | closed | Braintree Python SDK Update | engineering maintain | It looks like we'll need to update the Braintree Python SDK one last time. From Braintree:

> This is a reminder that a new version of the SDK was released on October 14, 2022 with new security enhancements. Please upgrade to the Braintree Python SDK v.4.17.1 as soon as possible. Starting February 28, 2023, older versions of the SDK may no longer be supported. Please disregard if you've already updated your SDK following our initial outreach on January 10 but take action now if you haven’t yet done so.

>To make this update:

> If you're currently using v4 of the Python SDK, you need to upgrade the SDK to v4.17.1 or higher. Your integration won't require any other changes.

If you're currently using v3 or lower of the Braintree Python SDK, please upgrade to Braintree Python SDK v3.59.1. You also should plan to upgrade to v4.17.1 or higher in the near future. See our [migration guide](https://developer.paypal.com/braintree/docs/reference/general/server-sdk-migration-guide/python) for details on necessary integration changes. | True | Braintree Python SDK Update - It looks like we'll need to update the Braintree Python SDK one last time. From Braintree:

> This is a reminder that a new version of the SDK was released on October 14, 2022 with new security enhancements. Please upgrade to the Braintree Python SDK v.4.17.1 as soon as possible. Starting February 28, 2023, older versions of the SDK may no longer be supported. Please disregard if you've already updated your SDK following our initial outreach on January 10 but take action now if you haven’t yet done so.

>To make this update:

> If you're currently using v4 of the Python SDK, you need to upgrade the SDK to v4.17.1 or higher. Your integration won't require any other changes.

If you're currently using v3 or lower of the Braintree Python SDK, please upgrade to Braintree Python SDK v3.59.1. You also should plan to upgrade to v4.17.1 or higher in the near future. See our [migration guide](https://developer.paypal.com/braintree/docs/reference/general/server-sdk-migration-guide/python) for details on necessary integration changes. | main | braintree python sdk update it looks like we ll need to update the braintree python sdk one last time from braintree this is a reminder that a new version of the sdk was released on october with new security enhancements please upgrade to the braintree python sdk v as soon as possible starting february older versions of the sdk may no longer be supported please disregard if you ve already updated your sdk following our initial outreach on january but take action now if you haven’t yet done so to make this update if you re currently using of the python sdk you need to upgrade the sdk to or higher your integration won t require any other changes if you re currently using or lower of the braintree python sdk please upgrade to braintree python sdk you also should plan to upgrade to or higher in the near future see our for details on necessary integration changes | 1 |

155 | 2,700,437,339 | IssuesEvent | 2015-04-04 04:59:48 | tgstation/-tg-station | https://api.github.com/repos/tgstation/-tg-station | closed | Large rsc files - TGservers have unsustainably high bandwidth usage. | Maintainability - Hinders improvements Not a bug Sound Sprites | http://ss13.eu/phpbb/viewtopic.php?f=3&t=229

Currently, our codebase has a .rsc filesize of 27.2Mb. This is extremely high.

Bandwidth usage for TGservers is almost 2000Gb a month.

This is something we've been saying is an issue for a long long time. It's not only an issue for hosts, but one for clients having to download almost 30Mb on a throttled byond connection.

As I see it we have some key savings we could make:

*Remove unused icon_states! There are loaaaaads.

*Make a MAPPING define, which would set area icons - this way, these icons would not be compiled into release versions where they are not used.

*Making use of the map-merge tools obligatory

*Reduce the colour pallettes of icon files

*Remove identical repeated frames (copypasted) in animated icons. Instead use the repeat frame option (could be particularly helpful on the titlescreen image)

*Remove/replace/reduce sounds which add nothing to the experience. Note, I'm not saying remove anything like sound effects. I am saying stuff like say, if "put a banging donk on it" was a 6Mb Ogg, possibly reducing that to a more acceptable size....as a hypothetical example

*Marking our more-stable revisions so servers do not have to update as often (not sure how you'd judge which ones are more stable)

*Look into hosting rsc files separately. Unfortunately, byond does not support https, so I am unsure how this would work in a practical sense. However, adding -support- for it should be fairly trivial, and would pretty much eliminate most of the bandwidth use whilst not affecting stability (it reverts to the old behaviour of downloading from the server if the rsc file cannot be fetched from the alternative source) | True | Large rsc files - TGservers have unsustainably high bandwidth usage. - http://ss13.eu/phpbb/viewtopic.php?f=3&t=229

Currently, our codebase has a .rsc filesize of 27.2Mb. This is extremely high.

Bandwidth usage for TGservers is almost 2000Gb a month.

This is something we've been saying is an issue for a long long time. It's not only an issue for hosts, but one for clients having to download almost 30Mb on a throttled byond connection.

As I see it we have some key savings we could make:

*Remove unused icon_states! There are loaaaaads.

*Make a MAPPING define, which would set area icons - this way, these icons would not be compiled into release versions where they are not used.

*Making use of the map-merge tools obligatory

*Reduce the colour pallettes of icon files

*Remove identical repeated frames (copypasted) in animated icons. Instead use the repeat frame option (could be particularly helpful on the titlescreen image)

*Remove/replace/reduce sounds which add nothing to the experience. Note, I'm not saying remove anything like sound effects. I am saying stuff like say, if "put a banging donk on it" was a 6Mb Ogg, possibly reducing that to a more acceptable size....as a hypothetical example

*Marking our more-stable revisions so servers do not have to update as often (not sure how you'd judge which ones are more stable)

*Look into hosting rsc files separately. Unfortunately, byond does not support https, so I am unsure how this would work in a practical sense. However, adding -support- for it should be fairly trivial, and would pretty much eliminate most of the bandwidth use whilst not affecting stability (it reverts to the old behaviour of downloading from the server if the rsc file cannot be fetched from the alternative source) | main | large rsc files tgservers have unsustainably high bandwidth usage currently our codebase has a rsc filesize of this is extremely high bandwidth usage for tgservers is almost a month this is something we ve been saying is an issue for a long long time it s not only an issue for hosts but one for clients having to download almost on a throttled byond connection as i see it we have some key savings we could make remove unused icon states there are loaaaaads make a mapping define which would set area icons this way these icons would not be compiled into release versions where they are not used making use of the map merge tools obligatory reduce the colour pallettes of icon files remove identical repeated frames copypasted in animated icons instead use the repeat frame option could be particularly helpful on the titlescreen image remove replace reduce sounds which add nothing to the experience note i m not saying remove anything like sound effects i am saying stuff like say if put a banging donk on it was a ogg possibly reducing that to a more acceptable size as a hypothetical example marking our more stable revisions so servers do not have to update as often not sure how you d judge which ones are more stable look into hosting rsc files separately unfortunately byond does not support https so i am unsure how this would work in a practical sense however adding support for it should be fairly trivial and would pretty much eliminate most of the bandwidth use whilst not affecting stability it reverts to the old behaviour of downloading from the server if the rsc file cannot be fetched from the alternative source | 1 |

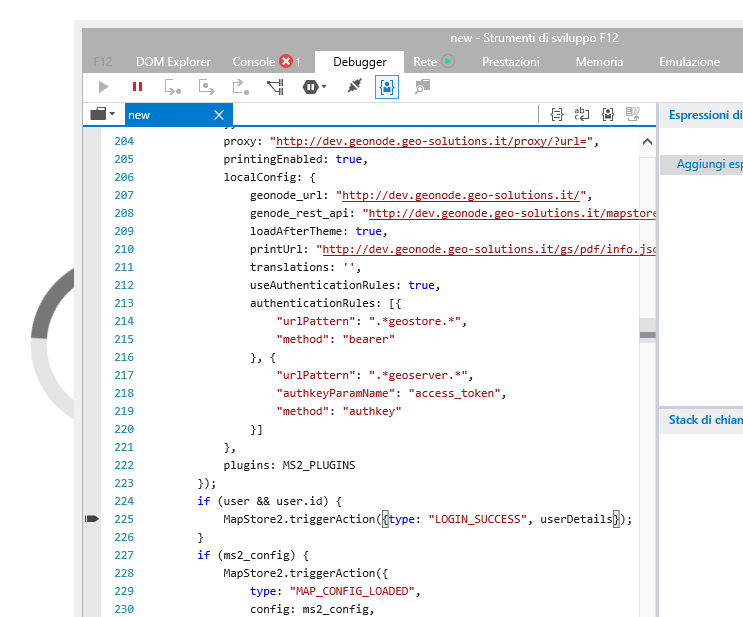

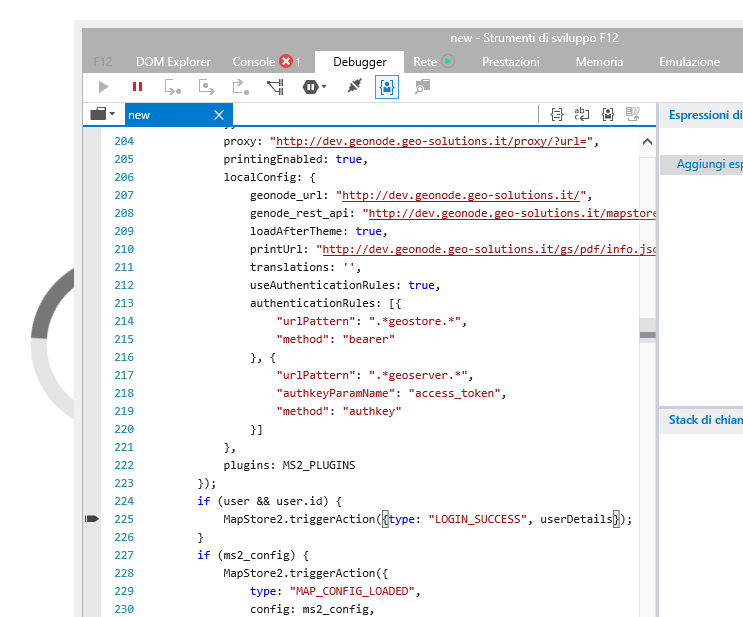

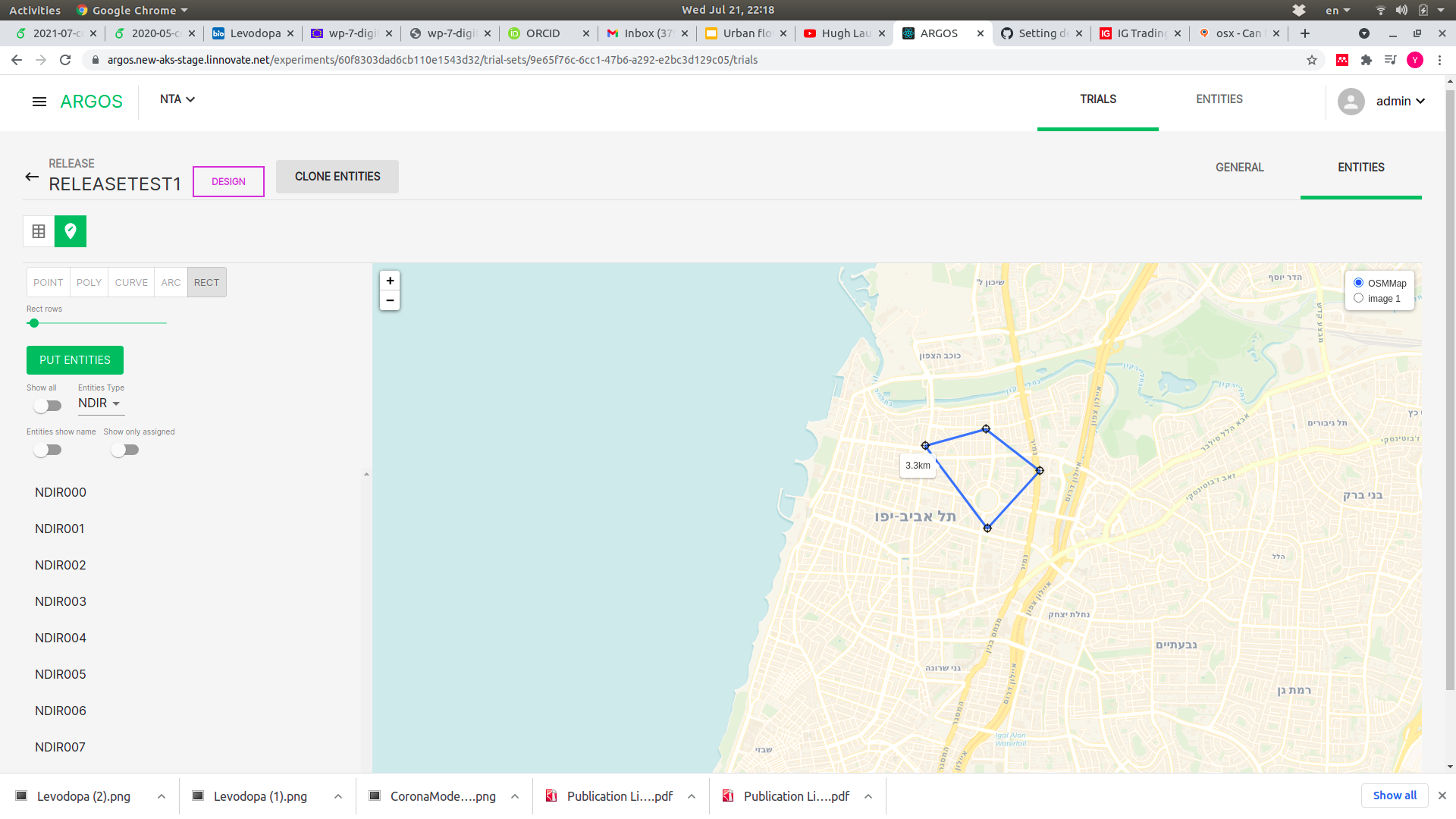

286,342 | 8,786,885,683 | IssuesEvent | 2018-12-20 16:54:58 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Infinite loading with IE v.11 | Priority: Blocker Timeline bug geonode_integration invalid ready | ### Description

Opening a new map or a pre-saved map with IE v.11 you have a infinite loading and the map not opens, this is probally due to a syntax error in configuration, see below:

### In case of Bug

- [x] Internet Explorer

- [ ] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- version 11

*Steps to reproduce*

- open a new map or a presaved map

*Expected Result*

- you can open maps

*Current Result*

- you can't open maps | 1.0 | Infinite loading with IE v.11 - ### Description

Opening a new map or a pre-saved map with IE v.11 you have a infinite loading and the map not opens, this is probally due to a syntax error in configuration, see below:

### In case of Bug

- [x] Internet Explorer

- [ ] Chrome

- [ ] Firefox

- [ ] Safari

*Browser Version Affected*

- version 11

*Steps to reproduce*

- open a new map or a presaved map

*Expected Result*

- you can open maps

*Current Result*

- you can't open maps | non_main | infinite loading with ie v description opening a new map or a pre saved map with ie v you have a infinite loading and the map not opens this is probally due to a syntax error in configuration see below in case of bug internet explorer chrome firefox safari browser version affected version steps to reproduce open a new map or a presaved map expected result you can open maps current result you can t open maps | 0 |

233,731 | 25,765,820,930 | IssuesEvent | 2022-12-09 01:40:57 | aero-surge/Word_of_the_day_messager | https://api.github.com/repos/aero-surge/Word_of_the_day_messager | opened | CVE-2022-23491 (Medium) detected in certifi-2021.5.30-py2.py3-none-any.whl | security vulnerability | ## CVE-2022-23491 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>certifi-2021.5.30-py2.py3-none-any.whl</b></p></summary>

<p>Python package for providing Mozilla's CA Bundle.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/05/1b/0a0dece0e8aa492a6ec9e4ad2fe366b511558cdc73fd3abc82ba7348e875/certifi-2021.5.30-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/05/1b/0a0dece0e8aa492a6ec9e4ad2fe366b511558cdc73fd3abc82ba7348e875/certifi-2021.5.30-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /requirements.txt</p>

<p>Path to vulnerable library: /requirements.txt</p>

<p>

Dependency Hierarchy:

- PyDictionary-2.0.1-py3-none-any.whl (Root Library)

- requests-2.26.0-py2.py3-none-any.whl

- :x: **certifi-2021.5.30-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Certifi is a curated collection of Root Certificates for validating the trustworthiness of SSL certificates while verifying the identity of TLS hosts. Certifi 2022.12.07 removes root certificates from "TrustCor" from the root store. These are in the process of being removed from Mozilla's trust store. TrustCor's root certificates are being removed pursuant to an investigation prompted by media reporting that TrustCor's ownership also operated a business that produced spyware. Conclusions of Mozilla's investigation can be found in the linked google group discussion.

<p>Publish Date: 2022-12-07

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-23491>CVE-2022-23491</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-23491">https://www.cve.org/CVERecord?id=CVE-2022-23491</a></p>

<p>Release Date: 2022-12-07</p>

<p>Fix Resolution: certifi - 2022.12.07</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-23491 (Medium) detected in certifi-2021.5.30-py2.py3-none-any.whl - ## CVE-2022-23491 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>certifi-2021.5.30-py2.py3-none-any.whl</b></p></summary>

<p>Python package for providing Mozilla's CA Bundle.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/05/1b/0a0dece0e8aa492a6ec9e4ad2fe366b511558cdc73fd3abc82ba7348e875/certifi-2021.5.30-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/05/1b/0a0dece0e8aa492a6ec9e4ad2fe366b511558cdc73fd3abc82ba7348e875/certifi-2021.5.30-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /requirements.txt</p>

<p>Path to vulnerable library: /requirements.txt</p>

<p>

Dependency Hierarchy:

- PyDictionary-2.0.1-py3-none-any.whl (Root Library)

- requests-2.26.0-py2.py3-none-any.whl

- :x: **certifi-2021.5.30-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Certifi is a curated collection of Root Certificates for validating the trustworthiness of SSL certificates while verifying the identity of TLS hosts. Certifi 2022.12.07 removes root certificates from "TrustCor" from the root store. These are in the process of being removed from Mozilla's trust store. TrustCor's root certificates are being removed pursuant to an investigation prompted by media reporting that TrustCor's ownership also operated a business that produced spyware. Conclusions of Mozilla's investigation can be found in the linked google group discussion.

<p>Publish Date: 2022-12-07

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-23491>CVE-2022-23491</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-23491">https://www.cve.org/CVERecord?id=CVE-2022-23491</a></p>

<p>Release Date: 2022-12-07</p>

<p>Fix Resolution: certifi - 2022.12.07</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_main | cve medium detected in certifi none any whl cve medium severity vulnerability vulnerable library certifi none any whl python package for providing mozilla s ca bundle library home page a href path to dependency file requirements txt path to vulnerable library requirements txt dependency hierarchy pydictionary none any whl root library requests none any whl x certifi none any whl vulnerable library found in base branch main vulnerability details certifi is a curated collection of root certificates for validating the trustworthiness of ssl certificates while verifying the identity of tls hosts certifi removes root certificates from trustcor from the root store these are in the process of being removed from mozilla s trust store trustcor s root certificates are being removed pursuant to an investigation prompted by media reporting that trustcor s ownership also operated a business that produced spyware conclusions of mozilla s investigation can be found in the linked google group discussion publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required high user interaction none scope changed impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution certifi step up your open source security game with mend | 0 |

5,725 | 30,270,983,782 | IssuesEvent | 2023-07-07 15:19:30 | carbon-design-system/carbon | https://api.github.com/repos/carbon-design-system/carbon | closed | Discord invite link in `README` not working | status: needs triage 🕵️♀️ status: waiting for maintainer response 💬 | Hi 👋 Love the carbon system!

I wanted to join the Discord to see what's up and whether I could get some informal help with a TypeScript issue, but the one in the readme is not working for me. Maybe the link expired, I don't know?

I only see that the invitation cannot be accepted.

<img width="718" alt="Screenshot 2023-06-26 at 14 28 33" src="https://github.com/carbon-design-system/carbon/assets/2946344/df55d9c2-bb88-4e93-9b76-e0bf8a4ace09">

If this is a non-issue, of course feel free to close this without answering - I won't be offended 😊 | True | Discord invite link in `README` not working - Hi 👋 Love the carbon system!

I wanted to join the Discord to see what's up and whether I could get some informal help with a TypeScript issue, but the one in the readme is not working for me. Maybe the link expired, I don't know?

I only see that the invitation cannot be accepted.

<img width="718" alt="Screenshot 2023-06-26 at 14 28 33" src="https://github.com/carbon-design-system/carbon/assets/2946344/df55d9c2-bb88-4e93-9b76-e0bf8a4ace09">

If this is a non-issue, of course feel free to close this without answering - I won't be offended 😊 | main | discord invite link in readme not working hi 👋 love the carbon system i wanted to join the discord to see what s up and whether i could get some informal help with a typescript issue but the one in the readme is not working for me maybe the link expired i don t know i only see that the invitation cannot be accepted img width alt screenshot at src if this is a non issue of course feel free to close this without answering i won t be offended 😊 | 1 |

299,812 | 9,205,876,503 | IssuesEvent | 2019-03-08 11:57:50 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | QGIS slows down PostgreSQL | Category: Data Provider Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **Paolo Cavallini** (Paolo Cavallini)

Original Redmine Issue: 1174, https://issues.qgis.org/issues/1174

Original Assignee: Jürgen Fischer

---

When qgis (0.10 on deb) queries the db (to load geometries) often postgres takes up a very large amount of CPU, for *many* minutes. I once had even to kill postgres (!!). Does this happens to

others? We never noticed it before. Tested on several db.

| 1.0 | QGIS slows down PostgreSQL - ---

Author Name: **Paolo Cavallini** (Paolo Cavallini)

Original Redmine Issue: 1174, https://issues.qgis.org/issues/1174

Original Assignee: Jürgen Fischer

---

When qgis (0.10 on deb) queries the db (to load geometries) often postgres takes up a very large amount of CPU, for *many* minutes. I once had even to kill postgres (!!). Does this happens to

others? We never noticed it before. Tested on several db.

| non_main | qgis slows down postgresql author name paolo cavallini paolo cavallini original redmine issue original assignee jürgen fischer when qgis on deb queries the db to load geometries often postgres takes up a very large amount of cpu for many minutes i once had even to kill postgres does this happens to others we never noticed it before tested on several db | 0 |

4,074 | 19,249,909,131 | IssuesEvent | 2021-12-09 03:05:47 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Apple M1: thread 'main' panicked at 'attempt to divide by zero' | blocked/more-info-needed area/local/start-api stage/needs-investigation area/cdk maintainer/need-followup platform/mac/arm | ### Description:

I experience this error: `thread 'main' panicked at 'attempt to divide by zero'` when I try to access an endpoint on the function.

### Steps to reproduce:

Run `sam-beta-cdk local start-api -p <PORT> --skip-pull-image --warm-containers EAGER --debug`

### Observed result:

```

2021-08-20 09:00:27,794 | Found one Lambda function with name '<FUNCTION_NAME>'

2021-08-20 09:00:27,794 | Invoking Container created from <FUNCTION_NAME>

2021-08-20 09:00:27,794 | Environment variables overrides data is standard format

2021-08-20 09:00:27,814 | Reuse the created warm container for Lambda function '<FUNCTION_NAME>'

2021-08-20 09:00:27,821 | Lambda function '<FUNCTION_NAME>' is already running

2021-08-20 09:00:27,822 | Starting a timer for 10 seconds for function '<FUNCTION_NAME>'

START RequestId: <REQUEST_ID> Version: $LATEST

thread 'meter_probe' panicked at 'Unable to read /proc for agent process: InternalError(bug at /local/p4clients/pkgbuild-QP4Aq/workspace/build/AWSLogsLambdaInsights/AWSLogsLambdaInsights-1.0.115.0/AL2_x86_64/DEV.STD.PTHREAD/build/private/cargo-home/registry/src/-c477fb05a7ac3d62/procfs-0.7.9/src/process/stat.rs:300 (please report this procfs bug)

Internal Unwrap Error: Internal error: bug at /local/p4clients/pkgbuild-QP4Aq/workspace/build/AWSLogsLambdaInsights/AWSLogsLambdaInsights-1.0.115.0/AL2_x86_64/DEV.STD.PTHREAD/build/private/cargo-home/registry/src/-c477fb05a7ac3d62/procfs-0.7.9/src/lib.rs:285 (please report this procfs bug)

Internal Unwrap Error: NoneError)', src/inputs/memory.rs:59:39

note: run with `RUST_BACKTRACE=1` environment variable to display a backtrace

thread 'main' panicked at 'attempt to divide by zero', src/inputs/memory.rs:44:32

```

### Expected result:

`Return appropriate result from the endpoint`

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Big Sur v11.5.1

2. `sam --version`: SAM CLI, version 1.22.0.dev202107140310

3. AWS region: eu-central-1

`Add --debug flag to command you are running`

| True | Apple M1: thread 'main' panicked at 'attempt to divide by zero' - ### Description:

I experience this error: `thread 'main' panicked at 'attempt to divide by zero'` when I try to access an endpoint on the function.

### Steps to reproduce:

Run `sam-beta-cdk local start-api -p <PORT> --skip-pull-image --warm-containers EAGER --debug`

### Observed result:

```

2021-08-20 09:00:27,794 | Found one Lambda function with name '<FUNCTION_NAME>'

2021-08-20 09:00:27,794 | Invoking Container created from <FUNCTION_NAME>

2021-08-20 09:00:27,794 | Environment variables overrides data is standard format

2021-08-20 09:00:27,814 | Reuse the created warm container for Lambda function '<FUNCTION_NAME>'

2021-08-20 09:00:27,821 | Lambda function '<FUNCTION_NAME>' is already running

2021-08-20 09:00:27,822 | Starting a timer for 10 seconds for function '<FUNCTION_NAME>'

START RequestId: <REQUEST_ID> Version: $LATEST

thread 'meter_probe' panicked at 'Unable to read /proc for agent process: InternalError(bug at /local/p4clients/pkgbuild-QP4Aq/workspace/build/AWSLogsLambdaInsights/AWSLogsLambdaInsights-1.0.115.0/AL2_x86_64/DEV.STD.PTHREAD/build/private/cargo-home/registry/src/-c477fb05a7ac3d62/procfs-0.7.9/src/process/stat.rs:300 (please report this procfs bug)

Internal Unwrap Error: Internal error: bug at /local/p4clients/pkgbuild-QP4Aq/workspace/build/AWSLogsLambdaInsights/AWSLogsLambdaInsights-1.0.115.0/AL2_x86_64/DEV.STD.PTHREAD/build/private/cargo-home/registry/src/-c477fb05a7ac3d62/procfs-0.7.9/src/lib.rs:285 (please report this procfs bug)

Internal Unwrap Error: NoneError)', src/inputs/memory.rs:59:39

note: run with `RUST_BACKTRACE=1` environment variable to display a backtrace

thread 'main' panicked at 'attempt to divide by zero', src/inputs/memory.rs:44:32

```

### Expected result:

`Return appropriate result from the endpoint`

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Big Sur v11.5.1

2. `sam --version`: SAM CLI, version 1.22.0.dev202107140310

3. AWS region: eu-central-1

`Add --debug flag to command you are running`

| main | apple thread main panicked at attempt to divide by zero description i experience this error thread main panicked at attempt to divide by zero when i try to access an endpoint on the function steps to reproduce run sam beta cdk local start api p skip pull image warm containers eager debug observed result found one lambda function with name invoking container created from environment variables overrides data is standard format reuse the created warm container for lambda function lambda function is already running starting a timer for seconds for function start requestid version latest thread meter probe panicked at unable to read proc for agent process internalerror bug at local pkgbuild workspace build awslogslambdainsights awslogslambdainsights dev std pthread build private cargo home registry src procfs src process stat rs please report this procfs bug internal unwrap error internal error bug at local pkgbuild workspace build awslogslambdainsights awslogslambdainsights dev std pthread build private cargo home registry src procfs src lib rs please report this procfs bug internal unwrap error noneerror src inputs memory rs note run with rust backtrace environment variable to display a backtrace thread main panicked at attempt to divide by zero src inputs memory rs expected result return appropriate result from the endpoint additional environment details ex windows mac amazon linux etc os big sur sam version sam cli version aws region eu central add debug flag to command you are running | 1 |

48,873 | 5,988,917,927 | IssuesEvent | 2017-06-02 06:56:13 | mautic/mautic | https://api.github.com/repos/mautic/mautic | closed | Segment filters doesn't remove contacts which does not fit the filters anymore | Bug Ready To Test | What type of report is this:

| Q | A

| ---| ---

| Bug report? | X

| Feature request? |

| Enhancement? |

## Description:

The more details the better...

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.8.1

| PHP version | 7

### Steps to reproduce:

1. I create a segment with a condition that corresponds to 3 contacts

2. Cron creates a segment of three contacts

3. Change the segment condition to suit other 4 contacts

4. Cron adds 4 other contacts, but does not delete the original

### Log errors:

_Please check for related errors in the latest log file in [mautic root]/app/log/ and/or the web server's logs and post them here. Be sure to remove sensitive information if applicable._

| 1.0 | Segment filters doesn't remove contacts which does not fit the filters anymore - What type of report is this:

| Q | A

| ---| ---

| Bug report? | X

| Feature request? |

| Enhancement? |

## Description:

The more details the better...

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.8.1

| PHP version | 7

### Steps to reproduce:

1. I create a segment with a condition that corresponds to 3 contacts

2. Cron creates a segment of three contacts

3. Change the segment condition to suit other 4 contacts

4. Cron adds 4 other contacts, but does not delete the original

### Log errors:

_Please check for related errors in the latest log file in [mautic root]/app/log/ and/or the web server's logs and post them here. Be sure to remove sensitive information if applicable._

| non_main | segment filters doesn t remove contacts which does not fit the filters anymore what type of report is this q a bug report x feature request enhancement description the more details the better if a bug q a mautic version php version steps to reproduce i create a segment with a condition that corresponds to contacts cron creates a segment of three contacts change the segment condition to suit other contacts cron adds other contacts but does not delete the original log errors please check for related errors in the latest log file in app log and or the web server s logs and post them here be sure to remove sensitive information if applicable | 0 |

219,839 | 24,539,336,352 | IssuesEvent | 2022-10-12 01:12:36 | pactflow/example-consumer-cypress | https://api.github.com/repos/pactflow/example-consumer-cypress | opened | CVE-2021-23382 (High) detected in postcss-7.0.21.tgz, postcss-7.0.27.tgz | security vulnerability | ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.21.tgz</b>, <b>postcss-7.0.27.tgz</b></p></summary>

<p>

<details><summary><b>postcss-7.0.21.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/resolve-url-loader/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.0.tgz (Root Library)

- resolve-url-loader-3.1.1.tgz

- :x: **postcss-7.0.21.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.27.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.27.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.27.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.0.tgz (Root Library)

- postcss-safe-parser-4.0.1.tgz

- :x: **postcss-7.0.27.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/pactflow/example-consumer-cypress/commit/340e24f4064182631ba657b42580dbb0476e3b94">340e24f4064182631ba657b42580dbb0476e3b94</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss before 8.2.13 are vulnerable to Regular Expression Denial of Service (ReDoS) via getAnnotationURL() and loadAnnotation() in lib/previous-map.js. The vulnerable regexes are caused mainly by the sub-pattern \/\*\s* sourceMappingURL=(.*).

<p>Publish Date: 2021-04-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23382>CVE-2021-23382</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382</a></p>

<p>Release Date: 2021-04-26</p>

<p>Fix Resolution (postcss): 7.0.36</p>

<p>Direct dependency fix Resolution (react-scripts): 4.0.0</p><p>Fix Resolution (postcss): 7.0.36</p>

<p>Direct dependency fix Resolution (react-scripts): 4.0.0</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| True | CVE-2021-23382 (High) detected in postcss-7.0.21.tgz, postcss-7.0.27.tgz - ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.21.tgz</b>, <b>postcss-7.0.27.tgz</b></p></summary>

<p>

<details><summary><b>postcss-7.0.21.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/resolve-url-loader/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.0.tgz (Root Library)

- resolve-url-loader-3.1.1.tgz

- :x: **postcss-7.0.21.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.27.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.27.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.27.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.0.tgz (Root Library)

- postcss-safe-parser-4.0.1.tgz

- :x: **postcss-7.0.27.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/pactflow/example-consumer-cypress/commit/340e24f4064182631ba657b42580dbb0476e3b94">340e24f4064182631ba657b42580dbb0476e3b94</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss before 8.2.13 are vulnerable to Regular Expression Denial of Service (ReDoS) via getAnnotationURL() and loadAnnotation() in lib/previous-map.js. The vulnerable regexes are caused mainly by the sub-pattern \/\*\s* sourceMappingURL=(.*).

<p>Publish Date: 2021-04-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23382>CVE-2021-23382</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23382</a></p>

<p>Release Date: 2021-04-26</p>

<p>Fix Resolution (postcss): 7.0.36</p>

<p>Direct dependency fix Resolution (react-scripts): 4.0.0</p><p>Fix Resolution (postcss): 7.0.36</p>

<p>Direct dependency fix Resolution (react-scripts): 4.0.0</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

| non_main | cve high detected in postcss tgz postcss tgz cve high severity vulnerability vulnerable libraries postcss tgz postcss tgz postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file package json path to vulnerable library node modules resolve url loader node modules postcss package json dependency hierarchy react scripts tgz root library resolve url loader tgz x postcss tgz vulnerable library postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file package json path to vulnerable library node modules postcss package json dependency hierarchy react scripts tgz root library postcss safe parser tgz x postcss tgz vulnerable library found in head commit a href found in base branch master vulnerability details the package postcss before are vulnerable to regular expression denial of service redos via getannotationurl and loadannotation in lib previous map js the vulnerable regexes are caused mainly by the sub pattern s sourcemappingurl publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution postcss direct dependency fix resolution react scripts fix resolution postcss direct dependency fix resolution react scripts check this box to open an automated fix pr | 0 |

375 | 3,385,752,053 | IssuesEvent | 2015-11-27 13:30:05 | Homebrew/homebrew | https://api.github.com/repos/Homebrew/homebrew | closed | Travis CI issues tracking | bug features help wanted maintainer feedback travis usability | I'd like us to be able to use Travis CI for all non-bottle builds in future (and maybe one day all bottle builds too). In the short-term this means fixing Travis so the only failures are legitimate timeouts and the only missing features are bottle uploads.

@Homebrew/owners please add any weird Travis issues you see for **any new builds started after now** as comments in this issue and I'll try and triage them into specific issues and then fix them.

Once these are all fixed I'll switch our `master` build over to Travis CI and then move Jenkins so it requires a `@BrewTestBot test this please` to actually run jobs on Jenkins. This means Jenkins will be far less loaded and all builds that don't rely on a bottle being uploaded (e.g. audit failures, pulls of formulae without bottles) can be done without getting Jenkins involved. It'll also mean, due to Travis's parallel builds, that we can get much quicker feedback to users.

Thanks! | True | Travis CI issues tracking - I'd like us to be able to use Travis CI for all non-bottle builds in future (and maybe one day all bottle builds too). In the short-term this means fixing Travis so the only failures are legitimate timeouts and the only missing features are bottle uploads.

@Homebrew/owners please add any weird Travis issues you see for **any new builds started after now** as comments in this issue and I'll try and triage them into specific issues and then fix them.

Once these are all fixed I'll switch our `master` build over to Travis CI and then move Jenkins so it requires a `@BrewTestBot test this please` to actually run jobs on Jenkins. This means Jenkins will be far less loaded and all builds that don't rely on a bottle being uploaded (e.g. audit failures, pulls of formulae without bottles) can be done without getting Jenkins involved. It'll also mean, due to Travis's parallel builds, that we can get much quicker feedback to users.

Thanks! | main | travis ci issues tracking i d like us to be able to use travis ci for all non bottle builds in future and maybe one day all bottle builds too in the short term this means fixing travis so the only failures are legitimate timeouts and the only missing features are bottle uploads homebrew owners please add any weird travis issues you see for any new builds started after now as comments in this issue and i ll try and triage them into specific issues and then fix them once these are all fixed i ll switch our master build over to travis ci and then move jenkins so it requires a brewtestbot test this please to actually run jobs on jenkins this means jenkins will be far less loaded and all builds that don t rely on a bottle being uploaded e g audit failures pulls of formulae without bottles can be done without getting jenkins involved it ll also mean due to travis s parallel builds that we can get much quicker feedback to users thanks | 1 |

63,156 | 17,397,557,302 | IssuesEvent | 2021-08-02 15:09:29 | snowplow/snowplow-javascript-tracker | https://api.github.com/repos/snowplow/snowplow-javascript-tracker | closed | Check stateStorageStrategy before testing for localStorage | type:defect | **Describe the bug**

When using `stateStorageStrategy: 'none'`, the below line means we check for localStorage before checking if we are allowed to use local storage, we should check `useLocalStorage` before calling `localStorageAccessible()`. Seems pointless to check it if we're not permitted by configuration to use it.

https://github.com/snowplow/snowplow-javascript-tracker/blob/268f6cef26edb87b51fda39126fd7ffbc41dff5b/libraries/browser-tracker-core/src/tracker/out_queue.ts#L109

| 1.0 | Check stateStorageStrategy before testing for localStorage - **Describe the bug**

When using `stateStorageStrategy: 'none'`, the below line means we check for localStorage before checking if we are allowed to use local storage, we should check `useLocalStorage` before calling `localStorageAccessible()`. Seems pointless to check it if we're not permitted by configuration to use it.

https://github.com/snowplow/snowplow-javascript-tracker/blob/268f6cef26edb87b51fda39126fd7ffbc41dff5b/libraries/browser-tracker-core/src/tracker/out_queue.ts#L109

| non_main | check statestoragestrategy before testing for localstorage describe the bug when using statestoragestrategy none the below line means we check for localstorage before checking if we are allowed to use local storage we should check uselocalstorage before calling localstorageaccessible seems pointless to check it if we re not permitted by configuration to use it | 0 |

435,378 | 30,496,977,139 | IssuesEvent | 2023-07-18 11:32:54 | josura/c2c-sepia | https://api.github.com/repos/josura/c2c-sepia | opened | Validation of the model | documentation | Since the model simulates the behavior of the cell and the perturbation of the cell itself, there needs to be some kind of validation (maybe in another branch) to describe and compare the simulation itself to real data (maybe differential expression at two different times). Also to compare the use of a drug and see if the drug itself changes the convergence of the system | 1.0 | Validation of the model - Since the model simulates the behavior of the cell and the perturbation of the cell itself, there needs to be some kind of validation (maybe in another branch) to describe and compare the simulation itself to real data (maybe differential expression at two different times). Also to compare the use of a drug and see if the drug itself changes the convergence of the system | non_main | validation of the model since the model simulates the behavior of the cell and the perturbation of the cell itself there needs to be some kind of validation maybe in another branch to describe and compare the simulation itself to real data maybe differential expression at two different times also to compare the use of a drug and see if the drug itself changes the convergence of the system | 0 |

3,612 | 14,611,240,183 | IssuesEvent | 2020-12-22 02:43:51 | Homebrew/homebrew-cask | https://api.github.com/repos/Homebrew/homebrew-cask | closed | gfortran cask duplicates the gcc formula in homebrew-core | awaiting maintainer feedback | I discovered today that there is a `gfortran` cask, which is distributing the gfortran installers that I build and make available. It's been happening for 3 years and I never knew 😄

I don't think that it fits the homebrew-cask rules, though:

- despite their name, these installers are a full GCC install, not just gfortran

- gfortran is fully part of GCC and cannot be separated, anyway

- this is purely command-line software

- that is already available as the `gcc` formula in homebrew-core

I could understand if the two distributions were different, but as I'm basically maintaining both, I don't think it should be kept that way. I suggest the cask be removed and users redirected to the gcc formula. | True | gfortran cask duplicates the gcc formula in homebrew-core - I discovered today that there is a `gfortran` cask, which is distributing the gfortran installers that I build and make available. It's been happening for 3 years and I never knew 😄

I don't think that it fits the homebrew-cask rules, though:

- despite their name, these installers are a full GCC install, not just gfortran

- gfortran is fully part of GCC and cannot be separated, anyway

- this is purely command-line software

- that is already available as the `gcc` formula in homebrew-core

I could understand if the two distributions were different, but as I'm basically maintaining both, I don't think it should be kept that way. I suggest the cask be removed and users redirected to the gcc formula. | main | gfortran cask duplicates the gcc formula in homebrew core i discovered today that there is a gfortran cask which is distributing the gfortran installers that i build and make available it s been happening for years and i never knew 😄 i don t think that it fits the homebrew cask rules though despite their name these installers are a full gcc install not just gfortran gfortran is fully part of gcc and cannot be separated anyway this is purely command line software that is already available as the gcc formula in homebrew core i could understand if the two distributions were different but as i m basically maintaining both i don t think it should be kept that way i suggest the cask be removed and users redirected to the gcc formula | 1 |

75,999 | 14,546,578,441 | IssuesEvent | 2020-12-15 21:27:52 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | superpmi: problem using arm collections | area-CodeGen-coreclr | I generated asm diffs on Windows x86 using Linux arm collection and clrjit_unix_arm_x86.dll cross-compiler JIT:

```

py -3 C:\gh\runtime\src\coreclr\scripts\superpmi.py asmdiffs -arch x86 -target_arch arm -filter libraries -jit_name clrjit_unix_arm_x86.dll --gcinfo -target_os Linux

```

This fails to replay every MC due to what appears to be an issue with sign extension of pointer types.

The JIT calls `getMethodClass()` with, in my example, 0xe8b8303c (from some previous SPMI call).

This calls the SuperPMI function:

```

CORINFO_CLASS_HANDLE MethodContext::repGetMethodClass(CORINFO_METHOD_HANDLE methodHandle)

```

which calls:

```

int index = GetMethodClass->GetIndex((DWORDLONG)methodHandle);

```

It casts a `CORINFO_METHOD_HANDLE`, which is a (32-bit) pointer, to a `DWORDLONG`, which is `unsigned __int64`, and in doing so sign extends it to 0xffffffffe8b8303c. It looks up in the GetMethodClass map, which includes a non-sign-extended value, and fails to find it.

Is there a difference in behavior between the C++ compiler behavior on Linux and Windows w.r.t. casting 32-bit pointer to 64-bit unsigned int? Does clang not sign extend? I would expect if it does sign extend, we would see the sign extended values stored in the method context.

We might need to change SuperPMI to specifically cast pointers (and thus handles) to same-sized unsigned ints before extending to larger unsigned ints.

category:eng-sys

theme:super-pmi

skill-level:intermediate

cost:medium

| 1.0 | superpmi: problem using arm collections - I generated asm diffs on Windows x86 using Linux arm collection and clrjit_unix_arm_x86.dll cross-compiler JIT:

```

py -3 C:\gh\runtime\src\coreclr\scripts\superpmi.py asmdiffs -arch x86 -target_arch arm -filter libraries -jit_name clrjit_unix_arm_x86.dll --gcinfo -target_os Linux

```

This fails to replay every MC due to what appears to be an issue with sign extension of pointer types.

The JIT calls `getMethodClass()` with, in my example, 0xe8b8303c (from some previous SPMI call).

This calls the SuperPMI function:

```

CORINFO_CLASS_HANDLE MethodContext::repGetMethodClass(CORINFO_METHOD_HANDLE methodHandle)

```

which calls:

```

int index = GetMethodClass->GetIndex((DWORDLONG)methodHandle);

```

It casts a `CORINFO_METHOD_HANDLE`, which is a (32-bit) pointer, to a `DWORDLONG`, which is `unsigned __int64`, and in doing so sign extends it to 0xffffffffe8b8303c. It looks up in the GetMethodClass map, which includes a non-sign-extended value, and fails to find it.

Is there a difference in behavior between the C++ compiler behavior on Linux and Windows w.r.t. casting 32-bit pointer to 64-bit unsigned int? Does clang not sign extend? I would expect if it does sign extend, we would see the sign extended values stored in the method context.

We might need to change SuperPMI to specifically cast pointers (and thus handles) to same-sized unsigned ints before extending to larger unsigned ints.

category:eng-sys

theme:super-pmi

skill-level:intermediate

cost:medium

| non_main | superpmi problem using arm collections i generated asm diffs on windows using linux arm collection and clrjit unix arm dll cross compiler jit py c gh runtime src coreclr scripts superpmi py asmdiffs arch target arch arm filter libraries jit name clrjit unix arm dll gcinfo target os linux this fails to replay every mc due to what appears to be an issue with sign extension of pointer types the jit calls getmethodclass with in my example from some previous spmi call this calls the superpmi function corinfo class handle methodcontext repgetmethodclass corinfo method handle methodhandle which calls int index getmethodclass getindex dwordlong methodhandle it casts a corinfo method handle which is a bit pointer to a dwordlong which is unsigned and in doing so sign extends it to it looks up in the getmethodclass map which includes a non sign extended value and fails to find it is there a difference in behavior between the c compiler behavior on linux and windows w r t casting bit pointer to bit unsigned int does clang not sign extend i would expect if it does sign extend we would see the sign extended values stored in the method context we might need to change superpmi to specifically cast pointers and thus handles to same sized unsigned ints before extending to larger unsigned ints category eng sys theme super pmi skill level intermediate cost medium | 0 |

4,393 | 22,536,669,960 | IssuesEvent | 2022-06-25 10:22:24 | wkentaro/gdown | https://api.github.com/repos/wkentaro/gdown | closed | --folder raises FileNotFoundError if files on Google Drive have slashes in their names | bug status: wip-by-maintainer | My folder has a file named `January/February 2020.csv` (and several others with the same naming pattern). Running `gdown --folder <url> -O /tmp/my-local-dir/` results in `FileNotFoundError: [Errno 2] No such file or directory: '/tmp/my-local-dir/January'` (treating `January/` as a local filesystem subdirectory).

I have fixed this locally by patching `file_name = file.name.replace(osp.sep, '_')` here:

https://github.com/wkentaro/gdown/blob/main/gdown/download_folder.py#L239-L250

But I am not sure if this is the only and the right place to fix this, so decided to first create an issue and not a PR. I may create a PR if you tell me which places should this affect too. | True | --folder raises FileNotFoundError if files on Google Drive have slashes in their names - My folder has a file named `January/February 2020.csv` (and several others with the same naming pattern). Running `gdown --folder <url> -O /tmp/my-local-dir/` results in `FileNotFoundError: [Errno 2] No such file or directory: '/tmp/my-local-dir/January'` (treating `January/` as a local filesystem subdirectory).

I have fixed this locally by patching `file_name = file.name.replace(osp.sep, '_')` here:

https://github.com/wkentaro/gdown/blob/main/gdown/download_folder.py#L239-L250

But I am not sure if this is the only and the right place to fix this, so decided to first create an issue and not a PR. I may create a PR if you tell me which places should this affect too. | main | folder raises filenotfounderror if files on google drive have slashes in their names my folder has a file named january february csv and several others with the same naming pattern running gdown folder o tmp my local dir results in filenotfounderror no such file or directory tmp my local dir january treating january as a local filesystem subdirectory i have fixed this locally by patching file name file name replace osp sep here but i am not sure if this is the only and the right place to fix this so decided to first create an issue and not a pr i may create a pr if you tell me which places should this affect too | 1 |

1,649 | 6,572,678,727 | IssuesEvent | 2017-09-11 04:20:36 | ansible/ansible-modules-extras | https://api.github.com/repos/ansible/ansible-modules-extras | closed | IPA: can't set password for ipa_user module | affects_2.3 bug_report waiting_on_maintainer | <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

ipa

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.3.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Linux Mint 18

##### SUMMARY

<!--- Explain the problem briefly -->

Can't add password for ipa user through ipa_user module - password is always empty in IPA

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Run ipa_user module with all required fields and password field filled.

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Ensure user is present

ipa_user:

name: "{{ item.0.login }}"

state: present

givenname: "{{ item.1.first_name }}"

sn: "{{ item.1.last_name }}"

mail: "{{ item.1.mail }}"

password: 123321

telephonenumber: "{{ item.1.telnum }}"

title: "{{ item.1.jobtitle }}"

ipa_host: "{{ global_host }}"

ipa_user: "{{ global_user }}"

ipa_pass: "{{ global_pass }}"

validate_certs: no

with_subelements:

- "{{ users_to_add }}"

- personal_data

ignore_errors: true

users_to_add:

- username: Harley Quinn

login: 90987264

password: "adasdk212masd"

cluster_zone: Default

group: mininform

group_desc: "Some random data for description"

personal_data:

- first_name: Harley

last_name: Quinn

mail: harley@gmail.com

telnum: +79788880132

jobtitle: Minister

- username: Vasya Pupkin

login: 77777777

password: "adasdk212masd"

cluster_zone: Default

group: mininform

group_desc: "Some random data for description"

personal_data:

- first_name: Vasya

last_name: Pupkin

mail: vasya@gmail.com

telnum: +7970000805

jobtitle: Vice minister

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

User creation with password expected.

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

User created has no password set. And module does not change user credentials (password) if you change it in playbook.

<!--- Paste verbatim command output between quotes below -->

```

ok: [ipa111.krtech.loc] => (item=({u'username': u'Harley Quinn', u'group': u'mininform', u'cluster_zone': u'Default', u'group_desc': u'Some rando

m data for description', u'login': 90987264, u'password': u'adasdk212masd'}, {u'mail': u'harley@gmail.com', u'first_name': u'Harley', u'last_name

': u'Quinn', u'jobtitle': u'Minister', u'telnum': 79788880132}))

ok: [ipa111.krtech.loc] => (item=({u'username': u'Vasya Pupkin', u'group': u'mininform', u'cluster_zone': u'Default', u'group_desc': u'Some rando

m data for description', u'login': 77777777, u'password': u'adasdk212masd'}, {u'mail': u'vasya@gmail.com', u'first_name': u'Vasya', u'last_name':

u'Pupkin', u'jobtitle': u'Vice minister', u'telnum': 7970000805}))

```

| True | IPA: can't set password for ipa_user module - <!--- Verify first that your issue/request is not already reported in GitHub -->

##### ISSUE TYPE

<!--- Pick one below and delete the rest: -->

- Bug Report

##### COMPONENT NAME

<!--- Name of the plugin/module/task -->

ipa

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.3.0

config file = /etc/ansible/ansible.cfg

configured module search path = Default w/o overrides

```

##### CONFIGURATION

<!---

Mention any settings you have changed/added/removed in ansible.cfg

(or using the ANSIBLE_* environment variables).

-->

##### OS / ENVIRONMENT

<!---

Mention the OS you are running Ansible from, and the OS you are

managing, or say “N/A” for anything that is not platform-specific.

-->

Linux Mint 18

##### SUMMARY

<!--- Explain the problem briefly -->

Can't add password for ipa user through ipa_user module - password is always empty in IPA

##### STEPS TO REPRODUCE

<!---

For bugs, show exactly how to reproduce the problem.

For new features, show how the feature would be used.

-->

Run ipa_user module with all required fields and password field filled.

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Ensure user is present

ipa_user:

name: "{{ item.0.login }}"

state: present

givenname: "{{ item.1.first_name }}"

sn: "{{ item.1.last_name }}"

mail: "{{ item.1.mail }}"

password: 123321

telephonenumber: "{{ item.1.telnum }}"

title: "{{ item.1.jobtitle }}"

ipa_host: "{{ global_host }}"

ipa_user: "{{ global_user }}"

ipa_pass: "{{ global_pass }}"

validate_certs: no

with_subelements:

- "{{ users_to_add }}"

- personal_data

ignore_errors: true

users_to_add:

- username: Harley Quinn

login: 90987264

password: "adasdk212masd"

cluster_zone: Default

group: mininform

group_desc: "Some random data for description"

personal_data:

- first_name: Harley

last_name: Quinn

mail: harley@gmail.com

telnum: +79788880132

jobtitle: Minister

- username: Vasya Pupkin

login: 77777777

password: "adasdk212masd"

cluster_zone: Default

group: mininform

group_desc: "Some random data for description"

personal_data:

- first_name: Vasya

last_name: Pupkin

mail: vasya@gmail.com

telnum: +7970000805

jobtitle: Vice minister

```

<!--- You can also paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- What did you expect to happen when running the steps above? -->

User creation with password expected.

##### ACTUAL RESULTS

<!--- What actually happened? If possible run with high verbosity (-vvvv) -->

User created has no password set. And module does not change user credentials (password) if you change it in playbook.

<!--- Paste verbatim command output between quotes below -->

```

ok: [ipa111.krtech.loc] => (item=({u'username': u'Harley Quinn', u'group': u'mininform', u'cluster_zone': u'Default', u'group_desc': u'Some rando

m data for description', u'login': 90987264, u'password': u'adasdk212masd'}, {u'mail': u'harley@gmail.com', u'first_name': u'Harley', u'last_name

': u'Quinn', u'jobtitle': u'Minister', u'telnum': 79788880132}))

ok: [ipa111.krtech.loc] => (item=({u'username': u'Vasya Pupkin', u'group': u'mininform', u'cluster_zone': u'Default', u'group_desc': u'Some rando

m data for description', u'login': 77777777, u'password': u'adasdk212masd'}, {u'mail': u'vasya@gmail.com', u'first_name': u'Vasya', u'last_name':

u'Pupkin', u'jobtitle': u'Vice minister', u'telnum': 7970000805}))

```

| main | ipa can t set password for ipa user module issue type bug report component name ipa ansible version ansible config file etc ansible ansible cfg configured module search path default w o overrides configuration mention any settings you have changed added removed in ansible cfg or using the ansible environment variables os environment mention the os you are running ansible from and the os you are managing or say “n a” for anything that is not platform specific linux mint summary can t add password for ipa user through ipa user module password is always empty in ipa steps to reproduce for bugs show exactly how to reproduce the problem for new features show how the feature would be used run ipa user module with all required fields and password field filled name ensure user is present ipa user name item login state present givenname item first name sn item last name mail item mail password telephonenumber item telnum title item jobtitle ipa host global host ipa user global user ipa pass global pass validate certs no with subelements users to add personal data ignore errors true users to add username harley quinn login password cluster zone default group mininform group desc some random data for description personal data first name harley last name quinn mail harley gmail com telnum jobtitle minister username vasya pupkin login password cluster zone default group mininform group desc some random data for description personal data first name vasya last name pupkin mail vasya gmail com telnum jobtitle vice minister expected results user creation with password expected actual results user created has no password set and module does not change user credentials password if you change it in playbook ok item u username u harley quinn u group u mininform u cluster zone u default u group desc u some rando m data for description u login u password u u mail u harley gmail com u first name u harley u last name u quinn u jobtitle u minister u telnum ok item u username u vasya pupkin u group u mininform u cluster zone u default u group desc u some rando m data for description u login u password u u mail u vasya gmail com u first name u vasya u last name u pupkin u jobtitle u vice minister u telnum | 1 |

144,124 | 11,595,731,180 | IssuesEvent | 2020-02-24 17:33:05 | terraform-providers/terraform-provider-google | https://api.github.com/repos/terraform-providers/terraform-provider-google | opened | Fix TestAccAppEngineServiceSplitTraffic_appEngineServiceSplitTrafficExample test | test failure | Missing a mutex I think. | 1.0 | Fix TestAccAppEngineServiceSplitTraffic_appEngineServiceSplitTrafficExample test - Missing a mutex I think. | non_main | fix testaccappengineservicesplittraffic appengineservicesplittrafficexample test missing a mutex i think | 0 |

1,010 | 4,787,260,955 | IssuesEvent | 2016-10-29 22:08:53 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | Return codes for command-module whould be configurable | affects_2.2 feature_idea waiting_on_maintainer | ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

command-module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0 (detached HEAD bce9bfce51) last updated 2016/10/24 14:13:42 (GMT +000)

```

##### SUMMARY

Allowed return codes to be successful should be configurable in commands module

e.g. the command `grep` has three possible return codes: 0, 1, 2

but on only 2 signals an error.

So it should be possible, to configure 0 AND 1 as "good" return codes. | True | Return codes for command-module whould be configurable - ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

command-module

##### ANSIBLE VERSION

<!--- Paste verbatim output from “ansible --version” between quotes below -->

```

ansible 2.2.0.0 (detached HEAD bce9bfce51) last updated 2016/10/24 14:13:42 (GMT +000)

```

##### SUMMARY

Allowed return codes to be successful should be configurable in commands module

e.g. the command `grep` has three possible return codes: 0, 1, 2

but on only 2 signals an error.

So it should be possible, to configure 0 AND 1 as "good" return codes. | main | return codes for command module whould be configurable issue type feature idea component name command module ansible version ansible detached head last updated gmt summary allowed return codes to be successful should be configurable in commands module e g the command grep has three possible return codes but on only signals an error so it should be possible to configure and as good return codes | 1 |

44,334 | 23,587,251,250 | IssuesEvent | 2022-08-23 12:39:38 | python/cpython | https://api.github.com/repos/python/cpython | closed | Patch for thread-support in md5module.c | performance extension-modules pending | BPO | [4818](https://bugs.python.org/issue4818)

--- | :---

Nosy | @loewis, @gpshead, @jcea, @tiran

Files | <li>[md5module_small_locks-2.diff](https://bugs.python.org/file12568/md5module_small_locks-2.diff "Uploaded as text/plain at 2009-01-03.14:44:19 by ebfe")</li>

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflect the current state.*</sup>

<details><summary>Show more details</summary><p>

GitHub fields:

```python

assignee = None

closed_at = None

created_at = <Date 2009-01-03.11:23:59.564>

labels = ['extension-modules', 'performance']

title = 'Patch for thread-support in md5module.c'

updated_at = <Date 2012-10-06.23:44:01.385>

user = 'https://bugs.python.org/ebfe'

```

bugs.python.org fields:

```python

activity = <Date 2012-10-06.23:44:01.385>

actor = 'christian.heimes'

assignee = 'none'

closed = False

closed_date = None

closer = None

components = ['Extension Modules']

creation = <Date 2009-01-03.11:23:59.564>

creator = 'ebfe'

dependencies = []

files = ['12568']

hgrepos = []

issue_num = 4818

keywords = ['patch']

message_count = 5.0

messages = ['78947', '78950', '78954', '78963', '81727']

nosy_count = 5.0

nosy_names = ['loewis', 'gregory.p.smith', 'jcea', 'christian.heimes', 'ebfe']

pr_nums = []

priority = 'normal'

resolution = None

stage = 'patch review'

status = 'open'

superseder = None

type = 'performance'

url = 'https://bugs.python.org/issue4818'

versions = ['Python 3.4']

```

</p></details>

| True | Patch for thread-support in md5module.c - BPO | [4818](https://bugs.python.org/issue4818)

--- | :---

Nosy | @loewis, @gpshead, @jcea, @tiran

Files | <li>[md5module_small_locks-2.diff](https://bugs.python.org/file12568/md5module_small_locks-2.diff "Uploaded as text/plain at 2009-01-03.14:44:19 by ebfe")</li>

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflect the current state.*</sup>

<details><summary>Show more details</summary><p>

GitHub fields:

```python

assignee = None

closed_at = None

created_at = <Date 2009-01-03.11:23:59.564>

labels = ['extension-modules', 'performance']

title = 'Patch for thread-support in md5module.c'

updated_at = <Date 2012-10-06.23:44:01.385>

user = 'https://bugs.python.org/ebfe'

```

bugs.python.org fields:

```python

activity = <Date 2012-10-06.23:44:01.385>

actor = 'christian.heimes'

assignee = 'none'

closed = False

closed_date = None

closer = None

components = ['Extension Modules']

creation = <Date 2009-01-03.11:23:59.564>

creator = 'ebfe'

dependencies = []

files = ['12568']

hgrepos = []

issue_num = 4818

keywords = ['patch']

message_count = 5.0

messages = ['78947', '78950', '78954', '78963', '81727']

nosy_count = 5.0

nosy_names = ['loewis', 'gregory.p.smith', 'jcea', 'christian.heimes', 'ebfe']

pr_nums = []

priority = 'normal'

resolution = None

stage = 'patch review'

status = 'open'

superseder = None

type = 'performance'

url = 'https://bugs.python.org/issue4818'

versions = ['Python 3.4']

```

</p></details>

| non_main | patch for thread support in c bpo nosy loewis gpshead jcea tiran files uploaded as text plain at by ebfe note these values reflect the state of the issue at the time it was migrated and might not reflect the current state show more details github fields python assignee none closed at none created at labels title patch for thread support in c updated at user bugs python org fields python activity actor christian heimes assignee none closed false closed date none closer none components creation creator ebfe dependencies files hgrepos issue num keywords message count messages nosy count nosy names pr nums priority normal resolution none stage patch review status open superseder none type performance url versions | 0 |

1,934 | 6,609,879,948 | IssuesEvent | 2017-09-19 15:50:32 | Kristinita/Erics-Green-Room | https://api.github.com/repos/Kristinita/Erics-Green-Room | closed | [Feature request] Регулируемое время на чтение комментариев | need-maintainer | ### 1. Запрос

#### 1. Желательно

Неплохо было бы, если б пользователь комнаты имел возможность регулировать время, которое ему нужно на прочтение комментария. Убрать время на просмотр источника.

#### 2. Альтернатива

Установить 3 секунды на просмотр комментария. Убрать время на просмотр источника.

### 2. Аргументация

В настоящее время даётся 3 секунды на просмотр комментария и 3 секунды на просмотр источника.

1. Время на просмотр источника — лишнее. Изучение источников — процесс не быстрый, во время интенсивной игры проанализировать их толком не получится.

1. Секунды простоя на каждом вопросе перетекают в минуты. Каждая тренировка пакета затягивается на несколько минут из-за простоев.

Спасибо. | True | [Feature request] Регулируемое время на чтение комментариев - ### 1. Запрос

#### 1. Желательно

Неплохо было бы, если б пользователь комнаты имел возможность регулировать время, которое ему нужно на прочтение комментария. Убрать время на просмотр источника.

#### 2. Альтернатива

Установить 3 секунды на просмотр комментария. Убрать время на просмотр источника.

### 2. Аргументация

В настоящее время даётся 3 секунды на просмотр комментария и 3 секунды на просмотр источника.

1. Время на просмотр источника — лишнее. Изучение источников — процесс не быстрый, во время интенсивной игры проанализировать их толком не получится.

1. Секунды простоя на каждом вопросе перетекают в минуты. Каждая тренировка пакета затягивается на несколько минут из-за простоев.

Спасибо. | main | регулируемое время на чтение комментариев запрос желательно неплохо было бы если б пользователь комнаты имел возможность регулировать время которое ему нужно на прочтение комментария убрать время на просмотр источника альтернатива установить секунды на просмотр комментария убрать время на просмотр источника аргументация в настоящее время даётся секунды на просмотр комментария и секунды на просмотр источника время на просмотр источника — лишнее изучение источников — процесс не быстрый во время интенсивной игры проанализировать их толком не получится секунды простоя на каждом вопросе перетекают в минуты каждая тренировка пакета затягивается на несколько минут из за простоев спасибо | 1 |

116,599 | 17,379,814,269 | IssuesEvent | 2021-07-31 13:13:17 | sap-labs-france/ev-server | https://api.github.com/repos/sap-labs-france/ev-server | closed | Logs > Security: Ensure that all user's actions are logged using Logging.logSecurityInfo/Warning/Error | security | To ensure tracability of the user's actions from the UI.

These logs will be kept one year.

Request a meeting for this issue. | True | Logs > Security: Ensure that all user's actions are logged using Logging.logSecurityInfo/Warning/Error - To ensure tracability of the user's actions from the UI.

These logs will be kept one year.

Request a meeting for this issue. | non_main | logs security ensure that all user s actions are logged using logging logsecurityinfo warning error to ensure tracability of the user s actions from the ui these logs will be kept one year request a meeting for this issue | 0 |

4,158 | 19,957,807,616 | IssuesEvent | 2022-01-28 02:46:54 | microsoft/DirectXTK | https://api.github.com/repos/microsoft/DirectXTK | opened | Retire VS 2017 support | maintainence | Visual Studio 2017 reaches it's [mainstream end-of-life]() on **April 2022**. I should retire these projects that time:

* DirectXTK_Desktop_2017.vcxproj

* DirectXTK_Desktop_2017_Win10.vcxproj

* DirectXTK_Windows10_2017.vcxproj

> I am not sure when I'll be retiring Xbox One XDK support which is not supported for VS 2019 or later. That means I'm not sure if I'll delete ``DirectXTK_XboxOneXDK_2017.vcxproj`` or not with this change.

| True | Retire VS 2017 support - Visual Studio 2017 reaches it's [mainstream end-of-life]() on **April 2022**. I should retire these projects that time:

* DirectXTK_Desktop_2017.vcxproj

* DirectXTK_Desktop_2017_Win10.vcxproj

* DirectXTK_Windows10_2017.vcxproj

> I am not sure when I'll be retiring Xbox One XDK support which is not supported for VS 2019 or later. That means I'm not sure if I'll delete ``DirectXTK_XboxOneXDK_2017.vcxproj`` or not with this change.