Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

381,589 | 11,276,862,402 | IssuesEvent | 2020-01-15 00:40:32 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | Follow up job messaging work | AMO caseflow-intake foxtrot priority-medium | <!-- The goal of this template is to be a tool to communicate the requirements for a story related task. It is not intended as a mandate, adapt as needed. -->

## User or job story

User story: As an intake user, I need to be able to communicate to veterans when their end product establishment is failing, through uploading a screenshot of their job details page. I would like the job details page to include the inbox messages in notes, because the inbox messages page includes information for other veterans.

## Acceptance criteria

- [x] When we add an inbox message for a job failing after 24 hours, subsequently succeeding, or being canceled, also add a job note (job note messages are already shown)

- [x] Read Inbox messages should be hidden after 120 days

- [x] Update copy (see below for new requested copy)

- [x] Use veteran file numbers when labeling async jobs in Inbox

- [x] Include a screenshot in this GitHub issue of an inbox message appearing on the job details page

## Release notes

Inbox messages related to asyncable jobs will appear as job notes on the job detail page.

### Designs

New copy:

- For messaging a job failure after 24 hours, add:

>No further action is necessary as the (IT) support team has been notified. You will receive a separate message in your inbox when the issue has resolved.

- For messaging a job being manually cancelled, add:

>No further action is necessary. Please see the job details page for more information on why this job has been cancelled.

Sample screenshot of job page with new job notes that get automatically created alongside Inbox messages (for failures and successes after 24 hours):

<img width="401" alt="Screen Shot 2019-12-18 at 10 51 19" src="https://user-images.githubusercontent.com/282869/71101457-bc7e1280-2184-11ea-843a-589221e82a2b.png">

| 1.0 | Follow up job messaging work - <!-- The goal of this template is to be a tool to communicate the requirements for a story related task. It is not intended as a mandate, adapt as needed. -->

## User or job story

User story: As an intake user, I need to be able to communicate to veterans when their end product establishment is failing, through uploading a screenshot of their job details page. I would like the job details page to include the inbox messages in notes, because the inbox messages page includes information for other veterans.

## Acceptance criteria

- [x] When we add an inbox message for a job failing after 24 hours, subsequently succeeding, or being canceled, also add a job note (job note messages are already shown)

- [x] Read Inbox messages should be hidden after 120 days

- [x] Update copy (see below for new requested copy)

- [x] Use veteran file numbers when labeling async jobs in Inbox

- [x] Include a screenshot in this GitHub issue of an inbox message appearing on the job details page

## Release notes

Inbox messages related to asyncable jobs will appear as job notes on the job detail page.

### Designs

New copy:

- For messaging a job failure after 24 hours, add:

>No further action is necessary as the (IT) support team has been notified. You will receive a separate message in your inbox when the issue has resolved.

- For messaging a job being manually cancelled, add:

>No further action is necessary. Please see the job details page for more information on why this job has been cancelled.

Sample screenshot of job page with new job notes that get automatically created alongside Inbox messages (for failures and successes after 24 hours):

<img width="401" alt="Screen Shot 2019-12-18 at 10 51 19" src="https://user-images.githubusercontent.com/282869/71101457-bc7e1280-2184-11ea-843a-589221e82a2b.png">

| priority | follow up job messaging work user or job story user story as an intake user i need to be able to communicate to veterans when their end product establishment is failing through uploading a screenshot of their job details page i would like the job details page to include the inbox messages in notes because the inbox messages page includes information for other veterans acceptance criteria when we add an inbox message for a job failing after hours subsequently succeeding or being canceled also add a job note job note messages are already shown read inbox messages should be hidden after days update copy see below for new requested copy use veteran file numbers when labeling async jobs in inbox include a screenshot in this github issue of an inbox message appearing on the job details page release notes inbox messages related to asyncable jobs will appear as job notes on the job detail page designs new copy for messaging a job failure after hours add no further action is necessary as the it support team has been notified you will receive a separate message in your inbox when the issue has resolved for messaging a job being manually cancelled add no further action is necessary please see the job details page for more information on why this job has been cancelled sample screenshot of job page with new job notes that get automatically created alongside inbox messages for failures and successes after hours img width alt screen shot at src | 1 |

270,650 | 8,468,165,249 | IssuesEvent | 2018-10-23 18:57:15 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: cross over equator (x = 0) does strange things | Medium Priority |

**Version:** 0.7.7.2 beta

**Steps to Reproduce:**

take a vehicle (steam truck) and slowly drive over the equator.

you can spot the point also by looking at the smoke comming out of the engine. it will disappear shortly when you drive over the point

**Expected behavior:**

nothing happens

**Actual behavior:**

the players drop for a short time (1/2 sec) into the ground and disappears then (after I drove over the point) they appear again. also nearby vehicles (steam tractor) are launched in the air and bug away. n

**Do you have mods installed? Does issue happen when no mods are installed?:**

no | 1.0 | USER ISSUE: cross over equator (x = 0) does strange things -

**Version:** 0.7.7.2 beta

**Steps to Reproduce:**

take a vehicle (steam truck) and slowly drive over the equator.

you can spot the point also by looking at the smoke comming out of the engine. it will disappear shortly when you drive over the point

**Expected behavior:**

nothing happens

**Actual behavior:**

the players drop for a short time (1/2 sec) into the ground and disappears then (after I drove over the point) they appear again. also nearby vehicles (steam tractor) are launched in the air and bug away. n

**Do you have mods installed? Does issue happen when no mods are installed?:**

no | priority | user issue cross over equator x does strange things version beta steps to reproduce take a vehicle steam truck and slowly drive over the equator you can spot the point also by looking at the smoke comming out of the engine it will disappear shortly when you drive over the point expected behavior nothing happens actual behavior the players drop for a short time sec into the ground and disappears then after i drove over the point they appear again also nearby vehicles steam tractor are launched in the air and bug away n do you have mods installed does issue happen when no mods are installed no | 1 |

436,738 | 12,552,561,275 | IssuesEvent | 2020-06-06 18:29:53 | Twin-Cities-Mutual-Aid/twin-cities-aid-distribution-locations | https://api.github.com/repos/Twin-Cities-Mutual-Aid/twin-cities-aid-distribution-locations | closed | Error logging/notification | Priority: Medium Type: Feature | Since our persistence layer (Google Sheets) is pretty error-prone, I think it would be really helpful if we could set up a service to notify us if there are errors in the production app. I know these exist but don't know anything about them ... ideally something we could plug into slack. | 1.0 | Error logging/notification - Since our persistence layer (Google Sheets) is pretty error-prone, I think it would be really helpful if we could set up a service to notify us if there are errors in the production app. I know these exist but don't know anything about them ... ideally something we could plug into slack. | priority | error logging notification since our persistence layer google sheets is pretty error prone i think it would be really helpful if we could set up a service to notify us if there are errors in the production app i know these exist but don t know anything about them ideally something we could plug into slack | 1 |

761,354 | 26,677,007,561 | IssuesEvent | 2023-01-26 14:58:47 | vaticle/intellij-rust | https://api.github.com/repos/vaticle/intellij-rust | opened | When configuring Rust toolchain, set default path as `bazel-out/{toolchain_path}` | priority: medium type: bug | When opening a project and loading a Rust file before running the appropriate Bazel build command - or when loading a Rust file at _any_ time after having ever done the above - you'll see "No Rust toolchain configured".

I'm not sure how we could easily configure it to _always_ try to auto-detect the Bazel-installed Rust toolchain in this case, but what we can definitely do, is modify the behaviour of the "Set up toolchain" option:

Currently it auto-detects the Rust toolchain in `/Users/{user}/.cargo/bin`, installed by `rustup`.

We should modify it to search the same paths that we've defined the Rust toolchain detection (on first load of a Rust file) to search; namely `bazel-out` for the Rust toolchain, and `bazel-{projectName}` for the `stdlib` sources. | 1.0 | When configuring Rust toolchain, set default path as `bazel-out/{toolchain_path}` - When opening a project and loading a Rust file before running the appropriate Bazel build command - or when loading a Rust file at _any_ time after having ever done the above - you'll see "No Rust toolchain configured".

I'm not sure how we could easily configure it to _always_ try to auto-detect the Bazel-installed Rust toolchain in this case, but what we can definitely do, is modify the behaviour of the "Set up toolchain" option:

Currently it auto-detects the Rust toolchain in `/Users/{user}/.cargo/bin`, installed by `rustup`.

We should modify it to search the same paths that we've defined the Rust toolchain detection (on first load of a Rust file) to search; namely `bazel-out` for the Rust toolchain, and `bazel-{projectName}` for the `stdlib` sources. | priority | when configuring rust toolchain set default path as bazel out toolchain path when opening a project and loading a rust file before running the appropriate bazel build command or when loading a rust file at any time after having ever done the above you ll see no rust toolchain configured i m not sure how we could easily configure it to always try to auto detect the bazel installed rust toolchain in this case but what we can definitely do is modify the behaviour of the set up toolchain option currently it auto detects the rust toolchain in users user cargo bin installed by rustup we should modify it to search the same paths that we ve defined the rust toolchain detection on first load of a rust file to search namely bazel out for the rust toolchain and bazel projectname for the stdlib sources | 1 |

41,298 | 2,868,994,804 | IssuesEvent | 2015-06-05 22:26:55 | dart-lang/pub-dartlang | https://api.github.com/repos/dart-lang/pub-dartlang | closed | Pub server should support 3-legged OAuth for 3rd party integration | enhancement MovedToGithub Priority-Medium | <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#6234_

----

Github has post commit hooks. A hook could ping pub server, which then talks to github and checks pubspec.yaml. If version number is different, rebuild pub package.

Neat! | 1.0 | Pub server should support 3-legged OAuth for 3rd party integration - <a href="https://github.com/sethladd"><img src="https://avatars.githubusercontent.com/u/5479?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sethladd](https://github.com/sethladd)**

_Originally opened as dart-lang/sdk#6234_

----

Github has post commit hooks. A hook could ping pub server, which then talks to github and checks pubspec.yaml. If version number is different, rebuild pub package.

Neat! | priority | pub server should support legged oauth for party integration issue by originally opened as dart lang sdk github has post commit hooks a hook could ping pub server which then talks to github and checks pubspec yaml if version number is different rebuild pub package neat | 1 |

210,541 | 7,190,740,983 | IssuesEvent | 2018-02-02 18:21:55 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | two-handed weapons/shields aren't easily identifiable as such | priority: medium section: Equipment status: issue: in progress type: medium level coding | Most two-handed weapons aren't easily identified as such because they don't have anything in their text to state that they are.

We sometimes see bug reports from mobile app users when equipping a two-handed item unequips a shield. There are issues to give notifications about that when equipping them on the apps (https://github.com/HabitRPG/habitica-ios/issues/433 and https://github.com/HabitRPG/habitica-android/issues/688) but we should also include a message in each two-handed item's description on website and apps - i.e., the message should be in the data returned from the API's `content` route.

For consistency, the message should be automatically added to the end of the item's description when the item has a true value for the `twoHanded` attribute (e.g., the rancherLasso below). I.e., the PR for this change will NOT change any of the `common/locales/en/` json files (with one exception listed below) but instead will modify the API's code to insert the message.

The message should be something like "**Two-handed item.**" We won't use "Two-handed weapon" because future items might have more of a shield-like feel to them.

The PR should change `weaponSpecialCandycaneNotes` in `common/locales/en/gear.json` to remove the hard-coded two-handed description. I.e., change this:

`A powerful mage's staff. Powerfully DELICIOUS, we mean! Two-handed weapon. Increases Intelligence by <%= int %> and Perception by <%= per %>. Limited Edition 2013-2014 Winter Gear.`

to this:

`A powerful mage's staff. Powerfully DELICIOUS, we mean! Increases Intelligence by <%= int %> and Perception by <%= per %>. Limited Edition 2013-2014 Winter Gear.`

Example of a two-handed item:

```

rancherLasso: {

twoHanded: true,

text: t('weaponArmoireRancherLassoText'),

notes: t('weaponArmoireRancherLassoNotes', { str: 5, per: 5, int: 5 }),

value: 100,

str: 5,

per: 5,

int: 5,

set: 'rancher',

canOwn: ownsItem('weapon_armoire_rancherLasso'),

},

``` | 1.0 | two-handed weapons/shields aren't easily identifiable as such - Most two-handed weapons aren't easily identified as such because they don't have anything in their text to state that they are.

We sometimes see bug reports from mobile app users when equipping a two-handed item unequips a shield. There are issues to give notifications about that when equipping them on the apps (https://github.com/HabitRPG/habitica-ios/issues/433 and https://github.com/HabitRPG/habitica-android/issues/688) but we should also include a message in each two-handed item's description on website and apps - i.e., the message should be in the data returned from the API's `content` route.

For consistency, the message should be automatically added to the end of the item's description when the item has a true value for the `twoHanded` attribute (e.g., the rancherLasso below). I.e., the PR for this change will NOT change any of the `common/locales/en/` json files (with one exception listed below) but instead will modify the API's code to insert the message.

The message should be something like "**Two-handed item.**" We won't use "Two-handed weapon" because future items might have more of a shield-like feel to them.

The PR should change `weaponSpecialCandycaneNotes` in `common/locales/en/gear.json` to remove the hard-coded two-handed description. I.e., change this:

`A powerful mage's staff. Powerfully DELICIOUS, we mean! Two-handed weapon. Increases Intelligence by <%= int %> and Perception by <%= per %>. Limited Edition 2013-2014 Winter Gear.`

to this:

`A powerful mage's staff. Powerfully DELICIOUS, we mean! Increases Intelligence by <%= int %> and Perception by <%= per %>. Limited Edition 2013-2014 Winter Gear.`

Example of a two-handed item:

```

rancherLasso: {

twoHanded: true,

text: t('weaponArmoireRancherLassoText'),

notes: t('weaponArmoireRancherLassoNotes', { str: 5, per: 5, int: 5 }),

value: 100,

str: 5,

per: 5,

int: 5,

set: 'rancher',

canOwn: ownsItem('weapon_armoire_rancherLasso'),

},

``` | priority | two handed weapons shields aren t easily identifiable as such most two handed weapons aren t easily identified as such because they don t have anything in their text to state that they are we sometimes see bug reports from mobile app users when equipping a two handed item unequips a shield there are issues to give notifications about that when equipping them on the apps and but we should also include a message in each two handed item s description on website and apps i e the message should be in the data returned from the api s content route for consistency the message should be automatically added to the end of the item s description when the item has a true value for the twohanded attribute e g the rancherlasso below i e the pr for this change will not change any of the common locales en json files with one exception listed below but instead will modify the api s code to insert the message the message should be something like two handed item we won t use two handed weapon because future items might have more of a shield like feel to them the pr should change weaponspecialcandycanenotes in common locales en gear json to remove the hard coded two handed description i e change this a powerful mage s staff powerfully delicious we mean two handed weapon increases intelligence by and perception by limited edition winter gear to this a powerful mage s staff powerfully delicious we mean increases intelligence by and perception by limited edition winter gear example of a two handed item rancherlasso twohanded true text t weaponarmoirerancherlassotext notes t weaponarmoirerancherlassonotes str per int value str per int set rancher canown ownsitem weapon armoire rancherlasso | 1 |

151,774 | 5,827,350,298 | IssuesEvent | 2017-05-08 08:45:33 | dotkom/onlineweb4 | https://api.github.com/repos/dotkom/onlineweb4 | closed | Add link to subnavbar to "Om interessegrupper" | Priority: Medium Status: Available | Add a link to the subnavbar that points to "Om interessegrupper", after "Om Online" etc. Awaiting merge of #1787 for now. | 1.0 | Add link to subnavbar to "Om interessegrupper" - Add a link to the subnavbar that points to "Om interessegrupper", after "Om Online" etc. Awaiting merge of #1787 for now. | priority | add link to subnavbar to om interessegrupper add a link to the subnavbar that points to om interessegrupper after om online etc awaiting merge of for now | 1 |

781,552 | 27,441,706,144 | IssuesEvent | 2023-03-02 11:29:09 | TetieWasTaken/BobTheBot | https://api.github.com/repos/TetieWasTaken/BobTheBot | opened | (Error) Responses should be standardized | priority: medium type: feature request | Replies to interactions such as "Item not found" or "[This] does not exist" should be standardized using the same format everywhere.

- Same layout

- Similar language/typing style

Achievable by making a custom logger. | 1.0 | (Error) Responses should be standardized - Replies to interactions such as "Item not found" or "[This] does not exist" should be standardized using the same format everywhere.

- Same layout

- Similar language/typing style

Achievable by making a custom logger. | priority | error responses should be standardized replies to interactions such as item not found or does not exist should be standardized using the same format everywhere same layout similar language typing style achievable by making a custom logger | 1 |

44,880 | 2,917,690,758 | IssuesEvent | 2015-06-24 00:26:57 | andresriancho/w3af | https://api.github.com/repos/andresriancho/w3af | closed | Write REST API client | improvement priority:medium rest-api | Write REST API client

- [x] Create a different repo in github

- [x] Initial implementation

- [x] Unittests for basic REST API consumption

- [x] Pypi integration

- [x] Manually push the first version

- [x] Define the required variables in circleci to push to pypi on successful builds

- [x] Integration tests

- [x] Download django-moth (use django moth utils)

- [x] Download latest w3af

- [x] Run `w3af_api`

- [x] Connect to w3af_api using the REST API and run a test scan

- [x] Successful w3af build should trigger build of w3af-api-client (develop to develop, master to master)

- [x] Add link from the w3af documentation to the w3af-api-client pypi / github repo | 1.0 | Write REST API client - Write REST API client

- [x] Create a different repo in github

- [x] Initial implementation

- [x] Unittests for basic REST API consumption

- [x] Pypi integration

- [x] Manually push the first version

- [x] Define the required variables in circleci to push to pypi on successful builds

- [x] Integration tests

- [x] Download django-moth (use django moth utils)

- [x] Download latest w3af

- [x] Run `w3af_api`

- [x] Connect to w3af_api using the REST API and run a test scan

- [x] Successful w3af build should trigger build of w3af-api-client (develop to develop, master to master)

- [x] Add link from the w3af documentation to the w3af-api-client pypi / github repo | priority | write rest api client write rest api client create a different repo in github initial implementation unittests for basic rest api consumption pypi integration manually push the first version define the required variables in circleci to push to pypi on successful builds integration tests download django moth use django moth utils download latest run api connect to api using the rest api and run a test scan successful build should trigger build of api client develop to develop master to master add link from the documentation to the api client pypi github repo | 1 |

284,429 | 8,738,327,503 | IssuesEvent | 2018-12-12 02:35:45 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | VisIt VTK reader does not support VTM files. | bug likelihood medium priority reviewed severity medium | VTK has an XML "VTM" file that looks a lot like a .visit file for grouping domains into a single multidomain dataset. The current VTK reader does not support VTM files. This came up when trying to look at some OpenFOAM VTK data in VisIt - a classic "paraview can read this fine" situation.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 2162

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: VisIt VTK reader does not support VTM files.

Assigned to: Kathleen Biagas

Category:

Target version: 2.10

Author: Brad Whitlock

Start: 02/26/2015

Due date:

% Done: 0

Estimated time:

Created: 02/26/2015 03:28 pm

Updated: 08/18/2015 06:48 pm

Likelihood: 3 - Occasional

Severity: 3 - Major Irritation

Found in version: 2.8.2

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

VTK has an XML "VTM" file that looks a lot like a .visit file for grouping domains into a single multidomain dataset. The current VTK reader does not support VTM files. This came up when trying to look at some OpenFOAM VTK data in VisIt - a classic "paraview can read this fine" situation.

Comments:

I attached the wrong file before. Added a parser for .vtm files. Currently only supports vtkMultiBlockDataSet flavor. (vtk has a sample file of vtkHierarchicalDataSet)M databases/VTK/VTKPluginInfo.CM databases/VTK/avtVTKFileReader.CM databases/VTK/avtVTKFileReader.hM databases/VTK/VTK.xmlA databases/VTK/VTMParser.CM databases/VTK/avtVTKFileFormat.CA databases/VTK/VTMParser.hM databases/VTK/CMakeLists.txt

| 1.0 | VisIt VTK reader does not support VTM files. - VTK has an XML "VTM" file that looks a lot like a .visit file for grouping domains into a single multidomain dataset. The current VTK reader does not support VTM files. This came up when trying to look at some OpenFOAM VTK data in VisIt - a classic "paraview can read this fine" situation.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 2162

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: VisIt VTK reader does not support VTM files.

Assigned to: Kathleen Biagas

Category:

Target version: 2.10

Author: Brad Whitlock

Start: 02/26/2015

Due date:

% Done: 0

Estimated time:

Created: 02/26/2015 03:28 pm

Updated: 08/18/2015 06:48 pm

Likelihood: 3 - Occasional

Severity: 3 - Major Irritation

Found in version: 2.8.2

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

VTK has an XML "VTM" file that looks a lot like a .visit file for grouping domains into a single multidomain dataset. The current VTK reader does not support VTM files. This came up when trying to look at some OpenFOAM VTK data in VisIt - a classic "paraview can read this fine" situation.

Comments:

I attached the wrong file before. Added a parser for .vtm files. Currently only supports vtkMultiBlockDataSet flavor. (vtk has a sample file of vtkHierarchicalDataSet)M databases/VTK/VTKPluginInfo.CM databases/VTK/avtVTKFileReader.CM databases/VTK/avtVTKFileReader.hM databases/VTK/VTK.xmlA databases/VTK/VTMParser.CM databases/VTK/avtVTKFileFormat.CA databases/VTK/VTMParser.hM databases/VTK/CMakeLists.txt

| priority | visit vtk reader does not support vtm files vtk has an xml vtm file that looks a lot like a visit file for grouping domains into a single multidomain dataset the current vtk reader does not support vtm files this came up when trying to look at some openfoam vtk data in visit a classic paraview can read this fine situation redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status resolved project visit tracker bug priority high subject visit vtk reader does not support vtm files assigned to kathleen biagas category target version author brad whitlock start due date done estimated time created pm updated pm likelihood occasional severity major irritation found in version impact expected use os all support group any description vtk has an xml vtm file that looks a lot like a visit file for grouping domains into a single multidomain dataset the current vtk reader does not support vtm files this came up when trying to look at some openfoam vtk data in visit a classic paraview can read this fine situation comments i attached the wrong file before added a parser for vtm files currently only supports vtkmultiblockdataset flavor vtk has a sample file of vtkhierarchicaldataset m databases vtk vtkplugininfo cm databases vtk avtvtkfilereader cm databases vtk avtvtkfilereader hm databases vtk vtk xmla databases vtk vtmparser cm databases vtk avtvtkfileformat ca databases vtk vtmparser hm databases vtk cmakelists txt | 1 |

778,498 | 27,318,638,078 | IssuesEvent | 2023-02-24 17:43:32 | AY2223S2-CS2103T-W11-2/tp | https://api.github.com/repos/AY2223S2-CS2103T-W11-2/tp | opened | List all internships that clash in interview or test dates | type.Story priority.Medium | as an Expert user so that I can try to reschedule some of them | 1.0 | List all internships that clash in interview or test dates - as an Expert user so that I can try to reschedule some of them | priority | list all internships that clash in interview or test dates as an expert user so that i can try to reschedule some of them | 1 |

729,930 | 25,151,208,511 | IssuesEvent | 2022-11-10 10:08:55 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: Application with buffer that cannot unref it in disconnect handler leads to advertising issues | bug priority: medium area: mcumgr | **Describe the bug**

This issue has been found with a peripheral zephyr device which supports 1 connection, the application works by receiving BT data and then putting it into a fifo to be processed by a dedicated (non-system) workqueue which will send responses to it and unref the connection, an issue arises however in this application if the remote device disconnects prior to the task having finished, the application attempts to restart advertising using the system workqueue from the disconnection handler. The problem is that because the other task contains references to the connection, restarting advertising fails with error -12. Therefore the application is unable to restart advertising and then is in a dead state. Upping the connection count to 2 does work around this issue, but is not an acceptable solution due to the additional RAM usage (which is essentially wasted).

Some operations can also take longer than others, e.g. a image erase command can take upwards of 5 seconds to process.

**To Reproduce**

mcumgr has this issue

**Expected behavior**

To be able to restart advertising, from the system workqueue, triggered in the disconnect handler

**Impact**

Showstopper

**Environment (please complete the following information):**

- Commit SHA or Version used: This is apparent in an nRF connect SDK sample application (samples/matter/lock) using the softdevice, but should be reproducible with any application that uses bluetooth, I did not attempt seeing if this issue was present in the zephyr controller but would imagine it would be given that it is caused by the limit of connection references/handles being reached | 1.0 | Bluetooth: Application with buffer that cannot unref it in disconnect handler leads to advertising issues - **Describe the bug**

This issue has been found with a peripheral zephyr device which supports 1 connection, the application works by receiving BT data and then putting it into a fifo to be processed by a dedicated (non-system) workqueue which will send responses to it and unref the connection, an issue arises however in this application if the remote device disconnects prior to the task having finished, the application attempts to restart advertising using the system workqueue from the disconnection handler. The problem is that because the other task contains references to the connection, restarting advertising fails with error -12. Therefore the application is unable to restart advertising and then is in a dead state. Upping the connection count to 2 does work around this issue, but is not an acceptable solution due to the additional RAM usage (which is essentially wasted).

Some operations can also take longer than others, e.g. a image erase command can take upwards of 5 seconds to process.

**To Reproduce**

mcumgr has this issue

**Expected behavior**

To be able to restart advertising, from the system workqueue, triggered in the disconnect handler

**Impact**

Showstopper

**Environment (please complete the following information):**

- Commit SHA or Version used: This is apparent in an nRF connect SDK sample application (samples/matter/lock) using the softdevice, but should be reproducible with any application that uses bluetooth, I did not attempt seeing if this issue was present in the zephyr controller but would imagine it would be given that it is caused by the limit of connection references/handles being reached | priority | bluetooth application with buffer that cannot unref it in disconnect handler leads to advertising issues describe the bug this issue has been found with a peripheral zephyr device which supports connection the application works by receiving bt data and then putting it into a fifo to be processed by a dedicated non system workqueue which will send responses to it and unref the connection an issue arises however in this application if the remote device disconnects prior to the task having finished the application attempts to restart advertising using the system workqueue from the disconnection handler the problem is that because the other task contains references to the connection restarting advertising fails with error therefore the application is unable to restart advertising and then is in a dead state upping the connection count to does work around this issue but is not an acceptable solution due to the additional ram usage which is essentially wasted some operations can also take longer than others e g a image erase command can take upwards of seconds to process to reproduce mcumgr has this issue expected behavior to be able to restart advertising from the system workqueue triggered in the disconnect handler impact showstopper environment please complete the following information commit sha or version used this is apparent in an nrf connect sdk sample application samples matter lock using the softdevice but should be reproducible with any application that uses bluetooth i did not attempt seeing if this issue was present in the zephyr controller but would imagine it would be given that it is caused by the limit of connection references handles being reached | 1 |

703,372 | 24,155,879,481 | IssuesEvent | 2022-09-22 07:38:49 | enviroCar/enviroCar-app | https://api.github.com/repos/enviroCar/enviroCar-app | closed | App crashes when we stop the track before the track starts recording | bug 3 - Done Priority - 2 - Medium | ## Issue

In Develop branch, When a user stops the track before the track starts recording or the timer starts, The app crashes

## Step to reproduce

1. Go to GPS mode

2. Click on start tracking

3. Immediately after the recording screen comes up, click on stop button

4. Click yes for the dialog box.

## Possible Solution

1. We can make the stop button unclickable untill the recording starts

2. Or we can check before exiting that recording is started or not

https://user-images.githubusercontent.com/85510030/163064806-dcd0faad-7fcb-4e05-a7e8-d09c76ec4764.mov

| 1.0 | App crashes when we stop the track before the track starts recording - ## Issue

In Develop branch, When a user stops the track before the track starts recording or the timer starts, The app crashes

## Step to reproduce

1. Go to GPS mode

2. Click on start tracking

3. Immediately after the recording screen comes up, click on stop button

4. Click yes for the dialog box.

## Possible Solution

1. We can make the stop button unclickable untill the recording starts

2. Or we can check before exiting that recording is started or not

https://user-images.githubusercontent.com/85510030/163064806-dcd0faad-7fcb-4e05-a7e8-d09c76ec4764.mov

| priority | app crashes when we stop the track before the track starts recording issue in develop branch when a user stops the track before the track starts recording or the timer starts the app crashes step to reproduce go to gps mode click on start tracking immediately after the recording screen comes up click on stop button click yes for the dialog box possible solution we can make the stop button unclickable untill the recording starts or we can check before exiting that recording is started or not | 1 |

55,130 | 3,072,154,116 | IssuesEvent | 2015-08-19 15:36:38 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | waitForText with scroll set to true scrolls only first ListView it finds | bug imported Priority-Medium wontfix | _From [gaz...@gmail.com](https://code.google.com/u/113313170396315103068/) on September 06, 2011 01:43:13_

What steps will reproduce the problem? 1. Create a tabbed activity with a ListView in each tab

2. In the second tab in the list, put "needle" at the end of the list

3. call solo.waitForText("needle", 1, 2000, true)

What is the expected output?

list will scroll down until the match is found

What do you see instead?

list doesn't scroll, and we're stuck in an infinite loop in searchFor (timeout is ignored, opened another bug for that) What version of the product are you using? On what operating system? Robotium 2.5, Android 2.2 (Cyanogen 6). Please provide any additional information below. the reason for that is that the function:

public boolean scroll(int direction)

gets a list of all ListViews on screen, but scrolls only the first.

It should enter a for loop which scrolls all ListViews on screen.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=151_ | 1.0 | waitForText with scroll set to true scrolls only first ListView it finds - _From [gaz...@gmail.com](https://code.google.com/u/113313170396315103068/) on September 06, 2011 01:43:13_

What steps will reproduce the problem? 1. Create a tabbed activity with a ListView in each tab

2. In the second tab in the list, put "needle" at the end of the list

3. call solo.waitForText("needle", 1, 2000, true)

What is the expected output?

list will scroll down until the match is found

What do you see instead?

list doesn't scroll, and we're stuck in an infinite loop in searchFor (timeout is ignored, opened another bug for that) What version of the product are you using? On what operating system? Robotium 2.5, Android 2.2 (Cyanogen 6). Please provide any additional information below. the reason for that is that the function:

public boolean scroll(int direction)

gets a list of all ListViews on screen, but scrolls only the first.

It should enter a for loop which scrolls all ListViews on screen.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=151_ | priority | waitfortext with scroll set to true scrolls only first listview it finds from on september what steps will reproduce the problem create a tabbed activity with a listview in each tab in the second tab in the list put needle at the end of the list call solo waitfortext needle true what is the expected output list will scroll down until the match is found what do you see instead list doesn t scroll and we re stuck in an infinite loop in searchfor timeout is ignored opened another bug for that what version of the product are you using on what operating system robotium android cyanogen please provide any additional information below the reason for that is that the function public boolean scroll int direction gets a list of all listviews on screen but scrolls only the first it should enter a for loop which scrolls all listviews on screen original issue | 1 |

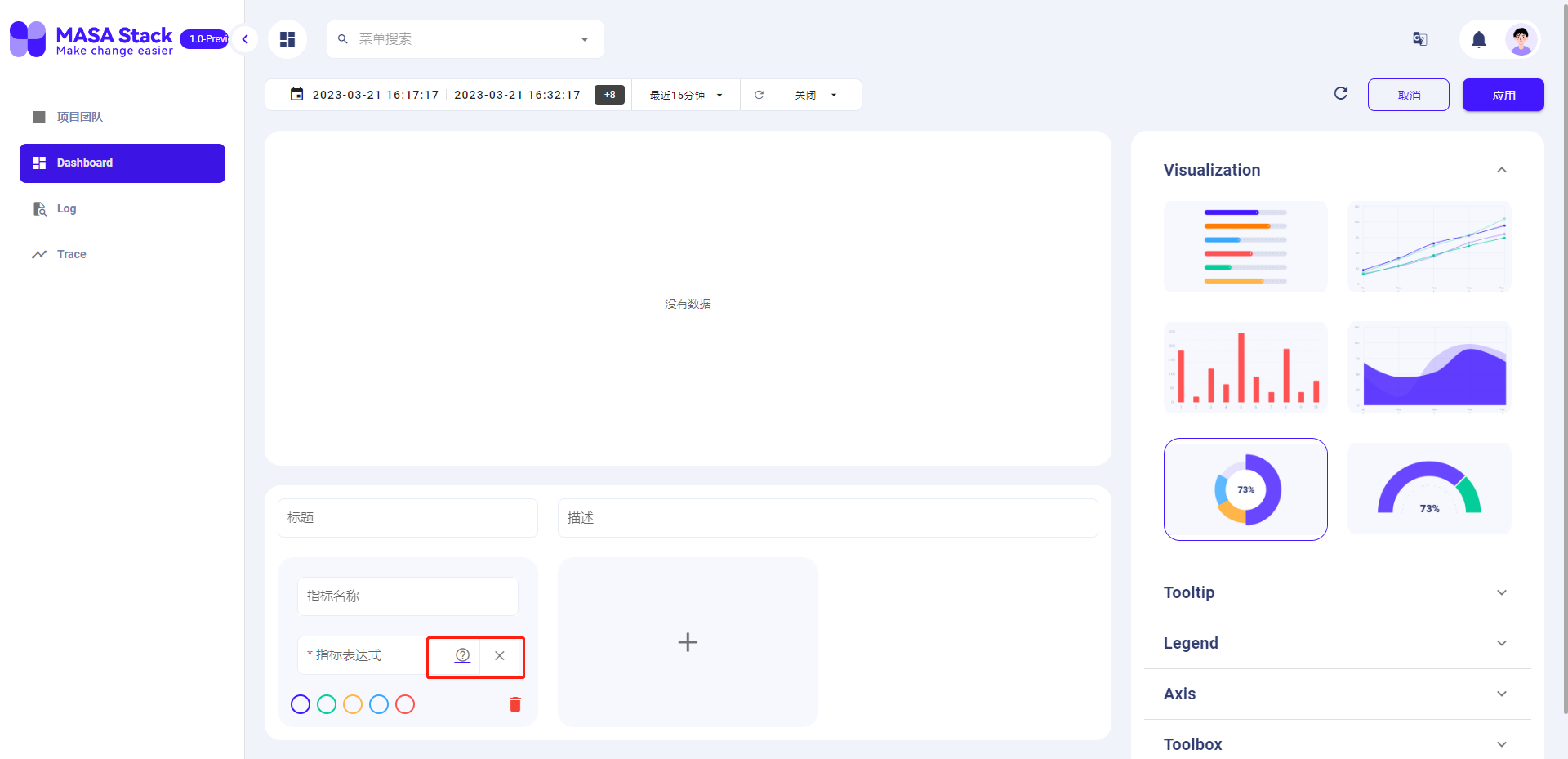

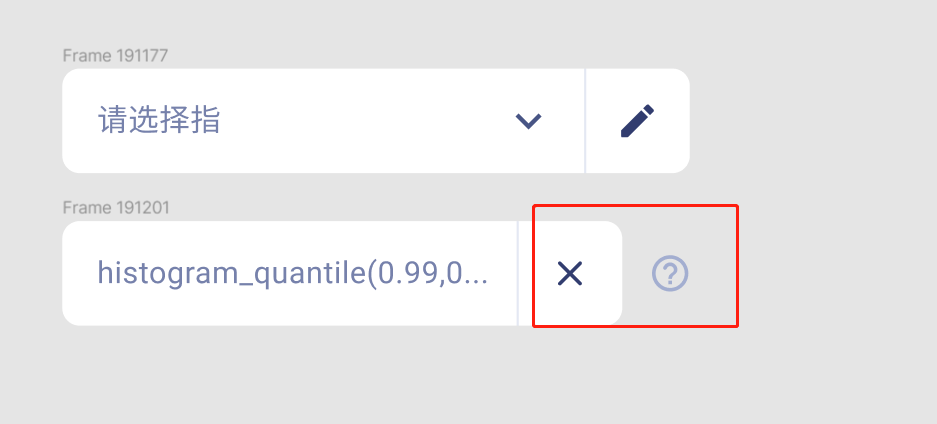

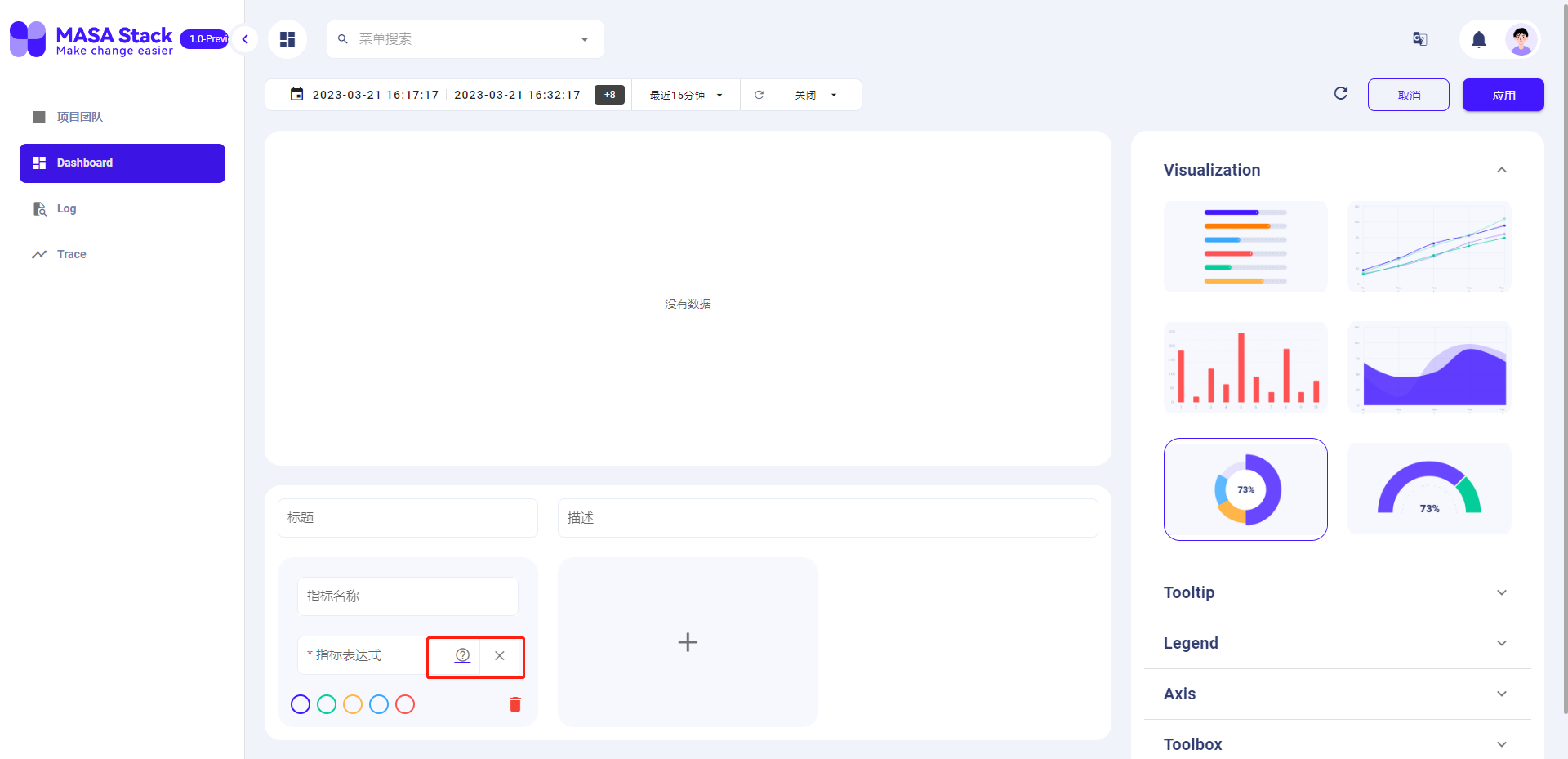

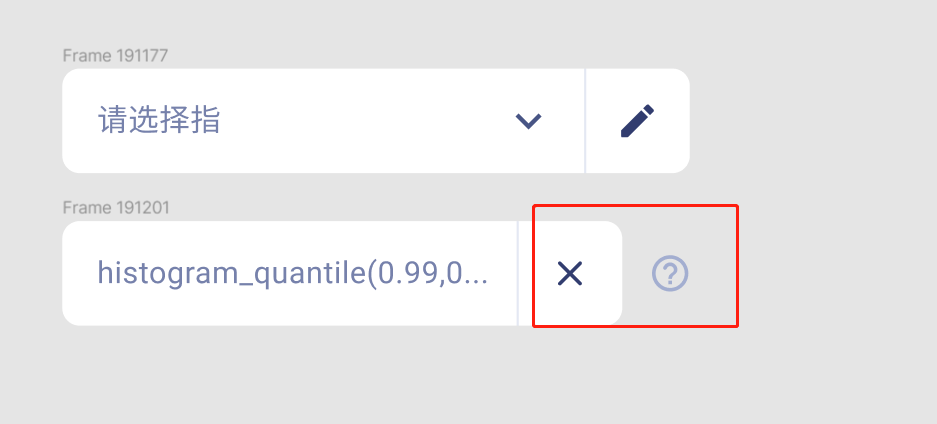

793,987 | 28,018,994,741 | IssuesEvent | 2023-03-28 02:43:58 | masastack/MASA.TSC | https://api.github.com/repos/masastack/MASA.TSC | closed | Indicator expression button position is inconsistent with the design draft | status/resolved type/ui severity/medium site/staging priority/p3 | 指标表达式按钮位置与设计稿不符

实际结果:

预期结果:

| 1.0 | Indicator expression button position is inconsistent with the design draft - 指标表达式按钮位置与设计稿不符

实际结果:

预期结果:

| priority | indicator expression button position is inconsistent with the design draft 指标表达式按钮位置与设计稿不符 实际结果: 预期结果: | 1 |

802,455 | 28,963,149,761 | IssuesEvent | 2023-05-10 05:29:31 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [xCluster] Enable consumer-side transactional consistency via setup replication options | kind/bug area/docdb priority/medium | Jira Link: [DB-6117](https://yugabyte.atlassian.net/browse/DB-6117)

### Description

Currently transactional consistency using apply_safe_time is enabled via a GFlag - xcluster_consistent_wal. Switch this to a parameter that is passed in using setup_replication.

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-6117]: https://yugabyte.atlassian.net/browse/DB-6117?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [xCluster] Enable consumer-side transactional consistency via setup replication options - Jira Link: [DB-6117](https://yugabyte.atlassian.net/browse/DB-6117)

### Description

Currently transactional consistency using apply_safe_time is enabled via a GFlag - xcluster_consistent_wal. Switch this to a parameter that is passed in using setup_replication.

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-6117]: https://yugabyte.atlassian.net/browse/DB-6117?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | enable consumer side transactional consistency via setup replication options jira link description currently transactional consistency using apply safe time is enabled via a gflag xcluster consistent wal switch this to a parameter that is passed in using setup replication warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 1 |

312,173 | 9,544,311,652 | IssuesEvent | 2019-05-01 13:50:28 | ukwa/w3act | https://api.github.com/repos/ukwa/w3act | closed | Report on Key Sites needed. | Enhancement Medium Priority | In order to manage the key sites list we need a report on Targets that are tagged as key sites (this functionality is only available to the Web Archivist using the Key sites checkbox in the Target record). Could the report be added to this page https://www.webarchive.org.uk/act/reportscreation/targets/ ?

The report can be viewed by all Users.

| 1.0 | Report on Key Sites needed. - In order to manage the key sites list we need a report on Targets that are tagged as key sites (this functionality is only available to the Web Archivist using the Key sites checkbox in the Target record). Could the report be added to this page https://www.webarchive.org.uk/act/reportscreation/targets/ ?

The report can be viewed by all Users.

| priority | report on key sites needed in order to manage the key sites list we need a report on targets that are tagged as key sites this functionality is only available to the web archivist using the key sites checkbox in the target record could the report be added to this page the report can be viewed by all users | 1 |

329,204 | 10,013,325,209 | IssuesEvent | 2019-07-15 14:59:47 | conan-io/conan | https://api.github.com/repos/conan-io/conan | opened | Proper error handling with 'conan get <ref> -r <remote>' when reference is not found | complex: low good first issue priority: medium stage: queue type: bug type: ux | Same as https://github.com/conan-io/conan/issues/5397 but for `conan get` command

Runnning `conan get non-existing/version@user/channel -r conan-center` it is printing the content of the whole 404 _file not found_ response from bintray.

| 1.0 | Proper error handling with 'conan get <ref> -r <remote>' when reference is not found - Same as https://github.com/conan-io/conan/issues/5397 but for `conan get` command

Runnning `conan get non-existing/version@user/channel -r conan-center` it is printing the content of the whole 404 _file not found_ response from bintray.

| priority | proper error handling with conan get r when reference is not found same as but for conan get command runnning conan get non existing version user channel r conan center it is printing the content of the whole file not found response from bintray | 1 |

80,525 | 3,563,413,171 | IssuesEvent | 2016-01-25 03:18:13 | HubTurbo/HubTurbo | https://api.github.com/repos/HubTurbo/HubTurbo | opened | Support user-defined lists in filters | feature-filters priority.medium type.enhancement | It would be nice if instead of typing `assignee:abc OR assignee:def OR assignee:xyz` we can type `assignee:in(group1)` or something like that where `group1` is a user defined list. | 1.0 | Support user-defined lists in filters - It would be nice if instead of typing `assignee:abc OR assignee:def OR assignee:xyz` we can type `assignee:in(group1)` or something like that where `group1` is a user defined list. | priority | support user defined lists in filters it would be nice if instead of typing assignee abc or assignee def or assignee xyz we can type assignee in or something like that where is a user defined list | 1 |

58,383 | 3,088,986,304 | IssuesEvent | 2015-08-25 19:18:33 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Зависание клиента при атаке множеством пользователей с хаба | bug imported Priority-Medium | _From [mike.kor...@gmail.com](https://code.google.com/u/101495626515388303633/) on October 14, 2014 22:34:47_

1. Подключаемся к специальному хабу.

2. Инициируем атаку на клиент множеством пользователей в ЛС.

3. GUI замерзает, клиент как-то шевелится и даже передает что-то, но после снятия атаки работоспособность не возвращается. https://yadi.sk/d/2-c1hDAac2Wtn В топе 2 нити (после атаки):

thread 224:

ntoskrnl.exe!KeWaitForMultipleObjects+0xc0a

ntoskrnl.exe!KeAcquireSpinLockAtDpcLevel+0x732

ntoskrnl.exe!KeWaitForMultipleObjects+0x26a

ntoskrnl.exe!FsRtlCancellableWaitForMultipleObjects+0xac

fltmgr.sys!FltSendMessage+0x4ea

MpFilter.sys!DllInitialize+0x2b2b5

MpFilter.sys!DllInitialize+0x233a8

fltmgr.sys!FltAcquirePushLockShared+0x907

fltmgr.sys!FltIsCallbackDataDirty+0xa39

fltmgr.sys+0x16c7

ntoskrnl.exe!MmCreateSection+0xbccf

ntoskrnl.exe!NtWaitForSingleObject+0xe04

ntoskrnl.exe!NtWaitForSingleObject+0xbc1

ntoskrnl.exe!NtWaitForSingleObject+0x1184

ntoskrnl.exe!KeSynchronizeExecution+0x3a23

ntdll.dll!ZwClose+0xa

KERNELBASE.dll!CloseHandle+0x13

kernel32.dll!CloseHandle+0x41

FlylinkDC_x64.exe+0x1e0621

FlylinkDC_x64.exe+0x7fe71

FlylinkDC_x64.exe+0x7ce44

FlylinkDC_x64.exe+0x74dec

FlylinkDC_x64.exe+0x532f3

USER32.dll!TranslateMessageEx+0x2a1

USER32.dll!TranslateMessage+0x1ea

FlylinkDC_x64.exe+0x9d03e

FlylinkDC_x64.exe+0x9c087

FlylinkDC_x64.exe+0x9c6f7

FlylinkDC_x64.exe+0x6e3294

kernel32.dll!BaseThreadInitThunk+0xd

ntdll.dll!RtlUserThreadStart+0x21

thread 2772:

ntoskrnl.exe!KeWaitForMultipleObjects+0xc0a

ntoskrnl.exe!KeAcquireSpinLockAtDpcLevel+0x732

ntoskrnl.exe!KeWaitForSingleObject+0x19f

ntoskrnl.exe!NtWaitForSingleObject+0xde

ntoskrnl.exe!KeSynchronizeExecution+0x3a23

ntdll.dll!NtWaitForSingleObject+0xa

mswsock.dll+0x3d28

mswsock.dll!WSPStartup+0x8077

WS2_32.dll!select+0x15c

WS2_32.dll!select+0xdd

FlylinkDC_x64.exe+0x279619

FlylinkDC_x64.exe+0x2cb8d0

FlylinkDC_x64.exe+0x20181f

FlylinkDC_x64.exe+0x6e1caf

FlylinkDC_x64.exe+0x6e1d43

kernel32.dll!BaseThreadInitThunk+0xd

ntdll.dll!RtlUserThreadStart+0x21

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1506_ | 1.0 | Зависание клиента при атаке множеством пользователей с хаба - _From [mike.kor...@gmail.com](https://code.google.com/u/101495626515388303633/) on October 14, 2014 22:34:47_

1. Подключаемся к специальному хабу.

2. Инициируем атаку на клиент множеством пользователей в ЛС.

3. GUI замерзает, клиент как-то шевелится и даже передает что-то, но после снятия атаки работоспособность не возвращается. https://yadi.sk/d/2-c1hDAac2Wtn В топе 2 нити (после атаки):

thread 224:

ntoskrnl.exe!KeWaitForMultipleObjects+0xc0a

ntoskrnl.exe!KeAcquireSpinLockAtDpcLevel+0x732

ntoskrnl.exe!KeWaitForMultipleObjects+0x26a

ntoskrnl.exe!FsRtlCancellableWaitForMultipleObjects+0xac

fltmgr.sys!FltSendMessage+0x4ea

MpFilter.sys!DllInitialize+0x2b2b5

MpFilter.sys!DllInitialize+0x233a8

fltmgr.sys!FltAcquirePushLockShared+0x907

fltmgr.sys!FltIsCallbackDataDirty+0xa39

fltmgr.sys+0x16c7

ntoskrnl.exe!MmCreateSection+0xbccf

ntoskrnl.exe!NtWaitForSingleObject+0xe04

ntoskrnl.exe!NtWaitForSingleObject+0xbc1

ntoskrnl.exe!NtWaitForSingleObject+0x1184

ntoskrnl.exe!KeSynchronizeExecution+0x3a23

ntdll.dll!ZwClose+0xa

KERNELBASE.dll!CloseHandle+0x13

kernel32.dll!CloseHandle+0x41

FlylinkDC_x64.exe+0x1e0621

FlylinkDC_x64.exe+0x7fe71

FlylinkDC_x64.exe+0x7ce44

FlylinkDC_x64.exe+0x74dec

FlylinkDC_x64.exe+0x532f3

USER32.dll!TranslateMessageEx+0x2a1

USER32.dll!TranslateMessage+0x1ea

FlylinkDC_x64.exe+0x9d03e

FlylinkDC_x64.exe+0x9c087

FlylinkDC_x64.exe+0x9c6f7

FlylinkDC_x64.exe+0x6e3294

kernel32.dll!BaseThreadInitThunk+0xd

ntdll.dll!RtlUserThreadStart+0x21

thread 2772:

ntoskrnl.exe!KeWaitForMultipleObjects+0xc0a

ntoskrnl.exe!KeAcquireSpinLockAtDpcLevel+0x732

ntoskrnl.exe!KeWaitForSingleObject+0x19f

ntoskrnl.exe!NtWaitForSingleObject+0xde

ntoskrnl.exe!KeSynchronizeExecution+0x3a23

ntdll.dll!NtWaitForSingleObject+0xa

mswsock.dll+0x3d28

mswsock.dll!WSPStartup+0x8077

WS2_32.dll!select+0x15c

WS2_32.dll!select+0xdd

FlylinkDC_x64.exe+0x279619

FlylinkDC_x64.exe+0x2cb8d0

FlylinkDC_x64.exe+0x20181f

FlylinkDC_x64.exe+0x6e1caf

FlylinkDC_x64.exe+0x6e1d43

kernel32.dll!BaseThreadInitThunk+0xd

ntdll.dll!RtlUserThreadStart+0x21

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1506_ | priority | зависание клиента при атаке множеством пользователей с хаба from on october подключаемся к специальному хабу инициируем атаку на клиент множеством пользователей в лс gui замерзает клиент как то шевелится и даже передает что то но после снятия атаки работоспособность не возвращается в топе нити после атаки thread ntoskrnl exe kewaitformultipleobjects ntoskrnl exe keacquirespinlockatdpclevel ntoskrnl exe kewaitformultipleobjects ntoskrnl exe fsrtlcancellablewaitformultipleobjects fltmgr sys fltsendmessage mpfilter sys dllinitialize mpfilter sys dllinitialize fltmgr sys fltacquirepushlockshared fltmgr sys fltiscallbackdatadirty fltmgr sys ntoskrnl exe mmcreatesection ntoskrnl exe ntwaitforsingleobject ntoskrnl exe ntwaitforsingleobject ntoskrnl exe ntwaitforsingleobject ntoskrnl exe kesynchronizeexecution ntdll dll zwclose kernelbase dll closehandle dll closehandle flylinkdc exe flylinkdc exe flylinkdc exe flylinkdc exe flylinkdc exe dll translatemessageex dll translatemessage flylinkdc exe flylinkdc exe flylinkdc exe flylinkdc exe dll basethreadinitthunk ntdll dll rtluserthreadstart thread ntoskrnl exe kewaitformultipleobjects ntoskrnl exe keacquirespinlockatdpclevel ntoskrnl exe kewaitforsingleobject ntoskrnl exe ntwaitforsingleobject ntoskrnl exe kesynchronizeexecution ntdll dll ntwaitforsingleobject mswsock dll mswsock dll wspstartup dll select dll select flylinkdc exe flylinkdc exe flylinkdc exe flylinkdc exe flylinkdc exe dll basethreadinitthunk ntdll dll rtluserthreadstart original issue | 1 |

77,093 | 3,506,260,158 | IssuesEvent | 2016-01-08 05:03:20 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | [boss] Reliquary of Souls - Essence of Anger (BB #154) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 19.05.2010 20:54:11 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/154

<hr>

I cought the RoS, there was no seeth, and than, without reason, I've lost aggro, RoS has attacked an another warrior. It was after 4-5 seconds from pull, in this time there was no chance for this warrior to build aggro using thrown weapon. Misdirect was on me, so a whole situation was increadibly wrong.

After a wipe we went to kill some trash mobs. As a tank I had a second problem, the same as on Arcatraz - i wasn't generating any aggro. I couldn't make a reload during combat so there was no way to repair a bug. Moreover, after every pull i had the same problem.

To sum up - the RoS os bugged. | 1.0 | [boss] Reliquary of Souls - Essence of Anger (BB #154) - This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 19.05.2010 20:54:11 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** invalid

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/154

<hr>

I cought the RoS, there was no seeth, and than, without reason, I've lost aggro, RoS has attacked an another warrior. It was after 4-5 seconds from pull, in this time there was no chance for this warrior to build aggro using thrown weapon. Misdirect was on me, so a whole situation was increadibly wrong.

After a wipe we went to kill some trash mobs. As a tank I had a second problem, the same as on Arcatraz - i wasn't generating any aggro. I couldn't make a reload during combat so there was no way to repair a bug. Moreover, after every pull i had the same problem.

To sum up - the RoS os bugged. | priority | reliquary of souls essence of anger bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state invalid direct link i cought the ros there was no seeth and than without reason i ve lost aggro ros has attacked an another warrior it was after seconds from pull in this time there was no chance for this warrior to build aggro using thrown weapon misdirect was on me so a whole situation was increadibly wrong after a wipe we went to kill some trash mobs as a tank i had a second problem the same as on arcatraz i wasn t generating any aggro i couldn t make a reload during combat so there was no way to repair a bug moreover after every pull i had the same problem to sum up the ros os bugged | 1 |

828,407 | 31,826,594,858 | IssuesEvent | 2023-09-14 07:56:35 | nickhaf/eatPlot | https://api.github.com/repos/nickhaf/eatPlot | closed | `combine_plots`: Don't adjust the plot widths if only one has a bar - optional argument for the plot_widths | bug medium priority | Also concerncs #353 | 1.0 | `combine_plots`: Don't adjust the plot widths if only one has a bar - optional argument for the plot_widths - Also concerncs #353 | priority | combine plots don t adjust the plot widths if only one has a bar optional argument for the plot widths also concerncs | 1 |

463,802 | 13,301,073,066 | IssuesEvent | 2020-08-25 12:24:36 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | CW: Disable maximum worlds options for tier 11(dev)+ | Category: Accounts Priority: Medium Status: Fixed | Right now we have `world_maximum` option in DB and it set to 1 on staging.

Make Dev Tier (11) and up to have unlimited cloud worlds. | 1.0 | CW: Disable maximum worlds options for tier 11(dev)+ - Right now we have `world_maximum` option in DB and it set to 1 on staging.

Make Dev Tier (11) and up to have unlimited cloud worlds. | priority | cw disable maximum worlds options for tier dev right now we have world maximum option in db and it set to on staging make dev tier and up to have unlimited cloud worlds | 1 |

808,141 | 30,035,107,401 | IssuesEvent | 2023-06-27 12:16:15 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID: 321157] Logically dead code in subsys/bluetooth/audio/csip_set_coordinator.c | bug priority: medium area: Bluetooth Coverity area: Bluetooth Audio |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/ce3317d03e46e1a218af3e712383d9216c48a992/subsys/bluetooth/audio/csip_set_coordinator.c

Category: Control flow issues

Function: `verify_members`

Component: Bluetooth

CID: [321157](https://scan9.scan.coverity.com/reports.htm#v29726/p12996/mergedDefectId=321157)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/ce3317d03e46e1a218af3e712383d9216c48a992/subsys/bluetooth/audio/csip_set_coordinator.c#L1500

Please fix or provide comments in coverity using the link:

https://scan9.scan.coverity.com/reports.htm#v29271/p12996.

For more information about the violation, check the [Coverity Reference](https://scan9.scan.coverity.com/doc/en/cov_checker_ref.html#static_checker_DEADCODE). ([CWE-561](http://cwe.mitre.org/data/definitions/561.html))

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| 1.0 | [Coverity CID: 321157] Logically dead code in subsys/bluetooth/audio/csip_set_coordinator.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/ce3317d03e46e1a218af3e712383d9216c48a992/subsys/bluetooth/audio/csip_set_coordinator.c

Category: Control flow issues

Function: `verify_members`

Component: Bluetooth

CID: [321157](https://scan9.scan.coverity.com/reports.htm#v29726/p12996/mergedDefectId=321157)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/ce3317d03e46e1a218af3e712383d9216c48a992/subsys/bluetooth/audio/csip_set_coordinator.c#L1500

Please fix or provide comments in coverity using the link:

https://scan9.scan.coverity.com/reports.htm#v29271/p12996.

For more information about the violation, check the [Coverity Reference](https://scan9.scan.coverity.com/doc/en/cov_checker_ref.html#static_checker_DEADCODE). ([CWE-561](http://cwe.mitre.org/data/definitions/561.html))

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| priority | logically dead code in subsys bluetooth audio csip set coordinator c static code scan issues found in file category control flow issues function verify members component bluetooth cid details please fix or provide comments in coverity using the link for more information about the violation check the note this issue was created automatically priority was set based on classification of the file affected and the impact field in coverity assignees were set using the codeowners file | 1 |

782,333 | 27,493,560,878 | IssuesEvent | 2023-03-04 22:41:34 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] ldb manifest_dump fails in 2.12 | kind/bug area/docdb priority/medium | Jira Link: [DB-5657](https://yugabyte.atlassian.net/browse/DB-5657)

### Description

In version 2.12, the `ldb manifest_dump` command fails when accessing a rocksdb MANIFEST file. The same file will open fine in the 2.17.1 version of the tool.

Run on a 2.12.10 cluster using the 2.12.10 version of the tool:

`./ldb manifest_dump --path=/mnt/d0/yb-data/tserver/data/rocksdb/table-8b2ad5af47ef46aabff43d2ad48bde03/tablet-0c19c426a5dc4123b577a319d4d9ba5c/MANIFEST-000013`

> Error in processing file /mnt/d0/yb-data/tserver/data/rocksdb/table-8b2ad5af47ef46aabff43d2ad48bde03/tablet-0c19c426a5dc4123b577a319d4d9ba5c/MANIFEST-000013 Illegal state (yb/rocksdb/db/version_edit.cc:180): Boundary values contains user frontier but extractor is not specified: key: "G\000\000S980a5363-2996-4c58-b5ab-b008f76c4362:1054314\000\000!!J\200#\200\001^\354B:\254\216\200J\0017\256\002\000\000\000\004" seqno: 1125899906842625 user_values { tag: 1 data: "\200\001^\354\206-\242\342\200J" } user_frontier { [type.googleapis.com/yb.docdb.ConsensusFrontierPB] { op_id { term: 1 index: 2 } hybrid_time: 6869422163067260928 history_cutoff: 18446744073709551614 max_value_level_ttl_expiration_time: 18446744073709551614 } }

Same command using the 2.17.1 tool:

> --------------- Column family "default" (ID 0) --------------

> log number: 4

> comparator: leveldb.BytewiseComparator

> --- level 0 --- version# 1 ---

> { number: 10 total_size: 5420804 base_size: 205661 being_compacted: 0 smallest: { seqno: 1125899906842625 user_frontier: 0x000055a8b9b0a2a0 -> { op_id: 1.2 hybrid_time: { physical: 1677105020280093 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <invalid> primary_schema_version: <NULL> cotable_schema_versions: [] } } largest: { seqno: 1125899907132804 user_frontier: 0x000055a8b9b0aaf0 -> { op_id: 2.145092 hybrid_time: { physical: 1677106773197952 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <initial> primary_schema_version: <NULL> cotable_schema_versions: [] } } }

> next_file_number 15 last_sequence 1125899907132838 prev_log_number 0 max_column_family 0 flushed_values 0x000055a8b9b0ab60 -> { op_id: 2.145092 hybrid_time: { physical: 1677106773197952 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <initial> primary_schema_version: <NULL> cotable_schema_versions: [] }

[DB-5657]: https://yugabyte.atlassian.net/browse/DB-5657?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [DocDB] ldb manifest_dump fails in 2.12 - Jira Link: [DB-5657](https://yugabyte.atlassian.net/browse/DB-5657)

### Description

In version 2.12, the `ldb manifest_dump` command fails when accessing a rocksdb MANIFEST file. The same file will open fine in the 2.17.1 version of the tool.

Run on a 2.12.10 cluster using the 2.12.10 version of the tool:

`./ldb manifest_dump --path=/mnt/d0/yb-data/tserver/data/rocksdb/table-8b2ad5af47ef46aabff43d2ad48bde03/tablet-0c19c426a5dc4123b577a319d4d9ba5c/MANIFEST-000013`

> Error in processing file /mnt/d0/yb-data/tserver/data/rocksdb/table-8b2ad5af47ef46aabff43d2ad48bde03/tablet-0c19c426a5dc4123b577a319d4d9ba5c/MANIFEST-000013 Illegal state (yb/rocksdb/db/version_edit.cc:180): Boundary values contains user frontier but extractor is not specified: key: "G\000\000S980a5363-2996-4c58-b5ab-b008f76c4362:1054314\000\000!!J\200#\200\001^\354B:\254\216\200J\0017\256\002\000\000\000\004" seqno: 1125899906842625 user_values { tag: 1 data: "\200\001^\354\206-\242\342\200J" } user_frontier { [type.googleapis.com/yb.docdb.ConsensusFrontierPB] { op_id { term: 1 index: 2 } hybrid_time: 6869422163067260928 history_cutoff: 18446744073709551614 max_value_level_ttl_expiration_time: 18446744073709551614 } }

Same command using the 2.17.1 tool:

> --------------- Column family "default" (ID 0) --------------

> log number: 4

> comparator: leveldb.BytewiseComparator

> --- level 0 --- version# 1 ---

> { number: 10 total_size: 5420804 base_size: 205661 being_compacted: 0 smallest: { seqno: 1125899906842625 user_frontier: 0x000055a8b9b0a2a0 -> { op_id: 1.2 hybrid_time: { physical: 1677105020280093 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <invalid> primary_schema_version: <NULL> cotable_schema_versions: [] } } largest: { seqno: 1125899907132804 user_frontier: 0x000055a8b9b0aaf0 -> { op_id: 2.145092 hybrid_time: { physical: 1677106773197952 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <initial> primary_schema_version: <NULL> cotable_schema_versions: [] } } }

> next_file_number 15 last_sequence 1125899907132838 prev_log_number 0 max_column_family 0 flushed_values 0x000055a8b9b0ab60 -> { op_id: 2.145092 hybrid_time: { physical: 1677106773197952 } history_cutoff: <invalid> hybrid_time_filter: <invalid> max_value_level_ttl_expiration_time: <initial> primary_schema_version: <NULL> cotable_schema_versions: [] }

[DB-5657]: https://yugabyte.atlassian.net/browse/DB-5657?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | ldb manifest dump fails in jira link description in version the ldb manifest dump command fails when accessing a rocksdb manifest file the same file will open fine in the version of the tool run on a cluster using the version of the tool ldb manifest dump path mnt yb data tserver data rocksdb table tablet manifest error in processing file mnt yb data tserver data rocksdb table tablet manifest illegal state yb rocksdb db version edit cc boundary values contains user frontier but extractor is not specified key g j seqno user values tag data user frontier op id term index hybrid time history cutoff max value level ttl expiration time same command using the tool column family default id log number comparator leveldb bytewisecomparator level version number total size base size being compacted smallest seqno user frontier op id hybrid time physical history cutoff hybrid time filter max value level ttl expiration time primary schema version cotable schema versions largest seqno user frontier op id hybrid time physical history cutoff hybrid time filter max value level ttl expiration time primary schema version cotable schema versions next file number last sequence prev log number max column family flushed values op id hybrid time physical history cutoff hybrid time filter max value level ttl expiration time primary schema version cotable schema versions | 1 |

585,595 | 17,501,424,518 | IssuesEvent | 2021-08-10 09:52:39 | nimblehq/nimble-medium-ios | https://api.github.com/repos/nimblehq/nimble-medium-ios | opened | As a user, I can edit my profile briefing from the left menu header when logged in | type : feature category : ui priority: medium | ## Why

Once the users logged in the application successfully, they can opt to edit the profile from the menu header if needed.

## Acceptance Criteria

- [ ] Add the `Edit Profile` button with no text in the bottom right corner of the header view container.

- [ ] Set the tint of the `Edit Profile` button to white for matching with our green and white theme.

## Resources

- Sample menu header:

<img width="566" alt="Screen Shot 2021-08-10 at 16 31 18" src="https://user-images.githubusercontent.com/70877098/128844841-c1f48023-17f1-4de1-b222-eca915367375.png">

- Edit Profile button icon:

https://icon-library.com/icon/edit-icon-png-14.html | 1.0 | As a user, I can edit my profile briefing from the left menu header when logged in - ## Why

Once the users logged in the application successfully, they can opt to edit the profile from the menu header if needed.

## Acceptance Criteria

- [ ] Add the `Edit Profile` button with no text in the bottom right corner of the header view container.

- [ ] Set the tint of the `Edit Profile` button to white for matching with our green and white theme.

## Resources

- Sample menu header:

<img width="566" alt="Screen Shot 2021-08-10 at 16 31 18" src="https://user-images.githubusercontent.com/70877098/128844841-c1f48023-17f1-4de1-b222-eca915367375.png">

- Edit Profile button icon:

https://icon-library.com/icon/edit-icon-png-14.html | priority | as a user i can edit my profile briefing from the left menu header when logged in why once the users logged in the application successfully they can opt to edit the profile from the menu header if needed acceptance criteria add the edit profile button with no text in the bottom right corner of the header view container set the tint of the edit profile button to white for matching with our green and white theme resources sample menu header img width alt screen shot at src edit profile button icon | 1 |

478,664 | 13,783,089,841 | IssuesEvent | 2020-10-08 18:39:18 | zeoflow/zeobot | https://api.github.com/repos/zeoflow/zeobot | closed | DraftRelease | Content | @bug @priority-medium | DraftRelease content contains the last pr details that was merged in the previous version | 1.0 | DraftRelease | Content - DraftRelease content contains the last pr details that was merged in the previous version | priority | draftrelease content draftrelease content contains the last pr details that was merged in the previous version | 1 |

362,644 | 10,730,129,096 | IssuesEvent | 2019-10-28 16:49:45 | AY1920S1-CS2103T-T12-3/main | https://api.github.com/repos/AY1920S1-CS2103T-T12-3/main | closed | As a coach/captain of male and female teams I want to filter players according to their gender | priority.Medium type.Story | To be able to plan for team/trainings more efficiently. | 1.0 | As a coach/captain of male and female teams I want to filter players according to their gender - To be able to plan for team/trainings more efficiently. | priority | as a coach captain of male and female teams i want to filter players according to their gender to be able to plan for team trainings more efficiently | 1 |

709,255 | 24,371,797,862 | IssuesEvent | 2022-10-03 20:00:08 | mit-cml/appinventor-sources | https://api.github.com/repos/mit-cml/appinventor-sources | opened | Custom label data for Charts | help wanted issue: noted for future Work status: forum feature request affects: ucr priority: medium | **Describe the desired feature**

Rather than trying to infer labels and colors for the legend, allow the user to specify the information using an associative list or dictionary.

**Give an example of how this feature would be used**

For a single data series, the Chart erroneously tries to treat each element in the line as its own entry in the legend, making the feature effectively useless.

**Why doesn't the current App Inventor system address this use case?**

See previous note.

**Why is this feature beneficial to App Inventor's educational mission?**

Explaining one's data is an important skill to learn, and giving students additional control over how their data are interpreted can help them build this skill. | 1.0 | Custom label data for Charts - **Describe the desired feature**

Rather than trying to infer labels and colors for the legend, allow the user to specify the information using an associative list or dictionary.

**Give an example of how this feature would be used**

For a single data series, the Chart erroneously tries to treat each element in the line as its own entry in the legend, making the feature effectively useless.

**Why doesn't the current App Inventor system address this use case?**

See previous note.

**Why is this feature beneficial to App Inventor's educational mission?**

Explaining one's data is an important skill to learn, and giving students additional control over how their data are interpreted can help them build this skill. | priority | custom label data for charts describe the desired feature rather than trying to infer labels and colors for the legend allow the user to specify the information using an associative list or dictionary give an example of how this feature would be used for a single data series the chart erroneously tries to treat each element in the line as its own entry in the legend making the feature effectively useless why doesn t the current app inventor system address this use case see previous note why is this feature beneficial to app inventor s educational mission explaining one s data is an important skill to learn and giving students additional control over how their data are interpreted can help them build this skill | 1 |

72,470 | 3,386,257,735 | IssuesEvent | 2015-11-27 16:22:22 | CosmosOS/Cosmos | https://api.github.com/repos/CosmosOS/Cosmos | closed | Dup tries to pop more stuff from analytical stack than there is! | area_compiler complexity_medium pending_verification priority_high | Log:

```

4> Error: Exception: System.Exception: Error compiling method 'SystemVoidKernelCommandsInputCommand': System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514 ---> System.Exception: OpCode IL_014D: Dup tries to pop more stuff from analytical stack than there is!

4> at Cosmos.IL2CPU.ILOpCode.InterpretStackTypes(IDictionary`2 aOpCodes, Stack`1 aStack, Boolean& aSituationChanged, Int32 aMaxRecursionDepth) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILOpCode.cs:line 369

4> at Cosmos.IL2CPU.AppAssembler.InterpretInstructionsToDetermineStackTypes(List`1 aCurrentGroup) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 714

4> at Cosmos.IL2CPU.AppAssembler.EmitInstructions(MethodInfo aMethod, List`1 aCurrentGroup, Boolean& emitINT3) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 557

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 514

4> --- End of inner exception stack trace ---

4> at Cosmos.IL2CPU.AppAssembler.ProcessMethod(MethodInfo aMethod, List`1 aOpCodes) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\AppAssembler.cs:line 529

4> at Cosmos.IL2CPU.ILScanner.Assemble() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 944

4> at Cosmos.IL2CPU.ILScanner.Execute(MethodBase aStartMethod) in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\ILScanner.cs:line 256

4> at Cosmos.IL2CPU.CompilerEngine.Execute() in C:\Users\Luka\Desktop\Cosmos-master\source\Cosmos.IL2CPU\CompilerEngine.cs:line 238

```

And there is code where it heappen:

```C#

public static void InputCommand()

{

Console.Write("D:/command>");

comd = Console.ReadLine();

comd = comd.ToLower();

if (comd == "reboot") h.Power.Restart();

else if (comd == "shutdown") h.Power.Shutdown();

else if (comd == "echo")

{

Console.Write("Echo>");

arg = Console.ReadLine();

Console.WriteLine(arg);

}

else if (comd == "notepad")

{

System.CLI.Applications.Notepad();

}

else if (comd == "cls")

{

Console.Clear();

Console.WriteLine("TriangleOS");

Console.WriteLine("=============================");

}

else if (comd == "soundtest")

{

Console.Write("Frequency>");

arg = Console.ReadLine();

Console.Write("Duration>");

optarg = Console.ReadLine();

Console.Write("Eh, this isn't implemted right now.");

//h.Multimedia.Speakers.CallSound(int.Parse(arg), int.Parse(optarg));

}

else if (comd == "boot")

{

Console.WriteLine("Starting TriangleOS.Drivers . . .");

//ProcessManager.Process Audio = new ProcessManager.Process();

//ProcessManager.Process Graphics = new ProcessManager.Process();

//Graphics.ProcessThread = new System.Threading.Thread(

h.Graphics.LowLevel.init();

//);

//ProcessManager.Process Mouse = new ProcessManager.Process();

//Mouse.ProcessThread = new System.Threading.Thread(

h.Mouse.InitMouse();

//);

//Graphics.Start();

//Mouse.Start();

//Audio.ProcessThread = new System.Threading.Thread(

h.Multimedia.Speakers.IntailizeAudio();

//);

Kernel.GUI();

}

else if (comd == "cliboot")

{

System.CLI.Controls.TextBox Text = new System.CLI.Controls.TextBox();

Text.x = 1;

Text.y = 1;

Text.length = 20;

Text.DrawTextBox();

Text.TypeInto();

System.CLI.Controls.Button OK = new System.CLI.Controls.Button();

OK.y = 22;

OK.x = 1;

OK.width = 6;

OK.height = 1;

OK.text = "OK";

OK.DrawButton();

}

else if (comd == "calculator")

{

System.CLI.Applications.Calculator();

}

else if (comd == "cd")

{

h.Graphics.Console.ErrO("Impossible operation performed. Can't request I/O while it isn't running!");

}

else if (comd == "dir")

{

Console.WriteLine("This isn't folder. you can use <cd> to go up folder.");

}

else if (comd == "paint")

{

System.CLI.Applications.Paint();

}

else if (comd == "changelog")

{