Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

434,213 | 12,515,631,951 | IssuesEvent | 2020-06-03 08:01:52 | canonical-web-and-design/build.snapcraft.io | https://api.github.com/repos/canonical-web-and-design/build.snapcraft.io | closed | the unclickable cells are more colorful overall than the clickable one | Priority: Medium | We need to make sure this is tested when we do testing…

_From Google Docs_

> Look at the penultimate row: there are three cells with ✔, but only the first two are clickable. How can you tell the third is not? …Its … text is green? That’s the only clear difference. The down arrows ⌵ are a good move, but it’s still the case that the unclickable cells are more colorful overall than the clickable ones.

| 1.0 | the unclickable cells are more colorful overall than the clickable one - We need to make sure this is tested when we do testing…

_From Google Docs_

> Look at the penultimate row: there are three cells with ✔, but only the first two are clickable. How can you tell the third is not? …Its … text is green? That’s the only clear difference. The down arrows ⌵ are a good move, but it’s still the case that the unclickable cells are more colorful overall than the clickable ones.

| priority | the unclickable cells are more colorful overall than the clickable one we need to make sure this is tested when we do testing… from google docs look at the penultimate row there are three cells with ✔ but only the first two are clickable how can you tell the third is not …its … text is green that’s the only clear difference the down arrows ⌵ are a good move but it’s still the case that the unclickable cells are more colorful overall than the clickable ones | 1 |

741,195 | 25,783,422,673 | IssuesEvent | 2022-12-09 17:59:49 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Add metrics to the RBS code path | kind/enhancement area/docdb priority/medium | Jira Link: [DB-2776](https://yugabyte.atlassian.net/browse/DB-2776)

### Description

Add metrics for the time spent in RBS as well as the CRC checksum validation part, if the CRC time is a big factor of the total time for RBS, then potentially skip the CRC checksum as the tablet bootstrap will eventually do the validation. | 1.0 | [DocDB] Add metrics to the RBS code path - Jira Link: [DB-2776](https://yugabyte.atlassian.net/browse/DB-2776)

### Description

Add metrics for the time spent in RBS as well as the CRC checksum validation part, if the CRC time is a big factor of the total time for RBS, then potentially skip the CRC checksum as the tablet bootstrap will eventually do the validation. | priority | add metrics to the rbs code path jira link description add metrics for the time spent in rbs as well as the crc checksum validation part if the crc time is a big factor of the total time for rbs then potentially skip the crc checksum as the tablet bootstrap will eventually do the validation | 1 |

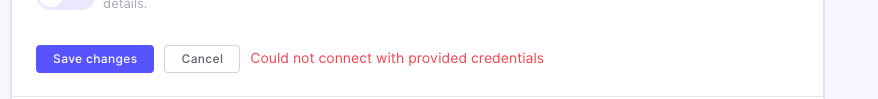

195,787 | 6,918,337,293 | IssuesEvent | 2017-11-29 11:48:03 | vmware/harbor | https://api.github.com/repos/vmware/harbor | closed | Test connection is sending wrong password | area/ui kind/bug priority/medium target/vic-1.3 | In the edit dialog of replication/endpoint.

After setting the password, tested connection and save.

Edit it again, uncheck "verify remote cert" (I'm using a http endpoint).

Click "test connection" again, it will always fail, seems UI is sending the wrong password.

| 1.0 | Test connection is sending wrong password - In the edit dialog of replication/endpoint.

After setting the password, tested connection and save.

Edit it again, uncheck "verify remote cert" (I'm using a http endpoint).

Click "test connection" again, it will always fail, seems UI is sending the wrong password.

| priority | test connection is sending wrong password in the edit dialog of replication endpoint after setting the password tested connection and save edit it again uncheck verify remote cert i m using a http endpoint click test connection again it will always fail seems ui is sending the wrong password | 1 |

727,722 | 25,045,416,304 | IssuesEvent | 2022-11-05 06:57:39 | KendallDoesCoding/mogul-christmas | https://api.github.com/repos/KendallDoesCoding/mogul-christmas | closed | [YOUTUBE API] Standard volume for songs played using the website | enhancement help wanted good first issue javascript EddieHub:good-first-issue 🟨 priority: medium Hacktoberfest-Accepted | Currently, the sound of the video/embed depends on the LAST watched YouTube video volume, however, that could've been on mute, then as mentioned in the README.md, the site will not be able to play the music - "Please ensure that the last YouTube video you watched wasn't on mute, otherwise the songs will not play, and the autoplay for the lyrics' directory will automatically be set on mute too."

So, I was researching and it seems we can add some JavaScript (Youtube API) that the volume of every embed will be standard set amount by us (i hope this is how it works, i'm unsure) we can set a standard volume.

Set the standard volume to 50/50%

**Reference: https://developers.google.com/youtube/iframe_api_reference#setVolume**

@TechStudent10 can you do this pls? | 1.0 | [YOUTUBE API] Standard volume for songs played using the website - Currently, the sound of the video/embed depends on the LAST watched YouTube video volume, however, that could've been on mute, then as mentioned in the README.md, the site will not be able to play the music - "Please ensure that the last YouTube video you watched wasn't on mute, otherwise the songs will not play, and the autoplay for the lyrics' directory will automatically be set on mute too."

So, I was researching and it seems we can add some JavaScript (Youtube API) that the volume of every embed will be standard set amount by us (i hope this is how it works, i'm unsure) we can set a standard volume.

Set the standard volume to 50/50%

**Reference: https://developers.google.com/youtube/iframe_api_reference#setVolume**

@TechStudent10 can you do this pls? | priority | standard volume for songs played using the website currently the sound of the video embed depends on the last watched youtube video volume however that could ve been on mute then as mentioned in the readme md the site will not be able to play the music please ensure that the last youtube video you watched wasn t on mute otherwise the songs will not play and the autoplay for the lyrics directory will automatically be set on mute too so i was researching and it seems we can add some javascript youtube api that the volume of every embed will be standard set amount by us i hope this is how it works i m unsure we can set a standard volume set the standard volume to reference can you do this pls | 1 |

357,020 | 10,600,756,086 | IssuesEvent | 2019-10-10 10:46:02 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | obj: unable to free space when pool is almost full | Exposure: Medium Priority: 4 low Type: Feature | If application requires transaction to free multiple objects to make progress it can get stuck, because pmemobj_tx_free can abort the transaction (freeing more than 8 objects requires allocation).

The prime example is pmemfile - if application creates a file using all available space, unlinking it fails. I can work around some cases (by carefully using atomic free), but some are not that easy. I could preallocate some space at pool creation time, free it it when transactional free fails and restart the transaction, but this is race'y (another thread could use what we freed before we got to transactional free).

I think pmemobj should expose some API/ctl to reserve space for internal purposes.

| 1.0 | obj: unable to free space when pool is almost full - If application requires transaction to free multiple objects to make progress it can get stuck, because pmemobj_tx_free can abort the transaction (freeing more than 8 objects requires allocation).

The prime example is pmemfile - if application creates a file using all available space, unlinking it fails. I can work around some cases (by carefully using atomic free), but some are not that easy. I could preallocate some space at pool creation time, free it it when transactional free fails and restart the transaction, but this is race'y (another thread could use what we freed before we got to transactional free).

I think pmemobj should expose some API/ctl to reserve space for internal purposes.

| priority | obj unable to free space when pool is almost full if application requires transaction to free multiple objects to make progress it can get stuck because pmemobj tx free can abort the transaction freeing more than objects requires allocation the prime example is pmemfile if application creates a file using all available space unlinking it fails i can work around some cases by carefully using atomic free but some are not that easy i could preallocate some space at pool creation time free it it when transactional free fails and restart the transaction but this is race y another thread could use what we freed before we got to transactional free i think pmemobj should expose some api ctl to reserve space for internal purposes | 1 |

690,347 | 23,654,745,674 | IssuesEvent | 2022-08-26 10:05:19 | projectdiscovery/nuclei | https://api.github.com/repos/projectdiscovery/nuclei | closed | Advanced template filtering | Priority: Medium Status: Completed Type: Enhancement | ### Please describe your feature request:

Allow advanced template filtering for execution with values from **id / info** section

### Describe the use case of this feature:

More control over template execution

### Proposed solutions:

New CLI flag:

```

-tc, -template-condition templates to run based on dsl filer

```

## Examples

```console

nuclei -tc "author=='pdteam' && ('wordpress','xss') in tags"

nuclei -tc "severity=='high' && metadata.verified"

```

```bash

id=='tect-detect' || contains(tags,'wordpress')

contains(author,'pdteam')

tag=='wordpress' || contains(tags,'xss') && !contains(tags,'excluded')

severity=='high'

```

| 1.0 | Advanced template filtering - ### Please describe your feature request:

Allow advanced template filtering for execution with values from **id / info** section

### Describe the use case of this feature:

More control over template execution

### Proposed solutions:

New CLI flag:

```

-tc, -template-condition templates to run based on dsl filer

```

## Examples

```console

nuclei -tc "author=='pdteam' && ('wordpress','xss') in tags"

nuclei -tc "severity=='high' && metadata.verified"

```

```bash

id=='tect-detect' || contains(tags,'wordpress')

contains(author,'pdteam')

tag=='wordpress' || contains(tags,'xss') && !contains(tags,'excluded')

severity=='high'

```

| priority | advanced template filtering please describe your feature request allow advanced template filtering for execution with values from id info section describe the use case of this feature more control over template execution proposed solutions new cli flag tc template condition templates to run based on dsl filer examples console nuclei tc author pdteam wordpress xss in tags nuclei tc severity high metadata verified bash id tect detect contains tags wordpress contains author pdteam tag wordpress contains tags xss contains tags excluded severity high | 1 |

501,383 | 14,527,065,998 | IssuesEvent | 2020-12-14 14:56:21 | carbon-design-system/carbon-for-ibm-dotcom | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom | closed | Web component: Content block - segmented Prod QA testing | QA dev complete package: web components priority: medium | <!-- Avoid any type of solutions in this user story -->

<!-- replace _{{...}}_ with your own words or remove -->

#### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

developer using the ibm.com Library `Cotnent block - segmented`

> I need to:

have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms

> so that I can:

be confident that my ibm.com web site users will have a good experience

#### Additional information

<!-- {{Please provide any additional information or resources for reference}} -->

- [Browser Stack link](https://ibm.ent.box.com/notes/578734426612)

- [Browser Standard](https://w3.ibm.com/standards/web/browser/)

- Browser versions to be tested: Tier 1 browsers will be tested with defects created as Sev 1 or Sev 2. Tier 2 browser defects will be created as Sev 3 defects.

- Platforms to be tested, by priority: 1) Desktop 2) Mobile 3) Tablet

- Mobile & Tablet iOS versions: 13.1, 13.3 and 14

- Mobile & Tablet Android versions: 9.0 Pie and 8.1 Oreo

- Browsers to be tested: Desktop: Chrome, Firefox, Safari, Edge, Mobile: Chrome, Safari, Samsung Internet, UC Browser, Tablet: Safari, Chrome, Android

- [Accessibility Checklist](https://www.ibm.com/able/guidelines/ci162/accessibility_checklist.html)

- [Creating a QA bug](https://ibm.ent.box.com/notes/603242247385)

- **See the Epic for the Design and Functional specs information**

- Dev issue (#3790)

- Once development is finished the updated code is available in the [**Web Components Canary Environment**](https://ibmdotcom-web-components-canary.mybluemix.net/?path=/story/overview-getting-started--page) for testing.

- [**React canary environment**](https://ibmdotcom-react-canary.mybluemix.net/?path=/story/overview-getting-started--page)

#### Acceptance criteria

- [ ] Accessibility testing is complete. Component is compliant.

- [ ] All browser versions are tested

- [ ] All operating systems are tested

- [ ] All devices are tested

- [ ] Defects are recorded and retested when fixed | 1.0 | Web component: Content block - segmented Prod QA testing - <!-- Avoid any type of solutions in this user story -->

<!-- replace _{{...}}_ with your own words or remove -->

#### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

developer using the ibm.com Library `Cotnent block - segmented`

> I need to:

have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms

> so that I can:

be confident that my ibm.com web site users will have a good experience

#### Additional information

<!-- {{Please provide any additional information or resources for reference}} -->

- [Browser Stack link](https://ibm.ent.box.com/notes/578734426612)

- [Browser Standard](https://w3.ibm.com/standards/web/browser/)

- Browser versions to be tested: Tier 1 browsers will be tested with defects created as Sev 1 or Sev 2. Tier 2 browser defects will be created as Sev 3 defects.

- Platforms to be tested, by priority: 1) Desktop 2) Mobile 3) Tablet

- Mobile & Tablet iOS versions: 13.1, 13.3 and 14

- Mobile & Tablet Android versions: 9.0 Pie and 8.1 Oreo

- Browsers to be tested: Desktop: Chrome, Firefox, Safari, Edge, Mobile: Chrome, Safari, Samsung Internet, UC Browser, Tablet: Safari, Chrome, Android

- [Accessibility Checklist](https://www.ibm.com/able/guidelines/ci162/accessibility_checklist.html)

- [Creating a QA bug](https://ibm.ent.box.com/notes/603242247385)

- **See the Epic for the Design and Functional specs information**

- Dev issue (#3790)

- Once development is finished the updated code is available in the [**Web Components Canary Environment**](https://ibmdotcom-web-components-canary.mybluemix.net/?path=/story/overview-getting-started--page) for testing.

- [**React canary environment**](https://ibmdotcom-react-canary.mybluemix.net/?path=/story/overview-getting-started--page)

#### Acceptance criteria

- [ ] Accessibility testing is complete. Component is compliant.

- [ ] All browser versions are tested

- [ ] All operating systems are tested

- [ ] All devices are tested

- [ ] Defects are recorded and retested when fixed | priority | web component content block segmented prod qa testing user story as a developer using the ibm com library cotnent block segmented i need to have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms so that i can be confident that my ibm com web site users will have a good experience additional information browser versions to be tested tier browsers will be tested with defects created as sev or sev tier browser defects will be created as sev defects platforms to be tested by priority desktop mobile tablet mobile tablet ios versions and mobile tablet android versions pie and oreo browsers to be tested desktop chrome firefox safari edge mobile chrome safari samsung internet uc browser tablet safari chrome android see the epic for the design and functional specs information dev issue once development is finished the updated code is available in the for testing acceptance criteria accessibility testing is complete component is compliant all browser versions are tested all operating systems are tested all devices are tested defects are recorded and retested when fixed | 1 |

277,917 | 8,634,438,031 | IssuesEvent | 2018-11-22 16:50:27 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | opened | Download signed (by NHS) contract is not available. Demo, #J537 | kind/support priority/medium | 1. Створюємо заявку.

2. Підтверджуємо створену заявку

3. Чекаємо на підпис НЗСУ

4. Із блоку "urgent" скачувати підписаний контент по урлі з типом - "SIGNED_CONTENT".

Створена заявка id - dc0f1e3d-b2b0-4fab-ab22-7e2782f716a2

Подробиці тут https://drive.google.com/drive/u/0/folders/1TRp5TpDFEDsClCv-8aIrIQq-EDm_geJL?ogsrc=32

Пріорітет стандартний, але це тестування контрактів.

| 1.0 | Download signed (by NHS) contract is not available. Demo, #J537 - 1. Створюємо заявку.

2. Підтверджуємо створену заявку

3. Чекаємо на підпис НЗСУ

4. Із блоку "urgent" скачувати підписаний контент по урлі з типом - "SIGNED_CONTENT".

Створена заявка id - dc0f1e3d-b2b0-4fab-ab22-7e2782f716a2

Подробиці тут https://drive.google.com/drive/u/0/folders/1TRp5TpDFEDsClCv-8aIrIQq-EDm_geJL?ogsrc=32

Пріорітет стандартний, але це тестування контрактів.

| priority | download signed by nhs contract is not available demo створюємо заявку підтверджуємо створену заявку чекаємо на підпис нзсу із блоку urgent скачувати підписаний контент по урлі з типом signed content створена заявка id подробиці тут пріорітет стандартний але це тестування контрактів | 1 |

449,120 | 12,963,634,633 | IssuesEvent | 2020-07-20 19:06:51 | ansible/awx | https://api.github.com/repos/ansible/awx | opened | Hide sync icon for smart inventory rows in Inventory List | component:ui_next priority:medium state:needs_devel type:bug | ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<img width="1407" alt="Screen Shot 2020-07-20 at 3 05 48 PM" src="https://user-images.githubusercontent.com/9889020/87976119-901fd100-ca9a-11ea-9fd2-976a80a7f55b.png">

Smart inventories don't have sources so this icon is not relevant

| 1.0 | Hide sync icon for smart inventory rows in Inventory List - ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<img width="1407" alt="Screen Shot 2020-07-20 at 3 05 48 PM" src="https://user-images.githubusercontent.com/9889020/87976119-901fd100-ca9a-11ea-9fd2-976a80a7f55b.png">

Smart inventories don't have sources so this icon is not relevant

| priority | hide sync icon for smart inventory rows in inventory list issue type bug report summary img width alt screen shot at pm src smart inventories don t have sources so this icon is not relevant | 1 |

57,612 | 3,083,124,406 | IssuesEvent | 2015-08-24 06:26:56 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | В calcBlockSize стреляет dcassert(aFileSize > 0); // [+] IRainman fix. | bug imported Priority-Medium | _From [Pavel.Pimenov@gmail.com](https://code.google.com/u/Pavel.Pimenov@gmail.com/) on May 22, 2013 09:32:52_

Для повторения падения нужно расшарить файл с размером = 0

1. Причина в HashManager::Hasher::run()

File f(m_fname, File::READ, File::OPEN);

const int64_t bs = TigerTree::getMaxBlockSize(f.getSize());

2. мы пытаемся открывать файл даже если размер у него = 0

при этом после открытия зовем API для определения размера f.getSize()

Хотя перед этим мы уже узнали размер файла

const int64_t l_size = File::getSize(m_fname);

TODO

- Если файл пустой, то его не нужно открывать?

- Даже если открыли не звать f.getSize() ?

- Пройти этот кусок под отладкой.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1043_ | 1.0 | В calcBlockSize стреляет dcassert(aFileSize > 0); // [+] IRainman fix. - _From [Pavel.Pimenov@gmail.com](https://code.google.com/u/Pavel.Pimenov@gmail.com/) on May 22, 2013 09:32:52_

Для повторения падения нужно расшарить файл с размером = 0

1. Причина в HashManager::Hasher::run()

File f(m_fname, File::READ, File::OPEN);

const int64_t bs = TigerTree::getMaxBlockSize(f.getSize());

2. мы пытаемся открывать файл даже если размер у него = 0

при этом после открытия зовем API для определения размера f.getSize()

Хотя перед этим мы уже узнали размер файла

const int64_t l_size = File::getSize(m_fname);

TODO

- Если файл пустой, то его не нужно открывать?

- Даже если открыли не звать f.getSize() ?

- Пройти этот кусок под отладкой.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1043_ | priority | в calcblocksize стреляет dcassert afilesize irainman fix from on may для повторения падения нужно расшарить файл с размером причина в hashmanager hasher run file f m fname file read file open const t bs tigertree getmaxblocksize f getsize мы пытаемся открывать файл даже если размер у него при этом после открытия зовем api для определения размера f getsize хотя перед этим мы уже узнали размер файла const t l size file getsize m fname todo если файл пустой то его не нужно открывать даже если открыли не звать f getsize пройти этот кусок под отладкой original issue | 1 |

230,709 | 7,613,018,642 | IssuesEvent | 2018-05-01 19:40:18 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Remove hello-world from extensions in samples | bug-type: unexpected behavior priority: medium problem: bug | `api/hello-world` was created to show a very basic example of an extension.

It has been added to a number of the sample page `tpl` files.

This extension is intercepting double clicks (which should trigger a zoom) and presents a dialog which is difficult to clear.

`We detected a double click, but you may have just clicked twice slowly. Proceed anyways?`

It should be removed from the main samples. We can make a special sample that uses it if we want to preserve the glory of `hello-world`. | 1.0 | Remove hello-world from extensions in samples - `api/hello-world` was created to show a very basic example of an extension.

It has been added to a number of the sample page `tpl` files.

This extension is intercepting double clicks (which should trigger a zoom) and presents a dialog which is difficult to clear.

`We detected a double click, but you may have just clicked twice slowly. Proceed anyways?`

It should be removed from the main samples. We can make a special sample that uses it if we want to preserve the glory of `hello-world`. | priority | remove hello world from extensions in samples api hello world was created to show a very basic example of an extension it has been added to a number of the sample page tpl files this extension is intercepting double clicks which should trigger a zoom and presents a dialog which is difficult to clear we detected a double click but you may have just clicked twice slowly proceed anyways it should be removed from the main samples we can make a special sample that uses it if we want to preserve the glory of hello world | 1 |

675,458 | 23,095,247,288 | IssuesEvent | 2022-07-26 18:51:41 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | yb-master fails to restart after errors during first run | kind/bug area/docdb priority/medium | Jira Link: [DB-1889](https://yugabyte.atlassian.net/browse/DB-1889)

Repro: Start yb-master with an incorrect master_addresses on first run, see it crash, fix the master_addresses and restart the master, it will not start up properly. This is similar to #4866 but a different failure point.

------------------

During yb-master startup, we create the instance file early in the fs manager init and then use the presence of this marker file to guide initialization of the sys catalog raft metadata. This is kind of broken because there are steps that can fail in between the instance file creation and the sys catalog metadata initialization.

This issue is to track the proper fix to all these kinds of issues. We need to fix the sys catalog Load/Create code to either do a LoadOrCreate or maybe have a marker to make sure it has gotten out of the "first run" phase successfully at least once.

Details

-------------------

```

sanketh@varahi:~/code/yugabyte-db$ ~/yugabyte-2.1.8.2/bin/yb-master --fs_data_dirs=/tmp/testcrash1 --rpc_bind_addresses=127.0.0.1:7100 --master_addresses=127.0.0.2:7100 --replication_factor=1

F0730 20:43:44.338073 17057 master_main.cc:120] Illegal state (yb/master/catalog_manager.cc:1273): Unable to initialize catalog manager: Failed to initialize sys tables async: None of the local addresses are present in master_addresses 127.0.0.2:7100.

Fatal failure details written to /tmp/testcrash1/yb-data/master/logs/yb-master.FATAL.details.2020-07-30T20_43_44.pid17057.txt

F20200730 20:43:44 ../../src/yb/master/master_main.cc:120] Illegal state (yb/master/catalog_manager.cc:1273): Unable to initialize catalog manager: Failed to initialize sys tables async: None of the local addresses are present in master_addresses 127.0.0.2:7100.

@ 0x7fb07a43cc0c yb::LogFatalHandlerSink::send()

@ 0x7fb079624346 google::LogMessage::SendToLog()

@ 0x7fb0796217aa google::LogMessage::Flush()

@ 0x7fb079624879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fb075283825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

*** Check failure stack trace: ***

@ 0x7fb07a43aff1 yb::(anonymous namespace)::DumpStackTraceAndExit()

@ 0x7fb079621c5d google::LogMessage::Fail()

@ 0x7fb079623dcd google::LogMessage::SendToLog()

@ 0x7fb0796217aa google::LogMessage::Flush()

@ 0x7fb079624879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fb075283825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

Aborted (core dumped)

sanketh@varahi:~/code/yugabyte-db$ ~/yugabyte-2.1.8.2/bin/yb-master --fs_data_dirs=/tmp/testcrash1 --rpc_bind_addresses=127.0.0.1:7100 --master_addresses=127.0.0.1:7100 --replication_factor=1

F0730 20:43:48.776080 17094 master_main.cc:120] Not found (yb/util/env_posix.cc:1482): Unable to initialize catalog manager: Failed to initialize sys tables async: Could not load Raft group metadata from /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: No such file or directory (system error 2)

Fatal failure details written to /tmp/testcrash1/yb-data/master/logs/yb-master.FATAL.details.2020-07-30T20_43_48.pid17094.txt

F20200730 20:43:48 ../../src/yb/master/master_main.cc:120] Not found (yb/util/env_posix.cc:1482): Unable to initialize catalog manager: Failed to initialize sys tables async: Could not load Raft group metadata from /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: No such file or directory (system error 2)

@ 0x7fc4c87f9c0c yb::LogFatalHandlerSink::send()

@ 0x7fc4c79e1346 google::LogMessage::SendToLog()

@ 0x7fc4c79de7aa google::LogMessage::Flush()

@ 0x7fc4c79e1879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fc4c3640825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

*** Check failure stack trace: ***

@ 0x7fc4c87f7ff1 yb::(anonymous namespace)::DumpStackTraceAndExit()

@ 0x7fc4c79dec5d google::LogMessage::Fail()

@ 0x7fc4c79e0dcd google::LogMessage::SendToLog()

@ 0x7fc4c79de7aa google::LogMessage::Flush()

@ 0x7fc4c79e1879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fc4c3640825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

Aborted (core dumped)

```

| 1.0 | yb-master fails to restart after errors during first run - Jira Link: [DB-1889](https://yugabyte.atlassian.net/browse/DB-1889)

Repro: Start yb-master with an incorrect master_addresses on first run, see it crash, fix the master_addresses and restart the master, it will not start up properly. This is similar to #4866 but a different failure point.

------------------

During yb-master startup, we create the instance file early in the fs manager init and then use the presence of this marker file to guide initialization of the sys catalog raft metadata. This is kind of broken because there are steps that can fail in between the instance file creation and the sys catalog metadata initialization.

This issue is to track the proper fix to all these kinds of issues. We need to fix the sys catalog Load/Create code to either do a LoadOrCreate or maybe have a marker to make sure it has gotten out of the "first run" phase successfully at least once.

Details

-------------------

```

sanketh@varahi:~/code/yugabyte-db$ ~/yugabyte-2.1.8.2/bin/yb-master --fs_data_dirs=/tmp/testcrash1 --rpc_bind_addresses=127.0.0.1:7100 --master_addresses=127.0.0.2:7100 --replication_factor=1

F0730 20:43:44.338073 17057 master_main.cc:120] Illegal state (yb/master/catalog_manager.cc:1273): Unable to initialize catalog manager: Failed to initialize sys tables async: None of the local addresses are present in master_addresses 127.0.0.2:7100.

Fatal failure details written to /tmp/testcrash1/yb-data/master/logs/yb-master.FATAL.details.2020-07-30T20_43_44.pid17057.txt

F20200730 20:43:44 ../../src/yb/master/master_main.cc:120] Illegal state (yb/master/catalog_manager.cc:1273): Unable to initialize catalog manager: Failed to initialize sys tables async: None of the local addresses are present in master_addresses 127.0.0.2:7100.

@ 0x7fb07a43cc0c yb::LogFatalHandlerSink::send()

@ 0x7fb079624346 google::LogMessage::SendToLog()

@ 0x7fb0796217aa google::LogMessage::Flush()

@ 0x7fb079624879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fb075283825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

*** Check failure stack trace: ***

@ 0x7fb07a43aff1 yb::(anonymous namespace)::DumpStackTraceAndExit()

@ 0x7fb079621c5d google::LogMessage::Fail()

@ 0x7fb079623dcd google::LogMessage::SendToLog()

@ 0x7fb0796217aa google::LogMessage::Flush()

@ 0x7fb079624879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fb075283825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

Aborted (core dumped)

sanketh@varahi:~/code/yugabyte-db$ ~/yugabyte-2.1.8.2/bin/yb-master --fs_data_dirs=/tmp/testcrash1 --rpc_bind_addresses=127.0.0.1:7100 --master_addresses=127.0.0.1:7100 --replication_factor=1

F0730 20:43:48.776080 17094 master_main.cc:120] Not found (yb/util/env_posix.cc:1482): Unable to initialize catalog manager: Failed to initialize sys tables async: Could not load Raft group metadata from /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: No such file or directory (system error 2)

Fatal failure details written to /tmp/testcrash1/yb-data/master/logs/yb-master.FATAL.details.2020-07-30T20_43_48.pid17094.txt

F20200730 20:43:48 ../../src/yb/master/master_main.cc:120] Not found (yb/util/env_posix.cc:1482): Unable to initialize catalog manager: Failed to initialize sys tables async: Could not load Raft group metadata from /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: /tmp/testcrash1/yb-data/master/tablet-meta/00000000000000000000000000000000: No such file or directory (system error 2)

@ 0x7fc4c87f9c0c yb::LogFatalHandlerSink::send()

@ 0x7fc4c79e1346 google::LogMessage::SendToLog()

@ 0x7fc4c79de7aa google::LogMessage::Flush()

@ 0x7fc4c79e1879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fc4c3640825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

*** Check failure stack trace: ***

@ 0x7fc4c87f7ff1 yb::(anonymous namespace)::DumpStackTraceAndExit()

@ 0x7fc4c79dec5d google::LogMessage::Fail()

@ 0x7fc4c79e0dcd google::LogMessage::SendToLog()

@ 0x7fc4c79de7aa google::LogMessage::Flush()

@ 0x7fc4c79e1879 google::LogMessageFatal::~LogMessageFatal()

@ 0x408fb4 yb::master::MasterMain()

@ 0x7fc4c3640825 __libc_start_main

@ 0x408369 _start

@ (nil) (unknown)

Aborted (core dumped)

```

| priority | yb master fails to restart after errors during first run jira link repro start yb master with an incorrect master addresses on first run see it crash fix the master addresses and restart the master it will not start up properly this is similar to but a different failure point during yb master startup we create the instance file early in the fs manager init and then use the presence of this marker file to guide initialization of the sys catalog raft metadata this is kind of broken because there are steps that can fail in between the instance file creation and the sys catalog metadata initialization this issue is to track the proper fix to all these kinds of issues we need to fix the sys catalog load create code to either do a loadorcreate or maybe have a marker to make sure it has gotten out of the first run phase successfully at least once details sanketh varahi code yugabyte db yugabyte bin yb master fs data dirs tmp rpc bind addresses master addresses replication factor master main cc illegal state yb master catalog manager cc unable to initialize catalog manager failed to initialize sys tables async none of the local addresses are present in master addresses fatal failure details written to tmp yb data master logs yb master fatal details txt src yb master master main cc illegal state yb master catalog manager cc unable to initialize catalog manager failed to initialize sys tables async none of the local addresses are present in master addresses yb logfatalhandlersink send google logmessage sendtolog google logmessage flush google logmessagefatal logmessagefatal yb master mastermain libc start main start nil unknown check failure stack trace yb anonymous namespace dumpstacktraceandexit google logmessage fail google logmessage sendtolog google logmessage flush google logmessagefatal logmessagefatal yb master mastermain libc start main start nil unknown aborted core dumped sanketh varahi code yugabyte db yugabyte bin yb master fs data dirs tmp rpc bind addresses master addresses replication factor master main cc not found yb util env posix cc unable to initialize catalog manager failed to initialize sys tables async could not load raft group metadata from tmp yb data master tablet meta tmp yb data master tablet meta no such file or directory system error fatal failure details written to tmp yb data master logs yb master fatal details txt src yb master master main cc not found yb util env posix cc unable to initialize catalog manager failed to initialize sys tables async could not load raft group metadata from tmp yb data master tablet meta tmp yb data master tablet meta no such file or directory system error yb logfatalhandlersink send google logmessage sendtolog google logmessage flush google logmessagefatal logmessagefatal yb master mastermain libc start main start nil unknown check failure stack trace yb anonymous namespace dumpstacktraceandexit google logmessage fail google logmessage sendtolog google logmessage flush google logmessagefatal logmessagefatal yb master mastermain libc start main start nil unknown aborted core dumped | 1 |

72,590 | 3,388,399,005 | IssuesEvent | 2015-11-29 08:19:43 | crutchcorn/stagger | https://api.github.com/repos/crutchcorn/stagger | closed | Add a function for deleting tags | enhancement Priority Medium | ```

>>> stagger.delete("test.mp3")

>>> stagger.read("test.mp3")

=> NoTagError

```

Original issue reported on code.google.com by `Karoly.Lorentey` on 13 Jun 2009 at 5:50 | 1.0 | Add a function for deleting tags - ```

>>> stagger.delete("test.mp3")

>>> stagger.read("test.mp3")

=> NoTagError

```

Original issue reported on code.google.com by `Karoly.Lorentey` on 13 Jun 2009 at 5:50 | priority | add a function for deleting tags stagger delete test stagger read test notagerror original issue reported on code google com by karoly lorentey on jun at | 1 |

125,599 | 4,958,505,497 | IssuesEvent | 2016-12-02 10:00:42 | GeographicaGS/gipc | https://api.github.com/repos/GeographicaGS/gipc | closed | Nuevo storymap - no se ve el mapa de la ficha | bug priority:medium | He creado un nuevo storymap para el paisaje_id = '42'. No sé si hay algo que no estoy poniendo bien porque no se ve el mapa.

http://landscapes.geographica.gs/storymap/42

Mapa en Carto (yo incluyo la url_visualización de Carto en la tabla 'paisajes_modos_narrativos):

https://gipc-admin.carto.com/viz/833f2f57-128d-441b-a163-bce3cb71f6fe/public_map

| 1.0 | Nuevo storymap - no se ve el mapa de la ficha - He creado un nuevo storymap para el paisaje_id = '42'. No sé si hay algo que no estoy poniendo bien porque no se ve el mapa.

http://landscapes.geographica.gs/storymap/42

Mapa en Carto (yo incluyo la url_visualización de Carto en la tabla 'paisajes_modos_narrativos):

https://gipc-admin.carto.com/viz/833f2f57-128d-441b-a163-bce3cb71f6fe/public_map

| priority | nuevo storymap no se ve el mapa de la ficha he creado un nuevo storymap para el paisaje id no sé si hay algo que no estoy poniendo bien porque no se ve el mapa mapa en carto yo incluyo la url visualización de carto en la tabla paisajes modos narrativos | 1 |

158,806 | 6,035,689,084 | IssuesEvent | 2017-06-09 14:27:54 | brandon1024/find | https://api.github.com/repos/brandon1024/find | closed | Selected Text Auto Search | feature medium priority | Beta Feature: When something is highlighted in the web page, and the extension is opened, whatever is highlighted in the web page should be automatically entered in the search field. This is common to IDEs. | 1.0 | Selected Text Auto Search - Beta Feature: When something is highlighted in the web page, and the extension is opened, whatever is highlighted in the web page should be automatically entered in the search field. This is common to IDEs. | priority | selected text auto search beta feature when something is highlighted in the web page and the extension is opened whatever is highlighted in the web page should be automatically entered in the search field this is common to ides | 1 |

479,047 | 13,790,434,235 | IssuesEvent | 2020-10-09 10:25:13 | madmachineio/MadMachineIDE | https://api.github.com/repos/madmachineio/MadMachineIDE | closed | Search function in the editor overlap with code content | bug priority: medium | **Describe the bug**

Search function in the editor overlap with code content

**To Reproduce**

Steps to reproduce the behavior:

1. Open any project in MadMachine IDE

2. Open any file in the editor

3. Press `command + f`

4. See error

**Expected behavior**

The content sould avoid to be overlaped with search content

| 1.0 | Search function in the editor overlap with code content - **Describe the bug**

Search function in the editor overlap with code content

**To Reproduce**

Steps to reproduce the behavior:

1. Open any project in MadMachine IDE

2. Open any file in the editor

3. Press `command + f`

4. See error

**Expected behavior**

The content sould avoid to be overlaped with search content

| priority | search function in the editor overlap with code content describe the bug search function in the editor overlap with code content to reproduce steps to reproduce the behavior open any project in madmachine ide open any file in the editor press command f see error expected behavior the content sould avoid to be overlaped with search content | 1 |

323,949 | 9,881,138,401 | IssuesEvent | 2019-06-24 14:07:11 | georchestra/georchestra | https://api.github.com/repos/georchestra/georchestra | closed | Drop epsg_extension in favor of vanilla geoserver user projections support? | priority-medium | It seems that the `epsg-extension` module was added back in [May 2011](https://github.com/georchestra/georchestra/blame/18.06/epsg-extension/src/main/java/org/geotools/referencing/factory/epsg/CustomCodes.java) right before GeoServer added support for custom CRS definitions in `<data dir>/user_projections/epsg.properties` in [June](https://github.com/geoserver/geoserver/blame/44bacafcf1352bfe0b1836262df686fd58036585/src/main/src/main/java/org/vfny/geoserver/crs/GeoserverCustomWKTFactory.java) of the same year.

Neither have changed over the years, so I wonder if we shouldn't just get rid of the custom `epsg_extension` module in favor of vanilla geoserver support for the same functionality.

Only thing to consider would be upgrading the config of anyone using the `-DCUSTOM_EPSG_FILE` system property by `-Duser.projections.file` or just saving the custom projections in `<data dir>/user_projections/epsg.properties`.

@fvanderbiest @pmauduit comments?

| 1.0 | Drop epsg_extension in favor of vanilla geoserver user projections support? - It seems that the `epsg-extension` module was added back in [May 2011](https://github.com/georchestra/georchestra/blame/18.06/epsg-extension/src/main/java/org/geotools/referencing/factory/epsg/CustomCodes.java) right before GeoServer added support for custom CRS definitions in `<data dir>/user_projections/epsg.properties` in [June](https://github.com/geoserver/geoserver/blame/44bacafcf1352bfe0b1836262df686fd58036585/src/main/src/main/java/org/vfny/geoserver/crs/GeoserverCustomWKTFactory.java) of the same year.

Neither have changed over the years, so I wonder if we shouldn't just get rid of the custom `epsg_extension` module in favor of vanilla geoserver support for the same functionality.

Only thing to consider would be upgrading the config of anyone using the `-DCUSTOM_EPSG_FILE` system property by `-Duser.projections.file` or just saving the custom projections in `<data dir>/user_projections/epsg.properties`.

@fvanderbiest @pmauduit comments?

| priority | drop epsg extension in favor of vanilla geoserver user projections support it seems that the epsg extension module was added back in right before geoserver added support for custom crs definitions in user projections epsg properties in of the same year neither have changed over the years so i wonder if we shouldn t just get rid of the custom epsg extension module in favor of vanilla geoserver support for the same functionality only thing to consider would be upgrading the config of anyone using the dcustom epsg file system property by duser projections file or just saving the custom projections in user projections epsg properties fvanderbiest pmauduit comments | 1 |

562,472 | 16,661,662,990 | IssuesEvent | 2021-06-06 12:41:35 | kaushiksk/portfolio-server-api | https://api.github.com/repos/kaushiksk/portfolio-server-api | closed | Migrate to FastAPI | enhancement investigation medium-priority | I am completely sold on FastAPI. It seems like a sleeker replacement for Flask and comes with swagger docs inbuilt. It also provides inbuilt json validation of input through pydactic.

Following changed will be needed:

- No more dependency on Flask-PyMongo - we'll need to use pymongo directly

- Cannot run flask commands anymore - we will replace command scripts with a manage.py file

- Tests drivers need to be updated to use FastAPI test client

- Routes changed to use FastAPI | 1.0 | Migrate to FastAPI - I am completely sold on FastAPI. It seems like a sleeker replacement for Flask and comes with swagger docs inbuilt. It also provides inbuilt json validation of input through pydactic.

Following changed will be needed:

- No more dependency on Flask-PyMongo - we'll need to use pymongo directly

- Cannot run flask commands anymore - we will replace command scripts with a manage.py file

- Tests drivers need to be updated to use FastAPI test client

- Routes changed to use FastAPI | priority | migrate to fastapi i am completely sold on fastapi it seems like a sleeker replacement for flask and comes with swagger docs inbuilt it also provides inbuilt json validation of input through pydactic following changed will be needed no more dependency on flask pymongo we ll need to use pymongo directly cannot run flask commands anymore we will replace command scripts with a manage py file tests drivers need to be updated to use fastapi test client routes changed to use fastapi | 1 |

769,151 | 26,994,649,131 | IssuesEvent | 2023-02-09 23:18:09 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [engine] Setting CORS configurations for accessControlAllowHeaders does not appear to have an effect | bug priority: medium CI can't reproduce | ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

_No response_

### Steps to reproduce

Steps:

1. Create a site

2. Configure CORS headers as follows

```

<cors>

<enable>true</enable>

<accessControlMaxAge>3600</accessControlMaxAge>

<accessControlAllowOrigin>*</accessControlAllowOrigin>

<accessControlAllowMethods>*</accessControlAllowMethods>

<accessControlAllowHeaders>X-Custom-Header, Content-Type</accessControlAllowHeaders>

<accessControlAllowCredentials>true</accessControlAllowCredentials>

</cors>

```

3. Attempt to set X-Custom-Heder or Content-Type and note that they are not sent.

### Relevant log output

_No response_

### Screenshots and/or videos

_No response_ | 1.0 | [engine] Setting CORS configurations for accessControlAllowHeaders does not appear to have an effect - ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

_No response_

### Steps to reproduce

Steps:

1. Create a site

2. Configure CORS headers as follows

```

<cors>

<enable>true</enable>

<accessControlMaxAge>3600</accessControlMaxAge>

<accessControlAllowOrigin>*</accessControlAllowOrigin>

<accessControlAllowMethods>*</accessControlAllowMethods>

<accessControlAllowHeaders>X-Custom-Header, Content-Type</accessControlAllowHeaders>

<accessControlAllowCredentials>true</accessControlAllowCredentials>

</cors>

```

3. Attempt to set X-Custom-Heder or Content-Type and note that they are not sent.

### Relevant log output

_No response_

### Screenshots and/or videos

_No response_ | priority | setting cors configurations for accesscontrolallowheaders does not appear to have an effect duplicates i have searched the existing issues latest version the issue is in the latest released x the issue is in the latest released x describe the issue no response steps to reproduce steps create a site configure cors headers as follows true x custom header content type true attempt to set x custom heder or content type and note that they are not sent relevant log output no response screenshots and or videos no response | 1 |

405,624 | 11,879,905,326 | IssuesEvent | 2020-03-27 09:41:42 | input-output-hk/jormungandr | https://api.github.com/repos/input-output-hk/jormungandr | closed | When starting gossiping, network does not check for already connected node | Priority - Medium bug jörmungandr subsys-network | Indeed, we call `topology.view(Any)` and quickly we already to `connect_and_propagate` with the node without checking if the node is already connected to us or not:

https://github.com/input-output-hk/jormungandr/blob/19f8eb49e15165e4a50a248e6a19221376f07cf6/jormungandr/src/network/mod.rs#L404-L414

---

instead of doing the pre-filtering of the already connected node it might be easier to do the already connected check in the `connect_and_propagate` function. | 1.0 | When starting gossiping, network does not check for already connected node - Indeed, we call `topology.view(Any)` and quickly we already to `connect_and_propagate` with the node without checking if the node is already connected to us or not:

https://github.com/input-output-hk/jormungandr/blob/19f8eb49e15165e4a50a248e6a19221376f07cf6/jormungandr/src/network/mod.rs#L404-L414

---

instead of doing the pre-filtering of the already connected node it might be easier to do the already connected check in the `connect_and_propagate` function. | priority | when starting gossiping network does not check for already connected node indeed we call topology view any and quickly we already to connect and propagate with the node without checking if the node is already connected to us or not instead of doing the pre filtering of the already connected node it might be easier to do the already connected check in the connect and propagate function | 1 |

54,745 | 3,071,170,783 | IssuesEvent | 2015-08-19 10:16:00 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Сохранение истории поисковых запросов при перезапуске программы | enhancement imported Priority-Medium | _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on April 28, 2010 21:43:16_

Во флае надо сделать чтобы история поисковых запросов сохранялась при выходе

из программы.

Вот я каждый день ищу один и тот же сериал и надо заново придумывать строку

поиска

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=84_ | 1.0 | Сохранение истории поисковых запросов при перезапуске программы - _From [a.rain...@gmail.com](https://code.google.com/u/117892482479228821242/) on April 28, 2010 21:43:16_

Во флае надо сделать чтобы история поисковых запросов сохранялась при выходе

из программы.

Вот я каждый день ищу один и тот же сериал и надо заново придумывать строку

поиска

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=84_ | priority | сохранение истории поисковых запросов при перезапуске программы from on april во флае надо сделать чтобы история поисковых запросов сохранялась при выходе из программы вот я каждый день ищу один и тот же сериал и надо заново придумывать строку поиска original issue | 1 |

22,158 | 2,645,695,151 | IssuesEvent | 2015-03-13 01:11:52 | prikhi/evoluspencil | https://api.github.com/repos/prikhi/evoluspencil | opened | bitmap image resizing problem | 2–5 stars bug imported Priority-Medium | _From [moonsha...@gmail.com](https://code.google.com/u/109720614888486048684/) on September 09, 2008 00:51:05_

What steps will reproduce the problem? 1. create new project

2. drag the "bitmap image" from common shapes onto the working panel

3. resize the bitmap image's upper right anchor / lower left anchor What is the expected output? What do you see instead? resizing according to the movement of the mouse, instead reverse resizing

occurs What version of the product are you using? On what operating system? FF addon V1.0.2, OS XP SP3 ,Browser FF 3.0.1 Please provide any additional information below.

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=50_ | 1.0 | bitmap image resizing problem - _From [moonsha...@gmail.com](https://code.google.com/u/109720614888486048684/) on September 09, 2008 00:51:05_

What steps will reproduce the problem? 1. create new project

2. drag the "bitmap image" from common shapes onto the working panel

3. resize the bitmap image's upper right anchor / lower left anchor What is the expected output? What do you see instead? resizing according to the movement of the mouse, instead reverse resizing

occurs What version of the product are you using? On what operating system? FF addon V1.0.2, OS XP SP3 ,Browser FF 3.0.1 Please provide any additional information below.

_Original issue: http://code.google.com/p/evoluspencil/issues/detail?id=50_ | priority | bitmap image resizing problem from on september what steps will reproduce the problem create new project drag the bitmap image from common shapes onto the working panel resize the bitmap image s upper right anchor lower left anchor what is the expected output what do you see instead resizing according to the movement of the mouse instead reverse resizing occurs what version of the product are you using on what operating system ff addon os xp browser ff please provide any additional information below original issue | 1 |

223,409 | 7,453,540,191 | IssuesEvent | 2018-03-29 12:22:01 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | URLs with parentesis break markdown links | Bug Priority: Medium Status: Sprint | **How to reproduce it**

Add a filter URL to a link, for example http://localhost:3000/en/library/?q=(order:desc,sort:metadata.date)

`[This will break closing on the first parentesis](http://localhost:3000/en/library/?q=(order:desc,sort:metadata.date))`

| 1.0 | URLs with parentesis break markdown links - **How to reproduce it**

Add a filter URL to a link, for example http://localhost:3000/en/library/?q=(order:desc,sort:metadata.date)

`[This will break closing on the first parentesis](http://localhost:3000/en/library/?q=(order:desc,sort:metadata.date))`

| priority | urls with parentesis break markdown links how to reproduce it add a filter url to a link for example | 1 |

206,300 | 7,111,368,152 | IssuesEvent | 2018-01-17 14:01:48 | hpi-swt2/sport-portal | https://api.github.com/repos/hpi-swt2/sport-portal | closed | Tournament overview table | po-review priority medium team swteam user story | **As a** tournament participant

**I want to** have a page that shows the progress/history of the tournament

**In order to** see how far each team came / its status

**Acceptance Criteria:**

- [x] Table with columns: Team-Name | Platzierung |

- [x] Platzierung should show how far the players is/got in the Tournament (e.g. "ist momentan im Viertelfinale", "ist im Viertelfinale ausgeschieden", "Erster/Zweiter/Dritter/Vierter Platz")

- [x] the table should be in sync with the progression of the Tournament, and be found / linked on the event page (compare to #31, #32, synchronize with Team issue number 5)

prototypical Table:

|Team-name|Platzierung|

|-------|------------|

|Team1 |im Viertelfinale ausgeschieden|

|Team2 |im Finale (gegen bla, falls bekannt)|

|Team3|im Halbfinale ausgeschieden|

|Team4|im Viertelfinale ausgeschieden|

|Team5|im Viertelfinale ausgeschieden|

|Team6|im Halbfinale|

|Team7|im Viertelfinale ausgeschieden|

|Team8|im Halbfinale| | 1.0 | Tournament overview table - **As a** tournament participant

**I want to** have a page that shows the progress/history of the tournament

**In order to** see how far each team came / its status

**Acceptance Criteria:**

- [x] Table with columns: Team-Name | Platzierung |

- [x] Platzierung should show how far the players is/got in the Tournament (e.g. "ist momentan im Viertelfinale", "ist im Viertelfinale ausgeschieden", "Erster/Zweiter/Dritter/Vierter Platz")

- [x] the table should be in sync with the progression of the Tournament, and be found / linked on the event page (compare to #31, #32, synchronize with Team issue number 5)

prototypical Table:

|Team-name|Platzierung|

|-------|------------|

|Team1 |im Viertelfinale ausgeschieden|

|Team2 |im Finale (gegen bla, falls bekannt)|

|Team3|im Halbfinale ausgeschieden|

|Team4|im Viertelfinale ausgeschieden|

|Team5|im Viertelfinale ausgeschieden|

|Team6|im Halbfinale|

|Team7|im Viertelfinale ausgeschieden|

|Team8|im Halbfinale| | priority | tournament overview table as a tournament participant i want to have a page that shows the progress history of the tournament in order to see how far each team came its status acceptance criteria table with columns team name platzierung platzierung should show how far the players is got in the tournament e g ist momentan im viertelfinale ist im viertelfinale ausgeschieden erster zweiter dritter vierter platz the table should be in sync with the progression of the tournament and be found linked on the event page compare to synchronize with team issue number prototypical table team name platzierung im viertelfinale ausgeschieden im finale gegen bla falls bekannt im halbfinale ausgeschieden im viertelfinale ausgeschieden im viertelfinale ausgeschieden im halbfinale im viertelfinale ausgeschieden im halbfinale | 1 |

296,057 | 9,103,890,561 | IssuesEvent | 2019-02-20 16:50:55 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | 7.11.1: Newer version of PHPMailer is not compatible with Email:email2Send method | Emails Fix Proposed Medium Priority Resolved: Next Release bug | SuiteCRM 7.11 comes with newer version of PHPMailer. Method **addAttachment** in PHPMailer object got new condition: **isPermittedPath** which blocks all pathes with ://

Email:emal2Send for $request['documents'] and $request['templateAttachments'] uses pathes:

`$fileLocation = "upload://{$GUID}";`

which will be blocked by isPermittedPath.

`SugarPHPMailer encountered an error: Could not access file: upload://c2a5ebd1-5a6d-0f2f-eafe-5c6286a20e74`

#### Your Environment

* SuiteCRM Version used: 7.11.1

* Environment name and version (e.g. MySQL, PHP 7): PHP 7.1

* Operating System and version (e.g Ubuntu 16.04): Ubuntu 16.04.4 LTS

| 1.0 | 7.11.1: Newer version of PHPMailer is not compatible with Email:email2Send method - SuiteCRM 7.11 comes with newer version of PHPMailer. Method **addAttachment** in PHPMailer object got new condition: **isPermittedPath** which blocks all pathes with ://

Email:emal2Send for $request['documents'] and $request['templateAttachments'] uses pathes:

`$fileLocation = "upload://{$GUID}";`

which will be blocked by isPermittedPath.

`SugarPHPMailer encountered an error: Could not access file: upload://c2a5ebd1-5a6d-0f2f-eafe-5c6286a20e74`

#### Your Environment

* SuiteCRM Version used: 7.11.1

* Environment name and version (e.g. MySQL, PHP 7): PHP 7.1

* Operating System and version (e.g Ubuntu 16.04): Ubuntu 16.04.4 LTS

| priority | newer version of phpmailer is not compatible with email method suitecrm comes with newer version of phpmailer method addattachment in phpmailer object got new condition ispermittedpath which blocks all pathes with email for request and request uses pathes filelocation upload guid which will be blocked by ispermittedpath sugarphpmailer encountered an error could not access file upload eafe your environment suitecrm version used environment name and version e g mysql php php operating system and version e g ubuntu ubuntu lts | 1 |

324,863 | 9,913,629,998 | IssuesEvent | 2019-06-28 12:23:47 | kirbydesign/designsystem | https://api.github.com/repos/kirbydesign/designsystem | closed | [Enhancement] Align on colors and naming + add 1 grey style | effort: hours enhancement medium priority | **Is your enhancement request related to a problem? Please describe.**

UX and Kirby needs some alignment on colors and naming, if its up2date! Also there has been added a semi-light, which is used for disabled style.

- [x] Color:

- [x] Add Semi-Light + Semi-Dark

- [x] Rename:

- [x] base-color => Background-color

- [x] contrast-light => White (hviiiii)

- [x] contrast-dark => Black (sååååårt)

- [x] Update hex codes | 1.0 | [Enhancement] Align on colors and naming + add 1 grey style - **Is your enhancement request related to a problem? Please describe.**

UX and Kirby needs some alignment on colors and naming, if its up2date! Also there has been added a semi-light, which is used for disabled style.

- [x] Color:

- [x] Add Semi-Light + Semi-Dark

- [x] Rename:

- [x] base-color => Background-color

- [x] contrast-light => White (hviiiii)

- [x] contrast-dark => Black (sååååårt)

- [x] Update hex codes | priority | align on colors and naming add grey style is your enhancement request related to a problem please describe ux and kirby needs some alignment on colors and naming if its also there has been added a semi light which is used for disabled style color add semi light semi dark rename base color background color contrast light white hviiiii contrast dark black sååååårt update hex codes | 1 |

734,331 | 25,344,829,342 | IssuesEvent | 2022-11-19 04:26:12 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Disallow packed row for co-located tables | kind/bug area/docdb priority/medium 2.16 Backport Required | Jira Link: [DB-4002](https://yugabyte.atlassian.net/browse/DB-4002)

### Description

Disallow packed row for co-located tables, while we identify and fix the backup-restore and xcluster integration with packed row feature to be stable. | 1.0 | [DocDB] Disallow packed row for co-located tables - Jira Link: [DB-4002](https://yugabyte.atlassian.net/browse/DB-4002)

### Description

Disallow packed row for co-located tables, while we identify and fix the backup-restore and xcluster integration with packed row feature to be stable. | priority | disallow packed row for co located tables jira link description disallow packed row for co located tables while we identify and fix the backup restore and xcluster integration with packed row feature to be stable | 1 |

309,208 | 9,462,992,651 | IssuesEvent | 2019-04-17 16:39:03 | smacademic/project-bdf | https://api.github.com/repos/smacademic/project-bdf | opened | Create connecction to twitter | priority: medium type: missing | **Is your feature request related to a problem? Please describe.**

As a part of our twitter extension, a connection to twitter needs to be set up as a part of our bot

**Describe the solution you'd like**

Create a connection to twitter using `tweepy`, a library used for creating twitter connections in a similar manner that `praw` uses to create connections to reddit.

**Describe alternatives you've considered**

Searching google for tweets. Direct connection cuts out the 'middle-man'.

| 1.0 | Create connecction to twitter - **Is your feature request related to a problem? Please describe.**

As a part of our twitter extension, a connection to twitter needs to be set up as a part of our bot

**Describe the solution you'd like**

Create a connection to twitter using `tweepy`, a library used for creating twitter connections in a similar manner that `praw` uses to create connections to reddit.

**Describe alternatives you've considered**

Searching google for tweets. Direct connection cuts out the 'middle-man'.

| priority | create connecction to twitter is your feature request related to a problem please describe as a part of our twitter extension a connection to twitter needs to be set up as a part of our bot describe the solution you d like create a connection to twitter using tweepy a library used for creating twitter connections in a similar manner that praw uses to create connections to reddit describe alternatives you ve considered searching google for tweets direct connection cuts out the middle man | 1 |

55,396 | 3,073,083,972 | IssuesEvent | 2015-08-19 20:09:00 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | solo.clickLongOnScreen cannot work | bug imported Priority-Medium | _From [onlyfors...@gmail.com](https://code.google.com/u/101589604104493003872/) on October 18, 2012 19:18:26_

What steps will reproduce the problem? 1.Add solo.clickLongOnScreen into test operation What is the expected output? What do you see instead? The item list should be displayed for long clicking on screen. But actually no list is displayed. What version of the product are you using? On what operating system? robotium-solo-3.5.jar on linux operating system Please provide any additional information below. This function work well on robotium-solo-3.4.1.jar and before.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=343_ | 1.0 | solo.clickLongOnScreen cannot work - _From [onlyfors...@gmail.com](https://code.google.com/u/101589604104493003872/) on October 18, 2012 19:18:26_

What steps will reproduce the problem? 1.Add solo.clickLongOnScreen into test operation What is the expected output? What do you see instead? The item list should be displayed for long clicking on screen. But actually no list is displayed. What version of the product are you using? On what operating system? robotium-solo-3.5.jar on linux operating system Please provide any additional information below. This function work well on robotium-solo-3.4.1.jar and before.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=343_ | priority | solo clicklongonscreen cannot work from on october what steps will reproduce the problem add solo clicklongonscreen into test operation what is the expected output what do you see instead the item list should be displayed for long clicking on screen but actually no list is displayed what version of the product are you using on what operating system robotium solo jar on linux operating system please provide any additional information below this function work well on robotium solo jar and before original issue | 1 |

525,891 | 15,268,085,318 | IssuesEvent | 2021-02-22 10:55:27 | truecharts/truecharts | https://api.github.com/repos/truecharts/truecharts | closed | [traefik] acmeDNS CertManager generates error | Priority/Medium bug | When attempting to create the `traefik` app using `acmeDNS` CertManager I got the following error.

<details>

<summary>Installing</summary>

`Error: [EFAULT] Failed to install catalog item: b'W0221 05:24:04.930155 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:04.964322 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:04.988432 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.014405 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.031023 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.052552 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.071766 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.104369 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.119070 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.124455 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.129408 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.134605 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.139315 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.144124 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.148737 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.153569 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nError: unable to build kubernetes objects from release manifest: error validating "": error validating data: unknown object type "nil" in Secret.stringData.acmedns-json\n'`

</details>

`*redacted*` is being used to maintain security. All settings are default with the exception of the following.

Email Address: `*redacted*@*redacted*.com`

Wildcard Domain: `*redacted*.*redacted*.com`

CertManager Provider: `acmeDNS`

host: `auth.acme-dns.io`

acmednsjson: `{ "allowfrom": [], "fulldomain": "*redacted*.auth.acme-dns.io", "password": "*redacted*", "subdomain": "*redacted*", "username": "*redacted*" }`

_Originally posted by @whiskerz007 in https://github.com/truecharts/truecharts/issues/150#issuecomment-782859285_ | 1.0 | [traefik] acmeDNS CertManager generates error - When attempting to create the `traefik` app using `acmeDNS` CertManager I got the following error.

<details>

<summary>Installing</summary>

`Error: [EFAULT] Failed to install catalog item: b'W0221 05:24:04.930155 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:04.964322 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:04.988432 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.014405 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.031023 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.052552 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.071766 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:05.104369 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.119070 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.124455 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.129408 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.134605 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.139315 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.144124 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.148737 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nW0221 05:24:07.153569 3274797 warnings.go:70] apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition\nError: unable to build kubernetes objects from release manifest: error validating "": error validating data: unknown object type "nil" in Secret.stringData.acmedns-json\n'`

</details>

`*redacted*` is being used to maintain security. All settings are default with the exception of the following.

Email Address: `*redacted*@*redacted*.com`

Wildcard Domain: `*redacted*.*redacted*.com`

CertManager Provider: `acmeDNS`

host: `auth.acme-dns.io`

acmednsjson: `{ "allowfrom": [], "fulldomain": "*redacted*.auth.acme-dns.io", "password": "*redacted*", "subdomain": "*redacted*", "username": "*redacted*" }`