Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

85,841 | 3,699,325,679 | IssuesEvent | 2016-02-28 22:05:32 | Chuppy21/projekktor-zwei | https://api.github.com/repos/Chuppy21/projekktor-zwei | closed | add "fast forward" and "backward" buttons | auto-migrated Priority-Medium Type-Enhancement | ```

... and implement a corresponding functionality of course.

```

Original issue reported on code.google.com by `frankygh...@googlemail.com` on 28 May 2010 at 11:02 | 1.0 | add "fast forward" and "backward" buttons - ```

... and implement a corresponding functionality of course.

```

Original issue reported on code.google.com by `frankygh...@googlemail.com` on 28 May 2010 at 11:02 | priority | add fast forward and backward buttons and implement a corresponding functionality of course original issue reported on code google com by frankygh googlemail com on may at | 1 |

31,004 | 2,730,831,472 | IssuesEvent | 2015-04-16 16:53:42 | chummer5a/chummer5a | https://api.github.com/repos/chummer5a/chummer5a | closed | Error in calculations if a piece of gear is allowed when availability is depending on the rating. | auto-migrated bug Priority-Medium | <a href="https://github.com/GoogleCodeExporter"><img src="https://avatars.githubusercontent.com/u/9614759?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [GoogleCodeExporter](https://github.com/GoogleCodeExporter)**

_Tuesday Mar 17, 2015 at 08:56 GMT_

_Originally opened as https://github.com/chummer5a/chummer5/issues/14_

----

```

What steps will reproduce the problem?

1. Create a character.

2. Open tab [Streed Gear]

3. Open subtab [Gear]

4. Push button [Add Gear]

5. Search for 'Fake' and select 'Fake SIN[ID/Credstics]'

6. Push the rating up as far as you can.

What is the expected output? What do you see instead?

The expected output is raring 4.

The reached rating is 6.

What version of the product are you using? On what operating system?

Version 0.0.5.139

Please provide any additional information below.

SR5 94:

Keep in mind... The characters are restricted to a maximum Availability rating

of 12 and a device rating of 6.

SR5 443: Table IDENTIFICATION

Fake SIN (Rating 1-6) AVAIL: (Rating x 3)F

Rating 6 -> AVAIL: (6*3)F = 18F -> forbidden.

Raring 4 -> AVAIL: (4*3)F = 12F -> allowed.

Kind regards.

```

Original issue reported on code.google.com by `a.steenv...@vista-online.nl` on 29 Sep 2014 at 7:34

| 1.0 | Error in calculations if a piece of gear is allowed when availability is depending on the rating. - <a href="https://github.com/GoogleCodeExporter"><img src="https://avatars.githubusercontent.com/u/9614759?v=3" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [GoogleCodeExporter](https://github.com/GoogleCodeExporter)**

_Tuesday Mar 17, 2015 at 08:56 GMT_

_Originally opened as https://github.com/chummer5a/chummer5/issues/14_

----

```

What steps will reproduce the problem?

1. Create a character.

2. Open tab [Streed Gear]

3. Open subtab [Gear]

4. Push button [Add Gear]

5. Search for 'Fake' and select 'Fake SIN[ID/Credstics]'

6. Push the rating up as far as you can.

What is the expected output? What do you see instead?

The expected output is raring 4.

The reached rating is 6.

What version of the product are you using? On what operating system?

Version 0.0.5.139

Please provide any additional information below.

SR5 94:

Keep in mind... The characters are restricted to a maximum Availability rating

of 12 and a device rating of 6.

SR5 443: Table IDENTIFICATION

Fake SIN (Rating 1-6) AVAIL: (Rating x 3)F

Rating 6 -> AVAIL: (6*3)F = 18F -> forbidden.

Raring 4 -> AVAIL: (4*3)F = 12F -> allowed.

Kind regards.

```

Original issue reported on code.google.com by `a.steenv...@vista-online.nl` on 29 Sep 2014 at 7:34

| priority | error in calculations if a piece of gear is allowed when availability is depending on the rating issue by tuesday mar at gmt originally opened as what steps will reproduce the problem create a character open tab open subtab push button search for fake and select fake sin push the rating up as far as you can what is the expected output what do you see instead the expected output is raring the reached rating is what version of the product are you using on what operating system version please provide any additional information below keep in mind the characters are restricted to a maximum availability rating of and a device rating of table identification fake sin rating avail rating x f rating avail f forbidden raring avail f allowed kind regards original issue reported on code google com by a steenv vista online nl on sep at | 1 |

40,719 | 2,868,938,147 | IssuesEvent | 2015-06-05 22:04:27 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | listDir() can return file paths that are not within the given directory's path | bug Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7346_

----

When you use listDir() to walk a directory, it returns paths that are the real paths of the contents, after symlink traversal. If the directory path itself uses a symlink, this means you can get paths that seem to not be within the directory.

For example:

listDir('/tmp/temp_dir1_pYa9UG/myapp/lib')

returns:

[/private/tmp/temp_dir1_pYa9UG/myapp/lib/src]

This is at least true on Mac. Not sure about other OS's. For M2, I'm going to make a narrowly targeted fix in the one place where this causes a bug (#7330), but we should do something directly in io.dart when we have a little more time.

I think the cleanest fix is to:

1. Have listDir() get the real path of the directory: new File(dir).fullPathSync();

2. Return the resulting paths relative to that.

| 1.0 | listDir() can return file paths that are not within the given directory's path - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7346_

----

When you use listDir() to walk a directory, it returns paths that are the real paths of the contents, after symlink traversal. If the directory path itself uses a symlink, this means you can get paths that seem to not be within the directory.

For example:

listDir('/tmp/temp_dir1_pYa9UG/myapp/lib')

returns:

[/private/tmp/temp_dir1_pYa9UG/myapp/lib/src]

This is at least true on Mac. Not sure about other OS's. For M2, I'm going to make a narrowly targeted fix in the one place where this causes a bug (#7330), but we should do something directly in io.dart when we have a little more time.

I think the cleanest fix is to:

1. Have listDir() get the real path of the directory: new File(dir).fullPathSync();

2. Return the resulting paths relative to that.

| priority | listdir can return file paths that are not within the given directory s path issue by originally opened as dart lang sdk when you use listdir to walk a directory it returns paths that are the real paths of the contents after symlink traversal if the directory path itself uses a symlink this means you can get paths that seem to not be within the directory for example listdir tmp temp myapp lib returns this is at least true on mac not sure about other os s for i m going to make a narrowly targeted fix in the one place where this causes a bug but we should do something directly in io dart when we have a little more time i think the cleanest fix is to have listdir get the real path of the directory new file dir fullpathsync return the resulting paths relative to that | 1 |

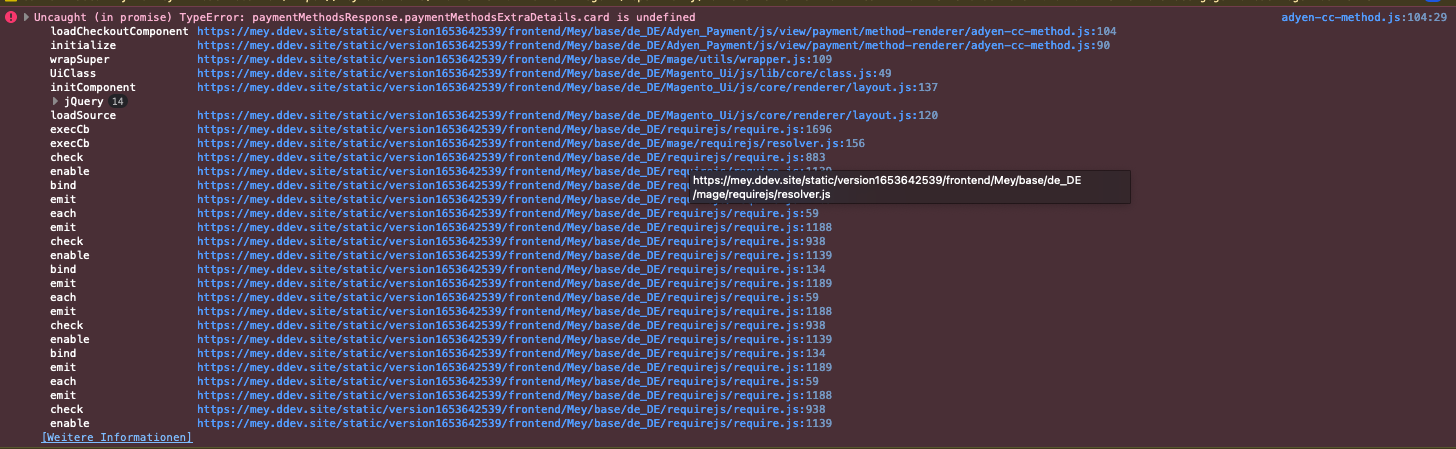

661,870 | 22,092,957,865 | IssuesEvent | 2022-06-01 07:44:00 | Adyen/adyen-magento2 | https://api.github.com/repos/Adyen/adyen-magento2 | closed | Credit card payment error TypeError: paymentMethodsResponse.paymentMethodsExtraDetails.card is undefined | Bug report Priority: medium Confirmed | **Describe the bug**

Credit card payment method is not usable

**To Reproduce**

Steps to reproduce the behavior:

1 Add a product to the card

2. go to checkout stept 2

3. open dev console

4. see error TypeError: paymentMethodsResponse.paymentMethodsExtraDetails.card is undefined

**Magento version**

Adobe Commerce 2.4.4

**Plugin version**

adyen/module-payment 8.2.3

**Screenshots**

| 1.0 | Credit card payment error TypeError: paymentMethodsResponse.paymentMethodsExtraDetails.card is undefined - **Describe the bug**

Credit card payment method is not usable

**To Reproduce**

Steps to reproduce the behavior:

1 Add a product to the card

2. go to checkout stept 2

3. open dev console

4. see error TypeError: paymentMethodsResponse.paymentMethodsExtraDetails.card is undefined

**Magento version**

Adobe Commerce 2.4.4

**Plugin version**

adyen/module-payment 8.2.3

**Screenshots**

| priority | credit card payment error typeerror paymentmethodsresponse paymentmethodsextradetails card is undefined describe the bug credit card payment method is not usable to reproduce steps to reproduce the behavior add a product to the card go to checkout stept open dev console see error typeerror paymentmethodsresponse paymentmethodsextradetails card is undefined magento version adobe commerce plugin version adyen module payment screenshots | 1 |

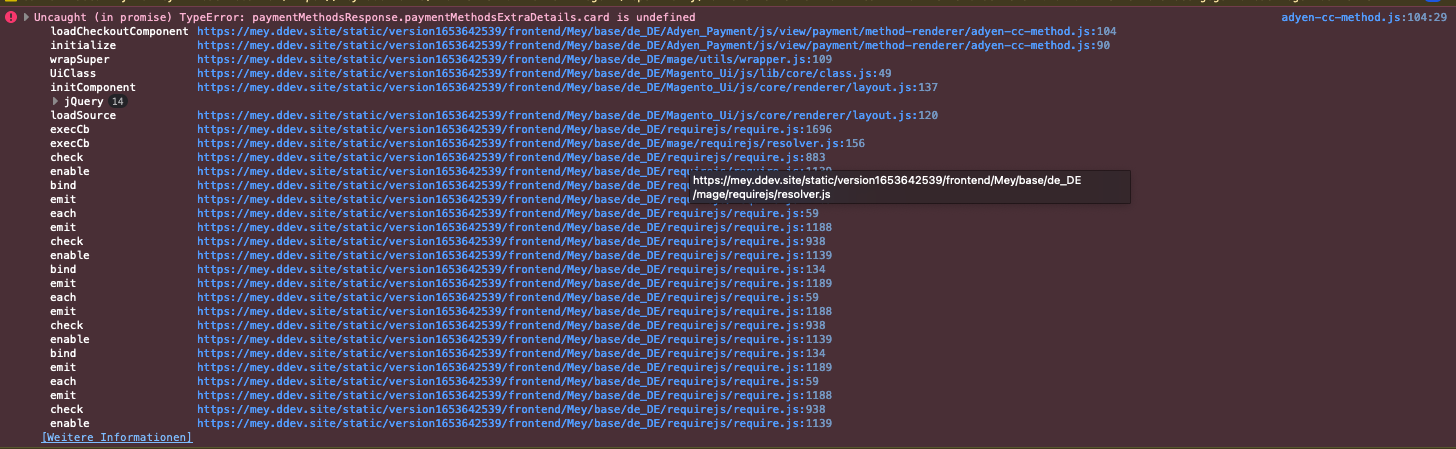

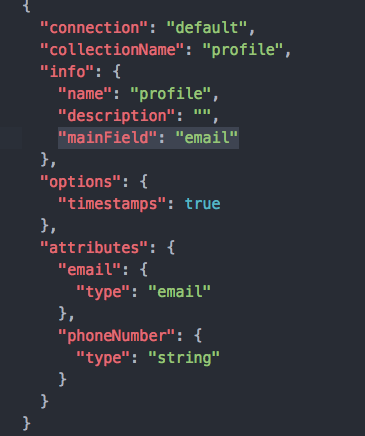

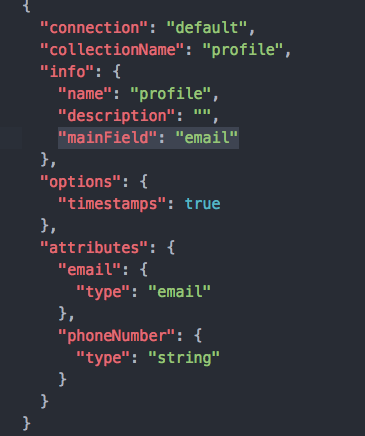

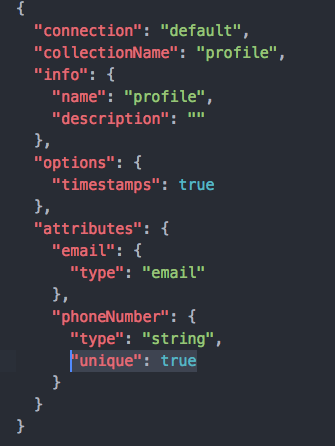

241,578 | 7,817,443,759 | IssuesEvent | 2018-06-13 09:03:38 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | mainField will lost content type builder model update | Good for New Contributors priority: medium status: confirmed type: bug 🐛 | **Informations**

- **Node.js version**: 9.10.1

- **npm version**:5.6.0

- **Strapi version**: 3.0.0-alpha.12.2

- **Database**: mongodb 3.6.4

- **Operating system**: macOS

**What is the current behavior?**

mainField in xxx.settings.json will lost if using admin panel to modify the same model

**Steps to reproduce the problem**

1. manually add 'mainField' in models/Profile.settings.json([documentation](https://strapi.io/documentation/guides/models.html#model-information)), since there is no entry to modify on admin panel.

2. modify field 'phoneNumber' in model 'Profile' use admin panel. For example, make 'phoneNumber' unique.

3. Result is: 'phoneNumber' saved, but 'mainField' lost.

**What is the expected behavior?**

new xxx.settings.json should copy from old xxx.settings.json, and merge new changes.

<!-- ⚠️ Make sure to browse the opened and closed issues before submit your issue. -->

| 1.0 | mainField will lost content type builder model update - **Informations**

- **Node.js version**: 9.10.1

- **npm version**:5.6.0

- **Strapi version**: 3.0.0-alpha.12.2

- **Database**: mongodb 3.6.4

- **Operating system**: macOS

**What is the current behavior?**

mainField in xxx.settings.json will lost if using admin panel to modify the same model

**Steps to reproduce the problem**

1. manually add 'mainField' in models/Profile.settings.json([documentation](https://strapi.io/documentation/guides/models.html#model-information)), since there is no entry to modify on admin panel.

2. modify field 'phoneNumber' in model 'Profile' use admin panel. For example, make 'phoneNumber' unique.

3. Result is: 'phoneNumber' saved, but 'mainField' lost.

**What is the expected behavior?**

new xxx.settings.json should copy from old xxx.settings.json, and merge new changes.

<!-- ⚠️ Make sure to browse the opened and closed issues before submit your issue. -->

| priority | mainfield will lost content type builder model update informations node js version npm version strapi version alpha database mongodb operating system macos what is the current behavior mainfield in xxx settings json will lost if using admin panel to modify the same model steps to reproduce the problem manually add mainfield in models profile settings json since there is no entry to modify on admin panel modify field phonenumber in model profile use admin panel for example make phonenumber unique result is phonenumber saved but mainfield lost what is the expected behavior new xxx settings json should copy from old xxx settings json and merge new changes | 1 |

625,229 | 19,722,821,342 | IssuesEvent | 2022-01-13 16:54:53 | carbon-design-system/carbon-for-ibm-dotcom | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom | closed | [Universal banner] React Wrapper: Prod QA testing | Feature request package: react priority: medium QA adopter: Innovation Team | #### User Story

> As a `[user role below]`:

developer using the Carbon for IBM.com `Universal banner`

> I need to:

have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms

> so that I can:

be confident that my ibm.com web site users will have a good experience

#### Additional information

- [Browser Stack link](https://ibm.ent.box.com/notes/578734426612)

- [Browser Standard](https://w3.ibm.com/standards/web/browser/)

- Sanity test of tier 1 mobile browsers (desktop is covered in e2e tests)

- Sanity test of Accessibility (Voiceover)

- [Accessibility testing guidance](https://pages.github.ibm.com/IBMa/able/Test/verify/)

- [Accessibility Checklist](https://www.ibm.com/able/guidelines/ci162/accessibility_checklist.html)

- [Creating a QA bug](https://ibm.ent.box.com/notes/603242247385)

- **See the Epic (https://github.com/carbon-design-system/carbon-for-ibm-dotcom/issues/6436) for the Design and Functional specs information**

- Web Components Dev issue (https://github.com/carbon-design-system/carbon-for-ibm-dotcom/issues/6815)

- Once development is finished the updated code is available in the [**Web Components Canary Environment**](https://ibmdotcom-web-components-canary.mybluemix.net/?path=/story/overview-getting-started--page) for testing.

- [**Web Components canary storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/web-components)

- [**React canary storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/react)

- [**React wrapper storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/web-components-react)

#### Acceptance criteria

- [ ] Accessibility testing is complete

- [ ] All manual testing is complete

- [ ] Defects are recorded | 1.0 | [Universal banner] React Wrapper: Prod QA testing - #### User Story

> As a `[user role below]`:

developer using the Carbon for IBM.com `Universal banner`

> I need to:

have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms

> so that I can:

be confident that my ibm.com web site users will have a good experience

#### Additional information

- [Browser Stack link](https://ibm.ent.box.com/notes/578734426612)

- [Browser Standard](https://w3.ibm.com/standards/web/browser/)

- Sanity test of tier 1 mobile browsers (desktop is covered in e2e tests)

- Sanity test of Accessibility (Voiceover)

- [Accessibility testing guidance](https://pages.github.ibm.com/IBMa/able/Test/verify/)

- [Accessibility Checklist](https://www.ibm.com/able/guidelines/ci162/accessibility_checklist.html)

- [Creating a QA bug](https://ibm.ent.box.com/notes/603242247385)

- **See the Epic (https://github.com/carbon-design-system/carbon-for-ibm-dotcom/issues/6436) for the Design and Functional specs information**

- Web Components Dev issue (https://github.com/carbon-design-system/carbon-for-ibm-dotcom/issues/6815)

- Once development is finished the updated code is available in the [**Web Components Canary Environment**](https://ibmdotcom-web-components-canary.mybluemix.net/?path=/story/overview-getting-started--page) for testing.

- [**Web Components canary storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/web-components)

- [**React canary storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/react)

- [**React wrapper storybook**](https://carbon-design-system.github.io/carbon-for-ibm-dotcom/canary/web-components-react)

#### Acceptance criteria

- [ ] Accessibility testing is complete

- [ ] All manual testing is complete

- [ ] Defects are recorded | priority | react wrapper prod qa testing user story as a developer using the carbon for ibm com universal banner i need to have a version of the component that has been tested for accessibility compliance as well as on multiple browsers and platforms so that i can be confident that my ibm com web site users will have a good experience additional information sanity test of tier mobile browsers desktop is covered in tests sanity test of accessibility voiceover see the epic for the design and functional specs information web components dev issue once development is finished the updated code is available in the for testing acceptance criteria accessibility testing is complete all manual testing is complete defects are recorded | 1 |

55,549 | 3,073,655,152 | IssuesEvent | 2015-08-19 23:24:34 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | ability to wait for activity/view to fully load | bug imported invalid Priority-Medium | _From [ram...@gmail.com](https://code.google.com/u/113328738738961004047/) on May 16, 2013 08:05:55_

Is that possible to have some kind of waiter for view to be fully loaded ? i.e.

solo.clickOnButton(buttonName);

solo.waitForLoad();

// do tests.

What we can see is that on some devices that buttons are not found but they clearly there which tends me to believe there can be some timing issue ?

Thanks.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=457_ | 1.0 | ability to wait for activity/view to fully load - _From [ram...@gmail.com](https://code.google.com/u/113328738738961004047/) on May 16, 2013 08:05:55_

Is that possible to have some kind of waiter for view to be fully loaded ? i.e.

solo.clickOnButton(buttonName);

solo.waitForLoad();

// do tests.

What we can see is that on some devices that buttons are not found but they clearly there which tends me to believe there can be some timing issue ?

Thanks.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=457_ | priority | ability to wait for activity view to fully load from on may is that possible to have some kind of waiter for view to be fully loaded i e solo clickonbutton buttonname solo waitforload do tests what we can see is that on some devices that buttons are not found but they clearly there which tends me to believe there can be some timing issue thanks original issue | 1 |

218,447 | 7,331,445,291 | IssuesEvent | 2018-03-05 13:33:19 | SmartlyDressedGames/Unturned-4.x-Community | https://api.github.com/repos/SmartlyDressedGames/Unturned-4.x-Community | closed | Ability stats editor | Priority: Medium Status: Complete Type: Optimization | - [x] Default value editor more concise

- [x] Modifier value editor show +/- and color

- [x] Unit test UAbilityStatSet | 1.0 | Ability stats editor - - [x] Default value editor more concise

- [x] Modifier value editor show +/- and color

- [x] Unit test UAbilityStatSet | priority | ability stats editor default value editor more concise modifier value editor show and color unit test uabilitystatset | 1 |

364,642 | 10,771,763,008 | IssuesEvent | 2019-11-02 10:05:42 | bounswe/bounswe2019group4 | https://api.github.com/repos/bounswe/bounswe2019group4 | closed | Add Prediction Feature Backend | Back-End Priority: Medium Type: Development | We need to add a rate showed on user's profile page, calculated by their past predictions. These predictions should be also showed in tradinq equipment page. | 1.0 | Add Prediction Feature Backend - We need to add a rate showed on user's profile page, calculated by their past predictions. These predictions should be also showed in tradinq equipment page. | priority | add prediction feature backend we need to add a rate showed on user s profile page calculated by their past predictions these predictions should be also showed in tradinq equipment page | 1 |

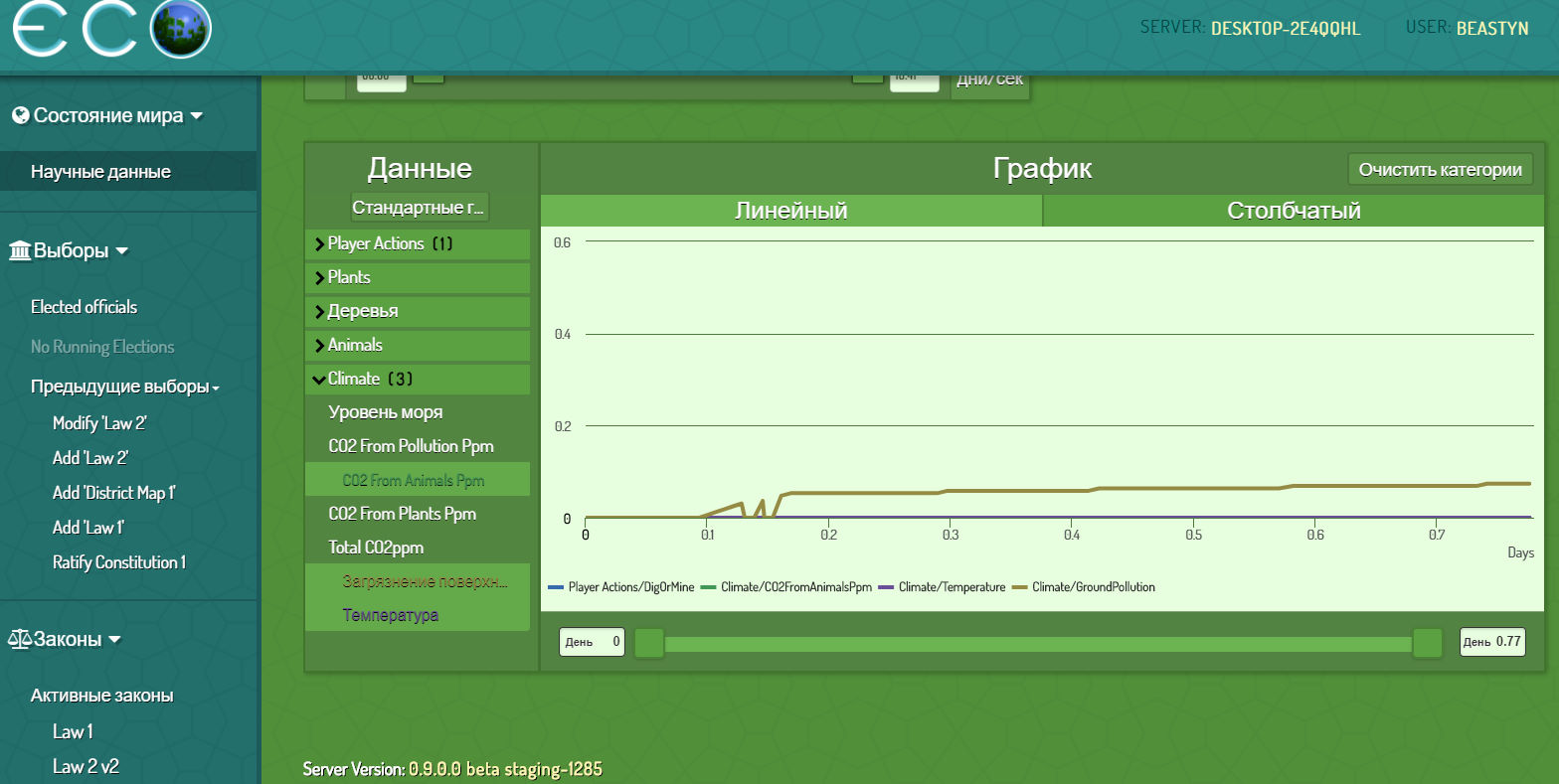

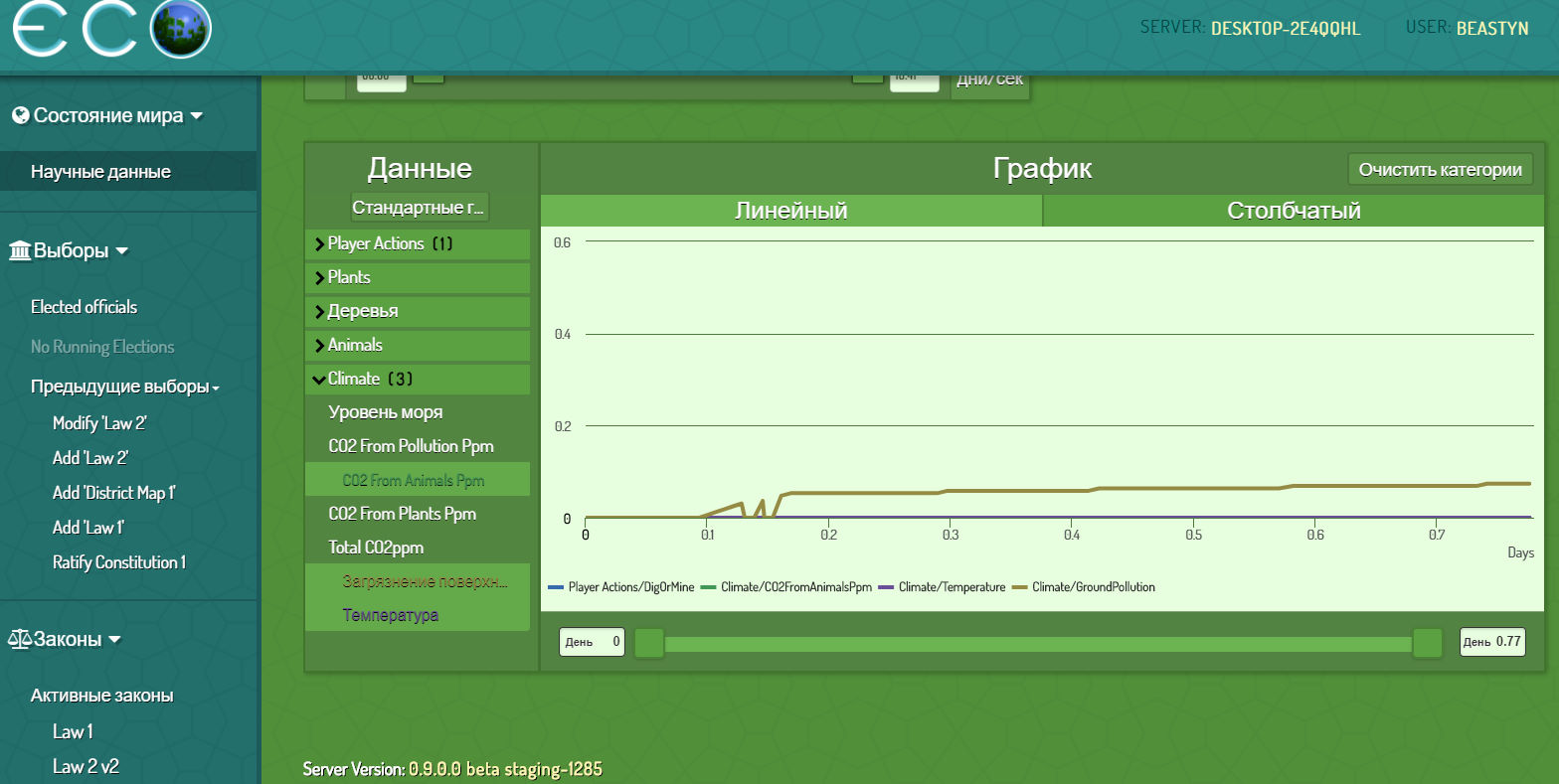

432,397 | 12,492,208,687 | IssuesEvent | 2020-06-01 06:36:02 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1285] Web: action counting for graphs | Category: Web Priority: Medium | I see that graphs filters had changed. I can't see particular peopla statistic for example.

And. check action counting please.

I was digging, placing, pickuping... and nothing on stats.

| 1.0 | [0.9.0 staging-1285] Web: action counting for graphs - I see that graphs filters had changed. I can't see particular peopla statistic for example.

And. check action counting please.

I was digging, placing, pickuping... and nothing on stats.

| priority | web action counting for graphs i see that graphs filters had changed i can t see particular peopla statistic for example and check action counting please i was digging placing pickuping and nothing on stats | 1 |

57,526 | 3,082,702,914 | IssuesEvent | 2015-08-24 00:18:16 | magro/memcached-session-manager | https://api.github.com/repos/magro/memcached-session-manager | closed | Switch to new CouchbaseClient API | enhancement imported Milestone-1.6.4 Priority-Medium | _From [rka...@gmail.com](https://code.google.com/u/116755208212771764698/) on March 05, 2012 20:00:26_

Cpuchbase Server 1.8 (formerly known as Membase) is using a slightly different API and it's no longer part of the spymemcached API.

_Original issue: http://code.google.com/p/memcached-session-manager/issues/detail?id=126_ | 1.0 | Switch to new CouchbaseClient API - _From [rka...@gmail.com](https://code.google.com/u/116755208212771764698/) on March 05, 2012 20:00:26_

Cpuchbase Server 1.8 (formerly known as Membase) is using a slightly different API and it's no longer part of the spymemcached API.

_Original issue: http://code.google.com/p/memcached-session-manager/issues/detail?id=126_ | priority | switch to new couchbaseclient api from on march cpuchbase server formerly known as membase is using a slightly different api and it s no longer part of the spymemcached api original issue | 1 |

109,025 | 4,366,534,043 | IssuesEvent | 2016-08-03 14:36:57 | LearningLocker/learninglocker | https://api.github.com/repos/LearningLocker/learninglocker | closed | Articulate Storyline 2 course doesn't resume | priority:medium status:unconfirmed type:bug | **Version**

1.13.3

**Steps to reproduce the bug**

1. Create working launch link to course

2. Launch course and play through a bit

3. Close course

4. Re-launch course with same launch link

5. The course does not prompt for resume

**Expected behaviour**

Course should prompt for resume when we refresh/reload with same activity and same actor

**Actual behaviour**

Course start over again

**Additional information**

OS: Window 10 x64 with WAMP + Mongodb

Browser: Version 51.0.2704.106 m (64-bit)

Tested the same course with ScormCloud and it is working fine. But couldn't get it work with LearningLocker.

---

The difference I notice is it return {} instead of { data:"xxxx" } in the http://localhost:8081/learninglocker/public/data/xAPI/activities/state?method=GET

I am not sure if the parameter I pass to the server is correct or not.

> story.html?endpoint=http://localhost:8081/learninglocker/public/data/xAPI/&auth=Basic%20M2FjNDAwNWZhNDY4YWQ4M2Y2ZjUxMTZjN2UwMDdmMDIyMjJhZjdkNzozOTE1MzI5ODcxN2QxYjFmZTI3MzY5OGI2NWJjNzBjNzdlODUwNTFj&actor={"mbox":"mailto:xxx@xxx.com",%20"name":"Jason"}®istration=2981c910-6445-11e4-9803-0800200c9a66&activity_id=www.example.com/my-activity

Also I am not sure where to get/generate for the registration and activity_id paramenter for, I just copy from the tutorial website and use it. Is that the reason it doesn't work?

---

I suspect the `http://localhost:8081/learninglocker/public/data/xAPI/activities/state?method=GET` data return {} is due to the data in content & registration field in `documentapi ` table is empty.

So that's why it couldn't resume.

```

{

"_id" : ObjectId("57991e7d7f7759ac2600002c"),

"lrs" : ObjectId("57991beb7f7759b02100002d"),

"lrs_id" : ObjectId("57991beb7f7759b02100002d"),

"documentType" : "state",

"identId" : "resume",

"activityId" : "http://5a8SiBHMf0l_course_id",

"agent" : {

"mbox" : "mailto:xxx@xxx.com",

"name" : "Jason"

},

"registration" : null,

"updated_at" : ISODate("2016-07-27T21:31:27.000Z"),

"sha" : "AA7B6DA600F7E471DEEE83DCB06F923C6353FF65",

"content" : null,

"contentType" : "application/json",

"created_at" : ISODate("2016-07-27T20:50:05.000Z")

}

``` | 1.0 | Articulate Storyline 2 course doesn't resume - **Version**

1.13.3

**Steps to reproduce the bug**

1. Create working launch link to course

2. Launch course and play through a bit

3. Close course

4. Re-launch course with same launch link

5. The course does not prompt for resume

**Expected behaviour**

Course should prompt for resume when we refresh/reload with same activity and same actor

**Actual behaviour**

Course start over again

**Additional information**

OS: Window 10 x64 with WAMP + Mongodb

Browser: Version 51.0.2704.106 m (64-bit)

Tested the same course with ScormCloud and it is working fine. But couldn't get it work with LearningLocker.

---

The difference I notice is it return {} instead of { data:"xxxx" } in the http://localhost:8081/learninglocker/public/data/xAPI/activities/state?method=GET

I am not sure if the parameter I pass to the server is correct or not.

> story.html?endpoint=http://localhost:8081/learninglocker/public/data/xAPI/&auth=Basic%20M2FjNDAwNWZhNDY4YWQ4M2Y2ZjUxMTZjN2UwMDdmMDIyMjJhZjdkNzozOTE1MzI5ODcxN2QxYjFmZTI3MzY5OGI2NWJjNzBjNzdlODUwNTFj&actor={"mbox":"mailto:xxx@xxx.com",%20"name":"Jason"}®istration=2981c910-6445-11e4-9803-0800200c9a66&activity_id=www.example.com/my-activity

Also I am not sure where to get/generate for the registration and activity_id paramenter for, I just copy from the tutorial website and use it. Is that the reason it doesn't work?

---

I suspect the `http://localhost:8081/learninglocker/public/data/xAPI/activities/state?method=GET` data return {} is due to the data in content & registration field in `documentapi ` table is empty.

So that's why it couldn't resume.

```

{

"_id" : ObjectId("57991e7d7f7759ac2600002c"),

"lrs" : ObjectId("57991beb7f7759b02100002d"),

"lrs_id" : ObjectId("57991beb7f7759b02100002d"),

"documentType" : "state",

"identId" : "resume",

"activityId" : "http://5a8SiBHMf0l_course_id",

"agent" : {

"mbox" : "mailto:xxx@xxx.com",

"name" : "Jason"

},

"registration" : null,

"updated_at" : ISODate("2016-07-27T21:31:27.000Z"),

"sha" : "AA7B6DA600F7E471DEEE83DCB06F923C6353FF65",

"content" : null,

"contentType" : "application/json",

"created_at" : ISODate("2016-07-27T20:50:05.000Z")

}

``` | priority | articulate storyline course doesn t resume version steps to reproduce the bug create working launch link to course launch course and play through a bit close course re launch course with same launch link the course does not prompt for resume expected behaviour course should prompt for resume when we refresh reload with same activity and same actor actual behaviour course start over again additional information os window with wamp mongodb browser version m bit tested the same course with scormcloud and it is working fine but couldn t get it work with learninglocker the difference i notice is it return instead of data xxxx in the i am not sure if the parameter i pass to the server is correct or not story html endpoint also i am not sure where to get generate for the registration and activity id paramenter for i just copy from the tutorial website and use it is that the reason it doesn t work i suspect the data return is due to the data in content registration field in documentapi table is empty so that s why it couldn t resume id objectid lrs objectid lrs id objectid documenttype state identid resume activityid agent mbox mailto xxx xxx com name jason registration null updated at isodate sha content null contenttype application json created at isodate | 1 |

40,499 | 2,868,923,625 | IssuesEvent | 2015-06-05 21:59:13 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Pub should gracefully handle a Git dependency's pubspec changing name or going away | bug Fixed Priority-Medium | <a href="https://github.com/nex3"><img src="https://avatars.githubusercontent.com/u/188?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [nex3](https://github.com/nex3)**

_Originally opened as dart-lang/sdk#5241_

----

I'm not sure what it does now, but at the very least there should be tests for this case. | 1.0 | Pub should gracefully handle a Git dependency's pubspec changing name or going away - <a href="https://github.com/nex3"><img src="https://avatars.githubusercontent.com/u/188?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [nex3](https://github.com/nex3)**

_Originally opened as dart-lang/sdk#5241_

----

I'm not sure what it does now, but at the very least there should be tests for this case. | priority | pub should gracefully handle a git dependency s pubspec changing name or going away issue by originally opened as dart lang sdk i m not sure what it does now but at the very least there should be tests for this case | 1 |

830,807 | 32,024,819,551 | IssuesEvent | 2023-09-22 08:05:39 | ImTheCactus/Crow-Get-It-Game | https://api.github.com/repos/ImTheCactus/Crow-Get-It-Game | closed | Oak1 LODs aren't functioning | Priority: Medium Status: In progress Bug | Describe the bug: For the Oak1 tree model, the LOD won't accept a renderer from the model like the other models and LODs have.

Version: Crow Get It 3.5 version 0.2.0 *(To be updated on)

Steps to reproduce the behaviour: (In game) Walk far away from the oak/birch starting forest, for instance to the farm area, and you will see most of the other environmental props will disappear but Oak1 tree models will not.

Expected behaviour: After a certain distance, the Oak1 tree models should disappear for an increase of performance.

Desktop system details: OS: Windows10 | 1.0 | Oak1 LODs aren't functioning - Describe the bug: For the Oak1 tree model, the LOD won't accept a renderer from the model like the other models and LODs have.

Version: Crow Get It 3.5 version 0.2.0 *(To be updated on)

Steps to reproduce the behaviour: (In game) Walk far away from the oak/birch starting forest, for instance to the farm area, and you will see most of the other environmental props will disappear but Oak1 tree models will not.

Expected behaviour: After a certain distance, the Oak1 tree models should disappear for an increase of performance.

Desktop system details: OS: Windows10 | priority | lods aren t functioning describe the bug for the tree model the lod won t accept a renderer from the model like the other models and lods have version crow get it version to be updated on steps to reproduce the behaviour in game walk far away from the oak birch starting forest for instance to the farm area and you will see most of the other environmental props will disappear but tree models will not expected behaviour after a certain distance the tree models should disappear for an increase of performance desktop system details os | 1 |

279,645 | 8,671,455,373 | IssuesEvent | 2018-11-29 19:12:45 | SETI/pds-opus | https://api.github.com/repos/SETI/pds-opus | closed | Social sharing from detail page picks up wrong image | A-Bug B-OPUS Django Effort 2 Medium Priority TBD | Originally reported by: **lisa ballard (Bitbucket: [basilleaf](https://bitbucket.org/basilleaf), GitHub: [basilleaf](https://github.com/basilleaf))**

---

sharing an image to Pinterest from detail page doesn't work, pinterest is picking up entire gallery, probably similar for facebook etc.

---

- Bitbucket: https://bitbucket.org/ringsnode/opus2/issue/116

| 1.0 | Social sharing from detail page picks up wrong image - Originally reported by: **lisa ballard (Bitbucket: [basilleaf](https://bitbucket.org/basilleaf), GitHub: [basilleaf](https://github.com/basilleaf))**

---

sharing an image to Pinterest from detail page doesn't work, pinterest is picking up entire gallery, probably similar for facebook etc.

---

- Bitbucket: https://bitbucket.org/ringsnode/opus2/issue/116

| priority | social sharing from detail page picks up wrong image originally reported by lisa ballard bitbucket github sharing an image to pinterest from detail page doesn t work pinterest is picking up entire gallery probably similar for facebook etc bitbucket | 1 |

147,596 | 5,642,456,949 | IssuesEvent | 2017-04-06 21:10:10 | driftyco/ionic-app-scripts | https://api.github.com/repos/driftyco/ionic-app-scripts | closed | `ionic build` always exits with `0` | priority:medium | Currently, all tslint errors are treated as (kind of) warnings. I reach this conclusion by the fact that the script exits with `0` despite all those tslint errors on screen.

This way, we can't catch these in CI, because of the exit code is `0`, and there's nearly no indication of such error. We have to do a regular express grep on the complete output to determine that.

There should be an option/setting to make the script return with not zero instead.

P.S. I think this applies beyond tslint. Other compilers has command line options to treat "warnings" as "error". | 1.0 | `ionic build` always exits with `0` - Currently, all tslint errors are treated as (kind of) warnings. I reach this conclusion by the fact that the script exits with `0` despite all those tslint errors on screen.

This way, we can't catch these in CI, because of the exit code is `0`, and there's nearly no indication of such error. We have to do a regular express grep on the complete output to determine that.

There should be an option/setting to make the script return with not zero instead.

P.S. I think this applies beyond tslint. Other compilers has command line options to treat "warnings" as "error". | priority | ionic build always exits with currently all tslint errors are treated as kind of warnings i reach this conclusion by the fact that the script exits with despite all those tslint errors on screen this way we can t catch these in ci because of the exit code is and there s nearly no indication of such error we have to do a regular express grep on the complete output to determine that there should be an option setting to make the script return with not zero instead p s i think this applies beyond tslint other compilers has command line options to treat warnings as error | 1 |

452,976 | 13,062,641,871 | IssuesEvent | 2020-07-30 15:26:15 | datavisyn/tdp_core | https://api.github.com/repos/datavisyn/tdp_core | closed | RankingView: Covered tooltip of overview mode warning | priority: medium type: bug | * Release number or git hash: v9.1.0

* Web browser version and OS: Chrome 84

* Environment (local or deployed): both

### Steps to reproduce

1. Open https://ordino-daily.caleydoapp.org/

1. Open list of all genes

2. Activate Overview mode

### Observed behavior

The warning explanation is covered by the Tourdino icon as well as the button tool tip.

### Expected behavior

The tooltip should not be covered.

| 1.0 | RankingView: Covered tooltip of overview mode warning - * Release number or git hash: v9.1.0

* Web browser version and OS: Chrome 84

* Environment (local or deployed): both

### Steps to reproduce

1. Open https://ordino-daily.caleydoapp.org/

1. Open list of all genes

2. Activate Overview mode

### Observed behavior

The warning explanation is covered by the Tourdino icon as well as the button tool tip.

### Expected behavior

The tooltip should not be covered.

| priority | rankingview covered tooltip of overview mode warning release number or git hash web browser version and os chrome environment local or deployed both steps to reproduce open open list of all genes activate overview mode observed behavior the warning explanation is covered by the tourdino icon as well as the button tool tip expected behavior the tooltip should not be covered | 1 |

317,862 | 9,670,100,166 | IssuesEvent | 2019-05-21 19:02:04 | etternagame/etterna | https://api.github.com/repos/etternagame/etterna | closed | Osu beatmaps with a [Colours] section do not load | Priority: Medium Type: Bug | **Describe the bug**

Etterna does not load Osu beatmaps that contain a [Colours] section.

Manually deleting this section in each .osu file allows the beatmap to load ok.

**To Reproduce**

Steps to reproduce the behavior:

1. Attempt to load an Osu beatmap that contains a [Colours] section, e.g. https://osu.ppy.sh/beatmapsets/127305

2. The chart doesn't show up in-game.

**Expected behavior**

The chart loads and is playable in-game.

**Desktop (please complete the following information):**

- OS: Windows 10 x64

- Version 0.65.1 | 1.0 | Osu beatmaps with a [Colours] section do not load - **Describe the bug**

Etterna does not load Osu beatmaps that contain a [Colours] section.

Manually deleting this section in each .osu file allows the beatmap to load ok.

**To Reproduce**

Steps to reproduce the behavior:

1. Attempt to load an Osu beatmap that contains a [Colours] section, e.g. https://osu.ppy.sh/beatmapsets/127305

2. The chart doesn't show up in-game.

**Expected behavior**

The chart loads and is playable in-game.

**Desktop (please complete the following information):**

- OS: Windows 10 x64

- Version 0.65.1 | priority | osu beatmaps with a section do not load describe the bug etterna does not load osu beatmaps that contain a section manually deleting this section in each osu file allows the beatmap to load ok to reproduce steps to reproduce the behavior attempt to load an osu beatmap that contains a section e g the chart doesn t show up in game expected behavior the chart loads and is playable in game desktop please complete the following information os windows version | 1 |

256,186 | 8,127,027,629 | IssuesEvent | 2018-08-17 06:14:13 | codephil-columbia/typephil | https://api.github.com/repos/codephil-columbia/typephil | closed | No start next lesson option in learn | High Priority Medium Priority | Steps to reproduce: Click on next available lesson that you haven't started yet. Just add button and sangjun can do ui styling.

| 2.0 | No start next lesson option in learn - Steps to reproduce: Click on next available lesson that you haven't started yet. Just add button and sangjun can do ui styling.

| priority | no start next lesson option in learn steps to reproduce click on next available lesson that you haven t started yet just add button and sangjun can do ui styling | 1 |

343,185 | 10,326,029,733 | IssuesEvent | 2019-09-01 22:38:44 | ESAPI/esapi-java-legacy | https://api.github.com/repos/ESAPI/esapi-java-legacy | closed | exception is java.lang.NoClassDefFoundError: org.owasp.esapi.codecs.Codec | Priority-Medium bug imported | _From [alexj...@gmail.com](https://code.google.com/u/117724374125274417382/) on June 15, 2011 05:02:44_

Hi All,

My web project contains esapi-2.0GA.jar inside WEB-INF/lib folder. but still i am getting the below error. My application is running on websphere 6.1. Could anyone help me to resolve this issue.

exception is java.lang.NoClassDefFoundError: org.owasp.esapi.codecs.Codec

_Original issue: http://code.google.com/p/owasp-esapi-java/issues/detail?id=227_

| 1.0 | exception is java.lang.NoClassDefFoundError: org.owasp.esapi.codecs.Codec - _From [alexj...@gmail.com](https://code.google.com/u/117724374125274417382/) on June 15, 2011 05:02:44_

Hi All,

My web project contains esapi-2.0GA.jar inside WEB-INF/lib folder. but still i am getting the below error. My application is running on websphere 6.1. Could anyone help me to resolve this issue.

exception is java.lang.NoClassDefFoundError: org.owasp.esapi.codecs.Codec

_Original issue: http://code.google.com/p/owasp-esapi-java/issues/detail?id=227_

| priority | exception is java lang noclassdeffounderror org owasp esapi codecs codec from on june hi all my web project contains esapi jar inside web inf lib folder but still i am getting the below error my application is running on websphere could anyone help me to resolve this issue exception is java lang noclassdeffounderror org owasp esapi codecs codec original issue | 1 |

680,554 | 23,276,406,722 | IssuesEvent | 2022-08-05 07:39:50 | canonical/maas-ui | https://api.github.com/repos/canonical/maas-ui | closed | IP Address tooltip on Machines page blocks access to everything underneath and doesnt disappear until mouse-off | Priority: Medium Review: UX needed | Bug originally filed by bladernr at https://bugs.launchpad.net/bugs/1980846

When moving the mouse pointer down a list of machines, every time the pointer moves over the IP listed under a machine name, a tooltip opens that lists all the IPs associated with the machine.

This tooltip opens overtop everything underneath and:

1: opens immediately on mouseover with no delay to account for people moving the pointer down the list

2: does not close automatically *until the mouse is moved off the tooltip which disrupts the flow of movement

3: Depending on the number of IPs assigned can be quite long and can block access to multiple machine hyperlinks that are trapped underneath.

See the attached screenshot.

To resolve this, the tooltip should either

1: open to the side, not directly below

2: open after a short delay (maybe 250 or 500 ms?) to not block someone who is just moving the mouse down the list. (*IOW the behaviour should be on hover, not on mouseover). | 1.0 | IP Address tooltip on Machines page blocks access to everything underneath and doesnt disappear until mouse-off - Bug originally filed by bladernr at https://bugs.launchpad.net/bugs/1980846

When moving the mouse pointer down a list of machines, every time the pointer moves over the IP listed under a machine name, a tooltip opens that lists all the IPs associated with the machine.

This tooltip opens overtop everything underneath and:

1: opens immediately on mouseover with no delay to account for people moving the pointer down the list

2: does not close automatically *until the mouse is moved off the tooltip which disrupts the flow of movement

3: Depending on the number of IPs assigned can be quite long and can block access to multiple machine hyperlinks that are trapped underneath.

See the attached screenshot.

To resolve this, the tooltip should either

1: open to the side, not directly below

2: open after a short delay (maybe 250 or 500 ms?) to not block someone who is just moving the mouse down the list. (*IOW the behaviour should be on hover, not on mouseover). | priority | ip address tooltip on machines page blocks access to everything underneath and doesnt disappear until mouse off bug originally filed by bladernr at when moving the mouse pointer down a list of machines every time the pointer moves over the ip listed under a machine name a tooltip opens that lists all the ips associated with the machine this tooltip opens overtop everything underneath and opens immediately on mouseover with no delay to account for people moving the pointer down the list does not close automatically until the mouse is moved off the tooltip which disrupts the flow of movement depending on the number of ips assigned can be quite long and can block access to multiple machine hyperlinks that are trapped underneath see the attached screenshot to resolve this the tooltip should either open to the side not directly below open after a short delay maybe or ms to not block someone who is just moving the mouse down the list iow the behaviour should be on hover not on mouseover | 1 |

441,169 | 12,708,953,926 | IssuesEvent | 2020-06-23 11:29:07 | graknlabs/workbase | https://api.github.com/repos/graknlabs/workbase | closed | Inferred attributes are drawn multiple times because they have different IDs | priority: medium type: bug | ## Description

When querying for inferred concepts when get back nodes with randomly generated IDs.

In the case of inferred attributes we might receive 2 nodes that have the same value but different IDs, we should probably just show the attribute once.

## Reproducible Steps

Create a schema with a rule which infers an attribute and trying querying for it (probably need to fetch the same attribute using multiple queries so that the IDs of the inferred attribute will actually be different).

## Expected Output

1 attribute node with a given value

## Actual Output

2 nodes with the same type and value:

| 1.0 | Inferred attributes are drawn multiple times because they have different IDs - ## Description

When querying for inferred concepts when get back nodes with randomly generated IDs.

In the case of inferred attributes we might receive 2 nodes that have the same value but different IDs, we should probably just show the attribute once.

## Reproducible Steps

Create a schema with a rule which infers an attribute and trying querying for it (probably need to fetch the same attribute using multiple queries so that the IDs of the inferred attribute will actually be different).

## Expected Output

1 attribute node with a given value

## Actual Output

2 nodes with the same type and value:

| priority | inferred attributes are drawn multiple times because they have different ids description when querying for inferred concepts when get back nodes with randomly generated ids in the case of inferred attributes we might receive nodes that have the same value but different ids we should probably just show the attribute once reproducible steps create a schema with a rule which infers an attribute and trying querying for it probably need to fetch the same attribute using multiple queries so that the ids of the inferred attribute will actually be different expected output attribute node with a given value actual output nodes with the same type and value | 1 |

472,522 | 13,626,285,005 | IssuesEvent | 2020-09-24 10:47:07 | FAIRsharing/fairsharing.github.io | https://api.github.com/repos/FAIRsharing/fairsharing.github.io | closed | Request ownership button | Medium priority | If logged in but not a maintainer of a record then the "claim ownership" etc. button should be placed there instead.

Clicking the button should post to the maintenance_requests controller and disable the button on a successful request. | 1.0 | Request ownership button - If logged in but not a maintainer of a record then the "claim ownership" etc. button should be placed there instead.

Clicking the button should post to the maintenance_requests controller and disable the button on a successful request. | priority | request ownership button if logged in but not a maintainer of a record then the claim ownership etc button should be placed there instead clicking the button should post to the maintenance requests controller and disable the button on a successful request | 1 |

815,692 | 30,567,718,318 | IssuesEvent | 2023-07-20 19:10:26 | Loony4Logic/jamt | https://api.github.com/repos/Loony4Logic/jamt | closed | sending all logs | priority: medium | Send all logs on get request on `/logs`

it should send realtime data with previously stored logs using server side event.

> Feasibility is up for discussion! | 1.0 | sending all logs - Send all logs on get request on `/logs`

it should send realtime data with previously stored logs using server side event.

> Feasibility is up for discussion! | priority | sending all logs send all logs on get request on logs it should send realtime data with previously stored logs using server side event feasibility is up for discussion | 1 |

43,772 | 2,892,601,400 | IssuesEvent | 2015-06-15 13:55:43 | expath/xspec | https://api.github.com/repos/expath/xspec | closed | Move XSpec to GitHub | auto-migrated Priority-Medium Type-Other | ```

I suggest that XSpec should move from GoogleCode to GitHub before GoogleCode

closes:

http://google-opensource.blogspot.ie/2015/03/farewell-to-google-code.html

There's already two copies of XSpec on GitHub:

https://github.com/search?utf8=%E2%9C%93&q=code.google.com%2Fp%2Fxspec&type=Repo

sitories&ref=searchresults

I suggest that we make an 'XSpec' Organization on GitHub and migrate the code

there. The low overhead of accepting pull requests on GitHub may also mean

that we get more fixes from current non-committers for some of the outstanding

XSpec issues.

Regards,

Tony.

```

Original issue reported on code.google.com by `dev.xspec@menteithconsulting.com` on 6 Jun 2015 at 4:47 | 1.0 | Move XSpec to GitHub - ```

I suggest that XSpec should move from GoogleCode to GitHub before GoogleCode

closes:

http://google-opensource.blogspot.ie/2015/03/farewell-to-google-code.html

There's already two copies of XSpec on GitHub:

https://github.com/search?utf8=%E2%9C%93&q=code.google.com%2Fp%2Fxspec&type=Repo

sitories&ref=searchresults

I suggest that we make an 'XSpec' Organization on GitHub and migrate the code

there. The low overhead of accepting pull requests on GitHub may also mean

that we get more fixes from current non-committers for some of the outstanding

XSpec issues.

Regards,

Tony.

```

Original issue reported on code.google.com by `dev.xspec@menteithconsulting.com` on 6 Jun 2015 at 4:47 | priority | move xspec to github i suggest that xspec should move from googlecode to github before googlecode closes there s already two copies of xspec on github sitories ref searchresults i suggest that we make an xspec organization on github and migrate the code there the low overhead of accepting pull requests on github may also mean that we get more fixes from current non committers for some of the outstanding xspec issues regards tony original issue reported on code google com by dev xspec menteithconsulting com on jun at | 1 |

269,320 | 8,434,607,637 | IssuesEvent | 2018-10-17 10:41:43 | geosolutions-it/smb-app | https://api.github.com/repos/geosolutions-it/smb-app | opened | Notify user about invalid tracks | Priority: Medium ready | The very first FCM notification to be implemented is "track_validated". The payload will be initially used only to inform user that a track was rejected because of some issues (error message).

Later it will also be used to manage session items state, paving the road to session editing.

depends on #50 | 1.0 | Notify user about invalid tracks - The very first FCM notification to be implemented is "track_validated". The payload will be initially used only to inform user that a track was rejected because of some issues (error message).

Later it will also be used to manage session items state, paving the road to session editing.

depends on #50 | priority | notify user about invalid tracks the very first fcm notification to be implemented is track validated the payload will be initially used only to inform user that a track was rejected because of some issues error message later it will also be used to manage session items state paving the road to session editing depends on | 1 |

670,033 | 22,666,746,619 | IssuesEvent | 2022-07-03 02:04:03 | ZeNyfh/gigavibe-java-edition | https://api.github.com/repos/ZeNyfh/gigavibe-java-edition | opened | now playing embed broken with single tracks | bug Priority: High Medium | if the track that started playing has no track after it, it will not send a "now playing" embed.

this isnt an issue while queuing a single track and listening to it, but it is if there is more than 1 track queued.

| 1.0 | now playing embed broken with single tracks - if the track that started playing has no track after it, it will not send a "now playing" embed.

this isnt an issue while queuing a single track and listening to it, but it is if there is more than 1 track queued.

| priority | now playing embed broken with single tracks if the track that started playing has no track after it it will not send a now playing embed this isnt an issue while queuing a single track and listening to it but it is if there is more than track queued | 1 |

247,536 | 7,919,567,976 | IssuesEvent | 2018-07-04 17:35:50 | ubc/compair | https://api.github.com/repos/ubc/compair | closed | Make login text and CAS/SAML login buttons configurable | back end developer suggestion enhancement front end medium priority | Add environment variables to store the html for for the login text and CAS/SAML buttons

default value will be what we currently use | 1.0 | Make login text and CAS/SAML login buttons configurable - Add environment variables to store the html for for the login text and CAS/SAML buttons

default value will be what we currently use | priority | make login text and cas saml login buttons configurable add environment variables to store the html for for the login text and cas saml buttons default value will be what we currently use | 1 |

782,367 | 27,494,875,158 | IssuesEvent | 2023-03-05 02:32:57 | CreeperMagnet/the-creepers-code | https://api.github.com/repos/CreeperMagnet/the-creepers-code | opened | Positional anchors do not drop ender pearls when broken and filled | priority: medium | This was caused by the fix for #127. Can be fixed by adding an exception for this specific case. | 1.0 | Positional anchors do not drop ender pearls when broken and filled - This was caused by the fix for #127. Can be fixed by adding an exception for this specific case. | priority | positional anchors do not drop ender pearls when broken and filled this was caused by the fix for can be fixed by adding an exception for this specific case | 1 |

545,994 | 15,981,957,047 | IssuesEvent | 2021-04-18 01:06:31 | ProjectSidewalk/SidewalkWebpage | https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage | closed | Human avatar goes missing from the top-down map | Audit Priority: Medium bug in progress potential-intern-assignment | Reported by 4 CMSC434 users and several active users of the deployed app. One reported that it happened towards the end of a mission, but this might not be true for all users.

<img width="203" alt="screen shot 2016-10-07 at 5 44 58 pm" src="https://cloud.githubusercontent.com/assets/2873216/19206289/ce6bb176-8cb5-11e6-9421-dfb3d692ee0f.png">

| 1.0 | Human avatar goes missing from the top-down map - Reported by 4 CMSC434 users and several active users of the deployed app. One reported that it happened towards the end of a mission, but this might not be true for all users.

<img width="203" alt="screen shot 2016-10-07 at 5 44 58 pm" src="https://cloud.githubusercontent.com/assets/2873216/19206289/ce6bb176-8cb5-11e6-9421-dfb3d692ee0f.png">

| priority | human avatar goes missing from the top down map reported by users and several active users of the deployed app one reported that it happened towards the end of a mission but this might not be true for all users img width alt screen shot at pm src | 1 |

433,636 | 12,508,117,701 | IssuesEvent | 2020-06-02 15:05:25 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | RecurrenceEditor does not validate nor updates "End recurrence on" date | Bug C: Scheduler FP: In Development Kendo2 Next LIB Priority 3 SEV: Medium | ### Bug report

The Recurrence editor does not validate the [End recurrence on date](http://screencast.com/t/i4bLFndgAo). When the date in the EndOn DatePicker is invalid, a javascript error is thrown.

### Reproduction of the problem

1. Go to https://demos.telerik.com/kendo-ui/scheduler/index

1. Start creating event;

1. Set recurrence to Daily;

1. Select **End: On** option

### Current behavior

- When you set invalid date in the "End recurrence On" date picker, e.g. 60/11/2013, a javascript error is thrown: `"Uncaught TypeError: Cannot read property 'getFullYear' of null";`

- When you try to save the event with the invalid date for "End recurrence On" date picker, the popup is closed as if the event is created successfully, but it is not created or not created correctly;

Here is a video of the issue: http://screencast.com/t/2uBH4dxLyH

### Expected/desired behavior

The Recurrence date picker should not throw error and should validate as the Start and End DateTime pickers. Similar to this fixed issue: https://github.com/telerik/kendo-ui-core/issues/3846

### Environment

* **Kendo UI version:** 2019.2.619

* **Browser:** all | 1.0 | RecurrenceEditor does not validate nor updates "End recurrence on" date - ### Bug report

The Recurrence editor does not validate the [End recurrence on date](http://screencast.com/t/i4bLFndgAo). When the date in the EndOn DatePicker is invalid, a javascript error is thrown.

### Reproduction of the problem

1. Go to https://demos.telerik.com/kendo-ui/scheduler/index

1. Start creating event;

1. Set recurrence to Daily;

1. Select **End: On** option

### Current behavior

- When you set invalid date in the "End recurrence On" date picker, e.g. 60/11/2013, a javascript error is thrown: `"Uncaught TypeError: Cannot read property 'getFullYear' of null";`

- When you try to save the event with the invalid date for "End recurrence On" date picker, the popup is closed as if the event is created successfully, but it is not created or not created correctly;

Here is a video of the issue: http://screencast.com/t/2uBH4dxLyH

### Expected/desired behavior

The Recurrence date picker should not throw error and should validate as the Start and End DateTime pickers. Similar to this fixed issue: https://github.com/telerik/kendo-ui-core/issues/3846

### Environment

* **Kendo UI version:** 2019.2.619

* **Browser:** all | priority | recurrenceeditor does not validate nor updates end recurrence on date bug report the recurrence editor does not validate the when the date in the endon datepicker is invalid a javascript error is thrown reproduction of the problem go to start creating event set recurrence to daily select end on option current behavior when you set invalid date in the end recurrence on date picker e g a javascript error is thrown uncaught typeerror cannot read property getfullyear of null when you try to save the event with the invalid date for end recurrence on date picker the popup is closed as if the event is created successfully but it is not created or not created correctly here is a video of the issue expected desired behavior the recurrence date picker should not throw error and should validate as the start and end datetime pickers similar to this fixed issue environment kendo ui version browser all | 1 |

143,933 | 5,532,928,569 | IssuesEvent | 2017-03-21 12:00:01 | LikeMyBread/Saylua | https://api.github.com/repos/LikeMyBread/Saylua | closed | Write basic Javascript tests for Dungeons | Medium Priority | Created to help narrow the scope of #12.

Check for basic render / paint issues. | 1.0 | Write basic Javascript tests for Dungeons - Created to help narrow the scope of #12.

Check for basic render / paint issues. | priority | write basic javascript tests for dungeons created to help narrow the scope of check for basic render paint issues | 1 |

461,229 | 13,226,867,296 | IssuesEvent | 2020-08-18 01:18:42 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | odo watch does not tell me the err on devfile validation failure | area/devfile kind/bug priority/Medium | /kind bug

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

**Operating System:**

**Output of `odo version`:** master

## How did you run odo exactly?

`odo watch` but I had two default commands. It errors out but doesn't tell me what the error is and if I am newbie I wouldn't be able to figure out what the issue was

## Any logs, error output, etc?

```

$ odo watch

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

File /Users/maysun/dev/redhat/resources/springboot-ex/src/main/java/application/rest/v1/Example.java changed

Pushing files...

Validation

✗ Validating the devfile [54323ns]

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

File /Users/maysun/dev/redhat/resources/springboot-ex/src/main/java/application/rest/v1/Example.java changed

Pushing files...

Validation

✗ Validating the devfile [61381ns]

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

```

| 1.0 | odo watch does not tell me the err on devfile validation failure - /kind bug

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

**Operating System:**

**Output of `odo version`:** master

## How did you run odo exactly?

`odo watch` but I had two default commands. It errors out but doesn't tell me what the error is and if I am newbie I wouldn't be able to figure out what the issue was

## Any logs, error output, etc?

```

$ odo watch

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

File /Users/maysun/dev/redhat/resources/springboot-ex/src/main/java/application/rest/v1/Example.java changed

Pushing files...

Validation

✗ Validating the devfile [54323ns]

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

File /Users/maysun/dev/redhat/resources/springboot-ex/src/main/java/application/rest/v1/Example.java changed

Pushing files...

Validation

✗ Validating the devfile [61381ns]

Waiting for something to change in /Users/maysun/dev/redhat/resources/springboot-ex

```

| priority | odo watch does not tell me the err on devfile validation failure kind bug welcome we kindly ask you to fill out the issue template below use the google group if you have a question rather than a bug or feature request the group is at thanks for understanding and for contributing to the project what versions of software are you using operating system output of odo version master how did you run odo exactly odo watch but i had two default commands it errors out but doesn t tell me what the error is and if i am newbie i wouldn t be able to figure out what the issue was any logs error output etc odo watch waiting for something to change in users maysun dev redhat resources springboot ex file users maysun dev redhat resources springboot ex src main java application rest example java changed pushing files validation ✗ validating the devfile waiting for something to change in users maysun dev redhat resources springboot ex file users maysun dev redhat resources springboot ex src main java application rest example java changed pushing files validation ✗ validating the devfile waiting for something to change in users maysun dev redhat resources springboot ex | 1 |

206,444 | 7,112,385,973 | IssuesEvent | 2018-01-17 16:52:18 | marklogic-community/data-explorer | https://api.github.com/repos/marklogic-community/data-explorer | opened | FERR-30 - Pull all of the docTypes from a database to use for creation of queries | Component - UI Config JIRA Migration Priority - Medium Type - Enhancement | **Original Reporter:** Greg Meddles

**Created:** 22/Jun/17 3:22 PM

# Description

As a configuration user, I would like to see a list of all of the document types that are available to me (based on security) so that I can immediately jump into creating queries and views based on the document in my databases | 1.0 | FERR-30 - Pull all of the docTypes from a database to use for creation of queries - **Original Reporter:** Greg Meddles

**Created:** 22/Jun/17 3:22 PM

# Description

As a configuration user, I would like to see a list of all of the document types that are available to me (based on security) so that I can immediately jump into creating queries and views based on the document in my databases | priority | ferr pull all of the doctypes from a database to use for creation of queries original reporter greg meddles created jun pm description as a configuration user i would like to see a list of all of the document types that are available to me based on security so that i can immediately jump into creating queries and views based on the document in my databases | 1 |

56,807 | 3,081,190,896 | IssuesEvent | 2015-08-22 13:25:25 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Двойной набор звёздочек перед сообщением в ЛС | bug imported Priority-Medium | _From [toss.Alexey](https://code.google.com/u/toss.Alexey/) on November 14, 2012 22:03:09_

Двойной набор звёздочек перед сообщениями в ЛС о приходе/уходе пользователя

[00:59:40] *** *** Пользователь ушёл [OpChat - dchub://127.0.0.1:411] ***

[00:59:42] *** *** Пользователь пришёл [OpChat - hub] ***

11947

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=854_ | 1.0 | Двойной набор звёздочек перед сообщением в ЛС - _From [toss.Alexey](https://code.google.com/u/toss.Alexey/) on November 14, 2012 22:03:09_

Двойной набор звёздочек перед сообщениями в ЛС о приходе/уходе пользователя

[00:59:40] *** *** Пользователь ушёл [OpChat - dchub://127.0.0.1:411] ***

[00:59:42] *** *** Пользователь пришёл [OpChat - hub] ***

11947

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=854_ | priority | двойной набор звёздочек перед сообщением в лс from on november двойной набор звёздочек перед сообщениями в лс о приходе уходе пользователя пользователь ушёл пользователь пришёл original issue | 1 |

25,563 | 2,683,844,301 | IssuesEvent | 2015-03-28 11:28:42 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | PictureView mod15/15a/15b - проблемы загрузки плагина при выводе через DirectX | 2–5 stars bug imported Priority-Medium | _From [victo...@mail333.com](https://code.google.com/u/114732384912597087095/) on October 29, 2009 12:17:01_

Версия ОС: XP SP3

Версия FAR: 2.0.1187

При настройке вывода через DirectX плагин судя по наблюдению в Process

Explorer не загружается, более того, происходит видимо его аварийное

завершение на форматах Post Script и DjVu. При выводе через средства GDI+

явление не воспроизводится. Точной причины пока назвать не могу,

предполагаю ошибку в DX.pdv

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=122_ | 1.0 | PictureView mod15/15a/15b - проблемы загрузки плагина при выводе через DirectX - _From [victo...@mail333.com](https://code.google.com/u/114732384912597087095/) on October 29, 2009 12:17:01_

Версия ОС: XP SP3

Версия FAR: 2.0.1187

При настройке вывода через DirectX плагин судя по наблюдению в Process

Explorer не загружается, более того, происходит видимо его аварийное

завершение на форматах Post Script и DjVu. При выводе через средства GDI+

явление не воспроизводится. Точной причины пока назвать не могу,

предполагаю ошибку в DX.pdv

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=122_ | priority | pictureview проблемы загрузки плагина при выводе через directx from on october версия ос xp версия far при настройке вывода через directx плагин судя по наблюдению в process explorer не загружается более того происходит видимо его аварийное завершение на форматах post script и djvu при выводе через средства gdi явление не воспроизводится точной причины пока назвать не могу предполагаю ошибку в dx pdv original issue | 1 |

613,802 | 19,098,956,179 | IssuesEvent | 2021-11-29 19:59:02 | WordPress/learn | https://api.github.com/repos/WordPress/learn | opened | Add workshop filter for WordPress version | [Type] Enhancement [Component] Learn Theme [Component] Learn Plugin [Priority] Medium | We have the `wporg_wp_version` taxonomy in the dashboard so we can tag content for specific versions of WordPress and it would be great if we could add this to the workshop filters so that people could find content that is version-specific.

If we could have this live before the release of 5.9 then it can be used in the about page and announcement posts and we can link directly to the filtered content. | 1.0 | Add workshop filter for WordPress version - We have the `wporg_wp_version` taxonomy in the dashboard so we can tag content for specific versions of WordPress and it would be great if we could add this to the workshop filters so that people could find content that is version-specific.

If we could have this live before the release of 5.9 then it can be used in the about page and announcement posts and we can link directly to the filtered content. | priority | add workshop filter for wordpress version we have the wporg wp version taxonomy in the dashboard so we can tag content for specific versions of wordpress and it would be great if we could add this to the workshop filters so that people could find content that is version specific if we could have this live before the release of then it can be used in the about page and announcement posts and we can link directly to the filtered content | 1 |

593,861 | 18,018,675,091 | IssuesEvent | 2021-09-16 16:32:41 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | opened | [GM-VV] (MARLO) Deliverable Status Adjustments | Priority - Medium Type -Task | Deliverable Status Adjustments

- [ ] Fix deliverableMetadata wrong casting on equals() method.

- [ ] Limit deliverables to only display the ones marked as "Complete".

- [ ] Fix validations for Deliverable dissemination, already disseminated and open access fields.

- [ ] Add validations for Deliverable status, Year of expected completion and New expected year of completion:

- [ ] When a Deliverable Year of expected completion is less than 2021, it will have enabled the field New expected year of completion, if not this field will be disabled and not visible.

- [ ] When a Year Phase is less than 2021, the Deliverable status options will be disabled, if not then all options are going to be enables except "Extended".

**Move to Closed when:** Moved to Dev.

| 1.0 | [GM-VV] (MARLO) Deliverable Status Adjustments - Deliverable Status Adjustments

- [ ] Fix deliverableMetadata wrong casting on equals() method.

- [ ] Limit deliverables to only display the ones marked as "Complete".

- [ ] Fix validations for Deliverable dissemination, already disseminated and open access fields.

- [ ] Add validations for Deliverable status, Year of expected completion and New expected year of completion:

- [ ] When a Deliverable Year of expected completion is less than 2021, it will have enabled the field New expected year of completion, if not this field will be disabled and not visible.

- [ ] When a Year Phase is less than 2021, the Deliverable status options will be disabled, if not then all options are going to be enables except "Extended".

**Move to Closed when:** Moved to Dev.

| priority | marlo deliverable status adjustments deliverable status adjustments fix deliverablemetadata wrong casting on equals method limit deliverables to only display the ones marked as complete fix validations for deliverable dissemination already disseminated and open access fields add validations for deliverable status year of expected completion and new expected year of completion when a deliverable year of expected completion is less than it will have enabled the field new expected year of completion if not this field will be disabled and not visible when a year phase is less than the deliverable status options will be disabled if not then all options are going to be enables except extended move to closed when moved to dev | 1 |

738,047 | 25,543,036,822 | IssuesEvent | 2022-11-29 16:35:51 | Heroic-Games-Launcher/HeroicGamesLauncher | https://api.github.com/repos/Heroic-Games-Launcher/HeroicGamesLauncher | opened | [macOS] Add support for Wineskin and Wine-crossover as well as macOS DXVK installation | feature request macOS medium-priority | ### Problem description

Heroic currently only works with Crossover, although provides great compatibility, is a paid software.

After some investigation, it seems that is possible to use other tools to play Windows games on macOS like wine-crossover (Catalina+ only though) and WineSkin.

### Feature description

- Auto-detect Wine-crossover and maybe other wine versions installed globally on macOS.

- Auto-detect WineSkin Wrappers (bottles) and make them available to select into Heroic.

- Gives the ability to install dxvk-macOS if Wine type is different than Crossover.

### Alternatives

_No response_

### Additional information

Some of the investigation is registered on this issue on WineSkin github with some information on how to use those tools in Heroic:

https://github.com/Gcenx/WineskinServer/issues/329investigations | 1.0 | [macOS] Add support for Wineskin and Wine-crossover as well as macOS DXVK installation - ### Problem description

Heroic currently only works with Crossover, although provides great compatibility, is a paid software.

After some investigation, it seems that is possible to use other tools to play Windows games on macOS like wine-crossover (Catalina+ only though) and WineSkin.

### Feature description

- Auto-detect Wine-crossover and maybe other wine versions installed globally on macOS.

- Auto-detect WineSkin Wrappers (bottles) and make them available to select into Heroic.

- Gives the ability to install dxvk-macOS if Wine type is different than Crossover.

### Alternatives

_No response_

### Additional information

Some of the investigation is registered on this issue on WineSkin github with some information on how to use those tools in Heroic:

https://github.com/Gcenx/WineskinServer/issues/329investigations | priority | add support for wineskin and wine crossover as well as macos dxvk installation problem description heroic currently only works with crossover although provides great compatibility is a paid software after some investigation it seems that is possible to use other tools to play windows games on macos like wine crossover catalina only though and wineskin feature description auto detect wine crossover and maybe other wine versions installed globally on macos auto detect wineskin wrappers bottles and make them available to select into heroic gives the ability to install dxvk macos if wine type is different than crossover alternatives no response additional information some of the investigation is registered on this issue on wineskin github with some information on how to use those tools in heroic | 1 |

137,086 | 5,293,752,400 | IssuesEvent | 2017-02-09 08:43:22 | hpi-swt2/workshop-portal | https://api.github.com/repos/hpi-swt2/workshop-portal | closed | Anmerkungen anderer Coaches auf Übersicht durch Icon anzeigen | Medium Priority needs review team-helene | Als Organizer möchte ich bei der Auswahl der Teilnehmer die Anmerkungen anderer Coaches, wenn es welche gibt, auf der Bewerberübersichts-Seite durch ein Icon angezeigt bekommen. (Einfach rechts neben "Details"). Beim Rüberhalten der Maus soll von der Bemerkung 10 Wörter angezeigt werden und dann ein "..." .

Das Icon kann so ähnlich wie dieses aussehen:

| 1.0 | Anmerkungen anderer Coaches auf Übersicht durch Icon anzeigen - Als Organizer möchte ich bei der Auswahl der Teilnehmer die Anmerkungen anderer Coaches, wenn es welche gibt, auf der Bewerberübersichts-Seite durch ein Icon angezeigt bekommen. (Einfach rechts neben "Details"). Beim Rüberhalten der Maus soll von der Bemerkung 10 Wörter angezeigt werden und dann ein "..." .

Das Icon kann so ähnlich wie dieses aussehen: