Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

804,211 | 29,479,297,472 | IssuesEvent | 2023-06-02 03:06:34 | returntocorp/semgrep | https://api.github.com/repos/returntocorp/semgrep | closed | aliengrep: add an option for caseless matching | priority:medium lang:aliengrep | **Is your feature request related to a problem? Please describe.**

Caseless matching allows matching case-insensitive languages like HTML and derivatives, HTTP headers, English text, etc. It is possible and easy to implement in aliengrep thanks to PCRE having options for case-insensitive matching.

**Describe the solution you'd like**

Support a new option in the `options` section of Semgrep rules:

```

options:

generic_caseless: true

```

| 1.0 | aliengrep: add an option for caseless matching - **Is your feature request related to a problem? Please describe.**

Caseless matching allows matching case-insensitive languages like HTML and derivatives, HTTP headers, English text, etc. It is possible and easy to implement in aliengrep thanks to PCRE having options for case-insensitive matching.

**Describe the solution you'd like**

Support a new option in the `options` section of Semgrep rules:

```

options:

generic_caseless: true

```

| priority | aliengrep add an option for caseless matching is your feature request related to a problem please describe caseless matching allows matching case insensitive languages like html and derivatives http headers english text etc it is possible and easy to implement in aliengrep thanks to pcre having options for case insensitive matching describe the solution you d like support a new option in the options section of semgrep rules options generic caseless true | 1 |

474,598 | 13,672,430,792 | IssuesEvent | 2020-09-29 08:29:43 | bcgov/ols-router | https://api.github.com/repos/bcgov/ols-router | closed | Create a Route Planner Sandbox application | Route Planner Sandbox enhancement estimate needed ferries functional route planner medium priority restriction-aware routing road events task time-dependent routing truck routing turn costs | Route Planner Sandbox is an application that lets you interactively test the Route Planner API. It can be used to create and update test and benchmark routes stored in a web-accessible document.

RPS will also let you study the effects of route planner API changes on the ability to effectively work out commercial vehicle routes. RPS may also be useful as a rapid prototyping environment in permit application requirements analysis.

RPS will have no user authentication or access control and will have a disclaimer that it is not to be used for actual commercial vehicle routing.

Initially, RPS will be used to interactively create and edit test and benchmark routes for the TransLink Commercial Vehicle Route Planner.

Areas of study include:

* ways to use local knowledge of the road network to improve the route planner and/or create tailored, but sub-optimal wrt time/distance, routes.

* effectively assisting permit clerks in search of efficient routes that involve manual intervention such as counterflow maneuvers (e.g., having flag people close a section of road to allow the vehicle to travel down the middle)

* supporting designated truck route and oversize corridor design | 1.0 | Create a Route Planner Sandbox application - Route Planner Sandbox is an application that lets you interactively test the Route Planner API. It can be used to create and update test and benchmark routes stored in a web-accessible document.

RPS will also let you study the effects of route planner API changes on the ability to effectively work out commercial vehicle routes. RPS may also be useful as a rapid prototyping environment in permit application requirements analysis.

RPS will have no user authentication or access control and will have a disclaimer that it is not to be used for actual commercial vehicle routing.

Initially, RPS will be used to interactively create and edit test and benchmark routes for the TransLink Commercial Vehicle Route Planner.

Areas of study include:

* ways to use local knowledge of the road network to improve the route planner and/or create tailored, but sub-optimal wrt time/distance, routes.

* effectively assisting permit clerks in search of efficient routes that involve manual intervention such as counterflow maneuvers (e.g., having flag people close a section of road to allow the vehicle to travel down the middle)

* supporting designated truck route and oversize corridor design | priority | create a route planner sandbox application route planner sandbox is an application that lets you interactively test the route planner api it can be used to create and update test and benchmark routes stored in a web accessible document rps will also let you study the effects of route planner api changes on the ability to effectively work out commercial vehicle routes rps may also be useful as a rapid prototyping environment in permit application requirements analysis rps will have no user authentication or access control and will have a disclaimer that it is not to be used for actual commercial vehicle routing initially rps will be used to interactively create and edit test and benchmark routes for the translink commercial vehicle route planner areas of study include ways to use local knowledge of the road network to improve the route planner and or create tailored but sub optimal wrt time distance routes effectively assisting permit clerks in search of efficient routes that involve manual intervention such as counterflow maneuvers e g having flag people close a section of road to allow the vehicle to travel down the middle supporting designated truck route and oversize corridor design | 1 |

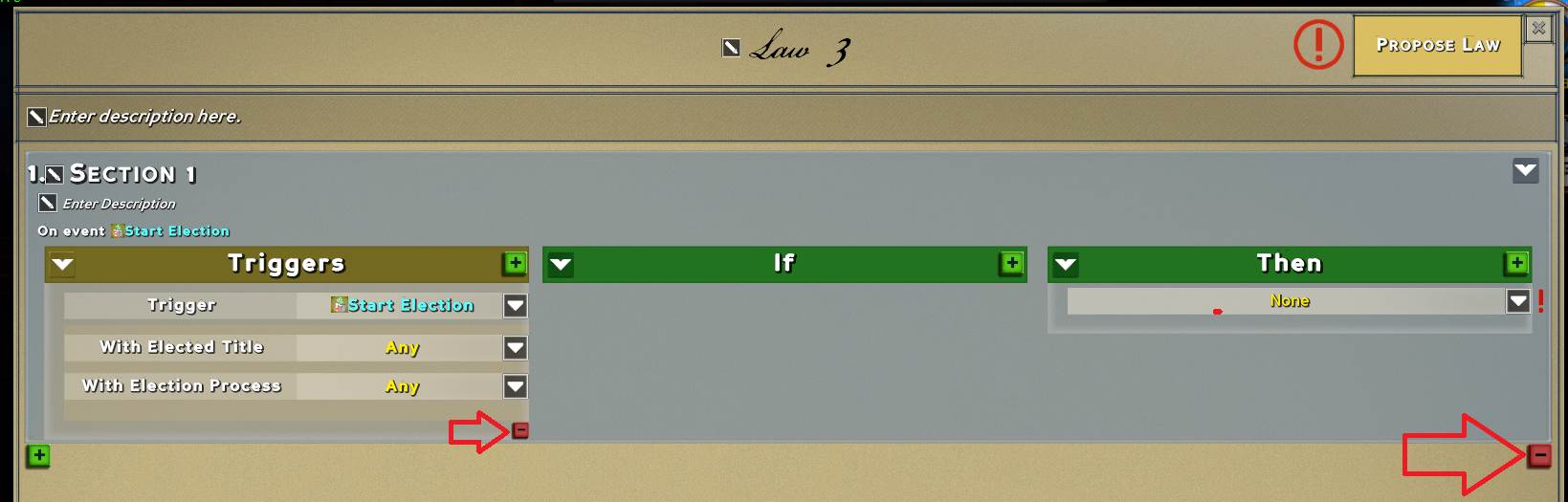

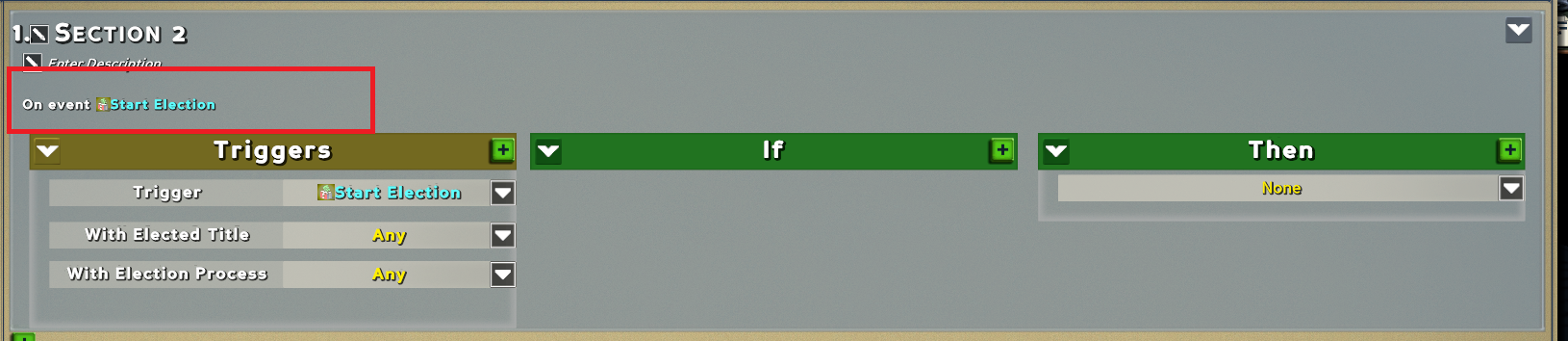

460,763 | 13,217,876,310 | IssuesEvent | 2020-08-17 07:41:37 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1693] Part of UI isn't updated immediately | Category: UI Priority: Medium Status: Fixed |

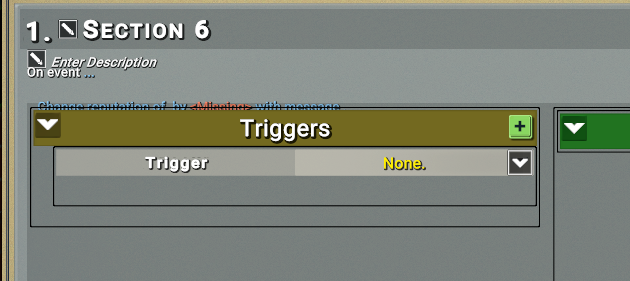

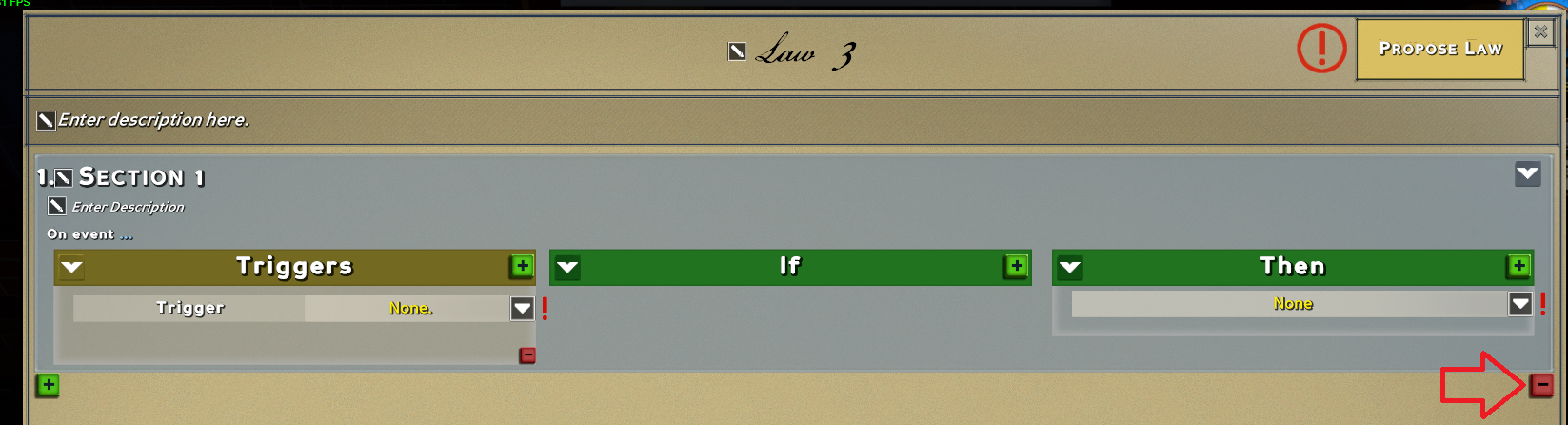

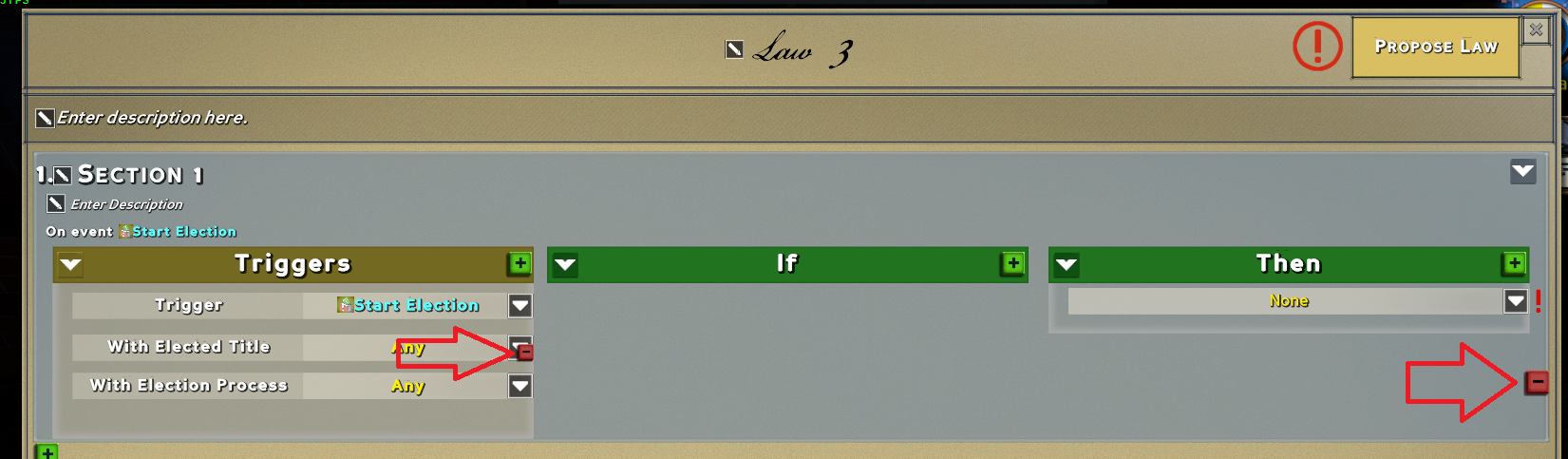

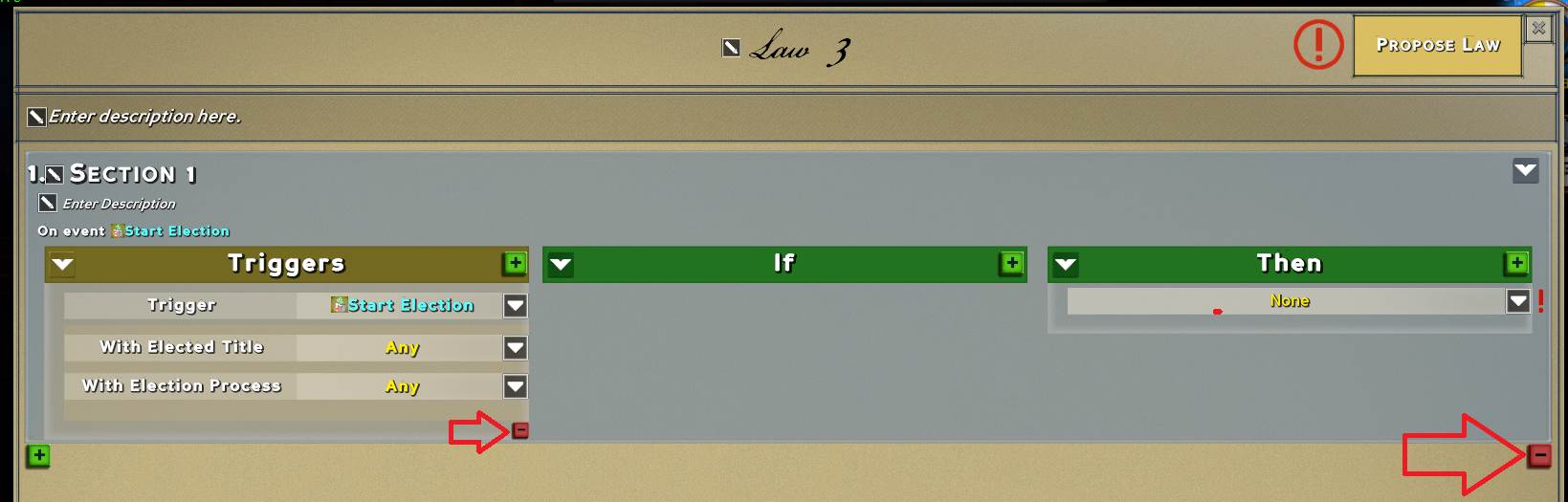

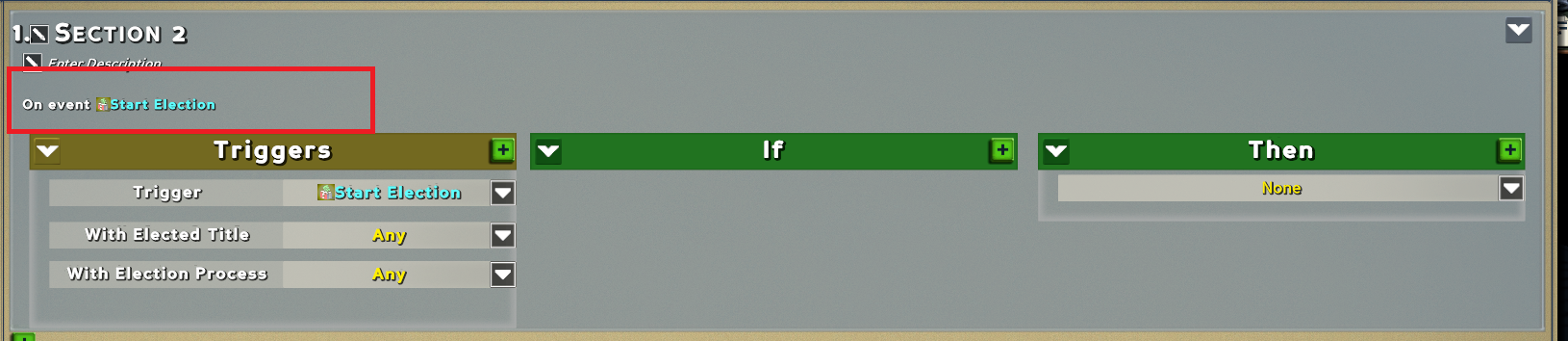

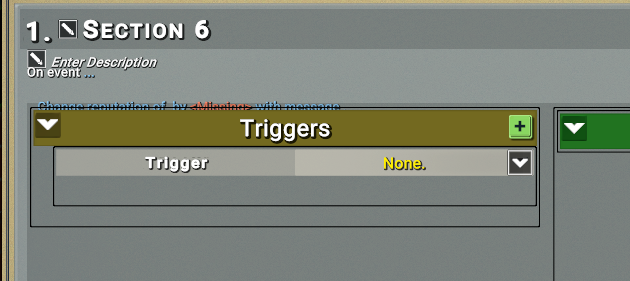

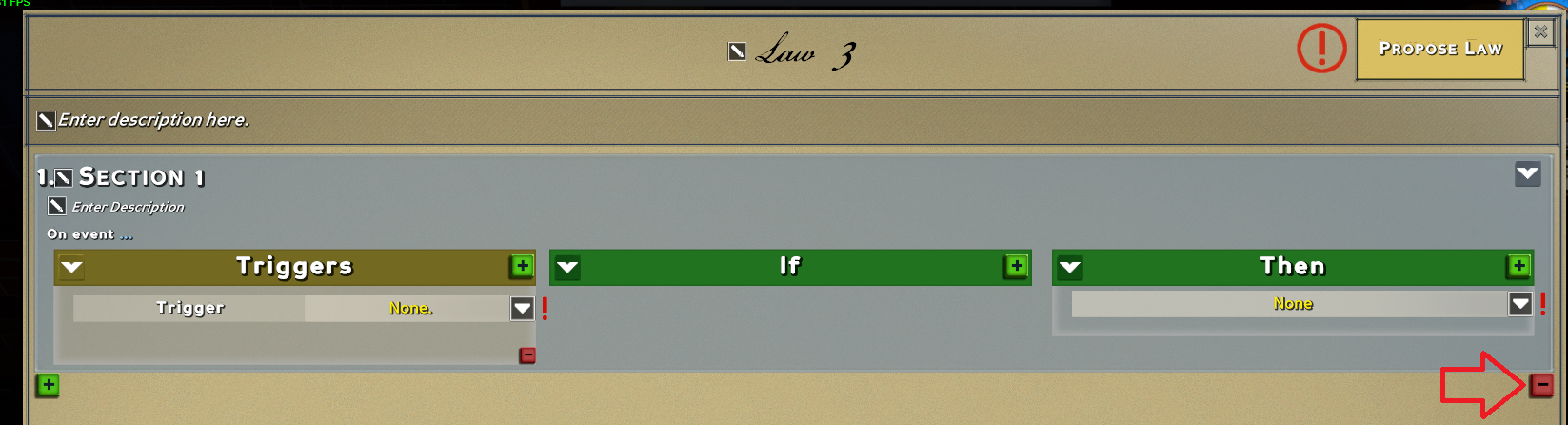

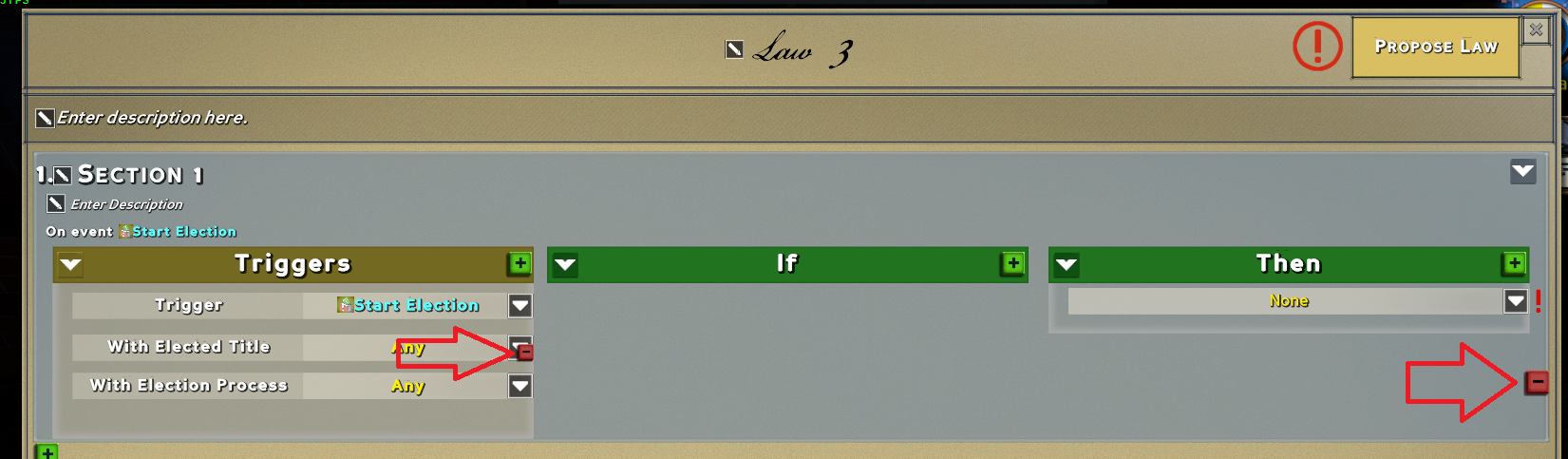

> * 5. some elements in law don't upgrade if you add/delete something. you need to select fields or do something else. Step to reproduce:

> * start a new law:

>

> * add some trigger:

>

> * reselect trigger and this section:

>

> * add some condition, we have overlap here:

>

> * selecting something else will fix it:

>

> * but if you delete this condition then you will have a lot of space here:

>

_Originally posted by @SlayksWood in https://github.com/StrangeLoopGames/EcoIssues/issues/16954#issuecomment-652828277_ | 1.0 | [0.9.0 staging-1693] Part of UI isn't updated immediately -

> * 5. some elements in law don't upgrade if you add/delete something. you need to select fields or do something else. Step to reproduce:

> * start a new law:

>

> * add some trigger:

>

> * reselect trigger and this section:

>

> * add some condition, we have overlap here:

>

> * selecting something else will fix it:

>

> * but if you delete this condition then you will have a lot of space here:

>

_Originally posted by @SlayksWood in https://github.com/StrangeLoopGames/EcoIssues/issues/16954#issuecomment-652828277_ | priority | part of ui isn t updated immediately some elements in law don t upgrade if you add delete something you need to select fields or do something else step to reproduce start a new law add some trigger reselect trigger and this section add some condition we have overlap here selecting something else will fix it but if you delete this condition then you will have a lot of space here originally posted by slaykswood in | 1 |

370,221 | 10,927,010,075 | IssuesEvent | 2019-11-22 15:49:47 | she-code-africa/SCA-Website | https://api.github.com/repos/she-code-africa/SCA-Website | closed | Set up staging environment | priority: medium type: task | This is to deploy the website for test purposes and also the environment we will use for test continuously.

We are yet to decide on a hosting provider. Maybe Heroku? | 1.0 | Set up staging environment - This is to deploy the website for test purposes and also the environment we will use for test continuously.

We are yet to decide on a hosting provider. Maybe Heroku? | priority | set up staging environment this is to deploy the website for test purposes and also the environment we will use for test continuously we are yet to decide on a hosting provider maybe heroku | 1 |

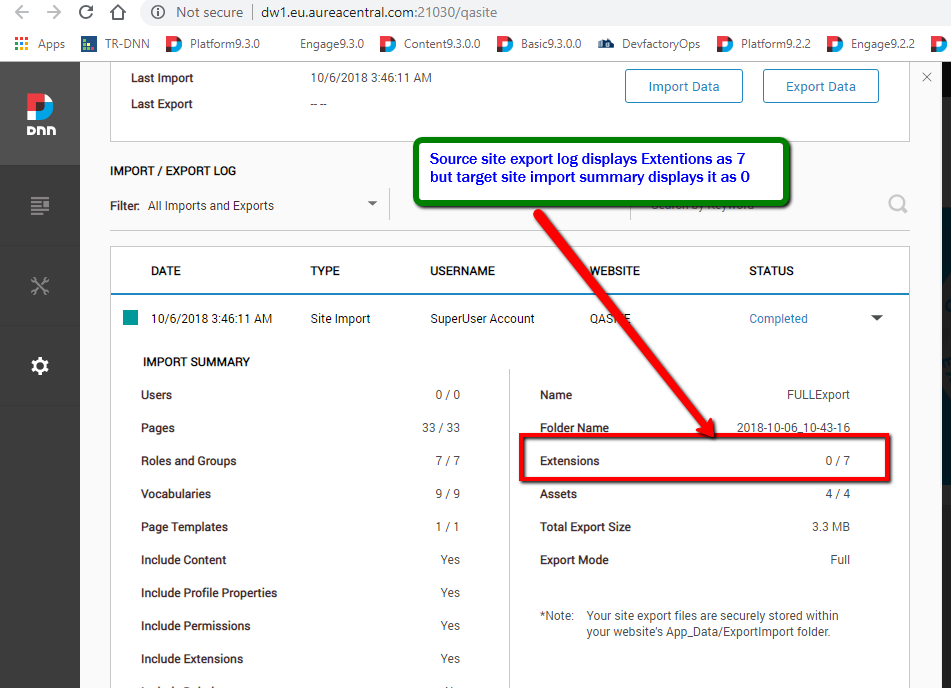

381,923 | 11,297,925,960 | IssuesEvent | 2020-01-17 07:38:45 | dnnsoftware/Dnn.Platform | https://api.github.com/repos/dnnsoftware/Dnn.Platform | closed | Processed count for import extension is showing 0 after import site is completed | Area: AE > PersonaBar Ext > SiteImportExport.Web Effort: Medium Priority: Medium Status: Closed Type: Bug | <!--

If you need community support or would like to solicit a Request for Comments (RFC), please post to the DNN Community forums at https://dnncommunity.org/forums for now. In the future, we are planning to implement a more robust solution for cultivating new ideas and nuturing these from concept to creation. We will update this template when this solution is generally available. In the meantime, we appreciate your patience as we endeavor to streamline our GitHub focus and efforts.

Please read the CONTRIBUTING guidelines at https://github.com/dnnsoftware/Dnn.Platform/blob/development/CONTRIBUTING.md prior to submitting an issue.

Any potential security issues SHOULD NOT be posted on GitHub. Instead, please send an email to security@dnnsoftware.com.

-->

## Description of bug

Processed count for import extension is showing 0 after import site is completed

## Steps to reproduce

1. Login as host user into DNN Platform.

2. Go to Persona Bar > Settings > Import/Export

3. Select the default site and export it with all the options enabled and mode FULL. Check the no of extension, no of Pages & No of Assets displayed on the export summary report.

4. Login to new site, Go to Persona Bar > Settings > Import/Export

5. Import the Exported file into new site.

6. Check the successful import summary.

## Current behavior

Extensions is showing 0/7 after import is completed

## Expected behavior

Extensions should show 7/7 after import is completed.

## Screenshots

## Error information

Provide any error information (console errors, error logs, etc.) related to this bug.

## Additional context

The processed count show always show the number of item processed even import extension doesn't happened.

## Affected version

<!--

Please add X in at least one of the boxes as appropriate. In order for an issue to be accepted, a developer needs to be able to reproduce the issue on a currently supported version. If you are looking for a workaround for an issue with an older version, please visit the forums at https://dnncommunity.org/forums

-->

* [x] 10.0.0 alpha build

* [x] 9.5.0 alpha build

* [x] 9.4.4 latest supported release

## Affected browser

<!--

Check all that apply, and add more if necessary. As appropriate, please specify the exact version(s) of the browser and operating system.

-->

* [x] Chrome

* [x] Firefox

* [x] Safari

* [x] Internet Explorer 11

* [x] Microsoft Edge (Classic)

* [x] Microsoft Edge Chromium

| 1.0 | Processed count for import extension is showing 0 after import site is completed - <!--

If you need community support or would like to solicit a Request for Comments (RFC), please post to the DNN Community forums at https://dnncommunity.org/forums for now. In the future, we are planning to implement a more robust solution for cultivating new ideas and nuturing these from concept to creation. We will update this template when this solution is generally available. In the meantime, we appreciate your patience as we endeavor to streamline our GitHub focus and efforts.

Please read the CONTRIBUTING guidelines at https://github.com/dnnsoftware/Dnn.Platform/blob/development/CONTRIBUTING.md prior to submitting an issue.

Any potential security issues SHOULD NOT be posted on GitHub. Instead, please send an email to security@dnnsoftware.com.

-->

## Description of bug

Processed count for import extension is showing 0 after import site is completed

## Steps to reproduce

1. Login as host user into DNN Platform.

2. Go to Persona Bar > Settings > Import/Export

3. Select the default site and export it with all the options enabled and mode FULL. Check the no of extension, no of Pages & No of Assets displayed on the export summary report.

4. Login to new site, Go to Persona Bar > Settings > Import/Export

5. Import the Exported file into new site.

6. Check the successful import summary.

## Current behavior

Extensions is showing 0/7 after import is completed

## Expected behavior

Extensions should show 7/7 after import is completed.

## Screenshots

## Error information

Provide any error information (console errors, error logs, etc.) related to this bug.

## Additional context

The processed count show always show the number of item processed even import extension doesn't happened.

## Affected version

<!--

Please add X in at least one of the boxes as appropriate. In order for an issue to be accepted, a developer needs to be able to reproduce the issue on a currently supported version. If you are looking for a workaround for an issue with an older version, please visit the forums at https://dnncommunity.org/forums

-->

* [x] 10.0.0 alpha build

* [x] 9.5.0 alpha build

* [x] 9.4.4 latest supported release

## Affected browser

<!--

Check all that apply, and add more if necessary. As appropriate, please specify the exact version(s) of the browser and operating system.

-->

* [x] Chrome

* [x] Firefox

* [x] Safari

* [x] Internet Explorer 11

* [x] Microsoft Edge (Classic)

* [x] Microsoft Edge Chromium

| priority | processed count for import extension is showing after import site is completed if you need community support or would like to solicit a request for comments rfc please post to the dnn community forums at for now in the future we are planning to implement a more robust solution for cultivating new ideas and nuturing these from concept to creation we will update this template when this solution is generally available in the meantime we appreciate your patience as we endeavor to streamline our github focus and efforts please read the contributing guidelines at prior to submitting an issue any potential security issues should not be posted on github instead please send an email to security dnnsoftware com description of bug processed count for import extension is showing after import site is completed steps to reproduce login as host user into dnn platform go to persona bar settings import export select the default site and export it with all the options enabled and mode full check the no of extension no of pages no of assets displayed on the export summary report login to new site go to persona bar settings import export import the exported file into new site check the successful import summary current behavior extensions is showing after import is completed expected behavior extensions should show after import is completed screenshots error information provide any error information console errors error logs etc related to this bug additional context the processed count show always show the number of item processed even import extension doesn t happened affected version please add x in at least one of the boxes as appropriate in order for an issue to be accepted a developer needs to be able to reproduce the issue on a currently supported version if you are looking for a workaround for an issue with an older version please visit the forums at alpha build alpha build latest supported release affected browser check all that apply and add more if necessary as appropriate please specify the exact version s of the browser and operating system chrome firefox safari internet explorer microsoft edge classic microsoft edge chromium | 1 |

766,420 | 26,883,091,369 | IssuesEvent | 2023-02-05 21:35:49 | schemathesis/schemathesis | https://api.github.com/repos/schemathesis/schemathesis | closed | [FEATURE] Do not copy schema components that are not referenced | Priority: Medium Type: Enhancement Difficulty: Medium | Now, all components are copied to intermediate schemas passed to `hypothesis-jsonschema` so all references can be resolved. The problem is that if the schema is huge, it might be problematic in terms of performance (a lot of things are serailized to JSON in `hypothesis-jsonschema`) or storage (if the end-user stores all failures). The idea is to copy only components that can be reached from the intermediate schema. | 1.0 | [FEATURE] Do not copy schema components that are not referenced - Now, all components are copied to intermediate schemas passed to `hypothesis-jsonschema` so all references can be resolved. The problem is that if the schema is huge, it might be problematic in terms of performance (a lot of things are serailized to JSON in `hypothesis-jsonschema`) or storage (if the end-user stores all failures). The idea is to copy only components that can be reached from the intermediate schema. | priority | do not copy schema components that are not referenced now all components are copied to intermediate schemas passed to hypothesis jsonschema so all references can be resolved the problem is that if the schema is huge it might be problematic in terms of performance a lot of things are serailized to json in hypothesis jsonschema or storage if the end user stores all failures the idea is to copy only components that can be reached from the intermediate schema | 1 |

469,054 | 13,496,999,422 | IssuesEvent | 2020-09-12 05:44:14 | csesoc/csesoc.unsw.edu.au | https://api.github.com/repos/csesoc/csesoc.unsw.edu.au | opened | Adoption of WEBP format | Priority: Medium Type: Enhancement | We should look into using webp files for our static website images instead of png files. The main reason for this switch are:

- Smaller size

- Faster loading

Source https://insanelab.com/blog/web-development/webp-web-design-vs-jpeg-gif-png | 1.0 | Adoption of WEBP format - We should look into using webp files for our static website images instead of png files. The main reason for this switch are:

- Smaller size

- Faster loading

Source https://insanelab.com/blog/web-development/webp-web-design-vs-jpeg-gif-png | priority | adoption of webp format we should look into using webp files for our static website images instead of png files the main reason for this switch are smaller size faster loading source | 1 |

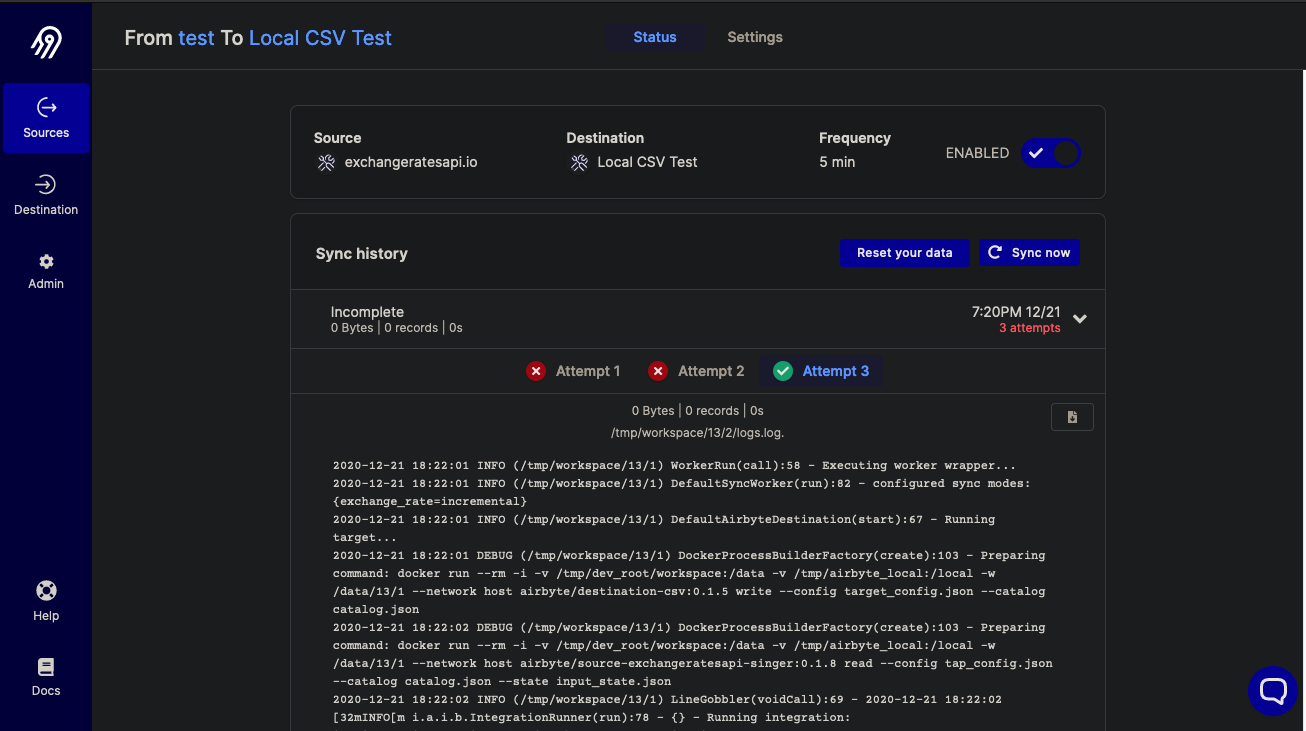

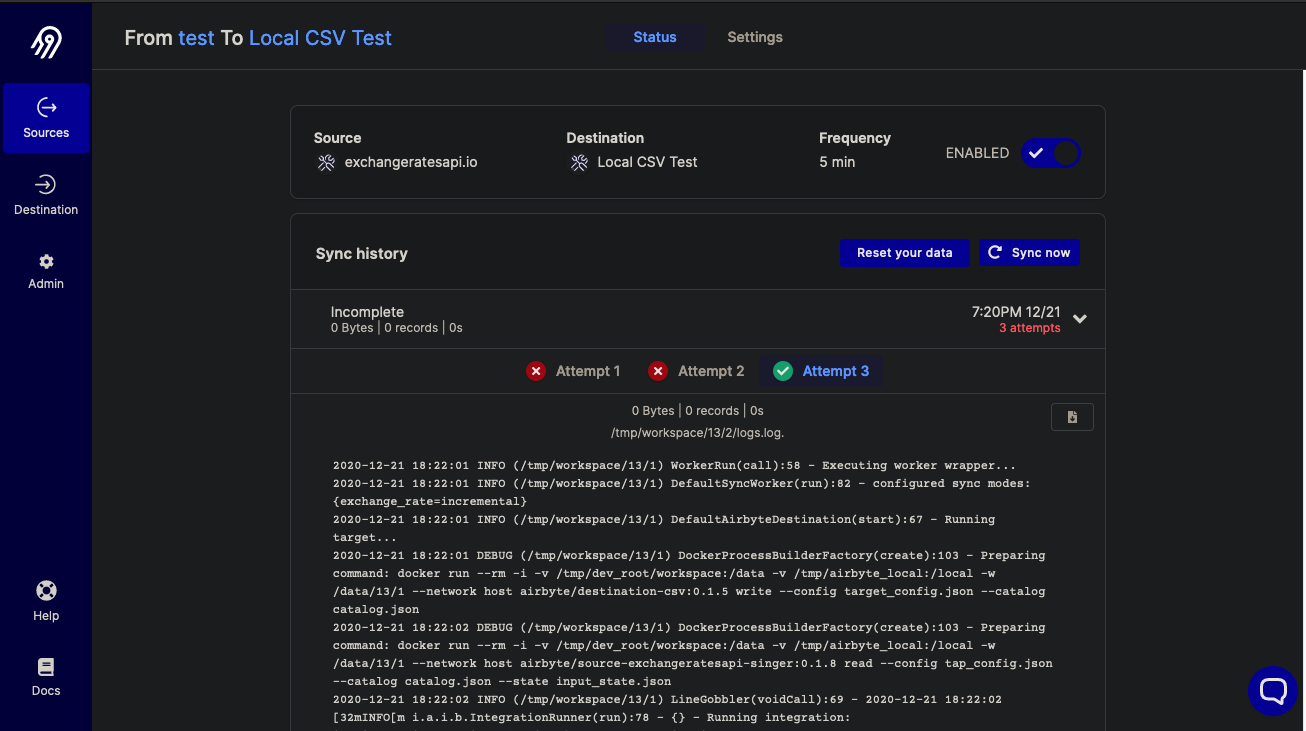

506,321 | 14,662,742,385 | IssuesEvent | 2020-12-29 08:03:06 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | Wrong display in attempts status? | area/frontend priority/medium type/bug | ## Expected Behavior

I am testing:

- a source setup with ExchangeRateAPI

- a destination with Local CSV

- a frequency of every 5 mins

After letting the scheduler run successfully a few trials, I changed the permission to remove write access to the local directory and observe how the system behaves with failures...

## Current Behavior

I observe 3 attempts and for some reason, the third one is marked with a green check (even though it is still failing without write permissions):

(Sorry i forgot to turn off my "dark" theme mode in my browser for the screenshot..)

| 1.0 | Wrong display in attempts status? - ## Expected Behavior

I am testing:

- a source setup with ExchangeRateAPI

- a destination with Local CSV

- a frequency of every 5 mins

After letting the scheduler run successfully a few trials, I changed the permission to remove write access to the local directory and observe how the system behaves with failures...

## Current Behavior

I observe 3 attempts and for some reason, the third one is marked with a green check (even though it is still failing without write permissions):

(Sorry i forgot to turn off my "dark" theme mode in my browser for the screenshot..)

| priority | wrong display in attempts status expected behavior i am testing a source setup with exchangerateapi a destination with local csv a frequency of every mins after letting the scheduler run successfully a few trials i changed the permission to remove write access to the local directory and observe how the system behaves with failures current behavior i observe attempts and for some reason the third one is marked with a green check even though it is still failing without write permissions sorry i forgot to turn off my dark theme mode in my browser for the screenshot | 1 |

320,345 | 9,779,634,620 | IssuesEvent | 2019-06-07 14:56:21 | canonical-web-and-design/mir-server.io | https://api.github.com/repos/canonical-web-and-design/mir-server.io | closed | The main image is of Gnome desktop | Priority: Medium | The image should be Mir related, or at least related to digital signage/kiosk.

Even a picture of Ubuntu Touch would be relevant. | 1.0 | The main image is of Gnome desktop - The image should be Mir related, or at least related to digital signage/kiosk.

Even a picture of Ubuntu Touch would be relevant. | priority | the main image is of gnome desktop the image should be mir related or at least related to digital signage kiosk even a picture of ubuntu touch would be relevant | 1 |

289,333 | 8,869,140,312 | IssuesEvent | 2019-01-11 03:31:50 | Hyracan/48532854823523 | https://api.github.com/repos/Hyracan/48532854823523 | closed | Добавить IP адреса в сетевой порт для ротации (см. комментарии) | Medium priority enchancement | Список IP адресов которые должны быть в ротации помимо прокси:

92.53.89.114 - сейчас используется сайтом и уже находится в ротации.

92.53.89.115

92.53.89.116

92.53.89.117

92.53.89.118 | 1.0 | Добавить IP адреса в сетевой порт для ротации (см. комментарии) - Список IP адресов которые должны быть в ротации помимо прокси:

92.53.89.114 - сейчас используется сайтом и уже находится в ротации.

92.53.89.115

92.53.89.116

92.53.89.117

92.53.89.118 | priority | добавить ip адреса в сетевой порт для ротации см комментарии список ip адресов которые должны быть в ротации помимо прокси сейчас используется сайтом и уже находится в ротации | 1 |

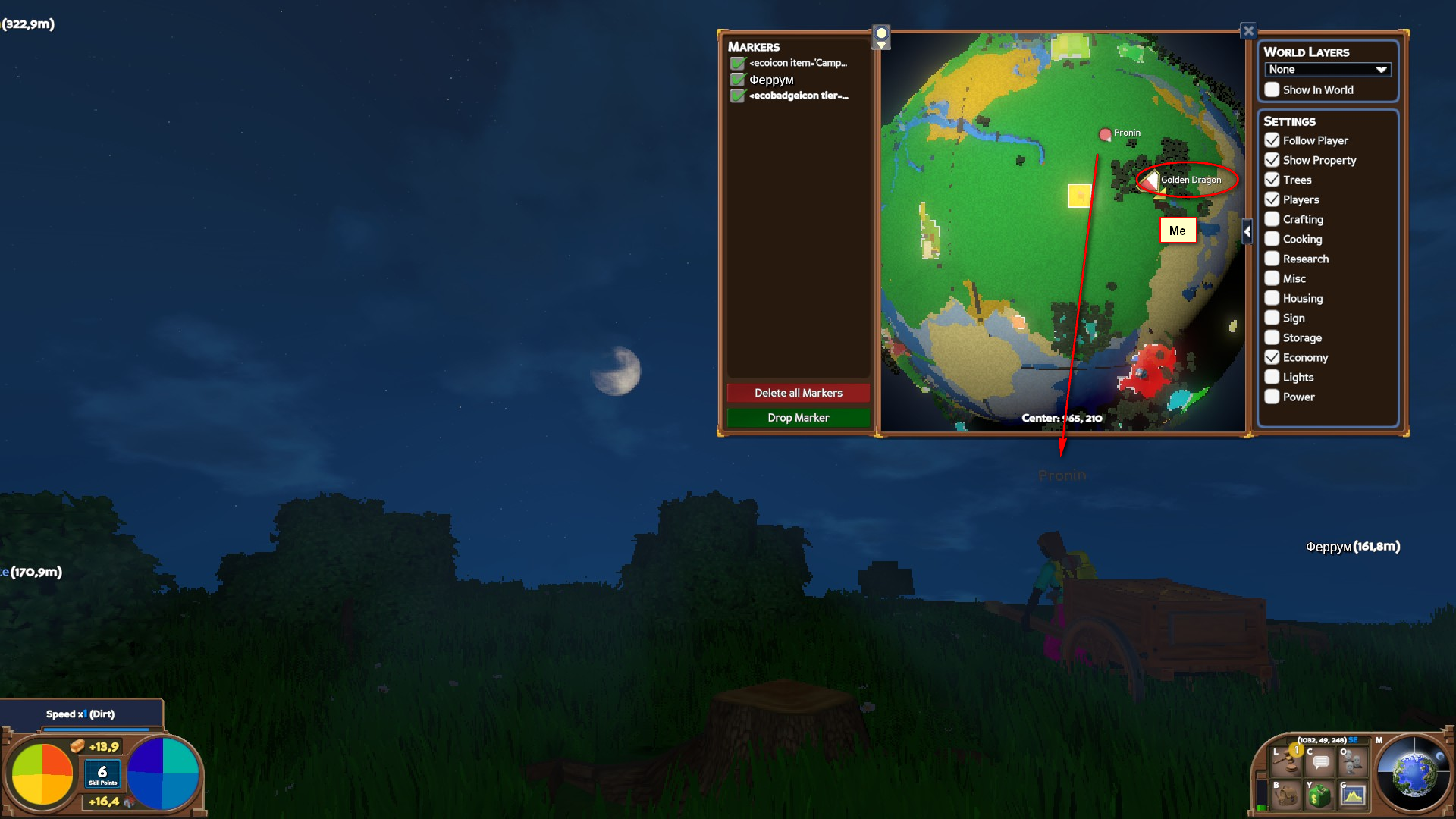

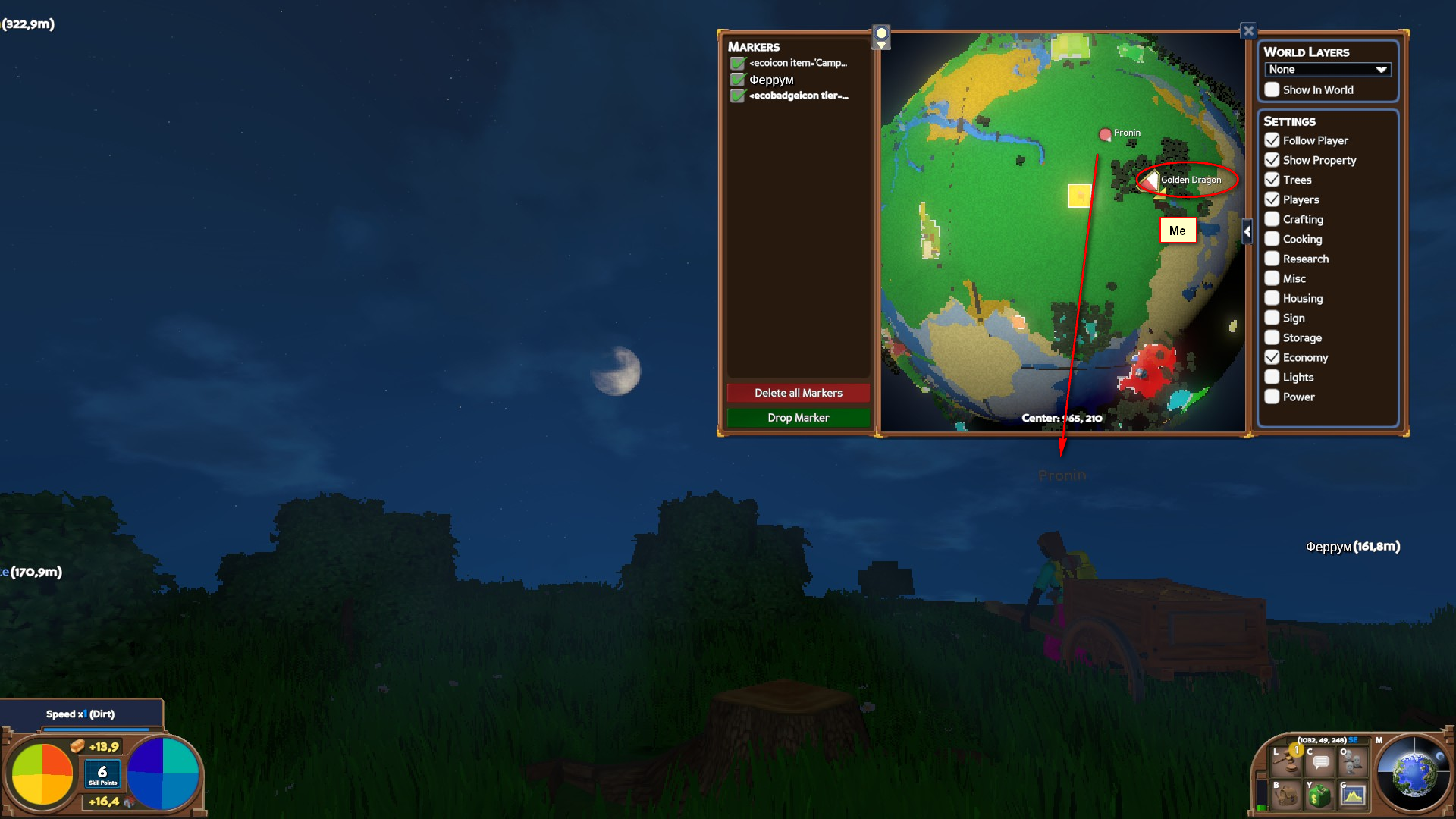

282,123 | 8,703,891,923 | IssuesEvent | 2018-12-05 17:50:07 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: minimap is not refresh if players use cart\vechicle. | Medium Priority | **Version:** 0.7.6.1 beta

| 1.0 | USER ISSUE: minimap is not refresh if players use cart\vechicle. - **Version:** 0.7.6.1 beta

| priority | user issue minimap is not refresh if players use cart vechicle version beta | 1 |

642,759 | 20,912,643,024 | IssuesEvent | 2022-03-24 10:42:32 | ASE-Projekte-WS-2021/ase-ws-21-unser-horsaal | https://api.github.com/repos/ASE-Projekte-WS-2021/ase-ws-21-unser-horsaal | opened | (ONBOARDING) Onboarding beim ersten Nutzen der App | Medium Priority | Als Nutzer möchte ich beim ersten Nutzen der App in die App eingeführt werden, damit ich verstehe wie die App zu nutzen ist und ich motiviert bin die Features der App zu nutzen. | 1.0 | (ONBOARDING) Onboarding beim ersten Nutzen der App - Als Nutzer möchte ich beim ersten Nutzen der App in die App eingeführt werden, damit ich verstehe wie die App zu nutzen ist und ich motiviert bin die Features der App zu nutzen. | priority | onboarding onboarding beim ersten nutzen der app als nutzer möchte ich beim ersten nutzen der app in die app eingeführt werden damit ich verstehe wie die app zu nutzen ist und ich motiviert bin die features der app zu nutzen | 1 |

664,068 | 22,238,665,919 | IssuesEvent | 2022-06-09 01:06:46 | Cockatrice/Cockatrice | https://api.github.com/repos/Cockatrice/Cockatrice | closed | Sound Do Not Overlap | App - Cockatrice Bug Medium Priority | **System Information:**

Client Version: 2.8.0 (2021-01-26)

Client Operating System: Windows 10 (10.0)

Build Architecture: 64-bit

Qt Version: 5.12.9

System Locale: en_US

Install Mode: Standard

_______________________________________________________________________________________

I have Uploaded a custom sound pack, but I've noticed that after I have one sound play, I need to wait until that sound file ends completely before any other sound plays instead of overlapping the sounds.

_______________________________________________________________________________________

**Steps to reproduce:**

- Do any action that produces a sound

- Do a second action that should produce a sound while the previous sound is still playing

| 1.0 | Sound Do Not Overlap - **System Information:**

Client Version: 2.8.0 (2021-01-26)

Client Operating System: Windows 10 (10.0)

Build Architecture: 64-bit

Qt Version: 5.12.9

System Locale: en_US

Install Mode: Standard

_______________________________________________________________________________________

I have Uploaded a custom sound pack, but I've noticed that after I have one sound play, I need to wait until that sound file ends completely before any other sound plays instead of overlapping the sounds.

_______________________________________________________________________________________

**Steps to reproduce:**

- Do any action that produces a sound

- Do a second action that should produce a sound while the previous sound is still playing

| priority | sound do not overlap system information client version client operating system windows build architecture bit qt version system locale en us install mode standard i have uploaded a custom sound pack but i ve noticed that after i have one sound play i need to wait until that sound file ends completely before any other sound plays instead of overlapping the sounds steps to reproduce do any action that produces a sound do a second action that should produce a sound while the previous sound is still playing | 1 |

115,550 | 4,675,789,507 | IssuesEvent | 2016-10-07 09:15:39 | BinPar/PPD | https://api.github.com/repos/BinPar/PPD | opened | INFORME PLANIFICACIÓN: INCORPORACIÓN CAMPOS FECHAS PANTALLA Y EXCEL | Priority: Medium | Incorporar los campos de fechas que son filtro, tanto en pantalla como en Excel.

Actualmente aparecen:

Fecha de publicación real en país de impresión

Fecha prevista de venta en filal

Fecha Servicio Novedad

Añadir:

- Fecha de publicación inicial:

- Fecha de entrada en Depto. Producción:

- Fecha estimada país de impresión:

- Fecha de entrada en almacén:

- Fecha de alta en SAP:

- Fecha de puesta a la venta real en filal:

CORREGIR *filal por filial | 1.0 | INFORME PLANIFICACIÓN: INCORPORACIÓN CAMPOS FECHAS PANTALLA Y EXCEL - Incorporar los campos de fechas que son filtro, tanto en pantalla como en Excel.

Actualmente aparecen:

Fecha de publicación real en país de impresión

Fecha prevista de venta en filal

Fecha Servicio Novedad

Añadir:

- Fecha de publicación inicial:

- Fecha de entrada en Depto. Producción:

- Fecha estimada país de impresión:

- Fecha de entrada en almacén:

- Fecha de alta en SAP:

- Fecha de puesta a la venta real en filal:

CORREGIR *filal por filial | priority | informe planificación incorporación campos fechas pantalla y excel incorporar los campos de fechas que son filtro tanto en pantalla como en excel actualmente aparecen fecha de publicación real en país de impresión fecha prevista de venta en filal fecha servicio novedad añadir fecha de publicación inicial fecha de entrada en depto producción fecha estimada país de impresión fecha de entrada en almacén fecha de alta en sap fecha de puesta a la venta real en filal corregir filal por filial | 1 |

798,253 | 28,241,173,028 | IssuesEvent | 2023-04-06 07:17:28 | yunki-kim/card-monkey-BE-refactor | https://api.github.com/repos/yunki-kim/card-monkey-BE-refactor | opened | [refactor] 비밀번호 변경 기능에 대한 예외처리 추가 | Status: In Progress For: Backend Priority: Medium Type: Feature | ## Description

비밀번호 변경 메서드에 대한 예외처리 추가

## Tasks

- [ ] 예외처리 추가

## Reference | 1.0 | [refactor] 비밀번호 변경 기능에 대한 예외처리 추가 - ## Description

비밀번호 변경 메서드에 대한 예외처리 추가

## Tasks

- [ ] 예외처리 추가

## Reference | priority | 비밀번호 변경 기능에 대한 예외처리 추가 description 비밀번호 변경 메서드에 대한 예외처리 추가 tasks 예외처리 추가 reference | 1 |

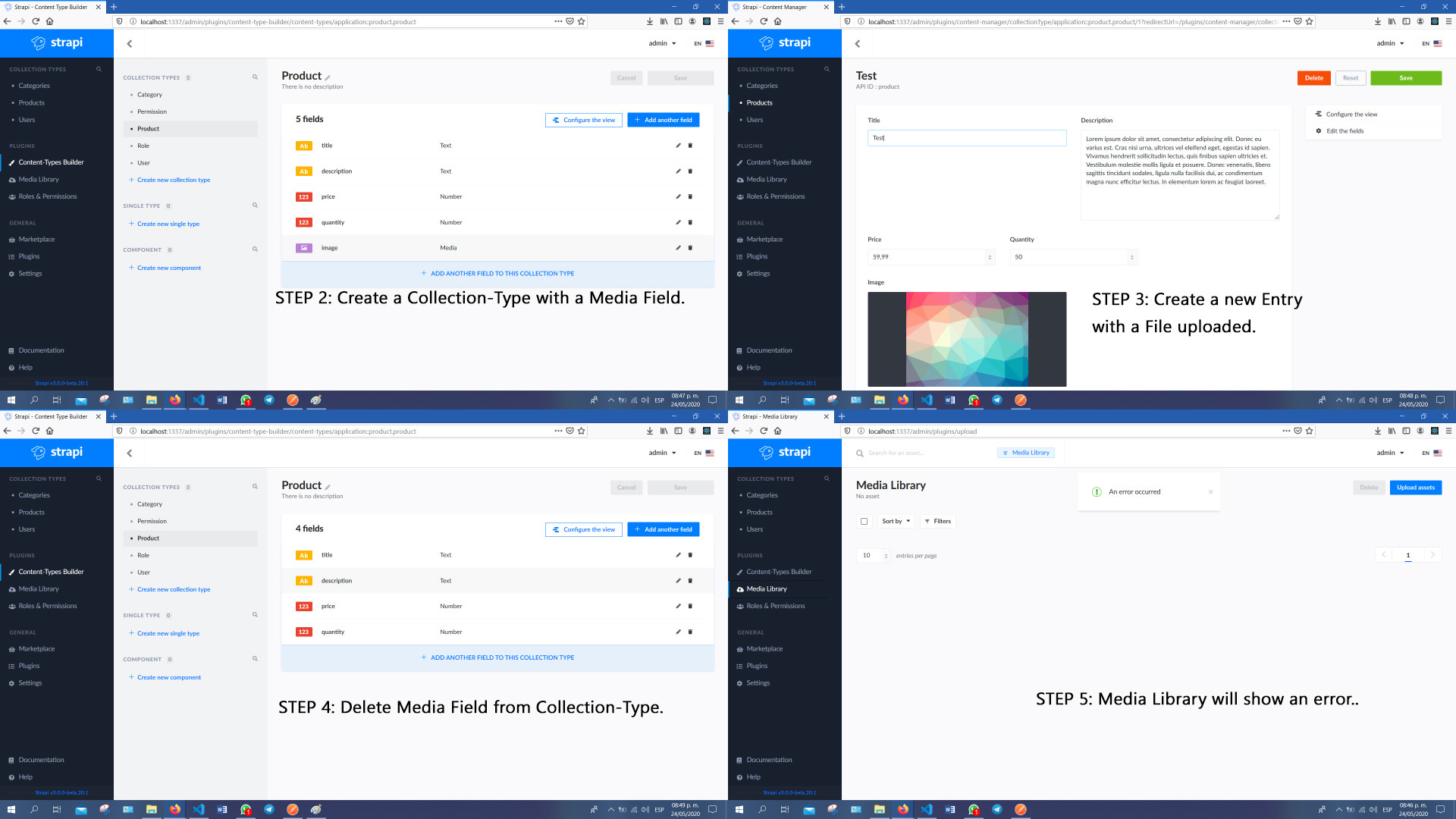

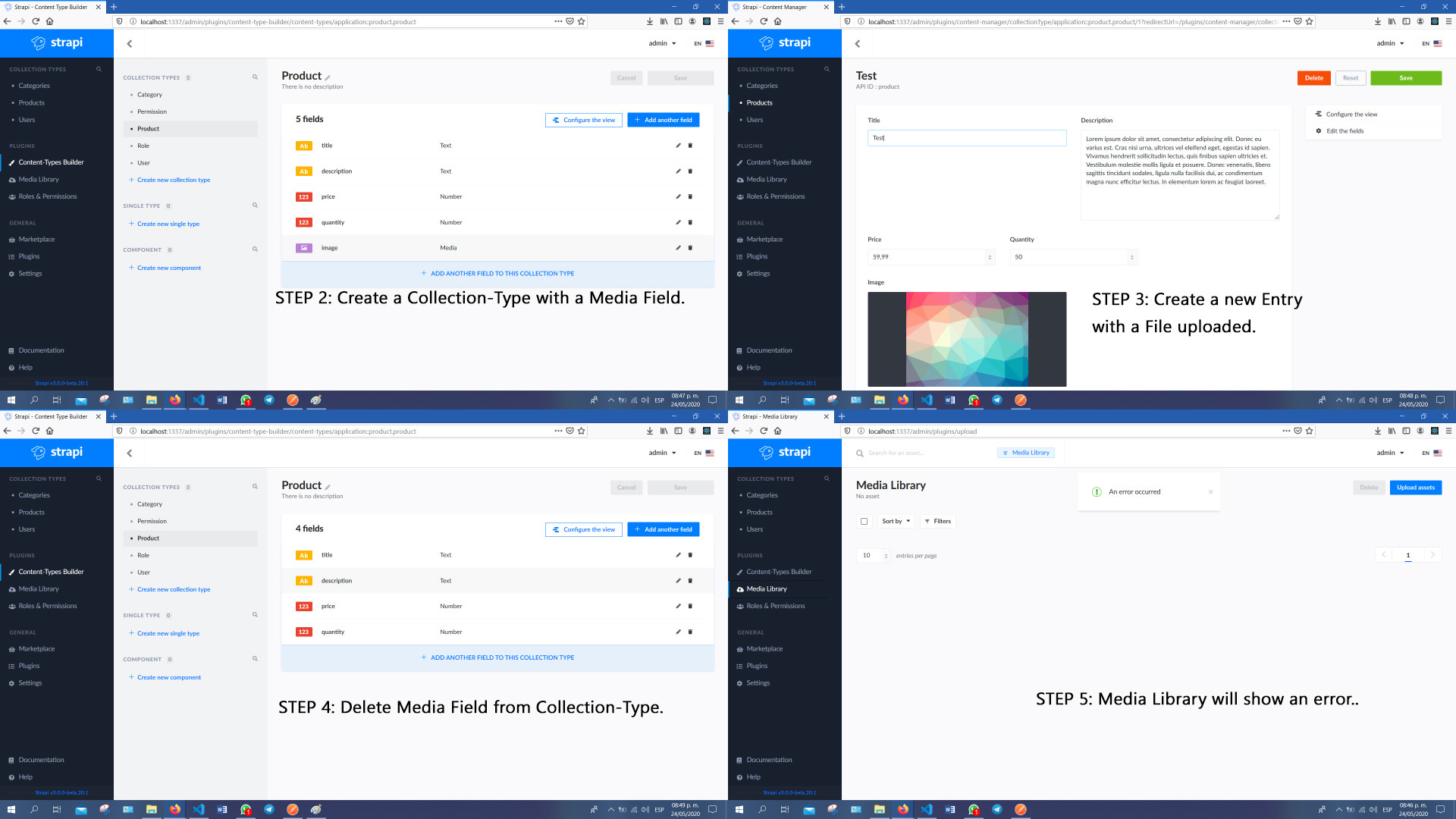

489,377 | 14,105,494,006 | IssuesEvent | 2020-11-06 13:35:38 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | Break Media Lib when deleting a Media field from an Content Type | priority: medium source: plugin:upload status: confirmed type: bug | # **Bug**

In Strapi, after deleting a **Media** Field from a **Collection-Type**, a **GET request to /upload/files** throws an **Error**.

This should not happened.

**Steps to reproduce the behavior**

1. Create a new Strapi App.

2. Create a **Collection-Type** with a **Media** Field.

3. Create a new **Entry** from this **Collection-Type** with a **File** uploaded.

4. Delete the **Media** Field from the **Collection-Type**.

5. Finally going to the Media Library (**GET request to /upload/files**) will result in an **ERROR**.

**Expected behavior**

When deleting a **Media** Field from a **Collection-Type** should remove any relation between the Entries that used **Media** as a Fields and the uploaded files.

**Screenshots**

**Code snippets**

This Bug can be achieved **without** using code, this scenario **only uses the Strapi CMS**.

**System**

- Node.js version: v12.16.1.

- NPM version: 6.13.2.

- Strapi version: v3.0.0-beta.20.1

- Database: Default (SQLlite I believe).

- Operating system: Windows.

# **FIX**

I did find a "solution" to this issue.

1. Add the Media field back to the Collection-Type.

2. Remove the Media field manually from every Entry.

3. Now you can remove the Media field from the collection-type without triggering this error.

## **Possible solution**

As stated before, when deleting the Media field from the Collection-Type should remove the Media attached to every Entry that uses it.

| 1.0 | Break Media Lib when deleting a Media field from an Content Type - # **Bug**

In Strapi, after deleting a **Media** Field from a **Collection-Type**, a **GET request to /upload/files** throws an **Error**.

This should not happened.

**Steps to reproduce the behavior**

1. Create a new Strapi App.

2. Create a **Collection-Type** with a **Media** Field.

3. Create a new **Entry** from this **Collection-Type** with a **File** uploaded.

4. Delete the **Media** Field from the **Collection-Type**.

5. Finally going to the Media Library (**GET request to /upload/files**) will result in an **ERROR**.

**Expected behavior**

When deleting a **Media** Field from a **Collection-Type** should remove any relation between the Entries that used **Media** as a Fields and the uploaded files.

**Screenshots**

**Code snippets**

This Bug can be achieved **without** using code, this scenario **only uses the Strapi CMS**.

**System**

- Node.js version: v12.16.1.

- NPM version: 6.13.2.

- Strapi version: v3.0.0-beta.20.1

- Database: Default (SQLlite I believe).

- Operating system: Windows.

# **FIX**

I did find a "solution" to this issue.

1. Add the Media field back to the Collection-Type.

2. Remove the Media field manually from every Entry.

3. Now you can remove the Media field from the collection-type without triggering this error.

## **Possible solution**

As stated before, when deleting the Media field from the Collection-Type should remove the Media attached to every Entry that uses it.

| priority | break media lib when deleting a media field from an content type bug in strapi after deleting a media field from a collection type a get request to upload files throws an error this should not happened steps to reproduce the behavior create a new strapi app create a collection type with a media field create a new entry from this collection type with a file uploaded delete the media field from the collection type finally going to the media library get request to upload files will result in an error expected behavior when deleting a media field from a collection type should remove any relation between the entries that used media as a fields and the uploaded files screenshots code snippets this bug can be achieved without using code this scenario only uses the strapi cms system node js version npm version strapi version beta database default sqllite i believe operating system windows fix i did find a solution to this issue add the media field back to the collection type remove the media field manually from every entry now you can remove the media field from the collection type without triggering this error possible solution as stated before when deleting the media field from the collection type should remove the media attached to every entry that uses it | 1 |

351,487 | 10,519,400,623 | IssuesEvent | 2019-09-29 17:44:01 | cuappdev/ithaca-transit-ios | https://api.github.com/repos/cuappdev/ithaca-transit-ios | closed | Take out "Teleportation" alert if start and end location == each other | Priority: Medium Type: Bug | Seems to annoy a lot of ppl | 1.0 | Take out "Teleportation" alert if start and end location == each other - Seems to annoy a lot of ppl | priority | take out teleportation alert if start and end location each other seems to annoy a lot of ppl | 1 |

479,643 | 13,804,164,802 | IssuesEvent | 2020-10-11 07:33:42 | AY2021S1-TIC4001-2/tp | https://api.github.com/repos/AY2021S1-TIC4001-2/tp | closed | Update user guide | priority.Medium type.Task | ... with add income category, add expense category, and delete income/expense category commands. | 1.0 | Update user guide - ... with add income category, add expense category, and delete income/expense category commands. | priority | update user guide with add income category add expense category and delete income expense category commands | 1 |

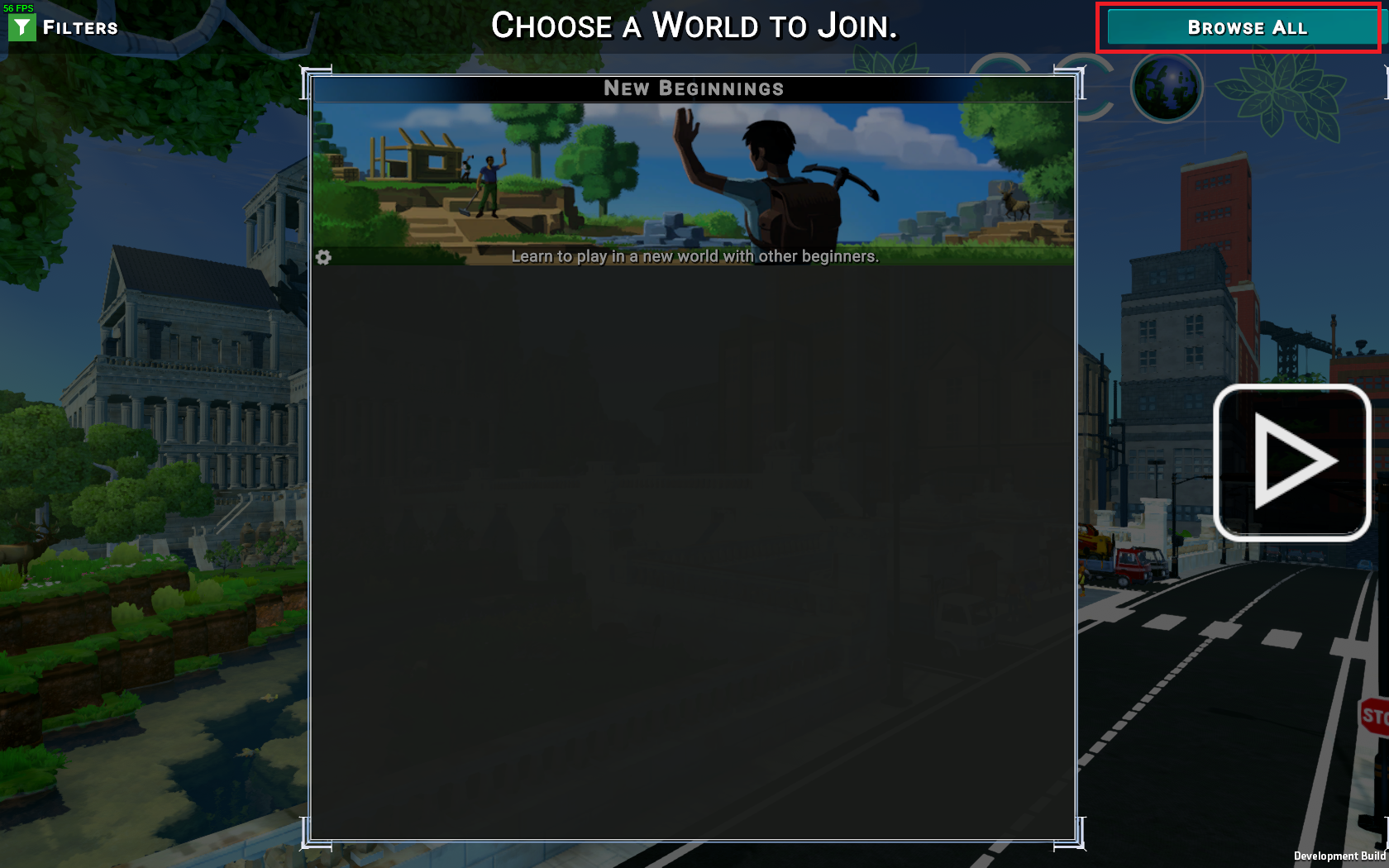

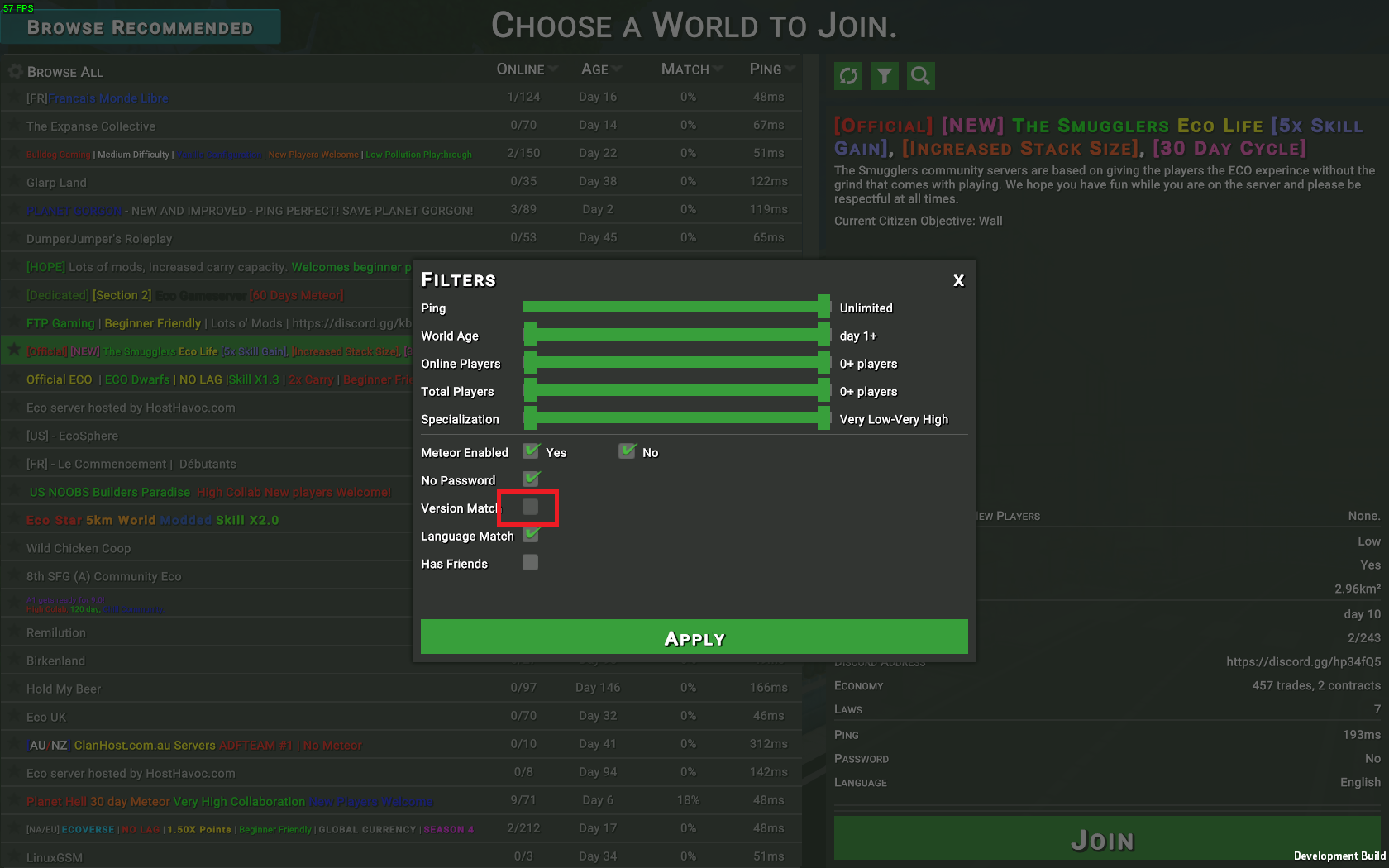

562,129 | 16,638,664,341 | IssuesEvent | 2021-06-04 04:50:42 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.2.x beta] Pending Elections icon always visible | Category: UI Priority: Medium Squad: Mountain Goat Status: Not reproduced Type: Bug | The Pending Elections icon on the right side of the screen is always visible since 0.9.2

Seen on two different servers (launched pre 0.9.2 then upgraded) and a fresh 0.9.2.2 (for testing purpose).

| 1.0 | [0.9.2.x beta] Pending Elections icon always visible - The Pending Elections icon on the right side of the screen is always visible since 0.9.2

Seen on two different servers (launched pre 0.9.2 then upgraded) and a fresh 0.9.2.2 (for testing purpose).

| priority | pending elections icon always visible the pending elections icon on the right side of the screen is always visible since seen on two different servers launched pre then upgraded and a fresh for testing purpose | 1 |

19,871 | 2,622,174,271 | IssuesEvent | 2015-03-04 00:15:56 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | closed | Allow leveldb to be binded to by FFI | auto-migrated OpSys-All Priority-Medium Type-Enhancement | ```

It would be nice if I could bind to leveldb using FFI

(https://github.com/ffi/ffi). To allow FFI bindings, leveldb needs to expose a

C API (extern "C" { ... }) and build a dynamically-linked shared-library

(libleveldb.so).

```

Original issue reported on code.google.com by `postmode...@gmail.com` on 29 Jul 2011 at 11:25 | 1.0 | Allow leveldb to be binded to by FFI - ```

It would be nice if I could bind to leveldb using FFI

(https://github.com/ffi/ffi). To allow FFI bindings, leveldb needs to expose a

C API (extern "C" { ... }) and build a dynamically-linked shared-library

(libleveldb.so).

```

Original issue reported on code.google.com by `postmode...@gmail.com` on 29 Jul 2011 at 11:25 | priority | allow leveldb to be binded to by ffi it would be nice if i could bind to leveldb using ffi to allow ffi bindings leveldb needs to expose a c api extern c and build a dynamically linked shared library libleveldb so original issue reported on code google com by postmode gmail com on jul at | 1 |

275,156 | 8,575,062,185 | IssuesEvent | 2018-11-12 16:19:15 | naccyde/yall | https://api.github.com/repos/naccyde/yall | opened | Check log display when receiving SIGSEGV or such signal | Priority: Medium Status: On Hold Type: Enhancement | ## Summary

Check the library behavior when the application receive a system's signal (`SIGSEGV` and such).

## Steps to reproduce

N/A

## What is the current bug behavior?

N/A

## What is the expected correct behavior?

All the log message should be displayed. The current buffer of the writer thread should not be discarded as is could contains a set of crash relevant logs.

## Relevant logs and/or screenshots

N/A

## Possible fixes

If some logs are missing it could be interesting to find a way to write them before closing. Catching signals is not the better way... | 1.0 | Check log display when receiving SIGSEGV or such signal - ## Summary

Check the library behavior when the application receive a system's signal (`SIGSEGV` and such).

## Steps to reproduce

N/A

## What is the current bug behavior?

N/A

## What is the expected correct behavior?

All the log message should be displayed. The current buffer of the writer thread should not be discarded as is could contains a set of crash relevant logs.

## Relevant logs and/or screenshots

N/A

## Possible fixes

If some logs are missing it could be interesting to find a way to write them before closing. Catching signals is not the better way... | priority | check log display when receiving sigsegv or such signal summary check the library behavior when the application receive a system s signal sigsegv and such steps to reproduce n a what is the current bug behavior n a what is the expected correct behavior all the log message should be displayed the current buffer of the writer thread should not be discarded as is could contains a set of crash relevant logs relevant logs and or screenshots n a possible fixes if some logs are missing it could be interesting to find a way to write them before closing catching signals is not the better way | 1 |

780,987 | 27,417,609,706 | IssuesEvent | 2023-03-01 14:45:59 | PrefectHQ/prefect | https://api.github.com/repos/PrefectHQ/prefect | closed | Orion - add search functionality in block selection. | enhancement status:accepted ui priority:medium | ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar request and didn't find it.

- [X] I searched the Prefect documentation for this feature.

### Prefect Version

2.x

### Describe the current behavior

If I define a block as an input for a flow or as a attribute of another block, I get a drop-down in Orion. If the list is long I have to scroll through lots of options.

### Describe the proposed behavior

Add search functionality to the drop-down. If I click a field in Orion that is of any block type, I can search through that list by typing.

The behavior would be similar how the search for issues here in GitHub works.

<img src="https://user-images.githubusercontent.com/24698503/197032103-1248981b-0436-4ebd-8783-24b1fb01b095.jpg" width="300">

### Example Use

This is especially helpful if one has lots of blocks of the same type. Say I have a custom Block called `ObjectDetectionModel`.

Each of these contains one trained and published model. If I have 100 of these the pure drop-down becomes a pain to use.

### Additional context

_No response_ | 1.0 | Orion - add search functionality in block selection. - ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar request and didn't find it.

- [X] I searched the Prefect documentation for this feature.

### Prefect Version

2.x

### Describe the current behavior

If I define a block as an input for a flow or as a attribute of another block, I get a drop-down in Orion. If the list is long I have to scroll through lots of options.

### Describe the proposed behavior

Add search functionality to the drop-down. If I click a field in Orion that is of any block type, I can search through that list by typing.

The behavior would be similar how the search for issues here in GitHub works.

<img src="https://user-images.githubusercontent.com/24698503/197032103-1248981b-0436-4ebd-8783-24b1fb01b095.jpg" width="300">

### Example Use

This is especially helpful if one has lots of blocks of the same type. Say I have a custom Block called `ObjectDetectionModel`.

Each of these contains one trained and published model. If I have 100 of these the pure drop-down becomes a pain to use.

### Additional context

_No response_ | priority | orion add search functionality in block selection first check i added a descriptive title to this issue i used the github search to find a similar request and didn t find it i searched the prefect documentation for this feature prefect version x describe the current behavior if i define a block as an input for a flow or as a attribute of another block i get a drop down in orion if the list is long i have to scroll through lots of options describe the proposed behavior add search functionality to the drop down if i click a field in orion that is of any block type i can search through that list by typing the behavior would be similar how the search for issues here in github works example use this is especially helpful if one has lots of blocks of the same type say i have a custom block called objectdetectionmodel each of these contains one trained and published model if i have of these the pure drop down becomes a pain to use additional context no response | 1 |

93,872 | 3,912,667,215 | IssuesEvent | 2016-04-20 11:25:03 | parallelus/Plugin-Installer-for-Runway | https://api.github.com/repos/parallelus/Plugin-Installer-for-Runway | opened | Install free plugins from WP repo rather than from included zip files | enhancement Priority 2: Medium | We want to change the way the plugin installer works so that free plugins (such as Sidekick, Ninja Forms and Simple Colorbox for example) are installed from the WordPress plugin repository rather than from zip files included in the theme package.

For free plugins, when you choose them in the Runway admin to set up the plugin installer, currently it goes to the WordPress repository and downloads the zip files into the 'extensions/plugin-installer/plugins' folder. That's what we need to change; what we need now is for it to write references into the JSON file so that it knows what plugins to use, but not have a local copies.

Then, in the standalone theme, when users click Install for any of the free required or recommended plugins it installs it from the WordPress repository.

With regard to updating any of these free plugins, we don’t need to do anything at all with the plugin installer, just let WordPress do its normal update notifications thing. | 1.0 | Install free plugins from WP repo rather than from included zip files - We want to change the way the plugin installer works so that free plugins (such as Sidekick, Ninja Forms and Simple Colorbox for example) are installed from the WordPress plugin repository rather than from zip files included in the theme package.

For free plugins, when you choose them in the Runway admin to set up the plugin installer, currently it goes to the WordPress repository and downloads the zip files into the 'extensions/plugin-installer/plugins' folder. That's what we need to change; what we need now is for it to write references into the JSON file so that it knows what plugins to use, but not have a local copies.

Then, in the standalone theme, when users click Install for any of the free required or recommended plugins it installs it from the WordPress repository.

With regard to updating any of these free plugins, we don’t need to do anything at all with the plugin installer, just let WordPress do its normal update notifications thing. | priority | install free plugins from wp repo rather than from included zip files we want to change the way the plugin installer works so that free plugins such as sidekick ninja forms and simple colorbox for example are installed from the wordpress plugin repository rather than from zip files included in the theme package for free plugins when you choose them in the runway admin to set up the plugin installer currently it goes to the wordpress repository and downloads the zip files into the extensions plugin installer plugins folder that s what we need to change what we need now is for it to write references into the json file so that it knows what plugins to use but not have a local copies then in the standalone theme when users click install for any of the free required or recommended plugins it installs it from the wordpress repository with regard to updating any of these free plugins we don’t need to do anything at all with the plugin installer just let wordpress do its normal update notifications thing | 1 |

320,804 | 9,789,338,080 | IssuesEvent | 2019-06-10 09:33:17 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | Tutorial "Forage for food" glitch | Medium Priority QA Staging | 1. After restart tutorials sometimes You need to collect plants very far from your position.

2. Sometimes 1 plant appears. I think need minimum 3 plants for tutorial.

.

3. When you hungry to work Tutorial "Forage for Food" appears but markers don't appear.

| 1.0 | Tutorial "Forage for food" glitch - 1. After restart tutorials sometimes You need to collect plants very far from your position.

2. Sometimes 1 plant appears. I think need minimum 3 plants for tutorial.

.

3. When you hungry to work Tutorial "Forage for Food" appears but markers don't appear.

| priority | tutorial forage for food glitch after restart tutorials sometimes you need to collect plants very far from your position sometimes plant appears i think need minimum plants for tutorial when you hungry to work tutorial forage for food appears but markers don t appear | 1 |

594,288 | 18,042,376,639 | IssuesEvent | 2021-09-18 08:59:44 | medic-code/IOL-Assist | https://api.github.com/repos/medic-code/IOL-Assist | closed | Improve individual IOL Page styling | Style issue Medium priority | Currently have a bare-bones styling for this page. May want to think about a design theme across the App and apply changes to the IOL Page | 1.0 | Improve individual IOL Page styling - Currently have a bare-bones styling for this page. May want to think about a design theme across the App and apply changes to the IOL Page | priority | improve individual iol page styling currently have a bare bones styling for this page may want to think about a design theme across the app and apply changes to the iol page | 1 |

814,732 | 30,519,645,557 | IssuesEvent | 2023-07-19 07:08:43 | ArizonaGreenTea05/FinancialOverview | https://api.github.com/repos/ArizonaGreenTea05/FinancialOverview | opened | WinFormsFinance: Enhance sales | Kind: enhancement Module: WinFormsFinance Priority: medium | Depends on #50

- [ ] different pages for sale definition and overview

- [ ] main page contains table with all sales and combo box to choose if it should be calculated up/down to daily, weekly, monthly or yearly (similar to current all-sales)

- [ ] overview page contains multiple tables for daily, weekly, monthly, yearly

- [ ] add button on main and overview page leads to new dialog to define a new sale | 1.0 | WinFormsFinance: Enhance sales - Depends on #50

- [ ] different pages for sale definition and overview

- [ ] main page contains table with all sales and combo box to choose if it should be calculated up/down to daily, weekly, monthly or yearly (similar to current all-sales)

- [ ] overview page contains multiple tables for daily, weekly, monthly, yearly

- [ ] add button on main and overview page leads to new dialog to define a new sale | priority | winformsfinance enhance sales depends on different pages for sale definition and overview main page contains table with all sales and combo box to choose if it should be calculated up down to daily weekly monthly or yearly similar to current all sales overview page contains multiple tables for daily weekly monthly yearly add button on main and overview page leads to new dialog to define a new sale | 1 |

128,292 | 5,052,295,734 | IssuesEvent | 2016-12-21 01:25:05 | JustBru00/RenamePlugin | https://api.github.com/repos/JustBru00/RenamePlugin | closed | Add messages.yml | Addition Request Medium Priority TODO | Requested by @Paras on spigotmc.org. https://www.spigotmc.org/threads/epicrename.51650/page-6#post-1749180

So basically redo the config system. :smile:

| 1.0 | Add messages.yml - Requested by @Paras on spigotmc.org. https://www.spigotmc.org/threads/epicrename.51650/page-6#post-1749180

So basically redo the config system. :smile:

| priority | add messages yml requested by paras on spigotmc org so basically redo the config system smile | 1 |

22,775 | 2,650,921,453 | IssuesEvent | 2015-03-16 06:46:31 | grepper/tovid | https://api.github.com/repos/grepper/tovid | closed | makexml tovid_encoded.mpg was not found; mplex created tovid_encoded.1.mpg | bug imported Priority-Medium wontfix | _From [DaleEMo...@gmail.com](https://code.google.com/u/109909325065118593133/) on October 05, 2007 08:45:02_

Howdy y'all;

When running tovid GUI 0.31 makexml can't find the file created by mplex.

Here's the specifics from my log file:

\- - - - -

mplex -V -f 8 -o /tmp/1/theWarANW.mpg.tovid_encoded.%d.mpg

/home/dalem/theWarANW.mpg.tovid_encoded.0/video.m2v

/home/dalem/theWarANW.mpg.tovid_encoded.0/audio.ac3

Multiplexing finished successfully

Output files:

4.2G /tmp/1/theWarANW.mpg.tovid_encoded.1.mpg

4.2G total

=========================================================

Statistics written to /home/dalem/.tovid/stats.tovid

Cleaning up...

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/video.yuv'

Removing temporary files...

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/tovid.scratch'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/video.m2v'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/audio.ac3'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/tovid.log'

removed directory: `/home/dalem/theWarANW.mpg.tovid_encoded.0'

=========================================================

Done!

=========================================================

Running command: makexml -quiet -overwrite -dvd -menu

"/tmp/1/A_Necessary_War.mpg" "/tmp/1/theWarANW.mpg.tovid_encoded.mpg" -out

"/tmp/1/The_War"

\--------------------------------

makexml

A script to generate XML for authoring a VCD, SVCD, or DVD.

Part of the tovid suite, version 0.31 http://www.tovid.org --------------------------------

Adding a titleset-level menu using file: /tmp/1/A_Necessary_War.mpg

The file /tmp/1/theWarANW.mpg.tovid_encoded.mpg was not found. Exiting.

\- - - - -

Many thanks for any suggestions,

Dale E. Moore

**Attachment:** [bad1.log](http://code.google.com/p/tovid/issues/detail?id=14)

_Original issue: http://code.google.com/p/tovid/issues/detail?id=14_ | 1.0 | makexml tovid_encoded.mpg was not found; mplex created tovid_encoded.1.mpg - _From [DaleEMo...@gmail.com](https://code.google.com/u/109909325065118593133/) on October 05, 2007 08:45:02_

Howdy y'all;

When running tovid GUI 0.31 makexml can't find the file created by mplex.

Here's the specifics from my log file:

\- - - - -

mplex -V -f 8 -o /tmp/1/theWarANW.mpg.tovid_encoded.%d.mpg

/home/dalem/theWarANW.mpg.tovid_encoded.0/video.m2v

/home/dalem/theWarANW.mpg.tovid_encoded.0/audio.ac3

Multiplexing finished successfully

Output files:

4.2G /tmp/1/theWarANW.mpg.tovid_encoded.1.mpg

4.2G total

=========================================================

Statistics written to /home/dalem/.tovid/stats.tovid

Cleaning up...

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/video.yuv'

Removing temporary files...

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/tovid.scratch'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/video.m2v'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/audio.ac3'

removed `/home/dalem/theWarANW.mpg.tovid_encoded.0/tovid.log'

removed directory: `/home/dalem/theWarANW.mpg.tovid_encoded.0'

=========================================================

Done!

=========================================================

Running command: makexml -quiet -overwrite -dvd -menu

"/tmp/1/A_Necessary_War.mpg" "/tmp/1/theWarANW.mpg.tovid_encoded.mpg" -out

"/tmp/1/The_War"

\--------------------------------

makexml

A script to generate XML for authoring a VCD, SVCD, or DVD.

Part of the tovid suite, version 0.31 http://www.tovid.org --------------------------------

Adding a titleset-level menu using file: /tmp/1/A_Necessary_War.mpg

The file /tmp/1/theWarANW.mpg.tovid_encoded.mpg was not found. Exiting.

\- - - - -

Many thanks for any suggestions,

Dale E. Moore

**Attachment:** [bad1.log](http://code.google.com/p/tovid/issues/detail?id=14)

_Original issue: http://code.google.com/p/tovid/issues/detail?id=14_ | priority | makexml tovid encoded mpg was not found mplex created tovid encoded mpg from on october howdy y all when running tovid gui makexml can t find the file created by mplex here s the specifics from my log file mplex v f o tmp thewaranw mpg tovid encoded d mpg home dalem thewaranw mpg tovid encoded video home dalem thewaranw mpg tovid encoded audio multiplexing finished successfully output files tmp thewaranw mpg tovid encoded mpg total statistics written to home dalem tovid stats tovid cleaning up removed home dalem thewaranw mpg tovid encoded video yuv removing temporary files removed home dalem thewaranw mpg tovid encoded tovid scratch removed home dalem thewaranw mpg tovid encoded video removed home dalem thewaranw mpg tovid encoded audio removed home dalem thewaranw mpg tovid encoded tovid log removed directory home dalem thewaranw mpg tovid encoded done running command makexml quiet overwrite dvd menu tmp a necessary war mpg tmp thewaranw mpg tovid encoded mpg out tmp the war makexml a script to generate xml for authoring a vcd svcd or dvd part of the tovid suite version adding a titleset level menu using file tmp a necessary war mpg the file tmp thewaranw mpg tovid encoded mpg was not found exiting many thanks for any suggestions dale e moore attachment original issue | 1 |

166,374 | 6,303,826,153 | IssuesEvent | 2017-07-21 14:35:59 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Search is not working, some configuration changed | bug Priority: Medium | Seems like the configuration for search changed, so we have to validate the UI. | 1.0 | [studio-ui] Search is not working, some configuration changed - Seems like the configuration for search changed, so we have to validate the UI. | priority | search is not working some configuration changed seems like the configuration for search changed so we have to validate the ui | 1 |

208,347 | 7,153,260,829 | IssuesEvent | 2018-01-26 00:41:48 | vmware/vic-product | https://api.github.com/repos/vmware/vic-product | closed | vm-support on VCH should include Harbor logs as well which are under /var/log/harbor | component/ova priority/medium team/lifecycle triage/proposed-1.4 | @lgayatri commented on [Fri May 26 2017](https://github.com/vmware/vic/issues/5265)

**User Statement:**

The support bundle that gets generated on VCH with vm-support should include Harbor logs

**Details:**

vm-support on VCH contains only vmware-* files from /var/log and does not include log folder harbor which is needed to debug harbor issues

**Acceptance Criteria:**

Extracted vm-support bundle of VCH, and found only below logs

root@vic-st-h2-132 [ /var/log/harbor/2017-05-26/tmp/vm-support.qabKha/var/log ]# ls

vmware-vgauthsvc.log.0 vmware-vmsvc.1.log vmware-vmsvc.2.log vmware-vmsvc.3.log vmware-vmsvc.4.log vmware-vmsvc.log

ls /var/log contains:

root@vic-st-h2-132 [ /var/log ]# ls

btmp harbor installer.log lastlog vmware-vmsvc.1.log vmware-vmsvc.3.log vmware-vmsvc.log

cloud-init.log journal vmware-vgauthsvc.log.0 vmware-vmsvc.2.log vmware-vmsvc.4.log wtmp

Missing log folder is "harbor"

Please include harbor log folder in the support bundle.

---

@anchal-agrawal commented on [Fri May 26 2017](https://github.com/vmware/vic/issues/5265#issuecomment-304334029)

@lgayatri Looks like this is the OVA applianceVM, not the VCH endpointVM. Ping @andrewtchin and @frapposelli for adding an estimate and priority for the work involved.

| 1.0 | vm-support on VCH should include Harbor logs as well which are under /var/log/harbor - @lgayatri commented on [Fri May 26 2017](https://github.com/vmware/vic/issues/5265)

**User Statement:**

The support bundle that gets generated on VCH with vm-support should include Harbor logs

**Details:**

vm-support on VCH contains only vmware-* files from /var/log and does not include log folder harbor which is needed to debug harbor issues

**Acceptance Criteria:**

Extracted vm-support bundle of VCH, and found only below logs

root@vic-st-h2-132 [ /var/log/harbor/2017-05-26/tmp/vm-support.qabKha/var/log ]# ls

vmware-vgauthsvc.log.0 vmware-vmsvc.1.log vmware-vmsvc.2.log vmware-vmsvc.3.log vmware-vmsvc.4.log vmware-vmsvc.log

ls /var/log contains:

root@vic-st-h2-132 [ /var/log ]# ls

btmp harbor installer.log lastlog vmware-vmsvc.1.log vmware-vmsvc.3.log vmware-vmsvc.log

cloud-init.log journal vmware-vgauthsvc.log.0 vmware-vmsvc.2.log vmware-vmsvc.4.log wtmp

Missing log folder is "harbor"

Please include harbor log folder in the support bundle.

---

@anchal-agrawal commented on [Fri May 26 2017](https://github.com/vmware/vic/issues/5265#issuecomment-304334029)

@lgayatri Looks like this is the OVA applianceVM, not the VCH endpointVM. Ping @andrewtchin and @frapposelli for adding an estimate and priority for the work involved.

| priority | vm support on vch should include harbor logs as well which are under var log harbor lgayatri commented on user statement the support bundle that gets generated on vch with vm support should include harbor logs details vm support on vch contains only vmware files from var log and does not include log folder harbor which is needed to debug harbor issues acceptance criteria extracted vm support bundle of vch and found only below logs root vic st ls vmware vgauthsvc log vmware vmsvc log vmware vmsvc log vmware vmsvc log vmware vmsvc log vmware vmsvc log ls var log contains root vic st ls btmp harbor installer log lastlog vmware vmsvc log vmware vmsvc log vmware vmsvc log cloud init log journal vmware vgauthsvc log vmware vmsvc log vmware vmsvc log wtmp missing log folder is harbor please include harbor log folder in the support bundle anchal agrawal commented on lgayatri looks like this is the ova appliancevm not the vch endpointvm ping andrewtchin and frapposelli for adding an estimate and priority for the work involved | 1 |

769,098 | 26,993,320,154 | IssuesEvent | 2023-02-09 21:55:19 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | pointblank simple example fails with a `fmt() unused argument ` error when using {gt} version 0.8.0 | Type: ☹︎ Bug Difficulty: [2] Intermediate Effort: [2] Medium Priority: ♨︎ Critical | ## Prework

* [x] Read and agree to the [code of conduct](https://www.contributor-covenant.org/version/2/0/code_of_conduct/) and [contributing guidelines](https://github.com/rich-iannone/pointblank/blob/main/.github/CONTRIBUTING.md).

* [x] If there is [already a relevant issue](https://github.com/rich-iannone/pointblank/issues), whether open or closed, comment on the existing thread instead of posting a new issue.

* [x] Post a [minimal reproducible example](https://www.tidyverse.org/help/) so the maintainer can troubleshoot the problems you identify. A reproducible example is:

* [x] **Runnable**: post enough R code and data so any onlooker can create the error on their own computer.

* [x] **Minimal**: reduce runtime wherever possible and remove complicated details that are irrelevant to the issue at hand.

* [x] **Readable**: format your code according to the [tidyverse style guide](https://style.tidyverse.org/).

## Forewords

Congratulation for the fantastic package, I'm using it on a daily basis when doing data wrangling, and I've had the honor to present it in front of the r-toulouse user group community with great success.

## Description

{pointblank} README simple example fails to print the agent object with an error

```

Error in fmt(data = data, columns = {: argument unused (prepend = TRUE)

```

when using {gt} version 0.8.0

## Reproducible example

``` r

# pak::pak("rstudio/gt@v0.8.0")

library(pointblank)

agent <-

dplyr::tibble(

a = c(5, 7, 6, 5, NA, 7),

b = c(6, 1, 0, 6, 0, 7)

) %>%

create_agent(

label = "A very *simple* example.",

) %>%

col_vals_between(

vars(a), 1, 9,

na_pass = TRUE

) %>%

col_vals_lt(

vars(c), 12,

preconditions = ~ . %>% dplyr::mutate(c = a + b)

) %>%

col_is_numeric(vars(a, b)) %>%

interrogate()

agent

#> Error in fmt(data = data, columns = {: argument inutilisé (prepend = TRUE)

```

<sup>Created on 2022-12-03 by the [reprex package](https://reprex.tidyverse.org) (v2.0.1)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os Ubuntu 22.04.1 LTS

#> system x86_64, linux-gnu

#> ui X11

#> language fr_FR

#> collate fr_FR.UTF-8

#> ctype fr_FR.UTF-8

#> tz Europe/Paris

#> date 2022-12-03

#> pandoc 2.18 @ /usr/lib/rstudio/bin/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.1)

#> base64enc 0.1-3 2015-07-28 [1] CRAN (R 4.2.1)

#> blastula 0.3.2 2020-05-19 [1] CRAN (R 4.2.1)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.1)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.1)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.1)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.1)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.1)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.1)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.1)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.1)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.1)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.1)

#> gt 0.8.0 2022-12-03 [1] Github (rstudio/gt@0acc7fb)

#> gtable 0.3.1 2022-09-01 [1] CRAN (R 4.2.1)

#> highr 0.9 2021-04-16 [1] CRAN (R 4.2.1)

#> htmltools 0.5.3 2022-07-18 [1] CRAN (R 4.2.1)

#> knitr 1.41 2022-11-18 [1] CRAN (R 4.2.1)

#> lifecycle 1.0.3 2022-10-07 [1] CRAN (R 4.2.1)

#> magrittr 2.0.3 2022-03-30 [1] CRAN (R 4.2.1)

#> munsell 0.5.0 2018-06-12 [1] CRAN (R 4.2.1)

#> pillar 1.8.1 2022-08-19 [1] CRAN (R 4.2.1)

#> pkgconfig 2.0.3 2019-09-22 [1] CRAN (R 4.2.1)

#> pointblank * 0.11.2 2022-10-08 [1] CRAN (R 4.2.1)

#> R6 2.5.1 2021-08-19 [1] CRAN (R 4.2.1)

#> reprex 2.0.1 2021-08-05 [3] CRAN (R 4.1.0)

#> rlang 1.0.6 2022-09-24 [1] CRAN (R 4.2.1)

#> rmarkdown 2.18 2022-11-09 [1] CRAN (R 4.2.1)

#> rstudioapi 0.14 2022-08-22 [1] CRAN (R 4.2.1)

#> scales 1.2.1 2022-08-20 [1] CRAN (R 4.2.1)

#> sessioninfo 1.2.2 2021-12-06 [1] CRAN (R 4.2.1)

#> stringi 1.7.8 2022-07-11 [1] CRAN (R 4.2.1)

#> stringr 1.5.0 2022-12-02 [1] CRAN (R 4.2.1)

#> tibble 3.1.8 2022-07-22 [1] CRAN (R 4.2.1)

#> tidyselect 1.2.0 2022-10-10 [1] CRAN (R 4.2.1)

#> utf8 1.2.2 2021-07-24 [1] CRAN (R 4.2.1)

#> vctrs 0.5.1 2022-11-16 [1] CRAN (R 4.2.1)

#> withr 2.5.0 2022-03-03 [1] CRAN (R 4.2.1)

#> xfun 0.35 2022-11-16 [1] CRAN (R 4.2.1)

#> yaml 2.3.6 2022-10-18 [1] CRAN (R 4.2.1)

#>

#> [1] /home/____/R/x86_64-pc-linux-gnu-library/4.2

#> [2] /usr/local/lib/R/site-library

#> [3] /usr/lib/R/site-library

#> [4] /usr/lib/R/library

#>

#> ──────────────────────────────────────────────────────────────────────────────

```

</details>

## Expected result

No error should happen running simple example and correct printing of the {gt} table

``` r

# pak::pak("rstudio/gt@v0.7.0")

library(pointblank)

agent <-

dplyr::tibble(

a = c(5, 7, 6, 5, NA, 7),

b = c(6, 1, 0, 6, 0, 7)

) %>%

create_agent(

label = "A very *simple* example.",

) %>%

col_vals_between(

vars(a), 1, 9,

na_pass = TRUE

) %>%

col_vals_lt(

vars(c), 12,

preconditions = ~ . %>% dplyr::mutate(c = a + b)

) %>%

col_is_numeric(vars(a, b)) %>%

interrogate()

agent

```

<div id="pb_agent" style="overflow-x:auto;overflow-y:auto;width:auto;height:auto;">

::: table removed :::

</div>

<sup>Created on 2022-12-03 by the [reprex package](https://reprex.tidyverse.org) (v2.0.1)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os Ubuntu 22.04.1 LTS

#> system x86_64, linux-gnu

#> ui X11

#> language fr_FR

#> collate fr_FR.UTF-8

#> ctype fr_FR.UTF-8

#> tz Europe/Paris

#> date 2022-12-03

#> pandoc 2.18 @ /usr/lib/rstudio/bin/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.1)

#> base64enc 0.1-3 2015-07-28 [1] CRAN (R 4.2.1)

#> blastula 0.3.2 2020-05-19 [1] CRAN (R 4.2.1)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.1)

#> commonmark 1.8.1 2022-10-14 [1] CRAN (R 4.2.1)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.1)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.1)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.1)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.1)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.1)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.1)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.1)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.1)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.1)

#> gt 0.7.0 2022-12-03 [1] Github (rstudio/gt@902c9e9)

#> gtable 0.3.1 2022-09-01 [1] CRAN (R 4.2.1)

#> highr 0.9 2021-04-16 [1] CRAN (R 4.2.1)

#> htmltools 0.5.3 2022-07-18 [1] CRAN (R 4.2.1)

#> knitr 1.41 2022-11-18 [1] CRAN (R 4.2.1)

#> lifecycle 1.0.3 2022-10-07 [1] CRAN (R 4.2.1)

#> magrittr 2.0.3 2022-03-30 [1] CRAN (R 4.2.1)

#> munsell 0.5.0 2018-06-12 [1] CRAN (R 4.2.1)

#> pillar 1.8.1 2022-08-19 [1] CRAN (R 4.2.1)

#> pkgconfig 2.0.3 2019-09-22 [1] CRAN (R 4.2.1)

#> pointblank * 0.11.2 2022-10-08 [1] CRAN (R 4.2.1)

#> R6 2.5.1 2021-08-19 [1] CRAN (R 4.2.1)

#> reprex 2.0.1 2021-08-05 [3] CRAN (R 4.1.0)

#> rlang 1.0.6 2022-09-24 [1] CRAN (R 4.2.1)

#> rmarkdown 2.18 2022-11-09 [1] CRAN (R 4.2.1)

#> rstudioapi 0.14 2022-08-22 [1] CRAN (R 4.2.1)

#> sass 0.4.4 2022-11-24 [1] CRAN (R 4.2.1)

#> scales 1.2.1 2022-08-20 [1] CRAN (R 4.2.1)

#> sessioninfo 1.2.2 2021-12-06 [1] CRAN (R 4.2.1)

#> stringi 1.7.8 2022-07-11 [1] CRAN (R 4.2.1)

#> stringr 1.5.0 2022-12-02 [1] CRAN (R 4.2.1)

#> tibble 3.1.8 2022-07-22 [1] CRAN (R 4.2.1)

#> tidyselect 1.2.0 2022-10-10 [1] CRAN (R 4.2.1)

#> utf8 1.2.2 2021-07-24 [1] CRAN (R 4.2.1)

#> vctrs 0.5.1 2022-11-16 [1] CRAN (R 4.2.1)

#> withr 2.5.0 2022-03-03 [1] CRAN (R 4.2.1)

#> xfun 0.35 2022-11-16 [1] CRAN (R 4.2.1)

#> yaml 2.3.6 2022-10-18 [1] CRAN (R 4.2.1)

#>

#> [1] /home/____/R/x86_64-pc-linux-gnu-library/4.2

#> [2] /usr/local/lib/R/site-library

#> [3] /usr/lib/R/site-library

#> [4] /usr/lib/R/library

#>

#> ──────────────────────────────────────────────────────────────────────────────

```

</details> | 1.0 | pointblank simple example fails with a `fmt() unused argument ` error when using {gt} version 0.8.0 - ## Prework

* [x] Read and agree to the [code of conduct](https://www.contributor-covenant.org/version/2/0/code_of_conduct/) and [contributing guidelines](https://github.com/rich-iannone/pointblank/blob/main/.github/CONTRIBUTING.md).

* [x] If there is [already a relevant issue](https://github.com/rich-iannone/pointblank/issues), whether open or closed, comment on the existing thread instead of posting a new issue.

* [x] Post a [minimal reproducible example](https://www.tidyverse.org/help/) so the maintainer can troubleshoot the problems you identify. A reproducible example is:

* [x] **Runnable**: post enough R code and data so any onlooker can create the error on their own computer.

* [x] **Minimal**: reduce runtime wherever possible and remove complicated details that are irrelevant to the issue at hand.

* [x] **Readable**: format your code according to the [tidyverse style guide](https://style.tidyverse.org/).

## Forewords

Congratulation for the fantastic package, I'm using it on a daily basis when doing data wrangling, and I've had the honor to present it in front of the r-toulouse user group community with great success.

## Description

{pointblank} README simple example fails to print the agent object with an error

```

Error in fmt(data = data, columns = {: argument unused (prepend = TRUE)

```

when using {gt} version 0.8.0

## Reproducible example

``` r

# pak::pak("rstudio/gt@v0.8.0")

library(pointblank)

agent <-

dplyr::tibble(

a = c(5, 7, 6, 5, NA, 7),

b = c(6, 1, 0, 6, 0, 7)

) %>%

create_agent(

label = "A very *simple* example.",

) %>%

col_vals_between(

vars(a), 1, 9,

na_pass = TRUE

) %>%

col_vals_lt(

vars(c), 12,

preconditions = ~ . %>% dplyr::mutate(c = a + b)

) %>%

col_is_numeric(vars(a, b)) %>%

interrogate()

agent

#> Error in fmt(data = data, columns = {: argument inutilisé (prepend = TRUE)

```

<sup>Created on 2022-12-03 by the [reprex package](https://reprex.tidyverse.org) (v2.0.1)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os Ubuntu 22.04.1 LTS

#> system x86_64, linux-gnu

#> ui X11

#> language fr_FR

#> collate fr_FR.UTF-8

#> ctype fr_FR.UTF-8

#> tz Europe/Paris

#> date 2022-12-03

#> pandoc 2.18 @ /usr/lib/rstudio/bin/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.1)

#> base64enc 0.1-3 2015-07-28 [1] CRAN (R 4.2.1)

#> blastula 0.3.2 2020-05-19 [1] CRAN (R 4.2.1)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.1)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.1)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.1)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.1)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.1)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.1)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.1)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.1)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.1)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.1)

#> gt 0.8.0 2022-12-03 [1] Github (rstudio/gt@0acc7fb)

#> gtable 0.3.1 2022-09-01 [1] CRAN (R 4.2.1)

#> highr 0.9 2021-04-16 [1] CRAN (R 4.2.1)

#> htmltools 0.5.3 2022-07-18 [1] CRAN (R 4.2.1)

#> knitr 1.41 2022-11-18 [1] CRAN (R 4.2.1)

#> lifecycle 1.0.3 2022-10-07 [1] CRAN (R 4.2.1)

#> magrittr 2.0.3 2022-03-30 [1] CRAN (R 4.2.1)

#> munsell 0.5.0 2018-06-12 [1] CRAN (R 4.2.1)

#> pillar 1.8.1 2022-08-19 [1] CRAN (R 4.2.1)

#> pkgconfig 2.0.3 2019-09-22 [1] CRAN (R 4.2.1)

#> pointblank * 0.11.2 2022-10-08 [1] CRAN (R 4.2.1)

#> R6 2.5.1 2021-08-19 [1] CRAN (R 4.2.1)

#> reprex 2.0.1 2021-08-05 [3] CRAN (R 4.1.0)

#> rlang 1.0.6 2022-09-24 [1] CRAN (R 4.2.1)

#> rmarkdown 2.18 2022-11-09 [1] CRAN (R 4.2.1)

#> rstudioapi 0.14 2022-08-22 [1] CRAN (R 4.2.1)

#> scales 1.2.1 2022-08-20 [1] CRAN (R 4.2.1)

#> sessioninfo 1.2.2 2021-12-06 [1] CRAN (R 4.2.1)

#> stringi 1.7.8 2022-07-11 [1] CRAN (R 4.2.1)

#> stringr 1.5.0 2022-12-02 [1] CRAN (R 4.2.1)

#> tibble 3.1.8 2022-07-22 [1] CRAN (R 4.2.1)

#> tidyselect 1.2.0 2022-10-10 [1] CRAN (R 4.2.1)

#> utf8 1.2.2 2021-07-24 [1] CRAN (R 4.2.1)

#> vctrs 0.5.1 2022-11-16 [1] CRAN (R 4.2.1)

#> withr 2.5.0 2022-03-03 [1] CRAN (R 4.2.1)

#> xfun 0.35 2022-11-16 [1] CRAN (R 4.2.1)

#> yaml 2.3.6 2022-10-18 [1] CRAN (R 4.2.1)

#>

#> [1] /home/____/R/x86_64-pc-linux-gnu-library/4.2

#> [2] /usr/local/lib/R/site-library

#> [3] /usr/lib/R/site-library

#> [4] /usr/lib/R/library

#>

#> ──────────────────────────────────────────────────────────────────────────────

```

</details>

## Expected result

No error should happen running simple example and correct printing of the {gt} table

``` r

# pak::pak("rstudio/gt@v0.7.0")

library(pointblank)

agent <-

dplyr::tibble(

a = c(5, 7, 6, 5, NA, 7),

b = c(6, 1, 0, 6, 0, 7)

) %>%

create_agent(

label = "A very *simple* example.",

) %>%

col_vals_between(

vars(a), 1, 9,

na_pass = TRUE

) %>%

col_vals_lt(

vars(c), 12,

preconditions = ~ . %>% dplyr::mutate(c = a + b)

) %>%

col_is_numeric(vars(a, b)) %>%

interrogate()

agent

```

<div id="pb_agent" style="overflow-x:auto;overflow-y:auto;width:auto;height:auto;">

::: table removed :::

</div>

<sup>Created on 2022-12-03 by the [reprex package](https://reprex.tidyverse.org) (v2.0.1)</sup>

<details style="margin-bottom:10px;">

<summary>

Session info

</summary>

``` r

sessioninfo::session_info()

#> ─ Session info ───────────────────────────────────────────────────────────────

#> setting value

#> version R version 4.2.1 (2022-06-23)

#> os Ubuntu 22.04.1 LTS

#> system x86_64, linux-gnu

#> ui X11

#> language fr_FR

#> collate fr_FR.UTF-8

#> ctype fr_FR.UTF-8

#> tz Europe/Paris

#> date 2022-12-03

#> pandoc 2.18 @ /usr/lib/rstudio/bin/quarto/bin/tools/ (via rmarkdown)

#>

#> ─ Packages ───────────────────────────────────────────────────────────────────

#> package * version date (UTC) lib source

#> assertthat 0.2.1 2019-03-21 [1] CRAN (R 4.2.1)

#> base64enc 0.1-3 2015-07-28 [1] CRAN (R 4.2.1)

#> blastula 0.3.2 2020-05-19 [1] CRAN (R 4.2.1)

#> cli 3.4.1 2022-09-23 [1] CRAN (R 4.2.1)

#> colorspace 2.0-3 2022-02-21 [1] CRAN (R 4.2.1)

#> commonmark 1.8.1 2022-10-14 [1] CRAN (R 4.2.1)

#> DBI 1.1.3 2022-06-18 [1] CRAN (R 4.2.1)

#> digest 0.6.30 2022-10-18 [1] CRAN (R 4.2.1)

#> dplyr 1.0.10 2022-09-01 [1] CRAN (R 4.2.1)

#> evaluate 0.18 2022-11-07 [1] CRAN (R 4.2.1)

#> fansi 1.0.3 2022-03-24 [1] CRAN (R 4.2.1)

#> fastmap 1.1.0 2021-01-25 [1] CRAN (R 4.2.1)

#> fs 1.5.2 2021-12-08 [1] CRAN (R 4.2.1)

#> generics 0.1.3 2022-07-05 [1] CRAN (R 4.2.1)

#> ggplot2 3.4.0 2022-11-04 [1] CRAN (R 4.2.1)

#> glue 1.6.2 2022-02-24 [1] CRAN (R 4.2.1)

#> gt 0.7.0 2022-12-03 [1] Github (rstudio/gt@902c9e9)