Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

141,569 | 5,438,276,235 | IssuesEvent | 2017-03-06 10:00:00 | pybel/pybel-tools | https://api.github.com/repos/pybel/pybel-tools | closed | Collapse based on orthology | medium medium priority | Make a function that collapses nodes based on their orthology connections

```python

def collapse_by_orthology(graph, priority_list=None):

"""Collapses a graph based on the orthology between nodes"""

priority_list = ['HGNC', 'MGI', 'RGD'] if priority_list is none else priority_list

...

``` | 1.0 | Collapse based on orthology - Make a function that collapses nodes based on their orthology connections

```python

def collapse_by_orthology(graph, priority_list=None):

"""Collapses a graph based on the orthology between nodes"""

priority_list = ['HGNC', 'MGI', 'RGD'] if priority_list is none else priority_list

...

``` | priority | collapse based on orthology make a function that collapses nodes based on their orthology connections python def collapse by orthology graph priority list none collapses a graph based on the orthology between nodes priority list if priority list is none else priority list | 1 |

162,006 | 6,145,463,706 | IssuesEvent | 2017-06-27 11:35:20 | ressec/thot | https://api.github.com/repos/ressec/thot | opened | Create a command coordinator entity | Domain: Actor Priority: Medium Type: New Feature | ## Description

The purpose of the command coordinator is to have the possibility to have some common commands to be pre-implemented and automatically registered by a command coordinator.

When the terminal (see #20 ) is notified on issued commands, it can pass to the command coordinator some pre-defined commands to be automatically executed. | 1.0 | Create a command coordinator entity - ## Description

The purpose of the command coordinator is to have the possibility to have some common commands to be pre-implemented and automatically registered by a command coordinator.

When the terminal (see #20 ) is notified on issued commands, it can pass to the command coordinator some pre-defined commands to be automatically executed. | priority | create a command coordinator entity description the purpose of the command coordinator is to have the possibility to have some common commands to be pre implemented and automatically registered by a command coordinator when the terminal see is notified on issued commands it can pass to the command coordinator some pre defined commands to be automatically executed | 1 |

681,100 | 23,297,266,556 | IssuesEvent | 2022-08-06 19:31:40 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | Azure Ansible - Doesn't fetch IPConfiguration Public IP Method | medium_priority work in |

##### SUMMARY

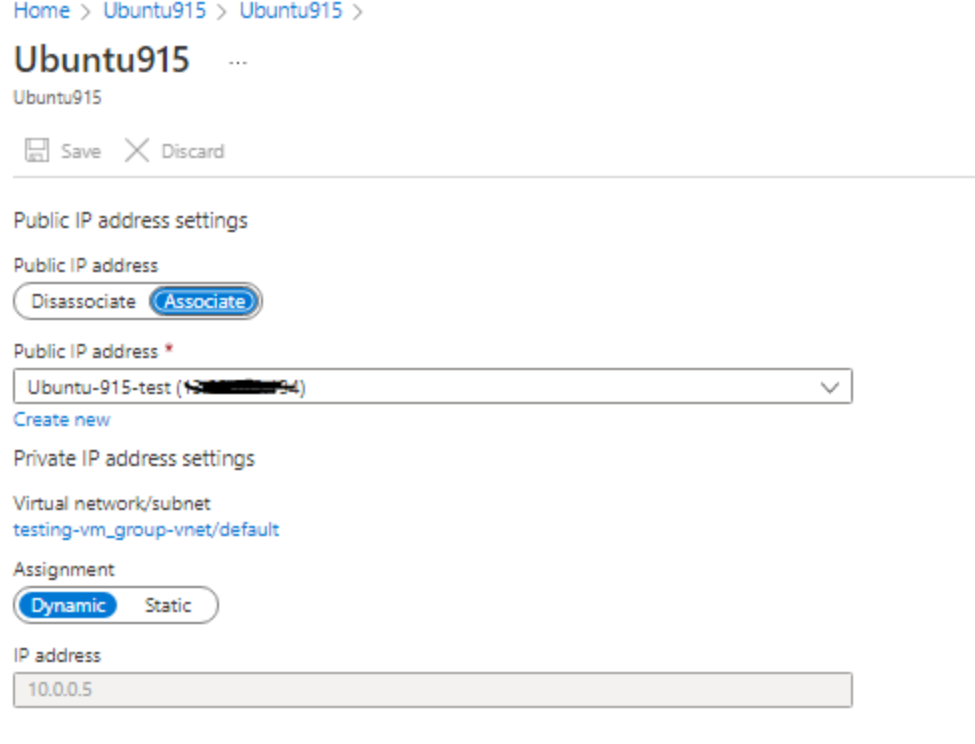

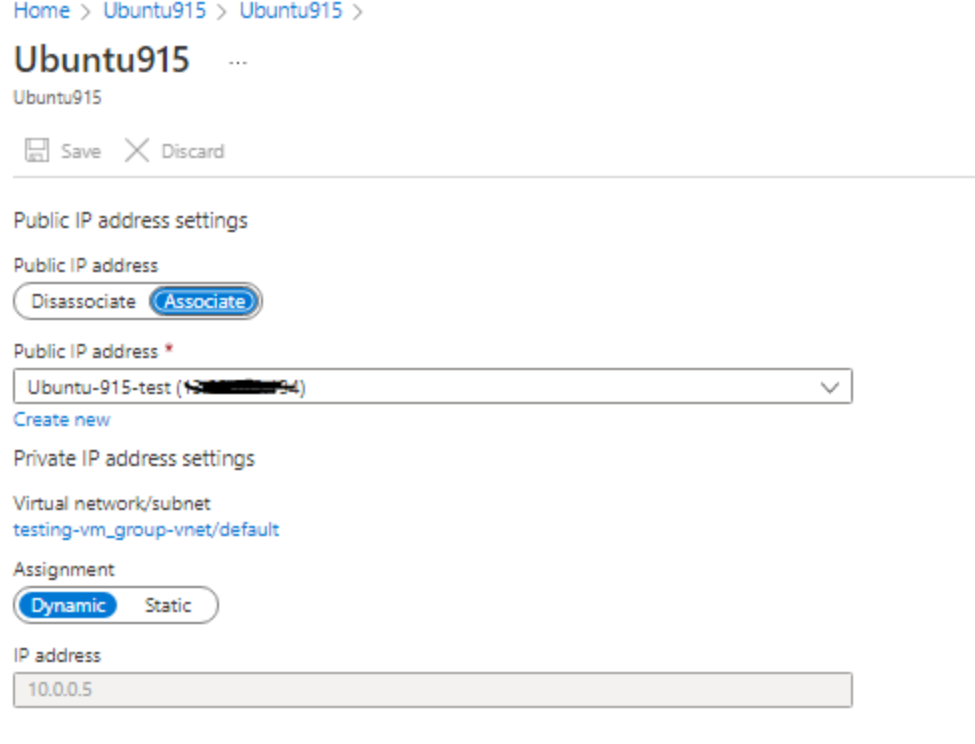

When I try to fetch the IP configuration of the network interface, I see that public_ip_allocation_method is set to NULL even though it is dynamic in Azure portal. I even tested by creating a new IP yet it shows NULL.

As a result I'm unable to modify network interface settings like Security Group, I get the error value of public_ip_allocation_method must be one of: Dynamic, Static, got: None found in ip_configurations

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```

ansible [core 2.13.2]

config file = /root/azure_ansible/ansible.cfg

configured module search path = ['/root/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.8/site-packages/ansible

ansible collection location = /root/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/local/bin/ansible

python version = 3.8.12 (default, Sep 16 2021, 10:46:05) [GCC 8.5.0 20210514 (Red Hat 8.5.0-3)]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```

# /root/.ansible/collections/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

# /usr/local/lib/python3.8/site-packages/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```

sh-4.4# ansible-config dump --only-changed

DEFAULT_HOST_LIST(/root/azure_ansible/ansible.cfg) = ['/root/azure_ansible/inventory/test.azure_rm.yml']

DEFAULT_LOAD_CALLBACK_PLUGINS(/root/azure_ansible/ansible.cfg) = True

DEFAULT_STDOUT_CALLBACK(/root/azure_ansible/ansible.cfg) = json

```

##### OS / ENVIRONMENT

<!--- Provide all relevant information below, e.g. target OS versions, network device firmware, etc. -->

Centos 7 Docker container

##### STEPS TO REPRODUCE

<!--- Describe exactly how to reproduce the problem, using a minimal test-case -->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Get facts for one network interface

azure_rm_networkinterface_info:

resource_group: "{{ resource_group }}"

name: "{{ azure_vm_network_interface }}"

register: azure_network_interface_info

- name: Applying NSG to target NIC

azure_rm_networkinterface:

name: "{{ azure_vm_network_interface }}"

resource_group: "{{ resource_group }}"

subnet_name: "{{ azure_network_interface_info.networkinterfaces[0].subnet }}"

virtual_network: "{{ azure_network_interface_info.networkinterfaces[0].virtual_network.name }}"

ip_configurations: "{{ azure_network_interface_info.networkinterfaces[0].ip_configurations }}"

security_group: "/subscriptions/123456/resourceGroups/test-resource-group/providers/Microsoft.Network/networkSecurityGroups/testing_temp_8"

```

<!--- HINT: You can paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

Fetch `public_ip_allocation_method` method instead of return NULL

Portal shows that the IP is Dynamic (I tested by creating a new IP but still ansible returns NULL for `public_ip_allocation_method`)

##### ACTUAL RESULTS

"ip_configurations": [

{

"application_gateway_backend_address_pools": null,

"application_security_groups": null,

"load_balancer_backend_address_pools": null,

"name": "Ubuntu915",

"primary": true,

"private_ip_address": "10.0.0.5",

"private_ip_address_version": "IPv4",

"private_ip_allocation_method": "Dynamic",

"public_ip_address": "/subscriptions/123456789/resourceGroups/test-resource-group/providers/Microsoft.Network/publicIPAddresses/Ubuntu-915-test",

"public_ip_address_name": "/subscriptions/123456789/resourceGroups/test-resource-group/providers/Microsoft.Network/publicIPAddresses/Ubuntu-915-test",

"public_ip_allocation_method": null

}

],

When trying to change security group, I get the following error

```

"msg": "value of public_ip_allocation_method must be one of: Dynamic, Static, got: None found in ip_configurations"

```

<!--- Paste verbatim command output between quotes -->

```paste below

```

| 1.0 | Azure Ansible - Doesn't fetch IPConfiguration Public IP Method -

##### SUMMARY

When I try to fetch the IP configuration of the network interface, I see that public_ip_allocation_method is set to NULL even though it is dynamic in Azure portal. I even tested by creating a new IP yet it shows NULL.

As a result I'm unable to modify network interface settings like Security Group, I get the error value of public_ip_allocation_method must be one of: Dynamic, Static, got: None found in ip_configurations

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```

ansible [core 2.13.2]

config file = /root/azure_ansible/ansible.cfg

configured module search path = ['/root/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.8/site-packages/ansible

ansible collection location = /root/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/local/bin/ansible

python version = 3.8.12 (default, Sep 16 2021, 10:46:05) [GCC 8.5.0 20210514 (Red Hat 8.5.0-3)]

jinja version = 3.1.2

libyaml = True

```

##### COLLECTION VERSION

<!--- Paste verbatim output from "ansible-galaxy collection list <namespace>.<collection>" between the quotes

for example: ansible-galaxy collection list community.general

-->

```

# /root/.ansible/collections/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

# /usr/local/lib/python3.8/site-packages/ansible_collections

Collection Version

------------------ -------

azure.azcollection 1.13.0

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```

sh-4.4# ansible-config dump --only-changed

DEFAULT_HOST_LIST(/root/azure_ansible/ansible.cfg) = ['/root/azure_ansible/inventory/test.azure_rm.yml']

DEFAULT_LOAD_CALLBACK_PLUGINS(/root/azure_ansible/ansible.cfg) = True

DEFAULT_STDOUT_CALLBACK(/root/azure_ansible/ansible.cfg) = json

```

##### OS / ENVIRONMENT

<!--- Provide all relevant information below, e.g. target OS versions, network device firmware, etc. -->

Centos 7 Docker container

##### STEPS TO REPRODUCE

<!--- Describe exactly how to reproduce the problem, using a minimal test-case -->

<!--- Paste example playbooks or commands between quotes below -->

```

- name: Get facts for one network interface

azure_rm_networkinterface_info:

resource_group: "{{ resource_group }}"

name: "{{ azure_vm_network_interface }}"

register: azure_network_interface_info

- name: Applying NSG to target NIC

azure_rm_networkinterface:

name: "{{ azure_vm_network_interface }}"

resource_group: "{{ resource_group }}"

subnet_name: "{{ azure_network_interface_info.networkinterfaces[0].subnet }}"

virtual_network: "{{ azure_network_interface_info.networkinterfaces[0].virtual_network.name }}"

ip_configurations: "{{ azure_network_interface_info.networkinterfaces[0].ip_configurations }}"

security_group: "/subscriptions/123456/resourceGroups/test-resource-group/providers/Microsoft.Network/networkSecurityGroups/testing_temp_8"

```

<!--- HINT: You can paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

Fetch `public_ip_allocation_method` method instead of return NULL

Portal shows that the IP is Dynamic (I tested by creating a new IP but still ansible returns NULL for `public_ip_allocation_method`)

##### ACTUAL RESULTS

"ip_configurations": [

{

"application_gateway_backend_address_pools": null,

"application_security_groups": null,

"load_balancer_backend_address_pools": null,

"name": "Ubuntu915",

"primary": true,

"private_ip_address": "10.0.0.5",

"private_ip_address_version": "IPv4",

"private_ip_allocation_method": "Dynamic",

"public_ip_address": "/subscriptions/123456789/resourceGroups/test-resource-group/providers/Microsoft.Network/publicIPAddresses/Ubuntu-915-test",

"public_ip_address_name": "/subscriptions/123456789/resourceGroups/test-resource-group/providers/Microsoft.Network/publicIPAddresses/Ubuntu-915-test",

"public_ip_allocation_method": null

}

],

When trying to change security group, I get the following error

```

"msg": "value of public_ip_allocation_method must be one of: Dynamic, Static, got: None found in ip_configurations"

```

<!--- Paste verbatim command output between quotes -->

```paste below

```

| priority | azure ansible doesn t fetch ipconfiguration public ip method summary when i try to fetch the ip configuration of the network interface i see that public ip allocation method is set to null even though it is dynamic in azure portal i even tested by creating a new ip yet it shows null as a result i m unable to modify network interface settings like security group i get the error value of public ip allocation method must be one of dynamic static got none found in ip configurations issue type bug report component name ansible version ansible config file root azure ansible ansible cfg configured module search path ansible python module location usr local lib site packages ansible ansible collection location root ansible collections usr share ansible collections executable location usr local bin ansible python version default sep jinja version libyaml true collection version between the quotes for example ansible galaxy collection list community general root ansible collections ansible collections collection version azure azcollection usr local lib site packages ansible collections collection version azure azcollection configuration sh ansible config dump only changed default host list root azure ansible ansible cfg default load callback plugins root azure ansible ansible cfg true default stdout callback root azure ansible ansible cfg json os environment centos docker container steps to reproduce name get facts for one network interface azure rm networkinterface info resource group resource group name azure vm network interface register azure network interface info name applying nsg to target nic azure rm networkinterface name azure vm network interface resource group resource group subnet name azure network interface info networkinterfaces subnet virtual network azure network interface info networkinterfaces virtual network name ip configurations azure network interface info networkinterfaces ip configurations security group subscriptions resourcegroups test resource group providers microsoft network networksecuritygroups testing temp expected results fetch public ip allocation method method instead of return null portal shows that the ip is dynamic i tested by creating a new ip but still ansible returns null for public ip allocation method actual results ip configurations application gateway backend address pools null application security groups null load balancer backend address pools null name primary true private ip address private ip address version private ip allocation method dynamic public ip address subscriptions resourcegroups test resource group providers microsoft network publicipaddresses ubuntu test public ip address name subscriptions resourcegroups test resource group providers microsoft network publicipaddresses ubuntu test public ip allocation method null when trying to change security group i get the following error msg value of public ip allocation method must be one of dynamic static got none found in ip configurations paste below | 1 |

475,131 | 13,687,543,255 | IssuesEvent | 2020-09-30 10:16:11 | ooni/probe-engine | https://api.github.com/repos/ooni/probe-engine | closed | Routine releases in Sprint 23 | effort/M priority/medium | This is about preparing a new stable release of ooni/probe-engine as well as a stable release of ooni/probe-cli. We are going to use such releases as the basic building blocks for upcoming probe-desktop and probe-{ios,android} releases.

- [x] Update dependencies

- [x] Update internal/httpheader/useragent.go

- [x] Update version/version.go

- [x] Update internal/resources/assets.go

- [x] Run go generate ./...

- [x] Tag a new version of ooni/probe-engine

- [x] Update again version.go to be alpha

- [x] Create release at GitHub

- [x] Update ooni/probe-engine mobile-staging branch

- [x] Pin ooni/probe-cli to ooni/probe-engine

- [x] Pin ooni/probe-android to latest mobile-staging

- [x] Pin ooni/probe-ios to latest mobile-staging

| 1.0 | Routine releases in Sprint 23 - This is about preparing a new stable release of ooni/probe-engine as well as a stable release of ooni/probe-cli. We are going to use such releases as the basic building blocks for upcoming probe-desktop and probe-{ios,android} releases.

- [x] Update dependencies

- [x] Update internal/httpheader/useragent.go

- [x] Update version/version.go

- [x] Update internal/resources/assets.go

- [x] Run go generate ./...

- [x] Tag a new version of ooni/probe-engine

- [x] Update again version.go to be alpha

- [x] Create release at GitHub

- [x] Update ooni/probe-engine mobile-staging branch

- [x] Pin ooni/probe-cli to ooni/probe-engine

- [x] Pin ooni/probe-android to latest mobile-staging

- [x] Pin ooni/probe-ios to latest mobile-staging

| priority | routine releases in sprint this is about preparing a new stable release of ooni probe engine as well as a stable release of ooni probe cli we are going to use such releases as the basic building blocks for upcoming probe desktop and probe ios android releases update dependencies update internal httpheader useragent go update version version go update internal resources assets go run go generate tag a new version of ooni probe engine update again version go to be alpha create release at github update ooni probe engine mobile staging branch pin ooni probe cli to ooni probe engine pin ooni probe android to latest mobile staging pin ooni probe ios to latest mobile staging | 1 |

769,547 | 27,011,159,793 | IssuesEvent | 2023-02-10 15:30:21 | authzed/spicedb | https://api.github.com/repos/authzed/spicedb | closed | Some mySQL URIs unable to be parsed by url.Parse | kind/bug priority/2 medium | The changes introduced by https://github.com/authzed/spicedb/pull/1129 cause mySQL URIs generated with https://pkg.go.dev/github.com/go-sql-driver/mysql#Config.FormatDSN to not work. The DSNs generated by the library are not able to be parsed by `url.Parse`. Can this be updated to use https://pkg.go.dev/github.com/go-sql-driver/mysql#ParseDSN instead of `url.Parse` or is the intention that SpiceDB only accepts URI connection strings? | 1.0 | Some mySQL URIs unable to be parsed by url.Parse - The changes introduced by https://github.com/authzed/spicedb/pull/1129 cause mySQL URIs generated with https://pkg.go.dev/github.com/go-sql-driver/mysql#Config.FormatDSN to not work. The DSNs generated by the library are not able to be parsed by `url.Parse`. Can this be updated to use https://pkg.go.dev/github.com/go-sql-driver/mysql#ParseDSN instead of `url.Parse` or is the intention that SpiceDB only accepts URI connection strings? | priority | some mysql uris unable to be parsed by url parse the changes introduced by cause mysql uris generated with to not work the dsns generated by the library are not able to be parsed by url parse can this be updated to use instead of url parse or is the intention that spicedb only accepts uri connection strings | 1 |

68,320 | 3,286,189,932 | IssuesEvent | 2015-10-29 00:35:27 | oshoukry/openpojo | https://api.github.com/repos/oshoukry/openpojo | closed | Adding support for inherited PojoFields similar to PojoClass.getPojoFields()? | auto-migrated Priority-Medium Type-Enhancement | ```

From Luke on wiki FAQ page - Dec 8th:

Is there any support for inheritance?

'PojoFieldFactory?.getPojoFields()' uses 'Class.getDeclaredFields()' , which

does not include inherited fields, which would be nice to have when testing

equals/hashCode and even toString in some cases...

Is there any plans for something along the lines of apache commons

'EqualsBuilder?.appendSuper()' etc?

```

Original issue reported on code.google.com by `oshou...@gmail.com` on 17 Jan 2012 at 6:18 | 1.0 | Adding support for inherited PojoFields similar to PojoClass.getPojoFields()? - ```

From Luke on wiki FAQ page - Dec 8th:

Is there any support for inheritance?

'PojoFieldFactory?.getPojoFields()' uses 'Class.getDeclaredFields()' , which

does not include inherited fields, which would be nice to have when testing

equals/hashCode and even toString in some cases...

Is there any plans for something along the lines of apache commons

'EqualsBuilder?.appendSuper()' etc?

```

Original issue reported on code.google.com by `oshou...@gmail.com` on 17 Jan 2012 at 6:18 | priority | adding support for inherited pojofields similar to pojoclass getpojofields from luke on wiki faq page dec is there any support for inheritance pojofieldfactory getpojofields uses class getdeclaredfields which does not include inherited fields which would be nice to have when testing equals hashcode and even tostring in some cases is there any plans for something along the lines of apache commons equalsbuilder appendsuper etc original issue reported on code google com by oshou gmail com on jan at | 1 |

663,360 | 22,191,603,961 | IssuesEvent | 2022-06-07 00:01:42 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] PITR: RESTORE_SYS_CATALOG operation returns a segmentation fault when replaying during tablet bootstrap | kind/bug area/docdb priority/medium | Jira Link: [DB-565](https://yugabyte.atlassian.net/browse/DB-565)

### Description

During tablet bootstrap if a committed RESTORE_SYS_CATALOG operation replays, then it gives a segmentation fault with the following stack trace:

```

[m-2] PC: @ 0x0 (unknown)

[m-2] *** SIGSEGV (@0x0) received by PID 776151 (TID 0xffff9b867740) from PID 0; stack trace: ***

[m-2] @ 0xffffac4507a0 ([vdso]+0x79f)

[m-2] @ 0xffffab7bbbd7 yb::tablet::Tablet::UnregisterOperationFilter()

[m-2] @ 0xffffab83684b yb::tablet::Operation::Replicated()

[m-2] @ 0xffffab7de763 yb::tablet::TabletBootstrap::PlayTabletSnapshotRequest()

[m-2] @ 0xffffab7dd07f yb::tablet::TabletBootstrap::PlayAnyRequest()

[m-2] @ 0xffffab7dc9c7 yb::tablet::TabletBootstrap::MaybeReplayCommittedEntry()

[m-2] @ 0xffffab7da5b3 yb::tablet::TabletBootstrap::ApplyCommittedPendingReplicates()

[m-2] @ 0xffffab7d562b yb::tablet::TabletBootstrap::PlaySegments()

[m-2] @ 0xffffab7d197f yb::tablet::TabletBootstrap::Bootstrap()

[m-2] @ 0xffffab7d0ef3 yb::tablet::BootstrapTabletImpl()

[m-2] @ 0xffffab7e4783 yb::tablet::BootstrapTablet()

[m-2] @ 0xffffac0d2937 yb::master::SysCatalogTable::OpenTablet()

[m-2] @ 0xffffac0cec3f yb::master::SysCatalogTable::Load()

```

```

yb-master: /opt/yb-build/thirdparty/yugabyte-db-thirdparty-v20220506040442-4cda3bf56c-almalinux8-aarch64-clang12/installed/uninstrumented/include/boost/intrusive/list.hpp:1309: boost::intrusive::list_impl::iterator boost::intrusive::list_impl<boost::intrusive::bhtraits<yb::tablet::OperationFilter, boost::intrusive::list_node_traits<void *>, boost::intrusive::safe_link, boost::intrusive::dft_tag, 1>, unsigned long, true, void>::iterator_to(boost::intrusive::list_impl::reference) [ValueTraits = boost::intrusive::bhtraits<yb::tablet::OperationFilter, boost::intrusive::list_node_traits<void *>, boost::intrusive::safe_link, boost::intrusive::dft_tag, 1>, SizeType = unsigned long, ConstantTimeSize = true, HeaderHolder = void]: Assertion `!node_algorithms::inited(this->priv_value_traits().to_node_ptr(value))' failed.

[m-2] *** Aborted at 1652974512 (unix time) try "date -d @1652974512" if you are using GNU date ***

```

This is due to a null operation filter. We should guard against such a situation. | 1.0 | [DocDB] PITR: RESTORE_SYS_CATALOG operation returns a segmentation fault when replaying during tablet bootstrap - Jira Link: [DB-565](https://yugabyte.atlassian.net/browse/DB-565)

### Description

During tablet bootstrap if a committed RESTORE_SYS_CATALOG operation replays, then it gives a segmentation fault with the following stack trace:

```

[m-2] PC: @ 0x0 (unknown)

[m-2] *** SIGSEGV (@0x0) received by PID 776151 (TID 0xffff9b867740) from PID 0; stack trace: ***

[m-2] @ 0xffffac4507a0 ([vdso]+0x79f)

[m-2] @ 0xffffab7bbbd7 yb::tablet::Tablet::UnregisterOperationFilter()

[m-2] @ 0xffffab83684b yb::tablet::Operation::Replicated()

[m-2] @ 0xffffab7de763 yb::tablet::TabletBootstrap::PlayTabletSnapshotRequest()

[m-2] @ 0xffffab7dd07f yb::tablet::TabletBootstrap::PlayAnyRequest()

[m-2] @ 0xffffab7dc9c7 yb::tablet::TabletBootstrap::MaybeReplayCommittedEntry()

[m-2] @ 0xffffab7da5b3 yb::tablet::TabletBootstrap::ApplyCommittedPendingReplicates()

[m-2] @ 0xffffab7d562b yb::tablet::TabletBootstrap::PlaySegments()

[m-2] @ 0xffffab7d197f yb::tablet::TabletBootstrap::Bootstrap()

[m-2] @ 0xffffab7d0ef3 yb::tablet::BootstrapTabletImpl()

[m-2] @ 0xffffab7e4783 yb::tablet::BootstrapTablet()

[m-2] @ 0xffffac0d2937 yb::master::SysCatalogTable::OpenTablet()

[m-2] @ 0xffffac0cec3f yb::master::SysCatalogTable::Load()

```

```

yb-master: /opt/yb-build/thirdparty/yugabyte-db-thirdparty-v20220506040442-4cda3bf56c-almalinux8-aarch64-clang12/installed/uninstrumented/include/boost/intrusive/list.hpp:1309: boost::intrusive::list_impl::iterator boost::intrusive::list_impl<boost::intrusive::bhtraits<yb::tablet::OperationFilter, boost::intrusive::list_node_traits<void *>, boost::intrusive::safe_link, boost::intrusive::dft_tag, 1>, unsigned long, true, void>::iterator_to(boost::intrusive::list_impl::reference) [ValueTraits = boost::intrusive::bhtraits<yb::tablet::OperationFilter, boost::intrusive::list_node_traits<void *>, boost::intrusive::safe_link, boost::intrusive::dft_tag, 1>, SizeType = unsigned long, ConstantTimeSize = true, HeaderHolder = void]: Assertion `!node_algorithms::inited(this->priv_value_traits().to_node_ptr(value))' failed.

[m-2] *** Aborted at 1652974512 (unix time) try "date -d @1652974512" if you are using GNU date ***

```

This is due to a null operation filter. We should guard against such a situation. | priority | pitr restore sys catalog operation returns a segmentation fault when replaying during tablet bootstrap jira link description during tablet bootstrap if a committed restore sys catalog operation replays then it gives a segmentation fault with the following stack trace pc unknown sigsegv received by pid tid from pid stack trace yb tablet tablet unregisteroperationfilter yb tablet operation replicated yb tablet tabletbootstrap playtabletsnapshotrequest yb tablet tabletbootstrap playanyrequest yb tablet tabletbootstrap maybereplaycommittedentry yb tablet tabletbootstrap applycommittedpendingreplicates yb tablet tabletbootstrap playsegments yb tablet tabletbootstrap bootstrap yb tablet bootstraptabletimpl yb tablet bootstraptablet yb master syscatalogtable opentablet yb master syscatalogtable load yb master opt yb build thirdparty yugabyte db thirdparty installed uninstrumented include boost intrusive list hpp boost intrusive list impl iterator boost intrusive list impl boost intrusive safe link boost intrusive dft tag unsigned long true void iterator to boost intrusive list impl reference assertion node algorithms inited this priv value traits to node ptr value failed aborted at unix time try date d if you are using gnu date this is due to a null operation filter we should guard against such a situation | 1 |

56,563 | 3,080,254,261 | IssuesEvent | 2015-08-21 20:59:17 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | Контекстное меню действий для магнет - ссылок (альтернатива диалогу при клике по ссылке). | Component-UI enhancement imported Priority-Medium | _From [sc0rpi0n...@gmail.com](https://code.google.com/u/100092996917054333852/) on May 29, 2012 00:26:33_

Возможно ли реализовать возможность из контекстного меню при клике правой кнопки мыши по магнет ссылке в чате вида magnet:?xt=urn:tree:tiger:MMNPMRPVVMWULKISXBX6HYO57WEWRG56XLZXDBY&xl=1848125&dn=MediaInfo_GUI_0.7.21_Windows_i386.exe там чтобы было: поиск,добавить в очередь скачивания,в общем чтоб как при клике?

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=761_ | 1.0 | Контекстное меню действий для магнет - ссылок (альтернатива диалогу при клике по ссылке). - _From [sc0rpi0n...@gmail.com](https://code.google.com/u/100092996917054333852/) on May 29, 2012 00:26:33_

Возможно ли реализовать возможность из контекстного меню при клике правой кнопки мыши по магнет ссылке в чате вида magnet:?xt=urn:tree:tiger:MMNPMRPVVMWULKISXBX6HYO57WEWRG56XLZXDBY&xl=1848125&dn=MediaInfo_GUI_0.7.21_Windows_i386.exe там чтобы было: поиск,добавить в очередь скачивания,в общем чтоб как при клике?

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=761_ | priority | контекстное меню действий для магнет ссылок альтернатива диалогу при клике по ссылке from on may возможно ли реализовать возможность из контекстного меню при клике правой кнопки мыши по магнет ссылке в чате вида magnet xt urn tree tiger xl dn mediainfo gui windows exe там чтобы было поиск добавить в очередь скачивания в общем чтоб как при клике original issue | 1 |

714,013 | 24,547,611,625 | IssuesEvent | 2022-10-12 09:59:45 | IAmTamal/Milan | https://api.github.com/repos/IAmTamal/Milan | closed | [Automations]: Linter using husky pre-commit/pre-push | ✨ goal: improvement 🟨 priority: medium 🤖 aspect: dx 🛠 status : under development hacktoberfest | ### What would you like to share?

Currently eslint is set up but the linting is not automated.

I'd like to make use of `husky` and `lint-staged` to create a `pre-commit` or `pre-push` hooks to run linter and fix auto-fixable issues before a PR can be created

### Additional information

_No response_

### 🥦 Browser

Microsoft Edge

### 👀 Have you checked if this issue has been raised before?

- [X] I checked and didn't find similar issue

### 🏢 Have you read the Contributing Guidelines?

- [X] I have read the [Contributing Guidelines](https://github.com/IAmTamal/Milan/blob/main/CONTRIBUTING.md)

### Are you willing to work on this issue ?

Yes I am willing to submit a PR! | 1.0 | [Automations]: Linter using husky pre-commit/pre-push - ### What would you like to share?

Currently eslint is set up but the linting is not automated.

I'd like to make use of `husky` and `lint-staged` to create a `pre-commit` or `pre-push` hooks to run linter and fix auto-fixable issues before a PR can be created

### Additional information

_No response_

### 🥦 Browser

Microsoft Edge

### 👀 Have you checked if this issue has been raised before?

- [X] I checked and didn't find similar issue

### 🏢 Have you read the Contributing Guidelines?

- [X] I have read the [Contributing Guidelines](https://github.com/IAmTamal/Milan/blob/main/CONTRIBUTING.md)

### Are you willing to work on this issue ?

Yes I am willing to submit a PR! | priority | linter using husky pre commit pre push what would you like to share currently eslint is set up but the linting is not automated i d like to make use of husky and lint staged to create a pre commit or pre push hooks to run linter and fix auto fixable issues before a pr can be created additional information no response 🥦 browser microsoft edge 👀 have you checked if this issue has been raised before i checked and didn t find similar issue 🏢 have you read the contributing guidelines i have read the are you willing to work on this issue yes i am willing to submit a pr | 1 |

800,352 | 28,362,570,621 | IssuesEvent | 2023-04-12 11:51:50 | uhh-cms/columnflow | https://api.github.com/repos/uhh-cms/columnflow | opened | Error due to `combine_uncs` in yields task | bug medium-priority low-priority | There seems to be an issue related to the `combine_uncs` option when transforming the yields into a string representation

https://github.com/uhh-cms/columnflow/blob/master/columnflow/tasks/yields.py#L225 | 2.0 | Error due to `combine_uncs` in yields task - There seems to be an issue related to the `combine_uncs` option when transforming the yields into a string representation

https://github.com/uhh-cms/columnflow/blob/master/columnflow/tasks/yields.py#L225 | priority | error due to combine uncs in yields task there seems to be an issue related to the combine uncs option when transforming the yields into a string representation | 1 |

102,108 | 4,150,882,928 | IssuesEvent | 2016-06-15 18:46:09 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | Can CDash report per-command build time? | priority: medium team: kitware type: feature request | Build time is a pain point. It would be useful to have a prioritized list of targets to improve. | 1.0 | Can CDash report per-command build time? - Build time is a pain point. It would be useful to have a prioritized list of targets to improve. | priority | can cdash report per command build time build time is a pain point it would be useful to have a prioritized list of targets to improve | 1 |

231,800 | 7,643,550,496 | IssuesEvent | 2018-05-08 13:04:27 | learnweb/moodle-mod_ratingallocate | https://api.github.com/repos/learnweb/moodle-mod_ratingallocate | closed | Rank Choices: Wrong description for number of choices | Backlog Effort: Very Low Priority: Medium bug | Within the settings dialog, there is the description for number of ranks:

> Number of fields the user is presented to vote on (smaller than number of choices!)

However, the number has to be smaller or equal to the number of choices. | 1.0 | Rank Choices: Wrong description for number of choices - Within the settings dialog, there is the description for number of ranks:

> Number of fields the user is presented to vote on (smaller than number of choices!)

However, the number has to be smaller or equal to the number of choices. | priority | rank choices wrong description for number of choices within the settings dialog there is the description for number of ranks number of fields the user is presented to vote on smaller than number of choices however the number has to be smaller or equal to the number of choices | 1 |

829,944 | 31,931,339,699 | IssuesEvent | 2023-09-19 07:38:55 | tooget/tooget.github.io | https://api.github.com/repos/tooget/tooget.github.io | opened | Contacts | Resume/CV 컴포넌트 순서 변경 및 단순화 | Priority:Medium Task:Enhancement ETR:1W- Domain:UX | ## 이런 목표를 달성해야 합니다

> 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요.

- tooget.github.io QR코드를 읽은 경우 "이력"이 바로 보일 수 있게 수정

## 현재 이런 상태입니다

> 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요.

- 실제로 QR코드 명함을 전달하며 의견을 확인한 결과, 관심사는 "이력"에 있으며 "커피챗"에는 관심이 덜 함.

## 이 이슈는 이 분이 풀 수 있을 것 같습니다

> 담당할 Assignee를 @로 **1명만** 멘션해주세요.

@tooget

## 아래의 세부적인 문제를 풀어야 할 것 같습니다

> 이 이슈를 해결하기 위한 세부 항목(이슈 클로징 조건)을 체크리스트로 적어주세요.

- [ ] TBD

## 이 이슈를 해결하기 위해 이런 내용을 참고할 수 있을 것 같습니다

> 문제 해결에 도움이 될 수 있을 것 같은 관련 이슈 번호, 문서, Wiki, 스크린샷, 개인적인 의견 등을 최대한 적어주세요.

> 이 이슈가 다른 이슈와 관련되어 있는 경우는 **반드시 이슈 번호를 적어주세요**

- 관련이슈: TBD

- 참고사항: TBD

## 이 이슈 해결을 위해 이정도 시간이 예상됩니다

> 예상소요시간을 한가지만 선택해주세요.

> (1W+ 가 아닌 경우 레이블을 변경해주세요.)

- 예상소요시간: **1W-**

## 관련된 세부 정보입니다.

> Reporter는 **1명만**, Domain, Priority, Task를 **각각 한가지만** 선택해주세요.

> (UX, Medium, Enhancement 가 아닌 경우 레이블을 변경해주세요.)

- Reporter: @tooget

- Domain : **UX**

- Priority: **Medium**

- Task : **Enhancement**

## 이 이슈를 해결함에 따라 이정도 재무적 영향이 예상됩니다.

> 이 이슈를 해결함에 따라 전사적으로 유의미한 수익/비용 변동이 예상될 경우, 해당 수치를 입력해주세요.

- 예상수익: 0 원/월

- 예상비용: 0 원/월

| 1.0 | Contacts | Resume/CV 컴포넌트 순서 변경 및 단순화 - ## 이런 목표를 달성해야 합니다

> 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요.

- tooget.github.io QR코드를 읽은 경우 "이력"이 바로 보일 수 있게 수정

## 현재 이런 상태입니다

> 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요.

- 실제로 QR코드 명함을 전달하며 의견을 확인한 결과, 관심사는 "이력"에 있으며 "커피챗"에는 관심이 덜 함.

## 이 이슈는 이 분이 풀 수 있을 것 같습니다

> 담당할 Assignee를 @로 **1명만** 멘션해주세요.

@tooget

## 아래의 세부적인 문제를 풀어야 할 것 같습니다

> 이 이슈를 해결하기 위한 세부 항목(이슈 클로징 조건)을 체크리스트로 적어주세요.

- [ ] TBD

## 이 이슈를 해결하기 위해 이런 내용을 참고할 수 있을 것 같습니다

> 문제 해결에 도움이 될 수 있을 것 같은 관련 이슈 번호, 문서, Wiki, 스크린샷, 개인적인 의견 등을 최대한 적어주세요.

> 이 이슈가 다른 이슈와 관련되어 있는 경우는 **반드시 이슈 번호를 적어주세요**

- 관련이슈: TBD

- 참고사항: TBD

## 이 이슈 해결을 위해 이정도 시간이 예상됩니다

> 예상소요시간을 한가지만 선택해주세요.

> (1W+ 가 아닌 경우 레이블을 변경해주세요.)

- 예상소요시간: **1W-**

## 관련된 세부 정보입니다.

> Reporter는 **1명만**, Domain, Priority, Task를 **각각 한가지만** 선택해주세요.

> (UX, Medium, Enhancement 가 아닌 경우 레이블을 변경해주세요.)

- Reporter: @tooget

- Domain : **UX**

- Priority: **Medium**

- Task : **Enhancement**

## 이 이슈를 해결함에 따라 이정도 재무적 영향이 예상됩니다.

> 이 이슈를 해결함에 따라 전사적으로 유의미한 수익/비용 변동이 예상될 경우, 해당 수치를 입력해주세요.

- 예상수익: 0 원/월

- 예상비용: 0 원/월

| priority | contacts resume cv 컴포넌트 순서 변경 및 단순화 이런 목표를 달성해야 합니다 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요 tooget github io qr코드를 읽은 경우 이력 이 바로 보일 수 있게 수정 현재 이런 상태입니다 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요 실제로 qr코드 명함을 전달하며 의견을 확인한 결과 관심사는 이력 에 있으며 커피챗 에는 관심이 덜 함 이 이슈는 이 분이 풀 수 있을 것 같습니다 담당할 assignee를 로 멘션해주세요 tooget 아래의 세부적인 문제를 풀어야 할 것 같습니다 이 이슈를 해결하기 위한 세부 항목 이슈 클로징 조건 을 체크리스트로 적어주세요 tbd 이 이슈를 해결하기 위해 이런 내용을 참고할 수 있을 것 같습니다 문제 해결에 도움이 될 수 있을 것 같은 관련 이슈 번호 문서 wiki 스크린샷 개인적인 의견 등을 최대한 적어주세요 이 이슈가 다른 이슈와 관련되어 있는 경우는 반드시 이슈 번호를 적어주세요 관련이슈 tbd 참고사항 tbd 이 이슈 해결을 위해 이정도 시간이 예상됩니다 예상소요시간을 한가지만 선택해주세요 가 아닌 경우 레이블을 변경해주세요 예상소요시간 관련된 세부 정보입니다 reporter는 domain priority task를 각각 한가지만 선택해주세요 ux medium enhancement 가 아닌 경우 레이블을 변경해주세요 reporter tooget domain ux priority medium task enhancement 이 이슈를 해결함에 따라 이정도 재무적 영향이 예상됩니다 이 이슈를 해결함에 따라 전사적으로 유의미한 수익 비용 변동이 예상될 경우 해당 수치를 입력해주세요 예상수익 원 월 예상비용 원 월 | 1 |

534,849 | 15,650,419,786 | IssuesEvent | 2021-03-23 08:58:07 | AY2021S2-CS2113T-T09-4/tp | https://api.github.com/repos/AY2021S2-CS2113T-T09-4/tp | closed | As a user, I can create, save data and load existing data | priority.Medium type.Story | - So that I can work on another device with the saved data.

- I can edit the data file directly if I am an expert user. | 1.0 | As a user, I can create, save data and load existing data - - So that I can work on another device with the saved data.

- I can edit the data file directly if I am an expert user. | priority | as a user i can create save data and load existing data so that i can work on another device with the saved data i can edit the data file directly if i am an expert user | 1 |

111,567 | 4,478,843,468 | IssuesEvent | 2016-08-27 07:45:18 | classilla/tenfourfox | https://api.github.com/repos/classilla/tenfourfox | reopened | Localized versions | auto-migrated Priority-Medium Type-Enhancement | ```

* I read everything above and have demonstrated this bug only occurs on

10.4Fx by testing against this official version of Firefox 4 (not

applicable for startup failure) - specify:

* This is a startup crash or failure to start (Y/N): N

* What steps are necessary to reproduce the bug? These must be reasonably

reliable.

Start tenfourfox on a non english system

* Describe your processor, computer, operating system and any special

things about your environment.

IMac Power PC G4 1,25Ghz

No localization is included into the package. I Try to replace en-en.jar by the

official fr.jar in chrome directory but didn't work. I manage to change search

engines, and dictionaries...

How could we localize tenfourfox ?

```

Original issue reported on code.google.com by `narcoti...@gmail.com` on 22 Mar 2011 at 10:58

* Blocked on: #61 | 1.0 | Localized versions - ```

* I read everything above and have demonstrated this bug only occurs on

10.4Fx by testing against this official version of Firefox 4 (not

applicable for startup failure) - specify:

* This is a startup crash or failure to start (Y/N): N

* What steps are necessary to reproduce the bug? These must be reasonably

reliable.

Start tenfourfox on a non english system

* Describe your processor, computer, operating system and any special

things about your environment.

IMac Power PC G4 1,25Ghz

No localization is included into the package. I Try to replace en-en.jar by the

official fr.jar in chrome directory but didn't work. I manage to change search

engines, and dictionaries...

How could we localize tenfourfox ?

```

Original issue reported on code.google.com by `narcoti...@gmail.com` on 22 Mar 2011 at 10:58

* Blocked on: #61 | priority | localized versions i read everything above and have demonstrated this bug only occurs on by testing against this official version of firefox not applicable for startup failure specify this is a startup crash or failure to start y n n what steps are necessary to reproduce the bug these must be reasonably reliable start tenfourfox on a non english system describe your processor computer operating system and any special things about your environment imac power pc no localization is included into the package i try to replace en en jar by the official fr jar in chrome directory but didn t work i manage to change search engines and dictionaries how could we localize tenfourfox original issue reported on code google com by narcoti gmail com on mar at blocked on | 1 |

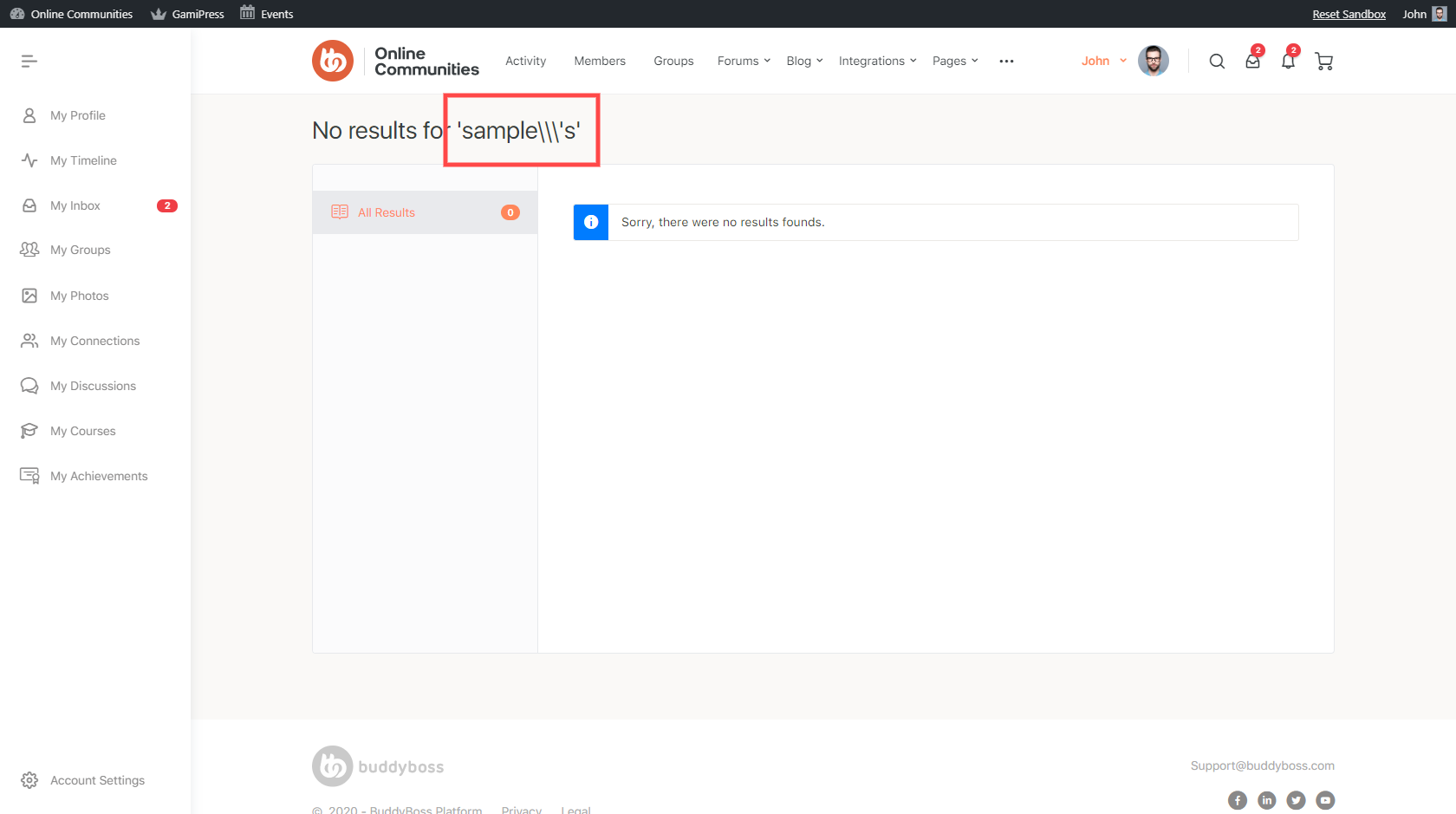

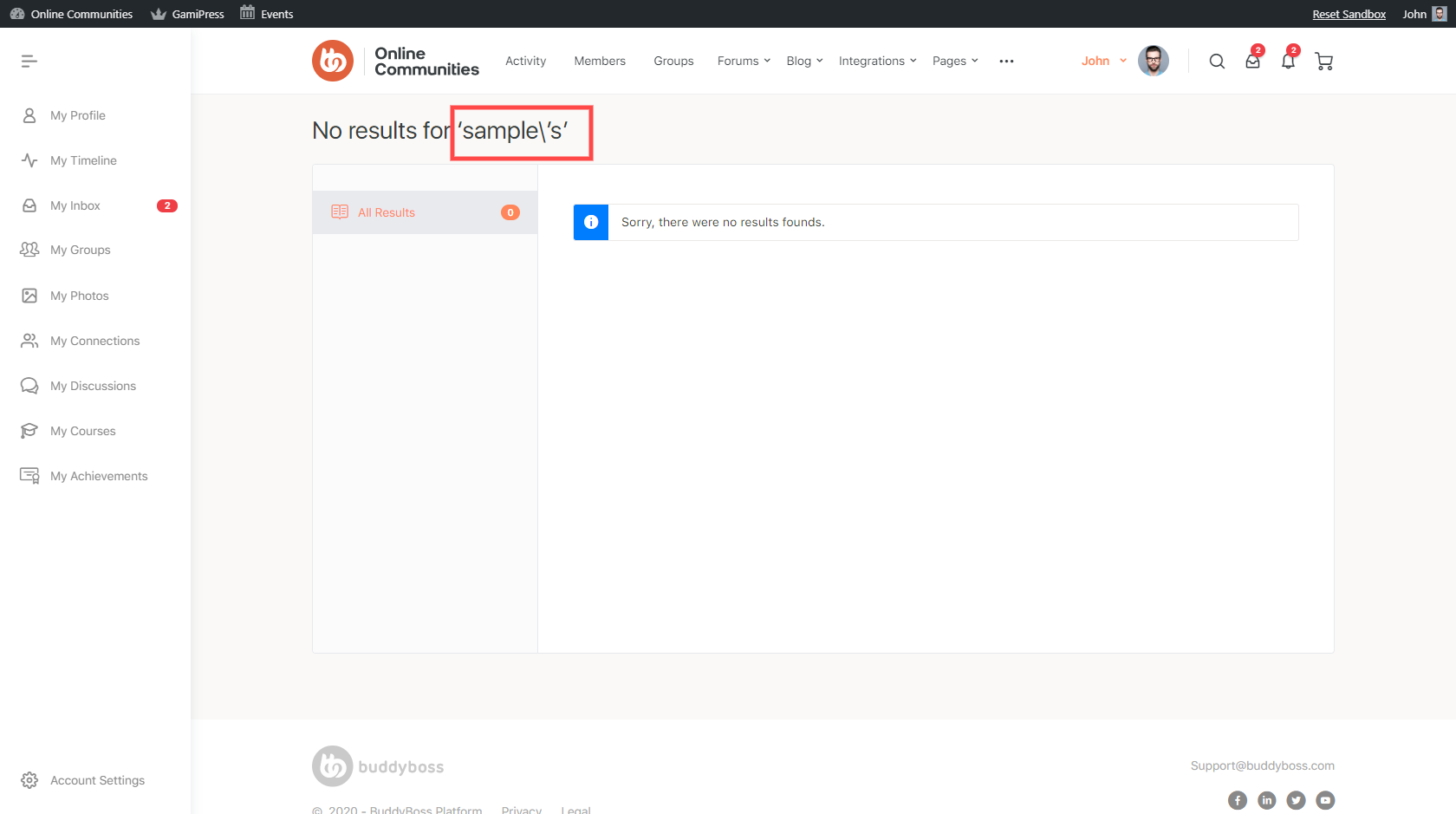

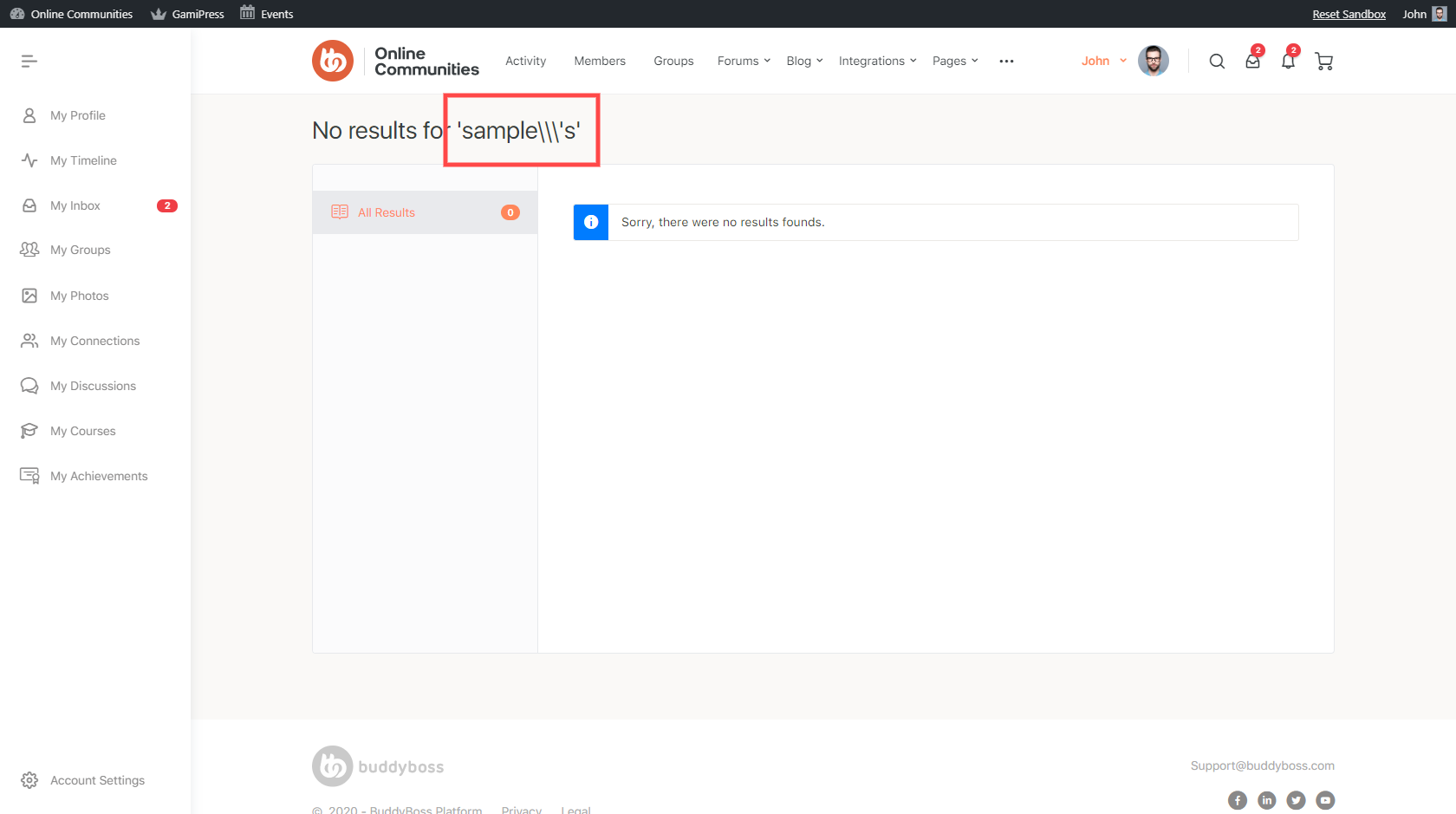

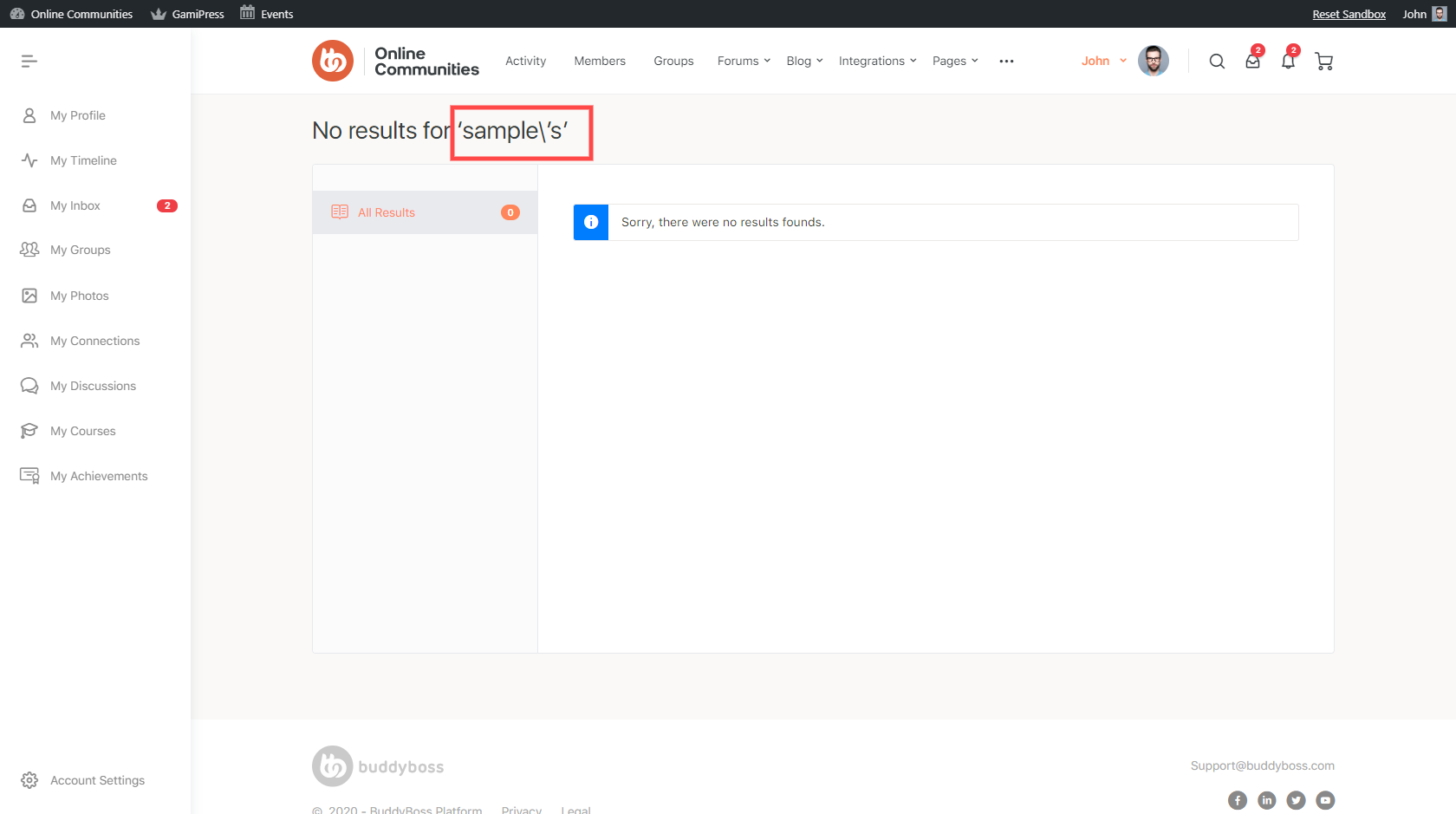

431,999 | 12,487,485,244 | IssuesEvent | 2020-05-31 09:20:27 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | Using apostrophe(') in search displays backslash(\) on the result page | Has-PR bug priority: medium | **Describe the bug**

Using apostrophe(') in search displays backslash(\) on the result page

**To Reproduce**

Steps to reproduce the behavior:

1. Go to front page

2. Search any keyword with apostrophe(')

3. Notice in the result page that there are backslashes added before the apostrophe(').

**Expected behavior**

There should be no backslashes

**Screencast**

https://drive.google.com/file/d/1hv9u05rXvzQcpwrXSUAQ5odSStGFT9_G/view

**Screenshots**

**Support ticket links**

https://secure.helpscout.net/conversation/1163576561/73270?folderId=3701263

| 1.0 | Using apostrophe(') in search displays backslash(\) on the result page - **Describe the bug**

Using apostrophe(') in search displays backslash(\) on the result page

**To Reproduce**

Steps to reproduce the behavior:

1. Go to front page

2. Search any keyword with apostrophe(')

3. Notice in the result page that there are backslashes added before the apostrophe(').

**Expected behavior**

There should be no backslashes

**Screencast**

https://drive.google.com/file/d/1hv9u05rXvzQcpwrXSUAQ5odSStGFT9_G/view

**Screenshots**

**Support ticket links**

https://secure.helpscout.net/conversation/1163576561/73270?folderId=3701263

| priority | using apostrophe in search displays backslash on the result page describe the bug using apostrophe in search displays backslash on the result page to reproduce steps to reproduce the behavior go to front page search any keyword with apostrophe notice in the result page that there are backslashes added before the apostrophe expected behavior there should be no backslashes screencast screenshots support ticket links | 1 |

332,631 | 10,102,053,346 | IssuesEvent | 2019-07-29 10:10:49 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | conan remote remove fails with editable packages | complex: low priority: medium stage: review type: bug | Trying to update the metadata, it fails because the `PackageEditableLayout` has no `update_metadata()`. The main questions are:

- Should the editable packages still have the metadata methods available?

or

- Should we force always to retrieve the `PackageCacheLayout` sometimes, e.g from the `remote_registry.py`?

```

Traceback (most recent call last):

File "/home/luism/workspace/conan_sources/conans/client/command.py", line 1832, in run

method(args[0][1:])

File "/home/luism/workspace/conan_sources/conans/client/command.py", line 1423, in remote

return self._conan.remote_remove(remote_name)

File "/home/luism/workspace/conan_sources/conans/client/conan_api.py", line 77, in wrapper

return f(*args, **kwargs)

File "/home/luism/workspace/conan_sources/conans/client/conan_api.py", line 922, in remote_remove

return self._cache.registry.remove(remote_name)

File "/home/luism/workspace/conan_sources/conans/client/cache/remote_registry.py", line 301, in remove

with self._cache.package_layout(ref).update_metadata() as metadata:

AttributeError: 'PackageEditableLayout' object has no attribute 'update_metadata'

``` | 1.0 | conan remote remove fails with editable packages - Trying to update the metadata, it fails because the `PackageEditableLayout` has no `update_metadata()`. The main questions are:

- Should the editable packages still have the metadata methods available?

or

- Should we force always to retrieve the `PackageCacheLayout` sometimes, e.g from the `remote_registry.py`?

```

Traceback (most recent call last):

File "/home/luism/workspace/conan_sources/conans/client/command.py", line 1832, in run

method(args[0][1:])

File "/home/luism/workspace/conan_sources/conans/client/command.py", line 1423, in remote

return self._conan.remote_remove(remote_name)

File "/home/luism/workspace/conan_sources/conans/client/conan_api.py", line 77, in wrapper

return f(*args, **kwargs)

File "/home/luism/workspace/conan_sources/conans/client/conan_api.py", line 922, in remote_remove

return self._cache.registry.remove(remote_name)

File "/home/luism/workspace/conan_sources/conans/client/cache/remote_registry.py", line 301, in remove

with self._cache.package_layout(ref).update_metadata() as metadata:

AttributeError: 'PackageEditableLayout' object has no attribute 'update_metadata'

``` | priority | conan remote remove fails with editable packages trying to update the metadata it fails because the packageeditablelayout has no update metadata the main questions are should the editable packages still have the metadata methods available or should we force always to retrieve the packagecachelayout sometimes e g from the remote registry py traceback most recent call last file home luism workspace conan sources conans client command py line in run method args file home luism workspace conan sources conans client command py line in remote return self conan remote remove remote name file home luism workspace conan sources conans client conan api py line in wrapper return f args kwargs file home luism workspace conan sources conans client conan api py line in remote remove return self cache registry remove remote name file home luism workspace conan sources conans client cache remote registry py line in remove with self cache package layout ref update metadata as metadata attributeerror packageeditablelayout object has no attribute update metadata | 1 |

278,908 | 8,652,422,404 | IssuesEvent | 2018-11-27 07:59:39 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | samples/subsys/usb/hid/ test hangs on quark_se_c1000_devboard | area: USB bug priority: medium | samples/subsys/usb/hid/ test hangs on quark_se_c1000_devboard

Arch: x86

Board: quark_se_c1000_devboard

Error Console log:

***** Booting Zephyr OS v1.13.0-rc3 *****

[main] [DBG] main: Starting application

[main] [DBG] set_idle_cb: Set Idle callback

[main] [DBG] main: Wrote 2 bytes with ret 0

Steps to reproduce:

cd samples/subsys/usb/hid

rm -rf build && mkdir build && cd build

cmake -D BOARD=quark_se_c1000_devboard ../

make BOARD=quark_se_c1000_devboard flash

| 1.0 | samples/subsys/usb/hid/ test hangs on quark_se_c1000_devboard - samples/subsys/usb/hid/ test hangs on quark_se_c1000_devboard

Arch: x86

Board: quark_se_c1000_devboard

Error Console log:

***** Booting Zephyr OS v1.13.0-rc3 *****

[main] [DBG] main: Starting application

[main] [DBG] set_idle_cb: Set Idle callback

[main] [DBG] main: Wrote 2 bytes with ret 0

Steps to reproduce:

cd samples/subsys/usb/hid

rm -rf build && mkdir build && cd build

cmake -D BOARD=quark_se_c1000_devboard ../

make BOARD=quark_se_c1000_devboard flash

| priority | samples subsys usb hid test hangs on quark se devboard samples subsys usb hid test hangs on quark se devboard arch board quark se devboard error console log booting zephyr os main starting application set idle cb set idle callback main wrote bytes with ret steps to reproduce cd samples subsys usb hid rm rf build mkdir build cd build cmake d board quark se devboard make board quark se devboard flash | 1 |

20,450 | 2,622,849,124 | IssuesEvent | 2015-03-04 08:04:12 | max99x/pagemon-chrome-ext | https://api.github.com/repos/max99x/pagemon-chrome-ext | closed | French translation | auto-migrated Priority-Medium Type-Enhancement | ```

see the attached file.

possible abbreviations if needed :

- "Voir la page d'origine" -> Voir l'original

- "Page mise à jour" -> "Page MàJ"

- "unknown" key : ("inconnu") literal translation but depends on what it's used

for, a more accurate translation can be done if you give me more details.

also is the "test_no_matches" key actually used somewhere ? I can't see it with

a wrong regex or a wrong selector...

some entries are too long to fit in the display, be sure to correct that before

integrate it to the new version.

Please, contact me if you need to change, adapt or reduce anything related to

this translation

```

Original issue reported on code.google.com by `djac...@gmail.com` on 19 Jun 2011 at 1:13

Attachments:

* [messages.json](https://storage.googleapis.com/google-code-attachments/pagemon-chrome-ext/issue-129/comment-0/messages.json)

| 1.0 | French translation - ```

see the attached file.

possible abbreviations if needed :

- "Voir la page d'origine" -> Voir l'original

- "Page mise à jour" -> "Page MàJ"

- "unknown" key : ("inconnu") literal translation but depends on what it's used

for, a more accurate translation can be done if you give me more details.

also is the "test_no_matches" key actually used somewhere ? I can't see it with

a wrong regex or a wrong selector...

some entries are too long to fit in the display, be sure to correct that before

integrate it to the new version.

Please, contact me if you need to change, adapt or reduce anything related to

this translation

```

Original issue reported on code.google.com by `djac...@gmail.com` on 19 Jun 2011 at 1:13

Attachments:

* [messages.json](https://storage.googleapis.com/google-code-attachments/pagemon-chrome-ext/issue-129/comment-0/messages.json)

| priority | french translation see the attached file possible abbreviations if needed voir la page d origine voir l original page mise à jour page màj unknown key inconnu literal translation but depends on what it s used for a more accurate translation can be done if you give me more details also is the test no matches key actually used somewhere i can t see it with a wrong regex or a wrong selector some entries are too long to fit in the display be sure to correct that before integrate it to the new version please contact me if you need to change adapt or reduce anything related to this translation original issue reported on code google com by djac gmail com on jun at attachments | 1 |

467,815 | 13,455,673,104 | IssuesEvent | 2020-09-09 06:38:56 | teamforus/forus | https://api.github.com/repos/teamforus/forus | closed | Provider bug: after email confirmation the signupflow tells me the acces token is invalid | Difficulty: Medium Priority: Must have Scope: Medium bug | ## Main asssignee: @

**Start from branch** Release v0.14.0

## Context/goal:

1. Was logged out and opened signup flow

2. created profile and verifef mail

3. Tried to create a organization and confirmed. The signup flow was not redirecting me to the next page. because of an invalid acces token (see image below)

<img width="437" alt="Screen Shot 2020-08-20 at 11 20 44" src="https://user-images.githubusercontent.com/38419514/90752151-56c7c480-e2d7-11ea-94a4-5174c09b993d.png">

| 1.0 | Provider bug: after email confirmation the signupflow tells me the acces token is invalid - ## Main asssignee: @

**Start from branch** Release v0.14.0

## Context/goal:

1. Was logged out and opened signup flow

2. created profile and verifef mail

3. Tried to create a organization and confirmed. The signup flow was not redirecting me to the next page. because of an invalid acces token (see image below)

<img width="437" alt="Screen Shot 2020-08-20 at 11 20 44" src="https://user-images.githubusercontent.com/38419514/90752151-56c7c480-e2d7-11ea-94a4-5174c09b993d.png">

| priority | provider bug after email confirmation the signupflow tells me the acces token is invalid main asssignee start from branch release context goal was logged out and opened signup flow created profile and verifef mail tried to create a organization and confirmed the signup flow was not redirecting me to the next page because of an invalid acces token see image below img width alt screen shot at src | 1 |

589,776 | 17,761,185,843 | IssuesEvent | 2021-08-29 18:24:05 | ClinGen/clincoded | https://api.github.com/repos/ClinGen/clincoded | closed | UI changes for Evidence Summary linking | GCI EP request priority: medium | Re-arrange UI to make it more obvious how to link to view the Provisional and Approved evidence summaries in the GCI.

| 1.0 | UI changes for Evidence Summary linking - Re-arrange UI to make it more obvious how to link to view the Provisional and Approved evidence summaries in the GCI.

| priority | ui changes for evidence summary linking re arrange ui to make it more obvious how to link to view the provisional and approved evidence summaries in the gci | 1 |

225,705 | 7,494,391,698 | IssuesEvent | 2018-04-07 09:05:28 | andgein/SIStema | https://api.github.com/repos/andgein/SIStema | closed | Перенести вопрос про размер футболки в профиль | priority:2:medium type:feature | Эта информация редко меняется из года в год, а ещё мы можем захотеть собирать её для препов. | 1.0 | Перенести вопрос про размер футболки в профиль - Эта информация редко меняется из года в год, а ещё мы можем захотеть собирать её для препов. | priority | перенести вопрос про размер футболки в профиль эта информация редко меняется из года в год а ещё мы можем захотеть собирать её для препов | 1 |

353,501 | 10,553,315,319 | IssuesEvent | 2019-10-03 16:56:39 | emory-libraries/ezpaarse-platforms | https://api.github.com/repos/emory-libraries/ezpaarse-platforms | closed | Foundation Center (Candid) | Add Parser Medium Priority | ### Example:star::star: :

http://foundationcenter.org.proxy.library.emory.edu/

### Priority:

Medium

### Subscriber (Library):

Woodruff

### ezPAARSE

Analysis: None | 1.0 | Foundation Center (Candid) - ### Example:star::star: :

http://foundationcenter.org.proxy.library.emory.edu/

### Priority:

Medium

### Subscriber (Library):

Woodruff

### ezPAARSE

Analysis: None | priority | foundation center candid example star star priority medium subscriber library woodruff ezpaarse analysis none | 1 |

68,512 | 3,288,950,612 | IssuesEvent | 2015-10-29 17:01:13 | patrickomni/omnimobileserver | https://api.github.com/repos/patrickomni/omnimobileserver | closed | Alerts - low battery alert not being sent | bug Priority MEDIUM | Pearl's Sendum device (id 05671343) delivered a LOWBATTERYCAP alarm to Classic Omni Listener on 2015-09-20 11:42:04 GMT (see below). When looking at the portal on 2015-09-21 @ 11:20 PDT (18:20 GMT), filter was set to "last 3 days" to ensure the alert would be included and there are no active alerts displayed.

I don't know whether/how Sendum alarm messages are processed - do they result in Omni alerts or do we ignore them and look at our own rules? | 1.0 | Alerts - low battery alert not being sent - Pearl's Sendum device (id 05671343) delivered a LOWBATTERYCAP alarm to Classic Omni Listener on 2015-09-20 11:42:04 GMT (see below). When looking at the portal on 2015-09-21 @ 11:20 PDT (18:20 GMT), filter was set to "last 3 days" to ensure the alert would be included and there are no active alerts displayed.

I don't know whether/how Sendum alarm messages are processed - do they result in Omni alerts or do we ignore them and look at our own rules? | priority | alerts low battery alert not being sent pearl s sendum device id delivered a lowbatterycap alarm to classic omni listener on gmt see below when looking at the portal on pdt gmt filter was set to last days to ensure the alert would be included and there are no active alerts displayed i don t know whether how sendum alarm messages are processed do they result in omni alerts or do we ignore them and look at our own rules | 1 |

25,888 | 2,684,026,527 | IssuesEvent | 2015-03-28 15:47:17 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Resizeing tabs with mouse in "panel view" (thumbs/tiles) does not work | 1 star bug imported Priority-Medium | _From [mickem](https://code.google.com/u/mickem/) on October 28, 2011 22:58:55_

Resizeing tabs with mouse in "panel view" (thumbs/tiles) does not work

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=449_ | 1.0 | Resizeing tabs with mouse in "panel view" (thumbs/tiles) does not work - _From [mickem](https://code.google.com/u/mickem/) on October 28, 2011 22:58:55_

Resizeing tabs with mouse in "panel view" (thumbs/tiles) does not work

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=449_ | priority | resizeing tabs with mouse in panel view thumbs tiles does not work from on october resizeing tabs with mouse in panel view thumbs tiles does not work original issue | 1 |

484,660 | 13,943,412,860 | IssuesEvent | 2020-10-22 23:03:21 | jcr7467/UCLAbookstack | https://api.github.com/repos/jcr7467/UCLAbookstack | opened | Login/signup page still accessible once signed in. | Priority - Medium bug | **Describe the bug**

When we login, we expect the user to not be able to access '/signin' but it still does.

**To Reproduce**

Steps to reproduce the behavior:

1. Sign in

2. manually enter into the url 'localhost:8000/signin' or alternatively, 'uclabookstack.com/signin'

**Expected behavior**

This is an easy issue to fix. We just need to place a middleware function that does not allow access to certain pages if a user is already signed in. (our /signin and /signup routes)

**Screenshots**

In this image, we can see that we are still on the login page, but on the upper right you can also see that I am already logged in. This is also true with the signup page

<img width="1265" alt="Screen Shot 2020-10-22 at 4 01 57 PM" src="https://user-images.githubusercontent.com/25701682/96938692-ec223900-147f-11eb-82ba-4d92ff50cdc9.png">

| 1.0 | Login/signup page still accessible once signed in. - **Describe the bug**

When we login, we expect the user to not be able to access '/signin' but it still does.

**To Reproduce**

Steps to reproduce the behavior:

1. Sign in

2. manually enter into the url 'localhost:8000/signin' or alternatively, 'uclabookstack.com/signin'

**Expected behavior**

This is an easy issue to fix. We just need to place a middleware function that does not allow access to certain pages if a user is already signed in. (our /signin and /signup routes)

**Screenshots**

In this image, we can see that we are still on the login page, but on the upper right you can also see that I am already logged in. This is also true with the signup page

<img width="1265" alt="Screen Shot 2020-10-22 at 4 01 57 PM" src="https://user-images.githubusercontent.com/25701682/96938692-ec223900-147f-11eb-82ba-4d92ff50cdc9.png">

| priority | login signup page still accessible once signed in describe the bug when we login we expect the user to not be able to access signin but it still does to reproduce steps to reproduce the behavior sign in manually enter into the url localhost signin or alternatively uclabookstack com signin expected behavior this is an easy issue to fix we just need to place a middleware function that does not allow access to certain pages if a user is already signed in our signin and signup routes screenshots in this image we can see that we are still on the login page but on the upper right you can also see that i am already logged in this is also true with the signup page img width alt screen shot at pm src | 1 |

597,436 | 18,163,456,243 | IssuesEvent | 2021-09-27 12:20:05 | robbinjanssen/home-assistant-omnik-inverter | https://api.github.com/repos/robbinjanssen/home-assistant-omnik-inverter | closed | Status.html support? | enhancement new-feature priority-medium | i use an omnik 2500tl. this inverter does not has a json or js page but it just posts it in a status.html. is it possible to support this? as it is webdata is directly visible in the html code? any suggestions? | 1.0 | Status.html support? - i use an omnik 2500tl. this inverter does not has a json or js page but it just posts it in a status.html. is it possible to support this? as it is webdata is directly visible in the html code? any suggestions? | priority | status html support i use an omnik this inverter does not has a json or js page but it just posts it in a status html is it possible to support this as it is webdata is directly visible in the html code any suggestions | 1 |

577,183 | 17,104,912,458 | IssuesEvent | 2021-07-09 16:11:48 | conan-io/conan | https://api.github.com/repos/conan-io/conan | closed | [feature] New AutotoolsDeps customization | complex: low priority: medium stage: queue type: feature | The `AutotoolsDeps` generator should precalculate things in the constructor to allow the user to modify them before calling the `generate()` method. | 1.0 | [feature] New AutotoolsDeps customization - The `AutotoolsDeps` generator should precalculate things in the constructor to allow the user to modify them before calling the `generate()` method. | priority | new autotoolsdeps customization the autotoolsdeps generator should precalculate things in the constructor to allow the user to modify them before calling the generate method | 1 |

142,422 | 5,475,177,584 | IssuesEvent | 2017-03-11 08:06:01 | Rsl1122/Plan-PlayerAnalytics | https://api.github.com/repos/Rsl1122/Plan-PlayerAnalytics | closed | ConcurrentModificationException | Bug Priority: MEDIUM | Spigot 1.11.2, Plan 2.8.2, 3:00 after server start:

> [12:03:30 INFO]: [Plan] Analysis | Starting Boot Analysis..

[12:03:30 WARN]: [Plan] Plugin Plan v2.8.2 generated an exception while executing task 842

java.util.ConcurrentModificationException

at java.util.ArrayList$ArrayListSpliterator.forEachRemaining(ArrayList.java:1380) ~[?:1.8.0_121]

at java.util.stream.ReferencePipeline$Head.forEach(ReferencePipeline.java:580) ~[?:1.8.0_121]

at main.java.com.djrapitops.plan.utilities.AnalysisUtils.transformSessionDataToLengths(AnalysisUtils.java:89) ~[?:?]

at main.java.com.djrapitops.plan.utilities.Analysis$1.run(Analysis.java:150) ~[?:?]

at org.bukkit.craftbukkit.v1_11_R1.scheduler.CraftTask.run(CraftTask.java:71) ~[spigot-1.11.2.jar-2017-03-10-0649:git-Spigot-283de8b-eac8591]

at org.bukkit.craftbukkit.v1_11_R1.scheduler.CraftAsyncTask.run(CraftAsyncTask.java:52) [spigot-1.11.2.jar-2017-03-10-0649:git-Spigot-283de8b-eac8591]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) [?:1.8.0_121]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) [?:1.8.0_121]

at java.lang.Thread.run(Thread.java:745) [?:1.8.0_121]

[12:04:35 INFO]: [StreetlightsAdvanced] Activated streetlights in world_blackdog

[12:04:35 INFO]: [StreetlightsAdvanced] Activated streetlights in world_city | 1.0 | ConcurrentModificationException - Spigot 1.11.2, Plan 2.8.2, 3:00 after server start:

> [12:03:30 INFO]: [Plan] Analysis | Starting Boot Analysis..

[12:03:30 WARN]: [Plan] Plugin Plan v2.8.2 generated an exception while executing task 842

java.util.ConcurrentModificationException

at java.util.ArrayList$ArrayListSpliterator.forEachRemaining(ArrayList.java:1380) ~[?:1.8.0_121]

at java.util.stream.ReferencePipeline$Head.forEach(ReferencePipeline.java:580) ~[?:1.8.0_121]

at main.java.com.djrapitops.plan.utilities.AnalysisUtils.transformSessionDataToLengths(AnalysisUtils.java:89) ~[?:?]

at main.java.com.djrapitops.plan.utilities.Analysis$1.run(Analysis.java:150) ~[?:?]

at org.bukkit.craftbukkit.v1_11_R1.scheduler.CraftTask.run(CraftTask.java:71) ~[spigot-1.11.2.jar-2017-03-10-0649:git-Spigot-283de8b-eac8591]

at org.bukkit.craftbukkit.v1_11_R1.scheduler.CraftAsyncTask.run(CraftAsyncTask.java:52) [spigot-1.11.2.jar-2017-03-10-0649:git-Spigot-283de8b-eac8591]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) [?:1.8.0_121]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) [?:1.8.0_121]

at java.lang.Thread.run(Thread.java:745) [?:1.8.0_121]

[12:04:35 INFO]: [StreetlightsAdvanced] Activated streetlights in world_blackdog

[12:04:35 INFO]: [StreetlightsAdvanced] Activated streetlights in world_city | priority | concurrentmodificationexception spigot plan after server start analysis starting boot analysis plugin plan generated an exception while executing task java util concurrentmodificationexception at java util arraylist arraylistspliterator foreachremaining arraylist java at java util stream referencepipeline head foreach referencepipeline java at main java com djrapitops plan utilities analysisutils transformsessiondatatolengths analysisutils java at main java com djrapitops plan utilities analysis run analysis java at org bukkit craftbukkit scheduler crafttask run crafttask java at org bukkit craftbukkit scheduler craftasynctask run craftasynctask java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java activated streetlights in world blackdog activated streetlights in world city | 1 |

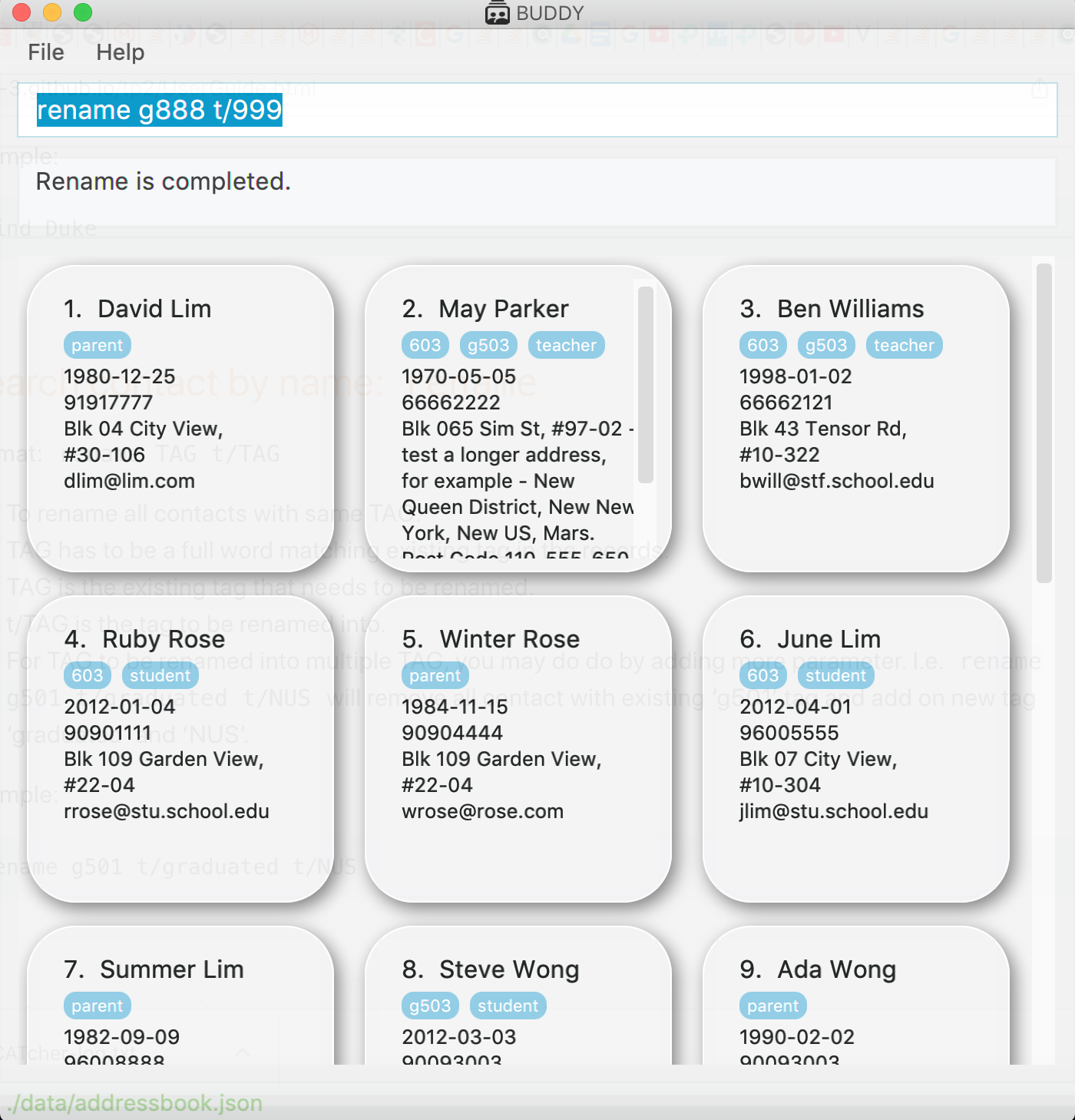

646,416 | 21,047,179,640 | IssuesEvent | 2022-03-31 17:07:07 | AY2122S2-TIC4002-F18-3/tp2 | https://api.github.com/repos/AY2122S2-TIC4002-F18-3/tp2 | closed | [PE-D] Rename Feature | priority.Medium severity.Low | Description: Tag number not available, showing Rename is completed

<!--session: 1648207698366-0d66add7-7474-4ccf-bbf8-13feb137c709-->

<!--Version: Web v3.4.2-->

-------------

Labels: `severity.Low` `type.FeatureFlaw`

original: jr-mojito/ped#3 | 1.0 | [PE-D] Rename Feature - Description: Tag number not available, showing Rename is completed

<!--session: 1648207698366-0d66add7-7474-4ccf-bbf8-13feb137c709-->

<!--Version: Web v3.4.2-->

-------------

Labels: `severity.Low` `type.FeatureFlaw`

original: jr-mojito/ped#3 | priority | rename feature description tag number not available showing rename is completed labels severity low type featureflaw original jr mojito ped | 1 |

670,099 | 22,673,929,374 | IssuesEvent | 2022-07-04 00:54:53 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | You can disarm and pick up items from within disposal units, lockers and cloning tubes. | Issue: Bug Priority: 1-Urgent Difficulty: 2-Medium Bug: Replicated | ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

You can hide within disposals and be safe from retribution while disarming people and taking their weapons and hitting them with it. While being cloned, you can disarm people near your cloning pod and do the same. Picking up itemsf rom outside lockers seemed UNRELIABLE. I really couldn't work out when I could and when I couldn't.

To reproduce:

1) Climb into a disposals unit

2) Have someone with an item in their active hand stasnd outside

3) Toggle disarm intent on, and click on them.

4) you disarm them/shove them.

It makes long-term brigging peopel even more unsustainable.

**Screenshots**

I have a two hour long video from a round, but it should be easy to reproduce.

**Additional context**

This is a big problem for Security, and is mercilessly exploited by those who are aware of it.

| 1.0 | You can disarm and pick up items from within disposal units, lockers and cloning tubes. - ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

You can hide within disposals and be safe from retribution while disarming people and taking their weapons and hitting them with it. While being cloned, you can disarm people near your cloning pod and do the same. Picking up itemsf rom outside lockers seemed UNRELIABLE. I really couldn't work out when I could and when I couldn't.

To reproduce:

1) Climb into a disposals unit

2) Have someone with an item in their active hand stasnd outside

3) Toggle disarm intent on, and click on them.

4) you disarm them/shove them.

It makes long-term brigging peopel even more unsustainable.

**Screenshots**

I have a two hour long video from a round, but it should be easy to reproduce.

**Additional context**

This is a big problem for Security, and is mercilessly exploited by those who are aware of it.

| priority | you can disarm and pick up items from within disposal units lockers and cloning tubes description you can hide within disposals and be safe from retribution while disarming people and taking their weapons and hitting them with it while being cloned you can disarm people near your cloning pod and do the same picking up itemsf rom outside lockers seemed unreliable i really couldn t work out when i could and when i couldn t to reproduce climb into a disposals unit have someone with an item in their active hand stasnd outside toggle disarm intent on and click on them you disarm them shove them it makes long term brigging peopel even more unsustainable screenshots i have a two hour long video from a round but it should be easy to reproduce additional context this is a big problem for security and is mercilessly exploited by those who are aware of it | 1 |

40,682 | 2,868,935,955 | IssuesEvent | 2015-06-05 22:03:41 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Make http://pub.dartlang.org/authorized a real page | bug Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7064_

----

After you authenticate with oauth, it bounces you to http://pub.dartlang.org/authorized. That's currently a 404.

I'm guessing there is a fix for this in progress, or maybe already done and just not uploaded. This is a tracking bug for that. :) | 1.0 | Make http://pub.dartlang.org/authorized a real page - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7064_

----

After you authenticate with oauth, it bounces you to http://pub.dartlang.org/authorized. That's currently a 404.

I'm guessing there is a fix for this in progress, or maybe already done and just not uploaded. This is a tracking bug for that. :) | priority | make a real page issue by originally opened as dart lang sdk after you authenticate with oauth it bounces you to that s currently a i m guessing there is a fix for this in progress or maybe already done and just not uploaded this is a tracking bug for that | 1 |

592,918 | 17,934,027,263 | IssuesEvent | 2021-09-10 13:13:27 | ranking-agent/strider | https://api.github.com/repos/ranking-agent/strider | closed | Add Liveness Probe to Strider | Status: Review Needed Priority: Medium | **Issue:** When a pod is moved or restarted, it doesn’t always come back with the data it needs.

**Solution:** Add a liveness probe to Strider. | 1.0 | Add Liveness Probe to Strider - **Issue:** When a pod is moved or restarted, it doesn’t always come back with the data it needs.

**Solution:** Add a liveness probe to Strider. | priority | add liveness probe to strider issue when a pod is moved or restarted it doesn’t always come back with the data it needs solution add a liveness probe to strider | 1 |

30,421 | 2,723,808,877 | IssuesEvent | 2015-04-14 14:39:06 | CruxFramework/crux-widgets | https://api.github.com/repos/CruxFramework/crux-widgets | closed | Make Wizard widget generic to read and write context data | CruxWidgets enhancement imported Milestone-3.0.0 Priority-Medium | _From [tr_busta...@yahoo.com.br](https://code.google.com/u/115454294030253308352/) on July 18, 2010 13:20:04_

Purpose of enhancement Make Wizard widget generic to read and write context data

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=150_ | 1.0 | Make Wizard widget generic to read and write context data - _From [tr_busta...@yahoo.com.br](https://code.google.com/u/115454294030253308352/) on July 18, 2010 13:20:04_

Purpose of enhancement Make Wizard widget generic to read and write context data

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=150_ | priority | make wizard widget generic to read and write context data from on july purpose of enhancement make wizard widget generic to read and write context data original issue | 1 |

445,035 | 12,825,311,294 | IssuesEvent | 2020-07-06 14:47:05 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :211039] Out-of-bounds access in drivers/gpio/gpio_nrfx.c | Coverity bug priority: medium |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/8e2c4a475dc375da6691175dd1da87525053ed76/drivers/gpio/gpio_nrfx.c#L105

Category: Memory - corruptions

Function: `gpiote_pin_int_cfg`

Component: Drivers

CID: [211039](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=211039)

Details:

```

99 {

100 struct gpio_nrfx_data *data = get_port_data(port);

101 const struct gpio_nrfx_cfg *cfg = get_port_cfg(port);

102 uint32_t abs_pin = NRF_GPIO_PIN_MAP(cfg->port_num, pin);

103 int res = 0;

104

>>> CID 211039: Memory - corruptions (ARRAY_VS_SINGLETON)

>>> Passing "&gpiote_alloc_mask" to function "gpiote_pin_cleanup" which uses it as an array. This might corrupt or misinterpret adjacent memory locations.

105 gpiote_pin_cleanup(&gpiote_alloc_mask, abs_pin);

106 nrf_gpio_cfg_sense_set(abs_pin, NRF_GPIO_PIN_NOSENSE);

107

108 /* Pins trigger interrupts only if pin has been configured to do so */

109 if (data->pin_int_en & BIT(pin)) {

110 if (data->trig_edge & BIT(pin)) {

```

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| 1.0 | [Coverity CID :211039] Out-of-bounds access in drivers/gpio/gpio_nrfx.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/8e2c4a475dc375da6691175dd1da87525053ed76/drivers/gpio/gpio_nrfx.c#L105

Category: Memory - corruptions

Function: `gpiote_pin_int_cfg`

Component: Drivers

CID: [211039](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=211039)

Details:

```

99 {

100 struct gpio_nrfx_data *data = get_port_data(port);

101 const struct gpio_nrfx_cfg *cfg = get_port_cfg(port);

102 uint32_t abs_pin = NRF_GPIO_PIN_MAP(cfg->port_num, pin);

103 int res = 0;

104

>>> CID 211039: Memory - corruptions (ARRAY_VS_SINGLETON)

>>> Passing "&gpiote_alloc_mask" to function "gpiote_pin_cleanup" which uses it as an array. This might corrupt or misinterpret adjacent memory locations.

105 gpiote_pin_cleanup(&gpiote_alloc_mask, abs_pin);

106 nrf_gpio_cfg_sense_set(abs_pin, NRF_GPIO_PIN_NOSENSE);

107

108 /* Pins trigger interrupts only if pin has been configured to do so */

109 if (data->pin_int_en & BIT(pin)) {

110 if (data->trig_edge & BIT(pin)) {

```

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.