Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

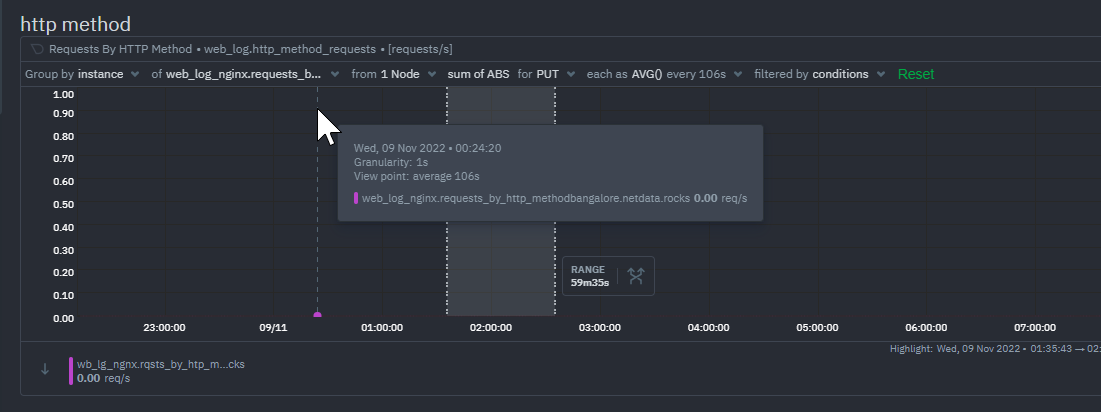

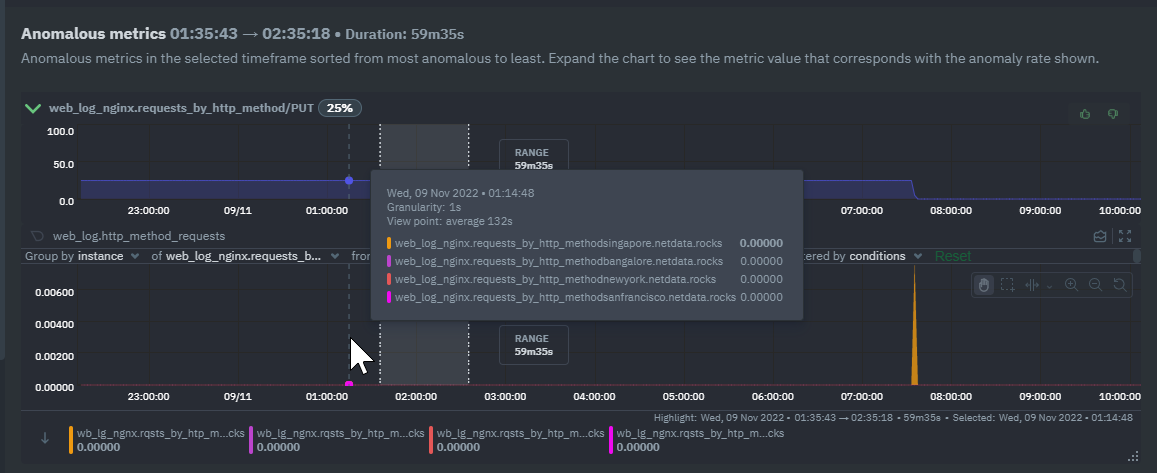

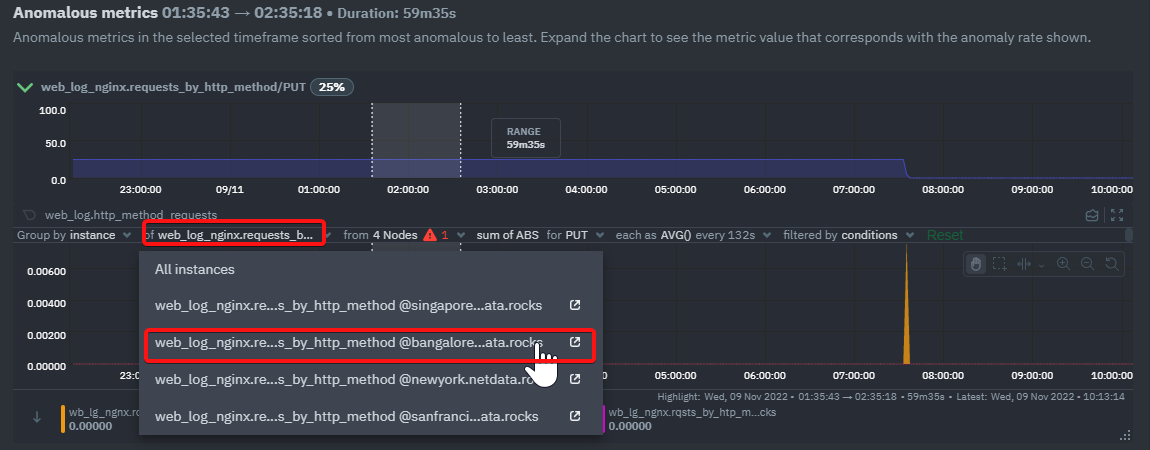

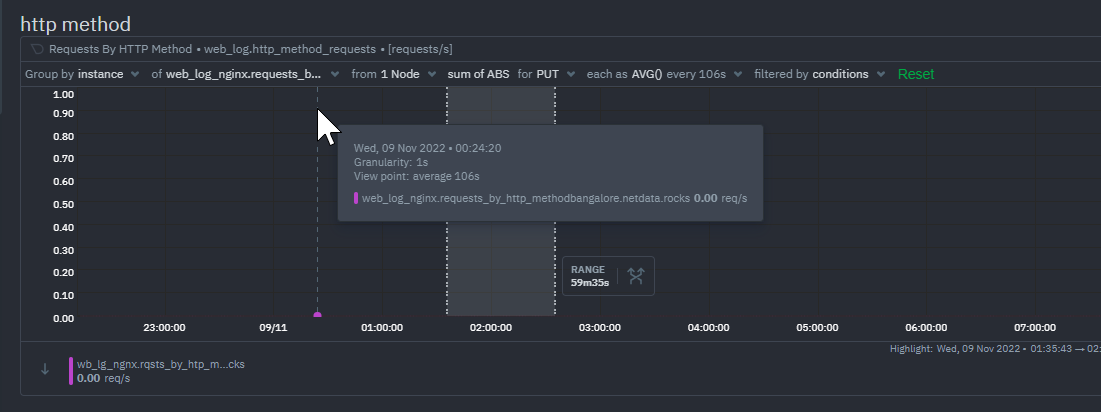

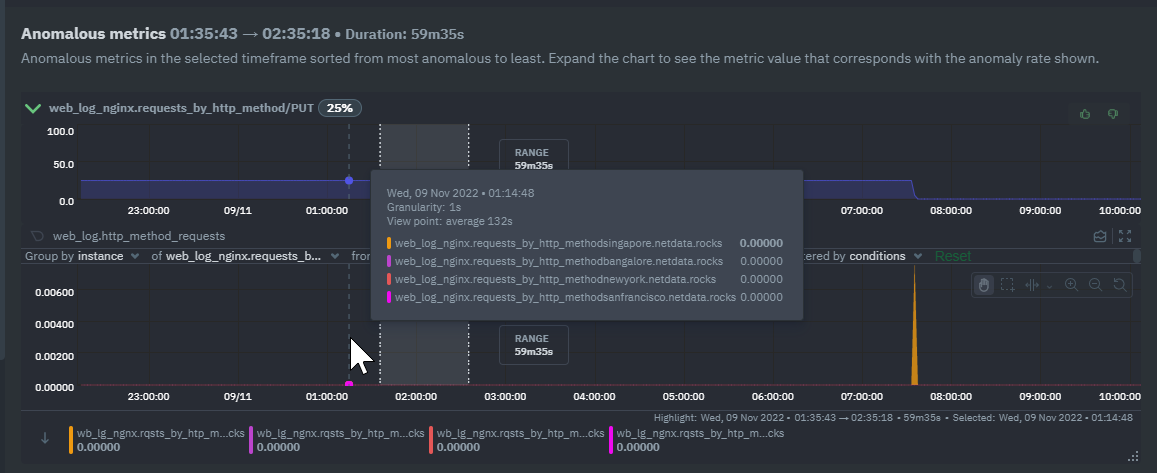

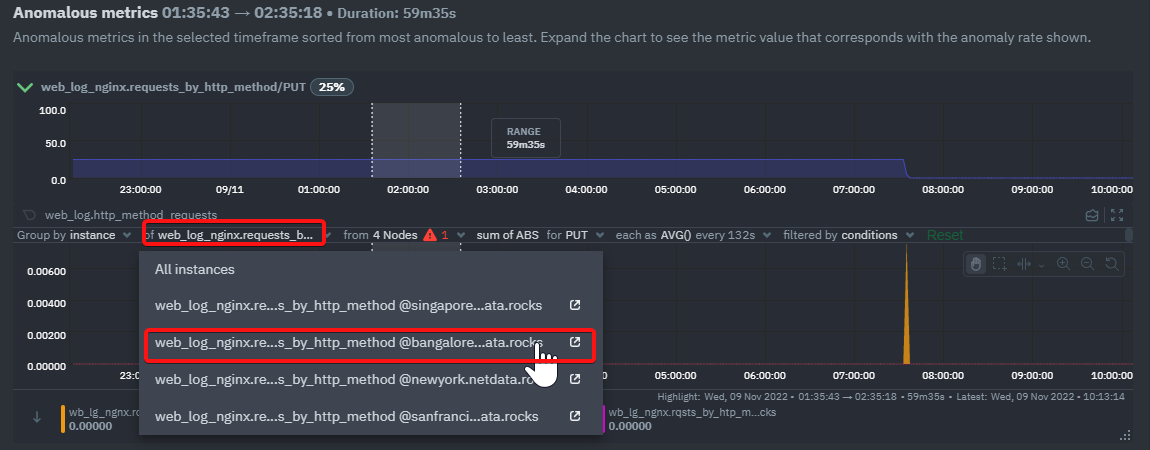

733,851 | 25,325,771,280 | IssuesEvent | 2022-11-18 09:09:44 | netdata/netdata-cloud | https://api.github.com/repos/netdata/netdata-cloud | closed | [Bug]: Anomalies tab doesn't properly show the actual charts with correct filtering | bug internal submit priority/medium cloud-frontend | ### Bug description

Instance filtering isn't correctly applied on the data charts shown together with the anomaly rate charts. The seen issues:

* filtering on one instances is applied but legend and tooltip show all instances

* instance filter dropdown is showing the set filter but when you expand it you don't see which one is selected

### Expected behavior

Same behaviour as on Overview or Single Node view, when I filter on a specific instance we shouldn't see the other instances on the legend and tooltip

Example:

### Steps to reproduce

1. Highlight an area on Anomaly Advisor and see the results

2. Expand one of the anomaly rate charts to see the actual data chart

3. The data chart seems to be filtered by instance but on the legend and tooltip I see all the instances

4. The instance selector is displaying the selected instance but when you expand it you don't see which one is selected

### Screenshots

### Error Logs

_No response_

### Desktop

OS: [e.g. iOS]

Browser [e.g. chrome, safari]

Browser Version [e.g. 22]

### Additional context

_No response_ | 1.0 | [Bug]: Anomalies tab doesn't properly show the actual charts with correct filtering - ### Bug description

Instance filtering isn't correctly applied on the data charts shown together with the anomaly rate charts. The seen issues:

* filtering on one instances is applied but legend and tooltip show all instances

* instance filter dropdown is showing the set filter but when you expand it you don't see which one is selected

### Expected behavior

Same behaviour as on Overview or Single Node view, when I filter on a specific instance we shouldn't see the other instances on the legend and tooltip

Example:

### Steps to reproduce

1. Highlight an area on Anomaly Advisor and see the results

2. Expand one of the anomaly rate charts to see the actual data chart

3. The data chart seems to be filtered by instance but on the legend and tooltip I see all the instances

4. The instance selector is displaying the selected instance but when you expand it you don't see which one is selected

### Screenshots

### Error Logs

_No response_

### Desktop

OS: [e.g. iOS]

Browser [e.g. chrome, safari]

Browser Version [e.g. 22]

### Additional context

_No response_ | priority | anomalies tab doesn t properly show the actual charts with correct filtering bug description instance filtering isn t correctly applied on the data charts shown together with the anomaly rate charts the seen issues filtering on one instances is applied but legend and tooltip show all instances instance filter dropdown is showing the set filter but when you expand it you don t see which one is selected expected behavior same behaviour as on overview or single node view when i filter on a specific instance we shouldn t see the other instances on the legend and tooltip example steps to reproduce highlight an area on anomaly advisor and see the results expand one of the anomaly rate charts to see the actual data chart the data chart seems to be filtered by instance but on the legend and tooltip i see all the instances the instance selector is displaying the selected instance but when you expand it you don t see which one is selected screenshots error logs no response desktop os browser browser version additional context no response | 1 |

358,663 | 10,619,133,173 | IssuesEvent | 2019-10-13 10:59:43 | bounswe/bounswe2019group7 | https://api.github.com/repos/bounswe/bounswe2019group7 | closed | Research on Docker containers | Backend Effort: Few Hours Priority: Medium Status: In-progress Type: Research | Docker containers will be researched and the gathered information will be explained to the backend team. | 1.0 | Research on Docker containers - Docker containers will be researched and the gathered information will be explained to the backend team. | priority | research on docker containers docker containers will be researched and the gathered information will be explained to the backend team | 1 |

2,962 | 2,534,841,350 | IssuesEvent | 2015-01-25 11:49:45 | VisiGod/com_tracker | https://api.github.com/repos/VisiGod/com_tracker | closed | seedbonus point system | enhancement medium priority | The mother of all enhancements.... Currently XBT Tracker doesn't track for how long the user has been seeding the torrent. Some webmaster have requested some addon that would count the time from the first seed until the current time and add some bonus 'put-something-here' to the seeder. | 1.0 | seedbonus point system - The mother of all enhancements.... Currently XBT Tracker doesn't track for how long the user has been seeding the torrent. Some webmaster have requested some addon that would count the time from the first seed until the current time and add some bonus 'put-something-here' to the seeder. | priority | seedbonus point system the mother of all enhancements currently xbt tracker doesn t track for how long the user has been seeding the torrent some webmaster have requested some addon that would count the time from the first seed until the current time and add some bonus put something here to the seeder | 1 |

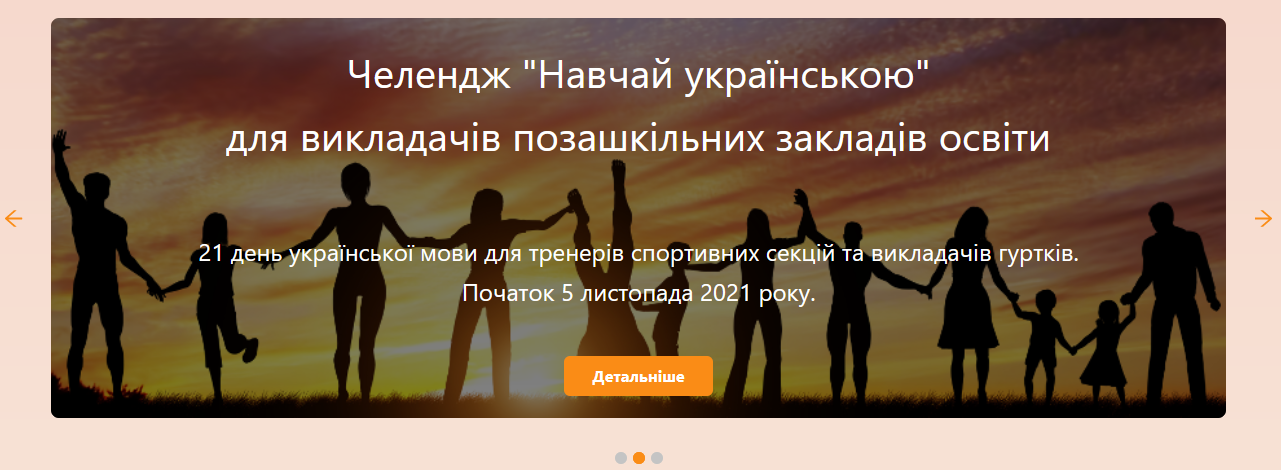

619,455 | 19,526,324,662 | IssuesEvent | 2021-12-30 08:32:46 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | closed | Develop banner backend and frontend | User story Backend Frontend Epic UI Priority: Medium API | Name at DB - BannerItems

Model BannerItem (id, title, subtitle, link, picture, sequenceNumber)

subtitle and link can be null

Repository

Sevice

Controller(don't forget to define permist in SecurityConfig and documentation)

+ GET /banners - get all banner items

+ GET /banner/{id} - get one banner item

+ POST /banner - add banner item

+ PUT /banner/{id} - update banner item

+ DELETE /banner/{id} - archive banner item

Validation:

title - min 5, max 150, cant contain russian letters

subtitle - min 5, max 250, cant contain russian letters

link - regex for links

picture - regex for picture folder (you can find it in CreateChallenge DTO)

And also display at frontend, like it is now, and control page for admin.

| 1.0 | Develop banner backend and frontend - Name at DB - BannerItems

Model BannerItem (id, title, subtitle, link, picture, sequenceNumber)

subtitle and link can be null

Repository

Sevice

Controller(don't forget to define permist in SecurityConfig and documentation)

+ GET /banners - get all banner items

+ GET /banner/{id} - get one banner item

+ POST /banner - add banner item

+ PUT /banner/{id} - update banner item

+ DELETE /banner/{id} - archive banner item

Validation:

title - min 5, max 150, cant contain russian letters

subtitle - min 5, max 250, cant contain russian letters

link - regex for links

picture - regex for picture folder (you can find it in CreateChallenge DTO)

And also display at frontend, like it is now, and control page for admin.

| priority | develop banner backend and frontend name at db banneritems model banneritem id title subtitle link picture sequencenumber subtitle and link can be null repository sevice controller don t forget to define permist in securityconfig and documentation get banners get all banner items get banner id get one banner item post banner add banner item put banner id update banner item delete banner id archive banner item validation title min max cant contain russian letters subtitle min max cant contain russian letters link regex for links picture regex for picture folder you can find it in createchallenge dto and also display at frontend like it is now and control page for admin | 1 |

79,255 | 3,525,845,207 | IssuesEvent | 2016-01-14 00:24:42 | gadLinux/hibernate-generic-dao | https://api.github.com/repos/gadLinux/hibernate-generic-dao | closed | Implement MetadataUtil for JPA 2 metadata model | auto-migrated Priority-Medium Type-Enhancement | ```

With this, the framework would theoretically support any JPA 2 container.

```

Original issue reported on code.google.com by `dwolvert` on 29 Apr 2013 at 2:09 | 1.0 | Implement MetadataUtil for JPA 2 metadata model - ```

With this, the framework would theoretically support any JPA 2 container.

```

Original issue reported on code.google.com by `dwolvert` on 29 Apr 2013 at 2:09 | priority | implement metadatautil for jpa metadata model with this the framework would theoretically support any jpa container original issue reported on code google com by dwolvert on apr at | 1 |

458,183 | 13,170,620,661 | IssuesEvent | 2020-08-11 15:24:41 | indianapublicmedia/indianapublicmedia-web | https://api.github.com/repos/indianapublicmedia/indianapublicmedia-web | opened | metadata reconfiguration | enhancement medium priority | New scripts are needed that don't rely on the service provider to use consistent metadata for pages being published | 1.0 | metadata reconfiguration - New scripts are needed that don't rely on the service provider to use consistent metadata for pages being published | priority | metadata reconfiguration new scripts are needed that don t rely on the service provider to use consistent metadata for pages being published | 1 |

316,740 | 9,654,343,931 | IssuesEvent | 2019-05-19 13:22:45 | teambit/bit | https://api.github.com/repos/teambit/bit | closed | getting 'bit failed to load' when tagging component | area/tag priority/medium type/bug | ## Expected Behavior

Should tag, without errors

## Actual Behavior

```bash

❯ bit tag iframe/communicator

successfully installed the bit.envs/compilers/babel@0.0.20 compiler

error: bit failed to load bit.envs/compilers/babel@0.0.20 with the following exception:

Cannot find module '/Users/kutner/Documents/Projects/bitsrc/web/.bit/components/compilers/babel/bit.envs/0.0.20'.

Error: Cannot find module '/Users/kutner/Documents/Projects/bitsrc/web/.bit/components/compilers/babel/bit.envs/0.0.20'

at Function.Module._resolveFilename (internal/modules/cjs/loader.js:655:15)

at Function.Module._load (internal/modules/cjs/loader.js:580:25)

at Module.require (internal/modules/cjs/loader.js:711:19)

at require (/usr/local/lib/node_modules/bit-bin/node_modules/v8-compile-cache/v8-compile-cache.js:159:20)

at Function._callee4$ (/usr/local/lib/node_modules/bit-bin/dist/extensions/base-extension.js:597:27)

at tryCatch (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:62:40)

at Generator.invoke [as _invoke] (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:296:22)

at Generator.prototype.(anonymous function) [as next] (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:114:21)

at Generator.tryCatcher (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/util.js:16:23)

at PromiseSpawn._promiseFulfilled (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/generators.js:97:49)

at Promise._settlePromise (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:574:26)

at Promise._settlePromise0 (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:614:10)

at Promise._settlePromises (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:694:18)

at _drainQueueStep (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:138:12)

at _drainQueue (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:131:9)

at Async._drainQueues (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:147:5)

web git/master* 13s

❯ bit tag iframe/communicator

⡀⠀ importing components(node:92540) [DEP0005] DeprecationWarning: Buffer() is deprecated due to security and usability issues. Please use the Buffer.alloc(), Buffer.allocUnsafe(), or Buffer.from() methods instead.

2 component(s) tagged | 0 added, 1 changed, 1 auto-tagged

changed components: bitsrc.ui/iframe/communicator@0.2.5

auto-tagged components (as a result of tagging their dependencies): playground-client@0.0.2

```

## Steps to Reproduce the Problem

1. import said component ```bitsrc.ui/iframe/communicator```

1. modify component (i.e. add space)

1. run ```bit tag iframe/communicator```

-> error occures

## Specifications

- Bit version: 14.1.0

- Node version: 11.13.0

- npm / yarn version: yarn 1.15.2

- Platform: MacOs

| 1.0 | getting 'bit failed to load' when tagging component - ## Expected Behavior

Should tag, without errors

## Actual Behavior

```bash

❯ bit tag iframe/communicator

successfully installed the bit.envs/compilers/babel@0.0.20 compiler

error: bit failed to load bit.envs/compilers/babel@0.0.20 with the following exception:

Cannot find module '/Users/kutner/Documents/Projects/bitsrc/web/.bit/components/compilers/babel/bit.envs/0.0.20'.

Error: Cannot find module '/Users/kutner/Documents/Projects/bitsrc/web/.bit/components/compilers/babel/bit.envs/0.0.20'

at Function.Module._resolveFilename (internal/modules/cjs/loader.js:655:15)

at Function.Module._load (internal/modules/cjs/loader.js:580:25)

at Module.require (internal/modules/cjs/loader.js:711:19)

at require (/usr/local/lib/node_modules/bit-bin/node_modules/v8-compile-cache/v8-compile-cache.js:159:20)

at Function._callee4$ (/usr/local/lib/node_modules/bit-bin/dist/extensions/base-extension.js:597:27)

at tryCatch (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:62:40)

at Generator.invoke [as _invoke] (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:296:22)

at Generator.prototype.(anonymous function) [as next] (/usr/local/lib/node_modules/bit-bin/node_modules/regenerator-runtime/runtime.js:114:21)

at Generator.tryCatcher (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/util.js:16:23)

at PromiseSpawn._promiseFulfilled (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/generators.js:97:49)

at Promise._settlePromise (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:574:26)

at Promise._settlePromise0 (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:614:10)

at Promise._settlePromises (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/promise.js:694:18)

at _drainQueueStep (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:138:12)

at _drainQueue (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:131:9)

at Async._drainQueues (/usr/local/lib/node_modules/bit-bin/node_modules/bluebird/js/release/async.js:147:5)

web git/master* 13s

❯ bit tag iframe/communicator

⡀⠀ importing components(node:92540) [DEP0005] DeprecationWarning: Buffer() is deprecated due to security and usability issues. Please use the Buffer.alloc(), Buffer.allocUnsafe(), or Buffer.from() methods instead.

2 component(s) tagged | 0 added, 1 changed, 1 auto-tagged

changed components: bitsrc.ui/iframe/communicator@0.2.5

auto-tagged components (as a result of tagging their dependencies): playground-client@0.0.2

```

## Steps to Reproduce the Problem

1. import said component ```bitsrc.ui/iframe/communicator```

1. modify component (i.e. add space)

1. run ```bit tag iframe/communicator```

-> error occures

## Specifications

- Bit version: 14.1.0

- Node version: 11.13.0

- npm / yarn version: yarn 1.15.2

- Platform: MacOs

| priority | getting bit failed to load when tagging component expected behavior should tag without errors actual behavior bash ❯ bit tag iframe communicator successfully installed the bit envs compilers babel compiler error bit failed to load bit envs compilers babel with the following exception cannot find module users kutner documents projects bitsrc web bit components compilers babel bit envs error cannot find module users kutner documents projects bitsrc web bit components compilers babel bit envs at function module resolvefilename internal modules cjs loader js at function module load internal modules cjs loader js at module require internal modules cjs loader js at require usr local lib node modules bit bin node modules compile cache compile cache js at function usr local lib node modules bit bin dist extensions base extension js at trycatch usr local lib node modules bit bin node modules regenerator runtime runtime js at generator invoke usr local lib node modules bit bin node modules regenerator runtime runtime js at generator prototype anonymous function usr local lib node modules bit bin node modules regenerator runtime runtime js at generator trycatcher usr local lib node modules bit bin node modules bluebird js release util js at promisespawn promisefulfilled usr local lib node modules bit bin node modules bluebird js release generators js at promise settlepromise usr local lib node modules bit bin node modules bluebird js release promise js at promise usr local lib node modules bit bin node modules bluebird js release promise js at promise settlepromises usr local lib node modules bit bin node modules bluebird js release promise js at drainqueuestep usr local lib node modules bit bin node modules bluebird js release async js at drainqueue usr local lib node modules bit bin node modules bluebird js release async js at async drainqueues usr local lib node modules bit bin node modules bluebird js release async js web git master ❯ bit tag iframe communicator ⡀⠀ importing components node deprecationwarning buffer is deprecated due to security and usability issues please use the buffer alloc buffer allocunsafe or buffer from methods instead component s tagged added changed auto tagged changed components bitsrc ui iframe communicator auto tagged components as a result of tagging their dependencies playground client steps to reproduce the problem import said component bitsrc ui iframe communicator modify component i e add space run bit tag iframe communicator error occures specifications bit version node version npm yarn version yarn platform macos | 1 |

59,285 | 3,104,867,407 | IssuesEvent | 2015-08-31 18:03:53 | CrisisTextLine/CTL-Online | https://api.github.com/repos/CrisisTextLine/CTL-Online | closed | In progress Tab is Misleading | Medium Priority | The "inprogress" tab is misleading. All modules that haven't started are also listed in this "in progress" tab.

Relevant Screenshot:

Relevant Trello Card: https://trello.com/c/QUNzvmSR/61-the-inprogress-tab-is-misleading-all-modules-that-haven-t-started-are-also-listed-in-this-in-progress-tab | 1.0 | In progress Tab is Misleading - The "inprogress" tab is misleading. All modules that haven't started are also listed in this "in progress" tab.

Relevant Screenshot:

Relevant Trello Card: https://trello.com/c/QUNzvmSR/61-the-inprogress-tab-is-misleading-all-modules-that-haven-t-started-are-also-listed-in-this-in-progress-tab | priority | in progress tab is misleading the inprogress tab is misleading all modules that haven t started are also listed in this in progress tab relevant screenshot relevant trello card | 1 |

447,397 | 12,888,427,451 | IssuesEvent | 2020-07-13 13:00:58 | medic/cht-core | https://api.github.com/repos/medic/cht-core | closed | Exporting App Settings seems to be adding extra elements for configured sms forms. | Help wanted Priority: 2 - Medium Type: Bug | **Describe the bug**

Uploading the standard config which contains SMS forms. Then using the admin page to backup the app settings. Shows a bunch of extra elements in the config. Starting at 0 and going to the count of number of sms forms configured.

**To Reproduce**

Steps to reproduce the behavior:

1. Upload standard config

2. Go to the admin page > backup app code > Download current settings.

3. Review the JSON for app_Settings.

**Expected behavior**

We should only show sms forms in there expected location.

**Environment**

- Instance: gamma.dev

- Browser: chrome

- Client platform: linux

- App: admin

- Version: 3.9

**Additional context**

See attached app_settings.json for example of the improper output.

[app_settings.txt](https://github.com/medic/cht-core/files/4781106/app_settings.txt)

| 1.0 | Exporting App Settings seems to be adding extra elements for configured sms forms. - **Describe the bug**

Uploading the standard config which contains SMS forms. Then using the admin page to backup the app settings. Shows a bunch of extra elements in the config. Starting at 0 and going to the count of number of sms forms configured.

**To Reproduce**

Steps to reproduce the behavior:

1. Upload standard config

2. Go to the admin page > backup app code > Download current settings.

3. Review the JSON for app_Settings.

**Expected behavior**

We should only show sms forms in there expected location.

**Environment**

- Instance: gamma.dev

- Browser: chrome

- Client platform: linux

- App: admin

- Version: 3.9

**Additional context**

See attached app_settings.json for example of the improper output.

[app_settings.txt](https://github.com/medic/cht-core/files/4781106/app_settings.txt)

| priority | exporting app settings seems to be adding extra elements for configured sms forms describe the bug uploading the standard config which contains sms forms then using the admin page to backup the app settings shows a bunch of extra elements in the config starting at and going to the count of number of sms forms configured to reproduce steps to reproduce the behavior upload standard config go to the admin page backup app code download current settings review the json for app settings expected behavior we should only show sms forms in there expected location environment instance gamma dev browser chrome client platform linux app admin version additional context see attached app settings json for example of the improper output | 1 |

779,784 | 27,366,091,133 | IssuesEvent | 2023-02-27 19:17:27 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Load balancing after tablet splitting in clusters with more than RF # of nodes may cause excessive remote bootstrap | kind/enhancement area/docdb priority/medium | Jira Link: [DB-3201](https://yugabyte.atlassian.net/browse/DB-3201)

In a single region, 3 AZ, 6 node cluster, with automatic tablet splitting enabled, we notice intermittent bursts of remote bootstraps which impact user-facing throughput. We should consider strategies for mitigating the overhead of automatic tablet splitting. For example, maybe we should block the LB from adding/removing new nodes to a raft group for a tablet which was recently split, with the assumption being that raft groups in the same table with non-equal peer groups will split at a roughly similar rate in a hash-partitioned table. | 1.0 | [DocDB] Load balancing after tablet splitting in clusters with more than RF # of nodes may cause excessive remote bootstrap - Jira Link: [DB-3201](https://yugabyte.atlassian.net/browse/DB-3201)

In a single region, 3 AZ, 6 node cluster, with automatic tablet splitting enabled, we notice intermittent bursts of remote bootstraps which impact user-facing throughput. We should consider strategies for mitigating the overhead of automatic tablet splitting. For example, maybe we should block the LB from adding/removing new nodes to a raft group for a tablet which was recently split, with the assumption being that raft groups in the same table with non-equal peer groups will split at a roughly similar rate in a hash-partitioned table. | priority | load balancing after tablet splitting in clusters with more than rf of nodes may cause excessive remote bootstrap jira link in a single region az node cluster with automatic tablet splitting enabled we notice intermittent bursts of remote bootstraps which impact user facing throughput we should consider strategies for mitigating the overhead of automatic tablet splitting for example maybe we should block the lb from adding removing new nodes to a raft group for a tablet which was recently split with the assumption being that raft groups in the same table with non equal peer groups will split at a roughly similar rate in a hash partitioned table | 1 |

780,098 | 27,379,399,542 | IssuesEvent | 2023-02-28 09:00:16 | Saga-sanga/mizo-apologia | https://api.github.com/repos/Saga-sanga/mizo-apologia | opened | Merge Topics and Categories | enhancement medium_priority optimization | Merge topics and categories into topics.

### Current Process

- Topics are for categorising answers.

- Categories are for categorising articles.

- Topics have a one-to-many mapping with answers.

- Categories have a one-to-many mapping with articles.

### Suggested Process

- Articles and answers will share topics.

- Topics will have many to many relations with answers and articles. | 1.0 | Merge Topics and Categories - Merge topics and categories into topics.

### Current Process

- Topics are for categorising answers.

- Categories are for categorising articles.

- Topics have a one-to-many mapping with answers.

- Categories have a one-to-many mapping with articles.

### Suggested Process

- Articles and answers will share topics.

- Topics will have many to many relations with answers and articles. | priority | merge topics and categories merge topics and categories into topics current process topics are for categorising answers categories are for categorising articles topics have a one to many mapping with answers categories have a one to many mapping with articles suggested process articles and answers will share topics topics will have many to many relations with answers and articles | 1 |

321,513 | 9,799,696,599 | IssuesEvent | 2019-06-11 14:53:21 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | Add/create dataset metadata | Category: Core Priority: Medium Status: Defined Type: Feature | Many of the datasets that we import and export use separate layers to hold metadata.

E.g.

* TDSv[4,6,7]: DATASET_S: Dataset (ZI031)

* TDSv[4,6,7]: ENTITY_COLLECTION_METADATA_S: Entity Collection Metadata (ZI039)

* GGDMv3: DATASET_S: Dataset (ZI031)

* GGDMv3: ENTITY_COLLECTION_METADATA_S: Entity Collection Metadata (ZI039)

On export:

* Generate a DATASET_S layer:

+ Build a polygon that is the bounding box for the dataset

+ Assign attributes to the polygon based on the dataset or config variables:

Based on the config variables, If a tag is present in the dataset, use its value or use the value form the config variable.

* It may be possible to generate a ENTITY_COLLECTION_METADATA_S layer. If so, this will be done in a follow-on ticket.

On import:

* Read the DATASET_S layer if present

* Apply selected attributes (from config variables) from this layer to the whole dataset

* Read the ENTITY_COLLECTION_METADATA_S layer if present

* Apply selected attributes (from config variables) from this layer to features that intersec/are contained by polygons from this layer. | 1.0 | Add/create dataset metadata - Many of the datasets that we import and export use separate layers to hold metadata.

E.g.

* TDSv[4,6,7]: DATASET_S: Dataset (ZI031)

* TDSv[4,6,7]: ENTITY_COLLECTION_METADATA_S: Entity Collection Metadata (ZI039)

* GGDMv3: DATASET_S: Dataset (ZI031)

* GGDMv3: ENTITY_COLLECTION_METADATA_S: Entity Collection Metadata (ZI039)

On export:

* Generate a DATASET_S layer:

+ Build a polygon that is the bounding box for the dataset

+ Assign attributes to the polygon based on the dataset or config variables:

Based on the config variables, If a tag is present in the dataset, use its value or use the value form the config variable.

* It may be possible to generate a ENTITY_COLLECTION_METADATA_S layer. If so, this will be done in a follow-on ticket.

On import:

* Read the DATASET_S layer if present

* Apply selected attributes (from config variables) from this layer to the whole dataset

* Read the ENTITY_COLLECTION_METADATA_S layer if present

* Apply selected attributes (from config variables) from this layer to features that intersec/are contained by polygons from this layer. | priority | add create dataset metadata many of the datasets that we import and export use separate layers to hold metadata e g tdsv dataset s dataset tdsv entity collection metadata s entity collection metadata dataset s dataset entity collection metadata s entity collection metadata on export generate a dataset s layer build a polygon that is the bounding box for the dataset assign attributes to the polygon based on the dataset or config variables based on the config variables if a tag is present in the dataset use its value or use the value form the config variable it may be possible to generate a entity collection metadata s layer if so this will be done in a follow on ticket on import read the dataset s layer if present apply selected attributes from config variables from this layer to the whole dataset read the entity collection metadata s layer if present apply selected attributes from config variables from this layer to features that intersec are contained by polygons from this layer | 1 |

283,135 | 8,717,051,700 | IssuesEvent | 2018-12-07 16:03:45 | lbryio/lbry-android | https://api.github.com/repos/lbryio/lbry-android | closed | Utilize notification content text area for notifications | needs: exploration needs: repro priority: medium type: bug | The notification that is added to the status bar while LBRY is running does not set the content text area.

Things that could go here:

- The startup status

- X unwatched subscriptions

- Y LBC unearned rewards

| 1.0 | Utilize notification content text area for notifications - The notification that is added to the status bar while LBRY is running does not set the content text area.

Things that could go here:

- The startup status

- X unwatched subscriptions

- Y LBC unearned rewards

| priority | utilize notification content text area for notifications the notification that is added to the status bar while lbry is running does not set the content text area things that could go here the startup status x unwatched subscriptions y lbc unearned rewards | 1 |

647,300 | 21,098,001,883 | IssuesEvent | 2022-04-04 12:12:32 | disorderedmaterials/dissolve | https://api.github.com/repos/disorderedmaterials/dissolve | opened | Change Input File Format | Priority: Medium | ### Focus

The current input file format requires us to maintain our own code for reading/writing those files. As part of #899 we have decided to replace this file with TOML. #1008 will be the initial PR that will cover quite a bit of the serialization (writing to file) part of the process.

In order to cover this process in an appropriate manner, there will be two main issues that will keep track of progress.

### Tasks

- [ ] Issue 1

- [ ] Issue 2

...

| 1.0 | Change Input File Format - ### Focus

The current input file format requires us to maintain our own code for reading/writing those files. As part of #899 we have decided to replace this file with TOML. #1008 will be the initial PR that will cover quite a bit of the serialization (writing to file) part of the process.

In order to cover this process in an appropriate manner, there will be two main issues that will keep track of progress.

### Tasks

- [ ] Issue 1

- [ ] Issue 2

...

| priority | change input file format focus the current input file format requires us to maintain our own code for reading writing those files as part of we have decided to replace this file with toml will be the initial pr that will cover quite a bit of the serialization writing to file part of the process in order to cover this process in an appropriate manner there will be two main issues that will keep track of progress tasks issue issue | 1 |

334,778 | 10,145,292,497 | IssuesEvent | 2019-08-05 03:30:03 | projectacrn/acrn-hypervisor | https://api.github.com/repos/projectacrn/acrn-hypervisor | closed | UOS occasionally failed to boot | priority: P3-Medium status: Assigned type: bug | **HW/Board**

NUC model nuc7i7dnhe

**ACRN version**

Acre release v0.4

**Clear Linux info**

26760

**Launch script**

```

mem_size=4096M

acrn-dm -A \

-m $mem_size \

-c $2 \

-s 0:0,hostbridge \

-s 1:0,lpc -l com1,stdio \

-s 2,pci-gvt -G "$3" \

-s 5,virtio-console,@pty:pty_port \

-s 6,virtio-hyper_dmabuf \

-s 3,virtio-blk,/home/clear/agl-rse.wic \

-s 4,virtio-net,$tap \

-s 7,xhci,1-5 \

-k /home/clear/bzImage \

-B "root=/dev/vda2 rw rootwait maxcpus=$2 nohpet console=tty0 console=hvc0 \

console=ttyS0 no_timer_check ignore_loglevel log_buf_len=16M \

consoleblank=0 tsc=reliable i915.avail_planes_per_pipe=$4 \

i915.enable_hangcheck=0 i915.nuclear_pageflip=1 i915.enable_guc_loading=0 \

i915.enable_guc_submission=0 i915.enable_guc=0" $vm_name

}

for i in `ls -d /sys/devices/system/cpu/cpu[2-4]`; do

online=`cat $i/online`

idx=`echo $i | tr -cd "[2-4]"`

echo cpu$idx online=$online

if [ "$online" = "1" ]; then

echo 0 > $i/online

echo $idx > /sys/class/vhm/acrn_vhm/offline_cpu

fi done

launch_agl 2 1 "64 448 8" 0x070000 "cluster"

```

**Expected result**

VM launches

**Actual result**

VM sometimes failed to launch. The repetition rate is low. Once or twice a day in a regular basic development.

**Log**

```

clear@clr ~ $

clear@clr ~ $ sudo ./launch_rse.sh

cpu2 online=0

cpu3 online=0

cpu4 online=0

tap device existed, reuse acrn_tap1

passed gvt-g optargs low_gm 64, high_gm 448, fence 8

SW_LOAD: get kernel path /home/clear/bzImage

SW_LOAD: get bootargs root=/dev/vda2 rw rootwait maxcpus=1 nohpet console=tty0 console=hvc0 console=ttyS0 no_timer_check ignore_loglevel log_buf_len=16M consoleblank=0 tsc=reliable i915.avail_planes_per_pipe=0x070000 i915.enable_hangcheck=0 i915.nuclear_pageflip=1 i915.enable_guc_loading=0 i915.enable_guc_submission=0 i915.enable_guc=0

VHM api version 1.0

open hugetlbfs file /run/hugepage/acrn/huge_lv1/D279543825D611E8864ECB7A18B34643

open hugetlbfs file /run/hugepage/acrn/huge_lv2/D279543825D611E8864ECB7A18B34643

level 0 free/need pages:791/0 page size:0x200000

level 1 free/need pages:0/4 page size:0x40000000

to reserve more free pages:

to reserve pages (+orig 10): echo 14 > /sys/kernel/mm/hugepages/hugepages-1048576kB/nr_hugepages

to reserve pages (+orig 1815): echo 3072 > /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepages

level 0 pages gap: 4 failed to reserve!

Unable to setup memory (0)

clear@clr ~ $

``` | 1.0 | UOS occasionally failed to boot - **HW/Board**

NUC model nuc7i7dnhe

**ACRN version**

Acre release v0.4

**Clear Linux info**

26760

**Launch script**

```

mem_size=4096M

acrn-dm -A \

-m $mem_size \

-c $2 \

-s 0:0,hostbridge \

-s 1:0,lpc -l com1,stdio \

-s 2,pci-gvt -G "$3" \

-s 5,virtio-console,@pty:pty_port \

-s 6,virtio-hyper_dmabuf \

-s 3,virtio-blk,/home/clear/agl-rse.wic \

-s 4,virtio-net,$tap \

-s 7,xhci,1-5 \

-k /home/clear/bzImage \

-B "root=/dev/vda2 rw rootwait maxcpus=$2 nohpet console=tty0 console=hvc0 \

console=ttyS0 no_timer_check ignore_loglevel log_buf_len=16M \

consoleblank=0 tsc=reliable i915.avail_planes_per_pipe=$4 \

i915.enable_hangcheck=0 i915.nuclear_pageflip=1 i915.enable_guc_loading=0 \

i915.enable_guc_submission=0 i915.enable_guc=0" $vm_name

}

for i in `ls -d /sys/devices/system/cpu/cpu[2-4]`; do

online=`cat $i/online`

idx=`echo $i | tr -cd "[2-4]"`

echo cpu$idx online=$online

if [ "$online" = "1" ]; then

echo 0 > $i/online

echo $idx > /sys/class/vhm/acrn_vhm/offline_cpu

fi done

launch_agl 2 1 "64 448 8" 0x070000 "cluster"

```

**Expected result**

VM launches

**Actual result**

VM sometimes failed to launch. The repetition rate is low. Once or twice a day in a regular basic development.

**Log**

```

clear@clr ~ $

clear@clr ~ $ sudo ./launch_rse.sh

cpu2 online=0

cpu3 online=0

cpu4 online=0

tap device existed, reuse acrn_tap1

passed gvt-g optargs low_gm 64, high_gm 448, fence 8

SW_LOAD: get kernel path /home/clear/bzImage

SW_LOAD: get bootargs root=/dev/vda2 rw rootwait maxcpus=1 nohpet console=tty0 console=hvc0 console=ttyS0 no_timer_check ignore_loglevel log_buf_len=16M consoleblank=0 tsc=reliable i915.avail_planes_per_pipe=0x070000 i915.enable_hangcheck=0 i915.nuclear_pageflip=1 i915.enable_guc_loading=0 i915.enable_guc_submission=0 i915.enable_guc=0

VHM api version 1.0

open hugetlbfs file /run/hugepage/acrn/huge_lv1/D279543825D611E8864ECB7A18B34643

open hugetlbfs file /run/hugepage/acrn/huge_lv2/D279543825D611E8864ECB7A18B34643

level 0 free/need pages:791/0 page size:0x200000

level 1 free/need pages:0/4 page size:0x40000000

to reserve more free pages:

to reserve pages (+orig 10): echo 14 > /sys/kernel/mm/hugepages/hugepages-1048576kB/nr_hugepages

to reserve pages (+orig 1815): echo 3072 > /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepages

level 0 pages gap: 4 failed to reserve!

Unable to setup memory (0)

clear@clr ~ $

``` | priority | uos occasionally failed to boot hw board nuc model acrn version acre release clear linux info launch script mem size acrn dm a m mem size c s hostbridge s lpc l stdio s pci gvt g s virtio console pty pty port s virtio hyper dmabuf s virtio blk home clear agl rse wic s virtio net tap s xhci k home clear bzimage b root dev rw rootwait maxcpus nohpet console console console no timer check ignore loglevel log buf len consoleblank tsc reliable avail planes per pipe enable hangcheck nuclear pageflip enable guc loading enable guc submission enable guc vm name for i in ls d sys devices system cpu cpu do online cat i online idx echo i tr cd echo cpu idx online online if then echo i online echo idx sys class vhm acrn vhm offline cpu fi done launch agl cluster expected result vm launches actual result vm sometimes failed to launch the repetition rate is low once or twice a day in a regular basic development log clear clr clear clr sudo launch rse sh online online online tap device existed reuse acrn passed gvt g optargs low gm high gm fence sw load get kernel path home clear bzimage sw load get bootargs root dev rw rootwait maxcpus nohpet console console console no timer check ignore loglevel log buf len consoleblank tsc reliable avail planes per pipe enable hangcheck nuclear pageflip enable guc loading enable guc submission enable guc vhm api version open hugetlbfs file run hugepage acrn huge open hugetlbfs file run hugepage acrn huge level free need pages page size level free need pages page size to reserve more free pages to reserve pages orig echo sys kernel mm hugepages hugepages nr hugepages to reserve pages orig echo sys kernel mm hugepages hugepages nr hugepages level pages gap failed to reserve unable to setup memory clear clr | 1 |

556,031 | 16,473,320,918 | IssuesEvent | 2021-05-23 21:07:38 | sopra-fs21-group-25/sopra-fs21-jass-client | https://api.github.com/repos/sopra-fs21-group-25/sopra-fs21-jass-client | closed | Text chat window, chat with friends in the main menu page | medium priority task | Time estimation: 24h

Part of user story #5 | 1.0 | Text chat window, chat with friends in the main menu page - Time estimation: 24h

Part of user story #5 | priority | text chat window chat with friends in the main menu page time estimation part of user story | 1 |

515,695 | 14,967,545,447 | IssuesEvent | 2021-01-27 15:48:16 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | Collection: generate error if job template survey is rejected | component:awx_collection priority:medium state:needs_devel type:bug | ##### ISSUE TYPE

- Feature Idea

##### SUMMARY

When creating a job template (or workflow job template) via the collection, generate an error if the template's associated survey spec is rejected.

Current behavior is to silently pass ("changed") while the survey is not added. Any malformation in the survey spec will result in the survey not being created along with the template, however, no error is presented to the end user. | 1.0 | Collection: generate error if job template survey is rejected - ##### ISSUE TYPE

- Feature Idea

##### SUMMARY

When creating a job template (or workflow job template) via the collection, generate an error if the template's associated survey spec is rejected.

Current behavior is to silently pass ("changed") while the survey is not added. Any malformation in the survey spec will result in the survey not being created along with the template, however, no error is presented to the end user. | priority | collection generate error if job template survey is rejected issue type feature idea summary when creating a job template or workflow job template via the collection generate an error if the template s associated survey spec is rejected current behavior is to silently pass changed while the survey is not added any malformation in the survey spec will result in the survey not being created along with the template however no error is presented to the end user | 1 |

270,801 | 8,470,385,006 | IssuesEvent | 2018-10-24 03:59:05 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | opened | Have a logout button in the admin app | Configuration Help Wanted Priority: 2 - Medium Status: 1 - Triaged Type: Improvement | Make it easy for admins to do the right thing and end their session. | 1.0 | Have a logout button in the admin app - Make it easy for admins to do the right thing and end their session. | priority | have a logout button in the admin app make it easy for admins to do the right thing and end their session | 1 |

396,888 | 11,715,409,242 | IssuesEvent | 2020-03-09 14:05:58 | vetterh1/frozengem | https://api.github.com/repos/vetterh1/frozengem | closed | [Details] Contextual help | enhancement priority medium | - [x] On code: "write down"

- [ ] On Camera / Category: "change / add ..."

- [x] On completed Tiles: "Click anywhere on a tile to edit / change it"

- [x] On empty Tiles: "Incomplete, please click to edit..."

- [x] On Remove: "Don't forget to remove..."

- [ ] On Help icon: "Get help anytime by clicking here" | 1.0 | [Details] Contextual help - - [x] On code: "write down"

- [ ] On Camera / Category: "change / add ..."

- [x] On completed Tiles: "Click anywhere on a tile to edit / change it"

- [x] On empty Tiles: "Incomplete, please click to edit..."

- [x] On Remove: "Don't forget to remove..."

- [ ] On Help icon: "Get help anytime by clicking here" | priority | contextual help on code write down on camera category change add on completed tiles click anywhere on a tile to edit change it on empty tiles incomplete please click to edit on remove don t forget to remove on help icon get help anytime by clicking here | 1 |

461,848 | 13,237,135,379 | IssuesEvent | 2020-08-18 21:04:02 | canonical-web-and-design/snapcraft.io | https://api.github.com/repos/canonical-web-and-design/snapcraft.io | closed | Snapcraft build is not logged in although the header thinks it is | Priority: Medium | ### Expected behaviour

Going to snapcraft.io/build while logged in should show me the logged in screen with a list of my set-up builds, not the "set up in minutes" screen for new users.

### Steps to reproduce the problem

Sign in to snapcraft.io to see one's existing snaps.

Go to build.snapcraft.io

Observe that the header shows my name (meaning that I'm logged in) but I see the "set up in minutes" screen that's shown to new users. I don't need to set up; I already _am_ set up. But I have to click that "set up in minutes" button in order to log in. This makes it feel like my work has been forgotten; it's strange to click a "set up in minutes" button (which is like "register an account" on other sites) when I already am registered.

(Technical note: I assume this is something to do with how signing in to snapcraft.io is through my Ubuntu One account, and signing in to build.snapcraft.io is through my github account, but this is an implementation detail that I should not have to care about; if I'm logged in, I'm logged in.)

### Specs

- _URL:_ https://build.snapcraft.io

- _Operating system:_ Ubuntu 18.04

- _Browser:_ Firefox

| 1.0 | Snapcraft build is not logged in although the header thinks it is - ### Expected behaviour

Going to snapcraft.io/build while logged in should show me the logged in screen with a list of my set-up builds, not the "set up in minutes" screen for new users.

### Steps to reproduce the problem

Sign in to snapcraft.io to see one's existing snaps.

Go to build.snapcraft.io

Observe that the header shows my name (meaning that I'm logged in) but I see the "set up in minutes" screen that's shown to new users. I don't need to set up; I already _am_ set up. But I have to click that "set up in minutes" button in order to log in. This makes it feel like my work has been forgotten; it's strange to click a "set up in minutes" button (which is like "register an account" on other sites) when I already am registered.

(Technical note: I assume this is something to do with how signing in to snapcraft.io is through my Ubuntu One account, and signing in to build.snapcraft.io is through my github account, but this is an implementation detail that I should not have to care about; if I'm logged in, I'm logged in.)

### Specs

- _URL:_ https://build.snapcraft.io

- _Operating system:_ Ubuntu 18.04

- _Browser:_ Firefox

| priority | snapcraft build is not logged in although the header thinks it is expected behaviour going to snapcraft io build while logged in should show me the logged in screen with a list of my set up builds not the set up in minutes screen for new users steps to reproduce the problem sign in to snapcraft io to see one s existing snaps go to build snapcraft io observe that the header shows my name meaning that i m logged in but i see the set up in minutes screen that s shown to new users i don t need to set up i already am set up but i have to click that set up in minutes button in order to log in this makes it feel like my work has been forgotten it s strange to click a set up in minutes button which is like register an account on other sites when i already am registered technical note i assume this is something to do with how signing in to snapcraft io is through my ubuntu one account and signing in to build snapcraft io is through my github account but this is an implementation detail that i should not have to care about if i m logged in i m logged in specs url operating system ubuntu browser firefox | 1 |

247,439 | 7,918,672,230 | IssuesEvent | 2018-07-04 14:02:59 | esteemapp/esteem-surfer | https://api.github.com/repos/esteemapp/esteem-surfer | closed | Relevant/Recommended posts | medium priority | Use eSync to get 3 to 5 recommended posts right under post body and above comments section, similar to Medium.

can check how busy fetches these posts here: github.com/busyorg/busy/src/client/components/Sidebar/PostRecommendation.js

| 1.0 | Relevant/Recommended posts - Use eSync to get 3 to 5 recommended posts right under post body and above comments section, similar to Medium.

can check how busy fetches these posts here: github.com/busyorg/busy/src/client/components/Sidebar/PostRecommendation.js

| priority | relevant recommended posts use esync to get to recommended posts right under post body and above comments section similar to medium can check how busy fetches these posts here github com busyorg busy src client components sidebar postrecommendation js | 1 |

233,249 | 7,695,722,446 | IssuesEvent | 2018-05-18 13:17:21 | georchestra/georchestra | https://api.github.com/repos/georchestra/georchestra | opened | header - resources have no cache | bug priority-medium | eg:

```

$ curl -I https://my.sdi.org/header/img/logo.png

HTTP/1.1 200 OK

Cache-Control: no-cache, no-store, max-age=0, must-revalidate

Pragma: no-cache

Expires: Thu, 01 Jan 1970 00:00:00 GMT

```

This is clearly sub-optimal. | 1.0 | header - resources have no cache - eg:

```

$ curl -I https://my.sdi.org/header/img/logo.png

HTTP/1.1 200 OK

Cache-Control: no-cache, no-store, max-age=0, must-revalidate

Pragma: no-cache

Expires: Thu, 01 Jan 1970 00:00:00 GMT

```

This is clearly sub-optimal. | priority | header resources have no cache eg curl i http ok cache control no cache no store max age must revalidate pragma no cache expires thu jan gmt this is clearly sub optimal | 1 |

453,705 | 13,087,365,935 | IssuesEvent | 2020-08-02 11:51:52 | SHUReeducation/autoAPI | https://api.github.com/repos/SHUReeducation/autoAPI | closed | CLI flags | feature request good first issue medium priority very very easy | Maybe we need a `--force` flag for our cli tool to control if `rm -rf output` before generate.

And a `-h / --help` for displaying usage. | 1.0 | CLI flags - Maybe we need a `--force` flag for our cli tool to control if `rm -rf output` before generate.

And a `-h / --help` for displaying usage. | priority | cli flags maybe we need a force flag for our cli tool to control if rm rf output before generate and a h help for displaying usage | 1 |

338,754 | 10,237,043,596 | IssuesEvent | 2019-08-19 13:05:37 | gather-community/gather | https://api.github.com/repos/gather-community/gather | closed | Portion factors should be based on formulas | priority:medium type:bug | _Originally created by **Tom Smyth** at **2016-05-04 19:39**, migrated from [redmine-#4495](https://redmine.sassafras.coop/issues/4495)_ | 1.0 | Portion factors should be based on formulas - _Originally created by **Tom Smyth** at **2016-05-04 19:39**, migrated from [redmine-#4495](https://redmine.sassafras.coop/issues/4495)_ | priority | portion factors should be based on formulas originally created by tom smyth at migrated from | 1 |

791,125 | 27,851,790,126 | IssuesEvent | 2023-03-20 19:17:08 | cdk8s-team/cdk8s | https://api.github.com/repos/cdk8s-team/cdk8s | closed | crd example fails with this error message | bug effort/medium priority/p1 | \iac\cdk8s\java\crd>kubectl apply -f dist/ --validate=false

```

unable to decode "dist\\construct-metadata.json": Object 'Kind' is missing in '{"version":"1.0.0","resources":{"crd-java-jenkins-c87fd85f":{"path":"crd-java/jenkins"},"crd-java-mattermost-c87957d0":{"path":"crd-java/mattermost"}}}'

resource mapping not found for name: "crd-java-jenkins-c87fd85f" namespace: "" from "dist\\crd-java.k8s.yaml": no matches for kind "Jenkins" in version "jenkins.io/v1alpha2"

ensure CRDs are installed first

resource mapping not found for name: "crd-java-mattermost-c87957d0" namespace: "" from "dist\\crd-java.k8s.yaml": no matches for kind "ClusterInstallation" in version "mattermost.com/v1alpha1"

ensure CRDs are installed first

``` | 1.0 | crd example fails with this error message - \iac\cdk8s\java\crd>kubectl apply -f dist/ --validate=false

```

unable to decode "dist\\construct-metadata.json": Object 'Kind' is missing in '{"version":"1.0.0","resources":{"crd-java-jenkins-c87fd85f":{"path":"crd-java/jenkins"},"crd-java-mattermost-c87957d0":{"path":"crd-java/mattermost"}}}'

resource mapping not found for name: "crd-java-jenkins-c87fd85f" namespace: "" from "dist\\crd-java.k8s.yaml": no matches for kind "Jenkins" in version "jenkins.io/v1alpha2"

ensure CRDs are installed first

resource mapping not found for name: "crd-java-mattermost-c87957d0" namespace: "" from "dist\\crd-java.k8s.yaml": no matches for kind "ClusterInstallation" in version "mattermost.com/v1alpha1"

ensure CRDs are installed first

``` | priority | crd example fails with this error message iac java crd kubectl apply f dist validate false unable to decode dist construct metadata json object kind is missing in version resources crd java jenkins path crd java jenkins crd java mattermost path crd java mattermost resource mapping not found for name crd java jenkins namespace from dist crd java yaml no matches for kind jenkins in version jenkins io ensure crds are installed first resource mapping not found for name crd java mattermost namespace from dist crd java yaml no matches for kind clusterinstallation in version mattermost com ensure crds are installed first | 1 |

115,467 | 4,674,890,426 | IssuesEvent | 2016-10-07 04:15:36 | ponylang/ponyc | https://api.github.com/repos/ponylang/ponyc | closed | pony_continuation is not thread-safe even in the single producer case | bug: 3 - ready for work difficulty: 1 - easy priority: 2 - medium | The documentation of `pony_continuation` states that the function is thread-safe as long as only one actor pushes a continuation to another actor. This is not true as the producer (`pony_continuation`) doesn't _synchronize-with_ the consumer (`ponyint_actor_run`). Fixing this problem would require a CAS loop, which would be expensive and would remove the wait-free property of message dequeuing (well, wait-free amortized since the cold path of `ponyint_pool_free` is only lock-free).

This function isn't used in the runtime. What are the real-world use cases? Would it be conceivable to change the semantics (e.g. state that an actor should only push a continuation onto itself) or to remove the function? | 1.0 | pony_continuation is not thread-safe even in the single producer case - The documentation of `pony_continuation` states that the function is thread-safe as long as only one actor pushes a continuation to another actor. This is not true as the producer (`pony_continuation`) doesn't _synchronize-with_ the consumer (`ponyint_actor_run`). Fixing this problem would require a CAS loop, which would be expensive and would remove the wait-free property of message dequeuing (well, wait-free amortized since the cold path of `ponyint_pool_free` is only lock-free).

This function isn't used in the runtime. What are the real-world use cases? Would it be conceivable to change the semantics (e.g. state that an actor should only push a continuation onto itself) or to remove the function? | priority | pony continuation is not thread safe even in the single producer case the documentation of pony continuation states that the function is thread safe as long as only one actor pushes a continuation to another actor this is not true as the producer pony continuation doesn t synchronize with the consumer ponyint actor run fixing this problem would require a cas loop which would be expensive and would remove the wait free property of message dequeuing well wait free amortized since the cold path of ponyint pool free is only lock free this function isn t used in the runtime what are the real world use cases would it be conceivable to change the semantics e g state that an actor should only push a continuation onto itself or to remove the function | 1 |

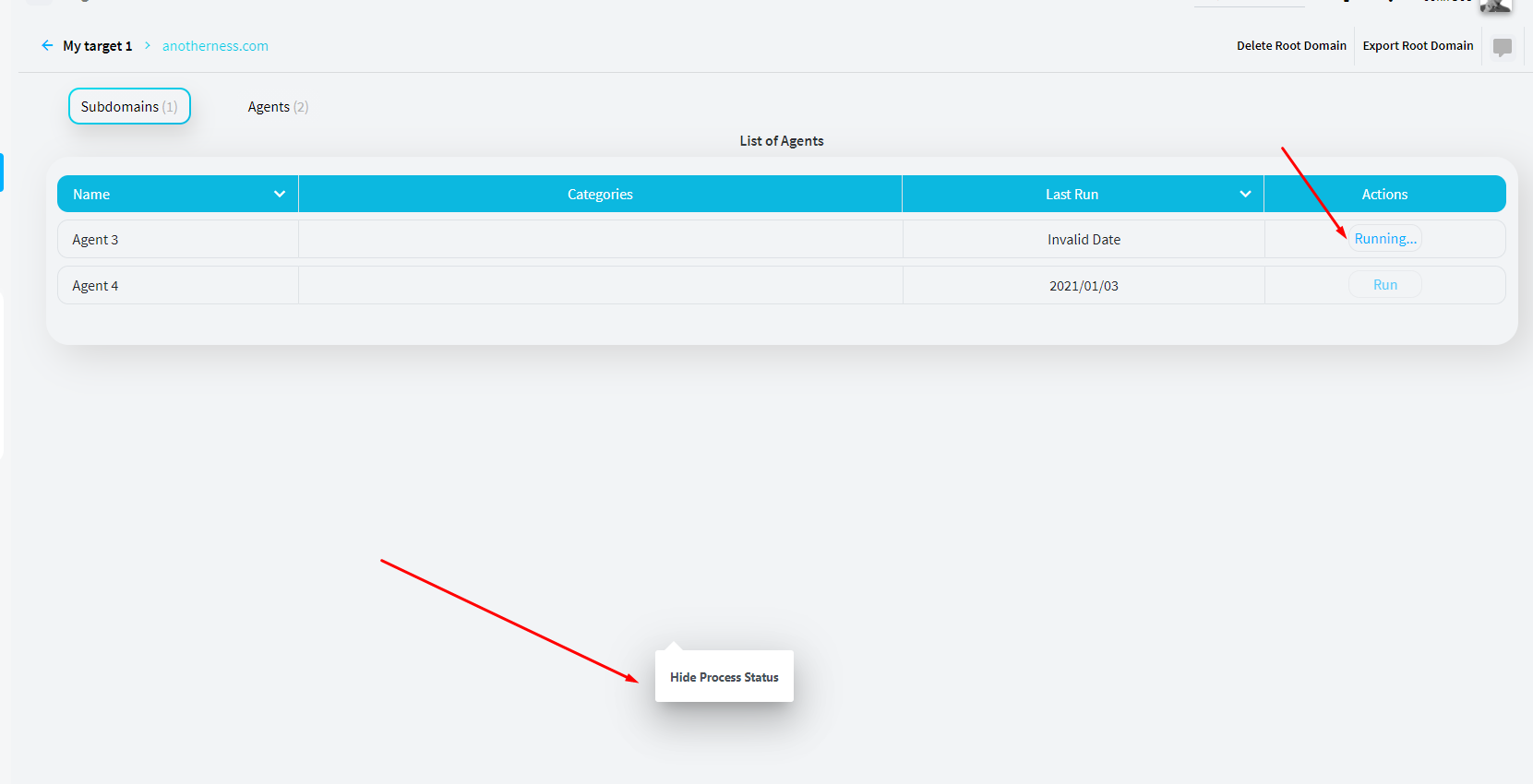

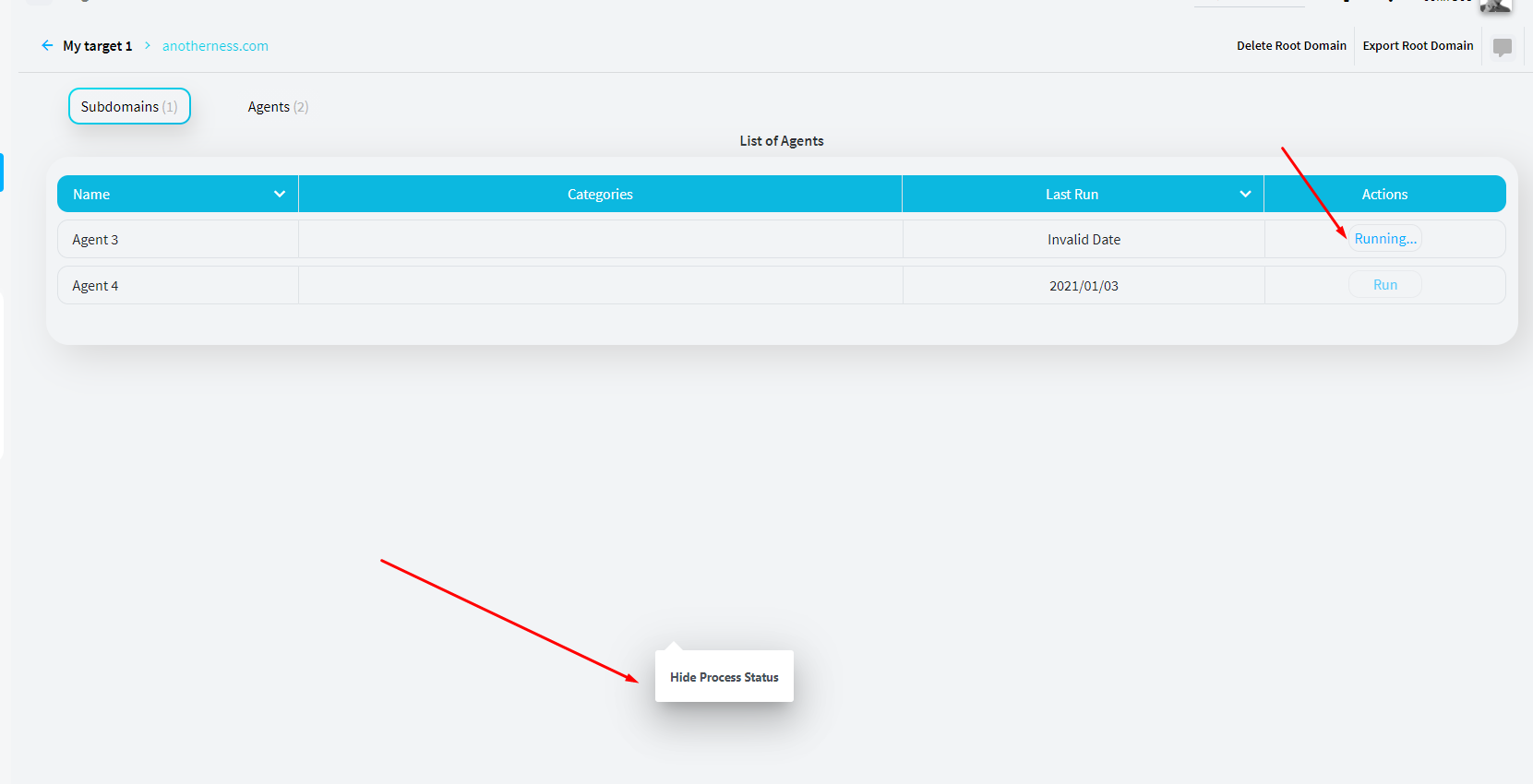

566,128 | 16,812,492,571 | IssuesEvent | 2021-06-17 00:52:39 | reconness/reconness-frontend | https://api.github.com/repos/reconness/reconness-frontend | closed | A tooltip keeps displayed in the middle of the screen when the Running agent popup is closed | bug priority: medium severity: minor | Steps to reproduce

1. Open a Root domain details page or a subdomain details page

2. Click on Agents tab

3. Start running one agent

4. Minimize the running agent popup

Current result: A tooltip keeps displayed in the middle of the screen when the Running agent popup is closed

Expected result: Tooltip will be hidden and the Running agent popup will be closed when user minimize the popup | 1.0 | A tooltip keeps displayed in the middle of the screen when the Running agent popup is closed - Steps to reproduce

1. Open a Root domain details page or a subdomain details page

2. Click on Agents tab

3. Start running one agent

4. Minimize the running agent popup

Current result: A tooltip keeps displayed in the middle of the screen when the Running agent popup is closed

Expected result: Tooltip will be hidden and the Running agent popup will be closed when user minimize the popup | priority | a tooltip keeps displayed in the middle of the screen when the running agent popup is closed steps to reproduce open a root domain details page or a subdomain details page click on agents tab start running one agent minimize the running agent popup current result a tooltip keeps displayed in the middle of the screen when the running agent popup is closed expected result tooltip will be hidden and the running agent popup will be closed when user minimize the popup | 1 |

413,882 | 12,093,201,480 | IssuesEvent | 2020-04-19 18:39:23 | busy-beaver-dev/busy-beaver | https://api.github.com/repos/busy-beaver-dev/busy-beaver | closed | Enable Slack workspace users to toggle features | effort high enhancement priority medium | Busy-Beaver should only post in channels that it belongs to. This allows admins to remove Busy-Beaver by kicking it out of channels.

A front-end with configuration settings for each of the features can also solve the same problem, but front-end resources are scarce. Hopefully, this will change soon; I need to do outreach in a Chicago JavaScript community.

Muting Busy-Beaver by kicking it out of a room is a good workaround for a single tenant use case in a single tenant workspace. | 1.0 | Enable Slack workspace users to toggle features - Busy-Beaver should only post in channels that it belongs to. This allows admins to remove Busy-Beaver by kicking it out of channels.

A front-end with configuration settings for each of the features can also solve the same problem, but front-end resources are scarce. Hopefully, this will change soon; I need to do outreach in a Chicago JavaScript community.

Muting Busy-Beaver by kicking it out of a room is a good workaround for a single tenant use case in a single tenant workspace. | priority | enable slack workspace users to toggle features busy beaver should only post in channels that it belongs to this allows admins to remove busy beaver by kicking it out of channels a front end with configuration settings for each of the features can also solve the same problem but front end resources are scarce hopefully this will change soon i need to do outreach in a chicago javascript community muting busy beaver by kicking it out of a room is a good workaround for a single tenant use case in a single tenant workspace | 1 |

122,574 | 4,837,361,003 | IssuesEvent | 2016-11-08 22:20:58 | kolibox/koli | https://api.github.com/repos/kolibox/koli | closed | Add build version for command line | complexity/medium feature priority/P0 | `koli version` must return the following information:

- The k8s library version (KubernetesVersion)

- The commit linked to the current build (GitCommit)

- The version of the command line (GitVersion)

- The build date (BuildDate)

- The Go version (GoVersion)

- The compiler (Compiler)

- The OS platform (Platform)

| 1.0 | Add build version for command line - `koli version` must return the following information:

- The k8s library version (KubernetesVersion)

- The commit linked to the current build (GitCommit)

- The version of the command line (GitVersion)

- The build date (BuildDate)

- The Go version (GoVersion)

- The compiler (Compiler)

- The OS platform (Platform)

| priority | add build version for command line koli version must return the following information the library version kubernetesversion the commit linked to the current build gitcommit the version of the command line gitversion the build date builddate the go version goversion the compiler compiler the os platform platform | 1 |

101,994 | 4,149,346,965 | IssuesEvent | 2016-06-15 14:13:40 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Error when loading a HGIS validation layer | Category: UI Priority: Medium Type: Bug | Run through the HGIS workflow to create a validation layer. When you add it to the map the following error occurs:

`Uncaught TypeError: validation.loadFeature is not a function` | 1.0 | Error when loading a HGIS validation layer - Run through the HGIS workflow to create a validation layer. When you add it to the map the following error occurs:

`Uncaught TypeError: validation.loadFeature is not a function` | priority | error when loading a hgis validation layer run through the hgis workflow to create a validation layer when you add it to the map the following error occurs uncaught typeerror validation loadfeature is not a function | 1 |

391,647 | 11,576,579,907 | IssuesEvent | 2020-02-21 12:16:53 | luna/enso | https://api.github.com/repos/luna/enso | opened | Implement the Text Buffer Structure | Category: Tooling Change: Non-Breaking Difficulty: Core Contributor Priority: Medium Type: Enhancement | ### Summary

With a underlying data structure decided on in #544, we now need to implement the underlying structure and use it for open buffers.

### Value

We ensure that we don't have performance problems (memory or time) with editing open buffers.

### Specification

- [ ] Implement the structure descided on in #544.

- [ ] It should provide a simple interface such that the underlying structure can later be swapped out without issue if needed.

- [ ] Use this structure to represent the open buffers.

### Acceptance Criteria & Test Cases

- The structure has been implemented with a clean interface and is well-tested.

- The structure is being used to underlie open buffers.

| 1.0 | Implement the Text Buffer Structure - ### Summary

With a underlying data structure decided on in #544, we now need to implement the underlying structure and use it for open buffers.

### Value

We ensure that we don't have performance problems (memory or time) with editing open buffers.

### Specification

- [ ] Implement the structure descided on in #544.

- [ ] It should provide a simple interface such that the underlying structure can later be swapped out without issue if needed.

- [ ] Use this structure to represent the open buffers.

### Acceptance Criteria & Test Cases

- The structure has been implemented with a clean interface and is well-tested.

- The structure is being used to underlie open buffers.

| priority | implement the text buffer structure summary with a underlying data structure decided on in we now need to implement the underlying structure and use it for open buffers value we ensure that we don t have performance problems memory or time with editing open buffers specification implement the structure descided on in it should provide a simple interface such that the underlying structure can later be swapped out without issue if needed use this structure to represent the open buffers acceptance criteria test cases the structure has been implemented with a clean interface and is well tested the structure is being used to underlie open buffers | 1 |

376,791 | 11,156,682,680 | IssuesEvent | 2019-12-25 08:32:29 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | When I split a room in half, room detection was not happy | Medium Priority | I had a big room, then added a divider wall to divide it in two. At this point, room detection did not seem to detect that there were now two rooms. Then I placed a chair in one of the rooms, and this seemed to cause that half of the room to become the 'real' room, and the other half of the room disappeared and did not count as a room anymore. Triggering a refresh on the other half of the room by placing and picking up a block in a window space over there fixed it again. | 1.0 | When I split a room in half, room detection was not happy - I had a big room, then added a divider wall to divide it in two. At this point, room detection did not seem to detect that there were now two rooms. Then I placed a chair in one of the rooms, and this seemed to cause that half of the room to become the 'real' room, and the other half of the room disappeared and did not count as a room anymore. Triggering a refresh on the other half of the room by placing and picking up a block in a window space over there fixed it again. | priority | when i split a room in half room detection was not happy i had a big room then added a divider wall to divide it in two at this point room detection did not seem to detect that there were now two rooms then i placed a chair in one of the rooms and this seemed to cause that half of the room to become the real room and the other half of the room disappeared and did not count as a room anymore triggering a refresh on the other half of the room by placing and picking up a block in a window space over there fixed it again | 1 |

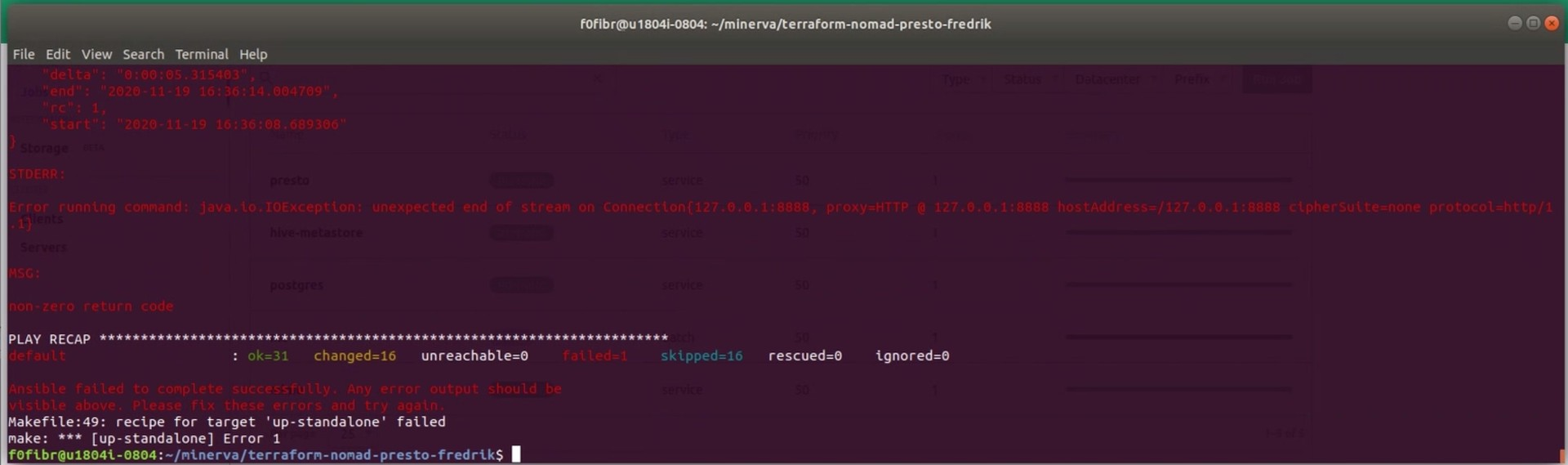

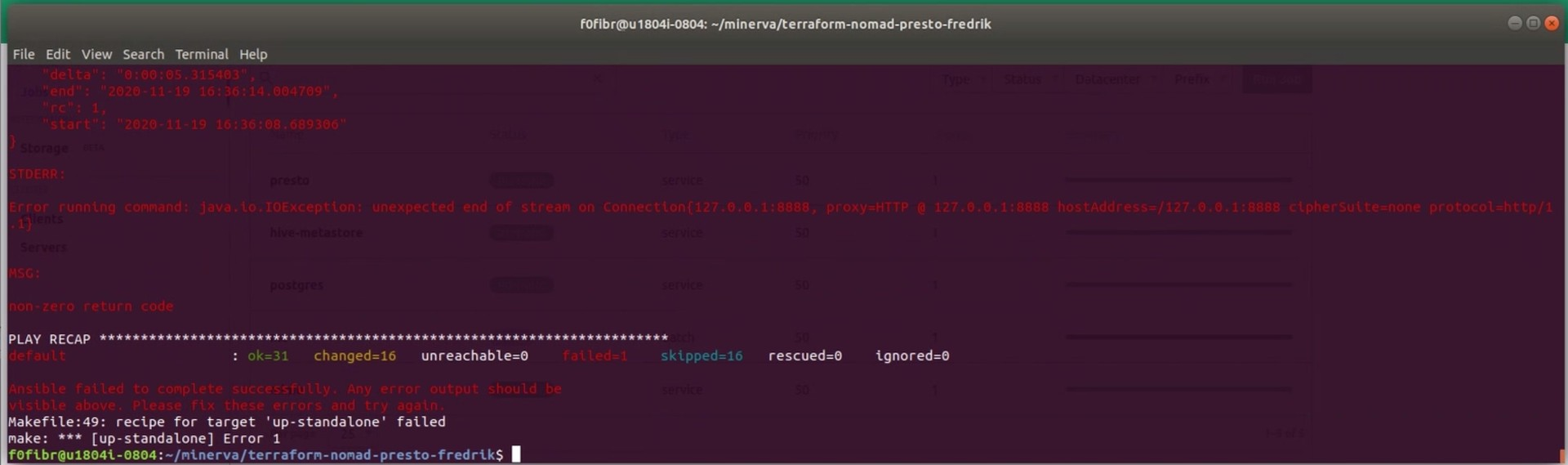

544,968 | 15,932,942,847 | IssuesEvent | 2021-04-14 06:43:09 | Skatteetaten/terraform-nomad-trino | https://api.github.com/repos/Skatteetaten/terraform-nomad-trino | closed | "Unexpected end of stream on connection" | priority/medium stage/research type/bug | ## Current behaviour

Fails when verifying tables.

## Expected behaviour

Successful verification

## How to reproduce?

Run `make up-standalone`

## Suggestion(s)/solution(s) [Optional]

## Checklist (after created issue)

- [x] Added label(s)

- [x] Added to project

- [x] Added to milestone | 1.0 | "Unexpected end of stream on connection" - ## Current behaviour

Fails when verifying tables.

## Expected behaviour

Successful verification

## How to reproduce?

Run `make up-standalone`

## Suggestion(s)/solution(s) [Optional]

## Checklist (after created issue)

- [x] Added label(s)

- [x] Added to project

- [x] Added to milestone | priority | unexpected end of stream on connection current behaviour fails when verifying tables expected behaviour successful verification how to reproduce run make up standalone suggestion s solution s checklist after created issue added label s added to project added to milestone | 1 |

382,221 | 11,302,638,947 | IssuesEvent | 2020-01-17 18:09:21 | etfdevs/ETe | https://api.github.com/repos/etfdevs/ETe | opened | Switch to CMake builder | Priority: Medium Status: Available Type: Maintenance | Switch over to building with CMake and get rid of SCons and VS project files but we need to try and maintain the VS project file support within CMake to ensure compatibility with the flags we currently use. | 1.0 | Switch to CMake builder - Switch over to building with CMake and get rid of SCons and VS project files but we need to try and maintain the VS project file support within CMake to ensure compatibility with the flags we currently use. | priority | switch to cmake builder switch over to building with cmake and get rid of scons and vs project files but we need to try and maintain the vs project file support within cmake to ensure compatibility with the flags we currently use | 1 |

600,628 | 18,346,998,120 | IssuesEvent | 2021-10-08 07:48:15 | owncloud/ocis | https://api.github.com/repos/owncloud/ocis | closed | Enable download as zip/tar service in OCIS | OCIS-Fastlane Priority:p3-medium Early-Adopter:CERN | As a follow-up of https://github.com/cs3org/reva/pull/2066

Can you please add this new service as a core OCIS service that is initialised as part of the frontend? | 1.0 | Enable download as zip/tar service in OCIS - As a follow-up of https://github.com/cs3org/reva/pull/2066

Can you please add this new service as a core OCIS service that is initialised as part of the frontend? | priority | enable download as zip tar service in ocis as a follow up of can you please add this new service as a core ocis service that is initialised as part of the frontend | 1 |

531,280 | 15,444,044,535 | IssuesEvent | 2021-03-08 09:54:22 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Hard to not miss private chat message | Category: UI Needs Task Plan Priority: Medium Squad: Mountain Goat Type: Quality of Life | Maybe it can make a little "beep" or something?

Communcation is vital in ECO, yet you have only that one chat window, that probably most people not watch. | 1.0 | Hard to not miss private chat message - Maybe it can make a little "beep" or something?

Communcation is vital in ECO, yet you have only that one chat window, that probably most people not watch. | priority | hard to not miss private chat message maybe it can make a little beep or something communcation is vital in eco yet you have only that one chat window that probably most people not watch | 1 |

5,076 | 2,571,114,372 | IssuesEvent | 2015-02-10 14:44:27 | jazkarta/edx-platform | https://api.github.com/repos/jazkarta/edx-platform | closed | New POCs don't appear in the Dashboard | Medium Priority | When I create a new POC, I expect to find it in the Dashboard. Currently, new POCs don't appear in the Dashboard, nor do all existing POCs. I have a POC that appears in the dashboard of the user I use as a student, but not in the dashboard of the user who is the POC Coach. | 1.0 | New POCs don't appear in the Dashboard - When I create a new POC, I expect to find it in the Dashboard. Currently, new POCs don't appear in the Dashboard, nor do all existing POCs. I have a POC that appears in the dashboard of the user I use as a student, but not in the dashboard of the user who is the POC Coach. | priority | new pocs don t appear in the dashboard when i create a new poc i expect to find it in the dashboard currently new pocs don t appear in the dashboard nor do all existing pocs i have a poc that appears in the dashboard of the user i use as a student but not in the dashboard of the user who is the poc coach | 1 |

695,822 | 23,873,164,028 | IssuesEvent | 2022-09-07 16:26:27 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Post-split compactions can crash tserver | kind/bug area/docdb priority/medium | Jira Link: [DB-3333](https://yugabyte.atlassian.net/browse/DB-3333)

### Description

Originally crashes were observed by @qvad during stress test.

I was able to reproduce with on local RF=1 cluster with the following settings and workload:

```

RF=1

MASTER_FLAGS='"enable_automatic_tablet_splitting=true","tablet_split_low_phase_size_threshold_bytes=0","tablet_split_high_phase_size_threshold_bytes=0","tablet_split_low_phase_shard_count_per_node=0","tablet_split_high_phase_shard_count_per_node=0","tablet_force_split_threshold_bytes=100000"'

TSERVER_FLAGS="db_write_buffer_size=100000"

./bin/yb-ctl --rf=$RF create --num_shards_per_tserver=1 --ysql_num_shards_per_tserver=1 --master_flags "$MASTER_FLAGS" --tserver_flags "$TSERVER_FLAGS"

java -jar ~/code/yb-sample-apps/target/yb-sample-apps.jar --workload SqlSecondaryIndex --nodes 127.0.0.1:5433 --num_threads_read 2 --num_threads_write 6 --num_unique_keys 1000000000 --nouuid

```

| 1.0 | [DocDB] Post-split compactions can crash tserver - Jira Link: [DB-3333](https://yugabyte.atlassian.net/browse/DB-3333)

### Description

Originally crashes were observed by @qvad during stress test.

I was able to reproduce with on local RF=1 cluster with the following settings and workload:

```

RF=1

MASTER_FLAGS='"enable_automatic_tablet_splitting=true","tablet_split_low_phase_size_threshold_bytes=0","tablet_split_high_phase_size_threshold_bytes=0","tablet_split_low_phase_shard_count_per_node=0","tablet_split_high_phase_shard_count_per_node=0","tablet_force_split_threshold_bytes=100000"'

TSERVER_FLAGS="db_write_buffer_size=100000"

./bin/yb-ctl --rf=$RF create --num_shards_per_tserver=1 --ysql_num_shards_per_tserver=1 --master_flags "$MASTER_FLAGS" --tserver_flags "$TSERVER_FLAGS"

java -jar ~/code/yb-sample-apps/target/yb-sample-apps.jar --workload SqlSecondaryIndex --nodes 127.0.0.1:5433 --num_threads_read 2 --num_threads_write 6 --num_unique_keys 1000000000 --nouuid

```

| priority | post split compactions can crash tserver jira link description originally crashes were observed by qvad during stress test i was able to reproduce with on local rf cluster with the following settings and workload rf master flags enable automatic tablet splitting true tablet split low phase size threshold bytes tablet split high phase size threshold bytes tablet split low phase shard count per node tablet split high phase shard count per node tablet force split threshold bytes tserver flags db write buffer size bin yb ctl rf rf create num shards per tserver ysql num shards per tserver master flags master flags tserver flags tserver flags java jar code yb sample apps target yb sample apps jar workload sqlsecondaryindex nodes num threads read num threads write num unique keys nouuid | 1 |

53,740 | 3,047,320,774 | IssuesEvent | 2015-08-11 03:25:21 | piccolo2d/piccolo2d.java | https://api.github.com/repos/piccolo2d/piccolo2d.java | closed | Prepare the 1.3 release | Milestone-1.3 OpSys-All Priority-Medium Status-Verified Toolkit-Piccolo2D.Java Type-Task | Originally reported on Google Code with ID 43

```

Put the release together as development finishes.

- ReleaseNotes

- prepare and test release candidates

- feature the downloads

- upload to a maven repository - ideally repo1.maven.org

```

Reported by `mr.rohrmoser` on 2008-07-20 12:56:12

- **Blocked on**: #167, #168, #169, #170

| 1.0 | Prepare the 1.3 release - Originally reported on Google Code with ID 43

```

Put the release together as development finishes.

- ReleaseNotes

- prepare and test release candidates

- feature the downloads

- upload to a maven repository - ideally repo1.maven.org

```

Reported by `mr.rohrmoser` on 2008-07-20 12:56:12

- **Blocked on**: #167, #168, #169, #170

| priority | prepare the release originally reported on google code with id put the release together as development finishes releasenotes prepare and test release candidates feature the downloads upload to a maven repository ideally maven org reported by mr rohrmoser on blocked on | 1 |

240,942 | 7,807,527,515 | IssuesEvent | 2018-06-11 17:09:23 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Migrate over to new Ginkgo CI framework | area/CI kind/meta priority/medium | #1733 merges in the base for a new test framework for Cilium. To ensure that the migration to this new framework runs smoothly, there are a variety of tasks to accomplish:

- [x] [Install new dependencies onto Cilium Jenkins slaves](https://github.com/cilium/cilium/issues/1768)

- [x] [Set up Jenkins job that runs the new tests on a reserved build slave while we are still running with the old bash script framework](https://github.com/cilium/cilium/issues/1840)

- [x] [Validate that existing tests are properly migrated over to have equivalent test coverage](https://github.com/cilium/cilium/issues/1841)

- [x] Deprecate Runtime bash-script test stage

- [x] Deprecate Kubernetes bash-script test stage

- [x] [Add test/ directory to be owned by cilium/CI in CODEOWNERS](https://github.com/cilium/cilium/issues/1842)

- [x] Validate log gathering functionality for each test and ensure that logs are available in Jenkins. There should be parity with what exists currently in the Cilium tests

- [x] #2062

- [x] #2026

- [x] [Update developer documentation that illustrates how to use this new framework](https://github.com/cilium/cilium/issues/1843)

- [x] [Add more logs to each test that illustrate what is going on in (like how `log` function is used in the current bash scripts).](https://github.com/cilium/cilium/issues/1841)

- [x] [Move Vagrant image to a Cilium-managed repository](https://github.com/cilium/cilium/issues/1844)

- [x] [Migrate image `eloycoto/cilium_dependencies:latest` to a Cilium-managed repository](https://github.com/cilium/cilium/issues/2536)

- [x] [Coalesce VM images from existing build / Ginkgo build](https://github.com/cilium/cilium/issues/1956)

- [x] [Add Envoy runtime test stage](https://github.com/cilium/cilium/issues/2252)

- [x] Add GitHub Organization for Cilium that uses ginkgo Jenkinsfile

Discovered issues:

- [x] #1907

- [x] #2067

- [x] #2066

- [x] #2065

- [x] #2068

- [x] #2075

- [x] #2077

After migration is complete:

- [ ] Remove Cilium-Ginkgo-Tests-All job

- [x] Update ginkgo.Jenkinsfile to run all Runtime* and K8s* tests and change `Describe` of each test to remove 'Validated' string.

- [x] Remove Cilium-Bash-Tests job and change GitHub settings to not make it a 'Required' job. This is only doable by an administrator of the Cilium GitHub Organization