Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

443,834 | 12,800,123,235 | IssuesEvent | 2020-07-02 16:30:36 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Move to full-page for convert video to virtual flow | Priority: Medium Product: caseflow-hearings Stakeholder: BVA Team: Tango 💃 | ## Description

Move "Convert to Virtual Hearing" functionality from the modal to a full page.

## Acceptance criteria

- [ ] Feature toggle??

- [ ] This feature should be accessible to the following user groups: Hearing Coordinators

- [ ] Include screenshot(s) in the Github issue

### Detailed AC

([Figma link to start of flow](https://www.figma.com/file/V87TZArfdurCGJiEjQ73ES/Virtual-Hearings?node-id=5649%3A26461))

The major update here is moving "Convert to Virtual Hearing" functionality from the current modal to a full page, in order to maintain consistency with the new Central Office designs. Unlike formerly-Central hearings which now calculate timezone based on veteran address, however, formerly video hearings will continue to calculate timezone based on the Regional Office.

- [ ] The Convert to Virtual Hearing page displays the following info / form fields:

- [ ] Hearing Date - read-only

- [ ] Hearing Time - same radio field that appears for Video hearings on the Daily Docket, except that the option values make the Regional Office timezone explicit, i.e. the option values format is [h:mm am/pm RegionalOfficeTimezone / h:mm am/pm BoardEasternTimezone]. Same formatting applies to values in the Other dropdown.

- [ ] Veteran / Appellant name and mailing address - read-only

- [ ] Veteran / Appellant Email with help text

- required field (no change to current state)

- pre-populated (no change to current state)

- [ ] Power of Attorney (POA) type label, name, and address - read-only

- note the various POA types/labels: Attorney or Service Organization

- `Open Question: for some Service Organizations, a person's name, in addition to service org name, displays on the Daily Docket but not on Case Details. Is this name being pulled from VACOLS or VBMS? Should we try to display it here?`

- [ ] if no POA, display message ([direct link to design applied to Central](https://www.figma.com/file/V87TZArfdurCGJiEjQ73ES/Virtual-Hearings?node-id=5649%3A19281))

- [ ] POA / Representative Email with help text

- optional

- pre-populated (no change to current state)

- [ ] VLJ dropdown

- optional

- [ ] VLJ Email - read-only, based on dropdown selection; if blank, display `None`

- [ ] On pressing the Convert to Virtual Hearing button, the async jobs to create the conference and send emails begin, and the user is taken back to Hearing Details where the In Progress and and Success states/alerts display as appropriate

- No changes to current state alert messaging or form field disabled states

## Background/context/resources

## Technical notes

| 1.0 | Move to full-page for convert video to virtual flow - ## Description

Move "Convert to Virtual Hearing" functionality from the modal to a full page.

## Acceptance criteria

- [ ] Feature toggle??

- [ ] This feature should be accessible to the following user groups: Hearing Coordinators

- [ ] Include screenshot(s) in the Github issue

### Detailed AC

([Figma link to start of flow](https://www.figma.com/file/V87TZArfdurCGJiEjQ73ES/Virtual-Hearings?node-id=5649%3A26461))

The major update here is moving "Convert to Virtual Hearing" functionality from the current modal to a full page, in order to maintain consistency with the new Central Office designs. Unlike formerly-Central hearings which now calculate timezone based on veteran address, however, formerly video hearings will continue to calculate timezone based on the Regional Office.

- [ ] The Convert to Virtual Hearing page displays the following info / form fields:

- [ ] Hearing Date - read-only

- [ ] Hearing Time - same radio field that appears for Video hearings on the Daily Docket, except that the option values make the Regional Office timezone explicit, i.e. the option values format is [h:mm am/pm RegionalOfficeTimezone / h:mm am/pm BoardEasternTimezone]. Same formatting applies to values in the Other dropdown.

- [ ] Veteran / Appellant name and mailing address - read-only

- [ ] Veteran / Appellant Email with help text

- required field (no change to current state)

- pre-populated (no change to current state)

- [ ] Power of Attorney (POA) type label, name, and address - read-only

- note the various POA types/labels: Attorney or Service Organization

- `Open Question: for some Service Organizations, a person's name, in addition to service org name, displays on the Daily Docket but not on Case Details. Is this name being pulled from VACOLS or VBMS? Should we try to display it here?`

- [ ] if no POA, display message ([direct link to design applied to Central](https://www.figma.com/file/V87TZArfdurCGJiEjQ73ES/Virtual-Hearings?node-id=5649%3A19281))

- [ ] POA / Representative Email with help text

- optional

- pre-populated (no change to current state)

- [ ] VLJ dropdown

- optional

- [ ] VLJ Email - read-only, based on dropdown selection; if blank, display `None`

- [ ] On pressing the Convert to Virtual Hearing button, the async jobs to create the conference and send emails begin, and the user is taken back to Hearing Details where the In Progress and and Success states/alerts display as appropriate

- No changes to current state alert messaging or form field disabled states

## Background/context/resources

## Technical notes

| priority | move to full page for convert video to virtual flow description move convert to virtual hearing functionality from the modal to a full page acceptance criteria feature toggle this feature should be accessible to the following user groups hearing coordinators include screenshot s in the github issue detailed ac the major update here is moving convert to virtual hearing functionality from the current modal to a full page in order to maintain consistency with the new central office designs unlike formerly central hearings which now calculate timezone based on veteran address however formerly video hearings will continue to calculate timezone based on the regional office the convert to virtual hearing page displays the following info form fields hearing date read only hearing time same radio field that appears for video hearings on the daily docket except that the option values make the regional office timezone explicit i e the option values format is same formatting applies to values in the other dropdown veteran appellant name and mailing address read only veteran appellant email with help text required field no change to current state pre populated no change to current state power of attorney poa type label name and address read only note the various poa types labels attorney or service organization open question for some service organizations a person s name in addition to service org name displays on the daily docket but not on case details is this name being pulled from vacols or vbms should we try to display it here if no poa display message poa representative email with help text optional pre populated no change to current state vlj dropdown optional vlj email read only based on dropdown selection if blank display none on pressing the convert to virtual hearing button the async jobs to create the conference and send emails begin and the user is taken back to hearing details where the in progress and and success states alerts display as appropriate no changes to current state alert messaging or form field disabled states background context resources technical notes | 1 |

398,204 | 11,739,257,971 | IssuesEvent | 2020-03-11 17:23:55 | sunpy/sunpy | https://api.github.com/repos/sunpy/sunpy | closed | Download data and add entries to database from a HEK query | Effort Medium Feature Request Package Novice Priority Low database | This should also be handled by the `Database.download` method.

Notes:

- add a new method download_from_hek_query_result(query_result, path=None, progress=False) which translates the incoming HEK qr to a VSO qr and then calls download_from_vso_query_result and passes the parameters path and progress on to this method.

| 1.0 | Download data and add entries to database from a HEK query - This should also be handled by the `Database.download` method.

Notes:

- add a new method download_from_hek_query_result(query_result, path=None, progress=False) which translates the incoming HEK qr to a VSO qr and then calls download_from_vso_query_result and passes the parameters path and progress on to this method.

| priority | download data and add entries to database from a hek query this should also be handled by the database download method notes add a new method download from hek query result query result path none progress false which translates the incoming hek qr to a vso qr and then calls download from vso query result and passes the parameters path and progress on to this method | 1 |

809,136 | 30,176,186,730 | IssuesEvent | 2023-07-04 04:59:36 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | opened | Menu popup container closes on hover when scrollable is enabled | Bug C: Menu SEV: Medium jQuery Priority 5 | ### Bug report

Menu popup container closes on hover when `scrollable` is enabled.

This is a regression introduced with v2023.2.606.

### Reproduction of the problem

1. Run this [dojo](https://dojo.telerik.com/@AleksandarEvangelatov/eHeZemIn)

2. Hover a menu item and try to select a subitem

[screencast](https://screenrec.com/share/gyY23R1MSv)

### Current behavior

Popup container closes on hover and subitems cannot be selected.

### Expected/desired behavior

Popup container should not close on hover.

### Environment

* **Kendo UI version:** 2023.2.606

* **Browser:** [all]

| 1.0 | Menu popup container closes on hover when scrollable is enabled - ### Bug report

Menu popup container closes on hover when `scrollable` is enabled.

This is a regression introduced with v2023.2.606.

### Reproduction of the problem

1. Run this [dojo](https://dojo.telerik.com/@AleksandarEvangelatov/eHeZemIn)

2. Hover a menu item and try to select a subitem

[screencast](https://screenrec.com/share/gyY23R1MSv)

### Current behavior

Popup container closes on hover and subitems cannot be selected.

### Expected/desired behavior

Popup container should not close on hover.

### Environment

* **Kendo UI version:** 2023.2.606

* **Browser:** [all]

| priority | menu popup container closes on hover when scrollable is enabled bug report menu popup container closes on hover when scrollable is enabled this is a regression introduced with reproduction of the problem run this hover a menu item and try to select a subitem current behavior popup container closes on hover and subitems cannot be selected expected desired behavior popup container should not close on hover environment kendo ui version browser | 1 |

503,488 | 14,592,962,542 | IssuesEvent | 2020-12-19 20:12:10 | bkenio/tidal | https://api.github.com/repos/bkenio/tidal | opened | Limit videos over 60fps | Priority: Medium Status: Available Type: Enhancement | Tidal should set the ffmpeg video filter to 60fps for videos over the threshold. Tidal will have to parse the fps string and decide if the video is over and then set the filter accordingly.

Tests examples

- "60/1" -> 60

- "90/1" -> 60

- 90 -> 60

- "9000/1" -> 60 | 1.0 | Limit videos over 60fps - Tidal should set the ffmpeg video filter to 60fps for videos over the threshold. Tidal will have to parse the fps string and decide if the video is over and then set the filter accordingly.

Tests examples

- "60/1" -> 60

- "90/1" -> 60

- 90 -> 60

- "9000/1" -> 60 | priority | limit videos over tidal should set the ffmpeg video filter to for videos over the threshold tidal will have to parse the fps string and decide if the video is over and then set the filter accordingly tests examples | 1 |

674,758 | 23,064,980,821 | IssuesEvent | 2022-07-25 13:14:43 | stiftelsen-effekt/effekt-backend | https://api.github.com/repos/stiftelsen-effekt/effekt-backend | closed | [Epost-kvitteringer] Fjern setningen om gjenbruk av KID for betalingstyper hvor det ikke er aktuelt | Medium high priority | Det gjelder setningen "Hvis du ønsker å donere med samme fordeling senere kan du bruke samme KID-nummer igjen. Du står helt fritt til å endre beløpet du donerer."

Dette er bare relevant for giveren om de manuelt har måttet forholde seg til KID-nummeret. Det gjelder kun enkeltdonasjoner via Bank (ikke AvtaleGiro) såvidt jeg kan komme på nå.

Endre epostmalen slik at denne setningen kun vises på kvitteringer som er for enkeltdonasjoner via Bank. | 1.0 | [Epost-kvitteringer] Fjern setningen om gjenbruk av KID for betalingstyper hvor det ikke er aktuelt - Det gjelder setningen "Hvis du ønsker å donere med samme fordeling senere kan du bruke samme KID-nummer igjen. Du står helt fritt til å endre beløpet du donerer."

Dette er bare relevant for giveren om de manuelt har måttet forholde seg til KID-nummeret. Det gjelder kun enkeltdonasjoner via Bank (ikke AvtaleGiro) såvidt jeg kan komme på nå.

Endre epostmalen slik at denne setningen kun vises på kvitteringer som er for enkeltdonasjoner via Bank. | priority | fjern setningen om gjenbruk av kid for betalingstyper hvor det ikke er aktuelt det gjelder setningen hvis du ønsker å donere med samme fordeling senere kan du bruke samme kid nummer igjen du står helt fritt til å endre beløpet du donerer dette er bare relevant for giveren om de manuelt har måttet forholde seg til kid nummeret det gjelder kun enkeltdonasjoner via bank ikke avtalegiro såvidt jeg kan komme på nå endre epostmalen slik at denne setningen kun vises på kvitteringer som er for enkeltdonasjoner via bank | 1 |

46,016 | 2,944,635,838 | IssuesEvent | 2015-07-03 06:44:35 | music-encoding/music-encoding | https://api.github.com/repos/music-encoding/music-encoding | closed | data.ARTICULATIONS is missing 'scoop' | Component: Core Schema Priority: Medium Status: Needs Discussion | _From [andrew.hankinson](https://code.google.com/u/andrew.hankinson/) on September 10, 2014 18:35:27_

I'm not sure if there's an equivalent value in there, but I can't seem to find anything that relates to a scoop.

_Original issue: http://code.google.com/p/music-encoding/issues/detail?id=204_ | 1.0 | data.ARTICULATIONS is missing 'scoop' - _From [andrew.hankinson](https://code.google.com/u/andrew.hankinson/) on September 10, 2014 18:35:27_

I'm not sure if there's an equivalent value in there, but I can't seem to find anything that relates to a scoop.

_Original issue: http://code.google.com/p/music-encoding/issues/detail?id=204_ | priority | data articulations is missing scoop from on september i m not sure if there s an equivalent value in there but i can t seem to find anything that relates to a scoop original issue | 1 |

681,024 | 23,294,717,299 | IssuesEvent | 2022-08-06 11:30:53 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | docs: add warning to platform automerge to configure required status checks | priority-3-medium type:docs status:in-progress | ### Describe the proposed change(s).

We should add a warning to `platformAutomerge`[^1] docs thatt this feature requires madatory status checks, otherwise PR's can get merged with failed status checks.

I know at least github will auto merge if the status checks are delayed.

[^1]: https://docs.renovatebot.com/configuration-options/#platformautomerge | 1.0 | docs: add warning to platform automerge to configure required status checks - ### Describe the proposed change(s).

We should add a warning to `platformAutomerge`[^1] docs thatt this feature requires madatory status checks, otherwise PR's can get merged with failed status checks.

I know at least github will auto merge if the status checks are delayed.

[^1]: https://docs.renovatebot.com/configuration-options/#platformautomerge | priority | docs add warning to platform automerge to configure required status checks describe the proposed change s we should add a warning to platformautomerge docs thatt this feature requires madatory status checks otherwise pr s can get merged with failed status checks i know at least github will auto merge if the status checks are delayed | 1 |

479,265 | 13,793,764,953 | IssuesEvent | 2020-10-09 15:23:59 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Allow configuration of the expiration of the local accounts | Priority: Medium Type: Feature / Enhancement | **Is your feature request related to a problem? Please describe.**

Right now local accounts are always expiring 31 days after their creation (#5892 changes it to follow the validity of the access) and its not flexible

**Describe the solution you'd like**

In the auth source, we should have a field that defines an access duration for the validity of the local account.

We could use a 0 value to follow the validity of the access (#5892 behavior)

Any value other than 0 would be used to determine when the account expires. | 1.0 | Allow configuration of the expiration of the local accounts - **Is your feature request related to a problem? Please describe.**

Right now local accounts are always expiring 31 days after their creation (#5892 changes it to follow the validity of the access) and its not flexible

**Describe the solution you'd like**

In the auth source, we should have a field that defines an access duration for the validity of the local account.

We could use a 0 value to follow the validity of the access (#5892 behavior)

Any value other than 0 would be used to determine when the account expires. | priority | allow configuration of the expiration of the local accounts is your feature request related to a problem please describe right now local accounts are always expiring days after their creation changes it to follow the validity of the access and its not flexible describe the solution you d like in the auth source we should have a field that defines an access duration for the validity of the local account we could use a value to follow the validity of the access behavior any value other than would be used to determine when the account expires | 1 |

636,223 | 20,595,307,942 | IssuesEvent | 2022-03-05 11:53:07 | GrottoCenter/Grottocenter3 | https://api.github.com/repos/GrottoCenter/Grottocenter3 | closed | [TESTS] Add more tests | Priority: Medium Status: Proposal Type: Enhancement | Clément a commencé à mettre en place de nombreux tests.

Il convient de finaliser ce travail afin de disposer de l'ensemble des tests nécessaires | 1.0 | [TESTS] Add more tests - Clément a commencé à mettre en place de nombreux tests.

Il convient de finaliser ce travail afin de disposer de l'ensemble des tests nécessaires | priority | add more tests clément a commencé à mettre en place de nombreux tests il convient de finaliser ce travail afin de disposer de l ensemble des tests nécessaires | 1 |

522,049 | 15,147,814,266 | IssuesEvent | 2021-02-11 09:43:23 | naev/naev | https://api.github.com/repos/naev/naev | closed | Tracker: are trails perfect yet? | Priority-Medium Type-Enhancement | Ideas for improvement post merge: [edited to reflect progress]

1. "add more definitions for capships" - ~~and now rockets (example: dat/outfits/rockets/fury_missile.xml).~~

2. "tune the colours. fire glow and afterburner are too close for example"

3. "different emitter types should have different afterburner and jump colours"

4. Somehow add nebula-specific trails

5. Make the rendering more optimized, without passing the colours as uniforms, but via the VBO [**EDIT** and maybe a bounding-box check before rendering, but only if faster. GL's stencil check may suffice.]

6. See how to make the trails de-activable, and maybe mutually exclusive with engine glow [**EDIT** or not, see comments]

7. ~~Maybe make the thickness variable (like thicker when afterburning)~~

8. ~~Do we want the noise (random()) in the shader, or is it nicer without?~~

Trails for rockets may be half-baked. We'll see when defining them. I made a guess about how to map their behavior to trail styles. In principle they could vary trail style while turning (seekers) or thrusting (mace rockets).

To expand on #7: in some ways it's more natural to pair colour with thickness as a style in whichever situation. On the other hand, for oddball ships like the Za'lek Mephisto/Diablo, it might be nice if `<trail_generator>` could override the trail's thickness (instead of creating trail definitions like red10 or red12). It's probably incoherent to support both features. | 1.0 | Tracker: are trails perfect yet? - Ideas for improvement post merge: [edited to reflect progress]

1. "add more definitions for capships" - ~~and now rockets (example: dat/outfits/rockets/fury_missile.xml).~~

2. "tune the colours. fire glow and afterburner are too close for example"

3. "different emitter types should have different afterburner and jump colours"

4. Somehow add nebula-specific trails

5. Make the rendering more optimized, without passing the colours as uniforms, but via the VBO [**EDIT** and maybe a bounding-box check before rendering, but only if faster. GL's stencil check may suffice.]

6. See how to make the trails de-activable, and maybe mutually exclusive with engine glow [**EDIT** or not, see comments]

7. ~~Maybe make the thickness variable (like thicker when afterburning)~~

8. ~~Do we want the noise (random()) in the shader, or is it nicer without?~~

Trails for rockets may be half-baked. We'll see when defining them. I made a guess about how to map their behavior to trail styles. In principle they could vary trail style while turning (seekers) or thrusting (mace rockets).

To expand on #7: in some ways it's more natural to pair colour with thickness as a style in whichever situation. On the other hand, for oddball ships like the Za'lek Mephisto/Diablo, it might be nice if `<trail_generator>` could override the trail's thickness (instead of creating trail definitions like red10 or red12). It's probably incoherent to support both features. | priority | tracker are trails perfect yet ideas for improvement post merge add more definitions for capships and now rockets example dat outfits rockets fury missile xml tune the colours fire glow and afterburner are too close for example different emitter types should have different afterburner and jump colours somehow add nebula specific trails make the rendering more optimized without passing the colours as uniforms but via the vbo see how to make the trails de activable and maybe mutually exclusive with engine glow maybe make the thickness variable like thicker when afterburning do we want the noise random in the shader or is it nicer without trails for rockets may be half baked we ll see when defining them i made a guess about how to map their behavior to trail styles in principle they could vary trail style while turning seekers or thrusting mace rockets to expand on in some ways it s more natural to pair colour with thickness as a style in whichever situation on the other hand for oddball ships like the za lek mephisto diablo it might be nice if could override the trail s thickness instead of creating trail definitions like or it s probably incoherent to support both features | 1 |

203,442 | 7,064,351,739 | IssuesEvent | 2018-01-06 05:57:59 | honestbleeps/Reddit-Enhancement-Suite | https://api.github.com/repos/honestbleeps/Reddit-Enhancement-Suite | closed | Hide comments which match keywords | Difficulty-3_Hard Difficulty-2_Medium Priority-7_Much Interest RE-Request | https://www.reddit.com/r/Enhancement/comments/2kyvdb/feature_request_why_are_we_not_able_to_filter_out/

This can re-use userTagger's "[this comment is from an ignored user -- show anyway?" comment hider.

This should probably re-use the keywords from #1741. maybe a separate list option that's shared.

| 1.0 | Hide comments which match keywords - https://www.reddit.com/r/Enhancement/comments/2kyvdb/feature_request_why_are_we_not_able_to_filter_out/

This can re-use userTagger's "[this comment is from an ignored user -- show anyway?" comment hider.

This should probably re-use the keywords from #1741. maybe a separate list option that's shared.

| priority | hide comments which match keywords this can re use usertagger s this comment is from an ignored user show anyway comment hider this should probably re use the keywords from maybe a separate list option that s shared | 1 |

60,303 | 3,122,569,948 | IssuesEvent | 2015-09-06 17:19:35 | RedstoneLamp/RedstoneLamp | https://api.github.com/repos/RedstoneLamp/RedstoneLamp | closed | [RFC]: Use ProtocolSessions | 0.12.0 Internal Medium Priority Network RFC v0.11.0 | In BlockServer, we used ProtocolSessions to handle packets and reroute them to a Subprotocol. But, with the system we have now, the Protocol class interacts directly with the Subprotocols. This has raised a few concerns for me, one of them being Chunk sending. MCPE automatically unloads chunks in multiplayer, but the PC version requires the server to tell it to unload a chunk. Since not all protocols require chunk unloading, (some don't even require chunks at all), it has come to my attention to use a ProtocolSession for each session to handle some protocol specific tasks, such as chunk sending and chunk unloading.

##### Please comment your idea(s) below, and remember this is on branch ```rewrite``` | 1.0 | [RFC]: Use ProtocolSessions - In BlockServer, we used ProtocolSessions to handle packets and reroute them to a Subprotocol. But, with the system we have now, the Protocol class interacts directly with the Subprotocols. This has raised a few concerns for me, one of them being Chunk sending. MCPE automatically unloads chunks in multiplayer, but the PC version requires the server to tell it to unload a chunk. Since not all protocols require chunk unloading, (some don't even require chunks at all), it has come to my attention to use a ProtocolSession for each session to handle some protocol specific tasks, such as chunk sending and chunk unloading.

##### Please comment your idea(s) below, and remember this is on branch ```rewrite``` | priority | use protocolsessions in blockserver we used protocolsessions to handle packets and reroute them to a subprotocol but with the system we have now the protocol class interacts directly with the subprotocols this has raised a few concerns for me one of them being chunk sending mcpe automatically unloads chunks in multiplayer but the pc version requires the server to tell it to unload a chunk since not all protocols require chunk unloading some don t even require chunks at all it has come to my attention to use a protocolsession for each session to handle some protocol specific tasks such as chunk sending and chunk unloading please comment your idea s below and remember this is on branch rewrite | 1 |

97,892 | 4,007,699,026 | IssuesEvent | 2016-05-12 19:02:04 | Fermat-ORG/fermat-org | https://api.github.com/repos/Fermat-ORG/fermat-org | reopened | Create a form for TSE permission management | client Priority: MEDIUM | A form its needed is needed to give TSE permission from one user to another. A list of the users missing any of the permissions that a certain user can give is required. | 1.0 | Create a form for TSE permission management - A form its needed is needed to give TSE permission from one user to another. A list of the users missing any of the permissions that a certain user can give is required. | priority | create a form for tse permission management a form its needed is needed to give tse permission from one user to another a list of the users missing any of the permissions that a certain user can give is required | 1 |

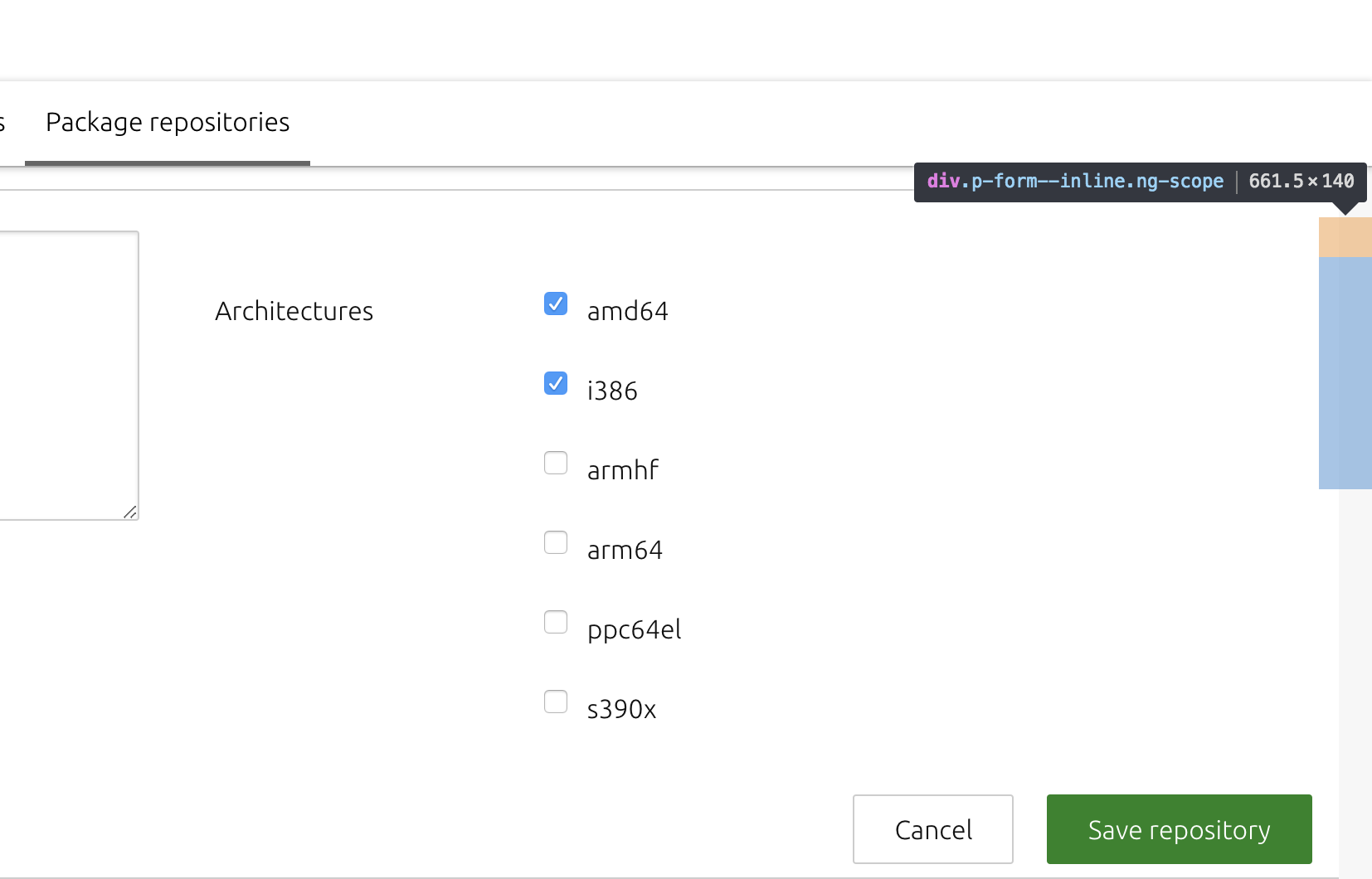

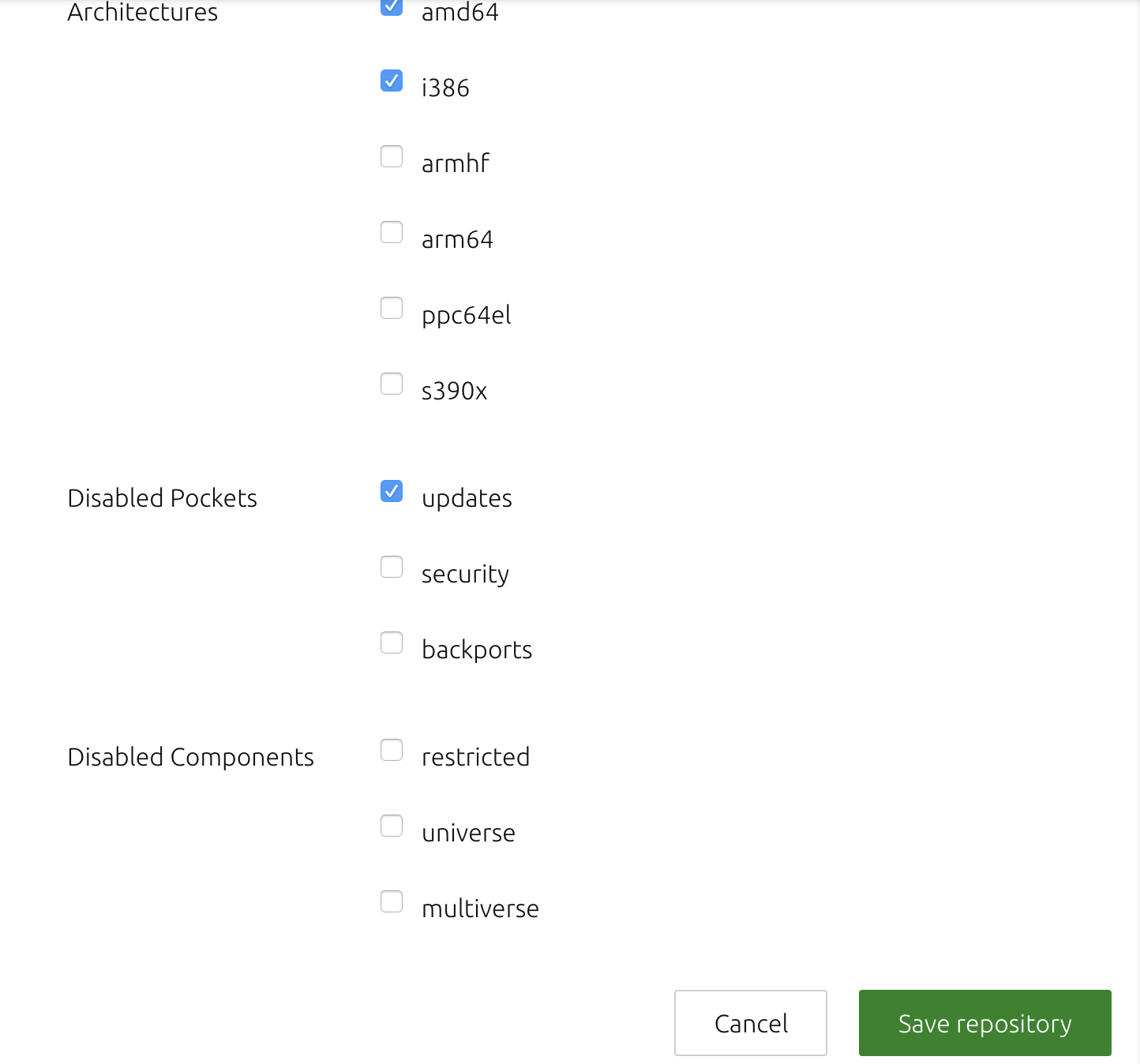

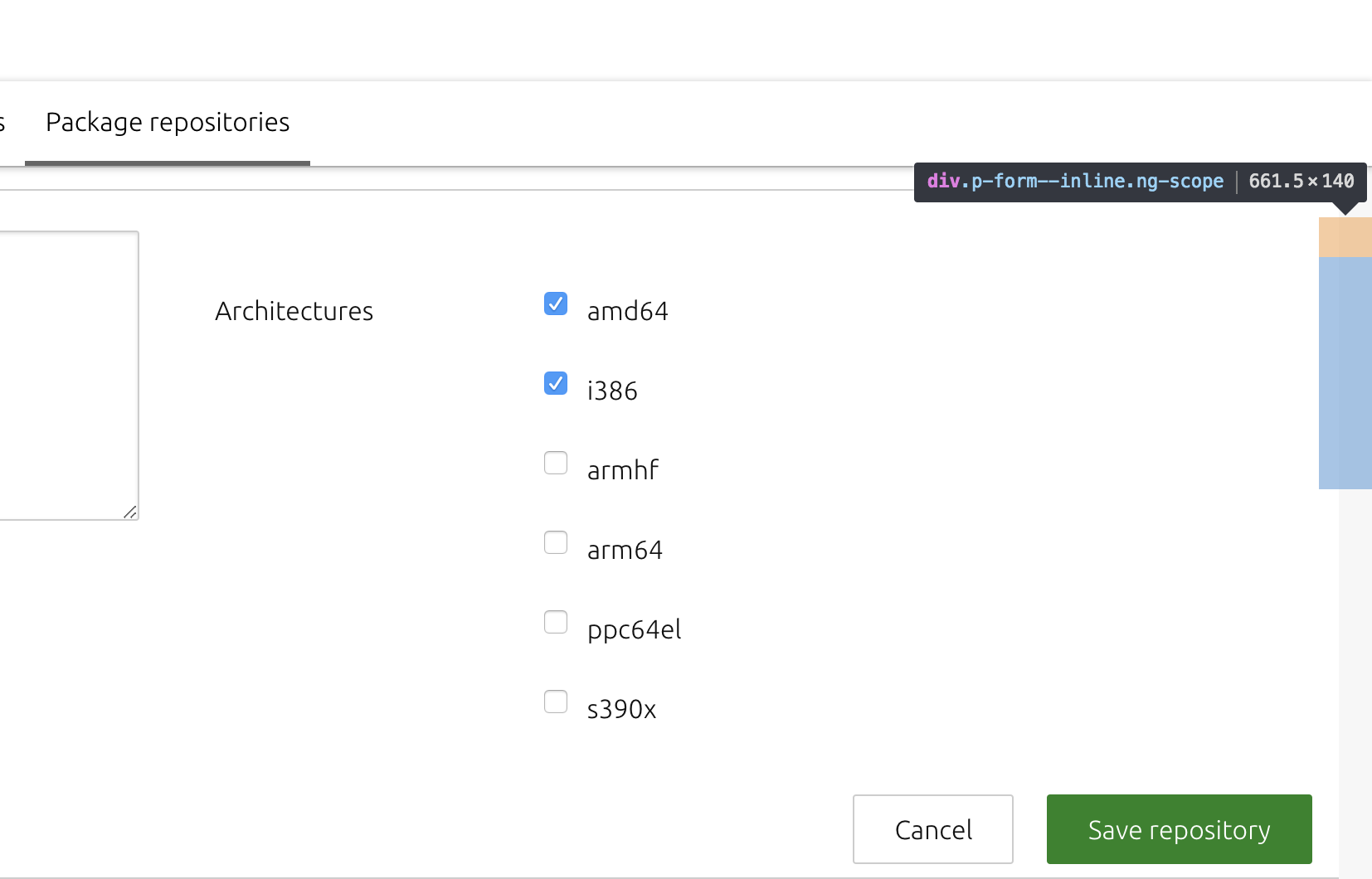

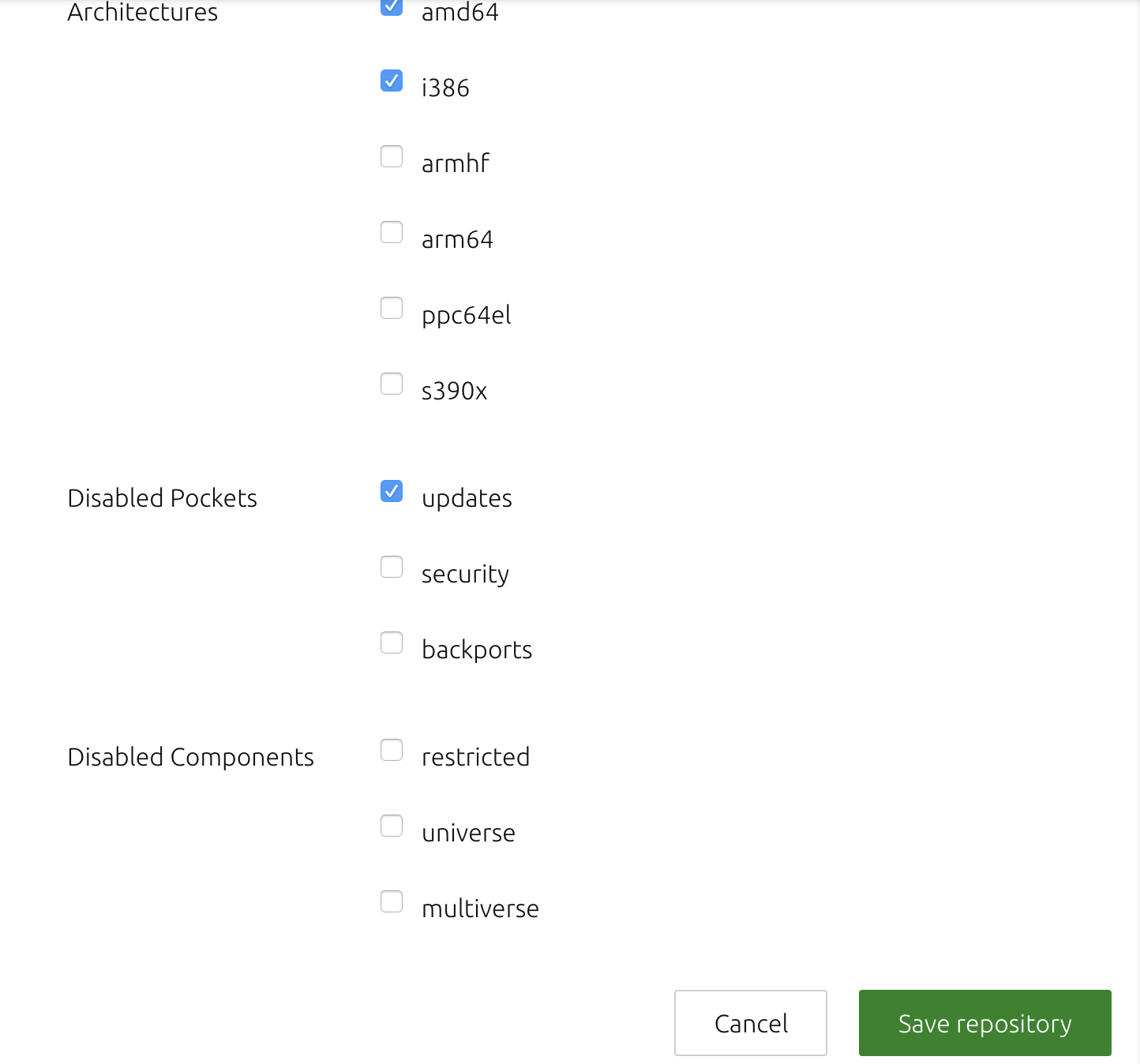

491,621 | 14,167,576,986 | IssuesEvent | 2020-11-12 10:28:56 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | p-form--inline elements do not wrap | Priority: Medium | The following bug was reported against MAAS recently: https://bugs.launchpad.net/maas/+bug/1782230

It appears that divs styled with _p-form--inline_ on the repository settings page render incorrectly, such that they don't appear to be visible. The divs are present in the markup, but as you can see from the attached screenshots, there appears to be a bug whereby the elements rendering outside the viewport fail to wrap appropriately.

# divs with p-form--inline

The "disabled pockets" div is selected here in the inspector.

# divs without p-form--inline

# Markup

The markup in question is in the form:

```html

<div class="row">

<div class="col-6">

<div class="p-form--inline">

...

</div>

<div class="p-form--inline">

...

</div>

</div>

</div>

```

| 1.0 | p-form--inline elements do not wrap - The following bug was reported against MAAS recently: https://bugs.launchpad.net/maas/+bug/1782230

It appears that divs styled with _p-form--inline_ on the repository settings page render incorrectly, such that they don't appear to be visible. The divs are present in the markup, but as you can see from the attached screenshots, there appears to be a bug whereby the elements rendering outside the viewport fail to wrap appropriately.

# divs with p-form--inline

The "disabled pockets" div is selected here in the inspector.

# divs without p-form--inline

# Markup

The markup in question is in the form:

```html

<div class="row">

<div class="col-6">

<div class="p-form--inline">

...

</div>

<div class="p-form--inline">

...

</div>

</div>

</div>

```

| priority | p form inline elements do not wrap the following bug was reported against maas recently it appears that divs styled with p form inline on the repository settings page render incorrectly such that they don t appear to be visible the divs are present in the markup but as you can see from the attached screenshots there appears to be a bug whereby the elements rendering outside the viewport fail to wrap appropriately divs with p form inline the disabled pockets div is selected here in the inspector divs without p form inline markup the markup in question is in the form html | 1 |

424,310 | 12,309,324,728 | IssuesEvent | 2020-05-12 08:46:50 | geosolutions-it/geonode-afghanistan | https://api.github.com/repos/geosolutions-it/geonode-afghanistan | closed | disasterrisk.af and assess-risk.info domains renewal | Priority: Medium | I would migrate these over to a well-known registrar if possible @simboss. Gandi DNS servers have not been very reliable lately..

Expire dates here below:

| 1.0 | disasterrisk.af and assess-risk.info domains renewal - I would migrate these over to a well-known registrar if possible @simboss. Gandi DNS servers have not been very reliable lately..

Expire dates here below:

| priority | disasterrisk af and assess risk info domains renewal i would migrate these over to a well known registrar if possible simboss gandi dns servers have not been very reliable lately expire dates here below | 1 |

295,899 | 9,102,204,103 | IssuesEvent | 2019-02-20 13:12:46 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | opened | tests/subsys/settings/fcb/base64 fails when write-block-size is 8 | bug priority: medium | **Describe the bug**

tests/subsys/settings/fcb/base64 on SoC with flash write-block-size set to 8.

I get same failed result with disco_l475_iot1 (wbs 8) and reel_board (with flash driver modified with write_block_size = 8).

**To Reproduce**

Steps to reproduce the behavior:

1. mkdir build; cd build

2. cmake -DBOARD=board\_xyz

3. make

4. See error

**Expected behavior**

fcb/base64 should work with all flash drivers with write-block-size <= 8.

**Impact**

Can't use fcb based subystems (settings, ...) with system with write-block-size = 8.

**Screenshots or console output**

```

***** Booting Zephyr OS zephyr-v1.13.0-4076-gc62ba44 *****

Running test suite test_config_fcb

===================================================================

starting test - test_settings_encode

PASS - test_settings_encode

===================================================================

starting test - test_setting_raw_read

PASS - test_setting_raw_read

===================================================================

starting test - test_setting_val_read

PASS - test_setting_val_read

===================================================================

starting test - config_empty_lookups

PASS - config_empty_lookups

===================================================================

starting test - test_config_insert

PASS - test_config_insert

===================================================================

starting test - test_config_getset_unknown

PASS - test_config_getset_unknown

===================================================================

starting test - test_config_getset_int

PASS - test_config_getset_int

===================================================================

starting test - test_config_getset_int64

PASS - test_config_getset_int64

===================================================================

starting test - test_config_commit

PASS - test_config_commit

===================================================================

starting test - test_config_empty_fcb

PASS - test_config_empty_fcb

===================================================================

starting test - test_config_save_1_fcb

PASS - test_config_save_1_fcb

===================================================================

starting test - test_config_insert2

PASS - test_config_insert2

===================================================================

starting test - test_config_save_2_fcb

PASS - test_config_save_2_fcb

===================================================================

starting test - test_config_insert3

PASS - test_config_insert3

===================================================================

starting test - test_config_save_3_fcb

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_save_3_fcb

===================================================================

starting test - test_config_compress_reset

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_compress_reset

===================================================================

starting test - test_config_save_one_fcb

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_save_one_fcb

===================================================================

starting test - test_config_compress_deleted

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_compress_)

The deleted settings shouldn be compressed.

FAIL - test_config_compress_deleted

===================================================================

Test suite test_config_fcb failed.

===================================================================

PROJECT EXECUTION FAILED

```

**Environment (please complete the following information):**

- OS: Linux,

- Toolchain (Zephyr SDK)

- Commit SHA: 020d32dca01b9d8c8278d60e43fd9eb3bf3f2ab0

**Additional context**

Add any other context about the problem here.

| 1.0 | tests/subsys/settings/fcb/base64 fails when write-block-size is 8 - **Describe the bug**

tests/subsys/settings/fcb/base64 on SoC with flash write-block-size set to 8.

I get same failed result with disco_l475_iot1 (wbs 8) and reel_board (with flash driver modified with write_block_size = 8).

**To Reproduce**

Steps to reproduce the behavior:

1. mkdir build; cd build

2. cmake -DBOARD=board\_xyz

3. make

4. See error

**Expected behavior**

fcb/base64 should work with all flash drivers with write-block-size <= 8.

**Impact**

Can't use fcb based subystems (settings, ...) with system with write-block-size = 8.

**Screenshots or console output**

```

***** Booting Zephyr OS zephyr-v1.13.0-4076-gc62ba44 *****

Running test suite test_config_fcb

===================================================================

starting test - test_settings_encode

PASS - test_settings_encode

===================================================================

starting test - test_setting_raw_read

PASS - test_setting_raw_read

===================================================================

starting test - test_setting_val_read

PASS - test_setting_val_read

===================================================================

starting test - config_empty_lookups

PASS - config_empty_lookups

===================================================================

starting test - test_config_insert

PASS - test_config_insert

===================================================================

starting test - test_config_getset_unknown

PASS - test_config_getset_unknown

===================================================================

starting test - test_config_getset_int

PASS - test_config_getset_int

===================================================================

starting test - test_config_getset_int64

PASS - test_config_getset_int64

===================================================================

starting test - test_config_commit

PASS - test_config_commit

===================================================================

starting test - test_config_empty_fcb

PASS - test_config_empty_fcb

===================================================================

starting test - test_config_save_1_fcb

PASS - test_config_save_1_fcb

===================================================================

starting test - test_config_insert2

PASS - test_config_insert2

===================================================================

starting test - test_config_save_2_fcb

PASS - test_config_save_2_fcb

===================================================================

starting test - test_config_insert3

PASS - test_config_insert3

===================================================================

starting test - test_config_save_3_fcb

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_save_3_fcb

===================================================================

starting test - test_config_compress_reset

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_compress_reset

===================================================================

starting test - test_config_save_one_fcb

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_fcb.c:285)

bad set-value size

FAIL - test_config_save_one_fcb

===================================================================

starting test - test_config_compress_deleted

Assertion failed at /local/mcu/zephyr/zephyr-stm32wb/tests/subsys/settings/fcb/src/settings_test_compress_)

The deleted settings shouldn be compressed.

FAIL - test_config_compress_deleted

===================================================================

Test suite test_config_fcb failed.

===================================================================

PROJECT EXECUTION FAILED

```

**Environment (please complete the following information):**

- OS: Linux,

- Toolchain (Zephyr SDK)

- Commit SHA: 020d32dca01b9d8c8278d60e43fd9eb3bf3f2ab0

**Additional context**

Add any other context about the problem here.

| priority | tests subsys settings fcb fails when write block size is describe the bug tests subsys settings fcb on soc with flash write block size set to i get same failed result with disco wbs and reel board with flash driver modified with write block size to reproduce steps to reproduce the behavior mkdir build cd build cmake dboard board xyz make see error expected behavior fcb should work with all flash drivers with write block size impact can t use fcb based subystems settings with system with write block size screenshots or console output booting zephyr os zephyr running test suite test config fcb starting test test settings encode pass test settings encode starting test test setting raw read pass test setting raw read starting test test setting val read pass test setting val read starting test config empty lookups pass config empty lookups starting test test config insert pass test config insert starting test test config getset unknown pass test config getset unknown starting test test config getset int pass test config getset int starting test test config getset pass test config getset starting test test config commit pass test config commit starting test test config empty fcb pass test config empty fcb starting test test config save fcb pass test config save fcb starting test test config pass test config starting test test config save fcb pass test config save fcb starting test test config pass test config starting test test config save fcb assertion failed at local mcu zephyr zephyr tests subsys settings fcb src settings test fcb c bad set value size fail test config save fcb starting test test config compress reset assertion failed at local mcu zephyr zephyr tests subsys settings fcb src settings test fcb c bad set value size fail test config compress reset starting test test config save one fcb assertion failed at local mcu zephyr zephyr tests subsys settings fcb src settings test fcb c bad set value size fail test config save one fcb starting test test config compress deleted assertion failed at local mcu zephyr zephyr tests subsys settings fcb src settings test compress the deleted settings shouldn be compressed fail test config compress deleted test suite test config fcb failed project execution failed environment please complete the following information os linux toolchain zephyr sdk commit sha additional context add any other context about the problem here | 1 |

185,891 | 6,731,593,690 | IssuesEvent | 2017-10-18 08:16:43 | Caleydo/lineupjs | https://api.github.com/repos/Caleydo/lineupjs | closed | Replace red-green color map with red-blue colormap | priority: medium type: bug | We use a red-green colormap for ordinal data:

There is no good reason to use this color map. It opens us up to criticism as it's problematic for red-green colorblind users. Replace with red-blue colormap.

| 1.0 | Replace red-green color map with red-blue colormap - We use a red-green colormap for ordinal data:

There is no good reason to use this color map. It opens us up to criticism as it's problematic for red-green colorblind users. Replace with red-blue colormap.

| priority | replace red green color map with red blue colormap we use a red green colormap for ordinal data there is no good reason to use this color map it opens us up to criticism as it s problematic for red green colorblind users replace with red blue colormap | 1 |

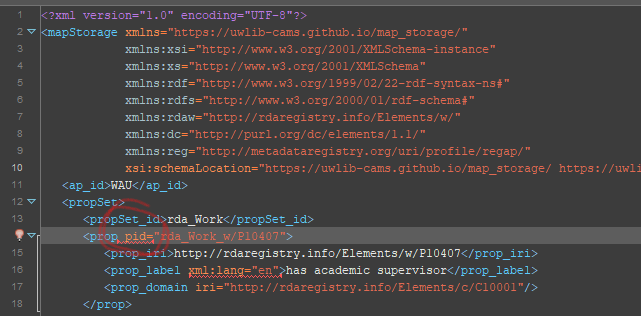

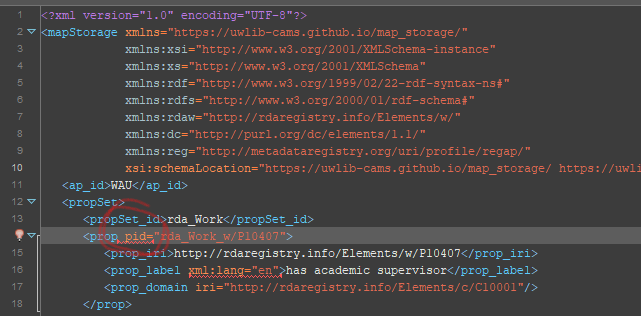

563,366 | 16,681,350,325 | IssuesEvent | 2021-06-08 00:28:57 | uwlib-cams/map_storage | https://api.github.com/repos/uwlib-cams/map_storage | closed | pid attibute validation error | help wanted medium priority xml schema | In the source file, `<prop>` elements will have an `lid` attribute (see [mockup](https://github.com/uwlib-cams/map_storage/blob/28c1daa212cca503e35968ec10f04091293e8063/map_storage_mockup.xml#L17) for details). This needs to be accounted for in the schema; I have been unsuccessful in doing this so far.

My attempt to simply allow a `pid` attribute in the draft source schema is [here](https://github.com/uwlib-cams/map_storage/blob/28c1daa212cca503e35968ec10f04091293e8063/map_storage.xsd#L55-L56).

Validation error is:

```

Attribute 'pid' is not allowed to appear in element 'prop'.

```

| 1.0 | pid attibute validation error - In the source file, `<prop>` elements will have an `lid` attribute (see [mockup](https://github.com/uwlib-cams/map_storage/blob/28c1daa212cca503e35968ec10f04091293e8063/map_storage_mockup.xml#L17) for details). This needs to be accounted for in the schema; I have been unsuccessful in doing this so far.

My attempt to simply allow a `pid` attribute in the draft source schema is [here](https://github.com/uwlib-cams/map_storage/blob/28c1daa212cca503e35968ec10f04091293e8063/map_storage.xsd#L55-L56).

Validation error is:

```

Attribute 'pid' is not allowed to appear in element 'prop'.

```

| priority | pid attibute validation error in the source file elements will have an lid attribute see for details this needs to be accounted for in the schema i have been unsuccessful in doing this so far my attempt to simply allow a pid attribute in the draft source schema is validation error is attribute pid is not allowed to appear in element prop | 1 |

40,838 | 2,868,945,115 | IssuesEvent | 2015-06-05 22:07:03 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | pub doesn't work if executed through a symlink | enhancement Fixed Priority-Medium | <a href="https://github.com/jbdeboer"><img src="https://avatars.githubusercontent.com/u/502633?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jbdeboer](https://github.com/jbdeboer)**

_Originally opened as dart-lang/sdk#9409_

----

**What steps will reproduce the problem?**

1. ln -s $DART_SDK/bin/pub ~/bin/dart-pub

2. ~/bin/dart-pub install

**What is the expected output? What do you see instead?**

It should work.

Instead it fails with an error: Unable to open file: $HOME/util/pub/pub.dart

**What version of the product are you using? On what operating system?**

http://dart.googlecode.com/svn/branches/bleeding_edge/dart@20353

**Please provide any additional information below.**

On Ubuntu. | 1.0 | pub doesn't work if executed through a symlink - <a href="https://github.com/jbdeboer"><img src="https://avatars.githubusercontent.com/u/502633?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [jbdeboer](https://github.com/jbdeboer)**

_Originally opened as dart-lang/sdk#9409_

----

**What steps will reproduce the problem?**

1. ln -s $DART_SDK/bin/pub ~/bin/dart-pub

2. ~/bin/dart-pub install

**What is the expected output? What do you see instead?**

It should work.

Instead it fails with an error: Unable to open file: $HOME/util/pub/pub.dart

**What version of the product are you using? On what operating system?**

http://dart.googlecode.com/svn/branches/bleeding_edge/dart@20353

**Please provide any additional information below.**

On Ubuntu. | priority | pub doesn t work if executed through a symlink issue by originally opened as dart lang sdk what steps will reproduce the problem ln s dart sdk bin pub bin dart pub bin dart pub install nbsp what is the expected output what do you see instead it should work instead it fails with an error unable to open file home util pub pub dart what version of the product are you using on what operating system please provide any additional information below on ubuntu | 1 |

292,116 | 8,953,295,944 | IssuesEvent | 2019-01-25 19:01:45 | AngelGuerra/la-buena-leche-org | https://api.github.com/repos/AngelGuerra/la-buena-leche-org | closed | Añadir HTML Proofer para testear el sitio construido | Priority: Medium Status: Completed Type: Enhancement | Con esta herramienta se puede testear que el sitio construido no tiene enlaces rotos, etc...

- [Documentación](https://github.com/gjtorikian/html-proofer) | 1.0 | Añadir HTML Proofer para testear el sitio construido - Con esta herramienta se puede testear que el sitio construido no tiene enlaces rotos, etc...

- [Documentación](https://github.com/gjtorikian/html-proofer) | priority | añadir html proofer para testear el sitio construido con esta herramienta se puede testear que el sitio construido no tiene enlaces rotos etc | 1 |

160,356 | 6,087,397,943 | IssuesEvent | 2017-06-18 12:37:51 | diamm/diamm | https://api.github.com/repos/diamm/diamm | closed | Sorting for Anonymous Compositions | Component: Search Priority: Medium Status: Waiting to be addressed Type: Bug | From @juliacmcf

Here's an oddity: I just searched on Anonymous compositions (BRILLIANTLY USEFUL, THANK YOU! I needed numbers of anyonmous works for statistics) but the resulting order was quite odd: I would have expected an alphabetical result (since there were no other search criteria), but the results were clustered by letter, starting with i and ending apparently with f, plus three out of order pieces tacked on the end. Can't quite see what the order is there, though in this case it's not that important, and presumably if I added a letter or two to the work title that would act as a filter. | 1.0 | Sorting for Anonymous Compositions - From @juliacmcf

Here's an oddity: I just searched on Anonymous compositions (BRILLIANTLY USEFUL, THANK YOU! I needed numbers of anyonmous works for statistics) but the resulting order was quite odd: I would have expected an alphabetical result (since there were no other search criteria), but the results were clustered by letter, starting with i and ending apparently with f, plus three out of order pieces tacked on the end. Can't quite see what the order is there, though in this case it's not that important, and presumably if I added a letter or two to the work title that would act as a filter. | priority | sorting for anonymous compositions from juliacmcf here s an oddity i just searched on anonymous compositions brilliantly useful thank you i needed numbers of anyonmous works for statistics but the resulting order was quite odd i would have expected an alphabetical result since there were no other search criteria but the results were clustered by letter starting with i and ending apparently with f plus three out of order pieces tacked on the end can t quite see what the order is there though in this case it s not that important and presumably if i added a letter or two to the work title that would act as a filter | 1 |

235,045 | 7,733,879,808 | IssuesEvent | 2018-05-26 17:06:28 | vinitkumar/googlecl | https://api.github.com/repos/vinitkumar/googlecl | closed | calendar should have an option to sort events from multiple calendars by date | Priority-Medium enhancement imported | _From [rut...@gmail.com](https://code.google.com/u/109754790269413139353/) on July 10, 2010 05:32:11_

Being able to sort by date instead of by calendar (when outputing events from multiple calendars) would be useful, for example:

2010-07-01:

event 1 (Calendar 1)

event 2 (Calendar 1)

event 1 (Calendar 2)

2010-07-02:

...

_Original issue: http://code.google.com/p/googlecl/issues/detail?id=219_

| 1.0 | calendar should have an option to sort events from multiple calendars by date - _From [rut...@gmail.com](https://code.google.com/u/109754790269413139353/) on July 10, 2010 05:32:11_

Being able to sort by date instead of by calendar (when outputing events from multiple calendars) would be useful, for example:

2010-07-01:

event 1 (Calendar 1)

event 2 (Calendar 1)

event 1 (Calendar 2)

2010-07-02:

...

_Original issue: http://code.google.com/p/googlecl/issues/detail?id=219_

| priority | calendar should have an option to sort events from multiple calendars by date from on july being able to sort by date instead of by calendar when outputing events from multiple calendars would be useful for example event calendar event calendar event calendar original issue | 1 |

384,853 | 11,404,913,887 | IssuesEvent | 2020-01-31 10:50:05 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Simultaneous BLE pairings getting the same slot in keys structure | area: Bluetooth bug has-pr priority: medium | **Describe the bug**

When a BLE pairing request comes in while another pairing is in progress, it is sometimes is assigned the same entry in keys structure, resulting in messed up settings being persisted.

This is due to a bad check when looking for a free slot in the key_pool.

See https://github.com/zephyrproject-rtos/zephyr/pull/22234 | 1.0 | Simultaneous BLE pairings getting the same slot in keys structure - **Describe the bug**

When a BLE pairing request comes in while another pairing is in progress, it is sometimes is assigned the same entry in keys structure, resulting in messed up settings being persisted.

This is due to a bad check when looking for a free slot in the key_pool.

See https://github.com/zephyrproject-rtos/zephyr/pull/22234 | priority | simultaneous ble pairings getting the same slot in keys structure describe the bug when a ble pairing request comes in while another pairing is in progress it is sometimes is assigned the same entry in keys structure resulting in messed up settings being persisted this is due to a bad check when looking for a free slot in the key pool see | 1 |

67,421 | 3,273,628,512 | IssuesEvent | 2015-10-26 04:24:42 | npgall/cqengine | https://api.github.com/repos/npgall/cqengine | closed | Add listeners (observers) to IndexedCollection | auto-migrated Priority-Medium Type-Enhancement | ```

From discussion in the forum:

https://groups.google.com/forum/#!topic/cqengine-discuss/8sPccIElN7M

Should add an ObservableIndexedCollection, which can wrap another, and notify a

given listener when objects are added and removed.

This will be purely a wrapper so will not have any impact on applications not

requiring this functionality.

```

Original issue reported on code.google.com by `ni...@npgall.com` on 25 Nov 2013 at 9:49 | 1.0 | Add listeners (observers) to IndexedCollection - ```

From discussion in the forum:

https://groups.google.com/forum/#!topic/cqengine-discuss/8sPccIElN7M

Should add an ObservableIndexedCollection, which can wrap another, and notify a

given listener when objects are added and removed.

This will be purely a wrapper so will not have any impact on applications not

requiring this functionality.

```

Original issue reported on code.google.com by `ni...@npgall.com` on 25 Nov 2013 at 9:49 | priority | add listeners observers to indexedcollection from discussion in the forum should add an observableindexedcollection which can wrap another and notify a given listener when objects are added and removed this will be purely a wrapper so will not have any impact on applications not requiring this functionality original issue reported on code google com by ni npgall com on nov at | 1 |

590,465 | 17,778,344,143 | IssuesEvent | 2021-08-30 22:44:28 | hackforla/design-systems | https://api.github.com/repos/hackforla/design-systems | closed | Create artwork for the HfLA website | Role: UI priority: medium size: small Feature - HfLA Website Awaiting Milestone | ### Overview

In order to place our project on the HfLA website we need to design artwork for the project card and project header.

### Action Items

- [x] Await logo lock up #13

- [x] Design project card 600 x 400 image

- [ ] Design project header 1500 x 700 hero image (please do not put project title on hero image)

### Resources/Instructions

Reference design mock-up designed by Hana Stevenson

<img width="918" alt="Screenshot 2021-07-22 at 14 48 09" src="https://user-images.githubusercontent.com/6236085/126713766-c2790fd0-5758-43a1-9c0c-78a9ee65bcc9.png">

[Figma file](https://www.figma.com/file/ly2kOpJc98oPbSIc181F2l/HfLA-Design-Systems?node-id=915%3A3934)

| 1.0 | Create artwork for the HfLA website - ### Overview

In order to place our project on the HfLA website we need to design artwork for the project card and project header.

### Action Items

- [x] Await logo lock up #13

- [x] Design project card 600 x 400 image

- [ ] Design project header 1500 x 700 hero image (please do not put project title on hero image)

### Resources/Instructions

Reference design mock-up designed by Hana Stevenson

<img width="918" alt="Screenshot 2021-07-22 at 14 48 09" src="https://user-images.githubusercontent.com/6236085/126713766-c2790fd0-5758-43a1-9c0c-78a9ee65bcc9.png">

[Figma file](https://www.figma.com/file/ly2kOpJc98oPbSIc181F2l/HfLA-Design-Systems?node-id=915%3A3934)

| priority | create artwork for the hfla website overview in order to place our project on the hfla website we need to design artwork for the project card and project header action items await logo lock up design project card x image design project header x hero image please do not put project title on hero image resources instructions reference design mock up designed by hana stevenson img width alt screenshot at src | 1 |

291,616 | 8,940,868,976 | IssuesEvent | 2019-01-24 01:38:15 | DancesportSoftware/das | https://api.github.com/repos/DancesportSoftware/das | closed | Admin manage accounts | Priority: Medium enhancement mvp | Current admin user has no easy way managing accounts in the system. During the development stage, many test accounts are created and admin needs a convenient way to search these accounts and roles without requiring developers to log on Firebase or Google Cloud.

Required functions for development:

- [x] Search accounts by first name, last name, phone number, email, and account roles

- [ ] Delete accounts or all accounts

In production, account deletion must be disabled. | 1.0 | Admin manage accounts - Current admin user has no easy way managing accounts in the system. During the development stage, many test accounts are created and admin needs a convenient way to search these accounts and roles without requiring developers to log on Firebase or Google Cloud.

Required functions for development:

- [x] Search accounts by first name, last name, phone number, email, and account roles

- [ ] Delete accounts or all accounts

In production, account deletion must be disabled. | priority | admin manage accounts current admin user has no easy way managing accounts in the system during the development stage many test accounts are created and admin needs a convenient way to search these accounts and roles without requiring developers to log on firebase or google cloud required functions for development search accounts by first name last name phone number email and account roles delete accounts or all accounts in production account deletion must be disabled | 1 |

46,082 | 2,946,606,170 | IssuesEvent | 2015-07-04 03:54:57 | facelessuser/Rummage | https://api.github.com/repos/facelessuser/Rummage | opened | Get the actual inverse of `\L` and `\C`. | Bug Priority - Medium Severity - Minor | Currently we only give the inverse correctly for `\l` and `\c` when `\C` and `\L` are used outside a character class, but we need to the proper unicode property inverse (obviously for unicode), and the ascii equivalent for non unicode. | 1.0 | Get the actual inverse of `\L` and `\C`. - Currently we only give the inverse correctly for `\l` and `\c` when `\C` and `\L` are used outside a character class, but we need to the proper unicode property inverse (obviously for unicode), and the ascii equivalent for non unicode. | priority | get the actual inverse of l and c currently we only give the inverse correctly for l and c when c and l are used outside a character class but we need to the proper unicode property inverse obviously for unicode and the ascii equivalent for non unicode | 1 |

338,136 | 10,224,769,160 | IssuesEvent | 2019-08-16 13:37:28 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: GATT: Write Without Reponse to invalid handle asserts | bug priority: medium | **Describe the bug**

Issuing a Write Without Response command to an invalid handle (i.e. this handle does not exist). This [line of code](https://github.com/zephyrproject-rtos/zephyr/blob/master/subsys/bluetooth/controller/ll_sw/nordic/lll/lll_conn.c#L725) asserts

**To Reproduce**

Steps to reproduce the behavior:

```

u8_t payload[5];

memset(payload, 1, sizeof(payload));

bt_gatt_write_without_response(pConn, 0x1234 payload,

sizeof(payload), FALSE);

```

**Expected behavior**

The previous behavior was that the write command returned 0 and no callback etc. was triggered. In case that the remote (G)ATT is notifying of the error, it would be great to trigger the write callback.

**Impact**

Our Zephyr system is behaving according to remote user input, so we cannot assume that the user passes a valid handle (think typo) hence this issue is crucial to us.

**Screenshots or console output**

```

d_00: @00:00:00.672567 [00:00:00.672,546] <err> bt_ctlr_llsw_nordic_lll_conn: assert: 'link' failed

d_00: @00:00:00.672567 @ /home/ntrnd/subversion/components/zephyr/system/Zephyr/zephyr/subsys/bluetooth/controller/ll_sw/nordic/lll/lll_conn.c:725:

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: >>> ZEPHYR FATAL ERROR 3: Kernel oops

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: Current thread: 0x566e0cc0 (idle)

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: Halting system

d_00: @00:00:00.672567 ERROR: Exiting due to fatal error

```

**Environment (please complete the following information):**

- OS: (e.g. Linux, MacOS, Windows): Kubuntu 18.04

- Toolchain (e.g Zephyr SDK, ...): Zephyr SDK 0.10.1

- Commit SHA or Version used: SHA 386fcf3b53f523398c1d980ab3f691b8ab505607

**Additional context**

Compiled for nrf52_bsim | 1.0 | Bluetooth: GATT: Write Without Reponse to invalid handle asserts - **Describe the bug**

Issuing a Write Without Response command to an invalid handle (i.e. this handle does not exist). This [line of code](https://github.com/zephyrproject-rtos/zephyr/blob/master/subsys/bluetooth/controller/ll_sw/nordic/lll/lll_conn.c#L725) asserts

**To Reproduce**

Steps to reproduce the behavior:

```

u8_t payload[5];

memset(payload, 1, sizeof(payload));

bt_gatt_write_without_response(pConn, 0x1234 payload,

sizeof(payload), FALSE);

```

**Expected behavior**

The previous behavior was that the write command returned 0 and no callback etc. was triggered. In case that the remote (G)ATT is notifying of the error, it would be great to trigger the write callback.

**Impact**

Our Zephyr system is behaving according to remote user input, so we cannot assume that the user passes a valid handle (think typo) hence this issue is crucial to us.

**Screenshots or console output**

```

d_00: @00:00:00.672567 [00:00:00.672,546] <err> bt_ctlr_llsw_nordic_lll_conn: assert: 'link' failed

d_00: @00:00:00.672567 @ /home/ntrnd/subversion/components/zephyr/system/Zephyr/zephyr/subsys/bluetooth/controller/ll_sw/nordic/lll/lll_conn.c:725:

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: >>> ZEPHYR FATAL ERROR 3: Kernel oops

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: Current thread: 0x566e0cc0 (idle)

d_00: @00:00:00.672567 [00:00:00.672,546] <err> os: Halting system

d_00: @00:00:00.672567 ERROR: Exiting due to fatal error

```

**Environment (please complete the following information):**

- OS: (e.g. Linux, MacOS, Windows): Kubuntu 18.04

- Toolchain (e.g Zephyr SDK, ...): Zephyr SDK 0.10.1

- Commit SHA or Version used: SHA 386fcf3b53f523398c1d980ab3f691b8ab505607

**Additional context**

Compiled for nrf52_bsim | priority | bluetooth gatt write without reponse to invalid handle asserts describe the bug issuing a write without response command to an invalid handle i e this handle does not exist this asserts to reproduce steps to reproduce the behavior t payload memset payload sizeof payload bt gatt write without response pconn payload sizeof payload false expected behavior the previous behavior was that the write command returned and no callback etc was triggered in case that the remote g att is notifying of the error it would be great to trigger the write callback impact our zephyr system is behaving according to remote user input so we cannot assume that the user passes a valid handle think typo hence this issue is crucial to us screenshots or console output d bt ctlr llsw nordic lll conn assert link failed d home ntrnd subversion components zephyr system zephyr zephyr subsys bluetooth controller ll sw nordic lll lll conn c d os zephyr fatal error kernel oops d os current thread idle d os halting system d error exiting due to fatal error environment please complete the following information os e g linux macos windows kubuntu toolchain e g zephyr sdk zephyr sdk commit sha or version used sha additional context compiled for bsim | 1 |

818,223 | 30,679,333,479 | IssuesEvent | 2023-07-26 08:06:18 | ContinualAI/avalanche | https://api.github.com/repos/ContinualAI/avalanche | closed | Saving models at incremental steps | Feature - Medium Priority core | 🐛 **Describe the bug**

Avalanche is amazing to train models 'from scratch' with multiple incremental learning experiences. For example, imagine MNIST separated with 5 experiences, the training plugins allow to run from the first step (2 classes) up to the final one (10 classes). In order to save some time, I was trying to save the model after each training experiences so if I need to modify the scenario configuration (for example, LwF parametres) I don't need to restart the training from the begging because I previously got the model with the optimal configuration.

🐜 **To Reproduce**

First of all, I was trying to pass the pretrained model (for example, in the first two classes) and start the training in the second experience (next two classes) but this dind't work because LwF doesn't know that the model has been previously trained, so catastrophic forgetting happened.

My idea was to modify the LwF plugging so for each training experience I can pass the model of the previous experience and the previously trained classes. This works in some way because the distillation loss is calculated but the behavior of the training is still different compared to the normal one (starting from the begging).

This is how the code looks like.

```

class LwFPlugin(SupervisedPlugin):

def __init__(self, alpha, temperature, model, prev_classes):

super().__init__()

self.alpha = alpha

self.temperature = temperature

self.prev_model = model

self.prev_classes = prev_classes

#self.prev_model = None

#self.prev_classes = {"0": set()}

```

🐝 **Expected behavior**

I expect the same behavior loading the pretrained model and starting the training from the first experience.

🐞 **Screenshots**

When I get some free time, I'm going to reproduce it again so you can see the difference performance in each case. Hope I have explained myself in a clear way. | 1.0 | Saving models at incremental steps - 🐛 **Describe the bug**

Avalanche is amazing to train models 'from scratch' with multiple incremental learning experiences. For example, imagine MNIST separated with 5 experiences, the training plugins allow to run from the first step (2 classes) up to the final one (10 classes). In order to save some time, I was trying to save the model after each training experiences so if I need to modify the scenario configuration (for example, LwF parametres) I don't need to restart the training from the begging because I previously got the model with the optimal configuration.

🐜 **To Reproduce**

First of all, I was trying to pass the pretrained model (for example, in the first two classes) and start the training in the second experience (next two classes) but this dind't work because LwF doesn't know that the model has been previously trained, so catastrophic forgetting happened.

My idea was to modify the LwF plugging so for each training experience I can pass the model of the previous experience and the previously trained classes. This works in some way because the distillation loss is calculated but the behavior of the training is still different compared to the normal one (starting from the begging).

This is how the code looks like.

```

class LwFPlugin(SupervisedPlugin):

def __init__(self, alpha, temperature, model, prev_classes):

super().__init__()

self.alpha = alpha

self.temperature = temperature

self.prev_model = model

self.prev_classes = prev_classes

#self.prev_model = None

#self.prev_classes = {"0": set()}

```

🐝 **Expected behavior**

I expect the same behavior loading the pretrained model and starting the training from the first experience.

🐞 **Screenshots**

When I get some free time, I'm going to reproduce it again so you can see the difference performance in each case. Hope I have explained myself in a clear way. | priority | saving models at incremental steps 🐛 describe the bug avalanche is amazing to train models from scratch with multiple incremental learning experiences for example imagine mnist separated with experiences the training plugins allow to run from the first step classes up to the final one classes in order to save some time i was trying to save the model after each training experiences so if i need to modify the scenario configuration for example lwf parametres i don t need to restart the training from the begging because i previously got the model with the optimal configuration 🐜 to reproduce first of all i was trying to pass the pretrained model for example in the first two classes and start the training in the second experience next two classes but this dind t work because lwf doesn t know that the model has been previously trained so catastrophic forgetting happened my idea was to modify the lwf plugging so for each training experience i can pass the model of the previous experience and the previously trained classes this works in some way because the distillation loss is calculated but the behavior of the training is still different compared to the normal one starting from the begging this is how the code looks like class lwfplugin supervisedplugin def init self alpha temperature model prev classes super init self alpha alpha self temperature temperature self prev model model self prev classes prev classes self prev model none self prev classes set 🐝 expected behavior i expect the same behavior loading the pretrained model and starting the training from the first experience 🐞 screenshots when i get some free time i m going to reproduce it again so you can see the difference performance in each case hope i have explained myself in a clear way | 1 |

52,192 | 3,022,203,288 | IssuesEvent | 2015-07-31 18:55:24 | aseprite/aseprite | https://api.github.com/repos/aseprite/aseprite | closed | Onion-Skinning Layering | enhancement imported medium priority ui | _From [DragonDe...@gmail.com](https://code.google.com/u/118079522278657757610/) on June 15, 2014 15:19:18_

What do you need to do? Onion-skinning is now overlaid over the current frame, instead of under it. This makes it hard to properly view the colors of the current frame and quickly check the silhouette of previous frames. How would you like to do it? Have the option to draw onion-skinning under the current frame. A checkbox in the onion-skinning settings (below Merge Frames and Red/Blue Tint) or an option in ASEprite.INI would work.

_Original issue: http://code.google.com/p/aseprite/issues/detail?id=412_ | 1.0 | Onion-Skinning Layering - _From [DragonDe...@gmail.com](https://code.google.com/u/118079522278657757610/) on June 15, 2014 15:19:18_

What do you need to do? Onion-skinning is now overlaid over the current frame, instead of under it. This makes it hard to properly view the colors of the current frame and quickly check the silhouette of previous frames. How would you like to do it? Have the option to draw onion-skinning under the current frame. A checkbox in the onion-skinning settings (below Merge Frames and Red/Blue Tint) or an option in ASEprite.INI would work.

_Original issue: http://code.google.com/p/aseprite/issues/detail?id=412_ | priority | onion skinning layering from on june what do you need to do onion skinning is now overlaid over the current frame instead of under it this makes it hard to properly view the colors of the current frame and quickly check the silhouette of previous frames how would you like to do it have the option to draw onion skinning under the current frame a checkbox in the onion skinning settings below merge frames and red blue tint or an option in aseprite ini would work original issue | 1 |

134,476 | 5,227,135,458 | IssuesEvent | 2017-01-28 00:00:27 | gri-is/portal | https://api.github.com/repos/gri-is/portal | closed | split keywords unless quoted | component: advanced search component: core component: filters component: search engine difficulty: complex priority: medium tool: angular type: enhancement | Users would like to be able to delete a single term from a keyword search. However, if multiple keywords were entered, they are grouped in the same search box chip. But sometimes users _do_ want terms grouped together, such as "New York". So,

- [ ] If a user puts a set of keywords in quotes, then keep them together in a single chip

- [ ] Otherwise, split all keyword terms into their own search box chips

| 1.0 | split keywords unless quoted - Users would like to be able to delete a single term from a keyword search. However, if multiple keywords were entered, they are grouped in the same search box chip. But sometimes users _do_ want terms grouped together, such as "New York". So,

- [ ] If a user puts a set of keywords in quotes, then keep them together in a single chip

- [ ] Otherwise, split all keyword terms into their own search box chips

| priority | split keywords unless quoted users would like to be able to delete a single term from a keyword search however if multiple keywords were entered they are grouped in the same search box chip but sometimes users do want terms grouped together such as new york so if a user puts a set of keywords in quotes then keep them together in a single chip otherwise split all keyword terms into their own search box chips | 1 |

181,790 | 6,664,163,058 | IssuesEvent | 2017-10-02 19:05:21 | classifiedz/classifiedz.github.io | https://api.github.com/repos/classifiedz/classifiedz.github.io | opened | [User Story] User profile page | High Priority Low Risk Medium Point | The user should be able to access their own profile page as well as other peoples profile page. When other users view someone else's profile, the profile page should contain general information about the user as well as all of their ads that they've posted and are still active. When a user views their own profile page, they should have the option to edit the information shown. The user should also be able to view all of their valid ads and delete ads that are no longer valid.

Front-end

- [ ] Profile page

- [ ] General Information

- [ ] Form for changing information

- [ ] Active Ads

- [ ] Option to delete ads

Back-end

- [ ] Accessible through navbar

- [ ] Ads specific to user appear in profile page

- [ ] Functioning edit form | 1.0 | [User Story] User profile page - The user should be able to access their own profile page as well as other peoples profile page. When other users view someone else's profile, the profile page should contain general information about the user as well as all of their ads that they've posted and are still active. When a user views their own profile page, they should have the option to edit the information shown. The user should also be able to view all of their valid ads and delete ads that are no longer valid.

Front-end

- [ ] Profile page

- [ ] General Information

- [ ] Form for changing information

- [ ] Active Ads

- [ ] Option to delete ads

Back-end

- [ ] Accessible through navbar

- [ ] Ads specific to user appear in profile page