Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

307,925 | 23,223,035,032 | IssuesEvent | 2022-08-02 20:14:40 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | closed | Add calls to action with links to the Teleport Cloud signup page | documentation time-to-value | ## Details

Teleport Cloud simplifies a lot of setup work that we describe in the docs, so we can add links to the Teleport Cloud signup page (`https://goteleport.com/signup?source=docs`) in pages within the docs that describe some complex setup process. The `source` param indicates that traffic is coming from the docs so we can gauge the effectiveness of our call to action links.

### Category

- Improve Existing

| 1.0 | Add calls to action with links to the Teleport Cloud signup page - ## Details

Teleport Cloud simplifies a lot of setup work that we describe in the docs, so we can add links to the Teleport Cloud signup page (`https://goteleport.com/signup?source=docs`) in pages within the docs that describe some complex setup process. The `source` param indicates that traffic is coming from the docs so we can gauge the effectiveness of our call to action links.

### Category

- Improve Existing

| non_priority | add calls to action with links to the teleport cloud signup page details teleport cloud simplifies a lot of setup work that we describe in the docs so we can add links to the teleport cloud signup page in pages within the docs that describe some complex setup process the source param indicates that traffic is coming from the docs so we can gauge the effectiveness of our call to action links category improve existing | 0 |

238,582 | 19,726,479,159 | IssuesEvent | 2022-01-13 20:30:33 | urapadmin/kiosk | https://api.github.com/repos/urapadmin/kiosk | reopened | "Master Control not up" message pops up like a bad penny on arch1900 | bug kiosk test-stage Beset | but I have seen it on other machines, as well. I wondered this time, whether or not it has anything to do with user privileges.

Needs a closer look at the mechanism. | 1.0 | "Master Control not up" message pops up like a bad penny on arch1900 - but I have seen it on other machines, as well. I wondered this time, whether or not it has anything to do with user privileges.

Needs a closer look at the mechanism. | non_priority | master control not up message pops up like a bad penny on but i have seen it on other machines as well i wondered this time whether or not it has anything to do with user privileges needs a closer look at the mechanism | 0 |

114,961 | 9,777,099,463 | IssuesEvent | 2019-06-07 08:08:35 | istio/istio | https://api.github.com/repos/istio/istio | closed | Add golden master tests for istio-iptables.sh | area/networking area/test and release | To ensure that the istio-iptables.sh-script remains stable, add golden master tests to istio.

[ ] Configuration Infrastructure

[ ] Docs

[ ] Installation

[X] Networking

[ ] Performance and Scalability

[ ] Policies and Telemetry

[ ] Security

[X] Test and Release

[ ] User Experience

**Additional context**

These tests can also be used to compare different implementations of istio-iptables, e.g. in golang. Related: #14355 | 1.0 | Add golden master tests for istio-iptables.sh - To ensure that the istio-iptables.sh-script remains stable, add golden master tests to istio.

[ ] Configuration Infrastructure

[ ] Docs

[ ] Installation

[X] Networking

[ ] Performance and Scalability

[ ] Policies and Telemetry

[ ] Security

[X] Test and Release

[ ] User Experience

**Additional context**

These tests can also be used to compare different implementations of istio-iptables, e.g. in golang. Related: #14355 | non_priority | add golden master tests for istio iptables sh to ensure that the istio iptables sh script remains stable add golden master tests to istio configuration infrastructure docs installation networking performance and scalability policies and telemetry security test and release user experience additional context these tests can also be used to compare different implementations of istio iptables e g in golang related | 0 |

129,546 | 18,103,311,332 | IssuesEvent | 2021-09-22 16:18:37 | gms-ws-demo/JS-Demo-Sep2021 | https://api.github.com/repos/gms-ws-demo/JS-Demo-Sep2021 | closed | CVE-2016-10540 (High) detected in minimatch-0.3.0.tgz - autoclosed | security vulnerability | ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-0.3.0.tgz</b></p></summary>

<p>a glob matcher in javascript</p>

<p>Library home page: <a href="https://registry.npmjs.org/minimatch/-/minimatch-0.3.0.tgz">https://registry.npmjs.org/minimatch/-/minimatch-0.3.0.tgz</a></p>

<p>Path to dependency file: JS-Demo-Sep2021/package.json</p>

<p>Path to vulnerable library: JS-Demo-Sep2021/node_modules/mocha/node_modules/minimatch/package.json</p>

<p>

Dependency Hierarchy:

- mocha-2.5.3.tgz (Root Library)

- glob-3.2.11.tgz

- :x: **minimatch-0.3.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gms-ws-demo/JS-Demo-Sep2021/commit/e8cd219daa23fb09c60a7e7095b13c9e8372f529">e8cd219daa23fb09c60a7e7095b13c9e8372f529</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.

<p>Publish Date: 2018-05-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-10540>CVE-2016-10540</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nodesecurity.io/advisories/118">https://nodesecurity.io/advisories/118</a></p>

<p>Release Date: 2016-06-20</p>

<p>Fix Resolution: Update to version 3.0.2 or later.</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"minimatch","packageVersion":"0.3.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"mocha:2.5.3;glob:3.2.11;minimatch:0.3.0","isMinimumFixVersionAvailable":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2016-10540","vulnerabilityDetails":"Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-10540","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | CVE-2016-10540 (High) detected in minimatch-0.3.0.tgz - autoclosed - ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-0.3.0.tgz</b></p></summary>

<p>a glob matcher in javascript</p>

<p>Library home page: <a href="https://registry.npmjs.org/minimatch/-/minimatch-0.3.0.tgz">https://registry.npmjs.org/minimatch/-/minimatch-0.3.0.tgz</a></p>

<p>Path to dependency file: JS-Demo-Sep2021/package.json</p>

<p>Path to vulnerable library: JS-Demo-Sep2021/node_modules/mocha/node_modules/minimatch/package.json</p>

<p>

Dependency Hierarchy:

- mocha-2.5.3.tgz (Root Library)

- glob-3.2.11.tgz

- :x: **minimatch-0.3.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/gms-ws-demo/JS-Demo-Sep2021/commit/e8cd219daa23fb09c60a7e7095b13c9e8372f529">e8cd219daa23fb09c60a7e7095b13c9e8372f529</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.

<p>Publish Date: 2018-05-31

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-10540>CVE-2016-10540</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nodesecurity.io/advisories/118">https://nodesecurity.io/advisories/118</a></p>

<p>Release Date: 2016-06-20</p>

<p>Fix Resolution: Update to version 3.0.2 or later.</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"minimatch","packageVersion":"0.3.0","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"mocha:2.5.3;glob:3.2.11;minimatch:0.3.0","isMinimumFixVersionAvailable":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2016-10540","vulnerabilityDetails":"Minimatch is a minimal matching utility that works by converting glob expressions into JavaScript `RegExp` objects. The primary function, `minimatch(path, pattern)` in Minimatch 3.0.1 and earlier is vulnerable to ReDoS in the `pattern` parameter.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-10540","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_priority | cve high detected in minimatch tgz autoclosed cve high severity vulnerability vulnerable library minimatch tgz a glob matcher in javascript library home page a href path to dependency file js demo package json path to vulnerable library js demo node modules mocha node modules minimatch package json dependency hierarchy mocha tgz root library glob tgz x minimatch tgz vulnerable library found in head commit a href found in base branch master vulnerability details minimatch is a minimal matching utility that works by converting glob expressions into javascript regexp objects the primary function minimatch path pattern in minimatch and earlier is vulnerable to redos in the pattern parameter publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution update to version or later isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree mocha glob minimatch isminimumfixversionavailable false basebranches vulnerabilityidentifier cve vulnerabilitydetails minimatch is a minimal matching utility that works by converting glob expressions into javascript regexp objects the primary function minimatch path pattern in minimatch and earlier is vulnerable to redos in the pattern parameter vulnerabilityurl | 0 |

677,078 | 23,149,350,869 | IssuesEvent | 2022-07-29 06:35:35 | trustwallet/wallet-core | https://api.github.com/repos/trustwallet/wallet-core | closed | [Wasm] Can't import module error | bug priority:medium size:small | Reported from https://github.com/robot-ux/wallet-core-example/issues/3

```

Uncaught (in promise) Error: Cannot find module './generated/core_proto'

at webpackEmptyContext (dist|sync:2:1)

at index.js:18:1

at index.js:8:1

at ./node_modules/@trustwallet/wallet-core/dist/index.js (index.js:14:1)

at options.factory (react refresh:6:1)

at __webpack_require__ (bootstrap:24:1)

at fn (hot module replacement:62:1)

at initWasm.ts:15:1

at new Promise (<anonymous>)

at initWasm (initWasm.ts:3:1)

``` | 1.0 | [Wasm] Can't import module error - Reported from https://github.com/robot-ux/wallet-core-example/issues/3

```

Uncaught (in promise) Error: Cannot find module './generated/core_proto'

at webpackEmptyContext (dist|sync:2:1)

at index.js:18:1

at index.js:8:1

at ./node_modules/@trustwallet/wallet-core/dist/index.js (index.js:14:1)

at options.factory (react refresh:6:1)

at __webpack_require__ (bootstrap:24:1)

at fn (hot module replacement:62:1)

at initWasm.ts:15:1

at new Promise (<anonymous>)

at initWasm (initWasm.ts:3:1)

``` | priority | can t import module error reported from uncaught in promise error cannot find module generated core proto at webpackemptycontext dist sync at index js at index js at node modules trustwallet wallet core dist index js index js at options factory react refresh at webpack require bootstrap at fn hot module replacement at initwasm ts at new promise at initwasm initwasm ts | 1 |

302,655 | 9,285,098,806 | IssuesEvent | 2019-03-21 05:18:22 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Regression 1.9 -> 2.0: linkifiers with dashes no longer work | area: markdown bug in progress priority: high | After the upgrade to 2.0, we've noticed that all of our linkifiers with dashes in the regex pattern have stopped working. The `-` might be a red herring, but that's what we've noticed between working linkifiers and non-working linkifiers.

Not working:

```

(?P<issue>[A-Z][A-Z_0-9]*-\d+) # HELP-4568

http://jira.example.com/browse/%(issue)s

(?P<id>[0-9a-f-]{1,64}@[a-zA-Z]{1,32}) # c9f4fb80-a9df-4e36-a202-5a2aa0853681@Thing

http://github.example.com/pages/swallitsch/resourceidentifier?id=%(id)s

```

Working:

```

(?P<subreddit>\/r\/[a-zA-Z]+) # /r/programming

http://www.reddit.com/%(subreddit)s

(?P<word>[tT]hanks|[tT]hank[- ]you|:thx:|thx) # Thanks

https://thx.youearnedit.com/?%(word)s

```

We've tried escaping the dashes in the regex and deleting and re-adding the regexes.

| 1.0 | Regression 1.9 -> 2.0: linkifiers with dashes no longer work - After the upgrade to 2.0, we've noticed that all of our linkifiers with dashes in the regex pattern have stopped working. The `-` might be a red herring, but that's what we've noticed between working linkifiers and non-working linkifiers.

Not working:

```

(?P<issue>[A-Z][A-Z_0-9]*-\d+) # HELP-4568

http://jira.example.com/browse/%(issue)s

(?P<id>[0-9a-f-]{1,64}@[a-zA-Z]{1,32}) # c9f4fb80-a9df-4e36-a202-5a2aa0853681@Thing

http://github.example.com/pages/swallitsch/resourceidentifier?id=%(id)s

```

Working:

```

(?P<subreddit>\/r\/[a-zA-Z]+) # /r/programming

http://www.reddit.com/%(subreddit)s

(?P<word>[tT]hanks|[tT]hank[- ]you|:thx:|thx) # Thanks

https://thx.youearnedit.com/?%(word)s

```

We've tried escaping the dashes in the regex and deleting and re-adding the regexes.

| priority | regression linkifiers with dashes no longer work after the upgrade to we ve noticed that all of our linkifiers with dashes in the regex pattern have stopped working the might be a red herring but that s what we ve noticed between working linkifiers and non working linkifiers not working p d help p thing working p r r programming p hanks hank you thx thx thanks we ve tried escaping the dashes in the regex and deleting and re adding the regexes | 1 |

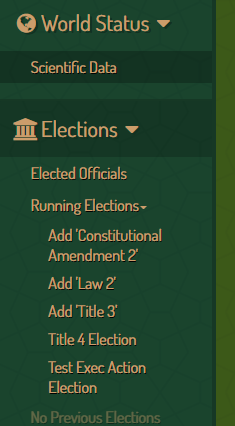

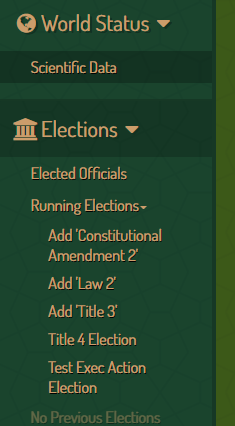

441,864 | 12,733,739,241 | IssuesEvent | 2020-06-25 12:49:46 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Current elections not showing up | Category: Web Priority: Medium Status: Fixed | Run 'generate test elections' in swagger, then this is empty:

Once I click the arrow, they appear:

Check other lists getting displayed here too.

| 1.0 | Current elections not showing up - Run 'generate test elections' in swagger, then this is empty:

Once I click the arrow, they appear:

Check other lists getting displayed here too.

| priority | current elections not showing up run generate test elections in swagger then this is empty once i click the arrow they appear check other lists getting displayed here too | 1 |

455,743 | 13,132,147,687 | IssuesEvent | 2020-08-06 18:21:03 | googleapis/google-auth-library-nodejs | https://api.github.com/repos/googleapis/google-auth-library-nodejs | closed | Synthesis failed for google-auth-library-nodejs | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate google-auth-library-nodejs. :broken_heart:

Here's the output from running `synth.py`:

```

B, cookie=0, name=docs-devsite.sh>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_ATTRIB, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/test.cfg', wd=47, mask=IN_MODIFY, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/test.cfg', wd=47, mask=IN_ATTRIB, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/common.cfg', wd=47, mask=IN_MODIFY, cookie=0, name=common.cfg>

2020-08-06 04:09:57,577 synthtool [DEBUG] > Installing dependencies...

DEBUG:synthtool:Installing dependencies...

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/common.cfg', wd=47, mask=IN_ATTRIB, cookie=0, name=common.cfg>

npm WARN deprecated istanbul@0.4.5: This module is no longer maintained, try this instead:

npm WARN deprecated npm i nyc

npm WARN deprecated Visit https://istanbul.js.org/integrations for other alternatives.

npm WARN deprecated chokidar@2.1.8: Chokidar 2 will break on node v14+. Upgrade to chokidar 3 with 15x less dependencies.

npm WARN deprecated resolve-url@0.2.1: https://github.com/lydell/resolve-url#deprecated

npm WARN deprecated urix@0.1.0: Please see https://github.com/lydell/urix#deprecated

npm WARN deprecated fsevents@1.2.13: fsevents 1 will break on node v14+ and could be using insecure binaries. Upgrade to fsevents 2.

npm ERR! code E404

npm ERR! 404 Not Found - GET https://registry.npmjs.org/@compodoc%2fcompodoc - Not found

npm ERR! 404

npm ERR! 404 '@compodoc/compodoc@^1.1.7' is not in the npm registry.

npm ERR! 404 You should bug the author to publish it (or use the name yourself!)

npm ERR! 404 It was specified as a dependency of 'google-auth-library-nodejs'

npm ERR! 404

npm ERR! 404 Note that you can also install from a

npm ERR! 404 tarball, folder, http url, or git url.

npm ERR! A complete log of this run can be found in:

npm ERR! /home/kbuilder/.npm/_logs/2020-08-06T11_10_04_702Z-debug.log

2020-08-06 04:10:04,718 synthtool [ERROR] > Failed executing npm install:

None

ERROR:synthtool:Failed executing npm install:

None

2020-08-06 04:10:04,733 synthtool [DEBUG] > Wrote metadata to synth.metadata.

DEBUG:synthtool:Wrote metadata to synth.metadata.

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 102, in <module>

main()

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 829, in __call__

return self.main(*args, **kwargs)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 94, in main

spec.loader.exec_module(synth_module) # type: ignore

File "<frozen importlib._bootstrap_external>", line 678, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/home/kbuilder/.cache/synthtool/google-auth-library-nodejs/synth.py", line 12, in <module>

node.install()

File "/tmpfs/src/github/synthtool/synthtool/languages/node.py", line 167, in install

shell.run(["npm", "install"], hide_output=hide_output)

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 39, in run

raise exc

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 33, in run

encoding="utf-8",

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 438, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['npm', 'install']' returned non-zero exit status 1.

2020-08-06 04:10:04,785 autosynth [ERROR] > Synthesis failed

2020-08-06 04:10:04,785 autosynth [DEBUG] > Running: git reset --hard HEAD

HEAD is now at a7e5701 fix: migrate token info API to not pass token in query string (#991)

2020-08-06 04:10:04,795 autosynth [DEBUG] > Running: git checkout autosynth

Switched to branch 'autosynth'

2020-08-06 04:10:04,801 autosynth [DEBUG] > Running: git clean -fdx

Removing __pycache__/

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 690, in <module>

main()

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 539, in main

return _inner_main(temp_dir)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 670, in _inner_main

commit_count = synthesize_loop(x, multiple_prs, change_pusher, synthesizer)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 375, in synthesize_loop

has_changes = toolbox.synthesize_version_in_new_branch(synthesizer, youngest)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 273, in synthesize_version_in_new_branch

synthesizer.synthesize(synth_log_path, self.environ)

File "/tmpfs/src/github/synthtool/autosynth/synthesizer.py", line 120, in synthesize

synth_proc.check_returncode() # Raise an exception.

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 389, in check_returncode

self.stderr)

subprocess.CalledProcessError: Command '['/tmpfs/src/github/synthtool/env/bin/python3', '-m', 'synthtool', '--metadata', 'synth.metadata', 'synth.py', '--']' returned non-zero exit status 1.

```

Google internal developers can see the full log [here](http://sponge2/results/invocations/76bb7f6f-4d47-4888-b0d2-a8761a276cc8/targets/github%2Fsynthtool;config=default/tests;query=google-auth-library-nodejs;failed=false).

| 1.0 | Synthesis failed for google-auth-library-nodejs - Hello! Autosynth couldn't regenerate google-auth-library-nodejs. :broken_heart:

Here's the output from running `synth.py`:

```

B, cookie=0, name=docs-devsite.sh>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/publish.cfg', wd=41, mask=IN_ATTRIB, cookie=0, name=publish.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/release/docs.cfg', wd=41, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/samples-test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=samples-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/lint.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=lint.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/common.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=common.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/system-test.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=system-test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_MODIFY, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node10/docs.cfg', wd=45, mask=IN_ATTRIB, cookie=0, name=docs.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/test.cfg', wd=47, mask=IN_MODIFY, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/test.cfg', wd=47, mask=IN_ATTRIB, cookie=0, name=test.cfg>

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/common.cfg', wd=47, mask=IN_MODIFY, cookie=0, name=common.cfg>

2020-08-06 04:09:57,577 synthtool [DEBUG] > Installing dependencies...

DEBUG:synthtool:Installing dependencies...

DEBUG:watchdog.observers.inotify_buffer:in-event <InotifyEvent: src_path=b'./.kokoro/continuous/node12/common.cfg', wd=47, mask=IN_ATTRIB, cookie=0, name=common.cfg>

npm WARN deprecated istanbul@0.4.5: This module is no longer maintained, try this instead:

npm WARN deprecated npm i nyc

npm WARN deprecated Visit https://istanbul.js.org/integrations for other alternatives.

npm WARN deprecated chokidar@2.1.8: Chokidar 2 will break on node v14+. Upgrade to chokidar 3 with 15x less dependencies.

npm WARN deprecated resolve-url@0.2.1: https://github.com/lydell/resolve-url#deprecated

npm WARN deprecated urix@0.1.0: Please see https://github.com/lydell/urix#deprecated

npm WARN deprecated fsevents@1.2.13: fsevents 1 will break on node v14+ and could be using insecure binaries. Upgrade to fsevents 2.

npm ERR! code E404

npm ERR! 404 Not Found - GET https://registry.npmjs.org/@compodoc%2fcompodoc - Not found

npm ERR! 404

npm ERR! 404 '@compodoc/compodoc@^1.1.7' is not in the npm registry.

npm ERR! 404 You should bug the author to publish it (or use the name yourself!)

npm ERR! 404 It was specified as a dependency of 'google-auth-library-nodejs'

npm ERR! 404

npm ERR! 404 Note that you can also install from a

npm ERR! 404 tarball, folder, http url, or git url.

npm ERR! A complete log of this run can be found in:

npm ERR! /home/kbuilder/.npm/_logs/2020-08-06T11_10_04_702Z-debug.log

2020-08-06 04:10:04,718 synthtool [ERROR] > Failed executing npm install:

None

ERROR:synthtool:Failed executing npm install:

None

2020-08-06 04:10:04,733 synthtool [DEBUG] > Wrote metadata to synth.metadata.

DEBUG:synthtool:Wrote metadata to synth.metadata.

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 102, in <module>

main()

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 829, in __call__

return self.main(*args, **kwargs)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/tmpfs/src/github/synthtool/env/lib/python3.6/site-packages/click/core.py", line 610, in invoke

return callback(*args, **kwargs)

File "/tmpfs/src/github/synthtool/synthtool/__main__.py", line 94, in main

spec.loader.exec_module(synth_module) # type: ignore

File "<frozen importlib._bootstrap_external>", line 678, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/home/kbuilder/.cache/synthtool/google-auth-library-nodejs/synth.py", line 12, in <module>

node.install()

File "/tmpfs/src/github/synthtool/synthtool/languages/node.py", line 167, in install

shell.run(["npm", "install"], hide_output=hide_output)

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 39, in run

raise exc

File "/tmpfs/src/github/synthtool/synthtool/shell.py", line 33, in run

encoding="utf-8",

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 438, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['npm', 'install']' returned non-zero exit status 1.

2020-08-06 04:10:04,785 autosynth [ERROR] > Synthesis failed

2020-08-06 04:10:04,785 autosynth [DEBUG] > Running: git reset --hard HEAD

HEAD is now at a7e5701 fix: migrate token info API to not pass token in query string (#991)

2020-08-06 04:10:04,795 autosynth [DEBUG] > Running: git checkout autosynth

Switched to branch 'autosynth'

2020-08-06 04:10:04,801 autosynth [DEBUG] > Running: git clean -fdx

Removing __pycache__/

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 690, in <module>

main()

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 539, in main

return _inner_main(temp_dir)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 670, in _inner_main

commit_count = synthesize_loop(x, multiple_prs, change_pusher, synthesizer)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 375, in synthesize_loop

has_changes = toolbox.synthesize_version_in_new_branch(synthesizer, youngest)

File "/tmpfs/src/github/synthtool/autosynth/synth.py", line 273, in synthesize_version_in_new_branch

synthesizer.synthesize(synth_log_path, self.environ)

File "/tmpfs/src/github/synthtool/autosynth/synthesizer.py", line 120, in synthesize

synth_proc.check_returncode() # Raise an exception.

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/subprocess.py", line 389, in check_returncode

self.stderr)

subprocess.CalledProcessError: Command '['/tmpfs/src/github/synthtool/env/bin/python3', '-m', 'synthtool', '--metadata', 'synth.metadata', 'synth.py', '--']' returned non-zero exit status 1.

```

Google internal developers can see the full log [here](http://sponge2/results/invocations/76bb7f6f-4d47-4888-b0d2-a8761a276cc8/targets/github%2Fsynthtool;config=default/tests;query=google-auth-library-nodejs;failed=false).

| priority | synthesis failed for google auth library nodejs hello autosynth couldn t regenerate google auth library nodejs broken heart here s the output from running synth py b cookie name docs devsite sh debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event debug watchdog observers inotify buffer in event synthtool installing dependencies debug synthtool installing dependencies debug watchdog observers inotify buffer in event npm warn deprecated istanbul this module is no longer maintained try this instead npm warn deprecated npm i nyc npm warn deprecated visit for other alternatives npm warn deprecated chokidar chokidar will break on node upgrade to chokidar with less dependencies npm warn deprecated resolve url npm warn deprecated urix please see npm warn deprecated fsevents fsevents will break on node and could be using insecure binaries upgrade to fsevents npm err code npm err not found get not found npm err npm err compodoc compodoc is not in the npm registry npm err you should bug the author to publish it or use the name yourself npm err it was specified as a dependency of google auth library nodejs npm err npm err note that you can also install from a npm err tarball folder http url or git url npm err a complete log of this run can be found in npm err home kbuilder npm logs debug log synthtool failed executing npm install none error synthtool failed executing npm install none synthtool wrote metadata to synth metadata debug synthtool wrote metadata to synth metadata traceback most recent call last file home kbuilder pyenv versions lib runpy py line in run module as main main mod spec file home kbuilder pyenv versions lib runpy py line in run code exec code run globals file tmpfs src github synthtool synthtool main py line in main file tmpfs src github synthtool env lib site packages click core py line in call return self main args kwargs file tmpfs src github synthtool env lib site packages click core py line in main rv self invoke ctx file tmpfs src github synthtool env lib site packages click core py line in invoke return ctx invoke self callback ctx params file tmpfs src github synthtool env lib site packages click core py line in invoke return callback args kwargs file tmpfs src github synthtool synthtool main py line in main spec loader exec module synth module type ignore file line in exec module file line in call with frames removed file home kbuilder cache synthtool google auth library nodejs synth py line in node install file tmpfs src github synthtool synthtool languages node py line in install shell run hide output hide output file tmpfs src github synthtool synthtool shell py line in run raise exc file tmpfs src github synthtool synthtool shell py line in run encoding utf file home kbuilder pyenv versions lib subprocess py line in run output stdout stderr stderr subprocess calledprocesserror command returned non zero exit status autosynth synthesis failed autosynth running git reset hard head head is now at fix migrate token info api to not pass token in query string autosynth running git checkout autosynth switched to branch autosynth autosynth running git clean fdx removing pycache traceback most recent call last file home kbuilder pyenv versions lib runpy py line in run module as main main mod spec file home kbuilder pyenv versions lib runpy py line in run code exec code run globals file tmpfs src github synthtool autosynth synth py line in main file tmpfs src github synthtool autosynth synth py line in main return inner main temp dir file tmpfs src github synthtool autosynth synth py line in inner main commit count synthesize loop x multiple prs change pusher synthesizer file tmpfs src github synthtool autosynth synth py line in synthesize loop has changes toolbox synthesize version in new branch synthesizer youngest file tmpfs src github synthtool autosynth synth py line in synthesize version in new branch synthesizer synthesize synth log path self environ file tmpfs src github synthtool autosynth synthesizer py line in synthesize synth proc check returncode raise an exception file home kbuilder pyenv versions lib subprocess py line in check returncode self stderr subprocess calledprocesserror command returned non zero exit status google internal developers can see the full log | 1 |

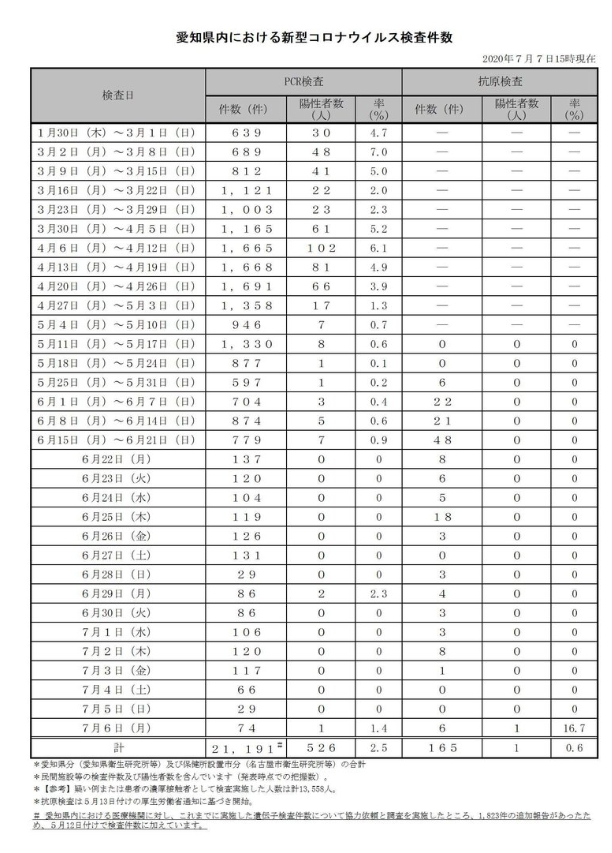

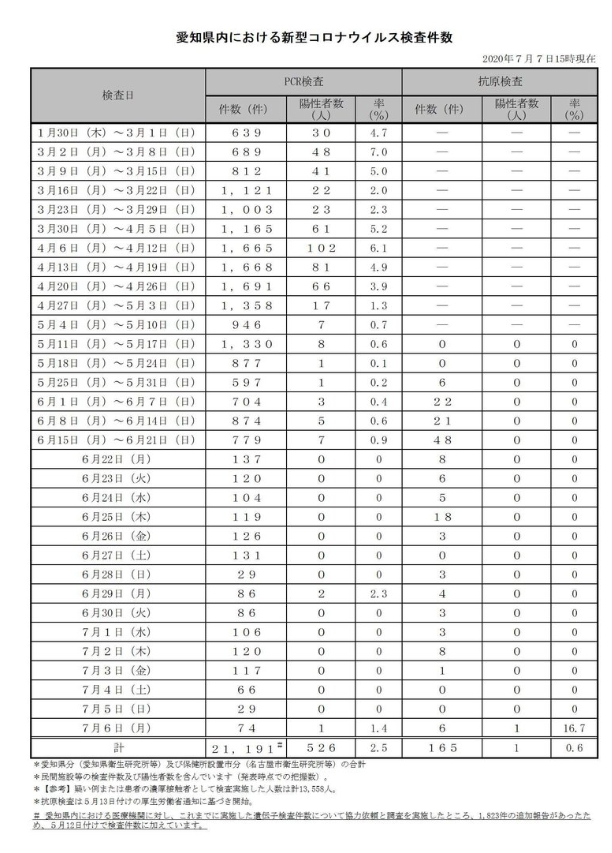

446,238 | 12,842,866,536 | IssuesEvent | 2020-07-08 03:09:19 | code4nagoya/covid19 | https://api.github.com/repos/code4nagoya/covid19 | opened | 愛知県HPの検査件数表に「抗原検査」が追加されたことによる対応 | improve priority high | ## 改善詳細 / Details of Improvement

[愛知県HP の 3.愛知県内の検査件数](https://www.pref.aichi.jp/site/covid19-aichi/kansensya-kensa.html) に「抗原検査」の列が追加されました。

それにより以下の事象の影響が発生します。

1. [優先度:高]表形式が発生したことにより(?)、定期自動スクレイピングが失敗している ※1

3. [優先度:低]「検査実施件数」グラフに、抗原検査の検査件数も表現する(「件数」としてなら、PCRと抗原検査の積み上げ防棒グラフでよい?) ※2

元々、検査件数表の情報は「件数」しか使っていませんでした(陽性者数は未使用)。そのため他の影響は無いと思います。

### ※1

https://github.com/code4nagoya/covid19/pull/712

### ※2

> 「抗原検査」ですね。「PCR検査」を代替するもので、短時間で判定出来ますが、感度が劣る(一定のウイルス量が必要)ようです。

> 「抗原検査」で陽性なら感染確定とし、陰性となっても症状から感染を疑う場合「PCR検査」を引き続き行うようです。

> https://news.yahoo.co.jp/byline/kutsunasatoshi/20200517-00178720/

> 「検査件数」は、情報として特に分けて見る必要は無いと考えます。重要なのは「検査人数」です。前述の例のように「抗原検査」→「PCR検査」とした場合、「検査人数」は1人なので、『愛知県は日毎の「検査人数」を公表して下さい』です。

## スクリーンショット / Screenshot

## 動作環境・ブラウザ / Environment

- macOS / Windows / Linux / iOS / Android

- Chrome / Safari / Firefox / Edge / Internet Explorer

| 1.0 | 愛知県HPの検査件数表に「抗原検査」が追加されたことによる対応 - ## 改善詳細 / Details of Improvement

[愛知県HP の 3.愛知県内の検査件数](https://www.pref.aichi.jp/site/covid19-aichi/kansensya-kensa.html) に「抗原検査」の列が追加されました。

それにより以下の事象の影響が発生します。

1. [優先度:高]表形式が発生したことにより(?)、定期自動スクレイピングが失敗している ※1

3. [優先度:低]「検査実施件数」グラフに、抗原検査の検査件数も表現する(「件数」としてなら、PCRと抗原検査の積み上げ防棒グラフでよい?) ※2

元々、検査件数表の情報は「件数」しか使っていませんでした(陽性者数は未使用)。そのため他の影響は無いと思います。

### ※1

https://github.com/code4nagoya/covid19/pull/712

### ※2

> 「抗原検査」ですね。「PCR検査」を代替するもので、短時間で判定出来ますが、感度が劣る(一定のウイルス量が必要)ようです。

> 「抗原検査」で陽性なら感染確定とし、陰性となっても症状から感染を疑う場合「PCR検査」を引き続き行うようです。

> https://news.yahoo.co.jp/byline/kutsunasatoshi/20200517-00178720/

> 「検査件数」は、情報として特に分けて見る必要は無いと考えます。重要なのは「検査人数」です。前述の例のように「抗原検査」→「PCR検査」とした場合、「検査人数」は1人なので、『愛知県は日毎の「検査人数」を公表して下さい』です。

## スクリーンショット / Screenshot

## 動作環境・ブラウザ / Environment

- macOS / Windows / Linux / iOS / Android

- Chrome / Safari / Firefox / Edge / Internet Explorer

| priority | 愛知県hpの検査件数表に「抗原検査」が追加されたことによる対応 改善詳細 details of improvement に「抗原検査」の列が追加されました。 それにより以下の事象の影響が発生します。 表形式が発生したことにより 、定期自動スクレイピングが失敗している ※ 「検査実施件数」グラフに、抗原検査の検査件数も表現する(「件数」としてなら、pcrと抗原検査の積み上げ防棒グラフでよい?) ※ 元々、検査件数表の情報は「件数」しか使っていませんでした(陽性者数は未使用)。そのため他の影響は無いと思います。 ※ ※ 「抗原検査」ですね。「pcr検査」を代替するもので、短時間で判定出来ますが、感度が劣る 一定のウイルス量が必要 ようです。 「抗原検査」で陽性なら感染確定とし、陰性となっても症状から感染を疑う場合「pcr検査」を引き続き行うようです。 「検査件数」は、情報として特に分けて見る必要は無いと考えます。重要なのは「検査人数」です。前述の例のように「抗原検査」→「pcr検査」とした場合、「検査人数」 、『愛知県は日毎の「検査人数」を公表して下さい』です。 スクリーンショット screenshot 動作環境・ブラウザ environment macos windows linux ios android chrome safari firefox edge internet explorer | 1 |

323,591 | 23,955,876,035 | IssuesEvent | 2022-09-12 14:55:25 | Dasharo/dasharo-issues | https://api.github.com/repos/Dasharo/dasharo-issues | closed | Talos II - "Testing firmware images without flashing" instructions are not working | documentation raptor-cs_talos-2 | At https://docs.dasharo.com/variants/talos_2/installation-manual/#testing-firmware-images-without-flashing

3. Mount the file as flash device:

1. mboxctl --backend file:/tmp/flash.pnor

1. root@talos:~# mboxctl --backend file:/tmp/flash.pnor

1. Failed to resolve path: No such file or directory

2. root@talos:~# mboxctl --version

3. Mailbox Control V2.1.1

2. Those instructions are not working.

1. As documented from https://wiki.raptorcs.com/wiki/Compiling_Firmware#Running_the_firmware_temporarily

1. systemctl stop mboxd

2. Point mboxd to prepared talos.pnor image

1. mboxd -f 64M -w 1M -b file:/tmp/talos.pnor -v

3. Open another ssh to BMC

1. mboxctl --lpc-state

1. should show “LPC Bus Maps: BMC Memory”

2. obmcutil poweron

3. When done testing

1. obmcutil poweroff

2. systemctl start mboxd

3. mboxctl --lpc-state

1. Should show: “LPC Bus Maps: Flash Device” | 1.0 | Talos II - "Testing firmware images without flashing" instructions are not working - At https://docs.dasharo.com/variants/talos_2/installation-manual/#testing-firmware-images-without-flashing

3. Mount the file as flash device:

1. mboxctl --backend file:/tmp/flash.pnor

1. root@talos:~# mboxctl --backend file:/tmp/flash.pnor

1. Failed to resolve path: No such file or directory

2. root@talos:~# mboxctl --version

3. Mailbox Control V2.1.1

2. Those instructions are not working.

1. As documented from https://wiki.raptorcs.com/wiki/Compiling_Firmware#Running_the_firmware_temporarily

1. systemctl stop mboxd

2. Point mboxd to prepared talos.pnor image

1. mboxd -f 64M -w 1M -b file:/tmp/talos.pnor -v

3. Open another ssh to BMC

1. mboxctl --lpc-state

1. should show “LPC Bus Maps: BMC Memory”

2. obmcutil poweron

3. When done testing

1. obmcutil poweroff

2. systemctl start mboxd

3. mboxctl --lpc-state

1. Should show: “LPC Bus Maps: Flash Device” | non_priority | talos ii testing firmware images without flashing instructions are not working at mount the file as flash device mboxctl backend file tmp flash pnor root talos mboxctl backend file tmp flash pnor failed to resolve path no such file or directory root talos mboxctl version mailbox control those instructions are not working as documented from systemctl stop mboxd point mboxd to prepared talos pnor image mboxd f w b file tmp talos pnor v open another ssh to bmc mboxctl lpc state should show “lpc bus maps bmc memory” obmcutil poweron when done testing obmcutil poweroff systemctl start mboxd mboxctl lpc state should show “lpc bus maps flash device” | 0 |

31,087 | 4,231,119,215 | IssuesEvent | 2016-07-04 14:43:19 | governmentbg/opendata-cms | https://api.github.com/repos/governmentbg/opendata-cms | closed | Прецизиране на размера на шрифтовете в хедъра | design low priority question | Казахме, че не гоним pixel-perfect matching на дизайна с този на портала за отворени данни и това все още е валидно, но шрифтовете в хедъра се изрисуват по видимо различен начин на двете места:

Портал:

<img width="985" alt="screenshot 2016-06-29 17 47 12" src="https://cloud.githubusercontent.com/assets/129307/16456720/8d8ad26e-3e21-11e6-887a-3ab2172b7c77.png">

CMS:

<img width="980" alt="screenshot 2016-06-29 17 47 19" src="https://cloud.githubusercontent.com/assets/129307/16456722/8fa75cca-3e21-11e6-9c32-4bdab1619774.png">

Приемам предложения дали лесно можем да направим нещо, така че да сближим двете визии още малко.

Неща, които на мен ми хрумват след бърза проверка:

- `font-weight: 900;` вместо `bold` на почернената част от заглавния текст в хедъра.

- Донагласяне на `line-height`, `font-size`, може би и `font-weight` или опциите за изчертаване и antialiasing.

Виждам, че почти навсякъде се ползва `rem`. Този `rem` стъпва на размера на шрифта на `<html>`, ако не се лъжа. Там е зададено `110%`. Това променя ли се някога, при някакви условия? Дали не е по-добре да се стъпи на фиксиран размер на шрифта в `<html>`, който да бъде увеличаван/намаляван с media queries, в зависимост от размера на екрана? | 1.0 | Прецизиране на размера на шрифтовете в хедъра - Казахме, че не гоним pixel-perfect matching на дизайна с този на портала за отворени данни и това все още е валидно, но шрифтовете в хедъра се изрисуват по видимо различен начин на двете места:

Портал:

<img width="985" alt="screenshot 2016-06-29 17 47 12" src="https://cloud.githubusercontent.com/assets/129307/16456720/8d8ad26e-3e21-11e6-887a-3ab2172b7c77.png">

CMS:

<img width="980" alt="screenshot 2016-06-29 17 47 19" src="https://cloud.githubusercontent.com/assets/129307/16456722/8fa75cca-3e21-11e6-9c32-4bdab1619774.png">

Приемам предложения дали лесно можем да направим нещо, така че да сближим двете визии още малко.

Неща, които на мен ми хрумват след бърза проверка:

- `font-weight: 900;` вместо `bold` на почернената част от заглавния текст в хедъра.

- Донагласяне на `line-height`, `font-size`, може би и `font-weight` или опциите за изчертаване и antialiasing.

Виждам, че почти навсякъде се ползва `rem`. Този `rem` стъпва на размера на шрифта на `<html>`, ако не се лъжа. Там е зададено `110%`. Това променя ли се някога, при някакви условия? Дали не е по-добре да се стъпи на фиксиран размер на шрифта в `<html>`, който да бъде увеличаван/намаляван с media queries, в зависимост от размера на екрана? | non_priority | прецизиране на размера на шрифтовете в хедъра казахме че не гоним pixel perfect matching на дизайна с този на портала за отворени данни и това все още е валидно но шрифтовете в хедъра се изрисуват по видимо различен начин на двете места портал img width alt screenshot src cms img width alt screenshot src приемам предложения дали лесно можем да направим нещо така че да сближим двете визии още малко неща които на мен ми хрумват след бърза проверка font weight вместо bold на почернената част от заглавния текст в хедъра донагласяне на line height font size може би и font weight или опциите за изчертаване и antialiasing виждам че почти навсякъде се ползва rem този rem стъпва на размера на шрифта на ако не се лъжа там е зададено това променя ли се някога при някакви условия дали не е по добре да се стъпи на фиксиран размер на шрифта в който да бъде увеличаван намаляван с media queries в зависимост от размера на екрана | 0 |

635,627 | 20,423,849,592 | IssuesEvent | 2022-02-24 00:15:29 | aws/aws-node-termination-handler | https://api.github.com/repos/aws/aws-node-termination-handler | closed | Incorrectly pulls amd64 image on arm64 machine. | Type: Bug Priority: Medium | **Describe the bug**

On an arm machine when you pull the multi-arch image, it pulls the amd64 image instead of the arm64 image.

**Steps to reproduce**

Some weird behavior I'm noticing with upstream amazon/aws-node-termination-handler

On inspecting the manifest it's clearly a multi-arch image and does have an arm64 arch version available.

```

$ docker manifest inspect amazon/aws-node-termination-handler:v1.6.1

{

"schemaVersion": 2,

"mediaType": "application/vnd.docker.distribution.manifest.list.v2+json",

"manifests": [

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:7e91ba3ff76e3c540f8e2f1d3935b61fb05f831a9c36f42961a5d0e878e7c8a4",

"platform": {

"architecture": "amd64",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:f83038b59db9cebe1b1904fc6f351e82f8aa9e6cdb09bd89d34996998ad5ab16",

"platform": {

"architecture": "arm",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:c3e1c2d17a05c8ecb661658bd179be3784fe605da58ab7940e1303c0fb8eda70",

"platform": {

"architecture": "arm64",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 1752,

"digest": "sha256:2a72849bcd7c46518523072f8ad3453c451030a1ddfd2dd9c95bdae3ffdfdc8e",

"platform": {

"architecture": "amd64",

"os": "windows",

"os.version": "10.0.17763.1282"

}

}

]

}

```

But when I pull the image on my arm64 machine, it pulls the amd64 image instead of the arm64 image.

```

$ docker pull amazon/aws-node-termination-handler:v1.6.1

$ docker image inspect amazon/aws-node-termination-handler:v1.6.1

.....

],

"OnBuild": null,

"Labels": null

},

"Architecture": "amd64",

"Os": "linux",

"Size": 36635931,

"VirtualSize": 36635931,

"GraphDriver": {

....

```

**Expected outcome**

Architecture should be arm64 instead of amd64.

**Application Logs**

The log output when experiencing the issue.

**Environment**

* NTH App Version:

* NTH Mode (IMDS/Queue processor):

* OS/Arch: MacOS/arm64

* Kubernetes version:

* Installation method:

| 1.0 | Incorrectly pulls amd64 image on arm64 machine. - **Describe the bug**

On an arm machine when you pull the multi-arch image, it pulls the amd64 image instead of the arm64 image.

**Steps to reproduce**

Some weird behavior I'm noticing with upstream amazon/aws-node-termination-handler

On inspecting the manifest it's clearly a multi-arch image and does have an arm64 arch version available.

```

$ docker manifest inspect amazon/aws-node-termination-handler:v1.6.1

{

"schemaVersion": 2,

"mediaType": "application/vnd.docker.distribution.manifest.list.v2+json",

"manifests": [

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:7e91ba3ff76e3c540f8e2f1d3935b61fb05f831a9c36f42961a5d0e878e7c8a4",

"platform": {

"architecture": "amd64",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:f83038b59db9cebe1b1904fc6f351e82f8aa9e6cdb09bd89d34996998ad5ab16",

"platform": {

"architecture": "arm",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 947,

"digest": "sha256:c3e1c2d17a05c8ecb661658bd179be3784fe605da58ab7940e1303c0fb8eda70",

"platform": {

"architecture": "arm64",

"os": "linux"

}

},

{

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"size": 1752,

"digest": "sha256:2a72849bcd7c46518523072f8ad3453c451030a1ddfd2dd9c95bdae3ffdfdc8e",

"platform": {

"architecture": "amd64",

"os": "windows",

"os.version": "10.0.17763.1282"

}

}

]

}

```

But when I pull the image on my arm64 machine, it pulls the amd64 image instead of the arm64 image.

```

$ docker pull amazon/aws-node-termination-handler:v1.6.1

$ docker image inspect amazon/aws-node-termination-handler:v1.6.1

.....

],

"OnBuild": null,

"Labels": null

},

"Architecture": "amd64",

"Os": "linux",

"Size": 36635931,

"VirtualSize": 36635931,

"GraphDriver": {

....

```

**Expected outcome**

Architecture should be arm64 instead of amd64.

**Application Logs**

The log output when experiencing the issue.

**Environment**

* NTH App Version:

* NTH Mode (IMDS/Queue processor):

* OS/Arch: MacOS/arm64

* Kubernetes version:

* Installation method:

| priority | incorrectly pulls image on machine describe the bug on an arm machine when you pull the multi arch image it pulls the image instead of the image steps to reproduce some weird behavior i m noticing with upstream amazon aws node termination handler on inspecting the manifest it s clearly a multi arch image and does have an arch version available docker manifest inspect amazon aws node termination handler schemaversion mediatype application vnd docker distribution manifest list json manifests mediatype application vnd docker distribution manifest json size digest platform architecture os linux mediatype application vnd docker distribution manifest json size digest platform architecture arm os linux mediatype application vnd docker distribution manifest json size digest platform architecture os linux mediatype application vnd docker distribution manifest json size digest platform architecture os windows os version but when i pull the image on my machine it pulls the image instead of the image docker pull amazon aws node termination handler docker image inspect amazon aws node termination handler onbuild null labels null architecture os linux size virtualsize graphdriver expected outcome architecture should be instead of application logs the log output when experiencing the issue environment nth app version nth mode imds queue processor os arch macos kubernetes version installation method | 1 |

27,715 | 2,695,320,650 | IssuesEvent | 2015-04-02 03:59:36 | cs2103jan2015-t15-4j/main | https://api.github.com/repos/cs2103jan2015-t15-4j/main | closed | A user can view a list of already completed tasks | priority.medium type.story | ...so that the user can review his/her completed tasks when necessary. | 1.0 | A user can view a list of already completed tasks - ...so that the user can review his/her completed tasks when necessary. | priority | a user can view a list of already completed tasks so that the user can review his her completed tasks when necessary | 1 |

283,223 | 8,717,913,612 | IssuesEvent | 2018-12-07 18:42:11 | rubykube/peatio | https://api.github.com/repos/rubykube/peatio | closed | Sessions do not delete when DELETE /api/v2/sessions | Priority: High Type: Bug v1.9 | After send DELETE /api/v2/sessions I can use old JWToken for creating new order and other actions. | 1.0 | Sessions do not delete when DELETE /api/v2/sessions - After send DELETE /api/v2/sessions I can use old JWToken for creating new order and other actions. | priority | sessions do not delete when delete api sessions after send delete api sessions i can use old jwtoken for creating new order and other actions | 1 |

538,187 | 15,764,592,414 | IssuesEvent | 2021-03-31 13:23:12 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :219484] Out-of-bounds access in tests/drivers/timer/nrf_rtc_timer/src/main.c | Coverity bug priority: low |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/bd97359a5338b2542d19011b6d6aa1d8d1b9cc3f/tests/drivers/timer/nrf_rtc_timer/src/main.c

Category: Memory - corruptions

Function: `test_int_disable_enabled`

Component: Tests

CID: [219484](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=219484)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/bd97359a5338b2542d19011b6d6aa1d8d1b9cc3f/tests/drivers/timer/nrf_rtc_timer/src/main.c#L147

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| 1.0 | [Coverity CID :219484] Out-of-bounds access in tests/drivers/timer/nrf_rtc_timer/src/main.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/bd97359a5338b2542d19011b6d6aa1d8d1b9cc3f/tests/drivers/timer/nrf_rtc_timer/src/main.c

Category: Memory - corruptions

Function: `test_int_disable_enabled`

Component: Tests

CID: [219484](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=219484)

Details:

https://github.com/zephyrproject-rtos/zephyr/blob/bd97359a5338b2542d19011b6d6aa1d8d1b9cc3f/tests/drivers/timer/nrf_rtc_timer/src/main.c#L147

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| priority | out of bounds access in tests drivers timer nrf rtc timer src main c static code scan issues found in file category memory corruptions function test int disable enabled component tests cid details please fix or provide comments in coverity using the link note this issue was created automatically priority was set based on classification of the file affected and the impact field in coverity assignees were set using the codeowners file | 1 |

742,450 | 25,855,464,409 | IssuesEvent | 2022-12-13 13:27:12 | hengband/hengband | https://api.github.com/repos/hengband/hengband | closed | BasitemInfo::locale/chance の取り扱い改善 | refactor Priority:MIDDLE | 主に以下:

・「常に両方の要素数は同じ」という性質を活かして構造体化

・生配列からstd::array への転換

・関連コードの整備 | 1.0 | BasitemInfo::locale/chance の取り扱い改善 - 主に以下:

・「常に両方の要素数は同じ」という性質を活かして構造体化

・生配列からstd::array への転換

・関連コードの整備 | priority | basiteminfo locale chance の取り扱い改善 主に以下: ・「常に両方の要素数は同じ」という性質を活かして構造体化 ・生配列からstd array への転換 ・関連コードの整備 | 1 |

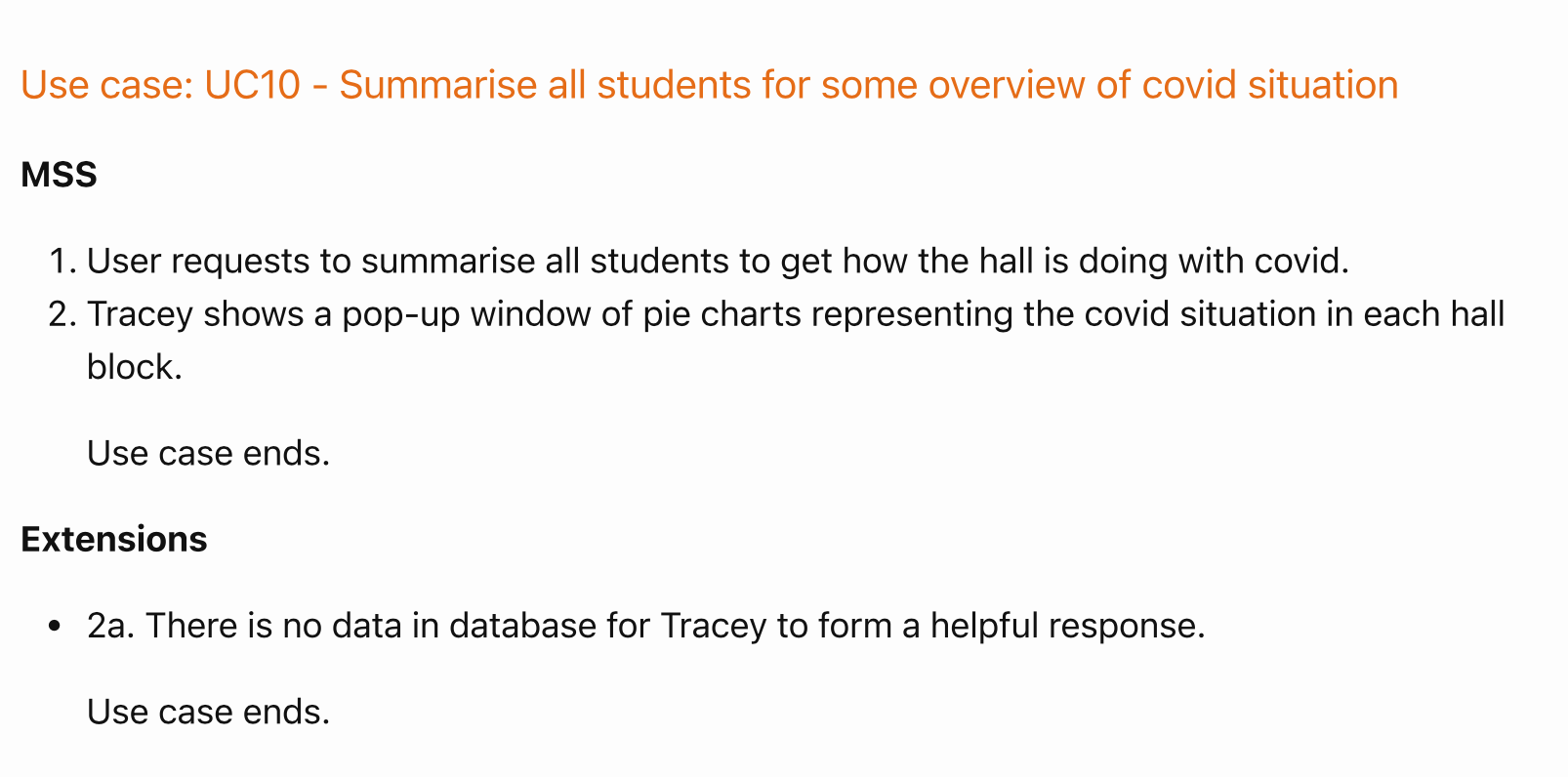

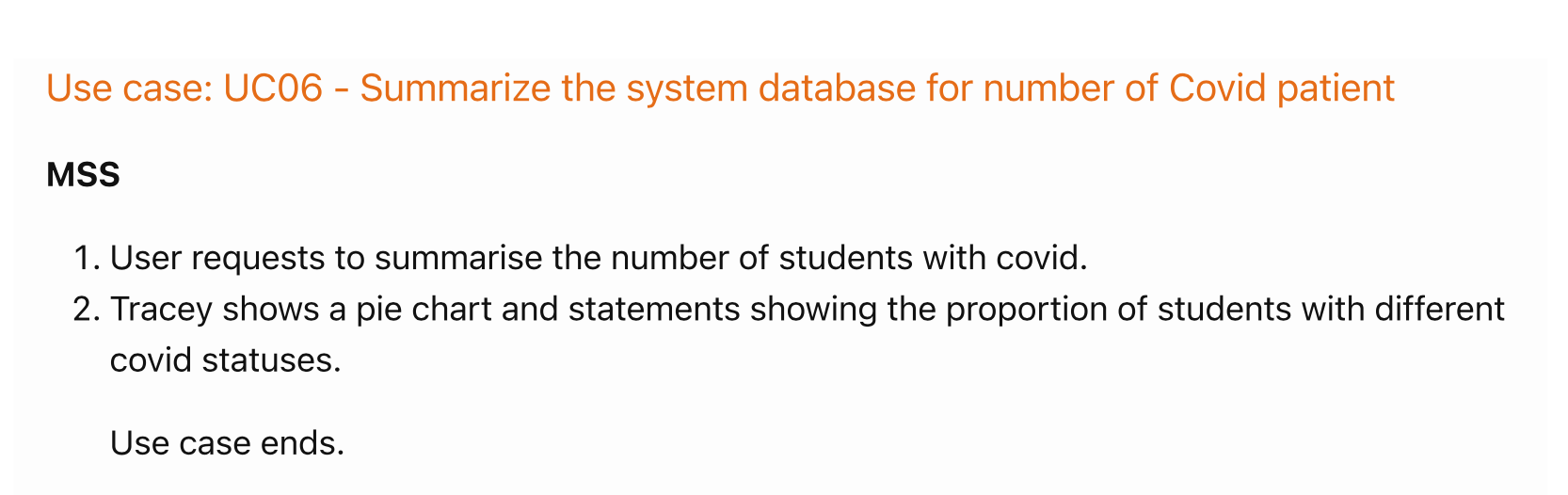

282,028 | 21,315,456,816 | IssuesEvent | 2022-04-16 07:31:43 | channne/pe | https://api.github.com/repos/channne/pe | opened | UC06 and UC10 | severity.Low type.DocumentationBug | UC06 and UC10 seem very similar, but also describe behavior that does not seem to exist - `Tracey` does not summarise over the entire hall, just by block and faculty

<!--session: 1650086993451-197aa2c0-ab17-433e-a4ec-44761c99441f-->

<!--Version: Web v3.4.2--> | 1.0 | UC06 and UC10 - UC06 and UC10 seem very similar, but also describe behavior that does not seem to exist - `Tracey` does not summarise over the entire hall, just by block and faculty

<!--session: 1650086993451-197aa2c0-ab17-433e-a4ec-44761c99441f-->

<!--Version: Web v3.4.2--> | non_priority | and and seem very similar but also describe behavior that does not seem to exist tracey does not summarise over the entire hall just by block and faculty | 0 |

240,773 | 20,073,542,171 | IssuesEvent | 2022-02-04 10:06:17 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: jepsen/g2/start-stop-2 failed | C-test-failure O-robot O-roachtest release-blocker branch-release-20.2 | [(roachtest).jepsen/g2/start-stop-2 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4299094&tab=buildLog) on [release-20.2@09707aabb12e50f6e7345b5c9664c0745bb7d742](https://github.com/cockroachdb/cockroach/commits/09707aabb12e50f6e7345b5c9664c0745bb7d742):

```

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2291

| main.runJepsen.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/jepsen.go:160

| main.runJepsen.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/jepsen.go:195

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1581

Wraps: (2) output in run_100536.122_n6_bash

Wraps: (3) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-4299094-1643958549-63-n6cpu4:6 -- bash -e -c "\

| cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

| ~/lein run test \

| --tarball file://${PWD}/cockroach.tgz \

| --username ${USER} \

| --ssh-private-key ~/.ssh/id_rsa \

| --os ubuntu \

| --time-limit 300 \

| --concurrency 30 \

| --recovery-time 25 \

| --test-count 1 \

| -n 10.128.0.25 -n 10.128.0.37 -n 10.128.0.23 -n 10.128.0.33 -n 10.128.0.21 \

| --test g2 --nemesis start-stop-2 \

| > invoke.log 2>&1 \

| " returned

| stderr:

| Error: SSH_PROBLEM: exit status 255

| (1) SSH_PROBLEM

| Wraps: (2) Node 6. Command with error:

| | ```

| | bash -e -c "\

| | cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

| | ~/lein run test \

| | --tarball file://${PWD}/cockroach.tgz \

| | --username ${USER} \

| | --ssh-private-key ~/.ssh/id_rsa \

| | --os ubuntu \

| | --time-limit 300 \

| | --concurrency 30 \

| | --recovery-time 25 \

| | --test-count 1 \

| | -n 10.128.0.25 -n 10.128.0.37 -n 10.128.0.23 -n 10.128.0.33 -n 10.128.0.21 \

| | --test g2 --nemesis start-stop-2 \

| | > invoke.log 2>&1 \

| | "

| | ```

| Wraps: (3) exit status 255

| Error types: (1) errors.SSH (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (4) exit status 10

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *main.withCommandDetails (4) *exec.ExitError

```

<details><summary>More</summary><p>

Artifacts: [/jepsen/g2/start-stop-2](https://teamcity.cockroachdb.com/viewLog.html?buildId=4299094&tab=artifacts#/jepsen/g2/start-stop-2)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Ajepsen%2Fg2%2Fstart-stop-2.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| 2.0 | roachtest: jepsen/g2/start-stop-2 failed - [(roachtest).jepsen/g2/start-stop-2 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4299094&tab=buildLog) on [release-20.2@09707aabb12e50f6e7345b5c9664c0745bb7d742](https://github.com/cockroachdb/cockroach/commits/09707aabb12e50f6e7345b5c9664c0745bb7d742):

```

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2291

| main.runJepsen.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/jepsen.go:160

| main.runJepsen.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/jepsen.go:195

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1581

Wraps: (2) output in run_100536.122_n6_bash

Wraps: (3) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-4299094-1643958549-63-n6cpu4:6 -- bash -e -c "\

| cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

| ~/lein run test \

| --tarball file://${PWD}/cockroach.tgz \

| --username ${USER} \

| --ssh-private-key ~/.ssh/id_rsa \

| --os ubuntu \

| --time-limit 300 \

| --concurrency 30 \

| --recovery-time 25 \

| --test-count 1 \

| -n 10.128.0.25 -n 10.128.0.37 -n 10.128.0.23 -n 10.128.0.33 -n 10.128.0.21 \

| --test g2 --nemesis start-stop-2 \

| > invoke.log 2>&1 \

| " returned

| stderr:

| Error: SSH_PROBLEM: exit status 255

| (1) SSH_PROBLEM

| Wraps: (2) Node 6. Command with error:

| | ```

| | bash -e -c "\

| | cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

| | ~/lein run test \

| | --tarball file://${PWD}/cockroach.tgz \

| | --username ${USER} \

| | --ssh-private-key ~/.ssh/id_rsa \

| | --os ubuntu \

| | --time-limit 300 \

| | --concurrency 30 \

| | --recovery-time 25 \

| | --test-count 1 \

| | -n 10.128.0.25 -n 10.128.0.37 -n 10.128.0.23 -n 10.128.0.33 -n 10.128.0.21 \

| | --test g2 --nemesis start-stop-2 \

| | > invoke.log 2>&1 \

| | "

| | ```

| Wraps: (3) exit status 255

| Error types: (1) errors.SSH (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (4) exit status 10

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *main.withCommandDetails (4) *exec.ExitError

```

<details><summary>More</summary><p>

Artifacts: [/jepsen/g2/start-stop-2](https://teamcity.cockroachdb.com/viewLog.html?buildId=4299094&tab=artifacts#/jepsen/g2/start-stop-2)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Ajepsen%2Fg2%2Fstart-stop-2.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| non_priority | roachtest jepsen start stop failed on home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main runjepsen home agent work go src github com cockroachdb cockroach pkg cmd roachtest jepsen go main runjepsen home agent work go src github com cockroachdb cockroach pkg cmd roachtest jepsen go runtime goexit usr local go src runtime asm s wraps output in run bash wraps home agent work go src github com cockroachdb cockroach bin roachprod run teamcity bash e c cd mnt jepsen cockroachdb set eo pipefail lein run test tarball file pwd cockroach tgz username user ssh private key ssh id rsa os ubuntu time limit concurrency recovery time test count n n n n n test nemesis start stop invoke log returned stderr error ssh problem exit status ssh problem wraps node command with error bash e c cd mnt jepsen cockroachdb set eo pipefail lein run test tarball file pwd cockroach tgz username user ssh private key ssh id rsa os ubuntu time limit concurrency recovery time test count n n n n n test nemesis start stop invoke log wraps exit status error types errors ssh hintdetail withdetail exec exiterror stdout wraps exit status error types withstack withstack errutil withprefix main withcommanddetails exec exiterror more artifacts powered by | 0 |

405,895 | 11,884,051,757 | IssuesEvent | 2020-03-27 16:58:30 | OpenFAM/OpenFAM | https://api.github.com/repos/OpenFAM/OpenFAM | closed | Add support to libfabric provider for Infiniband | <PRIORITY>- P0 enhancement | OpenFAM API need to support libfabric verbs provider for Inifiniband. | 1.0 | Add support to libfabric provider for Infiniband - OpenFAM API need to support libfabric verbs provider for Inifiniband. | priority | add support to libfabric provider for infiniband openfam api need to support libfabric verbs provider for inifiniband | 1 |

333,519 | 10,127,604,686 | IssuesEvent | 2019-08-01 10:37:36 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.apple.com - site is not usable | browser-firefox engine-gecko priority-critical | <!-- @browser: Firefox 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:69.0) Gecko/20100101 Firefox/69.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.apple.com/fr/itunes/download/thank-you/

**Browser / Version**: Firefox 69.0

**Operating System**: Windows 7

**Tested Another Browser**: Unknown

**Problem type**: Site is not usable

**Description**: update doesn't charge

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/7/3bd85b88-0186-4faa-8a7d-8644ca7178dd.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20190722201635</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: true</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: aurora</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.apple.com - site is not usable - <!-- @browser: Firefox 69.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:69.0) Gecko/20100101 Firefox/69.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.apple.com/fr/itunes/download/thank-you/

**Browser / Version**: Firefox 69.0

**Operating System**: Windows 7

**Tested Another Browser**: Unknown

**Problem type**: Site is not usable

**Description**: update doesn't charge

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2019/7/3bd85b88-0186-4faa-8a7d-8644ca7178dd.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>image.mem.shared: true</li><li>buildID: 20190722201635</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>hasTouchScreen: true</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>gfx.webrender.all: false</li><li>channel: aurora</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | site is not usable url browser version firefox operating system windows tested another browser unknown problem type site is not usable description update doesn t charge steps to reproduce browser configuration mixed active content blocked false image mem shared true buildid tracking content blocked false gfx webrender blob images true hastouchscreen true mixed passive content blocked false gfx webrender enabled false gfx webrender all false channel aurora from with ❤️ | 1 |