Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

433,630 | 12,508,038,527 | IssuesEvent | 2020-06-02 14:58:49 | canonical-web-and-design/build.snapcraft.io | https://api.github.com/repos/canonical-web-and-design/build.snapcraft.io | closed | Should not build i386 snaps with base: core20 | Priority: High | ## Summary

With the release of Ubuntu 20.04, snaps that specify `base: core20` should not auto-trigger builds on the i386 architecture because that will not be possible since there is not an Ubuntu 20.04 for i386, and thus there is not a core20 base snap for i386.

## Process

Create a snapcraft.yaml with `base... | 1.0 | Should not build i386 snaps with base: core20 - ## Summary

With the release of Ubuntu 20.04, snaps that specify `base: core20` should not auto-trigger builds on the i386 architecture because that will not be possible since there is not an Ubuntu 20.04 for i386, and thus there is not a core20 base snap for i386.

... | priority | should not build snaps with base summary with the release of ubuntu snaps that specify base should not auto trigger builds on the architecture because that will not be possible since there is not an ubuntu for and thus there is not a base snap for process create a snapcraf... | 1 |

61,674 | 25,598,549,355 | IssuesEvent | 2022-12-01 18:05:05 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | [Enhancement]: Add ECR Registry Permissions Resource | enhancement new-resource service/ecr | ### Description

I would like to be able to define the registry permissions JSON, which is necessary for cross account replication on the destination end. So https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/ecr_replication_configuration covers the origin part, but not the destination one.

##... | 1.0 | [Enhancement]: Add ECR Registry Permissions Resource - ### Description

I would like to be able to define the registry permissions JSON, which is necessary for cross account replication on the destination end. So https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/ecr_replication_configuration c... | non_priority | add ecr registry permissions resource description i would like to be able to define the registry permissions json which is necessary for cross account replication on the destination end so covers the origin part but not the destination one affected resource s and or data source s aws ecr repli... | 0 |

83,355 | 3,633,955,882 | IssuesEvent | 2016-02-11 16:21:36 | rsanchez-wsu/sp16-ceg3120 | https://api.github.com/repos/rsanchez-wsu/sp16-ceg3120 | closed | Fix checkstyle issues with team 6 branch. | priority-high state-inprogress team-6 | Code submission works on individual workstations but fails when Jenkins attempts to build it. | 1.0 | Fix checkstyle issues with team 6 branch. - Code submission works on individual workstations but fails when Jenkins attempts to build it. | priority | fix checkstyle issues with team branch code submission works on individual workstations but fails when jenkins attempts to build it | 1 |

6,720 | 6,609,341,277 | IssuesEvent | 2017-09-19 14:15:23 | ekylibre/ekylibre | https://api.github.com/repos/ekylibre/ekylibre | closed | Infinite map = infinite cultivable zone | Bug Cartography Security | When you create a new zone or modify an existent one, there is no limit on the size of it. The problem is the map is not a loop, it's a patern which repeat as many time as you want.

If the cultivable zone is 2x earth size there is a problem, an error is send and you have a zone which is at the same place on multiple ... | True | Infinite map = infinite cultivable zone - When you create a new zone or modify an existent one, there is no limit on the size of it. The problem is the map is not a loop, it's a patern which repeat as many time as you want.

If the cultivable zone is 2x earth size there is a problem, an error is send and you have a zo... | non_priority | infinite map infinite cultivable zone when you create a new zone or modify an existent one there is no limit on the size of it the problem is the map is not a loop it s a patern which repeat as many time as you want if the cultivable zone is earth size there is a problem an error is send and you have a zon... | 0 |

65,988 | 16,518,013,592 | IssuesEvent | 2021-05-26 11:49:44 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | ng e2e does not use --proxy-config for webdriver-manager update | comp: devkit/build-angular type: feature | <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [x] bug report -> please search issues before submitting

- [ ] feature request

```

### Versions.

<!--

Output from: `ng --version`.

If nothing,... | 1.0 | ng e2e does not use --proxy-config for webdriver-manager update - <!--

IF YOU DON'T FILL OUT THE FOLLOWING INFORMATION YOUR ISSUE MIGHT BE CLOSED WITHOUT INVESTIGATING

-->

### Bug Report or Feature Request (mark with an `x`)

```

- [x] bug report -> please search issues before submitting

- [ ] feature request

```... | non_priority | ng does not use proxy config for webdriver manager update if you don t fill out the following information your issue might be closed without investigating bug report or feature request mark with an x bug report please search issues before submitting feature request ... | 0 |

31,044 | 2,731,323,356 | IssuesEvent | 2015-04-16 19:41:20 | Theano/Theano | https://api.github.com/repos/Theano/Theano | opened | Sparse-aware addition for combining gradients | Low Priority Sparse | Reported in https://groups.google.com/d/topic/theano-users/aa-Ydpy6_0A/discussion

During gradient computation, when summing the contribution of different gradient path for the same variable, `tensor.add` can get called with one sparse and one dense input, which lead to a crash.

We could either:

- have an Op for th... | 1.0 | Sparse-aware addition for combining gradients - Reported in https://groups.google.com/d/topic/theano-users/aa-Ydpy6_0A/discussion

During gradient computation, when summing the contribution of different gradient path for the same variable, `tensor.add` can get called with one sparse and one dense input, which lead to a... | priority | sparse aware addition for combining gradients reported in during gradient computation when summing the contribution of different gradient path for the same variable tensor add can get called with one sparse and one dense input which lead to a crash we could either have an op for that that then gets o... | 1 |

56,283 | 23,743,020,559 | IssuesEvent | 2022-08-31 13:55:01 | miranda-ng/miranda-ng | https://api.github.com/repos/miranda-ng/miranda-ng | closed | VoiceService: некоторые надписи в окне вызова не переводятся | bug VoiceService | ERROR: type should be string, got "https://github.com/miranda-ng/miranda-ng/blob/master/plugins/VoiceService/src/VoiceCall.cpp#L222\r\n\r\n```\r\n\tcase VOICE_STATE_RINGING:\r\n\t\tincoming = true;\r\n\t\tSetCaption(L\"Incoming call\");\r\n\t\tm_btnAnswer.Enable(true);\r\n\t\tm_lblStatus.SetText(L\"Ringing\");\r\n\t\tSetWindowPos(GetHwnd(), HWND_TOPMOST, 0, 0, 0, 0, SWP_NOMOVE | SWP_NOSIZE | SWP_SHOWWINDOW);\r\n\t\tSetWindowPos(GetHwnd(), HWND_NOTOPMOST, 0, 0, 0, 0, SWP_NOMOVE | SWP_NOSIZE | SWP_SHOWWINDOW);\r\n\t\tbreak;\r\n\tcase VOICE_STATE_CALLING:\r\n\t\tincoming = false;\r\n\t\tSetCaption(L\"Outgoing call\");\r\n\t\tm_lblStatus.SetText(L\"Calling\");\r\n\t\tm_btnAnswer.Enable(false);\r\n\t\tbreak;\r\n\tcase VOICE_STATE_ON_HOLD:\r\n\t\tm_lblStatus.SetText(L\"Holded\");\r\n\t\tm_btnAnswer.Enable(true);\r\n\t\tm_btnAnswer.SetText(L\"Unhold\");\r\n\t\tbreak;\r\n\tcase VOICE_STATE_ENDED:\r\n\t\tm_calltimer.Stop();\r\n\t\tmir_snwprintf(text, _countof(text), L\"Call ended %s\", m_lblStatus.GetText());\r\n\t\tm_lblStatus.SetText(text);\r\n\t\tm_btnAnswer.Enable(false);\r\n\t\tm_btnDrop.SetText(L\"Close\");\r\n\t\tbreak;\r\n\tcase VOICE_STATE_BUSY:\r\n\t\tm_lblStatus.SetText(L\"Busy\");\r\n\t\tm_btnAnswer.Enable(false);\r\n\t\tm_btnDrop.SetText(L\"Close\");\r\n\t\tbreak;\r\n\tdefault:\r\n\t\tm_lblStatus.SetText(L\"Unknown state\");\r\n\t\tbreak;\r\n\t}\r\n```" | 1.0 | VoiceService: некоторые надписи в окне вызова не переводятся - https://github.com/miranda-ng/miranda-ng/blob/master/plugins/VoiceService/src/VoiceCall.cpp#L222

```

case VOICE_STATE_RINGING:

incoming = true;

SetCaption(L"Incoming call");

m_btnAnswer.Enable(true);

m_lblStatus.SetText(L"Ringing");

SetW... | non_priority | voiceservice некоторые надписи в окне вызова не переводятся case voice state ringing incoming true setcaption l incoming call m btnanswer enable true m lblstatus settext l ringing setwindowpos gethwnd hwnd topmost swp nomove swp nosize swp showwindow set... | 0 |

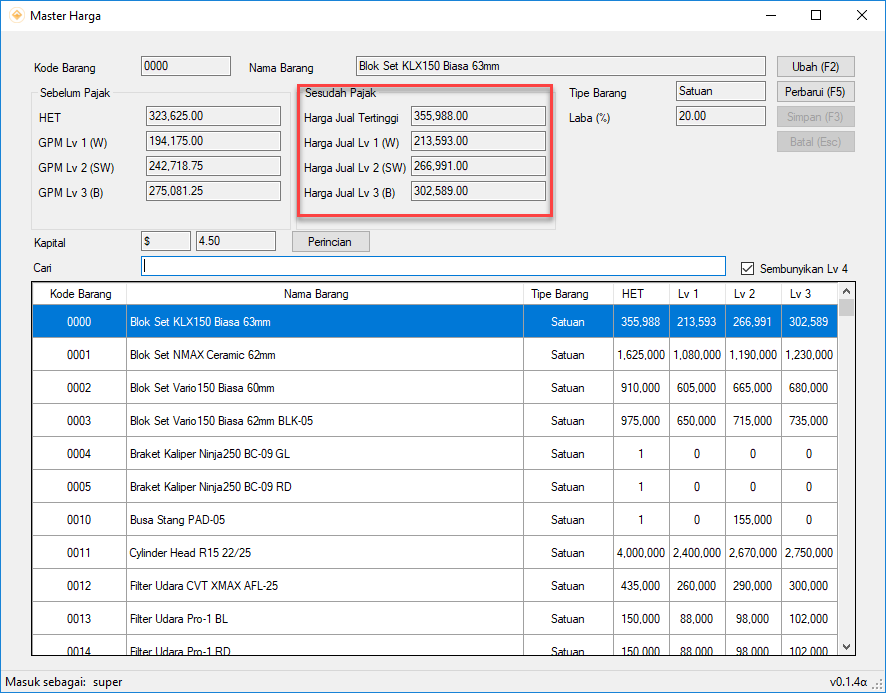

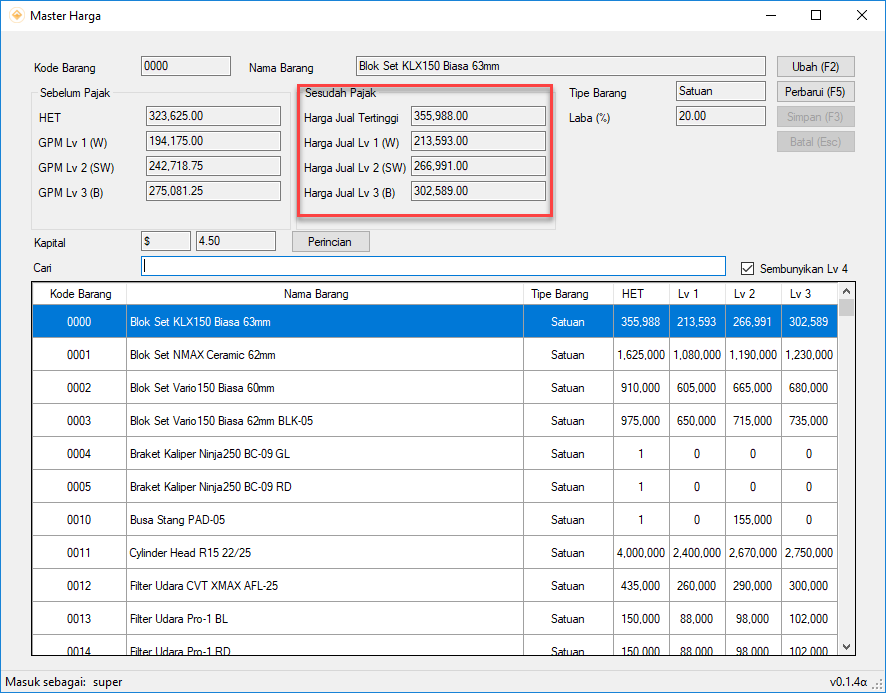

313,548 | 9,564,542,184 | IssuesEvent | 2019-05-05 04:40:06 | sevenzk/SJKTCI | https://api.github.com/repos/sevenzk/SJKTCI | closed | [Master Harga] Pembulatan 1000 ke atas atau ke bawah di Harga Setelah Pajak | Priority enhancement fixed | Ada pembulatan 1000 ke atas atau ke bawah (yang mendekati) di Harga Setelah Pajak seperti gambar di bawah:

| 1.0 | [Master Harga] Pembulatan 1000 ke atas atau ke bawah di Harga Setelah Pajak - Ada pembulatan 1000 ke atas atau ke bawah (yang mendekati) di Harga Setelah Pajak seperti gambar di bawah:

| priority | pembulatan ke atas atau ke bawah di harga setelah pajak ada pembulatan ke atas atau ke bawah yang mendekati di harga setelah pajak seperti gambar di bawah | 1 |

118,389 | 4,744,343,449 | IssuesEvent | 2016-10-21 00:29:54 | FeraGroup/FTCVortexScoreCounter | https://api.github.com/repos/FeraGroup/FTCVortexScoreCounter | closed | Large numbers do not fit | enhancement Low Priority | Any score higher then 99 will not fit completely in the box that displays the scores. This is only an issue while looking at the score using the smaller counter. | 1.0 | Large numbers do not fit - Any score higher then 99 will not fit completely in the box that displays the scores. This is only an issue while looking at the score using the smaller counter. | priority | large numbers do not fit any score higher then will not fit completely in the box that displays the scores this is only an issue while looking at the score using the smaller counter | 1 |

580,262 | 17,214,353,905 | IssuesEvent | 2021-07-19 09:33:46 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Pin the GitHub actions we use on this repo to a full length commit SHA | priority-3-normal status:blocked type:refactor | **What would you like Renovate to be able to do?**

<!-- Tell us what requirements you need solving, and be sure to mention too if this is part of any "bigger" problem you're trying to solve. -->

@rarkins and @viceice now that PR #10835 is merged, we can start thinking about pinning our GitHub Actions to the curre... | 1.0 | Pin the GitHub actions we use on this repo to a full length commit SHA - **What would you like Renovate to be able to do?**

<!-- Tell us what requirements you need solving, and be sure to mention too if this is part of any "bigger" problem you're trying to solve. -->

@rarkins and @viceice now that PR #10835 is me... | priority | pin the github actions we use on this repo to a full length commit sha what would you like renovate to be able to do rarkins and viceice now that pr is merged we can start thinking about pinning our github actions to the current full length git commit sha did you already have any implementati... | 1 |

716,982 | 24,656,113,350 | IssuesEvent | 2022-10-17 23:47:14 | ApplETS/Notre-Dame | https://api.github.com/repos/ApplETS/Notre-Dame | closed | Golden files not updated - CI tests failing | bug CI priority: high | **Describe the bug**

Golden files have not been updated correctly in a recent PR and CI tests are failing.

The files that are throwing the error are `gradesDetailsView_1.png`, `gradesDetailsView_2.png`, `gradesDetailsView_evaluation_not_completed.png` (located in `goldenFiles/`)

**To Reproduce**

Steps to reproduc... | 1.0 | Golden files not updated - CI tests failing - **Describe the bug**

Golden files have not been updated correctly in a recent PR and CI tests are failing.

The files that are throwing the error are `gradesDetailsView_1.png`, `gradesDetailsView_2.png`, `gradesDetailsView_evaluation_not_completed.png` (located in `goldenF... | priority | golden files not updated ci tests failing describe the bug golden files have not been updated correctly in a recent pr and ci tests are failing the files that are throwing the error are gradesdetailsview png gradesdetailsview png gradesdetailsview evaluation not completed png located in goldenf... | 1 |

269,463 | 8,435,892,153 | IssuesEvent | 2018-10-17 14:12:52 | smartdevicelink/sdl_core | https://api.github.com/repos/smartdevicelink/sdl_core | closed | Adjust code to accomodate new JsonCPP version | Bug Contributor priority 1: High | ### Bug Report

Adjust code to accomodate new JsonCPP version

##### Expected Behavior

Need to upgrade the third-party JsonCpp library.

The SDL library is currently using an old release candidate version of JsonCpp (0.6.0-rc2). This should be updated to an actually released version.

##### OS & Version Informa... | 1.0 | Adjust code to accomodate new JsonCPP version - ### Bug Report

Adjust code to accomodate new JsonCPP version

##### Expected Behavior

Need to upgrade the third-party JsonCpp library.

The SDL library is currently using an old release candidate version of JsonCpp (0.6.0-rc2). This should be updated to an actual... | priority | adjust code to accomodate new jsoncpp version bug report adjust code to accomodate new jsoncpp version expected behavior need to upgrade the third party jsoncpp library the sdl library is currently using an old release candidate version of jsoncpp this should be updated to an actually... | 1 |

455,448 | 13,127,063,960 | IssuesEvent | 2020-08-06 09:40:16 | phovea/generator-phovea | https://api.github.com/repos/phovea/generator-phovea | opened | Update build.js after moving deploy scripts from app to product | priority: high type: bug | * Release number or git hash: 2feaac301b3ccbaffb9af5bbac124be3832218b1

* OS: Linux

* Environment (local or deployed): both

### Steps to reproduce

1. build a product containing more deployment configurations than web and api

### Observed behavior

* only web and api are accepted options for Dockerfiles (see... | 1.0 | Update build.js after moving deploy scripts from app to product - * Release number or git hash: 2feaac301b3ccbaffb9af5bbac124be3832218b1

* OS: Linux

* Environment (local or deployed): both

### Steps to reproduce

1. build a product containing more deployment configurations than web and api

### Observed behavi... | priority | update build js after moving deploy scripts from app to product release number or git hash os linux environment local or deployed both steps to reproduce build a product containing more deployment configurations than web and api observed behavior only web and api are accepted o... | 1 |

450,454 | 31,925,465,711 | IssuesEvent | 2023-09-19 01:10:23 | ICEI-PUC-Minas-PMV-ADS/pmv-ads-2023-2-e3-proj-mov-t1-entre-time | https://api.github.com/repos/ICEI-PUC-Minas-PMV-ADS/pmv-ads-2023-2-e3-proj-mov-t1-entre-time | closed | Justificativa e Público alvo(01- Documentação de contexto)- H11a-ADS-CST | documentation | H11a-ADS-CST - Compreender os usuários e definir uma proposta de solução: definir o problema de forma clara e objetiva, apresentando os objetivos, a justificativa e a motivação da escolha. | 1.0 | Justificativa e Público alvo(01- Documentação de contexto)- H11a-ADS-CST - H11a-ADS-CST - Compreender os usuários e definir uma proposta de solução: definir o problema de forma clara e objetiva, apresentando os objetivos, a justificativa e a motivação da escolha. | non_priority | justificativa e público alvo documentação de contexto ads cst ads cst compreender os usuários e definir uma proposta de solução definir o problema de forma clara e objetiva apresentando os objetivos a justificativa e a motivação da escolha | 0 |

50,728 | 12,549,929,659 | IssuesEvent | 2020-06-06 09:07:38 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | appengine.flexible.tasks.snippets_test: test_pause_queue failed | buildcop: issue priority: p1 type: bug | This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: cc68a07af4cab7b48233680996d2913fb0ba... | 1.0 | appengine.flexible.tasks.snippets_test: test_pause_queue failed - This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will s... | non_priority | appengine flexible tasks snippets test test pause queue failed this test failed to configure my behavior see if i m commenting on this issue too often add the buildcop quiet label and i will stop commenting commit buildurl status failed test output traceback most recent call last... | 0 |

786,415 | 27,645,769,221 | IssuesEvent | 2023-03-10 22:49:58 | briandfoy/cpan-audit | https://api.github.com/repos/briandfoy/cpan-audit | closed | Feature: example drop-in cpan-audit.t file | Type: enhancement Priority: low | I think it would be great to offer a drop-in cpan-audit.t file for users to drop into their t/ directory, which does an audit and reports any security advisories on whatever is in cpanfile.snapshot, for example. | 1.0 | Feature: example drop-in cpan-audit.t file - I think it would be great to offer a drop-in cpan-audit.t file for users to drop into their t/ directory, which does an audit and reports any security advisories on whatever is in cpanfile.snapshot, for example. | priority | feature example drop in cpan audit t file i think it would be great to offer a drop in cpan audit t file for users to drop into their t directory which does an audit and reports any security advisories on whatever is in cpanfile snapshot for example | 1 |

74,821 | 14,346,379,831 | IssuesEvent | 2020-11-29 00:09:40 | Arcanorum/dungeonz | https://api.github.com/repos/Arcanorum/dungeonz | opened | Spatial audio | code | **Task description:**

Need to figure out and implement a way to associate playing certain sounds with an entity on screen, and adjust the volume to be appropriate for the distance from the player.

**References/notes:**

Some discussion around the topic.

https://phaser.discourse.group/t/sound-in-particular-place/2... | 1.0 | Spatial audio - **Task description:**

Need to figure out and implement a way to associate playing certain sounds with an entity on screen, and adjust the volume to be appropriate for the distance from the player.

**References/notes:**

Some discussion around the topic.

https://phaser.discourse.group/t/sound-in-pa... | non_priority | spatial audio task description need to figure out and implement a way to associate playing certain sounds with an entity on screen and adjust the volume to be appropriate for the distance from the player references notes some discussion around the topic acceptance criteria ac a given s... | 0 |

640,842 | 20,810,232,190 | IssuesEvent | 2022-03-18 01:10:45 | monarch-initiative/mondo | https://api.github.com/repos/monarch-initiative/mondo | closed | MONDO:0005755 equine infectious anemia; non-human disease [Revise subclass] | Revise subclass high priority | **Mondo term (ID and Label)**

MONDO:0005755 equine infectious anemia

**Suggested revision and reasons**

I think this belongs under MONDO:0005583 "non-human animal disease"

NCI definition from UMLS CUI C0014661: "A horse disease caused by a retrovirus which is transmitted by biting flies. The acute phase symptom... | 1.0 | MONDO:0005755 equine infectious anemia; non-human disease [Revise subclass] - **Mondo term (ID and Label)**

MONDO:0005755 equine infectious anemia

**Suggested revision and reasons**

I think this belongs under MONDO:0005583 "non-human animal disease"

NCI definition from UMLS CUI C0014661: "A horse disease caused... | priority | mondo equine infectious anemia non human disease mondo term id and label mondo equine infectious anemia suggested revision and reasons i think this belongs under mondo non human animal disease nci definition from umls cui a horse disease caused by a retrovirus which is transmitted by ... | 1 |

49,621 | 3,003,711,772 | IssuesEvent | 2015-07-25 05:58:51 | jayway/powermock | https://api.github.com/repos/jayway/powermock | opened | mockin org.apache.http.impl.client.DefaultHttpClient class | bug imported Priority-Medium | _From [daghana...@gmail.com](https://code.google.com/u/104674216580764072044/) on July 22, 2014 06:55:51_

What steps will reproduce the problem? 1. create a constructor mock of .DefaultHttpClient using Powermock_V1.5.5 Mockito_V1.9.5 and Junit_V4.1 ektorp_V1.4.1

2.run the test call new StdHttpClient.Builder().url(dbu... | 1.0 | mockin org.apache.http.impl.client.DefaultHttpClient class - _From [daghana...@gmail.com](https://code.google.com/u/104674216580764072044/) on July 22, 2014 06:55:51_

What steps will reproduce the problem? 1. create a constructor mock of .DefaultHttpClient using Powermock_V1.5.5 Mockito_V1.9.5 and Junit_V4.1 ektorp_V1... | priority | mockin org apache http impl client defaulthttpclient class from on july what steps will reproduce the problem create a constructor mock of defaulthttpclient using powermock mockito and junit ektorp run the test call new stdhttpclient builder url dburl tostring cach... | 1 |

43,168 | 5,529,972,604 | IssuesEvent | 2017-03-21 00:30:20 | easydigitaldownloads/easy-digital-downloads | https://api.github.com/repos/easydigitaldownloads/easy-digital-downloads | closed | Multiple EDD_Payments_Query's affect each other. | Bug Has PR Needs Testing Payments | If you instantiate 2 objects of EDD_Payments_Query, because the values are hooked to edd_pre_get_payments, any custom values you set up for the first object continue to be hooked for any subsequent objects.

**For example:**

The following code snippet will cause the payment history page in the WordPress dashboard ... | 1.0 | Multiple EDD_Payments_Query's affect each other. - If you instantiate 2 objects of EDD_Payments_Query, because the values are hooked to edd_pre_get_payments, any custom values you set up for the first object continue to be hooked for any subsequent objects.

**For example:**

The following code snippet will cause ... | non_priority | multiple edd payments query s affect each other if you instantiate objects of edd payments query because the values are hooked to edd pre get payments any custom values you set up for the first object continue to be hooked for any subsequent objects for example the following code snippet will cause ... | 0 |

47,853 | 7,354,063,348 | IssuesEvent | 2018-03-09 04:24:51 | Naoghuman/lib-validation | https://api.github.com/repos/Naoghuman/lib-validation | opened | [doc] Update ReadMe.md to 0.3.0. | documentation refactoring | [doc] Update ReadMe.md to 0.3.0.

* New UML image for the section `Intention`.

* Dependencies, Download... | 1.0 | [doc] Update ReadMe.md to 0.3.0. - [doc] Update ReadMe.md to 0.3.0.

* New UML image for the section `Intention`.

* Dependencies, Download... | non_priority | update readme md to update readme md to new uml image for the section intention dependencies download | 0 |

242,138 | 7,838,626,663 | IssuesEvent | 2018-06-18 10:56:19 | minishift/minishift-addons | https://api.github.com/repos/minishift/minishift-addons | opened | Add scenario for removal of Che addon | kind/task priority/major | Since PR #123 for removal of Che was merged there should be also a test case for the removal to cover the functionality in the future. | 1.0 | Add scenario for removal of Che addon - Since PR #123 for removal of Che was merged there should be also a test case for the removal to cover the functionality in the future. | priority | add scenario for removal of che addon since pr for removal of che was merged there should be also a test case for the removal to cover the functionality in the future | 1 |

612,926 | 19,059,447,170 | IssuesEvent | 2021-11-26 04:32:14 | tomusborne/generatepress | https://api.github.com/repos/tomusborne/generatepress | opened | Add missing wp_set_script_translations() functions | type: bug priority: medium | We're missing the needed `wp_set_script_translations( 'handle', 'generatepress' )` functions wherever we're adding `wp-i18n` as a dependency right now, which is preventing translations from working. | 1.0 | Add missing wp_set_script_translations() functions - We're missing the needed `wp_set_script_translations( 'handle', 'generatepress' )` functions wherever we're adding `wp-i18n` as a dependency right now, which is preventing translations from working. | priority | add missing wp set script translations functions we re missing the needed wp set script translations handle generatepress functions wherever we re adding wp as a dependency right now which is preventing translations from working | 1 |

830,349 | 32,003,233,796 | IssuesEvent | 2023-09-21 13:28:57 | dag-hammarskjold-library/dlx-rest | https://api.github.com/repos/dag-hammarskjold-library/dlx-rest | closed | Display and sorting in browse indexes by subfield when not in order | type: enhancement priority: high function: search sort | It looks like the sorting in the browse indexes is not by the alphabetical order of the subfields in the record, but rather by the order in which they display in the field? Here is an example:

This... | 1.0 | Display and sorting in browse indexes by subfield when not in order - It looks like the sorting in the browse indexes is not by the alphabetical order of the subfields in the record, but rather by the order in which they display in the field? Here is an example:

.

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: e1c5cd6b5d03afb03911ba9aa685457aa359a602

b... | 1.0 | AI platform get hyperparameter tuning job: should get the specified hyperparameter tuning job failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flak... | priority | ai platform get hyperparameter tuning job should get the specified hyperparameter tuning job failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test outp... | 1 |

73,471 | 7,335,034,949 | IssuesEvent | 2018-03-06 01:44:21 | istio/istio | https://api.github.com/repos/istio/istio | closed | Test Failure: security/tests/integration/certificateRotationTest | kind/fixit kind/test-failure | From: https://k8s-gubernator.appspot.com/build/istio-prow/pull/istio_istio/3913/istio-presubmit/6070/

```

I0302 22:34:20.343] 2018-03-02T22:34:20.341870Z error failed to create test namespace: failed to create a role (error: failed to create role (error: roles.rbac.authorization.k8s.io "istio-ca-role" is forbidden:... | 1.0 | Test Failure: security/tests/integration/certificateRotationTest - From: https://k8s-gubernator.appspot.com/build/istio-prow/pull/istio_istio/3913/istio-presubmit/6070/

```

I0302 22:34:20.343] 2018-03-02T22:34:20.341870Z error failed to create test namespace: failed to create a role (error: failed to create role (e... | non_priority | test failure security tests integration certificaterotationtest from error failed to create test namespace failed to create a role error failed to create role error roles rbac authorization io istio ca role is forbidden attempt to grant extra privileges apigroups ve... | 0 |

44,335 | 12,101,453,469 | IssuesEvent | 2020-04-20 15:14:09 | codesmithtools/Templates | https://api.github.com/repos/codesmithtools/Templates | closed | Join Table w/ Dependent Foreign Key | Framework-NHibernate Type-Defect auto-migrated | ```

Update the IsManyToMany logic and add a constraint on having no dependent

foreign keys.

http://community.codesmithtools.com/forums/t/10071.aspx

```

Original issue reported on code.google.com by `tdupont...@gmail.com` on 24 Aug 2009 at 4:13

| 1.0 | Join Table w/ Dependent Foreign Key - ```

Update the IsManyToMany logic and add a constraint on having no dependent

foreign keys.

http://community.codesmithtools.com/forums/t/10071.aspx

```

Original issue reported on code.google.com by `tdupont...@gmail.com` on 24 Aug 2009 at 4:13

| non_priority | join table w dependent foreign key update the ismanytomany logic and add a constraint on having no dependent foreign keys original issue reported on code google com by tdupont gmail com on aug at | 0 |

29,666 | 2,716,767,477 | IssuesEvent | 2015-04-10 21:15:18 | CruxFramework/crux | https://api.github.com/repos/CruxFramework/crux | closed | DataProvider clearChanges method is not working | bug imported Milestone-M14-C4 Priority-Medium TargetVersion-5.3.0 | _From [trbustam...@gmail.com](https://code.google.com/u/117925048001886933493/) on September 19, 2014 14:54:16_

The clearChanges method is not undoing editions on the dataprovider. It is only cleaning the change logs.

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=537_ | 1.0 | DataProvider clearChanges method is not working - _From [trbustam...@gmail.com](https://code.google.com/u/117925048001886933493/) on September 19, 2014 14:54:16_

The clearChanges method is not undoing editions on the dataprovider. It is only cleaning the change logs.

_Original issue: http://code.google.com/p/crux-fra... | priority | dataprovider clearchanges method is not working from on september the clearchanges method is not undoing editions on the dataprovider it is only cleaning the change logs original issue | 1 |

824,309 | 31,149,247,347 | IssuesEvent | 2023-08-16 08:49:17 | fossasia/open-event-frontend | https://api.github.com/repos/fossasia/open-event-frontend | opened | Speakers getting disconnected from sessions when editing social media profile | bug Priority: Urgent | When a speaker adds social media entries to their account they get disconnected from some sessions if they have several sessions. | 1.0 | Speakers getting disconnected from sessions when editing social media profile - When a speaker adds social media entries to their account they get disconnected from some sessions if they have several sessions. | priority | speakers getting disconnected from sessions when editing social media profile when a speaker adds social media entries to their account they get disconnected from some sessions if they have several sessions | 1 |

657,885 | 21,870,262,182 | IssuesEvent | 2022-05-19 03:59:34 | pytorch/data | https://api.github.com/repos/pytorch/data | closed | Multiprocessing with any DataPipe writing to local file | bug good first issue help wanted high priority | ### 🐛 Describe the bug

We need to take extra care all DataPipe that would write to file system when DataLoader2 triggered multiprocessing. If the file name on the local file system is same across multiple processes, it would be a racing condition.

This is found when TorchText team is using `on_disk_cache` to cache... | 1.0 | Multiprocessing with any DataPipe writing to local file - ### 🐛 Describe the bug

We need to take extra care all DataPipe that would write to file system when DataLoader2 triggered multiprocessing. If the file name on the local file system is same across multiple processes, it would be a racing condition.

This is f... | priority | multiprocessing with any datapipe writing to local file 🐛 describe the bug we need to take extra care all datapipe that would write to file system when triggered multiprocessing if the file name on the local file system is same across multiple processes it would be a racing condition this is found when ... | 1 |

50,182 | 3,006,232,964 | IssuesEvent | 2015-07-27 09:03:14 | Itseez/opencv | https://api.github.com/repos/Itseez/opencv | opened | Incorrect window size on Mac OS X Lion | auto-transferred bug category: highgui-gui priority: normal | Transferred from http://code.opencv.org/issues/2189

```

|| Jan Dlabal on 2012-07-24 17:26

|| Priority: Normal

|| Affected: None

|| Category: highgui-gui

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

Incorrect window size on Mac OS X Lion

-----------

```

See http://stackoverflow.com/ques... | 1.0 | Incorrect window size on Mac OS X Lion - Transferred from http://code.opencv.org/issues/2189

```

|| Jan Dlabal on 2012-07-24 17:26

|| Priority: Normal

|| Affected: None

|| Category: highgui-gui

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

Incorrect window size on Mac OS X Lion

--------... | priority | incorrect window size on mac os x lion transferred from jan dlabal on priority normal affected none category highgui gui tracker bug difficulty none pr none platform none none incorrect window size on mac os x lion see basically this ... | 1 |

269,879 | 8,444,066,087 | IssuesEvent | 2018-10-18 17:19:44 | poanetwork/metamask-extension | https://api.github.com/repos/poanetwork/metamask-extension | closed | (Bug) Token info isn't displayed if switch the network to localhost and back | high priority logical bug ready for release | Steps:

1. Set Sokol network

2. Add any token

3. Switch network to localhost

4. Switch network back to Sokol

Expected result:

- token info should be loaded and properly displayed

Actual result:

- token info isn't loaded

<img width="1440" alt="screen shot 2018-09-10 at 11 47 41 pm" src="https://user-ima... | 1.0 | (Bug) Token info isn't displayed if switch the network to localhost and back - Steps:

1. Set Sokol network

2. Add any token

3. Switch network to localhost

4. Switch network back to Sokol

Expected result:

- token info should be loaded and properly displayed

Actual result:

- token info isn't loaded

<im... | priority | bug token info isn t displayed if switch the network to localhost and back steps set sokol network add any token switch network to localhost switch network back to sokol expected result token info should be loaded and properly displayed actual result token info isn t loaded im... | 1 |

114,876 | 17,266,880,013 | IssuesEvent | 2021-07-22 14:44:56 | turkdevops/php-src | https://api.github.com/repos/turkdevops/php-src | closed | CVE-2019-11041 (High) detected in php-srcphp-7.1.0RC3 - autoclosed | security vulnerability | ## CVE-2019-11041 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>php-srcphp-7.1.0RC3</b></p></summary>

<p>

<p>The PHP Interpreter</p>

<p>Library home page: <a href=https://github.com/... | True | CVE-2019-11041 (High) detected in php-srcphp-7.1.0RC3 - autoclosed - ## CVE-2019-11041 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>php-srcphp-7.1.0RC3</b></p></summary>

<p>

<p>The ... | non_priority | cve high detected in php srcphp autoclosed cve high severity vulnerability vulnerable library php srcphp the php interpreter library home page a href found in head commit a href found in base branch microseconds vulnerable source files ... | 0 |

125,225 | 26,620,774,968 | IssuesEvent | 2023-01-24 11:03:02 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | insights: use code insight title for data export filename | team/code-insights backend | follow up on https://github.com/sourcegraph/sourcegraph/pull/46662#discussion_r1084634856

escape illegal characters | 1.0 | insights: use code insight title for data export filename - follow up on https://github.com/sourcegraph/sourcegraph/pull/46662#discussion_r1084634856

escape illegal characters | non_priority | insights use code insight title for data export filename follow up on escape illegal characters | 0 |

111,739 | 11,741,181,964 | IssuesEvent | 2020-03-11 21:09:49 | ISPPNightTurn/Clubby | https://api.github.com/repos/ISPPNightTurn/Clubby | closed | Diseñar presentación del 11/03/2020 | desing documentation | Es necesario preparar la presentación para la clase del próximo miércoles. | 1.0 | Diseñar presentación del 11/03/2020 - Es necesario preparar la presentación para la clase del próximo miércoles. | non_priority | diseñar presentación del es necesario preparar la presentación para la clase del próximo miércoles | 0 |

79,357 | 10,120,685,146 | IssuesEvent | 2019-07-31 14:12:42 | kids-first/kf-api-release-coordinator | https://api.github.com/repos/kids-first/kf-api-release-coordinator | closed | Add sphinx docs site | documentation | We should update existing documentation to use a sphinx docs site like most other code bases. | 1.0 | Add sphinx docs site - We should update existing documentation to use a sphinx docs site like most other code bases. | non_priority | add sphinx docs site we should update existing documentation to use a sphinx docs site like most other code bases | 0 |

490,825 | 14,140,593,105 | IssuesEvent | 2020-11-10 11:25:38 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | SHUTDOWN should not disable SHUTDOWN_HARD | Category: Core & System Community Priority: Normal Status: Accepted Type: Feature | **Description**

The most annoying issue of opennebula.

**To Reproduce**

Regarding:

- Shutdown

- Power Off

- Reset

Try to shut down a VM. Find out it won't shut down.

Now sit and wait a few minutes so you can force shutdown it.

Optionally, be educated that you want to do something invalid,

while in reali... | 1.0 | SHUTDOWN should not disable SHUTDOWN_HARD - **Description**

The most annoying issue of opennebula.

**To Reproduce**

Regarding:

- Shutdown

- Power Off

- Reset

Try to shut down a VM. Find out it won't shut down.

Now sit and wait a few minutes so you can force shutdown it.

Optionally, be educated that you w... | priority | shutdown should not disable shutdown hard description the most annoying issue of opennebula to reproduce regarding shutdown power off reset try to shut down a vm find out it won t shut down now sit and wait a few minutes so you can force shutdown it optionally be educated that you w... | 1 |

239,848 | 18,285,901,013 | IssuesEvent | 2021-10-05 10:13:32 | girlscript/winter-of-contributing | https://api.github.com/repos/girlscript/winter-of-contributing | closed | Competitive Programming : : Sliding window maximum (documentation) | documentation GWOC21 Assigned Competitive Programming | <hr>

## Description 📜

I would like to provide documentation about the Sliding window maximum problem.

<hr>

## Domain of Contribution 📊

<!----Please delete options that are not relevant.And in order to tick the check box just but x inside them for example [x] like this----->

- [x] Competitive Program... | 1.0 | Competitive Programming : : Sliding window maximum (documentation) - <hr>

## Description 📜

I would like to provide documentation about the Sliding window maximum problem.

<hr>

## Domain of Contribution 📊

<!----Please delete options that are not relevant.And in order to tick the check box just but x ins... | non_priority | competitive programming sliding window maximum documentation description 📜 i would like to provide documentation about the sliding window maximum problem domain of contribution 📊 competitive programming | 0 |

250,619 | 7,979,201,252 | IssuesEvent | 2018-07-17 20:52:53 | neurosynth/neurosynth-web | https://api.github.com/repos/neurosynth/neurosynth-web | closed | Update code page to include this repo | enhancement priority:med | The Code page needs to add a link to and description of this repository.

| 1.0 | Update code page to include this repo - The Code page needs to add a link to and description of this repository.

| priority | update code page to include this repo the code page needs to add a link to and description of this repository | 1 |

107,692 | 16,762,159,331 | IssuesEvent | 2021-06-14 01:03:34 | ioana-nicolae/first | https://api.github.com/repos/ioana-nicolae/first | closed | WS-2018-0209 (Medium) detected in morgan-1.8.0.tgz - autoclosed | security vulnerability | ## WS-2018-0209 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>morgan-1.8.0.tgz</b></p></summary>

<p>HTTP request logger middleware for node.js</p>

<p>Library home page: <a href="ht... | True | WS-2018-0209 (Medium) detected in morgan-1.8.0.tgz - autoclosed - ## WS-2018-0209 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>morgan-1.8.0.tgz</b></p></summary>

<p>HTTP request l... | non_priority | ws medium detected in morgan tgz autoclosed ws medium severity vulnerability vulnerable library morgan tgz http request logger middleware for node js library home page a href path to dependency file first angular js master angular js master yarn lock path to vulnera... | 0 |

162,834 | 6,176,610,319 | IssuesEvent | 2017-07-01 15:22:03 | Placeholder-Software/Dissonance | https://api.github.com/repos/Placeholder-Software/Dissonance | closed | Reconnection Error | Priority: High Status: Awaiting User Feedback Type: Bug | Hi,

we've bought your asset a couple of days ago and trying out your HLAPI as well as LLAPI integrations. Unfortunately LLAPI has some issues I'm not sure how to resolve them and that seem unusual.

I'm currently working with Unity 5.6.0f3. Empty Project, Dissonance Package imported as well asLLAPI integration.

... | 1.0 | Reconnection Error - Hi,

we've bought your asset a couple of days ago and trying out your HLAPI as well as LLAPI integrations. Unfortunately LLAPI has some issues I'm not sure how to resolve them and that seem unusual.

I'm currently working with Unity 5.6.0f3. Empty Project, Dissonance Package imported as well asL... | priority | reconnection error hi we ve bought your asset a couple of days ago and trying out your hlapi as well as llapi integrations unfortunately llapi has some issues i m not sure how to resolve them and that seem unusual i m currently working with unity empty project dissonance package imported as well aslla... | 1 |

33,935 | 7,302,940,520 | IssuesEvent | 2018-02-27 11:21:05 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | generic HazelcastException thrown, wrapping com.hazelcast.core.MemberLeftException | Team: Core Type: Defect |

exception

```

com.hazelcast.core.HazelcastException: com.hazelcast.core.MemberLeftException: Member [10.0.0.213]:5701 - d437817c-54f7-4631-a243-ac55ef2c7694 has left cluster!

at com.hazelcast.util.ExceptionUtil$1.create(ExceptionUtil.java:40)

at com.hazelcast.util.ExceptionUtil.peel(ExceptionUtil.java:116)

at... | 1.0 | generic HazelcastException thrown, wrapping com.hazelcast.core.MemberLeftException -

exception

```

com.hazelcast.core.HazelcastException: com.hazelcast.core.MemberLeftException: Member [10.0.0.213]:5701 - d437817c-54f7-4631-a243-ac55ef2c7694 has left cluster!

at com.hazelcast.util.ExceptionUtil$1.create(Exception... | non_priority | generic hazelcastexception thrown wrapping com hazelcast core memberleftexception exception com hazelcast core hazelcastexception com hazelcast core memberleftexception member has left cluster at com hazelcast util exceptionutil create exceptionutil java at com hazelcast util exce... | 0 |

240,153 | 26,254,327,648 | IssuesEvent | 2023-01-05 22:33:07 | TreyM-WSS/terra-clinical | https://api.github.com/repos/TreyM-WSS/terra-clinical | opened | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz | security vulnerability | ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validation and specifications</p>

<p>Library home page: <a href=... | True | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz - ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validat... | non_priority | cve high detected in json schema tgz cve high severity vulnerability vulnerable library json schema tgz json schema validation and specifications library home page a href path to dependency file package json path to vulnerable library node modules json schema packa... | 0 |

14,859 | 9,546,931,024 | IssuesEvent | 2019-05-01 21:25:23 | mercycorps/TolaActivity | https://api.github.com/repos/mercycorps/TolaActivity | closed | Add meaningful error messages for failed Django login on login page | Deploy Ready Verified usability | Currently, failing to enter a valid u/p combination on the Django login returns me silently to the login page. We need to add:

- [ ] ~link to reset password or other help]~ _(no longer addressing in this ticket)_

- [x] error message(s) | True | Add meaningful error messages for failed Django login on login page - Currently, failing to enter a valid u/p combination on the Django login returns me silently to the login page. We need to add:

- [ ] ~link to reset password or other help]~ _(no longer addressing in this ticket)_

- [x] error message(s) | non_priority | add meaningful error messages for failed django login on login page currently failing to enter a valid u p combination on the django login returns me silently to the login page we need to add link to reset password or other help no longer addressing in this ticket error message s | 0 |

604 | 3,003,590,670 | IssuesEvent | 2015-07-25 02:32:52 | mesosphere/marathon | https://api.github.com/repos/mesosphere/marathon | closed | docker: hostPath in volumes seems to be ignored | bug OKR Usability service | mesos: 0.22.1

marathon: 0.8.1

docker: 1.6.2

I define:

```

"volumes": [{

"containerPath": "/var/log/vimana",

"hostPath": "/var/log/vimana"

}]

```

But the mounts I get are:

```

"Volumes": {

"/mnt/mesos/sandbox": "/tmp/mesos/slaves/20150522-122903-2693333002-5050-7694-S9/frameworks/201... | 1.0 | docker: hostPath in volumes seems to be ignored - mesos: 0.22.1

marathon: 0.8.1

docker: 1.6.2

I define:

```

"volumes": [{

"containerPath": "/var/log/vimana",

"hostPath": "/var/log/vimana"

}]

```

But the mounts I get are:

```

"Volumes": {

"/mnt/mesos/sandbox": "/tmp/mesos/slaves/2015... | non_priority | docker hostpath in volumes seems to be ignored mesos marathon docker i define volumes containerpath var log vimana hostpath var log vimana but the mounts i get are volumes mnt mesos sandbox tmp mesos slaves ... | 0 |

697,530 | 23,942,680,719 | IssuesEvent | 2022-09-12 02:25:29 | jrsteensen/OpenHornet | https://api.github.com/repos/jrsteensen/OpenHornet | closed | Update Native F360 Stick Model | Type: Enhancement Category: MCAD Priority: Normal | - [x] Add new hall sensors to pitch axis

- [x] Add new hall sensor to roll axis

- [x] Mount Electronics to base

- [x] Rename everything to OH PNs

- [x] Update File Properties on all subcomponents.

- [ ] Design an enclosure for the controller PCB to give it some protection. | 1.0 | Update Native F360 Stick Model - - [x] Add new hall sensors to pitch axis

- [x] Add new hall sensor to roll axis

- [x] Mount Electronics to base

- [x] Rename everything to OH PNs

- [x] Update File Properties on all subcomponents.

- [ ] Design an enclosure for the controller PCB to give it some protection. | priority | update native stick model add new hall sensors to pitch axis add new hall sensor to roll axis mount electronics to base rename everything to oh pns update file properties on all subcomponents design an enclosure for the controller pcb to give it some protection | 1 |

243,141 | 7,853,763,521 | IssuesEvent | 2018-06-20 18:29:12 | canmet-energy/btap_tasks | https://api.github.com/repos/canmet-energy/btap_tasks | closed | Merge nrcan into develop | Priority High Standards | The schedule has moved up and we need to get our code into NREL's for the next release

Due Monday the 11th of June. | 1.0 | Merge nrcan into develop - The schedule has moved up and we need to get our code into NREL's for the next release

Due Monday the 11th of June. | priority | merge nrcan into develop the schedule has moved up and we need to get our code into nrel s for the next release due monday the of june | 1 |

263,229 | 28,029,746,253 | IssuesEvent | 2023-03-28 11:35:20 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | reopened | CVE-2022-1974 (Medium) detected in linuxv5.2 | Mend: dependency security vulnerability | ## CVE-2022-1974 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torv... | True | CVE-2022-1974 (Medium) detected in linuxv5.2 - ## CVE-2022-1974 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_priority | cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files vulnerability details a use a... | 0 |

178,605 | 6,612,727,926 | IssuesEvent | 2017-09-20 06:02:28 | arquillian/smart-testing | https://api.github.com/repos/arquillian/smart-testing | closed | NPE is thrown is strategy is mispelled | Component: Maven Priority: High Type: Bug | ##### Issue Overview

NPE is thrown if you set a strategy incorrectly

##### Expected Behaviour

Throw a meaningful exception instead of NPE.

##### Current Behaviour

NPE

| 1.0 | NPE is thrown is strategy is mispelled - ##### Issue Overview

NPE is thrown if you set a strategy incorrectly

##### Expected Behaviour

Throw a meaningful exception instead of NPE.

##### Current Behaviour

NPE

| priority | npe is thrown is strategy is mispelled issue overview npe is thrown if you set a strategy incorrectly expected behaviour throw a meaningful exception instead of npe current behaviour npe | 1 |

366,200 | 25,572,768,437 | IssuesEvent | 2022-11-30 19:08:57 | Westlake-AI/openmixup | https://api.github.com/repos/Westlake-AI/openmixup | opened | Release Models of Mixups and MogaNet and Update Features in V0.2.6 | documentation enhancement update | Updateing new features:

1. Fix the classification heads and update implementations and config files of [AlexNet](https://dl.acm.org/doi/10.1145/3065386) and [InceptionV3](https://arxiv.org/abs/1512.00567).

Uploading Benchmark Results (release):

1. Release pre-trained models and logs of mixup benchmarks on ImageNet... | 1.0 | Release Models of Mixups and MogaNet and Update Features in V0.2.6 - Updateing new features:

1. Fix the classification heads and update implementations and config files of [AlexNet](https://dl.acm.org/doi/10.1145/3065386) and [InceptionV3](https://arxiv.org/abs/1512.00567).

Uploading Benchmark Results (release):

1... | non_priority | release models of mixups and moganet and update features in updateing new features fix the classification heads and update implementations and config files of and uploading benchmark results release release pre trained models and logs of mixup benchmarks on imagenet as provided in and... | 0 |

587,294 | 17,612,267,406 | IssuesEvent | 2021-08-18 04:08:24 | goplus/gop | https://api.github.com/repos/goplus/gop | closed | repl continueMode bug | bug priority:low |

Cant input multiline code.

go version

go version go1.14.3 darwin/amd64

os: macOS Catalina 10.5.7 | 1.0 | repl continueMode bug -

Cant input multiline code.

go version

go version go1.14.3 darwin/amd64

os: macOS Catalina 10.5.7 | priority | repl continuemode bug cant input multiline code go version go version darwin os macos catalina | 1 |

61,161 | 17,023,621,376 | IssuesEvent | 2021-07-03 02:58:09 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | search from IOS is crashing | Component: nominatim Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 10.44am, Wednesday, 4th August 2010]**

Trying to do a search containing a "special keyword" (POI) like:

http://nominatim.openstreetmap.org/search/airport

from Safari (or any other web browser) on a IOS device (iPhone/iPad) does result in:

string(354) "select... | 1.0 | search from IOS is crashing - **[Submitted to the original trac issue database at 10.44am, Wednesday, 4th August 2010]**

Trying to do a search containing a "special keyword" (POI) like:

http://nominatim.openstreetmap.org/search/airport

from Safari (or any other web browser) on a IOS device (iPhone/iPad) does res... | non_priority | search from ios is crashing trying to do a search containing a special keyword poi like from safari or any other web browser on a ios device iphone ipad does result in string select place id false as in small false as in large from search name where name vector array and st dwithin... | 0 |

50,536 | 13,539,630,516 | IssuesEvent | 2020-09-16 13:42:28 | cniweb/missing-link | https://api.github.com/repos/cniweb/missing-link | opened | CVE-2020-1945 (Medium) detected in ant-1.8.2.jar | security vulnerability | ## CVE-2020-1945 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.2.jar</b></p></summary>

<p>master POM</p>

<p>Path to vulnerable library: missing-link/ant-props/lib/apache-an... | True | CVE-2020-1945 (Medium) detected in ant-1.8.2.jar - ## CVE-2020-1945 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ant-1.8.2.jar</b></p></summary>

<p>master POM</p>

<p>Path to vulne... | non_priority | cve medium detected in ant jar cve medium severity vulnerability vulnerable library ant jar master pom path to vulnerable library missing link ant props lib apache ant apache ant jar dependency hierarchy x ant jar vulnerable library found in head... | 0 |

230,392 | 7,609,805,441 | IssuesEvent | 2018-05-01 03:11:06 | Gamebuster19901/InventoryDecrapifier | https://api.github.com/repos/Gamebuster19901/InventoryDecrapifier | closed | Items are picked up one at a time | Bug Priority - Normal ↓ Side - Client Side - Server | Items are picked up from the ground one at a time at a rate of one per tick, instead of multiple per tick. | 1.0 | Items are picked up one at a time - Items are picked up from the ground one at a time at a rate of one per tick, instead of multiple per tick. | priority | items are picked up one at a time items are picked up from the ground one at a time at a rate of one per tick instead of multiple per tick | 1 |

713,347 | 24,525,461,879 | IssuesEvent | 2022-10-11 12:49:58 | quadratic-funding/mpc-phase2-suite | https://api.github.com/repos/quadratic-funding/mpc-phase2-suite | closed | Get access to cloud resource | DevOps ⚙ High Priority 🔥 | ### Description

We need to get access for GCP and AWS cloud resources in order to switch from personal billing account to EF one. We need access to Firebase and GCP Cloud Functions + Compute Engine, AWS S3 (possibly AWS EC2). | 1.0 | Get access to cloud resource - ### Description

We need to get access for GCP and AWS cloud resources in order to switch from personal billing account to EF one. We need access to Firebase and GCP Cloud Functions + Compute Engine, AWS S3 (possibly AWS EC2). | priority | get access to cloud resource description we need to get access for gcp and aws cloud resources in order to switch from personal billing account to ef one we need access to firebase and gcp cloud functions compute engine aws possibly aws | 1 |

48,885 | 13,184,766,840 | IssuesEvent | 2020-08-12 20:03:21 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | buggy nutau (Trac #383) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/383

, reported by olivas and owned by olivas_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-22T18:26:26",

"description": "it seems to me that in the nutau nugen dataset 6539 the light emission\nfrom muons (if pre... | 1.0 | buggy nutau (Trac #383) - <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/383

, reported by olivas and owned by olivas_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-22T18:26:26",

"description": "it seems to me that in the nutau nugen dataset 6539 the light em... | non_priority | buggy nutau trac migrated from reported by olivas and owned by olivas json status closed changetime description it seems to me that in the nutau nugen dataset the light emission nfrom muons if present in the event is missing i attach a scatter plot ... | 0 |

142,936 | 11,500,952,658 | IssuesEvent | 2020-02-12 16:21:52 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | opened | FIM System tests: Create common tasks to verify alerts on alerts.json and Elasticsearch | fim-system-tests | ### Objective

The purpose of this issue is to keep track of the progress of tasks that parse the generated file from actions tasks at https://github.com/wazuh/wazuh-qa/issues/444 and ensures that an alert has been created for every file creation/modification/deletion

### Tasks

- [ ] Create tasks to compare gen... | 1.0 | FIM System tests: Create common tasks to verify alerts on alerts.json and Elasticsearch - ### Objective

The purpose of this issue is to keep track of the progress of tasks that parse the generated file from actions tasks at https://github.com/wazuh/wazuh-qa/issues/444 and ensures that an alert has been created for e... | non_priority | fim system tests create common tasks to verify alerts on alerts json and elasticsearch objective the purpose of this issue is to keep track of the progress of tasks that parse the generated file from actions tasks at and ensures that an alert has been created for every file creation modification deletion ... | 0 |

246,106 | 20,822,888,405 | IssuesEvent | 2022-03-18 17:10:32 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | opened | Missing copy shareable link button | bug test-day | Opening the share entity dialog does not always render the copy shareable URL to clipboard button. The input is also showing a different size:

https://user-images.githubusercontent.com/716185/159049927-8b9e5cf6-c7a2-4854-ba88-2e25a018aead.mp4

This is not exclusive of saved searches, although I first noticed it ... | 1.0 | Missing copy shareable link button - Opening the share entity dialog does not always render the copy shareable URL to clipboard button. The input is also showing a different size:

https://user-images.githubusercontent.com/716185/159049927-8b9e5cf6-c7a2-4854-ba88-2e25a018aead.mp4

This is not exclusive of saved s... | non_priority | missing copy shareable link button opening the share entity dialog does not always render the copy shareable url to clipboard button the input is also showing a different size this is not exclusive of saved searches although i first noticed it there there are no errors in the browser console and i could... | 0 |

367,282 | 25,730,921,407 | IssuesEvent | 2022-12-07 20:15:24 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | [Docs]: | documentation service/ec2 needs-triage | ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/data-sources/ami

### Description

As per documentation,

[public](https://registry.terraform.io/providers/hashicorp/aws/latest/docs/data-sources/ami#public) - true if the image has public launch permissions.

if the public flag... | 1.0 | [Docs]: - ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/data-sources/ami

### Description

As per documentation,

[public](https://registry.terraform.io/providers/hashicorp/aws/latest/docs/data-sources/ami#public) - true if the image has public launch permissions.

if the ... | non_priority | documentation link description as per documentation true if the image has public launch permissions if the public flag is used as a filter then seeing below error message is public is still valid if i change public to is public then its working as expected code snippet ... | 0 |

304,251 | 9,329,465,887 | IssuesEvent | 2019-03-28 02:30:23 | rubrikinc/use-case-aws-cloudformation-template-cloudcluster | https://api.github.com/repos/rubrikinc/use-case-aws-cloudformation-template-cloudcluster | closed | Link for sharing CF Template | exp-beginner priority-p1 | The template needs to be uploaded and shared out via an AWS link like the rest of the CF templates.

For example: https://s3-us-west-1.amazonaws.com/cloudformation-templates-rubrik-prod/rubrik_cloudon.template | 1.0 | Link for sharing CF Template - The template needs to be uploaded and shared out via an AWS link like the rest of the CF templates.

For example: https://s3-us-west-1.amazonaws.com/cloudformation-templates-rubrik-prod/rubrik_cloudon.template | priority | link for sharing cf template the template needs to be uploaded and shared out via an aws link like the rest of the cf templates for example | 1 |

357,091 | 10,601,825,455 | IssuesEvent | 2019-10-10 13:07:40 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Create a switch/variable for data discovery with PhEDEx x Rucio | Medium Priority New Feature ReqMgr2 Rucio Transition WorkQueue | For central services.

Related to: https://its.cern.ch/jira/browse/CMSRUCIO-104 | 1.0 | Create a switch/variable for data discovery with PhEDEx x Rucio - For central services.

Related to: https://its.cern.ch/jira/browse/CMSRUCIO-104 | priority | create a switch variable for data discovery with phedex x rucio for central services related to | 1 |

84,050 | 7,888,578,955 | IssuesEvent | 2018-06-27 22:45:02 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | translate new entity has "type your text" | 2.0.4 testing week |

translate new entity has "type your text" to "הכנס כותרת"

and design according to original design (see http://root.203.projects.linnovate.net for referece) | 1.0 | translate new entity has "type your text" -

translate new entity has "type your text" to "הכנס כותרת"

and design according to original design (see http://root.203.projects.linnovate.net for referece) | non_priority | translate new entity has type your text translate new entity has type your text to הכנס כותרת and design according to original design see for referece | 0 |

314,163 | 9,593,467,818 | IssuesEvent | 2019-05-09 11:37:59 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.rockstargames.com - site is not usable | browser-firefox-mobile engine-gecko priority-normal | <!-- @browser: Firefox Mobile 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:67.0) Gecko/67.0 Firefox/67.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.rockstargames.com/GTAOnline/restricted-content/agegate/form?redirect=https%3A%2F%2Fwww.rockstargames.com%2FGTAOnline%2Fnews&options=... | 1.0 | www.rockstargames.com - site is not usable - <!-- @browser: Firefox Mobile 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:67.0) Gecko/67.0 Firefox/67.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.rockstargames.com/GTAOnline/restricted-content/agegate/form?redirect=https%3A%2F%2Fwww.... | priority | site is not usable url browser version firefox mobile operating system android tested another browser no problem type site is not usable description entering birthday to access page gets stuck in loop can t enter page steps to reproduce browser configurati... | 1 |

602,643 | 18,492,048,092 | IssuesEvent | 2021-10-19 02:27:59 | AY2122S1-CS2113T-T12-3/tp | https://api.github.com/repos/AY2122S1-CS2113T-T12-3/tp | closed | Add function to clear all entries being tracked | priority.Medium | So that we can have a easier time testing code. Users might also find clear all function handy if they want to start afresh. | 1.0 | Add function to clear all entries being tracked - So that we can have a easier time testing code. Users might also find clear all function handy if they want to start afresh. | priority | add function to clear all entries being tracked so that we can have a easier time testing code users might also find clear all function handy if they want to start afresh | 1 |

186,103 | 14,394,638,184 | IssuesEvent | 2020-12-03 01:46:13 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | tengteng/Guava: _vendor/src/golang.org/x/tools/go/gccgoimporter/importer_test.go; 3 LoC | fresh test tiny |

Found a possible issue in [tengteng/Guava](https://www.github.com/tengteng/Guava) at [_vendor/src/golang.org/x/tools/go/gccgoimporter/importer_test.go](https://github.com/tengteng/Guava/blob/f44ca584d2dc0fe32182990065cfc4fd0e6cebe8/_vendor/src/golang.org/x/tools/go/gccgoimporter/importer_test.go#L105-L107)

Below is t... | 1.0 | tengteng/Guava: _vendor/src/golang.org/x/tools/go/gccgoimporter/importer_test.go; 3 LoC -

Found a possible issue in [tengteng/Guava](https://www.github.com/tengteng/Guava) at [_vendor/src/golang.org/x/tools/go/gccgoimporter/importer_test.go](https://github.com/tengteng/Guava/blob/f44ca584d2dc0fe32182990065cfc4fd0e6ceb... | non_priority | tengteng guava vendor src golang org x tools go gccgoimporter importer test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the conten... | 0 |

545,022 | 15,934,230,699 | IssuesEvent | 2021-04-14 08:26:14 | NamanhTran/Syntax-Analyzer | https://api.github.com/repos/NamanhTran/Syntax-Analyzer | closed | Create Parsing Table Algorithm | Hard Priority | ## Overview

We need to implement the algorithm to read from the parse table, keep track of all production rules for each lexeme, and handle errors.

## Action Items

- [ ] Implement the algorithm

- [ ] Generate meaningful error message when syntax error identified.

- [ ] Test the table parser algorithm

## Assum... | 1.0 | Create Parsing Table Algorithm - ## Overview

We need to implement the algorithm to read from the parse table, keep track of all production rules for each lexeme, and handle errors.

## Action Items

- [ ] Implement the algorithm

- [ ] Generate meaningful error message when syntax error identified.

- [ ] Test the t... | priority | create parsing table algorithm overview we need to implement the algorithm to read from the parse table keep track of all production rules for each lexeme and handle errors action items implement the algorithm generate meaningful error message when syntax error identified test the table p... | 1 |

11,452 | 4,227,265,273 | IssuesEvent | 2016-07-03 02:40:56 | ac21/sherlock | https://api.github.com/repos/ac21/sherlock | closed | Fix "Rubocop/Lint/UnusedBlockArgument" issue in lib/api/v1/defaults.rb | code_climate | Unused block argument - `e`. You can omit the argument if you don't care about it.

https://codeclimate.com/github/ac21/sherlock/lib/api/v1/defaults.rb#issue_5771abba9591a1000110c0f3 | 1.0 | Fix "Rubocop/Lint/UnusedBlockArgument" issue in lib/api/v1/defaults.rb - Unused block argument - `e`. You can omit the argument if you don't care about it.

https://codeclimate.com/github/ac21/sherlock/lib/api/v1/defaults.rb#issue_5771abba9591a1000110c0f3 | non_priority | fix rubocop lint unusedblockargument issue in lib api defaults rb unused block argument e you can omit the argument if you don t care about it | 0 |

751,717 | 26,254,831,555 | IssuesEvent | 2023-01-05 23:09:13 | Automattic/woocommerce-payments | https://api.github.com/repos/Automattic/woocommerce-payments | closed | Non-prorated synced subscriptions don't result in synced WC Pay Subscription | type: bug priority: low component: wcpay subscriptions category: core | ### Describe the bug

<!-- A clear and concise description of what the bug is. Please be as descriptive as possible. -->

When a merchant selects the **Never (charge the full recurring amount at sign-up)** synchronisation setting, synced subscription products aren't being synced to their anchor date in Stripe.

, and issue #271 deals with showing the severity level in the frontend. The next step is to make it possible to also filter on severity level in the frontend. There is already support for this in the ba... | 1.0 | Filtering incidents by severity level - Severity levels were introduced into the API as part of [Uninett/Argus#70](https://github.com/Uninett/Argus/issues/70), and issue #271 deals with showing the severity level in the frontend. The next step is to make it possible to also filter on severity level in the frontend. The... | priority | filtering incidents by severity level severity levels were introduced into the api as part of and issue deals with showing the severity level in the frontend the next step is to make it possible to also filter on severity level in the frontend there is already support for this in the backend my suggestio... | 1 |

54,475 | 30,198,664,388 | IssuesEvent | 2023-07-05 02:06:10 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Tunnel mode lowers throughput by a large amount | kind/bug need-more-info sig/datapath kind/performance needs/triage kind/community-report stale | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### What happened?

When using cilium with tunnel mode enabled, throughput drops by a significant amount(30-50%). This was seen in #22898 with the attached logs. This bug is an extension of that to discuss matters related specifically ... | True | Tunnel mode lowers throughput by a large amount - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### What happened?

When using cilium with tunnel mode enabled, throughput drops by a significant amount(30-50%). This was seen in #22898 with the attached logs. This bug is an extensio... | non_priority | tunnel mode lowers throughput by a large amount is there an existing issue for this i have searched the existing issues what happened when using cilium with tunnel mode enabled throughput drops by a significant amount this was seen in with the attached logs this bug is an extension of tha... | 0 |

647,635 | 21,132,750,417 | IssuesEvent | 2022-04-06 01:22:36 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Make OnShowPanel and OnShowWalletOnboarding for Android only | priority/P2 QA/No release-notes/exclude feature/wallet OS/Android | OnShowPanel();

OnShowWalletOnboarding();

observer functions should be visible for Android only | 1.0 | Make OnShowPanel and OnShowWalletOnboarding for Android only - OnShowPanel();

OnShowWalletOnboarding();

observer functions should be visible for Android only | priority | make onshowpanel and onshowwalletonboarding for android only onshowpanel onshowwalletonboarding observer functions should be visible for android only | 1 |

540,084 | 15,800,575,450 | IssuesEvent | 2021-04-03 00:20:11 | JensenJ/EmbargoMC-IssueTracker | https://api.github.com/repos/JensenJ/EmbargoMC-IssueTracker | closed | [BUG] Water / Lava Deleted Source Blocks | bug low-priority | **Describe the bug**

Source blocks get deleted by custom caves from world painter

**Expected behaviour**

Consistent water/lava lakes spawning without holes in caused by caves | 1.0 | [BUG] Water / Lava Deleted Source Blocks - **Describe the bug**

Source blocks get deleted by custom caves from world painter

**Expected behaviour**

Consistent water/lava lakes spawning without holes in caused by caves | priority | water lava deleted source blocks describe the bug source blocks get deleted by custom caves from world painter expected behaviour consistent water lava lakes spawning without holes in caused by caves | 1 |

595,489 | 18,067,595,632 | IssuesEvent | 2021-09-20 21:07:17 | OpenMandrivaAssociation/test2 | https://api.github.com/repos/OpenMandrivaAssociation/test2 | closed | Adding users crashes the program (Bugzilla Bug 138) | bug high priority major | This issue was created automatically with bugzilla2github

# Bugzilla Bug 138

Date: 2013-09-14 15:05:51 +0000

From: @robxu9

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @cris-b

Last updated: 2013-09-19 20:05:04 +0000

## Comment 822

Date: 2013-09-14 15:05:51 +0000

From: @robxu9

Theme name: rosa-element... | 1.0 | Adding users crashes the program (Bugzilla Bug 138) - This issue was created automatically with bugzilla2github

# Bugzilla Bug 138

Date: 2013-09-14 15:05:51 +0000

From: @robxu9

To: OpenMandriva QA <<bugs@openmandriva.org>>

CC: @cris-b

Last updated: 2013-09-19 20:05:04 +0000

## Comment 822

Date: 2013-09-14 ... | priority | adding users crashes the program bugzilla bug this issue was created automatically with bugzilla bug date from to openmandriva qa lt gt cc cris b last updated comment date from theme name rosa elementary kernel version nrjq... | 1 |

156,371 | 5,968,203,730 | IssuesEvent | 2017-05-30 17:36:34 | kolihub/koli | https://api.github.com/repos/kolihub/koli | opened | Better error handling when downloading releases | area/slugrunner improvement priority/P2 | The slugrunner doesn't validate any error when downloading releases from the git-server. | 1.0 | Better error handling when downloading releases - The slugrunner doesn't validate any error when downloading releases from the git-server. | priority | better error handling when downloading releases the slugrunner doesn t validate any error when downloading releases from the git server | 1 |

729,955 | 25,152,590,302 | IssuesEvent | 2022-11-10 11:07:35 | rism-digital/rism-online-issues | https://api.github.com/repos/rism-digital/rism-online-issues | closed | Add contour search for incipit | Audience: General public Priority: Moderate Status: Blocked Topic: Incipits Component: Incipit Search | In Muscat BL we used to have contour search enabled. It was the implementation of Themefinder

* http://www.themefinder.org/help/refinedcontour/

* http://www.themefinder.org/help/grosscontour/

Having one type of contour might be useful, especially since we have now highlighting. | 1.0 | Add contour search for incipit - In Muscat BL we used to have contour search enabled. It was the implementation of Themefinder

* http://www.themefinder.org/help/refinedcontour/

* http://www.themefinder.org/help/grosscontour/

Having one type of contour might be useful, especially since we have now highlighting. | priority | add contour search for incipit in muscat bl we used to have contour search enabled it was the implementation of themefinder having one type of contour might be useful especially since we have now highlighting | 1 |