Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

410,102 | 11,983,209,292 | IssuesEvent | 2020-04-07 14:07:21 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | `ShowMoreCard` button used in developer reply has the wrong background color | component: add-on ratings contrib: welcome priority: p3 | ### Describe the problem and steps to reproduce it:

1. Go to https://addons-dev.allizom.org/en-US/firefox/addon/search_by_image/reviews/?src=recommended_fallback

2. Observe the "developer response" in the middle of the page

### What happened?

The `ShowMoreCard` displays a "read more" button with a white backg... | 1.0 | `ShowMoreCard` button used in developer reply has the wrong background color - ### Describe the problem and steps to reproduce it:

1. Go to https://addons-dev.allizom.org/en-US/firefox/addon/search_by_image/reviews/?src=recommended_fallback

2. Observe the "developer response" in the middle of the page

### What h... | priority | showmorecard button used in developer reply has the wrong background color describe the problem and steps to reproduce it go to observe the developer response in the middle of the page what happened the showmorecard displays a read more button with a white background img width... | 1 |

133,234 | 18,285,818,279 | IssuesEvent | 2021-10-05 10:08:20 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Security Solution][Timeline]On Clicking + Add field user focus moved from the Field name to the value | bug triage_needed Team: SecuritySolution | **Describe the bug**

Timeline: On Clicking + Add field user focus moved from the Field name to the value

**Build Details**

`Version: 7.15.0`

**Steps**

- Login to kibana

- Create timeline

- Click on + Add field in the timeline drag area

- click on drop down and don't select any value from this

- Observed t... | True | [Security Solution][Timeline]On Clicking + Add field user focus moved from the Field name to the value - **Describe the bug**

Timeline: On Clicking + Add field user focus moved from the Field name to the value

**Build Details**

`Version: 7.15.0`

**Steps**

- Login to kibana

- Create timeline

- Click on + Ad... | non_priority | on clicking add field user focus moved from the field name to the value describe the bug timeline on clicking add field user focus moved from the field name to the value build details version steps login to kibana create timeline click on add field in the timeline drag... | 0 |

562,345 | 16,657,647,556 | IssuesEvent | 2021-06-05 20:31:04 | dodona-edu/dodona | https://api.github.com/repos/dodona-edu/dodona | closed | HTML5 video tag does not support skipping forward/backward | bug low priority student | Video fragments embedded using the HTML5 tag `video` cannot be skipped forward nor backward.

Example: [exercise description](https://dodona.ugent.be/nl/courses/172/series/3251/activities/357352739/)

OS: Mac OS X

Browser: Google Chrome

Observed by: @toonijn @pverscha | 1.0 | HTML5 video tag does not support skipping forward/backward - Video fragments embedded using the HTML5 tag `video` cannot be skipped forward nor backward.

Example: [exercise description](https://dodona.ugent.be/nl/courses/172/series/3251/activities/357352739/)

OS: Mac OS X

Browser: Google Chrome

Observed by: @to... | priority | video tag does not support skipping forward backward video fragments embedded using the tag video cannot be skipped forward nor backward example os mac os x browser google chrome observed by toonijn pverscha | 1 |

52,057 | 3,020,503,464 | IssuesEvent | 2015-07-31 08:25:44 | 52North/SOS | https://api.github.com/repos/52North/SOS | closed | Client problem with feature capabilities in DescribeSensor response with SensorML 1.0.1 encoded procedure | bug enhancement medium priority | The SweText of the feature capabilities of a DescribeSensor response procedure encoded in SensorML 1.0.1 does not contain the definition which is used by the Sensor Web Client/REST-API proxy to identify featureOfInterest.

Problem: The merging process replaces the field name. | 1.0 | Client problem with feature capabilities in DescribeSensor response with SensorML 1.0.1 encoded procedure - The SweText of the feature capabilities of a DescribeSensor response procedure encoded in SensorML 1.0.1 does not contain the definition which is used by the Sensor Web Client/REST-API proxy to identify featureOf... | priority | client problem with feature capabilities in describesensor response with sensorml encoded procedure the swetext of the feature capabilities of a describesensor response procedure encoded in sensorml does not contain the definition which is used by the sensor web client rest api proxy to identify featureof... | 1 |

773,206 | 27,149,702,309 | IssuesEvent | 2023-02-16 23:31:46 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | opened | Migrate to Vue 3 | Type: Feature Request Priority: 3 1-off tasks Type: Epic Lead: @jimchamp | ### Describe the problem that you'd like solved

<!-- A clear and concise description of what you want to happen. -->

Open Library maintains a small number of web components that were created with Vue 2.

Vue 2 will reach end of life on 31 December 2023, so we'll have to migrate to Vue 3 in the near future.

This al... | 1.0 | Migrate to Vue 3 - ### Describe the problem that you'd like solved

<!-- A clear and concise description of what you want to happen. -->

Open Library maintains a small number of web components that were created with Vue 2.

Vue 2 will reach end of life on 31 December 2023, so we'll have to migrate to Vue 3 in the near... | priority | migrate to vue describe the problem that you d like solved open library maintains a small number of web components that were created with vue vue will reach end of life on december so we ll have to migrate to vue in the near future this also give us the opportunity to try using vite for our... | 1 |

112,988 | 9,607,837,253 | IssuesEvent | 2019-05-11 22:53:01 | dimitri/pgloader | https://api.github.com/repos/dimitri/pgloader | closed | AWS RDS to AWS VM | How To... Question Needs more testing / information | Thanks for contributing to [pgloader](https://pgloader.io) by reporting an

issue! Reporting an issue is the only way we can solve problems, fix bugs,

and improve both the software and its user experience in general.

The best bug reports follow those 3 simple steps:

1. show what you did,

2. show the result ... | 1.0 | AWS RDS to AWS VM - Thanks for contributing to [pgloader](https://pgloader.io) by reporting an

issue! Reporting an issue is the only way we can solve problems, fix bugs,

and improve both the software and its user experience in general.

The best bug reports follow those 3 simple steps:

1. show what you did,

... | non_priority | aws rds to aws vm thanks for contributing to by reporting an issue reporting an issue is the only way we can solve problems fix bugs and improve both the software and its user experience in general the best bug reports follow those simple steps show what you did show the result you got ... | 0 |

751,168 | 26,231,736,536 | IssuesEvent | 2023-01-05 01:14:06 | canonical/cn.ubuntu.com | https://api.github.com/repos/canonical/cn.ubuntu.com | closed | Empty links on Ubuntu Core特点概览 | Priority: High | I found 4 links not working on https://cn.ubuntu.com/internet-of-things/core/features

The current links and correct links are:

- OTA 更新

- https://cn.ubuntu.com/internet-of-things/features/ota-updates >> https://cn.ubuntu.com/internet-of-things/core/features/ota-updates

- 安全启动

- https://cn.ubuntu.com/intern... | 1.0 | Empty links on Ubuntu Core特点概览 - I found 4 links not working on https://cn.ubuntu.com/internet-of-things/core/features

The current links and correct links are:

- OTA 更新

- https://cn.ubuntu.com/internet-of-things/features/ota-updates >> https://cn.ubuntu.com/internet-of-things/core/features/ota-updates

- 安全启动

... | priority | empty links on ubuntu core特点概览 i found links not working on the current links and correct links are ota 更新 安全启动 全盘加密 恢复模式 thanks | 1 |

589,827 | 17,761,599,021 | IssuesEvent | 2021-08-29 20:00:53 | nic547/TauStellwerk | https://api.github.com/repos/nic547/TauStellwerk | closed | Replace "Random User $number" default usernames | priority:medium type:maintenance size:S | Differentiating users based on a number is kinda difficult.

Doing something like adjective + animal name or a similar approach would probably be better. | 1.0 | Replace "Random User $number" default usernames - Differentiating users based on a number is kinda difficult.

Doing something like adjective + animal name or a similar approach would probably be better. | priority | replace random user number default usernames differentiating users based on a number is kinda difficult doing something like adjective animal name or a similar approach would probably be better | 1 |

571,702 | 17,023,349,137 | IssuesEvent | 2021-07-03 01:33:46 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Public Libraries should show in Mapnik | Component: mapnik Priority: minor Resolution: fixed Type: enhancement | **[Submitted to the original trac issue database at 10.53pm, Tuesday, 13th January 2009]**

Libraries (specifically a node with amenity=library) show on osmarender but not mapnik.

Examples:

Forbes Library is the public library of Northampton, MA, USA:

http://openstreetmap.org/?lat=42.31708&lon=-72.63611&zoom=17&... | 1.0 | Public Libraries should show in Mapnik - **[Submitted to the original trac issue database at 10.53pm, Tuesday, 13th January 2009]**

Libraries (specifically a node with amenity=library) show on osmarender but not mapnik.

Examples:

Forbes Library is the public library of Northampton, MA, USA:

http://openstreetmap... | priority | public libraries should show in mapnik libraries specifically a node with amenity library show on osmarender but not mapnik examples forbes library is the public library of northampton ma usa there are many libraries here boston ma usa the reed free library in surry nh usa even h... | 1 |

26,505 | 13,039,424,841 | IssuesEvent | 2020-07-28 16:43:45 | aivivn/d2l-vn | https://api.github.com/repos/aivivn/d2l-vn | closed | Revise "hybridize_vn" - Phần 3 | chapter: computational-performance status: phase 2 | Phần này được dịch bởi: **davidnvq**

Nếu bạn đã dịch phần này, vui lòng bỏ qua việc revise. | True | Revise "hybridize_vn" - Phần 3 - Phần này được dịch bởi: **davidnvq**

Nếu bạn đã dịch phần này, vui lòng bỏ qua việc revise. | non_priority | revise hybridize vn phần phần này được dịch bởi davidnvq nếu bạn đã dịch phần này vui lòng bỏ qua việc revise | 0 |

163,377 | 25,803,200,607 | IssuesEvent | 2022-12-11 06:39:31 | jcklpe/research-ops-hackpack | https://api.github.com/repos/jcklpe/research-ops-hackpack | opened | Create new research project: {Project} | design product management research | ### Description

blah blah

### Acceptance Criteria

- [ ] Create a research [Epic](https://github.com/jcklpe/research-ops-hackpack/issues/new?assignees=octocat&labels=research%2Cdesign%2Cproduct+management%2Cepic&template=epic.yaml&title=%7BProject%2FTrial%7D+Epic).

- [ ] Create a research project folder.

- [ ] Crea... | 1.0 | Create new research project: {Project} - ### Description

blah blah

### Acceptance Criteria

- [ ] Create a research [Epic](https://github.com/jcklpe/research-ops-hackpack/issues/new?assignees=octocat&labels=research%2Cdesign%2Cproduct+management%2Cepic&template=epic.yaml&title=%7BProject%2FTrial%7D+Epic).

- [ ] Cre... | non_priority | create new research project project description blah blah acceptance criteria create a research create a research project folder create all tickets have been created and assigned | 0 |

124,829 | 16,668,906,745 | IssuesEvent | 2021-06-07 08:28:12 | blockframes/blockframes | https://api.github.com/repos/blockframes/blockframes | closed | Warning State on Avails Criteria Search Forms | Design - UI | Objective here is to add an intermediary state between hint and error, which would be warning.

First use case for that warning state is for the avails form: as soon as users fill in one of the avails fields, it should be clear that they have to fill in all the other ones as well before applying their search.

Researc... | 1.0 | Warning State on Avails Criteria Search Forms - Objective here is to add an intermediary state between hint and error, which would be warning.

First use case for that warning state is for the avails form: as soon as users fill in one of the avails fields, it should be clear that they have to fill in all the other ones... | non_priority | warning state on avails criteria search forms objective here is to add an intermediary state between hint and error which would be warning first use case for that warning state is for the avails form as soon as users fill in one of the avails fields it should be clear that they have to fill in all the other ones... | 0 |

492,490 | 14,214,384,107 | IssuesEvent | 2020-11-17 05:06:04 | bounswe/bounswe2020group3 | https://api.github.com/repos/bounswe/bounswe2020group3 | closed | [Backend] Create Dockerfile | Backend Priority: Critical Type: Enhancement | * **Project: BACKEND**

* **This is a: FEATURE REQUEST**

* **Description of the issue**

There should be a dockerfile for CI/CD configurations

* **Deadline for resolution:**

ASAP

| 1.0 | [Backend] Create Dockerfile - * **Project: BACKEND**

* **This is a: FEATURE REQUEST**

* **Description of the issue**

There should be a dockerfile for CI/CD configurations

* **Deadline for resolution:**

ASAP

| priority | create dockerfile project backend this is a feature request description of the issue there should be a dockerfile for ci cd configurations deadline for resolution asap | 1 |

59,982 | 3,117,659,987 | IssuesEvent | 2015-09-04 03:51:09 | framingeinstein/issues-test | https://api.github.com/repos/framingeinstein/issues-test | opened | SPK-613: Promo Code Help | priority:normal priority:normal priority:normal priority:normal priority:normal priority:normal resolution:in-progress resolution:in-progress resolution:in-progress | Hi Toby,

See SRP-90:

We entered a new ticket with Magento to request help on a promo code.

1 SKU that should be eligible is the only SKU not allowing a promo code to be used.

Magento reviewed this issue on our front end and believes the custom theme is creating the promo code issue.

Here is their feedback:

Tod... | 6.0 | SPK-613: Promo Code Help - Hi Toby,

See SRP-90:

We entered a new ticket with Magento to request help on a promo code.

1 SKU that should be eligible is the only SKU not allowing a promo code to be used.

Magento reviewed this issue on our front end and believes the custom theme is creating the promo code issue.

H... | priority | spk promo code help hi toby see srp we entered a new ticket with magento to request help on a promo code sku that should be eligible is the only sku not allowing a promo code to be used magento reviewed this issue on our front end and believes the custom theme is creating the promo code issue here... | 1 |

769,778 | 27,018,820,732 | IssuesEvent | 2023-02-10 22:25:12 | googleapis/nodejs-automl | https://api.github.com/repos/googleapis/nodejs-automl | closed | Automl Vision Object Detection Deploy Model Test: should deploy a model with a specified node count failed | type: bug priority: p1 api: automl flakybot: issue flakybot: flaky | Note: #688 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 97411a2bb514b9921bb3932543a2d895c452d5c6

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/407b55a7-9544-44ff-be2c-1f1106f810da), [Sponge](http://sponge2/407b55a7-9544-44ff-b... | 1.0 | Automl Vision Object Detection Deploy Model Test: should deploy a model with a specified node count failed - Note: #688 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 97411a2bb514b9921bb3932543a2d895c452d5c6

buildURL: [Build Status](https://source.cloud.googl... | priority | automl vision object detection deploy model test should deploy a model with a specified node count failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output expected unauthenticated request had inv... | 1 |

304,756 | 9,335,313,785 | IssuesEvent | 2019-03-28 18:15:52 | robotframework/SeleniumLibrary | https://api.github.com/repos/robotframework/SeleniumLibrary | closed | Set Browser Implicit Wait weird behavior | bug priority: medium | * Using the keyword Set Browser Implicit Wait will set the implicit wait but it can not be read with Get Selenium Implicit Wait, which returns only the value set with Set Selenium Implicit Wait or when importing the library.

* When you open a new tab (with js for exemple) and go back to the main tab the implicit wait ... | 1.0 | Set Browser Implicit Wait weird behavior - * Using the keyword Set Browser Implicit Wait will set the implicit wait but it can not be read with Get Selenium Implicit Wait, which returns only the value set with Set Selenium Implicit Wait or when importing the library.

* When you open a new tab (with js for exemple) and... | priority | set browser implicit wait weird behavior using the keyword set browser implicit wait will set the implicit wait but it can not be read with get selenium implicit wait which returns only the value set with set selenium implicit wait or when importing the library when you open a new tab with js for exemple and... | 1 |

282,822 | 30,889,436,543 | IssuesEvent | 2023-08-04 02:43:18 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | reopened | CVE-2023-28772 (Medium) detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2023-28772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2023-28772 (Medium) detected in linux-stable-rtv4.1.33 - ## CVE-2023-28772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia C... | non_priority | cve medium detected in linux stable cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source f... | 0 |

277,830 | 8,633,238,693 | IssuesEvent | 2018-11-22 13:17:39 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Eco Forum Unsecure Login. | High Priority | The official eco forum (http://ecoforum.strangeloopgames.com) uses insecure http for logging into the forum, Anyone who would be logging into the forum would be sending their username and password combination in cleartext over the internet allowing anyone sniffing traffic to steal an admin password for the forum. You c... | 1.0 | Eco Forum Unsecure Login. - The official eco forum (http://ecoforum.strangeloopgames.com) uses insecure http for logging into the forum, Anyone who would be logging into the forum would be sending their username and password combination in cleartext over the internet allowing anyone sniffing traffic to steal an admin p... | priority | eco forum unsecure login the official eco forum uses insecure http for logging into the forum anyone who would be logging into the forum would be sending their username and password combination in cleartext over the internet allowing anyone sniffing traffic to steal an admin password for the forum you can get a... | 1 |

239,072 | 26,201,215,106 | IssuesEvent | 2023-01-03 17:38:40 | MValle21/circonus-unified-agent | https://api.github.com/repos/MValle21/circonus-unified-agent | opened | CVE-2020-26160 (High) detected in github.com/dgrijalva/jwt-go-v3.2.1-0.20200107013213-dc14462fd587+incompatible | security vulnerability | ## CVE-2020-26160 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/dgrijalva/jwt-go-v3.2.1-0.20200107013213-dc14462fd587+incompatible</b></p></summary>

<p>ARCHIVE - Golang im... | True | CVE-2020-26160 (High) detected in github.com/dgrijalva/jwt-go-v3.2.1-0.20200107013213-dc14462fd587+incompatible - ## CVE-2020-26160 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>githu... | non_priority | cve high detected in github com dgrijalva jwt go incompatible cve high severity vulnerability vulnerable library github com dgrijalva jwt go incompatible archive golang implementation of json web tokens jwt this project is now maintained at library home page ... | 0 |

175,540 | 21,313,848,145 | IssuesEvent | 2022-04-16 01:08:57 | Nivaskumark/kernel_v4.1.15 | https://api.github.com/repos/Nivaskumark/kernel_v4.1.15 | opened | CVE-2019-3459 (Medium) detected in linuxlinux-4.6 | security vulnerability | ## CVE-2019-3459 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kerne... | True | CVE-2019-3459 (Medium) detected in linuxlinux-4.6 - ## CVE-2019-3459 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>L... | non_priority | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch master vulnerable source files net bluetooth core c net bluetooth core... | 0 |

350,772 | 31,932,305,166 | IssuesEvent | 2023-09-19 08:15:59 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_lax_operators.test_jax_expand_dims | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6223268002/job/16888850206"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6223268002/job/16888850206"><img src=https://img.shields.io/badge/-failure-red></a>

|tensorflo... | 1.0 | Fix jax_lax_operators.test_jax_expand_dims - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6223268002/job/16888850206"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6223268002/job/16888850206"><img src=https://img.s... | non_priority | fix jax lax operators test jax expand dims numpy a href src jax a href src tensorflow a href src torch a href src paddle a href src | 0 |

474,379 | 13,658,034,303 | IssuesEvent | 2020-09-28 06:56:08 | AY2021S1-CS2103T-T11-3/tp | https://api.github.com/repos/AY2021S1-CS2103T-T11-3/tp | closed | Add feature: add finance records | priority.High type.Story | As a forgetful business owner, I can save my transaction history, so that I can track my financials easily.

As a small business owner, I can view a summary of my finances, so that I can plan the next steps of my business.

| 1.0 | Add feature: add finance records - As a forgetful business owner, I can save my transaction history, so that I can track my financials easily.

As a small business owner, I can view a summary of my finances, so that I can plan the next steps of my business.

| priority | add feature add finance records as a forgetful business owner i can save my transaction history so that i can track my financials easily as a small business owner i can view a summary of my finances so that i can plan the next steps of my business | 1 |

101,684 | 31,394,752,004 | IssuesEvent | 2023-08-26 19:48:06 | expo/expo | https://api.github.com/repos/expo/expo | opened | Android 13 (Media Library Permissions) | needs validation Development Builds | ### Summary

When invoking [`await MediaLibrary.requestPermissionsAsync()`](https://docs.expo.dev/versions/latest/sdk/media-library/#medialibraryrequestpermissionsasyncwriteonly) on Android 13, two permission prompts appear asking for music/audio followed by photos/videos. According to the android developer docs in And... | 1.0 | Android 13 (Media Library Permissions) - ### Summary

When invoking [`await MediaLibrary.requestPermissionsAsync()`](https://docs.expo.dev/versions/latest/sdk/media-library/#medialibraryrequestpermissionsasyncwriteonly) on Android 13, two permission prompts appear asking for music/audio followed by photos/videos. Accor... | non_priority | android media library permissions summary when invoking on android two permission prompts appear asking for music audio followed by photos videos according to the android developer docs in android or higher they have introduced more i m interested in only presenting the user with a single promp... | 0 |

133,685 | 10,855,606,209 | IssuesEvent | 2019-11-13 18:46:14 | rancher/k3s | https://api.github.com/repos/rancher/k3s | closed | Upgrade to v0.10.0-rc2 to use default kubelet directory duplicates pod entry in old as well as in new directories. | [zube]: To Test | **Version:**

Upgrade from v0.9.1 to v0.10.0-rc2

**Describe the bug**

1. Upgrade to v0.10.0-rc2 has all pod entries from /var/lib/rancher/k3s/agent/kubelet/pods/ copied onto /var/lib/kubelet except helm and the pods are restarted.

2. Delete a pod removes in the new default dir and not from the old dir.

3. Helm po... | 1.0 | Upgrade to v0.10.0-rc2 to use default kubelet directory duplicates pod entry in old as well as in new directories. - **Version:**

Upgrade from v0.9.1 to v0.10.0-rc2

**Describe the bug**

1. Upgrade to v0.10.0-rc2 has all pod entries from /var/lib/rancher/k3s/agent/kubelet/pods/ copied onto /var/lib/kubelet except h... | non_priority | upgrade to to use default kubelet directory duplicates pod entry in old as well as in new directories version upgrade from to describe the bug upgrade to has all pod entries from var lib rancher agent kubelet pods copied onto var lib kubelet except helm and the pod... | 0 |

50,997 | 13,188,026,176 | IssuesEvent | 2020-08-13 05:20:36 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [g4-tankresponse] memory leaks (Trac #1796) | Migrated from Trac combo simulation defect | potential memory leaks found by static analysis:

http://software.icecube.wisc.edu/static_analysis/2016-07-26-030212-26135-1/report-5e3769.html#EndPath

http://software.icecube.wisc.edu/static_analysis/2016-07-26-030212-26135-1/report-314610.html#EndPath

http://software.icecube.wisc.edu/static_analysis/2016-07-26-030212... | 1.0 | [g4-tankresponse] memory leaks (Trac #1796) - potential memory leaks found by static analysis:

http://software.icecube.wisc.edu/static_analysis/2016-07-26-030212-26135-1/report-5e3769.html#EndPath

http://software.icecube.wisc.edu/static_analysis/2016-07-26-030212-26135-1/report-314610.html#EndPath

http://software.icec... | non_priority | memory leaks trac potential memory leaks found by static analysis migrated from json status closed changetime description potential memory leaks found by static analysis n reporter kjmeagher cc resolution ... | 0 |

43,509 | 2,889,826,327 | IssuesEvent | 2015-06-13 20:00:42 | damonkohler/android-scripting | https://api.github.com/repos/damonkohler/android-scripting | closed | Display additional info before installing interpretter | auto-migrated LowHangingFruit Priority-Medium Type-Enhancement | ```

Interpretter APKs should display version and total download size.

```

Original issue reported on code.google.com by `MeanEYE.rcf` on 7 Jul 2010 at 10:51 | 1.0 | Display additional info before installing interpretter - ```

Interpretter APKs should display version and total download size.

```

Original issue reported on code.google.com by `MeanEYE.rcf` on 7 Jul 2010 at 10:51 | priority | display additional info before installing interpretter interpretter apks should display version and total download size original issue reported on code google com by meaneye rcf on jul at | 1 |

187,839 | 6,761,563,228 | IssuesEvent | 2017-10-25 02:35:19 | opencollective/opencollective | https://api.github.com/repos/opencollective/opencollective | closed | Donor can't make a donation: logged in, system prompts her to login again | high priority | Thank you for taking the time to report an issue 🙏

The easier it is for us to reproduce it, the faster we can solve it.

So please try to be as complete as possible when filing your issue.

***

From user:

> The donation form beginning is a bit confusing for her: She’s already logged in, but placing her emai... | 1.0 | Donor can't make a donation: logged in, system prompts her to login again - Thank you for taking the time to report an issue 🙏

The easier it is for us to reproduce it, the faster we can solve it.

So please try to be as complete as possible when filing your issue.

***

From user:

> The donation form beginni... | priority | donor can t make a donation logged in system prompts her to login again thank you for taking the time to report an issue 🙏 the easier it is for us to reproduce it the faster we can solve it so please try to be as complete as possible when filing your issue from user the donation form beginni... | 1 |

3,124 | 8,972,202,604 | IssuesEvent | 2019-01-29 17:39:59 | firecracker-microvm/firecracker | https://api.github.com/repos/firecracker-microvm/firecracker | closed | Add Try_Read Functionality to EventFd Implementation | Contribute: Good First Issue Priority: Low Program: Architecture Quality: Improvement | We are currently using EventFds via the implementation from the sys_util crate, inherited from crosvm. One potentially significant downside is that it only exposes a blocking `read()` API.

There are places in our code where we use `read()` knowing that an event should definitely be available, because the logic is p... | 1.0 | Add Try_Read Functionality to EventFd Implementation - We are currently using EventFds via the implementation from the sys_util crate, inherited from crosvm. One potentially significant downside is that it only exposes a blocking `read()` API.

There are places in our code where we use `read()` knowing that an event... | non_priority | add try read functionality to eventfd implementation we are currently using eventfds via the implementation from the sys util crate inherited from crosvm one potentially significant downside is that it only exposes a blocking read api there are places in our code where we use read knowing that an event... | 0 |

19,773 | 5,932,300,454 | IssuesEvent | 2017-05-24 08:59:48 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | closed | missleading text in code viewer dialog | All CodeViewer Needs Fixing | **In the picture below, should it really say "delete" and not "cancel"?**

| 1.0 | missleading text in code viewer dialog - **In the picture below, should it really say "delete" and not "cancel"?**

| non_priority | missleading text in code viewer dialog in the picture below should it really say delete and not cancel | 0 |

57,893 | 16,131,877,731 | IssuesEvent | 2021-04-29 06:39:32 | cython/cython | https://api.github.com/repos/cython/cython | closed | Default arguments in methods are not preserved for introspection | Python Semantics defect | Tried on the latest master:

```

# test.pyx

def run(a, b=1):

return a + b

cdef class A:

def run(self, a, b=1):

return a + b

# app.py

from test import A, run

import inspect

a = A()

print(inspect.signature(run)) # ok - prints (a, b=1)

print(inspect.signature(a.run)) # not ok - prints (a,... | 1.0 | Default arguments in methods are not preserved for introspection - Tried on the latest master:

```

# test.pyx

def run(a, b=1):

return a + b

cdef class A:

def run(self, a, b=1):

return a + b

# app.py

from test import A, run

import inspect

a = A()

print(inspect.signature(run)) # ok - pri... | non_priority | default arguments in methods are not preserved for introspection tried on the latest master test pyx def run a b return a b cdef class a def run self a b return a b app py from test import a run import inspect a a print inspect signature run ok pri... | 0 |

71,401 | 3,356,465,895 | IssuesEvent | 2015-11-18 20:37:16 | psouza4/mediacentermaster | https://api.github.com/repos/psouza4/mediacentermaster | closed | Create a file blacklist for parser | Affects-General-Usability Component-Functionality Feature-Downloads Priority-Medium Type-FeatureRequest | Create a user editable configuration file (JSON, XML,etc) to be processed for a blacklist of files to be deleted and ignored while parsing movies and tv shows. The blacklist will be a string value, and depending on code implementation, may support .Net regular expressions. | 1.0 | Create a file blacklist for parser - Create a user editable configuration file (JSON, XML,etc) to be processed for a blacklist of files to be deleted and ignored while parsing movies and tv shows. The blacklist will be a string value, and depending on code implementation, may support .Net regular expressions. | priority | create a file blacklist for parser create a user editable configuration file json xml etc to be processed for a blacklist of files to be deleted and ignored while parsing movies and tv shows the blacklist will be a string value and depending on code implementation may support net regular expressions | 1 |

121,229 | 17,648,388,093 | IssuesEvent | 2021-08-20 09:37:23 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [Security Solution]Rule name is clickable and navigated to rule page contradicting TGrid Form AC | bug Team: SecuritySolution | **Describe the bug**

Rule name is clickable and navigated to rule page contradicting TGrid Form AC

**Build Details**

```

Version:7.15.0

Commit:d791226d9385122f33f4a5ca38fa5369012fbec3

Build:43636

```

**Browsers**

all

**Precondition**

- Install the Endpoint Security on the kibana

**Steps to Reprodu... | True | [Security Solution]Rule name is clickable and navigated to rule page contradicting TGrid Form AC - **Describe the bug**

Rule name is clickable and navigated to rule page contradicting TGrid Form AC

**Build Details**

```

Version:7.15.0

Commit:d791226d9385122f33f4a5ca38fa5369012fbec3

Build:43636

```

**Browser... | non_priority | rule name is clickable and navigated to rule page contradicting tgrid form ac describe the bug rule name is clickable and navigated to rule page contradicting tgrid form ac build details version commit build browsers all precondition install the endpoint security ... | 0 |

64,443 | 26,735,622,277 | IssuesEvent | 2023-01-30 09:15:07 | flipperdevices/flipperzero-firmware | https://api.github.com/repos/flipperdevices/flipperzero-firmware | closed | Show SD card CID in SD card info | feature request core+services | ### Describe the enhancement you're suggesting.

The card info shows filesystem type and size but not info on the physical hardware.

When there are troubles with some cards it would be nice to be able to see which card type is used.

### Anything else?

_No response_ | 1.0 | Show SD card CID in SD card info - ### Describe the enhancement you're suggesting.

The card info shows filesystem type and size but not info on the physical hardware.

When there are troubles with some cards it would be nice to be able to see which card type is used.

### Anything else?

_No response_ | non_priority | show sd card cid in sd card info describe the enhancement you re suggesting the card info shows filesystem type and size but not info on the physical hardware when there are troubles with some cards it would be nice to be able to see which card type is used anything else no response | 0 |

119,065 | 15,395,579,778 | IssuesEvent | 2021-03-03 19:25:27 | emory-libraries/blacklight-catalog | https://api.github.com/repos/emory-libraries/blacklight-catalog | opened | Review current bootstrap CSS and recommend changes needed for current theming and branding | UI Design View (Display and Navigation) | As the product owner, I would like to begin exploring what is needed to begin theming and branding the Blacklight application. The current bootstrap used for Emory Digital Collections is based off the pattern library for Emory Libraries website. Based on initial design of wireframes for header, footer, homepage, facets... | 1.0 | Review current bootstrap CSS and recommend changes needed for current theming and branding - As the product owner, I would like to begin exploring what is needed to begin theming and branding the Blacklight application. The current bootstrap used for Emory Digital Collections is based off the pattern library for Emory ... | non_priority | review current bootstrap css and recommend changes needed for current theming and branding as the product owner i would like to begin exploring what is needed to begin theming and branding the blacklight application the current bootstrap used for emory digital collections is based off the pattern library for emory ... | 0 |

228,517 | 7,552,437,773 | IssuesEvent | 2018-04-19 00:17:30 | leo-project/leofs | https://api.github.com/repos/leo-project/leofs | closed | [eleveldb] Make log files less fragmented | Improve Priority-MIDDLE survey v1.4 | As reported on https://github.com/leo-project/leofs/issues/940 by @vstax, eleveldb have a fragmentation problem for its .log files. This can be fixed by calling fallocate() or posix_fallocate() when creating .log file. and also we have to check how this fix would affect the performance of synced writes to .log file for... | 1.0 | [eleveldb] Make log files less fragmented - As reported on https://github.com/leo-project/leofs/issues/940 by @vstax, eleveldb have a fragmentation problem for its .log files. This can be fixed by calling fallocate() or posix_fallocate() when creating .log file. and also we have to check how this fix would affect the p... | priority | make log files less fragmented as reported on by vstax eleveldb have a fragmentation problem for its log files this can be fixed by calling fallocate or posix fallocate when creating log file and also we have to check how this fix would affect the performance of synced writes to log file for safe | 1 |

301,839 | 22,777,115,619 | IssuesEvent | 2022-07-08 15:27:48 | orchest/orchest | https://api.github.com/repos/orchest/orchest | closed | Improve documentation of installation targets | improvement documentation | **Describe the problem this improvement solves**

At the moment, we are documenting two different installation methods:

- minikube

- `kubectl`

and, in addition, our convenience script.

However, it would be good to add more clear separation between all these methods, and add other interesting targets such as EKS... | 1.0 | Improve documentation of installation targets - **Describe the problem this improvement solves**

At the moment, we are documenting two different installation methods:

- minikube

- `kubectl`

and, in addition, our convenience script.

However, it would be good to add more clear separation between all these method... | non_priority | improve documentation of installation targets describe the problem this improvement solves at the moment we are documenting two different installation methods minikube kubectl and in addition our convenience script however it would be good to add more clear separation between all these method... | 0 |

240,645 | 18,363,637,398 | IssuesEvent | 2021-10-09 17:14:49 | KylieScharf/flask_portfolio | https://api.github.com/repos/KylieScharf/flask_portfolio | opened | edit journal | documentation | Final Journals

- [x] kylie and khushi

[journal](https://docs.google.com/document/d/1eyTgQhMv7jFi28SIGlwOs3k95a9KklfJFCS0O9pXCzA/edit?usp=sharing)

- [x] Kevin and Hamza and Daniel

[journal](https://docs.google.com/document/d/1fy-J_PVrvafykD-OTxMm-Pe0L_UKrYUTC4-DP4vGXEw/edit?usp=sharing)

Add 3.5 and 3.6 notes to... | 1.0 | edit journal - Final Journals

- [x] kylie and khushi

[journal](https://docs.google.com/document/d/1eyTgQhMv7jFi28SIGlwOs3k95a9KklfJFCS0O9pXCzA/edit?usp=sharing)

- [x] Kevin and Hamza and Daniel

[journal](https://docs.google.com/document/d/1fy-J_PVrvafykD-OTxMm-Pe0L_UKrYUTC4-DP4vGXEw/edit?usp=sharing)

Add 3.5 a... | non_priority | edit journal final journals kylie and khushi kevin and hamza and daniel add and notes to journal kylie khushi kevin hamza daniel add test corrections to journal kylie khushi kevin hamza daniel add tpt notes to journal ... | 0 |

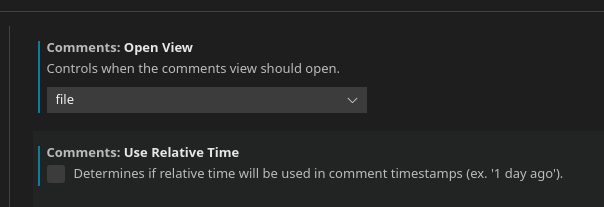

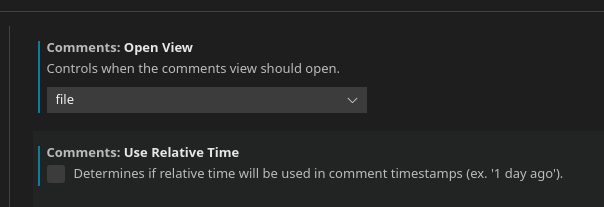

151,617 | 23,848,813,285 | IssuesEvent | 2022-09-06 16:00:04 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | No comments pane | *as-designed | Type: <b>Bug</b>

I cannot find the [comments pane](https://code.visualstudio.com/updates/v1_71#_comments) anywhere. It doesn't matter which setting I use for it to appear.

VS Code version: Code 1.71.0 ... | 1.0 | No comments pane - Type: <b>Bug</b>

I cannot find the [comments pane](https://code.visualstudio.com/updates/v1_71#_comments) anywhere. It doesn't matter which setting I use for it to appear.

VS Code ve... | non_priority | no comments pane type bug i cannot find the anywhere it doesn t matter which setting i use for it to appear vs code version code os version linux manjaro modes sandboxed no system info item value cpus intel r core tm cpu ... | 0 |

204,081 | 15,398,716,972 | IssuesEvent | 2021-03-04 00:37:16 | nucleus-security/Test-repo | https://api.github.com/repos/nucleus-security/Test-repo | opened | Nucleus - Project: Ticketing Rules now apply to all vulnerabilities - [Medium] - CentOS Security Update for kernel(CESA-2017:2863) | Testing | Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities.<p>Affected Products:<br /><br />centos 6

Impact: This vulnerability could be exploited to gain complete access to sensitive information. Malicious users could also use this vulnerability to change all the con... | 1.0 | Nucleus - Project: Ticketing Rules now apply to all vulnerabilities - [Medium] - CentOS Security Update for kernel(CESA-2017:2863) - Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities.<p>Affected Products:<br /><br />centos 6

Impact: This vulnerability could b... | non_priority | nucleus project ticketing rules now apply to all vulnerabilities centos security update for kernel cesa source qualys finding description centos has released security update for kernel to fix the vulnerabilities affected products centos impact this vulnerability could be exploited to gain compl... | 0 |

148,354 | 23,343,120,061 | IssuesEvent | 2022-08-09 15:30:29 | Atri-Labs/atrilabs-engine | https://api.github.com/repos/Atri-Labs/atrilabs-engine | closed | Change UI design of actions bar | design | 1. Send data

2. Send file - send own or different component

3. Internal navigation

4. External navigation | 1.0 | Change UI design of actions bar - 1. Send data

2. Send file - send own or different component

3. Internal navigation

4. External navigation | non_priority | change ui design of actions bar send data send file send own or different component internal navigation external navigation | 0 |

96,668 | 20,053,529,803 | IssuesEvent | 2022-02-03 09:36:23 | creativecommons/project_creativecommons.org | https://api.github.com/repos/creativecommons/project_creativecommons.org | opened | Determine whether we still (intend to) use Engaging Networks | 🟩 priority: low ✨ goal: improvement 💻 aspect: code 🚦 status: awaiting triage | There is a Gravityforms Engaging Networks addon defined as a dependency of our staging website. However, the legacy website does not contain the same plugin.

## Task

- [ ] determine whether we still use or intend to use the Engaging Networks service

- [ ] decide on whether or not to include the Gravityforms Enga... | 1.0 | Determine whether we still (intend to) use Engaging Networks - There is a Gravityforms Engaging Networks addon defined as a dependency of our staging website. However, the legacy website does not contain the same plugin.

## Task

- [ ] determine whether we still use or intend to use the Engaging Networks service

... | non_priority | determine whether we still intend to use engaging networks there is a gravityforms engaging networks addon defined as a dependency of our staging website however the legacy website does not contain the same plugin task determine whether we still use or intend to use the engaging networks service ... | 0 |

157,626 | 13,697,764,116 | IssuesEvent | 2020-10-01 04:01:24 | SE-Group4/PhotoGallery | https://api.github.com/repos/SE-Group4/PhotoGallery | opened | [1 mark] Create Backlog for All Three Sprints | documentation | [1 mark] Backlog for all three sprints.

Please identify team member(s) to whom each backlog item has been assigned to. Identify the Sprint I backlog items that you were not been able to complete by the end of the Sprint I.

A backlog is basically a prioritized list of concrete tasks assigned to individual memb... | 1.0 | [1 mark] Create Backlog for All Three Sprints - [1 mark] Backlog for all three sprints.

Please identify team member(s) to whom each backlog item has been assigned to. Identify the Sprint I backlog items that you were not been able to complete by the end of the Sprint I.

A backlog is basically a prioritized li... | non_priority | create backlog for all three sprints backlog for all three sprints please identify team member s to whom each backlog item has been assigned to identify the sprint i backlog items that you were not been able to complete by the end of the sprint i a backlog is basically a prioritized list of concrete... | 0 |

343,460 | 10,330,819,606 | IssuesEvent | 2019-09-02 15:40:35 | bbc/simorgh | https://api.github.com/repos/bbc/simorgh | closed | Add cookie check to mPulse beacon | high priority simorgh-core-stream | **Is your feature request related to a problem? Please describe.**

If a user has disabled performance cookies, the mPulse beacon will still be shown, therefore not respecting a user's privacy settings.

**Describe the solution you'd like**

Check that the user has enabled cookies (exact perferences/type of cookie to... | 1.0 | Add cookie check to mPulse beacon - **Is your feature request related to a problem? Please describe.**

If a user has disabled performance cookies, the mPulse beacon will still be shown, therefore not respecting a user's privacy settings.

**Describe the solution you'd like**

Check that the user has enabled cookies ... | priority | add cookie check to mpulse beacon is your feature request related to a problem please describe if a user has disabled performance cookies the mpulse beacon will still be shown therefore not respecting a user s privacy settings describe the solution you d like check that the user has enabled cookies ... | 1 |

324,570 | 9,905,480,169 | IssuesEvent | 2019-06-27 11:42:33 | kudobuilder/kudo | https://api.github.com/repos/kudobuilder/kudo | closed | CreateOrUpdate function fix | component/operator kind/bug priority/high | In the plan controller, the line:

```

result, err := controllerutil.CreateOrUpdate(context.TODO(), r.Client, obj, func(runtime.Object) error { return nil })

```

needs to be fixed. The last argument of this function is supposed to capture the modifications to the object pulled from the server. Need to replace it... | 1.0 | CreateOrUpdate function fix - In the plan controller, the line:

```

result, err := controllerutil.CreateOrUpdate(context.TODO(), r.Client, obj, func(runtime.Object) error { return nil })

```

needs to be fixed. The last argument of this function is supposed to capture the modifications to the object pulled from t... | priority | createorupdate function fix in the plan controller the line result err controllerutil createorupdate context todo r client obj func runtime object error return nil needs to be fixed the last argument of this function is supposed to capture the modifications to the object pulled from t... | 1 |

499,526 | 14,449,290,741 | IssuesEvent | 2020-12-08 07:51:35 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Incorrect stability check | platform:azure priority-3-normal reproduction needed type:bug | **What Renovate type, platform and version are you using?**

Self Hosted, Azure DevOps

**Describe the bug**

Renovate doesn't create PRs even if the update met stability days requirements.

**Relevant debug logs**

```

DEBUG: Updated 2 package files (repository=MyCompany/ProjectX, branch=renovate/microsoft-applic... | 1.0 | Incorrect stability check - **What Renovate type, platform and version are you using?**

Self Hosted, Azure DevOps

**Describe the bug**

Renovate doesn't create PRs even if the update met stability days requirements.

**Relevant debug logs**

```

DEBUG: Updated 2 package files (repository=MyCompany/ProjectX, bran... | priority | incorrect stability check what renovate type platform and version are you using self hosted azure devops describe the bug renovate doesn t create prs even if the update met stability days requirements relevant debug logs debug updated package files repository mycompany projectx bran... | 1 |

214,558 | 7,274,378,728 | IssuesEvent | 2018-02-21 09:48:24 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | clientauth.jwt jar version mentioned in the doc is different from the actual jar version created | Affected/5.5.0-Alpha Priority/High Type/Docs | clientauth.jwt jar version mentioned in the doc [1] is different from the actual jar version created.

[1] https://docs.wso2.com/display/IS550/Private+Key+JWT+Client+Authentication+for+OIDC

It says,

Place the target/org.wso2.carbon.identity.oauth2.token.handler.clientauth.jwt-1.0.2-SNAPSHOT.jar in the <IS_HOME>/... | 1.0 | clientauth.jwt jar version mentioned in the doc is different from the actual jar version created - clientauth.jwt jar version mentioned in the doc [1] is different from the actual jar version created.

[1] https://docs.wso2.com/display/IS550/Private+Key+JWT+Client+Authentication+for+OIDC

It says,

Place the targe... | priority | clientauth jwt jar version mentioned in the doc is different from the actual jar version created clientauth jwt jar version mentioned in the doc is different from the actual jar version created it says place the target org carbon identity token handler clientauth jwt snapshot jar in the re... | 1 |

372,812 | 11,028,587,565 | IssuesEvent | 2019-12-06 12:03:10 | coder3101/cp-editor2 | https://api.github.com/repos/coder3101/cp-editor2 | closed | Showing compiler warnings | enhancement high_priority linux macOs windows | **Is your feature request related to a problem? Please describe.**

I can't see the compiler warnings.

**Describe the solution you'd like**

Show compiler warnings after compiling.

Maybe add a setting of whether to show the warnings or not. (However, this can be done by adding `-w` in the compile command.)

... | 1.0 | Showing compiler warnings - **Is your feature request related to a problem? Please describe.**

I can't see the compiler warnings.

**Describe the solution you'd like**

Show compiler warnings after compiling.

Maybe add a setting of whether to show the warnings or not. (However, this can be done by adding `-w`... | priority | showing compiler warnings is your feature request related to a problem please describe i can t see the compiler warnings describe the solution you d like show compiler warnings after compiling maybe add a setting of whether to show the warnings or not however this can be done by adding w ... | 1 |

303,193 | 22,958,859,177 | IssuesEvent | 2022-07-19 13:52:23 | fga-eps-mds/UnbFlow | https://api.github.com/repos/fga-eps-mds/UnbFlow | closed | Docs: Escrever historias de usuário | documentation | # Descrição

Criar as histórias de usuário com base nos epicos, features e no prototipo de média fidelidade

## Critério de aceitação

- [x] Escrever histórias de usuário que serão realizadas durante as issues | 1.0 | Docs: Escrever historias de usuário - # Descrição

Criar as histórias de usuário com base nos epicos, features e no prototipo de média fidelidade

## Critério de aceitação

- [x] Escrever histórias de usuário que serão realizadas durante as issues | non_priority | docs escrever historias de usuário descrição criar as histórias de usuário com base nos epicos features e no prototipo de média fidelidade critério de aceitação escrever histórias de usuário que serão realizadas durante as issues | 0 |

186,220 | 6,734,466,543 | IssuesEvent | 2017-10-18 18:08:56 | octobercms/october | https://api.github.com/repos/octobercms/october | closed | Using Artisan::add in init.php does not work | Priority: Medium Status: Review Needed Type: Unconfirmed Bug | https://octobercms.com/docs/console/development states that you should be able to write `Artisan::add(new Acme\Blog\Console\MyCommand);` in `init.php` and the command will be added

Instead I get an exception

```

[Symfony\Component\Debug\Exception\FatalErrorException]

Call to undefined method October\Rain\Foundati... | 1.0 | Using Artisan::add in init.php does not work - https://octobercms.com/docs/console/development states that you should be able to write `Artisan::add(new Acme\Blog\Console\MyCommand);` in `init.php` and the command will be added

Instead I get an exception

```

[Symfony\Component\Debug\Exception\FatalErrorException]

... | priority | using artisan add in init php does not work states that you should be able to write artisan add new acme blog console mycommand in init php and the command will be added instead i get an exception call to undefined method october rain foundation console kernel add | 1 |

18,777 | 24,678,890,034 | IssuesEvent | 2022-10-18 19:25:58 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | opened | Investigate `ascii2nc_airnow_hourly` test in unit_ascii2nc.xml | type: bug alert: NEED ACCOUNT KEY requestor: METplus Team MET: PreProcessing Tools (Point) priority: high | ## Describe the Problem ##

During review of #2294 for issue #2276, a problem was discovered in the output of the `ascii2nc_airnow_hourly` test in unit_ascii2nc.xml. The output file created by this test (HourlyData_20220312.nc) contains values of Infinity (`Inf`). While the GHA run for that PR did increase the occurren... | 1.0 | Investigate `ascii2nc_airnow_hourly` test in unit_ascii2nc.xml - ## Describe the Problem ##

During review of #2294 for issue #2276, a problem was discovered in the output of the `ascii2nc_airnow_hourly` test in unit_ascii2nc.xml. The output file created by this test (HourlyData_20220312.nc) contains values of Infinity... | non_priority | investigate airnow hourly test in unit xml describe the problem during review of for issue a problem was discovered in the output of the airnow hourly test in unit xml the output file created by this test hourlydata nc contains values of infinity inf while the gha run for that pr d... | 0 |

566,801 | 16,830,786,287 | IssuesEvent | 2021-06-18 04:14:50 | CertifaiAI/classifai | https://api.github.com/repos/CertifaiAI/classifai | opened | Backend language support | bug low priority | **Describe the bug**

As ClassifAI is supporting multiple languages, The title of UI prompted from backend is still in english.

**Expected behavior**

The language should be dynamic in backend as well

**Screenshots**

tag - Orçamento subtag - Legislação | DoD: Realizar o teste de Generalização do validador da tag Orçamento - Legislação para o Município de Lagamar. | 1.0 | Teste de generalizacao para a tag Orçamento - Legislação - Lagamar - DoD: Realizar o teste de Generalização do validador da tag Orçamento - Legislação para o Município de Lagamar. | non_priority | teste de generalizacao para a tag orçamento legislação lagamar dod realizar o teste de generalização do validador da tag orçamento legislação para o município de lagamar | 0 |

622,689 | 19,654,052,470 | IssuesEvent | 2022-01-10 10:34:47 | pgfmc/Core | https://api.github.com/repos/pgfmc/Core | closed | Fix when AFK deactivates | bug low priority | What needs to change:

- AFK toggle on teleport

- Don't AFK toggle on Y level descend | 1.0 | Fix when AFK deactivates - What needs to change:

- AFK toggle on teleport

- Don't AFK toggle on Y level descend | priority | fix when afk deactivates what needs to change afk toggle on teleport don t afk toggle on y level descend | 1 |

461,080 | 13,223,211,077 | IssuesEvent | 2020-08-17 16:49:19 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Apps can't be installed in latest master from Dashboard | [zube]: Next Up alpha-priority/0 kind/bug-qa | **What kind of request is this:**

**Steps to reproduce:**

- Install Rancher HA ( k8s `master-head (08/10/2020)` _502b1839e_)

- Navigate to the cluster Dashboard and Apps

**Result:**

` _502b1839e_)

- Navigate to the cluster Dashboard and Apps

**Result:**

as prof:

with to... | 1.0 | record_function decorator in autograd profiler does not work with RPC - ## 🐛 Bug

Nested `RecordFunction`s during profiling do not work with RPC calls, due to mismanagement of some internal variables in `record_function.cpp` caused by handling `RecordFunction` objects completing in different hreads.

## To Reprodu... | priority | record function decorator in autograd profiler does not work with rpc 🐛 bug nested recordfunction s during profiling do not work with rpc calls due to mismanagement of some internal variables in record function cpp caused by handling recordfunction objects completing in different hreads to reprodu... | 1 |

15,368 | 3,461,414,456 | IssuesEvent | 2015-12-20 02:02:27 | ehmorris/Facebook-Mood | https://api.github.com/repos/ehmorris/Facebook-Mood | closed | Extension tests | extension-related testing | This is actually going to be kinda cool. Writing a testing module for javascript as a chrome extension. | 1.0 | Extension tests - This is actually going to be kinda cool. Writing a testing module for javascript as a chrome extension. | non_priority | extension tests this is actually going to be kinda cool writing a testing module for javascript as a chrome extension | 0 |

187,005 | 21,993,039,292 | IssuesEvent | 2022-05-26 01:22:44 | raindigi/GraphqlType-API-Registration | https://api.github.com/repos/raindigi/GraphqlType-API-Registration | opened | CVE-2022-24434 (High) detected in dicer-0.3.0.tgz | security vulnerability | ## CVE-2022-24434 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dicer-0.3.0.tgz</b></p></summary>

<p>A very fast streaming multipart parser for node.js</p>

<p>Library home page: <a h... | True | CVE-2022-24434 (High) detected in dicer-0.3.0.tgz - ## CVE-2022-24434 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dicer-0.3.0.tgz</b></p></summary>

<p>A very fast streaming multipa... | non_priority | cve high detected in dicer tgz cve high severity vulnerability vulnerable library dicer tgz a very fast streaming multipart parser for node js library home page a href path to dependency file graphqltype api registration package json path to vulnerable library node... | 0 |

50,519 | 13,187,554,369 | IssuesEvent | 2020-08-13 03:47:37 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | test ticket (Trac #869) | Migrated from Trac cmake defect |

<details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/869

, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-02-12T06:31:40",

"description": "",

"reporter": "nega",

"cc": "",

"resolution": "invalid",

"_ts": ... | 1.0 | test ticket (Trac #869) -

<details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/869

, reported by nega and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-02-12T06:31:40",

"description": "",

"reporter": "nega",

"cc": "",

"resolution... | non_priority | test ticket trac migrated from reported by nega and owned by nega json status closed changetime description reporter nega cc resolution invalid ts component cmake summary test ticke... | 0 |

810,198 | 30,229,778,490 | IssuesEvent | 2023-07-06 05:44:42 | glific/mobile | https://api.github.com/repos/glific/mobile | closed | Start a flow for a collection | Priority: High | **Start a flow for a collection**

Implement 'Start a flow' in Collection Chat Screen Option. When selected from options, a dialog box will appear, providing users with the ability to choose a flow and initiate it using the "Start" button or cancel the action with the "Cancel" button.

**Approach**

- Implement d... | 1.0 | Start a flow for a collection - **Start a flow for a collection**

Implement 'Start a flow' in Collection Chat Screen Option. When selected from options, a dialog box will appear, providing users with the ability to choose a flow and initiate it using the "Start" button or cancel the action with the "Cancel" button.

... | priority | start a flow for a collection start a flow for a collection implement start a flow in collection chat screen option when selected from options a dialog box will appear providing users with the ability to choose a flow and initiate it using the start button or cancel the action with the cancel button ... | 1 |

665,016 | 22,296,070,020 | IssuesEvent | 2022-06-13 01:49:26 | ESIPFed/Geoweaver | https://api.github.com/repos/ESIPFed/Geoweaver | closed | Improve the weaver GUI | enhancement high-priority | Some existing problems:

- The words inside the circles are hardly readable

- The process names often go out of bounds

- The status indicators are not easy to understand the color change

- The toolbar is not self explainable

- The process status bars are not indicating anything

- ... | 1.0 | Improve the weaver GUI - Some existing problems:

- The words inside the circles are hardly readable

- The process names often go out of bounds

- The status indicators are not easy to understand the color change

- The toolbar is not self explainable

- The process status bars are not indicating anything

- ... | priority | improve the weaver gui some existing problems the words inside the circles are hardly readable the process names often go out of bounds the status indicators are not easy to understand the color change the toolbar is not self explainable the process status bars are not indicating anything | 1 |

273,400 | 8,530,235,069 | IssuesEvent | 2018-11-03 20:20:27 | projectcalico/calico | https://api.github.com/repos/projectcalico/calico | closed | Document need for `iptables -P FORWARD ACCEPT` for IPVS until K8s fixes are merged | area/docs/content content/needed priority/P3 | Currently, when using kube-proxy in IPVS mode, for NodePorts to work (when workloads are on a host other than the host initially being connected to) it is necessary to run `iptables -P FORWARD ACCEPT` on all nodes. The issue is raised upstream https://github.com/kubernetes/kubernetes/issues/59656 and the fix is up as ... | 1.0 | Document need for `iptables -P FORWARD ACCEPT` for IPVS until K8s fixes are merged - Currently, when using kube-proxy in IPVS mode, for NodePorts to work (when workloads are on a host other than the host initially being connected to) it is necessary to run `iptables -P FORWARD ACCEPT` on all nodes. The issue is raised... | priority | document need for iptables p forward accept for ipvs until fixes are merged currently when using kube proxy in ipvs mode for nodeports to work when workloads are on a host other than the host initially being connected to it is necessary to run iptables p forward accept on all nodes the issue is raised u... | 1 |

702,123 | 24,120,640,480 | IssuesEvent | 2022-09-20 18:22:51 | googleapis/nodejs-analytics-data | https://api.github.com/repos/googleapis/nodejs-analytics-data | closed | Realtime report with multiple dimensions: should run realtime with multiple dimensions failed | type: bug priority: p1 api: analyticsdata flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: ae646a54bc64abff0cf92625117ffb258e303e8b

b... | 1.0 | Realtime report with multiple dimensions: should run realtime with multiple dimensions failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: q... | priority | realtime report with multiple dimensions should run realtime with multiple dimensions failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output co... | 1 |

121,118 | 15,857,163,455 | IssuesEvent | 2021-04-08 04:07:14 | asoffer/Icarus | https://api.github.com/repos/asoffer/Icarus | closed | Should array types allow implicit integer casts? | design decision | Currently array lengths need to be integral but will be implicitly cast to the underlying array-type's length implementation type. This is currently a `uint64_t` so any natively supported type in Icarus can convert to this losslessly (because negative lengths are prohibited). However, if Icarus starts supporting larger... | 1.0 | Should array types allow implicit integer casts? - Currently array lengths need to be integral but will be implicitly cast to the underlying array-type's length implementation type. This is currently a `uint64_t` so any natively supported type in Icarus can convert to this losslessly (because negative lengths are prohi... | non_priority | should array types allow implicit integer casts currently array lengths need to be integral but will be implicitly cast to the underlying array type s length implementation type this is currently a t so any natively supported type in icarus can convert to this losslessly because negative lengths are prohibited... | 0 |

140,427 | 5,408,859,972 | IssuesEvent | 2017-03-01 01:33:53 | google/roboto | https://api.github.com/repos/google/roboto | opened | Roboto Mono 'l' legibility | Priority-High | Requested change:

> Change the lowercase L in Roboto Mono to a reverse S shape, like Andale Mono or Monaco have; Roboto Mono has clearer distinction between lowercase L and 1 than Courier does, but the reverse-S type of L is clearer still.

| 1.0 | Roboto Mono 'l' legibility - Requested change:

> Change the lowercase L in Roboto Mono to a reverse S shape, like Andale Mono or Monaco have; Roboto Mono has clearer distinction between lowercase L and 1 than Courier does, but the reverse-S type of L is clearer still.

| priority | roboto mono l legibility requested change change the lowercase l in roboto mono to a reverse s shape like andale mono or monaco have roboto mono has clearer distinction between lowercase l and than courier does but the reverse s type of l is clearer still | 1 |

817,370 | 30,639,264,360 | IssuesEvent | 2023-07-24 20:24:25 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | speech.snippets.transcribe_gcs_v2_test: test_transcribe_gcs_v2 failed | priority: p2 type: bug api: speech samples flakybot: issue flakybot: flaky | Note: #9423 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 16ab3cf93caddb092710ba507d537945128c884c

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/297c18a8-be85-43ab-92b9-655f63a7de99), [Sponge](http://sponge2/297c18a8-be85-43ab-... | 1.0 | speech.snippets.transcribe_gcs_v2_test: test_transcribe_gcs_v2 failed - Note: #9423 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 16ab3cf93caddb092710ba507d537945128c884c

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/297c18a8-b... | priority | speech snippets transcribe gcs test test transcribe gcs failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output traceback most recent call last file workspace speech snippets nox py l... | 1 |

257,248 | 8,134,897,438 | IssuesEvent | 2018-08-19 21:14:51 | python/mypy | https://api.github.com/repos/python/mypy | opened | Re-work how fine grained targets are processed | needs discussion priority-1-normal refactoring topic-fine-grained-incremental | Currently, fine grained targets are processed per updated module. This can lead to files being processed multiple times (and also a bit hard to reason, but this may be subjective). I propose to reorganise them to be processed in topologically sorted order. So the algorithm would be like this:

1. Process all edited fil... | 1.0 | Re-work how fine grained targets are processed - Currently, fine grained targets are processed per updated module. This can lead to files being processed multiple times (and also a bit hard to reason, but this may be subjective). I propose to reorganise them to be processed in topologically sorted order. So the algorit... | priority | re work how fine grained targets are processed currently fine grained targets are processed per updated module this can lead to files being processed multiple times and also a bit hard to reason but this may be subjective i propose to reorganise them to be processed in topologically sorted order so the algorit... | 1 |

56,680 | 14,078,482,024 | IssuesEvent | 2020-11-04 13:38:02 | themagicalmammal/android_kernel_samsung_s5neolte | https://api.github.com/repos/themagicalmammal/android_kernel_samsung_s5neolte | opened | CVE-2019-15926 (High) detected in linuxv3.10 | security vulnerability | ## CVE-2019-15926 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torv... | True | CVE-2019-15926 (High) detected in linuxv3.10 - ## CVE-2019-15926 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_priority | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch cosmic experimental vulnerable source files vulne... | 0 |

70,221 | 9,382,912,243 | IssuesEvent | 2019-04-05 00:32:19 | certbot/certbot | https://api.github.com/repos/certbot/certbot | closed | Clarify documentation of configuration file | area: documentation | https://certbot.eff.org/docs/using.html#configuration-file says::

```

# All flags used by the client can be configured here. Run Certbot with

# "--help" to learn more about the available options.

```

This begs the question of whether there are configuration options that are not flags to the client, and it is n... | 1.0 | Clarify documentation of configuration file - https://certbot.eff.org/docs/using.html#configuration-file says::

```

# All flags used by the client can be configured here. Run Certbot with

# "--help" to learn more about the available options.

```

This begs the question of whether there are configuration options... | non_priority | clarify documentation of configuration file says all flags used by the client can be configured here run certbot with help to learn more about the available options this begs the question of whether there are configuration options that are not flags to the client and it is not answered... | 0 |

104,038 | 4,194,219,805 | IssuesEvent | 2016-06-25 00:18:10 | UHMDCmd/DCmd | https://api.github.com/repos/UHMDCmd/DCmd | opened | new Service-Host Assignment does not show. | bug Medium Priority | When editing an application, creating a new service host assignment does not show in the grid once created or updated. Audit log shows that something had happen, but nothing is shown for it.

On the Host page side, if the application has no service and try to make a new service host assignment, nothing happens. Only ... | 1.0 | new Service-Host Assignment does not show. - When editing an application, creating a new service host assignment does not show in the grid once created or updated. Audit log shows that something had happen, but nothing is shown for it.

On the Host page side, if the application has no service and try to make a new se... | priority | new service host assignment does not show when editing an application creating a new service host assignment does not show in the grid once created or updated audit log shows that something had happen but nothing is shown for it on the host page side if the application has no service and try to make a new se... | 1 |

129,388 | 18,092,941,060 | IssuesEvent | 2021-09-22 05:16:59 | AlexRogalskiy/typescript-tools | https://api.github.com/repos/AlexRogalskiy/typescript-tools | closed | CVE-2021-32796 (Medium) detected in xmldom-0.6.0.tgz | security vulnerability Status: Invalid | ## CVE-2021-32796 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmldom-0.6.0.tgz</b></p></summary>

<p>A pure JavaScript W3C standard-based (XML DOM Level 2 Core) DOMParser and XMLS... | True | CVE-2021-32796 (Medium) detected in xmldom-0.6.0.tgz - ## CVE-2021-32796 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmldom-0.6.0.tgz</b></p></summary>

<p>A pure JavaScript W3C s... | non_priority | cve medium detected in xmldom tgz cve medium severity vulnerability vulnerable library xmldom tgz a pure javascript standard based xml dom level core domparser and xmlserializer module library home page a href path to dependency file typescript tools package json... | 0 |

526,540 | 15,295,240,829 | IssuesEvent | 2021-02-24 04:21:34 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Missing translations for onboarding modal on first launch | OS/Desktop QA/Yes l10n onboarding priority/P2 release-notes/exclude | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Missing translations for onboarding modal on first launch - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CL... | priority | missing translations for onboarding modal on first launch have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue cl... | 1 |