Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

269,540 | 20,386,304,327 | IssuesEvent | 2022-02-22 07:21:56 | Azure/PSRule.Rules.Azure | https://api.github.com/repos/Azure/PSRule.Rules.Azure | opened | Improve running locally documentation | documentation | We need to improve documentation for testing a repository locally using VSCode extension including any configuration options that are relevant. | 1.0 | Improve running locally documentation - We need to improve documentation for testing a repository locally using VSCode extension including any configuration options that are relevant. | non_priority | improve running locally documentation we need to improve documentation for testing a repository locally using vscode extension including any configuration options that are relevant | 0 |

720,683 | 24,801,502,006 | IssuesEvent | 2022-10-24 22:14:41 | MSRevive/MSCScripts | https://api.github.com/repos/MSRevive/MSCScripts | closed | Replace bloodstone ring ability | help wanted high priority | As players can see monster's hp by default now, the bloodstone ring is currently useless. We need to replace it to do something useful now. | 1.0 | Replace bloodstone ring ability - As players can see monster's hp by default now, the bloodstone ring is currently useless. We need to replace it to do something useful now. | priority | replace bloodstone ring ability as players can see monster s hp by default now the bloodstone ring is currently useless we need to replace it to do something useful now | 1 |

554,126 | 16,389,597,249 | IssuesEvent | 2021-05-17 14:36:12 | ruuvi/com.ruuvi.station | https://api.github.com/repos/ruuvi/com.ruuvi.station | closed | Starting without wifi access causes crash - 1.5.8 - defect | bug medium priority | If mobile is out of range of wifi or otherwise not connected to wifi app will crash.

May be related to issue #350 | 1.0 | Starting without wifi access causes crash - 1.5.8 - defect - If mobile is out of range of wifi or otherwise not connected to wifi app will crash.

May be related to issue #350 | priority | starting without wifi access causes crash defect if mobile is out of range of wifi or otherwise not connected to wifi app will crash may be related to issue | 1 |

138,633 | 11,209,817,471 | IssuesEvent | 2020-01-06 11:29:25 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Manual test run on Windows x64 for 1.2.x - Beta | OS/Windows QA/Yes release-notes/exclude tests | ## Per release specialty tests

- [x] Devtools "Audit" (Lighthouse) feature causes browser to freeze / lock up.([#3199](https://github.com/brave/brave-browser/issues/3199))

- [x] Maximum daily ads at 21 instead of 20 (follow up to #3849).([#4207](https://github.com/brave/brave-browser/issues/4207))

- [x] Ads grant... | 1.0 | Manual test run on Windows x64 for 1.2.x - Beta - ## Per release specialty tests

- [x] Devtools "Audit" (Lighthouse) feature causes browser to freeze / lock up.([#3199](https://github.com/brave/brave-browser/issues/3199))

- [x] Maximum daily ads at 21 instead of 20 (follow up to #3849).([#4207](https://github.com/b... | non_priority | manual test run on windows for x beta per release specialty tests devtools audit lighthouse feature causes browser to freeze lock up maximum daily ads at instead of follow up to ads grants notification is shown when ads switch was off ads earnings notif... | 0 |

225,523 | 7,482,215,265 | IssuesEvent | 2018-04-04 23:59:47 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | GET /healthz blocks when etcd endpoint is down | kind/bug priority/1.0-blocker priority/insane | The following code blocks with a very long timeout when an etcd endpoint is down:

```

func (e *etcdClient) Status() (string, error) {

eps := e.client.Endpoints()

var err1 error

for i, ep := range eps {

if sr, err := e.client.Status(ctx.Background(), ep); err != nil {

... | 2.0 | GET /healthz blocks when etcd endpoint is down - The following code blocks with a very long timeout when an etcd endpoint is down:

```

func (e *etcdClient) Status() (string, error) {

eps := e.client.Endpoints()

var err1 error

for i, ep := range eps {

if sr, err := e.client.... | priority | get healthz blocks when etcd endpoint is down the following code blocks with a very long timeout when an etcd endpoint is down func e etcdclient status string error eps e client endpoints var error for i ep range eps if sr err e client sta... | 1 |

220,133 | 16,889,073,149 | IssuesEvent | 2021-06-23 06:53:51 | jinseobhong/typescript.reactNative.template | https://api.github.com/repos/jinseobhong/typescript.reactNative.template | closed | [Documentation] Create CODE_OF_CONDUCT.md for repository | documentation task | # Task <a href="#task" id="task">#</a>

1. [What kind to task](#what-kind-to-task)

- [Describe to What you are trying to solve by task](#describe-to-what-you-are-trying-to-solve-by-task)

- [Task goals](#task-goals)

- [Environment for task](#environment-for-task)

- [Tasks of Task](#tasks-of-task)

... | 1.0 | [Documentation] Create CODE_OF_CONDUCT.md for repository - # Task <a href="#task" id="task">#</a>

1. [What kind to task](#what-kind-to-task)

- [Describe to What you are trying to solve by task](#describe-to-what-you-are-trying-to-solve-by-task)

- [Task goals](#task-goals)

- [Environment for task](#env... | non_priority | create code of conduct md for repository task what kind to task describe to what you are trying to solve by task task goals environment for task tasks of task describe alternatives additional context reference what kind to... | 0 |

41,926 | 10,709,353,247 | IssuesEvent | 2019-10-24 21:54:20 | idaholab/moose | https://api.github.com/repos/idaholab/moose | opened | TestHarness isn't handling crashes in --recover tests (part1) correctly | C: TestHarness P: normal T: defect | ## Bug Description

<!--A clear and concise description of the problem (Note: A missing feature is not a bug).-->

It appears that if a test crashes when using the --recover flag during Part1, it still passes from the TestHarness point of view. We are getting away with this since Part2 will definitely fail if that ha... | 1.0 | TestHarness isn't handling crashes in --recover tests (part1) correctly - ## Bug Description

<!--A clear and concise description of the problem (Note: A missing feature is not a bug).-->

It appears that if a test crashes when using the --recover flag during Part1, it still passes from the TestHarness point of view.... | non_priority | testharness isn t handling crashes in recover tests correctly bug description it appears that if a test crashes when using the recover flag during it still passes from the testharness point of view we are getting away with this since will definitely fail if that happens steps to reproduc... | 0 |

146,967 | 5,631,510,808 | IssuesEvent | 2017-04-05 14:42:25 | actor-framework/actor-framework | https://api.github.com/repos/actor-framework/actor-framework | closed | Add Pony benchmark | @benchmarks low priority task | The [Pony](http://ponylang.org) language offers first-class actor support. The authors also compare it against CAF 0.13 in [a set of benchmarks](http://ponylang.org/benchmarks_all.pdf). It would be great to reproduce these numbers.

| 1.0 | Add Pony benchmark - The [Pony](http://ponylang.org) language offers first-class actor support. The authors also compare it against CAF 0.13 in [a set of benchmarks](http://ponylang.org/benchmarks_all.pdf). It would be great to reproduce these numbers.

| priority | add pony benchmark the language offers first class actor support the authors also compare it against caf in it would be great to reproduce these numbers | 1 |

723,338 | 24,893,744,412 | IssuesEvent | 2022-10-28 14:09:01 | wso2/api-manager | https://api.github.com/repos/wso2/api-manager | opened | Error Index 1 out of bounds for length 1 when searching api with name "doc" with publisher rest api | Type/Bug Priority/Normal | ### Description

When using the publisher rest api te search an api with the name "doc" a 500 response is returned. This happens when the api in question exists and when it doesn't. When using any other "query", like "do" or "dot" the 500 is not returned.

The following error is logged in the api manager:

```

[20... | 1.0 | Error Index 1 out of bounds for length 1 when searching api with name "doc" with publisher rest api - ### Description

When using the publisher rest api te search an api with the name "doc" a 500 response is returned. This happens when the api in question exists and when it doesn't. When using any other "query", like "... | priority | error index out of bounds for length when searching api with name doc with publisher rest api description when using the publisher rest api te search an api with the name doc a response is returned this happens when the api in question exists and when it doesn t when using any other query like do... | 1 |

584,685 | 17,461,692,076 | IssuesEvent | 2021-08-06 11:25:32 | turbot/steampipe-plugin-azure | https://api.github.com/repos/turbot/steampipe-plugin-azure | closed | Add table azure_iothub | enhancement priority:high new table | **References**

https://docs.microsoft.com/en-us/rest/api/iothub/iot-hub-resource/get

We need diagnostic settings details also for iothub. | 1.0 | Add table azure_iothub - **References**

https://docs.microsoft.com/en-us/rest/api/iothub/iot-hub-resource/get

We need diagnostic settings details also for iothub. | priority | add table azure iothub references we need diagnostic settings details also for iothub | 1 |

415,861 | 12,136,052,395 | IssuesEvent | 2020-04-23 13:51:20 | wso2/product-microgateway | https://api.github.com/repos/wso2/product-microgateway | closed | Can we unify path for product performance result | Priority/Normal Type/New Feature | **Description:**

Problem: Path for product performance report is not unified across every product. It is hard to find and the path is not descriptive at all.

Some product has performance result in branch root and it just has a number that increases.

**Suggested Labels:**

The suggestion is to have a unique path (... | 1.0 | Can we unify path for product performance result - **Description:**

Problem: Path for product performance report is not unified across every product. It is hard to find and the path is not descriptive at all.

Some product has performance result in branch root and it just has a number that increases.

**Suggested L... | priority | can we unify path for product performance result description problem path for product performance report is not unified across every product it is hard to find and the path is not descriptive at all some product has performance result in branch root and it just has a number that increases suggested l... | 1 |

173,185 | 6,521,363,950 | IssuesEvent | 2017-08-28 20:16:50 | Aubron/scoreshots-templates | https://api.github.com/repos/Aubron/scoreshots-templates | closed | Queens, Multi-Sport Schedule | Priority: Low Status: Needs Finalization / Preview Image | ### Requested by:

Queens University

### Due Date:

2017-08-02

## Template Description:

Original message from Danielle Nicosia of Queens enclosed below:

> I was wondering if there was a way to get a template made up that would allow us to put multiple sports schedules with a cut out of a player from that sport ... | 1.0 | Queens, Multi-Sport Schedule - ### Requested by:

Queens University

### Due Date:

2017-08-02

## Template Description:

Original message from Danielle Nicosia of Queens enclosed below:

> I was wondering if there was a way to get a template made up that would allow us to put multiple sports schedules with a cut o... | priority | queens multi sport schedule requested by queens university due date template description original message from danielle nicosia of queens enclosed below i was wondering if there was a way to get a template made up that would allow us to put multiple sports schedules with a cut out of... | 1 |

194,180 | 22,261,878,191 | IssuesEvent | 2022-06-10 01:47:36 | Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492 | https://api.github.com/repos/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492 | reopened | CVE-2019-19061 (High) detected in linuxlinux-4.19.88 | security vulnerability | ## CVE-2019-19061 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ke... | True | CVE-2019-19061 (High) detected in linuxlinux-4.19.88 - ## CVE-2019-19061 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linux Kernel</p... | non_priority | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files linux driv... | 0 |

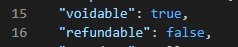

821,072 | 30,803,056,116 | IssuesEvent | 2023-08-01 04:10:46 | rangav/thunder-client-support | https://api.github.com/repos/rangav/thunder-client-support | closed | Set variable based on test result (or) if header/body property exists? | feature request Priority | Example:

I want to set a variable `{{isVoidable}}` or `{{isRefundable}}` based on the data in the response body.

So something like:

`json.voidable -> equals -> true` - if this passes, the immediate ne... | 1.0 | Set variable based on test result (or) if header/body property exists? - Example:

I want to set a variable `{{isVoidable}}` or `{{isRefundable}}` based on the data in the response body.

So something li... | priority | set variable based on test result or if header body property exists example i want to set a variable isvoidable or isrefundable based on the data in the response body so something like json voidable equals true if this passes the immediate next test is run to set the variable... | 1 |

73,740 | 15,281,690,062 | IssuesEvent | 2021-02-23 08:30:57 | raindigi/site-preview | https://api.github.com/repos/raindigi/site-preview | opened | CVE-2020-7608 (Medium) detected in nodev15.5.0 | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nodev15.5.0</b></p></summary>

<p>

<p>Node.js JavaScript runtime :sparkles::turtle::rocket::sparkles:</p>

<p>Library h... | True | CVE-2020-7608 (Medium) detected in nodev15.5.0 - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nodev15.5.0</b></p></summary>

<p>

<p>Node.js JavaScript runtime :spa... | non_priority | cve medium detected in cve medium severity vulnerability vulnerable library node js javascript runtime sparkles turtle rocket sparkles library home page a href found in head commit a href vulnerable source files vulnerab... | 0 |

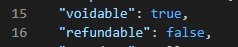

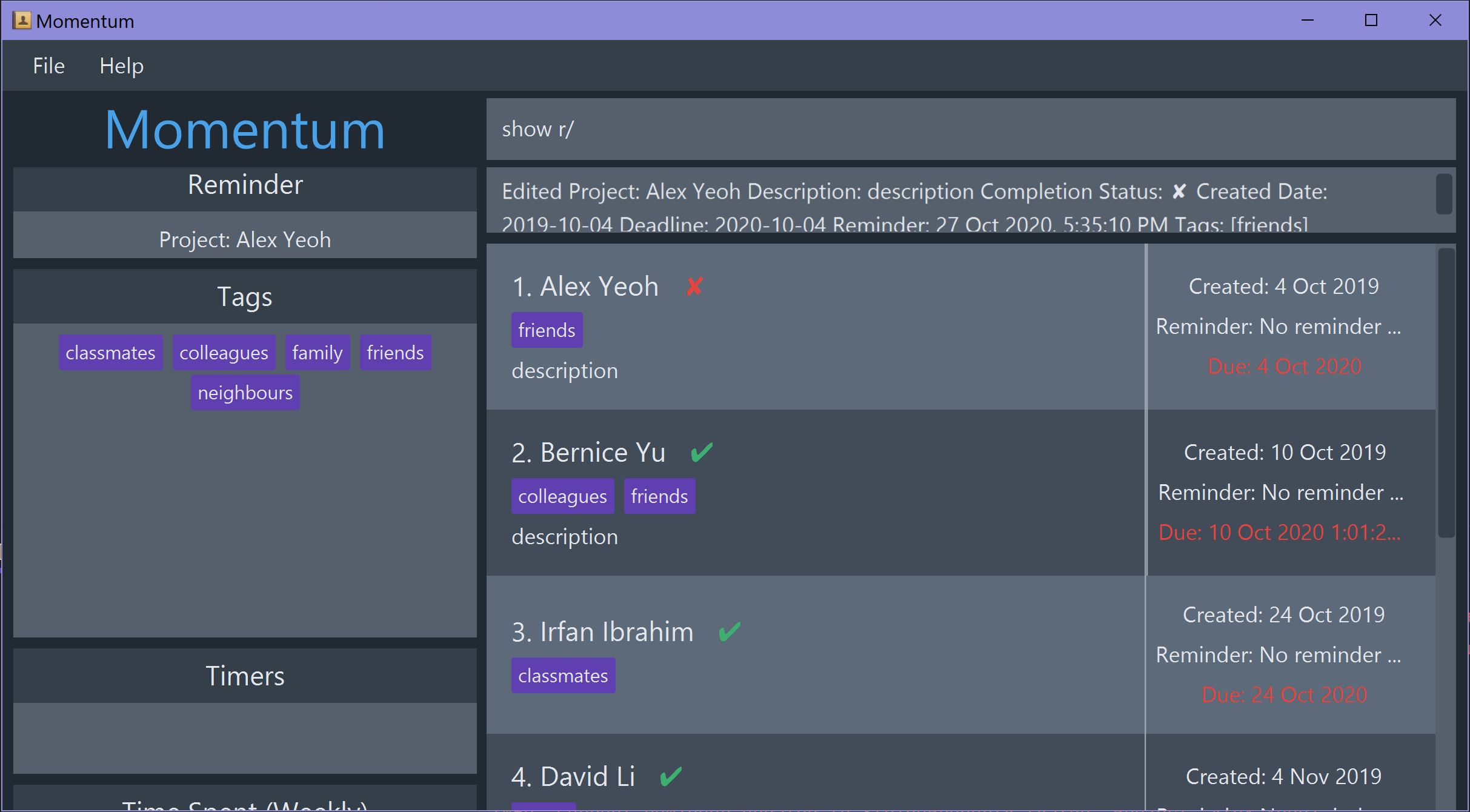

488,924 | 14,099,514,912 | IssuesEvent | 2020-11-06 01:35:50 | AY2021S1-CS2103T-T10-1/tp | https://api.github.com/repos/AY2021S1-CS2103T-T10-1/tp | closed | Tag pane resizes when typing in command box | priority.High severity.High type.Bug |

The command is not yet executed, enter is not pressed, just typing stuff in the command box causes the tags pane increase its height. | 1.0 | Tag pane resizes when typing in command box -

The command is not yet executed, enter is not pressed, just typing stuff in the command box causes the tags pane increase its height. | priority | tag pane resizes when typing in command box the command is not yet executed enter is not pressed just typing stuff in the command box causes the tags pane increase its height | 1 |

118,620 | 4,751,262,790 | IssuesEvent | 2016-10-22 19:50:06 | SuperTux/supertux | https://api.github.com/repos/SuperTux/supertux | reopened | 0.4 crashes on startup on Mac OS X 10.7 | os:macos priority:high type:bug | The new supertux release crashes on startup on my admitedly older 2006 intel iMac. I have not found any requirements for versions, so I do not know whether this is expected.

What can I do to help debug the problem? | 1.0 | 0.4 crashes on startup on Mac OS X 10.7 - The new supertux release crashes on startup on my admitedly older 2006 intel iMac. I have not found any requirements for versions, so I do not know whether this is expected.

What can I do to help debug the problem? | priority | crashes on startup on mac os x the new supertux release crashes on startup on my admitedly older intel imac i have not found any requirements for versions so i do not know whether this is expected what can i do to help debug the problem | 1 |

389,819 | 11,517,594,378 | IssuesEvent | 2020-02-14 08:45:10 | DimensionDev/Maskbook | https://api.github.com/repos/DimensionDev/Maskbook | closed | Rationalise localization | Component: i18n Priority: P4 (Do when free) | Some users reports say that Maskbook is being used in Hong Kong too. Looks like we can't just settle with a single `zh`: we need to add some subdivisions. While we are at it, we should also rationalize our localisation system.

- [ ] Use a proper library that has:

- [ ] Plurals

- [ ] Grammatical Gender

- [ ] P... | 1.0 | Rationalise localization - Some users reports say that Maskbook is being used in Hong Kong too. Looks like we can't just settle with a single `zh`: we need to add some subdivisions. While we are at it, we should also rationalize our localisation system.

- [ ] Use a proper library that has:

- [ ] Plurals

- [ ] ... | priority | rationalise localization some users reports say that maskbook is being used in hong kong too looks like we can t just settle with a single zh we need to add some subdivisions while we are at it we should also rationalize our localisation system use a proper library that has plurals gramma... | 1 |

177,353 | 6,577,606,002 | IssuesEvent | 2017-09-12 02:04:34 | apache/incubator-openwhisk-wskdeploy | https://api.github.com/repos/apache/incubator-openwhisk-wskdeploy | closed | Trigger supports "source" according to the code, yet spec has "feed" as trigger sub element. | bug priority: high | We should support `feed` under `trigger`, mark `source` deprecated.

In the use case `alarmtrigger`, a trigger is described as below.

```

package:

name: helloworld

triggers:

Every12Hours:

source: /whisk.system/alarms/alarm

```

If I change `source` to `feed`, it will create a trigge... | 1.0 | Trigger supports "source" according to the code, yet spec has "feed" as trigger sub element. - We should support `feed` under `trigger`, mark `source` deprecated.

In the use case `alarmtrigger`, a trigger is described as below.

```

package:

name: helloworld

triggers:

Every12Hours:

s... | priority | trigger supports source according to the code yet spec has feed as trigger sub element we should support feed under trigger mark source deprecated in the use case alarmtrigger a trigger is described as below package name helloworld triggers source whi... | 1 |

497,476 | 14,371,366,516 | IssuesEvent | 2020-12-01 12:28:14 | replicate/replicate | https://api.github.com/repos/replicate/replicate | closed | Add development support for Linux | priority/medium type/bug | The development environment outlined in `CONTRIBUTING.md` currently does not support Linux systems. Fixing this would enable more developers to contribute to the project. | 1.0 | Add development support for Linux - The development environment outlined in `CONTRIBUTING.md` currently does not support Linux systems. Fixing this would enable more developers to contribute to the project. | priority | add development support for linux the development environment outlined in contributing md currently does not support linux systems fixing this would enable more developers to contribute to the project | 1 |

446,984 | 12,881,367,641 | IssuesEvent | 2020-07-12 11:40:07 | grpc/grpc | https://api.github.com/repos/grpc/grpc | opened | Status(StatusCode=Cancelled, Detail="No grpc-status found on response.") | kind/question priority/P3 | Grpc.Core.RpcException: Status(StatusCode=Cancelled, Detail="No grpc-status found on response.") .

Always report this error and throw it when it happens at the server side,when i return result, here :static readonly grpc::Marshaller<global::GrpcServer.Web.Protos.GetGoodsByGdsCodeResponse> __Marshaller_GetGoodsByGdsCod... | 1.0 | Status(StatusCode=Cancelled, Detail="No grpc-status found on response.") - Grpc.Core.RpcException: Status(StatusCode=Cancelled, Detail="No grpc-status found on response.") .

Always report this error and throw it when it happens at the server side,when i return result, here :static readonly grpc::Marshaller<global::Grp... | priority | status statuscode cancelled detail no grpc status found on response grpc core rpcexception status statuscode cancelled detail no grpc status found on response always report this error and throw it when it happens at the server side when i return result here static readonly grpc marshaller marshall... | 1 |

19,459 | 4,403,393,879 | IssuesEvent | 2016-08-11 07:42:35 | datagraft/datagraft-portal | https://api.github.com/repos/datagraft/datagraft-portal | opened | Add groups | backend documentation enhancement UI | Add support for specifying groups of users and managing them. Make it possible to support access/modifications of assets by groups. | 1.0 | Add groups - Add support for specifying groups of users and managing them. Make it possible to support access/modifications of assets by groups. | non_priority | add groups add support for specifying groups of users and managing them make it possible to support access modifications of assets by groups | 0 |

73,768 | 3,421,077,913 | IssuesEvent | 2015-12-08 17:14:58 | ccswbs/hjckrrh | https://api.github.com/repos/ccswbs/hjckrrh | closed | G0, PG2 - Any page which adds an existing node as a panel generates an empty h2 with a link. | feature: Custom Content (C) feature: general (G) feature: page (P) priority: normal type: accessibility type: bug type: drupal issue type: enhancement request | An empty h2 (linked) title is being generated by the node.tpl.php file. This affects any site that adds an existing node as a panel on their pages.

**Steps necessary to demonstrate issue:**

1. Go to any panel page (Admin > Structure > Pages)

2. Add a panel to any region (e.g. middle)

3. Select "Existing content" ... | 1.0 | G0, PG2 - Any page which adds an existing node as a panel generates an empty h2 with a link. - An empty h2 (linked) title is being generated by the node.tpl.php file. This affects any site that adds an existing node as a panel on their pages.

**Steps necessary to demonstrate issue:**

1. Go to any panel page (Admin... | priority | any page which adds an existing node as a panel generates an empty with a link an empty linked title is being generated by the node tpl php file this affects any site that adds an existing node as a panel on their pages steps necessary to demonstrate issue go to any panel page admin st... | 1 |

793,934 | 28,017,335,212 | IssuesEvent | 2023-03-28 00:30:33 | matrixorigin/matrixone | https://api.github.com/repos/matrixorigin/matrixone | closed | [Feature Request]: text data type | priority/p0 kind/feature | ### Is there an existing issue for the same feature request?

- [X] I have checked the existing issues.

### Is your feature request related to a problem?

```Markdown

Text is a common data type.

```

### Describe the feature you'd like

MO supports a single TEXT data type instead of tinytext, mediumtext, text, longte... | 1.0 | [Feature Request]: text data type - ### Is there an existing issue for the same feature request?

- [X] I have checked the existing issues.

### Is your feature request related to a problem?

```Markdown

Text is a common data type.

```

### Describe the feature you'd like

MO supports a single TEXT data type instead ... | priority | text data type is there an existing issue for the same feature request i have checked the existing issues is your feature request related to a problem markdown text is a common data type describe the feature you d like mo supports a single text data type instead of tinytext mediu... | 1 |

339,979 | 10,265,228,963 | IssuesEvent | 2019-08-22 18:20:02 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio] Merge conflict results in empty dialog box | bug priority: high | ## Describe the bug

Pulling from a remote repository and causing a conflict opens an empty dialog.

## To Reproduce

Steps to reproduce the behavior:

1. Create a site using Editorial BP called `parent`

2. Create a site called `child` using remote repository, and point it to {PATH_TO_CRAFTER_BUNDLE}/crafter-authori... | 1.0 | [studio] Merge conflict results in empty dialog box - ## Describe the bug

Pulling from a remote repository and causing a conflict opens an empty dialog.

## To Reproduce

Steps to reproduce the behavior:

1. Create a site using Editorial BP called `parent`

2. Create a site called `child` using remote repository, an... | priority | merge conflict results in empty dialog box describe the bug pulling from a remote repository and causing a conflict opens an empty dialog to reproduce steps to reproduce the behavior create a site using editorial bp called parent create a site called child using remote repository and point... | 1 |

247,646 | 7,921,558,598 | IssuesEvent | 2018-07-05 07:57:36 | kubernetes/kubeadm | https://api.github.com/repos/kubernetes/kubeadm | reopened | Kube-dns failed to resolve the services inside the pods after stopping one master node in a multi-master/HA setup | area/HA kind/bug priority/backlog sig/cluster-lifecycle sig/network | ## BUG REPORT

<!--

If this is a BUG REPORT, please:

- Fill in as much of the template below as you can. If you leave out information, we can't help you as well.

If this is a FEATURE REQUEST, please:

- Describe *in detail* the feature/behavior/change you'd like to see.

In both cases, be ready for follow... | 1.0 | Kube-dns failed to resolve the services inside the pods after stopping one master node in a multi-master/HA setup - ## BUG REPORT

<!--

If this is a BUG REPORT, please:

- Fill in as much of the template below as you can. If you leave out information, we can't help you as well.

If this is a FEATURE REQUEST, pl... | priority | kube dns failed to resolve the services inside the pods after stopping one master node in a multi master ha setup bug report if this is a bug report please fill in as much of the template below as you can if you leave out information we can t help you as well if this is a feature request pl... | 1 |

194,774 | 6,898,863,059 | IssuesEvent | 2017-11-24 11:13:28 | xwikisas/application-flashmessages | https://api.github.com/repos/xwikisas/application-flashmessages | closed | Create template provider | Priority: Major Type: Improvement | Since the AWM dependency is removed in #4, a new way to create flash entries is needed. | 1.0 | Create template provider - Since the AWM dependency is removed in #4, a new way to create flash entries is needed. | priority | create template provider since the awm dependency is removed in a new way to create flash entries is needed | 1 |

49,721 | 13,187,256,728 | IssuesEvent | 2020-08-13 02:50:34 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | filterscript trunk: Error: »const class OMKey« has no member named »IsIceTop« (Trac #1930) | Incomplete Migration Migrated from Trac combo reconstruction defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1930">https://code.icecube.wisc.edu/ticket/1930</a>, reported by flauber and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2017-01-10T17:17:57",

"description": "Hi,\n\nwhile building the curren... | 1.0 | filterscript trunk: Error: »const class OMKey« has no member named »IsIceTop« (Trac #1930) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1930">https://code.icecube.wisc.edu/ticket/1930</a>, reported by flauber and owned by </em></summary>

<p>

```json

{

"status": "closed",

... | non_priority | filterscript trunk error »const class omkey« has no member named »isicetop« trac migrated from json status closed changetime description hi n nwhile building the current trunk i get this n n n built target filter tools n built target tensor of iner... | 0 |

157,888 | 6,017,838,189 | IssuesEvent | 2017-06-07 10:40:36 | metasfresh/metasfresh | https://api.github.com/repos/metasfresh/metasfresh | opened | Full Test on Translation of en_US in webUI | priority:high type:enhancement | ### Is this a bug or feature request?

feature

### What is the current behavior?

not all is translated

#### Which are the steps to reproduce?

### What is the expected or desired behavior?

everything visible to the user matches his language setting | 1.0 | Full Test on Translation of en_US in webUI - ### Is this a bug or feature request?

feature

### What is the current behavior?

not all is translated

#### Which are the steps to reproduce?

### What is the expected or desired behavior?

everything visible to the user matches his language setting | priority | full test on translation of en us in webui is this a bug or feature request feature what is the current behavior not all is translated which are the steps to reproduce what is the expected or desired behavior everything visible to the user matches his language setting | 1 |

33,366 | 7,700,556,927 | IssuesEvent | 2018-05-20 03:01:22 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] Frontend login page eye not styled | J4 Issue No Code Attached Yet | ### Steps to reproduce the issue

install Joomla! 4.0.0-alpha3 Alpha [ Amani ] 12-May-2018 15:23 GMT

install demo data in admin

go to frontend

click login button (without entering user/pass)

you get to the login page at

http://example.com/index.php/author-login

### Expected result

eye is styled

### A... | 1.0 | [4.0] Frontend login page eye not styled - ### Steps to reproduce the issue

install Joomla! 4.0.0-alpha3 Alpha [ Amani ] 12-May-2018 15:23 GMT

install demo data in admin

go to frontend

click login button (without entering user/pass)

you get to the login page at

http://example.com/index.php/author-login

##... | non_priority | frontend login page eye not styled steps to reproduce the issue install joomla alpha may gmt install demo data in admin go to frontend click login button without entering user pass you get to the login page at expected result eye is styled actual result eye ... | 0 |

284,139 | 21,392,220,540 | IssuesEvent | 2022-04-21 08:16:42 | marmelab/react-admin | https://api.github.com/repos/marmelab/react-admin | closed | Format of source returned from `getSource` has changed | documentation | In previous versions of React Admin, when evaluating code like the following:

```

<ArrayInput source="authors">

<SimpleFormIterator>

<FormDataConsumer>

{({ formData, scopedFormData, getSource, ...rest }) => {

return scopedFormData && scopedFormData.user_id ? (

<SelectInput

... | 1.0 | Format of source returned from `getSource` has changed - In previous versions of React Admin, when evaluating code like the following:

```

<ArrayInput source="authors">

<SimpleFormIterator>

<FormDataConsumer>

{({ formData, scopedFormData, getSource, ...rest }) => {

return scopedFormData && s... | non_priority | format of source returned from getsource has changed in previous versions of react admin when evaluating code like the following formdata scopedformdata getsource rest return scopedformdata scopedformdata user id selectinput s... | 0 |

46,600 | 13,055,944,076 | IssuesEvent | 2020-07-30 03:11:34 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [steamshovel] memory leak (Trac #1545) | Incomplete Migration Migrated from Trac combo core defect | Migrated from https://code.icecube.wisc.edu/ticket/1545

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:15",

"description": "http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-2a4604.html#EndPath\nhttp://software.icecube.wisc.edu/static_analysis/2016-02-10-0302... | 1.0 | [steamshovel] memory leak (Trac #1545) - Migrated from https://code.icecube.wisc.edu/ticket/1545

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:15",

"description": "http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-2a4604.html#EndPath\nhttp://software.icecube... | non_priority | memory leak trac migrated from json status closed changetime description reporter david schultz cc resolution invalid ts component combo core summary memory leak priority major ... | 0 |

18,000 | 6,537,167,648 | IssuesEvent | 2017-08-31 21:09:51 | craigbarnes/lua-gumbo | https://api.github.com/repos/craigbarnes/lua-gumbo | closed | Separate build targets for each Lua version/ABI | Build | The build system currently outputs the C module to `gumbo/parse.so`, regardless of the target Lua ABI, without any special naming or indication of which Lua interpreter is required to load it. Originally this was to allow `require "gumbo.parse"` to just work as expected within the build directory, for ad-hoc testing an... | 1.0 | Separate build targets for each Lua version/ABI - The build system currently outputs the C module to `gumbo/parse.so`, regardless of the target Lua ABI, without any special naming or indication of which Lua interpreter is required to load it. Originally this was to allow `require "gumbo.parse"` to just work as expected... | non_priority | separate build targets for each lua version abi the build system currently outputs the c module to gumbo parse so regardless of the target lua abi without any special naming or indication of which lua interpreter is required to load it originally this was to allow require gumbo parse to just work as expected... | 0 |

719,940 | 24,774,138,092 | IssuesEvent | 2022-10-23 14:17:57 | bounswe/bounswe2022group5 | https://api.github.com/repos/bounswe/bounswe2022group5 | closed | Deciding on Time and Platform for Backend Team First Meeting | High Priority Type: Communication Status: In Progress | ***Description*:**

As Backend Team (@mehmetemreakbulut , @canberkboun9 , @irfanbozkurt , @oguzhandemirelx), we need to decide the first meeting time and platform. Also, an agenda is crucial for a productive meeting.

Agenda determined during the [Meeting 15.1](https://github.com/bounswe/bounswe2022group5/wiki/Meeti... | 1.0 | Deciding on Time and Platform for Backend Team First Meeting - ***Description*:**

As Backend Team (@mehmetemreakbulut , @canberkboun9 , @irfanbozkurt , @oguzhandemirelx), we need to decide the first meeting time and platform. Also, an agenda is crucial for a productive meeting.

Agenda determined during the [Meetin... | priority | deciding on time and platform for backend team first meeting description as backend team mehmetemreakbulut irfanbozkurt oguzhandemirelx we need to decide the first meeting time and platform also an agenda is crucial for a productive meeting agenda determined during the deciding on... | 1 |

15,973 | 21,047,554,579 | IssuesEvent | 2022-03-31 17:28:32 | bayer-science-for-a-better-life/tiffslide | https://api.github.com/repos/bayer-science-for-a-better-life/tiffslide | closed | Unable to read PNG files | help wanted compatibility | Hi,

My workflow involves extracting patches from WSI and storing them in PNGs. But when I try to read PNG file, I am getting a tifffile error:

```python-traceback

>>> from tiffslide.tiffslide import TiffSlide

>>> path=r"C:\Projects\GaNDLF\testing\histo_patches\histo_patches_output\1\image\image_patch_3792-13696... | True | Unable to read PNG files - Hi,

My workflow involves extracting patches from WSI and storing them in PNGs. But when I try to read PNG file, I am getting a tifffile error:

```python-traceback

>>> from tiffslide.tiffslide import TiffSlide

>>> path=r"C:\Projects\GaNDLF\testing\histo_patches\histo_patches_output\1\i... | non_priority | unable to read png files hi my workflow involves extracting patches from wsi and storing them in pngs but when i try to read png file i am getting a tifffile error python traceback from tiffslide tiffslide import tiffslide path r c projects gandlf testing histo patches histo patches output i... | 0 |

310,255 | 9,487,705,986 | IssuesEvent | 2019-04-22 17:38:16 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Sync should show warning not to share the code with anyone | feature/sync priority/P2 security | STR:

1. Go to brave://sync

2. Click 'start a new chain'

3. Click either desktop or mobile

4. It shows the sync code (either code words or a QR code) without any type of warning that this is a sensitive encryption key.

Impact: Someone could accidentally share the code with Brave (ex: in a support request) which g... | 1.0 | Sync should show warning not to share the code with anyone - STR:

1. Go to brave://sync

2. Click 'start a new chain'

3. Click either desktop or mobile

4. It shows the sync code (either code words or a QR code) without any type of warning that this is a sensitive encryption key.

Impact: Someone could accidentally... | priority | sync should show warning not to share the code with anyone str go to brave sync click start a new chain click either desktop or mobile it shows the sync code either code words or a qr code without any type of warning that this is a sensitive encryption key impact someone could accidentally... | 1 |

4,670 | 3,875,970,613 | IssuesEvent | 2016-04-12 05:02:15 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 21912095: Autocompleted result in Spotlight Search flickers quickly even when result does not change | classification:ui/usability reproducible:always status:open | #### Description

Summary:

When using Spotlight search, the autocompleted result flickers when continuing to type, even when it has not changed.

Steps to Reproduce:

1. Activate Spotlight with Command+Space.

2. Type “Safari” letter by letter.

3. Notice that the autocompleted result for “Safari” flickers in between keyp... | True | 21912095: Autocompleted result in Spotlight Search flickers quickly even when result does not change - #### Description

Summary:

When using Spotlight search, the autocompleted result flickers when continuing to type, even when it has not changed.

Steps to Reproduce:

1. Activate Spotlight with Command+Space.

2. Type “... | non_priority | autocompleted result in spotlight search flickers quickly even when result does not change description summary when using spotlight search the autocompleted result flickers when continuing to type even when it has not changed steps to reproduce activate spotlight with command space type “safari”... | 0 |

751,839 | 26,260,724,367 | IssuesEvent | 2023-01-06 07:28:06 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [BUG] Havester 1.1.0 upgrade to 1.1.1 is missing image docker.io/longhornio/longhorn-ui:v1.3.2 | kind/bug priority/0 reproduce/needed severity/needed area/airgap-env | **Describe the bug**

After the upgrade from harvester 1.1.0 to 1.1.1 I can see this:

`longhorn-ui-94b465b84-2d2zx 0/1 ImagePullBackOff 0 59m`

and

`Failed to pull image "longhornio/longhorn-ui:v1.3.2`

**To Reproduce**

Upgrade Harvester 1.1.0 to 1.1.1 in air gapped

... | 1.0 | [BUG] Havester 1.1.0 upgrade to 1.1.1 is missing image docker.io/longhornio/longhorn-ui:v1.3.2 - **Describe the bug**

After the upgrade from harvester 1.1.0 to 1.1.1 I can see this:

`longhorn-ui-94b465b84-2d2zx 0/1 ImagePullBackOff 0 59m`

and

`Failed to pull image "longho... | priority | havester upgrade to is missing image docker io longhornio longhorn ui describe the bug after the upgrade from harvester to i can see this longhorn ui imagepullbackoff and failed to pull image longhornio longhorn ui ... | 1 |

30,056 | 2,722,147,107 | IssuesEvent | 2015-04-14 00:24:15 | CruxFramework/crux-smart-faces | https://api.github.com/repos/CruxFramework/crux-smart-faces | closed | DialogBox without close button | bug imported Milestone-M14-C4 Module-CruxWidgets Priority-Medium TargetVersion-5.3.0 | _From [flavia.jesus@triggolabs.com](https://code.google.com/u/flavia.jesus@triggolabs.com/) on March 17, 2015 11:22:45_

DialogBox used in the showcase project does not have close button on the small view type.

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=639_ | 1.0 | DialogBox without close button - _From [flavia.jesus@triggolabs.com](https://code.google.com/u/flavia.jesus@triggolabs.com/) on March 17, 2015 11:22:45_

DialogBox used in the showcase project does not have close button on the small view type.

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=6... | priority | dialogbox without close button from on march dialogbox used in the showcase project does not have close button on the small view type original issue | 1 |

60,723 | 25,234,777,374 | IssuesEvent | 2022-11-14 23:20:32 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | closed | SSO realm merge planning - vault | ops and shared services | **Describe the issue**

we will be merging the realm that vault uses to another realm in Gold. This ticket is to find out what's the impact and if the merge is doable for this service.

**What is the plan? How will this get completed?**

Discussion with Service Lead, testing, planning

**Definition of done**

- [x] ident... | 1.0 | SSO realm merge planning - vault - **Describe the issue**

we will be merging the realm that vault uses to another realm in Gold. This ticket is to find out what's the impact and if the merge is doable for this service.

**What is the plan? How will this get completed?**

Discussion with Service Lead, testing, planning

... | non_priority | sso realm merge planning vault describe the issue we will be merging the realm that vault uses to another realm in gold this ticket is to find out what s the impact and if the merge is doable for this service what is the plan how will this get completed discussion with service lead testing planning ... | 0 |

20,311 | 29,670,777,064 | IssuesEvent | 2023-06-11 11:32:48 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Editing a page that has a Spacer block with an HTML Anchor produces message “This block contains unexpected or invalid content.” | [Type] Bug [Feature] Blocks Backwards Compatibility [Type] Regression [Status] Duplicate | ### Description

Our website has pages that contain Spacer blocks with HTML Anchors defined. The site has been working for months with this design. Last edits were done in October 2022. Now when I try to edit these pages every one of these Spacer blocks shows the error message “This block contains unexpected or invalid... | True | Editing a page that has a Spacer block with an HTML Anchor produces message “This block contains unexpected or invalid content.” - ### Description

Our website has pages that contain Spacer blocks with HTML Anchors defined. The site has been working for months with this design. Last edits were done in October 2022. Now... | non_priority | editing a page that has a spacer block with an html anchor produces message “this block contains unexpected or invalid content ” description our website has pages that contain spacer blocks with html anchors defined the site has been working for months with this design last edits were done in october now wh... | 0 |

236,580 | 7,750,965,311 | IssuesEvent | 2018-05-30 15:40:02 | Flynrod/SpawnShield | https://api.github.com/repos/Flynrod/SpawnShield | opened | Ability to enter protection zone during combat | bug confirmed high priority | **SpawnShield version:** 2.0.8

**Description:**

When a player is tagged, he is able to enter the security zone despite the settings in the configuration file. | 1.0 | Ability to enter protection zone during combat - **SpawnShield version:** 2.0.8

**Description:**

When a player is tagged, he is able to enter the security zone despite the settings in the configuration file. | priority | ability to enter protection zone during combat spawnshield version description when a player is tagged he is able to enter the security zone despite the settings in the configuration file | 1 |

33,604 | 12,216,765,438 | IssuesEvent | 2020-05-01 15:48:21 | habusha/CIOIL | https://api.github.com/repos/habusha/CIOIL | opened | CVE-2020-5405 (Medium) detected in spring-cloud-config-client-2.0.1.RELEASE.jar | security vulnerability | ## CVE-2020-5405 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-cloud-config-client-2.0.1.RELEASE.jar</b></p></summary>

<p>This project is a Spring configuration client.</p>

... | True | CVE-2020-5405 (Medium) detected in spring-cloud-config-client-2.0.1.RELEASE.jar - ## CVE-2020-5405 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-cloud-config-client-2.0.1.REL... | non_priority | cve medium detected in spring cloud config client release jar cve medium severity vulnerability vulnerable library spring cloud config client release jar this project is a spring configuration client library home page a href path to dependency file tmp ws scm cioil in... | 0 |

22,881 | 2,651,012,327 | IssuesEvent | 2015-03-16 07:54:09 | ilgrosso/oldSyncopeIdM | https://api.github.com/repos/ilgrosso/oldSyncopeIdM | closed | Virtual attribute cache | 1 star Component-Logic Component-Persistence duplicate enhancement imported Priority-High Release-Soave-2.1 | _From [fabio.ma...@gmail.com](https://code.google.com/u/109095430973973917901/) on January 18, 2012 11:03:48_

Provide a simple cache for virtual attribute values in order to avoid to query external resources every time.

_Original issue: http://code.google.com/p/syncope/issues/detail?id=276_ | 1.0 | Virtual attribute cache - _From [fabio.ma...@gmail.com](https://code.google.com/u/109095430973973917901/) on January 18, 2012 11:03:48_

Provide a simple cache for virtual attribute values in order to avoid to query external resources every time.

_Original issue: http://code.google.com/p/syncope/issues/detail?id=276_ | priority | virtual attribute cache from on january provide a simple cache for virtual attribute values in order to avoid to query external resources every time original issue | 1 |

62,982 | 3,193,774,623 | IssuesEvent | 2015-09-30 08:11:04 | fusioninventory/fusioninventory-for-glpi | https://api.github.com/repos/fusioninventory/fusioninventory-for-glpi | closed | Add header "server-type" for answer of agent | Category: Communication Component: For junior contributor Priority: Normal Status: Closed Tracker: Feature | ---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 1406, http://forge.fusioninventory.org/issues/1406

Original Date: 2011-12-18

Original Assignee: David Durieux

---

Like return header :

```

server-type: glpi/fusioninventory 0.83+1.0

```

| 1.0 | Add header "server-type" for answer of agent - ---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 1406, http://forge.fusioninventory.org/issues/1406

Original Date: 2011-12-18

Original Assignee: David Durieux

---

Like return header :

```

server-type: glpi/fusioninventory 0.83+1.0

```

| priority | add header server type for answer of agent author name david durieux ddurieux original redmine issue original date original assignee david durieux like return header server type glpi fusioninventory | 1 |

400,259 | 11,771,273,650 | IssuesEvent | 2020-03-15 23:09:14 | GarkGarcia/icon-pie | https://api.github.com/repos/GarkGarcia/icon-pie | reopened | Freedesktop icon theme support | enhancement priority | If I understand correctly:

- IconPie is currently geared towards generating several icons at into container files ;

- the 'entry' term refers to an icon from within the container file.

Is that so ?

What about icon sets that are stored as individual files in Linux distribution packages and follow the [Freedeskto... | 1.0 | Freedesktop icon theme support - If I understand correctly:

- IconPie is currently geared towards generating several icons at into container files ;

- the 'entry' term refers to an icon from within the container file.

Is that so ?

What about icon sets that are stored as individual files in Linux distribution pa... | priority | freedesktop icon theme support if i understand correctly iconpie is currently geared towards generating several icons at into container files the entry term refers to an icon from within the container file is that so what about icon sets that are stored as individual files in linux distribution pa... | 1 |

2,109 | 2,697,578,893 | IssuesEvent | 2015-04-02 20:49:58 | SleepyTrousers/EnderIO | https://api.github.com/repos/SleepyTrousers/EnderIO | closed | Insert and Export pointers not rendering | bug Code Complete | I am not sure if it is the current version of enderio or cofh updates but the arrows no longer render correctly on the conduits. Must view from weird angles to see them. I noticed the issue on direwolf20 forgecraft video today as well | 1.0 | Insert and Export pointers not rendering - I am not sure if it is the current version of enderio or cofh updates but the arrows no longer render correctly on the conduits. Must view from weird angles to see them. I noticed the issue on direwolf20 forgecraft video today as well | non_priority | insert and export pointers not rendering i am not sure if it is the current version of enderio or cofh updates but the arrows no longer render correctly on the conduits must view from weird angles to see them i noticed the issue on forgecraft video today as well | 0 |

598,710 | 18,250,675,389 | IssuesEvent | 2021-10-02 06:22:23 | FantasticoFox/VerifyPage | https://api.github.com/repos/FantasticoFox/VerifyPage | closed | Only show numbers of revision of the page file in question instead of backend rev_id's | medium priority feature UX |

The backed revision ID's are only impotent for debugging purpose (so best is to have a debugging option to enable it). Useful for the user is to understand how many revisions the local file has. | 1.0 | Only show numbers of revision of the page file in question instead of backend rev_id's -

The backed revision ID's are only impotent for debugging purpose (so best is to have a debugging option to enable i... | priority | only show numbers of revision of the page file in question instead of backend rev id s the backed revision id s are only impotent for debugging purpose so best is to have a debugging option to enable it useful for the user is to understand how many revisions the local file has | 1 |

32,221 | 12,097,387,710 | IssuesEvent | 2020-04-20 08:32:18 | geea-develop/aurelia-datepicker-range-sample | https://api.github.com/repos/geea-develop/aurelia-datepicker-range-sample | opened | CVE-2016-10540 (High) detected in minimatch-0.3.0.tgz | security vulnerability | ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-0.3.0.tgz</b></p></summary>

<p>a glob matcher in javascript</p>

<p>Library home page: <a href="https://regis... | True | CVE-2016-10540 (High) detected in minimatch-0.3.0.tgz - ## CVE-2016-10540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimatch-0.3.0.tgz</b></p></summary>

<p>a glob matcher in jav... | non_priority | cve high detected in minimatch tgz cve high severity vulnerability vulnerable library minimatch tgz a glob matcher in javascript library home page a href path to dependency file tmp ws scm aurelia datepicker range sample package json path to vulnerable library tmp ... | 0 |

352,463 | 25,068,877,662 | IssuesEvent | 2022-11-07 10:30:31 | eclipse-dataspaceconnector/docs | https://api.github.com/repos/eclipse-dataspaceconnector/docs | opened | Add Trust Framework Adoption repo to documentation | documentation | # Feature Request

Add Trust Framework Adoption repo as submodule.

## Why Is the Feature Desired?

To also link the provided documentation there.

## Solution Proposal

Add submodule, link it in sidebar.

## Type of Issue

improvement

## Checklist

- [x] assigned appropriate label?

- [x] **Do NOT select ... | 1.0 | Add Trust Framework Adoption repo to documentation - # Feature Request

Add Trust Framework Adoption repo as submodule.

## Why Is the Feature Desired?

To also link the provided documentation there.

## Solution Proposal

Add submodule, link it in sidebar.

## Type of Issue

improvement

## Checklist

- [x... | non_priority | add trust framework adoption repo to documentation feature request add trust framework adoption repo as submodule why is the feature desired to also link the provided documentation there solution proposal add submodule link it in sidebar type of issue improvement checklist ... | 0 |

758,414 | 26,554,525,336 | IssuesEvent | 2023-01-20 10:48:54 | OffchainLabs/arb-token-bridge | https://api.github.com/repos/OffchainLabs/arb-token-bridge | opened | decouple `useTransactions` from `useArbTokenBridge` | Priority: P2 Low Type: Refactoring | At the moment, all of the state and business logic we need for the Bridge UI is kept inside `useArbTokenBridge`. However, we are slowly working towards more modular refactor that will leave us with a couple of stateless methods (like `depositETH`, `withdrawToken`) and other utilities for keeping state when needed (like... | 1.0 | decouple `useTransactions` from `useArbTokenBridge` - At the moment, all of the state and business logic we need for the Bridge UI is kept inside `useArbTokenBridge`. However, we are slowly working towards more modular refactor that will leave us with a couple of stateless methods (like `depositETH`, `withdrawToken`) a... | priority | decouple usetransactions from usearbtokenbridge at the moment all of the state and business logic we need for the bridge ui is kept inside usearbtokenbridge however we are slowly working towards more modular refactor that will leave us with a couple of stateless methods like depositeth withdrawtoken a... | 1 |

671,022 | 22,738,969,675 | IssuesEvent | 2022-07-07 00:26:50 | PolyhedralDev/TerraOverworldConfig | https://api.github.com/repos/PolyhedralDev/TerraOverworldConfig | opened | Global heightmap refactor | enhancement priority=medium major | Rework all terrain to use a global height map, rather than determining general height via biome distribution. This will make height variation look significantly better as terrain won't need to be interpolated so much. Biome specific detailing can be done by different EQs that utilize the heightmap in different ways.

... | 1.0 | Global heightmap refactor - Rework all terrain to use a global height map, rather than determining general height via biome distribution. This will make height variation look significantly better as terrain won't need to be interpolated so much. Biome specific detailing can be done by different EQs that utilize the hei... | priority | global heightmap refactor rework all terrain to use a global height map rather than determining general height via biome distribution this will make height variation look significantly better as terrain won t need to be interpolated so much biome specific detailing can be done by different eqs that utilize the hei... | 1 |

517,587 | 15,016,585,071 | IssuesEvent | 2021-02-01 09:46:35 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | Check and update the TDSv7 schema and translation | Category: Core Category: Translation Priority: High Status: In Progress Type: Bug Type: Maintenance Type: Support | Reconcile the differences between the published schema and the implementation.

Treat the implementation as the "standard" | 1.0 | Check and update the TDSv7 schema and translation - Reconcile the differences between the published schema and the implementation.

Treat the implementation as the "standard" | priority | check and update the schema and translation reconcile the differences between the published schema and the implementation treat the implementation as the standard | 1 |

171,442 | 13,233,560,455 | IssuesEvent | 2020-08-18 14:58:22 | RedHatInsights/tower-analytics-frontend | https://api.github.com/repos/RedHatInsights/tower-analytics-frontend | closed | Upgrade to PatternFly4 | needs_test | # Description

The C.R.C platform team wants all apps to upgrade to PatternFly4 by July 13th so they can upgrade the chrome to PatternFly4 then.

- [x] Test app with PatternFly4

- [x] Determine what changes need to be made

- [x] Make those changes

- [x] Verify changes | 1.0 | Upgrade to PatternFly4 - # Description

The C.R.C platform team wants all apps to upgrade to PatternFly4 by July 13th so they can upgrade the chrome to PatternFly4 then.

- [x] Test app with PatternFly4

- [x] Determine what changes need to be made

- [x] Make those changes

- [x] Verify changes | non_priority | upgrade to description the c r c platform team wants all apps to upgrade to by july so they can upgrade the chrome to then test app with determine what changes need to be made make those changes verify changes | 0 |

717,657 | 24,685,708,851 | IssuesEvent | 2022-10-19 03:09:23 | authelia/authelia | https://api.github.com/repos/authelia/authelia | closed | Add matcher for query arguments in ACL rules | priority/4/normal type/feature | ## Bug Report

### Description

I am attempting to restrict access to resource which due to the nature of application requires me to match an GET argument. Now the first ACL ruleset I sketched was following:

```

- domain: app.example.com

subject: "group:Admins"

resources:

- "^/path/to/... | 1.0 | Add matcher for query arguments in ACL rules - ## Bug Report

### Description

I am attempting to restrict access to resource which due to the nature of application requires me to match an GET argument. Now the first ACL ruleset I sketched was following:

```

- domain: app.example.com

subject: "group... | priority | add matcher for query arguments in acl rules bug report description i am attempting to restrict access to resource which due to the nature of application requires me to match an get argument now the first acl ruleset i sketched was following domain app example com subject group... | 1 |

477,786 | 13,768,462,831 | IssuesEvent | 2020-10-07 17:07:25 | pringyy/Individual-Project | https://api.github.com/repos/pringyy/Individual-Project | opened | Brain storm different story ideas for MMO | Low Priority research | This is not high priority right now, but for this game to have a story I need to brain storm different ideas of how players can progress to different points in the game so they are working towards something. This is key as if players are working to complete something and progress they can communicate and help each othe... | 1.0 | Brain storm different story ideas for MMO - This is not high priority right now, but for this game to have a story I need to brain storm different ideas of how players can progress to different points in the game so they are working towards something. This is key as if players are working to complete something and prog... | priority | brain storm different story ideas for mmo this is not high priority right now but for this game to have a story i need to brain storm different ideas of how players can progress to different points in the game so they are working towards something this is key as if players are working to complete something and prog... | 1 |

121,298 | 12,122,105,364 | IssuesEvent | 2020-04-22 10:23:11 | process-analytics/bpmn-visualization-js | https://api.github.com/repos/process-analytics/bpmn-visualization-js | closed | [TEST] Update the tests of BpmnXmlParser | documentation infra:refactoring | Replace the existing tests of BpmnXmlParser.

For the Xml Parser, we don't want to verify if we can convert all xml objects in json; but, if we can convert the BPMN files from different [vendors](https://github.com/bpmn-miwg/bpmn-miwg-test-suite) & the [BPMN Model Interchange Working Group](https://github.com/bpmn-mi... | 1.0 | [TEST] Update the tests of BpmnXmlParser - Replace the existing tests of BpmnXmlParser.

For the Xml Parser, we don't want to verify if we can convert all xml objects in json; but, if we can convert the BPMN files from different [vendors](https://github.com/bpmn-miwg/bpmn-miwg-test-suite) & the [BPMN Model Interchang... | non_priority | update the tests of bpmnxmlparser replace the existing tests of bpmnxmlparser for the xml parser we don t want to verify if we can convert all xml objects in json but if we can convert the bpmn files from different the we need to verify if we can convert correctly the attributes of the xml o... | 0 |

111,617 | 14,114,126,011 | IssuesEvent | 2020-11-07 14:36:05 | thomasmichaelwallace/another-moonshot | https://api.github.com/repos/thomasmichaelwallace/another-moonshot | closed | Game title and pitch | design | Before getting started, I need to:

- Outline an elevator pitch that'll guide the rest of the game development

- Come up with a working title to match | 1.0 | Game title and pitch - Before getting started, I need to:

- Outline an elevator pitch that'll guide the rest of the game development

- Come up with a working title to match | non_priority | game title and pitch before getting started i need to outline an elevator pitch that ll guide the rest of the game development come up with a working title to match | 0 |

79,273 | 10,115,405,421 | IssuesEvent | 2019-07-30 21:41:32 | magento-research/pwa-studio | https://api.github.com/repos/magento-research/pwa-studio | closed | [doc]: Migration banner | docs documentation pkg:pwa-devdocs | **Describe the request**

Since the pwa-studio repository is moving to another org, the URL for the docs site will change and visitors will get a 404 when visiting any old links.

**Possible solutions**

A banner needs to be put up as soon as possible to inform visitors of this change to mitigate the confusion.

**Scre... | 1.0 | [doc]: Migration banner - **Describe the request**

Since the pwa-studio repository is moving to another org, the URL for the docs site will change and visitors will get a 404 when visiting any old links.

**Possible solutions**

A banner needs to be put up as soon as possible to inform visitors of this change to mitig... | non_priority | migration banner describe the request since the pwa studio repository is moving to another org the url for the docs site will change and visitors will get a when visiting any old links possible solutions a banner needs to be put up as soon as possible to inform visitors of this change to mitigate th... | 0 |

51,584 | 6,180,743,740 | IssuesEvent | 2017-07-03 07:07:15 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | opened | System.IO.Tests.FileInfo_Delete failed with Xunit.Sdk.TrueException in CI | area-System.IO test-run-core | failed test: System.IO.Tests.FileInfo_Delete.Unix_ExistingDirectory_ReadOnlyVolume

detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_ubuntu14.04_debug/95/testReport/System.IO.Tests/FileInfo_Delete/Unix_ExistingDirectory_ReadOnlyVolume/

MESSAGE:

~~~

Assert.True() Failure\nExpected... | 1.0 | System.IO.Tests.FileInfo_Delete failed with Xunit.Sdk.TrueException in CI - failed test: System.IO.Tests.FileInfo_Delete.Unix_ExistingDirectory_ReadOnlyVolume

detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_ubuntu14.04_debug/95/testReport/System.IO.Tests/FileInfo_Delete/Unix_Existin... | non_priority | system io tests fileinfo delete failed with xunit sdk trueexception in ci failed test system io tests fileinfo delete unix existingdirectory readonlyvolume detail message assert true failure nexpected true nactual false stack trace at system io tests filesystemtest runassudo string ... | 0 |

30,370 | 2,723,600,757 | IssuesEvent | 2015-04-14 13:36:54 | CruxFramework/crux-widgets | https://api.github.com/repos/CruxFramework/crux-widgets | closed | ClassPathResolver section in UserManual is out of date | bug imported Milestone-3.0.0 Priority-Medium Wiki | _From [brunodep...@gmail.com](https://code.google.com/u/108972312674998482139/) on May 21, 2010 16:20:51_

What steps will reproduce the problem? 1.Go to Wiki/ UserManual 2.Check instructions for creating a WeblogicClassPathResolver

3.Check method public URL findWebBaseDir()

The document says to override method pu... | 1.0 | ClassPathResolver section in UserManual is out of date - _From [brunodep...@gmail.com](https://code.google.com/u/108972312674998482139/) on May 21, 2010 16:20:51_

What steps will reproduce the problem? 1.Go to Wiki/ UserManual 2.Check instructions for creating a WeblogicClassPathResolver

3.Check method public URL fin... | priority | classpathresolver section in usermanual is out of date from on may what steps will reproduce the problem go to wiki usermanual check instructions for creating a weblogicclasspathresolver check method public url findwebbasedir the document says to override method public url findwebbase... | 1 |

70,117 | 13,429,135,142 | IssuesEvent | 2020-09-07 00:49:38 | EKA2L1/Compatibility-List | https://api.github.com/repos/EKA2L1/Compatibility-List | opened | System Rush | - Game Genre: Racing Bootable IO Component Error N-Gage Unimplemented Opcode | # App summary

- App name: System Rush

# EKA2L1 info

- Build name: 1.0.1463

# Test environment summary

- OS: Windows

- CPU: AMD

- GPU: NVIDIA

- RAM: 8 GB

# Issues

it stops working after running into many "opcode" errors

# Log

[EKA2L1.log](https://github.com/EKA2L1/Compatibility-List/files/5180562/EKA... | 1.0 | System Rush - # App summary

- App name: System Rush

# EKA2L1 info

- Build name: 1.0.1463

# Test environment summary

- OS: Windows

- CPU: AMD

- GPU: NVIDIA

- RAM: 8 GB

# Issues

it stops working after running into many "opcode" errors

# Log

[EKA2L1.log](https://github.com/EKA2L1/Compatibility-List/fil... | non_priority | system rush app summary app name system rush info build name test environment summary os windows cpu amd gpu nvidia ram gb issues it stops working after running into many opcode errors log | 0 |

163,502 | 25,828,002,121 | IssuesEvent | 2022-12-12 14:16:33 | canedobox/gymproject | https://api.github.com/repos/canedobox/gymproject | closed | Fix contact form section height bug on small screens | bug design | The contact form section is overflowing on small screens, see screenshot:

| 1.0 | Fix contact form section height bug on small screens - The contact form section is overflowing on small screens, see screenshot:

| non_priority | fix contact form section height bug on small screens the contact form section is overflowing on small screens see screenshot | 0 |

545,098 | 15,936,060,913 | IssuesEvent | 2021-04-14 10:38:39 | googleapis/python-aiplatform | https://api.github.com/repos/googleapis/python-aiplatform | reopened | samples.snippets.create_custom_job_sample_test: test_ucaip_generated_create_custom_job failed | api: aiplatform flakybot: flaky flakybot: issue priority: p1 samples type: bug | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 1b03775c04db8a99c141c69813c42142c077ceef... | 1.0 | samples.snippets.create_custom_job_sample_test: test_ucaip_generated_create_custom_job failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot:... | priority | samples snippets create custom job sample test test ucaip generated create custom job failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output sh... | 1 |

704,759 | 24,207,949,885 | IssuesEvent | 2022-09-25 13:57:35 | elabftw/elabftw | https://api.github.com/repos/elabftw/elabftw | closed | Suport HEIF in PDF generation | feature request priority:low | # Feature request

<!-- Please provide a clear description of what problem you are trying to solve and how would you want it to be solved. -->

The PDF generation does not understand HEIF files. Can the PDF generator be modified to support this file format, since it is now the default standard on iOS devices.

I... | 1.0 | Suport HEIF in PDF generation - # Feature request

<!-- Please provide a clear description of what problem you are trying to solve and how would you want it to be solved. -->

The PDF generation does not understand HEIF files. Can the PDF generator be modified to support this file format, since it is now the defau... | priority | suport heif in pdf generation feature request the pdf generation does not understand heif files can the pdf generator be modified to support this file format since it is now the default standard on ios devices i can t tell anymore where in makepdf the images are generated but imagemagick supports th... | 1 |

236,292 | 18,091,955,228 | IssuesEvent | 2021-09-22 03:22:34 | pythonarcade/arcade | https://api.github.com/repos/pythonarcade/arcade | closed | Dead link in the docs | fix waiting for release documentation | ## Documentation request:

### What documentation needs to change?

[Get Started Here](https://api.arcade.academy/en/latest/get_started.html?highlight=Learn%20arcade%20book%20on%20collisions#arcade-skill-tree)

### Where is it located?

`arcade/doc/get_started.rst`

### What is wrong with it? How can it be improv... | 1.0 | Dead link in the docs - ## Documentation request:

### What documentation needs to change?

[Get Started Here](https://api.arcade.academy/en/latest/get_started.html?highlight=Learn%20arcade%20book%20on%20collisions#arcade-skill-tree)

### Where is it located?

`arcade/doc/get_started.rst`

### What is wrong with ... | non_priority | dead link in the docs documentation request what documentation needs to change where is it located arcade doc get started rst what is wrong with it how can it be improved the link | 0 |

758,628 | 26,562,439,766 | IssuesEvent | 2023-01-20 16:58:20 | strug-hub/LocusFocus | https://api.github.com/repos/strug-hub/LocusFocus | opened | CI/CD: Update version number using tagged releases | low priority | Depends partly on #29

Not important, but nice to have | 1.0 | CI/CD: Update version number using tagged releases - Depends partly on #29

Not important, but nice to have | priority | ci cd update version number using tagged releases depends partly on not important but nice to have | 1 |

607,478 | 18,783,321,935 | IssuesEvent | 2021-11-08 09:31:07 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | unifiedportal-mem.epfindia.gov.in - site is not usable | priority-important browser-fenix engine-gecko | <!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.1; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/92711 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://unifiedpo... | 1.0 | unifiedportal-mem.epfindia.gov.in - site is not usable - <!-- @browser: Firefox Mobile 95.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.1; Mobile; rv:95.0) Gecko/95.0 Firefox/95.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/92711 -->

<!-- @ex... | priority | unifiedportal mem epfindia gov in site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description browser unsupported steps to reproduce page lock not support ep... | 1 |

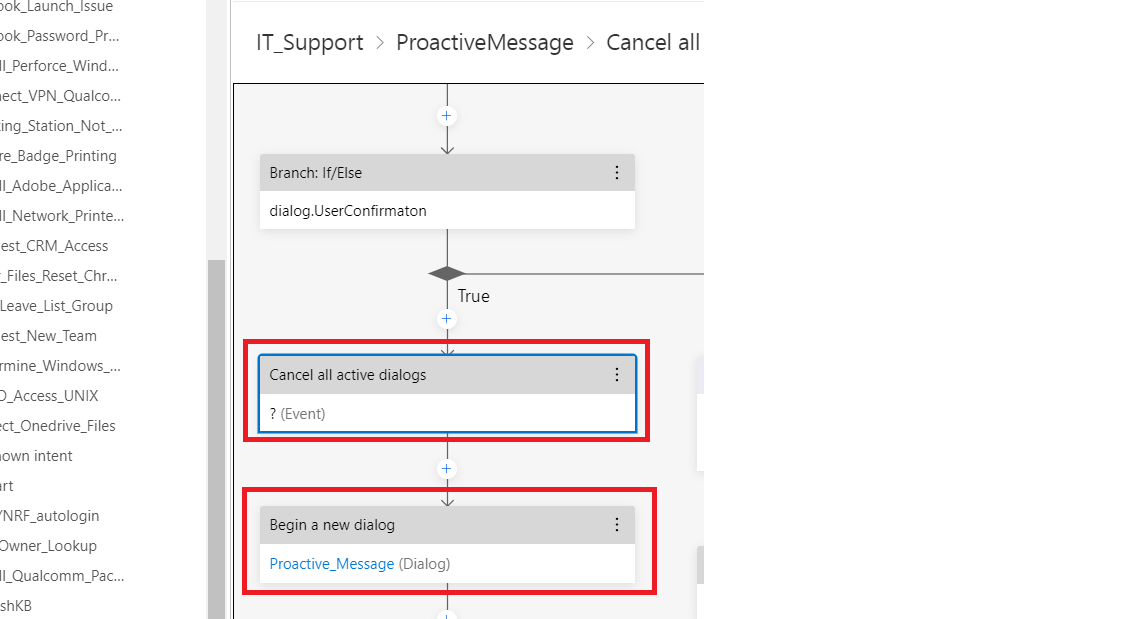

34,697 | 14,492,652,470 | IssuesEvent | 2020-12-11 07:20:47 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | Bot composer - Unable to Begin new Dialog after Cancel All active dialog | Bot Services Type: Bug customer-reported | I am using Bot composer. My case is simple.

I want to Cancel all active dialog and begin new dialog. I am not sure why this is not happening with the emulator.

| 1.0 | Bot composer - Unable to Begin new Dialog after Cancel All active dialog - I am using Bot composer. My case is simple.

I want to Cancel all active dialog and begin new dialog. I am not sure why this is not happening with the emulator.

** a subtitle file to the output of NVEnc and QSVEnc. Since in Staxrip the output of the encoder is *.h264 or *.h265, this option makes crash... | 1.0 | NVENC and QSVENS Subtitles file option must be removed - **Describe the bug**

The option Other > Subtitle File must be removed from :

- NVEnc : h264, h265

- QSVEnc : h264, h265

because this options allows to **mux (not harcode!)** a subtitle file to the output of NVEnc and QSVEnc. Since in Staxrip the output of... | priority | nvenc and qsvens subtitles file option must be removed describe the bug the option other subtitle file must be removed from nvenc qsvenc because this options allows to mux not harcode a subtitle file to the output of nvenc and qsvenc since in staxrip the output of the encoder... | 1 |

133,544 | 29,298,451,238 | IssuesEvent | 2023-05-25 00:03:11 | microsoft/devhome | https://api.github.com/repos/microsoft/devhome | closed | resource.resw issues | Issue-Bug Area-Code-Health | ### Dev Home version

_No response_

### Windows build number

_No response_

### Other software

_No response_

### Steps to reproduce the bug

* Settings_AboutDescription.Text <- not used in code.

### Expected result

_No response_

### Actual result

_No response_

### Included System Information

_No response_

##... | 1.0 | resource.resw issues - ### Dev Home version

_No response_

### Windows build number

_No response_

### Other software

_No response_

### Steps to reproduce the bug

* Settings_AboutDescription.Text <- not used in code.

### Expected result

_No response_

### Actual result

_No response_

### Included System Informa... | non_priority | resource resw issues dev home version no response windows build number no response other software no response steps to reproduce the bug settings aboutdescription text not used in code expected result no response actual result no response included system informa... | 0 |

166,234 | 14,045,201,065 | IssuesEvent | 2020-11-02 00:36:52 | matplotlib/matplotlib | https://api.github.com/repos/matplotlib/matplotlib | closed | Mostly unused glossary still exists in our docs | Documentation | <!--To help us understand and resolve your issue, please fill out the form to the best of your ability.-->

<!--You can feel free to delete the sections that do not apply.-->

### Problem

Discussed in the GSOD call, this feels like something that we wanted to add at some point but never really got around to using.... | 1.0 | Mostly unused glossary still exists in our docs - <!--To help us understand and resolve your issue, please fill out the form to the best of your ability.-->

<!--You can feel free to delete the sections that do not apply.-->

### Problem