Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

446,524 | 12,865,765,070 | IssuesEvent | 2020-07-10 01:26:31 | PyTorchLightning/pytorch-lightning | https://api.github.com/repos/PyTorchLightning/pytorch-lightning | closed | `model.test()` can fail for `ddp` because `args` in `evaluation_forward` are malformed | Priority bug / fix good first issue help wanted | ## 🐛 Bug

`model.test()` can fail while training via `dp` because `TrainerEvaluationLoopMixin.evaluation_forward` doesn't handle an edge case.

### To Reproduce

Attempt to `model.test()` any lightning model in `dp` mode (I believe it fails in any of the modes at https://github.com/PyTorchLightning/pytorch-light... | 1.0 | `model.test()` can fail for `ddp` because `args` in `evaluation_forward` are malformed - ## 🐛 Bug

`model.test()` can fail while training via `dp` because `TrainerEvaluationLoopMixin.evaluation_forward` doesn't handle an edge case.

### To Reproduce

Attempt to `model.test()` any lightning model in `dp` mode (I ... | priority | model test can fail for ddp because args in evaluation forward are malformed 🐛 bug model test can fail while training via dp because trainerevaluationloopmixin evaluation forward doesn t handle an edge case to reproduce attempt to model test any lightning model in dp mode i ... | 1 |

225,751 | 7,494,838,685 | IssuesEvent | 2018-04-07 14:32:41 | Blockrazor/blockrazor | https://api.github.com/repos/Blockrazor/blockrazor | closed | problem: [/currency/x] cannot add exchanges to coins | Paid-contributor Priority | Problem: it's not possible for users to add exchanges to coins on the currency detail page.

possible solution: Allow users to add an exchange to a currency from the existing list of exchanges or add a new exchange. Ideally this would be a typahead list with "add" if what the user types isn't found (e.g. how Github w... | 1.0 | problem: [/currency/x] cannot add exchanges to coins - Problem: it's not possible for users to add exchanges to coins on the currency detail page.

possible solution: Allow users to add an exchange to a currency from the existing list of exchanges or add a new exchange. Ideally this would be a typahead list with "add... | priority | problem cannot add exchanges to coins problem it s not possible for users to add exchanges to coins on the currency detail page possible solution allow users to add an exchange to a currency from the existing list of exchanges or add a new exchange ideally this would be a typahead list with add if what th... | 1 |

106,648 | 4,281,658,232 | IssuesEvent | 2016-07-15 04:42:01 | matuella/javaee-clinic | https://api.github.com/repos/matuella/javaee-clinic | closed | Doctor register won't clear up after saving | High Priority | Probably because it's now being injected as a EJB. Need to investigate why. | 1.0 | Doctor register won't clear up after saving - Probably because it's now being injected as a EJB. Need to investigate why. | priority | doctor register won t clear up after saving probably because it s now being injected as a ejb need to investigate why | 1 |

269,753 | 8,443,001,891 | IssuesEvent | 2018-10-18 14:35:04 | CDCgov/WebMicrobeTrace | https://api.github.com/repos/CDCgov/WebMicrobeTrace | opened | Sequence Validator | enhancement epic low priority | Secure HIV-TRACE is implementing some sort of Sequence Validation. We should too! Here are the checks that we know about:

1. Presentness - Does the sequence exist and is it non-trivial?

2. Distinctness - Is the sequence distinct from the reference sequence?

3. Uniqueness - Is the sequence unique, or do any other s... | 1.0 | Sequence Validator - Secure HIV-TRACE is implementing some sort of Sequence Validation. We should too! Here are the checks that we know about:

1. Presentness - Does the sequence exist and is it non-trivial?

2. Distinctness - Is the sequence distinct from the reference sequence?

3. Uniqueness - Is the sequence uniq... | priority | sequence validator secure hiv trace is implementing some sort of sequence validation we should too here are the checks that we know about presentness does the sequence exist and is it non trivial distinctness is the sequence distinct from the reference sequence uniqueness is the sequence uniq... | 1 |

269,711 | 23,460,572,898 | IssuesEvent | 2022-08-16 12:48:26 | cobudget/cobudget | https://api.github.com/repos/cobudget/cobudget | closed | [FEATURE] Add "experimental features" bool to orgs | needs testing | We want to give some orgs access to experimental features.

This is toggled by a bool set in the database.

Direct funding is the first such feature. | 1.0 | [FEATURE] Add "experimental features" bool to orgs - We want to give some orgs access to experimental features.

This is toggled by a bool set in the database.

Direct funding is the first such feature. | non_priority | add experimental features bool to orgs we want to give some orgs access to experimental features this is toggled by a bool set in the database direct funding is the first such feature | 0 |

7,283 | 2,891,477,979 | IssuesEvent | 2015-06-15 05:51:55 | brobeson/uml2code | https://api.github.com/repos/brobeson/uml2code | opened | implement unit tests for the UML system | tests | Implement a robust set of unit tests for the UML system. The UML system is implemented by #6. | 1.0 | implement unit tests for the UML system - Implement a robust set of unit tests for the UML system. The UML system is implemented by #6. | non_priority | implement unit tests for the uml system implement a robust set of unit tests for the uml system the uml system is implemented by | 0 |

16,828 | 2,948,319,266 | IssuesEvent | 2015-07-06 01:27:28 | Winetricks/winetricks | https://api.github.com/repos/Winetricks/winetricks | closed | xna31 fails due to "dotnet2 missing" | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. delete .wine

2. ./winetricks.svn870 xna31

3.

err:msi:ITERATE_Actions Execution halted, action L"NotWinFx2Action" returned

1603

------------------------------------------------------

Note: command 'wine msiexec /quiet /i xnafx31_redist.msi' returned status 67.

Aborting.

... | 1.0 | xna31 fails due to "dotnet2 missing" - ```

What steps will reproduce the problem?

1. delete .wine

2. ./winetricks.svn870 xna31

3.

err:msi:ITERATE_Actions Execution halted, action L"NotWinFx2Action" returned

1603

------------------------------------------------------

Note: command 'wine msiexec /quiet /i xnafx31_redist... | non_priority | fails due to missing what steps will reproduce the problem delete wine winetricks err msi iterate actions execution halted action l returned note command wine msiexec quiet i redist msi returned status aborting what... | 0 |

275,498 | 8,576,355,871 | IssuesEvent | 2018-11-12 20:07:14 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | discordapp.com - site is not usable | browser-firefox priority-important | <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://discordapp.com/channels/@me

**Browser / Version**: Firefox 65.0

**Operating System**: Linux

**Tested Another Browser**: No

**Problem typ... | 1.0 | discordapp.com - site is not usable - <!-- @browser: Firefox 65.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:65.0) Gecko/20100101 Firefox/65.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://discordapp.com/channels/@me

**Browser / Version**: Firefox 65.0

**Operating System**: Linux

**Teste... | priority | discordapp com site is not usable url browser version firefox operating system linux tested another browser no problem type site is not usable description can t connect to websocket steps to reproduce just login to discord webpage and it will hang loop forever ... | 1 |

345,226 | 10,354,581,642 | IssuesEvent | 2019-09-05 14:01:20 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | support custom Open SSL library for builds | kind/enhancement lang/c++ priority/P3 | ### What version of gRPC and what language are you using?

1.12

### What operating system (Linux, Windows, …) and version?

Ubuntu 16.04 (WSL)

### What runtime / compiler are you using (e.g. python version or version of gcc)

clang 5.0

### What did you do?

`ccmake -DOPENSSL_CRYPTO_LIBRARY=/mnt/... | 1.0 | support custom Open SSL library for builds - ### What version of gRPC and what language are you using?

1.12

### What operating system (Linux, Windows, …) and version?

Ubuntu 16.04 (WSL)

### What runtime / compiler are you using (e.g. python version or version of gcc)

clang 5.0

### What did you ... | priority | support custom open ssl library for builds what version of grpc and what language are you using what operating system linux windows … and version ubuntu wsl what runtime compiler are you using e g python version or version of gcc clang what did you do ... | 1 |

767,375 | 26,921,456,534 | IssuesEvent | 2023-02-07 10:44:45 | AUBGTheHUB/spa-website-2022 | https://api.github.com/repos/AUBGTheHUB/spa-website-2022 | closed | OnClick function for redirecting to Landing page | frontend medium priority SPA | Create an onClick function in React for redirecting users from 'subpages' (e.g. jobs page, or HackAUBG page) to the Landing page (main page) every time the user clicks the HUB logo/name in the Navbar.

| 1.0 | OnClick function for redirecting to Landing page - Create an onClick function in React for redirecting users from 'subpages' (e.g. jobs page, or HackAUBG page) to the Landing page (main page) every time the user clicks the HUB logo/name in the Navbar.

| priority | onclick function for redirecting to landing page create an onclick function in react for redirecting users from subpages e g jobs page or hackaubg page to the landing page main page every time the user clicks the hub logo name in the navbar | 1 |

128,557 | 10,542,902,024 | IssuesEvent | 2019-10-02 14:03:18 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | web3 prompt/modal appearing for sites that don't have web3 integrated | QA/Test-Plan-Specified QA/Yes bug feature/crypto-wallets | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | web3 prompt/modal appearing for sites that don't have web3 integrated - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET ... | non_priority | prompt modal appearing for sites that don t have integrated have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the is... | 0 |

2,833 | 3,900,901,025 | IssuesEvent | 2016-04-18 08:37:48 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | opened | cache-dir on a builder has a 40 GB cache of dart-lang/SDK - how is this possible | area-infrastructure Type: bug | File this as an issue with chrome-infrastructure-team, to get their input.

This filled up a 60GB drive, and caused the builder to fail.

Here is the result of df -k -d1 in the cache-dir

...

1013228 cache_dir/chromium.googlesource.com-external-github.com-dart--lang-co19

2965224 cache_dir/boringssl.googlesource.com... | 1.0 | cache-dir on a builder has a 40 GB cache of dart-lang/SDK - how is this possible - File this as an issue with chrome-infrastructure-team, to get their input.

This filled up a 60GB drive, and caused the builder to fail.

Here is the result of df -k -d1 in the cache-dir

...

1013228 cache_dir/chromium.googlesource.co... | non_priority | cache dir on a builder has a gb cache of dart lang sdk how is this possible file this as an issue with chrome infrastructure team to get their input this filled up a drive and caused the builder to fail here is the result of df k in the cache dir cache dir chromium googlesource com external ... | 0 |

129,946 | 18,152,074,021 | IssuesEvent | 2021-09-26 12:43:13 | anyulled/jbcnconf-react | https://api.github.com/repos/anyulled/jbcnconf-react | closed | CVE-2021-27290 (High) detected in ssri-6.0.1.tgz - autoclosed | security vulnerability | ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ssri-6.0.1.tgz</b></p></summary>

<p>Standard Subresource Integrity library -- parses, serializes, generates, and veri... | True | CVE-2021-27290 (High) detected in ssri-6.0.1.tgz - autoclosed - ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ssri-6.0.1.tgz</b></p></summary>

<p>Standard Subresour... | non_priority | cve high detected in ssri tgz autoclosed cve high severity vulnerability vulnerable library ssri tgz standard subresource integrity library parses serializes generates and verifies integrity metadata according to the sri spec library home page a href path to de... | 0 |

754,852 | 26,406,149,537 | IssuesEvent | 2023-01-13 08:12:02 | Northeastern-Electric-Racing/shepherd_bms | https://api.github.com/repos/Northeastern-Electric-Racing/shepherd_bms | closed | Implement Charging Logic | Feature High Priority | This will utilize the "Charger" API from the compute board to say when the car should/should not be charging and if we are allowing charging | 1.0 | Implement Charging Logic - This will utilize the "Charger" API from the compute board to say when the car should/should not be charging and if we are allowing charging | priority | implement charging logic this will utilize the charger api from the compute board to say when the car should should not be charging and if we are allowing charging | 1 |

795,784 | 28,086,121,953 | IssuesEvent | 2023-03-30 09:53:12 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | opened | Support type aliases in formats `'list[int]'` and `'int | float'` in argument conversion | enhancement priority: medium effort: medium | Our argument conversion typically uses based on actual types like `int`, `list[int]` and `int | float`, but we also support type aliases as strings like `'int'` or `'integer'`. The motivation for type aliases is to support types returned, for example, by dynamic libraries wrapping code using other languages. Such libra... | 1.0 | Support type aliases in formats `'list[int]'` and `'int | float'` in argument conversion - Our argument conversion typically uses based on actual types like `int`, `list[int]` and `int | float`, but we also support type aliases as strings like `'int'` or `'integer'`. The motivation for type aliases is to support types ... | priority | support type aliases in formats list and int float in argument conversion our argument conversion typically uses based on actual types like int list and int float but we also support type aliases as strings like int or integer the motivation for type aliases is to support types returned... | 1 |

182,511 | 30,858,454,071 | IssuesEvent | 2023-08-02 23:18:36 | AlaskaAirlines/AuroDesignTokens | https://api.github.com/repos/AlaskaAirlines/AuroDesignTokens | closed | Create new Jet Stream tokens/repo | Type: Feature Type: Documentation design tokens | # General Support Request

In order to deliver theming, we need to support a fork of the Auro design tokens to create JetStream Design Tokens.

## Possible Solution

There are two ways we can do this. One, host all the tokens in a single repo and configure a way to distribute two sets of tokens. Two, we simply c... | 1.0 | Create new Jet Stream tokens/repo - # General Support Request

In order to deliver theming, we need to support a fork of the Auro design tokens to create JetStream Design Tokens.

## Possible Solution

There are two ways we can do this. One, host all the tokens in a single repo and configure a way to distribute ... | non_priority | create new jet stream tokens repo general support request in order to deliver theming we need to support a fork of the auro design tokens to create jetstream design tokens possible solution there are two ways we can do this one host all the tokens in a single repo and configure a way to distribute ... | 0 |

804,297 | 29,483,177,574 | IssuesEvent | 2023-06-02 07:43:19 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | drivers: can: mcp2515: default thread stack size too small | bug priority: low area: CAN | **Describe the bug**

The default thread stack size of 512 bytes is too small and causes stack overflows on our `frdm_k64f` reference platform.

**To Reproduce**

Steps to reproduce the behavior:

1. `west build -b frdm_k64f tests/drivers/can/api -- -DSHIELD=keyestudio_can_bus_ks0411`

2. `west flash`

3. See error:

... | 1.0 | drivers: can: mcp2515: default thread stack size too small - **Describe the bug**

The default thread stack size of 512 bytes is too small and causes stack overflows on our `frdm_k64f` reference platform.

**To Reproduce**

Steps to reproduce the behavior:

1. `west build -b frdm_k64f tests/drivers/can/api -- -DSHIEL... | priority | drivers can default thread stack size too small describe the bug the default thread stack size of bytes is too small and causes stack overflows on our frdm reference platform to reproduce steps to reproduce the behavior west build b frdm tests drivers can api dshield keyestudio c... | 1 |

451,076 | 32,007,292,531 | IssuesEvent | 2023-09-21 15:34:02 | frostsg/inf2001-p11-2 | https://api.github.com/repos/frostsg/inf2001-p11-2 | closed | 1.8.1: Team Management | Documentation (Report) Meeting | Goal: Complete Team Management

Success criteria: Completed Team Management under Project Management

Start Date: 21 September 2023

End Date: 23 September 2023

Owner: Yeo Qing You, Kenrick

Status: In Progress | 1.0 | 1.8.1: Team Management - Goal: Complete Team Management

Success criteria: Completed Team Management under Project Management

Start Date: 21 September 2023

End Date: 23 September 2023

Owner: Yeo Qing You, Kenrick

Status: In Progress | non_priority | team management goal complete team management success criteria completed team management under project management start date september end date september owner yeo qing you kenrick status in progress | 0 |

24,828 | 7,571,398,763 | IssuesEvent | 2018-04-23 12:07:49 | junit-team/junit5 | https://api.github.com/repos/junit-team/junit5 | reopened | Test against JDK 9 modules | theme: Java 9+10+11... theme: build type: task | ## Status Quo

JUnit 5 currently builds and runs against JDK 9 early access builds (including Jigsaw builds), but we do not yet have any tests in place that run against user code built with module info.

## Related Pull Requests

- PR #1061 Part I - Introduce `ModuleUtils` with `ClassFinder` SPI

- PR #1057 Part ... | 1.0 | Test against JDK 9 modules - ## Status Quo

JUnit 5 currently builds and runs against JDK 9 early access builds (including Jigsaw builds), but we do not yet have any tests in place that run against user code built with module info.

## Related Pull Requests

- PR #1061 Part I - Introduce `ModuleUtils` with `Class... | non_priority | test against jdk modules status quo junit currently builds and runs against jdk early access builds including jigsaw builds but we do not yet have any tests in place that run against user code built with module info related pull requests pr part i introduce moduleutils with classfin... | 0 |

639,814 | 20,766,761,473 | IssuesEvent | 2022-03-15 21:29:34 | bottlerocket-os/bottlerocket | https://api.github.com/repos/bottlerocket-os/bottlerocket | closed | enable hardening features for service units | type/enhancement security priority/p1 status/notstarted | **What I'd like:**

`systemd-analyze security` should turn green where possible.

Useful articles:

* [systemd service sandboxing and security hardening 101](https://www.ctrl.blog/entry/systemd-service-hardening.html)

* [Limit the impact of a security intrusion with systemd security directives](https://www.ctrl.blog... | 1.0 | enable hardening features for service units - **What I'd like:**

`systemd-analyze security` should turn green where possible.

Useful articles:

* [systemd service sandboxing and security hardening 101](https://www.ctrl.blog/entry/systemd-service-hardening.html)

* [Limit the impact of a security intrusion with syst... | priority | enable hardening features for service units what i d like systemd analyze security should turn green where possible useful articles any alternatives you ve considered n a | 1 |

13,738 | 3,355,437,129 | IssuesEvent | 2015-11-18 16:25:47 | quantopian/zipline | https://api.github.com/repos/quantopian/zipline | opened | Create a minute-rate futures payout test | Needs Tests | Current test coverage of futures payouts are all at the daily frequency. | 1.0 | Create a minute-rate futures payout test - Current test coverage of futures payouts are all at the daily frequency. | non_priority | create a minute rate futures payout test current test coverage of futures payouts are all at the daily frequency | 0 |

435,711 | 12,539,816,958 | IssuesEvent | 2020-06-05 09:16:17 | scality/metalk8s | https://api.github.com/repos/scality/metalk8s | opened | Flaky during bootstrap restore | complexity:easy kind:bug priority:high topic:flakiness | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately to moonshot-platform@scality.com

-->

**Component**:

'salt', 'restore'

<... | 1.0 | Flaky during bootstrap restore - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately to moonshot-platform@scality.com

-->

**Com... | priority | flaky during bootstrap restore please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks if the matter is security related please disclose it privately to moonshot platform scality com com... | 1 |

313,253 | 23,465,212,967 | IssuesEvent | 2022-08-16 16:08:32 | vitejs/vite | https://api.github.com/repos/vitejs/vite | closed | [v3] Document type inference with import.meta.glob | documentation | ### Describe the bug

When using `import.meta.glob` with vite v3, no generic type is provided anymore. The [JS Doc provides you with this information](https://github.com/vitejs/vite/blob/main/packages/vite/types/importGlob.d.ts#L41), but it would have helped me to have it in the [official docs](https://vitejs.dev/gui... | 1.0 | [v3] Document type inference with import.meta.glob - ### Describe the bug

When using `import.meta.glob` with vite v3, no generic type is provided anymore. The [JS Doc provides you with this information](https://github.com/vitejs/vite/blob/main/packages/vite/types/importGlob.d.ts#L41), but it would have helped me to ... | non_priority | document type inference with import meta glob describe the bug when using import meta glob with vite no generic type is provided anymore the but it would have helped me to have it in the easily available also is this not a breaking change for typescript users strictly speaking thanks ... | 0 |

391,509 | 11,574,919,728 | IssuesEvent | 2020-02-21 08:38:14 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Configuring password recovery with reCaptcha for a tenant for not working | Affected/5.10.0-Beta2 Priority/High Severity/Blocker | **Steps to reproduce:**

Follow the instructions in [here](https://is.docs.wso2.com/en/next/learn/configuring-recaptcha-for-password-recovery/#configuring-password-recovery-with-recaptcha-for-a-tenant)

Recaptcha is not shown.

| 1.0 | Configuring password recovery with reCaptcha for a tenant for not working - **Steps to reproduce:**

Follow the instructions in [here](https://is.docs.wso2.com/en/next/learn/configuring-recaptcha-for-password-recovery/#configuring-password-recovery-with-recaptcha-for-a-tenant)

Recaptcha is not shown.

| priority | configuring password recovery with recaptcha for a tenant for not working steps to reproduce follow the instructions in recaptcha is not shown | 1 |

801,999 | 28,565,012,150 | IssuesEvent | 2023-04-21 00:45:40 | microsoft/rushstack | https://api.github.com/repos/microsoft/rushstack | closed | [rush] >O(n^2) performance in `rush version` | repro confirmed priority | ## Summary

When running `rush version --bump` in a monorepo with 877 projects, the `semver.satisfies` check in `PublishUtilties._updateDownstreamDependency` was invoked 80450952 times.

## Repro steps

In a large monorepo with a few hundred pending change files, run `rush version --bump`.

## Details

From inspect... | 1.0 | [rush] >O(n^2) performance in `rush version` - ## Summary

When running `rush version --bump` in a monorepo with 877 projects, the `semver.satisfies` check in `PublishUtilties._updateDownstreamDependency` was invoked 80450952 times.

## Repro steps

In a large monorepo with a few hundred pending change files, run `ru... | priority | o n performance in rush version summary when running rush version bump in a monorepo with projects the semver satisfies check in publishutilties updatedownstreamdependency was invoked times repro steps in a large monorepo with a few hundred pending change files run rush version b... | 1 |

322,943 | 9,833,845,905 | IssuesEvent | 2019-06-17 08:15:05 | input-output-hk/jormungandr | https://api.github.com/repos/input-output-hk/jormungandr | closed | Allow for better logging strategy | Priority - Low subsys-logging | In the event of a large amount of Logs being emitted the user may see this kind of logs:

```

Jun 13 17:27:39.749 ERRO slog-async: logger dropped messages due to channel overflow, count: 97, sub_task: Leader Task, task: leadership

```

We can configure the [`Async`](https://crates.io/crates/slog_async) to have a ... | 1.0 | Allow for better logging strategy - In the event of a large amount of Logs being emitted the user may see this kind of logs:

```

Jun 13 17:27:39.749 ERRO slog-async: logger dropped messages due to channel overflow, count: 97, sub_task: Leader Task, task: leadership

```

We can configure the [`Async`](https://cra... | priority | allow for better logging strategy in the event of a large amount of logs being emitted the user may see this kind of logs jun erro slog async logger dropped messages due to channel overflow count sub task leader task task leadership we can configure the to have a better strateg... | 1 |

161,672 | 13,865,481,375 | IssuesEvent | 2020-10-16 04:27:38 | pyconll/pyconll | https://api.github.com/repos/pyconll/pyconll | closed | Improve documentation via analytics and keeping module information up to date | documentation | Documentation in general for the library is relatively high quality but the next release should focus specifically on this.

Some items to improve.

* `to_tree` documentation improvement with a better description of what the tree structure is and what order the children are in relative to the sentence if possible.

... | 1.0 | Improve documentation via analytics and keeping module information up to date - Documentation in general for the library is relatively high quality but the next release should focus specifically on this.

Some items to improve.

* `to_tree` documentation improvement with a better description of what the tree struct... | non_priority | improve documentation via analytics and keeping module information up to date documentation in general for the library is relatively high quality but the next release should focus specifically on this some items to improve to tree documentation improvement with a better description of what the tree struct... | 0 |

515,485 | 14,964,029,129 | IssuesEvent | 2021-01-27 11:22:56 | woocommerce/woocommerce-gutenberg-products-block | https://api.github.com/repos/woocommerce/woocommerce-gutenberg-products-block | closed | Hide all filter blocks from Block Widget Editor | priority: high type: enhancement ◼️ block: active product filters ◼️ block: filter products by attribute ◼️ block: filter products by price 🔹 block-type: filter blocks | Currently the filter blocks will not have any impact on the default shop page in WooCommerce core. They also do not have feature parity with the filter widgets that do impact the shop page. To prevent user confusion, for the short term we need to hide the filter blocks from the Block Widget editor (and they'll only be ... | 1.0 | Hide all filter blocks from Block Widget Editor - Currently the filter blocks will not have any impact on the default shop page in WooCommerce core. They also do not have feature parity with the filter widgets that do impact the shop page. To prevent user confusion, for the short term we need to hide the filter blocks ... | priority | hide all filter blocks from block widget editor currently the filter blocks will not have any impact on the default shop page in woocommerce core they also do not have feature parity with the filter widgets that do impact the shop page to prevent user confusion for the short term we need to hide the filter blocks ... | 1 |

122,497 | 10,225,272,334 | IssuesEvent | 2019-08-16 14:46:49 | ValveSoftware/steam-for-linux | https://api.github.com/repos/ValveSoftware/steam-for-linux | closed | Steam Background Viewer Problem | Need Retest reviewed | Your system information

- Steam client version:

Built: Jul 8 2016 at 21:44:35

Steam API: v017

Steam Package Versions: 1468023329

- Distribution (e.g. Ubuntu): Ubuntu 14.04

- Opted into Steam client beta?: No

- Have you checked for system updates?: I check it daily, it is up to date

#### Please describe your issue... | 1.0 | Steam Background Viewer Problem - Your system information

- Steam client version:

Built: Jul 8 2016 at 21:44:35

Steam API: v017

Steam Package Versions: 1468023329

- Distribution (e.g. Ubuntu): Ubuntu 14.04

- Opted into Steam client beta?: No

- Have you checked for system updates?: I check it daily, it is up to da... | non_priority | steam background viewer problem your system information steam client version built jul at steam api steam package versions distribution e g ubuntu ubuntu opted into steam client beta no have you checked for system updates i check it daily it is up to date please descr... | 0 |

9,441 | 2,615,150,267 | IssuesEvent | 2015-03-01 06:27:33 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | iwlwifi driver : WPS transaction failed (code: 0x4) | auto-migrated Priority-Triage Type-Defect | ```

i didn't find information for my card with this driver,

i am on fedora 16 64 bit, kernel 3.2.1, driver iwlwifi for Intel 6300N

in previous kernels the old driver iwlagn was used with this card, not anymore

and i have yet to see oher reports with this driver.

injection works, tested with aircrack-ng suite.

My ... | 1.0 | iwlwifi driver : WPS transaction failed (code: 0x4) - ```

i didn't find information for my card with this driver,

i am on fedora 16 64 bit, kernel 3.2.1, driver iwlwifi for Intel 6300N

in previous kernels the old driver iwlagn was used with this card, not anymore

and i have yet to see oher reports with this driver.... | non_priority | iwlwifi driver wps transaction failed code i didn t find information for my card with this driver i am on fedora bit kernel driver iwlwifi for intel in previous kernels the old driver iwlagn was used with this card not anymore and i have yet to see oher reports with this driver inject... | 0 |

319,522 | 9,745,383,883 | IssuesEvent | 2019-06-03 09:29:47 | McStasMcXtrace/ifitlab | https://api.github.com/repos/McStasMcXtrace/ifitlab | closed | "Different arrow" for e.g. rmint method | Priority 0 ui | Suggestion: Horizontal line rather than bent arrow (indicating this is a 'method' rather than a 'function') | 1.0 | "Different arrow" for e.g. rmint method - Suggestion: Horizontal line rather than bent arrow (indicating this is a 'method' rather than a 'function') | priority | different arrow for e g rmint method suggestion horizontal line rather than bent arrow indicating this is a method rather than a function | 1 |

93,508 | 19,254,682,526 | IssuesEvent | 2021-12-09 10:01:07 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | [0.15.9.0] Abandoned Outpost - Hostages will not follow players that has diving suits on | Bug Code | - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Was in Multiplayer. Hostages will not follow players that has diving suits on in an abandoned outpost. Likely that they believe they need a suit themselves.

**Version**

0.15.9.0 | 1.0 | [0.15.9.0] Abandoned Outpost - Hostages will not follow players that has diving suits on - - [x] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Was in Multiplayer. Hostages will not follow players that has diving suits on in an abandoned outpost. Likely that they ... | non_priority | abandoned outpost hostages will not follow players that has diving suits on i have searched the issue tracker to check if the issue has already been reported description was in multiplayer hostages will not follow players that has diving suits on in an abandoned outpost likely that they believe the... | 0 |

768,743 | 26,978,609,184 | IssuesEvent | 2023-02-09 11:23:33 | strusoft/femdesign-api | https://api.github.com/repos/strusoft/femdesign-api | closed | Design parameters | priority:later type:scope | # Design parameters

## Goals

* It should be possible to setup general and element settings for the most common code-checks and design calculations.

## Background

General and element settings for code-check and design calculations were extended to `fdscript` in FEM-Design 21. This feature still needs to be imple... | 1.0 | Design parameters - # Design parameters

## Goals

* It should be possible to setup general and element settings for the most common code-checks and design calculations.

## Background

General and element settings for code-check and design calculations were extended to `fdscript` in FEM-Design 21. This feature sti... | priority | design parameters design parameters goals it should be possible to setup general and element settings for the most common code checks and design calculations background general and element settings for code check and design calculations were extended to fdscript in fem design this feature stil... | 1 |

50,467 | 7,605,224,679 | IssuesEvent | 2018-04-30 07:56:44 | RaRe-Technologies/gensim | https://api.github.com/repos/RaRe-Technologies/gensim | opened | Documentation fixes | documentation | This issue collects PRs related to improving the Gensim documentation.

Merged

--------

https://github.com/RaRe-Technologies/gensim/pull/1633

https://github.com/RaRe-Technologies/gensim/pull/1625

https://github.com/RaRe-Technologies/gensim/pull/1640

https://github.com/RaRe-Technologies/gensim/pull/1702

https:... | 1.0 | Documentation fixes - This issue collects PRs related to improving the Gensim documentation.

Merged

--------

https://github.com/RaRe-Technologies/gensim/pull/1633

https://github.com/RaRe-Technologies/gensim/pull/1625

https://github.com/RaRe-Technologies/gensim/pull/1640

https://github.com/RaRe-Technologies/ge... | non_priority | documentation fixes this issue collects prs related to improving the gensim documentation merged wip | 0 |

828,638 | 31,836,752,913 | IssuesEvent | 2023-09-14 13:57:26 | infor-design/enterprise | https://api.github.com/repos/infor-design/enterprise | closed | Breadcrumb: flex-toolbar icon cut off | type: bug :bug: [1] focus: mobile priority: minor stale | <!-- Please be aware that this is a publicly visible bug report. Do not post any credentials, screenshots with proprietary information, or anything you think shouldn't be visible to the world. If reporting a security issue such as a xss vulnerability. Please use the [security advisories feature](https://github.com/info... | 1.0 | Breadcrumb: flex-toolbar icon cut off - <!-- Please be aware that this is a publicly visible bug report. Do not post any credentials, screenshots with proprietary information, or anything you think shouldn't be visible to the world. If reporting a security issue such as a xss vulnerability. Please use the [security adv... | priority | breadcrumb flex toolbar icon cut off describe the bug btn icon is cut off in mobile when using new theme to reproduce steps to reproduce the behavior go to see error expected behavior should not be cut off version ids enterprise dev screenshots ... | 1 |

332,899 | 29,497,875,243 | IssuesEvent | 2023-06-02 18:39:54 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_cond_side_effects_dynamic_shapes_static_default (__main__.StaticDefaultDynamicShapesMiscTests) | triaged module: flaky-tests skipped module: dynamo | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_cond_side_effects_dynamic_shapes_static_default&suite=StaticDefaultDynamicShapesMiscTests) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/undefined).... | 1.0 | DISABLED test_cond_side_effects_dynamic_shapes_static_default (__main__.StaticDefaultDynamicShapesMiscTests) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_cond_side_effects_dynamic_shapes_static_default&suite=StaticDefaultDynami... | non_priority | disabled test cond side effects dynamic shapes static default main staticdefaultdynamicshapesmisctests platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes... | 0 |

180,777 | 30,566,644,415 | IssuesEvent | 2023-07-20 18:18:58 | EasyCorp/EasyAdminBundle | https://api.github.com/repos/EasyCorp/EasyAdminBundle | closed | submenu items are barely visible | bug design | **Describe the bug**

submenu items are barely visible in dark mode

**To Reproduce**

1. Create submenu items

2. set theme to dark mode

| 1.0 | submenu items are barely visible - **Describe the bug**

submenu items are barely visible in dark mode

**To Reproduce**

1. Create submenu items

2. set theme to dark mode

| non_priority | submenu items are barely visible describe the bug submenu items are barely visible in dark mode to reproduce create submenu items set theme to dark mode | 0 |

488,899 | 14,099,094,136 | IssuesEvent | 2020-11-06 00:29:23 | drashland/website | https://api.github.com/repos/drashland/website | closed | Write required documentation for deno-drash issue #427 (after_resource middleware hook) | Priority: Medium Remark: Deploy To Production Type: Chore | ## Summary

The following issue requires documentation before it can be closed:

https://github.com/drashland/deno-drash/issues/427

The following pull request is associated with the above issue:

https://github.com/drashland/deno-drash/issues/428 | 1.0 | Write required documentation for deno-drash issue #427 (after_resource middleware hook) - ## Summary

The following issue requires documentation before it can be closed:

https://github.com/drashland/deno-drash/issues/427

The following pull request is associated with the above issue:

https://github.com/drashl... | priority | write required documentation for deno drash issue after resource middleware hook summary the following issue requires documentation before it can be closed the following pull request is associated with the above issue | 1 |

391,526 | 26,896,663,212 | IssuesEvent | 2023-02-06 12:57:22 | cloudflare/cloudflare-docs | https://api.github.com/repos/cloudflare/cloudflare-docs | opened | Add guidance when creating Domain lists via API | documentation content:edit | ### Which Cloudflare product does this pertain to?

Zero Trust

### Existing documentation URL(s)

https://developers.cloudflare.com/cloudflare-one/policies/filtering/lists/

### Section that requires update

[](https://developers.cloudflare.com/cloudflare-one/policies/filtering/lists/#create-a-list-from-a-csv-file)

... | 1.0 | Add guidance when creating Domain lists via API - ### Which Cloudflare product does this pertain to?

Zero Trust

### Existing documentation URL(s)

https://developers.cloudflare.com/cloudflare-one/policies/filtering/lists/

### Section that requires update

[](https://developers.cloudflare.com/cloudflare-one/policie... | non_priority | add guidance when creating domain lists via api which cloudflare product does this pertain to zero trust existing documentation url s section that requires update create a list from a csv file what needs to change the conditions doesn t detail what happens when using duplicate hostnam... | 0 |

567,318 | 16,854,921,736 | IssuesEvent | 2021-06-21 04:35:38 | ballerina-platform/ballerina-standard-library | https://api.github.com/repos/ballerina-platform/ballerina-standard-library | closed | GraphQL Responses with Errors Should Return BAD_REQUEST Status Code | Priority/High Team/PCP Type/Improvement module/graphql | **Description:**

When a GraphQL request is failed in the validation phase, currently the response status code is set to `200`. But it should be `400`. | 1.0 | GraphQL Responses with Errors Should Return BAD_REQUEST Status Code - **Description:**

When a GraphQL request is failed in the validation phase, currently the response status code is set to `200`. But it should be `400`. | priority | graphql responses with errors should return bad request status code description when a graphql request is failed in the validation phase currently the response status code is set to but it should be | 1 |

40,871 | 8,870,550,742 | IssuesEvent | 2019-01-11 09:51:03 | Jigar3/Wall-Street | https://api.github.com/repos/Jigar3/Wall-Street | opened | Set up this project on your local machine | OpenCode'19 Rookie(10 Points) | Share a screenshot/GIF here. 10 points each for the frontend as well as backend setup | 1.0 | Set up this project on your local machine - Share a screenshot/GIF here. 10 points each for the frontend as well as backend setup | non_priority | set up this project on your local machine share a screenshot gif here points each for the frontend as well as backend setup | 0 |

135,388 | 5,247,424,657 | IssuesEvent | 2017-02-01 12:59:41 | moodlepeers/moodle-mod_groupformation | https://api.github.com/repos/moodlepeers/moodle-mod_groupformation | opened | layout issues with clean theme or beuth03 theme | bug FE (frontend) Priority medium | 1. Inside the Group Formation page, the Move block and Actions in every block will disappear, the icons will not appear appropriatly!

2. On the beuth03 Theme (the offical theme for Beuth Hochschule) the navigation header will not appear appropriatly inside the page of the groupformation, the header will navigate to th... | 1.0 | layout issues with clean theme or beuth03 theme - 1. Inside the Group Formation page, the Move block and Actions in every block will disappear, the icons will not appear appropriatly!

2. On the beuth03 Theme (the offical theme for Beuth Hochschule) the navigation header will not appear appropriatly inside the page of ... | priority | layout issues with clean theme or theme inside the group formation page the move block and actions in every block will disappear the icons will not appear appropriatly on the theme the offical theme for beuth hochschule the navigation header will not appear appropriatly inside the page of the groupfor... | 1 |

170,181 | 13,177,100,557 | IssuesEvent | 2020-08-12 06:39:15 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | About normal flush action | >test-failure | In SyncedFlushService.onShardInactive() method,

It notes,

"// A normal flush has the same effect as a synced flush if all nodes are on 7.6 or later."

I use 7.8.1,so it always will execute the NormalFlush(not the SyncedFlush).We know,the SyncedFlush will write the sync id on all shards,

but I find the NormalFlush ... | 1.0 | About normal flush action - In SyncedFlushService.onShardInactive() method,

It notes,

"// A normal flush has the same effect as a synced flush if all nodes are on 7.6 or later."

I use 7.8.1,so it always will execute the NormalFlush(not the SyncedFlush).We know,the SyncedFlush will write the sync id on all shards,

... | non_priority | about normal flush action in syncedflushservice onshardinactive method it notes a normal flush has the same effect as a synced flush if all nodes are on or later i use so it always will execute the normalflush not the syncedflush we know the syncedflush will write the sync id on all shards ... | 0 |

383,609 | 26,558,146,814 | IssuesEvent | 2023-01-20 13:48:30 | PennLINC/xcp_d | https://api.github.com/repos/PennLINC/xcp_d | closed | Offload advanced xcp-d usage examples to separate repository? | documentation question | ## Summary

There are a couple of xcp-d use-cases that require some preparation that we may want to document, including incorporating tedana components (#324) or non-RETROICOR physio regressors (#455) as custom confounds. We can easily write documentation or example code for these scenarios, but I think we would probab... | 1.0 | Offload advanced xcp-d usage examples to separate repository? - ## Summary

There are a couple of xcp-d use-cases that require some preparation that we may want to document, including incorporating tedana components (#324) or non-RETROICOR physio regressors (#455) as custom confounds. We can easily write documentation ... | non_priority | offload advanced xcp d usage examples to separate repository summary there are a couple of xcp d use cases that require some preparation that we may want to document including incorporating tedana components or non retroicor physio regressors as custom confounds we can easily write documentation or e... | 0 |

44,520 | 5,632,193,469 | IssuesEvent | 2017-04-05 16:02:34 | OAButton/discussion | https://api.github.com/repos/OAButton/discussion | closed | Testing citation search | Blocked: Development Blocked: Test enhancement JISC | @svmelton hopefully it should be relatively clear how to go about this (and builds on your work on other similar issues).

Assuming title search testing is happening #111

@markmacgillivray I don't think this is 100% required to ship in a week, if it's buggy we may be better placed to release it with care later. | 1.0 | Testing citation search - @svmelton hopefully it should be relatively clear how to go about this (and builds on your work on other similar issues).

Assuming title search testing is happening #111

@markmacgillivray I don't think this is 100% required to ship in a week, if it's buggy we may be better placed to re... | non_priority | testing citation search svmelton hopefully it should be relatively clear how to go about this and builds on your work on other similar issues assuming title search testing is happening markmacgillivray i don t think this is required to ship in a week if it s buggy we may be better placed to releas... | 0 |

783,444 | 27,531,040,303 | IssuesEvent | 2023-03-06 22:08:37 | dotCMS/core | https://api.github.com/repos/dotCMS/core | reopened | Allow greater configuration of S3Client to target additional endpoints beyond AWS | Type : Enhancement QA : Approved Doc : Needs Doc Merged QA : Passed Internal LTS: Excluded Team : Falcon Next LTS Release Release : 23.02 OKR : Customer Success Priority : 2 High OKR : Customer Support | **Is your feature request related to a problem? Please describe.**

There are multiple different S3 Object stores available beyond AWS. These all still utilize the S3 SDK from AWS, so we should be able to allow configuration of our S3Client to target endpoints that are not in AWS.

Related Ticket: https://dotcms.zend... | 1.0 | Allow greater configuration of S3Client to target additional endpoints beyond AWS - **Is your feature request related to a problem? Please describe.**

There are multiple different S3 Object stores available beyond AWS. These all still utilize the S3 SDK from AWS, so we should be able to allow configuration of our S3Cl... | priority | allow greater configuration of to target additional endpoints beyond aws is your feature request related to a problem please describe there are multiple different object stores available beyond aws these all still utilize the sdk from aws so we should be able to allow configuration of our to target e... | 1 |

566,805 | 16,831,084,074 | IssuesEvent | 2021-06-18 05:01:12 | DeFiCh/jellyfish | https://api.github.com/repos/DeFiCh/jellyfish | closed | flaky test in `accountHistoryCount` | area/jellyfish-api-core kind/bug priority/urgent-now triage/accepted | <!--

Please use this template while reporting a bug and provide as much info as possible.

If the matter is security related, please disclose it privately via security@defichain.com

-->

#### What happened:

https://github.com/DeFiCh/jellyfish/pull/337/checks?check_run_id=2732807319

```txt

Summary of all f... | 1.0 | flaky test in `accountHistoryCount` - <!--

Please use this template while reporting a bug and provide as much info as possible.

If the matter is security related, please disclose it privately via security@defichain.com

-->

#### What happened:

https://github.com/DeFiCh/jellyfish/pull/337/checks?check_run_id=... | priority | flaky test in accounthistorycount please use this template while reporting a bug and provide as much info as possible if the matter is security related please disclose it privately via security defichain com what happened txt summary of all failing tests fail packages jellyfis... | 1 |

235,575 | 25,955,213,571 | IssuesEvent | 2022-12-18 05:34:02 | Dima2022/JS-Demo | https://api.github.com/repos/Dima2022/JS-Demo | closed | CVE-2017-15010 (High) detected in tough-cookie-2.3.1.tgz - autoclosed | security vulnerability | ## CVE-2017-15010 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tough-cookie-2.3.1.tgz</b></p></summary>

<p>RFC6265 Cookies and Cookie Jar for node.js</p>

<p>Library home page: <a hr... | True | CVE-2017-15010 (High) detected in tough-cookie-2.3.1.tgz - autoclosed - ## CVE-2017-15010 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tough-cookie-2.3.1.tgz</b></p></summary>

<p>RF... | non_priority | cve high detected in tough cookie tgz autoclosed cve high severity vulnerability vulnerable library tough cookie tgz cookies and cookie jar for node js library home page a href path to dependency file package json path to vulnerable library node modules npm nod... | 0 |

18,655 | 11,031,774,470 | IssuesEvent | 2019-12-06 18:33:37 | bee-travels/bee-travels | https://api.github.com/repos/bee-travels/bee-travels | opened | Checkout/Cart/Payment Services DevOps Story | Cart Service Checkout Service Payment Service v3 | Create a DevOps Story around the Checkout/Cart/Payment Services | 3.0 | Checkout/Cart/Payment Services DevOps Story - Create a DevOps Story around the Checkout/Cart/Payment Services | non_priority | checkout cart payment services devops story create a devops story around the checkout cart payment services | 0 |

298,784 | 22,572,315,010 | IssuesEvent | 2022-06-28 02:14:37 | dipeshrai123/react-ui-animate-docs | https://api.github.com/repos/dipeshrai123/react-ui-animate-docs | closed | Some changes in `installation` in getting started page | documentation | Issue:

Expected Output:

- Change `you` to `your`, there is a typo in you.

- and change react-ui-animate@next to react-ui-animate in bash command only for v2.0.0 | 1.0 | Some changes in `installation` in getting started page - Issue:

Expected Output:

- Change `you` to `your`, there is a typo in you.

- and change react-ui-animate@next to react-ui-animate in bash command ... | non_priority | some changes in installation in getting started page issue expected output change you to your there is a typo in you and change react ui animate next to react ui animate in bash command only for | 0 |

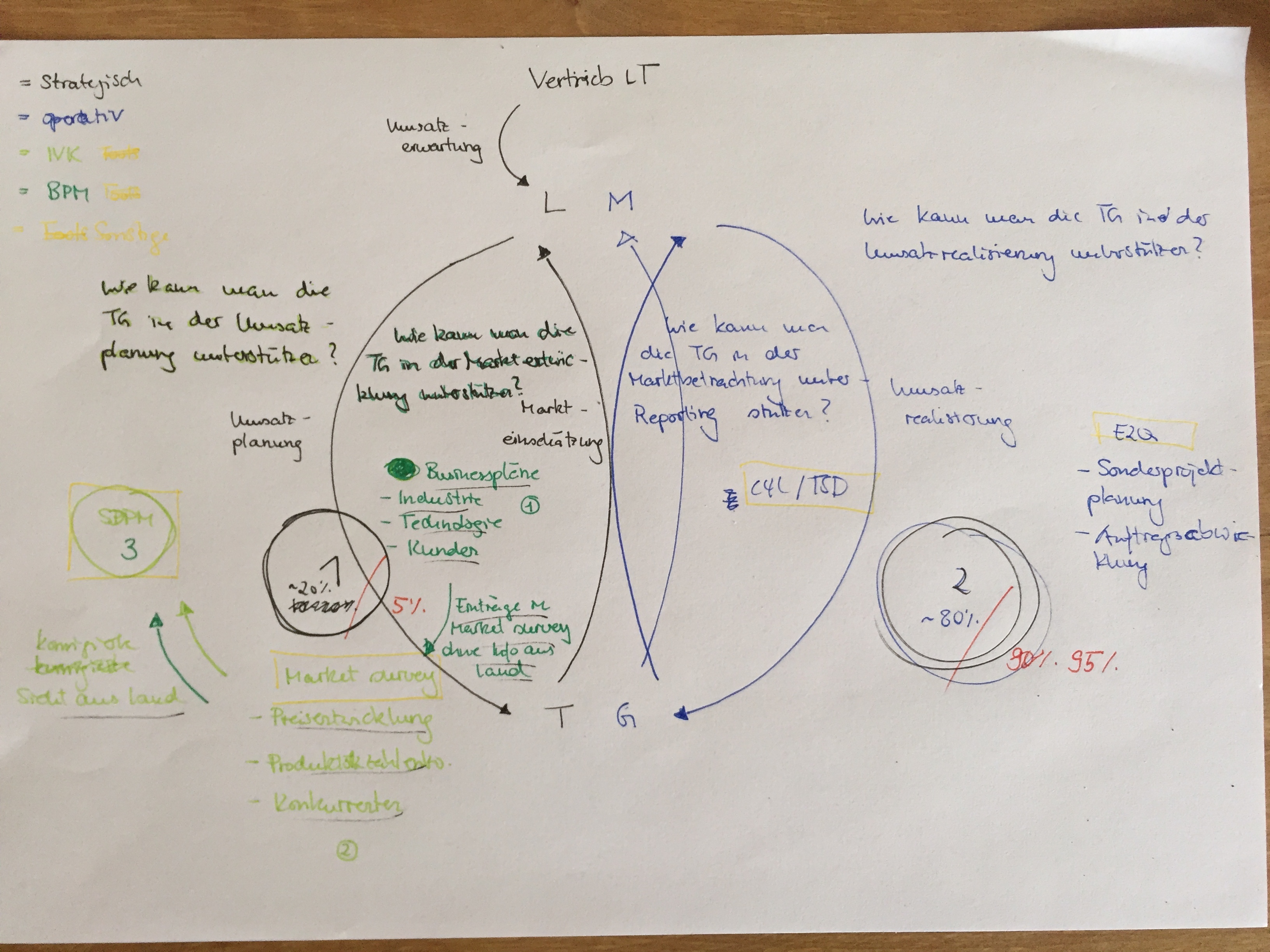

311,178 | 9,529,857,280 | IssuesEvent | 2019-04-29 12:31:18 | JuSpa/Trumpf | https://api.github.com/repos/JuSpa/Trumpf | opened | Feedback LM | Priority: High Status: ToDo Type: Task | ## Beschreibung:

Nach den CS steht noch ein Feedback zum Ländermanagement im Allgemeinen aus.

## Tasks:

- [ ] Vor welchen Herausforderungen steht das Ländermanagement?

## Ausarbeitung:

| 1.0 | Feedback LM - ## Beschreibung:

Nach den CS steht noch ein Feedback zum Ländermanagement im Allgemeinen aus.

## Tasks:

- [ ] Vor welchen Herausforderungen steht das Ländermanagement?

## Ausarbeitung:

### Description

yb_enable_expression_pushdown does not seem to work correctly. Refer to the slack thread - https://yugabyte.slack.com/archives/CAR5BCH29/p1677518458483219

The test case to reproduce the error is below:

```

drop table demo;

... | 1.0 | [YSQL] yb_enable_expression_pushdown for GIN index scan can yield incorrect results - Jira Link: [DB-5677](https://yugabyte.atlassian.net/browse/DB-5677)

### Description

yb_enable_expression_pushdown does not seem to work correctly. Refer to the slack thread - https://yugabyte.slack.com/archives/CAR5BCH29/p16775184... | priority | yb enable expression pushdown for gin index scan can yield incorrect results jira link description yb enable expression pushdown does not seem to work correctly refer to the slack thread the test case to reproduce the error is below drop table demo create table demo demo id ... | 1 |

221,255 | 7,375,115,500 | IssuesEvent | 2018-03-13 22:47:44 | Polymer/lit-html | https://api.github.com/repos/Polymer/lit-html | closed | Syntax Highlighting for vim | Priority: Low Status: Available Type: Question | Hello i found syntax highlighters for visual studio code and atom but non for vim does anyone know of any projects? | 1.0 | Syntax Highlighting for vim - Hello i found syntax highlighters for visual studio code and atom but non for vim does anyone know of any projects? | priority | syntax highlighting for vim hello i found syntax highlighters for visual studio code and atom but non for vim does anyone know of any projects | 1 |

264,777 | 8,319,379,672 | IssuesEvent | 2018-09-25 17:03:38 | Coow/cows-hacknslash | https://api.github.com/repos/Coow/cows-hacknslash | opened | SteamID to put into DiscordCtrl | Low Priority | Assigned to: Unassigned

Requires the game to first get into Steam | 1.0 | SteamID to put into DiscordCtrl - Assigned to: Unassigned

Requires the game to first get into Steam | priority | steamid to put into discordctrl assigned to unassigned requires the game to first get into steam | 1 |

691,429 | 23,696,723,171 | IssuesEvent | 2022-08-29 15:13:01 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | app.clipchamp.com - site is not usable | browser-firefox priority-normal severity-critical type-unsupported engine-gecko | <!-- @browser: Firefox 104.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:104.0) Gecko/20100101 Firefox/104.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/109811 -->

**URL**: https://app.clipchamp.com/signup

**Browser / Version**: Firefox 104... | 1.0 | app.clipchamp.com - site is not usable - <!-- @browser: Firefox 104.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:104.0) Gecko/20100101 Firefox/104.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/109811 -->

**URL**: https://app.clipchamp.com/s... | priority | app clipchamp com site is not usable url browser version firefox operating system windows tested another browser yes chrome problem type site is not usable description browser unsupported steps to reproduce app wont load due to browser compatibility check ... | 1 |

140,168 | 12,888,549,375 | IssuesEvent | 2020-07-13 13:12:53 | WizBhoo/OCR_P08_ToDoList | https://api.github.com/repos/WizBhoo/OCR_P08_ToDoList | opened | Lint : phpcs rules definition | documentation | Estimate time duration : 0,10 day.

Purpose : to define more precisely the rules to follow by contributors regarding quality code lint through phpcs.

Bonus : to implement phpcs in the Codacy report with symfony's rules.

Rules to follow : PSR-1 / PSR-2 / PSR-4 - Symfony | 1.0 | Lint : phpcs rules definition - Estimate time duration : 0,10 day.

Purpose : to define more precisely the rules to follow by contributors regarding quality code lint through phpcs.

Bonus : to implement phpcs in the Codacy report with symfony's rules.

Rules to follow : PSR-1 / PSR-2 / PSR-4 - Symfony | non_priority | lint phpcs rules definition estimate time duration day purpose to define more precisely the rules to follow by contributors regarding quality code lint through phpcs bonus to implement phpcs in the codacy report with symfony s rules rules to follow psr psr psr symfony | 0 |

235,533 | 19,377,313,969 | IssuesEvent | 2021-12-17 00:22:29 | kubernetes/test-infra | https://api.github.com/repos/kubernetes/test-infra | closed | crier: invalid memory address or nil pointer dereference on posting a GitHub comment in summary mode | kind/bug sig/testing area/prow/crier | **What happened**:

```

panic: runtime error: invalid memory address or nil pointer dereference

[signal SIGSEGV: segmentation violation code=0x1 addr=0x68 pc=0x1c6025b]

goroutine 1500 [running]:

k8s.io/test-infra/prow/github/report.createEntry(0x1cc0523, 0x7, 0xc0455af152, 0xe, 0xc03217ff20, 0x24, 0x0, 0x0, 0xc... | 1.0 | crier: invalid memory address or nil pointer dereference on posting a GitHub comment in summary mode - **What happened**:

```

panic: runtime error: invalid memory address or nil pointer dereference

[signal SIGSEGV: segmentation violation code=0x1 addr=0x68 pc=0x1c6025b]

goroutine 1500 [running]:

k8s.io/test-in... | non_priority | crier invalid memory address or nil pointer dereference on posting a github comment in summary mode what happened panic runtime error invalid memory address or nil pointer dereference goroutine io test infra prow github report createentry prow github ... | 0 |

466,934 | 13,437,491,614 | IssuesEvent | 2020-09-07 16:01:29 | php-censor/php-censor | https://api.github.com/repos/php-censor/php-censor | closed | [Localization] Improve Spanish localization (lang.es.php) | component:localization other:help-wanted priority:minor type:enhancement | See [lang.es.php](https://github.com/php-censor/php-censor/blob/master/src/Languages/lang.es.php):

**Not present strings:** 'per_page', 'default', 'login', 'remember_me', 'environment_x', 'project_groups', 'build_now_debug', 'delete_old_builds', 'delete_all_builds', 'projects_with_build_errors', 'no_build_errors', '... | 1.0 | [Localization] Improve Spanish localization (lang.es.php) - See [lang.es.php](https://github.com/php-censor/php-censor/blob/master/src/Languages/lang.es.php):

**Not present strings:** 'per_page', 'default', 'login', 'remember_me', 'environment_x', 'project_groups', 'build_now_debug', 'delete_old_builds', 'delete_all... | priority | improve spanish localization lang es php see not present strings per page default login remember me environment x project groups build now debug delete old builds delete all builds projects with build errors no build errors failed allowed error skipped trace ... | 1 |

422,423 | 28,437,384,890 | IssuesEvent | 2023-04-15 13:32:25 | ros2-dotnet/ros2_dotnet | https://api.github.com/repos/ros2-dotnet/ros2_dotnet | closed | Build overlaying Galactic | documentation Galactic | I an trying my luck with building it overlaying galactic and i encounter an error in file path like below

```

Failed <<< rosidl_typesupport_introspection_c [7.89s, exited with code 1]

C:\Program Files (x86)\Microsoft Visual Studio\2017\Community\Common7\IDE\VC\VCTargets\Microsoft.CppBuild.targets(321,5): error... | 1.0 | Build overlaying Galactic - I an trying my luck with building it overlaying galactic and i encounter an error in file path like below

```

Failed <<< rosidl_typesupport_introspection_c [7.89s, exited with code 1]

C:\Program Files (x86)\Microsoft Visual Studio\2017\Community\Common7\IDE\VC\VCTargets\Microsoft.Cp... | non_priority | build overlaying galactic i an trying my luck with building it overlaying galactic and i encounter an error in file path like below failed rosidl typesupport introspection c c program files microsoft visual studio community ide vc vctargets microsoft cppbuild targets error could n... | 0 |

488,013 | 14,073,515,823 | IssuesEvent | 2020-11-04 05:05:00 | MBFVSolutionsLLC/ChiEpsilon | https://api.github.com/repos/MBFVSolutionsLLC/ChiEpsilon | closed | I need to switch out a couple of DC | HIGH PRIORITY bug | We've had two changes of district councilors.

The Great Lakes DC is Gian A. Rassati (GRN: 109838) and the Southern DC is David A. Chin (GRN: 89299)

I got this when I tried to change out Robbie Barns in Southern.

and the Southern DC is David A. Chin (GRN: 89299)

I got this when I tried to change out Robbie Barns in Southern.

detected in multiple libraries | security vulnerability | ## CVE-2019-17531 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.6.jar</b>, <b>jackson-databind-2.9.5.jar</b>, <b>jackson-databind-2.8.10.jar</b>, <b>jackson-dat... | True | CVE-2019-17531 (High) detected in multiple libraries - ## CVE-2019-17531 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.6.jar</b>, <b>jackson-databind-2.9.5.jar<... | non_priority | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar general data bi... | 0 |

101,465 | 16,512,277,707 | IssuesEvent | 2021-05-26 06:27:07 | valtech-ch/microservice-kubernetes-cluster | https://api.github.com/repos/valtech-ch/microservice-kubernetes-cluster | opened | CVE-2019-12086 (High) detected in jackson-databind-2.9.8.jar | security vulnerability | ## CVE-2019-12086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-12086 (High) detected in jackson-databind-2.9.8.jar - ## CVE-2019-12086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file microservice kubernetes cluster... | 0 |

66,583 | 3,256,049,459 | IssuesEvent | 2015-10-20 11:59:03 | remkos/rads | https://api.github.com/repos/remkos/rads | opened | Add ALES retracker output | enhancement Priority-Medium | The ALES retracker output for range, sigma0 and SWH is available at PODAAC.

This will cover a 55-km swath along all global coastlines (5 km inland, 50 km off shore).

Requesting just the retracker output (instead of SGDRs) from NOCS. | 1.0 | Add ALES retracker output - The ALES retracker output for range, sigma0 and SWH is available at PODAAC.

This will cover a 55-km swath along all global coastlines (5 km inland, 50 km off shore).

Requesting just the retracker output (instead of SGDRs) from NOCS. | priority | add ales retracker output the ales retracker output for range and swh is available at podaac this will cover a km swath along all global coastlines km inland km off shore requesting just the retracker output instead of sgdrs from nocs | 1 |

225,024 | 7,476,806,297 | IssuesEvent | 2018-04-04 05:34:22 | CS2103JAN2018-W15-B4/main | https://api.github.com/repos/CS2103JAN2018-W15-B4/main | closed | As an Exco member who created a poll, I want to view results of the poll | enhancement priority.high type.UI type.story | So that I can understand the other members' opinions | 1.0 | As an Exco member who created a poll, I want to view results of the poll - So that I can understand the other members' opinions | priority | as an exco member who created a poll i want to view results of the poll so that i can understand the other members opinions | 1 |

422,148 | 12,266,997,745 | IssuesEvent | 2020-05-07 09:54:14 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | accounts.google.com - see bug description | browser-firefox engine-gecko ml-needsdiagnosis-false os-mac priority-critical | <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.11; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/52569 -->

**URL**: https://accounts.google.com/signin/v2/identifier?hl=en&pass... | 1.0 | accounts.google.com - see bug description - <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.11; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/52569 -->

**URL**: https://account... | priority | accounts google com see bug description url browser version firefox operating system mac os x tested another browser yes chrome problem type something else description wont log in steps to reproduce i tried to log in to google but it wont let me browse... | 1 |

24,140 | 4,059,257,746 | IssuesEvent | 2016-05-25 08:56:10 | difi/move-integrasjonspunkt | https://api.github.com/repos/difi/move-integrasjonspunkt | reopened | Leveringskvittering sendes for tidlig | test | Leveringskvittering sendes før sjekk gjøres på om innkommende melding er en kvittering. Dette forårsaker meldingsloop | 1.0 | Leveringskvittering sendes for tidlig - Leveringskvittering sendes før sjekk gjøres på om innkommende melding er en kvittering. Dette forårsaker meldingsloop | non_priority | leveringskvittering sendes for tidlig leveringskvittering sendes før sjekk gjøres på om innkommende melding er en kvittering dette forårsaker meldingsloop | 0 |

426,888 | 12,389,691,183 | IssuesEvent | 2020-05-20 09:25:39 | nativescript-vue/nativescript-vue | https://api.github.com/repos/nativescript-vue/nativescript-vue | closed | OnTouch error trying to Get X and Y | priority:normal | ### Version

2.6.1

### Reproduction link

[https://play.nativescript.org/?template=play-vue&id=Xg7jdt](https://play.nativescript.org/?template=play-vue&id=Xg7jdt)

### Platform and OS info

Android 9 - MIUI 11.0.3, Nativescript-Vue 2.6.10, Windows 10

### Steps to reproduce

I have added a @touch event listener on... | 1.0 | OnTouch error trying to Get X and Y - ### Version

2.6.1

### Reproduction link

[https://play.nativescript.org/?template=play-vue&id=Xg7jdt](https://play.nativescript.org/?template=play-vue&id=Xg7jdt)

### Platform and OS info

Android 9 - MIUI 11.0.3, Nativescript-Vue 2.6.10, Windows 10

### Steps to reproduce

I... | priority | ontouch error trying to get x and y version reproduction link platform and os info android miui nativescript vue windows steps to reproduce i have added a touch event listener on label element like below and it is linked to the following function ... | 1 |

144,162 | 11,596,388,994 | IssuesEvent | 2020-02-24 18:51:33 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | cli: TestCLITimeout failed | C-test-failure O-robot branch-master | [(cli).TestCLITimeout failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1763778&tab=buildLog) on [master@6d541881b9fc71c36175814fb206487d46b87f1a](https://github.com/cockroachdb/cockroach/commits/6d541881b9fc71c36175814fb206487d46b87f1a):

```

/go/src/github.com/cockroachdb/cockroach/pkg/internal/client/db... | 1.0 | cli: TestCLITimeout failed - [(cli).TestCLITimeout failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1763778&tab=buildLog) on [master@6d541881b9fc71c36175814fb206487d46b87f1a](https://github.com/cockroachdb/cockroach/commits/6d541881b9fc71c36175814fb206487d46b87f1a):

```

/go/src/github.com/cockroachdb/coc... | non_priority | cli testclitimeout failed on go src github com cockroachdb cockroach pkg internal client db go github com cockroachdb cockroach pkg ts db storekvs go src github com cockroachdb cockroach pkg ts db go github com cockroachdb cockroach pkg ts db trystoredata ... | 0 |

337,777 | 10,220,160,932 | IssuesEvent | 2019-08-15 20:33:27 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | hanime.tv - video or audio doesn't play | browser-focus-geckoview engine-gecko priority-normal | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://hanime.tv/hentai-videos/hakoiri-shoujo-virgin-territory-1

**Browser / Version**: Firefox Mobile 68.0

*... | 1.0 | hanime.tv - video or audio doesn't play - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://hanime.tv/hentai-videos/hakoiri-shoujo-virgin-territory-1

**... | priority | hanime tv video or audio doesn t play url browser version firefox mobile operating system android tested another browser yes problem type video or audio doesn t play description video doesn t play steps to reproduce browser configuration none ... | 1 |

21,392 | 3,506,160,065 | IssuesEvent | 2016-01-08 04:08:39 | isushao/sundyandroid | https://api.github.com/repos/isushao/sundyandroid | closed | 下载资源在VeryCD上找的,感谢一下。 | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. 课程顺序匪夷所思

2. 讲解逻辑清晰

3. 代码和演示都很好,加油!!

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `lixue..... | 1.0 | 下载资源在VeryCD上找的,感谢一下。 - ```

What steps will reproduce the problem?

1. 课程顺序匪夷所思

2. 讲解逻辑清晰

3. 代码和演示都很好,加油!!

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code... | non_priority | 下载资源在verycd上找的,感谢一下。 what steps will reproduce the problem 课程顺序匪夷所思 讲解逻辑清晰 代码和演示都很好,加油!! what is the expected output what do you see instead what version of the product are you using on what operating system please provide any additional information below original issue reported on code... | 0 |

63,183 | 3,194,268,744 | IssuesEvent | 2015-09-30 11:06:03 | fusioninventory/fusioninventory-for-glpi | https://api.github.com/repos/fusioninventory/fusioninventory-for-glpi | closed | PHP Fatal error: Call to undefined function logDebug() in .../fusinvdeploy/hook.php on line 154 | Category: Deploy Component: For junior contributor Component: Found in version Priority: Normal Status: Closed Tracker: Bug | ---

Author Name: **Mathieu Parent** (Mathieu Parent)

Original Redmine Issue: 1752, http://forge.fusioninventory.org/issues/1752

Original Date: 2012-08-02

Original Assignee: David Durieux

---

While deploying a package, I get a truncated page, and I see the above message in the Apache error log.

It seems that the ... | 1.0 | PHP Fatal error: Call to undefined function logDebug() in .../fusinvdeploy/hook.php on line 154 - ---

Author Name: **Mathieu Parent** (Mathieu Parent)

Original Redmine Issue: 1752, http://forge.fusioninventory.org/issues/1752

Original Date: 2012-08-02

Original Assignee: David Durieux

---

While deploying a package,... | priority | php fatal error call to undefined function logdebug in fusinvdeploy hook php on line author name mathieu parent mathieu parent original redmine issue original date original assignee david durieux while deploying a package i get a truncated page and i see the above message ... | 1 |

263,009 | 8,272,954,736 | IssuesEvent | 2018-09-17 01:58:50 | javaee/glassfish | https://api.github.com/repos/javaee/glassfish | closed | EAR deployment fails when OSGi bundle is deployed | Component: OSGi Component: OSGi-JavaEE ERR: Assignee Priority: Critical Type: Bug | We have a JEE application packaged and deployed as EAR. Now we started to develop some OSGi EJB Application Bundles. Both will be deployed in the same Glassfish instance.

The OSGi EJB bundle includes some classes which are packaged in the EAR too. For example the package com.macd.foo is included in the bundle. The pac... | 1.0 | EAR deployment fails when OSGi bundle is deployed - We have a JEE application packaged and deployed as EAR. Now we started to develop some OSGi EJB Application Bundles. Both will be deployed in the same Glassfish instance.

The OSGi EJB bundle includes some classes which are packaged in the EAR too. For example the pac... | priority | ear deployment fails when osgi bundle is deployed we have a jee application packaged and deployed as ear now we started to develop some osgi ejb application bundles both will be deployed in the same glassfish instance the osgi ejb bundle includes some classes which are packaged in the ear too for example the pac... | 1 |

749,836 | 26,181,042,487 | IssuesEvent | 2023-01-02 15:37:22 | projectdiscovery/retryabledns | https://api.github.com/repos/projectdiscovery/retryabledns | closed | Faulty rotate condition in client.queryMultiple | Priority: High Type: Bug | ## Description

The DNS server rotation logic in the client.queryMultiple does not rotate the server correctly. Picking one at the beginning if the variable is nil, and sticking to it for all retries

Ref: https://github.com/projectdiscovery/retryabledns/blob/32c28e9a7cd396d50dc15886f4ca14595bef5b57/client.go#L276 | 1.0 | Faulty rotate condition in client.queryMultiple - ## Description

The DNS server rotation logic in the client.queryMultiple does not rotate the server correctly. Picking one at the beginning if the variable is nil, and sticking to it for all retries

Ref: https://github.com/projectdiscovery/retryabledns/blob/32c28e9a... | priority | faulty rotate condition in client querymultiple description the dns server rotation logic in the client querymultiple does not rotate the server correctly picking one at the beginning if the variable is nil and sticking to it for all retries ref | 1 |

227,978 | 7,544,823,947 | IssuesEvent | 2018-04-17 19:38:52 | WordImpress/Give-Snippet-Library | https://api.github.com/repos/WordImpress/Give-Snippet-Library | closed | feat(donation): allow for splitting donations across multiple causes | 5-reported high-priority | ## Issue overview

This is a much-requested feature. Donors are far more likely to give once than they are 4 or 5 times back-to-back, so giving them a way to support multiple causes in one transaction would be preferable.

The best way to describe it is with this example:

### Use Case: churches