Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

611,838 | 18,982,438,631 | IssuesEvent | 2021-11-21 05:26:48 | phetsims/chipper | https://api.github.com/repos/phetsims/chipper | closed | Make sure all repos pass precommit hooks | priority:2-high dev:typescript | From https://github.com/phetsims/chipper/issues/1134 make sure all repos pass precommit hooks. I already fixed phet-io. Sun was ok. Others need to be checked. | 1.0 | Make sure all repos pass precommit hooks - From https://github.com/phetsims/chipper/issues/1134 make sure all repos pass precommit hooks. I already fixed phet-io. Sun was ok. Others need to be checked. | priority | make sure all repos pass precommit hooks from make sure all repos pass precommit hooks i already fixed phet io sun was ok others need to be checked | 1 |

826,434 | 31,623,764,113 | IssuesEvent | 2023-09-06 02:34:53 | robocupjunioraustralia/RCJA_Registration_System | https://api.github.com/repos/robocupjunioraustralia/RCJA_Registration_System | closed | Cannot create state as both available for registration and global. | priority | Need for this has materialised as National is now running events in its own right and will likely do so again in the future.

Should be able to set national to global, show on website, and registration available. | 1.0 | Cannot create state as both available for registration and global. - Need for this has materialised as National is now running events in its own right and will likely do so again in the future.

Should be able to set national to global, show on website, and registration available. | priority | cannot create state as both available for registration and global need for this has materialised as national is now running events in its own right and will likely do so again in the future should be able to set national to global show on website and registration available | 1 |

10,155 | 31,813,653,857 | IssuesEvent | 2023-09-13 18:43:16 | inbucket/inbucket | https://api.github.com/repos/inbucket/inbucket | closed | goreleaser: archives.rlcp should not be used anymore | automation | Printed by goreleaser 1.20

> DEPRECATED: archives.rlcp should not be used anymore, check https://goreleaser.com/deprecations#archivesrlcp for more info | 1.0 | goreleaser: archives.rlcp should not be used anymore - Printed by goreleaser 1.20

> DEPRECATED: archives.rlcp should not be used anymore, check https://goreleaser.com/deprecations#archivesrlcp for more info | non_priority | goreleaser archives rlcp should not be used anymore printed by goreleaser deprecated archives rlcp should not be used anymore check for more info | 0 |

313,956 | 9,582,715,537 | IssuesEvent | 2019-05-08 01:58:54 | mfractor/mfractor-feedback | https://api.github.com/repos/mfractor/mfractor-feedback | closed | Generate View/Viewmodel in different projects | High Priority User Requested enhancement | It would be nice to use the mvvm wizard when using a separate project for my viewmodels. This is already possible with the navigation between pages/viewmodels | 1.0 | Generate View/Viewmodel in different projects - It would be nice to use the mvvm wizard when using a separate project for my viewmodels. This is already possible with the navigation between pages/viewmodels | priority | generate view viewmodel in different projects it would be nice to use the mvvm wizard when using a separate project for my viewmodels this is already possible with the navigation between pages viewmodels | 1 |

820,116 | 30,760,151,624 | IssuesEvent | 2023-07-29 15:37:16 | OneUptime/oneuptime | https://api.github.com/repos/OneUptime/oneuptime | closed | Push Containers To GHCR.io | enhancement low priority | **Is your feature request related to a problem? Please describe.**

Docker Hub provides a very restrictive rate limit for anonymous users at 100 pulls every 6 hours per ip.

For authenticated users, its 200 pulls every 6 hours per ip.

Paid Users can get up to 5000 pull a day.

We as a community would very much appre... | 1.0 | Push Containers To GHCR.io - **Is your feature request related to a problem? Please describe.**

Docker Hub provides a very restrictive rate limit for anonymous users at 100 pulls every 6 hours per ip.

For authenticated users, its 200 pulls every 6 hours per ip.

Paid Users can get up to 5000 pull a day.

We as a co... | priority | push containers to ghcr io is your feature request related to a problem please describe docker hub provides a very restrictive rate limit for anonymous users at pulls every hours per ip for authenticated users its pulls every hours per ip paid users can get up to pull a day we as a community... | 1 |

65,592 | 3,236,441,690 | IssuesEvent | 2015-10-14 05:19:41 | cs2103aug2015-w14-3j/main | https://api.github.com/repos/cs2103aug2015-w14-3j/main | closed | As a user, I can assign priority levels to a certain task | priority.high type.story | so that know which tasks I need to complete soonest | 1.0 | As a user, I can assign priority levels to a certain task - so that know which tasks I need to complete soonest | priority | as a user i can assign priority levels to a certain task so that know which tasks i need to complete soonest | 1 |

340,821 | 10,278,933,707 | IssuesEvent | 2019-08-25 18:31:04 | RoboJackets/robocup-software | https://api.github.com/repos/RoboJackets/robocup-software | closed | Implement full angle paths with motion/rotation constraints | area / planning-motion priority / high status / need-triage type / bug type / not actionable | I've noticed that fast turning causes Pathing errors even in simulator, and this should be implemented eventually anyways to have accurate shooting and stuff.

Currently, PID is used to control angle on the soccer side with a target Angle or Location in MotionControl.cpp. I've already modified Paths and Pathplanner to ... | 1.0 | Implement full angle paths with motion/rotation constraints - I've noticed that fast turning causes Pathing errors even in simulator, and this should be implemented eventually anyways to have accurate shooting and stuff.

Currently, PID is used to control angle on the soccer side with a target Angle or Location in Moti... | priority | implement full angle paths with motion rotation constraints i ve noticed that fast turning causes pathing errors even in simulator and this should be implemented eventually anyways to have accurate shooting and stuff currently pid is used to control angle on the soccer side with a target angle or location in moti... | 1 |

273,923 | 8,554,956,213 | IssuesEvent | 2018-11-08 08:34:39 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | support.mozilla.org - see bug description | browser-firefox-mobile browser-firefox-reality priority-important | <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: browser-fxr -->

<!-- @extra_labels: browser-firefox-reality -->

**URL**: https://support.mozilla.org/en-US/kb/install-firefox-reality

**Browser / Version**: Firefox Mob... | 1.0 | support.mozilla.org - see bug description - <!-- @browser: Firefox Mobile 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.1.2; Mobile; rv:64.0) Gecko/64.0 Firefox/64.0 -->

<!-- @reported_with: browser-fxr -->

<!-- @extra_labels: browser-firefox-reality -->

**URL**: https://support.mozilla.org/en-US/kb/install-firefox... | priority | support mozilla org see bug description url browser version firefox mobile operating system android tested another browser no problem type something else description please add tab manage steps to reproduce i accidently click into a ad and not matter how m... | 1 |

43,185 | 23,139,138,147 | IssuesEvent | 2022-07-28 16:42:28 | ampproject/amp-wp | https://api.github.com/repos/ampproject/amp-wp | closed | Preload background images from hero Cover blocks when parallax used | Enhancement P1 Performance Optimizer | ### Feature Description

Content-initial Cover blocks with a selected image will often be identified as a hero image candidate for the AMP Optimizer to apply SSR. When [opt-ing in to preloading responsive images](https://gist.github.com/westonruter/6adb64e6e8c858a40ac1dc51b03f16d8) (which is only supported by Chromium ... | True | Preload background images from hero Cover blocks when parallax used - ### Feature Description

Content-initial Cover blocks with a selected image will often be identified as a hero image candidate for the AMP Optimizer to apply SSR. When [opt-ing in to preloading responsive images](https://gist.github.com/westonruter/6... | non_priority | preload background images from hero cover blocks when parallax used feature description content initial cover blocks with a selected image will often be identified as a hero image candidate for the amp optimizer to apply ssr when which is only supported by chromium at the moment such cover block images w... | 0 |

11,340 | 7,518,705,480 | IssuesEvent | 2018-04-12 09:14:51 | mono/monodevelop | https://api.github.com/repos/mono/monodevelop | opened | Roslyn Full Solution Analysis is always on | Area: Performance Area: Roslyn Integration | http://source.roslyn.io/#Microsoft.CodeAnalysis.Workspaces/Shared/RuntimeOptions.cs,12

See this, we need to have an option for this. Maybe we could surface this in options, similar to Enable Source Analysis.

This causes the diagnostic analyzers to run on the full solution in the background, rather than just open ... | True | Roslyn Full Solution Analysis is always on - http://source.roslyn.io/#Microsoft.CodeAnalysis.Workspaces/Shared/RuntimeOptions.cs,12

See this, we need to have an option for this. Maybe we could surface this in options, similar to Enable Source Analysis.

This causes the diagnostic analyzers to run on the full solut... | non_priority | roslyn full solution analysis is always on see this we need to have an option for this maybe we could surface this in options similar to enable source analysis this causes the diagnostic analyzers to run on the full solution in the background rather than just open files | 0 |

505,933 | 14,654,923,689 | IssuesEvent | 2020-12-28 09:49:25 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | addons.mozilla.org - design is broken | browser-fenix engine-gecko ml-needsdiagnosis-false priority-important | <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64314 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://addons.mo... | 1.0 | addons.mozilla.org - design is broken - <!-- @browser: Firefox Mobile 85.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:85.0) Gecko/85.0 Firefox/85.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/64314 -->

<!-- @extra_labels: brows... | priority | addons mozilla org design is broken url browser version firefox mobile operating system android tested another browser yes chrome problem type design is broken description images not loaded steps to reproduce browser configuration gfx webrende... | 1 |

124,796 | 26,540,085,947 | IssuesEvent | 2023-01-19 18:30:46 | vtex/admin-ui | https://api.github.com/repos/vtex/admin-ui | closed | Listing template: adjustments | documentation code enhancement | **[Full blown example](https://97wzs0.csb.app/)**

* Change page width to wide

* On the row Menu, add a divider before the Delete option

* Update the Filter component, so it doesn't display a Clear all button when no filters are applied

**[Basic example](https://cjmzyv.csb.app/)**

* Add pagination | 1.0 | Listing template: adjustments - **[Full blown example](https://97wzs0.csb.app/)**

* Change page width to wide

* On the row Menu, add a divider before the Delete option

* Update the Filter component, so it doesn't display a Clear all button when no filters are applied

**[Basic example](https://cjmzyv.csb.app/)**

* Add ... | non_priority | listing template adjustments change page width to wide on the row menu add a divider before the delete option update the filter component so it doesn t display a clear all button when no filters are applied add pagination | 0 |

67,425 | 7,047,982,557 | IssuesEvent | 2018-01-02 15:51:25 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | ccl/sqlccl: TestBackupRestoreControlJob failed under stress | Robot test-failure | SHA: https://github.com/cockroachdb/cockroach/commits/4fd3e09aaa7974a7fb6f81853d717c25b082d878

Parameters:

```

TAGS=deadlock

GOFLAGS=

```

Stress build found a failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=455324&tab=buildLog

```

I171223 10:12:01.624626 9732186 sql/event_log.go:113 [client=127.0... | 1.0 | ccl/sqlccl: TestBackupRestoreControlJob failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/4fd3e09aaa7974a7fb6f81853d717c25b082d878

Parameters:

```

TAGS=deadlock

GOFLAGS=

```

Stress build found a failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=455324&tab=buildLog

```

I1712... | non_priority | ccl sqlccl testbackuprestorecontroljob failed under stress sha parameters tags deadlock goflags stress build found a failed test sql event log go event create database target info databasename cancelimport statement create database cancelimport user root ... | 0 |

274,774 | 23,866,542,843 | IssuesEvent | 2022-09-07 11:32:38 | CommunitySolidServer/CommunitySolidServer | https://api.github.com/repos/CommunitySolidServer/CommunitySolidServer | closed | Add conformance tests to PR CI | ➕ test | The latest version of the conformance test harness added an `--ignore-failures` option which we could use to make it so it can be run when doing a PR without blocking the merge. https://github.com/solid/conformance-test-harness/releases/tag/v1.0.10

But even better is that we can now target specific versions, see htt... | 1.0 | Add conformance tests to PR CI - The latest version of the conformance test harness added an `--ignore-failures` option which we could use to make it so it can be run when doing a PR without blocking the merge. https://github.com/solid/conformance-test-harness/releases/tag/v1.0.10

But even better is that we can now ... | non_priority | add conformance tests to pr ci the latest version of the conformance test harness added an ignore failures option which we could use to make it so it can be run when doing a pr without blocking the merge but even better is that we can now target specific versions see we could for example fix to a spec... | 0 |

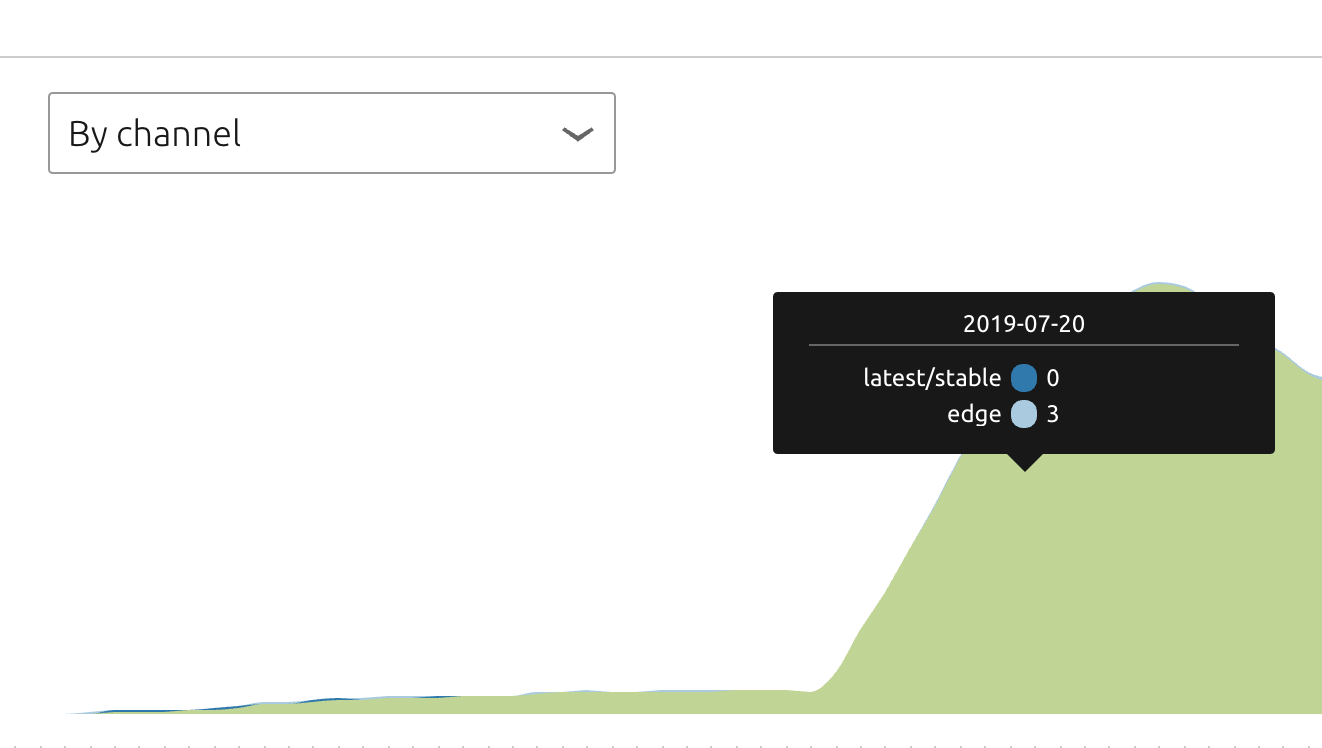

604,898 | 18,720,736,114 | IssuesEvent | 2021-11-03 11:28:29 | canonical-web-and-design/snapcraft.io | https://api.github.com/repos/canonical-web-and-design/snapcraft.io | closed | Metrics showing inaccurate values | Priority: High 🚀 Dev ready |

There are some instances where the tooltip is not showing accurate values on metrics and probably it has to do with some data that has changed or we haven't updated.

[Raised in the forum in here](https://... | 1.0 | Metrics showing inaccurate values -

There are some instances where the tooltip is not showing accurate values on metrics and probably it has to do with some data that has changed or we haven't updated.

[... | priority | metrics showing inaccurate values there are some instances where the tooltip is not showing accurate values on metrics and probably it has to do with some data that has changed or we haven t updated | 1 |

337,944 | 10,221,669,125 | IssuesEvent | 2019-08-16 02:50:22 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | opened | [LOCALIZATION] | old_god_partly_free_effect_desc | :beetle: bug - localization :scroll: :grey_exclamation: priority low | **Mod Version**

458123df

**Please explain your issue in as much detail as possible:**

No loc

**Upload screenshots of the problem localization:**

<details>

</details> | 1.0 | [LOCALIZATION] | old_god_partly_free_effect_desc - **Mod Version**

458123df

**Please explain your issue in as much detail as possible:**

No loc

**Upload screenshots of the problem localization:**

<details>

? | Priority-Medium Type-Enhancement auto-migrated | ```

What steps will reproduce the problem?

1.-

2.

3.

What is the expected output? What do you see instead?

-

What version of the product are you using? On what operating system?

AVRdude / Custom Made board / Win7

Please provide any additional information below.

For develop remote flash by serial link application.I... | 1.0 | Does it possible to call/ jump into boot loader from main loop ( for remote access)? - ```

What steps will reproduce the problem?

1.-

2.

3.

What is the expected output? What do you see instead?

-

What version of the product are you using? On what operating system?

AVRdude / Custom Made board / Win7

Please provide an... | priority | does it possible to call jump into boot loader from main loop for remote access what steps will reproduce the problem what is the expected output what do you see instead what version of the product are you using on what operating system avrdude custom made board please provide any a... | 1 |

50,403 | 21,093,868,907 | IssuesEvent | 2022-04-04 08:28:36 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Compute Instance naming rule is not identical with Azure ML portal | enhancement service/machine-learning | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backen... | 1.0 | Compute Instance naming rule is not identical with Azure ML portal - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state... | non_priority | compute instance naming rule is not identical with azure ml portal please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into o... | 0 |

136,360 | 5,281,235,541 | IssuesEvent | 2017-02-07 16:03:17 | timberline-secondary/flex-site | https://api.github.com/repos/timberline-secondary/flex-site | opened | Date filter in Add Registration in Admin | 4. Low Priority | Add a date filter in the registration section for registering a student. Right now the drop down list lists ALL the event and you cannot tell by looking at it which event (date) the student will actually be registered for. | 1.0 | Date filter in Add Registration in Admin - Add a date filter in the registration section for registering a student. Right now the drop down list lists ALL the event and you cannot tell by looking at it which event (date) the student will actually be registered for. | priority | date filter in add registration in admin add a date filter in the registration section for registering a student right now the drop down list lists all the event and you cannot tell by looking at it which event date the student will actually be registered for | 1 |

247,051 | 18,857,235,088 | IssuesEvent | 2021-11-12 08:20:48 | Daimler/odxtools | https://api.github.com/repos/Daimler/odxtools | opened | Better API documentation | documentation good first issue help wanted nice to have | The API documentation is generated from the python docstrings and it can be generated and inspected via

```bash

cd $ODXTOOLS_SRC_DIR/doc

make html

firefox _build/html/index.html

```

It IMO already looks decent, but it is sorely lacking in completeness and does not feature any "tutorial style" introductions. thi... | 1.0 | Better API documentation - The API documentation is generated from the python docstrings and it can be generated and inspected via

```bash

cd $ODXTOOLS_SRC_DIR/doc

make html

firefox _build/html/index.html

```

It IMO already looks decent, but it is sorely lacking in completeness and does not feature any "tutoria... | non_priority | better api documentation the api documentation is generated from the python docstrings and it can be generated and inspected via bash cd odxtools src dir doc make html firefox build html index html it imo already looks decent but it is sorely lacking in completeness and does not feature any tutoria... | 0 |

789,877 | 27,808,475,267 | IssuesEvent | 2023-03-17 23:00:31 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] ALTER TABLE fails constraints if executed in the same connection | kind/bug area/ysql priority/medium status/awaiting-triage | Jira Link: [DB-5878](https://yugabyte.atlassian.net/browse/DB-5878)

### Description

In YB master, executing the following sequence of statements will fail

```SQL

yugabyte=# CREATE TABLE nopk ( id int CHECK (id > 0), v1 int CHECK (v1 > 0));

CREATE TABLE

yugabyte=# ALTER TABLE nopk ADD PRIMARY KEY (id);

ERROR:... | 1.0 | [YSQL] ALTER TABLE fails constraints if executed in the same connection - Jira Link: [DB-5878](https://yugabyte.atlassian.net/browse/DB-5878)

### Description

In YB master, executing the following sequence of statements will fail

```SQL

yugabyte=# CREATE TABLE nopk ( id int CHECK (id > 0), v1 int CHECK (v1 > 0))... | priority | alter table fails constraints if executed in the same connection jira link description in yb master executing the following sequence of statements will fail sql yugabyte create table nopk id int check id int check create table yugabyte alter table nopk add primary key... | 1 |

53,501 | 13,261,773,480 | IssuesEvent | 2020-08-20 20:30:29 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | cmake - `make deploy-docs` goes to the wrong destination (Trac #1548) | Migrated from Trac cmake defect | goes to "METAPROJECT_release"

should just go to "METAPROJECT"

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1548">https://code.icecube.wisc.edu/projects/icecube/ticket/1548</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed"... | 1.0 | cmake - `make deploy-docs` goes to the wrong destination (Trac #1548) - goes to "METAPROJECT_release"

should just go to "METAPROJECT"

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1548">https://code.icecube.wisc.edu/projects/icecube/ticket/1548</a>, reported by ne... | non_priority | cmake make deploy docs goes to the wrong destination trac goes to metaproject release should just go to metaproject migrated from json status closed changetime ts description goes to metaproject release nshould just go to metaproje... | 0 |

244,340 | 7,874,036,230 | IssuesEvent | 2018-06-25 15:49:50 | cms-gem-daq-project/gem-plotting-tools | https://api.github.com/repos/cms-gem-daq-project/gem-plotting-tools | opened | Feature Request: 2D Map of Detector Scurve Width | Priority: Medium Status: Help Wanted Type: Enhancement | <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

To better correlate channel loss with physical location on the detector a new distribution is needed. It should be a `... | 1.0 | Feature Request: 2D Map of Detector Scurve Width - <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

To better correlate channel loss with physical location on the detec... | priority | feature request map of detector scurve width brief summary of issue to better correlate channel loss with physical location on the detector a new distribution is needed it should be a which has on the y axis ieta and on the x axis strip here strip should go as to the z axis should be ... | 1 |

16,495 | 22,333,858,207 | IssuesEvent | 2022-06-14 16:39:06 | ikemen-engine/Ikemen-GO | https://api.github.com/repos/ikemen-engine/Ikemen-GO | closed | Couple of issues with ReversalDef | bug compatibility | Found a few problems with this sctrl compared to how it works in Mugen.

The first one is easy to explain: if you use a ReversalDef without P2stateno, such as for autoguard moves or parrying, a player caught by it will become your target indefinitely. Or until you hit him again anyway. In Mugen the target is dropped ... | True | Couple of issues with ReversalDef - Found a few problems with this sctrl compared to how it works in Mugen.

The first one is easy to explain: if you use a ReversalDef without P2stateno, such as for autoguard moves or parrying, a player caught by it will become your target indefinitely. Or until you hit him again any... | non_priority | couple of issues with reversaldef found a few problems with this sctrl compared to how it works in mugen the first one is easy to explain if you use a reversaldef without such as for autoguard moves or parrying a player caught by it will become your target indefinitely or until you hit him again anyway in ... | 0 |

433,775 | 30,349,528,028 | IssuesEvent | 2023-07-11 17:50:13 | EducationalTestingService/rsmtool | https://api.github.com/repos/EducationalTestingService/rsmtool | closed | Add best practices for sharing reports to documentation. | documentation | It would be useful to add some best practices for sharing reports with other people to the documentation. When to send just the HTML, when to zip up everything, when to include the CSVs etc. | 1.0 | Add best practices for sharing reports to documentation. - It would be useful to add some best practices for sharing reports with other people to the documentation. When to send just the HTML, when to zip up everything, when to include the CSVs etc. | non_priority | add best practices for sharing reports to documentation it would be useful to add some best practices for sharing reports with other people to the documentation when to send just the html when to zip up everything when to include the csvs etc | 0 |

445,671 | 12,834,775,788 | IssuesEvent | 2020-07-07 11:41:54 | radical-cybertools/radical.pilot | https://api.github.com/repos/radical-cybertools/radical.pilot | closed | Get RP running on NCAR Cheyenne | layer:rp priority:critical topic:deployment | Our collaborators want to run their applications on Cheyenne. See ticket https://github.com/radical-collaboration/hpc-workflows/issues/28.

A config file has been added for cheyenne under resource_ncar.json in the feature/cheyenne branch. Pending instructions to create a static VE on cheyenne (if required), need to c... | 1.0 | Get RP running on NCAR Cheyenne - Our collaborators want to run their applications on Cheyenne. See ticket https://github.com/radical-collaboration/hpc-workflows/issues/28.

A config file has been added for cheyenne under resource_ncar.json in the feature/cheyenne branch. Pending instructions to create a static VE on... | priority | get rp running on ncar cheyenne our collaborators want to run their applications on cheyenne see ticket a config file has been added for cheyenne under resource ncar json in the feature cheyenne branch pending instructions to create a static ve on cheyenne if required need to confirm with andre | 1 |

60,147 | 8,406,091,473 | IssuesEvent | 2018-10-11 16:56:11 | GMOD/jbrowse | https://api.github.com/repos/GMOD/jbrowse | closed | need a "embedding" section of documentation | documentation has pullreq in progress | Probably title it "Advanced Configuration -> Embedding JBrowse in another page".

Should tell users how to embed JBrowse without an iframe. | 1.0 | need a "embedding" section of documentation - Probably title it "Advanced Configuration -> Embedding JBrowse in another page".

Should tell users how to embed JBrowse without an iframe. | non_priority | need a embedding section of documentation probably title it advanced configuration embedding jbrowse in another page should tell users how to embed jbrowse without an iframe | 0 |

40,531 | 5,301,545,964 | IssuesEvent | 2017-02-10 10:03:08 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | FAIL: TestTriggers_configChange | area/tests component/deployments kind/test-flake priority/P1 | ```

--- FAIL: TestTriggers_configChange (4.99s)

deploy_trigger_test.go:666: Operation cannot be fulfilled on deploymentconfigs "config": the object has been modified; please apply your changes to the latest version and try again

```

https://ci.openshift.redhat.com/jenkins/job/test_pull_requests_origin_integration/... | 2.0 | FAIL: TestTriggers_configChange - ```

--- FAIL: TestTriggers_configChange (4.99s)

deploy_trigger_test.go:666: Operation cannot be fulfilled on deploymentconfigs "config": the object has been modified; please apply your changes to the latest version and try again

```

https://ci.openshift.redhat.com/jenkins/job/test... | non_priority | fail testtriggers configchange fail testtriggers configchange deploy trigger test go operation cannot be fulfilled on deploymentconfigs config the object has been modified please apply your changes to the latest version and try again from the logs it seems that the test config was... | 0 |

291,337 | 8,923,563,989 | IssuesEvent | 2019-01-21 15:58:40 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | Score filtering dropdown menu disappears after selecting zero-count rating | component: add-on ratings priority: p2 state: pull request ready | Describe the problem and steps to reproduce it:

1. Load addons-dev.allizom.org

2. go to reviews page (addon or theme without too many reviews..)

3. In score filtering dropdown menu, select rating with 0 review

What happened?

The dropdown disappears.

What did you expect to happen?

The dropdown still be there ... | 1.0 | Score filtering dropdown menu disappears after selecting zero-count rating - Describe the problem and steps to reproduce it:

1. Load addons-dev.allizom.org

2. go to reviews page (addon or theme without too many reviews..)

3. In score filtering dropdown menu, select rating with 0 review

What happened?

The dropdo... | priority | score filtering dropdown menu disappears after selecting zero count rating describe the problem and steps to reproduce it load addons dev allizom org go to reviews page addon or theme without too many reviews in score filtering dropdown menu select rating with review what happened the dropdo... | 1 |

415,417 | 12,129,139,829 | IssuesEvent | 2020-04-22 21:53:28 | kubeflow/kubeflow | https://api.github.com/repos/kubeflow/kubeflow | closed | Notebook auto-scaling | kind/question platform/aws priority/p2 | /kind question

**Question:**

I've installed kubeflow (1.0) on AWS on a EKS cluster with autoscaling. Min number of instances 0, max number of instances 4, and desired 2.

I was under the impression that as I request for more notebooks (and resources for it) Kubeflow would be smart enough to auto-scale by itself u... | 1.0 | Notebook auto-scaling - /kind question

**Question:**

I've installed kubeflow (1.0) on AWS on a EKS cluster with autoscaling. Min number of instances 0, max number of instances 4, and desired 2.

I was under the impression that as I request for more notebooks (and resources for it) Kubeflow would be smart enough t... | priority | notebook auto scaling kind question question i ve installed kubeflow on aws on a eks cluster with autoscaling min number of instances max number of instances and desired i was under the impression that as i request for more notebooks and resources for it kubeflow would be smart enough t... | 1 |

12,140 | 2,685,250,602 | IssuesEvent | 2015-03-29 21:12:11 | IssueMigrationTest/Test5 | https://api.github.com/repos/IssueMigrationTest/Test5 | closed | Support "with" statement | auto-migrated Priority-Medium Type-Defect | **Issue by [Jérémie Roquet](/arkanosis)**

_6 Jan 2010 at 10:25 GMT_

_Originally opened on Google Code_

----

```

Hello,

It would be nice to have support for the "with" statement introduced in

http://www.python.org/dev/peps/pep-0343/

It's available in CPython from version 2.5 (in the __future__

pseudo-module) and fr... | 1.0 | Support "with" statement - **Issue by [Jérémie Roquet](/arkanosis)**

_6 Jan 2010 at 10:25 GMT_

_Originally opened on Google Code_

----

```

Hello,

It would be nice to have support for the "with" statement introduced in

http://www.python.org/dev/peps/pep-0343/

It's available in CPython from version 2.5 (in the __fut... | non_priority | support with statement issue by arkanosis jan at gmt originally opened on google code hello it would be nice to have support for the with statement introduced in it s available in cpython from version in the future pseudo module and from version as a normal ke... | 0 |

107,057 | 23,339,746,857 | IssuesEvent | 2022-08-09 13:08:48 | neovim/neovim | https://api.github.com/repos/neovim/neovim | closed | `set ambiwidth=double` will cause display error | bug tui display unicode 💩 | ### Neovim version (nvim -v)

NVIM v0.7.0 Build type: Release

### Vim (not Nvim) behaves the same?

no, vim8.2

### Operating system/version

manjaro

### Terminal name/version

terminator

### $TERM environment variable

xterm-256color

### Installation

AUR

### How to reproduce the issue

1. nvim --clean

2. paste ... | 1.0 | `set ambiwidth=double` will cause display error - ### Neovim version (nvim -v)

NVIM v0.7.0 Build type: Release

### Vim (not Nvim) behaves the same?

no, vim8.2

### Operating system/version

manjaro

### Terminal name/version

terminator

### $TERM environment variable

xterm-256color

### Installation

AUR

### How ... | non_priority | set ambiwidth double will cause display error neovim version nvim v nvim build type release vim not nvim behaves the same no operating system version manjaro terminal name version terminator term environment variable xterm installation aur how to reproduc... | 0 |

1,820 | 2,574,686,931 | IssuesEvent | 2015-02-11 18:22:47 | ORNL-CEES/DataTransferKit | https://api.github.com/repos/ORNL-CEES/DataTransferKit | opened | Fix Failing/Passing SplineInterpolation test | bug Priority Testing | The SplineInterpolation test fails on my machine but passes on most of the CDash builds. There are CDash builds in which this test passes some nights and not others. The test started failing after Tpetra switched to view semantics as part of the Kokkos refactor. This issue will track the process of finding the bugs. It... | 1.0 | Fix Failing/Passing SplineInterpolation test - The SplineInterpolation test fails on my machine but passes on most of the CDash builds. There are CDash builds in which this test passes some nights and not others. The test started failing after Tpetra switched to view semantics as part of the Kokkos refactor. This issue... | non_priority | fix failing passing splineinterpolation test the splineinterpolation test fails on my machine but passes on most of the cdash builds there are cdash builds in which this test passes some nights and not others the test started failing after tpetra switched to view semantics as part of the kokkos refactor this issue... | 0 |

570,116 | 17,019,208,860 | IssuesEvent | 2021-07-02 16:09:19 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Add Site should probably read Add site in new-tab page's Favorites | OS/Desktop feature/new-tab good first issue needs-text-change polish priority/P4 release-notes/exclude | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Add Site should probably read Add site in new-tab page's Favorites - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE... | priority | add site should probably read add site in new tab page s favorites have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the... | 1 |

597,942 | 18,216,690,592 | IssuesEvent | 2021-09-30 05:50:58 | literakl/mezinamiridici | https://api.github.com/repos/literakl/mezinamiridici | opened | Anchor flag for blogs | type: enhancement priority: P3 | Blog subtype: short text and a link to external article. Such an article must be visually distinguished in the stream. | 1.0 | Anchor flag for blogs - Blog subtype: short text and a link to external article. Such an article must be visually distinguished in the stream. | priority | anchor flag for blogs blog subtype short text and a link to external article such an article must be visually distinguished in the stream | 1 |

18,944 | 13,173,644,220 | IssuesEvent | 2020-08-11 20:43:36 | dotnet/dotnet-docker | https://api.github.com/repos/dotnet/dotnet-docker | closed | Incorrect PR build leg paths for 5.0 Alpine | area:infrastructure bug triaged | The changes from https://github.com/dotnet/dotnet-docker/pull/2064 end up causing the 5.0 Alpine PR build leg in nightly to build a bunch of images that should not be built. This is due to the build matrix generation. This leg ends up having the following build paths defined for it:

```

--path src/runtime-deps/5.... | 1.0 | Incorrect PR build leg paths for 5.0 Alpine - The changes from https://github.com/dotnet/dotnet-docker/pull/2064 end up causing the 5.0 Alpine PR build leg in nightly to build a bunch of images that should not be built. This is due to the build matrix generation. This leg ends up having the following build paths defi... | non_priority | incorrect pr build leg paths for alpine the changes from end up causing the alpine pr build leg in nightly to build a bunch of images that should not be built this is due to the build matrix generation this leg ends up having the following build paths defined for it path src runtime deps ... | 0 |

4,830 | 3,896,897,613 | IssuesEvent | 2016-04-16 03:02:23 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 16284628: Autolayout Constraint Label not updated in IB Tree | classification:ui/usability reproducible:sometimes status:open | #### Description

Summary:

Two similar constraints, one updates in the sidebar the other doesn't.

Steps to Reproduce:

Add a "box" to a view controller in IB with storyboards enabled. Add a leading edge constraint with a value of 60 points. Add a trailing space constraint with the same values.

Then select the constr... | True | 16284628: Autolayout Constraint Label not updated in IB Tree - #### Description

Summary:

Two similar constraints, one updates in the sidebar the other doesn't.

Steps to Reproduce:

Add a "box" to a view controller in IB with storyboards enabled. Add a leading edge constraint with a value of 60 points. Add a trailing... | non_priority | autolayout constraint label not updated in ib tree description summary two similar constraints one updates in the sidebar the other doesn t steps to reproduce add a box to a view controller in ib with storyboards enabled add a leading edge constraint with a value of points add a trailing space c... | 0 |

456,780 | 13,150,997,156 | IssuesEvent | 2020-08-09 14:35:13 | chrisjsewell/docutils | https://api.github.com/repos/chrisjsewell/docutils | closed | The inline markup recognition rule example "2 * x *a **b *.txt" does not validate. [SF:bugs:308] | bugs closed-fixed priority-3 |

author: edauvergne

created: 2017-02-08 21:19:56.282000

assigned: goodger

SF_url: https://sourceforge.net/p/docutils/bugs/308

From the [inline markup recognition rules](http://docutils.sourceforge.net/docs/ref/rst/restructuredtext.html#inline-markup-recognition-rules), the following example is given for not requ... | 1.0 | The inline markup recognition rule example "2 * x *a **b *.txt" does not validate. [SF:bugs:308] -

author: edauvergne

created: 2017-02-08 21:19:56.282000

assigned: goodger

SF_url: https://sourceforge.net/p/docutils/bugs/308

From the [inline markup recognition rules](http://docutils.sourceforge.net/docs/ref/rst/... | priority | the inline markup recognition rule example x a b txt does not validate author edauvergne created assigned goodger sf url from the the following example is given for not requiring escaping due to the breaking of rule x a b txt however i don t see how ru... | 1 |

340,522 | 10,273,144,534 | IssuesEvent | 2019-08-23 18:26:24 | fgpv-vpgf/fgpv-vpgf | https://api.github.com/repos/fgpv-vpgf/fgpv-vpgf | closed | Grid number filters dont allow negative symbol | bug-type: broken use case priority: medium problem: bug type: corrective | A user cannot type the `-` symbol in a min/max filter box in the data grid. However you can compose a negative number in `notepad.exe` and then paste it into the filter box ok.

[This service](http://section917.cloudapp.net/arcgis/rest/services/TestData/Oilsands/MapServer/0) has negative numbers in the `longitude` f... | 1.0 | Grid number filters dont allow negative symbol - A user cannot type the `-` symbol in a min/max filter box in the data grid. However you can compose a negative number in `notepad.exe` and then paste it into the filter box ok.

[This service](http://section917.cloudapp.net/arcgis/rest/services/TestData/Oilsands/MapSe... | priority | grid number filters dont allow negative symbol a user cannot type the symbol in a min max filter box in the data grid however you can compose a negative number in notepad exe and then paste it into the filter box ok has negative numbers in the longitude field | 1 |

280,626 | 21,313,875,353 | IssuesEvent | 2022-04-16 01:14:13 | uf-mil/mil | https://api.github.com/repos/uf-mil/mil | opened | Document documentation generation process | documentation enhancement software | The documentation process has grown increasingly complex with the addition of `autodoc`, attribute tables, and building in containerized environments.

As a result, this should all be documented. | 1.0 | Document documentation generation process - The documentation process has grown increasingly complex with the addition of `autodoc`, attribute tables, and building in containerized environments.

As a result, this should all be documented. | non_priority | document documentation generation process the documentation process has grown increasingly complex with the addition of autodoc attribute tables and building in containerized environments as a result this should all be documented | 0 |

54,289 | 3,062,998,462 | IssuesEvent | 2015-08-17 02:16:01 | Miniand/brdg.me-issues | https://api.github.com/repos/Miniand/brdg.me-issues | closed | Simplify command backend | priority:medium project:server type:enhancement | It's currently too complex and poorly abstracted, which can complicate commands sitting on top of state machines (limiting the ability to support things like mid-game votes.) Things should also be cleaned up to support more strongly defined parsers which will hopefully pave the way for richer UIs for mobile / web.

... | 1.0 | Simplify command backend - It's currently too complex and poorly abstracted, which can complicate commands sitting on top of state machines (limiting the ability to support things like mid-game votes.) Things should also be cleaned up to support more strongly defined parsers which will hopefully pave the way for riche... | priority | simplify command backend it s currently too complex and poorly abstracted which can complicate commands sitting on top of state machines limiting the ability to support things like mid game votes things should also be cleaned up to support more strongly defined parsers which will hopefully pave the way for riche... | 1 |

531,114 | 15,441,182,200 | IssuesEvent | 2021-03-08 05:21:34 | octobercms/october | https://api.github.com/repos/octobercms/october | closed | Adding multiple images inside rich text editor with a single drag | Priority: Low Status: In Progress Type: Enhancement | ##### Expected behavior

So the richtext editor froala comes with a nice feature, adding images from the gallery, when I multiselect images and click insert ... all the images I selected should be added to my text.

##### Actual behavior

Only one image is added

##### Reproduce steps

add a rich text editor to yo... | 1.0 | Adding multiple images inside rich text editor with a single drag - ##### Expected behavior

So the richtext editor froala comes with a nice feature, adding images from the gallery, when I multiselect images and click insert ... all the images I selected should be added to my text.

##### Actual behavior

Only one i... | priority | adding multiple images inside rich text editor with a single drag expected behavior so the richtext editor froala comes with a nice feature adding images from the gallery when i multiselect images and click insert all the images i selected should be added to my text actual behavior only one i... | 1 |

204,679 | 15,947,324,380 | IssuesEvent | 2021-04-15 03:13:13 | akiradeveloper/lol | https://api.github.com/repos/akiradeveloper/lol | opened | Use term "bootstrap" | documentation | To add a new node, there should be an existing cluster to accept the joining. But how about the first node?

The first node is taken as a special node that forms a single node cluster with only itself.

Other softwares like elasticsearch and Consul seem to do the same thing and both call it bootstrapping.

- http... | 1.0 | Use term "bootstrap" - To add a new node, there should be an existing cluster to accept the joining. But how about the first node?

The first node is taken as a special node that forms a single node cluster with only itself.

Other softwares like elasticsearch and Consul seem to do the same thing and both call it b... | non_priority | use term bootstrap to add a new node there should be an existing cluster to accept the joining but how about the first node the first node is taken as a special node that forms a single node cluster with only itself other softwares like elasticsearch and consul seem to do the same thing and both call it b... | 0 |

686,075 | 23,476,050,841 | IssuesEvent | 2022-08-17 06:07:45 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Readonly Service Object doesn't Validate at Compile time | Type/Bug Priority/Blocker Team/CompilerFE | **Description:**

Consider the following record:

```ballerina

public type GraphqlServiceConfig record {|

readonly readonly & Interceptor[] interceptors = [];

|};

public type Interceptor distinct service object {

isolated remote function execute(Context context, Field 'field) returns anydata|error;

};

... | 1.0 | Readonly Service Object doesn't Validate at Compile time - **Description:**

Consider the following record:

```ballerina

public type GraphqlServiceConfig record {|

readonly readonly & Interceptor[] interceptors = [];

|};

public type Interceptor distinct service object {

isolated remote function execute(... | priority | readonly service object doesn t validate at compile time description consider the following record ballerina public type graphqlserviceconfig record readonly readonly interceptor interceptors public type interceptor distinct service object isolated remote function execute co... | 1 |

636,370 | 20,598,476,032 | IssuesEvent | 2022-03-05 22:13:23 | mreishman/Log-Hog | https://api.github.com/repos/mreishman/Log-Hog | closed | Combine whats new and change log pages | enhancement Priority - 3 - Medium | - [x] Move whats new images into change log

- [x] change images into slideshow with click to go full screen | 1.0 | Combine whats new and change log pages - - [x] Move whats new images into change log

- [x] change images into slideshow with click to go full screen | priority | combine whats new and change log pages move whats new images into change log change images into slideshow with click to go full screen | 1 |

271,712 | 8,488,808,431 | IssuesEvent | 2018-10-26 17:50:36 | cyberperspectives/sagacity | https://api.github.com/repos/cyberperspectives/sagacity | closed | Ops Page slow category load - db->get_Finding_Count_By_Status | High Priority bug | The Ops page overall loads quickly, until it gets to a category with a lot of hosts (like the 20-30 Win 10 SCC targets). The attached ste_index_php cachegrind files show that get_Finding_Count_By_Status is taking an inordinate amount of time.

[cachegrind.out.zip](https://github.com/cyberperspectives/sagacity/files/24... | 1.0 | Ops Page slow category load - db->get_Finding_Count_By_Status - The Ops page overall loads quickly, until it gets to a category with a lot of hosts (like the 20-30 Win 10 SCC targets). The attached ste_index_php cachegrind files show that get_Finding_Count_By_Status is taking an inordinate amount of time.

[cachegrind... | priority | ops page slow category load db get finding count by status the ops page overall loads quickly until it gets to a category with a lot of hosts like the win scc targets the attached ste index php cachegrind files show that get finding count by status is taking an inordinate amount of time | 1 |

178,073 | 6,598,694,744 | IssuesEvent | 2017-09-16 09:25:15 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | Need downgrade tests for 1.8 release | kind/bug priority/critical-urgent sig/release | From what I learned on slack we're testing upgrades only - we check if we upgrade 1.7 cluster to 1.8 cluster things keep working. On the other hand we're NOT testing if downgrading 1.8 cluster to 1.7 works at all. Downgrades are extremely important for everyone running kubernetes in production - if it turns out that 1.... | 1.0 | Need downgrade tests for 1.8 release - From what I learned on slack we're testing upgrades only - we check if we upgrade 1.7 cluster to 1.8 cluster things keep working. On the other hand we're NOT testing if downgrading 1.8 cluster to 1.7 works at all. Downgrades are extremely important for everyone running kubernetes ... | priority | need downgrade tests for release from what i learned on slack we re testing upgrades only we check if we upgrade cluster to cluster things keep working on the other hand we re not testing if downgrading cluster to works at all downgrades are extremely important for everyone running kubernetes ... | 1 |

522,397 | 15,159,061,166 | IssuesEvent | 2021-02-12 03:02:57 | apcountryman/picolibrary-microchip-megaavr | https://api.github.com/repos/apcountryman/picolibrary-microchip-megaavr | closed | Add SPI peripheral based SPI basic controller | priority-normal status-awaiting_approval type-feature | Add SPI peripheral based SPI basic controller (`::picolibrary::Microchip::megaAVR::SPI::Basic_Controller<::picolibrary::Microchip::megaAVR::Peripheral::SPI>`) and associated echo interactive test program. | 1.0 | Add SPI peripheral based SPI basic controller - Add SPI peripheral based SPI basic controller (`::picolibrary::Microchip::megaAVR::SPI::Basic_Controller<::picolibrary::Microchip::megaAVR::Peripheral::SPI>`) and associated echo interactive test program. | priority | add spi peripheral based spi basic controller add spi peripheral based spi basic controller picolibrary microchip megaavr spi basic controller and associated echo interactive test program | 1 |

66,183 | 14,767,360,161 | IssuesEvent | 2021-01-10 06:15:53 | shiriivtsan/bebo | https://api.github.com/repos/shiriivtsan/bebo | opened | WS-2019-0103 (Medium) detected in handlebars-1.0.12.tgz | security vulnerability | ## WS-2019-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-1.0.12.tgz</b></p></summary>

<p>Extension of the Mustache logicless template language</p>

<p>Library home p... | True | WS-2019-0103 (Medium) detected in handlebars-1.0.12.tgz - ## WS-2019-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-1.0.12.tgz</b></p></summary>

<p>Extension of the ... | non_priority | ws medium detected in handlebars tgz ws medium severity vulnerability vulnerable library handlebars tgz extension of the mustache logicless template language library home page a href path to dependency file bebo decompress zip package package json path to vulner... | 0 |

71,837 | 23,822,387,482 | IssuesEvent | 2022-09-05 12:24:55 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | closed | Mention pills from partial unsent message disappear when coming back to Room | T-Defect A-Composer A-Room | ### Steps to reproduce

1. Go to any room

2. Use a mention pill

3. Leave the screen OR kill the app

4. Come back to the same room

### Outcome

#### What did you expect?

Mention pill from stored message is restored

#### What happened instead?

Mention pill disappears and get replaced by a whitespace

### Y... | 1.0 | Mention pills from partial unsent message disappear when coming back to Room - ### Steps to reproduce

1. Go to any room

2. Use a mention pill

3. Leave the screen OR kill the app

4. Come back to the same room

### Outcome

#### What did you expect?

Mention pill from stored message is restored

#### What happe... | non_priority | mention pills from partial unsent message disappear when coming back to room steps to reproduce go to any room use a mention pill leave the screen or kill the app come back to the same room outcome what did you expect mention pill from stored message is restored what happe... | 0 |

263,868 | 28,070,715,485 | IssuesEvent | 2023-03-29 18:51:00 | turkdevops/cirrus-ci-web | https://api.github.com/repos/turkdevops/cirrus-ci-web | opened | CVE-2022-25927 (High) detected in ua-parser-js-0.7.31.tgz | Mend: dependency security vulnerability | ## CVE-2022-25927 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.31.tgz</b></p></summary>

<p>Detect Browser, Engine, OS, CPU, and Device type/model from User-Agent da... | True | CVE-2022-25927 (High) detected in ua-parser-js-0.7.31.tgz - ## CVE-2022-25927 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ua-parser-js-0.7.31.tgz</b></p></summary>

<p>Detect Browse... | non_priority | cve high detected in ua parser js tgz cve high severity vulnerability vulnerable library ua parser js tgz detect browser engine os cpu and device type model from user agent data supports browser node js environment library home page a href dependency hierarchy ... | 0 |

274,708 | 23,859,360,102 | IssuesEvent | 2022-09-07 05:04:51 | godotengine/godot | https://api.github.com/repos/godotengine/godot | opened | Very random crashes when executing `SubViewport.set_size_2d_override_stretch` | bug topic:rendering needs testing crash | ### Godot version

4.0.alpha.custom_build. 4b164b8e4

### System information

Ubuntu 22.04 - Nvidia GTX 970, Gnome shell 42 X11

### Issue description

When executing random SubViewport function, then after a while(usually after 30min of project running), I have this crash

```

drivers/vulkan/rendering_device_vulkan... | 1.0 | Very random crashes when executing `SubViewport.set_size_2d_override_stretch` - ### Godot version

4.0.alpha.custom_build. 4b164b8e4

### System information

Ubuntu 22.04 - Nvidia GTX 970, Gnome shell 42 X11

### Issue description

When executing random SubViewport function, then after a while(usually after 30min of pr... | non_priority | very random crashes when executing subviewport set size override stretch godot version alpha custom build system information ubuntu nvidia gtx gnome shell issue description when executing random subviewport function then after a while usually after of project running i ha... | 0 |

177,069 | 6,573,906,451 | IssuesEvent | 2017-09-11 10:37:00 | EyeSeeTea/pictureapp | https://api.github.com/repos/EyeSeeTea/pictureapp | closed | Introduce a variable timeout for WS push | complexity - low (1hr) eReferrals priority - high type - feature | WS can spend some variable time to answer a push call. The suggested function that would describe the timeout to be configured in the call is: number_vouchers x 2000ms | 1.0 | Introduce a variable timeout for WS push - WS can spend some variable time to answer a push call. The suggested function that would describe the timeout to be configured in the call is: number_vouchers x 2000ms | priority | introduce a variable timeout for ws push ws can spend some variable time to answer a push call the suggested function that would describe the timeout to be configured in the call is number vouchers x | 1 |

97,655 | 20,370,946,942 | IssuesEvent | 2022-02-21 11:09:07 | GeoNode/geonode | https://api.github.com/repos/GeoNode/geonode | closed | Align importlayers command to the new upload interface | performance code quality 3.3.x master | `importlayers` must be upgrade both for 3.3.x and master in light of the changes to te upload interface, including the support for remote files (https://github.com/GeoNode/geonode/issues/8667).

- **3.3.x:** currently it still uses the old REST API

- **master**: the old upload method was renamed to `upload_legacy`... | 1.0 | Align importlayers command to the new upload interface - `importlayers` must be upgrade both for 3.3.x and master in light of the changes to te upload interface, including the support for remote files (https://github.com/GeoNode/geonode/issues/8667).

- **3.3.x:** currently it still uses the old REST API

- **maste... | non_priority | align importlayers command to the new upload interface importlayers must be upgrade both for x and master in light of the changes to te upload interface including the support for remote files x currently it still uses the old rest api master the old upload method was renamed to uplo... | 0 |

100,577 | 11,199,491,133 | IssuesEvent | 2020-01-03 18:53:31 | sparkdesignsystem/spark-design-system | https://api.github.com/repos/sparkdesignsystem/spark-design-system | closed | Add Dictionary Docs - NDS | Component: Dictionary status: PO approved type: documentation | **User Story:**

As Spark, we created new Dictionary Docs in word that we want to copy into Storybook so that our New Doc Site displays the updated documentation.

**Notes:**

- Docs can be found at shorty/sparkcontent

**AC:**

- I will see updated documentation displaying on the New Doc Site | 1.0 | Add Dictionary Docs - NDS - **User Story:**

As Spark, we created new Dictionary Docs in word that we want to copy into Storybook so that our New Doc Site displays the updated documentation.

**Notes:**

- Docs can be found at shorty/sparkcontent

**AC:**

- I will see updated documentation displaying on the New ... | non_priority | add dictionary docs nds user story as spark we created new dictionary docs in word that we want to copy into storybook so that our new doc site displays the updated documentation notes docs can be found at shorty sparkcontent ac i will see updated documentation displaying on the new ... | 0 |

189,099 | 6,794,112,235 | IssuesEvent | 2017-11-01 10:41:14 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | closed | Elasticsearch starter forces use of Log4j2, breaking logging in apps that try to use Logback | priority: normal type: bug | I'm trying to run `spring-boot-starter-data-elasticsearch` in latest Milestone 2.0.0.M5.

I've used project template generated from start.spring.io.

Here is the GitHub repo url: https://github.com/staleks/spring-boot-2.0.M5-ES

Run

1. `$ ./gradlew clean build`

2. `$ ./gradlew bootRun`

stale the process of loa... | 1.0 | Elasticsearch starter forces use of Log4j2, breaking logging in apps that try to use Logback - I'm trying to run `spring-boot-starter-data-elasticsearch` in latest Milestone 2.0.0.M5.

I've used project template generated from start.spring.io.

Here is the GitHub repo url: https://github.com/staleks/spring-boot-2.0.M... | priority | elasticsearch starter forces use of breaking logging in apps that try to use logback i m trying to run spring boot starter data elasticsearch in latest milestone i ve used project template generated from start spring io here is the github repo url run gradlew clean build gr... | 1 |

613,434 | 19,090,232,105 | IssuesEvent | 2021-11-29 11:14:35 | golemfactory/ya-provider-winui | https://api.github.com/repos/golemfactory/ya-provider-winui | closed | Send all rolling log files with user feedback | priority: lowest no-issue-activity | Right now I believe that only one log with default name will be sent | 1.0 | Send all rolling log files with user feedback - Right now I believe that only one log with default name will be sent | priority | send all rolling log files with user feedback right now i believe that only one log with default name will be sent | 1 |

25,311 | 4,288,706,068 | IssuesEvent | 2016-07-17 16:51:25 | kraigs-android/kraigsandroid | https://api.github.com/repos/kraigs-android/kraigsandroid | closed | Rotating phone cuts off music preview | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Make sure auto-rotate isn't disabled

2. Select song i Alarm Klock so the preview starts playing

3. Rotate phone

What is the expected output? What do you see instead?

Expect phone to rotate display, and music to continue to play. Instead, music

is cut off and music selecti... | 1.0 | Rotating phone cuts off music preview - ```

What steps will reproduce the problem?

1. Make sure auto-rotate isn't disabled

2. Select song i Alarm Klock so the preview starts playing

3. Rotate phone

What is the expected output? What do you see instead?

Expect phone to rotate display, and music to continue to play. Inst... | non_priority | rotating phone cuts off music preview what steps will reproduce the problem make sure auto rotate isn t disabled select song i alarm klock so the preview starts playing rotate phone what is the expected output what do you see instead expect phone to rotate display and music to continue to play inst... | 0 |

236,429 | 7,749,198,104 | IssuesEvent | 2018-05-30 10:38:54 | Gloirin/m2gTest | https://api.github.com/repos/Gloirin/m2gTest | closed | 0003518:

Im Kalender eingetragene Termine werden nicht angepasst wenn sich eine eingeladene Gruppe nachträglich ändert | Calendar bug high priority | **Reported by svenkaths on 16 Dec 2010 12:36**

**Version:** Mialena (2010-03-9)

Trägt man einen neuen Termin in einen gemeinsamen Kalender und fügt eine Gruppe als Attendee hinzu, so erscheint dieser Termin bei allen Mitgliedern der Gruppe im privaten Kalender und wird bei einem Sync mit ActiveSync mit übertragen. Än... | 1.0 | 0003518:

Im Kalender eingetragene Termine werden nicht angepasst wenn sich eine eingeladene Gruppe nachträglich ändert - **Reported by svenkaths on 16 Dec 2010 12:36**

**Version:** Mialena (2010-03-9)

Trägt man einen neuen Termin in einen gemeinsamen Kalender und fügt eine Gruppe als Attendee hinzu, so erscheint dies... | priority | im kalender eingetragene termine werden nicht angepasst wenn sich eine eingeladene gruppe nachträglich ändert reported by svenkaths on dec version mialena trägt man einen neuen termin in einen gemeinsamen kalender und fügt eine gruppe als attendee hinzu so erscheint dieser termin bei al... | 1 |

6,370 | 6,361,319,796 | IssuesEvent | 2017-07-31 12:37:23 | warg-lang/warg | https://api.github.com/repos/warg-lang/warg | opened | Generate accessible static analysis diagnostics | ci enhancement infrastructure | [neovim](https://github.com/neovim/neovim) provides a nice [diagnostics overview](https://neovim.io/doc/reports/clang/) using Clang Static Analysis. While it is not completely transparent how to do that, making similar page would be great. | 1.0 | Generate accessible static analysis diagnostics - [neovim](https://github.com/neovim/neovim) provides a nice [diagnostics overview](https://neovim.io/doc/reports/clang/) using Clang Static Analysis. While it is not completely transparent how to do that, making similar page would be great. | non_priority | generate accessible static analysis diagnostics provides a nice using clang static analysis while it is not completely transparent how to do that making similar page would be great | 0 |

500,638 | 14,503,419,690 | IssuesEvent | 2020-12-11 22:40:36 | phetsims/faradays-law | https://api.github.com/repos/phetsims/faradays-law | closed | Dragging magnet to pan around while zoomed in causes jittery movement | priority:5-deferred type:bug | **Test device**

iPad 6th Gen

**Operating System**

iPadOS 14.1

**Browser**

Safari

**Problem description**

For https://github.com/phetsims/QA/issues/568. Fairly minor. Feel free to close if not an issue. Mostly seen on iPad/touch screen, but reproduced a bit on laptop.

When the sim is zoomed in, you can drag the ... | 1.0 | Dragging magnet to pan around while zoomed in causes jittery movement - **Test device**

iPad 6th Gen

**Operating System**

iPadOS 14.1

**Browser**

Safari

**Problem description**

For https://github.com/phetsims/QA/issues/568. Fairly minor. Feel free to close if not an issue. Mostly seen on iPad/touch screen, but r... | priority | dragging magnet to pan around while zoomed in causes jittery movement test device ipad gen operating system ipados browser safari problem description for fairly minor feel free to close if not an issue mostly seen on ipad touch screen but reproduced a bit on laptop when the sim is ... | 1 |

13,475 | 15,983,888,932 | IssuesEvent | 2021-04-18 11:02:20 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | Theorem title in Jats-XML | bug postprocessing schema | When i convert a theorem to JATS-XML the title is not appearing in the XML.

For a theorem environment defined like

**test.tex**

```tex

\documentclass{article}

\newtheorem{theorem}{Theorem}[section]

\begin{document}

\begin{theorem}

Let f be a function.

\end{theorem}

\end{document}

```

I get with `latex... | 1.0 | Theorem title in Jats-XML - When i convert a theorem to JATS-XML the title is not appearing in the XML.

For a theorem environment defined like

**test.tex**

```tex

\documentclass{article}

\newtheorem{theorem}{Theorem}[section]

\begin{document}

\begin{theorem}

Let f be a function.

\end{theorem}

\end{docum... | non_priority | theorem title in jats xml when i convert a theorem to jats xml the title is not appearing in the xml for a theorem environment defined like test tex tex documentclass article newtheorem theorem theorem begin document begin theorem let f be a function end theorem end document ... | 0 |

551,654 | 16,177,762,784 | IssuesEvent | 2021-05-03 09:44:19 | sopra-fs21-group-4/server | https://api.github.com/repos/sopra-fs21-group-4/server | closed | Joining a lobby via the Lobby URL | high priority removed task | - [x] Joining Request via Lobby URL makes User Entity join Lobby

- [ ] Test

#27 Story 4 | 1.0 | Joining a lobby via the Lobby URL - - [x] Joining Request via Lobby URL makes User Entity join Lobby

- [ ] Test

#27 Story 4 | priority | joining a lobby via the lobby url joining request via lobby url makes user entity join lobby test story | 1 |

18,681 | 4,295,667,561 | IssuesEvent | 2016-07-19 08:09:51 | smartive/giuseppe | https://api.github.com/repos/smartive/giuseppe | opened | Enhance documentation about tsconfig.json settings | documentation | Mentioned in #84:

We should documentate the used minimum settings for the `tsconfig.json` file.

In addition:

- Create yeoman generator for a full blown app (#81)

- #82 | 1.0 | Enhance documentation about tsconfig.json settings - Mentioned in #84:

We should documentate the used minimum settings for the `tsconfig.json` file.

In addition:

- Create yeoman generator for a full blown app (#81)

- #82 | non_priority | enhance documentation about tsconfig json settings mentioned in we should documentate the used minimum settings for the tsconfig json file in addition create yeoman generator for a full blown app | 0 |

162,887 | 20,255,481,067 | IssuesEvent | 2022-02-14 22:37:18 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Security Solution][Detections] In-memory Rule Management and Monitoring tables | Team:Detections and Resp Team: SecuritySolution Feature:Rule Management v8.1.0 Feature:Rule Monitoring Team:Detection Rules | ## Summary

Previously we considered an option of implementing in-memory filtering, sorting and searching in the browser for our Rule Management and Monitoring tables: https://github.com/elastic/kibana/pull/89877

This PR was abandoned because:

- Our `rules/_find` and `rules/_find_statuses` endpoints were extrem... | True | [Security Solution][Detections] In-memory Rule Management and Monitoring tables - ## Summary

Previously we considered an option of implementing in-memory filtering, sorting and searching in the browser for our Rule Management and Monitoring tables: https://github.com/elastic/kibana/pull/89877

This PR was abandone... | non_priority | in memory rule management and monitoring tables summary previously we considered an option of implementing in memory filtering sorting and searching in the browser for our rule management and monitoring tables this pr was abandoned because our rules find and rules find statuses endpoints w... | 0 |

754,484 | 26,390,473,104 | IssuesEvent | 2023-01-12 15:20:27 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | opened | [NPC] Bleeding Hollow Tormentor | Confirmed Priority-Low 61-64 | Original Issue: https://github.com/chromiecraft/chromiecraft/issues/4708

### What client do you play on?

enUS

### Faction

Both

### Content Phase:

61-64

### Current Behaviour

Mounted Bleeding Hollow Tormentors waddle while walking

They don't cast Mend Pet when their pet gets low

Unmounted ones ... | 1.0 | [NPC] Bleeding Hollow Tormentor - Original Issue: https://github.com/chromiecraft/chromiecraft/issues/4708

### What client do you play on?

enUS

### Faction

Both

### Content Phase:

61-64

### Current Behaviour

Mounted Bleeding Hollow Tormentors waddle while walking

They don't cast Mend Pet when t... | priority | bleeding hollow tormentor original issue what client do you play on enus faction both content phase current behaviour mounted bleeding hollow tormentors waddle while walking they don t cast mend pet when their pet gets low unmounted ones still summon their riding ... | 1 |

462,384 | 13,245,982,374 | IssuesEvent | 2020-08-19 15:05:23 | airshipit/airshipctl | https://api.github.com/repos/airshipit/airshipctl | closed | Add Label phase injections in airshipctl. | enhancement priority/low | **Problem description (if applicable)**

Given the new approach of delivering document bundles that have been grouped or segregated as kustomization sets by the intended phase of airship in which they are delivered.

These feature calls for the injection of an appropriate label to mark the artifacts with. the appropr... | 1.0 | Add Label phase injections in airshipctl. - **Problem description (if applicable)**

Given the new approach of delivering document bundles that have been grouped or segregated as kustomization sets by the intended phase of airship in which they are delivered.

These feature calls for the injection of an appropriate l... | priority | add label phase injections in airshipctl problem description if applicable given the new approach of delivering document bundles that have been grouped or segregated as kustomization sets by the intended phase of airship in which they are delivered these feature calls for the injection of an appropriate l... | 1 |

523,391 | 15,180,770,662 | IssuesEvent | 2021-02-15 01:11:43 | QuantEcon/lecture-python.myst | https://api.github.com/repos/QuantEcon/lecture-python.myst | closed | [lecture_comparison]math_size_in_headings | medium-priority | This is a minor issue that math expressions in headings is too small in ```MyST```. I wonder whether there is any solution for this.

The example is: (Left: ```RST```, Right: ```MyST```.)

detected in linuxv5.2 | security vulnerability | ## CVE-2018-1130 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torv... | True | CVE-2018-1130 (Medium) detected in linuxv5.2 - ## CVE-2018-1130 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_priority | cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files net dccp output c net dccp output c ... | 0 |

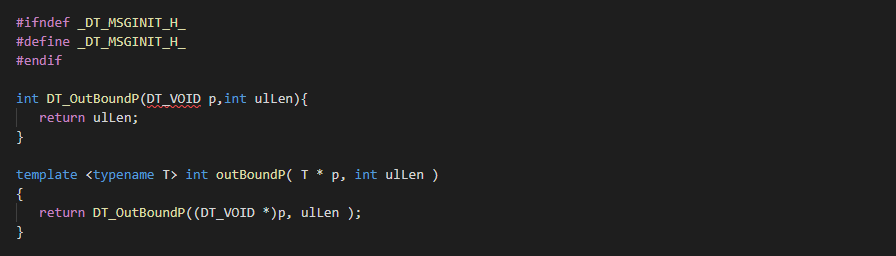

306,633 | 26,485,398,229 | IssuesEvent | 2023-01-17 17:35:16 | AscEmu/AscEmu | https://api.github.com/repos/AscEmu/AscEmu | opened | 👾 [Bug Report] build "NOT USE_PCH" not work (develop) | Issue - Needs retesting | **Description**:

build "NOT USE_PCH" not work

**Steps to reproduce the problem**:

1. build "NOT USE_PCH"

**AscEmu hash/commit**:

latest dev | 1.0 | 👾 [Bug Report] build "NOT USE_PCH" not work (develop) - **Description**:

build "NOT USE_PCH" not work

**Steps to reproduce the problem**:

1. build "NOT USE_PCH"

**AscEmu hash/commit**:

latest dev | non_priority | 👾 build not use pch not work develop description build not use pch not work steps to reproduce the problem build not use pch ascemu hash commit latest dev | 0 |

529,199 | 15,383,158,184 | IssuesEvent | 2021-03-03 02:12:23 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | ConvexPolygon assignable behaviour | Framework Low Priority Stale | As a corollary to fix #14200 I notice that the use-case for _FractionalRebinning_ [here](https://github.com/mantidproject/mantid/blob/master/Framework/DataObjects/src/FractionalRebinning.cpp#L147) has a single ConvexPolygon object, which is global to the loop, and is _cleared_ via `clear` and then inserted into via `in... | 1.0 | ConvexPolygon assignable behaviour - As a corollary to fix #14200 I notice that the use-case for _FractionalRebinning_ [here](https://github.com/mantidproject/mantid/blob/master/Framework/DataObjects/src/FractionalRebinning.cpp#L147) has a single ConvexPolygon object, which is global to the loop, and is _cleared_ via `... | priority | convexpolygon assignable behaviour as a corollary to fix i notice that the use case for fractionalrebinning has a single convexpolygon object which is global to the loop and is cleared via clear and then inserted into via insert upon each iteration it raises a few questions surely you have n ass... | 1 |

395,201 | 11,672,501,991 | IssuesEvent | 2020-03-04 06:48:57 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Message on ROI is missing when inputting pre-filled stake | Add post v2 launch Priority: Low | Should appear once the user enters an amount:

Design: https://www.figma.com/file/aAzKHh4cA6OT2t7WFv2BQ7fB/Reporting-and-Disputing?node-id=231%3A60628

| 1.0 | Message on ROI is missing when inputting pre-filled stake - Should appear once the user enters an amount: