Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

364,417 | 25,490,185,439 | IssuesEvent | 2022-11-27 00:22:42 | projetosala/projetosalabadge | https://api.github.com/repos/projetosala/projetosalabadge | opened | Criar documentação do projeto | documentation priority | Adicionar os seguintes pontos na documentação:

- [ ] Ambiente de desenvolvimento

- [ ] Fluxo de trabalho

- [ ] Como contribuir

- [ ] Como executar

- [ ] Design da aplicação

- [ ] Licença utilizada | 1.0 | Criar documentação do projeto - Adicionar os seguintes pontos na documentação:

- [ ] Ambiente de desenvolvimento

- [ ] Fluxo de trabalho

- [ ] Como contribuir

- [ ] Como executar

- [ ] Design da aplicação

- [ ] Licença utilizada | non_priority | criar documentação do projeto adicionar os seguintes pontos na documentação ambiente de desenvolvimento fluxo de trabalho como contribuir como executar design da aplicação licença utilizada | 0 |

145,398 | 5,575,075,543 | IssuesEvent | 2017-03-28 00:23:51 | projectcalico/calico | https://api.github.com/repos/projectcalico/calico | closed | Remove Docker Vagrant-Ubuntu Guide | area/demo content/out-of-date priority/P1 size/S | I don't think we really need to be maintaining 2 vagrant guides. Let's stick with CoreOS guide to match the k8s guide. | 1.0 | Remove Docker Vagrant-Ubuntu Guide - I don't think we really need to be maintaining 2 vagrant guides. Let's stick with CoreOS guide to match the k8s guide. | priority | remove docker vagrant ubuntu guide i don t think we really need to be maintaining vagrant guides let s stick with coreos guide to match the guide | 1 |

313,214 | 9,558,471,500 | IssuesEvent | 2019-05-03 14:18:39 | entrepreneur-interet-general/gobelins | https://api.github.com/repos/entrepreneur-interet-general/gobelins | closed | Sur l'écran d'accueil Beta, lancer une recherche bloque le scroll. | Priority 4 | **Description du défaut**

Sur l'écran d'accueil Beta, si l'utilisateur, avant de faire défiler jusqu'aux objets, lance une recherche depuis le bas de son écran, alors le viewport est bloqué lors de l'affichage des résultats.

**Comportement attendu**

Interagir avec l'interface de recherche devrait faire scroller et... | 1.0 | Sur l'écran d'accueil Beta, lancer une recherche bloque le scroll. - **Description du défaut**

Sur l'écran d'accueil Beta, si l'utilisateur, avant de faire défiler jusqu'aux objets, lance une recherche depuis le bas de son écran, alors le viewport est bloqué lors de l'affichage des résultats.

**Comportement attendu... | priority | sur l écran d accueil beta lancer une recherche bloque le scroll description du défaut sur l écran d accueil beta si l utilisateur avant de faire défiler jusqu aux objets lance une recherche depuis le bas de son écran alors le viewport est bloqué lors de l affichage des résultats comportement attendu... | 1 |

703,990 | 24,180,409,942 | IssuesEvent | 2022-09-23 08:21:21 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | opened | APC construction doesn't require a cell and will not pass through cell charge | Priority: 2-Before Release Issue: Feature Request Difficulty: 2-Medium | ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

So APC construction has been improved but still doesn't require a power cell like it does in SS13. This is effectively the battery component of the APC and allows APCs to be upgraded in game... | 1.0 | APC construction doesn't require a cell and will not pass through cell charge - ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

So APC construction has been improved but still doesn't require a power cell like it does in SS13. This is eff... | priority | apc construction doesn t require a cell and will not pass through cell charge description so apc construction has been improved but still doesn t require a power cell like it does in this is effectively the battery component of the apc and allows apcs to be upgraded in game just by swapping the cell or to ... | 1 |

754,279 | 26,380,182,809 | IssuesEvent | 2023-01-12 07:57:34 | Together-Java/TJ-Bot | https://api.github.com/repos/Together-Java/TJ-Bot | closed | Auto-post advice on empty help threads | enhancement priority: normal valid help wanted | Sometimes new users create a help thread and then dont post any details in it.

In such a situation, the bot should (after some waiting time, maybe 5 minutes) post a message where it pings the author and asks them to post some details, shortly explaining how the system works.

This could be setup as scheduled task,... | 1.0 | Auto-post advice on empty help threads - Sometimes new users create a help thread and then dont post any details in it.

In such a situation, the bot should (after some waiting time, maybe 5 minutes) post a message where it pings the author and asks them to post some details, shortly explaining how the system works.

... | priority | auto post advice on empty help threads sometimes new users create a help thread and then dont post any details in it in such a situation the bot should after some waiting time maybe minutes post a message where it pings the author and asks them to post some details shortly explaining how the system works ... | 1 |

296,073 | 9,104,018,432 | IssuesEvent | 2019-02-20 17:07:50 | medic/medic | https://api.github.com/repos/medic/medic | closed | Navigate away from form dialog shown when completing a task | Priority: 1 - High Type: Bug | The dialog that you are navigating away from a un-saved form is shown on submit from a task.

**Steps to reproduce**:

Navigate to a person/place that has a task. Or navigate to the tasks page.

Complete a task

**What should happen**:

The task is completed and user is returned.

**What actually happens**:

The... | 1.0 | Navigate away from form dialog shown when completing a task - The dialog that you are navigating away from a un-saved form is shown on submit from a task.

**Steps to reproduce**:

Navigate to a person/place that has a task. Or navigate to the tasks page.

Complete a task

**What should happen**:

The task is comp... | priority | navigate away from form dialog shown when completing a task the dialog that you are navigating away from a un saved form is shown on submit from a task steps to reproduce navigate to a person place that has a task or navigate to the tasks page complete a task what should happen the task is comp... | 1 |

20,481 | 3,814,422,532 | IssuesEvent | 2016-03-28 13:14:36 | Exa-Networks/exabgp | https://api.github.com/repos/Exa-Networks/exabgp | closed | exabgp ibgp down | duplicate fixed-need-testing | ```

exabgp: 29770 network Peer ip1 ASN asnumber out loop, peer reset, message [] error[]

exabgp: 29770 message Peer ip2 ASN asnumber<< KEEPALIVE

exabgp: 29770 message Peer ip2 ASN asnumber << NOTIFICATION

********************************************************************************

EXA... | 1.0 | exabgp ibgp down - ```

exabgp: 29770 network Peer ip1 ASN asnumber out loop, peer reset, message [] error[]

exabgp: 29770 message Peer ip2 ASN asnumber<< KEEPALIVE

exabgp: 29770 message Peer ip2 ASN asnumber << NOTIFICATION

******************************************************************... | non_priority | exabgp ibgp down exabgp network peer asn asnumber out loop peer reset message error exabgp message peer asn asnumber keepalive exabgp message peer asn asnumber notification exab... | 0 |

95,572 | 27,553,770,128 | IssuesEvent | 2023-03-07 16:30:30 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | AV during System.Net.Http.Functional.Tests on windows x86 | blocking-clean-ci Known Build Error | ## Build Information

Build: https://dev.azure.com/dnceng-public/cbb18261-c48f-4abb-8651-8cdcb5474649/_build/results?buildId=195488

Build error leg or test failing: System.Net.Http.Functional.Tests.WorkItemExecution

Pull request: https://github.com/dotnet/runtime/pull/83068

<!-- Error message template -->

## Error... | 1.0 | AV during System.Net.Http.Functional.Tests on windows x86 - ## Build Information

Build: https://dev.azure.com/dnceng-public/cbb18261-c48f-4abb-8651-8cdcb5474649/_build/results?buildId=195488

Build error leg or test failing: System.Net.Http.Functional.Tests.WorkItemExecution

Pull request: https://github.com/dotnet/ru... | non_priority | av during system net http functional tests on windows build information build build error leg or test failing system net http functional tests workitemexecution pull request error message fill the error message using json errormessage exit code buildretry false ... | 0 |

76,226 | 14,583,065,292 | IssuesEvent | 2020-12-18 13:25:33 | odpi/egeria | https://api.github.com/repos/odpi/egeria | opened | Security Analysis - Poor Logging Practice | code-quality security | Ubrella issue for Code quality improvements identified

OWASP [Poor Logging Practice](https://owasp.org/www-community/vulnerabilities/Poor_Logging_Practice) | 1.0 | Security Analysis - Poor Logging Practice - Ubrella issue for Code quality improvements identified

OWASP [Poor Logging Practice](https://owasp.org/www-community/vulnerabilities/Poor_Logging_Practice) | non_priority | security analysis poor logging practice ubrella issue for code quality improvements identified owasp | 0 |

123,296 | 16,473,849,633 | IssuesEvent | 2021-05-23 23:37:31 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Incorrect Type, expected array with terminal | *as-designed |

Issue Type: <b>Bug</b>

At any time go into settings, edit user settings in settings.json.

Write the following:

"terminal.integrated.profiles.windows": {

//automatically generated code here, remove comma below if necessary

,

"DevPowerShell64": {

"path": "C:\\Windows\\SysWOW64\\W... | 1.0 | Incorrect Type, expected array with terminal -

Issue Type: <b>Bug</b>

At any time go into settings, edit user settings in settings.json.

Write the following:

"terminal.integrated.profiles.windows": {

//automatically generated code here, remove comma below if necessary

,

"DevPowerShell64": {... | non_priority | incorrect type expected array with terminal issue type bug at any time go into settings edit user settings in settings json write the following terminal integrated profiles windows automatically generated code here remove comma below if necessary path... | 0 |

352,817 | 25,083,467,542 | IssuesEvent | 2022-11-07 21:26:59 | SWE-OutOfBounds/Documents | https://api.github.com/repos/SWE-OutOfBounds/Documents | opened | Creazione preventivo orari | documentation | Definizione di un documento per la specifica degli orari da rispettare | 1.0 | Creazione preventivo orari - Definizione di un documento per la specifica degli orari da rispettare | non_priority | creazione preventivo orari definizione di un documento per la specifica degli orari da rispettare | 0 |

80,060 | 29,935,850,321 | IssuesEvent | 2023-06-22 12:43:18 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | MapStore Write-Behind Batching produces too small batches | Type: Defect | **Problem**

Our write-behind queue is growing very large because it is not processed efficiently. We are trying to use batches of size 1000, but we see that most batches are smaller than 10 items.

**Expected behavior**

Since we are using coalescing write-behind queues - and therefore only the latest version of a m... | 1.0 | MapStore Write-Behind Batching produces too small batches - **Problem**

Our write-behind queue is growing very large because it is not processed efficiently. We are trying to use batches of size 1000, but we see that most batches are smaller than 10 items.

**Expected behavior**

Since we are using coalescing write-... | non_priority | mapstore write behind batching produces too small batches problem our write behind queue is growing very large because it is not processed efficiently we are trying to use batches of size but we see that most batches are smaller than items expected behavior since we are using coalescing write behi... | 0 |

545,033 | 15,934,592,489 | IssuesEvent | 2021-04-14 08:51:37 | Automattic/Edit-Flow | https://api.github.com/repos/Automattic/Edit-Flow | opened | Migrating Travis CI to GitHub Actions | [Priority] High enhancement | As the title, we consider moving from Travis CI to GitHub Actions as https://travis-ci.org/ will be moved to http://www.travis-ci.com/ soon.

Good start https://docs.github.com/en/actions/learn-github-actions/migrating-from-travis-ci-to-github-actions | 1.0 | Migrating Travis CI to GitHub Actions - As the title, we consider moving from Travis CI to GitHub Actions as https://travis-ci.org/ will be moved to http://www.travis-ci.com/ soon.

Good start https://docs.github.com/en/actions/learn-github-actions/migrating-from-travis-ci-to-github-actions | priority | migrating travis ci to github actions as the title we consider moving from travis ci to github actions as will be moved to soon good start | 1 |

328,736 | 9,999,337,652 | IssuesEvent | 2019-07-12 10:23:06 | ushahidi/opensourcedesign | https://api.github.com/repos/ushahidi/opensourcedesign | reopened | Square logo to be created | Design Highest Priority In progress | We need a logo that will work on social icons/profiles that is square orientation.

max size 1080x1080px - High res version in .png .jpg .svg

min size 50x50px low res version .png .jpg .svg

Favicon .favi (use website to create this) | 1.0 | Square logo to be created - We need a logo that will work on social icons/profiles that is square orientation.

max size 1080x1080px - High res version in .png .jpg .svg

min size 50x50px low res version .png .jpg .svg

Favicon .favi (use website to create this) | priority | square logo to be created we need a logo that will work on social icons profiles that is square orientation max size high res version in png jpg svg min size low res version png jpg svg favicon favi use website to create this | 1 |

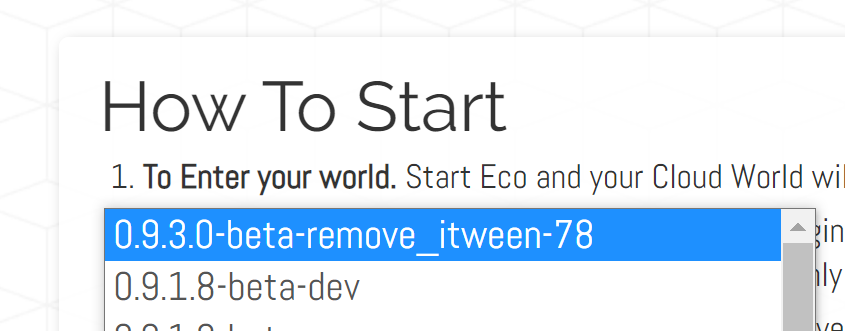

499,363 | 14,445,962,477 | IssuesEvent | 2020-12-08 00:08:44 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Cloud Worlds - auto-updated to version 0.9.3.0 | Category: Cloud Worlds Priority: High Status: Fixed | My Cloud World auto updated to version 0.9.3.0 , I'm guessing other Cloud Worlds might've done the same if they were set to auto-update:

| 1.0 | Cloud Worlds - auto-updated to version 0.9.3.0 - My Cloud World auto updated to version 0.9.3.0 , I'm guessing other Cloud Worlds might've done the same if they were set to auto-update:

| priority | cloud worlds auto updated to version my cloud world auto updated to version i m guessing other cloud worlds might ve done the same if they were set to auto update | 1 |

262,213 | 19,767,688,987 | IssuesEvent | 2022-01-17 06:00:01 | Real-Dev-Squad/mobile-app | https://api.github.com/repos/Real-Dev-Squad/mobile-app | opened | Make a README.md | documentation | Make the README.md file for the repository explaining the file structure and local installation and running details. | 1.0 | Make a README.md - Make the README.md file for the repository explaining the file structure and local installation and running details. | non_priority | make a readme md make the readme md file for the repository explaining the file structure and local installation and running details | 0 |

270,252 | 8,453,646,251 | IssuesEvent | 2018-10-20 17:37:21 | CS2113-AY1819S1-T09-1/main | https://api.github.com/repos/CS2113-AY1819S1-T09-1/main | closed | add : add a print manually | priority.medium type.enhancement type.story | As a student , i want to be able to add a print to a particular queue manually so that i can ensure that my print job will be processed. | 1.0 | add : add a print manually - As a student , i want to be able to add a print to a particular queue manually so that i can ensure that my print job will be processed. | priority | add add a print manually as a student i want to be able to add a print to a particular queue manually so that i can ensure that my print job will be processed | 1 |

416,739 | 28,097,845,128 | IssuesEvent | 2023-03-30 17:04:47 | microsoft/torchgeo | https://api.github.com/repos/microsoft/torchgeo | closed | torchgeo install in google colab | documentation | ### Description

I wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue.

```

%pip install torchgeo

from torchgeo.trainers import ClassificationTask

```

`ContextualVersionConflict: (Pygments 2.6.1 (/usr/local/lib/python3.8/dist-package... | 1.0 | torchgeo install in google colab - ### Description

I wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue.

```

%pip install torchgeo

from torchgeo.trainers import ClassificationTask

```

`ContextualVersionConflict: (Pygments 2.6.1 (/u... | non_priority | torchgeo install in google colab description i wanted to check a potential bug with a reproducible example in a colab notebook and found that there is some installation issue pip install torchgeo from torchgeo trainers import classificationtask contextualversionconflict pygments u... | 0 |

307,483 | 9,417,762,153 | IssuesEvent | 2019-04-10 17:31:56 | Esri/maps-app-ios | https://api.github.com/repos/Esri/maps-app-ios | closed | Remove ATS allows arbitrary loads. | Effort - Small Priority - Low Status - Backlog Type - Enhancement | ArcGIS Online has updated it's owning system URL. https://www.arcgis.com/sharing/rest/info?f=pjson

This change should be reflected in

- [ ] XCode

- [ ] README | 1.0 | Remove ATS allows arbitrary loads. - ArcGIS Online has updated it's owning system URL. https://www.arcgis.com/sharing/rest/info?f=pjson

This change should be reflected in

- [ ] XCode

- [ ] README | priority | remove ats allows arbitrary loads arcgis online has updated it s owning system url this change should be reflected in xcode readme | 1 |

49,588 | 3,003,706,314 | IssuesEvent | 2015-07-25 05:49:21 | jayway/powermock | https://api.github.com/repos/jayway/powermock | closed | Add support for Java 8 | bug imported Priority-Medium | _From [iir...@gmail.com](https://code.google.com/u/113896979631297957884/) on January 06, 2014 23:51:28_

Some features may not work. At least PowerMock agent module didn't work.

Here's my fix for that module: https://github.com/iirekm/powermock/commit/339ae4a17ec7e7adde3c78ea5f37843647bbbc3d

_Original issue: http://... | 1.0 | Add support for Java 8 - _From [iir...@gmail.com](https://code.google.com/u/113896979631297957884/) on January 06, 2014 23:51:28_

Some features may not work. At least PowerMock agent module didn't work.

Here's my fix for that module: https://github.com/iirekm/powermock/commit/339ae4a17ec7e7adde3c78ea5f37843647bbbc3d

... | priority | add support for java from on january some features may not work at least powermock agent module didn t work here s my fix for that module original issue | 1 |

67,531 | 20,978,278,355 | IssuesEvent | 2022-03-28 17:13:03 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | opened | auth API: fetching individual RRsets skips disabled records | auth defect | - Program: Authoritative

- Issue type: Bug report

### Short description

Fetching of individual RRsets via the API, as introduced in #11389, skips disabled records. Given that full zone listings do include those records, this is inconsistent and potentially confusing.

### Other notes

Because fixing this looks... | 1.0 | auth API: fetching individual RRsets skips disabled records - - Program: Authoritative

- Issue type: Bug report

### Short description

Fetching of individual RRsets via the API, as introduced in #11389, skips disabled records. Given that full zone listings do include those records, this is inconsistent and potent... | non_priority | auth api fetching individual rrsets skips disabled records program authoritative issue type bug report short description fetching of individual rrsets via the api as introduced in skips disabled records given that full zone listings do include those records this is inconsistent and potentiall... | 0 |

202,237 | 7,045,636,547 | IssuesEvent | 2018-01-01 22:32:46 | pybel/pybel | https://api.github.com/repos/pybel/pybel | closed | Integrate document utilities from pybel-tools | enhancement low priority | Since there's already a feature to make bel scripts, the robust solution might as well be in place here too | 1.0 | Integrate document utilities from pybel-tools - Since there's already a feature to make bel scripts, the robust solution might as well be in place here too | priority | integrate document utilities from pybel tools since there s already a feature to make bel scripts the robust solution might as well be in place here too | 1 |

709,272 | 24,372,602,728 | IssuesEvent | 2022-10-03 20:42:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | exporter/loadbalancingexporter: use hashicorp/memberlist instead of DNS to create hashring | enhancement priority:p3 exporter/loadbalancing | **Is your feature request related to a problem? Please describe.**

Doing DNS lookups every 5 seconds seems to put some strain on the infrastructure. Also scaling events that change the number of pods may change where a traceID goes, causing disruption. I've been thinking of adding an HPA for the load-balancer front-en... | 1.0 | exporter/loadbalancingexporter: use hashicorp/memberlist instead of DNS to create hashring - **Is your feature request related to a problem? Please describe.**

Doing DNS lookups every 5 seconds seems to put some strain on the infrastructure. Also scaling events that change the number of pods may change where a traceID... | priority | exporter loadbalancingexporter use hashicorp memberlist instead of dns to create hashring is your feature request related to a problem please describe doing dns lookups every seconds seems to put some strain on the infrastructure also scaling events that change the number of pods may change where a traceid... | 1 |

557,537 | 16,510,987,357 | IssuesEvent | 2021-05-26 04:05:57 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | mcumgr: shell output gets truncated | area: Shell area: mcumgr bug priority: low | **Describe the bug**

The mcumgr output from shell command gets truncated at predefined length, determined by buffer length.

Providing `shell help` as an example but the issue impacts all shell commands that return some output.

**To Reproduce**

Steps to reproduce the behavior:

1. Build

`west build -b nrf52840dk_... | 1.0 | mcumgr: shell output gets truncated - **Describe the bug**

The mcumgr output from shell command gets truncated at predefined length, determined by buffer length.

Providing `shell help` as an example but the issue impacts all shell commands that return some output.

**To Reproduce**

Steps to reproduce the behavior:... | priority | mcumgr shell output gets truncated describe the bug the mcumgr output from shell command gets truncated at predefined length determined by buffer length providing shell help as an example but the issue impacts all shell commands that return some output to reproduce steps to reproduce the behavior ... | 1 |

112,264 | 24,245,494,144 | IssuesEvent | 2022-09-27 10:11:44 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: Space or newline in action binding causes Action selector not to update properly | Bug Low Low effort Papercut FE Coders Pod Evaluated Value | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

If we add a space after the binding curly braces, then the action selector value is not updated properly.

Refer to the following video:

https://user-images.githubusercontent.com/10436935/142255827-6268bc4f-e53... | 1.0 | [Bug]: Space or newline in action binding causes Action selector not to update properly - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

If we add a space after the binding curly braces, then the action selector value is not updated properly.

Refer to the fol... | non_priority | space or newline in action binding causes action selector not to update properly is there an existing issue for this i have searched the existing issues current behavior if we add a space after the binding curly braces then the action selector value is not updated properly refer to the following... | 0 |

783,901 | 27,550,582,276 | IssuesEvent | 2023-03-07 14:42:20 | NewGraphEnvironment/fish_passage_skeena_2022_reporting | https://api.github.com/repos/NewGraphEnvironment/fish_passage_skeena_2022_reporting | closed | Populate cost estimate objects and add to memos and results | priority low | I labelled as low priority for now because I can get to this later once memos and other stuff is done first. Just wondering if I'm following a similar workflow to how it was done in the bulkley report? Helpful issue from bulkley repo [here](https://github.com/NewGraphEnvironment/fish_passage_bulkley_2022_reporting/issu... | 1.0 | Populate cost estimate objects and add to memos and results - I labelled as low priority for now because I can get to this later once memos and other stuff is done first. Just wondering if I'm following a similar workflow to how it was done in the bulkley report? Helpful issue from bulkley repo [here](https://github.co... | priority | populate cost estimate objects and add to memos and results i labelled as low priority for now because i can get to this later once memos and other stuff is done first just wondering if i m following a similar workflow to how it was done in the bulkley report helpful issue from bulkley repo | 1 |

299,921 | 9,205,971,251 | IssuesEvent | 2019-03-08 12:17:00 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | toobars re-arrange at their will | Category: GUI Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **Maciej Sieczka -** (Maciej Sieczka -)

Original Redmine Issue: 1275, https://issues.qgis.org/issues/1275

Original Assignee: nobody -

---

r9249. See the attached screendumps.

1. On ok.png toolbars are organized the way I want them.

2. Then I quite QGIS and start it again.

3. Toolbars are organi... | 1.0 | toobars re-arrange at their will - ---

Author Name: **Maciej Sieczka -** (Maciej Sieczka -)

Original Redmine Issue: 1275, https://issues.qgis.org/issues/1275

Original Assignee: nobody -

---

r9249. See the attached screendumps.

1. On ok.png toolbars are organized the way I want them.

2. Then I quite QGIS and star... | priority | toobars re arrange at their will author name maciej sieczka maciej sieczka original redmine issue original assignee nobody see the attached screendumps on ok png toolbars are organized the way i want them then i quite qgis and start it again toolbars are organized di... | 1 |

591,814 | 17,862,437,766 | IssuesEvent | 2021-09-06 04:06:06 | ArkEcosystem/exchange-json-rpc | https://api.github.com/repos/ArkEcosystem/exchange-json-rpc | closed | Upgrade `@arkecosystem/crypto` to `3.0.0-next.34` | Priority: Critical | Required to support the new voting transactions. | 1.0 | Upgrade `@arkecosystem/crypto` to `3.0.0-next.34` - Required to support the new voting transactions. | priority | upgrade arkecosystem crypto to next required to support the new voting transactions | 1 |

803,030 | 29,115,577,360 | IssuesEvent | 2023-05-17 00:26:29 | returntocorp/semgrep | https://api.github.com/repos/returntocorp/semgrep | closed | Support `dot dotdotdot dot` ellipsis in swift | priority:medium parsing-pattern lang:swift | **Describe the bug**

Currently the engine does not support `$VALUE. ... .FOO(...)` or `$VALUE. ...` which makes it fairly hard to write certain rules in swift.

**To Reproduce**

Visit https://semgrep.dev/s/JK8W click run

**Expected behavior**

https://semgrep.dev/s/JK8W should pass

`$VALUE. ... .$FOO(...)`

... | 1.0 | Support `dot dotdotdot dot` ellipsis in swift - **Describe the bug**

Currently the engine does not support `$VALUE. ... .FOO(...)` or `$VALUE. ...` which makes it fairly hard to write certain rules in swift.

**To Reproduce**

Visit https://semgrep.dev/s/JK8W click run

**Expected behavior**

https://semgrep.dev/s... | priority | support dot dotdotdot dot ellipsis in swift describe the bug currently the engine does not support value foo or value which makes it fairly hard to write certain rules in swift to reproduce visit click run expected behavior should pass value foo an... | 1 |

70,173 | 13,434,317,551 | IssuesEvent | 2020-09-07 11:10:38 | hackjunction/hackplatform | https://api.github.com/repos/hackjunction/hackplatform | opened | Search all snake_case variables and see if they are possible to convert to camelCase | chore code style | Meta issue to figure out extent of work needed to do this. | 1.0 | Search all snake_case variables and see if they are possible to convert to camelCase - Meta issue to figure out extent of work needed to do this. | non_priority | search all snake case variables and see if they are possible to convert to camelcase meta issue to figure out extent of work needed to do this | 0 |

755,943 | 26,448,574,018 | IssuesEvent | 2023-01-16 09:28:36 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.sonyliv.com - site is not usable | priority-normal browser-focus-geckoview engine-gecko | <!-- @browser: Firefox Mobile 108.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 12; Mobile; rv:108.0) Gecko/108.0 Firefox/108.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/116784 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https... | 1.0 | www.sonyliv.com - site is not usable - <!-- @browser: Firefox Mobile 108.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 12; Mobile; rv:108.0) Gecko/108.0 Firefox/108.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/116784 -->

<!-- @extra_labels: brow... | priority | site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description page not loading correctly steps to reproduce the page didn not even load view the sc... | 1 |

100,682 | 8,752,748,083 | IssuesEvent | 2018-12-14 04:59:05 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | Testing 14 : ApiV1DashboardCountTimeBetweenGetPathParamTodateMongodbNoSqlInjectionTimebound | Testing 14 | Project : Testing 14

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=... | 1.0 | Testing 14 : ApiV1DashboardCountTimeBetweenGetPathParamTodateMongodbNoSqlInjectionTimebound - Project : Testing 14

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, ma... | non_priority | testing project testing job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint request... | 0 |

133,846 | 18,959,496,799 | IssuesEvent | 2021-11-19 01:41:05 | airshipit/airshipctl | https://api.github.com/repos/airshipit/airshipctl | closed | Airship specific implementation of KRM Function Specification | enhancement priority/low design needed | **Problem description (if applicable)**

At the moment Airship uses two types of generic containers: krm (kyaml/runfn) and airship (airship specific docker wrapper). kyaml/runfn has some limitations that do not allow to use it everywhere. For example, sometimes we want a container to be run from a privileged user. Also... | 1.0 | Airship specific implementation of KRM Function Specification - **Problem description (if applicable)**

At the moment Airship uses two types of generic containers: krm (kyaml/runfn) and airship (airship specific docker wrapper). kyaml/runfn has some limitations that do not allow to use it everywhere. For example, some... | non_priority | airship specific implementation of krm function specification problem description if applicable at the moment airship uses two types of generic containers krm kyaml runfn and airship airship specific docker wrapper kyaml runfn has some limitations that do not allow to use it everywhere for example some... | 0 |

268,769 | 8,411,465,752 | IssuesEvent | 2018-10-12 13:58:48 | CS2113-AY1819S1-T16-3/main | https://api.github.com/repos/CS2113-AY1819S1-T16-3/main | closed | As a user, I want to have administrator priviledges | priority.high type.story | so that I can use superuser commands and manage employees. | 1.0 | As a user, I want to have administrator priviledges - so that I can use superuser commands and manage employees. | priority | as a user i want to have administrator priviledges so that i can use superuser commands and manage employees | 1 |

132,882 | 10,772,264,803 | IssuesEvent | 2019-11-02 13:45:55 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | [Failing Test] gce-cos-master-scalability-100 (ci-kubernetes-e2e-gci-gce-scalability) | kind/failing-test priority/critical-urgent sig/scheduling | **Which jobs are failing**:

`gce-cos-master-scalability-100 (ci-kubernetes-e2e-gci-gce-scalability)`

**Since when has it been failing**:

`1st Nov 01:32 PDT`

**Testgrid link**:

https://testgrid.k8s.io/sig-release-master-blocking#gce-cos-master-scalability-100

**Reason for failure**:

```console

W1101 14:14... | 1.0 | [Failing Test] gce-cos-master-scalability-100 (ci-kubernetes-e2e-gci-gce-scalability) - **Which jobs are failing**:

`gce-cos-master-scalability-100 (ci-kubernetes-e2e-gci-gce-scalability)`

**Since when has it been failing**:

`1st Nov 01:32 PDT`

**Testgrid link**:

https://testgrid.k8s.io/sig-release-master-bloc... | non_priority | gce cos master scalability ci kubernetes gci gce scalability which jobs are failing gce cos master scalability ci kubernetes gci gce scalability since when has it been failing nov pdt testgrid link reason for failure console run kubetest ... | 0 |

797,107 | 28,138,039,092 | IssuesEvent | 2023-04-01 16:09:12 | OWASP/threat-dragon | https://api.github.com/repos/OWASP/threat-dragon | closed | BrowserStack End to End Tests failing | bug version-2.0 priority | **Describe the bug**

The workflow action BrowserStack End to End Tests are failing, along with all other pipelines, with:

```

Run npm i -g pnpm

ERR_PNPM_FROZEN_LOCKFILE_WITH_OUTDATED_LOCKFILE Cannot perform a frozen installation because the version of the lockfile is incompatible with this version of pnpm

```

... | 1.0 | BrowserStack End to End Tests failing - **Describe the bug**

The workflow action BrowserStack End to End Tests are failing, along with all other pipelines, with:

```

Run npm i -g pnpm

ERR_PNPM_FROZEN_LOCKFILE_WITH_OUTDATED_LOCKFILE Cannot perform a frozen installation because the version of the lockfile is inco... | priority | browserstack end to end tests failing describe the bug the workflow action browserstack end to end tests are failing along with all other pipelines with run npm i g pnpm err pnpm frozen lockfile with outdated lockfile cannot perform a frozen installation because the version of the lockfile is inco... | 1 |

53,016 | 6,670,390,703 | IssuesEvent | 2017-10-03 23:23:02 | phetsims/ohms-law | https://api.github.com/repos/phetsims/ohms-law | closed | Describing size of the current arrows | design:a11y | From https://github.com/phetsims/ohms-law/issues/80, @emily-phet said

> Current arrows relative description doesn't seem to update (stays at "tiny arrows represent...").

@jessegreenberg said:

> I think that the problem is just that the current arrows grow like 1 / R. The max height of the arrows is 1243 view c... | 1.0 | Describing size of the current arrows - From https://github.com/phetsims/ohms-law/issues/80, @emily-phet said

> Current arrows relative description doesn't seem to update (stays at "tiny arrows represent...").

@jessegreenberg said:

> I think that the problem is just that the current arrows grow like 1 / R. The... | non_priority | describing size of the current arrows from emily phet said current arrows relative description doesn t seem to update stays at tiny arrows represent jessegreenberg said i think that the problem is just that the current arrows grow like r the max height of the arrows is view coordinate... | 0 |

433,946 | 30,387,583,787 | IssuesEvent | 2023-07-13 03:00:36 | Jeong29Hyeon/jpa-study | https://api.github.com/repos/Jeong29Hyeon/jpa-study | closed | 벌크 연산 | documentation | # 벌크연산

- 재고가 10개 미만인 모든 상품의 가격을 10% 상승시키려면 ?

- JPA 변경 감지 기능으로 실행하려면 너무 많은 SQL을 실행해야한다.

- 재고가 10개 미만인 상품 리스트를 조회한다.

- 상품 엔티티의 가격을 10% 증가시킨다.

- 트랜잭션 커밋 시점에 변경감지가 동작한다.

- 변경된 데이터가 100건이라면 100번의 UPDATE SQL 실행됨.

위의 문제점을 해결하기 위한 메소드가 존재한다! `executeUpdate()` !!

쿼리 한 번으로 여러 테이블 데이터를 변경한다.

executeUpdate() 의 결과는 영향... | 1.0 | 벌크 연산 - # 벌크연산

- 재고가 10개 미만인 모든 상품의 가격을 10% 상승시키려면 ?

- JPA 변경 감지 기능으로 실행하려면 너무 많은 SQL을 실행해야한다.

- 재고가 10개 미만인 상품 리스트를 조회한다.

- 상품 엔티티의 가격을 10% 증가시킨다.

- 트랜잭션 커밋 시점에 변경감지가 동작한다.

- 변경된 데이터가 100건이라면 100번의 UPDATE SQL 실행됨.

위의 문제점을 해결하기 위한 메소드가 존재한다! `executeUpdate()` !!

쿼리 한 번으로 여러 테이블 데이터를 변경한다.

executeUpdate() ... | non_priority | 벌크 연산 벌크연산 재고가 미만인 모든 상품의 가격을 상승시키려면 jpa 변경 감지 기능으로 실행하려면 너무 많은 sql을 실행해야한다 재고가 미만인 상품 리스트를 조회한다 상품 엔티티의 가격을 증가시킨다 트랜잭션 커밋 시점에 변경감지가 동작한다 변경된 데이터가 update sql 실행됨 위의 문제점을 해결하기 위한 메소드가 존재한다 executeupdate 쿼리 한 번으로 여러 테이블 데이터를 변경한다 executeupdate 의 결과는 영향받은 엔티티의 ... | 0 |

758,291 | 26,549,024,526 | IssuesEvent | 2023-01-20 05:05:44 | myalley-project/myalley-be | https://api.github.com/repos/myalley-project/myalley-be | closed | 메이트 모집글 작성 기능 | back-end 1st Priority | **할 일**

- [x] 메이트 모집 페키지 구조 틀 만들기

- [x] 도메인 변수명, 변수 목록 확정하기

- [x] 메이트 모집글 삭제 repository, domain 도 만들기

- [x] 글 작성용 dto 추가하기

- [x] 전시글과 연동해서 등록시키기

- [x] 토큰 받아서 회원 정보 연결해서 등록시키기

- [x] service & controller 구현하기

- [x] 연관관계 설정 추가하기 | 1.0 | 메이트 모집글 작성 기능 - **할 일**

- [x] 메이트 모집 페키지 구조 틀 만들기

- [x] 도메인 변수명, 변수 목록 확정하기

- [x] 메이트 모집글 삭제 repository, domain 도 만들기

- [x] 글 작성용 dto 추가하기

- [x] 전시글과 연동해서 등록시키기

- [x] 토큰 받아서 회원 정보 연결해서 등록시키기

- [x] service & controller 구현하기

- [x] 연관관계 설정 추가하기 | priority | 메이트 모집글 작성 기능 할 일 메이트 모집 페키지 구조 틀 만들기 도메인 변수명 변수 목록 확정하기 메이트 모집글 삭제 repository domain 도 만들기 글 작성용 dto 추가하기 전시글과 연동해서 등록시키기 토큰 받아서 회원 정보 연결해서 등록시키기 service controller 구현하기 연관관계 설정 추가하기 | 1 |

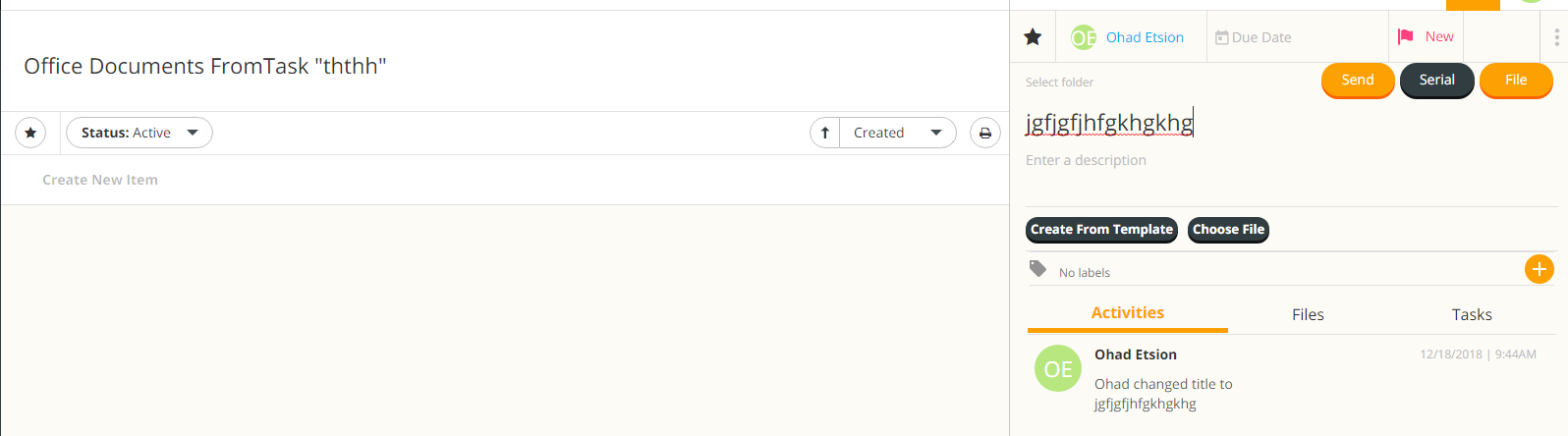

6,001 | 8,808,922,197 | IssuesEvent | 2018-12-27 16:54:37 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | office documents from tasks bug | 2.0.6 Fixed Process bug critical | after creating a task, and then going to the documents tab, clicking on manage documents

create new item doesnt update the list, and after editing the document it isnt saved after refreshing the page

| 1.0 | office documents from tasks bug - after creating a task, and then going to the documents tab, clicking on manage documents

create new item doesnt update the list, and after editing the document it isnt saved after refreshing the page

When I turn on the power save mode(back to 60HZ) The jank and laggy will not happen.

But when I back to 120HZ, it happens again.

**The scroll animation is fine.But drag is not.**

(Tip:Drag, instead of sw... | True | [iOS] The drag behavior is laggy and seems to not have 120hz adjustment - As title of issue descripes, this issue will happen when I use an iPhone with **Promotion** (eg:iPhone13Pro)

When I turn on the power save mode(back to 60HZ) The jank and laggy will not happen.

But when I back to 120HZ, it happens again.

*... | non_priority | the drag behavior is laggy and seems to not have adjustment as title of issue descripes this issue will happen when i use an iphone with promotion eg when i turn on the power save mode back to the jank and laggy will not happen but when i back to it happens again the scroll animation is ... | 0 |

728,360 | 25,076,163,323 | IssuesEvent | 2022-11-07 15:38:57 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | Duplicate orders with 2 parallel GraphQL requests | Issue: Confirmed Reproduced on 2.4.x Priority: P1 Severity: S1 Progress: done Evaluated | #### Precondition

As a Guest user follow steps by GraphQL checkout tutorial to step 10 Place order https://devdocs.magento.com/guides/v2.4/graphql/tutorials/checkout/index.html

#### Steps

Execute Place the order mutation in parallel in 2 browsers (I did it in Postman) .

```

mutation {

placeOrder(input: {cart... | 1.0 | Duplicate orders with 2 parallel GraphQL requests - #### Precondition

As a Guest user follow steps by GraphQL checkout tutorial to step 10 Place order https://devdocs.magento.com/guides/v2.4/graphql/tutorials/checkout/index.html

#### Steps

Execute Place the order mutation in parallel in 2 browsers (I did it in Pos... | priority | duplicate orders with parallel graphql requests precondition as a guest user follow steps by graphql checkout tutorial to step place order steps execute place the order mutation in parallel in browsers i did it in postman mutation placeorder input cart id cart id ... | 1 |

46,217 | 13,152,203,721 | IssuesEvent | 2020-08-09 20:53:20 | Jacksole/Learning-JavaScript | https://api.github.com/repos/Jacksole/Learning-JavaScript | closed | CVE-2019-2391 (Medium) detected in bson-1.1.3.tgz | security vulnerability | ## CVE-2019-2391 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bson-1.1.3.tgz</b></p></summary>

<p>A bson parser for node.js and the browser</p>

<p>Library home page: <a href="http... | True | CVE-2019-2391 (Medium) detected in bson-1.1.3.tgz - ## CVE-2019-2391 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bson-1.1.3.tgz</b></p></summary>

<p>A bson parser for node.js and... | non_priority | cve medium detected in bson tgz cve medium severity vulnerability vulnerable library bson tgz a bson parser for node js and the browser library home page a href path to dependency file tmp ws scm learning javascript meanauthapp package json path to vulnerable library... | 0 |

324,898 | 27,828,203,239 | IssuesEvent | 2023-03-20 00:29:26 | FasterXML/jackson-dataformat-xml | https://api.github.com/repos/FasterXML/jackson-dataformat-xml | closed | Enabled FromXmlParser.Feature.EMPTY_ELEMENT_AS_NULL breaks up old functionality since 2.12.0 | test-needed | I have a class

```

@Data

public class FooView {

private String str;

}

```

and enabled feature **FromXmlParser.Feature.EMPTY_ELEMENT_AS_NULL** for XmlMapper.

Then i trying to call

`

FooView fooView = xmlMapper.readValue(content, FooView.class)

`

and set content as:

`

<Content/>

`

As result i'... | 1.0 | Enabled FromXmlParser.Feature.EMPTY_ELEMENT_AS_NULL breaks up old functionality since 2.12.0 - I have a class

```

@Data

public class FooView {

private String str;

}

```

and enabled feature **FromXmlParser.Feature.EMPTY_ELEMENT_AS_NULL** for XmlMapper.

Then i trying to call

`

FooView fooView = xmlM... | non_priority | enabled fromxmlparser feature empty element as null breaks up old functionality since i have a class data public class fooview private string str and enabled feature fromxmlparser feature empty element as null for xmlmapper then i trying to call fooview fooview xmlma... | 0 |

6,281 | 5,346,098,181 | IssuesEvent | 2017-02-17 18:45:14 | jruby/jruby | https://api.github.com/repos/jruby/jruby | closed | Improve Bignum string parsing | JRuby 1.7.x JRuby 9000 performance stdlib | JRuby currently uses Java's BigInteger to handle String#to_i when it might need a Bignum. However, this algorithm is quite a bit slower than the one in MRI 2.1.

```

system ~/projects/jruby/jruby-tmp $ rvm ruby-head do ruby -v -rjson

-rbenchmark -e 'p Benchmark.realtime { ("1" * 1000000).to_i }'

ruby 2.1.0dev (2013-09-... | True | Improve Bignum string parsing - JRuby currently uses Java's BigInteger to handle String#to_i when it might need a Bignum. However, this algorithm is quite a bit slower than the one in MRI 2.1.

```

system ~/projects/jruby/jruby-tmp $ rvm ruby-head do ruby -v -rjson

-rbenchmark -e 'p Benchmark.realtime { ("1" * 1000000)... | non_priority | improve bignum string parsing jruby currently uses java s biginteger to handle string to i when it might need a bignum however this algorithm is quite a bit slower than the one in mri system projects jruby jruby tmp rvm ruby head do ruby v rjson rbenchmark e p benchmark realtime to i ... | 0 |

234,375 | 17,952,810,722 | IssuesEvent | 2021-09-13 01:10:50 | UnBArqDsw2021-1/2021.1_G6_Curumim | https://api.github.com/repos/UnBArqDsw2021-1/2021.1_G6_Curumim | closed | Guia de estilo | documentation | ### Descrição:

Issue direcionada para a criação do guia de estilo da aplicação.

### Tarefas:

- [ ] Criar guia de estilo;

- [ ] Todos os integrantes pontuarem a issue.

### Critérios de aceitação:

- [ ] Guia de estilo criado;

- [ ] Issue pontuada.

| 1.0 | Guia de estilo - ### Descrição:

Issue direcionada para a criação do guia de estilo da aplicação.

### Tarefas:

- [ ] Criar guia de estilo;

- [ ] Todos os integrantes pontuarem a issue.

### Critérios de aceitação:

- [ ] Guia de estilo criado;

- [ ] Issue pontuada.

| non_priority | guia de estilo descrição issue direcionada para a criação do guia de estilo da aplicação tarefas criar guia de estilo todos os integrantes pontuarem a issue critérios de aceitação guia de estilo criado issue pontuada | 0 |

137,131 | 12,746,260,786 | IssuesEvent | 2020-06-26 15:37:33 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Document Special Issues Findings | Priority: Medium Product: caseflow-dispatch Product: caseflow-queue Team: Echo 🐬 Type: Documentation Type: Tech-Improvement | After the completion of the Special Issues investigations, we want to capture the information we learned about Special Issues in Queue.

### AC

- [ ] Create a Special Issues page

- Document general special issues information

- Document the disconnection between Special Issues in Queue vs in Caseflow Dispatch... | 1.0 | Document Special Issues Findings - After the completion of the Special Issues investigations, we want to capture the information we learned about Special Issues in Queue.

### AC

- [ ] Create a Special Issues page

- Document general special issues information

- Document the disconnection between Special Issu... | non_priority | document special issues findings after the completion of the special issues investigations we want to capture the information we learned about special issues in queue ac create a special issues page document general special issues information document the disconnection between special issues... | 0 |

720,895 | 24,810,240,431 | IssuesEvent | 2022-10-25 08:51:23 | spidernet-io/spiderpool | https://api.github.com/repos/spidernet-io/spiderpool | closed | Edit subnet is rejected | issue/not-assign priority/important-soon kind/bug | Describe the version

version about:

spiderpool

- v0.2.2

**Describe the bug**

A Subnet, without any IP assigned to the IPPool,But it is not possible to change the size of the ips

**Output of the failure**

```

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file wil... | 1.0 | Edit subnet is rejected - Describe the version

version about:

spiderpool

- v0.2.2

**Describe the bug**

A Subnet, without any IP assigned to the IPPool,But it is not possible to change the size of the ips

**Output of the failure**

```

# Please edit the object below. Lines beginning with a '#' will be ignored... | priority | edit subnet is rejected describe the version version about spiderpool describe the bug a subnet without any ip assigned to the ippool,but it is not possible to change the size of the ips output of the failure please edit the object below lines beginning with a will be ignored ... | 1 |

631,370 | 20,151,185,281 | IssuesEvent | 2022-02-09 12:33:45 | ita-social-projects/horondi_admin | https://api.github.com/repos/ita-social-projects/horondi_admin | closed | (SP:1)[Admin page: Категорії] The button 'Зберегти' isn't clickable and error message doesn't appear after creating new category without image | bug priority: medium Admin | Environment: Windows Server 2019 Standart 64-bit, Google Chrome v.88.0.4324.190

Reproducible: always

Build found: the last commit from https://github.com/ita-social-projects/horondi_admin

Pre-conditions:

Go to https://horondi-admin-staging.azurewebsites.net

Log into Administrator page as Administrator: login 'qu... | 1.0 | (SP:1)[Admin page: Категорії] The button 'Зберегти' isn't clickable and error message doesn't appear after creating new category without image - Environment: Windows Server 2019 Standart 64-bit, Google Chrome v.88.0.4324.190

Reproducible: always

Build found: the last commit from https://github.com/ita-social-projects... | priority | sp the button зберегти isn t clickable and error message doesn t appear after creating new category without image environment windows server standart bit google chrome v reproducible always build found the last commit from pre conditions go to log into administrator page as administ... | 1 |

209,226 | 7,166,486,608 | IssuesEvent | 2018-01-29 17:22:23 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | oc cluster up v3.9.0-alpha.3 fails due to panic | kind/bug priority/P1 sig/master | `oc cluster up` fails because the server start panics inside the container.

##### Version

```

$ oc version

oc v3.9.0-alpha.3+4f709b4-198-dirty

kubernetes v1.9.1+a0ce1bc657

features: Basic-Auth GSSAPI Kerberos SPNEGO

```

##### Steps To Reproduce

1. Build from latest master (`4f709b48f8e52e8c6012bd8b91945f... | 1.0 | oc cluster up v3.9.0-alpha.3 fails due to panic - `oc cluster up` fails because the server start panics inside the container.

##### Version

```

$ oc version

oc v3.9.0-alpha.3+4f709b4-198-dirty

kubernetes v1.9.1+a0ce1bc657

features: Basic-Auth GSSAPI Kerberos SPNEGO

```

##### Steps To Reproduce

1. Build f... | priority | oc cluster up alpha fails due to panic oc cluster up fails because the server start panics inside the container version oc version oc alpha dirty kubernetes features basic auth gssapi kerberos spnego steps to reproduce build from latest master ... | 1 |

299,850 | 9,205,911,527 | IssuesEvent | 2019-03-08 12:04:52 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | Relative paths within QGIS project file | Category: Project Loading/Saving Component: Easy fix? Component: Pull Request or Patch supplied Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Feature request | ---

Author Name: **springmeyer -** (springmeyer -)

Original Redmine Issue: 1211, https://issues.qgis.org/issues/1211

Original Assignee: nobody -

---

It would be extremely useful to allow for the use of relative paths to files loaded within a Qgis project file that rest on the filesystem. This would be a user contr... | 1.0 | Relative paths within QGIS project file - ---

Author Name: **springmeyer -** (springmeyer -)

Original Redmine Issue: 1211, https://issues.qgis.org/issues/1211

Original Assignee: nobody -

---

It would be extremely useful to allow for the use of relative paths to files loaded within a Qgis project file that rest on ... | priority | relative paths within qgis project file author name springmeyer springmeyer original redmine issue original assignee nobody it would be extremely useful to allow for the use of relative paths to files loaded within a qgis project file that rest on the filesystem this would be a user ... | 1 |

445,720 | 12,835,441,163 | IssuesEvent | 2020-07-07 12:53:34 | yalla-coop/tempo | https://api.github.com/repos/yalla-coop/tempo | closed | Update get all Spend Activities query | 3-points back-end backlog priority-3 | __Is this part of a User Journey?__

#616

---

### Acceptance Criteria:

- [x] Update DB to ensure spend activity and spend venue profiles are now in line with latest architecture #577

- [x] Query to fetch all spend venue listings (spend venue has marked themselves as public AND the spend activity has been mar... | 1.0 | Update get all Spend Activities query - __Is this part of a User Journey?__

#616

---

### Acceptance Criteria:

- [x] Update DB to ensure spend activity and spend venue profiles are now in line with latest architecture #577

- [x] Query to fetch all spend venue listings (spend venue has marked themselves as pu... | priority | update get all spend activities query is this part of a user journey acceptance criteria update db to ensure spend activity and spend venue profiles are now in line with latest architecture query to fetch all spend venue listings spend venue has marked themselves as public and... | 1 |

178,658 | 14,675,973,062 | IssuesEvent | 2020-12-30 18:57:46 | Andrew-Chen-Wang/cookiecutter-django-ecs-github | https://api.github.com/repos/Andrew-Chen-Wang/cookiecutter-django-ecs-github | opened | Tutorial: Adding custom VPC | documentation enhancement | For [Donate Anything](https://donate-anything.org/) ([GitHub link](https://github.com/Donate-Anything/Donate-Anything/)), I created a custom VPC so that I could still use my default VPC for other tests the required an EC2 instance.

It's also beneficial to do so so you can separate your security groups and instances ... | 1.0 | Tutorial: Adding custom VPC - For [Donate Anything](https://donate-anything.org/) ([GitHub link](https://github.com/Donate-Anything/Donate-Anything/)), I created a custom VPC so that I could still use my default VPC for other tests the required an EC2 instance.

It's also beneficial to do so so you can separate your ... | non_priority | tutorial adding custom vpc for i created a custom vpc so that i could still use my default vpc for other tests the required an instance it s also beneficial to do so so you can separate your security groups and instances based on vpc for security measures and better filterability the next time i d... | 0 |

47,392 | 5,890,404,012 | IssuesEvent | 2017-05-17 14:54:14 | RestComm/Restcomm-Connect | https://api.github.com/repos/RestComm/Restcomm-Connect | closed | CallManager properly handle numbers that start with + | 1. Bug Testing unplanned | bug discovered from failing test case: org.restcomm.connect.testsuite.telephony.TestDialVerbPartThree#testDialClientAliceWithPlusSign | 1.0 | CallManager properly handle numbers that start with + - bug discovered from failing test case: org.restcomm.connect.testsuite.telephony.TestDialVerbPartThree#testDialClientAliceWithPlusSign | non_priority | callmanager properly handle numbers that start with bug discovered from failing test case org restcomm connect testsuite telephony testdialverbpartthree testdialclientalicewithplussign | 0 |

279,129 | 24,201,650,836 | IssuesEvent | 2022-09-24 16:57:18 | wearable-learning-cloud-platform/wlcp-issues | https://api.github.com/repos/wearable-learning-cloud-platform/wlcp-issues | closed | 24 Hour Sessions | enhancement game manager game editor high priority game player ready for testing | Sessions in the WLCP should only last 24 hours then you should be automatically logged out. | 1.0 | 24 Hour Sessions - Sessions in the WLCP should only last 24 hours then you should be automatically logged out. | non_priority | hour sessions sessions in the wlcp should only last hours then you should be automatically logged out | 0 |

145,571 | 11,698,990,987 | IssuesEvent | 2020-03-06 14:52:23 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | Test defect persistent timers demo | team:Zombie Apocalypse test bug | A test defect has been found during a build where the AutomaticDatabase timer in com.ibm.ws.concurrent.persistent_fat_demo_timers had it's `@PostConstruct` method called a second time. Likely indicating there may have been a race condition issue, or was prematurely destroyed. As a stateless bean (and not singleton) t... | 1.0 | Test defect persistent timers demo - A test defect has been found during a build where the AutomaticDatabase timer in com.ibm.ws.concurrent.persistent_fat_demo_timers had it's `@PostConstruct` method called a second time. Likely indicating there may have been a race condition issue, or was prematurely destroyed. As a... | non_priority | test defect persistent timers demo a test defect has been found during a build where the automaticdatabase timer in com ibm ws concurrent persistent fat demo timers had it s postconstruct method called a second time likely indicating there may have been a race condition issue or was prematurely destroyed as a... | 0 |

147,370 | 5,638,436,514 | IssuesEvent | 2017-04-06 11:57:58 | kuzzleio/kuzzle | https://api.github.com/repos/kuzzleio/kuzzle | closed | The Redis cache must be disable in RoleRepository and ProfileRepository | bug priority-high | The `RoleRepository` and `ProfileRepository` was ment to force the disabling of Redis cache as they already have inmemory cache, but was somehow re-enabled by mistake. | 1.0 | The Redis cache must be disable in RoleRepository and ProfileRepository - The `RoleRepository` and `ProfileRepository` was ment to force the disabling of Redis cache as they already have inmemory cache, but was somehow re-enabled by mistake. | priority | the redis cache must be disable in rolerepository and profilerepository the rolerepository and profilerepository was ment to force the disabling of redis cache as they already have inmemory cache but was somehow re enabled by mistake | 1 |

429,169 | 12,421,737,942 | IssuesEvent | 2020-05-23 18:20:33 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Problem trying to add a text box | Priority:P2 Reporting/Dashboards Type:Bug | Info

---

**OS**

```CentOS release 6.9 (Final)```

**Metabase**

```Metabase version v0.29.0```

**Metabase hosting**

```Docker version 1.7.1, build 786b29d/1.7.1```

**DB**

```MySQL Community Server (GPL) 5.7.20```

----

Hi, I have a problem when I try to add a "text box" in all dashboards, this is the erro... | 1.0 | Problem trying to add a text box - Info

---

**OS**

```CentOS release 6.9 (Final)```

**Metabase**

```Metabase version v0.29.0```

**Metabase hosting**

```Docker version 1.7.1, build 786b29d/1.7.1```

**DB**

```MySQL Community Server (GPL) 5.7.20```

----

Hi, I have a problem when I try to add a "text box" ... | priority | problem trying to add a text box info os centos release final metabase metabase version metabase hosting docker version build db mysql community server gpl hi i have a problem when i try to add a text box in all da... | 1 |

46,132 | 13,150,684,608 | IssuesEvent | 2020-08-09 12:57:35 | shaundmorris/ddf | https://api.github.com/repos/shaundmorris/ddf | closed | CVE-2018-10237 Medium Severity Vulnerability detected by WhiteSource | security vulnerability wontfix | ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>guava-18.0.jar</b>, <b>guava-14.0.1.jar</b>, <b>guava-19.0.jar</b>, <b>guava-16.0.1.jar</b>, <b>guava-20.0... | True | CVE-2018-10237 Medium Severity Vulnerability detected by WhiteSource - ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>guava-18.0.jar</b>, <b>guava-14.0.1... | non_priority | cve medium severity vulnerability detected by whitesource cve medium severity vulnerability vulnerable libraries guava jar guava jar guava jar guava jar guava jar guava jar guava is a suite of core and expanded libraries that include utilit... | 0 |

22,702 | 15,386,811,982 | IssuesEvent | 2021-03-03 08:44:24 | cividi/spatial-data-package-platform | https://api.github.com/repos/cividi/spatial-data-package-platform | closed | Re-Setup autodeploy to stage | enhancement infrastructure | After migrating to master stage should be reconnected to automatically update on every push / merged pull request to master. | 1.0 | Re-Setup autodeploy to stage - After migrating to master stage should be reconnected to automatically update on every push / merged pull request to master. | non_priority | re setup autodeploy to stage after migrating to master stage should be reconnected to automatically update on every push merged pull request to master | 0 |

394,277 | 11,634,393,722 | IssuesEvent | 2020-02-28 10:17:12 | Seniru/merchant | https://api.github.com/repos/Seniru/merchant | opened | Set a maximum limit of workers a company can have | difficulty: hard good first issue help wanted priority: high status: unassigned type: enhancement type: feature request | This issue is mostly related with the changes planned in #79

The company's growth will be retarded when the maximum salary is set to a certain maximum level - since there's no obvious to invest it anymore. That'd make a bad experience to players also, since they can't improve themselves nor their companies.

Beca... | 1.0 | Set a maximum limit of workers a company can have - This issue is mostly related with the changes planned in #79

The company's growth will be retarded when the maximum salary is set to a certain maximum level - since there's no obvious to invest it anymore. That'd make a bad experience to players also, since they c... | priority | set a maximum limit of workers a company can have this issue is mostly related with the changes planned in the company s growth will be retarded when the maximum salary is set to a certain maximum level since there s no obvious to invest it anymore that d make a bad experience to players also since they ca... | 1 |

300,390 | 9,210,259,390 | IssuesEvent | 2019-03-09 03:09:33 | smacademic/project-bdf | https://api.github.com/repos/smacademic/project-bdf | closed | Create product requirements | priority: high type: missing | In order to stay organized and on task, we will need to create a product requirement document. We may follow the CS305 guidelines for product requirements.

- Every requirement should be verifiable and traceable

- Every requirement should have:

- a unique ID

- a priority to show urgency

- a status t... | 1.0 | Create product requirements - In order to stay organized and on task, we will need to create a product requirement document. We may follow the CS305 guidelines for product requirements.

- Every requirement should be verifiable and traceable

- Every requirement should have:

- a unique ID

- a priority to ... | priority | create product requirements in order to stay organized and on task we will need to create a product requirement document we may follow the guidelines for product requirements every requirement should be verifiable and traceable every requirement should have a unique id a priority to show... | 1 |

124,185 | 26,417,262,025 | IssuesEvent | 2023-01-13 16:55:48 | patternfly/pf-codemods | https://api.github.com/repos/patternfly/pf-codemods | closed | Popover - Remove deprecated props | codemod | Follow up to breaking change PR https://github.com/patternfly/patternfly-react/pull/8201

- Any consumer references to Popover's `boundary` and `tippyProps` props should be removed.

- Any consumers defining and passing Popover a `shouldClose` function with more than one parameter, needs to be given a warning that th... | 1.0 | Popover - Remove deprecated props - Follow up to breaking change PR https://github.com/patternfly/patternfly-react/pull/8201

- Any consumer references to Popover's `boundary` and `tippyProps` props should be removed.

- Any consumers defining and passing Popover a `shouldClose` function with more than one parameter,... | non_priority | popover remove deprecated props follow up to breaking change pr any consumer references to popover s boundary and tippyprops props should be removed any consumers defining and passing popover a shouldclose function with more than one parameter needs to be given a warning that the first parameter h... | 0 |

38,046 | 18,900,944,073 | IssuesEvent | 2021-11-16 00:46:27 | keras-team/keras | https://api.github.com/repos/keras-team/keras | closed | fix Cropping2D layer return empty list if crop is higher than data shape | type:bug/performance | As we did in #14970. This issue solve the `Cropping2D` layer of return and empty list if the `cropping` parameter is higher than data shape.

We solved in the same way that we did in #14970. Please refer to #14970 for more info. | True | fix Cropping2D layer return empty list if crop is higher than data shape - As we did in #14970. This issue solve the `Cropping2D` layer of return and empty list if the `cropping` parameter is higher than data shape.

We solved in the same way that we did in #14970. Please refer to #14970 for more info. | non_priority | fix layer return empty list if crop is higher than data shape as we did in this issue solve the layer of return and empty list if the cropping parameter is higher than data shape we solved in the same way that we did in please refer to for more info | 0 |

22,823 | 6,303,713,992 | IssuesEvent | 2017-07-21 14:21:33 | Microsoft/WindowsTemplateStudio | https://api.github.com/repos/Microsoft/WindowsTemplateStudio | opened | Port existing C# templates to VB | Generated Code | As a continuation of #371 and X-Ref https://github.com/Microsoft/WindowsTemplateStudio/wiki/Visual-Basic

- Port existing C# templates to VB.

- Also, need strategy and automation for the handling of future changes to C# templates and localization. | 1.0 | Port existing C# templates to VB - As a continuation of #371 and X-Ref https://github.com/Microsoft/WindowsTemplateStudio/wiki/Visual-Basic

- Port existing C# templates to VB.

- Also, need strategy and automation for the handling of future changes to C# templates and localization. | non_priority | port existing c templates to vb as a continuation of and x ref port existing c templates to vb also need strategy and automation for the handling of future changes to c templates and localization | 0 |

238,326 | 19,712,245,414 | IssuesEvent | 2022-01-13 07:15:08 | milvus-io/milvus | https://api.github.com/repos/milvus-io/milvus | opened | [Bug]: [benchmark][cluster][performance] 50 million data sets, create ivf_flat index, increase the number of indexnodes, the time reduction of index creation does not meet expectations | kind/bug needs-triage test/benchmark performance tuning | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Environment

```markdown

- Milvus version:

- Deployment mode(standalone or cluster):

- SDK version(e.g. pymilvus v2.0.0rc2):

- OS(Ubuntu or CentOS):

- CPU/Memory:

- GPU:

- Others:

```

### Current Behavior

**50 million d... | 1.0 | [Bug]: [benchmark][cluster][performance] 50 million data sets, create ivf_flat index, increase the number of indexnodes, the time reduction of index creation does not meet expectations - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Environment

```markdown

- Milvus version:

... | non_priority | million data sets create ivf flat index increase the number of indexnodes the time reduction of index creation does not meet expectations is there an existing issue for this i have searched the existing issues environment markdown milvus version deployment mode standalone or clust... | 0 |

710,999 | 24,446,596,026 | IssuesEvent | 2022-10-06 18:31:58 | systemd/systemd | https://api.github.com/repos/systemd/systemd | closed | Support `libbpf` v1.0.0 | RFE 🎁 pid1 priority bpf | ### Component

systemd

### Is your feature request related to a problem? Please describe

systemd does not support the [recently released `libbpf` v1.0.0](https://github.com/libbpf/libbpf/releases/tag/v1.0.0).

### Describe the solution you'd like

- Bump the version of `libbpf.so`: https://github.com/systemd/systemd/... | 1.0 | Support `libbpf` v1.0.0 - ### Component

systemd

### Is your feature request related to a problem? Please describe

systemd does not support the [recently released `libbpf` v1.0.0](https://github.com/libbpf/libbpf/releases/tag/v1.0.0).

### Describe the solution you'd like

- Bump the version of `libbpf.so`: https://g... | priority | support libbpf component systemd is your feature request related to a problem please describe systemd does not support the describe the solution you d like bump the version of libbpf so adapt implementation which currently relies on functions which got removed in bpf cr... | 1 |

382,598 | 11,308,777,867 | IssuesEvent | 2020-01-19 08:33:45 | dirkwhoffmann/vAmiga | https://api.github.com/repos/dirkwhoffmann/vAmiga | closed | Test bltpri3 fails | Blitter Priority-High bug | Amiga 500 8A 🥰:

UAE 👍:

<img width="721" alt="bltpri3_uae" src="https://user-images.githubusercontent.com/12561945/72666308-176b8980-3a11-11ea-9931-41e71f8b7de1.png">

vAmiga: 🙈

<img... | 1.0 | Test bltpri3 fails - Amiga 500 8A 🥰:

UAE 👍:

<img width="721" alt="bltpri3_uae" src="https://user-images.githubusercontent.com/12561945/72666308-176b8980-3a11-11ea-9931-41e71f8b7de1.png">

... | priority | test fails amiga 🥰 uae 👍 img width alt uae src vamiga 🙈 img width alt bildschirmfoto um src if i interpret the images correctly vamiga s cpu is delayed too much here in contrast to test which has all bitplanes disabled this test runs with ... | 1 |

25,726 | 12,728,842,110 | IssuesEvent | 2020-06-25 03:58:24 | yalelibrary/YUL-DC | https://api.github.com/repos/yalelibrary/YUL-DC | opened | Can't Deploy to ECS from efs-mounts branch | bug performance team | ```

bin/build-cluster.sh yul-cd # runs successfully

bin/add-alb.sh yul-dc # runs successfully

bin/deploy-psql.sh yul-cd # runs successfully

bin/deploy-solr.sh yul-cd # runs successfully

bin/deploy-main.sh yul-cd

Target cluster: yul-cd

Using AWS_PROF... | True | Can't Deploy to ECS from efs-mounts branch - ```

bin/build-cluster.sh yul-cd # runs successfully

bin/add-alb.sh yul-dc # runs successfully

bin/deploy-psql.sh yul-cd # runs successfully

bin/deploy-solr.sh yul-cd # runs successfully

bin/deploy-main.sh... | non_priority | can t deploy to ecs from efs mounts branch bin build cluster sh yul cd runs successfully bin add alb sh yul dc runs successfully bin deploy psql sh yul cd runs successfully bin deploy solr sh yul cd runs successfully bin deploy main sh... | 0 |

50,891 | 7,643,206,020 | IssuesEvent | 2018-05-08 11:55:26 | shoebot/shoebot | https://api.github.com/repos/shoebot/shoebot | opened | Our index.html is just an index.. | documentation | Maybe this should be more of a front-page.

I've been using a lot of python projects recently, and my favorites:

- Tell you what they are

- Have small code examples + screenshots demonstrating how awsome they are on their landing page.

The worst offenders, say things like "Frob" is an implementation of "Baa", wh... | 1.0 | Our index.html is just an index.. - Maybe this should be more of a front-page.

I've been using a lot of python projects recently, and my favorites:

- Tell you what they are

- Have small code examples + screenshots demonstrating how awsome they are on their landing page.

The worst offenders, say things like "Fro... | non_priority | our index html is just an index maybe this should be more of a front page i ve been using a lot of python projects recently and my favorites tell you what they are have small code examples screenshots demonstrating how awsome they are on their landing page the worst offenders say things like fro... | 0 |

89,629 | 3,798,156,782 | IssuesEvent | 2016-03-23 11:14:57 | BrcMapsTeam/europe-15-situational-awareness | https://api.github.com/repos/BrcMapsTeam/europe-15-situational-awareness | opened | Add in High Importance Events to Forecasting Graphs to add Context | high priority new feature | Would be good to incorporate the high importance events from the situational awareness dash to the forecasting graphs for better context | 1.0 | Add in High Importance Events to Forecasting Graphs to add Context - Would be good to incorporate the high importance events from the situational awareness dash to the forecasting graphs for better context | priority | add in high importance events to forecasting graphs to add context would be good to incorporate the high importance events from the situational awareness dash to the forecasting graphs for better context | 1 |

134,663 | 18,491,463,097 | IssuesEvent | 2021-10-19 01:14:24 | serhii73/ukrdict | https://api.github.com/repos/serhii73/ukrdict | opened | CVE-2020-7212 (High) detected in urllib3-1.25.3-py2.py3-none-any.whl | security vulnerability | ## CVE-2020-7212 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>urllib3-1.25.3-py2.py3-none-any.whl</b></p></summary>

<p>HTTP library with thread-safe connection pooling, file post, a... | True | CVE-2020-7212 (High) detected in urllib3-1.25.3-py2.py3-none-any.whl - ## CVE-2020-7212 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>urllib3-1.25.3-py2.py3-none-any.whl</b></p></summ... | non_priority | cve high detected in none any whl cve high severity vulnerability vulnerable library none any whl http library with thread safe connection pooling file post and more library home page a href dependency hierarchy requests none any whl root... | 0 |

3,890 | 2,711,123,998 | IssuesEvent | 2015-04-09 02:10:24 | openaustralia/righttoknow | https://api.github.com/repos/openaustralia/righttoknow | closed | 'View as HTML' label is meaningless to the citizen | bug design wording | For the link for citizens to view a document attached to a request in their browser:

Why are we telling them what format it's in? Do they know what it means that it's in HT... | 1.0 | 'View as HTML' label is meaningless to the citizen - For the link for citizens to view a document attached to a request in their browser:

Why are we telling them what forma... | non_priority | view as html label is meaningless to the citizen for the link for citizens to view a document attached to a request in their browser why are we telling them what format it s in do they know what it means that it s in html maybe a label like view online or view in your browser or view text ver... | 0 |

130,476 | 12,429,876,678 | IssuesEvent | 2020-05-25 09:17:37 | enzoampil/fastquant | https://api.github.com/repos/enzoampil/fastquant | closed | Add auto-optimization feature in readme | documentation | The new auto-optimization feature is not yet in the readme. | 1.0 | Add auto-optimization feature in readme - The new auto-optimization feature is not yet in the readme. | non_priority | add auto optimization feature in readme the new auto optimization feature is not yet in the readme | 0 |