Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

271,034 | 8,474,834,159 | IssuesEvent | 2018-10-24 17:13:37 | T-Soft/unismev | https://api.github.com/repos/T-Soft/unismev | closed | config + FTP // ключ для ns по принудительному отключению получения FTP | HIGH PRIORITY core-logic enhancement | Нужен ключ в конфиг который отключит для конкретного ns получение FTP данных, даже если они есть. по сути - игнорирование наличия FTP

совершенно аварийный ключ, т.к. по хорошему тянуть надо всё | 1.0 | config + FTP // ключ для ns по принудительному отключению получения FTP - Нужен ключ в конфиг который отключит для конкретного ns получение FTP данных, даже если они есть. по сути - игнорирование наличия FTP

совершенно аварийный ключ, т.к. по хорошему тянуть надо всё | priority | config ftp ключ для ns по принудительному отключению получения ftp нужен ключ в конфиг который отключит для конкретного ns получение ftp данных даже если они есть по сути игнорирование наличия ftp совершенно аварийный ключ т к по хорошему тянуть надо всё | 1 |

132,468 | 5,186,702,140 | IssuesEvent | 2017-01-20 14:52:08 | bioinformatics-ua/dicoogle | https://api.github.com/repos/bioinformatics-ua/dicoogle | closed | Search service does not warn about invalid query provider names | dicoogle-core easy low priority | If I attempt to GET "/search?provider=idontexist&query=CT", I obtain an empty result list. Although it's a somewhat coherent behaviour, I would actually prefer the service to tell me that "idontexist" is not a valid query provider.

The proposal here: if _any_ of the query providers listed does not exist, an error me... | 1.0 | Search service does not warn about invalid query provider names - If I attempt to GET "/search?provider=idontexist&query=CT", I obtain an empty result list. Although it's a somewhat coherent behaviour, I would actually prefer the service to tell me that "idontexist" is not a valid query provider.

The proposal here: ... | priority | search service does not warn about invalid query provider names if i attempt to get search provider idontexist query ct i obtain an empty result list although it s a somewhat coherent behaviour i would actually prefer the service to tell me that idontexist is not a valid query provider the proposal here ... | 1 |

68,550 | 29,040,827,782 | IssuesEvent | 2023-05-13 00:27:17 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | closed | Patroni Testing | Epic *team/ ops and shared services* post upgrade Testing | **Describe the issue**

Creating automated tests to make sure Patroni is working post platform upgrade/update.

**Additional context**

CWU: https://marketplace.digital.gov.bc.ca/opportunities/code-with-us/4bf245cb-8800-4535-8ea1-7d1324c39a55

**How does this benefit the users of our platform?**

It will enable the... | 1.0 | Patroni Testing - **Describe the issue**

Creating automated tests to make sure Patroni is working post platform upgrade/update.

**Additional context**

CWU: https://marketplace.digital.gov.bc.ca/opportunities/code-with-us/4bf245cb-8800-4535-8ea1-7d1324c39a55

**How does this benefit the users of our platform?**

... | non_priority | patroni testing describe the issue creating automated tests to make sure patroni is working post platform upgrade update additional context cwu how does this benefit the users of our platform it will enable the platform team to run a series of tests against certain the applications after a p... | 0 |

578,911 | 17,156,551,520 | IssuesEvent | 2021-07-14 07:41:40 | googleapis/java-bigtable-hbase | https://api.github.com/repos/googleapis/java-bigtable-hbase | closed | bigtable.hbase.TestFilters: testInterleaveNoDuplicateCells failed | api: bigtable flakybot: issue priority: p1 type: bug | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: a891335ce3179c45fade4f3683b7e09d38d0107a... | 1.0 | bigtable.hbase.TestFilters: testInterleaveNoDuplicateCells failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will sto... | priority | bigtable hbase testfilters testinterleavenoduplicatecells failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output org apache hadoop hbase client... | 1 |

126,640 | 4,998,539,185 | IssuesEvent | 2016-12-09 20:10:59 | ProjectSidewalk/SidewalkWebpage | https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage | closed | Change jumping mechanism in the system | Priority: Medium pull-request-submitted | Frequent jumps while auditing is annoying and many users (>4/10 434 students) in our usability studies have that pointed out. Jumps usually occur when the routing algorithm places the user either near the boundary of a neighborhood or when all connected routes around the user's current location are audited and (s)he is... | 1.0 | Change jumping mechanism in the system - Frequent jumps while auditing is annoying and many users (>4/10 434 students) in our usability studies have that pointed out. Jumps usually occur when the routing algorithm places the user either near the boundary of a neighborhood or when all connected routes around the user's ... | priority | change jumping mechanism in the system frequent jumps while auditing is annoying and many users students in our usability studies have that pointed out jumps usually occur when the routing algorithm places the user either near the boundary of a neighborhood or when all connected routes around the user s cur... | 1 |

257,378 | 19,516,086,750 | IssuesEvent | 2021-12-29 10:27:46 | Refemi/refemi_front | https://api.github.com/repos/Refemi/refemi_front | closed | Let's start a documentation! | documentation question | - [x] creation of a mapping of existing components to clarify architecture and provide technical templates of website

- [x] Write presentation of project

- [x] list tech stack | 1.0 | Let's start a documentation! - - [x] creation of a mapping of existing components to clarify architecture and provide technical templates of website

- [x] Write presentation of project

- [x] list tech stack | non_priority | let s start a documentation creation of a mapping of existing components to clarify architecture and provide technical templates of website write presentation of project list tech stack | 0 |

166,018 | 20,711,378,512 | IssuesEvent | 2022-03-12 01:14:15 | snowflakedb/snowflake-jdbc | https://api.github.com/repos/snowflakedb/snowflake-jdbc | closed | SNOW-558866: CVE-2020-11113 (High) detected in jackson-databind-2.9.8.jar - autoclosed | security vulnerability | ## CVE-2020-11113 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | SNOW-558866: CVE-2020-11113 (High) detected in jackson-databind-2.9.8.jar - autoclosed - ## CVE-2020-11113 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b>... | non_priority | snow cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws ua... | 0 |

232,978 | 7,688,355,652 | IssuesEvent | 2018-05-17 09:12:17 | bounswe/bounswe2018group1 | https://api.github.com/repos/bounswe/bounswe2018group1 | closed | Team Logo | Position: Abandoned Priority: Low Type: Suggestion Who: Group-Work | I think we can create a cooler and more beautiful logo. I don't like the current one. | 1.0 | Team Logo - I think we can create a cooler and more beautiful logo. I don't like the current one. | priority | team logo i think we can create a cooler and more beautiful logo i don t like the current one | 1 |

711,445 | 24,464,481,372 | IssuesEvent | 2022-10-07 13:57:10 | AY2223S1-CS2103T-T15-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-T15-1/tp | closed | Change how information is presented in the `PersonCard` | enhancement priority.medium type.ui | Currently the information shown in the `PersonCard` does not contains a field name besides the **Employee ID**.

<img width="1015" alt="image" src="https://user-images.githubusercontent.com/37807290/194542174-dd923a00-d910-4277-a2a6-16058b9d71c3.png">

Hence, I think we can include a field name for every field so that ... | 1.0 | Change how information is presented in the `PersonCard` - Currently the information shown in the `PersonCard` does not contains a field name besides the **Employee ID**.

<img width="1015" alt="image" src="https://user-images.githubusercontent.com/37807290/194542174-dd923a00-d910-4277-a2a6-16058b9d71c3.png">

Hence, I ... | priority | change how information is presented in the personcard currently the information shown in the personcard does not contains a field name besides the employee id img width alt image src hence i think we can include a field name for every field so that the delivery of content is clearer and easier t... | 1 |

458,675 | 13,179,542,844 | IssuesEvent | 2020-08-12 11:08:25 | magento/adobe-stock-integration | https://api.github.com/repos/magento/adobe-stock-integration | closed | Remove adminhtm area emulation from media-content:sync command (if possible) | Backend Complex Priority: P2 Progress: PR created Severity: S2 refactoring | Remove adminhtm area emulation from media-content:sync command (if possible) | 1.0 | Remove adminhtm area emulation from media-content:sync command (if possible) - Remove adminhtm area emulation from media-content:sync command (if possible) | priority | remove adminhtm area emulation from media content sync command if possible remove adminhtm area emulation from media content sync command if possible | 1 |

204,725 | 23,272,169,651 | IssuesEvent | 2022-08-05 01:10:39 | snowdensb/nifi | https://api.github.com/repos/snowdensb/nifi | closed | CVE-2022-2596 (Medium) detected in node-fetch-2.3.0.tgz - autoclosed | security vulnerability | ## CVE-2022-2596 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-fetch-2.3.0.tgz</b></p></summary>

<p>A light-weight module that brings window.fetch to node.js</p>

<p>Library ho... | True | CVE-2022-2596 (Medium) detected in node-fetch-2.3.0.tgz - autoclosed - ## CVE-2022-2596 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-fetch-2.3.0.tgz</b></p></summary>

<p>A li... | non_priority | cve medium detected in node fetch tgz autoclosed cve medium severity vulnerability vulnerable library node fetch tgz a light weight module that brings window fetch to node js library home page a href dependency hierarchy dtsgenerator tgz root library ... | 0 |

214,851 | 16,581,219,061 | IssuesEvent | 2021-05-31 12:07:38 | MernDevOps/MovieProject | https://api.github.com/repos/MernDevOps/MovieProject | opened | Header | documentation | Fatih bey

Öncelikle Branche'nızdaki dosyaları yeni duzene göre update yapmak lazım (git PULL) yani css lerin adlarını değiştirmiştik

Navbar icerisinde yer alan TV SHOWS yazısı alt alta kayma yapıyor

Bell tıklanmasında ortaya çıkan pop-up biraz büyük ve uzak bir yerde zuhur ediyor

responsive 768x530 posizyonunda ... | 1.0 | Header - Fatih bey

Öncelikle Branche'nızdaki dosyaları yeni duzene göre update yapmak lazım (git PULL) yani css lerin adlarını değiştirmiştik

Navbar icerisinde yer alan TV SHOWS yazısı alt alta kayma yapıyor

Bell tıklanmasında ortaya çıkan pop-up biraz büyük ve uzak bir yerde zuhur ediyor

responsive 768x530 posi... | non_priority | header fatih bey öncelikle branche nızdaki dosyaları yeni duzene göre update yapmak lazım git pull yani css lerin adlarını değiştirmiştik navbar icerisinde yer alan tv shows yazısı alt alta kayma yapıyor bell tıklanmasında ortaya çıkan pop up biraz büyük ve uzak bir yerde zuhur ediyor responsive posizyonun... | 0 |

102,345 | 21,950,071,710 | IssuesEvent | 2022-05-24 07:04:03 | google/iree | https://api.github.com/repos/google/iree | opened | Missing vectorization for gather ops | help wanted codegen | We've been hitting issues about vectorizing table lookups. I had an offline discussion with @MaheshRavishankar . The main issue is that we don't handle `tensor.extract` op in Linalg vectorization. There are a couple of approaches to vectorize gather ops.

1. We can add scalar ops support for `tensor.extract` vectoriz... | 1.0 | Missing vectorization for gather ops - We've been hitting issues about vectorizing table lookups. I had an offline discussion with @MaheshRavishankar . The main issue is that we don't handle `tensor.extract` op in Linalg vectorization. There are a couple of approaches to vectorize gather ops.

1. We can add scalar op... | non_priority | missing vectorization for gather ops we ve been hitting issues about vectorizing table lookups i had an offline discussion with maheshravishankar the main issue is that we don t handle tensor extract op in linalg vectorization there are a couple of approaches to vectorize gather ops we can add scalar op... | 0 |

103,166 | 12,867,510,646 | IssuesEvent | 2020-07-10 07:02:43 | nextcloud/server | https://api.github.com/repos/nextcloud/server | closed | Navigation Bar early Dropdown on medium sized screens | 0. Needs triage 18-feedback bug design | <!--

Thanks for reporting issues back to Nextcloud!

Note: This is the **issue tracker of Nextcloud**, please do NOT use this to get answers to your questions or get help for fixing your installation. This is a place to report bugs to developers, after your server has been debugged. You can find help debugging your ... | 1.0 | Navigation Bar early Dropdown on medium sized screens - <!--

Thanks for reporting issues back to Nextcloud!

Note: This is the **issue tracker of Nextcloud**, please do NOT use this to get answers to your questions or get help for fixing your installation. This is a place to report bugs to developers, after your ser... | non_priority | navigation bar early dropdown on medium sized screens thanks for reporting issues back to nextcloud note this is the issue tracker of nextcloud please do not use this to get answers to your questions or get help for fixing your installation this is a place to report bugs to developers after your ser... | 0 |

48,151 | 13,301,679,701 | IssuesEvent | 2020-08-25 13:17:38 | Whizkevina/portfolio | https://api.github.com/repos/Whizkevina/portfolio | opened | CVE-2020-7660 (High) detected in serialize-javascript-1.9.1.tgz | security vulnerability | ## CVE-2020-7660 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>serialize-javascript-1.9.1.tgz</b></p></summary>

<p>Serialize JavaScript to a superset of JSON that includes regular ex... | True | CVE-2020-7660 (High) detected in serialize-javascript-1.9.1.tgz - ## CVE-2020-7660 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>serialize-javascript-1.9.1.tgz</b></p></summary>

<p>S... | non_priority | cve high detected in serialize javascript tgz cve high severity vulnerability vulnerable library serialize javascript tgz serialize javascript to a superset of json that includes regular expressions and functions library home page a href path to dependency file tmp ws... | 0 |

692,697 | 23,746,565,926 | IssuesEvent | 2022-08-31 16:28:01 | yuhwan-park/dail | https://api.github.com/repos/yuhwan-park/dail | closed | Recoil atoms를 명확하고 간결하게 하기 | todo Refactor high-priority | ## 현재 문제점

1. atom과 selector를 의미없이 나눈 것

```ts

// dateState에서 dayjs 객체로 세팅을 하고 dateSelector에서 값을 변환하고 있다.

// 현재 구현된 로직에서 날짜 값을 꺼내 쓸 때 dateSelector 값만 쓰고 있기 때문에

// 나뉘어질 필요가 없고 dateState 내에서 default 값을 selector로 구현할 수 있음

export const dateState = atom<dayjs.Dayjs>({

key: 'date',

default: dayjs(),

});

expor... | 1.0 | Recoil atoms를 명확하고 간결하게 하기 - ## 현재 문제점

1. atom과 selector를 의미없이 나눈 것

```ts

// dateState에서 dayjs 객체로 세팅을 하고 dateSelector에서 값을 변환하고 있다.

// 현재 구현된 로직에서 날짜 값을 꺼내 쓸 때 dateSelector 값만 쓰고 있기 때문에

// 나뉘어질 필요가 없고 dateState 내에서 default 값을 selector로 구현할 수 있음

export const dateState = atom<dayjs.Dayjs>({

key: 'date',

de... | priority | recoil atoms를 명확하고 간결하게 하기 현재 문제점 atom과 selector를 의미없이 나눈 것 ts datestate에서 dayjs 객체로 세팅을 하고 dateselector에서 값을 변환하고 있다 현재 구현된 로직에서 날짜 값을 꺼내 쓸 때 dateselector 값만 쓰고 있기 때문에 나뉘어질 필요가 없고 datestate 내에서 default 값을 selector로 구현할 수 있음 export const datestate atom key date default dayjs... | 1 |

228,319 | 7,549,656,438 | IssuesEvent | 2018-04-18 14:47:06 | threefoldfoundation/tf_app | https://api.github.com/repos/threefoldfoundation/tf_app | closed | Add endpoint for setting tf chain status on node | priority_major state_inprogress type_feature | - timestamp (time when request arrived)

- wallet_status (locked/unlocked)

- block_height (number) | 1.0 | Add endpoint for setting tf chain status on node - - timestamp (time when request arrived)

- wallet_status (locked/unlocked)

- block_height (number) | priority | add endpoint for setting tf chain status on node timestamp time when request arrived wallet status locked unlocked block height number | 1 |

267,374 | 23,296,434,020 | IssuesEvent | 2022-08-06 16:48:30 | systemd/systemd | https://api.github.com/repos/systemd/systemd | reopened | TEST-13-NSPAWN-SMOKE became unstable | tests not-our-bug ci-blocker 🚧 | ### systemd version the issue has been seen with

latest main

### Used distribution

Arch Linux

### Linux kernel version used

_No response_

### CPU architectures issue was seen on

_No response_

### Component

tests

### Expected behaviour you didn't see

TEST-13-NSPAWN-SMOKE should reliably pass.

### Unexpected ... | 1.0 | TEST-13-NSPAWN-SMOKE became unstable - ### systemd version the issue has been seen with

latest main

### Used distribution

Arch Linux

### Linux kernel version used

_No response_

### CPU architectures issue was seen on

_No response_

### Component

tests

### Expected behaviour you didn't see

TEST-13-NSPAWN-SMOKE... | non_priority | test nspawn smoke became unstable systemd version the issue has been seen with latest main used distribution arch linux linux kernel version used no response cpu architectures issue was seen on no response component tests expected behaviour you didn t see test nspawn smoke s... | 0 |

73,435 | 24,625,375,003 | IssuesEvent | 2022-10-16 12:58:52 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | opened | p-treeSelect: selectionMode="checkbox" and [showClear]="true" doesn't work properly | defect | ### Describe the bug

If you use the component <p-treeSelect> with selectionMode="checkbox" and [showClear]="true" the minus icons doesn't get cleared.

### Environment

not relevant

### Reproducer

https://stackblitz.com/edit/github-drcvx7?file=src/assets/files.json

### Angular version

14.0.7

### PrimeNG version

... | 1.0 | p-treeSelect: selectionMode="checkbox" and [showClear]="true" doesn't work properly - ### Describe the bug

If you use the component <p-treeSelect> with selectionMode="checkbox" and [showClear]="true" the minus icons doesn't get cleared.

### Environment

not relevant

### Reproducer

https://stackblitz.com/edit/github... | non_priority | p treeselect selectionmode checkbox and true doesn t work properly describe the bug if you use the component with selectionmode checkbox and true the minus icons doesn t get cleared environment not relevant reproducer angular version primeng version buil... | 0 |

176,915 | 6,569,232,077 | IssuesEvent | 2017-09-09 04:29:45 | ODIQueensland/data-curator | https://api.github.com/repos/ODIQueensland/data-curator | opened | On Quit, don't offer to save unsaved work when all work is saved | priority:Low problem:Bug | > Please provide a general summary of the issue in the Issue Title above

> fill out the headings below as applicable to the issue you are reporting,

> deleting as appropriate but offering us as much detail as you can to help us resolve the issue

### Expected Behaviour

https://relishapp.com/odi-australia/data-cura... | 1.0 | On Quit, don't offer to save unsaved work when all work is saved - > Please provide a general summary of the issue in the Issue Title above

> fill out the headings below as applicable to the issue you are reporting,

> deleting as appropriate but offering us as much detail as you can to help us resolve the issue

##... | priority | on quit don t offer to save unsaved work when all work is saved please provide a general summary of the issue in the issue title above fill out the headings below as applicable to the issue you are reporting deleting as appropriate but offering us as much detail as you can to help us resolve the issue ... | 1 |

165,161 | 6,264,629,170 | IssuesEvent | 2017-07-16 10:03:50 | pmrukot/aion | https://api.github.com/repos/pmrukot/aion | opened | Refactor Elm | Priority: Medium Status: Blocked Type: Question | **Type**

Enhancement

**Current behaviour**

We need to improve our frontend code, the more we add to it, the worse it gets. We should do this as soon as we finish with #34

**Expected behaviour**

My suggestions:

- [ ] `roomId` is an `Int`, not sure why that's the case, as in most of the cases we convert it... | 1.0 | Refactor Elm - **Type**

Enhancement

**Current behaviour**

We need to improve our frontend code, the more we add to it, the worse it gets. We should do this as soon as we finish with #34

**Expected behaviour**

My suggestions:

- [ ] `roomId` is an `Int`, not sure why that's the case, as in most of the case... | priority | refactor elm type enhancement current behaviour we need to improve our frontend code the more we add to it the worse it gets we should do this as soon as we finish with expected behaviour my suggestions roomid is an int not sure why that s the case as in most of the cases w... | 1 |

9,339 | 3,898,280,565 | IssuesEvent | 2016-04-17 00:03:29 | factor/factor | https://api.github.com/repos/factor/factor | closed | Organize unicode vocab better | cleanup internationalization rename unicode | It would be nice if the ``unicode`` vocabulary had most of the API in it that people would use instead of trying to remember ``unicode.case``, ``unicode.categories``, etc. | 1.0 | Organize unicode vocab better - It would be nice if the ``unicode`` vocabulary had most of the API in it that people would use instead of trying to remember ``unicode.case``, ``unicode.categories``, etc. | non_priority | organize unicode vocab better it would be nice if the unicode vocabulary had most of the api in it that people would use instead of trying to remember unicode case unicode categories etc | 0 |

107,218 | 16,751,736,502 | IssuesEvent | 2021-06-12 02:00:34 | turkdevops/graphql-tools | https://api.github.com/repos/turkdevops/graphql-tools | opened | CVE-2019-11358 (Medium) detected in jquery-1.9.1.min.js, jquery-1.9.1.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.9.1.min.js</b>, <b>jquery-1.9.1.js</b></p></summary>

<p>

<details><summary><b>jquery-1.9.1.min.js</b></p>... | True | CVE-2019-11358 (Medium) detected in jquery-1.9.1.min.js, jquery-1.9.1.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.9.1.min.js</b>, <b>jquery-1.9.1... | non_priority | cve medium detected in jquery min js jquery js cve medium severity vulnerability vulnerable libraries jquery min js jquery js jquery min js javascript library for dom operations library home page a href path to dependency file graphql tool... | 0 |

367,729 | 10,861,284,637 | IssuesEvent | 2019-11-14 10:45:43 | bobbingwide/oik-shortcodes | https://api.github.com/repos/bobbingwide/oik-shortcodes | opened | API ref for WordPress core 5.3 completely wrong | Priority A Severity 1 bug | As reported in https://github.com/bobbingwide/wp-a2z/issues/16#issuecomment-553830206 the rebuilt API reference for core is completely wrong.

Note: This could be related to #67 | 1.0 | API ref for WordPress core 5.3 completely wrong - As reported in https://github.com/bobbingwide/wp-a2z/issues/16#issuecomment-553830206 the rebuilt API reference for core is completely wrong.

Note: This could be related to #67 | priority | api ref for wordpress core completely wrong as reported in the rebuilt api reference for core is completely wrong note this could be related to | 1 |

44,482 | 2,906,235,636 | IssuesEvent | 2015-06-19 08:40:44 | haskell/cabal | https://api.github.com/repos/haskell/cabal | closed | Hs-Source-Dirs in nested If must be respected by 'sdist' | bug high-priority library | (Imported from [Trac #374](http://hackage.haskell.org/trac/hackage/ticket/374), reported by guest on 2008-10-17)

I have the following part in a Cabal file:

<pre class="wiki"> If flag(executePipe)

Hs-Source-Dirs: execute/pipe

Else

If flag(executeShell)

Hs-Source-Dirs: execute/shell

Else

Hs-S... | 1.0 | Hs-Source-Dirs in nested If must be respected by 'sdist' - (Imported from [Trac #374](http://hackage.haskell.org/trac/hackage/ticket/374), reported by guest on 2008-10-17)

I have the following part in a Cabal file:

<pre class="wiki"> If flag(executePipe)

Hs-Source-Dirs: execute/pipe

Else

If flag(executeShe... | priority | hs source dirs in nested if must be respected by sdist imported from reported by guest on i have the following part in a cabal file if flag executepipe hs source dirs execute pipe else if flag executeshell hs source dirs execute shell else hs source dirs execute tmp ... | 1 |

90,197 | 10,674,945,956 | IssuesEvent | 2019-10-21 10:30:51 | TwoHorus/dbExelExportLaravel | https://api.github.com/repos/TwoHorus/dbExelExportLaravel | opened | Technische Unterlagen | documentation | - [ ] Anleitung für Abteilungsleitung

- [ ] Anleitung für Teamleiter

- [ ] Anleitung für Mitarbeiter

- [ ] Anleitung zur technischen Wartung

- [ ] Kommentierter Quellcode

- [ ] User-Stories

- [ ] Abnahmeprotokoll

- [ ] Prozessorientierter Projektbericht

- [ ] Aufwandsschätzung

- [ ] Pflichtenheft - Projektplan... | 1.0 | Technische Unterlagen - - [ ] Anleitung für Abteilungsleitung

- [ ] Anleitung für Teamleiter

- [ ] Anleitung für Mitarbeiter

- [ ] Anleitung zur technischen Wartung

- [ ] Kommentierter Quellcode

- [ ] User-Stories

- [ ] Abnahmeprotokoll

- [ ] Prozessorientierter Projektbericht

- [ ] Aufwandsschätzung

- [ ] Pfl... | non_priority | technische unterlagen anleitung für abteilungsleitung anleitung für teamleiter anleitung für mitarbeiter anleitung zur technischen wartung kommentierter quellcode user stories abnahmeprotokoll prozessorientierter projektbericht aufwandsschätzung pflichtenheft projekt... | 0 |

650,904 | 21,435,752,849 | IssuesEvent | 2022-04-24 01:02:36 | lokka30/LevelledMobs | https://api.github.com/repos/lokka30/LevelledMobs | opened | Add damage indicator system | type: improvement priority: normal status: unassigned target version status: confirmed | > @UltimaOath

> idk feelings about it, but regarding the 'display damage taken', I wonder if there might be an option where, upon the entity receiving damage from a player, the nametag we display to the player can be temporarily rotated to a 'damage indicator' version of the nametag, then rotated immediately back to ... | 1.0 | Add damage indicator system - > @UltimaOath

> idk feelings about it, but regarding the 'display damage taken', I wonder if there might be an option where, upon the entity receiving damage from a player, the nametag we display to the player can be temporarily rotated to a 'damage indicator' version of the nametag, the... | priority | add damage indicator system ultimaoath idk feelings about it but regarding the display damage taken i wonder if there might be an option where upon the entity receiving damage from a player the nametag we display to the player can be temporarily rotated to a damage indicator version of the nametag the... | 1 |

14,321 | 9,022,698,453 | IssuesEvent | 2019-02-07 02:59:25 | geneontology/go-site | https://api.github.com/repos/geneontology/go-site | closed | Sporadic SSL issues with OpenJDK while loading Ontologies via HTTPS | bug (B: affects usability) upstream | In the TermGenie and Amigo loads, there have been problems loading PATO hosted on Github.

One problem seems to be an SSL implementation issue in the OpenJDK (seen on 7u45):

```

Could not download IRI: http://purl.obolibrary.org/obo/pato.owl

javax.net.ssl.SSLException: bad record MAC

```

The official JDK bug is here:... | True | Sporadic SSL issues with OpenJDK while loading Ontologies via HTTPS - In the TermGenie and Amigo loads, there have been problems loading PATO hosted on Github.

One problem seems to be an SSL implementation issue in the OpenJDK (seen on 7u45):

```

Could not download IRI: http://purl.obolibrary.org/obo/pato.owl

javax.n... | non_priority | sporadic ssl issues with openjdk while loading ontologies via https in the termgenie and amigo loads there have been problems loading pato hosted on github one problem seems to be an ssl implementation issue in the openjdk seen on could not download iri javax net ssl sslexception bad record mac ... | 0 |

210,195 | 16,090,197,344 | IssuesEvent | 2021-04-26 15:47:19 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] IndexingIT fails with legacy index templates deprecation warning | :Core/Features/Indices APIs >test-failure Team:Core/Features | <!--

Please fill out the following information, and ensure you have attempted

to reproduce locally

-->

**Build scan**:

https://gradle-enterprise.elastic.co/s/7tdbwdl5an6ic

**Repro line**:

```shell

./gradlew ':qa:rolling-upgrade:v7.13.0#oldClusterTest' -Dtests.class="org.elasticsearch.upgrades.IndexingIT... | 1.0 | [CI] IndexingIT fails with legacy index templates deprecation warning - <!--

Please fill out the following information, and ensure you have attempted

to reproduce locally

-->

**Build scan**:

https://gradle-enterprise.elastic.co/s/7tdbwdl5an6ic

**Repro line**:

```shell

./gradlew ':qa:rolling-upgrade:v7.1... | non_priority | indexingit fails with legacy index templates deprecation warning please fill out the following information and ensure you have attempted to reproduce locally build scan repro line shell gradlew qa rolling upgrade oldclustertest dtests class org elasticsearch upg... | 0 |

398,619 | 11,742,031,081 | IssuesEvent | 2020-03-11 23:22:47 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Functie in bestuur in profielpagina | easy and fun priority: low | In GitLab by @joren485 on Mar 25, 2017, 12:43

Op dit moment staat er bij (oud-)bestuursleden alleen in welk jaar ze bestuur zijn (geweest). Ik zie hier graag ook hun functie in dat bestuur bij. | 1.0 | Functie in bestuur in profielpagina - In GitLab by @joren485 on Mar 25, 2017, 12:43

Op dit moment staat er bij (oud-)bestuursleden alleen in welk jaar ze bestuur zijn (geweest). Ik zie hier graag ook hun functie in dat bestuur bij. | priority | functie in bestuur in profielpagina in gitlab by on mar op dit moment staat er bij oud bestuursleden alleen in welk jaar ze bestuur zijn geweest ik zie hier graag ook hun functie in dat bestuur bij | 1 |

91,672 | 26,460,648,423 | IssuesEvent | 2023-01-16 17:15:04 | skypjack/uvw | https://api.github.com/repos/skypjack/uvw | closed | The library targets are not versioned. | build system static-shared-libs portability | Working on the Yocto Project [meta-layer](https://github.com/stefanofiorentino/meta-uvw.git) to natively list `uvw` in the OpenEmbedded Layer index and this [sample application](https://github.com/stefanofiorentino/uvw_static_lib_client.git) (the application delivered by `uvw` inside the test folder, brought in a separ... | 1.0 | The library targets are not versioned. - Working on the Yocto Project [meta-layer](https://github.com/stefanofiorentino/meta-uvw.git) to natively list `uvw` in the OpenEmbedded Layer index and this [sample application](https://github.com/stefanofiorentino/uvw_static_lib_client.git) (the application delivered by `uvw` i... | non_priority | the library targets are not versioned working on the yocto project to natively list uvw in the openembedded layer index and this the application delivered by uvw inside the test folder brought in a separated repo i got the error that the libraries provided and installed by this cmake based project ... | 0 |

20,376 | 3,811,664,795 | IssuesEvent | 2016-03-27 00:30:59 | dimitri/pgloader | https://api.github.com/repos/dimitri/pgloader | closed | fails to separate similar tablenames from mysql | Corner case Must Fix Needs more testing / information | Howdy!

It seems to me that `mysql-schema.lisp:210` doesnt force case-sensitivity, regardless of quote identifiers. This applies to default settings of a current debian-jessie mysql. I'm not sure if forcing to a case-sensitive collation is the best idea though.

==MySQL==

```

Create Table A(id int);

Create Table... | 1.0 | fails to separate similar tablenames from mysql - Howdy!

It seems to me that `mysql-schema.lisp:210` doesnt force case-sensitivity, regardless of quote identifiers. This applies to default settings of a current debian-jessie mysql. I'm not sure if forcing to a case-sensitive collation is the best idea though.

==M... | non_priority | fails to separate similar tablenames from mysql howdy it seems to me that mysql schema lisp doesnt force case sensitivity regardless of quote identifiers this applies to default settings of a current debian jessie mysql i m not sure if forcing to a case sensitive collation is the best idea though mys... | 0 |

796,289 | 28,105,745,605 | IssuesEvent | 2023-03-31 00:21:57 | department-of-veterans-affairs/abd-vro | https://api.github.com/repos/department-of-veterans-affairs/abd-vro | closed | Ensure VRO API requests from MAS are saved to Redis and encrypted disk | Engineer high priority | For v1 API endpoints, VRO API requests are saved to Redis and encrypted disk; do the same for v2 endpoints.

Related v1 PRs:

* https://github.com/department-of-veterans-affairs/abd-vro/pull/651

* https://github.com/department-of-veterans-affairs/abd-vro/pull/751 | 1.0 | Ensure VRO API requests from MAS are saved to Redis and encrypted disk - For v1 API endpoints, VRO API requests are saved to Redis and encrypted disk; do the same for v2 endpoints.

Related v1 PRs:

* https://github.com/department-of-veterans-affairs/abd-vro/pull/651

* https://github.com/department-of-veterans-affairs/a... | priority | ensure vro api requests from mas are saved to redis and encrypted disk for api endpoints vro api requests are saved to redis and encrypted disk do the same for endpoints related prs | 1 |

271,910 | 8,492,030,550 | IssuesEvent | 2018-10-27 18:42:47 | CS2103-AY1819S1-W17-2/main | https://api.github.com/repos/CS2103-AY1819S1-W17-2/main | opened | Add UI for display of module details | enhancement priority.Medium type.UI | The details of module card should be shown inside browser panel when the user clicks on the module card. | 1.0 | Add UI for display of module details - The details of module card should be shown inside browser panel when the user clicks on the module card. | priority | add ui for display of module details the details of module card should be shown inside browser panel when the user clicks on the module card | 1 |

173,597 | 13,432,232,581 | IssuesEvent | 2020-09-07 08:08:56 | rancher/harvester | https://api.github.com/repos/rancher/harvester | closed | Redeploy fails | area/installation bug to-test | **Steps to reproduce:**

1. `kubectl create ns harvester-system`

2. `helm install harvester -n harvester-system deploy/charts/harvester`, wait until everything is ready.

3. `helm delete harvester -n harvester-system`, wait until the release is cleaned up.

4. do step 2 again.

**Result:**

kubevirt pods are not d... | 1.0 | Redeploy fails - **Steps to reproduce:**

1. `kubectl create ns harvester-system`

2. `helm install harvester -n harvester-system deploy/charts/harvester`, wait until everything is ready.

3. `helm delete harvester -n harvester-system`, wait until the release is cleaned up.

4. do step 2 again.

**Result:**

kubevi... | non_priority | redeploy fails steps to reproduce kubectl create ns harvester system helm install harvester n harvester system deploy charts harvester wait until everything is ready helm delete harvester n harvester system wait until the release is cleaned up do step again result kubevi... | 0 |

636,986 | 20,616,629,203 | IssuesEvent | 2022-03-07 13:51:23 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [BUG] "Default version" doesn't work on Modify template page | bug area/ui priority/1 | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

"Default version" doesn't work on Modify template page

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Advanced -> Templates

2. Select a template (e.g. windows-iso-image-base-version), select "Modify template" on the ... | 1.0 | [BUG] "Default version" doesn't work on Modify template page - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

"Default version" doesn't work on Modify template page

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Advanced -> Templates

2. Select a template (e.g. wi... | priority | default version doesn t work on modify template page describe the bug default version doesn t work on modify template page to reproduce steps to reproduce the behavior go to advanced templates select a template e g windows iso image base version select modify template on the ... | 1 |

504,794 | 14,621,120,073 | IssuesEvent | 2020-12-22 20:59:57 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | [YCQL] index update on list after an overwrite has incorrect behavior | kind/bug priority/high | Test Case:

```

ycqlsh:k> create table T(k int primary key, l list<int>);

ycqlsh:k> update T set l=[1, 2, 3] where k=1;

ycqlsh:k> update T set l[0]=100 where k=1;

ycqlsh:k> select * from T;

k | l

---+-------------

1 | [100, 2, 3] --> EXPECTED/CORRECT

(1 rows)

# but after an overwrite to li... | 1.0 | [YCQL] index update on list after an overwrite has incorrect behavior - Test Case:

```

ycqlsh:k> create table T(k int primary key, l list<int>);

ycqlsh:k> update T set l=[1, 2, 3] where k=1;

ycqlsh:k> update T set l[0]=100 where k=1;

ycqlsh:k> select * from T;

k | l

---+-------------

1 | [100, 2, 3] ... | priority | index update on list after an overwrite has incorrect behavior test case ycqlsh k create table t k int primary key l list ycqlsh k update t set l where k ycqlsh k update t set l where k ycqlsh k select from t k l expected correct ... | 1 |

41,838 | 12,842,407,826 | IssuesEvent | 2020-07-08 01:56:33 | Alanwang2015/JsonPath | https://api.github.com/repos/Alanwang2015/JsonPath | opened | CVE-2017-9735 (High) detected in jetty-util-9.3.0.M1.jar | security vulnerability | ## CVE-2017-9735 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jetty-util-9.3.0.M1.jar</b></p></summary>

<p>Utility classes for Jetty</p>

<p>Library home page: <a href="http://www.ec... | True | CVE-2017-9735 (High) detected in jetty-util-9.3.0.M1.jar - ## CVE-2017-9735 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jetty-util-9.3.0.M1.jar</b></p></summary>

<p>Utility classes... | non_priority | cve high detected in jetty util jar cve high severity vulnerability vulnerable library jetty util jar utility classes for jetty library home page a href path to vulnerable library home wss scanner gradle caches modules files org eclipse jetty jetty util ... | 0 |

79,831 | 7,725,536,636 | IssuesEvent | 2018-05-24 18:17:17 | golang/go | https://api.github.com/repos/golang/go | closed | x/net/http2: flake on TestTransportHandlerBodyClose | NeedsFix Testing | One instance here:

https://storage.googleapis.com/go-build-log/1e3f563b/linux-386_95855899.log

```

--- FAIL: TestTransportHandlerBodyClose (4.08s)

transport_test.go:2393: appeared to leak goroutines

``` | 1.0 | x/net/http2: flake on TestTransportHandlerBodyClose - One instance here:

https://storage.googleapis.com/go-build-log/1e3f563b/linux-386_95855899.log

```

--- FAIL: TestTransportHandlerBodyClose (4.08s)

transport_test.go:2393: appeared to leak goroutines

``` | non_priority | x net flake on testtransporthandlerbodyclose one instance here fail testtransporthandlerbodyclose transport test go appeared to leak goroutines | 0 |

570,140 | 17,019,579,945 | IssuesEvent | 2021-07-02 16:41:48 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Game server error: failure to find assembly. cw log bug | Category: Cloud Worlds Priority: High Squad: Pumpkin | cloud world logs are full of this error: failure to find assembly

@thetestgame can you add any extra context to this bug

@WeaselDog this is a game side issue, who to assign? | 1.0 | Game server error: failure to find assembly. cw log bug - cloud world logs are full of this error: failure to find assembly

@thetestgame can you add any extra context to this bug

@WeaselDog this is a game side issue, who to assign? | priority | game server error failure to find assembly cw log bug cloud world logs are full of this error failure to find assembly thetestgame can you add any extra context to this bug weaseldog this is a game side issue who to assign | 1 |

466,372 | 13,400,901,821 | IssuesEvent | 2020-09-03 16:28:35 | easydigitaldownloads/easy-digital-downloads | https://api.github.com/repos/easydigitaldownloads/easy-digital-downloads | closed | Make Chosen.js matches wordpress style | component-administration priority-low type-feature | EDD and other plugins uses this js resources (built-in edd core)

I know this is a really low priority but with a few lines of code we can made this matches same style like wordpress inputs

```css

.chosen-container .chosen-single,

.chosen-container .chosen-drop {

border: 1px solid #ddd;

-webkit-box-sha... | 1.0 | Make Chosen.js matches wordpress style - EDD and other plugins uses this js resources (built-in edd core)

I know this is a really low priority but with a few lines of code we can made this matches same style like wordpress inputs

```css

.chosen-container .chosen-single,

.chosen-container .chosen-drop {

bor... | priority | make chosen js matches wordpress style edd and other plugins uses this js resources built in edd core i know this is a really low priority but with a few lines of code we can made this matches same style like wordpress inputs css chosen container chosen single chosen container chosen drop bor... | 1 |

548,521 | 16,065,904,426 | IssuesEvent | 2021-04-23 19:03:06 | googleapis/java-aiplatform | https://api.github.com/repos/googleapis/java-aiplatform | closed | aiplatform.CreateTrainingPipelineImageObjectDetectionSampleTest: testCreateTrainingPipelineImageObjectDetectionSample failed | api: aiplatform flakybot: flaky flakybot: issue priority: p2 type: bug | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 6759605519c6d4ab8980ec2f7b01d3cbf83158a4... | 1.0 | aiplatform.CreateTrainingPipelineImageObjectDetectionSampleTest: testCreateTrainingPipelineImageObjectDetectionSample failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issu... | priority | aiplatform createtrainingpipelineimageobjectdetectionsampletest testcreatetrainingpipelineimageobjectdetectionsample failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl st... | 1 |

22,670 | 11,774,151,436 | IssuesEvent | 2020-03-16 08:55:06 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | Extend Data factory datasources/datasets, Linked services and triggers | enhancement service/data-factory | ### Description

There are wide variety of datasources/ datasets/linked service types available for Azure Data factory but only very limited small set is available from terraform provider.

### New or Affected Resource(s)

* azurerm_data_factory

* azurerm_data_factory_linked_service

* azurerm_data_factory_t... | 1.0 | Extend Data factory datasources/datasets, Linked services and triggers - ### Description

There are wide variety of datasources/ datasets/linked service types available for Azure Data factory but only very limited small set is available from terraform provider.

### New or Affected Resource(s)

* azurerm_data... | non_priority | extend data factory datasources datasets linked services and triggers description there are wide variety of datasources datasets linked service types available for azure data factory but only very limited small set is available from terraform provider new or affected resource s azurerm data... | 0 |

14,150 | 3,801,342,242 | IssuesEvent | 2016-03-23 22:28:34 | solettaproject/soletta | https://api.github.com/repos/solettaproject/soletta | opened | Packages: Review Soletta casing throughout the text | documentation | <!-- TEMPLATE FOR TASKS -->

#### Task Description

Page: https://github.com/solettaproject/soletta/wiki/Packages

Sometimes Soletta is spelled as Soletta and sometimes as soletta. If we're talking about the package, write it as **soletta** as it's done for **libsoletta-dev** and use Soletta casing for the other case... | 1.0 | Packages: Review Soletta casing throughout the text - <!-- TEMPLATE FOR TASKS -->

#### Task Description

Page: https://github.com/solettaproject/soletta/wiki/Packages

Sometimes Soletta is spelled as Soletta and sometimes as soletta. If we're talking about the package, write it as **soletta** as it's done for **libs... | non_priority | packages review soletta casing throughout the text task description page sometimes soletta is spelled as soletta and sometimes as soletta if we re talking about the package write it as soletta as it s done for libsoletta dev and use soletta casing for the other cases dependencies n... | 0 |

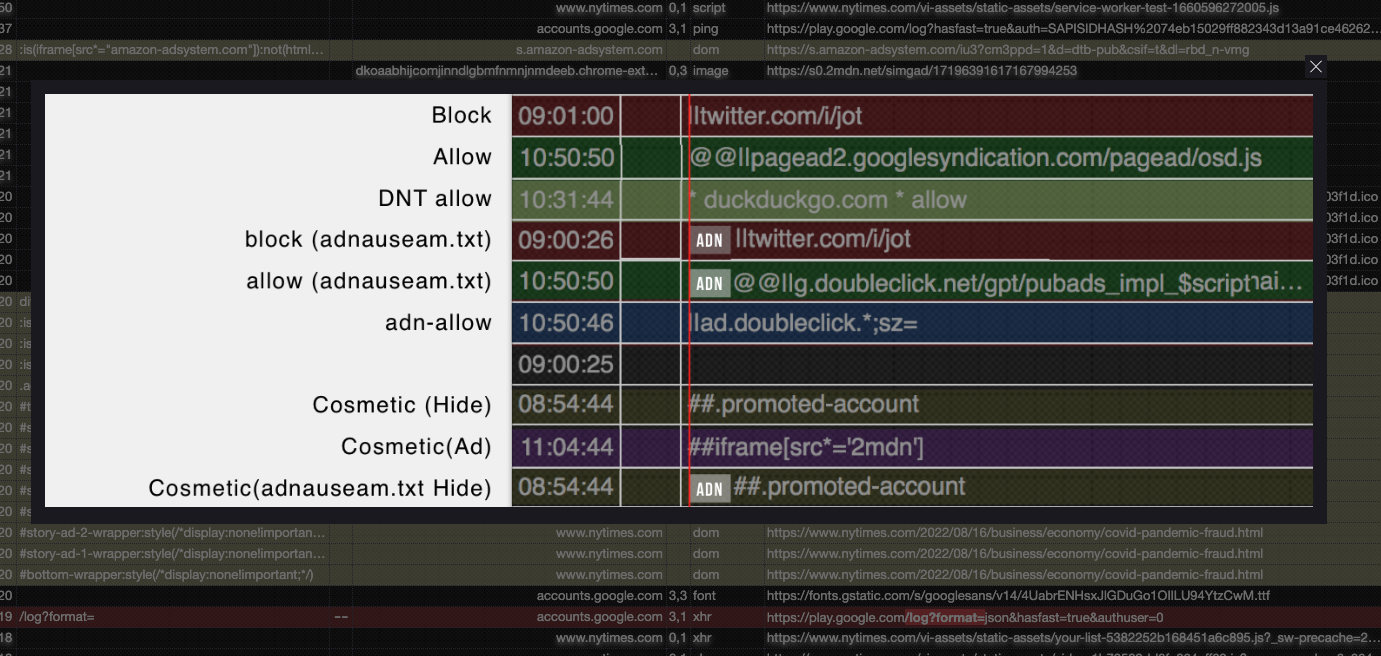

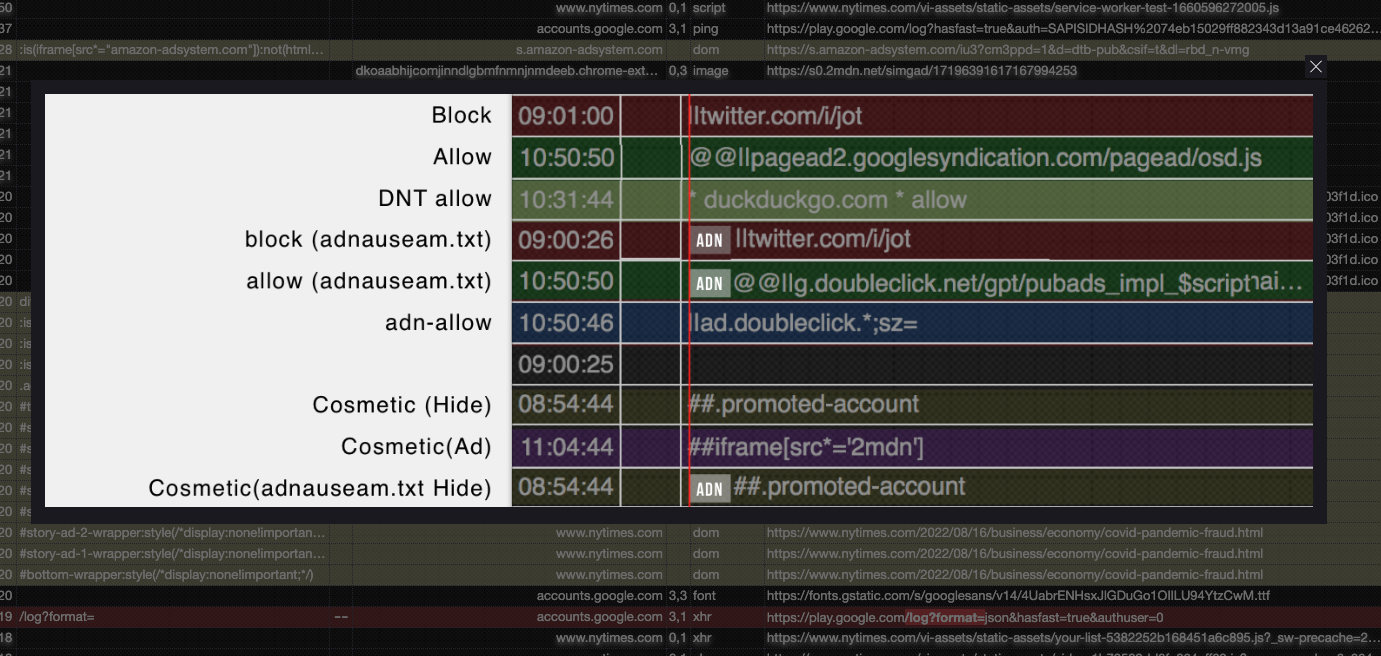

685,874 | 23,470,126,747 | IssuesEvent | 2022-08-16 20:51:41 | dhowe/AdNauseam | https://api.github.com/repos/dhowe/AdNauseam | closed | Update Logger Color Reference. | PRIORITY: Medium | In high resolution screens the image reference for the logger colors is really low quality. We should probably do it in HTML instead.

| 1.0 | Update Logger Color Reference. - In high resolution screens the image reference for the logger colors is really low quality. We should probably do it in HTML instead.

| priority | update logger color reference in high resolution screens the image reference for the logger colors is really low quality we should probably do it in html instead | 1 |

6,456 | 9,403,877,006 | IssuesEvent | 2019-04-09 03:24:41 | helloworldpark/tickle-stock-watcher | https://api.github.com/repos/helloworldpark/tickle-stock-watcher | closed | 크롤러 매니저를 구현한다 | requirement | # 구현사항

- 단위 크롤러들을 전부 관리하는 매니저

- 유저의 관심 대상인 크롤러들만 관리한다

- 새로 관심 대상에 포함되는 종목의 경우, 마지막으로 수집한 가격 정보의 날짜 이후로 새로 수집한다

- 이전에 한 번도 수집한 적이 없다면, 새로 수집한다

- 단위 크롤러들의 가동을 관리

- 크롤러는 두 가지 타입으로 나뉜다

- 과거 가격 정보 수집

- 현재 가격 정보 수집

- 모든 크롤러는 주식시장이 개장한 날의 데이터만 수집한다

- 현재 가격 수집 크롤러... | 1.0 | 크롤러 매니저를 구현한다 - # 구현사항

- 단위 크롤러들을 전부 관리하는 매니저

- 유저의 관심 대상인 크롤러들만 관리한다

- 새로 관심 대상에 포함되는 종목의 경우, 마지막으로 수집한 가격 정보의 날짜 이후로 새로 수집한다

- 이전에 한 번도 수집한 적이 없다면, 새로 수집한다

- 단위 크롤러들의 가동을 관리

- 크롤러는 두 가지 타입으로 나뉜다

- 과거 가격 정보 수집

- 현재 가격 정보 수집

- 모든 크롤러는 주식시장이 개장한 날의 데이터만 수집한다

... | non_priority | 크롤러 매니저를 구현한다 구현사항 단위 크롤러들을 전부 관리하는 매니저 유저의 관심 대상인 크롤러들만 관리한다 새로 관심 대상에 포함되는 종목의 경우 마지막으로 수집한 가격 정보의 날짜 이후로 새로 수집한다 이전에 한 번도 수집한 적이 없다면 새로 수집한다 단위 크롤러들의 가동을 관리 크롤러는 두 가지 타입으로 나뉜다 과거 가격 정보 수집 현재 가격 정보 수집 모든 크롤러는 주식시장이 개장한 날의 데이터만 수집한다 ... | 0 |

672,662 | 22,835,699,891 | IssuesEvent | 2022-07-12 16:26:17 | GoogleCloudPlatform/java-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/java-docs-samples | closed | com.example.containeranalysis.SamplesTest: testFindHighSeverityVulnerabilitiesForImage failed | type: bug priority: p1 api: containeranalysis samples flakybot: issue flakybot: flaky | Note: #6643 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: f5fb24e28e93306e965fa509c627c708ecfbd7d0

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/27bfb200-c79a-47c1-a04a-d56cd3845b2a), [Sponge](http://sponge2/27bfb200-c79a-47c1-... | 1.0 | com.example.containeranalysis.SamplesTest: testFindHighSeverityVulnerabilitiesForImage failed - Note: #6643 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: f5fb24e28e93306e965fa509c627c708ecfbd7d0

buildURL: [Build Status](https://source.cloud.google.com/result... | priority | com example containeranalysis samplestest testfindhighseverityvulnerabilitiesforimage failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output java lang assertionerror expected but was at org ju... | 1 |

120,244 | 17,644,071,426 | IssuesEvent | 2021-08-20 01:37:00 | DavidSpek/pipelines | https://api.github.com/repos/DavidSpek/pipelines | opened | CVE-2021-29559 (High) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29559 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learn... | True | CVE-2021-29559 (High) detected in tensorflow-1.15.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-29559 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.15.0-cp27-cp27m... | non_priority | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file pipelines contrib components ... | 0 |

120,715 | 4,793,188,409 | IssuesEvent | 2016-10-31 17:29:20 | leeensminger/DelDOT-NPDES-Field-Tool | https://api.github.com/repos/leeensminger/DelDOT-NPDES-Field-Tool | opened | New Inspection Shows Picture from Old Inspection on Same Conveyance | bug - high priority | Similar to issue #3

I opened a pre-existing swale (migrated data) which already had an inspection on it. I then viewed the inspection and made no changes so I clicked cancel. I then proceeded to create a new inspection and noticed the photo from the pre-existing inspection was showing under the inspection photos of... | 1.0 | New Inspection Shows Picture from Old Inspection on Same Conveyance - Similar to issue #3

I opened a pre-existing swale (migrated data) which already had an inspection on it. I then viewed the inspection and made no changes so I clicked cancel. I then proceeded to create a new inspection and noticed the photo from ... | priority | new inspection shows picture from old inspection on same conveyance similar to issue i opened a pre existing swale migrated data which already had an inspection on it i then viewed the inspection and made no changes so i clicked cancel i then proceeded to create a new inspection and noticed the photo from ... | 1 |

93,282 | 19,178,872,466 | IssuesEvent | 2021-12-04 03:09:17 | Daotin/fe-tips | https://api.github.com/repos/Daotin/fe-tips | closed | vscode配置.json | vscode | ```js

{

"editor.wordWrap": "off",

"editor.mouseWheelZoom": true,

"editor.fontLigatures": true,

"editor.minimap.renderCharacters": false,

"vetur.format.options.tabSize": 4,

"editor.minimap.enabled": false,

"search.followSymlinks": false,

"workbench.startupEditor": "newUntitledFil... | 1.0 | vscode配置.json - ```js

{

"editor.wordWrap": "off",

"editor.mouseWheelZoom": true,

"editor.fontLigatures": true,

"editor.minimap.renderCharacters": false,

"vetur.format.options.tabSize": 4,

"editor.minimap.enabled": false,

"search.followSymlinks": false,

"workbench.startupEditor":... | non_priority | vscode配置 json js editor wordwrap off editor mousewheelzoom true editor fontligatures true editor minimap rendercharacters false vetur format options tabsize editor minimap enabled false search followsymlinks false workbench startupeditor ... | 0 |

252,954 | 8,049,104,178 | IssuesEvent | 2018-08-01 09:04:23 | layersoflondon/application | https://api.github.com/repos/layersoflondon/application | closed | Map: flickering map pins | High priority bug | > When the mouse cursor is placed over a pin it flickers and distorts badly.

[From feedback doc: LoL_Beta_Review_v0.2] | 1.0 | Map: flickering map pins - > When the mouse cursor is placed over a pin it flickers and distorts badly.

[From feedback doc: LoL_Beta_Review_v0.2] | priority | map flickering map pins when the mouse cursor is placed over a pin it flickers and distorts badly | 1 |

240,112 | 26,254,322,181 | IssuesEvent | 2023-01-05 22:32:46 | yaeljacobs67/cncjs | https://api.github.com/repos/yaeljacobs67/cncjs | opened | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz | security vulnerability | ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validation and specifications</p>

<p>Library home page: <a href=... | True | CVE-2021-3918 (High) detected in json-schema-0.2.3.tgz - ## CVE-2021-3918 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-schema-0.2.3.tgz</b></p></summary>

<p>JSON Schema validat... | non_priority | cve high detected in json schema tgz cve high severity vulnerability vulnerable library json schema tgz json schema validation and specifications library home page a href dependency hierarchy coveralls tgz root library request tgz http ... | 0 |

200,202 | 7,001,408,512 | IssuesEvent | 2017-12-18 10:07:25 | nobt-io/frontend | https://api.github.com/repos/nobt-io/frontend | closed | Reconfigure AppBar | bug priority-high | The AppBar jumps in from the top as soon as you scroll upwards and takes up half the screen.

I think the AppBar should just be part of the feed at the very top. | 1.0 | Reconfigure AppBar - The AppBar jumps in from the top as soon as you scroll upwards and takes up half the screen.

I think the AppBar should just be part of the feed at the very top. | priority | reconfigure appbar the appbar jumps in from the top as soon as you scroll upwards and takes up half the screen i think the appbar should just be part of the feed at the very top | 1 |

78,734 | 10,083,228,838 | IssuesEvent | 2019-07-25 13:14:23 | Pageworks/papertrain | https://api.github.com/repos/Pageworks/papertrain | closed | Update Readme | documentation | When installing Papertrain the `npm run dev` command needs to run before the user can launch the front-end of the website. | 1.0 | Update Readme - When installing Papertrain the `npm run dev` command needs to run before the user can launch the front-end of the website. | non_priority | update readme when installing papertrain the npm run dev command needs to run before the user can launch the front end of the website | 0 |

83,545 | 15,710,734,913 | IssuesEvent | 2021-03-27 03:22:51 | AlexRogalskiy/github-action-json-fields | https://api.github.com/repos/AlexRogalskiy/github-action-json-fields | opened | CVE-2020-28500 (Medium) detected in lodash-4.17.20.tgz | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.20.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-28500 (Medium) detected in lodash-4.17.20.tgz - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.20.tgz</b></p></summary>

<p>Lodash modular util... | non_priority | cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file github action json fields package json path to vulnerable library github action json fields no... | 0 |

180,820 | 6,653,719,082 | IssuesEvent | 2017-09-29 09:33:39 | dhowe/AdNauseam | https://api.github.com/repos/dhowe/AdNauseam | closed | Ad is shown despite "Hide ads" being enabled from settings | Ads Visible PRIORITY: High | ### Describe the issue

Ad on the bottom right is shown despite "Hide ads" being enabled from settings

### One or more specific URLs where the issue occurs

https://coinmarketcap.com/

### Screenshot in which the issue can be seen

<img width="1226" alt="screen shot 2017-09-27 at 15 40 11" src="https://user-imag... | 1.0 | Ad is shown despite "Hide ads" being enabled from settings - ### Describe the issue

Ad on the bottom right is shown despite "Hide ads" being enabled from settings

### One or more specific URLs where the issue occurs

https://coinmarketcap.com/

### Screenshot in which the issue can be seen

<img width="1226" al... | priority | ad is shown despite hide ads being enabled from settings describe the issue ad on the bottom right is shown despite hide ads being enabled from settings one or more specific urls where the issue occurs screenshot in which the issue can be seen img width alt screen shot at ... | 1 |

127,835 | 12,340,672,367 | IssuesEvent | 2020-05-14 20:22:27 | thephpleague/commonmark | https://api.github.com/repos/thephpleague/commonmark | opened | [1.5] Release Goals | documentation pinned | Creating this issue to track a few odds and ends

- Minimize BC breaks between 1.5 API and 2.0 API (#475)

- Modify 2.0 docs to show migration path from 1.5 -> 2.0 instead of 1.4 -> 2.0 | 1.0 | [1.5] Release Goals - Creating this issue to track a few odds and ends

- Minimize BC breaks between 1.5 API and 2.0 API (#475)

- Modify 2.0 docs to show migration path from 1.5 -> 2.0 instead of 1.4 -> 2.0 | non_priority | release goals creating this issue to track a few odds and ends minimize bc breaks between api and api modify docs to show migration path from instead of | 0 |

73,561 | 14,103,706,821 | IssuesEvent | 2020-11-06 10:41:25 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Enum literals should be defined by generator strategies | C: Code Generation E: All Editions P: Medium T: Enhancement | Enum literals are currently not defined by generator strategies, but by jOOQ-codegen internals, so users cannot override the logic. | 1.0 | Enum literals should be defined by generator strategies - Enum literals are currently not defined by generator strategies, but by jOOQ-codegen internals, so users cannot override the logic. | non_priority | enum literals should be defined by generator strategies enum literals are currently not defined by generator strategies but by jooq codegen internals so users cannot override the logic | 0 |

210,429 | 16,100,869,717 | IssuesEvent | 2021-04-27 09:07:08 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [test-failed]: Chrome UI Functional Tests1.test/functional/apps/discover/_discover·js - discover app discover test query should modify the time range when the histogram is brushed | :KibanaApp/fix-it-week Team:KibanaApp failed-test test-cloud | **Version: 7.11.1**

**Class: Chrome UI Functional Tests1.test/functional/apps/discover/_discover·js**

**Stack Trace:**

```

Error: expected 1 to equal 26

at Assertion.assert (packages/kbn-expect/expect.js:100:11)

at Assertion.be.Assertion.equal (packages/kbn-expect/expect.js:227:8)

at Assertion.be (packages... | 2.0 | [test-failed]: Chrome UI Functional Tests1.test/functional/apps/discover/_discover·js - discover app discover test query should modify the time range when the histogram is brushed - **Version: 7.11.1**

**Class: Chrome UI Functional Tests1.test/functional/apps/discover/_discover·js**

**Stack Trace:**

```

Error: expecte... | non_priority | chrome ui functional test functional apps discover discover·js discover app discover test query should modify the time range when the histogram is brushed version class chrome ui functional test functional apps discover discover·js stack trace error expected to equal at a... | 0 |

19,735 | 4,442,002,212 | IssuesEvent | 2016-08-19 11:42:30 | coala-analyzer/coala | https://api.github.com/repos/coala-analyzer/coala | closed | Videos for newcomers doc | area/documentation difficulty/low importance/low | In the newcomers docs we should have an ascii cinema or Video tutorial on how to use git for our workflow.

Just makes it so much easier because people get confused about `rebase -i` and so on frequently.

I'm thinking 3 videos:

- How to make a contribution: (Clone, create branch, edit file, commit, push, possibly ... | 1.0 | Videos for newcomers doc - In the newcomers docs we should have an ascii cinema or Video tutorial on how to use git for our workflow.

Just makes it so much easier because people get confused about `rebase -i` and so on frequently.

I'm thinking 3 videos:

- How to make a contribution: (Clone, create branch, edit fi... | non_priority | videos for newcomers doc in the newcomers docs we should have an ascii cinema or video tutorial on how to use git for our workflow just makes it so much easier because people get confused about rebase i and so on frequently i m thinking videos how to make a contribution clone create branch edit fi... | 0 |

219,329 | 24,469,358,940 | IssuesEvent | 2022-10-07 18:08:58 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Former Uber CISO Joe Sullivan Found Guilty Over Breach Cover-Up | SecurityWeek Stale |

**A San Francisco jury on Wednesday found former Uber security chief Joe Sullivan guilty of covering up a 2016 data breach and concealing information on a felony from law enforcement.**

[read more](https://www.securityweek.com/former-uber-ciso-joe-s... | True | [SecurityWeek] Former Uber CISO Joe Sullivan Found Guilty Over Breach Cover-Up -

**A San Francisco jury on Wednesday found former Uber security chief Joe Sullivan guilty of covering up a 2016 data breach and concealing information on a felony from la... | non_priority | former uber ciso joe sullivan found guilty over breach cover up sites default files uber data breach jpg a san francisco jury on wednesday found former uber security chief joe sullivan guilty of covering up a data breach and concealing information on a felony from law enforcement | 0 |

248,279 | 7,928,801,688 | IssuesEvent | 2018-07-06 13:03:29 | gwu-libraries/lai-libsite | https://api.github.com/repos/gwu-libraries/lai-libsite | opened | Institute captcha on forms to deter spam | low priority (after Primo/AC/Study Spaces) | Definitely need captcha on contact us form :https://library.gwu.edu/contact and maybe all forms. | 1.0 | Institute captcha on forms to deter spam - Definitely need captcha on contact us form :https://library.gwu.edu/contact and maybe all forms. | priority | institute captcha on forms to deter spam definitely need captcha on contact us form and maybe all forms | 1 |

205,570 | 15,648,024,499 | IssuesEvent | 2021-03-23 04:45:29 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: sqlsmith/setup=seed/setting=no-mutations failed | C-test-failure O-roachtest O-robot branch-master release-blocker | [(roachtest).sqlsmith/setup=seed/setting=no-mutations failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2788995&tab=buildLog) on [master@36dea46f8cedf42df31b57dd70db7e0f1fd7a453](https://github.com/cockroachdb/cockroach/commits/36dea46f8cedf42df31b57dd70db7e0f1fd7a453):

```

The test failed on branch=master... | 2.0 | roachtest: sqlsmith/setup=seed/setting=no-mutations failed - [(roachtest).sqlsmith/setup=seed/setting=no-mutations failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2788995&tab=buildLog) on [master@36dea46f8cedf42df31b57dd70db7e0f1fd7a453](https://github.com/cockroachdb/cockroach/commits/36dea46f8cedf42df31... | non_priority | roachtest sqlsmith setup seed setting no mutations failed on the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts sqlsmith setup seed setting no mutations run sqlsmith go sqlsmith go test runner go error p... | 0 |

4,645 | 11,491,481,942 | IssuesEvent | 2020-02-11 19:04:37 | ralphg6/david | https://api.github.com/repos/ralphg6/david | closed | Refactory main module to usage CLI mode | architecture | Migrate http serve start to command:

```bash

david run

``` | 1.0 | Refactory main module to usage CLI mode - Migrate http serve start to command:

```bash

david run

``` | non_priority | refactory main module to usage cli mode migrate http serve start to command bash david run | 0 |

312,569 | 9,549,294,018 | IssuesEvent | 2019-05-02 08:44:30 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | closed | Move cursor displayed when it shouldn't be | Diagram gruppA2019 highPriority | Move cursor is displayed when hovering a line and lines shouldn't be able to move. They are "static" between entities. It also displays when a draw tool for line is chosen and this is very confusing. Is the user drawing a line or moving the entity? | 1.0 | Move cursor displayed when it shouldn't be - Move cursor is displayed when hovering a line and lines shouldn't be able to move. They are "static" between entities. It also displays when a draw tool for line is chosen and this is very confusing. Is the user drawing a line or moving the entity? | priority | move cursor displayed when it shouldn t be move cursor is displayed when hovering a line and lines shouldn t be able to move they are static between entities it also displays when a draw tool for line is chosen and this is very confusing is the user drawing a line or moving the entity | 1 |

179,800 | 6,628,718,677 | IssuesEvent | 2017-09-23 21:43:34 | beloitcollegecomputerscience/OED | https://api.github.com/repos/beloitcollegecomputerscience/OED | closed | Client UI options is not responsive on mobile devices | medium priority | We need to display properly on multiple types of screens. | 1.0 | Client UI options is not responsive on mobile devices - We need to display properly on multiple types of screens. | priority | client ui options is not responsive on mobile devices we need to display properly on multiple types of screens | 1 |

10,553 | 13,340,229,945 | IssuesEvent | 2020-08-28 14:08:00 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Need more information on how to get the fully-qualified Id of Marketplace tasks | Pri1 devops-cicd-process/tech devops/prod doc-enhancement |

In the Custom tasks section when you mention Marketplace tasks a more elaborate description on how to refer these tasks would be very helpful. It isn't obvious - at least for me - to get the fully-qualified name of a downloaded task extension. A sentence or two on this topic would be very handy either here or in anot... | 1.0 | Need more information on how to get the fully-qualified Id of Marketplace tasks -

In the Custom tasks section when you mention Marketplace tasks a more elaborate description on how to refer these tasks would be very helpful. It isn't obvious - at least for me - to get the fully-qualified name of a downloaded task ext... | non_priority | need more information on how to get the fully qualified id of marketplace tasks in the custom tasks section when you mention marketplace tasks a more elaborate description on how to refer these tasks would be very helpful it isn t obvious at least for me to get the fully qualified name of a downloaded task ext... | 0 |

322,194 | 23,896,371,039 | IssuesEvent | 2022-09-08 15:03:13 | Requisitos-de-Software/2022.1-TikTok | https://api.github.com/repos/Requisitos-de-Software/2022.1-TikTok | closed | Padronizar documento de priorização | documentation | ## Descrição

Padronizar documento de priorização de requisitos

## Tarefas

- [x] Adicionar fontes às tabelas

- [x] Alterar numeração

## Critérios de aceitação

- [x] Documento atualizado no repositório | 1.0 | Padronizar documento de priorização - ## Descrição

Padronizar documento de priorização de requisitos

## Tarefas

- [x] Adicionar fontes às tabelas

- [x] Alterar numeração

## Critérios de aceitação

- [x] Documento atualizado no repositório | non_priority | padronizar documento de priorização descrição padronizar documento de priorização de requisitos tarefas adicionar fontes às tabelas alterar numeração critérios de aceitação documento atualizado no repositório | 0 |

68,821 | 14,958,285,572 | IssuesEvent | 2021-01-27 00:23:15 | fufunoyu/mall | https://api.github.com/repos/fufunoyu/mall | opened | CVE-2020-35491 (Medium) detected in jackson-databind-2.9.4.jar | security vulnerability | ## CVE-2020-35491 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2020-35491 (Medium) detected in jackson-databind-2.9.4.jar - ## CVE-2020-35491 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.4.jar</b></p></summary>

<p>Gen... | non_priority | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file mall mall manager pom xml ... | 0 |

269,471 | 8,435,929,370 | IssuesEvent | 2018-10-17 14:17:53 | chanzuckerberg/cellxgene | https://api.github.com/repos/chanzuckerberg/cellxgene | closed | clean up f/e lint | Priority Low cleanup frontend | reduce overall lint warnings & errors pre release. Progress:

* [x] src/actions

* [ ] src/components/

* [x] src/middleware

* [x] src/reducers

* [X] src/util

* [X] top-level | 1.0 | clean up f/e lint - reduce overall lint warnings & errors pre release. Progress:

* [x] src/actions

* [ ] src/components/

* [x] src/middleware

* [x] src/reducers

* [X] src/util

* [X] top-level | priority | clean up f e lint reduce overall lint warnings errors pre release progress src actions src components src middleware src reducers src util top level | 1 |

600,138 | 18,290,158,492 | IssuesEvent | 2021-10-05 14:29:26 | AY2122S1-CS2113T-W12-1/tp | https://api.github.com/repos/AY2122S1-CS2113T-W12-1/tp | closed | Remove food from daily intake | priorityHigh | User should be able to remove an entry from his/her daily intake | 1.0 | Remove food from daily intake - User should be able to remove an entry from his/her daily intake | priority | remove food from daily intake user should be able to remove an entry from his her daily intake | 1 |

141,589 | 21,569,562,967 | IssuesEvent | 2022-05-02 06:12:59 | OdyseeTeam/odysee-frontend | https://api.github.com/repos/OdyseeTeam/odysee-frontend | closed | flash of white during page load (before site loads) | design | Someone was asking if that can be made Dark for Dark mode. Not sure if this is possible to support both light and dark, maybe local storage has that quickly enough? | 1.0 | flash of white during page load (before site loads) - Someone was asking if that can be made Dark for Dark mode. Not sure if this is possible to support both light and dark, maybe local storage has that quickly enough? | non_priority | flash of white during page load before site loads someone was asking if that can be made dark for dark mode not sure if this is possible to support both light and dark maybe local storage has that quickly enough | 0 |

16,355 | 2,889,790,156 | IssuesEvent | 2015-06-13 19:16:58 | damonkohler/android-scripting | https://api.github.com/repos/damonkohler/android-scripting | closed | recorderCaptureVideo results in blank video | auto-migrated Priority-Medium Type-Defect | ```

What device(s) are you experiencing the problem on?

Samsung Captivate

What firmware version are you running on the device?

2.1

What steps will reproduce the problem?

1. use recorderCaptureVideo in a python script

2. ???

3. don't profit?

What is the expected output? What do you see instead?

The expected output... | 1.0 | recorderCaptureVideo results in blank video - ```

What device(s) are you experiencing the problem on?

Samsung Captivate

What firmware version are you running on the device?

2.1

What steps will reproduce the problem?

1. use recorderCaptureVideo in a python script

2. ???

3. don't profit?

What is the expected output?... | non_priority | recordercapturevideo results in blank video what device s are you experiencing the problem on samsung captivate what firmware version are you running on the device what steps will reproduce the problem use recordercapturevideo in a python script don t profit what is the expected output ... | 0 |

53,915 | 7,867,099,620 | IssuesEvent | 2018-06-23 03:30:39 | GiselleSerate/myaliases | https://api.github.com/repos/GiselleSerate/myaliases | closed | add guidance about setup/install to wiki | documentation | I mean everyone looks at the readme, but it's important enough that it should also be in the wiki. | 1.0 | add guidance about setup/install to wiki - I mean everyone looks at the readme, but it's important enough that it should also be in the wiki. | non_priority | add guidance about setup install to wiki i mean everyone looks at the readme but it s important enough that it should also be in the wiki | 0 |